Computational Chemistry Databases for Method Validation: A Practical Guide for Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on leveraging computational chemistry databases for robust method validation.

Computational Chemistry Databases for Method Validation: A Practical Guide for Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on leveraging computational chemistry databases for robust method validation. It covers the foundational role of these databases, explores methodological applications in virtual screening and machine learning, addresses common troubleshooting and optimization challenges, and establishes best practices for comparative analysis and validation. The content synthesizes current trends to help scientists navigate the complexities of validation, ensuring computational tools truly accelerate hit discovery and lead optimization in biomedical research.

The Critical Role of Databases in Computational Chemistry Validation

Defining Validation and Verification (V&V) in Computational Chemistry

In computational chemistry, Validation and Verification (V&V) represent a fundamental framework for establishing the reliability and credibility of computational methods and results. Verification addresses the question "Are we solving the equations correctly?" by ensuring that computational implementations accurately represent their underlying theoretical models. Validation answers "Are we solving the correct equations?" by determining how well computational results correspond to physical reality through comparison with experimental data [1]. This distinction is particularly crucial as computational methods increasingly inform critical decisions in drug discovery, materials design, and energy technologies.

The expanding influence of artificial intelligence and machine learning in computational chemistry has further heightened the importance of robust V&V practices [2] [3]. As noted in a recent cross-disciplinary perspective, without proper validation, "impressive metrics [may] differ greatly from the quantity of interest," potentially leading to misdirected research resources [1]. This guide examines current V&V methodologies, benchmark databases, and experimental protocols that support reliable computational chemistry research.

Computational Chemistry Databases for V&V Research

The foundation of effective V&V in computational chemistry rests upon standardized, high-quality databases that serve as benchmarks for method comparison and validation. The table below summarizes key databases used in V&V research.

Table 1: Key Databases for Computational Chemistry Validation

| Database Name | Data Content & Size | Computational Methods | Primary V&V Applications |

|---|---|---|---|

| OMol25 (Open Molecules 2025) | >100 million 3D molecular snapshots; systems up to 350 atoms [4] | Density Functional Theory (DFT) | Training Machine Learning Interatomic Potentials (MLIPs); benchmarking across diverse chemical spaces [4] |

| QCML Dataset | 33.5M DFT + 14.7B semi-empirical calculations; molecules up to 8 heavy atoms [5] | DFT, Semi-empirical methods | Training foundation models; force field development; includes both equilibrium and off-equilibrium structures [5] |

| NIST CCCBDB (Standard Reference Database 101) | Experimental and computational thermochemical data [6] | Multiple quantum chemical methods | Method benchmarking; comparison with experimental values [6] |

| ChEMBL | ~456,000 compounds, 1,300+ bioactivity assays [1] | Machine learning models for bioactivity prediction | Validation of ligand-based virtual screening methods [1] |

These databases enable researchers to perform systematic comparisons between computational methods and against experimental reference data, forming the empirical backbone of V&V processes.

Experimental Protocols for V&V in Computational Chemistry

Protocol 1: Benchmarking Quantum Chemistry Methods

Objective: To assess the accuracy and efficiency of quantum chemistry methods (e.g., DFT functionals, wavefunction methods) for predicting molecular properties.

Methodology:

- Reference Data Selection: Curate a set of molecular structures with reliable experimental data (e.g., from NIST CCCBDB) or high-level theoretical results [6].

- Computational Setup: Apply multiple quantum chemistry methods (e.g., different DFT functionals, MP2, CCSD(T)) to calculate target properties using consistent basis sets and convergence criteria [7].

- Error Quantification: Compute statistical measures (mean absolute error, root mean square error) between computational results and reference values.

- Performance Assessment: Evaluate computational cost (CPU time, memory requirements) alongside accuracy metrics.

Key Considerations: Method transferability across different chemical systems (e.g., organics vs. transition metal complexes) must be assessed, as performance can vary significantly [2].

Protocol 2: Validating Machine Learning Potentials

Objective: To establish the reliability of Machine Learned Interatomic Potentials (MLIPs) for molecular dynamics simulations.

Methodology:

- Training Data Curation: Select diverse molecular configurations from databases like OMol25 or QCML that represent relevant chemical spaces [4] [5].

- Model Training: Develop MLIPs using architectures such as neural networks or kernel methods.

- Property Prediction: Use trained MLIPs to predict energies, forces, and molecular properties not included in the training set.

- Reference Comparison: Compare MLIP predictions with DFT calculations (for accuracy) and experimental data (for physical validity).

- Application Testing: Perform molecular dynamics simulations to assess stability and physical behavior in practical applications.

Evaluation Metrics: Forces and energy predictions should achieve DFT-level accuracy while demonstrating orders-of-magnitude improvement in computational efficiency [4].

Protocol 3: Assessing Virtual Screening Performance

Objective: To evaluate machine learning methods for bioactivity prediction in drug discovery.

Methodology:

- Data Preparation: Use curated bioactivity data (e.g., from ChEMBL) with appropriate train/test splits to avoid artificial performance inflation [1].

- Model Comparison: Test diverse algorithms (e.g., deep neural networks, support vector machines, random forests) using consistent molecular representations.

- Metric Selection: Evaluate performance using both ROC-AUC and precision-recall curves, particularly for imbalanced datasets common in drug discovery [1].

- Statistical Validation: Apply rigorous statistical testing with uncertainty quantification, such as nested cross-validation with multiple random seeds.

Critical Consideration: As noted in validation studies, "deep learning methods do not significantly outperform all competing methods" across all scenarios, highlighting the need for context-specific benchmarking [1].

Signaling Pathways and Workflows

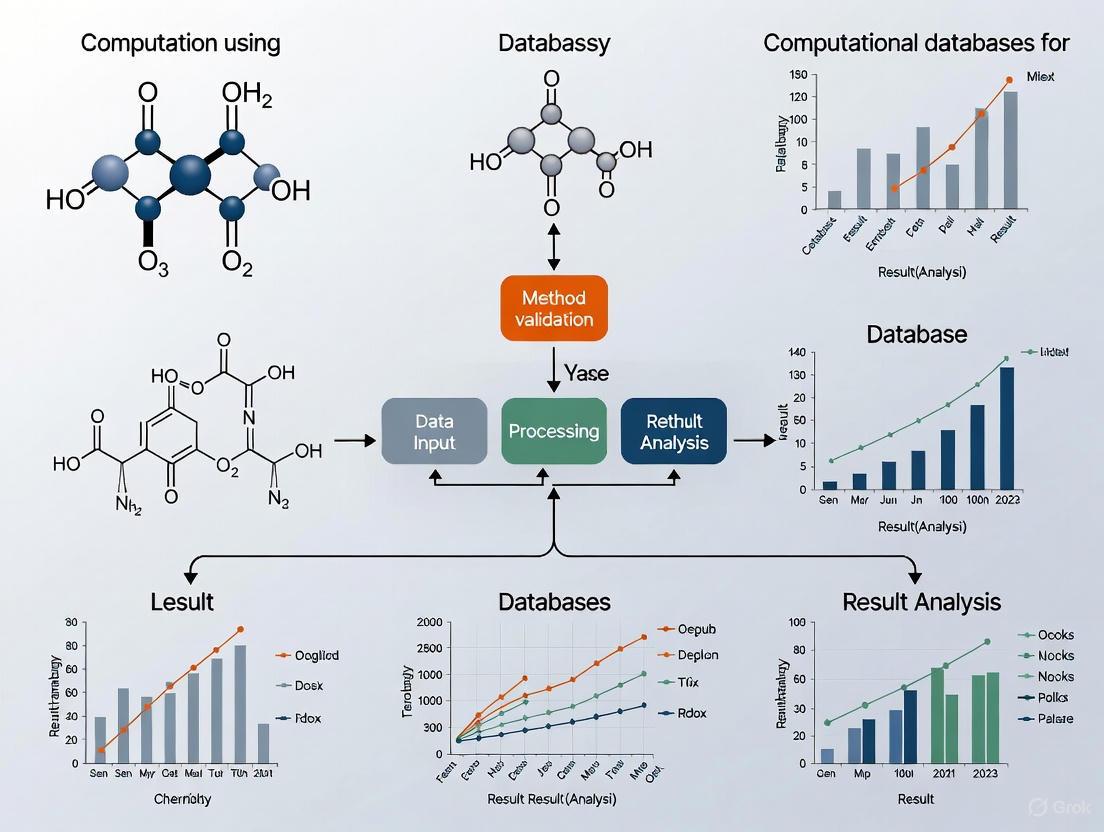

The following diagram illustrates the conceptual relationship between V&V components in computational chemistry and their role in ensuring predictive reliability.

Diagram 1: V&V Framework

The practical workflow for conducting V&V studies involves multiple stages from data preparation to final assessment, as shown in the following diagram.

Diagram 2: V&V Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Essential Computational Tools for V&V Research

| Tool Category | Representative Examples | Primary Function in V&V |

|---|---|---|

| Quantum Chemistry Software | VASP (solids), Gaussian (molecules), ORCA, GAMESS (free alternatives) [7] [8] | Generate reference data; perform method comparisons; calculate molecular properties |

| Visualization & Analysis | VESTA (solids), Avogadro (molecules), GaussView [7] | Structure modeling; result interpretation; visual validation of molecular structures |

| Reference Databases | NIST CCCBDB, OMol25, QCML Dataset [4] [6] [5] | Provide benchmark data; training ML models; method validation against references |

| Machine Learning Frameworks | PySCF, Psi4 (Python integration) [7] [8] | Develop ML potentials; automate workflows; integrate with quantum chemistry methods |

| Specialized Libraries | RDKit (chemoinformatics), NumPy/SciPy (numerical analysis) [1] | Molecular featurization; statistical analysis; data preprocessing |

Establishing robust Validation and Verification protocols is fundamental to maintaining scientific rigor in computational chemistry, particularly as the field increasingly relies on complex machine learning methods and high-throughput screening. The growing ecosystem of benchmark databases, standardized validation protocols, and specialized software tools provides researchers with a comprehensive framework for assessing computational methodologies. By systematically implementing these V&V practices, computational chemists can enhance the reliability of their predictions and accelerate the discovery of new molecules and materials with greater confidence.

Why Method Validation is Crucial for Reliable Drug Discovery

In the field of drug discovery, the journey from a theoretical compound to a life-saving medicine is fraught with complexity. Method validation serves as the critical foundation that ensures every step of this journey—from initial computational predictions to final laboratory assays—produces reliable, accurate, and interpretable data. It is the cornerstone that supports informed decision-making, reduces costly late-stage failures, and ultimately ensures the development of safe and effective therapeutics. This is particularly true for computational chemistry databases and prediction tools, where validation transforms speculative models into trusted research assets [9] [10].

The Critical Role of Validation in Computational Prediction

Computational methods, especially for target prediction, are powerful for generating hypotheses about a molecule's mechanism of action and potential for repurposing. However, their utility is entirely dependent on rigorous validation to assess their reliability and consistency [9].

A precise comparison of seven target prediction methods, including stand-alone codes and web servers like MolTarPred and PPB2, revealed significant performance variations. This systematic evaluation used a shared benchmark dataset of FDA-approved drugs to ensure a fair comparison. Key findings are summarized in the table below [9].

Table 1: Performance Comparison of Selected Target Prediction Methods

| Method Name | Type | Underlying Algorithm | Key Database | Reported Performance Highlights |

|---|---|---|---|---|

| MolTarPred [9] | Ligand-centric | 2D similarity (Morgan fingerprints, Tanimoto) | ChEMBL 20 | Most effective method in the comparison; suitable for drug repurposing. |

| PPB2 [9] | Ligand-centric | Nearest neighbor/Naïve Bayes/Deep Neural Network | ChEMBL 22 | Uses multiple algorithms and fingerprints (MQN, Xfp, ECFP4). |

| RF-QSAR [9] | Target-centric | Random Forest | ChEMBL 20 & 21 | Uses ECFP4 fingerprints; model built for each target. |

| TargetNet [9] | Target-centric | Naïve Bayes | BindingDB | Utilizes multiple fingerprint types (FP2, MACCS, ECFP). |

| ChEMBL [9] | Target-centric | Random Forest | ChEMBL 24 | Uses Morgan fingerprints for its predictive models. |

The evaluation showed that MolTarPred emerged as the most effective method in this comparison. The study also highlighted that model optimization, such as using high-confidence data filters or selecting Morgan fingerprints over MACCS, can significantly impact performance. For applications like drug repurposing, where identifying all potential targets is key, a high-confidence filter that reduces recall might be less ideal [9].

Establishing Confidence with Experimental Validation

While computational models are invaluable for prioritization, their predictions must often be confirmed through experimental methods before progressing in the drug development pipeline. The validation of these analytical methods is a formal, regulated process to demonstrate they are suitable for their intended use [11] [12].

The core parameters assessed during analytical method validation, as per ICH Q2(R1) guidelines, are summarized below [11] [12].

Table 2: Core Parameters for Analytical Method Validation

| Validation Parameter | What It Assesses | Why It is Crucial |

|---|---|---|

| Accuracy [11] [12] | How close the results are to the true value. | Ensures the method provides a correct measurement of the analyte (e.g., drug concentration). |

| Precision [11] [12] | The consistency of results under normal operating conditions. | Confirms the method yields reproducible data across different runs, analysts, and days. |

| Specificity [11] [12] | The ability to measure the analyte accurately in the presence of other components. | Guarantees that the signal is from the target molecule only, and not from impurities or the sample matrix. |

| Linearity & Range [11] [12] | The ability to produce results proportional to the concentration of the analyte, across a specified range. | Defines the concentrations over which the method can be accurately and precisely applied. |

| Limit of Detection (LOD) & Quantification (LOQ) [11] [12] | The lowest amount of an analyte that can be detected (LOD) or reliably quantified (LOQ). | Essential for detecting and measuring low levels of impurities or degradants that could affect safety. |

| Robustness [11] [12] | The reliability of the method when small, deliberate changes are made to parameters (e.g., pH, temperature). | Ensures the method will perform consistently in different laboratories or over the method's lifetime. |

A practical example of this process is the development and validation of a novel RP-HPLC method for quantifying the drug favipiravir. Using an Analytical Quality by Design (AQbD) approach, scientists systematically identified high-risk factors (like solvent ratio and column type) and optimized the method to ensure it was robust, precise, and accurate before its application for quality control [13].

Method Validation in Practice: Workflows and Metrics

Implementing method validation involves structured workflows and specialized metrics tailored to the challenges of biomedical data.

Method Validation Workflow

For computational models in drug discovery, traditional metrics like simple accuracy can be misleading due to highly imbalanced datasets. The field therefore relies on more nuanced evaluation metrics [14].

Table 3: Key Metrics for Evaluating Computational Models in Drug Discovery

| Metric | Definition | Application in Drug Discovery |

|---|---|---|

| Precision-at-K [14] | Measures the proportion of true positives among the top K ranked predictions. | Crucial for virtual screening to ensure the top-ranked compounds are truly active. |

| Rare Event Sensitivity [14] | Assesses the model's ability to detect low-frequency but critical events. | Used to predict rare adverse drug reactions or identify compounds for rare diseases. |

| Pathway Impact Metrics [14] | Evaluates how well model predictions align with relevant biological pathways. | Ensures predictions are not just statistically sound but also biologically interpretable. |

| Recall (Sensitivity) [14] | Measures the proportion of actual positives that are correctly identified. | Prioritized when the cost of missing a true active compound (false negative) is very high. |

Ligand-Centric Target Prediction

The Scientist's Toolkit: Essential Reagents and Databases

The integrity of any validated method hinges on the quality of its underlying components. Below is a list of key research reagents and database solutions essential for method validation in computational and analytical chemistry.

Table 4: Essential Research Reagents and Database Solutions

| Item / Solution | Function in Method Validation |

|---|---|

| ChEMBL Database [9] | A manually curated database of bioactive molecules with drug-like properties. It provides experimentally validated bioactivity data (e.g., IC50, Ki) for building and benchmarking target prediction models. |

| PubChem [15] | A public repository of chemical substances and their biological activities. Used for chemical similarity searches, retrieving physicochemical properties, and accessing a vast amount of bioassay data for validation. |

| Reference Standards [11] | Highly characterized, pure chemical substances. Used to calibrate instruments, confirm the identity of analytes, and establish the accuracy and precision of analytical methods. |

| Certified Reference Materials (CRMs) | Real-world samples with certified values for specific properties. Used as a benchmark to test the overall accuracy and reliability of a newly validated method against a known standard. |

| High-Quality Solvents & Buffers [13] | Essential components of the mobile phase in chromatographic methods (e.g., HPLC). Their purity and consistency are critical for achieving robust and reproducible results, as per ICH guidelines. |

Method validation is the linchpin that connects innovation to application in drug discovery. It provides the documented evidence that a method—whether computational or analytical—is fit for its purpose, enabling researchers to trust their data, make go/no-go decisions with confidence, and design effective experiments. As computational models and databases grow in size and complexity, the principles of rigorous, transparent validation become even more critical. By adhering to these principles, the scientific community can ensure that the pursuit of new therapies is built upon a foundation of reliability and scientific rigor, accelerating the delivery of safe and effective drugs to patients.

In computational chemistry, particularly for drug discovery, the reliability of any method is contingent upon rigorous validation against empirical evidence. This process ensures that computational predictions not only align with physical reality but also provide actionable insights that can accelerate research and development. Validation transcends simple accuracy checks; it encompasses a comprehensive framework for assessing model robustness, generalizability, and predictive power. The cornerstone of this framework is the use of diverse, high-quality data types, each serving a distinct purpose in challenging and refining computational models [16] [17].

The critical data types for a robust validation strategy include experimental binding affinities, which provide a quantitative benchmark for predictive methods; negative data, which delineate the boundaries of a model's knowledge by defining what does not work; and large-scale reference datasets, which offer the breadth and chemical diversity needed to train and evaluate modern machine-learning potentials. This guide objectively compares the roles of these data types, the performance of methods that leverage them, and the detailed experimental protocols that underpin their generation.

Experimental Binding Affinity Data

The Role of Binding Affinities in Validation

The free energy of binding, or binding affinity, is a central quantitative measure in drug discovery, serving as a primary indicator of drug potency. It is the key experimental metric against which computational methods for predicting ligand-protein interactions are validated [18]. The accuracy of these computational predictions is vital for making reliable decisions in hit-to-lead and lead optimization stages. Even highly accurate experimental techniques like isothermal titration calorimetry (ITC) can have associated measurement errors, which underscores the importance of using computational methods that provide their own uncertainty quantification (UQ) for statistically robust validation [18].

Performance Comparison of Binding Affinity Prediction Methods

Computational methods for predicting binding affinity exhibit a wide range of performance characteristics, trading off between computational cost, throughput, and accuracy. The following table summarizes the key attributes of several prominent approaches.

Table 1: Performance Comparison of Binding Affinity Prediction Methods

| Method | Type | Key Metric (RMSE) | Computational Cost | Throughput | Key Advantage |

|---|---|---|---|---|---|

| FEP+ [19] | Alchemical Simulation | ~1.0 kcal/mol [19] | Very High | Low | High accuracy, considered near gold-standard |

| PBCNet [19] | AI (Graph Neural Network) | 1.11 - 1.49 kcal/mol [19] | Low | Very High | High speed and accuracy after fine-tuning |

| MM-GB/SA [19] | End-point Sampling | >1.49 kcal/mol [19] | Medium | Medium | Balanced cost and accuracy |

| DeltaDelta [19] | AI (Siamese Network) | >1.49 kcal/mol [19] | Low | High | Direct RBFE prediction |

| Glide SP [19] | Docking Score | Variable (lower ρ) [19] | Low | Very High | High-throughput screening |

Abbreviations: RMSE: Root-Mean-Square Error, RBFE: Relative Binding Free Energy.

As the data shows, FEP+ methods are highly accurate but computationally intensive, making them less suitable for rapid screening. In contrast, AI-based models like PBCNet offer a favorable balance, achieving accuracy close to FEP+ (1.11 kcal/mol on one test set) while operating at a fraction of the computational cost and with much higher throughput [19]. The performance of MM-GB/SA and older AI models like DeltaDelta is generally surpassed by these newer approaches.

Experimental Protocol for Binding Affinity Determination

For a computational method like PBCNet, validation relies on experimental binding affinity data obtained from established assays. The typical workflow for generating this validation data involves:

- Ligand Preparation: A congeneric series of ligands—sharing a core structure but differing in substituents—is synthesized.

- Binding Assay: The binding affinity of each ligand for the target protein is quantified experimentally.

- Common Metrics: IC₅₀ (half-maximal inhibitory concentration) or Kd (dissociation constant).

- Common Techniques: Enzymatic inhibition assays (for IC₅₀), Isothermal Titration Calorimetry (ITC), or Surface Plasmon Resonance (SPR) for more direct thermodynamic measurements [18].

- Data Conversion: The experimental results (e.g., IC₅₀) are often converted to pIC₅₀ values (pIC₅₀ = -log₁₀(IC₅₀)) for modeling.

- Computational Validation: The experimental pIC₅₀ values are used as the ground truth to train and validate computational models. The relative binding affinity (ΔpIC₅₀) between ligand pairs is the key prediction target for models like PBCNet [19].

Negative Data: The Critical Role of Unsuccessful Results

Defining Negative Data and Its Importance

Negative data, which refers to information about unsuccessful experimental outcomes or non-binding molecule-protein pairs, is a significantly underutilized resource in computational chemistry. It is estimated that unsuccessful experimental outcomes are nearly an order of magnitude more common than positive results [20]. This data provides critical insights into the boundaries of chemical space, informing models about which interactions do not occur and which compounds do not bind. Harnessing this data is essential for refining AI/ML models, improving their predictive accuracy, and preventing them from generating false positives [21] [20].

Performance Advantages of Incorporating Negative Data

Integrating negative data into the validation and training pipeline addresses a key flaw in many virtual high-throughput screening (vHTS) workflows. Without high-quality negative data, performance metrics can be artificially inflated, leading to an overestimation of a pipeline's real-world utility [21]. The use of negative data enables a more realistic and rigorous assessment, helping to distinguish tools that truly accelerate discovery from those that do not. IBM research demonstrates that using reinforcement learning with negative data can strengthen model resilience and adaptability in the face of data inconsistencies [20].

Experimental Protocol for Generating Negative Data

Curating high-quality negative data from published literature can be challenging, as negative results are historically under-reported. The following workflow, derived from recent research, outlines a computational strategy for generating high-quality negative data without additional lab experiments [21]:

Diagram 1: Negative Data Generation Workflow

This method involves two primary techniques for generating negative data that closely matches positive data in molecular properties [21]:

- Ligand Randomization: Docking ligands from one published protein structure (e.g., PDB ID: 1G74) into the binding pocket of a different, unrelated protein structure (e.g., PDB ID: 1FCX). This creates a non-binding pair (XXXX != YYYY) [21].

- Structural Isomer Generation: Using tools like MAYGEN to generate structural isomers of known binding ligands. These isomers have the same atomic composition but different connectivity, which typically disrupts binding [21].

The resulting sets of non-binding pairs and decoy molecules provide a robust, property-matched negative dataset. Running a vHTS pipeline on this combined positive/negative dataset allows for a definitive assessment of its ability to enrich true binders and reject non-binders at every stage of the workflow [21].

Large-Scale Reference Datasets for Machine Learning

The Emergence of Large-Scale Benchmarking Datasets

The development of accurate machine-learned interatomic potentials (MLIPs) depends on vast amounts of high-quality quantum chemical data. These MLIPs aim to achieve Density Functional Theory (DFT)-level accuracy at a fraction of the computational cost, enabling simulations of large, chemically diverse systems that were previously infeasible [4]. The usefulness of an MLIP is directly tied to the amount, quality, and chemical breadth of the data it was trained on [4].

Performance and Scale of OMol25 and Other Datasets

The recent release of the Open Molecules 2025 (OMol25) dataset represents a significant leap in scale and diversity over previous resources. The table below quantifies this advancement.

Table 2: Comparison of Molecular Datasets for Training MLIPs

| Dataset | Size (Calculations) | Computational Cost | Avg. Atoms per System | Key Chemical Domains | Level of Theory |

|---|---|---|---|---|---|

| OMol25 [4] [22] | >100 million | 6 billion CPU hours | ~200-350 (10x larger) | Biomolecules, Electrolytes, Metal Complexes | ωB97M-V/def2-TZVPD |

| Previous SOTA (e.g., SPICE, ANI) [4] [22] | Millions | ~500 million CPU hours | 20-30 | Simple organic molecules | Lower (e.g., ωB97X/6-31G(d)) |

This unprecedented scale and diversity have directly translated into superior model performance. For example, models trained on OMol25, such as the eSEN and Universal Models for Atoms (UMA), have been reported to achieve "essentially perfect performance on all benchmarks" and provide "much better energies than the DFT level of theory I can afford" for some researchers, marking a significant step forward for the field [22].

Experimental and Computational Protocol for Dataset Creation

Creating a dataset like OMol25 involves a community-driven, multi-stage process that combines existing data with new, targeted calculations [4] [22]:

- Curation: The process starts with existing community datasets (e.g., SPICE, Transition-1x) to cover important, established chemical areas.

- Identification of Gaps: The team analyzes existing data to identify major types of chemistry that are underrepresented.

- Focused Generation: New molecular configurations are simulated to fill these gaps, with OMol25 focusing on:

- Biomolecules: Structures from the PDB, with diverse protonation states and tautomers sampled using tools like Schrödinger's.

- Electrolytes: Molecular dynamics simulations of aqueous solutions, ionic liquids, and clusters relevant to battery chemistry.

- Metal Complexes: Combinatorial generation of structures using the Architector package with GFN2-xTB.

- High-Level Calculation: All molecular configurations are calculated at a consistent, high-level of quantum theory (ωB97M-V/def2-TZVPD) to ensure data quality and uniformity. This step required millions of CPU hours using Meta's computing infrastructure [4] [22].

The Scientist's Toolkit: Essential Research Reagents

The following table lists key databases, tools, and datasets that are indispensable for researchers conducting validation studies in computational chemistry.

Table 3: Essential Research Reagents for Validation Studies

| Reagent / Resource | Type | Primary Function in Validation | Key Features / Notes |

|---|---|---|---|

| OMol25 [4] [22] | Reference Dataset | Training & benchmarking ML interatomic potentials | 100M+ calculations, DFT-level, biomolecules/electrolytes/metals |

| PubChem [17] [15] | Public Database | Source of chemical structures & bioactivity data | Billions of compounds, essential for virtual screening |

| PDBbind [21] | Curated Dataset | Provides protein-ligand complexes for binding affinity studies | Used to generate positive/negative data pairs |

| MAYGEN [21] | Software Tool | Generates structural isomers for negative data creation | Creates non-binding decoys from active ligands |

| Schrödinger FEP+ [19] | Software Suite | Gold-standard for binding affinity prediction; a key benchmarking baseline | High accuracy, high computational cost |

| PBCNet Web Service [19] | AI Model (Web Tool) | Rapid prediction of relative binding affinity for lead optimization | User-friendly interface for RBFE prediction |

| QDB Platform [23] | Database | Validation of chemistry sets for plasma processes | Includes uncertainty quantification for reactions |

| Meta's UMA/eSEN Models [22] | Pre-trained MLIPs | Fast, accurate molecular energy & force calculations | Trained on OMol25; available for inference on platforms like HuggingFace |

A robust validation strategy for computational chemistry methods, especially in drug discovery, requires a multifaceted approach to data. Relying solely on one data type is insufficient. As this guide has detailed, experimental binding affinities provide the essential ground truth for predictive models; negative data are crucial for defining the boundaries of a model's knowledge and preventing over-optimistic performance estimates; and large-scale, diverse datasets are the foundation for developing the next generation of fast and accurate machine learning potentials.

The most reliable and actionable computational insights emerge from the integration of all these data types. This comprehensive approach to validation, which includes rigorous benchmarking against experimental data and the use of uncertainty quantification, is what ultimately builds trust in computational tools and allows them to become standard, relied-upon components in the scientific and industrial toolkit [16] [17] [18].

In the field of computational chemistry and drug discovery, databases containing protein-ligand structures and binding affinities are indispensable for developing and validating predictive models. These resources provide the experimental data necessary to train machine learning scoring functions, benchmark performance, and guide structure-based drug design. The quality, size, and diversity of these databases directly impact the real-world applicability of computational methods. Among the most critical resources are PDBbind, a manually curated database linking Protein Data Bank structures with binding affinity data, and ChEMBL, a large-scale repository of bioactive molecules with drug-like properties [24] [25]. However, as research advances, significant challenges have emerged regarding data quality, including structural artifacts, data leakage between training and test sets, and curation errors that can severely compromise model generalizability [24] [26] [27]. This guide provides a comparative analysis of key databases, highlighting their applications in method validation research while addressing critical data quality considerations that impact computational prediction reliability.

Quantitative Comparison of Major Databases

Table 1: Core Database Features and Applications

| Database | Primary Content | Size (Entries/Measurements) | Key Strengths | Primary Use Cases |

|---|---|---|---|---|

| PDBbind [24] [26] | Protein-ligand complex structures with binding affinities | ~19,500 complexes (v2020) [26] | Links 3D structures with affinity data; Basis for CASF benchmark [24] | Training/scoring functions; Binding affinity prediction |

| ChEMBL [25] [28] | Bioactive molecules, drug-like compounds, target annotations | 20.7M+ bioactivities; 2.4M+ compounds (v34) [29] | Manually curated; Extensive target/disease annotations; 35+ years of data [25] [28] | Target identification/validation; Ligand-based screening; QSAR modeling |

| BindingDB [29] [26] | Binding affinity data for protein-ligand pairs | 2.9M+ binding data points; 9,300+ targets [29] | Focus on binding affinities from literature/patents [26] | Binding affinity prediction; Virtual screening |

| BindingNet v2 [29] | Modeled protein-ligand binding complexes | 689,796 complexes; 1,794 targets [29] | Expanded structural coverage via template-based modeling [29] | Data augmentation for pose prediction; Training on novel ligands |

Table 2: Specialized Structural and Quality-Focused Datasets

| Database/Dataset | Primary Purpose | Key Differentiators | Impact on Model Performance |

|---|---|---|---|

| PDBbind CleanSplit [24] | Minimize train-test data leakage in PDBbind | Structure-based filtering removes complexes similar to CASF test set [24] | Reduces overestimation of generalization; Performance of top models dropped when retrained [24] |

| HiQBind [26] | Provide high-quality, artifact-free structures | Corrects common PDB structural errors; Open-source workflow [26] | Aims to improve accuracy/reliability of scoring functions |

| OMol25 [22] | Quantum chemical calculations for NNPs | 100M+ calculations at ωB97M-V/def2-TZVPD level [22] | Enables highly accurate neural network potentials for molecular modeling |

Database Selection Workflow

The relationships between different database types and their primary applications in computational research can be visualized through the following workflow:

Database Selection Workflow for Method Validation

Critical Data Quality Challenges and Solutions

Data Leakage and Bias in Benchmarking

A significant challenge in method validation is train-test data leakage, which severely inflates performance metrics and leads to overestimation of model generalization capabilities. Research has revealed that nearly half (49%) of complexes in the commonly used CASF benchmark share exceptionally high similarity with structures in the PDBbind training set, creating an unrealistic testing scenario [24]. This leakage occurs when models encounter test complexes that share similar ligands, proteins, and binding conformations with training data, enabling prediction through memorization rather than genuine learning of protein-ligand interactions [24]. The PDBbind CleanSplit algorithm addresses this by implementing structure-based filtering that eliminates training complexes closely resembling any CASF test complex, including those with ligand Tanimoto similarity >0.9 [24]. When state-of-the-art models like GenScore and Pafnucy were retrained on CleanSplit, their benchmark performance dropped substantially, confirming that previous high scores were largely driven by data leakage rather than true generalization capability [24].

Structural Artifacts and Curation Errors

Beyond data leakage, structural quality issues present another critical challenge. The PDBbind database suffers from various structural artifacts including incorrect bond orders, steric clashes, and missing atoms that compromise scoring function accuracy [26]. A manual analysis of protein-protein PDBbind records revealed a ~19% curation error rate where reported dissociation constants (KD) were not supported by primary publications [27]. These errors included incorrect units, approximate values instead of precise measurements, and values belonging to different protein heterodimers [27]. Correcting these curation errors improved the Pearson correlation between measured and predicted log10(KD) values by approximately 8 percentage points in random forest models, highlighting the significant impact of data quality on predictive performance [27]. Solutions like the HiQBind workflow address these issues through automated correction of structural artifacts, filtering of covalent binders, and removal of structures with severe steric clashes [26].

Experimental Protocols for Database Validation

Protocol 1: Assessing Data Leakage with CleanSplit

Objective: Evaluate and mitigate train-test data leakage between PDBbind and CASF benchmarks to enable genuine assessment of model generalizability [24].

Methodology:

- Similarity Calculation: Compute multimodal similarity between all CASF and PDBbind complexes using:

- Leakage Identification: Identify test-training pairs with Tanimoto >0.9 and structural similarity exceeding thresholds [24]

- Filtered Dataset Creation: Iteratively remove training complexes that closely resemble any CASF test complex [24]

- Redundancy Reduction: Apply adapted filtering thresholds to eliminate similarity clusters within training data [24]

- Model Validation: Retrain existing models on filtered dataset and evaluate performance on CASF benchmark [24]

Validation Metrics:

- Percentage of CASF complexes with similar training counterparts

- Performance change (Pearson R, RMSD) when models are retrained on CleanSplit

- Generalization capability on strictly independent test sets

Protocol 2: Assessing Impact of Structural Quality

Objective: Quantify how structural data quality impacts scoring function accuracy and reliability [26] [27].

Methodology:

- Structure Preparation: Process PDB structures through HiQBind-WF workflow:

- Curation Verification: Manually verify binding affinity annotations against primary publications [27]

- Error Categorization: Classify discrepancies into categories (Units, Approximate, Different Heterodimer, etc.) [27]

- Model Training: Train scoring functions on original vs. corrected datasets [27]

- Performance Comparison: Evaluate models using clustering-based cross-validation to prevent artificial inflation [27]

Validation Metrics:

- Curation error rate (percentage of records with unsupported K_D values) [27]

- Improvement in Pearson correlation after error correction [27]

- Reduction in prediction variance across different protein families

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools and Databases for Method Validation

| Resource | Type | Primary Function | Application in Validation |

|---|---|---|---|

| CASF Benchmark [24] | Evaluation framework | Standardized assessment of scoring functions | Testing scoring, ranking, docking, and screening power |

| HiQBind-WF [26] | Data curation workflow | Corrects structural artifacts in PDB structures | Ensuring high-quality input data for model training |

| CleanSplit Algorithm [24] | Data splitting method | Structure-based clustering to prevent data leakage | Creating truly independent training/test sets |

| RF-score Features [27] | Molecular descriptor set | Structure-based features for machine learning | Training binding affinity prediction models |

| Uni-Mol [29] | Deep learning model | Protein-ligand binding pose generation | Evaluating generalization on novel ligands (Tc < 0.3) |

| ChEMBL Web Interface [25] | Data query platform | Access to bioactivity data and target annotations | Ligand-based screening and target prioritization |

The evolving landscape of computational chemistry databases reveals a critical transition from simply expanding dataset sizes to prioritizing data quality, diversity, and proper benchmarking methodologies. While established resources like PDBbind and ChEMBL provide invaluable foundations for method development, recent research has exposed significant challenges including data leakage, structural artifacts, and curation errors that compromise model validation [24] [26] [27]. Solutions such as PDBbind CleanSplit, HiQBind-WF, and BindingNet v2 represent important steps toward more rigorous validation standards by addressing these fundamental data quality issues [24] [29] [26]. For researchers in computational chemistry and drug development, successful method validation now requires careful database selection combined with critical assessment of data quality, appropriate splitting strategies to prevent leakage, and thorough benchmarking across multiple independent test sets. The integration of high-quality curated data with robust validation protocols will be essential for developing predictive models that genuinely generalize to novel targets and compound classes, ultimately accelerating computational drug discovery.

Applying Databases for Virtual Screening and AI Model Training

Generating High-Quality Negative Data for Robust Benchmarking

In computational chemistry, the ability of a model to identify not just active compounds, but also inactive ones, is a critical measure of its real-world utility. Generating high-quality negative data—reliable information on compounds that do not exhibit activity against a target—is therefore foundational for creating robust benchmarks in drug discovery research. Without carefully curated negative data, models can develop false confidence, leading to costly failures in experimental validation.

This guide objectively compares prevalent approaches and data sources used for this purpose, framed within the broader thesis of building reliable computational chemistry databases for method validation. We present an analysis of experimental protocols and quantitative data to help researchers select the most appropriate strategies for their specific validation contexts, focusing on practical applicability for scientists and drug development professionals.

The Critical Role of Negative Data in Model Validation

The Problem of Biased Benchmarking

Many existing benchmark datasets suffer from distribution patterns that do not fully align with real-world scenarios, primarily due to the challenges in curating reliable negative data [30]. Data from public resources like ChEMBL are often sparse, unbalanced, and sourced from multiple experimental protocols, which can introduce unintended biases [30]. For instance, the DECOY-based approach used in datasets like DUD-E, while useful for molecular docking benchmarks, can be of lower confidence for general activity prediction as the actual activities are not experimentally measured [30]. This limitation can skew model evaluation and lead to overoptimistic performance estimates.

Real-World Data Characteristics

Analyses of real-world compound activity data reveal two distinct patterns corresponding to different drug discovery stages, each requiring tailored negative data strategies [30]:

- Virtual Screening (VS) Assays: Exhibit diffused, widespread compound distributions with lower pairwise similarities, typical of diverse screening libraries.

- Lead Optimization (LO) Assays: Show aggregated, concentrated distributions with high compound similarities, characteristic of congeneric series derived from hit or lead compounds.

This distinction is crucial when generating negative data, as the nature of inactive compounds differs significantly between these contexts, impacting model generalization.

Comparative Analysis of Data Generation Methodologies

The table below summarizes four principal methodologies for generating negative data, along with their comparative advantages and limitations.

Table 1: Comparison of Negative Data Generation Methodologies

| Methodology | Key Principle | Best-Suited Applications | Key Advantages | Key Limitations |

|---|---|---|---|---|

| DECOY-Based Sampling [30] | Generation of physically similar but chemically distinct inactive compounds | Molecular docking validation; Structure-based virtual screening | Enhances benchmark dataset size; Controls for certain molecular properties | May introduce bias; Lower confidence as activities are not actually measured |

| Public Database Mining [15] | Curating confirmed inactive compounds from public databases (PubChem, ChEMBL, BindingDB) | Virtual screening assay benchmarks; Training machine learning classifiers | Utilizes experimentally validated negative data; High biological relevance | Data sparsity; Potential reporting biases across sources |

| Chemical Space Filtering [31] | Applying physicochemical and drug-likeness filters to exclude non-relevant compounds | Early-stage hit identification; Library enrichment tasks | Reduces search space efficiently; Incorporates medicinal chemistry knowledge | May exclude potentially active scaffolds; Filter thresholds can be arbitrary |

| Experimental Benchmark Transfer [30] | Leveraging assay type distinctions (VS/LO) to inform data splitting and negative sample selection | Lead optimization benchmarks; Few-shot learning scenarios | Mimics real-world data distribution patterns; Supports practical evaluation schemes | Requires careful assay characterization; More complex implementation |

Quantitative Performance Across Task Types

Recent benchmarking initiatives like CARA (Compound Activity benchmark for Real-world Applications) have enabled standardized evaluation of how different negative data strategies perform across various prediction tasks [30]. The findings reveal that methodology effectiveness varies significantly depending on the application context.

Table 2: Performance Comparison of Training Strategies with Different Negative Data Approaches

| Training Strategy | Virtual Screening Task Performance | Lead Optimization Task Performance | Recommended Negative Data Source |

|---|---|---|---|

| Meta-Learning [30] | Highly effective | Moderately effective | DECOY-based sampling; Public database mining |

| Multi-Task Learning [30] | Highly effective | Less effective | Public database mining |

| Single-Task QSAR Modeling [30] | Moderately effective | Highly effective | Chemical space filtering; Experimental benchmark transfer |

| Few-Shot Learning [30] | Performance varies | Performance varies | Experimental benchmark transfer |

Experimental Protocols for Robust Negative Data Generation

Protocol 1: DECOY-Based Generation for Virtual Screening

Application Context: This protocol is adapted from established benchmarks like DUD-E and is primarily valuable for evaluating structure-based virtual screening methods where true negative data is scarce [30].

Step-by-Step Methodology:

- Active Compound Selection: Curate a set of confirmed active compounds for the target of interest from reliable databases like ChEMBL or BindingDB [15].

- DECOY Generation Parameters:

- Match physical properties (e.g., molecular weight, logP) between actives and decoys while ensuring chemical dissimilarity

- Maintain similar numbers of rotatable bonds and hydrogen bond donors/acceptors

- Chemical Diversity Enforcement: Ensure decoys are topologically dissimilar to active compounds to avoid true activity potential

- Benchmark Construction: Combine confirmed actives with generated decoys in a predefined ratio (typically 1:10 to 1:100) for evaluation

Validation Approach: While decoys are presumed inactive, cross-reference with experimental databases where possible to identify false negatives [30].

Protocol 2: Experimental Data Curation for Lead Optimization

Application Context: This approach is particularly suited for lead optimization benchmarks where series of congeneric compounds with measured activities are available [30].

Step-by-Step Methodology:

- Assay Type Identification: Classify source assays as Lead Optimization (LO) type based on compound similarity patterns [30].

- Activity Threshold Definition: Establish context-appropriate activity thresholds (e.g., IC50 < 1μM for actives) based on experimental conditions and target biology.

- Negative Data Selection:

- Select compounds from the same assay with measured inactivity against the target

- Prioritize compounds with structural similarity to actives but confirmed inactivity to address activity cliffs

- Data Splitting Strategy: Implement assay-aware splitting to prevent information leakage:

- Train-test splits should maintain separate assays to mimic real-world generalization challenges

- For few-shot scenarios, ensure adequate representation of both active and inactive compounds across splits

Validation Approach: Use orthogonal assay data or literature validation to confirm true inactivity of selected negative examples.

Visualization of Workflows and Logical Relationships

Negative Data Generation and Validation Workflow

The following diagram illustrates the comprehensive workflow for generating and validating high-quality negative data, integrating multiple strategies to maximize robustness:

Diagram 1: Negative Data Generation Workflow

Benchmark Validation Logic

The following diagram outlines the decision process for validating benchmarks using generated negative data:

Diagram 2: Benchmark Validation Logic

Computational Databases and Platforms

Table 3: Essential Research Resources for Negative Data Curation

| Resource Name | Type | Primary Function in Negative Data Generation | Access Information |

|---|---|---|---|

| ChEMBL [15] [30] | Public Database | Source of experimentally confirmed inactive compounds and activity data | https://www.ebi.ac.uk/chembl/ |

| PubChem [31] [15] | Public Database | Provides bioassay data including confirmed inactives for diverse targets | https://pubchem.ncbi.nlm.nih.gov/ |

| BindingDB [15] [30] | Public Database | Curated binding affinity data with both active and inactive measurements | https://www.bindingdb.org/ |

| RDKit [31] | Cheminformatics Toolkit | Calculates molecular descriptors and fingerprints for chemical space analysis | Open-source: http://www.rdkit.org/ |

| CARA Benchmark [30] | Specialized Benchmark | Reference implementation for assay-aware data splitting and evaluation | Described in Communications Chemistry, 2024 |

| ZINC [31] [15] | Compound Database | Source of purchasable compounds for virtual screening and decoy generation | http://zinc.docking.org/ |

The generation of high-quality negative data remains a complex but essential endeavor for creating robust benchmarks in computational chemistry. Through comparative analysis, we've demonstrated that the optimal strategy depends significantly on the specific application context—whether virtual screening or lead optimization—and the available experimental data. The methodologies and protocols presented here provide researchers with a structured approach to address this critical challenge, ultimately supporting the development of more reliable predictive models that translate more successfully to real-world drug discovery applications.

Molecular docking is a cornerstone computational technique in modern drug discovery, enabling researchers to predict how a small molecule (ligand) interacts with a target protein. The reliability of these predictions is paramount, which is why validation against experimental structures is a critical step. This process typically involves two main scenarios: self-docking, where a ligand is docked back into the protein structure from which it was extracted, and cross-docking, where a ligand is docked into a protein structure that was crystallized with a different ligand [32] [33].

Cross-docking presents a more rigorous and practically relevant validation test, as it assesses a method's ability to handle real-world challenges like protein flexibility and induced fit, where the binding site conformation may differ from the one used for docking [32]. This guide provides an objective comparison of current docking methodologies, focusing on their performance in these validation paradigms, and details the experimental protocols used for benchmarking.

Understanding Docking Validation Types

The following diagram illustrates the conceptual and workflow relationships between the primary docking tasks used for method validation.

As illustrated, benchmarking typically progresses from the least to the most challenging task. Self-docking (or re-docking) evaluates a method's pose reproduction capability under ideal conditions, serving as a sanity check [32] [33]. Cross-docking is a more practical test, simulating real-world scenarios where a protein's conformation may vary, making it a gold standard for assessing generalizability [34]. Apo-docking and blind docking represent even more challenging real-world conditions [32].

Performance Comparison of Docking Methods

Recent comprehensive benchmarks, particularly the PoseX study, have evaluated a wide array of docking methods across self-docking and cross-docking tasks [34]. The table below summarizes the quantitative performance of key method categories.

Table 1: Performance Comparison of Docking Method Categories

| Method Category | Representative Tools | Self-Docking Success Rate (%) | Cross-Docking Success Rate (%) | Key Characteristics |

|---|---|---|---|---|

| Traditional Physics-Based | Glide, AutoDock Vina, MOE, Discovery Studio, GNINA [34] | Lower than AI | Lower than AI | Relies on force fields & sampling; better generalizability on unseen targets [34] |

| AI Docking Methods | DiffDock, EquiBind, TankBind, DeepDock [34] | High | High | Fast pose prediction from 3D protein structure & ligand SMILES [34] |

| AI Co-Folding Methods | AlphaFold3, RoseTTAFold-All-Atom, Chai-1, Boltz-1 [34] | Variable | Variable | Predicts joint structure of protein-ligand complex; often has ligand chirality issues [34] |

A key insight from recent benchmarks is that cutting-edge AI docking methods now dominate in overall docking accuracy, outperforming traditional physics-based approaches in terms of RMSD on standard tests [34]. However, traditional physics-based methods can exhibit stronger generalizability when applied to protein targets not seen during training, due to their physical nature [34].

The performance of AI methods can be significantly enhanced by a post-processing relaxation step (energy minimization), which refines the binding pose to improve physicochemical consistency and structural plausibility [34]. In contrast, AI co-folding methods, while powerful, commonly face issues with incorrect ligand chirality, which cannot be fixed through simple relaxation [34].

Experimental Protocols for Docking Validation

To ensure fair and meaningful comparisons, benchmarks must follow rigorous and standardized experimental protocols. The following workflow outlines the key steps for a comprehensive docking evaluation, based on established practices.

Dataset Curation and Preparation

The foundation of a robust benchmark is a carefully curated dataset. The PoseX benchmark, for example, uses a dataset containing 718 entries for self-docking and 1,312 entries for cross-docking, derived from experimentally determined structures in the Protein Data Bank (PDB) [34]. It is crucial to separate these sets to evaluate method performance under different difficulty levels.

Structure preparation involves several standardized steps:

- Protein Preparation: Adding hydrogen atoms, assigning partial charges, and handling missing residues or sidechains. For cross-docking, the native ligand is removed from the binding site.

- Ligand Preparation: Generating likely tautomers and protonation states at physiological pH, and creating 3D conformers if needed [35] [33].

Docking Execution and Pose Evaluation

Each docking method is run according to its standard protocol. A critical step, particularly for AI-based methods, is post-processing relaxation. This involves a brief energy minimization of the predicted protein-ligand complex using a molecular mechanics force field, which alleviates steric clashes and improves stereochemical quality without significantly altering the binding pose [34].

The primary metric for pose evaluation is the Root-Mean-Square Deviation (RMSD) between the heavy atoms of the predicted ligand pose and the experimentally determined reference structure. A prediction is typically considered successful if the RMSD is below 2.0 Å, indicating high spatial accuracy [34].

Essential Research Reagents and Tools

This section details key software, datasets, and computational resources required for conducting docking validation studies.

Table 2: Research Reagent Solutions for Docking Validation

| Resource Type | Name | Key Function / Application |

|---|---|---|

| Commercial Docking Software | Schrödinger Glide, Molecular Operating Environment (MOE), Discovery Studio [34] | High-performance docking with sophisticated scoring functions and sampling algorithms. |

| Open-Source Docking Software | AutoDock Vina, GNINA, DOCK3.7 [35] [34] | Accessible docking tools; GNINA incorporates deep learning for scoring. |

| AI Docking Methods | DiffDock, EquiBind, TankBind [32] [34] | Deep learning-based pose prediction offering high speed and accuracy. |

| AI Co-Folding Methods | AlphaFold3, RoseTTAFold-All-Atom [34] | Predict the joint 3D structure of protein-ligand complexes. |

| Benchmarking Platforms | PoseX Benchmark [34] | Standardized dataset and leaderboard for fair comparison of docking methods. |

| Validation Datasets | PDBBind [32] | Curated database of protein-ligand complexes with binding affinity data for training and testing. |

| Force Field Software | Included in MOE, Discovery Studio, or OpenMM | Provides energy minimization for post-docking relaxation to refine poses. |

The field of molecular docking is undergoing a rapid transformation, driven by the advent of AI. Current benchmarks clearly demonstrate that AI-based docking methods have achieved superior accuracy in standard self-docking and cross-docking tests compared to traditional physics-based approaches [34]. However, this does not render traditional methods obsolete; their strong physical foundations continue to provide value, especially in terms of generalizability.

For researchers, the choice of method depends on the specific application. For high-throughput virtual screening where speed is critical, modern AI docking tools are increasingly advantageous. When docking to novel targets or those with high flexibility, a hybrid approach—using AI for initial pose prediction followed by physics-based refinement—may offer the best of both worlds. As the PoseX benchmark shows, post-docking relaxation is a simple yet highly effective step for improving the physicochemical realism of AI-generated poses [34]. Moving forward, the community's focus will likely remain on improving how these models handle the dynamic nature of proteins, a key to unlocking more reliable and predictive docking in real-world drug discovery.

In the landscape of computer-aided drug design, ligand-based approaches are indispensable when the three-dimensional structure of the biological target is unknown or uncertain. Pharmacophore modeling and Quantitative Structure-Activity Relationship (QSAR) analysis represent two foundational methodologies that leverage the known biological activities of small molecules to guide the discovery and optimization of new therapeutics [36] [37]. These techniques are particularly vital for validating new computational methods and databases, as they provide robust, data-driven frameworks for predicting compound activity based on chemical structure.

Pharmacophore models abstract the essential steric and electronic features necessary for a molecule to interact with its target, serving as a template for virtual screening [38] [39]. In parallel, QSAR modeling establishes a quantitative mathematical relationship between molecular descriptors and biological activity, enabling the predictive assessment of novel compounds [36] [37]. The integration of artificial intelligence and machine learning is now revolutionizing both fields, enhancing their predictive power, speed, and applicability across diverse chemical spaces [40] [37]. This guide provides a comparative analysis of these methodologies, detailing their experimental protocols, performance, and practical applications in modern drug discovery.

Comparative Analysis: Pharmacophore Modeling vs. QSAR

The table below summarizes the core characteristics, performance metrics, and optimal use cases for pharmacophore modeling and QSAR.

Table 1: Comparative overview of pharmacophore modeling and QSAR approaches.

| Aspect | Pharmacophore Modeling | QSAR Modeling |

|---|---|---|

| Core Principle | Abstraction of essential steric/electronic features for molecular recognition [38] | Mathematical relationship between molecular descriptors and biological activity [36] |

| Primary Application | Virtual screening, de novo molecular generation, and scaffold hopping [38] [41] | Activity prediction, lead optimization, and toxicity/environmental impact assessment [36] [37] |

| Key Strengths | Handles diverse chemotypes; interpretable; useful when target structure is unknown [41] | High predictive accuracy for congeneric series; quantitative activity estimates [36] |

| Common Software/Tools | ZINCPharmer, PharmaGist, Catalyst, Phase [41] [39] | PaDEL, BuildQSAR, DRAGON, QSARINS, ProQSAR [42] [36] [41] |

| Representative Performance | Identified novel MAO-A inhibitors (33% inhibition); 1000x faster screening than docking [40] | Predictive R² > 0.78 for FGFR-1 inhibitors; strong correlation with experimental IC₅₀ [43] |

| Data Requirements | A few known active molecules for model generation [41] | A larger set of compounds (typically >20) with consistent activity data [36] |

Experimental Protocols and Workflows

Pharmacophore Modeling and Virtual Screening Protocol

Ligand-based pharmacophore modeling involves deriving a set of essential interaction features from structurally diverse molecules known to be active against a common target. A typical workflow for identifying novel Dengue virus NS3 protease inhibitors is detailed below [41]:

- Data Set Curation: Select a set of known active compounds (e.g., 80 compounds with reported IC₅₀ values from a database like DenvInD). Convert IC₅₀ values to pIC₅₀ (pIC₅₀ = -log₁₀IC₅₀) to normalize the data [41].

- Pharmacophore Model Generation:

- Select the top 3-5 most active compounds.

- Minimize their molecular energies using software like Avogadro with the MMFF94 force field.

- Input the energy-minimized structures into a modeling tool like PharmaGist to generate multiple pharmacophore hypotheses.

- Select the best model based on its ability to discriminate between known active and inactive compounds.

- Database Screening:

- Use the selected pharmacophore model as a query in a screening tool like ZINCPharmer.

- Screen large chemical databases (e.g., ZINC) to retrieve compounds that match the pharmacophore features.

- Activity Prediction and Validation:

- Develop a QSAR model (see Section 3.2) to predict the activity of the hit compounds.

- Experimentally validate the top-ranking hits through in vitro assays.

The following diagram illustrates this multi-step workflow:

QSAR Model Development and Validation Protocol

Developing a robust QSAR model is a multi-stage process that requires rigorous validation to ensure predictive reliability. The following protocol, exemplified by a study on FGFR-1 inhibitors, outlines the key steps [36] [43]:

- Data Collection and Curation:

- Compile a dataset of compounds with consistent experimental activity data (e.g., IC₅₀, Ki) from sources like ChEMBL.

- Curate the data by removing duplicates and compounds with ambiguous activity values.

- Chemical Structure and Descriptor Generation:

- Draw or retrieve 2D/3D structures of the compounds.

- Calculate molecular descriptors (e.g., topological, geometrical, electronic) using software such as PaDEL or Alvadesc.

- Data Splitting:

- Model Building and Training:

- Model Validation:

- Internal Validation: Assess the model on the training data using cross-validation (e.g., 10-fold cross-validation) and report Q².

- External Validation: Use the held-out test set to evaluate predictive performance, reporting R² and root mean square error (RMSE).

- Applicability Domain: Define the chemical space where the model can make reliable predictions, for instance, using the leverage method [36].

The workflow for this protocol is visualized as follows:

Performance and Validation Data

Quantitative Performance Comparison

Both pharmacophore and QSAR approaches have demonstrated significant success in accelerating drug discovery campaigns. The table below summarizes key performance data from recent studies.

Table 2: Experimental performance data for pharmacophore modeling and QSAR.

| Method | Target / Application | Reported Performance | Key Findings / Experimental Outcome |

|---|---|---|---|

| Pharmacophore Modeling with ML [40] | Monoamine Oxidase (MAO) Inhibitors | Docking score prediction 1000x faster than classical docking. | 24 compounds synthesized; one showed 33% MAO-A inhibition at lowest tested concentration. |

| Ligand-based Pharmacophore [41] | Dengue Virus NS3 Protease | Identified ZINC22973642 with predicted pIC₅₀ of 7.872. | Molecular docking confirmed strong binding (affinity: -8.1 kcal/mol); promising ADMET profile. |

| MLR-based QSAR [43] | FGFR-1 Inhibitors | Training R² = 0.7869; Test R² = 0.7413. | Strong correlation between predicted and experimental pIC₅₀; Oleic acid identified as a potent hit. |

| ANN-based QSAR [36] | NF-κB Inhibitors | Model showed superior reliability and prediction vs. MLR. | Enabled efficient screening of new NF-κB inhibitor series with high accuracy. |

| Pharmacophore-Guided Generative AI (PGMG) [38] | De Novo Molecule Generation | High scores in validity, uniqueness, and novelty. | Generated molecules with strong docking affinities, matching given pharmacophore hypotheses. |

Integrated Workflow for Method Validation

The synergy between pharmacophore modeling and QSAR is powerful for validating new computational methods and databases. A combined workflow leverages the strengths of both: the scaffold-hopping capability of pharmacophore models and the quantitative predictive power of QSAR. This is particularly effective for screening large databases like ZINC [40] [41]. The integration of AI further enhances this pipeline; for example, machine learning models can be trained to predict docking scores based on molecular fingerprints, drastically accelerating virtual screening [40]. Furthermore, generative models like PGMG and DiffPhore use pharmacophores as input to create novel, active molecules, providing a robust test for the information content of a pharmacophore model and the chemical space covered by a training database [38] [39].

The following diagram illustrates how these methods can be integrated with AI and experimental validation:

Essential Research Reagent Solutions

The practical application of pharmacophore modeling and QSAR relies on a suite of software tools, databases, and computational resources. The table below lists key "research reagents" for conducting these studies.

Table 3: Essential resources for pharmacophore and QSAR research.

| Resource Name | Type | Primary Function | Relevance to Method Validation |

|---|---|---|---|

| ZINC Database [40] [41] | Chemical Database | Library of commercially available compounds for virtual screening. | Primary source for purchasable compounds to test model predictions. |

| ChEMBL Database [40] | Bioactivity Database | Curated database of bioactive molecules with drug-like properties. | Source of training data for QSAR and for benchmarking pharmacophore models. |

| PaDEL Software [41] | Descriptor Calculator | Computes molecular descriptors and fingerprints for QSAR. | Standardizes the descriptor calculation process, ensuring reproducibility. |

| BuildQSAR Tool [41] | QSAR Modeling | Builds QSAR models using Multiple Linear Regression (MLR). | Provides a dedicated platform for developing and validating QSAR models. |

| ProQSAR Framework [42] | QSAR Workbench | Modular, reproducible pipeline for end-to-end QSAR development. | Ensures best practices, formal validation, and provenance tracking. |

| PharmaGist / ZINCPharmer [41] | Pharmacophore Tools | Generates ligand-based pharmacophores and screens databases. | Allows for the creation and testing of pharmacophore hypotheses against large libraries. |

| RDKit [38] | Cheminformatics Toolkit | Open-source platform for cheminformatics and machine learning. | Provides fundamental functions for molecule handling, fingerprinting, and descriptor calculation. |

Training and Validating Machine Learning Models with Large-Scale Bioactivity Data

The application of machine learning (ML) in drug discovery has transformed the landscape of bioactivity prediction, offering the potential to significantly reduce the time and cost associated with experimental screening. As the volume of publicly available bioactivity data grows, so does the promise of developing more accurate and generalizable models. However, this promise is contingent on rigorous training and validation methodologies that can withstand the complexities and heterogeneities inherent in large-scale biological data. This guide provides an objective comparison of contemporary ML approaches, databases, and validation frameworks used in computational chemistry, synthesizing recent advances to equip researchers with the knowledge to build robust predictive tools.

The critical importance of proper validation cannot be overstated. Models that demonstrate impressive metrics on biased benchmarks or improper train-test splits often fail in real-world virtual screening campaigns, leading to significant misdirection of resources [1]. This guide places special emphasis on the methodological rigor required for reliable model development, from data curation and feature selection to performance evaluation and error analysis, all within the context of the increasingly sophisticated ecosystem of computational chemistry databases.

Comparative Analysis of Machine Learning Models for Bioactivity Prediction

Model Performance Across Diverse Assays

Table 1: Comparative performance of machine learning models on bioactivity prediction tasks.

| Model/Algorithm | Primary Use Case | Reported Performance Metrics | Key Strengths | Key Limitations |

|---|---|---|---|---|

| LightGBM (Gradient Boosting) | Blastocyst yield prediction in IVF [44] | R²: 0.673–0.676; MAE: 0.793–0.809 [44] | High accuracy with fewer features, superior interpretability, fast training [44] | May underestimate yields in specific patient subgroups [44] |

| XGBoost (Gradient Boosting) | Antiproliferative activity prediction [45] | MCC > 0.58; F1-score > 0.8 [45] | High versatility, robust performance, handles diverse descriptors well [45] | Can suffer from misclassification without post-prediction filtering [45] |

| Support Vector Machines (SVM) | Drug target prediction on ChEMBL [1] | Competitive AUC-ROC with Deep Learning [1] | Strong performance on complex, non-linear data; effective with ECFP fingerprints [1] | Performance is highly competitive with modern deep learning methods [1] |

| Deep Neural Networks (FNN) | Large-scale multi-task target prediction [1] | Reported as superior, but reanalysis shows SVM is competitive [1] | Potential for capturing complex feature interactions in large datasets [1] | High computational cost; performance gains over simpler models not always significant [1] |

| Random Forest (RF) | General-purpose bioactivity classification [45] | Performance varies with feature type and dataset [45] | Good interpretability, less prone to overfitting than boosted trees [45] | May be outperformed by gradient boosting methods (GBM, XGBoost) [45] |

Critical Evaluation of Performance Metrics

The choice of evaluation metrics is paramount and should be aligned with the practical goal of the model. The area under the receiver operating characteristic curve (AUC-ROC) is commonly used but can be misleading in the context of virtual screening where class imbalance is the norm—a vast number of inactive compounds versus a small number of actives [1]. In such scenarios, the area under the precision-recall curve (AUC-PR) provides a more realistic picture of model performance [1]. Furthermore, metrics like the F1-score (the harmonic mean of precision and recall) and the Matthews Correlation Coefficient (MCC) are highly valuable as they offer a balanced view of model accuracy across imbalanced classes [45].

A reanalysis of a large-scale benchmark study cautions against over-reliance on p-values to declare a "best" model, as statistically significant differences may not translate to practical significance in a real-world drug discovery setting [1]. Model performance can vary dramatically from one assay to another due to factors like data set size and balance, underscoring the need for assay-specific validation and uncertainty quantification [1].

Essential Databases for Training and Validation

The quality and scope of the training data are as critical as the model architecture. The following databases are foundational for training and validating ML models in drug discovery.

Table 2: Key public databases for bioactivity data and molecular structures.

| Database Name | Primary Content | Scale (As of 2025) | Utility in ML Workflows |

|---|---|---|---|

| ChEMBL | Curated bioactivity data, drug-like molecules, ADME/Tox data [1] | > 456,000 compounds, > 1300 assays in one benchmark [1] | Primary source for building ligand-based target prediction models; highly heterogeneous [1] |

| PubChem | Chemical structures, bioactivities, screening data [15] | Thousands to billions of compounds [15] | Used for virtual screening via similarity searches, physicochemical filtering, and target-based selection [15] |

| OMol25 (Open Molecules 2025) | 3D molecular snapshots with DFT-calculated energies and forces [4] [22] | >100 million configurations; 6 billion CPU hours to generate [4] | Training Machine Learned Interatomic Potentials (MLIPs) for quantum-level accuracy at a fraction of the cost [4] |

| Other Key DBs (ZINC, ChEMBL, DrugBank) | Purchasable compounds, drug molecules, bioactive data [15] | Varies by database [15] | Provide diverse chemical structures and pharmacological properties for virtual screening [15] |

The recent release of the OMol25 dataset represents a paradigm shift, enabling the training of ML models that can simulate molecular systems with Density Functional Theory (DFT) level accuracy but thousands of times faster [4] [22]. This "AlphaFold moment" for computational chemistry unlocks the ability to model scientifically relevant systems of real-world complexity, from protein-ligand binding to electrolyte reactions in batteries [22].

Experimental Protocols for Robust Model Development

Protocol 1: Building a Classifier for Bioactivity Prediction

This protocol outlines the steps for developing a classifier to predict compound activity against a biological target, using tree-based models as an example [45].

- Data Curation and Preparation: Begin with a raw compound list from a source like ChEMBL. Use a tool like MEHC-curation to validate, clean, and normalize SMILES strings, remove duplicates, and track errors [46]. This step is crucial for model reliability.

- Feature Calculation (Featurization): Encode the chemical structures into numerical representations. Common choices include:

- ECFP4/ECFP6 Fingerprints: Circular fingerprints encoding atom environments [1] [45].

- RDKit Molecular Descriptors: A set of 200+ physicochemical and topological descriptors (e.g., molecular weight, logP, number of hydrogen bond donors/acceptors) [45].

- MACCS Keys: A set of 166 binary structural keys [45].

- Data Splitting: Split the curated and featurized dataset into training and test sets using a scaffold split. This ensures that compounds with different molecular scaffolds are in the training and test sets, testing the model's ability to generalize to novel chemotypes and reducing optimism in performance estimates [1].

- Model Training and Hyperparameter Tuning: Train multiple algorithms (e.g., XGBoost, GBM, SVM) on the training set. Use cross-validation on the training set to optimize hyperparameters.

- Model Evaluation: Apply the final model to the held-out test set. Report a suite of metrics: AUC-ROC, AUC-PR, F1-score, and MCC to provide a comprehensive view of performance [1] [45].

- Error Analysis and Interpretation: Use eXplainable AI (XAI) techniques like SHAP (SHapley Additive exPlanations) to interpret predictions. SHAP quantifies the contribution of each feature to an individual prediction, helping to identify features driving misclassifications and build trust in the model's outputs [45].

Protocol 2: Validating with a Multi-Center Framework

For maximum robustness and clinical translatability, a multi-center validation framework is recommended, as demonstrated in a metabolomics study for Rheumatoid Arthritis (RA) diagnosis [47].