Computational Chemistry Reproducibility: Foundational Concepts, FAIR Data, and Best Practices for Drug Discovery

This article provides a comprehensive guide to computational chemistry reproducibility, a critical challenge with an estimated $200B annual global impact on scientific research.

Computational Chemistry Reproducibility: Foundational Concepts, FAIR Data, and Best Practices for Drug Discovery

Abstract

This article provides a comprehensive guide to computational chemistry reproducibility, a critical challenge with an estimated $200B annual global impact on scientific research. Tailored for researchers, scientists, and drug development professionals, we explore the foundational principles of reproducible science, including the FAIR data standards and the severity of the computational reproducibility crisis. The content details methodological applications from quantum chemistry to machine learning, offers troubleshooting strategies for common technical and data pipeline failures, and establishes robust validation frameworks through blind challenges and industry-proven practices like the 80:20 validation rule. By synthesizing insights from recent blind challenges, economic analyses, and AI-driven drug discovery case studies, this article equips teams with the practical knowledge to build more reliable, efficient, and trustworthy computational workflows.

The Reproducibility Crisis and FAIR Data Fundamentals: Why Computational Chemistry Fails and How to Fix It

The Scale of the Reproducibility Crisis

The reproducibility crisis represents a fundamental breakdown in scientific integrity, affecting nearly every field of human inquiry and wasting billions of research dollars annually. This crisis manifests when scientific findings cannot be independently verified or reproduced, leading to misdirected resources, delayed progress, and flawed policy decisions.

Quantifying the exact global financial impact of irreproducible research is challenging, but conservative estimates indicate it represents a multibillion-dollar problem annually across research fields. In biomedical research alone, irreproducible preclinical research misdirects approximately $28 billion in research and development funding each year [1]. When expanded to include all computational research fields—including economics, psychology, computer science, physics, climate science, and materials science—the cumulative global financial impact reaches an estimated $200+ billion annually in wasted research expenditure and misinformed policy decisions [1].

The scope of the problem extends beyond financial costs. The landmark Reproducibility Project in psychology found that between one-third and one-half of studies could not be successfully replicated [1]. Similar investigations in experimental economics revealed that nearly half of celebrated results vanished under systematic scrutiny [1]. This pattern of irreproducibility creates cascading effects throughout the scientific ecosystem, as each irreproducible study actively misleads other researchers across multiple fields [1].

Table 1: Documented Reproducibility Rates Across Scientific Disciplines

| Research Domain | Reproducibility Rate | Key Studies |

|---|---|---|

| Psychology | 50-67% | Reproducibility Project [1] |

| Experimental Economics | ~50% | Preregistered replications [1] |

| Cancer Biology (Preclinical) | 2% with open data | Open Science Collaboration [2] |

| Computer Science | Varies significantly | ML Reproducibility Challenges [2] |

| Organic Chemistry | 87.5-92.5% | Organic Syntheses validation [3] |

Root Causes and Contributing Factors in Computational Research

Technical and Methodological Challenges

Computational research faces unique reproducibility challenges that stem from both technical complexities and scientific practices. Several interconnected factors contribute to this problem:

Insufficient documentation: Critical computational details, parameters, and manual processing steps often go undocumented, making exact reproduction impossible [4]. Research papers commonly leave out experimental details essential for reproduction, creating an "irreproducibility trap" for follow-up studies [5].

Software and environment instability: Computational research depends on specific software versions, library dependencies, and operating system components that frequently change over time [5]. Without archiving exact computational environments, results become irreproducible as software evolves.

Non-standardized workflows: The lack of standardized approaches to organizing computational projects means that data, code, and results often exist in fragmented repositories without clear connections [4]. This separation requires manual reconstruction of computational workflows that are rarely documented thoroughly.

Parallel computing complexities: High-performance computing introduces non-deterministic behavior through parallel processing, where floating-point arithmetic operations can produce different results due to their non-associative nature when executed in varying orders [6].

Inadequate randomization handling: Analyses involving random number generators often fail to record underlying random seeds, preventing exact reproduction of stochastic computational results [5].

Systemic and Cultural Barriers

Beyond technical challenges, significant systemic and cultural factors perpetuate the reproducibility crisis:

Lack of incentives: Researchers face insufficient motivation to dedicate time and effort to ensure reproducibility, as academic reward systems prioritize novel findings over verification [4]. The "publish or perish" culture dominates global academia, intrinsically contributing to the publication of non-reproducible research outcomes [3].

Inadequate training: Many computational researchers lack formal training in software engineering best practices, leading to software that is difficult to run, understand, test, or modify [4].

Fragmented solutions: Field-specific reproducibility initiatives create fragmentation, with physics, economics, and computer science communities developing isolated tools rather than unified frameworks [1].

Consequences Across Scientific Disciplines

Documented Case Studies of Reproducibility Failures

The impact of irreproducible research extends beyond theoretical concerns, with concrete examples demonstrating significant real-world consequences:

Economics: The austerity policy case - The influential paper "Growth in The Time of Debt" published in the American Economic Review asserted a critical relationship between public debt and economic growth, directly influencing austerity policies adopted by governments worldwide following the 2008 financial crisis [2]. Subsequent replication attempts revealed missing data and calculation errors in the original work, with corrected analysis showing no evidence to support the claimed relationship between debt and growth [2]. These irreproducible findings contributed to austerity measures linked to increased inequality and hundreds of thousands of excess deaths in the United Kingdom alone [2].

Cancer biology: The preclinical research gap - A comprehensive eight-year study by the Center for Open Science attempted to replicate 193 experiments from 53 high-impact cancer biology papers [2]. The results revealed that only 2% of studies had open data, 0% had pre-requisite protocols that allowed for replication, and only a small fraction of experiments could be successfully reproduced. This irreproducibility in preclinical research creates tremendous opportunity costs for cancer patients who participate in clinical trials based on potentially flawed preliminary findings [2].

Psychology: Theoretical foundations undermined - The "ego depletion" theory in psychology spawned thousands of studies and influenced public policy for decades before systematic replications revealed it was largely false [1]. Similarly, influential work on "priming" effects led to costly interventions that likely never worked, despite extensive implementation [1].

Materials science: Systematic overestimation - In hydrogen adsorption research for energy storage applications, studies have found systematic overestimation of results across multiple material classes including carbon nanotubes, metal-organic frameworks, and conducting polymers [3]. Similar issues were identified in CO₂ adsorption measurements in metal-organic frameworks, with approximately 20% of isotherms classified as outliers [3].

Table 2: Documented Impacts of Irreproducible Research Across Disciplines

| Discipline | Impact of Irreproducibility | Documented Consequences |

|---|---|---|

| Economics | Misguided macroeconomic policy | Austerity measures linked to increased inequality and excess deaths [2] |

| Cancer Biology | Inefficient drug development | Only 1 in 20 cancer drugs in clinical studies achieves licensing [2] |

| Psychology | Invalid behavioral interventions | Costly priming interventions based on false premises [1] |

| Materials Science | Misleading performance metrics | Systematic overestimation of hydrogen storage capabilities [3] |

| Computational Chemistry | Delayed innovation | Unverifiable simulations slowing materials development [3] |

Cumulative Impact on Scientific Progress

The collective impact of these reproducibility failures creates a substantial drag on scientific advancement:

Cascading misinformation: Each irreproducible study actively misleads other researchers across all fields, creating compound errors that propagate through citation networks [1].

Resource misallocation: Funding agencies and research institutions invest in dead-end research trajectories based on false premises, delaying genuine scientific breakthroughs.

Erosion of public trust: Highly publicized failures to replicate prominent findings diminish public confidence in scientific institutions and expertise.

Slowed innovation: In computational chemistry and materials science, irreproducible simulations and characterizations delay the development of new materials, catalysts, and compounds with potential applications in energy, medicine, and technology [3].

Methodologies for Assessing Reproducibility

Systematic Assessment Frameworks

Rigorous assessment of computational reproducibility requires structured methodologies and systematic approaches:

Large-scale replication studies: Initiatives like the Reproducibility Project in psychology and economics conduct preregistered direct replications of published findings using standardized protocols [1]. These studies typically attempt to replicate a representative sample of findings from a specific domain using the original materials and methods when available.

Multi-laboratory validation: In chemistry, journals like Organic Syntheses require independent reproduction of synthetic procedures in the laboratory of an editorial board member before publication, maintaining rejection rates of 7.5-12% for procedures that cannot be reproduced within a reasonable range [3].

Computational verification pipelines: Automated systems can parse computational papers, reconstruct computational environments, execute analyses, and flag irreproducible results using specialized AI agents [1]. These systems typically assign Green/Amber/Red badges to indicate levels of verification.

Metadata standards application: Reproducible computational research requires extensive metadata describing both scientific concepts and computing environments across an "analytic stack" consisting of input data, tools, reports, pipelines, and publications [7].

Practical Implementation Frameworks

Structured frameworks provide practical pathways for implementing reproducibility assessments:

The ENCORE framework: ENCORE (ENhancing COmputational REproducibility) provides a standardized approach to organizing computational projects through a standardized file system structure (sFSS) that serves as a self-contained project compendium [4]. This framework integrates all project components and uses predefined files as documentation templates while leveraging GitHub for version control.

Ten Simple Rules for Reproducible Research: Established guidelines include: (1) keeping track of how every result was produced; (2) avoiding manual data manipulation steps; (3) archiving exact versions of all external programs used; (4) version controlling all custom scripts; (5) recording intermediate results in standardized formats; (6) noting underlying random seeds for analyses involving randomness; and (7) storing raw data behind plots [5].

Workflow management systems: Platforms like Galaxy, GenePattern, and Taverna provide integrated frameworks that inherently support reproducible computational analyses by tracking parameters, software versions, and data provenance throughout analytical pipelines [5].

Research Reagent Solutions: A Computational Toolkit

Implementing reproducible computational research requires both conceptual frameworks and practical tools. The following toolkit provides essential components for establishing reproducible workflows in computational chemistry and related fields.

Table 3: Essential Tools for Reproducible Computational Research

| Tool Category | Specific Solutions | Function & Purpose |

|---|---|---|

| Version Control Systems | Git, Subversion, Mercurial | Track evolution of code and scripts throughout development, enabling backtracking to specific states [5] |

| Computational Environment Management | Docker, Singularity, Conda | Archive exact software versions and dependencies to recreate computational environments [5] |

| Workflow Management Systems | Galaxy, GenePattern, Taverna, Nextflow | Package full analytical pipelines from raw data to final results with automated provenance tracking [5] |

| Project Organization Frameworks | ENCORE (sFSS) | Standardize file system structure and documentation across research projects [4] |

| Metadata Standards | Research Object Crates (RO-Crate), Bioschemas | Annotate datasets, tools, and workflows with standardized metadata for discovery and reuse [7] |

| Data & Code Repositories | Zenodo, Figshare, GitHub | Provide persistent archiving of research components with digital object identifiers (DOIs) [4] |

| Literate Programming Tools | Jupyter Notebooks, R Markdown | Integrate code, results, and narrative explanation in executable documents [6] |

Implementation Protocols

Successful implementation of these tools requires systematic protocols:

Version control protocol: Initialize version control at project inception; commit frequently with descriptive messages; utilize branching for experimental features; maintain a canonical repository with protected main branch [5].

Environment documentation: Record exact versions of all software packages and dependencies; utilize containerization for complex environments; document operating system and hardware specifications where performance-critical [5].

Workflow implementation: Define workflows as executable specifications; parameterize analytical steps; record all intermediate results when storage-feasible; implement continuous integration testing for critical workflows [5].

Metadata annotation: Apply domain-specific metadata standards throughout project lifecycle; utilize persistent identifiers for datasets, instruments, and computational tools; expose metadata through standardized APIs for discovery [7].

Emerging Solutions and Future Directions

AI-Powered Verification Systems

Advanced artificial intelligence systems present promising approaches to addressing reproducibility at scale:

Automated replication infrastructure: AI-powered systems can automatically reproduce scientific findings across computational research fields at the moment of publication [1]. These systems utilize multiple AI agents that parse papers, reconstruct computational environments, execute analyses, and flag irreproducible results.

Verification badging systems: Automated systems can assign Green/Amber/Red badges to computational analyses, where Green indicates full agreement between regenerated output and published results, Amber signals minor divergences requiring author attention, and Red flags blocking errors in the evidentiary chain [1].

Knowledge graph development: As verification data accumulates, systems can continuously update public knowledge graphs that trace how unverified claims propagate through citation networks and identify collaboration clusters with unusual fragility patterns [1].

Institutional and Policy Initiatives

Systemic solutions require coordination across the research ecosystem:

Publisher integration: Major publishers are increasingly integrating automated reproducibility checks into manuscript submission systems, requiring computational code and data availability, and conducting pre-publication verification [1].

Funder requirements: Federal science agencies (NSF, NIH, DOE) are announcing plans to accept verification badges for grant reporting and eventually require them for funding continuation [1].

Standardization efforts: Cross-disciplinary publisher roundtables are establishing universal metadata standards for computational research, while field-specific specialists adapt verification criteria for different methodological approaches [1].

Cultural transformation: The most significant challenge remains the lack of incentives motivating researchers to dedicate sufficient time and effort to ensure reproducibility [4]. Addressing this requires fundamental shifts in academic reward structures, publication practices, and research training methodologies.

The reproducibility crisis in computational research represents a substantial drain on scientific progress and research resources, with documented global impacts exceeding $200 billion annually. Addressing this crisis requires both technical solutions—including standardized frameworks, robust tooling, and automated verification systems—and cultural transformation within the scientific community. For computational chemistry and related fields, implementing structured approaches like the ENCORE framework, adhering to established best practices, and adopting emerging AI-powered verification systems can significantly enhance research reproducibility. This multifaceted approach offers the promise of restoring scientific integrity, accelerating genuine discovery, and ensuring that research investments deliver meaningful returns.

The Findable, Accessible, Interoperable, and Reusable (FAIR) principles represent a transformative framework for scientific data management and stewardship, originally formalized in 2016 to enhance the reusability of data holdings and improve the capacity of computational systems to automatically find and use data [8]. In the specific context of computational chemistry and materials science, implementing FAIR principles addresses critical challenges including fragmented data systems, inefficiencies in data sharing, and limited reproducibility of scientific findings [9]. The core value of FAIR lies in its focus on making data machine-actionable, which is particularly relevant for computational chemistry where datasets are increasingly vast and complex, and where artificial intelligence (AI) and machine learning (ML) applications depend on high-quality, well-structured data [10] [8].

The FAIR principles are often discussed alongside open data, but they possess distinct characteristics. While open data focuses on making data freely available to anyone without restrictions, FAIR data emphasizes rich metadata, standardized formats, and machine-interpretability [8]. This distinction is crucial for computational chemistry, where data may be restricted due to intellectual property concerns but still needs to be structured for maximum utility and potential future sharing. The implementation of FAIR principles enables faster time-to-insight, improves data return on investment, supports AI and multi-modal analytics, ensures reproducibility and traceability, and enables better team collaboration across organizational silos [8].

The FAIR Principles Demystified

Core Principles and Definitions

The FAIR principles provide a systematic approach to managing digital research objects. Each component addresses specific aspects of the data lifecycle:

Findable: The first step in data reuse is discovery. Data and computational workflows must be easy to find for both humans and computers. This is achieved by assigning globally unique and persistent identifiers (PIDs) such as Digital Object Identifiers (DOIs) and ensuring datasets are described with rich, machine-readable metadata that is indexed in searchable resources [11] [8]. Metadata should include relevant context such as project names, funders, and subject keywords to enhance discoverability.

Accessible: Once found, data should be retrievable using standardized, open protocols. Accessibility does not necessarily mean openly available to everyone; rather, it emphasizes that even restricted data should have clear access protocols and authentication procedures [11] [8]. The general principle is that research data should be "as open as possible, as closed as necessary" with appropriate provisions for ethical, safety, or commercial constraints [11].

Interoperable: Data must be structured in ways that enable integration with other datasets and analysis tools. This requires using common data formats, standardized vocabularies, and community-adopted ontologies that allow machines to automatically process and combine data from diverse sources [11] [8]. In computational chemistry, this might involve using standardized file formats and semantic models that describe chemical entities and reactions unambiguously.

Reusable: The ultimate goal of FAIR is to optimize data reuse. Reusability depends on comprehensive documentation of research context, clear licensing information, and detailed provenance records that describe how data was generated and processed [11] [8]. Well-documented data enables researchers to understand, replicate, and build upon previous work without requiring direct communication with the original investigators.

FAIR for Research Software and Workflows

Computational chemistry relies heavily on specialized software and computational workflows, which themselves require FAIR implementation. The FAIR for Research Software (FAIR4RS) principles, established in 2022, address the unique characteristics of software as a research output, including its executability, modularity, and continuous evolution through versioning [12]. Computational workflows—defined as the formal specification of data flow and execution control between executable components—are particularly important digital objects in computational chemistry that benefit from FAIR implementation [13].

A key characteristic of workflows is the separation of the workflow specification from its execution, making the description of the process a form of data-describing method [13]. Applying FAIR principles to computational workflows involves ensuring that both the workflow components and their composite structure are findable, accessible, interoperable, and reusable. This includes providing detailed metadata about each step's inputs, outputs, dependencies, and computational requirements, as well as configuration files and software dependency lists necessary for operational context [13].

Table: The Four FAIR Principles and Their Implementation Requirements

| Principle | Core Requirement | Key Implementation Methods |

|---|---|---|

| Findable | Easy discovery for researchers and computers | Persistent identifiers, rich machine-actionable metadata, indexed in searchable resources |

| Accessible | Retrievable via standardized protocols | Open or clearly defined access procedures, authentication where necessary, long-term preservation |

| Interoperable | Integration with other data and systems | Use of shared vocabularies, ontologies, and community standards; machine-readable formats |

| Reusable | Replication and reuse in new contexts | Clear licensing, detailed provenance, domain-relevant community standards, comprehensive documentation |

Implementing FAIR in Computational Chemistry: Practical Approaches

Metadata Standards and Ontologies

Effective implementation of FAIR principles in computational chemistry requires robust metadata standards and domain-specific ontologies. The use of semantic data models enables data from various origins to be analyzed collectively, significantly enhancing research potential [9]. For instance, the ioChem-BD platform for computational chemistry and materials science integrates semantic data models into its repository to enable collective analysis of diverse datasets [9].

The Swiss Cat+ West hub exemplifies advanced implementation of semantic modeling through its use of the Allotrope Foundation Ontology and other established chemical standards to transform experimental metadata into validated Resource Description Framework (RDF) graphs [10]. This ontology-driven approach enables sophisticated querying through SPARQL endpoints and facilitates integration with downstream AI and analysis pipelines. The platform employs a modular RDF converter to systematically capture each experimental step in a structured, machine-interpretable format, creating a scalable and interoperable data backbone [10].

Research Data Infrastructures (RDIs)

Research Data Infrastructures are community-driven platforms that progressively transform fragmented research outputs into reusable, findable, and interoperable resources [10]. In computational chemistry, RDIs like HT-CHEMBORD (High-Throughput Chemistry Based Open Research Database) provide specialized infrastructure for processing and sharing high-throughput chemical data [10]. These infrastructures are built on open-source technologies and deployed using containerized environments like Kubernetes, enabling scalable and automated data processing.

A key feature of advanced RDIs is their ability to capture complete experimental context, including negative results, branching decisions, and intermediate steps that are often excluded from traditional publications but are crucial for training robust AI models [10]. By systematically recording both successful and failed experiments, these infrastructures ensure data completeness, strengthen traceability, and enable the creation of bias-resilient datasets essential for robust AI model development in chemistry [10].

Table: Essential Research Reagent Solutions for FAIR Data in Computational Chemistry

| Tool Category | Specific Examples | Function in FAIR Implementation |

|---|---|---|

| Persistent Identifier Systems | DOI, UUID | Provide globally unique and persistent references to datasets, software, and workflows |

| Metadata Standards | Allotrope Foundation Ontology, Dublin Core | Enable rich description of research assets using community-agreed schemas |

| Workflow Management Systems | Nextflow, Snakemake, Apache Airflow | Automate and record computational processes, ensuring reproducibility and provenance tracking |

| Containerization Technologies | Docker, Singularity | Package software and dependencies to ensure portability and consistent execution environments |

| Semantic Platforms | RDF, SPARQL endpoints | Transform metadata into machine-interpretable knowledge graphs for advanced querying |

| Data Repositories | ioChem-BD, Zenodo, Open Reaction Database | Provide specialized platforms for storing, sharing, and discovering research assets |

Case Study: The HT-CHEMBORD Platform

Architecture and Workflow

The HT-CHEMBORD platform developed by Swiss Cat+ and the Swiss Data Science Center represents a state-of-the-art implementation of FAIR principles for high-throughput chemical data [10]. The platform's architecture is built on Kubernetes and utilizes Argo Workflows for orchestration, with scheduled synchronizations and backup workflows to ensure data reliability and accessibility [10]. The entire pipeline is designed as a modular, end-to-end digital workflow where each system component communicates through standardized metadata schemes.

The experimental workflow begins with digital initialization through a Human-Computer Interface that enables structured input of sample and batch metadata, formatted and stored in standardized JSON format [10]. Compound synthesis is then carried out using automated platforms like Chemspeed, with programmable parameters (temperature, pressure, light frequency, shaking, stirring) automatically logged using ArkSuite software, which generates structured synthesis data in JSON format [10]. This file serves as the entry point for the subsequent analytical characterization pipeline.

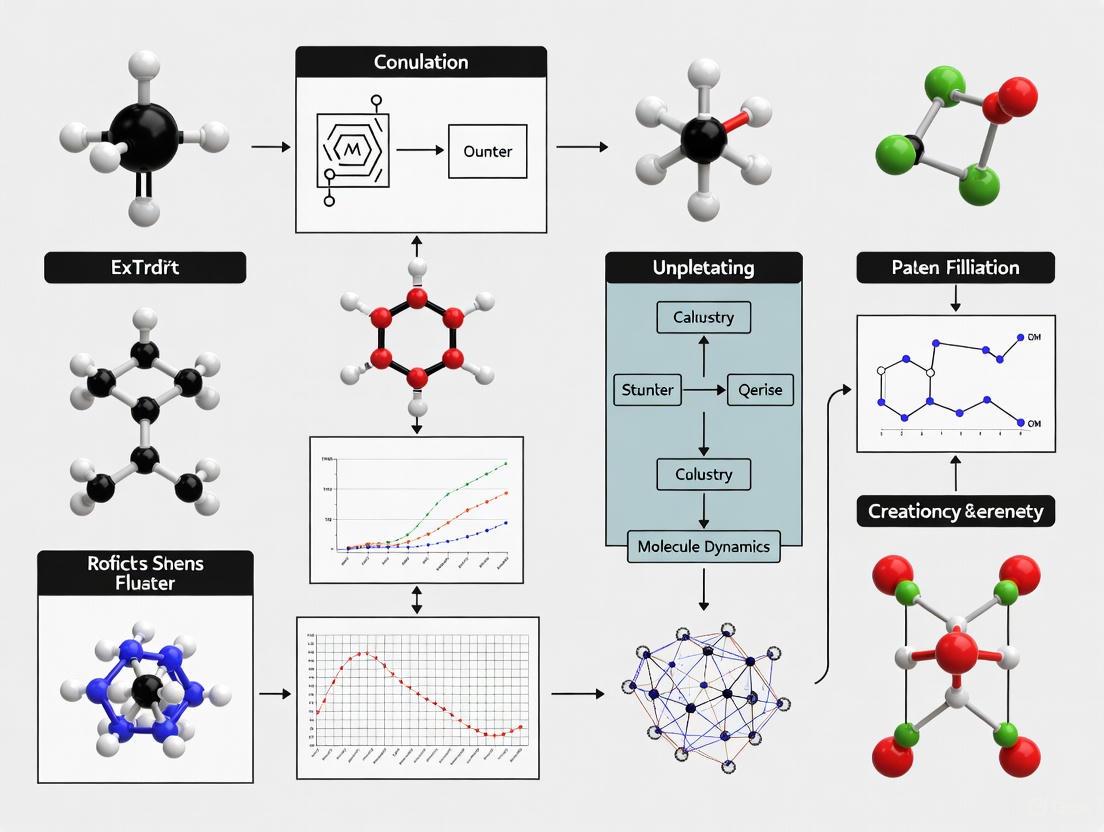

Diagram: FAIR Data Workflow in High-Throughput Computational Chemistry. This workflow illustrates the automated, multi-stage process for generating FAIR chemical data, from synthesis to semantic representation.

Data Capture and Transformation

A distinctive feature of the HT-CHEMBORD platform is its comprehensive approach to data capture throughout the experimental lifecycle. Upon completion of synthesis, compounds undergo a multi-stage analytical workflow with decision points that determine subsequent characterization paths based on properties of each sample [10]. The screening path rapidly assesses reaction outcomes through known product identification, semi-quantification, yield analysis, and enantiomeric excess evaluation, while the characterization path supports discovery of new molecules through detailed chromatographic and spectroscopic analyses.

Instrument-specific outputs are stored in structured formats depending on the analytical method: ASM-JSON, JSON, or XML [10]. This structured approach to data capture ensures consistency across analytical modules and enables automated data integration. Critically, even when no signal is observed from analytical methods, the associated metadata representing failed detection events is retained within the infrastructure for future analysis and machine learning training, addressing the common problem of publication bias in chemical research [10].

Experimental Protocols for FAIR Implementation

Protocol: Implementing Semantic Metadata Conversion

The transformation of experimental metadata into semantic formats is a cornerstone of FAIR implementation in computational chemistry. The following protocol outlines the systematic approach used by the HT-CHEMBORD platform:

Structured Data Capture: Initiate experiments through a Human-Computer Interface that enforces structured input of sample and batch metadata. Store this information in standardized JSON format containing reaction conditions, reagent structures, and batch identifiers to ensure traceability [10].

Automated Instrument Data Collection: Configure analytical instruments to output data in structured, machine-readable formats (ASM-JSON, JSON, or XML) depending on the analytical method and hardware supplier. Implement automated data transfer protocols to centralize storage upon experiment completion [10].

Semantic Transformation: Deploy a modular RDF converter to transform raw experimental metadata into validated Resource Description Framework graphs. Utilize domain-specific ontologies such as the Allotrope Foundation Ontology to ensure proper semantic mapping and interoperability [10].

Knowledge Graph Storage: Load the transformed RDF graphs into a semantic database equipped with a SPARQL endpoint for querying. Implement regular synchronization workflows (e.g., weekly) to ensure the knowledge graph remains current with newly generated experimental data [10].

Access Interface Deployment: Provide multiple access modalities including a user-friendly web interface for browsing and a SPARQL endpoint for programmatic querying by experienced users. Implement appropriate access controls based on licensing agreements and data sensitivity [10].

Protocol: Making Computational Workflows FAIR

Computational workflows are essential research assets in computational chemistry that require specific approaches for FAIR implementation:

Workflow Documentation: Create comprehensive documentation that includes the workflow's purpose, design, inputs, outputs, parameters, and dependencies. Use standard metadata schemas to describe the workflow and its components [13].

Component Identification: Assign persistent identifiers to all workflow components, including individual tools, scripts, and sub-workflows. Ensure each component is versioned and has its own metadata describing functionality, authorship, and requirements [13].

Execution Environment Specification: Use containerization technologies (Docker, Singularity) to capture the complete computational environment. Specify software dependencies, versions, and configuration requirements to ensure reproducibility across different computing platforms [13].

Provenance Capture: Implement mechanisms to automatically record provenance information during workflow execution, including data lineage, parameter values, and execution logs. Store this information alongside output data to enable traceability [13].

Registration and Publication: Deposit workflows and their associated components in recognized repositories that support versioning and assign persistent identifiers. Include appropriate licenses that clearly state conditions for reuse and modification [13] [14].

Measuring and Maintaining FAIR Compliance

Assessment Tools and Metrics

Evaluating FAIR implementation requires specialized tools and metrics. The F-UJI assessment tool provides automated evaluation of published research data against FAIR principles [11]. Additionally, the FAIR-IMPACT project has defined 17 metrics for automated FAIR software assessment in disciplinary contexts, with ongoing work to implement these as practical tests by extending existing assessment tools [14].

For computational workflows, assessment should consider both the workflow specification as a digital object and its component parts. This includes evaluating the availability of persistent identifiers, richness of metadata, clarity of licensing, completeness of documentation, and adequacy of provenance information [13]. The FAIR-IMPACT cascading grants program includes specific pathways for assessment and improvement of existing research software using extended evaluation tools [14].

Organizational Strategies for FAIR Adoption

Successful FAIR implementation requires organizational commitment and cultural change. Research institutions and funders are increasingly developing policies that encourage FAIR adoption, such as the Netherlands eScience Center's Software Management Plan Template that has been updated to align with FAIR4RS Principles [14]. The German Research Council has published guidelines for reviewing grant proposals that suggest compliance with FAIR4RS Principles for archiving and reuse [14].

Training initiatives play a crucial role in building FAIR capabilities. Programs like the FAIR for Research Software Program at Delft University of Technology and the Research Software Support course developed by the Netherlands eScience Center provide researchers with essential tools for creating scientific software following FAIR4RS Principles [14]. Community forums such as the RDA Software Source Code Interest Group provide venues for discussing management, sharing, discovery, archival, and provenance of software source code, further normalizing FAIR adoption [14].

Table: FAIR Assessment Criteria for Computational Chemistry Assets

| FAIR Principle | Assessment Criteria | Evidence of Compliance |

|---|---|---|

| Findable | Persistent identifiers, Rich metadata, Resource indexing | DOI assignment, Structured metadata files, Repository indexing |

| Accessible | Standard protocols, Authentication/authorization, Persistent access | HTTPS API, Access control documentation, Long-term preservation plan |

| Interoperable | Standardized formats, Shared vocabularies, Qualified references | Use of community file formats, Ontology terms, Cross-references to other resources |

| Reusable | License clarity, Provenance information, Community standards | Clear usage license, Experimental protocols, Domain standards compliance |

The implementation of FAIR principles in computational chemistry represents a fundamental shift in how research data is managed, shared, and utilized. By making data and workflows Findable, Accessible, Interoperable, and Reusable, the research community can accelerate discovery, enhance collaboration, and maximize the value of research investments. Platforms like ioChem-BD for computational chemistry and HT-CHEMBORD for high-throughput experimental data demonstrate the practical application of FAIR principles through semantic data models, automated workflows, and specialized research data infrastructures [10] [9].

The journey toward comprehensive FAIR implementation requires coordinated efforts across multiple dimensions—including policy development, incentive structures, community building, training initiatives, and technical infrastructure [14]. As computational chemistry continues to generate increasingly complex and voluminous datasets, the FAIR principles provide an essential framework for ensuring that these valuable research assets remain discoverable, interpretable, and reusable for future scientific breakthroughs. By adopting the protocols, standards, and best practices outlined in this guide, researchers and institutions can contribute to a more open, reproducible, and collaborative research ecosystem in computational chemistry and materials science.

The development of computational methods for predicting physicochemical properties represents a mature scientific field, with techniques ranging from molecular mechanics and quantum calculations to empirical and machine learning models. A significant challenge, however, lies in the fair and unbiased evaluation of these diverse methodologies. Blind prediction challenges have emerged as a critical solution to this problem, enabling researchers from academia and industry to test their methods without prior knowledge of experimental results [15] [16].

The first euroSAMPL pKa blind prediction challenge (euroSAMPL1) introduced a novel dimension to this traditional framework by incorporating a comprehensive assessment of Research Data Management (RDM) practices alongside predictive accuracy [15] [17]. This challenge was explicitly designed to rank not only the predictive performance of computational models but also to evaluate participants' adherence to the FAIR principles (Findable, Accessible, Interoperable, Reusable) through a cross-evaluation system among participants themselves [16]. This dual-focused approach represents a significant advancement in establishing foundational concepts for computational chemistry reproducibility research.

This case study examines the design, execution, and outcomes of the euroSAMPL1 challenge, with particular emphasis on its innovative FAIRscore evaluation system. By analyzing both the statistical metrics of prediction quality and the newly defined FAIRscores, we aim to provide insights into the current state of pKa prediction methodologies and research data management standards in computational chemistry, offering valuable guidance for researchers and drug development professionals committed to reproducible science.

Background and Challenge Design

The FAIR Principles and Reproducible Research

The FAIR guiding principles, formally published in 2016, establish comprehensive standards for scientific data management and stewardship [18] [19]. These principles emphasize machine-actionability—the capacity of computational systems to find, access, interoperate, and reuse data with minimal human intervention—recognizing that researchers increasingly rely on computational support to manage the growing volume, complexity, and creation speed of scientific data [18].

The four pillars of FAIR include:

- Findable: Metadata and data should be easily discoverable by both humans and computers, with machine-readable metadata being essential for automatic discovery [18].

- Accessible: Once found, users should clearly understand how data can be accessed, including any authentication and authorization requirements [18].

- Interoperable: Data must be able to be integrated with other datasets and interoperate with applications or workflows for analysis, storage, and processing [18].

- Reusable: The ultimate goal of FAIR is to optimize data reuse through well-described metadata and data that can be replicated or combined in different settings [18].

The euroSAMPL1 challenge extended these principles to include reproducibility (FAIR+R), acknowledging that true scientific rigor requires that computational chemistry data be reproducible using only the information provided in publications and supporting information [16]. This expansion aligns with broader scientific definitions of reproducibility, which emphasize that independent groups should be able to obtain the same results using artifacts they develop independently [20].

euroSAMPL1 Challenge Architecture

The euroSAMPL1 challenge was organized as a use case for the German National Research Data Infrastructure (NFDI4Chem) with the explicit goal of testing RDM tools and community acceptance of RDM standards [16]. The challenge focused on predicting aqueous pKa values for 35 carefully selected drug-like molecules provided as SMILES strings [17] [16].

A critical design aspect was the selection of compounds exhibiting only a single macroscopic transition (change of charge) within the pH range of 2-12, with dominance of only a single tautomer in each charge state according to preliminary calculations [16]. This simplification allowed participation from diverse modeling communities—from atomistic quantum-mechanical methods to empirical rule-based and machine learning approaches—while requiring prediction only of macroscopic pKa values without addressing complex ensembles of coupled charge and tautomer transitions [16].

The challenge followed a structured timeline:

- Compound structure disclosure: February 19, 2024

- Prediction submission deadline: May 10, 2024

- Experimental data disclosure: May 10, 2024

- Peer evaluation period: May 10-29, 2024 [17]

This timeline ensured true blind prediction conditions while facilitating immediate cross-evaluation of methodologies once experimental results were revealed.

Experimental Protocols and Methodologies

Compound Selection and Experimental Measurements

The experimental foundation of the euroSAMPL1 challenge relied on a curated set of 35 compounds initially obtained from the research group of Ruth Brenk at the University of Bergen [16]. These compounds were purchased from Otava Chemicals as part of a fragment library, ensuring relevance to drug discovery applications.

Experimental pKa measurements were conducted using standardized methodologies, with ChemAxon's cx_calc software integrated into the cheminformatics pipeline to assign measured pKa values to respective titration sites [17]. This integration highlights the practical industry tools employed in challenge administration and underscores the importance of reproducible assignment protocols in experimental data processing.

Computational Prediction Methodologies

Participants employed diverse computational strategies for pKa prediction, reflecting the broad methodological spectrum in the field. These approaches can be categorized into several fundamental paradigms:

Table: Computational Methods for pKa Prediction

| Method Category | Underlying Principle | Strengths | Limitations | Representative Tools |

|---|---|---|---|---|

| Quantum Mechanics | Computes free energy difference between microstates using DFT or other quantum-chemical methods | Minimal parameterization to experimental data; generalizes well to new chemical spaces | Computationally expensive; requires extensive conformer searching | Schrödinger's Jaguar, Rowan's AIMNet2 workflow [21] |

| Explicit-Solvent Free-Energy Simulations | Uses molecular dynamics with Monte Carlo- or λ-dynamics to model protonation state changes | Directly accounts for solvation effects; suitable for protein environments | Resource-intensive; requires domain expertise | OpenMM, AMBER, CHARMM, NAMD [21] |

| Fragment-Based Methods | Applies Hammett/Taft-style linear free-energy relationships and curated fragment libraries | Very fast; highly accurate within domain of applicability | Poor generalization; may miss complex chemical motifs | ACD/Labs' pKa module, Schrödinger's Epik Classic [21] |

| Data-Driven Methods | Learns pKa relationships from structure/features using machine learning | High throughput; improves with additional training data | Data-hungry; unreliable for unexplored chemical spaces | Schrödinger's Epik, Rowan's Starling, MolGpka [21] |

| Hybrid Approaches | Combines physics-based features with machine learning | Physical inductive bias with data-driven improvement | Dependent on underlying physical model accuracy | ChemAxon's pKa plugin, QupKake [21] |

The winning submission employed a thermodynamics-informed neural network approach, specifically an S+pKa model, which demonstrated the effectiveness of integrating physical principles with data-driven methodologies [22].

FAIRscore Evaluation Protocol

A novel aspect of euroSAMPL1 was the implementation of a structured peer evaluation process to assess adherence to FAIR+R principles. After the prediction phase concluded, participants anonymously evaluated each other's submissions using a standardized questionnaire [16]. This cross-evaluation system generated a quantitative FAIRscore for each submission, assessing:

- Findability: How easily data and metadata could be discovered

- Accessibility: clarity of data access protocols

- Interoperability: Ability to integrate data with other resources

- Reusability: Potential for replication and application in new contexts

- Reproducibility: completeness of computational environment documentation

This systematic evaluation represented a significant innovation in blind challenge design, explicitly linking methodological transparency with predictive performance assessment.

Diagram: FAIRscore Evaluation Workflow. The process began with anonymous peer evaluation of submissions using a standardized questionnaire assessing FAIR principles and reproducibility, culminating in a quantitative FAIRscore that contributed to dual ranking.

Results and Analysis

Predictive Performance Assessment

The statistical evaluation of pKa predictions in euroSAMPL1 revealed that multiple methods could achieve chemical accuracy in their predictions [15]. Quantitative analysis demonstrated that consensus predictions constructed from multiple independent methods frequently outperformed individual submissions, highlighting the value of methodological diversity in computational chemistry [15] [16].

This finding aligns with established best practices in the field, where the choice between data-driven and physics-based methods often depends on specific research requirements. For structures containing common functional groups well-represented in training databases, or in high-throughput virtual screening campaigns, machine-learning models typically offer optimal combination of speed and reliability [21]. Conversely, for exotic functional groups or complex chemical effects, quantum-chemical methods provide greater resilience despite increased computational demands [21].

Table: Performance Metrics in pKa Prediction

| Method Type | Typical RMSE | Appropriate Use Cases | Throughput | Domain of Applicability |

|---|---|---|---|---|

| Quantum Mechanics | Varies; can achieve chemical accuracy | Exotic functional groups, complex chemical effects | Low (hours to days per prediction) | Broad with physical principles |

| Data-Driven Methods | ~1.11 (e.g., ChemAxon on drug discovery set) [23] | "Normal" drug-like functional groups | High (thousands of compounds) | Limited to training data coverage |

| Fragment-Based Methods | Highly accurate within domain | Specific chemical series with established parameters | Very high | Narrow, domain-specific |

| Consensus Predictions | Often outperforms individual methods [15] [16] | Critical applications requiring high reliability | Medium (requires multiple methods) | Broad through method combination |

FAIRscore Implementation Outcomes

The introduction of the FAIRscore evaluation revealed significant variability in research data management practices across the computational chemistry community. Analysis of the peer evaluation results indicated that many models, along with their training data and generated outputs, fell short of one or multiple FAIR standards [15] [16].

The cross-evaluation process itself served as an educational intervention, raising community awareness about RDM standards and their importance in reproducible research. By requiring participants to critically assess their peers' methodologies and documentation practices, the challenge fostered collective reflection on implementation of FAIR principles in computational chemistry workflows [16].

Research Reagent Solutions

The euroSAMPL1 challenge utilized and evaluated various computational tools and resources that constitute essential "research reagents" in modern computational chemistry workflows. These reagents form the foundational toolkit for reproducible pKa prediction research.

Table: Essential Research Reagents for pKa Prediction

| Tool/Resource | Type | Function | Application in euroSAMPL1 |

|---|---|---|---|

| cx_calc (ChemAxon) | Cheminformatics Tool | Structure standardization and pKa prediction | Used by organizers to assign measured pKa to titration sites [17] |

| GitLab Repository | Data Management Infrastructure | Version control and collaboration | Hosted challenge compounds, data, and analysis scripts [17] |

| NFDI4Chem Infrastructure | Research Data Management | Persistent storage and metadata standards | Provided FAIR data infrastructure framework [16] |

| Thermodynamics-Informed S+pKa Model | Hybrid Prediction Method | Integrates physical principles with machine learning | Winning submission methodology [22] |

| FAIRscore Questionnaire | Evaluation Framework | Quantitative assessment of FAIR compliance | Standardized peer evaluation instrument [16] |

Discussion and Implications

Advancements in Reproducible Research Practices

The euroSAMPL1 challenge represents a significant milestone in computational chemistry's evolving approach to research reproducibility. By explicitly linking methodological evaluation with FAIR principles assessment, the challenge established a precedent for future community initiatives seeking to elevate both predictive accuracy and research transparency.

The finding that consensus predictions often outperform individual methods has profound implications for drug development workflows [15] [16]. Rather than relying on single-methodologies, research groups can achieve more reliable results through method diversification and integration. This approach requires robust data management practices to ensure different methodological outputs can be effectively compared and combined.

The FAIRscore implementation demonstrated that machine-actionable metadata is not merely an administrative concern but a fundamental enabler of methodological progress. When data and models are findable, accessible, interoperable, and reusable, the entire research community benefits from accelerated validation, integration, and improvement of computational approaches [18] [19].

Barriers and Implementation Challenges

Despite the demonstrated benefits of FAIR+R principles, significant implementation barriers persist in computational chemistry. These include technical challenges related to diverse data types and volumes, cultural resistance to shifting from "my data" to "our data" mindsets, and the need for domain-specific metadata standards that balance comprehensiveness with practicality [19] [16].

The chemistry community has traditionally lagged behind other disciplines in adopting FAIR culture, though initiatives like the Chemistry Implementation Network (ChIN) manifesto calling for the community to "Go FAIR" are driving gradual change [19]. Successful adoption requires coordinated development of supportive infrastructure, standardized nomenclature, and intuitive tools that integrate seamlessly into research workflows.

Future Directions

The euroSAMPL1 challenge establishes a framework for future competitions that could expand assessment to additional physicochemical properties, including solubility, partition coefficients, and binding affinities [23]. The FAIRscore methodology provides a transferable model for evaluating research data management practices across computational chemistry subdisciplines.

Future challenges could further refine the quantitative assessment of reproducibility, potentially incorporating automated verification of submitted computational workflows. As the field progresses, integration of FAIR principles into graduate education and professional training will be essential for cultivating a new generation of computational chemists equipped with both methodological expertise and data stewardship capabilities.

The euroSAMPL1 pKa blind prediction challenge successfully advanced both methodological development and research data management standards in computational chemistry. By integrating traditional predictive accuracy assessment with innovative FAIRscore evaluation, the challenge demonstrated that true scientific progress requires excellence in both computational methodology and research transparency.

The finding that consensus predictions frequently surpass individual methods underscores the collective nature of scientific advancement, while the variability in FAIRscores reveals significant opportunity for community growth in data management practices. As computational chemistry continues to play an expanding role in drug discovery and materials science, the principles exemplified by euroSAMPL1—rigorous blind validation, methodological diversity, and commitment to reproducible research—will be essential for translating computational predictions into reliable scientific insights.

For researchers and drug development professionals, this case study highlights the importance of selecting appropriate prediction methodologies based on specific chemical contexts while implementing robust data management practices that ensure research transparency and reproducibility. The continued evolution of this dual-focused approach will be essential for addressing the complex challenges at the frontiers of computational chemistry and drug discovery.

Computational reproducibility—the ability to regenerate specific results using the original data, code, and computational environment—represents a foundational pillar of scientific integrity, particularly in computational chemistry and drug development. Theoretically deterministic computational research faces a paradoxical crisis: despite its digital nature, consistent replication remains elusive. Recent quantitative assessments reveal the severity of this challenge across scientific computing domains. A systematic analysis of Jupyter notebooks in biomedical literature found that only 5.9% (245 of 4,169) produced similar results when re-executed, with failures attributed primarily to missing dependencies, broken libraries, and environment differences [24]. Similarly, an evaluation of R scripts in the Harvard Dataverse repository showed only 26% completed without errors, while a sobering assessment of bioinformatics studies indicated only 11% (2 of 18) could be successfully reproduced [25].

The economic impact of this irreproducibility is staggering, with estimates suggesting an annual global drain of $200 billion on scientific computing resources [24]. The pharmaceutical industry alone wastes approximately $40 billion annually on irreproducible computational research, with individual study replications requiring between 3-24 months and $500,000-$2 million in additional investment [24]. Beyond financial costs, this crisis undermines scientific progress, erodes public trust, and in clinical research contexts, potentially jeopardizes patient safety when flawed computational analyses inform treatment decisions [25].

Quantifying the Problem: Economic and Scientific Costs

Table 1: Documented Reproducibility Failure Rates Across Computational Domains

| Domain | Reproducibility Rate | Sample Size | Primary Failure Causes |

|---|---|---|---|

| Bioinformatics Studies | 11% | 18 studies | Missing data, software, documentation [25] |

| Jupyter Notebooks (Biomedical) | 5.9% | 4,169 notebooks | Missing dependencies, broken libraries, environment differences [24] [25] |

| R Scripts (Harvard Dataverse) | 26% | N/A | Coding errors, missing resources [25] |

| Preclinical Cancer Studies | 46% | 54% of studies failed replication | Methodology issues, insufficient documentation [26] |

| Computational Physics Papers | ~26% | N/A | Software versions, environment configuration [24] |

Table 2: Economic Impact of Computational Irreproducibility

| Cost Category | Estimated Financial Impact | Scope |

|---|---|---|

| Total Global Scientific Impact | $200 billion annually | Worldwide [24] |

| Pharmaceutical Industry Losses | $40 billion annually | Sector-specific [24] |

| Individual Study Replication | $500,000 - $2,000,000 | Per study [24] |

| Computational Resource Waste | ~$3,600 per 1,000-core simulation | 24-hour run at commercial rates [24] |

Core Failure Points: Technical and Documentation Barriers

Missing Dependencies and Software Versioning Issues

Dependency management represents one of the most pervasive failure points in computational reproducibility. Modern computational chemistry workflows typically incorporate numerous software libraries, packages, and tools with complex, often undocumented, interdependencies. Version conflicts emerge when software packages require incompatible library versions, while "dependency hell" occurs when circular or conflicting requirements prevent environment setup altogether.

The Oak Ridge National Laboratory documented how GPU atomic operations can produce variations of several percent in Monte Carlo simulations depending on the specific GPU model and driver version [24]. Similarly, a landmark study in computational chemistry revealed how 15 different software packages, all widely used in pharmaceutical and materials development, generated divergent results when calculating properties of the same simple crystals [24]. These tools represented millions of dollars in development and decades of research, yet were initially unable to agree on basic elemental properties.

Inadequate Documentation and Methodology Reporting

Insufficient documentation creates critical knowledge gaps that prevent experiment replication. Common deficiencies include missing installation instructions, incomplete parameter specifications, omitted data preprocessing steps, and absent execution protocols. Traditional methods of documenting experiments through written descriptions or manually recorded steps prove prone to human error and omission [27].

The transition from conceptual model to computational implementation presents particular documentation challenges. As noted in simulation research, this "translation" process represents a key failure point, where conceptual understanding fails to be fully encoded in executable instructions [28]. This problem is exacerbated when researchers with domain expertise (such as chemistry) lack computational background, while computationally skilled researchers may lack domain-specific knowledge.

Environmental and Hardware Variability

Computational environments introduce multiple reproducibility failure points, including operating system differences, hardware architecture variations, and containerization inconsistencies. High-performance computing environments face nondeterministic interactions where parallel execution order variations, floating-point arithmetic differences across architectures, and compiler optimization choices produce divergent results [24].

The computational reproducibility framework identifies compute environment control as one of the five essential pillars for reproducible research [25]. Without precise specification of the computational environment, including operating system, library versions, environment variables, and system dependencies, otherwise sound code may produce different results or fail entirely when executed in different environments.

Data Accessibility and Integrity Issues

Data-related failures encompass multiple dimensions: raw data unavailability, insufficient data annotation, format incompatibilities, and data corruption during storage or transfer. The National Academies of Sciences, Engineering, and Medicine emphasize that complete data documentation must include "a clear description of all methods, instruments, materials, procedures, measurements, and other variables involved in the study" [29].

The case example of a retracted clinical genomics study highlights data integrity risks. Investigators discovered that patient response labels had been reversed, some patients were included multiple times (up to four repetitions) with inconsistent grouping, and results were ascribed to incorrect drugs [25]. These errors, which potentially affected patient treatment decisions, underscore the critical importance of rigorous data management throughout the computational workflow.

Code Quality and Maintenance Deficiencies

Code quality issues manifest as undiscovered bugs, poor code structure, inadequate error handling, and insufficient testing protocols. Unlike production software, research code often evolves rapidly with minimal engineering oversight, accumulating technical debt that compromises reproducibility. The practice of "clean code" principles—readability, meaningful naming, and modular structure—is essential yet frequently overlooked in research environments [28].

Additionally, code maintenance creates long-term reproducibility challenges. Computational chemistry software stacks evolve, leaving older implementations incompatible with modern systems. One assessment found that many reproducibility tools themselves become outdated or unavailable over time, including CARE, CDE, Encapsulator, PARROT, Prune, reprozip-jupyter, ResearchCompendia, SOLE, and Umbrella [27].

Experimental Protocols for Assessing Reproducibility

Protocol for Dependency and Environment Mapping

Objective: Systematically identify all software dependencies and environment configuration requirements for a computational chemistry workflow.

Materials: Computational experiment codebase, system documentation, containerization tools (Docker, Singularity), dependency management tools (conda, pip).

Methodology:

- Execute automated dependency scanning using tools specific to the programming languages employed

- Document implicit dependencies through execution pathway analysis

- Capture environment variables and system configuration

- Generate dependency graph mapping all interconnections

- Create version-specific requirement specifications

- Verify completeness through isolated environment testing

This protocol aligns with the compute environment control pillar of reproducible computational research, which emphasizes precise specification of the computational environment to ensure consistent execution [25].

Protocol for Documentation Adequacy Assessment

Objective: Evaluate the completeness and accuracy of documentation supporting computational experiment replication.

Materials: Research publications, code comments, README files, methodology sections, lab notebooks.

Methodology:

- Create documentation checklist based on the Five Pillars framework [25]

- Conduct gap analysis between existing documentation and checklist requirements

- Verify documentation accuracy through execution against stated procedures

- Assess clarity for researchers from different domains or backgrounds

- Evaluate accessibility of documentation within the overall research package

The National Academies recommend that researchers "include a clear, specific, and complete description of how a reported result was reached," with details appropriate for the research type [29].

Visualization of Failure Points and Mitigation Strategies

Diagram 1: Computational reproducibility failure points and mitigation strategies.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Computational Research Reagents for Reproducibility

| Tool Category | Specific Solutions | Function & Purpose |

|---|---|---|

| Environment Control | Docker, Singularity, conda | Isolate and encapsulate computational environments for consistent execution across systems [27] [25] |

| Version Control | Git, GitHub, GitLab | Track code changes, enable collaboration, provide persistent identifiers for specific code versions [28] [25] |

| Literate Programming | Jupyter Notebooks, R Markdown, MyST | Combine executable code with explanatory text and results in integrated documents [25] |

| Workflow Management | Nextflow, Snakemake, CWL, WDL | Automate multi-step computational processes, ensure proper execution order, manage software dependencies [25] |

| Data Sharing | Zenodo, Figshare, Data Repositories | Provide persistent storage and digital object identifiers (DOIs) for research data [25] |

| Reproducibility Tools | SciConv, Code Ocean, RenkuLab, WholeTale | Package computational experiments for easier re-execution, often with user-friendly interfaces [27] |

Implementation Framework: The Five Pillars of Reproducible Computational Research

The "Five Pillars" framework provides a comprehensive structure for addressing common failure points in computational chemistry reproducibility [25]:

Literate Programming: Combine analytical code with human-readable documentation in formats like Jupyter notebooks or R Markdown. This approach directly addresses inadequate documentation by integrating explanation with execution.

Code Version Control and Sharing: Utilize systems like Git with platforms such as GitHub to track changes, enable collaboration, and provide persistent access to code. This practice mitigates code maintenance and availability issues.

Compute Environment Control: Employ containerization (Docker, Singularity) or environment management (conda) to capture and replicate precise computational environments, resolving dependency and configuration conflicts.

Persistent Data Sharing: Utilize certified repositories that provide digital object identifiers (DOIs) for datasets, ensuring long-term accessibility and addressing data availability failures.

Comprehensive Documentation: Create detailed, accessible documentation that encompasses both the computational methods and the scientific context, bridging knowledge gaps between domain experts and computational practitioners.

Implementation of this framework requires both technical adoption and cultural shift within research organizations. As noted by the National Academies, "Researchers need to understand the complexity of computation and acknowledge when outside collaboration is necessary" [29].

Addressing the common failure points in computational reproducibility—from missing dependencies and software versions to inadequate documentation—requires systematic implementation of both technical solutions and methodological standards. The Five Pillars framework provides a comprehensive approach for overcoming these challenges, while specialized tools and protocols enable practical implementation. For computational chemistry and drug development, where research outcomes increasingly inform critical decisions in therapeutic development, embracing these practices is both a scientific and ethical imperative. Through adoption of containerization, version control, literate programming, automated workflows, and persistent data sharing, researchers can transform computational reproducibility from an occasional achievement into a consistent standard.

The integration of advanced computational methods, particularly artificial intelligence (AI), into drug development represents a paradigm shift with the potential to drastically reduce timelines and costs. However, this reliance on computation introduces significant new risks. This whitepaper quantifies the substantial economic and scientific costs arising from wasted computational resources, inefficient processes, and a lack of reproducibility in computational chemistry. Industry analyses reveal that pharmaceutical companies waste approximately $44.5 billion annually on underutilized cloud infrastructure, a cost ultimately borne by consumers and one that diverts funds from critical research [30]. Furthermore, the failure to adopt robust probabilistic models and FAIR (Findable, Accessible, Interoperable, Reusable) data principles leads to scientific waste: overconfident predictions, irreproducible results, and missed opportunities for innovation. By examining these challenges through the lens of computational reproducibility research, this guide provides a framework for quantifying waste and implementing methodologies that enhance the reliability and efficiency of drug discovery.

The modern drug discovery process has become inextricably linked with high-performance computing. The field is undergoing a rapid transformation driven by AI and machine learning (ML), which are now deployed for genomics, proteomics, and molecular design [31]. This shift is dramatically increasing computational demand; for instance, training models like AlphaFold required thousands of GPU-years of compute [31]. The global industry is responding with massive infrastructure investments, with AI-related capital spending forecast to exceed $2.8 trillion by 2029 [31].

Despite this influx of resources and technological promise, the industry faces a critical challenge of efficiency. The goalposts for achievement are often defined by speed, potentially at the expense of pursuing the most impactful therapeutic targets [32]. This environment, described by experts as an "extreme hyper-phase," can lead to investment decisions clouded by fear of missing out (FOMO) rather than scientific rigor [33]. The convergence of escalating compute costs, inefficiencies in resource management, and foundational scientific uncertainties creates a perfect storm of waste that this paper seeks to quantify and address.

Quantifying the Economic Cost of Computational Waste

Macro-Scale Financial Waste in Cloud Infrastructure

At the macroeconomic level, inefficiencies in computational resource management represent a staggering financial drain. A recent industry study concluded that pharmaceutical firms waste $44.5 billion annually on underutilized cloud resources [30]. This figure highlights a systemic failure to optimize the very infrastructure upon which modern computational chemistry and AI research depend.

Table 1: Primary Sources of Computational Waste in Pharma Cloud Infrastructure

| Source of Waste | Annual Financial Impact | Common Causes |

|---|---|---|

| Underutilized Compute Instances | Portion of $44.5B [30] | Instances running at full capacity during off-hours; over-provisioning for peak loads [30]. |

| Inefficient Data Storage | Portion of $44.5B [30] | Redundant or rarely accessed data in expensive storage tiers; failure to archive or delete temporary files [30]. |

| AI Compute Demand & Supply Mismatch | Global AI infrastructure spending may reach $2.8T by 2029 [31] | Exponential growth in compute demand for AI models rapidly outpacing optimized infrastructure supply [31]. |

The case of Takeda provides a microcosm of this industry-wide problem. An internal optimization project found that a significant portion of AWS cloud storage contained redundant or rarely accessed data, while compute machines (EC2 instances) ran at full capacity continuously, even during nights and weekends. By addressing these two areas—cleaning up redundant data and right-sizing compute resources—the company achieved a 40% reduction in cloud infrastructure costs while maintaining strict regulatory and compliance standards [30]. This case demonstrates that waste is not an inevitable cost of doing business but a manageable inefficiency.

The Ripple Effects: Rising Drug Development Costs and Stagnant ROI

The massive waste in computational resources directly contributes to the escalating cost of drug development, which now exceeds $2.23 billion per asset [34]. While R&D returns have recently seen a promising uptick to 5.9%, this follows a record low of 1.2% in 2022, indicating persistent underlying challenges [34]. Every dollar spent on redundant cloud storage or idle compute instances is a dollar not allocated to critical research, ultimately inflating the cost of developed therapies and reducing the industry's ability to fund innovative projects.

The Scientific Cost: Uncertainty, Irreproducibility, and Lost Creativity

Beyond direct financial costs, a deeper scientific toll is exacted by inadequate computational practices. These include the failure to quantify prediction uncertainty, poor research data management, and a culture that overhypes AI's current capabilities.

The High Cost of Ignoring Uncertainty

A critical source of scientific waste is the use of machine learning models that provide only a single best estimate, ignoring all sources of uncertainty. Predictions from these models are often over-confident, leading to the pursuit of compounds that are destined to fail. This puts patients at risk and wastes resources when these compounds enter expensive late-stage development [35].

Probabilistic predictive models (PPMs) are designed to incorporate all sources of uncertainty, returning a distribution of predicted values. The seven key sources of uncertainty in such models are:

- Data Uncertainty: Noise inherent in the experimental data used for training.

- Distribution Function Uncertainty: The choice of probability distribution for the data.

- Mean Function Uncertainty: The mathematical form of the relationship between inputs and outputs.

- Variance Function Uncertainty: How the variance of the data changes.

- Link Function Uncertainty: The function connecting the model to the data.

- Parameter Uncertainty: Uncertainty in the model's parameter estimates.

- Hyperparameter Uncertainty: Uncertainty in the settings that control the model's learning process [35].

Failure to account for these uncertainties, particularly in areas like toxicity prediction, can lead to costly late-stage failures. Incorporating PPMs provides a quantitative measure of confidence, allowing researchers to prioritize compounds with not just promising predicted activity, but also with well-understood risks.

The Reproducibility Crisis in Computational Chemistry

The lack of standardized research data management (RDM) is a major contributor to scientific waste. Without reproducibility, computational results cannot be trusted, built upon, or translated reliably into wet-lab experiments. Initiatives like the euroSAMPL pKa blind prediction challenge have highlighted that while multiple methods can predict a property like pKa to within chemical accuracy, the field still falls short of the FAIR standards [15].

Adhering to FAIR principles ensures that data and models are Findable, Accessible, Interoperable, and Reusable. The euroSAMPL challenge went beyond mere predictive accuracy, also evaluating participants' adherence to these principles through a cross-evaluation "FAIRscore" [15]. The findings suggest that "consensus" predictions constructed from multiple, independent methods can outperform any individual prediction, but only if the underlying data and methodologies are managed in a reproducible way [15]. As argued by advocates of Open Science, computational reproducibility is fundamental to preserving knowledge and enabling its future reuse and reinterpretation by new generations of researchers [36].

The Opportunity Cost of Overhyped AI and Diminished Creativity

The hype surrounding AI in drug discovery carries its own cost. Scientists report that overhyping AI produces unrealistic expectations and is not conducive to sustainable development [33]. When AI is sold as a panacea, the inevitable failure to meet inflated promises can lead to a backlash, causing the field to be "put back quite a long way when people stop thinking it can work because they feel like they’ve tried it, and it didn’t work" [33].

This environment also diminishes opportunities for creative discovery. Medicinal chemists have expressed frustration that some AI applications draw them into mundane, soul-destroying work to produce data for training models, crushing creativity [33]. The real advantage of AI is not to replace human scientists but to empower them, acting as a "force multiplier in the hands of experienced scientists" [32]. The opportunity cost of misapplied AI is the breakthrough discovery that never occurs because human ingenuity was sidelined in favor of a conservative, data-driven process that "stick(s) too closely to what is already known" [33].

Experimental Protocols for Quantifying and Mitigating Waste

Protocol 1: Cloud Infrastructure Waste Audit and Optimization

This protocol provides a step-by-step methodology for quantifying and reducing financial waste in cloud computing environments, based on demonstrated industry success [30].

Objective: To identify and eliminate redundant cloud storage and compute resources, reducing costs while maintaining regulatory compliance. Experimental Workflow:

Methodology:

- Profile Cloud Costs: Use native cloud tools (e.g., AWS Cost Explorer) to categorize spending by service (e.g., S3 storage, EC2 compute), region, and project tags.