Conquering Overfitting: Advanced Strategies for Molecular Property Prediction on Small Datasets

This article addresses the critical challenge of overfitting in molecular property prediction, a major bottleneck in drug discovery where labeled data is often scarce and costly.

Conquering Overfitting: Advanced Strategies for Molecular Property Prediction on Small Datasets

Abstract

This article addresses the critical challenge of overfitting in molecular property prediction, a major bottleneck in drug discovery where labeled data is often scarce and costly. We explore the foundational causes of overfitting, including dataset size limitations and data heterogeneity. The core of the article presents a methodological deep dive into state-of-the-art solutions such as multi-task learning, meta-learning, and specialized neural network architectures designed for low-data regimes. We further provide a practical troubleshooting guide for optimizing model performance and rigorously evaluate these strategies through comparative analysis and robustness checks on out-of-distribution data. Designed for researchers, scientists, and drug development professionals, this guide synthesizes the latest research to equip readers with actionable strategies for building more reliable and generalizable predictive models.

The Small Data Problem: Why Molecular Property Prediction is Inherently Prone to Overfitting

Troubleshooting Guides

Guide 1: Troubleshooting Negative Transfer in Multi-Task Learning

Problem: My Multi-Task Learning (MTL) model performance is worse than single-task models. I suspect negative transfer. Application Context: This occurs when updates from one task degrade performance on another, often due to low task relatedness or imbalanced datasets [1].

| Step | Action & Diagnosis | Solution |

|---|---|---|

| 1 | Diagnose: Check for significant performance disparity between tasks, especially for low-data tasks. | Implement Adaptive Checkpointing with Specialization (ACS). Use a shared GNN backbone with task-specific heads and checkpoint the best backbone-head pair for each task when its validation loss minimizes [1]. |

| 2 | Diagnose: Confirm if tasks have vastly different numbers of labeled samples (task imbalance). | Apply the meta-learning framework. Use a meta-model to derive optimal weights for source data points during pre-training to mitigate negative transfer from irrelevant samples [2]. |

| 3 | Verify: After applying ACS, ensure specialized models for each task are saved and used for final inference, not the shared model from the last training step [1]. |

Experimental Protocol for ACS [1]:

- Architecture Setup: Construct a model with a shared Graph Neural Network (GNN) backbone and independent Multi-Layer Perceptron (MLP) heads for each task.

- Training Loop: Train the model on all tasks simultaneously.

- Validation Monitoring: Continuously monitor the validation loss for every individual task throughout training.

- Checkpointing: For each task, save a checkpoint of the shared backbone parameters and its specific head whenever that task's validation loss hits a new minimum.

- Specialization: After training, for each task, load its best-performing checkpoint to create a specialized model for inference.

Guide 2: Mitigating Overfitting in Non-Linear Models on Small Datasets

Problem: My non-linear model (e.g., Neural Network, Random Forest) shows a large gap between training and validation error. Application Context: Non-linear models are prone to overfitting in low-data regimes, traditionally leading researchers to prefer linear models [3].

| Step | Action & Diagnosis | Solution |

|---|---|---|

| 1 | Diagnose: Use Repeated Cross-Validation (e.g., 10x 5-fold CV). If CV error is much higher than training error, overfitting is likely. | Integrate an overfitting metric directly into hyperparameter optimization. Use a combined RMSE score that averages both interpolation (standard CV) and extrapolation (sorted CV) performance [3]. |

| 2 | Diagnose: Check if your model fails to predict values outside the training data range. | Use automated workflows like ROBERT that employ Bayesian Optimization with the combined RMSE as the objective function, which penalizes models that extrapolate poorly [3]. |

| 3 | Verify: Perform y-scrambling (shuffling target values). If your model still achieves high performance, it is learning noise and is flawed [3]. |

Experimental Protocol for Robust Workflow [3]:

- Data Reservation: Reserve 20% of the data (min. 4 points) as an external test set, split with an "even" distribution of target values.

- Hyperparameter Optimization: Use Bayesian Optimization to tune model hyperparameters.

- Objective Function: For each hyperparameter set, calculate a combined RMSE: (RMSEInterpolation + RMSEExtrapolation) / 2.

- RMSEInterpolation: From a 10x repeated 5-fold cross-validation on the training/validation data.

- RMSEExtrapolation: From a sorted 5-fold CV, using the highest RMSE from the top and bottom partitions.

- Model Selection: Select the model with the best (lowest) combined RMSE score.

- Final Evaluation: Train the final model on the entire training set and evaluate once on the held-out test set.

Frequently Asked Questions (FAQs)

What are the most effective strategies when I have fewer than 50 labeled molecules?

With ultra-low data, leveraging transfer learning and pre-training on large, unlabeled datasets is critical. The key is a strategic two-stage pre-training process [4]:

| Strategy | Description | Function |

|---|---|---|

| Two-Stage Pre-training | A framework (e.g., MoleVers) that first learns general molecular representations from unlabeled data, then refines them using computationally derived auxiliary properties [4]. | Maximizes generalizability by learning both structural and property-based features. |

| Stage 1: Self-Supervised Learning | Train on large unlabeled datasets using tasks like Masked Atom Prediction (MAP) and extreme denoising of 3D coordinates [4]. | Learns robust, general-purpose molecular representations without labeled data. |

| Stage 2: Auxiliary Property Prediction | Further pre-train the model to predict properties calculated via Density Functional Theory (HOMO, LUMO, dipole moment) or relative rankings from Large Language Models [4]. | Provides a rich, physics-aware and context-aware initialization for fine-tuning. |

| Fine-Tuning | Finally, fine-tune the pre-trained model on your small, labeled target dataset [4]. | Adapts the general model to your specific property prediction task. |

How can I reliably estimate model performance with so little data?

Traditional single train-test splits are highly unreliable in low-data regimes. You must use rigorous validation techniques [3].

- Use Repeated Cross-Validation: Perform 10x repeated 5-fold cross-validation. This mitigates the variance caused by random data splitting and provides a more stable estimate of model performance [3].

- Evaluate Extrapolation Explicitly: Use a sorted cross-validation approach where data is partitioned based on the target value. This tests the model's ability to predict compounds outside the property range seen in training, which is a critical assessment of generalizability [3].

- Employ a Comprehensive Scoring System: Use tools that provide a multi-faceted score (e.g., on a scale of 10) evaluating predictive ability, overfitting, prediction uncertainty, and robustness to spurious correlations (e.g., via y-shuffling) [3].

My experimental data has many "less than" or "greater than" values (censored data). Can I use it?

Yes, censored data contains valuable information and should not be discarded. Standard models cannot use it, but you can adapt Uncertainty Quantification (UQ) methods to learn from these thresholds [5].

- The Problem: Censored labels (e.g., IC50 > 10 μM) provide partial information but are common in early drug discovery.

- The Solution: Adapt ensemble-based, Bayesian, or Gaussian models using the Tobit model from survival analysis. This allows the model to learn from the censored labels, leading to more reliable uncertainty estimates [5].

- The Benefit: This is essential for making optimal decisions in the drug discovery process, as it allows you to prioritize compounds where the model is confident, saving resources [5].

Beyond graph-based models, what other molecular representations are useful?

While Graph Neural Networks (GNNs) are powerful, a diverse toolkit of representations exists, each with strengths. The choice depends on your specific task and data [6] [7].

| Representation | Description | Best Use Cases |

|---|---|---|

| Extended Connectivity Fingerprints (ECFP) [6] [2] | Circular fingerprints encoding molecular substructures as fixed-length bit vectors. | Similarity searching, virtual screening, QSAR modeling. Computationally efficient. |

| SMILES/String-Based [6] [7] | A string of characters representing the molecular structure. Can be processed by language models like Transformers. | De novo molecular design, generative tasks, leveraging NLP architectures. |

| 3D-Aware Representations [7] | Representations that incorporate the spatial 3D geometry of a molecule, often through denoising tasks or geometric GNNs. | Modeling molecular interactions, binding affinity prediction, quantum property prediction. |

| Multi-Modal Fusion [7] | Integrating multiple representation types (e.g., graphs, SMILES, descriptors) into a unified model. | Capturing complex molecular interactions for challenging prediction tasks where no single representation is sufficient. |

| Item | Function & Application |

|---|---|

| ROBERT Software [3] | An automated workflow for building ML models from CSV files, performing data curation, hyperparameter optimization with overfitting mitigation, and generating comprehensive reports. |

| Adaptive Checkpointing with Specialization (ACS) [1] | A training scheme for multi-task GNNs that mitigates negative transfer by checkpointing the best model parameters for each task individually during training. |

| MoleVers Model [4] | A versatile pre-trained model using a two-stage strategy (self-supervised learning + auxiliary property prediction) designed for extreme low-data regimes. |

| Meta-Transfer Learning Framework [2] | A meta-learning algorithm that identifies optimal source data samples and initializations for transfer learning, effectively mitigating negative transfer. |

| Tobit Model for Censored Data [5] | A statistical model from survival analysis adapted for UQ in drug discovery, enabling learning from censored experimental labels (e.g., IC50 > 10 μM). |

| Combined RMSE Metric [3] | An objective function used during hyperparameter optimization that combines interpolation and extrapolation errors to directly penalize and reduce overfitting. |

Overfitting occurs when a machine learning model learns the training data too well, capturing not only the underlying patterns but also the noise and random fluctuations. This results in a model that performs excellently on its training data but fails to generalize to new, unseen data [8]. In the context of molecular property prediction for drug development, this is a critical challenge. Traditional deep-learning models often produce overconfident mispredictions for out-of-distribution (OoD) samples—molecules that fall outside the coverage of the original training datasets [9] [10]. When these unreliable predictions enter the decision-making pipeline, they can lead to significant resource wastage and slow down the discovery of viable drug candidates [10].

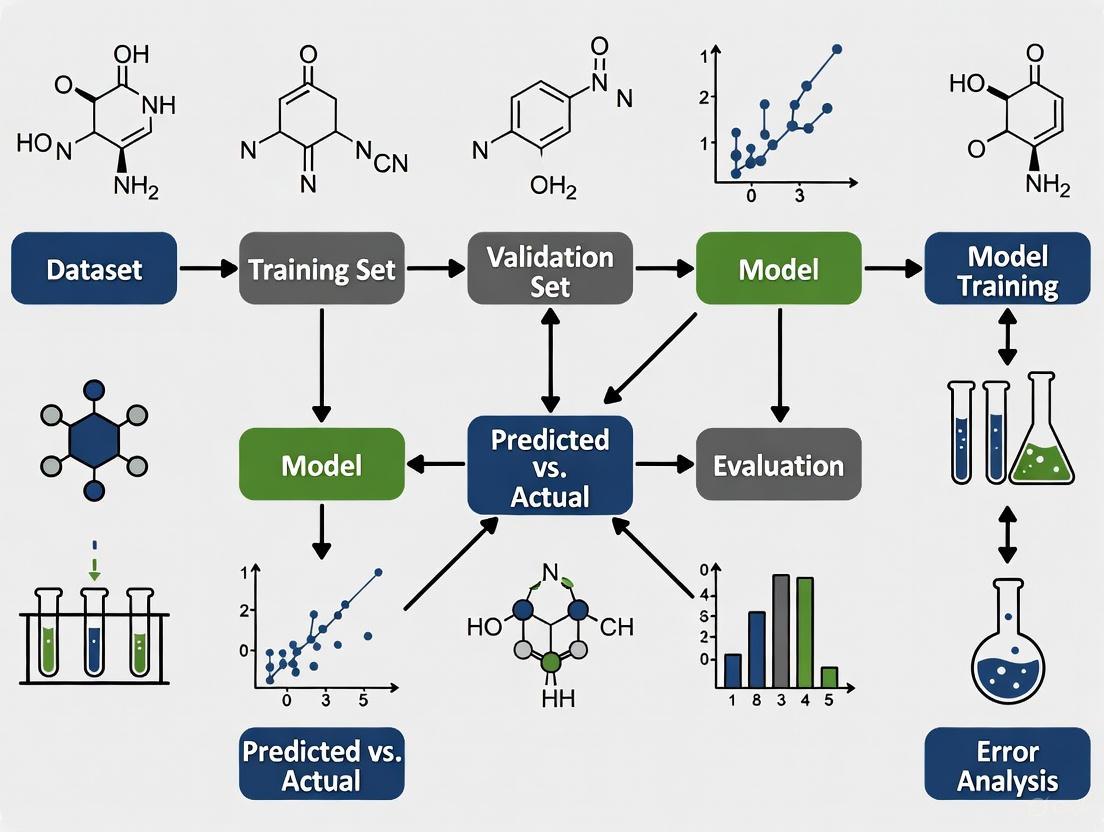

This technical support center is designed within the broader thesis of addressing overfitting in molecular property prediction, with a special focus on the complications introduced by small datasets. The following guides and FAQs provide actionable troubleshooting advice, detailed protocols, and visual resources to help researchers diagnose and mitigate overfitting in their experiments.

Troubleshooting Guides

Guide 1: Diagnosing and Addressing High Capacity Mismatch

Symptom: Your model shows a significant performance gap, with near-perfect accuracy on the training set but poor accuracy on the validation or test set. This is a classic sign of a model that is too complex for the amount of data available [8] [11].

Troubleshooting Steps:

- Simplify Your Model Architecture: Begin by reducing model complexity.

- For Neural Networks: Reduce the number of layers or hidden units. Implement dropout, which randomly deactivates a percentage of neurons during training to prevent co-adaptation and force the network to learn more robust features [8] [11].

- For Decision Trees: Apply pruning to remove branches that have low importance and do not contribute significantly to predictive accuracy [8].

- Apply Regularization: Introduce penalty terms to the model's loss function to discourage complexity.

- L2 Regularization (Ridge): Adds a penalty equal to the square of the magnitude of coefficients. This forces model weights to be small but rarely zero [8] [11].

- L1 Regularization (Lasso): Adds a penalty equal to the absolute value of the magnitude of coefficients. This can shrink some coefficients to zero, effectively performing feature selection [8].

- Use Early Stopping: Monitor the model's performance on a validation set during training. Halt the training process as soon as the performance on the validation set begins to degrade, even if performance on the training set is still improving [8].

- Switch to a More Data-Efficient Model: For molecular property prediction with limited data, consider models specifically designed for low-data regimes. Adaptive Checkpointing with Specialization (ACS) is a training scheme for multi-task graph neural networks that mitigates negative transfer, a phenomenon where learning from one task degrades performance on another, which is common in imbalanced datasets [1].

Visual Guide: The Effect of Model Complexity The diagram below illustrates how model capacity leads to overfitting, a good fit, or underfitting.

Guide 2: Mitigating Noisy and Heterogeneous Data

Symptom: Model performance is unstable, and predictions seem to be based on spurious correlations that are not chemically meaningful. This often arises from datasets with high noise, significant class imbalance, or data collected from disparate sources (heterogeneous data) [12] [1].

Troubleshooting Steps:

- Data Cleaning and Curation:

- Audit Data Sources: Check for temporal or spatial disparities in your data. A model trained on data from one source may not generalize to data from another. Whenever possible, use time-split or source-specific validation to get a realistic performance estimate [1].

- Address Class Imbalance: For classification tasks, techniques like oversampling the minority class, undersampling the majority class, or using algorithmic approaches like SMOTE can help rebalance the dataset.

- Employ Multi-Task Learning (MTL) with Caution: MTL can improve data efficiency by leveraging correlations among related molecular properties. However, it can suffer from negative transfer (NT) when tasks are not sufficiently related or are imbalanced [1].

- Use methods like Adaptive Checkpointing with Specialization (ACS) which combines a shared, task-agnostic backbone with task-specific heads. It checkpoints the best model parameters for each task individually, protecting them from detrimental updates from other tasks [1].

- Implement Uncertainty Estimation: For molecular property prediction, use models that can quantify the confidence of their predictions.

Visual Guide: Workflow for Handling Noisy/Heterogeneous Data The diagram below outlines a protocol to preprocess data and select a modeling strategy that is robust to noise and heterogeneity.

Frequently Asked Questions (FAQs)

FAQ 1: What are the most straightforward indicators that my molecular property prediction model is overfitting?

The primary indicator is a large gap between performance on the training data and performance on a held-out validation or test set. For example, your model may have 99% accuracy on the training set but only 70% on the test set [8] [11]. In the context of molecular property prediction, a more nuanced sign is the production of overconfident false predictions on out-of-distribution molecules, where the model assigns a high probability to an incorrect prediction [10].

FAQ 2: I have very few labeled molecules for my property of interest. What is the best strategy to avoid overfitting?

With small datasets, the risk of overfitting is high. Key strategies include:

- Use Strong Regularization: Aggressively apply L2 regularization, dropout, and early stopping.

- Simplify the Model: Start with a simple model architecture and gradually increase complexity only if needed.

- Leverage Multi-Task Learning (MTL): If data for other related properties is available, MTL can help. However, to avoid negative transfer, use advanced schemes like ACS that are designed to handle task imbalance [1].

- Incorporate Uncertainty Quantification: Employ models like AttFpPost (which integrates Posterior Network) that are better at estimating prediction uncertainty. This allows you to filter out unreliable predictions for OoD samples, preventing them from affecting your downstream analysis [10].

FAQ 3: How can multi-task learning sometimes make overfitting worse?

While MTL aims to improve generalization by sharing representations across tasks, it can lead to negative transfer (NT). NT occurs when updates from one task are detrimental to another, often due to low task relatedness, severe imbalance in the amount of data per task, or differences in the optimal learning dynamics for each task [1]. This can manifest as worse performance on some tasks compared to single-task learning, which is a form of overfitting to the noisy or imbalanced training signals.

FAQ 4: My model's training loss is still decreasing, but the validation loss has started to increase. What should I do?

This is a textbook sign of overfitting. You should implement early stopping. Halt the training process immediately and revert to the model parameters that were saved when the validation loss was at its minimum [8]. Continuing to train will cause the model to further memorize the training data at the expense of generalization.

FAQ 5: What is the difference between aleatoric and epistemic uncertainty, and why does it matter for drug discovery?

- Aleatoric uncertainty is related to the inherent noise in the data. For example, it could stem from measurement errors in molecular property assays. This type of uncertainty cannot be reduced by collecting more data.

- Epistemic uncertainty is related to the model's lack of knowledge, often due to insufficient training data in certain regions of the chemical space. This uncertainty can be reduced by collecting more relevant data [10].

In drug discovery, distinguishing between the two is crucial. A model with high epistemic uncertainty for a given molecule indicates that it is an OoD sample, and its prediction should not be trusted. Techniques like evidential deep learning can capture both types of uncertainty, providing a more reliable confidence measure for predictions [10].

Experimental Protocols & Data

Protocol: Evaluating the AttFpPost Model for Reducing Overconfident Predictions

This protocol outlines the procedure for evaluating an evidential deep learning model designed to reduce overconfident errors on out-of-distribution samples in molecular property classification [10].

1. Objective: To validate that the AttFpPost model effectively reduces overconfident mispredictions compared to a traditional Softmax-based model, especially on OoD samples.

2. Materials/Reagents:

| Item | Function/Specification |

|---|---|

| Datasets | Synthetic dataset (for controlled evaluation), ADMET-specific datasets, and ligand-based virtual screening (LBVS) datasets [10]. |

| Software Framework | Deep learning framework (e.g., PyTorch or TensorFlow) with support for normalizing flows [10]. |

| Baseline Model | A vanilla model using the Softmax function for classification (e.g., AttFp without PostNet) [10]. |

| Evaluation Metric | Rate of Overconfident False (OF) predictions, early enrichment capability in LBVS, Brier Score for calibration [10]. |

3. Methodology:

- Model Architecture:

- Global Feature Extraction: Use the Attentive FP (AttFp) framework as the backbone to generate molecular representations.

- Uncertainty Estimation Module: Replace the standard Softmax output layer with the Posterior Network (PostNet), which uses a normalizing flow to model the probability distribution in the latent space. This enhances the model's ability to estimate epistemic uncertainty [10].

- Experimental Scenarios:

- Synthetic Experiment: Train both AttFpPost and the baseline model on a carefully designed synthetic dataset. Evaluate their predictions on OoD samples deliberately excluded from the training domain.

- ADMET Prediction: Train and validate models on real-world ADMET property datasets.

- Ligand-Based Virtual Screening (LBVS): Assess the model's early enrichment capability, which measures its ability to identify active compounds early in a ranked list [10].

- Evaluation:

- Quantify and compare the number of OF predictions (where the model is highly confident but incorrect) between AttFpPost and the baseline.

- Compare the Brier Score, where a lower score indicates better-calibrated probabilities [10].

4. Expected Outcome: The AttFpPost model is expected to demonstrate a statistically significant reduction in OF predictions and improved early enrichment in LBVS, confirming its superior uncertainty estimation and robustness against overfitting on OoD samples [10].

Protocol: Applying ACS for Multi-Task Learning with Imbalanced Data

This protocol describes using the Adaptive Checkpointing with Specialization (ACS) method to train a multi-task graph neural network on datasets with severe task imbalance, thereby mitigating negative transfer [1].

1. Objective: To achieve accurate molecular property prediction across multiple tasks, even for tasks with very few labeled samples (e.g., ~29 samples), by preventing negative transfer.

2. Materials/Reagents:

| Item | Function/Specification |

|---|---|

| Datasets | Multi-task benchmarks (e.g., ClinTox, SIDER, Tox21) or custom datasets with imbalanced tasks. Use Murcko-scaffold splitting for evaluation [1]. |

| Model Architecture | A Graph Neural Network (GNN) backbone based on message passing, with task-specific Multi-Layer Perceptron (MLP) heads [1]. |

| Training Scheme | Adaptive Checkpointing with Specialization (ACS) code implementation. |

3. Methodology:

- Model Setup:

- A single, shared GNN backbone learns general-purpose molecular representations.

- Each prediction task has a dedicated MLP head that takes the backbone's latent representations as input.

- Training with ACS:

- Train the entire model (shared backbone + all task heads) on the multi-task dataset.

- Independently monitor the validation loss for each task throughout the training process.

- For each task, checkpoint (save) the specific backbone-head pair at the training epoch where that task's validation loss is minimized.

- Evaluation:

- After training, the final model for each task is its specialized checkpointed backbone-head pair.

- Compare the performance of ACS against baselines like Single-Task Learning (STL) and standard MTL without checkpointing. The key is to show improved performance on low-data tasks without sacrificing performance on high-data tasks [1].

4. Expected Outcome: ACS will match or surpass the performance of state-of-the-art supervised methods, demonstrating robust performance on tasks with ultra-low data (e.g., 29 samples) by effectively mitigating the negative transfer that plagues conventional MTL [1].

The Scientist's Toolkit: Key Research Reagents & Materials

The following table details essential computational "reagents" and materials for conducting research on overfitting in molecular property prediction.

| Item | Brief Explanation & Function |

|---|---|

| Evidential Deep Learning (EDL) | A class of deep learning methods that model uncertainty by placing a higher-order distribution over the predictions of a neural network, avoiding the computational cost of Bayesian methods [10]. |

| Posterior Network (PostNet) | A specific EDL architecture that uses normalizing flows to model the latent distribution of data, providing high-quality uncertainty estimation for classification tasks [10]. |

| Normalizing Flow | A technique used in PostNet to transform a simple probability distribution into a complex one by applying a series of invertible transformations. It enhances the model's density estimation capabilities [10]. |

| Adaptive Checkpointing with Specialization (ACS) | A training scheme for multi-task GNNs that combats negative transfer by checkpointing the best model parameters for each task individually during training [1]. |

| Graph Neural Network (GNN) | A type of neural network that directly operates on the graph structure of a molecule, making it the standard architecture for molecular representation learning [1]. |

| Brier Score | A proper scoring rule that measures the accuracy of probabilistic predictions. It is the mean squared difference between the predicted probability and the actual outcome (0 or 1). Lower scores are better [10]. |

| Murcko-scaffold Split | A method of splitting a molecular dataset into training and test sets based on the molecular scaffold (core structure). This provides a more challenging and realistic estimate of a model's ability to generalize to novel chemotypes compared to random splitting [1]. |

Frequently Asked Questions

Q1: Why does my model, which achieves over 95% accuracy on my test set, perform poorly when given new, real-world data? This is a classic sign of overfitting and a distribution shift. Standard random train-test splits often create in-distribution test sets that share similar statistical properties with the training data. Your model has likely memorized these patterns instead of learning generalizable principles. Real-world data often comes from a different distribution (out-of-distribution, or OOD), causing the model's performance to drop significantly [13] [14].

Q2: How can I quickly test if my model is capable of learning a meaningful task and not just memorizing? A common debugging practice is to attempt to overfit a very small dataset (e.g., 5-10 samples). A reasonably sized model should be able to memorize this small batch and achieve near-zero loss. If it cannot, this often indicates a bug in the model architecture or training loop rather than a lack of model capacity [13].

Q3: What are the most effective strategies to prevent overfitting when working with a small molecular dataset? Key strategies include:

- Regularization: Applying techniques like L1 or L2 regularization, which penalize complex models by adding a term to the loss function, forcing the network to learn simpler and more robust features [14].

- Dropout: Randomly ignoring a subset of neurons during training, which prevents the network from becoming too dependent on any single neuron and encourages a more distributed representation [14].

- Data Augmentation: "Creating" new training samples by applying realistic transformations to your existing data. For molecular data, this could include valid SMILES string variations or small, structure-preserving perturbations [14].

- Early Stopping: Halting the training process when performance on a held-out validation set stops improving, which prevents the model from learning noise in the training data [14].

Q4: What is "transduction" in the context of OOD property prediction? Transduction is an approach where the prediction for a new test sample is made based on its relationship to known training samples. Instead of learning a function that maps a material's structure directly to a property, a transductive model learns how property values change as a function of the difference between materials in representation space. This can enable better extrapolation to OOD property values [15].

Troubleshooting Guides

Problem: Poor OOD Generalization in Molecular Property Prediction

This guide addresses the issue where a predictive model fails to maintain accuracy on data outside its training distribution, a critical challenge in materials and drug discovery where the goal is often to find molecules with better properties than those already known.

Diagnosis Steps:

- Perform a Distribution Analysis: Compare the property value distributions of your training set and your real-world/test set. If the test set contains values outside the range or in underrepresented regions of the training distribution, you are dealing with an OOD problem [15].

- Check for "Shortcuts": Analyze your model's attention or feature importance to see if it is relying on spurious correlations in the training data that may not hold in the wider chemical space.

- Benchmark with OOD Splits: Instead of a random split, deliberately split your data so that certain property value ranges or structural scaffolds are absent from the training set and present only in the test set. Evaluate your model's performance on this challenging split [15].

Solutions:

- Solution 1: Implement a Transductive Learning Method

Adopt advanced methods like the Bilinear Transduction model, which reframes the prediction problem. It learns to predict a property based on a known training example and the difference in representation space between that example and the new sample [15].

- Experimental Protocol:

- Representation: Encode your molecular structures into a continuous representation (e.g., using a graph neural network).

- Training: For each pair of training samples (i, j), the model learns to predict the property difference (ΔPij) based on their representation difference (ΔRij).

- Inference: To predict the property of a new test molecule, select a similar training molecule, compute their representation difference, and use the model to predict the property difference, which is then added to the known training property.

- Expected Outcome: This method has been shown to significantly improve OOD prediction. For example, in materials and molecular datasets, it improved the True Positive Rate (TPR) of OOD classification by 3x and 2.5x, respectively, compared to non-transductive baselines [15].

- Experimental Protocol:

- Solution 2: Enhance Model Regularization and Data Strategy

Strengthen your model's generalizability by making it harder to overfit the training data.

- Experimental Protocol:

- Architecture: Integrate dropout layers and L2 weight regularization into your neural network. For a CNN, this can be added to both convolutional and dense layers [14].

- Data: Employ a data augmentation strategy specific to your molecular representation (e.g., SMILES, graph). Use early stopping by monitoring loss on a validation set to terminate training once performance plateaus [14].

- Expected Outcome: These measures will reduce the gap between training and validation/test accuracy, leading to a more robust model that performs better on unseen data, though it may still struggle with extreme OOD extrapolation [14].

- Experimental Protocol:

Problem: Model Fails to Overfit a Small Training Subset

If your model cannot achieve low loss on a very small dataset, it suggests a fundamental issue with the training setup rather than the model's capacity for the task.

Diagnosis Steps:

- Verify Data Loading: Ensure the data and labels are being loaded and passed to the model correctly.

- Check Loss Function: Confirm the loss function is appropriate for the task (e.g., cross-entropy for classification, MSE for regression).

- Inspect Optimization: Verify that the model's weights are being updated by checking the gradient flow. A common issue is an incorrectly set or overly high learning rate.

Solutions:

- Solution: Sanity Check and Debug the Training Loop

This is a diagnostic procedure to isolate the problem [13].

- Experimental Protocol:

- Create a Mini Dataset: Randomly select 5-10 samples from your training set.

- Simplify the Model: Temporarily reduce the model's size or remove strong regularization (like high dropout) to ensure sufficient capacity.

- Train to Zero Loss: Run the training for a large number of epochs (e.g., 1000). A correctly implemented model should be able to drive the loss on this tiny set very close to zero.

- Expected Outcome: If the loss does not decrease, it strongly indicates a bug in the code (e.g., in the data pipeline, loss calculation, or backpropagation). If it does overfit, the issue likely lies with the full dataset or model hyperparameters [13].

- Experimental Protocol:

Quantitative Data on OOD Prediction Performance

The following table summarizes the performance of different models on various material property prediction tasks, highlighting the effectiveness of a transductive OOD method compared to established baselines. Lower values are better for Mean Absolute Error (MAE).

Table 1: Performance Comparison on Materials Property Datasets (MAE ± Std Dev) [15]

| Dataset | Property | #Samples | Ridge Reg. | MODNet | CrabNet | Transductive (Ours) |

|---|---|---|---|---|---|---|

| AFLOW | Band Gap [eV] | 14,123 | 2.59 ± 0.03 | 2.65 ± 0.04 | 1.47 ± 0.03 | 1.51 ± 0.04 |

| AFLOW | Bulk Modulus [GPa] | 2,740 | 74.0 ± 3.8 | 93.06 ± 3.7 | 59.25 ± 3.2 | 47.4 ± 3.4 |

| AFLOW | Shear Modulus [GPa] | 2,740 | 0.69 ± 0.03 | 0.78 ± 0.04 | 0.55 ± 0.02 | 0.42 ± 0.02 |

| Matbench | Band Gap [eV] | 2,154 | 6.37 ± 0.28 | 3.26 ± 0.13 | 2.70 ± 0.13 | 2.54 ± 0.16 |

| Matbench | Yield Strength [MPa] | 312 | 972 ± 34 | 731 ± 82 | 740 ± 49 | 591 ± 62 |

| MP | Bulk Modulus [GPa] | 6,307 | 151 ± 14 | 60.1 ± 3.9 | 57.8 ± 4.2 | 45.8 ± 3.9 |

Experimental Workflow Visualization

The following diagram illustrates the core workflow for troubleshooting and improving OOD generalization in molecular property prediction.

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 2: Essential Components for OOD Molecular Property Prediction Research

| Item | Function & Explanation |

|---|---|

| OOD Benchmark Datasets (e.g., from AFLOW, Matbench) | Curated datasets with predefined splits for testing extrapolation to property values or structural classes not seen during training. Critical for rigorous evaluation [15]. |

| Graph Neural Network (GNN) | A type of neural network that operates directly on graph structures, ideal for representing molecules where atoms are nodes and bonds are edges. |

| Transductive Model Framework | A software framework that implements transductive prediction, enabling models to reason about differences between samples for improved extrapolation [15]. |

| Regularization Techniques (L1/L2, Dropout) | Methods used during training to prevent overfitting by discouraging over-reliance on any single feature or neuron, promoting simpler models [14]. |

| Data Augmentation Library | A set of functions for generating valid variations of molecular data (e.g., SMILES augmentation, graph perturbations) to artificially expand training data [14]. |

| Automated Hyperparameter Optimization Tool | Software (e.g., Optuna) to systematically search for the best model parameters, which is crucial for balancing model complexity and generalization. |

Frequently Asked Questions (FAQs)

FAQ 1: What makes CYP2B6 and CYP2C8 particularly challenging targets for predictive modeling? The primary challenge is the severe scarcity of reliable experimental inhibition data. While other major CYP isoforms have thousands of data points, CYP2B6 and CYP2C8 datasets are orders of magnitude smaller. Furthermore, these small datasets often suffer from significant label imbalance, where the number of confirmed inhibitors is much lower than non-inhibitors, increasing the risk of model overfitting [16].

FAQ 2: My model for CYP2B6 inhibition achieves 95% training accuracy but performs poorly on new compounds. What is the most likely cause? This is a classic sign of overfitting, where the model has memorized the noise and specific patterns of the small training set instead of learning generalizable rules. This is a common pitfall when using complex deep learning models on limited data, such as the CYP2B6 dataset which contained only 462 compounds [16] [17].

FAQ 3: What are the most effective strategies to build a robust model when I have less than 500 compounds, like in the CYP2B6 case? The most successful strategy is to leverage data from related tasks. Multi-task learning (MTL) is particularly effective, as it allows a model to learn simultaneously from a large dataset (e.g., CYP3A4 with over 9,000 compounds) and a small target dataset (e.g., CYP2B6). This technique, especially when combined with data imputation for missing values, has been shown to significantly improve prediction accuracy for small datasets [16] [18].

FAQ 4: How can I quantify whether my model's predictions for a new molecule are reliable? You should evaluate the molecule against your model's Applicability Domain (AD). The AD defines the chemical and response space where the model makes reliable predictions. If the new molecule's structural features are very different from those in the training set (i.e., it falls outside the AD), the prediction should be treated with low confidence. This is crucial for avoiding false leads in virtual screening [17].

Troubleshooting Guides

Problem: Overfitting on Small CYP Datasets

Symptoms:

- High accuracy on training data but low accuracy on validation/test sets.

- Drastic performance drop when predicting compounds with novel scaffolds.

Solutions:

- Adopt Multi-Task Learning (MTL): Instead of building a single model for one CYP isoform, train a single model to predict inhibition for multiple CYP isoforms simultaneously. This forces the model to learn generalized features that are predictive across related tasks.

- Apply Strong Regularization:

- Use Early Stopping: Monitor the validation loss during training. Stop the training process as soon as the validation loss stops improving, preventing the model from over-optimizing on the training data [19].

Problem: Severe Class Imbalance in a Small Dataset

Symptoms:

- Model bias towards the majority class (e.g., predicting "non-inhibitor" for most compounds).

- Poor recall for the minority class (e.g., inability to identify true inhibitors).

Solutions:

- Data Resampling:

- Oversampling: Randomly duplicate samples from the minority class (inhibitors) in the training set.

- Undersampling: Randomly remove samples from the majority class (non-inhibitors) to balance the distribution.

- Loss Function Modification:

- Use a weighted loss function (e.g., weighted binary cross-entropy) that assigns a higher penalty for misclassifying samples from the underrepresented inhibitor class. This ensures the model pays more attention to learning the inhibitor patterns [16].

Problem: Incorporating Large but Related Datasets with Missing Labels

Symptoms:

- A combined dataset for multiple CYPs has abundant data for some isoforms but >90% missing labels for others like CYP2B6 and CYP2C8 [16].

- Standard MTL fails due to the extreme task imbalance.

Solutions:

- Implement Data Imputation: Use techniques to fill in missing inhibition labels. This creates a more complete dataset for MTL, allowing the model to better leverage the shared molecular representations across all tasks. One study showed that MTL with data imputation provided a significant performance boost for CYP2B6 and CYP2C8 prediction [16].

- Apply Advanced MTL Schemes: Use methods like Adaptive Checkpointing with Specialization (ACS). This technique trains a shared backbone network but saves task-specific model checkpoints when each task's validation loss is at a minimum. This mitigates "negative transfer," where updates from data-rich tasks harm the performance of data-poor tasks [1].

Table 1: Summary of Dataset Sizes and Challenges for CYP Isoforms

| CYP Isoform | Number of Compounds | Key Challenge | Recommended Technique |

|---|---|---|---|

| CYP2B6 | 462 [16] | Ultra-small, imbalanced dataset | Multi-task learning with data imputation [16] |

| CYP2C8 | 713 [16] | Ultra-small, imbalanced dataset | Multi-task learning with data imputation [16] |

| CYP3A4 | 9,263 [16] | Large, can be used as source data | Use as complementary task in MTL [16] |

| CYP2C9 | 5,287 [16] | Large, can be used as source data | Use as complementary task in MTL [16] |

Experimental Protocols

Protocol: Multi-Task Learning with Graph Neural Networks for CYP Inhibition Prediction

Objective: To accurately predict the inhibition of data-scarce CYP isoforms (e.g., CYP2B6, CYP2C8) by jointly training a model with data-rich related CYP isoforms.

Methodology:

- Data Curation:

- Collect IC50 data from public databases like ChEMBL and PubChem for seven CYP isoforms (1A2, 2B6, 2C8, 2C9, 2C19, 2D6, 3A4).

- Apply a consistent threshold (e.g., pIC50 ≥ 5, equivalent to IC50 ≤ 10 µM) to label compounds as "inhibitor" or "non-inhibitor" [16].

- Handle missing labels using techniques like loss masking or data imputation [16].

- Model Architecture:

- Shared Backbone: A Graph Convolutional Network (GCN) that takes the molecular graph of a compound as input and generates a shared feature representation.

- Task-Specific Heads: Separate Multi-Layer Perceptrons (MLPs) for each CYP isoform that take the shared features and make the final binary classification [16] [1].

- Training Scheme (ACS):

- Train the entire model (shared backbone + all heads) simultaneously.

- The total loss is the sum of the binary cross-entropy losses for each task.

- Monitor the validation loss for each task individually.

- For each task, save a checkpoint of the shared backbone and its specific head whenever a new minimum validation loss is achieved for that task [1].

- Inference:

- To predict inhibition for a specific CYP, use the specialized checkpoint (backbone + head) saved for that task during training.

The following workflow diagram illustrates the ACS training process:

MTL-ACS Workflow for CYP Inhibition Prediction

Protocol: Data Augmentation for Molecular Datasets

Objective: Artificially expand the effective size of a small molecular dataset to improve model generalization.

Methodology:

- Identify Valid Transformations: For molecular data, augmentation must create new, plausible structures. Common techniques include:

- Atom/Bond Masking: Randomly mask a portion of atoms or bonds in a molecular graph, forcing the model to learn from incomplete information (a form of self-supervised learning) [18].

- Stereo Isomer Generation: Generate different stereoisomers of a chiral compound.

- Tautomer Generation: Generate different tautomeric forms of the same molecule.

- Apply Augmentation: For each molecule in the original small dataset, generate a defined number of augmented variants. This creates a larger and more diverse training set.

- Model Training: Train the model on the combined original and augmented dataset. The augmented data acts as a regularizer, preventing the model from overfitting to the exact structures in the original small set [18].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for CYP Inhibition Research

| Reagent / Resource | Function / Description | Example Use in Research |

|---|---|---|

| ChEMBL Database | A large-scale bioactivity database containing curated IC50 values for drug targets, including CYP isoforms [16]. | Primary source for compiling training and test datasets for CYP inhibition prediction models [16]. |

| PubChem Bioassay | A public repository of biological activity data from high-throughput screening efforts [17]. | Supplementary source for CYP inhibition data and compound structures [16]. |

| Graph Convolutional Network (GCN) | A deep learning model that operates directly on molecular graph structures, learning features from atoms and bonds [16]. | The core architecture for building multi-task prediction models that learn meaningful molecular representations [16] [1]. |

| UMAP (Uniform Manifold Approximation and Projection) | A dimensionality reduction technique for visualizing high-dimensional data in 2D or 3D [16]. | Used to visualize the chemical space of a dataset and identify clusters or outliers, helping to define the model's Applicability Domain [16]. |

In molecular property prediction, the reliability of a machine learning model is fundamentally constrained by the quality of its training data. Data misalignment—a divergence between the data's representation and the real-world context—poses a significant threat, particularly through inconsistent expert annotations. These inconsistencies introduce a form of "noise" that models can learn, compromising their ability to generalize to new, unseen molecules. For researchers working with small datasets, this peril is magnified, as the model has fewer examples from which to discern true signal from annotator-induced noise, directly impacting the pace and accuracy of AI-driven materials discovery and drug development [20] [1].

This guide provides troubleshooting resources to help researchers identify, diagnose, and mitigate the risks associated with inconsistent annotations in their experiments.

FAQs: Annotation Inconsistencies and Model Reliability

1. What are the primary sources of annotation inconsistency in molecular science? Even highly experienced experts can produce inconsistent labels due to several factors [20]:

- Inherent Subjectivity and Bias: Judgment calls in interpreting complex or ambiguous data.

- Human Error and "Slips": Mistakes due to cognitive overload or lapses in concentration.

- Insufficient Information: Poor quality data or unclear annotation guidelines.

- Interrater Variability: Different experts applying slightly different criteria for the same task.

2. How does inconsistent annotation differ from general "noisy data"? While both are data quality issues, inconsistent annotations are a specific form of noise originating from the human labelers themselves. This is particularly problematic because it represents a "shifting ground truth," where the ideal output a model should learn is not fixed, making it difficult for the model to establish a reliable mapping from input to output [20].

3. Why are small datasets in molecular property prediction especially vulnerable? With limited data, the influence of each individual annotation is magnified. A handful of inconsistent labels can significantly skew the learned pattern, leading the model to overfit to the annotation errors rather than the underlying chemistry or biology. This can render multi-task learning (MTL) strategies less effective due to negative transfer between tasks [1].

4. What are the observable symptoms of a model compromised by annotation inconsistencies?

- Poor Generalization: High performance on training data but significantly lower performance on validation or test sets, a classic sign of overfitting to the noisy training labels [21] [11].

- Low Inter-Model Agreement: Models trained on the same data but annotated by different experts produce divergent predictions on the same input [20].

- Unexplained Model Complexity: The model may learn overly complex rules to account for the contradictions in the training labels [20].

5. Beyond collecting more data, what are the key strategies to mitigate this issue?

- Robust Annotation Protocols: Establish clear, detailed guidelines and train all annotators thoroughly.

- Consensus Mechanisms: Use methods beyond simple majority voting, such as assessing annotation "learnability" to build optimal models [20].

- Advanced Training Schemes: Employ techniques like Adaptive Checkpointing with Specialization (ACS) for multi-task models, which helps mitigate negative transfer from imbalanced or noisy tasks [1].

- Regularization: Apply techniques like L1/L2 regularization or dropout to prevent the model from becoming overly complex and fitting the annotation noise [22] [11].

Troubleshooting Guides

Guide 1: Diagnosing Annotation Inconsistency in Your Dataset

Follow this workflow to assess the quality and consistency of your annotated dataset.

Objective: To quantify the level of disagreement among annotators and identify its sources. Materials:

- Your raw, unlabeled molecular dataset.

- At least 2-3 domain experts (e.g., senior scientists, experienced researchers).

- IAA calculation software (e.g., using

sklearnornltkin Python).

Protocol:

- Independent Annotation: Provide each expert with the same set of molecules and a detailed annotation guideline. Ensure they work independently to assign property labels.

- Calculate Inter-Annotator Agreement (IAA): Use statistical measures to quantify consistency.

- For two annotators: Use Cohen's Kappa (κ).

- For more than two annotators: Use Fleiss' Kappa (κ).

- Interpret IAA Scores: Refer to the standard interpretation scale for these metrics [20]:

- 0.0 – 0.20: None to slight agreement.

- 0.21 – 0.39: Minimal agreement.

- 0.40 – 0.59: Weak agreement.

- 0.60 – 0.79: Moderate agreement.

- 0.80 – 1.00: Strong to almost perfect agreement.

- Root Cause Analysis: If scores indicate "minimal" or "weak" agreement, conduct interviews with annotators to understand discrepancies. Are the guidelines ambiguous? Is the molecular property inherently subjective?

Guide 2: Mitigating Inconsistency with Adaptive Checkpointing (ACS)

For projects using Multi-Task Learning (MTL), implement this training scheme to protect tasks from negative transfer caused by imbalanced or noisily-annotated tasks.

Objective: To balance inductive transfer with task-specific specialization, preserving the best model for each task individually. Materials:

- A multi-task graph neural network (GNN) architecture with a shared backbone and task-specific heads [1].

- An imbalanced molecular property dataset (e.g., where some properties have very few labeled samples).

Protocol:

- Model Architecture: Set up a GNN as the shared backbone to learn general molecular representations. Attach separate Multi-Layer Perceptron (MLP) "heads" for each specific property prediction task.

- Training with Validation Monitoring: Train the entire model on all tasks simultaneously. Crucially, monitor the validation loss for each task individually throughout the training process.

- Adaptive Checkpointing: For each task, implement a checkpointing rule: whenever the validation loss for that task reaches a new minimum, save the state of the shared backbone and its corresponding task-specific head.

- Obtain Specialized Models: After training is complete, you will have a set of saved checkpoints. The final model for any given task is the combination of the shared backbone and its specific head from its best-validation checkpoint [1].

Quantitative Impact of Annotation Inconsistency

The table below summarizes key findings from a real-world study on the impact of expert annotation inconsistencies in a clinical setting, which is highly analogous to molecular property prediction with expert labels [20].

Table 1: Impact of Expert Annotation Inconsistencies on Model Performance

| Metric | Internal Validation (on QEUH ICU data) | External Validation (on HiRID dataset) |

|---|---|---|

| Inter-Annotator Agreement | Fleiss' κ = 0.383 (Fair agreement) | Not Applicable |

| Inter-Model Agreement | Not Reported | Average pairwise Cohen’s κ = 0.255 (Minimal agreement) |

| Agreement on Discharge Decisions | Not Applicable | Fleiss' κ = 0.174 (Slight agreement) |

| Agreement on Mortality Predictions | Not Applicable | Fleiss' κ = 0.267 (Minimal agreement) |

| Key Finding | Inconsistencies are present even in a controlled setting. | Models built from different experts' annotations show low consensus when applied to new data. |

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 2: Essential Resources for Mitigating Annotation and Overfitting Issues

| Item / Solution | Function / Description | Relevance to Small Datasets |

|---|---|---|

| K-fold Cross-Validation | A resampling procedure that splits data into 'k' groups to robustly estimate model performance and generalization [21] [22]. | Maximizes the use of limited data for both training and validation, providing a more reliable performance estimate. |

| Adaptive Checkpointing (ACS) | A training scheme for MTL that checkpoints model parameters to avoid negative transfer from imbalanced or noisy tasks [1]. | Protects tasks with very few labeled samples from being overwhelmed by updates from larger, potentially noisier tasks. |

| L1 / L2 Regularization | Techniques that add a penalty to the model's loss function to discourage overcomplexity and prevent overfitting to noise [22] [11]. | Constrains models from memorizing the small dataset, including annotation errors, by promoting simpler models. |

| Data Augmentation | The process of artificially increasing the size and diversity of a training dataset by creating modified versions of existing data [22]. | In molecular contexts, this could involve generating valid analogous molecular structures or using SMILES augmentation to create more examples. |

| Learnability-based Consensus | A method that selects annotations for a consensus model based on how well they can be learned, rather than simple majority vote [20]. | Helps build an optimal model from conflicting expert labels by focusing on consistent, learnable patterns. |

Beyond Basic Regularization: Advanced Architectures for Low-Data Regimes

Frequently Asked Questions (FAQs)

1. My multi-task model performs worse than single-task models. What is happening? You are likely experiencing Negative Transfer (NT). This occurs when tasks are not sufficiently related or when task imbalances cause one task to interfere with the learning of another. The solution is to implement task selection strategies or use training methods like Adaptive Checkpointing with Specialization (ACS), which saves the best model parameters for each task individually during training to prevent harmful interference [1].

2. How do I choose which tasks to learn together in an MTL model? Select tasks that are related or share common underlying factors. For molecular properties, this could be different ADMET endpoints influenced by similar biochemical mechanisms. You can quantitatively analyze task relationships by building a task association network—train models on individual and pairwise tasks to measure how learning one task affects performance on another [23].

3. How can I design my MTL model architecture to best share knowledge? The most common and effective approach is hard parameter sharing. This uses a shared backbone (like a Graph Neural Network for molecules) to learn a general representation, with task-specific heads (like small neural networks) that make final predictions for each property. This balances shared knowledge with task-specific needs [1] [24] [25].

4. My multi-task model converges, but performance is unbalanced across tasks. How can I fix this? This is a common issue addressed by loss balancing methods. Instead of using a simple sum of losses for each task, advanced techniques dynamically adjust the weight of each task's loss during training. This ensures no single task dominates the learning process and leads to more balanced and accurate models [25].

5. Can MTL really help when I have very little data for my primary task? Yes, this is a key strength of MTL. By leveraging data from related auxiliary tasks, an MTL model can learn a more robust data representation. For example, the UMedPT model in biomedical imaging maintained high performance on in-domain classification tasks using only 1% of the original training data by leveraging knowledge from other tasks [26].

Troubleshooting Guide

Issue 1: Diagnosing and Mitigating Negative Transfer

Symptoms: The MTL model's performance on one or more tasks is significantly worse than its single-task counterpart.

Diagnosis Steps:

- Check Task Relatedness: Calculate the correlation between task labels or their data distributions. Low correlation may signal poor relatedness [1].

- Analyze Gradient Conflicts: During training, monitor if gradients from different tasks point in opposing directions for shared parameters, which indicates optimization conflict [1].

Solutions:

- Implement ACS (Adaptive Checkpointing with Specialization):

- Use a shared GNN backbone with task-specific heads.

- Independently monitor the validation loss for each task.

- For each task, save a checkpoint of the model whenever its validation loss hits a new minimum. This preserves the best shared representation for each task before negative interference occurs [1].

- Adopt a "One Primary, Multiple Auxiliaries" Paradigm:

- Formally select optimal auxiliary tasks for your primary task of interest.

- Use status theory from network science to identify "friendly" auxiliary tasks.

- Apply maximum flow algorithms to the task association network to estimate the potential performance gain for the primary task, and select the auxiliary set that maximizes this gain [23].

Issue 2: Managing Imbalanced and Sparse Data Across Tasks

Symptoms: The model performs well on tasks with abundant data but poorly on tasks with few labeled samples.

Solutions:

- Apply Loss Masking: Implement a loss function that ignores missing labels for a given task. This allows you to fully utilize all available data without imputation [1].

- Use Dynamic Loss Weighting: Employ methods that automatically adjust the contribution of each task's loss based on factors like:

- The task's homoscedastic uncertainty (task-dependent uncertainty).

- The rate of change (gradient magnitude) of each task. This prevents high-data tasks from dominating the learning process [25].

Performance of Different MTL Training Schemes on Molecular Benchmarks

The following table compares the average performance of different training schemes on standard molecular property prediction benchmarks (like ClinTox, SIDER, and Tox21), demonstrating the effectiveness of ACS in mitigating negative transfer [1].

| Training Scheme | Description | Average Performance (AUC/R²) |

|---|---|---|

| Single-Task Learning (STL) | Independent model for each task | Baseline |

| MTL (no checkpointing) | Standard joint training, shared parameters | +3.9% vs. STL |

| MTL with Global Loss Checkpointing | Saves one model when total loss is lowest | +5.0% vs. STL |

| ACS (Adaptive Checkpointing) | Saves best task-specific parameters | +8.3% vs. STL |

Issue 3: Adapting MTL for Heterogeneous Datasets

Symptoms: You have multiple separate datasets, each with labels for different tasks, and cannot build a single multi-label dataset.

Solution: Implement an Inter-Dataset MTL Framework (e.g., UNITI)

- Sequential Dataset Training: Instead of mixing data, train on one dataset at a time in sequence. This reduces catastrophic forgetting and feature interference [27].

- Feature-Level Knowledge Distillation:

- Train a separate "teacher" model on each individual dataset.

- Train a single "student" model to mimic the features extracted by all teacher models. This allows the student to integrate knowledge from all datasets without needing co-labeled examples [27].

Experimental Protocol: Implementing an MTL Workflow for Molecular Property Prediction

This protocol outlines the key steps for setting up a robust MTL experiment to predict molecular properties with limited data.

1. Task Selection and Data Preparation

- Objective: Define a primary task (e.g., predicting solubility) with scarce data.

- Procedure:

- Gather Candidate Tasks: Collect datasets for other molecular properties (e.g., permeability, metabolic stability, toxicity) that may share underlying structural determinants with solubility.

- Quantify Task Association: Pre-train single-task models and pairwise MTL models. Construct a task association network where nodes are tasks and link weights represent the performance gain from joint training [23].

- Select Auxiliary Tasks: Use the status theory and maximum flow algorithm on the association network to select the most beneficial auxiliary tasks for the primary task [23].

2. Model Architecture Setup

- Objective: Build a model that shares low-level features while allowing for task-specific adjustments.

- Procedure:

- Shared Backbone: Use a Graph Neural Network (e.g., Message Passing Neural Network) to encode the molecular graph. This GNN will learn a general-purpose representation of the molecules [28] [1].

- Task-Specific Heads: Attach separate, smaller neural networks (e.g., Multi-Layer Perceptrons) to the shared backbone. Each head will take the shared representation and make predictions for one specific property [1].

3. Training with Dynamic Balancing and Checkpointing

- Objective: Train the model effectively while preventing negative transfer and performance imbalance.

- Procedure:

- Initialize: Use standard initialization or pre-trained weights for the GNN.

- Choose a Loss Weighting Method: Implement a dynamic weighting strategy (e.g., uncertainty weighting) to automatically balance the loss terms.

- Implement ACS:

- For each task, maintain a variable for its best validation loss.

- During training, after each epoch, evaluate the model on the validation set for every task.

- If a task's validation loss is a new record, checkpoint the entire model (shared backbone + that task's specific head).

- Upon completion, you will have a specialized model for each task [1].

4. Model Evaluation and Interpretation

- Objective: Assess performance and gain insights into important molecular features.

- Procedure:

- Evaluate: Test the final checkpointed model for each task on a held-out test set. Compare against single-task baselines.

- Interpret (for GNNs): Use attention mechanisms or gradient-based techniques to analyze which atomic substructures the model deems important for each property prediction. For example, MTGL-ADMET uses atom aggregation weights to highlight crucial substructures related to specific ADMET properties [23].

MTL Performance in Data-Scarce Scenarios

This table summarizes results from various studies showing how MTL maintains performance with significantly less data for the primary task.

| Application Domain | Model / Strategy | Data Usage for Primary Task | Performance Result |

|---|---|---|---|

| Biomedical Imaging | UMedPT (Foundational Model) | 1% of training data | Matched best ImageNet-pretrained model performance [26] |

| Molecular Property Prediction | ACS on Fuel Ignition Data | 29 labeled samples | Achieved accurate predictions, unattainable by single-task learning [1] |

| Molecular Property Prediction | Hard Parameter Sharing & Loss Weighting | Varying reduced amounts | More accurate predictions with less computational cost vs. single-task [25] |

The Scientist's Toolkit: Essential Research Reagents for MTL Experiments

| Tool / Resource | Function / Description | Example Use in MTL |

|---|---|---|

| Graph Neural Network (GNN) | A neural network that operates directly on graph-structured data. | Serves as the shared backbone for learning universal molecular representations from molecular graphs [28] [1]. |

| Task Association Network | A graph where nodes are tasks and edges represent the benefit of training them together. | Used for the scientific selection of auxiliary tasks to maximize positive transfer to a primary task [23]. |

| Dynamic Loss Weighting Algorithm | An algorithm that automatically adjusts the weight of each task's loss during training. | Prevents model bias towards high-data tasks and ensures balanced optimization across all tasks [25]. |

| Adaptive Checkpointing (ACS) | A training scheme that saves the best model parameters for each task individually. | Mitigates negative transfer by preserving optimal shared representations for each task, despite gradient conflicts [1]. |

| Knowledge Distillation | A technique where a "student" model learns to mimic the outputs or features of a "teacher" model. | Enables inter-dataset MTL by transferring knowledge from dataset-specific teachers into a unified student model [27]. |

Workflow Diagram: Adaptive Checkpointing with Specialization (ACS)

Workflow Diagram: "One Primary, Multiple Auxiliaries" Task Selection

By integrating these strategies and tools, researchers can effectively leverage Multi-Task Learning to overcome data scarcity, build more robust and generalizable models, and accelerate discovery in molecular sciences and beyond.

Troubleshooting Guide: Common ACS Implementation Issues

This guide addresses specific, high-priority problems researchers may encounter when implementing the Adaptive Checkpointing with Specialization (ACS) method for molecular property prediction.

Q1: My model suffers from severe performance degradation on tasks with very few labels (e.g., less than 50 samples). What steps should I take?

This is a classic symptom of negative transfer, where updates from data-rich tasks interfere with the learning of data-scarce tasks. ACS is specifically designed to mitigate this.

- Diagnosis: Confirm the issue by comparing the task's validation loss in the ACS model against a single-task learning (STL) baseline. If ACS performance is significantly worse, negative transfer is likely occurring.

- Solution: Leverage ACS's core mechanism. The adaptive checkpointing should be saving the model parameters (backbone and head) specifically at the point of minimum validation loss for the affected task, insulating it from subsequent detrimental updates.

- Verification: Inspect the training logs and saved checkpoints. Ensure that for the low-data task, the training script correctly identifies and checkpoints the model at the appropriate epoch. The final specialized model for this task should be built from this specific checkpoint [29] [1].

Q2: During training, the validation loss for one task is highly unstable, while others learn smoothly. How can I stabilize it?

Unstable learning often stems from gradient conflicts between tasks with different complexities or data distributions.

- Diagnosis: Monitor the gradient norms for each task-specific head. A task with explosively large or oscillating gradient norms is likely causing instability.

- Solution:

- Gradient Clipping: Implement gradient clipping in your optimizer. This caps the magnitude of gradients during backpropagation, preventing unstable updates from one task from derailing the shared backbone [1].

- Task-Balanced Learning Rates: Consider using a smaller learning rate for the shared backbone and larger rates for the task-specific heads. This allows the shared representation to evolve more stably while giving heads the flexibility to specialize [1].

- Verification: After implementing gradient clipping, the unstable validation loss curve should show smaller oscillations and a more consistent downward trend.

Q3: After implementing ACS, the overall multi-task performance is on par with single-task learning, but does not exceed it. What might be wrong?

This suggests that the model is not effectively leveraging shared information across tasks, potentially due to low task-relatedness or implementation issues.

- Diagnosis: Check the correlation between the tasks in your dataset. ACS provides the most significant gains when tasks are related. Also, verify that your model architecture has sufficient capacity in the shared backbone to learn a useful general representation.

- Solution:

- Architecture Adjustment: If tasks are indeed related, consider increasing the capacity (e.g., more layers or hidden units) of the shared Graph Neural Network (GNN) backbone. A model that is too small cannot capture complex, shared patterns [1].

- Checkpointing Logic: Double-check the implementation of the adaptive checkpointing. Ensure that the best model for each task is saved independently based on its own validation loss, not a global average loss [29].

- Verification: Run a simple experiment on a dataset with known high task-relatedness (e.g., a benchmark from the original paper like Tox21) to confirm your ACS implementation can outperform STL [1].

Q4: My dataset has a high rate of missing labels for certain properties. How does ACS handle this?

ACS, like many MTL methods, uses a practical technique called loss masking.

- Explanation: During training, for each molecular sample, the loss is only computed for the tasks for which labels are available. The gradients for missing labels are masked out (set to zero), meaning they do not contribute to the parameter updates for that training step. This allows the model to use all available data fully without the need for imputation, which can introduce bias [1].

Frequently Asked Questions (FAQs)

Q: When should I choose ACS over a pre-trained model and fine-tuning approach?

A: The choice depends on your data context. ACS is a supervised multi-task learning method ideal when you have multiple related property prediction tasks and at least one task has extremely limited data (dozens of samples). Pre-trained models require large, unlabeled datasets for pre-training and can be great for initialization, but they may still struggle with domain-specific, sparse targets without significant fine-tuning data. ACS is designed to work reliably even with as few as two tasks, making it suitable for niche chemical domains where large-scale pre-training data is unavailable [1].

Q: How does ACS fundamentally differ from standard Multi-Task Learning (MTL) and MTL with Global Loss Checkpointing (MTL-GLC)?

A: The key difference lies in how model checkpoints are saved.

- Standard MTL trains a single shared backbone with task-specific heads and typically saves the final model or a checkpoint based on a single metric.

- MTL-GLC checkpoints the model when the average validation loss across all tasks reaches a minimum.

- ACS uses adaptive checkpointing, where it independently saves a specialized backbone-head pair for each task at the epoch where that specific task's validation loss is minimized.

This core mechanism allows ACS to shield each task from negative transfer by preserving its optimal parameters, even if continuing training would benefit other tasks but harm this one [1]. The following table summarizes the performance advantage of ACS over these baseline methods.

| Model | Core Checkpointing Strategy | Average Performance vs. STL | Key Advantage |

|---|---|---|---|

| Single-Task Learning (STL) | Each model saved at its best. | Baseline (0% improvement) | No negative transfer. |

| Standard MTL | Saves a single, final model. | +3.9% [1] | Basic parameter sharing. |

| MTL with Global Loss (MTL-GLC) | Saves one model when global average loss is lowest. | +5.0% [1] | Captures a globally optimal point. |

| ACS (Proposed Method) | Saves a specialized model per task at its individual best. | +8.3% [1] | Mitigates negative transfer; optimal for each task. |

Q: Can ACS be combined with techniques to prevent overfitting, which is a major concern in small datasets?

A: Yes, absolutely. Overfitting is a critical issue in low-data regimes, and ACS can be integrated with standard regularization techniques. The original ACS implementation and general machine learning practice suggest several complementary strategies [21] [30] [31]:

- Early Stopping: This is inherent to the ACS checkpointing process itself, as it saves models before they overfit to the training data for each task.

- Regularization: Applying L2 regularization (weight decay) to the model parameters.

- Dropout: Adding dropout layers within the GNN backbone or task-specific MLP heads.

- Data Augmentation: For molecular graphs, this could involve generating synthetic but valid molecular structures or using domain-specific transformations to artificially expand the training set [28] [30].

Experimental Protocols & Methodologies

Core ACS Training Workflow

The following diagram illustrates the key steps in the ACS training procedure.

Benchmarking Protocol: ClinTox Dataset

To quantitatively validate ACS against negative transfer, the original study used the ClinTox dataset with artificially induced task imbalance [1].

- Objective: To demonstrate that ACS effectively mitigates performance degradation on a task with ultra-low data.

- Dataset: ClinTox (1,478 molecules) with two binary classification tasks: FDA approval status (CT_TOX) and clinical trial failure due to toxicity (CT_TOX).

- Imbalance Induction: The dataset was modified to create a severe imbalance, where the FDA approval task had only 29 labeled samples, while the toxicity task used all available data.

- Compared Models:

- STL: Single-task learning as a baseline.

- MTL: Standard multi-task learning.

- MTL-GLC: MTL with checkpointing based on global validation loss.

- ACS: The proposed adaptive checkpointing with specialization.

- Evaluation Metric: The primary metric was the performance (e.g., ROC-AUC) on the low-data FDA approval task. ACS showed significant improvement, confirming its utility in the ultra-low data regime [1].

The table below details key computational tools and datasets used in developing and evaluating ACS for molecular property prediction.

| Item / Resource | Function / Description | Relevance to ACS Experiments |

|---|---|---|

| Graph Neural Network (GNN) | The shared backbone model that learns a general-purpose latent representation from molecular graphs [1]. | Core architectural component of ACS. Processes input molecules to create features for task-specific heads. |

| Multi-layer Perceptron (MLP) Heads | Task-specific neural network modules that take the shared GNN's output and make final property predictions [1]. | Enable specialization in the ACS architecture, allowing the model to tailor predictions for each property. |

| MoleculeNet Benchmarks | A collection of standardized molecular property prediction datasets (e.g., ClinTox, SIDER, Tox21) [1]. | Used for fair comparison and validation of ACS against other state-of-the-art supervised methods. |

| Sustainable Aviation Fuel (SAF) Dataset | A real-world, proprietary dataset of 15 physicochemical properties for fuel molecules [29] [1]. | Demonstrated the practical utility of ACS, achieving accurate predictions with as few as 29 labeled samples. |

| Murcko Scaffold Split | A method for splitting datasets based on molecular scaffolds, preventing data leakage and providing a more realistic evaluation [1]. | Used in benchmarking to ensure models are evaluated on structurally distinct molecules, not just random splits. |

| Loss Masking | A technique where the loss calculation ignores missing labels, allowing training to proceed with incomplete data [1]. | Critical for handling the pervasive issue of missing property labels in real-world molecular datasets. |

Troubleshooting Guide: Overcoming Critical Challenges

This guide addresses common pitfalls when using meta-learning frameworks like Meta-Mol for molecular property prediction, helping you diagnose issues related to overfitting, generalization, and model performance.

Q1: My model achieves near-perfect accuracy on my training tasks but fails on new, unseen tasks. What is happening?

This is a classic sign of meta-overfitting [32] [33]. Instead of learning a general strategy to adapt to new molecular tasks, your model has simply memorized the training tasks.

- Diagnosis: A large performance gap between your meta-training tasks (high accuracy) and meta-validation/meta-test tasks (low accuracy) [34] [32].

- Primary Cause: The model is solving the problem through "memorization" rather than "adaptation." In a non-mutually exclusive task setting, a single global function can fit all training tasks, removing the need for the model to learn how to use a new task's support set for quick adaptation [32].

- Solutions:

- Introduce Bayesian Uncertainty: Implement a Bayesian meta-learning strategy, as in Meta-Mol, which learns a probabilistic distribution of model weights rather than a single set of point estimates. This makes the model less certain about spurious patterns in the training tasks and improves generalization [35].

- Increase Task Diversity: Curate or augment your meta-training task distribution to include a wider variety of molecular properties and structures. This makes it harder for the model to find a single memorizing function [32] [33].

- Use Dynamic Sampling: Employ a dynamic sampling strategy during meta-training that actively selects diverse subsets of molecules for each task, preventing the model from overfitting to a static set of patterns [35].

Q2: How can I verify that my meta-learning model has the capacity to learn, before running a full experiment?

The recommended practice is to perform a small-scale overfitting test [13].

- Protocol: