Cross-Validation for Predictive Models: A Complete Guide for Biomedical Researchers

This guide provides a comprehensive framework for applying cross-validation in predictive model development, tailored for researchers and professionals in drug development and biomedical sciences.

Cross-Validation for Predictive Models: A Complete Guide for Biomedical Researchers

Abstract

This guide provides a comprehensive framework for applying cross-validation in predictive model development, tailored for researchers and professionals in drug development and biomedical sciences. It covers the foundational principles of why cross-validation is essential for avoiding overfitting and obtaining realistic performance estimates. The article delivers practical, step-by-step methodologies for implementing various cross-validation techniques, addresses common pitfalls and optimization strategies specific to clinical data, and establishes rigorous protocols for model validation and comparison. By synthesizing statistical theory with applied examples, this resource aims to equip scientists with the knowledge to build more reliable, generalizable, and clinically impactful predictive models.

Why Cross-Validation is Non-Negotiable in Predictive Biomarker and Clinical Model Development

Overfitting remains one of the most pervasive and deceptive pitfalls in predictive modeling, particularly in clinical research and drug development [1]. It occurs when a statistical machine learning model learns the training data set so well that it performs poorly on unseen data sets [2]. This phenomenon happens when a model learns both the systematic patterns (signal) and random fluctuations (noise) present in the training data to the extent that it negatively impacts performance on new data [2]. The paradox of overfitting is that complex models contain more information about the training data but less information about the testing data (future data we want to predict) [2].

In clinical research, where predictive models are increasingly used for adverse event prediction, diagnostic support, and risk stratification, overfitting poses significant challenges to implementation and patient safety [3] [4]. The consequences of overfitted models in healthcare can be severe, leading to incorrect risk assessments, inappropriate clinical decisions, and ultimately, patient harm [3]. This application note explores the theoretical foundations of overfitting, its clinical consequences, and provides detailed protocols for detection and prevention within the context of cross-validation for predictive models research.

Theoretical Foundations: Bias-Variance Tradeoff

The performance of machine learning models is fundamentally governed by the bias-variance tradeoff, which highlights the need for balance between model simplicity and complexity [5].

Table 1: Characteristics of Model Fitting States

| Fitting State | Bias-Variance Profile | Training Performance | Testing Performance | Model Characteristics |

|---|---|---|---|---|

| Underfitting | High bias, low variance | Poor | Poor | Too simple, fails to capture relevant patterns |

| Appropriate Fitting | Balanced bias and variance | Good | Good | Optimal complexity, generalizes well |

| Overfitting | Low bias, high variance | Excellent | Poor | Too complex, memorizes noise and patterns |

Conceptual Understanding through Visualization

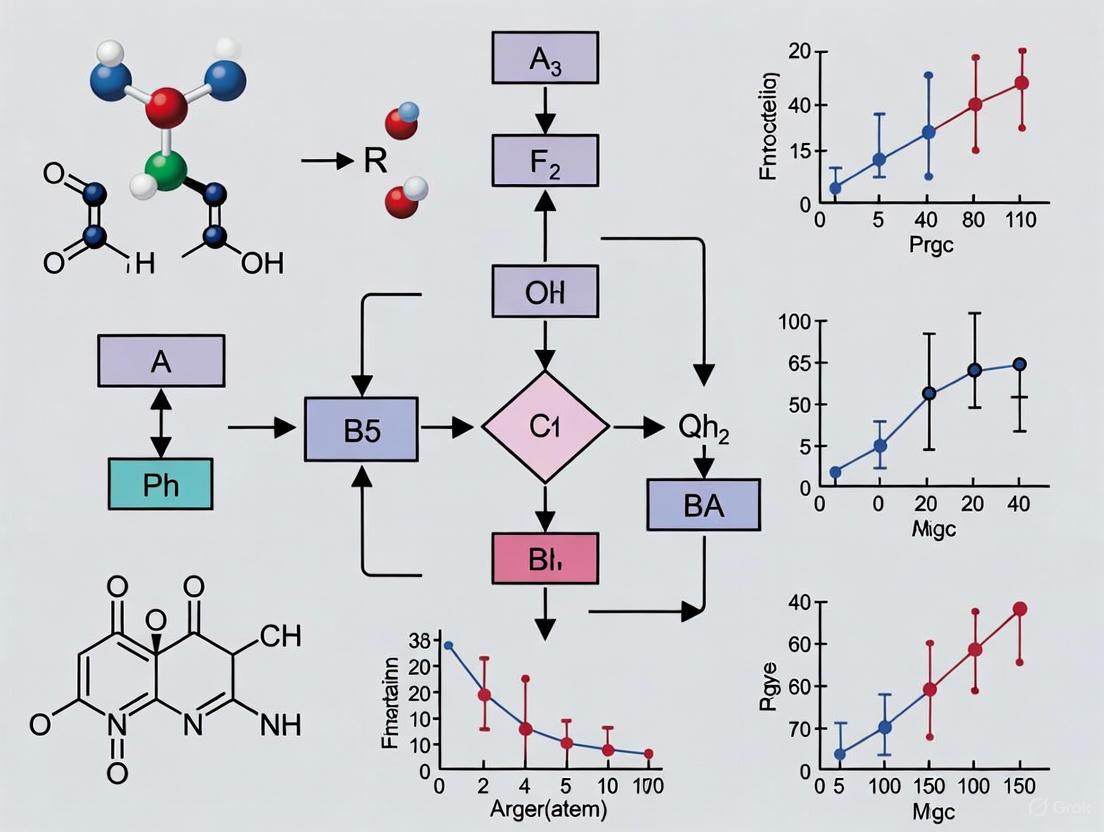

The following diagram illustrates the fundamental relationship between model complexity and error, highlighting the optimal zone for model performance:

According to the bias-variance tradeoff principle, increasing model complexity reduces bias but increases variance (risk of overfitting), while simplifying the model reduces variance but increases bias (risk of underfitting) [5]. The goal is to find an optimal balance where both bias and variance are minimized, resulting in good generalization performance [5]. In explanatory modeling, the focus is on minimizing bias, whereas predictive modeling seeks to minimize the combination of bias and estimation variance [2].

Clinical Consequences of Overfitting

In healthcare applications, overfitted models present significant risks that extend beyond statistical inaccuracy to direct patient safety concerns [3]. When AI-based clinical decision support (CDS) systems are overfitted, they threaten the generalizability of algorithms across different healthcare centers and patient populations [3] [4].

Specific Clinical Domains at Risk

Table 2: Clinical Consequences of Overfitting in Healthcare Applications

| Clinical Domain | Potential Impact of Overfitting | Real-World Example | Patient Safety Risk |

|---|---|---|---|

| Adverse Event Prediction | Wrongful determination of patient risk for adverse events [3] | Underestimation of risk when applied to different surgical specialties [3] | Failure to prevent medication side effects, physical injury, or death |

| Risk Stratification | Inaccurate mortality predictions that don't capture full spectrum of patient deterioration [3] | Over-reliance on mortality as primary outcome missing other deterioration indicators [3] | Missed detection of patient deterioration, delayed interventions |

| Diagnostic Support Tools | Reduced performance and reliability when applied to new populations [3] | Models trained on limited ICU data (<1,000 patients) overestimating performance [3] | Misdiagnosis, inappropriate treatment decisions |

| Treatment Response Prediction | Poor generalization to diverse patient demographics and comorbidities | Population shifts due to demographic region or hospital specialization [3] | Ineffective treatments, adverse drug reactions |

The problem is exacerbated in clinical settings because adverse event prediction does not occur in randomized controlled trials [3]. Whether a patient is assigned to the control group or suffers from an adverse event is not determined randomly, but is instead a result of a multitude of factors that may or may not have been observed during data acquisition [3]. Additionally, the definition of adverse events is subject to change and may be dependent on local hospital practices, creating further challenges for model generalizability [3].

Detection and Evaluation Protocols

Cross-Validation Framework for Clinical Predictive Models

Cross-validation is a vital statistical method that enhances model validation and evaluation by ensuring that the model performs well on unseen data [6]. This technique divides the dataset into multiple subsets, allowing for a more robust assessment of the model's predictive capabilities [6].

Performance Evaluation Metrics Table

Table 3: Quantitative Metrics for Detecting Overfitting in Clinical Models

| Metric Category | Specific Metrics | Expected Pattern Indicating Overfitting | Acceptable Threshold for Clinical Use |

|---|---|---|---|

| Performance Discrepancy | Training vs. testing accuracy difference | >10-15% performance drop in testing | <5% difference |

| Training vs. testing AUC-ROC difference | >0.1 AUC point decrease in testing | <0.05 AUC difference | |

| Variance Indicators | Cross-validation fold performance variance | High standard deviation (>0.05) across folds | Standard deviation <0.03 |

| Confidence interval width for performance metrics | Widening confidence intervals in testing | Consistent interval width | |

| Error Analysis | Training error trend vs. validation error trend | Validation error increases while training error decreases | Parallel decreasing trends |

| Clinical Calibration | Brier score degradation | Significant increase in testing Brier score | Minimal change (<0.02) |

Cross-validation helps to mitigate overfitting by ensuring that the model is validated against various data splits [6]. The primary benefits include improved model reliability and lower variance in performance estimates, making it a cornerstone technique for data scientists and machine learning engineers [6]. For clinical applications, it is recommended to use stratified sampling for imbalanced datasets and evaluate multiple performance metrics to gain a holistic understanding [6].

Prevention and Mitigation Strategies

Comprehensive Protocol for Overcoming Overfitting

The following workflow outlines a systematic approach to preventing overfitting in clinical predictive models, incorporating multiple mitigation strategies:

Research Reagent Solutions for Robust Clinical Predictive Modeling

Table 4: Essential Methodological Components for Overfitting Prevention

| Method Category | Specific Technique | Function and Purpose | Implementation Considerations |

|---|---|---|---|

| Data Preprocessing | Resampling strategies [3] | Address class imbalance in adverse event data | Apply higher weights to underrepresented groups or over-sample minority classes |

| Data augmentation [3] | Mitigate data scarcity for rare events | Create synthetic data or apply appropriate transformations to increase dataset diversity | |

| Missing data imputation [3] | Handle incomplete clinical records | Remove or impute variables based on reason for missing data | |

| Model Training | Regularization techniques [3] | Balance model complexity and generalizability | Apply L1 (Lasso) or L2 (Ridge) regularization to constrain model parameters |

| Early stopping [3] | Prevent overfitting during training iterations | Monitor validation performance and stop training when performance degrades | |

| Dropout (for neural networks) [3] | Prevent co-adaptation of features | Randomly set a percentage of hidden unit weights to zero during training | |

| Validation & Testing | External validation [3] | Assess generalizability across populations | Test model on completely separate datasets from different institutions or demographics |

| Out-of-distribution detection [3] | Alert clinicians to unfamiliar data patterns | Monitor when current patient data deviates significantly from training data | |

| Hyperparameter tuning [6] | Optimize model settings systematically | Use cross-validation to explore different parameter settings without data leakage |

Implementation Considerations for Clinical Settings

Successful implementation of predictive models in clinical practice requires careful attention to workflow integration and trust-building measures. The lack of interpretability in AI models poses trust and transparency issues, advocating for transparent algorithms and requiring rigorous testing on specific hospital populations before implementation [3] [4]. Additionally, emphasizing human judgment alongside AI integration is essential to mitigate the risks of deskilling healthcare practitioners [3].

Ongoing evaluation processes and adjustments to regulatory frameworks are crucial for ensuring the ethical, safe, and effective use of AI in clinical decision support [3]. This highlights the need for meticulous attention to data quality, preprocessing, model training, interpretability, and ethical considerations throughout the model development lifecycle [3]. By adopting the protocols and strategies outlined in this document, researchers, scientists, and drug development professionals can significantly reduce the risks associated with overfitting and develop more reliable, generalizable predictive models for healthcare applications.

In the domain of supervised machine learning, the bias-variance tradeoff represents a fundamental concept that describes the tension between two primary sources of prediction error that affect model generalization [7] [8]. This tradeoff directly influences a model's ability to capture underlying patterns in training data while maintaining performance on unseen data, making it particularly crucial for research applications where predictive accuracy is paramount, such as in drug development and scientific discovery [9] [10].

The mathematical foundation of this tradeoff is formally expressed through the bias-variance decomposition of the mean squared error (MSE) [8] [11]. For a given model prediction f^(x) of the true function f(x), the expected prediction error on new data can be decomposed as follows:

This decomposition reveals that the total prediction error comprises three distinct components [12] [10]:

- Bias²: Error resulting from overly simplistic model assumptions

- Variance: Error from model oversensitivity to training data fluctuations

- Irreducible Error: Innate noise in the data generation process that cannot be eliminated

Table 1: Mathematical Components of Prediction Error

| Component | Mathematical Definition | Interpretation |

|---|---|---|

| Bias² | [E[f^(x)] - f(x)]² |

How much model predictions differ from true values on average |

| Variance | E[(f^(x) - E[f^(x)])²] |

How much predictions vary across different training sets |

| Irreducible Error | σ² |

inherent noise in the data generation process |

Core Theoretical Framework

Defining Bias and Variance

Bias represents the systematic error introduced when a model makes oversimplified assumptions about the underlying data relationships [7] [9]. A high-bias model typically exhibits underfitting, where it fails to capture relevant patterns in the data, resulting in poor performance on both training and test datasets [7] [13]. Examples include using linear regression to model complex non-linear relationships or excluding important predictive features from the model [9] [10].

Variance quantifies a model's sensitivity to specific patterns and noise in the training data [7] [8]. A high-variance model typically exhibits overfitting, where it learns both the underlying signal and the random noise in the training data [7] [10]. While such models may achieve excellent performance on training data, they often generalize poorly to unseen data [9]. Examples include complex decision trees with excessive depth or neural networks with insufficient regularization [13] [10].

The Tradeoff Relationship

The bias-variance tradeoff emerges from the inverse relationship between these two error sources [7] [8]. As model complexity increases:

- Bias generally decreases as the model becomes more flexible

- Variance generally increases as the model becomes more sensitive to training data specifics

The optimal balance occurs at the level of model complexity that minimizes the total error, representing the point where the model has sufficient expressiveness to capture true data patterns without overfitting to noise [7] [9].

Diagnostic Framework for Model Validation

Performance Indicators and Diagnosis

Accurately diagnosing whether a model suffers from high bias, high variance, or both is essential for effective model development [13]. The following table summarizes key diagnostic indicators:

Table 2: Diagnostic Indicators for Bias and Variance Issues

| Condition | Training Error | Validation/Test Error | Error Gap | Primary Issue |

|---|---|---|---|---|

| High Bias | High | High | Small | Underfitting |

| High Variance | Low | High | Large | Overfitting |

| Optimal Model | Low | Low | Small | Balanced |

In practice, these patterns manifest through specific symptoms [9] [13]:

- High Bias Symptoms: Consistently poor performance across different datasets, failure to capture known relationships, similar performance across training and validation sets

- High Variance Symptoms: Significant performance degradation from training to validation, high sensitivity to small changes in training data, excellent performance on training data with poor generalization

Learning Curves as Diagnostic Tools

Learning curves provide a powerful visual diagnostic tool by plotting model performance against training set size or model complexity [13] [10]. These curves reveal characteristic patterns:

- High bias: Training and validation errors converge at high values

- High variance: A persistent gap between training error (low) and validation error (high)

- Optimal balance: Converging curves at low error levels with minimal gap

Experimental Protocols for Cross-Validation

k-Fold Cross-Validation Protocol

k-Fold Cross-Validation represents the gold standard for model evaluation and bias-variance assessment in research settings [14] [15]. The following protocol provides a detailed methodology for implementation:

Objective: To obtain reliable estimates of model generalization error while diagnosing bias-variance characteristics.

Materials and Requirements:

- Labeled dataset with sufficient samples for k-fold partitioning

- Computational environment with necessary machine learning libraries

- Candidate models with varying complexity levels

Procedure:

- Dataset Preparation: Randomly shuffle the dataset to eliminate ordering effects

- Fold Generation: Partition the data into k equal-sized folds (typically k=5 or k=10)

- Iterative Validation: For each fold i (i = 1 to k):

- Designate fold i as the validation set

- Combine remaining k-1 folds as the training set

- Train the model on the training set

- Evaluate performance on the validation set, recording relevant metrics

- Performance Aggregation: Calculate mean and standard deviation of performance metrics across all k iterations

Interpretation Guidelines [14] [13]:

- Low Bias Indication: Low mean error across folds

- Low Variance Indication: Low standard deviation of errors across folds

- Optimal Model Selection: Choose model complexity that minimizes mean error while maintaining acceptable variance

Comparative Analysis of Validation Methods

Different validation approaches offer distinct tradeoffs between computational efficiency and statistical reliability [14] [15]:

Table 3: Comparison of Model Validation Techniques

| Method | Procedure | Advantages | Limitations | Bias-Variance Properties |

|---|---|---|---|---|

| Holdout Validation | Single split into train/test sets (typically 70/30 or 80/20) | Computationally efficient, simple to implement | High variance in error estimate, inefficient data usage | Potentially high bias if split unrepresentative |

| k-Fold Cross-Validation | Data divided into k folds; each fold used once as test set | Reduced variance compared to holdout, more reliable error estimate | k times more computationally intensive than holdout | Balanced bias-variance when k=5-10 |

| Leave-One-Out Cross-Validation (LOOCV) | Each data point used once as test set | Low bias, uses nearly all data for training | Computationally prohibitive for large datasets, high variance in error estimate | Minimal bias but high variance |

Advanced Cross-Validation Protocol: Stratified k-Fold

For classification problems with imbalanced class distributions, Stratified k-Fold Cross-Validation provides enhanced reliability [15]:

Objective: To maintain consistent class distribution across folds, ensuring representative training and validation splits.

Procedure:

- Calculate class distribution in the full dataset

- For each class, distribute samples across k folds while preserving overall class ratios

- Follow standard k-fold procedure with stratified folds

- Report class-specific performance metrics in addition to overall performance

Quality Control: Verify that each fold maintains approximately the same class distribution as the full dataset.

Mitigation Strategies and Model Optimization

Addressing High Bias (Underfitting)

When diagnostic indicators suggest high bias, researchers can employ several strategies [9] [13]:

- Increase Model Complexity: Transition from linear models to polynomial models, decision trees, or neural networks with appropriate capacity

- Feature Engineering: Create additional relevant features, interaction terms, or transformed variables that may better capture underlying relationships

- Reduce Regularization: Decrease the strength of L1/L2 regularization penalties that may be overly constraining the model

- Algorithm Selection: Switch to more expressive model families that can capture complex patterns in the data

Addressing High Variance (Overfitting)

When diagnostic indicators suggest high variance, researchers can implement the following approaches [9] [13]:

- Gather More Training Data: Increasing dataset size remains one of the most effective approaches to reduce overfitting

- Regularization Techniques: Implement L1 (Lasso) or L2 (Ridge) regularization to penalize model complexity

- Feature Selection: Remove irrelevant or redundant features using techniques like recursive feature elimination

- Ensemble Methods: Employ bagging techniques (e.g., Random Forests) that average multiple models to reduce variance

- Early Stopping: For iterative algorithms, halt training when validation performance begins to degrade

Advanced Techniques for Bias-Variance Optimization

Ensemble methods provide sophisticated approaches to managing the bias-variance tradeoff [9] [10]:

- Bagging (Bootstrap Aggregating): Reduces variance by averaging multiple models trained on different data subsets

- Boosting: Sequentially builds models that focus on previously misclassified examples, reducing both bias and variance

- Stacking: Combines multiple models through a meta-learner to leverage strengths of different algorithms

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Tools for Bias-Variance Research

| Research Reagent | Function | Application Context | Implementation Examples |

|---|---|---|---|

| k-Fold Cross-Validator | Partition data into training/validation folds | Model evaluation protocol | Scikit-learn KFold, StratifiedKFold |

| Regularization Modules | Apply penalty terms to control model complexity | Overfitting mitigation | L1 (Lasso), L2 (Ridge), Elastic Net |

| Ensemble Algorithm Suite | Combine multiple models to improve generalization | Both bias and variance reduction | Random Forests, Gradient Boosting, Stacking |

| Learning Curve Generator | Visualize training vs. validation performance | Diagnostic assessment | Scikit-learn learning_curve |

| Hyperparameter Optimization | Systematic search for optimal model parameters | Bias-variance balancing | GridSearchCV, RandomizedSearchCV |

| Feature Selection Toolkit | Identify most relevant variables | Variance reduction | Recursive Feature Elimination, PCA |

The bias-variance tradeoff provides an essential theoretical framework for understanding model generalization in predictive modeling research [8] [11]. Through systematic application of cross-validation protocols and diagnostic techniques outlined in this document, researchers can develop models that optimally balance underfitting and overfitting tendencies [14] [15].

For scientific applications, particularly in high-stakes domains like drug development, rigorous validation using these principles ensures that predictive models will maintain performance on new data, ultimately supporting robust scientific conclusions and decision-making [13] [10]. The integration of these methodologies into the model development lifecycle represents a critical component of modern predictive analytics in research environments.

In the development of predictive models for critical applications such as drug development and clinical diagnostics, validating model performance is as crucial as model building itself. The holdout method, which involves splitting a dataset into separate training and testing subsets, has been a fundamental validation technique in machine learning due to its simplicity and computational efficiency [16] [17]. In this method, a typical split ratio of 70:30 or 80:20 is used, where the larger portion trains the model and the remaining holdout set tests its performance [16] [18].

However, within the context of a broader thesis on cross-validation for predictive models research, it is imperative to recognize that the holdout method presents significant limitations, especially for the small-sample-size datasets prevalent in biomedical research [19] [20]. These limitations include high variance in performance estimates due to the specific data partition, inefficient use of scarce data by reducing the sample size available for both training and testing, and potentially optimistic or pessimistic generalizations about model performance [19] [21]. Simulation studies have demonstrated that in small datasets, using a holdout set or a very small external dataset results in performance estimates with large uncertainty, making cross-validation techniques a preferred alternative for a more reliable validation [19].

Quantitative Comparison of Validation Methods

The performance and stability of different validation methods can be quantitatively assessed through simulation studies. The tables below summarize key findings from such analyses, highlighting the impact of dataset size and validation technique on model performance metrics.

Table 1: Performance of internal validation methods on a simulated dataset of 500 patients (based on [19]). AUC = Area Under the Curve; SD = Standard Deviation.

| Validation Method | CV-AUC (Mean ± SD) | Calibration Slope | Key Characteristics |

|---|---|---|---|

| Holdout (n=100) | 0.70 ± 0.07 | Comparable | Higher uncertainty in performance estimate |

| Cross-Validation (5-fold) | 0.71 ± 0.06 | Comparable | More stable performance estimate |

| Bootstrapping | 0.67 ± 0.02 | Comparable | Lower AUC, less variable estimate |

Table 2: Impact of external test set size on model performance precision (based on [19]).

| Test Set Size | Impact on CV-AUC Estimate | Impact on Calibration Slope SD |

|---|---|---|

| n=100 | Less precise | Larger SD |

| n=200 | More precise | Smaller SD |

| n=500 | More precise | Smaller SD |

Experimental Protocols for Model Validation

This section provides detailed methodologies for key validation experiments, enabling researchers to implement robust evaluation frameworks for their predictive models.

Protocol: Holdout Validation for Model Evaluation

This protocol outlines the steps for a basic holdout validation, suitable for initial model assessment when data is relatively abundant [16] [17].

- Dataset Splitting: Randomly shuffle the entire dataset and split it into two mutually exclusive subsets using a typical ratio (e.g., 70% for training and 30% for testing). In Python's scikit-learn, this is achieved with the

train_test_splitfunction [16] [18]. - Model Training: Train the predictive model (e.g., Logistic Regression, Random Forest) using only the training subset.

- Model Testing & Evaluation: Use the trained model to generate predictions for the holdout test set. Calculate performance metrics (e.g., Accuracy, AUC) by comparing these predictions to the true labels.

- Final Model Training (Optional): For deployment, the final model may be retrained on the entire dataset to leverage all available information [17].

Protocol: k-Fold Cross-Validation for Small Datasets

This protocol is designed for small datasets where the holdout method is unreliable. It provides a more robust estimate of model performance by using data more efficiently [19] [15].

- Define Folds: Partition the entire dataset into k equal-sized folds (common choices are k=5 or k=10). For stratified k-fold, ensure each fold preserves the overall class distribution of the dataset [15].

- Iterative Training and Validation: Repeat the following process for each of the k folds:

- Validation Set: Designate one fold as the validation set.

- Training Set: Combine the remaining k-1 folds to form the training set.

- Model Training & Evaluation: Train the model on the training set and evaluate it on the validation set. Record the chosen performance metric(s).

- Performance Aggregation: Calculate the final performance estimate by averaging the metric values obtained from all k iterations. This average provides a more stable and reliable measure of expected model performance [18] [15].

Protocol: Validation with an Independent External Test Set

This protocol simulates a true external validation, which is the gold standard for assessing a model's generalizability to new populations or settings, a critical step in clinical application [19] [21].

- Training Set Creation: Use the original, internal dataset (e.g., from a specific clinical trial) for model development. Internal validation techniques like k-fold CV can be applied here for model tuning.

- External Test Set Acquisition: Obtain a completely independent dataset, collected from a different center, population, or under different conditions (e.g., different PET reconstruction characteristics like EARL2) [19].

- Blinded Evaluation: Apply the final, fixed model (no retraining on the external set) to this independent test set to evaluate its performance.

- Analysis of Performance Shift: Compare the performance metrics from the external test set with those from the internal validation. A significant drop may indicate overfitting or limited generalizability due to population differences, which may require model adjustment or stratification [19].

Workflow Visualization of Validation Strategies

The following diagram illustrates the logical flow and data usage of the three core validation strategies discussed in the protocols.

The Scientist's Toolkit: Key Research Reagent Solutions

This table details essential methodological components and their functions in the design and validation of robust predictive models.

Table 3: Essential methodological components for predictive model validation.

| Research Reagent | Function & Explanation |

|---|---|

| Stratified Splitting | Ensures that the distribution of the outcome variable (e.g., disease prevalence) is consistent across training and test splits. This is crucial for imbalanced datasets common in medical research (e.g., rare diseases) to avoid biased performance estimates [15]. |

| Calibration Analysis | Assesses the agreement between predicted probabilities and actual observed frequencies. A calibration slope of 1 indicates perfect calibration, while values <1 suggest overfitting (predictions are too extreme) [19]. |

| Performance Metrics (AUC) | The Area Under the Receiver Operating Characteristic curve measures discrimination—the model's ability to distinguish between classes (e.g., diseased vs. healthy). It is a core metric for binary classification problems [19] [22]. |

| Resampling Methods (Bootstrapping) | A validation technique that involves creating multiple new datasets by randomly sampling the original data with replacement. It is used to estimate the model's performance and stability, particularly useful with small datasets [19] [18]. |

| Logistic Regression | A best-practice, interpretable modeling technique often required in regulated contexts like credit lending and drug development. Its explainability is key for regulatory approval and understanding underlying risks [22]. |

In predictive model research, particularly within pharmaceutical drug discovery, robust validation frameworks are essential for developing models that generalize effectively to real-world scenarios. Cross-validation serves as a cornerstone methodology, addressing three interconnected core objectives: performance estimation, hyperparameter tuning, and algorithm selection. These practices directly counter the pervasive challenge of overfitting, where models perform well on training data but fail on unseen data, a critical concern in high-stakes fields like drug development [1].

The following application notes delineate structured protocols and quantitative frameworks to guide researchers in implementing cross-validation strategies that ensure model reliability, reproducibility, and translational utility.

Performance Estimation

Purpose and Significance

Performance estimation aims to provide an unbiased assessment of a predictive model's generalization error—its expected performance on unseen data. Accurate estimation is fundamental to evaluating a model's practical utility and is a critical checkpoint before deployment in drug discovery pipelines [1] [23].

Recommended Cross-Validation Techniques

The choice of technique depends on dataset size, structure, and computational constraints. Key methods include:

- K-Fold Cross-Validation: The dataset is randomly partitioned into k equally sized folds. The model is trained on k-1 folds and validated on the remaining fold. This process is repeated k times, with each fold used exactly once as the validation set. The final performance estimate is the average of the k validation scores [15] [23]. A value of k=10 is often recommended as a standard, providing a good balance between bias and variance [15].

- Stratified K-Fold Cross-Validation: A variation of K-Fold that preserves the percentage of samples for each class in every fold. This is crucial for imbalanced datasets, which are common in medical research, such as in predicting rare drug side effects [15].

- Leave-One-Out Cross-Validation (LOOCV): A special case of K-Fold where k equals the number of samples. While it leads to a low-bias estimate, it is computationally expensive and can yield high variance [15].

Table 1: Comparison of Common Performance Estimation Techniques

| Technique | Best Use Case | Key Advantages | Key Disadvantages |

|---|---|---|---|

| Holdout Validation | Very large datasets; quick evaluation [15] | Simple and fast to compute [15] | High variance; unreliable estimate with a single split [15] |

| K-Fold CV (k=5 or 10) | Small to medium datasets; general purpose [15] | Lower bias than holdout; more reliable performance estimate [15] [23] | Slower than holdout; model is trained and evaluated k times [15] |

| Stratified K-Fold CV | Imbalanced classification problems [15] | Ensures representative class distribution in each fold; reduces bias [15] | Same computational cost as standard K-Fold |

| LOOCV | Very small datasets where data is precious [15] | Utilizes all data for training; low bias [15] | Computationally prohibitive for large datasets; high variance [15] |

Performance Metrics for Drug Discovery

The selection of evaluation metrics must align with the specific research goal. In drug discovery, common metrics include [24] [25]:

- For Regression Models: Root Mean Square Error (RMSE), Pearson Correlation Coefficient (Rpearson), and Spearman Rank Correlation Coefficient (Rspearman) [24].

- For Classification Models: Accuracy, Precision (Positive Predictive Value), Recall (Sensitivity), F1-Score (harmonic mean of precision and recall), and the Area Under the Receiver Operating Characteristic Curve (AUC-ROC) [25].

Table 2: Quantitative Performance Metrics from a Drug Response Prediction Study

| Metric | All Drugs (Mean ± SD) | Selective Drugs (Mean ± SD) | Interpretation |

|---|---|---|---|

| Rpearson | 0.885 ± 0.021 | 0.781 ± 0.032 | Strong positive correlation between predicted and actual drug activity [24] |

| Rspearman | 0.891 ± 0.019 | 0.791 ± 0.029 | Strong rank-order correlation [24] |

| Hit Rate in Top 10 | 6.6 ± 0.5 | 4.3 ± 0.6 | Number of correctly identified highly active drugs in top 10 predictions [24] |

Hyperparameter Tuning

The Tuning Imperative

Hyperparameters are configuration variables external to the model that cannot be estimated from the data (e.g., learning rate, number of trees in a random forest, regularization strength). Tuning these parameters is vital for optimizing model performance and is a primary defense against overfitting [1].

Nested Cross-Validation Protocol

Using a standard K-Fold CV for both hyperparameter tuning and performance estimation leads to optimistically biased results because the test set information "leaks" into the model selection process [23]. Nested cross-validation rigorously addresses this issue by embedding two layers of cross-validation.

Objective: To identify the optimal hyperparameter set for a given algorithm without biasing the final performance estimate. Procedure:

- Define an outer loop for performance estimation (e.g., 5-fold or 10-fold CV).

- Define an inner loop for hyperparameter tuning within each training fold of the outer loop.

- For each fold in the outer loop: a. The data is split into outer training and outer test sets. b. On the outer training set, perform a grid or random search using a CV method (e.g., 3-fold or 5-fold) to evaluate different hyperparameter combinations. c. Select the best-performing hyperparameters from the inner search. d. Retrain a model on the entire outer training set using these optimal hyperparameters. e. Evaluate this final model on the held-out outer test set to obtain an unbiased performance score.

- The final model's generalization performance is the average of the scores across all outer test folds.

Experimental Protocol: Tuning a Random Forest for Drug Response Prediction

This protocol is based on methodologies successfully applied to predict drug responses in patient-derived cell cultures [24].

- Objective: Optimize the hyperparameters of a Random Forest model to maximize predictive accuracy for drug activity.

- Algorithm: Random Forest (as implemented in scikit-learn).

- Hyperparameter Search Space:

n_estimators: [50, 100, 200] (Number of trees in the forest)max_depth: [5, 10, 20, None] (Maximum depth of the tree)min_samples_split: [2, 5, 10] (Minimum number of samples required to split a node)

- Inner CV Method: 5-Fold Stratified Cross-Validation.

- Scoring Metric: Spearman Rank Correlation (Rspearman), to prioritize correct ranking of drug efficacies [24].

- Procedure:

- Within the outer training set, instantiate a

GridSearchCVorRandomizedSearchCVobject with the defined hyperparameter space. - Fit the searcher object using the inner CV. The best hyperparameters (

n_estimators=100,max_depth=10, etc.) will be identified based on the average Rspearman across the 5 inner folds. - Proceed with the retraining and evaluation steps as described in the nested CV protocol.

- Within the outer training set, instantiate a

Algorithm Selection

A Framework for Fair Comparison

Algorithm selection involves comparing different types of predictive models (e.g., Random Forest vs. Support Vector Machine vs. Logistic Regression) to determine the most suitable one for a given task. A fair comparison requires that all algorithms are evaluated on the same data splits and with their hyperparameters optimally tuned.

Protocol for Rigorous Algorithm Comparison

The nested cross-validation framework used for hyperparameter tuning naturally extends to algorithm selection. Each candidate algorithm undergoes the same nested CV process, and their final performance estimates (from the outer test folds) are compared.

- Objective: To compare the generalization performance of multiple machine learning algorithms and select the best one for a specific drug discovery problem.

- Procedure:

- Define Candidate Algorithms: Select a set of algorithms appropriate for the problem (e.g., Logistic Regression, Random Forest, Gradient Boosting, SVM).

- Define Tuning Strategy: For each algorithm, define a relevant hyperparameter search space for the inner loop.

- Execute Nested CV: Run the nested cross-validation protocol independently for each candidate algorithm.

- Compare Performance: Statistically compare the distribution of outer test scores (e.g., from 10 folds) across all algorithms. The algorithm with the best and most robust average performance is selected.

Table 3: Example Algorithm Comparison for Side Effect Prediction

| Algorithm | Mean AUC | Std. Dev. AUC | Key Hyperparameters Tuned | Considerations for Drug Discovery |

|---|---|---|---|---|

| Random Forest | 0.89 | 0.03 | nestimators, maxdepth, minsamplessplit | Handles high-dimensional data well; provides feature importance [24] |

| Support Vector Machine (SVM) | 0.87 | 0.04 | C, kernel, gamma | Can model complex interactions but less interpretable [23] |

| Logistic Regression | 0.85 | 0.05 | C, penalty (L1/L2) | Highly interpretable; good baseline model [25] |

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational and data "reagents" essential for implementing robust cross-validation in predictive drug discovery research.

Table 4: Essential Research Reagents for Cross-Validation Studies

| Reagent / Tool | Function / Purpose | Example / Specification |

|---|---|---|

| scikit-learn Library | Provides unified API for models, CV splitters, and metrics [23] | GridSearchCV, cross_validate, KFold, StratifiedKFold |

| High-Performance Computing (HPC) Cluster | Manages computational load of nested CV and large-scale hyperparameter tuning [24] | Cloud-based (AWS, GCP) or on-premise cluster with multiple GPUs/CPUs |

| Structured Bioactivity Dataset | Serves as the foundational data for training and validating predictive models [24] | GDSC, TCGA, or in-house patient-derived cell culture (PDC) screens [24] |

| Molecular Descriptors & Fingerprints | Numerical representations of chemical structures used as model input features [24] | ECFP fingerprints, molecular weight, cLogP, etc. |

| Pipeline Tool | Encapsulates preprocessing and model steps to prevent data leakage during CV [23] | sklearn.pipeline.Pipeline |

| Version Control System | Tracks exact code, parameters, and data versions for full reproducibility [1] | Git repositories with detailed commit history |

A Practical Guide to Implementing Cross-Validation Techniques with Clinical Data

K-fold cross-validation is a fundamental resampling procedure used to evaluate the skill of machine learning models on a limited data sample. As a cornerstone of predictive model validation, it provides a more robust estimate of a model's expected performance on unseen data compared to a simple train/test split, thereby helping to identify and prevent overfitting [23] [26]. The procedure is widely adopted in applied machine learning and clinical prediction research because it is straightforward to understand, implement, and generally results in skill estimates with lower bias than other methods, such as a single holdout validation [27] [26]. In the context of drug development and healthcare modeling, where datasets are often costly, restricted, and of small to moderate size, making efficient use of all available data is paramount, a key advantage offered by k-fold cross-validation [27].

The core principle behind k-fold cross-validation is to split the available dataset into k groups, or "folds," of approximately equal size. The model is trained and evaluated k times, each time using a different fold as the validation set and the remaining k-1 folds as the training set. The final performance metric is then typically the average of the k validation scores [23] [28]. This process allows every observation in the dataset to be used for both training and validation exactly once, providing a comprehensive assessment of model performance [28].

The K-Fold Algorithm and Workflow

The Standard Procedure

The general procedure for k-fold cross-validation follows a standardized sequence of steps designed to ensure a robust evaluation [26]:

- Shuffle the dataset randomly. This step is crucial to minimize any bias that might be introduced by the initial order of the data.

- Split the dataset into k groups. The value k defines the number of folds and is a key choice, discussed in detail in Section 4.

- For each unique group: a. Take the group as a hold out or test data set. b. Take the remaining groups as a training data set. c. Fit a model on the training set and evaluate it on the test set. Any data preprocessing (e.g., standardization, feature selection) must be learned from the training set within the loop and then applied to the test set to prevent data leakage [23]. d. Retain the evaluation score and discard the model. The model itself is a means to an end; the primary output is the performance score.

- Summarize the skill of the model using the sample of the k model evaluation scores, most commonly by reporting the mean and standard deviation of the scores [23] [26].

Workflow Visualization

The following diagram illustrates the logical flow and data splitting protocol of the k-fold cross-validation algorithm.

Diagram: K-Fold Cross-Validation Workflow. This diagram illustrates the iterative process of model training and validation across K data partitions.

Key Considerations for Clinical and Healthcare Data

Applying k-fold cross-validation to real-world health care data, such as Electronic Health Records (EHR), introduces specific challenges that must be addressed to obtain valid performance estimates [27].

Subject-Wise vs. Record-Wise Splitting

Clinical data often contain multiple records or measurements per individual patient. A critical decision is whether to perform record-wise or subject-wise splitting [27].

- Record-wise splitting splits individual events or encounters randomly, which risks having records from the same patient in both the training and test sets. This can lead to data leakage and spuriously high performance, as the model may learn to identify patients rather than generalizable clinical patterns [27].

- Subject-wise splitting ensures all records from a single patient are kept within the same fold (either all in training or all in testing). This is generally the recommended and more conservative approach for prognosis over time, as it better simulates the real-world scenario of predicting for a new, unseen patient [27].

The choice depends on the modeling goal: record-wise validation might be acceptable for diagnosis at a specific encounter, but subject-wise is favorable for longitudinal prognosis [27].

Handling Imbalanced Outcomes

Clinical outcomes, such as mortality or rare adverse events, are often imbalanced, with a low incidence rate (e.g., ≤1%) [27]. Randomly partitioning data can create folds with varying outcome rates or even folds with no positive instances, leading to unreliable performance estimates. Stratified k-fold cross-validation is a solution that ensures each fold has approximately the same proportion of the class labels as the complete dataset [27] [23]. This is considered necessary for highly imbalanced classification problems [27].

Choosing the Right Value of K

The choice of k is a critical decision in the cross-validation process, as it directly influences the bias and variance of the resulting performance estimate [29] [26].

The Bias-Variance Trade-off

The value of k governs a fundamental trade-off [29] [26]:

- Lower values of

k(e.g., 2, 3, 5):- Result in higher bias. The training set in each fold is significantly smaller than the entire dataset, which may lead to models that are underfit and not representative of the model trained on the full dataset. The performance estimate can thus be pessimistically biased.

- Result in lower variance. The models trained on these smaller, more distinct datasets can lead to a higher variance in the performance estimate across different data splits.

- Higher values of

k(e.g., 10, n):- Result in lower bias. Each training set is very similar in size and content to the full dataset, so the performance of the surrogate models closely approximates the model trained on all available data. This reduces pessimistic bias.

- Result in higher variance. The training sets between folds overlap significantly, leading to highly correlated models and test errors. The average of these correlated scores can have higher variance [29]. Furthermore, with a larger

k, the validation set is smaller, which can lead to a noisier estimate of performance in each fold.

Common Heuristics and Practical Recommendations

There is no universally optimal k, but several well-established tactics guide the choice [26]:

k=10: This has become a widely used default in applied machine learning. Through extensive empirical experimentation,k=10has been found to generally offer a good compromise, producing a model skill estimate with low bias and modest variance [26].k=5: Another very common and practical choice, offering a slightly more computationally efficient alternative to 10-fold CV while often still providing reliable estimates [26].- Leave-One-Out Cross-Validation (LOOCV): This is the extreme case where

kis set equal to the number of observations in the dataset (k=n). While LOOCV is nearly unbiased, it can suffer from high variance and is computationally expensive for large datasets [28] [26]. It may also have higher variance in its estimate compared to k-fold with a lowerk[29].

The table below summarizes the quantitative and qualitative implications of different choices for k.

Table: Comparison of Common K Values in Cross-Validation

| Value of K | Typical Use Case | Relative Bias | Relative Variance | Computational Cost | Key Consideration |

|---|---|---|---|---|---|

k=5 |

Medium to large datasets | Medium | Medium | Low | A good compromise between cost and reliability [26]. |

k=10 |

General purpose default | Low | Medium | Medium | Established empirical standard; often recommended [26]. |

k=n (LOOCV) |

Very small datasets | Very Low | High | Very High | Nearly unbiased but can be unstable; use for small samples [28] [26]. |

Iterating and Repeating Cross-Validation

To obtain a more stable and reliable performance estimate and to mitigate the variance associated with a single random split into k folds, it is considered good practice to repeat the k-fold cross-validation process multiple times with different random shuffles of the data [29]. For example, a researcher might perform 10 repeats of 5-fold cross-validation, resulting in 50 performance metrics that can be aggregated (e.g., by taking the overall mean and standard deviation). This practice provides a better understanding of the variability of the model's performance [29].

Experimental Protocols and Implementation

A Standard Protocol for Predictive Modeling

This protocol outlines the application of k-fold cross-validation for a typical predictive modeling task in a research environment, such as mortality prediction or length-of-stay regression.

- Problem Formulation and Data Curation:

- Define the prediction target (e.g., binary mortality, continuous length of stay).

- Assemble the dataset, ensuring compliance with data governance and ethical guidelines [27].

- Perform initial data cleaning to handle obvious errors and anomalies.

- Preprocessing and Feature Engineering Strategy:

- Define all preprocessing steps (e.g., imputation for missing values, standardization, encoding of categorical variables).

- Critically, all these steps must be embedded within the cross-validation loop. The parameters for transformations (e.g., mean for imputation, standard deviation for scaling) must be learned from the training fold and then applied to the validation fold to prevent data leakage [23].

- Model and Validation Configuration:

- Select the candidate model(s) and their hyperparameter grids for evaluation.

- Choose a value for

k(e.g.,k=10). For classification, opt for Stratified K-Fold. For data with multiple records per subject, implement Subject-Wise Splitting. - Decide on the number of repeats for repeated k-fold CV (e.g., 5-10 repeats).

- Execution and Scoring:

- For each repeat and fold, fit the model on the training data and generate predictions for the validation data.

- Calculate the chosen performance metric(s) (e.g., AUC, Accuracy, F1-score for classification; MSE, R² for regression) on the validation set [23].

- Results Aggregation and Analysis:

- Aggregate the scores from all folds and repeats.

- Report the mean performance as the central estimate and the standard deviation or confidence interval as a measure of variability [23] [26].

- The final model for deployment is typically retrained on the entire dataset using the hyperparameters that were found to be optimal during the cross-validation process.

The Scientist's Toolkit: Essential Research Reagents

The following table details key computational tools and methodological components essential for implementing k-fold cross-validation in a scientific research pipeline.

Table: Essential Components for a K-Fold Cross-Validation Pipeline

| Tool/Component | Category | Function & Explanation |

|---|---|---|

scikit-learn Library |

Software Library | A cornerstone Python library for machine learning. It provides integrated implementations for KFold, StratifiedKFold, crossvalscore, and cross_validate, seamlessly combining CV with model fitting and scoring [23]. |

| Stratified K-Fold | Methodological Component | A variant of k-fold that returns stratified folds, preserving the percentage of samples for each class in every fold. Crucial for validating models on imbalanced datasets common in clinical research [27] [23]. |

Pipeline Object |

Software Component | An sklearn class used to chain together all preprocessing steps and the final model into a single unit. This is the primary and most robust mechanism to prevent data leakage during cross-validation by ensuring transformations are fit only on the training fold [23]. |

| Nested Cross-Validation | Methodological Protocol | A technique used when both model selection and evaluation are required. It features an outer loop for performance estimation and an inner loop for hyperparameter tuning. It reduces optimistic bias but adds significant computational cost [27]. |

| Performance Metrics | Evaluation Component | The specific measures used to quantify model performance (e.g., AUC-ROC, F1-score, Mean Squared Error). The choice of metric must align with the clinical or research objective [23]. |

K-fold cross-validation stands as an indispensable workhorse method in the development and validation of predictive models, especially within healthcare and drug development. Its strength lies in providing a robust, less biased estimate of model generalization performance by making efficient use of limited data. A deliberate choice of k, guided by an understanding of the bias-variance trade-off and contextualized by dataset specifics, is crucial. For most applied research settings, k=10 serves as a robust starting point. Furthermore, adherence to critical protocols—such as subject-wise splitting for patient data, stratification for imbalanced outcomes, and rigorous prevention of data leakage via pipelines—is non-negotiable for deriving valid and clinically meaningful performance estimates that can be trusted to inform decision-making.

In clinical prediction research, datasets often exhibit severe class imbalance, where critical outcomes such as disease severity, treatment response, or adverse events are inherently rare compared to more common outcomes [30]. This imbalance presents a fundamental challenge for predictive model development, as standard validation techniques can produce misleading performance estimates that fail to generalize to real-world clinical settings [27] [31].

Standard k-fold cross-validation randomly partitions data into folds, which with imbalanced classes can result in folds with few or no examples from the minority class. This leads to unreliable model evaluation, as some folds may not adequately represent the minority class patterns that are often most critical for clinical decision-making [31]. Stratified k-fold cross-validation addresses this limitation by preserving the original class distribution in each fold, providing more reliable performance estimation for imbalanced clinical outcomes [32].

This protocol details the implementation of stratified k-fold cross-validation specifically for clinical research contexts, where accurately identifying minority classes (e.g., patients with severe symptoms or treatment complications) is often the primary objective of predictive modeling.

Background and Theoretical Foundation

The Problem of Class Imbalance in Clinical Data

Clinical research datasets frequently exhibit skewed distributions where medically critical outcomes are underrepresented. For example, in Patient-Reported Outcomes (PROs) data from cancer patients undergoing radiation therapy, severe symptoms represent the minority class that requires heightened clinical attention [30]. Similar imbalance patterns occur in bankruptcy prediction datasets, where the proportion of bankrupt firms was only 3.23% in a study of Taiwanese companies [33].

When evaluating classifiers on imbalanced data, conventional k-fold cross-validation can break down because random partitioning may create folds with inadequate minority class representation. One study demonstrated that with a 1:100 class ratio, 5-fold cross-validation produced folds where the test set contained as few as zero minority class examples, making performance evaluation impossible for the most clinically relevant cases [31].

Stratified k-Fold Cross-Validation

Stratified k-fold cross-validation is a refinement that ensures each fold maintains approximately the same percentage of samples of each target class as the complete dataset [32] [28]. This preservation of class distribution addresses the critical flaw of standard cross-validation when applied to imbalanced data.

For binary classification, stratified cross-validation is particularly valuable when outcomes are rare at the health-system scale (e.g., ≤1% incidence) [27]. The method can be extended to multi-class problems, ensuring that all classes are properly represented in each fold regardless of their original frequency [30].

Table 1: Comparison of Cross-Validation Approaches for Imbalanced Data

| Method | Handling of Class Imbalance | Advantages | Limitations |

|---|---|---|---|

| Standard k-Fold | Random partitioning, may create folds without minority class samples | Simple implementation; standard practice for balanced data | Unreliable for imbalanced data; high variance in performance estimates |

| Stratified k-Fold | Preserves original class distribution in all folds | More reliable performance estimates; better for model comparison | Requires careful implementation to avoid data leakage |

| Repeated Stratified k-Fold | Multiple stratified splits with different randomizations | More stable performance estimates; reduces variance | Increased computational cost |

Experimental Protocol: Implementation for Clinical Data

Research Reagent Solutions

Table 2: Essential Tools for Implementing Stratified k-Fold Cross-Validation

| Tool/Category | Specific Implementation | Function in Protocol |

|---|---|---|

| Programming Environment | Python 3.7+ with scikit-learn | Primary implementation platform |

| Cross-Validation Class | StratifiedKFold from sklearn.model_selection |

Creates stratified folds preserving class distribution |

| Data Preprocessing | StandardScaler, MinMaxScaler from sklearn.preprocessing |

Normalizes features before model training |

| Classification Algorithms | LogisticRegression, RandomForestClassifier, SVC from sklearn |

Benchmark models for evaluation |

| Performance Metrics | precision_score, recall_score, f1_score, roc_auc_score from sklearn.metrics |

Evaluates model performance, especially on minority class |

Workflow Implementation

The following diagram illustrates the complete stratified k-fold cross-validation workflow for clinical data:

Detailed Step-by-Step Protocol

Step 1: Data Preparation and Preprocessing

Clinical data often requires specialized preprocessing before applying cross-validation:

Data Cleaning: Address missing values, outliers, and data quality issues specific to clinical datasets [27]. For PRO data, consider iterative imputation to handle missing item responses while preserving dataset structure [30].

Feature Scaling: Apply normalization to harmonize heterogeneous feature ranges. StandardScaler or MinMaxScaler should be fit only on the training fold within each cross-validation iteration to prevent data leakage [23] [32].

Step 2: Stratified Splitting with Clinical Considerations

Subject-Wise vs Record-Wise Splitting: For clinical data with multiple records per patient, use subject-wise splitting to ensure all records from the same patient are in either training or test sets [27].

Stratification for Multi-Class Problems: For outcomes with multiple severity levels, ensure all classes are represented proportionally in each fold [30].

Handling Extreme Imbalance: When minority classes have very few samples, increase k-value or use stratified repeated cross-validation to ensure adequate representation [31].

Step 3: Model Training and Evaluation

Algorithm Selection: Consider algorithms that handle imbalance well, such as Random Forest or XGBoost, which have demonstrated strong performance on imbalanced clinical data [30] [33].

Appropriate Performance Metrics: For imbalanced clinical outcomes, accuracy alone is misleading. Use precision, recall, F1-score, and AUROC to comprehensively evaluate model performance, particularly for the minority class [30] [33].

Statistical Aggregation: Calculate mean and standard deviation of performance metrics across all folds to estimate model stability and average performance [23] [26].

Application to Clinical Data: A Case Study

Experimental Setup and Materials

To illustrate the protocol, we describe an application using Patient-Reported Outcomes (PROs) data from cancer patients, where severe symptoms represent the minority class [30]:

- Dataset: PROs from cancer therapy patients with multi-class imbalance across pain, sleep disturbances, and depressive symptoms.

- Class Distribution: Highly skewed with disproportionately fewer patients reporting severe symptoms.

- Classification Algorithms: Random Forest, XGBoost, SVM, Logistic Regression, Gradient Boosting, and MLP-Bagging.

- Preprocessing Pipeline: Three-stage approach including iterative imputation, normalization, and strategic oversampling that maintains original skewed distribution.

Performance Comparison

The following table summarizes quantitative results from applying stratified cross-validation to imbalanced clinical data:

Table 3: Performance Comparison of Classifiers on Imbalanced Clinical Data Using Stratified k-Fold

| Classifier | Overall Accuracy (%) | Minority Class F1-Score | AUROC | Training Time (Relative) |

|---|---|---|---|---|

| Random Forest (RF) | 96.2 | 0.89 | 0.98 | 1.0x |

| XGBoost (XGB) | 95.8 | 0.87 | 0.97 | 1.2x |

| Support Vector Machine (SVM) | 93.1 | 0.79 | 0.94 | 3.5x |

| Logistic Regression (LR) | 92.6 | 0.76 | 0.93 | 0.3x |

| Gradient Boosting (GB) | 94.3 | 0.82 | 0.95 | 1.8x |

| MLP-Bagging | 94.7 | 0.84 | 0.96 | 4.2x |

Advanced Considerations for Clinical Research

Integration with Nested Cross-Validation

For both model selection and hyperparameter tuning, nested cross-validation provides less biased performance estimates:

While computationally intensive, nested cross-validation reduces optimistic bias in performance estimation, which is particularly valuable for clinical prediction models [27] [33].

Complementary Approaches for Imbalanced Data

Stratified cross-validation can be enhanced with additional techniques for severe imbalance:

Strategic Oversampling: Techniques like SMOTE can augment minority classes while preserving original class ratios during cross-validation [30].

Cost-Sensitive Learning: Assign higher misclassification penalties to minority classes to improve sensitivity for critical clinical outcomes [30].

Ensemble Methods: Bagging and boosting approaches can improve robustness against imbalance when combined with stratified sampling [30].

Discussion and Best Practices

Interpretation of Results

When using stratified k-fold cross-validation with imbalanced clinical data:

- Focus on minority class performance metrics (precision, recall, F1-score) rather than overall accuracy

- Consider standard deviation across folds as an indicator of model stability

- Evaluate clinical utility rather than purely statistical performance

Common Pitfalls and Solutions

Data Leakage: Ensure all preprocessing steps are fit only on training folds within the cross-validation loop [23].

Insufficient Folds: With extreme class imbalance, increase k-value (e.g., k=10) or use stratified repeated cross-validation [31].

Subject-Level Data Leakage: For longitudinal data, implement subject-wise splitting to prevent correlated samples from appearing in both training and test sets [27].

Recommendations for Clinical Researchers

Based on empirical evidence from clinical applications:

- Use stratified k-fold with k=5 or 10 as a standard practice for imbalanced clinical outcomes

- Employ nested cross-validation when both model selection and performance estimation are required

- Report both overall and class-wise performance metrics to provide a complete picture of model capability

- Consider computational efficiency when choosing between algorithms, as tree-based methods like Random Forest and XGBoost offer favorable performance-to-computation ratios [30] [33]

Stratified k-fold cross-validation provides a robust framework for evaluating predictive models on imbalanced clinical data, enabling more reliable assessment of how models will perform on real-world patient populations where accurately identifying rare but critical outcomes is paramount.

Leave-One-Out Cross-Validation (LOOCV) is a specialized resampling technique used to evaluate the predictive performance of statistical and machine learning models. As a special case of k-fold cross-validation where k equals the number of samples (n) in the dataset, LOOCV provides a nearly unbiased estimate of the true generalization error by leveraging almost the entire dataset for training in each iteration [34] [35]. This exhaustive approach makes it particularly valuable in research settings where data scarcity is a significant constraint, such as in early-stage drug discovery and biomedical studies [36].

The fundamental principle of LOOCV involves systematically iterating through each data point in a dataset of n observations. For each iteration i, the model is trained on n-1 data points and validated on the single remaining observation [35]. This process repeats n times until every sample has served exactly once as the test set. The overall performance metric is then calculated as the average of all n validation results, providing a comprehensive assessment of model robustness [37].

Mathematically, the LOOCV estimate for the prediction error (ELOOCV) can be expressed as:

ELOOCV = (1/n) * Σ L(yi, ŷ(i)) for i = 1 to n

Where:

yirepresents the true value for the i-th observationŷ(i)represents the predicted value when the model is trained excluding the i-th observationLis the loss function (e.g., mean squared error for regression, 0-1 loss for classification) [35] [36]

Theoretical Foundations and Trade-offs

Bias-Variance Characteristics

Understanding the bias-variance tradeoff is essential when selecting cross-validation strategies. LOOCV offers distinct advantages in bias reduction but presents challenges in variance stability [34] [38].

Table: Bias-Variance Profile of LOOCV Compared to Other Cross-Validation Methods

| Method | Bias | Variance | Computational Cost | Best For |

|---|---|---|---|---|

| LOOCV | Low | High | Very High | Small datasets [34] |

| 10-Fold CV | Balanced | Balanced | Moderate | Most problems [34] [39] |

| 5-Fold CV | High | Low | Moderate | General use [34] |

| Stratified K-Fold | Balanced | Balanced | Moderate | Classification, class imbalance [34] |

| Time Series CV | Varies | Varies | Moderate | Sequential, time-sensitive data [34] |

LOOCV provides an almost unbiased estimate of model performance because each training set utilizes n-1 samples, closely approximating the performance of a model trained on the entire dataset [34] [38]. This minimal bias comes at the cost of higher variance in performance estimates. Since the test sets in LOOCV overlap substantially (differing by only one observation), the error estimates become highly correlated, leading to increased variance when averaging these correlated estimates [39] [38].

The variance issue is particularly pronounced when datasets are small or contain highly influential points. In such cases, the removal of a single observation can significantly alter model parameters, resulting in unstable performance estimates across iterations [36].

Computational Complexity

The exhaustive nature of LOOCV results in significant computational demands. For a dataset with n samples, the method requires training the model n times, leading to a time complexity of approximately O(n²) or higher, depending on the underlying training algorithm [35].

Table: Computational Requirements for LOOCV Implementation

| Dataset Size | Number of Models | Training Examples per Model | Relative Computational Cost |

|---|---|---|---|

| Small (n = 50) | 50 | 49 | Low |

| Medium (n = 1,000) | 1,000 | 999 | High |

| Large (n = 10,000) | 10,000 | 9,999 | Prohibitive |

For complex models with training algorithms that scale superlinearly with dataset size, LOOCV can become prohibitively expensive [35]. However, for certain model classes with efficient update mechanisms (such as linear regression, ridge regression, and some kernel methods), computational shortcuts exist that make LOOCV more feasible [36].

When to Use LOOCV: Application Scenarios

Ideal Use Cases

LOOCV is particularly advantageous in several specific research scenarios:

Small Datasets: When working with limited data where maximizing training data utilization is critical, LOOCV provides more reliable performance estimates than k-fold methods with higher k values [34] [35]. This is common in biomedical research, specialized chemical studies, and rare disease classification where sample collection is challenging and expensive [36] [40].

Model Selection and Comparison: When comparing multiple algorithms or configurations, LOOCV's low bias helps ensure fair comparisons, especially with small to moderate dataset sizes [39]. This is valuable in drug discovery pipelines where selecting the most promising QSAR model early can significantly accelerate research [41].

Influential Point Detection: The iterative nature of LOOCV naturally facilitates identification of observations that disproportionately impact model performance, helping researchers detect outliers and influential cases [36].

High-Precision Requirements: In applications where prediction accuracy is critical and computational resources are sufficient, LOOCV provides the most accurate performance estimate available through cross-validation [35].

When to Avoid LOOCV

LOOCV may be impractical or suboptimal in these scenarios:

Large Datasets: With large n, the computational cost becomes prohibitive without providing meaningful improvement over k-fold methods (typically k=5 or 10) [34] [39].

Time-Series Data: For temporal data, standard LOOCV violates time-ordering assumptions. Time-series cross-validation with rolling windows or forward chaining is more appropriate [34] [42].

High-Dimensional Data: When features significantly outnumber samples, LOOCV can exhibit instability, and specialized regularized approaches often perform better [41].

Imbalanced Classification: Standard LOOCV doesn't preserve class distributions in each fold. Stratified variants or balanced k-fold approaches are preferable for imbalanced datasets [34] [43].

LOOCV in Drug Discovery and Development

The pharmaceutical industry presents compelling use cases for LOOCV, particularly during early discovery phases where data is naturally limited. Several recent studies demonstrate its practical utility:

In antiviral discovery research, scientists successfully employed machine learning models trained on small, imbalanced datasets (36 compounds, 5 active against EV71) using LOOCV for evaluation [40]. Despite the dataset limitations, their framework demonstrated significant predictive accuracy, with experimental validation confirming that five out of eight model-predicted compounds exhibited virucidal activity [40].

Similarly, AI-integrated QSAR modeling for enhanced drug discovery often relies on LOOCV for rigorous validation, especially when working with novel compound classes or rare targets where historical data is sparse [41]. This approach helps maximize the informational value from each expensive-to-acquire data point while providing realistic performance estimates for model selection.

LOOCV also finds application in ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) prediction, where researchers must build reliable models from limited experimental data during lead optimization phases [41].

Experimental Protocols and Implementation

Core LOOCV Protocol for Predictive Modeling

This protocol provides a standardized methodology for implementing LOOCV in predictive modeling research, with special considerations for drug development applications.

Table: Research Reagent Solutions for LOOCV Implementation

| Component | Function | Implementation Examples |

|---|---|---|

| Data Splitting Module | Systematically partitions data into n train-test combinations | Scikit-learn LeaveOneOut, custom iterators |

| Model Training Framework | Trains the model on n-1 samples in each iteration | Scikit-learn, PyTorch, TensorFlow, R caret |

| Performance Metrics | Quantifies model performance on left-out samples | Accuracy, AUC-ROC, MSE, R², concordance index |

| Result Aggregation | Combines n performance estimates into overall metrics | Mean, standard deviation, confidence intervals |

| Statistical Validation | Assesses significance of performance differences | Paired t-tests, corrected repeated k-fold CV tests |

Procedure:

Data Preparation and Preprocessing

- Perform initial data cleaning, handling missing values, and outlier detection

- Critical: All preprocessing steps (normalization, feature scaling, etc.) must be performed within each LOOCV iteration to prevent data leakage [35] [43]

- For drug discovery applications: Compute molecular descriptors (1D, 2D, 3D, or 4D) or generate learned representations using graph neural networks [41]

LOOCV Iteration Process

- Initialize the LOOCV splitter:

loo = LeaveOneOut() - For each split (i = 1 to n):

- Extract training set (all samples except i)

- Extract test set (single sample i)

- Apply preprocessing parameters derived from training set to test set

- Train model on preprocessed training set

- Generate prediction for left-out sample

- Record performance metric for this iteration

- For large n or complex models, implement parallel processing to distribute iterations across multiple cores or nodes [35] [36]

- Initialize the LOOCV splitter:

Performance Aggregation and Analysis

- Calculate mean and standard deviation of performance metrics across all n iterations

- Generate model diagnostics: residual analysis, influential point detection, uncertainty quantification

- For classification: Compute confusion matrices, ROC curves, precision-recall curves

- For regression: Generate residual plots, prediction error distributions

Model Selection and Final Evaluation

- Compare LOOCV performance across different algorithms or hyperparameter settings

- Select optimal configuration based on LOOCV results

- Train final model on entire dataset using selected configuration

- Evaluate on completely independent external test set if available

LOOCV Experimental Workflow: Systematic n-iteration validation process

Protocol for Small Dataset Scenarios in Drug Discovery

This specialized protocol addresses the unique challenges of applying LOOCV to small datasets common in early-stage drug discovery.

Special Considerations:

- With small n, implement nested cross-validation for both model selection and evaluation to prevent overfitting [36] [39]

- For imbalanced data (common in active/inactive compound classification), employ stratified LOOCV approaches that maintain class ratios

- Use regularization techniques (L1/L2 penalty, dropout in neural networks) to control model complexity

- Consider data augmentation strategies specific to chemical space (scaffold hopping, analog generation) to effectively increase dataset size [36]

Procedure:

Data Characterization

- Compute dataset statistics: size, feature-to-sample ratio, class distribution

- Perform exploratory analysis to identify potential outliers or clusters

Nested LOOCV Implementation

- Outer loop: Standard LOOCV for performance estimation

- Inner loop: Hyperparameter optimization using additional cross-validation on the n-1 training samples

- For each outer loop iteration:

- Further split n-1 training samples using k-fold CV (typically k=3 or 5 due to small size)

- Optimize hyperparameters on these inner splits

- Retrain on all n-1 samples with optimal hyperparameters

- Test on left-out sample

Uncertainty Quantification

- Calculate confidence intervals for performance metrics using bootstrapping or analytical methods

- Perform sensitivity analysis to assess model stability

Nested LOOCV for Small Datasets: Hyperparameter optimization within validation

Python Implementation Code

Decision Framework and Best Practices

LOOCV Selection Guidelines