Cross-Validation in Computational Science: A Comprehensive Guide for Biomedical Researchers

This article provides a comprehensive guide to cross-validation techniques tailored for researchers, scientists, and professionals in drug development and computational science.

Cross-Validation in Computational Science: A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide to cross-validation techniques tailored for researchers, scientists, and professionals in drug development and computational science. It covers foundational concepts, detailed methodologies, practical troubleshooting strategies, and comparative analyses of validation approaches. By addressing critical challenges like overfitting, data leakage, and computational efficiency, the content equips practitioners with the knowledge to build robust, generalizable predictive models essential for biomedical innovation and clinical translation. The guide integrates current research and practical implementation insights to enhance model reliability in complex research environments.

Understanding Cross-Validation: Core Principles and Critical Importance in Computational Science

In computational science research, particularly in fields requiring high-fidelity predictive modeling like drug development, the evaluation of model performance is paramount. Cross-validation (CV) stands as a cornerstone technique for assessing how the results of a statistical analysis will generalize to an independent data set, serving as a critical safeguard against overfitting—a scenario where a model learns the training data too well, including its noise and random fluctuations, but fails to predict new, unseen data effectively [1]. This methodological necessity arises from a fundamental machine learning principle: learning a model's parameters and testing its performance on the identical data constitutes a profound methodological error [1].

The computational necessity of cross-validation becomes evident when dealing with limited data, a common challenge in scientific research such as clinical trials or drug discovery where data collection is expensive, ethically constrained, or time-consuming [2]. By providing a robust framework for model assessment and selection, cross-validation enables researchers to make the most efficient use of available data, often eliminating the need for a separate validation set and allowing the entire dataset to be used for both training and validation [1] [3]. This article systematically compares cross-validation techniques, providing experimental protocols and quantitative analyses to guide researchers in selecting appropriate validation strategies for their specific computational challenges.

Core Concepts and Terminology

Understanding cross-validation requires precise terminology. A sample (or instance) refers to a single unit of observation [4]. The dataset represents the total collection of all available samples [4]. In k-fold cross-validation, the dataset is partitioned into folds—smaller subsets of approximately equal size [4]. A group comprises samples that share common characteristics (e.g., multiple measurements from the same patient) that must be kept together during splitting to prevent data leakage [4]. Stratification ensures that each fold maintains the same class distribution as the complete dataset, which is particularly crucial for imbalanced datasets common in medical research [3] [5].

The mathematical foundation of cross-validation connects to the bias-variance tradeoff. For a continuous outcome, the mean-squared error of a learned model can be decomposed into bias, variance, and irreducible error terms [2]. Cross-validation techniques directly influence this tradeoff: larger numbers of folds (fewer samples per fold) typically yield lower bias but higher variance, while smaller numbers of folds tend toward higher bias and lower variance [2].

Comparative Analysis of Cross-Validation Techniques

Taxonomy of Methods

Cross-validation techniques broadly fall into two categories: exhaustive and non-exhaustive methods. Exhaustive methods test all possible ways to divide the original sample into training and validation sets, while non-exhaustive methods approximate this process through repeated sampling [6]. The following sections compare the most prominent techniques used in computational science.

Quantitative Comparison of Techniques

Table 1: Comparative Analysis of Primary Cross-Validation Techniques

| Technique | Key Characteristics | Computational Cost | Variance | Bias | Optimal Use Cases |

|---|---|---|---|---|---|

| Hold-Out [7] [5] | Single random split (typically 70-80% training, 20-30% testing) | Low (1 model training) | High | High (with small datasets) | Very large datasets, initial prototyping |

| K-Fold [1] [3] | Dataset divided into k equal folds; each fold used once as validation | Moderate (k model trainings) | Moderate | Low | Small to medium datasets, general use |

| Stratified K-Fold [3] [5] | Preserves class distribution in each fold | Moderate (k model trainings) | Moderate | Low | Imbalanced classification problems |

| Leave-One-Out (LOOCV) [7] [5] | Each sample used once as validation; n-1 samples for training | High (n model trainings) | High | Low | Very small datasets |

| Leave-P-Out [6] [5] | All possible training sets containing n-p samples | Very High (C(n,p) trainings) | High | Low | Small datasets requiring robust estimates |

| Repeated K-Fold [6] [5] | Multiple rounds of k-fold with different random splits | High (k × rounds trainings) | Low | Low | Stabilizing performance estimates |

| Nested K-Fold [2] [4] | Outer loop for performance estimation, inner loop for model selection | Very High (k² model trainings) | Low | Low | Hyperparameter tuning without overoptimistic bias |

Table 2: Performance Comparison on Representative Problems

| Technique | Stability (Score Variance) | Data Usage Efficiency | Handling Class Imbalance | Computational Tractability |

|---|---|---|---|---|

| Hold-Out | Low (highly variable) [7] | Poor (only uses portion of data) | Poor without stratification | High |

| K-Fold | Moderate [3] | Excellent (all data used) | Moderate | Moderate |

| Stratified K-Fold | Moderate [3] | Excellent | Excellent | Moderate |

| LOOCV | High (each estimate uses nearly identical training sets) [7] | Excellent | Good with stratification | Low for large n |

| Nested K-Fold | High [2] | Excellent | Good with stratification | Low |

Specialized Techniques for Domain-Specific Applications

Time-Series Cross-Validation: For temporal data in domains like clinical monitoring, standard random splitting violates temporal dependencies. Forward chaining methods (e.g., rolling-origin) train on chronological data and validate on subsequent periods, preserving time relationships [8] [9].

Grouped Cross-Validation: When data contain natural groupings (e.g., multiple samples from the same patient), grouped CV ensures all samples from the same group are either in training or validation sets, preventing information leakage [2] [4].

Stratified Methods for Imbalanced Data: In drug discovery where positive hits are rare, stratified approaches maintain minority class representation across folds, providing more reliable performance estimates [10].

Experimental Protocols and Implementation

Standard K-Fold Cross-Validation Protocol

Objective: To evaluate model performance while minimizing variance and bias in performance estimates [3].

Methodology:

- Dataset Preparation: Preprocess data (handle missing values, normalize features) ensuring no preprocessing decisions incorporate information from the validation set [1].

- Fold Generation: Split dataset into k folds (typically k=5 or 10), randomly shuffling data while preserving class distributions in classification problems [3].

- Iterative Training-Validation:

- For each fold i (i=1 to k):

- Use fold i as validation set

- Use remaining k-1 folds as training set

- Train model on training set

- Validate on fold i, recording performance metric(s)

- For each fold i (i=1 to k):

- Performance Aggregation: Calculate mean and standard deviation of performance metrics across all k iterations [1].

Implementation (scikit-learn):

Nested Cross-Validation for Model Selection Protocol

Objective: To simultaneously evaluate model performance and optimize hyperparameters without optimistic bias [2].

Methodology:

- Outer Loop: Split data into k folds for performance estimation.

- Inner Loop: For each training set of the outer loop, perform k-fold CV to tune hyperparameters.

- Model Training: For each outer fold, train model with optimal hyperparameters on the entire training set.

- Performance Evaluation: Validate on the outer test set.

- Aggregation: Compute average performance across all outer test sets [2].

Computational Considerations: Nested CV requires training k × m models (where k is outer folds and m is inner folds), making it computationally intensive but essential for reliable model evaluation in rigorous scientific applications [2].

Validation Set Approach for Large-Scale Data

Objective: To efficiently evaluate models when computational resources are constrained [7].

Methodology:

- Single Split: Randomly partition data into training (typically 70-80%) and validation (20-30%) sets.

- Model Training: Train model on training set.

- Performance Evaluation: Evaluate on validation set.

Limitations: The validation set approach may produce highly variable estimates depending on the specific split, as demonstrated in polynomial regression experiments where different random splits suggested different optimal model complexities [7].

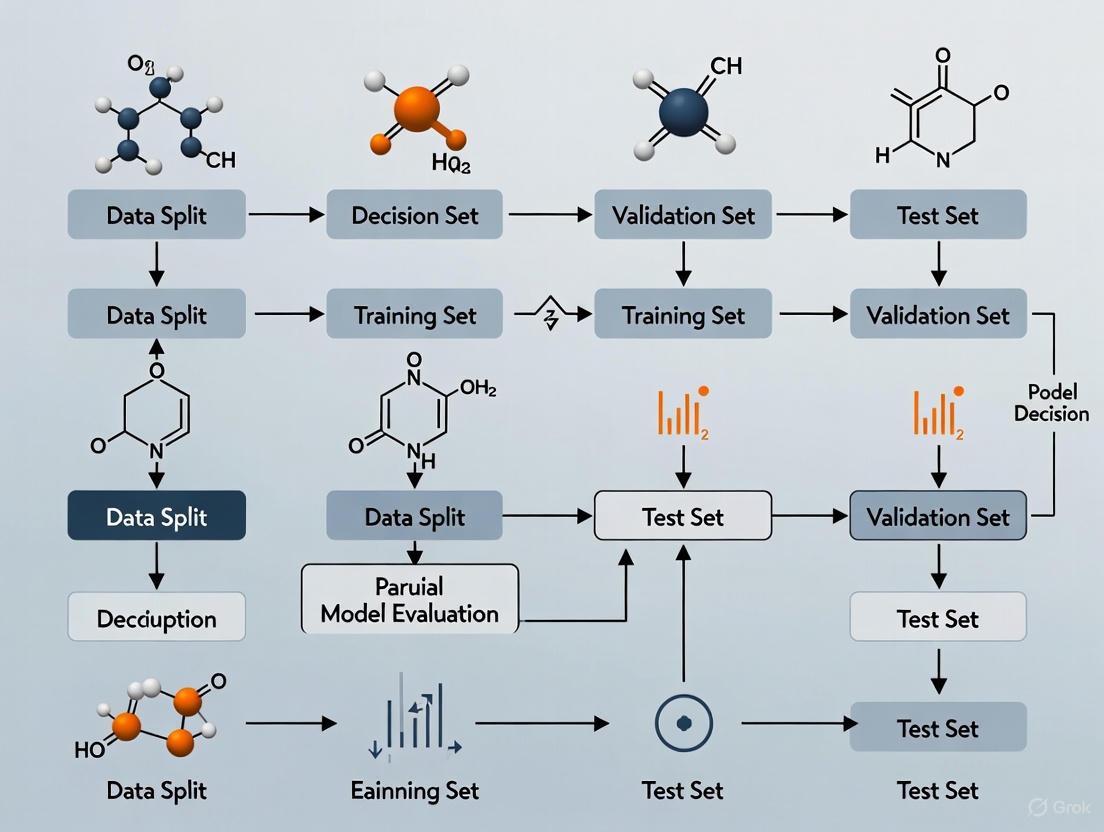

Workflow Visualization

K-Fold Cross-Validation Workflow

Nested Cross-Validation Architecture

The Scientist's Toolkit: Essential Research Reagents

Table 3: Computational Tools for Cross-Validation Research

| Tool/Resource | Function | Application Context |

|---|---|---|

| scikit-learn [1] [3] | Python ML library providing crossvalscore, KFold, and other CV splitters | General machine learning, prototype development |

| StratifiedKFold [3] | Preserves class distribution in splits | Imbalanced classification problems (e.g., rare disease detection) |

| GroupKFold [2] | Ensures group integrity across splits | Clinical data with multiple samples per patient |

| TimeSeriesSplit [8] | Respects temporal ordering | Longitudinal studies, clinical monitoring data |

| Nested Cross-Validation [2] | Hyperparameter tuning without bias | Rigorous model selection for publication |

| Pipeline Class [1] | Prevents data leakage by binding preprocessing with estimation | All applied research contexts |

| cross_validate [1] | Multiple metric evaluation with timing information | Comprehensive model assessment |

Cross-validation represents a computational necessity in modern scientific research, particularly in domains like drug development where model decisions have significant real-world implications. The technique provides a principled approach to model evaluation that respects the fundamental statistical challenge of generalization [1] [2]. While computationally more intensive than simple hold-out validation, methods like k-fold cross-validation offer superior reliability in performance estimation, making them indispensable in rigorous scientific workflows [3].

The choice of specific cross-validation technique involves tradeoffs between computational efficiency, bias, and variance [2]. For most scientific applications, 5- or 10-fold cross-validation provides an optimal balance, though specialized scenarios (e.g., temporal data, grouped data, or severe class imbalance) require modified approaches [8] [10]. As computational science continues to evolve with increasingly complex models and datasets, cross-validation remains an essential methodology for ensuring that predictive models generalize beyond their training data to deliver reliable insights in critical research applications.

In scientific research and drug development, the reliability of computational models determines the success of subsequent experimental validation and clinical translation. Overfitting represents a fundamental challenge—a phenomenon where a model learns the specific patterns, including noise, in a training dataset rather than the underlying biological or chemical relationships that generalize to new data [11] [12]. An overfit model appears highly accurate during development but fails when applied to unseen data, potentially leading to misguided research directions and costly failed experiments in drug development pipelines.

Single split validation (holdout method), which partitions data once into training and testing sets, remains commonly used despite documented vulnerabilities [13]. This method provides only a single, often optimistic, performance estimate that is highly dependent on a particular random data partition [14]. When dataset size is limited—a common scenario in early-stage drug discovery with restricted samples or patient data—this approach can yield misleading performance estimates that mask poor generalization capability [15]. This article examines why single split validation fails as a robust validation strategy and presents comprehensive cross-validation techniques that offer more reliable alternatives for scientific research.

The Theoretical Framework: Overfitting and Validation Concepts

Defining Overfitting and Its Consequences

Overfitting occurs when a model learns the training data too closely, including its statistical noise and irrelevant features, rather than the true underlying signal [12]. Formally, an overfit model demonstrates significant disparity between its performance on training data versus unseen test data from the same distribution [11]. In scientific terms, such a model has memorized rather than learned, compromising its ability to extract meaningful patterns from new data.

The consequences are particularly severe in scientific domains. In chemometrics and quantitative structure-activity relationship (QSAR) modeling, overfit models may incorrectly predict compound activity, wasting synthetic chemistry resources [15]. In medical imaging artificial intelligence (AI), overfitting can create algorithms that perform excellently on historical data but fail clinically on new patient populations [14]. The model becomes overconfident about patterns that do not exist in the broader population, creating a false sense of predictive capability [12].

Measuring Generalization Performance

True generalization error represents a model's expected error on new data drawn from the same population as the training data [12]. Since we cannot typically access the entire population, we estimate this error through validation techniques:

- Training Error: Error calculated on the same data used for model development (often optimistically biased)

- Estimated Generalization Error: Error estimated through validation procedures on data not used in training

- True Generalization Error: The actual error on the population distribution (typically unknown in real-world applications) [12]

Single split validation provides a single estimate of generalization performance using a held-out test set, but this estimate suffers from high variance and depends heavily on the particular random partition [16].

Why Single Split Validation Fails: Experimental Evidence

Comparative Studies on Data Splitting Methods

A comprehensive comparative study examined multiple data splitting methods using simulated datasets with known misclassification probabilities. Researchers generated datasets of varying sizes (30, 100, and 1000 samples) using the MixSim model and applied partial least squares discriminant analysis (PLS-DA) and support vector machines for classification (SVC) [15]. The performance estimates from validation sets were compared against true performance on blind test sets generated from the same distribution.

Table 1: Performance Gap Between Validation Estimates and True Test Performance Across Dataset Sizes

| Dataset Size | Single Split | k-Fold CV (k=5) | k-Fold CV (k=10) | Bootstrap | Kennard-Stone |

|---|---|---|---|---|---|

| 30 samples | 22.5% gap | 15.3% gap | 14.1% gap | 16.2% gap | 28.7% gap |

| 100 samples | 12.8% gap | 8.7% gap | 7.9% gap | 9.1% gap | 19.4% gap |

| 1000 samples | 4.2% gap | 2.1% gap | 1.8% gap | 2.3% gap | 8.9% gap |

The results demonstrated a significant gap between performance estimated from the validation set and true test set performance for all data splitting methods when applied to small datasets (30 samples) [15]. This disparity decreased with larger sample sizes (1000 samples) as models approached approximations of central limit theory. Crucially, single split validation consistently showed among largest performance gaps across dataset sizes, particularly for smaller samples common in early-stage research.

The Dataset Size Dilemma in Scientific Research

Single split validation performs particularly poorly with limited samples, a frequent scenario in scientific research where data may be scarce due to:

- High costs of experimental data collection (e.g., clinical trials, animal studies)

- Rare disease populations with limited patient data

- Novel compound testing with restricted synthesis capability

- Complex assays with technical or budgetary constraints

With small datasets, a single split must sacrifice either training data (increasing bias) or test data (increasing variance in performance estimation) [15] [17]. Holding out 20-30% of a small dataset for testing may leave insufficient data for proper model training, while using most data for training leaves a test set too small for reliable performance estimation [3].

Sensitivity to Data Partitioning

Single split validation produces performance estimates that vary significantly based on which specific samples are randomly assigned to training versus test sets [14]. In one partitioning, difficult-to-predict samples might be concentrated in the test set, yielding pessimistic performance estimates. In another partitioning, the test set might contain easier samples, creating optimistic performance estimates [16]. This high variance makes comparative model evaluation unreliable—researchers might select an inferior model simply because it was evaluated on a favorable test set partition.

Robust Alternatives: Cross-Validation Techniques

k-Fold Cross-Validation: A Standard Approach

k-Fold cross-validation addresses single split limitations by partitioning data into k equal-sized folds [3] [16]. In each of k iterations, k-1 folds serve as training data while the remaining fold serves as validation data. Each data point is used exactly once for validation, and the final performance estimate averages results across all k iterations [1].

Table 2: Comparison of Common k Values in k-Fold Cross-Validation

| k Value | Advantages | Disadvantages | Recommended Use Cases |

|---|---|---|---|

| k=5 | Lower computational cost, reasonable bias-variance tradeoff | Higher bias than larger k values | Medium to large datasets, initial model screening |

| k=10 | Lower bias, widely accepted standard | 10x computational cost vs single split | Most applications, final model evaluation |

| LOO (k=n) | Unbiased estimate, uses maximum training data | Highest variance, computationally expensive | Very small datasets (<50 samples) |

Specialized Cross-Validation Methods for Scientific Data

Stratified Cross-Validation

For classification problems with imbalanced class distributions (common with rare disease outcomes or active compounds in drug discovery), stratified cross-validation preserves the original class proportions in each fold [3] [13]. This prevents scenarios where random partitioning creates folds missing representation from minority classes, which would lead to unreliable performance estimates.

Nested Cross-Validation

When both model selection and performance estimation are required, nested cross-validation provides an unbiased solution by implementing two layers of cross-validation [14] [13]. The inner loop performs hyperparameter optimization and model selection, while the outer loop provides performance estimation on completely held-out data. This approach prevents information leakage from the test set into model selection, a common pitfall when using single split validation [14].

Subject-Wise and Record-Wise Cross-Validation

For biomedical data with multiple measurements per subject, standard cross-validation can create bias if the same subject appears in both training and test sets [13]. Subject-wise cross-validation ensures all records from a single subject remain in either training or test folds, preventing artificially inflated performance from the model learning subject-specific correlations rather than generalizable patterns.

Experimental Protocols for Validation Strategy Comparison

Benchmarking Methodology for Validation Techniques

To quantitatively compare single split validation against cross-validation approaches, researchers can implement the following experimental protocol:

- Dataset Selection: Curate datasets of varying sizes (small: <100 samples, medium: 100-1000 samples, large: >1000 samples) relevant to the scientific domain

- Model Training: Apply consistent preprocessing and train identical model architectures using different validation strategies

- Performance Benchmarking: Compare validation estimates against performance on a completely held-out test set (or synthetic datasets with known ground truth)

- Stability Assessment: Repeat each validation method with multiple random seeds to measure estimate variance

Implementation Framework

Table 3: Research Reagent Solutions for Validation Experiments

| Tool/Technique | Function | Example Implementation |

|---|---|---|

| scikit-learn | Machine learning library with cross-validation utilities | cross_val_score, KFold, StratifiedKFold |

| MixSim | Generate datasets with known misclassification probabilities | Simulate data with controlled overlap between classes [15] |

| Early Stopping | Prevent overfitting during training by monitoring validation performance | Stop training when validation loss stops improving [17] |

| Data Augmentation | Artificially expand training data by creating modified versions | Image transformations, SMOTE for tabular data [17] |

| Regularization | Constrain model complexity to prevent overfitting | L1 (Lasso) or L2 (Ridge) regularization [17] |

Single split validation represents an inadequate approach for model evaluation in scientific research due to its high variance, sensitivity to data partitioning, and systematic overestimation of performance—particularly problematic with limited sample sizes common in early-stage research and drug development. Cross-validation techniques, particularly k-fold and stratified approaches, provide more reliable and stable performance estimates by leveraging multiple data partitions and incorporating all available data into both training and validation roles.

For researchers and drug development professionals, adopting robust cross-validation practices is essential for generating trustworthy computational models that translate successfully to experimental validation and clinical application. The choice of specific validation strategy should align with dataset characteristics, including size, class distribution, and subject structure, to ensure accurate estimation of true generalization performance and avoid the costly consequences of overfit models in scientific discovery pipelines.

In computational science research, robust model validation is paramount to ensuring that predictive findings are reliable and generalizable. Cross-validation stands as a cornerstone technique in this process, providing a framework for assessing how the results of a statistical analysis will generalize to an independent dataset [16]. The proper application of cross-validation requires a precise understanding of its core components: the folds, sets, samples, and groups that structure the validation workflow. Misunderstanding these elements can lead to data leakage, overfitting, and ultimately, non-reproducible research—a significant concern in fields like drug development where decisions have profound implications [18] [2].

This guide delineates these key terms within the context of cross-validation techniques, providing researchers with the conceptual clarity needed to implement validation protocols correctly. We objectively compare the performance outcomes associated with different validation approaches, supported by experimental data from published studies, to equip scientists with evidence-based recommendations for their analytical workflows.

Core Terminology and Definitions

Foundational Concepts

- Samples: Individual data points or observations within a dataset. In a study, the total number of samples is often denoted as n [16]. For example, a dataset with 150 iris plants contains 150 samples [1].

- Sets: Distinct partitions of the overall dataset created for machine learning purposes. The three critical sets are:

- Training Set: The subset of data used exclusively to fit (train) the model [19]. The model learns parameters from this data.

- Validation Set: A subset of data used to provide an unbiased evaluation of a model fit on the training dataset while tuning model hyperparameters [1]. It acts as a simulated test set during the model development cycle.

- Test Set: A final, held-out subset of data used to provide an unbiased evaluation of the final model after training and hyperparameter tuning are complete [1] [16]. It is crucial for estimating the model's real-world performance and generalization error.

The Concept of Folds and Groups

- Folds: In k-fold cross-validation, the dataset is divided into k equal (or nearly equal) subsets, each of which is called a fold or group [16] [20]. These terms are often used interchangeably in this context. The value k is a user-defined parameter, with 5 and 10 being common choices [20].

- Groups: This term can also have a specific meaning in "group-wise" or "subject-wise" cross-validation, where data is partitioned such that all samples from a single group (e.g., a patient in a medical study) are kept together in either the training or test set to prevent data leakage [2].

The following workflow diagram illustrates how these components interact in a standard k-fold cross-validation process.

Comparative Analysis of Validation Techniques

Different validation strategies utilize the core terminology in distinct ways, leading to varying outcomes in model performance, computational cost, and reliability. The table below summarizes the key characteristics of the most common techniques.

Table 1: Comparison of Common Model Validation Techniques

| Technique | Definition | Key Parameters | Best-Suited Use Cases | Reported Performance & Experimental Findings |

|---|---|---|---|---|

| Holdout Validation | A simple split into a single training set and a single test set [16]. | test_size (e.g., 0.2 or 20%) |

Large datasets, initial model prototyping [19]. | Prone to high variance in performance estimates based on a single random split [16] [2]. |

| k-Fold Cross-Validation | The dataset is divided into k folds. The model is trained and tested k times, each time using a different fold as the test set and the remaining k-1 folds as the training set [20]. | n_splits or k (e.g., 5 or 10) [1] |

General-purpose model assessment and selection with limited data [16]. | Provides a more reliable and less biased performance estimate than a single holdout set. A study on credit scoring used this to identify and reduce overfitting [21]. |

| Stratified k-Fold | A variation of k-fold that ensures each fold has the same proportion of class labels as the complete dataset [19]. | n_splits, shuffle, random_state |

Classification problems, especially with imbalanced datasets [19]. | Prevents optimistic bias from random sampling. In one example, it yielded an overall accuracy of ~96.7% with a standard deviation of ~0.02 on a breast cancer dataset [19]. |

| Leave-One-Out (LOOCV) | A special case of k-fold where k is equal to the number of samples (n) in the dataset. Each sample gets to be the test set exactly once [16]. | n_splits = n (number of samples) |

Very small datasets where maximizing training data is critical [16]. | Computationally expensive but leads to a low-bias estimate of performance. However, it can have high variance [16] [19]. |

| Nested Cross-Validation | Uses an outer loop for performance estimation and an inner loop for hyperparameter tuning on the training folds, preventing optimistic bias [2]. | outer_cv, inner_cv |

Rigorous model evaluation when hyperparameter tuning is required [2]. | Considered a gold standard; reduces optimistic bias but comes with significant computational costs [2]. |

Experimental Performance Data

The choice of validation technique directly impacts reported model performance and its real-world applicability.

- Healthcare Predictive Modeling: A study using the MIMIC-III dataset demonstrated that nested cross-validation, while computationally intensive, provides a less optimistic and more realistic estimate of model performance on unseen patient data compared to a simple holdout method [2]. This is critical for clinical decision-making.

- Contamination Classification: In an engineering application, researchers achieved accuracies consistently exceeding 98% for classifying contamination levels in high-voltage insulators. They validated these results using robust cross-validation techniques, underscoring the model's reliability [22].

- Stability in Reproducibility: Research highlights that ML models with stochastic initialization can suffer from reproducibility issues. A novel validation approach involving repeated trials (up to 400 per subject) was shown to stabilize predictive performance and feature importance, addressing the variability introduced by random seeds [18].

Implementation Protocols and Workflows

Standard k-Fold Cross-Validation Protocol

The following protocol details the steps for implementing k-fold cross-validation, a widely used method in computational research.

- Data Preparation: Shuffle the dataset randomly to avoid any order effects [20].

- Partitioning: Split the entire collection of samples into k folds (or groups) of approximately equal size.

- Iterative Training and Validation: For each unique fold i (where i = 1 to k):

- a. Assign fold i to be the test set.

- b. Assign the remaining k-1 folds to be the training set.

- c. Train the model on the training set.

- d. Evaluate the trained model on the test set and record the performance score (e.g., accuracy).

- Result Aggregation: Calculate the final model performance by averaging the k recorded scores. The standard deviation of these scores can also be reported as a measure of stability [1] [20].

This workflow ensures that every sample in the dataset is used exactly once for testing and k-1 times for training, maximizing data usage and providing a robust performance estimate.

Protocol for Subject-Wise Validation

In domains like healthcare and drug development, where multiple records may belong to a single subject, a standard k-fold approach can lead to data leakage. The following subject-wise protocol is designed to prevent this.

Protocol Steps:

- Subject Identification: Identify all unique subjects (e.g., patient IDs) in the dataset.

- Subject-Level Splitting: Shuffle the list of unique subjects and split it into k folds.

- Record Assignment: For each fold i, assign all records belonging to the subjects in fold i to the test set. Assign all records from all remaining subjects to the training set.

- Modeling and Evaluation: Proceed with training and evaluation as in the standard k-fold protocol. This ensures no subject has their data in both the training and test set for a given fold, preventing optimistic bias and ensuring a more realistic evaluation [2].

The Scientist's Toolkit: Research Reagent Solutions

The following table outlines essential computational "reagents" and their functions for implementing robust validation strategies.

Table 2: Essential Tools and Packages for Validation Experiments

| Tool/Reagent | Function in Validation Protocol | Example Implementation |

|---|---|---|

scikit-learn (sklearn) |

A comprehensive Python library providing implementations for all major cross-validation techniques and data splitters [1]. | from sklearn.model_selection import KFold, StratifiedKFold, cross_val_score |

| Stratified Splitters | Specialized classes that preserve the percentage of samples for each class in the splits, crucial for imbalanced data [1] [19]. | skf = StratifiedKFold(n_splits=5, shuffle=True, random_state=1) |

| Pipeline Class | Ensures that data preprocessing (e.g., scaling) is fitted only on the training fold and applied to the validation fold, preventing data leakage [1]. | make_pipeline(StandardScaler(), SVM(C=1)) |

| Random State Seed | An integer used to initialize the random number generator. Fixing this ensures that the same data splits are produced, making experiments reproducible [1] [18]. | KFold(n_splits=5, shuffle=True, random_state=42) |

| Hyperparameter Optimizers | Tools like GridSearchCV or RandomizedSearchCV that integrate with cross-validation for automated model tuning [1]. |

GridSearchCV(estimator, param_grid, cv=5) |

| Performance Metrics | Functions to calculate evaluation scores (e.g., accuracy, F1, ROC-AUC) for each fold during cross-validation [1] [19]. | cross_val_score(clf, X, y, cv=5, scoring='f1_macro') |

In machine learning (ML) and artificial intelligence (AI), the bias-variance tradeoff is a fundamental concept that governs the performance of any predictive model [23]. It describes the inherent tension between two sources of error that affect a model's predictions. Bias refers to the error that occurs due to overly simplistic assumptions in the learning algorithm, leading to underfitting. Variance refers to the error from being overly sensitive to small fluctuations in the training data, leading to overfitting [23] [24].

Striking the right balance between these two errors is not merely a theoretical exercise; it is essential for building robust, generalizable models, especially in scientific fields like computational biology and drug development. A model that overfits may appear perfect during training but will fail catastrophically when presented with new, unseen data from a real-world experiment [23] [25]. Cross-validation techniques provide the primary toolkit for diagnosing this tradeoff and guiding researchers toward models that will perform reliably in production [4] [25].

Theoretical Framework and Definitions

Decomposing Prediction Error

The total error of a machine learning model can be mathematically decomposed into three components [24]:

Total Error = Bias² + Variance + Irreducible Error

- Bias² (Squared Bias): This error arises from erroneous assumptions in the model. A high-bias model is too simplistic and fails to capture the underlying patterns in the data. For example, using a linear model to fit a complex, non-linear relationship will result in high bias [23] [24].

- Variance: This error measures the model's sensitivity to the specific training set. A high-variance model learns the training data too well, including its noise and random fluctuations. This causes the model's predictions to change significantly if it were trained on a slightly different dataset [23] [24].

- Irreducible Error: This is the inherent noise in the data itself. No model can reduce this error, as it represents the natural randomness and unmeasurable factors in the problem domain [24].

The Tradeoff in Model Complexity

The core of the bias-variance tradeoff is managed through model complexity. As a model becomes more complex, its ability to capture intricate patterns increases.

- Simple Models (e.g., Linear Regression): Typically have high bias and low variance. They make strong assumptions about the data, leading to consistent but potentially inaccurate predictions. This is known as underfitting [23].

- Complex Models (e.g., deep neural networks or high-degree polynomials): Typically have low bias and high variance. They are highly flexible and can fit the training data very closely, but may fail to generalize. This is known as overfitting [23] [26].

The goal is to find a "sweet spot" in complexity where the sum of bias² and variance is minimized, yielding the best predictive performance on new, unseen data [27] [24]. The following table summarizes the relationship between model complexity and error components.

Table 1: The Relationship Between Model Complexity and Error Components

| Model Complexity | Bias | Variance | Total Error | Phenomenon |

|---|---|---|---|---|

| Low | High | Low | High (dominated by bias) | Underfitting |

| Medium | Medium | Medium | Low (Optimal) | Balanced |

| High | Low | High | High (dominated by variance) | Overfitting |

| Very High | Very Low | Very High | Very High | Severe Overfitting |

Cross-Validation: The Diagnostic Toolkit

Cross-validation (CV) is a family of statistical techniques used to estimate the robustness and generalization performance of a model [4]. It is the primary practical method for diagnosing the bias-variance tradeoff.

Core Concepts and Terminology

To ensure clarity, we define key terms used in cross-validation [4]:

- Sample: A single unit of observation or record within a dataset (synonyms: instance, data point).

- Dataset (D): The total set of all samples available.

- Fold: A batch of samples used as a subset of the dataset, typically in k-fold CV.

- Group (g): A sub-collection of samples that share a common characteristic (e.g., data from the same patient or laboratory).

Common Cross-Validation Methods

Different CV methods are suited for different data structures and scientific questions.

- k-Fold Cross-Validation: The dataset is randomly split into

kroughly equal-sized folds. The model is trained onk-1folds and validated on the remaining fold. This process is repeatedktimes, with each fold used exactly once as the validation set [4] [28]. Thekresults are then averaged to produce a single estimation. This method is the most common implementation of CV [25]. - Leave-One-Out Cross-Validation (LOOCV): A special case of k-fold CV where

kequals the number of samples in the dataset. This means that each sample is used once as a single-item test set. It is computationally expensive but useful for very small datasets [4]. - Stratified Cross-Validation: A variation that ensures each fold represents the overall dataset well, particularly by preserving the percentage of samples for each class in classification problems. This is crucial for imbalanced datasets [25].

- Grouped and Time-Series Cross-Validation: When data have inherent groupings or temporal dependencies, standard random splits can lead to over-optimistic performance estimates. Methods like grouped CV ensure that all samples from the same group are either in the training or test set, preventing data leakage. Time-series splits respect the temporal order of data [29] [4].

The following diagram illustrates the workflow of a standard k-fold cross-validation process.

Advanced Validation for Scientific Discovery

In scientific research, particularly in drug discovery, standard random-split CV may not sufficiently test a model's real-world applicability. Prospective validation—assessing performance on truly out-of-distribution data—is critical [29].

- k-Fold n-Step Forward Cross-Validation: Inspired by materials science, this method is useful when data are sorted by a meaningful property (e.g., molecular hydrophobicity, LogP). The dataset is sorted and divided into bins. The model is first trained on the first bin and tested on the second. The training set is then expanded step-wise to include the next bin, while testing on the subsequent one. This mimics a real-world scenario of optimizing compounds toward more drug-like properties [29].

- Cross-Cohort Validation: When multiple datasets (e.g., from different clinical sites or populations) are available, a model is trained on one cohort and tested on another. This rigorously tests whether the model has learned generalizable biological signals rather than cohort-specific noise [25].

Experimental Comparison: Validation in Bioactivity Prediction

To illustrate the practical implications of the bias-variance tradeoff and cross-validation, we examine an experimental case study from bioactivity prediction.

Experimental Protocol and Research Reagents

Table 2: The Scientist's Toolkit: Key Research Reagents and Computational Resources

| Item / Resource | Function / Description | Source / Implementation |

|---|---|---|

| hERG, MAPK14, VEGFR2 Datasets | Provide experimentally measured pIC50 values for model training and testing. | Sourced from Landrum et al. [29] |

| RDKit | Open-source cheminformatics library used for molecule standardization and featurization. | RDKit (version 2023.9.4) [29] |

| ECFP4 Fingerprints (2048-bit) | Encodes molecular structures into a fixed-length binary vector, serving as model input features. | Generated via RDKit [29] |

| Random Forest (RF) Regressor | A high-variance, ensemble model that can capture complex, non-linear relationships. | scikit-learn [29] |

| Gradient Boosting | A powerful, sequential ensemble method that often has low bias but risks high variance. | scikit-learn [29] |

| Multi-Layer Perceptron (MLP) | A neural network model capable of learning highly complex functions. | scikit-learn [29] |

Methodology Summary [29]:

- Data Curation: Three datasets (hERG, MAPK14, VEGFR2) were sourced and curated. Molecules were standardized using RDKit, and their IC50 values (concentration for 50% inhibition) were converted to pIC50 (-log10(IC50)) for a more intuitive scale of potency.

- Featurization: Molecular structures were converted into numerical ECFP4 fingerprints.

- Model Training: Three model types—Random Forest (RF), Gradient Boosting, and Multi-Layer Perceptron (MLP)—were trained.

- Validation Protocols: Models were evaluated using two distinct methods:

- Conventional k-Fold CV: Data was split randomly into training and test sets.

- Sorted k-Fold n-Step Forward CV (SFCV): Data was sorted by LogP (a key drug-like property) and split sequentially to simulate prospective optimization.

Quantitative Results and Analysis

The following table summarizes the key performance data from the study, highlighting the differences observed between validation methods.

Table 3: Comparative Model Performance Using Different Cross-Validation Strategies

| Target Protein | Model Algorithm | Conventional k-Fold CV Performance (MSE) | Sorted Step-Forward CV (SFCV) Performance (MSE) | Implied Generalization Gap |

|---|---|---|---|---|

| hERG | Random Forest | Not Explicitly Reported | Higher than conventional CV | Larger gap for SFCV, indicating potential overfitting to random splits [29] |

| hERG | Gradient Boosting | Not Explicitly Reported | Higher than conventional CV | Larger gap for SFCV, indicating potential overfitting to random splits [29] |

| hERG | Multi-Layer Perceptron | Not Explicitly Reported | Higher than conventional CV | Larger gap for SFCV, indicating potential overfitting to random splits [29] |

| MAPK14 | Random Forest | Not Explicitly Reported | Higher than conventional CV | Larger gap for SFCV, indicating potential overfitting to random splits [29] |

| VEGFR2 | Random Forest | Not Explicitly Reported | Higher than conventional CV | Larger gap for SFCV, indicating potential overfitting to random splits [29] |

Key Findings [29]:

- The Sorted Step-Forward Cross-Validation (SFCV) consistently resulted in higher prediction errors (MSE) compared to conventional k-fold CV across all models and targets.

- This performance drop in SFCV is a critical indicator of a model's true generalizability. A model that performs well in random CV but poorly in SFCV has likely overfit to the specific distribution of the randomly split data and cannot extrapolate effectively to a structured, real-world task like molecular optimization.

- Therefore, SFCV provides a more realistic and pessimistic estimate of model performance for drug discovery, helping researchers avoid deploying models that will fail prospectively.

The interplay between the bias-variance tradeoff and cross-validation has profound implications for computational science research.

- Informed Model Selection: Relying solely on a single metric like testing error from a random split is insufficient. Researchers must analyze the gap between training and validation error to diagnose overfitting (high variance) or underfitting (high bias). Techniques like learning curves are invaluable here [23] [24].

- The Peril of Data Leakage: A common mistake in scientific literature is performing feature selection or other preprocessing steps on the entire dataset before cross-validation. This allows information from the test set to "leak" into the training process, resulting in optimistically biased performance estimates [25]. All steps must be performed within the CV loop, using only the training portion.

- Choosing the Right Validation: There is no one-size-fits-all CV method. Researchers must select a validation strategy that mirrors the intended use case. For temporal data, use time-series splits; for data with groupings, use grouped CV; for discovery tasks aiming at novel regions of chemical space, use step-forward or cross-cohort validation [29] [4] [25].

In conclusion, the bias-variance tradeoff is not a problem to be solved but a fundamental balance to be managed. Cross-validation provides the essential toolkit for diagnosing this balance. For scientists and drug developers, moving beyond simple random splits to more rigorous, prospective validation strategies is paramount for building models that deliver true predictive power and drive successful scientific outcomes.

The Role of Cross-Validation in CRISP-DM and Scientific Workflows

In computational science research, the reliability of data-driven models is paramount. The Cross-Industry Standard Process for Data Mining (CRISP-DM) provides a robust, iterative framework for analytics projects, with cross-validation serving as a critical technical procedure within its modeling and evaluation phases. Cross-validation estimates how well a model will generalize to unseen data, directly impacting the credibility of scientific findings [1]. This guide examines cross-validation's role within CRISP-DM, objectively comparing techniques and presenting experimental data to inform researchers and drug development professionals.

CRISP-DM's six-phase structure—Business Understanding, Data Understanding, Data Preparation, Modeling, Evaluation, and Deployment—creates a logical container for rigorous model validation [30] [31]. Within this framework, cross-validation specifically addresses the model generalization requirement during the Modeling phase and provides essential evidence for the performance assessment required in the Evaluation phase [32]. The following diagram illustrates how cross-validation is embedded within the broader CRISP-DM workflow:

Cross-Validation Within the CRISP-DM Lifecycle

Integration in Modeling and Evaluation Phases

In CRISP-DM, cross-validation is formally incorporated during the Modeling phase as part of the "Generate Test Design" task, where the validation strategy for model development is established [30]. The model performance metrics obtained through cross-validation then feed directly into the Evaluation phase, where researchers determine which model best meets business objectives and scientific requirements [33] [31].

This integration is crucial for maintaining scientific rigor, as it provides empirical evidence of model robustness before deployment. In scientific contexts like drug development, this process helps ensure that predictive models will perform reliably on new experimental data or patient populations, potentially reducing late-stage failure rates [34].

Iterative Nature and Feedback Loops

CRISP-DM is inherently iterative, and cross-validation results often trigger these iterations [30]. For example, poor cross-validation performance might necessitate returning to Data Preparation for additional feature engineering or to Modeling for algorithm selection [35]. This iterative process, when properly documented, creates an audit trail valuable for regulatory compliance in fields like pharmaceutical development [32].

Comparative Analysis of Cross-Validation Techniques

Different cross-validation techniques offer distinct tradeoffs between bias, variance, and computational expense, making them suitable for different research scenarios within the CRISP-DM framework.

Table 1: Cross-Validation Techniques Comparison

| Technique | Mechanism | Best For | Advantages | Limitations |

|---|---|---|---|---|

| k-Fold [1] [36] | Data divided into k equal folds; each fold serves as validation once | Medium to large datasets, general use | Low bias, good data utilization | Higher variance with small k |

| Stratified k-Fold [4] | Preserves class distribution in each fold | Imbalanced datasets, classification | Reliable with class imbalance | Increased complexity |

| Leave-One-Out (LOO) [4] | Each sample serves as validation once | Very small datasets | Low bias, maximum training data | High computational cost, high variance |

| Leave-P-Out [4] | Leaves p samples out for validation | Small datasets, thorough validation | More thorough than LOO | Computationally prohibitive for large p |

| Subject-Wise [34] | Keeps all samples from same subject in same fold | Medical data with multiple samples per subject | Prevents data leakage, realistic clinical simulation | Requires subject identifiers |

| Time-Series Split [4] | Maintains temporal ordering | Time-series data, forecasting | Preserves temporal dependencies | Not for independent data |

Key Differentiating Factors

The choice between these techniques depends on several factors. The subject-wise versus record-wise distinction is particularly critical in medical research, where multiple measurements from the same patient violate the assumption of independent samples [34]. Similarly, stratification becomes crucial with imbalanced datasets common in rare disease research, where the event of interest is infrequent [4].

Experimental Protocols and Performance Data

Case Study: Parkinson's Disease Diagnosis

A 2021 study compared subject-wise and record-wise cross-validation for Parkinson's disease diagnosis using smartphone audio data, highlighting how validation methodology impacts reported performance [34].

Table 2: Cross-Validation Performance in Parkinson's Disease Detection

| Validation Method | Classifier | Reported Accuracy | True Holdout Accuracy | Error Underestimation |

|---|---|---|---|---|

| Record-Wise 10-Fold | Support Vector Machine | 78.3% | 62.1% | 16.2% |

| Subject-Wise 10-Fold | Support Vector Machine | 65.4% | 63.8% | 1.6% |

| Record-Wise 10-Fold | Random Forest | 82.7% | 64.9% | 17.8% |

| Subject-Wise 10-Fold | Random Forest | 67.2% | 65.3% | 1.9% |

Experimental Protocol: Researchers collected 848 audio recordings from 424 subjects (212 with Parkinson's, 212 healthy controls) [34]. The dataset was split using both subject-wise division (ensuring all recordings from a subject were in either training or test sets) and record-wise division (random splitting ignoring subject identity). For each splitting method, they evaluated Support Vector Machine and Random Forest classifiers using 10-fold cross-validation, then assessed final performance on a true holdout set.

Results Interpretation: Record-wise cross-validation significantly overestimated model performance (by 16-18%) because it violated the independence assumption by allowing recordings from the same subject in both training and validation folds [34]. This demonstrates how inappropriate cross-validation techniques can lead to overly optimistic performance estimates, with serious implications for clinical application.

k-Fold Performance Variability Study

A separate analysis of k value selection demonstrated how this parameter affects model evaluation reliability across different dataset sizes and algorithms.

Table 3: k-Fold Performance Variability Across Different Scenarios

| Dataset Size | Algorithm | k=5 | k=10 | k=LOO | Optimal k |

|---|---|---|---|---|---|

| California Housing (20k samples) [36] | Random Forest | 0.801±0.015 | 0.805±0.008 | 0.807±0.021 | 10 |

| Iris (150 samples) [1] | Linear SVM | 0.960±0.032 | 0.973±0.025 | 0.980±0.028 | LOO |

| Parkinson's Audio (848 records) [34] | Random Forest | 0.794±0.041 | 0.803±0.036 | 0.812±0.052 | 10 |

Experimental Protocol: Each study employed standardized k-fold cross-validation with different k values, recording mean performance metrics and standard deviations. The California Housing dataset used Random Forest with 100 trees [36], the Iris dataset used a Linear Support Vector Machine [1], and the Parkinson's data used Random Forest with subject-wise validation [34].

Results Interpretation: The optimal k value depends on both dataset size and algorithm complexity [37] [36]. For smaller datasets (like Iris with 150 samples), Leave-One-Out provided the best performance despite higher variance, while for larger datasets, k=10 offered a better balance between bias and variance [37].

Implementation Guide for Scientific Workflows

The Researcher's Toolkit: Cross-Validation Solutions

Table 4: Essential Cross-Validation Implementation Tools

| Tool/Resource | Function | Implementation Example | Use Case |

|---|---|---|---|

| Scikit-learn KFold [1] [36] | Basic k-fold splitting | KFold(n_splits=5, shuffle=True) |

Standard datasets without special structure |

| StratifiedKFold [4] | Preserves class distribution | StratifiedKFold(n_splits=5) |

Classification with imbalanced classes |

| LeaveOneOut [4] | Leave-one-out validation | LeaveOneOut() |

Very small datasets |

| GroupKFold [34] | Subject-wise splitting | GroupKFold(n_splits=5) |

Medical data with multiple samples per subject |

| TimeSeriesSplit [4] | Temporal validation | TimeSeriesSplit(n_splits=5) |

Time-series forecasting |

| crossvalscore [1] | Quick validation | cross_val_score(model, X, y, cv=5) |

Rapid model evaluation |

| cross_validate [1] | Multiple metrics | cross_validate(model, X, y, scoring=metrics) |

Comprehensive evaluation |

Decision Framework for Technique Selection

The following diagram outlines a systematic approach for selecting the appropriate cross-validation technique within a CRISP-DM project:

Implementation Protocol

A robust cross-validation implementation within CRISP-DM should follow this protocol:

Preprocessing Lockstep: Ensure all preprocessing steps (scaling, imputation, feature selection) are performed within each cross-validation fold to prevent data leakage [1]. Use Scikit-learn's

Pipelinefor seamless integration.Stratification: For classification problems, use stratified splits to maintain class distribution in each fold [4].

Multiple Metrics: Employ

cross_validatewith multiple scoring metrics to gain comprehensive model insights [1].Statistical Reporting: Always report both mean performance and standard deviation across folds to communicate estimate uncertainty [36].

Final Holdout: Reserve a completely unseen test set for final model evaluation after cross-validation and model selection [1].

Cross-validation serves as the critical bridge between model development and reliable deployment within the CRISP-DM framework. For scientific researchers and drug development professionals, technique selection is not merely a technical implementation detail but a fundamental methodological choice that directly impacts study validity. The experimental evidence presented demonstrates that inappropriate validation approaches can significantly overestimate performance, particularly in domains like healthcare with complex data dependencies.

By embedding rigorous, context-appropriate cross-validation within the structured CRISP-DM methodology, computational scientists can produce more reliable, reproducible models that truly generalize to real-world scenarios. This integration of process and validation represents best practice for any data-driven scientific workflow.

Why Cross-Validation Matters in Drug Development and Biomedical Research

In the field of drug development and biomedical research, the ability to build predictive models that generalize reliably to new, unseen data is paramount. Cross-validation is a statistical procedure used to evaluate the performance and generalizability of machine learning models, serving as a critical safeguard against overfitting—a scenario where a model performs well on its training data but fails to predict new samples accurately [1]. This is especially crucial in domains like bioactivity prediction and clinical prognostics, where models inform high-stakes decisions. Unlike a simple train-test split, which can yield optimistic and unstable performance estimates, cross-validation uses multiple data splits to provide a more robust assessment of how a model will perform in practice [38] [13].

The core principle of cross-validation is to give every data point a chance to be in the testing set. The model is trained and evaluated multiple times on different subsets of the available data. The final performance metric is an average across all these iterations, which provides a more reliable estimate of out-of-sample prediction error [38]. For biomedical researchers, this process is not just an academic exercise; it is a fundamental practice for developing models that can truly predict the properties of novel compounds or patient outcomes, thereby de-risking the costly and lengthy process of drug discovery and clinical translation.

Core Cross-Validation Techniques and Their Applications

Various cross-validation techniques have been developed, each with specific strengths tailored to different data structures and research questions common in biomedicine. The choice of method can significantly impact the reliability of a model's performance estimate.

k-Fold Cross-Validation is the most common approach. The dataset is randomly partitioned into k equal-sized folds (groups). The model is trained k times, each time using k-1 folds for training and the remaining one fold for testing. The performance scores from all k iterations are then averaged [38] [1]. This method provides a good balance between bias and variance and is widely applicable.

Stratified k-Fold Cross-Validation is a vital variant for classification problems with imbalanced datasets. Standard k-fold might by chance create folds with very few or no instances of a minority class. Stratified k-fold ensures that each fold maintains the same proportion of class labels as the original dataset, leading to a more representative and fair model evaluation [38] [13].

Leave-One-Out Cross-Validation (LOOCV) represents an extreme case of k-fold where k equals the number of data points. In each iteration, a single data point is used for testing, and the model is trained on all the others. While LOOCV is almost unbiased, it is computationally expensive and can have high variance, making it most suitable for very small datasets [38].

Time-Series Cross-Validation is essential for temporal data, such as longitudinal patient studies or sensor readings. Randomly shuffling such data would break temporal dependencies and cause data leakage. Instead, folds are built chronologically using an expanding or rolling window, ensuring that the model is always tested on data from a future time point compared to its training data [38].

Subject-Wise vs. Record-Wise Splitting is a critical consideration for electronic health record (EHR) data or any dataset with multiple records per individual. Record-wise splitting randomly assigns individual patient encounters to training or testing, which can lead to data leakage if records from the same patient appear in both sets. Subject-wise splitting ensures all records from a single patient are contained within either the training or test set, which is a more rigorous approach for developing models that generalize to new patients [13].

The following diagram illustrates the workflow of a typical k-fold cross-validation process, from data preparation to model evaluation.

Comparative Analysis of Validation Methods

Selecting an appropriate validation strategy is a critical step in the model development pipeline. The table below compares the key characteristics of common internal validation methods, highlighting their suitability for different scenarios in biomedical research.

Table 1: Comparison of Common Internal Validation Methods

| Method | Key Principle | Advantages | Disadvantages | Ideal Use Case in Biomedicine |

|---|---|---|---|---|

| Hold-Out Validation | Single random split into training and test sets. | Simple, fast, low computational cost. | High variance in performance estimate; inefficient data use. [39] | Preliminary model screening with very large datasets. |

| k-Fold Cross-Validation | Data divided into k folds; each fold serves as test set once. | More reliable performance estimate; better data utilization. [38] | Higher computational cost than hold-out. | General-purpose model evaluation for most tabular datasets. |

| Stratified k-Fold | k-Fold while preserving class distribution in each fold. | Better for imbalanced classes; more realistic for clinical outcomes. [38] [13] | Only applicable to classification tasks. | Predicting rare clinical events (e.g., disease progression). |

| Leave-One-Out (LOOCV) | Each sample is a test set once; model trained on all others. | Low bias; uses maximum data for training. [38] | Very high computational cost; high variance. | Very small datasets (e.g., early-stage preclinical studies). |

| Time-Series Split | Sequential splitting respecting time order. | Prevents data leakage; realistic for temporal data. [38] | Not for randomly sampled data. | Longitudinal EHR analysis or forecasting disease trajectories. |

| Subject-Wise Split | All records from a subject are in the same fold. | Prevents data leakage; generalizes to new patients. [13] | Requires subject identifiers; can be complex to implement. | All models based on EHR data or clinical trials with repeated measures. |

The performance and reliability of these methods can be quantified. A 2022 simulation study compared internal validation approaches on a clinical dataset of 500 patients predicting disease progression. The results, summarized in the table below, demonstrate that while k-fold cross-validation and a hold-out set produced comparable area under the curve (AUC) values, the hold-out method exhibited greater uncertainty due to its reliance on a single, arbitrary data split [39].

Table 2: Performance Comparison of Internal Validation Methods from a Clinical Simulation Study [39]

| Validation Method | Mean CV-AUC (± SD) | Calibration Slope | Key Finding |

|---|---|---|---|

| 5-Fold Repeated Cross-Validation | 0.71 ± 0.06 | Comparable | Reliable performance estimate. |

| Hold-Out Validation | 0.70 ± 0.07 | Comparable | Higher uncertainty than CV. |

| Bootstrapping | 0.67 ± 0.02 | Comparable | Slightly lower but stable estimate. |

Advanced and Domain-Specific Validation Techniques

Beyond standard methods, advanced cross-validation techniques address specific challenges in computational drug discovery and biomedical research, such as the need to predict properties for novel chemical structures or to optimize multiple compound properties simultaneously.

k-Fold n-Step Forward Cross-Validation

In drug discovery, the ultimate goal is often to predict the bioactivity of novel, more drug-like compounds that are structurally distinct from those in the training set. Conventional random split cross-validation is often inadequate for this task, as it tends to overestimate performance on compounds that are very different from the training data [29].

Inspired by validation methods in materials science, k-fold n-step forward cross-validation provides a more realistic assessment. In this method, the dataset is sorted by a key physicochemical property relevant to drug-likeness, such as logP (the partition coefficient measuring hydrophobicity). The data is divided into bins based on descending logP values. The model is first trained on the bin with the highest logP compounds and tested on the next bin. In each subsequent iteration, the training set expands to include the previous bin, and the model is tested on the next bin with lower logP values [29].

This process mimics the real-world drug optimization process, where chemists aim to improve properties like logP to achieve more moderate, drug-like values (typically between 1 and 3). This method more accurately reflects the challenge of extrapolating to new regions of chemical space and provides a better estimate of a model's prospective performance [29]. The following diagram contrasts this approach with a standard k-fold procedure.

Key Metrics for Prospective Validation

When using advanced methods like step-forward cross-validation, two specific metrics are particularly useful for evaluating a model's potential for prospective discovery in drug development [29]:

Discovery Yield: This metric assesses a model's ability to correctly identify molecules with desirable bioactivity compared to other small molecules. It is calculated as the proportion of true positives among the top-k ranked predictions, helping researchers understand the model's hit-finding capability in a virtual screen.

Novelty Error: This measures a model's performance on compounds that are structurally distinct from the training set, effectively quantifying its ability to generalize to new chemical series. A high novelty error indicates that the model's applicability domain is limited and that it may fail when applied to truly novel scaffolds.

Experimental Protocols and Research Toolkit

Detailed Protocol for Step-Forward Cross-Validation

The following protocol outlines the steps for implementing a step-forward cross-validation study for bioactivity prediction, as described in the preprint by [29].

Dataset Curation: Select a clean dataset of compounds with experimentally measured bioactivity values (e.g., IC50 for a protein target). Standardize molecular structures using a toolkit like RDKit to desalt, neutralize charges, and normalize tautomers. Use the median activity value for replicate measurements.

Data Featurization: Convert the standardized molecular structures into a numerical representation. A common method is to use 2048-bit ECFP4 fingerprints (Morgan fingerprints), which encode circular substructures of the molecule into a binary bit vector.

Property Calculation and Sorting: Calculate the logP value for each compound using RDKit. Sort the entire dataset from the highest to the lowest logP value.

Data Binning: Divide the sorted dataset into k contiguous bins (e.g., 10 bins). Each bin will represent a block of compounds with similar logP values.

Iterative Training and Validation:

- Iteration 1: Train the model (e.g., Random Forest, Gradient Boosting) on Bin 1. Validate the model on Bin 2.

- Iteration 2: Train the model on Bins 1 and 2. Validate the model on Bin 3.

- Continue until the model is trained on Bins 1 through k-1 and validated on Bin k.

Performance Analysis: For each iteration, calculate performance metrics (e.g., Root Mean Squared Error, R²). Also, calculate the Discovery Yield and Novelty Error across the iterations to assess the model's utility for prospective compound identification.

The Scientist's Computational Toolkit

Table 3: Essential Software and Tools for Cross-Validation in Biomedical Research

| Tool/Resource | Function | Application in Biomedicine |

|---|---|---|

| Scikit-learn (Python) | Provides implementations for k-fold, stratified k-fold, shuffle-split, LOOCV, and time-series splits. [40] [1] | General-purpose machine learning and model evaluation for diverse data types. |

| RDKit | Open-source cheminformatics toolkit. | Standardizing molecular structures, calculating descriptors (e.g., logP), and generating molecular fingerprints. [29] |

| DeepChem | Open-source toolkit for deep learning in drug discovery, materials science, and quantum chemistry. | Provides scaffold splitting methods and specialized featurizers for molecules. [29] |

| StratifiedKFold (Scikit-learn) | Ensures relative class frequencies are preserved in each fold. | Essential for modeling rare clinical events or imbalanced bioactivity data. [38] [13] |

| Pipeline (Scikit-learn) | Chains together data preprocessing (e.g., scaling) and model training. | Prevents data leakage by ensuring preprocessing is fitted only on the training fold during cross-validation. [1] |

| Cross-Validation Metrics (e.g., Discovery Yield, Novelty Error) | Domain-specific metrics for prospective validation. | Evaluating the real-world potential of predictive models in drug discovery. [29] |

Cross-validation is a cornerstone of robust predictive model development in drug development and biomedical research. Moving beyond simple hold-out validation to more sophisticated methods like k-fold or stratified k-fold provides a more reliable and stable estimate of model performance, which is critical for informed decision-making. For the unique challenges of the biomedical domain—such as imbalanced clinical outcomes, temporal data, and the presence of multiple records per patient—techniques like stratified splitting, time-series splitting, and subject-wise splitting are essential to avoid optimistic bias and data leakage.

Furthermore, the adoption of advanced, domain-aware validation strategies like k-fold n-step forward cross-validation represents a significant step toward more realistic model evaluation in drug discovery. By mimicking the real-world process of chemical optimization and incorporating metrics like discovery yield and novelty error, researchers can better assess a model's potential to identify truly novel and effective compounds. As predictive modeling continues to play an expanding role in biomedicine, the rigorous application of these cross-validation techniques will be fundamental to building trustworthy, generalizable, and impactful tools that can accelerate scientific discovery and improve patient outcomes.

Cross-Validation Techniques: Implementation Strategies for Scientific Applications

In the field of computational science research, particularly with the expanding role of artificial intelligence (AI) in domains like medical imaging and drug discovery, the validation of predictive models is paramount. Overoptimistic performance estimates caused by overfitted models that memorize dataset-specific noise rather than learning generalizable patterns have become a common source of disappointment in clinical translation [14]. Cross-validation (CV) comprises a set of data sampling methods used by algorithm developers to avoid this overoptimism in overfitted models [14]. It is used to estimate the generalization performance of an algorithm—how it will perform on unseen data—but also serves critical roles in hyperparameter tuning and algorithm selection [14].

Among the various cross-validation techniques, K-Fold Cross-Validation has emerged as the standard approach for general-purpose modeling. This guide provides an objective comparison of K-Fold CV with other validation techniques, supported by experimental data and practical implementation protocols relevant to researchers, scientists, and drug development professionals.

Understanding K-Fold Cross-Validation: Principles and Process

The Core Mechanism of K-Fold CV

K-Fold Cross-Validation is a resampling procedure used to evaluate machine learning models on a limited data sample [20]. The procedure has a single parameter called k that refers to the number of groups that a given data sample is to be split into. When a specific value for k is chosen, it may be used in place of k in the reference to the model, such as k=10 becoming 10-fold cross-validation [20].

The general procedure is as follows [20]:

- Shuffle the dataset randomly.

- Split the dataset into k groups (folds).

- For each unique group:

- Take the group as a hold out or test data set.

- Take the remaining groups as a training data set.

- Fit a model on the training set and evaluate it on the test set.

- Retain the evaluation score and discard the model.

- Summarize the skill of the model using the sample of model evaluation scores.

Importantly, each observation in the data sample is assigned to an individual group and stays in that group for the duration of the procedure. This means that each sample is given the opportunity to be used in the hold out set 1 time and used to train the model k-1 times [20].

Visualizing the K-Fold Cross-Validation Workflow

The following diagram illustrates the standard K-Fold Cross-Validation workflow:

Research Reagent Solutions: Essential Components for Implementation

The table below details key computational tools and their functions for implementing K-Fold Cross-Validation in scientific research:

| Component | Function | Example Implementations |

|---|---|---|

| Data Splitting Library | Partitions dataset into k folds while maintaining distribution | Scikit-learn KFold, StratifiedKFold [20] |

| Model Training Framework | Algorithm implementation and training execution | Scikit-learn, PyTorch, TensorFlow, XGBoost [41] [42] |

| Performance Metrics | Quantifies model performance across folds | Accuracy, AUC-ROC, F1-Score, MSE [41] [2] |

| Hyperparameter Tuning | Optimizes model parameters using validation folds | GridSearchCV, RandomizedSearchCV [14] |

| Statistical Testing | Assesses significance of performance differences | DeLong test, paired t-test [43] [44] |

Comparative Analysis of Cross-Validation Techniques

Quantitative Comparison of Cross-Validation Methods

The table below summarizes the key characteristics, advantages, and limitations of K-Fold CV compared to other common validation approaches:

| Method | Typical Use Case | Bias-Variance Tradeoff | Computational Cost | Data Efficiency |

|---|---|---|---|---|

| K-Fold Cross-Validation | General purpose modeling with limited data | Balanced: Moderate bias and variance [20] | Moderate (k model trainings) | High: All data used for training and testing [16] |

| Holdout Validation | Large datasets, initial prototyping | High variance with small test sets [14] | Low (single training) | Low: Portion of data withheld entirely |

| Leave-One-Out CV (LOOCV) | Very small datasets (<100 samples) [20] | Low bias, high variance [16] | High (n model trainings) | Maximum: Each observation used as test once [16] |

| Repeated Random Subsampling | Unbalanced datasets, complementary to k-fold | Similar to k-fold [16] | High (multiple random splits) | Medium: Some observations may be missed |

| Stratified K-Fold | Imbalanced classification problems | Reduces bias with minority classes | Similar to k-fold | High with maintained class distribution |

Experimental Performance Comparison

Recent studies have provided empirical evidence comparing the effectiveness of K-Fold CV against alternative approaches across various domains:

Performance in Bankruptcy Prediction Models

A 2025 study on bankruptcy prediction using random forest and XGBoost classifiers evaluated the validity of k-fold cross-validation for model selection [41]. The research employed a nested cross-validation framework to assess the relationship between cross-validation (CV) and out-of-sample (OOS) performance on 40 different train/test data partitions. Key findings included:

- On average, k-fold cross-validation was found to be a valid selection technique when applied within a model class.

- However, k-fold cross-validation may fail for specific train/test splits, with 67% of model selection regret variability explained by the particular train/test split.

- The correlation between CV and OOS performance differed between random forest and XGBoost models, suggesting the predictive power of CV performance is affected by both data samples and model types [41].

Step-Forward Cross-Validation for Bioactivity Prediction

A 2024 study on bioactivity prediction explored k-fold n-step forward cross-validation as an alternative to conventional random split cross-validation [29]. This approach sorted compounds by logP (a key drug-like property) and implemented forward-chaining validation to better simulate real-world drug discovery scenarios. The study found:

- Sorted step-forward CV provided a more realistic assessment of model performance for out-of-distribution compounds compared to random k-fold CV.

- Conventional random split k-fold CV typically suffers from a limited applicability domain because test compounds are often similar to training compounds.

- For tasks where temporal or property-based generalization is crucial, modified k-fold approaches like step-forward CV may be more appropriate than standard k-fold [29].

Practical Implementation Guide

Configuration Recommendations for k