Establishing Credibility: A Comprehensive Guide to Computational Model V&V Standards in Biomedical Research

This article provides a comprehensive guide to Verification and Validation (V&V) standards for computational models, tailored for researchers and professionals in drug development and biomedical fields.

Establishing Credibility: A Comprehensive Guide to Computational Model V&V Standards in Biomedical Research

Abstract

This article provides a comprehensive guide to Verification and Validation (V&V) standards for computational models, tailored for researchers and professionals in drug development and biomedical fields. It explores the foundational principles of model credibility, with a focus on the widely adopted ASME V&V 40 risk-based framework. The scope covers methodological applications across medical devices, drug design, and manufacturing, alongside troubleshooting common pitfalls in techniques like QSAR, molecular dynamics, and AI/ML. It further examines quantitative validation metrics, regulatory perspectives from the FDA and EMA, and comparative analysis of standards. The article synthesizes key takeaways to offer a clear path for implementing robust V&V practices, enhancing model reliability for high-stakes decision-making in research and regulatory submissions.

The Pillars of Model Credibility: Core Concepts and Governing Standards

In computational modeling and simulation (CM&S), the adoption of Verification, Validation, and Uncertainty Quantification (VVUQ) is fundamental for establishing model credibility. As industries from medical devices to aerospace increasingly rely on computational predictions for critical decision-making, the rigorous application of VVUQ processes ensures that simulations are fit-for-purpose and yield reliable results. This framework is particularly vital in regulatory contexts and high-consequence applications where model inaccuracies could lead to significant risks [1] [2].

Model credibility is not a binary attribute but a risk-informed judgment on whether a computational model's outputs are adequate to support decision-making for a specific Context of Use (COU). The ASME V&V 40 standard provides a foundational, risk-based framework for establishing these credibility requirements, which has become a key enabler for regulatory submissions, including those to the US FDA CDRH [3]. This guide details the core principles of VVUQ, providing researchers and drug development professionals with the methodologies and standards needed to credibly employ computational models in research and development.

Core Principles of VVUQ

Definitions and Terminology

VVUQ encompasses three distinct but interconnected processes:

- Verification is the process of determining that a computational model accurately represents the underlying mathematical model and its solution. It answers the question: "Are we solving the equations correctly?" This involves activities like code verification (ensuring the software is implemented without bugs) and calculation verification (ensuring the numerical solution is accurate for a specific set of inputs) [1] [2].

- Validation is the process of determining the degree to which a model is an accurate representation of the real world from the perspective of the intended uses of the model. It answers the question: "Are we solving the correct equations?" This is achieved by comparing computational results with experimental data specifically designed for validation purposes [1] [2].

- Uncertainty Quantification (UQ) is the process of characterizing and propagating the impact of uncertainties in input parameters, numerical approximations, and model form on the simulation outcomes. UQ quantifies the confidence in the model's predictions, which is essential for risk-informed decision-making [1] [3].

The VVUQ Process Workflow

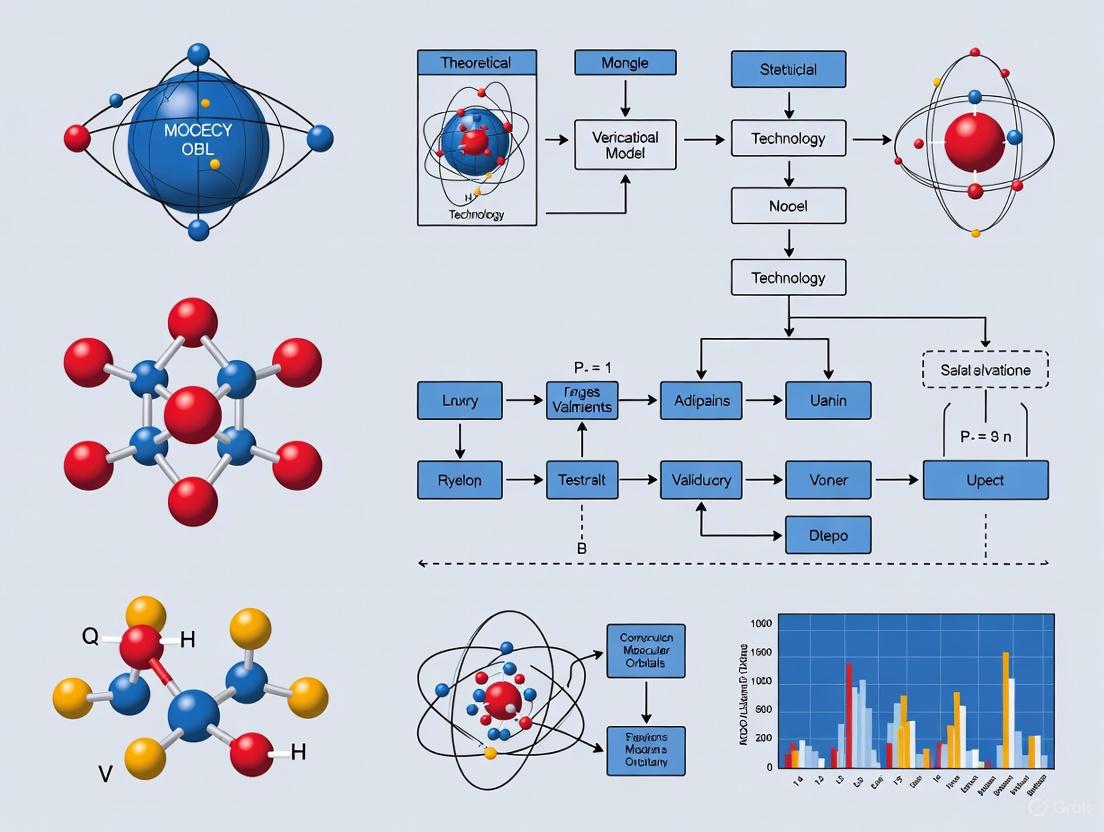

The following diagram illustrates the logical workflow and relationships between the core VVUQ activities, from problem definition to a credible model prediction.

Establishing Model Credibility: A Standards-Based Framework

The ASME V&V 40 Risk-Informed Framework

The ASME V&V 40-2018 standard provides a structured, risk-informed framework for establishing the credibility of a computational model. The core of this methodology is a risk analysis that assesses the model's COU and the decision consequence based on the model's output. The level of credibility required, and thus the rigor of the VVUQ activities, is directly proportional to the perceived risk of an incorrect prediction [3].

The framework guides users through assessing six primary credibility factors, each with a set of credibility activities that can be tiered based on the required level of rigor [3]:

- Model Development and Evaluation

- Code Verification

- Solution Verification

- Model Validation

- Uncertainty Quantification and Sensitivity Analysis

- Other Evidence (e.g., historical evidence, peer review)

Credibility Factors and Activities

The table below summarizes the key credibility factors and example activities as guided by ASME V&V 40.

Table 1: Credibility Factors and Example Activities Based on ASME V&V 40

| Credibility Factor | Objective | Example Activities |

|---|---|---|

| Model Validation | Determine model accuracy for the COU by comparing to experimental data. | Conduct a validation experiment; Use validation metrics (e.g., area metric, waveform metrics); Assess predictive capability [2] [3]. |

| Uncertainty Quantification | Quantify the impact of input and model uncertainties on output. | Propagate input uncertainties (Monte Carlo, Taylor Series); Distinguish aleatory and epistemic uncertainty; Perform sensitivity analysis [2]. |

| Solution Verification | Estimate numerical accuracy of a specific simulation (e.g., discretization error). | Perform grid convergence studies (systematic mesh refinement); Estimate iterative errors [2] [3]. |

| Code Verification | Ensure software correctly implements the mathematical model. | Use method of manufactured solutions; Perform benchmark comparisons [2]. |

Quantitative Data Analysis in VVUQ

Quantitative data analysis is the backbone of VVUQ, transforming raw numerical data into meaningful insights about model performance and reliability. The process relies on two main statistical approaches [4]:

- Descriptive Statistics summarize and describe the characteristics of a dataset using measures of central tendency (mean, median, mode) and dispersion (range, variance, standard deviation).

- Inferential Statistics use sample data to make generalizations or predictions about a larger population. Key techniques include hypothesis testing, regression analysis, and correlation analysis.

For VVUQ, specific quantitative techniques are applied at different stages:

- During Validation: Validation metrics are used to quantitatively compare computational results to experimental data. These can range from simple comparisons of scalar quantities to more complex metrics for waveforms or time-series data, such as the area metric or other deterministic and non-deterministic metrics discussed in standards like ASME V&V 10.1 and VVUQ 20.1 [2].

- During UQ: Statistical methods are used to represent uncertain inputs (e.g., probability density functions) and to propagate these uncertainties through the model. Sensitivity Analysis helps identify which input uncertainties contribute most to the uncertainty in the output, guiding resource allocation for better characterization [2].

Table 2: Key Quantitative Analysis Methods for VVUQ

| VVUQ Stage | Quantitative Method | Application in VVUQ |

|---|---|---|

| Verification | Grid Convergence Index (GCI) | Quantifies discretization error based on systematic mesh refinement [2]. |

| Validation | Validation Metrics (e.g., Area Metric) | Provides a quantitative measure of the difference between model predictions and experimental data [2]. |

| UQ | Monte Carlo Simulation | Propagates input uncertainties by repeatedly sampling from their distributions to build a distribution of the output [2]. |

| UQ | Sensitivity Analysis (e.g., Variance-Based) | Identifies which input parameters are the most significant contributors to output uncertainty [2]. |

Experimental Protocols for Validation

A cornerstone of model credibility is a well-designed validation experiment. The protocol must provide high-quality, relevant data for comparing with computational results.

Validation Experiment Design

The design of a validation experiment must adhere to strict principles to ensure the data is suitable for assessing the model's predictive capability [2]:

- Correspondence with COU: The experimental conditions, boundary conditions, and physical quantities measured must closely match the intended Context of Use of the computational model.

- Uncertainty Characterization: All significant sources of experimental uncertainty must be identified, estimated, and documented. This includes measurement uncertainty, variability in test articles, and procedural uncertainties.

- Benchmark-Quality Data: The experiment should be designed to minimize uncertainty and be thoroughly documented to allow for replication and peer review. The data is often referred to as "benchmark" or "validation-grade."

The Validation Workflow

The process of executing a validation activity follows a structured workflow, from planning to final assessment, as shown in the diagram below.

Uncertainty Quantification and Sensitivity Analysis

Uncertainty Quantification Methodology

UQ is critical for understanding the reliability of model predictions. The process involves a systematic workflow [2]:

- Define Quantities of Interest (QoIs): Identify the key output variables critical for decision-making.

- Identify Sources of Uncertainty: Classify uncertainties as either aleatory (inherent random variation) or epistemic (reducible uncertainty due to lack of knowledge).

- Estimate Input Uncertainties: Characterize uncertain inputs using probability distributions, intervals, or other mathematical representations.

- Propagate Uncertainties: Use computational methods to propagate the input uncertainties through the model to determine their combined effect on the QoIs.

- Analyze and Interpret Results: Analyze the output uncertainty to inform decisions and identify key drivers of uncertainty.

Key UQ and Sensitivity Analysis Techniques

Table 3: Methods for Uncertainty Quantification and Sensitivity Analysis

| Method | Description | Typical Application |

|---|---|---|

| Monte Carlo Simulation | A computational technique that uses random sampling of input distributions to simulate a large number of model evaluations and build an output distribution. | Robust and widely applicable for nonlinear models and large uncertainties. Computationally expensive [2]. |

| Taylor Series Method / Variance Transmission Equation | An approximation method that uses first-order derivatives to estimate the output variance based on input variances. | Efficient for models with small uncertainties and near-linear behavior [2]. |

| Bayesian Inference | A statistical method for updating the probability estimate for a hypothesis (e.g., model parameters) as more evidence or data becomes available. | Used for model calibration to estimate parameter uncertainties based on experimental data [2]. |

| Variance-Based Sensitivity Analysis (Sobol' Indices) | A global sensitivity analysis method that quantifies how much of the output variance each input parameter (or combination of parameters) is responsible for. | Identifies the most important sources of uncertainty to prioritize reduction efforts [2]. |

The Researcher's Toolkit for VVUQ

Implementing VVUQ requires a combination of standards, software tools, and computational techniques. The following table details essential components of the VVUQ toolkit for researchers.

Table 4: Essential Research Tools and Resources for VVUQ

| Tool/Resource Category | Examples | Function in VVUQ |

|---|---|---|

| VVUQ Standards | ASME VVUQ 1 (Terminology), V&V 10 (Solid Mechanics), V&V 20 (CFD/Heat Transfer), V&V 40 (Medical Devices) [1] [3] | Provide standardized definitions, recommended practices, and risk-based frameworks for applying VVUQ in specific disciplines. |

| Software Tools (Statistical Analysis) | R, Python (Pandas, NumPy, SciPy), SPSS [5] [4] | Perform statistical analysis, uncertainty propagation, sensitivity analysis, and generate quantitative data visualizations. |

| Software Tools (Visualization) | Python (Matplotlib), ChartExpo, Ajelix BI [6] [4] | Create charts, graphs, and dashboards to communicate VVUQ results effectively, showing trends, comparisons, and uncertainties. |

| Computational Methods | Method of Manufactured Solutions (Code Verification), Systematic Mesh Refinement (Solution Verification) [2] [3] | Provide methodologies for verifying the numerical implementation and solution accuracy of computational models. |

| Challenge Problems | ASME VVUQ Symposium Workshop Problems [1] | Provide specific engineering problems to study, assess, and compare different VVUQ methods and approaches. |

The rigorous application of Verification, Validation, and Uncertainty Quantification is indispensable for establishing model credibility in computational research. Frameworks like ASME V&V 40 provide a structured, risk-informed approach to determine the necessary level of VVUQ effort, ensuring computational models are credible for their intended use. As computational methods continue to evolve, including the incorporation of Artificial Intelligence and Machine Learning, and as applications expand into high-stakes areas like In Silico Clinical Trials, the principles of VVUQ will remain the foundation for trustworthy and reliable simulation-based science and engineering [3] [7]. For researchers and drug development professionals, mastering these processes is no longer optional but a core competency for advancing innovation safely and effectively.

The Critical Role of Context of Use (COU) in Risk-Based V&V Planning

In the realm of computational modeling and simulation, the Context of Use (COU) is formally defined as a concise description of a model's specified role and scope within a development process [8] [9]. For computational models used in biomedical product development, the COU provides the critical foundation for planning and executing risk-based Verification and Validation (V&V) activities. It serves as the definitive statement that clarifies how the model will be applied to address a specific question or decision point, thereby establishing the boundaries for assessing model credibility.

The American Society of Mechanical Engineers (ASME) V&V 40 standard, recognized by the U.S. Food and Drug Administration (FDA), has established a risk-informed credibility assessment framework where the COU is central to determining the appropriate level of V&V evidence required [10] [11] [1]. This framework contends that the rigor of V&V activities should be commensurate with the risk associated with the model's application, with the COU directly informing this risk assessment [10]. Without a precisely defined COU, model developers and regulatory reviewers lack the necessary context to determine what constitutes sufficient evidence of model credibility for regulatory decision-making.

The ASME V&V 40 Risk-Informed Credibility Framework

The ASME V&V 40 standard provides a structured framework for assessing the credibility of computational models, with the COU serving as its cornerstone [10] [1]. This framework outlines a systematic process for establishing model credibility that is proportional to model risk, ensuring efficient resource allocation while maintaining scientific rigor.

Core Concepts of the V&V 40 Framework

The risk-informed credibility assessment framework comprises several interconnected concepts that guide the V&V process:

- Question of Interest: The specific question, decision, or concern that the modeling effort aims to address. This question may be broader than the model's specific application [9].

- Context of Use (COU): The detailed statement defining the specific role and scope of the computational model in addressing the question of interest [9] [10].

- Model Risk: The possibility that the computational model and its results may lead to an incorrect decision with adverse outcomes. Risk is determined by both model influence and decision consequence [9] [10].

- Model Credibility: The trust, established through evidence collection, in the predictive capability of a computational model for a specific COU [9] [10].

- Credibility Factors: Specific elements of the verification and validation process used to establish credibility, including code verification, validation testing, and applicability assessment [9].

The following diagram illustrates the logical workflow of the ASME V&V 40 risk-informed credibility assessment framework:

Relationship Between COU, Model Risk, and Credibility Requirements

The COU directly influences model risk assessment, which in turn determines the necessary level of credibility evidence. Model risk is evaluated based on two key factors:

- Model Influence: The weight of the computational model relative to other evidence in the decision-making process [9] [10]. A model with high influence carries greater risk than one used for supplementary information.

- Decision Consequence: The significance of an adverse outcome resulting from an incorrect decision based on the model [9] [10]. Decisions with serious patient safety implications have higher consequences.

The table below illustrates how different combinations of these factors determine overall model risk and corresponding credibility requirements:

Table 1: Model Risk Assessment Matrix and Corresponding Credibility Requirements

| Decision Consequence | Low Model Influence | Medium Model Influence | High Model Influence |

|---|---|---|---|

| Low | Low RiskBasic V&V | Low-Medium RiskModerate V&V | Medium RiskSubstantial V&V |

| Medium | Low-Medium RiskModerate V&V | Medium RiskSubstantial V&V | Medium-High RiskRigorous V&V |

| High | Medium RiskSubstantial V&V | Medium-High RiskRigorous V&V | High RiskComprehensive V&V |

Defining the Context of Use: Methodology and Components

A well-defined COU is essential for establishing a model's purpose, boundaries, and applicability. The COU should be articulated with sufficient detail to guide all subsequent V&V activities and credibility assessments.

Core Components of a COU Statement

A comprehensive COU statement should explicitly address several key elements:

- Biomarker Category or Model Type: Specification of the model category (e.g., predictive biomarker, physiologically-based pharmacokinetic model, finite element analysis) [8] [9].

- Intended Drug Development Use: Clear description of how the model will inform development decisions (e.g., defining inclusion/exclusion criteria, supporting clinical dose selection, evaluating treatment response) [8].

- Patient Population and Disease Context: Description of the relevant patient population, disease stage, or model system [8].

- Stage of Drug Development: Identification of the development phase where the model will be applied (e.g., Phase 2/3 clinical trials) [8].

- Mechanism of Action: When relevant, description of the therapeutic intervention's mechanism of action [8].

- Model Inputs and Outputs: Specification of the data inputs required by the model and the outputs it will generate [12].

COU Definition Protocol

The following protocol provides detailed methodology for defining a comprehensive COU:

Identify the Regulatory or Development Question

- Formulate the specific question the model will help address

- Document the regulatory context and decision points

- Example: "How should the investigational drug be dosed when coadministered with CYP3A4 modulators?" [9]

Delineate Model Scope and Boundaries

- Specify the model's specific role in addressing the question

- Define what the model will and will not be used for

- Identify limitations and constraints on model application

Characterize Technical Specifications

- Define required model inputs and their sources

- Specify model outputs and their format

- Describe performance expectations and accuracy requirements

Document Implementation Context

- Identify the development stage where the model will be applied

- Specify the patient population, disease state, or biological system

- Describe how the model will interface with other data sources or decision-making processes

Review and Refine COU Statement

- Circulate the draft COU statement to all stakeholders

- Incorporate feedback to ensure clarity and completeness

- Finalize the COU statement and obtain formal approval

Implementing Risk-Based V&V Planning Based on COU

Once the COU is clearly defined, it drives the planning and execution of risk-based V&V activities. The level of rigor applied to each credibility factor should be commensurate with the model risk determined through the COU.

Credibility Factors and Activities

The ASME V&V 40 standard identifies 13 credibility factors across verification, validation, and applicability activities that contribute to establishing overall model credibility [9]. The table below details these factors and provides examples of corresponding activities:

Table 2: Credibility Factors and Associated V&V Activities

| Activity Category | Credibility Factor | Example V&V Activities |

|---|---|---|

| Verification | Software Quality Assurance | Code validation; version control; bug tracking |

| Numerical Code Verification | Comparison to analytical solutions; order-of-accuracy testing | |

| Discretization Error | Grid convergence studies; mesh refinement analysis | |

| Numerical Solver Error | Iterative convergence analysis; solver parameter studies | |

| Use Error | User training; interface design evaluation; workflow documentation | |

| Validation | Model Form | Evaluation of mathematical foundations; comparison to alternative model structures |

| Model Inputs | Sensitivity analysis; uncertainty quantification of input parameters | |

| Test Samples | Representative sampling; appropriate sample size determination | |

| Test Conditions | Coverage of relevant operational conditions; boundary condition testing | |

| Equivalency of Input Parameters | Assessment of parameter consistency between validation and application contexts | |

| Output Comparison | Quantitative comparison to experimental data; acceptance criterion testing | |

| Applicability | Relevance of Quantities of Interest | Assessment of output relevance to COU; uncertainty analysis for predicted quantities |

| Relevance of Validation Activities to COU | Evaluation of how well validation conditions represent the actual COU |

Risk-Based V&V Implementation Protocol

The following protocol guides the implementation of risk-based V&V activities according to model risk:

Map Credibility Factors to COU Requirements

- Identify which credibility factors are most relevant to the specific COU

- Prioritize factors based on their importance to model predictions

- Allocate resources according to risk level and factor criticality

Establish Acceptance Criteria

- Define quantitative or qualitative metrics for each credibility factor

- Set acceptance thresholds commensurate with model risk

- Document rationale for all acceptance criteria

Execute Verification Activities

- Implement software quality assurance processes

- Perform code verification to ensure correct implementation of numerical algorithms

- Conduct numerical error estimation and uncertainty quantification

Execute Validation Activities

- Design validation experiments that reflect the COU

- Select appropriate comparator data sets (in vitro, in vivo, or clinical)

- Perform quantitative comparisons between model predictions and experimental data

Assess Applicability

- Evaluate relevance of validation activities to the COU

- Assess extrapolation beyond validated conditions

- Document limitations and boundaries of validated model use

Case Studies and Practical Applications

Case Study 1: PBPK Model for Drug-Drug Interactions and Pediatric Populations

A physiologically-based pharmacokinetic (PBPK) model for a small molecule drug eliminated primarily by cytochrome P450 3A4 demonstrates how the same model can have multiple COUs with different risk profiles [9]:

COU 1: Predict effects of weak and moderate CYP3A4 inhibitors and inducers on drug pharmacokinetics in adult patients

- Question of Interest: "How should the investigational drug be dosed when coadministered with CYP3A4 modulators?"

- Model Risk: Medium (complementary evidence available from clinical DDI studies)

- Credibility Activities: Validation against clinical DDI data with strong CYP3A4 modulators; verification of enzyme kinetics parameters

COU 2: Predict pharmacokinetic profiles in children and adolescent patients

- Question of Interest: "What is appropriate dosing for pediatric populations?"

- Model Risk: High (limited clinical data in pediatric populations; direct extrapolation from adults)

- Credibility Activities: Comprehensive validation against available pediatric PK data; uncertainty quantification for ontogeny functions; verification of physiological parameters

Case Study 2: Computational Fluid Dynamics Model for Blood Pump Safety

A computational fluid dynamics (CFD) model evaluating hemolysis levels in a centrifugal blood pump illustrates how the same model applied to different device classifications carries different risk levels [10]:

COU 1: Cardiopulmonary Bypass (CPB)

- Device Classification: II

- Decision Consequence: Medium

- Model Influence: Medium

- Model Risk: Medium

- Credibility Activities: Validation against particle image velocimetry data; comparison to in vitro hemolysis testing; verification of numerical methods

COU 2: Short-Term Ventricular Assist Device (VAD)

- Device Classification: III

- Decision Consequence: High

- Model Influence: Medium

- Model Risk: Medium-High

- Credibility Activities: Enhanced validation with multiple operating conditions; comprehensive uncertainty quantification; additional verification of turbulence modeling

The Scientist's Toolkit: Essential Research Reagents for V&V

Table 3: Essential Research Reagents and Resources for Computational Model V&V

| Resource Category | Specific Solution | Function in V&V Process |

|---|---|---|

| Software Tools | Commercial CFD/FEA Solvers (e.g., ANSYS) | Numerical simulation of physical phenomena [10] |

| PBPK Modeling Platforms (e.g., GastroPlus, Simcyp) | Prediction of pharmacokinetic behavior [9] | |

| Statistical Analysis Software (e.g., R, SAS) | Quantitative comparison of model outputs to experimental data | |

| Experimental Comparators | In Vitro Test Systems | Provide validation data under controlled conditions [10] |

| Particle Image Velocimetry | Experimental flow field measurement for CFD validation [10] | |

| Clinical Data Sources | Gold-standard comparator for models predicting clinical outcomes [9] | |

| Documentation Frameworks | ASME V&V 40 Standard | Risk-informed framework for establishing model credibility [10] [1] |

| FDA Guidance Documents | Regulatory expectations for model submission and evaluation [12] | |

| Credibility Assessment Plan | Documented strategy for model V&V specific to COU [12] | |

| Quality Management | Version Control Systems | Track model changes and ensure reproducibility |

| Uncertainty Quantification Tools | Quantify and communicate limitations in model predictions [1] |

The Context of Use is not merely an administrative requirement but a fundamental scientific concept that enables efficient, risk-informed V&V planning for computational models. By precisely defining the COU, model developers can establish a clear roadmap for credibility activities that addresses regulatory expectations while optimizing resource allocation. The ASME V&V 40 framework provides a standardized methodology for implementing this risk-based approach across diverse modeling applications, from medical devices to pharmaceutical development.

As computational models assume increasingly prominent roles in regulatory decision-making, the disciplined application of COU-driven V&V planning will be essential for demonstrating model credibility and ensuring the safety and efficacy of biomedical products. The continued evolution of standards and best practices in this area will further strengthen the scientific rigor of computational modeling in regulatory science.

The use of computational modeling and simulation (M&S) has become increasingly critical in both medical device and pharmaceutical development, enabling faster innovation and more robust evaluation of product safety and efficacy. However, the regulatory frameworks governing these computational approaches have evolved along distinct pathways for devices versus pharmaceuticals, creating a complex landscape for researchers and developers. The core regulatory challenge lies in establishing and demonstrating the credibility of computational models for specific decision-making contexts, a requirement now recognized by major regulatory bodies worldwide.

For medical devices, the American Society of Mechanical Engineers (ASME) V&V 40 standard provides the foundational risk-based framework for establishing model credibility. This FDA-recognized standard specifically addresses verification and validation (V&V) activities needed to build confidence in computational models used for medical device evaluation [11]. In parallel, the pharmaceutical domain has developed the Model-Informed Drug Development (MIDD) framework, which utilizes quantitative modeling to integrate nonclinical and clinical data. The International Council for Harmonisation (ICH) M15 guideline, released for public consultation in late 2024, now provides harmonized principles for MIDD applications across regulatory jurisdictions [13] [14].

The European Medicines Agency (EMA) complements this landscape with its own guidance on modeling and simulation, particularly emphasizing mechanistic models and pediatric applications [15] [16]. Together, these frameworks represent a transformative shift in regulatory science, moving toward standardized approaches for evaluating computational models across the product development lifecycle.

Detailed Analysis of Key Standards and Guidelines

ASME V&V 40 Standard: Risk-Based Framework for Medical Devices

The ASME V&V 40-2018 standard, titled "Assessing Credibility of Computational Modeling through Verification and Validation: Application to Medical Devices," provides a structured framework for establishing model credibility based on risk analysis [11] [14]. This standard has been formally recognized by the U.S. Food and Drug Administration (FDA) and is widely implemented across the medical device industry.

The fundamental principle of V&V 40 is that the extent of validation evidence required for a computational model should be commensurate with the risk associated with the decision the model informs. The standard introduces several key conceptual innovations, including:

- Context of Use (COU): A precise definition of how the model will be used to inform a specific decision, including all relevant boundary conditions and assumptions [11].

- Model Risk: The potential impact of an incorrect model outcome on decision-making and ultimately on patient safety and product efficacy [11].

- Credibility Goals: Target levels for validation activities determined through a structured risk assessment process [11].

The practical application of ASME V&V 40 is exemplified in cardiac device development. Dr. Tinen Iles demonstrated how the standard applies to finite element analysis (FEA) models of transcatheter aortic valves used for structural component stress/strain analysis in accordance with ISO5840-1:2021 requirements [11]. In this context, the model credibility assessment directly supports design verification activities under 21 CFR 820.30(f). Similarly, Dr. Snehal Shetye from the FDA highlighted the importance of V&V 40 in evaluating lumbar interbody fusion devices, where subtle variations in contact friction and stiffness parameters significantly impact both global and local mechanical response predictions [11].

Table: Core Components of ASME V&V 40 Standard

| Component | Description | Application Example |

|---|---|---|

| Context of Use Definition | Precise statement of model purpose, boundaries, and decision role | FEA model for heart valve fatigue analysis |

| Risk Assessment | Evaluation of decision consequence and model influence | Determining validation rigor for implant stress predictions |

| Verification | Ensuring computational model is solved correctly | Code verification, calculation checks |

| Validation | Ensuring model accurately represents reality | Bench test comparison, clinical data correlation |

| Uncertainty Quantification | Characterizing statistical and modeling uncertainties | Sensitivity analysis, probabilistic methods |

FDA Regulatory Framework for Model Credibility

The FDA's approach to computational model credibility spans both device and pharmaceutical domains, with evolving frameworks that increasingly emphasize harmonization. For medical devices, the FDA has formally recognized ASME V&V 40 as a consensus standard and has published complementary guidelines that adopt its risk-based credibility assessment framework [14].

In the pharmaceutical sector, the FDA's Division of Pharmacometrics (DPM), established within the Office of Clinical Pharmacology, has pioneered the use of quantitative modeling approaches since the early 2000s [17]. The Division's 2020 strategic plan resulted in significant achievements, including the training of 91 pharmacometricians and the development of 14 disease-specific models to support trial design and regulatory decision-making [17]. These disease models span conditions from non-small cell lung cancer to rheumatoid arthritis and have been instrumental in supporting endpoint selection, patient enrichment strategies, and pediatric extrapolation.

The FDA's most recent contribution to harmonization is the December 2024 draft guidance on ICH M15 General Principles for Model-Informed Drug Development [13]. This document represents a multinational consensus on MIDD approaches and provides recommendations on planning, model evaluation, and evidence documentation. The ICH M15 framework explicitly adapts credibility assessment concepts from ASME V&V 40 to pharmaceutical applications, creating a bridge between the device and drug regulatory paradigms [14].

A critical innovation in the FDA's approach is the Model Analysis Plan (MAP), a comprehensive document that pre-specifies modeling objectives, data sources, methodologies, and evaluation criteria [14]. This structured documentation approach ensures transparency and reproducibility in regulatory submissions.

EMA Regulations and Guidelines on Modeling

The European Medicines Agency has developed a complementary but distinct regulatory framework for computational models, with particular emphasis on mechanistic models and pediatric applications. The EMA's 2025 concept paper on mechanistic models represents a significant milestone in regulatory science, providing guidance for assessing and reporting sophisticated modeling approaches such as Physiologically Based Pharmacokinetic (PBPK), Quantitative Systems Pharmacology (QSP), and multi-scale mechanistic models [16].

A distinctive feature of EMA's guidance is its detailed treatment of pediatric drug development, where practical and ethical limitations make computational approaches particularly valuable [15]. The EMA recommends specific methodologies for accounting for ontogeny and maturation effects, including:

- Allometric Scaling: Using theoretical exponents (0.75 for clearance, 1.0 for volume of distribution) to account for body size differences, with fixed exponents considered scientifically justified and practical [15].

- Maturation Functions: Implementing sigmoid Emax or Hill equation models to describe time-dependent maturation processes, particularly crucial for premature neonates and infants [15].

- Organ Function Ontogeny: Incorporating known patterns of renal function development and cytochrome P450 expression patterns to improve pediatric pharmacokinetic predictions [15].

The EMA also emphasizes the importance of visualization and documentation standards for regulatory evaluation. The agency recommends specific graphical representations showing exposure metrics versus body weight and age on continuous scales, with separate visualizations for children 0-1 years of age [15]. These visualization requirements facilitate transparent assessment of proposed dosing regimens across pediatric subpopulations.

Comparative Analysis and Implementation Framework

Side-by-Side Comparison of Regulatory Frameworks

Table: Comparative Analysis of Regulatory Frameworks for Computational Models

| Aspect | ASME V&V 40 | FDA ICH M15 | EMA Guidelines |

|---|---|---|---|

| Primary Scope | Medical Devices | Pharmaceuticals | Pharmaceuticals |

| Core Principle | Risk-based credibility | Model-informed development | Mechanistic model credibility |

| Key Innovation | Context of Use definition | Model Analysis Plan (MAP) | Pediatric ontogeny integration |

| Validation Approach | Credibility evidence matrix | Multidisciplinary assessment | Uncertainty quantification |

| Documentation | V&V protocol and report | MAP and summary documents | Model justification and reporting |

| Regulatory Status | FDA-recognized standard | Draft ICH guideline (2024) | Concept paper (2025) |

Integrated Workflow for Model Credibility Assessment

The following workflow diagram illustrates the integrated process for establishing model credibility across regulatory frameworks:

Model Credibility Assessment Workflow

This integrated workflow begins with precisely defining the Context of Use, which establishes the model's purpose, boundaries, and decision-making role across all regulatory frameworks [11] [14]. The subsequent risk assessment evaluates the consequences of an incorrect model output and the model's influence on the decision, determining the required level of validation evidence [11]. The planning phase then specifies the detailed verification, validation, and uncertainty quantification activities needed to meet credibility goals.

Experimental Protocols for Model Validation

Implementing the credibility assessment framework requires rigorous experimental protocols tailored to specific model types and contexts of use. Based on case studies from the regulatory standards, the following protocols provide detailed methodologies for key validation scenarios:

Protocol 1: Medical Device Component Stress Analysis This protocol aligns with ASME V&V 40 requirements for finite element analysis of implantable device components, as demonstrated in transcatheter aortic valve development [11].

Model Verification

- Execute numerical code verification using standardized benchmark problems

- Perform spatial convergence analysis through mesh refinement studies

- Verify material model implementation through unit testing

Experimental Validation

- Conduct benchtop tests on physical prototypes using ASTM standard methods (e.g., F2077 for spinal devices)

- Instrument test specimens with strain gauges at critical locations

- Apply quasi-static loading conditions matching worst-case clinical scenarios

- Acquire force-displacement data and local strain measurements at 1kHz sampling rate

Model-Experiment Comparison

- Compare predicted versus experimental strain values at matched locations

- Establish acceptance criteria based on risk assessment (typically ±15% for high-risk predictions)

- Document validation evidence in standardized format linking to credibility goals

Protocol 2: Pharmacokinetic Model Pediatric Extrapolation This protocol follows EMA and ICH M15 requirements for leveraging adult data to predict pediatric exposures, particularly crucial for orphan diseases [15] [14].

Base Model Development

- Develop population PK model using rich adult data (8-12 samples per subject)

- Identify significant covariates using stepwise covariate modeling (p<0.01 forward, p<0.001 backward)

- Validate model using visual predictive checks and bootstrap methods

Pediatric Extrapolation

- Implement allometric scaling using fixed exponents (CL: 0.75, Vd: 1.0)

- Incorporate maturation functions for relevant clearance pathways

- Generate virtual pediatric populations accounting for weight and age distributions

Dosing Regimen Optimization

- Simulate exposure distributions for candidate dosing regimens

- Compare pediatric exposure metrics to established adult therapeutic range

- Optimize dosing to achieve similar exposure targets across pediatric subgroups

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of regulatory-compliant computational models requires carefully selected tools and methodologies. The following table catalogues essential research reagents and their functions in model development and validation:

Table: Essential Research Reagents for Computational Model Development

| Reagent/Material | Function | Regulatory Application |

|---|---|---|

| ASTM F2996 Standard | Guidance for finite element analysis of medical devices | ASME V&V 40 compliance for implant models |

| Physiological Bench Test Apparatus | Experimental validation under simulated physiological conditions | Device model validation per FDA recognized standards |

| Virtual Population Software | Generation of anthropometrically and physiologically diverse virtual subjects | Pediatric extrapolation for EMA submissions |

| PBPK Modeling Platform | Mechanistic prediction of absorption, distribution, metabolism, excretion | ICH M15 compliance for drug-drug interaction assessment |

| Uncertainty Quantification Toolkit | Propagation of parameter and model form uncertainties | Risk-informed credibility assessment across frameworks |

| Model Documentation Framework | Standardized reporting of assumptions, methods, and results | Regulatory submission preparation for all agencies |

The regulatory landscape for computational model verification and validation is rapidly converging toward harmonized principles centered on risk-informed credibility assessment. The ASME V&V 40 standard provides the foundational framework for medical devices, while the ICH M15 guideline extends similar principles to pharmaceutical development, creating unexpected alignment between previously separate regulatory pathways [11] [13] [14].

The most significant evolution in this landscape is the transition from validation as a checklist activity to a comprehensive credibility assessment that considers the decision context, risk profile, and available evidence [11] [14]. This evolution enables more efficient regulatory evaluation while maintaining rigorous standards for patient safety.

Future developments will likely focus on several key areas. First, the integration of artificial intelligence and machine learning components into computational models presents new challenges for verification and validation [14]. Second, regulatory agencies are increasingly emphasizing real-world evidence integration with computational models to enhance their predictive capability [14]. Finally, global harmonization initiatives will continue to align assessment criteria across FDA, EMA, and other international regulators, reducing the burden on developers seeking simultaneous market approval in multiple regions [16].

For researchers and drug development professionals, successfully navigating this landscape requires proactive engagement with regulatory agencies through pre-submission meetings and early dialogue about modeling strategies. By adopting the structured approaches outlined in ASME V&V 40, ICH M15, and EMA guidelines, developers can build robust evidence of model credibility that supports efficient regulatory review and, ultimately, accelerates patient access to innovative therapies.

The integration of computational modeling and simulation, particularly through advanced frameworks like Digital Twins, is poised to revolutionize precision medicine. These technologies promise to enable highly personalized treatment strategies by simulating patient-specific health trajectories and interventions. However, their potential cannot be realized without establishing rigorous, standardized Verification, Validation, and Uncertainty Quantification (VVUQ) processes. The current lack of specific guidance creates a critical gap, hindering the reliable adoption of in-silico methods in drug development and regulatory decision-making. This whitepaper examines the urgent need for definitive V&V standards to ensure the safety, efficacy, and credibility of computational models, thereby accelerating the delivery of innovative therapies to patients.

The Evolving Regulatory and Technological Landscape

The context for drug development is shifting from a one-size-fits-all model toward precision medicine, which tailors health delivery to an individual's unique physiological and disease characteristics [18]. This paradigm shift is being accelerated by several key drivers:

- Complex New Therapies: The rise of Advanced Therapeutic Medicinal Products (ATMPs), including cell and gene therapies, presents unique manufacturing and validation challenges that traditional methods are ill-equipped to handle [19] [20]. These therapies often involve small batch production, complex biological processes, and a high degree of variability, necessitating a more robust approach to ensuring product quality.

- Data-Integrated Computational Models: The concept of the Digital Twin is gaining traction. Defined as a set of virtual information constructs that mimics a physical system, is dynamically updated with data, and informs decisions, it moves beyond simple simulation [18]. In healthcare, digital twins for patients can simulate interventions, but they introduce new challenges for validation due to their dynamic, continuously updated nature [18].

- Regulatory Modernization: The US FDA and other global agencies are emphasizing lifecycle-based, data-driven validation. There is a clear move away from static, paper-based protocols toward Continuous Process Verification (CPV), which uses real-time data to monitor processes throughout their lifecycle [21] [20]. Furthermore, regulators now expect a risk-based approach to validation, focusing resources on systems and processes most critical to product quality and patient safety [20].

Despite these advancements, a significant gap remains. While frameworks like the ASME V&V 40 standard provide a risk-based approach for establishing model credibility in medical devices [3] [22], similarly mature and specific guidance for the unique challenges of drug development and precision medicine is still emerging [18]. This lack of a standardized framework is the primary unmet need that must be addressed.

Core VVUQ Principles and Methodologies for Computational Models

Verification, Validation, and Uncertainty Quantification form the foundational trilogy for establishing confidence in computational models. Their definitions, while sometimes varying across disciplines, are critical for scientific rigor.

Table 1: Core Definitions in VVUQ for Computational Science

| Term | Definition | Core Question |

|---|---|---|

| Verification | The process of ensuring that the computational model is solved correctly. It assesses software correctness and numerical accuracy. [23] | "Are we solving the equations right?" |

| Code Verification | Assessing the reliability of the software coding and the numerical algorithms. [23] | "Is the software implemented correctly?" |

| Solution Verification | Assessing the numerical accuracy of the solution to a computational model (e.g., estimating discretization errors). [18] [23] | "What is the numerical error of this specific solution?" |

| Validation | The process of assessing a model's physical accuracy by comparing computational results with experimental data. [24] [23] | "Are we solving the right equations?" |

| Uncertainty Quantification (UQ) | The formal process of quantifying uncertainties in model inputs, parameters, and predictions. [18] | "What is the confidence bound on the prediction?" |

The ASME V&V 40 standard, though developed for medical devices, offers a valuable risk-informed framework that can be adapted for drug development. It guides the level of V&V effort based on the Model Risk—the potential impact of an erroneous model result on the decision to be made. This risk is a function of the Context of Use (COU) and the Impact of the Model Result on the decision [3]. This risk-based tiering is essential for efficiently allocating resources.

A critical methodology in validation is the use of quantitative validation metrics. These provide an objective, standardized measure of similarity between the computational model's output and experimental data, moving beyond subjective "face validity" checks [24]. The development of these metrics is an active area of research, as they are essential for both validating the virtual component of a Digital Twin and for supporting automated decision-making within a Digital Twin framework [24].

<br />

Diagram Title: VVUQ Process for Model Credibility

`

Implementing V&V: Protocols and Research Reagents

Translating V&V principles into practice requires structured protocols and tools. The following experimental workflow and "toolkit" outline a systematic approach.

<br />

Diagram Title: Validation Benchmarking Workflow

`

Table 2: The Scientist's Toolkit: Key Research Reagent Solutions for V&V

| Category | Item | Function in V&V |

|---|---|---|

| Computational Tools | Software Quality Engineering (SQE) Tools | Automated testing suites for code verification, ensuring software performs as expected. [18] |

| Grid Convergence Tools | Tools for systematic mesh refinement to perform solution verification and quantify numerical accuracy. [3] [23] | |

| Uncertainty Quantification (UQ) Software | Libraries (e.g., for Bayesian calibration, sensitivity analysis) to quantify input and predictive uncertainties. [18] | |

| Experimental Benchmarks | Perturbed Parameter Ensembles (PPEs) | A suite of model runs with varying parameter values to expose systematic errors and assess model sensitivity. [25] |

| Validation Databases & Benchmarks | Curated, high-quality experimental datasets with documented uncertainties for quantitative model validation. [23] | |

| Manufactured Analytical Solutions | Exact solutions to simplified versions of the governing equations, used for rigorous code verification. [23] | |

| Methodological Frameworks | Risk-Based Credibility Framework (ASME V&V 40) | A structured framework to determine the necessary level of V&V effort based on the model's decision-making impact. [3] [22] |

| Digital Validation Management Systems (DVMS) | Paperless systems (e.g., ValGenesis, Kneat Gx) to automate and manage validation documentation and workflows. [21] |

A specific example of an advanced V&V protocol is the use of Perturbed Parameter Ensembles (PPEs). In this methodology, dozens of model parameters are systematically varied across a defined range to create an ensemble of hundreds of model variants [25]. This approach is highly effective for:

- Exposing Systematic Errors: An error is considered systematic if it persists across the entire parameter space, indicating a fundamental issue with the model's structural formulation, not just its parameter settings [25].

- Tracking Model Improvements: By comparing PPEs from different model versions, developers can unambiguously determine if updates genuinely improve the model's physical accuracy, separating this effect from parameter tuning that may mask underlying errors [25].

The Path Forward: Standardization and Integration

To close the current guidance gap, a concerted effort from researchers, industry, and regulators is required. Key priorities include:

- Develop Application-Specific V&V Protocols: Building on foundational standards like ASME V&V 40, there is a need to develop technical reports and best practices tailored to specific applications in drug development, such as In-Silico Clinical Trials (ISCTs) and patient-specific pharmacokinetic/pharmacodynamic (PK/PD) models [3] [18].

- Establish Standardized Validation Metrics and Benchmarks: The community must converge on a set of quantitative validation metrics and create curated, high-quality benchmark datasets for common challenges in pharmaceutical modeling, similar to the International Standard Problems (ISPs) used in nuclear reactor safety [24] [23].

- Integrate VVUQ into the Digital Twin Lifecycle: For dynamic models like Digital Twins, VVUQ cannot be a one-time activity. New methodologies for continuous and temporal validation are needed, where the model is repeatedly validated as new patient data is incorporated [18].

- Embrace Digital Validation Platforms: The industry must transition to Digital Validation Management Systems (DVMS) to manage the complexity of modern V&V, ensure data integrity, and enable continuous process verification in line with regulatory expectations [21] [20].

The adoption of computational modeling and Digital Twins represents a frontier for innovation in drug development. However, this potential is tethered to our ability to demonstrate that these complex models are credible, reliable, and fit-for-purpose. The current lack of specific V&V guidance is not merely an academic concern; it is a tangible barrier to translating cutting-edge science into safe and effective patient therapies. By championing the development and adoption of rigorous, standardized, and risk-informed VVUQ processes, the research community can provide the evidence base needed to build trust with regulators, clinicians, and patients. Addressing this unmet need is not just crucial—it is imperative for the future of precision medicine.

In the rapidly evolving field of computational modeling and simulation, the alignment of stakeholders through common standards has emerged as a critical enabler of technological progress and regulatory acceptance. The development and implementation of standards for verification, validation, and uncertainty quantification (VVUQ) create a essential framework that bridges the methodological gaps between regulators, academia, and industry. This alignment is particularly crucial in safety-critical sectors such as medical device development and pharmaceutical research, where computational models increasingly inform high-stakes decisions without traditional physical validation. The ASME V&V 40 standard, specifically developed for assessing credibility of computational models in medical devices, represents a paradigm of such stakeholder-driven standardization efforts [3]. This standard provides a risk-based framework that has become a key enabler for the US Food and Drug Administration's Center for Devices and Radiological Health (FDA CDRH) framework for evaluating computational modeling and simulation data in regulatory submissions [3]. Without such common frameworks, the credibility of computational models remains fragmented, impeding innovation and potentially compromising patient safety through inconsistent evaluation methodologies.

The Standards Landscape: Frameworks for Credibility Assessment

Established VVUQ Standards and Their Applications

The current standards ecosystem for computational modeling encompasses a diverse portfolio of guidelines and technical reports addressing different aspects of verification, validation, and uncertainty quantification. These standards provide the foundational language and methodological approaches that enable consistent implementation across organizations and sectors.

Table: Key ASME VVUQ Standards and Their Applications

| Standard | Title | Focus Area | Status |

|---|---|---|---|

| VVUQ 1-2022 | Verification, Validation, and Uncertainty Quantification Terminology | Standardized terminology | Published |

| V&V 10-2019 | Standard for Verification and Validation in Computational Solid Mechanics | Solid mechanics | Published |

| V&V 20-2009 | Standard for Verification and Validation in Computational Fluid Dynamics and Heat Transfer | Fluid dynamics, heat transfer | Published |

| V&V 40-2018 | Assessing Credibility of Computational Modeling through Verification and Validation: Application to Medical Devices | Medical devices | Published |

| VVUQ 40.1-20XX | Tibial Tray Component Worst-Case Size Identification for Fatigue Testing | Medical device example | Coming Soon |

| VVUQ 50.1-20XX | Guide to a Model Life Cycle Approach that Incorporates VVUQ | Model life cycle | Coming Soon |

The ASME V&V 40 standard, initially published in 2018, provides a risk-based framework for establishing credibility requirements of computational models [3]. This standard has been particularly influential in medical device regulation, serving as the foundation for FDA CDRH's evaluation framework for computational modeling and simulation data in regulatory submissions. The standard's risk-based approach allows for scalable implementation, where the level of VVUQ rigor is proportionate to the model's intended use and the associated decision consequences [3].

Beyond the core standards, supplementary technical reports provide practical implementation guidance. The upcoming VVUQ 40.1 technical report offers an end-to-end example applying the ASME V&V 40-2018 standard to a computational model assessing the durability of a fictional tibial tray [3]. This report demonstrates the planning and execution of validation and verification activities for each credibility factor, including discussions on additional work that could be performed if greater credibility were required.

Emerging Standards for Advanced Applications

The standards landscape continues to evolve to address emerging computational methodologies and applications. Ongoing standardization efforts include:

Patient-Specific Computational Models: The ASME VVUQ 40 Sub-Committee is developing a new technical report applying the ASME V&V 40 standard to patient-specific applications, specifically femur-fracture prediction [3]. This effort includes developing a classification framework for comparators used to assess the credibility of patient-specific computational models, highlighting the strengths and weaknesses of each comparator type.

In Silico Clinical Trials (ISCT): Standards are being adapted for the emerging field of in silico clinical trials, where simulated patients augment or replace results from human patients [3]. These applications present unique credibility challenges, particularly regarding validation against human data, which is rarely possible for practical reasons.

Artificial Intelligence and Machine Learning: As computational modeling increasingly incorporates AI and machine learning components, standardization efforts are expanding to address the unique verification and validation challenges these technologies present [26].

Stakeholder Perspectives and Implementation Challenges

Regulatory Perspectives on Model Credibility

Regulatory agencies approach computational model credibility from a risk-management perspective, requiring sufficient evidence that model predictions are trustworthy for specific decision contexts. The FDA CDRH has formally incorporated the ASME V&V 40 standard into its evaluation framework for medical device submissions, creating a clear pathway for industry implementation [3]. This regulatory adoption provides a compelling case study in how standards can bridge the gap between innovation and public safety.

Regulators particularly value standards that provide:

- Risk-Based Frameworks: Approaches that scale validation efforts based on the model's role in decision-making and the associated consequences of incorrect predictions [3].

- Transparent Methodologies: Clearly documented verification and validation processes that enable regulatory assessment.

- Comparators for Validation: Well-defined approaches for comparing model predictions to experimental or clinical data [3].

For in silico clinical trials, regulators face the particular challenge of validating models against human data when such direct validation is rarely possible [3]. This necessitates specialized approaches to model credibility that may differ from traditional physical testing paradigms.

Industry Implementation and Practical Applications

Industry stakeholders implement VVUQ standards to streamline product development, reduce physical testing requirements, and strengthen regulatory submissions. The medical device industry has been particularly active in adopting these standards, with companies like Medtronic, Boston Scientific, and W. L. Gore & Associates actively contributing to standards development and implementation [3].

Industry implementation highlights include:

Medical Device Durability Assessment: The upcoming VVUQ 40.1 technical report provides a practical example of how the ASME V&V 40 standard can be applied to identify worst-case sizes for fatigue testing of tibial tray components [3]. This example demonstrates how standards enable efficient, targeted physical testing informed by computational models.

Multicore System Verification: In safety-critical applications such as aerospace systems, industry faces new verification challenges with the transition to multicore architectures [27]. Standards and best practices are emerging to address these challenges, including formal specifications of processor memory models and methodologies for bounding multicore interference [27].

Systematic Mesh Refinement: Industry practitioners emphasize the importance of systematic mesh refinement for code and calculation verification, particularly highlighting how misleading results can arise when systematic approaches are not applied [3].

Academic Research and Methodological Development

Academic institutions contribute to the standards ecosystem through fundamental research, methodological development, and educational initiatives. Researchers are extending VVUQ methodologies to address emerging challenges such as:

Patient-Specific Modeling: Academic researchers are developing classification frameworks for comparators used in validating patient-specific computational models [3]. These frameworks define, classify, and compare different types of comparators, providing rationale for selecting appropriate validation approaches based on model context and application.

Verification and Validation of Modeling Methods: Academic research is clarifying the distinctions between verification, validation, and evaluation (VVE) of modeling methods themselves [28]. This work adapts software engineering principles to modeling methods, asking "Am I building the method right?" (verification), "Am I building the right method?" (validation), and "Is my method worthwhile?" (evaluation) [28].

Formal Method Specifications: Academic-industry collaborations are advancing formal specifications of complex systems, such as the formal definition of Arm's architecture specification language and concurrency model [27]. These efforts improve the ability to verify programs running on complex modern processors.

Methodological Framework: Experimental Protocols for VVUQ

Risk-Based Credibility Assessment Protocol

The ASME V&V 40 standard provides a systematic, risk-based methodology for establishing model credibility requirements. The experimental protocol for implementing this framework involves sequential phases:

Phase 1: Context of Use Definition

- Clearly specify the model's intended application and the specific decisions it will inform

- Define the model boundaries, operating conditions, and relevant physical phenomena

- Document all assumptions and limitations

Phase 2: Model Risk Assessment

- Evaluate the model's influence on the decision-making process

- Assess the consequences of an incorrect model prediction

- Determine the model risk level based on the combination of influence and consequences

Phase 3: Credibility Factor Identification

- Identify which credibility factors (such as conceptual model, mathematical model, numerical solution, etc.) are relevant for the specific context of use

- Prioritize factors based on their importance to model credibility

Phase 4: Credibility Plan Development

- Establish target credibility levels for each factor based on the risk assessment

- Define specific verification, validation, and uncertainty quantification activities to achieve these targets

- Allocate resources based on risk-based prioritization

This protocol enables efficient allocation of VVUQ resources by focusing efforts on the areas of highest risk and impact, avoiding both insufficient rigor for high-risk applications and excessive verification for low-risk applications [3].

Systematic Mesh Refinement Protocol for Code Verification

For computational models using discretization methods such as finite element analysis, systematic mesh refinement represents a critical verification methodology. The experimental protocol for implementation involves:

Step 1: Initial Mesh Generation

- Develop a baseline mesh with careful attention to boundary layers, discontinuities, and regions of high gradients

- Document mesh quality metrics including aspect ratio, skewness, and orthogonality

Step 2: Systematic Refinement

- Implement refinement methodology maintaining consistent refinement ratios (typically √2 or 2)

- For unstructured meshes, maintain similar element size distributions and quality metrics across refinement levels

- Ensure geometric fidelity is preserved during refinement

Step 3: Solution Calculation

- Execute simulation on each mesh refinement level

- Monitor and ensure iterative convergence at each refinement level

- Extract key quantities of interest (observables) for each solution

Step 4: Discretization Error Estimation

- Apply Richardson extrapolation or similar techniques to estimate discretization error

- Calculate observed order of accuracy and compare to theoretical order

- Verify solutions are in the asymptotic convergence range

Step 5: Uncertainty Quantification

- Quantify numerical uncertainties resulting from discretization errors

- Document all verification activities and results

This methodology is particularly critical for avoiding misleading results in complex simulations, as demonstrated in applications such as blood hemolysis modeling where nonsystematic approaches can produce erroneous conclusions [3].

Table: Essential VVUQ Standards and Implementation Resources

| Resource | Type | Function | Access |

|---|---|---|---|

| ASME V&V 40-2018 | Standard | Provides risk-based framework for establishing credibility of computational models | ASME Standards |

| VVUQ 40.1 (Upcoming) | Technical Report | End-to-end example applying V&V 40 to medical device fatigue testing | ASME Publications |

| VVUQ 1-2022 | Terminology Standard | Standardized terminology for VVUQ activities | ASME Standards |

| SISAQOL-IMI Guidelines | Consensus Guidelines | Standardized PRO assessment in cancer clinical trials | The Lancet Oncology |

| Method VVE Framework | Methodological Framework | Verification, Validation, Evaluation for modeling methods | Springer Publications |

Computational and Methodological Tools

Systematic Mesh Refinement Tools: Software capabilities for controlled mesh refinement maintaining element quality and geometric fidelity, particularly important for unstructured meshes with nonuniform element sizes [3].

Formal Concurrency Modeling Tools: Specialized tools such as "herd7" for interpreting formal concurrency models and "litmus" for running concurrency tests, essential for verifying software on multicore architectures [27].

Uncertainty Quantification Frameworks: Methodologies for quantifying both aleatory and epistemic uncertainties and their propagation through computational models.

Multicore Interference Analysis Tools: Tooling solutions that combine targeted interference generators and measurement capabilities to analyze interference channels in multicore hardware platforms [27].

Reference Interpreters: Formally defined reference interpreters for architecture specification languages, such as those developed for Arm's Architecture Specification Language (ASL) [27].

The alignment of regulators, academia, and industry through common standards represents a critical enabling factor for the advancement and adoption of computational modeling in high-stakes applications. The ASME V&V 40 standard and its expanding ecosystem of technical reports and implementation guides demonstrate how risk-based, practical frameworks can bridge stakeholder perspectives while maintaining scientific rigor. As computational methods continue to evolve—embracing artificial intelligence, digital twins, and in silico clinical trials—the continued collaboration of stakeholders in standards development will be essential for ensuring both innovation and public safety. The upcoming ASME VVUQ Symposium in 2026 provides a forum for this ongoing collaboration, addressing emerging topics including AI/ML models, digital twins, and advanced manufacturing [26]. Through continued commitment to common standards, the computational modeling community can ensure that increasingly sophisticated models deliver trustworthy results that benefit researchers, regulators, and ultimately, the public they serve.

From Theory to Practice: Implementing V&V Frameworks Across Biomedical Domains

The ASME V&V 40-2018 standard, titled "Assessing Credibility of Computational Modeling through Verification and Validation: Application to Medical Devices," provides a structured, risk-informed framework for establishing the trustworthiness of computational models used in medical device development and regulatory evaluation [22] [30]. Developed through collaboration between the U.S. Food and Drug Administration (FDA), medical device companies, and software providers, this standard addresses a critical industry need for consensus on the evidentiary requirements for model validation [10] [31]. Unlike traditional V&V methodologies that prescribe specific technical procedures, V&V 40 introduces a risk-based approach that determines "how much" verification and validation evidence is sufficient based on the model's intended role and the potential consequences of an incorrect decision [10] [30].

The core tenet of the V&V 40 framework is that credibility requirements should be commensurate with model risk [10]. This principle acknowledges that different applications demand different levels of evidence, allowing organizations to allocate resources efficiently while ensuring patient safety. The standard has gained significant recognition since its publication, including FDA recognition, making it a critical tool for manufacturers seeking regulatory approval for devices developed or evaluated using computational modeling [11] [10]. The framework is flexible enough to accommodate various computational disciplines—including computational fluid dynamics, solid mechanics, heat transfer, and electromagnetism—across the total product life cycle [10].

Core Concepts and Terminology

Fundamental Definitions

Credibility: "The trust, obtained through the collection of evidence, in the predictive capability of a computational model for a context of use (COU)" [10]. This trust is established through structured V&V activities rather than assumed.

Context of Use (COU): A detailed statement that defines the specific role and scope of the computational model in addressing a question of interest [10]. The COU precisely specifies what the model will predict, under what conditions, and how the results will inform decision-making.

Verification: The process of determining that a computational model accurately represents the underlying mathematical model and its solution [1] [30]. It answers the question: "Are we solving the equations correctly?" Verification encompasses code verification (checking for programming errors) and calculation verification (estimating numerical errors).

Validation: The process of determining the degree to which a model is an accurate representation of the real world from the perspective of the intended applications [1] [30]. It answers the question: "Are we solving the correct equations?" Validation involves comparing computational results with experimental data.

Uncertainty Quantification (UQ): The process of characterizing and assessing uncertainties in modeling and simulation [1]. This includes identifying uncertainties in input parameters, numerical approximations, and physical experiments used for validation.

The Credibility Factors

The V&V 40 standard identifies 13 key factors that contribute to establishing model credibility, categorized under verification, validation, and applicability [30]. These factors provide a systematic way to plan and evaluate V&V activities:

Verification Factors:

- Mathematical Model Verification

- Numerical Algorithm Verification

- Software Quality Assurance

- Code Verification

- Calculation Verification

Validation Factors:

- Input Quantity Characterization

- Validation Experiments

- Validation Metrics

- Model Updating

Applicability Factors:

- Use Condition Analysis

- Extrapolation Analysis

- Applicability Domain Analysis

- Additional Evidence

The Step-by-Step Framework

Step 1: Define the Question of Interest

The first step involves precisely defining the fundamental question that the computational model will help address. This question typically relates to device safety, performance, or effectiveness. For example: "Are the flow-induced hemolysis levels of the centrifugal pump acceptable for the intended use?" [10]. The question should be specific, measurable, and directly relevant to the device's regulatory evaluation or design verification.

Step 2: Establish the Context of Use (COU)

The COU provides the critical foundation for all subsequent credibility assessment activities. A well-defined COU includes:

- The specific model predictions required (e.g., stress distributions, flow rates, temperature profiles)

- The boundary conditions and operating ranges

- The intended role of the model in decision-making (complementary to experimental data, replacement for certain tests, etc.)

- The device type and relevant anatomical or physiological systems

Table: Examples of Context of Use Statements for Different Applications

| Device Type | Sample Context of Use |

|---|---|

| Centrifugal Blood Pump (CPB) | "Use CFD to predict hemolysis index at the nominal operating condition (5 L/min, 3000 RPM) to complement in vitro hemolysis testing for cardiopulmonary bypass applications." [10] |

| Centrifugal Blood Pump (VAD) | "Use CFD to predict hemolysis index across the operating range (2.5-6 L/min, 2500-3500 RPM) to support device safety assessment for short-term ventricular assist device applications." [10] |

| Tibial Tray Component | "Use finite element analysis to identify worst-case size for fatigue testing of a tibial tray component." [3] |

| Hip Fracture Risk Prediction | "Use the Bologna Biomechanical Computed Tomography (BBCT) solution to predict the absolute risk of fracture at the femur for a subject." [32] |

Step 3: Assess Model Risk

The V&V 40 framework introduces a two-dimensional risk assessment approach that evaluates both the influence of the model on decision-making and the consequence of an incorrect decision [10].

Model Risk = f(Model Influence, Decision Consequence)

The following diagram illustrates the key relationships and workflow for establishing model risk:

Model Influence categories:

- High: The computational model provides the primary evidence for the decision, with little or no supporting experimental or clinical data.

- Medium: The computational model provides substantial evidence, complemented by some experimental or clinical data.

- Low: The computational model provides supporting evidence, with the decision primarily based on experimental or clinical data.

Decision Consequence categories:

- High: An incorrect decision could result in death or serious deterioration of health.

- Medium: An incorrect decision could result in minor deterioration of health or require medical intervention.

- Low: An incorrect decision is unlikely to impact health.

Table: Model Risk Assessment Matrix

| Decision Consequence | Low Model Influence | Medium Model Influence | High Model Influence |

|---|---|---|---|

| Low | Low Risk | Low Risk | Medium Risk |

| Medium | Low Risk | Medium Risk | High Risk |

| High | Medium Risk | High Risk | High Risk |

Step 4: Establish Credibility Goals