Existence and Uniqueness Analysis in ABM Verification: A Foundational Framework for Credible Computational Models in Biomedicine

This article provides a comprehensive guide to existence and uniqueness analysis, a critical but often overlooked component of Agent-Based Model (ABM) verification.

Existence and Uniqueness Analysis in ABM Verification: A Foundational Framework for Credible Computational Models in Biomedicine

Abstract

This article provides a comprehensive guide to existence and uniqueness analysis, a critical but often overlooked component of Agent-Based Model (ABM) verification. Tailored for researchers, scientists, and drug development professionals, we demystify the foundational mathematical concepts and present a step-by-step methodological framework for practical implementation. The content bridges theoretical principles with real-world application, covering troubleshooting strategies for common numerical instabilities and validation techniques to integrate this analysis within broader model credibility assessments like VV&UQ. By establishing rigorous verification practices, this guide aims to empower the development of high-fidelity, regulatory-ready in silico models for predictive oncology, immunology, and therapeutic development.

Why Existence and Uniqueness Matter: The Bedrock of Credible Agent-Based Models

Defining Existence and Uniqueness in the Context of Stochastic ABMs

Frequently Asked Questions (FAQs) and Troubleshooting Guides

FAQ: Core Theoretical Concepts

Q1: What do "existence and uniqueness" mean for a Stochastic Agent-Based Model? In the context of Stochastic ABMs, "existence" refers to the mathematical guarantee that a solution to the model's governing equations exists for a given set of initial conditions and parameters. "Uniqueness" means that this solution is the only possible one; no other fundamentally different behaviors can emerge from the same starting point. Establishing these properties is a foundational step in model verification, ensuring that your model's dynamics are well-defined and not subject to arbitrary numerical instabilities [1].

Q2: Why is proving existence and uniqueness particularly challenging for Stochastic ABMs compared to other model types? Stochastic ABMs present unique challenges due to their inherent nonlinearity, path-dependence, and the complex interactions between discrete agents. Unlike simpler models, the governing equations often involve locally one-sided Lipschitz conditions and lack the global monotonicity that simplifies analysis in other dynamical systems. Furthermore, the discrete stochastic interactions can create discontinuities that violate the smoothness assumptions of classical theorems [1].

Q3: My ABM produces chaotic-looking results. How can I tell if this is genuine complexity or a numerical artifact? This is a critical distinction in verification. Begin by implementing sensitivity analysis on your numerical integrator's step size. If the qualitative behavior stabilizes as the step size decreases, it suggests genuine complexity. Conversely, if wild fluctuations persist or change unpredictably, it is likely a numerical artifact, indicating that your model may violate local uniqueness conditions or that the numerical method is inappropriate for your system's stiffness [2].

Troubleshooting Guide: Common Errors and Solutions

Problem: Simulation runs yield dramatically different outcomes from identical initial conditions. This symptom directly points to a potential failure of uniqueness or a severe numerical instability.

- Possible Cause 1: Violation of a local Lipschitz condition in the agent interaction rules.

- Solution: Check if your agent decision functions or interaction kernels have discontinuous jumps or are not locally one-sided Lipschitz. Reformulate these rules to be smooth, or apply a more general existence theorem that accommodates your specific condition [1].

- Possible Cause 2: Inadequate numerical method for the underlying stochastic differential equations.

- Solution: The standard Euler-Maruyama method may be insufficient. Consider implementing Euler's polygonal line method, which has been proven effective for establishing existence and uniqueness under locally one-sided Lipschitz conditions [1].

- Investigation Protocol:

- Parameter Smoothing: Temporarily replace all

if-then-elserules in agent decisions with smooth sigmoid functions. Re-run the simulation. - Path Sampling: If results converge, the original discontinuity is the cause. If not, sample and plot multiple agent trajectories to identify where they diverge.

- Method Comparison: Re-implement the model using a higher-order stochastic numerical method (e.g., Milstein method) and compare the outcome distributions.

- Parameter Smoothing: Temporarily replace all

Problem: The model fails to produce a stable solution or "blows up" in finite time. This often indicates a failure of the existence conditions, where the model's dynamics do not permit a bounded solution.

- Possible Cause: The model's coefficients do not satisfy the necessary growth conditions, allowing agent states (e.g., wealth, social pressure) to become infinite.

- Solution: Introduce a mathematical constraint that bounds the agent state variables. This can often be achieved by adding a stabilizing term that mimics carrying capacity or budget constraints, ensuring the

pth moment boundedness of solutions [1]. - Investigation Protocol:

- Moment Tracking: Implement real-time tracking of the mean and variance of key agent state variables.

- Growth Analysis: Log the maximum and minimum values of these states. The point at which they trend towards infinity is the failure point.

- Constraint Implementation: Add a soft maximum limit to the critical variables using a bounding function and verify that the solution stabilizes.

Problem: Difficulty in matching simulated data to real-world data for validation. This is a core challenge in moving from verification to validation.

- Possible Cause: The model has not been adequately validated through explicit comparison of real and simulated data. A model can be mathematically sound (existence and uniqueness) but still not reflect reality [3].

- Solution: Employ the validation framework of explicitly comparing real and simulated data. This process is more fundamental than calibration for ensuring a model tells you something about the real world [3].

- Investigation Protocol:

- Data Confrontation: Identify specific, quantifiable outputs from your model and obtain corresponding real-world data.

- Comparison and Iteration: Systematically compare these outputs in tables or figures. Use the discrepancies to refine the model's mechanisms, not just its parameters [3].

Experimental Protocols for Verification

Protocol 1: Numerical Verification of Existence via Euler's Polygonal Line Method

Objective: To provide numerical evidence for the existence of a solution by implementing a stable discretization method.

Methodology:

- Discretization: Apply Euler's polygonal line method to the stochastic differential equations governing agent dynamics. This method constructs an approximate solution by creating a polygonal line through computed points [1].

- Convergence Testing: Run simulations with a sequence of decreasing time steps (e.g.,

dt = 0.1, 0.01, 0.001). - Analysis: Observe if the sequence of approximate solutions converges to a limiting process. Qualitative and quantitative stability across step sizes supports the existence of a true, continuous-time solution.

Protocol 2: Empirical Validation Through Real vs. Simulated Data Comparison

Objective: To validate the model by confronting its outputs with real-world data, moving beyond mere mathematical verification.

Methodology:

- Output Selection: Define one or more key outputs from your ABM (e.g., population distribution, market share over time).

- Data Collection: Gather corresponding empirical data from historical records, surveys, or other studies.

- Explicit Comparison: Create a combined figure or table that places the real data and the simulated data side-by-side. This direct visual and statistical comparison is the cornerstone of empirical validation [3].

- Goodness-of-Fit: Use statistical tests (e.g., Kolmogorov-Smirnov, Mean Squared Error) to quantify the fit between the simulated and real data.

Model Verification Workflow

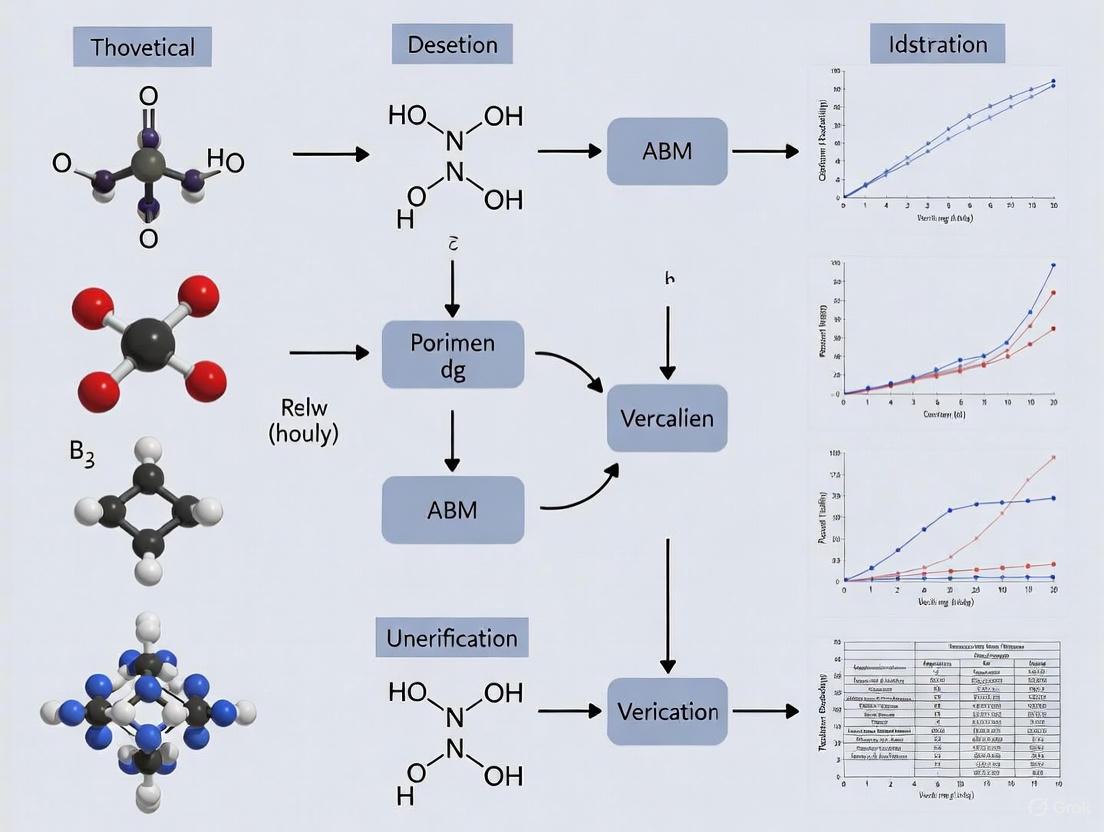

The following diagram illustrates the logical relationship and process flow for establishing the existence and uniqueness of solutions in Stochastic ABMs, leading to empirical validation.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key methodological "reagents" and their function in the analysis of existence and uniqueness for Stochastic ABMs.

| Research Reagent | Function in Analysis |

|---|---|

| Locally One-Sided Lipschitz Condition | A generalized assumption on model coefficients that allows growth while still permitting the proof of existence and uniqueness, replacing the more restrictive monotone condition [1]. |

| Euler's Polygonal Line Method | A numerical technique used not just for simulation, but as a constructive method to prove the existence of solutions for SDEs with complex coefficients [1]. |

| p-th Moment Boundedness | A mathematical property demonstrating that the solution's statistical moments (mean, variance) remain finite over time, providing evidence of a stable, non-explosive solution [1]. |

| Empirical Validation Bibliography | A curated collection of ABM research that explicitly compares real and simulated data, serving as a benchmark for validation practices and methodology [3]. |

| Sensitivity Analysis | A process of testing the model's response to changes in parameters and numerical step sizes, helping to distinguish true emergent complexity from numerical artifacts [2]. |

Quantitative Data on Verification and Validation

The table below summarizes key concepts and their quantitative or qualitative benchmarks relevant to the verification process.

| Concept | Benchmark / Threshold | Purpose in Verification |

|---|---|---|

| Contrast (Enhanced) - WCAG AAA | 7:1 (standard text)4.5:1 (large text) | A benchmark for ensuring visualizations and diagrams have sufficient color contrast for readability and accessibility, which is critical for accurately interpreting model output [4]. |

| Numerical Convergence | Stable solution with decreasing step size (e.g., dt -> 0) | Provides numerical evidence for the existence of a solution. A model whose behavior wildly changes with step size may not have a unique solution. |

| p-th Moment Boundedness | Finite variance and higher moments over time | Demonstrates solution stability and is a key property often established alongside existence and uniqueness theorems [1]. |

| Empirical Validation | Explicit comparison in figure/table | The fundamental test for determining if a verified model actually tells us something about the real world, moving from mathematical correctness to scientific utility [3]. |

The Critical Role in Regulatory Acceptance and In Silico Trials

Foundational Concepts: Verification, Validation, and Credibility

What is the difference between verification and validation (V&V) for an Agent-Based Model (ABM)?

Verification and validation are distinct but complementary processes critical for establishing ABM credibility.

- Verification answers the question "Are we building the model right?" It is the process of ensuring that the computational model correctly implements its intended mathematical and conceptual design, and that there are no errors in the code or the numerical solutions [5] [2]. Key steps include checking for coding bugs and ensuring numerical accuracy.

- Validation answers the question "Are we building the right model?" It is the process of determining the degree to which the model is an accurate representation of the real-world biology or clinical outcome from the perspective of the model's intended use [2] [6]. This involves comparing model predictions with independent experimental or clinical data.

Why is the "Context of Use" (COU) so important for regulatory submission?

The Context of Use (COU) is a formal definition that specifies the specific role and scope of the computational model in addressing a regulatory question of interest [5] [6]. It is the foundational first step in any credibility assessment, as defined by standards like ASME V&V 40-2018 [5]. The COU dictates the required level of model credibility. For instance, a model used to inform a go/no-go decision on a drug target will require a different level of validation than a model used as primary evidence of efficacy in a marketing authorization application. All subsequent verification, validation, and uncertainty quantification activities are scaled based on the COU and its associated risk [5] [6].

What is a "Credibility Assessment Plan" and what are its key components?

A Credibility Assessment Plan is a risk-informed strategy, often based on standards like ASME V&V 40-2018, that outlines the specific activities and acceptance criteria for demonstrating a model's fitness for its Context of Use [5] [6]. The core components are outlined in the workflow below:

Troubleshooting ABM Verification: Existence and Uniqueness

How do I verify the "existence" and "uniqueness" of my ABM's solution?

Existence and Uniqueness analysis is a core component of the deterministic verification of ABMs [7]. The following guide helps diagnose and resolve common failures in this analysis.

| Symptom | Potential Root Cause | Recommended Corrective Action |

|---|---|---|

| Simulation fails to produce an output or crashes for a valid input set. | Violation of Existence: Model rules or parameters lead to an unrecoverable state (e.g., division by zero, an agent type going extinct). | 1. Review agent interaction rules for logical errors. 2. Implement safeguards in the code (e.g., check for zero before division). 3. Validate input parameter ranges against known biological constraints [7]. |

| The same initial seed and inputs produce meaningfully different outputs across runs. | Violation of Uniqueness: Numerical instabilities, use of uninitialized variables, or parallel computing race conditions. | 1. Fix the random seed and verify it is correctly applied to all stochastic processes. 2. Check for floating-point rounding errors in critical calculations. 3. Ensure all variables are properly initialized before the main simulation loop [7]. |

| Small changes in an input parameter cause large, discontinuous jumps in model output. | Numerical Ill-Conditioning: The model is overly sensitive to specific parameters, indicating potential structural or stability issues. | 1. Perform a sensitivity analysis (e.g., LHS-PRCC) to identify problematic parameters. 2. Review the biological rationale for the sensitive parameters and interactions. 3. Consider model simplification or re-formulation in the sensitive areas [7]. |

What is the standard workflow for deterministic verification of an ABM?

The verification workflow involves several automated and manual checks to ensure model robustness. The following protocol, based on the Model Verification Tools (MVT) computational framework, outlines the key steps [7].

Protocol: Deterministic Verification Workflow for ABMs

Objective: To verify that the ABM is implemented correctly and operates in a robust, numerically stable manner.

Materials:

- The ABM software and computational environment.

- Model Verification Tools (MVT) suite or equivalent custom scripts [7].

- A defined set of model outputs of interest (e.g., cell count, cytokine concentration).

Method:

- Existence and Uniqueness Analysis:

- Run the model across the entire defined range of input parameters to ensure it always produces a valid output (Existence).

- Execute multiple runs with the exact same initial random seed and input parameters. Compare key outputs to ensure they are identical (Uniqueness). The tolerated variation should be minimal, attributable only to numerical rounding [7].

- Time Step Convergence Analysis:

- Run the same simulation scenario with progressively smaller time-step lengths (e.g., 1.0, 0.5, 0.1, 0.01).

- Calculate the percentage discretization error (eqi) for a key output quantity (e.g., peak value) relative to the result from the smallest, computationally tractable reference time-step (i). The model is considered converged if *eqi < 5% for the time-step used in production [7].

- Smoothness Analysis:

- For all output time series, compute the coefficient of variation (D) as the standard deviation of the first difference of the series, scaled by the absolute mean.

- Use a moving window (e.g., k=3) for this calculation. A high value of D indicates a higher risk of stiffness, singularities, or discontinuities in the solution that may warrant investigation [7].

- Parameter Sweep Analysis:

- Use global sensitivity analysis techniques, such as Latin Hypercube Sampling combined with Partial Rank Correlation Coefficient (LHS-PRCC), to sample the input parameter space.

- The goal is to confirm the model is not ill-conditioned and to identify input parameters to which the outputs are abnormally sensitive [7].

Tools and Materials for ABM Verification and Validation

What tools are available to help automate the verification of ABMs?

The Model Verification Tools (MVT) is an open-source software suite specifically designed to facilitate the verification of discrete-time stochastic models like ABMs [7]. It provides a user-friendly interface to perform key deterministic verification steps.

Research Reagent Solutions: Key Computational Tools for ABM Credibility

| Tool / Resource | Function | Relevance to ABM Credibility |

|---|---|---|

| Model Verification Tools (MVT) [7] | An open-source Python-based suite that automates key verification steps. | Provides automated analysis for Existence, Uniqueness, Time Step Convergence, Smoothness, and Parameter Sweeps. |

| ASME V&V 40-2018 Standard [5] [6] | A technical standard for assessing credibility of computational models in medical device development, adaptable to drug development. | Provides the overarching risk-informed framework for planning and reporting credibility assessments, including definitions for COU and model risk. |

| Latin Hypercube Sampling & PRCC (LHS-PRCC) [7] | A global sensitivity analysis technique implemented within MVT and other packages. | Used in Parameter Sweep analysis to identify which input parameters have the greatest influence on model outputs, highlighting potential ill-conditioning. |

| Universal Immune System Simulator (UISS) [6] | An agent-based modeling platform designed to simulate immune system responses. | Serves as an example of an ABM framework for which a comprehensive Credibility Assessment Plan has been developed for a specific Context of Use (TB treatment) [6]. |

How is a risk analysis performed for an ABM used in a regulatory submission?

The risk analysis is a critical step that directly influences the level of V&V required. Risk is defined as a combination of Model Influence and Decision Consequence [5] [6]. The following table helps categorize these elements.

Table: Framework for ABM Risk Analysis in Regulatory Submissions

| Model Influence (Contribution to Decision) | Decision Consequence (Impact of an Incorrect Prediction) |

|---|---|

| Low: The ABM provides supportive, exploratory insights. Other evidence is primary. | Low: Minor impact on development timeline or internal resource allocation. |

| Medium: The ABM is used to inform critical development choices (e.g., dose selection, trial design). | Medium: Potential for a large financial loss or a significant delay in a development program. |

| High: The ABM provides the primary or sole evidence of safety/efficacy for a regulatory decision. | High: Potential for adverse patient outcomes, misinformed clinical use, or product recall. |

The overall model risk is determined by considering both factors. A high-influence model supporting a high-consequence decision necessitates the most rigorous and extensive V&V activities.

Distinguishing Model Verification from Model Validation

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between verification and validation? Verification is the process of confirming that a computational model implements its underlying equations and intended behaviors correctly and without technical errors. It answers the question: "Are we building the model right?" In contrast, validation determines whether the model is an accurate representation of the real-world system it is intended to simulate. It answers the question: "Are we building the right model?" [8] [9]

FAQ 2: Why is the distinction especially critical for Agent-Based Models (ABMs) in biomedical research? ABMs simulate how population-level behaviors emerge from the interactions of individual components (agents) [10]. This complexity means that a model can be perfectly verified (bug-free code) yet still be invalid if the rules governing agent behavior do not reflect biological reality. Establishing that a model's outcomes are a unique and credible consequence of its mechanistic rules is a core challenge in ABM research [11] [12].

FAQ 3: How does the "Context of Use" influence validation? The level of rigor required for both verification and validation is determined by the model's Context of Use—the specific role and impact of the model in informing a decision, especially in a regulatory setting [8]. A model used for early-stage hypothesis generation will have different validation requirements than one used to support a clinical trial design or a regulatory submission for a new drug.

FAQ 4: What are common signs that my ABM may not be properly validated? Common indicators include an over-reliance on face-validity (the model "looks right" but isn't tested quantitatively) and outcome measures that are only loosely tied to the underlying mechanisms. Another sign is an inability to replicate core emergent phenomena observed in real-world data when initial conditions are slightly altered [11].

Troubleshooting Guides

Issue 1: Model Produces Unstable or Inconsistent Results

Problem: Your ABM generates vastly different outcomes across simulation runs with identical parameters, suggesting potential implementation errors or true stochasticity that needs characterization.

Investigation Protocol:

- Verification Check: Ensure all random number generators are properly seeded to allow for reproducible results during the debugging phase.

- Sensitivity Analysis: Systematically vary key parameters one at a time to identify if specific inputs are causing the instability. This helps distinguish between coding errors and highly sensitive model dynamics.

- State-Space Exploration: For ABMs, it is often necessary to run multiple simulations (ensemble runs) to understand the distribution of possible outcomes, rather than expecting a single result from one run [10].

Issue 2: Failure to Replicate Key Empirical or Clinical Data

Problem: The macro-level patterns emerging from your ABM do not match the empirical data you are trying to model.

Investigation Protocol:

- Input Validation: Re-examine the empirical meaningfulness of your model's exogenous inputs, such as initial conditions, parameter estimates, and functional forms [9]. Are they grounded in appropriate biological data?

- Process Validation: Scrutinize the rules governing agent behavior and interaction. "If you didn't grow it, you didn't explain it" [9]. Ensure the mechanistic processes (biological, physical) represented in the model reflect real-world aspects critical for your context of use [9].

- Calibration and Fitting: Calibrate your model against a portion of your empirical data (in-sample fitting). Then, test its predictive power on a separate, withheld portion of the data (out-of-sample forecasting) to ensure you have not over-fitted the model [9].

Issue 3: Preparing an ABM for Regulatory Evaluation

Problem: You need to demonstrate the credibility of your model for use in the regulatory evaluation of a biomedical product.

Investigation Protocol:

- Define Context of Use: Formally document the specific regulatory question your model is intended to address. This is the foundational step that determines all subsequent V&V activities [8].

- Conduct Risk-Based Analysis: Perform an analysis to identify the model's high-risk assumptions—those that most significantly impact the output relevant to the Context of Use. Focus your V&V efforts on these areas [8].

- Execute a V&V Plan: Follow a structured framework for credibility assessment, which includes:

- Verification: Demonstrate the software is implemented correctly.

- Validation: Provide evidence the model is sufficiently accurate for its Context of Use.

- Uncertainty Quantification: Characterize the uncertainty in model predictions [8].

Core Concepts & Workflow

The relationship between verification and validation, and their role in establishing model credibility, can be visualized as a sequential workflow.

Comparative Analysis: Verification vs. Validation

The table below provides a structured comparison to help distinguish these two critical processes.

| Aspect | Model Verification | Model Validation |

|---|---|---|

| Core Question | Are we building the model right? [9] | Are we building the right model? [9] |

| Primary Focus | Internal correctness; code and implementation [8] | External accuracy; match to real-world data [8] |

| Key Methods | Unit testing, code review, debugging, ensuring solutions to equations are unique and stable [13] | Input, process, and output validation; calibration against empirical data; historical data matching [9] |

| Relationship to Context of Use | Largely independent of the specific application. | Entirely dependent on the model's intended Context of Use [8]. |

| Analogy | Confirming a blueprint is followed correctly during construction. | Confirming the finished building meets the occupants' needs. |

The Scientist's Toolkit: Research Reagent Solutions

The following table details key methodological "reagents" and their functions in the verification and validation process.

| Research Reagent | Function in V&V Process |

|---|---|

| Sensitivity & Uncertainty Analysis (SA/UA) | A computational method to determine how variations in model inputs affect outputs. It is crucial for identifying high-risk parameters to target for validation [8]. |

| Ordinary/Partial Differential Equations (ODEs/PDEs) | Used in hybrid multi-scale models or to represent specific biological processes. Their well-established existence and uniqueness theorems provide a verification baseline for parts of the system [13] [12]. |

| Markov Decision Process (MDP) Formalism | A framework for modeling agent decision-making in uncertain environments. Formalizing agent rules as an MDP allows for rigorous analysis of emergent behavior and probabilistic verification [14]. |

| Empirical Validation Framework | A structured approach encompassing input, process, descriptive output, and predictive output validation to ensure the model is consistent with empirical data at multiple levels [9]. |

| In Silico Clinical Trials | The use of validated models to simulate clinical trials. This requires the highest degree of model credibility and is subject to rigorous regulatory scrutiny and V&V standards [8]. |

Frequently Asked Questions (FAQs)

Q1: What is solution verification in the context of Agent-Based Models (ABMs), and why is it critical for my research? Solution verification is the process of ensuring that your computational model is implemented correctly and produces numerically sound and reliable results. For ABMs, this involves specific analyses like existence and uniqueness to check that a solution exists for your input parameters and that it is the only possible solution, preventing ambiguous interpretations. Ignoring this can lead to instability and unreliable predictions, as your model might produce different outcomes under identical conditions or be overly sensitive to minor numerical changes, completely undermining its scientific and regulatory value [7] [15] [5].

Q2: I've validated my model against real-world data. Why do I still need to perform a uniqueness analysis? Validation checks if your model matches reality, while verification (including uniqueness analysis) checks if the model itself is built and solved correctly. A model can be well-validated but still be numerically unstable. Uniqueness analysis specifically guards against non-unique solutions and round-off errors due to the limited precision of computers. If ignored, your validated model could still produce different results on different computing platforms or with different random seeds, making its predictions fundamentally unreliable for high-stakes decisions like drug development [7] [16].

Q3: What are the most common symptoms of an unverified ABM that suffers from instability? If your ABM lacks proper solution verification, you may observe these common symptoms:

- Diverging Results: Simulation outputs differ significantly when run with the same parameters but on different machines or software versions.

- Extreme Sensitivity: Outputs change drastically with tiny variations in input parameters, indicating potential ill-conditioning.

- Non-Smooth Outputs: Time-series results show unexpected bucking, discontinuities, or stiffness, suggesting numerical errors in the solution process [7].

- Failure to Converge: Key model outputs do not stabilize when you reduce the simulation time-step [7].

Troubleshooting Guides

Problem: Non-Unique or Non-Reproducible Model Outputs

Description: Your model produces different results for the same initial conditions and parameters, making conclusions unreliable.

Diagnosis: This is a classic failure in deterministic verification, specifically related to existence and uniqueness.

Solution:

- Existence Check: Verify the model returns an output for all reasonable values in your input parameter space [7].

- Uniqueness Analysis: Run the model multiple times with identical input sets and the same pseudo-random number generator seed. The outputs should be identical, with at most a minimal variation determined by the numerical rounding algorithm of your computing platform [7].

- Code Verification: Check for programming errors, such as uninitialized variables or the inadvertent use of non-fixed random seeds.

Problem: Model is Overly Sensitive or Yields Ill-Conditioned Results

Description: Small, scientifically insignificant changes to an input parameter cause large, unpredictable swings in model outcomes.

Diagnosis: The model may be numerically ill-conditioned. This requires a parameter sweep analysis to map the model's behavior across its input space.

Solution:

- Parameter Sweep: Systematically sample the entire input parameter space to identify regions where the model fails to produce a valid solution or where the solution is valid but outside the expected range [7].

- Sensitivity Analysis: Employ robust sensitivity analysis techniques like LHS-PRCC (Latin Hypercube Sampling - Partial Rank Correlation Coefficient) or Sobol' method to quantitatively estimate the influence of each input parameter on the output. This helps distinguish true biological sensitivity from numerical instability [7].

Experimental Protocols for Verification

The table below summarizes key quantitative analyses for assessing your ABM's stability and reliability.

| Analysis Type | Key Metric | Target Threshold | Methodology |

|---|---|---|---|

| Time-Step Convergence Analysis [7] | Percentage discretization error: $e_{q}^{i} = \frac{{q^{i} - q^{i} }}{{q^{i} }}*100$ | Error < 5% | Run the model with progressively smaller time-steps (i). Compare output quantity (q) at each step to a reference value (q*) from the smallest tractable time-step. |

| Smoothness Analysis [7] | Coefficient of Variation (D) | Lower is better; no universal threshold. Evaluates risk. | Calculate the standard deviation of the first difference of the output time series, scaled by the absolute value of their mean, using a moving window (e.g., k=3). |

| Stochastic Verification (Consistency) [7] | Distributional similarity | Pass statistical tests (e.g., Kolmogorov-Smirnov) | Run multiple stochastic realizations (with different random seeds) and confirm that the outputs are consistent and belong to the same statistical distribution. |

The following workflow diagram illustrates the logical relationship between these verification steps and the consequences of their failure.

The Scientist's Toolkit: Essential Research Reagents for ABM Verification

This table details key computational "reagents" and tools essential for performing rigorous solution verification.

| Tool / Reagent | Function in Verification | Brief Explanation |

|---|---|---|

| Model Verification Tools (MVT) [7] | All-in-one suite for deterministic verification. | An open-source, Dockerized platform that automates key steps like existence, time-step convergence, smoothness, and parameter sweep analyses for discrete-time ABMs. |

| Pseudo-Random Number Generator (PRNG) | Uniqueness and stochastic verification. | A core component for stochastic ABMs. Fixing the PRNG seed is essential for testing deterministic uniqueness. Varying the seed is necessary for assessing stochastic consistency [7]. |

| LHS-PRCC Analysis [7] | Parameter sweep and sensitivity analysis. | A technique combining Latin Hypercube Sampling (LHS) with Partial Rank Correlation Coefficient (PRCC) to assess the influence of input parameters on outputs, crucial for identifying ill-conditioning. |

| ASME V&V 40 Standard [5] | Regulatory credibility framework. | A technical standard for assessing the credibility of computational models, providing a risk-informed framework for planning verification and validation activities for regulatory submission. |

| High-Fidelity Field Data [15] | Multi-level validation. | Real-world data used not only for overall model validation but also to validate the behavior of individual agents and their interactions, ensuring the model's emergent dynamics are realistic. |

Integrating Existence and Uniqueness within the Broader VV&UQ Framework

This technical support center provides troubleshooting guides and FAQs for researchers, scientists, and drug development professionals conducting verification, validation, and uncertainty quantification (VV&UQ) for Agent-Based Models (ABMs), with a specific focus on existence and uniqueness analysis.

# Frequently Asked Questions (FAQs)

1. What are existence and uniqueness analysis, and why are they critical first steps in ABM verification?

Existence and uniqueness analysis are fundamental components of the deterministic verification of an ABM. They ensure the model's numerical and computational robustness before more complex validation.

- Existence Analysis: This procedure verifies that the computational model produces an output for every reasonable input value within the defined parameter space. It checks that the simulation runs to completion without fatal errors across its intended operating range [7].

- Uniqueness Analysis: This test ensures that for an identical set of inputs (including the same random seed), the model produces the same outputs across different simulation runs, with at most minimal variations due to numerical rounding errors inherent to computing platforms. A failure of uniqueness suggests underlying instabilities or implementation errors in the code [7].

These analyses form the foundation of model credibility, especially for in-silico trials intended for regulatory evaluation of medicinal products. A model that fails these tests cannot be considered reliable for generating evidence on drug safeness or efficacy [7].

2. Within the broader VV&UQ workflow, when should I perform existence and uniqueness analysis?

Existence and uniqueness analysis are not isolated activities; they are initial, critical steps within a larger, iterative verification workflow. A typical structured approach includes [7]:

- Deterministic Verification:

- Existence and Uniqueness Analysis

- Time Step Convergence Analysis: Ensures the model's outputs are not overly sensitive to the discrete time-step length chosen for the simulation.

- Smoothness Analysis: Checks for unnatural singularities or discontinuities in output time series that may indicate numerical errors.

- Parameter Sweep Analysis: Tests the model across its input parameter space to identify regions of ill-conditioning or abnormal sensitivity.

- Stochastic Verification (for subsequent steps):

- Consistency and Sample Size analysis to ensure the number of stochastic runs is sufficient.

3. My ABM is inherently stochastic. How can I test for uniqueness when outputs are supposed to vary?

This is a common point of confusion. The uniqueness test is part of deterministic verification. To perform it, you must temporarily remove the primary sources of stochasticity. This is typically done by initializing the model's pseudo-random number generator with a fixed seed. When the same initial seed is used, the sequence of "random" numbers is identical, and thus all model outputs should also be identical. A failure to produce identical outputs under a fixed seed indicates a non-determinism bug in the code, such as reliance on an unseeded system clock or uninitialized variables [7].

4. What are the most common root causes for uniqueness failures in an ABM?

Failures in uniqueness analysis often stem from implementation errors that introduce unintended non-determinism.

- Uncontrolled Random Number Generators: Using a random number generator that is not properly seeded or reseeded at the start of each run.

- Parallel Processing Race Conditions: In parallelized code, where multiple processing threads access and modify shared data or resources simultaneously without proper synchronization, leading to unpredictable execution orders.

- Floating-Point Non-Associativity: The order of arithmetic operations (especially addition) on floating-point numbers can yield slightly different results due to rounding. This can be triggered by non-deterministic thread execution orders.

- External Data or Timing Dependencies: The model relying on external data sources, system timestamps, or user input that may change between runs.

# Troubleshooting Guides

# Problem: Model Fails Uniqueness Test (Outputs Diverge with Fixed Seed)

Symptoms: When running the ABM multiple times with an identical input parameter set and a fixed random seed, the output trajectories or final results are not identical.

Diagnosis and Resolution Table:

| Symptom | Potential Root Cause | Recommended Solution |

|---|---|---|

| Slight numerical differences in outputs (e.g., at the 10th decimal place). | Expected numerical rounding errors from different operation orders on floating-point numbers. | Verify that the differences are within a defined tolerance level (e.g., eqi < 5%) [7]. This may not be a critical failure. |

| Significant divergence in outputs from the first few time steps. | Unseeded or incorrectly seeded random number generator. | Implement a fixed seed for the model's primary and all secondary random number generators at the start of every simulation run. |

| Divergence occurs only when the model runs on multiple CPU cores. | Race condition in parallelized code. | Use debugging tools to identify shared resources. Implement mutex locks, semaphores, or redesign the algorithm to avoid shared state. |

| Outputs are "mostly" the same but show sporadic, unexpected jumps. | Reliance on system time or external input. | Refactor the code to remove dependencies on the system clock or external files for core model logic. Use the fixed random seed for all stochastic decisions. |

# Problem: Model Fails Existence Test (Crashes or Hangs)

Symptoms: The ABM fails to complete a simulation run, resulting in a crash, hang, or fatal error for certain input parameter values.

Diagnosis and Resolution Table:

| Symptom | Potential Root Cause | Recommended Solution |

|---|---|---|

| Crash occurs when accessing an array or list index. | Invalid agent state or out-of-bounds world interaction. The model attempts an operation that is not defined. | Add comprehensive input validation and state checks. Implement try-catch blocks to log the precise state of the model at the point of failure. |

| Simulation hangs indefinitely, often in a loop. | Violation of a model assumption leading to an infinite loop or deadlock (e.g., an agent cannot find a valid move). | Introduce loop counters with hard limits. Add detailed logging to identify the agent and its state when the hang occurs. Check agent decision logic for exit conditions. |

| Crash occurs for specific parameter combinations during a parameter sweep. | Numerical ill-conditioning, such as division by zero or arithmetic overflow, for extreme parameter values [7]. | Perform Parameter Sweep Analysis to map the "valid" and "invalid" regions of your input parameter space. Introduce safeguards (e.g., clipping extreme values) or redefine the model's domain to exclude non-physical parameter combinations. |

| The model runs but produces nonsensical outputs (e.g., negative population counts). | Logical error in agent rules or world dynamics that violates a fundamental constraint. | This is a verification failure. Implement "sanity check" rules for agent behaviors and environment updates to ensure they adhere to physical or logical constraints (e.g., population counts cannot go negative). |

# Experimental Protocol: A Step-by-Step Guide for Existence and Uniqueness Analysis

This protocol provides a detailed methodology for conducting existence and uniqueness analysis, as adapted from the verification workflow for mechanistic ABMs [7].

Objective: To verify that the ABM produces a valid output for all intended inputs (existence) and that this output is reproducible for identical inputs (uniqueness).

Materials and Computational Tools:

- The ABM software and its runtime environment.

- A computing cluster or machine capable of running multiple model instances.

- (Recommended) Automated scripting to manage multiple runs (e.g., Python, Bash).

- (Recommended) Model Verification Tools (MVT) or similar frameworks for automating verification tests [7].

Procedure:

Part A: Existence Analysis

- Define the Input Parameter Space: Identify all input parameters for the model and define their plausible ranges based on theoretical or empirical grounds.

- Design a Sampling Strategy: Use a space-filling design like Latin Hypercube Sampling (LHS) to efficiently sample the multi-dimensional parameter space. This ensures broad coverage without the combinatorial explosion of a full factorial design [7].

- Execute Batch Runs: For each sampled parameter set, execute the model.

- Monitor for Failures: Record whether each run (a) completes successfully, (b) crashes, or (c) hangs.

- Analyze and Map Results: Identify regions in the parameter space where the model fails to run. Investigate and correct the root causes of these failures, as they indicate a violation of the model's "existence" condition.

Part B: Uniqueness Analysis

- Select Representative Parameter Sets: Choose a subset of parameter sets from Part A that led to successful model execution.

- Initialize with a Fixed Seed: For each selected parameter set, configure the model to use a single, fixed seed for its random number generator.

- Execute Replicated Runs: Run the model multiple times (e.g., 5-10 times) for each parameter set, using the same fixed seed every time.

- Compare Outputs: For each set of replicated runs, compare the key output variables. Use a tolerance-based comparison (e.g.,

eqi < 5%[7]) to account for negligible floating-point differences. - Identify Non-Uniqueness: If outputs for identical input-seed pairs are not consistent, use the troubleshooting guide above to diagnose the source of non-determinism in the code.

# Research Reagent Solutions: Essential Tools for ABM Verification

The following table details key computational tools and methodologies essential for conducting rigorous existence and uniqueness analysis and broader VV&UQ.

| Tool / Method | Function in Verification | Relevance to Existence/Uniqueness |

|---|---|---|

| Model Verification Tools (MVT) | An open-source software suite that automates key steps in the deterministic verification of discrete-time models, including ABMs [7]. | Provides a structured framework and automated procedures for running the parameter sweeps and replicated runs needed for existence and uniqueness testing. |

| Latin Hypercube Sampling (LHS) | A statistical method for generating a near-random sample of parameter values from a multidimensional distribution. It is efficient for exploring high-dimensional parameter spaces [7]. | Core to Existence Analysis. Used to systematically sample the input parameter space to test for model crashes or hangs. |

| Fixed Increment Time Advance (FITA) | The most common time-advance mechanism in ABMs, which progresses the simulation in discrete, fixed-time steps [7]. | The choice of time-step can indirectly affect uniqueness if it influences operation order. It is the subject of a separate Time Step Convergence Analysis. |

| Pseudo-Random Number Generator (PRNG) | An algorithm that generates a sequence of numbers that approximates the properties of random numbers. It can be initialized with a 'seed' [7]. | Critical for Uniqueness Analysis. Using a fixed, reproducible seed is the primary method for isolating and testing the model's deterministic core. |

| Parameter Sweep Analysis | A technique that involves running the model multiple times while systematically varying input parameters to assess the model's response [7]. | The primary methodology for conducting a comprehensive Existence Analysis to find regions of failure in the input space. |

| Sobol Sensitivity / LHS-PRCC | Variance-based and correlation-based sensitivity analysis techniques used to quantify how input uncertainty contributes to output uncertainty [7]. | While more common in later validation/UQ stages, these can help identify parameters that most influence model instability discovered during existence testing. |

A Practical Framework for Implementing Existence and Uniqueness Analysis

Before an Agent-Based Model (ABM) can be used for mission-critical applications, such as predicting the efficacy of a new drug in in silico trials, its credibility must be rigorously assessed. Deterministic verification is a fundamental part of this process, aiming to identify, quantify, and reduce the numerical errors associated with the model itself [17]. For ABMs, which often simulate complex, emergent behaviors from the bottom up, this presents a unique challenge. Unlike equation-based models where numerical error can be assessed against an analytical solution, the "local rules" of an ABM require a specialized verification framework [17] [7].

This guide, framed within broader research on existence and uniqueness analysis, provides a practical workflow to help researchers and scientists ensure their computational models are robust and numerically sound. A verified model is a reliable model, and in the context of drug development, this reliability is paramount for regulatory acceptance [7].

Frequently Asked Questions

Q: Why is deterministic verification separate from stochastic verification? A: Many ABMs use Pseudo-Random Number Generators (PRNGs) to simulate uncertainty. Deterministic verification involves running the model with a fixed random seed, ensuring that any variation in output is due to numerical approximation and not the model's inherent randomness. This isolation allows you to pinpoint numerical errors [17] [7].

Q: How does this relate to "existence and uniqueness" in my ABM? A: In mathematical modeling, existence and uniqueness theorems guarantee that a solution exists and is unique for a given set of inputs. In computational ABM verification, we adapt this concept. We check that the model produces a solution for all reasonable inputs (existence) and that, for the same fixed inputs and random seed, it produces the same solution every time, within a small tolerance defined by numerical precision (uniqueness) [7] [18].

Q: My ABM results are stochastic by nature. Is deterministic verification still relevant? A: Absolutely. Before you can trust the statistical distributions of your stochastic results, you must first verify that the underlying deterministic logic of your agent interactions and state changes is implemented correctly and consistently. Deterministic verification is the first step in establishing this trust [17].

The Deterministic Verification Workflow: A Step-by-Step Guide

The following workflow, synthesized from established verification procedures, is designed to be implemented systematically on your ABM [17] [7].

Step 1: Existence and Uniqueness Analysis

Objective: To verify that the model produces a valid output for a given input and that this output is reproducible.

Experimental Protocol:

- Define Input Space: Identify the key input parameters for your model. For a biological ABM, this could include initial agent counts, environmental factors, or kinetic parameters.

- Test for Existence: Sample across the admissible range of your input parameters. For each input set, run the model and verify that it completes without fatal errors and produces a logically valid output.

- Test for Uniqueness: Select a representative subset of input parameter sets. Run the model multiple times (e.g., 10-20 runs) for each input set using the same fixed random seed. The output across these repeated runs should be identical, with at most minimal variations due to floating-point rounding errors [7].

Troubleshooting Guide:

- Problem: The model crashes for specific input values.

- Solution: Identify the root cause, often a division by zero, an out-of-bounds array access, or an invalid state transition. Implement safeguards or correct the model logic.

- Problem: The model runs but produces different results for the same input and random seed.

- Solution: This indicates a violation of uniqueness, often caused by parallel processing race conditions, the use of non-fixed random seeds in parts of the code, or uninitialized variables. Review your code for these issues.

Step 2: Time Step Convergence Analysis

Objective: To ensure that the discrete-time approximation used in the simulation does not unduly influence the results.

Experimental Protocol:

- Select a Reference Output: Choose a key quantitative output from your model (e.g., final agent count, peak value of a critical variable, or mean value over time).

- Run with Decreasing Time Steps: Execute the model with a series of progressively smaller time steps (e.g.,

dt,dt/2,dt/4), keeping the random seed fixed. - Calculate Discretization Error: For each run, calculate the percentage error of your output quantity relative to the result from the smallest, computationally feasible time step (your reference solution,

i*). The formula is: ( e_{q}^{i} = \frac{{q^{i} - q^{i} }}{{q^{i} }} \times 100 ) where ( q^{i*} ) is the reference output and ( q^{i} ) is the output at time-stepi[7]. - Check for Convergence: The model is considered converged when the error ( e_{q}^{i} ) falls below a pre-defined tolerance (e.g., 5%).

Troubleshooting Guide:

- Problem: The discretization error does not decrease with a smaller time step.

- Solution: This may suggest an instability in the model's algorithm or a bug in the implementation of time-advancement logic. Review the model's core interaction and update functions.

Step 3: Smoothness Analysis

Objective: To identify unintended numerical stiffness, singularities, or discontinuities in the model output over time.

Experimental Protocol:

- Generate Output Time Series: Run the model and record a key output variable over time.

- Calculate the Coefficient of Variation (D): For the output time series, calculate the standard deviation of the first difference, scaled by the absolute mean of these differences. This is often done using a moving window (e.g., k=3 neighbors) to analyze local variation.

- Interpret the Results: A high value of

Dindicates a "bumpy" or discontinuous output, which may be a sign of numerical instability or unintended model behavior that requires investigation [7].

Step 4: Parameter Sweep Analysis

Objective: To ensure the model is not ill-conditioned, meaning it does not exhibit extreme sensitivity to tiny changes in inputs.

Experimental Protocol:

- Design the Sweep: Use sampling methods like Latin Hypercube Sampling (LHS) to efficiently explore the multi-dimensional input parameter space.

- Run the Ensemble: Execute the model for each sampled parameter set.

- Analyze Sensitivity and Validity: Check for input regions where the model fails or produces outputs outside expected biological or logical bounds. Use sensitivity analysis techniques, such as calculating Partial Rank Correlation Coefficients (PRCC), to quantify how much each input parameter influences the output. A model is ill-conditioned if a small parameter change causes a disproportionately large output shift [7].

The workflow for deterministic verification can be visualized as a sequential process where the output of one step informs the next, as shown in the following diagram:

Quantitative Criteria for Verification Success

The table below summarizes the key metrics and their success criteria for the deterministic verification workflow.

Table 1: Key Metrics for Deterministic Verification Steps

| Verification Step | Primary Metric | Success Criteria | Common Tools & Methods |

|---|---|---|---|

| Existence & Uniqueness | Output variability over repeated runs (fixed seed) | Zero variability (bit-wise identical outputs) or variation within floating-point error tolerance [7]. | Custom scripts, Unit tests |

| Time Step Convergence | Percentage discretization error (( e_{q}^{i} )) | Error below a set tolerance (e.g., < 5%) when compared to a reference solution with a finer time step [7]. | Model Verification Tools (MVT) [7] |

| Smoothness Analysis | Coefficient of Variation (D) | A low value of D, indicating a smooth output trajectory without abnormal buckling or discontinuities [7]. | Model Verification Tools (MVT) [7] |

| Parameter Sweep | Partial Rank Correlation Coefficient (PRCC) | No extreme, non-monotonic sensitivity; model outputs remain within valid bounds across the input space [7]. | LHS Sampling, PRCC Analysis, SALib [7] |

The Scientist's Toolkit: Essential Research Reagent Solutions

In computational science, "research reagents" refer to the software tools and libraries that enable verification. The following table lists key resources for implementing the workflow described above.

Table 2: Essential Computational Tools for ABM Verification

| Tool / Resource | Type | Primary Function in Verification |

|---|---|---|

| Model Verification Tools (MVT) [7] | Software Platform | An open-source toolkit that automates key steps like time step convergence and smoothness analysis. Essential for a standardized approach. |

| SALib [7] | Python Library | Provides robust algorithms for sensitivity analysis, including Sobol and Morris methods, crucial for parameter sweep analysis. |

| Pingouin & Scikit-learn [7] | Python Libraries | Used for statistical analysis, including calculating Partial Rank Correlation Coefficients (LHS-PRCC) for parameter sensitivity. |

| Latin Hypercube Sampling (LHS) | Methodology | An efficient statistical method for exploring the parameter space of a model with a limited number of runs [7]. |

| Fixed Increment Time Advance (FITA) | Core Algorithm | The standard time-advancement method in most ABM frameworks. Its configuration is the target of time step convergence analysis [7]. |

A rigorous, step-by-step deterministic verification workflow is not merely an academic exercise but a foundational practice for any researcher employing Agent-Based Models in high-stakes environments like drug development. By systematically confirming that your model produces unique and reproducible results, converges with appropriate time steps, produces smooth outputs, and responds reasonably to parameter changes, you build a bedrock of credibility upon which further validation and experimentation can safely rest.

Frequently Asked Questions (FAQs)

Q1: What is the primary purpose of the Mobile Verification Toolkit (MVT)? MVT is designed to facilitate the consensual forensic analysis of Android and iOS devices to identify traces of compromise [19] [20]. It helps in conducting forensics of mobile devices to find signs of a potential compromise [21].

Q2: Is MVT suitable for non-technical users to perform self-assessments? No. MVT is a forensic research tool intended for technologists and investigators. Using it requires an understanding of forensic analysis and command-line tools. It is not intended for end-user self-assessment [20].

Q3: Can MVT guarantee that a device is free of spyware? No. Public Indicators of Compromise (IOCs) alone are insufficient to determine that a device is "clean". Reliance on them can miss recent forensic traces and provide a false sense of security. Comprehensive analysis often requires access to non-public IOCs and threat intelligence [20].

Q4: What are the key capabilities of MVT? Key features include decrypting iOS backups, parsing records from iOS system and app databases, extracting apps from Android devices, comparing records against malicious IOCs, and generating JSON logs and chronological timelines of records [20].

Q5: Is it permissible to use MVT on devices without the user's consent? No. The use of MVT to extract or analyze data from devices of non-consenting individuals is explicitly prohibited by its license [20].

Troubleshooting Guides

Issue 1: Incomplete or Failed Data Acquisition from an Android Device

Problem: The mvt-android command fails to extract a complete set of installed applications or diagnostic information.

Solution:

- Verify USB Debugging: Ensure that USB debugging is enabled on the Android device within the "Developer options" menu.

- Check Device Connection: Use the

adb devicescommand to confirm your computer recognizes the device and that you have authorized the connection. - Review Permissions: Some information may require root access to extract. Note that MVT is designed for consensual forensics, and rooting a device may not always be feasible or desired [20].

- Re-run with Detailed Logging: Execute the command with the

-v(verbose) flag to generate more detailed output, which can help pinpoint the stage at which the failure occurs.

Issue 2: Processing Encrypted iOS Backups

Problem: MVT is unable to decrypt an encrypted iOS backup.

Solution:

- Confirm Password: Ensure you have the correct backup password. MVT will prompt you for this password during the decryption process [20].

- Verify Backup Integrity: Confirm that the backup file is not corrupted. Try creating a new backup via iTunes or Finder.

- Use Latest MVT Version: Update to the latest version of MVT, as decryption capabilities are continuously improved [19].

Issue 3: Interpretation of IOC Scan Results

Problem: The tool outputs a list of potential malicious traces, but the significance is unclear.

Solution:

- Review IOC Source: Check the origin and date of the Indicator of Compromise file you are using. Older IOCs may not detect recent threats [20].

- Examine the JSON Logs: Generate and carefully review the detailed JSON logs of detected traces for additional context [20].

- Seek Expert Consultation: For reliable triage, seek support from organizations like Amnesty International's Security Lab or Access Now’s Digital Security Helpline, which have access to non-public research and intelligence [20].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key digital "reagents" and materials used in mobile forensic analysis with MVT.

| Research Reagent / Material | Function in Analysis |

|---|---|

| iOS Backup Image | A forensic copy of the device's file system and application data. Serves as the primary source for parsing records and logs [20]. |

| Android ADB Extraction | Diagnostic information and a list of installed applications extracted from an Android device via the Android Debug Bridge (adb) protocol [20]. |

| Indicators of Compromise (IOCs) - STIX2 Format | A standardized list of known malicious patterns (e.g., file hashes, domains). Used by MVT to scan device data and identify potential threats [20]. |

| Chronological Timeline | A unified timeline of system events generated by MVT. Allows the researcher to analyze the sequence and correlation of activities on the device [20]. |

| JSON Logs of Records | Structured logs of all extracted records from the device. Facilitates detailed manual review and automated processing of the data [20]. |

Experimental Protocols

Protocol 1: Forensic Acquisition of an iOS Device via Backup

Objective: To create a verifiable data image of an iOS device for subsequent forensic analysis.

Methodology:

- Create a Local Backup: Connect the iOS device to a trusted computer and use iTunes (on Windows) or Finder (on macOS) to create an encrypted local backup. Encryption is required to extract the most comprehensive data set.

- Verify Backup Completion: Ensure the backup process completes successfully without errors.

- Transfer Backup for Analysis: Locate the backup folder on the computer system. The path is typically:

- macOS:

~/Library/Application Support/MobileSync/Backup/ - Windows:

\Users\(username)\AppData\Roaming\Apple Computer\MobileSync\Backup\

- macOS:

- Acquire with MVT: Use the

mvt-ioscommand-line tool, pointing it to the location of the backup folder and providing the backup password for decryption [20].

Protocol 2: Indicator of Compromise (IOC) Scanning and Validation

Objective: To systematically scan acquired device data for known malicious indicators and validate the findings.

Methodology:

- IOC Sourcing: Obtain a current, reputable set of IOCs in the STIX2 format. This could be from public research reports or trusted threat intelligence feeds [20].

- Execute MVT Scan: Run the appropriate MVT command (e.g.,

mvt-ios check-backupormvt-android check-iocs) specifying the path to the acquired data and the IOC file[s]. - Result Triage: MVT will generate two primary outputs [20]:

- A full JSON log of all extracted records.

- A separate JSON log of all detected malicious traces.

- Expert Analysis: Correlate the detected traces within the generated chronological timeline. Context is critical; a single match may not confirm a compromise, while a cluster of related IOCs is more significant. False positives must be ruled out [20].

Workflow Visualization

MVT Forensic Analysis Workflow

IOC Validation & Triage Logic

Troubleshooting Guide: Common UISS Platform Issues

Issue 1: Poor Visual Contrast in Simulation Outputs

Problem: Visualization outputs from the UISS platform lack sufficient contrast, making it difficult to distinguish between different agent types or states, especially in the simulated tissue environment [22].

Solution: Implement automated contrast checking.

- For Programmatic Generation: Calculate the luminance of the background color and set the text or agent color accordingly. Use the formula:

label_col <- ifelse(hcl[, "l"] > 50, "black", "white")to ensure text is readable [23]. - For Manual Design: Adhere to WCAG 2.1 (Level AA) standards, which require a minimum 3:1 contrast ratio for meaningful graphics and large text [22]. Use online contrast checker tools to validate your color pairs.

Issue 2: Ineffective or Misleading Color Palettes

Problem: The chosen color palette for categorical data (e.g., different cell phenotypes) creates false associations or is not differentiable by users with color vision deficiencies [22].

Solution: Utilize a pre-validated, accessible categorical palette.

- Selection: Choose palettes that are both differentiated (sufficient visual contrast between colors) and diverse (avoid false correlations due to similar hues or brightness) [22].

- Validation: Use tools like Viz Palette to evaluate the palette's effectiveness. This tool generates reports on the "just-noticeable difference" (JND) between colors, helping to identify hues that are hard to tell apart [22].

- Fallbacks: Incorporate secondary accessibility cues like textures, shapes, or patterns to encode information, ensuring robustness against color vision deficiencies [22].

Issue 3: Graphviz Node Rendering Issue

Problem: When generating pathway diagrams using Graphviz DOT language, node fillcolor does not appear in the output.

Solution: Ensure the style=filled attribute is included for the node. The fillcolor attribute only takes effect when a fill style is applied [24].

Frequently Asked Questions (FAQs)

FAQ 1: What are the core principles for designing cognitively efficient ABM visualizations? Effective ABM visualizations should facilitate swift perceptual inferences. Key principles derived from Gestalt psychology and scientific visualization include [25]:

- Foreground/Background Segregation: Clearly distinguish agents from their environment.

- Informed Use of Visual Variables: Use variables like color, size, and shape in ways that match the data type (e.g., hue for categorical data).

- Removal of Visual Interference: Eliminate unnecessary visual elements that do not contribute to the model's message. The primary goals are to simplify, emphasize, and explain the model's key behaviors [25].

FAQ 2: How does the UISS platform handle the specific recognition between immune cells and pathogens? The UISS platform uses an abstraction based on binary strings to mimic the adaptive immune response. Epitopes on pathogens and receptors on immune system cells are both represented by binary strings. The probability of an immune cell recognizing a pathogen is proportional to the Hamming distance (the number of mismatching bits) between their respective strings. This approach efficiently reproduces features like immune memory, specificity, and tolerance [26].

FAQ 3: Why is deriving a mean-field limit important for a hybrid PDE-ABM in mathematical oncology? Deriving a mean-field limit is crucial for connecting stochastic microdynamics to deterministic macrodynamics. It integrates the complex hybrid PDE-ABM system into a single, analytically tractable PDE system. This allows researchers to [27] [28]:

- Rigorously link stochastic agent rules to continuum-scale descriptions.

- Perform formal mathematical analysis (e.g., well-posedness, stability) on the entire system.

- Develop more efficient hybrid numerical schemes that can use the PDE surrogate in non-critical regions, reducing computational cost.

Experimental Protocol: UISS In Silico Vaccine Trial

This protocol outlines the methodology for using the Universal Immune System Simulator (UISS) to predict the efficacy of a candidate vaccine or monoclonal antibody therapy against SARS-CoV-2 [26].

Objective Definition and Conceptual Mapping

- Define the Candidate: Specify the therapeutic agent (e.g., a monoclonal antibody like the one proposed by Wang et al. that targets a communal conserved epitope on the spike receptor binding domain) [26].

- Develop a Conceptual Map: Aggregate existing knowledge and hypotheses about SARS-CoV-2 dynamics and the host immune response into a flow chart. This map should detail the cascade of biological events from infection to immune clearance [26].

Model Formulation and Computational Translation

- Agent and State Definition: Define the agents (e.g., SARS-CoV-2 virions, B cells, T cells, antibodies) and their possible states (e.g., naive, activated, memory) [26].

- Rule Specification: Codify the rules of interaction between agents. This includes:

- Integration of Continuum Fields (for hybrid models): If modeling diffusive substances (e.g., cytokines, oxygen), couple agent-based rules with reaction-diffusion equations (PDEs) to describe their spatial dynamics [27] [28].

Simulation Execution and In Silico Trial

- Calibrate Parameters: Use known biological data (e.g., viral load peaks in the first week, IgG/IgM antibody rise around day 10) to calibrate model parameters [26].

- Run Virtual Cohorts: Execute the model multiple times with a virtual population to account for stochasticity. This constitutes the "in silico trial" [26].

- Administer Virtual Intervention: Introduce the candidate vaccine or monoclonal antibody into the simulation according to the proposed treatment schedule [26].

Output Analysis and Efficacy Prediction

- Monitor Key Metrics: Track simulation outputs such as:

- Time to viral clearance.

- Peak viral load.

- Magnitude and duration of humoral (antibody) and cellular immune responses.

- Incidence of severe symptoms (modeled via cytokine storm markers like IL-6) [26].

- Compare to Control: Compare these metrics against a control cohort that did not receive the virtual intervention.

- Predict Efficacy: The platform predicts the therapy's efficacy based on its ability to significantly improve key metrics (e.g., reduce viral load, accelerate clearance) compared to the control [26].

Diagram: UISS In Silico Trial Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table: Key Components for a Hybrid ABM-PDE Framework in Immunology/Oncology

| Research Reagent / Component | Function & Explanation |

|---|---|

| Agent-Based Model (ABM) | Core simulation engine for modeling discrete, stochastic entities (e.g., individual immune cells, tumor cells, vessel agents) and their rule-based interactions [26] [25]. |

| Partial Differential Equations (PDEs) | Describes the spatiotemporal dynamics of continuum fields (e.g., concentration gradients of oxygen, cytokines, drugs) within the tissue microenvironment [27] [28]. |

| Gillespie Algorithm | An exact stochastic simulation algorithm (a Monte Carlo method) used to model the timing and occurrence of random events, such as phenotypic switching in tumor cells or stochastic mutation events [27] [28]. |

| Mean-Field Limit Derivation | A mathematical technique (using moment-closure) to derive a deterministic PDE description from the stochastic ABM rules. This connects micro-scale randomness to macro-scale dynamics and aids in analysis [27] [28]. |

| Binary String Recognition | A computational method used in UISS to abstractly mimic the specific binding between immune cell receptors and pathogen epitopes, enabling simulation of adaptive immunity [26]. |

| Visualization & Gestalt Principles | Guidelines for creating cognitively efficient visualizations of ABM outputs, ensuring emergent behaviors and key model features are clearly communicated [25]. |

Diagram: Simplified Signaling in the Tumor Microenvironment

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: What does a "No fixed point found" error mean for the validity of my Agent-Based Model? A "No fixed point found" error indicates that, within the defined mathematical framework, your ABM lacks a verifiable equilibrium state. This does not necessarily invalidate your model but suggests it may represent a system that is inherently unstable, oscillatory, or chaotic. You should first verify the correctness of your translation from the ABM to the mathematical equation system. If correct, this result can be a significant finding about the system's dynamics, but it means techniques relying on equilibrium analysis are not applicable.

Q2: My model's state space is vast and high-dimensional. How can I make fixed-point analysis computationally feasible? High dimensionality is a common challenge. Apply dimensionality reduction techniques like Principal Component Analysis (PCA) on sampled model states to identify a lower-dimensional manifold in which the system's essential dynamics occur. You can then search for fixed points within this reduced space. Furthermore, consider applying fixed-point theorems on simpler, abstracted versions of your model that capture its core interactions before scaling up to the full complexity.

Q3: How do I handle non-continuous agent behaviors when applying Brouwer's theorem, which requires continuity? Brouwer's Fixed-Point Theorem indeed requires a continuous mapping on a convex, compact set. If agent behaviors are discrete or non-continuous, you have two primary paths:

- Smoothing: Replace discrete rules (e.g., step functions) with continuous, sigmoidal approximations. This creates a continuous model amenable to analysis, and you can study the convergence back to the discrete case.

- Alternative Formulation: Use the Kakutani Fixed-Point Theorem, which generalizes Brouwer's theorem to set-valued functions (correspondences) and can directly handle certain types of discontinuities and discrete choices.

Q4: What does it mean if my analysis finds multiple fixed points? Finding multiple fixed points is a critical insight. It means your ABM is multistable; depending on the initial conditions, the system can evolve to one of several distinct equilibrium states. For drug development, this could theoretically represent different disease outcomes (e.g., remission vs. chronic state). You must characterize the basin of attraction for each fixed point—the set of initial conditions that lead to each equilibrium—to understand the model's long-term behavior.

Q5: How can I verify that a discovered fixed point is unique for my specific ABM? Proving uniqueness is often more challenging than proving existence. Strategies include:

- Contractive Mapping: Show that your model's state transition function is a contraction. If the function is Lipschitz continuous with a constant less than 1, Banach's Fixed-Point Theorem guarantees a unique fixed point.

- Jacobian Analysis: Analyze the Jacobian matrix of the system's dynamics at the fixed point. Specific spectral properties can indicate local uniqueness.

- Index Theory: In more complex cases, fixed-point index theory can be used to rule out the existence of additional fixed points within a defined region.

Common Experimental Errors & Resolutions

| Error Message / Symptom | Likely Cause | Resolution Protocol |

|---|---|---|

| "Iteration limit exceeded without convergence." | The chosen numerical method (e.g., Newton-Raphson) is failing to find a fixed point within the allowed steps. | 1. Check the Lipschitz constant of your mapping; it may be too close to or exceed 1. 2. Switch to a more robust root-finding algorithm (e.g., Levenberg-Marquardt). 3. Verify the convexity and compactness of your defined state space. |

| "Solution violates model constraints." | The mathematical solver has found a fixed point, but it lies outside biologically or physically plausible ranges (e.g., negative cell counts). | 1. Reformulate your problem to explicitly include constraints (e.g., use Lagrange multipliers). 2. Re-define the state space to be a closed and bounded (compact) set that inherently respects the constraints (e.g., population fractions between 0 and 1). |

| High sensitivity to initial parameter values. | The model's dynamics are highly nonlinear, and the fixed-point landscape may have a very small basin of attraction for some equilibria. | 1. Perform a global sensitivity analysis (e.g., Sobol method) to identify the most influential parameters. 2. Conduct a extensive parameter sweep to map out the different basins of attraction and their boundaries (bifurcation analysis). |

| Fixed point is found but is unstable. | The equilibrium exists but is not robust to small perturbations. This is common in models representing transition states or pathological thresholds. | Analyze the eigenvalues of the Jacobian matrix at the fixed point. An unstable point will have at least one eigenvalue with a positive real part. In a therapeutic context, this could represent a drug target to push the system away from this state. |

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Tool | Function in ABM Verification |

|---|---|

| Banach Fixed-Point Theorem | Provides a constructive method for finding a unique fixed point by proving the model's state-transition function is a contraction mapping, guaranteeing convergence from any initial condition. |

| Brouwer Fixed-Point Theorem | Used to prove the existence of at least one equilibrium point in continuous models defined on convex, compact sets, even when the exact point cannot be easily computed. |

| Kakutani Fixed-Point Theorem | Essential for extending existence proofs to ABMs with set-valued dynamics or discrete choices, generalizing Brouwer's theorem for correspondences. |

| Newton-Raphson Method | A powerful numerical algorithm for rapidly converging to a fixed point when a good initial guess is available and the function is well-behaved. |

| Lipschitz Constant Analysis | Quantifies the sensitivity and stability of the model. A constant less than 1 is required for the Contractive Mapping Theorem, ensuring model predictability. |