Face Validity in Computer Simulation Models: A Practical Guide for Biomedical Researchers

This article provides a comprehensive guide to face validity for researchers, scientists, and drug development professionals using computer simulation models.

Face Validity in Computer Simulation Models: A Practical Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide to face validity for researchers, scientists, and drug development professionals using computer simulation models. It covers the foundational principles of face validity—the subjective assessment of whether a model 'looks right' and plausibly represents the real-world system it is intended to simulate. The piece details methodological approaches for evaluating face validity, common pitfalls and optimization strategies, and situates face validity within the broader context of a multi-faceted validation framework that includes construct and predictive validity. By synthesizing these aspects, the article aims to equip modelers with the knowledge to enhance the credibility and utility of their simulations in biomedical and clinical research.

What is Face Validity? Defining the Cornerstone of Model Plausibility

In computer simulation model research, face validity is a fundamental, albeit initial, step in the model validation process. It is defined as the property that a model appears to be a reasonable imitation of a real-world system to individuals who are knowledgeable about that system [1]. Unlike more rigorous statistical forms of validation, face validity is inherently subjective, relying on the qualitative judgment of experts and users to assess whether a model's behavior and outputs are plausible and consistent with their understanding of the real system [1]. This assessment is not merely about whether a model "looks right"; it is a critical procedure that enhances the model's credibility, fosters user confidence, and identifies potential deficiencies early in the development cycle [2] [1]. Within a broader thesis on model validation, establishing face validity is often the first essential step, as proposed by Naylor and Finger's widely adopted three-step approach to model validation [1].

Methodological Framework for Establishing Face Validity

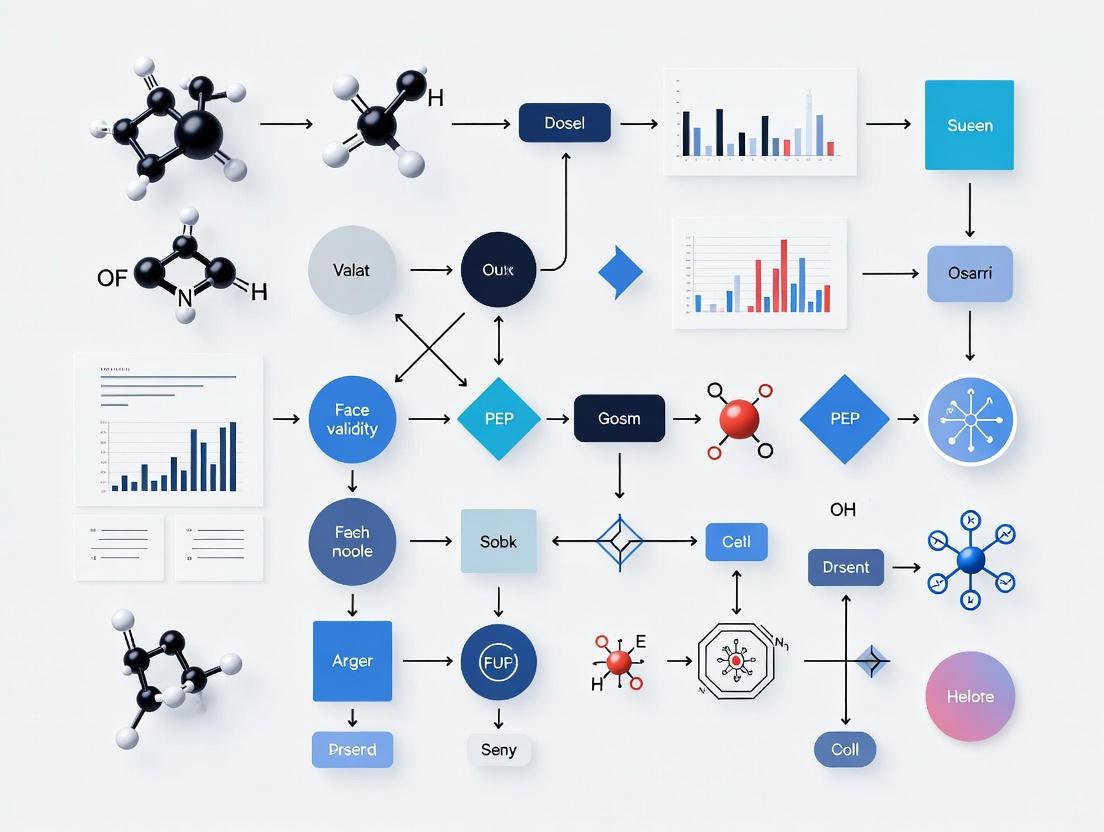

The process of establishing face validity is iterative and should be integrated throughout model development. The following workflow outlines a systematic methodology for achieving and documenting face validity.

Core Methodologies and Techniques

2.1.1 Expert Advisory Panels and Participatory Modeling A robust method for establishing face validity involves the formation of a formal standing advisory group. This approach, exemplified in cancer epidemiology research, moves beyond one-off focus groups to create a structured forum for bidirectional learning [2]. The advisory group should comprise representatives from all key perspectives, including medical professionals, patients, and payors, ensuring that the model is vetted for clinical relevance and realism from multiple viewpoints [2]. This process not only tests the model's face validity but also improves its transparency and aids in the future dissemination of results.

2.1.2 Structured Examination of Model Output and Assumptions Experts and end-users should examine the model's output for reasonableness under a variety of input conditions [1]. This involves:

- Sensitivity Analysis for Plausibility: Observing if model outputs change in a logical and expected manner when input parameters are varied. For instance, in a fast-food drive-through simulation, increasing the customer arrival rate should logically increase outputs like average wait time [1].

- Logic Flow Diagrams: Creating diagrams that map every logically possible action within the model to verify that the structure aligns with real-world processes [1].

- Assumption Testing: Subjecting the model's structural, data, and simplification assumptions to expert scrutiny to ensure they are justifiable and appropriate for the model's intended purpose [1].

Quantitative Frameworks and Data Presentation

While face validity is qualitative, it can be informed and supported by quantitative data. The following table summarizes key quantitative aspects that experts evaluate when assessing face validity.

Table 1: Key Quantitative and Qualitative Dimensions for Face Validity Assessment

| Dimension | Description | Exemplary Data & Metrics | Validation Technique |

|---|---|---|---|

| Input-Output Transformations | Comparison of model outputs to real-system data for identical inputs [1]. | Mean customer wait time, throughput rates, disease prevalence [1]. | Statistical hypothesis testing (t-test), confidence intervals [1]. |

| Sensitivity Plausibility | Direction and magnitude of output changes in response to input variations [1]. | Correlation coefficients, sensitivity indices. | Expert judgment on whether observed sensitivities match real-world expectations [1]. |

| Data Assumption Validity | Appropriateness of the statistical distributions used for model inputs [1]. | Goodness-of-fit test results (e.g., Kolmogorov-Smirnov, Chi-square) [1]. | Graphical analysis (histograms, Q-Q plots), empirical distribution fitting. |

The assessment of face validity often employs standardized instruments. In a study comparing robotic surgery simulators, faculty members used a 5-point Likert-scale questionnaire to quantitatively rate aspects of realism (face validity) and the effectiveness of the simulator for teaching (content validity) [3]. This structured feedback allowed for a comparative analysis of the DaVinci and CMR simulators, demonstrating how qualitative judgments can be systematically captured and analyzed.

Establishing face validity requires specific methodological "reagents" — standardized components and procedures that ensure a rigorous and repeatable validation process.

Table 2: Essential Research Reagents for Face Validity Assessment

| Research Reagent | Function in Validation | Application Example |

|---|---|---|

| Structured Expert Elicitation Protocol | A standardized interview or survey guide to systematically gather expert opinion on model assumptions and outputs. | Using a Delphi method to achieve consensus on parameter values for unmeasurable inputs in a cancer model [2]. |

| Standardized Model Scenarios | A set of predefined input conditions and edge cases used to test model behavior across its expected domain of applicability. | Running a simulation model with high and low customer arrival rates to check if output trends are plausible [1]. |

| 5-Point Likert Scale Questionnaire | A psychometric instrument to quantify subjective expert assessments of realism and relevance [3]. | Surgical faculty rating a simulator's visual realism and tool behavior on a scale from "Very Poor" to "Excellent" [3]. |

| Formal Advisory Group Charter | A document defining the group's composition, roles, meeting frequency, and decision-making processes [2]. | Establishing a standing advisory group with representatives from medicine, patient advocacy, and payors for a thyroid cancer model [2]. |

Advanced Applications and Contemporary Case Studies

The application of rigorous face validity assessment is evident across multiple high-stakes research fields. The following diagram illustrates its role in a comprehensive validation framework, as applied in a recent cancer modeling study.

Case Study: Participatory Modeling in Cancer Epidemiology A seminal example of advanced face validation is the development of the PATCAM (PApillary Thyroid CArcinoma Microsimulation) model. The researchers employed a participatory action research approach, establishing a formal standing advisory group [2]. This group provided critical input on six key unmeasurable modeling assumptions, including the role of nodule size in biopsy decisions, trends in provider biopsy behavior, and the population prevalence of thyroid cancer over time [2]. This process systematically incorporated clinical belief and practice into the model, thereby optimizing its face validity and clinical relevance for answering research and policy questions where prospective evidence is infeasible.

Case Study: Robotic Surgery Simulator Validation Another clear application is found in the comparative validation of robotic surgery simulators. A 2025 descriptive analytical study assessed the face validity of the DaVinci Skills Simulator (dVSS) and the CMR Versius Simulator among surgical faculty [3]. Participants performed standardized tasks on both simulators and completed a 5-point Likert-scale questionnaire. The study concluded that the dVSS showed significantly higher face validity, meaning it was perceived as a more realistic imitation of actual robotic surgery [3]. This type of validation is crucial for guiding effective simulation-based training programs by ensuring that the training environment is a faithful representation of the real task.

In conclusion, face validity is a necessary and multifaceted component of model development that extends far beyond a superficial check. It is a systematic process that leverages expert knowledge and structured feedback to ensure a model is a plausible representation of the real-world system it intends to imitate. When integrated as the first step in a broader validation framework—followed by assumption validation and input-output transformation checks—it lays the groundwork for a credible and impactful model [1]. For researchers in drug development and other scientific fields, a documented and rigorous face validation process is not merely an academic exercise; it is a critical factor in building stakeholder trust, identifying model weaknesses early, and ultimately ensuring that model results can be confidently used to inform clinical decisions and policy [2].

The Role of Face Validity in the Broader Validation Framework

In the rapidly evolving field of computer simulation models, particularly within drug development and biomedical research, establishing the credibility of in silico methods is paramount. Face validity, defined as the subjective perception of how realistic a simulation appears to its users, serves as a critical initial gateway in the validation process [4]. While not a standalone measure of a model's predictive accuracy, it fosters crucial early-stage trust and acceptance among researchers, clinicians, and regulators [4]. This technical guide examines the nuanced role of face validity within a comprehensive validation framework, arguing that while it is a necessary component for user engagement, it must be systematically evaluated and complemented by more rigorous forms of validity to ensure the scientific credibility and regulatory acceptance of computer simulations.

The recent paradigm shift in biomedical research, marked by the increased adoption of in silico methodologies and AI-driven tools, has intensified the need for robust validation frameworks [5] [6]. As regulatory agencies like the U.S. Food and Drug Administration (FDA) and the European Medicines Agency (EMA) begin to accept computational evidence in regulatory submissions, the principles of validation, including face validity, have moved from academic concerns to regulatory necessities [6] [7]. This guide provides researchers and drug development professionals with evidence-based methodologies for assessing face validity and integrating it effectively within a broader, multi-faceted validation strategy.

Defining Face Validity and Its Subtypes

Face validity represents the most accessible yet often misunderstood form of validity. It is the extent to which a simulation looks realistic to subject matter experts, end-users, and stakeholders [4]. This subjective assessment is influenced by a simulation's superficial visual features, its structural components, and its functional aspects, such as how user input relates to actions within the model [4].

Within the overarching concept of face validity, two critical subtypes can be distinguished, as outlined in Table 1.

Table 1: Subtypes of Face Validity in Simulation Models

| Subtype | Definition | Key Influencing Factors | Primary Assessment Method |

|---|---|---|---|

| Perceptual Fidelity | The degree to which a simulation recreates the visual, auditory, and haptic cues of the real-world system [4]. | Graphical realism, environmental detail, texture quality, auditory authenticity [4]. | Expert rating scales, user questionnaires focusing on sensory realism. |

| Functional Verisimilitude | The extent to which the simulation's input-response mechanisms mirror real-world interactions and cause-effect relationships [4]. | Model dynamics, response to interventions, accuracy of user interaction paradigms [4]. | Expert review of workflow logic, observation of user-task interactions. |

A common point of confusion in simulation design is the conflation of high-fidelity graphics with high functional validity. A model may possess stunning visual realism (high perceptual fidelity) yet fail to replicate the fundamental functional relationships of the target system, thereby offering poor training or predictive value [4]. Conversely, a simulation with rudimentary graphics but accurately modeled core mechanics can be a highly valid and effective tool [4]. This distinction is crucial for allocating development resources effectively.

The Face Validity Assessment Methodology

A structured, evidence-based approach to evaluating face validity moves the process beyond informal opinion gathering. The following protocol provides a reproducible methodology for research teams.

Systematic Protocol for Assessment

- Expert Panel Selection: Convene a panel of 5-10 subject matter experts (SMEs) who are deeply familiar with the real-world system being simulated. This should include end-users (e.g., clinicians for a surgical simulator) and potentially stakeholders from regulatory or operational backgrounds [4].

- Structured Exposure: SMEs interact with the simulation in a controlled environment. The interaction should cover all key functional aspects, not just visual inspection. A standardized checklist of system components and behaviors to be reviewed ensures consistency.

- Quantitative Data Collection: Utilize Likert-scale questionnaires (1-5 or 1-7 scales) to solicit standardized feedback. Key rating domains should include:

- Visual realism of critical elements

- Perceived accuracy of system dynamics and responses

- Realism of user interaction and control

- Overall plausibility of the simulated experience

- Qualitative Data Collection: Conduct structured debriefing interviews or facilitate focus group discussions following the exposure session. Prompt SMEs to identify specific elements that appeared unrealistic and to explain why.

- Data Synthesis and Iteration: Collate quantitative and qualitative data to identify consistent strengths and weaknesses. Prioritize identified issues based on their potential impact on the simulation's credibility and functional objectives. Use these findings to inform subsequent design iterations.

The following diagram illustrates this multi-stage workflow, highlighting its iterative nature.

Face Validity within the Comprehensive Validation Framework

Face validity is a single component in a hierarchy of validities required for a simulation to be deemed credible and fit-for-purpose. Its relationship to other critical forms of validity is hierarchical and interdependent.

Table 2: Hierarchy of Validities in Simulation Model Validation

| Validity Type | Core Question | Relationship to Face Validity | Primary Evidence |

|---|---|---|---|

| Face Validity | Does the simulation look and feel realistic to experts? [4] | Serves as the initial, subjective gateway to broader acceptance. | Expert opinion, user ratings, qualitative feedback. |

| Construct Validity | Does the simulation accurately measure the underlying theoretical constructs it purports to represent? [4] | A simulation with high face validity may lack construct validity if it fails to capture fundamental theoretical principles. | Statistical correlation with gold-standard measures, hypothesis testing. |

| Predictive Validity | Can the simulation accurately forecast future real-world outcomes? | Not guaranteed by face validity. A visually simplistic model can have high predictive power. | Correlation between simulated predictions and subsequent real-world observations. |

| Translational Validity | Do skills or insights gained in the simulation transfer effectively to the real world? [4] | Functional verisimilitude within face validity is a stronger predictor of transfer than perceptual fidelity. | Performance comparison in real-world tasks before and after simulation training. |

The ultimate test of a simulation's value, especially in training contexts, is the transfer of learning to the real world [4]. While face validity can enhance user engagement and buy-in, it is the coherence of psychological, affective, and ergonomic principles—often reflected in construct and translational validity—that determines successful transfer [4]. Therefore, establishing face validity should be viewed as a foundational step that enables and motivates the more rigorous and objective testing required for full validation.

Applications and Protocols in Drug Development

The principles of face validity and broader validation are critically applied in modern drug development, particularly with the rise of in silico trials and AI/ML models.

Validation of In Silico Clinical Trials

In silico clinical trials use computer models to simulate disease progression, drug effects, and virtual patient populations [5]. The face validity of a virtual patient or "digital twin" is assessed by how well its represented physiology and response to interventions mirror that of a real human patient, as perceived by clinical experts [5]. For example, in oncology, a digital twin of a patient's tumor must not only look anatomically plausible in a visualization but must also exhibit growth dynamics and response to therapy that clinicians would expect based on biological first principles and historical data [5].

Experimental Protocol for Validating a Disease Progression Model:

- Aims: To establish the face and construct validity of a computational model simulating Multiple Sclerosis (MS) progression.

- Data-Generating Mechanisms: Develop a model integrating multi-omics data, clinical biomarkers, and real-world data to simulate disease trajectories across diverse patient profiles [5].

- Estimands: The model's primary outputs are longitudinal disability scores (e.g., EDSS) and response to disease-modifying therapies.

- Methods: The simulation's outputs (e.g., virtual patient charts, progression curves) are presented to a panel of neurologists specializing in MS. They rate the plausibility of the simulated disease courses without knowing whether the data is from a real patient or the model.

- Performance Measures: Quantitative: Mean expert rating on a realism scale (1-5). Qualitative: Feedback on specific, unrealistic behaviors. Success is defined by a mean rating above a pre-specified threshold (e.g., 4.0) and no major qualitative challenges to the model's core mechanisms [4] [8].

Regulatory Perspectives and AI/ML Model Validation

Regulatory agencies are developing frameworks that implicitly and explicitly address aspects of validation. The FDA's draft guidance on AI in drug development emphasizes a risk-based "credibility assessment framework" which, while focused on the entire AI model lifecycle, requires transparency and evidence that a model is fit for its context of use [6] [7]. Establishing face validity—ensuring the model's inputs, operations, and outputs are intelligible and plausible to regulatory reviewers—is a critical part of building this overall credibility [7]. The "black-box" nature of some complex AI models poses a significant challenge to demonstrating face and construct validity, highlighting the need for explainable AI (XAI) techniques to make model workings more accessible and assessable for human experts [5] [7].

The Scientist's Toolkit: Key Research Reagents and Materials

The following table details essential methodological "reagents" and tools for conducting rigorous face and construct validity testing in simulation research.

Table 3: Essential Methodological Toolkit for Simulation Validation

| Tool/Reagent | Function in Validation | Application Example |

|---|---|---|

| Subject Matter Expert (SME) Panel | Provides the authoritative subjective judgment required for face validity assessment and insights for construct definition [4]. | A panel of oncologists assesses the realism of a virtual tumor microenvironment's response to a simulated immunotherapy. |

| Structured Rating Scales (e.g., Likert) | Quantifies subjective perceptions of realism, allowing for pre-/post-comparison and statistical analysis of face validity [4]. | Experts rate the visual plausibility of a simulated molecular dynamics simulation on a scale of 1 (Highly Implausible) to 5 (Highly Plausible). |

| ADEMP Framework | Provides a structured approach for Planning simulation Studies (Aims, Data-generating mechanisms, Estimands, Methods, Performance measures) [8]. | Used to design a rigorous simulation study to test a new AI model's predictive validity for drug toxicity. |

| Good Simulation Practice (GSP) Guidelines | Emerging standardized frameworks akin to Good Clinical Practice, intended to ensure consistency, quality, and trust in simulation methods [5]. | Following GSP principles when developing a digital twin library to ensure model reproducibility and regulatory-grade validation. |

| Explainable AI (XAI) Techniques | Makes the internal logic and decision-making processes of complex AI models interpretable to humans, enhancing face and construct validity [5]. | Using feature importance scores and saliency maps to show clinicians which patient data most influenced an AI model's treatment recommendation. |

Face validity is an indispensable, yet insufficient, component of the validation framework for computer simulation models. Its primary power lies in fostering initial trust, facilitating user acceptance, and identifying gross inconsistencies that can guide early development. However, an over-reliance on superficial visual realism or subjective appeal without rigorous construct and predictive validation is a critical scientific and operational risk. As in silico methodologies become central to drug development and regulatory decision-making, a balanced, evidence-based approach is required. Researchers must systematically assess face validity but must then pivot to the more demanding tasks of demonstrating that their models accurately embody theoretical constructs and can reliably predict real-world outcomes. The future of credible simulation science depends on a validation strategy that respects the intuitive appeal of face validity while demanding the empirical rigor of its counterparts.

Contrasting Face, Construct, and Predictive Validity

In the rigorous world of computer simulation models, particularly within pharmaceutical development and computational social science, validity is not merely a statistical formality but the foundational determinant of a model's utility and credibility. Validity refers to the extent to which an instrument, test, or simulation accurately measures what it purports to measure [9]. For researchers and drug development professionals, establishing validity is paramount for ensuring that simulation outputs can inform high-stakes decisions, from clinical trial designs to policy recommendations. The integration of complex computational models, including agent-based models and large language models, has intensified scrutiny on validation practices [10]. Within this landscape, face validity serves as the critical first gatekeeper—a preliminary assessment of whether a model's behavior appears plausible to subject matter experts [11]. This technical guide provides an in-depth examination and contrast of three fundamental validity types—face, construct, and predictive validity—framed within the pressing challenges of modern simulation research.

Defining the Validity Triad

Face Validity: The Plausibility Threshold

Face validity represents the most accessible, though least scientifically rigorous, form of validity assessment. It is a subjective judgment of whether a test or model appears to measure what it claims to measure, based on superficial inspection [12] [13]. In computer simulation modeling, face validity is demonstrated when the model's structure, inputs, processes, and outputs seem reasonable and credible to domain experts and stakeholders [11]. For instance, a simulation of fast-food restaurant drive-through operations would have face validity if, when customer arrival rates increased from 20 to 40 per hour, the model outputs showed corresponding increases in average wait times and maximum queue lengths [11]. This form of validity is particularly valuable in the early stages of model development and for building stakeholder confidence, though it never suffices as standalone validation [12] [13].

Construct Validity: The Theoretical Foundation

Construct validity assesses how well a test or instrument measures the abstract theoretical concept—or construct—it was designed to capture [14] [13]. Constructs are phenomena that cannot be directly observed or measured, such as intelligence, stress, market volatility, or disease severity [14]. Establishing construct validity requires demonstrating that the measurement tool's performance aligns with theoretical predictions about the construct [15] [13]. This involves gathering multiple forms of evidence, including convergent validity (high correlation with measures of the same construct) and discriminant validity (low correlation with measures of distinct constructs) [15] [14]. In pharmaceutical research, a disease progression model with strong construct validity would accurately reflect the underlying biological mechanisms and their interactions, not merely surface-level symptoms.

Predictive Validity: The Forecasting Benchmark

Predictive validity evaluates how well a measurement or simulation can forecast future outcomes or behaviors [15] [13]. Also known as criterion-related validity, it is established by correlating current test scores with later outcomes measured by a respected benchmark or "gold standard" [15] [9]. For example, an aptitude test has predictive validity if it accurately forecasts which candidates will succeed in an educational program [15]. In drug development, a pharmacokinetic model demonstrates predictive validity when it can accurately forecast patient drug concentration levels over time based on dosage regimens. This forward-looking validation is especially crucial for models intended for prognostic applications or long-term strategic planning.

Comparative Analysis: A Technical Examination

The table below synthesizes the core characteristics, methodologies, and applications of these three validity types, highlighting their distinct roles in research validation.

Table 1: Comparative Analysis of Face, Construct, and Predictive Validity

| Aspect | Face Validity | Construct Validity | Predictive Validity |

|---|---|---|---|

| Core Definition | Superficial appearance of measuring the target concept [13] | Measurement of abstract theoretical constructs [14] | Accuracy in forecasting future outcomes [15] |

| Primary Question | "Does the test look like it measures the intended variable?" | "Does the test actually measure the theoretical concept?" | "Does the test predict future performance?" |

| Nature of Assessment | Subjective, intuitive judgment [12] | Theoretical and empirical [13] | Empirical and correlational [15] |

| Key Methods | Expert review, stakeholder feedback [11] | Convergent/divergent validation, factor analysis, MTMM* [15] [14] | Correlation analysis, ROC curves, sensitivity/specificity [15] |

| Statistical Measures | None; relies on qualitative assessment | Pearson's correlation, factor loadings [15] | Pearson's correlation, AUC⁺, phi coefficient [15] |

| Strength | Quick to assess, builds stakeholder confidence [11] | Comprehensive, tests theoretical foundations [14] | Practical, directly tests real-world utility [15] |

| Limitation | Potentially misleading, vulnerable to bias [12] [13] | Complex to establish, requires multiple studies [14] | Depends on quality of criterion measure [15] |

MTMM: Multitrait-Multimethod Matrix [15]; *AUC: Area Under the Curve [15]

Methodological Protocols for Validation

Establishing Face Validity

The following workflow details the expert-driven process for establishing face validity in simulation models:

The protocol for establishing face validity involves convening a panel of domain experts and stakeholders to evaluate the model's conceptual structure and output reasonableness [11]. These experts examine whether the model's mechanisms and responses align with their understanding of the real-world system, providing qualitative feedback on perceived deficiencies. This iterative process continues until the model achieves sufficient face validity to proceed to more rigorous validation stages [11].

Establishing Construct Validity

Construct validation requires a multifaceted approach, as illustrated in the following methodological framework:

The construct validation process begins with precisely defining the theoretical construct and its hypothesized relationships with other variables (nomological network) [13]. Researchers then collect multiple forms of evidence: convergent validity through strong correlations (>0.7) with measures of the same construct; discriminant validity through weak correlations with unrelated constructs; factor analysis to confirm the underlying dimensional structure; and known-groups validation by testing whether the measure distinguishes between groups that should theoretically differ [15] [14] [13]. This evidentiary triangulation continues until sufficient construct validity is established.

Establishing Predictive Validity

The protocol for predictive validation involves correlating test measurements with future outcomes:

Table 2: Predictive Validity Assessment Protocol

| Step | Action | Measurement | Statistical Analysis |

|---|---|---|---|

| 1. Criterion Selection | Identify and obtain a respected "gold standard" outcome measure [15] | Quality and acceptability of the criterion measure | Expert consensus on criterion appropriateness |

| 2. Baseline Measurement | Administer the test or simulation to participants [15] | Scores on the predictive instrument | Descriptive statistics (mean, standard deviation) |

| 3. Outcome Measurement | Collect outcome data after a specified time interval [15] | Performance on gold standard measure | Descriptive statistics of outcome measure |

| 4. Correlation Analysis | Calculate relationship between test scores and outcomes [15] | Strength and direction of association | Pearson's correlation for continuous variables; Sensitivity/Specificity for dichotomous outcomes [15] |

| 5. Validation Decision | Determine if predictive power meets requirements [15] | Practical significance of correlation | Statistical significance (p < 0.05) and effect size [14] |

For continuous variables, predictive validity is typically quantified using Pearson's correlation coefficient, with values greater than 0.7 generally considered strong [15] [14]. For dichotomous outcomes, sensitivity, specificity, and area under the ROC curve (AUC) provide measures of predictive accuracy [15].

Table 3: Essential Research Tools for Validity Assessment

| Tool Category | Specific Instrument/Method | Primary Application | Key Function |

|---|---|---|---|

| Statistical Software | R, Python (SciPy), SPSS, SAS | All validity types | Correlation analysis, factor analysis, regression modeling |

| Expert Panel | Domain specialists, end-users | Face validity | Qualitative assessment of model plausibility and structure [11] |

| Gold Standard Measures | Validated instruments, objective outcomes | Predictive validity | Criterion for evaluating predictive accuracy [15] |

| Multitrait-Multimethod Matrix | Campbell-Fiske methodology [15] | Construct validity | Simultaneous assessment of convergent and discriminant validity [15] |

| Factor Analysis | Exploratory (EFA) & Confirmatory (CFA) | Construct validity | Identification of latent constructs and dimensional structure [15] |

| ROC Analysis | Sensitivity/Specificity plots | Predictive validity | Optimization of classification thresholds [15] |

Integration in Computer Simulation Research

In computer simulation models, particularly agent-based models and generative social simulations, these validity types form a hierarchical validation framework [10] [11]. Face validity provides the initial credibility check, ensuring the model appears reasonable to stakeholders. Construct validity establishes that the model accurately represents underlying theoretical mechanisms, not merely surface phenomena. Predictive validity tests the model's practical utility in forecasting future system states [10].

The integration of large language models (LLMs) into agent-based modeling has complicated validation efforts, as their black-box nature, cultural biases, and stochastic outputs make traditional validation challenging [10]. In this context, face validity becomes increasingly important as an initial screening tool, while predictive and construct validity require more sophisticated approaches to address the unique characteristics of generative AI systems [10].

Each validity type addresses distinct aspects of model credibility: face validity establishes plausibility, construct validity ensures theoretical fidelity, and predictive validity demonstrates forecasting utility. Together, they form a comprehensive validation strategy essential for producing credible, actionable simulation results in scientific research and drug development.

Why Face Validity Matters for Model Credibility and Adoption

In the development of computer simulation models for biomedical research, face validity—the superficial, phenomenological similarity of a model to the human condition it represents—serves as a critical gateway for credibility and adoption. While not sufficient on its own, strong face validity fosters intuitive acceptance among researchers, clinicians, and stakeholders, facilitating model integration into the research workflow. This whitepaper delineates the role of face validity within a holistic validation framework, provides methodologies for its systematic assessment, and underscores its indispensable function in bridging laboratory research and clinical application.

The pursuit of effective treatments for human diseases relies heavily on preclinical research using experimentally tractable models, from animal subjects to in silico simulations. The utility of these models is governed by their validity, typically categorized into three primary criteria established by Willner and widely adopted across research fields [16]:

- Predictive Validity: The ability of a model to accurately predict unknown aspects of the disease or therapeutic outcomes in humans. This is often considered the most crucial criterion for translational research [17] [16].

- Construct Validity: The alignment between the model's underlying mechanisms (e.g., genetic, molecular) and the understood etiology of the human disease [17] [16].

- Face Validity: The phenomenological similarity between the model's presentation—its symptoms, phenotypes, or outputs—and the human disease [16] [18].

While predictive validity is the ultimate goal and construct validity provides the foundational rationale, face validity is frequently the starting point that grants a model its initial credibility and encourages its adoption by the scientific community [18].

Defining Face Validity and Its Context within a Validation Framework

Face validity is the extent to which a model "looks right" or appears to measure what it is supposed to measure based on its overt characteristics [17] [4]. In the context of computer simulation models, this translates to whether the model's outputs and behaviors are recognizably similar to the real-world phenomenon being simulated, as judged by domain experts.

It is crucial to recognize that face validity is a subjective assessment [4]. A simulation can possess high face validity yet be a poor predictor of real-world outcomes if it fails to capture functionally critical elements. Conversely, a model with low face validity can be highly predictive if it accurately captures key underlying principles [4]. For instance, a virtual reality simulation for surgical training might have visually stunning graphics (high face validity) but fail to teach correct surgical techniques, while a simpler model that accurately represents kinematic constraints could be far more effective for learning despite its basic appearance [4].

The relationship between the different types of validity is not hierarchical but interconnected, as illustrated below.

The Critical Importance of Face Validity for Credibility and Adoption

Despite its subjective nature, face validity plays several indispensable roles in the research ecosystem.

Facilitating Initial Model Acceptance and Buy-in: A model that recapitulates well-known features of a disease is more intuitively accepted by researchers and clinicians. This phenomenological similarity is often the starting point for establishing a preclinical test platform [18]. For example, a mouse model of Niemann-Pick disease type C (NPC) that exhibits cerebellar ataxia and Purkinje cell loss—key features of the human disease—immediately gains credibility for studying neurodegenerative aspects of the disorder [17].

Enhancing Communication and Stakeholder Engagement: Models with high face validity can serve as powerful communication tools. They make complex pathophysiological processes more tangible for a broader audience, including grant reviewers, pharmaceutical partners, and regulatory bodies, thereby facilitating funding and collaborative opportunities.

Guiding Experimental Design and Hypothesis Generation: The visible alignment between model outputs and clinical observations can help researchers formulate more relevant hypotheses. In virtual reality training simulations, face validity contributes to "plausibility," the user's subjective feeling that the depicted scenario is really occurring, which is critical for eliciting realistic behaviors and ensuring the training's ecological validity [4].

Quantitative and Qualitative Assessment of Face Validity

Assessing face validity requires a structured approach that combines qualitative expert judgment with quantitative metrics where possible.

Methodologies for Establishing Face Validity

The following experimental protocols are commonly employed to establish and quantify face validity in various model systems.

Protocol 1: Expert Consensus Rating

- Objective: To obtain a standardized assessment of phenotypic similarity from domain experts.

- Procedure:

- Assemble a panel of independent experts (e.g., clinicians, pathologists, biologists).

- Present them with a blinded set of data, including model outputs (e.g., behavioral readouts, histology slides, simulation logs) and equivalent human clinical data.

- Experts score the similarity for each predefined key phenotype (e.g., tremor, gait abnormality, cognitive deficit) using a Likert scale (e.g., 1=Not Similar to 5=Very Similar).

- Analysis: Calculate inter-rater reliability (e.g., Cohen's Kappa) and average similarity scores for each phenotype. A high consensus score indicates strong face validity.

Protocol 2: Behavioral and Phenotypic Profiling

- Objective: To systematically compare and quantify disease-relevant phenotypes between the model and human condition.

- Procedure:

- Identify a battery of tests that capture the core features of the human disease.

- Apply this test battery to the model system (e.g., animal model, simulated patient cohort).

- For animal models, this may include motor function tests, cognitive assays, and physiological monitoring.

- For computer models, this involves running simulations to generate outcome data that can be compared to clinical datasets.

- Analysis: Use statistical methods (e.g., t-tests, ANOVA) to compare model data against control data and known clinical benchmarks. Effect sizes can be used as a measure of phenotypic congruence.

Key Reagents and Tools for Assessment

The following reagents and tools are essential for conducting rigorous face validity assessments.

Table 1: Essential Research Reagents and Tools for Face Validity Assessment

| Item | Function in Assessment |

|---|---|

| Behavioral Test Battery | A standardized set of assays (e.g., open field, rotarod, Morris water maze) to quantify disease-relevant phenotypes in animal models. |

| Histological Stains & Kits | (e.g., H&E, Nissl, immunohistochemistry kits) used to visualize and compare tissue pathology and cellular morphology between model and human samples. |

| Clinical Scoring Scales | Validated clinical assessment tools (e.g., UPDRS for Parkinson's, MMSE for dementia) adapted for use in model systems to provide a direct comparison to human symptoms. |

| High-Content Imaging Systems | Automated microscopy platforms that allow for quantitative analysis of cellular and histological phenotypes in high throughput. |

| Data Logging & Simulation Software | Tools to record model outputs and run in silico experiments for comparison with real-world clinical or experimental data. |

Case Studies in Face Validity

The practical application and limitations of face validity are best understood through specific research examples.

Case Study 1: Neurological Disease Models. The mouse model of Niemann-Pick disease type C (NPC) with a spontaneous Npc1 mutation exhibits strong face validity for the human condition, including cholesterol accumulation and cerebellar ataxia due to Purkinje cell loss. However, a notable lack of face validity exists: the mouse models do not exhibit seizures, which are a common feature in human patients. This discrepancy highlights that while a model may be excellent for studying certain aspects of a disease (e.g., neurodegeneration), its lack of specific phenotypes can limit its utility for studying others (e.g., seizure management) [17].

Case Study 2: Virtual Reality Training Simulations. In VR, face validity is often conflated with graphical realism. However, studies show that psychological, affective, and ergonomic fidelity are more critical determinants of successful skill transfer than high-fidelity visuals. A VR surgical simulator with less photorealistic graphics but accurate haptic feedback and kinematic relationships will have more effective face validity for training purposes than a visually stunning but functionally inaccurate simulation [4].

Limitations and the Path Forward

An over-reliance on face validity carries risks. Judging a model primarily by its superficial appearance can lead to the dismissal of models that are highly predictive based on mechanisms not immediately visible, or the adoption of models that look convincing but are poor predictors [18]. The field must therefore move towards a multifactorial validation strategy.

No single model can perfectly recapitulate all aspects of a human disease [16]. The future of effective preclinical research lies in employing a combination of complementary models, each with its own strengths in face, construct, and predictive validity. This approach, combined with rigorous, evidence-based methods for establishing all forms of validity, will maximize the translational significance of data generated in any field, from immuno-oncology to neurodegenerative medicine [16].

Face validity, while a subjective and insufficient criterion in isolation, is a powerful catalyst for model credibility and adoption. It provides the intuitive bridge that connects complex models to human disease, fostering initial acceptance and guiding further investigation. Researchers must rigorously assess face validity using structured methodologies while remaining cognizant of its limitations. By integrating face validity into a comprehensive framework that also prioritizes construct and predictive validity, the scientific community can develop more reliable and translatable models, ultimately accelerating the path to effective therapies.

Historical Context and Evolution of Validity Concepts in Modeling

This whitepaper examines the historical context and conceptual evolution of validity frameworks within computer simulation modeling, with particular emphasis on face validity's role in biomedical and drug development research. We trace the philosophical development from early subjective assessments to contemporary multi-stage validation paradigms, documenting how face validity serves as the critical initial gatekeeper in model credibility assessment. Through analysis of experimental protocols and quantitative data from medical simulation studies, we demonstrate that while face validity remains a subjective judgment, its systematic implementation provides essential foundation for establishing model credibility among researchers, clinicians, and regulatory professionals. The paper further presents standardized methodologies for face validity assessment and introduces visualization frameworks to contextualize its position within comprehensive validation workflows for simulation-based medical research.

Verification and validation of computer simulation models represents a critical process in model development with the ultimate goal of producing accurate and credible models [11]. As simulation models increasingly inform decision-making in fields from drug development to medical education, establishing validity has become an ethical imperative for researchers and practitioners alike. The fundamental challenge stems from the nature of simulation models as approximate imitations of real-world systems that never exactly imitate the real system they represent [11].

Within this context, face validity has emerged as the most accessible yet frequently misunderstood component of validation frameworks. Face validity refers to the extent to which a test or model is subjectively viewed as covering the concept it purports to measure [19]. It represents the transparency or relevance of a test as it appears to test participants and stakeholders [20]. In simulation contexts, face validity is often described as the degree to which a model "looks like" a reasonable imitation of the real-world system to people knowledgeable about that system [11].

The evolution of validity concepts has followed a trajectory from simple face-value assessments to sophisticated multi-stage frameworks. The contemporary understanding positions face validity not as a standalone validation measure, but as the initial step in a comprehensive process that establishes the foundation for more rigorous validation techniques.

Historical Development of Validity Frameworks

Early Conceptual Foundations

The formalization of validity concepts in modeling emerged from mid-20th century research methodology, with early frameworks drawing sharp distinctions between different validity types. During this period, face validity was often dismissed as "unscientific" due to its reliance on subjective judgment rather than statistical proof [20]. The earliest simulation models in medical education frequently employed simple decision trees that could be checked exhaustively for face validity, presenting students with limited choices that were clearly classified as "right" or "wrong" [21].

The philosophical shift toward structured validation frameworks began with Naylor and Finger (1967), who formulated a three-step approach to model validation that has been widely followed [11]:

- Build a model that has high face validity

- Validate model assumptions

- Compare the model input-output transformations to corresponding input-output transformations for the real system

This framework represented a significant advancement by positioning face validity as the essential starting point for comprehensive model validation rather than treating it as an optional or inferior form of assessment.

Evolution in Medical Simulation

The adoption of simulation in medical education and drug development accelerated the evolution of validity concepts. Early medical simulations focused primarily on instilling concrete measurable skills through vocational training approaches [21]. As simulations grew more sophisticated, attempting to model complex biological systems and clinical decision-making, validation requirements similarly expanded.

A significant challenge emerged in balancing biological realism with educational utility. Early models like the Oncology Thinking Cap (OncoTCap) revealed tensions between face validity and what developers termed "deep validity" - the accurate representation of underlying biological mechanisms rather than surface-level appearances [21]. This period saw recognition that good face validity could sometimes mask poor underlying model structure, particularly when systems presented with complex, non-linear behaviors that contradicted intuitive expectations.

Contemporary Integrated Frameworks

Modern validity frameworks for simulation models have evolved toward integrated approaches that position face validity within a broader validation ecosystem. Sargent's (2011) model identifies three primary components of simulation model validation [22]:

- Conceptual Model Validation: Determining that theories and assumptions underlying the conceptual model are correct

- Computerized Model Verification: Ensuring the computer program implements the conceptual model correctly

- Operational Validation: Substantiation that the model's output behavior has sufficient accuracy for its intended purpose

Within this framework, face validity primarily supports conceptual model validation though it also informs initial assessments of operational validity.

Face Validity: Methodological Foundations

Definition and Conceptual Boundaries

Face validity is defined as the degree to which a test or model appears to measure what it purports to measure based on subjective judgment [20] [23]. It is characterized by:

- Subjectivity: Based on judgment rather than statistical analysis [20]

- Surface-Level Assessment: Concerned with appearance and relevance rather than comprehensive measurement [20]

- Accessibility: Can be evaluated by non-experts, including end-users [19]

- Context Dependence: Varies based on audience and application domain [23]

In simulation contexts, face validity is often described as the extent to which the task performance on the simulator appears representative of the real world it models [19]. This distinguishes it from the more rigorous content validity, which requires expert assessment of how well the model represents the entire domain of content [20].

Assessment Methodologies

The assessment of face validity employs distinct methodological approaches that prioritize subjective perception over objective measurement:

Table 1: Standard Methodologies for Face Validity Assessment

| Method | Description | Key Applications | Strengths |

|---|---|---|---|

| Expert Review | Subject matter experts provide subjective judgment on whether the model appears to measure the intended construct [23] | Early-stage model development; Medical simulation validation [24] | Leverages domain knowledge; Identifies obvious mismatches |

| User Pretesting | Small group of target users complete the simulation and provide feedback on perceived relevance [23] | End-user acceptance testing; Educational simulation development | Identifies usability issues; Assesses perceived relevance |

| Structured Observation | Researchers observe users interacting with the simulation and note difficulties or confusion [23] | Interface validation; Workflow assessment | Captures unprompted reactions; Identifies intuitive elements |

| Focus Groups | Structured discussions with representative users to gather feedback on perceived validity [23] | Complex system validation; Cross-cultural adaptation | Reveals group consensus; Uncovers diverse perspectives |

| Likert Scale Rating | Numerical ratings (e.g., 1-5) of specific simulation elements by experts or users [24] | Quantitative comparison of simulation elements; Iterative development | Provides quantitative data; Allows statistical comparison |

Quantitative Assessment in Practice: EndoSim Case Study

A recent study of the EndoSim virtual reality endoscopic simulator demonstrates the practical application of face validity assessment in medical simulation. In this validation study, experts completed 13 simulator-based endoscopy exercises and rated their face validity using a Likert scale (1-5) [24].

Table 2: Face Validity Ratings for Endoscopic Simulation Exercises [24]

| Simulation Exercise | Median Score | Interquartile Range (IQR) | Statistical Significance (P-value) |

|---|---|---|---|

| Mucosal Examination | 5 | 4.5-5 | 1.000 |

| Visualize Colon 1 | 4.5 | 4-5 | 1.00 |

| Visualize Colon 2 | 4.5 | 4-5 | 1.00 |

| Scope Handling | 4.5 | 3-5 | 0.796 |

| Examination | 4 | 4-5 | 0.796 |

| Navigation Skill | 4 | 4-5 | 0.853 |

| Knob Handling | 4 | 4-5 | 0.529 |

| Retroflexion | 4 | 2-5 | 0.218 |

| Navigation Tip/Torque | 3.75 | 3-4 | 0.105 |

| ESGE Photo | 3.75 | 3-4 | 0.105 |

| Intubation Case 3 | 3 | 2-3 | 0.004 |

| Loop Management | 3 | 1-3 | 0.001 |

The significant variation in scores across different exercises (P < 0.003) demonstrates that face validity is not uniform across all components of a single simulation platform. Exercises involving fundamental skills like mucosal examination received the highest scores, while more complex tasks like loop management received the lowest, highlighting how task complexity influences perceived validity [24].

Experimental Protocols for Face Validity Assessment

Standardized Assessment Framework

Based on methodological review, we propose the following standardized protocol for assessing face validity in simulation models:

Phase 1: Expert Panel Formation

- Recruit 5-10 subject matter experts with comprehensive knowledge of the target domain [24]

- Ensure representation across relevant subdisciplines (e.g., for medical simulation: clinicians, educators, technicians)

- Exclude individuals with direct involvement in model development to minimize bias

Phase 2: Structured Evaluation Session

- Provide standardized orientation to simulation capabilities and limitations

- Allow hands-on interaction with simulation components

- Use structured evaluation instruments with Likert-scale ratings and open-ended feedback prompts [24]

Phase 3: Quantitative and Qualitative Data Collection

- Collect numerical ratings for specific simulation elements

- Document specific feedback regarding unrealistic elements or missing components

- Record observations of expert behavior during interaction

Phase 4: Iterative Refinement

- Identify common criticisms across multiple experts

- Prioritize modifications based on frequency and severity of feedback

- Conduct follow-up assessments after modifications

Domain-Specific Adaptation: Medical Education Protocol

In medical education simulations, the protocol requires specific adaptations to address unique domain requirements:

The experimental workflow emphasizes the cyclical nature of face validity assessment, particularly during simulation development. The EndoSim validation study employed precisely this approach, using expert feedback to iteratively modify exercises between pilot and final validation phases [24].

The Relationship Between Face Validity and Other Validity Types

Comprehensive Validation Ecosystem

Face validity operates within a network of complementary validity types that together constitute comprehensive model validation. The relationship between these concepts follows a hierarchical structure:

This conceptual framework illustrates how face validity serves as the foundational layer upon which more rigorous validity assessments are built. While face validity alone is insufficient to establish overall model validity, its absence typically undermines credibility and user acceptance before other validity types can be assessed [20].

Distinguishing Characteristics

The differentiation between face validity and content validity deserves particular attention in simulation contexts:

Table 3: Face Validity vs. Content Validity Comparison

| Characteristic | Face Validity | Content Validity |

|---|---|---|

| Definition | The degree to which a test appears to measure what it claims to measure [20] | The extent to which a test samples the entire domain of content it intends to measure [20] |

| Focus | Superficial appearance and perceptions [20] | Comprehensive content coverage and representation [20] |

| Assessment Perspective | Test-takers, end-users, non-experts [19] | Subject matter experts [20] |

| Methodological Rigor | Subjective, less rigorous [20] | Objective, more rigorous [20] |

| Primary Function | Enhances credibility, user acceptance, and cooperation [20] | Ensures measurement comprehensiveness and relevance [20] |

| Dependency | Can exist without content validity [20] | Typically assumes at least minimal face validity [20] |

Table 4: Essential Research Reagents for Face Validity Assessment

| Resource Category | Specific Examples | Function in Validity Research |

|---|---|---|

| Expert Panels | Clinical specialists, Domain experts, End-user representatives | Provide subjective validity assessments; Identify content gaps; Evaluate relevance [24] [23] |

| Structured Rating Instruments | Likert scales (1-5), Semantic differential scales, Structured interview protocols | Quantify subjective perceptions; Enable statistical analysis; Standardize responses [24] |

| Simulation Platforms | EndoSim (Surgical Science), OncoTCap, Custom simulation environments | Provide testbeds for validity assessment; Enable iterative refinement [24] [21] |

| Statistical Analysis Tools | SPSS, R, MATLAB | Analyze rating data; Compute inter-rater reliability; Test significance of differences [24] [22] |

| Validation Frameworks | Naylor and Finger three-step approach, Sargent's validation model | Provide methodological structure; Guide comprehensive assessment [11] [22] |

Implementation Protocols

The practical implementation of face validity studies requires specific methodological components:

- Standardized Orientation Materials: Ensure consistent baseline understanding across evaluators

- Structured Feedback Mechanisms: Capture both quantitative ratings and qualitative insights

- Cross-Cultural Adaptation Protocols: Address potential cultural biases in perception [23]

- Iterative Refinement Workflows: Support continuous improvement based on feedback

Face validity remains an essential component of comprehensive simulation model validation, serving as the critical initial gateway to model credibility and acceptance. Its historical evolution from dismissed superficial assessment to recognized foundational validity component reflects growing understanding of its role in user engagement and model utility. While methodological limitations prevent face validity from standing alone as sufficient evidence of model quality, its absence typically precludes meaningful adoption regardless of other validity evidence.

The future of face validity assessment lies in standardized methodologies that balance subjective perception with structured assessment protocols. Particularly in biomedical and drug development contexts, where model complexity increasingly exceeds intuitive verification, face validity provides the essential bridge between technical sophistication and practical utility. As simulation platforms grow more sophisticated, the continued development of robust face validity assessment methodologies will remain crucial to ensuring their successful implementation in research and practice.

How to Assess Face Validity: Methodologies and Real-World Applications

In computer simulation model research, particularly within drug development and healthcare, face validity is a fundamental component of model assessment. It represents whether subject matter experts (SMEs) perceive the model and its behavior as plausible and reasonable for its intended purpose [25]. Expert elicitation is the formal process of systematically capturing and quantifying these qualitative judgments from domain specialists. This guide details the core protocols, methodologies, and validation frameworks for integrating expert elicitation to establish and enhance the face validity of computer simulation models, supporting robust decision-making in the face of uncertain or incomplete data [26].

Structured expert elicitation (SEE) protocols are designed to minimize cognitive biases and improve the transparency, accuracy, and consistency of qualitative judgments obtained from experts [26]. These protocols transform expert knowledge into quantifiable probability distributions for use in decision-making models.

Commonly Used SEE Protocols

Several established protocols guide the design and execution of an elicitation. The table below summarizes the key characteristics of five prominent methods.

Table 1: Comparison of Structured Expert Elicitation Protocols

| Protocol Name | Level of Elicitation | Expert Interaction | Aggregation Method | Key Features |

|---|---|---|---|---|

| Sheffield Elicitation Framework (SHELF) [27] [26] | Group | Interactive discussion after individual estimation | Behavioral (consensus) | Includes facilitated discussion and use of performance weighting; well-suited for healthcare contexts. |

| Cooke’s Classical Method [27] [26] | Individual | No group discussion | Mathematical (performance-based weighting) | Uses empirical control questions to score and weight expert performance; highly mathematical. |

| Investigate, Discuss, Estimate, Aggregate (IDEA) [27] [26] | Group | Interactive discussion before and during estimation | Combination of behavioral and mathematical | "Investigate" and "Discuss" phases aim to reduce overconfidence. |

| Modified Delphi Method [27] [26] | Group | Anonymized, iterative feedback | Behavioral (consensus-seeking) | Involves multiple rounds of anonymous estimation with controlled feedback. |

| MRC Reference Protocol [27] [26] | Group | Interactive discussion | Behavioral (consensus) | Developed for healthcare decision-making; emphasizes evidence review and structured discussion. |

Selecting a Fit-for-Purpose Protocol

Protocol selection depends on the decision context and constraints. For model face validation, interactive protocols like SHELF, IDEA, and the MRC protocol are often advantageous because the group discussion allows experts to challenge assumptions and refine the model's conceptual structure collectively [27] [26]. In contrast, Cooke's method is preferable when mathematical aggregation and demonstrable performance calibration are required, minimizing the influence of dominant personalities [26].

Implementing a structured elicitation is a resource-intensive process that requires meticulous planning, execution, and reporting. The following workflow outlines the key phases.

Planning and Expert Selection

The foundation of a successful elicitation is careful preparation. This phase involves defining the Quantities of Interest (QoI)—specific, unambiguous questions about the model or its parameters that experts will assess [27]. An evidence dossier containing relevant background data and model specifications should be prepared to inform experts and align their understanding [27] [26].

Expert selection is critical. A diverse panel of 4 to 12 specialists is typical, balancing domain expertise with methodological knowledge. The selection process should be transparent, documenting expert credentials, years of experience, and relevance to the problem to establish credibility [25].

Sessions typically begin with individual, private judgment collection to prevent biases like anchoring or dominance in group settings [27] [26]. In interactive protocols, this is followed by a facilitated discussion where experts share their reasoning, challenge assumptions, and debate differences. The facilitator's role is to manage the discussion neutrally and ensure all voices are heard. Finally, experts may provide revised estimates, either individually or as a consensus [27].

Aggregation and Reporting

Individual judgments must be combined into a single distribution for use in models. Behavioral aggregation seeks a consensus through discussion, while mathematical aggregation uses weighted averages of individual distributions [27] [26]. Transparent reporting is essential for credibility, especially in regulatory contexts like National Institute for Health and Care Excellence (NICE) submissions, where a lack of technical detail can hinder committee review [27]. Reports should document the protocol used, expert identities and credentials, elicited values, and how disagreements were handled.

A Framework for Face Validity Assessment

Face validity, though subjective, can be assessed systematically using a structured framework. The following diagram and table outline key validity tests applied to expert-elicited models, such as Bayesian Networks.

Table 2: Applying a Validity Framework to Expert-Elicited Models

| Validity Type | Definition | Application in Expert Elicitation |

|---|---|---|

| Content Validity | The extent to which the model represents all key facets of the real-world system [25]. | Experts verify the model structure (nodes and relationships) is complete and relevant, with no critical factors missing [25]. |

| Construct Validity | The degree to which the model accurately measures the theoretical constructs it is intended to represent [25]. | Experts assess whether the model's conceptual framework and the discretization of node states are meaningful and appropriate [25]. |

| Convergent Validity | The model's outputs align with other methods or models measuring the same construct [25]. | Expert-derived model predictions are compared with known empirical data or outputs from established models for similar scenarios. |

| Discriminant Validity | The model can distinguish between different scenarios or populations where differences are expected [25]. | Experts review model outputs for a range of inputs to confirm it produces meaningfully different and clinically plausible results. |

This framework allows for a partitioned examination of uncertainty, ensuring that the model's structure, discretization, and parameterization are valid before its overall behavior is trusted [25].

The Scientist's Toolkit: Key Reagents and Materials

Conducting a rigorous expert elicitation requires both methodological and practical tools. The table below lists essential "research reagents" for the process.

Table 3: Essential Materials for Expert Elicitation Exercises

| Item | Function | Example/Description |

|---|---|---|

| Evidence Dossier | A pre-read document to align expert understanding and provide a common evidence base [27] [26]. | Contains summaries of relevant clinical data, literature, model specifications, and clear definitions of QoIs. |

| Structured Elicitation Protocol | The formal methodology governing the process to minimize bias [26]. | A predefined guide (e.g., SHELF or IDEA) detailing steps for individual estimation, discussion, and aggregation. |

| Training Materials | Resources to familiarize experts with probabilistic thinking and the elicitation process. | Slides or exercises on interpreting probabilities, quantifying uncertainty, and avoiding common cognitive heuristics. |

| Elicitation Instrument | The tool used to capture expert judgments. | Could be a software interface, a calibrated probability scale, or paper forms for specifying probability distributions. |

| Facilitator's Guide | A script or checklist for the session facilitator to ensure neutrality and protocol adherence. | Includes key questions to prompt discussion, timekeeping notes, and techniques for managing dominant personalities. |

| Validation Framework | A set of criteria to assess the quality and validity of the elicited judgments and the resulting model [25]. | The multi-dimensional framework (Table 2) used to test content, construct, convergent, and discriminant validity. |

The field of expert elicitation is being influenced by advances in Artificial Intelligence (AI). Emerging research explores the potential of Large Language Models (LLMs) to assist in, or serve as a proxy for, certain aspects of expert elicitation. Initial studies suggest that LLMs can generate causal structures like Bayesian Networks with high precision (low entropy), though they may also introduce "hallucinated" dependencies or reflect biases from their training data [28]. This suggests a future where AI systems could help draft initial model structures or identify potential biases in human judgments, though rigorous prospective validation against human expertise and clinical data remains essential [29] [28].

Systematic Protocols for Evaluating Model Outputs and Behaviors

In computational social science, healthcare, and drug development, simulation models have become indispensable for understanding complex systems, predicting outcomes, and informing policy decisions. These models range from traditional Agent-Based Models (ABMs) to the emerging class of Large Language Model (LLM)-powered generative simulations. However, their scientific utility depends entirely on rigorous evaluation of their outputs and behaviors. Within a broader thesis on face validity in computer simulation research, this guide establishes systematic protocols for model evaluation. Face validity—the superficial plausibility that a model represents reality—serves as a foundational but insufficient first step in a comprehensive validation framework. Evaluation must progress beyond superficial checks to establish empirical grounding, especially as LLM-integrated models introduce new challenges of stochasticity, cultural bias, and black-box opacity that complicate validation efforts [10]. This technical guide provides researchers, scientists, and drug development professionals with structured methodologies, quantitative benchmarks, and visualization tools to implement robust evaluation protocols, ensuring model reliability and trustworthiness for critical decision-making.

Foundational Concepts: VVE and Face Validity

A systematic approach to model assessment requires clear conceptual distinctions. The framework of Verification, Validation, and Evaluation (VVE) provides a structured methodology for assessing modeling methods, each addressing a distinct quality aspect [30]:

- Verification addresses "Am I building the method right?" It is the process of ensuring the computational model correctly implements its intended design and specifications, focusing on internal correctness and code quality.

- Validation addresses "Am I building the right method?" It assesses whether the model accurately represents the real-world system it is intended to simulate, establishing the credibility of its outputs.

- Evaluation addresses "Is my method worthwhile?" It determines the model's utility, effectiveness, and fitness for purpose within its specific application context [30].

Within this framework, face validity constitutes an initial, subjective assessment of whether a model's behavior and outputs appear plausible to domain experts. While easily critiqued for its subjectivity, face validity serves as a crucial first filter in model development, often prompting further, more rigorous validation. The integration of LLMs into agent-based modeling, for example, may enhance perceived behavioral realism (face validity) while potentially exacerbating challenges in empirical grounding and validation due to their black-box nature [10].

Table 1: Core Components of Model VVE

| Component | Core Question | Focus Area | Primary Methods |

|---|---|---|---|

| Verification | Am I building the method right? | Internal correctness & code | Debugging, unit testing, code review [30] |

| Validation | Am I building the right method? | Correspondence to real world | Face validation, empirical comparison, calibration [30] |

| Evaluation | Is my method worthwhile? | Utility & effectiveness | Cost-benefit analysis, impact assessment [30] |

Current Evaluation Challenges in Modern Modeling Paradigms

Traditional Agent-Based Models

Agent-Based Models have historically struggled with empirical grounding. Critics highlight a tendency to oversimplify human behavior and construct models based on ad-hoc intuitions rather than robust empirical data or established theory. Without standardized validation practices, concerns about reliability, reproducibility, and generalizability persist, limiting ABM adoption in mainstream social science [10].

Generative and LLM-Integrated Models

The advent of Large Language Models has revitalized ABMs through "generative simulations," where agents can plan, reason, and interact via natural language. While offering greater expressive power, these LLM-powered models introduce novel evaluation challenges [10]:

- Black-Box Structure: The internal decision-making processes of LLMs are often opaque, complicating interpretability and mechanistic understanding.

- Cultural Biases: LLMs trained on extensive corpora can perpetuate and amplify existing cultural and social biases present in the training data, potentially skewing simulation outcomes [10] [31].

- Stochastic Outputs: The inherent randomness in LLM generations creates challenges for reproducibility and reliability testing [10].

- Validation Gaps: A review of LLM-based health coaches found the evaluation landscape "fragmented and methodologically weak," with a median Evaluation Rigor Score of just 2.5 out of 5, highlighting significant methodological shortcomings [32].

Systematic Evaluation Protocols and Experimental Methodologies

A Framework for Comprehensive Model Evaluation

Implementing a rigorous evaluation strategy requires a multi-faceted approach that moves progressively from basic checks to complex, real-world validation. The following workflow outlines key stages:

Core Evaluation Methodologies

Verification Protocols

- Unit Testing for Model Components: Isolate and test individual agent behaviors, interaction rules, and environmental functions.

- Sensitivity Analysis: Systematically vary input parameters to assess their impact on outputs and identify critical dependencies.

- Boundary Testing: Evaluate model behavior under extreme or edge-case conditions to uncover instability or logical errors.

Face Validation Protocols

- Expert Elicitation: Engage domain experts (e.g., clinical researchers, pharmacologists) to review model structures, assumptions, and output behaviors for plausibility.

- Scenario Walkthroughs: Present detailed model trajectories to experts who assess whether the sequences of events and emergent patterns align with real-world expectations.

- Pattern Validation: Compare qualitative patterns generated by the model (e.g., population distributions, response curves) with known phenomena from the target domain.

Empirical Validation Protocols

- Historical Data Validation: Compare model predictions with historical datasets not used during model development.

- Cross-Validation: Employ k-fold or leave-one-out validation techniques to assess model generalizability.

- Out-of-Sample Testing: Reserve a portion of empirical data for exclusive use in final validation.

- Parameter Estimation: Find parameter values that best account for real behavioral data, using these parameters to succinctly summarize datasets and investigate individual differences [33].

Performance Benchmarking

- Metric Selection: Choose appropriate quantitative metrics aligned with model purpose (e.g., accuracy, recall, WSS@95, ATD).

- Comparative Analysis: Benchmark against established models or baseline approaches.

- Efficiency Assessment: Evaluate computational requirements, training time, and resource utilization, especially important for resource-intensive LLMs [31].

Table 2: Quantitative Metrics for Model Evaluation

| Metric Category | Specific Metrics | Application Context | Interpretation Guidelines |

|---|---|---|---|

| Accuracy Metrics | Recall, Precision, F1-Score, MAE, RMSE | General predictive performance | Higher values indicate better performance [34] |

| Efficiency Metrics | WSS@95, Time to Discovery (TD) | Systematic review screening, resource allocation | WSS@95: Work saved over sampling at 95% recall [34] |

| Workload Reduction | Average Time to Discovery (ATD) | Active learning models, screening prioritization | Lower ATD indicates faster discovery of relevant items [34] |

| Statistical Measures | R², Log-Likelihood, AIC, BIC | Model comparison, goodness-of-fit | Lower AIC/BIC suggests better model balancing fit and complexity [33] |

Specialized Evaluation Strategies for LLM-Based Models

Addressing LLM-Specific Challenges

Evaluating LLM-integrated models requires specialized approaches beyond traditional validation:

- Bias and Fairness Audits: Implement rigorous testing across demographic groups and scenarios to detect discriminatory outputs or unfair biases [31].

- Factual Consistency Checks: Verify the accuracy of generated content against trusted knowledge sources.