From Battlefields to Breakthroughs: How the World Wars Forged Modern Pharmaceutical Chemistry

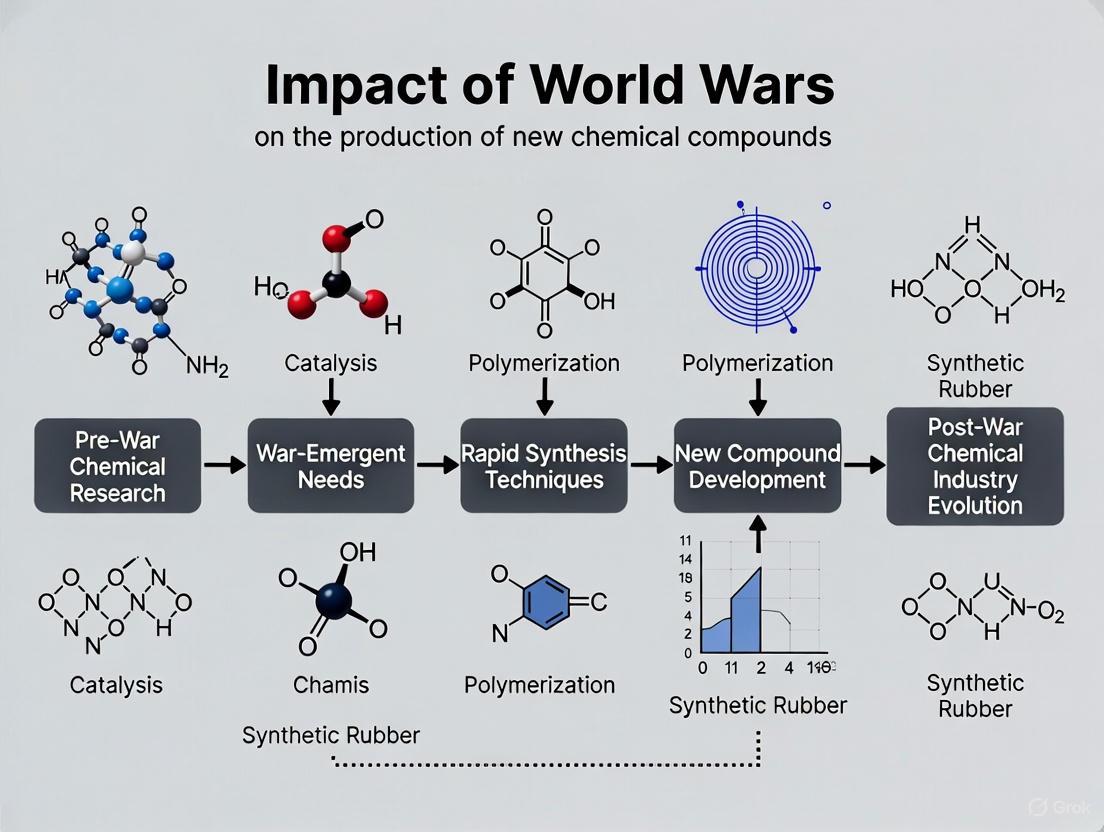

This article explores the profound and lasting impact of the two World Wars on the development of new chemical compounds, with a specific focus on implications for drug development.

From Battlefields to Breakthroughs: How the World Wars Forged Modern Pharmaceutical Chemistry

Abstract

This article explores the profound and lasting impact of the two World Wars on the development of new chemical compounds, with a specific focus on implications for drug development. It examines how the urgent demands of total war catalyzed the industrial-scale production of novel chemicals, from warfare agents to life-saving medicines. The analysis covers the foundational science of war-driven chemistry, the methodological shifts in large-scale production and research, the troubleshooting of efficacy and safety issues, and a comparative validation of these innovations against pre-war capabilities. For researchers and scientists, this historical overview provides critical insights into how crisis-driven innovation can accelerate chemical and pharmaceutical progress, shaping the modern landscape of drug discovery and production.

The Chemist's War: Foundational Discoveries in Chemical Synthesis and Production

The period of the World Wars marked a transformative era for chemical science, channeling industrial and academic research toward the development of novel chemical compounds for warfare. The First World War, in particular, witnessed the first large-scale deployment of chemical weapons, moving their production from laboratory curiosities to industrialized manufacturing processes [1]. This shift was facilitated by the advanced state of European chemical industry, which provided the necessary expertise, infrastructure, and production capacity [2] [3]. The genesis of industrial chemical warfare during this period represents a pivotal moment in the relationship between scientific progress, industrial capacity, and military application, creating a legacy that would influence chemical research, drug development, and international policy for decades to follow.

Historical Context and Deployment

The First World War created a stalemate on the Western Front that military leaders sought to break through technological innovation. Chemical weapons emerged as a potential solution, offering a means to incapacitate entrenched defenders and create breakthroughs in fortified lines [4]. Germany, possessing the world's most advanced chemical industry at the time, took the lead in this new form of warfare under the scientific direction of Fritz Haber of the Kaiser Wilhelm Institute for Physical Chemistry and Electrochemistry in Berlin [3] [1].

The first significant deployment of chemical weapons occurred on April 22, 1915, near Ypres, Belgium, when German specialist troops opened 5,730 cylinders containing approximately 168 tons of chlorine gas [2] [5] [1]. The resulting greenish-yellow cloud drifted toward French and Algerian positions, causing approximately 1,100 fatalities and 4,000 injuries through asphyxiation and respiratory damage [1] [6]. This attack marked a turning point in military history, representing the first successful large-scale use of a traditional weapon of mass destruction [1].

The table below summarizes the key chemical agents deployed during World War I and their impact:

Table 1: Key Chemical Warfare Agents of World War I

| Agent | First Major Use | Primary Inventor/Program | Physiological Classification | Total Casualties (WWI) | Fatalities (WWI) |

|---|---|---|---|---|---|

| Chlorine | April 1915 at Ypres | Fritz Haber (Germany) | Lung irritant/Choking agent | Not specified | ~1,100 in first attack [7] [1] |

| Phosgene | December 1915 at Ypres | Fritz Haber (Germany) | Lung injurant/Choking agent | Responsible for 85% of gas fatalities [7] [3] | ~90,000 total gas fatalities [1] |

| Mustard Gas | July 1917 at Ypres | Germany | Vesicant/Blistering agent | >120,000 casualties [3] | 2-3% mortality rate [7] |

The rapid escalation of chemical warfare prompted corresponding advances in defensive measures. Initial primitive protections such as urine-soaked rags quickly evolved into sophisticated filter respirators using charcoal and chemical neutralizers [5] [4]. This cycle of offensive innovation and defensive countermeasures characterized the chemical arms race throughout the war, with both sides investing significantly in research, development, and production capabilities [1].

Chemical Agent Profiles and Mechanisms of Action

Chlorine: The Prototypical Warfare Gas

Chlorine (Cl₂), a pale green diatomic gas approximately 2.5 times denser than air, was among the first chemical weapons deployed on an industrial scale [2] [1]. Its production was facilitated by the existing industrial infrastructure of German chemical companies BASF, Hoechst, and Bayer, which produced chlorine as a by-product of their dye manufacturing operations [5].

Physiological Action: When inhaled, chlorine reacts with water in the lungs to form hydrochloric acid (HCl) and hypochlorous acid (HClO), which destroy respiratory tissue and cause death by asphyxiation through chemical burns and pulmonary edema [2] [7]. The immediate irritant effects occur at concentrations as low as 3-5 parts per million (ppm), with concentrations of several hundred ppm proving rapidly fatal [2].

Chemical Properties and Limitations: Chlorine's distinct greenish color and strong bleach-like odor made detection straightforward, allowing for warning and potential evacuation [2]. Furthermore, its water solubility meant that simple countermeasures such as water-or urine-soaked cloths could provide some protection by dissolving the gas before inhalation [5].

Phosgene: The Silent Killer

Phosgene (COCl₂), a colorless gas with an odor often described as "musty hay," represented a significant advancement in chemical warfare agents due to its increased potency and stealth characteristics [7] [3]. It was six times more deadly than chlorine and presented particular danger because symptoms were often delayed for up to 48 hours after exposure [3].

Physiological Action: Phosgene reacts with proteins in the pulmonary alveoli, disrupting the blood-air barrier and leading to suffocation through gradual accumulation of fluid in the lungs (pulmonary edema) [7]. The minimal immediate effects are lachrymatory, with severe respiratory distress developing hours after exposure [7].

Industrial Significance: Beyond its use as a weapon, phosgene served as an important industrial reagent and precursor to pharmaceuticals and other organic compounds, illustrating the dual-use nature of many chemical warfare agents [7].

Mustard Gas: The Persistent Vesicant

Mustard gas (bis(2-chloroethyl) sulfide), first deployed in July 1917, differed fundamentally from earlier chemical agents through its persistent vesicant (blistering) properties and multifactorial mechanism of action [3] [8]. In pure form, it is a colorless, oily liquid, but when used in warfare, it typically appeared yellow-brown with a garlic or horseradish-like odor [7] [8].

Physiological Action: Mustard gas acts as a potent alkylating agent, dissolving in skin and then producing severe chemical burns and blisters, particularly in moist areas of the body [8] [1]. Its effects are typically delayed for several hours, and by the time symptoms appear, preventative measures are ineffective [8]. The mortality rate was relatively low (2-3%), but those exposed suffered prolonged hospitalizations and increased cancer risk [7] [8].

Chemical Stability and Persistence: With a boiling point of 215-217°C and low solubility in water, mustard gas could persist in the environment for weeks, contaminating terrain and posing ongoing hazards [8] [1].

Table 2: Physicochemical Properties of Major Chemical Warfare Agents

| Property | Chlorine | Phosgene | Mustard Gas |

|---|---|---|---|

| Chemical Formula | Cl₂ | COCl₂ | C₄H₈Cl₂S or (ClCH₂CH₂)₂S |

| Molecular Weight | 70.9 g/mol | 98.92 g/mol | 159.07 g/mol |

| Physical State at Room Temp | Gas | Gas | Oily Liquid |

| Vapor Density (Air=1) | 2.5 [1] | 3.5 [1] | 5.5 (vapor) [1] |

| Odor | Bleach-like [3] | Musty Hay [3] | Garlic, Horseradish [7] |

| Rate of Action | Immediate (minutes) | Delayed (up to 48 hours) [3] | Delayed (2-24 hours) [8] |

Experimental Protocols and Molecular Mechanisms

Mustard Gas Synthesis: Levinstein Process

The primary industrial method for mustard gas production during WWI was the Levinstein Process, which involved the reaction of ethylene with sulfur dichloride [9] [8]. The fundamental reactions are as follows:

Addition of sulfur dichloride to ethylene to form 2-chloroethylsulfenyl chloride: SCl₂ + CH₂=CH₂ → ClCH₂CH₂SCl

Addition of this intermediate to a second molecule of ethylene: ClCH₂CH₂SCl + CH₂=CH₂ → (ClCH₂CH₂)₂S

The resulting Levinstein mustard gas contained approximately 70% sulfur mustard with various impurities, but physiological tests showed no appreciable difference in vesicant activity compared to purified material [9].

Hydrolysis Kinetics of Sulfur Mustard

The mechanism and kinetics of sulfur mustard hydrolysis have been extensively studied to understand its environmental persistence and biological activity [9]. The reaction proceeds through a transient cyclic sulfonium cation, which then reacts with water:

- Formation of cyclic sulfonium cation intermediate

- Reaction with water to form 2-chloroethyl-2-hydroxysulfide and a hydrogen ion

- Repeat sequence to form thiodiglycol

The rate constant for hydrolysis is markedly dependent on temperature and the presence of chloride ions, which retard the hydrolysis rate without altering reaction products [9]. At greater substrate concentrations, the reaction becomes more complex, with initial products reacting with the sulfonium cation to form dimeric sulfonium cations with their own notable toxicity [9].

DNA Alkylation and Cytotoxicity Mechanisms

The consensus scientific view holds that the cytotoxicity of sulfur mustard is primarily due to alkylation of DNA, with evidence first obtained in the late 1940s from studies with bacteria and transforming DNA [9]. The mechanism involves:

Alkylation Specificity: Sulfur mustard preferentially alkylates purine bases in DNA, primarily at the N-7 position of guanine and N-1 position of adenine, though reactions with O-6 and N-2 of guanine and N-6 of adenine have also been reported [9].

Cross-link Formation: Due to its bifunctional nature, sulfur mustard can form interstrand cross-links between guanine bases in the DNA double helix, preventing strand separation during replication and transcription [9].

Cellular Consequences: The interstrand cross-links produced by sulfur mustard represent the lesion that produces lethality at the lowest frequency of occurrence. However, cell death from this lesion is delayed until the cell attempts DNA replication or division [9].

Diagram 1: Mustard Gas Mechanism of Cellular Toxicity

The Scientist's Toolkit: Key Research Reagents and Methodologies

The study of chemical warfare agents requires specific reagents and methodologies to understand their mechanisms and develop countermeasures. The following toolkit outlines essential materials and their research applications:

Table 3: Essential Research Reagents for Chemical Agent Studies

| Reagent/Material | Chemical Composition | Research Application | Mechanistic Function |

|---|---|---|---|

| Thiodiglycol | (HOCH₂CH₂)₂S | Mustard gas biomarker detection [9] [8] | Hydrolysis product used to confirm exposure through urine analysis |

| N-Acetyl-L-Cysteine (NAC) | C₅H₉NO₃S | Apoptosis inhibition studies [10] | Protects actin filaments from reorganization by mustard gas |

| Sodium Thiosulfate | Na₂S₂O₃₅ | Decontamination solution [8] | Nucleophile that reacts with mustard agent to form non-toxic products |

| Competitive Nucleophiles | Various (e.g., amines, thiols) | Reaction kinetics studies [9] | Quantitative measurement of sulfonium ion reactivity |

| Bleaching Powder | Ca(ClO)₂ | Surface decontamination [1] | Oxidizing agent that neutralizes mustard gas |

| Charcoal | C | Gas mask filtration [5] [4] | Adsorbent for chemical agent vapors |

| Silver Sulfadiazine | C₁₀H₉AgN₄O₂S | Treatment of skin lesions [8] | Antimicrobial for preventing infection of chemical burns |

Impact on Chemical Research and Pharmaceutical Development

The industrial-scale production of chemical warfare agents during the World Wars had profound and lasting impacts on chemical research and pharmaceutical development:

Acceleration of Industrial Chemical Processes

The war effort necessitated rapid scaling of chemical production, leading to innovations in industrial chemistry. Germany's annual production of chlorine reached approximately 55 million metric tons by the war period, making it one of the ten most produced chemicals in the United States [2]. This massive scaling of production capabilities directly transferred to postwar chemical manufacturing and pharmaceutical synthesis.

From Chemical Warfare to Chemotherapy

The discovery that sulfur mustards caused bone marrow suppression and lymphocytotoxicity led directly to the development of nitrogen mustards as chemotherapeutic agents [9] [10]. Researchers recognized that the same alkylating mechanism that caused cellular damage could be harnessed to target rapidly dividing cancer cells, marking the birth of modern cancer chemotherapy.

Advancements in Toxicological Research

The need to understand the mechanisms of chemical agents accelerated developments in toxicology and biochemistry. Studies on the kinetics of mustard gas hydrolysis and its reaction with biological nucleophiles provided fundamental insights into chemical-biological interactions that informed both pharmaceutical development and safety assessment [9].

Diagram 2: Warfare Research to Pharmaceutical Applications

The genesis of industrial chemical warfare during the World Wars represents a complex legacy in the history of chemistry and pharmacology. While born from conflict and destruction, the large-scale production and mechanistic studies of compounds like chlorine, phosgene, and mustard gas ultimately contributed to significant scientific advances that transcended their military origins. The industrial processes developed for mass production of these agents formed the foundation for modern chemical manufacturing capabilities, while the investigation of their biological mechanisms provided crucial insights that enabled the development of life-saving chemotherapeutic agents. This duality illustrates the broader theme of how military research needs can drive scientific and industrial progress, with outcomes that extend far beyond their original destructive purposes to benefit pharmaceutical development and medical science.

The two World Wars of the 20th century represented pivotal turning points in the relationship between scientific research, industrial production, and military objectives. This period witnessed the systematic militarization of chemistry, transforming it from a primarily academic and commercial pursuit into a strategically directed enterprise focused on national security needs. The unprecedented scale of these conflicts necessitated the large-scale mobilization of scientific personnel, institutional reorganization of research, and massive government investment in chemical production capabilities. This whitepaper examines how this mobilization during World War I and World War II fundamentally redirected chemical research agendas, accelerated the development of new compounds, and established enduring frameworks for government-academic-industrial collaboration that continue to influence modern drug development and materials science.

The World Wars created what historians have termed "the chemists' war," particularly in reference to WWI, where the successful deployment of chemical weapons reflected the increasing sophistication of scientific and engineering practice [1]. By the time of the armistice in 1918, chemical weapons had resulted in more than 1.3 million casualties and approximately 90,000 deaths [11] [1]. This stark statistic underscores both the devastating application of chemical research and the immense resources dedicated to its development. The institutional frameworks established during this period enabled a unique environment of accelerated innovation, driven by military necessity rather than commercial markets or pure scientific curiosity.

World War I: The Genesis of Modern Chemical Warfare

The Initial Deployment of Chemical Agents

World War I marked the first systematic, large-scale application of chemical weapons in modern warfare, beginning with the German chlorine gas attack at Ypres, Belgium, on April 22, 1915. This engagement demonstrated the tactical potential of chemical agents and initiated a rapid cycle of offensive and defensive innovation that would characterize the entire conflict. On that day, German military specialists opened 6,000 steel cylinders containing 160 tons of chlorine gas, creating a dense, poisonous cloud that drifted toward Allied positions [1] [6]. Within minutes, this first successful use of lethal chemical weapons on the battlefield killed more than 1,000 French and Algerian soldiers while wounding approximately 4,000 more [1].

The technological development behind this attack was spearheaded by Fritz Haber, a prominent German chemist and future Nobel laureate, who now is often referred to as the "father of chemical weapons" [1] [3]. Haber and his team at the Kaiser Wilhelm Institute in Berlin selected chlorine gas because it was readily available from Germany's advanced dye industry and could be weaponized effectively [12]. Haber's approach exemplified the new militarization of science—he moved enthusiastically between the front lines and his research institute, solving ongoing problems in chemical agent development and deployment while organizing the German chemical warfare program [11] [1].

The Escalating Arms Race of Chemical Agents

Following the initial deployment of chlorine gas, all major combatants rapidly developed and deployed increasingly sophisticated chemical agents throughout WWI, creating a technological arms race that drew heavily on academic and industrial resources.

Table 1: Major Chemical Warfare Agents of World War I

| Agent | Chemical Composition | First Major Use | Physiological Effects | Fatalities & Casualties |

|---|---|---|---|---|

| Chlorine | Cl₂ | April 1915 by Germany | Lung irritant, asphyxiation | ~1,000 deaths in initial attack [1] |

| Phosgene | COCl₂ | December 1915 by Germany | 6x more deadly than chlorine, pulmonary edema | Responsible for 85% of chemical weapons fatalities [3] |

| Mustard Gas | (ClCH₂CH₂)₂S | July 1917 by Germany | Vesicant (blistering), delayed symptoms | Highest casualties (~120,000) but few direct deaths [3] |

| Lewisite | ClCH=CHAsCl₂ | Developed 1918 by U.S. | Vesicant, systemic poison | Mass-produced but war ended before deployment [11] |

This technological competition extended beyond offensive capabilities to include defensive measures and detection systems. By 1915, Allied forces developed rudimentary protective masks, which evolved into more sophisticated gas masks as the war progressed [6]. Nations also established specialized training programs, such as Germany's Royal Prussian Army Gas School, where soldiers learned "gas defense in the trenches," "first aid for gas illnesses," and "exercises for handling of gas masks" [6].

Institutional Mobilization for Chemical Warfare

The development and production of chemical weapons during WWI required an unprecedented mobilization of scientific resources across academic, industrial, and governmental sectors. Germany's initial leadership in this area reflected its pre-war dominance in academic and industrial chemistry, but Allied nations quickly responded with their own comprehensive mobilization efforts.

In the United States, the origins of an organized chemical warfare program emerged from the civilian sector. On February 8, 1917, Van H. Manning, director of the Bureau of Mines, offered the technical services of his agency to the Military Committee of the National Research Council [11]. This initiative evolved rapidly after the U.S. declaration of war on April 6, 1917, leading to the establishment of the National Research Council subcommittee on noxious gases tasked to "carry on investigations into noxious gases, generation, antidote for same, for war purposes" [11].

The American chemical warfare program eventually involved research at numerous prestigious universities and medical schools, including MIT, Johns Hopkins, Harvard, and Yale, in addition to leading industrial firms [11]. Directed from laboratories at American University in Washington, DC, the program encompassed investigations into "gas mask research; pyrotechnic research; small-scale manufacturing; mechanical research; pharmacological research" and related areas [11]. By July 1918, this research and development enterprise involved more than 1,900 scientists and technicians, making it the largest government research program in American history at that time [11].

Table 2: National Chemical Weapons Production in World War I

| Country | Tons Produced | Key Institutions Mobilized | Notable Scientists |

|---|---|---|---|

| Germany | 68,000 tons [6] | Kaiser Wilhelm Institute, University of Berlin | Fritz Haber, Walther Nernst |

| France | 36,000 tons [6] | University of Paris, leading medical schools | Unknown |

| Great Britain | 25,000 tons [6] | Oxford, Cambridge, University College London | Unknown |

| United States | Unknown | American University, Bureau of Mines, leading universities | James B. Conant, Wilfred Lee Lewis |

The French government took a more direct approach by militarizing the chemistry, pathology, and physiology departments of leading medical schools and institutes, while Britain enlisted scientists at Oxford, Cambridge, University College London, and other academic institutions to work on both offensive and defensive aspects of gas warfare [11]. Historians estimate that by war's end, more than 5,500 university-trained scientists and technicians and tens of thousands of industrial workers on both sides worked on chemical weapons [11].

World War II: Expanding the Model to Strategic Materials

The Synthetic Rubber Program

While chemical weapons played a less prominent role in WWII battlefield tactics, the mobilization model established during WWI expanded to address critical shortages in strategic materials, most notably in the development of synthetic rubber. With the natural rubber supply from Southeast Asia cut off at the beginning of WWII, the United States faced the loss of a material essential for military operations—a single battleship required 75 tons of rubber, a tank needed about one ton, and a military airplane used one-half ton [13].

In response to this crisis, the U.S. government established the Rubber Reserve Company (RRC) in June 1940 and eventually launched an unprecedented collaboration between government, industry, and academic researchers [13]. This consortium included the "big four" rubber companies (Firestone, B.F. Goodrich, Goodyear, and United States Rubber Company) along with petroleum companies like Standard Oil of New Jersey, working under government coordination to produce a general-purpose synthetic rubber designated GR-S (Government Rubber-Styrene) [13].

The scientific and engineering achievement was remarkable—the U.S. synthetic rubber industry expanded from an annual output of 231 tons of general purpose rubber in 1941 to 70,000 tons per month in 1945 [13]. This success demonstrated how effectively the militarized research model could be applied to strategic materials beyond weapons systems, establishing a template for large-scale directed research that would characterize much postwar industrial policy.

The Penicillin Program

Another significant example of WWII scientific mobilization was the crash program to mass-produce penicillin, which represented a different application of the militarized chemistry model—addressing medical rather than tactical needs. When the war began, penicillin remained a laboratory curiosity with no commercial production method, despite its recognized antibacterial potential [14].

The U.S. government established a broad coalition involving multiple agencies, including the Office of Scientific Research and Development and the War Production Board (WPB), which coordinated efforts across 21 companies, 5 academic groups, and several government agencies [14]. Unlike traditional commercial development, this project emphasized open exchange of scientific information rather than proprietary control. The WPB worked "to circulate new information among its participants, lifting restrictions on scientific exchange induced by property rights" and even obtained exemptions from the Justice Department allowing companies to pool technical information without antitrust concerns [14].

The results were dramatic—where laboratory scientists initially could only produce minimal amounts of crude, unstable penicillin, by January 1945 U.S. production had soared to 4 million sterile packages per month, with the WPB releasing penicillin for commercial distribution to the public in March 1945 [14]. This success created the foundation for the modern antibiotic industry and demonstrated how government-directed collaboration could accelerate pharmaceutical development by years or even decades.

Methodological Approaches: Experimental Protocols and Research Tools

Chemical Agent Development and Testing Protocols

The rapid development of chemical agents during WWI followed systematic methodologies that established patterns for later military-directed research. The protocol for developing new chemical warfare agents typically involved multiple stages:

Compound Identification and Synthesis: Researchers systematically investigated toxic compounds available from industrial processes, particularly Germany's dye industry which provided chlorine and other potential warfare agents [12]. Later, more targeted synthesis programs emerged, such as the U.S. development of lewisite (β-chlorovinyldichloroarsine) by Wilfred Lee Lewis, which was mass-produced under the direction of chemist James B. Conant [11].

Efficacy Testing: Initial screening evaluated physiological effects on animal models, followed by controlled field tests to determine dispersal characteristics and environmental persistence. For example, Germany's first experimental work on chemical agents in late 1914, at the suggestion of University of Berlin chemist Walther Nernst, quickly produced an effective tear gas artillery shell [11].

Delivery System Development: Engineers worked to develop effective dispersal mechanisms, beginning with simple cylinder-based systems and advancing to specialized artillery shells and mortars. The Germans developed "projectors made from recalibrated 180 millimeter mortars with the capacity to launch between three to four gallons of chemical agent a distance of one to two miles" [12].

Defensive Countermeasure Development: Parallel programs focused on protective equipment including gas masks and protective clothing, as well as detection systems and decontamination procedures [6].

The Scientist's Toolkit: Key Research Reagents and Materials

The chemical warfare research conducted during the World Wars relied on specialized materials and reagents that formed the essential toolkit for investigators in this field.

Table 3: Essential Research Materials for Chemical Warfare Development

| Material/Reagent | Function | Application Example |

|---|---|---|

| Chlorine Gas (Cl₂) | Primary warfare agent, industrial chemical | First major chemical weapon deployed at Ypres [1] [3] |

| Phosgene (COCl₂) | More potent successor to chlorine | Responsible for 85% of chemical weapons fatalities in WWI [3] |

| Sulfur Mustard ((ClCH₂CH₂)₂S) | Persistent vesicant (blistering agent) | Caused the highest number of chemical casualties in WWI [3] |

| Lewisite (ClCH=CHAsCl₂) | Arsenic-based vesicant | Developed by U.S. in 1918 as potential new warfare agent [11] |

| Sodium Thiosulfate | Reducing agent for decontamination | Used in early protective solutions against chlorine [6] |

| Activated Charcoal | Adsorbent for gas masks | Critical component in respiratory protection systems [6] |

| Bleaching Powder | Oxidizing agent for decontamination | Used to neutralize mustard gas and other agents [1] |

Organizational Frameworks: Diagramming the Research Infrastructure

The militarization of chemistry during the World Wars required creating new organizational structures that could direct scientific research toward military objectives. The following diagram illustrates the integrated system that emerged, particularly in the United States, showing the relationships between governmental, academic, and industrial components.

Diagram 1: Organizational Structure of U.S. Chemical Mobilization

This organizational framework enabled unprecedented coordination across sectors that had previously operated independently. The government agencies served as both strategic directors and coordinators, ensuring that academic research addressed militarily relevant problems while industrial partners focused on scalable production methods.

The research and development workflow for specific chemical programs followed a systematic progression from basic research to mass production, as illustrated in the following diagram of the penicillin development process.

Diagram 2: Penicillin Development Workflow

Impact and Legacy: Transformation of Chemical Research

The militarization of academic and industrial chemistry during the World Wars created lasting impacts that transformed the practice and organization of chemical research in the postwar period:

Institutionalization of Large-Scale Collaborative Research: The success of the wartime research model established precedents for large-scale, mission-directed research projects that would characterize postwar science policy, including nuclear energy, space exploration, and later, initiatives like the Human Genome Project.

Transformation of the Pharmaceutical Industry: The penicillin program demonstrated the potential of systematic antibiotic development and created the template for postwar pharmaceutical research, transitioning the industry from small-scale operations to large, research-intensive enterprises [14].

Establishment of the Petrochemical Industry: The synthetic rubber program cemented the relationship between petroleum feedstocks and chemical production, establishing the foundation for the modern petrochemical industry [13].

Ethical Reconsiderations in Scientific Research: The development of chemical weapons, particularly following WWII, prompted ongoing debates about the ethical responsibilities of scientists and the appropriate boundaries between military and civilian research [3].

Creation of the Military-Academic-Industrial Complex: The organizational frameworks developed during the World Wars evolved into the permanent infrastructure of government-funded research that continues to influence scientific priorities and funding patterns to the present day.

The chemistries mobilized for warfare—whether destructive agents like mustard gas or beneficial products like penicillin and synthetic rubber—shared common developmental pathways rooted in the unique pressures and resources of wartime mobilization. This period represents a fundamental transformation point where chemical research became systematically aligned with national objectives, establishing patterns of innovation that would dominate much of 20th-century science and technology policy. For contemporary researchers and drug development professionals, understanding these historical foundations provides essential context for modern research ecosystems and their inherent tensions between scientific autonomy, commercial application, and public purpose.

The World Wars acted as a profound catalyst for industrial chemistry, compressing decades of peacetime research and development into a few years to meet urgent strategic needs. This period was defined by the intertwined activities of key individuals like Fritz Haber, corporate entities like IG Farben, and research institutions like the Kaiser Wilhelm Institute. Their collaboration led to groundbreaking technological advances, most notably the large-scale synthesis of ammonia and the development of chemical warfare, which fundamentally altered global food production and the conduct of war. This guide details the technical methodologies, institutional frameworks, and lasting impacts of these developments, providing a comprehensive resource for understanding this pivotal era in chemical research.

The outbreak of World War I presented Germany with an immediate and severe strategic challenge: a naval blockade that cut off vital supplies of raw materials, most notably Chilean saltpeter (sodium nitrate), which was essential for both fertilizers and the production of explosives [15] [16]. This crisis created an unprecedented demand for the synthetic production of key chemical compounds. In response, a powerful triad formed between ambitious scientists, state-supported research institutes, and large-scale industry. The Kaiser Wilhelm Institute for Physical Chemistry and Electrochemistry, under the leadership of Fritz Haber, became the central research hub for these efforts [17] [18]. The industrial conglomerate IG Farben, in turn, was responsible for scaling these discoveries to an industrial level, most famously with the Haber-Bosch process [19]. This collaboration between state, science, and industry not only sustained the German war effort but also laid the foundation for the modern chemical industry, demonstrating how national crisis can dramatically accelerate and redirect scientific research.

Key Institutional and Individual Profiles

Fritz Haber: The Scientist-Patriarch

Fritz Haber (1868–1934) was a German physical chemist whose work epitomizes the dual-use nature of chemical innovation. His legacy is a complex mixture of profound benefit and devastating application.

Scientific Contributions: In 1909, Haber achieved the catalytic synthesis of ammonia (NH₃) from nitrogen (N₂) and hydrogen (H₂) gases [16]. This process, which utilized high pressure (185 atmospheres) and high temperature (500-600°C) over a catalyst such as osmium or uranium, solved the critical problem of nitrogen fixation [16] [20]. For this work, which Carl Bosch later scaled industrially, Haber was awarded the Nobel Prize in Chemistry in 1918 [15] [20].

Wartime Role: Driven by intense patriotism, Haber redirected his institute entirely to the German war effort after 1914 [17] [18]. He is often called the "father of chemical warfare" for his pivotal role in developing and deploying weaponized chlorine gas [15] [21]. His personal motto, "In peace for mankind, in war for the fatherland," succinctly captures this ethical dichotomy [17].

Table 1: Key Figures in German Wartime Chemistry

| Name | Role & Affiliation | Key Contribution | Impact |

|---|---|---|---|

| Fritz Haber | Director, Kaiser Wilhelm Institute for Physical Chemistry [17] | Invention of the ammonia synthesis process; development and deployment of chemical weapons [17] [15] | Enabled Germany's prolonged war effort; revolutionized agriculture; introduced modern chemical warfare [16] |

| Carl Bosch | BASF/IG Farben [19] | Industrial scale-up of the Haber process (Haber-Bosch process) [15] | Made mass production of synthetic fertilizers and explosives feasible [15] |

| James Franck | Department Head, Kaiser Wilhelm Institute [18] | Research on atomic collisions; Nobel Prize in Physics (1925) [18] | Advanced fundamental understanding of atomic physics |

Kaiser Wilhelm Institute: The Research Engine

The Kaiser Wilhelm Institute for Physical Chemistry and Electrochemistry (founded 1911) was a premier research institution that became a central laboratory for Germany's wartime chemical research [22] [18].

Wartime Mobilization: At the outbreak of war, the institute was placed under military control and its research was almost entirely dedicated to the war effort [18]. Under Haber's direction, it transformed into the central development and testing facility for chemical weapons and protective measures like gas masks [17] [18].

Scale of Operations: The institute's staff grew exponentially during the war, forming a team of over 1,000 scientists and assistants working on warfare-related projects [18]. This included notable scientists like James Franck, Otto Hahn, and Herbert Freundlich [18].

IG Farben: The Industrial Powerhouse

IG Farben was a German chemical and pharmaceutical conglomerate formed in 1925 through the merger of several major companies, including BASF, Bayer, and Hoechst [19]. Its role was critical in bridging the gap between laboratory discovery and mass production.

Industrial Scale-Up: The challenge of scaling Haber's laboratory ammonia synthesis to an industrial level was immense. Carl Bosch of BASF (later part of IG Farben) led a team that conducted tens of thousands of experiments to find a commercially viable catalyst and build reactors capable of withstanding the high-pressure process [16]. This resulted in the Haber-Bosch process, a cornerstone of the modern chemical industry.

Strategic Production: During WWI, the Haber-Bosch plants at Oppau and Leuna provided Germany with the ammonia necessary for nitric acid production, which was used to make explosives and propellants, thus overcoming the Allied blockade on nitrates [16] [20].

Technical Methodologies and Breakthroughs

The Haber-Bosch Process for Ammonia Synthesis

The synthesis of ammonia was one of the most critical chemical breakthroughs of the early 20th century, driven by wartime necessity.

Experimental Protocol

The methodology for ammonia synthesis involves a high-pressure catalytic reaction.

- Feedstock Preparation: Hydrogen gas (H₂) is typically obtained through the steam reforming of methane or the gasification of coke. Nitrogen gas (N₂) is sourced from the fractional distillation of liquefied air [16].

- Gas Purification: The reactant gases must be meticulously purified to remove impurities such as sulfur compounds, oxygen, and carbon monoxide, which can poison the catalyst [20].

- Compression and Reaction: The purified gas mixture (in a 3:1 H₂ to N₂ ratio) is compressed to a high pressure, typically 150–200 atmospheres [20]. The compressed gas is then passed over a solid-bed catalyst at an elevated temperature of 400–500°C [16] [20].

- Catalyst Selection: Haber's initial laboratory setup used catalysts like osmium or uranium [16]. For industrial scale, Bosch's team developed a promoted iron catalyst, often referred to as "dirty iron," which is a mixture of iron oxide (Fe₃O₄) with promoters such as potassium oxide and aluminum oxide [16].

- Ammonia Recovery: The effluent gas from the reactor contains a percentage of ammonia, which is cooled and liquefied for separation from the unreacted hydrogen and nitrogen gases. The unreacted gases are recycled back into the reactor to improve overall yield [20].

The Scientist's Toolkit: Key Research Reagents and Materials

Table 2: Essential Materials for Ammonia Synthesis

| Material/Reagent | Function in the Process |

|---|---|

| Nitrogen Gas (N₂) | Primary reactant, sourced from the atmosphere. |

| Hydrogen Gas (H₂) | Primary reactant, typically derived from natural gas or coal. |

| Promoted Iron Catalyst | A solid catalyst that lowers the activation energy required for the reaction between N₂ and H₂, enabling practical reaction rates at moderate temperatures [16]. |

| High-Pressure Reactor | A specially designed steel vessel (converter) capable of withstanding extreme pressures and temperatures, which are necessary to achieve a favorable equilibrium concentration of ammonia [20]. |

Development and Deployment of Chemical Weapons

The stalemate of trench warfare during WWI prompted the development of a new class of weaponry designed to overcome entrenched defenses.

Experimental Protocol: Chlorine Gas Cloud Attack

The first large-scale use of chemical weapons followed a specific military-operational protocol.

- Chemical Agent Selection: Chlorine (Cl₂) was chosen as the first agent because it is a dense, heavier-than-air gas that would flow into enemy trenches, causing suffocation through pulmonary edema [17].

- Delivery System Preparation: Approximately 5,730 steel cylinders (1,600 large and 4,130 small) were filled with liquefied chlorine and manually emplaced along a 6 km segment of the German front line near Ypres [17]. The cylinders were dug in upright, shielded with sandbags, and fitted with lead pipes directed toward Allied positions [17].

- Meteorological Assessment: The release was entirely dependent on wind conditions. Scientists, including meteorologists, were integrated into the gas units to advise on the optimal time for release. The attack at Ypres was preceded by seven aborted attempts due to unfavorable winds [17].

- Weapon Deployment: On April 22, 1915, at 17:00 GMT, the valves on the cylinders were opened simultaneously, releasing an estimated 150 tons of chlorine [17]. The gas formed a greenish-yellow cloud that drifted into French and Algerian positions.

- Tactical Evaluation: The attack caused widespread panic and significant casualties, with an estimated 5,000 injuries and 1,000 deaths, and created a major breach in the Allied line [17]. However, the German military was unprepared to fully exploit this tactical advantage, revealing the weapon's limitations as a decisive strategic tool [17].

Diagram: Chemical Weapon Deployment at Ypres (1915)

The Scientist's Toolkit: Chemical Warfare Agents

Table 3: Early Chemical Warfare Agents and Their Effects

| Agent (Code Name) | Chemical Composition | Physiological Effect | Limitations & Countermeasures |

|---|---|---|---|

| Chlorine (Cl₂) | Diatomic chlorine gas | Pulmonary irritant; causes suffocation through lung tissue damage and edema [17]. | Highly dependent on wind direction and speed; relatively easy to detect by sight and smell [17]. |

| Xylyl Bromide (T-Stoff) | C₆H₄(CH₃)CH₂Br | Potent lachrymator (tear-inducing agent) [17]. | Low vapor pressure in cold weather, rendering it ineffective on the Eastern front [17]. |

| Phosgene (COCl₂) | Carbonyl chloride | Severe pulmonary irritant, causing delayed-onset fatal pulmonary edema. | More lethal than chlorine but with a less immediate symptom onset, complicating tactical assessment. |

Quantitative Impact Assessment

The industrial and military chemical innovations of the World War era had quantifiable impacts on both the war efforts and global industry.

Table 4: Quantitative Impact of Wartime Chemical Innovations

| Parameter | Wartime Impact & Scale | Post-War Legacy & Global Significance |

|---|---|---|

| Ammonia Production | Enabled German munitions production after the blockade cut off Chilean saltpeter; Oppau and Leuna plants were critical [16] [20]. | An estimated one-third of annual global food production now relies on nitrogen fertilizers from the Haber-Bosch process, supporting nearly half the world's population [21]. |

| Chemical Warfare | First use at Ypres (1915): 150 tons of chlorine released, causing ~1,000 deaths and ~5,000 injuries [17]. Overall, chemical weapons killed ~92,000 and injured ~1.3 million in WWI [16]. | Led to the 1925 Geneva Protocol banning chemical weapons; established a new, horrific paradigm in warfare that persists as a global security concern. |

| Institutional Scale | Kaiser Wilhelm Institute staff grew to over 1,000 people during WWI [18]. | Evolved into the Fritz Haber Institute of the Max Planck Society (1953), continuing foundational research in physical chemistry and surface science [22] [23]. |

The collaboration between Fritz Haber, the Kaiser Wilhelm Institute, and IG Farben during the World Wars represents a paradigm of crisis-driven innovation. The imperative to overcome strategic shortages led to technological leaps, most notably the Haber-Bosch process, which permanently altered global agriculture and demographics. Simultaneously, the application of scientific research to chemical warfare introduced a new dimension of destruction, creating an enduring ethical dilemma for the scientific community. The legacy of this period is thus a dual one: foundational technologies that underpin modern civilization, born from an era of immense conflict and moral complexity. This history serves as a powerful case study on the profound responsibility that accompanies scientific and technological prowess.

The two World Wars of the twentieth century represented a pivotal transformation point for the chemical industry, particularly in Germany. What began as a sophisticated dye production infrastructure rapidly evolved into a centralized weapons development and manufacturing apparatus capable of altering modern warfare. This transition from civilian to military chemical production exemplifies how scientific innovation, industrial capacity, and geopolitical ambition can converge with devastating consequences. The German experience demonstrates how specialized chemical knowledge and industrial plants can be systematically repurposed to develop and deploy novel chemical weapons on an unprecedented scale [24] [1]. This transformation had profound implications for the pace of chemical discovery, with historical analysis revealing quantifiable dips in new compound discovery during both World Wars, followed by rapid recovery within five years after each conflict ended [25].

This technical analysis examines the strategic leveraging of Germany's chemical industry for warfare, focusing on the key technological breakthroughs, industrial reorganization, and production methodologies that enabled this conversion. The assessment extends to the lasting environmental and public health consequences of these activities, which continue to demand attention nearly a century later [24]. Understanding this historical transformation provides valuable insights for researchers, scientists, and drug development professionals studying the complex relationship between scientific progress, industrial capacity, and weapons development.

The Foundation: Germany's Pre-War Chemical Industry

The remarkable transformation of Germany's chemical industry from dyes to weapons was built upon a foundation of extraordinary scientific and industrial achievement that predated World War I. By the late 19th century, Germany had established global dominance in synthetic dye production, with its eight major firms producing nearly 90% of the world supply of dyestuffs and selling approximately 80% of their production abroad [26]. This commercial success was underpinned by significant advances in organic chemistry and the strategic utilization of coal tar, previously considered a waste product, which was discovered to contain aniline suitable for producing coal-tar dyes [27].

The industry's structure evolved toward increasing consolidation through complex business arrangements. The first major step toward consolidation occurred in 1904 when Bayer, BASF, and Agfa formed a profit-pooling alliance known as the Dreibund (Triple Confederation) or "little IG" [26] [28]. This was followed by a competing alliance between Hoechst and Cassella. These alliances represented early experiments in cooperation while maintaining operational independence, setting the stage for the more comprehensive consolidation that would follow.

Three key developments established the technological bridge between dye manufacturing and weapons production:

Dual-Use Chemical Processes: Many production processes developed for dyes proved readily adaptable to military applications. For instance, chlorine gas production utilized similar electrochemical processes already employed in dye manufacturing [27].

High-Pressure Chemical Engineering: The pioneering work of Fritz Haber and Carl Bosch in developing ammonia synthesis from nitrogen and hydrogen under high pressures (the Haber-Bosch process) represented a fundamental technological breakthrough with direct military applications [27]. This process, industrialized by BASF beginning in 1913, enabled Germany to produce ammonia for both nitrate fertilizers and explosives without relying on imported saltpeter [24] [27].

Organizational Innovation: German chemical companies pioneered the model of large-scale, managerial-industrial enterprises with professional management structures and integrated research and development capabilities [26]. This organizational sophistication would prove crucial for the rapid mobilization of chemical production for warfare.

Table 1: Major German Chemical Companies Before World War I

| Company | Year Founded | Primary Specialization | Later Role in IG Farben |

|---|---|---|---|

| BASF | 1865 | Dyes, ammonia synthesis | Core company (27.4% of equity) |

| Bayer | 1863 | Dyes, pharmaceuticals | Core company (27.4% of equity) |

| Hoechst | 1863 | Dyes, pharmaceuticals | Core company (27.4% of equity) |

| Agfa | 1873 | Photographic chemicals | Participant (9% of equity) |

| Griesheim-Elektron | 1863 | Electrochemicals | Participant (6.9% of equity) |

| Weiler Ter Meer | 1861 | Aniline production | Participant (1.9% of equity) |

World War I: The Birth of Industrial Chemical Warfare

World War I marked the first systematic large-scale deployment of chemical weapons, transforming chemical warfare from a theoretical concept into a devastating battlefield reality. The conflict has been aptly described as "the chemist's war" due to the extensive mobilization of scientific and engineering resources for chemical weapons development and production [1]. The German chemical industry, with its sophisticated infrastructure and technical expertise, was uniquely positioned to lead this transformation.

The first major deployment of chemical weapons occurred on April 22, 1915, near Ypres, Belgium, when German forces released approximately 160 tons of chlorine gas from over 6,000 pre-positioned cylinders [1]. This attack, planned and executed under the direction of chemist Fritz Haber, exploited wind patterns to carry the toxic cloud across French and Algerian positions, causing approximately 1,000 fatalities and 4,000 casualties in a matter of minutes [1]. The psychological impact of this new weapon far exceeded its tactical effectiveness, generating widespread "gas fright" and forcing rapid adaptations in military doctrine and personal protection.

The scale of chemical production during World War I was staggering. Throughout the war, Germany produced approximately 47,400 metric tons of chemical warfare agents across seven dedicated production facilities [24]. This industrial effort required massive quantities of intermediate products, some of which diverted resources from civilian needs, including limited food supplies [24]. The production network encompassed both state-controlled facilities and private chemical companies operating under military direction.

Table 2: Primary Chemical Warfare Agents of World War I

| Agent | Chemical Formula | Physiological Classification | First Major Use | Total Casualties |

|---|---|---|---|---|

| Chlorine | Cl₂ | Lung injurant | April 1915, Ypres | ~90,000 deaths, >1,000,000 casualties |

| Phosgene | COCl₂ | Lung injurant | 1915 | Highly lethal, caused most gas-related deaths |

| Mustard Gas | (ClCH₂CH₂)₂S | Vesicant (blistering agent) | July 1917, Ypres | Delayed action, persistent contamination |

The development and production of these chemical weapons relied heavily on the pre-existing infrastructure and expertise of the dye industry. For instance, chlorine production utilized electrochemical processes already established in dye manufacturing, while the chemical synthesis of more complex agents like mustard gas drew directly on organic chemistry expertise developed for dye research [27]. This synergy between dye chemistry and weapons development established a pattern that would continue and intensify in the subsequent decades.

Key Experimental Protocols and Methodologies

The rapid development of chemical weapons during WWI depended on standardized experimental approaches and production methodologies:

Protocol 1: Large-Scale Chlorine Production and Deployment

- Objective: Industrial-scale production and battlefield delivery of chlorine gas

- Method: Electrolytic production of chlorine using processes adapted from dye industry; compression and liquefaction for storage in steel cylinders; battlefield deployment via wind-dependent release from trench-positioned cylinders

- Key Parameters: Purity >98%; storage pressure 6-8 atm; meteorological assessment of wind patterns and velocity; concentration thresholds of 2.53 mg/L for 30-minute exposure (lethal to 50% of subjects) [1]

- Safety Considerations: Limited protective equipment for deploying forces; dependence on wind stability to prevent backflow

Protocol 2: Synthesis and Weaponization of Mustard Gas

- Objective: Development of persistent vesicant agent with delayed symptoms

- Method: Direct reaction of ethylene with sulfur chloride (Levinstein process); purification through distillation; deployment in artillery shells for area denial

- Key Parameters: Optimal concentration 0.07 mg/L for 30-minute exposure (lethal); persistency 24 hours in open, 1 week in woods; decontamination via bleaching powder (3% solution) or sodium sulfide [1]

- Characterization: Odor of garlic or horseradish; vapor density 5.5 (heavier than air); dissolution in skin followed by delayed burns

The Interwar Period: Consolidation and Preparation

The period between World Wars witnessed the further consolidation and strategic realignment of Germany's chemical industry, creating an even more powerful and centralized structure primed for military mobilization. The most significant development was the formation of IG Farbenindustrie AG on December 2, 1925, through the merger of six major German chemical companies: BASF, Bayer, Hoechst, Agfa, Griesheim-Elektron, and Weiler-ter-Meer [26]. This consolidation created the world's largest chemical and pharmaceutical conglomerate, with unprecedented technical and financial resources.

IG Farben's organizational structure balanced centralized policy-making with decentralized operations. The company divided production into five industrial zones (Upper Rhine, Middle Rhine, Lower Rhine, Middle Germany, and Berlin) and established specialized technical commissions to oversee different product ranges [28]. This sophisticated management structure enabled efficient resource allocation and strategic planning while maintaining operational flexibility. By 1938, IG Farben employed approximately 218,090 people and maintained an extensive international network of trust arrangements and interests [26].

Throughout the 1930s, IG Farben underwent a process of political alignment with the rising Nazi regime. Despite initial tensions—the company had been accused by Nazis of being an "international capitalist Jewish company" in the 1920s—IG Farben became a significant Nazi Party donor and government contractor after 1933 [26]. The company systematically purged its Jewish employees, completing this process by 1938 following Hermann Göring's decree linking foreign exchange allocations to the removal of Jewish staff [26]. This political and ideological alignment facilitated deep integration with the Nazi war machine.

Technically, the interwar period saw significant advances in chemical research with direct military applications. IG Farben scientists made fundamental contributions across multiple chemical domains, including Otto Bayer's 1937 discovery of polyaddition for polyurethane synthesis [26]. The company also developed critical capabilities in synthetic fuel production through coal liquefaction processes, reducing dependence on imported petroleum [26]. Perhaps most significantly, IG Farben pioneered the first nerve agents, with the initial discovery of sarin representing a new class of chemical weapons far more lethal than previous agents [26].

World War II: Industrialized Chemical Warfare Production

World War II witnessed the full mobilization of Germany's consolidated chemical industry for total war, with IG Farben at the center of an unprecedented expansion of chemical weapons production and related military materials. The scale of production dramatically exceeded World War I levels, with ten factories producing 69,500 metric tons of chemical warfare agents, alongside 977,500 metric tons of explosives and 974,000 metric tons of propellants [24]. This massive output required approximately 805,000 metric tons of intermediate products, demonstrating the extensive industrial infrastructure dedicated to wartime chemical production [24].

The production and deployment network for chemical weapons during World War II was highly systematized. Chemical warfare agents were manufactured at 24 production sites, though only 13 were responsible for the total output of 69,500 tons [24]. Five sites were operated directly by private companies, including two facilities each in Ludwigshafen and Uerdingen operated by IG Farben, leveraging existing chemical infrastructure [24]. Munitions filling occurred at seven specialized plants—five operated by the army and two by the air force—though particularly dangerous agents like phosgene and the modern nerve gas tabun were filled at their production facilities due to safety concerns [24].

The most notorious example of IG Farben's direct involvement in Nazi atrocities was the establishment of a synthetic rubber and oil plant at Auschwitz in 1941 to exploit slave labor from the concentration camp [26] [28]. The company employed approximately 30,000 slave laborers from Auschwitz and conducted medical experiments on inmates at both Auschwitz and Mauthausen concentration camps [26]. One IG Farben subsidiary supplied Zyklon B, the poison gas used to murder over one million people in Holocaust gas chambers [26]. These activities led to the post-war IG Farben Trial (1947-1948), where 23 company directors were tried for war crimes and 13 were convicted [26].

Table 3: Chemical Weapons Production in Germany During World War II

| Aspect | World War I | World War II |

|---|---|---|

| Total Chemical Warfare Agents Produced | 47,400 tons | 69,500 tons |

| Number of Production Sites | 7 factories | 13 primary sites (of 24 total) |

| Explosives Production | 510,000 tons | 977,500 tons |

| Propellants Production | 285,000 tons | 974,000 tons |

| Primary Organizational Structure | Loose coordination between companies | Centralized through IG Farben |

| Notable New Agents | Chlorine, phosgene, mustard gas | Nerve agents (tabun, sarin) |

Technical Protocols for Nerve Agent Production

The development and production of nerve agents represented a significant technological advancement in chemical weapons during WWII:

Protocol 3: Synthesis and Weaponization of Tabun

- Objective: Industrial-scale production of organophosphate nerve agents

- Method: Multi-step synthesis involving dimethylamidophosphoric dichloride reaction with sodium cyanide and ethanol; quality control via infrared spectroscopy; direct filling into munitions at production facility (Dyhernfurth)

- Key Parameters: High purity requirements (>95%) to prevent decomposition; extreme toxicity (LCt50 ~200 mg·min/m³); rapid percutaneous absorption

- Safety Protocol: Closed-system production; remote handling equipment; atmospheric monitoring for leaks; rapid decontamination with alkaline solutions

Protocol 4: Synthetic Fuel Production

- Objective: Manufacture of liquid fuels from coal to support war effort

- Method: Coal liquefaction via high-pressure hydrogenation (Bergius process); catalytic refinement to gasoline specifications; quality assurance through distillation testing

- Key Parameters: Operation at 200-700 atm pressure; temperature range 380-550°C; catalytic systems (tin oxalate, molybdenum compounds); daily production capacity ~1,000 tons

- Process Flow: Coal preparation → slurry preparation with oil → catalytic hydrogenation → fractionation → product stabilization

The Scientist's Toolkit: Key Research Reagent Solutions

The transformation of Germany's chemical industry from dyes to weapons relied on specific chemical compounds and processes that served dual civilian and military purposes. Understanding these key reagents provides insight into the technical foundation that enabled this strategic pivot.

Table 4: Essential Research Reagents and Their Applications

| Reagent/Chemical | Primary Civilian Application | Military Adaptation | Key Properties |

|---|---|---|---|

| Aniline | Dyestuff intermediate | Explosives precursor | Amine group enables diazotization; basic building block |

| Chlorine | Bleaching agent in textile industry | Chemical warfare agent | High reactivity; pulmonary damage; dense gas cloud |

| Phosgene | Chemical intermediate for dyes | Chemical warfare agent | Delayed action; lower respiratory damage |

| Sulfur Chloride | Chemical intermediate | Mustard gas precursor | Reacts with ethylene to form vesicant agents |

| Hydrogen Cyanide | Fumigant, chemical synthesis | Zyklon B production | Rapid toxicity; interference with cellular respiration |

| Nitric Acid | Fertilizer production | Explosives manufacturing | Strong oxidizing agent; nitration of organic compounds |

| Ammonia | Fertilizer production | Explosives precursor | Haber-Bosch process; oxidation to nitrate |

| Dimethylamidophosphoric Dichloride | Chemical research | Nerve agent precursor | Phosphorylation of acetylcholinesterase |

Analytical Approaches and Technical Visualization

The systematic transformation of Germany's chemical industry from dyes to weapons can be visualized through several key process flows and organizational structures. The following technical diagrams illustrate the critical pathways and relationships that enabled this strategic conversion.

Chemical Industry Mobilization Pathway

Dual-Use Chemical Production Process

Legacy and Environmental Impact

The legacy of Germany's weaponized chemical industry extends far beyond the conclusion of hostilities in 1945. The environmental and public health consequences of chemical weapons production and disposal continue to pose significant challenges nearly a century later. As noted in contemporary assessments, "the effects of chemical warfare agents—their production and deployment at the frontline—continue to pose a risk 100 years later" [24]. The cleanup costs for contaminated sites have proven enormous, with the former munitions site at Stadtallendorf alone requiring approximately 160 million euros for remediation [24].

The environmental impact stems from multiple sources: deliberate disposal of chemical weapons through detonation or burial, accidental releases during production, and residual contamination of production facilities. Many demolition sites remain unknown today, creating ongoing risks for development and land use [24]. Modern risk assessment methodologies for these sites typically involve phased approaches, beginning with historical documentation analysis (Phase I), proceeding to risk assessment through orientation and detailed studies (Phase II), and culminating in safety or cleanup measures (Phase III) [24].

The post-war period saw the deliberate dismantling of IG Farben by the Allies, with the conglomerate formally split into its constituent companies in 1951, eventually reemerging as BASF, Bayer, and Hoechst in West Germany [26] [28]. Despite this dissolution, the technical expertise and industrial capabilities that enabled the chemical warfare programs persisted, contributing significantly to West Germany's "Wirtschaftswunder" (economic miracle) in the postwar period [26].

From a research perspective, the World Wars had a measurable impact on the pace of chemical discovery. Analysis of chemical compound discovery rates reveals "two large dips over the past two centuries during the two world wars," though the pace recovered to original levels within five years after each conflict [25]. This suggests that while war temporarily redirected chemical research toward immediate military applications, it did not fundamentally diminish long-term chemical innovation capacity.

The transformation of Germany's chemical industry from dyes to weapons represents a compelling case study in the mobilization of scientific and industrial capacity for warfare. This technical analysis has demonstrated how existing chemical infrastructure, expertise, and organizational structures were systematically leveraged to develop and produce chemical weapons on an unprecedented scale. The continuity from the pre-war dye industry through the formation of IG Farben to the massive chemical weapons production of both World Wars reveals a pattern of increasing integration between industrial capability and military ambition.

For contemporary researchers, scientists, and drug development professionals, this historical example offers several crucial insights. First, it demonstrates the dual-use potential of chemical research and production capabilities, where similar technologies can serve both civilian and military purposes. Second, it highlights the critical importance of organizational structures in enabling the rapid redirection of scientific and industrial capacity. Finally, it underscores the long-term environmental and ethical consequences of weapons development programs, with contamination and disposal challenges persisting for generations.

The German experience with chemical weapons development remains highly relevant today, as advances in chemical and pharmaceutical research continue to present dual-use dilemmas. Understanding this historical trajectory provides valuable perspective for current professionals navigating the complex ethical landscape of chemical research and its potential applications.

The first large-scale use of chemical weapons in modern warfare occurred on April 22, 1915, when German forces released 160 tons of chlorine gas from approximately 6,000 pre-positioned cylinders at Ypres, Belgium [1]. This attack created an 8,000-yard gap in the Allied lines, causing approximately 1,000 fatalities and 4,000 casualties in a matter of minutes through asphyxiation and pulmonary damage [1] [5]. This event represented not merely a tactical challenge but a fundamental crisis in military science, demanding an immediate and multifaceted response from Allied nations. The Allied response to chemical warfare encompassed two parallel trajectories: the rapid development of defensive countermeasures to protect soldiers, and the establishment of offensive capabilities to retaliate in kind. This technological and industrial mobilization reflected the first large-scale militarization of academic and industrial chemistry, creating a new paradigm for chemical research driven by military necessity [1]. The development of these capabilities during World War I illustrates how wartime imperatives can dramatically accelerate applied research in chemical synthesis, toxicology, and protective technology, ultimately shaping the landscape of chemical innovation for decades to follow.

Initial Defensive Countermeasures

Early Improvisation and Stopgap Solutions

Faced with an unprecedented threat, Allied forces initially resorted to improvised solutions based on available materials. The first response to the chlorine attacks involved simple water-soaked cloths held over the mouth and nose, capitalizing on chlorine's water solubility to reduce its effects [5]. Soldiers soon discovered that urine-soaked rags offered superior protection because the urea reacted with chlorine to form less harmful dichloro urea [5]. These primitive methods provided limited protection but demonstrated the critical principle of chemical neutralization. Within days, military medical services and engineering units organized the mass production of simple pad respirators. The British Army, for instance, distributed cotton waste pads soaked in bicarbonate solution, while French civilians were employed to manufacture rudimentary pads from muslin, flannel, and gauze [5]. The Royal Engineers established specialized companies for chemical warfare response, marking the beginning of an organized institutional approach to chemical defense [4].

Evolution of Gas Mask Technology

The rapid evolution of respiratory protection progressed through several distinct generations of technology, each improving upon the limitations of its predecessor:

Table: Evolution of Allied Gas Mask Technology During WWI

| Appearance Timeline | Device Name/Type | Key Components | Protection Mechanism | Limitations |

|---|---|---|---|---|

| April-May 1915 | Hypo Helmet [5] | Flannel bag, celluloid window | Chemical-soaked fabric neutralized gases | Limited field of vision, breathing resistance |

| Mid-1915 | Smoke Helmet (MacPherson) [5] | Flannel bag, celluloid window | Entire head coverage, chemical impregnation | Uncomfortable, fogging, limited duration |

| July 1915 | PH Helmet [5] | Cotton skull cap, eyepieces, mouth tube | Phenate hexamine solution for neutralization | Improved over predecessors but still limited |

| 1916 onward | Box Respirator [4] | Facepiece, corrugated tube, canister | Charcoal/chemical filters in separate canister | Comprehensive protection, longer duration |

The technological breakthrough came with the introduction of the box respirator, which represented a fundamental shift in protective design. This system separated the filtering apparatus from the facepiece, allowing for more effective filtration media and greater comfort during extended use [4]. The canister contained activated charcoal to absorb toxic gases and chemical sorbents to neutralize specific agents, creating a more comprehensive and reliable protection system [4]. This evolution from primitive pads to sophisticated respirators occurred within just eighteen months, demonstrating remarkable acceleration in applied chemical engineering under wartime pressure.

Development of Offensive Retaliatory Capabilities

Organizational and Industrial Mobilization

The psychological impact of chemical weapons created intense pressure for retaliation. As Lieutenant General Sir Charles Ferguson of the British II Corps stated: "We cannot win this war unless we kill or incapacitate more of our enemies than they do of us, and if this can only be done by our copying the enemy in his choice of weapons, we must not refuse to do so" [5]. This sentiment led to the rapid establishment of dedicated chemical warfare institutions. Britain formed specialized Royal Engineer companies responsible for offensive gas warfare, while the United States created the Chemical Warfare Service in 1918, consolidating research, development, and production [4] [3]. The Allied approach represented a full-scale mobilization of academic and industrial resources. Major chemical companies, academic institutions, and government laboratories were integrated into a coordinated research and production network. In the United States, research was centralized at American University in Maryland before moving to the Edgewood Arsenal, where approximately 10% of all American artillery shells were eventually filled with chemical agents [3].

Chemical Agents and Delivery Systems

Allied offensive chemical capabilities evolved significantly throughout the war, both in the agents used and their methods of delivery:

Table: Primary Chemical Warfare Agents Deployed by Allied Forces

| Chemical Agent | First Used By | Physiological Classification | Allied Deployment Methods | Tactical Purpose |

|---|---|---|---|---|

| Chlorine [1] [3] | Germany, April 1915 | Lung injurant | Cylinder releases, artillery shells | Casualty agent, area denial |

| Phosgene [1] [3] | Germany, December 1915 | Lung injurant | Artillery shells | Primary lethal agent |

| Mustard Gas [1] [3] | Germany, July 1917 | Vesicant (blister agent) | Artillery shells, mortar bombs | Persistent casualty agent |

The British first used chlorine at the Battle of Loos on September 25, 1915, employing cylinder releases similar to the German method [3]. However, this approach proved problematic when wind conditions shifted, blowing gas back toward British positions [4]. This experience drove the transition to artillery-based delivery systems, which offered greater reliability and tactical flexibility. By 1916, gas was primarily delivered by shells, allowing attacks from greater ranges without dependence on weather conditions [4]. The Allies particularly capitalized on phosgene, which became their primary lethal chemical agent and was responsible for approximately 85% of all chemical weapons fatalities during WWI [7] [3]. The development of mustard gas capabilities represented a further escalation, as this persistent vesicant caused serious burns, respiratory damage, and long-term casualties, while also contaminating terrain [1] [3].

Research Methodologies and Experimental Protocols

Chemical Agent Development and Testing

The rapid development of new chemical agents and delivery systems required systematic research approaches that combined empirical testing with emerging toxicological principles. The experimental workflow for chemical warfare agent development followed a structured methodology:

The experimental protocols for chemical agent evaluation encompassed several critical methodologies:

Toxicological Screening: Researchers exposed animal models (typically dogs, cats, or monkeys) to varying concentrations of candidate agents to determine median lethal concentrations (LC50) and median lethal doses (LD50). Exposure chambers were used to maintain precise atmospheric concentrations, with animals monitored for delayed effects over 48-72 hours, particularly for agents like phosgene with delayed symptom onset [1] [7].

Environmental Behavior Studies: Scientists conducted field tests to understand how agents behaved under various meteorological conditions. This included evaluating vapor density (compared to air), persistence in different environments (open terrain vs. woods, summer vs. winter), and interaction with environmental factors like rain, temperature, and wind patterns [1].

Materials Compatibility Testing: Researchers assessed how candidate agents interacted with potential storage and delivery materials, including steel artillery shells, brass fittings, and various protective coatings. This determined shelf life and identified potential corrosion issues that could compromise weapon reliability [1].

Protective Equipment Evaluation

The development of effective countermeasures required rigorous testing methodologies to evaluate protective materials and designs:

Filtration Efficiency Protocols: Researchers constructed test chambers with precise agent concentrations, then measured breakthrough times for various filtration media including activated charcoal, chemical sorbents, and multi-layer fabric systems. Efficiency was measured against progressively more challenging agents, from tear gases to phosgene and eventually mustard gas [5].

Human Factors Assessment: Prototype respirators were evaluated in simulated combat conditions to assess field of vision, breathing resistance, communication capability, and mobility. This iterative process led to design improvements such as separate filter canisters to reduce weight on the face and improve comfort during extended wear [5].

Neutralization Chemistry Verification: Laboratory protocols were established to test the efficacy of proposed neutralization methods. For example, researchers quantitatively measured the reaction rates between chlorine and various potential neutralizing agents, including bicarbonate solutions, sodium thiosulfate, and hexamethylenetetramine, to identify the most effective chemistries for impregnation into protective equipment [5].

The Scientist's Toolkit: Key Research Reagents and Materials

The chemical warfare research effort required specialized materials and reagents that formed the essential toolkit for scientists and engineers working on both offensive and defensive capabilities.

Table: Essential Research Materials for Chemical Warfare Development

| Research Material | Composition/Type | Primary Function | Application Context |

|---|---|---|---|

| Activated Charcoal [4] | High-surface-area carbon | Adsorption of toxic gases | Gas mask canister filters |

| Hypo Solution [5] | Sodium thiosulfate | Chlorine neutralization | Chemical impregnation for early respirators |

| Phenate-Hexamine [5] | Phenol & hexamethylenetetramine | Acid gas neutralization | PH Helmet impregnation solution |

| Bleaching Powder [1] | Calcium hypochlorite | Mustard gas neutralization | Decontamination of terrain and equipment |

| Chloropicrin [7] | Trichloronitromethane | Tear gas/mask breaker | Chemical weapon filler, training agent |

| Phosgene [1] | Carbonyl chloride | Lethal pulmonary agent | Chemical weapon filler, industrial precursor |

The research and development process also required specialized laboratory equipment for safe handling and testing of these hazardous materials. This included closed-system reaction vessels for chemical synthesis, gas-tight exposure chambers for toxicological evaluation, precision analytical instruments for quantifying agent concentrations, and environmental simulation equipment for studying agent behavior under various conditions. The development of these specialized tools and methodologies created a foundation for modern toxicology and chemical defense research that extended far beyond immediate military applications.