From Lab to License: A Practical Guide to Bayesian Models in Chemistry and Drug Development Validation

This article provides a comprehensive guide for researchers and drug development professionals on the practical application of Bayesian statistical models in chemistry validation.

From Lab to License: A Practical Guide to Bayesian Models in Chemistry and Drug Development Validation

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the practical application of Bayesian statistical models in chemistry validation. It explores the foundational shift from frequentist to Bayesian reasoning, demonstrating how prior knowledge and existing data are formally incorporated to enhance decision-making. The content covers core methodological applications, including analytical method validation, pharmaceutical process development, and toxicological risk assessment. It further addresses common troubleshooting and optimization challenges, such as managing small datasets and model bias, and provides a framework for rigorous model validation and comparison with traditional statistical approaches. The insights are geared towards enabling more efficient, cost-effective, and robust validation processes across the chemical and pharmaceutical industries.

Bayesian Basics: Shifting from Frequentist Convention to Dynamic Reasoning in Chemistry

In statistical inference, the Bayesian and Frequentist paradigms represent two fundamentally different approaches to probability, distinguished primarily by their interpretation of probability itself and their treatment of unknown parameters. The core distinction can be summarized as P(H|D) (Bayesian) versus P(D|H) (Frequentist). The Frequentist approach defines probability as the long-run frequency of events, treating parameters as fixed but unknown quantities. Statistical inference in this framework relies on sampling distributions—what would happen if we repeated the data collection process numerous times. The Bayesian approach, in contrast, interprets probability as a measure of belief or certainty about propositions. It treats parameters as random variables with associated probability distributions, allowing direct probability statements about parameters [1] [2].

This article explores these foundational differences through conceptual explanations, practical applications in chemistry and drug development, quantitative comparisons, and experimental protocols. The Bayesian interpretation of P(H|D) represents the posterior probability of a hypothesis (H) given the observed data (D). This directly quantifies our updated belief about the hypothesis after considering the evidence. The Frequentist interpretation of P(D|H) represents the p-value or the probability of observing data (D) at least as extreme as what was actually obtained, assuming the null hypothesis (H) is true. This approach does not assign probabilities to hypotheses but rather to data under a fixed hypothesis [3] [4] [1].

Conceptual Foundation and Philosophical Differences

The Frequentist Approach: P-Value and Confidence Intervals

The Frequentist framework, dominant in 20th-century science, operates on the principle that probability refers to the relative frequency of an event over many repeated trials. In hypothesis testing, the p-value is calculated as P(D|H₀), the probability of observing the obtained data (or more extreme data) assuming the null hypothesis (H₀) is true [3] [4]. A small p-value indicates that the observed data would be unlikely if the null hypothesis were true, potentially leading to its rejection. However, this framework does not provide the probability that the null hypothesis is true or false. As noted in recent literature, "the p-value itself provides no information regarding the evidence in favor of an alternative hypothesis" [3]. While intuitive, this approach has significant limitations: sensitivity to sample size (where large samples can yield significance for trivial effects), binary yes/no conclusions that fail to capture evidence continuity, and the inability to directly quantify evidence for hypotheses [3] [4].

Confidence intervals represent another cornerstone of Frequentist inference. A 95% confidence interval means that if we were to repeat the same study numerous times, 95% of the calculated intervals would contain the true population parameter. The confidence lies in the procedure, not in any specific interval. As one statistical explanation notes: "From the frequentist perspective, the unknown parameter θ is a number: either that number is in the interval or it's not; there's no probability to it" [1]. This contrasts sharply with the Bayesian interpretation of intervals, which directly addresses parameter uncertainty.

The Bayesian Approach: Posterior Probability and Bayes Factor

Bayesian statistics fundamentally updates beliefs by combining prior knowledge with new evidence. This process follows a simple yet powerful formula based on Bayes' theorem:

Posterior ∝ Likelihood × Prior

This translates to P(H|D) = [P(D|H) × P(H)] / P(D), where P(H) is the prior probability (belief before seeing data), P(D|H) is the likelihood (probability of data given hypothesis), P(D) is the marginal probability of the data, and P(H|D) is the posterior probability (updated belief after seeing data) [2].

The Bayes Factor (BF) provides a specific Bayesian tool for hypothesis comparison, measuring the strength of evidence for one hypothesis over another. Formally, BF₁₀ = P(D|H₁) / P(D|H₀), representing how much more likely the data are under H₁ compared to H₀ [3] [4]. Unlike p-values, Bayes Factors directly quantify relative evidence between competing hypotheses. The interpretation follows continuous scales, such as: BF 1-3 provides "negligible evidence" for H₁; 3-10 "weak to moderate evidence"; 10-30 "moderate to strong evidence"; 30-100 "strong evidence"; and >100 "strong to very strong evidence" [3] [4].

Table 1: Interpretation of Bayes Factor Values

| Bayes Factor Value | Interpretation |

|---|---|

| <0.01 | Strong to very strong evidence for H₀ |

| 0.01-0.03 | Strong evidence for H₀ |

| 0.03-0.1 | Moderate to strong evidence for H₀ |

| 0.1-0.33 | Weak to moderate evidence for H₀ |

| 0.33-1 | Negligible evidence for H₀ |

| 1 | No evidence |

| 1-3 | Negligible evidence for H₁ |

| 3-10 | Weak to moderate evidence for H₁ |

| 10-30 | Moderate to strong evidence for H₁ |

| 30-100 | Strong evidence for H₁ |

| >100 | Strong to very strong evidence for H₁ |

Practical Applications in Chemistry Validation and Drug Development

Method Validation and Measurement Uncertainty

Bayesian approaches are revolutionizing analytical method validation by providing a holistic framework that treats the analytical method as a complete system. Rather than decomposing methods into individual steps, Bayesian validation utilizes accuracy profiles based on tolerance intervals to assess overall method performance [5]. This approach allows researchers to control the risk associated with future use of the analytical method through β-expectation tolerance intervals, which cover on average 100β% of the distribution given estimated parameters [5].

In one demonstrated application, Bayesian simulations were employed to validate quantitative analytical procedures across different instrumental techniques including spectrofluorimetry, liquid chromatography (LC–UV, LC–MS), capillary electrophoresis, and enzyme-linked immunosorbent assay (ELISA) [5]. The Bayesian accuracy profile procedure enables practical evaluation of measurement reliability, with studies showing that intervals calculated by conventional methods and Bayesian strategies are generally close, validating the Bayesian approach for diverse sectors including pharmacy, biopharmacy, and food processing [5].

Clinical Development and Regulatory Applications

Bayesian methods are gaining significant traction in drug development, particularly where traditional approaches face challenges. The U.S. Food and Drug Administration (FDA) recognizes that "when experts from various disciplines have determined that there is high-quality, relevant information external to a clinical trial, these methods may allow studies to be completed more quickly and with fewer participants" [6]. This advantage proves particularly valuable in several specialized applications:

Rare Disease Research: Bayesian approaches enable robust studies where traditional strategies would be unfeasible or unethical by incorporating external evidence and historical data [7] [8]. This allows for adequate statistical power with smaller sample sizes, crucial for ultra-rare conditions.

Dose-Finding Trials: In oncology and other fields, Bayesian designs improve accuracy in identifying maximum tolerated doses (MTD) and enhance study efficiency by linking toxicity estimation across doses [6]. The flexibility to adapt dosing based on accumulating evidence represents a significant advantage over traditional dose-escalation methods.

Pediatric Drug Development: Since pediatric development typically occurs after demonstrating safety and efficacy in adults, "Bayesian statistics can incorporate the information from adults that can be considered in understanding the effects of a drug in children" [6]. This enables more ethical and efficient pediatric studies.

Subgroup Analysis: Hierarchical Bayesian models provide more accurate estimates of drug effects in patient subgroups (defined by age, race, etc.) compared to analyzing each subgroup in isolation [6]. This supports personalized medicine approaches.

The FDA is actively promoting Bayesian methodologies, with expectations to "publish draft guidance on the use of Bayesian methodology in clinical trials of drugs and biologics" by the end of FY 2025 [6] [8]. The Complex Innovative Designs (CID) Paired Meeting Program, established to facilitate novel clinical trial designs, has seen selected submissions predominantly utilizing Bayesian frameworks [6].

Quantitative Comparison and Simulation Studies

Comparative Behavior of P-Values and Bayes Factors

Simulation studies reveal crucial differences in how p-values and Bayes Factors behave under varying experimental conditions. Research comparing both measures in two-sample t-tests demonstrates that "BF is less sensitive to sample size in the presence of mild effects of 0.1 and 0.2" compared to p-values [3] [4]. This differential sensitivity has important practical implications:

With moderate effect sizes (0.5) and sample sizes of 150, p-values can reach extremely low values while Bayes Factors remain more cautious, indicating only moderate evidence for the alternative hypothesis. Similarly, with effect sizes of 0.5 and sample sizes of 100, p-values strongly support rejecting the null hypothesis while Bayes Factors show "barely worth mentioning" evidence for H₁ [3] [4]. The p-value demonstrates sensitivity to sample size primarily when the null hypothesis is false, whereas Bayes Factors appear affected by sample size regardless of whether true effects exist [3] [4].

Table 2: Comparison of P-Value and Bayes Factor Properties

| Property | P-Value | Bayes Factor |

|---|---|---|

| Definition | P(D⁺|H₀) - Probability of extreme data given null hypothesis | P(D|H₁)/P(D|H₀) - Relative evidence for competing hypotheses |

| Hypothesis Probability | Does not provide P(H₀|D) or P(H₁|D) | Directly provides P(H₁|D) with specified prior |

| Sample Size Sensitivity | Highly sensitive, especially when H₀ is false | Less sensitive to large samples for mild effects |

| Interpretation Scale | Dichotomous (significant/not significant) | Continuous evidence measure |

| Prior Information | Cannot incorporate prior knowledge | Explicitly incorporates prior knowledge |

| Result Communication | "We reject H₀ at α=0.05 level" | "The data are 10 times more likely under H₁ than H₀" |

Interval Estimation: Confidence vs. Credible Intervals

The distinction between Bayesian and Frequentist approaches extends to interval estimation, with confidence intervals representing the Frequentist approach and credible intervals the Bayesian alternative. The interpretation differs fundamentally:

Frequentist Confidence Intervals: A 95% confidence interval means that if the same study were repeated many times, 95% of the calculated intervals would contain the true parameter value. The probability refers to the procedure, not the specific interval [1] [2].

Bayesian Credible Intervals: A 95% credible interval means there is a 95% probability that the parameter lies within the specified interval, given the observed data and prior distribution. This direct probability statement about parameters aligns with intuitive interpretations [1] [2].

As one explanation summarizes: "The Bayesian approach provides probability statements about the parameter: There is a 98% chance that θ is between 0.718 and 0.771; our assessment is that θ is 49 times more likely to lie inside the interval than outside" [1].

Experimental Protocols and Implementation

Protocol for Bayesian Method Validation in Analytical Chemistry

Objective: To validate an analytical method using Bayesian accuracy profiles for quantifying compound concentration in biological matrices.

Materials and Reagents:

- Reference Standard: High-purity analyte for calibration curves

- Quality Control (QC) Samples: Prepared at low, medium, and high concentrations

- Internal Standard: Stable isotopically labeled analog of analyte

- Mobile Phase: HPLC-grade solvents with appropriate modifiers

- Solid-Phase Extraction Plates: For sample clean-up and concentration

Experimental Design:

- Sample Preparation: Prepare QC samples at three concentration levels (n=6 each) across three independent runs

- Calibration Standards: Analyze nine non-zero concentrations in duplicate across the measurement range

- Data Collection: Perform liquid chromatography with tandem mass spectrometry (LC-MS/MS) analysis

Statistical Analysis Workflow:

- Model Specification: Use the one-way random effects model: Yij = μ + bi + eij where Yij represents the jth replicate in run i, μ is the overall mean, bi ~ N(0, σ²b) is the between-run variability, and eij ~ N(0, σ²e) is the within-run variability [5]

- Prior Selection: Implement weakly informative priors for variance components (e.g., half-t distributions for standard deviations) to maintain conservatism

- Posterior Computation: Utilize Markov Chain Monte Carlo (MCMC) sampling with at least 10,000 iterations after burn-in

- Accuracy Profile Construction: Calculate β-expectation tolerance intervals (e.g., 80% or 95%) across the concentration range

- Method Validation: Verify that tolerance intervals remain within acceptance limits (typically ±15% for bioanalytical methods)

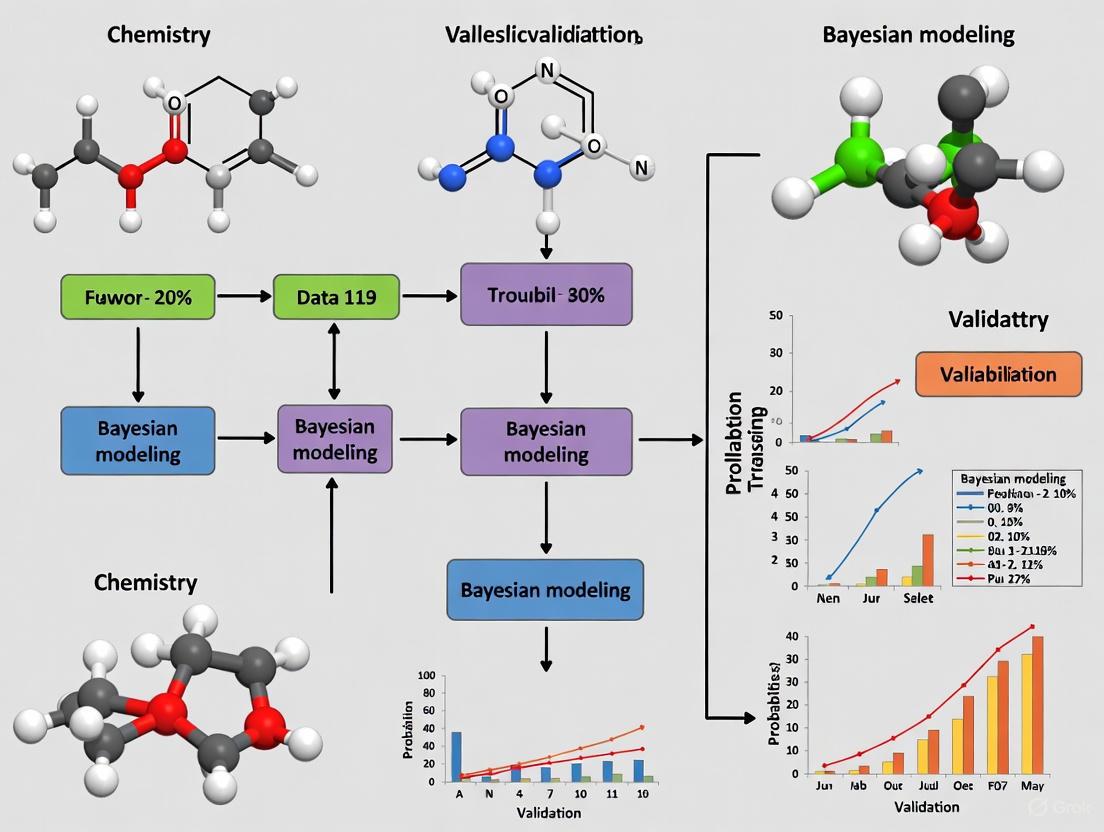

Diagram 1: Bayesian Method Validation Workflow. This flowchart illustrates the sequential process for validating analytical methods using Bayesian accuracy profiles.

Protocol for Bayesian Adaptive Dose-Finding in Clinical Trials

Objective: To identify the optimal dose using Bayesian adaptive design in early-phase clinical development.

Materials and Software:

- Statistical Software: R with Bayesian packages (RStan, rjags, brms) or equivalent

- Prior Data: Historical information on compound class toxicity and efficacy

- Dose Levels: Pre-specified range of doses to be evaluated

- Safety Monitoring: Standard operating procedures for adverse event reporting

Trial Design:

- Dose Selection: Define 4-6 dose levels based on preclinical data and allometric scaling

- Cohort Size: Plan for 3-6 patients per initial cohort with potential expansion at promising doses

- Endpoint Definition: Establish clear efficacy and toxicity endpoints with grading criteria

- Stopping Rules: Pre-specify criteria for dose escalation, de-escalation, and trial termination

Bayesian Analysis Workflow:

- Prior Elicitation: Define prior distributions for dose-toxicity and dose-efficacy relationships using historical data or expert opinion

- Model Structure: Implement logistic regression for dose-response: logit(P(response)) = α + β×dose with priors on α and β

- Posterior Updating: After each cohort, update posterior probabilities of toxicity and efficacy for all dose levels

- Adaptive Decisions: Allocate future patients to doses with optimal risk-benefit profiles based on posterior distributions

- Final Recommendation: Select recommended phase II dose based on pre-specified criteria (e.g., target efficacy with acceptable toxicity)

Diagram 2: Bayesian Adaptive Dose-Finding Trial. This workflow demonstrates the iterative process of Bayesian adaptive dose selection in early clinical development.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents for Bayesian Analytical Methods

| Reagent/Material | Function | Application Examples |

|---|---|---|

| Certified Reference Standards | Provides measurement traceability and calibration | Method validation, accuracy profiles [5] |

| Stable Isotope-Labeled Internal Standards | Corrects for analytical variability in sample preparation | Bioanalytical method validation, LC-MS/MS assays [5] |

| Quality Control Materials | Monitors method performance over time | Inter-day precision assessment, accuracy profiles [5] |

| Bayesian Statistical Software (R/Stan/Pymc) | Implements MCMC sampling and posterior inference | Bayesian modeling, prior-posterior analysis [3] [4] |

| Historical Control Data | Informs prior distributions in Bayesian models | Rare disease trials, pediatric extrapolation [6] [8] |

| Validated Structural Alert Libraries | Provides prior knowledge for toxicity assessment | Bayesian approaches in toxicology [9] |

The distinction between Bayesian P(H|D) and Frequentist P(D|H) represents more than a mathematical technicality—it embodies fundamentally different approaches to scientific reasoning and evidence assessment. While Frequentist methods dominate many established validation protocols, Bayesian approaches offer compelling advantages for modern chemical and pharmaceutical research: direct probability statements about parameters, formal incorporation of prior knowledge, natural handling of complex models, and adaptive decision-making frameworks.

The growing regulatory acceptance of Bayesian methods, particularly in specialized areas like rare diseases, pediatric research, and dose-finding, signals an important shift in statistical practice. As the FDA prepares new guidance on Bayesian methodologies [6] [8], researchers in chemistry validation and drug development would benefit from building proficiency in both paradigms, leveraging their complementary strengths to advance scientific discovery and product development.

The future of analytical science lies not in choosing one paradigm over the other, but in understanding when each approach provides the most appropriate framework for answering specific research questions, with Bayesian methods particularly valuable for complex inference problems where prior information exists and should be formally incorporated into the analytical framework.

In the field of chemistry and drug development, the Bayesian statistical framework provides a powerful paradigm for formally incorporating existing knowledge into new analyses. This approach allows researchers to move beyond treating each experiment in isolation, instead leveraging historical data and expert judgment to make more efficient and informed decisions. At the core of this methodology are prior distributions—mathematical representations of previous knowledge or beliefs about parameters of interest before observing new experimental data. When combined with new data through Bayes' theorem, these priors yield posterior distributions that form the basis for statistical inference [10].

The application of Bayesian methods is particularly valuable in chemistry validation research where data may be costly, time-consuming to acquire, or limited by ethical and practical constraints. In drug discovery, for instance, Bayesian models have demonstrated remarkable efficiency by leveraging public high-throughput screening (HTS) data to identify novel therapeutic candidates with hit rates exceeding typical HTS results by 1-2 orders of magnitude [11]. Similarly, in analytical chemistry, Bayesian approaches have revolutionized quantitative nuclear magnetic resonance (NMR) spectroscopy by enabling accurate quantification even at low signal-to-noise ratios where conventional methods fail [12].

Theoretical Framework

Types of Informative Priors

Bayesian methodology employs several classes of prior distributions to incorporate historical information, each with distinct characteristics and applications suitable for chemical validation research.

Table 1: Categories of Informative Priors for Chemical Applications

| Prior Type | Mechanism | Chemical Research Application |

|---|---|---|

| Power Prior [13] [10] | Historical data likelihood raised to power a₀ (0≤a₀≤1) |

Down-weighting historical control data in clinical trials or previous batch analyses |

| Commensurate Prior [10] | Hierarchical model assessing similarity between historical and current data | Incorporating historical NMR spectral libraries when analyzing new samples |

| Meta-Analytic Predictive (MAP) [10] | Meta-analysis of historical data forms prior for current analysis | Combining results from multiple previous drug efficacy studies |

| Adaptive Bayesian Models [13] | Combines historical prior with variance-reducing shrinkage prior | Building mortality risk prediction models with additional biometric measurements |

Bayesian Formulation

The mathematical foundation of Bayesian analysis rests on Bayes' theorem:

Posterior ∝ Likelihood × Prior

In formal terms, for parameters θ and data D, this becomes: P(θ|D) = P(D|θ)P(θ) / P(D)

Where:

- P(θ|D) is the posterior distribution representing updated knowledge after observing data

- P(D|θ) is the likelihood function of the observed data

- P(θ) is the prior distribution encoding previous knowledge

- P(D) is the marginal likelihood serving as a normalizing constant

The power prior formulation provides a specific mechanism for incorporating historical data D₀: P(θ|D₀, a₀) ∝ L(θ|D₀)^{a₀} π₀(θ)

Where a₀ represents the discounting parameter controlling the influence of historical data [13] [10].

Applications in Chemistry and Drug Development

Drug Discovery and Repurposing

Bayesian models have demonstrated exceptional utility in accelerating drug discovery pipelines. Researchers have leveraged public HTS data to build Bayesian models that distinguish between compounds with desired bioactivity and those with cytotoxicity. In one application, a dual-event Bayesian model successfully identified compounds with antitubercular activity and low mammalian cell cytotoxicity from a published set of antimalarials [11]. The most potent hit exhibited the in vitro activity and in vitro/in vivo safety profile of a drug lead, demonstrating how prior knowledge from related domains can be harnessed for novel therapeutic identification.

This approach offers significant economies in time and cost to drug discovery programs. By virtually screening commercial libraries, researchers have achieved experimental confirmation rates of 14%—dramatically higher than the typical hit rates of conventional HTS campaigns [11]. The model ranked compounds by Bayesian score, which relates to the likelihood of a compound being active through determination of its molecular features compared to features in the model's actives and inactives.

Analytical Chemistry and Spectroscopy

In analytical chemistry, Bayesian methods have transformed quantitative analysis, particularly in NMR spectroscopy. Traditional peak integration methods for quantitative NMR analysis are inherently limited in resolving overlapping peaks and are susceptible to noise. Bayesian approaches provide a principled framework that incorporates prior knowledge about the studied system, including spectral patterns, chemical shifts, and peak widths [12].

A Bayesian model for NMR quantification has demonstrated exceptional performance, achieving absolute accuracy of up to 0.01 mol/mol for mixture constituents at high signal-to-noise ratios (SNR>40dB) [12]. Even under challenging conditions with SNR<20dB, where precise phasing is practically impossible, the model maintained accuracy of 0.05-0.1 mol/mol. This robustness makes Bayesian approaches particularly valuable for benchtop NMR instruments with lower field strengths, where decreased signal-to-noise ratios and spectral resolution present challenges for conventional analysis.

Clinical Trial Design and Analysis

Bayesian methods are increasingly employed in clinical development to incorporate historical control data, particularly in orphan diseases and pediatric studies where patient populations are limited. Various Bayesian approaches exist to incorporate historical control data from single or multiple previous studies, including power priors, hierarchical power priors, modified power priors, and commensurate priors [10].

The meta-analytic predictive (MAP) approach has emerged as a gold standard, performing a meta-analysis of historical data to form an informative prior which is then combined with current trial data using Bayesian updating [10]. This methodology has been successfully applied in several published studies and is particularly valuable when randomized controls are ethically or practically challenging to obtain.

Experimental Protocols

Protocol: Building a Bayesian Model for Drug Discovery Screening

This protocol outlines the procedure for developing and validating a Bayesian model to prioritize compounds for experimental testing in drug discovery campaigns [11].

Materials and Equipment

- High-throughput screening data (public or proprietary)

- Compound libraries for virtual screening

- Cheminformatics software (e.g., Python/R with appropriate packages)

- Laboratory equipment for experimental validation (HTS robotics, plate readers, etc.)

Procedure

Data Collection and Curation

- Gather HTS data containing both active and inactive compounds

- Curate structures and standardize chemical representations

- Annotate compounds with additional assay data (e.g., cytotoxicity)

Descriptor Calculation

- Compute molecular descriptors or fingerprints for all compounds

- Common descriptors include molecular weight, logP, topological indices, etc.

Model Training

- Implement Bayesian classifier using appropriate algorithms

- The model learns structural features correlating with bioactivity

- Validate model using cross-validation techniques

Virtual Screening

- Apply trained model to rank compounds in screening library

- Select top-ranked compounds for experimental testing

Experimental Validation

- Test selected compounds in biological assays

- Compare hit rates with conventional HTS approaches

Key Calculations

Bayesian scores are calculated based on the presence of molecular features: Score = Σ log(P(feature|active)/P(feature|inactive)) + log(prior odds)

Where higher scores indicate greater probability of activity.

Protocol: Bayesian Quantification in NMR Spectroscopy

This protocol details the application of Bayesian methods for quantitative analysis of chemical mixtures using NMR spectroscopy [12].

Materials and Equipment

- NMR spectrometer (high-field or benchtop)

- Reference standards for quantification

- Software for Bayesian analysis (e.g., custom Python/Matlab implementations)

- Standard NMR tubes and sample preparation materials

Procedure

Sample Preparation

- Prepare chemical mixtures with known concentrations for method validation

- Include internal standards when appropriate

Data Acquisition

- Acquire NMR spectra using standard pulse sequences

- Collect sufficient transients to achieve desired signal-to-noise ratio

- Record data without phase or baseline correction

Model Specification

- Define mathematical model for NMR signal including:

- Chemical shifts for each species

- Relaxation parameters

- Lineshape imperfections

- Baseline distortions

- Incorporate prior knowledge about chemical system

- Define mathematical model for NMR signal including:

Parameter Estimation

- Implement Bayesian inference to estimate parameters

- Use Markov Chain Monte Carlo (MCMC) or variational inference

- Obtain posterior distributions for concentration estimates

Validation and Quality Control

- Compare results with traditional integration methods

- Assess accuracy using known standards

- Evaluate performance at different signal-to-noise ratios

Key Calculations

The NMR signal model incorporates chemical shifts (δ), relaxation (T₂), and amplitudes (A) related to concentration: s(t) = Σ Aₖ exp(iφ₀) exp(2πiδₖt - t/T₂ₖ) + baseline(t) + noise

Implementation Workflow

The process of implementing Bayesian methods with historical data and expert knowledge follows a systematic workflow that integrates multiple data sources and validation steps.

Research Reagent Solutions

Table 2: Essential Materials for Bayesian-Driven Chemical Research

| Reagent/Resource | Function/Application | Specifications |

|---|---|---|

| PubChem Database [14] | Source of chemical structures and bioactivity data for prior information | >230 million substance records, 90 million compounds |

| ChEMBL Database [14] | Provides small molecule bioactivity data for prior distributions | >2 million compound records, 1 million assays |

| NMR Reference Standards [12] | Quantitative validation of Bayesian NMR models | Certified reference materials with known purity |

| Analytical Balances [15] | Precise sample weighing for analytical validation | Sensitivity to 0.0001 grams with draft protection |

| Chemical Descriptor Software [11] | Calculation of molecular features for Bayesian models | Generates topological, electronic, and structural descriptors |

| Bayesian Modeling Software | Implementation of statistical models and inference | Python (PyMC3, TensorFlow Probability), R (rstan, brms) |

| High-Throughput Screening Plates [11] | Experimental validation of Bayesian predictions | 384-well or 1536-well format with appropriate coatings |

The formal incorporation of historical data and expert knowledge through Bayesian priors represents a transformative approach in chemistry validation research. By moving beyond the limitations of analyzing each experiment in isolation, researchers can dramatically improve the efficiency and success rates of drug discovery, enhance the accuracy of analytical methods, and optimize clinical development programs. The protocols and applications outlined in this article provide a practical foundation for implementation across various chemical and pharmaceutical domains. As the availability of chemical data continues to grow, Bayesian methods will play an increasingly vital role in extracting maximum knowledge from these valuable resources.

Posterior Distributions, Markov Chain Monte Carlo (MCMC), and Uncertainty Quantification

Application Note: Quantitative NMR Spectroscopy

Background and Protocol Objective

Nuclear magnetic resonance (NMR) spectroscopy serves as a powerful non-destructive technique for quantitative characterization of chemical mixtures. Traditional peak integration methods face limitations in resolving overlapping peaks and are susceptible to noise, particularly in low-field instruments where spectral resolution decreases. This protocol details a Bayesian model-based approach that incorporates lineshape imperfections, phasing, and baseline distortions directly into the quantification process, enabling accurate concentration determination even at low signal-to-noise ratios (SNR < 20 dB) and for overlapping peaks [12].

Experimental Design and Workflow

The methodology operates on time-domain NMR signals, treating the entire raw signal as an instance generated by a model with specific parameters. The quantification problem is thus reduced to parameter estimation, where Bayesian statistics provide a principled framework for incorporating prior knowledge about the system while estimating uncertainty [12].

Table: Key Parameters for Bayesian NMR Quantification

| Parameter | Description | Role in Quantification |

|---|---|---|

| Chemical Shifts | Resonance frequencies of nuclei | Define expected peak positions |

| Relaxation Rates (T₁, T₂) | Longitudinal and transverse relaxation times | Affect signal decay and linewidth |

| Phase Parameters (φ₀) | Zero-order and first-order phase corrections | Correct for instrumental imperfections |

| Baseline Parameters | Polynomial or spline coefficients | Model low-frequency baseline distortions |

| Linewidth Parameters | Gaussian/Lorentzian mixing ratios | Account for lineshape imperfections |

Research Reagent Solutions

Table: Essential Materials for Bayesian NMR Quantification

| Reagent/Software | Function | Application Context |

|---|---|---|

| Reference Standards | Concentration calibration | Provides absolute quantification reference |

| Deuterated Solvents | Signal locking and shimming | Ensures magnetic field homogeneity |

| Bayesian Modeling Software (e.g., PyMC3, Stan) | Posterior distribution computation | Implements MCMC sampling for parameter estimation |

| NMR Processing Software | Raw data conversion and basic processing | Handles Fourier transformation and initial phase correction |

Validation and Performance Metrics

In experimental validation, this Bayesian approach achieved quantification accuracy of up to 10⁻⁴ mol/mol for mixture compositions. At high SNR (>40 dB), it achieved an absolute accuracy of at least 0.01 mol/mol for all species concentrations, performing comparably to or slightly better than conventional peak integration while maintaining effectiveness at low SNR conditions where conventional phasing becomes practically impossible [12].

Application Note: Automated Chemical Reaction Discovery

Background and Protocol Objective

Chemical discovery traditionally depends on human expertise for interpreting experimental outcomes, introducing hidden biases and limiting scalability. This protocol describes the implementation of a Bayesian Oracle that interprets chemical reactivity using probability, enabling standardized, bias-aware discovery processes. The system quantitatively formalizes expert intuition while retaining both positive and negative results, providing confidence values for deductions and automating experiment design [16].

Experimental Design and Workflow

The Bayesian Oracle employs a probabilistic model connecting reagents and process variables to observed reactivity. Compounds are assigned abstract properties (represented numerically between 0-1), and prior distributions are established for reactivity between compound sets. As the robotic platform performs experiments, the model continuously updates beliefs using Bayes' theorem, with high-performance numerical implementation via MCMC [16].

Table: Bayesian Oracle Parameters for Reaction Discovery

| Parameter | Description | Role in Discovery |

|---|---|---|

| Compound Properties | Abstract numerical descriptors (0-1) | Represent potential reactivity characteristics |

| Reactivity Priors | Initial belief strength about reactions | Encodes existing chemical knowledge |

| Likelihood Function | Probability of observations given parameters | Connects experimental outcomes to model |

| Posterior Reactivity | Updated belief after experiments | Quantifies discovery significance |

Research Reagent Solutions

Table: Essential Materials for Bayesian Reaction Discovery

| Reagent/Software | Function | Application Context |

|---|---|---|

| Chemical Stock Solutions | 24+ compound library | Provides diverse chemical space for exploration |

| Robotic Chemistry Platform (e.g., Chemputer) | Automated liquid handling | Ensures reproducibility and high-throughput experimentation |

| Online Analytics (HPLC, NMR, MS) | Real-time reaction monitoring | Provides observation data for Bayesian updates |

| Probabilistic Programming Framework | Implements Bayesian model | Handles MCMC sampling and posterior computation |

Validation and Performance Metrics

The Bayesian Oracle successfully rediscovered eight historically important reactions (aldol condensation, Buchwald-Hartwig amination, Heck, Mannich, Sonogashira, Suzuki, Wittig, and Wittig-Horner reactions) through analysis of >500 reactions. The system tracked observation likelihoods to identify anomalous results, quantitatively pinpointing when unexpected reactivity transitions from anomaly to validated discovery [16].

Application Note: CEST MRI Quantification

Background and Protocol Objective

Chemical exchange saturation transfer (CEST) magnetic resonance imaging provides valuable biomarkers for disease diagnosis but faces quantification challenges due to signal contamination from competing effects. This protocol outlines an MCMC-based Bayesian inference approach for estimating exchange parameters in CEST MRI, offering improved specificity to underlying biochemical exchange processes compared to conventional methods like magnetization transfer ratio asymmetry (MTRasym) and Lorentzian fitting [17].

Experimental Design and Workflow

The method employs Bloch-McConnell equations as the physical model, describing CEST contrast mechanisms through multiple proton pools. Bayesian inference combines this prior physical knowledge with measured Z-spectrum data, with MCMC sampling used to generate the posterior distribution for parameters including exchange rates, relaxation properties, and concentrations [17].

Table: CEST MRI Parameters for Bayesian Estimation

| Parameter | Description | Biological Significance |

|---|---|---|

| Exchange Rate (kₛw) | Proton transfer rate between pools | Sensitive to pH and temperature changes |

| Pool Concentration (fₛ) | Relative concentration of CEST agents | Reflects metabolite levels (e.g., amides) |

| Relaxation Times (T₁, T₂) | Longitudinal and transverse relaxation | Characterizes local tissue environment |

| NOE Effects | Nuclear Overhauser enhancement signals | Represents competing signal contributions |

Research Reagent Solutions

Table: Essential Materials for Bayesian CEST MRI

| Reagent/Software | Function | Application Context |

|---|---|---|

| Phantom Solutions | Method validation | Provides ground truth for parameter estimation |

| Contrast Agents (endo-/exogenous) | CEST effect generation | Creates measurable chemical exchange signals |

| MCMC Sampling Software | Posterior distribution computation | Implements Metropolis-Hastings algorithm for parameter estimation |

| MRI Scanner with CEST Protocol | Data acquisition | Generates Z-spectra for Bayesian analysis |

Validation and Performance Metrics

In Bloch simulations, the MCMC method achieved excellent fittings for both 2-pool and 5-pool models, with sum of squares error values <10⁻³ and R-squared values close to 1. Parameter estimation errors were less than 0.5% relative to ground truth. In ischemic stroke rat experiments, the method showed obvious contrast between ischemic and contralateral regions with the highest contrast-to-noise ratios (3.9, 2.73, and 3.93) and lowest coefficient of variation values across all stroke periods compared to conventional methods [17].

Fundamental Principles: Integration of Core Concepts

Theoretical Framework

The posterior probability distribution forms the foundation of Bayesian inference, containing everything knowable about uncertain parameters conditional on observed data. According to Bayes' theorem:

p(θ|X) = p(X|θ)p(θ)/p(X)

where p(θ|X) is the posterior distribution, p(X|θ) is the likelihood function, p(θ) is the prior distribution representing existing knowledge, and p(X) is the marginal likelihood or evidence [18] [19].

For complex models where analytical determination of the posterior is infeasible, Markov chain Monte Carlo (MCMC) methods enable numerical approximation by constructing a Markov chain whose stationary distribution matches the target posterior distribution. This allows sampling from the posterior even for high-dimensional parameter spaces [20].

Uncertainty Quantification Framework

Uncertainty quantification (UQ) formally specifies likelihoods and distributional forms to infer joint probabilistic responses across all modeled factors. In the Bayesian paradigm, UQ naturally emerges through the posterior distribution, which characterizes epistemic uncertainty about parameters conditional on observed data [21].

The posterior predictive distribution extends this uncertainty quantification to future observations:

p(yrep|y) = ∫p(yrep|θ)p(θ|y)dθ

This enables model evaluation by comparing replicated data generated from the posterior distribution to actual observations, with Bayesian p-values quantifying the probability that future observations would exceed the existing data [19].

Why Now? The Convergence of Computational Power, Regulatory Openness, and Complex Data

The practical application of Bayesian models in chemical and pharmaceutical research is experiencing a pivotal moment, driven by the simultaneous maturation of three critical factors: advanced computational power that can handle complex biological systems, a significant shift towards regulatory openness, and the proliferation of high-dimensional, complex data. This convergence is moving Bayesian methods from theoretical appeal to practical necessity, enabling researchers to tackle problems that were previously intractable. In validation research, where quantifying uncertainty is paramount, Bayesian frameworks provide a principled approach for model calibration, bias correction, and decision-making under uncertainty. This article details the protocols and applications demonstrating why now is the time for Bayesian methods in chemistry validation.

The following table summarizes the key quantitative evidence supporting the current rise of Bayesian methods, highlighting advances across computational, regulatory, and data complexity domains.

Table 1: Quantitative Evidence Driving the Adoption of Bayesian Methods

| Enabling Factor | Specific Advance | Quantitative Impact / Evidence | Source Domain |

|---|---|---|---|

| Computational Power & Algorithms | Sparse Axis-Aligned Subspace (SAAS) Priors | Enables identification of near-optimal candidates from chemical libraries of >100,000 molecules using <100 property evaluations [22]. | Molecular Design |

| Bayesian Optimization (BO) for Synthesis | Overcomes inefficiencies of trial-and-error; achieves high predictive accuracy (e.g., R²=0.83 for ZIF-8 morphology prediction) [23]. | Materials Science | |

| Regulatory Openness | FDA Draft Guidance | Specific FDA guidance on Bayesian methods for drugs and biologics expected by September 2025 [24] [25] [8]. | Drug Development |

| Regulatory Pilot Programs | FDA's Complex Innovative Trial Design (CID) Pilot Program and C3TI demonstration project support Bayesian adaptive designs [25] [8]. | Clinical Trials | |

| Complex Data Handling | Multi-task & Transfer Learning | Integration of these techniques enhances BO's versatility in addressing chemical synthesis challenges [26]. | Chemical Synthesis |

| Validation for Dynamic Systems | Bayesian methods quantify model inadequacy and error for ODE models of biological networks, providing prediction bounds over entire time intervals [27]. | Systems Biology |

Application Note: Bayesian Validation of Dynamic Systems in Biological Networks

Background and Objective

Dynamic systems, often described by ordinary differential equations (ODEs), are crucial for modeling complex biological networks. However, these deterministic models often fail to fully capture the noisy and uncertain nature of biological data, leading to a discrepancy between the model and the actual biological process. This application note outlines a Bayesian protocol for validating such ODE models, explicitly addressing model inadequacy (represented as bias) to improve prediction accuracy and interpretive value [27].

Experimental Protocol

Protocol 1: Bayesian Validation for ODE Model Inadequacy

1. Problem Formulation and Priors Definition

- Define the ODE Model: Let the deterministic ODE model be represented as ( \frac{dy}{dt} = f(y, t, \theta) ), where ( y ) represents the state variables and ( \theta ) are the model parameters.

- Specify the Statistical Model: Acknowledge that the true biological process, ( z(t) ), differs from the ODE solution, ( y(t) ). Formulate the relationship as:

( z(t) = y(t) + \delta(t) + \epsilon )

where:

- ( \delta(t) ) is a time-dependent bias function representing model inadequacy.

- ( \epsilon ) is the observation error, typically ( N(0, \sigma^2) ).

- Define Prior Distributions:

- Parameters (( \theta )): Assign weakly informative priors based on existing biological knowledge (e.g., log-normal for positive parameters).

- Bias Function (( \delta(t) )): Place a Gaussian process prior over the bias: ( \delta(t) \sim \mathcal{GP}(0, k(t, t')) ), where ( k ) is a kernel function (e.g., squared-exponential).

- Error Term (( \sigma )): Assign a half-Cauchy or inverse-Gamma prior.

2. Inference and Model Fitting

- Data Collection: Collect time-course data ( D = { (ti, zi) }_{i=1}^n ) for the state variables.

- Posterior Computation: Use Markov Chain Monte Carlo (MCMC) sampling (e.g., Hamiltonian Monte Carlo) or variational inference to approximate the joint posterior distribution: ( P(\theta, \delta, \sigma | D) \propto P(D | \theta, \delta, \sigma) P(\theta) P(\delta) P(\sigma) )

- Tools: Implement in probabilistic programming languages like Stan, PyMC, or Turing.jl.

3. Validation and Prediction

- Bias Assessment: Examine the posterior distribution of ( \delta(t) ). If its credible band does not contain zero over a significant time interval, the ODE model is deemed inadequate in that region.

- Bias-Corrected Prediction: Generate predictive distributions for future observations using the full model ( y(t) + \delta(t) ), which provides more accurate and calibrated uncertainty intervals.

- Model Iteration: Use the insights into ( \delta(t) ) to inform revisions and improvements to the underlying mechanistic ODE model.

The following diagram illustrates the logical workflow and iterative nature of this validation protocol.

Application Note: Data-Efficient Molecular Property Optimization

Background and Objective

The discovery of molecules with optimal properties is a central challenge in drug development and materials science. Bayesian Optimization (BO) offers a principled framework for this sample-efficient discovery, but its effectiveness depends on the molecular representation. The MolDAIS (Molecular Descriptors with Actively Identified Subspaces) framework addresses this by adaptively identifying task-relevant subspaces within large descriptor libraries, making it exceptionally powerful in low-data regimes [22].

Experimental Protocol

Protocol 2: Molecular Optimization with MolDAIS

1. Problem Setup and Featurization

- Define Search Space: A discrete set of molecules ( \mathcal{M} ) (e.g., a chemical library with >100,000 compounds) [22].

- Define Objective: A black-box function ( F(m) ) mapping a molecule ( m ) to a property of interest (e.g., binding affinity, solubility).

- Featurization: Compute a comprehensive library of molecular descriptors for every molecule in ( \mathcal{M} ). This can include simple atom counts, topological indices, quantum-chemical descriptors, and more [22].

2. Initialize MolDAIS Optimization Loop

- Surrogate Model: Use a Gaussian Process (GP) with a SAAS (Sparse Axis-Aligned Subspace) prior. This prior induces sparsity, automatically focusing the model on the most relevant molecular descriptors.

- Acquisition Function: Select an acquisition function ( \alpha(m) ) such as Expected Improvement (EI) or Upper Confidence Bound (UCB).

3. Iterative Optimization

- For iteration ( t = 1, 2, ... ) until budget is exhausted:

- Fit Surrogate: Train the GP surrogate model on all observed data ( { (mi, F(mi)) } ).

- Maximize Acquisition: Find the molecule ( mt ) that maximizes ( \alpha(m) ).

- Evaluate & Update: Query the expensive function ( F(mt) ) (via experiment or simulation) and add the new data point to the observation set.

The workflow for this closed-loop Bayesian optimization is detailed below.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Computational and Data Resources for Bayesian Validation Research

| Research Reagent / Tool | Function / Application | Specific Examples / Notes |

|---|---|---|

| Gaussian Process (GP) with SAAS Prior | Surrogate model for high-dimensional optimization; actively identifies relevant features to prevent overfitting. | Core of the MolDAIS framework; enables efficient search in >100,000 molecule libraries [22]. |

| Probabilistic Programming Languages (PPLs) | Provide the computational backbone for flexible Bayesian model specification, inference, and validation. | Stan, PyMC, NumPyro, and Turing.jl are essential for implementing protocols like Bayesian validation for ODEs [27]. |

| Molecular Descriptor Libraries | Comprehensive featurization of molecules into numerical vectors, serving as the input for property prediction models. | Can include simple (atom counts) and complex (quantum-informed) features. MolDAIS adaptively selects relevant subsets [22]. |

| Bayesian Optimization Frameworks | Software packages that implement the BO loop, including surrogate models and acquisition functions. | Frameworks like Summit compare multiple strategies (e.g., TSEMO) for chemical reaction optimization [26]. |

| Historical & Real-World Data (RWD) | Used to construct informative priors, augment control arms in trials, and increase statistical power. | Critical for Bayesian trials in rare diseases; FDA guidance supports its use with robust borrowing methods [8]. |

Bayesian Methods in Action: Real-World Applications from Analytical Chemistry to Drug Development

Validating Quantitative Analytical Procedures using Bayesian Accuracy Profiles

The validation of quantitative analytical procedures demonstrates that analytical methods are suitable for their intended purpose, ensuring the reliability of measurements in pharmaceutical, biopharmaceutical, and chemical research. Traditional validation approaches, often based on frequentist statistics, typically require breaking down analytical methods into individual steps for separate validation. In contrast, the Bayesian accuracy profile offers a holistic validation framework that treats the analytical procedure as an integrated whole, directly controlling the risk associated with the method's future use [5] [28]. This approach aligns with the Analytical Quality by Design (AQbD) concept emerging in regulatory guidelines like ICH-Q14, emphasizing that the fundamental objective of any analytical procedure is to provide reportable values close enough to the true unknown quantity with high probability [29].

Bayesian accuracy profiles utilize β-expectation tolerance intervals constructed through Bayesian simulation to provide a visual and decision-making tool. This method allows practitioners to verify that a defined proportion of future measurements (e.g., 95%) will fall within predefined acceptance limits across the method's range [5]. By incorporating prior knowledge and directly quantifying measurement uncertainty, the Bayesian framework offers a more robust risk-assessment compared to conventional methods, making it particularly valuable for environments requiring high regulatory compliance like drug development [29].

Theoretical Foundation

Bayesian Framework for Analytical Validation

The Bayesian approach to analytical validation is built upon the one-way random effects model, which accurately represents data generated during method validation studies where measurements occur over multiple independent assay runs with replicate determinations within each run [5]. The model is specified as:

Yij = μ + bi + eij

where Yij represents the jth replicate in the ith run, μ is the overall mean, bi represents the between-run random effect (bi ~ N(0, σb²)), and eij represents the within-run random error (eij ~ N(0, σe²)) [5].

In the Bayesian framework, prior distributions are established for model parameters (μ, σb², σe²), which are then updated through Bayesian simulation to generate posterior distributions. This process incorporates existing knowledge while giving more weight to the observed validation data [5]. The key output for constructing the accuracy profile is the β-expectation tolerance interval, which represents an interval that covers on average 100β% of the distribution of future results, given the estimated parameters [5].

Accuracy Profiles as Decision Tools

The accuracy profile graphically represents the total error (combining bias and precision) of an analytical method across different concentration levels. It plots the tolerance intervals against known concentrations, overlaying acceptance limits that represent the maximum acceptable deviation [5]. The method is considered valid if the tolerance intervals fall entirely within these acceptance limits across the validated range, ensuring that a specified proportion of future measurements will be acceptable [28].

Compared to the conventional method adopted by organizations like the Société Française des Sciences et Techniques Pharmaceutiques (SFSTP), the Bayesian approach demonstrates excellent agreement while offering advantages in risk control and holistic method assessment [5].

Experimental Protocol

Sample Preparation and Analysis

Table 1: Experimental Design for Bayesian Accuracy Profile Validation

| Component | Specifications | Considerations |

|---|---|---|

| Standard Solutions | Prepare at minimum 5 concentration levels across the claimed range | Cover entire range from lower quantification limit to upper limit |

| Quality Control (QC) Samples | Prepare in replicate (n=6) at each concentration level | Use independent stock solutions than calibration standards |

| Matrix | Use authentic matrix (e.g., plasma, formulation base) for QC samples | Ensure matrix represents actual sample conditions |

| Analysis Runs | Conduct over minimum 3 independent runs (different days, analysts, equipment) | Ensure results reflect intermediate precision conditions |

| Replicates | Include minimum 2 replicates per concentration per run | Balance practical constraints with statistical requirements |

Computational Implementation

Table 2: Bayesian Computation Requirements

| Step | Method/Software | Specifications |

|---|---|---|

| Prior Specification | Non-informative or weakly-informative priors | e.g., μ ~ N(0, 10000), σ⁻² ~ Γ(0.001, 0.001) |

| Posterior Simulation | Markov Chain Monte Carlo (MCMC) | Minimum 3 chains, 10,000 iterations per chain after burn-in |

| Convergence Diagnostics | Gelman-Rubin statistic (R-hat), trace plots | R-hat < 1.05 for all parameters indicates convergence |

| Tolerance Interval Calculation | β-expectation tolerance intervals | Typically β = 0.95 for 95% future coverage |

| Software Tools | R/Stan, JAGS, Python/PyMC, specialized packages | Ensure implementation of one-way random effects model |

Application Example: Pharmaceutical Compound Analysis

To demonstrate the practical implementation of Bayesian accuracy profiles, we present a validation study for a pharmaceutical compound assay using LC-UV, based on published data [5].

Method Parameters

Table 3: Validation Results for Pharmaceutical Compound

| Concentration (μg/mL) | Bayesian Tolerance Interval (μg/mL) | Mee's Method Interval (μg/mL) | Within Acceptance Limits? |

|---|---|---|---|

| 5.0 | 4.72 - 5.31 | 4.75 - 5.29 | Yes |

| 25.0 | 23.89 - 26.15 | 23.92 - 26.11 | Yes |

| 50.0 | 48.52 - 51.51 | 48.55 - 51.48 | Yes |

| 75.0 | 72.84 - 77.19 | 72.87 - 77.16 | Yes |

| 100.0 | 96.31 - 103.72 | 96.35 - 103.68 | Yes |

The table demonstrates excellent agreement between the Bayesian approach and conventional methods, with all tolerance intervals falling within typical ±15% acceptance limits for pharmaceutical quality control, confirming method validity across the entire range [5].

Measurement Uncertainty Estimation

The Bayesian framework simultaneously estimates measurement uncertainty using the same underlying model. The combined standard uncertainty can be obtained from the posterior distributions of variance components:

uc = √(σb² + σe²)

where σb² represents between-run variance and σe² represents within-run variance [5]. This approach provides a direct, holistic estimation of measurement uncertainty without requiring separate precision and trueness studies.

The Scientist's Toolkit

Table 4: Essential Research Reagent Solutions

| Reagent/Tool | Function in Bayesian Validation | Implementation Notes |

|---|---|---|

| Statistical Software (R/Python) | Bayesian computation and visualization | R/Stan or Python/PyMC for MCMC sampling |

| Reference Standards | Establish ground truth for concentration levels | Certified reference materials with documented purity |

| Quality Control Samples | Generate validation data across concentration range | Prepared in authentic matrix, cover entire range |

| MCMC Diagnostics | Verify convergence of Bayesian simulations | Check R-hat statistics, effective sample size, trace plots |

| β-Expectation Tolerance Script | Calculate tolerance limits from posterior distributions | Custom code or specialized validation packages |

| Accuracy Profile Plotting | Visual decision-making tool | Graphical representation with acceptance limits |

Advanced Applications and Integration

Bioanalytical Method Validation

The Bayesian accuracy profile approach successfully validates ligand-binding assays (e.g., ELISA) and chromatographic methods (LC-MS, LC-UV) in bioanalytical contexts [5]. For bioanalytical methods, the approach demonstrates robustness in handling the additional variability inherent in biological matrices.

Integration with Analytical Quality by Design

Bayesian accuracy profiles naturally support the Analytical Quality by Design (AQbD) framework referenced in ICH-Q14 by establishing a direct link between method performance criteria (Analytical Target Profile) and validation outcomes [29]. The approach formally quantifies the target measurement uncertainty (TMU) needed to ensure that the probability of incorrect decisions about product quality remains acceptable.

Bayesian accuracy profiles provide a statistically rigorous, holistic framework for validating quantitative analytical procedures. This approach offers substantial advantages over conventional methods, including direct risk quantification for future use, simultaneous uncertainty estimation, and natural alignment with quality by design principles. The methodology has proven applicable across diverse analytical techniques and sectors, including pharmaceutical, biopharmaceutical, and food processing industries [5].

By implementing the protocols and experimental designs outlined in this document, researchers and drug development professionals can establish robust, fit-for-purpose analytical methods with clearly defined performance characteristics and known risks, ultimately supporting the development of safer and more effective pharmaceutical products.

Accelerating Pharmaceutical Process Development and Optimization with Bayesian Models

The primary objectives of pharmaceutical development encompass identifying the routes, processes, and conditions for producing medicines while establishing a control strategy to ensure acceptable quality attributes throughout the commercial manufacturing lifecycle [30]. However, achieving these goals is challenged by inherent uncertainties surrounding design decisions for the manufacturing process and variations in manufacturing methods resulting in distributions of outcomes during production [30]. The application of Bayesian modeling approaches, which combine prior information with observed data to create probabilistic posterior distributions of target responses, provides a powerful framework to quantify these uncertainties and guide faster decision-making in process development [30] [31].

Bayesian optimization (BO) has gained significant popularity in the early drug design phase over the last decade as a well-known method for the determination of the global optimum of a function [31] [32]. This approach is particularly valuable for pharmaceutical applications where traditional experimentation is often resource-intensive, expensive, and time-consuming [33]. By incorporating uncertainty quantification directly into the optimization process, Bayesian methods enable chemical engineers and pharmaceutical scientists to navigate complex decision landscapes and optimize processes for improved efficiency and reliability with significantly reduced experimental burden [30] [34].

Bayesian Foundations for Pharmaceutical Applications

Core Principles

The Bayesian approach combines information across observed data and current experiments to create probabilistic posterior distributions of target responses [30]. This methodology operates through several key principles:

- Prior Distributions: Initial distributions of parameters are determined by available data for possible parameter values before conducting new experiments [30]

- Posterior Distributions: Through Bayesian analysis, prior distributions are updated by combining weighted prior information with new experimental data to generate posterior distributions that reflect updated understanding [30]

- Markov Chain Monte Carlo (MCMC): This technique proves effective in estimating process reliability when analytical solutions are intractable [30]

Algorithmic Components

Bayesian optimization implementations typically incorporate several building blocks that make it particularly suitable for pharmaceutical development challenges:

- Surrogate Modeling: Gaussian processes, decision trees, and neural networks offer novel means to quantify uncertainty and have shown success in designing experimental plans that reduce the number of required experiments [30]

- Acquisition Functions: These guide the selection of subsequent experiments by balancing exploration of uncertain regions with exploitation of known promising areas [31]

- Sequential Design: The iterative process of updating models with new data points allows for continuous improvement of process understanding with minimal experimental effort [31] [35]

Application Notes: Bayesian Methods Across the Development Workflow

Bayesian approaches provide value throughout the pharmaceutical development continuum, from initial route selection to final process characterization. The table below summarizes key applications across this spectrum.

Table 1: Bayesian Model Applications in Pharmaceutical Development

| Development Stage | Primary Bayesian Application | Key Benefits | Representative Techniques |

|---|---|---|---|

| Route & Formulation Invention [30] | Molecular optimization & reaction scouting | Identifies promising chemical space with minimal experiments; optimizes multiple properties simultaneously | Gaussian processes with molecular descriptors [31] |

| Process Invention & Optimization [30] | Experimental design with Bayesian optimization | Finds optimum conditions with less experimental burden; handles mixed parameter types | Bayesian optimization with acquisition functions [34] [35] |

| Process Characterization [30] | Reliability estimation & failure prediction | Estimates distribution of outcomes; predicts failure rates against desired limits | Bayesian parametric models, MCMC [30] |

| Scale-up Translation [35] | Hybrid modeling with limited data | Manages uncertainty during technology transfer; reduces material requirements | Bayesian semi-mechanistic models [35] |

Route and Formulation Invention

In the route and formulation invention stage, Bayesian methods accelerate the selection of synthetic pathways and formulation components. For small molecule drugs, route invention involves selecting the sequence of chemical transformations that will enable commercial manufacturing, while for biologics, it encompasses designing the sequence for the host and the corresponding bioreactor conditions [30].

Bayesian optimization has demonstrated particular success in molecular optimization tasks, where it efficiently navigates high-dimensional chemical spaces to identify compounds with desired properties. The approach is especially valuable when balancing multiple objectives simultaneously, such as potency, solubility, and synthetic accessibility [31]. By employing acquisition functions that explicitly manage the trade-off between exploration and exploitation, BO algorithms can identify promising candidate molecules with far fewer synthetic iterations than traditional approaches [31] [32].

Process Invention and Optimization

Once the synthetic route is established, process invention focuses on finalizing unit operations and optimizing conditions against multiple constraints, including safety, impurity control, sustainability, and cost [30]. Bayesian optimization has proven particularly effective in this domain, as evidenced by recent industrial applications.

Notably, Merck, in collaboration with Sunthetics, received the 2025 ACS Green Chemistry Award for Algorithmic Process Optimization (APO), a proprietary machine learning platform that integrates Bayesian optimization to support complex optimization challenges in pharmaceutical R&D [34]. The APO technology replaces traditional Design of Experiments (DOE) with a smarter alternative that handles numeric, discrete, and mixed-integer problems with 11+ input parameters, enabling significant reductions in hazardous reagent usage and material waste while accelerating development timelines [34].

Process Characterization and Scale-up

In the final stages of process development, Bayesian methods provide robust tools for characterizing and predicting the distribution of outcomes from the manufacturing process. During process characterization, Bayesian parametric models estimate failure rates against desired quality limits, providing crucial information for quality risk management [30].

A recent case study demonstrated the application of Bayesian optimization to crystallisation process development using an automated scale-up DataFactory [35]. This approach employed a 5-point Latin hypercube design to investigate the effects of cooling rate, seed mass, and seed point supersaturation on nucleation, growth, and yield during the cooling crystallisation of lamivudine in ethanol. The screening data served as inputs for Bayesian optimisation, which determined the optimal next experiment aimed at achieving target process parameters and reducing uncertainty [35]. This data-driven methodology achieved approximately 10% improvement in the objective function value within just one iteration, highlighting the efficiency gains possible with Bayesian approaches [35].

Experimental Protocols

Protocol: Bayesian Optimization for Crystallization Process Development

This protocol outlines the methodology for applying Bayesian optimization to pharmaceutical crystallization process development, based on recent research employing automated scale-up crystallisation platforms [35].

Experimental Setup and Initialization

- Equipment Configuration: Establish a multi-vessel crystallisation platform equipped with peristaltic pump transfer, integrated HPLC, image-based process analytical technology (PAT), and single board computer control based IoT system [35]

- Initial Design: Employ a 5-point Latin hypercube design to investigate critical process parameters (CPPs) including cooling rate, seed mass, and seed point supersaturation [35]

- Response Monitoring: Measure critical quality attributes (CQAs) including crystal size distribution, yield, purity, and polymorphic form using integrated PAT tools [35]

Bayesian Optimization Workflow

- Surrogate Model Selection: Implement Gaussian process regression to model the relationship between process parameters and quality attributes

- Acquisition Function: Apply expected improvement to balance exploration of uncertain parameter space with exploitation of known high-performance regions

- Iteration Cycle: Execute sequential experiments based on acquisition function recommendations, updating the surrogate model after each iteration

Termination Criteria

- Convergence Metric: Continue optimization until expected improvement falls below 1% of the current best performance

- Resource Limit: Alternatively, terminate after a predetermined number of iterations (typically 10-20) based on available resources

- Validation: Confirm optimal conditions through triplicate runs to ensure reproducibility

Protocol: Multi-Objective Formulation Optimization

This protocol describes the application of Bayesian optimization for pharmaceutical formulation development where multiple quality attributes must be balanced simultaneously.

Problem Formulation

- Parameter Definition: Identify critical formulation variables (e.g., excipient ratios, processing conditions) and their feasible ranges

- Objective Specification: Define multiple objectives (e.g., dissolution rate, stability, flow properties) and their relative priorities

- Constraint Identification: Specify any constraints (e.g., total tablet mass, manufacturing limitations)

Optimization Procedure

- Preference Modeling: Incorporate preference information using weighted scalarization or Pareto-based approaches

- Multi-Objective Acquisition: Implement q-Expected Hypervolume Improvement or other multi-objective acquisition functions

- Parallel Evaluation: Utilize batch Bayesian optimization to design multiple experiments for simultaneous execution

Decision Making

- Pareto Front Analysis: Identify the set of non-dominated solutions representing optimal trade-offs between competing objectives

- Posterior Sampling: Characterize uncertainty in the Pareto front using posterior samples from the Gaussian process

- Final Selection: Apply decision-maker preferences to select the most suitable formulation from the Pareto-optimal set

Workflow Visualization

Diagram 1: Bayesian Optimization Workflow for Pharmaceutical Process Development. This diagram illustrates the iterative cycle of experimental design, data collection, model updating, and acquisition function evaluation that enables efficient process optimization.

Research Reagent Solutions and Essential Materials

The successful implementation of Bayesian optimization in pharmaceutical process development requires specific analytical technologies and computational tools. The table below details key resources and their functions.

Table 2: Essential Research Tools for Bayesian Process Optimization

| Tool Category | Specific Technologies | Function in Bayesian Optimization | Implementation Considerations |

|---|---|---|---|

| Process Analytical Technology (PAT) [35] | HPLC, FTIR, Raman spectroscopy, FBRM, imaging systems | Provides real-time quality attribute data for model training | Integration with data management systems; sampling frequency aligned with process dynamics |

| Automation Platforms [35] | Multi-vessel reactors with peristaltic pumps, IoT control systems | Enables high-throughput experimentation with minimal human intervention | Compatibility with existing equipment; reliability for extended unmanned operation |

| Computational Libraries [31] [32] | GAUCHE, Scikit-learn, GPyTorch, Bayesian optimization packages | Implements surrogate modeling and acquisition function calculation | Scalability to problem dimension; handling of mixed parameter types |

| Data Management Systems | Laboratory Information Management Systems (LIMS), Electronic Lab Notebooks (ELN) | Maintains experimental records and ensures data integrity for model building | Interoperability with analytical instruments and modeling software |

| Surrogate Model Options [30] | Gaussian processes, Bayesian neural networks, random forests | Quantifies uncertainty and predicts process performance | Trade-offs between expressivity and computational requirements |

Implementation Considerations

Organizational Adoption

Successful implementation of Bayesian methods in pharmaceutical development requires addressing several organizational challenges. The industry has historically relied on more empirical methods for process development, optimization, and control, with heuristic approaches leading to an unsustainable number of drug shortages and recalls [33]. Transitioning to model-based approaches like Bayesian optimization requires:

- Cross-functional Teams: Collaboration between chemical engineers, data scientists, analytical chemists, and manufacturing specialists [30] [36]

- Regulatory Alignment: Demonstrating model validity and alignment with Quality by Design (QbD) principles as outlined in ICH Q8, Q9, and Q10 guidelines [36]

- Change Management: Addressing cultural resistance through education and demonstration of successful case studies [33]

Technical Implementation

From a technical perspective, effective deployment of Bayesian optimization requires attention to several factors:

- Problem Formulation: Appropriate parameter space definition and objective function specification based on process understanding [31]

- Data Quality: Ensuring reliable, sufficiently large datasets for initial model training, though Bayesian methods are particularly valuable for data-scarce scenarios [32]

- Computational Infrastructure: Adequate resources for model training and optimization, though cloud computing has made this increasingly accessible [30]

Bayesian models provide a powerful framework for accelerating pharmaceutical process development and optimization while effectively managing the uncertainties inherent in these complex systems. By enabling more efficient experimental designs, quantifying prediction uncertainty, and systematically balancing multiple objectives, these approaches can significantly reduce development timelines, material requirements, and environmental impact [30] [34] [35].

The continuing evolution of Bayesian methods, including advances in surrogate modeling, uncertainty quantification, and integration with mechanistic knowledge, promises to further enhance their utility across the pharmaceutical development lifecycle [30] [33]. As demonstrated by successful industrial implementations [34] [35], the strategic adoption of Bayesian approaches represents a valuable competitive advantage in the increasingly challenging landscape of pharmaceutical development.

The assessment of chemical safety is undergoing a paradigm shift. The traditional approach, which relies heavily on apical outcomes from in vivo testing, is increasingly viewed as unfit for purpose in the 21st century [9]. While the in vivo acute lethality test, first introduced in the 1920s and measuring the median lethal dose (LD50) in rodents, has long been the gold standard for acute toxicity evaluation, ethical concerns and scientific progress have motivated the development of alternative approaches [37] [38].

An array of New Approach Methodologies (NAM)—spanning in vitro and in silico techniques—have emerged to determine toxic effects [9]. However, regulatory adoption of these individual methods has been slow, partly due to concerns about their reliability and lack of validation frameworks [9]. A proposed solution formalizes the concept of combining evidence from multiple sources through a "tiered assessment" approach, whereby data gathered through a sequential NAM testing strategy is used to infer the properties of a compound of interest [9]. This paper illustrates how such a scheme, underpinned by Bayesian statistical inference, can be developed and applied for the endpoint of rat acute oral lethality, enabling quantification of the degree of confidence that a substance belongs to a specific toxicity category [9].

Background and Significance

The Need for Alternative Assessment Methods

The ubiquitous use of chemical substances across industries creates unavoidable opportunities for human exposure, necessitating robust hazard identification and assessment activities [38]. Despite the usefulness of LD50 data for chemical screening, triaging, and hazard classification, ethical considerations centered on dosing animals to the point of mortality have provided strong motivation to identify and validate alternative testing approaches [37] [38].

Furthermore, it is unrealistic to expect that a single alternative test might reliably reproduce the results of a complex animal study [9]. Toxicological outcomes rarely represent simple binary determinations; instead, they exist along a continuum, with "positive" and "negative" judgments often dictated by which side of a regulatory threshold a value falls [9].

Bayesian Inference in Predictive Toxicology