Generative AI vs. Active Learning: A Strategic Comparison for Accelerating Drug Discovery

This article provides a comprehensive comparison of Generative AI and Active Learning, two pivotal machine learning paradigms transforming pharmaceutical R&D.

Generative AI vs. Active Learning: A Strategic Comparison for Accelerating Drug Discovery

Abstract

This article provides a comprehensive comparison of Generative AI and Active Learning, two pivotal machine learning paradigms transforming pharmaceutical R&D. Tailored for researchers and drug development professionals, it explores the foundational principles of each approach, details their methodological applications in tasks from de novo molecular design to lead optimization, and addresses key implementation challenges. By presenting real-world case studies and validation metrics, it offers a strategic framework for selecting and integrating these technologies to enhance efficiency, reduce costs, and improve success rates in the drug discovery pipeline.

Core Concepts: Demystifying Generative AI and Active Learning in a Pharmaceutical Context

The pursuit of knowledge and innovation is undergoing a fundamental transformation, moving from serendipitous "Discovery by Luck" toward systematic "Discovery by Design." This paradigm shift is largely driven by the emergence of sophisticated computational approaches, particularly generative artificial intelligence (AI) and active learning methodologies. In scientific domains, especially drug development, this transition represents a move away from reliance on chance observations toward engineered, predictable discovery processes powered by algorithms that can explore complex spaces with unprecedented efficiency.

This guide provides an objective comparison of two leading computational approaches—generative AI and active learning—that are enabling this transition. We examine their performance characteristics, experimental protocols, and practical implementations to help researchers, scientists, and drug development professionals make informed decisions about integrating these technologies into their discovery workflows.

Quantitative Performance Comparison

Extensive research has quantified the performance characteristics of both generative AI and active learning approaches across multiple dimensions. The table below summarizes key findings from controlled studies and implementation data.

Table 1: Performance Metrics of Generative AI vs. Active Learning Approaches

| Performance Metric | Generative AI | Active Learning | Data Sources |

|---|---|---|---|

| Learning Gains/Effectiveness | Over double the median learning gains compared to in-class active learning [1] | 54% higher test scores than traditional passive learning [2] [3] | Randomized controlled trials [1] [3] |

| Time Efficiency | Learned significantly more in less time; median 49 minutes vs. 60 minutes for same material [1] | 13 times more learner talk time and 16 times more non-verbal engagement [3] | Time-on-task measurements [1] [3] |

| User Engagement & Motivation | Significantly higher engagement and motivation ratings [1] | 75% of students feel more motivated compared to 30% in traditional environments [2] | Likert-scale surveys and engagement tracking [1] [2] |

| Implementation Scale | Highly scalable; addresses limitations of one-teacher-to-many-students model [1] | 62.7% participation rate vs. 5% in lecture formats [3] | Large-scale educational studies [1] [3] |

| Resource Efficiency | Drastic reduction in inference costs (over 280-fold for equivalent performance) [4] | Enables better model performance with fewer labeled examples [5] | Market analysis and machine learning benchmarks [4] [5] |

| Risks & Limitations | Potential for over-reliance, decreased cognitive engagement, and superficial learning [6] | Requires careful implementation to overcome student perception gaps [3] | Controlled experiments and observational studies [6] [3] |

Experimental Protocols and Methodologies

Generative AI in Educational Intervention

A recent randomized controlled trial at Harvard University provides a robust protocol for evaluating generative AI effectiveness [1].

Population and Setting: The study involved 194 undergraduate students in a physics course, broadly representative of diverse institutional populations.

Experimental Design: A crossover design was implemented where students were divided into two groups. Each group experienced both teaching methodologies in consecutive weeks:

- Week 1: Group 1 used AI-supported lesson at home; Group 2 participated in active learning lesson in class

- Week 2: Conditions were reversed between groups

AI Intervention: The custom AI tutor was designed with specific pedagogical principles:

- Targeted prompt engineering to facilitate active learning

- Cognitive load management techniques

- Growth mindset promotion

- Scaffolding of content with timely, accurate feedback

- Self-paced progression through material

Measurement Instruments:

- Pre-test and post-test assessments for baseline knowledge and content mastery

- 5-point Likert scale surveys measuring engagement, enjoyment, motivation, and growth mindset

- Platform analytics tracking time-on-task for AI group

- Statistical analysis using two-sample rank-sum (Mann-Whitney) tests and linear regression models with multiple controls

Key Findings: The AI group demonstrated significantly higher post-test scores (median = 4.5 vs. 3.5) with less median time on task (49 minutes vs. 60 minutes), while reporting higher engagement and motivation [1].

Active Learning Implementation Framework

Research from Engageli and other institutions establishes a clear protocol for active learning implementation [3].

Setting and Participants: Studies span K-12, higher education, and corporate training environments with diverse participant populations.

Intervention Components:

- Transformation of passive lecture-based learning into participatory experiences

- Interactive techniques including discussions, polls, small-group activities

- Real-time adjustments based on student engagement levels

- Digital and collaborative elements to enhance participation

Measurement Approach:

- Comparison of test scores between active learning and traditional lecture formats

- Tracking of verbal and non-verbal engagement metrics

- Assessment of knowledge retention over time

- Measurement of participation rates and attendance patterns

- Longitudinal tracking of performance in follow-up courses

Key Outcomes: Active learning environments generated 13 times more learner talk time, 16 times higher non-verbal engagement, and 54% higher test scores compared to traditional lectures [3].

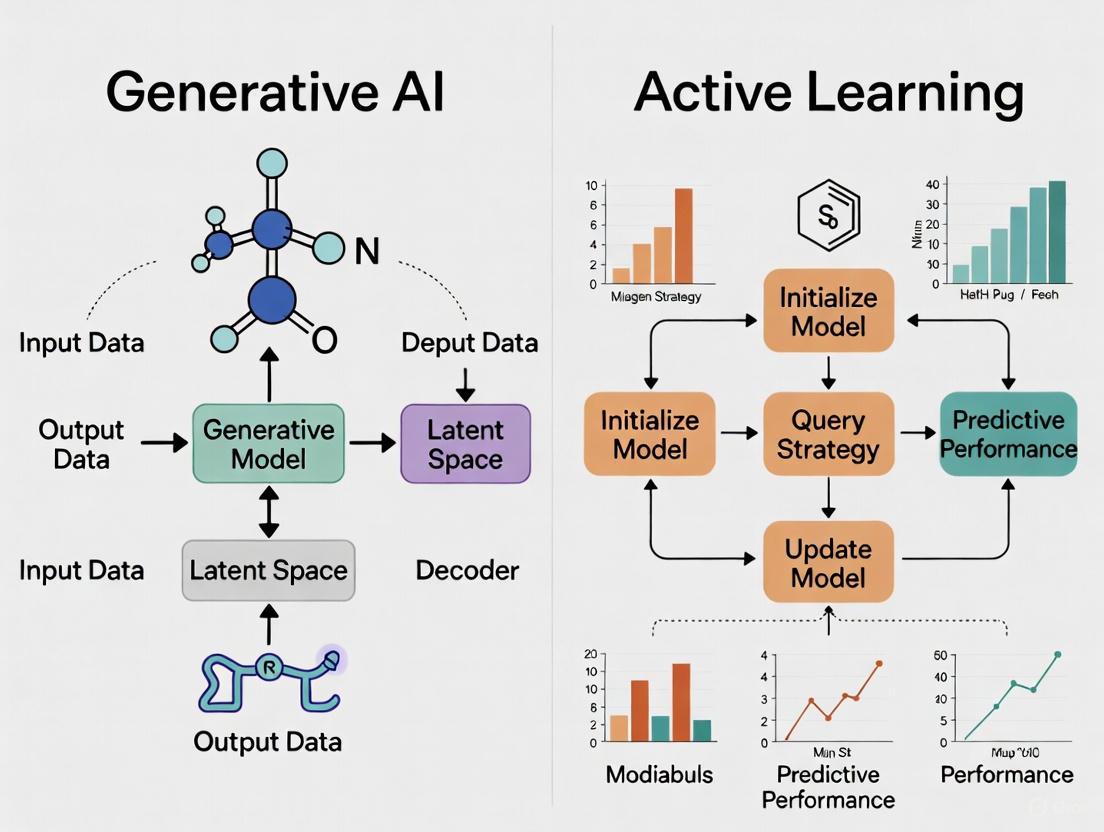

Visualization of Methodological Frameworks

Generative AI Tutoring Workflow

Active Learning Implementation Cycle

The Researcher's Toolkit: Essential Implementation Components

Successful implementation of either generative AI or active learning approaches requires specific "research reagent solutions" – the essential components that enable effective deployment.

Table 2: Essential Research Reagents for AI and Active Learning Implementation

| Component Category | Specific Tools & Solutions | Function & Purpose | Implementation Examples |

|---|---|---|---|

| Computational Infrastructure | GPUs/TPUs, Cloud Computing (AWS, Google Cloud, Azure), High-performance Hardware [7] | Handles large-scale parallel computations for model training and inference | Enables processing of complex AI algorithms and real-time interactions [7] |

| AI Models & Frameworks | Transformer Models (GPT), GANs, VAEs, PyTorch, TensorFlow, Keras [8] [7] | Provides foundation for generative AI capabilities and model development | Custom AI tutors for personalized learning [1] |

| Data Management Systems | Data Collection Tools, Preprocessing Pipelines, Annotation Platforms [7] [5] | Ensures quality, diversity, and appropriate labeling of training data | Critical for both AI training and active learning selection strategies [5] |

| Interaction & Engagement Platforms | Digital Polling, Chat Systems, Collaboration Tools, Video Conferencing with Engagement Features [3] | Facilitates real-time interaction and participation measurement | Enables 13x more learner talk time and 16x more non-verbal engagement [3] |

| Assessment & Analytics | Learning Management Systems, Analytics Dashboards, A/B Testing Frameworks [1] | Tracks effectiveness, engagement metrics, and learning outcomes | Measures learning gains, time-on-task, and knowledge retention [1] |

| Pedagogical Design Components | Prompt Engineering Templates, Cognitive Load Management, Scaffolding Sequences [1] | Ensures educational effectiveness and appropriate challenge levels | Key to successful AI tutor design and active learning activity structure [1] |

Analysis and Interpretation of Findings

The comparative data reveals distinct strengths and applications for each paradigm. Generative AI demonstrates remarkable efficiency in personalized knowledge transfer, enabling students to learn more in less time while providing scalability that addresses fundamental limitations of traditional education models [1]. This comes with the caveat that poorly implemented AI may foster over-reliance and reduce cognitive engagement, potentially undermining long-term knowledge retention [6].

Active learning approaches show consistent advantages in fostering deeper engagement and developing critical thinking skills through social learning and collaboration [3]. The methodology effectively addresses the "perception gap" where students may feel they learn less despite demonstrating significantly better actual retention and understanding [3].

In the context of "Discovery by Design," both approaches offer pathways toward more systematic innovation. Generative AI excels in exploring vast solution spaces and generating novel possibilities, while active learning provides frameworks for collaborative refinement and validation of discoveries. The emerging research suggests that hybrid approaches—leveraging the strengths of both paradigms—may represent the most promising direction for advanced research and development workflows.

The transition from "Discovery by Luck" to "Discovery by Design" is not merely about adopting new technologies, but about fundamentally reengineering how we approach knowledge creation and innovation. Both generative AI and active learning offer powerful methodologies for this transformation, each with distinct performance characteristics and implementation requirements.

Generative AI provides unprecedented scalability and personalization in exploration and content generation, while active learning creates environments conducive to deep engagement and collaborative problem-solving. The experimental evidence suggests context-appropriate application of these approaches—either individually or in combination—can significantly accelerate discovery processes across scientific domains.

For researchers and drug development professionals, the imperative is clear: deliberate design of discovery workflows, informed by robust experimental data and implementing appropriate technological solutions, can systematically enhance innovation outcomes. The paradigm of chance observations is giving way to engineered discovery processes, with generative AI and active learning serving as foundational methodologies in this transformative era.

Eroom's law—the observation that drug discovery is becoming slower and more expensive over time, despite technological improvements—presents a critical economic and innovative challenge for the pharmaceutical industry [9]. This review examines the potential of artificial intelligence (AI) to reverse this trend by comparing two dominant computational approaches: generative AI and active learning. We synthesize current data from 2024-2025, detailing experimental protocols, performance metrics, and practical workflows to guide researchers and drug development professionals in leveraging these technologies to address the productivity crisis.

Understanding Eroom's Law and the "Better than the Beatles" Problem

Eroom's law describes the adverse trend where the inflation-adjusted cost of developing a new drug roughly doubles every nine years [9]. This decline in R&D efficiency is attributed to several factors, including the "better than the Beatles" problem, where new drugs must demonstrate incremental benefit over already highly effective treatments, thereby requiring larger and more expensive clinical trials [9]. Other key causes include increasingly cautious regulators, inefficient resource allocation, and a bias towards basic research methods that often fail in clinical trials due to the complexity of whole organisms [9]. The field of cardiovascular therapeutics (CVD) exemplifies this crisis, with 33% fewer CVD therapeutics approved in the 2000s compared to the previous decade [10].

AI-driven discovery platforms claim to drastically shorten early-stage R&D timelines and cut costs by using machine learning and generative models to accelerate tasks traditionally reliant on cumbersome trial-and-error [11]. This review evaluates the evidence for this claim by comparing the capabilities of generative AI and active learning.

Comparative Analysis: Generative AI vs. Active Learning

The table below summarizes the core characteristics, performance data, and applications of these two approaches based on recent literature.

Table 1: Comparative Analysis of Generative AI and Active Learning in Drug Discovery

| Feature | Generative AI | Active Learning |

|---|---|---|

| Core Paradigm | "Describe first then design": Creates novel molecular structures from scratch [12]. | "Design first then predict": Iteratively selects informative candidates from a library for evaluation [12]. |

| Primary Objective | De novo design of novel, drug-like molecules with optimized properties [11] [12]. | Efficiently navigate vast chemical spaces to identify high-potential hits with minimal resource use [12]. |

| Key Strengths | - Explores novel chemical space beyond known scaffolds.- Can generate molecules tailored to specific target profiles.- High speed in ideation [13] [12]. | - High efficiency in resource-constrained settings.- Reduces number of costly assays or simulations.- Improves predictive model accuracy with each cycle [12]. |

| Reported Performance | - ISM001-055: From target to Phase I in 18 months (Insilico Medicine) [11].- CDK2 Inhibitors: 8 out of 9 synthesized molecules showed in vitro activity [12].- Exscientia: ~70% faster design cycles with 10x fewer synthesized compounds [11]. | - Achieves 5–10x higher hit rates than random selection in drug combination searches [12].- Significantly reduces number of docking or ADMET assays needed to identify top candidates [12]. |

| Institutional Examples | Insilico Medicine, Exscientia, Recursion, BenevolentAI, Schrödinger, MIT (BoltzGen) [11] [13]. | Commonly integrated into molecular modeling pipelines and QSAR/QSPR model development [12]. |

Experimental Protocols and Workflows

Protocol 1: A Generative AI Model for Novel Protein Binders

Recent work from MIT on the model BoltzGen provides a protocol for generating novel protein binders for "undruggable" targets [13].

- Aim: To generate novel, functional protein binders from scratch for challenging biological targets.

- Key Innovations:

- Unified Architecture: The model performs both protein design and structure prediction, maintaining state-of-the-art performance across tasks [13].

- Physics-Aware Constraints: Built-in constraints, designed with wet-lab collaborator feedback, ensure generated proteins are functional and physically plausible [13].

- Rigorous Validation: The model was tested on 26 therapeutically relevant targets, including those explicitly chosen for their dissimilarity to training data. Validation was conducted in eight independent wet labs [13].

- Outcome: BoltzGen successfully generated novel protein binders ready to enter the drug discovery pipeline, demonstrating the ability to address previously undruggable targets [13].

Protocol 2: A Hybrid Workflow Merging Generative AI with Active Learning

A 2025 study published in Communications Chemistry detailed a hybrid workflow that nests a generative model within an active learning framework to overcome the limitations of either method used in isolation [12].

- Aim: To generate diverse, drug-like molecules with high predicted affinity and synthesis accessibility for specific targets.

- Workflow Overview:

Diagram 1: VAE-Active Learning Workflow

- Key Steps & Methodology:

- Data Representation & Initial Training: A Variational Autoencoder (VAE) is initially trained on a general set of molecules, then fine-tuned on a target-specific set [12].

- Nested Active Learning Cycles:

- Inner Cycle (Chemical Optimization): The VAE generates molecules, which are evaluated by chemoinformatic oracles for drug-likeness, synthetic accessibility (SA), and novelty. Molecules passing these filters are used to fine-tune the VAE, creating a self-improving cycle focused on chemical desirability [12].

- Outer Cycle (Affinity Optimization): After several inner cycles, accumulated molecules undergo physics-based evaluation (e.g., molecular docking). Those with excellent docking scores are added to a permanent set used for further VAE fine-tuning, shifting the focus towards high-affinity binders [12].

- Candidate Selection: Promising candidates from the permanent set undergo more rigorous molecular modeling simulations (e.g., PELE, Absolute Binding Free Energy) before final selection for synthesis and in vitro testing [12].

- Experimental Validation:

The Scientist's Toolkit: Essential Research Reagents & Solutions

The following table details key computational and experimental resources integral to implementing the described AI-driven discovery workflows.

Table 2: Key Research Reagent Solutions for AI-Driven Drug Discovery

| Item / Solution | Function / Description | Example Use Case |

|---|---|---|

| Generative AI Platforms (e.g., Exscientia, Insilico, BoltzGen) | Software that uses AI to design novel molecular structures from scratch based on desired properties [11] [13]. | De novo design of protein binders (BoltzGen) [13] or small-molecule inhibitors (Insilico's ISM001-055) [11]. |

| Active Learning (AL) Framework | An iterative computational protocol that selects the most informative data points for evaluation to maximize learning efficiency [12]. | Prioritizing compounds for docking studies or bioassays to rapidly identify hits with minimal resource expenditure [12]. |

| Variational Autoencoder (VAE) | A type of generative model that learns a compressed, continuous representation (latent space) of molecular structures, enabling smooth interpolation and generation [12]. | Core component of the hybrid workflow for generating novel molecules; its latent space is iteratively refined via AL cycles [12]. |

| Physics-Based Oracle (e.g., Molecular Docking, PELE, ABFE) | Computational methods that use physical principles to predict the binding affinity and pose of a molecule to a target protein [12]. | Used in the outer AL cycle to evaluate and filter generated molecules for their predicted binding energy and mode of action [12]. |

| Chemoinformatic Oracle | Algorithms that predict chemical properties such as drug-likeness, synthetic accessibility (SA), and novelty [12]. | Used in the inner AL cycle to filter out generated molecules that are not synthesizable or do not adhere to drug-like criteria [12]. |

Integrated Workflow for Target Selection and Candidate Generation

For a research team aiming to initiate a new project, the following diagram outlines a logical decision pathway for selecting and applying these AI methodologies.

Diagram 2: Target Strategy Selection

The data and experimental protocols synthesized here demonstrate that AI methodologies, particularly generative AI and active learning, are transitioning from theoretical promise to tangible utility in combating Eroom's law. While no AI-discovered drug has yet gained full approval, the acceleration of candidates into clinical trials and the enhanced efficiency in pre-clinical stages provide compelling evidence of a paradigm shift [11]. The most powerful approach may not be a choice between generative AI or active learning, but their strategic integration. The hybrid VAE-AL workflow, which leverages the creative power of generative models guided by the efficient, physics-informed prioritization of active learning, offers a robust framework for generating high-quality, novel drug candidates [12]. For researchers and drug development professionals, mastering these tools and workflows is no longer a niche specialty but an economic and scientific imperative to ensure a future pipeline of innovative and accessible therapeutics.

The process of drug discovery has historically been characterized by high costs, extensive timelines, and low success rates. Traditional methods, which often rely on the exhaustive evaluation of molecular libraries, fundamentally limit the exploration of vast and diverse chemical spaces [12]. Generative Artificial Intelligence (GenAI) represents a disruptive paradigm shift, moving from a "design first, then predict" approach to an inverse "describe first, then design" methodology [12]. This allows researchers to algorithmically navigate the estimated 10^23 to 10^60 drug-like molecules in the chemical universe to create novel biological compounds from scratch [14] [15]. By learning the underlying patterns and rules of chemical and biological data, generative models can produce previously unseen molecular structures with tailored properties, dramatically accelerating the identification of promising therapeutic candidates [16] [15] [17].

This review positions generative AI as the creative engine for de novo molecular design, objectively comparing its performance and methodologies against and in conjunction with other computational approaches, particularly active learning (AL). Active learning is a specific instance of sequential experimental design that uses machine learning to intelligently choose the next batch of molecular structures for evaluation, closely mimicking the iterative design-make-test-analyze cycles of laboratory experiments [18]. We will explore how these approaches individually and synergistically address the core challenges of modern drug discovery.

Comparative Analysis of Generative AI Architectures

Various generative AI architectures have been developed, each with distinct strengths, limitations, and optimal applications. The table below provides a structured comparison of the primary model types used in de novo molecular design.

Table 1: Comparison of Key Generative AI Architectures for Molecular Design

| Model Architecture | Core Operating Principle | Key Advantages | Inherent Challenges | Exemplary Applications |

|---|---|---|---|---|

| Variational Autoencoders (VAEs) [18] [14] | Encodes input into a probabilistic latent space; decodes sampled points to generate new data [17]. | Continuous, interpretable latent space enabling smooth interpolation; robust and scalable training; fast parallelizable sampling [12]. | Can generate blurry or invalid structures; prior distribution may over-simplify complex data [14]. | Integration with active learning cycles; efficient exploration of chemical space [12]. |

| Generative Adversarial Networks (GANs) [14] [17] | Two neural networks (generator & discriminator) are trained adversarially [17]. | Capable of producing high yields of chemically valid molecules [12]. | Training instability and "mode collapse" (limited diversity) [12]. | Image-driven molecular design; creative content generation [17]. |

| Autoregressive Transformers [12] [17] | Models sequence data (e.g., SMILES) by predicting the next token based on all previous ones [17]. | Captures long-range dependencies in data; leverages powerful pre-trained chemical language models [12]. | Sequential decoding can make training and sampling slower [12]. | Goal-directed generation using large chemical corpora [19]. |

| Diffusion Models [14] [19] | Iteratively denoises random noise into valid molecular structures through a reversal process [12] [17]. | High sample quality and diversity; state-of-the-art performance in structured output generation [12] [19]. | Computationally intensive due to many sampling steps [12]. | 3D molecular structure generation [19]; high-fidelity inverse design [17]. |

Performance Benchmarking: Generative Models and Hybrid Workflows

The true test of these technologies lies in their ability to produce novel, valid, and effective molecular structures. The following table summarizes quantitative performance data from recent studies and workflows, highlighting the synergy between generative AI and active learning.

Table 2: Experimental Performance of Generative AI and Active Learning Workflows

| Study / Workflow | Core Methodology | Target(s) | Key Experimental Results & Performance Metrics |

|---|---|---|---|

| VAE with Nested Active Learning [12] | VAE integrated with inner (chemoinformatics) and outer (molecular modeling) active learning cycles. | CDK2, KRAS | CDK2: 9 molecules synthesized, 8 showed in vitro activity (1 with nanomolar potency). KRAS: 4 molecules identified with potential activity. Generated novel, diverse scaffolds with high predicted affinity and synthesis accessibility. |

| REINVENT + Free Energy Simulations [18] | Generative AI (REINVENT) combined with precise absolute binding free energy (ABFE) simulations in an active learning protocol. | 3CLpro, TNKS2 | Discovered ligands with higher scores than a baseline surrogate model for 3CLpro and compounds with experimentally determined affinities for TNKS2. Achieved high chemical diversity, exploring a different chemical space than the baseline. |

| Property-Guided Diffusion (GaUDI) [17] | Equivariant graph neural network for property prediction combined with a generative diffusion model. | Organic Electronic Materials | Achieved 100% validity in generated molecular structures while optimizing for single and multiple objectives. |

| Graph Convolutional Policy Network (GCPN) [17] | Reinforcement learning (RL) model that sequentially adds atoms and bonds to construct molecules. | General Molecular Properties | Demonstrated capability to generate molecules with desired chemical properties while ensuring high chemical validity. |

| GraphAF [17] | Autoregressive flow-based model fine-tuned with reinforcement learning. | General Molecular Properties | Combined efficient sampling from a learned distribution with targeted optimization towards desired molecular properties. |

Detailed Experimental Protocols

To ensure reproducibility and provide a clear understanding of the methodological rigour behind these approaches, we detail two of the most effective protocols from the benchmarked studies.

Protocol 1: VAE with Nested Active Learning Cycles

This workflow, which yielded experimentally validated hits for CDK2 and KRAS, integrates generative and discriminative models within an iterative refinement framework [12].

Data Representation and Initial Training:

- Representation: Training molecules are represented as SMILES strings, which are tokenized and converted into one-hot encoding vectors.

- Training: A VAE is first pre-trained on a general molecular dataset to learn the fundamental rules of chemical viability. It is then fine-tuned on a target-specific training set to bias the generation towards structures with increased target engagement.

Molecule Generation and Inner AL Cycle (Cheminformatics Oracle):

- Generation: The fine-tuned VAE is sampled to yield a batch of new molecular structures.

- Evaluation: Generated molecules are evaluated by cheminformatics "oracles" for key properties including:

- Drug-likeness: Adherence to rules like Lipinski's Rule of Five.

- Synthetic Accessibility (SA): Estimated ease of chemical synthesis.

- Novelty/Dissimilarity: Measured against the current training set to ensure exploration.

- Fine-tuning: Molecules meeting predefined thresholds are added to a "temporal-specific set," which is used to further fine-tune the VAE, creating a feedback loop that prioritizes desired chemical properties.

Outer AL Cycle (Physics-Based Affinity Oracle):

- After several inner cycles, accumulated molecules in the temporal-specific set undergo more computationally intensive evaluation via molecular docking simulations, which serve as a physics-based affinity oracle.

- Molecules with favorable docking scores are transferred to a "permanent-specific set," which is used for the next round of VAE fine-tuning, directly steering the generation toward structures with high predicted binding affinity.

Candidate Selection and Validation:

- The most promising candidates from the permanent-specific set undergo rigorous filtration and advanced molecular modeling, such as Protein Energy Landscape Exploration (PELE) simulations, for an in-depth assessment of binding interactions and stability [12].

- Top-ranked compounds are then selected for synthesis and in vitro bioassays for experimental validation.

The following workflow diagram illustrates this nested active learning process:

Protocol 2: REINVENT with Binding Free Energy Ranking

This GAL (Generative Active Learning) protocol demonstrates the powerful combination of AI-driven generation with high-accuracy physics-based simulations on high-performance computing systems [18].

Molecular Generation with REINVENT: The REINVENT algorithm, a specialized generative model for molecular design, is used to propose a large initial batch of candidate molecules conditioned on the target protein.

Surrogate Model Pre-screening: A faster, surrogate machine learning model (e.g., a QSAR or docking model) is used to screen the large generated library and select a smaller, top-ranking batch of molecules for more precise evaluation. This step optimizes computational efficiency.

Precise Affinity Ranking via Free Energy Simulations: The selected batch of molecules undergoes rigorous binding affinity assessment using Absolute Binding Free Energy (ABFE) calculations, specifically the ESMACS (Enhanced Sampling of Molecular dynamics with Approximation of Continuum Solvent) protocol. These physics-based molecular dynamics simulations provide a highly accurate ranking of candidates, far surpassing the precision of docking scores.

Active Learning Feedback Loop: The results from the ABFE calculations are fed back into the REINVENT model. This feedback informs and guides the subsequent generation cycle, creating a closed-loop system that iteratively improves the quality of the generated molecules toward higher-affinity ligands.

The Scientist's Toolkit: Essential Research Reagents and Solutions

The experimental protocols outlined above rely on a suite of computational tools and resources. The following table details these key "research reagents" for implementing generative AI and active learning in molecular design.

Table 3: Essential Research Reagents and Computational Tools for AI-Driven Molecular Design

| Tool / Resource | Type | Primary Function in Workflows | Exemplary Use Case |

|---|---|---|---|

| SMILES/SELFIES [15] | Molecular Representation | String-based representations that encode molecular structure; the "language" for many generative models. | SMILES strings are tokenized and used as input for VAEs and Transformer models [12] [15]. |

| VAE (Variational Autoencoder) [12] | Generative Model Architecture | Learns a continuous latent representation of molecules; enables generation and interpolation in chemical space. | Core generator in the nested AL workflow for CDK2/KRAS [12]. |

| REINVENT [18] | Generative AI Software | A generative model specifically designed for de novo molecular design and optimization. | Used in the GAL protocol for generating ligands for 3CLpro and TNKS2 [18]. |

| Molecular Docking [12] | Physics-Based Simulation | Predicts the preferred orientation and preliminary binding affinity of a small molecule to a protein target. | Serves as the "affinity oracle" in the outer active learning cycle [12]. |

| ABFE (Absolute Binding Free Energy) [18] | Physics-Based Simulation | Provides highly accurate calculation of binding affinity using molecular dynamics; used for precise ranking. | Final rigorous assessment in the REINVENT GAL protocol [18]. |

| PELE (Protein Energy Landscape Exploration) [12] | Advanced Sampling Algorithm | Models protein-ligand binding pathways and induced-fit conformational changes for in-depth pose analysis. | Used for candidate refinement and selection after docking in the VAE-AL workflow [12]. |

Integrated Workflow Logic

The most successful strategies merge generative AI's creative power with active learning's strategic guidance. The following diagram synthesizes the core logical relationship between these components into a unified, iterative workflow for modern, data-driven drug discovery.

The empirical data and experimental protocols presented in this review compellingly argue that generative AI serves as the indispensable creative engine for de novo molecular design. However, its full potential is unlocked when coupled with the strategic, iterative refinement provided by active learning and robust physics-based validation [12] [18]. While standalone generative models can rapidly explore chemical space, they can struggle with challenges such as target engagement, synthetic accessibility, and the generalization beyond their training data [12]. Active learning frameworks directly address these limitations by embedding generative models within a closed-loop feedback system, leveraging both cheminformatics and physics-based oracles to steer the generation toward drug-like, synthesizable, and high-affinity ligands [12].

The synergy between these approaches is evident in the reported results. The VAE-AL workflow successfully generated novel scaffolds for CDK2 and KRAS, leading to experimentally confirmed active compounds, including a nanomolar-potency inhibitor [12]. Similarly, the GAL protocol combining REINVENT with free energy simulations discovered ligands with higher scores than baseline models and accessed diverse, unexplored regions of chemical space [18]. These successes demonstrate a powerful convergence of data-driven AI and physics-based modeling, creating a new paradigm for molecular design.

Future directions in this field point towards even greater integration and automation. This includes the convergence of generative models with Bayesian retrosynthesis planners, self-supervised pre-training on ultra-large chemical corpora, and the multimodal integration of omics-derived features for precision therapeutics [19]. The synthesis of generative AI, closed-loop automation, and advanced computing is paving the way for fully autonomous molecular design ecosystems, poised to radically accelerate the journey from concept to viable therapeutic candidate [19].

In the fields of materials science and drug development, researchers face a fundamental challenge: exploring vast experimental design spaces with limited time and financial resources. Exhaustive trial-and-error approaches are often impractical, creating a critical need for strategies that can maximize information gain from a minimal number of experiments. Active Learning (AL) has emerged as a powerful solution to this problem. AL is a subfield of machine learning that studies algorithms designed to select the most informative data points to improve their own models, forming an iterative refinement loop [20]. This guide provides an objective comparison of traditional AL models against a emerging paradigm: generative AI and Large Language Model-based Active Learning (LLM-AL). Benchmarked across diverse scientific domains, these approaches demonstrate how strategic data selection can dramatically accelerate discovery, potentially reducing the number of experiments needed by over 70% [21].

Foundational Active Learning Protocols and Performance

The Core Active Learning Workflow

The standard AL process is an iterative cycle comprising three critical stages, as established in computational biology and materials science reviews [20]:

- Model Training: An initial model is learned from a (often small) starting set of experimental data.

- Query Selection: The model generates hypotheses to propose the most informative subsequent experiments. The selection is based on criteria designed to reduce model uncertainty or maximize expected improvement.

- Model Update: New data from the performed experiment is used to update the model. This cycle repeats until a performance target is met or resources are exhausted.

This workflow can be visualized as a continuous loop of learning and experimentation.

Traditional Machine Learning Models in AL

Traditional AL relies on well-established machine learning models as its core "brain" for experiment selection. The table below summarizes four common models and their typical application in AL pipelines.

Table 1: Key Traditional Machine Learning Models for Active Learning

| Model | Primary Function in AL | Key Characteristic | Noted Challenge |

|---|---|---|---|

| Gaussian Process Regressor (GPR) | Models a distribution over functions to make predictions. | Provides native uncertainty quantification, crucial for query selection. | Hyperparameter tuning is brittle with scarce data [21]. |

| Random Forest Regressor (RFR) | Ensemble model using multiple decision trees. | Robust to outliers and handles mixed data types. | Lacks inherent, well-calibrated uncertainty estimates. |

| Bayesian Neural Network (BNN) | Neural network with probability distributions over weights. | Combines flexibility of NNs with Bayesian uncertainty. | Computationally intensive and complex to train. |

| eXtreme Gradient Boosting (XGBoost) | Optimized gradient-boosting library. | High predictive accuracy and execution speed. | Not inherently designed for uncertainty-aware query. |

Documented Performance of Traditional AL

When integrated into automated, closed-loop systems, traditional AL has demonstrated significant value. Studies and industrial applications highlight its impact on experimental efficiency:

- Order-of-Magnitude Efficiency Gains: AL implementations using Bayesian optimization have repeatedly demonstrated identifying optimal material candidates with up to 10 to 20 times fewer experimental iterations compared to unguided or random screening [21].

- Enhanced Learning Outcomes: In educational settings, which serve as a proxy for training efficiency, AI-enhanced active learning programs have shown a 54% increase in test scores and generate 10 times more engagement than traditional passive methods [2].

- Corporate Training Efficiency: In corporate settings, AI-powered training, which often leverages AL principles, leads to a 57% increase in learning efficiency, with employees completing training faster while demonstrating superior mastery and retention [2].

The Emerging Paradigm: LLM-Based Active Learning

The LLM-AL Framework and Benchmarking

A 2025 study introduced a training-free LLM-based Active Learning framework (LLM-AL) that operates in an iterative few-shot setting [21]. This approach leverages the pretrained knowledge and universal token-based representations of LLMs to propose experiments directly from text-based descriptions of experimental conditions and results. The researchers benchmarked LLM-AL against conventional ML models (GPR, BNN, RFR, XGBoost) across four diverse materials science datasets: matbench_steels (alloy design), P3HT/CNT (polymer nanocomposites), Perovskite, and Membrane optimization [21].

The study explored two prompting strategies:

- Parameter-Format: Inputs are structured as concise feature-value pairs.

- Report-Format: Inputs are rewritten into expanded descriptive text to provide additional experimental context.

Comparative Performance Data

The performance of LLM-AL and traditional ML models was measured by their efficiency in converging on optimal candidates within each dataset. The results demonstrate a strong advantage for the LLM-based approach.

Table 2: Experimental Efficiency: LLM-AL vs. Traditional ML Models

| Dataset | Primary Domain | Top Performing Model(s) | Key Performance Metric |

|---|---|---|---|

| matbench_steels | Alloy Design | LLM-AL (Parameter-Format) | Consistently reached optima using <30% of data. |

| P3HT/CNT | Polymer Nanocomposites | LLM-AL | Outperformed all traditional ML models. |

| Perovskite | Photovoltaic Materials | LLM-AL | Consistently reached optima using <30% of data. |

| Membrane | Membrane Optimization | LLM-AL (Report-Format) | Most notable improvement with descriptive prompts. |

| Across all datasets | Multiple | LLM-AL | >70% reduction in experiments needed to find top candidates [21]. |

The LLM-AL Experimental Workflow

The LLM-AL framework modifies the traditional AL loop by using a Large Language Model as the surrogate model for experiment selection. The process begins with a text-based prompt that contains prior experimental results and context, from which the LLM suggests the next most informative experiment.

Critical Analysis: LLM-AL vs. Traditional Machine Learning

Performance and Stability

The benchmark study yielded several critical findings for researchers considering these approaches [21]:

- Performance: LLM-AL consistently converged to optimal targets using less than 30% of the available data across most dataset and prompt format combinations. It consistently outperformed traditional ML models, requiring fewer iterations to reach the same performance level.

- Stability: Despite the inherent non-determinism of LLMs, the performance variability of LLM-AL across repeated runs was found to be broadly consistent and within the variability range typically observed for traditional ML approaches.

- Prompting Strategy: The optimal prompting format is context-dependent. The parameter-format (concise) was superior for datasets with many independent variables (e.g., compositions), while the report-format (descriptive) excelled for datasets with procedural descriptors, where added context revealed hidden relationships.

Advantages and Limitations

Table 3: Functional Comparison: LLM-AL vs. Traditional AL

| Feature | LLM-AL | Traditional AL |

|---|---|---|

| Generalizability | High; operates in universal token space, transferable across domains [21]. | Low; often requires problem-specific feature engineering [21]. |

| Cold-Start Performance | Strong; leverages pretrained knowledge to guide exploration with sparse data [21]. | Weak; suffers from the "cold-start" problem with low predictive accuracy initially [21]. |

| Input Representation | Flexible text-based inputs (descriptive or structured). | Rigid, fixed-length numerical feature vectors. |

| Interpretability | Potential for human-readable reasoning (e.g., via chain-of-thought). | Often a "black box"; decisions can be hard to interpret. |

| Computational Cost | Higher per-query cost due to model size. | Lower per-query cost. |

| Primary Bottleneck | Prompt design and context management. | Feature engineering and hyperparameter tuning. |

The Scientist's Toolkit: Essential Research Reagents

Implementing an effective AL pipeline, whether traditional or LLM-based, requires a suite of computational "reagents." The following tools are essential for conducting modern, data-efficient research.

Table 4: Key Research Reagent Solutions for Active Learning

| Reagent / Tool | Function | Relevance to AL |

|---|---|---|

| Large Language Model (e.g., GPT, Cohere) | Core surrogate model for experiment suggestion. | Serves as the "brain" in LLM-AL, interpreting prompts and proposing experiments based on learned knowledge [21]. |

| Traditional ML Libraries (e.g., Scikit-learn, XGBoost) | Provides algorithms for GPR, RFR, XGBoost, etc. | Forms the backbone of traditional AL pipelines for model training and prediction [21]. |

| Benchmark Datasets | Standardized data for model training and validation. | Critical for benchmarking AL performance across different strategies (e.g., matbench_steels, Perovskite) [21]. |

| Interactive Visualization Tools | Elucidates the model training and query selection process. | Helps researchers understand when and how AL works by tracking prediction changes across query stages [22]. |

| High-Contrast Accessibility Tools | Ensures software and visualizations are accessible. | Crucial for inclusive tool development, testing rendering in Windows High Contrast Mode, etc. [23] [24]. |

The empirical evidence clearly positions Active Learning as a transformative strategy for optimizing data efficiency in scientific discovery. The comparison between traditional ML and the emerging LLM-AL paradigm reveals a shifting landscape. While traditional models like GPR and BNN remain powerful, they are often constrained by their lack of generalizability and reliance on feature engineering. The LLM-AL framework demonstrates that leveraging the broad, pretrained knowledge of large language models can mitigate the cold-start problem and provide a more flexible, generalizable tool for guiding experimental design across diverse domains [21]. For researchers and drug development professionals, the choice of strategy will depend on the specific problem structure, data availability, and computational resources. However, the overarching conclusion is that integrating strategic data selection via Active Learning is no longer optional but is becoming essential infrastructure for efficient and accelerated research.

The research and development (R&D) landscape is being transformed by two powerful computational approaches: generative artificial intelligence (Gen-AI) and active learning. While often discussed separately, their combined potential within scientific workflows represents a frontier of innovation. Generative AI, a subset of artificial intelligence that utilizes machine learning models to create new, original content—from molecular structures to predictive text—operates by learning patterns and structures from existing data [25]. In contrast, active learning refers to AI systems that engage in an iterative process of selecting the most informative data points for human labeling or experimental validation, thereby maximizing learning efficiency from limited data [2]. Within the context of scientific R&D, particularly in fast-moving fields like drug development, understanding the distinct strengths, limitations, and synergistic potential of these approaches is critical for accelerating discovery. This guide provides an objective comparison of their performance, supported by experimental data and concrete protocols for integration.

Performance Comparison: Quantitative Analysis of Approaches

The table below summarizes key performance metrics for Generative AI and Active Learning, synthesized from recent studies and meta-analyses. This data provides a foundation for understanding their complementary roles.

Table 1: Comparative Performance of Generative AI and Active Learning in R&D Contexts

| Performance Metric | Generative AI | Active Learning | Comparative Insights |

|---|---|---|---|

| Learning Efficiency / Score Improvement | 30% improvement in student performance [26]; improves outcomes by up to 30% [2] | 54% higher test scores in AI-enhanced active learning environments [2] | Active learning demonstrates a significantly larger effect size for knowledge acquisition and retention. |

| Effect on Innovation & Creativity | 64% of data leaders say AI enables innovation [27]; 64% of organizations report AI enables innovation [28] | Generates 10x more engagement than passive learning [2] | Gen-AI is a direct catalyst for novel idea generation, while active learning sustains deep engagement necessary for innovation. |

| Impact on Cognitive Engagement | Can lower cognitive effort and "cognitive debt"; associated with less brain activity in writing tasks [25] | High cognitive engagement through iterative querying and problem-solving [2] | A key differentiator; active learning promotes deeper cognitive processing, while Gen-AI risks cognitive offloading. |

| Intervention Duration for Efficacy | Medium-term interventions (4–12 weeks) yielded higher effect sizes [26] | Effective in short-term, focused training initiatives [29] | Gen-AI may require longer integration to show stable benefits, while active learning can produce rapid gains. |

| Domain Specificity & Accuracy | May be less accurate for highly technical or niche tasks without fine-tuning [27] | Excels at identifying and addressing specific knowledge gaps [2] | Active learning is inherently designed to navigate complex, specific problem spaces efficiently. |

Experimental Protocols: Methodologies for Validation

To objectively evaluate these approaches, researchers have employed rigorous experimental designs. Below are detailed protocols from key studies.

Protocol 1: Randomized Controlled Trial on AI Reliance

- Objective: To measure the impact of unrestricted Gen-AI use on learning outcomes and student engagement in a technical subject.

- Source: Corvinus University of Budapest experiment on operations research students [6].

- Methodology:

- Population: Students enrolled in an operations research class.

- Randomization: Participants were randomly assigned to one of two groups using a random algorithm to prevent self-selection bias.

- Intervention Group: Permitted to use generative AI tools (e.g., ChatGPT) during classes and examinations.

- Control Group: Required to complete all work without the use of AI tools.

- Fairness Control: A point compensation mechanism was applied post-test to equalize the average performance between the two groups, ensuring neither was disadvantaged by the group assignment.

- Metrics: Measured understanding of material, engagement levels, and final exam performance.

- Key Findings: The experiment, though revised due to student complaints, indicated that uncontrolled use of AI tools led to disengaged students and a lower understanding of the material. The strong student reaction to the removal of AI access was itself evidence of deep reliance [6].

Protocol 2: Meta-Analysis on Generative AI Efficacy

- Objective: To comprehensively synthesize the effect of Generative AI on university students' learning outcomes across diverse domains.

- Source: Large-scale meta-analysis published in ScienceDirect [26].

- Methodology:

- Literature Search: Systematic search across multiple academic databases following PRISMA guidelines.

- Study Selection: Incorporated 57 qualified studies with 97 effect size estimations. Studies were required to control for baseline differences between experimental and control groups.

- Framework: Analysis was grounded in the Activity Theory-Mobile Computer-Supported Collaborative Learning (AT-MCSCL) framework, categorizing moderators into learner features, tools, roles, rules, and context.

- Outcome Measures: Academic achievement, affective-motivational status, higher-order thinking, language skills, and metacognition.

- Key Findings: Gen-AI produced a large overall positive effect on learning outcomes. The largest effect sizes were observed in language skills, followed by academic achievement, affective-motivational status, and higher-order thinking. The effect on metacognition was not statistically significant [26].

Synergistic Workflow Integration

The true power of Gen-AI and active learning emerges when they are integrated into a cohesive R&D workflow. The following diagram visualizes this synergistic cycle, where Gen-AI acts as a generator of possibilities and active learning as a mechanism for targeted validation and refinement.

This workflow can be operationalized in a drug discovery pipeline as follows:

- Generative AI for Candidate Generation: AI models like specialized LLMs (e.g., Merlyn Mind) or molecular structure generators propose initial compound structures or biological targets based on vast scientific literature and existing drug databases [30].

- Active Learning for Prioritization: The list of generated candidates is too large for experimental validation. An active learning algorithm queries a human expert (or a high-fidelity simulation) to label the most promising or diverse candidates, dramatically narrowing the list to a high-priority subset [2].

- Generative AI for Protocol Design: For the prioritized candidates, Gen-AI assists in drafting initial experimental protocols or suggesting necessary research reagents [25].

- Active Learning for Experimental Optimization: The experimental design is refined through an active learning loop that seeks to minimize the number of wet-lab experiments needed to reach a conclusion, efficiently exploring parameter spaces like concentration, temperature, and timing.

- Iterative Validation and Re-generation: Results from physical experiments are fed back into the generative model, fine-tuning it to produce more accurate and viable candidates in the next cycle, thus closing the loop [27].

The Scientist's Toolkit: Essential Research Reagents & Solutions

For researchers aiming to implement the hybrid workflow described above, the following table details key "reagents" — both computational and physical — that are essential for conducting experiments.

Table 2: Key Research Reagent Solutions for AI-Enhanced R&D

| Research Reagent / Tool | Type | Primary Function in Workflow |

|---|---|---|

| Large Language Models (e.g., GPT-4, Claude, Gemini) | Computational | Serves as a core Generative AI engine for brainstorming, literature synthesis, hypothesis generation, and initial code or protocol drafting [27] [30]. |

| Specialized AI Tutors (e.g., LearnLM, Physics Wallah's Model) | Computational | Provides domain-specific knowledge support and guided problem-solving, acting as an on-demand expert in fields like STEM [30]. |

| Active Learning Query Strategy Algorithms (e.g., Uncertainty Sampling, Diversity Sampling) | Computational | The core "logic" that decides which data points or experiments are most informative to perform next, optimizing resource allocation [2]. |

| Synthetic Data Generators | Computational | Creates statistically realistic datasets to augment small experimental datasets, used for training robust machine learning models without privacy concerns or high initial costs [27]. |

| High-Throughput Screening Assays | Wet-lab / Physical | Provides the rapid, automated experimental data generation required to feed and validate the active learning cycle, especially in biology and chemistry [28]. |

| Automated Lab Equipment & Lab Information Management Systems (LIMS) | Physical / Digital Infrastructure | Executes designed experiments and seamlessly logs structured, high-quality data back into the digital workflow, creating a closed-loop system [28]. |

Generative AI and active learning are not competing technologies but complementary forces in the modern R&D toolkit. The evidence shows that Generative AI excels as a force multiplier for creativity and content generation, while active learning provides the strategic focus and cognitive engagement needed for deep, efficient knowledge acquisition. The risks of over-reliance on Gen-AI, such as cognitive offloading and superficial understanding, can be mitigated by integrating it within an active learning framework that demands continuous validation and critical thinking. For research organizations, the imperative is to move beyond siloed experiments and strategically design workflows that harness this synergy. By doing so, they can unlock unprecedented acceleration in innovation, from the initial spark of an idea to its rigorous and efficient validation.

From Theory to Bench: Practical Applications of Generative AI and Active Learning in R&D

The pharmaceutical industry is undergoing a significant transformation through the integration of artificial intelligence (AI) into traditional drug discovery workflows. This evolution represents not a replacement of established approaches but rather the development of complementary tools that augment human expertise and computational chemistry methods refined over decades [31]. Two prominent AI paradigms have emerged: generative AI, which creates novel molecular structures from scratch, and active learning, which strategically selects experiments to maximize learning and optimize compounds. While both approaches leverage machine learning, they operate on fundamentally different principles and excel in distinct aspects of the drug discovery pipeline.

Generative AI involves algorithms that create new data based on learned patterns, with models like variational autoencoders (VAEs) and generative adversarial networks (GANs) being trained on chemical and biological datasets to propose novel molecules [32]. In contrast, active learning represents an iterative framework where the AI algorithm selectively identifies the most informative experiments to perform, thereby maximizing knowledge gain while minimizing resource expenditure [33]. This comparative guide examines the performance, applications, and practical implementation of these two approaches within modern pharmaceutical research and development.

Comparative Performance Analysis

The table below summarizes the key performance characteristics of generative AI versus active learning approaches across critical metrics relevant to drug discovery professionals.

Table 1: Performance Comparison of Generative AI and Active Learning in Drug Discovery

| Performance Metric | Generative AI | Active Learning |

|---|---|---|

| Primary Application | De novo molecular design; creating novel chemical entities [32] | Lead optimization; refining existing compounds [34] [33] |

| Data Efficiency | Requires large initial training datasets (~104-106 compounds) [31] | Highly efficient in low-data regimes; optimal for ~102 initially known compounds [33] |

| Success Rate | Can generate synthesizable candidates with drug-like properties (>70% success in some studies) [32] | Discovers 60% of synergistic drug pairs by exploring only 10% of combinatorial space [33] |

| Time Acceleration | Reduced target-to-candidate timeline from years to months (e.g., 18 months for INS018_055) [31] | Reduces experimental burden by 82% for synergy identification [33] |

| Key Advantage | Exploration of novel chemical space beyond human bias | Cost-effective exploitation of known chemical space |

| Clinical Validation | Multiple candidates in trials (e.g., rentosertib - Phase II; ISM-6631 - Phase I) [35] | Extensive retrospective validation; emerging prospective applications [34] |

Experimental Protocols and Workflows

Generative AI Protocol for De Novo Drug Design

The experimental workflow for generative AI in de novo drug design follows a multi-stage process that integrates deep learning with experimental validation [32] [31]:

Data Curation and Preprocessing: Collect and curate large-scale chemical databases (e.g., ChEMBL, ZINC) containing molecular structures and associated biological activities. Apply standardization, normalization, and chemical representation techniques (e.g., SMILES, molecular graphs).

Model Training: Implement and train generative models such as:

- Variational Autoencoders (VAEs): Learn continuous latent representations of molecular structures enabling interpolation and novel compound generation.

- Generative Adversarial Networks (GANs): Employ generator and discriminator networks in adversarial training to produce realistic molecular structures.

- Transformers: Utilize self-attention mechanisms to model complex molecular patterns and generate novel structures autoregressively.

Molecular Generation and Optimization: Generate novel compounds conditioned on specific target properties (e.g., high binding affinity, optimal physicochemical properties, selectivity). Apply transfer learning and reinforcement learning to fine-tune models for specific target classes.

In Silico Validation: Screen generated molecules using predictive models for key parameters including:

- Drug-target interaction predictions using deep learning classifiers

- ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) profiling

- Synthetic accessibility scoring

Experimental Validation: Synthesize top-ranking candidates and validate through in vitro assays (binding affinity, functional activity) and in vivo studies for promising leads.

Active Learning Protocol for Lead Optimization

The active learning framework for lead optimization employs an iterative, closed-loop design that efficiently guides experimental efforts [34] [33]:

Initial Model Training: Develop a preliminary machine learning model (e.g., random forest, neural network) using initially available compound activity data. Molecular representations may include Morgan fingerprints, MAP4 fingerprints, or graph-based embeddings.

Acquisition Function Design: Implement selection strategies to identify the most informative compounds for experimental testing, including:

- Exploration: Selecting compounds where the model is most uncertain (e.g., high prediction variance)

- Exploitation: Selecting compounds predicted to have high potency or desirable properties

- Hybrid Approaches: Balancing exploration and exploitation using methods like upper confidence bound

Iterative Experimentation Cycle:

- Batch Selection: Choose a limited set of compounds (batch size typically 10-100) based on the acquisition function

- Experimental Testing: Synthesize and evaluate selected compounds for target activity and properties

- Model Retraining: Update the predictive model with new experimental results

- Convergence Check: Repeat until performance criteria met or budget exhausted

Validation and Model Interpretation: Validate final model performance on hold-out test sets and apply explainable AI techniques to identify structural features driving compound activity.

AI Drug Discovery Workflow

The Scientist's Toolkit: Essential Research Reagents and Platforms

Successful implementation of AI-driven drug discovery requires specific computational tools, datasets, and experimental resources. The following table details key components of the modern AI drug discovery toolkit.

Table 2: Essential Research Reagents and Platforms for AI-Driven Drug Discovery

| Tool Category | Specific Examples | Function & Application |

|---|---|---|

| Generative AI Platforms | Insilico Medicine, Exscientia, Relay Therapeutics | End-to-end platforms for de novo molecular design and optimization [32] [35] |

| Active Learning Frameworks | RECOVER, DeepSynergy, Custom implementations | Iterative experimental design for lead optimization and synergy prediction [33] |

| Molecular Representations | Morgan Fingerprints, MAP4, Graph Neural Networks | Convert chemical structures into numerical features for machine learning [33] |

| Cellular Context Features | GDSC gene expression, CCLE omics data | Incorporate cellular environment into prediction models [33] |

| Benchmark Datasets | Oneil, ALMANAC, DrugComb | Curated drug combination screening data for training and validation [33] |

| Validation Assays | High-throughput screening, Binding assays, ADMET profiling | Experimental verification of AI-generated compounds [31] |

Signaling Pathways and Experimental Design

AI Method Selection Guide

Discussion and Future Perspectives

The integration of both generative AI and active learning into pharmaceutical R&D represents a paradigm shift in how drug discovery is conducted. Generative AI excels in the early discovery phase by exploring vast chemical spaces and generating novel molecular entities, while active learning provides superior efficiency in lead optimization by strategically guiding experimental resources [32] [33]. The most successful implementations increasingly leverage hybrid approaches that combine the creative capacity of generative models with the resource efficiency of active learning.

Current challenges include data quality and availability, model interpretability, regulatory acceptance, and integration with traditional medicinal chemistry expertise [31]. However, the field is advancing rapidly, with the 2025 FDA draft guidance establishing a risk-based credibility assessment framework for AI applications that complements existing regulatory frameworks [31]. As these technologies mature, they promise to significantly reduce the time and cost of drug development while increasing success rates, ultimately accelerating the delivery of innovative therapies to patients.

The future of AI in drug discovery lies in the development of more sophisticated agentic AI systems that can autonomously navigate discovery pipelines, the integration of multi-modal data (genomic, proteomic, clinical), and the creation of more accurate predictive models through advances in foundation models specifically trained on chemical and biological data [31]. For researchers and drug development professionals, understanding the complementary strengths of generative AI and active learning is crucial for selecting the appropriate tool for each stage of the drug discovery process.

The exploration of chemical space for novel drug candidates represents a monumental challenge in pharmaceutical research, with the space of synthesizable small molecules estimated to exceed 10^33 compounds [36]. Generative artificial intelligence (AI) has emerged as a transformative force in this domain, enabling researchers to design molecules with tailored properties rather than relying solely on exhaustive screening [37] [17]. Among the various architectures employed, Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), and Transformer-based models have demonstrated particular promise for molecular generation tasks. These architectures differ significantly in their theoretical foundations, operational mechanisms, and performance characteristics, making the choice between them critical for successful drug discovery applications.

When framed within broader research on generative AI combined with active learning approaches, these models take on enhanced significance. Active learning creates iterative feedback loops where models are refined based on computational or experimental evaluations, progressively improving the quality of generated molecules [38] [12]. This synergy addresses a key limitation of standalone generative models: poor generalization to new chemical spaces beyond the training data distribution. As the field advances toward increasingly automated drug discovery pipelines, understanding the comparative strengths and limitations of these architectures becomes essential for researchers and drug development professionals.

Architectural Frameworks and Mechanisms

Variational Autoencoders (VAEs)

VAEs are probabilistic generative models that learn to encode input molecules into a lower-dimensional latent space and then decode samples from this space to generate novel molecular structures [39] [37]. The architecture consists of two primary components: an encoder that maps input data to a probability distribution in latent space (typically characterized by mean and standard deviation parameters), and a decoder that reconstructs molecules from points sampled from this distribution [40]. The training objective combines reconstruction loss (ensuring input molecules can be accurately reconstructed) with a KL-divergence term that regularizes the latent space to approximate a standard normal distribution [40].

Modern VAE implementations for molecular generation have evolved significantly from early approaches. The STAR-VAE (Selfies-encoded, Transformer-based, AutoRegressive Variational Auto Encoder) framework exemplifies this evolution, incorporating a bi-directional Transformer encoder and an autoregressive Transformer decoder [36]. This architecture is trained on large-scale molecular datasets (e.g., 79 million drug-like molecules from PubChem) and uses SELFIES representations to guarantee 100% syntactic validity of generated molecules [36]. The latent-variable formulation provides a principled foundation for conditional generation, where property predictors supply conditioning signals that consistently shape the latent prior, inference network, and decoder [36].

Another innovative approach, the Transformer Graph Variational Autoencoder (TGVAE), employs molecular graphs as input data to capture complex structural relationships more effectively than string-based representations [41]. This model addresses common issues like over-smoothing in graph neural networks and posterior collapse in VAEs to ensure robust training and improve the generation of chemically valid and diverse molecular structures [41].

Generative Adversarial Networks (GANs)

GANs operate on a fundamentally different principle, framing molecular generation as an adversarial game between two competing neural networks: a generator that creates synthetic molecules from random noise, and a discriminator that distinguishes between real molecules from the training data and fake ones produced by the generator [39] [40]. Through this adversarial training process, the generator progressively improves its ability to produce realistic molecular structures that can fool the discriminator [40].

Despite their potential, GANs face significant challenges when applied to discrete molecular representations like SMILES strings, as the discrete nature of the data disrupts gradient-based optimization essential for GAN training [42]. Several architectures have been developed to address these limitations. RL-MolGAN introduces a novel Transformer-based discrete GAN framework that utilizes a first-decoder-then-encoder structure, diverging from traditional Transformer designs [42]. This framework integrates reinforcement learning (RL) with Monte Carlo Tree Search (MCTS) to stabilize GAN training and optimize the chemical properties of generated molecules [42]. An extended version, RL-MolWGAN, incorporates Wasserstein distance and mini-batch discrimination to further enhance training stability [42].

Another approach to addressing GAN limitations involves hybrid architectures. The LM-GAN framework combines a masked language model with a GAN, leveraging the language model's ability to learn common subsequences from training data and apply them as automated, generalized mutation operators [43]. This hybrid approach demonstrates superior performance over standalone masked language models, particularly for smaller population sizes [43].

Transformer-Based Models

Transformer architectures have revolutionized molecular generation by leveraging self-attention mechanisms to capture long-range dependencies in molecular representations [39]. Unlike sequential models that process tokens one by one, Transformers process all parts of an input sequence simultaneously, making them particularly effective at addressing the sensitivity of SMILES representations to small perturbations [42].

Transformer-based molecular generators are typically organized into decoder-only and encoder-decoder families [36]. Decoder-only models like MolGPT adapt the GPT-style autoregressive Transformer for SMILES, generating molecules token by token with high validity and support for property- and scaffold-conditioned sampling [36]. However, they lack an explicit encoder to structure latent representations, which limits controllable exploration of molecular space [36]. Encoder-decoder models like Chemformer and SELFIES-TED provide richer conditioning interfaces than decoder-only systems but often function as deterministic transducers rather than probabilistic latent-variable generators [36].

The STAR-VAE framework represents a hybrid approach that combines Transformer architectures with latent-variable modeling, unifying broad distribution learning with controllable conditional generation [36]. This model demonstrates how modernized, scale-appropriate VAEs with Transformer components remain competitive for molecular generation when paired with principled conditioning and parameter-efficient finetuning [36].

Performance Comparison and Experimental Data

Quantitative Performance Metrics

Table 1: Benchmark Performance of Molecular Generation Architectures

| Architecture | Model Name | Validity (%) | Uniqueness (%) | Novelty (%) | Diversity | Property Optimization |

|---|---|---|---|---|---|---|

| VAE | STAR-VAE [36] | >99% (SELFIES) | High | High | 0.83 (GuacaMol) | Strong (Tartarus) |

| VAE | TGVAE [41] | High | High | High | Superior to baselines | Effective |

| GAN | RL-MolGAN [42] | High | - | - | - | Effective for target properties |

| GAN | LM-GAN [43] | - | - | - | Superior for small populations | Enhanced efficiency |

| Transformer | MolGPT [36] | High | High | Lower than other ML frameworks | - | Supports conditioned generation |

| Transformer | STAR-VAE [36] | >99% | - | - | - | Shifts docking score distributions |

Table 2: Conditional Generation Performance on Protein Targets

| Architecture | Model | Target Protein | Performance | Experimental Validation |

|---|---|---|---|---|

| VAE with AL | VAE-AL GM [12] | CDK2 | 8/9 synthesized molecules showed in vitro activity, 1 with nanomolar potency | Synthesized and tested |

| VAE with AL | VAE-AL GM [12] | KRAS | 4 molecules with predicted activity | In silico validation |

| Conditional VAE | STAR-VAE [36] | 1SYH, 6Y2F | Docking score distribution statistically stronger than baseline | In silico docking |

Analysis of Comparative Performance

The experimental data reveals distinct performance patterns across architectural families. VAE-based models consistently demonstrate strong performance across multiple benchmarks, with STAR-VAE matching or exceeding baselines on GuacaMol and MOSES benchmarks under comparable computational budgets [36]. The TGVAE similarly outperforms existing approaches, generating a larger collection of diverse molecules and discovering previously unexplored structures [41]. Notably, VAEs show particular strength in conditional generation tasks, with STAR-VAE successfully shifting docking-score distributions toward stronger predicted binding for specific protein targets [36].

GAN-based approaches show promising results in property optimization but face challenges in training stability and diversity. The RL-MolGAN framework demonstrates the ability to generate molecules with desired chemical properties by incorporating reinforcement learning and MCTS [42]. The LM-GAN hybrid architecture shows particular advantage in scenarios with smaller population sizes, addressing the mode collapse problem that often plagues traditional GANs [43].

Transformer-based models excel in capturing long-range dependencies in molecular sequences but may exhibit limitations in novelty compared to other approaches [36]. MolGPT demonstrates high validity and uniqueness but lower novelty scores compared to various modern machine learning frameworks [36]. However, Transformer architectures integrated with latent-variable formulations, as in STAR-VAE, overcome these limitations while maintaining the benefits of attention mechanisms [36].

Experimental Protocols and Methodologies

Benchmarking Standards

Experimental evaluation of molecular generative models typically follows established benchmarking protocols using standardized datasets and metrics. Common benchmarks include GuacaMol [36], MOSES [36], and Tartarus [36], which provide standardized frameworks for evaluating model performance across multiple dimensions including validity, uniqueness, novelty, and diversity.

The GuacaMol benchmark employs a suite of tasks designed to evaluate various aspects of generative model performance, including the ability to generate molecules with specific property profiles [36]. The MOSES benchmark provides standardized metrics and baselines to ensure fair comparison between different generative models [36]. The Tartarus benchmark specifically focuses on protein-ligand design, evaluating a model's ability to generate molecules with strong predicted binding affinities for specific protein targets [36].

Active Learning Integration Protocols

The integration of generative models with active learning frameworks follows specific experimental protocols that create iterative feedback loops. The VAE-AL GM workflow provides a representative example, featuring a VAE with two nested active learning cycles that iteratively refine predictions using chemoinformatics and molecular modeling predictors [12].

Table 3: Active Learning Cycle Components in Molecular Generation

| Cycle Type | Evaluation Oracles | Filtering Criteria | Output |

|---|---|---|---|

| Inner AL Cycle [12] | Chemoinformatic predictors (drug-likeness, synthetic accessibility) | Druggability, SA, similarity thresholds | Temporal-specific set |

| Outer AL Cycle [12] | Molecular modeling (docking simulations) | Docking score thresholds | Permanent-specific set |

| Full Pipeline [12] | Molecular dynamics (PELE), binding free energy simulations | Stringent filtration for candidate selection | Molecules for synthesis |

The experimental protocol typically involves these key steps:

- Initial Training: The generative model is initially trained on a general training set to learn fundamental chemical principles, then fine-tuned on a target-specific training set to enhance target engagement [12].

- Molecule Generation: The trained model samples new molecules from the learned chemical space [12].