Global Minimum Search of Molecular Clusters Using Genetic Algorithms: Principles, Optimization, and Applications in Drug Discovery

This article provides a comprehensive overview of genetic algorithms (GAs) for locating the global minimum energy structures of molecular clusters, a critical task in computational chemistry and drug discovery.

Global Minimum Search of Molecular Clusters Using Genetic Algorithms: Principles, Optimization, and Applications in Drug Discovery

Abstract

This article provides a comprehensive overview of genetic algorithms (GAs) for locating the global minimum energy structures of molecular clusters, a critical task in computational chemistry and drug discovery. It covers foundational principles, including how GAs mimic natural selection to navigate complex energy landscapes. The article details methodological advances, such as specialized crossover operators and parallel computing implementations, which enhance efficiency and scalability. It also addresses common troubleshooting and optimization strategies to overcome convergence challenges and explores validation protocols and performance comparisons with other global optimization techniques. Aimed at researchers and drug development professionals, this review synthesizes current knowledge to guide the effective application of GAs in predicting stable molecular configurations and accelerating rational drug design.

The Foundations of Genetic Algorithms in Molecular Optimization

Global optimization methods are essential for predicting the most stable structures of molecular clusters, which exist in the lowest energy configuration on a complex potential energy surface (PES) [1] [2]. This technical support center addresses common computational challenges, with a specific focus on genetic algorithms (GAs) that evolve populations of candidate structures toward the global minimum [1] [3]. The FAQs and guides below synthesize current methodologies to assist researchers in navigating pitfalls in cluster structure prediction.

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between local and global optimization in this context?

A local minimum is a point where the energy is lower than that of all nearby structures but potentially higher than that of a distant point on the PES. In contrast, the global minimum is the point with the absolutely lowest energy across the entire PES [4]. Standard local optimizers typically converge to the nearest local minimum, while global optimizers like GAs are designed to search across multiple "basins of attraction" to find the true global minimum [4].

2. Why is population diversity critical in a Genetic Algorithm, and how can I maintain it?

Population diversity prevents the algorithm from getting trapped in a local minimum and ensures a representative exploration of the PES [1]. Loss of diversity is a common cause of stagnation. You can maintain it by:

- Incorporating random mutants: Continuously including randomly modified structures in the population, regardless of their immediate fitness [1].

- Structural similarity checks: Implementing measures to eliminate overly similar structures from the population. This can be based on topological information or connectivity tables [1].

- Operator management: Dynamically adjusting the usage rate of different genetic operators based on their performance in generating well-adapted offspring, which helps avoid inefficient search patterns [1] [2].

3. My simulation is not converging to a known global minimum. What could be wrong?

This is a complex issue with several potential causes, which are detailed in the Troubleshooting Guide below. Common problems include poor operator choice, insufficient population diversity, and inadequate sampling of the PES.

4. Which empirical potential should I use for my cluster system?

The choice depends on your system and the trade-off between computational cost and accuracy.

- Lennard-Jones: A simpler potential, often used for general case studies and method benchmarking (e.g., 26- and 55-atom clusters) [1] [2].

- Morse: Another analytic potential used for cluster studies [1].

- REBO (Reactive Empirical Bond Order): A more complex potential suitable for describing covalent systems like carbon clusters (e.g., 18-atom carbon cluster) and graphene PES [1] [2]. For final, high-accuracy results, the best candidates from a GA search are often refined using quantum mechanical approaches like Density Functional Theory (DFT) [3].

Troubleshooting Guides

Table 1: Common GA Convergence Issues and Solutions

| Problem | Possible Cause | Recommended Solution |

|---|---|---|

| Premature Convergence | Loss of population diversity; inefficient genetic operators. | Introduce a "twist" operator; implement a dynamic operator management strategy; enforce a minimum structural difference between individuals [1] [2]. |

| Slow or No Convergence | Poor initial population; operators failing to generate low-energy offspring. | Pre-screen initial structures; increase the application rate of high-performance operators like "twist" or "cut-and-splice"; verify local minimizer settings [1]. |

| Consistently High-Energy Results | Inadequate sampling of the PES; getting stuck in a high-energy basin. | Combine with a basin-hopping methodology; use multiple, independent GA runs with different random seeds; increase population size [1] [3]. |

Table 2: Performance of Common Genetic Operators

The following table summarizes the relative performance of different operators used to create new candidate structures in a GA, as tested on Lennard-Jones clusters. A dynamic management strategy that favors the best-performing operators can significantly improve efficiency [1] [2].

| Operator Name | Type | Relative Performance | Key Characteristics |

|---|---|---|---|

| Twist | Mutation | Excellent | Found to be the fastest operator in generating new individuals [2]. |

| Deaven and Ho Cut-and-Splice | Crossover | Good (Commonly Used) | A standard crossover operator that cuts two parent clusters and splices halves together [1]. |

| Annihilator | Mutation | Good | A specialized operator efficient at escaping deep local minima [1]. |

| History | Memetic | Good | Utilizes information from previously visited structures to guide the search [1]. |

Experimental Protocols

Standard Genetic Algorithm Workflow for Cluster Optimization

This protocol outlines the core steps for a GA aimed at finding the global minimum of a molecular cluster [1] [2].

- Initialization: Generate an initial population of cluster structures, typically with random atomic coordinates.

- Local Minimization: Relax every structure in the population to the nearest local minimum on the PES using a local optimizer (e.g., a gradient-based method) and an empirical potential (e.g., Lennard-Jones or REBO). The energy after minimization is the individual's "fitness."

- Selection: Rank all individuals by their fitness (energy). Eliminate the worst-performing members (e.g., the worst 25%).

- Creation of New Individuals: Apply genetic operators to the surviving individuals to generate new offspring. This is a critical step:

- Crossover (e.g., Deaven and Ho): Combines parts of two parent structures.

- Mutation (e.g., Twist, Annihilator): Randomly modifies a single parent structure.

- Operator Management: Dynamically adjust the probability of using each operator based on its recent success in producing low-energy offspring.

- Iteration: Return to Step 2 with the new, combined population (survivors + new offspring). Repeat for a set number of generations or until convergence criteria are met.

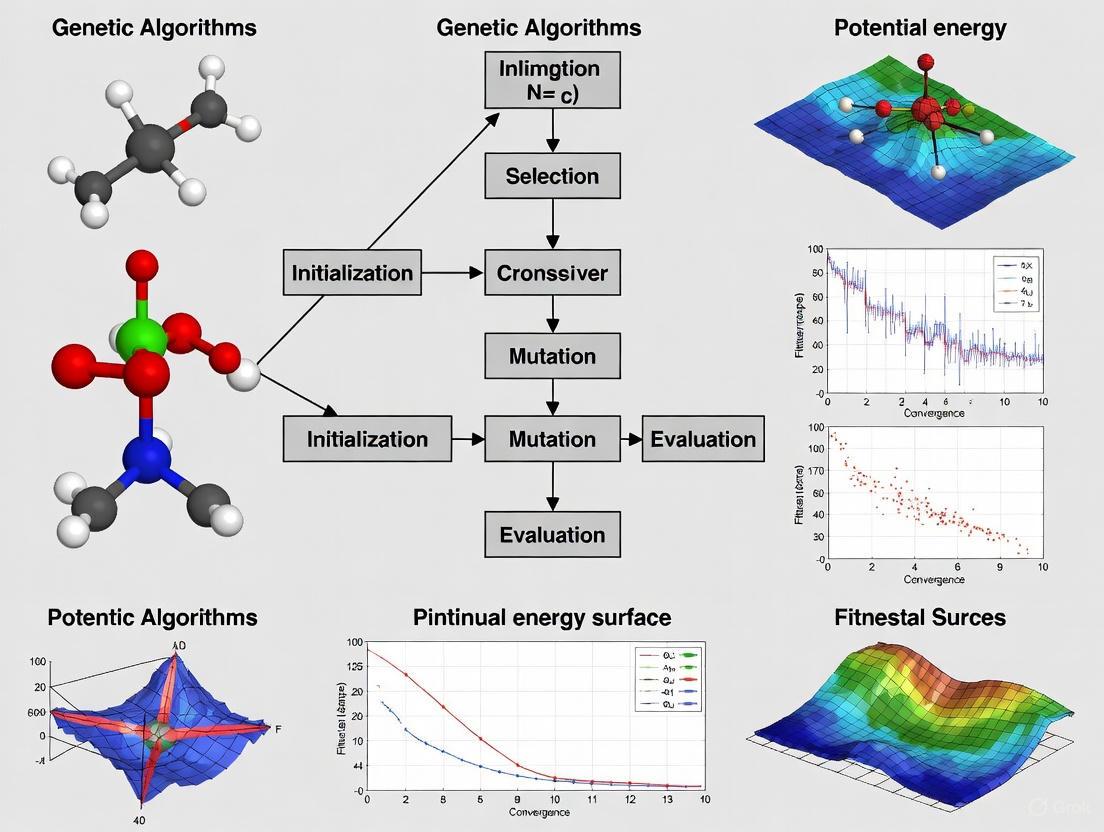

Workflow Diagram

Operator Management Strategy

This advanced protocol describes how to dynamically manage genetic operators to improve GA performance [1] [2].

- Operator Pool: Define a set of genetic operators (O1, O2, ..., O13).

- Track Success: For each operator, track its efficiency in generating well-adapted (low-energy) offspring over recent generations.

- Adjust Rates: Periodically calculate a performance score for each operator.

- Apply New Rates: Use the updated probabilities to select operators for creating the next generation of offspring. This strategy automatically promotes effective operators and suppresses poor ones.

Operator Management Diagram

The Scientist's Toolkit

Table 3: Research Reagent Solutions

This table lists key software and algorithmic "reagents" essential for conducting global optimization studies on molecular clusters.

| Item | Function | Example Use Case |

|---|---|---|

| Genetic Algorithm (GA) | An evolutionary algorithm that evolves a population of cluster structures toward the global minimum by applying selection, crossover, and mutation [1] [3]. | Primary engine for global search of molecular cluster PES. |

| Basin-Hopping (BH) | A stochastic global optimization method that transforms the PES into a collection of "basins" via random steps followed by local minimization [1] [5]. | Can be hybridized with GA or used as an alternative search strategy. |

| Lennard-Jones Potential | An empirical pair potential used to compute the binding energy of clusters for rapid energy evaluation during searches [1] [2]. | Benchmarking and testing new algorithms on model systems (e.g., 38-atom LJ clusters). |

| REBO Potential | A reactive empirical bond-order potential that describes covalent bonding, enabling the study of carbon-based systems like graphene and carbon clusters [1] [2]. | Searching for global minima of carbon nanostructures. |

| Twist Operator | A specific mutation operator that has demonstrated high efficiency in generating well-adapted cluster offspring within a GA [1] [2]. | Dynamic operator management to accelerate convergence. |

| Density Functional Theory (DFT) | A quantum mechanical method used for computing the electronic structure and highly accurate energy of a system [3]. | Final, high-fidelity refinement of low-energy candidate structures identified by the GA. |

A technical guide for researchers navigating the global optimization of molecular clusters

A Technical Support Center for Computational Researchers

This resource provides targeted troubleshooting guidance for researchers applying Genetic Algorithms (GAs) to a critical task in computational chemistry: finding the global minimum energy structure of molecular and atomic clusters. The complex, high-dimensional energy landscapes of these systems present unique challenges that this guide will help you overcome.

Frequently Asked Questions (FAQs)

FAQ 1: What makes GAs suitable for global minimum searches in molecular clusters?

GAs are well-suited for this task because they are a population-based metaheuristic, meaning they explore multiple areas of the potential energy surface (PES) simultaneously [6] [7]. This makes them less likely to become trapped in local minima compared to local optimization methods. By mimicking biological evolution through selection, crossover, and mutation, GAs can efficiently navigate the exponentially large number of possible cluster configurations [8] [7].

FAQ 2: How do I represent a molecular cluster structure within a GA?

A candidate solution (an "individual" in the population) must be encoded to represent a cluster's structure. Common representations include:

- Cartesian Coordinates: Directly using the x, y, z coordinates of all atoms. This is straightforward but can lead to a high-dimensional search space.

- Internal Coordinates: Using bond lengths, angles, and dihedrals.

- Specific-to-Known-Symmetries: Forcing initial random structures to have certain point group symmetries to reduce the search space.

The choice of representation is critical and can significantly impact the algorithm's performance [6].

FAQ 3: Why is my GA converging to a local minimum instead of the global minimum?

Premature convergence is often caused by a loss of population diversity. This can be remedied by:

- Increasing the Mutation Rate: A higher, yet still small, probability of mutation introduces new genetic material [6] [9].

- Using Speciation Heuristics: These techniques penalize crossover between solutions that are too similar, encouraging the population to explore diverse regions of the PES [6].

- Reviewing Selection Pressure: If your selection process is too aggressive (e.g., always selecting only the very best), the population can converge too quickly. Tournament selection with an appropriate size can help balance this [10].

FAQ 4: How do I define an effective fitness function for my cluster?

The fitness function is the driving force of the GA. For molecular cluster geometry optimization, the most common and effective fitness function is the potential energy of the cluster structure. The goal of the GA is to minimize this energy. The energy can be calculated using various methods, from fast empirical potentials (e.g., Gupta, Lennard-Jones) for larger clusters to highly accurate but computationally expensive quantum chemical methods like Density Functional Theory (DFT) or the Density-Functional Tight-Binding (DFTB) method for smaller systems or final refinement [8] [7].

FAQ 5: My fitness function evaluations are computationally expensive. How can I make the search feasible?

This is a primary limitation of GAs in this field [6]. Strategies to manage this include:

- Hybrid Approach: Use a fast, less accurate method (e.g., an empirical force field) for the initial global search. The low-energy candidates found can then be re-optimized with a more accurate method (e.g., DFT) [8] [7].

- Tailored Methods: As demonstrated in recent research, tailoring the computational method to the specific cluster, such as using a tuned DFTB approach for sodium nanoclusters, can enhance reliability and efficiency without the cost of full ab-initio calculations [8].

Troubleshooting Guides

Issue 1: The Algorithm Is Not Improving from Generation to Generation

Problem: The average fitness of the population stagnates early in the search process.

| Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Insufficient population diversity | Calculate the average Hamming distance between individuals in the population. | Increase the population size. Implement a speciation heuristic that discourages mating between overly similar structures [6]. |

| Excessively high selection pressure | Check if the same few individuals are selected as parents repeatedly in early generations. | Switch to a less aggressive selection method (e.g., use a larger tournament size in tournament selection) or increase the influence of crossover and mutation [10]. |

| Ineffective genetic operators | Manually inspect if offspring generated by crossover are valid and viable structures. | Adjust or change your crossover and mutation operators. For cluster optimization, a "surface-based perturbation operator" that randomly adjusts the positions of surface or central atoms has proven effective [11]. |

Issue 2: Finding Duplicate or Symmetrically Equivalent Structures

Problem: Computational resources are wasted on optimizing and evaluating the same fundamental cluster geometry multiple times.

Solution Protocol:

- After each local optimization step, normalize the cluster geometry. This typically involves centering the cluster and aligning it with a principal axis.

- Implement a comparison check. Before a new candidate is added to the population, compare its energy and a geometric fingerprint (e.g., root-mean-square deviation of atomic positions, after accounting for rotational symmetry) against all existing unique candidates.

- Employ a uniqueness threshold. Define a tolerance for energy and geometry differences below which two structures are considered duplicates. Discard duplicates to maintain a diverse set of unique low-energy candidates [7].

Issue 3: Balancing Global Exploration and Local Refinement

Problem: The algorithm finds promising regions of the PES but fails to locate the precise local minimum, or it refines local minima without exploring enough.

Solution: Implement a Hybrid GA-Basin Hopping (BH) strategy. This is a highly effective and common practice in computational chemistry [8] [7].

- GA for Global Exploration: The genetic algorithm operates as the primary global search driver, maintaining a population and using crossover and mutation to explore widely.

- BH for Local Refinement: Every new offspring structure generated by the GA is not evaluated directly. Instead, it is passed to a local optimizer (e.g., using energy gradients) to "quench" it to the nearest local minimum. This transformed the PES into a collection of "basins of attraction."

- Metropolis Criterion: The new, locally minimized structure is accepted or rejected based on the Metropolis criterion from Monte Carlo algorithms, which allows for occasional uphill moves to escape local minima.

The following workflow diagram illustrates this powerful hybrid approach:

The Scientist's Toolkit: Essential Reagents & Methods

The table below details key computational "reagents" used in genetic algorithm searches for molecular cluster structures.

| Item/Reagent | Function in the Experiment | Key Consideration for Researchers |

|---|---|---|

| Potential Energy Function | Acts as the fitness function; evaluates the energy of a given cluster geometry. | Choice is critical. Balance accuracy and speed (e.g., Lennard-Jones for benchmarking [8], Gupta for metals [11], DFTB [8] or DFT [7] for accuracy). |

| Local Optimizer | Refines raw candidate structures to the nearest local minimum on the PES. | Essential for hybrid GA-BH. Methods like L-BFGS are common. The efficiency of the local optimizer greatly impacts total computational cost [7]. |

| Initial Population Generator | Creates the starting set of candidate solutions for generation zero. | Can be purely random or "seeded" with common structural motifs (e.g., icosahedral, decahedral) to bias the search towards chemically plausible regions [6] [12]. |

| Genetic Operators (Crossover/Mutation) | Creates new offspring solutions by combining or perturbing parent solutions. | Must be tailored to the structure representation. Mutation often involves perturbing atomic coordinates, while crossover is more challenging but can swap sub-units of two parent clusters [6] [9]. |

Experimental Protocols & Data Presentation

Benchmarking Your GA: The Lennard-Jones Cluster Protocol

Before applying your GA to a novel chemical system, it is essential to validate it on a system with a known answer. The Lennard-Jones (LJ) cluster is the "fruit fly" of this field [8].

Detailed Methodology:

- Potential: Use the standard Lennard-Jones potential to calculate the cluster's energy.

- Representation: Encode the cluster using the Cartesian coordinates of all atoms.

- Algorithm Execution: Run your hybrid GA-Basin Hopping algorithm on a cluster size where the global minimum is well-established (e.g., LJ38, which is famous for its double-funnel energy landscape).

- Validation: Success is measured by your algorithm's ability to consistently find the known global minimum structure. The efficiency (number of energy evaluations) can be compared to published benchmarks [8].

Parameter Tuning: A Quantitative Guide

The table below summarizes the effect of key GA parameters. Tuning these is often necessary for optimal performance on a specific cluster system.

| Parameter | Effect if Set Too Low | Effect if Set Too High | Recommended Starting Range for Clusters |

|---|---|---|---|

| Population Size | Insufficient exploration of PES; premature convergence. | High computational cost per generation; slow convergence. | 20 - 100 individuals [9]. |

| Mutation Rate | Loss of diversity; convergence to local minima. | Search becomes too random; good solutions are disrupted. | 0.01 - 0.1 (1% - 10%) [6] [12]. |

| Crossover Rate | Relies too heavily on mutation; slow convergence. | Population becomes too homogeneous too quickly. | 0.7 - 0.9 (70% - 90%) [6]. |

| Number of Generations | Algorithm terminates before finding a good solution. | Wasted computational resources on diminishing returns. | 100 - 1000+ (use a convergence criterion) [12]. |

Core Workflow of a Genetic Algorithm

The following diagram outlines the fundamental generational cycle of a standard genetic algorithm, which forms the backbone of the more sophisticated hybrid approaches.

Troubleshooting Guide: Common Issues in Genetic Algorithm Implementation

Problem 1: Premature Convergence

- Symptoms: The algorithm quickly converges on a solution that is a local, not global, minimum. Population diversity drops rapidly.

- Causes: High selection pressure from a few "super" individuals, or ineffective operators that fail to generate novel structures.

- Solutions:

- Implement a fitness scaling strategy during selection to temper dominance of high-fitness individuals early on [13]. The selection probability can be calculated as

p(sh)=exp(f(sh)/T) / Σ exp(f(sh)/T), where a cooling temperatureThelps maintain diversity. - Dynamically manage operator rates, increasing the creation rate of operators that produce fit offspring and decreasing rates for poorly performing ones [2].

- Introduce a niche technique or enforce a minimum energy difference between structures to maintain population diversity [2].

- Implement a fitness scaling strategy during selection to temper dominance of high-fitness individuals early on [13]. The selection probability can be calculated as

Problem 2: Inefficient Exploration of the Potential Energy Surface (PES)

- Symptoms: The algorithm fails to find lower-energy regions, even after many generations.

- Causes: Operators lack the creativity to generate significantly new geometries; population becomes too homogeneous.

- Solutions:

- Incorporate a random mutation operator that guarantees a portion of the population is always composed of randomly modified structures, ensuring a baseline level of PES exploration [2].

- Use a similarity measure (e.g., based on cluster topology or connectivity tables) to identify and eliminate overly similar structures, forcing exploration of new regions [2].

- Combine GA with a local search method (e.g., gradient-based minimization) to refine individuals and quickly find the nearest local minimum on the PES [8] [2].

Problem 3: High Computational Cost of Fitness Evaluation

- Symptoms: Each generation takes prohibitively long, slowing overall research progress.

- Causes: Using computationally expensive ab initio methods for every energy evaluation on every candidate structure.

- Solutions:

- Employ a two-step optimization process. Use a fast, empirical potential (e.g., Lennard-Jones, REBO) or semi-empirical method (e.g., Density-Functional Tight-Binding, DFTB) for the global search, and refine the final candidates with more accurate (e.g., DFT) methods [8] [2].

- Implement a pre-screening step to eliminate structures with a high probability of convergence failure before they undergo costly local minimization [2].

Frequently Asked Questions (FAQs)

Q1: What is the most critical component for the success of a Genetic Algorithm in this field? A1: While all components are important, maintaining population diversity is paramount. Without it, the algorithm cannot effectively explore the complex Potential Energy Surface and will likely become trapped in a local minimum. This requires a careful balance between the fitness function, selection pressure, and a set of operators that can generate novel structures [2].

Q2: How do I choose a fitness function for my molecular cluster system? A2: The fitness function must be aligned with your research goal of finding the global minimum. For most studies, this is directly related to the potential energy of the cluster configuration. However, for specific applications, you might use a function that combines energy with other properties. In clustering analysis, a function like the Variance Ratio Criterion (VRC) can be used to maximize between-cluster variance and minimize within-cluster variance [13].

Q3: What is a good genetic representation for a nanocluster?

A3: A common and effective representation is the Cartesian coordinates of the atoms within a defined center of mass. This representation works well with crossover and mutation operators that manipulate atomic positions [2]. For medoid-based clustering problems, a string of cluster medoids [m1, m2, ..., mk] can be an effective representation [13].

Q4: My algorithm is stalling. Which operators should I try to improve performance? A4: Research indicates that the twist operator can outperform other common operators. Also, do not hesitate to integrate operators from other optimization methods, such as basin-hopping, into your GA. The key is to use a diverse set of operators and consider implementing a management strategy that dynamically favors the ones proving most effective at generating low-energy offspring [2].

Q5: How can I validate that my GA has truly found the global minimum? A5: There is no absolute guarantee for complex systems, but you can increase confidence by: - Comparing your result against known global minima for standard test systems (e.g., Lennard-Jones clusters) [8]. - Running the algorithm multiple times with different random seeds and checking for consistency in the final result. - Using the putative global minimum as a starting point for high-level, more accurate quantum chemical calculations to confirm its stability [8] [7].

Essential Components: Data Tables

Table 1: Common Genetic Operators for Molecular Clusters

| Operator Name | Type | Function | Key Parameter(s) |

|---|---|---|---|

| Deaven and Ho Cut-and-Splice [2] | Crossover | Cuts two parent clusters with a plane and splices halves to create an offspring. | Cutting plane position. |

| Twist [2] | Crossover | Rotates a subsection of a cluster. | Rotation axis and angle. |

| Mutation [13] [2] | Mutation | Randomly modifies an individual's genes (e.g., atom coordinates). | Mutation probability (pm). |

| Annihilator [2] | Specialized | Removes worst part of a cluster. | - |

| History [2] | Specialized | Reuses building blocks from successful historical structures. | - |

Table 2: Fitness Functions and Evaluation Methods

| Function Name | Formula / Description | Application Context |

|---|---|---|

| Potential Energy | E_total = Σ Σ V(rij) |

Primary fitness function for global minimum search of molecular/atomic clusters [8] [2]. |

| Variance Ratio Criterion (VRC) | f(sh) = [trace(B)/(k-1)] / [trace(W)/(n-k)] |

Fitness function for medoid-based clustering; maximizes between-cluster (B) vs. within-cluster (W) variance [13]. |

| DFTB Energy | DFTB method with tuned parameters. | Fast, semi-empirical electronic structure method for fitness evaluation in large sodium nanoclusters [8]. |

Experimental Protocol: A Standard GA for Cluster Optimization

The following methodology outlines a standard Genetic Algorithm procedure for finding the global minimum structure of a molecular cluster, as described in research [2].

Initialization

- Generate an initial population of

pindividuals (cluster structures). This is often done by creating random atomic coordinates within a defined volume. - The population size

pis a fixed parameter set by the researcher.

- Generate an initial population of

Evaluation and Selection

- Fitness Calculation: For each individual in the population, perform a local geometry optimization (e.g., using gradient descent) to find the nearest local minimum on the PES. The fitness is typically the potential energy of this minimized structure, calculated via an empirical potential or a quantum method [8] [2].

- Selection and Culling: Rank all individuals according to their fitness (lowest energy is best). A common strategy is to eliminate the worst 25% of the population [2].

Creation of New Individuals

- Operator Application: Use a set of genetic operators (see Table 1) on the surviving individuals to generate new offspring clusters and repopulate the system.

- Operator Management (Advanced): Implement a dynamic management system that tracks the performance of each operator in producing fit offspring. Adjust the rate at which each operator is used accordingly [2].

- Diversity Check: Employ a similarity measure (e.g., based on cluster topology or interatomic distances) to prevent the population from becoming saturated with identical or nearly identical structures. Replace duplicates with new random individuals [2].

Termination

- The process of evaluation, selection, and creation is repeated for a fixed number of generations (

G) or until a convergence criterion is met (e.g., no improvement in the best fitness for a certain number of generations). - The chromosome with the best fitness across all generations is reported as the putative global minimum [13].

- The process of evaluation, selection, and creation is repeated for a fixed number of generations (

Workflow Visualization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Computational Tools

| Tool / "Reagent" | Function / Purpose | Key Feature |

|---|---|---|

| DFTB+ [8] | Energy & Force Calculation: A semi-empirical quantum method used for fitness evaluation. | Faster than DFT, with parameters tuned for specific clusters (e.g., sodium). |

| GATOR [8] | Crystal Structure Prediction: A first-principles genetic algorithm for molecular crystals. | Integrates GA with quantum mechanical calculations. |

| Birmingham Cluster GA (BCGA) [2] | Genetic Algorithm Engine: A specialized GA for cluster geometry optimization. | Can be coupled with electronic structure packages like PWscf. |

| SciPy [8] | Scientific Computing: Provides fundamental algorithms for optimization and linear algebra. | A core library for building or supporting custom GA workflows in Python. |

| FASP [8] | Parameterization: A framework for automating Slater-Koster file parameterization for DFTB. | Ensures reliability of the DFTB method for specific nanoclusters. |

Frequently Asked Questions (FAQs)

Q1: What is the fundamental principle behind the Building Block Hypothesis (BBH) in Genetic Algorithms?

The Building Block Hypothesis (BBH) proposes that Genetic Algorithms (GAs) achieve adaptation by implicitly identifying, combining, and promoting low-order, short, and highly-fit schema, known as "building blocks" [6]. Through iterative selection, crossover, and mutation, a GA samples these building blocks and recombines them into strings of potentially higher fitness [6]. This process is analogous to constructing a complex structure from simpler, high-quality components, thereby reducing the problem's complexity by leveraging the best partial solutions discovered in past generations [6].

Q2: Why is population diversity critical in GA for molecular cluster searches, and how can it be maintained?

Population diversity is essential to prevent premature convergence on local optima, which is a significant risk when navigating the complex, multimodal potential energy surfaces (PES) of molecular clusters [2] [6]. A diverse population ensures a more extensive exploration of the PES. Strategies to maintain diversity include:

- Speciation Heuristics: Penalizing crossover between overly similar candidate solutions to encourage population variety [6].

- Structural Similarity Checking: Using topological information or connectivity tables to estimate dissimilarity between cluster structures, helping to balance diversity and convergence efficiency [2].

- Managed Operators: Dynamically adjusting the application rate of different evolutionary operators based on their performance in generating well-adapted offspring, effectively phasing out ineffective operators [2].

Q3: My GA is converging rapidly but to a suboptimal solution. What could be going wrong?

Rapid convergence to a suboptimal solution often indicates that the algorithm is trapped in a local optimum [6]. This is a common limitation of GAs, particularly on complex fitness landscapes. Troubleshooting steps include:

- Increase Mutation Probability: A higher mutation rate can introduce new genetic material and help the population escape local optima. However, if set too high, it can destroy good solutions, so tuning is necessary [6].

- Review Selection Pressure: Ensure your selection process is not overly greedy. Incorporating less fit solutions into the breeding pool can preserve genetic diversity [6].

- Implement Diversity-Preserving Heuristics: Techniques like speciation or niching can explicitly maintain a diverse population [6].

- Adjust Operator Rates: A recombination (crossover) rate that is too high can lead to premature convergence. Experiment with balancing crossover and mutation rates [6].

Q4: How do I choose an appropriate fitness function for a molecular cluster optimization problem?

The fitness function is always problem-dependent [6]. For molecular cluster global minimum searches, the most common and direct fitness function is the potential energy of the cluster structure [2]. The objective is to find the global minimum on the Potential Energy Surface (PES). Therefore, the fitness function is typically the negative of the cluster's energy as computed by an empirical potential (e.g., Lennard-Jones, Morse, or REBO potentials) or a quantum mechanics method [2]. The algorithm then seeks to maximize this fitness (i.e., minimize the energy).

Troubleshooting Common GA Experimental Issues

Table 1: Common GA Issues and Proposed Solutions in Molecular Cluster Research

| Problem | Possible Causes | Recommended Solutions |

|---|---|---|

| Premature Convergence | Low population diversity, excessive selection pressure, insufficient mutation [6]. | Implement speciation heuristics [6]; use dynamic operator management [2]; increase mutation rate; introduce a portion of random mutants [2]. |

| Slow Convergence | Poorly performing operators, inadequate fitness function, population size too large [6]. | Profile and manage operator performance [2]; tune parameters (mutation probability, crossover rate) [6]; ensure fitness function is not computationally prohibitive [6]. |

| Failure to Find Global Minimum | Problem complexity, exponential search space, operators not effectively exploring PES [2] [6]. | Hybridize GA with local search methods (e.g., basin-hopping) [2]; use topological structure similarity checks to enhance PES exploration [2]; experiment with advanced operators (e.g., "twist" operator) [2]. |

| High Computational Cost | Expensive fitness function evaluations (e.g., quantum calculations for large clusters) [6]. | Use empirical potentials for initial screening [2]; employ fitness approximation models; pre-screen structures likely to have high energy before full evaluation [2]. |

Experimental Protocols & Workflows

Standard Genetic Algorithm Protocol for Cluster Optimization

The following outlines a standard GA procedure for finding the global minimum structure of atomic or molecular clusters, as drawn from the literature [2].

Initialization:

Selection:

- Evaluate the fitness (e.g., the negative of the potential energy) of every individual in the population [2] [6].

- Rank all individuals according to their fitness.

- Select the fittest individuals for breeding. A common strategy is to eliminate a portion of the worst individuals (e.g., the worst 25%) [2].

Creation of New Individuals:

- Apply genetic operators to the selected individuals to generate new offspring for the next generation. Key operators include:

- Crossover (Recombination): Combine parts of two "parent" clusters to form a "child" cluster. The Deaven and Ho cut-and-splice crossover is a commonly used operator in this field [2].

- Mutation: Randomly alter a cluster's structure to introduce new genetic material. This can include atomic displacements or more complex transformations [6].

- Advanced Operators: The "twist" operator has been shown to perform well for cluster geometry optimization [2].

- A management strategy can be implemented to dynamically adjust the rate at which different operators are used based on their performance in creating well-adapted offspring [2].

- Apply genetic operators to the selected individuals to generate new offspring for the next generation. Key operators include:

Termination:

- This generational process (steps 2-3) is repeated until a termination condition is met. Common conditions include [6]:

- A solution is found that satisfies minimum criteria (e.g., energy below a threshold).

- A maximum number of generations has been produced.

- The highest ranking solution's fitness has reached a plateau.

- This generational process (steps 2-3) is repeated until a termination condition is met. Common conditions include [6]:

Protocol: Dynamic Management of Evolutionary Operators

This advanced protocol aims to improve GA efficiency by dynamically prioritizing the most effective operators [2].

Define Operator Pool: Assemble a set of evolutionary operators (e.g., o1, o2, ..., o13), which can include various crossover, mutation, and other structure-generating methods [2].

Initialization: Assign equal creation rates to all operators at the start of the algorithm.

Performance Tracking: During the GA run, track the performance of each operator by evaluating the fitness of the offspring it generates.

Dynamic Adjustment: Periodically adjust the creation rate of each operator:

- Increase the rate for operators that consistently produce well-adapted (high-fitness) offspring.

- Decrease the rate for operators that poorly fulfill the task of creating favorable new individuals.

Iteration: Continue the GA process with the updated operator creation rates, allowing the algorithm to automatically focus on the most effective search strategies for the specific PES.

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Computational "Reagents" for GA-based Cluster Optimization

| Item / Concept | Function / Role in the Experiment | Example / Notes |

|---|---|---|

| Potential Energy Surface (PES) | The hyper-surface representing the energy of a cluster as a function of its atomic coordinates. The landscape the GA navigates [2]. | Defined by empirical potentials (e.g., Lennard-Jones) or quantum mechanics methods [2]. |

| Genetic Representation (Genotype) | The encoding of a cluster's structure into a format manipulable by the GA [6]. | Can be a string of atomic coordinates, a bit string, or a more complex tree/graph representation [6]. |

| Fitness Function | The function that evaluates the quality of a candidate solution (cluster structure) [6]. | Typically the negative of the cluster's potential energy [2]. |

| Selection Operator | The mechanism for choosing which individuals from the current generation get to reproduce [6]. | Fitness-proportional selection, tournament selection, or truncation selection (e.g., removing the worst 25%) [2] [6]. |

| Crossover/Recombination Operators | Combine parts of two or more parent structures to create new offspring, exploiting existing building blocks [2] [6]. | Deaven and Ho cut-and-splice [2]; "Twist" operator [2]. |

| Mutation Operators | Introduce random changes to a single structure, exploring new regions of the PES and maintaining diversity [6]. | Atomic displacement, rotation of a sub-cluster, or bond alteration. |

Why Molecular Clusters? The Critical Importance of Global Minimum Structures in Drug Discovery

FAQs: Navigating Global Minimum Challenges in Molecular Clustering

1. Why is finding the global minimum (GM) structure so critical for molecular clusters in drug discovery?

The global minimum represents the most thermodynamically stable configuration of a molecular system. In drug discovery, accurately predicting this structure is essential because it dictates a molecule's properties, including its thermodynamic stability, reactivity, spectroscopic behavior, and, most importantly, its biological activity [7]. The equilibrium conformer, often the global minimum, is typically the most representative structure at room temperature and is key to understanding how a drug candidate will interact with its biological target [14].

2. How can I be confident that my computational search has found the true global minimum?

For non-trivial molecules, it is challenging to be absolutely certain. However, several strategies can build confidence:

- Restart from Different Points: Conduct multiple searches from diverse initial conformations. If different starting points converge on the same "global minimum," it indicates the algorithm is spanning the conformational space effectively [14].

- Compare Algorithms: Using a different search algorithm (e.g., comparing a Monte Carlo result with a systematic search) can serve as a validation check [14].

- Use Management Strategies: Advanced algorithms, like some modern Genetic Algorithms (GAs), dynamically manage evolutionary operators to avoid inefficiency and improve the exploration of the potential energy surface (PES) [2].

3. My search keeps getting stuck in local minima. What are my options?

This is a common challenge due to the exponentially growing number of local minima on the PES. Potential solutions include:

- Adjust Algorithm Parameters: Increase the search "fold count" or use simulated annealing to allow the system to escape local minima by initially accepting higher-energy configurations [14].

- Adopt a Hybrid Strategy: Combine global exploration with local refinement. A typical workflow involves a stochastic global search (e.g., Monte Carlo, Genetic Algorithm) to identify candidate structures, followed by local optimization to refine them to the nearest minimum [7].

- Employ Advanced Techniques: Methods like basin-hopping (BH) transform the PES into a collection of local minima, simplifying the landscape for more efficient global exploration [7] [15].

4. When should I use a stochastic method versus a deterministic one?

The choice depends on your system and the guarantee you need.

- Stochastic Methods (e.g., Genetic Algorithms, Simulated Annealing): These incorporate randomness and are ideal for exploring complex, high-dimensional energy landscapes. They do not guarantee finding the GM but are excellent for broad sampling and avoiding premature convergence [7].

- Deterministic Methods (e.g., some Single-Ended methods, Molecular Dynamics): These rely on defined rules and analytical information like energy gradients. They can offer precise convergence but may become computationally expensive for systems with many local minima and cannot guarantee finding the GM for large systems without exhaustive, and often infeasible, searching [7].

5. Is the global minimum always the most important structure for my study?

Not necessarily. While the global minimum is crucial for understanding thermodynamic stability, "what conformation is 'best' depends on what you are looking for" [14]. Other low-energy minima might be functionally relevant if they are accessible at room temperature. Furthermore, factors like solvent effects and the accuracy of the theoretical method used can shift the relative stability of conformers [14].

Troubleshooting Guide: Common Issues in Global Optimization

| Problem | Potential Cause | Recommended Solution |

|---|---|---|

| Failed Convergence | Algorithm stuck in a local minimum. | Switch from a purely local optimizer to a global search method. Combine stochastic global search (e.g., GA) with local refinement [7]. |

| Inconsistent Results | Search results vary with different starting conformations. | Perform multiple independent searches from random initial structures. If they yield the same GM, it increases confidence [14]. Use algorithms like CSA that explicitly control population diversity [16]. |

| Low Population Diversity (in GAs) | Population becomes too similar, halting exploration. | Implement a similarity check using connectivity tables or fingerprint-based distances to eliminate redundant structures [2]. Introduce random "mutant" structures to maintain diversity [2]. |

| Computational Cost Too High | Using high-level quantum methods (e.g., DFT) for the entire search. | Adopt a multi-step strategy: use a fast molecular mechanics force field (e.g., MMFF) to generate low-energy conformers, then re-optimize the promising candidates at the desired quantum mechanics (QM) level [14] [17]. |

| Invalid Structures Generated | Particularly when using SMILES-based GA with random crossover/mutation. | Use robust algorithms like MolFinder that employ sophisticated selection and distance constraints to maintain valid and diverse molecules [16]. |

Experimental Protocols & Workflows

Standard Two-Phase Global Optimization Protocol

This is a widely used framework that combines global search with local refinement [7].

Step 1: Initial Population Generation

- Generate a diverse set of initial candidate structures using random sampling, physically motivated perturbations, or heuristic design.

Step 2: Global Search

- Employ a stochastic algorithm (e.g., Genetic Algorithm, Monte Carlo, Particle Swarm Optimization) to broadly explore the Potential Energy Surface (PES).

- Genetic Algorithm Specifics: The population is evolved using operators like crossover (cut-and-splice) and mutation (twist, annihilator) to create new candidate structures [2].

Step 3: Local Refinement

- Each candidate structure from the global search is locally optimized using a method that leverages energy gradients (e.g., density functional theory - DFT) to find the nearest local minimum on the PES.

Step 4: Redundancy Removal and Validation

- Remove duplicate or symmetrically equivalent structures.

- Perform frequency analysis to confirm each candidate is a true minimum (not a saddle point).

- The structure with the lowest energy is designated the putative global minimum.

Advanced Genetic Algorithm with Operator Management

This protocol details a refined GA that dynamically optimizes its search operators for efficiency [2].

Step 1: Initialization and Selection

- Generate an initial population of cluster structures.

- Rank all individuals by their fitness (e.g., binding energy calculated via an empirical potential like Lennard-Jones or REBO).

- Eliminate the worst-performing individuals (e.g., bottom 25%).

Step 2: Managed Creation of New Individuals

- Operator Pool: Maintain a set of operators (e.g., Deaven and Ho cut-and-splice, twist, annihilator).

- Performance Tracking: Monitor the efficiency of each operator in generating well-adapted offspring.

- Dynamic Rates: Increase the application rate of high-performing operators and decrease that of poorly performing ones. This management strategy avoids bad operators and improves overall performance.

Step 3: Similarity Checking and Diversity Maintenance

- Apply a similarity check (e.g., using a connectivity table or ultrafast shape recognition - USR) to new structures [2] [15].

- Reject new structures that are too similar to existing ones in the population to maintain diversity and prevent stagnation.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Tools for Global Minimum Search

| Tool / Resource | Function | Application Note |

|---|---|---|

| Genetic Algorithm (GA) | A population-based stochastic method inspired by evolution, using selection, crossover, and mutation. | Highly effective for atomic/molecular clusters and protein folding. Performance is enhanced by managing operator rates and ensuring population diversity [2]. |

| Basin-Hopping (BH) | Transforms the PES into a set of interpenetrating staircases, simplifying the search for minima. | Excellent for locating GM of Lennard-Jones clusters. Can be combined with xTB or DFT methods for more complex systems [7] [15]. |

| Monte Carlo with Simulated Annealing | A stochastic method that uses a temperature schedule to allow escape from local minima. | Useful for conformer sampling of drug-sized organic molecules. The temperature ramp helps explore the space broadly before cooling into low-energy wells [14]. |

| Machine Learning Potentials (e.g., NequIP) | Graph neural network potentials trained on DFT data for highly accurate and faster energy/force calculations. | Used for pre-screening and in simulated annealing procedures to discover new low-energy conformers of metal clusters like Pt19 and Pt20 [17]. |

| xTB (Extended Tight Binding) | A fast, semi-empirical quantum chemical method. | Serves as an efficient engine for local optimization within a BH or GA workflow, enabling the screening of thousands of structures before final refinement with higher-level DFT [15]. |

| Conformational Space Annealing (CSA) | A global optimization algorithm blending GA, simulated annealing, and Monte Carlo minimization. | The core of MolFinder, it extensively explores chemical space using SMILES representation and is effective for molecular property optimization without prior training [16]. |

Implementing Genetic Algorithms: From Theory to Practice in Molecular Design

Frequently Asked Questions (FAQs)

FAQ 1: Why should I use a Genetic Algorithm instead of a simpler local search method for my molecular cluster optimization?

Genetic Algorithms (GAs) are globally oriented search methods and are particularly useful when the objective function contains multiple local optima and other irregularities [18]. Unless an analytical solution exists, the only method guaranteed to find the global minimum is a complete search of the parameter space, which is often impractical for high-dimensional problems [19]. GAs can find objective function values close to the global minimum [18] and are less likely to get trapped in suboptimal local minima compared to simple descent algorithms, which can converge on incorrect parameter values (e.g., double the correct spacing in a peak-fitting model) if not initialized in the region of the global optimum [19].

FAQ 2: My GA converges too quickly and seems stuck in a local optimum. What protocol changes can I make to improve exploration?

Quick convergence often indicates a lack of population diversity. The REvoLd algorithm implemented several protocol changes to offset this effect [20]:

- Increase Crossovers: Enforce more variance and recombination between well-suited ligands.

- Introduce Diversity Mutations: Add a mutation step that switches single fragments to low-similarity alternatives, keeping well-performing parts intact but enforcing significant changes on small parts.

- Vary Reactions: Implement a mutation that changes the reaction of a molecule and searches for similar fragments within the new reaction group to open larger parts of the combinatorial space.

- Promote Lower-Fitness Solutions: Introduce a second round of crossover and mutation that excludes the fittest molecules, allowing worse-scoring ligands to improve and carry their molecular information forward [20].

FAQ 3: How do I determine the right population size and number of generations for my run?

These parameters depend on your problem's complexity and computational budget. Based on benchmarks from a drug discovery context [20]:

- Population Size: A random start population of 200 ligands often provides enough variety to begin the optimization process. Larger sizes increase runtime costs, while smaller populations may be too homogeneous and hinder exploration.

- Generations: About 30 generations often strikes a good balance. Good solutions are usually found after 15 generations, but discovery rates flatten around 30. The algorithm may continue to find new molecules after many more generations, but hit rates diminish. For broader exploration, multiple independent runs are advised over a single, very long run [20].

FAQ 4: What are the most critical genetic operators to focus on for molecular optimization?

The core operators are selection, crossover, and mutation, and their balance is key.

- Selection: A portion of the existing population is selected to breed a new generation, typically based on a fitness-based process where fitter solutions are more likely to be selected [6].

- Crossover (Recombination): This operator combines the "genetic material" of two parent solutions to produce new "child" solutions [6]. For molecular problems, increasing crossovers between fit molecules can enforce more variance [20].

- Mutation: This operator randomly alters a candidate solution's genome to maintain genetic diversity [6]. It is crucial for jumping out of local minima and exploring new areas of the chemical space. Tuning the mutation probability is essential, as a rate that is too high may destroy good solutions, while a rate that is too low may lead to genetic drift [6].

FAQ 5: My algorithm is not discovering the single best-scoring compound. Is this a failure?

Not necessarily. For structure-based computer-aided drug discovery campaigns, the goal is rarely to find a single best-scoring compound. It is more important to discover many promising compounds that will enrich hit rates in subsequent in-vitro experiments [20]. Meta-heuristic optimization algorithms like GAs often find close-to-optimal solutions, and the ruggedness of the scoring landscape can trap runs in local minima. Therefore, generating a diverse set of high-quality candidates is often more valuable than finding the theoretical global minimum.

Troubleshooting Guides

Issue 1: Premature Convergence

Problem: The algorithm's population becomes homogeneous too quickly, leading to convergence on a suboptimal solution.

| Checkpoint | Action |

|---|---|

| Population Diversity | Check the genetic diversity in the population. Introduce a speciation heuristic that penalizes crossover between candidate solutions that are too similar to encourage population diversity [6]. |

| Mutation Rate | Increase the mutation probability to introduce more random variations. Be cautious, as a rate that is too high may lead to the loss of good solutions unless elitist selection is employed [6]. |

| Selection Pressure | Review your selection method. If it is too aggressively biased towards the fittest individuals, the population may lose diversity rapidly. Allow some less-fit solutions to reproduce to maintain a diverse genetic pool [6]. |

Issue 2: Failure to Find High-Quality Hits

Problem: The algorithm runs but fails to produce candidate solutions with satisfactory fitness scores.

| Checkpoint | Action |

|---|---|

| Initial Population | Ensure your initial population is large enough (e.g., 200 individuals [20]) and is generated with sufficient randomness to cover a broad search space. Occasionally, solutions can be "seeded" in areas where optimal solutions are likely to be found [6]. |

| Fitness Function | Verify that your fitness function accurately reflects the problem's objectives. In some cases, a simulation may be used to determine fitness, but this can be computationally expensive [6]. |

| Genetic Operators | Re-evaluate the balance between crossover and mutation. Some research suggests that using more than two "parents" can generate higher quality chromosomes [6]. Also, consider implementing the advanced mutation steps from REvoLd that promote greater exploration [20]. |

Issue 3: Prohibitive Computational Time

Problem: The fitness function evaluation for complex molecular problems is too slow, making the GA run impractical.

| Checkpoint | Action |

|---|---|

| Fitness Evaluation | This is often the most prohibitive segment. Consider using an approximated fitness function that is computationally efficient, such as a pre-computed model or a less rigorous simulation [6]. |

| Termination Criteria | Implement clear and efficient termination conditions, such as a fixed number of generations, a allocated budget (computation time), or when the highest ranking solution's fitness reaches a plateau [6]. |

| Protocol | Use a combination of an initial search using the GA for broad exploration, followed by fine-tuning using a more direct, local search technique to converge efficiently on the best candidates [18]. |

Experimental Protocols & Data

Table 1: Key Genetic Algorithm Parameters for Molecular Optimization

This table summarizes parameters and their recommended values based on benchmarking in evolutionary ligand docking [20].

| Parameter | Recommended Value | Function & Rationale |

|---|---|---|

| Population Size | 200 | Provides sufficient genetic variety to start optimization without excessive computational cost [20]. |

| Generations | 30 | A good balance between convergence and exploration; good solutions often appear by generation 15 [20]. |

| Individuals Advancing | 50 | Carries forward a pool of solutions that is large enough to avoid homogeneity but small enough to be effective [20]. |

| Mutation Probability | Problem-dependent | Must be tuned; too high loses good solutions, too low causes genetic drift. Elitist selection can help mitigate loss of good solutions [6]. |

| Crossover Probability | Problem-dependent | Must be tuned; a rate that is too high may lead to premature convergence [6]. |

Table 2: Benchmark Performance of an Evolutionary Algorithm (REvoLd) in Drug Discovery

This table shows the performance of the REvoLd evolutionary algorithm in a realistic benchmark on five drug targets using an ultra-large chemical space [20].

| Metric | Result | Context & Explanation |

|---|---|---|

| Molecules Docked per Target | 49,000 - 76,000 | Total unique molecules docked across 20 runs per target. The variation is due to the stochastic nature of the algorithm producing different numbers of duplicates [20]. |

| Hit Rate Improvement | 869x - 1622x | Improvement factor compared to random selection, demonstrating strong enrichment for promising compounds [20]. |

| New Scaffold Discovery | Ongoing after 30 generations | The algorithm does not fully converge and continues to discover new well-scored motifs in independent runs, recommending multiple runs for diverse results [20]. |

Workflow Visualization

Genetic Algorithm Workflow for Molecular Clusters

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Reagents and Computational Tools for GA-driven Molecular Optimization

| Item Name | Function & Explanation |

|---|---|

| Rosetta Software Suite | A comprehensive platform for macromolecular modeling. It includes RosettaLigand, which allows for flexible protein-ligand docking, a common fitness evaluator in evolutionary drug discovery algorithms like REvoLd [20]. |

| Combinatorial Chemical Library | A make-on-demand library constructed from lists of substrates and chemical reactions (e.g., Enamine REAL Space). It defines the vast search space of synthesizable molecules that the GA will explore [20]. |

| Cheminformatics Toolkit | A software library (e.g., Avalon Cheminformatics Toolkit, RDKit) for parsing molecular SMILES codes, handling chemical representations, and depicting structures. Essential for processing the molecular data [21]. |

| Fitness Function | The objective function that the GA aims to optimize. In molecular docking, this is often a scoring function that estimates the binding affinity between a ligand and a target protein. Its design is critical to the algorithm's success [6]. |

| Clustering Algorithm | A method like γ-clustering used to analyze and preprocess complex molecular networks. It can identify dense substructures (clusters) to simplify the visualization and analysis of results, making them more interpretable [22]. |

Frequently Asked Questions (FAQs)

Q1: What is the primary advantage of using a managed set of genetic operators over a fixed strategy? Using a dynamic management strategy that evaluates and adjusts the application rate of operators (like cut-and-splice, twist, mutation) based on their performance in generating well-adapted offspring leads to a more efficient exploration of the potential energy surface. This avoids wasting computational resources on less productive operators and can significantly accelerate convergence to the global minimum structure. [2]

Q2: My algorithm is converging prematurely. How can I maintain population diversity? Premature convergence is often a result of lost population diversity. Strategies to mitigate this include:

- Incorporating Random Mutants: Continuously introducing a set of randomly modified structures into the population throughout the evolutionary procedure. [2]

- Similarity Checking: Implementing measures based on topological information or energy differences to prevent overly similar structures from dominating the population. This ensures a balance between optimization performance and exploration. [2]

- Utilizing Niche Techniques: Distributing different types of geometries into niches based on structural characteristics to preserve a variety of solutions. [2]

Q3: How can Machine Learning be integrated to reduce the computational cost of fitness evaluations? A Machine Learning-Accelerated Genetic Algorithm (MLaGA) trains a model (e.g., a Gaussian Process regression) on-the-fly to act as a cheap surrogate predictor for the potential energy. This model can be used to screen large numbers of candidate structures in a "nested GA" without performing expensive quantum mechanical calculations. Only the most promising candidates, as predicted by the model, are then passed to the high-level energy calculator (e.g., Density Functional Theory), leading to a dramatic reduction in the total number of energy evaluations required. [23]

Q4: What is a structural motif in the context of molecular clusters, and why is its preservation important? A structural motif is a specific, often stable, arrangement of atoms within a cluster that can be considered a building block. In pre-nucleation clusters, for example, these can consist of specific solvated moieties rather than the units found in the final crystal. [24] Preserving these motifs during crossover and mutation can be critical because they may represent key low-energy configurations. Specialized operators that recognize and conserve these motifs can guide the search more effectively toward the global minimum.

Troubleshooting Guides

Problem: The genetic algorithm fails to find known stable structures.

- Potential Cause 1: Ineffective operator set and rates.

- Solution: Implement an operator management system. Monitor how successfully each operator (cut-and-splice, twist, annihilator, etc.) produces offspring with better fitness. Dynamically increase the application rate of high-performing operators and decrease the rate of poor performers. [2]

- Potential Cause 2: Lack of structural diversity in the population.

- Solution: Enforce diversity by integrating a similarity check. Reject new candidates that are structurally too similar to existing individuals in the population based on topological descriptors or energy thresholds. [2]

- Potential Cause 3: Over-reliance on random search due to an inefficient surrogate model.

- Solution: In an MLaGA, use the model's prediction uncertainty. Employ acquisition functions like the cumulative distribution function to balance exploration (trying uncertain regions) and exploitation (refining known good regions) during the surrogate-based search. [23]

Problem: The computational cost of energy evaluations (e.g., DFT) is prohibitively high.

- Potential Cause: Using a traditional "brute-force" GA that requires thousands of energy evaluations.

- Solution: Adopt a Machine Learning-Accelerated Genetic Algorithm (MLaGA) framework. This involves:

- On-the-fly Model Training: Train a machine learning model (e.g., Gaussian Process) concurrently with the GA on the data from expensive calculations. [23]

- Surrogate-Based Screening: Use this model as a fast surrogate to evaluate and pre-select candidates in a nested genetic algorithm. [23]

- Selective Validation: Perform the high-cost energy calculation (e.g., DFT) only on the most promising candidates filtered by the surrogate model. This protocol can reduce the number of required energy calculations by 50-fold or more. [23]

- Solution: Adopt a Machine Learning-Accelerated Genetic Algorithm (MLaGA) framework. This involves:

Problem: The algorithm gets stuck in local minima corresponding to common structural motifs.

- Potential Cause: The operators are disrupting key, stable motifs that are essential for reaching the global minimum.

- Solution: Develop or incorporate operators specifically designed to preserve beneficial structural motifs. For example, the "twist" operator has been shown to be highly effective in some systems. [2] Alternatively, implement a local search or "basin-hopping" step after genetic operations to quickly relax candidates to the nearest local minimum, ensuring that promising motifs are not lost due to small structural imperfections. [2]

Genetic Operator Performance Data

The following table summarizes the performance of different operator management strategies in locating the global minimum for a 147-atom Pt-Au icosahedral nanoparticle, a system with a vast search space of ~1.78x10^44 possible homotops. [23]

Table 1: Comparison of GA Strategies for Nanoparticle Alloy Optimization

| Strategy | Key Feature | Number of Energy Calculations (Approx.) | Relative Efficiency |

|---|---|---|---|

| Traditional GA | Standard operators with fixed rates. | 16,000 | Baseline |

| MLaGA (Generational) | ML surrogate screens a full generation of candidates. | 1,200 | ~13x |

| MLaGA (Pool-based) | A new ML model is trained after each energy calculation. | 310 | ~52x |

| MLaGA (With Uncertainty) | Uses prediction uncertainty to guide the search (Pool-based). | 280 | ~57x |

Experimental Protocol: Machine Learning-Accelerated Genetic Algorithm (MLaGA)

This protocol outlines the steps for implementing a generational MLaGA to efficiently search for the global minimum of a molecular cluster. [23]

Initialization:

- Generate an initial population of candidate cluster structures.

- Perform a high-level energy calculation (e.g., using Density Functional Theory - DFT) and local geometry optimization for each candidate in the population.

Master GA Loop (for each generation):

- Selection: Rank all individuals by their actual fitness (energy from DFT) and select the top performers. Eliminate the worst 25%. [2]

- Model Training: Train a Gaussian Process (GP) regression model on the entire dataset of calculated structures and their energies.

- Nested Surrogate GA:

- Create a copy of the current population.

- Run a separate GA using this population, but use the trained GP model to predict the fitness of candidates, not DFT.

- Perform crossover, mutation, and selection based on the predicted fitness for multiple generations within the nested GA.

- Energy Validation:

- Take the final population from the nested GA and perform actual DFT energy calculations and local minimization on these new candidates.

- Add these newly validated structures and their energies to the master population and the training dataset.

Convergence Check:

- The algorithm is considered converged when the nested GA, guided by the ML model, can no longer find new candidates predicted to have a lower energy than the existing best candidates.

Workflow and Operator Function Diagrams

MLaGA Workflow

Genetic Operator Functions

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Cluster Structure Prediction

| Item / Software | Function / Application | Key Feature |

|---|---|---|

| Density Functional Theory (DFT) | High-accuracy electronic structure calculator for energy and geometry optimization. | Provides quantum-mechanical accuracy; computationally expensive. [23] |

| Empirical Potentials (Lennard-Jones, REBO) | Fast, approximate energy calculator for large systems or initial screening. | Allows for rapid evaluation of many structures; less accurate. [2] |

| Gaussian Process (GP) Regression | Machine learning model used as a surrogate energy predictor in MLaGA. | Provides both a predicted energy and an uncertainty estimate. [23] |

| Birmingham Cluster Genetic Algorithm (BCGA) | A genetic algorithm package specifically designed for cluster optimization. | Can be coupled with external energy calculators like DFT. [2] |

| Structural Alphabet (e.g., from SA-Motifbase) | A library of local structural motifs to classify and compare cluster geometries. | Converts 3D structure into a 1D sequence for efficient analysis and motif discovery. [25] |

Frequently Asked Questions (FAQs)

1. What is the primary advantage of using a Genetic Algorithm (GA) over other global optimization methods for molecular structure prediction?

Genetic Algorithms are stochastic population-based methods that excel at exploring complex, high-dimensional potential energy surfaces (PES). They are less likely to become trapped in local minima compared to deterministic methods, making them particularly suitable for locating the global minimum of molecular clusters and surface reconstructions. Their evolutionary operations (selection, crossover, mutation) allow for a broad exploration of configurational space while exploiting promising regions [7] [8].

2. My GA simulation is converging too quickly to a structure that does not seem to be the global minimum. What parameters can I adjust to improve the search?

Premature convergence often indicates a lack of genetic diversity. You can troubleshoot this by:

- Increasing the Mutation Rate: A higher rate introduces more random structural perturbations, helping the population escape local funnels on the PES.

- Reviewing Selection Pressure: If your selection process is too aggressive (e.g., only selecting the very best candidates), the population diversity can drop rapidly. Consider implementing a roulette-wheel or tournament selection scheme that allows some less-fit individuals to survive and contribute to the gene pool [26].

- Checking Population Size: A small population may not adequately sample the configurational space. Increasing the population size can provide a more diverse starting point for the evolution [8].

3. How can I balance computational cost with accuracy when using electronic structure methods in a GA search?

A common and effective strategy is to use a multi-level approach. The GA search itself can be performed using a fast, semi-empirical method (e.g., Density-Functional Tight-Binding, DFTB) to screen thousands of candidate structures. The most stable low-energy candidates identified by the GA are then refined using more accurate, but computationally expensive, first-principles methods like Density Functional Theory (DFT) [8]. This combines the broad exploration of GA with high-accuracy local refinement.

4. What does a "double-funnel" energy landscape mean, and why is it challenging for optimization?

A double-funnel energy landscape describes a PES with two distinct "funnels" or regions leading to different deep minima. Each funnel may contain many local minima, and the lowest minimum (the global minimum) might be in the narrower, harder-to-find funnel. This is challenging because algorithms can easily become trapped in the wider, more accessible funnel, believing they have found the best structure [8]. GAs, with their stochastic nature, are one of the preferred methods for navigating such complex landscapes.

Troubleshooting Guides

Issue 1: Poor Convergence and Stagnating Objective Function

Problem: The fitness of the best candidate in the population stops improving over multiple generations, indicating the search may be stuck.

| Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Low population diversity | Calculate the average structural similarity (e.g., root-mean-square deviation) between population members. | Increase the mutation rate; introduce new random individuals ("immigration") into the population every few generations [26]. |

| Ineffective crossover operator | Visualize offspring structures to see if crossover produces reasonable, new configurations or mostly disordered structures. | Switch from a cut-and-splice crossover to a maximum common substructure (MCS)-based crossover, which is more chemically aware [27]. |

| Inadequate fitness function | Check if the fitness function is sensitive enough to distinguish between similar, low-energy structures. | Consider adding more terms to the objective function, such as a penalty for high symmetry if seeking defective surfaces, or incorporating multiple properties in a Pareto optimization scheme [27]. |

Issue 2: Infeasible or Unphysical Candidate Structures

Problem: The GA generates a high number of molecular or cluster configurations that are chemically unreasonable, with unrealistic bond lengths or angles.

Solution Workflow:

Explanation:

- Hard Constraints: During the structure generation and mutation phases, implement immediate checks and reject any structure that violates fundamental chemical rules (e.g., atoms too close, impossible bond angles) [8]. This prevents the population from being polluted with nonsensical candidates.

- Soft Constraints: For more subtle issues, add a penalty term to the fitness function (e.g., the total potential energy). For example, a penalty can be applied for significant angle strain or van der Waals overlaps, making such structures less likely to be selected for reproduction [27].

Issue 3: High Computational Cost per Fitness Evaluation

Problem: The electronic structure calculation for each candidate is so slow that the GA cannot explore a sufficiently large area of the configurational space in a reasonable time.

| Strategy | Method | Application Note |

|---|---|---|

| Multi-level Approach | Use a fast method (DFTB, force field) for GA search, then re-optimize top candidates with accurate DFT [8]. | Ensure the low-level method is parameterized for your specific system (e.g., sodium clusters) to ensure reliability [8]. |

| Parallelization | Distribute fitness evaluations across multiple CPU cores or nodes. | GAs are "embarrassingly parallel" as each individual's fitness can be calculated independently. This is the most effective way to speed up the wall-clock time. |

| Machine Learning Potentials | Train a machine learning model on DFT data to replace the expensive quantum mechanics calculator [7] [27]. | Tools like STELLA integrate graph transformer models for fast property prediction, drastically accelerating the search [27]. |

Experimental Protocols & Data

Detailed Methodology: GA for Sodium Nanoclusters

The following protocol is adapted from a study searching for the global minima of sodium nanoclusters [8].

1. Algorithm Initialization:

- Population Generation: Generate an initial population of candidate cluster structures using random seeding or based on known symmetric motifs (e.g., icosahedral, decahedral).

- Representation: Encode each cluster structure as a chromosome, typically represented by the 3D Cartesian coordinates of its atoms.

2. Core Evolutionary Loop:

- Fitness Evaluation: For each candidate in the population, perform a local geometry optimization using a tailored Density-Functional Tight-Binding (DFTB) method. The fitness (or objective function) is the final, optimized potential energy of the cluster. Lower energy indicates higher fitness.

- Selection: Select parent structures for reproduction using a fitness-proportional method (e.g., roulette wheel selection). Clustering-based selection can also be used to maintain structural diversity by picking the best candidate from each cluster [27].

- Crossover (Recombination): Create offspring by combining parts of two parent structures. A common method is "cut-and-splice," where two clusters are cut and recombined to form a new child cluster.

- Mutation: Apply random perturbations to offspring structures. This includes atom displacements, rotation of sub-clusters, or permutation of atom types in nanoalloys. A "gradient-adjusted" mutation that incorporates local gradient information can improve efficiency [8].

3. Termination and Validation:

- The algorithm runs for a predefined number of generations or until the best fitness remains unchanged for a specified period.

- The putative global minimum is validated by comparing its energy and symmetry to known structures and by re-optimizing it with a higher-level DFT calculation.

Performance Comparison: STELLA Framework vs. REINVENT 4

The following data summarizes a case study comparing a metaheuristic framework (STELLA) with a deep learning-based tool (REINVENT 4) in a drug discovery application, highlighting the performance of evolutionary approaches [27].

Table 1: Hit Generation Performance in a PDK1 Inhibitor Case Study

| Metric | REINVENT 4 | STELLA (Genetic Algorithm) |

|---|---|---|

| Total Hit Compounds | 116 | 368 |

| Average Hit Rate | 1.81% per epoch | 5.75% per iteration |

| Mean Docking Score (GOLD PLP Fitness) | 73.37 | 76.80 |

| Mean QED (Drug-Likeness) | 0.75 | 0.75 |

| Unique Scaffolds | Baseline | 161% more |

Visualizing the Optimization Challenge