Graph Neural Networks for Molecular Property Prediction: A Complete Guide for Drug Discovery

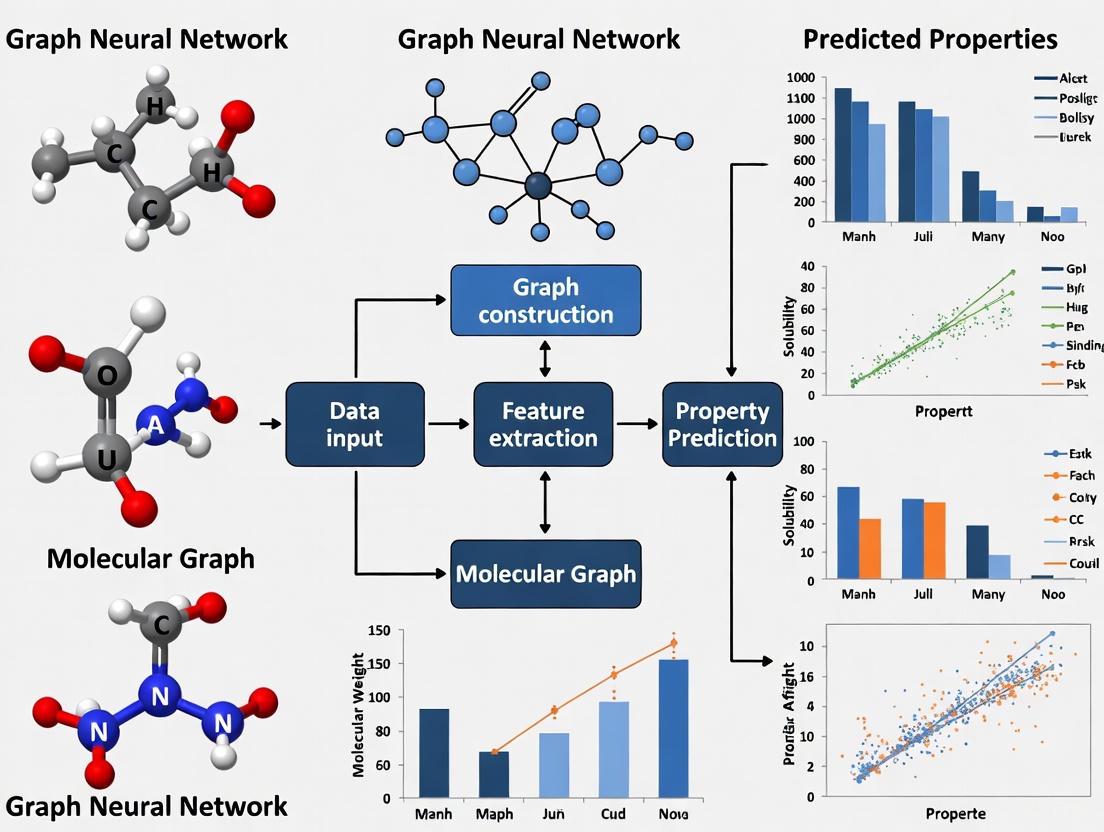

This article provides a comprehensive introduction to Graph Neural Networks (GNNs) for molecular property prediction, a transformative technology accelerating drug discovery and materials design.

Graph Neural Networks for Molecular Property Prediction: A Complete Guide for Drug Discovery

Abstract

This article provides a comprehensive introduction to Graph Neural Networks (GNNs) for molecular property prediction, a transformative technology accelerating drug discovery and materials design. We explore the foundational principles that make GNNs uniquely suited for modeling molecular graphs, where atoms are nodes and bonds are edges. The guide details core GNN architectures—including GCN, GAT, GIN, and emerging Kolmogorov-Arnold Networks (KANs)—and their specific applications in predicting bioactivity, toxicity, and physicochemical properties. It further addresses critical real-world challenges such as data scarcity through few-shot learning techniques and provides a framework for rigorous model validation and benchmarking against standardized datasets. Aimed at researchers, scientists, and development professionals, this resource synthesizes current methodologies, optimization strategies, and comparative analyses to empower the effective implementation of GNNs in biomedical research.

Why Graphs? The Foundational Bridge Between Molecules and Machine Learning

In the field of computer-aided drug discovery and materials science, the accurate prediction of molecular properties is a crucial task. The molecular graph paradigm, which represents atoms as nodes and bonds as edges in a graph structure, has emerged as a powerful framework for this purpose [1]. This approach provides a natural and expressive representation that allows machine learning models to directly learn from the intrinsic topological structure of molecules. Graph Neural Networks (GNNs) have particularly revolutionized this domain by enabling end-to-end learning from molecular graphs, significantly reducing reliance on manual feature engineering and opening new frontiers in molecular property prediction research [2] [3].

Molecular Representation in Graph Form

Graph Construction Fundamentals

In a molecular graph, each atom is represented as a node, characterized by features such as atomic number, chirality, formal charge, and whether it is part of a ring structure. Chemical bonds between atoms form the edges, annotated with properties including bond type (single, double, triple) and conjugation [4]. This representation preserves the fundamental connectivity and functional relationships that define a molecule's chemical identity and behavior.

The translation from chemical structure to graph typically begins with a Simplified Molecular-Input Line-Entry System (SMILES) string, which is subsequently processed using toolkits like RDKit to generate the corresponding graph object [4]. This conversion establishes a standardized pipeline for preparing molecular data for GNN models.

Advanced Representations

While the basic node-edge model captures covalent bonding relationships, recent advancements have incorporated non-covalent interactions and 3D geometric information to create more expressive representations [5] [2]. These enriched representations have demonstrated notable performance improvements, particularly for properties sensitive to spatial molecular conformation.

Table 1: Standard Molecular Graph Datasets for Benchmarking

| Dataset Name | # Graphs | Avg. Nodes/Graph | Avg. Edges/Graph | Task Type | Primary Metric |

|---|---|---|---|---|---|

| ogbg-molhiv | 41,127 | 25.5 | 27.5 | Binary classification | ROC-AUC |

| ogbg-molpcba | 437,929 | 26.0 | 28.1 | 128 binary classification tasks | Average Precision |

| QM9 | ~134,000 | ~18.0 | ~18.0 | Regression (quantum properties) | MAE |

| ClinTox | 1,478 | - | - | Binary classification | ROC-AUC |

Graph Neural Network Architectures for Molecular Property Prediction

Core Architectural Concepts

GNNs operate on molecular graphs through a message-passing framework, where nodes iteratively aggregate information from their neighbors and update their own representations [6]. This fundamental mechanism allows the network to capture both local atomic environments and global molecular structure. Several specialized architectures have been developed to optimize this process for molecular tasks:

- Graph Isomorphism Networks (GIN): Employ powerful aggregation functions to capture local substructures effectively, serving as strong baselines for 2D topological analysis [2].

- Equivariant GNNs (EGNN): Integrate 3D coordinate information while preserving Euclidean symmetries (translation, rotation, reflection), making them particularly valuable for geometry-sensitive properties [2].

- Graph Transformer Models: Incorporate global attention mechanisms to model long-range dependencies within molecules, even without explicit 3D information [2].

Emerging Hybrid Architectures

Recent research has explored hybrid models that combine the strengths of different paradigms. Kolmogorov-Arnold GNNs (KA-GNNs) integrate Fourier-based KAN modules into the three fundamental components of GNNs: node embedding, message passing, and readout [5]. This approach replaces conventional multi-layer perceptrons with learnable univariate functions based on Fourier series, enhancing both expressivity and interpretability while effectively capturing both low-frequency and high-frequency structural patterns in graphs [5].

Another innovative direction involves augmenting GNNs with knowledge from Large Language Models (LLMs), where domain-relevant knowledge and structural features are fused to create more robust molecular representations [3]. This integration helps address the long-tail distribution of molecular knowledge in LLMs by combining their conceptual understanding with structural information from GNNs.

Experimental Frameworks and Benchmarking

Standardized Evaluation Protocols

Rigorous evaluation of molecular property prediction models requires standardized benchmarks and appropriate dataset splits. The scaffold split, which groups molecules based on their two-dimensional structural frameworks, provides a more realistic assessment of model generalization compared to random splits [4]. This approach tests a model's ability to extrapolate to structurally novel compounds, mirroring real-world discovery scenarios where models must predict properties for chemically distinct molecules.

Performance metrics are tailored to task characteristics: ROC-AUC for balanced binary classification, Average Precision (AP) for highly imbalanced classification tasks, and Mean Absolute Error (MAE) for regression tasks [4].

Table 2: Performance Comparison of GNN Architectures on Molecular Property Prediction

| Model Architecture | ogbg-molhiv (ROC-AUC) | log Kow (MAE) | log Kaw (MAE) | OGB-MolHIV (ROC-AUC) |

|---|---|---|---|---|

| Graph Isomorphism Network (GIN) | 0.763 (reported in related studies) | 0.29 | 0.41 | 0.763 |

| Equivariant GNN (EGNN) | - | 0.21 | 0.25 | - |

| Graphormer | 0.807 (reported in related studies) | 0.18 | 0.28 | 0.807 |

| KA-GNN (Kolmogorov-Arnold) | Consistently outperforms conventional GNNs (exact values dataset-dependent) [5] | - | - | - |

Addressing Data Scarcity Through Multi-Task Learning

Data scarcity remains a significant challenge in molecular property prediction, particularly for specialized domains with limited experimental measurements. Multi-task Learning (MTL) has emerged as a promising strategy to leverage correlations among related molecular properties, thereby improving data efficiency [6].

However, conventional MTL approaches can suffer from negative transfer, where updates from one task detrimentally affect another. Recent work has introduced Adaptive Checkpointing with Specialization (ACS), a training scheme that mitigates this issue by combining a shared, task-agnostic backbone with task-specific heads [6]. This approach checkpoints model parameters when negative transfer signals are detected, preserving the benefits of inductive transfer while protecting individual tasks from detrimental parameter updates. The ACS method has demonstrated particular utility in ultra-low data regimes, achieving accurate predictions with as few as 29 labeled samples in sustainable aviation fuel property prediction [6].

Advanced Interpretability and Functional Group Analysis

Interpretable GNN Architectures

Model interpretability is crucial for scientific discovery and drug development, as it provides insights into the structural determinants of molecular properties. FragNet represents a significant advancement in this area, offering interpretability at four distinct levels: atoms, bonds, molecular fragments, and connections between fragments [7]. This multi-level interpretability helps researchers identify which substructures are significant for predicting specific molecular properties, facilitating scientific insight and hypothesis generation.

Similarly, KA-GNNs provide enhanced interpretability by highlighting chemically meaningful substructures through their learnable activation functions [5]. The Fourier-based KAN modules enable more transparent reasoning about which molecular patterns contribute most strongly to property predictions.

Functional Group-Centric Reasoning

Functional groups—specific groups of atoms that impart characteristic chemical properties—provide a natural bridge between molecular structure and property prediction. The recently introduced FGBench dataset enables molecular property reasoning at the functional group level, containing 625K molecular property reasoning problems with precise functional group annotations and localization [8].

This approach mirrors the reasoning process of human chemists, who typically analyze property changes through three steps: associating similar molecules, observing functional group differences, and rephrasing the problem using prior knowledge of functional groups [8]. By incorporating this fine-grained information, models can develop more interpretable, structure-aware reasoning capabilities that align with chemical intuition.

Essential Research Reagents and Computational Tools

Table 3: Essential Computational Tools for Molecular Graph Research

| Tool Name | Type | Primary Function | Application Context |

|---|---|---|---|

| RDKit | Cheminformatics Library | SMILES to graph conversion, molecular descriptor calculation | Preprocessing molecular data, feature generation [4] |

| Open Graph Benchmark (OGB) | Benchmarking Suite | Standardized datasets (e.g., ogbg-molhiv, ogbg-molpcba) and evaluation | Model benchmarking and comparison [4] |

| PyTorch Geometric | Deep Learning Library | GNN model implementation and training | Building and experimenting with GNN architectures [4] |

| DGL | Deep Learning Library | Graph neural network implementation | Scalable GNN training on large molecular datasets [4] |

| OMol25 | Quantum Chemistry Dataset | High-accuracy DFT calculations for biomolecules, metal complexes | Training and validating foundational atomistic models [9] |

| Universal Model for Atoms (UMA) | Foundational Model | Machine learning interatomic potential | Accurate prediction of atomic interactions across materials [9] |

| FGBench | Specialized Dataset | Functional group-level property reasoning | Enhancing interpretability and structure-aware reasoning [8] |

The molecular graph paradigm continues to evolve with several promising research directions. 3D-aware GNN architectures that explicitly incorporate spatial geometry are showing superior performance for physics-sensitive properties like partition coefficients [2]. The integration of external knowledge sources through LLMs and knowledge graphs addresses the long-tail challenge of molecular data while enhancing model interpretability [3]. Furthermore, foundational models pre-trained on massive diverse molecular datasets, such as Meta's Universal Model for Atoms, are demonstrating remarkable transfer learning capabilities across diverse molecular tasks [9].

In conclusion, the representation of molecules as graphs with atoms as nodes and bonds as edges has established itself as a powerful paradigm for molecular property prediction. By directly encoding molecular topology into machine learning models, this approach has enabled significant advances in accuracy, interpretability, and data efficiency. As architectural innovations continue to emerge and computational resources grow, GNNs based on this paradigm are poised to play an increasingly central role in accelerating scientific discovery and molecular design across pharmaceuticals, materials science, and environmental chemistry.

Graph Neural Networks (GNNs) have emerged as a transformative technology for molecular property prediction, enabling researchers to learn directly from graph-structured representations of chemical compounds. This technical guide provides an in-depth examination of the three core mechanics underpinning modern GNNs: message passing, aggregation, and readout. Framed within the context of drug discovery research, we detail the mathematical foundations, architectural variants, and experimental methodologies that allow GNNs to capture complex molecular patterns for accurate property prediction. By integrating recent advances such as Kolmogorov-Arnold Networks and multi-level fusion approaches, this work equips computational researchers and drug development professionals with the technical understanding necessary to leverage and advance GNN architectures in molecular machine learning.

In computational drug discovery, molecules are naturally represented as graphs where atoms serve as nodes and chemical bonds as edges. This representation makes Graph Neural Networks particularly well-suited for molecular property prediction, as they operate directly on this relational structure [10] [11]. Unlike traditional neural networks designed for grid-like or sequential data, GNNs excel at capturing the complex topological features and dependencies inherent in molecular graphs [12]. The core innovation enabling this capability is a framework known as message passing, which allows nodes to iteratively exchange information with their neighbors, effectively learning representations that encode both local atomic environments and global molecular structure [13] [14].

The significance of GNNs in molecular research is demonstrated by their widespread adoption across various pharmaceutical applications, from predicting protein-ligand binding affinities to simulating molecular interactions [5] [1]. These models have fundamentally changed molecular structural and property analysis, ushering in a new era of data-driven drug design and discovery [5]. This technical guide examines the foundational mechanics of message passing, aggregation, and readout that enable these advancements, with particular emphasis on their implementation and optimization for molecular property prediction tasks.

Foundational Concepts and Mathematical Framework

Graph Representation of Molecules

In molecular graphs, we formally define a graph as (G = (V, E)), where (V) represents the set of nodes (atoms) and (E) represents the set of edges (chemical bonds) [15]. Each node (v \in V) is associated with a feature vector (Xv) encapsulating atomic attributes such as element type, charge, and hybridization state. Similarly, edges may possess feature vectors (e{uv}) describing bond characteristics including type, length, and stereochemistry [14]. The graph structure is typically represented through an adjacency matrix (A), where (A_{ij} = 1) if nodes (i) and (j) are connected, and 0 otherwise [10].

The Message Passing Framework

The message passing framework, also referred to as Message Passing Neural Networks (MPNNs), forms the computational backbone of GNNs [14]. This iterative process enables nodes to incorporate information from their local neighborhoods, with each iteration extending the receptive field by one hop [11]. The framework consists of three fundamental operations executed sequentially at each layer:

- Message Creation: Each node computes messages to be sent to its neighbors

- Message Aggregation: Nodes collect and combine messages from neighbors

- Node Update: Nodes update their representations using aggregated messages [13]

Mathematically, for a node (i) at layer (l+1), the message passing process can be formalized as:

[ \begin{aligned} m{ij}^{(l)} &= \text{Message}(hi^{(l)}, hj^{(l)}, e{ij}) \quad \text{for } j \in N(i) \ mi^{(l)} &= \text{Aggregate}({m{ij}^{(l)} : j \in N(i)}) \ hi^{(l+1)} &= \text{Update}(hi^{(l)}, m_i^{(l)}) \end{aligned} ]

Where (hi^{(l)}) is the feature vector of node (i) at layer (l), (N(i)) is the set of neighbors of node (i), and (e{ij}) is the edge feature between nodes (i) and (j) [13].

Table 1: Components of the Message Passing Framework

| Component | Mathematical Function | Role in Molecular Context |

|---|---|---|

| Message | (m{ij}^{(l)} = M(hi^{(l)}, hj^{(l)}, e{ij})) | Encodes interaction between adjacent atoms |

| Aggregate | (mi^{(l)} = \sum{j \in N(i)} m_{ij}^{(l)}) | Combines information from bonded neighbors |

| Update | (hi^{(l+1)} = U(hi^{(l)}, m_i^{(l)})) | Updates atomic representation with local context |

The following diagram illustrates the complete message passing process between two nodes in a molecular graph:

Core Mechanic I: Message Passing in Depth

Message Functions

The message function (M(\cdot)) transforms neighbor information into a transferable format. In molecular graphs, this function encodes the relationship between adjacent atoms and their bonding characteristics [14]. The design of the message function varies across GNN architectures:

Linear Transformation: Simple yet effective, using weight matrices and biases: [ m{ij}^{(l)} = W{\text{msg}} \cdot [hj^{(l)} \| hi^{(l)} \| e{ij}] + b{\text{msg}} ] where (\|) denotes concatenation [13].

Edge-Aware Functions: Incorporate bond features directly, particularly important for distinguishing single, double, and triple bonds in molecular graphs [14].

Kolmogorov-Arnold Networks (KANs): Recent advances replace traditional linear transformations with learnable univariate functions based on the Kolmogorov-Arnold representation theorem, offering improved expressivity and parameter efficiency [5].

Neighborhood Sampling Strategies

For large molecular graphs, complete neighborhood aggregation can be computationally expensive. Several sampling strategies address this challenge:

Full Neighborhood Aggregation: Utilizes all adjacent atoms, preserving complete local chemical environment information [13].

GraphSAGE Sampling: Uniformly samples a fixed number of neighbors to maintain computational consistency [15].

Attention-Based Sampling: Dynamically selects important neighbors based on learned attention weights [10].

Core Mechanic II: Aggregation Operations

Aggregation Functions

The aggregation function combines multiple incoming messages into a single fixed-size vector. Common approaches include:

Sum Aggregation: Element-wise summation of neighbor messages, which preserves the complete neighborhood information and is permutation invariant [13] [15].

Mean Aggregation: Element-wise averaging, providing normalization for nodes with varying degrees [13].

Max Pooling: Element-wise maximum operation, capturing the most salient features from neighbors [13] [15].

Attention-Based Aggregation: Weighted combination where importance weights are learned dynamically, allowing the model to focus on more relevant neighbors [13] [10].

Table 2: Comparison of Aggregation Functions for Molecular Graphs

| Aggregation Type | Mathematical Form | Advantages in Molecular Context | Limitations |

|---|---|---|---|

| Sum | (mi = \sum{j \in N(i)} m_{ij}) | Preserves molecular bond count information | Sensitive to node degree |

| Mean | (mi = \frac{1}{|N(i)|} \sum{j \in N(i)} m_{ij}) | Normalizes for atom connectivity | May dilute strong signals |

| Max | (mi = \max{j \in N(i)} m_{ij}) | Identifies most influential interactions | Loses collective neighborhood information |

| Attention | (mi = \sum{j \in N(i)} \alpha{ij} m{ij}) | Adaptively weights atomic interactions | Increased computational complexity |

Advanced Aggregation Techniques

Recent research has introduced sophisticated aggregation mechanisms tailored for molecular property prediction:

Multi-Level Fusion: Integrates both local atomic environments and global molecular structures through simultaneous aggregation at multiple topological levels [16].

Fourier-Based KAN Aggregation: Employs Fourier series as basis functions within KAN modules to capture both low-frequency and high-frequency structural patterns in molecular graphs, enhancing representation of periodic molecular properties [5].

Graph Attention Networks (GAT): Implement attention mechanisms where attention weights (\alpha{ij}) are computed as: [ \alpha{ij} = \frac{\exp(\text{LeakyReLU}(a^T[Whi \| Whj]))}{\sum{k \in N(i)} \exp(\text{LeakyReLU}(a^T[Whi \| Wh_k]))} ] allowing each molecular node to attend to its neighbors with varying degrees of importance [10].

Core Mechanic III: Readout Functions

Graph-Level Readout for Molecular Property Prediction

The readout (or pooling) function generates graph-level representations from updated node embeddings, essential for molecular property prediction where the target property is a function of the entire molecular structure [15] [14]. Common readout operations include:

Sum/Mean/Max Readout: Simple permutation-invariant operations that combine node embeddings: [ hG = \sum{v \in V} hv^{(L)} \quad \text{or} \quad hG = \frac{1}{|V|} \sum{v \in V} hv^{(L)} \quad \text{or} \quad hG = \max{v \in V} h_v^{(L)} ] where (L) is the final GNN layer [15].

Hierarchical Readout: Performs pooling at multiple topological scales to capture both local functional groups and global molecular architecture [16].

Attention-Based Readout: Uses learned attention weights to emphasize chemically significant atoms in the final representation: [ hG = \sum{v \in V} \betav hv^{(L)}, \quad \betav = \frac{\exp(w^T hv^{(L)})}{\sum{u \in V} \exp(w^T hu^{(L)})} ] where (\beta_v) represents the importance of atom (v) to the molecular property [15].

Advanced Readout Architectures

For complex molecular properties, specialized readout architectures have demonstrated superior performance:

Fourier-KAN Readout: Replaces traditional MLP readout functions with Fourier-based Kolmogorov-Arnold Networks, providing stronger approximation capabilities for complex molecular property functions [5].

Interaction-Based Readout: Incorporates cross-modal interactions between different molecular representations (e.g., graph embeddings and molecular fingerprints) before final prediction [16].

Multi-Task Readout: Generates multiple property predictions simultaneously while sharing representation learning, particularly valuable in early drug discovery where multiple molecular characteristics need evaluation [1].

Experimental Protocols and Methodologies

Benchmarking GNN Architectures for Molecular Property Prediction

Rigorous experimental evaluation is essential for assessing GNN performance on molecular tasks. Standard protocols include:

Dataset Selection: Utilizing established molecular benchmarks such as MoleculeNet, which includes datasets for various properties like ESOL (solubility), FreeSolv (hydration free energy), and Tox21 (toxicity) [1].

Evaluation Metrics: Employing task-appropriate metrics including Root Mean Square Error (RMSE) for regression tasks, Area Under the ROC Curve (AUC-ROC) for classification tasks, and Mean Average Precision (MAP) for multi-label classification [5] [16].

Baseline Models: Comparing against traditional machine learning approaches (Random Forests, Support Vector Machines) and molecular descriptors (Morgan fingerprints) to quantify GNN advantages [1].

KA-GNN Experimental Framework

Recent work on Kolmogorov-Arnold GNNs (KA-GNNs) provides a state-of-the-art experimental framework:

Architecture Variants: Implementing both KA-GCN (KAN-augmented Graph Convolutional Networks) and KA-GAT (KAN-augmented Graph Attention Networks) to evaluate KAN integration across different GNN backbones [5].

Ablation Studies: Systematically removing KAN components from node embedding, message passing, and readout to isolate their individual contributions to performance [5].

Interpretability Analysis: Visualizing learned KAN basis functions to identify chemically meaningful molecular substructures and patterns that drive predictions [5].

The following diagram illustrates the architecture of a KA-GNN integrating KAN modules into all core components:

Table 3: Research Reagent Solutions for Molecular GNN Experiments

| Component | Function in Molecular GNN Research | Example Implementations |

|---|---|---|

| Deep Learning Frameworks | Provides foundational tensor operations and automatic differentiation | PyTorch, TensorFlow |

| GNN Libraries | Offers optimized implementations of GNN layers and graph operations | PyTorch Geometric, Deep Graph Library (DGL) |

| Molecular Datasets | Standardized benchmarks for evaluating molecular property prediction | MoleculeNet, ZINC, QM9 |

| Cheminformatics Tools | Processes molecular structures into graph representations | RDKit, OpenBabel |

| KAN Implementations | Provides Kolmogorov-Arnold Network layers for integration into GNNs | PyKAN, KAN-Torch |

Results and Performance Analysis

Quantitative Evaluation of GNN Components

Experimental results across multiple molecular benchmarks demonstrate the impact of different message passing, aggregation, and readout designs:

Aggregation Function Performance: Attention-based aggregation consistently outperforms simple sum/mean/max operations on molecular classification tasks, with average improvements of 3-5% in AUC-ROC scores across Tox21 and MUV datasets [10] [16].

Message Passing Depth: Optimal performance typically occurs at 3-5 message passing layers, balancing local chemical environment capture with over-smoothing effects [13] [15].

KA-GNN Advantages: Kolmogorov-Arnold GNNs demonstrate superior accuracy and computational efficiency compared to conventional GNNs, achieving 5-15% improvement on regression tasks like solubility and energy prediction while using 20-30% fewer parameters [5].

Case Study: Multi-Level Fusion GNN

The Multi-Level Fusion Graph Neural Network (MLFGNN) represents the state-of-the-art in molecular property prediction by integrating:

Local and Global Dependency Modeling: Simultaneously capturing atomic-level interactions through Graph Attention Networks and molecular-level patterns via Graph Transformers [16].

Multi-Modal Fusion: Incorporating molecular fingerprints as complementary features to graph representations, with adaptive fusion mechanisms [16].

Interpretable Predictions: Identifying chemically meaningful substructures that contribute to property predictions, validated by domain experts [5] [16].

Experimental results on seven benchmark datasets show that MLFGNN consistently outperforms baseline methods in both classification and regression tasks, with particularly strong performance on complex properties like drug efficacy and toxicity [16].

The core mechanics of message passing, aggregation, and readout form the computational foundation of modern Graph Neural Networks for molecular property prediction. Through iterative neighborhood information exchange, sophisticated aggregation schemes, and hierarchical readout functions, GNNs effectively capture the complex structural determinants of molecular properties. Recent advances such as Kolmogorov-Arnold Networks and multi-level fusion architectures further enhance the representational power, efficiency, and interpretability of these models.

Future research directions include developing more dynamic message passing schemes that adapt to molecular context, creating specialized aggregation functions for capturing non-covalent interactions, and designing hierarchical readout operations that explicitly model molecular substructures at multiple scales. As these technical innovations mature, GNNs will continue to transform computational drug discovery, enabling more accurate, efficient, and interpretable molecular property prediction.

Graph Neural Networks (GNNs) have emerged as a transformative technology for molecular property prediction, a critical task in modern drug discovery and materials science [1] [17]. Unlike traditional convolutional neural networks designed for grid-like data such as images, GNNs specialize in processing graph-structured data where entities (nodes) are connected by relationships (edges) [18]. This capability makes them uniquely suited for representing molecular structures, where atoms serve as nodes and chemical bonds as edges [19]. The inherent ability of GNNs to learn from both node features and topological relationships has positioned them as powerful tools for predicting molecular properties including solubility, toxicity, and biological activity [1] [17].

This technical guide provides an in-depth examination of four foundational GNN architectures that have proven particularly effective for molecular property prediction: Graph Convolutional Networks (GCN), Graph Attention Networks (GAT), Graph Isomorphism Networks (GIN), and Message Passing Neural Networks (MPNN). We explore their architectural principles, implementation methodologies, and comparative performance across various molecular prediction tasks, with a specific focus on their application within pharmaceutical research and development contexts.

Core Architectural Principles

The Message-Passing Framework

Most modern GNNs operate on a message-passing paradigm, where information is iteratively exchanged and aggregated between neighboring nodes in a graph [17] [18]. In this framework, each node updates its representation by combining its current state with aggregated information from its neighbors. This process enables nodes to incorporate contextual information from their local graph neighborhoods, with each iteration extending the receptive field by one hop [18]. The message-passing mechanism can be formally described through three key functions:

- Message Function: Defines what information is sent between nodes

- Aggregation Function: Specifies how incoming messages are combined

- Update Function: Determines how a node updates its state based on aggregated messages

This fundamental mechanism provides the foundation upon which the specialized architectures of GCN, GAT, GIN, and MPNN are built.

Figure 1: High-level abstraction of the GNN message-passing framework for molecular property prediction.

Molecular Graph Representation

In computational chemistry, molecules are naturally represented as graphs where atoms correspond to nodes and chemical bonds to edges [19]. Each atom node contains feature information such as atom type, hybridization state, and formal charge, while bond edges contain features such as bond type, conjugation, and stereochemistry [19] [17]. This representation allows GNNs to learn patterns directly from the structural composition of molecules, capturing complex relationships that traditional descriptor-based methods might miss [17].

Figure 2: Molecular graph representation process from chemical structure to GNN-processable format.

Architectural Blueprints

Graph Convolutional Network (GCN)

GCNs adapt convolutional operations from traditional CNNs to graph-structured data by performing localized filtering operations directly on graph nodes and their neighborhoods [17] [18]. The GCN layer operates by normalizing and transforming neighborhood information using a spectral graph theory-inspired approach that approximates first-order Chebyshev polynomial filters [18].

Key Architectural Features:

- Uses symmetric normalization to maintain numerical stability

- Employs degree-based scaling to handle variable node connectivity

- Applies feature transformation through learnable weight matrices

Mathematical Formulation: For a GCN layer, the node representation update is computed as: [ H^{(l+1)} = \sigma\left(\tilde{D}^{-\frac{1}{2}}\tilde{A}\tilde{D}^{-\frac{1}{2}}H^{(l)}W^{(l)}\right) ] Where (\tilde{A} = A + I) is the adjacency matrix with self-connections, (\tilde{D}) is the diagonal degree matrix of (\tilde{A}), (H^{(l)}) are the node representations at layer (l), (W^{(l)}) is the trainable weight matrix, and (\sigma) is a nonlinear activation function.

Graph Attention Network (GAT)

GATs introduce an attention mechanism that assigns learned importance weights to neighboring nodes during aggregation, allowing the model to focus on more relevant neighbors when updating node representations [17]. This addresses limitations of GCNs which treat all neighbors equally regardless of their potential differing importance.

Key Architectural Features:

- Uses self-attention mechanism to compute attention coefficients between connected nodes

- Supports multi-head attention to stabilize learning

- Does not require expensive matrix operations or eigen-decompositions

Mathematical Formulation: The attention mechanism in GAT computes the normalized attention coefficients: [ \alpha{ij} = \frac{\exp\left(\text{LeakyReLU}\left(\mathbf{a}^T[W\mathbf{h}i \| W\mathbf{h}j]\right)\right)}{\sum{k \in \mathcal{N}(i)} \exp\left(\text{LeakyReLU}\left(\mathbf{a}^T[W\mathbf{h}i \| W\mathbf{h}k]\right)\right)} ] Where (\mathbf{a}) is a learnable attention vector, (W) is a shared weight matrix, (\|) denotes concatenation, and (\mathcal{N}(i)) represents the neighbors of node (i). The node update then becomes a weighted sum: [ \mathbf{h}'i = \sigma\left(\sum{j \in \mathcal{N}(i)} \alpha{ij} W \mathbf{h}j\right) ]

Graph Isomorphism Network (GIN)

GINs are theoretically motivated by the Weisfeiler-Lehman graph isomorphism test, designed to maximize discriminative power between different graph structures [17]. GINs use a simple sum aggregator combined with multi-layer perceptrons to achieve high expressive power.

Key Architectural Features:

- Uses sum aggregation which is injective and preserves distinct neighborhood structures

- Incorporates MLPs for increased model capacity

- Includes a learnable parameter (\epsilon) to balance self-information and neighborhood information

Mathematical Formulation: The GIN update function is defined as: [ \mathbf{h}v^{(k)} = \text{MLP}^{(k)}\left((1 + \epsilon^{(k)}) \cdot \mathbf{h}v^{(k-1)} + \sum{u \in \mathcal{N}(v)} \mathbf{h}u^{(k-1)}\right) ] Where (\epsilon) is a learnable or fixed parameter, and MLP represents a multi-layer perceptron.

Message Passing Neural Network (MPNN)

MPNNs provide a general framework that unifies many graph neural architectures under the message-passing paradigm [20] [17]. The framework explicitly defines message and update functions that can be customized for specific applications.

Key Architectural Features:

- Generalizes various GNN architectures through customizable functions

- Supports edge features in addition to node features

- Enables flexible design of message passing steps

Mathematical Formulation: The MPNN framework consists of two phases:

- Message Passing Phase: [ \mathbf{m}v^{(t+1)} = \sum{w \in \mathcal{N}(v)} Mt(\mathbf{h}v^{(t)}, \mathbf{h}w^{(t)}, \mathbf{e}{vw}) ]

- Update Phase: [ \mathbf{h}v^{(t+1)} = Ut(\mathbf{h}v^{(t)}, \mathbf{m}v^{(t+1)}) ] Where (Mt) is the message function, (Ut) is the update function, and (\mathbf{e}_{vw}) represents edge features.

Table 1: Comparative Analysis of GNN Architectures for Molecular Property Prediction

| Architecture | Core Mechanism | Key Advantages | Molecular Applications | Computational Complexity |

|---|---|---|---|---|

| GCN [17] [18] | Spectral graph convolution with normalization | Computational efficiency, stable training | Molecular property classification, toxicity prediction | O(|E|d + |V|d^2) |

| GAT [17] | Attention-weighted neighborhood aggregation | Adaptive neighbor importance, improved interpretability | Protein-ligand interaction, reaction yield prediction | O(|V|d^2 + |E|d) |

| GIN [17] | Sum aggregation with MLP transformation | Maximum discriminative power, theoretical guarantees | Molecular graph classification, functional group detection | O(|E|d + |V|d^2 + Kd^2) |

| MPNN [20] [17] | Customizable message and update functions | Flexibility, support for edge features | Reaction yield prediction (R²=0.75 [20]), molecular optimization | O(T(|E|d + |V|d^2)) |

Experimental Protocols & Performance Assessment

Benchmarking Methodologies

Comprehensive evaluation of GNN architectures for molecular property prediction requires standardized benchmarking protocols. Recent research has employed rigorous methodologies to assess model performance across diverse molecular tasks [20] [21].

Dataset Considerations: Molecular property prediction utilizes specialized datasets such as those available through MoleculeNet [17] and the Therapeutic Data Commons (TDC) [21]. These datasets encompass various property types including:

- Physicochemical Properties: ESOL (water solubility), Lipophilicity (octanol/water distribution) [17]

- Biophysical Properties: FreeSolv (hydration free energies) [17]

- Biological Activity: BACE (β-secretase inhibition), HIV (antiviral activity) [17] [21]

- Toxicity: SIDER (drug side effects), Tox21 (toxicity across 12 targets) [17] [21]

Splitting Strategies: Performance evaluation must consider different data splitting approaches to assess model generalization [21]:

- Random Splitting: Basic evaluation assuming independent and identically distributed data

- Scaffold Splitting: Groups molecules by Bemis-Murcko scaffolds to test generalization to novel chemotypes

- Cluster Splitting: Uses chemical similarity clustering (e.g., UMAP-based with ECFP4 fingerprints) to create challenging out-of-distribution tests

Comparative Performance Analysis

Recent studies have provided quantitative comparisons of GNN architectures across various molecular prediction tasks. A 2025 study evaluating yield prediction in cross-coupling reactions demonstrated that MPNN achieved the highest predictive performance with an R² value of 0.75, outperforming other architectures including GCN, GAT, and GIN [20].

The consistency-regularized GNN (CRGNN) approach has shown particular promise for scenarios with limited labeled data, addressing the common challenge of small datasets in molecular discovery [22]. By applying consistency regularization between differently augmented views of molecular graphs, CRGNNs improve robustness without altering intrinsic molecular properties [22].

Table 2: Performance Metrics Across Molecular Property Prediction Tasks

| Architecture | Yield Prediction (R²) [20] | Classification (ROC-AUC) [21] | Data Efficiency [22] | OOD Robustness [21] |

|---|---|---|---|---|

| GCN | 0.68 | 0.79 ± 0.04 | Moderate | Low on cluster splits |

| GAT/GATv2 | 0.71 | 0.81 ± 0.03 | Moderate | Medium on cluster splits |

| GIN | 0.69 | 0.80 ± 0.05 | High | Medium on scaffold splits |

| MPNN | 0.75 | 0.83 ± 0.03 | High | High on scaffold splits |

Advanced Architectural Extensions

Recent research has developed sophisticated GNN extensions to address specific challenges in molecular property prediction:

Geometry-Enhanced Molecular Representation Learning (GEM) The GEM framework incorporates molecular geometry (3D spatial structure) through dedicated graph neural architectures and self-supervised learning tasks [19]. This approach models atom-bond-angle relationships using dual graph representations:

- Atom-Bond Graph ((G = (\mathcal{V}, \mathcal{E}))): Atoms as nodes, bonds as edges

- Bond-Angle Graph ((H = (\mathcal{E}, \mathcal{A}))): Bonds as nodes, bond angles as edges

GEM employs geometry-level self-supervised tasks including bond length prediction, bond angle prediction, and atomic distance matrix prediction to leverage unlabeled molecular data [19]. This approach has demonstrated state-of-the-art performance on 14 of 15 molecular property prediction benchmarks [19].

Multi-Level Fusion Graph Neural Network (MLFGNN) MLFGNN integrates Graph Attention Networks with Graph Transformers to simultaneously capture local and global molecular dependencies [16]. By incorporating molecular fingerprints as a complementary modality and introducing cross-representation attention mechanisms, MLFGNN achieves consistent performance improvements across both classification and regression tasks [16].

Implementation Guide

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Resources for GNN-Based Molecular Property Prediction

| Resource Category | Specific Tools/Datasets | Function/Purpose | Access Reference |

|---|---|---|---|

| Benchmark Datasets | ESOL, FreeSolv, Lipophilicity, BBBP, BACE, Tox21 [17] | Standardized benchmarks for model evaluation | MoleculeNet [17] |

| ADMET/Toxicity Data | CYP450 isoforms, HERG, AMES [21] | Prediction of pharmacokinetics and safety profiles | TDC [21] |

| Reaction Datasets | Cross-coupling reactions (Suzuki, Sonogashira, etc.) [20] | Reaction yield prediction and optimization | Custom curation [20] |

| Cheminformatics Tools | RDKit [19] | Molecular graph construction, feature calculation, 3D structure generation | [19] |

| Evaluation Metrics | RMSE, MAE, R², ROC-AUC, PRC-AUC [17] | Quantitative performance assessment | Standard practice [20] [17] [21] |

| Splitting Strategies | Random, Scaffold, Cluster-based [21] | Generalization capability assessment | TDC, MoleculeNet [21] |

Experimental Protocol for Molecular Property Prediction

A standardized experimental protocol for GNN-based molecular property prediction includes the following key steps:

Data Preparation:

- Obtain molecular structures in SMILES format

- Generate molecular graphs using RDKit or similar tools [19]

- Compute atom features (type, degree, hybridization, etc.)

- Compute bond features (type, conjugation, stereochemistry, etc.)

- For geometry-aware models, generate 3D conformations and compute spatial parameters [19]

Model Configuration:

- Select appropriate GNN architecture based on task requirements

- Implement network with 3-6 message passing layers

- Set hidden dimensions between 64-512 based on dataset size

- Choose readout function (sum, mean, attention) for graph-level representation

Training Procedure:

Evaluation and Interpretation:

- Calculate task-appropriate metrics on test sets

- Perform ablation studies to assess component contributions

- Use interpretability methods (integrated gradients) to identify important substructures [20]

- Analyze failure cases and performance across molecular scaffolds

The four GNN architectural blueprints examined—GCN, GAT, GIN, and MPNN—provide a comprehensive foundation for molecular property prediction in drug discovery and materials science. Each architecture offers distinct advantages: GCN for computational efficiency, GAT for adaptive neighbor weighting, GIN for maximal discriminative power, and MPNN for flexibility and strong performance on reaction prediction tasks [20] [17].

Recent advances including geometry-aware models [19], consistency regularization for small datasets [22], and multi-level fusion approaches [16] demonstrate the ongoing evolution of GNN architectures to address specific challenges in molecular modeling. As the field progresses, the integration of 3D structural information, improved out-of-distribution generalization, and enhanced interpretability will continue to expand the utility of GNNs in accelerating molecular discovery and optimization pipelines.

The experimental protocols and performance benchmarks outlined in this guide provide researchers with standardized methodologies for evaluating and implementing these architectures in real-world molecular property prediction applications.

The Shift from Handcrafted Descriptors to End-to-End Deep Learning

The field of computational chemistry and drug discovery has undergone a profound transformation in its approach to molecular property prediction. For decades, scientists relied on handcrafted molecular descriptors or fingerprints, which were manually engineered features derived from chemical structures. These included topological indices, physicochemical properties, and fragment-based counts. While effective to a degree, these representations often failed to capture the full complexity of molecular systems and were not optimized for specific predictive tasks [5] [23].

The emergence of graph neural networks (GNNs) has ushered in a new paradigm: end-to-end deep learning. This approach operates directly on the molecular graph structure, where atoms naturally represent nodes and bonds represent edges. The model itself learns optimal representations from these "raw" structural inputs, simultaneously discovering relevant features and performing the target prediction. This shift has significantly advanced molecular property prediction, a crucial task in rational compound design for the chemical and pharmaceutical industries [23] [24].

This technical guide examines this fundamental transition, framing it within the broader context of GNN applications for molecular property research. We will explore the architectural principles underpinning this shift, provide detailed experimental protocols, and quantify the performance gains achieved through end-to-end deep learning.

From Traditional Feature Engineering to Geometric Deep Learning

The Regime of Handcrafted Descriptors

Traditional machine learning models for molecular property prediction operated on precomputed features. The model's predictive capability was inherently limited by the quality and completeness of these human-designed descriptors.

- Molecular Fingerprints: These are bit-vectors indicating the presence or absence of specific substructures or paths within the molecule. Examples include Extended-Connectivity Fingerprints (ECFPs), which are circular topological fingerprints [23].

- Molecular Descriptors: These are numerical values capturing specific physicochemical properties (e.g., logP, molecular weight, polar surface area) or topological features (e.g., Wiener index, Zagreb index) derived from the molecular graph [1] [25].

A significant limitation of this approach was that these features were not optimized for the specific prediction task and could include redundant or irrelevant information, creating a bottleneck on model performance [23].

The Rise of End-to-End Graph Neural Networks

End-to-end learning with GNNs eliminates the feature engineering bottleneck by allowing the model to learn the most informative representations directly from the graph structure. Molecules are intuitively represented as graphs, making GNNs a natural and powerful fit for this domain [24].

GNNs leverage a message-passing framework to learn node (atom) embeddings that incorporate both local and global structural information. In this paradigm, each node's features are updated by aggregating information from its neighboring nodes [26]. The core operation for a node ( v ) at layer ( k ) can be summarized as:

[av^{(k)} = \text{aggregate}^{(k)} ({ hu^{(k-1)}: u \in N(v) })]

[hv^{(k)} = \text{combine}^{(k)} (hv^{(k-1)}, av^{(k)})]

where ( hv^{(k)} ) is the embedding of node ( v ) at layer ( k ), ( N(v) ) are the neighbors of node ( v ), aggregate is a permutation-invariant function (e.g., sum, mean, max), and combine is often a neural network layer like a multilayer perceptron (MLP) [26].

This message-passing mechanism enables the model to capture complex, non-linear relationships between molecular structure and properties in a data-driven manner, far surpassing the expressivity of fixed fingerprints.

Architectural Evolution of GNNs for Molecular Property Prediction

The development of GNN architectures has been driven by the need for greater expressive power, which is the ability to distinguish between different molecular graph structures.

Foundational GNN Architectures

Table 1: Key GNN Architectures for Molecular Property Prediction

| Architecture | Core Mechanism | Advantages | Limitations |

|---|---|---|---|

| Graph Convolutional Network (GCN) [26] [23] | Applies a normalized sum over features of a node and its neighbors. | Simple, computationally efficient. | Uses mean-based aggregation, which is not injective and can fail to distinguish different graphs (e.g., isomers). |

| Graph Isomorphism Network (GIN) [26] [23] | Uses a sum aggregation followed by an MLP. Provably as powerful as the Weisfeiler-Lehman graph isomorphism test. | High expressive power; can distinguish a broader class of graph structures than GCN. | More parameter-heavy than GCN due to the integrated MLP. |

| Message Passing Neural Network (MPNN) [25] | A general framework that encompasses many GNNs. It explicitly defines a message function and an update function. | Highly flexible; can be tailored to specific molecular representations. | Design choices for message and update functions are critical and can be complex. |

Advanced and Hybrid Architectures

Recent research has focused on enhancing GNNs through novel integration and learning paradigms.

- Kolmogorov-Arnold GNNs (KA-GNNs): This architecture integrates Kolmogorov-Arnold Networks (KANs) into the fundamental components of GNNs: node embedding, message passing, and readout. KA-GNNs replace the linear transformations and fixed activation functions of traditional MLPs with learnable univariate functions (e.g., based on Fourier series or B-splines). This leads to improved expressivity, parameter efficiency, and interpretability, as demonstrated by superior performance on molecular benchmarks [5].

- Contrastive Dual-Interaction GNNs (DIG-Mol): This framework addresses the challenge of limited labeled data by employing self-supervised learning. It uses a dual-interaction graph contrastive learning mechanism with a novel molecular graph augmentation strategy. This allows the model to learn robust molecular representations from unlabeled data, significantly improving generalization, especially in few-shot learning scenarios [27].

- Quantized GNNs: To address the high computational and memory footprint of GNNs for deployment on resource-constrained devices, quantization techniques like DoReFa-Net have been applied. This involves representing model parameters (weights, activations) in lower bit-widths (e.g., INT8, INT4). The effectiveness is architecture- and task-dependent; for instance, predicting quantum mechanical dipole moments maintains strong performance up to 8-bit precision [23].

The following diagram illustrates the core workflow of a modern, end-to-end GNN for molecular property prediction.

Experimental Protocols and Performance Benchmarking

Standardized Evaluation Benchmarks

Rigorous evaluation relies on public benchmarks. MoleculeNet provides a comprehensive collection of datasets for molecular machine learning [23]. Key datasets include:

Table 2: Key Molecular Property Prediction Benchmarks

| Dataset | Property Type | Property Description | Dataset Size | Metric |

|---|---|---|---|---|

| QM9 | Quantum Mechanics | Multiple properties (e.g., HOMO-LUMO gap, dipole moment) for small organic molecules. | ~130,831 | MAE / RMSE |

| ESOL | Physical Chemistry | Water solubility (log solubility in mols per litre). | 1,128 | RMSE / MAE |

| FreeSolv | Physical Chemistry | Hydration free energy (kcal/mol). | 642 | RMSE / MAE |

| BBBP (Blood-Brain Barrier) | Biochemistry | Permeability (binary classification). | 2,050 | ROC-AUC |

| Lipophilicity (Lipo) | Physical Chemistry | Octanol/water distribution coefficient (logD). | 4,200 | RMSE / MAE |

Detailed Protocol: Implementing a KA-GNN

The following protocol details the implementation of a Kolmogorov-Arnold Graph Convolutional Network (KA-GCN), a state-of-the-art architecture [5].

Data Preparation and Featurization:

- Input: SMILES strings of molecules.

- Graph Construction: Use a toolkit like RDKit to convert SMILES into molecular graphs.

- Node Features: For each atom (node), create a feature vector encoding atomic properties (e.g., atomic number, number of valence electrons, number of hydrogen bonds, hybridization state). This is often one-hot encoded [25].

- Edge Features: For each bond (edge), create a feature vector encoding bond properties (e.g., bond type: single, double, triple, aromatic; conjugation) [25].

- Graph Pooling: Apply a pooling operation (e.g., mean, sum) to the final node embeddings to generate a single graph-level representation for property prediction [26].

Model Architecture Configuration:

- Node Embedding: Pass the initial atom feature matrix through a Fourier-based KAN layer instead of a standard linear layer.

- Message Passing: Implement graph convolutional layers. The node update in each layer uses a residual KAN module to transform and combine features, replacing the standard activation functions.

- Readout/Global Pooling: After the final message-passing layer, apply a pooling operation (e.g., mean) to the node embeddings to obtain a graph-level embedding. Pass this graph-level embedding through a final KAN layer for the property prediction output.

Training Procedure:

- Loss Function: For regression tasks, use Mean Squared Error (MSE) or Mean Absolute Error (MAE). For classification, use Cross-Entropy loss.

- Optimization: Use the Adam optimizer with an initial learning rate of 0.001 and a batch size suited to the dataset and model size (e.g., 32-128).

- Validation: Use a separate validation set for hyperparameter tuning and early stopping to prevent overfitting.

Quantitative Performance Comparison

Experimental results consistently demonstrate the superiority of end-to-end GNNs over traditional methods and the continual improvements from advanced architectures.

Table 3: Performance Comparison of Different Modeling Approaches

| Model / Approach | ESOL (RMSE) | FreeSolv (RMSE) | QM9 (Dipole Moment MAE) | BBBP (ROC-AUC) |

|---|---|---|---|---|

| Traditional ML with Descriptors (e.g., Random Forest) | ~1.0 [23] | ~2.5 [23] | ~0.5 [23] | ~0.85 [25] |

| Basic GCN [26] [23] | 0.87 [23] | 2.15 [23] | 0.30 (est.) | 0.89 [25] |

| GIN [26] [23] | 0.85 [23] | 2.10 [23] | 0.28 (est.) | 0.90 (est.) |

| KA-GNN (Kolmogorov-Arnold) [5] | ~0.78 (est., based on reported improvements) | ~1.95 (est., based on reported improvements) | ~0.25 (est., based on reported improvements) | ~0.92 (est., based on reported improvements) |

| Quantized GNN (8-bit) [23] | Performance similar to full-precision | Slight degradation vs. full-precision | Performance similar to full-precision | Slight degradation vs. full-precision |

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Software and Computational Tools for GNN Research

| Tool / Resource | Type | Primary Function | Application in Protocol |

|---|---|---|---|

| RDKit | Cheminformatics Library | Converts SMILES strings to molecular objects; computes molecular descriptors and fingerprints. | Used in the initial graph construction and featurization step to generate node and edge features from SMILES [24] [25]. |

| PyTorch Geometric (PyG) | Deep Learning Library | A library built upon PyTorch specifically for deep learning on graphs. Provides implementations of GCN, GIN, MPNN, and other layers and datasets. | Used to define the GNN model architecture, handle graph batching, and manage the training loop [26] [23]. |

| MoleculeNet | Benchmark Suite | A standardized benchmark for molecular machine learning, providing access to multiple datasets. | Used to obtain standardized training, validation, and test splits for fair model evaluation and comparison [23]. |

| DoReFa-Net Algorithm | Quantization Algorithm | A method for quantizing weights and activations of neural networks to low-bit widths. | Applied in a post-training or training-aware manner to reduce the model's memory footprint and computational cost for deployment [23]. |

Interpretability and Explainable AI (XAI)

The "black-box" nature of deep learning models is a significant concern in scientific applications. Explainable AI (XAI) methods have been developed to interpret GNN predictions by identifying which atoms, bonds, or substructures were most influential.

- Substructure Mask Explanation (SME): This method provides chemistry-intuitive explanations by attributing predictions to chemically meaningful substructures (e.g., BRICS fragments, Murcko scaffolds, functional groups). It works by systematically masking out these predefined substructures and measuring the change in the model's prediction. This aligns with how chemists reason about structure-activity relationships (SAR) [28].

- Quantitative Evaluation: Studies have established benchmarks to quantitatively assess XAI methods. Results show that methods like SME can deliver reliable and informative answers that complement existing classical fingerprints and can even be used to improve molecular property predictions [29].

The following diagram contrasts the traditional and end-to-end paradigms, highlighting the role of interpretability in the latter.

The shift from handcrafted descriptors to end-to-end deep learning represents a fundamental advancement in molecular property prediction. GNNs, by learning task-specific representations directly from molecular graphs, have consistently demonstrated superior accuracy and generalization over traditional methods. This transition is marked by several key developments: the move from fixed features to learned embeddings, the architectural evolution from simple GCNs to more powerful and efficient models like KA-GNNs and GINs, and the growing emphasis on model interpretability through XAI techniques like SME.

Looking forward, the field continues to evolve rapidly. Key research directions include overcoming data scarcity through self-supervised and few-shot learning frameworks like DIG-Mol, enhancing computational efficiency via quantization and other optimization techniques, and improving real-world applicability by generating novel molecular structures with desired properties through inverse design. This end-to-end paradigm, powered by GNNs, is poised to remain a cornerstone of AI-driven drug discovery and materials science.

Architectures in Action: From GCN to KANs for Real-World Property Prediction

Classification and Regression in Molecular Property Prediction

Molecular property prediction is a fundamental task in computational chemistry and drug discovery, where the goal is to map a molecule's structure to its experimental or quantum-chemical properties. Graph Neural Networks (GNNs) have emerged as a powerful framework for this task because they can naturally represent molecules as graph structures, with atoms as nodes and bonds as edges [5] [30]. Property prediction tasks are typically framed as either classification (predicting discrete labels, such as toxicity presence/absence) or regression (predicting continuous values, such as energy levels or solubility) [6] [31]. The performance of these models is crucial for accelerating material design and reducing reliance on costly experimental measurements.

Core Architectures and Methodologies

Kolmogorov-Arnold Graph Neural Networks (KA-GNNs)

A recent advancement integrates Kolmogorov-Arnold Networks (KANs) into GNNs. Unlike standard GNNs that use fixed activation functions on nodes, KA-GNNs place learnable univariate functions on edges, offering improved expressivity, parameter efficiency, and interpretability [5]. The KA-GNN framework systematically integrates Fourier-based KAN modules into the three core components of a GNN:

- Node Embedding: Initial atom representations are generated using KAN layers that process atomic and local bond features [5].

- Message Passing: Feature transformations during neighbor aggregation are handled by KAN layers, enhancing the learning of complex feature interactions [5].

- Readout: The step for generating a graph-level representation from updated node embeddings also employs KANs for a more expressive aggregation [5].

Two primary variants, KA-Graph Convolutional Networks (KA-GCN) and KA-Graph Attention Networks (KA-GAT), have been developed. The Fourier-series-based functions used in these KANs help capture both low-frequency and high-frequency structural patterns in molecular graphs, which is beneficial for predicting a wide range of molecular properties [5].

Multi-Task Learning with Adaptive Checkpointing

Data scarcity is a major challenge in molecular machine learning. Multi-task learning (MTL) addresses this by training a single model on multiple related properties simultaneously, leveraging correlations to improve generalization [6] [31]. However, negative transfer can occur, where learning one task detrimentally affects another, especially under imbalanced data [6].

Adaptive Checkpointing with Specialization (ACS) is a training scheme designed to mitigate negative transfer [6]. It employs a shared GNN backbone to learn general molecular representations, coupled with task-specific multi-layer perceptron (MLP) heads. During training, ACS monitors the validation loss for each task and checkpoints the best-performing backbone-head pair for a task whenever its validation loss reaches a new minimum. This ensures each task gets a specialized model that benefits from shared learning where helpful, but is shielded from harmful interference [6].

Table 1: Core GNN Architectures for Molecular Property Prediction

| Architecture | Key Principle | Best Suited For | Key Advantage |

|---|---|---|---|

| KA-GNN [5] | Integration of learnable activation functions on edges | General-purpose classification & regression | High expressivity and interpretability |

| ACS-MTL [6] | Shared backbone with task-specific heads & checkpointing | Multi-task learning with imbalanced data | Mitigates negative transfer; effective in low-data regimes |

Experimental Protocols and Performance Benchmarking

Benchmarking on Classification Tasks

Classification tasks often involve predicting toxicological or physiological endpoints. The ACS method was evaluated on several MoleculeNet benchmarks, including:

- ClinTox: Classifies drugs as FDA-approved or failed in clinical trials due to toxicity.

- Tox21: Predicts 12 different nuclear receptor and stress response toxicity endpoints.

- SIDER: Classifies the presence or absence of 27 types of side effects [6].

The standard protocol uses Murcko-scaffold splitting, which separates molecules based on their core structure. This provides a more challenging and realistic assessment of model generalizability compared to random splitting [6]. Models are typically evaluated using the ROC-AUC metric.

Table 2: Performance (Avg. ROC-AUC) on Classification Benchmarks

| Method | ClinTox | SIDER | Tox21 | Notes |

|---|---|---|---|---|

| Single-Task Learning (STL) | 0.839 | 0.681 | 0.819 | Separate model for each task |

| Multi-Task Learning (MTL) | 0.854 | 0.689 | 0.826 | Standard joint training |

| ACS (Proposed) | 0.892 | 0.693 | 0.828 | Mitigates negative transfer |

Benchmarking on Regression Tasks

Regression tasks predict continuous molecular properties. The KA-GNN architecture was tested on seven molecular benchmarks, demonstrating consistent improvements in prediction accuracy and computational efficiency over conventional GNNs [5]. In a separate study focusing on charge-related properties, various models were benchmarked on two key regression tasks:

- Reduction Potential: The voltage at which a molecule gains an electron in solution.

- Electron Affinity: The energy change when a molecule gains an electron in the gas phase [32].

These properties are sensitive probes for evaluating a model's ability to handle changes in charge and spin state. Performance is typically measured by Mean Absolute Error (MAE) and Root Mean Squared Error (RMSE) [32].

Table 3: Performance on Regression Benchmarks for Reduction Potential

| Method | OROP (Main-Group) MAE (V) | OMROP (Organometallic) MAE (V) | Notes |

|---|---|---|---|

| B97-3c (DFT) | 0.260 | 0.414 | Traditional computational method |

| GFN2-xTB (SQM) | 0.303 | 0.733 | Semi-empirical method |

| UMA-S (OMol25 NNP) | 0.261 | 0.262 | Neural network potential; excels on organometallics |

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Resources for Molecular Property Prediction Research

| Resource Name | Type | Function in Research |

|---|---|---|

| MoleculeNet [8] [6] | Dataset Collection | Standardized benchmarks for fair model comparison. |

| QM9 [31] [33] | Dataset | ~134k small organic molecules with quantum chemical properties. |

| FGBench [8] | Dataset | Provides functional group-annotated data for interpretable reasoning. |

| OMol25 [32] | Dataset & Model | Large-scale dataset and pre-trained models for molecular energy. |

| Graph Convolutional Network (GCN) | Model Architecture | Base model for many molecular GNNs [5] [30]. |

| Graph Isomorphism Network (GIN) | Model Architecture | Powerful GNN variant for capturing graph structure [33]. |

| Multi-Task Learning (MTL) | Training Paradigm | Improves data efficiency by learning related tasks jointly [6] [31]. |

| Murcko Scaffold Split | Data Protocol | Splits data by molecular core to test generalization [6]. |

Workflow Visualization

KA-GNN Architectural Framework

The following diagram illustrates the flow of information in a Kolmogorov-Arnold Graph Neural Network, highlighting the integration of KAN layers into the core GNN components.

ACS Training Scheme

This diagram outlines the adaptive checkpointing with specialization (ACS) process for multi-task learning, showing how task-specific checkpoints are managed.

Future Outlook

The field of molecular property prediction is rapidly evolving. Key future directions include enhancing model interpretability to identify chemically meaningful substructures, as seen in KA-GNNs [5], and developing methods for the ultra-low data regime [6]. Furthermore, incorporating finer-grained chemical knowledge, such as functional group-level information [8], and improving the physical grounding of models, particularly for charge-related properties [32], represent critical frontiers for building more predictive, reliable, and trustworthy models for real-world scientific discovery and drug development.

Graph Neural Networks (GNNs) have fundamentally transformed molecular property prediction by providing an end-to-end learning framework that operates directly on molecular graph representations. In this paradigm, atoms naturally correspond to nodes and chemical bonds to edges, eliminating the dependency on manual feature engineering required by traditional descriptor-based methods [2] [3]. The capacity of GNNs to capture both local chemical environments and global molecular structure has established them as indispensable tools across computational chemistry, drug discovery, and materials science [5] [2]. This technical guide examines four foundational GNN architectures—Graph Convolutional Networks (GCN), Graph Attention Networks (GAT), Graph Isomorphism Networks (GIN), and Graph Transformers—within the context of molecular property prediction. We provide a comprehensive analysis of their underlying mechanisms, comparative performance across standardized benchmarks, detailed experimental protocols, and emerging research directions that are shaping the next generation of molecular machine learning models.

Architectural Fundamentals and Molecular Applications

Graph Convolutional Network (GCN)

GCNs employ a spectral-based convolution approach that approximates first-order Chebyshev polynomial filters to aggregate neighbor information [23]. In molecular graphs, each atom node updates its representation by combining features from adjacent atoms connected by chemical bonds. The node update function is defined as:

[H^{(l+1)} = \sigma\left(\hat{D}^{-\frac{1}{2}}\hat{A}\hat{D}^{-\frac{1}{2}}H^{(l)}W^{(l)}\right)]

where (\hat{A} = A + I) is the adjacency matrix with self-loops, (\hat{D}) is the corresponding degree matrix, (H^{(l)}) contains node embeddings at layer (l), (W^{(l)}) is the trainable weight matrix, and (\sigma) denotes the activation function [23]. This symmetric normalization ensures numerical stability while aggregating neighborhood information. For molecular property prediction, GCNs effectively capture local chemical environments but face limitations in modeling long-range interactions due to their spectral foundations [34].

Graph Attention Network (GAT)

GATs replace the static normalization of GCNs with an attention mechanism that computes adaptive, weighted averages of neighbor features [35]. Each node pair's attention coefficients are calculated as:

[\alpha{ij} = \frac{\exp\left(\text{LeakyReLU}\left(\mathbf{a}^T [W\mathbf{h}i || W\mathbf{h}j]\right)\right)}{\sum{k\in\mathcal{N}(i)}\exp\left(\text{LeakyReLU}\left(\mathbf{a}^T [W\mathbf{h}i || W\mathbf{h}j]\right)\right)}]

where (\mathbf{a}) is a learnable attention vector, (W) is a weight matrix, (\mathbf{h}i) and (\mathbf{h}j) are node features, and (||) denotes concatenation [35]. Multi-head attention extends this mechanism to capture different aspects of molecular interactions. The adaptive weighting allows GATs to prioritize chemically significant substructures and functional groups during message passing, which is particularly valuable for predicting properties influenced by specific molecular regions [5].

Graph Isomorphism Network (GIN)

GINs were specifically designed to maximize discriminative power in line with the Weisfeiler-Lehman graph isomorphism test [33] [2]. The GIN update function employs a multi-layer perceptron (MLP) to model injective functions:

[\mathbf{h}v^{(k)} = \text{MLP}^{(k)}\left((1 + \epsilon^{(k)}) \cdot \mathbf{h}v^{(k-1)} + \sum{u\in\mathcal{N}(v)}\mathbf{h}u^{(k-1)}\right)]

where (\epsilon) is a learnable parameter that adjusts the relative importance of the center node versus its neighbors [33]. This architecture enables GIN to capture distinct molecular substructures and topological patterns more effectively than other GNN variants. Empirical studies demonstrate GIN's exceptional performance on molecular symmetry prediction, achieving 92.7% accuracy on the QM9 dataset for point group classification [33].

Graph Transformer Architecture

Graph Transformers adapt the self-attention mechanism to graph-structured data, enabling global information exchange between all node pairs regardless of connectivity [34] [35]. The core self-attention mechanism is computed as:

[\text{Attention}(Q, K, V) = \text{softmax}\left(\frac{QK^T}{\sqrt{d_k}} + \mathbf{M}\right)V]

where (Q, K, V) are query, key, and value matrices derived from node embeddings, and (\mathbf{M}) is an attention mask that can incorporate structural information [35]. To preserve molecular graph structure, Graph Transformers integrate specialized encodings including spatial encodings (based on inter-atomic distances), structural encodings (based on graph connectivity measures), and edge encodings (representing bond information) [35]. Architectures like Graphormer and MolGraphormer have demonstrated state-of-the-art performance on molecular benchmarks by effectively capturing long-range dependencies between atoms that conventional message-passing GNNs struggle to model [36] [2].

Table 1: Core Architectural Components of GNN Variants

| Architecture | Key Mechanism | Molecular Relevance | Complexity | ||

|---|---|---|---|---|---|

| GCN | Spectral graph convolution with fixed weights | Captures local chemical environments | (\mathcal{O}( | \mathcal{E} | )) |

| GAT | Attention-weighted neighborhood aggregation | Adaptively focuses on chemically significant regions | (\mathcal{O}( | \mathcal{V} | ^2)) |

| GIN | MLP-based injective aggregation | Maximally powerful for graph isomorphism detection | (\mathcal{O}( | \mathcal{E} | )) |

| Graph Transformer | Global self-attention with structural encodings | Models long-range interatomic interactions | (\mathcal{O}( | \mathcal{V} | ^2)) |

Comparative Performance Analysis

Benchmark Results Across Molecular Tasks

Comprehensive evaluations across standardized molecular benchmarks reveal distinct performance patterns aligned with architectural strengths. On quantum mechanical property prediction (QM9 dataset), GIN demonstrates exceptional capability for symmetry-related tasks, achieving 92.7% accuracy in molecular point group prediction [33]. Equivariant GNNs like EGNN, which incorporate 3D coordinate information, excel at geometry-sensitive properties, achieving the lowest mean absolute error on partition coefficients including log Kₐ𝓌 (MAE=0.25) and log K_d (MAE=0.22) [2].

Graph Transformer architectures consistently deliver superior performance on tasks requiring global molecular context. On the OGB-MolHIV benchmark for bioactivity classification, Graphormer achieves an ROC-AUC of 0.807, outperforming both GIN and geometric models [2]. Similarly, on partition coefficient prediction, Graphormer attains the best performance for log Kₒ𝓌 prediction (MAE=0.18) [2]. The integration of Kolmogorov-Arnold Networks (KANs) into GNN frameworks has emerged as a promising advancement, with KA-GNNs consistently outperforming conventional GNNs across multiple molecular benchmarks while offering enhanced interpretability through highlighted chemically meaningful substructures [5].

Table 2: Performance Comparison Across Molecular Benchmarks

| Architecture | QM9 (Point Group) | OGB-MolHIV (ROC-AUC) | log Kₒ𝓌 (MAE) | log Kₐ𝓌 (MAE) |

|---|---|---|---|---|

| GIN | 92.7% [33] | 0.799 [2] | 0.24 [2] | 0.31 [2] |

| EGNN | - | - | 0.21 [2] | 0.25 [2] |

| Graphormer | - | 0.807 [2] | 0.18 [2] | 0.27 [2] |

| KA-GNN | Superior to conventional GNNs [5] | Consistently outperforms [5] | - | - |

Limitations and Failure Modes

Each architecture presents specific limitations under certain molecular contexts. GCNs suffer from over-smoothing with increasing layers, limiting their depth and capacity to capture complex molecular patterns [34]. Both GCN and GAT face over-squashing bottlenecks when modeling long-range interatomic interactions, as information must propagate through multiple message-passing steps [35]. While Graph Transformers circumvent this limitation through global attention, they incur substantial computational costs ((\mathcal{O}(|\mathcal{V}|^2))) and require complex structural encodings to maintain graph inductive bias [35]. Recent hybrid approaches like the Local-Global Transformer (LGT) address these limitations by combining efficient local message passing with sparse global attention, achieving state-of-the-art results on QM9 and ZINC benchmarks [34].

Experimental Protocols and Methodologies

Standardized Evaluation Frameworks

Robust evaluation of GNN architectures for molecular property prediction requires standardized datasets, splitting strategies, and performance metrics. The MoleculeNet benchmark provides curated datasets including QM9 (quantum mechanical properties), ESOL (water solubility), FreeSolv (hydration free energy), and Lipophilicity (octanol/water distribution coefficient) [2] [23]. Dataset splitting typically follows random splits (80/10/10) for smaller datasets, while scaffold split strategies based on molecular substructures create more challenging generalization tests [37]. Performance metrics include Mean Absolute Error (MAE) and Root Mean Squared Error (RMSE) for regression tasks, and ROC-AUC for classification benchmarks like OGB-MolHIV [2].

Recent advancements incorporate uncertainty quantification techniques including Monte Carlo Dropout and Temperature Scaling to improve model calibration and reliability in downstream decision-making [36]. The MolGraphormer architecture, for instance, employs these techniques for toxicity prediction, achieving an F1-Score of 0.6697 and AUC-ROC of 0.7806 on the Tox21 benchmark while providing calibrated uncertainty estimates [36].

Pre-training Strategies

Large-scale pre-training has emerged as a powerful paradigm for enhancing GNN generalization across diverse molecular properties. The Self-Conformation-Aware Graph Transformer (SCAGE) implements a multi-task pre-training framework (M4) incorporating four supervised and unsupervised tasks: molecular fingerprint prediction, functional group prediction using chemical prior information, 2D atomic distance prediction, and 3D bond angle prediction [37]. This approach, pre-trained on approximately 5 million drug-like compounds, enables learning of comprehensive conformation-aware representations that transfer effectively to downstream molecular property tasks [37].

Similarly, GROVER employs self-supervised graph transformer pre-training on 10 million molecules through context-based and motif-based objectives, addressing challenges of limited labeled data and poor generalization to newly synthesized compounds [37]. These pre-training strategies demonstrate that incorporating chemical prior knowledge—including functional groups, molecular conformations, and physicochemical principles—significantly enhances model performance and interpretability.

Implementation and Optimization

The Scientist's Toolkit: Essential Research Components

Table 3: Essential Experimental Components for Molecular GNN Research