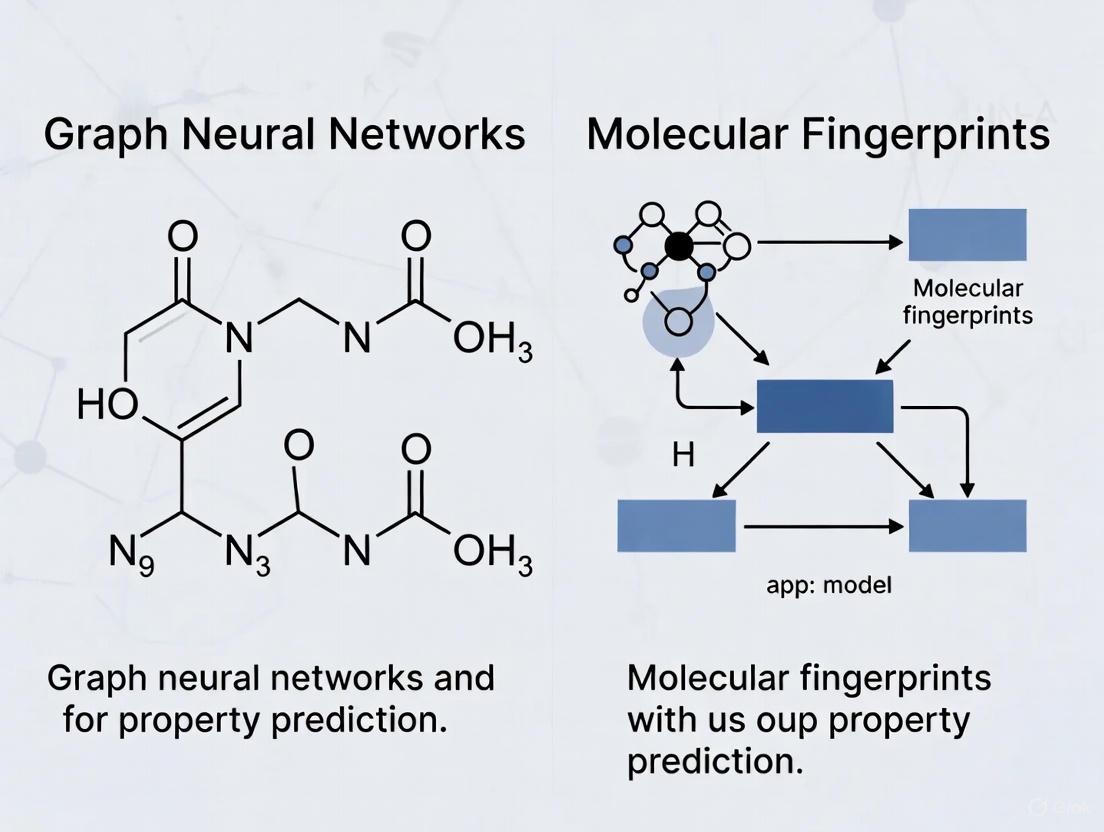

Graph Neural Networks vs. Molecular Fingerprints: A 2025 Benchmark for Molecular Property Prediction

Molecular property prediction is a cornerstone of modern drug discovery and materials science.

Graph Neural Networks vs. Molecular Fingerprints: A 2025 Benchmark for Molecular Property Prediction

Abstract

Molecular property prediction is a cornerstone of modern drug discovery and materials science. This article provides a comprehensive analysis and benchmark of two dominant approaches: traditional molecular fingerprints and advanced graph neural networks (GNNs). Drawing on the latest research, we explore the foundational principles of both methods, detail cutting-edge hybrid architectures like FP-GNN and KA-GNN, and address critical practical considerations such as data requirements, uncertainty estimation, and model interpretability. Through a rigorous comparative validation across diverse property endpoints—from ADMET and toxicity to taste and odor perception—we synthesize evidence-based guidelines for researchers and development professionals to select and optimize the right model for their specific predictive task. The findings reveal a nuanced landscape where the 'best' model is highly context-dependent, and hybrid strategies often provide the most robust solution.

Understanding the Core Technologies: From Handcrafted Fingerprints to Learned Graph Representations

In the field of cheminformatics and drug discovery, molecular fingerprints are a fundamental tool for converting the complex structure of a molecule into a fixed-length, machine-readable bit vector. They enable rapid similarity searching, virtual screening, and the prediction of molecular properties by capturing key structural features. Among the numerous available fingerprints, the Extended-Connectivity Fingerprint (ECFP), the Morgan fingerprint (a common implementation of ECFP), and the PubChem fingerprint have emerged as industry standards. This guide provides a detailed, objective comparison of these fingerprints, frames their performance against modern Graph Neural Networks (GNNs), and outlines the experimental protocols used for their evaluation.

Fingerprint Definitions and Algorithms

The following table summarizes the core characteristics and generation algorithms of the three industry-standard fingerprints.

Table 1: Definition and Key Characteristics of Standard Molecular Fingerprints

| Fingerprint | Type | Core Algorithm | Key Features | Common Uses |

|---|---|---|---|---|

| ECFP / Morgan [1] | Circular (Topological) | Morgan algorithm; iteratively captures circular atom neighborhoods, hashes them into a bit vector [1]. | Captures molecular topology independent of atom numbering; excellent for identifying structurally similar molecules. | Structure-activity modeling, virtual screening, molecular similarity. |

| PubChem Fingerprint [2] | Substructure-based | Encodes the presence or absence of 881 predefined chemical substructures derived from the PubChem database [2]. | Provides a direct, interpretable mapping between bits and specific functional groups or substructures. | Bioactivity prediction, high-throughput screening. |

The generation of these fingerprints, particularly the circular ECFP/Morgan, follows a systematic workflow. The diagram below illustrates the key steps involved in creating an ECFP/Morgan fingerprint.

Fingerprints vs. Graph Neural Networks: A Performance Benchmark

A critical question in modern cheminformatics is whether complex deep learning models like GNNs outperform traditional fingerprint-based methods. Evidence from comprehensive studies reveals a nuanced performance landscape.

Table 2: Comparative Performance of Fingerprint-Based Models vs. Graph Neural Networks

| Model Category | Representative Examples | Key Findings from Experimental Benchmarks | Relative Advantages |

|---|---|---|---|

| Fingerprint-Based Models | SVM, Random Forest (RF), XGBoost using ECFP and other fingerprints [3]. | On average, descriptor-based models (using fingerprints) outperformed graph-based models on 11 public datasets in terms of prediction accuracy and computational efficiency [3]. SVM performed best on regression tasks, while RF and XGBoost were top classifiers [3]. | Computational efficiency, interpretability, and state-of-the-art performance on many ADMET prediction tasks [4]. |

| Graph Neural Networks (GNNs) | GCN, GAT, MPNN, Attentive FP [3]. | Some GNNs (e.g., Attentive FP, GCN) achieved outstanding performance on specific, larger or multi-task datasets [3]. Newer architectures like KA-GNNs show consistent improvements over conventional GNNs [5]. | Potential to automatically learn task-specific features; strong performance on unstructured data like 3D molecular shape [4]. |

| Hybrid Models | FH-GNN (Fingerprint-enhanced GNN) [6]. | Models that integrate hierarchical graph structures with fingerprint features outperformed baseline models, demonstrating the complementary strengths of both approaches [6]. | Combines learned representations from GNNs with expert knowledge from fingerprints. |

The typical methodology for a comparative study, as outlined in the search results, involves a direct, standardized evaluation across multiple datasets and model types, as shown in the workflow below.

Essential Research Reagents and Tools

To implement the experimental protocols cited in this guide, researchers require the following key software tools and databases.

Table 3: Key Research Reagents and Computational Tools

| Item Name | Function / Purpose | Relevant Context / Use Case |

|---|---|---|

| RDKit | An open-source cheminformatics toolkit. | Used for generating Morgan/ECFP fingerprints, reading SMILES strings, and calculating molecular descriptors [3] [7]. |

| MoleculeNet / TDC | Curated benchmarks for molecular machine learning. | Provides standardized datasets (e.g., ESOL, FreeSolv, BBBP) for fair model comparison [3] [4]. |

| DeepPurpose | A molecular modeling and prediction toolkit. | Facilitates the implementation and comparison of various molecular representation methods, including multiple fingerprints and GNNs [2]. |

| CHEMBL / PubChem | Large-scale databases of bioactive molecules. | Sources for experimental bioactivity data used for training and validating predictive models [2] [8]. |

The ECFP/Morgan and PubChem fingerprints remain powerful, efficient, and often superior choices for many molecular property prediction tasks, especially when combined with traditional machine learning models like XGBoost. The choice between fingerprints and GNNs is not a simple dichotomy. Fingerprints excel in computational efficiency and performance on structured data and well-defined tasks, while GNNs show promise in handling unstructured data and for large, multi-task datasets. The most powerful emerging trend is hybrid modeling, which integrates the interpretability and robust performance of fingerprints with the automatic feature-learning capacity of GNNs, offering a synergistic path forward for computational drug discovery [6].

The accurate prediction of molecular properties is a cornerstone of modern drug discovery and materials science, where computational models are essential for reducing the costs and risks of experimental trials. For years, the dominant paradigm relied on molecular fingerprints—expert-crafted vectors encoding specific structural patterns—combined with traditional machine learning models like Random Forest or XGBoost [3] [4]. However, the emergence of Graph Neural Networks (GNNs) has introduced a powerful alternative: end-to-end deep learning models that learn task-specific representations directly from the molecular graph structure itself [3] [9].

This paradigm shift raises a critical question for researchers and development professionals: which approach delivers superior performance for specific property prediction tasks? The answer is not absolute but depends on dataset characteristics, property types, and resource constraints. While GNNs automatically learn hierarchical features from atomic interactions, fingerprints offer computational efficiency and strong baselines, especially on smaller datasets [3] [4]. This guide provides an objective comparison of these competing methodologies, supported by recent experimental data and detailed protocols to inform your research decisions.

How GNNs Learn from Molecular Structures

Fundamental Architecture of Graph Neural Networks

GNNs are specifically designed to process data represented as graphs, making them naturally suited for molecules where atoms constitute nodes and bonds form edges. The core operation of a GNN is the message-passing mechanism, where each atom's representation is iteratively updated by aggregating information from its neighboring atoms [3]. This process enables GNNs to capture the complex topological environment of each atom within the molecular structure.

Advanced GNN Architectures for Molecular Property Prediction

Recent research has produced specialized GNN architectures that extend beyond basic message-passing:

- Fingerprint-Enhanced Hierarchical GNNs (FH-GNN): These models integrate traditional fingerprint features with hierarchical graph learning through adaptive attention mechanisms, simultaneously learning from atomic-level, motif-level, and graph-level information [6].

- Kolmogorov-Arnold GNNs (KA-GNN): This framework replaces standard multilayer perceptrons in GNNs with Kolmogorov-Arnold networks using Fourier-series-based functions, enhancing expressivity and parameter efficiency while improving interpretability by highlighting chemically meaningful substructures [5].

- Equivariant GNNs (EGNN): These models incorporate 3D molecular coordinates while preserving Euclidean symmetries (translation, rotation, reflection), showing particular strength for geometry-sensitive properties like partition coefficients [9].

- Graph Transformers (Graphormer): Applying global attention mechanisms to graph structures, these models excel at capturing long-range dependencies within molecules, achieving state-of-the-art performance on various benchmarks [9].

Experimental Comparison: GNNs vs. Molecular Fingerprints

Benchmarking Protocols and Dataset Specifications

To ensure fair comparisons, researchers typically employ standardized benchmark datasets and evaluation protocols:

Commonly Used Datasets:

- MoleculeNet: A comprehensive collection including QM9 (quantum properties), ESOL (solubility), FreeSolv (solvation energy), Lipop (lipophilicity), and Tox21 (toxicity) [6] [9] [3].

- OGB-MolHIV: A realistic bioactivity classification dataset from the Open Graph Benchmark [9].

- ToxCast: Toxicity prediction dataset with 19-617 tasks, used for assessing real-world scenario performance [10] [3].

Standard Evaluation Metrics:

- Regression Tasks: Mean Absolute Error (MAE), Root Mean Squared Error (RMSE)

- Classification Tasks: Area Under ROC Curve (AUROC), Area Under Precision-Recall Curve (AUPRC), Accuracy, Precision, Recall [11] [9]

Experimental protocols typically involve stratified k-fold cross-validation (commonly k=5) with maintained train/test splits to ensure reliable generalization estimates. For GNN training, standard practice includes early stopping based on validation performance and multiple random initializations to account for variability [11] [3].

Quantitative Performance Comparison

Table 1: Performance Comparison Across Various Molecular Property Prediction Tasks

| Model Category | Specific Model | Dataset | Property Type | Performance Metrics |

|---|---|---|---|---|

| Fingerprint-Based | Morgan FP + XGBoost | Odor Prediction | Multi-label Classification | AUROC: 0.828, AUPRC: 0.237 [11] |

| Fingerprint-Based | Morgan FP + RF | Odor Prediction | Multi-label Classification | AUROC: 0.784, AUPRC: 0.216 [11] |

| GNN | FH-GNN | Multiple MoleculeNet | Classification/Regression | Outperformed baselines on 8 datasets [6] |

| GNN | KA-GNN | 7 Molecular Benchmarks | Multiple | Superior accuracy & computational efficiency vs conventional GNNs [5] |

| GNN | Graphormer | OGB-MolHIV | Bioactivity Classification | ROC-AUC: 0.807 [9] |

| GNN | EGNN | QM9 (log K_d) | Environmental Partitioning | MAE: 0.22 [9] |

| GNN | GPS + Knowledge Graph | Tox21 (NR-AR) | Toxicity Classification | AUC: 0.956 [12] |

Table 2: Computational Efficiency and Data Requirements

| Model Type | Training Time | Data Efficiency | Interpretability | Best-Suited Applications |

|---|---|---|---|---|

| Fingerprint + Traditional ML | Seconds to minutes (large datasets) [3] | Excellent on small datasets [4] | High (via SHAP, feature importance) [3] | Small datasets, rapid prototyping, structured data [4] |

| Standard GNNs | Hours to days | Requires larger datasets [13] | Moderate (attention weights) | General-purpose property prediction [3] |

| Advanced GNNs (Hierarchical, KA) | Similar to standard GNNs | Improved via pre-training | Enhanced (meaningful substructures) [5] | Complex properties requiring hierarchical understanding [6] [5] |

| 3D-Aware GNNs (EGNN) | Higher due to 3D processing | Requires 3D structural data | Moderate | Geometry-sensitive properties (partition coefficients) [9] |

Analysis of Performance Patterns

The experimental data reveals several key patterns:

Fingerprint advantages: On structured data modalities, traditional fingerprints combined with gradient-boosted trees consistently achieve competitive results, with Morgan-fingerprint-based XGBoost delivering AUROC of 0.828 in odor prediction tasks [11]. The Therapeutic Data Commons (TDC) ADMET benchmark shows that approximately 75% of state-of-the-art results are achieved using "old-school" gradient-boosted trees with molecular fingerprints [4].

GNN strengths: GNNs excel in capturing complex spatial relationships and hierarchical structures, with specialized architectures demonstrating particular advantages:

- FH-GNN outperforms baselines by integrating hierarchical molecular graphs with fingerprint features through adaptive attention [6].

- KA-GNN consistently surpasses conventional GNNs in both prediction accuracy and computational efficiency across seven molecular benchmarks [5].

- Knowledge-Enhanced GNNs integrating biological mechanism information (e.g., compound-gene-pathway associations) achieve exceptional performance on toxicity prediction, with GPS model reaching AUC of 0.956 on Tox21 NR-AR task [12].

Data size dependency: GNNs tend to underperform on small datasets, with one comprehensive study finding that descriptor-based models generally outperformed graph-based models across 11 public datasets [3]. Consistency-regularized approaches (CRGNN) have been developed specifically to address this limitation by better utilizing molecular graph augmentation during training [13].

Methodology Deep Dive: Experimental Protocols

Molecular Fingerprint Implementation Protocol

Feature Extraction Process:

- Input Representation: Molecules are represented as SMILES strings or MolBlock representations [11].

- Fingerprint Generation:

- Morgan Fingerprints: Computed using the Morgan algorithm from optimized molecular structures, typically with radius 2 (equivalent to ECFP4) [11].

- Functional Group Fingerprints: Generated by detecting predefined substructures using SMARTS patterns [11].

- Molecular Descriptors: Calculated using RDKit, including molecular weight, hydrogen bond donors/acceptors, topological polar surface area (TPSA), logP, rotatable bonds, heavy atom count, and ring count [11].

- Model Training: Fingerprints are used as input features for traditional machine learning models:

Advantages: This approach benefits from computational efficiency, with XGBoost and Random Forest requiring only seconds to train models even on large datasets [3].

Graph Neural Network Implementation Protocol

Standard GNN Training Workflow:

- Graph Construction: Atoms represented as nodes (with features: atom type, hybridization, valence), bonds as edges (with features: bond type, conjugation) [3].

- Message Passing: Multiple layers (typically 3-6) of neighborhood aggregation using frameworks like:

- Readout Phase: Atom representations are aggregated into molecular-level representations using summation, averaging, or attention-based pooling [3].

- Property Prediction: The molecular representation is passed through fully connected layers for final property prediction [6].

Advanced GNN Training Strategies:

- Consistency Regularization (CRGNN): Applied for small datasets by creating strongly and weakly-augmented views of molecular graphs and encouraging consistent predictions between them [13].

- Knowledge Integration: Incorporating external biological knowledge through heterogeneous graph structures that connect compounds to genes and pathways [12].

- Self-Supervised Pre-training: Leveraging large unlabeled molecular datasets to learn generalizable representations before fine-tuning on specific property prediction tasks [14].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Computational Tools for Molecular Property Prediction

| Tool Name | Type | Primary Function | Application Context |

|---|---|---|---|

| RDKit | Cheminformatics Library | Molecular descriptor calculation, fingerprint generation, SMILES processing [11] | Fundamental preprocessing for both fingerprint and GNN approaches |

| OGG/MoleculeNet | Benchmark Datasets | Standardized molecular property datasets for fair model comparison [9] | Model evaluation and benchmarking across diverse property types |

| PyTor Geometric | Deep Learning Library | GNN implementation and training with molecular graph support [9] | Developing and training custom GNN architectures |

| XGBoost/LightGBM | Traditional ML Library | Gradient boosting implementations for fingerprint-based modeling [11] [3] | Building high-performance fingerprint-based predictors |

| Chemprop | Specialized GNN Framework | Message-passing neural networks specifically designed for molecular property prediction [10] | Rapid implementation of state-of-the-art GNN models |

| TDC (Therapeutic Data Commons) | Benchmark Platform | ADMET-specific benchmarks and datasets [4] | Real-world drug development property prediction |

| Neo4j | Graph Database | Storage and querying of knowledge graphs for biological information [12] | Integrating heterogeneous knowledge into GNN models |

Based on the comprehensive experimental evidence:

Choose Molecular Fingerprints with Traditional ML when:

Choose Graph Neural Networks when:

- Larger datasets are available (> 10,000 compounds) for sufficient training [13] [3]

- Predicting complex properties requiring 3D spatial understanding or hierarchical reasoning [6] [9]

- Integrating heterogeneous biological knowledge is beneficial for the task [12]

- Capturing long-range dependencies or complex molecular interactions is essential [5] [9]

Consider Hybrid Approaches when:

- Seeking to balance predictive performance with uncertainty estimation (neural fingerprints with Random Forest) [10]

- Addressing data scarcity issues while leveraging GNN strengths (consistency-regularized GNNs) [13]

- Combining structural information with external knowledge for mechanistically informed predictions [14] [12]

The most advanced applications are increasingly leveraging integrated approaches, such as fingerprint-enhanced hierarchical GNNs or knowledge graph-augmented networks, which combine the complementary strengths of both paradigms [6] [12]. As the field evolves, the optimal solution typically involves selecting architectures based on specific dataset characteristics, property complexity, and available computational resources rather than adhering to a one-size-fits-all approach.

In the field of molecular property prediction, a central debate exists between traditional descriptor-based methods using molecular fingerprints and modern graph neural networks. Molecular fingerprints, such as Extended Connectivity Fingerprints (ECFPs), employ predefined structural keys and hashing algorithms to represent molecules as fixed-length vectors [15] [3]. In contrast, GNNs learn representations directly from the molecular graph structure, where atoms constitute nodes and bonds form edges [16]. While early studies suggested GNNs might universally outperform fingerprint-based approaches, more comprehensive benchmarks reveal a nuanced reality where model performance depends significantly on dataset characteristics, task requirements, and architectural selection [3].

This guide provides an objective comparison of three fundamental GNN architectures—MPNN, GAT, and GCN—within this broader context, presenting experimental data to inform researchers' model selection for drug discovery applications.

Core Architectural Principles and Mechanisms

Table 1: Core Computational Mechanisms of GNN Architectures

| Architecture | Core Operational Principle | Mathematical Formulation | Key Hyperparameters |

|---|---|---|---|

| GCN | Spectral-based convolution with symmetric normalization [17] | ( H^{(l+1)} = \sigma(\tilde{D}^{-\frac{1}{2}}\tilde{A}\tilde{D}^{-\frac{1}{2}} H^{(l)}W^{(l)}) ) | Number of graph convolution layers, Units per layer (e.g., [64,64], [128,128]) [16] |

| GAT | Attention-weighted neighborhood aggregation [16] [17] | ( \alpha{ij} = \text{softmax}(\text{LeakyReLU}(\vec{a}^T[Whi|Wh_j])) ) | Attention heads, Dropout rate [16] |

| MPNN | Message functions with permutation-invariant aggregation [17] | ( m{ij} = fe(hi,hj,e{ij});\ hi' = fh(hi, \sum{j \in Ni} m_{ij}) ) | Number of atom output features, Message passing steps [16] |

Figure 1: Computational workflows of GCN, GAT, and MPNN architectures illustrating their distinct approaches to graph-based feature learning.

Key Differentiating Architectural Features

GCN employs spectral graph convolutions with symmetric normalization of the graph Laplacian, enabling localized first-order neighborhood aggregation. The renormalization trick (( I + D^{-1/2}AD^{-1/2} \rightarrow \tilde{D}^{-1/2}\tilde{A}\tilde{D}^{-1/2} )) addresses gradient instability issues in deeper architectures [17].

GAT introduces attention mechanisms that compute adaptive weights for neighborhood aggregation, allowing the model to prioritize more influential neighboring nodes. Multi-head attention extends this capability to capture different aspects of structural relationships [16] [17].

MPNN provides a generalized framework for message passing where each node receives messages from its neighbors, aggregates them, and updates its state accordingly. This approach explicitly separates message construction, aggregation, and node update functions, offering greater flexibility in modeling complex molecular interactions [17].

Experimental Performance Comparison

Quantitative Benchmarking Across Molecular Prediction Tasks

Table 2: Performance Comparison of GNN Architectures on Molecular Property Prediction Tasks

| Task Domain | Best Performing Architecture | Key Metric | Comparative Performance | Reference Dataset |

|---|---|---|---|---|

| Cross-Coupling Reaction Yield Prediction | MPNN | R² = 0.75 | Outperformed GCN, GAT, GraphSAGE, GIN [18] | Diverse transition metal-catalyzed cross-coupling reactions [18] |

| Acute Toxicity Prediction | Attentive FP (GAT variant) | Lowest MSE | 12.3-13.3% improvement over second-best GCN [16] | Fish, Daphnia magna, Tetrahymena pyriformis [16] |

| Activity Cliff Sensitivity | ECFP Fingerprints | Slope >1 in dissimilarity analysis | GCN, GAT, MPNN all showed lower sensitivity to subtle structural changes [15] | MoleculeACE benchmark [15] |

| General Molecular Property Prediction | Descriptor-based models (SVM, XGBoost) | Prediction accuracy | Outperformed GCN, GAT, MPNN on average across 11 datasets [3] | MoleculeNet benchmarks [3] |

Task-Specific Performance Patterns

Reaction Yield Prediction: In heterogeneous datasets encompassing Suzuki, Sonogashira, and other cross-coupling reactions, MPNN demonstrated superior predictive capability (R²=0.75), potentially due to its flexible message functions effectively capturing complex reaction pathways [18].

Toxicity Prediction: For acute toxicity tasks across four different species, Attentive FP (a GAT variant) achieved the lowest prediction error, with attention mechanisms providing interpretable atomic heatmaps highlighting chemically significant substructures [16].

Handling Subtle Structural Changes: Traditional ECFPs demonstrated greater sensitivity to minor structural modifications that cause significant potency differences (activity cliffs), with graph embeddings from GCN, GAT, and MPNN showing compressed representational distances between structurally similar molecules [15].

Experimental Protocols and Methodologies

Standardized Evaluation Frameworks

Dataset Splitting: Common practice employs random splits with 80% for training and 20% for testing, with five-fold cross-validation applied on the training set, effectively creating 64%/16%/20% divisions for training/validation/testing respectively [16].

Hyperparameter Tuning: Critical parameters include batch size (typically 32-128), dropout rate (0-0.2), number of GNN layers (1-4), and hidden layer dimensions (64-256 units). Systematic exploration of full parameter combinations is recommended for optimal performance [16].

Performance Metrics: Standard evaluation employs Mean Squared Error (MSE), Mean Absolute Percentage Error (MAPE), and R² values for regression tasks, with attention to both predictive accuracy and model interpretability [18] [16].

Emerging Hybrid and Enhanced Architectures

Recent architectural innovations address limitations of standard GNNs:

KA-GNNs: Kolmogorov-Arnold GNNs integrate Fourier-based KAN modules into node embedding, message passing, and readout components, demonstrating enhanced accuracy and computational efficiency on molecular benchmarks [5].

FH-GNN: Fingerprint-enhanced hierarchical GNNs combine atomic-level, motif-level, and graph-level information with chemical fingerprints using adaptive attention mechanisms, outperforming baseline models on multiple MoleculeNet datasets [6].

GraphCliff: Specifically designed to address activity cliff challenges through short-long range gating mechanisms, improving discrimination of structurally similar molecules with different properties [15].

Table 3: Essential Computational Tools for GNN Molecular Property Prediction Research

| Tool Category | Specific Implementation | Research Function | Application Context |

|---|---|---|---|

| Graph Representation | RDKit [3] | Molecular graph construction from SMILES | Preprocessing pipeline for all GNN architectures |

| Fingerprint Generation | ECFP [15] [3] | Baseline molecular representation | Comparative performance benchmarking |

| Core GNN Frameworks | MPNN, GCN, GAT [16] | Fundamental architectural implementations | Baseline model development and ablation studies |

| Advanced Architectures | Attentive FP [16], KA-GNN [5] | Specialized property prediction | High-accuracy molecular modeling |

| Interpretability Tools | Integrated Gradients [18], SHAP [3] | Model decision explanation | Mechanistic insight and validation |

| Benchmark Datasets | MoleculeNet [3], MoleculeACE [15] | Standardized performance evaluation | Comparative architecture assessment |

The comparative analysis reveals that no single GNN architecture universally dominates molecular property prediction. MPNNs demonstrate particular strength for reaction yield prediction, likely due to their flexible message-passing mechanisms capturing complex transformation pathways [18]. GAT-based models like Attentive FP excel in toxicity prediction tasks where attention mechanisms provide both performance and interpretability benefits [16]. GCNs offer solid baseline performance with computational efficiency but may lack sensitivity to subtle structural changes critical for activity cliff identification [15].

For researchers navigating the GNN versus molecular fingerprints decision, the experimental evidence suggests that traditional fingerprint-based methods remain competitive, particularly for smaller datasets or when subtle structural changes significantly impact properties [3]. However, GNNs offer advantages in end-to-end learning without manual feature engineering and increasingly outperform fingerprints as dataset size and structural complexity increase, especially with emerging hybrid architectures that integrate fingerprint-enhanced approaches [6] and novel mechanisms like Fourier-based KAN modules [5].

Strategic architecture selection should consider dataset size, structural complexity, requirement for interpretability, and computational resources, with the understanding that the field continues to evolve through architectures specifically designed to address current limitations in molecular representation learning.

The accurate prediction of molecular properties is a critical task in computational drug discovery and materials science, driving the need for robust and efficient machine learning models. The central challenge lies in selecting the optimal molecular representation, which dictates how the raw structural information of a compound is encoded for a machine learning algorithm. This guide provides an objective comparison between two dominant paradigms: molecular fingerprints, which are fixed, hand-crafted vectors representing predefined substructures, and graph neural networks (GNNs), which learn representations directly from the atomic graph structure of a molecule. The choice between these input modalities involves fundamental trade-offs between representational capacity, data efficiency, computational demand, and interpretability, which this article explores through recent experimental evidence and detailed methodological breakdowns.

Core Concepts and Methodologies

Molecular Fingerprints: Structured Vector Representations

Molecular fingerprints are fixed-length vector representations where specific bits or components correspond to the presence or absence of predefined molecular substructures or features [19].

- Extended-Connectivity Fingerprints (ECFP): Among the most common, ECFPs are circular fingerprints that capture atom environments within a specified radius. They are generated using a hashing procedure to map each identified substructure to a set of bits in the fixed-length vector [19] [11].

- Generation Workflow: The typical generation process begins with a molecule's SMILES string. The Morgan algorithm, often implemented via toolkits like RDKit, is then used to enumerate all circular substructures around each atom up to a given radius. These substructures are then hashed into a bit vector of fixed length [11].

- Model Integration: The resulting fingerprint vector serves as a high-dimensional input feature for classical machine learning models. Common algorithms include Random Forest (RF), Support Vector Machines (SVM), and gradient-boosting frameworks like XGBoost and LightGBM [11] [10].

Graph Neural Networks: Learned Graph Representations

Graph Neural Networks (GNNs) are a class of deep learning models designed to operate directly on graph-structured data. A molecule is naturally represented as a graph, where atoms are nodes and bonds are edges [20].

- Model Architecture: A typical GNN for molecules consists of several key layers [21]:

- Node Embedding: Initializes each atom node with a feature vector (e.g., atom type, charge).

- Message Passing: In multiple stacked layers, each node aggregates feature information from its neighboring nodes. This step allows the model to capture the local chemical environment of each atom [20].

- Graph Pooling (Readout): After several message-passing layers, the node representations are aggregated into a single, fixed-dimensional vector that represents the entire molecule. This vector is then passed to a standard neural network to make a property prediction [21].

- Permutation Invariance: A key property of GNNs is that they are designed to be invariant to the ordering of atoms and bonds, ensuring the same molecule always produces the same representation regardless of how its graph is presented [20].

Performance Benchmarking and Comparative Analysis

Predictive Performance on Standard Tasks

Recent comparative studies provide quantitative benchmarks for fingerprint-based and GNN-based models across various molecular property prediction tasks.

Table 1: Performance Comparison on Odor Prediction (Multi-label Classification)

| Model Combination | Feature Set | AUROC | AUPRC | Accuracy (%) | Precision (%) | Recall (%) |

|---|---|---|---|---|---|---|

| XGBoost | Morgan Fingerprints (ST) | 0.828 | 0.237 | 97.8 | 41.9 | 16.3 |

| XGBoost | Molecular Descriptors (MD) | 0.802 | 0.200 | - | - | - |

| XGBoost | Functional Groups (FG) | 0.753 | 0.088 | - | - | - |

| Random Forest | Morgan Fingerprints (ST) | 0.784 | 0.216 | - | - | - |

| LightGBM | Morgan Fingerprints (ST) | 0.810 | 0.228 | - | - | - |

Source: Adapted from a comparative study on odor decoding using a dataset of 8,681 compounds [11].

The data in Table 1 demonstrates that a classical machine learning model (XGBoost) paired with Morgan fingerprints achieved the highest discrimination performance on this complex sensory prediction task, surpassing descriptor-based models and other tree-based algorithms [11].

Table 2: Performance and Uncertainty on ToxCast Tasks

| Model | Average Balanced Accuracy | Uncertainty Estimation Quality |

|---|---|---|

| Chemprop (GNN) | ~0.6 - 0.8 | Moderate |

| Random Forest + Neural Fingerprints | Slightly lower than Chemprop | High and robust |

| SVM + Neural Fingerprints | Comparable to RF+FP | High |

Source: Summarized from an analysis of uncertainty on 19 ToxCast datasets [10].

Table 2 highlights a different trade-off. While a native GNN model (Chemprop) may have a slight edge in pure predictive performance on some toxicity tasks, fingerprint-based models combined with classical ML methods can provide significantly better and more robust uncertainty estimates, a critical feature for real-world industrial decision-making [10].

Advanced GNN Architectures and Hybrid Approaches

Researchers have developed advanced GNN frameworks to overcome limitations of basic models. For instance, Stable-GNN (S-GNN) was proposed to address the performance degradation of GNNs under Out-of-Distribution (OOD) data, a common scenario in real-world applications. By using a feature sample weighting decorrelation technique, S-GNN aims to extract genuine causal features and eliminate spurious correlations, thereby improving generalization and stability on unseen data distributions [21].

Another powerful approach is the integration of GNNs with external biological knowledge. One study constructed a Toxicological Knowledge Graph (ToxKG) incorporating entities like genes and pathways. Heterogeneous GNN models (e.g., GPS, HGT) that leveraged this structured biological knowledge significantly outperformed traditional models using only structural fingerprints on the Tox21 dataset, achieving an AUC of up to 0.956 for specific toxicity endpoints [12].

Experimental Protocols and Workflows

Protocol A: Fingerprint-Based Model Training

The following is a standard workflow for building a molecular property predictor using fingerprints [19] [11].

- Data Curation: Assemble a dataset of molecules, typically represented by SMILES strings, alongside their experimentally measured properties.

- Fingerprint Generation: Use a cheminformatics toolkit like RDKit to compute fingerprints (e.g., ECFP4, Morgan) for every molecule in the dataset. This results in a feature matrix (X) and a target vector (y).

- Model Training: Split the data into training and test sets. Train a classical machine learning model, such as Random Forest or XGBoost, on the training fingerprint vectors.

- Evaluation: Use the held-out test set to evaluate the model's performance using metrics like AUC, accuracy, or mean squared error, depending on the task.

Diagram 1: Fingerprint-based model workflow.

Protocol B: Graph Neural Network Training

The workflow for a GNN-based predictor involves an end-to-end learning process [20] [21].

- Graph Construction: Convert each molecule's SMILES string into a graph object where nodes (atoms) are labeled with features (e.g., element, degree) and edges (bonds) are labeled with their type (e.g., single, double).

- Model Definition: Instantiate a GNN architecture. This typically includes:

- An encoder to create initial node embeddings.

- Multiple message-passing layers to refine node features.

- A global pooling (readout) layer to create a graph-level representation.

- A final feed-forward network for the prediction.

- End-to-End Training: The model is trained via backpropagation. The loss is computed by comparing the model's predictions against the true properties, and the weights across the entire network are updated simultaneously.

Diagram 2: GNN-based model workflow.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Software and Data Resources for Molecular Property Prediction

| Resource Name | Type | Primary Function | Relevance |

|---|---|---|---|

| RDKit | Cheminformatics Library | Generation of molecular fingerprints (ECFP, Morgan), descriptors, and graph structures from SMILES. | Foundational for both fingerprint and GNN data preprocessing [19] [11]. |

| PyTorch / TensorFlow | Deep Learning Framework | Provides building blocks and auto-differentiation for constructing and training custom GNN architectures. | Essential for implementing and training GNN models [22]. |

| TUDatasets / OGB | Graph Dataset Repositories | Curated benchmarks for graph machine learning, including molecular datasets like Tox21 and QM9. | Standardized datasets for training and fair model evaluation [21]. |

| PubChem | Chemical Database | Source of SMILES strings, compound identifiers (CIDs), and associated biological assay data. | Primary source for curating custom datasets and gathering molecular structures [12]. |

| Neo4j | Graph Database | Storage and querying of large-scale knowledge graphs (e.g., ToxKG) that integrate chemical and biological data. | Enables advanced GNN models that incorporate external biological context [12]. |

The choice between molecular fingerprints and graph neural networks is not a matter of declaring one universally superior. The optimal input modality is dictated by the specific research context. Molecular fingerprints paired with classical ML models offer a robust, computationally efficient, and often highly competitive baseline, especially on tasks where well-defined substructures are strong predictors and where reliable uncertainty quantification is paramount [11] [10]. In contrast, GNNs excel at end-to-end representation learning from raw structural data, can capture complex topological patterns beyond predefined substructures, and provide a flexible framework for integrating multimodal data, as evidenced by knowledge-graph-enhanced models [12]. For applications demanding maximum predictive performance and where large, high-quality datasets are available, GNNs present a powerful solution. However, for many practical scenarios, particularly those with limited data or a need for high interpretability and robust uncertainty, fingerprint-based models remain a formidable and pragmatic choice. A promising future direction lies in hybrid approaches that leverage the structured prior knowledge of fingerprints within the flexible, learning-based framework of GNNs.

Implementation and Advanced Architectures: From Standalone Models to Hybrid Frameworks

In the field of computational chemistry and drug discovery, the accurate prediction of molecular properties from chemical structure is a fundamental task that directly impacts the efficiency of identifying promising therapeutic candidates. Historically, this challenge has been approached through two primary paradigms: descriptor-based methods that rely on expert-crafted molecular features and fingerprints, and graph-based methods that utilize end-to-end deep learning models such as graph neural networks (GNNs) to automatically learn representations from molecular graphs. While GNNs have garnered significant attention in recent literature, extensive benchmarking studies reveal that traditional fingerprint-based approaches, particularly when combined with powerful tree-based algorithms like XGBoost and Random Forest (RF), remain highly competitive and often superior in terms of predictive accuracy, computational efficiency, and interpretability [3].

The core premise of this guide aligns with emerging evidence that questions the automatic superiority of GNNs. A comprehensive 2021 comparison study concluded that "on average the descriptor-based models outperform the graph-based models in terms of prediction accuracy and computational efficiency" [3]. Similarly, a 2025 study on odor perception modeling found that a Morgan-fingerprint-based XGBoost model achieved the highest discrimination (AUROC 0.828), consistently outperforming descriptor-based models [11]. This guide provides a detailed methodological framework for implementing high-performance fingerprint-based pipelines, objectively compares their performance against contemporary GNN alternatives, and contextualizes these findings within the broader landscape of molecular property prediction research.

Experimental Benchmarking: Fingerprint-Based Models vs. Graph Neural Networks

Quantitative Performance Comparison

Recent comparative studies across diverse molecular property prediction tasks provide compelling evidence for the continued competitiveness of fingerprint-based approaches paired with tree-based models. The following table synthesizes key performance metrics from multiple benchmark studies:

Table 1: Performance comparison of fingerprint-based models versus GNNs across various benchmark datasets

| Dataset/Task | Best Fingerprint-Based Model | Performance | Best GNN Model | Performance | Performance Advantage |

|---|---|---|---|---|---|

| Odor Perception [11] | Morgan Fingerprint + XGBoost | AUROC: 0.828, AUPRC: 0.237 | - | - | Fingerprint + XGBoost |

| BBB Permeability [23] | MACCS Fingerprint + DNN | Accuracy: 97.8%, ROC-AUC: 0.98 | - | - | Fingerprint + DNN |

| Molecular Property Prediction (11 datasets) [3] | Descriptors+Fingerprints + SVM/XGBoost/RF | Best overall average across regression and classification | Attentive FP, GCN | Variable performance across datasets | Fingerprint-Based Models |

| ToxCast (19 datasets) [10] | Neural Fingerprint + Random Forest/SVC | Competitive performance with improved uncertainty | Native Chemprop GNN | Slightly higher prediction performance | Context-Dependent |

The superiority of fingerprint-based approaches is particularly evident in specific domains such as odor prediction, where Morgan-fingerprint-based XGBoost achieved the highest discrimination metrics in a 2025 benchmark study [11]. Similarly, for blood-brain barrier (BBB) permeability prediction, a fingerprint-based deep neural network model achieved remarkable accuracy of 97.8% [23]. Even in direct comparisons with specialized GNN architectures like Attentive FP and GCN, traditional descriptor-based models frequently matched or exceeded graph-based performance across multiple benchmark datasets [3].

Computational Efficiency Assessment

Beyond raw predictive accuracy, computational requirements present another critical dimension for model evaluation, particularly in large-scale virtual screening scenarios:

Table 2: Computational efficiency comparison between fingerprint-based and GNN approaches

| Model Type | Training Time | Inference Speed | Resource Requirements | Implementation Complexity |

|---|---|---|---|---|

| Fingerprint + XGBoost/RF | Seconds to minutes for large datasets [3] | Very fast | Moderate CPU/Memory | Low (established libraries) |

| Graph Neural Networks (GCN, GAT, MPNN) | Hours to days [3] | Slower due to graph processing | High (GPU acceleration beneficial) | High (specialized expertise) |

| Hybrid Models (GNN + Fingerprints) | Moderate to high [6] [24] | Moderate | High (GPU typically required) | Very High |

As evidenced by multiple studies, tree-based models like XGBoost and Random Forest demonstrate exceptional computational efficiency, requiring "only a few seconds to train a model even for a large dataset" [3]. This efficiency advantage extends beyond training to inference phases, making fingerprint-based approaches particularly suitable for high-throughput virtual screening applications where computational resources or time may be constrained.

Uncertainty Estimation and Interpretability

The reliability of predictive models depends not only on accuracy but also on calibrated uncertainty estimates and interpretability. A 2025 study examining uncertainty estimation found that "neural fingerprints combined with classical machine learning methods exhibit a slight decrease in prediction performance compared to the native Chemprop model," but importantly, "provide significantly improved uncertainty estimates" [10]. This characteristic is particularly valuable in real-world industrial applications where understanding prediction confidence directly impacts decision-making.

For interpretability, fingerprint-based models benefit from well-established feature importance methods and model explanation techniques like SHAP (SHapley Additive exPlanations), which can effectively "explore the established domain knowledge for the descriptor-based models" [3]. This interpretability advantage facilitates deeper chemical insights and supports rational molecular design in ways that are often more challenging with complex GNN architectures.

Methodological Framework: Implementing a Fingerprint-Based Pipeline

Experimental Workflow for Fingerprint-Based Modeling

The following diagram illustrates the comprehensive workflow for building a fingerprint-based molecular property prediction pipeline, integrating feature engineering with RDKit and model training with XGBoost/RF:

Molecular Property Prediction Pipeline: From chemical structures to validated predictive models

Detailed Experimental Protocols

Data Curation and Preprocessing

Robust model development begins with rigorous data curation. For molecular property prediction, this involves:

- Data Sourcing: Assemble datasets from multiple expert-curated sources such as ChEMBL, PubChem, or specialized databases relevant to the target property [11] [25]. For odor prediction, researchers unified "ten expert-curated sources" to create a dataset of 8,681 unique odorants [11].

- Standardization: Apply consistent processing to molecular structures using tools like RDKit, including normalization of tautomeric states, removal of duplicates, and standardization of stereochemistry representation [23].

- Descriptor Calculation: Compute comprehensive molecular descriptors using RDKit's built-in functionality, including key physicochemical properties such as molecular weight (MolWt), topological polar surface area (TPSA), octanol-water partition coefficient (molLogP), hydrogen bond donors/acceptors, rotatable bonds count, and ring systems [11] [25].

- Class Imbalance Handling: For classification tasks, employ resampling techniques such as SMOTE, ADASYN, or TOMEK links when facing significant class imbalance, as demonstrated in BBB permeability prediction research [23].

Feature Engineering with RDKit

Effective feature engineering forms the foundation of successful fingerprint-based models:

- Morgan Fingerprints (ECFP-like): Generate circular topological fingerprints using the Morgan algorithm, typically with radius 2 (equivalent to ECFP4) and bit lengths of 1024 or 2048 [11] [25]. These fingerprints effectively capture molecular substructures and have demonstrated superior performance in benchmarking studies [11].

- MACCS Keys: Implement predefined structural keys (166 bits) that encode specific chemical substructures and functional groups, particularly valuable for capturing pharmacophoric features [23].

- Functional Group Fingerprints: Create binary fingerprints indicating the presence or absence of specific functional groups using SMARTS patterns to define relevant chemical substructures [11].

- Descriptor Computation: Calculate comprehensive molecular descriptors using RDKit, including topological, constitutional, and physicochemical properties that provide complementary information to structural fingerprints [3].

Model Training with XGBoost and Random Forest

Optimized training protocols for tree-based models:

- Data Splitting: Implement stratified splitting (typically 80:20 train:test ratio) to maintain class distribution, with optional nested cross-validation for hyperparameter optimization [11] [23].

- Hyperparameter Tuning: For XGBoost, optimize critical parameters including learning rate (η), maximum tree depth, subsampling rates, and L1/L2 regularization strengths [11]. For Random Forest, tune the number of trees, maximum features per split, and maximum depth [25].

- Multi-label Support: Configure models to support multi-label classification where molecules can simultaneously exhibit multiple properties (e.g., "Floral" and "Spicy" odor characteristics) using One-vs-Rest or specialized multi-output strategies [11].

- Evaluation Metrics: Employ comprehensive evaluation metrics including Area Under the Receiver Operating Characteristic (AUROC), Area Under the Precision-Recall Curve (AUPRC), accuracy, precision, recall, and F1-score, with particular emphasis on AUROC and AUPRC for imbalanced datasets [11] [25].

Table 3: Essential tools and resources for implementing fingerprint-based molecular property prediction pipelines

| Tool/Resource | Type | Primary Function | Application Notes |

|---|---|---|---|

| RDKit [11] [25] | Open-source Cheminformatics Library | Molecular descriptor calculation, fingerprint generation, structural standardization | Core dependency for feature engineering; provides multiple fingerprint types and 200+ molecular descriptors |

| XGBoost [11] | Machine Learning Library | Gradient boosting implementation for model training | Excels with high-dimensional fingerprint data; superior performance with Morgan fingerprints |

| Random Forest [25] | Machine Learning Library | Ensemble learning with decision trees | Robust to noise; provides native feature importance estimates |

| Scikit-learn [25] | Machine Learning Library | Data preprocessing, model evaluation, auxiliary algorithms | Essential for data splitting, preprocessing, and performance metrics calculation |

| SHAP [25] | Model Interpretation Library | Explainable AI for model predictions | Identifies influential molecular features and substructures driving predictions |

| PubChem [11] | Chemical Database | Compound information and structure retrieval | Source for canonical SMILES and compound metadata via PUG-REST API |

| PyRfume [11] | Data Repository | Curated olfactory datasets | Example of domain-specific data source for model development |

Comparative Analysis: When to Choose Fingerprint-Based Approaches vs. GNNs

Technical and Practical Considerations

The choice between fingerprint-based pipelines and graph neural networks involves multiple technical and practical considerations:

Table 4: Decision framework for selecting between fingerprint-based and GNN approaches

| Consideration | Fingerprint + XGBoost/RF | Graph Neural Networks |

|---|---|---|

| Data Efficiency | Excellent performance with small to medium datasets (n < 10,000) [3] | Typically requires larger datasets for optimal performance |

| Computational Resources | Suitable for CPU-based environments; minimal hardware requirements [3] | GPU acceleration strongly recommended; substantial memory requirements |

| Interpretability Needs | High (native feature importance + SHAP explanations) [25] [3] | Moderate to Low (requires specialized interpretation techniques) |

| Implementation Timeline | Rapid prototyping and iteration (hours to days) [3] | Extended development cycles (weeks to months) |

| Representation Flexibility | Fixed molecular representation | Adaptive, task-specific representation learning |

| Uncertainty Estimation | Well-calibrated probabilities with Random Forest [10] | Variable calibration; may require specialized techniques |

Emerging Hybrid Approaches

Recent research has explored hybrid architectures that combine the strengths of both paradigms, integrating "hierarchical molecular graphs and fingerprints" to create more powerful predictive models [6]. These approaches simultaneously learn "from hierarchical molecular graphs and fingerprints" using "adaptive attention mechanism to balance the importance of hierarchical graphs and fingerprint features" [6]. Similarly, the Multi-Level Fusion Graph Neural Network (MLFGNN) incorporates "molecular fingerprints as a complementary modality" to enhance model performance [24]. Such hybrid strategies represent a promising direction for future methodology development, potentially mitigating the limitations of both pure fingerprint-based and pure graph-based approaches.

Empirical evidence from recent benchmarking studies consistently demonstrates that fingerprint-based pipelines utilizing RDKit for feature engineering and XGBoost/Random Forest for model training remain highly competitive for molecular property prediction tasks. These approaches offer compelling advantages in terms of predictive performance, computational efficiency, implementation simplicity, and model interpretability. While graph neural networks provide valuable capabilities for automated feature learning and may excel in specific scenarios, fingerprint-based methods establish a strong baseline that should be included in any comprehensive molecular property prediction workflow. The continued development of hybrid approaches that combine molecular fingerprints with graph-based architectures represents a promising research direction that may further advance the state of the art in computational molecular modeling.

The accurate prediction of molecular properties is a cornerstone of modern chemical and pharmaceutical research. The fundamental challenge lies in identifying optimal representations of molecular structure that can be leveraged by machine learning models. Two dominant paradigms have emerged: molecular fingerprints, which are human-engineered vector representations encoding specific chemical substructures and properties, and graph neural networks (GNNs), which learn representations directly from the molecular graph structure where atoms constitute nodes and bonds form edges [2]. This guide provides a practical examination of implementing GNN models, with particular focus on the critical steps of atom and bond featureization and the construction of effective training loops, while contextualizing performance against traditional fingerprint-based approaches.

Molecular Representation Methodologies

Molecular Fingerprints: Traditional Feature Engineering

Molecular fingerprints represent molecules as fixed-length vectors encoding structural information through predefined rules. Several fingerprinting methods are commonly employed, each capturing different aspects of molecular structure [2]:

- Morgan Fingerprints (Circular Fingerprints): Encode structural information by considering substructures at different radii around each atom, providing a conformation-independent representation of molecular topology.

- PubChem Fingerprints: Binary fingerprints derived from the PubChem Compound database, representing molecular structural features based on a large dictionary of predefined chemical substructures.

- RDKit Fingerprints: A fingerprinting method integrated within the RDKit cheminformatics package, generating bit vectors based on molecular substructures with dictionary entries for each set bit.

- ErG Fingerprints: Employ pharmacophore-type node descriptions to encode relevant molecular properties and spatial relationships, capturing different chemical information than path-based fingerprints.

These hand-crafted representations have demonstrated utility in Quantitative Structure-Activity Relationship (QSAR) and Quantitative Structure-Property Relationship (QSPR) studies, but inherently limit the model to pre-specified chemical patterns and may fail to capture novel structural motifs relevant to specific property prediction tasks [2].

Graph Neural Networks: Learned Representations

GNNs operate directly on the molecular graph structure, learning task-specific representations through message passing between connected nodes (atoms). The fundamental operation of a GNN layer involves aggregating information from a node's neighbors and updating the node's feature representation accordingly [26]. A basic Graph Convolutional Network (GCN) layer can be implemented as follows [26]:

Advanced GNN architectures have been developed specifically for molecular property prediction. The Atomistic Line Graph Neural Network (ALIGNN) explicitly models both two-body (bond) and three-body (angle) interactions by performing message passing on both the atomistic bond graph and its line graph corresponding to bond angles [27]. This approach directly incorporates angular information critical for many molecular properties, moving beyond distance-based representations to capture more complex geometric features.

Atom and Bond Featureization Strategies

Atom Featureization

The initial step in GNN implementation involves representing atoms as feature vectors encoding chemically relevant information. The ALIGNN framework utilizes 9 input node features based on atomic species [27]:

- Electronegativity

- Group number

- Covalent radius

- Valence electrons

- First ionization energy

- Electron affinity

- Block (s, p, d, f)

- Atomic volume

These features provide the model with fundamental chemical information about each atom, enabling it to learn relationships between elemental properties and molecular behavior.

Bond and Angle Featureization

Beyond atom features, bond representations are crucial for capturing molecular topology. ALIGNN initializes edge features as interatomic bond distances, expanded using a radial basis function (RBF) expansion with support between 0-8 Å for crystals and up to 5 Å for molecules [27]. For angle information, ALIGNN uses an RBF expansion of bond angle cosines, calculated as θ = arccos((rij · rjk)/(|rij||rjk|)), where rij and rjk are atomic displacement vectors between atoms i, j, and k [27].

The ALIGNN update mechanism alternates between graph convolution on the bond graph and its line graph, enabling the propagation of bond angle information through interatomic bond representations to the atom-wise representations and vice versa [27]. This dual-graph approach allows the model to explicitly leverage angular information that is critical for many molecular properties but challenging to capture in standard GNN architectures.

Experimental Comparison: GNNs vs. Fingerprints

Performance Benchmarking

A comprehensive comparative analysis evaluated various molecular representations on taste prediction tasks using a dataset of 2601 molecules [2]. The results demonstrated the superior performance of GNN-based approaches:

Table 1: Performance comparison of molecular representation methods for taste prediction [2]

| Representation Method | Prediction Accuracy | Key Advantages | Limitations |

|---|---|---|---|

| GNN-based Models | Highest reported accuracy | Learns task-specific features directly from graph structure; captures complex topological patterns | Computationally intensive; requires careful hyperparameter tuning |

| Morgan Fingerprints | Competitive performance | Conformation-independent; well-established interpretation methods | Limited to predefined substructures; may miss novel patterns |

| PubChem Fingerprints | Moderate performance | Large dictionary of chemical substructures | Dependent on PubChem's specific substructure definitions |

| RDKit Fingerprints | Moderate performance | Integrated with popular cheminformatics toolkit | Similar limitations to other predefined fingerprint methods |

| Consensus Models (Fingerprints + GNN) | Improved performance over individual methods | Combines strengths of engineered and learned features | Increased model complexity |

The study found that consensus models combining GNNs with molecular fingerprints demonstrated the best performance, highlighting the complementary strengths of learned and engineered features [2]. This suggests that fingerprint features may capture some chemical information not immediately accessible to GNNs from graph structure alone, possibly due to the predefined chemical knowledge embedded in fingerprint designs.

Advanced GNN Architectures and Their Performance

Recent GNN advancements have further improved molecular property prediction capabilities:

Fingerprint-enhanced Hierarchical GNN (FH-GNN): Integrates hierarchical molecular graphs (atomic-level, motif-level, graph-level) with traditional fingerprint features, using an adaptive attention mechanism to balance their importance. This architecture outperformed baseline models on eight benchmark datasets from MoleculeNet [6].

Quantized GNN Models: Address computational efficiency concerns by integrating GNN models with quantization algorithms like DoReFa-Net, reducing memory footprint and computational demands while maintaining predictive performance. Studies show that 8-bit quantization preserves performance on quantum mechanical property prediction tasks, though aggressive 2-bit quantization significantly degrades performance [28].

ALIGNN for Materials Property: Demonstrates improved performance on 52 solid-state and molecular properties from JARVIS-DFT, Materials Project, and QM9 databases, outperforming previous GNN models while maintaining comparable training speed. The explicit incorporation of angle information provides particular benefits for electronic properties sensitive to geometric distortions [27].

Implementation Guide: Training Loops and Optimization

GNN Training Workflow

The training loop for GNNs follows the standard deep learning paradigm with graph-specific considerations [26]:

Graph Representation: Convert molecular structures to graph representations with node features (atoms), edge indices (bonds), and optional edge attributes.

Mini-batching: Combine multiple graphs into a single batch using techniques like zero-padding or more advanced approaches such as stacking adjacency matrices in a block-diagonal form.

Forward Pass: Perform message passing through multiple GNN layers to update node representations based on neighborhood information.

Readout Phase: Aggregate node representations into a graph-level representation for molecular property prediction (using global average pooling, attention-based pooling, or other methods).

Loss Computation and Backpropagation: Calculate loss between predictions and targets, then update model parameters through backpropagation.

The following diagram illustrates the complete ALIGNN training workflow, from data preparation to model deployment:

ALIGNN-Specific Implementation

Implementing ALIGNN models requires specific considerations for handling both the atomistic graph and its line graph. The key implementation steps include [27] [29]:

Graph Construction: Create the atomistic bond graph with atoms as nodes and bonds as edges, then generate the line graph where nodes correspond to bonds in the original graph and edges correspond to bond angles.

Alternating Updates: Implement the ALIGNN update which composes edge-gated graph convolution on both graphs:

Progressive Training: Use N layers of ALIGNN updates followed by M layers of edge-gated graph convolution updates on the bond graph alone.

The ALIGNN repository provides comprehensive training scripts supporting various tasks [29]:

- Regression:

train_alignn.py --root_dir "sample_data" --config "config_example.json" - Classification:

train_alignn.py --root_dir "sample_data" --classification_threshold 0.01 - Multi-output prediction:

train_alignn.py --root_dir "sample_data_multi_prop" - Force-field training:

train_alignn.py --root_dir "sample_data_ff"

The Scientist's Toolkit: Essential Research Reagents

Table 2: Essential tools and libraries for GNN implementation in molecular property prediction

| Tool/Library | Function | Application Context |

|---|---|---|

| PyTorch Geometric | Specialized extension of PyTorch for GNNs | Provides datasets, transforms, and GNN layers optimized for graph learning; includes molecular datasets like QM9 [26] [28] |

| Deep Graph Library (DGL) | Python package for deep learning on graphs | Supports various GNN models; used by ALIGNN for efficient message passing [27] |

| RDKit | Cheminformatics and machine learning software | Molecular manipulation, fingerprint generation, and descriptor calculation [2] |

| ALIGNN Framework | Specialized implementation for materials and molecules | Provides pretrained models and training scripts for molecular property prediction [29] |

| JARVIS-Tools | Materials informatics toolkit | Database access and tools for materials property prediction [27] |

| DeepPurpose | Molecular modeling and prediction toolkit | Integrates multiple molecular representation methods including fingerprints, CNN, and GNN [2] |

Inverse Design: From Prediction to Generation

A particularly innovative application of GNNs extends beyond property prediction to molecular generation. Recent work demonstrates that the differentiable nature of GNNs enables direct optimization of molecular graphs toward target properties through gradient ascent [22]. This approach, termed Direct Inverse Design (DIDgen), holds the random graph or existing molecule while fixing GNN weights, optimizing the molecular graph toward desired electronic properties like HOMO-LUMO gaps. The method successfully generates molecules with specific energy gaps verified by density functional theory (DFT), achieving success rates comparable to or better than state-of-the-art generative models while producing more diverse molecules [22].

This inverse design capability represents a significant advancement beyond traditional fingerprint-based methods, which lack the differentiable pathway necessary for direct gradient-based optimization of molecular structures.

GNNs represent a powerful paradigm for molecular property prediction, offering advantages over traditional fingerprint-based methods through their ability to learn task-specific representations directly from molecular graph structure. The implementation of effective GNN models requires careful attention to atom and bond featureization strategies, with advanced architectures like ALIGNN demonstrating the value of explicitly incorporating angular information beyond simple connectivity. While GNNs generally outperform fingerprint-based approaches, consensus models combining both representations often achieve the best performance, leveraging the complementary strengths of learned and engineered features. As the field advances, techniques such as hierarchical GNNs, model quantization, and inverse design applications are further expanding the capabilities and efficiency of graph-based molecular machine learning.

The accurate prediction of molecular properties is a critical task in drug discovery, traditionally approached through two main paradigms: models based on expert-crafted molecular fingerprints and graph neural networks (GNNs) that learn directly from molecular structure. This guide focuses on a groundbreaking advancement in GNN architectures: Kolmogorov-Arnold Graph Neural Networks (KA-GNNs). By integrating the mathematical foundations of the Kolmogorov-Arnold representation theorem with GNNs, KA-GNNs demonstrate a consistent performance advantage over both traditional GNNs and fingerprint-based models in molecular property prediction. The following sections provide a detailed comparison of their performance, experimental methodologies, and architectural innovations.

Understanding the Core Technologies

Molecular Fingerprints: The Knowledge-Driven Approach

Molecular fingerprints are human-engineered representations that encode molecular structures into fixed-length bit strings. They function as expert-crafted features, where each bit typically indicates the presence or absence of a specific chemical substructure or descriptor [14]. While effective and interpretable, their performance is inherently limited by the quality and completeness of the pre-defined features and can introduce human bias [14]. Methods like the Fingerprint-enhanced Hierarchical GNN (FH-GNN) have sought to combine these fingerprints with GNNs, using attention mechanisms to balance the importance of learned and engineered features [6].

Graph Neural Networks: The Structure-Driven Approach

GNNs represent molecules natively as graphs, with atoms as nodes and bonds as edges. They learn representations end-to-end through message-passing mechanisms, capturing complex, non-linear structure-property relationships directly from data [30] [14]. Prior to KA-GNNs, models like Graph Convolutional Networks (GCNs), Graph Attention Networks (GATs), and Message Passing Neural Networks (MPNNs) were established as state-of-the-art, with GATs often showing a slight edge in accuracy and generalizability in benchmark studies [31] [32].

The KA-GNN Innovation: A Hybrid Paradigm

KA-GNNs represent a fusion of mathematical theory and deep learning. They are built upon the Kolmogorov-Arnold representation theorem, which states that any multivariate continuous function can be represented as a finite composition of univariate functions and additions [33] [34]. KA-GNNs instantiate this theorem within a GNN framework by replacing the standard linear transformations and fixed activation functions of traditional GNNs with learnable, univariate functions on the edges of the network [5] [34]. This core innovation leads to enhanced expressivity, parameter efficiency, and interpretability.

A key advancement within the KA-GNN family is the use of Fourier-series-based univariate functions. This replaces other basis functions like B-splines, allowing the network to effectively capture both low-frequency and high-frequency structural patterns in molecular graphs, which is crucial for accurate property prediction [5].

Performance Comparison: KA-GNNs vs. Alternatives

Extensive benchmarking across public molecular datasets reveals the performance profile of KA-GNNs relative to other methods. The following tables summarize quantitative results.

Table 1: Performance Comparison on Molecular Property Prediction Tasks (Classification & Regression)

| Model Category | Example Models | Average Accuracy (Across 7 Datasets) | Key Strengths | Key Limitations |

|---|---|---|---|---|

| Traditional Fingerprint-Based | RF/SVM with ECFP [31] [14] | Lower than GNNs (Baseline) | High interpretability, low computational cost [14] | Relies on expert knowledge, may not capture complex patterns [6] [14] |

| Standard GNNs | GCN, GAT, MPNN [31] | Outperforms fingerprint baselines [31] [32] | End-to-end learning, captures structural information [30] | Can be a "black-box"; may struggle with activity cliffs [35] |

| Enhanced GNN (for reference) | FH-GNN (with fingerprints) [6] | Outperforms baseline GNNs on 8 datasets [6] | Integrates hierarchical and fingerprint information [6] | Increased model complexity |

| KA-GNN (Fourier-Based) | KA-GCN, KA-GAT [5] | Consistently outperforms standard GNNs [5] [34] | High accuracy & parameter efficiency, improved interpretability [5] | Emerging technology, less established than traditional GNNs |

Table 2: Detailed Benchmarking of GNN Variants on ADME/Toxicity Datasets

| Model | Accuracy (Internal Test Set) | Generalizability (External Test Set) | Computational Efficiency |

|---|---|---|---|

| GCN [31] [32] | Moderate | Moderate | High |

| GAT [31] [32] | High (Best among standard GNNs) | High (Best among standard GNNs) | Moderate |

| MPNN [31] | Moderate | Moderate | Moderate |

| AttentiveFP [31] | Moderate | Moderate | Lower |

| KA-GNN (e.g., KA-GAT) [5] | Higher than GAT | Reported high generalizability | High (parameter-efficient) |

Experimental Protocols and Methodologies

KA-GNN Architecture and Workflow

The superiority of KA-GNNs is demonstrated through rigorous experiments. The core methodology involves a systematic replacement of standard GNN components with Kolmogorov-Arnold (KA) modules.

Diagram 1: KA-GNNs integrate KAN modules into all three core GNN components.

Key Architectural Components:

- Node Embedding Initialization: Atomic and bond features are concatenated and processed by a Fourier-KAN layer instead of a standard MLP, creating a rich initial node representation that encodes local chemical context [5].

- Message Passing: The core GNN operations (e.g., aggregation and update functions in GCN or GAT) are augmented with KA modules. For example, node features are updated using residual Fourier-KAN layers, enhancing the expressiveness of feature interactions [5] [34].

- Graph Readout: The final graph-level representation, often created by pooling node embeddings, is passed through a final KAN layer for the property prediction task, replacing the typical MLP classifier/regressor [5] [33].

Key Experimental Details

- Datasets: Models are typically validated on seven public benchmark datasets from MoleculeNet (e.g., HIV, BACE, FreeSolv) for various classification and regression tasks [5] [33] [34].

- Evaluation Protocol: Standard practice involves k-fold cross-validation (e.g., 10-fold) to ensure robust performance estimation. Metrics like ROC-AUC, RMSE, and MAE are used for classification and regression, respectively [5] [6].

- Baselines: Performance is compared against a suite of established models, including fingerprint-based methods (e.g., Random Forests with ECFP) and state-of-the-art GNNs like GCN, GAT, and MPNN [5] [31].

- Fourier-KAN Formulation: The learnable activation functions in KA-GNNs are implemented using a Fourier series:

f(x) ∼ Σ (a_k cos(k·x) + b_k sin(k·x)). This provides a theoretically sound and flexible basis for function approximation, proven to capture complex patterns effectively [5].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools and Datasets for Molecular Property Prediction

| Item Name | Function / Description | Relevance to KA-GNN Research |

|---|---|---|

| MoleculeNet Benchmarks | A collection of standardized public datasets for molecular machine learning [6]. | Essential for training and benchmarking KA-GNN models against state-of-the-art alternatives. |

| Extended Connectivity Fingerprints (ECFPs) | A circular fingerprint that captures molecular substructures and is widely used in chemoinformatics [35]. | Serves as a primary baseline and can be integrated into hybrid models (e.g., FH-GNN) for comparison [6]. |

| Graph Attention Network (GAT) | A GNN that uses attention mechanisms to weigh the importance of neighboring nodes [31]. | A key baseline and backbone architecture for one of the main KA-GNN variants (KA-GAT) [5]. |

| Message Passing Neural Network (MPNN) | A general framework for GNNs that encompasses many message-passing algorithms [35]. | A standard model used in benchmarks; its explainability is a focus in frameworks like ACES-GNN [35]. |

| Activity Cliff (AC) Datasets | Curated datasets containing pairs of structurally similar molecules with large potency differences [35]. | Used to stress-test model interpretability and generalization, as done in explainable GNN frameworks [35]. |

Interpretation and Broader Context

The following diagram situates KA-GNNs within the broader research landscape of molecular property prediction.

Diagram 2: KA-GNNs represent a convergence of knowledge-driven and structure-driven paradigms.

The emergence of KA-GNNs is part of a larger trend to overcome the limitations of pure data-driven models. This includes other advanced frameworks like ACES-GNN, which uses explanation-guided learning on activity cliffs to make model decisions more transparent and chemically intuitive [35], and approaches that integrate knowledge from Large Language Models (LLMs) to augment structural information with human prior knowledge [14]. Together, these approaches signal a move towards more powerful, efficient, and interpretable models for drug discovery.

The accurate prediction of molecular properties is a cornerstone of modern drug discovery and materials science, where reducing the costs and risks of trials depends on selecting compounds with ideal characteristics. For decades, two dominant paradigms have existed in molecular property prediction: molecular fingerprints and graph neural networks (GNNs). Molecular fingerprints, such as Extended Connectivity Fingerprints (ECFP), provide fixed-length vector representations encoding chemical structures through predefined rules and hash-based functions, offering interpretability and computational efficiency but limited adaptability to specific tasks [36]. In contrast, GNNs operate directly on molecular graphs, treating atoms as nodes and bonds as edges, enabling end-to-end learning of structure-property relationships without manual feature engineering [5].