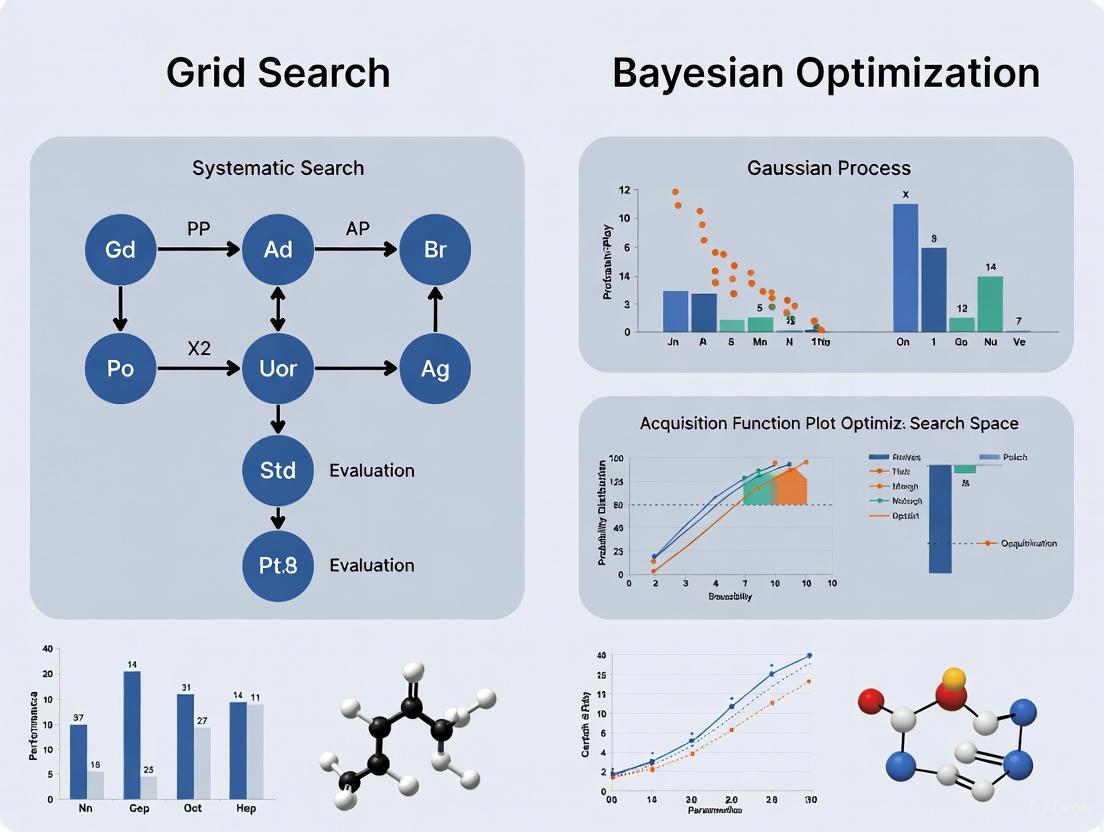

Grid Search vs. Bayesian Optimization: A Practical Guide to Hyperparameter Tuning for Molecular Property Prediction

Accurate molecular property prediction is crucial for accelerating drug discovery, yet its success heavily depends on selecting optimal machine learning model hyperparameters.

Grid Search vs. Bayesian Optimization: A Practical Guide to Hyperparameter Tuning for Molecular Property Prediction

Abstract

Accurate molecular property prediction is crucial for accelerating drug discovery, yet its success heavily depends on selecting optimal machine learning model hyperparameters. This article provides a comprehensive guide for researchers and drug development professionals on two fundamental hyperparameter optimization strategies: the exhaustive Grid Search and the efficient Bayesian Optimization. We explore their core mechanisms, practical implementation in cheminformatics, and performance across various real-world scenarios, including low-data regimes and complex molecular representations. Drawing on recent benchmark studies and case studies, we deliver actionable insights for choosing and applying the right tuning method to build more predictive and reliable models, ultimately enhancing the efficiency of AI-driven molecular design.

Hyperparameter Tuning Fundamentals: Why Your Model's Settings Matter in Drug Discovery

In molecular property prediction (MPP), the performance of a deep learning model is not solely determined by its architecture or the data it is trained on, but critically by the configuration of its hyperparameters—the knobs and dials that control the learning process itself. Unlike model parameters learned during training, hyperparameters are set beforehand and govern aspects such as model structure and learning algorithm behavior [1] [2]. For researchers and scientists in drug development, selecting an efficient strategy to tune these hyperparameters is paramount, as it directly influences the accuracy and computational cost of predicting vital properties like the melt index of polymers or the glass transition temperature ((T_g)) [2]. While traditional methods like Grid Search offer a straightforward approach, advanced techniques like Bayesian Optimization have demonstrated superior efficiency in navigating the complex hyperparameter landscapes typical of molecular deep learning models [2] [3] [4].

This guide provides an objective comparison of these optimization methods, supported by experimental data and detailed protocols tailored for MPP research.

What are Hyperparameters and Why Do They Matter?

In machine learning, particularly in deep neural networks (DNNs) for molecular property prediction, hyperparameters are broadly categorized into two types [2]:

- Structural Hyperparameters: These define the blueprint of the neural network. Examples include the number of hidden layers, the number of units (neurons) per layer, and the choice of activation function (e.g., ReLU, sigmoid). Selecting different values results in fundamentally different network structures.

- Learning Algorithm Hyperparameters: These govern how the model is trained. Key examples are the learning rate (the size of steps taken during optimization), the number of epochs (complete passes through the dataset), and the batch size (number of samples used to compute the gradient in one iteration) [5] [2].

The choice of hyperparameters profoundly impacts both the predictive accuracy and the computational efficiency of the resulting model. A poorly chosen learning rate, for instance, can cause the training process to become unstable and diverge, or to converge so slowly that it becomes impractical [5]. In the context of MPP, where training can be computationally expensive and datasets are often complex, systematic Hyperparameter Optimization (HPO) is not a luxury but a necessity to achieve state-of-the-art results [2].

Comparing Hyperparameter Optimization Methods

Several strategies exist for HPO, ranging from brute-force approaches to more intelligent, adaptive methods. The table below summarizes the core characteristics of the three primary techniques.

| Method | Core Principle | Key Advantages | Key Limitations |

|---|---|---|---|

| Grid Search [1] [6] | Exhaustively evaluates all possible combinations within a pre-defined grid. | Simple to implement and parallelize; guaranteed to find the best combination within the specified grid. | Computationally intractable for high-dimensional spaces; search time grows exponentially with each new hyperparameter. |

| Random Search [5] [3] | Evaluates a fixed number of random combinations from the search space. | More efficient than Grid Search; better suited for high-dimensional spaces; easy to parallelize. | No guarantee of finding the optimal configuration; can miss important regions of the search space; does not learn from past evaluations. |

| Bayesian Optimization [1] [7] [6] | Builds a probabilistic model (surrogate) of the objective function to intelligently select the most promising hyperparameters to evaluate next. | Highly sample-efficient; requires fewer evaluations to find a good optimum; well-suited for expensive-to-evaluate functions. | Higher per-iteration overhead; more complex to implement; sequential nature can make parallelization less straightforward. |

Performance Comparison in Molecular Property Prediction

The theoretical advantages and disadvantages of these methods are borne out in practical MPP studies. The following table summarizes key experimental findings from recent research.

| Study / Context | Optimization Method | Key Performance Findings | Computational Efficiency |

|---|---|---|---|

| DNNs for Molecular Property Prediction (e.g., Melt Index, (T_g)) [2] | Random Search, Bayesian Optimization, Hyperband | Hyperband was found to be the most computationally efficient, delivering optimal or near-optimal prediction accuracy. Bayesian Optimization also showed strong performance. | Hyperband > Bayesian Optimization > Random Search |

| Predicting Heart Failure Outcomes (SVM, RF, XGBoost) [3] | Grid Search, Random Search, Bayesian Search | Bayesian Search consistently required less processing time than both Grid and Random Search, while achieving competitive model performance (AUC scores >0.66). | Bayesian Search > Random Search > Grid Search |

| Oligomer Search for Organic Photovoltaics [4] | Random Search, Bayesian Optimization | Bayesian Optimization identified a thousand times more promising molecules with desired properties compared to Random Search using the same computational resources. | Bayesian Optimization >> Random Search |

| General Model Tuning (Digits Dataset) [6] | Grid Search, Random Search, Bayesian Optimization | Grid Search and Bayesian Optimization achieved the highest F-1 score (0.985), but Bayesian Optimization found this optimum in 67 iterations, versus Grid Search's 810. | Bayesian Optimization (by iterations) > Random Search > Grid Search |

Experimental Protocols for Hyperparameter Optimization

To ensure reproducible and effective hyperparameter tuning in MPP research, a structured experimental protocol is essential. The following workflow, adapted from studies using tools like KerasTuner and Optuna, outlines a standard methodology [2] [7].

Standard HPO Workflow for Molecular Property Prediction

The diagram below illustrates the iterative workflow for Bayesian Optimization, which incorporates learning from past trials.

Detailed Methodology

Problem Formulation:

- Define the Search Space: Specify the hyperparameters to be tuned and their value ranges (e.g., learning rate: [0.0001, 0.1], number of layers: [2, 5], units per layer: [32, 64, 128]) [7]. The search space must be broad enough to contain optimal values but constrained to maintain computational feasibility.

- Define the Objective Function: This function takes a set of hyperparameters, builds and trains a model (e.g., a Dense DNN or Convolutional Neural Network), and returns a performance metric to be optimized (e.g., validation set Mean Squared Error for regression tasks like predicting melt index) [2] [7].

Select and Run HPO Algorithm:

- Grid Search: Systematically iterate over every combination in the pre-defined grid. This is often used as a baseline but is only feasible for small search spaces [3].

- Random Search: Randomly sample a pre-determined number of configurations from the search space. This is a robust baseline for larger spaces [2].

- Bayesian Optimization: Using a framework like

OptunaorKerasTuner, run the iterative process shown in the workflow diagram. The algorithm uses a surrogate model (like a Gaussian Process or Tree Parzen Estimator) to model the objective function and an acquisition function (like Expected Improvement) to balance exploration and exploitation when selecting the next hyperparameters to evaluate [2] [7].

Model Validation and Selection:

- Use techniques like k-fold cross-validation (e.g., 10-fold) during the HPO process to obtain a robust estimate of model performance and mitigate overfitting [3].

- Once the HPO process is complete, the best-performing set of hyperparameters is used to train a final model on the full training set.

Essential Research Reagent Solutions for HPO

The following software tools are critical for implementing hyperparameter optimization in molecular property prediction research.

| Tool / Resource | Function in HPO | Relevance to MPP Research |

|---|---|---|

| KerasTuner [2] | An intuitive Python library that integrates with TensorFlow/Keras workflows to perform HPO. | Recommended for its user-friendliness, making it accessible to chemical engineers and scientists without extensive computer science backgrounds. Supports Random Search, Bayesian Optimization, and Hyperband. |

| Optuna [2] [7] | A flexible Python framework for automated HPO, known for its efficient algorithms and distributed computing support. | Used for more advanced HPO, including the combination of Bayesian Optimization with Hyperband (BOHB). Its define-by-run API allows for dynamic search spaces. |

| Scikit-learn [8] [6] | A core Python library for machine learning that provides simple implementations of GridSearchCV and RandomizedSearchCV. | Ideal for initial experiments and optimizing traditional ML models (e.g., Random Forests, SVMs) on smaller datasets or with simpler neural networks. |

| Hyperband [2] | A bandit-based approach that uses early-stopping to speed up the random search through adaptive resource allocation. | Identified in recent MPP studies as the most computationally efficient algorithm, providing optimal or nearly optimal accuracy faster than other methods. |

For researchers in drug development and molecular science, the choice of hyperparameter optimization strategy has a direct and significant impact on research outcomes. While Grid Search is a valuable tool for small-scale problems, its computational cost makes it impractical for tuning complex deep learning models. Random Search offers a powerful and easily parallelized alternative that is generally superior to Grid Search.

However, evidence from molecular property prediction and other scientific domains strongly suggests that Bayesian Optimization and Hyperband represent a more efficient and intelligent class of solutions [2] [3] [4]. Bayesian Optimization's sample efficiency makes it ideal when each model training is computationally expensive, as it can find excellent hyperparameters in fewer trials. For the utmost in speed and efficiency, Hyperband is highly recommended, as it has been shown to deliver top-tier results for MPP in the least amount of time [2].

A practical strategy for researchers is to adopt a hybrid approach: using Bayesian Optimization or Hyperband to efficiently explore a large search space and identify promising regions, followed by a more focused, fine-grained search (or even a local Grid Search) around the most optimal configuration found to refine the results [5]. By leveraging modern software tools like KerasTuner and Optuna, scientists can integrate these advanced HPO methods into their workflows, accelerating the discovery of accurate models for molecular property prediction.

The Critical Role of Tuning in Molecular Property Prediction

In the field of molecular property prediction, the development of robust machine learning models is crucial for accelerating drug discovery and materials science. The performance of these models is highly dependent on their hyperparameters—the configuration settings that govern the learning process. Unlike model parameters learned during training, hyperparameters are set beforehand and can dramatically influence predictive accuracy, training stability, and generalization capability [1]. Selecting appropriate hyperparameter optimization strategies is therefore not merely a technical detail but a critical determinant of research outcomes in computational chemistry and pharmaceutical development.

The challenge is particularly acute in molecular property prediction, where datasets are often characterized by high dimensionality, significant noise, and limited sample sizes—sometimes containing as few as 29 labeled examples [9]. Within this context, two fundamentally different approaches have emerged as standards: the exhaustive Grid Search and the adaptive Bayesian Optimization. This article provides a comprehensive comparison of these methods, examining their theoretical foundations, practical performance, and suitability for molecular informatics tasks through experimental data and detailed methodological analysis.

Understanding the Optimization Methods

Grid Search: The Systematic Explorer

Grid Search represents the most straightforward approach to hyperparameter tuning. It operates by systematically evaluating a predefined set of hyperparameter combinations across a multidimensional grid [1] [6]. Imagine a scenario where a researcher is tuning a random forest model for toxicity prediction with two hyperparameters: the number of trees in the forest (nestimators) and the maximum depth of each tree (maxdepth). If nestimators has three possible values [50, 100, 200] and maxdepth has four [None, 10, 20, 30], Grid Search would train and evaluate 3 × 4 = 12 separate models to identify the optimal combination [10].

The primary strength of Grid Search lies in its comprehensive coverage of the specified search space. When dealing with a small number of hyperparameters with limited possible values, this brute-force method guarantees finding the best combination within the defined grid [1]. Additionally, its simplicity and deterministic nature make it easily implementable and reproducible, appealing qualities for researchers without extensive optimization expertise [10].

However, Grid Search suffers from the "curse of dimensionality"—as the number of hyperparameters increases, the search space grows exponentially [11]. For a model with ten hyperparameters, each with just five possible values, Grid Search would require evaluating 5¹⁰ = 9,765,625 combinations, becoming computationally prohibitive. Furthermore, this method treats each hyperparameter combination independently without learning from previous evaluations, potentially wasting computational resources on poorly performing regions of the search space [1] [6].

Bayesian Optimization: The Adaptive Learner

Bayesian Optimization takes a fundamentally different approach by building a probabilistic model of the objective function and using it to direct the search toward promising hyperparameter configurations [1] [11]. This method operates sequentially, using past evaluation results to inform future selections through a two-component framework:

- Surrogate Model: Typically a Gaussian Process, this model approximates the unknown relationship between hyperparameters and model performance, providing predictions and uncertainty estimates for unevaluated configurations [11] [3].

- Acquisition Function: This utility function (e.g., Expected Improvement) uses the surrogate's predictions to balance exploration of uncertain regions with exploitation of known promising areas, determining the next hyperparameters to evaluate [11].

The Bayesian optimization cycle begins with a few initial random samples. After each iteration, the surrogate model updates its understanding of the objective function, and the acquisition function suggests the most informative point to evaluate next [11]. This adaptive approach allows Bayesian Optimization to typically converge to high-performing hyperparameters with far fewer evaluations than Grid Search, making it particularly valuable for optimizing complex models with many hyperparameters or when each evaluation is computationally expensive [6] [3].

A potential limitation is that each iteration requires additional computation to update the surrogate model and optimize the acquisition function [6]. However, this overhead is generally negligible compared to the cost of training complex machine learning models for molecular property prediction.

Table 1: Fundamental Comparison of Optimization Methods

| Characteristic | Grid Search | Bayesian Optimization |

|---|---|---|

| Search Strategy | Exhaustive search across a predefined grid | Adaptive sampling guided by a probabilistic model |

| Parameter Learning | Does not learn from previous evaluations | Actively uses past results to inform next selection |

| Theoretical Basis | Brute-force enumeration | Bayes' theorem, Gaussian processes |

| Key Parameters | Grid resolution, parameter bounds | Acquisition function, surrogate model, initial samples |

| Optimality Guarantee | Finds best point within the defined grid | No guarantee, but typically finds good solutions efficiently |

Comparative Performance Analysis

Computational Efficiency and Model Performance

Multiple empirical studies have demonstrated the superior efficiency of Bayesian Optimization compared to Grid Search across various molecular property prediction tasks. In a comprehensive study focused on predicting heart failure outcomes, researchers evaluated Grid Search, Random Search, and Bayesian Search across three machine learning algorithms: Support Vector Machine (SVM), Random Forest (RF), and eXtreme Gradient Boosting (XGBoost) [3]. The dataset included 167 features from 2008 patients, with models built to predict all-cause readmission and mortality.

The study revealed that while all optimization methods could find hyperparameters yielding competitive model performance, Bayesian Search consistently required less processing time than both Grid and Random Search methods [3]. This computational advantage was achieved without sacrificing predictive performance, as measured by accuracy, sensitivity, and AUC scores. After rigorous 10-fold cross-validation, Random Forest models demonstrated superior robustness with an average AUC improvement of 0.03815, whereas SVM models showed potential for overfitting [3].

In a separate case study comparing hyperparameter tuning approaches for a random forest classifier, Bayesian Optimization found hyperparameters yielding the highest F-1 score after just 67 iterations—far fewer than the 680 iterations Grid Search required to find its best combination [6]. Although each Bayesian Optimization iteration requires more computation than a Grid Search evaluation, the dramatically reduced number of needed evaluations results in significantly shorter overall run times for complex problems [6].

Table 2: Experimental Results from Heart Failure Prediction Study [3]

| Optimization Method | Best Accuracy (SVM) | Robustness (Avg. AUC Δ post-CV) | Computational Efficiency |

|---|---|---|---|

| Grid Search | 0.6294 | Potential overfitting (SVM: -0.0074) | Highest processing time |

| Random Search | Competitive with GS | Moderate improvement (XGBoost: +0.01683) | Medium processing time |

| Bayesian Search | Competitive with GS | Superior robustness (RF: +0.03815) | Best (lowest processing time) |

Performance in High-Dimensional and Low-Data Regimes

Molecular property prediction often involves navigating high-dimensional chemical spaces with limited experimental data. In such challenging regimes, the advantages of Bayesian Optimization become particularly pronounced.

Researchers successfully applied Bayesian Optimization to parameterize a 41-dimensional coarse-grained model of Pebax-1657, a copolymer composed of alternating polyamide and polyether segments [12]. The optimization framework simultaneously targeted multiple physical properties—density, radius of gyration, and glass transition temperature—achieving convergence in fewer than 600 iterations and producing a model that accurately reproduced key properties of its atomistic counterpart [12]. This demonstrates Bayesian Optimization's capability to handle complex, high-dimensional parameter spaces that would be computationally intractable for Grid Search.

In ultra-low data regimes, where labeled molecular properties are exceptionally scarce, adaptive optimization methods show particular promise. One study demonstrated that advanced multi-task learning approaches could learn accurate models with as few as 29 labeled samples [9]. While this research focused on model architecture rather than hyperparameter optimization, it highlights the critical importance of data-efficient methods throughout the machine learning pipeline in molecular informatics.

Optimization Workflows in Molecular Property Prediction

The fundamental difference between Grid Search and Bayesian Optimization is best understood through their distinct workflows, particularly in the context of molecular property prediction.

Grid Search Workflow

Diagram 1: Grid Search Iteration Process

The Grid Search workflow follows a strictly predetermined path. After researchers define the hyperparameter grid, the method systematically generates all possible combinations [1] [10]. For each combination, it trains a model (such as a graph neural network for molecular properties) and evaluates its performance using predefined metrics like AUC or accuracy [3]. This process continues exhaustively until all combinations have been evaluated, finally selecting the combination that yielded the best performance [6]. The workflow does not incorporate knowledge from previous evaluations when selecting subsequent hyperparameters, making it simple but inefficient for high-dimensional spaces.

Bayesian Optimization Workflow

Diagram 2: Bayesian Optimization Iteration Cycle

Bayesian Optimization employs a fundamentally different, adaptive approach. The process begins with a small set of random initial samples to build a preliminary surrogate model of the objective function [11] [3]. Based on this model, an acquisition function determines the most promising hyperparameters to evaluate next by balancing exploration of uncertain regions with exploitation of known promising areas [11]. After evaluating the selected hyperparameters (by training and testing a model), the results update the surrogate model, refining its understanding of the hyperparameter-performance relationship [11] [3]. This iterative process continues until convergence criteria are met, efficiently guiding the search toward optimal regions of the hyperparameter space.

Essential Research Reagent Solutions

Implementing effective hyperparameter optimization requires both software tools and methodological components. Below are key "research reagents" for molecular property prediction studies:

Table 3: Essential Research Reagent Solutions for Hyperparameter Optimization

| Research Reagent | Function | Example Tools/Packages |

|---|---|---|

| Bayesian Optimization Frameworks | Provides algorithms for efficient hyperparameter search | Ax, BoTorch, Optuna, Scikit-optimize [11] |

| Molecular Representation Libraries | Converts chemical structures to machine-readable formats | RDKit, SMILES enumeration tools [13] |

| Surrogate Models | Approximates the objective function for Bayesian methods | Gaussian Processes, Random Forests [11] [3] |

| Acquisition Functions | Guides parameter selection by balancing exploration/exploitation | Expected Improvement, Upper Confidence Bound [11] |

| Multi-Objective Optimization | Handles optimization of multiple conflicting properties | Hypervolume-based methods, scalarization approaches [14] |

The critical role of tuning in molecular property prediction cannot be overstated, as hyperparameter selection directly influences model reliability and consequently decision-making in drug discovery and materials design. Through comparative analysis, Bayesian Optimization emerges as the superior approach for most molecular informatics applications, particularly given the field's characteristic high-dimensional problems and limited data regimes.

Bayesian Optimization demonstrates consistently better computational efficiency than Grid Search while achieving comparable or superior model performance [6] [3]. Its ability to navigate complex parameter spaces with fewer evaluations makes it particularly valuable for optimizing contemporary deep learning architectures used in molecular property prediction [13] [12]. Furthermore, its principled balance of exploration and exploitation aligns well with the need to extract maximum insights from often scarce and noisy experimental data [9].

Grid Search retains utility for simpler models with few hyperparameters or when exhaustive search is computationally feasible [1] [10]. However, for the increasingly complex prediction tasks in modern chemical and pharmaceutical research—such as multi-property optimization, transfer learning, and few-shot learning scenarios—Bayesian Optimization provides the sophisticated toolkit necessary to advance the field efficiently [14] [15]. As molecular property prediction continues to evolve toward more data-efficient and robust methodologies, Bayesian Optimization stands as an essential component in the researcher's toolkit.

In the landscape of modern drug discovery, molecular representation serves as the foundational bridge between chemical structures and their predicted biological activity or physical properties. The rapid evolution of Artificial Intelligence (AI) has positioned AI-assisted drug design as a prominent research area, where the critical first step is translating molecules into a computer-readable format [16]. This process, known as molecular representation, enables machine learning (ML) and deep learning (DL) models to process, analyze, and predict molecular behavior [16]. The choice of representation directly influences model performance in crucial tasks like virtual screening, activity prediction, and scaffold hopping—the strategic modification of core molecular structures while retaining biological activity [16].

Within this context, hyperparameter optimization becomes paramount for developing accurate predictive models. As highlighted in recent methodology reviews, "hyperparameter optimization is often the most resource-intensive step in model training," and most prior molecular property prediction studies have paid limited attention to this process, resulting in suboptimal predictions [2]. This guide objectively compares predominant molecular representation methods, examining their performance characteristics and integration with optimization protocols like Grid Search and Bayesian Optimization to empower researchers in making informed methodological choices.

Molecular Representation Methods: A Comparative Analysis

Molecular representations can be broadly categorized into traditional expert-defined features and modern learned representations. The following sections provide a detailed comparison of their methodologies, strengths, and limitations.

Traditional Molecular Representations

Traditional methods rely on predefined rules and expert knowledge to convert molecular structures into quantitative descriptors.

Molecular Fingerprints: These are binary bit strings encoding the presence or absence of specific molecular substructures or patterns. The most widely used method is Extended Connectivity Fingerprints (ECFP), which captures local atomic environments in a compact, efficient manner [16] [17]. ECFP and similar fingerprints are particularly effective for similarity searching and clustering due to their computational efficiency [16]. Studies have found MACCS fingerprints to be surprisingly effective overall despite their simplicity [17].

Molecular Descriptors: These quantify physical or chemical properties of molecules, such as molecular weight, hydrophobicity, or topological indices [16] [17]. Descriptors from libraries like PaDEL have proven particularly well-suited for predicting physical properties of molecules [17]. They are extensively used in Quantitative Structure-Activity Relationship (QSAR) modeling [16].

String-Based Representations: The Simplified Molecular Input Line Entry System (SMILES) provides a compact method to encode chemical structures as strings of ASCII characters [16]. Despite limitations in capturing molecular complexity, SMILES remains mainstream due to its human-readability and simplicity [16]. Improved versions like CXSMILES and SMARTS have been developed to extend its functionality [16].

Modern AI-Driven Molecular Representations

Modern approaches employ deep learning to automatically learn feature representations directly from data, moving beyond predefined rules.

Graph-Based Representations: These treat molecules as graphs with atoms as nodes and bonds as edges. Graph Neural Networks (GNNs), particularly Message Passing Neural Networks (MPNNs), process these graphs to capture both local and global molecular features [16] [9] [18]. A 2025 study introduced adaptive checkpointing with specialization (ACS) for multi-task GNNs, effectively mitigating "negative transfer" in scenarios with imbalanced training data [9].

Language Model-Based Representations: Inspired by natural language processing, models like Transformers have been adapted to process molecular sequences (e.g., SMILES or SELFIES) by treating them as a specialized chemical language [16]. These models tokenize molecular strings at the atomic or substructure level and process them using architectures like Transformers or BERT [16].

Multimodal Representations: Recent approaches integrate multiple representation types to leverage complementary information. The Multimodal Cross-Attention Molecular Property Prediction (MCMPP) model innovatively integrates SMILES, ECFP fingerprints, molecular graphs, and 3D molecular conformations through a cross-attention mechanism [18]. Tests on benchmark datasets demonstrate how MCMPP improves prediction accuracy by using complementary effects across modalities [18].

Table 1: Comparative Analysis of Molecular Representation Methods

| Representation Type | Key Examples | Strengths | Limitations | Ideal Use Cases |

|---|---|---|---|---|

| Fingerprints | ECFP, MACCS [17] | Computational efficiency; interpretability; effective for similarity search [16] | Limited to predefined features; may miss complex patterns [16] | Virtual screening, QSAR, clustering [16] |

| Descriptors | PaDEL, alvaDesc [17] | Direct encoding of physicochemical properties; interpretable [16] [17] | Feature engineering requires domain expertise; may not capture structural nuances [16] | Physical property prediction, QSAR [17] |

| SMILES/Strings | SMILES, SELFIES [16] | Simple, compact, human-readable [16] | Struggles with structural complexity; variance problem [16] | Sequence-based model input, simple database storage |

| Graph-Based | GNNs, MPNNs [16] [9] | Naturally represents molecular structure; captures local/global features [16] | Computationally intensive; complex architecture [17] | Complex property prediction, structure-function studies [16] |

| Multimodal | MCMPP [18] | Leverages complementary information; superior accuracy [18] | High complexity; integration challenges [18] | Challenging prediction tasks where accuracy is paramount [18] |

Experimental Comparison and Performance Benchmarks

Quantitative Performance Across Benchmark Datasets

Comprehensive comparisons of molecular feature representations on multiple benchmark datasets reveal nuanced performance patterns. A broad evaluation on 11 benchmark datasets for predicting properties like mutagenicity, melting points, and solubility showed that several molecular features perform similarly well overall [17]. Specifically, molecular descriptors from the PaDEL library excelled for predicting physical properties, while MACCS fingerprints performed robustly despite their simplicity [17]. Notably, learnable representations achieved competitive performance compared to expert-based representations, though task-specific representations like graph convolutions rarely offered substantial benefits given their higher computational demands [17].

The MCMPP multimodal model demonstrated significant advantages on established benchmarks including Delaney (solubility), Lipophilicity, SAMPL, and BACE datasets [18]. By integrating SMILES, ECFP fingerprints, molecular graphs, and 3D conformations processed through specialized encoders (Transformer-Encoder, BiLSTM, GCN, reduced Unimol+), MCMPP achieved the lowest Root-Mean-Square Error (RMSE) compared to single-modality models and other fusion techniques [18]. This demonstrates that effectively leveraging complementary information across modalities can substantially enhance prediction accuracy.

Performance in Low-Data Regimes

Data scarcity remains a major obstacle in molecular property prediction, particularly for pharmaceutical applications. Modern multi-task learning approaches address this by leveraging correlations among related properties. The recently developed ACS method for multi-task GNNs effectively mitigates detrimental "negative transfer," where updates from one task harm another [9]. In practical validation, ACS enabled accurate predictions with as few as 29 labeled samples in a sustainable aviation fuel property prediction task—capabilities unattainable with single-task learning or conventional multi-task learning [9].

The Critical Role of Data Consistency

Beyond model architecture and representation choice, data quality profoundly impacts performance. A 2025 analysis of public ADME (Absorption, Distribution, Metabolism, Excretion) datasets uncovered significant distributional misalignments and inconsistent property annotations between gold-standard and popular benchmark sources [19]. These discrepancies, arising from differences in experimental conditions and chemical space coverage, can introduce noise and degrade model performance [19]. The findings emphasize that data consistency assessment is a crucial prerequisite for reliable modeling, leading to the development of tools like AssayInspector to systematically identify outliers, batch effects, and dataset discrepancies before model training [19].

Hyperparameter Optimization Frameworks for Molecular Property Prediction

Hyperparameter optimization is essential for developing accurate and efficient deep learning models for molecular property prediction. Comparative studies have evaluated several HPO algorithms, including Grid Search, Random Search, Bayesian Optimization, and Hyperband [2].

Comparison of HPO Methodologies

Grid Search: This exhaustive method evaluates all possible combinations within a predefined hyperparameter grid. While methodical and guaranteed to find the best combination within the specified range, it becomes computationally prohibitive as the number of hyperparameters increases [2].

Bayesian Optimization: This sequential strategy uses probabilistic models to make informed decisions about which hyperparameters to test next, balancing exploration of new combinations with exploitation of known good regions [2] [20]. It typically requires fewer evaluations than Grid Search and is particularly valuable for complex models with multiple hyperparameters [2].

Hyperband: This algorithm combines random search with early-stopping to accelerate the optimization process, making it highly computationally efficient [2]. Recent research concludes that "the hyperband algorithm, which has not been used in previous MPP studies, is most computationally efficient; it gives MPP results that are optimal or nearly optimal in terms of prediction accuracy" [2].

Table 2: Hyperparameter Optimization Methods for Molecular Property Prediction

| Optimization Method | Mechanism | Advantages | Disadvantages | Recommended Context |

|---|---|---|---|---|

| Grid Search [2] | Exhaustive search over defined space | Simple; finds best in-grid combination; easily parallelized [2] | Computationally intractable for high dimensions [2] | Small hyperparameter spaces with limited resources |

| Random Search [2] | Random sampling from parameter distributions | More efficient than grid search; good for high dimensions [2] | May miss important regions; no learning from past trials [2] | Moderate-dimensional spaces with limited computational budget |

| Bayesian Optimization [2] [20] | Probabilistic model-guided sequential search | Sample-efficient; balances exploration/exploitation [2] [20] | Complex implementation; sequential nature can limit parallelism [2] | Complex models with costly evaluations and limited parameters |

| Hyperband [2] | Random search with early-stopping | High computational efficiency; effective resource allocation [2] | May terminate promising configurations prematurely [2] | Large-scale models with many hyperparameters and limited resources |

| BOHB [2] | Bayesian Optimization + Hyperband | Combines efficiency of Hyperband with guidance of BO [2] | Increased implementation complexity [2] | Diverse molecular representations requiring robust optimization |

Integrated Workflow for Representation and Optimization

The relationship between molecular representation selection and hyperparameter optimization follows a logical sequence, where choices in one area influence decisions in the other.

Essential Research Reagents and Computational Tools

Successful implementation of molecular representation methods requires specific computational tools and resources. The following table catalogs key solutions referenced in recent literature.

Table 3: Essential Research Reagent Solutions for Molecular Representation Research

| Tool/Resource | Type | Primary Function | Relevant Context |

|---|---|---|---|

| RDKit [19] | Software Library | Calculates molecular descriptors, fingerprints, and processing | Used in AssayInspector for descriptor calculation [19] |

| KerasTuner [2] | HPO Library | Hyperparameter optimization for deep learning models | Recommended for HPO of DNNs for molecular property prediction [2] |

| AssayInspector [19] | Data Quality Tool | Identifies dataset discrepancies and distribution misalignments | Critical for data consistency assessment before model training [19] |

| FGBench [21] | Specialized Dataset | Provides functional group-level molecular property reasoning | Enhances interpretability and structure-aware reasoning in LLMs [21] |

| PaDEL [17] | Descriptor Software | Calculates comprehensive molecular descriptors | Particularly effective for predicting physical properties [17] |

The landscape of molecular representation has evolved significantly from traditional fingerprints and descriptors to modern graph-based and multimodal approaches. Each representation offers distinct advantages: fingerprints and descriptors provide computational efficiency and interpretability, graph-based methods naturally capture molecular structure, and multimodal approaches deliver superior accuracy by integrating complementary information [16] [17] [18].

The choice of representation must align with the specific prediction task, dataset characteristics, and computational resources. For low-data regimes, multi-task learning with methods like ACS demonstrates remarkable efficacy [9]. Regardless of the representation selected, rigorous hyperparameter optimization is essential, with Hyperband emerging as a particularly efficient algorithm for molecular property prediction [2]. Furthermore, data consistency assessment must precede modeling to ensure reliable performance [19].

As the field advances, the integration of specialized chemical knowledge—such as functional group information from resources like FGBench—with sophisticated representation learning and efficient optimization protocols will continue to enhance the accuracy, interpretability, and impact of molecular property prediction in accelerating drug discovery and materials design [21].

In the field of molecular property prediction, the accuracy of machine learning models is critical for accelerating drug discovery and materials science. These models depend heavily on their hyperparameters—the configuration settings that govern the learning process itself. Unlike model parameters learned from data, hyperparameters are set prior to training and significantly influence predictive performance. The challenge of identifying optimal hyperparameter configurations is a fundamental step in developing reliable predictive models for applications ranging from drug efficacy studies to organic photovoltaic material design.

Among the various strategies available, Grid Search represents the most straightforward and systematic approach. As a brute-force method, it exemplifies exhaustive exploration of predefined hyperparameter spaces. This guide examines Grid Search's methodology, performance, and practical implementation within molecular property prediction research, providing a direct comparison with the increasingly prevalent Bayesian Optimization approach. Through experimental data and detailed protocols, we equip researchers with the knowledge to select appropriate tuning strategies for their specific computational challenges.

Understanding Grid Search: Mechanism and Workflow

Grid Search operates on a simple yet exhaustive principle: it performs an organized exploration of every combination within a user-defined hyperparameter grid. Imagine specifying a set of values for several hyperparameters, such as the learning rate (e.g., 0.01, 0.001) and the number of layers in a neural network (e.g., 2, 3, 4). Grid Search would systematically construct and evaluate a model for each possible combination of these values—(0.01, 2), (0.01, 3), (0.01, 4), (0.001, 2), (0.001, 3), (0.001, 4)—resulting in six distinct models in this example [1].

This method guarantees that the best configuration within the specified grid will be found, as no combination is left unevaluated. Its implementation is conceptually simple and easily parallelized, as each point in the grid can be evaluated independently of the others. However, this exhaustive nature is also its primary drawback; the total number of evaluations grows exponentially with each additional hyperparameter, a phenomenon known as the "curse of dimensionality." This can make Grid Search computationally prohibitive for tuning a large number of hyperparameters or when model evaluation is inherently expensive, as is often the case with complex graph neural networks predicting molecular properties [1] [22].

The following diagram illustrates the systematic workflow of a Grid Search.

Experimental Comparison: Grid Search vs. Bayesian Optimization

Performance Metrics and Experimental Data

The comparative efficiency of Grid Search and Bayesian Optimization has been quantified across various studies. In one HVAC system modeling study, researchers developed and tested 288 unique hyperparameter configurations using Grid Search, with each configuration trained three times, resulting in a total of 864 artificial neural network models [23]. This highlights the resource-intensive nature of a comprehensive Grid Search.

When compared directly, Bayesian Optimization has demonstrated superior sample efficiency. Evidence shows it can lead a model to the same performance level as Grid Search but in 7x fewer iterations and 5x faster execution time [22]. This efficiency stems from its informed search strategy, which allows it to discard non-optimal configurations early in the process.

In molecular discovery, the performance gap can be even more significant. A 2025 study comparing search approaches for discovering organic solar cell molecules found that in a vast chemical space of over 10^14 molecules, Bayesian Optimization identified a thousand times more promising molecules with the desired properties compared to random search (a simpler alternative to Grid Search) using the same computational resources [24]. Another molecular optimization framework, MolDAIS, demonstrated that Bayesian Optimization could identify near-optimal candidates from chemical libraries of over 100,000 molecules using fewer than 100 property evaluations [25].

Table 1: Experimental Performance Comparison of Tuning Methods

| Metric | Grid Search | Bayesian Optimization | Experimental Context |

|---|---|---|---|

| Number of Evaluations | 288 configurations (864 models) [23] | Fewer than 100 evaluations [25] | Model training on a specific task |

| Computational Efficiency | Baseline (1x) | 5x faster execution [22] | Achieving equivalent model performance |

| Sample Efficiency | Exhaustive | 7x fewer iterations [22] | Achieving equivalent model performance |

| Discovery Rate | Not specifically tested | 1000x more promising molecules [24] | Exploration of a chemical space of >10^14 molecules |

Detailed Experimental Protocols

To ensure reproducibility and provide a clear framework for benchmarking, this section outlines the standard protocols for implementing Grid Search and Bayesian Optimization in a molecular property prediction context.

Grid Search Experimental Protocol

The following steps detail a rigorous methodology for conducting a Grid Search, as exemplified in building energy prediction research [23].

- Hyperparameter Space Definition: Define a discrete grid of hyperparameter values. For example:

- Number of epochs: 100, 200, 500

- Network size (hidden layers): 5, 10, 17

- Learning rate: 1e-3, 1e-4, 5e-5

- Optimizer: Adam, SGD, RMSprop

- Combinatorial Evaluation: Train and validate a separate model for every possible combination of the hyperparameters listed in the grid.

- Cross-Validation: For each combination, perform k-fold cross-validation (e.g., k=3) to obtain a robust performance estimate and mitigate the influence of random data splitting [23].

- Performance Logging: Store the performance metric (e.g., Mean Squared Error, ROC-AUC) for every trained model.

- Optimal Configuration Selection: After all evaluations are complete, identify the hyperparameter combination that yielded the best average validation performance.

Bayesian Optimization Experimental Protocol

The protocol for Bayesian Optimization is inherently adaptive, using information from past experiments to inform the next. This description is based on implementations used for molecular property optimization [24] [25].

- Initialization: Select a small initial set of hyperparameter configurations via random sampling.

- Model Training and Evaluation: Train and evaluate models for the initial set.

- Surrogate Model Update: Use the collected performance data to build or update a probabilistic surrogate model, typically a Gaussian Process (GP), which models the function mapping hyperparameters to model performance [25].

- Acquisition Function Maximization: Use an acquisition function (e.g., Expected Improvement), which balances exploration and exploitation, to determine the most promising hyperparameter configuration to evaluate next [25].

- Iteration: Repeat steps 2-4 for a predefined number of iterations or until performance convergence is achieved.

The logical relationship and core components of the Bayesian Optimization process are summarized below.

Implementing and comparing hyperparameter tuning methods requires a suite of software tools and computational resources. The following table details key "reagent solutions" essential for experiments in this field.

Table 2: Essential Research Tools for Hyperparameter Tuning in Molecular Property Prediction

| Tool / Resource | Type | Primary Function in Research | Relevance to Tuning Methods |

|---|---|---|---|

| stk-search [24] | Python Package | Searches the chemical space of molecules built from smaller blocks. | Provides infrastructure to compare Bayesian Optimization and evolutionary algorithms against baselines like random search. |

| BoTorch [24] | Python Library | A framework for Bayesian Optimization research and implementation. | Serves as the core Bayesian Optimization engine, providing surrogate models and acquisition functions. |

| Graph Neural Networks (GNNs) [26] [9] | Model Architecture | Learns representations from molecular graph structures for property prediction. | The model whose hyperparameters (e.g., layers, hidden dimensions) are being tuned. A key application for these methods. |

| MoleculeNet [9] | Benchmark Dataset | A standardized benchmark for molecular property prediction tasks. | Provides consistent datasets (e.g., Tox21, SIDER) for fair comparison of tuning methods and model performance. |

| MolDAIS [25] | Optimization Framework | A Bayesian Optimization framework for data-efficient molecular design. | An example of a state-of-the-art Bayesian Optimization method that adaptively identifies task-relevant descriptor subspaces. |

Grid Search remains a valuable, systematic brute-force approach for hyperparameter tuning, particularly when the hyperparameter space is small or computational resources are abundant. Its exhaustive nature guarantees finding the best point within a pre-defined grid and its simplicity makes it easy to implement and parallelize [1].

However, for the vast and complex landscapes common in molecular property prediction, Bayesian Optimization offers a more efficient and powerful alternative. Its ability to leverage past evaluations to make informed decisions about the next hyperparameters to test results in significant savings in both time and computational cost [25] [22]. As molecular datasets grow and models become more complex, the sample efficiency of adaptive methods like Bayesian Optimization positions them as the leading approach for accelerating drug development and materials discovery. The future of hyperparameter tuning in this field lies in these intelligent, data-efficient strategies that can navigate high-dimensional spaces where Grid Search is simply infeasible.

In the fields of drug discovery and materials science, researchers face a formidable challenge: navigating vast, high-dimensional molecular spaces to find compounds with optimal properties, all while constrained by extremely limited experimental resources. Traditional optimization methods often fall short in these complex landscapes. Bayesian optimization (BO) has emerged as a powerful, adaptive alternative that intelligently guides the search for optimal molecules by leveraging probabilistic models to balance exploration of unknown regions with exploitation of promising areas [20] [11]. This approach is particularly valuable for "black-box" functions where the relationship between inputs and outputs is unknown, complex, or expensive to evaluate—characteristics common to molecular property prediction tasks [20].

The fundamental advantage of BO lies in its data efficiency. By building a probabilistic model of the objective function and using it to select the most informative experiments, BO can identify optimal candidates with far fewer evaluations than traditional methods [27] [25]. This efficiency is critical in molecular research where each experiment—whether computational simulation or wet-lab testing—carries significant time and resource costs. As research increasingly moves toward automated workflows and self-driving laboratories, Bayesian optimization provides the intelligent decision-making core that enables truly autonomous scientific discovery [28].

Optimization Methods: A Comparative Framework

Grid Search: The Systematic Brute-Force Approach

Grid Search (GS) operates on a simple brute-force principle: define a grid of possible values for each hyperparameter and exhaustively evaluate every combination within this predefined space [3]. Think of it as a systematic treasure hunt where you methodically check every marked location on a map without any guidance about where treasure is more likely to be found [1]. The key advantage of GS is its comprehensiveness—it guarantees finding the best combination within the specified parameter grid, making it suitable for low-dimensional problems with small search spaces [3].

However, GS suffers from the "curse of dimensionality"—as the number of parameters increases, the search space grows exponentially [1] [11]. For molecular property prediction involving multiple parameters (e.g., composition, synthesis conditions, structural features), this method becomes computationally prohibitive. Furthermore, GS treats each parameter combination independently without learning from previous evaluations, potentially wasting valuable experimental resources on poor-performing configurations [1].

Random Search: The Stochastic Alternative

Random Search (RS) addresses some limitations of GS by randomly sampling parameter combinations from the search space according to a specified distribution [3]. This stochastic approach has proven surprisingly effective in practice, often outperforming GS in high-dimensional spaces because it has a better chance of stumbling into productive regions without being constrained by a rigid grid structure [3]. Studies have shown that RS can achieve comparable or better performance than GS while requiring less processing time [3].

The primary limitation of RS is its lack of intelligence—it doesn't learn from previous results to inform future sampling. Each evaluation is independent, so the method cannot strategically focus on promising regions of the search space or avoid redundant experiments [1]. While more efficient than GS for high-dimensional problems, RS still wastes significant resources on unproductive regions of the molecular design space.

Bayesian Optimization: The Intelligent Approach

Bayesian optimization takes a fundamentally different approach by building a probabilistic model of the objective function and using it to guide the search process [27] [11]. The core components of BO include:

- Surrogate Model: Typically a Gaussian Process (GP) that approximates the unknown objective function and provides both predictions and uncertainty estimates [29] [27]

- Acquisition Function: A decision policy that uses the surrogate model's predictions to select the most promising next experiment by balancing exploration and exploitation [27]

Unlike GS and RS, BO learns from previous evaluations to make informed decisions about where to sample next [1] [3]. This sequential model-based approach is particularly advantageous for optimizing expensive black-box functions, making it ideally suited for molecular property prediction where each evaluation is computationally or experimentally costly [20] [11].

Table 1: Core Components of Bayesian Optimization

| Component | Function | Common Variants |

|---|---|---|

| Surrogate Model | Approximates the objective function; provides uncertainty quantification | Gaussian Process (GP), Random Forest (RF), Bayesian Neural Networks |

| Acquisition Function | Balances exploration vs. exploitation to select next experiment | Expected Improvement (EI), Upper Confidence Bound (UCB), Probability of Improvement (PI) |

| Kernel Function | Defines similarity between data points; encodes assumptions about function smoothness | Radial Basis Function (RBF), Matérn, Automatic Relevance Detection (ARD) |

Quantitative Performance Comparison

Computational Efficiency and Performance Metrics

Multiple studies across different domains have demonstrated Bayesian optimization's superior efficiency compared to traditional methods. In direct comparisons for heart failure prediction models, Bayesian Search consistently required less processing time than both Grid and Random Search methods while achieving competitive predictive performance [3]. This computational efficiency becomes increasingly significant as the dimensionality of the problem grows.

In molecular optimization tasks, BO's advantage is even more pronounced. When applied to optimizing limonene production in E. coli through four-dimensional transcriptional control, a BO policy converged to near-optimal performance after investigating just 18 unique points—approximately 22% of the experiments required by the grid search approach used in the original study [20]. This represents a 4-5x reduction in experimental effort, demonstrating BO's potential to dramatically accelerate research cycles in biological domains.

Robustness and Generalization Performance

Beyond raw optimization speed, model robustness is crucial for practical applications. Comparative studies have shown that while some methods may achieve high initial performance on training data, they often exhibit significant performance degradation under cross-validation. In healthcare prediction tasks, Random Forest models optimized with Bayesian methods demonstrated superior robustness with an average AUC improvement of 0.03815 after 10-fold cross-validation, while Support Vector Machine models showed signs of overfitting [3].

BO's robustness stems from its principled handling of uncertainty through the surrogate model. By explicitly modeling uncertainty and using it to guide exploration, BO avoids overcommitting to potentially suboptimal regions early in the search process. This systematic uncertainty quantification makes BO particularly effective for noisy experimental data common in molecular sciences [27].

Table 2: Experimental Performance Comparison Across Domains

| Application Domain | Optimization Method | Key Performance Metrics | Experimental Budget Required |

|---|---|---|---|

| Limonene Production in E. coli [20] | Grid Search | Baseline performance | 83 experiments |

| Bayesian Optimization | Equivalent performance | 18 experiments (78% reduction) | |

| Heart Failure Prediction [3] | Grid Search | Accuracy: 0.6294, Sensitivity: >0.61 | Highest processing time |

| Random Search | Moderate processing time | 20-30% faster than Grid Search | |

| Bayesian Search | Competitive accuracy | Lowest processing time | |

| Molecule Design [27] | Genetic Algorithms/RL | Baseline performance | Varies |

| Properly Tuned BO | Highest performance on PMO benchmark | Similar experimental budget |

Advanced Bayesian Optimization Frameworks for Molecular Science

Adaptive Representation Learning

A critical challenge in molecular optimization is selecting appropriate representations or features that capture the relevant chemical information. Traditional approaches rely on fixed representations chosen by domain experts or through separate feature selection processes. However, Feature Adaptive Bayesian Optimization (FABO) introduces a framework that dynamically identifies the most informative molecular representations during the optimization process itself [28].

FABO operates by starting with a comprehensive, high-dimensional feature set and iteratively refining the representation using feature selection methods like Maximum Relevancy Minimum Redundancy (mRMR) or Spearman ranking [28]. This adaptive approach has demonstrated superior performance across multiple molecular optimization tasks, including metal-organic framework (MOF) discovery for CO₂ adsorption and electronic band gap optimization [28]. By automatically tailoring the representation to the specific optimization task, FABO eliminates the need for prior feature engineering expertise and ensures the optimization process focuses on the most relevant molecular characteristics.

Sparse Subspace Optimization

The MolDAIS framework addresses high-dimensional challenges by adaptively identifying task-relevant subspaces within large molecular descriptor libraries [25]. By incorporating sparsity-inducing priors, particularly the Sparse Axis-Aligned Subspace (SAAS) prior, MolDAIS constructs parsimonious Gaussian process models that focus computational resources on the most informative features [25].

This approach consistently outperforms state-of-the-art molecular property optimization methods across benchmark and real-world tasks, identifying near-optimal candidates from chemical libraries containing over 100,000 molecules using fewer than 100 property evaluations [25]. The method's efficiency stems from its ability to ignore irrelevant dimensions while progressively refining its understanding of which features drive property variations—a crucial advantage when working with comprehensive molecular descriptor sets that may contain many redundant or uninformative features.

Experimental Protocols and Methodologies

Standard Bayesian Optimization Workflow

The Bayesian optimization process follows a systematic, iterative workflow that combines statistical modeling with experimental design:

Bayesian Optimization Workflow

Step 1: Initialization - The process begins with a small set of initial experiments, typically selected via Latin Hypercube Sampling or random sampling to ensure broad coverage of the design space [29].

Step 2: Surrogate Modeling - A Gaussian Process (GP) is trained on all available data to build a probabilistic model of the objective function. The GP provides both a prediction (mean) and uncertainty estimate (variance) for any point in the design space [27]. Key considerations include:

- Kernel Selection: The Matérn kernel is often preferred over the Radial Basis Function (RBF) for modeling physical phenomena as it accommodates less smooth functions [29]

- Hyperparameter Tuning: Kernel parameters (length scales, amplitude) are typically optimized by maximizing the marginal likelihood [27]

- Anisotropic Kernels: GP with Automatic Relevance Detection (ARD) allows different length scales for each input dimension, significantly improving performance on high-dimensional problems [29]

Step 3: Acquisition Function Optimization - An acquisition function (e.g., Expected Improvement, Upper Confidence Bound) uses the surrogate model's predictions to balance exploration of uncertain regions with exploitation of promising areas [27]. The point maximizing the acquisition function is selected as the next experiment.

Step 4: Experimental Evaluation & Update - The selected experiment is performed, and the results are added to the dataset. The surrogate model is updated with the new information, and the cycle repeats until convergence or exhaustion of the experimental budget [11].

Molecular Representation and Feature Selection

For molecular optimization problems, the representation of chemical structures is a critical factor influencing BO performance [28]. The following workflow illustrates the adaptive representation approach:

Adaptive Molecular Representation

Common molecular representations include:

- Fixed Descriptors: Traditional molecular fingerprints (ECFP, MACCS), physicochemical properties, and topological descriptors [25]

- Adaptive Descriptors: Frameworks like FABO and MolDAIS that dynamically select relevant features from a comprehensive descriptor library during optimization [28] [25]

- Learned Representations: Embeddings from graph neural networks or other deep learning models, though these may require substantial data for training [30]

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools for Bayesian Optimization

| Tool/Resource | Function | Application Context |

|---|---|---|

| Gaussian Process Regression | Probabilistic surrogate modeling | Core statistical model for predicting molecular properties with uncertainty |

| Expected Improvement (EI) | Acquisition function | Balances exploration and exploitation; widely used default choice |

| Automatic Relevance Detection (ARD) | Kernel feature selection | Identifies relevant molecular descriptors automatically |

| Molecular Descriptor Libraries | Feature generation | Comprehensive sets of chemical features (e.g., RACs, topological indices) |

| Sparse Axis-Aligned Subspace (SAAS) Prior | High-dimensional modeling | Enables efficient optimization in large descriptor spaces [25] |

| Maximum Relevancy Minimum Redundancy (mRMR) | Feature selection | Identifies informative, non-redundant molecular features [28] |

Bayesian optimization represents a paradigm shift in how researchers approach complex optimization problems in molecular sciences. By intelligently leveraging probabilistic models to guide experimental campaigns, BO dramatically reduces the number of experiments required to identify optimal compounds or conditions. The method's superior data efficiency makes it particularly valuable for resource-constrained scenarios common in drug discovery and materials research.

As the field advances, several promising directions are emerging. Multi-objective optimization extends BO to handle competing objectives simultaneously—essential for balancing efficacy, toxicity, and synthesizability in drug candidates [31] [11]. Transfer learning and meta-learning approaches enable knowledge transfer between related optimization tasks, potentially reducing the initialization cost for new campaigns [30]. Hybrid human-AI collaboration frameworks are developing to incorporate expert knowledge into the optimization process, creating synergistic partnerships between human intuition and machine efficiency [28].

For researchers embarking on molecular optimization projects, the evidence strongly suggests adopting Bayesian optimization as the default approach for expensive black-box functions. While Grid Search retains utility for low-dimensional problems with cheap evaluations, and Random Search provides a simple baseline, BO's superior sample efficiency and adaptive intelligence make it the preferred choice for most real-world molecular design challenges. As automated research platforms become increasingly prevalent, Bayesian optimization will undoubtedly form the computational backbone of tomorrow's autonomous discovery pipelines.

Implementing Tuning Strategies: A Step-by-Step Guide for Cheminformatics

Selecting the right hyperparameter tuning method is a critical strategic decision in molecular property prediction (MPP). This choice directly impacts not only predictive accuracy but also the computational efficiency of your research pipeline. For researchers and drug development professionals working with often scarce and costly experimental data, an efficient tuning process is paramount. This guide objectively compares the performance of Grid Search, Random Search, and Bayesian Optimization, providing the experimental data and protocols needed to inform your MPP workflow.

The core of an effective tuning pipeline lies in selecting a method that intelligently navigates the hyperparameter space. The three predominant strategies each employ a distinct search philosophy.

The diagram above illustrates the fundamental logical relationship between the main tuning strategies and their core search principles. As detailed in the subsequent table, each method operates on a distinct core principle, leading to significant differences in application and performance [1] [6] [10].

| Method | Core Principle | Best-Suited Scenarios | Key Advantages |

|---|---|---|---|

| Grid Search | Exhaustively evaluates every combination in a predefined grid [6] [10]. | Small, discrete hyperparameter spaces (typically <4 parameters) [1] [8]. | Guaranteed to find the best combination within the defined grid; simple to implement and understand [1] [10]. |

| Random Search | Randomly samples a fixed number of combinations from defined distributions [6]. | Higher-dimensional spaces (>4-5 parameters) or when computational resources are limited [6] [10]. | More efficient than Grid Search; can explore a wider range and works with continuous distributions [6] [10]. |

| Bayesian Optimization | Builds a probabilistic model to intelligently select the most promising hyperparameters to evaluate next, based on previous results [1] [6]. | Complex models, large hyperparameter spaces, or when each model evaluation is computationally expensive [1] [6] [2]. | Highly data-efficient; often finds optimal parameters in far fewer iterations; effective in high-dimensional spaces [1] [6]. |

Quantitative Performance Comparison in Molecular Property Prediction

Theoretical advantages must be validated by empirical performance. Controlled experiments, particularly within MPP, provide clear evidence of how these methods compare in practice.

Case Study: Tuning a Random Forest Model

One systematic comparison tuned a Random Forest classifier using all three methods on a classification dataset, with the goal of maximizing the F1 score [6]. The hyperparameter search space consisted of 810 unique combinations. The results are summarized in the table below.

| Method | Total Trials | Trials to Find Optimum | Best F1 Score | Run Time |

|---|---|---|---|---|

| Grid Search | 810 | 680 | 0.94 | Longest |

| Random Search | 100 | 36 | 0.91 | Shortest |

| Bayesian Optimization | 100 | 67 | 0.94 | Moderate |

The data shows that Bayesian Optimization achieved the same high performance as Grid Search but with 7.3x fewer iterations (100 vs. 810 total trials) and a significantly shorter run time [6]. While Random Search was the fastest, it failed to find the best-performing hyperparameters, yielding a lower F1 score [6]. This demonstrates Bayesian Optimization's superior balance of efficiency and effectiveness.

Performance in Deep Learning for MPP

Research focusing specifically on deep neural networks for MPP reinforces these findings. One study concluded that for developing accurate and efficient models, it is critical to "optimize as many hyperparameters as possible" [2]. The study compared Random Search, Bayesian Optimization, and the Hyperband algorithm, finding that Hyperband (a modern advanced method) was the most computationally efficient and yielded optimal or nearly optimal prediction accuracy [2]. This highlights a shift away from traditional Grid Search towards more sophisticated algorithms in modern MPP research.

Experimental Protocols for Method Comparison

To ensure reproducible and fair comparisons between hyperparameter tuning methods, a structured experimental protocol is essential. The following workflow outlines the key steps from initial data preparation to final evaluation.

Detailed Methodological Steps

- Data Splitting: Split the dataset into three parts: a Training Set (for model fitting during cross-validation), a Validation Set (for guiding the hyperparameter search and avoiding overfitting), and a Hold-out Test Set (for the final, unbiased evaluation of the model tuned with the selected hyperparameters). Cross-validation (e.g., 5-fold) on the training set is typically used during the tuning process itself [10] [8].

- Define Search Space: Clearly specify the hyperparameters and their ranges for the model being tuned.

- For Random Forest [6] [10]:

n_estimators(e.g., 50 to 200),max_depth(e.g., 5 to 30),min_samples_split(e.g., 2 to 10). - For Deep Neural Networks in MPP [2]: Structural hyperparameters (number of layers, units per layer, activation functions) and learning hyperparameters (learning rate, optimizer, batch size, dropout rate).

- For Random Forest [6] [10]:

- Configure Tuner: Initialize the three tuning methods with comparable budgets.

- Model Training & Validation: For each hyperparameter set proposed by the tuner, train a model on the training set and evaluate its performance on the validation set. The key metric (e.g., F1 score, Mean Squared Error) is reported back to the tuner [6].

- Optimal Model Selection & Final Evaluation: After the tuning loop completes, each method identifies its best hyperparameter configuration. A model is then trained on the entire training+validation set using these optimal hyperparameters and evaluated on the held-out test set to obtain the final, unbiased performance metric [6] [10].

Building an effective MPP tuning pipeline requires both computational tools and domain-specific data. The following table details key resources mentioned in experimental research.

| Tool / Resource | Function in the Tuning Pipeline | Relevance to MPP |

|---|---|---|

| Scikit-learn (GridSearchCV, RandomizedSearchCV) [10] | Provides easy-to-implement, standardized classes for conducting Grid and Random Search with cross-validation. | Ideal for benchmarking against traditional ML models (e.g., Random Forest, SVM) on fingerprint or descriptor data [32]. |

| Optuna [6] [2] | A dedicated Bayesian optimization framework that simplifies defining the search space and objective function, supporting advanced algorithms like BOHB. | Enables efficient tuning of complex models, including deep neural networks and GNNs, which are common in modern MPP [2]. |

| KerasTuner [2] | A user-friendly hyperparameter tuning library compatible with Keras and TensorFlow models. | Recommended in MPP research for its intuitive interface, which is valuable for chemical engineers and scientists without extensive CS backgrounds [2]. |

| QM9 Dataset [33] | A widely used benchmark dataset containing quantum mechanical properties for ~130,000 small organic molecules. | Serves as a standard for controlled experiments and for pre-training models in low-data regimes, as used in multi-task learning studies [33]. |

| Molecular Graph Representations | Represents a molecule as a graph (atoms=nodes, bonds=edges) for direct consumption by Graph Neural Networks (GNNs). | The natural representation for molecules; allows GNNs to learn directly from molecular structure, forming the basis for many state-of-the-art MPP models [33] [32]. |

The experimental data and protocols presented lead to a clear conclusion: while Grid Search offers simplicity and thoroughness within a defined space, its computational cost is often prohibitive for tuning complex MPP models. Random Search provides a faster, more efficient alternative but risks missing optimal configurations due to its random nature.

For most modern MPP research involving deep learning, Graph Neural Networks (GNNs), or large hyperparameter spaces, Bayesian Optimization and its variants (like Hyperband and BOHB) offer a superior approach [2]. They consistently achieve high predictive accuracy with significantly greater computational efficiency, making them the recommended choice for structuring a robust and effective tuning pipeline in molecular property prediction.

In molecular property prediction, the performance of a machine learning model is highly dependent on its hyperparameters. These settings, fixed before the training process begins, control the learning algorithm's behavior. Grid Search and Bayesian Optimization represent two philosophically distinct approaches to this critical optimization problem. For researchers in computational chemistry and drug development, the choice between an exhaustive, systematic search and an intelligent, adaptive one has significant implications for both computational resource expenditure and research outcomes. This guide provides an objective comparison of these methods to inform your experimental design.

Methodological Comparison: Grid Search vs. Bayesian Optimization

Understanding the core mechanics of each hyperparameter tuning method is fundamental to selecting the right tool for your research.

Grid Search: A Systematic Approach

Grid Search is a traditional, exhaustive algorithm for hyperparameter tuning [34]. Its operation is methodical:

- Parameter Grid Definition: The researcher defines a discrete set of values for each hyperparameter to be tuned [35]. For instance, when tuning a Support Vector Machine, one might specify

'C': [0.1, 1, 10, 100]and'gamma': [1, 0.1, 0.01, 0.001]. - Grid Formation: The algorithm constructs a "grid" where each point represents a unique combination of these hyperparameters [36].

- Exhaustive Evaluation: It then trains and evaluates a model for every single combination in this grid, typically using cross-validation to ensure robustness [37] [38].

- Selection: The hyperparameter set yielding the best cross-validated performance (e.g., highest accuracy or lowest error) is selected as optimal [34].

The primary advantage of Grid Search is its thoroughness; given enough time and resources, it will find the best combination within the pre-defined search space [8]. However, this completeness is also its major drawback. The total number of models to evaluate is the product of the number of values for each parameter, leading to a combinatorial explosion with many hyperparameters—a challenge known as the "curse of dimensionality" [34].

Bayesian Optimization: An Adaptive Strategy

Bayesian Optimization takes a probabilistic and adaptive approach [8]. Instead of evaluating all possibilities, it builds a probabilistic model, called a surrogate model, of the objective function (the model's performance as a function of its hyperparameters) [22].

- Surrogate Model: A probabilistic model (often a Gaussian Process) is used to approximate the complex relationship between hyperparameters and model performance.

- Acquisition Function: An acquisition function uses the surrogate model to decide which hyperparameter combination to test next. It balances exploring uncertain regions of the parameter space and exploiting areas known to perform well.

- Iterative Update: The algorithm iteratively tests the hyperparameters suggested by the acquisition function, then updates the surrogate model with the new results. This process informs each subsequent step [22].