High-Performance Computing for Ab Initio Simulations: Revolutionizing Drug Discovery and Materials Science

This article explores the transformative impact of high-performance computing (HPC) on ab initio simulations, which provide quantum-mechanically accurate insights into molecular and material behavior.

High-Performance Computing for Ab Initio Simulations: Revolutionizing Drug Discovery and Materials Science

Abstract

This article explores the transformative impact of high-performance computing (HPC) on ab initio simulations, which provide quantum-mechanically accurate insights into molecular and material behavior. Aimed at researchers, scientists, and drug development professionals, it covers foundational principles and the pressing challenges of achieving exascale performance. The article details key methodological advances, including machine learning-accelerated molecular dynamics and specialized software, alongside their concrete applications in drug discovery and materials design. It further provides a practical guide for troubleshooting performance bottlenecks and optimizing simulations on modern, heterogeneous HPC architectures. Finally, it examines validation frameworks and comparative analyses of different computational approaches, offering a comprehensive resource for leveraging HPC to push the boundaries of computational chemistry and biology.

Ab Initio Principles and the Drive to Exascale HPC

Ab initio molecular dynamics (AIMD) is a powerful computational method that simulates the physical movements of atoms over time based on first-principles quantum mechanics, without relying on empirical potentials [1]. This approach bridges molecular dynamics with quantum mechanics by calculating the forces acting on atoms directly from quantum-mechanical principles [2]. Density Functional Theory (DFT) serves as the foundational quantum mechanical theory for most modern AIMD simulations, providing a framework for determining the electronic structure of many-body systems [3]. The integration of these methodologies enables researchers to study complex systems undergoing chemical reactions, phase transitions, and other dynamic processes with quantum mechanical accuracy, making AIMD particularly valuable for systems where chemical bond breaking and forming occur [1].

Theoretical Foundations

Density Functional Theory Fundamentals

Density Functional Theory (DFT) begins with the Hohenberg-Kohn theorems, which demonstrate that all ground-state properties of a many-electron system are uniquely determined by its electron density, a function of only three spatial coordinates [3]. This revolutionary concept reduces the intractable many-body problem of N electrons with 3N spatial coordinates to a problem dealing with just three spatial coordinates through the use of functionals of the electron density [3].

The Kohn-Sham equations, developed later, form the practical basis for most DFT calculations by introducing a system of non-interacting electrons that produce the same density as the real system [2] [3]. The total energy functional in Kohn-Sham DFT is expressed as:

[ E{\text{KS}}[\rho(\mathbf{r})] = \int d\mathbf{r} \rho(\mathbf{r})V{\text{ext}}(\mathbf{r},\mathbf{R}) + K[\rho(\mathbf{r})] + V{\text{ee}}[\rho(\mathbf{r})] + E{\text{xc}}[\rho(\mathbf{r})] ]

where the terms represent, respectively: the interaction of electrons with an external potential (typically from nuclei), the kinetic energy of a non-interacting reference system, the electron-electron Coulombic energy, and the exchange-correlation energy [2]. The exchange-correlation functional ( E_{\text{xc}}[\rho(\mathbf{r})] ) encompasses all quantum mechanical effects not captured by the other terms and must be approximated in practical calculations [2] [3].

Ab Initio Molecular Dynamics Methodologies

AIMD simulations generate finite-temperature dynamical trajectories using forces obtained directly from electronic structure calculations performed "on the fly" as the simulation proceeds [2]. The classical dynamics of the nuclei follows Newton's equations:

[ MI \frac{\partial^2 \mathbf{R}I}{\partial t^2} = -\nablaI [\epsilon0(\mathbf{R}) + V{nn}(\mathbf{R})], \quad (I=1,\ldots,Nn) ]

where ( MI ) and ( \mathbf{R}I ) refer to the nuclear mass and coordinates, ( \epsilon0(\mathbf{R}) ) is the ground-state energy at nuclear configuration ( \mathbf{R} ), and ( V{nn} ) represents nuclear-nuclear Coulomb repulsion [2].

Two primary algorithmic approaches dominate the AIMD landscape:

Born-Oppenheimer Molecular Dynamics (BOMD): This approach treats the electronic structure problem within the time-independent Schrödinger equation, requiring explicit electronic minimization at each molecular dynamics time step [1].

Car-Parrinello Molecular Dynamics (CPMD): This method explicitly includes electronic degrees of freedom as fictitious dynamical variables through an extended Lagrangian, avoiding the need for self-consistent iterative minimization at each step [1]. The Car-Parrinello Lagrangian is defined as: [ \mathcal{L} = \frac{1}{2} \left( \sum{I}^{\text{nuclei}} MI \dot{\mathbf{R}}I^2 + \mu \sum{i}^{\text{orbitals}} \int d\mathbf{r} \, |\dot{\psi}i(\mathbf{r},t)|^2 \right) - E[{\psii},{\mathbf{R}I}] + \sum{ij} \Lambda{ij} \left( \int d\mathbf{r} \, \psii \psij - \delta{ij} \right) ] where ( \mu ) is a fictitious mass parameter assigned to the electronic orbitals [1].

Table 1: Comparison of AIMD Methodological Approaches

| Feature | Born-Oppenheimer MD | Car-Parrinello MD |

|---|---|---|

| Electronic minimization | Required at each time step | Avoided after initial step |

| Electronic degrees of freedom | Treated implicitly | Explicit dynamical variables |

| Time steps | Larger (1-10 fs) [1] | Smaller (due to fictitious electron mass) [1] |

| Computational cost per step | Higher | Lower |

| Typical systems | Wide range | Metallic systems can be challenging |

Computational Implementation

Essential Software Solutions

Several specialized software packages have been developed to implement AIMD simulations, each with particular strengths and specializations. These packages implement the complex algorithms necessary to solve the Kohn-Sham equations efficiently on high-performance computing architectures.

Table 2: Prominent Software Packages for AIMD Simulations

| Software | License | Key Features | Basis Set |

|---|---|---|---|

| VASP [4] [5] | Commercial | Robust pseudopotential library; hybrid functionals; GW methods | Plane waves |

| Quantum ESPRESSO [5] | Open-source | Car-Parrinello implementation; phonon calculations; TDDFPT | Plane waves |

| CP2K [5] [6] | Open-source | Quickstep module; mixed Gaussian/plane waves; good for large systems | Gaussian and plane waves |

| CPMD [1] [5] | Open-source | Original Car-Parrinello code; QM/MM capabilities | Plane waves |

| ABINIT [5] | Open-source | Many-body perturbation theory; excited states; wavelets | Plane waves/wavelets |

| SIESTA [7] | Open-source | Linear-scaling; numerical atomic orbitals | Numerical atomic orbitals |

Selection of appropriate software depends on multiple factors including system size, elemental composition, properties of interest, and available computational resources [5]. Key considerations include the availability of pseudopotentials for all elements in the system, parallel scalability, and the specific physical properties needing investigation [5].

Performance Considerations on HPC Systems

AIMD simulations are computationally demanding, with traditional DFT calculations scaling as N³ with system size (where N is the number of atoms) [2]. This computational complexity has driven the development of novel algorithms and their implementation on modern high-performance computing (HPC) systems.

Performance analyses of electronic structure codes on HPC architectures reveal several critical considerations:

- Strong scaling (fixed system size, increasing cores) efficiency typically decreases with rising core counts, but this decrease is less pronounced for larger systems [7].

- Weak scaling (increasing system size proportionally with cores) performance varies significantly with supercomputer topology and network architecture [7].

- System size dependence must be considered when evaluating parallel performance, as efficiency generally improves with larger simulation sizes [7].

Recent algorithmic advances have focused on achieving linear-scaling (O(N)) methods that take advantage of the "nearsightedness" of quantum mechanical systems, where local electronic properties depend predominantly on nearby atoms [2]. These approaches, such as the embedded divide-and-conquer scheme, enable simulations of increasingly large systems (up to 19,000 atoms demonstrated) [2].

Application Notes: Phase-Change Memory Materials

Experimental Background and Objectives

Phase-change random access memory (PRAM) utilizes the dramatic contrast in electrical resistance between amorphous and crystalline states of chalcogenide materials for data storage [8]. While Ge₂Sb₂Te₅ has served as the core material in commercial PRAM devices, its relatively low crystallization temperature (~150°C) makes it unsuitable for embedded memory applications requiring high-temperature stability, such as automotive electronics that must endure temperatures above 300°C [8].

This application note details a comprehensive AIMD study investigating Ge-rich Ge-Sb-Te alloys as potential high-temperature alternatives, focusing on the compositional range from Ge₂Sb₁Te₂ to Ge₇Sb₁Te₂ [8]. The research aimed to elucidate the atomic-scale structural features and bonding nature responsible for enhanced amorphous-phase stability in these materials.

Computational Methodology and Workflow

The investigation employed AIMD simulations based on density functional theory to generate structural models of various GST compositions using a melt-quench protocol [8]. The specific workflow encompassed:

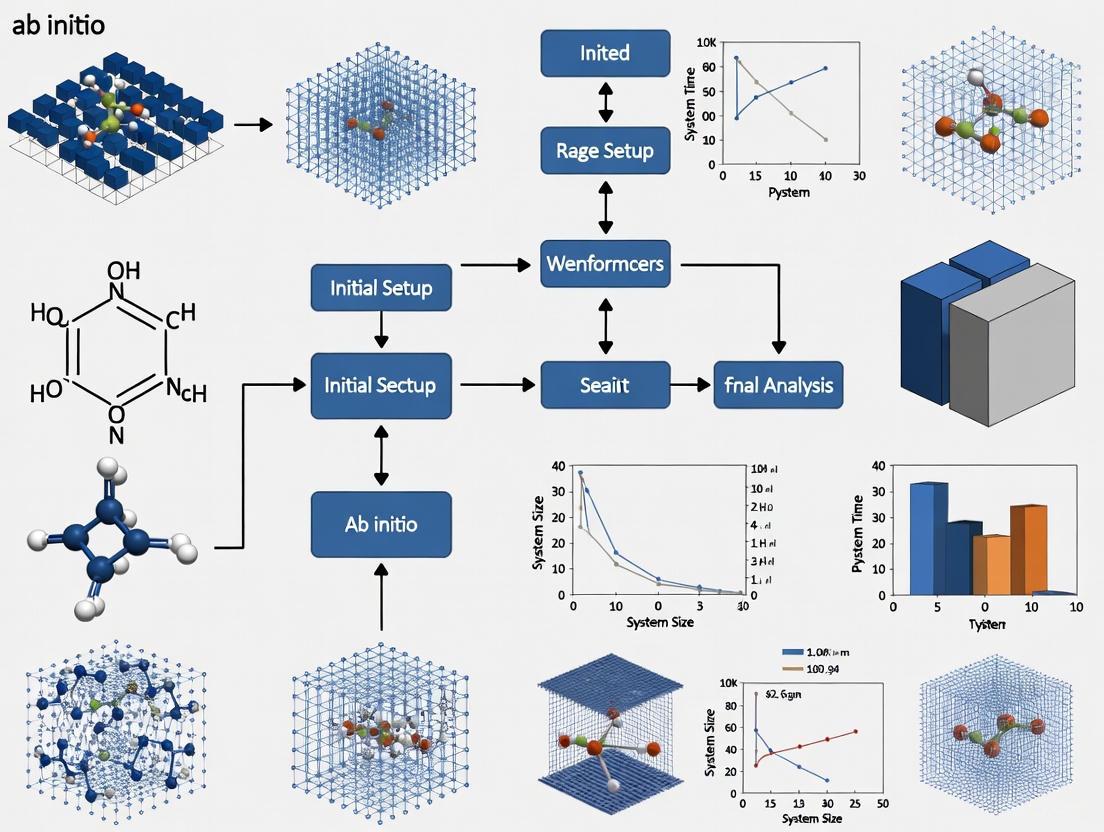

Figure 1: AIMD melt-quench protocol workflow for modeling amorphous materials [8].

Step 1: Model Generation

- Created cubic supercells containing 189-540 atoms representing 3×3×3 expansions of the cubic rocksalt crystal structure [8]

- Systematically varied Ge content while maintaining fixed Sb and Te atom counts [8]

- Adjusted simulation box sizes during quenching to minimize internal pressure [8]

Step 2: Melt-Quench Protocol

- Employed AIMD simulations to melt structures at high temperature (2000 K) [8]

- Quenched systems to 300 K at approximately 50 K/ps [8]

- Performed three independent runs for each composition to ensure statistical reliability [8]

Step 3: Structural and Electronic Analysis

- Calculated radial distribution functions to characterize atomic structure [8]

- Performed chemical bonding analysis using crystal orbital overlap population (COOP) method [8]

- Applied Smooth Overlap of Atomic Positions (SOAP) similarity analysis to compare local environments [8]

Computational Details:

- Software: Density functional theory code (specific package not identified) [8]

- Pseudopotentials: Plane-wave basis set with pseudopotential approximation [2]

- Exchange-Correlation: Semi-local PBE functional [8]

- Simulation Cell: Periodic boundary conditions [8]

- Temperature Control: Langevin dynamics or Nosé-Hoover thermostat [8]

The Researcher's Toolkit

Table 3: Essential Computational Materials for AIMD Simulations of Phase-Change Materials

| Component | Function/Role | Specific Examples |

|---|---|---|

| Pseudopotentials | Represent core electrons and reduce computational cost | Norm-conserving, ultrasoft, PAW datasets [2] |

| Basis Set | Expand electronic wavefunctions | Plane waves, numerical atomic orbitals, Gaussians [2] |

| Exchange-Correlation Functional | Approximate quantum interactions | PBE, LDA, hybrid functionals [8] |

| Molecular Dynamics Ensemble | Control simulation conditions | NVE, NVT, NPT ensembles [8] |

| Analysis Tools | Extract structural and electronic properties | RDF, COOP, SOAP similarity [8] |

Key Findings and Materials Design Principles

The AIMD simulations revealed that increasing Ge content in GST alloys significantly enhances the stability of the amorphous phase while systematically altering structural properties:

- Structural Homogeneity: All generated amorphous models, including Ge-rich compositions, maintained homogeneous phases without substantial de-mixing [8]

- Density Evolution: Theoretical number density increased progressively with Ge content: 0.0278 Å⁻³ (Ge₂Sb₂Te₅) → 0.0310 Å⁻³ (Ge₂Sb₁Te₂) → 0.0327 Å⁻³ (Ge₄Sb₁Te₂) → 0.0358 Å⁻³ (Ge₇Sb₁Te₂) [8]

- Bonding Analysis: COOP calculations showed no strong antibonding interactions at the Fermi level, indicating chemical stability of the amorphous models [8]

- Compositional Threshold: Identified a maximum Ge concentration threshold beyond which phase separation occurs, compromising device performance [8]

Based on these atomic-scale insights, the research established concrete materials design principles for embedded phase-change memories:

- Balance Ge content to achieve high crystallization temperature while maintaining reasonable switching speed [8]

- Avoid exceeding the compositional threshold for homogeneous amorphous phase formation [8]

- Utilize the identified "golden composition" region (marked in the ternary phase diagram) for optimal performance [8]

Figure 2: Composition-property relationships for Ge-rich phase-change materials [8].

Ab initio molecular dynamics integrated with density functional theory provides a powerful framework for investigating and designing advanced materials at the atomic scale. The case study of Ge-rich phase-change memory materials demonstrates how AIMD simulations can reveal fundamental structure-property relationships and establish practical design principles for technological applications. As computational methods continue to advance, with improved exchange-correlation functionals, linear-scaling algorithms, and enhanced performance on extreme-scale computing architectures, AIMD will play an increasingly vital role in materials discovery and optimization across diverse fields including energy storage, catalysis, and electronic devices.

The field of high-performance computing (HPC) is undergoing a transformative shift, moving from traditional CPU-based clusters to heterogeneous architectures that integrate GPU acceleration, a change that is particularly impactful for ab initio simulations research. This evolution, marked by the deployment of pre-exascale systems, enables scientists to tackle problems of unprecedented complexity in materials science and drug development. These advanced computational platforms provide the foundation for exploring biological and chemical systems with quantum mechanical accuracy, significantly accelerating the pace of discovery. This article details the current HPC landscape, provides actionable protocols for leveraging these systems, and showcases their application through a case study on simulating phase-change materials, offering a blueprint for researchers in computational chemistry and physics.

The Evolving HPC Hardware Landscape

The hardware underpinning modern HPC has diversified beyond homogeneous CPU clusters. Today's systems are characterized by a hybrid architecture that combines CPUs with GPUs, designed to handle specific workloads with optimal efficiency.

Central Processing Unit (CPU) clusters have been the traditional workhorses of HPC, excellent for handling tasks that require complex, serial processing and for managing the orchestration of large-scale simulations. In contrast, Graphics Processing Units (GPUs) are designed for massive parallelism, making them ideal for accelerating the computationally intensive, matrix-based mathematical operations that are fundamental to ab initio methods and molecular dynamics. The core distinction lies in their design philosophy: CPUs have a few complex cores optimized for single-thread performance, while GPUs contain thousands of simpler cores designed for parallel execution [9].

The emergence of pre-exascale systems, such as the pan-European supercomputer LEONARDO, represents the current frontier. LEONARDO is a exemplar of this modern architecture, featuring a partition with over 14,000 NVIDIA Ampere A100 GPUs alongside a robust CPU partition, all interconnected with high-speed fabrics to support both traditional HPC and emerging AI applications [10]. This convergence of AI and HPC is a key trend, with AI-optimized hardware being increasingly utilized for both AI training and traditional simulation workloads [11].

Table 1: Key Hardware Considerations for Scientific Simulations

| Hardware Component | Key Consideration | Relevance to Ab Initio Simulations |

|---|---|---|

| GPU (Graphics Processing Unit) | Parallel processing capability; Single (FP32) vs. Double (FP64) Precision | Crucial for accelerating quantum chemistry calculations (e.g., density functional theory). FP64 is often mandatory for accuracy [9]. |

| CPU (Central Processing Unit) | Single-thread performance; core count | Manages serial portions of code, input/output operations, and coordinates parallel tasks across GPUs. |

| Interconnect | Bandwidth and latency (e.g., InfiniBand, Ultra Ethernet) | Critical for performance in multi-node simulations, affecting how quickly data is exchanged between GPUs/CPUs [11]. |

| Memory (VRAM) | Capacity and bandwidth | Limits the maximum system size (number of atoms) that can be simulated on a single GPU node. |

A critical decision point for researchers is the precision requirement of their computational code. Many research codes can operate effectively in mixed precision, but methods like Density Functional Theory (DFT) in codes such as CP2K, Quantum ESPRESSO, and VASP often mandate true double precision (FP64) throughout [9]. Consumer-grade GPUs (e.g., GeForce RTX series) have intentionally limited FP64 throughput, making them a poor fit for such workloads. For FP64-dominated codes, data-center GPUs like the NVIDIA A100/H100 or AMD Instinct MI300X are necessary to achieve maximum performance [9] [12].

Performance Metrics and Comparison Frameworks

Evaluating the performance of HPC systems, particularly when comparing CPU and GPU architectures, requires metrics that provide fair and actionable insights. Traditional metrics like simple speedup ratios can be misleading as they are highly dependent on the specific workload size.

To address this, recent research proposes two peak-based performance metrics [13]:

- Peak Ratio Crossover (PRC): The problem size at which the performance of a GPU begins to surpass that of a CPU.

- Peak-to-Peak Ratio (PPR): The ratio of the best achievable performance on a GPU to the best achievable performance on a CPU for a given application.

These metrics help researchers make informed decisions about which hardware is best suited for their specific problem size and performance goals. For instance, a benchmark study on the Cloud Layers Unified by Binormals (CLUBB) model demonstrated how these metrics can guide execution strategy and prioritize optimization efforts [13].

Table 2: Performance Results from Real-World Applications

| Application / Case Study | Hardware Configuration | Performance Result | Key Implication |

|---|---|---|---|

| Aerospace CFD (Ansys Fluent) | 8x AMD Instinct MI300X GPUs | Simulated 5 sec of physical flow time in 3.7 hrs (single precision) [12]. | GPU acceleration compresses simulation time from weeks to hours, enabling more design iterations. |

| Aerospace CFD (Ansys Fluent) | 16x AMD Instinct MI300X GPUs | Simulation completed in under 4.4 hrs (double precision) [12]. | Confirms feasibility of high-fidelity, FP64-required simulations on modern GPU clusters in practical timeframes. |

| Phase-Change Materials (GST-ACE-24) | ARCHER2 CPU-based HPC system | Achieved >400x higher efficiency compared to previous model (GST-GAP-22) [14]. | Algorithmic and model improvements (ML potentials) can yield performance gains that rival or exceed hardware upgrades. |

Experimental Protocols for HPC Simulations

Protocol: Benchmarking CPU-GPU Performance

Objective: To determine the optimal hardware (CPU vs. GPU) for a specific scientific application and problem size.

- Hardware Selection: Identify representative CPU and GPU nodes available within your HPC ecosystem.

- Workload Definition: Create a set of input files that represent a range of typical problem sizes for your application (e.g., small, medium, large).

- Baseline Measurement: For each workload, run the application on the CPU node and record the wall-clock time to completion. Ensure the run utilizes the CPU fully (e.g., using all available cores).

- GPU Measurement: For each workload, run the same application on the GPU node, making sure to use a GPU-accelerated version of the code and to transfer all computationally intensive kernels to the GPU.

- Data Analysis: Calculate the speedup (CPU time / GPU time) for each workload size. Plot the speedup against the problem size to identify the Peak Ratio Crossover (PRC) point [13].

- Reporting: Document the exact software versions, compiler flags, input parameters, and wall-clock times to ensure reproducibility.

Protocol: Running a Molecular Dynamics Simulation with GPU Acceleration

Objective: To efficiently run a molecular dynamics simulation using a code like GROMACS on a GPU-equipped HPC node.

- Environment Setup: Begin by loading the necessary environment modules on the HPC cluster. This typically includes the compiler (e.g., GCC), MPI library (e.g., OpenMPI), CUDA toolkit, and the GROMACS installation compiled with GPU support.

- Job Configuration: Prepare a job submission script for the cluster's job scheduler (e.g., Slurm, PBS). Request access to a node with one or more GPUs.

- Execution Command: Use explicit flags to ensure all possible computation is offloaded to the GPU. A typical GROMACS command would be:

Where

-nb gpuoffloads short-range non-bonded forces,-pme gpuhandles the Particle Mesh Ewald calculation, and-update gpuand-bonded gpuoffload coordinate updates and bonded forces, respectively [9]. - Performance Monitoring: During the run, monitor performance metrics such as nanoseconds-per-day and the load distribution between CPU and GPU cores to identify potential bottlenecks.

- Reproducibility: Record a "run card" – a one-page text file containing the input dataset hash, all solver parameters (.mdp file for GROMACS), command line, environment variables, and software version details [9].

Case Study: Full-Cycle Device-Scale Simulations of Phase-Change Materials

This case study illustrates the convergence of advanced algorithms and HPC hardware to solve a problem previously considered intractable.

5.1 Background and Objective Phase-change materials (PCMs) like Ge–Sb–Te (GST) alloys are crucial for non-volatile memory and neuromorphic computing. Understanding their switching mechanisms (SET crystallisation and RESET amorphisation) requires atomistic simulations that cover the entire device programming cycle. The challenge was simultaneously reaching the necessary length scales (millions of atoms) and time scales (nanoseconds) for realistic device simulation, which was prohibitively expensive with previous methods like Density-Functional Theory (DFT) or even earlier machine-learned potentials [14].

5.2 Methodology and HPC Implementation The research team developed a ultra-fast machine-learned interatomic potential using the Atomic Cluster Expansion (ACE) framework, known as GST-ACE-24 [14].

- Training: The potential was trained on a comprehensive DFT dataset, followed by several iterations of "self-correction" to include configurations from melt-quenched and phase-transition processes, ensuring robustness and accuracy.

- Software and Workflow: The custom ACE potential was integrated with molecular dynamics software to simulate the current pulses that induce Joule heating and phase transitions in a realistic device geometry containing hundreds of thousands of atoms.

- HPC Execution: The simulations were performed on the ARCHER2 supercomputer. The computational efficiency of the ACE framework allowed for excellent strong scaling, meaning the simulation speed increased efficiently when using more CPU cores, up to 65,536 cores for a 1-million-atom model [14].

5.3 Key Findings and HPC Impact The GST-ACE-24 potential demonstrated a more than 400-fold increase in computational efficiency compared to its predecessor (GST-GAP-22) on the same CPU-based ARCHER2 system [14]. This dramatic improvement was not due to new hardware but to a more efficient algorithm. This efficiency enabled the first full-cycle simulation of a PCM device, including the time-consuming crystallisation process, which would have been infeasible in terms of time, cost, and carbon emissions with prior methods. This showcases how algorithmic advances, leveraged on pre-exascale HPC systems, can open new frontiers in computational materials science.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Hardware for HPC-Accelerated Ab Initio Research

| Item | Function / Description | Example Use Case |

|---|---|---|

| Machine-Learned Interatomic Potentials (MLIPs) | Fast, accurate force fields trained on DFT data; bridge the gap between quantum accuracy and classical MD scale. | Enabling large-scale, long-time-scale molecular dynamics simulations of materials, as in the PCM case study [14]. |

| GPU-Accelerated Simulation Codes | Scientific software (e.g., GROMACS, LAMMPS, Ansys Fluent) compiled to offload computations to GPUs. | Dramatically reducing time-to-solution for MD and CFD simulations compared to CPU-only execution [9] [12]. |

| Container Technology (e.g., Docker, Singularity) | Packages code, libraries, and dependencies into a single, reproducible, and portable image. | Ensuring simulation reproducibility and simplifying the deployment of complex software stacks on diverse HPC systems [9]. |

| Pre-Exascale Supercomputers | Large-scale HPC systems (e.g., LEONARDO) integrating many thousands of GPUs and CPUs with high-speed interconnects. | Providing the aggregate compute power and memory required for device-scale or system-scale ab initio quality simulations [10]. |

| Performance Profiling Tools | Software (e.g., NVIDIA Nsight, ARM MAP) to identify computational bottlenecks in code. | Guiding optimization efforts by pinpointing the specific functions or kernels that consume the most time [13]. |

Visualizations

Diagram 1: The evolution of HPC system architecture and applications over time.

Diagram 2: A simplified workflow of a typical GPU-accelerated scientific simulation.

In the field of high-performance computing for ab initio simulations, researchers face three interconnected fundamental challenges: achieving scalable performance across thousands of compute cores, managing communication overhead in distributed memory systems, and optimizing data movement across complex memory hierarchies. These challenges become particularly acute as scientific inquiries expand to larger molecular systems, more complex materials, and longer time scales requiring statistically significant sampling. The pursuit of predictive accuracy in applications ranging from drug discovery to materials design necessitates constant advancement in computational methods that address these bottlenecks directly. This document outlines specific computational challenges, presents quantitative performance data, and provides detailed protocols for optimizing simulations, framed within the context of modern computational research infrastructure.

Quantitative Analysis of Computational Performance

Table 1: Performance Comparison of Machine-Learning Potentials for Molecular Dynamics

| Potential Type | Computational Framework | Speedup Factor | System Size Demonstrated | Parallel Efficiency | Key Limitation |

|---|---|---|---|---|---|

| Gaussian Approximation Potential (GAP) [15] | DFT-based MD | 1x (Baseline) | ~500,000 atoms | Not Reported | ~150M CPU hours for 10 ns simulation |

| Atomic Cluster Expansion (ACE) [15] | DFT-based MD | >400x vs. GAP | >1,000,000 atoms | Good scaling to 65,536 cores | Performance drop for small systems on many nodes |

| ViSNet / AI2BMD [16] | AI-driven MD | "Orders of magnitude" faster than DFT | >10,000 atoms | Not Reported | Higher cost than classical MD, but much lower than DFT |

| Neural Network Quantum States [17] | Quantum Chemistry | 8.41x speedup with optimized framework | Large molecules on Fugaku supercomputer | 95.8% on 1,536 nodes | Exponentially growing cost with system size |

Table 2: Communication and Scaling Performance of ab Initio Software

| Software / Method | Communication Library | Parallelization Strategy | Scaling Performance | Key Optimization |

|---|---|---|---|---|

| VASP (Hybrid DFT) [18] | NVIDIA NCCL | Multi-node GPU | >80% efficiency on 32 nodes; Good to 256 nodes | GPU-initiated, stream-aware communication hiding |

| CPMD (USPP) [19] [20] | Hybrid MPI+OpenMP | Multi-node CPU | Demonstrated for 32-2048 water molecules | Overlapped computation/communication; Batched 3D FFTs |

| Neural Network Quantum States [17] | Custom | Multi-level Sampling Parallelism | 95.8% efficiency on 1,536 nodes | Cache-centric optimization for transformer ansatz |

Detailed Experimental Protocols

Protocol 1: Full-Device Phase-Change Memory Simulation using ACE

Objective: To simulate the full SET-RESET cycle of a Ge-Sb-Te (GST) based phase-change memory device, encompassing the computationally intensive crystallisation process.

Background: Simulating the crystallisation (SET operation) of GST has been prohibitively expensive, requiring hundreds of millions of CPU core hours with previous ML potentials [15].

Materials and Reagents:

- Software: LAMMPS or similar MD engine integrated with the ACE potential.

- Computational Resources: High-performance computing cluster with CPU-based nodes (e.g., ARCHER2). The protocol is optimized for ~65,536 CPU cores.

- Initial Structure: Atomic coordinate file for a cross-point or mushroom-type device geometry containing 500,000 to 1,000,000 atoms.

Procedure:

- Potential Initialization: Load the trained GST-ACE-24 potential, which has undergone iterative refinement (iter-0 to iter-5) on a dataset including melt-quenched disordered structures and phase-transition intermediates [15].

- System Equilibration:

- Thermalize the system to the target temperature (e.g., 300 K) using an NVT ensemble for 100 ps.

- Apply a Langevin thermostat or Nosé-Hoover chain to maintain temperature control.

- RESET Operation (Amorphisation):

- Apply a steep temperature ramp to 900-1000 K over 10 ps to simulate Joule heating-induced melting.

- Hold the system at the peak temperature for 5 ps.

- Quench the system rapidly to 300 K over 15 ps to vitrify the molten region. The total RESET simulation should be ~50 ps [15].

- SET Operation (Crystallisation):

- Apply a moderate, sub-melting temperature pulse (e.g., 600-700 K) for a significantly longer duration (10-50 ns) to induce nucleation and crystal growth.

- Monitor the potential energy and radial distribution function (RDF) to track the phase transition.

- Data Analysis:

- Use common neighbor analysis or bond-order parameters to distinguish between crystalline and amorphous atoms.

- Calculate the ionic conductivity contrast between the two states to confirm the switching event.

Protocol 2: Scaling VASP Hybrid-DFT Calculations with NCCL

Objective: To efficiently compute the electronic band structure of a doped HfO₂ (hafnia) system using hybrid density functional theory (HSE06) on a multi-node, GPU-accelerated supercomputer.

Background: Hybrid-DFT provides superior accuracy for band gaps but is computationally demanding. Efficient scaling is essential for practical system sizes [18].

Materials and Reagents:

- Software: Vienna Ab initio Simulation Package (VASP) compiled with NVIDIA HPC SDK and support for NCCL.

- Computational Resources: GPU-accelerated cluster (e.g., nodes with 8x A100/A800 GPUs) interconnected with high-bandwidth fabric (InfiniBand).

- Input File: A VASP input set (INCAR, POSCAR, KPOINTS, POTCAR) for a doped HfO₂ supercell (e.g., 256-atom Si-doped cell).

Procedure:

- Baseline Calculation:

- Run a single ionic step on a single node (8 GPUs) with NCCL enabled to establish a reference runtime.

- Use the

-DNCflag in theINCARfile to enable the use of NCCL for all communications.

- Strong Scaling Test:

- Run the same calculation on 4, 8, 16, 32, 64, and 128 nodes (32, 64, 128, 256, 512, 1024 GPUs).

- Use

mpirunorsrunto launch VASP across all allocated nodes. - For each run, record the total wall time and the time spent in the electronic minimization loop.

- Performance Analysis:

- Calculate the Speedup as (Reference Runtime on 1 node) / (Runtime on N nodes).

- Calculate the Parallel Efficiency as (Speedup on N nodes) / (Ideal Speedup on N nodes) * 100%.

- The ideal scaling target is >80% parallel efficiency on 32 nodes for a system of this size [18].

- Result Extraction: Upon completion, analyze the

OUTCARfile for the final total energy and the calculated band gap.

Protocol 3: Large-Scale Ab Initio MD of an Electrochemical Interface

Objective: To characterize the atomistic structure of the electric double layer (EDL) at a metal-water electrolyte interface under potential control.

Background: Reliable modeling requires statistical sampling of the liquid electrolyte, necessitating long simulation times (>>100 ps) for large systems (>500 atoms) to achieve converged properties [21].

Materials and Reagents:

- Software: Ab initio molecular dynamics package (e.g., CPMD, VASP) with capabilities for applied potential or fixed-charge simulations.

- Model System: A slab model of a Pt(111) electrode in contact with ~30 layers of liquid water, totaling ~1000 atoms.

- Methodology: Plane-wave Density Functional Theory (DFT) with a hybrid functional (e.g., PBE0) for improved accuracy.

Procedure:

- System Preparation:

- Construct the metal slab with a vacuum layer >15 Å.

- Insert pre-equilibrated water molecules using classical MD.

- Apply the desired electrode potential using an implicit solvent method or an explicit countercharge [21].

- Equilibration:

- Run >20 ps of AIMD in the NVT ensemble (T=300 K) to equilibrate the water structure, discarding this data from production analysis.

- Production Run:

- Extend the simulation for a minimum of 100-200 ps to ensure proper sampling of water orientations and ion distributions in the EDL.

- Write trajectory frames every 5-10 fs.

- Post-Processing and Analysis:

- Averaging: Calculate the time-averaged number density profiles for water oxygen and hydrogen atoms along the surface normal (z-axis).

- Orientation: Compute the distribution of water dipole moments as a function of distance from the electrode.

- Validation: Compare the simulated X-ray reflectivity or capacitance with experimental data, if available.

Visualization of Optimization Strategies and Relationships

The following diagram illustrates the logical relationships between the key computational challenges, the optimization strategies employed to address them, and the resulting performance outcomes.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Software and Library Solutions for High-Performance Ab Initio Simulation

| Tool Name | Type | Primary Function | Application Context |

|---|---|---|---|

| VASP [22] [18] | Electronic Structure Code | Performs DFT calculations and AIMD simulations for materials science. | Predicting material properties (band gaps, phase stability) and simulating solid-state and molecular systems. |

| CPMD [19] [20] | Ab Initio MD Code | Specialized in plane-wave/pseudopotential AIMD simulations. | Simulating condensed phase systems, including liquids and electrochemical interfaces. |

| ACE Framework [15] | Machine-Learning Potential | Provides ultra-fast, scalable force fields trained on DFT data. | Enabling device-scale MD simulations of phase-change materials and other complex systems. |

| GAP Framework [15] | Machine-Learning Potential | Creates highly accurate interatomic potentials with high data efficiency. | Initial model development and simulation of complex alloys and functional materials. |

| NVIDIA NCCL [18] | Communication Library | Optimizes multi-GPU and multi-node collective communications. | Scaling VASP and other CUDA-aware codes on GPU-based supercomputers with high parallel efficiency. |

| ViSNet / AI2BMD [16] | AI-driven MD System | Provides a machine-learned force field for proteins with ab initio accuracy. | Performing high-accuracy biomolecular dynamics for drug discovery and protein interaction studies. |

| Neural Network Quantum States [17] [23] | Quantum Chemistry Solver | Solves the electronic Schrödinger equation for quantum many-body problems. | High-accuracy ab initio calculations of strongly correlated molecular systems. |

Application Notes: The State of Quantum Computing in Scientific Simulation

The integration of quantum computing with high-performance computing (HPC) for ab initio simulations represents a paradigm shift, moving from theoretical promise to tangible experimental utility in 2025. The field is characterized by rapid hardware scaling, intensified investment, and the demonstration of early quantum advantages for specific scientific problems, particularly in molecular simulation.

Market and Hardware Landscape

The quantitative landscape of the quantum computing sector reflects its transition into a commercially relevant technology. The data below summarizes key market and hardware metrics.

Table 1: Quantum Computing Market and Investment Landscape (2025)

| Metric | Value / Status | Source/Projection |

|---|---|---|

| Global Market Size (2025) | $1.8 - $3.5 billion | Industry Report [24] |

| Projected Market (2029) | $5.3 billion (32.7% CAGR) | Industry Projection [24] |

| Venture Capital (2024) | ~$2.0 billion | McKinsey Analysis [25] |

| Government Investment (2024) | $1.8 billion | McKinsey Analysis [25] |

| Quantum Computing Revenue (2024) | $650 - $750 million | McKinsey Analysis [25] |

Table 2: Recent Quantum Hardware Breakthroughs and Roadmaps

| Company/Institution | Breakthrough / System | Key Specification |

|---|---|---|

| Willow Chip | 105 superconducting qubits; demonstrated calculation 13,000x faster than supercomputer [24] | |

| IBM | Quantum Starling (Roadmap) | Target: 200 logical qubits by 2029 [24] |

| Fujitsu & RIKEN | Superconducting System | 256-qubit system; 1,000-qubit machine planned for 2026 [24] |

| Microsoft & Atom Computing | Topological/Majorana Qubits | Demonstrated 28 logical qubits with 1,000-fold error reduction [24] |

| Pasqal | Neutral-Atom Quantum Computer (Orion) | Used for first quantum algorithm for protein hydration analysis [26] |

Application in Drug Discovery and Materials Science

Quantum computing is demonstrating practical value in simulating molecular systems, a core task of ab initio simulation research.

- Protein-Ligand Binding and Hydration: A collaboration between Pasqal and Qubit Pharmaceuticals has established a hybrid quantum-classical approach to analyze water molecule distribution within protein pockets. This is a critical step in predicting drug binding affinity and accuracy. Their quantum algorithm, run on Pasqal's Orion neutral-atom computer, evaluates numerous molecular configurations far more efficiently than classical systems, marking a significant step in computational drug discovery [26].

- Quantum-Enhanced CADD: Computer-Assisted Drug Discovery (CADD) tools like molecular dynamics (MD) and density functional theory (DFT) are limited on classical HPC for medium-to-large molecules. Quantum computing is poised to enhance CADD by enabling more accurate prediction of molecular properties, protein folding, and protein-ligand interactions. This could significantly shorten screening times and reduce dead-end research cycles [27].

- Demonstrated Quantum Advantage: In March 2025, IonQ and Ansys reported running a medical device simulation on a 36-qubit computer that outperformed classical HPC by 12%, providing one of the first documented cases of practical quantum advantage in a real-world application [24].

Experimental Protocols

This section provides detailed methodologies for implementing novel quantum computing paradigms in scientific research workflows.

Protocol 1: Hybrid Quantum-Classical Approach for Protein Hydration Site Analysis

This protocol details the methodology pioneered by Pasqal and Qubit Pharmaceuticals for determining the location and energetics of water molecules in protein binding pockets [26].

Objective: To accurately and efficiently map the distribution and free energy of water molecules within protein cavities using a hybrid quantum-classical computational workflow.

Materials and Reagents:

- Protein Structure File: A experimentally determined (e.g., X-ray crystallography) or computationally predicted (e.g., AlphaFold) 3D structure of the target protein in PDB format.

- Classical HPC Resources: For running molecular dynamics (MD) or classical Monte Carlo simulations.

- Quantum Computing Access: Quantum-as-a-Service (QaaS) platform with access to a neutral-atom quantum processor (e.g., Pasqal's Orion) or an equivalent gate-based system.

- Software Stack: Classical molecular simulation software (e.g., GROMACS, AMBER) and quantum algorithm development kits (e.g., Qiskit, Cirq, Pulser).

Procedure:

- Classical Pre-Processing (Water Density Generation):

- Solvate the protein structure in a virtual water box using classical MD simulation software.

- Run an MD simulation to generate an ensemble of water molecule configurations around the protein.

- From the simulation trajectories, calculate the classical water density map within the protein's binding pocket of interest.

Quantum Algorithm Execution (Water Placement):

- Formulate the water placement problem as a quantum optimization problem, where the goal is to find the lowest-energy configuration of water molecules within the pocket.

- Encode the classical water density data and protein atomic positions into the quantum processor. This involves mapping possible water sites to qubits and their interactions to quantum gates or entanglement.

- Implement a quantum algorithm, such as the Quantum Approximate Optimization Algorithm (QAOA) or a variational quantum eigensolver (VQE), on the quantum processor to find the optimal water molecule positions, particularly in challenging, buried regions of the pocket.

- Execute the quantum circuit and perform multiple measurements to obtain a statistical distribution of results.

Classical Post-Processing and Validation:

- Decode the quantum processor's output to determine the predicted coordinates and orientations of water molecules.

- Calculate the binding free energy of the predicted water molecules.

- Validate the quantum-derived hydration sites by comparing them to experimental data (e.g., from high-resolution X-ray structures) or against results from highly detailed, computationally expensive classical methods (e.g., 3D-RISM).

Figure 1: Hybrid Quantum-Classical Workflow for Protein Hydration Analysis.

Protocol 2: Topologically Protected Grover's Oracle for the Partition Problem

This protocol outlines a novel hardware-specific approach developed at Los Alamos National Laboratory that rethinks quantum algorithm implementation to reduce errors and complexity [28].

Objective: To implement Grover's algorithm for an unstructured search problem (e.g., the partition problem) using a hybrid hardware design that replaces complex quantum gate sequences with a natural physical interaction, thereby achieving topological protection against control errors.

Materials and Reagents:

- Specialized Quantum Processor: A quantum system designed for this protocol, where one "spin" qubit can naturally interact with a set of computational qubits without requiring direct qubit-qubit gates.

- Control System: Hardware for applying simple, time-dependent external magnetic field pulses to rotate the single spin qubit.

Procedure:

- Problem Initialization:

- Initialize the register of computational qubits to a superposition of all possible states, as per the standard Grover's algorithm initialization.

- Initialize the single, dedicated "oracle spin" qubit.

Topologically Protected Oracle Implementation:

- Instead of constructing the oracle—the black-box function that identifies the solution—using a complex circuit of two-qubit gates, allow the computational qubits to naturally interact with the oracle spin.

- Apply a sequence of simple, time-dependent external field pulses that rotate the oracle spin. The entire oracle operation is achieved through this natural interaction and the pulse sequence, requiring no direct interactions between the computational qubits.

- This method is "topologically protected," meaning its success is robust against small imprecisions in the control fields and other physical parameters, even in the absence of full quantum error correction.

Grover Iteration and Measurement:

- Complete the Grover iteration by applying the standard diffusion operator (inversion about the mean) to the computational qubits.

- Repeat the iteration the optimal number of times to amplify the solution state(s).

- Measure the computational qubits to read out the solution to the search problem.

Figure 2: Topologically Protected Grover's Algorithm Workflow.

The Scientist's Toolkit: Essential Reagents for QuantumAb InitioResearch

This table details key resources required to conduct quantum computing research in the field of ab initio simulations.

Table 3: Key Research Reagents and Platforms

| Item / Resource | Type | Function / Application | Example Providers / Instances |

|---|---|---|---|

| Quantum-as-a-Service (QaaS) | Platform | Cloud-based access to quantum processors and simulators; democratizes experimental use. | IBM Quantum, Microsoft Azure Quantum, Amazon Braket, SpinQ [24] |

| Neutral-Atom Quantum Computer | Hardware | Uses laser-cooled atoms as qubits; suitable for quantum simulation and optimization problems. | Pasqal's Orion, Atom Computing [24] [26] |

| Superconducting Quantum Processor | Hardware | Uses superconducting circuits as qubits; a leading platform for gate-based quantum computing. | Google's Willow, IBM's Kookaburra, Fujitsu [24] |

| Ab Initio Molecular Dynamics Software | Software | Performs first-principles MD simulations on classical HPC; used for pre/post-processing. | CP2K, VASP [4] [6] |

| Replacement-Type Quantum Gates | Novel Hardware Component | A new class of bias-preserving gates that reduce quantum error correction overhead. | ParityQC [29] |

| Post-Quantum Cryptography (PQC) | Security | Quantum-safe encryption algorithms to secure data against future quantum attacks. | NIST Standards (ML-KEM, ML-DSA, SLH-DSA) [24] |

| Federated Learning with FHE and QC | Software Framework | A privacy-preserving ML paradigm that integrates quantum layers with homomorphic encryption. | Emerging research framework [30] |

HPC-Accelerated Methods and Real-World Applications in Biomedicine

Molecular dynamics (MD) simulation serves as a "computational microscope," providing atomic-level insights into the dynamic behavior of molecular systems, from small organic compounds to massive biomolecular complexes [31]. The choice of software platform is critical, as it directly influences the accuracy, scale, and type of scientific questions researchers can address. Within the realm of high-performance computing (HPC) for ab initio simulations, the software ecosystem has diversified into specialized tools catering to different methodological approaches. This application note details four leading platforms—GROMACS, AMBER, CP2K, and DeePMD-kit—contrasting their capabilities, optimal use cases, and implementation protocols to guide researchers in selecting and effectively employing the right tool for their specific research objectives in computational chemistry, structural biology, and drug development.

The MD software landscape encompasses highly optimized classical simulators, advanced ab initio packages, and innovative machine-learning driven platforms.

AMBER (Assisted Model Building with Energy Refinement) is a highly respected suite renowned for its precision in simulating biomolecular systems, with a strong focus on the development of robust force fields for proteins, nucleic acids, and carbohydrates [32] [33]. Its comprehensive tools for parameterization, free energy calculations, and hybrid quantum mechanics/molecular mechanics (QM/MM) simulations make it indispensable for researchers demanding high accuracy [32].

GROMACS (GROningen MAchine for Chemical Simulations) is a powerful and versatile molecular dynamics engine celebrated for its exceptional speed and efficiency in parallel computations [32] [34]. It is optimized for both CPUs and GPUs, making it one of the fastest MD programs available, and is an excellent choice for large-scale simulations and high-throughput studies [32].

CP2K is a comprehensive software package that performs atomistic simulations using ab initio electronic structure methods like Density-Functional Theory (DFT), Hartree-Fock (HF), and second-order Møller-Plesset perturbation theory (MP2) [35] [36]. It is especially aimed at massively parallel and linear scaling electronic structure methods and state-of-the-art ab initio molecular dynamics (AIMD) simulations, offering capabilities that classical force fields cannot provide, such as modeling chemical reactions and electronic properties [36].

DeePMD-kit represents a paradigm shift, employing deep learning to construct potential energy models trained on first-principles data [37] [38]. It aims to resolve the accuracy-versus-efficiency dilemma by providing ab initio accuracy at a computational cost that is several orders of magnitude lower than conventional ab initio methods, enabling simulations of large biomolecules with quantum-chemical fidelity [31] [38].

Table 1: Quantitative Comparison of Key MD Simulation Platforms

| Platform | Primary Methodology | Computational Scaling | Key Strength | Typical System Size | Accuracy Level |

|---|---|---|---|---|---|

| GROMACS | Classical MD | Linear (Highly optimized) | Speed & Scalability | >100,000 atoms | Force field accuracy |

| AMBER | Classical MD | Linear (Biomolecule-optimized) | Biomolecular force fields | ~10,000-100,000 atoms | High for proteins/NA |

| CP2K | Ab Initio MD (DFT, MP2) | O(N³) for DFT | Electronic structure | ~100-1,000 atoms | Chemical accuracy |

| DeePMD-kit | Machine Learning Potential | Near-linear | Accuracy & Efficiency | ~1,000-100,000 atoms | Ab initio accuracy |

Table 2: Specialized Capabilities and Force Field Support

| Platform | QM/MM Support | Free Energy Methods | Supported Force Fields | ML Integration |

|---|---|---|---|---|

| GROMACS | Limited | Thermodynamic integration | AMBER, CHARMM, OPLS, GROMOS | Traditional ML potentials |

| AMBER | Excellent (Native) | MM-PBSA, TI, FEP | AMBER (ff14SB, GAFF) | DeePMD-kit, QM/MM-ΔMLP |

| CP2K | Native (QM/MM) | Metadynamics | AMBER, CHARMM (in MM region) | Internal ML workflows |

| DeePMD-kit | Via interfaces (e.g., AMBER) | Via MD engine | Trained from ab initio data | Native (Deep Potential models) |

Methodologies and Experimental Protocols

The following diagram illustrates the high-level workflow common to molecular dynamics studies, highlighting the parallel pathways for different simulation methodologies.

Protocol 1: Classical MD for Protein-Ligand Binding (AMBER/GROMACS)

Objective: Characterize binding dynamics and affinity between a protein and small molecule ligand using classical force fields.

Required Research Reagents:

Table 3: Essential Components for Classical MD Simulations

| Component | Function | Example/Format |

|---|---|---|

| Protein Structure | Simulation template | PDB ID or experimental structure |

| Ligand Parameterization | Define non-standard residues | antechamber (AMBER) or CGenFF (GROMACS) |

| Force Field | Potential energy function | AMBER ff19SB or CHARMM36m |

| Solvation Model | Aqueous environment | TIP3P water box, 10-12 Å padding |

| Neutralizing Ions | Physiological ionic concentration | Na⁺, Cl⁻ ions (~150 mM) |

Step-by-Step Procedure:

System Preparation:

- Obtain initial coordinates from experimental structures (e.g., PDB) or homology modeling.

- For AMBER: Use the

tLEaPmodule to load protein, standard residues, and force fields (e.g., ff19SB). For non-standard ligands, useantechamberto generate GAFF parameters. Solvate the system in a TIP3P water box with 10-12 Å padding and add neutralizing ions [33]. - For GROMACS: Use

pdb2gmxto process the protein and apply a force field. For ligands, useacpypeor similar tools to generate parameters. Solvate usinggmx solvateand add ions withgmx genion.

Energy Minimization:

- Perform steepest descent followed by conjugate gradient minimization to remove bad contacts and high-energy configurations.

- Typical protocol: 5,000 steps of solvent minimization with protein heavy atoms restrained, followed by 10,000 steps of full-system minimization.

System Equilibration:

- NVT Ensemble: Gradually heat the system from 0 K to 300 K over 100 ps using a Langevin thermostat or velocity rescaling, with position restraints on protein and ligand heavy atoms.

- NPT Ensemble: Equilibrate the system density for 100-500 ps at 1 bar using a Parrinello-Rahman or Berendsen barostat, maintaining position restraints.

Production MD:

- Run unrestrained simulation for 100 ns to 1 µs (depending on system size and research question) with a 2-fs time step. Use LINCS (GROMACS) or SHAKE (AMBER) constraints on hydrogen-heavy atom bonds.

- For binding affinity calculations, use the AMBER

MMPBSA.pyscript or GROMACS withg_mmpbsato compute binding free energies from trajectory snapshots [33].

Protocol 2: Ab Initio Biomolecular Dynamics with AI2BMD (DeePMD-kit)

Objective: Simulate protein dynamics with ab initio accuracy using a machine learning force field.

Required Research Reagents:

Table 4: Essential Components for AI-Driven MD Simulations

| Component | Function | Example/Format |

|---|---|---|

| Reference Data | Training ML potentials | DFT-level energies/forces for fragments |

| Fragmentation Scheme | Divide-and-conquer approach | 21 standard protein dipeptide units |

| ML Potential | Energy/force predictor | ViSNet model (in AI2BMD) |

| Polarizable Solvent | Explicit solvent model | AMOEBA force field |

Step-by-Step Procedure:

Data Generation and Preparation:

- Fragment the target protein into standardized dipeptide units. For generalizable accuracy, AI2BMD uses a universal set of 21 protein units [31].

- Generate comprehensive training data by scanning main-chain dihedrals of all protein units and running AIMD simulations at the DFT level (e.g., M06-2X/6-31g*) to obtain energies and forces [31].

- Use

dpdatato convert the ab initio data (from VASP, CP2K, ABACUS, etc.) into DeePMD-kit's compressed format (training_data/,validation_data/) [38].

Model Training:

- Prepare an

input.jsonfile specifying the neural network architecture (e.g., descriptor, fitting network), training parameters (learning rate, loss function), and training/validation data paths [38]. - Run

dp train input.jsonto train the Deep Potential model. Monitor the loss and validation error to ensure proper convergence.

- Prepare an

Model Freezing and Compression:

- Freeze the trained model into a standardized format for efficient inference:

dp freeze -o model.pb. - (Optional) Compress the model to accelerate inference 4-15 times using

dp compress -i model.pb -o model_compressed.pb[37].

- Freeze the trained model into a standardized format for efficient inference:

Molecular Dynamics Simulation:

- Use the DeePMD-kit interface with LAMMPS, GROMACS, or AMBER to perform production MD. In LAMMPS, this involves using the

pair_style deepmdcommand and providing the path to the frozen model [38]. - Run the simulation with an explicit solvent model (e.g., AMOEBA) [31]. The ML potential will provide energies and forces with ab initio accuracy at a fraction of the computational cost.

- Use the DeePMD-kit interface with LAMMPS, GROMACS, or AMBER to perform production MD. In LAMMPS, this involves using the

Performance Benchmarks and Hardware Considerations

The computational performance and hardware requirements vary significantly across the different platforms, directly impacting research feasibility and cost.

Table 5: Hardware Recommendations and Performance Characteristics

| Platform | Recommended CPU | Recommended GPU | Parallelization Strategy | Typical Performance |

|---|---|---|---|---|

| GROMACS | High clock speed, 32-64 cores | RTX 4090, RTX 6000 Ada | Excellent multi-core CPU & GPU | ~100 ns/day for 100k atoms |

| AMBER | Mid-range, 2 cores/GPU | RTX 4090, RTX 6000 Ada | Primarily GPU-accelerated | ~50-100 ns/day on single GPU |

| CP2K | High core count, fast memory | GPU support for specific kernels | MPI for DFT, hybrid for MM | Minutes/step for 500 atoms |

| DeePMD-kit | Standard HPC node | High-end GPU for training/inference | MPI, GPU, linear scaling | Near-DFT accuracy, 10⁶ speedup |

The following diagram illustrates the relative positioning of each platform in the critical trade-off between computational accuracy and efficiency for biomolecular simulations.

Quantitative benchmarks demonstrate the transformative efficiency of machine learning approaches. AI2BMD, built upon DeePMD-kit principles, can perform energy calculations for a protein like Trp-cage (281 atoms) in 0.072 seconds per step—compared to 21 minutes required for DFT, an improvement of several orders of magnitude [31]. For larger systems like aminopeptidase N (13,728 atoms), DFT calculations become infeasible (estimated at >254 days), while AI2BMD requires only 2.61 seconds per step [31].

Integrated Research Applications and Future Directions

Application Note: Multi-Scale Drug Discovery Pipeline

An integrated pipeline leveraging the strengths of multiple platforms accelerates structure-based drug discovery:

Rapid Screening with GROMACS: Utilize GROMACS for high-throughput molecular docking and scoring of compound libraries against a protein target, leveraging its exceptional simulation speed for initial screening [32].

Binding Affinity Refinement with AMBER: Employ AMBER's advanced free energy perturbation (FEP) and MM-PBSA capabilities on top-ranked hits to obtain accurate binding free energy estimates, capitalizing on its highly accurate biomolecular force fields [32] [33].

Reaction Mechanism Studies with CP2K: For covalent inhibitors or enzymatic reactions, use CP2K to model the electronic structure changes and reaction pathways at the DFT or QM/MM level, providing insights into chemical mechanisms [36].

High-Fidelity Dynamics with DeePMD-kit: For particularly challenging systems where classical force fields may be inadequate, perform final validation simulations using DeePMD-kit with a potential trained on ab initio data of the specific binding pocket, achieving near-DFT accuracy at MD speeds [31].

Emerging Frontiers: Interoperability and Machine Learning

The future of MD software ecosystems lies in enhanced interoperability and the pervasive integration of machine learning:

DeePMD-GNN Plugin: This extension of DeePMD-kit enables seamless integration of popular graph neural network potentials (e.g., NequIP, MACE) within the DeePMD-kit ecosystem, facilitating consistent benchmarking and application [39]. It also supports the development of range-corrected ΔMLP models for QM/MM applications within AMBER, correcting inexpensive semiempirical QM methods to reproduce target ab initio accuracy [39].

Automated Parameterization and Active Learning: Tools like

dpdatastreamline the conversion between different MD data formats, while active learning platforms (e.g., DP-GEN) automate the process of generating robust ML potentials by intelligently sampling new configurations for ab initio labeling [38] [39].

These advancements are democratizing access to high-accuracy simulations, enabling drug development professionals to routinely incorporate ab initio quality insights into their research workflows, ultimately accelerating the discovery of novel therapeutics.

Ab initio molecular dynamics (AIMD) serves as a cornerstone computational method in materials science, chemistry, and drug development, enabling the study of atomic-scale dynamics with quantum mechanical accuracy. However, its prohibitive computational cost has historically restricted accessible timescales to the picosecond range, making it challenging to study complex phenomena such as chemical reactions, phase transitions, and protein folding that require nanosecond-scale simulations or longer. The emergence of machine learning interatomic potentials (MLIPs) has revolutionized this landscape by bridging the gap between the high accuracy of quantum mechanics and the computational efficiency of classical force fields. These potentials leverage machine learning algorithms to construct accurate representations of potential energy surfaces from AIMD data, enabling nanosecond-scale simulations with ab initio fidelity [40]. This paradigm shift is particularly transformative for fields like drug development, where understanding molecular interactions at biologically relevant timescales is crucial for rational drug design.

The integration of MLIPs with high-performance computing (HPC) resources, especially GPU acceleration, has been instrumental in achieving these advances. By combining innovative ML architectures with optimized simulation packages, researchers can now access previously unreachable spatiotemporal scales while maintaining the precision required for predictive simulations. This Application Note details the protocols, benchmarks, and implementation strategies that empower researchers to leverage MLIPs for nanosecond-scale AIMD simulations, with specific attention to performance optimization and validation within HPC environments.

Key Advances in Machine Learning Interatomic Potentials

From Specific to Universal Potentials

The development of MLIPs has evolved from system-specific potentials to universal models (uMLIPs) capable of handling diverse chemistries and crystal structures. Early MLIPs were typically trained for specific chemical systems with limited transferability, but recent advances have produced foundational models like M3GNet, CHGNet, and MACE-MP-0 that demonstrate remarkable accuracy across broad domains of materials science [41]. These uMLIPs are trained on extensive datasets containing numerous elements and crystal structures, enabling their application to novel systems without retraining. Benchmark studies reveal that these universal models can predict harmonic phonon properties—which depend on the curvature of the potential energy surface—with accuracy comparable to the variability between different density functional theory approximations [41].

Architectural Innovations

Several key architectural innovations have driven improvements in MLIP accuracy and efficiency:

- Message-Passing Neural Networks: Frameworks that update atomic representations by iteratively passing information between neighboring atoms, effectively capturing many-body interactions [41].

- Equivariant Models: Architectures that respect the physical symmetries of atomic systems (rotation, translation, and permutation invariance), leading to better data efficiency and accuracy [42].

- Higher-Order Representations: Models incorporating three-body and higher-order interactions that more accurately describe complex bonding environments [41].

These architectural advances have substantially improved the data efficiency of MLIPs, reducing the amount of expensive ab initio reference data required for training while improving generalization to unseen configurations.

Performance Benchmarks and Hardware Considerations

MLIP Model Performance Comparison

Table 1: Performance comparison of universal machine learning interatomic potentials for phonon property prediction.

| Model | Energy MAE (eV/atom) | Forces MAE (eV/Å) | Structure Relaxation Failure Rate (%) | Phonon Accuracy |

|---|---|---|---|---|

| M3GNet | 0.035 | - | 0.22 | Medium |

| CHGNet | 0.086 | - | 0.09 | Medium |

| MACE-MP-0 | - | - | 0.21 | High |

| SevenNet-0 | - | - | 0.22 | Medium |

| MatterSim-v1 | - | - | 0.10 | High |

| ORB | - | - | 0.47 | Medium-High |

| eqV2-M | - | - | 0.85 | High |

Hardware Performance for Molecular Dynamics Simulations

Table 2: GPU performance benchmarks for molecular dynamics simulations (throughput in ns/day).

| GPU Model | ~44K Atoms (OpenMM) | ~24K Atoms (AMBER) | ~1M Atoms (AMBER) | Relative Cost Efficiency |

|---|---|---|---|---|

| NVIDIA H200 | 555 | - | 114.16 | 1.13x |

| NVIDIA L40S | 536 | - | - | 1.60x |

| NVIDIA RTX 5090 | - | 1632.97 | 109.75 | Best value |

| NVIDIA H100 PCIe | - | 1500.37 | 74.50 | Medium |

| NVIDIA A100 | 250 | - | - | 1.25x |

| NVIDIA V100 | 237 | - | - | 0.77x |

| NVIDIA T4 | 103 | - | - | Baseline |

The benchmarking data reveals several critical considerations for HPC resource allocation. First, the L40S GPU demonstrates exceptional cost-efficiency for traditional MD workloads, offering nearly H200-level performance at a significantly reduced cost [43]. Second, the RTX 5090 provides the best performance for its cost, particularly for single-GPU workstations, though it lacks multi-GPU scalability [44]. For large-scale simulations exceeding one million atoms, the B200 SXM and H200 GPUs deliver the highest absolute performance, making them suitable for resource-intensive production runs where time-to-solution is critical [44].

A crucial technical consideration is I/O optimization. Studies show that frequent trajectory saving (e.g., every 10 steps) can reduce GPU utilization by up to 4× due to data transfer overhead between GPU and CPU memory [43]. Optimizing output intervals to every 1,000-10,000 steps maintains high GPU utilization and significantly improves simulation throughput, especially for shorter simulations where I/O represents a larger fraction of total runtime.

Application Notes and Protocols

Protocol 1: MLIP-Accelerated Electrochemical Interface Simulation

The ElectroFace dataset provides a exemplary framework for implementing MLIP-accelerated AIMD for complex interfaces [45]. The following protocol details the workflow for simulating solid-liquid electrochemical interfaces:

Step 1: System Preparation

- Construct a slab-vacuum model by cleaving the bulk material along the desired facet (e.g., Pt(111), SnO2(110))

- Ensure slab symmetry along the surface normal to avoid spurious dipole interactions under periodic boundary conditions

- Maintain stoichiometry to prevent introduction of excess charge carriers

- Determine slab thickness through convergence tests of band alignment and water adsorption energies

Step 2: Solvation and Equilibration

- Create an orthorhombic simulation box matching the slab's lateral dimensions with approximately 25 Å height in the z-dimension

- Fill the box with water molecules using PACKMOL to achieve a density of 1 g/cm³

- Equilibrate the water box using classical MD with the SPC/E force field in the NVT ensemble

- Merge the slab and water box, saturating under-coordinated surface atoms with water molecules when possible

Step 3: AIMD Production and Active Learning

- Perform a 20-30 ps AIMD simulation using CP2K/QUICKSTEP with PBE functional and D3 dispersion correction

- Use a Gaussian-type DZVP basis set with 400-600 Ry plane-wave cutoff

- Maintain temperature at 330 K using a Nosé-Hoover thermostat (elevated temperature prevents PBE water glassy behavior)

- Extract 50-100 evenly distributed structures from the AIMD trajectory as initial training data

Step 4: MLIP Training via Active Learning

- Implement concurrent learning using DP-GEN or ai2-kit packages

- Train multiple MLIPs (typically four) with different initializations on the current dataset

- Use one MLIP for exploration through molecular dynamics sampling

- Screen sampled structures based on maximum force disagreement among MLIPs

- Label 50 structures from the "decent" category (moderate disagreement) with AIMD calculations

- Add newly labeled structures to the training set and iterate until >99% of sampled structures fall into the "good" category over consecutive iterations

Step 5: MLMD Production Simulation

- Execute nanosecond-scale MLMD simulations using LAMMPS with the trained DeePMD-kit potential

- Analyze interfacial properties using specialized toolkits (e.g., ECToolkits for water density profiles, ai2-kit for proton transfer pathways)

Protocol 2: Dynamic Training for Enhanced MLIP Accuracy

Conventional MLIP training minimizes errors on individual configurations but may accumulate errors during extended MD simulations. Dynamic training (DT) addresses this limitation by incorporating temporal sequence information:

Step 1: Data Preprocessing from AIMD Trajectories

- Extract unit cell parameters, atomic positions, and elemental types for each AIMD structure

- Form atomic graphs using radius-based neighbor criteria with a suitable cutoff

- Store reference energies, forces, positions, and velocities for each configuration

- For each data point, include subsequent S_max-1 configurations from the AIMD trajectory

Step 2: Model Architecture Selection

- Implement an Equivariant Graph Neural Network (EGNN) to respect physical symmetries

- Use one-hot vectors for atomic element representation as node features

- Encode interatomic distances as edge features

- Set global learning targets as DFT-computed energies and forces

Step 3: Progressive Dynamic Training

- Phase 1 (S=1): Train on individual structures using standard energy and force loss minimization

- Phase 2 (S>1): Incrementally increase subsequence length during training

- For each training iteration with subsequence length S:

- Predict energies and forces for the initial structure

- Propagate dynamics using the velocity Verlet algorithm

- Generate subsequent structures using predicted forces

- Compute loss against reference AIMD data for the entire subsequence

- Employ neighbor lists from reference AIMD calculations to maintain differentiability

Step 4: Validation with Extended Sequences

- Use longer subsequences for validation (typically 10× training sequence length)

- Monitor error propagation across time steps

- Evaluate stability of long simulations beyond training data duration

This approach demonstrates superior accuracy for challenging systems such as H₂ interaction with Pd₆ clusters on graphene vacancies, maintaining stability over extended simulation timescales [42].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential software tools and resources for MLIP-driven molecular dynamics.

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| CP2K/QUICKSTEP | Software | AIMD production with mixed Gaussian/plane-wave basis | Generating reference data for MLIP training [45] |

| DeePMD-kit | Software | Training and running deep neural network potentials | MLIP implementation with high accuracy [45] |

| LAMMPS | Software | Large-scale MD simulations with MLIP support | Production MLMD simulations [46] |

| DP-GEN/ai2-kit | Software | Active learning workflow automation | Efficient and robust MLIP training [45] |

| ElectroFace | Dataset | AI²MD trajectories for electrochemical interfaces | Benchmarking and training for interface systems [45] |

| ML-IAP-Kokkos | Interface | PyTorch-LAMMPS integration for MLIPs | Deployment of custom ML models in MD [46] |

| MACE-MP-0 | ML Model | Universal MLIP with atomic cluster expansion | High-accuracy materials simulations [41] |

| CHGNet | ML Model | Universal MLIP with magnetic awareness | Materials simulations with electron density [41] |

Implementation Guide: ML-IAP-Kokkos Interface

The ML-IAP-Kokkos interface enables seamless integration of PyTorch-based MLIPs with LAMMPS for scalable, GPU-accelerated simulations [46]. Implementation requires the following steps:

Environment Configuration

- Install LAMMPS with Kokkos, MPI, ML-IAP, and Python support

- Ensure Python environment with PyTorch and necessary dependencies

- Prepare trained PyTorch MLIP model (optionally with cuEquivariance support)

Model Implementation

- Implement the

MLIAPUnifiedabstract class from LAMMPS - Define

compute_forcesfunction to infer energies and forces from LAMMPS data - Specify element types and cutoff radius during initialization

- Serialize the model using

torch.save()for LAMMPS loading

LAMMPS Integration

- Use

pair_style mliap unifiedcommand to load the serialized model - Map element types to LAMMPS atom types using

pair_coeff - Execute with Kokkos acceleration for GPU utilization

This interface maintains full GPU acceleration across the simulation workflow while providing flexibility for custom model architectures. The implementation handles distributed memory parallelism through LAMMPS's built-in communication capabilities, enabling large-scale simulations across multiple GPUs.

Validation and Best Practices

Technical Validation

Robust validation is essential for ensuring MLIP reliability:

- Geometry Optimization: Verify force convergence below 0.005 eV/Å for relaxed structures [41]

- Phonon Dispersion: Compare with DFT-calculated phonon spectra to validate potential energy surface curvature [41]

- Thermodynamic Properties: Evaluate consistency of derived properties (free energy, thermal expansion) with experimental or high-level theoretical data

- Transferability Testing: Assess performance on structures far from training data distribution

Optimization Guidelines

- I/O Optimization: Balance trajectory saving frequency (typically 1,000-10,000 steps) to minimize GPU idle time [43]

- Hardware Selection: Choose GPUs based on both performance and cost-effectiveness for specific system sizes [44]

- Active Learning: Implement iterative data collection to focus computational resources on chemically relevant configurations [45]

- Model Selection: Evaluate universal MLIPs for broad applicability or system-specific models for targeted studies

The integration of machine learning potentials with high-performance computing represents a paradigm shift in computational molecular dynamics, enabling nanosecond-scale simulations with ab initio accuracy. As MLIP methodologies continue to mature and HPC resources become increasingly accessible, these techniques will play an indispensable role in accelerating materials discovery and drug development across diverse scientific domains.

The process of drug discovery is characterized by significant challenges, including high costs (often exceeding one billion dollars), low success rates (typically below 10%), and extremely long development cycles (frequently over a decade) [47]. Computer-aided drug discovery (CADD) has become an indispensable tool in the pharmaceutical industry to address these challenges. Within this field, virtual screening and binding affinity prediction are critical computational techniques for identifying and optimizing potential drug candidates. These methods are increasingly reliant on high-performance computing (HPC) to perform the computationally intensive simulations required for accurate predictions [48]. This case study examines the application of HPC-powered virtual screening and binding affinity prediction, detailing protocols, performance benchmarks, and practical implementations.

Quantitative Performance Benchmarks

Accuracy in virtual screening and binding affinity prediction is paramount. The tables below summarize key performance metrics for various state-of-the-art methods and datasets.

Table 1: Virtual Screening Performance on the DUD-E Dataset [49]

| Method | AUC | ROC Enrichment | Notes |

|---|---|---|---|

| RosettaVS (VSH Mode) | 0.80 | 35.2 | Highest accuracy, models receptor flexibility |

| RosettaVS (VSX Mode) | 0.76 | 28.5 | Rapid initial screening |

| Autodock Vina | 0.72 | ~20.0 (est.) | Widely used free program |

| Schrödinger Glide | High | N/A | Leading commercial solution |

Table 2: Binding Affinity Prediction Performance on CASF2016 Benchmark [49]

| Method | Docking Power (Success Rate) | Screening Power (EF1%) | Ranking Power (ρ) |

|---|---|---|---|

| RosettaGenFF-VS | 87.5% | 16.72 | 0.731 |

| GenScore | ~80% (on biased data) | <10.0 (on CleanSplit) | Lower on CleanSplit |

| Pafnucy | ~75% (on biased data) | <10.0 (on CleanSplit) | Lower on CleanSplit |