High-Throughput Validation of Chemical Probes: A Comprehensive Protocol for Robust Assay Development and Target Engagement

This article provides a detailed protocol for the high-throughput validation of chemical probes, essential tools for drug discovery and basic research.

High-Throughput Validation of Chemical Probes: A Comprehensive Protocol for Robust Assay Development and Target Engagement

Abstract

This article provides a detailed protocol for the high-throughput validation of chemical probes, essential tools for drug discovery and basic research. It addresses the critical need for robust, reproducible methods to confirm probe selectivity, efficacy, and biological relevance. Covering foundational principles, advanced methodological applications, troubleshooting of common pitfalls like false positives, and rigorous validation strategies, this guide integrates techniques such as covalent chemoproteomics, cell-based assays, and AI-driven data analysis. Aimed at researchers and drug development professionals, it synthesizes current best practices to accelerate the development of reliable chemical tools for interrogating disease mechanisms and target validation.

Chemical Probes and High-Throughput Screening: Defining the Landscape

Chemical probes are highly selective, cell-permeable small molecules designed to perturb the function of a specific protein or protein family in a biological system. They represent indispensable tools in chemical biology and drug discovery for target validation, mechanistic studies, and pathway analysis [1] [2]. Unlike drugs, which are optimized for pharmacokinetics and safety, chemical probes are engineered for maximum selectivity and potency to generate confident biological conclusions with minimal off-target effects.

The development of high-quality chemical probes follows established criteria including: 1) potent biochemical activity (typically ≤100 nM), 2) demonstrated cellular activity (≤1 μM), 3) target engagement in cells, and 4) substantial selectivity (>30-fold against related targets) [3]. Probes must be thoroughly characterized through counter-screens and orthogonal assays to exclude pan-assay interference compounds (PAINS) and other artifacts [4].

Table 1: Key Characteristics of High-Quality Chemical Probes

| Characteristic | Minimum Standard | Validation Methods |

|---|---|---|

| Biochemical Potency | IC50/Kd ≤ 100 nM | Enzymatic assays, binding studies |

| Cellular Activity | IC50/EC50 ≤ 1 μM | Cell-based assays, phenotypic readouts |

| Selectivity | >30-fold against related targets | Panel screening, proteomics |

| Target Engagement | Demonstrated in cells | CETSA, cellular thermal shift |

| Solubility/Stability | Suitable for biological studies | LC-MS, kinetic solubility assays |

| Chemical Tractability | Defined structure-activity relationship | Analog synthesis, medicinal chemistry |

Covalent Chemical Probes

Fundamental Principles and Applications

Covalent chemical probes form irreversible or reversible covalent bonds with their target proteins, typically through electrophilic warheads that react with nucleophilic amino acid residues (e.g., cysteine, serine). Historically avoided due to toxicity concerns, covalent targeting has gained renewed interest driven by clinical successes including aspirin, penicillin, omeprazole, and ibrutinib [1]. These probes offer unique advantages including prolonged duration of action, increased potency, and the ability to trap transient molecular interactions.

The resurgence of covalent probes has been enabled by advanced screening technologies that facilitate identification of selective warheads and characterization of their reactivity profiles. Modern approaches emphasize the rational design of covalent inhibitors with tuned reactivity to minimize off-target effects while maintaining efficient target engagement [1]. Covalent probes uniquely enable high-throughput biochemistry, discovery of post-translational modifications, and trapping of non-covalent interactions via latent electrophiles.

Experimental Protocol: Development of Covalent Probes

Materials and Reagents:

- Purified target protein(s)

- Compound library with diverse electrophilic warheads

- Activity-based protein profiling (ABPP) reagents

- LC-MS/MS instrumentation

- Reaction quenchers (e.g., iodoacetamide for cysteine)

- Cellular lysates or live cells for validation

Procedure:

Warhead Screening and Selection

- Screen potential warheads (acrylamides, vinyl sulfonamides, aldehydes, etc.) against target protein using biochemical assays

- Assess inherent reactivity using glutathione trapping assays

- Prioritize warheads with balanced reactivity and selectivity potential

* covalent docking and Design*

- Perform molecular docking with covalent bonding considerations

- Optimize non-covalent interactions for binding affinity and orientation

- Design synthetic routes for candidate probes

Kinetic Characterization

- Determine IC50 values under pre-incubation conditions

- Measure kinact/KI values to assess efficiency of covalent modification

- Assess reversibility through dilution or competing ligand experiments

Selectivity Profiling

Cellular Target Engagement

- Implement cellular thermal shift assays (CETSA) [4]

- Use activity-based probes in live cells to monitor target modification

- Confirm functional effects through downstream pathway analysis

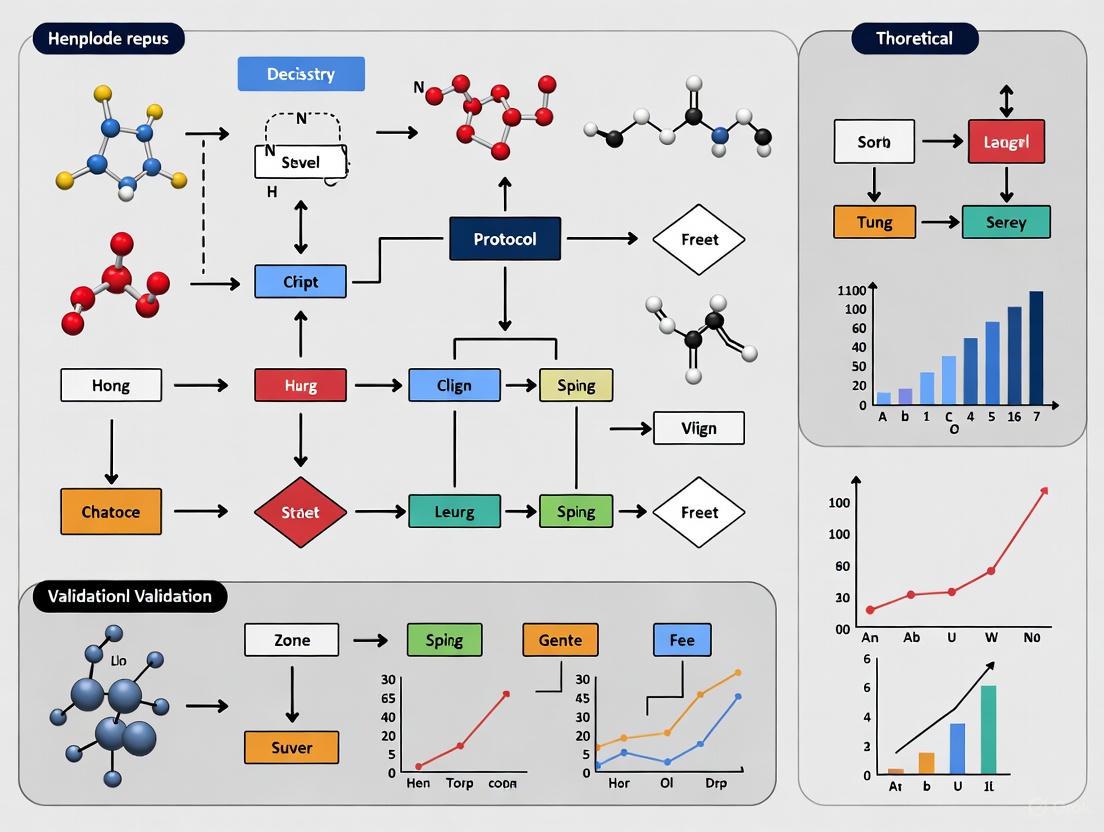

Figure 1: Workflow for Covalent Chemical Probe Development

Activity-Based Protein Profiling (ABPP) Probes

Principles and Design Strategies

Activity-based probes (ABPs) are chemical tools that covalently label enzymes based on their catalytic activity rather than mere abundance. These probes typically consist of three key elements: 1) a reactive electrophile (warhead) that covalently modifies active site residues, 2) a recognition element (scaffold) that provides binding affinity and selectivity, and 3) a reporter tag (e.g., biotin, fluorophore) for detection and enrichment [5]. ABPP enables quantitative assessment of enzyme activity states in complex biological systems, making it particularly valuable for identifying enzymatic alterations in disease states and monitoring inhibitor engagement.

ABPP has been extensively applied to kinase profiling using ATP-analog probes that desthiobiotinylate lysine residues near the active site, facilitating avidin-biotin capture and subsequent LC-MS/MS analysis [5]. This approach allows simultaneous assessment of hundreds of ATP-utilizing enzymes, providing a systems-level view of enzymatic activity changes in response to pharmacological perturbations or disease progression.

Experimental Protocol: ABPP for Kinase Profiling

Materials and Reagents:

- Cell lines or tissue samples of interest

- Pierce Kinase Enrichment Kit with ActivX Probes (or equivalent)

- Lysis buffer (e.g., Pierce IP Lysis Buffer with protease inhibitors)

- Streptavidin beads

- Desthiobiotin-ATP or desthiobiotin-ADP probes

- Kinase inhibitors for treatment controls

- Mass spectrometry-grade trypsin

- LC-MS/MS system

Procedure:

Sample Preparation

- Culture cells to 70% confluence and treat with compounds or vehicle control

- Wash cells with ice-cold PBS containing 1 mM sodium orthovanadate

- Lyse cells in IP Lysis Buffer with protease inhibitors

- Clear lysates by centrifugation at 18,000 × g for 20 minutes at 4°C

- Desalt using Zeba Spin Columns and determine protein concentration

Activity-Based Labeling

- Incubate 1 mg total protein with 20 mM MnCl₂ for 5 minutes at room temperature

- Pre-treat with kinase inhibitors (10 μM) or DMSO for 10 minutes

- Add desthiobiotin-ATP probe (10 μM final concentration) and incubate for 10 minutes

- Include negative controls without probe for background subtraction

Streptavidin Enrichment

- Denature labeled proteins in 5 M urea with 5 mM DTT for 30 minutes at 65°C

- Alkylate with 40 mM iodoacetamide for 30 minutes in the dark

- Desalt into HEPES digestion buffer and digest with trypsin (1:50 w/w) overnight at 37°C

- Incubate with streptavidin beads for 2 hours at room temperature

- Wash beads sequentially with lysis buffer, PBS, and water

- Elute labeled peptides with 50% acetonitrile/0.1% TFA

Mass Spectrometric Analysis

- Reconstitute peptides in 2% ACN/0.1% formic acid with retention time calibrants

- Analyze by LC-MS/MS using DDA, DIA, MRM, or PRM acquisition [5]

- For DIA: Create spectral library from DDA data, then analyze samples with 30-40 m/z windows

- For targeted: Develop MRM/PRM transitions for kinases of interest

Data Analysis

- Identify and quantify desthiobiotinylated peptides

- Normalize to internal standards and protein loading

- Compare abundance between treatment conditions

- Validate key findings with orthogonal methods

Table 2: Comparison of Mass Spectrometry Platforms for ABPP

| Platform | Identification Rate | Quantitative Precision | Throughput | Best Application |

|---|---|---|---|---|

| DDA (Data-Dependent) | Moderate (~100 kinases) | Variable, missing data | High | Discovery profiling |

| DIA (Data-Independent) | High (~21% increase vs DDA) [5] | Improved consistency | High | Comprehensive quantification |

| MRM (Multiple Reaction Monitoring) | Targeted (pre-defined) | Excellent precision | Medium | Validation of specific targets |

| PRM (Parallel Reaction Monitoring) | Targeted (pre-defined) | High accuracy with HRAM | Medium | Targeted verification |

Figure 2: ABPP Experimental Workflow for Kinase Profiling

High-Throughput Screening and Validation

Integrated Screening Approaches

Modern chemical probe discovery increasingly leverages integrated screening strategies that combine experimental high-throughput screening with computational approaches. As demonstrated in the development of aldehyde dehydrogenase (ALDH) probes, quantitative high-throughput screening (qHTS) of ~13,000 compounds against multiple ALDH isoforms can be combined with machine learning and pharmacophore modeling to virtually screen larger chemical libraries (~174,000 compounds) [3]. This integrated approach significantly expands accessible chemical diversity while optimizing resource utilization.

The power of computational prediction enables identification of novel chemotypes beyond those present in initial screening libraries. Following virtual screening, selected compounds undergo rigorous validation in both biochemical and cell-based assays, with confirmation of selective target engagement using techniques such as cellular thermal shift assays (CETSA) and split-luciferase systems [3]. This strategy has successfully yielded selective probe candidates for ALDH1A2, ALDH1A3, ALDH2, and ALDH3A1 isoforms.

Experimental Protocol: qHTS with ML Integration

Materials and Reagents:

- Compound library (diverse chemical structures)

- Target protein(s) and assay reagents

- qHTS-compatible instrumentation (automated liquid handling)

- Cell lines for phenotypic assays

- Computational resources for machine learning

Procedure:

Primary qHTS

- Format compounds in 1536-well plates using acoustic dispensing

- Perform concentration-response testing (typically 7-15 points)

- Use assay conditions with substrates at or above Km

- Maintain reaction conversion below 20% for linear kinetics

- Include reference compounds as controls

Data Processing and Hit Identification

- Fit concentration-response curves and assign curve classes

- Apply quality control metrics (Z'-factor >0.5)

- Identify hits based on potency, efficacy, and curve quality

- Exclude promiscuous compounds and frequent hitters

Machine Learning Model Development

- Use qHTS data as training set for QSAR models

- Generate molecular descriptors and fingerprints

- Train random forest, neural network, or other ML algorithms

- Validate model performance with test set compounds

Virtual Screening

- Apply trained models to larger virtual compound libraries

- Rank compounds by predicted activity and selectivity

- Apply pharmacophore filters to enrich for desired properties

- Select diverse chemotypes for experimental testing

Experimental Validation

- Source or synthesize predicted active compounds

- Test in confirmatory biochemical assays

- Assess selectivity against related targets

- Evaluate cellular activity and target engagement

- Iterate with expanded analog by catalog (ABC) purchases

Hit Validation and Triaging

Following primary screening, rigorous hit validation is essential to eliminate false positives and identify genuine probe candidates. Key steps include:

Orthogonal Assays: Confirm activity using different detection technologies (e.g., fluorescence, luminescence, radioactivity) to exclude technology-specific artifacts [4].

Specificity Testing:

- Assess detergent sensitivity to identify aggregators

- Perform enzyme concentration shift assays (IC50 should be independent of enzyme concentration)

- Determine Hill coefficients; values significantly different from 1 may indicate non-specific mechanisms

- Test for redox cycling activity using horseradish peroxidase/phenol red assays [4]

Chemical Analysis:

- Confirm compound identity and purity using LC-MS and NMR

- Resynthesize or repurify compounds to eliminate potential contaminants

- Purchase analogs to establish preliminary structure-activity relationships

Target Engagement:

- Utilize biophysical methods (SPR, DSF, ITC, MST) to confirm direct binding

- Implement cellular target engagement assays (CETSA, cellular thermal shift)

- For covalent binders, demonstrate time-dependent inhibition and confirm modification via mass spectrometry

Table 3: Essential Research Reagent Solutions for Probe Validation

| Reagent/Category | Specific Examples | Primary Function | Key Applications |

|---|---|---|---|

| Activity-Based Probes | Desthiobiotin-ATP probes, FP-rhodamine | Covalent labeling of active enzymes | ABPP, target identification |

| Detection Systems | Thioflavin T, FRET biosensors, SplitLuc | Signal generation and detection | HTS, cellular assays, engagement |

| Selectivity Panels | Kinase panels, GPCR arrays, safety screens | Profiling against multiple targets | Selectivity assessment |

| Mass Spec Standards | SILAC, TMT, iRT peptides | Quantitative reference standards | Proteomics, quantification |

| Cellular Assay Tools | CETSA, BioID, APEX2 | Monitoring intracellular target engagement | Cellular validation |

| Computational Tools | Probe Miner, ChEMBL, canSAR | Data-driven probe assessment | Objective quality evaluation [2] |

Objective Assessment and Data-Driven Evaluation

Quantitative Assessment Frameworks

The quality of chemical probes varies considerably in published literature, necessitating objective assessment frameworks. Probe Miner represents a valuable resource that capitalizes on public medicinal chemistry data to enable quantitative, data-driven evaluation of chemical probes against 2,220 human targets [6] [2]. This approach systematically analyzes >1.8 million compounds to assess their suitability as chemical tools based on potency, selectivity, and cellular activity.

Alarming limitations in current chemical probe coverage have been identified through systematic analysis. While 11% (2,220 proteins) of the human proteome has been liganded, only 4% (795 proteins) can be probed with compounds satisfying minimal potency (≤100 nM) and selectivity (≥10-fold) criteria. When adding cellular activity requirements (≤10 μM), this coverage drops to just 1.2% (250 proteins) of the human proteome [2]. These findings highlight critical gaps in the chemical toolset available for functional genomics and target validation.

Criteria for Probe Assessment

Potency Assessment:

- Biochemical IC50/Kd ≤ 100 nM

- Cellular IC50/EC50 ≤ 1 μM (preferably ≤ 100 nM)

- Demonstration of saturation or full efficacy in concentration-response

Selectivity Evaluation:

- ≥10-fold selectivity against anti-targets (minimal)

- ≥30-fold selectivity within target family (preferred)

- Broad profiling against related targets (kinases, GPCRs, etc.)

- Assessment using diverse assay formats (binding, functional)

Cellular Activity:

- Target engagement demonstrated in live cells

- Functional modulation of pathway or phenotype

- Appropriate pharmacokinetics for intended application

- Exclusion of cytotoxicity at effective concentrations

Chemical Properties:

- Defined structure-activity relationship

- Solubility ≥ 10 μM in aqueous buffer

- Chemical stability under assay conditions

- Synthetic tractability for analog development

Emerging Technologies and Future Directions

Advanced Screening Platforms

Recent technological advances have enabled novel screening approaches for chemical probe discovery. Flow cytometry-based high-throughput screening now permits simultaneous measurement of multiple metabolites in live cells using FRET biosensors for glucose, ATP, and glycosomal pH, facilitating identification of metabolic probes [7]. Similarly, protein-adaptive differential scanning fluorimetry (paDSF) enables rapid screening of fluoroprobe collections against protein targets, as demonstrated by the discovery of amyloid fibril-binding fluoroprobes using the 300+ compound Aurora dye library [8].

Multiplexed screening approaches provide internal validation of active compounds and offer clues regarding potential mechanisms of action. For example, pooling sensor cell lines (e.g., glucose, ATP, pH sensors) and analyzing them by flow cytometry enables identification of compounds with specific metabolic effects while excluding non-selective agents [7]. These multiplexed systems typically achieve Z'-factor values acceptable for high-throughput screening (>0.5), with hit rates of 0.2-0.4% in primary screens.

Specialized Probe Classes

Fluoroprobes: Advanced fluoroprobes with specificity for protein polymorphs represent powerful tools for studying pathological aggregates. Screening diverse dye collections against tau fibril polymorphs has identified both pan-fibril binders and conformation-selective fluoroprobes, including compounds with coumarin and polymethine scaffolds that were previously underrepresented in amyloid-binding dyes [8]. These selective imaging tools enable discrimination between structurally distinct aggregates that may underlie different disease states.

Covalent Aptamers: Expanding beyond small molecules, covalent aptamers represent emerging targeting modalities. Selection of antibody-binding covalent aptamers combines the specificity of nucleic acid aptamers with the irreversible binding of covalent inhibitors, creating novel recognition elements for biological applications [1].

Covalent Peptide Inhibitors: mRNA display with genetically encoded electrophilic warheads enables discovery of covalent cyclic peptide inhibitors, as demonstrated for peptidyl arginine deiminase 4 (PADI4) [1]. This approach merges the specificity of peptide-protein interactions with the sustained target engagement of covalent modifiers.

The continued evolution of chemical probe development promises to close critical gaps in the liganded proteome while providing increasingly sophisticated tools for biological investigation and therapeutic discovery.

The Role of High-Throughput Screening (HTS) in Probe Validation and Discovery

High-Throughput Screening (HTS) represents a foundational methodology in modern drug discovery and chemical probe development, enabling the rapid experimental assessment of thousands to millions of chemical compounds against biological targets. This approach is particularly valuable when prior structural or mechanistic knowledge of the target is limited, making structure-based design strategies less feasible [9]. In the specific context of chemical probe research, HTS serves as the critical initial filter for identifying promising "hit" compounds from vast libraries that can be refined into selective molecular tools for deconvoluting biological pathways and target validation [10].

The core principle of HTS involves the use of automated, miniaturized assays alongside specialized data analysis pipelines to rapidly identify novel compounds that modulate a specific biological target or pathway [9]. The transition from traditional screening methods to HTS has fundamentally accelerated early discovery timelines, with modern systems capable of testing 10,000–100,000 compounds per day, allowing researchers to identify starting points for probe development campaigns more efficiently than ever before [9]. This accelerated identification process is crucial for building a robust pipeline of chemical tools that can be used to interrogate biological systems with high precision.

HTS Technologies and Methodological Framework

Core Screening Technologies and Assay Formats

HTS methodologies can be broadly categorized into several technological approaches, each with distinct advantages for different stages of probe discovery and validation. The choice of assay format dictates the type of information obtained about compound activity and is therefore a critical consideration in experimental design.

Table 1: High-Throughput Screening Assay Technologies and Applications

| Technology Type | Detection Method | Throughput Capacity | Primary Applications in Probe Discovery | Key Advantages |

|---|---|---|---|---|

| Biochemical Assays | Fluorescence, Luminescence, Mass Spectrometry [9] | 100,000+ compounds/day [9] | Target-based screening, Enzyme inhibition [9] | Direct target engagement assessment, Well-defined molecular mechanisms |

| Cell-Based Assays | High-content imaging, Label-free detection, Reporter systems [11] [12] | 50,000-100,000 compounds/day [9] | Phenotypic screening, Functional response assessment [11] | Cellular context preservation, Detection of functional outcomes |

| Ultra-High-Throughput Screening (uHTS) | Fluorescence intensity, Miniaturized sensor systems [9] | 300,000+ compounds/day [9] | Primary screening of very large libraries (>1 million compounds) [9] | Maximum efficiency for library coverage, Minimal reagent consumption |

| Label-Free Technologies | Dynamic mass redistribution, Impedance-based systems [12] | Moderate to high | Pathway analysis, Functional cellular responses [12] | Non-perturbing to native biology, Rich phenotypic information |

Biochemical assays typically utilize purified protein targets and are ideal for understanding direct molecular interactions between compounds and their intended targets. These assays often employ enzymatic readouts, such as the fluorescence-based assays developed for histone deacetylase (HDAC) inhibitors, where a peptide substrate coupled to a fluorescent leaving group allows quantification of enzymatic activity [9]. In contrast, cell-based assays provide crucial information about compound behavior in a more physiologically relevant environment, capturing aspects of cell permeability, cytotoxicity, and functional efficacy [11]. Recent advancements have seen the expansion of HTS beyond traditional small molecules into biologics, cell and gene therapy screening, and complex phenotypic models that better reflect disease states [11].

Automation and Miniaturization Infrastructure

The practical implementation of HTS relies heavily on integrated automation systems that enable the rapid processing of compound libraries. Central to this infrastructure are automated liquid-handling robots capable of dispensing nanoliter aliquots with high precision, significantly minimizing assay setup times and reagent consumption [9]. These systems interface with microplate handlers and detection instruments to create seamless screening workflows.

Modern HTS is characterized by progressive miniaturization, with assays routinely run in 384-well and 1536-well formats, dramatically reducing reagent requirements and costs while increasing throughput [9]. The development of microfluidic systems and high-density microwell plates with volumes of 1–2 µL has been particularly instrumental in enabling ultra-high-throughput screening (uHTS) approaches [9]. This miniaturization is complemented by sophisticated compound management systems that provide highly automated procedures for compound storage, retrieval, solubilization, and quality control, ensuring compound integrity throughout the screening process [9].

HTS Workflow for Probe Discovery

Quantitative Performance Metrics in HTS

The successful implementation of HTS in probe discovery requires careful consideration of performance metrics that determine screening quality and efficiency. These quantitative parameters guide assay optimization and provide benchmarks for comparing different screening approaches.

Table 2: Key Performance Metrics for HTS in Probe Discovery

| Performance Parameter | Target Value/Range | Calculation Method | Impact on Probe Discovery Quality | ||

|---|---|---|---|---|---|

| Z'-Factor | >0.5 [12] | 1 - (3×SDₛᵢ₉ₙₐₗ + 3×SDₜᵣₐₜₘₑₙₜ)/ | μₛᵢ₉ₙₐₗ - μₜᵣₐₜₘₑₙₜ | Assay robustness and quality assessment | |

| Hit Rate | Typically 0.1-1% [12] | (Number of hits / Total compounds screened) × 100 | Library diversity and screening stringency | ||

| Coefficient of Variation (CV) | <10% | (Standard deviation / Mean) × 100 | Assay precision and reproducibility | ||

| Signal-to-Noise Ratio | >5:1 | Meanₛᵢ₉ₙₐₗ / Meanₙₒᵢₛₑ | Assay sensitivity and detection window | ||

| False Positive Rate | Minimize through counterscreens [9] | False positives / Total hits | Screening efficiency and downstream resource allocation | ||

| False Negative Rate | Minimize through assay optimization [9] | False negatives / Total active compounds | Comprehensive coverage of chemical space |

The Z'-factor is particularly crucial as it provides a quantitative measure of assay quality and robustness, incorporating both the dynamic range of the assay and the data variation associated with both positive and negative controls [12]. Assays with Z'-factors >0.5 are considered excellent for HTS applications, while those with values between 0.5 and 0 represent a range from marginal to useless assays. Understanding these metrics allows researchers to optimize screening conditions specifically for probe discovery, where the goal is not merely identifying any modulator but finding compounds with sufficient potency and selectivity to serve as useful biological tools.

The economic and temporal impacts of HTS implementation are significant, with studies indicating that HTS can reduce development timelines by approximately 30% and identify potential drug targets up to 10,000 times faster than traditional methods [12]. These efficiency gains are particularly valuable in probe discovery, where rapid iteration between screening and validation accelerates the development of high-quality chemical tools.

Experimental Protocols for Probe Discovery and Validation

Protocol 1: Primary uHTS Campaign for Hit Identification

Objective: To perform an ultra-high-throughput screen of a diverse compound library to identify initial hits against a defined molecular target.

Materials:

- Compound library (50,000-500,000 compounds)

- 1536-well microplates

- Automated liquid handling system

- Target protein or cell line

- Assay-specific reagents and detection system

- Plate reader compatible with 1536-well format

Procedure:

- Assay Optimization: Prior to primary screening, optimize assay conditions in 1536-well format, including reagent concentrations, incubation times, and DMSO tolerance. Determine Z'-factor using positive and negative controls to ensure robustness [12].

- Compound Transfer: Using automated liquid handling, transfer 10-20 nL of compound solutions (typically 1-10 mM in DMSO) to assay plates, maintaining final DMSO concentration ≤0.5%.

- Reagent Addition: Add assay reagents simultaneously or sequentially using dispensers capable of 1-2 µL additions with high precision.

- Incubation: Incubate plates under appropriate conditions (time, temperature, CO₂ for cell-based assays) as determined during optimization.

- Signal Detection: Read plates using appropriate detection method (fluorescence, luminescence, absorbance) with instrumentation capable of 1536-well format reading.

- Data Capture: Export raw data to HTS data management system for analysis.

Validation Parameters:

- Include control wells on each plate (16-32 wells each of positive and negative controls)

- Calculate plate-wise Z'-factors to monitor screening quality throughout the campaign

- Implement quality control thresholds and flag plates falling outside acceptable parameters

Protocol 2: Hit Confirmation and Counterscreening

Objective: To validate primary screening hits and eliminate false positives arising from assay interference.

Materials:

- Hit compounds from primary screen (500-2,000 compounds)

- Source plates containing hit compounds

- Countersassay reagents (e.g., for detergent sensitivity, fluorescence interference)

- Dose-response plates (384-well format)

Procedure:

- Hit Picking: Reformulate hit compounds in 384-well format for dose-response testing.

- Dose-Response Confirmation: Test each hit compound in 8-point, 1:3 serial dilution series to confirm concentration-dependent activity.

- Counterscreening: Implement orthogonal assays to identify compounds with undesirable mechanisms:

- Aggregation Detection: Include non-ionic detergents (e.g., 0.01% Triton X-100) to disrupt colloidal aggregators [9]

- Fluorescence Interference: Test compounds alone in assay buffer to identify auto-fluorescent compounds

- Redox Cycling: Assess activity in presence of antioxidant enzymes (catalase, superoxide dismutase)

- Cytotoxicity: For cell-based assays, include general viability assessment

- Selectivity Assessment: Test confirmed hits against related targets or family members to assess initial selectivity profile.

Validation Parameters:

- Calculate IC₅₀/EC₅₀ values from dose-response curves

- Apply compound quality filters (e.g., pan-assay interference compound (PAINS) filters) [9]

- Prioritize hits based on potency, efficacy, and clean counterscreening profile

Protocol 3: Advanced Probe Characterization

Objective: To comprehensively characterize validated hits for development as chemical probes.

Materials:

- Validated hit compounds (20-100 compounds)

- Secondary assay systems (pathway-specific reporters, orthogonal binding assays)

- Selectivity screening panels (related targets, diverse target classes)

- Solubility, stability, and preliminary ADMET assessment tools

Procedure:

- Mechanism of Action Studies:

- For enzyme targets: Perform kinetic studies (time-dependent inhibition, reversibility)

- For cellular targets: Assess target engagement using cellular thermal shift assays (CETSA) or bioluminescence resonance energy transfer (BRET)

- Selectivity Profiling:

- Screen against panel of 50-100 diverse targets (kinases, GPCRs, ion channels, etc.)

- Perform structural similarity searches against known probe compounds

- Preliminary ADMET Assessment:

- Determine solubility in physiological buffers

- Assess metabolic stability in liver microsomes

- Evaluate membrane permeability (PAMPA, Caco-2)

- Chemical Optimization:

- Synthesize or acquire structural analogs to establish initial structure-activity relationships (SAR)

- Identify potential sites for chemical modification to improve properties

Validation Parameters:

- Establish comprehensive profile including potency (Kd, Ki, IC₅₀), selectivity (10-100 fold over related targets), and developability criteria

- Compare to literature standards and known probe compounds

- Prioritize 1-3 lead series for further optimization

Research Reagent Solutions for HTS

The successful implementation of HTS for probe discovery requires specialized reagents and tools designed for automation, miniaturization, and high-quality data generation.

Table 3: Essential Research Reagents and Materials for HTS in Probe Discovery

| Reagent Category | Specific Examples | Function in HTS Workflow | Key Considerations |

|---|---|---|---|

| Compound Libraries | Diverse small molecules, Targeted collections, Natural product extracts [9] | Source of chemical starting points for probe discovery | Library diversity, chemical tractability, physicochemical properties |

| Detection Reagents | Fluorescent probes, Luminescent substrates, Antibody-based detection systems [9] | Signal generation for quantifying biological activity | Sensitivity, stability, compatibility with automation |

| Cell Lines | Engineered reporter lines, Primary cells, iPSC-derived models [11] | Biologically relevant screening systems | Relevance to physiology, robustness in screening, reproducibility |

| Assay Kits | Commercial optimized kits for specific target classes | Streamlined assay implementation | Validation data, compatibility with HTS formats, reliability |

| Microplates | 384-well, 1536-well plates with various surface treatments [9] | Miniaturized reaction vessels | Well-to-well uniformity, binding characteristics, evaporation control |

| Automation Consumables | Tips, reservoirs, tubing, seals | Enable automated liquid handling | Precision, compatibility with instrumentation, lot-to-lot consistency |

The selection of appropriate research reagents is critical for generating high-quality HTS data. Compound libraries should balance structural diversity with favorable physicochemical properties to maximize the identification of developable probe candidates [9]. Detection reagents must provide sufficient sensitivity for miniaturized formats while minimizing interference from test compounds. Recent trends include the development of more physiologically relevant cell models, such as 3D cell cultures and organ-on-chip systems, that better mimic human biology and may improve the translational potential of probes identified through HTS campaigns [11].

Data Management and Analysis Framework

The volume and complexity of data generated in HTS necessitate sophisticated data management and analysis strategies. A typical HTS campaign can generate millions of data points that must be processed, normalized, and interpreted to identify legitimate probe candidates.

HTS Data Analysis Workflow

The data analysis workflow begins with raw data acquisition from plate readers, followed by normalization to correct for plate-to-plate variability and systematic errors. Common normalization approaches include percentage of control (positive and negative controls on each plate) and Z-score normalization [12]. Quality control metrics, particularly the Z'-factor, are calculated for each plate to identify potential issues requiring re-testing [12].

Hit identification typically employs statistical thresholds, such as values falling beyond three standard deviations from the mean or percentage inhibition/activation thresholds (e.g., >50% inhibition). More sophisticated approaches use B-score normalization to correct for spatial effects within plates [12].

A critical step in HTS data analysis is compound triage, which involves filtering out promiscuous, reactive, or otherwise undesirable compounds using computational approaches. These include pan-assay interference compound (PAINS) filters, which identify substructures associated with assay interference rather than specific target engagement [9]. Additional cheminformatic filters address compounds with unfavorable physicochemical properties, potential toxicity, or synthetic complexity that would hinder optimization.

Machine learning and AI are increasingly applied to HTS data analysis, with models trained on historical screening data to predict compound behavior, prioritize candidates for follow-up, and even design optimized compound libraries for future screens [13]. These approaches help address the significant challenge of false positives in HTS, which can arise from various forms of assay interference, including chemical reactivity, metal impurities, autofluorescence, and colloidal aggregation [9].

High-Throughput Screening remains an indispensable component of the chemical probe discovery pipeline, providing an efficient method for surveying vast chemical space to identify starting points for tool development. The continued evolution of HTS technologies—including further miniaturization, enhanced detection methods, and more physiologically relevant assay systems—promises to increase both the efficiency and quality of probes identified through these approaches.

The integration of artificial intelligence and machine learning throughout the HTS process represents perhaps the most significant advancement, with potential applications in assay design, hit identification, and compound prioritization [13]. These computational approaches, combined with experimental innovations in 3D cell culture, organ-on-chip technology, and label-free detection methods, are creating a new generation of HTS platforms capable of identifying more relevant and useful chemical probes [11].

For researchers engaged in probe development, a comprehensive understanding of HTS principles, methodologies, and data analysis approaches is essential for designing effective screening strategies and interpreting results in the context of probe qualification. The protocols and frameworks outlined here provide a foundation for implementing HTS in probe discovery campaigns, with appropriate attention to quality control, validation, and the specific requirements of chemical tool development rather than just drug discovery. As these technologies continue to advance, HTS will remain a cornerstone methodology for generating the high-quality chemical probes essential for deciphering complex biological systems and validating novel therapeutic targets.

High-Throughput Screening (HTS) is a foundational methodology in modern drug discovery and chemical biology, enabling the rapid experimental testing of hundreds of thousands of compounds against biological targets [9]. The power of HTS lies in its integration of automation, miniaturized assays, and robust data management to accelerate the identification of novel chemical probes and drug candidates [13] [14]. This application note details the core components of an HTS workflow, framed within the context of a protocol for the high-throughput validation of covalent chemical probes [15]. It provides actionable methodologies and standards for researchers, scientists, and drug development professionals aiming to establish or refine HTS operations in their laboratories.

Core HTS Workflow and Integration

A successful HTS workflow is a tightly integrated, sequential process that transforms a library of compounds into validated hits. The entire operation is orchestrated by automation and informatics systems to ensure speed, accuracy, and reproducibility.

Figure 1: The integrated High-Throughput Screening (HTS) workflow. This sequential process transforms a compound library into validated hits through automated and standardized steps [13] [9] [14].

Key Component 1: Automation and Robotics

Automation is the physical engine of the HTS workflow, providing the precision, speed, and reproducibility required for large-scale screening campaigns.

Robotic Modules and Their Functions

Integrated robotics handle the movement of microplates between specialized functional modules, enabling continuous, unattended operation [14]. The key modules are detailed in Table 1.

Table 1: Key Robotic Modules in an HTS Platform and Their Functions

| Module Type | Primary Function | Critical Requirement in HTS |

|---|---|---|

| Liquid Handler | Precise fluid dispensing and aspiration | Sub-microliter accuracy; low dead volume [14] |

| Microplate Reader | Signal detection (e.g., fluorescence, luminescence) | High sensitivity and rapid data acquisition [14] |

| Plate Incubator | Temperature and atmospheric control | Uniform heating/cooling across all microplates [14] |

| Plate Washer | Automated washing cycles | Minimal residual volume and cross-contamination control [14] |

| Robotic Arm | Moves microplates between modules | High reliability and precision for 24/7 operation [14] |

Protocol for Automated Screening Execution

Objective: To automate the setup and execution of a cell-based enzymatic assay in a 384-well format for the identification of covalent kinase inhibitors. Materials: Compound library (10 mM in DMSO), assay reagents (buffer, substrate, enzyme/cells), 384-well microplates, integrated HTS platform (e.g., with liquid handler, incubator, plate reader).

System Initialization:

- Power on all robotic modules and the scheduling software (scheduler).

- Execute priming routines for liquid handling systems to purge air and ensure fluidic path integrity.

- Pre-warm incubators and plate readers to the assay-required temperature (e.g., 37°C).

Plate Replication and Compound Transfer:

- The robotic arm retrieates a source microplate from the stacker.

- The liquid handler transfers nanoliter volumes of compounds from the source library plate to the assay plate using positive displacement tips [14].

- Critical Note: Include controls on each plate: positive control (inhibitor), negative control (DMSO only), and a reference covalent probe (e.g., known kinase inhibitor) [15].

Assay Reagent Dispensing:

- The liquid handler adds the enzyme or cell suspension to the assay plate.

- The plate is transferred to the incubator for a pre-determined incubation time (e.g., 30 minutes) to allow for covalent binding [15].

- The substrate is subsequently dispensed into all wells to initiate the reaction.

Signal Detection and Data Output:

- After a defined reaction period, the robotic arm moves the assay plate to the microplate reader.

- The reader measures the signal (e.g., fluorescence, luminescence) according to the assay protocol.

- Raw data files (e.g., intensity values per well) are automatically sent to the data management system for analysis [14].

Key Component 2: Assay Development and Validation

The biological or biochemical assay is the core of any HTS campaign, where the interaction between compound and target is measured.

Assay Types and Miniaturization

HTS assays are broadly categorized as biochemical (cell-free) or cell-based [9]. The drive for efficiency has led to widespread miniaturization, using 96-, 384-, and 1536-well microplates to conserve expensive reagents and enable smaller reaction volumes, often in the microliter to nanoliter range [9] [14].

Protocol for Assay Validation and Robustness Testing

Objective: To validate a biochemical assay for its suitability in an HTS campaign by determining its robustness and reproducibility. Materials: Assay reagents, positive/negative controls, low-volume 384-well plates, liquid handler, microplate reader.

Plate Design:

- Design a plate map where positive and negative controls are interspersed across the entire plate (e.g., in a checkerboard pattern) to account for spatial biases.

Assay Performance Run:

- Using the automated liquid handler, dispense controls and reagents into the plate according to the final HTS protocol.

- Run the assay on the plate reader and collect data as intended for the full screen. Repeat this process for a minimum of three independent runs.

Data Analysis and Robustness Calculation:

- For each control well, calculate the mean signal and standard deviation (SD).

- Calculate the Z'-factor, a key metric for assessing assay quality and robustness, using the formula:

Z' = 1 - [ (3*SD_positive + 3*SD_negative) / |Mean_positive - Mean_negative| ][14]. - An assay with a Z'-factor > 0.5 is considered excellent and robust for HTS. A Z'-factor between 0.5 and 0 is marginal and may require optimization [14].

Table 2: Key Quality Metrics for HTS Assay Validation

| Metric | Formula/Description | Acceptance Criterion | ||

|---|---|---|---|---|

| Z'-factor | `1 - [ (3SD_positive + 3SD_negative) / | Meanpositive - Meannegative | ]` | ≥ 0.5 [14] |

| Signal-to-Background Ratio (S/B) | Mean_positive / Mean_negative |

> 3 [9] | ||

| Coefficient of Variation (CV) | (Standard Deviation / Mean) * 100% |

< 10% for controls [14] |

Key Component 3: Data Management and Analysis

The massive volume of data generated by HTS demands a sophisticated informatics infrastructure to transform raw values into scientifically meaningful results.

The HTS Informatics Pipeline

A typical HTS data analysis workflow involves multiple steps of processing and triage to minimize false positives and identify true hits.

Figure 2: The HTS data analysis and hit identification pipeline. This multi-step process ensures data quality and minimizes false positives through statistical and cheminformatics methods [13] [9] [14].

Protocol for Hit Identification and Triage

Objective: To process raw HTS data, identify initial hits, and triage them to remove likely false positives. Materials: Raw data file from plate reader, Laboratory Information Management System (LIMS), chemical structures of screening library, data analysis software (e.g., Knime, R).

Data Normalization:

- Normalize raw well signals to plate controls using the formula:

% Inhibition = (1 - (Raw_well - Mean_positive) / (Mean_negative - Mean_positive)) * 100.

- Normalize raw well signals to plate controls using the formula:

Quality Control Check:

- Calculate the Z'-factor for each plate. Flag or exclude from analysis any plates that fail the quality threshold (e.g., Z' < 0.5) [14].

Primary Hit Identification:

- Apply a hit threshold, typically 3 standard deviations from the plate mean or a specific percentage of inhibition (e.g., >50% inhibition) [9]. Compounds exceeding this threshold are designated as "primary hits."

Hit Triage using Cheminformatics:

- Subject the list of primary hits to computational filters to identify compounds with undesirable features.

- Apply pan-assay interference compound (PAINS) filters to remove compounds known to cause false positives through non-specific mechanisms [9].

- Filter out compounds with problematic chemical functionalities or poor physicochemical properties that make them unsuitable as chemical probe starting points [9] [15].

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key reagents and materials essential for implementing a covalent chemical probe HTS protocol.

Table 3: Essential Research Reagent Solutions for Covalent Probe HTS

| Item | Function in HTS Workflow |

|---|---|

| Covalent Compound Library | A curated collection of small molecules bearing reactive electrophiles (e.g., acrylamides, sulfonyl fluorides) for targeting nucleophilic amino acids (e.g., cysteine) in proteins [15]. |

| Activity-Based Probes (ABPs) | Covalent chemical probes used for target identification and validation; often contain a reactive warhead, a linker, and a reporter tag (e.g., biotin, fluorophore) [15]. |

| Microplates (96-, 384-, 1536-well) | Standardized platforms for assay miniaturization, enabling high-density, low-volume reactions crucial for HTS throughput and cost-effectiveness [9] [14]. |

| Positive/Negative Controls | Well-characterized compounds (e.g., a known covalent inhibitor and an inert vehicle) used on every plate to normalize data and calculate assay performance metrics like the Z'-factor [14]. |

| Label-Free Detection Reagents | Reagents for technologies like Differential Scanning Fluorimetry (DSF) that monitor target engagement without the need for a labeled substrate, useful for challenging targets [9]. |

Troubleshooting Common HTS Challenges

| Challenge | Potential Cause | Solution |

|---|---|---|

| High False Positive Rate | Chemical reactivity, assay interference, colloidal aggregation [9]. | Implement rigorous cheminformatic triage (e.g., PAINS filters) and use orthogonal, non-biochemical assays for hit confirmation [9]. |

| Poor Assay Robustness (Low Z'-factor) | High signal variability, insufficient separation between controls, liquid handling inaccuracy [14]. | Optimize assay conditions, check liquid handler calibration and precision, and use fresh reagent batches. |

| Integration Bottlenecks | Legacy instruments with proprietary communication protocols [14]. | Invest in vendor-agnostic scheduling software or custom middleware to unify the workflow. |

High-Throughput Screening (HTS) is an indispensable, automated methodology in modern drug discovery, enabling researchers to rapidly test tens of thousands to hundreds of thousands of chemical compounds against a biological target [9]. This approach is fundamental for identifying novel hit compounds, especially when little is known about the pharmacological target, making structure-based drug design unfeasible [9]. The core promise of HTS lies in its ability to accelerate the early stages of drug discovery, compressing timelines and delivering diverse drug leads faster than rational design approaches [9]. However, this speed and scale come with significant inherent challenges. The technical complexity and high upfront costs of establishing HTS platforms are considerable, but perhaps the most insidious challenge is the generation of false positives—compounds that appear active in the primary screen but are ultimately assay artifacts [16] [9]. These false positives can mimic a desired biological response through various interference mechanisms, leading to wasted resources and misguided research efforts if not properly identified and triaged [16]. This application note examines the critical balance between the throughput and cost of HTS campaigns and the pervasive challenge of false positives, providing detailed protocols and computational tools for their effective validation and mitigation.

Quantitative Landscape of HTS Performance

The performance and resource demands of HTS can be quantified across different operational scales. The table below summarizes key attributes of standard HTS and its more advanced counterpart, Ultra-High-Throughput Screening (uHTS), based on current industry capabilities [9].

Table 1: Comparison of HTS and uHTS Capabilities and Challenges

| Attribute | HTS | uHTS | Comments |

|---|---|---|---|

| Throughput (assays/day) | < 100,000 | >300,000 | uHTS can achieve significantly higher throughput [9]. |

| Complexity & Cost | High | Significantly Greater | uHTS requires more advanced instrumentation and infrastructure [9]. |

| Data Quality Requirements | High | High | Both formats require rigorous internal standards to reduce false positives [9]. |

| False Positive/Negative Bias | Present | Present | No obvious reduction in false positive rates from increased throughput alone [9]. |

| Ability to Monitor Multiple Analytes | Limited | Enhanced | uHTS necessitates miniaturized, multiplexed sensor systems for parallel measurement [9]. |

A primary advantage of HTS is its speed in identifying potential hits from vast chemical libraries [9]. Furthermore, HTS supports "fast to failure" strategies, allowing researchers to reject unsuitable candidates early, thereby saving time and resources in later development stages [9]. The main disadvantages include high costs, technical complexity, and the generation of false positives and negatives [9]. Specifically, HTS approaches can result in libraries with inflated physicochemical properties (e.g., high lipophilicity), which contribute to poor aqueous solubility and high attrition rates in clinical development [9].

Experimental Protocol: A Dual-Color Fluorescent Assay for Primary Screening

The following protocol details a robust dual-color fluorescent assay for the high-throughput screening of anti-chikungunya virus (CHIKV) compounds. This assay simultaneously evaluates antiviral efficacy and cytotoxicity, streamlining the primary screening process and providing early data to triage false positives caused by general cellular toxicity [17].

Background and Principle

This cell-based immunofluorescence assay (IFA) uses a double-staining technique to quantify both the number of infected cells and the total number of cells in a single well. The principle relies on specific antibody binding to viral antigens and a general nuclear stain, enabling automated image acquisition and analysis to determine the percentage of infected cells and compound cytotoxicity [17].

Materials and Reagents

Table 2: Research Reagent Solutions for Dual-Color Fluorescent Assay

| Item | Function/Description |

|---|---|

| Vero Cells | African green monkey kidney cell line; deficient in interferon production, allowing efficient CHIKV replication [17]. |

| CHIKV ECSA Strain | Arthropod-borne virus belonging to the East/Central/South African clade; the target pathogen for the assay [17]. |

| Anti-CHIKV Polyclonal Antibody | Primary antibody that specifically binds to viral antigens expressed in infected cells [17]. |

| Fluorophore-Conjugated Secondary Antibody | Labels the primary antibody, producing a fluorescent signal to quantify infected cells. |

| DAPI (4',6-Diamidino-2-Phenylindole) | Fluorescent stain that binds strongly to DNA in the cell nucleus; used to count the total number of cells and assess compound cytotoxicity [17]. |

| Cycloheximide (CHX) | Known translation inhibitor; used as a positive control for antiviral activity [17]. |

| Acyclovir (ACY) | Anti-herpes simplex virus drug with no activity against CHIKV; used as a negative control [17]. |

| Cell Culture Microplates | Typically 96- or 384-well formats, suitable for automation and miniaturized assays [9]. |

Step-by-Step Procedure

- Host Cell Seeding: Seed Vero cells at an optimized density of 10,000 cells per well in a microplate. Culture the cells for 48 hours to reach approximately 87% confluency, which ensures uniform infection without overconfluency [17].

- Viral Infection: Infect the cells with CHIKV ECSA at a low Multiplicity of Infection (MOI) of 0.1. This MOI was selected to minimize cytopathic effect on host cells while maintaining an excellent discrimination power (Z' factor > 0.5) between infected and uninfected wells [17].

- Compound Application: Co-incubate the virus with the library of test compounds. Include control wells: infected cells with DMSO (CVD), non-infected cells with DMSO (CD), and controls with the reference compounds CHX (positive) and ACY (negative) [17].

- Incubation and Fixation: Incubate the plate for 24 hours to allow viral replication. Afterwards, fix the cells to permeabilize them and preserve cellular architecture for staining.

- Dual-Color Immunofluorescence Staining: a. Viral Antigen Staining: Incubate with an anti-CHIKV polyclonal primary antibody, followed by a fluorophore-conjugated secondary antibody. This stains the infected cells. b. Nuclear Staining: Counterstain the cells with DAPI to label the nucleus of every cell.

- Image Acquisition and Analysis: Acquire fluorescence images using a high-throughput imager. Analyze the images with a dedicated algorithm to quantify the number of infected cells (via the viral stain) and the total number of cells (via DAPI) in each well [17].

- Data Calculation:

- % Inhibition: Calculate the reduction in the number of infected cells in compound-treated wells compared to the CVD control.

- % Cells Left: Calculate the ratio of total cells in compound-treated wells compared to the CD control, serving as an approximation of cell viability/cytotoxicity.

Validation and Data Interpretation

The assay's validation demonstrated its power to correctly discriminate active from inactive compounds. CHX treatment resulted in 100% inhibition with no significant cytotoxicity, while ACY showed no inhibition [17]. The assay showed high reproducibility across three independent rounds with no significant variation [17]. When benchmarked against standard methods (plaque assay for inhibition and MTS assay for viability), the dual-color assay showed excellent performance, with an Area Under the Curve (AUC) of 0.962 for inhibition and 0.876 for viability in Receiver Operating Characteristic (ROC) analysis [17]. A cutoff of 80% inhibition is recommended for hit identification to ensure stringency, alongside a careful review of the "% Cells Left" data to eliminate cytotoxic false positives [17].

Diagram 1: Dual-color fluorescent assay workflow for HTS.

Computational Protocol: Triage of False Positives with Liability Predictor

False positives in HTS arise from various assay interference mechanisms, including chemical reactivity, reporter enzyme inhibition, and compound aggregation [16]. The "Liability Predictor" is a freely available webtool that uses Quantitative Structure-Interference Relationship (QSIR) models to predict such nuisance behaviors, offering a more reliable alternative to oversensitive PAINS (Pan-Assay INterference compoundS) filters [16].

Background and Principle

"Liability Predictor" was developed using curated HTS datasets for thiol reactivity, redox activity, and luciferase (firefly and nano) inhibitory activity [16]. The underlying QSIR models account for the interplay between a chemical fragment and its structural surroundings, providing a more nuanced prediction of interference potential than simple substructure alerts [16]. The model demonstrated balanced accuracies of 58-78% on external test sets [16].

Step-by-Step Procedure for Computational Triage

- Data Input Preparation: Prepare a list of hit compounds identified from your HTS campaign in a compatible chemical structure file format (e.g., SDF, SMILES).

- Access the Webbtool: Navigate to the "Liability Predictor" website at https://liability.mml.unc.edu/ [16].

- Compound Submission: Upload the structure file or input the SMILES strings of the hit compounds into the webtool.

- Model Selection and Prediction: The tool will process the compounds through its pre-trained QSIR models for thiol reactivity, redox activity, and luciferase interference.

- Result Interpretation: The output provides a prediction for each compound regarding its potential to act as an assay artifact in each interference category.

- Hit List Triaging: Use the predictions to prioritize compounds for confirmatory assays. Compounds flagged as high-risk interference liabilities can be deprioritized or subjected to specific counter-screens.

Integration into HTS Workflow

This computational triage should be performed after the primary screening and before committing significant resources to hit confirmation. It is a cost-effective step that helps focus experimental efforts on the most promising, high-quality hits with a lower likelihood of being artifacts [16].

Diagram 2: Computational triage of HTS hits for assay interference.

Discussion and Concluding Remarks

Navigating the trade-offs between throughput, cost, and data fidelity is central to a successful HTS campaign. While uHTS offers unparalleled scale, it does not inherently solve the false positive problem and introduces higher complexity and cost [9]. The strategic integration of robust, multi-parameter primary assays, like the dual-color fluorescent protocol, with advanced computational triage tools, like "Liability Predictor", creates a powerful framework for enhancing the validation of chemical probes.

The future of HTS validation is increasingly digital and computational. The adoption of AI and machine learning is poised to become integral to validation processes, handling large datasets and performing predictive modeling to identify risks earlier [18]. Furthermore, the industry is shifting towards continuous validation practices, where validation is integrated throughout the product lifecycle, allowing for real-time monitoring and updates [18]. By embracing these trends—digital tools, robust primary assays, and intelligent computational triage—researchers can build more resilient HTS processes. This ensures that the high throughput of modern screening translates into genuine discoveries, efficiently navigating the challenges of cost and false positives to deliver safer and more effective therapeutic candidates.

Advanced Methodologies for Probe Validation and Application

In the disciplined pursuit of chemical probes for research, robust assay development forms the critical foundation of any successful high-throughput screening (HTS) campaign. Assays function as the essential tools that translate biological phenomena into quantifiable data, enabling researchers to distinguish promising hits from false positives and to understand the kinetic behavior of novel inhibitors [19]. Within the context of a thesis on high-throughput validation of chemical probes, this document provides detailed application notes and protocols for designing, optimizing, and validating both biochemical and cell-based assays compatible with HTS requirements.

The Assay Guidance Manual from the National Center for Advancing Translational Sciences (NCATS) serves as a crucial resource for this endeavor. This manual, a collaborative effort of over 100 global experts, provides comprehensive guidelines for developing assay formats compatible with HTS and Structure-Activity Relationship (SAR) measurements, making it indispensable for academic, non-profit, and industrial research laboratories [20]. The following sections synthesize these established principles into actionable protocols, ensuring that the resulting data is reproducible, statistically sound, and physiologically relevant for the rigorous validation of chemical probes.

Core Concepts and Assay Selection

Biochemical vs. Cell-Based Assays: A Strategic Comparison

Selecting the appropriate assay type is a fundamental strategic decision. The choice between biochemical and cell-based formats depends on the biological question, the desired information about the chemical probe, and the available resources. Biochemical assays measure interactions or enzyme activity in a purified, cell-free system, while cell-based assays utilize live cells to quantify responses in a more physiologically complex environment [19] [21].

Table 1: Strategic Comparison of Biochemical and Cell-Based Assays for HTS

| Feature | Biochemical Assays | Cell-Based Assays |

|---|---|---|

| Biological Relevance | Direct target engagement; simplified system. | Higher; preserves cellular context, signaling pathways, and mimics disease states more accurately [21]. |

| Primary Applications | Screening for enzyme inhibitors, binding partners, and initial mechanism of action (MOA) studies [20]. | Assessing functional cellular responses (viability, proliferation, toxicity), pathway modulation, and complex phenotypic changes [22]. |

| Throughput | Typically very high. | High, but can be more complex than biochemical formats [23]. |

| Complexity & Cost | Lower; uses purified components. | Higher; requires cell culture and more complex optimization [22]. |

| Artifact Potential | Susceptible to compound interference (e.g., fluorescence, reactivity). | Can identify membrane-impermeant compounds and capture complex biology, reducing certain artifacts [20]. |

| Key Readouts | Fluorescence Polarization (FP), Time-Resolved FRET (TR-FRET), luminescence, absorbance [20] [19]. | Luminescence (e.g., ATP levels), fluorescence (e.g., calcium flux, reporter genes), high-content imaging [22]. |

Universal Assay Platforms for Efficiency

A powerful strategy to accelerate research is the adoption of universal assay platforms. These assays detect a common product of an enzymatic reaction, allowing multiple targets within an enzyme family to be studied with the same detection chemistry. For example, the Transcreener ADP² Assay detects ADP, a universal product of kinase, ATPase, and other nucleotide-utilizing enzymes, providing a broad applicability across target classes [19]. This "mix-and-read" homogeneous format simplifies automation, reduces variability, and increases throughput, making it ideal for HTS and lead discovery campaigns focused on generating SAR data.

Protocols for Biochemical Assay Development

This protocol outlines the development of a robust, homogeneous biochemical assay suitable for HTS, using a universal detection method as a model system.

Protocol: Development of a Universal Biochemical Activity Assay

Objective: To design, optimize, and validate a biochemical assay for measuring enzyme activity and inhibitor potency in a high-throughput format.

Principle: The assay directly measures the formation of a universal enzymatic product (e.g., ADP for kinases) using competitive immunodetection in a homogeneous, "mix-and-read" format compatible with fluorescence intensity (FI), fluorescence polarization (FP), or TR-FRET readouts [19].

Workflow Overview:

The following diagram illustrates the key stages of the biochemical assay development process.

Materials and Reagents:

- The Scientist's Toolkit: Key Reagents for Biochemical Assay Development is provided in Section 3.2.

Procedure:

Define Biological Objective and Reaction Conditions:

- Identify the enzyme target and understand its reaction type (e.g., kinase, methyltransferase).

- Clarify the functional outcome to be measured (e.g., product formation, substrate consumption).

- Prepare a reaction buffer. A typical starting point is 50 mM HEPES pH 7.5, 10 mM MgCl₂, 1 mM DTT, and 0.01% BSA. The final composition will be optimized in later steps [20].

Select and Optimize Detection Method:

- Choose a homogeneous, "mix-and-read" detection chemistry (e.g., FP or TR-FRET) compatible with your plate reader and the enzymatic product [19].

- Following the kit manufacturer's guidelines, establish the initial concentration of detection reagents (antibody, tracer).

Optimize Assay Components:

- Enzyme Titration: Perform a serial dilution of the enzyme in reaction buffer. Incubate with a fixed, saturating concentration of substrate and cofactors for a defined time (e.g., 1 hour). Stop the reaction with detection reagents and measure the signal. Select an enzyme concentration that produces a robust signal (70-80% of the maximum signal) while conserving protein [19].

- Substrate Titration: Repeat the assay with a fixed enzyme concentration (from the previous step) and varying substrate concentrations. Determine the apparent KM (Michaelis constant) and use a substrate concentration at or below KM for inhibitor screening to ensure sensitivity [20].

- Buffer and Cofactor Optimization: Systematically vary buffer pH, ionic strength, and concentration of essential cofactors (e.g., ATP) to find conditions for maximal enzyme activity and stability.

Validate Assay Performance:

- In a 96-well or 384-well plate, set up the following controls in replicates of at least 8:

- High Signal Control (100% Activity): Enzyme + Substrate.

- Low Signal Control (0% Activity): No enzyme, or a well-characterized potent inhibitor at a concentration 10x its IC50.

- Run the optimized assay protocol and calculate the following statistical parameters [19]:

- Signal-to-Background (S/B) Ratio: MeanSignalHigh / MeanSignalLow. A ratio >2 is generally acceptable.

- Z′-Factor: 1 - [ (3 × SDHigh + 3 × SDLow) / |MeanHigh - MeanLow| ]. A Z′ > 0.5 indicates an excellent, robust assay suitable for HTS [19].

- In a 96-well or 384-well plate, set up the following controls in replicates of at least 8:

Scale and Automate for HTS:

- Miniaturize the validated assay to the desired HTS format (e.g., 384-well or 1536-well plates).

- Adapt the protocol for automated liquid handling systems, ensuring consistency in dispensing and incubation times.

The Scientist's Toolkit: Key Reagents for Biochemical Assay Development

Table 2: Essential Reagents and Their Functions in Biochemical Assays

| Reagent / Solution | Function / Purpose |

|---|---|

| Universal Assay Kits (e.g., Transcreener) | Detects common products (e.g., ADP, SAH); enables broad screening across enzyme families with a single, optimized platform [19]. |

| Purified Target Enzyme | The key reagent; quality (identity, mass purity, enzymatic purity) is critical for generating reliable data [20]. |

| Substrates & Cofactors | Reactants required for the enzymatic reaction (e.g., ATP, peptide substrates for kinases; SAM for methyltransferases). |

| Detection Antibody & Tracer | Antibody specific to the product (e.g., ADP) and a fluorescently labeled tracer; binding competition generates the detectable signal in FP/TR-FRET [19]. |

| Optimized Reaction Buffer | Maintains optimal pH, ionic strength, and enzyme stability; may include cofactors (Mg²⁺, Mn²⁺) and reducing agents (DTT) [20]. |

| Reference Inhibitors | Well-characterized potent inhibitors for use as positive controls and for assay validation. |

Protocols for Cell-Based Assay Development

This protocol details the creation of a robust cell-based viability assay, a common starting point in HTS, emphasizing physiological relevance and reproducibility.

Protocol: Development of a Cell-Based Viability Assay for HTS

Objective: To establish a reproducible, scalable cell-based assay for quantifying compound effects on cell viability, proliferation, or cytotoxicity in a high-throughput format.

Principle: The assay measures the amount of ATP present, which indicates the presence of metabolically active cells. The luciferase enzyme uses ATP to convert luciferin to oxyluciferin, producing a luminescent signal proportional to the number of viable cells [22].

Workflow Overview:

The multi-stage process for developing a robust cell-based assay is outlined below.

Materials and Reagents:

- The Scientist's Toolkit: Key Reagents for Cell-Based Assay Development is provided in Section 4.2.

Procedure:

Cell Line Selection and Culture:

- Select a cell line relevant to the biological question or disease (e.g., a cancer cell line for an oncology probe) [22]. Preferably use human cell lines for clinical translational value [21].

- Maintain cells in recommended culture media and conditions (37°C, 5% CO₂). Ensure cells are healthy and in the logarithmic growth phase at the time of assay.

Optimize Cell Seeding Density:

- Harvest and count cells. Prepare a series of dilutions to seed in a 96-well tissue culture-treated plate (e.g., 1,000; 5,000; 10,000; 20,000 cells per well in 100 µL medium). Incubate for 24 hours.

- After incubation, add a homogeneous ATP-based luminescence reagent (e.g., CellTiter-Glo) according to the manufacturer's instructions. Measure the luminescent signal.

- Select a cell density that produces a robust signal and is within the linear range of the detection method, avoiding both overcrowding and under-representation [22].

Establish Assay Window and Dynamics:

- Seed cells at the optimized density in 96-well plates. After 24 hours, treat with a positive control for cytotoxicity (e.g., 1 µM Staurosporine) and a negative control (vehicle, e.g., DMSO) for 24, 48, 72, and 96 hours [22].

- At each time point, perform the viability assay. Determine the optimal incubation time that provides the largest dynamic range (difference between positive and negative controls) and aligns with the biological model.

Define Controls and Validate Performance:

- In the final HTS plate format (e.g., 384-well), include on-plate controls in replicates:

- Negative Control (100% Viability): Cells + Vehicle (e.g., 0.1% DMSO).

- Positive Control (0% Viability): Cells + a cytotoxic agent (e.g., 1 µM Staurosporine).

- Calculate the Z′-factor as described in Section 3.1. A Z′ > 0.5 confirms the assay is robust for HTS.

- In the final HTS plate format (e.g., 384-well), include on-plate controls in replicates:

HTS Implementation and Data Analysis:

- Use automated liquid handlers to dispense cells, compounds, and reagents uniformly.

- For dose-response studies, generate 10-point, half-log dilution series of test compounds.

- Normalize raw data to the negative and positive controls on each plate (0% and 100% inhibition, respectively).

- Fit the normalized dose-response data to a 4-parameter logistic (4PL) model to determine IC50/EC50 values [21].

The Scientist's Toolkit: Key Reagents for Cell-Based Assay Development

Table 3: Essential Reagents and Their Functions in Cell-Based Assays

| Reagent / Solution | Function / Purpose |

|---|---|

| Relevant Cell Line | The biological model; should be disease-relevant and properly authenticated (e.g., by STR DNA profiling) to ensure validity [20] [21]. |

| Cell Culture Media & Supplements | Provides nutrients and growth factors to maintain cell health and proliferation before and during compound treatment. |

| ATP-based Viability Reagents (e.g., CellTiter-Glo) | Homogeneous, "add-measure" lytic reagents that generate luminescence proportional to ATP concentration (a marker of metabolically active cells) [22]. |

| Reference Standard / Control Compounds | A cytotoxic agent for positive control (e.g., Staurosporine) and a vehicle for negative control (e.g., DMSO); critical for plate normalization and quality control [22] [21]. |

| Multi-Well Plates (TC-Treated) | 96-, 384-, or 1536-well plates treated for optimal cell adhesion; designed for compatibility with automation and plate readers [22]. |

Assay Validation and Data Analysis

Statistical Validation for HTS Robustness

The ultimate test of an HTS-ready assay is its statistical robustness. The Z′-factor is the key metric for this assessment. It evaluates the quality of the assay by incorporating both the dynamic range (separation between high and low controls) and the data variation (standard deviation of the controls) into a single parameter [19]. An assay with a Z′ ≥ 0.5 is considered excellent and suitable for high-throughput screening, as it has a wide separation band between controls and low variability.

Advanced Considerations for Chemical Probe Validation

Once a primary HTS assay is established, the journey to validate a chemical probe requires orthogonal assays—those that use a different detection technology or biological readout—to confirm the activity of initial "hits." Furthermore, for cell-based assays intended for later-stage development (e.g., lot-release testing of biologics), rigorous validation under Good Manufacturing Practice (GMP) principles may be required. This involves demonstrating accuracy, precision, linearity, specificity, and robustness across multiple operators, equipment, and critical reagent lots [21]. The data analysis often involves calculating the Relative Potency (RP) of a test sample compared to a reference standard, typically using a 4-parameter (4P) or parallel line analysis (PLA) to determine EC50 values and ensure biological similarity [21].

Leveraging Covalent Chemistry for Irreversible Target Engagement and Profiling

Covalent chemical probes are small, biologically active molecules designed to form a irreversible or reversible covalent bond with a specific target protein. [15] This mechanism offers a significant advantage: prolonged and sustained target engagement, which can lead to more profound and durable pharmacological effects. [24] [15] Historically, the pursuit of covalent drugs was approached with caution due to concerns about off-target reactivity and potential toxicity. However, inspired by blockbuster drugs like aspirin and penicillin, covalency has made a powerful comeback in both basic research and clinical therapy. [15] The rational design of Targeted Covalent Inhibitors (TCIs) now allows for the precise targeting of non-catalytic cysteine residues and other nucleophilic amino acids, enabling highly selective inhibition of proteins previously considered "undruggable." [24] [25]

Within a high-throughput validation pipeline, covalent probes are invaluable. They enable researchers to conclusively link a observed cellular phenotype to the modulation of a specific protein target. [25] The critical parameters for a high-quality covalent probe extend beyond simple potency to include efficient inactivation kinetics, demonstrated selectivity over closely related proteins, and direct evidence of target engagement in a cellular environment. [24] [25] The following sections detail the key quantitative parameters, provide protocols for their determination, and visualize the integrated workflow for validating these essential research tools.

Key Quantitative Parameters for Covalent Probes

The characterization of covalent probes relies on specific kinetic and potency parameters that differentiate them from traditional reversible inhibitors. The two most critical kinetic parameters for evaluating Targeted Covalent Inhibitors (TCIs) are the inactivation efficiency rate (kinact/KI) and the IC50 value. It is important to note that optimization efforts should focus on balancing, not merely maximizing, the kinact/KI ratio to achieve selectivity, particularly for mutants over the wild-type protein. [24]

The table below summarizes the essential parameters and recommended benchmarks for a high-quality chemical probe, synthesized from current literature.

Table 1: Key Quantitative Parameters for High-Quality Covalent Probes

| Parameter | Description | Recommended Benchmark | Application & Significance |

|---|---|---|---|