Hybrid Physics-Informed Models: A Framework for Robust Transferability Assessment in Biomedical Research

This article explores the emerging paradigm of hybrid physics-informed models, which integrate mechanistic knowledge with data-driven machine learning to overcome the limitations of purely physics-based or black-box AI approaches.

Hybrid Physics-Informed Models: A Framework for Robust Transferability Assessment in Biomedical Research

Abstract

This article explores the emerging paradigm of hybrid physics-informed models, which integrate mechanistic knowledge with data-driven machine learning to overcome the limitations of purely physics-based or black-box AI approaches. We provide a comprehensive framework for assessing the transferability of these models—their ability to maintain predictive accuracy and physical consistency when applied to new, unseen data scenarios, a critical challenge in drug development and clinical translation. Drawing on cutting-edge applications from adjacent fields like energy systems and advanced manufacturing, we detail methodological innovations, troubleshooting strategies for domain shift and data scarcity, and rigorous validation techniques. The synthesized insights offer researchers and drug development professionals a practical guide for building more generalizable, interpretable, and reliable predictive models for complex biomedical systems.

The Principles and Imperative of Hybrid Modeling for Biomedical Generalizability

In the quest to advance computational modeling across scientific domains, researchers are increasingly moving beyond the traditional dichotomy of purely physics-based "white box" models and purely data-driven "black box" models. Hybrid physics-informed models represent a sophisticated "gray box" paradigm that systematically integrates first-principles knowledge with data-driven machine learning. This approach leverages the complementary strengths of both methodologies: the generalizability and theoretical grounding of physical laws, together with the flexibility and pattern recognition capabilities of modern machine learning. For researchers and drug development professionals, these hybrid frameworks offer a promising path toward more predictive, data-efficient, and physically plausible models, particularly when dealing with complex, multi-scale systems where first-principles understanding may be incomplete or computational costs prohibitive [1] [2].

The fundamental motivation for this hybrid approach lies in its ability to address critical limitations inherent in either method used in isolation. First-principles models, derived from fundamental physical laws, provide strong generalization guarantees and interpretability but often struggle with computational complexity and may fail to capture poorly understood phenomena. Conversely, purely data-driven models excel at identifying complex patterns from data but often require large datasets, lack physical consistency, and may generalize poorly beyond their training distribution. By embedding physical knowledge directly into the learning process, hybrid models can achieve superior performance with less data, produce more physically realistic predictions, and ultimately enhance trust in their outputs for critical applications in fields like drug development and biomedical engineering [1] [2] [3].

Comparative Analysis of Hybrid Modeling Approaches

Taxonomy and Architectural Frameworks

The landscape of hybrid physics-informed models encompasses several distinct architectural philosophies, each with unique mechanisms for integrating physical knowledge. The three predominant frameworks are Physics-Informed Neural Networks (PINNs), Hybrid Semi-Parametric Models, and Neural Operators.

Physics-Informed Neural Networks (PINNs) embed physical laws directly into the neural network's loss function, typically by penalizing violations of governing Partial Differential Equations (PDEs) at collocation points within the domain. This approach ensures the model satisfies known physics while fitting available data, making it particularly valuable for inverse problems where parameters of physical laws must be inferred from sparse observations [1] [2]. A representative application includes reconstructing cerebrospinal fluid flow fields in biomedical imaging, where PINNs integrate Navier-Stokes equations with sparse particle tracking velocimetry data [1].

Hybrid Semi-Parametric Models adopt a modular structure, explicitly combining a first-principles component (often derived from mechanistic understanding) with a data-driven component (typically a neural network) that learns the residual phenomena or uncertain parameters. This structure provides inherent interpretability, as the contributions of physics and data remain distinct, and allows domain experts to incorporate well-established physical relationships directly [4].

Neural Operators represent a more recent advancement, learning mappings between infinite-dimensional function spaces rather than specific instances. This enables zero-shot generalization to new system configurations or boundary conditions without retraining, offering significant computational advantages for multi-query scenarios in parametric studies [1]. For instance, neural operators can map initial conditions in aortic aneurysm progression to future states across multiple patient-specific risk factors [1].

Table 1: Comparative Overview of Major Hybrid Modeling Frameworks

| Framework | Integration Mechanism | Primary Strengths | Ideal Application Scenarios |

|---|---|---|---|

| Physics-Informed Neural Networks (PINNs) | Physics as soft constraints via loss function | Handles incomplete physics; Mesh-free; Suitable for inverse problems | Systems with known governing equations but unknown parameters/boundary conditions [1] |

| Hybrid Semi-Parametric Models | Explicit separation of physics and data components | High interpretability; Data efficiency; Stable training | Processes with partially known mechanics where data fills gaps [4] |

| Neural Operators | Learning solution operators of PDEs | Fast inference; Resolution invariance; Transferable across configurations | Multi-query simulations; Systems requiring real-time prediction [1] |

Performance Benchmarking and Quantitative Comparisons

Empirical studies consistently demonstrate that the choice of hybrid architecture significantly impacts model performance, particularly in data-limited regimes and for ensuring physical consistency.

A comparative study on a pilot-scale bubble column aeration unit provides direct, quantitative evidence of these trade-offs. The research evaluated a Hybrid Semi-Parametric structure (combining first-principles with a feed-forward neural network) against Physics-Informed Recurrent Neural Networks (PIRNNs). The key findings revealed that while both approaches benefited from physical grounding, the hybrid semi-parametric model generally delivered superior prediction accuracy, better adherence to the governing physics, and more robust performance when the quantity of training data was reduced. This advantage was attributed to its structured decomposition of the problem into well-understood and data-driven components [4].

Similar performance characteristics extend to other domains. In computational chemistry, a machine-learned coarse-grained (CG) model for proteins demonstrated the power of hybrid approaches. This model was trained on all-atom simulation data but learned to represent effective physical interactions, enabling it to predict metastable states of folded and unfolded proteins while being several orders of magnitude faster than all-atom molecular dynamics. Crucially, it maintained this accuracy on new protein sequences not seen during training, showcasing superior generalization rooted in its physical basis [5].

Furthermore, studies on air quality prediction have shown that integrating physical laws via PINNs significantly enhances reliability over conventional machine learning models, which often neglect the underlying physical constraints governing pollutant dispersion [3].

Table 2: Experimental Performance Comparison of Hybrid vs. Pure Data-Driven Models

| Application Domain | Model Type | Key Performance Metrics | Quantitative Results |

|---|---|---|---|

| Bubble Column Aeration [4] | Hybrid Semi-Parametric | Prediction Accuracy, Data Efficiency | Superior accuracy and robustness with reduced training data |

| Bubble Column Aeration [4] | PIRNN | Adherence to Physics, Measurement Frequency Sensitivity | Good physics adherence, higher sensitivity to low measurement frequency |

| Protein Simulation [5] | Machine-Learned Coarse-Grained (CG) Model | Speed vs. All-Atom MD, Prediction Accuracy | Several orders of magnitude faster, quantitatively accurate for folding free energies |

| Gaze Point Prediction [6] | Physics-Informed Neural Network (PINN) | Mean Absolute Error (MAE), Predictive Accuracy (R²) | MAE (X: 0.61, Y: 0.35); R² (Y): 0.91 vs. 0.85 for conventional NN |

| Air Quality Index Prediction [3] | PINN (AirSense-X) | Accuracy, Precision, Recall, F1 Score | Accuracy: 98%, Precision: 97%, Recall: 95%, F1: 0.96 |

Experimental Protocols and Implementation Methodologies

Workflow for Hybrid Model Development

Implementing a successful hybrid model requires a structured pipeline that systematically integrates physical knowledge with data-driven learning. The following workflow, common across many applications, outlines the key stages:

1. Problem Definition and Physics Formulation: The process begins with a clear articulation of the system to be modeled and the identification of relevant first-principles knowledge. This typically involves specifying governing equations (e.g., PDEs, ODEs), conservation laws, symmetry properties, or known boundary conditions. In computational chemistry, for example, this might involve the Hamiltonian formulation or molecular force field equations [7] [5].

2. Data Collection and Synthesis: The next stage involves gathering experimental or high-fidelity simulation data for training and validation. For the machine-learned coarse-grained protein model, this entailed generating a extensive dataset of all-atom explicit solvent simulations of small proteins with diverse folded structures [5]. The quality and diversity of this data directly impacts the model's ability to learn the residual phenomena not captured by the physics component.

3. Hybrid Architecture Selection: Based on the problem structure, an appropriate hybrid framework is selected (e.g., PINN, Semi-Parametric). This choice involves designing the network architecture, determining how physical knowledge will be embedded (e.g., via the loss function or model structure), and defining the interface between physics and data components.

4. Physics-Informed Loss Design: This critical step involves formulating the loss function to balance data fidelity with physical consistency. A typical composite loss function might be: L = λ_data L_data + λ_physics L_physics + λ_BC L_BC, where:

L_dataensures fit with observed dataL_physicspenalizes violations of governing equationsL_BC/ICenforces boundary and initial conditionsλterms are weighting parameters that balance these objectives [1] [3]

5. Model Training and Validation: The model is trained using appropriate optimization algorithms, often combining first-order methods like Adam with second-order methods like L-BFGS for improved convergence [1]. Validation against held-out data and physical plausibility checks are essential.

6. Deployment and Interpretation: The trained model is deployed for prediction, with particular attention to interpreting results, quantifying uncertainty, and potentially enabling scientific discovery through analysis of the learned data-driven components.

Specialized Computational Techniques

Successful implementation of hybrid models often requires specialized computational methods, particularly for problems involving quantum effects or multi-scale phenomena:

Path Integral Molecular Dynamics (PIMD) for Quantum Systems: The calculation of heat capacity in water from first principles demonstrates a sophisticated hybrid approach. To accurately capture nuclear quantum effects, researchers employed PIMD simulations with machine-learned high-dimensional neural network potentials (HDNNPs). This methodology required:

- Advanced Sampling: Using 128 beads (imaginary time slices) in the path integral to model quantum nuclei

- Efficient Algorithms: Implementing a highly parallel PIMD algorithm to compute energies, forces, and time evolution efficiently

- Extensive Sampling: Running 4 ns simulations to achieve statistical convergence for energy fluctuations from which heat capacity is derived [7]

Transfer Learning for Enhanced Efficiency: A common strategy to reduce training cost involves transfer learning, where a model pre-trained on one system or set of parameters is fine-tuned for a related problem. This is particularly effective in PINNs, where a network trained for one set of boundary conditions or source terms can be rapidly adapted to new scenarios, significantly accelerating the training process [8].

Implementing hybrid physics-informed models requires both domain-specific knowledge and specialized computational tools. The following table catalogues key "research reagents" essential for experimental work in this field.

Table 3: Essential Research Reagents and Computational Tools for Hybrid Modeling

| Tool Category | Specific Examples | Function and Application |

|---|---|---|

| Governing Equation Frameworks | Navier-Stokes, Euler Equations, Schrödinger Equation, Darcy's Law [1] [9] | Provide first-principles foundation; Encode core physical constraints into model structure |

| Data Generation Tools | All-Atom Molecular Dynamics (e.g., GROMACS) [5], High-Fidelity CFD Solvers, Experimental Sensing Platforms | Generate training data; Provide ground truth for model validation; Capture system behavior across scales |

| Machine Learning Architectures | Feed-Forward Neural Networks, Recurrent Neural Networks (RNNs), High-Dimensional Neural Network Potentials (HDNNPs) [7] [5] | Learn residual phenomena; Represent complex non-linear mappings; Capture multi-body interactions |

| Quantum Computing Elements | Parameterized Quantum Circuits (PQC) [9] | Enhance feature representation; Model harmonic solutions; Explore quantum-enhanced learning |

| Optimization & Training Tools | Adam Optimizer, L-BFGS [1], Automatic Differentiation (e.g., in PyTorch, TensorFlow) [8] | Solve optimization problem; Compute precise derivatives; Balance multiple loss components |

| Validation Metrics | Mean Absolute Error (MAE), R² Score, Physical Constraint Violation, Relative Error | Quantify model performance; Assess physical plausibility; Compare against traditional solvers |

Hybrid physics-informed models represent a fundamental shift in computational science, moving beyond the artificial separation of first-principles modeling and data-driven approaches. As the comparative analysis demonstrates, each hybrid architecture—from PINNs to semi-parametric models and neural operators—offers distinct advantages depending on the application context, data availability, and completeness of physical knowledge [4] [1] [2].

For researchers and drug development professionals, these approaches open new possibilities for creating predictive digital twins of complex biological systems, from protein folding dynamics [5] to physiological flows [1]. The key insight emerging from recent studies is that the most successful implementations often leverage the complementary strengths of different hybrid approaches—using semi-parametric models for their data efficiency and interpretability [4], while employing neural operators for rapid exploration of parameter spaces [1].

As the field evolves, critical challenges remain in improving training efficiency, especially for problems with multi-scale dynamics, enhancing uncertainty quantification, and developing more interpretable architectures. Nevertheless, the continued development of hybrid physics-informed models promises to accelerate scientific discovery across biomedical science, materials design, and drug development by creating more faithful, data-efficient, and physically plausible computational representations of complex natural systems.

In the pursuit of robust predictive models across scientific and engineering disciplines, researchers increasingly face the transferability challenge—the frustrating phenomenon where models demonstrating exceptional performance in their original domain fail dramatically when applied to new environments, conditions, or systems. This challenge represents a critical bottleneck in fields ranging from drug development to industrial prognostics, where accurate predictions in novel scenarios directly impact economic, safety, and health outcomes. The core of this problem lies in the fundamental limitations of two dominant modeling paradigms: purely physics-based approaches and purely data-driven methods. Physics-based models, grounded in established first principles, often struggle to capture the full complexity of real-world systems, while data-driven models frequently fail when confronted with data distributions different from their training sets [10] [11].

The domain shift phenomenon undermines model reliability precisely when it is most needed—during deployment in real-world conditions that inevitably differ from those encountered during development. In industrial prognostics, for instance, a remaining useful life (RUL) prediction model trained on one type of equipment under specific operating conditions may perform poorly when applied to similar equipment in different environments or with varying usage patterns [10]. Similarly, in pharmaceutical development, models predicting compound efficacy may fail when applied to novel chemical structures or different biological systems [12]. This article systematically analyzes why these two dominant modeling approaches fail in cross-domain applications and examines how emerging hybrid methodologies are addressing these fundamental limitations.

The Pitfalls of Purely Physics-Based Models

Pure physics-based models leverage established scientific principles and mathematical equations to represent system behavior, providing interpretability and theoretical grounding. However, their reliance on precise parameterization and simplifying assumptions renders them particularly vulnerable in cross-domain applications.

Fundamental Limitations in New Domains

Physics-based models encounter several critical challenges when transferred to new domains:

Ill-posed inverse problems: In many complex systems, multiple combinations of input parameters can generate nearly identical outputs, creating ambiguity in parameter estimation and model inversion. In plant trait estimation using PROSPECT-D radiative transfer models, for instance, different combinations of biochemical parameters can produce virtually identical canopy reflectance spectra, leading to non-unique solutions and significant uncertainty in trait retrieval [11].

Parameterization dependency: Model accuracy depends critically on accurate prior knowledge of system-specific parameters, which may be unavailable or inaccurate across diverse environments. As systems become more complex or move into novel operating regimes, the required parameterization becomes increasingly difficult to obtain, limiting practical application [11] [13].

Computational intractability: High-fidelity physics-based simulations often require substantial computational resources, making them impractical for real-time applications or large-scale system analysis, particularly when rapid iterations are needed across multiple domains [12].

Case Study: Radiative Transfer Models in Plant Science

The application of PROSPECT-D models for cross-ecosystem plant trait estimation illustrates these limitations starkly. When estimating crucial plant functional traits like chlorophyll content (CHL), equivalent water thickness (EWT), and leaf mass per area (LMA), these physics-based models demonstrate significant performance degradation when applied across different ecosystems, species compositions, and environmental conditions [11]. The models' rigid parameterization, optimized for specific plant species or canopy structures, fails to accommodate the natural variations present in heterogeneous environments. This limitation fundamentally constrains their utility for large-scale ecosystem monitoring and precision agriculture applications where conditions vary substantially across domains.

The Shortcomings of Purely Data-Driven Models

Data-driven approaches, particularly modern deep learning architectures, have demonstrated remarkable success within their training domains but face distinct challenges when confronted with domain shifts.

Critical Vulnerabilities to Domain Shift

The performance of data-driven models degrades in cross-domain scenarios due to several inherent characteristics:

Feature representation mismatch: Models trained on source domain data learn feature representations that may become suboptimal or misleading when the underlying data distribution changes. In industrial Prognostics and Health Management (PHM), for instance, sensor data relationships that indicate degradation in one operating condition may differ significantly under different conditions, causing models to miss critical patterns or generate false alarms [10].

Data volume requirements: Deep learning models typically require extensive labeled datasets for training, which are economically and practically infeasible to collect for every possible domain, especially for applications involving rare events or expensive measurements [10] [13].

Black-box nature: The opaque decision-making processes of complex data-driven models make it difficult to diagnose failure modes or understand why performance degrades in new domains, complicating remediation efforts [10].

Empirical Evidence: Building Energy Prediction

A comprehensive study on building energy prediction demonstrates these limitations concretely. When a data-driven model pre-trained on 327 buildings was applied to new buildings without adaptation, it exhibited significant performance degradation, with median Mean Absolute Percentage Error (MAPE) values as high as 18.31% [13]. This performance drop occurred despite the substantial volume of source domain data, highlighting how even models trained on extensive datasets can fail when faced with domain shifts. The study further revealed that negative transfer—where transfer learning actually worsens performance—occurred in a subset of cases, though fortunately at a relatively low rate unrelated to data volume [13].

Table 1: Performance Comparison of Modeling Approaches in Cross-Domain Applications

| Application Domain | Model Type | Performance Metric | Source Domain | Target Domain | Performance Drop |

|---|---|---|---|---|---|

| Building Energy Prediction | Data-Driven (Bi-LSTM) | MAPE | 327 buildings | New buildings | 18.31% to 7.76% (after transfer) [13] |

| Plant Trait Estimation | Physics-Based (PROSPECT-D) | R² | Synthetic spectra | Field measurements | Significant degradation without adaptation [11] |

| Industrial PHM | Data-Driven (LLM-based) | RUL Prediction Accuracy | Original equipment | Different operating conditions | Requires dual-score sample selection to maintain accuracy [10] |

| Drug Development | Physics-Based | Attrition Rate | Pre-clinical models | Human trials | 96% candidate attrition [12] |

Hybrid Physics-Informed Approaches: A Path Forward

Hybrid methodologies that integrate physics-based principles with data-driven learning have emerged as a promising direction for addressing transferability challenges. These approaches aim to leverage the complementary strengths of both paradigms while mitigating their individual weaknesses.

Methodological Framework and Integration Strategies

Successful hybrid frameworks typically employ several key strategies:

Physical priors for data efficiency: Incorporating physical knowledge as constraints or regularization within data-driven models significantly reduces dependency on large labeled datasets. In plant trait estimation, PPADA-Net integrates PROSPECT-D radiative transfer modeling with adversarial domain adaptation, using synthetic spectra from physical models to pre-train residual networks that capture biophysical relationships between leaf traits and spectral signatures [11].

Adversarial domain adaptation: This technique employs domain-discriminative networks to reduce discrepancies between source and target feature spaces, aligning feature distributions across domains. The integration of adversarial learning enables models to learn domain-invariant representations that maintain performance across different ecosystems or operating conditions [11].

Transfer learning with physical guidance: Model-based transfer learning uses physically-informed pre-trained models as starting points, which are then fine-tuned using limited target domain data. This approach has demonstrated remarkable efficiency—in building energy prediction, transfer learning with just 7 days of target data outperformed direct prediction using 180 days of data [13].

Experimental Protocols in Hybrid Modeling

Research into hybrid approaches has established several rigorous experimental protocols for evaluating cross-domain performance:

Cross-dataset validation: Models are trained on one dataset and tested on completely different datasets collected under varying conditions. For example, PPADA-Net was validated on four public datasets and one field-measured dataset, demonstrating consistent performance with R² values of 0.72 (CHL), 0.77 (EWT), and 0.86 (LMA) across domains [11].

Progressive data scarcity testing: Studies evaluate how model performance degrades as target domain data becomes increasingly limited, testing the lower bounds of data requirements for effective transfer. This protocol has demonstrated that hybrid models maintain robustness even with very limited target data [13].

Temporal robustness evaluation: Transfer procedures are repeated at multiple time nodes to assess whether performance improvements are consistent or fluctuate based on temporal factors, with studies conducting evaluations at 20 different time nodes to establish robustness [13].

Table 2: Hybrid Model Performance Across Applications

| Hybrid Framework | Application Domain | Physics Component | Data-Driven Component | Cross-Domain Performance |

|---|---|---|---|---|

| PPADA-Net [11] | Plant Trait Estimation | PROSPECT-D Radiative Transfer | Adversarial Domain Adaptation ResNet | Mean R²: 0.72 (CHL), 0.77 (EWT), 0.86 (LMA) across 5 datasets |

| SRPTL [10] | Industrial RUL Prediction | Physical degradation patterns | Transferable LLM (GPT-2) with dual-score sample selection | State-of-the-art across diverse operating conditions with single hyperparameter configuration |

| Digital Twin Framework [12] | Pharmaceutical Development | Physiological models | AI/ML for real-time monitoring | Predicts optimal dosages within 7% of clinical outcomes |

| Bi-LSTM Transfer [13] | Building Energy Prediction | Building thermal dynamics | Bi-directional LSTM with fine-tuning | Median MAPE improvement from 18.31% to 7.76% with 7 days data |

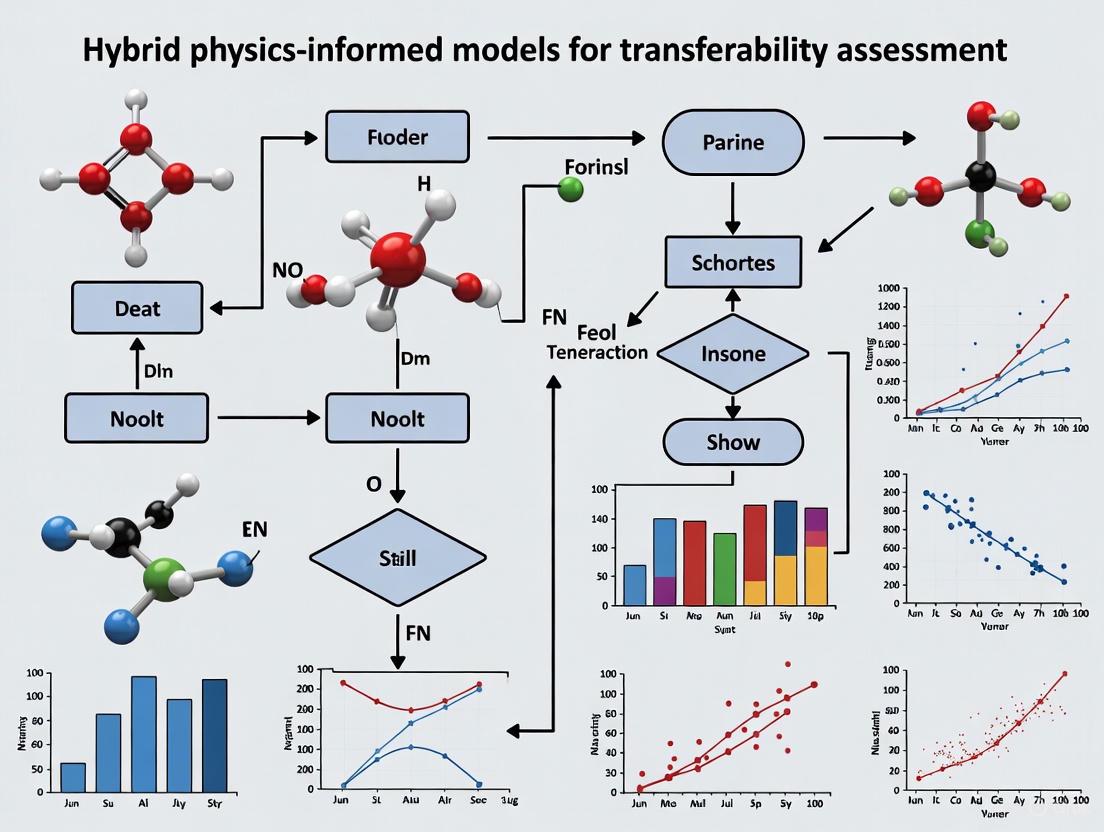

Conceptual Workflow of a Hybrid Physics-Informed Framework

The following diagram illustrates the integrated workflow of a successful hybrid physics-informed model that maintains performance across domains:

This workflow demonstrates how hybrid models leverage physical knowledge throughout both pre-training and adaptation phases, creating a continuous integration of first principles with data-driven learning that enhances cross-domain robustness.

The Scientist's Toolkit: Essential Research Reagents for Transferability Assessment

Implementing and evaluating hybrid physics-informed models requires specialized methodological approaches and validation strategies. The following toolkit outlines key components for effective transferability assessment research:

Table 3: Research Toolkit for Transferability Assessment

| Tool/Component | Category | Function in Transferability Research | Example Implementation |

|---|---|---|---|

| PROSPECT-D | Physics-Based Simulator | Generates synthetic training data based on radiative transfer physics | Creating synthetic spectra for plant trait estimation [11] |

| Dual-Score Sample Selection | Data Strategy | Identifies most informative samples for transfer learning based on influence and effort metrics | Improving sample efficiency in LLM-based RUL prediction [10] |

| Adversarial Domain Adaptation | Algorithmic Framework | Aligns feature distributions between source and target domains | Domain-Adversarial Neural Networks (DANN) for cross-ecosystem trait prediction [11] |

| Bi-directional LSTM | Model Architecture | Captures temporal dependencies in sequential data while accommodating domain shift | Building energy prediction across 327 buildings [13] |

| Digital Twin Framework | Hybrid Platform | Creates virtual replicas that integrate physical models with real-time data | Pharmaceutical development from discovery to manufacturing [12] |

| Negative Transfer Monitoring | Evaluation Metric | Tracks when transfer learning worsens performance rather than improving it | Assessing robustness in building energy prediction [13] |

The transferability challenge represents a fundamental limitation of both purely physics-based and purely data-driven modeling paradigms. Physics-based models fail in new domains due to their dependency on precise parameterization, simplifying assumptions, and difficulties with ill-posed inverse problems. Data-driven models exhibit performance degradation due to their sensitivity to domain shift, data volume requirements, and opaque decision processes. Hybrid physics-informed models emerge as a promising path forward, leveraging physical principles to guide and constrain data-driven approaches, resulting in improved sample efficiency, interpretability, and cross-domain robustness. As research in this area advances, the integration of physical knowledge with adaptive learning continues to show promise for developing models that maintain reliability when deployed in the novel conditions inevitably encountered in real-world applications.

In the evolving landscape of computational science, hybrid physics-informed models have emerged as a transformative paradigm for enhancing predictive accuracy and generalization across diverse application domains. Transferable hybrid models are characterized by their core capability to integrate well-established physical principles with data-driven machine learning (ML) methodologies, creating systems that are both physically plausible and adaptable to real-world observed data. This integration is paramount for applications where purely data-driven models fail due to sparse data or where traditional physics-based models lack the flexibility to capture complex, non-linear system behaviors. The transferability of these models—their ability to perform robustly under varying conditions, geographic locations, or system configurations—hinges critically on three foundational components: the strategic implementation of physical constraints, robust data integration frameworks, and flexible model architecture.

The necessity for such models is particularly acute in fields like drug development, where accurately predicting ligand-protein binding affinity is crucial for identifying hit compounds yet challenging due to the scarcity of data for novel molecular structures [14]. Similarly, in environmental sciences like hydrology, while traditional physical models are interpretable, they often suffer from rigidity and simplifying assumptions, whereas black-box ML methods like Long Short-Term Memory (LSTM) networks, despite exceptional predictive performance, face criticism for lack of interpretability and potential failure in extrapolation [15]. Hybrid modeling aims to reconcile these approaches by embedding physical laws into learning frameworks, thus enhancing both performance and trustworthiness for critical scientific and industrial decisions.

Architectural Patterns for Hybrid Integration

The architecture of a hybrid model defines how physical knowledge and data-driven components are interconnected. Across different domains, several effective patterns have emerged, each with distinct mechanisms for ensuring physical consistency and improving transferability.

Physics-Informed Loss Functions and Input Constraints

A prevalent architectural pattern involves using physical laws to constrain the learning process of a neural network, typically through the loss function or input features. This pattern is exemplified in a study on hydrodynamic prediction for water transfer systems, where a physics-constrained neural network (PcNN) employing LSTM was developed [16]. The hybrid model integrates a 1D physics-based Saint-Venant equations (SVE) model with the PcNN for real-time prediction of offtake discharges. The physical constraints are embedded in two ways: firstly, in the input layer, where features are engineered or selected based on prior physical knowledge of the canal system; and secondly, in the loss function, where physical governing equations or conservation laws are incorporated to penalize physically implausible predictions [16]. This approach improved offtake discharge prediction by 30%–70% over the baseline and enhanced water level forecasting, demonstrating effective integration of system hydrodynamics with data patterns.

Physics-Guided Model Replacement and Hybrid Backbones

Another architectural strategy involves replacing specific components of a physics-based model with data-driven surrogates or constructing hybrid backbones that leverage the efficiency of different paradigms. For instance, in drop-on-demand inkjet printing, a hybrid modeling framework was developed by integrating continuous equivalent circuit models (ECMs) with data-driven adjusters [17]. The ECMs, derived from prior knowledge of nozzle geometries and ink properties, simulate the continuous drop growth within the nozzle. However, to address discrepancies at the critical pinch-off moment, data-driven adjusters (modeled as linear functions) are incorporated to refine the estimations of in-flight drop volume and jetting velocity [17]. This architecture leverages the physical model for the core dynamic process while using data-driven components to correct for phenomena that are poorly described by first principles.

Similarly, in large language models (LLMs), the LightTransfer framework transforms standard transformer models into hybrid variants to improve inference efficiency [18]. It identifies "lazy layers" that focus primarily on recent or initial tokens and replaces their full attention mechanism with memory-efficient streaming attention. The non-lazy layers retain standard attention to preserve global context understanding. This hybrid attention architecture achieves up to a 2.17× throughput improvement with minimal performance loss, showcasing a transferable design that balances efficiency and capability [18].

Parameterized Physics-Informed Networks

A more deeply integrated pattern involves using the neural network to predict the parameters of physical equations rather than directly predicting the target output. A seminal example is PIGNet (Physics-Informed Graph Neural Network), developed for molecular docking in drug development [14]. PIGNet does not directly predict ligand-protein binding affinity. Instead, the graph neural network learns to predict intermediate parameters that are fed into established physical equations for van der Waals energy, hydrogen bonding, and hydrophobic interactions. The final binding energy is calculated by summing these physics-informed components [14]. This architecture directly embeds physical laws like the Lennard-Jones potential into the model's forward pass, ensuring that the predictions are always physically grounded. This mitigates the overfitting problems of pure data-driven approaches and improves generalization, especially for novel molecular structures not seen during training.

Table 1: Comparison of Hybrid Model Architectural Patterns

| Architectural Pattern | Core Mechanism | Key Advantage | Exemplar Model/Domain |

|---|---|---|---|

| Physics-Informed Loss/Input | Physical laws embedded in loss function or input features [16]. | Enhances physical plausibility of predictions without altering model structure. | Hydrodynamic prediction in canals [16]. |

| Physics-Guided Replacement | Replacing specific model components with data-driven surrogates [17] [18]. | Boosts efficiency and addresses specific model shortcomings. | Inkjet printing [17], Long-context LLMs [18]. |

| Parameterized Physics-Informed Networks | NN predicts parameters for physical equations [14]. | Ensures outputs are structurally grounded in physical laws, improving transferability. | Molecular docking (PIGNet) [14]. |

Quantitative Performance Comparison

The efficacy of hybrid models is best demonstrated through rigorous quantitative comparison against pure physics-based and pure data-driven alternatives across various benchmarks. The following tables summarize experimental data from multiple domains, highlighting the performance gains offered by hybrid approaches.

Table 2: Performance Comparison in Environmental Science and Hydrology

| Model Type | Model Name / Description | Performance Metrics | Key Findings |

|---|---|---|---|

| Hybrid (Physical + ML) | Hybrid model for daily ET estimation [19]. | R²: 0.9, RMSE: 0.5 mm/day, BIAS: 0.2 mm/day, KGE: 0.9 [19]. | Outperformed both pure physical and pure ML models, showing superior accuracy and lower bias. |

| Physics-Based | Penman-Monteith (P-M) and Priestley-Taylor (P-T) algorithms [19]. | Lower R² and higher RMSE than the hybrid model [19]. | Static parameters limit dynamic capture of ET across different plant functional types. |

| Pure Machine Learning | Random Forest (RF), Extreme Gradient Boosting (XGB), K-Nearest Neighbors (KNN) [19]. | High performance but potential for physically implausible results; poor transferability to data-sparse regions [19]. | May not be suitable for derivation in regions with different subsurface and sparse data. |

| Hybrid (Physical + ML) | Physics-Constrained Neural Network (PcNN) for water level prediction [16]. | Nash-Sutcliffe Efficiency (NSE): 0.84 (upstream), 0.92 (downstream) [16]. | Improved offtake discharge prediction by 30%–70% over the baseline model. |

Table 3: Performance Comparison in Drug Development and LLMs

| Model Type | Model Name / Description | Performance Metrics | Key Findings |

|---|---|---|---|

| Hybrid (Physical + ML) | PIGNet for binding affinity prediction [14]. | Pearson Correlation: >2x higher than conventional docking tools (e.g., Glide, GOLD, AutoDock Vina) [14]. | Achieves high generalization and accuracy, addressing the data scarcity challenge in drug discovery. |

| Hybrid (Physical + ML) | PIGNet for virtual screening [14]. | Enrichment Factor (EF): Up to 2x higher than conventional methods across various datasets [14]. | Doubles the probability of identifying active compounds in virtual screening. |

| Hybrid Architecture | LightTransfer for LLMs (e.g., LLaMA) [18]. | Throughput: Up to 2.17x improvement; Performance: <1.5% drop on LongBench [18]. | Enables efficient long-context handling by creating a hybrid attention mechanism. |

| Physics-Based | Traditional Molecular Docking (e.g., FEP+) [14]. | High computational demand, slower speed; accuracy compromised by approximations for speed [14]. | Universally applicable but often requires expert setup and significant resources. |

Experimental Protocols for Model Validation

Validating the transferability and robustness of hybrid models requires carefully designed experimental protocols. The methodologies from the cited studies provide a blueprint for rigorous evaluation.

Cross-Validation with Diverse Datasets

A critical step is to test the model on datasets that differ from the training data in terms of location, time, or system properties. For the evapotranspiration (ET) hybrid model, the protocol involved coupling physical constraints with machine learning to estimate daily ET in the Heihe River Basin [19]. The validation process included comparing the hybrid model's performance against five other models (two physical and three pure ML) using metrics like R², RMSE, BIAS, and Kling-Gupta efficiency (KGE). The model was tested across different time scales and its spatial ET patterns were validated against regional vegetation changes [19]. This multi-faceted validation protocol is essential for confirming that the model's performance gains are consistent and transferable across temporal and spatial domains.

Ablation Studies and Component Analysis

To understand the contribution of each component, ablation studies are indispensable. In the hydrodynamic modeling study, the authors evaluated the effect of feature extraction using AutoEncoders by comparing prediction errors using raw inputs versus encoded features [16]. The results showed that models using encoded inputs yielded lower prediction errors and narrower error distributions, with a notable 22.7% reduction in Mean Absolute Error (MAE) in one of the tested reaches [16]. Similarly, the hydrological study [15] proposed an entropy-based metric to quantitatively evaluate the relative contributions of the physics-based and data-driven components. This type of analysis helps determine whether the physical constraints are genuinely informing the model or if the data-driven component is overriding them for the sake of performance.

Performance Benchmarking Against Established Baselines

A standard protocol involves benchmarking the hybrid model against established state-of-the-art pure physics-based and pure data-driven models. For PIGNet, this involved two key experiments [14]:

- Binding Affinity Prediction of Derivatives: The accuracy was quantified through the Pearson correlation between experimental values (IC₅₀, Kᵢ) and predicted values. PIGNet demonstrated more than twice the accuracy of other leading docking methodologies.

- Virtual Screening Performance: Measured through the Enrichment Factor (EF), which calculates the ratio of identified active compounds compared to a random selection. PIGNet showed up to twice the EF across various datasets, indicating a much higher probability of success in early drug discovery.

The following workflow diagram generalizes the experimental and validation protocol for developing a transferable hybrid model:

Diagram 1: Hybrid Model Development and Validation Workflow

The Scientist's Toolkit: Essential Research Reagents and Solutions

The development and application of transferable hybrid models rely on a suite of computational and methodological "reagents." The table below details key resources essential for research in this field.

Table 4: Essential Research Reagents and Solutions for Hybrid Modeling

| Tool/Reagent Name | Type | Primary Function | Relevance to Hybrid Modeling |

|---|---|---|---|

| Long Short-Term Memory (LSTM) Network [16] [15] | Algorithm/Model | Models temporal dependencies and long-term memories in sequential data. | Core data-driven component for time-series forecasting in hydrology [16] and other dynamic systems. |

| Graph Neural Network (GNN) [14] | Algorithm/Model | Learns from graph-structured data by propagating information between nodes. | Ideal for modeling molecular structures (atoms as nodes, bonds as edges) in drug discovery [14]. |

| Physics-Informed Loss Function [16] | Methodological Framework | Incorporates physical equations (e.g., ODEs, PDEs) as constraints in the model's optimization objective. | Ensures model predictions adhere to known physical laws and conservation principles [16]. |

| Equivalent Circuit Model (ECM) [17] | Physics-Based Model | Represents complex physical systems (e.g., fluid dynamics in a printhead) using electrical analogies. | Provides a simplified, interpretable physical backbone for the hybrid framework [17]. |

| Monte Carlo Simulation [17] | Statistical Method | Uses random sampling to understand the impact of uncertainty and variability in a model. | Used for model validation and to assess the impact of parameter uncertainties on hybrid framework outputs [17]. |

| AutoEncoder [16] | Algorithm/Model | Neural network for unsupervised learning of efficient data codings. | Used for feature extraction and dimensionality reduction to improve the performance of the data-driven component [16]. |

The following diagram illustrates how these core components interact within a generalized hybrid model architecture, such as that used in PIGNet or a physics-constrained LSTM:

Diagram 2: Core Architecture of a Parameterized Physics-Informed Hybrid Model

The comparative analysis presented in this guide unequivocally demonstrates that hybrid physics-informed models, when constructed with the right balance of physical constraints, data integration, and architectural design, can achieve superior performance and enhanced transferability compared to purely physics-based or purely data-driven approaches. Core components such as physics-informed loss functions, hybrid backbones that efficiently combine global and local processing, and parameterized networks that embed physical equations directly into the forward pass are key to this success.

However, challenges remain. As highlighted in hydrological research, there is a need for critical evaluation of whether physical constraints genuinely enhance the model or simply make the learning problem harder, with data-driven components sometimes finding ways to bypass prescribed physics [15]. Future research should focus on developing more nuanced and adaptive constraint mechanisms, improving model interpretability, and establishing robust regulatory frameworks for the use of hybrid models in critical fields like drug development [20]. As these models continue to evolve, they will undoubtedly become an indispensable tool for researchers and scientists aiming to solve complex problems where both physical principle and empirical observation are paramount.

The integration of artificial intelligence (AI) into drug discovery represents a paradigm shift, offering the potential to dramatically accelerate therapeutic development. However, this promise is constrained by a fundamental biomedical imperative: the need to overcome significant data limitations while ensuring models produce interpretable, reliable results for critical decision-making. AI models, particularly deep learning approaches, are notoriously data-hungry, yet drug discovery often operates in low-data regimes, especially for novel targets or rare diseases [21]. Furthermore, the black-box nature of many complex AI models limits their adoption in pharmaceutical development, where understanding a drug's mechanism of action is paramount for efficacy and safety assessments [22]. These challenges are compounded by the structural complexity of biological systems and the high stakes of regulatory approval [23].

In response, hybrid physics-informed models have emerged as a transformative approach, integrating data-driven machine learning with established physical and biological principles. This methodology enhances model generalization capability in data-scarce environments and provides a more transparent, mechanistically grounded framework for prediction [14]. By leveraging physical laws, these models achieve greater transferability—the ability to maintain accuracy when applied to novel molecular structures or biological contexts beyond their initial training data. This article examines the current state of hybrid modeling, providing a comparative analysis of emerging solutions and the experimental frameworks validating their potential to overcome the most pressing challenges in computational drug development.

Experimental Protocols: Assessing Hybrid Model Performance

To objectively evaluate the performance of hybrid physics-informed models against conventional approaches, researchers employ standardized experimental protocols focusing on two critical tasks in early drug discovery: binding affinity prediction for molecular derivatives and virtual screening enrichment.

Binding Affinity Prediction for Derivatives

Objective: To quantify a model's accuracy in predicting the binding affinity (e.g., IC50, Ki values) of various molecular derivatives, assessing its utility in lead optimization [14].

Methodology:

- Dataset Curation: A diverse set of ligand-protein complexes with experimentally determined binding affinities is compiled from public databases such as PDBBind.

- Derivative Selection: A series of molecular derivatives are selected from the dataset, ensuring structural diversity while maintaining a common scaffold.

- Model Benchmarking: Predictions from the hybrid model (e.g., PIGNet) are compared against those from traditional docking software (e.g., AutoDock Vina, Glide) and purely data-driven deep learning models.

- Performance Quantification: The Pearson correlation coefficient between experimental values and model predictions is calculated. A value closer to 1 indicates superior predictive accuracy.

Virtual Screening Enrichment

Objective: To evaluate a model's effectiveness in identifying active compounds from large libraries of decoys, a crucial capability for hit identification [14].

Methodology:

- Dataset Preparation: Known active compounds and inactive decoy molecules for a specific protein target are gathered from directories such as the Directory of Useful Decoys (DUD).

- Screening Simulation: Each model ranks the entire compound library (actives and decoys) based on predicted binding affinity.

- Enrichment Calculation: The Enrichment Factor (EF) is computed, measuring the concentration of active compounds found in the top-ranked fraction of the library compared to a random selection.

- Formula:

EF = (Number of actives in top X% / Total number of actives) / X%

- Formula:

- Comparative Analysis: Enrichment factors across different datasets and target classes are compared between hybrid and conventional models.

Comparative Performance Analysis of Drug Discovery Models

The experimental protocols outlined above generate quantitative data that reveal significant performance differences between modeling approaches. The following tables summarize key findings from recent studies, highlighting the advantage of hybrid methodologies.

Table 1: Performance Comparison in Binding Affinity Prediction

| Model Type | Model Name | Performance Metric (Pearson Correlation) | Key Characteristic |

|---|---|---|---|

| Hybrid Physics-Informed | PIGNet | > 2x accuracy vs. conventional docking [14] | Integrates physical equations with deep learning |

| Traditional Docking | AutoDock Vina, Glide, GOLD | Baseline | Physics-based with approximations |

| Purely Data-Driven AI | Various Deep Learning Models | High for similar molecules; drops for novel scaffolds [14] | Learns exclusively from data patterns |

Table 2: Performance Comparison in Virtual Screening

| Model Type | Model Name | Performance Metric (Enrichment Factor - EF) | Key Characteristic |

|---|---|---|---|

| Hybrid Physics-Informed | PIGNet | Up to 2x higher EF across various datasets [14] | Superior identification of active compounds |

| Traditional Docking | AutoDock Vina, Glide, GOLD | Baseline EF | Standard for virtual screening |

| Explainable Graph-Based | XGDP | Enhanced prediction accuracy vs. pioneering works [24] | Identifies salient functional groups and genes |

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful implementation of hybrid models and experimental validation relies on specific computational tools and data resources.

Table 3: Key Research Reagents and Computational Tools

| Item Name | Function/Brief Explanation | Example Sources/Software |

|---|---|---|

| Molecular Graph Converter | Converts linear molecular notations (e.g., SMILES) into graph structures for GNN processing. | RDKit library [24] |

| Curated Bioactivity Dataset | Provides experimental data (IC50, Ki) for model training and benchmarking. | GDSC database, CCLE [24] |

| Explainable AI (XAI) Tool | Interprets model predictions, highlighting influential molecular features or genes. | GNNExplainer, Integrated Gradients [24] |

| Federated Learning Framework | Enables collaborative model training on distributed datasets without sharing private data. | Emerging tool for pharma collaborations [21] |

| Graph Neural Network (GNN) Library | Implements deep learning on graph-structured data like molecular graphs. | DeepChem [24] |

Workflow Visualization: Hybrid Physics-Informed Model Architecture

The following diagram illustrates the integrated architecture of a hybrid physics-informed model, such as PIGNet, showcasing how data-driven and physics-based components interact.

Addressing Data Scarcity: Strategies for Robust Model Training

The challenge of data scarcity is paramount in AI-driven drug discovery. Beyond hybrid modeling, several strategic frameworks are employed to maximize learning from limited datasets:

- Transfer Learning (TL): This technique involves initial training of a model on a large, generalizable dataset from a related task, followed by fine-tuning on the specific, smaller drug discovery dataset. This process transfers the fundamental information learned from the large dataset, allowing the model to perform well even with limited target-specific data [21].

- Multi-Task Learning (MTL): MTL improves model performance and data efficiency by simultaneously learning several related tasks that share underlying representations. In drug discovery, a single model can be trained to predict multiple molecular properties (e.g., toxicity, solubility, binding affinity) concurrently. This shared learning allows information from each task to reinforce the others, leading to more robust models, especially when data for any single task is limited [21].

- Data Augmentation (DA) and Synthesis (DS): These methods artificially expand the effective size of a training set. Data augmentation creates modified versions of existing training examples, while data synthesis uses AI algorithms like Generative Adversarial Networks (GANs) to generate entirely new, biologically plausible molecular data. This is particularly valuable for simulating scenarios with limited experimental data, such as for rare diseases [21].

The Critical Role of Explainability and Transferability

For AI models to gain trust and be adopted in high-stakes drug development, they must be both interpretable and generalizable.

- Explainable AI (XAI) for Actionable Insights: Explainable Artificial Intelligence (XAI) addresses the "black-box" problem by clarifying the decision-making mechanisms behind AI predictions. Methods like SHAP and LIME estimate the contribution of each input feature to the output. In practice, models like the eXplainable Graph-based Drug response Prediction (XGDP) use GNNExplainer and Integrated Gradients to identify which functional groups in a drug molecule and which genes in a cancer cell line are most significant for the predicted drug response. This provides researchers with actionable insights for rational drug design and understanding mechanisms of action [24] [22].

- A Multidimensional View of Transferability: In the context of hybrid models and drug discovery, transferability extends beyond simple application in a new context. A robust framework for assessing transferability can be conceptualized in three dimensions: 1) Applicability: The practical utility of a model or finding in a different setting; 2) Theoretical Engagement: How the work connects to and informs broader theoretical knowledge; and 3) Resonance: Its ability to generate meaningful insight and impact for researchers and practitioners in other contexts [25]. This comprehensive view ensures that models are not just technically portable but also scientifically valuable.

The integration of hybrid physics-informed models represents a significant advancement in addressing the core challenges of data scarcity, complexity, and interpretability in drug development. By synergistically combining data-driven learning with established physical principles, these models achieve superior predictive accuracy and generalization, as evidenced by their performance in binding affinity prediction and virtual screening. The continued development and standardization of these approaches, supported by rigorous experimental protocols and explainable AI frameworks, are paving the way for more efficient, reliable, and transparent drug discovery pipelines. This progress is crucial for accelerating the delivery of novel therapeutics to patients.

Architectures and Implementation: Building Transferable Hybrid Models

Physics-Informed Neural Networks (PINNs) have emerged as a powerful deep learning framework for solving forward and inverse problems involving partial differential equations (PDEs). At their core, PINNs integrate physical laws, typically described by PDEs, directly into the learning objective of a neural network by embedding these equations into the loss function. This approach represents a significant shift from traditional numerical methods and purely data-driven machine learning models. Unlike traditional neural networks that rely solely on data patterns, PINNs leverage the known physical constraints of a system to guide the training process, resulting in solutions that are not only accurate but also physically consistent. The methodology was fully formulated by Raissi et al. (2019) and has since seen rapid adoption across scientific domains including fluid dynamics, biomechanics, and electromagnetics [26] [27].

The fundamental architecture of a PINN consists of a deep neural network (typically a feedforward multilayer perceptron) that takes spatial and temporal coordinates as input and outputs the approximate solution of the PDE. The network is trained by minimizing a composite loss function that incorporates the PDE residuals, initial conditions, boundary conditions, and any available observational data. This mesh-free methodology is particularly advantageous for problems with complex geometries or high-dimensional parameter spaces where traditional discretization-based methods struggle. By using automatic differentiation to compute derivatives exactly, PINNs avoid discretization errors and can efficiently solve both forward problems (where the solution is unknown) and inverse problems (where parameters of the PDE are unknown) within the same framework [26].

Architectural Framework and Key Methodologies

Core PINN Architecture and Loss Function Composition

The standard PINN architecture transforms the problem of solving a PDE into an unconstrained optimization problem. Consider a general PDE of the form:

[ u_t + \mathcal{N}[u] = 0, \quad x \in \Omega, \quad t \in [0, T] ]

with initial conditions ( u(0, x) = u_0(x) ) and boundary conditions ( u(t, x) = g(t, x) ) for ( x \in \partial \Omega ), where ( \mathcal{N} ) is a nonlinear differential operator and ( \Omega ) is the spatial domain. A PINN ( \hat{u}(t, x; \theta) ) with parameters ( \theta ) approximates the solution ( u(t, x) ). The network is trained by minimizing a loss function ( \mathcal{L}(\theta) ) composed of multiple terms [26]:

[ \mathcal{L}(\theta) = \lambdar \mathcal{L}r(\theta) + \lambdab \mathcal{L}b(\theta) + \lambdai \mathcal{L}i(\theta) + \lambdad \mathcal{L}d(\theta) ]

where:

- ( \mathcal{L}_r ) is the PDE residual loss enforcing the governing equation

- ( \mathcal{L}_b ) is the boundary condition loss

- ( \mathcal{L}_i ) is the initial condition loss

- ( \mathcal{L}_d ) is the data loss (when measurements are available)

- ( \lambda ) terms are weighting coefficients balancing the different loss components

The following diagram illustrates the fundamental architecture and information flow of a PINN:

Advanced PINN Variants and Methodological Innovations

S-PINN: Stabilized Physics-Informed Neural Networks

The S-PINN framework addresses key limitations in traditional PINNs related to conservation laws and stability. While standard PINNs enforce physical laws only at individual collocation points, S-PINN incorporates local subdomains around these points for evaluating residuals of conserved quantities. These subdomains are flexibly established without complex meshing, and during training, S-PINN minimizes a novel loss function based on the cumulative residuals in all subdomains. This approach significantly enhances conservation properties and has demonstrated notable improvements in both accuracy and stability for incompressible fluid problems, including the Navier-Stokes equations [28].

Ψ-NN: Physics Structure-Informed Neural Network Discovery

The Ψ-NN method represents a paradigm shift by automatically discovering and embedding physically meaningful structures directly into the neural network architecture, rather than relying solely on external loss functions. This approach uses a knowledge distillation framework with separate teacher and student networks to decouple physical regularization from parameter regularization. After distillation, clustering and parameter reconstruction extract and embed physically consistent structures. This method has shown improved accuracy and training efficiency while enhancing structural adaptability across different physical problems including Laplace, Burgers, and Poisson equations [29].

Hybrid Adaptive PINNs with Advanced Sampling

Recent innovations in adaptive sampling techniques have addressed training inefficiencies in PINNs. Hybrid adaptive methods dynamically resample spatiotemporal residual points during training, balancing randomness with focused attention on regions exhibiting large PDE residuals. When combined with feature embedding layers that transform raw inputs into higher-dimensional spaces, these approaches have reduced L2 relative errors by approximately 1-2 orders of magnitude compared to vanilla PINN implementations. This significantly improves accuracy with reduced reliance on the number of sampling points [27].

Comparative Performance Analysis

Quantitative Comparison of PINN Variants

Table 1: Performance comparison of different PINN architectures across various benchmark problems

| PINN Variant | Key Innovation | Test Equations | Accuracy Improvement | Computational Efficiency | Conservation Properties |

|---|---|---|---|---|---|

| Standard PINN | Base architecture integrating PDEs into loss | Burgers, Navier-Stokes, Heat Equation | Baseline | Baseline | Limited to collocation points |

| S-PINN [28] | Local subdomains for residual evaluation | Navier-Stokes, Burgers, Heat Diffusion | Notable improvements in conservation and accuracy | Comparable to standard PINN | Significantly enhanced through cumulative residuals |

| Ψ-NN [29] | Automatic structure discovery via distillation | Laplace, Burgers, Poisson, Fluid Mechanics | Enhanced accuracy through physically consistent structures | Improved training efficiency | Built into network architecture |

| Hybrid Adaptive PINN [27] | Adaptive sampling + feature embedding | Various forward and inverse PDE problems | 1-2 orders of magnitude error reduction | Reduced points needed for same accuracy | Similar to standard PINN |

| BridgeNet [30] | CNN integration for spatial features | High-dimensional Fokker-Planck | Significantly lower error metrics | Faster convergence | Enhanced through physical constraints |

Comparison with Alternative Modeling Approaches

Table 2: PINNs versus other hybrid modeling approaches for integrating physical knowledge

| Modeling Approach | Physical Knowledge Integration | Data Requirements | Interpretability | Inverse Problem Capability | Transferability |

|---|---|---|---|---|---|

| Pure Data-Driven NN | None | Large amounts of labeled data | Low (black box) | Limited | Poor to moderate |

| Traditional Numerical Methods (FEM, FVM) | Complete (governing equations) | None for forward problems | High | Limited (requires separate formulation) | High within domain |

| Hybrid Semi-Parametric [4] | Mechanistic model + NN residual | Moderate | Moderate | Good | Superior in data-sparse regimes |

| Process-Informed NN [31] | Process knowledge in NN structure | Low to moderate | Moderate to high | Good | Good for high-transfer tasks |

| Standard PINN | PDE constraints in loss function | Low to moderate | Moderate | Excellent (native capability) | Moderate |

| Advanced PINN Variants (S-PINN, Ψ-NN) | Enhanced physical structure | Low to moderate | High | Excellent with improved stability | Improved |

Domain-Specific Performance Metrics

Table 3: Performance of PINNs across different application domains

| Application Domain | Specific Problem | PINN Variant | Key Performance Metrics | Comparison to Traditional Methods |

|---|---|---|---|---|

| Fluid Dynamics [28] | Navier-Stokes equations | S-PINN | Improved conservation properties, accurate velocity/pressure fields | Comparable accuracy to FVM with better conservation |

| Pharmacokinetics [32] | PBPK brain model | PBPK-iPINN | Accurate parameter estimation (Cmax, Tmax, AUC), concentration profiles | Alternative to traditional compartmental modeling |

| Porous Media Flow [33] | Two-phase Buckley-Leverett problem | MLP & Attention PINN | Accurate saturation front prediction with spatial-dependent diffusion | Validated with laboratory experimental data |

| Ecology [31] | Carbon flux prediction | Process-Informed NN | Superior prediction in data-sparse regimes, high-transfer tasks | Outperforms both process-based and pure NN models |

| Electromagnetics [34] | Eddy current analysis | Transfer Learning PINN | 80.2% reduction in learning time | Enables practical application to transient phenomena |

Experimental Protocols and Methodologies

Protocol 1: S-PINN for Fluid Dynamics

The S-PINN methodology employs a specialized approach for evaluating residuals in fluid dynamics problems [28]:

Domain Discretization: Create collocation points throughout the spatial and temporal domain, then establish local subdomains around each point without complex meshing.

Residual Calculation: Compute residuals of conserved quantities (mass, momentum) not just at points but integrated over each local subdomain using Gaussian quadrature.

Loss Function Construction: Formulate a novel loss function ( \mathcal{L}{S-PINN} = \sum{k=1}^{Nd} \omegak \mathcal{L}{domaink} + \mathcal{L}{BC} + \mathcal{L}{IC} + \mathcal{L}{data} ) where ( Nd ) is the number of subdomains and ( \omega_k ) are weighting factors.

Optimization: Minimize the loss using adaptive moment estimation (Adam) optimizer with Swish activation functions.

Validation: Benchmark against traditional numerical methods (Finite Volume Method) for accuracy and conservation properties.

Protocol 2: Ψ-NN for Automatic Structure Discovery

The Ψ-NN framework implements a three-stage knowledge distillation process for discovering physically meaningful network structures [29]:

Physics-Informed Distillation: Train a teacher network with physical regularization (governing equations) and a student network with parameter regularization separately to avoid constraint conflicts.

Network Parameter Matrix Extraction: After distillation, apply clustering algorithms to the trained parameters of the student network to identify recurring structural patterns.

Structured Network Reconstruction: Reinitialize the network using the identified cluster centers as fixed parameters, embedding the discovered physical structure directly into the architecture.

Transfer Learning: Apply the discovered structure to related problems with different parameters to validate generalizability.

Protocol 3: PBPK-iPINN for Pharmacokinetic Modeling

The PBPK-iPINN approach addresses parameter estimation in physiological based pharmacokinetic models [32]:

Problem Formulation: Represent the PBPK model as a system of parametric ODEs with mass balance equations for each compartment (e.g., brain blood, brain mass, CSF).

Network Architecture: Design a fully connected neural network with input (time) and outputs (drug concentrations in each compartment).

Loss Function: Incorporate data loss (available concentration measurements), residual loss (ODE system), and initial condition loss with careful weighting to handle stiffness.

Hyperparameter Tuning: Systematically optimize layers, neurons, activation functions, learning rate, and collocation points for convergence.

Validation: Compare parameter estimates and concentration profiles against gold-standard commercial software (Simcyp Simulator) and traditional numerical methods.

The following diagram illustrates the workflow for inverse problems in pharmacokinetic modeling using PBPK-iPINN:

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential computational tools and frameworks for PINN research

| Tool Name | Type | Primary Function | Key Features | Application Context |

|---|---|---|---|---|

| DeepXDE [27] | Software Library | PINN implementation and testing | Comprehensive framework for solving forward/inverse PDE problems | General PDE problems, educational research |

| PyTorch/TensorFlow [26] | Deep Learning Framework | Neural network construction and training | Automatic differentiation, GPU acceleration, flexibility | Custom PINN implementations, experimental architectures |

| Adam Optimizer [28] [29] | Optimization Algorithm | Neural network parameter optimization | Adaptive learning rates, momentum | Standard choice for most PINN implementations |

| Swish Activation [28] | Activation Function | Neural network nonlinearity | Smooth, non-monotonic, avoids vanishing gradients | Fluid dynamics, stiff equations |

| Adaptive Sampling Algorithms [27] | Sampling Method | Dynamic point selection during training | Focuses on high-residual regions, improves efficiency | Problems with sharp gradients or discontinuities |

| Knowledge Distillation Framework [29] | Training Methodology | Network structure discovery | Decouples physical and parameter regularization | Automatic discovery of physically meaningful structures |

| Gaussian Quadrature [28] | Numerical Integration | Residual evaluation over subdomains | High accuracy with few points | S-PINN for conservation laws |

Physics-Informed Neural Networks represent a transformative approach to integrating physical knowledge with data-driven modeling through the loss function. The comparative analysis presented in this guide demonstrates that while standard PINNs provide a solid foundation, recent variants address key limitations in conservation, stability, and efficiency. S-PINN enhances conservation properties through local domain residuals, Ψ-NN automates the discovery of physically consistent network structures, and hybrid adaptive methods significantly improve accuracy through dynamic sampling.

For researchers and drug development professionals, these advancements offer promising tools for tackling complex problems where traditional methods face challenges. In pharmacological applications specifically, PBPK-iPINN provides a robust framework for parameter estimation in complex compartmental models, enabling more accurate prediction of drug concentration profiles and key pharmacokinetic parameters. The continued development of PINN methodologies holds particular promise for inverse problems where parameters cannot be directly measured and for multiscale phenomena where traditional discretization methods struggle.

As PINN methodologies mature, we anticipate increased integration with traditional numerical methods, enhanced robustness for high-dimensional problems, and more sophisticated approaches for balancing multiple physical constraints. The field is moving toward more automated physics-informed learning systems that require less manual tuning and can discover relevant physical structures directly from data and fundamental principles.

Physics-Informed Neural Networks (PINNs) have emerged as a powerful framework for solving forward and inverse problems involving partial differential equations (PDEs). A significant limitation of standard PINNs is their problem-specific nature; any change in boundary conditions, geometry, or material properties typically necessitates retraining from scratch. This constraint is particularly challenging in scientific and industrial contexts where rapid predictions for similar but distinct scenarios are required. To address this limitation, Transfer Learning (TL) has been introduced to enhance PINNs, creating a paradigm known as Transfer Learning-enhanced PINNs (TL-PINNs). This approach leverages knowledge from a pre-trained model (source domain) to accelerate training and improve performance on a new, related task (target domain).

TL-PINNs are a critical component in the broader research on Hybrid physics-informed models for transferability assessment, aiming to develop robust, generalizable models that reduce computational costs while maintaining physical consistency. This guide provides a comparative analysis of TL-PINN methodologies, their experimental protocols, and performance across various applications.

Comparative Analysis of TL-PINN Methods and Performance

Transfer learning strategies for PINNs can be categorized into several distinct approaches, each with unique mechanisms and advantages. The following table summarizes the primary methods, their core principles, and reported performance gains.

Table 1: Comparison of Primary Transfer Learning Methods for PINNs

| Method Category | Core Principle | Key Advantages | Reported Performance Gains | Application Contexts |

|---|---|---|---|---|

| Full Fine-Tuning [35] | All parameters of a pre-trained model are updated for the new task. | Can achieve high accuracy; flexible adaptation. | Significant improvement in convergence speed; slight accuracy enhancement [35]. | General-purpose; different boundary conditions, materials, geometries [35]. |

| Lightweight Fine-Tuning [35] | Initial layers are frozen; only later layers are re-trained. | Reduced computational cost; faster than full fine-tuning. | Less effective than full fine-tuning and LoRA in some studies [35]. | Tasks where source and target domains share fundamental features [35]. |

| Low-Rank Adaptation (LoRA) [35] | Uses low-rank matrices to adapt pre-trained weights, keeping original weights frozen. | Highly parameter-efficient; reduces computational cost; flexible. | Significantly improves convergence speed; performance comparable to full fine-tuning [35]. | Effective across boundary conditions, materials, and geometries [35]. |

| Sequential Fine-Tuning (for High-Freq.) [36] | Model is first trained on a low-frequency problem, then fine-tuned on the target high-frequency problem. | Overcomes spectral bias; improves robustness and convergence. | Effectively approximates solutions from low to high frequencies; requires fewer data points and less training time [36]. | High-frequency and multi-scale problems like wave propagation [36]. |

Beyond the core methods, performance is also measured through specialized frameworks and domain-specific applications:

Table 2: Performance of TL-PINNs in Specific Frameworks and Applications

| Framework/Application | TL Strategy | Quantitative Performance Improvement | Domain Similarity Measure |

|---|---|---|---|

| Finite Element-Integrated NN (FEINN) [37] | Scale, Material, and Load Transfer Learning. | Significantly improved accuracy and training efficiency [37]. | Explored transfers from coarse to fine mesh, elastic to elastoplastic material, and between load conditions [37]. |

| Nuclear Reactor Transients [38] | Pre-training on one transient, fine-tuning on another. | Up to two orders of magnitude acceleration in training; prediction error for neutron densities < 1% [38]. | Correlation between TL performance gain and similarity of transients (Hausdorff, Fréchet distance) [38]. |

| Fracture Mechanics [39] | Transfer learning for variational energy-based PINN. | More efficient crack path prediction; better accuracy than conventional residual-based PINNs [39]. | Knowledge transfer for different crack initiation scenarios. |

| Battery Degradation Diagnosis [40] | Fine-tuning a cloud-based pre-trained model for a local deployment. | Improved degradation mode estimation and phase detection in target scenario [40]. | From protocol cycling scenarios (source) to dynamic cycling scenarios (target) [40]. |

Experimental Protocols and Methodologies

The experimental validation of TL-PINNs involves a structured pipeline and specific protocols to ensure robust performance.

General TL-PINN Workflow

The following diagram illustrates the standard workflow for implementing transfer learning in PINNs.

Detailed Methodological Steps

Base Model Training (Source Domain): A conventional PINN is first trained to solve a source problem. The loss function typically incorporates the PDE residuals, boundary conditions (BCs), and initial conditions (ICs):

Loss_total = λ_phys * Loss_PDE + λ_BC * Loss_BC + λ_IC * Loss_IC[36]. The model's parameters (weights and biases, θ_source) are stored.Target Domain Definition and Model Initialization: A new, related target problem is defined, which may involve changes in:

- Geometry: Altering the spatial domain (e.g., size of a hole in a plate) [35].

- Boundary Conditions: Changing the applied loads or constraints [35] [37].

- Material Properties: Modifying constitutive parameters (e.g., elastic modulus) [35] [41].

- Physical Parameters: Varying coefficients in the governing PDEs (e.g., viscosity in Burgers equation) [29] [38].