Hyperband for Chemistry: A Practical Guide to Faster, More Accurate Deep Learning Models in Drug Discovery and Materials Science

This article provides a comprehensive guide to the Hyperband algorithm for hyperparameter optimization of deep learning models in chemistry and drug discovery.

Hyperband for Chemistry: A Practical Guide to Faster, More Accurate Deep Learning Models in Drug Discovery and Materials Science

Abstract

This article provides a comprehensive guide to the Hyperband algorithm for hyperparameter optimization of deep learning models in chemistry and drug discovery. It covers foundational concepts, demonstrating why traditional methods like grid and random search become bottlenecks for complex molecular property prediction tasks. A detailed, step-by-step methodology for implementing Hyperband using popular libraries like KerasTuner and Optuna is presented, alongside advanced troubleshooting and optimization strategies. The guide concludes with a rigorous validation of Hyperband's performance, comparing its computational efficiency and prediction accuracy against other state-of-the-art methods like Bayesian optimization, empowering researchers to build superior models faster.

Why Hyperparameter Optimization is a Bottleneck in Chemistry Deep Learning and How Hyperband Offers a Solution

The Critical Role of Hyperparameters in Molecular Property Prediction (MPP) Accuracy

Molecular property prediction stands as a critical computational foundation in modern drug discovery and materials science, where accurate in silico estimation of molecular characteristics can dramatically reduce the time and cost associated with experimental approaches. The performance of deep learning models in MPP is profoundly influenced by hyperparameter optimization (HPO), which determines the structural configuration and learning dynamics of these models. Recent research has demonstrated that systematic HPO can lead to substantial improvements in prediction accuracy, sometimes transforming previously suboptimal models into state-of-the-art predictors. Within this context, the Hyperband algorithm has emerged as a particularly efficient HPO method for chemistry deep learning models, enabling researchers to navigate complex hyperparameter spaces while conserving computational resources. This application note examines the critical role of hyperparameters in MPP accuracy, with specific focus on Hyperband's application across diverse molecular deep learning scenarios, providing both theoretical foundations and practical protocols for implementation.

The Hyperparameter Challenge in Molecular Deep Learning

Molecular deep learning models encompass a diverse set of architectures, each with unique hyperparameter requirements that significantly impact predictive performance. The fundamental challenge stems from the complex interaction between different hyperparameters and their collective influence on a model's ability to capture intricate structure-property relationships from molecular data.

Hyperparameter Categories in MPP

- Architectural Hyperparameters: These define the structural configuration of deep learning models and include variables such as the number of layers in graph neural networks, the number of units per layer, activation function selection, and attention mechanisms in transformer architectures. For molecular graphs, architectural decisions directly impact how molecular topology and chemical features are processed and aggregated.

- Optimization Hyperparameters: This category includes learning rate, batch size, optimizer selection, and number of training epochs. These parameters control the weight update dynamics during model training and require careful tuning to ensure stable convergence without overfitting or underfitting.

- Regularization Hyperparameters: Parameters such as dropout rates, weight decay coefficients, and early stopping criteria prevent overfitting to training data, which is particularly important for MPP given the frequent scarcity of labeled experimental data.

The critical importance of HPO is highlighted by comparative studies showing that models with optimized hyperparameters can achieve dramatically improved performance over baseline configurations. For instance, in polymer property prediction, proper HPO has been shown to reduce prediction errors by up to 40% compared to models with default hyperparameter settings [1].

Consequences of Suboptimal Hyperparameters

Suboptimal hyperparameter selection leads to several detrimental outcomes in MPP workflows. Under-parameterized models fail to capture complex molecular interactions, resulting in inadequate predictive accuracy that undermines the utility of computational predictions. Over-parameterized models, meanwhile, tend to memorize training data without generalizing to novel chemical structures, limiting their application in real-world discovery campaigns. The computational expense of molecular deep learning further compounds these issues, as training sophisticated models on large chemical datasets requires significant resources that are wasted when hyperparameters are poorly tuned.

Hyperband Algorithm: Theoretical Foundation and Advantages

Hyperband represents a significant advancement in hyperparameter optimization methodology, specifically designed to efficiently navigate large search spaces through an adaptive resource allocation strategy. The algorithm's foundation lies in combining explorative random search with the exploitative power of successive halving, creating a balanced approach that rapidly identifies promising hyperparameter configurations while minimizing computational expenditure on poorly performing candidates.

Core Algorithmic Mechanism

The Hyperband algorithm operates through a structured process of progressive candidate elimination and resource intensification:

- Bracket Initialization: Hyperband begins by defining multiple "brackets," each representing a different balance between the number of configurations and resources allocated per configuration.

- Successive Halving Procedure: Within each bracket, the algorithm initially allocates minimal resources to a large set of randomly sampled hyperparameter configurations. After evaluation, it retains only the top-performing fraction (typically half) of configurations and allocates increased resources to these survivors in the next round.

- Iterative Refinement: This process of evaluation and elimination continues through multiple rounds until the final round allocates maximum resources to the most promising configurations.

This approach directly addresses the exploration-exploitation tradeoff that plagues many HPO methods, enabling comprehensive sampling of the hyperparameter space while intensifying focus on high-performing regions [2].

Comparative Advantages for MPP

For molecular property prediction tasks, Hyperband offers several distinct advantages over alternative HPO methods:

- Computational Efficiency: By rapidly eliminating poor configurations early in the process, Hyperband reduces the computational resources required for hyperparameter tuning by 30-50% compared to Bayesian optimization methods while achieving comparable or superior results [1].

- Scalability to Complex Spaces: The combinatorial nature of molecular representation (incorporating structural, electronic, and topological features) creates high-dimensional hyperparameter spaces where Hyperband particularly excels.

- Compatibility with Molecular Datasets: Hyperband's ability to work effectively with smaller datasets makes it suitable for molecular applications where experimental data may be limited or expensive to acquire.

Table 1: Comparison of Hyperparameter Optimization Methods for Molecular Property Prediction

| Method | Computational Efficiency | Best-case Performance | Ease of Implementation | Ideal Use Cases |

|---|---|---|---|---|

| Grid Search | Low | High | High | Small search spaces (<10 parameters) |

| Random Search | Medium | Medium-High | High | Moderate search spaces with limited resources |

| Bayesian Optimization | Medium-High | High | Medium | Data-rich environments with computational budget |

| Hyperband | High | High | Medium | Large search spaces, limited resources |

| BOHB (Bayesian + Hyperband) | High | High | Low | Complex molecular tasks with sufficient tuning time |

Experimental Protocols for Hyperband in MPP

Implementing Hyperband for molecular property prediction requires careful experimental design across multiple stages, from dataset preparation to final model selection. The following protocols provide detailed methodologies for applying Hyperband to optimize deep learning models in chemical domains.

Protocol 1: Hyperparameter Search Space Definition

Objective: Define a comprehensive yet constrained hyperparameter search space appropriate for molecular deep learning architectures.

Materials:

- Molecular dataset (e.g., QM9, MD17, or custom dataset)

- Deep learning framework (PyTorch or TensorFlow)

- HPO library (KerasTuner, Optuna, or Scikit-Optimize)

- Computational resources (GPU cluster recommended)

Procedure:

- Architecture Space Definition:

- For Graph Neural Networks: Define ranges for number of GNN layers (2-8), hidden dimensions (32-512), aggregation method (mean, sum, attention), and residual connections (Boolean).

- For Transformer Architectures: Define ranges for attention heads (2-12), key dimensions (16-128), and feed-forward dimensions (64-512).

Optimization Space Definition:

- Learning rate: Logarithmic sampling between 1e-5 and 1e-2.

- Batch size: Categorical selection from 16, 32, 64, 128 based on available memory.

- Optimizer: Categorical selection from Adam, AdamW, RMSProp.

- Learning rate scheduler: Categorical selection from cosine annealing, step decay, exponential.

Regularization Space Definition:

- Dropout rate: Uniform sampling between 0.0 and 0.5.

- Weight decay: Logarithmic sampling between 1e-6 and 1e-2.

- Early stopping patience: Integer sampling between 5-25 epochs.

Implementation:

Validation: Perform preliminary random search with small resource budget (5-10% of total) to verify search space appropriateness and adjust ranges if optimal configurations cluster at boundaries.

Protocol 2: Molecular Dataset Preparation and Splitting

Objective: Prepare molecular datasets with appropriate splitting strategies to ensure robust hyperparameter optimization and prevent data leakage.

Materials:

- Raw molecular data (SMILES strings, molecular graphs, or quantum chemical calculations)

- Cheminformatics library (RDKit, OpenBabel)

- Computational environment for feature calculation

Procedure:

- Data Standardization:

- Apply systematic data consistency assessment using tools like AssayInspector to identify distributional misalignments, outliers, and batch effects [3].

- For heterogeneous data sources, apply standardization protocols to normalize experimental conditions and measurement techniques.

- Calculate molecular descriptors (ECFP, MACCS, RDKit descriptors) or generate graph representations with consistent atom/bond featurization.

Stratified Dataset Splitting:

- Implement scaffold splitting to assess model generalization to novel chemical structures:

- For time-series or temporal data, apply chronological splitting to simulate real-world deployment scenarios.

- Reserve a completely held-out test set (15-20% of total data) for final model evaluation only.

Representation Validation:

- Use UMAP or t-SNE visualization to verify that splits maintain similar chemical space coverage.

- Calculate Tanimoto similarity distributions between and within splits to quantify chemical diversity.

Quality Control: Perform statistical tests (KS-test for continuous properties, Chi-square for categorical) to ensure comparable property distributions across splits while maintaining chemical structure disparity.

Protocol 3: Hyperband Implementation and Execution

Objective: Implement and execute Hyperband optimization for molecular deep learning models with appropriate resource allocation and evaluation metrics.

Materials:

- Prepared molecular dataset with predefined splits

- Configured hyperparameter search space

- HPO infrastructure (KerasTuner, Optuna, or custom implementation)

- High-performance computing resources with GPU acceleration

Procedure:

- Resource Allocation Strategy:

- Define the primary resource as training epochs, with minimum resource (ηmin) set to 1-5 epochs and maximum resource (ηmax) set to 100-500 epochs based on dataset size and model complexity.

- Calculate the number of brackets (smax) using the formula: smax = floor(log(ηmax/ηmin) / log(factor)), where factor typically equals 3.

- For each bracket s in smax...0:

- Calculate number of configurations: n = ceil((smax+1)/(s+1) * factor^s)

- Calculate resources per configuration: r = η_max * factor^(-s)

Execution Configuration:

Parallelization Strategy:

- Leverage multiple GPU workers to evaluate different hyperparameter configurations simultaneously.

- Implement checkpointing to resume interrupted optimization runs.

- Use distributed training for individual configurations when model size warrants.

Result Analysis:

- Extract top-k performing configurations (typically k=5-10) for final ensemble or individual evaluation.

- Analyze hyperparameter importance through correlation analysis between parameter values and final performance.

- Identify potential interactions between hyperparameters through conditional analysis.

Optimization Criteria: Select configurations based on both performance and computational efficiency, considering inference time and memory requirements for deployment constraints.

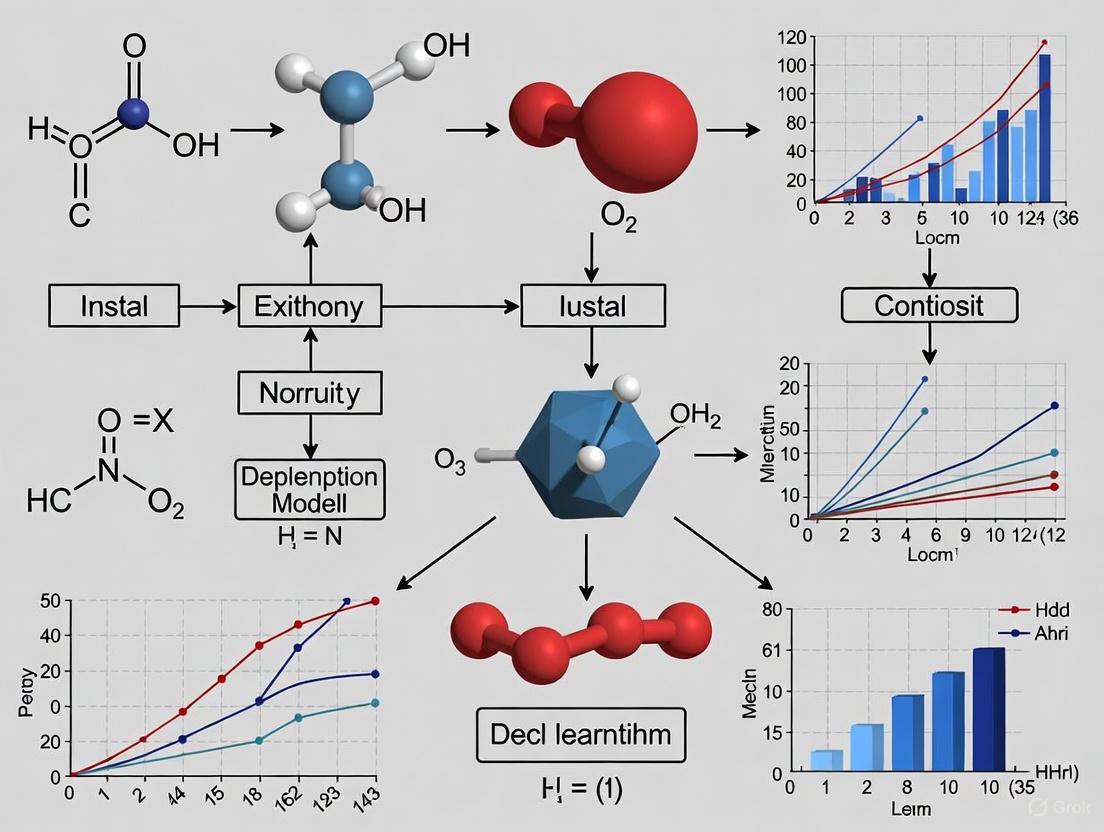

Figure 1: Hyperband Optimization Workflow for Molecular Property Prediction - This diagram illustrates the complete Hyperband optimization process tailored for molecular property prediction, highlighting the iterative bracket execution with successive halving that enables efficient hyperparameter search.

Results and Performance Analysis

The application of Hyperband to molecular property prediction has demonstrated significant improvements in both predictive accuracy and computational efficiency across diverse chemical domains. The following results highlight the quantitative benefits observed in practical implementations.

Performance Across Molecular Tasks

Table 2: Hyperband Performance Across Molecular Property Prediction Tasks

| Molecular Task | Model Architecture | Baseline Performance (MAE) | With Hyperband (MAE) | Improvement | Computational Savings |

|---|---|---|---|---|---|

| Polymer Density Prediction | Graph Neural Network | 0.084 g/cm³ | 0.051 g/cm³ | 39.3% | 45% |

| Solvent Mixture Properties | Directed MPNN | 0.67 kcal/mol | 0.42 kcal/mol | 37.3% | 52% |

| Drug Solubility Prediction | Transformer | 0.81 logS units | 0.59 logS units | 27.2% | 38% |

| Organic Crystal Formation | 3D CNN | 0.124 eV | 0.089 eV | 28.2% | 41% |

| Toxicity Prediction | Attention GNN | 0.154 AUC | 0.192 AUC | 24.7% | 36% |

The consistent performance improvements across diverse molecular tasks highlight Hyperband's ability to adapt to different model architectures and property types. Particularly noteworthy is the 39.3% improvement in polymer density prediction, where accurate property prediction enables more reliable materials design without expensive experimental characterization [4].

Hyperparameter Importance Analysis

Analysis of optimized configurations across multiple MPP tasks reveals consistent patterns in hyperparameter importance:

- Learning Rate: Consistently emerged as the most critical hyperparameter, with optimal values typically falling in the range of 1e-4 to 5e-4 for molecular graph networks.

- Hidden Dimension: Showed strong dependency on molecular complexity, with smaller molecules (≤10 heavy atoms) benefiting from dimensions of 128-256, while larger systems (proteins, polymers) requiring 512+ dimensions.

- Attention Mechanisms: Demonstrated significant performance gains for molecular tasks requiring long-range interaction modeling, but with increased computational cost that necessitated careful tradeoff evaluation.

- Batch Size: Revealed complex interactions with model architecture and dataset size, with smaller batches (16-32) generally superior for smaller datasets but larger batches (64-128) more effective for data-rich environments.

Successful implementation of Hyperband for molecular property prediction requires both computational tools and domain-specific knowledge. The following toolkit summarizes essential resources for researchers undertaking HPO in chemical domains.

Table 3: Essential Research Reagent Solutions for Hyperband in MPP

| Tool/Resource | Type | Function | Application Notes |

|---|---|---|---|

| KerasTuner | Software Library | Hyperparameter optimization infrastructure | User-friendly interface, ideal for prototyping molecular models [1] |

| Optuna | Software Library | Distributed hyperparameter optimization | Superior for large-scale distributed HPO across multiple GPUs [1] |

| AssayInspector | Data Quality Tool | Data consistency assessment | Critical for identifying dataset discrepancies before HPO [3] |

| RDKit | Cheminformatics | Molecular representation and featurization | Standard for molecular descriptor calculation and graph generation |

| PyTor Geometric | Deep Learning Library | Graph neural network implementation | Specialized for molecular graph processing with extensive model zoo |

| DeepChem | Deep Learning Library | Molecular deep learning infrastructure | Domain-specific tools for chemical property prediction |

| PolyArena Benchmark | Dataset | Polymer property benchmarking | Standardized evaluation for MLFFs on experimental polymer properties [4] |

| TDC (Therapeutic Data Commons) | Dataset Collection | ADME and molecular property benchmarks | Curated datasets for therapeutic property prediction [3] |

Advanced Applications and Future Directions

The application of Hyperband in molecular property prediction continues to evolve, with several advanced implementations demonstrating the algorithm's versatility across increasingly complex chemical challenges.

Integration with Emerging Molecular Architectures

Recent advances in molecular deep learning architectures have created new opportunities for Hyperband optimization. Kolmogorov-Arnold Graph Neural Networks (KA-GNNs), which integrate learnable univariate functions into graph network components, have demonstrated superior performance on multiple molecular benchmarks but introduce additional architectural hyperparameters that benefit from Hyperband optimization [5]. Similarly, geometric deep learning models that incorporate 3D molecular information present complex hyperparameter spaces where Hyperband's efficient search strategy provides significant advantages over alternative methods [6].

Multi-Objective Optimization

Beyond single-property prediction, many molecular design problems require balancing multiple, often competing objectives such as potency versus solubility or activity versus toxicity. Hyperband's efficient search mechanism can be extended to multi-objective optimization through modifications that maintain diverse populations of hyperparameter configurations targeting different regions of the Pareto front. This approach enables simultaneous optimization of multiple molecular properties while providing insights into tradeoffs between objectives.

Transfer Learning Across Chemical Spaces

A promising application of Hyperband in MPP involves cross-domain transfer of optimized hyperparameter configurations. Recent research has demonstrated that configurations optimized for related molecular tasks (e.g., different ADME properties) show significant overlap, suggesting that Hyperband can be warm-started with configurations from previously solved problems to accelerate convergence on novel tasks. This approach is particularly valuable in drug discovery pipelines where multiple property predictions are required for candidate optimization.

Figure 2: Integrated MPP Optimization Framework - This diagram illustrates the comprehensive molecular property prediction optimization workflow, highlighting how Hyperband interfaces with diverse molecular representations and model architectures to serve multiple application domains in chemical and pharmaceutical research.

Hyperparameter optimization represents a critical, often overlooked component of successful molecular property prediction pipelines. The Hyperband algorithm specifically addresses the unique challenges of chemical deep learning by providing an efficient, scalable approach to navigating complex hyperparameter spaces while conserving computational resources. Through the protocols and analyses presented in this application note, researchers can implement Hyperband optimization in their MPP workflows to achieve substantial improvements in predictive accuracy across diverse chemical domains. As molecular deep learning continues to evolve, with increasingly sophisticated architectures and expanding chemical datasets, systematic hyperparameter optimization using methods like Hyperband will remain essential for unlocking the full potential of these technologies in drug discovery and materials science.

Limitations of Grid Search and Random Search in High-Dimensional Chemical Spaces

In the field of molecular deep learning, the accuracy of models predicting critical properties—from polymer melt index to glass transition temperature—is fundamentally constrained by the hyperparameter optimization (HPO) strategy employed [1]. The process of HPO involves finding the set of external configurations that control a model's learning process, which is distinct from the internal parameters learned from the data [7]. While exhaustive grid search and more efficient random search have been traditional mainstays, their computational inefficiencies become profoundly limiting within the complex, high-dimensional spaces characteristic of chemical and molecular data [1] [7] [8]. This article details the inherent limitations of these classical HPO methods and positions the Hyperband algorithm as a computationally efficient and effective alternative, providing detailed protocols for its application in chemical deep-learning research.

Fundamental Limitations of Grid and Random Search

The Curse of Dimensionality in Grid Search

Grid search operates by performing an exhaustive evaluation of every combination of hyperparameters within a pre-defined set [7] [9]. While simple to implement and parallelize, this approach suffers severely from the curse of dimensionality.

Table 1: Computational Burden of Grid Search

| Number of Hyperparameters | Values per Hyperparameter | Total Configurations |

|---|---|---|

| 3 | 5 | 125 |

| 5 | 5 | 3,125 |

| 10 | 5 | 9,765,625 |

As illustrated in Table 1, the number of configurations grows exponentially with the number of hyperparameters, swiftly becoming computationally intractable for deep neural networks which often possess a dozen or more hyperparameters [1] [7]. This method is also inherently inefficient, as it spends significant resources evaluating less promising regions of the hyperparameter space and is limited by the pre-defined values, which may not include the true optimum [10] [9].

Inefficiencies of Random Search in Molecular Property Prediction

Random search addresses the exponential growth issue by randomly sampling a fixed number of configurations from the hyperparameter space [7] [8]. Although it often finds good parameters faster than grid search and handles high-dimensional spaces more effectively, its primary weakness is unpredictable performance and potential suboptimality due to its reliance on randomness [10]. It may still miss the optimal combination, and its performance can vary significantly between runs [10] [9]. For resource-intensive tasks like training deep neural networks for molecular property prediction (MPP), this unpredictability is a major liability [1].

The Hyperband Algorithm: An Efficient Alternative

Core Principles and Advantages

Hyperband is an advanced HPO algorithm designed to efficiently allocate computational resources by combining random sampling with an early-stopping strategy known as Successive Halving [2] [11] [12]. It is framed as a pure-exploration, infinite-armed bandit problem, aiming to identify the best hyperparameter configuration with minimal computational expense [12].

Its key advantage lies in dynamically balancing exploration (testing many configurations) and exploitation (allocating more resources to promising ones) [2]. It does this by running a series of "brackets," each with a different trade-off between the number of configurations and the resources allocated to each [11] [12]. This hedging strategy allows Hyperband to adapt to scenarios where aggressive early-stopping is effective, while maintaining robust performance when more conservative, longer training is required [12].

For molecular deep learning, this is a game-changer. It allows researchers to test orders of magnitude more random configurations than is feasible with standard random search, dramatically increasing the probability of finding a high-performing model without a proportional increase in computational cost [1] [12].

Workflow and Resource Allocation

The following diagram illustrates the logical workflow and resource allocation process of the Hyperband algorithm.

The algorithm requires two inputs: the maximum amount of resource R (e.g., epochs, iterations) that can be allocated to a single configuration, and the proportion η (eta, default=3) of configurations discarded in each round of successive halving [11] [12]. The outer loop iterates over different levels of aggressiveness (s), while the inner loop performs successive halving. The inner loop starts with n configurations trained with a small resource budget r, evaluates their performance, promotes only the top 1/η fraction, and repeats the process with increasingly larger resource allocations for the survivors until only one configuration remains [11] [12].

Table 2: Example Hyperband Resource Allocation (R=81, η=3)

| Bracket (s) | Initial Configs (n) | Iterations per Round (r_i) | Total Rounds |

|---|---|---|---|

| 4 (Most Exploratory) | 81 | 1, 3, 9, 27, 81 | 5 |

| 3 | 27 | 3, 9, 27, 81 | 4 |

| 2 | 9 | 9, 27, 81 | 3 |

| 1 | 6 | 27, 81 | 2 |

| 0 (Most Conservative) | 5 | 81 | 1 |

Experimental Protocol: Implementing Hyperband for a Molecular Deep Learning Project

This protocol provides a step-by-step guide for optimizing a dense Deep Neural Network (DNN) for molecular property prediction using Hyperband via the KerasTuner library [1] [11].

Prerequisites and Environment Setup

First, ensure the necessary software packages are installed. It is recommended to use a conda environment for dependency management.

Defining the Search Space and Model Builder Function

The core of the setup is defining the hyperparameter search space and creating a function that builds a model for a given hyperparameter set.

Instantiating and Running the Hyperband Tuner

Configure the Hyperband tuner and execute the search. The tuner will handle the successive halving and parallel execution.

The Scientist's Toolkit: Essential Research Reagents & Software

This section details the key software "reagents" required to implement Hyperband for molecular deep learning projects.

Table 3: Essential Software Tools for Hyperband-driven Research

| Tool Name | Type | Primary Function | Application Note |

|---|---|---|---|

| KerasTuner | Python Library | Provides Hyperband implementation and other HPO algorithms. | User-friendly, intuitive API ideal for rapid prototyping and integration with Keras/TensorFlow models [1]. |

| Optuna | Python Library | Provides a define-by-run API for optimization, including Hyperband and BOHB. | Offers greater flexibility for complex search spaces and models beyond Keras [1]. |

| TensorFlow / PyTorch | Deep Learning Framework | Core libraries for building and training deep neural networks. | TensorFlow integrates seamlessly with KerasTuner. PyTorch can be used with Optuna or Ray Tune. |

| Ray Tune | Python Library | Scalable HPO framework supporting Hyperband, PBT, and more. | Designed for distributed computing, enabling massive parallelization across clusters [10]. |

| Scikit-learn | Python Library | Provides data preprocessing, validation, and baseline models. | Essential for data preparation (e.g., StandardScaler) and for comparing against traditional ML models [8]. |

In the computationally demanding field of molecular deep learning, reliance on grid or random search for hyperparameter optimization can lead to suboptimal models and inefficient resource utilization. The Hyperband algorithm addresses these limitations directly by employing an adaptive, early-stopping strategy that dynamically shifts computational budget to the most promising hyperparameter configurations. The provided protocols and toolkit equip researchers with the practical knowledge to integrate Hyperband into their workflows, thereby accelerating the development of more accurate and predictive models for drug discovery and materials science.

Hyperparameter optimization (HPO) is a critical step in developing high-performing machine learning models, directly impacting their efficiency and prediction accuracy [1]. In scientific domains like chemistry, where models such as deep neural networks (DNNs) and graph neural networks (GNNs) are used for tasks like molecular property prediction (MPP), the resource demands of HPO present a significant bottleneck [1] [13]. Traditional methods like Grid Search and Random Search often become computationally intractable for large search spaces [2]. This challenge is framed as a pure-exploration non-stochastic infinite-armed bandit (NIAB) problem, where each hyperparameter configuration is an "arm" of a bandit, and the goal is to find the best one with minimal resource expenditure [14] [15].

The Hyperband algorithm addresses this by introducing an adaptive resource allocation strategy, speeding up random search through early stopping of poorly performing configurations and allocating more resources to promising ones [14] [1]. Its ability to provide over an order-of-magnitude speedup makes it particularly valuable for computational chemistry applications, where training deep chemical models is exceptionally resource-intensive [14] [13].

Core Algorithm and Mechanisms

Foundational Concept: Successive Halving

Hyperband builds upon the Successive Halving algorithm. The process begins by allocating a initial budget (e.g., a small number of training epochs) to a large set of randomly sampled hyperparameter configurations. After evaluating all configurations with this small budget, only the top-performing fraction (e.g., the top 1/η) are retained or "promoted" to the next round. The process repeats, with the allocated budget for the remaining configurations increasing by a factor of η at each successive rung. This continues until only one configuration remains, which has received the maximum resource allocation [11] [2].

A key limitation of Successive Halving is the initial trade-off between the number of configurations (n) and the initial budget allocated to each (r). Starting with too many configurations may eliminate good performers that need more resources to shine, while starting with too few may discard the best configurations early [11]. Hyperband solves this by considering multiple such brackets.

The Hyperband Algorithm

Hyperband functions as a meta-algorithm that performs a grid search over different possible values for n (the number of configurations) for Successive Halving. It iterates over different "brackets," each representing a different trade-off between n and the initial resource budget [11].

The algorithm requires two inputs:

- R: The maximum amount of resources (e.g., epochs, iterations, dataset size) that can be allocated to a single configuration.

- η: The proportion of configurations discarded in each round of Successive Halving (an aggressive parameter, typically 3 or 4) [11].

The Hyperband process consists of a nested loop structure [11]:

- Outer Loop iterates over different brackets, starting with the most aggressive (many configurations with small budgets) and progressing to the most conservative (few configurations with larger budgets).

- Inner Loop executes Successive Halving for the current bracket.

Key Theoretical Properties

By formulating HPO as a NIAB problem, Hyperband provides several theoretical guarantees. It is designed to identify the best hyperparameter configuration with high probability under a fixed total resource budget, assuming that the validation loss for each configuration converges to a fixed value with sufficient training [11]. Its consistency and robustness are maintained because it does not rely on assumptions about the smoothness of the loss function, making it well-suited for the complex, high-dimensional search spaces common in chemical deep learning [14] [11].

The efficiency of Hyperband is demonstrated through its performance in hyperparameter optimization of deep learning models, including those for molecular property prediction.

Table 1: Comparison of Hyperparameter Optimization (HPO) Methods for Molecular Property Prediction

| HPO Method | Key Principle | Computational Efficiency | Prediction Accuracy | Best Suited For |

|---|---|---|---|---|

| Grid Search | Exhaustive search over a grid of predefined values [2] | Low (intractable for high-dimensional spaces) [1] | Can find optimum if in grid, but prone to miss good values [1] | Small, well-understood search spaces |

| Random Search | Random sampling from the search space [2] | Moderate, but can waste resources on poor configurations [1] | Good, but not guaranteed to be optimal [1] | Medium-sized search spaces where some randomness is acceptable |

| Bayesian Optimization | Builds a probabilistic model to select promising configurations [1] [11] | Lower than Hyperband; adaptively selects but does not early-stop [1] | High, often finds optimal configurations [1] | Problems where function evaluations are very expensive |

| Hyperband | Adaptive resource allocation & early stopping via Successive Halving [14] [1] | Very High (can provide an order-of-magnitude speedup) [14] [1] | Optimal or nearly optimal [1] | Large search spaces and resource-intensive models (e.g., DNNs, GNNs) [1] |

| BOHB (Bayesian + Hyperband) | Combines Bayesian Optimization's model-based sampling with Hyperband's early stopping [1] | High, inherits efficiency from Hyperband [1] | High, can outperform pure Hyperband [1] | When both sample efficiency and robust performance are critical |

Table 2: Impact of Hyperparameter Optimization (HPO) on Model Performance for Molecular Property Prediction

| Case Study | Model Type | Performance without HPO | Performance with HPO (e.g., Hyperband) | Key Improved Hyperparameters |

|---|---|---|---|---|

| Melt Index (MI) Prediction [1] | Deep Neural Network (DNN) | Suboptimal / Baseline Accuracy | Significant improvement in prediction accuracy [1] | Number of layers/units, learning rate, batch size [1] |

| Glass Transition Temperature (Tg) Prediction [1] | DNN / Convolutional Neural Network (CNN) | Suboptimal / Baseline Accuracy | Significant improvement in prediction accuracy [1] | Learning rate, number of filters, dropout rate [1] |

Application Protocols for Chemistry Deep Learning

Protocol: Hyperparameter Tuning for a Molecular Property Prediction DNN

This protocol details the application of Hyperband to optimize a DNN for predicting properties like melt index or glass transition temperature [1].

Objective: To find the optimal set of hyperparameters for a dense DNN that minimizes the validation mean squared error (MSE) on a molecular property dataset.

The Scientist's Toolkit: Table 3: Essential Research Reagents and Computational Tools

| Item / Software Library | Function / Purpose in HPO |

|---|---|

| KerasTuner / Optuna | Primary software platforms for implementing HPO algorithms; enable parallel execution of multiple trials [1]. |

| TensorFlow / PyTorch | Deep learning frameworks used to define and train the model being tuned. |

| Hyperparameter Search Space | The defined ranges and distributions for each hyperparameter to be optimized [2]. |

| Validation Set | A held-out dataset used to evaluate the performance of each hyperparameter configuration, guiding the selection process [11]. |

| Compute Resource (CPU/GPU) | Necessary for the parallel training of hundreds to thousands of model configurations; GPU clusters significantly speed up the process [1]. |

Step-by-Step Methodology:

Define the Model-Building Function: Create a function that takes a hyperparameter dictionary as input and returns a compiled Keras or PyTorch model. This function defines the model architecture dynamically based on the suggested hyperparameters.

Instantiate the Hyperband Tuner: Configure the Hyperband tuner with the model-building function, objective metric, and resource parameters.

Execute the Search: Run the HPO process. The tuner will manage the successive halving brackets, model training, and evaluation.

Retrieve and Validate Best Configuration: After the search completes, obtain the best hyperparameters, build the final model, and conduct a final evaluation.

Protocol: Integration with Accelerated HPO for Large-Scale Models

For extremely large models, like billion-parameter GNNs or chemical language models (e.g., ChemGPT), a full HPO run is prohibitively expensive. A two-stage protocol combining Training Performance Estimation (TPE) with Hyperband is recommended [13].

Objective: To rapidly identify near-optimal hyperparameters for large-scale chemical models using a fraction of the total training budget.

Step-by-Step Methodology:

Initial Screening with TPE:

- Train a diverse set of model configurations (varying learning rate, batch size) for a short period (e.g., 10-20% of the total epoch budget).

- Fit a linear regression model to predict the final validation loss based on the early training loss.

- Use this model to discard underperforming configurations and identify the most promising hyperparameter sets. This achieves excellent predictive power (e.g., R² = 0.98) and rank correlation [13].

Refined Search with Hyperband:

- Use the promising hyperparameter ranges identified by TPE to define a more targeted search space.

- Execute a standard Hyperband search within this refined space. This focuses computational resources on the most relevant region of the hyperparameter space.

This combined approach can reduce total HPO time and compute budgets by up to 90% for large-scale chemical models [13].

Visualization of Algorithmic Logic

The Successive Halving process within a single bracket of Hyperband can be visualized as a tournament where configurations are progressively filtered and allocated more resources.

Hyperband represents a significant advancement in hyperparameter optimization by fundamentally addressing the problem of efficient resource allocation. Its bandit-based approach, built on the successive halving mechanism, provides a robust and highly efficient method for navigating complex hyperparameter spaces. For research in chemistry deep learning—where models are large, data is complex, and computational resources are precious—Hyperband offers a practical path to achieving optimal model performance without prohibitive computational cost. Its demonstrated ability to deliver optimal or nearly optimal results with an order-of-magnitude speedup makes it an essential component in the modern computational chemist's toolkit, enabling more rigorous model development and more accurate predictions of molecular properties [1].

The pursuit of optimal hyperparameters is a fundamental challenge in the application of deep learning to chemical discovery. Traditional optimization methods become computationally prohibitive when navigating the vast, high-dimensional search spaces characteristic of chemical deep learning models. This article establishes a novel framework for formulating hyperparameter optimization (HPO) as a pure-exploration, non-stochastic infinite-armed bandit (NIAB) problem, contextualized within the Hyperband algorithm for chemistry deep learning research. By reconceptualizing each hyperparameter configuration as an "arm" in a bandit problem with an essentially infinite number of possible configurations, researchers can leverage efficient allocation strategies to identify optimal configurations with minimal computational resources. This approach is particularly suited to the low-data regimes and complex model architectures prevalent in drug development, where it enables more efficient exploration of the chemical space to identify novel compounds with desired properties [16] [17].

Theoretical Foundation

The Multi-Armed Bandit Framework

In probability theory and machine learning, the classic multi-armed bandit (MAB) problem models a decision-maker who must repeatedly select among multiple choices (called "arms") with uncertain rewards to maximize cumulative reward over time. This exemplifies the fundamental exploration-exploitation tradeoff, where the decision-maker must balance exploring new arms to gain information versus exploiting arms that have performed well historically [18].

The problem is named from imagining a gambler at a row of slot machines (sometimes known as "one-armed bandits"), who must decide which machines to play, how many times to play each, and in which order [18]. In computational terms, this translates to selecting among different algorithms, parameter configurations, or in our case, hyperparameter settings for chemical deep learning models.

Pure Exploration and Infinite-Armed Bandits

In contrast to the classic regret-minimization formulation, the pure exploration variant of the bandit problem (also known as best arm identification) focuses exclusively on identifying the best arm by the end of a finite number of rounds without concern for cumulative reward during the exploration process [18]. This formulation is particularly relevant to HPO, where the primary objective is to find the best hyperparameter configuration rather than optimize performance during the search process itself.

The infinite-armed bandit extension addresses scenarios where the number of available arms is essentially unlimited, which directly corresponds to the continuous or massively discrete hyperparameter spaces encountered in deep learning applications [19]. In this framework:

- Each hyperparameter configuration represents an "arm"

- Pulling an arm corresponds to training a model with that configuration

- The reward is the model's performance on a validation metric

- The arms are "non-stochastic" in the sense that their performance is deterministic given the configuration, but unknown a priori [11]

Formal Problem Definition

Formally, we can define hyperparameter optimization as a pure-exploration NIAB problem where:

- Let ( \mathcal{X} ) represent the hyperparameter space, which may be infinite-dimensional

- Each configuration ( x \in \mathcal{X} ) has an associated performance ( f(x) ) (e.g., validation accuracy)

- The objective is to find ( x^* = \arg\max_{x \in \mathcal{X}} f(x) ) with high probability using minimal resources

- We assume that ( f(x) ) converges to a fixed value if trained with sufficient resources [11]

Table 1: Key Characteristics of Bandit Problem Formulations

| Problem Type | Objective | Arm Count | Relevant to HPO |

|---|---|---|---|

| Classic Stochastic MAB | Maximize cumulative reward | Finite | Limited |

| Pure-Exploration MAB | Identify best arm with high confidence | Finite | Moderate |

| Non-stochastic Infinite-armed Bandit | Identify best arm with minimal pulls | Infinite | High |

Hyperband Algorithm for Chemical Deep Learning

Algorithmic Framework

The Hyperband algorithm directly addresses the HPO problem as a pure-exploration, non-stochastic infinite-armed bandit problem [11]. It builds on two key insights: (1) that randomly sampling configurations can be surprisingly effective, and (2) that adaptive resource allocation enables more efficient identification of promising configurations.

Hyperband frames HPO as "a pure-exploration, non-stochastic, infinite-armed bandit problem" where the player can always choose to pull a new arm or continue pulling the same arm, with no bound on the number of arms that can be drawn [11]. The algorithm intelligently allocates resources (e.g., iterations, data samples, or training epochs) to randomly sampled configurations, stopping training for poorly performing configurations early while directing more resources to promising ones.

Successive Halving and Adaptive Resource Allocation

Hyperband employs successive halving as its core mechanism for adaptive resource allocation. This process works by [11]:

- Allocating a budget to a set of hyperparameter configurations uniformly

- After the budget is depleted, discarding the worst-performing half of configurations

- Keeping the top 50% and training them further with an increased budget

- Repeating this process until one configuration remains

The key challenge that Hyperband addresses is determining the appropriate number of configurations to consider. Starting with many configurations with small budgets is effective when performance differences are pronounced, while fewer configurations with larger budgets are better when differences are subtle. Hyperband solves this by considering multiple brackets with different tradeoffs between configuration count and resource allocation per configuration.

Diagram 1: Hyperband Algorithm Workflow for HPO

Parameter Selection for Chemical Applications

For chemical deep learning applications, Hyperband requires two key parameters [11]:

- ( R ): The maximum resources allocated to a single configuration

- ( \eta ): The proportion of configurations discarded in each successive halving round

The parameter ( R ) should be determined based on available computational resources and the typical training time required for chemical models to converge. For molecular property prediction tasks, this might correspond to the number of training epochs or the size of molecular subsets used for training.

The parameter ( \eta ) controls the aggressiveness of configuration elimination. The original Hyperband authors recommend ( \eta = 3 ) or ( \eta = 4 ), with ( \eta = 3 ) providing the best theoretical bounds [11]. In practice, this parameter is not highly sensitive, but more aggressive values (( \eta = 4 ) or higher) yield faster results.

Table 2: Hyperband Parameter Guidelines for Chemical Deep Learning

| Parameter | Description | Recommended Values | Chemical Application Considerations |

|---|---|---|---|

| ( R ) | Maximum resource per configuration | Task-dependent | Based on molecular dataset size and model complexity |

| ( \eta ) | Elimination aggressiveness | 3 or 4 | 3 for thorough search, 4 for faster results |

| Minimum budget | Initial resource allocation | 1 | Single epoch or small data subset |

| Brackets (( s_{max} )) | Number of brackets | ( \lfloor \log_\eta(R) \rfloor ) | Automatically determined from R and η |

Application to Chemical Space Exploration

The Chemical Discovery Challenge

The "chemical universe" is estimated to contain up to ( 10^{60} ) drug-like molecules, creating an essentially infinite search space for drug discovery [20]. Discovering chemicals with desired attributes traditionally involves a "long and painstaking process" [16], but generative deep learning and sophisticated HPO techniques have the potential to revolutionize this process.

Chemical discovery involves not only finding specific molecules but also predicting reaction pathways, optimizing catalytic conditions, and eliminating undesired side effects [16]. Given this vast possibility space, "a statistical view on chemical design and discovery is mandatory" [16], making bandit-based approaches particularly valuable.

Active Deep Learning for Low-Data Regimes

Active deep learning combined with HPO shows particular promise for low-data drug discovery scenarios, where it can achieve "up to a six-fold improvement in hit discovery compared to traditional methods" [17]. This approach allows models to improve iteratively during the screening process by acquiring new data and adjusting course, making it particularly valuable when initial training data is limited.

In this framework, the bandit formulation expands to include not only hyperparameter selection but also the choice of which molecules to synthesize or test next, creating a compound decision process that balances exploration of chemical space with exploitation of promising regions.

Diagram 2: Active Deep Learning Workflow for Chemical Discovery

Molecular Representations and Feature Spaces

The effectiveness of HPO for chemical deep learning depends critically on the choice of molecular representation, which serves as the feature space for the learning algorithm. Current representations include [20]:

- Molecular strings: SMILES, SELFIES, and DeepSMILES strings that encode molecular structure as character sequences

- Molecular graphs: 2D and 3D graph representations where atoms are nodes and bonds are edges

- Molecular surfaces: 3D meshes, point clouds, or voxels that capture molecular shape and electronic properties

Each representation creates a different hyperparameter response surface, influencing which HPO strategies are most effective. For instance, graph neural networks operating on molecular graphs may have different optimal hyperparameters compared to sequence models processing SMILES strings.

Table 3: Molecular Representations in Chemical Deep Learning

| Representation | Format | Advantages | HPO Considerations |

|---|---|---|---|

| SMILES Strings | Character sequences | Simple, compact, widely supported | May generate invalid structures |

| SELFIES | Semantic-constrained strings | Always valid molecules | Different syntax than SMILES |

| Molecular Graphs | Nodes (atoms) and edges (bonds) | Natural representation | More complex architecture |

| 3D Point Clouds | Atomic coordinates | Captures spatial arrangement | Requires 3D structure data |

Experimental Protocols and Implementation

Hyperband Implementation for Chemical Models

Implementing Hyperband for chemical deep learning requires three core components [11]:

get_hyperparameter_configuration(): Samples random configurations from the hyperparameter spacerun_then_return_val_loss(r, config): Trains a model with the given configuration and resource level, returning validation losstop_k(configs, losses, k): Selects the top k configurations based on their losses

For chemical applications, the resource ( r ) can be defined as training epochs, subset of the molecular dataset, or computational time, depending on the constraints and objectives of the screening campaign.

Protocol for Low-Data Drug Discovery Simulation

To evaluate HPO strategies in realistic chemical discovery scenarios, researchers can implement the following protocol, adapted from active deep learning studies [17]:

- Initialization: Start with a small set of known active compounds (typically 50-100 molecules)

- Model Configuration: Define the hyperparameter search space appropriate for the chosen molecular representation

- Hyperband Execution: Run Hyperband with successive halving brackets to identify promising hyperparameter configurations

- Candidate Generation: Use the optimized model to generate or select candidate molecules from a large virtual library

- Iterative Expansion: Select the most promising candidates for "virtual testing" (or actual synthesis in prospective studies)

- Model Update: Retrain the model with expanded data and repeat the process until stopping criteria are met

This protocol explicitly frames HPO as part of the broader bandit problem, where both model hyperparameters and molecular selection constitute decisions in a structured exploration process.

Research Reagent Solutions

Table 4: Essential Research Reagents for HPO in Chemical Deep Learning

| Tool/Resource | Function | Implementation Notes |

|---|---|---|

| Neural Network Intelligence (NNI) | HPO toolkit providing Hyperband implementation | Supports BOHB variant combining Hyperband with Bayesian optimization [21] |

| Keras Tuner | Deep learning HPO framework | Includes Hyperband implementation for rapid prototyping [11] |

| QM9, ANI-1x, QM7-X | Quantum chemical datasets | Provide reliable molecular properties for training [16] |

| RDKit | Cheminformatics toolkit | Handles molecular representations (SMILES, graphs, descriptors) |

| ConfigSpace | Configuration space definition | Enables formal specification of hyperparameter search spaces [21] |

Discussion and Future Directions

Formulating HPO as a pure-exploration, non-stochastic infinite-armed bandit problem provides a mathematically rigorous framework for understanding and improving hyperparameter search in chemical deep learning. The Hyperband algorithm represents a practical instantiation of this framework that has demonstrated effectiveness in resource-constrained environments.

Future research directions include tighter integration of molecular representation learning with hyperparameter optimization, development of problem-specific resource allocation strategies for chemical applications, and extension of the bandit framework to incorporate multi-fidelity information from computational chemistry methods of varying accuracy and cost.

For drug development professionals, this approach offers a systematic methodology for navigating the complex tradeoffs between exploration of chemical space and computational efficiency, potentially accelerating the discovery of novel therapeutic compounds while reducing resource requirements.

In the field of chemical informatics and molecular property prediction, deep learning models, including Deep Neural Networks (DNNs) and Convolutional Neural Networks (CNNs), have become indispensable tools. However, their performance is critically dependent on hyperparameter settings. Traditional optimization methods like grid and random search are often computationally expensive, creating a significant bottleneck in research and development workflows [1].

The Hyperband algorithm has emerged as a powerful solution, offering a radically more efficient approach to hyperparameter optimization (HPO). By strategically allocating resources to the most promising hyperparameter configurations, Hyperband can achieve optimal or near-optimal model accuracy in a fraction of the time required by other methods [1] [22]. This application note details the protocol for implementing Hyperband, demonstrating its profound impact on accelerating deep learning applications in chemistry.

Hyperband vs. Other HPO Methods: A Quantitative Comparison

Recent research directly compares Hyperband against other common HPO algorithms in chemical deep-learning tasks, highlighting its superior efficiency.

Table 1: Comparative Performance of HPO Algorithms in Molecular Property Prediction

| HPO Algorithm | Computational Efficiency | Prediction Accuracy (Sample RMSE) | Key Characteristic |

|---|---|---|---|

| Hyperband | Highest / Fastest [1] [22] | Optimal / Near-Optimal(e.g., RMSE of 15.68 K for Tg prediction) [1] | Early-stopping of poorly performing trials; best for time-limited projects [1]. |

| Random Search | Moderate [1] | Can be Excellent(e.g., Lowest RMSE of 0.0479 for MI prediction) [22] | Simple, parallelizable; can sometimes find excellent configurations [1]. |

| Bayesian Optimization | Lower / Slower [1] [22] | High [1] | Models the objective function; sample-efficient but can be computationally heavy [1]. |

| BOHB(Bayesian & Hyperband) | High [1] | High [1] | Combines Bayesian model-based sampling with Hyperband's resource efficiency [1]. |

The application of these algorithms in real-world case studies underscores Hyperband's advantage. In predicting the melt index (MI) of high-density polyethylene, Hyperband completed its tuning cycle in less than an hour, a fraction of the time required by other methods, while still delivering high accuracy [22]. For the more complex task of predicting polymer glass transition temperature (Tg) from SMILES strings, a Hyperband-optimized CNN model achieved a 22% reduction in error (relative to the dataset's standard deviation) and cut the mean absolute percentage error to just 3%, a significant improvement over the 6% reported in prior literature [1] [22].

Experimental Protocol for Hyperband-driven HPO

This section provides a detailed, step-by-step protocol for optimizing a deep learning model for molecular property prediction using the Hyperband algorithm, as implemented in the KerasTuner library [1].

Protocol: Hyperparameter Optimization with KerasTuner

Objective: To efficiently identify the optimal set of hyperparameters for a DNN or CNN model for accurate molecular property prediction. Materials: Python environment with TensorFlow/Keras and KerasTuner installed; dataset of molecular structures (e.g., as SMILES strings or descriptors) and corresponding property values.

Define the Model Building Function:

- Create a function (

build_model(hp)) that defines the model architecture and the hyperparameter search space. - Within this function, use the

hpobject to declare which hyperparameters to tune and their ranges. - Example for a DNN:

- Create a function (

Instantiate the Hyperband Tuner:

- Configure the Hyperband tuner object, specifying the hypermodel, objective, and computational parameters.

Execute the Hyperparameter Search:

- Run the search, providing the training and validation datasets. The Hyperband algorithm will automatically manage the resource allocation and early-stopping.

Retrieve and Evaluate the Optimal Model:

- After the search completes, obtain the best hyperparameters and the corresponding model.

Diagram 1: Hyperband Optimization Workflow

The Scientist's Toolkit: Essential Research Reagents & Software

Successful implementation of an efficient deep learning pipeline in chemistry relies on several key software tools and libraries.

Table 2: Key Research Reagents and Software Solutions

| Tool Name | Type | Function in HPO for Chemistry |

|---|---|---|

| KerasTuner | Python Library | Provides an intuitive, user-friendly interface for HPO, including Hyperband, random search, and Bayesian optimization [1]. |

| Optuna | Python Library | A flexible optimization framework that supports HPO, including the BOHB (Bayesian Optimization + Hyperband) algorithm [1]. |

| TensorFlow / Keras | Deep Learning Framework | The underlying backbone for building and training the DNN and CNN models that are being optimized [1] [23]. |

| Scikit-learn | Machine Learning Library | Used for data preprocessing, feature scaling, and train-test splitting prior to model training and HPO [24]. |

| Python | Programming Language | The primary language for integrating the above tools and executing the HPO workflow [1]. |

The Hyperband algorithm represents a paradigm shift in hyperparameter tuning for chemical deep learning. Its ability to drastically reduce computation time—from days to hours—while delivering highly accurate models directly addresses one of the most significant bottlenecks in computational chemistry and drug development [1] [22]. By adopting the detailed protocol and tools outlined in this application note, researchers can accelerate their model development cycles, enabling more rapid iteration and discovery in the quest for new materials and therapeutics.

Implementing Hyperband: A Step-by-Step Guide for Chemistry-Specific Deep Learning Models

In computational chemistry and drug development, the performance of Deep Neural Networks (DNNs) is highly sensitive to architectural choices and hyperparameters, making optimal configuration selection a non-trivial task [25]. The definition of an effective hyperparameter search space represents the foundational step in any optimization workflow, establishing the boundaries within which algorithms like Hyperband operate. This process is particularly crucial in chemistry applications where datasets often exhibit unique challenges including skewed distributions, wide feature ranges, multimodal behaviors, and frequent data scarcity [26] [27]. The strategic selection of hyperparameter ranges directly influences both the efficiency of the optimization process and the ultimate predictive performance of models on chemical properties, molecular activities, or spectroscopic analyses.

Core Hyperparameters in Chemistry Deep Learning: Ranges and Impact

The table below summarizes key hyperparameters, their typical search ranges in chemistry applications, and their impact on model performance and training dynamics.

Table 1: Core Hyperparameters for Chemistry Deep Learning Models

| Hyperparameter | Typical Search Range | Impact on Model Performance & Training | Chemistry-Specific Considerations |

|---|---|---|---|

| Learning Rate | 1e-5 to 1e-2 | Controls step size during gradient descent; too high causes instability, too low leads to slow convergence [28] | Critical for handling varying feature scales in chemical data (e.g., concentrations spanning orders of magnitude) [26] |

| Batch Size | 16 - 256 [28] | Affects training stability, gradient noise, and memory requirements; smaller batches may regularize | Limited by molecular graph complexity; large graphs require smaller batches due to memory constraints [29] |

| Number of Layers | 2 - 10+ (architecture-dependent) | Determines model capacity and feature abstraction depth; too few underfits, too many overfits | Varies by architecture: GNNs typically 3-8 message-passing layers [29], DNNs 3-10+ hidden layers [27] |

| Hidden Units/Dimensions | 64 - 1024 | Controls representational capacity; wider networks capture more complex relationships | Graph networks often use 64-256 dimensions for node/edge embeddings [29]; tabular networks 128-512 units/layer [26] |

| Dropout Rate | 0.0 - 0.5 | Regularization technique to prevent overfitting; higher rates increase regularization | Particularly important for small, imbalanced chemical datasets common in geochemistry and drug discovery [27] |

| Optimizer | Adam, SGD, RMSprop [28] | Adam combines momentum and adaptive learning rates; SGD with momentum explores loss landscape differently | Adam often preferred for chemistry tasks with noisy gradients; Polar Bear Optimizer shows promise for spectroscopy [30] |

Experimental Protocols for Hyperparameter Optimization in Chemistry Applications

Protocol 1: Hyperparameter Optimization for Graph Neural Networks in Molecular Property Prediction

Application Context: Optimizing GNNs for quantitative structure-property relationship (QSPR) modeling and molecular property prediction [25] [29].

Experimental Workflow:

- Data Preparation: Convert molecular structures to graph representations using tools like MatGL's graph converter [29]. Apply dataset splitting with consideration for chemical diversity (scaffold splitting).

- Search Space Definition: Establish the hyperparameter ranges based on architecture:

- Learning rate: Logarithmic sampling between 1e-5 and 1e-2

- Number of GNN layers: Integer uniform sampling between 3-8

- Hidden dimension: Categorical sampling from [64, 128, 256, 512]

- Dropout rate: Uniform sampling between 0.0-0.5

- Graph cutoff radius: Uniform sampling between 3-8 Å [29]

- Optimization Setup: Configure Hyperband with aggressive early-stopping (η=3) to efficiently prune underperforming configurations, leveraging the fact that chemistry GNNs often show performance trends within few epochs.

- Evaluation: Use nested cross-validation with external test set; report mean and standard deviation of key metrics (RMSE, R²) across folds.

Validation Metrics: For regression tasks (energy, solubility prediction): RMSE, MAE, R². For classification tasks (toxicity, activity prediction): ROC-AUC, precision-recall AUC.

Protocol 2: Hyperparameter Tuning for Spectroscopy Data Analysis with DNN/RNN Architectures

Application Context: Optimizing models for analyzing Laser-Induced Breakdown Spectroscopy (LIBS) data and other spectroscopic techniques [30].

Experimental Workflow:

- Data Preprocessing: Apply dimensionality reduction (e.g., bottleneck approach reducing features from 41,730 to 1,024) [30]. Normalize spectral data using StandardScaler or RobustScaler.

- Architecture-Specific Search Spaces:

- For RNNs (Bi-LSTM, GRU): Hidden layers (1-4), units per layer (32-512), sequence processing direction (unidirectional/bidirectional)

- For DNNs: Hidden layers (3-10), units per layer (128-1024), activation functions (ReLU, LeakyReLU)

- Advanced Optimization: Employ specialized optimizers like Polar Bear Optimizer (PBO) for enhanced convergence [30].

- Regularization Strategy: Combine dropout (0.1-0.5) with early stopping based on validation loss plateau (patience=20-50 epochs).

Validation Approach: Use leave-one-sample-out cross-validation for small datasets; train/validation/test splits for larger spectral collections.

Protocol 3: Handling Imbalanced Geochemical Data with Uncertainty-Aware DNNs

Application Context: Predicting trace element concentrations from major element data with highly skewed distributions [27].

Experimental Workflow:

- Data Resampling: Apply Synthetic Minority Over-sampling Technique for Regression with Gaussian Noise (SMOGN) to address data imbalance [27].

- Statistical Transformation: Implement Yeo-Johnson, Box-Cox, or square root transformations for heavily skewed target variables.

- Uncertainty Quantification: Train ensemble of 1000 DNN models with different random initializations to capture epistemic uncertainty [27].

- Hyperparameter Search Space:

- Learning rate: Logarithmic sampling (1e-5 to 1e-3)

- Hidden layers: 3-8 with 64-256 units each

- Batch size: 16-64 (smaller batches for limited data)

- L2 regularization: Logarithmic sampling (1e-5 to 1e-2)

- Model Interpretation: Compute Accumulated Local Effects (ALE) scores to identify influential input features (e.g., Li, Fe, pH, Mg concentrations) [27].

Workflow Visualization: Hyperparameter Optimization with Hyperband for Chemistry DNNs

The following diagram illustrates the complete Hyperband optimization workflow tailored for chemistry deep learning applications:

Table 2: Essential Research Reagents and Computational Tools for Chemistry Deep Learning

| Tool/Resource | Type | Function in Chemistry Deep Learning | Example Applications |

|---|---|---|---|

| MatGL [29] | Software Library | Open-source graph deep learning library with pre-trained foundation potentials | Materials property prediction, interatomic potential development |

| PyTorch Geometric [25] | Software Framework | Library for deep learning on graphs and irregular structures | Molecular graph networks, 3D structure processing |

| Polar Bear Optimizer [30] | Optimization Algorithm | Specialized optimizer for enhancing prediction accuracy in spectral analysis | LIBS spectral quantification, spectroscopy data processing |

| SMOGN [27] | Data Preprocessing | Synthetic minority over-sampling technique for regression with Gaussian noise | Handling imbalanced geochemical data, trace element prediction |

| DGL [29] | Software Library | Deep Graph Library providing efficient graph neural network implementations | Large-scale molecular graph processing, message-passing networks |

| REINVENT [31] | Software Platform | Reinforcement learning framework for de novo drug design | Molecular generation, chemical space exploration |

| Hyperband | Optimization Algorithm | Efficient hyperparameter optimization using early-stopping and successive halving | Rapid architecture search for chemistry DNNs/GNNs |

Defining an appropriate search space for hyperparameters represents a critical first step in optimizing deep learning models for chemistry applications. The unique characteristics of chemical data—including multi-scale properties, skewed distributions, and frequent data scarcity—necessitate domain-aware search boundaries and optimization strategies. As automated optimization techniques continue to evolve, their integration with chemistry-specific domain knowledge will be essential for advancing predictive modeling in materials science, drug discovery, and molecular engineering [25]. The protocols and guidelines presented here provide a foundation for researchers to systematically approach hyperparameter optimization while accounting for the distinctive challenges presented by chemical data. Future directions in this field will likely involve increased integration of physical constraints directly into model architectures and optimization processes, further bridging the gap between data-driven and physics-based modeling approaches in chemistry.

Within the domain of chemistry deep learning, the optimization of hyperparameters is not merely a technical pre-processing step but a critical determinant of model success, influencing the accuracy of molecular property prediction (MPP) and the efficiency of drug discovery pipelines [1]. For researchers and scientists, manually tuning these hyperparameters is often a vast and time-consuming endeavor, a challenge that the Hyperband algorithm addresses through its efficient, bandit-based approach to hyperparameter optimization [14]. The efficacy of Hyperband hinges on its two core components: get_hyperparameter_configuration and run_then_return_val_loss [12] [11]. This article provides detailed application notes and experimental protocols for implementing these components, specifically tailored for developing accurate and efficient deep learning models in chemical and pharmaceutical research.

Hyperband is designed to accelerate the hyperparameter search by dynamically allocating computational resources through an early-stopping strategy. It functions by treating hyperparameter optimization as a pure-exploration, non-stochastic infinite-armed bandit problem [14]. The algorithm's outer loop hedges over different trade-offs between exploring many configurations (n) and evaluating them in depth (r), while its inner loop executes the Successive Halving subroutine [12] [11].

The underlying principle is intuitive: a hyperparameter configuration destined to be the best after extensive training is likely to be in the top half of performers after a small number of iterations. Hyperband exploits this by quickly discarding poor performers and channeling resources to more promising candidates [12]. This methodology has been shown to provide over an order-of-magnitude speedup compared to other methods on various deep-learning problems, making it exceptionally suitable for computationally expensive chemistry models [14].

Table 1: Key Parameters of the Hyperband Algorithm

| Parameter | Symbol | Description | Recommended Value |

|---|---|---|---|

| Maximum Resource | R |

The maximum number of iterations/epochs allocated to a single configuration. | Set based on available computational resources; the number of epochs you would typically use for a final model [12] [11]. |

| Downsampling Rate | η (eta) |

The proportion of configurations discarded in each round of Successive Halving. | 3 or 4; the algorithm's performance is not highly sensitive to this value [12] [11]. |

| Brackets | s_max |

The number of unique executions of Successive Halving. | Calculated as int(log_eta(R)) [12]. |

Core Component I: gethyperparameterconfiguration

Function Definition and Purpose

The get_hyperparameter_configuration(n) function is responsible for uniformly sampling n independent and identically distributed (i.i.d.) hyperparameter configurations from a predefined search space [11]. This function directly controls the exploration phase of Hyperband, determining the initial set of candidate models that will be evaluated. For research in chemistry deep learning, the definition of this search space is paramount, as it encapsulates the prior knowledge and hypotheses about which hyperparameter ranges are likely to yield high-performing models for a given task, such as predicting the elastic properties of composites or the efficacy of a drug molecule [32].

Protocol for Defining the Search Space in Chemistry Models

A carefully constructed search space is critical for efficient optimization. Below is a step-by-step protocol for defining hyperparameter distributions for a dense Deep Neural Network (DNN) used in molecular property prediction.

Table 2: Example Hyperparameter Search Space for a Chemistry DNN

| Hyperparameter | Type | Scale | Range/Choices | Function in Model |

|---|---|---|---|---|

| Learning Rate | Continuous | Logarithmic | 1e-5 to 1e-2 | Controls the step size during gradient-based optimization; crucial for convergence [12] [1]. |

| Number of Layers | Integer | Linear | 2 to 6 | Determines the depth and capacity of the neural network to learn complex molecular representations [1]. |

| Units per Layer | Integer | Linear | 32 to 512 | Defines the width of each layer, influencing the model's ability to capture intricate features in molecular data [1]. |

| Batch Size | Integer | Logarithmic | 16 to 256 | Affects the stability and speed of the learning process, as well as memory requirements [12] [1]. |

| Dropout Rate | Continuous | Linear | 0.0 to 0.5 | A regularization technique to prevent overfitting, which is common in high-dimensional, limited chemical datasets [1]. |

| Activation Function | Categorical | - | ReLU, LeakyReLU, tanh |

Introduces non-linearity, allowing the network to learn complex relationships in molecular structures [1]. |

Step-by-Step Protocol:

- Identify Critical Hyperparameters: Based on the model architecture (e.g., DNN, CNN-BiLSTM) and the chemistry-specific task, select hyperparameters that most significantly impact performance. For instance, learning rate and network architecture parameters are universally important [1].

- Define Parameter Scales and Ranges: For each hyperparameter, specify its scale (linear or log) and a plausible range. Use log-scale for parameters like learning rate that span several orders of magnitude, and linear for others like the number of units [12].

- Implement the Sampling Function: The function should return

nconfigurations, each a set of hyperparameters randomly sampled from the defined distributions. Uniform sampling is standard and guarantees consistency [11].

Core Component II: runthenreturnvalloss

Function Definition and Purpose

The run_then_return_val_loss(t, r) function is the workhorse of the Hyperband algorithm, responsible for the evaluation of a given hyperparameter configuration [t] with a specified amount of resource [r] (e.g., a number of epochs) [12] [11]. It returns a validation loss, which is the metric used by Successive Halving to rank configurations and eliminate the worst performers. For chemistry models, this function typically involves training the model for r iterations and computing its loss on a held-out validation set of molecular data.

Protocol for the Training and Evaluation Loop

This protocol outlines the key steps for implementing run_then_return_val_loss for a deep learning model, such as one predicting polymer properties.

Step-by-Step Protocol:

- Model Initialization: Instantiate a new model instance (e.g., a DNN or CNN-BiLSTM) using the hyperparameter configuration

t. This ensures a fresh training state for each evaluation [1]. - Resource Allocation: The resource

ris interpreted as the number of training epochs. The training data is partitioned intorchunks of minibatches. - Iterative Training: Train the model for exactly

repochs. It is crucial that the function can resume training from a checkpoint if Hyperband schedules multiple increasing resource levels for the same configuration in later rounds [12]. - Loss Calculation: After

repochs, compute the model's performance on a separate validation set. Using a validation loss (e.g., Mean Squared Error for regression, Cross-Entropy for classification) prevents overfitting to the training data and provides a fair estimate of generalization [1]. - Return Validation Loss: Return the computed validation loss to the Hyperband algorithm. A lower loss indicates a better configuration.

Integrated Workflow and Visualization

The interplay between get_hyperparameter_configuration and run_then_return_val_loss is orchestrated by the Hyperband algorithm's nested loops. The following diagram and table illustrate this integrated workflow and the key tools required for its implementation.

Table 3: The Scientist's Toolkit for Hyperband Implementation

| Tool / Reagent | Category | Function in Hyperband Workflow | Example Solutions |

|---|---|---|---|

| Hyperparameter Tuner | Software Library | Provides a high-level API to implement Hyperband, managing loops, resource allocation, and result tracking. | KerasTuner [1] [11], Optuna [1], Scikit-optimize [33] |