Hyperparameter Optimization for Chemical Machine Learning: A Practical Guide for Drug Discovery

This article provides a comprehensive introduction to Hyperparameter Optimization (HPO) for chemical machine learning (ML) models, a critical step for enhancing prediction accuracy in drug discovery.

Hyperparameter Optimization for Chemical Machine Learning: A Practical Guide for Drug Discovery

Abstract

This article provides a comprehensive introduction to Hyperparameter Optimization (HPO) for chemical machine learning (ML) models, a critical step for enhancing prediction accuracy in drug discovery. Tailored for researchers and drug development professionals, it covers the foundational role of HPO in predicting molecular properties and drug-target interactions. The scope extends from core concepts and a comparison of HPO algorithms like Hyperband and Bayesian optimization to their practical application in pipelines for tasks such as molecular property prediction. It further addresses advanced strategies for overcoming computational challenges and includes a framework for the rigorous validation and comparative analysis of optimized models to ensure robust, reliable performance in biomedical research.

Why Hyperparameter Optimization is a Keystone of Chemical Machine Learning

In the development of machine learning (ML) models for chemical sciences, such as predicting molecular properties or optimizing reaction conditions, configuring the learning algorithm is as crucial as the data itself. This configuration hinges on understanding two distinct classes of variables: model parameters and hyperparameters. The precise distinction between them forms the foundational knowledge required for effective model tuning and, ultimately, for achieving state-of-the-art performance in applications like drug discovery and material design [1] [2].

This guide provides an in-depth technical explanation of model parameters and hyperparameters, framed within the context of hyperparameter optimization (HPO) for chemical machine learning. We will define these concepts, illustrate their differences, and detail modern methodologies for optimizing hyperparameters to enhance the efficiency and accuracy of deep chemical models.

Core Definitions and Conceptual Distinctions

What are Model Parameters?

Model parameters are internal variables that the machine learning model learns autonomously from the training data. They are not set manually by the practitioner but are instead estimated or learned by the optimization algorithm (e.g., Gradient Descent, Adam) during the training process [3] [4]. These parameters quantitatively capture the relationships between input features and the target output.

Examples in different models:

- Linear Regression: The slope (m) and intercept (c) of the regression line [3] [5].

- Neural Networks: The weights and biases connecting the neurons across different layers [3] [1].

What are Hyperparameters?

Hyperparameters are external configuration variables that control the overarching behavior of the learning algorithm. They are set before the training process begins and remain fixed throughout it. These variables govern the process of learning itself, influencing how the model parameters are estimated [6] [3] [4].

Examples in different models:

- General Learning Algorithms: Learning rate, number of training iterations (epochs), and batch size [3] [1].

- Neural Networks: Number of hidden layers, number of neurons per layer, choice of activation function, and dropout rate [1] [4].

- Tree-based Models: Maximum depth of the tree, minimum samples per leaf, and the criterion for splitting (e.g., Gini or entropy) [6] [5].

The following diagram illustrates the fundamental relationship between data, hyperparameters, the learning algorithm, and the resulting model parameters.

The table below provides a structured comparison to crystallize the differences.

Table 1: A comparative summary of model parameters versus hyperparameters.

| Aspect | Model Parameters | Hyperparameters |

|---|---|---|

| Definition | Internal variables learned from data [3]. | External configuration variables set before training [3]. |

| Purpose | Used to make predictions on new data [3]. | Control the learning process and how parameters are estimated [3]. |

| Determination | Learned automatically by optimization algorithms during training [3] [4]. | Set manually by the researcher or determined via HPO [3] [4]. |

| Examples | Weights in a neural network; coefficients in linear regression [3] [5]. | Learning rate; number of layers in a neural network; regularization strength [3] [1]. |

| Influence | Determine the performance of the final model on unseen data [3]. | Determine the efficiency and effectiveness of the training process [3]. |

The Critical Role of Hyperparameters in Chemical Machine Learning

In scientific machine learning, particularly in chemistry, the cost of data acquisition can be high and models must be both accurate and generalizable. Proper hyperparameter tuning is not merely a technical step but a fundamental research activity for several reasons:

- Improved Model Performance: Finding the optimal combination of hyperparameters can significantly boost model accuracy and robustness, which is critical for reliable molecular property prediction (MPP) [6] [1].

- Prevention of Overfitting and Underfitting: Chemical data can be complex and high-dimensional. Tuning hyperparameters like regularization strength or network size helps create a well-balanced model that generalizes well to new, unseen molecules [6] [5].

- Optimized Resource Utilization: Training large-scale chemical models like deep neural networks is computationally intensive. Efficient HPO avoids unnecessary work and can lead to massive savings in time and compute resources [6] [2]. A study on deep chemical models highlighted that HPO is often the most resource-intensive step in model training, making its efficiency paramount [1].

Techniques for Hyperparameter Optimization (HPO)

The process of finding the optimal set of hyperparameters is known as Hyperparameter Optimization (HPO). Several strategies have been developed, ranging from brute-force to sophisticated learning-based approaches [6] [7].

Common HPO Algorithms

Table 2: Summary of key Hyperparameter Optimization (HPO) techniques and their characteristics.

| Technique | Core Principle | Advantages | Disadvantages |

|---|---|---|---|

| Grid Search [6] | Exhaustively searches over a predefined set of hyperparameter values. | Guaranteed to find the best combination within the grid. | Computationally prohibitive for high-dimensional spaces; inefficient. |

| Random Search [6] | Randomly samples hyperparameter combinations from defined distributions. | More efficient than Grid Search; better at exploring large spaces. | No guarantee of finding the optimum; can miss important regions. |

| Bayesian Optimization [6] [1] | Builds a probabilistic model (surrogate) of the objective function to guide the search. | Smarter and more sample-efficient than random/grid search. | Higher computational overhead per trial; complex to implement. |

| Hyperband [1] | Uses an adaptive resource allocation and early-stopping strategy to speed up random search. | High computational efficiency; fast identification of promising configurations. | Does not use a predictive model like Bayesian optimization. |

Recent research on HPO for deep neural networks in molecular property prediction has concluded that the Hyperband algorithm is particularly advantageous due to its computational efficiency, providing results that are optimal or nearly optimal in terms of prediction accuracy [1]. Another promising approach is the combination of Bayesian Optimization with Hyperband (BOHB), which aims to leverage the strengths of both methods [1].

Practical HPO Workflow

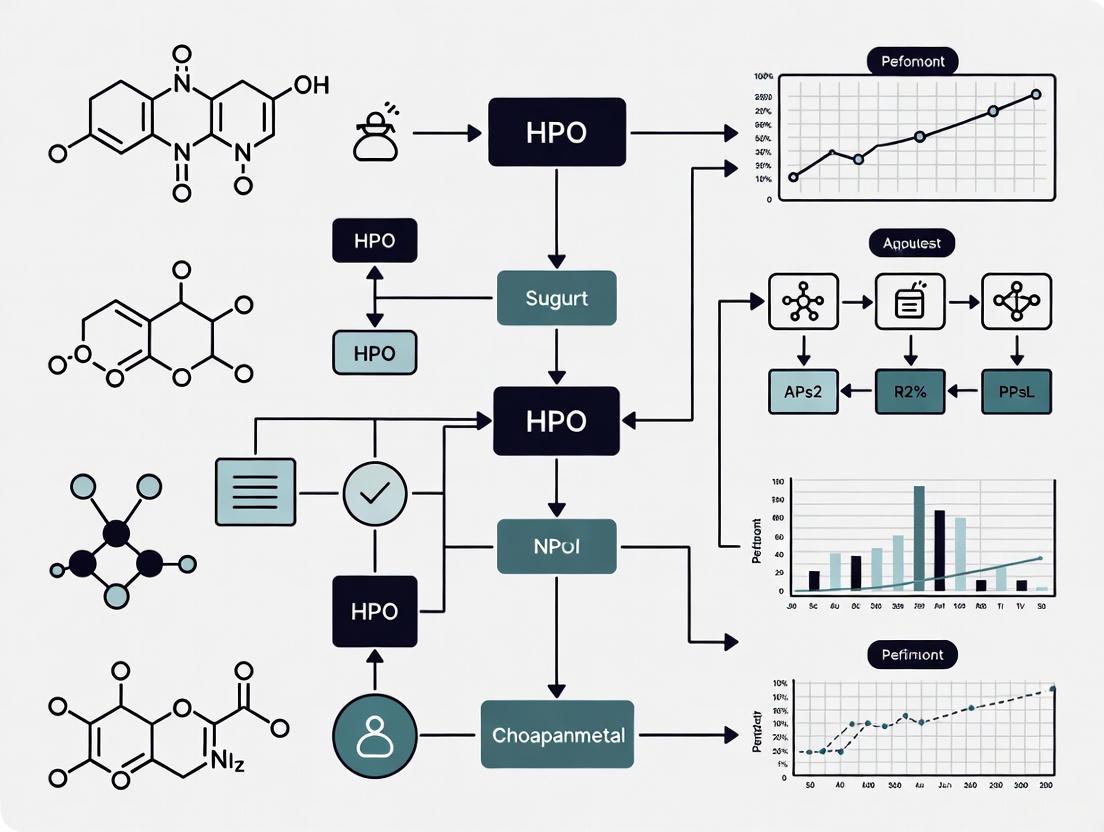

A standardized workflow for HPO is essential for reproducible and successful results in chemical ML research. The following diagram outlines a generalized protocol for conducting HPO, from problem definition to model deployment.

Experimental Protocols and Research Reagents for HPO

Case Study: Accelerated HPO for Large Chemical Models

A seminal study on the neural scaling of deep chemical models introduced a methodology called Training Performance Estimation (TPE) to drastically accelerate HPO [2]. This is critical when dealing with large models and datasets where full training is computationally expensive.

Methodology:

- Objective: Quickly identify hyperparameter settings (e.g., learning rate, batch size) that lead to optimal model convergence without completing the full training.

- Procedure: Train multiple model instances with different hyperparameter configurations for only a small fraction of the total training budget (e.g., 10-20% of the total epochs).

- Estimation: Use the learning curves from this short training to predict the final performance of each configuration. The study achieved a high rank correlation (Spearman's ρ = 1.0 for ChemGPT) between predicted and final loss, allowing for the early discarding of poor configurations [2].

- Outcome: This approach reduced the total time and compute budgets for HPO by up to 90%, enabling the subsequent large-scale scaling experiments for ChemGPT and graph neural network interatomic potentials [2].

The Scientist's Toolkit: Essential Software for HPO

Implementing advanced HPO algorithms requires robust software libraries. The table below details key tools that have become essential in the modern chemical ML researcher's toolkit.

Table 3: Key software tools and platforms for Hyperparameter Optimization.

| Tool / Library | Type | Key Features | Recommended Use Case |

|---|---|---|---|

| Optuna [8] [9] | Open-source Python framework | Define-by-run API; efficient sampling & pruning algorithms; supports distributed optimization [9]. | General-purpose HPO for ML/DL; user-friendly for Python developers. |

| KerasTuner [1] | Open-source Python library | Intuitive, user-friendly, and easy to code; integrates seamlessly with Keras and TensorFlow. | HPO for dense DNNs and CNNs, particularly in chemical ML [1]. |

| Ray Tune [9] | Open-source Python library | Scalable to distributed computing; integrates with many optimization libraries (Ax, HyperOpt, etc.). | Large-scale HPO requiring distributed computing across multiple nodes/GPUs. |

| HyperOpt [9] | Open-source Python library | Bayesian optimization using Tree of Parzen Estimators (TPE); supports conditional search spaces. | HPO over complex, conditional parameter spaces. |

The distinction between model parameters and hyperparameters is a fundamental concept in machine learning. For researchers in chemistry and drug development, mastering this distinction and the subsequent practice of hyperparameter optimization is no longer optional but a prerequisite for building competitive and reliable models. As chemical models grow in size and complexity, exemplified by billion-parameter networks, the adoption of efficient, automated HPO methodologies—such as Hyperband and Bayesian Optimization—becomes critical to harness the full potential of deep learning for scientific discovery. By leveraging modern software frameworks and accelerated protocols, scientists can systematically navigate the hyperparameter space, leading to more accurate, robust, and generalizable chemical models that accelerate innovation.

The Critical Impact of HPO on Prediction Accuracy in Molecular Property Prediction

The escalating energy crisis and the demands of modern drug discovery have intensified the search for highly functional organic compounds, making the accurate prediction of molecular properties more critical than ever [10]. Traditional trial-and-error methods for discovering these compounds are notoriously expensive and time-consuming, creating an urgent need for efficient computational approaches [10]. In this context, Hyperparameter Optimization (HPO) has emerged as a pivotal process in machine learning (ML) that significantly affects prediction accuracy, especially for Molecular Property Prediction (MPP) [11] [1].

HPO refers to the automated process of efficiently setting all necessary hyperparameter values before the training phase, which results in the best performance on a dataset within a reasonable time [1]. In deep learning, hyperparameters are broadly categorized into two types: (1) those describing the structural configuration of Deep Neural Networks (DNNs), such as the number of layers, neurons per layer, and activation functions; and (2) those associated with the learning algorithms, such as learning rate, number of epochs, and batch size [1]. The selection of these values profoundly impacts the potential performance of neural network models.

Despite its importance, HPO is often the most resource-intensive step in model training, leading many prior MPP studies to pay limited attention to this crucial process [1]. This neglect typically results in suboptimal values of predicted molecular properties. As Boldini et al. concluded from their comprehensive evaluation, "the relevance of each hyperparameter varies greatly across datasets and that it is crucial to optimize as many hyperparameters as possible to maximize the predictive performance" [1]. The transition from manual trial-and-error hyperparameter adjustment to automated HPO represents a fundamental shift toward more robust, accurate, and efficient molecular property prediction.

The Necessity of HPO in MPP: Overcoming Traditional Limitations

Limitations of Manual Hyperparameter Tuning

Traditional approaches to hyperparameter tuning in machine learning models have relied heavily on manual adjustment through trial and error. This method presents significant limitations that are particularly pronounced in the complex domain of molecular property prediction. Manual tuning is inherently subjective and often results in only locally optimal solutions rather than globally optimal configurations [11]. The process is exceptionally time-consuming, requiring extensive computational resources and expert knowledge, which creates substantial bottlenecks in model development pipelines [1]. Furthermore, the manual approach struggles to explore the complex, high-dimensional hyperparameter spaces with interactions that are difficult to understand, making it virtually impossible to exhaustively search the entire parameter space [11] [1].

The consequences of inadequate hyperparameter optimization are clearly demonstrated in comparative studies. As shown in Table 1, models without proper HPO consistently deliver suboptimal performance across various molecular property prediction tasks. This performance gap becomes increasingly critical in applications with real-world implications, such as drug discovery and materials science, where accurate predictions can significantly accelerate research and development timelines.

Table 1: Comparative Performance of ML Models Without and With HPO for MPP

| Molecular Property | Model Type | Performance Without HPO | Performance With HPO | Improvement |

|---|---|---|---|---|

| Melt Index (MI) of HDPE | Dense DNN | R²: 0.847 | R²: 0.920 | +8.6% |

| Glass Transition Temperature (Tg) | Dense DNN | R²: 0.769 | R²: 0.893 | +12.4% |

| Polymer Property Prediction | CNN | Suboptimal | Optimal | Significant [1] |

Impact on Prediction Accuracy and Model Reliability

The implementation of systematic HPO directly addresses the limitations of manual approaches by substantially enhancing both prediction accuracy and model reliability. Comprehensive HPO enables ML models to capture complex, nonlinear relationships between molecular structures and their properties more effectively [11]. This capability is particularly valuable in molecular property prediction, where such relationships are often governed by intricate quantum mechanical and structural factors.

Proper hyperparameter optimization also significantly improves model generalizability, reducing the risk of overfitting to training data—a common challenge in chemical informatics where datasets may be limited [1]. By finding optimal hyperparameter configurations, HPO ensures that models maintain robust performance on unseen molecular structures, enhancing their utility in practical screening scenarios. Furthermore, optimized models demonstrate increased consistency and reproducibility, crucial factors for scientific applications where reliable predictions inform experimental design and resource allocation [1].

The critical importance of HPO is further emphasized by its impact on advanced molecular representation learning approaches. As demonstrated by the Org-Mol pre-trained model, which uses a 3D transformer-based algorithm, appropriate fine-tuning—essentially a form of HPO—enables accurate prediction of various physical properties of pure organics, with test set R² values exceeding 0.92 [10]. This level of performance would be unattainable without systematic optimization of the model's hyperparameters.

HPO Methodologies: Algorithms and Technical Approaches

The evolution of HPO has produced several distinct algorithmic approaches, each with unique strengths and limitations for molecular property prediction. Understanding these methods is essential for selecting appropriate optimization strategies for specific MPP tasks.

Grid Search (GS) represents one of the most straightforward approaches, systematically working through multiple combinations of hyperparameter values. While simple to implement and parallelize, GS suffers from the "curse of dimensionality," becoming computationally prohibitive as the hyperparameter space grows [1]. Random Grid Search (RGS) addresses this limitation by sampling hyperparameter combinations randomly, often achieving comparable results to GS with significantly fewer iterations [11].

More sophisticated approaches include Bayesian Optimization, which builds a probabilistic model of the objective function to direct the search toward promising hyperparameters. This method is particularly effective for expensive-to-evaluate functions, as it balances exploration and exploitation of the search space [1]. The Tree-structured Parzen Estimator (TPE) is a Bayesian optimization variant that has demonstrated exceptional performance in HPO-ML approaches for spatial prediction tasks [11].

The Hyperband algorithm introduces a novel approach by leveraging early-stopping to dynamically allocate resources to the most promising configurations. This method has shown remarkable computational efficiency, providing MPP results that are optimal or nearly optimal in terms of prediction accuracy [1]. For particularly challenging optimization problems, combinations of these methods such as Bayesian Optimization with Hyperband (BOHB) can leverage the strengths of multiple approaches [1].

Table 2: Comparison of HPO Algorithms for Molecular Property Prediction

| Algorithm | Key Mechanism | Advantages | Limitations | Best Suited MPP Tasks |

|---|---|---|---|---|

| Grid Search (GS) | Exhaustive search over specified values | Simple, parallelizable | Computationally expensive for large spaces | Small hyperparameter spaces |

| Random Grid Search (RGS) | Random sampling of combinations | Better efficiency than GS | May miss important regions | Moderate-dimensional spaces |

| Bayesian Optimization | Probabilistic model of objective function | Efficient for expensive functions | Complex implementation | High-dimensional continuous spaces |

| Tree-structured Parzen Estimator (TPE) | Sequential model-based optimization | Handles complex conditional spaces | Requires careful initialization | Spatial prediction of molecular properties [11] |

| Hyperband | Early-stopping with successive halving | High computational efficiency | Limited by minimum resources | Large-scale screening projects [1] |

HPO-Enhanced Machine Learning Frameworks

The implementation of HPO in molecular property prediction has given rise to specialized frameworks that integrate optimization algorithms with machine learning models. The HPO-ML approach represents a comprehensive methodology that combines auto hyperparameter optimization with ML models like Random Forest (RF) and Extreme Gradient Boosting (XGBoost) [11]. This framework employs search algorithms to automatically identify optimal hyperparameters, significantly enhancing prediction accuracy for various molecular properties.

In practice, HPO-empowered machine learning has demonstrated remarkable performance across diverse prediction tasks. For instance, in spatial prediction of soil heavy metals—a problem analogous to molecular property prediction—the TPE-XGBoost model achieved the highest accuracy for predicting various elements including As (R² = 70.35%), Cd (R² = 75.43%), and Cr (R² = 82.11%) [11]. These results substantially outperformed models without systematic HPO, highlighting the critical importance of proper hyperparameter optimization.

For deep learning applications in MPP, methodology combining HPO with DNNs has shown significant improvements in prediction accuracy [1]. As evidenced in Table 1, implementing comprehensive HPO for dense DNNs increased R² values from 0.847 to 0.920 for predicting the melt index of HDPE and from 0.769 to 0.893 for glass transition temperature prediction [1]. These improvements demonstrate that regardless of the specific ML architecture employed, systematic HPO is essential for achieving state-of-the-art performance in molecular property prediction.

Experimental Protocols and Implementation Guidelines

Step-by-Step HPO Methodology for MPP

Implementing effective hyperparameter optimization for molecular property prediction requires a systematic approach. The following methodology provides a comprehensive framework for integrating HPO into MPP workflows:

Step 1: Problem Formulation and Objective Definition Clearly define the molecular property prediction task and establish evaluation metrics. For MPP, common objectives include regression metrics (R², RMSE) for continuous properties or classification metrics (AUROC, accuracy) for categorical properties. The selection of appropriate metrics directly influences the optimization trajectory and final model performance [1].

Step 2: Hyperparameter Space Configuration Establish the bounds and distributions for all hyperparameters to be optimized. This includes structural hyperparameters (number of layers, units per layer, activation functions) and algorithmic hyperparameters (learning rate, batch size, optimizer settings) [1]. The definition of this search space should incorporate domain knowledge about molecular representations and their relationship to target properties.

Step 3: Selection of HPO Algorithm Choose an appropriate optimization algorithm based on the problem characteristics, computational resources, and search space dimensionality. For most MPP tasks, Hyperband is recommended due to its computational efficiency, while Bayesian methods are preferable for limited data scenarios [1].

Step 4: Implementation with Parallel Execution Utilize HPO software platforms that enable parallel execution of multiple hyperparameter instances, significantly reducing optimization time. Recommended platforms include KerasTuner for its user-friendly interface and Optuna for advanced functionality [1]. Parallelization is particularly valuable for MPP, where model training can be computationally intensive.

Step 5: Iterative Optimization and Evaluation Execute the HPO process, continuously evaluating candidate configurations using cross-validation to ensure robustness. For molecular data, stratified splitting methods that maintain similar distributions of key molecular features across folds are essential [1].

Step 6: Final Model Selection and Validation Select the best-performing hyperparameter configuration and perform comprehensive validation on held-out test sets containing diverse molecular scaffolds not seen during optimization. This step verifies the generalizability of the optimized model [1].

Research Reagent Solutions: Software Tools for HPO in MPP

Successful implementation of HPO for molecular property prediction requires specialized software tools that facilitate efficient optimization workflows. The table below details essential "research reagents" in the form of software platforms and their specific functions in the HPO process.

Table 3: Essential Software Tools for HPO in Molecular Property Prediction

| Tool/Platform | Type | Primary Function | Advantages for MPP | Implementation Example |

|---|---|---|---|---|

| KerasTuner | HPO Library | Automated hyperparameter tuning for Keras models | User-friendly, intuitive API suitable for chemical engineers | Hyperparameter optimization for DNNs predicting polymer properties [1] |

| Optuna | HPO Framework | Define-by-run API for automated hyperparameter optimization | Flexible search spaces and efficient algorithms for complex molecular representations | Bayesian Optimization with Hyperband (BOHB) for property prediction [1] |

| Scikit-learn | ML Library | Traditional ML models with built-in HPO utilities | Comprehensive traditional ML algorithms for baseline comparisons | Random Forest and XGBoost with GridSearchCV [11] |

| Python | Programming Language | Implementation environment for custom HPO workflows | Extensive ecosystem for cheminformatics and machine learning | Custom HPO-ML pipelines for spatial prediction [11] |

| DNN Frameworks (TensorFlow, PyTorch) | Deep Learning Platforms | Neural network construction and training | State-of-the-art architectures for molecular graph processing | Dense DNN and CNN models for property prediction [1] |

Case Studies and Performance Analysis

HPO in Polymer Property Prediction

The impact of comprehensive hyperparameter optimization is powerfully demonstrated in polymer property prediction, where accurate models are essential for materials design and selection. A recent systematic study investigated HPO for deep neural networks predicting key polymer properties, including melt index (MI) of high-density polyethylene (HDPE) and glass transition temperature (Tg) [1].

In this study, researchers implemented a rigorous HPO methodology comparing random search, Bayesian optimization, and hyperband algorithms within the KerasTuner framework. The base case without HPO utilized a dense DNN with an input layer of 9 nodes, three hidden layers with 64 nodes each, and ReLU activation functions [1]. Through systematic HPO, the optimal architecture and training parameters were identified, resulting in dramatic improvements in prediction accuracy.

The findings revealed that the hyperband algorithm was most computationally efficient, providing MPP results that were optimal or nearly optimal in terms of prediction accuracy [1]. For MI prediction, the R² value improved from 0.847 without HPO to 0.920 with HPO, while for Tg prediction, the improvement was even more substantial—from 0.769 to 0.893 [1]. These results underscore that even well-conceived initial architectures can benefit significantly from systematic hyperparameter optimization, with performance gains that could substantially impact materials development timelines.

Large-Scale Molecular Screening with Optimized Models

The practical value of HPO-optimized models extends beyond individual property prediction to large-scale molecular screening applications. The Org-Mol pre-trained model exemplifies this capability, utilizing a 3D transformer-based molecular representation learning algorithm trained on 60 million semi-empirically optimized small organic molecule structures [10]. After fine-tuning—a specialized form of HPO—with public experimental data, the model achieved exceptional accuracy in predicting various physical properties of pure organics, with test set R² values exceeding 0.92 [10].

This optimized model enabled high-throughput screening of millions of ester molecules to identify novel immersion coolants, resulting in the experimental validation of two promising candidates [10]. The success of this large-scale screening effort was directly dependent on the accuracy of the property predictions, which in turn relied on appropriate fine-tuning of the model's hyperparameters. Without systematic HPO, the model would have lacked the precision necessary to reliably distinguish between promising and unsuitable candidates from the vast chemical space.

The implementation of HPO in this context addressed the challenge of predicting bulk properties from single-molecule inputs—a fundamental limitation in molecular property prediction. By bridging static molecular geometry with bulk phenomena through careful optimization, the fine-tuned model corrected single-molecule limitations and enabled accurate predictions despite the complexity of collective effects [10]. This case study demonstrates how HPO transforms molecular property prediction from a theoretical exercise to a practical tool for accelerated molecular discovery.

Emerging Trends in HPO for MPP

The field of hyperparameter optimization for molecular property prediction continues to evolve, with several emerging trends shaping its future development. Automated Machine Learning (AutoML) systems represent a natural extension of HPO, seeking to automate the entire ML pipeline from data preprocessing to model selection and deployment [11]. These systems are particularly valuable for molecular property prediction, where they can help domain experts without extensive ML expertise leverage advanced prediction models.

Multi-fidelity optimization methods, which use cheaper approximations of the objective function to guide the search process, are gaining traction for computationally intensive molecular simulations [1]. These approaches enable more efficient exploration of hyperparameter spaces when full model training is prohibitively expensive. Similarly, meta-learning approaches that transfer knowledge from previously solved MPP tasks to new problems show promise for reducing the computational burden of HPO [1].

The integration of HPO with explainable AI (XAI) techniques represents another important direction. Methods like SHapley Additive exPlanations (SHAP) are being used not only to interpret model predictions but also to understand the influence of different hyperparameters on model behavior [11] [12]. This integration is particularly valuable in scientific contexts where interpretability is as important as accuracy.

Hyperparameter Optimization has unequivocally established itself as a critical component of accurate molecular property prediction. The evidence from multiple studies demonstrates that systematic HPO can dramatically improve prediction accuracy, with performance gains of 8-12% in R² values commonly observed [1]. These improvements are not merely statistical artifacts but translate to practical advantages in real-world applications, from polymer design to molecular screening for energy applications.

The implementation of HPO requires careful consideration of algorithmic choices, with Hyperband emerging as particularly efficient for many MPP tasks, while Bayesian methods offer advantages in sample-efficient optimization [1]. The development of user-friendly software tools like KerasTuner and Optuna has made sophisticated HPO accessible to researchers without extensive machine learning expertise, further accelerating adoption across chemical and materials science domains [1].

As molecular property prediction continues to evolve, HPO will play an increasingly central role in ensuring model reliability and accuracy. The growing complexity of molecular representations and the expanding scale of chemical space exploration make efficient optimization not merely desirable but essential. By embracing systematic HPO methodologies, researchers can unlock the full potential of machine learning for molecular property prediction, accelerating the discovery of novel compounds with tailored properties for energy, healthcare, and materials applications.

The application of machine learning (ML) in chemical research represents a paradigm shift from traditional Edisonian approaches to data-driven discovery. This transition is primarily hampered by two interconnected core challenges: the high-dimensionality of chemical space and the prohibitive cost of experimental data generation. This whitepaper details these challenges within the context of hyperparameter optimization (HPO) for chemical ML models, framing them as a dual problem of model and experimental efficiency. We present a technical analysis of advanced strategies—including innovative HPO methods, Bayesian optimization, and high-throughput experimentation—that are proving effective in navigating this complex landscape. The discussion is supported by summarized quantitative data, detailed experimental protocols, and visual workflows, providing researchers and drug development professionals with a practical guide for accelerating ML-driven chemical innovation.

The discovery and development of new molecules and materials are fundamentally constrained by the vastness of chemical space, estimated to exceed 10^60 for drug-like molecules and 10^100 for materials, making brute-force exploration impossible [13]. Traditional research relies on costly, laborious trial-and-error, exemplified by the thousands of experiments required for historic breakthroughs like the Haber-Bosch catalyst [13]. Machine learning promises to traverse this space more efficiently but introduces its own set of challenges. The performance and generalizability of ML models are critically dependent on their hyperparameters, the configuration settings not learned from data. The process of Hyperparameter Optimization (HPO) is thus a essential yet computationally demanding step in building reliable chemical ML models. This whitepaper examines how the core challenges of chemical ML—high-dimensional spaces and costly experiments—are intrinsically linked and how advanced HPO and experimental design strategies are creating a path forward.

Core Challenge 1: Navigating High-Dimensional Chemical Spaces

The Problem of Dimensionality

In chemical ML, molecular structures are represented using numerical descriptors or features. These can include physicochemical properties, structural fingerprints, or quantum chemical calculations, often resulting in hundreds or thousands of dimensions [13] [14]. This high dimensionality leads to the "Curse of Dimensionality," where the data becomes sparse, and the distance between points becomes less meaningful, severely impacting model performance [14].

- Sparse Data Coverage: The volume of space grows exponentially with dimensionality, meaning available datasets cover a minuscule fraction of the possible chemical space.

- Increased Model Complexity and Overfitting: Models with fixed training data size become more prone to overfitting as dimensionality increases, learning noise rather than underlying patterns.

- Computational Intractability: Many ML algorithms suffer from computational costs that scale poorly with the number of features.

Strategies for Dimensionality Management

Several strategies are employed to mitigate the curse of dimensionality in chemical ML:

- Dimensionality Reduction Techniques: Algorithms like Principal Component Analysis (PCA) and t-Distributed Stochastic Neighbor Embedding (t-SNE) project high-dimensional data into a lower-dimensional space while preserving meaningful structure, aiding both visualization and model input [15] [14].

- Chemical Space Visualization: As highlighted by Sosnin (2025), new methods for large-scale visualization of chemical space are crucial for human-in-the-loop navigation of these vast datasets, allowing researchers to identify clusters and trends [15].

- Automated Feature Selection: Instead of using all available descriptors, algorithms can automatically identify and retain the most informative features for a given prediction task, reducing noise and computational load.

Table 1: Impact of High Dimensionality on Chemical ML Models

| Aspect | Challenge in High Dimensions | Potential Solution |

|---|---|---|

| Data Sparsity | Data points are isolated; models cannot reliably infer patterns. | Dimensionality reduction (PCA, t-SNE) [15] [14]. |

| Model Performance | Increased overfitting and reduced generalizability to new compounds. | Regularization, feature selection, and ensemble methods. |

| Computational Cost | Training times and resource demands increase dramatically. | Efficient HPO and feature selection algorithms. |

| Human Interpretation | Difficulty in understanding model decisions and chemical patterns. | Visual navigation tools and interpretable ML [15] [16]. |

Core Challenge 2: The High Cost of Experimental Data

The Bottleneck of Experimentation

The "Big Data" era in medicinal chemistry is paradoxically constrained by the difficulty of obtaining high-quality, relevant experimental data. Generating data for chemical ML models involves real-world experiments that can be slow, resource-intensive, and expensive. Some experiments, particularly in fields like battery development or catalysis, can take "weeks or months and significant resources to carry out" [17]. This creates a critical bottleneck, as the accuracy of ML models is often directly proportional to the quantity and quality of the data on which they are trained.

Strategies for Experimental Efficiency

To overcome this bottleneck, researchers are developing methods to extract maximum information from a minimal number of experiments.

- Bayesian Optimization (BO): BO is a powerful statistical technique for guiding experimentation. It builds a probabilistic model (a surrogate model) that relates experimental parameters (e.g., temperature, concentration) to an outcome (e.g., yield, purity). It then uses an acquisition function to recommend the next most informative experiment, balancing exploration (testing uncertain conditions) and exploitation (refining known promising conditions) [17].

- Advanced BO Algorithms: Recent research, such as the award-winning work by Folch et al., adapts BO to real-world chemical R&D. Their algorithm handles "multi-fidelity" data (integrating cheap, approximate experiments with costly, accurate ones) and "asynchronous batch" experiments (where different experiments have varying completion times), maximizing the use of available resources [17].

- High-Throughput Experimentation (HTE) Integrated with ML: Automated HTE platforms can conduct hundreds or thousands of reactions in parallel [18]. When coupled with ML, these platforms can become self-optimizing systems. The ML model guides the HTE platform on which experiments to run, and the data generated by the platform is used to refine the ML model, creating a closed-loop discovery cycle [18].

Optimizing the Model: Hyperparameter Optimization (HPO) in Chemical ML

Hyperparameter optimization is the process of searching for the optimal configuration of a machine learning model's hyperparameters to maximize its predictive performance on a given task. In chemical ML, this is especially critical because a well-tuned model can mean the difference between identifying a promising candidate molecule and wasting costly experimental resources on a false lead.

The Computational Burden of HPO

Standard HPO practices like manual or grid search are not only archaic but also computationally expensive, often requiring the training and validation of a model hundreds or thousands of times. This "poses a notable challenge to ML applications, as suboptimal hyperparameter selections curtail the potential of ML model performance" [19].

A Case Study in Efficient HPO: The Two-Step Method

To address this, researchers at Pacific Northwest National Laboratory (PNNL) developed a two-step HPO method that drastically reduces computation time. The protocol is detailed below [19].

Experimental Protocol: Two-Step Hyperparameter Optimization

- Objective: To accelerate the hyperparameter search for a neural network model designed to predict aerosol activation.

- Principle: Identify promising hyperparameter configurations using a tiny fraction of the training data before committing resources to full training.

Step 1: Preliminary Screening

- A wide search over the hyperparameter space is conducted.

- For each hyperparameter set, the model is trained and validated on a very small, representative subset of the full training dataset (e.g., 0.0025%, or a few thousand samples).

- The performance of each model from this initial screening is evaluated and ranked.

- Output: A shortlist of the top-performing hyperparameter configurations from the initial screen.

Step 2: Full-Dataset Validation

- Only the shortlisted, top-performing models from Step 1 are retrained from scratch using the entire training dataset.

- The final performance of these fully-trained models is evaluated on a held-out test set.

- Output: The best-performing model from this final group is selected for deployment.

- Result: This method achieved a 135x speed-up in finding the optimal hyperparameter configuration compared to a full search, with minimal drop in final model accuracy [19]. This approach efficiently identifies the minimal model complexity required for the best performance, which is crucial for deploying models in resource-intensive environments like global climate simulations.

Case Study: Integrated ML for Flame Retardant Discovery

A groundbreaking study by Chen et al. provides a comprehensive example of overcoming both high-dimensional and experimental cost challenges in practice. They developed a novel ML framework to discover high-performance flame retardants for epoxy resins, a task traditionally reliant on empirical methods [20].

Experimental Protocol: ML-Driven Molecular Generation and Screening

- Data Curation: Two datasets were constructed: a labeled dataset with known Limiting Oxygen Index (LOI) values and associated features (chemical structures, addition amounts, curing agent details), and an unlabeled dataset of typical flame retardant functional groups.

- Model Framework:

- Unsupervised Learning: The labeled dataset was initially clustered using the K-Means algorithm to identify inherent structure-property relationships.

- Supervised Learning: A predictive model was trained, achieving a high coefficient of determination (R² = 0.83) for LOI.

- Molecular Generation and Virtual Screening: Molecular generation techniques were used to create a diverse library of over 860,000 candidate molecules. The trained model was then used to screen this entire library in silico, prioritizing only 8 high-potential candidates for experimental validation.

- Experimental Validation: The top candidates were synthesized and tested. Results were remarkable: a compounded flame retardant system achieved a record LOI of 42.5, placing it in the top 0.4% of reported benchmarks. Crucially, this was achieved with a 95.6% reduction in material cost and a 30% formulation cost reduction [20].

This case study exemplifies the power of integrated ML to navigate high-dimensional molecular space and drastically reduce the number of required lab experiments, accelerating discovery while cutting costs.

Table 2: Key Research Reagents and Solutions in Chemical ML

| Reagent / Solution | Function in Chemical ML Research |

|---|---|

| High-Throughput Experimentation (HTE) Platforms [18] | Automated systems that conduct many reactions in parallel, generating large, uniform datasets for training ML models. |

| Bayesian Optimization (BO) Algorithms [17] | A statistical framework that guides experimenters on which parameters to test next to find an optimum with the fewest experiments. |

| Gaussian Process (GP) Surrogate Models [18] | A probabilistic model used within BO to relate input variables (e.g., reaction conditions) to the objective (e.g., yield). |

| Variational Autoencoder (VAE) [18] | A type of neural network that can compress high-dimensional molecular representations into a lower-dimensional latent space for more efficient search and generation. |

| Open Reaction Database [18] | A community-driven initiative to standardize and share chemical reaction data, addressing data scarcity and quality issues. |

The Critical Role of Interpretability and Future Outlook

As ML models become more complex, their "black-box" nature poses a significant barrier to adoption in risk-averse chemical and pharmaceutical industries. Interpretable ML is therefore not a luxury but a necessity. It is "the degree to which a human can understand the cause of a decision" [16]. In chemical contexts, interpretability tools like SHAP (SHapley Additive exPlanations) help researchers:

- Debug and Audit Models: Identify if a model is relying on spurious correlations (e.g., a husky/wolf classifier using snow as a feature) [16].

- Build Trust and Gain Insights: Understand the molecular features driving property predictions, leading to new scientific knowledge and more reliable safety protocols [21].

- Ensure Fairness and Robustness: Detect unintended bias and ensure model predictions are robust to small changes in input [16].

The future of chemical ML lies in the tighter integration of robust HPO, interpretable models, and self-driving experimental platforms. This will create a virtuous cycle where models guide experiments, and experiments enrich models, systematically accelerating the journey from a hypothesis to a validated material or molecule.

Hyperparameter Optimization (HPO) is a critical, yet often overlooked, process that directly addresses the core challenges of time and cost in AI-driven drug discovery. By systematically tuning the configuration settings of deep learning models, HPO transitions AI from an experimental curiosity to a reliable engine for clinical candidate identification. This whitepaper details how HPO compresses early-stage research and development (R&D) timelines, which traditionally take approximately five years, down to as little as 18 months for AI-designed candidates, while simultaneously improving the predictive accuracy of molecular property models. We present a step-by-step methodology and comparative data demonstrating that modern HPO algorithms, particularly Hyperband, achieve optimal or near-optimal prediction accuracy with superior computational efficiency, thereby delivering a faster, more cost-effective path to investigational new drug (IND) approval [1] [22].

The application of artificial intelligence (AI) in drug discovery has surged, with over 75 AI-derived molecules reaching clinical stages by the end of 2024 [22]. AI platforms claim to drastically shorten early-stage R&D, with notable examples like Insilico Medicine's generative-AI-designed idiopathic pulmonary fibrosis (IPF) drug progressing from target discovery to Phase I trials in just 18 months—a fraction of the typical 5-year timeline [22]. However, this acceleration is contingent on the performance and reliability of the underlying machine learning (ML) models. The design of these models is governed by hyperparameters—configuration settings that must be set before the training process begins. These include structural hyperparameters (e.g., number of layers and neurons in a deep neural network) and algorithmic hyperparameters (e.g., learning rate, batch size) [1].

Most prior applications of deep learning to molecular property prediction (MPP) have paid only limited attention to HPO, resulting in models with suboptimal predictive accuracy [1]. As "hyperparameter optimization is often the most resource-intensive step in model training," it is frequently bypassed, undermining the potential of AI in this high-stakes field [1]. This whitepaper establishes the business case for HPO as a non-negotiable step, demonstrating through experimental data and case studies how a rigorous HPO strategy is fundamental to realizing the promised efficiencies of AI in drug discovery.

The Direct Impact of HPO on Discovery Efficiency

Quantitative Gains in Model Accuracy

Ignoring HPO leads to inaccurate molecular property predictions, which can misdirect entire research programs. Conversely, a comprehensive HPO process directly and significantly enhances model performance. The table below summarizes the quantitative improvement in prediction accuracy for two molecular property prediction case studies after implementing HPO [1].

Table 1: Impact of HPO on Model Accuracy for Molecular Property Prediction

| Molecular Property | Model Type | Performance Metric | Without HPO | With HPO | Improvement |

|---|---|---|---|---|---|

| Melt Index (MI) of HDPE | Dense DNN | Mean Absolute Error (MAE) | 0.92 | 0.27 | ~70% reduction in error [1] |

| Glass Transition Temp (Tg) | Convolutional Neural Network (CNN) | Mean Absolute Error (MAE) | 16.5 | 6.5 | ~61% reduction in error [1] |

Compression of Discovery Timelines

The accuracy gains from HPO directly translate into faster and more reliable decision-making throughout the discovery pipeline:

- Accelerated Design-Make-Test-Analyze (DMTA) Cycles: Companies like Exscientia report that their AI-powered platforms, which rely on optimized models, can complete in silico design cycles approximately 70% faster and require 10 times fewer synthesized compounds than industry norms [22]. This represents a dramatic compression of the iterative DMTA cycle.

- From Target to Clinic in Record Time: The high predictive accuracy of well-tuned models enables more confident selection of candidate molecules for synthesis and testing. This efficiency is evidenced by Insilico Medicine's ISM001-055, which progressed from target discovery to Phase I clinical trials for idiopathic pulmonary fibrosis in only 18 months [22].

- Efficient Resource Allocation: By reducing the number of compounds that need to be synthesized and tested experimentally, HPO directs financial and laboratory resources toward the most promising candidates, lowering overall R&D costs [22].

HPO Methodologies: Algorithms and Comparative Performance

Selecting the right HPO algorithm is crucial for balancing computational cost with model performance. The following section details the primary HPO methods and their applicability to drug discovery.

- Random Search: Evaluates random combinations of hyperparameters within a defined search space. It is more efficient than an exhaustive grid search and serves as a reliable baseline [1].

- Bayesian Optimization: A sequential model-based optimization technique that builds a probabilistic model of the function mapping hyperparameters to model performance. It uses this model to select the most promising hyperparameters to evaluate next, making it more sample-efficient than random search [1].

- Hyperband: An innovative algorithm that accelerates random search through adaptive resource allocation and early-stopping of poorly performing trials. It uses a multi-fidelity approach, quickly evaluating a large number of configurations with small resources (e.g., few training epochs) and then allocating more resources only to the most promising candidates [1].

- BOHB (Bayesian Optimization and HyperBand): A hybrid algorithm that combines the robustness of Hyperband with the guidance of Bayesian optimization. It uses the Hyperband structure to manage resource allocation while leveraging a probabilistic model to select promising configurations, aiming for the best of both worlds [1].

Comparative Performance of HPO Algorithms

A comparative study on molecular property prediction tasks provides clear evidence for algorithm selection based on the goals of accuracy and efficiency.

Table 2: Comparative Performance of HPO Algorithms on Molecular Property Prediction

| HPO Algorithm | Computational Efficiency | Prediction Accuracy | Key Strengths | Recommended Use Case |

|---|---|---|---|---|

| Hyperband | Highest | Optimal or Nearly Optimal | Dramatically reduces computation time via early-stopping | Default choice for most MPP tasks [1] |

| Bayesian Optimization | Medium | High | High sample-efficiency; finds excellent configurations | When computational budget is moderate and high accuracy is critical [1] |

| BOHB (Hybrid) | High | High | Combines robustness of Hyperband with guidance of BO | Complex search spaces where pure Hyperband may be less effective [1] |

| Random Search | Low | Variable, Suboptimal | Simple to implement and parallelize | Useful as a baseline to benchmark more advanced methods [1] |

Based on this empirical evidence, the study concludes that "we recommend the use of the hyperband algorithm... it gives MPP results that are optimal or nearly optimal in terms of prediction accuracy" and is the most computationally efficient [1].

Experimental Protocol: Implementing HPO for Molecular Property Prediction

This section provides a detailed, step-by-step methodology for implementing HPO to develop accurate Deep Neural Network (DNN) models for predicting molecular properties.

The following diagram illustrates the end-to-end HPO workflow for an AI-driven drug discovery project, from data preparation to the deployment of an optimized model.

Step-by-Step Methodology

Step 1: Establish a Base-Case Model

Before beginning HPO, establish a baseline model for performance comparison. A typical base-case DNN for MPP might consist of an input layer, three densely connected hidden layers with 64 nodes each using ReLU activation, and an output layer with a linear activation. The Adam optimizer and Mean Squared Error (MSE) loss function are common starting points [1].

Step 2: Define the Hyperparameter Search Space

The next step is to define the range of values for the hyperparameters to be optimized. The following table outlines a recommended search space for a DNN for MPP.

Table 3: Example Hyperparameter Search Space for a Dense DNN

| Hyperparameter Category | Hyperparameter | Recommended Search Space |

|---|---|---|

| Structural Configuration | Number of Hidden Layers | Int[1, 5] |

| Number of Neurons per Layer | Int[32, 512] | |

| Activation Function | Choice['relu', 'tanh', 'selu'] | |

| Dropout Rate | Float[0.0, 0.5] | |

| Algorithmic Configuration | Learning Rate | Float[1e-5, 1e-2] (log scale) |

| Batch Size | Choice[32, 64, 128, 256] | |

| Optimizer | Choice['adam', 'rmsprop', 'sgd'] |

Step 3: Execute the HPO Algorithm

Using a software library like KerasTuner, execute the chosen HPO algorithm (e.g., Hyperband). Configure the tuner to run multiple trials in parallel to reduce optimization time. A key parameter for Hyperband is the max_epochs, which defines the maximum resources allocated to a single model configuration [1].

Step 4: Evaluate and Validate

Once the HPO process is complete, retrieve the top-performing model configurations. It is critical to train these top models from scratch on the full training dataset and then evaluate them on a held-out test set to confirm performance. The model with the best validation performance is selected as the "champion" for final training and deployment.

Implementing a successful HPO strategy requires both software tools and computational resources. The table below catalogs the essential components of the HPO toolkit for AI-driven drug discovery.

Table 4: Research Reagent Solutions for HPO in AI-Driven Drug Discovery

| Tool Category | Specific Tool / Resource | Function and Application |

|---|---|---|

| HPO Software Libraries | KerasTuner | User-friendly Python library ideal for HPO of Keras and TensorFlow models. Recommended for its intuitiveness and ease of coding [1]. |

| Optuna | A more flexible, define-by-run Python library for HPO. Suitable for complex search spaces and supports advanced algorithms like BOHB [1]. | |

| Machine Learning Frameworks | TensorFlow / Keras | Core frameworks for building, training, and deploying deep learning models for MPP [1]. |

| Data Generation & Validation | High-Throughput Molecular Dynamics (MD) Simulations | Generates comprehensive, consistent datasets of molecular properties (e.g., ~30,000 solvent mixtures) to train and benchmark ML models when experimental data is scarce [23]. |

| Computational Infrastructure | Cloud Platforms (e.g., AWS) | Provides scalable computing power for the parallel execution of multiple HPO trials, which is essential for searching large parameter spaces efficiently [1] [22]. |

| Robotic Automation | Integrated platforms (e.g., Exscientia's AutomationStudio) use robotics to synthesize and test AI-designed molecules, creating a closed-loop "design-make-test-learn" cycle [22]. |

Hyperparameter Optimization is not a mere technical refinement but a strategic imperative that directly accelerates AI-driven drug discovery. By systematically implementing modern HPO algorithms like Hyperband, research organizations can build more accurate and reliable AI models, leading to faster identification of clinical candidates and significant reductions in R&D costs. The experimental evidence is clear: HPO delivers measurable improvements in predictive accuracy, which in turn compresses discovery timelines from years to months. As the industry moves forward, integrating HPO into a seamless, automated workflow—from AI design to robotic synthesis and testing—will be the hallmark of the most efficient and successful drug discovery enterprises.

A Practical Guide to HPO Algorithms and Their Implementation

In the field of chemical machine learning (ML), the prediction of molecular properties, reaction outcomes, and catalyst performance has become increasingly reliant on sophisticated algorithms like deep neural networks and graph neural networks. The performance of these models is critically dependent on their hyperparameters—the configuration variables that control the learning process itself. These include settings for model architecture (e.g., number of layers, neurons per layer) and learning algorithms (e.g., learning rate, batch size), which must be set before training begins [1]. Unlike model parameters (e.g., weights and biases) that are learned from data, hyperparameters are not learned and thus require alternative optimization strategies.

Hyperparameter Optimization (HPO) presents a significant challenge in computational chemistry and drug discovery. The process is inherently computationally expensive, with evaluation times ranging from hours to days for large models and datasets. Furthermore, the configuration space is often complex, high-dimensional, and may contain conditional parameters, making exhaustive search infeasible [24]. For chemical ML applications, where datasets may be small and overfitting is a major concern, proper HPO becomes even more critical [25]. This technical guide provides an in-depth analysis of three core HPO algorithms—Grid Search, Random Search, and Bayesian Optimization—framed within the context of chemical ML research for molecular property prediction and related tasks.

Core HPO Algorithms: Theoretical Foundations and Mechanisms

Grid Search

Grid Search (GS) represents the most straightforward approach to HPO, operating as a systematic brute-force method that evaluates every possible combination within a user-defined hyperparameter grid [26] [27]. Imagine a multi-dimensional grid where each axis represents a hyperparameter, and every intersection point corresponds to a unique model configuration awaiting evaluation [27].

The algorithm functions by creating a discrete grid from predefined hyperparameter values and executing a comprehensive search across this grid. For each combination, it trains a model and assesses performance using a validation protocol such as cross-validation. The configuration yielding the optimal performance is selected [26]. While GS is thorough and deterministic, its computational cost grows exponentially with the number of hyperparameters, a phenomenon known as the "curse of dimensionality" [24].

Random Search

Random Search (RS) addresses GS's computational limitations by adopting a probabilistic sampling approach. Rather than exhaustively evaluating all combinations, RS randomly samples configurations from specified distributions over the hyperparameter space for a fixed number of iterations [26] [27].

This method benefits from the empirical observation that in high-dimensional spaces, hyperparameters exhibit varying levels of importance—some parameters significantly influence performance while others have minimal effect. By randomly sampling across the entire space, RS has a higher probability of finding good configurations with far fewer evaluations than GS, making it particularly efficient for high-dimensional problems [27].

Bayesian Optimization

Bayesian Optimization (BO) represents a more sophisticated, sequential model-based approach that builds a probabilistic surrogate model to approximate the objective function [26]. Unlike the model-free GS and RS methods, BO uses past evaluation results to inform future selections [27].

The algorithm operates through an iterative process: initially sampling a few random points, constructing a surrogate model (typically a Gaussian Process) of the objective function, and employing an acquisition function to determine the most promising next point to evaluate by balancing exploration (testing uncertain regions) and exploitation (refining known promising areas) [26] [7]. This adaptive learning mechanism enables BO to often find high-performing configurations with significantly fewer evaluations than GS or RS [26].

Comparative Analysis of HPO Algorithms

Qualitative Comparison of Algorithm Characteristics

Table 1: Qualitative comparison of core HPO algorithms

| Characteristic | Grid Search | Random Search | Bayesian Optimization |

|---|---|---|---|

| Search Strategy | Exhaustive, systematic | Randomized sampling | Sequential, model-based |

| Parameter Space Exploration | Uniform, structured | Random, unstructured | Adaptive, informed |

| Theoretical Guarantees | Finds best in grid | Probabilistic convergence | Bayesian optimality |

| Handling of Conditional Parameters | Difficult | Straightforward | Possible with tailored surrogates |

| Implementation Complexity | Low | Low | High |

| Parallelization Potential | High | High | Limited |

Quantitative Performance Comparison

Table 2: Empirical performance comparison across different domains

| Study Context | Grid Search Performance | Random Search Performance | Bayesian Optimization Performance | Key Metrics |

|---|---|---|---|---|

| Molecular Property Prediction [1] | - | Suboptimal | Optimal/Nearly Optimal (with Hyperband) | Prediction Accuracy |

| Heart Failure Prediction [26] | Accuracy: 0.6294 (SVM) | Similar to GS | Best computational efficiency | Accuracy, AUC, Processing Time |

| General ML Classification [27] | Best CV score: 0.9043 (108 combinations) | Best CV score: 0.9129 (30 combinations) | - | Cross-validation Score |

| Computational Complexity [28] | High computational cost | Moderate computational cost | Variable (lower with good surrogate) | Execution Time, Resource Usage |

Chemical ML Case Study: Molecular Property Prediction

Recent research specifically addressing HPO for molecular property prediction (MPP) provides compelling evidence for algorithm selection. A comprehensive methodology applied to deep neural networks for MPP compared Random Search, Bayesian Optimization, and Hyperband (a multi-fidelity extension of Random Search). The study concluded that the Hyperband algorithm—which has not been widely used in previous MPP studies—demonstrated superior computational efficiency while delivering optimal or nearly optimal prediction accuracy [1].

The researchers recommended the Python library KerasTuner for implementing HPO in chemical ML applications, noting its user-friendly interface and support for parallel execution, which significantly reduces optimization time [1]. This finding is particularly relevant for drug development professionals working with large chemical datasets or complex molecular representations like Graph Neural Networks (GNNs), where HPO is essential for achieving state-of-the-art performance [29].

Experimental Protocols for HPO in Chemical ML

Methodology for Molecular Property Prediction with DNNs

A rigorous experimental protocol for HPO in molecular property prediction was outlined in a recent study that established a step-by-step methodology [1]:

Base Case Establishment: Begin with a baseline dense Deep Neural Network (DNN) without HPO. A typical architecture includes an input layer (e.g., 9 nodes for molecular features), three densely connected hidden layers (e.g., 64 nodes each), and an output layer with linear activation for regression tasks. The ReLU activation function and Adam optimizer are commonly employed [1].

HPO Implementation: Implement three primary HPO algorithms—Random Search, Bayesian Optimization, and Hyperband—using the KerasTuner library with parallel execution capabilities.

Performance Validation: Compare results against the base case using appropriate validation protocols, such as repeated k-fold cross-validation, to ensure robustness, particularly in low-data regimes common in chemical applications [1] [25].

Advanced Techniques: For enhanced performance, combine Bayesian Optimization with Hyperband (BOHB) using libraries like Optuna, which integrates the adaptive strength of BO with the efficiency of multi-fidelity approaches [1].

Workflow for Low-Data Chemical Scenarios

Chemical ML often faces data scarcity challenges, where overfitting is a significant concern. The ROBERT software framework introduces a specialized workflow for such scenarios [25]:

Data Splitting: Reserve 20% of initial data (minimum four data points) as an external test set using an "even" distribution split to ensure balanced representation of target values.

Combined Metric Formulation: Create an objective function that combines interpolation performance (assessed via 10-times repeated 5-fold cross-validation) with extrapolation capability (evaluated through selective sorted 5-fold CV based on target value) [25].

Bayesian HPO: Execute Bayesian optimization using this combined RMSE metric as the objective function, systematically exploring the hyperparameter space while penalizing overfitting.

Model Scoring: Implement a comprehensive scoring system (on a scale of ten) that evaluates predictive ability, overfitting, prediction uncertainty, and robustness against spurious correlations [25].

Implementation Framework

The Scientist's Toolkit: Essential Software and Libraries

Table 3: Essential tools for implementing HPO in chemical ML research

| Tool/Library | Primary Function | Key Features | Chemical ML Applicability |

|---|---|---|---|

| KerasTuner [1] | HPO for Keras models | User-friendly, parallel execution, supports RS, BO, Hyperband | Molecular property prediction with DNNs |

| Optuna [1] | Hyperparameter optimization | Define-by-run API, efficient sampling, pruning | BOHB for complex chemical models |

| ROBERT [25] | Automated ML workflows for chemistry | Data curation, Bayesian HPO, model selection, specialized for small datasets | Low-data chemical scenarios, reaction optimization |

| Scikit-learn [26] [27] | Traditional ML with HPO | GridSearchCV, RandomizedSearchCV | Preprocessing and baseline model development |

Decision Framework for Algorithm Selection

Based on the comparative analysis, the following decision framework is recommended for chemical ML researchers:

Grid Search: Suitable only for low-dimensional hyperparameter spaces (typically ≤3 dimensions) with discrete values where computational cost is not prohibitive [27] [24].

Random Search: Recommended as the default starting point for most chemical ML applications, particularly when exploring high-dimensional spaces (≥4 hyperparameters) or when computational resources are limited [1] [27].

Bayesian Optimization: Ideal for expensive model evaluations where the number of trials must be minimized, and when sufficient computational resources are available for the sequential optimization process [26].

Hyperband/BOHB: Recommended for large-scale chemical ML projects involving deep neural networks or graph neural networks, where it provides the best balance of efficiency and performance [1].

For chemical ML applications specifically, recent research emphasizes the importance of optimizing as many hyperparameters as possible and selecting software platforms that enable parallel execution to manage computational demands [1].

Visualizations

HPO Algorithm Decision Flowchart

Bayesian Optimization Workflow

The comparative analysis of core HPO algorithms reveals a clear evolution from brute-force methods (Grid Search) through stochastic approaches (Random Search) to intelligent, adaptive strategies (Bayesian Optimization). For chemical ML applications, including molecular property prediction and reaction optimization, the selection of an HPO algorithm must balance computational efficiency with prediction accuracy. Recent research demonstrates that while Grid Search provides a straightforward baseline, and Random Search offers efficient exploration of high-dimensional spaces, Bayesian Optimization and its extensions (particularly Hyperband and BOHB) deliver superior performance for complex chemical models. As automated ML workflows become increasingly integrated into chemical research, the strategic implementation of these HPO algorithms will play a pivotal role in accelerating drug discovery and materials development.

In the field of chemical machine learning (ML), the performance of models predicting molecular properties, reaction outcomes, or optimizing synthesis pathways is highly sensitive to hyperparameter settings. Hyperparameters are configuration variables that control the ML training process itself, such as learning rate, network architecture, or batch size, and cannot be learned directly from the data [30] [31]. Hyperparameter optimization (HPO) is the process of finding the optimal set of these variables to maximize model performance. For chemical researchers, this often translates to more accurate predictions of yield, selectivity, or other critical reaction objectives, directly impacting experimental efficiency and resource allocation [32] [33].

Traditional HPO methods like grid search—which exhaustively evaluates a Cartesian product of hyperparameter values—become computationally intractable for high-dimensional search spaces common in complex chemical models [31]. Random search, while more efficient, can still waste significant resources evaluating poor-performing configurations [34] [35]. This has spurred the adoption of advanced strategies, including the highly efficient Hyperband algorithm and robust Genetic Algorithms (GAs), which are particularly suited to the challenges of chemical ML, such as noisy experimental data and complex, multi-objective optimization landscapes [32] [36].

Hyperband: A Strategy for Computational Efficiency

Core Principles and Mechanics

Hyperband is an innovative HPO algorithm designed to dramatically increase efficiency through adaptive resource allocation and early-stopping of underperforming trials [34] [37] [35]. It is built on two key ideas: treating HPO as a configuration evaluation problem rather than a selection problem, and leveraging the Successive Halving procedure.

Successive Halving starts by allocating a minimal budget (e.g., a small number of training epochs) to a large set of randomly sampled hyperparameter configurations. After evaluating all configurations with this budget, it discards the worst-performing half and allocates a larger budget to the remaining top half. This process repeats until only one configuration remains [34] [35]. A critical challenge in Successive Halving is choosing the initial number of configurations (n). Hyperband solves this by considering multiple possible values for n in a single run, effectively hedging its bets between exploring many configurations (large n) and deeply evaluating a few (small n) [37].

The algorithm requires two inputs:

R: The maximum amount of resources (e.g., epochs, training time) that can be allocated to a single configuration.η: The proportion of configurations discarded in each round of Successive Halving (aggression factor). A default value of 3 or 4 is typically recommended, as performance is not highly sensitive to this parameter [37] [35].

The following diagram illustrates the logical workflow of the Hyperband algorithm.

Hyperband in Practice: An Example for a Chemical ML Model

The table below outlines a hypothetical resource allocation for a Hyperband run with R=81 and η=3, targeting a chemical property prediction model. This demonstrates how Hyperband dynamically allocates resources across different "brackets" (values of s).

Table 1: Example Hyperband Resource Allocation (R=81, η=3)

| Bracket (s) | Initial Configs (n) | Resource per Config (r_i) in Successive Rounds | Configs Left After Each Round |

|---|---|---|---|

| s=4 (Most exploratory) | 81 | 1, 3, 9, 27, 81 | 81 → 27 → 9 → 3 → 1 |

| s=3 | 27 | 3, 9, 27, 81 | 27 → 9 → 3 → 1 |

| s=2 | 9 | 9, 27, 81 | 9 → 3 → 1 |

| s=1 | 6 | 27, 81 | 6 → 2 |

| s=0 (Most conservative) | 5 | 81 | 5 |

This strategy allows Hyperband to explore a vast hyperparameter space efficiently. In the time a naive method might evaluate 5 configurations for 81 epochs each, Hyperband's most aggressive bracket (s=4) evaluates 81 different configurations, albeit for a single epoch initially, quickly weeding out non-viable options [37].

Key Parameters and Research Reagents

Implementing Hyperband requires defining key components and their functions, analogous to research reagents in a laboratory setting.

Table 2: Hyperband "Research Reagent" Solutions

| Component/Reagent | Function & Description | Typical Specification |

|---|---|---|

| Resource (r) | The budget allocated to a configuration (e.g., number of training epochs, dataset subset size). Determines the fidelity of the performance evaluation. | Defined by R (max) and scaled by η. |

| Configuration Sampler | A function that draws random hyperparameter configurations from a predefined search space. | Uniform random sampling is standard, but can be informed by prior knowledge. |

| Validation Loss Function | The objective function that quantifies model performance (e.g., mean squared error for yield prediction). Used to rank configurations. | Must be carefully chosen to reflect the primary chemical ML objective. |

| Aggression Factor (η) | Controls the proportion of configurations discarded in each Successive Halving round. A higher η leads to more aggressive pruning. | Default value of 3 or 4. |

Genetic Algorithms: A Strategy for Robustness

Core Principles and Mechanics

Genetic Algorithms (GAs) are population-based, metaheuristic optimization algorithms inspired by the process of natural selection [36] [31]. Unlike Hyperband's focus on resource efficiency, GAs excel at robustly navigating complex, noisy, and highly structured search spaces—precisely the characteristics often found in chemical kinetics and reaction optimization problems [36]. They are less prone to becoming trapped in local optima compared to gradient-based methods.

GAs operate on a population of candidate solutions (individual hyperparameter sets). This population evolves over generations through the application of genetic operators:

- Selection: Individuals are selected for reproduction based on their fitness (e.g., the inverse of the validation loss). Fitter individuals have a higher probability of being selected.

- Crossover (Recombination): Pairs of selected "parent" solutions combine their "genes" (hyperparameters) to produce "offspring" solutions. This exploits existing good solutions.

- Mutation: Random alterations are applied to some offspring with a small probability. This introduces new genetic material and explores new regions of the search space, aiding robustness [36] [31].

The following diagram illustrates the iterative workflow of a standard Genetic Algorithm.

GAs in Practice: Application in Chemical Kinetics

GAs have proven highly effective for solving the "inverse problem of chemical kinetics," which involves finding the optimal reaction rate coefficients for a given reaction mechanism [36]. This is a complex, high-dimensional optimization problem where objective functions can have multiple ridges and valleys, and gradient information is often unavailable.

In one documented application, a multi-objective GA was used to optimize reaction mechanisms for hydrogen and methane combustion. The algorithm incorporated data from both Perfectly Stirred Reactors (PSR) and laminar premixed flames, producing reaction mechanisms with improved predictive capabilities. The GA successfully handled the complex trade-offs between fitting different types of experimental data, a task for which traditional gradient-based methods struggle due to the problem's ill-posed nature and the noise present in measurements [36].

Key Parameters and Research Reagents

Implementing a GA for HPO requires careful setting of its own hyperparameters and components.

Table 3: Genetic Algorithm "Research Reagent" Solutions

| Component/Reagent | Function & Description | Typical Specification |

|---|---|---|

| Population | A set of candidate hyperparameter configurations (individuals). The diversity of the population is key to exploration. | Size typically ranges from tens to hundreds. |

| Fitness Function | The objective function that evaluates the performance of a configuration (e.g., model accuracy). Guides the selection process. | Must be designed to accurately reflect the ultimate goal of the chemical ML model. |