Hyperparameter Optimization for Chemistry Models: A Comparative Guide to Bayesian, Evolutionary, and Gradient-Based Methods

This article provides a comprehensive comparison of hyperparameter optimization (HPO) methods tailored for machine learning models in chemistry and drug discovery.

Hyperparameter Optimization for Chemistry Models: A Comparative Guide to Bayesian, Evolutionary, and Gradient-Based Methods

Abstract

This article provides a comprehensive comparison of hyperparameter optimization (HPO) methods tailored for machine learning models in chemistry and drug discovery. It covers foundational concepts of HPO and its critical role in enhancing model performance for applications like molecular property prediction and virtual screening. We explore the mechanics, strengths, and weaknesses of key methodologies—including Bayesian optimization, evolutionary algorithms, and gradient-based techniques—with specific examples from recent cheminformatics research. The article further offers practical troubleshooting advice for overcoming common optimization challenges and presents a framework for the rigorous validation and benchmarking of HPO techniques to guide researchers and professionals in selecting the most efficient and effective strategy for their projects.

Why Hyperparameter Optimization is Crucial for Modern Cheminformatics

The Role of Machine Learning in Scalable Chemical Data Analysis

The field of chemistry is undergoing a profound transformation, driven by the convergence of automation, big data, and artificial intelligence. Where traditional chemical research relied heavily on manual experimentation and theoretical calculations, the emergence of high-throughput digital chemistry now generates volumes of experimental data that far exceed human analytical capacity [1]. This data explosion has created a critical need for scalable analysis methods, positioning machine learning (ML) as an indispensable tool for modern chemical research and development. By leveraging ML algorithms, researchers can now predict molecular properties, optimize synthetic pathways, and extract meaningful patterns from complex spectroscopic data at unprecedented speeds [2] [3].

The integration of ML is particularly transformative for drug discovery, where it accelerates the iterative Design-Make-Test-Analyze (DMTA) cycle through improved predictive accuracy and reduced experimental overhead [4]. From predicting reaction outcomes to optimizing hyperparameters for chemical models, ML methods are enabling a shift from traditional trial-and-error approaches to targeted, intelligent experimentation. This article examines the current state of ML in chemical data analysis, comparing performance across different applications and providing experimental protocols for implementing these methods in research workflows.

ML Applications in Chemical Data Analysis: Methods and Performance

Machine learning has penetrated nearly every subdomain of chemical research, from fundamental property prediction to complex synthesis planning. The following sections explore key applications, comparing model performance across different chemical tasks.

Molecular Property Prediction

Predicting molecular properties from chemical structure represents one of the most established ML applications in chemistry. Different molecular representations and algorithms yield varying performance across property types:

Table 1: Performance Comparison of ML Models for Molecular Property Prediction

| Prediction Task | Best Model | Key Metric | Performance | Reference |

|---|---|---|---|---|

| Odor Perception | Morgan-fingerprint-based XGBoost | AUROC | 0.828 | [5] |

| Odor Perception | Morgan-fingerprint-based XGBoost | AUPRC | 0.237 | [5] |

| pKa Prediction | Thermodynamic-principle-integrated ML | Accuracy | Superior to ab initio methods | [6] |

| Reaction Outcome | Graph-convolutional neural networks | Accuracy | Expert-level | [6] |

| Free Energy/Kinetics | Hybrid QM/ML models | Computational Cost | Significant reduction vs. high-precision ab initio | [6] |

The superior performance of Morgan fingerprints combined with XGBoost for odor prediction highlights how structural fingerprints effectively capture essential olfactory cues [5]. For electronic properties like pKa, incorporating thermodynamic principles directly into ML architectures ensures physical consistency while maintaining accuracy [6].

Hyperparameter Optimization for Chemical Models

Selecting appropriate hyperparameter optimization methods significantly impacts model performance in chemical applications. Comparative studies reveal method-specific strengths:

Table 2: Hyperparameter Optimization Method Performance Across Domains

| Optimization Method | Application Domain | Best For | Performance Advantages | Reference |

|---|---|---|---|---|

| Bayesian Optimization | Air Quality Prediction | CO, NO₂, PM₁₀ | Superior performance for most pollutants | [7] |

| Hyperband Search | Air Quality Prediction | NOX | Best for specific pollutant types | [7] |

| Bayesian Search | Heart Failure Prediction | Computational Efficiency | Fastest processing time | [8] |

| Random Search | Heart Failure Prediction | Simplicity | Better than Grid Search for large parameter spaces | [8] |

Bayesian Optimization generally provides the best trade-off between performance and computational efficiency across domains, building a surrogate model to guide the search process [7] [8]. For chemical applications with complex parameter spaces, this approach often yields the most robust models.

Reaction Prediction and Retrosynthetic Planning

ML approaches have dramatically advanced synthetic chemistry through improved reaction prediction and planning:

- Neural-symbolic frameworks and Monte Carlo Tree Search (MCTS) integrated with deep neural networks revolutionize retrosynthetic planning, generating expert-quality routes at unprecedented speeds [6]

- Transformer architectures with denoising diffusion models generate synthetic routes rather than just molecular structures, ensuring synthetic feasibility by operating within chemical spaces defined by purchasable building blocks [2]

- Machine learning models based on molecular orbital reaction theory achieve remarkable accuracy and generalizability in predicting organic reaction outcomes [6]

Experimental Protocols and Methodologies

Implementing effective ML solutions for chemical data analysis requires careful experimental design and methodological rigor. This section details protocols for key applications.

Protocol for ML-Based Odor Prediction

The odor prediction study [5] provides a comprehensive methodology for structure-property relationship modeling:

Dataset Curation:

- Unified 10 expert-curated sources containing 8,681 unique odorants and 200 candidate descriptors

- Standardized descriptor labels through a controlled vocabulary of 201 labels (200 specific odors plus "Others")

- Retrieved canonical SMILES via PubChem's PUG-REST API using PubChem CIDs

Feature Extraction:

- Functional Group (FG) features: Generated by detecting predefined substructures using SMARTS patterns

- Molecular Descriptors (MD): Calculated using RDKit library including molecular weight, hydrogen bond donors/acceptors, topological polar surface area, logP, rotatable bonds, heavy atom count, and ring count

- Structural Fingerprints (ST): Derived using the Morgan algorithm from MolBlock representations generated from SMILES strings and optimized using the universal force field algorithm

Model Training and Evaluation:

- Implemented multi-label classification using Random Forest, XGBoost, and LightGBM

- Applied stratified fivefold cross-validation with 80:20 train:test split

- Used MultiLabelBinarizer to encode presence/absence of each odor category

- Evaluated models using Accuracy, AUROC, AUPRC, Specificity, Precision, and Recall

Protocol for Hyperparameter Optimization in Chemical Models

The air quality prediction study [7] provides a validated protocol for hyperparameter optimization:

Data Preprocessing:

- Addressed missing data through comparative analysis of mean imputation and k-Nearest Neighbors (kNN) imputation

- Applied z-score normalization to standardize continuous features

- Used one-hot encoding for categorical variables

Optimization Methods:

- Random Search: Defined parameter distributions, performed random sampling of 100 configurations

- Bayesian Optimization: Built Gaussian Process surrogate model, used expected improvement acquisition function

- Hyperband Search: Implemented successive halving with aggressive early-stopping

Model Validation:

- Evaluated performance using 5-fold cross-validation

- Compared results against baseline models with default parameters

- Assessed computational efficiency through training time measurements

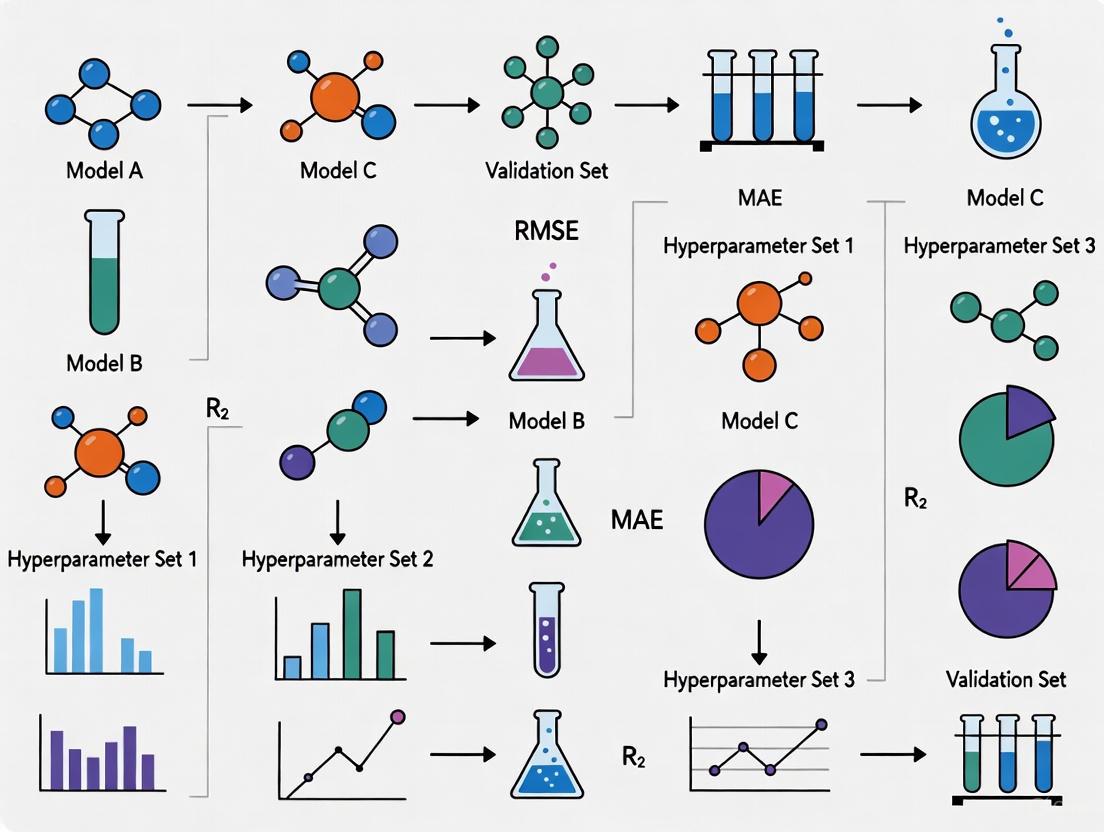

Hyperparameter Optimization Workflow: This diagram illustrates the three primary optimization methods compared in chemical ML applications, showing distinct approaches for efficient parameter tuning.

Research Infrastructure and Data Management

Effective ML implementation in chemistry requires robust data infrastructure and specialized software solutions.

FAIR Data Infrastructure for Chemical ML

The HT-CHEMBORD (High-Throughput Chemistry Based Open Research Database) project exemplifies modern research data infrastructure (RDI) designed specifically for ML-ready chemical data [1]:

Key Components:

- Automated Synthesis Platforms: Chemspeed systems for programmable chemical synthesis with parameter logging

- Multi-stage Analytical Workflow: LC-DAD-MS-ELSD-FC, GC-MS, SFC-DAD-MS-ELSD for comprehensive characterization

- Semantic Data Modeling: Converts experimental metadata to Resource Description Framework (RDF) using ontology-driven models

- Standardized Data Formats: ASM-JSON, JSON, or XML formats depending on analytical method

FAIR Principles Implementation:

- Findability: Rich metadata indexed in searchable front-end interface

- Accessibility: Controlled data access through licensing agreements

- Interoperability: Mapping to structured ontologies incorporating Allotrope Foundation Ontology

- Reusability: Detailed, standardized metadata with clear provenance

Chemical Data Analysis Pipeline: This workflow shows the automated, multi-stage process for chemical data generation and analysis, highlighting decision points that ensure comprehensive data capture including negative results.

Essential Software Solutions for Chemical ML

Table 3: Key Software Platforms for ML-Driven Chemical Research

| Software Platform | Primary Application | Key ML Features | Licensing Model | Reference |

|---|---|---|---|---|

| Schrödinger | Quantum Mechanics & Free Energy | DeepAutoQSAR, GlideScore | Modular | [9] |

| deepmirror | Hit-to-Lead Optimization | Generative AI Engine | Single Package | [9] |

| Chemaxon | Compound Design | Plexus Suite, Design Hub | Pay-per-use | [9] |

| Cresset | Protein-Ligand Modeling | Free Energy Perturbation (FEP) | Modular | [9] |

| BIOVIA | Molecular Modeling | AI-powered data analysis | Enterprise | [10] |

| Benchling | Biopharma R&D | AI-powered data insights | Subscription | [10] |

The Scientist's Toolkit: Essential Research Reagents and Solutions

Implementing ML-driven chemical research requires both computational and experimental resources:

Table 4: Essential Research Reagents and Solutions for ML-Chemistry Integration

| Reagent/Solution | Function | Application Example | Reference |

|---|---|---|---|

| Morgan Fingerprints | Molecular representation | Capturing structural features for odor prediction | [5] |

| SMILES Strings | Chemical structure encoding | Input for graph neural networks | [2] |

| Allotrope Foundation Ontology | Semantic data modeling | Standardizing experimental metadata | [1] |

| ASM-JSON Format | Analytical data storage | Instrument output standardization | [1] |

| RDKit Library | Molecular descriptor calculation | Feature extraction for QSAR models | [5] |

| Purchasable Building Blocks | Synthetic feasibility constraint | Ensuring tractable generative designs | [2] |

Machine learning has fundamentally transformed scalable chemical data analysis, enabling researchers to extract meaningful insights from increasingly large and complex datasets. Through comparative analysis of methods and applications, several key principles emerge:

First, model performance is highly dependent on appropriate molecular representations, with Morgan fingerprints demonstrating particular efficacy for sensory property prediction [5]. Second, hyperparameter optimization methods show domain-specific strengths, with Bayesian Optimization generally providing the best balance of performance and efficiency for chemical applications [7]. Third, successful ML implementation requires robust data infrastructure that adheres to FAIR principles and captures both positive and negative results [1].

Looking forward, several trends will shape the future of ML in chemical data analysis: increased integration of generative AI for molecular design [2], broader adoption of equivariant neural networks that respect physical symmetries [2], development of autonomous experimentation systems [1], and improved multi-omics data integration for drug discovery [9]. As these technologies mature, they will further accelerate the transition from data-rich to knowledge-rich chemical research, enabling more efficient discovery across pharmaceuticals, materials, and sustainable chemistry.

In the fields of cheminformatics and drug discovery, Graph Neural Networks (GNNs) have emerged as a powerful tool for molecular property prediction, drug-target interaction analysis, and reaction yield forecasting. Unlike traditional neural networks that process grid-like data, GNNs operate directly on graph-structured data, making them particularly suited for representing molecular structures where atoms serve as nodes and chemical bonds as edges. However, the performance of GNNs is highly sensitive to architectural choices and hyperparameters, making optimal configuration selection a non-trivial task that significantly impacts model accuracy, generalizability, and computational efficiency [11]. This sensitivity stems from multiple factors, including the fundamental trade-offs between different GNN architectures' expressive power, the complex interplay between hyperparameters, and the specific characteristics of chemical datasets, which are often smaller than typical deep learning benchmarks [12].

The challenge is particularly pronounced in chemistry applications, where researchers must navigate competing priorities: model expressiveness must be balanced against risks of overfitting on limited datasets, computational constraints must be considered alongside prediction accuracy requirements, and interpretability needs must be addressed without sacrificing performance. Understanding these configuration sensitivities is essential for researchers aiming to deploy GNNs effectively in molecular property prediction, drug discovery, and materials science applications [13].

Core Architectural Factors Influencing GNN Performance

Aggregation Mechanisms and Expressive Power

The core operation in GNNs is message passing, where information is aggregated from neighboring nodes to update each node's representation. The choice of aggregation function fundamentally impacts a GNN's discriminative power:

Sum aggregation provides injective multiset functions, enabling GNNs to distinguish different neighborhood structures. This approach is employed by Graph Isomorphism Networks (GINs), which achieve maximal expressive power within the conventional neighborhood aggregation paradigm, matching the ability of the 1-dimensional Weisfeiler-Lehman (1-WL) isomorphism test to distinguish non-isomorphic graphs [14].

Mean and max aggregation are not injective for multisets and can collapse non-isomorphic structures into identical embeddings. For instance, while Graph Convolutional Networks (GCNs) use mean aggregation, and some GraphSAGE variants employ max pooling, these approaches cannot capture fine-grained structural differences as effectively as sum aggregation [14].

The theoretical expressiveness directly translates to practical performance differences. In molecular classification tasks, GINs consistently achieve state-of-the-art or tied results on various benchmarks, including bioinformatics datasets like MUTAG (≈89%), PROTEINS (≈76%), and social networks like IMDB-BINARY (≈75%) [14]. However, this superior expressiveness comes with a cost: GINs require careful hyperparameter tuning and regularization, particularly in data-scarce regimes where they may be outperformed by less expressive but more stable architectures like GATs [14].

Attention Mechanisms and Neighborhood Prioritization

Graph Attention Networks (GATs) introduce attention mechanisms that assign differentiable weights to neighboring nodes during aggregation, allowing the model to focus on more relevant neighbors [15]. This dynamic weighting is particularly valuable in molecular graphs where certain atomic interactions exert stronger influence on molecular properties than others. However, the introduction of attention mechanisms adds additional parameters that require optimization and increases computational complexity [16].

The Message Passing Neural Network (MPNN) framework provides a generalized approach to message passing that encompasses many GNN variants. In comparative studies on cross-coupling reaction yield prediction, MPNNs achieved the highest predictive performance with an R² value of 0.75 across diverse datasets encompassing various transition metal-catalyzed reactions including Suzuki, Sonogashira, and Buchwald-Hartwig couplings [17]. This superior performance suggests that the flexible message functions and update mechanisms in MPNNs are particularly well-suited for capturing complex relationships in chemical reaction data.

Architectural Depth and Oversmoothing

Unlike convolutional neural networks for images, which benefit from substantial depth, most message-passing GNNs suffer from the oversmoothing problem – where node representations become indistinguishable as the number of layers increases [18]. This phenomenon fundamentally limits the effective depth of GNNs and varies in impact across architectures.

Table: Comparison of GNN Architectures and Their Sensitivity to Depth

| Architecture | Recommended Layers | Oversmoothing Sensitivity | Mitigation Strategies |

|---|---|---|---|

| GIN | 2-7 [14] | High with excessive stacking | Deeper MLPs within layers, jumping knowledge connections |

| GCN | 2-5 | Very high | Residual connections, dense connections |

| GAT | 2-5 | Moderate | Attention-guided neighborhood prioritization |

| DenseGNN | 5+ [18] | Low | Dense connectivity networks, hierarchical residual networks |

Novel architectures like DenseGNN address the depth limitation through Dense Connectivity Networks (DCN) and hierarchical node-edge-graph residual networks (HRN), enabling deeper GNNs without performance degradation [18]. This approach allows for more direct and dense information propagation throughout the network, reducing information loss during message passing and effectively combating oversmoothing. On several benchmark datasets including JARVIS-DFT, Materials Project, and QM9, DenseGNN achieved state-of-the-art performance while supporting substantially deeper architectures than conventional GNNs [18].

Hyperparameter Sensitivity and Optimization Strategies

Critical Hyperparameters and Their Impact

GNN performance depends on the careful configuration of numerous hyperparameters, each exhibiting complex interactions:

Learning Rate: Optimal values typically range between 0.01-0.02 for GINs, with Adagard often outperforming Adam optimizers in molecular property prediction tasks [14].

Embedding Dimension: Typical values range from 32-128 atoms, with higher dimensions increasing model capacity but also raising overfitting risks, particularly on small datasets [14].

MLP Depth within GIN Layers: Deeper MLPs (2-5 layers) within each GIN layer often yield more benefit than simply stacking more GIN layers [14].

Batch Normalization and Dropout: Essential for stabilizing training, especially for expressive models like GINs in small-data regimes [14].

The sensitivity of these hyperparameters is exacerbated by the characteristics of molecular datasets, which are often far smaller than typical deep learning benchmarks in other domains [12]. This data scarcity amplifies the variance introduced by suboptimal hyperparameter choices and necessitates careful regularization strategies.

Hyperparameter Optimization Methods

Given the multidimensional hyperparameter space and expensive evaluation costs, systematic Hyperparameter Optimization (HPO) is essential. Research has compared several HPO methods specifically for GNNs in molecular property prediction:

Table: Comparison of Hyperparameter Optimization Methods for GNNs

| Method | Key Principle | Strengths | Limitations |

|---|---|---|---|

| Random Search (RS) | Random sampling of hyperparameter space [12] | Good baseline, parallelizable | Inefficient for high-dimensional spaces |

| Tree-structured Parzen Estimator (TPE) | Sequential model-based optimization [12] | Efficient for limited budgets | Can get stuck in local minima |

| Covariance Matrix Adaptation Evolution Strategy (CMA-ES) | Evolutionary strategy [12] | Effective for ill-conditioned problems | Higher computational overhead |

No single HPO method dominates across all molecular tasks. Experimental studies on MoleculeNet benchmarks indicate that RS, TPE, and CMA-ES each have individual advantages for tackling different specific molecular problems [12]. The optimal choice depends on factors including dataset size, molecular complexity, and computational budget.

Experimental Protocols and Performance Benchmarking

Standardized Evaluation Methodologies

Robust evaluation of GNN configurations requires standardized protocols across several dimensions:

Dataset Partitioning: Molecular datasets are typically split using scaffold splitting, which groups molecules based on their Bemis-Murcko scaffolds, ensuring that structurally different molecules appear in training and test sets. This approach provides a more challenging and realistic assessment of generalization compared to random splitting [16].

Evaluation Metrics: Appropriate metrics must be selected based on task type:

- Regression Tasks (e.g., yield prediction, solubility): Mean Absolute Error (MAE), Root Mean Squared Error (RMSE), and R² [15]

- Classification Tasks (e.g., toxicity prediction): ROC-AUC, PRC-AUC, Balanced Accuracy [15]

- Generation Tasks (e.g., molecule generation): Validity, Uniqueness, Novelty, Quantitative Estimation of Drug-likeness (QED) [15]

Benchmark Datasets: Commonly used benchmarks include:

- MoleculeNet collections (ESOL, FreeSolv, Lipophilicity, Tox21) [15]

- Chemical Reaction Datasets encompassing various coupling reactions [17]

- Material Project datasets for crystal property prediction [18]

The following diagram illustrates a typical experimental workflow for evaluating GNN configurations in chemical applications:

Quantitative Performance Comparisons

Experimental studies consistently demonstrate significant performance variations across GNN architectures:

Table: GNN Architecture Performance on Chemical Tasks

| Architecture | Reaction Yield Prediction (R²) | Molecular Classification (AUC) | QSAR Regression (MAE) | Computational Cost |

|---|---|---|---|---|

| MPNN | 0.75 [17] | - | - | Medium |

| GIN | - | 0.793-0.849 [14] | 0.44 [14] | High |

| GAT | - | Moderate [14] | - | Medium-High |

| GCN | - | Lower than GIN [14] | - | Low |

| ECFP-MLP | - | - | 0.42 [14] | Low |

The performance hierarchy varies substantially across task types. For reaction yield prediction on heterogeneous datasets encompassing various cross-coupling reactions, MPNNs achieve superior performance [17]. For molecular classification tasks on toxicological assays, GINs typically outperform GCNs and GATs in data-rich environments [14]. However, in quantitative structure-activity relationship (QSAR) regression, classical ECFP-MLP baselines can sometimes outperform GIN-based models, highlighting that the optimal architecture is highly task-dependent [14].

Implementing and optimizing GNNs for chemical applications requires leveraging specialized tools, datasets, and methodologies:

Table: Essential Research Reagents for GNN Experimentation

| Resource Category | Specific Examples | Function | Access/Implementation |

|---|---|---|---|

| Molecular Datasets | ESOL, FreeSolv, Lipophilicity, Tox21 [15] | Benchmark performance across chemical domains | MoleculeNet |

| Chemical Features | Circular Atomic Features [16], Daylight atomic invariants [16] | Enhanced node/edge representations for molecules | RDKit, DeepChem |

| HPO Algorithms | TPE, CMA-ES, Random Search [12] | Efficient navigation of hyperparameter space | Optuna, Scikit-optimize |

| Interpretability Methods | GNNExplainer, Integrated Gradients [16] | Identify salient molecular substructures and features | PyTorch Geometric |

| Architecture Variants | DenseGNN, ALIGNN, GIN, MPNN [18] [17] [14] | Address specific limitations like oversmoothing | Various GitHub repositories |

The performance sensitivity of GNNs to their configuration is not merely an implementation challenge but stems from fundamental architectural trade-offs. The most expressive architectures (e.g., GINs) typically require the most careful regularization and hyperparameter tuning, particularly in the data-scarce environments common in chemical research. Meanwhile, architectures with inherent constraints may offer more stable performance at the cost of representational power.

Successful deployment of GNNs in chemical applications requires a methodical approach: (1) establishing clear performance requirements and constraints, (2) selecting architectures aligned with both data characteristics and task objectives, (3) implementing systematic hyperparameter optimization informed by dataset size and complexity, and (4) incorporating interpretability techniques to validate model behavior against chemical intuition.

As the field evolves, emerging techniques including automated Neural Architecture Search (NAS), self-supervised pretraining strategies, and novel architectures that explicitly balance expressiveness with stability are poised to reduce the configuration burden while maintaining performance. However, understanding the fundamental sources of configuration sensitivity will remain essential for researchers aiming to leverage GNNs effectively in drug discovery, materials science, and chemical synthesis prediction.

Defining Hyperparameter Optimization (HPO) and Neural Architecture Search (NAS)

In the competitive landscape of modern computational research, particularly in chemistry and drug development, the manual design and tuning of machine learning models are no longer sufficient. The pursuit of higher accuracy, greater efficiency, and more interpretable models has given rise to Automated Machine Learning (AutoML). Two core pillars of AutoML are Hyperparameter Optimization (HPO) and Neural Architecture Search (NAS). While often mentioned together, they address distinct aspects of the model creation process. This guide provides a definitive comparison of HPO and NAS, framing them within the context of chemistry model research. We will dissect their definitions, methodologies, and practical applications, supported by experimental data and protocols relevant to scientists and researchers in drug discovery.

Core Definitions and Comparative Framework

What is Hyperparameter Optimization (HPO)?

Hyperparameter Optimization (HPO) is the automated process of finding the optimal set of hyperparameters for a given machine learning algorithm. Hyperparameters are configuration settings that are not learned from the data but are set prior to the training process. They control the learning process itself, such as the learning rate, the number of layers in a neural network, or the batch size. The goal of HPO is to find the combination of these settings that results in the best model performance, typically measured by accuracy or another relevant metric on a validation set [19] [20]. In essence, HPO tunes the "knobs" of a fixed model architecture.

What is Neural Architecture Search (NAS)?

Neural Architecture Search (NAS) is the automated process of designing the architecture of a neural network. Instead of just tuning the parameters of a fixed structure, NAS searches for the structure itself. This involves making fundamental decisions about the network's composition, such as the types of operations (e.g., convolution, pooling, attention), how they are connected (e.g., sequential, residual, branching), and the overall depth and width of the network [11] [21]. NAS automates the design of the model's blueprint, a task that traditionally requires significant human expertise and trial and error.

HPO vs. NAS: A Side-by-Side Comparison

The table below summarizes the key distinctions between HPO and NAS.

Table 1: Fundamental Comparison Between HPO and NAS

| Aspect | Hyperparameter Optimization (HPO) | Neural Architecture Search (NAS) |

|---|---|---|

| Primary Goal | Tune the settings of a fixed model architecture [21]. | Find the optimal model structure itself [21]. |

| What is Searched | Learning rate, number of epochs, optimizer type, batch size, number of neurons in a fixed layer [20] [19]. | Types of layers (convolution, pooling), connectivity patterns (skip connections), number of layers [11] [21]. |

| Search Space | Often a predefined set of values or ranges for specific parameters. | A space of possible neural network architectures, often represented as a directed acyclic graph (DAG) [21]. |

| Typical Scope | A component of the model training process. | Encompasses model design and can include HPO within its process. |

| Computational Cost | Can be high, but generally lower than NAS. | Often very high, though advanced methods like weight-sharing aim to reduce this [21]. |

Diagram 1: HPO and NAS Decision Flow

Key Methodologies and Experimental Protocols

The effectiveness of HPO and NAS hinges on the strategies used to navigate their respective search spaces. The following section details common optimization methods and experimental frameworks.

Common HPO and NAS Search Strategies

Researchers and engineers employ various strategies to automate the search for optimal configurations.

Table 2: Comparison of Primary Search Strategies

| Search Strategy | Description | Typical Use Case |

|---|---|---|

| Grid Search | An exhaustive search that tests all possible combinations of hyperparameter values within a predefined set. It is guaranteed to find the best combination within the grid but is computationally very expensive [19]. | HPO with a small, well-defined search space. |

| Random Search | Randomly selects hyperparameter combinations from a defined range. It is more efficient than grid search and often finds good solutions faster, as it does not waste resources on evaluating every single combination [19]. | HPO with a larger search space where computational budget is limited. |

| Bayesian Optimization | A sequential model-based optimization technique. It uses the results of past evaluations to build a probabilistic model of the objective function and selects the next hyperparameters to evaluate that are most likely to improve performance [19]. | Both HPO and NAS for efficient search in complex, expensive-to-evaluate spaces. |

| Max-Flow Based Search (MF-NAS/MF-HPO) | A novel approach that formulates the search for an optimal architecture or hyperparameters as a max-flow problem on a graph. The "capacity" of edges represents the importance of different operations or hyperparameter intervals, guiding the search efficiently [21]. | NAS and HPO, particularly when the search space can be naturally represented as a graph. |

Diagram 2: Generic Search Strategy Workflow

Experimental Protocol: HPO for Chemical Reaction Prediction

A practical example of HPO in chemistry research comes from a study optimizing a neural network to predict coefficients for the decay plots of Methylene Blue (MB) absorbance during its reduction by Ascorbic Acid [22].

Objective: To predict the coefficients (A, B, C) in the exponential decay equation A + B · e^(-x/C) that describes the reduction reaction of Methylene Blue.

Methodology:

- Data Collection: 220 spectral files were obtained from reactions with varying concentrations of MB, Ascorbic Acid, and solvents. After filtering, 180 records were used for training.

- HPO Setup: A grid search was employed to explore the hyperparameter space.

- Search Space:

- Number of hidden layers: 1 to 5

- Number of neurons per layer: 16 to 256

- Activation function: ReLU, Sigmoid, Tanh, Leaky ReLU, ELU, Swish

- Training epochs: 50 or 100

- Batch size: Varied

Result: The optimal architecture identified was a network with five hidden layers, each containing sixteen neurons, and using the Swish activation function. This model achieved low normalized mean square errors (NMSE) for predicting the decay coefficients [22].

Experimental Protocol: NAS and Novel Architecture Design in Cheminformatics

NAS is increasingly used to develop novel, high-performing model architectures for molecular property prediction, a key task in drug discovery.

Objective: Design a Graph Neural Network (GNN) that surpasses the performance of manually designed architectures for molecular property prediction.

Methodology (KA-GNN):

- Search Space Definition: The search space integrates Kolmogorov-Arnold Networks (KANs) into the fundamental components of a GNN: node embedding, message passing, and readout [23].

- Architectural Innovation: The proposed KA-GNN replaces standard multi-layer perceptrons (MLPs) in GNNs with Fourier-series-based KAN modules. This enhances the model's ability to capture complex, non-linear patterns in molecular graph data [23].

- Evaluation: Two variants, KA-GCN and KA-GAT, were developed and evaluated across seven benchmark molecular datasets.

Result: The NAS-derived KA-GNN architectures consistently outperformed conventional GNNs in both prediction accuracy and computational efficiency. They also offered improved interpretability by highlighting chemically meaningful molecular substructures [23].

Performance Data and Comparison

The true value of HPO and NAS is demonstrated through quantitative performance gains. The table below summarizes key results from the cited experiments.

Table 3: Experimental Performance Comparison

| Experiment | Method | Key Performance Metric | Result | Comparative Outcome |

|---|---|---|---|---|

| Chemical Reaction Prediction [22] | HPO (Grid Search) | Normalized Mean Square Error (NMSE) | NMSE of 0.05, 0.03, and 0.04 for coefficients A, B, and C, respectively. | A 5-layer Swish network was optimal. |

| Molecular Property Prediction [23] | NAS (KA-GNN) | Prediction Accuracy & Efficiency | Consistently higher accuracy and better computational efficiency across 7 molecular benchmarks. | Outperformed conventional GNNs (GCN, GAT). |

| General AutoML [21] | MF-NAS / MF-HPO | Search Efficacy & Efficiency | Competitive results across diverse datasets and search spaces. | Matched or exceeded state-of-the-art methods. |

The Scientist's Toolkit: Essential Research Reagents and Materials

For researchers looking to replicate or build upon HPO and NAS experiments in chemistry, the following tools and "reagents" are essential.

Table 4: Key Research Reagents and Solutions for HPO/NAS Experiments

| Item / Tool | Function / Description | Example Use in Context |

|---|---|---|

| Benchmark Datasets | Standardized datasets used to evaluate and compare model performance fairly. | Molecular datasets (e.g., from MoleculeNet) for drug discovery [11]; ImageNet for computer vision [24]. |

| HPO/NAS Frameworks | Software libraries that automate the search process. | Optuna, HyperOpt, Ray Tune for HPO [20]; frameworks supporting DARTS or weight-sharing for NAS. |

| Graph Neural Network (GNN) | A deep learning model that operates directly on graph-structured data. | The base model for molecular property prediction, as molecules are naturally represented as graphs [11] [23]. |

| Methylene Blue (MB) & Ascorbic Acid (AA) | Chemical reagents in a model reaction system for kinetic studies. | Used to generate spectroscopic data for training the HPO-tuned neural network in [22]. |

| Spectrophotometer | An instrument that measures the absorption of light by a chemical substance. | Used to track the concentration of Methylene Blue over time by measuring absorbance at λ=665 nm [22]. |

In the field of chemical and molecular informatics, machine learning models are increasingly employed for critical tasks such as molecular property prediction, toxicity classification, and de novo molecular design. The performance of these models is highly sensitive to their hyperparameters—the configuration variables that govern the learning process itself. Hyperparameter optimization (HPO) is the systematic process of finding the optimal set of these hyperparameters to maximize model performance on a given task. However, HPO presents significant challenges in computational cost, scalability, and navigating the curse of dimensionality, particularly when dealing with the high-dimensional feature spaces common in chemical data. The curse of dimensionality refers to various phenomena that arise when analyzing data in high-dimensional spaces, including increased computational complexity and the counterintuitive nature of geometric relationships, which severely impact the performance of machine learning algorithms [25] [26].

This guide provides a comprehensive comparison of HPO methods specifically within the context of chemistry models, evaluating their performance against quantitative metrics and providing detailed experimental protocols. As the complexity of chemical datasets and models continues to grow, selecting an appropriate HPO strategy becomes paramount for researchers aiming to develop accurate, efficient, and scalable machine learning solutions for drug discovery and materials science.

Theoretical Foundations: The HPO Problem and Its Challenges

Defining the Hyperparameter Optimization Problem

In supervised machine learning, an algorithm ingests training data and outputs a predictor. The quality of this predictor is measured on validation data using an evaluation metric, such as error rate or accuracy. Since the predictor depends on the chosen hyperparameters, the validation performance also depends on those hyperparameters. The mapping from hyperparameter values to validation performance is termed the response function. HPO consists of finding the hyperparameters that optimize this response function [27].

The HPO problem is distinguished from conventional optimization by its nested structure: evaluating the response function for a given hyperparameter configuration requires executing the learning algorithm, which typically involves solving another optimization problem to fit a model to the training data. This characteristic means the response function is rarely available in closed form and is often stochastic, non-convex, and computationally expensive to evaluate—sometimes requiring hours or days of computation for a single configuration [27].

Key Challenges in High-Dimensional Spaces

The curse of dimensionality manifests in HPO through several interconnected challenges. As the number of hyperparameters increases, the search space grows exponentially, a phenomenon known as combinatorial explosion. For example, if each of 10 hyperparameters has just 5 possible values, the total number of combinations exceeds 10 million. This exponential growth makes exhaustive search strategies computationally infeasible [27] [26].

High-dimensional spaces also exhibit sparse sampling; data points tend to reside in the corners of the space rather than the center, and distance measures become less meaningful as dimensionality increases. These factors severely impact the performance of machine learning models applied to chemical data, such as molecular fingerprints, which are inherently high-dimensional [25]. Additionally, the hyperparameter search space is often complex and heterogeneous, containing continuous, integer, and categorical variables, some of which may only be relevant conditionally based on the values of others [27].

Comparative Analysis of Hyperparameter Optimization Methods

Traditional HPO Methods

Grid Search exhaustively explores a predefined set of hyperparameter values. For example, when tuning a Random Forest with three hyperparameters (nestimators, maxdepth, minsamplessplit), each with three possible values, Grid Search would train and evaluate 3×3×3=27 separate models [28]. While thorough, this approach becomes computationally prohibitive as the number of hyperparameters increases, failing to leverage information from previous evaluations.

Random Search randomly samples a fixed number of hyperparameter configurations from specified distributions. Rather than trying all combinations, it selects random combinations, which can be more efficient, especially with many parameters or large ranges. However, it performs a blind search with no learning from previous trials and may miss optimal configurations due to its random nature [28].

Bayesian Optimization and Modern Frameworks

Bayesian Optimization represents a more intelligent approach that builds a probabilistic model of the objective function to guide the search process efficiently. The core components include a surrogate model, typically a Gaussian Process or Tree-structured Parzen Estimator (TPE), which approximates the unknown objective function, and an acquisition function that determines the next hyperparameters to evaluate by balancing exploration and exploitation [28] [29].

Optuna is a powerful HPO framework that implements Bayesian optimization with several enhancements. It employs a "define-by-run" API that allows users to dynamically construct the search space and uses TPE for modeling the objective function. Optuna also incorporates pruning to automatically terminate unpromising trials early, significantly reducing computational waste [28].

Table 1: Comparison of Hyperparameter Optimization Methods

| Method | Search Strategy | Scalability | Best For | Key Limitations |

|---|---|---|---|---|

| Grid Search | Exhaustive search over predefined grid | Poor with high-dimensional spaces | Small search spaces with few hyperparameters | Computationally expensive; fails to leverage past evaluations |

| Random Search | Random sampling from distributions | Moderate improvement over Grid Search | Medium-dimensional spaces with limited budget | Blind search; may miss optima; performance depends on luck |

| Bayesian Optimization (e.g., Optuna) | Sequential model-based optimization using surrogate models and acquisition functions | Excellent for high-dimensional, complex spaces | Expensive black-box functions with complex search spaces | Overhead of maintaining model; can over-exploit |

Experimental Protocols and Performance Benchmarks

Protocol for Bayesian Optimization

A standardized protocol for implementing Bayesian Optimization with Optuna involves several key steps [28] [29]:

- Define the Objective Function: Create a function that takes a set of hyperparameters, builds and trains a model with those hyperparameters, and returns a validation performance metric.

- Configure the Tuner: Initialize the Bayesian optimization tuner, specifying parameters such as the number of initial random points and the total number of trials.

- Execute the Search: Run the optimization process, where the algorithm selects hyperparameters, evaluates them, and updates its probabilistic model.

- Retrieve Results: Extract the best-performing hyperparameters and the corresponding model.

Diagram Title: Bayesian Optimization Workflow

Quantitative Performance Comparison

In practical applications, Bayesian optimization consistently outperforms traditional methods. In a fraud detection case study, Bayesian optimization successfully tuned a deep learning model, significantly improving recall from 0.66 to 0.84, though with expected trade-offs in precision and accuracy [29].

Table 2: Performance Comparison of HPO Methods on Model Tuning Tasks

| Method | Best Validation Recall | Computational Time (Relative) | Number of Trials to Convergence | Key Hyperparameters Identified |

|---|---|---|---|---|

| Grid Search | 0.745 | 100% (baseline) | ~200 (exhaustive) | Fixed grid values |

| Random Search | 0.792 | ~65% | ~130 | Random sampling |

| Bayesian Optimization (Optuna) | 0.840 | ~45% | ~75 | neurons1: 40, dropoutrate2: 0.4, learningrate: 0.004 |

For chemical domain tasks, a study comparing embedding techniques for toxicity classification provides further evidence. When optimizing classifiers on different molecular representations, models leveraging modern HPO techniques demonstrated superior performance across multiple toxicity endpoints, with Matthews Correlation Coefficient values improving by 0.1-0.3 compared to baseline methods [25].

Specialized Applications in Chemical Informatics

Combating Dimensionality in Molecular Representation

Chemical datasets often suffer from extreme dimensionality, particularly when using molecular fingerprints or descriptor-based representations. Dimensionality reduction techniques serve as crucial preprocessing steps to mitigate the curse of dimensionality before model training and HPO. Principal Component Analysis provides a linear transformation that maximizes variance explanation but may miss nonlinear relationships. Uniform Manifold Approximation and Projection is a nonlinear method that utilizes local manifold approximations and topological representations. Variational Autoencoders employ deep learning to learn compressed representations in an unsupervised manner, often demonstrating advantages in maintaining chemical information [25].

In toxicity classification benchmarks, using VAE embeddings as features for optimized classifiers consistently showed advantages in accuracy over PCA and UMAP approaches, particularly for complex toxicity endpoints like NR-AR and NR-AR-LBD, where VAE-based models achieved MCC values above 0.60 [25].

Advanced Architectures for High-Dimensional Problems

Novel neural architectures specifically designed for high-dimensional problems have emerged recently. Anant-Net addresses the curse of dimensionality in solving high-dimensional partial differential equations by using tensor product structures and dimension-wise sweeps. This approach efficiently incorporates boundary conditions and minimizes PDE residuals at collocation points, successfully solving PDEs up to 300 dimensions on a single GPU [30] [26].

For molecular systems, AlphaNet represents a local-frame-based equivariant model for interatomic potentials that achieves both computational efficiency and predictive precision. By constructing equivariant local frames with learnable geometric transitions, AlphaNet enhances representational capacity while maintaining scalability across diverse system sizes [31].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Tools for Hyperparameter Optimization in Chemistry Research

| Tool Name | Type | Primary Function | Application in Chemistry Models |

|---|---|---|---|

| Optuna | Hyperparameter Optimization Framework | Implements Bayesian optimization with pruning and dynamic search spaces | Tuning neural networks for molecular property prediction and toxicity classification |

| Scikit-learn | Machine Learning Library | Provides implementations of GridSearchCV and RandomizedSearchCV | Baseline HPO for traditional QSAR models using random forests and SVMs |

| KerasTuner | Deep Learning HPO Library | Bayesian optimization for Keras/TensorFlow models | Architecture search for deep neural networks processing chemical structures |

| RDKit | Cheminformatics Library | Generates molecular fingerprints and descriptors | Creates high-dimensional features from molecular structures that require optimization |

| Dimensionality Reduction | Preprocessing | Techniques like PCA, UMAP, VAE to reduce feature space | Compresses molecular fingerprints before model training to mitigate curse of dimensionality |

The effective optimization of hyperparameters presents significant challenges in computational cost, scalability, and navigating the curse of dimensionality, particularly for chemistry models operating on high-dimensional molecular representations. Traditional methods like Grid Search and Random Search provide baseline approaches but become computationally prohibitive as model complexity increases. Bayesian optimization frameworks like Optuna offer substantial improvements in efficiency and effectiveness by leveraging probabilistic models to guide the search process intelligently.

When combined with dimensionality reduction techniques and specialized neural architectures, modern HPO methods enable researchers to develop more accurate and scalable models for chemical informatics tasks. As the field advances, the integration of these approaches will be crucial for tackling increasingly complex problems in drug discovery and materials science, where both data dimensionality and model complexity continue to grow exponentially.

A Deep Dive into HPO Algorithms: From Bayesian Optimization to Evolutionary Strategies

Bayesian Optimization (BO) is a powerful machine learning approach for finding the global optimum of black-box functions that are expensive, difficult, or noisy to evaluate [32] [33]. This makes BO particularly valuable for scientific and engineering applications where each function evaluation consumes substantial computational resources or requires physical experiments. In chemistry and drug discovery, BO has emerged as a transformative technology, enabling researchers to navigate complex experimental spaces—such as chemical synthesis parameters or molecular combinations—with dramatically fewer experiments than traditional approaches [34] [35] [36].

Unlike gradient-based optimization methods that require derivative information, BO constructs a probabilistic surrogate model of the objective function and uses an acquisition function to guide the search process [33] [37]. This sequential model-based optimization strategy is especially effective for problems with high-dimensional parameter spaces and costly evaluations, which are common in hyperparameter tuning for chemistry models and drug discovery pipelines [34] [35].

Core Principles of Bayesian Optimization

The Bayesian Optimization Framework

At its core, Bayesian Optimization operates on the principle of iterative refinement. The algorithm begins with an initial set of observations and progressively selects new evaluation points that balance exploration of uncertain regions with exploitation of known promising areas [37]. This process is formalized through Bayes' theorem, which describes the correlation between different events and enables the calculation of conditional probabilities [34]. The BO framework can be summarized as follows [34] [33]:

- Initialization: Start with a small set of initial observations of the objective function.

- Surrogate Modeling: Build a probabilistic surrogate model that approximates the objective function.

- Acquisition: Use an acquisition function to determine the most promising point to evaluate next.

- Evaluation: Evaluate the objective function at the selected point.

- Update: Incorporate the new observation into the surrogate model.

- Iteration: Repeat steps 2-5 until convergence or budget exhaustion.

The Exploration-Exploitation Tradeoff

A fundamental principle underlying BO is the exploration-exploitation tradeoff [37]. Exploitation involves sampling areas where the surrogate model predicts high performance, while exploration targets regions with high uncertainty where surprising improvements might be found [33] [37]. The acquisition function quantitatively balances these competing objectives, ensuring the algorithm neither converges prematurely to local optima nor wastes excessive resources on unpromising regions of the search space [37].

Surrogate Models in Bayesian Optimization

Gaussian Process Regression

The Gaussian Process (GP) is the most widely used surrogate model in Bayesian Optimization [32] [38]. A GP defines a distribution over functions, where any finite collection of function values follows a multivariate Gaussian distribution [38]. Formally, a Gaussian Process is fully specified by a mean function μ(·) and a kernel function K(·, ·):

f(·) ∼ GP(μ(·), K(·, ·))

The mean function is often set to zero or a constant, while the kernel function encodes assumptions about the function's smoothness and continuity [38]. Through Bayesian inference, the GP posterior distribution provides both mean predictions and uncertainty estimates for unseen data points, which is crucial for the acquisition function's decision-making process [33] [38].

Table 1: Common Kernel Functions in Gaussian Processes

| Kernel Name | Mathematical Form | Key Properties |

|---|---|---|

| Radial Basis Function (RBF) | ( k{\text{RBF}}(\bm{x},\bm{x}') = \theta{\text{out}}\exp\left(-\frac{1}{2}r(\bm{x}, \bm{x}')\right) ) | Infinitely differentiable, produces smooth functions |

| Matérn | ( k\nu(\bm{x}, \bm{x}') = \theta{\text{out}}\frac{2^{1 - \nu}}{\Gamma(\nu)}(\sqrt{2\nu}r)^\nu K_\nu(\sqrt{2\nu}r) ) | More flexible than RBF, with parameter ν controlling smoothness |

Alternative Surrogate Models

While Gaussian Processes are the standard choice for BO, other surrogate models can be employed, particularly in high-dimensional settings or with large datasets:

- Random Forests: Often used in the Sequential Model-Based Algorithm Configuration (SMAC) framework, random forests can handle categorical parameters more naturally than GPs [39].

- Bayesian Neural Networks: These provide greater scalability to high dimensions and large datasets, though they can be more complex to implement and train [32].

Acquisition Functions

Acquisition functions are the decision-making engine of Bayesian Optimization, quantifying the potential utility of evaluating different points in the search space [33]. They use the surrogate model's predictions to balance exploration and exploitation [37].

Common Acquisition Functions

Table 2: Comparison of Acquisition Functions

| Acquisition Function | Mathematical Form | Strengths | Weaknesses |

|---|---|---|---|

| Upper Confidence Bound (UCB) | ( a(x;\lambda) = \mu(x) + \lambda \sigma (x) ) | Simple, explicit exploration-exploitation parameter λ | Requires careful tuning of λ |

| Probability of Improvement (PI) | ( \text{PI}(x) = \Phi\left(\frac{\mu(x)-f(x^\star)}{\sigma(x)}\right) ) | Intuitive, focuses on probability of improvement | Tends to over-exploit, ignores improvement magnitude |

| Expected Improvement (EI) | ( \text{EI}(x) = \left(\mu- f(x^\star)\right) \Phi\left(\frac{\mu-f(x^\star)}{\sigma}\right) + \sigma \varphi\left(\frac{\mu - f(x^\star)}{\sigma}\right) ) | Considers both probability and magnitude of improvement | More computationally intensive than PI |

Optimization of Acquisition Functions

A critical step in the BO cycle is maximizing the acquisition function to select the next evaluation point [32]. While gradient-based methods like L-BFGS-B are commonly used, they can converge to local optima [32]. Recent research has explored mixed-integer programming (MIP) approaches that provide global optimality guarantees for acquisition function optimization [32]. The Piecewise-linear Kernel Mixed Integer Quadratic Programming (PK-MIQP) formulation, for example, introduces a piecewise-linear approximation for GP kernels and admits a corresponding MIQP representation for acquisition functions with theoretical regret bounds [32].

Performance Comparison of Optimization Methods

Comparative Studies in Healthcare and Chemistry

Multiple studies have systematically compared Bayesian Optimization against alternative hyperparameter optimization methods across different domains:

Table 3: Performance Comparison of Optimization Methods in Healthcare Applications

| Study Context | Optimization Methods | Key Performance Findings | Reference |

|---|---|---|---|

| Heart Failure Prediction | Grid Search (GS), Random Search (RS), Bayesian Search (BS) | BS had best computational efficiency; Random Forest with BS showed superior robustness with AUC improvement of 0.03815 | [8] |

| Predicting High-Need Healthcare Users | 9 HPO methods for XGBoost | All HPO methods improved AUC (0.82 to 0.84) and calibration vs. default parameters; similar gains across methods attributed to large sample size and strong signal-to-noise ratio | [39] |

| Mechanical Properties of Nanocomposites | BO, Simulated Annealing (SA), Genetic Algorithm (GA) | GA consistently outperformed BO and SA for most mechanical properties; BO achieved highest R² (0.9776) for modulus of elasticity prediction | [40] |

In chemistry and drug discovery, Bayesian Optimization has demonstrated remarkable efficiency. In one prospective study screening 206 drugs across 16 cancer cell lines, a Bayesian active learning platform (BATCHIE) accurately predicted unseen combinations and detected synergies after exploring only 4% of the 1.4 million possible experiments [36]. The platform identified a panel of effective combinations for Ewing sarcomas, including the clinically relevant combination of PARP plus topoisomerase I inhibition [36].

Multifidelity Bayesian Optimization

Multifidelity Bayesian Optimization (MF-BO) extends standard BO by incorporating information from experimental sources of differing cost and accuracy [35]. This approach mirrors the traditional experimental funnel in pharmaceutical discovery, where low-fidelity assays screen large compound libraries, and higher-fidelity assays validate promising candidates [35].

In drug discovery applications, MF-BO has been shown to outperform experimental funnels, transfer learning with low-fidelity data, and Bayesian optimization using only high-fidelity data [35]. By optimally allocating resources across docking scores (low-fidelity), single-point percent inhibitions (medium-fidelity), and dose-response IC₅₀ values (high-fidelity), MF-BO accelerates the discovery of potent drug molecules while reducing experimental costs [35].

Experimental Protocols and Methodologies

Standard Bayesian Optimization Workflow

Bayesian Optimization Workflow: This diagram illustrates the iterative process of Bayesian Optimization, showing how the surrogate model and acquisition function guide the selection of evaluation points until convergence.

Protocol for Comparative Studies

Robust experimental comparison of optimization methods requires careful protocol design. A typical methodology includes:

- Dataset Preparation: Use real-world datasets with known ground truth or well-established benchmarks [8] [35].

- Optimization Methods: Implement multiple optimization algorithms with appropriate hyperparameter spaces [8] [39].

- Evaluation Metrics: Define objective functions based on relevant performance metrics (AUC, RMSE, R², etc.) [8] [40].

- Validation: Employ cross-validation or hold-out test sets to assess generalization performance [8].

- Budgeting: Specify computational budgets (number of trials or function evaluations) for each method [39].

- Multiple Runs: Conduct multiple independent runs with different random seeds to account for stochasticity [40].

For example, in the heart failure prediction study, researchers evaluated GS, RS, and BS across SVM, RF, and XGBoost algorithms using real patient data with 167 features from 2008 patients [8]. The study implemented multiple imputation techniques for missing values and employed 10-fold cross-validation to assess model robustness [8].

The Scientist's Toolkit

Table 4: Essential Research Reagents and Computational Tools for Bayesian Optimization

| Tool Name | Type | Primary Function | Application Context |

|---|---|---|---|

| BoTorch | Software Library | Bayesian Optimization research framework with Monte Carlo acquisition functions | General-purpose BO, multi-objective optimization [32] |

| GPyTorch | Software Library | Gaussian Process modeling with GPU acceleration | Large-scale GP regression for BO [38] |

| BATCHIE | Software Platform | Bayesian active learning for combination drug screens | Adaptive design of drug combination experiments [36] |

| Optuna | Software Framework | Hyperparameter optimization with efficient sampling algorithms | Automated ML pipeline tuning [39] |

| Gaussian Process | Surrogate Model | Probabilistic function approximation with uncertainty quantification | Standard surrogate model for BO [33] [38] |

| Morgan Fingerprints | Molecular Representation | Molecular structure encoding using circular fingerprints | Chemical compound representation in drug discovery BO [35] |

Bayesian Optimization represents a powerful paradigm for optimizing expensive black-box functions, with particular relevance to chemistry and drug discovery applications. The method's strength lies in its principled balance of exploration and exploitation through acquisition functions, and its ability to quantify uncertainty through surrogate models, typically Gaussian Processes.

While comparative studies show that BO consistently outperforms simpler alternatives like Grid Search and Random Search, its performance relative to other sophisticated optimizers like Genetic Algorithms appears context-dependent [8] [40]. In scenarios with large sample sizes, low-dimensional feature spaces, and strong signal-to-noise ratios, multiple optimization methods may achieve similar performance [39]. However, BO's sample efficiency makes it particularly valuable for applications with expensive function evaluations, such as experimental chemistry and clinical prediction models.

Emerging directions in Bayesian Optimization include multifidelity approaches that leverage experiments of varying cost and accuracy [35], Bayesian active learning for large-scale experimental design [36], and improved global optimization of acquisition functions using mixed-integer programming [32]. These advances promise to further expand BO's applicability and effectiveness in chemical and pharmaceutical research.

In the field of computational intelligence, Evolutionary Algorithms (EA) provide powerful tools for solving complex optimization problems where traditional mathematical methods fall short. Among the most prominent EAs are Genetic Algorithms (GA) and Particle Swarm Optimization (PSO), which draw inspiration from different natural phenomena. GA mimics the process of natural selection and evolution, operating through selection, crossover, and mutation on a population of potential solutions. In contrast, PSO simulates social behavior, such as bird flocking or fish schooling, where particles navigate the solution space by adjusting their positions based on individual and collective experiences [41] [42].

These algorithms have found significant application in chemistry and drug discovery, where they help researchers navigate vast chemical spaces to identify compounds with desirable properties. The performance of these algorithms is highly dependent on their parameter configurations and problem-aware designs, making understanding their comparative strengths crucial for effective implementation in research settings [43] [11].

Algorithmic Fundamentals: How GA and PSO Work

Genetic Algorithm Workflow

GAs operate through a cycle inspired by biological evolution, maintaining a population of candidate solutions that undergo selective pressure and genetic operations over multiple generations [44] [41]:

- Initialization: Creating an initial population of random solutions (chromosomes)

- Evaluation: Assessing solution quality using a fitness function

- Selection: Choosing the fittest solutions as parents for reproduction

- Crossover: Combining parent solutions to create offspring

- Mutation: Introducing random changes to maintain diversity

- Replacement: Forming the next generation from parents and offspring

Particle Swarm Optimization Workflow

PSO operates through a different paradigm, where potential solutions (particles) fly through the solution space, adjusting their trajectories based on personal and collective experiences [42]:

- Initialization: Initialize particle positions and velocities randomly

- Evaluation: Calculate fitness for each particle

- Update Personal Best: Track best position encountered by each particle

- Update Global Best: Track best position found by entire swarm

- Velocity Update: Adjust velocity based on personal and global bests

- Position Update: Move particles to new positions

Position Update Mechanisms

The core PSO position update equations demonstrate how social information guides the search process [45]:

Velocity Update Equation:

Position Update Equation:

Where:

v_i(k)is particle i's velocity at iteration kx_i(k)is particle i's position at iteration kwis inertia weight controlling momentumc₁, c₂are acceleration coefficientsr₁, r₂are random numbers between 0 and 1pbest_iis particle i's personal best positiongbestis the swarm's global best position

Performance Comparison: Quantitative Analysis

Benchmark Performance Analysis

Extensive testing on standard benchmark functions reveals distinct performance characteristics for each algorithm. The following table summarizes key comparative metrics based on empirical studies:

Table 1: Performance Comparison on Standard Benchmark Functions

| Performance Metric | Genetic Algorithm (GA) | Particle Swarm Optimization (PSO) | Hybrid IGA-IPSO |

|---|---|---|---|

| Average Execution Time | 5.1059 seconds [46] | 4.5632 seconds [46] | 1.8527 seconds [46] |

| Friedman Rank | Not specified | Not specified | 1.2308 (top rank) [46] |

| Convergence Speed | Moderate [42] | Fast [42] | Fastest [46] |

| Global Search Capability | Good [41] | Good [41] | Superior [46] |

| Local Optima Avoidance | Mutation operators help escape local optima [41] | Social learning helps escape local optima [41] | Enhanced via constriction coefficient and chaotic search [46] |

Application-Specific Performance

The relative performance of GA and PSO varies significantly across application domains, with each demonstrating strengths in different contexts:

Table 2: Domain-Specific Performance Comparison

| Application Domain | Genetic Algorithm Performance | Particle Swarm Optimization Performance | Remarks |

|---|---|---|---|

| Optimal Power Flow | Slightly better accuracy [42] | Less computational burden [42] | Both offer remarkable accuracy [42] |

| High-Dimensional Feature Selection | Not specified | Superior balance between feature number and classification accuracy with PAPSO variant [43] | Problem-aware hyperparameter design crucial [43] |

| Molecular Optimization | Used in earlier de novo design approaches [47] | Effective in continuous latent spaces (Molecule Swarm Optimization) [47] | PSO enables flexible objective functions [47] |

| Stochastic Biochemical Systems | Suitable for parameter estimation [48] | More suitable for parameter estimation [48] | PSO reliably reconstructs system dynamics [48] |

Chemistry Applications: Case Studies and Experimental Protocols

Molecular Optimization with Particle Swarm Optimization

The application of PSO to molecular optimization, known as Molecule Swarm Optimization (MSO), represents a significant advancement in de novo drug design. This approach operates in a continuous latent space of chemical structures, allowing efficient navigation of the chemical landscape [47].

Experimental Protocol for MSO:

Latent Space Representation: Encode chemical structures into continuous vectors using a variational autoencoder trained on SMILES notations [47]

Swarm Initialization: Initialize particle positions randomly in the latent space, with each position decodable to a molecular structure [47]

Objective Function Definition: Define a composite objective function incorporating:

- Predicted biological activity from QSAR models

- ADME properties (solubility, metabolic stability)

- Drug-likeness metrics (QED)

- Synthetic accessibility scores

- Substructure constraints or penalties [47]

Iterative Optimization:

- Evaluate each particle's position by decoding to molecular structure and computing objective function

- Update personal best (pbest) and global best (gbest) positions

- Update particle velocities and positions using standard PSO equations [47]

Termination and Validation:

- Continue for fixed iterations or until convergence

- Validate top-ranking molecules through synthetic testing [47]

FACTS Device Optimization with Hybrid Approach

A hybrid Improved Genetic Algorithm-Improved Particle Swarm Optimization (IGA-IPSO) has demonstrated exceptional performance in optimizing Flexible AC Transmission Systems (FACTS) devices, showcasing how hybrid approaches can leverage the strengths of both algorithms [46].

Experimental Protocol for IGA-IPSO:

Algorithm Enhancement:

- Improve PSO using constriction coefficient for better convergence

- Implement chaotic search in PSO for enhanced exploration

- Enhance GA with improved genetic operators [46]

Validation:

- Test on 13 standard benchmark functions

- Evaluate on 4 CEC2020 test functions

- Apply to two engineering design problems [46]

Application:

- Optimize Static Synchronous Compensator (STATCOM) location and size

- Test on IEEE 33-, 69-, and 118-bus systems [46]

Performance Metrics:

- Measure power loss reduction

- Evaluate voltage profile improvement

- Compare computation time with other algorithms [46]

Results: The IGA-IPSO approach achieved power loss reductions of 21.09% (IEEE 33-bus), 43.34% (IEEE 69-bus), and 8.08% (IEEE 118-bus) while achieving the lowest average execution time across benchmarks (1.8527 seconds) compared to GA-PSO (4.0083 s), PSO (4.5632 s), and GA (5.1059 s) [46].

Advanced Hybridization Techniques

Problem-Aware Hyperparameter Design

Recent advances in PSO focus on problem-aware hyperparameter design that adapts to specific dataset characteristics rather than using predefined settings. The PAPSO (Problem-Aware PSO) variant introduces two key innovations [43]:

Dynamic Inertia Weight Adjustment:

- Inertia weight determined by evaluating feature importance based on partial instances and all instances

- Incorporates optimizable parameters that are refined during the PSO process

- Enables automatic refinement and improved search efficiency [43]

Statistical Initialization for Acceleration Coefficients:

- Coefficients derived from statistical properties of feature importance measures

- Uses ReliefF and Mutual Information (MI) metrics to guide initialization

- Assigns unique coefficients to each feature, enhancing convergence behavior [43]

Quantum-Inspired Hybrid Approaches

Quantum-inspired algorithms represent another frontier in evolutionary computation optimization. The Quantum-Inspired Gravitationally Guided PSO (QIGPSO) combines elements from Quantum PSO and Gravitational Search Algorithm to overcome limitations of conventional methods [45].

Key Innovations in QIGPSO:

- Replaces acceleration factors with absolute Gaussian random variables

- Modifies position update equations

- Uses contraction-expansion coefficient adaptive tuning

- Implements wrapper-based approach with Support Vector Machine for feature selection [45]

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools for Evolutionary Algorithm Implementation

| Tool/Component | Function | Example Applications |

|---|---|---|

| Continuous Molecular Representation | Encodes discrete molecular structures into continuous vectors | Enables gradient-based optimization in chemical space [47] |

| Variational Autoencoder | Learns compressed latent representations of chemical structures | Creates continuous chemical space for molecular optimization [47] |

| QSAR Models | Predicts biological activity based on chemical structure | Provides objective function for optimization [47] |

| ADME Prediction Models | Estimates pharmacokinetic properties | Ensures drug-like characteristics in optimized molecules [47] |

| Synthetic Accessibility Score | Evaluates ease of molecule synthesis | Maintains practical utility of designed molecules [47] |

| Benchmark Function Suites | Standardized test problems for algorithm validation | Enables fair comparison between algorithms (e.g., CEC2020) [46] |

| Problem-Aware Hyperparameters | Algorithm parameters adapted to specific dataset characteristics | Improves performance on high-dimensional feature selection [43] |

The comparative analysis reveals that both Genetic Algorithms and Particle Swarm Optimization offer distinct advantages for different optimization scenarios in chemistry research:

Choose PSO when working with continuous representations, when computational efficiency is prioritized, and for problems where social information sharing can effectively guide the search process [42] [47].

Choose GA when dealing with highly discrete optimization problems, when maintaining population diversity is crucial, and when the problem benefits from genetic operators like crossover and mutation [44] [41].

Consider Hybrid Approaches like IGA-IPSO for superior performance on complex, multi-faceted optimization problems, as hybrids can leverage the strengths of both algorithms while mitigating their individual limitations [46].

Implement Problem-Aware Designs like PAPSO for domain-specific applications, as adaptive hyperparameters tuned to dataset characteristics consistently outperform fixed parameter configurations [43].

As optimization challenges in chemistry research continue to grow in complexity, the strategic selection and implementation of these evolutionary algorithms will play an increasingly important role in accelerating drug discovery and materials design.

Hyperparameter optimization (HPO) is a critical step in developing robust machine learning models, especially in scientific fields like chemistry and drug development where data is often limited and costly. While Bayesian optimization has been a popular choice for HPO in materials research, gradient-based methods offer a compelling alternative. This guide compares the performance of gradient-based HPO using reversible learning against other established methods, providing experimental data and implementation protocols to help researchers select the appropriate technique for their specific applications.

Understanding Hyperparameter Optimization Methods

Methodologies and Theoretical Foundations

Gradient-Based Hyperparameter Optimization with Reversible Learning represents a significant advancement in HPO methodology. Unlike conventional approaches that treat hyperparameter tuning as a black-box optimization, this method computes exact gradients of cross-validation performance with respect to hyperparameters by chaining derivatives backward through the entire training procedure. This approach enables optimization of thousands of hyperparameters simultaneously, including step-size and momentum schedules, weight initialization distributions, and richly parameterized regularization schemes [49] [50]. The core innovation lies in exactly reversing the dynamics of stochastic gradient descent with momentum, making it particularly valuable for complex neural network architectures common in chemical property prediction.

Bayesian Optimization (BO) operates on fundamentally different principles. As a sequential model-based optimization strategy, BO uses a surrogate function to estimate the posterior distribution of the objective function and an acquisition function to determine which hyperparameters to evaluate next. This process is particularly effective for optimizing black-box functions where derivatives are unavailable [34] [51]. In chemical applications, BO has demonstrated success in various domains, from materials discovery to battery aging diagnostics [34] [52].

Evolutionary Algorithms represent another important class of HPO methods. These population-based, nature-inspired metaheuristic approaches include Genetic Algorithms (GA), Differential Evolution (DE), and Covariance Matrix Adaptation Evolution Strategy (CMA-ES). They modify domain-specific knowledge into heuristics through exploration (diversification) and exploitation (intensification) procedures [51].

Comparative Workflow Visualization

The diagram below illustrates the fundamental differences in workflow between gradient-based HPO using reversible learning and Bayesian optimization:

Experimental Comparison and Performance Benchmarking

Quantitative Performance Analysis

Table 1: Comparative Performance of HPO Methods Across Different Domains