Hyperparameter Optimization for Chemists: A Practical Guide to Boosting ML Model Performance

This guide provides chemists and drug development researchers with a comprehensive framework for applying hyperparameter optimization (HPO) to machine learning models in chemical research.

Hyperparameter Optimization for Chemists: A Practical Guide to Boosting ML Model Performance

Abstract

This guide provides chemists and drug development researchers with a comprehensive framework for applying hyperparameter optimization (HPO) to machine learning models in chemical research. It covers foundational concepts, from defining hyperparameters and their impact on models like Graph Neural Networks and Support Vector Machines, to practical methodologies including Bayesian optimization and automated workflows. The content addresses critical challenges such as overfitting in low-data regimes, a common scenario in experimental chemistry, and offers troubleshooting strategies for real-world applications like reaction optimization and molecular property prediction. By comparing optimization techniques and validating model performance, this guide empowers scientists to enhance the accuracy, efficiency, and reliability of their data-driven research.

Why Hyperparameters Matter: The Foundation of Chemical Machine Learning

In the field of cheminformatics, where machine learning models are increasingly deployed for molecular property prediction, drug discovery, and material science, understanding the distinction between model parameters and hyperparameters is fundamental to building effective predictive systems. This technical guide delineates these core concepts, framing them within the critical process of hyperparameter optimization. For chemists and drug development professionals, mastering these "tunable knobs" is not merely a technical exercise but a prerequisite for developing robust, reliable, and interpretable models that can accelerate research and development timelines. This whitepaper provides an in-depth examination of these concepts, supplemented with structured data, experimental protocols, and practical toolkits tailored for scientific applications.

Machine learning models, particularly Graph Neural Networks (GNNs) adept at handling molecular structures, have revolutionized cheminformatics by offering data-driven approaches to uncover complex patterns in vast chemical datasets [1]. The performance of these models, however, is highly sensitive to two distinct types of variables: model parameters and hyperparameters.

A simple analogy is to consider model parameters as the engine of a car—internal components like piston positions and valve timings that are learned and adjusted automatically during operation. Hyperparameters, in contrast, are the control panel—the gear shift, accelerator sensitivity, and cruise control settings that the driver (the researcher) must configure before and during the journey to ensure optimal performance. Confusing these two is a common pitfall that can hinder model efficacy [2] [3].

Core Definitions and Distinctions

Model Parameters: The Learned Internals

Model parameters are the internal variables of a model that are learned directly from the training data during the optimization process [2] [4]. They are not set manually by the researcher and are fundamental to the model's predictive function.

- In Linear Regression: The coefficients (weights) and the intercept (bias) are the parameters. For a model y = mx + c, m (slope) and c (intercept) are the parameters estimated by minimizing an error function like Root Mean Squared Error (RMSE) [2] [5].

- In Neural Networks: The weights and biases connecting neurons across layers are the model parameters [2] [4].

Hyperparameters: The Tunable Knobs

Hyperparameters are external configuration variables whose values are set prior to the commencement of the learning process [2] [6]. They control the overarching behavior of the training algorithm and the model's structure itself. They are not learned from the data but are instead "tuned" by the experimenter.

- Examples: Learning rate, number of training epochs, batch size, number of layers in a neural network, number of neurons per layer, and regularization strength [4] [6] [5].

The table below provides a consolidated comparison for clarity.

Table 1: Fundamental Differences Between Model Parameters and Hyperparameters

| Aspect | Model Parameters | Hyperparameters |

|---|---|---|

| Definition | Internal variables learned from data [4] | External configurations set before training [2] |

| Set By | Optimization algorithm (e.g., Gradient Descent, Adam) [2] | Researcher or automated tuning process [2] |

| Purpose | Used for making predictions on new data [2] | Control the process of learning parameters [2] |

| Examples | Weights & biases in Neural Networks; Coefficients in Linear Regression [2] [4] | Learning rate, number of epochs, batch size, number of layers [4] [6] |

| Determination | Estimated by fitting the model to training data [2] | Determined via hyperparameter tuning (e.g., Grid Search, Bayesian Optimization) [2] [3] |

Key Hyperparameters in Cheminformatics Models

The selection of hyperparameters is highly algorithm-dependent. In cheminformatics, tree-based ensembles and GNNs are particularly prevalent. The following tables detail critical hyperparameters for these model classes.

Table 2: Key Hyperparameters for Tree-Based Ensemble Models [5]

| Hyperparameter | Function | Impact on Model |

|---|---|---|

| Number of Estimators | Defines the number of trees in the ensemble (e.g., Random Forest). | A higher number generally improves accuracy and stability but increases computational cost [5]. |

| Maximum Depth | The maximum allowed depth for each tree. | Limits model complexity; high values risk overfitting, low values risk underfitting [5]. |

| Learning Rate (Boosting) | Controls the contribution of each weak learner in sequential models like Gradient Boosting. | A lower rate often leads to better generalization but requires more estimators (trees) to converge [5]. |

| Minimum Samples per Leaf | The minimum number of samples required to be at a leaf node. | A higher value regularizes the model, preventing it from learning overly specific patterns from noise [5]. |

Table 3: Key Hyperparameters for Neural Network Training [6]

| Hyperparameter | Function | Impact on Training |

|---|---|---|

| Learning Rate | Controls the step size during weight updates in gradient descent. | Too high: model may never converge or diverge. Too low: training is slow and may get stuck in a suboptimal state [6]. |

| Batch Size | Number of training examples used to compute one gradient update. | Smaller batches introduce noise that can help generalization but are less computationally efficient. Larger batches provide a more stable gradient estimate [6]. |

| Number of Epochs | Number of complete passes through the entire training dataset. | Too few: underfitting. Too many: overfitting to the training data [2]. |

| Number of Layers/Neurons | Defines the architecture and capacity of the network. | Increasing layers/neurons allows the model to learn more complex patterns but increases the risk of overfitting [4]. |

Hyperparameter Optimization: Methodologies and Protocols

Relying on default hyperparameters is a significant risk in real-world applications, as optimal configurations are highly dependent on the specific dataset and problem [3]. Hyperparameter Optimization (HPO) is the formal process of searching for the optimal set of hyperparameters.

Core Tuning Algorithms

Several automated strategies exist for HPO, each with its own strengths and weaknesses.

- Grid Search: An exhaustive search over a predefined set of hyperparameter values. It is guaranteed to find the best combination within the grid but becomes computationally intractable as the number of hyperparameters grows [3].

- Random Search: Randomly samples hyperparameter combinations from specified distributions. It often finds good configurations much faster than Grid Search, especially when some hyperparameters are more important than others [3].

- Bayesian Optimization: A sequential model-based approach that uses the results from previous trials to inform the next hyperparameter set to evaluate. It is typically more sample-efficient than random or grid search, making it suitable for expensive-to-train models like large GNNs [3] [7].

Advanced methods are also emerging, such as the Multi-Strategy Parrot Optimizer (MSPO), which integrates strategies like Sobol sequence initialization and nonlinear decreasing inertia weight to enhance global exploration and convergence stability in complex tasks like medical image classification [7]. Furthermore, novel paradigms like E2ETune leverage fine-tuned generative language models to learn a direct mapping from workload features (e.g., molecular dataset characteristics) to optimal configurations, potentially eliminating iterative tuning for new, similar tasks [8].

Experimental Protocol for HPO

A standardized protocol ensures reproducible and efficient model tuning.

- Define the Search Space: Select the hyperparameters to tune and define their value ranges (e.g., learning rate:

[0.001, 0.01, 0.1], number of layers:[2, 4, 6]). This requires domain knowledge and an educated compromise between completeness and computational feasibility [3]. - Choose an Optimization Metric: Select a primary metric to evaluate model performance (e.g., validation accuracy, F1-score, mean squared error). This metric will guide the optimization process [3].

- Select a Tuning Algorithm: Choose a method (e.g., Bayesian Optimization) based on the size of the search space and available computational resources.

- Configure Tuning Run Parameters:

- Maximum Trials: The total number of hyperparameter combinations to evaluate.

- Early Stopping Rounds: The number of epochs without improvement in the optimization metric after which a single training run can be terminated early to save resources [3].

- Parallel Trials: The number of trials to run concurrently, if resources allow [3].

- Execute and Monitor: Launch the tuning job, tracking all runs, metadata, and artifacts using a robust experimentation framework (e.g., MLRun, Weights & Biases) for traceability and collaboration [3].

Case Study: Hyperparameter Optimization in Action

A compelling example of HPO's impact comes from breast cancer image classification, a task analogous to the analysis of histopathological images in drug safety assessment. Research has shown that deep learning model performance heavily relies on the proper configuration of hyperparameters like learning rate, batch size, and network depth [7].

In one study, the ResNet18 model was applied to the BreaKHis breast cancer image dataset. When optimized using a novel Multi-Strategy Parrot Optimizer (MSPO), the model's performance notably surpassed both the non-optimized version and models optimized with other algorithms across four key metrics: accuracy, precision, recall, and F1-score [7]. This validates that advanced HPO can directly enhance model performance in critical medical and cheminformatics applications.

The Scientist's Toolkit: Essential Reagents for HPO

For chemists and researchers venturing into model tuning, the following "reagent solutions" are essential components of the experimental workflow.

Table 4: Essential Software Tools for Hyperparameter Optimization

| Tool / "Reagent" | Function | Relevance to Cheminformatics |

|---|---|---|

| Scikit-learn | A core machine learning library in Python providing implementations of GridSearchCV and RandomizedSearchCV. | Ideal for tuning traditional models (e.g., Random Forests, SVMs) on molecular fingerprint data [5]. |

| Hyperopt | A Python library for distributed asynchronous Bayesian optimization. | Well-suited for defining complex, conditional search spaces for neural networks and GNNs [3]. |

| Optuna | A hyperparameter optimization framework featuring a define-by-run API that allows for dynamic search spaces. | Excellent for large-scale tuning studies; its efficiency benefits computationally expensive molecular property predictions [3]. |

| Managed ML Services (e.g., AWS SageMaker, Google Vizier) | Cloud-based services that automate the infrastructure for running large-scale HPO jobs. | Reduces operational overhead, allowing researchers to focus on model design and analysis [3]. |

| MLRun | An open-source MLOps framework that manages the entire lifecycle of HPO experiments, from tracking to production. | Ensures reproducibility and collaboration across research teams, a critical need in regulated drug development environments [3]. |

The distinction between model parameters and hyperparameters is a cornerstone of effective machine learning practice in cheminformatics. Model parameters are the internal, learned essence of the model, while hyperparameters are the external, tunable knobs that govern the learning process itself. As the field increasingly relies on complex models like GNNs for molecular property prediction and drug discovery, the systematic optimization of these hyperparameters transitions from a best practice to an absolute necessity. By adopting the methodologies, protocols, and tools outlined in this guide, chemists and research scientists can ensure their models are not only powerful but also robust, efficient, and reliably tuned to deliver actionable scientific insights.

The Impact of Hyperparameters on Model Performance and Generalization in Chemical Tasks

In modern computational chemistry and drug discovery, machine learning (ML) models, particularly Graph Neural Networks (GNNs), have become indispensable tools for tasks ranging from molecular property prediction to drug-target interaction forecasting. The performance of these models is exceptionally sensitive to their architectural configurations and training parameters. Hyperparameter Optimization (HPO) and Neural Architecture Search (NAS) have therefore emerged as critical processes for developing models that are not only accurate but also generalize well to unseen chemical data. The effectiveness of ML models in cheminformatics is highly sensitive to architectural choices and hyperparameters, making optimal configuration selection a non-trivial task that directly impacts a model's predictive accuracy and generalizability [1]. This technical guide examines the profound impact of hyperparameter selection on model performance and generalization within chemical tasks, providing chemists and researchers with experimentally-grounded methodologies for model optimization.

Hyperparameter Influence in Chemical Machine Learning

Core Hyperparameters and Their Chemical Relevance

In chemical ML tasks, different categories of hyperparameters exert distinct influences on model behavior:

Architectural Hyperparameters: These include parameters such as the number of graph convolutional layers, attention heads in transformer-based models, and the dimensionality of atomic embeddings. In GNNs for molecular graphs, the depth of the network directly controls the receptive field—the number of bond hops across which atomic information can be propagated. This is particularly crucial for capturing long-range interactions in large, flexible pharmaceutical compounds [1].

Regularization Hyperparameters: Parameters like dropout rates, weight decay coefficients, and batch normalization settings control model complexity and prevent overfitting. Given that many chemical datasets are characterized by limited samples (often only hundreds of compounds), appropriate regularization is essential for maintaining generalization capability [9] [10].

Optimization Hyperparameters: Learning rates, batch sizes, and scheduler parameters govern the training dynamics. The learning rate is especially critical when fine-tuning pretrained models on small, specialized chemical datasets, as overly aggressive rates can cause catastrophic forgetting of valuable pretrained chemical knowledge [11].

Quantifying Hyperparameter Impact on Model Performance

The table below summarizes empirical findings on how key hyperparameters affect specific chemical prediction tasks:

Table 1: Hyperparameter Impact on Chemical Model Performance

| Hyperparameter | Chemical Task | Performance Impact | Optimal Range | Generalization Effect |

|---|---|---|---|---|

| Learning Rate | Reaction Yield Prediction [9] | ±15% RMSE variation | 1e-4 to 1e-3 | Critical for extrapolation to new reaction classes |

| GNN Depth (Layers) | Molecular Property Prediction [1] | ±12% MAE variation | 3-6 layers | Deeper models degrade on small molecules |

| Dropout Rate | Low-Data Regimes (≤50 samples) [9] | ±20% prediction error | 0.3-0.5 | Prevents overfitting to noise in experimental data |

| Attention Heads | Protein-Ligand Binding Affinity [10] | ±8% ROC-AUC | 8-16 heads | Improves interpretation of key molecular interactions |

| Batch Size | Quantum Property Prediction [12] | ±5% MAE variation | 32-128 | Smaller batches improve out-of-distribution generalization |

| Embedding Dimension | Formation Energy Prediction [12] | ±10% MAE variation | 128-256 | Larger dimensions help with unseen elements |

Methodologies for Hyperparameter Optimization in Chemical Tasks

Bayesian Optimization for Chemical Workflows

Bayesian Optimization (BO) has emerged as a particularly effective approach for HPO in chemical ML applications due to its sample efficiency. The ROBERT software package implements BO with a specialized objective function that combines interpolation and extrapolation performance metrics, specifically designed for chemical data characteristics [9]:

Problem Formulation: Define hyperparameter search space Θ and objective function f(θ) based on chemical performance metrics.

Surrogate Modeling: Employ Gaussian processes to model the posterior distribution of f(θ) based on observed evaluations.

Acquisition Function: Use Expected Improvement (EI) or Upper Confidence Bound (UCB) to select the most promising hyperparameter configurations for evaluation.

Parallelization: Implement synchronous or asynchronous parallel evaluation to accelerate the optimization process using distributed computing resources.

For chemical reaction optimization, BO has demonstrated effectiveness in discovering general, transferable parameters that enable high yields across related transformations without need for laborious re-optimization [13].

Addressing Low-Data Regimes in Chemical Applications

Chemical research often operates in low-data regimes (frequently 18-50 data points), where traditional HPO approaches risk overfitting. Specialized workflows have been developed to address this challenge [9]:

Combined Validation Metric: Implement a combined Root Mean Squared Error (RMSE) calculated from different cross-validation methods:

- Interpolation performance assessed via 10-times repeated 5-fold CV

- Extrapolation performance evaluated via selective sorted 5-fold CV based on target value

Data Splitting Protocol: Reserve 20% of initial data (minimum 4 points) as an external test set with even distribution of target values to prevent data leakage and ensure balanced representation.

Regularization-Centric HPO: Prioritize optimization of regularization hyperparameters (dropout, weight decay) over architectural parameters when data is severely limited.

Table 2: Automated Workflow for Low-Data Chemical Applications

| Workflow Stage | Components | Chemical Application Considerations |

|---|---|---|

| Data Preprocessing | Feature selection, normalization | Domain-informed descriptors (electronic, steric) |

| Hyperparameter Space Definition | Search boundaries, distributions | Chemistry-aware constraints (e.g., GNN depth vs. molecular size) |

| Objective Formulation | Combined RMSE metric | Balance of interpolation and extrapolation performance |

| Model Selection | Cross-validation, scoring system | Integration of chemical interpretability criteria |

| Validation | External test set, y-shuffling | Assessment of physicochemical consistency |

Advanced HPO Strategies for Specific Chemical Applications

Graph Neural Networks for Molecular Property Prediction

GNNs represent molecules as graphs where atoms correspond to nodes and bonds to edges. The HPO for GNNs in cheminformatics requires special consideration of graph-specific parameters [1]:

- Message Passing Steps: Optimize the number of graph convolutional layers based on the diameter of target molecules.

- Edge Feature Encoding: Tune parameters for bond type representation and directional messaging.

- Global Readout Functions: Optimize aggregation methods (sum, mean, attention) for molecular-level property prediction.

Out-of-Distribution Generalization with Elemental Features

For formation energy prediction and other materials properties, models must generalize to compounds containing elements not seen during training. Incorporating elemental features significantly enhances Out-of-Distribution (OoD) generalization [12]:

- Feature Integration: Augment node representations with elemental descriptors including atomic radius, electronegativity, valence electrons, and periodicity information.

- Transfer Learning: Pre-train on diverse chemical spaces before fine-tuning on target domain with limited elements.

- Active Learning: Implement uncertainty-aware acquisition functions to strategically expand training data to cover chemical diversity.

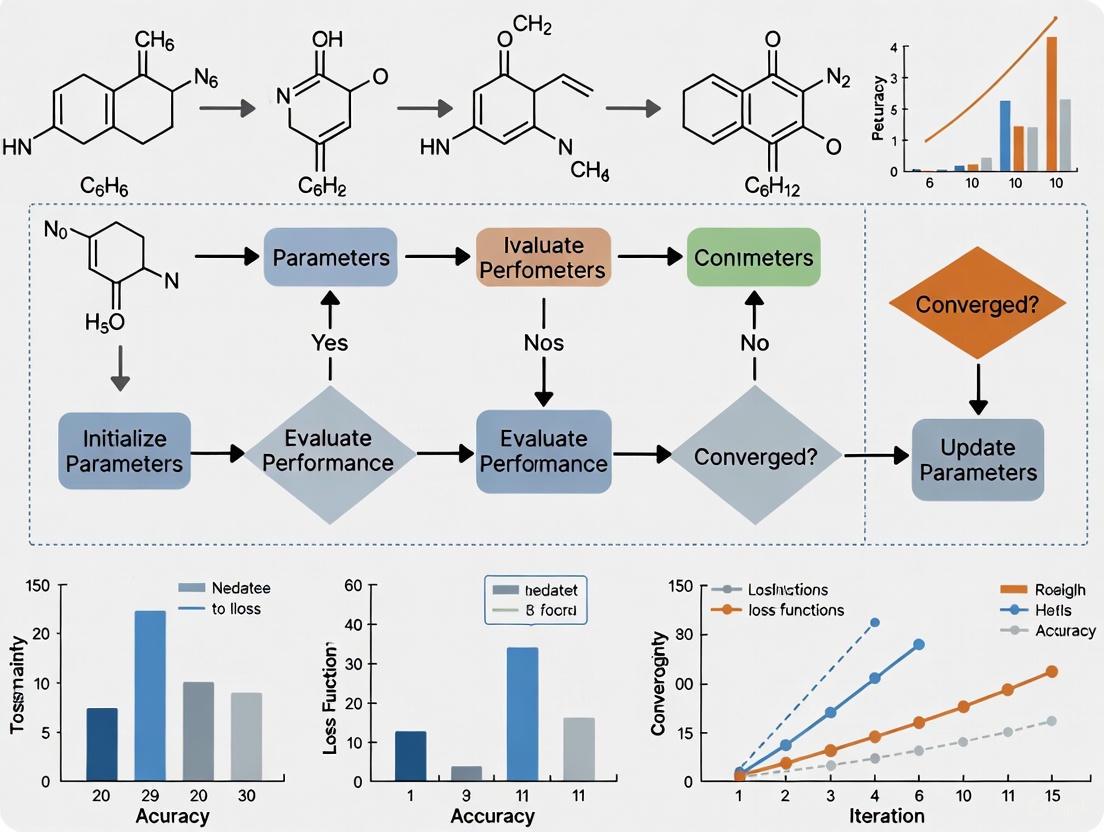

The following workflow diagram illustrates the automated HPO process for chemical applications in low-data regimes:

Experimental Protocols and Benchmarking

Rigorous Evaluation Practices

Current research reveals that common but unrealistic benchmarking practices, such as providing ground-truth atom-to-atom mappings or 3D geometries at test time, lead to overly optimistic performance estimates [14]. The ChemTorch framework proposes more rigorous evaluation standards:

End-to-End Evaluation: Models must operate on readily available 2D chemical structures without relying on computationally expensive data.

Realistic Data Splits: Implement scaffold-based splits that separate compounds by structural similarity to better simulate real discovery scenarios.

Extrapolation Assessment: Systematically evaluate performance on compounds outside the training distribution in chemical space.

Benchmarking Results

The table below summarizes hyperparameter optimization results across diverse chemical tasks:

Table 3: Hyperparameter Optimization Performance Across Chemical Tasks

| Chemical Task | Dataset Size | Baseline Model | Optimized Model | Performance Improvement | Key Hyperparameters |

|---|---|---|---|---|---|

| Reaction Yield Prediction [9] | 21-44 compounds | Linear Regression | Neural Network | 15-30% RMSE reduction | Learning rate, hidden layers, dropout |

| Formation Energy Prediction [12] | 132,752 structures | SchNet (default) | SchNet (optimized) | 8-12% MAE improvement | Embedding dim, radial basis, cutoff distance |

| Drug-Target Interaction [15] | 11,000 compounds | Standard Classifier | CA-HACO-LF | 18% accuracy gain | Feature selection, tree depth, ensemble size |

| Molecular Property Prediction [10] | 18-44 compounds | Random Forest | Gradient Boosting | 10-25% error reduction | Tree depth, learning rate, subsample ratio |

| Aqueous Solubility [16] | 464 compounds | Default GNN | Optimized GNN | 20% improvement | Attention heads, message passing steps |

Research Reagent Solutions: Software Tools for Chemical HPO

The following table details essential computational tools and their applications in hyperparameter optimization for chemical tasks:

Table 4: Essential Software Tools for Hyperparameter Optimization in Chemical Research

| Tool Name | Application Domain | Key Features | Chemical Task Specialization |

|---|---|---|---|

| ROBERT [9] | Low-data chemical ML | Automated Bayesian HPO, combined RMSE metric, overfitting detection | Reaction optimization, molecular property prediction |

| ChemTorch [14] | Reaction property prediction | Unified benchmarking, end-to-end evaluation protocols | Reaction yield, barrier height prediction |

| fastprop [10] | Molecular property prediction | Fast hyperparameter optimization, Mordred descriptors | ADMET, physicochemical properties |

| XenonPy [12] | Materials informatics | Elemental feature integration, OoD generalization | Formation energy prediction with unseen elements |

| CA-HACO-LF [15] | Drug-target interaction | Ant colony optimization for feature selection | Virtual screening, binding affinity prediction |

| Gnina 1.3 [10] | Structure-based drug design | CNN scoring functions, covalent docking | Protein-ligand pose prediction, scoring |

Visualization of Model Selection Criteria

The following diagram illustrates the multi-faceted scoring system used for model selection in chemical applications, particularly in low-data regimes:

Hyperparameter optimization represents a critical dimension in developing high-performing, generalizable machine learning models for chemical tasks. The specialized methodologies outlined in this guide—particularly Bayesian optimization with chemistry-aware objective functions, rigorous evaluation protocols that prevent overfitting in low-data regimes, and strategic incorporation of domain knowledge through elemental features and molecular representations—provide a robust framework for optimizing chemical models. As the field progresses, automated HPO and NAS are expected to play increasingly pivotal roles in advancing GNN-based solutions across cheminformatics, ultimately accelerating drug discovery, materials design, and chemical synthesis optimization. Future directions will likely focus on transfer learning across chemical domains, multi-objective optimization for conflicting property balances, and uncertainty-aware optimization for high-risk chemical applications.

In the modern drug discovery pipeline, the integration of artificial intelligence has become a transformative force. For chemists and drug development researchers, achieving precise control over AI-driven molecular design requires a fundamental understanding of three interconnected optimization targets: model parameters, model hyperparameters, and the molecular structures themselves. While model parameters are learned from data during training and hyperparameters are set before training begins, both directly influence the quality, efficacy, and synthesizability of generated molecular candidates. This whitepaper provides an in-depth technical examination of these core concepts, framed within practical cheminformatics applications to equip scientists with the knowledge needed to optimize generative AI models for advanced molecular design.

The significance of hyperparameter optimization (HPO) is particularly pronounced in graph neural networks (GNNs), which have emerged as powerful tools for modeling molecular structures. As noted in a comprehensive review, "the performance of GNNs is highly sensitive to architectural choices and hyperparameters, making optimal configuration selection a non-trivial task" [1]. The careful tuning of these external configurations becomes a critical step in developing reliable in-silico molecular design tools.

Foundational Concepts: Parameters vs. Hyperparameters

Definitions and Core Differences

In machine learning, particularly in the context of molecular design, a clear distinction exists between model parameters and model hyperparameters. Model parameters are internal variables that the model learns automatically from the training data during the optimization process. These are estimated by fitting the model to the data and are fundamental to making predictions on new data. In contrast, model hyperparameters are external configurations whose values are set prior to the commencement of the learning process [2] [17]. They control the very process of how the model learns its parameters.

Table 1: Comparative Analysis of Model Parameters vs. Hyperparameters

| Characteristic | Model Parameters | Model Hyperparameters |

|---|---|---|

| Definition | Internal variables learned from data | External configurations set before training |

| Determination | Estimated by optimization algorithms (e.g., Gradient Descent, Adam) [2] | Set manually or via hyperparameter tuning [2] |

| Role | Required for making predictions; define model skill [17] | Control the learning process; determine how parameters are learned [18] |

| Examples in ML | Weights & biases in neural networks; coefficients in linear regression [2] [17] | Learning rate; number of hidden layers; number of epochs [2] |

| Examples in Molecular AI | Learned representations of molecular structures in Graph Neural Networks [1] | Architecture choices in GNNs; reinforcement learning policy parameters [19] |

Interrelationship in Molecular Design

The relationship between hyperparameters and parameters is hierarchical and crucial for successful generative models in chemistry. Hyperparameters dictate how the learning algorithm will discover parameters during training. As one technical explanation notes: "In ML/DL, a model is defined or represented by the model parameters. However, the process of training a model involves choosing the optimal hyperparameters that the learning algorithm will use to learn the optimal parameters" [18]. This relationship is particularly important in molecular design, where the choice of hyperparameters can significantly impact the quality, diversity, and synthesizability of generated compounds.

The optimization process can be visualized as follows, showing how hyperparameters control the learning of parameters which ultimately define the molecular generation capabilities:

Hyperparameter Optimization in Molecular Generative AI

Advanced HPO Techniques

Hyperparameter optimization in molecular generative AI employs several sophisticated techniques, each with distinct advantages for drug discovery applications:

Bayesian Optimization (BO): This approach is particularly valuable when dealing with expensive-to-evaluate objective functions, such as docking simulations or quantum chemical calculations [19]. BO develops a probabilistic model of the objective function and uses it to make informed decisions about which hyperparameter configurations to evaluate next. In generative models, BO often operates in the latent space of architectures like Variational Autoencoders (VAEs), proposing latent vectors that are likely to decode into desirable molecular structures [19].

Reinforcement Learning (RL) Approaches: RL frameworks train an agent to navigate through molecular space by optimizing a reward function that incorporates desired chemical properties. "In this context, reward function shaping is crucial for guiding RL agents toward desirable chemical properties such as drug-likeness, binding affinity, and synthetic accessibility" [19]. Models like MolDQN and Graph Convolutional Policy Networks (GCPN) use RL to iteratively modify or construct molecules with targeted properties [19].

Multi-objective Optimization: Real-world drug discovery requires balancing multiple, often competing objectives. Recent approaches leverage "multi-objective optimization methods to help the design of novel small molecules optimised for conflicting pharmacological attributes with generative models" [20]. This allows for the generation of compounds that balance requirements for potency, safety, metabolic stability, and pharmacodynamic profile.

Property-Guided Generation

Property-guided generation represents a significant advancement in molecular design, offering a directed approach to generating molecules with desirable characteristics. For instance, the Guided Diffusion for Inverse Molecular Design (GaUDI) framework "combines an equivariant graph neural network for property prediction with a generative diffusion model" [19]. This approach demonstrated significant efficacy in designing molecules for organic electronic applications, achieving validity of 100% in generated structures while optimizing for both single and multiple objectives.

Another innovative approach utilizes VAEs for property-guided generation. The integration of property prediction into the latent representation of VAEs "allows for a more targeted exploration of molecular structures with desired properties" [19]. This enables researchers to navigate the vast chemical space more efficiently by focusing on regions with higher probabilities of containing molecules with the target characteristics.

Experimental Protocols and Workflows

Integrated VAE with Active Learning Cycles

A sophisticated workflow for generative molecular design integrates Variational Autoencoders (VAEs) with nested active learning (AL) cycles [21]. This methodology aims to overcome common limitations of generative models, including insufficient target engagement, lack of synthetic accessibility, and limited generalization. The protocol consists of the following key stages:

Data Representation and Initial Training: Molecular structures are represented as SMILES strings, tokenized, and converted into one-hot encoding vectors before input into the VAE. The VAE is initially trained on a general training set to learn viable chemical structures, then fine-tuned on a target-specific training set to enhance target engagement [21].

Nested Active Learning Cycles: The workflow implements two nested feedback loops:

- Inner AL Cycles: Generated molecules are evaluated for druggability, synthetic accessibility, and similarity to training data using chemoinformatic predictors. Molecules meeting threshold criteria are added to a temporal-specific set for VAE fine-tuning.

- Outer AL Cycles: After set numbers of inner cycles, accumulated molecules undergo docking simulations as an affinity oracle. Molecules with favorable docking scores are transferred to a permanent-specific set for VAE fine-tuning [21].

Candidate Selection and Validation: Following multiple AL cycles, stringent filtration processes identify promising candidates. Advanced molecular modeling simulations, such as Protein Energy Landscape Exploration (PELE), provide in-depth evaluation of binding interactions and stability within protein-ligand complexes [21].

The complete workflow can be visualized as follows:

Deep Reinforcement Learning for Flow Chemistry Optimization

Recent advances demonstrate the application of Deep Reinforcement Learning (DRL) for self-optimization of chemical reactions, particularly in flow chemistry. One notable protocol employed a Deep Deterministic Policy Gradient (DDPG) agent to optimize imine synthesis in flow reactors [22]. The experimental framework included:

Agent Design and Training: A DDPG agent was designed to iteratively interact with the flow reactor environment and learn optimal operating conditions. The agent was trained on a mathematical model of the reactor developed from experimental data.

Hyperparameter Optimization Methods: The protocol compared different hyperparameter tuning methods for the DDPG agent, including trial-and-error, Bayesian optimization, and a novel adaptive dynamic hyperparameter tuning approach to enhance training performance [22].

Experimental Validation: The performance of the DRL strategy was compared against state-of-the-art gradient-free methods (SnobFit and Nelder-Mead). The DRL approach demonstrated superior performance, offering better tracking of global optima while reducing required experiments by approximately 50-75% compared to traditional methods [22].

Synthesizability Optimization with Retrosynthesis Models

Addressing synthesizability remains a pressing challenge in generative molecular design. A recently developed protocol directly optimizes for synthesizability using retrosynthesis models rather than relying solely on heuristics-based metrics [23]. The methodology includes:

Retrosynthesis Integration: Unlike traditional approaches that use retrosynthesis models as post-hoc filters, this protocol incorporates them directly into the optimization loop despite computational costs.

Sample-Efficient Generation: The approach employs a sufficiently sample-efficient generative model to enable direct optimizations for synthesizability within constrained computational budgets.

Multi-Parameter Optimization: The model generates molecules satisfying multi-parameter drug discovery optimization tasks while maintaining synthesizability as determined by retrosynthesis models [23].

This protocol demonstrated that while common synthesizability heuristics correlate well with retrosynthesis model solvability for known bio-active molecules, this correlation diminishes for other molecular classes (e.g., functional materials), highlighting the importance of direct retrosynthesis integration in these cases [23].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Research Reagents and Computational Tools in AI-Driven Molecular Design

| Tool/Reagent | Function | Application Context |

|---|---|---|

| Variational Autoencoder (VAE) | Learns continuous latent representation of molecular structures; enables generation and interpolation [19] [21] | Core architecture for molecular generation; provides balanced sampling speed and interpretable latent space |

| Graph Neural Networks (GNNs) | Models molecular structures as graphs; captures structural relationships [1] | Molecular property prediction; representation learning for chemical structures |

| Retrosynthesis Models | Predicts synthetic pathways for generated molecules [23] | Assessing and optimizing synthesizability during molecular generation |

| Bayesian Optimization | Efficiently explores hyperparameter spaces with probabilistic modeling [19] [22] | Hyperparameter tuning; optimization in high-dimensional chemical spaces |

| Deep Reinforcement Learning | Trains agents to navigate chemical space via reward maximization [19] [22] | Goal-directed molecular optimization; chemical reaction optimization |

| Active Learning Frameworks | Iteratively refines models by selecting informative candidates [21] | Reducing computational costs; improving model performance with limited data |

| Molecular Dynamics Simulations | Provides physics-based evaluation of binding interactions [21] | Candidate validation; binding affinity and stability assessment |

The strategic optimization of model parameters and hyperparameters represents a critical pathway toward advancing AI-driven molecular design. As the field evolves, several emerging trends promise to further enhance our capabilities: the integration of adaptive hyperparameter tuning that dynamically adjusts during training, the development of more sample-efficient generative architectures, and the creation of unified frameworks that simultaneously optimize multiple competing objectives in drug discovery.

For chemists and drug development researchers, mastering these optimization targets is no longer optional but essential for leveraging the full potential of generative AI in molecular design. The experimental protocols and methodologies outlined in this whitepaper provide a foundation for developing more efficient, reliable, and practical AI-driven approaches to address the complex challenges of modern drug discovery. As these technologies continue to mature, they hold the promise of significantly accelerating the identification and optimization of novel therapeutic compounds with tailored properties.

In the field of machine learning (ML) for chemistry, the performance of models predicting molecular properties, toxicity, or binding affinities is highly sensitive to architectural choices and hyperparameter settings [1]. Hyperparameters are the configuration variables that govern the training process itself, such as the learning rate or the number of layers in a neural network. Unlike model parameters, which are learned from the data, hyperparameters are set prior to the training process and guide how the learning occurs.

Choosing these hyperparameters judiciously is a non-trivial task that significantly impacts a model's ability to generalize. An poor choice can lead to either overfitting, where the model memorizes the training data including its noise, or underfitting, where the model is too simplistic to capture the underlying patterns in the data [10]. For chemists and drug development professionals, this balance is paramount; a model that overfits may appear promising during validation but will fail to predict the activity of novel compounds accurately, potentially derailing a discovery project. This guide examines the relationship between hyperparameter choices and model fit, providing a technical framework for optimization within cheminformatics workflows.

Core Concepts: Overfitting and Underfitting

The ultimate goal of a machine learning model is generalization—the ability to make accurate predictions on new, unseen data based on patterns learned from a training dataset [24]. The concepts of overfitting and underfitting describe the failure to achieve this goal.

Overfitting occurs when a model is excessively complex. It learns not only the underlying pattern of the training data but also its noise and random fluctuations [24] [25]. Imagine a student who memorizes a textbook word-for-word but cannot apply the concepts to new problems [24]. In technical terms, an overfit model has low bias but high variance, meaning it is highly sensitive to the specific training set used [24]. The hallmark sign is a very low error on the training data but a high error on the test (or validation) data [25] [26].

Underfitting occurs when a model is too simple to capture the underlying trends in the data [24] [25]. Using a linear model for a complex, non-linear problem is a classic cause [24]. An underfit model has high bias and low variance, resulting in poor performance on both the training data and any new, unseen data [24] [26]. It fails to learn enough from the data and makes overly generalized predictions [26].

The following table summarizes the key characteristics:

Table 1: Diagnosing Overfitting and Underfitting

| Feature | Underfitting | Overfitting | Good Fit |

|---|---|---|---|

| Performance on Training Data | Poor [25] | Excellent / Too Good [24] [25] | Good [24] |

| Performance on Test/New Data | Poor [24] [25] | Poor [24] [25] | Good [24] |

| Model Complexity | Too Simple [24] | Too Complex [24] | Balanced [24] |

| Analogy | Only knows chapter titles [24] | Memorized the whole book [24] | Understands the concepts [24] |

The Bias-Variance Tradeoff

The tension between overfitting and underfitting is governed by the bias-variance tradeoff, a fundamental challenge in machine learning [24]. Bias is the error from erroneous assumptions in the model; high bias can cause an algorithm to miss relevant relationships, leading to underfitting. Variance is the error from sensitivity to small fluctuations in the training set; high variance can cause the model to model the random noise, leading to overfitting [24]. The goal is to find a model with enough complexity to capture the underlying patterns (low bias) but not so complex that it memorizes the noise (low variance) [24].

Hyperparameters and Their Impact on Model Fit

Hyperparameters provide the primary levers for managing the bias-variance tradeoff. They can be categorized based on their primary influence, though their effects are often interconnected.

Table 2: Key Hyperparameters and Their Influence on Model Fit

| Hyperparameter | Primary Influence | How It Affects Fit | Common Pitfalls in Chemical ML |

|---|---|---|---|

Model Complexity (e.g., max_depth in trees, number of layers/units in NN) |

Underfitting / Overfitting | Increasing complexity reduces bias (helps avoid underfitting) but increases risk of overfitting [25]. | A graph neural network with too few layers may fail to capture complex molecular interactions [1]. |

| Learning Rate | Underfitting / Overfitting | A rate too high can prevent convergence (underfitting); a rate too low can lead to overfitting to the training data [27]. | Poor convergence during training of a molecular property predictor, failing to minimize the loss function effectively [27]. |

| Regularization Strength (e.g., L1/L2, Dropout rate) | Overfitting | Increasing strength reduces variance by penalizing complexity, helping prevent overfitting. Too much can cause underfitting [24] [25]. | Overly aggressive L2 regularization on molecular descriptors simplifies the model to the point of missing key structure-activity relationships [24]. |

| Number of Training Epochs | Overfitting | Training for too many epochs can lead the model to over-optimize and memorize the training data [24] [25]. | A molecular classifier's performance on a validation set degrades after continued training, even as training accuracy improves [25]. |

| Batch Size | Underfitting / Overfitting | Affects the noise and convergence of the gradient estimate. Smaller batches can have a regularizing effect but may increase training time [27]. | - |

| Number of Features | Overfitting | Including too many irrelevant features or descriptors increases the risk of the model latching onto spurious correlations [25] [26]. | Using all possible Mordred descriptors without selection can cause a QSAR model to learn noise instead of the true signal [10]. |

Hyperparameter Optimization (HPO) Methodologies

Hyperparameter optimization is the process of systematically searching for the optimal combination of hyperparameters that minimizes a pre-defined loss function on a validation set. For chemists, this is crucial for developing robust models for tasks like molecular property prediction [1].

Experimental Protocols for HPO

Several strategies exist for HPO, ranging from straightforward to sophisticated. The choice often depends on the computational cost of model training and the size of the hyperparameter space.

- Manual Search: The initial, intuitive approach where a researcher uses domain knowledge and intuition to adjust a few hyperparameters based on validation performance. While a necessary starting point, it is inefficient and non-exhaustive [28].

- Grid Search: An exhaustive search over a pre-defined set of values for each hyperparameter. It is simple to implement and parallelize but becomes computationally intractable as the number of hyperparameters grows (the "curse of dimensionality") [27].

- Random Search: Instead of an exhaustive grid, random search samples hyperparameter combinations from a specified distribution. It has been shown to find good hyperparameters more efficiently than grid search, as it better explores the search space without being confined to a grid [27].

- Bayesian Optimization: A more advanced, sequential model-based optimization technique. It builds a probabilistic model of the function mapping hyperparameters to the objective function (e.g., validation loss) and uses this model to decide the most promising hyperparameters to evaluate next [28] [27]. This approach is particularly well-suited for optimizing expensive-to-evaluate functions, such as training large Graph Neural Networks (GNNs) on cheminformatics datasets [28] [1]. Frameworks like Optuna facilitate the implementation of Bayesian optimization [28].

The following diagram illustrates the logical workflow of a systematic HPO process, which is agnostic to the specific search algorithm chosen.

HPO Workflow Logic

A Protocol for HPO in Cheminformatics

A practical HPO experiment for a molecular property prediction task can be structured as follows, using a Graph Neural Network (GNN) as an example:

- Objective: Minimize the Mean Absolute Error (MAE) on a held-out validation set for a molecular solubility prediction task.

- Model: A Graph Neural Network (GNN) architecture.

- Define Search Space:

num_layers: [2, 3, 4, 5] (Number of GNN layers)hidden_channels: [64, 128, 256] (Dimensionality of node features)learning_rate: [1e-4, 1e-3, 1e-2] (log-uniform)dropout_rate: [0.0, 0.1, 0.2, 0.5] (Probability of dropping a neuron)

- Optimization Strategy: Employ a Bayesian optimization framework like Optuna for 100 trials [28]. Each trial consists of a unique set of hyperparameters sampled from the search space.

- Evaluation Protocol: For each trial (hyperparameter set):

- Initialize the GNN model with the sampled hyperparameters.

- Train the model on the training dataset for a fixed number of epochs (e.g., 500).

- Use a validation set to compute the MAE after each epoch.

- Implement early stopping if the validation MAE does not improve for 50 consecutive epochs, to prevent overfitting during the HPO itself and save computational resources [25].

- Report the best validation MAE achieved during training for that trial.

- Selection: Upon completion of all trials, select the hyperparameter configuration that achieved the lowest validation MAE.

- Final Assessment: Retrain the model on the combined training and validation data using the optimal hyperparameters, and report its final performance on a completely held-out test set.

For researchers implementing HPO in cheminformatics, a suite of software tools and resources is essential. The following table details key "research reagents" for this computational work.

Table 3: Essential Computational Tools for Hyperparameter Optimization

| Tool / Resource | Function | Relevance to Chemical ML |

|---|---|---|

| Optuna [28] | A hyperparameter optimization framework that supports define-by-run APIs and various samplers like Bayesian optimization. | Efficiently navigates vast hyperparameter search spaces for GNNs and other models, saving significant time and computational resources [28] [1]. |

| RDKit [29] | An open-source toolkit for cheminformatics. | Used for generating molecular descriptors, fingerprints, and graph representations that serve as input features for ML models, directly influencing the feature space [29]. |

| ChemProp [10] [30] | A message-passing neural network for molecular property prediction. | A specialized GNN that is a common target for HPO; its performance is sensitive to hyperparameters like depth, hidden size, and dropout [10] [30]. |

| scikit-learn | A core Python library for machine learning. | Provides implementations of models (like Random Forests), evaluation tools (like cross-validation), and basic HPO methods (GridSearchCV, RandomSearchCV). |

| TensorBoard / Weights & Biases [25] | Tools for visualizing the training process. | Monitor training and validation metrics in real-time to diagnose overfitting/underfitting and manage training dynamics [25]. |

Advanced Considerations and Future Directions

While HPO is powerful, it is not a silver bullet. Several advanced considerations must be taken into account for rigorous model development.

- The Risk of Overfitting with HPO: Extensively tuning hyperparameters on a fixed validation set can itself lead to overfitting to that validation set [10]. Using techniques like nested cross-validation provides a more robust framework for both model selection and evaluation, ensuring that the reported performance generalizes [25].

- Data-Centric AI: The paradigm is shifting from solely model-centric optimization to a data-centric approach. The quality and representativeness of the training data are foundational [25]. For cheminformatics, this means that addressing data imbalance (e.g., few active compounds in a screening library) through techniques like focal loss or data augmentation can be as important as HPO [10].

- The Role of Expert Knowledge: In molecular optimization, leveraging human expert knowledge can refine the selection of molecules during active learning, leading to more navigable chemical space and compounds with favorable properties [10]. Furthermore, interpreting models with tools like SHAP (SHapley Additive exPlanations) is crucial for building trust and generating actionable hypotheses in high-stakes domains like drug discovery [31].

The following diagram synthesizes the interconnected concepts discussed in this guide, showing how HPO is part of a larger, iterative process for building robust chemical ML models.

Chemical ML Model Development Cycle

Poor hyperparameter choices are a primary conduit to the pitfalls of overfitting and underfitting, which can compromise the utility of machine learning models in chemical research. A nuanced understanding of how hyperparameters like model complexity, learning rate, and regularization strength influence the bias-variance tradeoff is essential. By adopting systematic Hyperparameter Optimization methodologies, such as Bayesian optimization with tools like Optuna, and integrating them within a rigorous, data-centric validation framework, chemists can build more reliable, generalizable, and impactful predictive models. This disciplined approach is key to accelerating innovation in drug discovery and materials science.

In the realm of optimization for chemical research, the conflict between exploration and exploitation represents a fundamental strategic dilemma. Exploration involves gathering new information by testing unknown parameterizations, while exploitation leverages known information to refine parameterizations that have previously shown good performance [32]. This trade-off is particularly crucial in pharmaceutical and materials science research where experimental evaluations are expensive, time-consuming, and resource-intensive [33]. With the emergence of automated research workflows and high-throughput experimentation, data-driven optimization algorithms have become essential tools for accelerating discovery while promoting sustainable research practices through reduced experimental burden [9].

Bayesian optimization (BO) has emerged as a powerful machine learning approach that systematically balances this exploration-exploitation dilemma for global optimization problems [34]. This sequential model-based strategy is particularly valuable for chemists facing high-dimensional problems with numerous parameters—such as temperature, catalyst, solvent, and concentration—where traditional trial-and-error approaches become prohibitively expensive [35]. By transforming chemical intuition into computable mathematical principles, Bayesian optimization enables researchers to navigate complex experimental landscapes with significantly fewer experiments while reducing the risk of becoming trapped in local optima [35].

Mathematical Foundations of Bayesian Optimization

At the heart of Bayesian optimization lies Bayes' theorem, which describes the correlation between different events and calculates conditional probabilities [33]. The Bayesian optimization framework employs two key components: a surrogate model to approximate the objective function, and an acquisition function to guide the selection of subsequent experiments [34].

The process begins by building a surrogate model, typically a Gaussian Process (GP), which defines a probability distribution over possible functions that fit the observed data points [34] [36]. This model generates predictions with uncertainty estimates for unexplored regions of the parameter space. The surrogate model provides both a predicted mean μ(x) and variance σ²(x) for each data point x, where the mean indicates the expected performance and the variance quantifies the uncertainty in the prediction [36].

The acquisition function uses these predictions to quantify the utility of evaluating unknown parameterizations by balancing the predicted mean (exploitation) and uncertainty (exploration) [34]. This function is optimized to suggest the most promising experiment to perform next. The newly observed outcome is then added to the dataset, and the surrogate model is updated, creating an iterative feedback loop that progressively refines understanding of the experimental landscape [34].

Acquisition Functions: Strategic Balancing Mechanisms

Acquisition functions are mathematical formulations that implement specific strategies for balancing exploration and exploitation. The following table summarizes four principal acquisition functions used in Bayesian optimization:

Table 1: Comparison of Key Acquisition Functions in Bayesian Optimization

| Acquisition Function | Mathematical Formulation | Strategy | Best-Suited Applications |

|---|---|---|---|

| Probability of Improvement (PI) | PI(x) = Φ((μ(x) - f(x⁺)) / σ(x)) [35] |

Conservative approach focusing on regions near current optimum [35] | Unimodal landscapes; fine-tuning known good conditions [35] |

| Expected Improvement (EI) | EI(x) = E[max(f(x) - f⁺, 0)] [34] |

Balances probability and magnitude of improvement [35] | Complex multi-extremal landscapes; general-purpose optimization [35] [34] |

| Upper Confidence Bound (UCB) | UCB(x) = μ(x) + βσ(x) [36] |

Explicitly quantifies uncertainty; proactively explores high-variance regions [35] | Early-stage optimization; rapid mapping of global response surfaces [35] |

| Thompson Sampling (TS) | Samples from posterior distribution [35] | Adaptive randomness through probability matching [35] | Noisy, dynamic systems; real-time optimization [35] |

In-Depth Analysis of Acquisition Strategies

Probability of Improvement (PI) adopts a strategy of steady, incremental progress by prioritizing regions near the current optimal solution where improvements are likely [35]. This approach is analogous to fine-tuning parameters within a familiar reaction system. For instance, if researchers have identified a catalyst achieving 60% yield, PI would guide optimization around this condition by testing similar catalysts or adjusting temperature [35]. The primary limitation of PI is its tendency to become trapped in local optima due to limited exploration of uncharted regions [35].

Expected Improvement (EI) represents a more balanced approach that comprehensively evaluates both the probability and magnitude of improvement [35]. This dual consideration allows EI to dynamically equilibrium between exploring unknown regions and exploiting existing results. EI is particularly well-suited for complex scenarios where the objective function has multiple potential extrema, such as multi-step reactions or multi-component systems [35]. Its neutral strategic positioning makes it appropriate for most chemical optimization scenarios, especially when reaction mechanisms are unclear [35].

Upper Confidence Bound (UCB) embraces a strategy of frontier expansion by proactively exploring high-uncertainty regions through the upper bound of confidence intervals [35] [36]. The hyperparameter β controls the exploration weight, typically decaying over time [35] [36]. This approach is particularly valuable in early optimization stages for rapidly mapping the global response surface, similar to extensively exploring a new city to identify promising neighborhoods before focusing on specific areas [35].

Thompson Sampling (TS) employs a strategy of adaptive randomness through probability matching, where multiple potential models are sampled from the posterior distribution [35]. This approach demonstrates strong robustness to experimental noise and adapts well to stochastic environments, making it suitable for dynamic scenarios with random perturbations, such as yield fluctuations due to manual operations or catalyst activity decay over time [35].

Experimental Protocols for Chemical Applications

Workflow for Molecular Geometry Optimization

The application of Bayesian optimization to molecular geometry searches involves a structured five-step protocol that has been successfully implemented for locating global minima and conical intersections [36]:

Diagram 1: Geometry optimization workflow using Bayesian optimization

Step 0: Initial Dataset Preparation - Collect diverse molecular structures using low-computational cost methods such as the single-component artificial force-induced reaction (SC-AFIR) method. For formaldehyde, this approach identified 21 reaction pathways yielding 71 unique structures after excluding physically improbable configurations [36].

Step 1: Gaussian Process Regression Model Construction - Build a surrogate model using internal coordinates (distances, angles, dihedral angles) as explanatory variables. For global minimum searches, the objective variable is -E(S₀) to transform minimization into a maximization problem. For conical intersection searches, use a cost function that balances energy degeneracy and minimization: C = (E(S₀) + E(S₁))/2 + (E(S₁) - E(S₀))²/α [36].

Step 2: Candidate Geometry Identification - Calculate the acquisition function (e.g., UCB, EI) across the parameter space and select the geometry with the maximum value for subsequent evaluation [36].

Step 3: Quantum Chemical Calculation - Perform energy evaluations at the selected geometry using appropriate theoretical methods (e.g., DFT/TDDFT with ωB97XD functional and cc-pVDZ basis set) [36].

Step 4: Termination Check - Continue iterations until convergence criteria are satisfied, such as minimal improvement between cycles or reaching a maximum iteration count [36].

Workflow for Reaction Condition Optimization

For optimizing chemical reaction conditions, Bayesian optimization follows a similar iterative process tailored to experimental constraints:

Diagram 2: Reaction optimization workflow for experimental chemistry

This workflow has demonstrated significant efficiency improvements in pharmaceutical applications, potentially reducing the number of required experiments from 25 to 10 in traditional drug development scenarios [35]. The sequential model-based strategy allows researchers to efficiently navigate high-dimensional parameter spaces where numerous factors simultaneously influence reaction outcomes.

Successful implementation of Bayesian optimization in chemical research requires both software tools and strategic knowledge. The following table catalogs essential resources:

Table 2: Bayesian Optimization Software Tools for Chemical Research

| Tool Name | Key Features | License | Chemical Applications |

|---|---|---|---|

| BoTorch [33] | Flexible framework for Bayesian optimization; multi-objective optimization | MIT | Materials synthesis, molecular design [33] |

| Ax [33] [34] | Modular platform built on BoTorch; adaptive experimentation | MIT | Concrete formulation, dye laser molecules [34] |

| NEXTorch [33] | User-friendly interface; specialized for chemical applications | MT | Reaction optimization, automated workflows [33] |

| GPyOpt [33] | Gaussian process-based optimization; parallel experimentation | BSD | High-throughput screening [33] |

| ROBERT [9] | Automated workflows for low-data regimes; overfitting prevention | - | Chemical reaction optimization [9] |

Strategic Implementation Guidelines

Choosing an appropriate acquisition function depends on both the experimental context and available resources:

Probability of Improvement is recommended when experimental costs are high and the objective function has obvious extrema [35]. This approach aligns with a mechanism-first conservative mindset.

Expected Improvement represents a robust default choice for most chemical optimization scenarios due to its balanced approach [34]. It embodies a philosophy of data-mechanism integration.

Upper Confidence Bound is particularly effective in early-stage optimization when rapidly mapping the parameter space is prioritized [35]. This strategy reflects the exploratory spirit of bold hypothesis-testing.

Thompson Sampling excels in noisy, dynamic systems where experimental conditions fluctuate [35]. It simulates the adaptive art of flexible trial-and-error.

For low-data regimes common in chemical research, specialized workflows that incorporate measures to prevent overfitting are essential. The ROBERT software, for instance, employs a combined root mean squared error metric that evaluates both interpolation and extrapolation performance during Bayesian hyperparameter optimization [9].

The strategic balance between exploration and exploitation represents a cornerstone of efficient experimental design in chemical research. Bayesian optimization formalizes this dilemma through mathematical frameworks implemented in acquisition functions, each embodying distinct strategic priorities. As automated chemistry platforms become increasingly prevalent, mastering these computational strategies enables researchers to construct digital twins of reaction systems through systematic data accumulation [35].

When facing high-dimensional optimization challenges—from molecular geometry prediction to reaction condition screening—chemists must continually ask from a Bayesian perspective: at this experimental stage, should the model explore the boundaries of the unknown or deepen the value of the known? [35]. By leveraging the appropriate acquisition functions and software tools detailed in this guide, researchers can dramatically accelerate discovery while promoting sustainable research practices through reduced experimental burden.

HPO in Action: A Toolbox of Optimization Methods for Chemical Data

In machine learning, hyperparameters are configuration settings that control the learning process itself. Unlike model parameters, which are learned automatically from the data, hyperparameters are set prior to training and guide how the model learns. The process of finding the optimal set of hyperparameters for a given model and dataset is known as hyperparameter optimization or hyperparameter tuning [37]. For chemists and drug development researchers, this process is crucial for building accurate predictive models for tasks such as quantitative structure-activity relationship (QSAR) modeling, molecular property prediction, and spectral classification [38] [39] [40].

The goal of hyperparameter optimization is to search through an n-dimensional space (where each dimension represents a different hyperparameter) to find the point that results in the best model performance, as measured by a specific evaluation metric like accuracy or mean absolute error [37]. Two of the most fundamental and widely used approaches for this search are Grid Search and Random Search, both of which provide systematic methodologies for exploring hyperparameter configurations [41].

This guide examines these core techniques within the context of chemical research, providing detailed methodologies, comparisons, and implementation protocols to equip scientists with practical knowledge for optimizing machine learning models in materials chemistry and drug discovery applications.

Core Concepts and Definitions

Hyperparameter Tuning

Hyperparameter tuning consists of systematically searching for the best combination of hyperparameter values to boost a model's performance [41]. It is essential because the choice of hyperparameters can dramatically influence a model's predictive accuracy and generalization capability. For chemistry applications, this might involve tuning models to predict binding affinities, optimize synthetic conditions, or classify spectroscopic data [38] [39] [42].

Search Space

The search space defines the volume of possible hyperparameter combinations to be explored during optimization. It can be thought of geometrically as an n-dimensional volume, where each hyperparameter represents a different dimension and the scale of the dimension represents the values that the hyperparameter may take on (real-valued, integer-valued, or categorical) [37].

Grid Search: Systematic Exploration

Fundamental Principles

Grid Search is a conventional exhaustive algorithm used in machine learning for hyperparameter tuning. It meticulously evaluates every possible combination of hyperparameters from a pre-defined grid to identify the configuration that yields the best model performance [41] [43]. The algorithm operates by constructing a grid of hyperparameter values and systematically evaluating the model performance for each position in this grid [43].

For example, if a grid provides 3 values for n_estimators (e.g., 50, 100, and 500) and 3 values for max_depth (e.g., None, 1, and 4), Grid Search will evaluate 3 × 3 = 9 possible hyperparameter configurations [41]. For each combination, it typically trains and evaluates a machine learning model using k-fold cross-validation, calculating the average performance across all folds to provide a final score [41].

Workflow and Implementation

The following diagram illustrates the systematic workflow of the Grid Search hyperparameter optimization process:

Experimental Protocol for Grid Search:

Define the hyperparameter grid: Create a dictionary where keys are hyperparameter names and values are lists of possible settings.

Initialize the model: Define the base model to be optimized.

Configure GridSearchCV: Set up the search with cross-validation and scoring metric.

Execute the search: Fit the GridSearchCV object to the training data.

Extract optimal parameters: Retrieve the best performing hyperparameter combination.

Random Search: Stochastic Sampling

Fundamental Principles

Random Search represents a different approach to hyperparameter optimization. Instead of exhaustively trying all possible combinations, it randomly samples a predefined number of configurations from specified distributions of hyperparameter values [41] [43]. The key distinction from Grid Search lies in both the input (distributions of values rather than discrete lists) and the search methodology (random sampling rather than exhaustive evaluation) [41].

In Random Search, the hyperparameter space is defined by specifying probability distributions for each hyperparameter. These distributions can be uniform, log-uniform, normal, or explicitly defined categorical values [41]. The number of random combinations to test is explicitly controlled by the user through a parameter such as n_iter in scikit-learn, allowing for a direct balance between computational cost and search thoroughness [41].

Studies have shown that by testing approximately 60 randomly selected combinations, Random Search has a high probability of finding optimal or near-optimal hyperparameters for most machine learning models [44]. This efficiency stems from its ability to explore the search space more broadly without being constrained to a predefined grid structure.

Workflow and Implementation

The following diagram illustrates the stochastic sampling workflow of the Random Search hyperparameter optimization process:

Experimental Protocol for Random Search:

Define the hyperparameter distributions: Create a dictionary where keys are hyperparameter names and values are distributions to sample from.

Initialize the model: Define the base model to be optimized.

Configure RandomizedSearchCV: Set up the search with cross-validation, scoring metric, and number of iterations.

Execute the search: Fit the RandomizedSearchCV object to the training data.

Extract optimal parameters: Retrieve the best performing hyperparameter combination.

Comparative Analysis: Grid Search vs. Random Search

Performance and Efficiency Comparison

The following table summarizes the key characteristics and comparative performance of Grid Search and Random Search:

Table 1: Comprehensive Comparison of Grid Search vs. Random Search

| Aspect | Grid Search | Random Search |

|---|---|---|

| Search Methodology | Exhaustive search over all specified combinations [41] [43] | Random sampling from specified distributions [41] [43] |

| Parameter Space Definition | Discrete values for each hyperparameter [41] | Probability distributions for each hyperparameter [41] |

| Computational Efficiency | Less efficient for large parameter spaces; scales poorly with dimensionality [43] | More efficient; can find good solutions with fewer evaluations [41] [44] |

| Optimal Solution Guarantee | Finds best combination within defined grid [41] | Probabilistic; finds near-optimal solutions with high probability [44] |

| Ideal Use Cases | Small parameter spaces (few hyperparameters with limited values) [43] | Large parameter spaces and high-dimensional searches [41] |

| Parallelization | Highly parallelizable since all evaluations are independent [43] | Highly parallelizable since all evaluations are independent [41] |

| User Control | Complete control over specific values to test [41] | Control over distributions and number of iterations [41] |

Search Space Coverage Comparison

The visual representation below illustrates the fundamental difference in how Grid Search and Random Search explore the hyperparameter space, explaining why Random Search can often find good solutions more efficiently in high-dimensional spaces:

Key Advantages and Limitations

Grid Search Advantages:

- Comprehensive within grid: Guaranteed to find the best combination within the specified parameter values [41]

- Simple implementation: Easy to understand, implement, and interpret results [43]

- Reproducible: Always produces the same results when repeated with the same grid [41]

Grid Search Limitations:

- Computationally expensive: High time and resource consumption with increasing dimensions [43]

- Suffers from curse of dimensionality: Becomes infeasible as the number of hyperparameters increases [43]

- Discrete sampling: Cannot explore continuous parameter spaces effectively [45]

Random Search Advantages:

- Computational efficiency: Can discover good hyperparameters with fewer iterations [41] [44]

- Better for high-dimensional spaces: Explores more diverse values for each hyperparameter [41]

- Flexible parameter definitions: Supports both discrete and continuous distributions [41]

Random Search Limitations:

- No optimality guarantee: May miss important regions of the search space due to random sampling [41]

- Requires careful distribution specification: Poorly chosen distributions may lead to suboptimal results [41]

- Less reproducible: Results may vary due to random sampling nature [41]

Applications in Chemistry and Materials Science

Case Studies and Research Applications

Hyperparameter optimization plays a critical role in various chemistry and materials science applications. The following case studies demonstrate practical implementations:

1. Raman Spectroscopy Classification: A study on colorectal cancer detection using Raman spectroscopy implemented a custom grid search approach to optimize both model hyperparameters and preprocessing parameters. The researchers prioritized balanced accuracy on the test set to reduce bias toward the dominant class, with Decision Tree and Support Vector Classifier models achieving the highest balanced accuracy (71.77% for DT and 70.77% for SVC) [39].

2. Materials Property Prediction: In materials chemistry, machine learning applications for predicting properties of perovskites (piezoelectric coefficient, band gap, energy storage) have utilized grid search hyperparameter optimization for both classical and quantum machine learning models, including Support Vector Regressors (SVR) and Gaussian Process Regressors (GPR) [46].

3. Drug Discovery and QSAR Modeling: Generative machine learning approaches in drug discovery construct smooth chemical search spaces where small moves correspond to small changes in properties like binding affinity and ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity). These approaches enable efficient optimization over large chemical spaces comprising tens of billions of compounds [40].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Computational Tools for Hyperparameter Optimization in Chemical Research

| Tool/Category | Function | Example Applications |

|---|---|---|

| Scikit-learn [41] [37] | Python library providing GridSearchCV and RandomizedSearchCV implementations | General-purpose ML model tuning for spectroscopic data and QSAR models |

| Cross-Validation [41] [37] | Technique for robust performance estimation; RepeatedStratifiedKFold for classification, RepeatedKFold for regression | Preventing overfitting in small chemical datasets |

| Performance Metrics [39] [37] | Evaluation criteria: accuracy, balanced accuracy, negmeanabsolute_error | Handling class imbalance in biological datasets; regression tasks |

| Hyperparameter Distributions [41] | Probability distributions (uniform, log-uniform, normal) for random search | Efficient exploration of continuous parameters like regularization strength |

| Bayesian Optimization [45] | Advanced optimization using probabilistic models to guide search | Intermediate/large models where grid and random search are too costly |

Advanced Considerations and Future Directions

Alternative Optimization Techniques

While Grid Search and Random Search represent foundational approaches, more advanced techniques are gaining adoption in chemical research:

Bayesian Optimization uses probabilistic models to predict promising hyperparameter configurations based on previous evaluations, typically requiring fewer iterations than random search [45]. Unlike Grid and Random Search which evaluate every configuration independently, Bayesian Optimization takes informed steps based on previous results, allowing it to discard non-optimal configurations more efficiently [45].

Quantum Active Learning represents an emerging frontier where quantum algorithms are integrated within active learning frameworks. Recent explorations have utilized quantum support vector regressors (QSVR) and quantum Gaussian process regressors (QGPR) with various quantum kernels for materials design and discovery tasks [46].

Best Practices for Chemical Applications

Based on the reviewed literature and applications, the following recommendations emerge for chemists implementing hyperparameter optimization: