Hyperparameter Optimization for Drug Discovery ML Models: Methods, Applications, and Best Practices

This article provides a comprehensive guide to hyperparameter optimization (HPO) for machine learning (ML) models in drug discovery.

Hyperparameter Optimization for Drug Discovery ML Models: Methods, Applications, and Best Practices

Abstract

This article provides a comprehensive guide to hyperparameter optimization (HPO) for machine learning (ML) models in drug discovery. Tailored for researchers and drug development professionals, it covers the foundational principles of HPO, explores advanced methodological frameworks like Hierarchically Self-Adaptive PSO (HSAPSO) and Bayesian Optimization, and addresses critical troubleshooting challenges such as data imbalance and overfitting. It further details validation strategies and comparative analyses of HPO techniques, illustrating their impact on key tasks including target identification, ADMET prediction, and drug-target interaction forecasting. By synthesizing the latest research and real-world case studies, this resource aims to equip scientists with the knowledge to build more accurate, robust, and efficient ML models, ultimately accelerating the pharmaceutical R&D pipeline.

Why Hyperparameter Optimization is a Game-Changer in AI-Driven Drug Discovery

The modern drug discovery landscape is characterized by a critical paradox: unprecedented scientific innovation coincides with mounting economic pressures and development risks. While technological advances like artificial intelligence (AI) and novel therapeutic modalities open new treatment possibilities, the industry faces a clinical trial success rate that has plummeted to 6.7% for Phase 1 drugs in 2024, down from 10% a decade ago [1]. This high attrition rate, combined with escalating development costs, places immense strain on research and development (R&D) budgets, with the internal rate of return for R&D investment falling to 4.1% – significantly below the cost of capital [1]. This application note quantifies these stakes, providing structured data and actionable protocols to inform the optimization of machine learning (ML) models, which are increasingly vital for navigating this complex environment. By framing these challenges within the context of hyperparameter optimization for predictive ML, we aim to equip researchers with the data and methodologies necessary to enhance the precision and efficiency of the drug discovery pipeline.

Quantitative Landscape: Costs, Success Rates, and Expenditures

A data-driven understanding of the industry's economic and attrition metrics is fundamental for setting realistic benchmarks and optimization goals for ML models. The following tables synthesize the current quantitative landscape.

Table 1: Global Pharmaceutical R&D and Clinical Success Metrics (2024-2025)

| Metric | Value | Source/Context |

|---|---|---|

| Drug Candidates in Development | 23,000 | Global R&D pipeline [1] |

| Annual R&D Spending | >$300 Billion | Global biopharma investment [1] |

| Phase 1 Success Rate (2024) | 6.7% | Down from 10% a decade ago [1] |

| Internal Rate of R&D Return | 4.1% | Below the cost of capital [1] |

| AI Impact on Preclinical Timelines | 25-50% Reduction | Estimated reduction in time and cost [2] |

| Projected AI-Discovered New Drugs | 30% | Proportion of new drugs by 2025 [2] |

Table 2: U.S. Pharmaceutical Expenditure Trends and Projections

| Sector | 2024 Expenditure (Change from 2023) | 2025 Projected Growth | Key Drivers |

|---|---|---|---|

| Overall U.S. Market | $805.9 Billion (+10.2%) | 9.0% to 11.0% | Utilization (7.9% increase) and new drugs (2.5% increase) [3] |

| Clinic Settings | $158.2 Billion (+14.4%) | 11.0% to 13.0% | Primarily increased utilization [3] |

| Non-Federal Hospitals | $39.0 Billion (+4.9%) | 2.0% to 4.0% | Modest contributions from new products, price, and volume [3] |

These figures highlight the intense pressure to improve R&D productivity. The low success rates, particularly in early phases, underscore the need for more predictive models to identify failures earlier and prioritize the most promising candidates.

Experimental Protocols for Key Emerging Modalities

Protocol: Target Engagement Validation Using Cellular Thermal Shift Assay (CETSA)

Principle: CETSA measures drug-target engagement in intact cells and native tissues by detecting thermal stabilization of a protein target upon ligand binding, providing a direct readout of pharmacological activity [4].

Materials: (See Section 6: The Scientist's Toolkit) Method:

- Cell Preparation and Dosing: Culture adherent or suspension cells in appropriate medium. Treat with the compound of interest across a range of concentrations (e.g., 1 nM - 100 µM) and a vehicle control (DMSO) for a predetermined time (e.g., 1-2 hours).

- Heat Challenge: Harvest cells and divide into aliquots in PCR tubes. Subject each aliquot to a range of elevated temperatures (e.g., 45°C - 65°C) for 3-5 minutes in a thermal cycler to denature and precipitate un-stabilized proteins.

- Cell Lysis and Clarification: Lyse cells using a non-denaturing buffer supplemented with protease inhibitors. Centrifuge at high speed (e.g., 20,000 x g for 20 minutes) to separate the soluble protein fraction (containing stabilized target) from precipitated aggregates.

- Target Quantification: Analyze the soluble fraction by Western blot, immunoassay, or high-resolution mass spectrometry (as in Mazur et al., 2024) to quantify the remaining intact target protein [4].

- Data Analysis: Plot the fraction of remaining soluble protein against temperature for each compound concentration. Calculate the melting temperature (Tm) shift (ΔTm) between treated and vehicle-control samples. A concentration-dependent increase in Tm indicates direct target engagement.

Protocol: In Silico Screening and AI-Driven Hit Identification

Principle: This protocol leverages machine learning and molecular docking to virtually screen large compound libraries, prioritizing molecules with high predicted binding affinity and favorable drug-like properties for experimental validation [4] [5].

Materials: (See Section 6: The Scientist's Toolkit) Method:

- Library and Target Preparation:

- Obtain a small molecule library in a suitable format (e.g., SDF, SMILES).

- Prepare the 3D structure of the target protein (e.g., from Protein Data Bank or homology modeling). Define the binding site coordinates and generate necessary grid parameter files.

- Feature Extraction and Model Training (for ML approaches):

- Extract molecular features (e.g., molecular weight, logP, topological descriptors, pharmacophoric features) from the compound library. Ahmadi et al. (2025) demonstrated that integrating these features can boost hit enrichment by over 50-fold [4].

- Train a machine learning model (e.g., a context-aware hybrid model like CA-HACO-LF, which uses ant colony optimization for feature selection and a logistic forest classifier) on known active and inactive compounds to predict bioactivity [6].

- Virtual Screening:

- Perform molecular docking (e.g., using AutoDock Vina) of the compound library against the target protein to predict binding poses and affinity scores (e.g., predicted Kd) [4].

- Use the trained ML model to score and rank compounds based on predicted activity and desired properties.

- Prioritization and Triaging:

- Apply filters for drug-likeness (e.g., Lipinski's Rule of Five) and predicted ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties using platforms like SwissADME [4].

- Visually inspect the top-ranking compounds' binding poses. Select a final, diverse subset of hits for purchase or synthesis and subsequent in vitro validation.

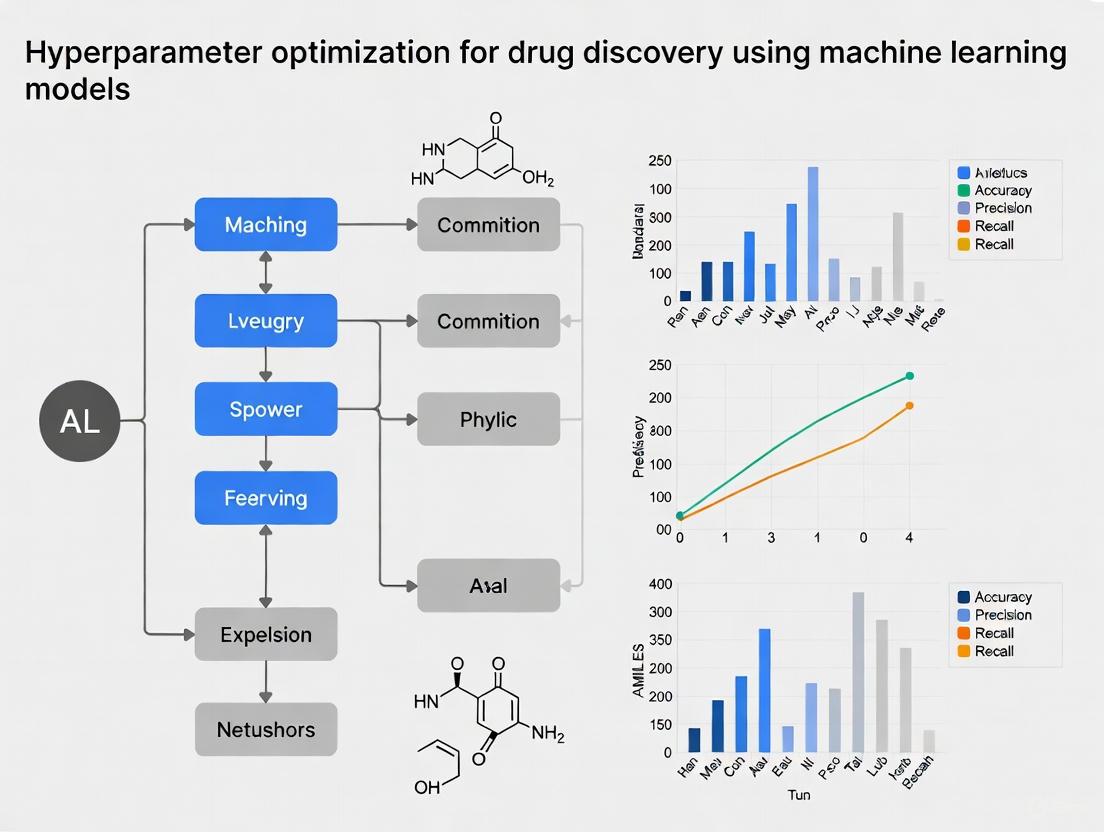

Visualization of Key Workflows and Pathways

AI-Optimized Drug Discovery Workflow

PROTAC-Mediated Protein Degradation Pathway

Key Signaling Pathways and Biological Networks in Emerging Therapies

CAR-T Cell Signaling and Engineering Platforms

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Platforms for Modern Drug Discovery

| Reagent / Platform | Function / Application | Specific Example / Note |

|---|---|---|

| CETSA Kits | Validates direct drug-target engagement in physiologically relevant cellular contexts, bridging biochemical and cellular efficacy [4]. | Used to confirm dose-dependent stabilization of DPP9 in rat tissue [4]. |

| AI/ML Drug Discovery Platforms | Accelerates target prediction, compound prioritization, PK/PD modeling, and clinical trial simulation. | Context-Aware Hybrid Ant Colony Optimized Logistic Forest (CA-HACO-LF) for drug-target interaction prediction [6]. |

| Virtual Screening Software | Enables in silico docking of compound libraries to target proteins for hit identification. | AutoDock, SwissADME for predicting binding potential and drug-likeness [4]. |

| PROTAC E3 Ligase Toolbox | Provides ligands and building blocks for recruiting specific E3 ubiquitin ligases in proteolysis-targeting chimera design. | Moving beyond Cereblon/VHL to ligands for DCAF16, KEAP1, FEM1B [7]. |

| Digital Twin Platforms | Generates AI-powered virtual patient cohorts to augment control arms in clinical trials, reducing required patient numbers. | Unlearn.ai demonstrated this in Alzheimer's trials, reducing placebo group size [7]. |

| CRISPR Gene Editing Tools | Enables rapid in vivo and ex vivo gene editing for target validation and therapeutic development. | Lipid nanoparticles for in vivo delivery (e.g., CTX310 for lowering LDL) [7]. |

Defining Hyperparameters vs. Model Parameters in Machine Learning

Core Definitions and Distinctions

In machine learning, model parameters and hyperparameters represent two distinct classes of variables that govern model behavior, each with a different role in the learning process.

Model parameters are internal variables whose values are learned directly from the training data during the model fitting process. These parameters are not set manually but are estimated by optimization algorithms to map input data to the correct output. Examples include the weights and biases in a neural network or the slope and intercept in a linear regression model [8] [9]. They are essential for making predictions on new, unseen data.

In contrast, hyperparameters are external configuration variables whose values are set prior to the commencement of the training process. They control the overarching behavior of the learning algorithm itself and cannot be learned from the data. Examples include the learning rate for gradient descent, the number of layers in a neural network, or the number of trees in a random forest [8] [9]. The choice of hyperparameters directly influences how effectively the model parameters are learned.

Table 1: Fundamental Differences Between Parameters and Hyperparameters

| Feature | Model Parameters | Model Hyperparameters |

|---|---|---|

| Definition | Internal variables learned from data [9] | External configuration variables set before training [8] |

| Purpose | Used for making predictions on new data [9] | Control the process of learning model parameters [8] [9] |

| Determined By | Optimization algorithms (e.g., Gradient Descent, Adam) [9] | The researcher via manual setting or hyperparameter tuning [8] [9] |

| Examples | Weights & biases in Neural Networks; Slope & intercept in Linear Regression [9] | Learning rate, number of model layers, number of epochs, regularization strength [8] [9] |

Hyperparameters in Drug Discovery ML

In the context of drug discovery, the performance of machine learning models is highly sensitive to hyperparameter configuration. The complex, high-dimensional nature of pharmaceutical data—ranging from molecular structures to 'omics' profiles—makes optimal hyperparameter selection a non-trivial yet critical task for building predictive and generalizable models [10] [11].

Hyperparameters in this domain can be broadly categorized to better understand their function:

- Architecture Hyperparameters: These define the model's structure. Examples include the number of layers in a Graph Neural Network (GNN) or the number of neurons per layer, which control the model's capacity to learn complex representations from molecular graphs [8] [12].

- Optimization Hyperparameters: These govern the training process. The learning rate and batch size are prime examples, critically affecting the speed, stability, and ultimate success of the optimization process [8] [13].

- Regularization Hyperparameters: These are designed to prevent overfitting, a common risk with limited bioactivity data. They include the dropout rate and the strength of L1/L2 regularization [8].

Experimental Protocols for Hyperparameter Optimization

Protocol: Bayesian Hyperparameter Optimization for a Molecular Property Predictor

This protocol outlines the use of Bayesian optimization to tune a deep learning model for predicting molecular properties, a common task in early-stage drug discovery [13].

1. Objective: Identify the optimal set of hyperparameters for a Convolutional Neural Network (CNN) model that predicts molecular properties (e.g., solubility, permeability) from SMILES strings [13].

2. Experimental Setup:

- Model: Fully Convolutional Sequence-to-Sequence (ConvS2S) model.

- Representation: SMILES strings are used as the molecular representation.

- Technique: Bayesian Optimization is employed as a efficient strategy for hyperparameter search.

3. Procedure:

- Step 1 - Define Search Space: Specify the hyperparameter ranges to be explored. Key hyperparameters include:

- Learning Rate (Logarithmic): 1e-5 to 1e-2

- Batch Size (Categorical): 32, 64, 128, 256

- Number of CNN Layers: 4 to 8

- Number of Epochs: 50 to 200 [13]

- Step 2 - Configure Optimization: Use a Bayesian optimization framework (e.g., Ax, Scikit-optimize) with a Gaussian Process or Tree-structured Parzen Estimator as the surrogate model. The objective metric is the root mean squared error (RMSE) on the validation set.

- Step 3 - Iterate and Evaluate: Run the optimization for a predetermined number of trials (e.g., 50-100). In each trial, the algorithm selects a hyperparameter combination, trains the model, and evaluates it on the validation set to update the surrogate model [13].

- Step 4 - Final Model Training: Train the final model on the combined training and validation data using the best-found hyperparameters, and evaluate its performance on a held-out test set.

Protocol: Hierarchically Self-Adaptive PSO for a Stacked Autoencoder

This protocol describes an advanced optimization method applied to a deep learning model for drug classification and target identification [14].

1. Objective: Optimize the hyperparameters of a Stacked Autoencoder (SAE) model to achieve high accuracy in classifying druggable protein targets.

2. Experimental Setup:

- Model: Stacked Autoencoder (SAE) for feature extraction, followed by a classifier.

- Algorithm: Hierarchically Self-Adaptive Particle Swarm Optimization (HSAPSO), an evolutionary algorithm that dynamically balances exploration and exploitation [14].

3. Procedure:

- Step 1 - Particle Initialization: Initialize a population of particles, where each particle's position vector represents a candidate set of SAE hyperparameters (e.g., number of neurons per layer, learning rate, dropout rate).

- Step 2 - Fitness Evaluation: For each particle, train the SAE with its hyperparameters and evaluate the fitness, defined as the classification accuracy on the validation set.

- Step 3 - Position and Velocity Update: The HSAPSO algorithm updates each particle's velocity and position based on:

- Its personal best position (pbest).

- The global best position (gbest) found by the swarm.

- Hierarchically adaptive parameters that control the exploration-exploitation trade-off [14].

- Step 4 - Termination and Selection: Repeat Steps 2-3 until a convergence criterion is met (e.g., a maximum number of iterations or no improvement in gbest). The hyperparameters represented by the final gbest are selected as optimal.

4. Outcome: The proposed optSAE+HSAPSO framework achieved a classification accuracy of 95.52% on DrugBank and Swiss-Prot datasets, demonstrating the efficacy of this optimization protocol [14].

The Scientist's Toolkit: Key Reagents and Computational Tools

Table 2: Essential Research Reagents and Tools for ML in Drug Discovery

| Tool/Reagent | Function/Description | Application in Drug Discovery |

|---|---|---|

| Bayesian Optimization Framework | An efficient hyperparameter tuning strategy that builds a probabilistic model of the objective function to direct the search [13]. | Optimizing deep learning models for molecular property prediction (e.g., solubility, toxicity) [13]. |

| Particle Swarm Optimization (PSO) | An evolutionary optimization algorithm inspired by social behavior, useful for high-dimensional problems [14]. | Tuning complex models like Stacked Autoencoders for drug-target identification [14]. |

| Graph Neural Network (GNN) | A deep learning architecture that operates directly on graph-structured data [10] [12]. | Modeling molecular graphs for drug response prediction and molecular property analysis [10] [12]. |

| Stacked Autoencoder (SAE) | A neural network composed of multiple autoencoder layers for unsupervised feature learning [14]. | Dimensionality reduction and feature extraction from high-dimensional pharmaceutical data [14]. |

| SMILES/String Representations | A string-based notation for representing molecular structures [13]. | Input for sequence-based deep learning models (e.g., CNNs, RNNs) in chemical property prediction [13]. |

| Molecular Graph Representations | Represents atoms as nodes and bonds as edges in a graph [12]. | Native input format for GNNs, preserving structural information for more accurate modeling [12]. |

Performance Data and Comparison

The critical impact of hyperparameter optimization is quantified through improved model performance on key pharmaceutical tasks.

Table 3: Impact of Hyperparameter Optimization on Model Performance

| Model | Optimization Technique | Reported Performance | Application/Task |

|---|---|---|---|

| Stacked Autoencoder (SAE) [14] | Hierarchically Self-Adaptive PSO (HSAPSO) | Accuracy: 95.52%Computational Speed: 0.010 s/sample | Drug classification and target identification |

| Graph Neural Network (GNN) [12] | Attribution Algorithms (GNNExplainer, Integrated Gradients) | Enhanced prediction accuracy vs. pioneering works; Captured salient molecular features | Drug response prediction (IC50) with mechanism interpretation |

| Convolutional Neural Network (CNN) [13] | Bayesian Optimization & Dynamic Batch Size | General performance benefit across multiple molecular properties | Prediction of solubility, lipophilicity, etc. |

The integration of artificial intelligence (AI) and machine learning (ML) has revolutionized pharmaceutical research, enabling the precise simulation of receptor–ligand interactions and the optimization of lead compounds [15]. However, the efficacy of these algorithms is intrinsically linked to the quality and volume of training data [15]. Real-world drug discovery data is often characterized by three fundamental challenges: class imbalance, significant noise, and high-dimensionality [16] [17]. These issues can lead to biased models, poor generalization, and ultimately, costly failures in the drug development pipeline, which typically spans over 12 years with cumulative expenditures exceeding $2.5 billion [15]. This application note details these core data challenges and provides practical, experimentally-validated protocols to mitigate them, with a specific focus on optimizing machine learning models for pharmaceutical applications.

The following table summarizes the primary data challenges in drug discovery, their impact on ML model performance, and the key mitigation strategies explored in this note.

Table 1: Core Data Challenges in AI-Driven Drug Discovery

| Challenge | Manifestation in Drug Discovery | Impact on ML Models | Primary Mitigation Strategies |

|---|---|---|---|

| Data Imbalance | • Active compounds significantly outnumbered by inactive ones in screening [16].• Binding sites correspond to less than 5% of all amino acids in proteins [17]. | • Biased predictions favoring majority classes [16].• Failure to identify critical minority classes (e.g., toxic compounds) [16]. | Resampling (SMOTE, NearMiss) [16], Cost-sensitive learning [16], Data augmentation [17] |

| Data Noise | • Experimental errors in high-throughput screening and ADMET assays [17].• Inconsistent or missing biochemical annotations. | • Reduced predictive accuracy and model reliability [17].• Overfitting to spurious correlations. | Robust loss functions (e.g., Focal Loss) [17], Data cleaning pipelines, Ensemble methods |

| High-Dimensionality | • Thousands of molecular descriptors and fingerprints [14].• High-dimensional 'omics' data and protein sequences [18]. | • Increased computational complexity and risk of overfitting ("curse of dimensionality") [14].• Difficulties in model interpretation. | Dimensionality reduction (PCA, UMAP) [17], Autoencoders for feature extraction [14], Feature selection |

Application Notes & Experimental Protocols

Protocol 1: Addressing Data Imbalance with Advanced Resampling

Principle: Data imbalance, where certain classes are significantly underrepresented, is a widespread ML challenge in chemistry [16]. For instance, in drug discovery, active drug molecules are often drastically outnumbered by inactive ones, and models predicting toxicity often have far more data on toxic substances than non-toxic ones [16]. This leads to models that neglect minority classes.

Experimental Protocol: A Hybrid Resampling Workflow

This protocol uses a combination of oversampling the minority class and undersampling the majority class to create a balanced dataset for training.

Step 1: Data Preprocessing and Feature Engineering

- Standardize molecular representations (e.g., SMILES, fingerprints).

- Perform feature scaling to normalize the range of independent variables.

Step 2: Apply Synthetic Minority Over-sampling Technique (SMOTE)

- SMOTE generates new synthetic samples for the minority class by interpolating between existing minority class instances [16].

- Implementation: Use the

imbalanced-learn(v0.12.0) Python library. Key hyperparameters to optimize include:k_neighbors: The number of nearest neighbors used to construct synthetic samples. A lower value may be needed for high-dimensional data.sampling_strategy: The desired ratio of the number of samples in the minority class over the number in the majority class after resampling.

Step 3: Apply NearMiss Algorithm for Informed Undersampling

- NearMiss reduces the number of majority class samples by selecting those closest to the minority class in the feature space, preserving key distribution characteristics [16].

- Implementation: Using

imbalanced-learn, select the version of NearMiss (e.g., NearMiss-2). The primary hyperparameter is thesampling_strategy, defining the final desired ratio.

Step 4: Model Training with Balanced Data

- Train a classifier (e.g., Random Forest, XGBoost) on the resampled dataset.

- Validation: Use stratified k-fold cross-validation and focus on metrics like Balanced Accuracy, F1-score, and Area Under the Precision-Recall Curve (AUPRC), as accuracy can be misleading with imbalanced data [16].

Diagram: Hybrid Resampling Workflow for Imbalanced Data

Protocol 2: Mitigating Noise with Robust Model Architectures

Principle: Noise in drug discovery data arises from experimental variability, measurement errors in assays like hERG toxicity or DILI (Drug-Induced Liver Injury), and inconsistent biological annotations [17]. This can cause models to learn spurious patterns.

Experimental Protocol: Implementing a Noise-Robust Training Loop

Step 1: Data Curation and Cleaning

- Identify and filter out obvious outliers using statistical methods (e.g., Isolation Forest).

- Cross-reference experimental data from multiple public sources (e.g., ChEMBL, PubChem) to flag inconsistencies.

Step 2: Utilize Robust Loss Functions

- Standard cross-entropy loss is sensitive to noisy labels. Implement Focal Loss to down-weight the loss assigned to well-classified examples, focusing the model on harder, potentially more informative samples [17].

- Hyperparameters: The

alpha(balancing factor) andgamma(focusing parameter) in Focal Loss are critical and should be tuned for the specific dataset.

Step 3: Employ Ensemble Methods

- Train multiple models (e.g., Bagging of Neural Networks) and aggregate their predictions. Ensemble methods like Random Forest are inherently more robust to noise [16].

- Implementation: Use

scikit-learnfor Bagging or Random Forest classifiers. The number of base estimators (n_estimators) is a key hyperparameter.

Step 4: Model Interpretation and Noise Audit

- Use SHAP (SHapley Additive exPlanations) or model-specific interpretation methods (e.g., attention mechanisms in Transformers [17]) to identify which data points the model relies on most. Predictions based on nonsensical features may indicate noisy samples or dataset artifacts.

Protocol 3: Managing High-Dimensionality via Deep Feature Extraction

Principle: Drug discovery data is inherently high-dimensional, encompassing thousands of molecular descriptors, protein sequences, and complex interaction fingerprints [14]. This can lead to the "curse of dimensionality," where model performance degrades and the risk of overfitting increases.

Experimental Protocol: Dimensionality Reduction with Stacked Autoencoders

This protocol uses a Stacked Autoencoder (SAE), an unsupervised deep learning model, to learn a compressed, informative representation of high-dimensional input data [14].

Step 1: Data Preparation

- Input high-dimensional features (e.g., Mordred descriptors, extended-connectivity fingerprints).

- Handle missing values and normalize the data.

Step 2: Construct the Stacked Autoencoder Architecture

- The encoder network progressively compresses the input into a lower-dimensional "bottleneck" layer.

- The decoder network attempts to reconstruct the original input from this compressed representation.

- Hyperparameters: The structure of the encoder/decoder layers (number and size) and the size of the bottleneck layer are the most critical to optimize.

Step 3: Optimize Hyperparameters with Hierarchically Self-Adaptive PSO (HSAPSO)

- Particle Swarm Optimization (PSO) is an evolutionary algorithm that optimizes a problem by iteratively trying to improve a candidate solution [14]. HSAPSO enhances PSO by dynamically adapting its parameters during the search.

- Implementation: The HSAPSO algorithm is used to find the optimal hyperparameters for the SAE (e.g., learning rate, number of units per layer). The objective is to minimize the reconstruction loss.

Step 4: Extract Features and Train Predictor

- Once trained, discard the decoder. Use the encoder to transform the original high-dimensional data into the low-dimensional latent space.

- Use this new, reduced feature set to train a downstream ML model (e.g., a classifier for target identification). This framework (optSAE + HSAPSO) has been shown to achieve high accuracy (95.52%) in drug classification tasks [14].

Diagram: High-Dimensionality Reduction with an Optimized Autoencoder

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Addressing Drug Discovery Data Challenges

| Tool / Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| imbalanced-learn [16] | Python Library | Provides a suite of algorithms for resampling imbalanced datasets (SMOTE, NearMiss). | Mitigating class imbalance in virtual screening and toxicity prediction. |

| HSAPSO Algorithm [14] | Optimization Algorithm | Hierarchically Self-Adaptive Particle Swarm Optimization for hyperparameter tuning. | Optimizing complex models like Stacked Autoencoders where grid search is computationally prohibitive. |

| Stacked Autoencoder (SAE) [14] | Deep Learning Architecture | Unsupervised learning of compressed, meaningful data representations from high-dimensional inputs. | Feature extraction and dimensionality reduction for molecular and protein data. |

| Focal Loss [17] | Loss Function | A dynamically scaled cross-entropy loss that reduces the influence of easy-to-classify samples. | Training robust models on noisy datasets, such as imperfect biological assay data. |

| UMAP [17] | Dimensionality Reduction | Non-linear dimensionality reduction for visualization and creating challenging data splits. | Dataset analysis and creating realistic benchmarking splits for model evaluation. |

| ChemProp [17] | Graph Neural Network | A message-passing neural network for molecular property prediction directly from molecular graphs. | Accurately modeling physicochemical and ADMET properties while learning from molecular structure. |

The Critical Impact of HPO on Model Accuracy, Generalization, and Computational Efficiency

Hyperparameter optimization (HPO) is a cornerstone of developing effective machine learning (ML) models, serving as a critical bridge between algorithmic potential and real-world performance. In the high-stakes field of drug discovery, the precise calibration of these hyperparameters transcends technical refinement, becoming a fundamental determinant of a model's ability to identify viable therapeutic candidates. This document establishes application notes and protocols for implementing HPO within drug discovery ML workflows, addressing its multifaceted impact on predictive accuracy, compositional generalization, and operational computational efficiency.

The shift from traditional single-target paradigms to multi-target drug discovery, which addresses the complex, multifactorial nature of diseases like cancer and neurodegenerative disorders, has rendered model configuration increasingly challenging [19]. Within this context, HPO evolves from a peripheral task to a strategic imperative, enabling researchers to navigate the high-dimensional, nonlinear space of drug-target-disease interactions and systematically engineer models with enhanced therapeutic relevance.

Quantitative Impact of HPO: A Comparative Analysis

The following tables synthesize empirical data from various studies, illustrating the measurable impact of advanced HPO techniques on model performance and resource utilization in scientific applications.

Table 1: Impact of HPO Techniques on Model Accuracy and Generalization

| Application Domain | Model Type | HPO Technique | Performance Metric | Baseline Performance | Post-HPO Performance |

|---|---|---|---|---|---|

| Financial Forecasting (Nifty BeEs ETF) [20] | LSTM | Optuna (TPE) | Directional Accuracy | Not Specified | 63% |

| Financial Forecasting (Nifty BeEs ETF) [20] | 1D-CNN | Optuna (TPE) | Directional Accuracy | Not Specified | 61% |

| Sentiment Analysis [21] | Logistic Regression | Not Specified | Accuracy | Not Specified | Comparable to State-of-the-Art |

| Chemical Synthesis [22] | Deep Deterministic Policy Gradient (DDPG) | Bayesian Optimization | Achievement of Global Optima | Suboptimal with Fixed Hyperparameters | Superior Tracking & Solution Quality |

Table 2: Impact of HPO on Computational and Experimental Efficiency

| Application Domain | HPO Technique | Computational/Experimental Load | Key Efficiency Outcome |

|---|---|---|---|

| Chemical Synthesis in Flow [22] | DDPG with Adaptive Tuning | Number of Required Experiments | ~50% and ~75% reduction vs. Nelder–Mead & SnobFit |

| Hyperparameter Optimization [23] | EvoContext (LLM + GA) | Evaluation Budget | Superior performance under limited budget vs. traditional methods |

| General ML [24] | RandomizedSearchCV | Number of Combinations Evaluated | Explores fewer combinations than GridSearchCV for similar results |

HPO Methodologies: Protocols and Applications

Protocol: Randomized Search for Predictive Modeling

RandomizedSearchCV offers an efficient alternative to exhaustive grid search by sampling a fixed number of hyperparameter combinations from predefined distributions [24].

Application Procedure:

- Define the Search Space: Specify the hyperparameters and their probability distributions.

- Initialize the Model and Search: Set up the estimator and the

RandomizedSearchCVobject, defining the number of iterations (n_iter) and cross-validation folds (cv). - Execute Search and Validate: Fit the search object to the training data and retrieve the optimal hyperparameters.

- Final Model Evaluation: Train a final model using

best_params_on the entire training set and evaluate its performance on a held-out test set to estimate generalization error.

Protocol: Bayesian Optimization for Complex Drug Discovery Pipelines

Bayesian optimization is a powerful, model-driven HPO technique that builds a probabilistic surrogate model to approximate the relationship between hyperparameters and model performance [24] [22]. It is particularly suited for optimizing expensive-to-evaluate functions, such as training large deep learning models on massive bio-assay datasets.

Application Procedure:

- Surrogate Model Selection: Choose a probabilistic model, typically a Gaussian Process, to model the objective function.

- Acquisition Function Selection: Define an acquisition function (e.g., Expected Improvement) to guide the search by balancing exploration and exploitation.

- Iterative Optimization Loop:

- Propose Configuration: Use the acquisition function to select the next hyperparameter set to evaluate.

- Evaluate Configuration: Train and validate the ML model with the proposed hyperparameters.

- Update Surrogate Model: Incorporate the new performance data to refine the surrogate model.

- Termination and Validation: After a fixed number of iterations or upon convergence, validate the best-performing hyperparameter configuration on a held-out test set.

Advanced Application: Adaptive HPO for Deep Reinforcement Learning in Flow Chemistry

Deep Reinforcement Learning (DRL) can be applied to self-optimize chemical reaction conditions in flow reactors, a promising application in pharmaceutical synthesis [22]. The performance of the DRL agent itself is highly sensitive to its hyperparameters.

Workflow Diagram: Adaptive HPO for DRL in Flow Chemistry

Application Notes:

- Objective: The DRL agent learns a policy to manipulate reactor conditions (e.g., temperature, flow rate) to maximize a reward (e.g., reaction yield).

- Challenge: Fixed hyperparameters can lead to suboptimal policies and poor convergence [22].

- Solution: An outer loop of adaptive HPO (e.g., using Bayesian optimization) dynamically tunes the DRL agent's hyperparameters (e.g., learning rate, discount factor) based on its cumulative performance, leading to a 50-75% reduction in required experiments [22].

The Scientist's Toolkit: Essential Research Reagents & Solutions

This section catalogs key computational tools and data resources critical for conducting HPO in drug discovery ML research.

Table 3: Key Research Reagents & Solutions for HPO in Drug Discovery

| Tool/Resource Name | Type | Function in HPO Workflow | Relevance to Drug Discovery |

|---|---|---|---|

| DrugBank [19] | Database | Provides comprehensive drug, target, and mechanism data to create accurate labels and features for model training. | Essential for building accurate drug-target interaction (DTI) predictors. |

| ChEMBL [19] | Database | A manually curated repository of bioactive molecules with drug-like properties, used for training compound property predictors. | Provides high-quality bioactivity data for model training and validation. |

| TTD [19] | Database | Details therapeutic protein and nucleic acid targets, associated diseases, and pathways for network pharmacology models. | Informs multi-target drug discovery and polypharmacology predictions. |

| ESM/ProtBERT [19] | Pre-trained Model | Generates informative vector representations (embeddings) of protein sequences from amino acid sequences. | Encodes biological targets for models predicting drug-protein interactions. |

| GridSearchCV [24] | HPO Algorithm | Exhaustive search over a specified parameter grid. Best for small, discrete search spaces. | Good for initial exploration of a limited number of key hyperparameters. |

| RandomizedSearchCV [25] [24] | HPO Algorithm | Randomly samples hyperparameters from distributions. More efficient than grid search for large spaces. | General-purpose tuning for a wide range of models, including random forests. |

| Bayesian Optimization [21] [22] | HPO Algorithm | Model-based approach that balances exploration and exploitation. Efficient for expensive function evaluations. | Ideal for tuning complex, computationally intensive models like graph neural networks. |

| Optuna [20] | HPO Framework | Defines and optimizes hyperparameter search spaces, supporting state-of-the-art algorithms like TPE. | Used for tuning deep learning models (LSTM, CNN) on complex datasets. |

Advanced Techniques and Emerging Frontiers

Integrating Knowledge Graphs with HPO

Knowledge graphs (KGs) provide a powerful framework for integrating heterogeneous biological data, and KG-based methods have emerged as powerful tools for modeling and predicting drug-disease relationships [26]. The effectiveness of these models depends on their hyperparameters.

Workflow Diagram: HPO for KG-Based Drug Repurposing Models

Application Notes:

- Objective: Discover novel drug-disease relationships (link prediction) within a knowledge graph.

- Model: Use a Graph Neural Network (GNN) or other KG embedding technique.

- Critical Hyperparameters: Embedding dimension, number of GNN layers, learning rate, and dropout rate. Optimizing these is crucial for the model's ability to learn meaningful representations and generalize to unseen links [26].

Frontier Protocol: LLM-Driven HPO with EvoContext

Large Language Models (LLMs) can be leveraged for HPO by using their in-context learning capabilities to generate promising hyperparameter configurations [23]. A key challenge is the repetition issue, where LLMs get stuck generating similar configurations. EvoContext addresses this by integrating genetic algorithms.

Application Procedure:

- Initialization: Generate an initial set of contextual examples (hyperparameter-performance pairs) via cold start (random) or warm start (from historical data).

- Iterative Optimization Loop:

- Genetic Evolution Phase: Apply crossover and mutation to the current set of examples to create a new, diverse population of contextual examples. This breaks the self-reinforcing loop and encourages global exploration.

- LLM Generation Phase: The LLM, prompted with the evolved examples, generates new hyperparameter configurations based on these diverse patterns.

- Evaluation and Selection: The newly generated configurations are evaluated, and the best performers are used to update the example pool for the next iteration.

- Termination: The loop continues until the evaluation budget is exhausted, and the best-performing configuration is returned.

This hybrid approach balances the global exploration capability of genetic algorithms with the local refinement and knowledge-based reasoning of LLMs, demonstrating superior HPO performance on benchmark datasets [23].

Target Identification and Validation

Target identification is the foundational first step in the drug discovery pipeline, aiming to pinpoint biologically relevant molecules, typically proteins, whose modulation is expected to have a therapeutic effect. Modern artificial intelligence (AI) and machine learning (ML) approaches have revolutionized this process by shifting from a reductionist, single-target view to a holistic, systems-level analysis of complex biological networks [19] [27].

AI-Driven Methodologies

Multi-Modal Data Integration: Advanced platforms integrate massive-scale, heterogeneous datasets to build comprehensive biological knowledge graphs. For instance, the PandaOmics system leverages 1.9 trillion data points from over 10 million biological samples (e.g., RNA sequencing, proteomics) and 40 million documents (patents, clinical trials) [27]. This allows for the identification of novel therapeutic targets based on a confluence of genetic, functional, and textual evidence.

Deep Learning for Druggability Prediction: Supervised learning models are trained to classify and prioritize druggable targets. The optSAE + HSAPSO framework, which integrates a stacked autoencoder for feature extraction with a hierarchically self-adaptive particle swarm optimization algorithm, has demonstrated a classification accuracy of 95.52% on datasets from DrugBank and Swiss-Prot [14]. This method significantly reduces computational complexity and improves stability for large-scale target identification tasks.

Cellular Target Engagement Validation: Once a target is identified, confirming that a drug candidate physically binds to it in a physiologically relevant context is critical. The Cellular Thermal Shift Assay (CETSA) and its quantitative proteomics variations are used to validate direct target engagement within intact cells and tissues, providing system-level confirmation of mechanistic hypotheses [4].

Table 1: Key Data Sources for AI-Driven Target Identification

| Database Name | Data Type | Description | URL/Reference |

|---|---|---|---|

| TTD | Therapeutic targets, drugs, diseases | Information on therapeutic targets, diseases, pathways, and drugs. | https://idrblab.org/ttd/ |

| DrugBank | Drug-target, chemical, pharmacological data | Comprehensive resource combining drug data with target and pathway information. | https://go.drugbank.com |

| ChEMBL | Bioactivity, chemical, genomic data | Manually curated database of bioactive drug-like small molecules. | https://www.ebi.ac.uk/chembl/ |

| KEGG | Genomics, pathways, diseases, drugs | Knowledge base linking genomic information with pathways and drug networks. | https://www.genome.jp/kegg/ |

Experimental Protocol: AI-Guided Target Prioritization and Validation

Objective: To computationally identify and experimentally validate a novel therapeutic target for a specified complex disease.

Materials:

- Hardware: High-performance computing cluster or cloud instance with GPU acceleration.

- Software: AI platform with multi-modal data integration capabilities (e.g., knowledge graph, NLP tools).

- Data: Relevant omics datasets (e.g., transcriptomics from patient tissues), literature/patent corpora, and protein-protein interaction networks.

- Biological Reagents: Cell lines or primary cells relevant to the disease, antibodies for target protein detection, qPCR reagents, siRNA or CRISPR-Cas9 components for gene knockdown/knockout.

Procedure:

- Hypothesis-Free Target Discovery: Input disease phenotype or key terms into the AI platform (e.g., PandaOmics). The system will use NLP to mine literature and patents and perform multi-omics analysis to generate a ranked list of potential novel targets associated with the disease [27].

- Target Prioritization: Apply a druggability classification model (e.g., optSAE + HSAPSO) to the candidate list. The model evaluates features like protein structure, known ligandability, and functional annotations to score and prioritize targets with a high potential for successful intervention [14].

- In Silico Validation: Place the top target candidates within their broader biological context using the platform's knowledge graph to assess network connectivity, potential for off-pathway effects, and therapeutic novelty [19] [27].

- Experimental Validation (Wet-Lab): a. Genetic Perturbation: Knock down or knock out the expression of the prioritized target gene in a disease-relevant cellular model using siRNA or CRISPR-Cas9. b. Phenotypic Assessment: Measure the impact of genetic perturbation on disease-relevant phenotypic endpoints (e.g., cell viability, cytokine release, tau phosphorylation). c. Target Engagement Confirmation: Treat cells with a lead compound and apply CETSA. Incubate cells at different temperatures, lyse them, and quantify the stabilization of the target protein (indicative of binding) via Western blot or high-resolution mass spectrometry [4].

AI Target Identification Workflow

Molecular Design and Lead Optimization

The hit-to-lead and lead optimization phases are being radically accelerated by AI, compressing timelines from months to weeks through generative models and high-throughput in silico screening [4].

AI-Driven Methodologies

Generative Chemistry: Models like Generative Adversarial Networks (GANs), Variational Autoencoders (VAEs), and reinforcement learning are used for de novo molecular design. These systems can generate novel, synthetically accessible compounds optimized for multiple parameters simultaneously, such as binding affinity, metabolic stability, and novelty [28] [27]. For example, Insilico Medicine's Chemistry42 platform uses a combination of these techniques to design drug-like molecules [27].

AI-Enhanced Structural Modeling: Tools like NeuralPLexer (Iambic Therapeutics) represent a significant advance by predicting the 3D structure of protein-ligand complexes directly from protein sequence and ligand graph input. This provides critical insights for structure-based drug design, informing on target engagement and binding specificity [27].

High-Throughput Virtual Screening: Classical computational methods like molecular docking and QSAR modeling have become frontline tools for triaging vast virtual compound libraries. Platforms such as Gnina employ convolutional neural networks (CNNs) as scoring functions to improve the accuracy of binding pose prediction and active molecule identification [17]. A study by Ahmadi et al. (2025) demonstrated that integrating pharmacophoric features with protein-ligand interaction data could boost hit enrichment rates by more than 50-fold compared to traditional methods [4].

Table 2: Performance of Selected AI-Designed Molecules in Clinical Trials (as of 2025)

| Small Molecule | Company | Target | Stage | Indication |

|---|---|---|---|---|

| INS018-055 | Insilico Medicine | TNIK | Phase 2a | Idiopathic Pulmonary Fibrosis (IPF) |

| ISM3091 | Insilico Medicine | USP1 | Phase 1 | BRCA mutant cancer |

| RLY-2608 | Relay Therapeutics | PI3Kα | Phase 1/2 | Advanced Breast Cancer |

| EXS4318 | Exscientia | PKC-theta | Phase 1 | Inflammatory/Immunologic diseases |

| REC-3964 | Recursion | C. diff Toxin Inhibitor | Phase 2 | Clostridioides difficile Infection |

Experimental Protocol: AI-Driven Design-Make-Test-Analyze (DMTA) Cycle

Objective: To rapidly optimize a hit compound into a lead candidate with improved potency and desired drug-like properties.

Materials:

- Software: Generative AI chemistry platform (e.g., Chemistry42, Magnet); molecular docking software (e.g., AutoDock, Gnina); ADMET prediction tools (e.g., Deep-PK, AttenhERG).

- Data: Initial hit compound structure(s); target protein structure or sequence; assay data for model fine-tuning.

- Chemical Reagents: Building blocks for combinatorial chemistry or automated synthesis; solvents.

Procedure:

- Design: Input the structure of the initial hit and desired target profile (e.g., IC50 < 100 nM, logP < 3, no hERG liability) into the generative AI platform. The model will propose a library of thousands of virtual analogs [4].

- In Silico Screening: Subject the generated virtual library to a multi-step computational filter: a. Virtual Screening: Use AI-powered docking (e.g., Gnina 1.3) or affinity prediction models (e.g., DeepTGIN) to score compounds for predicted binding affinity and pose [17]. b. ADMET Prediction: Screen the top-scoring compounds for predicted pharmacokinetic and toxicity properties using specialized models (e.g., predict hERG blockade with AttenhERG, or other endpoints with MolGPS and MolE models) [17] [27].

- Make: Synthesize the top-ranking, synthetically accessible virtual candidates (typically 10s-100s) using high-throughput and automated chemistry platforms [4].

- Test: Experimentally test the synthesized compounds in biochemical and cellular assays to determine actual potency (e.g., IC50), selectivity, and early cytotoxicity.

- Analyze: Feed the experimental results back into the AI models as new training data. This active learning loop retrains and refines the models, improving the quality of the next cycle of compound generation [27]. The cycle repeats until a lead candidate meeting all criteria is identified.

AI-Driven DMTA Cycle

ADMET Prediction

Predicting the Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) profile of compounds in silico is crucial for reducing late-stage attrition due to poor pharmacokinetics or safety issues [28].

AI-Driven Methodologies

Graph Neural Networks (GNNs) for Molecular Property Prediction: GNNs, such as Attentive FP and ChemProp, naturally operate on the graph structure of molecules, learning representations that lead to state-of-the-art accuracy in predicting properties like solubility, permeability, and toxicity [17]. The AttenhERG model, based on Attentive FP, has achieved the highest accuracy in external benchmarking studies for predicting hERG channel blockade, a key cause of cardiotoxicity [17].

Multi-Task and Transfer Learning: These approaches train a single model on multiple related ADMET endpoints simultaneously. This allows the model to learn generalized features from diverse, noisy preclinical datasets, improving prediction accuracy, especially for endpoints with limited data [5] [15]. The Enchant model (Iambic Therapeutics) uses a multi-modal transformer and transfer learning to predict human pharmacokinetics with high accuracy from minimal clinical data [27].

Platforms for Integrated Prediction: Comprehensive platforms like Deep-PK and DeepTox leverage graph-based descriptors and multi-task learning to provide a unified suite of ADMET predictions, integrating them early into the molecular design process [28].

Table 3: Benchmarking of Machine Learning Models for Key ADMET Properties

| Property/Endpoint | Exemplary AI Model | Key Model Architecture | Reported Performance |

|---|---|---|---|

| hERG Toxicity | AttenhERG | Graph Neural Network (GNN) | Highest accuracy in external benchmarking [17] |

| Drug-Induced Liver Injury (DILI) | StreamChol | Not Specified | User-friendly web tool for cholestasis risk estimation [17] |

| Aqueous Solubility | fastprop | Molecular Descriptors (Mordred) + DNN | Comparable to GNNs (e.g., ChemProp) with 10x faster computation [17] |

| Human Pharmacokinetics | Enchant | Multi-modal Transformer + Transfer Learning | High predictive accuracy with minimal clinical data [27] |

Experimental Protocol: In Silico ADMET Profiling

Objective: To computationally predict the ADMET profile of a series of lead compounds to prioritize the safest candidates for in vivo studies.

Materials:

- Software: ADMET prediction software (e.g., AttenhERG, StreamChol, fastprop, or commercial platforms).

- Hardware: Standard computer workstation.

- Input Data: Chemical structures of compounds in SMILES or SDF format.

Procedure:

- Data Preparation: Convert the chemical structures of all lead compounds into a standardized format (e.g., SMILES strings).

- Model Selection: Choose appropriate pre-trained models for the ADMET endpoints most critical to the project. Essential endpoints often include:

- Absorption: Caco-2 permeability, P-glycoprotein inhibition.

- Distribution: Plasma Protein Binding, Blood-Brain Barrier Penetration.

- Metabolism: Cytochrome P450 Inhibition (e.g., CYP3A4, CYP2D6).

- Excretion: Total Clearance.

- Toxicity: hERG inhibition (using AttenhERG), Drug-Induced Liver Injury (using StreamChol), and Ames mutagenicity [17].

- Prediction and Analysis: Run the prepared structures through the selected models. Compile the results into a profile for each compound.

- Ranking and Prioritization: Rank the compounds based on a composite score that weights the importance of each ADMET property relative to the therapeutic target and intended route of administration. For example, a CNS drug candidate would be penalized for high BBB penetration predicted by the model, while it might be desirable for a peripheral target.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Reagents and Tools for AI-Enhanced Drug Discovery

| Research Reagent / Tool | Function / Application | Example Use Case |

|---|---|---|

| CETSA Kits | Validate direct drug-target engagement in physiologically relevant cellular environments. | Confirming compound binding to DPP9 in rat tissue lysates or intact cells [4]. |

| siRNA/CRISPR-Cas9 Libraries | Perform high-throughput genetic perturbation to validate novel AI-predicted targets. | Knocking down candidate genes in disease models to assess impact on phenotype [27]. |

| PandaOmics | AI-powered target identification platform integrating multi-omics and textual data. | Generating a ranked list of novel therapeutic targets for a complex disease [27]. |

| Chemistry42 / Magnet | Generative AI platforms for de novo design of novel, synthetically accessible small molecules. | Generating lead-like compounds optimized for multiple parameters (potency, ADMET) [27]. |

| Gnina 1.3 | Open-source molecular docking software with CNN-based scoring functions. | Screening large virtual compound libraries and predicting accurate binding poses [17]. |

| AttenhERG & StreamChol | Specialized AI models for predicting specific toxicity endpoints (cardiotoxicity, liver injury). | Early triaging of compounds with high hERG or DILI liability during lead optimization [17]. |

| QDπ Dataset | A large, accurate quantum chemical dataset for training machine learning potentials (MLPs). | Developing universal MLPs for highly accurate molecular simulation in drug discovery [29]. |

Advanced HPO Techniques and Their Implementation in Pharmaceutical Research

Hyperparameter optimization (HPO) is a critical step in developing machine learning (ML) models for drug discovery, where predicting molecular properties with high accuracy is paramount for successful outcomes in areas like de novo molecular design and chemical reaction modeling [10]. The performance of sophisticated models, including Graph Neural Networks (GNNs) and deep neural networks, is highly sensitive to their architectural and training hyperparameters [10] [30]. This application note establishes a comprehensive framework for HPO, contextualized specifically for cheminformatics. It provides detailed protocols, from data preprocessing to final model validation, to equip researchers with the methodologies necessary to build robust, efficient, and accurate predictive models for molecular property prediction (MPP).

Background and Significance in Cheminformatics

Cheminformatics bridges chemistry and information science, playing a critical role in drug discovery and material science [10]. Traditional machine learning applications in MPP have often paid limited attention to HPO, resulting in suboptimal prediction of crucial properties [30]. The process of HPO involves selecting the best set of hyperparameters, which are configuration settings that must be specified before the training process begins. These are distinct from model parameters (e.g., weights and biases) that the algorithm learns from the data [31].

Hyperparameters are broadly categorized into two types:

- Structural hyperparameters, which describe the model's architecture, such as the number of layers in a neural network, the number of units per layer, and the type of activation function.

- Algorithmic hyperparameters, which are associated with the learning algorithm itself, such as the learning rate, the number of training epochs, and the batch size [30].

Optimizing as many of these hyperparameters as possible is crucial for maximizing the predictive performance of ML models in MPP [30].

A Structured HPO Framework for Drug Discovery

The following workflow outlines the core stages of implementing HPO for a drug discovery ML project. The process begins with data preparation and moves iteratively through model configuration, validation, and final evaluation.

Data Preprocessing and Splitting

The foundation of any reliable ML model is a robust dataset. In cheminformatics, data often comes from molecular structures and must be transformed into a suitable format for learning algorithms.

- Molecular Representation: For GNNs, molecules are naturally represented as graphs, where atoms are nodes and bonds are edges. Features can include atom type, charge, and bond type [10].

- Data Splitting: To ensure an unbiased evaluation of the model's generalization error, the data must be split appropriately. A common strategy is to create three distinct sets:

- Training Set: Used to train the model with a given hyperparameter configuration (HPC).

- Validation Set: Used to evaluate the performance of the model trained with a specific HPC. This evaluation guides the HPO search.

- Hold-Out Test Set: Used only once, at the very end of the process, to provide a final, unbiased estimate of the generalization error of the model trained with the best-found HPC on the full training data [32]. Resampling strategies like k-fold cross-validation can be used within the training set for a more robust validation during HPO [30] [32].

Defining the HPO Search

The core of HPO involves defining the search space and selecting an optimization algorithm.

- Search Space Definition: This is the set of all hyperparameters and their possible values to be explored. It is crucial to define a sensible range for each hyperparameter based on prior knowledge or literature.

- Optimization Algorithms: Several strategies exist, each with trade-offs between computational efficiency and the likelihood of finding the global optimum.

Table 1: Comparison of Primary HPO Algorithms

| Algorithm | Key Principle | Advantages | Disadvantages | Recommended Use in MPP |

|---|---|---|---|---|

| Grid Search [31] | Exhaustively searches over a predefined set of values for all hyperparameters. | Simple to implement and parallelize; guaranteed to find the best point in the grid. | Computationally intractable for high-dimensional spaces; curse of dimensionality. | Not recommended for complex models with many hyperparameters. |

| Random Search [30] [31] | Randomly samples hyperparameter configurations from predefined distributions. | More efficient than grid search; better at exploring high-dimensional spaces. | No guarantee of finding the optimum; may still miss important regions. | Good initial baseline or for a wide initial search. |

| Bayesian Optimization [30] [31] | Builds a probabilistic model (surrogate) of the objective function to direct the search towards promising configurations. | Sample-efficient; often finds good configurations with fewer iterations. | Higher computational overhead per iteration; complex to implement. | Effective when model training is very expensive. |

| Hyperband [30] | A bandit-based approach that uses adaptive resource allocation and early-stopping to speed up the search. | Highly computationally efficient; does not require a surrogate model. | Can discard promising configurations that start poorly. | Recommended for MPP due to its efficiency and accuracy [30]. |

| BOHB (Bayesian Opt. & Hyperband) [30] | Combines Hyperband's efficiency with Bayesian Optimization's sample-efficiency. | Leverages strengths of both Bayesian and bandit-based approaches. | More complex than individual methods. | Powerful alternative to Hyperband for improved performance. |

Key Considerations and Pitfalls

- Overtuning: A critical risk in HPO is "overtuning," a form of overfitting at the HPO level. This occurs when the HPO process over-optimizes for the validation set estimate, which is inherently stochastic, leading to the selection of an HPC that performs worse on truly unseen data (the test set) [32]. Overtuning is more common in small-data regimes but can occur in various scenarios [32].

- Computational Efficiency: HPO is often the most resource-intensive step in model training [30]. Using software platforms that allow for parallel execution of multiple HPO trials is essential for reducing the total time required [30].

Experimental Protocols for HPO in Molecular Property Prediction

This section provides a detailed, step-by-step protocol for performing HPO using the Hyperband algorithm, which has been identified as particularly effective for MPP tasks [30].

Protocol: Hyperparameter Optimization with KerasTuner for a Dense Deep Neural Network

Aim: To optimize the hyperparameters of a Dense Deep Neural Network (Dense DNN) for predicting the melt index of a polymer or a similar molecular property.

Materials and Software:

- Python 3.7+

- Libraries: TensorFlow/Keras, KerasTuner, Pandas, NumPy, Scikit-learn

- A dataset of molecular structures or descriptors and their corresponding target property (e.g., melt index, glass transition temperature).

Table 2: The Scientist's Toolkit: Essential Research Reagents & Software

| Item Name | Type | Function / Description | Example / Specification |

|---|---|---|---|

| KerasTuner [30] | Software Library | An intuitive, user-friendly HPO library that integrates with Keras/TensorFlow workflows. | Python library; supports RandomSearch, Hyperband, Bayesian Optimization. |

| Optuna [30] | Software Library | A define-by-run HPO framework that allows for more flexible and complex search spaces. | Python library; supports various samplers and pruners, including BOHB. |

| Training/Validation/Test Split [32] | Data Protocol | Partitioning data to tune models without biasing the final performance estimate. | Typical split: 60/20/20 or 70/15/15; crucial for avoiding data leakage. |

| Hyperband Algorithm [30] | HPO Method | A bandit-based resource allocation method that quickly discards poor configurations. | Implemented in KerasTuner (Hyperband class) and Optuna. |

| Resampling Strategy [32] | Validation Protocol | Estimating the generalization error of an inducer configured by an HPC. | e.g., k-fold Cross-Validation, hold-out validation. |

Procedure:

Data Preprocessing and Splitting: a. Load your molecular dataset (e.g., a CSV file containing molecular descriptors/fingerprints and a target property column). b. Perform necessary cleaning, handling of missing values, and feature scaling (e.g., standardization). c. Split the dataset into three parts: Training (70%), Validation (15%), and Hold-Out Test (15%) sets. The test set should be set aside and not used during the HPO process.

Define the Model-Building Function: a. Within the KerasTuner framework, define a function that builds and compiles a Keras model. This function takes an

hpargument from which you can sample hyperparameters.Instantiate the Hyperband Tuner: a. Create an instance of the

Hyperbandtuner, specifying the model-building function, the objective to optimize, and the maximum number of epochs to train for a single configuration.Execute the HPO Search: a. Run the search, providing the training and validation data. The tuner will automatically manage the adaptive resource allocation and early stopping.

Retrieve the Optimal Hyperparameters: a. After the search completes, obtain the best hyperparameter configuration(s).

Train and Validate the Final Model: a. Use the best hyperparameters to build the final model. b. Train this model on the combined training and validation data. c. Evaluate its performance on the held-out test set to obtain an unbiased estimate of its generalization error.

Protocol Validation: Case Study Results

The effectiveness of this HPO protocol is demonstrated in a study comparing HPO algorithms for molecular property prediction. The results, summarized in Table 3, show that Hyperband provides an excellent balance of computational efficiency and predictive accuracy.

Table 3: Comparison of HPO Algorithm Performance in Molecular Property Prediction [30]

| HPO Algorithm | Prediction Accuracy (e.g., MSE) | Computational Efficiency (Time) | Key Findings / Recommendation |

|---|---|---|---|

| No HPO (Base Case) | Suboptimal / Higher MSE | N/A (Baseline) | Results in suboptimal values of predicted properties [30]. |

| Random Search | Good improvement over baseline | Moderate | Better than grid search, but can be inefficient. |

| Bayesian Optimization | Optimal or near-optimal | Lower than Hyperband | Sample-efficient but computationally intensive per trial. |

| Hyperband | Optimal or near-optimal | Highest | Most computationally efficient; recommended for MPP [30]. |

| BOHB (Bayesian & Hyperband) | Optimal or near-optimal | High | Combines strengths of both methods; a powerful alternative. |

Model Validation and Mitigating Overtuning

After completing the HPO process and training the final model, rigorous validation is essential. The hold-out test set, which has not been used in any way during model selection or HPO, provides the final performance metric.

To mitigate the risk of overtuning, where the model is overfitted to the validation score, researchers should:

- Use Nested Cross-Validation: For a more robust evaluation, especially in smaller datasets, a nested cross-validation protocol can be used, where an inner loop performs HPO and an outer loop provides an unbiased performance estimate [32].

- Limit the HPO Budget: Avoid an excessively large number of HPO trials, particularly on small datasets, as this increases the chance of overtuning [32].

- Validate on External Temporal or Spatial Data: If possible, validate the final model on a completely independent dataset collected at a different time or from a different source [33].

A systematic framework for HPO is indispensable for building high-performing ML models in drug discovery and cheminformatics. This application note has outlined a comprehensive pathway from data preprocessing to final model validation, emphasizing the importance of using efficient HPO algorithms like Hyperband. By following the detailed protocols and being mindful of pitfalls such as overtuning, researchers and scientists can significantly enhance the accuracy and reliability of their molecular property predictions, thereby accelerating the drug discovery pipeline.

The integration of evolutionary and swarm intelligence with deep learning architectures is revolutionizing the development of machine learning models for pharmaceutical research. Hyperparameter optimization presents a significant bottleneck in deploying deep learning models like Stacked Autoencoders (SAE) for critical drug discovery tasks such as drug-target interaction prediction and molecular property classification. Traditional optimization methods, including grid search and manual tuning, are often slow, suboptimal, and require extensive expert knowledge. The Hierarchically Self-Adaptive Particle Swarm Optimization (HSAPSO) algorithm addresses these limitations by providing an efficient, adaptive framework for simultaneously optimizing SAE architecture and training parameters. This protocol details the application of the HSAPSO-Optimized Stacked Autoencoder (optSAE + HSAPSO) framework, a novel approach that has demonstrated state-of-the-art performance of 95.52% accuracy in drug classification tasks while reducing computational time to just 0.010 seconds per sample [14] [34].

Performance Comparison of Optimization Methods for SAE in Drug Discovery

Table 1: Quantitative performance comparison of HSAPSO-optimized SAE versus other methods on drug discovery datasets

| Method | Reported Accuracy (%) | Computational Time (s/sample) | Stability (±) | Key Advantages |

|---|---|---|---|---|

| optSAE + HSAPSO [14] [34] | 95.52 | 0.010 | 0.003 | Fast convergence, high stability, superior accuracy |

| XGB-DrugPred [14] | 94.86 | N/R | N/R | Optimized DrugBank features |

| Bagging-SVM with GA [14] | 93.78 | N/R | N/R | Enhanced computational efficiency |

| DrugMiner (SVM/NN) [14] | 89.98 | N/R | N/R | Leverages 443 protein features |

| MPSO-SAE (Chaotic Time Series) [35] | N/R | N/R | N/R | Effective for high-dimensional data |

| SAAE with Cultural Algorithm [36] | 9.54% improvement over baseline | N/R | N/R | Prevents over-fitting/under-fitting |

N/R = Not Reported in the cited sources

Experimental Protocol: Implementing HSAPSO for SAE Optimization

Phase 1: Data Preparation and Preprocessing

Objective: Prepare pharmaceutical data for effective feature extraction by the Stacked Autoencoder.

Materials:

- DrugBank and Swiss-Prot datasets [14]

- Python preprocessing libraries (NumPy, Pandas, Scikit-learn)

- Computational environment: Standard workstation with 16GB RAM [37]

Procedure:

- Data Normalization: Apply min-max scaling to transform all features to [0,1] range using the formula:

v' = (v - min_A)/(max_A - min_A)where v is the original value and v' is the normalized value [37]. - Outlier Removal: Implement Isolation Forest algorithm with a contamination parameter of 0.02 to identify and remove anomalous data points [38].

- Data Partitioning: Split the normalized dataset into training (80%) and testing (20%) sets using random sampling [38].

- Feature Dimension Analysis: Conduct principal component analysis (PCA) to estimate initial latent dimension requirements for SAE configuration.

Phase 2: Stacked Autoencoder Architecture Configuration

Objective: Establish the initial SAE architecture for feature extraction and drug classification.

Materials:

- Deep learning framework (TensorFlow or PyTorch)

- Python 3.7+ environment

Procedure:

- Base Architecture Setup:

- Configure input layer dimensions matching the preprocessed feature set

- Initialize encoder pathway with progressively decreasing layer dimensions (e.g., 512 → 256 → 128 → 64 neurons)

- Create symmetric decoder pathway for reconstruction

- Set output layer with softmax activation for classification tasks

Parameter Initialization:

- Initialize weights using He normal initialization

- Set biases to zero

- Configure ReLU activation functions for hidden layers [36]

Pretraining Setup:

- Implement layer-wise unsupervised pretraining

- Configure reconstruction loss (Mean Squared Error)

- Set initial learning rate to 0.001

Phase 3: HSAPSO Hyperparameter Optimization

Objective: Optimize SAE hyperparameters using Hierarchically Self-Adaptive PSO.

Materials:

- High-performance computing cluster or GPU-enabled workstation

- Custom HSAPSO implementation [14]

Table 2: HSAPSO optimization parameters and search space

| Hyperparameter | Search Space | Optimal Value Range | Optimization Frequency |

|---|---|---|---|

| Learning Rate | [0.0001, 0.01] | 0.001-0.005 | Global level |

| Number of Hidden Layers | [3, 7] | 4-6 | Hierarchical level |

| Neurons per Layer | [64, 1024] | 128-512 | Hierarchical level |

| Batch Size | [32, 256] | 64-128 | Global level |

| Regularization Factor | [0.0001, 0.1] | 0.001-0.01 | Global level |

| Activation Function | {ReLU, Sigmoid, TanH} | ReLU | Hierarchical level |

Procedure:

- HSAPSO Initialization:

- Set swarm size to 50 particles

- Configure hierarchical topology with 3 sub-swarms

- Initialize particle positions randomly within search space bounds

- Set initial velocity vectors to zero

Fitness Evaluation:

- For each particle position (hyperparameter set):

- Configure SAE with proposed hyperparameters

- Train on 80% of training data for 50 epochs

- Validate on remaining 20% of training data

- Calculate fitness as (1 - validation accuracy) + 0.001 * training time

- For each particle position (hyperparameter set):

Hierarchical Optimization:

- Execute PSO with adaptive inertia weights within each sub-swarm

- Implement migration operator every 20 iterations for information exchange between sub-swarms

- Apply dynamic sub-swarm regrouping based on fitness similarity

Convergence Monitoring:

- Track global best fitness across all sub-swarms

- Terminate after 100 iterations or when fitness improvement < 0.001 for 10 consecutive iterations

Phase 4: Model Validation and Interpretation

Objective: Validate optimized model performance and extract biological insights.

Materials:

- Held-out test dataset (20% of original data)

- Model interpretation libraries (SHAP, LIME)

Procedure:

- Performance Assessment:

- Load HSAPSO-optimized SAE model with best hyperparameters

- Evaluate on completely held-out test set

- Calculate accuracy, precision, recall, F1-score, and AUC-ROC

Robustness Analysis:

- Execute 5-fold cross-validation with different random seeds

- Calculate performance variance across folds

- Compare training vs. test performance to detect overfitting

Biological Interpretation:

- Extract feature importance scores from encoder layers

- Identify molecular descriptors most influential in classification

- Map significant features to known biological pathways

Workflow Visualization

Table 3: Key research reagents and computational resources for implementing HSAPSO-optimized SAE

| Resource | Type/Example | Function in Protocol | Implementation Notes |

|---|---|---|---|

| Pharmaceutical Datasets | DrugBank, Swiss-Prot [14] | Model training and validation | Curated datasets with drug-target annotations |

| Deep Learning Framework | TensorFlow, PyTorch | SAE implementation | GPU acceleration recommended |

| Optimization Library | Custom HSAPSO [14] | Hyperparameter optimization | Requires parallel processing capability |

| Data Preprocessing Tools | Scikit-learn, Pandas | Data normalization and cleaning | Includes Isolation Forest for outlier detection |

| Validation Metrics | Accuracy, AUC-ROC, F1-score | Performance assessment | Critical for model comparison |

| High-Performance Computing | GPU cluster (NVIDIA Tesla) | Accelerate training | Reduces optimization time from days to hours |

| Model Interpretation | SHAP, LIME [17] | Biological insight extraction | Links model decisions to domain knowledge |

Troubleshooting and Technical Notes

Common Implementation Challenges

Premature Convergence: If HSAPSO converges too quickly to suboptimal solutions:

- Increase swarm size from 50 to 100 particles

- Enhance mutation rate in hierarchical sub-swarms

- Implement chaotic mapping for particle initialization as demonstrated in MPSO variants [35]

Overfitting: If validation performance lags training performance:

- Increase regularization factor through HSAPSO search space

- Implement early stopping with patience of 10 epochs

- Add dropout layers to SAE architecture

Computational Bottlenecks: For datasets exceeding 50,000 samples:

Adaptation to Specific Drug Discovery Applications

The optSAE + HSAPSO framework can be adapted to various pharmaceutical applications:

- Drug-Target Interaction Prediction: Modify output layer for binary classification and incorporate protein sequence descriptors [14]

- Molecular Property Optimization: Implement regression output layer for quantitative property prediction (e.g., solubility, toxicity) [17]

- Binding Affinity Prediction: Incorporate 3D structural information through graph-based representations [17]

The HSAPSO-optimized Stacked Autoencoder represents a significant advancement in hyperparameter optimization for drug discovery machine learning models. By integrating the adaptive exploration-exploitation balance of hierarchical particle swarm optimization with the powerful feature extraction capabilities of deep stacked autoencoders, this protocol enables researchers to achieve state-of-the-art performance in pharmaceutical classification tasks. The method's demonstrated efficiency (0.010 s/sample) and high accuracy (95.52%) on benchmark datasets position it as a valuable tool for accelerating early-stage drug discovery while reducing computational overhead.

Bayesian Optimization for Efficient Search in High-Dimensional Spaces

The application of machine learning (ML) in drug discovery has revolutionized the process of candidate screening and optimization. However, the performance of these ML models is highly sensitive to their architectural choices and hyperparameters [10]. Navigating these high-dimensional hyperparameter spaces to find optimal configurations is a complex, computationally expensive challenge. Bayesian Optimization (BO) has emerged as a powerful strategy for the efficient global optimization of such expensive black-box functions, demonstrating particular value in drug discovery pipelines by requiring an order of magnitude fewer experiments than traditional methods [39] [40]. This Application Note details the theoretical underpinnings, practical protocols, and key applications of BO for hyperparameter optimization of ML models in high-dimensional drug discovery contexts.