Hyperparameter Optimization in Molecular Property Prediction: A Complete Guide for Drug Discovery

This article provides a comprehensive guide to hyperparameters in molecular property prediction, a critical factor for developing accurate AI models in drug discovery and materials science.

Hyperparameter Optimization in Molecular Property Prediction: A Complete Guide for Drug Discovery

Abstract

This article provides a comprehensive guide to hyperparameters in molecular property prediction, a critical factor for developing accurate AI models in drug discovery and materials science. It establishes a foundational understanding of what hyperparameters are and why they are vital for model performance. The guide then explores practical methodologies and algorithms for hyperparameter optimization (HPO), detailing their application with popular deep learning architectures like Message Passing Neural Networks (MPNNs) and Graph Neural Networks (GNNs). Furthermore, it addresses common challenges and solutions for optimizing models in low-data regimes and for complex multi-task problems. Finally, the article covers rigorous validation techniques and presents comparative analyses of different HPO methods, offering actionable insights for researchers and professionals aiming to build reliable and chemically accurate predictive models.

What Are Hyperparameters? The Foundation of Accurate Molecular AI Models

In the domain of molecular property prediction, a field critical to accelerating drug discovery and materials science, the construction of robust machine learning models hinges on the precise configuration of two distinct entities: model parameters and model hyperparameters. Understanding their difference is not merely an academic exercise; it is a foundational principle that separates a poorly performing model from a highly accurate predictor of molecular behavior. Model parameters are the internal variables that the model learns autonomously from the training data, such as the weights and biases in a neural network [1]. In contrast, model hyperparameters are external configuration variables whose values are set prior to the training process. These hyperparameters govern the architecture of the model itself and the specific dynamics of the learning algorithm [2] [1]. In the context of molecular property prediction, where data is often scarce and the cost of error is high, the rigorous optimization of hyperparameters has been identified as a crucial step for developing accurate and efficient deep learning models [2]. This guide provides an in-depth examination of this distinction, framing it within the practical challenges of cheminformatics research.

Definitions and Core Concepts

Model Parameters: The Learned Knowledge

Model parameters are the internal variables of a model that are learned directly and automatically from the provided training data. They are essentially the "knowledge" that the model extracts from the dataset, and they are used to make predictions on new, unseen data.

- Nature: Learned and adapted during the training process.

- Examples: Weight coefficients and bias terms in linear regression, neural networks, or support vector machines; split points in a decision tree.

- In Molecular Property Prediction: In a Graph Neural Network (GNN) trained to predict toxicity, the parameters are the weights applied to atom and bond features as messages are passed through the molecular graph. These weights are iteratively updated to minimize prediction error.

Model Hyperparameters: The Architectural Blueprint

Model hyperparameters are configuration variables that are set before the learning process begins. They are not learned from the data but act as the "architect's blueprint," controlling the structure of the model and the behavior of the learning algorithm itself.

- Nature: Set by the researcher prior to training.

- Examples: Number of layers in a deep neural network, number of hidden units per layer, learning rate, choice of activation function, dropout rate, and batch size [2].

- In Molecular Property Prediction: For a GNN, critical hyperparameters include the depth of the message-passing steps (which controls how far information travels across the molecular graph) and the architecture of the task-specific prediction heads [3] [4].

Table 1: Comparative Analysis of Parameters and Hyperparameters

| Feature | Model Parameters | Model Hyperparameters |

|---|---|---|

| Purpose | Define the learned mapping from input features to output prediction. | Control the model's structure and the learning process. |

| Determination | Automatically learned and optimized from training data. | Set heuristically or via optimization algorithms by the researcher. |

| Dependency | Dependent on the specific training dataset used. | Independent of the dataset (though chosen in context of the problem). |

| Examples | Weights, biases, split points. | Learning rate, number of layers, number of estimators, activation function. |

The Critical Role of Hyperparameters in Molecular Property Prediction

The performance of models in molecular property prediction is highly sensitive to their architectural choices and hyperparameters, making optimal configuration a non-trivial task [5]. The application of Hyperparameter Optimization (HPO) is therefore not a luxury but a necessity for achieving state-of-the-art performance.

Research has demonstrated that conducting HPO can lead to significant improvements in prediction accuracy. A comparative study on deep neural networks for molecular property prediction confirmed that models with HPO achieved markedly lower prediction errors than those without, validating that overlooking this step results in suboptimal models [2]. The challenge is pronounced in Graph Neural Networks (GNNs), where hyperparameters can be categorized into those belonging to graph-related layers and those of task-specific layers. Studies show that while optimizing these separately yields gains, simultaneously optimizing both types leads to the most predominant improvements in model performance [4].

Quantitative Insights and Methodologies

Key HPO Algorithms and Performance

Several HPO algorithms are employed to navigate the complex search space of hyperparameters. A comparative study of these methods for deep neural networks in molecular property prediction provides clear guidance on their efficacy.

Table 2: Comparison of Hyperparameter Optimization Algorithms [2]

| HPO Algorithm | Key Principle | Computational Efficiency | Prediction Accuracy | Recommended Use Case |

|---|---|---|---|---|

| Grid Search | Exhaustive search over a predefined set of values. | Low | High, if space is well-defined | Small, well-understood hyperparameter spaces. |

| Random Search | Random sampling from a predefined distribution. | Medium | Often better than Grid Search | Good baseline method for moderate-sized spaces. |

| Bayesian Optimization | Builds a probabilistic model to direct the search. | High | High | Effective for expensive-to-evaluate functions. |

| Hyperband | Uses adaptive resource allocation and early-stopping. | Very High | Optimal or nearly optimal | Recommended for most MPP tasks for its efficiency. |

| BOHB (Bayesian + Hyperband) | Combines Bayesian Optimization with Hyperband. | High | Optimal | When both robustness and top accuracy are critical. |

The Hyperband algorithm, in particular, has been highlighted as the most computationally efficient method, delivering optimal or nearly optimal prediction accuracy, and is recommended for molecular property prediction tasks [2].

Experimental Protocol for Hyperparameter Optimization

For researchers aiming to implement HPO, a detailed, step-by-step methodology is essential. The following protocol, adapted from current literature, outlines a robust process using modern tools.

Define the Model Architecture and Hyperparameter Search Space:

- Specify the type of model (e.g., Dense DNN, GNN, CNN) and define the hyperparameters to be tuned. The search space should be broad but realistic.

- Example Search Space for a GNN [4]:

- Graph-related layers: Number of message-passing layers, aggregation function (sum, mean, max), hidden layer dimension, dropout rate.

- Task-specific layers: Size of the fully-connected layers, learning rate, batch size.

Select an HPO Algorithm and Software Platform:

- Choose an algorithm from Table 2 (e.g., Hyperband). For efficiency, use a software platform that allows parallel execution of multiple hyperparameter trials.

- Recommended Platforms: KerasTuner (user-friendly, intuitive for chemical engineers) or Optuna (highly configurable) [2].

Implement the HPO Process:

- The chosen software will automatically manage the iterative process of training multiple model instances with different hyperparameter combinations, evaluating them on a validation set, and seeking the best configuration.

Evaluate and Validate:

- Once the HPO process is complete, retrain the best-found model on the combined training and validation data.

- The final model's performance is then assessed on a held-out test set to provide an unbiased estimate of its generalization ability.

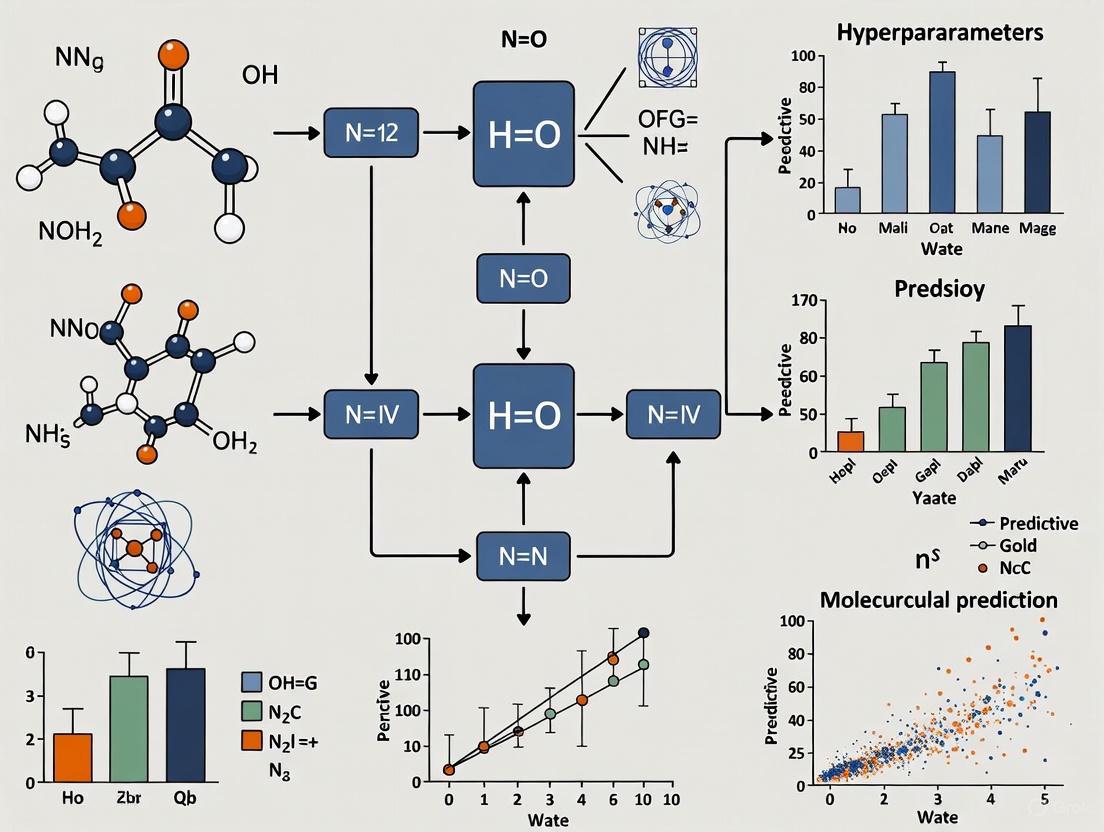

Visualizing the Hierarchy and Optimization Workflow

The relationship between hyperparameters, model parameters, and the final output can be conceptualized as a hierarchical process. The following diagram, generated from the DOT script below, illustrates this workflow and the role of HPO in the context of molecular property prediction.

Diagram 1: The Molecular Property Prediction Modeling Hierarchy. Hyperparameters (red) define the blueprint, guiding the training process to learn model parameters (red), resulting in an optimized model (green).

The Scientist's Toolkit: Essential Research Reagents & Materials

Beyond algorithmic choices, successful molecular property prediction relies on a suite of computational "reagents" and benchmarks.

Table 3: Essential Research Tools for Molecular Property Prediction

| Tool / Resource | Type | Function in Research |

|---|---|---|

| MoleculeNet | Benchmark Dataset Suite | A standardized collection of datasets for fair evaluation and benchmarking of ML models on molecular properties [6]. |

| Graph Neural Network (GNN) | Model Architecture | A powerful neural network class that operates directly on molecular graph structures, mirroring underlying chemistry [5] [3]. |

| KerasTuner / Optuna | HPO Software Platform | User-friendly Python libraries that automate the hyperparameter search process, enabling parallel trials and efficient optimization [2]. |

| RDKit | Cheminformatics Toolkit | An open-source software for calculating molecular descriptors (e.g., 2D descriptors, ECFP fingerprints) and handling chemical data [6]. |

| Hyperband | HPO Algorithm | A cutting-edge optimization algorithm that uses adaptive resource allocation to identify high-performing hyperparameters quickly [2]. |

The clear distinction between hyperparameters and model parameters forms the bedrock of effective machine learning in molecular property prediction. Hyperparameters act as the architect's blueprint, defining the model's potential, while parameters are the knowledge it acquires. As the field advances with techniques like Automated Machine Learning (AutoML) [7], the necessity for a deep understanding of these concepts only intensifies. By systematically applying robust Hyperparameter Optimization protocols and leveraging modern tools, researchers can transform this theoretical blueprint into predictive models that reliably accelerate the discovery of new drugs and materials.

In molecular property prediction, hyperparameters are not merely technical settings but pivotal factors that determine the success of machine learning models in accelerating drug discovery and materials design. These predefined configurations govern how models learn from inherently complex chemical data, directly impacting their ability to predict critical properties such as binding affinity, solubility, and toxicity with the accuracy required for scientific application [5] [2]. The performance of Graph Neural Networks (GNNs)—which have emerged as a premier architecture for modeling molecular structures—is exceptionally sensitive to these hyperparameter choices, making their systematic optimization a fundamental research activity rather than an afterthought [4] [8].

The process of hyperparameter optimization (HPO) presents unique challenges in computational chemistry. Experimental data on molecular properties is often scarce, with high-quality labeled datasets sometimes containing only thousands of samples, in stark contrast to the millions of images available in computer vision benchmarks [8]. This data scarcity, combined with the high computational cost of training complex deep learning models, necessitates efficient and deliberate HPO strategies to build models that are both accurate and resource-efficient [2]. This guide provides a comprehensive technical framework for understanding and optimizing hyperparameters specifically within the context of molecular property prediction.

Core Hyperparameter Categories

Hyperparameters can be functionally divided into three primary categories that collectively control a model's structure, learning dynamics, and generalization behavior. This taxonomy is particularly useful for methodically organizing the optimization process for graph neural networks and other deep learning architectures used in cheminformatics.

Model Architecture Hyperparameters

Architecture hyperparameters define the structural blueprint of a machine learning model. They determine its capacity to represent complex functions and capture intricate patterns in molecular data [9] [10].

For Graph Neural Networks, which operate directly on molecular graph structures, these hyperparameters control how information is propagated and aggregated between atoms and bonds [8]. The configuration of these parameters directly influences whether a model can effectively learn relevant chemical patterns, such as functional group interactions and spatial relationships.

Table: Key Architecture Hyperparameters for GNNs and DNNs in Molecular Property Prediction

| Hyperparameter | Description | Impact on Model Performance | Typical Values/Range |

|---|---|---|---|

| Number of GNN Layers | Depth of the graph network; determines how many atomic neighborhoods are merged. | Too few layers limit the receptive field; too many can lead to over-smoothing where all node representations become similar [8]. | 2-8 layers |

| Hidden Layer Dimension | Size of the feature vector for each atom/node after each graph convolution. | Larger dimensions capture more features but increase computational cost and risk of overfitting, especially with small datasets [10]. | 64-512 dimensions |

| Graph Readout Function | Operation (e.g., sum, mean, max) that combines node embeddings into a single graph-level representation. | Affects molecular fingerprint invariance and discriminative power; sum often performs well for molecular properties [8]. | Sum, Mean, Max |

| Number of Hidden Layers (in task-specific heads) | Depth of fully connected networks following graph feature extraction. | Deeper networks can model complex property relationships but may overfit on small molecular datasets [2]. | 1-3 layers |

| Units per Layer (in task-specific heads) | Width of fully connected layers in the prediction head. | Similar to hidden layer dimension; balances model expressiveness with parameter efficiency [10]. | 32-256 units |

| Activation Function | Non-linear function (e.g., ReLU, Tanh) applied after layers. | ReLU and its variants are common; choice can affect learning dynamics and gradient flow [10]. | ReLU, Leaky ReLU |

Optimization Hyperparameters

Optimization hyperparameters govern the training process itself, controlling how the model learns from data by adjusting internal parameters to minimize prediction error [9] [10]. These settings are crucial for achieving stable convergence to a good solution in a reasonable time frame, which is particularly important given the computational expense of training on molecular datasets.

Table: Optimization Hyperparameters for Training Deep Learning Models in Cheminformatics

| Hyperparameter | Description | Impact on Training & Performance | Recommended Tuning Approach |

|---|---|---|---|

| Learning Rate | Step size for updating model parameters during optimization. | Too high causes divergence; too low leads to slow training or convergence to poor local minima [10]. | Log-uniform sampling (e.g., 1e-5 to 1e-2) [11] |

| Batch Size | Number of samples (molecules) processed before a model update. | Affects training stability and speed; smaller batches provide noisy gradients that can help escape local minima [10]. | Powers of 2 (e.g., 16, 32, 64, 128) [11] |

| Number of Epochs | Number of complete passes through the training dataset. | Too few result in underfitting; too many lead to overfitting [10]. | Use early stopping based on validation performance |

| Optimizer Algorithm | Optimization method (e.g., Adam, SGD) used to update weights. | Adam is commonly used; different optimizers have different convergence properties and sensitivity to learning rates [2]. | Adam, SGD with Momentum |

| Learning Rate Schedule | Strategy to adjust learning rate during training (e.g., exponential decay). | Helps refine learning in later stages; warm-up can stabilize early training [10]. | Cosine decay, Exponential decay |

Regularization Hyperparameters

Regularization hyperparameters are designed to prevent overfitting, a significant risk when training complex models on limited molecular data [9]. These techniques constrain the learning process to produce models that generalize better to unseen molecules, which is the ultimate goal in predictive cheminformatics.

Table: Regularization Hyperparameters for Improving Model Generalization

| Hyperparameter | Description | Mechanism of Action | Typical Values/Range |

|---|---|---|---|

| Dropout Rate | Fraction of randomly selected neurons that are ignored during a training step. | Prevents complex co-adaptations of neurons, forcing the network to learn robust features [10]. | 0.1 - 0.5 |

| L2 Regularization Strength | Weight penalty added to the loss function to discourage large parameter values. | Shrinks weight parameters toward zero, effectively reducing model complexity [10]. | 1e-5 - 1e-2 |

| Early Stopping Patience | Number of epochs to wait without validation improvement before stopping training. | Halts training when validation performance plateaus, preventing overfitting to training data [11]. | 10-50 epochs |

Diagram: Integrated Hyperparameter Optimization Workflow for GNNs. This diagram illustrates the systematic process of tuning architecture, optimization, and regularization hyperparameters in Graph Neural Networks for molecular property prediction, culminating in the selection of an optimal configuration through an efficient optimization algorithm like Hyperband.

Experimental Protocols for Hyperparameter Optimization

Selecting appropriate methodologies for hyperparameter optimization is essential for balancing computational efficiency with resulting model performance. The following protocols detail established and emerging techniques specifically valuable in the context of molecular property prediction.

Established HPO Algorithms and Their Application

Grid Search: This exhaustive strategy involves specifying a finite set of values for each hyperparameter and evaluating every possible combination [12]. While guaranteed to find the best combination within the predefined set, grid search becomes computationally prohibitive for tuning more than 2-3 hyperparameters simultaneously, making it poorly suited for comprehensive GNN tuning where the search space is high-dimensional [2].

Random Search: Instead of exhaustive enumeration, random search samples hyperparameter combinations randomly from predefined distributions over the search space [12]. This approach often finds high-performing configurations more efficiently than grid search because it doesn't waste resources on uniformly sampling less important parameters and can naturally focus on regions that yield better performance [10].

Bayesian Optimization: This sequential model-based optimization technique builds a probabilistic surrogate model (e.g., Gaussian Process, Tree-structured Parzen Estimator) that maps hyperparameters to the probability of a model performance score [12] [10]. The method uses an acquisition function to balance exploration (trying hyperparameters in uncertain regions) and exploitation (focusing on regions likely to yield improvement). For resource-intensive GNN training, Bayesian optimization can significantly reduce the number of trials needed to find optimal configurations by leveraging information from previous evaluations [2].

Evolutionary Algorithms: Techniques such as CMA-ES (Covariance Matrix Adaptation Evolution Strategy) maintain a population of hyperparameter sets that undergo selection, recombination, and mutation across generations [4]. These methods are particularly effective for complex, non-convex search spaces and can handle both continuous and discrete hyperparameters, making them suitable for simultaneously optimizing both graph-related and task-specific layers in GNNs [4].

Advanced Protocols: Hyperband and BOHB

Hyperband: This state-of-the-art algorithm addresses the computational cost of HPO through a multi-fidelity approach, initially evaluating configurations with limited resources (e.g., fewer training epochs, subset of data) and only advancing promising candidates to more expensive training runs [2]. The method combines random search with successive halving, where the number of configurations is repeatedly reduced while resource allocation per configuration is increased. Recent studies recommend Hyperband for molecular property prediction due to its superior computational efficiency while delivering optimal or near-optimal prediction accuracy [2].

Bayesian Optimization and Hyperband (BOHB): This hybrid approach combines the strengths of Bayesian optimization and Hyperband by using a Bayesian probabilistic model to guide the selection of configurations which are then evaluated using Hyperband's multi-fidelity resource allocation strategy [2]. BOHB achieves state-of-the-art performance by leveraging the sample efficiency of Bayesian models while benefiting from Hyperband's resource efficiency.

Case Study: HPO Impact on Molecular Property Prediction

A comparative study of HPO algorithms for deep neural networks applied to molecular property prediction revealed significant practical insights [2]. When optimizing dense neural networks and convolutional neural networks for predicting properties like polymer melt index and glass transition temperature, researchers implemented the following experimental protocol:

- Base Case Definition: Established a baseline model without systematic HPO, using heuristic or default hyperparameter values.

- Search Space Definition: Defined appropriate search spaces for all essential hyperparameters, including the number of layers, units per layer, learning rate, batch size, and dropout rate.

- Parallel Implementation: Executed HPO using KerasTuner and Optuna frameworks, enabling parallel evaluation of multiple hyperparameter configurations to reduce total optimization time.

- Algorithm Comparison: Systematically applied and compared random search, Bayesian optimization, Hyperband, and BOHB using identical computational budgets and evaluation metrics.

- Validation: Assessed final model performance on held-out test sets using domain-relevant metrics such as Mean Squared Error (MSE) for regression tasks.

The study concluded that the Hyperband algorithm demonstrated superior computational efficiency while achieving optimal or nearly optimal prediction accuracy, making it particularly recommended for molecular property prediction tasks where training resources are a constraint [2].

Successful hyperparameter optimization in molecular property prediction requires both specialized software tools and strategic methodological approaches. The following table catalogues essential "research reagents" for implementing effective HPO workflows.

Table: Essential Tools and Resources for Hyperparameter Optimization in Molecular Property Prediction

| Tool/Resource | Type | Primary Function | Application Notes |

|---|---|---|---|

| KerasTuner | Python Library | User-friendly HPO framework that integrates with Keras/TensorFlow models. | Recommended for its intuitiveness and ease of coding, especially for researchers without extensive computer science backgrounds [2]. |

| Optuna | Python Library | Define-by-run API for automated HPO, supporting various samplers and pruning algorithms. | Excels in flexibility and supports advanced techniques like BOHB; ideal for complex search spaces [2]. |

| Azure Machine Learning SweepJob | Cloud Service | Automated hyperparameter tuning service with support for various sampling methods and early termination policies. | Enables scalable parallel HPO experiments with integrated job scheduling and resource management [11]. |

| Scikit-learn | Python Library | Provides GridSearchCV and RandomizedSearchCV for simpler models. | Good foundation for understanding HPO concepts; often used with traditional machine learning models before deep learning [12]. |

| Cross-Validation with Structural Splits | Methodology | Data splitting strategy based on molecular scaffolds rather than random splits. | More accurately estimates model generalizability to novel chemotypes, crucial for real-world drug discovery applications [8]. |

| Regression Enrichment Factor EFχ(R) | Evaluation Metric | Measures early enrichment of computational models for chemical data. | Newly introduced metric that provides additional insight into model performance beyond standard correlation coefficients [8]. |

Diagram: Hyperparameter-Driven Molecular Property Prediction Pipeline. This workflow illustrates how different hyperparameter categories integrate into an end-to-end pipeline for predicting molecular properties, emphasizing the iterative refinement cycle based on validation performance.

In molecular property prediction, hyperparameters transcend their role as mere technical configurations to become fundamental determinants of model success. The interplay between architecture, optimization, and regularization hyperparameters collectively shapes a model's capacity to learn meaningful representations from molecular structures and generalize to novel chemical entities. As the field advances, automated optimization techniques like Hyperband and BOHB are proving essential for efficiently navigating complex hyperparameter spaces, enabling researchers to extract maximum predictive power from often limited experimental data. By adopting a systematic approach to hyperparameter optimization—leveraging appropriate tools, methodologies, and domain-aware validation strategies—researchers can develop more accurate and reliable models that accelerate the pace of artificial intelligence-driven drug discovery and materials design.

The Critical Impact of Hyperparameters on Prediction Accuracy and Generalization

Hyperparameter optimization has emerged as a critical determinant of model performance in molecular property prediction, directly impacting the accuracy, generalization capability, and practical utility of AI-driven drug discovery pipelines. This technical review systematically evaluates the profound influence of hyperparameter selection on prediction accuracy across diverse molecular representations, including graph-based models, fingerprint-based approaches, and sequential representations. By synthesizing evidence from large-scale empirical studies and methodological innovations, we demonstrate that strategic hyperparameter tuning can yield performance improvements of 1.5-2.5% in absolute accuracy metrics while significantly enhancing model robustness against activity cliffs and dataset artifacts. The analysis further reveals that the relationship between hyperparameters and model performance exhibits task-specific characteristics that necessitate tailored optimization strategies rather than universal presets. This comprehensive assessment provides researchers with structured frameworks for hyperparameter selection, evidence-based optimization protocols, and practical guidance for maximizing predictive performance in real-world molecular property prediction applications.

In artificial intelligence-driven drug discovery, hyperparameters represent the foundational configuration elements that govern how machine learning models learn from chemical data, distinguishing them from parameters that models learn during training [13] [14]. These predefined settings control critical aspects of the learning process, including model architecture complexity, optimization behavior, and regularization intensity. Within molecular property prediction—a fundamental task in computer-aided drug discovery—hyperparameter selection has demonstrated profound implications for prediction accuracy, generalization capability, and ultimately, the practical utility of models in identifying viable drug candidates [15] [6].

The escalating complexity of molecular representation learning approaches, including graph neural networks (GNNs), transformer architectures, and various fingerprint-based methods, has exponentially expanded the hyperparameter search space, making systematic optimization increasingly challenging yet indispensable [6] [5]. Contemporary research indicates that suboptimal hyperparameter configuration constitutes a predominant factor behind the performance disparities observed between reported state-of-the-art results and the practical outcomes achieved in many drug discovery environments [16] [6]. This whitepaper synthesizes current evidence regarding hyperparameter impacts, evaluates optimization methodologies, and provides structured guidance for researchers seeking to maximize predictive performance in molecular property prediction tasks.

Molecular Representations and Their Hyperparameter Landscapes

The selection of molecular representation fundamentally reshapes the hyperparameter optimization landscape, imposing distinct constraints and opportunities for model configuration. Molecular property prediction employs three primary representation paradigms, each with associated hyperparameter considerations.

Fixed Molecular Representations

Fixed representations, including molecular fingerprints and structural keys, encode molecules as fixed-length vectors capturing predefined chemical features. Extended Connectivity Fingerprints (ECFP) represent the de facto standard, with critical hyperparameters including radius size (typically 2-3, designated ECFP4 or ECFP6) and vector size (commonly 1024 or 2048 bits) [6]. These fingerprints operate by iteratively updating atom identifiers to reflect neighborhood structures, followed by duplicate removal to generate final feature lists [6]. Traditional machine learning models applied to fixed representations (e.g., Random Forests, Support Vector Machines) introduce additional hyperparameters, including the number of estimators, maximum depth, and regularization constants, which collectively control model capacity and generalization behavior [12] [13].

Graph-Based Representations

Graph representations conceptualize molecules as topological structures with atoms as nodes and bonds as edges, processed predominantly via Graph Neural Networks (GNNs) [15] [6]. This representation introduces architectural hyperparameters including GNN depth (number of message-passing layers), hidden layer dimensionality, aggregation functions (sum, mean, max), and nonlinear activation selections [5]. The performance of GNNs exhibits exceptional sensitivity to these configurations, with suboptimal selections frequently degrading model performance more significantly than architectural innovations themselves [6] [5]. For instance, the GSL-MPP framework demonstrates that integrating graph structure learning with conventional GNNs necessitates careful tuning of similarity thresholds and iteration counts to balance intra-molecular and inter-molecular information [15].

Sequential Representations

Simplified Molecular-Input Line-Entry System (SMILES) strings represent molecules as sequential data, processed via recurrent neural networks, transformers, or convolutional architectures [6]. Critical hyperparameters include tokenization strategies, sequence length limitations, positional encoding schemes, and attention mechanisms [6]. The canonicalization of SMILES strings introduces additional preprocessing decisions that effectively function as hyperparameters by influencing the consistency of representation across similar molecular structures [6].

Table 1: Critical Hyperparameter Categories in Molecular Property Prediction

| Category | Specific Examples | Impact on Learning Process |

|---|---|---|

| Model Architecture | GNN layers, hidden dimensions, attention heads | Controls model capacity and feature extraction capability |

| Optimization | Learning rate, batch size, optimizer selection | Governs convergence behavior and final solution quality |

| Regularization | Dropout, weight decay, label smoothing | Mitigates overfitting and enhances generalization |

| Data Representation | Fingerprint radius, graph connectivity, SMILES tokenization | Determines informational content available for learning |

Quantitative Impact of Hyperparameters on Prediction Accuracy

Empirical evidence consistently demonstrates that hyperparameter selection directly controls prediction accuracy, with optimized configurations delivering substantial performance improvements across diverse molecular property prediction tasks.

Performance Gains from Systematic Optimization

Large-scale benchmarking studies reveal that hyperparameter optimization routinely yields absolute accuracy improvements of 1.5-2.5% across model architectures and datasets [16]. In lightweight deep learning models for chemical data, adjusting the initial learning rate from 0.001 to 0.1 increased Top-1 accuracy for ConvNeXt-T from 77.61% to 81.61%, while TinyViT-21M improved from 85.49% to 89.49% [16]. Beyond learning rates, strategic data augmentation incorporating RandAugment, Mixup, CutMix, and Label Smoothing delivered consistent gains, elevating MobileViT v2 (S) performance from 85.45% to 89.45% compared to baseline configurations [16]. These improvements substantially impact practical drug discovery applications, where marginal gains in prediction accuracy can translate to significant reductions in experimental validation costs.

The Dataset Size Interaction

The relationship between hyperparameter optimality and dataset size exhibits non-linear characteristics with profound implications for resource allocation [6]. Representation learning models particularly benefit from extensive hyperparameter tuning in low-data regimes, where appropriate regularization and model capacity settings can mitigate overfitting [6]. However, as dataset size increases, the marginal utility of extensive hyperparameter optimization diminishes, with default configurations often achieving competitive performance given sufficient training examples [6]. This interaction underscores the importance of considering dataset characteristics when determining appropriate optimization intensity.

Table 2: Hyperparameter Impact on Model Performance Across Architectures

| Model Architecture | Key Hyperparameters | Performance Variation Range | Most Influential Parameter |

|---|---|---|---|

| GNN-based Models | Message-passing layers, hidden dimensions, graph pooling | 3-8% AUC variation | Graph attention mechanisms |

| Fingerprint-based Models | Fingerprint radius, vector size, estimator count | 2-5% AUC variation | ECFP radius size |

| Transformer Models | Attention heads, learning rate, warmup steps | 4-9% AUC variation | Learning rate schedule |

| CNN-based Models | Convolutional layers, kernel size, dropout rate | 2-6% AUC variation | Dropout probability |

Hyperparameter Optimization Methodologies: From Theory to Practice

Effective hyperparameter optimization requires methodological rigor beyond naive trial-and-error approaches. Contemporary optimization strategies span efficiency-effectiveness tradeoffs, with selection criteria dependent on computational constraints, search space complexity, and performance requirements.

Exhaustive and Stochastic Search Strategies

GridSearchCV represents the traditional exhaustive approach, systematically evaluating all combinations within a predefined hyperparameter grid [12] [13]. While methodologically sound for low-dimensional spaces, this approach suffers from the curse of dimensionality, becoming computationally prohibitive as hyperparameter counts increase [12] [13]. RandomizedSearchCV offers a scalable alternative by sampling random combinations from specified distributions, often identifying competitive configurations with significantly reduced computational expenditure [12] [13]. Empirical evidence suggests random search explores hyperparameter spaces more efficiently than grid search, particularly when only a small subset of hyperparameters meaningfully impacts final performance [13].

Bayesian Optimization and Advanced Approaches

Bayesian optimization employs probabilistic surrogate models to guide hyperparameter selection, balancing exploration of promising regions with exploitation of known performance patterns [12] [13] [14]. This approach models the function mapping hyperparameters to validation performance, using acquisition functions to select subsequent evaluations [13] [14]. Implementations like Optuna, Hyperopt, and Scikit-Optimize provide accessible interfaces for Bayesian optimization, often achieving superior performance with fewer evaluations compared to exhaustive or random strategies [14]. For molecular property prediction specifically, recent advancements incorporate problem-specific knowledge through transfer learning, where optimization histories from similar datasets warm-start the search process, potentially reducing required evaluations by 30-50% [5].

Emerging Frontiers: Neural Architecture Search and Multi-Fidelity Optimization

Neural Architecture Search (NAS) extends hyperparameter optimization to architectural dimensions, automatically discovering optimal GNN configurations for specific molecular prediction tasks [5]. While computationally intensive, NAS has demonstrated capability to identify novel architectures that outperform human-designed counterparts on specific molecular datasets [5]. Multi-fidelity optimization approaches, including Hyperband and Successive Halving, accelerate search processes by early termination of unpromising configurations based on intermediate performance metrics [13] [16]. These approaches strategically allocate computational resources toward hyperparameter combinations with the highest potential, making comprehensive optimization feasible under constrained resources.

Special Considerations for Molecular Property Prediction

Molecular property prediction introduces domain-specific challenges that necessitate specialized hyperparameter strategies beyond conventional machine learning practice.

Addressing Activity Cliffs and Dataset Artifacts

Activity cliffs—where structurally similar molecules exhibit significant property differences—present particular challenges for molecular property prediction [15] [6]. Models with inappropriate smoothing hyperparameters may either over-smooth these critical regions or overfit to spurious correlations [15]. The GSL-MPP framework addresses this through molecule-level graph structure learning that explicitly models both intra-molecular and inter-molecular relationships, requiring careful tuning of similarity thresholds to balance these information sources [15]. Additionally, dataset splitting strategies introduce implicit hyperparameters, with random splits potentially overstating performance compared to more challenging temporal or scaffold-based splits that better simulate real-world generalization [6].

Evaluation Rigor and Metric Selection

Hyperparameter optimization requires rigorous evaluation methodologies to prevent optimistic performance estimates [6]. Nested cross-validation provides the gold standard, with inner loops dedicated to hyperparameter optimization and outer loops delivering unbiased performance estimates [13] [6]. Metric selection further influences optimal configurations; while AUROC predominates literature reports, practitioners may prefer metrics emphasizing true positive rates or early enrichment in virtual screening contexts [6]. The recent emphasis on reporting variability across multiple random seeds represents an important advancement in evaluation rigor, revealing the stability of hyperparameter selections under different initializations [6].

Experimental Protocols and Implementation Guidelines

Translating hyperparameter optimization theory into practice requires structured experimental protocols and implementation decisions.

Structured Optimization Protocol

- Search Space Definition: Delineate hyperparameter bounds based on architectural constraints, prior knowledge, and computational limitations. Include both continuous (learning rate, dropout) and categorical (optimizer selection, activation functions) parameters.

- Evaluation Framework Selection: Implement nested cross-validation with appropriate splitting strategies (random, temporal, or scaffold-based) aligned with intended use cases.

- Optimization Algorithm Configuration: Select optimization methods commensurate with available computational resources and search space complexity.

- Convergence Monitoring: Track performance improvement trajectories, terminating optimization when marginal gains fall below predefined thresholds.

- Final Model Assessment: Report performance on held-out test sets using multiple random seeds to quantify variability.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Tools for Hyperparameter Optimization in Molecular Property Prediction

| Tool Category | Specific Implementations | Primary Function | Application Context |

|---|---|---|---|

| Optimization Frameworks | Optuna, Hyperopt, Scikit-Optimize | Bayesian optimization implementation | Large search spaces with limited evaluations |

| Molecular Representations | RDKit, DeepChem, Mordred | Molecular fingerprint and descriptor calculation | Feature engineering for traditional ML |

| Deep Learning Platforms | PyTorch Geometric, Deep Graph Library | GNN implementation and training | Graph-based molecular representation |

| Benchmarking Suites | MoleculeNet, Therapeutic Data Commons | Standardized dataset collections | Method comparison and validation |

Hyperparameter optimization represents an indispensable component of modern molecular property prediction pipelines, with demonstrated impact exceeding that of many architectural innovations. The evidence reviewed establishes that systematic hyperparameter selection directly controls prediction accuracy, generalization capability, and practical utility in drug discovery applications. As the field progresses, emerging techniques including transfer learning across molecular datasets, meta-learning for optimization warm-starting, and multi-objective optimization balancing accuracy with computational efficiency promise to further enhance optimization effectiveness. For contemporary researchers, allocating sufficient resources to hyperparameter optimization remains not merely advisable but essential for realizing the full potential of molecular property prediction models in accelerating drug discovery and development.

- PMC (2024). Molecular property prediction based on graph structure learning. Bioinformatics.

- GeeksforGeeks. Hyperparameter Tuning.

- Wikipedia. Hyperparameter optimization.

- Kumar, V. et al. (2024). Impact of Hyperparameter Optimization on the Accuracy of Lightweight Deep Learning Models. arXiv.

- JIVP (2019). Machine learning hyperparameter selection for Contrast Limited Adaptive Histogram Equalization.

- Nature Communications (2023). A systematic study of key elements underlying molecular property prediction.

- Babu, A. (2024). A Comprehensive Guide to Hyperparameter Tuning in Machine Learning. Medium.

- Neurocomputing (2026). Importance estimation of hyperparameters in reinforcement learning.

- arXiv (2024). Accessible Color Sequences for Data Visualization.

- ScienceDirect (2025). Hyperparameter optimization and neural architecture search algorithms for graph Neural Networks in cheminformatics.

In molecular property prediction research, hyperparameters are the configuration settings that govern the learning process of a machine learning model, as opposed to the parameters that the model learns from the data itself. The choice and tuning of these hyperparameters are critical, as they directly control model complexity, learning efficiency, and ultimately, predictive performance. Within cheminformatics, the optimal hyperparameter landscape is profoundly influenced by the type of molecular representation used—be it graphs, SMILES strings, or fingerprints—as each representation encodes chemical information through fundamentally different data structures and inductive biases. This technical guide provides an in-depth examination of the core hyperparameters associated with these predominant molecular representations, framing them within the experimental protocols and empirical findings from contemporary research to equip practitioners with methodologies for optimizing predictive performance in drug development.

Molecular Graph Representations

Molecular graphs represent atoms as nodes and chemical bonds as edges, providing an intuitive structure for graph neural networks (GNNs). The hyperparameters for these models can be categorized into architectural, training, and graph-specific parameters.

Core Hyperparameters and Experimental Protocols

Architectural Hyperparameters:

- Number of GNN Layers: Determines the depth of the network and the range of atomic interactions captured. Shallow networks may fail to capture long-range dependencies, while deep networks can suffer from over-smoothing, where node representations become indistinguishable [17].

- Hidden Dimension Size: Controls the width of each layer and the richness of the learned atomic embeddings.

- Aggregation Function: The method (e.g., sum, mean, max) for combining messages from a node's neighbors, influencing how local chemical environments are summarized.

Training Hyperparameters:

- Learning Rate: Crucial for convergence; often tuned on a logarithmic scale.

- Batch Size: Affects the stability and speed of training.

Graph-Specific Hyperparameters:

- Jumping Knowledge: A technique that aggregates information from all GNN layers to combat over-smoothing and capture both local and global structural patterns [17]. Its use and style (e.g., concatenation, max-pooling) are key tunable choices.

Advanced GNN architectures introduce specialized hyperparameters. The MolGraph-xLSTM model, which integrates GNNs with extended Long Short-Term Memory (xLSTM) networks to address long-range dependencies, requires configuration of its xLSTM modules (sLSTM and mLSTM) and the integration points between the GNN and xLSTM components [17].

Optimization Methodologies

The performance of GNNs is highly sensitive to architectural choices and hyperparameters, making automated optimization a necessity [5]. Neural Architecture Search (NAS) and Hyperparameter Optimization (HPO) are crucial strategies. Common HPO algorithms include:

- Bayesian Optimization: Models the performance landscape to select promising hyperparameters efficiently.

- Random Search: A simple yet effective baseline.

- Evolutionary Algorithms: Inspired by natural selection to evolve hyperparameter sets.

These methods can be applied to search spaces encompassing architectural depth, hidden dimensions, and learning rates to automate the discovery of high-performing model configurations [5].

SMILES-Based Representations

SMILES (Simplified Molecular-Input Line-Entry System) strings represent molecular graphs as sequences of characters, enabling the use of natural language processing (NLP) models like Transformers and LSTMs.

Core Hyperparameters and Experimental Protocols

Model Architecture Hyperparameters:

- Vocabulary Size: The number of unique tokens in the SMILES vocabulary.

- Sequence Length: The maximum length of SMILES strings, with longer sequences required for complex molecules.

- Embedding Dimension: The size of the vector representing each token.

- Number of Attention Heads / LSTM Units: Determines the model's capacity to capture complex, long-range dependencies within the sequence [18].

Training Hyperparameters:

- Learning Rate Scheduler: A warm-up scheduler is often used to stabilize early training.

- Batch Size: Typically tuned in powers of two (e.g., 32, 64, 128).

Pretraining is a powerful strategy for SMILES-based models. The Self-Conformation-Aware Graph Transformer (SCAGE) utilizes a multitask pretraining framework (M4) that incorporates tasks like molecular fingerprint prediction and 3D bond angle prediction [18]. Key hyperparameters here include the weights assigned to each pretraining task and the type of conformational information (e.g., MMFF94 force field) used to generate molecular conformations for training [18].

Table 1: Key Hyperparameters for SMILES-Based Models

| Hyperparameter Category | Specific Parameters | Influence on Model Performance |

|---|---|---|

| Model Architecture | Vocabulary Size, Sequence Length, Embedding Dimension, Number of Attention Heads/LSTM Units | Controls model capacity and ability to capture syntactic and semantic rules of SMILES notation [18]. |

| Training Strategy | Learning Rate Scheduler, Batch Size, Pretraining Task Weights | Affects training stability, convergence speed, and the balance of learned molecular features [18]. |

| Data Representation | Use of Conformational Information (e.g., from MMFF94) | Enhances model by incorporating spatial structural information beyond the 1D sequence [18]. |

Molecular Fingerprint Representations

Molecular fingerprints, such as Extended-Connectivity Fingerprints (ECFPs), are fixed-length vectors encoding the presence of chemical substructures.

Core Hyperparameters and Experimental Protocols

The definition of a fingerprint itself involves critical hyperparameters:

- Radius: The maximum distance (in bonds) from an atom to define its local environment. ECFP4 (radius=2) is a common standard [19] [20].

- Length: The size of the bit vector. Common lengths are 1024, 2048, or 4096 bits. A longer vector reduces hash collisions but increases computational cost [20].

- Use of Counts vs. Bits: Determines if the fingerprint records the frequency of a substructure or merely its presence.

When fingerprints are used with traditional machine learning models like Gaussian Processes (GPs), the kernel function is a central hyperparameter. The Tanimoto kernel is a standard and often optimal choice for fingerprint vectors [20]. For models like feedforward neural networks, standard hyperparameters like learning rate, number of hidden layers, and layer sizes apply.

Impact of Hash Collisions and Mitigation

A key finding from recent research is that hash collisions in folded fingerprints can degrade model performance. Collisions occur when distinct substructures are mapped to the same bit, causing an overestimation of molecular similarity [20]. Studies using Gaussian Processes on docking score data (e.g., from the DOCKSTRING benchmark) show that using exact fingerprints (which avoid collisions) yields a small but consistent improvement in predictive accuracy (e.g., R² score improvements of 0.006 to 0.017) compared to standard compressed fingerprints [20]. Alternative methods like Sort&Slice, which selects the most frequent substructures from a reference dataset, can also reduce collisions and offer a performance trade-off [20].

Table 2: Key Hyperparameters and Performance for Fingerprint-Based Models

| Hyperparameter | Typical Values | Impact and Considerations |

|---|---|---|

| Radius (for ECFP) | 2 (ECFP4), 3, 4 | Larger radii capture larger substructures and more global molecular features [19]. |

| Fingerprint Length | 1024, 2048, 4096 | Longer lengths reduce hash collisions and improve model accuracy at the cost of memory [20]. |

| Fingerprint Type | Binary, Count-based | Count-based fingerprints retain more structural information and can lead to better performance [20]. |

| Kernel Function (for GPs) | Tanimoto, RBF | The Tanimoto kernel is specifically designed for binary/count vectors and is often the best performer [20]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Datasets for Molecular Representation Research

| Tool / Resource | Type | Primary Function |

|---|---|---|

| RDKit | Open-source Cheminformatics Library | Generation of molecular graphs, computation of fingerprints (ECFP), and SMILES parsing [20] [21]. |

| MoleculeNet | Benchmark Dataset Collection | Standardized datasets (e.g., BBBP, ESOL) for training and evaluating molecular property prediction models [17] [22]. |

| Therapeutics Data Commons (TDC) | Benchmark Dataset Collection | Datasets focused on ADMET and other therapeutic property predictions [17]. |

| DOCKSTRING | Benchmark Dataset | Provides docking scores for over 260,000 molecules against 58 protein targets for benchmarking [20]. |

| ZINC | Molecular Database | A large database of commercially available compounds, often used for pretraining and as a source of chemical space [19]. |

Comparative Workflows and Hyperparameter Interplay

The journey from a molecular structure to a property prediction involves a sequence of critical steps, with the optimal path heavily dependent on the chosen representation. The diagram below illustrates the parallel workflows for graph, SMILES, and fingerprint representations, highlighting key decision points and hyperparameters.

The landscape of hyperparameters in molecular property prediction is vast and intimately tied to the chosen representation. Graph-based models require careful balancing of architectural depth and message-passing mechanisms. SMILES-based models depend on sequence-modeling capacities and effective pretraining strategies. Fingerprint-based approaches, while conceptually simpler, demand careful specification of the fingerprint itself and an understanding of the trade-offs involving information loss through hashing. A unifying theme is the critical role of automated optimization techniques like NAS and HPO in navigating this complex space. As the field evolves towards multi-modal representations that combine graphs, sequences, and 3D spatial information, the challenge of hyperparameter tuning will only grow in importance, solidifying its status as a cornerstone of modern, data-driven molecular design.

Hyperparameter Optimization Methods: From Grid Search to Bayesian Optimization

In the field of molecular property prediction, hyperparameters are crucial configuration variables that govern the learning process of machine learning models. Unlike model parameters, which are learned during training, hyperparameters are set prior to the training process and control key aspects of the model's behavior and performance [2]. These include structural configurations such as the number of layers in a neural network, the number of units per layer, and the type of activation functions, as well as learning algorithm parameters such as learning rate, number of training iterations (epochs), and batch size [2]. The optimization of these hyperparameters is particularly vital in molecular property prediction, where accurately mapping chemical structures to properties like lipophilicity, solubility, or biological activity forms the cornerstone of efficient drug discovery and materials design [23] [6].

The process of finding optimal hyperparameter values, known as hyperparameter optimization (HPO), presents significant challenges in computational chemistry. Molecular datasets are often far smaller than those in typical deep learning applications, which amplifies the impact of proper hyperparameter selection on model generalizability [24]. Furthermore, the computational cost of training complex models like Graph Neural Networks (GNNs) on molecular structures makes inefficient HPO strategies prohibitively expensive [5] [2]. As noted in recent literature, "hyperparameter optimization is often the most resource-intensive step in model training," and many prior molecular property prediction studies have paid limited attention to systematic HPO, resulting in suboptimal predictive performance [2].

Within this context, manual search and automated baseline strategies like grid search and random search form the foundation of HPO in molecular informatics. This whitepaper provides an in-depth technical examination of these core methods, offering structured comparisons, implementation protocols, and practical guidance for researchers engaged in molecular property prediction.

Understanding Core Hyperparameter Optimization Strategies

Manual Search

Manual search represents the most fundamental approach to hyperparameter tuning, relying on domain expertise, intuition, and iterative experimentation. Researchers make educated guesses for hyperparameter values based on prior experience, literature recommendations, or understanding of the model's behavior, then manually adjust these values based on model performance.

- Methodology: The process typically begins with establishing baseline performance using default hyperparameter values or settings from similar published studies. Researchers then adjust one or two hyperparameters at a time while observing the impact on validation performance. This iterative process continues until performance plateaus or meets project requirements.

- Applications in Molecular Property Prediction: Manual search is often employed in preliminary investigations or when computational resources are severely constrained. It can be effective when tuning a small number of hyperparameters with well-understood effects on model behavior. For instance, a researcher might manually adjust the learning rate or batch size of a neural network model predicting molecular lipophilicity [23] based on training convergence behavior.

- Limitations: The approach becomes impractical as model complexity increases. Modern deep learning architectures for molecular property prediction, such as Graph Neural Networks (GNNs) or complex transformers, may have dozens of interacting hyperparameters [5] [24]. Manual search cannot systematically explore these high-dimensional spaces, often missing optimal configurations and introducing researcher bias.

Grid Search

Grid search is a systematic, exhaustive approach to HPO that involves specifying a finite set of values for each hyperparameter and evaluating every possible combination within this predefined grid.

- Technical Methodology: For each hyperparameter, researchers define a discrete set of values to explore. The algorithm then trains and evaluates a model for every combination of these values, typically using cross-validation to ensure robust performance estimation. The combination achieving the best validation performance is selected as optimal.

- Implementation Example: The following code illustrates a grid search implementation for a random forest model predicting molecular properties using Scikit-Learn:

- Strengths and Weaknesses: Grid search is guaranteed to find the best combination within the specified grid, making it comprehensive and straightforward to implement. However, it suffers from the "curse of dimensionality" – the number of required evaluations grows exponentially with each additional hyperparameter, making it computationally prohibitive for high-dimensional spaces [25] [26].

Random Search

Random search addresses the computational limitations of grid search by randomly sampling hyperparameter combinations from specified distributions over a fixed number of iterations.

- Technical Methodology: Instead of discrete value sets, researchers define probability distributions for each hyperparameter. The algorithm then randomly samples from these distributions for a predetermined number of trials (

n_iter), training and evaluating a model for each sampled combination. - Theoretical Foundation: Random search is particularly effective because most hyperparameter spaces have low effective dimensionality, meaning only a few parameters significantly impact model performance [26]. By randomly sampling across all parameters simultaneously, it explores the space more efficiently than grid search and has a high probability of finding good combinations with far fewer evaluations.

- Implementation Example: The following code demonstrates random search for the same random forest model:

Comparative Analysis of HPO Methods

Performance and Efficiency Comparison

The following table synthesizes quantitative findings from molecular property prediction studies comparing grid search and random search:

Table 1: Empirical Comparison of Grid Search and Random Search

| Metric | Grid Search | Random Search | Context and Evidence |

|---|---|---|---|

| Computational Time | Significantly higher | Lower and more efficient | A study on SGDClassifier showed grid search took 4.23 seconds for 60 candidates vs. 0.78 seconds for random search with 15 candidates [27]. |

| Parameter Space Exploration | Exhaustive but limited to predefined grid | Broad, stochastic exploration of the entire space | Random search can explore a larger, potentially continuous parameter space by sampling from distributions, unlike the fixed grid [25] [26]. |

| Best Found Performance | Finds best point on the grid | Often finds comparable or better configurations | Research on GNNs for molecular property prediction concluded that different HPO methods have individual advantages, with random search often performing well [24]. |

| Scalability to High-Dimensional Spaces | Poor; exponential cost with added parameters | Good; linear cost with added parameters | In a Random Forest example, random search efficiently explored a large space with n_iter=100, while an equivalent grid search would have been infeasible [25]. |

| Risk of Overfitting | Potentially higher on validation set | More resilient due to non-exhaustive search | By not exhaustively searching, random search reduces the risk of overfitting to the validation set [26]. |

Workflow and Logical Relationships

The following diagram illustrates the logical workflow and decision-making process for selecting and applying these baseline HPO strategies in a molecular property prediction pipeline.

Experimental Protocol for Molecular Property Prediction

Implementing a rigorous HPO strategy requires a systematic, reproducible protocol. The following steps outline a generalized methodology applicable to various molecular prediction tasks.

Prerequisite Data Preparation

- Molecular Representation: Convert molecular structures into a machine-readable format. Common approaches include:

- SMILES Strings: Linear notations of molecular structure [23] [28].

- Molecular Graphs: Represent atoms as nodes and bonds as edges, suitable for Graph Neural Networks (GNNs) [5] [6].

- Fingerprints and Descriptors: Fixed-length vectors encoding structural features (e.g., ECFP, RDKit 2D descriptors) [23] [6].

- Dataset Splitting: Partition data into three distinct sets:

- Training Set: Used for model training with different hyperparameters.

- Validation Set: Used for evaluating hyperparameter performance during HPO.

- Test Set: Held out entirely from the HPO process and used only for the final evaluation of the model trained with the selected optimal hyperparameters.

- Performance Metric Selection: Choose an appropriate metric aligned with the research goal (e.g., Mean Squared Error for regression tasks like predicting lipophilicity [23], or AUC-ROC for classification tasks).

Step-by-Step HPO Protocol

- Define the Search Space:

- For Grid Search: Create a discrete parameter grid. Example for a DNN:

{'learning_rate': [0.001, 0.01, 0.1], 'n_layers': [1, 2, 3], 'units_per_layer': [64, 128, 256]}. - For Random Search: Define sampling distributions. Example:

{'learning_rate': loguniform(1e-4, 1e-1), 'n_layers': randint(1, 5), 'units_per_layer': randint(50, 300)}.

- For Grid Search: Create a discrete parameter grid. Example for a DNN:

- Configure the Search Algorithm:

- Use

GridSearchCVorRandomizedSearchCVfrom Scikit-Learn, specifying the model, search space, cross-validation strategy, number of iterations (for random search), and performance metric. - Leverage parallelization (

n_jobs=-1) to distribute computations across available CPU cores [2].

- Use

- Execute the Search:

- Fit the search object to the training data. The internal cross-validation will use this data to train and validate models for each hyperparameter combination.

- Monitor progress to identify any immediate failures or trends.

- Validate and Select:

- Once complete, the search object's

best_params_attribute contains the hyperparameters that performed best on the validation set. - It is good practice to inspect the full results (

cv_results_) to understand the sensitivity of the model to different hyperparameters.

- Once complete, the search object's

- Final Evaluation:

- Retrain a final model on the entire training set using the

best_params_. - Evaluate this final model's performance on the held-out test set to obtain an unbiased estimate of its generalization ability for molecular property prediction.

- Retrain a final model on the entire training set using the

The Scientist's Toolkit: Essential Research Reagents

The following table details key computational tools and resources essential for implementing HPO in molecular property prediction research.

Table 2: Essential Computational Tools for HPO in Molecular Property Prediction

| Tool / Resource | Type | Primary Function | Relevance to HPO |

|---|---|---|---|

| Scikit-Learn [25] [27] | Python Library | Machine Learning | Provides GridSearchCV and RandomizedSearchCV for easy implementation of baseline HPO strategies. |

| RDKit [6] | Cheminformatics Library | Molecular Informatics | Generates molecular representations (SMILES, fingerprints, 2D descriptors) from which features for model training are derived. |

| KerasTuner / Optuna [2] | HPO Library | Hyperparameter Optimization | Offers advanced, scalable HPO algorithms (e.g., Hyperband, Bayesian Optimization) for more complex tuning needs beyond baseline methods. |

| MoleculeNet [6] [24] | Benchmark Suite | Standardized Datasets | Provides curated molecular property prediction datasets (e.g., QM9) for fair benchmarking and model evaluation. |

| Graph Neural Networks (GNNs) [5] [6] | Model Architecture | Deep Learning on Graphs | A key model type for molecular graphs; their performance is highly sensitive to architectural and training hyperparameters. |

Manual search, grid search, and random search represent foundational strategies for hyperparameter optimization in molecular property prediction. While manual search relies on expert intuition and grid search offers exhaustive but computationally expensive exploration, random search typically provides a superior balance of efficiency and effectiveness, especially in higher-dimensional spaces. The choice among them should be guided by project-specific constraints, including the number of hyperparameters, available computational resources, and prior knowledge of the model's behavior. As the field advances towards more complex models and larger chemical datasets, these baseline methods continue to serve as critical starting points and benchmarks against which more advanced optimization techniques must be measured. A rigorous, systematic application of these HPO strategies is indispensable for building robust, high-performing models that can accelerate drug discovery and materials design.

In the field of molecular property prediction and drug discovery, researchers are perpetually faced with the challenge of optimizing complex, expensive-to-evaluate functions within vast chemical spaces. Whether tuning hyperparameters of machine learning models, identifying molecular structures with desired properties, or parameterizing coarse-grained force fields, the underlying problem remains the same: finding the optimal input to an unknown function with minimal evaluations. Bayesian optimization (BO) has emerged as a powerful framework for addressing these challenges, offering a sample-efficient approach to global optimization of black-box functions [29]. This is particularly valuable in molecular sciences where each evaluation may represent an expensive wet-lab experiment or a computationally intensive quantum chemistry calculation.

The core premise of BO is its ability to balance exploration and exploitation through a probabilistic model. Unlike grid or random search, which are uninformed by past evaluations, BO builds a surrogate model of the objective function and uses it to select the most promising parameters to evaluate next [30] [13]. This reasoning allows BO to often find better solutions in fewer iterations, making it indispensable for applications ranging from hyperparameter tuning of deep learning models to the autonomous design of functional materials and pharmaceuticals [31] [29].

Fundamental Principles of Bayesian Optimization

The Bayesian optimization algorithm is built upon two foundational components: a surrogate model for probabilistic inference and an acquisition function to guide the search strategy.

The Surrogate Model

The surrogate model, often a Gaussian Process (GP), serves as a probabilistic approximation of the true, unknown objective function. A GP defines a prior over functions and can be updated with observational data to form a posterior distribution. For any set of input hyperparameters x, the GP provides a mean prediction μ(x) and an uncertainty estimate s²(x) [29]. This is mathematically represented as a posterior predictive distribution that gets updated after each new observation, allowing the model to become "less wrong" with more data [30]. Alternative surrogate models include Random Forest regressions and Tree Parzen Estimators (TPE), each with distinct advantages for different problem types [30] [13].

Acquisition Functions

The acquisition function α(x) uses the surrogate's predictions to determine the next most promising point to evaluate by balancing exploration (sampling regions with high uncertainty) and exploitation (sampling regions with promising predicted values) [29]. Common acquisition functions include:

- Expected Improvement (EI): Selects points that offer the highest expected improvement over the current best observation [30] [32].

- Upper Confidence Bound (UCB): Uses a confidence parameter to balance mean performance and uncertainty [29].

- Bayesian Active Learning by Disagreement (BALD): Maximizes the information gain about model parameters [33].

Table 1: Common Acquisition Functions in Bayesian Optimization

| Acquisition Function | Mathematical Formulation | Key Principle |

|---|---|---|

| Expected Improvement (EI) | EI(x) = E[max(0, f(x) - f(x*))] |

Expected improvement over current best |

| Upper Confidence Bound (UCB) | UCB(x) = μ(x) + κσ(x) |

Optimism in the face of uncertainty |

| Probability of Improvement | PI(x) = P(f(x) ≥ f(x*) + ξ) |

Probability of improving current best |

| Entropy Search | Maximizes information gain about optimum | Reduction in uncertainty of optimum location |

Bayesian Optimization Workflow

The complete BO process follows a sequential, iterative cycle that integrates the surrogate model and acquisition function.

Bayesian Optimization Cycle - The iterative process of model building, acquisition, and evaluation continues until convergence.

Step-by-Step Algorithm

Initialization: Start with a small initial dataset of evaluated points, often selected via random sampling or Latin hypercube design.

Surrogate Modeling: Fit the surrogate model (e.g., Gaussian Process) to all observed data

{X, y}. The model learnsp(y | X), mapping hyperparameters to the probability of a score on the objective function [30].Acquisition Optimization: Find the next point

x_nextthat maximizes the acquisition functionα(x), which uses the surrogate's predictive distributionp(y | x, D)[33] [30].Objective Evaluation: Evaluate the expensive black-box function

f(x_next)at the selected point (e.g., train a model with hyperparametersx_nextand measure validation performance).Data Update: Augment the dataset

Dwith the new observation{x_next, f(x_next)}.Termination Check: Repeat steps 2-5 until convergence or a predetermined budget is exhausted.

This workflow's efficiency stems from its informed selection of evaluation points, dramatically reducing the number of expensive function evaluations required compared to uninformed methods [30] [13].

Bayesian Optimization for Molecular Property Prediction

In molecular property prediction research, hyperparameters control critical aspects of machine learning models that map molecular structures to target properties. BO provides an efficient framework for tuning these hyperparameters and directly optimizing molecular properties.

Hyperparameters in Molecular Machine Learning

Molecular property prediction models contain numerous hyperparameters that significantly impact performance. For graph neural networks, these include architectural hyperparameters (message-passing layers, aggregation functions), optimization hyperparameters (learning rate, batch size), and molecular representation parameters (fingerprint radius, descriptor types) [33] [34]. Traditional tuning methods like grid search become computationally prohibitive given the high dimensionality of these spaces and the expense of model training and validation.

Active Learning for Drug Discovery

BO principles extend naturally to active learning for molecular screening. In this context, the "hyperparameters" become the molecular structures themselves, and the objective function is the experimental measurement of a target property. A notable implementation combines pretrained molecular BERT representations with Bayesian active learning, achieving equivalent toxic compound identification with 50% fewer iterations compared to conventional approaches on the Tox21 and ClinTox datasets [33]. This demonstrates BO's capability to strategically select the most informative molecules for experimental testing, dramatically reducing resource requirements in early drug discovery.

Table 2: Bayesian Optimization Performance in Molecular Discovery

| Application Domain | Dataset/System | Performance Improvement | Key Metric |

|---|---|---|---|

| Toxic Compound Identification | Tox21 & ClinTox | 50% fewer iterations | Equivalent identification rate |

| Coarse-Grained Model Parameterization | Pebax-1657 Polymer | Convergence in <600 iterations | Accuracy vs. atomistic model |

| Target-Oriented Materials Discovery | Shape Memory Alloy | 2.66°C from target in 3 iterations | Transformation temperature |

| Hyperparameter Optimization | SVM on Breast Cancer | Test accuracy: 99.1% (vs. 94.7% baseline) | Classification accuracy |

Advanced BO Strategies for Molecular Optimization

Recent research has introduced specialized BO variants to address challenges specific to chemical spaces:

Rank-Based Bayesian Optimization (RBO): Replaces regression surrogates with ranking models that learn the relative ordering of molecules rather than exact property values. This approach proves particularly effective for rough structure-property landscapes with activity cliffs, where small structural changes cause large property fluctuations [34].

Target-Oriented Bayesian Optimization: Modifies the acquisition function to efficiently find materials with specific target property values rather than simply maximizing or minimizing properties. This approach successfully discovered a shape memory alloy

Ti₀.₂₀Ni₀.₃₆Cu₀.₁₂Hf₀.₂₄Zr₀.₀₈with a transformation temperature difference of only 2.66°C from the target in just 3 experimental iterations [32].

Experimental Protocols and Implementation

Protocol 1: Hyperparameter Optimization for Molecular Property Prediction

Objective: Optimize hyperparameters of a machine learning model for molecular property prediction.

Materials:

- Molecular dataset (e.g., Tox21, ClinTox) with labeled properties [33]

- Molecular representation (ECFP fingerprints, graph representations)

- Machine learning model (Graph Neural Network, Random Forest, SVM)

Procedure:

- Define Hyperparameter Search Space: Specify distributions for each hyperparameter (e.g., learning rate: log-uniform between 1e-6 and 1e-1, hidden layers: integer between 1 and 5) [35].

- Select Surrogate Model: Choose appropriate surrogate (e.g., Gaussian Process with Tanimoto kernel for molecular fingerprints) [34].

- Configure Acquisition Function: Set parameters for acquisition function (e.g., exploration-exploitation balance parameter κ for UCB).

- Initialize with Random Points: Evaluate 10-20 random configurations to build initial dataset.