Hyperparameter Optimization in QSAR Models: A Guide for Robust and Predictive Drug Discovery

Hyperparameter tuning is a critical, yet often overlooked, step in developing reliable Quantitative Structure-Activity Relationship (QSAR) models.

Hyperparameter Optimization in QSAR Models: A Guide for Robust and Predictive Drug Discovery

Abstract

Hyperparameter tuning is a critical, yet often overlooked, step in developing reliable Quantitative Structure-Activity Relationship (QSAR) models. This article provides a comprehensive guide for researchers and drug development professionals on the strategic role of hyperparameters across classical and machine learning-based QSAR workflows. We explore foundational concepts, detailing how parameters like the number of trees in a Random Forest or the learning rate in XGBoost directly influence model performance and interpretability. The article then delves into methodological applications, demonstrating optimization techniques such as Grid Search and Bayesian Optimization with real-world case studies from recent literature. A dedicated troubleshooting section addresses common pitfalls like overfitting and underfitting, offering practical solutions for model refinement. Finally, we cover rigorous validation protocols and comparative analyses of different algorithms, emphasizing how proper hyperparameter configuration is indispensable for building models that are not only predictive but also mechanistically insightful and reliable for decision-making in biomedical research.

The Building Blocks: Understanding Hyperparameters in QSAR Model Architecture

In the field of Quantitative Structure-Activity Relationship (QSAR) modeling, the distinction between model parameters and hyperparameters is fundamental to developing robust, predictive tools for drug discovery and toxicological assessment. Model parameters are the internal variables that the machine learning algorithm learns automatically from the training data, such as weights in a neural network or coefficients in a regression model. In contrast, hyperparameters are external configuration variables that are set prior to the training process and cannot be learned directly from the data. These tunable settings control the very structure of the learning algorithm and the nature of the learning process itself, profoundly impacting model performance, generalizability, and ultimately, the reliability of scientific conclusions drawn from QSAR predictions [1] [2].

The optimization of hyperparameters has emerged as a critical step in QSAR workflow, particularly as researchers increasingly employ complex machine learning algorithms to model intricate relationships between chemical structure and biological activity. Proper hyperparameter configuration can mean the difference between a model that accurately generalizes to new chemical entities and one that fails to provide meaningful predictions, a consideration of paramount importance when these models inform decisions in drug development pipelines or safety assessments [2] [1].

Theoretical Foundation: Parameters vs. Hyperparameters

Fundamental Definitions and Distinctions

In machine learning-based QSAR modeling, the clear conceptual and practical separation between parameters and hyperparameters guides both model development and interpretation:

Model Parameters: These are internally learned variables that define the specific relationship between molecular descriptors and the biological endpoint. Examples include the weights connecting neurons in an Artificial Neural Network (ANN), the support vectors in a Support Vector Machine (SVM), or the coefficients in a linear regression model. These parameters are optimized during the training process through algorithms like gradient descent and are unique to each trained model [1].

Hyperparameters: These are externally set configuration variables that control the learning process itself. They are not learned from the data but are specified beforehand by the researcher. Hyperparameters determine the architecture of the model (e.g., number of layers in a neural network) and how the learning algorithm behaves (e.g., learning rate). The process of finding optimal hyperparameters is called hyperparameter optimization (HPO) or tuning [2].

Examples in Common QSAR Algorithms

The specific nature of hyperparameters varies significantly across different machine learning algorithms commonly used in QSAR modeling:

Table 1: Key Hyperparameters in Common QSAR Machine Learning Algorithms

| Algorithm | Key Hyperparameters | Impact on Model Performance |

|---|---|---|

| Random Forest (RF) | Number of trees (n_estimators), maximum tree depth (max_depth), minimum samples per split (min_samples_split) |

Controls model complexity and overfitting; deeper trees can capture more patterns but may overfit to training data [3] [4]. |

| Support Vector Machine (SVM) | Regularization parameter (C), kernel coefficient (gamma), kernel type (e.g., RBF) |

C trades off misclassification of training examples against simplicity of decision surface; gamma defines influence of a single training example [4]. |

| Artificial Neural Network (ANN) | Number of hidden layers and neurons, activation function (e.g., ReLU), optimizer (e.g., Adam), learning rate | Determines capacity to learn complex non-linear relationships; insufficient neurons may underfit, while too many may overfit [4]. |

| Gradient Boosting (XGBoost) | Learning rate, number of boosting rounds, maximum depth, subsample ratio | Learning rate shrinks feature weights to make boosting more robust; subsample ratio prevents overfitting [5]. |

Hyperparameter Optimization Methodologies and Experimental Protocols

Optimization Algorithms and Workflows

Selecting appropriate hyperparameter optimization strategies is essential for balancing computational efficiency with model performance in QSAR studies. Below are the detailed methodologies for the primary optimization approaches cited in current literature.

Bayesian Optimization with Tree-Structured Parzen Estimator (TPE)

Bayesian optimization, particularly with TPE, has become a cornerstone of efficient HPO in QSAR research due to its ability to model the performance of hyperparameters and focus on promising regions of the search space [2] [6].

- Objective Function Definition: The process begins by defining an objective function that takes a set of hyperparameters as input and returns a performance metric (e.g., cross-validated RMSE or AUC). For a QSAR model, this function typically includes steps for model initialization, training, and validation [2].

- Surrogate Model: TPE works by modeling the probability ( p(x|y) ) of the hyperparameters ( x ) given the loss ( y ). It constructs two density estimates: ( l(x) ) for the distribution of hyperparameters when the loss is below a threshold ( y^* ), and ( g(x) ) for the distribution when the loss is above ( y^* ) [6].

- Acquisition Function: The algorithm uses the Expected Improvement (EI) acquisition function to decide which hyperparameters to evaluate next: ( EI{y^*}(x) = \int{-\infty}^{y^}(y^-y)p(y|x)dy ). This balances exploration and exploitation by favoring hyperparameters likely to yield improvements over ( y^* ) [6].

- Iteration: The process iterates, updating the surrogate model with each new observation until a stopping criterion is met (e.g., maximum number of trials or convergence) [2].

Grid Search and Random Search

While Bayesian methods are often more efficient, traditional Grid Search and Random Search remain relevant, especially for smaller hyperparameter spaces or when computational resources are ample [2].

- Grid Search Protocol: This method involves specifying a discrete set of values for each hyperparameter and exhaustively evaluating every possible combination. For example, optimizing an SVM for a QSAR model might involve evaluating all combinations of

C= [0.1, 1, 10, 100] andgamma= [0.001, 0.01, 0.1, 1]. The model with the best average performance on cross-validation is selected. While thorough, this approach becomes computationally prohibitive as the number of hyperparameters grows [2] [1]. - Random Search Protocol: Instead of an exhaustive search, random search randomly samples hyperparameter combinations from predefined distributions for a fixed number of trials. This method often outperforms grid search when some hyperparameters are more important than others, as it explores the space more broadly and is less likely to get stuck in suboptimal regions [2].

Evolutionary and Multi-Fidelity Methods

For particularly complex search spaces or large-scale QSAR problems, advanced methods like evolutionary algorithms and multi-fidelity approaches offer alternatives.

- Evolutionary Algorithms: These methods maintain a population of hyperparameter sets that undergo selection, recombination, and mutation over generations, mimicking natural evolution. They are effective for optimizing both the architecture and hyperparameters of complex models like Graph Neural Networks [7].

- Successive Halving and Hyperband: These are multi-fidelity methods that allocate more resources to promising configurations. They initially evaluate many configurations on a small budget (e.g., few training epochs or limited data) and only the best-performing configurations are advanced to the next round with a larger budget. This allows for a more efficient exploration of the hyperparameter space [6].

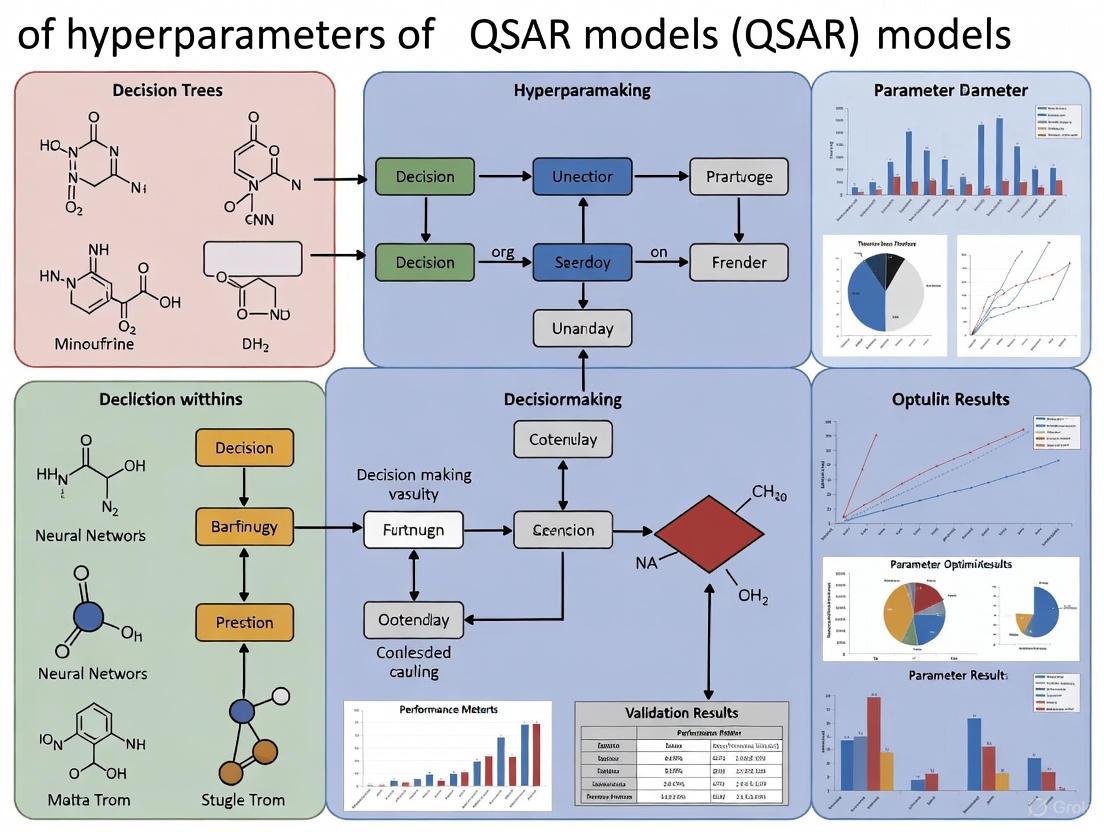

Visualization of a Standard Hyperparameter Optimization Workflow for QSAR

The following diagram illustrates the iterative workflow for optimizing a machine learning-based QSAR model, integrating the methodologies described above.

Diagram 1: Hyperparameter Optimization Workflow for QSAR. This diagram outlines the iterative process of tuning a QSAR model, from defining the search space to deploying the best-performing configuration. CV = Cross-Validation; TPE = Tree-Structured Parzen Estimator.

Quantitative Analysis of Hyperparameter Impact on QSAR Performance

Case Studies and Performance Metrics

The critical importance of hyperparameter optimization is demonstrated by its tangible impact on key performance metrics in published QSAR studies. The following table synthesizes quantitative evidence from recent research.

Table 2: Impact of Hyperparameter Optimization on QSAR Model Performance

| QSAR Study Focus | Algorithm | Key Hyperparameters Tuned | Performance Before/After HPO | Citation |

|---|---|---|---|---|

| Repeat Dose Toxicity POD | Random Forest | n_estimators, max_depth, others (study type/species as descriptors) |

External Test Set: RMSE = 0.71 log10-mg/kg/day, R² = 0.53 (post-HPO) [3] | [3] |

| T. cruzi Inhibitors | Artificial Neural Network | Number of neurons, activation function (ReLU), optimizer (Adam) | Training set Pearson R = 0.9874, Test set Pearson R = 0.6872 (post-HPO) [4] | [4] |

| T. cruzi Inhibitors | Support Vector Machine | Regularization (C), kernel coefficient (gamma) |

Optimized via grid-based tuning and cross-validation [4] | [4] |

| T. cruzi Inhibitors | Random Forest | n_estimators, tree depth, min_samples_split |

Optimized via grid-based tuning and cross-validation [4] | [4] |

| hERG Blockage | Multiple (RF, SVM, etc.) | Algorithm-specific parameters | Classification accuracy for blockers/non-blockers: 0.83–0.93 on external set (post-HPO) [8] | [8] |

| Drug Discovery Datasets | Multiple (BNB, LLR, ABDT, RF, SVM, DNN) | Comprehensive algorithm-specific parameters | Hyperopt models achieved better/comparable performance on 33 of 36 models vs. referenced baselines [2] | [2] |

The data consistently show that systematic HPO leads to robust model performance. For instance, the optimization of a random forest model for predicting repeat-dose point-of-departure (POD) values resulted in a model capable of identifying 80% of the most potent chemicals in the top 20% of predictions, demonstrating high value for screening-level risk assessments [3]. Furthermore, a large-scale benchmark study across six drug discovery datasets found that models built with Hyperopt for HPO outperformed or matched baseline models in 33 out of 36 cases, underscoring the universal benefit of systematic tuning across different algorithms and endpoints [2].

The Scientist's Toolkit: Essential Software for Hyperparameter Optimization

Implementing effective hyperparameter optimization requires specialized software tools. The table below details key libraries and platforms used by QSAR researchers.

Table 3: Essential Software Tools for Hyperparameter Optimization in QSAR Research

| Tool Name | Type/Function | Key Features | Application in QSAR |

|---|---|---|---|

| Hyperopt | Python library for HPO | Uses Tree of Parzen Estimators (TPE), defines space with domain-specific language, supports conditional spaces [2] [6]. | Successfully applied to optimize multiple ML algorithms (BNB, ABDT, RF, SVM, DNN) on drug discovery datasets [2]. |

| Optuna | Python framework for HPO | Uses sequential model-based optimization, define-by-run API for dynamic search spaces, efficient pruning of trials [6]. | Not explicitly mentioned in QSAR contexts in results, but is a state-of-the-art alternative to Hyperopt. |

| Scikit-learn | Python ML library | Provides GridSearchCV and RandomizedSearchCV for basic HPO integrated with cross-validation [1]. |

Widely used for model development and tuning in QSAR studies, such as in the development of T. cruzi inhibitor models [4]. |

| PaDEL-Descriptor | Molecular descriptor calculator | Calculates 1,024 CDK fingerprints and 780 atom pair 2D fingerprints for molecular representation [4]. | Critical pre-HPO step: generating features for the QSAR model. Used to calculate descriptors for T. cruzi inhibitors [4]. |

A comparative analysis of Hyperopt and Optuna reveals differences in design philosophy and implementation. Hyperopt requires pre-defining the search space and uses a Trials object to track results, while Optuna employs a "define-by-run" approach where the search space is defined dynamically within the objective function, offering greater flexibility for complex conditional spaces [6]. One analysis noted that Optuna's API involves slightly less boilerplate code and provides more flexibility for on-the-fly sampling decisions, which can be advantageous for intricate optimization procedures [6].

The precise definition and methodological optimization of hyperparameters are not merely technical exercises but fundamental components of rigorous QSAR research. As evidenced by case studies across toxicology and drug discovery, systematically tuned hyperparameters significantly enhance model predictability, reliability, and translational utility. The evolution of sophisticated HPO frameworks like Hyperopt and Optuna enables researchers to efficiently navigate complex parameter spaces, transforming hyperparameter tuning from an art into a science. For QSAR practitioners, adopting robust HPO protocols as detailed in this review is essential for building models that truly fulfill the promise of in silico methods in accelerating drug development and improving chemical safety assessments.

The predictive performance, interpretability, and generalizability of Quantitative Structure-Activity Relationship (QSAR) models are profoundly influenced by the careful selection of hyperparameters. As QSAR modeling has evolved from classical statistical approaches to sophisticated machine learning (ML) and deep learning (DL) algorithms, the complexity of hyperparameter optimization has increased correspondingly [9]. In modern computational drug discovery, where models must extract meaningful patterns from high-dimensional chemical data, understanding and tuning algorithm-specific hyperparameters is not merely a technical refinement but a fundamental requirement for building robust predictive systems [7] [9].

This technical guide provides a comprehensive categorization of essential hyperparameters across the algorithm spectrum commonly employed in QSAR research, with a particular focus on Random Forests, Support Vector Machines, and Graph Neural Networks. By framing this discussion within experimental protocols and practical optimization methodologies relevant to cheminformatics, we aim to equip researchers with the systematic approaches needed to maximize the potential of their QSAR models while maintaining scientific rigor and interpretability.

Foundational Concepts in Hyperparameter Optimization

Hyperparameters are configuration variables external to the model itself that govern the learning process. Unlike model parameters learned during training, hyperparameters must be set prior to the learning process and significantly impact model performance, stability, and generalization capability [10] [11]. In QSAR modeling, proper hyperparameter configuration helps balance the bias-variance tradeoff, particularly crucial when working with the limited datasets common in chemical informatics [9] [12].

Two primary algorithmic approaches dominate hyperparameter optimization in QSAR workflows: GridSearchCV, which exhaustively searches through a predefined hyperparameter space, and RandomizedSearchCV, which samples a fixed number of parameter settings from specified distributions [10]. The latter often proves more efficient for high-dimensional parameter spaces or when computational resources are constrained. For complex architectures like Graph Neural Networks, more advanced techniques including Bayesian optimization and evolutionary algorithms are increasingly employed [7].

A critical consideration in QSAR is the relationship between hyperparameter tuning and model interpretability. While complex ensembles and neural networks can achieve high predictive accuracy, their "black-box" nature poses challenges for regulatory acceptance and scientific insight [9]. Thus, hyperparameter selection must balance predictive performance with the need for mechanistic interpretation in drug discovery applications.

Random Forest Hyperparameters in QSAR Applications

Random Forest (RF) algorithms have gained prominence in QSAR studies due to their robustness against overfitting, native feature selection capabilities, and ability to model complex nonlinear relationships without demanding extensive feature engineering [13] [14] [15]. These characteristics make them particularly valuable for cheminformatics tasks where molecular descriptors frequently outnumber compounds in the training set.

Core Hyperparameters and Their Effects

Table 1: Key Random Forest Hyperparameters and Their Impact on QSAR Modeling

| Hyperparameter | Description | Default Value | QSAR-Specific Considerations |

|---|---|---|---|

n_estimators |

Number of decision trees in the forest | 100 [10] | Higher values improve performance but increase computational cost; particularly important for large chemical libraries [10] |

max_features |

Number of features considered for splitting | "sqrt" [10] | Controls feature randomness; "sqrt" or "log2" reduce overfitting with high-dimensional molecular descriptors [10] [11] |

max_depth |

Maximum depth of each tree | None [10] | Shallower trees may underfit; deeper trees may capture complex structure-activity relationships but risk overfitting [10] |

min_samples_split |

Minimum samples required to split a node | 2 [10] | Higher values regularize the model; useful for noisy bioactivity data [10] |

min_samples_leaf |

Minimum samples required at a leaf node | 1 [10] | Prevents overfitting to outlier compounds in training data [10] |

bootstrap |

Whether to use bootstrap sampling | True [10] | Introduces diversity through bagging; improves model robustness [10] |

Experimental Protocol for RF Hyperparameter Optimization in QSAR

The following protocol outlines a systematic approach for optimizing Random Forest hyperparameters in QSAR workflows, adaptable for both classification (e.g., active/inactive classification) and regression (e.g., pIC50 prediction) tasks:

Data Preparation: Calculate molecular descriptors (e.g., using RDKit, PaDEL, or DRAGON) or fingerprints (e.g., ECFP, SubstructureCount) for all compounds [14] [9]. Split the data into training (70-80%), validation (10-15%), and hold-out test sets (10-15%) using stratified splitting based on the target variable to maintain activity distribution.

Baseline Establishment: Train a Random Forest model with default scikit-learn parameters (

n_estimators=100,max_features="sqrt", etc.) and evaluate its performance on the validation set using appropriate metrics (e.g., RMSE, R² for regression; AUC-ROC, accuracy for classification) [10].Define Search Space: Create a parameter grid specifying ranges for key hyperparameters:

Execute Hyperparameter Search: Employ either

GridSearchCVorRandomizedSearchCVfrom scikit-learn with 5-10 fold cross-validation on the training set to identify the optimal combination [10]. Use the validation set for early stopping if applicable.Final Model Evaluation: Retrain the model on the combined training and validation data using the optimal hyperparameters. Assess the final model performance on the hold-out test set to estimate generalization error [13].

Variable Importance Analysis: Extract and interpret feature importance scores (e.g., Gini importance or permutation importance) to identify molecular descriptors most predictive of bioactivity, providing chemical insights [13] [11].

Impact on Variable Selection in QSAR

Hyperparameter settings significantly influence RF-based variable selection methods like Vita and Boruta, which are crucial for identifying meaningful molecular descriptors in QSAR studies [11]. Research indicates that the proportion of splitting candidates (mtry.prop) and sample fraction (sample.fraction) particularly affect sensitivity in detecting important variables. For weakly correlated molecular descriptors, smaller values of sample.fraction can increase sensitivity, while for strongly correlated descriptors, the default values often suffice [11]. This nuanced understanding enables more effective identification of physiochemically meaningful descriptors linked to bioactivity.

Support Vector Machine Hyperparameters in Cheminformatics

Support Vector Machines (SVM) remain a robust choice for QSAR modeling, particularly effective in high-dimensional descriptor spaces common in cheminformatics [16]. Their effectiveness stems from the kernel trick, which allows them to handle nonlinear relationships in molecular data by projecting descriptors into higher-dimensional feature spaces where separation becomes feasible [16].

Core Hyperparameters and Their Effects

Table 2: Key Support Vector Machine Hyperparameters and Their Impact on QSAR Modeling

| Hyperparameter | Description | Common Values | QSAR-Specific Considerations |

|---|---|---|---|

C (Regularization) |

Controls trade-off between maximizing margin and minimizing classification error | 0.1, 1, 10, 100 [16] | Lower values prevent overfitting with noisy bioactivity data; higher values fit training data more closely [16] |

kernel |

Determines the nonlinear mapping function | "rbf", "linear", "poly", "sigmoid" [16] | RBF kernel effectively captures complex nonlinear structure-activity relationships [16] |

gamma (RBF kernel) |

Defines the influence of a single training example | "scale", "auto", or numerical values [16] | Low gamma values improve generalization across diverse chemical series [16] |

epsilon (Regression) |

Specifies the margin of error tolerance in SVM regression | 0.1, 0.2, 0.5 [16] | Larger values create more generalized models tolerant to noise in activity measurements [16] |

degree (Polynomial kernel) |

Sets the degree of the polynomial function | 2, 3, 4, 5 [16] | Higher degrees increase model complexity but risk overfitting to training compounds [16] |

Experimental Protocol for SVM Hyperparameter Optimization

SVM implementation in QSAR requires careful attention to data preprocessing and parameter tuning:

Data Preprocessing: Standardize all molecular descriptors (mean of 0, standard deviation of 1) to ensure features with larger numerical ranges don't dominate the optimization. For imbalanced datasets (common in virtual screening), apply appropriate sampling techniques or class weighting.

Kernel Selection: Begin with the Radial Basis Function (RBF) kernel, which effectively handles nonlinear relationships in molecular data. For largely linear problems or when interpretability is paramount, consider the linear kernel [16].

Parameter Grid Definition: Establish a comprehensive search space:

Cross-Validation Strategy: Implement stratified k-fold cross-validation (k=5 or 10) to account for variability in compound selection and activity distribution [16].

Model Interpretation: For linear kernels, examine feature weights directly. For nonlinear kernels, utilize model-agnostic interpretation tools like SHAP or LIME to identify influential molecular descriptors [9].

Graph Neural Network Hyperparameters in Molecular Property Prediction

Graph Neural Networks (GNNs) represent a paradigm shift in QSAR modeling by directly operating on molecular graph structures, naturally representing atoms as nodes and bonds as edges [7]. This architecture aligns with the fundamental nature of molecular structures and eliminates the need for manual descriptor engineering.

Core Hyperparameters and Architectural Considerations

GNN hyperparameters encompass both traditional neural network parameters and graph-specific architectural elements:

Table 3: Key Graph Neural Network Hyperparameters for Molecular Property Prediction

| Hyperparameter Category | Specific Parameters | Influence on QSAR Modeling |

|---|---|---|

| Architectural Parameters | Number of message passing layers, Graph pooling operation, Hidden dimension size [7] | Deeper networks capture larger molecular motifs but may suffer from over-smoothing; pooling operations affect whole-molecule representation [7] |

| Optimization Parameters | Learning rate, Batch size, Dropout rate [7] | Critical for stable training with limited chemical data; dropout regularizes against overfitting small compound sets [7] |

| Graph-Specific Parameters | Neighborhood aggregation function, Edge feature handling, Atomic representation dimension [7] | Aggregation functions (sum, mean, max) affect how atomic environments are represented; edge features encode bond characteristics [7] |

Neural Architecture Search and Hyperparameter Optimization for GNNs

The performance of GNNs is highly sensitive to architectural choices and hyperparameters, making optimal configuration a non-trivial task [7]. Neural Architecture Search (NAS) and Hyperparameter Optimization (HPO) have emerged as crucial methodologies for automating this process:

Search Space Definition: Define flexible architectural templates including ranges for GNN depth (typically 3-8 layers), hidden dimensions (64-512 units), aggregation functions (mean, sum, max), and readout functions [7].

Optimization Strategy: Employ Bayesian optimization with multi-fidelity methods (e.g., Hyperband) to efficiently navigate the complex joint space of architectural and training parameters while managing computational costs [7].

Regularization Techniques: Implement graph-specific regularization including node dropout, edge dropout, and node feature masking to improve generalization given limited molecular training data [7].

Transfer Learning: Leverage pre-training on larger molecular datasets (e.g., ChEMBL, ZINC) followed by fine-tuning on target-specific bioactivity data to mitigate data scarcity issues [7] [12].

The QSAR Researcher's Toolkit

Table 4: Essential Research Reagent Solutions for Hyperparameter Optimization in QSAR

| Tool/Category | Specific Examples | Function in Hyperparameter Optimization |

|---|---|---|

| Machine Learning Libraries | scikit-learn [10] [16], Deep Graph Library [7] | Provide implemented algorithms and hyperparameter tuning utilities (GridSearchCV, RandomizedSearchCV) [10] |

| Molecular Descriptor Tools | RDKit [9], PaDEL [9], DRAGON [9] | Calculate 1D-3D molecular descriptors and fingerprints for feature-based models [9] |

| Hyperparameter Optimization Frameworks | Optuna, Scikit-optimize, Weights & Biases | Automate search for optimal hyperparameters using advanced algorithms like Bayesian optimization |

| Cheminformatics Databases | ChEMBL [12], NPASS [12], CMNPD [12] | Provide bioactivity data for training and validating QSAR models with appropriate hyperparameters [12] |

| Visualization & Interpretation | SHAP [9], LIME [9], t-SNE [12] | Interpret model predictions and guide hyperparameter adjustments for improved explainability [9] |

Systematic hyperparameter optimization transcends mere model refinement in QSAR research—it constitutes an essential methodology for building predictive, interpretable, and generalizable models that accelerate drug discovery. As the field advances toward increasingly complex architectures including Graph Neural Networks and multi-task learning systems, the development of efficient, automated hyperparameter optimization strategies will grow correspondingly more crucial. By categorizing key hyperparameters across the algorithm spectrum and providing structured experimental protocols, this guide establishes a foundation for rigorous, reproducible QSAR research that effectively bridges computational methodology and chemical insight.

In the field of Quantitative Structure-Activity Relationship (QSAR) modeling, the strategic selection of hyperparameters has evolved from a mere technical consideration to a fundamental determinant of predictive success. As drug discovery increasingly relies on machine learning to navigate complex chemical spaces, the deliberate tuning of hyperparameters provides researchers with precise control over the bias-variance tradeoff, ultimately dictating a model's ability to generalize from training data to novel therapeutic compounds. The transition from classical statistical methods in QSAR—such as Multiple Linear Regression (MLR) and Partial Least Squares (PLS)—to advanced machine learning algorithms like Random Forests, Support Vector Machines, and deep neural networks has dramatically expanded the hyperparameter landscape [9]. This expansion offers unprecedented modeling flexibility but simultaneously introduces critical challenges in optimization that directly impact model efficacy in predicting biological activity, toxicity, and pharmacokinetic properties.

The significance of hyperparameter tuning extends beyond technical optimization; it represents a core component of robust QSAR workflow that affects the very validity of computational findings. As noted in recent studies, improper hyperparameter selection can lead to models that either oversimplify complex structure-activity relationships (high bias) or memorize dataset noise (high variance), both yielding misleading predictions with substantial consequences in downstream experimental validation [17] [18]. Within the context of pharmaceutical development, where QSAR models guide costly synthesis and testing decisions, understanding the direct mechanistic link between hyperparameters and model behavior becomes not merely academic but essential to reducing attrition in the drug discovery pipeline.

Theoretical Foundation: Bias, Variance, and the Bias-Variance Tradeoff

The performance of any predictive model in QSAR modeling is fundamentally governed by its bias and variance characteristics. Bias refers to the error introduced by approximating a real-world problem, which may be complex, with a simplified model. A model with high bias pays little attention to the training data and makes strong assumptions, leading to consistent underprediction or overprediction of biological activity values [19]. In practical QSAR terms, this might manifest as a model that systematically underestimates the potency of certain chemical scaffolds due to oversimplified feature representations.

Conversely, variance describes the model's sensitivity to fluctuations in the training data. A high variance model captures noise and random fluctuations in the training set—such as experimental measurement errors in activity data—that do not represent the true underlying structure-activity relationship [20] [19]. When deployed for virtual screening, such a model would demonstrate excellent performance on known compounds but fail catastrophically when predicting novel chemotypes outside its narrow training distribution.

The mathematical decomposition of the expected prediction error formally captures this relationship, expressed as: Error = Bias² + Variance + Irreducible Error [20]. The irreducible error stems from noise inherent in the data generation process itself, such as experimental variability in bioactivity assays. The bias-variance tradeoff describes the tension where decreasing bias typically increases variance, and vice versa [20]. The fundamental goal of hyperparameter optimization in QSAR is to navigate this tradeoff to minimize the total error, thereby creating models that are complex enough to capture genuine structure-activity patterns yet robust enough to ignore dataset-specific noise.

Key Hyperparameters and Their Direct Effects on Model Behavior

Hyperparameters serve as the primary mechanism for researchers to exert control over model complexity, directly influencing where a QSAR model lands on the bias-variance spectrum. Unlike model parameters learned during training, hyperparameters are set prior to the learning process and govern the learning process itself [21]. The following section details critical hyperparameters across common algorithms in QSAR research, with their specific effects summarized in Table 1.

Table 1: Key Hyperparameters and Their Influence on QSAR Models

| Algorithm | Hyperparameter | Direct Effect on Bias | Direct Effect on Variance | Mechanism in QSAR Context |

|---|---|---|---|---|

| K-Nearest Neighbors | n_neighbors (K) |

↑ K → ↑ Bias | ↑ K → ↓ Variance | Determines how many similar compounds influence activity prediction |

| Decision Trees/Random Forests | max_depth |

↑ Depth → ↓ Bias | ↑ Depth → ↑ Variance | Controls how many molecular feature splits are considered |

| Decision Trees/Random Forests | min_samples_split |

↑ Samples → ↑ Bias | ↑ Samples → ↓ Variance | Prevents splits based on too few compounds, reducing noise capture |

| Support Vector Machines | C (Regularization) |

↑ C → ↓ Bias | ↑ C → ↑ Variance | Balances margin maximization against training error tolerance |

| Support Vector Machines | gamma (Kernel) |

↑ Gamma → ↓ Bias | ↑ Gamma → ↑ Variance | Controls influence radius of individual compounds in feature space |

| Neural Networks | learning_rate |

↑ Rate → ↓ Bias (initially) | ↑ Rate → ↑ Variance | Governs optimization convergence during training on chemical data |

| Gradient Boosting | n_estimators |

↑ Estimators → ↓ Bias | ↑ Estimators → ↑ Variance* | Increases sequential learning from residual errors of previous models |

| All Regularized Models | Regularization Strength | ↑ Strength → ↑ Bias | ↑ Strength → ↓ Variance | Constrains model coefficients to prevent overfitting to descriptor noise |

*Note: When coupled with techniques like subsampling, increasing n_estimators in ensemble methods can sometimes reduce variance through averaging.

For Random Forests, which are extensively used in modern QSAR due to their robustness with high-dimensional descriptor data [9], the max_depth parameter exemplifies this direct control. A shallow tree (low max_depth) may only utilize a few molecular descriptors, potentially missing critical interactions (high bias), while an excessively deep tree might create decision paths that are overly specific to the training compounds (high variance) [21]. Similarly, the min_samples_split parameter ensures that splits in the tree are based on sufficient data points, preventing the model from learning spurious relationships from small clusters of compounds.

In Support Vector Machines, the regularization parameter C directly determines the trade-off between achieving a low training error and maintaining a simple decision boundary [21]. A low C value creates a simple hyperplane that may inadequately separate active from inactive compounds in descriptor space (high bias), while a high C value allows the model to accommodate outliers and noise in the activity data (high variance). The gamma parameter in radial basis function (RBF) kernels controls the influence distance of a single training compound, where high values can lead to complex boundaries that perfectly separate training data but fail to generalize [21].

Experimental Protocols for Hyperparameter Optimization in QSAR

Establishing a Robust Validation Framework

The optimization of hyperparameters requires a rigorous experimental protocol to ensure that observed improvements generalize beyond the specific data used for tuning. A critical first step involves implementing appropriate data splitting strategies that reflect the ultimate goal of QSAR models: predicting activities for entirely new chemical structures. Recent benchmarking studies suggest that scaffold splits—where compounds are divided based on their core molecular frameworks—provide a more challenging and realistic assessment of generalization compared to simple random splits [18]. This approach tests the model's ability to extrapolate to novel chemotypes, a common scenario in lead optimization.

Following data splitting, cross-validation provides the mechanism for robust hyperparameter evaluation. The standard k-fold cross-validation (typically 5-fold or 10-fold) estimates how the model would perform on unseen data while mitigating the influence of particular data partitions. For QSAR applications, it is crucial that the cross-validation procedure maintains the same compound separation principle (e.g., scaffold-based) as the ultimate test set division to prevent optimistic performance estimates [20].

Optimization Methodologies

Several systematic approaches exist for navigating the hyperparameter space, each with distinct advantages for QSAR applications:

Grid Search: This exhaustive method evaluates all possible combinations within a predefined hyperparameter grid. While computationally expensive for high-dimensional spaces, it provides comprehensive coverage and is suitable when dealing with a limited number of critical hyperparameters [22] [21].

Random Search: Unlike grid search, random search samples hyperparameter combinations randomly from specified distributions. This approach often outperforms grid search in efficiency, particularly when only a subset of hyperparameters significantly impacts performance [22]. For QSAR tasks with many potential molecular descriptors, random search can effectively identify promising regions in the hyperparameter space without exhaustive computation.

Bayesian Optimization: This more sophisticated approach builds a probabilistic model of the objective function (e.g., cross-validation score) and uses it to direct subsequent evaluations toward promising hyperparameter combinations [22]. Bayesian optimization is particularly valuable for QSAR applications involving complex models like deep neural networks, where each training cycle is computationally intensive.

Recent advances in automated hyperparameter optimization for QSAR include the use of Hyperband and successive halving algorithms, which dynamically allocate computational resources to the most promising hyperparameter configurations through early-stopping of poorly performing trials [22]. These methods can significantly reduce tuning time for large-scale QSAR modeling efforts.

Visualization of Hyperparameter Effects on Model Complexity

The relationship between hyperparameter values, model complexity, and prediction error can be effectively visualized through a conceptual diagram that captures their interconnected nature. The following Graphviz representation illustrates how different hyperparameters influence the bias-variance tradeoff:

Diagram 1: Hyperparameter Influence on Model Behavior. This visualization shows how hyperparameters control model complexity, creating an inverse relationship with bias and a direct relationship with variance, ultimately determining total prediction error.

A complementary experimental approach involves empirically measuring the effect of specific hyperparameters on model performance. The following workflow represents a typical hyperparameter optimization experiment in QSAR:

Diagram 2: Hyperparameter Optimization Workflow. This experimental protocol outlines the systematic process for identifying optimal hyperparameters in QSAR modeling, from search space definition to final evaluation.

Case Studies & Research Reagent Solutions

Case Study: Hyperparameter Optimization in Deep Learning QSAR

A recent investigation into deep learning applications for QSAR provides a compelling case study on hyperparameter optimization. Researchers developing the ChemProp model—a graph neural network specifically designed for molecular property prediction—conducted extensive hyperparameter tuning to balance model capacity with generalization [18]. The study revealed that default hyperparameters often yielded suboptimal performance, but surprisingly, extensive optimization could lead to overfitting on small datasets. The researchers ultimately recommended a preselected set of hyperparameters that provided consistently strong performance across diverse chemical endpoints without requiring dataset-specific tuning [18].

In a separate study focused on toxicity prediction, the AttenhERG model—based on the Attentive FP algorithm—achieved state-of-the-art accuracy in predicting hERG channel blockage, a critical cardiotoxicity endpoint [18]. This success was attributed to careful tuning of attention mechanisms and network depth, allowing the model to identify toxicophores without overfitting to chemical noise in the training data. The interpretable nature of the attention weights further validated the hyperparameter choices, as they highlighted structurally meaningful atom contributions to toxicity predictions.

Table 2: Essential Tools for Hyperparameter Optimization in QSAR Research

| Tool Name | Type | Primary Function | QSAR Application Example |

|---|---|---|---|

| Scikit-learn | Software Library | Provides implementations of GridSearchCV and RandomSearchCV | Systematic evaluation of classical ML algorithms (RF, SVM) with molecular descriptors |

| Optuna | Hyperparameter Optimization Framework | Defines and optimizes hyperparameter search spaces using Bayesian optimization | Efficient tuning of deep learning models for large-scale virtual screening |

| ChemProp | Specialized Software | Graph neural network with built-in hyperparameter optimization for molecular properties | Predicting ADMET properties with message-passing neural networks |

| fastprop | Descriptor-based Modeling | Rapid machine learning with Mordred descriptors using preset hyperparameters | Quick baseline models for molecular property prediction without extensive tuning |

| Hyperopt | Optimization Library | Distributed asynchronous hyperparameter optimization | Large-scale QSAR model tuning across multiple computing nodes |

| TensorBoard | Visualization Toolkit | Tracking and visualizing training metrics across hyperparameter experiments | Monitoring neural network training convergence for deep learning QSAR |

The direct mechanistic link between hyperparameters, model bias, variance, and complexity establishes hyperparameter optimization as a non-negotiable discipline in contemporary QSAR research. As pharmaceutical discovery increasingly leverages complex machine learning algorithms to navigate expansive chemical spaces, the deliberate calibration of hyperparameters provides the necessary control mechanism to balance model flexibility with generalization power. The transition from classical QSAR methods to advanced deep learning architectures has not diminished the importance of this balance but has rather made it more critical—and more computationally challenging—to achieve.

Looking forward, the integration of automated hyperparameter optimization into end-to-end AI-driven drug discovery platforms represents the next frontier in computational chemistry [23]. As these platforms increasingly incorporate multi-objective optimization—simultaneously balancing potency, selectivity, and ADMET properties—the role of hyperparameters will expand from controlling single-model performance to orchestrating complex tradeoffs across multiple prediction tasks. For research scientists and drug development professionals, mastering the relationship between hyperparameters and model behavior will remain an essential competency, ensuring that QSAR models deliver not just predictive accuracy but chemically meaningful insights that successfully translate to clinical candidates.

In modern Quantitative Structure-Activity Relationship (QSAR) modeling, hyperparameters transcend their traditional role as mere performance optimizers to become critical factors influencing model interpretability. These configuration settings—which control learning algorithm behavior—fundamentally shape how models arrive at predictions and consequently, how we extract meaningful biological or chemical insights from them. The rise of complex machine learning approaches in drug discovery, including Random Forests, Gradient Boosting, and Support Vector Machines, has amplified the importance of understanding this relationship [24] [25]. As QSAR applications expand from predicting protein adsorption capacities to assessing environmental toxicity of chemicals, researchers require sophisticated tools to peer inside these increasingly complex models [24] [26].

This technical guide examines how SHapley Additive exPlanations (SHAP) and complementary interpretability methods reveal the intricate connections between hyperparameter choices and model reasoning. We explore experimental evidence demonstrating that hyperparameters not only affect predictive accuracy but fundamentally alter which molecular descriptors models prioritize, ultimately changing the scientific narratives derived from QSAR analyses. Within the broader thesis on hyperparameters' role in QSAR research, we establish that interpretability-aware hyperparameter tuning is not optional but essential for producing chemically plausible and biologically meaningful models.

Theoretical Foundations: SHAP and Hyperparameter Interactions

SHAP Methodology in QSAR Context

SHAP provides a unified approach to feature importance based on cooperative game theory, allocating credit for predictions among input features by computing their marginal contributions across all possible feature combinations. In QSAR applications, SHAP bridges the gap between model complexity and chemical interpretability by quantifying how much each molecular descriptor contributes to predicted bioactivities or properties [27]. The mathematical foundation lies in Shapley values, which ensure fair attribution satisfying properties of efficiency, symmetry, dummy, and additivity.

For a given QSAR model and prediction, SHAP values represent the deviation from the average model output attributable to each feature. When applied to QSAR models, these values transform black-box predictions into actionable insights by identifying which structural features (e.g., rotatable bond count, hydrophobic surface area, electrostatic properties) drive particular activity predictions [28]. This capability is particularly valuable when comparing models with different hyperparameter configurations, as it reveals how tuning alters the fundamental reasoning patterns the model employs.

Hyperparameters as Interpretability Gatekeepers

Hyperparameters in QSAR models operate as gatekeepers controlling both model complexity and interpretability fidelity. Key hyperparameter categories include:

- Complexity controllers: Maximum tree depth (Random Forests, XGBoost), number of trees, minimum samples per leaf

- Regularization parameters: Learning rate (boosting methods), penalty terms (SVM, neural networks)

- Kernel selections: Radial basis function versus linear kernels in SVM

- Architecture decisions: Number of layers and neurons in neural networks

Each hyperparameter category influences how models capture and prioritize relationships between molecular structure and activity. For instance, increasing tree depth in ensemble methods enables capture of more complex descriptor interactions but may overemphasize subtle correlations that lack chemical relevance. Similarly, SVM kernel selection fundamentally alters the feature space in which similarity is computed, thereby changing which molecular features appear most significant [26].

Experimental Evidence: Documented Cases of Hyperparameter Influence on SHAP Interpretations

Case Study 1: Fluorocarbon Inhalation Toxicity Prediction

A systematic QSAR study predicting acute inhalation toxicity (LC50) of fluorocarbon insulating gases demonstrated pronounced hyperparameter influence on SHAP interpretations [26]. Researchers developed models using both SVM-RBF and XGBoost algorithms, with each requiring distinct hyperparameter tuning strategies. The SHAP analysis revealed that despite similar predictive performance (SVM-RBF: R²test = 0.7532; XGBoost: R²test = 0.7185), the two models prioritized different molecular descriptors as toxicity drivers.

Table 1: Hyperparameter Settings and Their Impact on SHAP Results in Fluorocarbon Toxicity Study

| Model | Key Hyperparameters | Top SHAP Descriptors | Mechanistic Interpretation |

|---|---|---|---|

| SVM-RBF | C=10, γ=0.1, kernel=RBF | ATS0v, GGI2, MDEC-23 | Emphasized electronic structure and charge distribution |

| XGBoost | maxdepth=7, learningrate=0.1, n_estimators=150 | SpMaxB(p), SM6B, ATS0v | Prioritized topological and steric parameters |

The researchers noted that hyperparameter configurations directly influenced the descriptor importance rankings produced by SHAP analysis, with certain descriptors appearing significant in one model configuration but not in others. This highlights that hyperparameter choices can lead to different mechanistic interpretations of the same endpoint [26].

Case Study 2: Protein Adsorption Capacity Prediction

Research on predicting protein adsorption capacities on mixed-mode resins employed Random Forest and Gradient Boosting methods with SHAP interpretation [24]. The study demonstrated that hyperparameter tuning affected not only prediction accuracy but also the stability of SHAP explanations across different validation splits.

Table 2: Hyperparameter Impact on Model Performance and SHAP Stability in Protein Adsorption Study

| Model | Hyperparameter Settings | R² Test | SHAP Stability* | Key Descriptors Identified |

|---|---|---|---|---|

| Random Forest | nestimators=200, maxdepth=15 | 0.90-0.93 | Medium | Protein charge, hydrophobicity index |

| Gradient Boosting | nestimators=150, learningrate=0.1, max_depth=5 | 0.90-0.93 | High | Hydrophobicity, structural fingerprints |

*Stability measured by consistency of top-5 descriptors across multiple training-test splits

The two-step descriptor elimination method employed in this study, combined with SHAP analysis, revealed that more constrained models (lower max_depth, higher regularization) produced more consistent descriptor importance rankings that aligned better with known protein adsorption mechanisms [24].

Experimental Protocols for Assessing Hyperparameter Influence

Based on the reviewed studies, the following protocol systematically evaluates hyperparameter impact on SHAP interpretations:

Protocol 1: Hyperparameter-Influenced Interpretability Analysis

- Data Preparation: Curate standardized QSAR dataset with sufficient size (n>100 recommended) and compute comprehensive molecular descriptors (e.g., using PaDEL, RDKit) [27]

- Model Training with Varied Hyperparameters: Train multiple model instances with systematically varied hyperparameters while maintaining constant training/data split

- SHAP Calculation: Compute SHAP values for all models using uniform sampling of background dataset and consistent explanation set

- Descriptor Ranking Analysis: Rank descriptors by mean absolute SHAP value for each model configuration

- Interpretation Consistency Metrics: Quantify ranking consistency using Spearman correlation and top-k descriptor overlap between configurations

- Mechanistic Plausibility Assessment: Evaluate descriptor importance lists against domain knowledge for chemical/biological plausibility

Protocol 2: Cross-Validation for Interpretability Robustness

- Perform repeated k-fold cross-validation with fixed hyperparameters

- Compute SHAP values for each cross-validation fold

- Assess variance in descriptor importance rankings across folds

- Identify hyperparameters that minimize interpretation variance while maintaining predictive performance

Complementary Interpretability Approaches Beyond SHAP

Model-Agnostic Alternatives

While SHAP provides powerful insights, research indicates limitations to its interpretations, particularly regarding sensitivity to hyperparameters and correlated descriptors [29]. Several complementary approaches provide additional perspectives:

- Feature Agglomeration: Unsupervised grouping of correlated descriptors before modeling reduces SHAP instability [29]

- Partial Dependence Plots (PDP): Visualize relationship between selected descriptors and predicted outcome marginalizing over other features

- Permutation Importance: Measure performance decrease when shuffering individual features, providing alternative importance metric

- Counterfactual Explanations: Identify minimal structural changes that alter predictions (e.g., Molecular Model Agnostic Counterfactual Explanations) [30]

Model-Specific Interpretability Methods

Certain QSAR models offer built-in interpretability features that complement SHAP analysis:

- Random Forest Feature Importance: Gini importance or mean decrease in impurity provide native feature rankings

- Decision Tree Visualization: Direct inspection of decision paths in individual trees

- Linear Model Coefficients: For regularized linear models, coefficients provide direct feature weights

A comparative study on anti-inflammatory activity prediction found that combining multiple interpretability approaches provided more robust insights than relying on any single method [25].

Table 3: Essential Research Reagent Solutions for Hyperparameter-Interpretability Studies

| Tool/Category | Specific Examples | Function in Analysis | Implementation Notes |

|---|---|---|---|

| QSAR Modeling Libraries | Scikit-learn, XGBoost, LightGBM | Provide ML algorithms with hyperparameter control | Ensure version consistency across experiments |

| Interpretability Frameworks | SHAP, Lime, ALIBI | Generate feature importance scores | SHAP supports most major ML libraries |

| Molecular Descriptor Calculation | PaDEL, RDKit, Mordred | Compute structural descriptors from molecules | Standardize descriptor set before comparisons |

| Hyperparameter Optimization | Optuna, Hyperopt, GridSearchCV | Systematic hyperparameter exploration | Use same search space for fair comparisons |

| Visualization Tools | Matplotlib, Seaborn, Plotly | Create plots of SHAP values and descriptor rankings | Customize for chemical relevance |

| Chemical Representation | SMILES, Molecular fingerprints | Standardize molecular input format | RDKit handles conversion and normalization |

Visualization Frameworks for Hyperparameter-Interpretability Relationships

The diagram illustrates how hyperparameter settings influence both model training and SHAP calculation, ultimately affecting mechanistic interpretations derived from QSAR models. The dashed line represents the often-overlooked direct influence of hyperparameters on interpretation outcomes.

Best Practices for Hyperparameter Selection with Interpretability in Mind

Interpretability-Aware Tuning Strategies

Based on the reviewed literature, the following practices optimize both predictive performance and interpretability:

- Multi-Objective Optimization: Include interpretation stability metrics alongside accuracy metrics during hyperparameter tuning

- Consistency Validation: Validate that important descriptors align with domain knowledge across hyperparameter settings

- Regularization Prioritization: Favor slightly stronger regularization to reduce overfitting and improve descriptor importance stability [24]

- Ensemble Interpretations: Combine SHAP analyses from multiple reasonable hyperparameter configurations to identify robust descriptor importances

Documentation and Reporting Standards

For reproducible interpretability analysis, document these hyperparameter details:

- Complete hyperparameter settings for all models

- SHAP computation parameters (background data size, sampling method)

- Version information for all software libraries

- Descriptor importance rankings for major model configurations

Hyperparameters in QSAR models serve as critical mediators between predictive performance and interpretability, directly influencing which molecular descriptors are identified as important through SHAP analysis. The documented cases demonstrate that alternative hyperparameter choices can lead to different mechanistic interpretations of the same underlying structure-activity relationships [26] [29].

Future research directions should develop hyperparameter tuning methods specifically optimized for interpretability stability, standardized benchmarks for evaluating interpretation robustness, and integration of domain knowledge directly into the hyperparameter selection process. As QSAR applications expand into new domains like environmental toxicology and material science [26] [31], the relationship between hyperparameters and interpretability will become increasingly important for building scientifically plausible and regulatory-acceptable models.

Researchers should treat hyperparameter selection not merely as an optimization problem but as an integral part of scientific interpretation in QSAR modeling. By applying the methodologies and best practices outlined in this guide, scientists can ensure their models provide both accurate predictions and chemically meaningful insights that advance drug discovery and environmental safety assessment.

From Theory to Practice: Strategies and Tools for Hyperparameter Optimization

In modern drug discovery, Quantitative Structure-Activity Relationship (QSAR) modeling has become an indispensable tool for predicting the biological activity and physicochemical properties of molecules from their structural descriptors [9] [32]. The effectiveness of these computational models hinges on the careful selection of hyperparameters—the configuration settings that control the learning process of machine learning algorithms. Hyperparameter tuning is not merely a technical refinement but a crucial step that determines the predictive accuracy, generalizability, and ultimately the success of computational drug discovery pipelines [9] [33]. As QSAR models evolve from classical statistical approaches to sophisticated artificial intelligence (AI) methods, including deep learning and ensemble techniques, the hyperparameter search space grows exponentially, necessitating efficient and intelligent optimization strategies [9] [34].

The integration of AI in drug discovery has transformed QSAR modeling, enabling the screening of billions of compounds and significantly accelerating the identification of therapeutic candidates [9]. However, this advancement comes with the challenge of configuring complex models where hyperparameters control fundamental aspects such as model capacity, convergence behavior, and regularization strength. The choice of optimization technique directly impacts resource utilization, model performance, and the ability to meet critical deadlines in pharmaceutical research and development [35]. This technical review examines the three cornerstone methodologies—Grid Search, Random Search, and Bayesian Optimization—within the context of QSAR research, providing researchers with practical insights for selecting and implementing these approaches in computational drug discovery.

Hyperparameter Optimization Methodologies

Grid Search: The Systematic Approach

Grid Search represents the most straightforward approach to hyperparameter tuning, employing a brute-force methodology that systematically explores a predefined set of hyperparameters [36] [35]. The technique operates by constructing a multidimensional grid where each axis corresponds to a different hyperparameter, and each point in the grid represents a specific combination of hyperparameter values. The algorithm exhaustively trains and evaluates a model for every possible combination within this grid, typically using cross-validation to assess performance [36].

The implementation of Grid Search in QSAR studies typically involves defining a parameter grid specifying the values for each hyperparameter. For instance, when optimizing a Random Forest classifier for a QSAR classification task, the grid might include parameters such as n_estimators (number of trees), max_depth (maximum tree depth), min_samples_split (minimum samples required to split a node), and min_samples_leaf (minimum samples required at a leaf node) [36]. A key advantage of Grid Search is its comprehensive nature—it guarantees finding the best combination within the specified parameter space. However, this completeness comes at a significant computational cost, as the total number of model evaluations grows exponentially with each additional hyperparameter, a phenomenon known as the "curse of dimensionality" [36] [37].

Table 1: Grid Search Implementation Analysis

| Aspect | Implementation Details |

|---|---|

| Search Pattern | Exhaustive, systematic exploration of all specified combinations |

| Parameter Space Handling | Discrete, predefined values for each hyperparameter |

| Computational Complexity | Grows exponentially with additional parameters (O(n^k)) |

| Best For | Small parameter spaces (typically 2-4 dimensions) |

| QSAR Application Example | Preliminary screening of hyperparameters for classical models like SVM or RF |

Random Search: The Stochastic Alternative

Random Search addresses the computational inefficiency of Grid Search through a probability-based approach [36] [35]. Rather than exhaustively evaluating all possible combinations, Random Search samples hyperparameter configurations randomly from specified distributions over the parameter space. This method allows for a more flexible exploration of the hyperparameter landscape, particularly beneficial for continuous parameters where Grid Search is limited to discrete values [36].

In practical QSAR applications, Random Search defines probability distributions for each hyperparameter rather than discrete values. For continuous parameters like learning rates or regularization coefficients, uniform or log-uniform distributions are typically specified to ensure appropriate sampling across scales [36]. The number of iterations (n_iter) is predetermined based on computational resources and time constraints. Research has demonstrated that Random Search often outperforms Grid Search in efficiency, finding comparable or superior models with significantly fewer iterations because it doesn't waste resources on unimportant parameters [36] [35]. This makes it particularly valuable for QSAR models with high-dimensional hyperparameter spaces, where some parameters have minimal impact on performance while others are critical determinants of model accuracy.

Table 2: Random Search Performance Characteristics

| Characteristic | Grid Search | Random Search |

|---|---|---|

| Search Strategy | Exhaustive | Stochastic sampling |

| Parameter Space | Discrete values | Continuous distributions |

| Computational Efficiency | Low (exponential growth) | High (linear growth with iterations) |

| Optimal For | Small parameter spaces | Medium to large parameter spaces |

| Coverage Guarantee | Complete within specified grid | Probabilistic |

Bayesian Optimization: The Intelligent Strategy

Bayesian Optimization represents a paradigm shift in hyperparameter tuning by employing a probabilistic, adaptive approach that leverages information from previous evaluations to guide the search process [37] [35] [38]. Unlike Grid and Random Search, which treat each hyperparameter configuration independently, Bayesian Optimization builds a surrogate model of the objective function (typically using Gaussian Processes or Tree Parzen Estimators) and uses an acquisition function to decide which hyperparameters to evaluate next [37] [38].

The Bayesian Optimization process iterates through a sequence of steps: first, using the surrogate model to approximate the unknown objective function; second, applying an acquisition function (such as Expected Improvement or Upper Confidence Bound) to identify the most promising hyperparameters to evaluate next; and third, updating the surrogate model with new results [37] [35]. This adaptive learning mechanism enables Bayesian Optimization to focus computational resources on promising regions of the hyperparameter space while avoiding unpromising areas. In QSAR applications, particularly those involving computationally expensive deep learning models, this approach can reduce the number of required iterations by 5-7x compared to traditional methods while achieving comparable or superior performance [37] [39]. The efficiency gains are especially valuable in drug discovery contexts where model training involves large chemical databases or complex neural architectures.

Diagram 1: Bayesian optimization iterative process for QSAR model tuning

Comparative Analysis of Optimization Techniques

Performance and Efficiency Metrics

The three hyperparameter optimization techniques demonstrate markedly different performance characteristics when applied to QSAR modeling scenarios. Quantitative evaluations reveal that Bayesian Optimization consistently achieves comparable or superior model performance with significantly fewer iterations—typically 5-7x faster than alternative methods [37] [39]. This efficiency advantage stems from its ability to leverage information from previous evaluations to make informed decisions about promising regions of the hyperparameter space.

Grid Search, while guaranteed to find the optimal combination within a specified discrete space, becomes computationally prohibitive as the dimensionality of the hyperparameter space increases. For example, a grid search with only 5 hyperparameters, each with 5 possible values, requires 3,125 model evaluations—a substantial computational burden for complex QSAR models [36]. Random Search provides a middle ground, offering better scalability than Grid Search while maintaining simplicity of implementation. However, its stochastic nature means that results may vary between runs, and it cannot leverage information from previous evaluations to refine its search [36] [35].

Table 3: Comprehensive Comparison of Hyperparameter Optimization Methods

| Criterion | Grid Search | Random Search | Bayesian Optimization |

|---|---|---|---|

| Search Strategy | Exhaustive | Random sampling | Model-guided adaptive |

| Computational Efficiency | Low | Medium | High |

| Parameter Space Type | Discrete | Continuous or discrete | Continuous or discrete |

| Theoretical Guarantees | Optimal in grid | Probabilistic | Sublinear regret bounds [38] |

| Scalability | Poor (>4 parameters) | Good | Excellent |

| Implementation Complexity | Low | Low | Medium-High |

| Typical Iterations Needed | O(n^k) | 50-100 | 7x fewer than alternatives [39] |

| Best for QSAR Applications | Classical models with few hyperparameters | Medium-complexity models with limited resources | Deep learning, ensemble methods, large chemical spaces |

Implementation Considerations for QSAR Workflows

Implementing hyperparameter optimization in QSAR pipelines requires careful consideration of several practical factors. The choice of technique should align with the specific characteristics of the QSAR problem, including dataset size, model complexity, computational resources, and project timelines [35]. For classical QSAR approaches utilizing Multiple Linear Regression (MLR) or Partial Least Squares (PLS) with a limited number of hyperparameters, Grid Search may be sufficient and advantageous due to its simplicity and determinism [32].

For more complex QSAR models employing deep neural networks or ensemble methods with extensive hyperparameter spaces, Bayesian Optimization provides significant advantages. Recent research demonstrates successful applications of Bayesian Optimization in QSAR pipelines for various targets, including NF-κB inhibitors and BCRP inhibitors [32] [33]. The integration of tools like Optuna or scikit-optimize with popular QSAR platforms enables efficient implementation of Bayesian Optimization, even for researchers with limited expertise in optimization algorithms [36] [35]. A hybrid approach that combines coarse Grid Search to identify promising regions followed by Bayesian Optimization for refinement has been shown to be particularly effective in QSAR applications [33].

Experimental Protocols and Research Applications

Protocol: Bayesian Optimization for Deep Learning QSAR Models

The following protocol outlines the implementation of Bayesian Optimization for hyperparameter tuning in deep learning-based QSAR models, adapted from recent research [40] [33]:

Objective Function Definition: Define an objective function that takes hyperparameters as input and returns the cross-validation performance of a QSAR model. For classification tasks, use metrics such as Matthews Correlation Coefficient (MCC) or Area Under the ROC Curve (AUC). For regression tasks, use Root Mean Square Error (RMSE) or R² [33].

Search Space Configuration: Define the hyperparameter search space including learning rate (log-uniform distribution between 10⁻⁵ and 10⁻¹), number of hidden layers (integer uniform between 1 and 5), units per layer (integer uniform between 32 and 512), dropout rate (uniform between 0.1 and 0.5), and batch size (categorical from 32, 64, 128, 256) [33].

Surrogate Model and Acquisition Function: Select a Gaussian Process surrogate model with Matern kernel and Expected Improvement acquisition function to balance exploration and exploitation [40].

Iteration and Convergence: Run the optimization for a predetermined budget (typically 50-100 iterations) or until performance plateaus (less than 1% improvement over 10 consecutive iterations) [33].

Validation: Train the final model with the optimal hyperparameters on the complete training set and evaluate on a held-out test set to estimate generalization performance [32] [33].

Protocol: Grid Search for Traditional QSAR Models

For classical QSAR models, Grid Search remains a viable and straightforward option:

Parameter Grid Definition: Create a discrete grid of hyperparameter values based on empirical knowledge and literature recommendations. For Support Vector Machines, include C values (e.g., 0.1, 1, 10, 100), kernel types (linear, RBF), and gamma values (0.001, 0.01, 0.1, 1) [36] [32].

Cross-Validation Setup: Implement k-fold cross-validation (typically 5-fold) with stratified sampling for classification tasks to ensure representative distribution of activity classes in each fold [32].

Exhaustive Evaluation: Train and evaluate a model for each hyperparameter combination in the grid, recording performance metrics for each fold.

Optimal Parameter Selection: Identify the hyperparameter combination that delivers the best average cross-validation performance.

Model Validation: Apply the tuned model to an external test set to assess predictive ability on unseen data, ensuring the model's applicability domain is clearly defined [32].

Research Reagent Solutions: Computational Tools for QSAR Hyperparameter Optimization

Table 4: Essential Computational Tools for Hyperparameter Optimization in QSAR Research

| Tool/Platform | Function | QSAR Application |

|---|---|---|

| Scikit-learn (Python) | Provides GridSearchCV and RandomizedSearchCV | Classical ML algorithms for QSAR (SVM, RF, PLS) |

| Optuna (Python) | Bayesian optimization framework | Deep learning QSAR models, large hyperparameter spaces |

| H2O.ai (R/Python) | Automated machine learning with built-in tuning | High-throughput QSAR screening of compound libraries |

| Caret (R) | Unified interface for training and tuning models | Traditional QSAR modeling with multiple algorithms |

| mlrMBO (R) | Model-based optimization for hyperparameter tuning | Bayesian optimization for QSAR models in R workflows |

Hyperparameter optimization represents a critical component in the development of robust and predictive QSAR models for drug discovery. The three core techniques—Grid Search, Random Search, and Bayesian Optimization—offer distinct trade-offs between computational efficiency, implementation complexity, and effectiveness across different QSAR scenarios [36] [35]. As the field progresses toward increasingly complex AI-driven QSAR approaches, including deep neural networks and graph-based representations, Bayesian Optimization and its variants are poised to become the standard for hyperparameter tuning due to their superior efficiency and performance [9] [34].

Future developments in hyperparameter optimization for QSAR will likely focus on multi-fidelity optimization methods that leverage cheaper approximations of the objective function, meta-learning approaches that transfer knowledge from previous QSAR tasks to new problems, and integration with automated QSAR platforms that streamline the entire model development pipeline [34]. Furthermore, the emergence of quantum-inspired optimization algorithms may offer additional acceleration for exploring complex hyperparameter landscapes [34]. As these advanced techniques mature, they will empower drug discovery researchers to build more accurate and reliable QSAR models while significantly reducing computational costs and development timelines, ultimately accelerating the delivery of novel therapeutics.

Leveraging Automated Machine Learning (AutoML) for Efficient QSAR Workflows

Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone of modern computational drug discovery, enabling researchers to predict the biological activity of compounds from their chemical structures. The fundamental premise of QSAR—that molecular structure determines activity—has driven six decades of methodological evolution, from simple linear regression to increasingly sophisticated machine learning (ML) approaches [41] [42]. However, building robust QSAR models requires navigating complex decisions regarding algorithm selection, feature engineering, and hyperparameter optimization, creating significant bottlenecks in research workflows.

Automated Machine Learning (AutoML) has emerged as a transformative solution to these challenges, offering systematic automation of the end-to-end ML pipeline. As evidenced by bibliometric analyses, AutoML has experienced remarkable growth with an annual publication growth rate of 87.76%, reflecting surging academic and industrial interest [43]. In QSAR modeling, AutoML frameworks streamline the process of building predictive models by automatically selecting algorithms, optimizing hyperparameters, and generating validated solutions—dramatically reducing the time and specialized expertise required while enhancing model performance [44].

This technical guide examines the integration of AutoML into QSAR workflows, with particular emphasis on the critical role of hyperparameter optimization. By providing structured protocols, comparative analyses, and implementation frameworks, we equip researchers with the methodologies needed to leverage AutoML for accelerated, reproducible, and regulatory-compliant drug discovery.

Foundations of QSAR and the Hyperparameter Challenge

Essential Components of QSAR Modeling

QSAR modeling rests on three fundamental pillars that collectively determine model performance and applicability:

Datasets: High-quality, curated datasets form the foundation of reliable QSAR models. These datasets contain chemical structures and associated biological activity measurements, typically expressed as IC₅₀, Ki, or binary activity classifications. The quality, diversity, and size of training data significantly influence model generalizability [41]. For robust model development, datasets must encompass diverse chemical structures representing the application domain while maintaining rigorous data quality standards.

Molecular Descriptors: Descriptors are mathematical representations that encode chemical structure information into numerical values usable by ML algorithms. They range from simple 1D descriptors (molecular weight, atom counts) to complex 2D (topological indices), 3D (molecular shape, electrostatic potentials), and even 4D descriptors (accounting for conformational flexibility) [9]. The selection and engineering of appropriate descriptors is crucial, as poor descriptor choice leads to the "garbage in, garbage out" phenomenon [41].

Mathematical Models: The algorithms that establish quantitative relationships between descriptors and biological activity span from classical statistical methods (Multiple Linear Regression, Partial Least Squares) to advanced machine learning techniques (Random Forests, Support Vector Machines, Deep Neural Networks) [9] [45]. Each algorithm class possesses distinct strengths, weaknesses, and inductive biases suited to different QSAR tasks.