Hyperparameter Tuning for Materials Science Machine Learning: A Practical Guide for Researchers

This article provides a comprehensive guide to hyperparameter tuning, tailored for researchers and professionals in materials science and drug development.

Hyperparameter Tuning for Materials Science Machine Learning: A Practical Guide for Researchers

Abstract

This article provides a comprehensive guide to hyperparameter tuning, tailored for researchers and professionals in materials science and drug development. It covers foundational concepts, core optimization algorithms like Grid Search, Random Search, and Bayesian Optimization, and their practical application in predicting material properties. The guide addresses common pitfalls such as overfitting and loss of model interpretability, offering troubleshooting strategies and best practices for rigorous model validation. By connecting methodological theory with real-world case studies from supercapacitors, metal forming, and alloy discovery, this resource aims to equip scientists with the knowledge to build more accurate, reliable, and interpretable machine learning models, thereby accelerating materials innovation.

Why Hyperparameters Matter: The Foundation of Reliable Materials Science ML

In the burgeoning field of materials informatics, the effective application of machine learning (ML) hinges on a nuanced understanding of a model's internal components. Central to this understanding is the critical distinction between model parameters and model hyperparameters. This distinction governs how models are built, trained, and optimized, directly impacting the success of data-driven materials discovery. This guide provides an in-depth technical exploration of these concepts, framed specifically for the workflows and challenges encountered in materials science research.

Core Definitions: Parameters vs. Hyperparameters

At its core, the difference between parameters and hyperparameters lies in how they are determined during the machine learning process.

Model Parameters: These are the internal variables of the model that are learned directly from the training data during the training process. They are not set manually but are estimated by the optimization algorithm (e.g., Gradient Descent, Adam) to map input features to the target output. Model parameters define the specific representation of the relationship inherent in your dataset [1] [2] [3].

Model Hyperparameters: These are the configuration variables that are set before the training process begins. They control the very structure of the model and the learning process itself. Hyperparameters are not learned from the data; instead, they must be tuned by the researcher to achieve the best performance for a given task [1] [2] [3].

The table below provides a consolidated comparison for clarity.

Table 1: Fundamental Differences Between Model Parameters and Hyperparameters

| Aspect | Model Parameters | Model Hyperparameters |

|---|---|---|

| Definition | Internal variables learned from the data. | External configuration set before training. |

| Purpose | Used to make predictions on new data. | Control the learning process and model structure. |

| Determination | Estimated automatically by optimization algorithms. | Set manually or via automated tuning. |

| Examples | Weights, biases, coefficients. | Learning rate, number of layers, number of estimators. |

The Critical "Why": Impact on Model Performance and Workflow

Understanding this distinction is not merely academic; it is fundamental to building effective and reliable ML models in materials science.

Role of Model Parameters: The learned parameters encapsulate the patterns found in your training data, such as the complex relationships between material composition, processing conditions, and a target property (e.g., tensile strength, band gap). The final values of these parameters determine how the model will perform on unseen experimental or computational data [1] [3].

Role of Model Hyperparameters: Hyperparameters act as the control knobs for the learning algorithm. They directly influence how efficiently and effectively the model parameters are learned. A poor choice of hyperparameters can lead to underfitting (where the model is too simple to capture trends) or overfitting (where the model memorizes the training data and fails to generalize) [1]. For instance, setting the learning rate too high might prevent the model from converging to a good solution, while setting it too low makes training unnecessarily slow.

The process of finding the optimal set of hyperparameters is known as Hyperparameter Optimization (HPO). In materials science, where datasets can be small and computationally expensive to generate, efficient HPO is crucial for maximizing the value of available data and accelerating discovery cycles [4] [5].

Hyperparameter Optimization in Practice: Methods and Protocols

Given that hyperparameters cannot be learned from data, researchers must employ systematic strategies to find the best configurations. The following workflow and methods form the backbone of modern HPO.

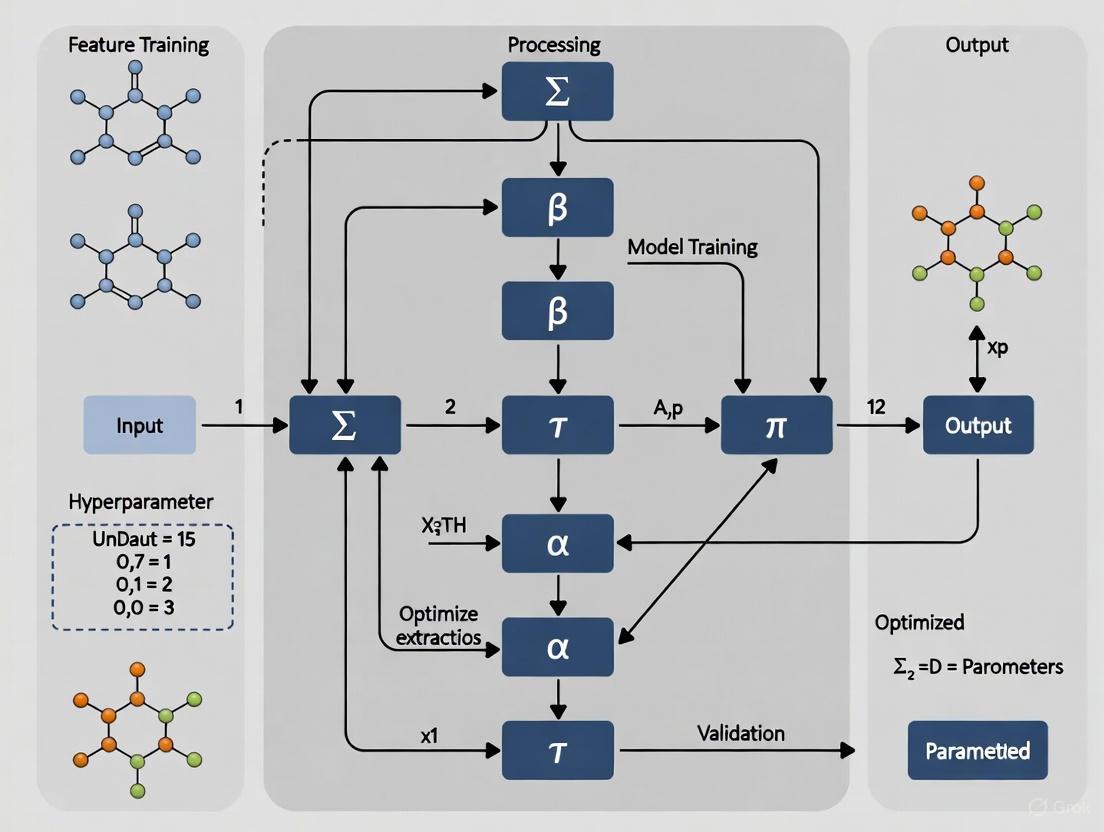

Diagram: Hyperparameter Optimization (HPO) Workflow

Common Hyperparameter Optimization Algorithms

Several algorithms exist for navigating the hyperparameter space, each with its own strengths and computational trade-offs [5] [6].

Table 2: Comparison of Common Hyperparameter Optimization Methods

| Method | Core Principle | Strengths | Weaknesses |

|---|---|---|---|

| Grid Search (GS) | Exhaustively searches over a predefined set of hyperparameter values. [6] | Guaranteed to find the best combination within the grid; simple to implement and parallelize. [6] | Computationally expensive and infeasible for high-dimensional spaces; performance depends on a well-chosen grid. [6] |

| Random Search (RS) | Randomly samples hyperparameter combinations from specified distributions. [6] | More efficient than GS, especially when some hyperparameters are less important; requires less processing time. [6] | Does not guarantee a global optimum; can still be inefficient in very large search spaces. [6] |

| Bayesian Optimization (BO) | Builds a probabilistic surrogate model to predict model performance and guides the search intelligently. [4] [6] | Highly sample-efficient; requires fewer evaluations to find good hyperparameters; superior computational efficiency. [4] [6] | More complex to implement; can have higher overhead per iteration. [6] |

Experimental Protocol for Hyperparameter Tuning

A typical HPO experiment, as applied in studies ranging from predicting heart failure outcomes to materials property prediction, follows a structured protocol [6]:

- Define the Search Space: For each hyperparameter (e.g., learning rate, number of layers, regularization strength), specify a range or list of possible values. This requires domain knowledge and an understanding of the model.

- Choose an Optimization Algorithm: Select an HPO method such as GS, RS, or BO based on the size of the search space and available computational budget.

- Select a Performance Metric: Choose an appropriate evaluation metric (e.g., R² for regression, AUC for classification, mean absolute error) that aligns with the research goal.

- Implement Cross-Validation: To ensure robustness and avoid overfitting, the performance of each hyperparameter set is typically evaluated using k-fold cross-validation (e.g., 10-fold cross-validation) on the training data [6].

- Execute the Search: The HPO algorithm iteratively proposes hyperparameter combinations, trains the model, and evaluates its performance.

- Validate the Best Model: Once the search is complete, the best hyperparameter set is used to train a final model on the entire training set, and its performance is confirmed on a held-out test set.

The Materials Science Context: Tools and Applications

The principles of HPO are universally applicable, but their implementation in materials science is often facilitated by specialized software and frameworks.

The Scientist's Toolkit: HPO in Materials Informatics

Table 3: Key Resources for Hyperparameter Optimization in Materials Science Research

| Tool / Category | Example(s) | Function in Materials Informatics |

|---|---|---|

| Automated ML Frameworks | MatSci-ML Studio, Autonomminer, MatPipe [4] | Provides end-to-end workflows, often with integrated HPO capabilities, lowering the barrier for domain experts. [4] |

| HPO Libraries | Optuna [4], Scikit-learn [6] | Provide flexible, state-of-the-art algorithms (like Bayesian Optimization) for tuning models built in Python. |

| Model Libraries | Scikit-learn, XGBoost, LightGBM, CatBoost [4] | Offer a wide array of models whose performance is critically dependent on effective hyperparameter tuning. |

| Specialized NN Optimizers | Gaussian Process Regressor, Bayesian Neural Networks [7] | Used as surrogate models in AL loops or for uncertainty quantification, themselves requiring HPO. |

Case in Point: HPO in Action

The importance of HPO is evident in real-world materials science applications. For example, the MatSci-ML Studio toolkit automates hyperparameter optimization using the Optuna library, which employs efficient Bayesian optimization to identify optimal model configurations [4]. This automation is vital for enabling materials scientists with limited coding expertise to build high-performing models for predicting properties from composition-process-property relationships [4].

Furthermore, in advanced applications like optimizing Graph Neural Networks (GNNs) for molecular property prediction in cheminformatics, HPO and Neural Architecture Search (NAS) are crucial for managing model complexity and computational cost [8]. Similarly, optimizing Convolutional Neural Networks (CNNs) requires systematic HPO to navigate their numerous architectural and optimization-related hyperparameters [5].

The clear separation between model parameters and hyperparameters is a foundational concept in machine learning. For materials scientists and drug development professionals, mastering this distinction—and the subsequent practice of hyperparameter optimization—is key to unlocking the full potential of data-driven research. By leveraging modern tools and protocols for HPO, researchers can systematically develop more accurate, robust, and predictive models, thereby accelerating the design and discovery of novel materials with tailored properties.

The Critical Impact of Hyperparameters on Model Performance and Generalization

In the domain of materials science machine learning (ML), the configuration of hyperparameters is not merely a technical preliminary but a fundamental determinant of research outcomes. Hyperparameters are the configuration variables that control the very behavior of machine learning algorithms [9]. Their selection dictates a model's ability to generalize from training data to unseen experimental results, a capability paramount for accelerating material discovery and optimizing complex formulations. The nested nature of hyperparameter optimization—where each evaluation requires training a model—presents unique challenges, including complex, heterogeneous search spaces and computationally expensive evaluation procedures [9]. Within materials science, where data is often scarce and costly to acquire from synthesis or characterization [10], efficient hyperparameter tuning becomes critical for building robust predictive models for properties such as ultimate tensile strength in alloys [4] or stress fields in composite materials [11]. This guide examines the profound impact of hyperparameters on model performance and generalization, providing materials scientists with structured experimental data, detailed protocols, and advanced frameworks to navigate this complex landscape.

Experimental Evidence from Materials Science

Case Study: Physics-Informed Deep Learning Networks

The critical role of bespoke hyperparameter optimization (HPO) is vividly demonstrated in physics-informed deep learning. A seminal study investigating Physics-Based Regularization (PBR) for predicting stress fields in high elastic contrast composites revealed that independently fine-tuning hyperparameters for each unique loss function implementation was essential for achieving peak performance [11].

Table 1: Hyperparameter Impact on Physics-Informed Network Accuracy

| Model / Loss Function Type | Key Hyperparameters | Optimization Method | Impact on Performance |

|---|---|---|---|

| Baseline Model (No PBR) | Learning Rate, Number of Epochs | Separate Fine-Tuning | Baseline for comparison [11] |

| Physics-Based Regularization (PBR) Loss 1 | Learning Rate, Loss Weight λ₁ | Separate Fine-Tuning | Enforced physical constraint more accurately than baseline [11] |

| Physics-Based Regularization (PBR) Loss 2 | Learning Rate, Loss Weight λ₂ | Separate Fine-Tuning | Faster convergence of stress equilibrium [11] |

The study concluded that assessing the relative effectiveness of different deep learning models requires this careful, individualized tuning process, as each loss formulation and dataset required different optimal learning rates and loss weights [11].

Benchmarking Active Learning Strategies with AutoML

The interplay between model selection and hyperparameter optimization is further clarified in the context of Active Learning (AL) for small-sample regression. A comprehensive benchmark study evaluated 17 different AL strategies using an Automated Machine Learning (AutoML) framework across multiple materials datasets [10]. The study underscored that AutoML automates the process of model and hyperparameter selection, which is crucial for reliable performance when labeled data is scarce [10]. The key findings are summarized below.

Table 2: Performance of Select Active Learning Strategies in AutoML (Early-Stage Data Acquisition)

| Active Learning Strategy | Underlying Principle | Performance vs. Random Sampling | Key Characteristics |

|---|---|---|---|

| LCMD | Uncertainty-Driven | Outperforms | Effective in data-scarce regimes [10] |

| Tree-based-R | Uncertainty-Driven | Outperforms | Effective in data-scarce regimes [10] |

| RD-GS | Diversity-Hybrid | Outperforms | Combines representativeness and diversity [10] |

| GSx, EGAL | Geometry-Only | Matches or slightly exceeds | Pure diversity heuristics [10] |

The benchmark revealed that early in the acquisition process, uncertainty-driven and diversity-hybrid strategies clearly outperformed geometry-only heuristics and random sampling by selecting more informative samples [10]. As the labeled set grew, the performance gap narrowed, indicating diminishing returns from AL under AutoML and a reduced relative impact of the initial hyperparameter and strategy choices [10].

Methodologies for Hyperparameter Optimization

A Taxonomy of HPO Algorithms

Hyperparameter optimization techniques can be broadly categorized into several families, each with distinct strengths and weaknesses. The selection of an appropriate method depends on factors such as the computational budget, the size and nature of the search space, and the cost of function evaluations [5] [9].

Implementing Bayesian Optimization

Bayesian Optimization (BO) is a powerful model-based approach for optimizing expensive black-box functions. It operates by constructing a probabilistic surrogate model, typically a Gaussian Process (GPR), to approximate the response function [9]. An acquisition function, such as Expected Improvement (EI), uses this model to decide which hyperparameters to evaluate next by balancing exploration and exploitation [9]. The process can be broken down into the following detailed protocol:

- Define the Search Space: Specify all hyperparameters and their domains (continuous, integer, categorical). For conditional hyperparameters (e.g., the number of layers only matters if a deep network is chosen), define the dependencies [9].

- Select a Surrogate Model: Choose a probabilistic model. Gaussian Process Regressors are common, but random forests can also be used (e.g., in the Tree-structured Parzen Estimator, TPE).

- Choose an Acquisition Function: Common choices include Expected Improvement (EI), Upper Confidence Bound (UCB), or Probability of Improvement (PI).

- Initialize with a Design of Experiments: Start by evaluating a small number (e.g., 10-20) of randomly selected hyperparameter configurations to build an initial surrogate model.

- Iterate until Convergence:

- Fit the surrogate model to all observations

{(H, L)}collected so far, whereHis a hyperparameter set andLis the resulting validation loss. - Find the hyperparameters

H_{next}that maximize the acquisition function. - Evaluate the true validation loss

L_{next}by training the model withH_{next}. - Add the new observation

(H_{next}, L_{next})to the dataset.

- Fit the surrogate model to all observations

- Final Output: After the budget is exhausted, return the hyperparameter set

H*that achieved the lowest validation lossL.

This framework was famously used to optimize the hyperparameters of the AlphaGo system, demonstrating its capability in high-stakes scenarios [9].

Table 3: Key Research Reagent Solutions for HPO in Materials Science

| Tool / Resource Name | Type | Primary Function | Relevance to Materials Science |

|---|---|---|---|

| MatSci-ML Studio [4] | Automated ML Toolkit | Provides a GUI for end-to-end ML workflow, including automated HPO and model training. | Democratizes ML for domain experts; incorporates SHAP interpretability and multi-objective optimization for material design. |

| Automatminer/MatPipe [4] | Python Framework | Automates featurization and model benchmarking from composition or structure. | Enables high-throughput model benchmarking for computational materials scientists. |

| Optuna [4] | HPO Library | Enables efficient Bayesian optimization with pruning algorithms. | Integrated into tools like MatSci-ML Studio for automated hyperparameter tuning. |

| Universal ML Interatomic Potentials (uMLIPs) [12] | Pre-trained Models | MLIPs like M3GNet, MACE, and CHGNet offer pre-optimized architectures for atomistic simulations. | Serve as direct replacements for DFT calculations at a fraction of the cost; reduce need for architecture HPO. |

| LLM-based Active Learning (LLM-AL) [7] | Novel AL Framework | Uses large language models as surrogate models for experiment selection in an iterative few-shot setting. | Aims to provide a generalizable, tuning-free alternative to conventional AL, mitigating the cold-start problem. |

Advanced Topics and Future Directions

The Frontier of Tuning-Free Optimization

Recent research explores paradigms that reduce reliance on traditional HPO. The introduction of Large Language Models for Active Learning (LLM-AL) demonstrates a promising alternative. This framework leverages the pretrained knowledge and in-context learning capabilities of LLMs to propose experiments directly from text-based descriptions, operating effectively in a few-shot setting without fine-tuning or explicit hyperparameter tuning for a surrogate model [7]. Benchmarks across diverse materials datasets showed that LLM-AL could reduce the number of experiments needed to reach top-performing candidates by over 70%, consistently outperforming traditional ML models like Random Forest and Gaussian Process Regressors [7]. This suggests a path toward more generalizable and accessible optimization tools for experimental science.

Hyperparameter Optimization in Physics-Informed Learning

As identified in the study on physics-informed deep learning networks, altering the loss function to incorporate physical knowledge changes the optimization landscape [11]. This makes hyperparameters like the learning rate and, crucially, the weights of individual physics-based loss terms, extremely sensitive. Future work in this area is likely to focus on dynamic and adaptive loss balancing methods to manage the competition between multiple loss terms during training [11]. Furthermore, the development of more sophisticated optimization algorithms, such as MetaOptimize, which dynamically adjusts meta-parameters like learning rates during training by tracking intrinsic properties like gradient autocorrelation, points toward a future of more automated and stable training processes for complex models [13].

Essential Hyperparameters Across Common ML Models in Materials Science

The application of machine learning (ML) in materials science has revolutionized the process of discovering and optimizing new functional materials, from high-performance alloys and energy storage materials to novel catalysts and photovoltaics [14]. However, the performance of these ML models is critically dependent on the careful configuration of their hyperparameters—the settings that govern the learning process itself [15] [16]. Unlike model parameters that are learned from data, hyperparameters are set before training begins and control aspects such as model complexity, learning speed, and convergence behavior [16]. Proper hyperparameter tuning is not merely a technical refinement; it is an absolute crucial step that determines whether a sophisticated algorithm will succeed in extracting meaningful structure-property relationships from often limited and costly materials data [15]. Without systematic tuning, researchers cannot conclusively determine that a poor model outcome stems from the algorithm itself rather than from suboptimal configuration choices [15].

This guide provides materials scientists with a comprehensive framework for hyperparameter optimization, focusing on the most impactful parameters across common ML models used in the field. We integrate foundational tuning principles with advanced strategies like Bayesian optimization and AutoML that have demonstrated significant success in recent materials informatics research [17] [10]. By structuring this information within practical workflows and providing experimentally-validated protocols, we aim to equip researchers with the methodologies needed to maximize the predictive accuracy of their ML-driven materials discovery efforts.

Core Hyperparameter Concepts

Definitions and Significance

In machine learning, a clear distinction exists between parameters and hyperparameters. Parameters are values that the model learns automatically during training from the data, such as weights and biases in a neural network or split points in a decision tree [15] [16]. In contrast, hyperparameters are configuration variables that guide the learning process itself and are set by the practitioner before training begins [15] [16]. Common examples include the learning rate in neural networks, the maximum depth in tree-based models, and the regularization strength in support vector machines.

The significance of hyperparameter tuning in materials science cannot be overstated. Well-tuned hyperparameters directly lead to improved model accuracy by guiding the model to learn efficiently from often limited experimental or computational datasets [16]. They play a critical role in avoiding overfitting (where the model memorizes training data noise and fails to generalize) and underfitting (where the model is too simple to capture underlying patterns) [16]. Given the high costs associated with both computational screening and experimental synthesis in materials science, efficient hyperparameter tuning also enables better resource utilization, ensuring that research cycles are not wasted on suboptimal models [16].

Hyperparameter Tuning Workflows

The process of hyperparameter optimization typically follows a structured workflow that can be implemented through various strategies. The diagram below illustrates this general process and the key optimization algorithms available at each stage.

General Hyperparameter Optimization Workflow

This workflow forms the foundation for most tuning operations, whether implemented manually or through automated frameworks. For materials science applications, where dataset sizes are often limited due to high acquisition costs, Bayesian optimization has demonstrated particular promise by achieving higher performance with reduced computation time compared to traditional methods like grid search [17]. Furthermore, the emergence of Automated Machine Learning (AutoML) frameworks has begun to transform this process, automating model selection, hyperparameter tuning, and feature engineering to significantly improve the efficiency of materials informatics [14] [10].

Model-Specific Hyperparameters

Neural Networks and Deep Learning

Deep learning architectures, including Graph Neural Networks (GNNs) and Convolutional Neural Networks (CNNs), have shown remarkable success in predicting material properties from complex input representations such as crystal structures, molecular graphs, and microstructural images [14] [18]. These models contain several hyperparameters that critically influence their performance.

Table: Essential Hyperparameters for Neural Networks in Materials Science

| Hyperparameter | Description | Impact on Learning | Common Values/Range | Materials Science Application Notes |

|---|---|---|---|---|

| Learning Rate | Step size for weight updates during optimization | Too high: unstable training, divergence. Too low: slow convergence, local minima [16]. | 0.1 to 10-6 (log scale) [15] | Most critical parameter to tune first [15]; often needs values <0.001 for predicting material properties [15] |

| Number of Layers | Depth of the neural network | Too few: unable to capture complex patterns. Too many: overfitting, vanishing gradients [16]. | 2-10+ | Deeper networks beneficial for complex structure-property relationships [14] |

| Batch Size | Number of samples processed before updating parameters | Smaller: noisy updates, better generalization. Larger: stable convergence, memory intensive [16]. | 32, 64, 128, 256 | Important for handling materials datasets of varying sizes [16] |

| Activation Function | Determines neuron output (non-linearity) | Choice affects learning capability and gradient flow [5]. | ReLU, Sigmoid, Tanh, Leaky ReLU | ReLU variants common for materials property prediction [5] |

| Dropout Rate | Fraction of neurons randomly disabled during training | Regularization to prevent overfitting [5]. | 0.2-0.5 | Particularly useful with limited experimental data [5] |

For neural networks applied to materials science problems, the learning rate is almost universally the most important hyperparameter to optimize first [15]. As highlighted in search results, when tuning learning rates, it is crucial to vary them in orders of magnitude (e.g., [0.01, 0.001, 0.0001, 0.00001]) rather than linear increments, as appropriate values are often found across different magnitude ranges [15]. Advanced strategies like learning rate schedules (step decay, exponential decay) and adaptive optimizers (Adam, RMSprop) can further enhance training stability and efficiency [16].

In practice, for predicting electronic and mechanical properties of materials under realistic finite-temperature conditions, Graph Neural Networks (GNNs) have demonstrated exceptional performance when trained on physically-informed datasets [18]. Recent research on predicting properties of anti-perovskite materials found that GNN performance was significantly enhanced when training configurations were generated using phonon-informed sampling rather than random atomic displacements, despite using fewer data points [18].

Tree-Based Models

Tree-based ensemble methods, including Random Forests and Gradient Boosting Machines (XGBoost, LightGBM, CatBoost), are extensively used for materials property prediction due to their strong performance on tabular datasets and relative ease of tuning [10] [4]. These models are particularly valuable for establishing baseline performance and for applications where model interpretability is important.

Table: Essential Hyperparameters for Tree-Based Models in Materials Science

| Hyperparameter | Description | Impact on Performance | Common Values/Range | Tuning Considerations |

|---|---|---|---|---|

| Number of Trees/Estimators | Number of trees in the ensemble | More trees: better performance, but diminishing returns and increased computation [4] | 100-1000 | Important for convergence; monitor OOB error for guidance [4] |

| Maximum Depth | Maximum depth of individual trees | Deeper: more complex patterns, risk of overfitting. Shallower: faster training, underfitting [4] | 3-20+ | Critical for controlling model complexity [4] |

| Minimum Samples Split | Minimum samples required to split an internal node | Higher values: prevent overfitting, create simpler trees [4] | 2-10+ | Useful regularization for small materials datasets [4] |

| Learning Rate (Boosting) | Shrinkage factor for subsequent trees in boosting | Smaller values: more robust but require more trees [4] | 0.01-0.3 | Interacts with number of trees; lower rate needs more trees [4] |

| Feature Sampling Rate | Fraction of features considered for each split | Lower values: more diverse trees, reduces overfitting [4] | 0.5-1.0 | Important for high-dimensional feature spaces [4] |

Tree-based models have been successfully applied across diverse materials science domains. For example, in predicting band gaps of low-symmetry perovskites, gradient boosting and support vector regressors achieved mean absolute errors as low as 0.18 eV [10]. Similarly, in modeling ultimate tensile strength of Al-Si-Cu-Mg-Ni alloys, Random Forest models achieved R² values of 0.84, with further improvements possible through ensemble methods like AdaBoost (R² = 0.94) [4].

Support Vector Machines (SVM)

Support Vector Machines remain valuable for many materials informatics tasks, particularly with smaller datasets where their strong theoretical foundations provide good generalization performance.

Table: Essential Hyperparameters for Support Vector Machines

| Hyperparameter | Description | Impact on Performance | Kernel-Specific Considerations |

|---|---|---|---|

| C (Regularization) | Trade-off between margin maximization and error minimization | Low C: smoother decision boundary, may underfit. High C: correct classification, may overfit [5] | Affects all kernels similarly [5] |

| Gamma (γ) | Inverse radius of influence for samples | Low gamma: far influence, decision boundary smoother. High gamma: close influence, captures complexity, may overfit [5] | Critical for RBF kernel; interpretable range varies with data [5] |

| Kernel Type | Determines transformation of feature space | Linear: efficient for separable data. RBF: powerful for non-linear relationships [5] | Choice depends on data structure and feature relationships [5] |

Advanced Optimization Methodologies

Bayesian Optimization

Bayesian optimization has emerged as a powerful methodology for hyperparameter tuning in materials science applications, particularly when dealing with computationally expensive model evaluations. Unlike grid or random search which treat each hyperparameter configuration independently, Bayesian optimization constructs a probabilistic model of the objective function and uses it to select the most promising hyperparameters to evaluate next [17].

This approach has demonstrated significant advantages in materials informatics. In a study focused on predicting actual evapotranspiration using machine learning models, Bayesian optimization not only achieved higher performance but also substantially reduced computation time compared to traditional grid search [17]. The efficiency gains make Bayesian optimization particularly valuable for tuning complex deep learning models like LSTMs and GRUs, which have shown superior performance for materials property prediction but typically require extensive hyperparameter optimization [17].

The optimization process typically involves:

- Surrogate Model: A Gaussian process or tree-based model approximates the unknown function mapping hyperparameters to model performance.

- Acquisition Function: A criterion (e.g., Expected Improvement, Probability of Improvement) balances exploration and exploitation to select the next hyperparameter set.

- Iterative Refinement: The surrogate model is updated with new evaluations until convergence or budget exhaustion.

Automated Machine Learning (AutoML) and Active Learning

The integration of Automated Machine Learning (AutoML) with active learning strategies represents a cutting-edge approach for addressing the data scarcity challenges common in materials science [10]. AutoML frameworks automate the entire model development process, including hyperparameter tuning, model selection, and feature engineering, significantly reducing the manual effort required from researchers [10] [4].

When combined with active learning—which strategically selects the most informative data points to label—this approach enables the construction of robust predictive models while substantially reducing the volume of labeled data required [10]. Recent benchmarking studies have evaluated 17 different active learning strategies within AutoML frameworks for materials science regression tasks, finding that uncertainty-driven and diversity-hybrid strategies particularly outperform random sampling, especially in early acquisition stages when labeled data is most limited [10].

Tools like MatSci-ML Studio are making these advanced methodologies more accessible to materials researchers by providing intuitive graphical interfaces that encapsulate comprehensive, end-to-end ML workflows, including automated hyperparameter optimization [4]. These platforms integrate multi-strategy feature selection, automated hyperparameter optimization using libraries like Optuna, and model interpretation capabilities—significantly lowering the technical barrier for implementing advanced ML strategies in materials research [4].

Experimental Protocols and Case Studies

Case Study: Hyperparameter Optimization for Predicting Actual Evapotranspiration

A comprehensive study on predicting actual evapotranspiration (AET) using machine learning provides a robust protocol for hyperparameter optimization in materials-inspired prediction tasks [17]. This research compared deep learning models (LSTM, GRU, CNN) against classical machine learning approaches (SVR, RF) using two different input combinations of meteorological and soil features.

Experimental Protocol:

- Data Preparation: Two input combinations were developed: (i) features selected through Pearson correlation, tolerance, and VIF scores to address multicollinearity; (ii) more accessible features for practical applicability.

- Model Selection: Both deep learning and classical ML models were implemented for comparison.

- Hyperparameter Optimization: Bayesian optimization and grid search were systematically compared for tuning model hyperparameters.

- Performance Evaluation: Models were evaluated using multiple statistical indicators (RMSE, MSE, MAE, R²) to ensure comprehensive assessment.

Results and Implications: The study demonstrated that Bayesian optimization consistently achieved higher performance with reduced computation time compared to grid search [17]. Among the models, LSTM networks achieved the best results (R²=0.8861 with five predictors, R²=0.8467 with four predictors), slightly outperforming SVR (R²=0.8456) with fewer predictors [17]. This case study underscores the importance of selecting appropriate optimization strategies and demonstrates that deep learning methods, particularly when combined with advanced hyperparameter tuning, can deliver superior performance for complex materials-related prediction tasks.

Case Study: Active Learning with AutoML for Small-Sample Regression

A benchmark study on integrating active learning with AutoML provides valuable insights for hyperparameter optimization under data constraints common in materials science [10]. This research evaluated 17 active learning strategies together with a random-sampling baseline across 9 materials formulation design datasets.

Methodology:

- Pool-based Active Learning Framework: Initial dataset comprised a small set of labeled samples and a large pool of unlabeled samples.

- Iterative Sampling: Different AL strategies performed multi-step sampling, with an AutoML model fitted at each step.

- Strategy Comparison: Methods based on uncertainty estimation, expected model change maximization, diversity, and representativeness were evaluated.

- Performance Metrics: Model accuracy was tracked using MAE and R² as the labeled set expanded.

Key Findings:

- Early Acquisition Phase: Uncertainty-driven (LCMD, Tree-based-R) and diversity-hybrid (RD-GS) strategies clearly outperformed geometry-only heuristics and random baseline [10].

- Diminishing Returns: As the labeled set grew, the performance gap narrowed and all methods eventually converged [10].

- Practical Implication: The optimal hyperparameter optimization strategy depends on the amount of available labeled data, with uncertainty-based methods particularly effective for data-scarce scenarios common in materials science [10].

Table: Essential Tools and Resources for Hyperparameter Optimization in Materials Science

| Tool/Resource | Type | Key Functionality | Applicability to Materials Science |

|---|---|---|---|

| Optuna | Hyperparameter Optimization Framework | Efficient Bayesian optimization with pruning algorithms [4] | Integrated in MatSci-ML Studio for automated hyperparameter tuning [4] |

| MatSci-ML Studio | GUI-based ML Toolkit | End-to-end workflow with automated HPO, feature selection, and SHAP interpretability [4] | Democratizes ML for materials scientists with limited coding expertise [4] |

| AutoGluon/TPOT/H2O.ai | AutoML Frameworks | Automated model selection, hyperparameter tuning, and feature engineering [14] | Reduces repetitive work in model design and parameterization [14] |

| Automated "A-Lab" Platforms | Experimental ML Integration | Literature-mined recipes coupled with active learning for autonomous synthesis [10] | Successfully synthesized 41 previously unreported inorganic compounds in 17 days [10] |

| SHAP Analysis | Model Interpretation | Explains model predictions by quantifying feature importance [19] | Reveals decisive design levers (e.g., valence-charge variance for band gap prediction) [10] |

Hyperparameter optimization represents a critical bridge between theoretical machine learning capabilities and practical materials science applications. As the field continues to evolve, several key principles emerge for researchers seeking to maximize the effectiveness of their ML-driven materials discovery efforts:

First, prioritize the most impactful hyperparameters, with the learning rate representing the universal starting point for any model involving gradient-based optimization [15]. Second, select optimization strategies appropriate for your computational budget and dataset size, with Bayesian optimization generally providing superior efficiency for complex models [17]. Third, leverage emerging AutoML and active learning frameworks to navigate the data scarcity challenges inherent in materials science [10] [4].

The integration of physical knowledge into the hyperparameter optimization process—whether through physically-informed training data generation [18], physics-constrained model architectures, or domain-aware search spaces—represents a particularly promising direction for future research. As demonstrated by recent advances, models trained on physically-representative datasets (e.g., phonon-informed configurations) can achieve higher accuracy and robustness with significantly fewer data points [18].

By adopting the systematic approaches and methodologies outlined in this guide, materials researchers can significantly enhance the predictive performance of their machine learning models, ultimately accelerating the discovery and development of next-generation functional materials for energy, electronics, medicine, and beyond.

Connecting Hyperparameter Tuning to Trust and Interpretability in Scientific AI

In the pursuit of scientific discovery, fields like materials science and drug development are increasingly leveraging sophisticated machine learning (ML) models. The exceptional accuracy of these models, however, often comes at the cost of explainability, creating a significant barrier to their adoption in high-stakes research environments. The most accurate models, such as deep neural networks (DNNs), can function as "black boxes," making it difficult for scientists to trust their predictions or extract actionable physical insights [19]. This technical guide posits that hyperparameter tuning—often viewed merely as a performance optimization step—is a critical, though underutilized, mechanism for bridging the gap between model accuracy and model trustworthiness. Framed within the broader thesis of establishing hyperparameter tuning fundamentals for materials science ML research, this paper will demonstrate how a deliberate tuning strategy, moving beyond brute-force accuracy maximization, is foundational to building interpretable and reliable scientific AI systems.

Hyperparameter Tuning: From Black Box to Trusted Scientific Tool

Defining the Role of Hyperparameters in Model Trust

Hyperparameters are the external configurations set prior to the training process that govern the learning algorithm itself. Examples include the learning rate, the number of layers in a neural network, the regularization strength, and the dropout ratio [16] [20]. In scientific contexts, the choice of these values directly influences a model's tendency to overfit or underfit, thus controlling its ability to generalize and produce reliable predictions on new, experimental data.

The connection to trust is direct: a model that generalizes well is inherently more trustworthy. Proper hyperparameter tuning systematically manages the bias-variance trade-off, preventing a model from being either too simple to capture key patterns (high bias, underfitting) or too complex, causing it to memorize noise and artifacts in the training data (high variance, overfitting) [20]. For instance, tuning regularization parameters or the dropout ratio directly controls model complexity, safeguarding against overfitting and leading to more robust and reliable predictions [21] [20]. This process is not just about achieving a high score on a validation set; it is about ensuring the model learns the underlying physical principles of the data rather than superficial correlations.

The Explainability-Accuracy Trade-off and the Path Forward

A well-documented challenge in modern ML is the trade-off between model accuracy and model explainability [19]. Simple, transparent models like linear regression are easy to interpret but often lack the expressive power for complex scientific tasks. Conversely, highly complex models like DNNs offer state-of-the-art accuracy but are notoriously difficult to explain. This creates a dilemma for researchers who require both high predictive power and causal understanding.

Hyperparameter tuning sits at the heart of this conflict. The pursuit of maximum accuracy can lead to extremely complex models that are incomprehensible. However, a more nuanced tuning strategy can help navigate this trade-off. By treating hyperparameters that control model complexity (e.g., network depth, number of trees in a forest) as scientific levers, researchers can methodically explore the Pareto frontier of accuracy versus interpretability. Often, a slightly less complex model with a minimal accuracy drop can be significantly more interpretable, fostering greater trust and yielding more scientific insights. Explainable Artificial Intelligence (XAI) techniques can then be applied more effectively to these "right-sized" models [19].

Quantitative Comparison of Tuning Methodologies in Scientific Research

Selecting an appropriate hyperparameter optimization (HPO) algorithm is crucial for both efficiency and final model quality. The table below summarizes the core HPO methods, their mechanisms, and their performance in scientific applications.

Table 1: Comparison of Hyperparameter Tuning Methodologies

| Method | Core Mechanism | Key Advantages | Key Disadvantages | Reported Performance in Research |

|---|---|---|---|---|

| Grid Search [21] [22] | Exhaustive search over a discrete grid of values | Systematic, comprehensive, simple to implement | Computationally prohibitive for high-dimensional spaces, inefficient | Often used as a baseline; generally outperformed by more efficient methods |

| Random Search [21] [22] | Random sampling from specified distributions | More computationally efficient than grid search, better at locating promising regions in large spaces | Does not use information from past experiments to inform next sample, can still be wasteful | A strong and practical baseline, especially when some hyperparameters are more important than others [21] |

| Bayesian Optimization [21] [17] [23] | Sequential model-based optimization using a probabilistic surrogate model (e.g., Gaussian Process) | Highly sample-efficient, uses past results to guide future searches, faster convergence to optimum | Sequential nature can be harder to parallelize, higher computational overhead per iteration | AET Prediction (LSTM): Bayesian Opt. achieved RMSE=0.0230, R²=0.8861, outperforming grid search [17]. Drug Discovery (Ensemble): Used to fine-tune models, contributing to R² of 0.92 [23] |

| Multi-Strategy Parrot Optimizer (MSPO) [24] | Metaheuristic algorithm enhanced with Sobol sequence, nonlinear decreasing inertia weight, and chaotic parameters | Enhanced global exploration, avoids premature convergence, high optimization precision | Newer method, less established in broad scientific communities | Breast Cancer Image Classification (ResNet18): Surpassed other optimizers in accuracy, precision, recall, and F1-score [24] |

The evidence from recent scientific studies strongly favors advanced optimization techniques. In a study predicting actual evapotranspiration, Bayesian optimization not only achieved higher performance with an LSTM model but also did so with reduced computation time compared to grid search [17]. Similarly, in AI-driven drug discovery, Bayesian optimization was employed to fine-tune state-of-the-art models like Graph Neural Networks and Stacking Ensembles, which achieved exceptional accuracy in predicting pharmacokinetic parameters [23]. For image classification in medical applications, novel metaheuristic algorithms like MSPO have demonstrated superior ability to optimize model architecture, leading to tangible improvements in diagnostic accuracy [24].

Experimental Protocols for Trust-Centric Hyperparameter Tuning

The Incremental Tuning Strategy for Scientific Insight

A scientific approach to tuning prioritizes long-term insight over short-term validation error improvements. This involves an incremental strategy where researchers build understanding through rounds of targeted experiments [25]. The core of this methodology is the classification of hyperparameters for a given experimental goal:

- Scientific Hyperparameters: The variables whose effect on performance you are actively trying to measure (e.g., "Does a model with more hidden layers have lower validation error?" where the number of layers is scientific).

- Nuisance Hyperparameters: Variables that must be optimized over to ensure a fair comparison of scientific hyperparameters (e.g., the learning rate, which must be tuned separately for each model architecture).

- Fixed Hyperparameters: Variables held constant for a round of experiments, accepting that conclusions may be limited to these settings [25].

This classification forces a deliberate experimental design. For example, to fairly compare the effect of different activation functions (scientific hyperparameters), one must tune nuisance hyperparameters like the learning rate separately for each function, rather than using a single fixed value.

A Protocol for Connecting Tuning to Interpretability

The following workflow provides a detailed protocol for a tuning experiment aimed at enhancing both performance and interpretability.

Table 2: The Scientist's Toolkit for Trust-Centric Hyperparameter Tuning

| Tool / Concept | Function in the Protocol | Application Example |

|---|---|---|

| Validation Set [21] | Used to evaluate and compare different hyperparameter configurations during tuning, preventing information leakage from the test set. | Holding out a portion of materials data (e.g., spectral images) to assess model generalization after each tuning round. |

| Bayesian Optimization [21] [17] | An intelligent search algorithm that models the performance landscape and suggests the most promising hyperparameters to evaluate next. | Efficiently searching the hyperparameter space of a Graph Neural Network for molecular property prediction. |

| Learning Rate Scheduler [16] | Dynamically adjusts the learning rate during training, which can improve convergence and help escape local minima. | Using a step decay schedule to fine-tune a pre-trained CNN on a new set of material micrographs. |

| Saliency Maps [19] | An XAI technique that highlights which regions of an input (e.g., an image) were most important for the model's prediction. | Visualizing which parts of a molecular structure or material microstructure the tuned model focuses on to predict a property. |

| Concept Visualization [19] | An XAI technique that identifies and visualizes the high-level concepts a model has learned. | Determining if a CNN trained on catalyst images has learned to recognize pore structures or surface defects. |

Step 1: Establish a Baseline and a Goal Begin with a simple, untuned model configuration. The goal for the first round of experiments should be scoped, for example: "Understand the impact of model depth (a scientific hyperparameter) on validation error and prediction consistency, while tuning the learning rate and weight decay (nuisance hyperparameters)."

Step 2: Design and Execute the Experiment Choose an efficient HPO method like Bayesian optimization. The search space for the scientific and nuisance hyperparameters should be defined based on domain knowledge. For interpretability analysis, plan to save the predictions and, if applicable, generate explanation maps (e.g., saliency maps) for the best-performing models from different architectural depths.

Step 3: Analyze Performance and Explanations Evaluate the results not just on raw accuracy (e.g., R², RMSE), but also on signs of robust learning, such as smooth training curves and minimal gap between training and validation loss [25]. Then, apply XAI techniques to the candidate models. A model that is both accurate and whose explanations align with domain knowledge (e.g., a model that bases its prediction of material strength on known microstructural features) is inherently more trustworthy.

Step 4: Adopt, Refine, or Iterate If a model configuration yields improved performance and its explanations are physically plausible, adopt the change. If the explanations are nonsensical despite good accuracy, it may indicate the model is relying on spurious correlations, and the configuration should be rejected or investigated further. This decision point is where tuning directly feeds into building trust.

The logical relationship between hyperparameter tuning, model performance, and the pillars of trust is summarized in the following workflow:

Hyperparameter tuning must be elevated from a mere technical pre-processing step to a core component of the scientific methodology in AI-driven research. As demonstrated, the strategic selection of tuning algorithms and the adoption of an incremental, insight-driven experimental protocol do not only maximize a model's predictive accuracy but also directly enhance its generalization, robustness, and interpretability. These factors are the fundamental pillars of trust. For researchers in materials science and drug development, where models inform critical and costly experimental decisions, fostering this trust is not optional—it is essential. By rigorously connecting hyperparameter tuning to trust and interpretability, we can unlock the full potential of AI as a reliable partner in scientific discovery.

Core Tuning Algorithms and Their Application in Materials Informatics

Hyperparameter tuning represents a critical step in the development of robust machine learning models for scientific research. This technical guide provides an in-depth examination of grid search, a systematic hyperparameter optimization technique, contextualized specifically for applications in materials science and drug development. We present the fundamental principles of grid search, detailed experimental protocols from case studies, quantitative performance comparisons with alternative methods, and practical implementation guidelines. The content is structured to equip researchers with both the theoretical foundation and practical tools necessary to effectively implement grid search methodologies within their machine learning pipelines, thereby enhancing model performance and predictive accuracy in materials informatics applications.

In machine learning, hyperparameters are configuration variables whose values are set prior to the commencement of the learning process, unlike model parameters which are learned during training [26]. These hyperparameters control critical aspects of the learning algorithm's behavior, such as model complexity, learning rate, and regularization strength. The process of identifying the optimal set of hyperparameter values that maximize model performance on a given task is known as hyperparameter optimization [27] [22]. For materials science researchers employing machine learning for tasks such as predicting material properties or optimizing synthesis parameters, proper hyperparameter tuning is not merely a refinement step but a fundamental necessity to ensure model reliability and scientific validity.

Several hyperparameter optimization strategies have been developed, ranging from simple manual search to sophisticated Bayesian approaches [28]. Among these, grid search stands as one of the most widely used and conceptually straightforward methods [29]. Its exhaustive nature provides a systematic framework for exploring the hyperparameter space, making it particularly valuable in scientific domains where reproducibility and thorough exploration are paramount. This guide focuses exclusively on grid search methodology, implementation considerations, and its application within materials science research contexts.

Theoretical Foundations of Grid Search

Core Principles and Algorithm

Grid search, also known as parameter sweep, is an exhaustive search strategy that systematically explores a predefined subset of the hyperparameter space [27]. The algorithm operates by constructing a multidimensional grid where each dimension corresponds to a different hyperparameter, and each point in the grid represents a specific combination of hyperparameter values [30]. The learning algorithm is then trained and evaluated at every point in this grid, with performance typically measured using cross-validation on the training set or evaluation on a hold-out validation set [27].

Mathematically, for a set of hyperparameters ( H1, H2, ..., Hn ) with corresponding value sets ( V1, V2, ..., Vn ), the search space ( S ) is the Cartesian product: [ S = V1 \times V2 \times \cdots \times Vn ] The algorithm evaluates the model performance function ( f ) for each element ( (v1, v2, ..., vn) ) in ( S ), ultimately selecting the combination that optimizes the predefined evaluation metric [27] [30].

Comparison with Other Optimization Methods

Grid search occupies a specific position within the landscape of hyperparameter optimization techniques. Unlike random search, which samples parameter combinations randomly from specified distributions [31] [28], grid search employs a deterministic, exhaustive approach. While more computationally expensive than random search in high-dimensional spaces, grid search provides comprehensive coverage of the predefined parameter grid and is guaranteed to find the optimal combination within that grid [31].

More advanced methods such as Bayesian optimization take a probabilistic approach, building a surrogate model of the objective function to guide the search toward promising regions [27] [28]. These methods can be more efficient but are also more complex to implement and interpret. The table below summarizes key comparisons between these approaches:

Table 1: Comparison of Hyperparameter Optimization Methods

| Method | Search Strategy | Advantages | Limitations | Best Use Cases |

|---|---|---|---|---|

| Grid Search | Exhaustive search over specified grid | Guaranteed to find best point in grid; simple to implement; easily parallelized | Computationally expensive; suffers from curse of dimensionality | Small parameter spaces (2-4 parameters); discrete parameters; when completeness is valued over speed |

| Random Search | Random sampling from specified distributions | More efficient for high-dimensional spaces; better for continuous parameters | May miss optimal combinations; no guarantee of finding best parameters | Larger parameter spaces; when computational budget is limited |

| Bayesian Optimization | Sequential model-based optimization | More sample-efficient; learns from previous evaluations | Complex to implement; difficult to parallelize; computationally intensive per iteration | Expensive model evaluations; limited evaluation budget |

Grid Search Implementation Methodology

Workflow and Process

The implementation of grid search follows a systematic workflow that ensures thorough exploration of the hyperparameter space. The following diagram illustrates this process:

Grid Search Algorithm Workflow

The workflow consists of five key phases:

Parameter Grid Definition: Researchers specify the hyperparameters to be optimized and the values to be explored for each parameter [30] [22]. This critical step requires domain knowledge to establish appropriate value ranges.

Model Training Iteration: For each hyperparameter combination in the grid, the algorithm instantiates a new model with those hyperparameters and trains it on the training data [26].

Cross-Validation Performance Assessment: Each model's performance is evaluated using k-fold cross-validation, which involves partitioning the training data into k subsets, training the model k times (each time using k-1 subsets for training and the remaining subset for validation), and averaging the performance metrics across all folds [26] [28].

Exhaustive Search: Steps 2-3 are repeated for all possible combinations of hyperparameters in the defined grid [27] [29].

Optimal Model Selection: After all combinations have been evaluated, the algorithm selects the hyperparameter configuration that yielded the best average performance during cross-validation [30] [26].

Practical Implementation with Scikit-Learn

The scikit-learn library provides the GridSearchCV class, which implements grid search with cross-validation [30]. The following code illustrates a typical implementation:

The GridSearchCV class provides several key parameters that control the search behavior. The cv parameter determines the cross-validation splitting strategy, while scoring defines the performance metric to optimize. The n_jobs parameter enables parallelization by specifying the number of jobs to run in parallel, with -1 indicating usage of all available processors [30] [26].

Experimental Protocols and Case Studies

Case Study: Grid Search in Additive Manufacturing

A comprehensive study applied grid search to optimize a Multilayer Perceptron (MLP) model for predicting critical quality metrics in Fused Filament Fabrication (FFF) additive manufacturing [32]. The experimental protocol was designed to systematically investigate the effects of hyperparameters on model performance.

Table 2: Hyperparameter Grid for Additive Manufacturing Case Study

| Hyperparameter | Values Tested | Role in Model | Optimal Value Found |

|---|---|---|---|

| Number of Neurons | Multiple values in hidden layer | Control model capacity and representation power | Specific optimal value not disclosed in abstract |

| Learning Rate | Multiple values tested | Control step size during optimization | Specific optimal value not disclosed in abstract |

| Number of Epochs | Multiple values tested | Determine training duration | Specific optimal value not disclosed in abstract |

| Train-Test Ratio | Two different ratios | Affect validation methodology | Specific optimal value not disclosed in abstract |

The dataset comprised five dominant input parameters (layer thickness, build orientation, extrusion temperature, building temperature, and print speed) and three output parameters (dimensional accuracy, porosity, and tensile strength). The experimental results demonstrated that grid search successfully identified optimal hyperparameter configurations that significantly improved model performance as measured by RMSE and computational time [32].

Case Study: HVAC System Performance Prediction

In a large-scale study on predicting HVAC heating coil performance, researchers employed grid search to optimize artificial neural network architectures [33]. The experimental design involved an extensive hyperparameter search across five key dimensions:

Table 3: Hyperparameter Configuration for HVAC Performance Prediction

| Hyperparameter | Values Tested | Experimental Range | Optimal Configuration |

|---|---|---|---|

| Number of Epochs | Multiple values | Varied systematically | 500 epochs |

| Network Size | Multiple configurations | Varied number of hidden layers | 17 hidden layers |

| Network Shape | Different architectures | Various topological arrangements | Left-triangle architecture |

| Learning Rate | Multiple values | Different precision levels | 5 × 10⁻⁵ |

| Optimizer | Different algorithms | Various optimization methods | Adam optimizer |

The experimental protocol involved developing 288 unique hyperparameter configurations, with each configuration tested three times, resulting in a total of 864 artificial neural network models [33]. This comprehensive approach ensured statistical reliability of the results. The best-performing model achieved a mean squared error of 0.469, demonstrating the critical importance of systematic hyperparameter tuning in complex scientific domains.

Performance Analysis and Computational Considerations

The computational requirements of grid search grow exponentially with the number of hyperparameters, a phenomenon known as the curse of dimensionality [27] [29]. For a grid search with (k) hyperparameters, each with (n) possible values, the total number of model evaluations is (n^k). This exponential relationship highlights the importance of carefully selecting which hyperparameters to include in the search and how many values to test for each.

In a direct comparison between grid search and random search for a random forest classifier on the Iris dataset, grid search evaluated 180 parameter combinations in 352.0 seconds, while random search with 50 iterations achieved comparable accuracy in only 75.5 seconds—approximately 21% of the computation time [28]. This efficiency advantage of random search becomes more pronounced as the dimensionality of the hyperparameter space increases.

Grid Search in Materials Science Research

Adaptation to Materials Informatics

For materials science researchers, grid search offers a systematic approach to optimizing machine learning models for various applications, including predicting material properties, optimizing synthesis parameters, and discovering new materials with targeted characteristics. The exhaustive nature of grid search aligns well with scientific methodology, providing comprehensive exploration of the hyperparameter space and generating valuable insights into model behavior across different parameter regions.

When applying grid search to materials informatics problems, researchers should consider:

Prioritizing Hyperparameters: Focus on hyperparameters with the greatest expected impact on model performance for the specific materials domain.

Domain-Informed Value Ranges: Leverage domain knowledge to set appropriate value ranges rather than relying on generic defaults.

Computational Budget Planning: Allocate sufficient computational resources for the expected number of model evaluations.

Performance Metric Selection: Choose evaluation metrics that align with materials science objectives, such as accuracy in property prediction or robustness across material classes.

Research Reagent Solutions: Computational Tools

The experimental implementation of grid search requires specific computational tools and libraries that constitute the essential "research reagents" for hyperparameter optimization:

Table 4: Essential Computational Tools for Grid Search Implementation

| Tool/Library | Function | Application Context | Key Features |

|---|---|---|---|

| Scikit-learn | Machine learning library | Primary implementation platform | GridSearchCV class with cross-validation |

| NumPy/SciPy | Numerical computing | Search space definition and mathematical operations | Array operations, statistical distributions |

| Python | Programming language | Algorithm implementation and execution | Extensive ecosystem, scientific computing support |

| Parallel Processing | Computational resource | Accelerating grid search execution | Multi-core CPU utilization (n_jobs parameter) |

| Cross-Validation | Evaluation framework | Model performance assessment | K-fold, stratified, or leave-one-out methods |

Advanced Grid Search Techniques

Successive Halving and Hybrid Approaches

To address the computational limitations of standard grid search, advanced techniques such as HalvingGridSearchCV implement successive halving algorithms [30]. This approach allocates more resources to promising hyperparameter combinations by:

- Evaluating all candidates with a small amount of resources initially

- Selecting the top-performing candidates for further evaluation

- Increasing resources iteratively while reducing the number of candidates

- Repeating until the optimal configuration is identified

The relationship between standard grid search and these advanced methods can be visualized as follows:

Advanced Grid Search Optimization Methods

Domain-Specific Optimization Strategies

For materials science applications, researchers have developed specialized grid search variants that incorporate domain-specific knowledge. The GridsearchWEF (Weighted Error Function) method, for instance, uses a weighted error function to improve performance in financial time series forecasting [34]. Similar domain-informed approaches could be adapted for materials science applications, such as:

- Multi-objective optimization for balancing multiple material properties

- Constraint-aware search that incorporates physical constraints or domain knowledge

- Transfer learning approaches that leverage hyperparameters from related materials systems

Grid search remains a fundamental hyperparameter optimization technique that offers significant value for materials science research. Its exhaustive nature ensures comprehensive exploration of the defined search space, while its conceptual simplicity promotes reproducibility and interpretability. Despite computational limitations in high-dimensional spaces, grid search provides a robust baseline against which more complex optimization methods can be compared.

For materials researchers implementing grid search, we recommend: (1) beginning with a coarse grid to identify promising regions of the hyperparameter space, (2) leveraging domain knowledge to prioritize the most impactful hyperparameters, (3) utilizing parallel computing resources to mitigate computational costs, and (4) considering hybrid approaches that combine grid search with successive halving or random search for more efficient optimization. When implemented systematically, grid search significantly enhances model performance and reliability, ultimately accelerating materials discovery and development.

In the field of materials science, machine learning (ML) has emerged as a transformative tool for accelerating the discovery and development of new materials, from high-performance alloys to novel pharmaceutical compounds [35]. The performance of these ML models is critically dependent on their hyperparameters—the configuration settings that govern the learning process itself [8]. These are not learned from data but must be set prior to training. Examples include the learning rate for neural networks, the depth of a decision tree, or the regularization strength in a support vector machine.

Traditional methods for hyperparameter tuning, such as manual search and Grid Search, often become prohibitively inefficient, especially as the number of hyperparameters (the dimensionality) grows. Manual search relies heavily on researcher intuition and iterative trial-and-error, a process that is not only time-consuming but difficult to replicate systematically [36]. Grid Search, which involves evaluating a predefined set of points across all hyperparameters, suffers from the "curse of dimensionality"; its computational cost grows exponentially with the number of hyperparameters, making it unsuitable for high-dimensional problems [36].

This article frames Random Search within the broader context of hyperparameter optimization (HPO) as a powerful and efficient alternative. Its fundamental strength lies in its ability to explore a broader, less correlated region of the hyperparameter space with a fixed computational budget, often leading to the discovery of superior model configurations faster than its grid-based counterpart.

Theoretical Foundations of Random Search

The Curse of Dimensionality in Hyperparameter Space

To understand the efficiency of Random Search, one must first appreciate the geometry of high-dimensional spaces. In a hyperparameter space with ( D ) dimensions, a Grid Search with ( k ) divisions along each axis requires ( k^D ) model evaluations. For example, with just 10 hyperparameters and 5 divisions each, this results in nearly 10 million configurations. Crucially, this exhaustive approach becomes highly redundant if some hyperparameters have little to no impact on the model's performance for a given dataset. The grid expends massive computational resources re-evaluating the same, unimportant values for these parameters.

The Random Search Algorithm

Random Search addresses this inefficiency by sampling hyperparameter configurations stochastically. Given a budget of ( N ) trials, it samples each configuration independently from a predefined probability distribution (e.g., uniform or log-uniform) over the hyperparameter space.

The theoretical justification is that for a fixed computational budget ( N ), Random Search has a higher probability of finding a high-performing region of the search space because it does not waste evaluations on correlated points. It treats every dimension as independent, allowing it to explore a much wider range of values for each hyperparameter with the same number of iterations.

Convergence Properties

Theoretical studies on the convergence of stochastic optimization algorithms provide a formal basis for Random Search. Research has shown that, given a continuous problem function and a discrete-time stochastic process, the Asymptotic Convergence Rate (ACR) is a key metric for analyzing performance [37]. The ACR is defined as the optimal constant ( C ) controlling the asymptotic behavior of the expected approximation errors, satisfying ( E[|f(Xt)-f^\star |] \le C^t \cdot E[|f(X0)-f_{opt}|] ) for large ( t ) [37]. A condition of ( ACR < 1 ) indicates linear convergence, characterized by an exponential decrease in error.

While some complex algorithms may achieve a lower ACR, Random Search provides a robust and theoretically sound baseline. Its convergence is guaranteed under general assumptions, and it serves as a natural benchmark against which the progress of more advanced sequential optimization algorithms can be measured [37].

Random Search in Practice: Methodologies and Protocols

Experimental Protocol for Implementing Random Search

Implementing Random Search for a materials science ML problem involves a standardized workflow. The following protocol details the essential steps:

Define the Hyperparameter Search Space: This is a critical first step. For each hyperparameter, specify a probability distribution.

- Continuous (e.g., learning rate): Use a log-uniform distribution over an interval like [1e-5, 1e-1].

- Integer (e.g., number of layers): Use a uniform distribution over a set like {2, 3, 4, 5, 6}.

- Categorical (e.g., optimizer type): Use a discrete uniform distribution over choices like {'Adam', 'SGD', 'RMSprop'}.

Set the Computational Budget: Determine the number of trials ( N ) based on available computational resources. A typical ( N ) might range from 50 to 200.

Execute the Random Search Loop: For ( i = 1 ) to ( N ): a. Sample a Configuration: Draw a new set of hyperparameters ( \thetai ) from the defined distributions. b. Train and Validate: Train the model using ( \thetai ) and evaluate its performance on a held-out validation set. The primary metric could be validation accuracy, F1-score, or mean squared error, depending on the problem. c. Store Result: Record the tuple ( (\thetai, \text{performance}i) ).

Select the Optimal Configuration: After all ( N ) trials, select the hyperparameter set ( \theta^* ) that achieved the best validation performance.

Final Evaluation: Train a final model on the combined training and validation data using ( \theta^* ), and report its performance on a completely held-out test set.

Comparative Analysis: Random Search vs. Grid Search

The following table summarizes a quantitative comparison between Grid Search and Random Search, based on empirical findings from the literature [36].

| Feature | Grid Search | Random Search |

|---|---|---|

| Search Strategy | Exhaustive over a grid | Stochastic sampling |

| Scalability | Poor; exponential cost ( O(k^D) ) | Good; linear cost ( O(N) ) |

| Dimensionality | Inefficient for high-dimensional spaces | More efficient for high-dimensional spaces |

| Parallelization | Embarrassingly parallel | Embarrassingly parallel |

| Best Use Case | Low-dimensional spaces (2-3 parameters) with important parameters known | Medium- to high-dimensional spaces, or when important parameters are unknown |

Workflow Visualization

The following diagram illustrates the logical workflow of a Random Search experiment, highlighting its iterative and stochastic nature.

The Scientist's Toolkit for Hyperparameter Optimization

Successful application of HPO in a research environment requires a suite of software tools and conceptual frameworks. The table below details key "research reagents" — the essential materials and software — for conducting rigorous hyperparameter optimization experiments.

| Tool / Concept | Type | Function in HPO |

|---|---|---|

| Scikit-learn | Software Library | Provides simple implementations of GridSearchCV and RandomizedSearchCV for classical ML models [35]. |

| TensorFlow / PyTorch | Software Library | Deep learning frameworks used to build and train complex models like GNNs; their computational graphs enable efficient gradient-based learning [35]. |

| Bayesian Optimization | Algorithm | A sequential model-based optimization technique that builds a probabilistic model to direct the search towards promising configurations [36]. |

| Support Vector Machine (SVM) | ML Model | A classifier whose performance is highly sensitive to hyperparameters like regularization (C) and kernel parameters, making it a common benchmark for HPO [36]. |

| Graph Neural Network (GNN) | ML Model | A specialized architecture for graph-structured data, crucial in cheminformatics and materials science; its performance is highly sensitive to architectural hyperparameters [8]. |

| Validation Set | Data | A held-out subset of training data used to evaluate the performance of a model trained with a specific hyperparameter set, guiding the HPO process [35]. |

Within the demanding context of materials science and drug discovery, where computational resources are valuable and model performance is paramount, Random Search establishes itself as a fundamental and powerfully efficient strategy for hyperparameter tuning. Its ability to navigate high-dimensional spaces more effectively than Grid Search, coupled with its simplicity and ease of parallelization, makes it an indispensable tool in the modern computational researcher's arsenal. While more sophisticated methods like Bayesian Optimization offer further refinements, Random Search provides a robust, theoretically-grounded baseline that consistently delivers strong results. By adopting Random Search, scientists and researchers can significantly accelerate their ML-driven discovery pipelines, optimizing the performance of their models to uncover new materials and therapeutics with greater speed and confidence.