Identifying and Mitigating Key Sources of Error in Computational Biomechanics Models

This article provides a comprehensive analysis of the primary sources of error in computational biomechanics models, a critical field for drug development, medical device innovation, and understanding human physiology.

Identifying and Mitigating Key Sources of Error in Computational Biomechanics Models

Abstract

This article provides a comprehensive analysis of the primary sources of error in computational biomechanics models, a critical field for drug development, medical device innovation, and understanding human physiology. It systematically explores foundational errors in model conceptualization and input parameters, methodological challenges in multiscale modeling and AI integration, strategies for troubleshooting and optimizing subject-specific models, and rigorous frameworks for model validation. Aimed at researchers and scientists, the content synthesizes recent advances, including the use of Virtual Human Twins and deep learning, to offer actionable insights for improving model accuracy, reliability, and clinical translation in biomedical research.

Fundamental Sources of Error: From Model Conception to Input Parameters

In computational biomechanics, models are powerful tools for simulating the mechanical behavior of biological tissues, supplementing experimental investigations, and predicting outcomes in scenarios where direct experimentation is not feasible [1]. The credibility of these simulations, however, is entirely contingent on the accuracy of the material properties assigned to the tissues being modeled. Inaccurate material properties represent a fundamental source of error, compromising the predictive power of models and potentially leading to erroneous conclusions in both basic science and clinical applications [1] [2]. The pitfalls of applying non-human or generic tissue data are particularly pronounced, as biological tissues exhibit immense species-specific and subject-specific variability in their mechanical characteristics.

The field relies on verification and validation (V&V) processes to build confidence in computational simulations. Verification ensures that the mathematical equations are solved correctly ("solving the equations right"), while validation determines whether the right equations are being solved for the real-world physics ("solving the right equations") [1] [2]. The use of inaccurate material properties constitutes a critical modeling error that no degree of verification can rectify, as it introduces a fundamental disconnect between the computational representation and the physical system it intends to simulate [2]. When models are designed to inform patient-specific diagnoses or evaluate targeted treatments, these errors can have profound effects, moving beyond theoretical incorrectness to potentially impact healthcare decisions [1].

This technical guide examines the sources, implications, and mitigation strategies for errors arising from the application of non-human and generic tissue data, framing the discussion within the broader context of error sources in computational biomechanics research.

The Scope and Impact of the Problem

Systematic Errors from Non-Human Data in Preclinical Models

The reliance on non-human animal models in preclinical drug development is a significant source of error due to fundamental biological differences. These differences encompass the structure, size, and regenerative capacity of organs and tissues, as well as physiological variations in metabolism, immunology, and drug transport [3]. Consequently, approximately 75% of drugs that emerge from preclinical studies fail in phase II or phase III human clinical trials due to lack of efficacy or safety concerns [3]. While large animal models can improve predictive value, molecular, genetic, cellular, anatomical, and physiological differences persist, creating a continuous demand for preclinical models based on human tissues [3].

Reconstruction Errors in Evolutionary Biomechanics

The challenge of soft tissue reconstruction presents a parallel problem in evolutionary biomechanics, where researchers must estimate muscle properties from skeletal fossils. A 2021 study objectively tested this by modeling the masticatory system in extant rodents. The research found that predictions from models using reconstructed soft tissue properties—methods typical in fossil studies—varied widely. In the worst cases, these models failed to correctly capture even qualitative differences between macroevolutionary morphotypes, despite using the same skeletal morphology that is typically available for extinct species [4]. This demonstrates that incorrectly reconstructed soft tissue parameters can fundamentally alter functional interpretations, potentially leading to incorrect inferences about evolutionary adaptations.

Sample Size and Variability in Tissue Characterization

Biomechanical experiments on human tissues themselves face challenges of adequate sampling. A 2023 investigation into sample size considerations for soft tissues demonstrated that obtaining stable estimations of material properties requires careful consideration of intrinsic tissue variation. The study found that while stable estimations of means and medians for scalp skin and dura mater properties could be achieved with sample sizes below 30 at a ±20% tolerance with 80% conformity, lower tolerance levels or higher conformity requirements dramatically increased the necessary sample size [5]. This highlights that using underpowered studies to define "generic" human tissue properties may yield data with unacceptable uncertainty for precise computational modeling.

Table 1: Sample Size Requirements for Stable Estimation of Soft Tissue Biomechanical Properties (Based on [5])

| Parameter Type | ±20% Tolerance, 80% Conformity | ±10% Tolerance, 80% Conformity | ±20% Tolerance, 95% Conformity |

|---|---|---|---|

| Mean/Median | <30 samples | Significantly higher | Significantly higher |

| Coefficient of Variation | Rarely achieved at any sample size | Rarely achieved at any sample size | Rarely achieved at any sample size |

Species-Specific Variations in Tissue Architecture

The mechanical behavior of biological tissues emerges from their complex hierarchical architecture and composition, which varies significantly between species. For instance, the arrangement of collagen fibers, proteoglycan content, cellular density, and vascularization patterns can differ substantially, leading to variations in nonlinearity, anisotropy, viscoelasticity, and failure properties. Applying material properties derived from animal models to human tissues ignores these fundamental architectural differences, introducing systematic errors that can propagate through computational simulations.

Inadequate Representation of Pathological Conditions

Generic tissue data often fails to capture the alterations in material behavior associated with disease states, aging, or individual genetic variations. Osteoporotic bone, atherosclerotic arteries, osteoarthritic cartilage, and scar tissue each possess distinct mechanical properties that deviate significantly from healthy baseline values. Computational models that utilize "normal" tissue properties to simulate pathological conditions contain inherent inaccuracies that limit their clinical utility and predictive capability.

Dynamic and Time-Dependent Property Changes

Biological tissues are not static materials; their properties change over time due to growth, remodeling, fatigue, and adaptation. Computational models that assume static material properties fail to capture these dynamic processes. This limitation is particularly relevant in simulations of long-term implant performance, tissue engineering constructs, and disease progression, where temporal changes in mechanical behavior significantly influence outcomes.

Quantitative Evidence of Error Propagation

Case Study: Soft Tissue Reconstruction in Rodent Mastication

The rodent masticatory system case study provides quantitative evidence of how reconstruction errors impact functional predictions [4]. Researchers compared biomechanical models using measured soft tissue properties against models using reconstructed properties. The "baseline" models with real data yielded differences in muscle proportions, bite force, and bone stress expected between sciuromorph, myomorph, and hystricomorph rodents. However, models using reconstructed properties showed substantial deviations:

- Muscle force miscalculation: Errors in reconstructed muscle volume and fiber length directly affected physiological cross-sectional area (PCSA) calculations, a key determinant of muscle force generation capacity [4].

- Bite force inaccuracies: Multi-body dynamics analysis revealed significant errors in predicted maximal incisor bite forces when reconstructed soft tissue properties were used [4].

- Incorrect bone stress patterns: Finite element analyses demonstrated that reconstructed properties failed to accurately predict both the magnitude and distribution of stress in craniofacial bones during mastication [4].

The inter-investigator variability in muscle volume reconstruction further compounded these errors, highlighting the subjective nature of current reconstruction methods [4].

Machine Learning Interatomic Potentials and Material Property Prediction

Even sophisticated machine learning approaches face challenges in accurately predicting material properties. Studies of machine learning interatomic potentials (MLIPs) have revealed that low average errors in energy and force predictions do not guarantee accurate reproduction of atomic dynamics or related physical properties [6]. For instance, an MLIP for aluminum reported a low mean absolute error for forces (0.03 eV Å⁻¹) yet predicted the activation energy of aluminum vacancy diffusion with an error of 0.1 eV compared to the DFT reference value of 0.59 eV [6]. This discrepancy persisted despite vacancy structures being included in the training dataset, demonstrating that inaccuracies can persist in specific configurations even with apparently good overall model performance.

Table 2: Documented Discrepancies Between Computational Predictions and Reference Values

| System Studied | Reported Error Metric | Documented Discrepancy | Impact |

|---|---|---|---|

| Aluminum MLIP [6] | MAE force: 0.03 eV Å⁻¹ | Activation energy error: 0.1 eV (Reference: 0.59 eV) | Inaccurate prediction of diffusion properties |

| Rodent Masticatory Models [4] | Low geometric reconstruction error | Failure to capture qualitative functional differences between morphotypes | Incorrect evolutionary functional inferences |

| Silicon MLIPs [6] | RMSE force: <0.3 eV Å⁻¹ | Errors in defect formation energies and migration barriers | Inaccurate modeling of material defects |

Methodological Protocols for Mitigating Errors

Experimental Protocol for Tissue-Specific Material Characterization

To establish accurate, tissue-specific material properties, researchers should implement comprehensive experimental protocols:

Tissue Sourcing and Preparation:

- Source human tissues through ethical donation programs when possible, with appropriate demographic and health history documentation.

- For animal tissues, clearly document species, strain, age, sex, and anatomical location.

- Implement standardized preparation protocols to maintain tissue hydration and prevent degradation during testing.

Mechanical Testing:

- Perform multi-axial mechanical testing to capture anisotropic behavior when applicable.

- Implement stress relaxation and creep tests to characterize time-dependent properties.

- Conduct cyclic loading to assess preconditioning effects and fatigue behavior.

- Use environmental chambers to maintain physiological temperature and hydration during testing.

Microstructural Analysis:

- Correlate mechanical properties with histological analysis of tissue structure.

- Use advanced imaging (e.g., multiphoton microscopy, micro-CT) to quantify organizational parameters.

Constitutive Model Fitting:

- Select appropriate constitutive models that capture the essential features of the tissue's mechanical behavior.

- Use optimization algorithms to determine material parameters that best fit experimental data.

- Validate fitted models against test data not used in the fitting process.

Protocol for Validating Soft Tissue Reconstructions in Evolutionary Biomechanics

Based on the rodent masticatory study [4], the following protocol provides a framework for validating soft tissue reconstruction methods:

Establish Baseline with Measured Data:

- Select extant species with known morphological and functional differences.

- Measure muscle architecture parameters (volume, fiber length, pennation angle) through dissection or medical imaging.

- Develop computational models using measured data to establish baseline functional predictions (e.g., bite forces, joint reactions).

Apply Reconstruction Methods:

- Have multiple investigators independently reconstruct soft tissue parameters using only skeletal morphology.

- Apply different reconstruction approaches (e.g., muscle scarring, phylogenetic bracketing) to assess method-dependent variability.

Quantitative Comparison:

- Compare functional outputs from reconstruction-based models against baseline models.

- Assess both quantitative accuracy and ability to capture qualitative patterns between taxa.

- Calculate error metrics for specific functional parameters (e.g., bite force error, stress distribution differences).

Verification and Validation Framework for Computational Models

Implement a rigorous V&V framework to quantify and mitigate errors [1] [2]:

Verification Procedures:

- Code verification: Compare numerical solutions against analytical solutions for simplified problems.

- Calculation verification: Perform mesh convergence studies to ensure discretization errors are acceptable (typically <5% change in solution outputs with mesh refinement) [1].

Validation Experiments:

- Design experiments specifically for validation purposes, independent of those used for parameter estimation.

- Compare model predictions with experimental measurements at multiple locations and under varied loading conditions.

- Quantify agreement using both global metrics (e.g., RMS error) and local comparisons at critical regions.

Sensitivity Analysis:

- Perform systematic sensitivity analyses to identify parameters with the greatest influence on model outputs [1].

- Focus validation efforts on accurately determining these high-sensitivity parameters.

- Use uncertainty quantification methods to propagate parameter uncertainties to model predictions.

Table 3: Research Reagent Solutions for Tissue Biomechanics

| Tool/Technology | Function | Application Notes |

|---|---|---|

| Biaxial Testing Systems | Characterizes anisotropic mechanical behavior under complex loading | Essential for soft tissues with fiber reinforcement (e.g., arteries, skin) |

| Micro-CT/MRI Scanners | Non-destructive 3D geometry acquisition and microstructural analysis | Enables patient-specific modeling and structure-function correlation |

| Inverse Finite Element Methods | Extracts material parameters from complex experimental tests | Powerful for parameterizing constitutive models from heterogeneous strain data |

| Digital Image Correlation (DIC) | Full-field surface strain measurement during mechanical testing | Provides comprehensive data for model validation beyond point measurements |

| Machine Learning Interatomic Potentials | Bridges accuracy of quantum methods with scale of classical simulations | Requires careful validation of dynamics and rare events [6] |

| Data Augmentation Techniques | Expands limited biomechanical datasets for machine learning | Improves model robustness; must preserve biomechanical plausibility [7] |

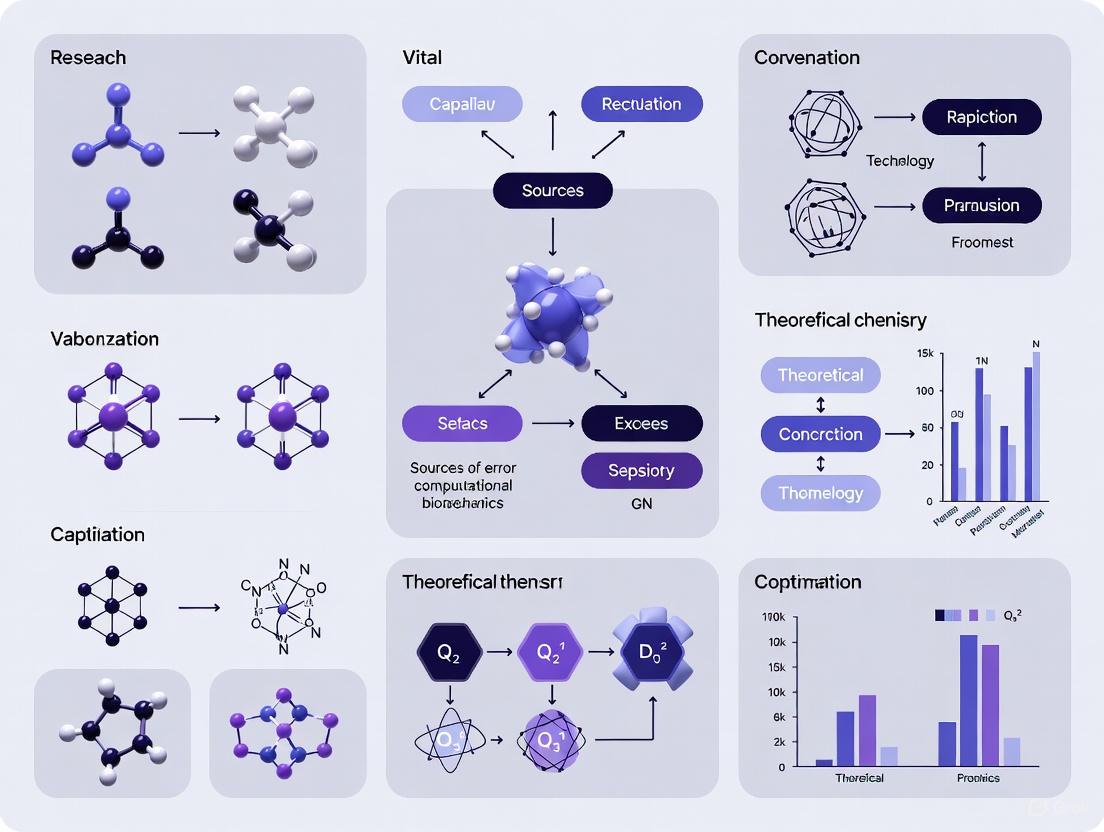

Visualization of Error Propagation and Mitigation Workflows

Workflow for Computational Model Validation

Diagram 1: The verification and validation workflow for computational models, highlighting the distinction between solving equations correctly and solving the correct equations [1] [2].

Error Propagation from Inaccurate Material Properties

Diagram 2: Propagation pathways showing how inaccurate material properties lead to various mechanical miscalculations and ultimately result in significant practical consequences.

The use of non-human and generic tissue data introduces significant errors in computational biomechanics that can compromise research conclusions, clinical applications, and evolutionary inferences. These errors stem from fundamental species-specific differences, inadequate representation of pathological conditions, and insufficient characterization of human tissue variability. As demonstrated through multiple case studies, these inaccuracies can persist even in sophisticated modeling approaches that show good performance on general error metrics.

Addressing these challenges requires a multi-faceted approach: rigorous validation against targeted experiments, implementation of comprehensive sensitivity analyses, development of species-specific and condition-specific material databases, and careful consideration of sample size requirements in tissue characterization studies. Furthermore, emerging technologies such as machine learning interatomic potentials and data augmentation techniques offer promising avenues for improvement but must be applied with careful attention to their limitations and validation needs.

By recognizing the pitfalls of applying non-human and generic tissue data, and implementing the methodological frameworks outlined in this guide, researchers can significantly improve the accuracy and reliability of computational biomechanics models, ultimately enhancing their utility for scientific discovery and clinical application.

In computational biomechanics, the fidelity of a model's geometric representation is a primary determinant of its predictive power. Geometric oversimplification—the abstraction of complex, patient-specific anatomical shapes into idealized forms—represents a critical source of error that can compromise the translational potential of computational simulations. As biomechanical models increasingly inform clinical decision-making and drug development processes, understanding and quantifying the impact of these simplifications becomes paramount. This whitepaper examines how geometric abstraction influences predictive accuracy across multiple biomechanical domains, providing researchers with methodological frameworks for evaluating and mitigating associated errors.

The drive toward simplification often stems from practical constraints: computational cost limitations, insufficiently detailed imaging data, or the unavailability of patient-specific tissue properties. However, when models sacrifice geometric fidelity for computational convenience, the resulting simulations may fail to capture critical biomechanical phenomena. For instance, trunk biomechanics research demonstrates that oversimplified geometric models can introduce significant errors in inverse dynamic analyses of lifting tasks, particularly for subjects with atypical morphologies [8]. Similarly, in soft tissue modeling, representing complex organs with simplified geometries neglects crucial anatomical features that govern mechanical behavior under load. By systematically examining case studies and quantitative evidence, this analysis establishes geometric oversimplification as a fundamental challenge requiring coordinated methodological advancement.

Quantitative Evidence: Measuring the Impact of Simplification

Comparative Error Analysis in Trunk Biomechanics

Research in trunk biomechanics provides compelling quantitative evidence of how geometric simplification impacts predictive accuracy. A seminal study evaluating different trunk modeling approaches during lifting tasks revealed that oversimplified models introduce substantial errors in calculated net muscular moments at the L5/S1 joint [8]. The investigation compared five linked segment models differing primarily in how the trunk was represented geometrically and parametrically, analyzing four distinct lifting tasks across twenty-one male subjects.

Table 1: Error Analysis of Trunk Modeling Approaches in Inverse Dynamic Analysis

| Modeling Parameter | Traditional Approach | Enhanced Approach | Error Reduction |

|---|---|---|---|

| Anthropometric Model | Proportional model using height and mass | Geometric model accounting for individual variations | Significant reduction, especially for subjects with larger abdomen |

| COM Positioning | Located on straight line between hips and shoulders | Adjusted according to trunk depth percentage | Notable error reduction across all subject morphologies |

| Trunk Partitioning | Two segments (pelvis, thoracolumbar) | Three segments (additional abdominal segment) | Improved moment estimation, particularly during asymmetric tasks |

| Morphology Consideration | One-size-fits-all approach | Grouping by antero-posterior diameter to height ratio | Greatest improvement for subjects with non-standard trunk geometry |

The findings demonstrated that all three geometric modeling parameters significantly influenced moment calculation errors. Specifically, using a geometric trunk model instead of a proportional anthropometric model reduced errors by better accounting for interindividual variability in abdominal region morphology. Similarly, proper antero-posterior positioning of the center of mass (COM) and implementing a three-segment trunk model both contributed to more accurate moment estimations [8]. The research notably found that subjects with a larger abdomen (characterized by higher antero-posterior diameter to height ratios) experienced the greatest error reductions with enhanced geometric modeling, highlighting the particular importance of geometric fidelity for non-standard morphologies.

Consequences in Soft Object Perception and Tissue Modeling

Beyond traditional biomechanics, the impact of geometric representation extends to computational models of visual perception and soft tissue mechanics. Research on soft object perception reveals that human visual systems employ sophisticated physics-based reasoning to interpret deformable objects, a capability that simplistic geometric models fail to capture [9]. The "Woven" model, which incorporates physics-based simulations to infer probabilistic representations of cloths, outperforms both deep neural networks and simplified geometric approaches in predicting human perceptual performance, particularly for estimating properties like stiffness and mass across different scene configurations [9].

In clinical biomechanics, the tension between geometric fidelity and practical constraints is particularly acute. Researchers note that obtaining patient-specific mechanical properties of soft tissues remains a fundamental obstacle in patient-specific modeling [10]. While advanced imaging techniques like MR and ultrasound elastography offer pathways toward better characterization, one promising approach involves reformulating computational problems to yield solutions weakly sensitive to mechanical properties variations [10]. For example, in image-guided neurosurgery, displacement-zero traction problems can predict intraoperative organ configurations without detailed tissue properties by leveraging preoperative images and limited intraoperative data [10].

Methodological Frameworks: Experimental Protocols for Quantification

Protocol for Evaluating Trunk Model Geometric Fidelity

The experimental protocol from trunk biomechanics research provides a robust template for quantifying geometric simplification effects [8]:

Subject Selection and Grouping:

- Recruit subjects representing diverse morphologies (e.g., varying antero-posterior diameter to height ratios)

- Establish subgroups based on morphological characteristics to evaluate model performance across population variability

Experimental Tasks:

- Implement both simple and complex lifting tasks to stress model capabilities

- Include asymmetric lifting conditions to evaluate model performance under non-idealized scenarios

- Standardize task execution while capturing three-dimensional motion data

Data Collection Apparatus:

- Utilize multi-camera motion capture systems (5 cameras in reference study)

- Implement force platforms to measure ground reaction forces

- Employ dynamometric instrumentation to capture hand forces during lifting tasks

Model Comparison Framework:

- Test identical datasets across multiple modeling approaches

- Compare geometric versus proportional anthropometric models

- Evaluate different trunk segmentation strategies (2-segment vs. 3-segment)

- Assess center of mass positioning methods (hip-shoulder line vs. trunk depth percentage)

Error Quantification:

- Calculate moment errors at critical joints (e.g., L5/S1)

- Implement multiple error metrics to capture different aspects of model performance

- Conduct statistical analysis to determine significance of differences between modeling approaches

Digital Twin Development for Volumetric Error Compensation

Recent advances in digital twin technology offer methodologies for addressing geometric and thermal errors in complex systems. Research on large machine tools demonstrates a unified approach to volumetric error compensation that treats geometric and thermal errors as a single time-varying error source [11]. The experimental protocol involves:

Sensor Network Implementation:

- Strategic distribution of temperature sensors throughout the structure (50 sensors in the referenced study)

- Automated artifact-based calibration procedures capable of characterizing volumetric error variation over time

- Continuous monitoring of thermal state and positional accuracy

Model Training and Validation:

- Conduct distinct thermal tests spanning multiple days for training and validation

- Employ phenomenological models trained on experimental volumetric calibration data

- Incorporate temperature measurements and axis positions as model inputs

- Deploy validated digital twins in control systems to apply real-time corrections

This approach demonstrates how iterative model refinement based on empirical data can compensate for both geometric inaccuracies and thermally induced errors in a unified framework [11].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Tools for Geometric Fidelity in Biomechanics

| Tool/Category | Function | Representative Examples |

|---|---|---|

| Motion Capture Systems | Capture three-dimensional kinematic data during dynamic tasks | Multi-camera systems with force platforms [8] |

| Statistical Shape Models (SSM) | Generate population-based anatomical variations from limited data | Personalized 3D foot models from sensor data [12] |

| Finite Element (FE) Simulation | High-fidelity stress/strain analysis in complex geometries | Personalized foot models for bone stress prediction [12] |

| Digital Twin Frameworks | Dynamic virtual representations updated with sensor data | Volumetric thermal error compensation for machine tools [11] |

| Inertial Measurement Units (IMUs) | Capture motion data outside laboratory environments | Nine-axis sensors for running biomechanics [12] |

| Probabilistic Programming | Incorporate uncertainty quantification into physical simulations | Woven model for soft object perception [9] |

The following diagram illustrates the relationship between modeling approaches and their typical outcomes in biomechanical simulations:

Modeling Pathways and Outcomes

Geometric oversimplification remains a pervasive challenge in computational biomechanics with demonstrable impacts on predictive accuracy across multiple domains. The evidence presented indicates that enhanced geometric modeling—through geometric anthropometric models, appropriate segmentation, and proper center of mass positioning—significantly reduces errors in biomechanical simulations [8]. Furthermore, emerging approaches like digital twin frameworks [11] and physics-informed models [9] offer promising pathways for balancing computational efficiency with predictive accuracy.

For researchers and drug development professionals, the findings underscore several critical considerations. First, model validation must include subjects with diverse morphologies, as geometric simplifications disproportionately impact non-standard anatomies. Second, investment in personalized geometric representation—whether through statistical shape modeling or patient-specific finite element meshes—yields substantial returns in predictive accuracy. Finally, the development of problems formulated to be weakly sensitive to uncertain parameters offers a complementary approach when perfect geometric fidelity remains elusive [10]. As computational biomechanics continues its translational journey toward clinical application and drug development, acknowledging and addressing geometric oversimplification will be essential for building trustworthy, predictive simulations that reliably inform critical decisions.

The accuracy of computational biomechanics models is fundamentally dependent on the precise definition of musculotendon parameters, particularly optimal fiber length (OFL) and tendon slack length (TSL). These parameters are central to Hill-type muscle models, which are widely used in musculoskeletal simulations to estimate muscle forces, joint loads, and metabolic energy consumption [13] [14]. Despite their critical importance, OFL and TSL remain exceptionally challenging to determine accurately for individual subjects, creating a significant source of error in model predictions [15] [16].

The determination of these parameters exists within the broader context of model verification and validation (V&V), a framework essential for building confidence in computational simulations [17] [1]. In this context, errors in muscle parameter specification represent a form of model form error—the discrepancy between the mathematical representation and the true biological system [18] [17]. This technical guide examines the specific challenges associated with defining OFL and TSL, quantifies their impact on model predictions, details current methodological approaches, and provides a toolkit for researchers navigating these complexities in computational biomechanics research.

The Critical Role of OFL and TSL in Muscle Modeling

Physiological Definitions and Biomechanical Significance

Within Hill-type muscle models, optimal fiber length (OFL) and tendon slack length (TSL) govern the fundamental force-length-velocity relationships that determine muscle force production:

- Optimal Fiber Length (OFL) is the length at which a muscle fiber can generate its maximum isometric force. At this length, the overlap between actin and myosin filaments is ideal for maximum cross-bridge formation [13] [14].

- Tendon Slack Length (TSL) is the length at which the tendon begins to develop tension when stretched. Below this length, the tendon contributes negligibly to force transmission [13] [19].

These parameters collectively determine the operating range of a muscle—the range of joint angles over which a muscle can effectively generate force [13] [19]. Inaccuracies in their specification propagate through musculoskeletal simulations, affecting predictions of muscle forces, joint moments, and body dynamics [20] [14].

Quantifying Sensitivity: Impact on Model Predictions

Comprehensive sensitivity analyses reveal that muscle force estimations exhibit varying degrees of sensitivity to different musculotendon parameters. The following table summarizes the relative sensitivity of force estimation to key Hill-type model parameters:

Table 1: Sensitivity of muscle force estimation to musculotendon parameters

| Parameter | Relative Impact on Force Estimation | Primary Effect on Muscle Function |

|---|---|---|

| Tendon Slack Length (TSL) | Highest sensitivity | Determines the transition between tendon compliance and force development, dramatically shifting the force-length curve [14]. |

| Optimal Fiber Length (OFL) | High sensitivity | Directly defines the peak and width of the force-length relationship [13] [20]. |

| Maximum Isometric Force | Moderate sensitivity | Scales the maximum force capacity without altering the fundamental force-length relationship [14]. |

| Pennation Angle | Least sensitivity | Affects the transmission of fiber force to the tendon, generally having a smaller impact than OFL or TSL [14]. |

Recent experimental validation studies have quantified the magnitude of errors that can occur in practice. When comparing model predictions to intraoperative measurements of gracilis muscle dynamics, researchers found substantial errors: individual fiber length errors reached 20% and passive force errors were as high as 37%, even when using subject-specific modeling approaches [15] [16]. These findings highlight the profound impact that parameter uncertainties can have on the predictive capability of musculoskeletal models.

Methodological Approaches and Their Limitations

Current Methods for Parameter Determination

Researchers have developed multiple methodological approaches to estimate OFL and TSL, each with distinct advantages and limitations:

Table 2: Comparison of methods for determining musculotendon parameters

| Method | Description | Key Advantages | Documented Limitations |

|---|---|---|---|

| Linear Scaling | Scales parameters from a generic model based on segment lengths, preserving OFL/TSL ratios [21]. | Simple to implement; requires minimal data [19]. | Assumes linear relationships that may not reflect biological reality; OFL does not always correlate linearly with leg length [21]. |

| Functional Scaling (Winby et al.) | Maps the operating range of muscle fiber lengths from a generic model to a scaled model [19]. | Maintains force-generating characteristics across subjects [13] [19]. | Originally limited to single joints; may not fully address multi-articular muscles [13]. |

| Optimization Techniques (Modenese et al.) | Uses optimization to adjust parameters, maintaining muscles' operating range between models [13]. | Can be applied to complete 3D limb models; suitable for models built from medical images [13]. | Relies on the quality of the reference model; may not capture true intersubject variability [15]. |

| Experiment-Guided Tuning | Leverages experimental data (e.g., ultrasound, passive moments) to tune parameters [20]. | Directly incorporates experimental observations; improves agreement with measured fiber lengths [20]. | Time-intensive; requires collection of experimental data [20]. |

The Subject-Specific Modeling Paradigm: Promise and Limitations

The development of subject-specific models represents a significant advancement in addressing parameter uncertainties. By incorporating individual anatomical measurements, these models have demonstrated improved accuracy compared to generic models [15] [21]. However, they introduce their own methodological challenges:

Creating truly subject-specific models requires extensive data collection, including medical imaging, motion analysis, and sometimes intraoperative measurements [15]. Even with such comprehensive approaches, significant errors persist. A 2023 study demonstrated that incorporating all subject-specific values reduced errors but still resulted in individual fiber length errors up to 20% and passive force errors up to 37% [15] [16]. This suggests fundamental limitations in both our measurement techniques and our mathematical representations of muscle physiology.

Experimental Protocols for Parameter Identification

Intraoperative Measurement Protocol

Direct measurement of musculotendon parameters represents the gold standard for validation, though it is highly invasive. Recent studies have established methodologies for intraoperative data collection:

- Surgical Context: Data collection during gracilis free functional muscle transfer procedures for elbow flexion restoration [15] [16].

- Parameter Measurement: Direct measurement of gracilis muscle-tendon unit length, optimal fiber length, and tendon slack length using intraoperative calipers and laser diffraction [16].

- Validation Approach: Comparison of model predictions to directly measured passive forces and fiber lengths across multiple joint positions [15].

- Sample Size: Thirty-two subjects providing informed consent from thirty-four invited participants [15].

This protocol revealed that the modeling parameter "tendon slack length" did not correlate with any real-world anatomical length, highlighting fundamental discrepancies between model representations and biological reality [15] [16].

Experiment-Guided Tuning Protocol

Non-invasive approaches have been developed that leverage multiple experimental data sources to tune musculotendon parameters:

- Imaging Data: Use of ultrasound imaging to measure fiber lengths in specific muscles (soleus, gastrocnemii, vasti) during controlled poses [20].

- Passive Moment Characterization: Measurement of joint passive moment-angle relationships across ankle, knee, and hip joints to inform passive force-length curves [20].

- Tuning Process: Adjustment of optimal fiber length, tendon slack length, and tendon stiffness to match reported fiber lengths from ultrasound and passive force-length relationships to match joint moment-angle relationships [20].

- Validation Metrics: Evaluation of tuned parameters by comparing simulated muscle excitations to EMG signals and metabolic rates to measured energy costs [20].

This approach demonstrated that with tuned parameters, muscles contracted more isometrically, and soleus's operating range was better estimated than with linearly scaled parameters [20].

Table 3: Key research reagents and computational tools for musculotendon parameter research

| Tool/Resource | Function/Application | Example Implementations |

|---|---|---|

| OpenSim Platform | Open-source software for creating and analyzing musculoskeletal models and simulations [21]. | Provides implementations of multiple lower limb models (Hamner, Rajagopal, Lai-Arnold) with different parameter sets [21]. |

| Muscle Parameter Optimization Tool | Implements algorithms to estimate OFL and TSL using optimization techniques [13]. | Tool available at https://simtk.org/home/optmusclepar implementing Modenese et al. algorithm [13]. |

| Ultrasound Imaging | Non-invasive measurement of muscle fiber lengths and pennation angles in vivo [20]. | Used to track fascicle length changes during dynamic tasks to inform parameter tuning [20]. |

| Intraoperative Measurement Setup | Direct measurement of muscle-tendon properties during surgical procedures [15]. | Calibration of model parameters against direct biological measurements [15] [16]. |

| Bayesian Validation Metrics | Quantitative framework for comparing model predictions with experimental data under uncertainty [17]. | Calculation of Bayes factors to assess model confidence considering various error sources [17]. |

Emerging Solutions and Future Directions

Hybrid Methodologies and Error Reduction Strategies

The limitations of individual approaches have led to the development of hybrid methodologies that combine multiple data sources:

Experiment-guided computational tuning represents a promising direction that leverages both experimental observations and computational optimization [20]. This approach tunes optimal fiber length, tendon slack length, and tendon stiffness to match reported fiber lengths from ultrasound imaging while also ensuring that passive moment-angle relationships match experimental data [20]. Studies implementing this methodology have demonstrated improved estimation of muscle excitation patterns and more physiologically plausible fiber length operating ranges [20].

The implementation of Bayesian validation frameworks provides a structured approach to quantify and manage errors in musculoskeletal models [17]. These frameworks explicitly recognize that both model predictions and experimental measurements contain uncertainties, and they provide metrics to assess confidence in model predictions while accounting for these uncertainties [17] [1].

Fundamental Challenges and Research Needs

Despite these advances, fundamental challenges remain in the precise determination of subject-specific muscle parameters:

- Tendon Slack Length Definition: Experimental evidence indicates that the modeling parameter "tendon slack length" does not correlate with any single real-world anatomical length, suggesting a fundamental discrepancy between model representations and biological reality [15] [16].

- Inter-Subject Variability: Current approaches struggle to capture the full extent of physiological variation between individuals, particularly in clinical populations where muscle architecture may be substantially altered [20].

- Parameter Interdependence: The high sensitivity of force predictions to tendon slack length, combined with the difficulty in its accurate determination, creates a persistent source of error in model predictions [14].

The accurate determination of subject-specific optimal fiber length and tendon slack length remains a significant challenge in computational biomechanics, representing a major source of error in musculoskeletal models. While current methodologies—from linear scaling to experiment-guided tuning—have progressively improved parameter estimation, substantial errors persist even in state-of-the-art subject-specific models. The sensitivity of force predictions to these parameters, particularly tendon slack length, means that these errors have profound effects on model outputs and their clinical or research applications.

Future progress will likely come from continued development of hybrid approaches that integrate multiple data sources within rigorous validation frameworks. The scientific community must acknowledge and quantify these uncertainties, particularly when models inform clinical decision-making or surgical planning. Only through transparent acknowledgment of these limitations and continued refinement of parameter identification techniques can computational biomechanics fulfill its potential to accurately represent and predict human movement.

In computational biomechanics, models are powerful tools for simulating the mechanical behavior of biological tissues to supplement experimental investigations or when direct experimentation is not possible [1]. These models play crucial roles in both basic science and patient-specific applications, such as diagnosis and evaluation of targeted treatments [1]. However, confidence in computational simulations is only justified when investigators have verified the mathematical foundation of the model and validated the results against sound experimental data [1].

A particularly challenging aspect of model development lies in the accurate representation of boundary and loading conditions, which define how forces are applied to and distributed within the model. Errors in these representations can profoundly impact model predictions, potentially leading to false conclusions in basic science or adverse outcomes in clinical applications [1]. This technical guide examines the sources, impacts, and mitigation strategies for boundary and loading condition errors within the broader context of error sources in computational biomechanics research.

The V&V Framework

Verification and validation (V&V) form the cornerstone of credible computational biomechanics. Verification is "the process of determining that a computational model accurately represents the underlying mathematical model and its solution," while validation is "the process of determining the degree to which a model is an accurate representation of the real world from the perspective of the intended uses of the model" [1]. Succinctly, verification is "solving the equations right" (mathematics) and validation is "solving the right equations" (physics) [1].

For the purpose of error analysis, error is defined as the difference between a simulation or experimental value and the truth [1]. The intended use of the model dictates the stringency of error analysis required, with clinical applications demanding far more extensive examination than basic science investigations [1].

Errors in computational biomechanics models arise from multiple sources, which can be categorized as follows:

- Model Form Error: Discrepancies between the mathematical model and true physics

- Numerical Error: Errors arising from computational implementation

- Input Uncertainty: Errors in model parameters, geometry, and boundary conditions

This guide focuses primarily on the last category, particularly errors in force representations, while acknowledging their interaction with other error sources.

The Critical Role of Boundary and Loading Conditions

Defining Boundary and Loading Conditions

In computational biomechanics, boundary conditions specify how the model interacts with its environment at its boundaries, while loading conditions define the forces, pressures, or displacements applied to the model. In biological systems, these often represent complex in-vivo forces generated by muscles, gravitational loading, contact interactions, or fluid-structure interactions.

Errors in boundary and loading conditions arise from several sources:

- Oversimplification of Anatomy: Replacing complex anatomical structures with simplified representations

- Inaccurate Muscle Force Estimation: Approximating complex muscle activation patterns

- Incomplete Characterization of Joint Mechanics: Simplifying joint kinematics and kinetics

- Incorrect Tissue Material Properties: Using inappropriate constitutive models

- Measurement Limitations: Technological constraints in quantifying in-vivo forces

Case Studies in Boundary and Loading Condition Errors

Spinal Biomechanics: Sensitivity to Kinematic Inputs

In spinal biomechanics, recent advances have enabled the development of pure displacement-control trunk models that estimate spinal loads without calculating muscle forces. These models are driven by measured in-vivo displacements from medical imaging rather than traditional force-control approaches [22].

A Monte Carlo analysis investigated the sensitivity of musculoskeletal (MS) and finite element (FE) spine models to errors in image-based vertebral displacement measurements [22]. The study revealed substantial task-dependent sensitivities to errors in measured vertebral translations, with potentially dramatic effects on model predictions:

Table 1: Impact of Vertebral Translation Errors on Spinal Model Predictions

| Error Level | Translation Error (SD) | Rotation Error (SD) | Impact on L5-S1 IDPs | Impact on Compression/Shear Forces |

|---|---|---|---|---|

| Low | 0.1 mm | 0.2° | Minimal change | Minimal change |

| Medium | 0.2 mm | 0.4° | Moderate change (SD ~0.7 MPa) | Noticeable directional changes |

| High | 0.3 mm | 0.6° | Substantial change (SD ~1.05 MPa) | Force direction reversal in some cases |

The results demonstrated that outputs of both MS and FE models were considerably more sensitive to errors in measured vertebral translations than rotations [22]. This finding is particularly significant given that current measurement errors in image-based kinematics are reported to be approximately 0.4-0.9° and 0.2-0.3 mm in vertebral displacements [22]. The authors concluded that "measured vertebral translations are currently not accurate enough to drive biomechanical models when estimating spinal loads" [22].

Cardiovascular Modeling: Challenges in Patient-Specific Boundary Conditions

In cardiovascular fluid dynamics, specifying patient-specific inlet and outlet conditions presents significant challenges [23]. Often, only the time-varying flow rate or pressure are known, necessitating approximations that introduce error:

Inlet Flow Approximation: The Womersley equation for unsteady pulsatile flow in a rigid straight cylindrical vessel is commonly used, but this velocity profile fails to capture the complexity of pulsatile inlet flow fields arising from vessel curvature, short entrance lengths, and pulse-wave reflections [23].

Outlet Conditions: The downstream conditions can significantly affect the solution, particularly when dealing with truncated vascular networks where the impact of distal vasculature must be approximated [23].

These limitations become particularly problematic when using computational models to diagnose cardiovascular disease severity or guide surgical treatments, where accurate prediction of parameters like fractional flow reserve is essential [23].

Foot Biomechanics: From External Forces to Internal Stresses

In running biomechanics, understanding internal bone stresses is crucial for preventing stress fractures, yet most models focus on predicting external forces (e.g., ground reaction forces) or joint kinetics, which may not fully capture internal mechanical stresses [24]. Previous studies have shown that external load metrics often exhibit weak correlations with internal tibial bone stress [24].

A recent study developed a digital twin framework for predicting metatarsal bone stresses in runners, integrating personalized finite element models with deep learning predictions [24]. The approach highlighted the disconnect between easily measurable external forces and clinically relevant internal stresses, emphasizing the need for models that can accurately bridge this gap through appropriate boundary condition representation.

Methodological Approaches for Error Mitigation

Experimental Protocols for Validation

Comprehensive Sensitivity Analysis: Prior to validation experiments, sensitivity studies help identify critical parameters that most significantly impact model outputs [1]. This allows experimentalists to design validation studies that tightly control these quantities of interest.

Multi-Modal Experimental Validation: Combining different experimental techniques provides more comprehensive validation data. For spine biomechanics, this may include combining motion capture, mechanical loading rigs, strain gauges, and digital image correlation [25].

Hierarchical Validation Approach: Implementing validation at multiple levels, from tissue-level properties to organ-level responses, helps isolate sources of error [25].

Table 2: Methodologies for Quantifying and Mitigating Boundary Condition Errors

| Methodology | Application Examples | Key Benefits | Limitations |

|---|---|---|---|

| Monte Carlo Analysis | Assessing sensitivity to kinematic measurement errors [22] | Quantifies output uncertainty from input variability | Computationally intensive |

| Domain Adaptation with LSTM | Predicting bone stress from wearable sensors [24] | Translates external measurements to internal stresses | Requires extensive training data |

| Error Fields Customization | Robotic movement training with personalized error augmentation [26] | Adapts to individual error patterns | Complex implementation |

| Intravital 3D Bioprinting | Direct force measurement in morphogenesis [27] | Direct quantification of tissue-level forces | Specialized equipment required |

Computational Techniques for Improved Force Representation

Constitutive Model Refinement: Developing more sophisticated material models that better capture tissue behavior under complex loading conditions [1].

Fluid-Structure Interaction: Implementing coupled fluid-structure models that more accurately represent physiological loading conditions in cardiovascular systems [23].

Personalized Geometry Reconstruction: Using statistical shape modeling and free-form deformation techniques to create patient-specific anatomical models [24].

Emerging Solutions and Future Directions

Deep Learning Integration

Deep learning approaches show significant promise for addressing challenges in boundary and loading condition specification:

Image Segmentation Acceleration: Convolutional neural networks can reduce the time required for image segmentation while improving accuracy [23].

Boundary Condition Prediction: Neural networks can learn to infer appropriate boundary conditions from limited clinical data [23].

Model Order Reduction: Deep learning surrogates can accelerate computationally intensive simulations, enabling more comprehensive parameter studies [23].

Advanced Force Measurement Technologies

Novel technologies are emerging to directly quantify forces in biological systems:

Intravital Mechano-Sensory Hydrogels (iMeSH): Spring-like force sensors fabricated by intravital three-dimensional bioprinting directly in developing embryos allow direct quantification of morphogenetic forces [27]. These sensors have been used to measure compression forces exceeding hundreds of nano-newtons during neural tube closure [27].

Error Field Customization: Robotic training systems that customize error augmentation based on individual error statistics show promise for personalized rehabilitation approaches [26].

Model Sharing and Reproducibility

The biomechanics community increasingly recognizes the importance of sharing computational models and related resources to enhance reproducibility and enable repurposing of models [28]. Infrastructure to host modeling and simulation projects has been developed, and scientific journals are beginning to encourage sharing of data, models, and software [28].

Visualizing Error Propagation in Computational Biomechanics

The following diagram illustrates the relationship between boundary condition errors and their impact on computational model predictions:

Table 3: Computational Tools for Addressing Boundary Condition Challenges

| Tool Category | Specific Tools | Primary Application |

|---|---|---|

| Multibody Dynamics | SIMM, SD/Fast, Open Dynamics Engine, ADAMS, LifeMOD, Simulink, SimMechanics [29] | Movement simulation, neuromusculoskeletal models |

| Finite Element Analysis | ABAQUS, ANSYS, CMISS [29] | Continuum mechanics of organs and tissues |

| Mesh Generation | TrueGrid, Cubit, Hypermesh, TetGen, NETGEN [29] | Creating 3D geometries for FEA |

| Image to Geometry Conversion | 3D Slicer, 3D-Doctor, Amira, MATLAB [29] | Converting 2D medical images to 3D models |

| Personalized Modeling | Statistical Shape Models, Free-Form Deformation techniques [24] | Patient-specific model development |

Boundary and loading condition errors represent a significant challenge in computational biomechanics, with potentially profound implications for both basic science and clinical applications. The case studies presented demonstrate that even small errors in force representations can dramatically alter model predictions, particularly in sensitive applications like spinal load estimation [22] or cardiovascular diagnostics [23].

Addressing these challenges requires a multi-faceted approach combining rigorous verification and validation protocols [1], advanced measurement technologies [27], sophisticated computational techniques [23], and community-wide efforts to enhance model sharing and reproducibility [28]. As computational biomechanics continues to advance toward real-time clinical applications, the accurate representation of in-vivo forces will remain a critical frontier in the field's development.

Methodological Challenges in Multiscale Modeling and AI Integration

In computational biomechanics, the pursuit of personalized simulations presents a fundamental challenge: balancing the demand for high accuracy against the constraints of computational time. Personalized models, particularly those derived from patient-specific medical imaging data, are increasingly crucial for applications in surgical planning, implant design, and drug development [30] [1]. These models account for inter-individual variability in anatomy and tissue properties, offering the potential for highly accurate predictions [30]. However, this enhanced predictive capability comes at a significant computational cost. The fidelity of a model—determined by its geometric complexity, material properties, and boundary conditions—directly influences its computational expense. This article examines the core trade-offs between accuracy and time in Finite Element Analysis (FEA) for personalized simulations, framed within the critical context of identifying and managing sources of error in computational biomechanics research.

Foundational Concepts: Error, Verification, and Validation

A systematic understanding of error is a prerequisite for managing the accuracy-time trade-off. In computational mechanics, error is defined as the difference between a simulated value and the true physical value [1]. Two processes are essential for building confidence in model predictions: verification and validation.

- Verification addresses the question, "Are we solving the equations correctly?" It is a mathematical process of ensuring the computational model correctly implements the underlying mathematical model and its solution algorithms [1]. This involves code verification against benchmark problems with known analytical solutions and calculation verification, typically through mesh convergence studies [1].

- Validation addresses the question, "Are we solving the correct equations?" It is the process of determining how well the computational model represents reality from the perspective of its intended use by comparing its predictions with experimental data [31] [1].

For personalized biomechanical models, a significant source of error stems from the subject-specific data used to construct them. The resolution of medical image data can introduce geometric inaccuracies during 3D reconstruction, while the assignment of material properties often relies on literature-based values that may not reflect the specific patient's tissue characteristics [1]. These uncertainties must be quantified through sensitivity analyses.

Table 1: Glossary of Key Terminology in Computational Error Analysis

| Term | Definition | Relevance to Accuracy-Time Trade-off |

|---|---|---|

| Verification | Process of ensuring the computational model correctly implements the mathematical model [1]. | A verified model is a prerequisite for meaningful accuracy assessments. Incomplete verification wastes computational resources. |

| Validation | Process of determining how well a model represents the real world from its intended perspective [1]. | Establishes the model's predictive credibility. Validation experiments are essential but time-consuming. |

| Sensitivity Analysis | Study of how variation in model inputs affects the outputs [1]. | Identifies which parameters require precise specification, allowing simplification of less sensitive components to save time. |

| Mesh Convergence | Ensuring the FE solution does not change significantly with further mesh refinement [1]. | Finer meshes generally improve accuracy but exponentially increase computation time. |

| Uncertainty Quantification | The process of characterizing and reducing uncertainties in model predictions. | Critical for assessing the reliability of a personalized simulation, adding to the overall computational burden. |

Quantifying the Trade-offs: Accuracy, Time, and Model Complexity

The relationship between model complexity, accuracy, and solution time is not linear. Small increases in fidelity can lead to large increases in computational cost. The primary factors contributing to this trade-off are mesh density, material model complexity, and the degree of personalization.

The Impact of Discretization: Mesh Convergence

The finite element method relies on discretizing a continuous domain into a mesh of simple elements. The fineness of this mesh is a primary lever controlling accuracy and time. A mesh that is too coarse (under-discretized) produces an overly stiff solution that does not capture stress concentrations, while an excessively fine mesh consumes disproportionate computational resources for diminishing returns in accuracy [1]. A mesh convergence study is a verification standard to find a balance, where the mesh is iteratively refined until the change in a key output variable (e.g., peak stress) falls below a predefined threshold, often suggested as less than 5% [1].

Material and Geometric Nonlinearities

Biological tissues exhibit complex, nonlinear mechanical behaviors. Modeling these behaviors with sophisticated constitutive laws (e.g., hyperelastic, viscoelastic) is more accurate than simple linear models but requires significantly more computational effort due to the need for iterative solution techniques [31] [1]. Similarly, geometric nonlinearities, which arise when a structure undergoes large deformations, further increase the computational cost. The decision to include these nonlinearities is a direct trade-off between physical realism and simulation time.

Table 2: Computational Cost and Accuracy of Common Modeling Choices

| Modeling Aspect | Low-Cost / Less Accurate Approach | High-Cost / More Accurate Approach | Impact on Computational Time |

|---|---|---|---|

| Mesh Density | Coarse mesh with few elements. | Fine, converged mesh; adaptive meshing. | Exponential increase in degrees of freedom and solver time. |

| Material Model | Linear elastic, isotropic. | Nonlinear, anisotropic, viscoelastic. | Significant increase due to iterative solvers and complex state evaluations. |

| Geometry | Template or simplified anatomy (e.g., MNI152 head model) [30]. | Patient-specific geometry from high-resolution MRI/CT. | Increase due to complex mesh generation and more irregular geometry. |

| Physics | Quasi-static analysis. | Dynamic analysis; coupled physics (e.g., fluid-structure interaction). | Large increase from time-stepping and solving multiple physical fields. |

| Solver | Direct solver for linear problems. | Iterative solver with preconditioning for nonlinear problems. | Varies; iterative solvers can be more efficient for large, sparse systems. |

Methodologies for Quantitative Error Assessment

To rationally navigate the accuracy-time trade-off, researchers must employ rigorous methodologies for quantitative error assessment. These methodologies provide the data needed to decide if a model is "good enough" for its intended purpose.

Experimental Validation Protocols

Validation requires high-quality experimental data that captures the essential physics the model intends to predict. A well-designed validation experiment for a biomechanical model should:

- Replicate Boundary Conditions: The experimental setup must accurately mimic the loading and constraints defined in the simulation [31].

- Measure Quantities of Interest: The experimental outputs (e.g., strain, displacement, force) should be the same as the primary outputs of the simulation.

- Quantify Discrepancy: Use metrics like the

L²-normof the difference between the simulated and experimental data fields to provide a scalar measure of error [32]. For example, one study on forging processes highlighted that even advanced FE code-simulations could not accurately capture all nonlinear behaviors, underscoring the need for rigorous, quantitative comparison with physical data [31].

The Statistical Finite Element (statFEM) Approach

A modern approach to error analysis is the statistical Finite Element (statFEM) method. statFEM provides a probabilistic framework that synthesizes measurement data with a finite element model. It uses a Gaussian process prior to model the discrepancy between the simulation and the true system response. This approach allows for a rigorous quantification of uncertainty in model predictions, accounting for both errors in the model itself and noise in the measurement data [32]. Error estimates in statFEM show polynomial rates of convergence in the numbers of measurement points and finite element basis functions, directly linking model refinement to predictive accuracy [32].

Emerging Strategies for Balancing Accuracy and Time

Several advanced strategies are being developed to break away from the traditional accuracy-time dichotomy.

Machine Learning as a Surrogate

Machine learning (ML) is increasingly used to create data-driven surrogate models. These surrogates learn the mapping between input parameters (e.g., geometry, load) and output fields (e.g., stress, strain) from a set of high-fidelity FE simulations. Once trained, the surrogate can make near-instantaneous predictions, offering speedups of several orders of magnitude for specific scenarios [33]. There are two predominant approaches:

- Direct Surrogate Modeling: A model (often a deep neural network) directly predicts the quantity of interest.

- Reduced-Order Models (ROMs): The high-dimensional system is projected onto a lower-dimensional subspace where the solution is computationally efficient [33].

The primary challenges remain the generalizability of these models beyond their training data and the significant computational cost required to generate the training dataset.

Physics-Informed and Scientific Machine Learning (SciML)

To improve the generalizability of pure data-driven models, Scientific Machine Learning (SciML) incorporates physical laws (e.g., partial differential equations for conservation of momentum) directly into the learning process [33]. This "physics-informed" approach ensures that model predictions are physically plausible, even in regions of the parameter space not covered by training data. This hybridization of CFD/FEA solvers with data-driven models is a crucial step toward deploying reliable, fast models for engineering design [33].

The following workflow diagram illustrates how these modern methodologies integrate with traditional FEA to optimize the balance between accuracy and computational time.

The Scientist's Toolkit: Essential Research Reagents

Navigating the computational trade-offs in FEA requires a suite of software and methodological tools. The table below details key "research reagents" essential for conducting rigorous studies in this field.

Table 3: Essential Computational Tools for Personalized FEA

| Tool / Reagent | Function | Role in Managing Accuracy-Time Trade-off |

|---|---|---|

| Automated Segmentation Software | Converts medical images (MRI, CT) into 3D geometric models of anatomical structures [30]. | Reduces time for model personalization; accuracy of segmentation directly impacts model fidelity. |

| Mesh Generation Software | Creates the finite element mesh from the 3D geometry. | Allows for control over mesh density and quality, directly influencing the accuracy and computational cost. |

| FE Software with Nonlinear Solvers | Solves the system of equations governing the physics of the problem (e.g., Abaqus, FEBio). | The choice of solver (implicit/explicit) and its settings can drastically affect solution time for complex problems. |

| Statistical Finite Element (statFEM) Code | Probabilistic framework that synthesizes FEA with measurement data [32]. | Quantifies uncertainty, allowing informed decisions about model refinement and reliability of predictions. |

| Machine Learning Libraries (e.g., PyTorch, TensorFlow) | Enables the development of surrogate models and physics-informed neural networks [33]. | Used to create fast-running models that approximate high-fidelity FEA, bypassing the original computational cost. |

| Validation Experiment Kit | Physical setup for measuring biomechanical quantities (e.g., force, strain, displacement) [31] [1]. | Provides the ground-truth data required to validate models and quantify error, closing the loop on model development. |

The trade-off between accuracy and computational time is a central challenge in personalized finite element analysis. Effectively managing this trade-off requires a disciplined approach centered on the principles of verification, validation, and error quantification. While increasing model complexity generally improves accuracy, it incurs a heavy computational penalty. Emerging strategies, particularly statistical finite element methods and physics-informed machine learning, offer promising pathways to transcend this traditional trade-off by providing fast, quantifiably reliable predictions. For researchers in biomechanics and drug development, adopting these rigorous methodologies is not merely a technical exercise but a fundamental requirement for building credible, clinically relevant computational models.

In computational biomechanics and drug development, the adoption of deep learning models is often hampered by two interconnected challenges: significant prediction errors and profound opacity in decision-making. These black-box AI systems produce inputs and outputs whose internal workings remain obscure, complicating their application in mission-critical research such as surgical planning or pharmaceutical development [34]. This opacity is not merely an inconvenience; it masks potential biases, impedes model debugging, and can lead to overconfident predictions on novel data, thereby introducing substantial risks in scientific and clinical contexts [34] [35] [36]. The core of the problem lies in the inherent complexity of deep neural networks, which can comprise hundreds or thousands of layers, each containing numerous neurons. While this architecture enables the identification of complex, non-linear patterns, it also renders the model's reasoning process virtually impossible for humans to decipher through direct inspection [34].

The drive for explainability is particularly urgent in computationally intensive fields like biomechanics, where models inform critical decisions. For instance, in augmented reality (AR)-guided surgical navigation, inaccurate deformation modeling of organs can lead to misalignment between preoperative models and intraoperative anatomy, directly compromising patient safety [37]. Similarly, in drug-target interaction (DTI) prediction, traditional deep learning models lack probability calibration, often producing high prediction probabilities even in low-confidence situations. This "overconfidence" can push false positives into experimental validation stages, wasting valuable resources and potentially delaying the entire drug discovery pipeline [36]. Therefore, understanding and mitigating these limitations is not an academic exercise but a necessary step toward building reliable, trustworthy, and deployable AI systems in computational life sciences.

Quantitative Evidence of Deep Learning Limitations

Recent rigorous benchmarking studies have provided sobering evidence that the performance of complex deep learning models can often be matched or even surpassed by deliberately simple baselines. A 2024 study critically evaluated five foundation models and two other deep learning models for predicting transcriptome changes after genetic perturbations, comparing them against simplistic baselines like a 'no change' model and an 'additive' model [38].

Table 1: Benchmarking Performance of Deep Learning Models vs. Simple Baselines in Genetic Perturbation Prediction

| Model Category | Representative Models | Key Finding | Performance on Double Perturbation Prediction | Performance on Unseen Perturbation Prediction |

|---|---|---|---|---|

| Foundation Models | scGPT, scFoundation | Failed to outperform simple additive baseline for double perturbations [38] | Higher prediction error (L2 distance) than additive baseline [38] | Unable to consistently outperform mean prediction or linear models [38] |

| Other Deep Models | GEARS, CPA | Particularly uncompetitive in double perturbation benchmark [38] | All models had substantially higher prediction error than additive baseline [38] | GEARS performed similarly to linear models using its own pretrained embeddings [38] |

| Simple Baselines | 'No change', 'Additive' | Set competitive performance benchmarks despite their simplicity [38] | Additive model used sum of individual logarithmic fold changes [38] | Linear model with perturbation data pretraining consistently outperformed foundation models [38] |

This benchmarking exercise revealed that none of the sophisticated deep learning models could outperform the simple additive baseline for predicting double perturbation effects. Furthermore, when predicting the effects of unseen perturbations, none consistently outperformed the simple mean prediction or a straightforward linear model [38]. These findings align with other benchmarks in different domains. For example, in rice leaf disease detection, models like InceptionV3 and EfficientNetB0 achieved high classification accuracies but demonstrated poor feature selection capabilities, indicating they were learning from irrelevant image features rather than pathologically significant patterns—a phenomenon known as the Clever Hans effect [39]. This reliance on spurious correlations severely limits a model's reliability when deployed in real-world agricultural settings [39].

Experimental Protocols for Model Evaluation

Benchmarking Genetic Perturbation Prediction

The protocol for evaluating genetic perturbation prediction models provides a robust template for rigorous assessment. The study utilized data where 100 individual genes and 124 pairs of genes were upregulated in K562 cells using a CRISPR activation system [38].

Methodology:

- Data Preparation: Expression data for 19,264 genes under 224 perturbations plus a control were used. The double perturbations were split, with 62 used for training and 62 held out for testing [38].

- Model Fine-tuning: All models were fine-tuned on all 100 single perturbations and the 62 training double perturbations. The analysis was run five times with different random partitions for robustness [38].

- Evaluation Metric: The primary metric was the L2 distance between predicted and observed expression values for the 1,000 most highly expressed genes. This was supplemented by examining Pearson delta and L2 distances for other gene subsets [38].

- Interaction Prediction: Genetic interactions were operationalized as double perturbation phenotypes that differed from the additive expectation more than expected under a Normal distribution null model. True-positive rates and false discovery proportions were calculated across prediction thresholds [38].

Quantitative Evaluation of Explainable AI (XAI)

For tasks like medical image analysis, a comprehensive three-stage methodology moves beyond mere classification accuracy to assess model reliability through Explainable AI (XAI) [39].

Methodology:

- Traditional Performance Evaluation: Models are first assessed using standard metrics like accuracy, precision, recall, and F1-score [39].

- Qualitative XAI Analysis: Techniques like Local Interpretable Model-agnostic Explanations (LIME) or Grad-CAM generate heatmaps to visualize the image regions the model considered important for its decision. This is assessed through visual inspection [39].

- Quantitative XAI Analysis: The similarity between the XAI heatmap and a ground-truth region of interest is measured using metrics such as Intersection over Union (IoU) and the Dice Similarity Coefficient (DSC). This provides an objective measure of whether the model focuses on clinically relevant features [39].

- Overfitting Ratio Calculation: A novel metric quantifies the model's reliance on insignificant features, with a higher ratio indicating poorer reliability for real-world application [39].

Table 2: Three-Stage Protocol for Evaluating Deep Learning Model Reliability [39]

| Stage | Purpose | Key Actions | Output Metrics |

|---|---|---|---|

| 1. Traditional Evaluation | Assess classification performance | Train and test models on labeled datasets | Accuracy, Precision, Recall, F1-score |

| 2. Qualitative XAI Analysis | Visualize model decision basis | Apply XAI techniques (e.g., LIME) to generate heatmaps | Saliency maps highlighting important regions |

| 3. Quantitative XAI Analysis | Objectively measure feature alignment | Calculate similarity between heatmaps and ground-truth regions | IoU, DSC, Specificity, Matthews Correlation Coefficient (MCC) |

| Overfitting Analysis | Quantify reliance on insignificant features | Measure model's attention to irrelevant image areas | Overfitting Ratio (lower is better) |

Frameworks for Quantifying Uncertainty and Improving Interpretability

Evidential Deep Learning for Reliable Predictions

To address overconfidence in predictions, particularly for novel data, Evidential Deep Learning (EDL) offers a framework for uncertainty quantification. Applied to drug-target interaction prediction, EDL models like EviDTI integrate multiple data dimensions—drug 2D graphs, 3D structures, and target sequence features—and output both a prediction probability and an uncertainty estimate [36]. This is achieved by replacing the standard softmax output layer with an evidence layer that parameterizes a Dirichlet distribution, allowing the model to express its confidence level explicitly [36]. In practical terms, this means that when the model encounters a drug-target pair that is structurally different from its training data, it can output a high uncertainty score, signaling to researchers that the prediction requires further validation. This uncertainty information can prioritize which DTIs to advance to costly experimental validation, thereby increasing the efficiency of the drug discovery process [36].

Data-Driven Computational Mechanics