Learning Medicinal Chemistry Intuition: How AI Predicts Expert Evaluations in Drug Discovery

This article explores the emerging field of computational prediction for medicinal chemist evaluations, a critical bottleneck in drug discovery.

Learning Medicinal Chemistry Intuition: How AI Predicts Expert Evaluations in Drug Discovery

Abstract

This article explores the emerging field of computational prediction for medicinal chemist evaluations, a critical bottleneck in drug discovery. We cover the foundational principles of capturing chemical intuition, detailing how machine learning models, particularly preference learning algorithms, are trained on expert feedback to prioritize compounds. The methodological section examines the application of these AI proxies in real-world tasks like compound prioritization, motif rationalization, and biased de novo molecular design. We also address significant challenges including data quality, cognitive biases, and model interpretability, providing strategies for troubleshooting and optimization. Finally, the article presents rigorous validation frameworks and comparative analyses against traditional rule-based methods, assessing the real-world impact of these models on accelerating lead optimization and improving clinical success rates for researchers and drug development professionals.

The Quest to Quantify Chemistry Intuition: Foundations and Motivation

Defining Medicinal Chemistry Intuition in the Lead Optimization Process

Medicinal chemistry intuition represents the complex, experience-based knowledge that guides chemists in making critical decisions during lead optimization in drug discovery. This expertise, traditionally developed over years of practice, enables medicinal chemists to prioritize which compounds to synthesize and evaluate based on a subtle balance of activity, ADMET properties, and synthetic feasibility [1]. The lead optimization process is an inherently arduous endeavor where the collective input of medicinal chemists is weighed to achieve desired molecular property profiles [1]. While this human intuition has long been regarded as an art form, recent computational advances are now successfully capturing, quantifying, and even predicting these expert evaluations through artificial intelligence and machine learning approaches. This guide compares the emerging computational methodologies that aim to replicate and augment medicinal chemistry intuition, examining their experimental foundations, performance metrics, and practical applications in modern drug discovery pipelines.

Computational Approaches to Quantifying Chemistry Intuition

Defining the Experimental Framework

Research efforts to computationally capture medicinal chemistry intuition employ carefully designed experimental protocols that collect and model expert decision-making. The core methodology involves presenting chemists with compound pairs and recording their preferences, then using this data to train machine learning models that can predict these preferences [1]. These studies typically involve several key phases:

Data Collection Design: Researchers use pairwise comparison-based studies to minimize cognitive biases like the "anchoring effect" that plagued earlier Likert-scale approaches [1]. This method, inspired by multiplayer game ranking systems, frames compound evaluation as a preference learning problem rather than absolute scoring.

Participant Selection: Studies typically involve diverse chemistry experts, including wet-lab, computational, and analytical chemists. For example, one published study engaged 35 Novartis chemists who provided over 5,000 annotations over several months [1], while another Sanofi study involved 92 researchers with diverse scientific expertise [2].

Model Training: Collected preferences train machine learning models, typically using neural networks or Bayesian classifiers, to predict chemist choices. These models learn implicit scoring functions that capture the subtleties of medicinal chemistry intuition [1] [3].

Table 1: Key Experimental Parameters in Intuition Capture Studies

| Parameter | Novartis Study [1] | NIH Probes Study [3] | Sanofi Study [2] |

|---|---|---|---|

| Participants | 35 chemists | 1 experienced medicinal chemist (>40 years) | 92 researchers |

| Data Points | 5,000+ pairwise comparisons | 300+ NIH chemical probes evaluated | Lead optimization exercise |

| Evaluation Method | Pairwise comparisons | Binary classification (desirable/undesirable) | Collective intelligence exercise |

| Model Type | Neural network with active learning | Bayesian classifiers | Collective intelligence agent |

| Key Metrics | AUROC, Fleiss' κ, Cohen's κ | Accuracy compared to rule-based filters | ADMET endpoint prediction |

Performance Validation and Agreement Metrics

Quantifying how well computational models capture human intuition requires robust validation metrics. Research studies employ both inter-rater agreement statistics (to measure consensus among chemists) and machine learning performance metrics (to evaluate prediction accuracy).

In the Novartis study, inter-rater agreement measured by Fleiss' κ showed moderate agreement between chemists (κF₁=0.4, κF₂=0.32), while intra-rater agreement measured by Cohen's κ showed fair consistency in individual chemist decisions (κC₁=0.6, κC₂=0.59) [1]. These values indicate that while medicinal chemists demonstrate consistent personal preferences, there remains significant variability between different experts' intuition.

For predictive performance, the Novartis study reported steady improvement in area under the receiver-operating characteristic (AUROC) curve values as more data became available, starting from 0.6 and surpassing 0.74 with 5,000 available pairs [1]. This performance continued to improve without plateauing, suggesting that additional data could further enhance model accuracy.

Comparative Analysis of Computational Methodologies

Approach Comparison: Techniques and Applications

Multiple computational strategies have emerged to capture and replicate medicinal chemistry intuition, each with distinct methodological foundations and application strengths.

Table 2: Comparison of Computational Intuition Capture Approaches

| Approach | Methodological Foundation | Key Advantages | Limitations | Representative Study |

|---|---|---|---|---|

| Preference Machine Learning | Pairwise comparisons with neural networks | Captures subtle preferences; minimizes cognitive bias | Requires extensive data collection | Novartis Study [1] |

| Bayesian Classification | Expert binary classifications with Bayesian models | Interpretable models; works with smaller datasets | Depends on single expert perspective | NIH Probes Study [3] |

| Collective Intelligence | Aggregation of diverse expert opinions | Outperforms individuals for ADMET endpoints | Complex to coordinate multiple experts | Sanofi Study [2] |

| Rule-Based Filtering | Structural alerts and property rules | Transparent and easily implementable | Misses subtleties of chemical intuition | PAINS, REOS, Lilly Rules [3] |

Performance Across Drug Discovery Tasks

Different computational approaches demonstrate varying strengths across specific lead optimization tasks. The Sanofi collective intelligence study revealed that for most ADMET endpoints except hERG inhibition, collective intelligence outperformed artificial intelligence models [2]. This highlights the complementary value of human expertise and computational approaches in complex prediction tasks.

The Novartis preference learning approach demonstrated particular utility in compound prioritization, motif rationalization, and biased de novo drug design [1]. The learned scoring functions captured aspects of chemistry intuition not covered by standard in silico metrics like quantitative estimate of drug-likeness (QED), with which it showed only moderate correlation (Pearson r < 0.4) [1].

Bayesian models developed to predict an expert chemist's evaluation of NIH chemical probes achieved accuracy comparable to other drug-likeness measures and filtering rules, successfully identifying problematic probes based on criteria including excessive literature references, lack of published data, and predicted chemical reactivity [3].

Essential Research Reagents and Computational Tools

Table 3: Key Research Reagents and Computational Solutions

| Tool Category | Specific Solutions | Function in Intuition Research |

|---|---|---|

| Compound Databases | ZINC, ChEMBL, DrugBank [4] | Provide annotated compounds for preference studies and model training |

| Cheminformatics | RDKit [1], CDD Vault [3] | Calculate molecular properties and generate descriptors |

| Modeling Platforms | DeepChem [4], MolSkill [1] | Implement machine learning for preference prediction |

| Validation Tools | PAINS [3], QED [3], BadApple [3] | Benchmark learned models against established filters |

| Data Collection | Custom annotation platforms [1] | Present compound pairs and record chemist preferences |

Experimental Workflows in Intuition Capture Studies

The process of capturing and computationalizing medicinal chemistry intuition follows a systematic workflow that integrates human feedback with machine learning optimization.

Integration Pathways: From Intuition to Optimization

The application of captured medicinal chemistry intuition extends throughout the lead optimization process, integrating with established computational medicinal chemistry workflows.

Medicinal chemistry intuition, once considered an ineffable human expertise, is now being successfully captured, quantified, and augmented through computational approaches. Preference-based machine learning, Bayesian classification, and collective intelligence methodologies each offer distinct advantages for specific lead optimization challenges. The experimental evidence demonstrates that these computational proxies can replicate expert decision-making with increasing accuracy, providing objective validation for the subtle patterns underlying chemical intuition. As these approaches continue to evolve, integrating captured intuition with both traditional and contemporary drug discovery workflows promises to accelerate lead optimization cycles while preserving the valuable expertise that experienced medicinal chemists bring to drug development. The future of medicinal chemistry lies not in replacing human intuition, but in amplifying it through computational partnership.

The pharmaceutical industry stands at a pivotal juncture, grappling with Eroom's Law - the paradoxical observation that drug discovery costs rise exponentially despite technological advancements [5]. While artificial intelligence and computational methods promise to revolutionize therapeutic development, their ultimate impact hinges on a critical, often overlooked component: the systematic integration of expert human judgment. This guide examines how capturing and formalizing medicinal chemistry expertise transforms computational prediction from a black-box oracle into a reliable, interpretable partner in the drug discovery process.

The stakes could not be higher. Traditional discovery consumes over $2 billion and 10-15 years per approved drug, with approximately 90% of candidates failing in clinical trials [6] [7]. Computational approaches offer acceleration, but their true potential emerges only when they embody the nuanced decision-making frameworks of experienced scientists. This analysis compares emerging methodologies that bridge this human-AI divide, providing researchers with objective performance data and implementation frameworks to enhance their discovery pipelines.

Comparative Analysis: Computational Approaches with Expert Integration

Table 1: Performance Comparison of Expert-Informed Computational Approaches in Drug Discovery

| Methodology | Key Performance Metrics | Expert Integration Mechanism | Limitations |

|---|---|---|---|

| Expert-Defined Bayesian Networks [8] | Reduced causality assessment time from days to hours; High concordance with expert judgement | Explicit encoding of expert-defined probabilistic relationships | Limited to domains with well-established causal knowledge |

| Multi-Agent Co-Scientist (DiscoVerse) [9] | Near-perfect recall (≥0.99) with precision (0.71-0.91) on pharmaceutical queries | Role-specialized agents mirroring scientist workflows (preclinical, clinical, strategic) | Requires extensive historical organizational data |

| Large Quantitative Models (LQMs) [10] | Physics-based molecular simulations; Prediction of binding affinity, efficacy, toxicity | Grounded in first principles of physics, chemistry, and biology | High computational resource requirements |

| AI-Driven Predictive Platforms [6] | Identification of novel drug targets; Established immuno-oncology pipeline | Continuous refinement through learning from experimental failures | Platform-specific expertise may not generalize |

| Foundation Models for Biology [5] | Pattern detection across genomic, transcriptomic, proteomic datasets | Training on massive biological datasets to uncover fundamental "rules" | Limited success stories; biological complexity challenges model accuracy |

Table 2: Quantitative Performance Benchmarks Across Discovery Stages

| Discovery Stage | Traditional Approach Success Rate | Expert-Informed Computational Approach | Improvement Documented |

|---|---|---|---|

| Target Identification | ~5% of targets yield clinical candidates [5] | AI platforms with expert curation | 50-fold hit enrichment reported [11] |

| Lead Optimization | 6-12 months per cycle [11] | AI-guided retrosynthesis and DMTA cycles | Reduction to weeks [11] |

| Toxicity Prediction | 30% of failures due to toxicity [8] | Bayesian networks with expert causality assessment | High concordance with expert judgment [8] |

| Clinical Trial Design | High attrition from poor patient selection | Digital twins and AI-optimized trials [12] | Real-time adjustments based on ongoing data [13] |

Experimental Protocols and Methodologies

Expert-Defined Bayesian Network for Causality Assessment

Protocol Overview: This methodology formalizes expert judgment into a probabilistic framework for adverse drug reaction assessment [8].

Detailed Methodology:

- Expert Knowledge Elicitation: Structured interviews with pharmacovigilance experts identify key variables and their relationships

- Network Structure Definition: Construction of directed acyclic graphs capturing conditional dependencies between variables

- Parameter Estimation: Combination of expert-defined priors with historical data on drug safety profiles

- Validation Framework: Comparison of network outputs against independent expert judgments on test cases

- Iterative Refinement: Incorporation of new evidence and expert feedback to update network structure and parameters

Performance Metrics: Processing time reduction from days to hours while maintaining high concordance with expert judgment [8]

Multi-Agent Pharmaceutical Co-Scientist Evaluation

Protocol Overview: DiscoVerse implements a multi-agent system for reverse translation using historical pharmaceutical data [9].

Detailed Methodology:

- Corpus Curation: Assembly of 180 molecules from research repositories spanning 0.87 billion tokens across four decades

- Agent Specialization: Development of role-specific agents (preclinical, clinical, strategic) mirroring scientist workflows

- Semantic Retrieval Implementation: Cross-document linking with attention to terminology drift and synonymy

- Human-in-the-Loop Validation: Blinded expert evaluation of source-linked outputs for accuracy and utility

- Recall and Precision Calculation: Quantitative assessment on seven benchmark queries covering discontinuation rationale and organ-specific toxicity

Performance Metrics: Near-perfect recall (≥0.99) with precision ranging from 0.71-0.91 across pharmaceutical queries [9]

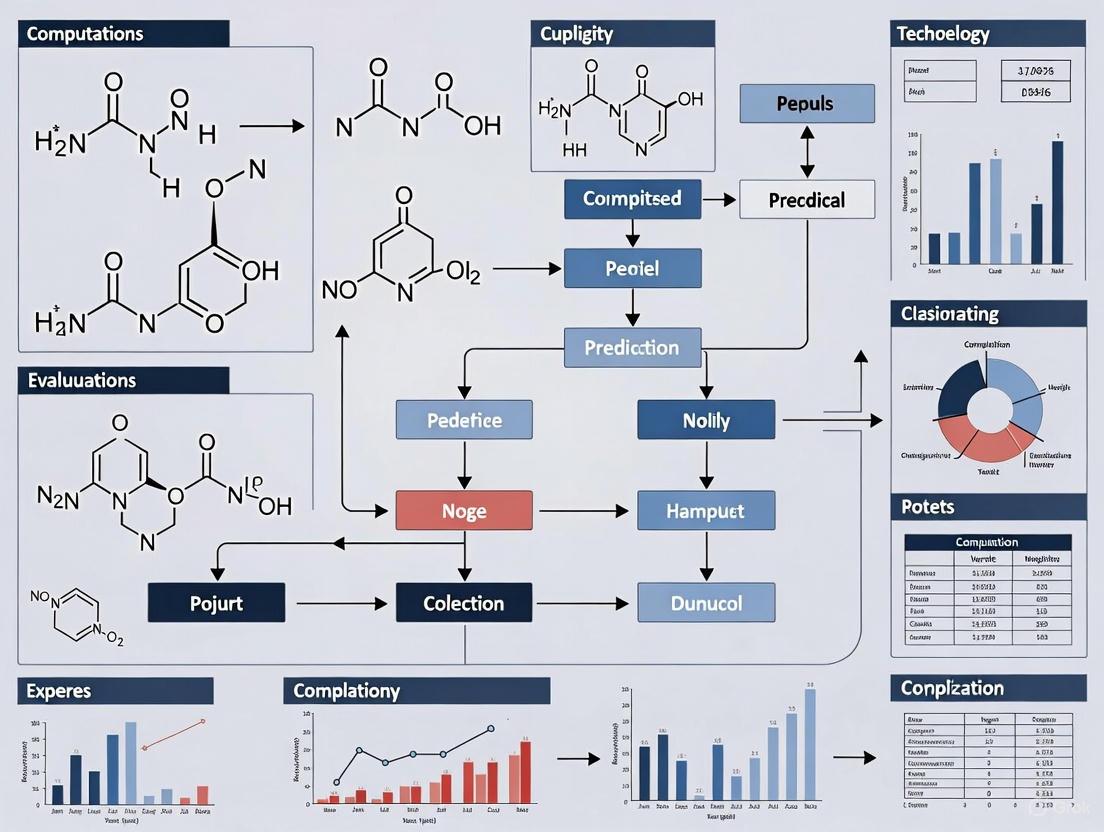

Visualization of Expert-Informed Computational Workflows

Multi-Agent Pharmaceutical Co-Scientist Architecture

Figure 1: Multi-Agent Pharmaceutical Co-Scientist Architecture. This diagram illustrates how the DiscoVerse system orchestrates specialized agents that mirror pharmaceutical scientist workflows, with each agent accessing historical knowledge bases to generate evidence-based answers [9].

Expert Judgment Integration in Computational Workflow

Figure 2: Expert Judgment Integration Workflow. This diagram illustrates the continuous feedback loop between expert knowledge, computational models, and experimental data that enables iterative refinement of predictive systems in drug discovery [8] [9].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Platforms for Expert-Informed Computational Discovery

| Tool/Platform | Function | Expert Integration Features |

|---|---|---|

| CETSA (Cellular Thermal Shift Assay) [11] | Validates direct target engagement in intact cells and tissues | Provides quantitative, system-level validation bridging biochemical potency and cellular efficacy |

| DiscoVerse Multi-Agent System [9] | Semantic retrieval and synthesis across historical pharmaceutical data | Role-specialized agents mirroring scientist workflows; preserves institutional memory |

| Bioptimus Foundation Model [5] | Universal AI foundation model for biology across multiple scales | Creates comprehensive multiscale representation of human biology from proteins to tissues |

| AI-Driven Predictive Platforms [6] | Target identification and candidate optimization | Continuous refinement through learning from experimental failures across multiple programs |

| vigiMatch Algorithm [8] | Identifies duplicate adverse event reports using ML | Analyzes similarities in patient demographics, drug information, and adverse event descriptions |

| Large Quantitative Models (LQMs) [10] | Physics-based molecular simulations | Grounded in first principles of physics, chemistry, and biology rather than literature patterns |

The integration of expert judgment with computational methodologies represents more than a technical enhancement—it constitutes a fundamental strategic imperative for overcoming Eroom's Law in pharmaceutical R&D. The comparative data presented in this guide demonstrates that approaches which successfully formalize and incorporate human expertise achieve superior performance across multiple metrics: from reduced cycle times and higher prediction accuracy to improved decision-making transparency.

As regulatory frameworks evolve to address AI implementation in drug development, the explainability and auditability afforded by expert-informed systems will become increasingly valuable [12]. The EMA's structured approach and FDA's flexible model both acknowledge the necessity of human oversight in computational applications affecting patient safety [12]. Future innovations will likely focus on enhanced knowledge capture methodologies, more sophisticated human-AI interaction paradigms, and standardized frameworks for validating expert-informed computational systems across the drug discovery lifecycle.

The organizations leading pharmaceutical innovation will be those that recognize expertise not as a competitor to computational efficiency, but as its essential enabler—creating discovery ecosystems where human experience and artificial intelligence operate in continuous, productive dialogue.

The field of drug discovery is undergoing a profound transformation, moving from traditional, intuition-based methods to approaches powered by massive data and computational intelligence. For decades, the painstaking process of identifying and optimizing potential drug candidates relied heavily on the expertise and pattern recognition capabilities of seasoned medicinal chemists. Today, this process is being systematically decoded and scaled through two interconnected paradigms: human-powered crowdsourcing and machine learning algorithms. This evolution represents more than a mere technological shift—it constitutes a fundamental reimagining of how expert decisions are captured, modeled, and ultimately enhanced to accelerate the journey from hypothesis to therapeutic. This guide examines the complementary roles of crowdsourcing and machine learning in modeling medicinal chemistry expertise, providing researchers with a practical framework for leveraging these technologies in computational prediction of medicinal chemist evaluations.

The Foundation: Data Generation through Crowdsourcing

Before machine learning models can emulate expert decisions, they require extensive, high-quality training data. Crowdsourcing platforms have emerged as critical infrastructure for generating the annotated datasets that power modern AI systems in drug discovery.

What is Crowdsourcing in AI Data Services?

Crowdsourcing platforms operate by breaking down large, complex data projects into smaller microtasks distributed to a global network of human workers [14]. This model creates a two-sided marketplace: businesses and AI teams access scalable, cost-effective solutions for data-intensive projects, while workers gain flexible earning opportunities contributing to AI development [14]. The scale of this ecosystem is substantial—leading platforms like Clickworker boast over 7 million registered workers across 136 countries, creating a diverse, on-demand workforce capable of handling tasks at immense scale [14].

Key Crowdsourcing Platforms and Their Capabilities

Table: Comparison of Major Data Crowdsourcing Platforms for AI Drug Discovery Applications

| Platform | AI Data Services | Specialized Capabilities | Workforce Scale | Quality Control Mechanisms |

|---|---|---|---|---|

| LXT (+ Clickworker) | AI training data generation, data annotation, RLHF | Full range of data types (image, video, audio, text); self-service platform & API; managed services | ~7 million workers (post-acquisition) | Qualification tests, gold standard tasks, multi-person validation [14] |

| Appen | Data collection, annotation, validation | User-friendly platform; wide data type coverage | Smaller participant network | Not specified in sources |

| Amazon Mechanical Turk | Data collection, annotation, market research | Quick, efficient data collection; user-friendly interface | Significantly smaller network; limited English skills | Basic platform controls |

| Toloka AI | Data labeling, cleaning, categorization | Covers all data types (image, video, text, audio) | ~200,000 workers | Platform-managed quality assurance |

| Prolific | AI data collection, academic research data | Specialization for research data; pairs with annotation tools | Not specified | Attention checks, representative sampling |

Experimental Protocols in Crowdsourced Data Generation

The quality of crowdsourced data directly impacts the performance of machine learning models trained on it. Rigorous experimental protocols are essential for ensuring data reliability:

Task Design and Instruction Clarity: Projects begin with meticulously designed tasks featuring unambiguous instructions with clear examples of correct and incorrect responses [14]. For drug discovery applications, this might involve precise guidelines for classifying molecular structures or identifying protein binding sites.

Workforce Targeting and Selection: Platforms enable researchers to filter workers by qualifications, demographics, or performance history [14]. For specialized medicinal chemistry tasks, this might involve targeting workers with scientific backgrounds or high accuracy scores on previous chemistry-related tasks.

Multi-Layered Quality Control: Effective implementations combine several quality assurance methods:

- Qualification Tests: Workers must pass unpaid tests demonstrating understanding before accessing paid projects [14].

- Gold Standard Tasks: Pre-labeled test items injected into task flows identify underperforming workers [14].

- Redundancy and Consensus: Having multiple workers (3-5) complete the same task enables consensus validation and identifies discrepancies [14].

Continuous Performance Monitoring: Worker accuracy is tracked throughout projects, with automated removal of those falling below quality thresholds [14].

Table: Research Reagent Solutions for Crowdsourced Data Generation

| Solution Type | Specific Examples | Function in Experimental Workflow |

|---|---|---|

| Crowdsourcing Platforms | LXT/Clickworker, Appen, Amazon Mechanical Turk | Infrastructure for task distribution, worker management, and quality control |

| Data Annotation Tools | Bounding box tools, polygon segmentation, semantic segmentation interfaces | Enable precise labeling of images, molecular structures, and chemical data |

| Quality Validation Systems | Gold standard datasets, consensus algorithms, qualification tests | Verify and maintain data accuracy throughout collection process |

| API Integration | REST APIs for major crowdsourcing platforms | Enable seamless integration with existing data pipelines and MLOps workflows |

The Transition: From Human Intelligence to Machine Intelligence

The transition from crowdsourcing to machine learning represents a natural progression in scaling expert decision-making. While crowdsourcing harnesses distributed human intelligence for specific tasks, machine learning aims to capture and replicate the underlying patterns of expert decision-making itself.

The Informacophore Concept: Bridging Human Expertise and Machine Learning

A key conceptual framework emerging in modern drug discovery is the "informacophore"—an evolution of the traditional pharmacophore concept [15]. Where classical pharmacophores represent the spatial arrangement of chemical features essential for molecular recognition based on human-defined heuristics, the informacophore incorporates data-driven insights derived from structure-activity relationships (SARs), computed molecular descriptors, fingerprints, and machine-learned representations of chemical structure [15].

This fusion of structural chemistry with informatics enables a more systematic and bias-resistant strategy for scaffold modification and optimization in medicinal chemistry [15]. The informacophore acts as a bridge between human expertise and machine intelligence—it represents the minimal chemical structure combined with computational descriptors essential for a molecule to exhibit biological activity, effectively encoding the patterns that expert medicinal chemists recognize through experience and intuition [15].

Experimental Evidence: Validating Crowdsourcing for Expert-Like Tasks

Substantial research has validated crowdsourcing as a mechanism for generating expert-level annotations. A World Bank study comparing data collection methods found strong statistical alignment between crowdsourced data and traditional enumerator-collected surveys, with correlation coefficients reaching 0.99 for some commodity price pairs [16]. While this specific study focused on economic data, the methodological validation has important implications for scientific applications: it demonstrates that properly structured crowdsourcing can produce data quality comparable to expert-collected benchmarks.

In drug discovery contexts, researchers have employed similar validation frameworks, using expert medicinal chemists' evaluations as gold standards against which to measure crowdsourced annotations of molecular properties, binding affinities, and toxicity profiles.

The Machine Learning Paradigm: Modeling Expert Decisions

With the foundation of high-quality, crowdsourced training data, machine learning models can begin to directly emulate and scale the decision-making processes of expert medicinal chemists.

Key Machine Learning Applications in Medicinal Chemistry Evaluation

Predictive Modeling of Molecular Properties

Machine learning algorithms can predict key molecular properties that inform medicinal chemists' evaluations, including boiling point, vaporization enthalpy, molecular mass, and refractivity [17]. Researchers have successfully used valency-based topological indices (including Zagreb and atom bond connectivity indices) combined with regression analysis to create predictive models for these physicochemical properties [17]. Statistical metrics from these studies demonstrate significant predictive power, enabling rapid virtual screening of compound libraries.

Multi-Criteria Decision Making for Compound Prioritization

Multiple-criteria decision-making (MCDM) methodologies, such as VIseKriterijumska Optimizacija I Kompromisno Raspoređivanje (VIKOR) and Simple Additive Weighting (SAW), enable hierarchical ordering of compounds based on various parameters [17]. This approach directly models how expert chemists balance multiple factors when prioritizing lead compounds. Hierarchical ordering in drug design streamlines discovery by systematically ranking candidates based on criteria including potency, selectivity, toxicity, and synthetic accessibility [17].

AI-Enhanced Molecular Modeling and ADMET Prediction

The fusion of artificial intelligence with computational chemistry has revolutionized compound optimization and molecular modeling [18]. Core AI algorithms—including support vector machines, random forests, graph neural networks, and transformers—now support applications in molecular representation, virtual screening, and ADMET (absorption, distribution, metabolism, excretion, and toxicity) property prediction [18]. Platforms like Deep-PK and DeepTox leverage graph-based descriptors and multitask learning to predict pharmacokinetics and toxicity, directly modeling complex expert evaluations of drug candidate viability [18].

Experimental Protocols for ML Modeling of Expert Decisions

Protocol 1: Model Training with Crowdsourced Data

- Dataset Curation: Collect and pre-process expert-level annotations from crowdsourcing platforms, ensuring balanced representation across chemical space.

- Feature Engineering: Compute molecular descriptors (topological indices, fingerprints, 3D descriptors) that capture structurally and electronically relevant features [17] [18].

- Model Selection: Choose appropriate algorithms based on data size and complexity—from random forests for smaller datasets to graph neural networks for complex structure-activity relationships [18].

- Validation Framework: Implement rigorous train-test splits, cross-validation, and external validation sets to prevent overfitting and ensure generalizability [19].

Protocol 2: Prospective Validation and Iterative Refinement

- Blinded Prediction: Deploy trained models to predict expert evaluations for novel compound libraries not included in training data.

- Experimental Verification: Compare model predictions with actual expert medicinal chemist evaluations and experimental results.

- Error Analysis: Systematically examine prediction errors to identify patterns and limitations in the model.

- Model Retraining: Incorporate new expert evaluations to continuously improve model performance through active learning cycles.

Evolution of Expert Decision Modeling Workflow

Comparative Analysis: Performance Across Modeling Approaches

Table: Performance Comparison of Expert Decision Modeling Approaches

| Modeling Approach | Data Requirements | Interpretability | Scalability | Documented Accuracy/Performance |

|---|---|---|---|---|

| Traditional Medicinal Chemistry | Limited structured data; heavy reliance on individual expertise | High—direct human reasoning | Limited by human capacity | Foundation of historical drug discovery; slow and expensive [20] |

| Crowdsourced Evaluation | Large-scale human annotations; quality control protocols | Medium—human decisions with some standardization | High for discrete tasks; limited for complex integration | Strong correlation with expert benchmarks (R=0.99 in validation studies) [16] |

| Machine Learning Models | Extensive training datasets; feature engineering | Variable—simpler models more interpretable than deep learning | Very high—once trained, scales infinitely | Reduces preclinical research time by ~2 years; improves multiparameter optimization [20] |

| Hybrid Human-AI Systems | Combined human annotations and algorithmic training | Medium-high—human oversight of AI predictions | High—leverages strengths of both approaches | Emerging as most promising for complex decision environments |

Future Directions and Emerging Applications

The evolution of modeling expert decisions continues to advance toward increasingly integrated and sophisticated approaches. Several promising directions are shaping the next generation of computational tools for medicinal chemistry evaluation:

Hybrid AI-Quantum Frameworks

The convergence of artificial intelligence with quantum chemistry calculations is enabling more accurate prediction of molecular properties and reaction mechanisms [18]. Surrogate models trained on quantum mechanical calculations can approximate complex electronic properties while dramatically reducing computational costs, making expert-level quantum chemical insights more accessible in early drug discovery [18].

Multi-Omics Integration for Context-Aware Predictions

Next-generation models are incorporating diverse biological data streams—including genomics, proteomics, and metabolomics—to create more context-aware predictions of compound efficacy and safety [18]. This multi-omics approach allows models to better emulate how expert chemists integrate diverse biological information when evaluating potential drug candidates.

Generative AI for De Novo Molecular Design

Generative adversarial networks (GANs) and variational autoencoders (VAEs) are increasingly used for de novo drug design, creating novel molecular structures optimized for multiple parameters simultaneously [18]. These systems effectively learn and replicate the creative aspects of expert medicinal chemistry decision-making, generating innovative chemical matter that satisfies complex constraint sets.

The evolution from crowdsourcing to machine learning represents a fundamental transformation in how expert medicinal chemistry decisions are captured, modeled, and scaled. Crowdsourcing provides the essential foundation of high-quality training data, enabling machine learning models to identify complex patterns in expert decision-making that would be difficult to articulate through traditional knowledge representation methods. As these technologies continue to mature and integrate, they promise to augment—rather than replace—medicinal chemistry expertise, freeing researchers from routine evaluation tasks to focus on more creative and complex aspects of drug discovery. The most successful implementations will likely remain hybrid systems that leverage the complementary strengths of human intelligence and artificial intelligence, creating a synergistic relationship that accelerates the development of novel therapeutics while maintaining the essential chemical intuition that has long driven medicinal chemistry innovation.

In the field of computational medicinal chemistry, the journey from compound design to viable therapeutic agent relies on a complex interplay between data-driven algorithms and human expertise. While artificial intelligence and machine learning platforms excel at processing explicit knowledge—codified data from molecular structures, quantitative structure-activity relationships (QSAR), and physicochemical properties—they struggle to capture the tacit, intuitive understanding experienced medicinal chemists develop through years of experimental practice [4]. This tacit knowledge includes the nuanced ability to recognize promising compound profiles, troubleshoot synthesis pathways, and predict biological behavior based on pattern recognition that often defies straightforward articulation [21] [22].

The formalization of this tacit knowledge presents significant challenges, particularly regarding the inherent subjectivity of human experience, the pervasive influence of cognitive biases, and the difficulty in achieving consistent documentation across research teams. These challenges become increasingly critical as the industry seeks to integrate human expertise with computational approaches to accelerate drug discovery [23]. This guide examines these key challenges through the lens of computational medicinal chemistry, providing a structured comparison of their impacts and potential mitigation strategies.

Comparative Analysis of Key Challenges

The table below summarizes the three primary challenges in formalizing tacit knowledge, their specific manifestations in computational medicinal chemistry, and their impact on research outcomes.

Table 1: Key Challenges in Formalizing Tacit Knowledge in Computational Medicinal Chemistry

| Challenge | Manifestation in Medicinal Chemistry | Impact on Research & Development |

|---|---|---|

| Subjectivity [24] [25] | Reliance on individual chemist's intuition for assessing compound "drug-likeness" or synthesis feasibility that varies between experts. | Inconsistent compound selection and prioritization; difficulty replicating success across projects or research teams. |

| Cognitive Bias [24] | Confirmation bias favoring data that aligns with previous successful chemical classes; sunk cost bias persisting with suboptimal lead compounds due to significant prior investment. | Misguided research directions; continued investment in failing compounds; overlooked promising chemical space. |

| Inconsistency [21] [22] | Variable documentation of rationale for compound design choices or experimental adjustments, leading to fragmented knowledge. | Loss of valuable contextual knowledge when team members change; impeded organizational learning and process optimization. |

Experimental Protocols for Studying and Mitigating Challenges

Researchers have developed several methodological approaches to study and address the challenges of formalizing tacit knowledge. The following protocols outline key experimental designs used to evaluate and improve knowledge capture in computational medicinal chemistry environments.

Protocol 1: Community Deliberation for Bias Mitigation

Objective: To counter individual cognitive biases in tacit knowledge by testing individual expertise against the collective judgment of a Community of Practice (CoP) [24].

- Design Complex Reasoning Tasks: Present medicinal chemists with a challenging compound optimization problem involving multiple parameters (e.g., potency, selectivity, metabolic stability).

- Individual Assessment: Ask a group of individual chemists to solve the task independently, documenting their proposed solutions and reasoning.

- Group Deliberation: Assemble a diverse team of chemists, computational scientists, and pharmacologists to deliberate on the same task. The group must work collaboratively to produce a single, agreed-upon solution.

- Outcome Measurement: Compare the success rates of individual versus group solutions. Laboratory evidence suggests groups can achieve an 80% success rate on complex reasoning tasks, compared to approximately 10% for individuals working alone [24].

- Application: Implement structured community processes like Peer Assist, where a project team invites external experts to challenge assumptions and provide insights before a project begins.

Protocol 2: Cross-Cultural Comparison of Tacit Knowledge Acquisition

Objective: To empirically measure how tacit knowledge acquisition varies across different organizational or national cultures, and its subsequent influence on innovation [25].

- Population Sampling: Recruit professionals from the IT and pharmaceutical industries across different countries (e.g., USA and Poland). Sample sizes in a foundational study were n=379 for the US and n=350 for Poland [25].

- Variable Measurement: Use survey instruments to quantify two primary modes of tacit knowledge acquisition:

- "Learning by doing": Measured through scales assessing experiential experimentation.

- "Learning by interaction": Measured through scales assessing socialization and collaboration.

- Mediation Analysis: Measure how well the acquired knowledge is translated into innovation (both process and product/service innovation) through the mediators of knowledge awareness (internalization) and willingness to share (externalization).

- Outcome Analysis: Compare the dominant acquisition modes by region and their relative effectiveness in driving innovation. The referenced study demonstrated that in the US, "learning by doing" is dominant, whereas in Poland, "learning by interaction" and critical thinking are more common [25].

Protocol 3: Reality Testing Through Structured Reflection

Objective: To use routine reflection processes as a self-correction mechanism against individual and group subjective bias [24].

- Conduct After-Action Reviews (AAR): Following key experimental milestones, gather the project team to discuss two primary questions in a facilitated session:

- "What was expected to happen?"

- "What actually happened?"

- Document Variances: Systematically document and analyze the discrepancies between expectations and reality. This process uses objective outcomes to challenge and refine the team's initial assumptions and tacit understandings.

- Manage Lessons Learned: Formalize the insights gained from the AAR into actionable lessons that can be integrated into future computational workflows or experimental designs, creating a continuous feedback loop that grounds tacit knowledge in empirical evidence.

Workflow Diagram for Knowledge Formalization

The following diagram illustrates a logical workflow for capturing and validating tacit knowledge in computational medicinal chemistry, integrating the experimental protocols described above to mitigate subjectivity, bias, and inconsistency.

The Scientist's Toolkit: Essential Research Reagents & Solutions

The following table details key methodological solutions and their functions for researching and formalizing tacit knowledge in a scientific context.

Table 2: Key Reagent Solutions for Tacit Knowledge Research

| Research Reagent Solution | Function in Knowledge Formalization |

|---|---|

| Community of Practice (CoP) [24] | A structured group of professionals with a common interest, used as a forum to test individual tacit knowledge against collective expertise and mitigate cognitive biases. |

| After Action Review (AAR) [24] | A facilitated reflection protocol that compares expected versus actual outcomes, serving as a reality-check against subjective memory and bias. |

| Peer Assist [24] | A pre-project meeting where a team invites external experts to challenge its assumptions and plans, providing corrective input before work begins. |

| AI/ML Integration Platforms [4] [23] | Computational tools (e.g., for QSPR analysis, generative design) that provide a structured, data-driven framework to capture and replicate the decision patterns of expert chemists. |

| Quantitative Survey Instruments [25] | Validated research tools designed to measure tacit knowledge acquisition modes ("learning by doing" vs. "learning by interaction") and their correlation with innovation outcomes. |

The formalization of tacit knowledge represents a critical frontier in computational medicinal chemistry, with the potential to significantly accelerate drug discovery by preserving and scaling invaluable human expertise. While the challenges of subjectivity, bias, and inconsistency are substantial, the experimental protocols and tools outlined provide a robust methodological foundation for addressing them. Success in this endeavor requires a deliberate, multi-faceted strategy that combines technological solutions with cultural and procedural shifts, ultimately creating a more integrated and effective research ecosystem where human intuition and computational power are mutually reinforcing.

In the field of computational medicinal chemistry, the quality and scope of underlying data sources fundamentally determine the predictive accuracy and translational value of research outcomes. The emerging paradigm leverages a synergistic relationship between publicly available chemical probes and proprietary annotations to create robust, predictive models. Chemical probes—selective small-molecule modulators of protein activity—serve as essential reagents for investigating mechanistic and phenotypic aspects of molecular targets through biochemical analyses, cell-based assays, and animal studies [26]. These probes enable researchers to form critical hypotheses about target function and therapeutic potential. Meanwhile, proprietary annotations provide the commercial context, experimental depth, and strategic intelligence necessary to transform basic research findings into viable therapeutic candidates. This comparative guide objectively analyzes the performance characteristics of these complementary data sources within the context of computational prediction of medicinal chemist evaluations, providing researchers with a framework for optimal resource allocation and experimental design.

The ecosystem of chemical data sources spans from open-access repositories to commercially protected intelligence, each with distinct advantages and limitations. The table below summarizes the core characteristics of these complementary resources:

| Data Characteristic | Public Databases (ChEMBL, PubChem) | Proprietary Annotations (Patent Data, Internal R&D) |

|---|---|---|

| Primary Content | Bioactivity data, chemical structures, target information [27] | Structure-activity relationships, manufacturing processes, formulation data [27] |

| Commercial Context | Limited; focuses on basic research findings [27] | Comprehensive; includes strategic intellectual property [27] |

| Result Spectrum | Predominantly positive results (publication bias) [27] | Includes negative data and failed experiments [27] |

| Data Standardization | Variable quality; inconsistent metadata [27] | Highly standardized internal formats [27] |

| Temporal Context | Retrospective; significant publication delays [27] | Forward-looking; includes emerging trends [27] |

| Accessibility | Freely available [27] | Restricted via licensing or internal access [27] |

| Chemical Space Coverage | Broad but incomplete [28] | Targeted to specific therapeutic areas [29] |

| Validation Requirements | Extensive curation needed [28] | Pre-validated for specific applications [26] |

Performance Metrics in Predictive Modeling

The practical impact of data source selection becomes evident when evaluating computational model performance. Public databases, while invaluable for foundational research, introduce specific limitations that affect predictive accuracy:

- Publication Bias Impact: Models trained exclusively on public data demonstrate inflated performance metrics due to the absence of negative results, which compromises their translational predictive power [27].

- Commercial Viability Blind Spots: Public data lacks critical information on synthesis scalability, formulation challenges, and compound stability, leading to discrepancies between computational predictions and practical feasibility [27].

- Standardization Challenges: Inconsistent data quality and metadata representation in public sources necessitate extensive curation efforts, with one analysis showing approximately 15-20% of compounds typically require removal during data cleaning procedures [28].

Proprietary data sources address these limitations by providing comprehensive experimental context, but introduce challenges of accessibility and potential fragmentation across competing organizations [27].

Experimental Protocols for Data Validation and Application

Protocol 1: Chemical Probe Validation for Target Identification

Objective: To experimentally validate tool compounds for probing novel targets identified through computational prediction.

Methodology:

- Probe Selection: Identify selective small-molecule modulators with well-defined mechanism of action from commercial vendors (e.g., Tocris Bioscience, MilliporeSigma) or published literature [26] [29].

- Potency Confirmation: Determine IC50/EC50 values using at least two orthogonal methodologies (e.g., biochemical assays and surface plasmon resonance) [26].

- Selectivity Profiling: Evaluate against related targets (e.g., kinase family panels for kinase inhibitors) to establish specificity [26] [30].

- Cellular Target Engagement: Implement Cellular Thermal Shift Assay (CETSA) to confirm direct binding in physiologically relevant environments [11].

- Phenotypic Validation: Assess functional effects in disease-relevant cell models and measure appropriate biomarker readouts [26].

Expected Outcomes: Establishment of high-quality chemical probes with defined potency, selectivity, and cellular activity profiles for use in computational model training and validation [26].

Protocol 2: High-Throughput Experimentation (HTE) Analysis

Objective: To generate statistically robust datasets for informing computational models of chemical reactivity and compound properties.

Methodology:

- Reaction Selection: Design experiments to cover diverse chemical spaces, including cross-coupling reactions and chiral salt resolutions [31].

- Standardized Screening: Execute reactions using automated platforms with systematic variation of reagents, catalysts, and conditions [31].

- Data Processing: Apply High-Throughput Experimentation Analyser (HiTEA) framework incorporating random forests, Z-score ANOVA-Tukey, and principal component analysis [31].

- Bias Assessment: Identify regions of dataset bias and chemical spaces requiring further investigation [31].

- Model Integration: Incorporate both positive and negative results into computational prediction platforms to balance predictive algorithms [31].

Expected Outcomes: Creation of a comprehensive "reactome" dataset elucidating statistically significant relationships between reaction components and outcomes for training more accurate predictive models [31].

Protocol 3: Computational Tool Benchmarking for Property Prediction

Objective: To evaluate and select optimal computational tools for predicting physicochemical and toxicokinetic properties of novel compounds.

Methodology:

- Dataset Curation: Collect experimental data from literature sources, followed by structural standardization and removal of duplicates, inorganic compounds, and response outliers [28].

- Chemical Space Analysis: Map validation datasets against reference chemical spaces (e.g., approved drugs, industrial chemicals, natural products) using principal component analysis of molecular fingerprints [28].

- Tool Selection: Prioritize software implementing Quantitative Structure-Activity Relationship (QSAR) models with defined applicability domains and batch prediction capabilities (e.g., OPERA, SwissADME) [28].

- Performance Assessment: Evaluate predictive accuracy (R² for regression, balanced accuracy for classification) both inside and outside model applicability domains [28].

- Model Recommendation: Identify best-performing tools for specific chemical properties and compound classes based on external validation results [28].

Expected Outcomes: Establishment of a validated computational toolkit for accurate prediction of key molecular properties, enabling more efficient compound prioritization in early discovery stages [28].

Visualization of Chemical Probe Validation Workflow

Figure 1. Multi-stage workflow for experimental validation of chemical probes prior to computational model integration. The process begins with computational target identification and progresses through sequential experimental validation stages to establish comprehensive probe characteristics [26] [11].

The Scientist's Toolkit: Essential Research Reagent Solutions

The experimental protocols described require specific research reagents and platforms to generate high-quality data for computational models. The table below details key solutions and their applications:

| Research Reagent | Provider Examples | Primary Function | Application in Computational Research |

|---|---|---|---|

| Selective Kinase Inhibitors [30] | Tocris Bioscience, Selleck Chemicals [29] | Target validation for kinase-focused projects | Training models for kinase inhibitor selectivity prediction |

| CETSA Kits [11] | Pelago Biosciences | Cellular target engagement validation | Generating data on cellular target binding for model training |

| Tool Compounds for Dark Kinome [30] | MedChem Express, Cayman Chemical [29] | Probing understudied kinases from dark kinome | Expanding model coverage to chemically unexplored targets |

| Fluorescent Chemical Probes [29] | AAT Bioquest, Abcam [29] | Imaging and real-time monitoring of biological processes | Providing spatial and temporal data for phenotypic models |

| High-Throughput Screening Libraries [31] | Enamine, OTAVA [15] | Large-scale compound profiling | Generating comprehensive structure-activity relationship data |

| QSAR Software Platforms [28] | OPERA, SwissADME | Predicting physicochemical and toxicokinetic properties | Enabling in silico compound prioritization and optimization |

The comparative analysis presented demonstrates that neither public nor proprietary data sources alone suffice for robust computational prediction in medicinal chemistry. Publicly available chemical probes provide essential foundational knowledge and accessibility, while proprietary annotations offer the commercial context and experimental depth necessary for translational success. The most effective research strategies leverage both resources through rigorous experimental validation protocols, including chemical probe characterization, high-throughput experimentation analysis, and computational tool benchmarking. This integrated approach enables the development of predictive models that more accurately reflect the complex realities of drug discovery, ultimately accelerating the identification and optimization of novel therapeutic candidates. As the field advances, the systematic generation and curation of high-quality data will remain the critical factor determining success in computational medicinal chemistry research.

Building the AI Chemist: Machine Learning Methods and Practical Applications

The lead optimization process in drug discovery represents an arduous endeavor where the collective input of numerous medicinal chemists is weighed to achieve a desired molecular property profile. Building the expertise to successfully drive such projects collaboratively is a very time-consuming process that typically spans many years within a chemist's career [32]. Historically, this expertise has remained largely tacit—embedded in the intuition of experienced chemists and prone to subjective biases that affect decision-making [33]. Human decision bias is well-studied in the field of human computation: human characteristics, opinions, cognitive and social biases, as well as the way the human computation task is formulated, can result in biased human feedback [34].

The fundamental challenge lies in the fact that human feedback, whether collected directly or inferred indirectly from behavior, often serves as input to algorithmic decision making. When algorithms fail to account for potential biases in this feedback, the result can be systematically skewed decision-making with tangible impacts on research outcomes [34]. This is particularly problematic in medicinal chemistry, where previous studies have reported only weak agreement between chemists and even inconsistencies in individual chemists' own prior selections, associated with various psychological factors including loss aversion [32].

Preference learning from pairwise comparisons emerges as a promising solution to these challenges. By reformulating compound assessment as a preference learning problem and adopting methodologies that mitigate known cognitive biases, researchers can develop more robust, data-driven models of medicinal chemistry intuition. This approach allows for the distillation of collective expert knowledge while controlling for individual biases, potentially accelerating drug discovery pipelines and improving decision consistency [32] [34].

Methodological Approaches: From Traditional Pairwise Comparisons to Advanced Bias-Aware Models

Foundational Preference Learning Techniques

The Bradley-Terry model stands as a seminal approach in the field of ranking from pairwise comparisons [34]. This probabilistic model, along with related methods such as Thurstone's model, establishes distributional assumptions about the relationship between pairwise comparisons and latent quality scores [34]. These classic approaches enable the recovery of item scores and ranking through maximum likelihood estimation, providing a mathematical framework for aggregating preference data.

Traditional counting and heuristic methods, such as David's score, offer alternative approaches to deriving rankings from pairwise comparisons [34]. Additionally, graph-based interpretations treat pairwise comparisons as directed graphs where nodes represent items and directed edges represent pairwise comparisons. This interpretation enables the application of random walk and spectral-based methods for ranking items, including RankCentrality, SerialRank, and GNNRank [34].

Bias-Aware Ranking Models

Recent methodological advances have focused specifically on addressing evaluator biases in pairwise comparison data. The BARP (Bias-Aware Ranker from Pairwise Comparisons) method extends the classic Bradley-Terry model by incorporating a bias parameter for each evaluator which distorts the true quality score of each item depending on the group the item belongs to [34]. This model enables the disentanglement of true latent scores from evaluators' bias through maximum likelihood estimation, effectively detecting and correcting for systematic biases in evaluator responses.

The BARP approach operates under the assumption that pairwise assessments should reflect the latent true quality scores of items but may be affected by each evaluator's own bias against or in favor of certain groups of items [34]. Unlike many fair ranking methods, BARP does not require designating any group as protected; instead, all groups are treated equivalently and the method can detect and fix bias in favor of or against any group without prior information about evaluator preferences [34].

Active Learning for Efficient Data Collection

Active learning approaches have been successfully applied to preference learning in medicinal chemistry contexts. In the MolSkill implementation, an active learning framework was employed to efficiently collect approximately 5000 annotations from 35 chemists at Novartis over several months [32]. This iterative process allowed for the strategic selection of informative molecule pairs for evaluation, maximizing information gain while minimizing the burden on expert chemists.

Table 1: Key Methodological Approaches in Preference Learning

| Method | Key Innovation | Bias Handling | Domain Application |

|---|---|---|---|

| Bradley-Terry Model | Probabilistic ranking from pairwise comparisons | None | General preference learning |

| BARP | Bias parameter for each evaluator | Explicit modeling of group biases | General ranking tasks |

| MolSkill | Active learning from chemist preferences | Implicit through diverse evaluators | Drug discovery |

| POLO | Multi-turn reinforcement learning | Learning from complete trajectories | Molecular optimization |

Experimental Protocols and Implementation

Data Collection Design

The MolSkill study exemplifies a carefully designed protocol for collecting medicinal chemistry preference data [32]. Researchers presented 35 chemists at Novartis with pairs of molecules and asked them to select which of the two they preferred. To mitigate cognitive biases such as anchoring effects that had plagued previous study designs, the approach adopted a pairwise comparison framework well-established in multiplayer game contexts [32]. The experimental design included preliminary rounds to assess inter-rater agreement, with measured Fleiss' κ coefficients of κF1 = 0.4 and κF2 = 0.32 for the first and second rounds respectively, indicating moderate agreement between chemists [32].

To evaluate response consistency, researchers included redundant pairs in both preliminary rounds and calculated per-chemist intra-rater agreement using Cohen's κ coefficient, finding κC1 = 0.6 and κC2 = 0.59 for the first and second preliminary rounds respectively [32]. This demonstrated a fair degree of response consistency among participants. Additionally, the design incorporated controls for positional bias, with preferences reasonably close to the expected random 50% baseline [32].

Model Architecture and Training

The MolSkill implementation utilized a simple neural network architecture trained on the pairwise comparison data [32]. Predictive performance was evaluated iteratively using the area under the receiver-operating characteristic (AUROC) curve under different scenarios. Cross-validation results showed steady improvement in pair classification performance as more data became available, starting from 0.6 AUROC and surpassing 0.74 at the 5000 available pairs threshold [32]. Performance showed no signs of plateauing even with the final batch of responses, suggesting potential for further improvement with additional data [32].

The POLO framework introduces Preference-Guided Policy Optimization (PGPO), a novel reinforcement learning algorithm that extracts learning signals at two complementary levels: trajectory-level optimization reinforces successful strategies, while turn-level preference learning provides dense comparative feedback by ranking intermediate molecules within each optimization trajectory [35].

Diagram 1: POLO Multi-Turn Optimization Workflow - 85 characters

Bias Detection and Correction Protocols

The BARP methodology employs a systematic approach to bias detection [34]. The model assumes pairwise comparisons follow a probabilistic model where the probability of preferring item i over item j depends on both the latent quality scores of the items and the bias of the evaluator. The log-likelihood of the parameters (items' latent scores and evaluators' bias) is explicitly defined and optimized using an alternating approach [34].

Experimental validation on synthetic data with ground-truth evaluators' bias demonstrated BARP's ability to accurately reconstruct evaluator bias (MSE < 0.3 with evaluators' bias uniformly distributed in [-5,5]) [34]. The ranking produced by BARP was consistently closer to the unbiased ranking than those produced by all baseline methods, with the performance gap widening as evaluator bias increased [34].

Comparative Performance Analysis

Predictive Accuracy and Bias Mitigation

Table 2: Performance Comparison of Preference Learning Methods

| Method | AUROC | Bias Mitigation | Sample Efficiency | Application Context |

|---|---|---|---|---|

| MolSkill | 0.74+ | Implicit through diverse raters | 5000+ comparisons | Drug-likeness prediction |

| BARP | N/A (Outperforms BT model) | Explicit bias modeling | Varies with dataset | General ranking with biased evaluators |

| POLO | N/A | Learning from complete trajectories | 500 oracle evaluations | Multi-property molecular optimization |

| Traditional BT Model | Baseline | No explicit bias handling | Varies with dataset | General preference learning |

Quantitative evaluation of the MolSkill approach demonstrated steady improvement in predictive performance as more data became available [32]. The area under the receiver-operating characteristic (AUROC) curve reached values exceeding 0.74 with 5000 available pairwise comparisons, with no evidence of performance plateauing, suggesting potential for further improvement with additional data [32].

The POLO framework achieved remarkable sample efficiency in lead optimization tasks, reaching an 84% average success rate on single-property optimization tasks (2.3× better than baselines) and 50% on multi-property tasks using only 500 oracle evaluations [35]. This represents a significant advancement in sample-efficient molecular optimization, crucial for domains with expensive experimental validation.

Relationship to Traditional Metrics

Comparative analysis reveals that preference learning approaches capture aspects of medicinal chemistry intuition orthogonal to traditional cheminformatics metrics. Analysis of the MolSkill scoring function showed Pearson correlation coefficients with established properties generally not surpassing r = 0.4 [32]. The most correlated descriptors included QED (quantitative estimate of drug-likeness), fingerprint density, fraction of allylic oxidation sites, atomic contributions to the van der Waals surface area, and the Hall-Kier kappa value [32].

Independent evaluation of MolSkill against traditional metrics demonstrated its ability to distinguish between different molecular sets. In comparative tests, MolSkill successfully differentiated "odd" molecules from drug-like ChEMBL molecules even after applying standard functional group filters, while QED failed to distinguish these sets after filtering [33].

Diagram 2: Bias Aware Ranking Mechanism - 80 characters

Applications in Drug Discovery Workflows

Compound Prioritization and Lead Optimization

Preference learning models have demonstrated significant utility in routine drug discovery tasks. The learned proxies from pairwise comparison data have been successfully applied to compound prioritization, motif rationalization, and biased de novo drug design [32]. These applications directly address critical challenges in lead optimization, where medicinal chemists must identify which compounds to synthesize and evaluate over subsequent rounds of optimization [32].

The POLO framework specifically targets lead optimization by formulating it as a multi-turn Markov Decision Process (MDP) [35]. In this formulation, the state space encodes the complete conversational context including task instructions, all proposed molecules, and their oracle evaluations, while the action space represents the agent's response in generating new candidate molecules [35]. This approach transforms large language models from one-shot generators into strategic decision-makers that improve through experience.

Bias-Resistant Molecular Evaluation

Beyond direct optimization, preference learning approaches offer promising solutions to the challenge of biased molecular evaluation. Traditional functional group filters struggle to identify "odd" molecules—structures that may be synthetically inaccessible or chemically unstable despite not violating explicit functional group rules [33]. The MolSkill approach demonstrates capability in identifying such molecules where traditional methods fail, effectively capturing subtle aspects of chemical intuition that elude rule-based systems [33].

The application of bias-aware ranking methods extends to various domains where human evaluators may exhibit systematic biases. In the IMDB-WIKI-SbS dataset comprising pairwise comparisons of face snapshots, the BARP method successfully identified evaluators who frequently misperceived ages of males compared to females and vice versa [34]. Similar approaches show promise for mitigating biases in medicinal chemistry evaluations where subjective preferences may influence compound selection.

Essential Research Reagents and Computational Tools

Table 3: Key Research Tools for Preference Learning Experiments

| Tool/Resource | Function | Application Context |

|---|---|---|

| MolSkill | Preference learning from pairwise comparisons | Drug-likeness prediction |

| BARP Model | Bias-aware ranking from pairwise comparisons | General ranking with biased evaluators |

| POLO Framework | Multi-turn reinforcement learning for optimization | Sample-efficient lead optimization |

| RDKit | Cheminformatics descriptor calculation | Molecular property computation |

| NIBR Filters | Functional group and property filtering | Preprocessing for molecular evaluation |

| ChEMBL Database | Bioactivity data for drug-like molecules | Benchmarking and validation |

| Oracle Evaluation | Property prediction functions | Objective molecular assessment |

The successful implementation of preference learning approaches requires specific computational tools and datasets. The RDKit software package provides essential cheminformatics functionality for computing molecular descriptors and properties relevant to drug discovery [32]. Specialized filtering systems such as the NIBR filters offer standardized approaches for removing compounds with undesirable functional groups or properties prior to analysis [33].

Critical to these approaches are carefully curated molecular datasets for benchmarking and validation. Resources such as the ChEMBL database provide access to millions of compounds with annotated physicochemical and bioactivity data, enabling robust validation of preference learning models [32] [33]. Additionally, standardized oracle functions for property prediction establish objective measures for evaluating molecular optimization performance [35].

Preference learning from pairwise comparisons represents a powerful paradigm for capturing medicinal chemistry intuition while mitigating cognitive biases inherent in expert judgment. The comparative analysis presented demonstrates that approaches such as MolSkill, BARP, and POLO offer distinct advantages for specific applications in drug discovery, from compound prioritization to multi-property optimization.

The experimental protocols and performance metrics detailed provide researchers with practical guidance for implementing these methodologies in their workflows. As the field progresses, the integration of increasingly sophisticated bias-aware ranking methods with active learning strategies promises to further enhance the efficiency and objectivity of medicinal chemistry decision-making, potentially accelerating the discovery of novel therapeutic agents.

The computational prediction of medicinal chemist evaluations has undergone a revolutionary transformation, evolving from simple statistical classifiers to sophisticated deep learning architectures. This paradigm shift has been driven by the increasing availability of chemical data and the need for more accurate, interpretable models in pharmaceutical research. Early approaches relied heavily on fingerprint-based representations paired with Bayesian methods, which offered simplicity and interpretability but limited predictive power for complex molecular relationships [36]. The emergence of deep learning has introduced models capable of automatically learning relevant features from molecular structures, significantly advancing predictive capabilities for critical properties including absorption, distribution, metabolism, excretion, toxicity (ADME/Tox), and biological activity profiles [36] [37].

The fundamental challenge in molecular machine learning lies in effectively representing chemical structures for computational analysis. Traditional approaches utilized fixed-length fingerprint representations or molecular descriptors, which required significant domain expertise to engineer and often captured limited structural information [38]. Contemporary graph-based representations naturally preserve molecular topology by representing atoms as nodes and bonds as edges, enabling more sophisticated neural architectures to learn directly from structural data [39] [40]. This evolution from descriptor-based to representation-learning approaches has substantially improved predictive accuracy across diverse pharmaceutical endpoints while introducing new considerations regarding computational requirements, interpretability, and implementation complexity.

Traditional Machine Learning Approaches

Bayesian Classification Models

Bayesian classifiers have served as foundational tools in cheminformatics due to their computational efficiency, interpretability, and strong performance with limited training data. These methods apply Bayes' theorem to calculate the probability that a compound belongs to a particular activity class based on its molecular features. The Laplacian-corrected Bayesian classifier modifies probability estimates to account for rare features that might otherwise lead to overfitting, making it particularly valuable for chemical datasets with sparse feature representations [41].

In pharmaceutical applications, Bayesian models have demonstrated significant utility for predicting specific molecular properties such as hERG channel blockage, a common cause of drug-induced cardiotoxicity. Studies using extended-connectivity fingerprints (ECFP_14) and molecular properties (molecular weight, fractional polar surface area, ALogP, and basic pKa) achieved global accuracy of 91% with sensitivity of 90% and specificity of 92% on test sets [41]. The interpretable nature of Bayesian models allows medicinal chemists to identify specific structural features associated with activity or toxicity, providing valuable guidance for structural optimization during lead compound development.

Support Vector Machines and Random Forests

Support vector machines (SVMs) operate by identifying optimal hyperplanes that separate compounds of different activity classes in high-dimensional descriptor space, while random forests construct multiple decision trees and aggregate their predictions. Both methods typically utilize fingerprint representations such as Functional Class Fingerprints (FCFP6) or extended-connectivity fingerprints [36].

In comprehensive comparisons across multiple drug discovery datasets including solubility, hERG inhibition, and anti-infective activities, SVMs consistently ranked as top-performing traditional methods, second only to deep neural networks [36]. Their robustness to high-dimensional data and ability to model complex decision boundaries made them popular choices for quantitative structure-activity relationship (QSAR) modeling throughout the 2000s and early 2010s. However, both SVMs and random forests remain limited by their dependence on fixed fingerprint representations, which may fail to capture complex structural patterns relevant to biological activity.

Deep Learning Architectures

Deep Neural Networks (DNNs)

Deep neural networks represent the foundational architecture of deep learning, consisting of multiple fully connected layers between input and output. These networks transform input representations through successive nonlinear transformations, enabling them to learn complex hierarchical features from molecular data. In pharmaceutical applications, DNNs typically utilize fingerprint representations as inputs but learn to combine these features in more sophisticated ways than traditional machine learning methods.

Comparative studies have demonstrated that DNNs generally outperform other machine learning methods across diverse pharmaceutical endpoints. Research comparing multiple algorithms across eight datasets relevant to drug discovery found that DNNs achieved superior performance based on normalized rankings of multiple metrics including AUC, F1 score, and Matthews correlation coefficient [36]. The study implementation utilized FCFP6 fingerprints with 1024 bits as input features, with datasets spanning solubility, probe-likeness, hERG inhibition, KCNQ1 potassium channel activity, and pathogen whole-cell screens including bubonic plague, Chagas disease, tuberculosis, and malaria [36].

Table 1: Performance Comparison Across Model Architectures

| Model Architecture | Representation | Solubility Prediction AUC | hERG Prediction AUC | TB Screen AUC | Interpretability |

|---|---|---|---|---|---|

| Bayesian Classifier | ECFP_14 | 0.85 | 0.91 | 0.82 | High |

| Support Vector Machine | FCFP6 | 0.88 | 0.89 | 0.85 | Medium |

| Random Forest | FCFP6 | 0.86 | 0.87 | 0.83 | Medium |

| Deep Neural Network | FCFP6 | 0.92 | 0.94 | 0.89 | Low |

| Graph Neural Network | Molecular Graph | 0.95 | 0.96 | 0.93 | Medium with GNNExplainer |

Graph Neural Networks (GNNs)

Graph neural networks represent a paradigm shift in molecular machine learning by operating directly on graph-based representations of molecular structure. Unlike fingerprint-based approaches, GNNs preserve the complete topological information of molecules, treating atoms as nodes and bonds as edges in a graph structure [40]. These networks employ message-passing mechanisms where atom representations are iteratively updated by aggregating information from neighboring atoms, effectively learning structural patterns that correlate with molecular properties.

The implementation of GNNs involves several key steps: (1) constructing molecular graphs from SMILES strings using tools like RDKit, (2) initializing node features using atom properties (element type, degree, hybridization, etc.) and edge features using bond characteristics, (3) applying multiple graph convolutional layers to learn increasingly sophisticated representations, and (4) global pooling to generate molecular-level embeddings for property prediction [40]. Recent advances have introduced more sophisticated attention mechanisms that weight neighbor contributions differently, allowing models to focus on the most relevant structural features for specific predictions [39].