Leverage Plots: A Practical Guide for Identifying Influential Points in Biomedical Research

This guide provides researchers, scientists, and drug development professionals with a comprehensive framework for using leverage plots to detect influential observations in regression analysis.

Leverage Plots: A Practical Guide for Identifying Influential Points in Biomedical Research

Abstract

This guide provides researchers, scientists, and drug development professionals with a comprehensive framework for using leverage plots to detect influential observations in regression analysis. It covers foundational concepts distinguishing outliers, leverage, and influence, offers step-by-step methodologies for creating and interpreting diagnostic plots in statistical software, and presents troubleshooting protocols for addressing high-leverage points. The article also explores advanced validation techniques and compares leverage plots with other diagnostic measures, emphasizing their critical role in ensuring model robustness and data integrity for regulatory compliance and high-impact publications.

Understanding Leverage, Outliers, and Influence in Regression Diagnostics

FAQs on Unusual Observations in Linear Regression

Q1: What is the fundamental distinction between an outlier, a leverage point, and an influential observation?

An outlier is a data point whose response (y-value) does not follow the general trend of the rest of the data [1]. Its dependent variable value is unusual given its predictor values.

A data point has high leverage if it has an extreme or "unusual" predictor (x-value) [1]. In multiple regression, this can mean a value that is particularly high or low for one or more predictors, or an unusual combination of predictor values [1]. A leverage point can follow the regression trend and thus may not be an outlier in the y-direction [2].

An influential point is one that unduly influences any part of the regression analysis, such as the estimated slope coefficients, predicted responses, or hypothesis test results [1]. Its removal from the dataset would cause a substantial change in the fitted model [2]. Influential points are often both outliers and high-leverage points [1].

Q2: How can I statistically test for these unusual points in my dataset?

Diagnostic tests help identify different types of unusual observations [2].

- y-Outlier: Check the residual (RESI), standardized residual (SRES), or studentized deleted residual (TRES). A data point is generally considered an outlier if the absolute value of any of these is high compared to others [2].

- x-Outlier (Leverage): Calculate the diagonal elements of the hat matrix (HI or leverage). The sum of all HI values equals p (the number of parameters plus the intercept). A common rule is that any HI value exceeding

2*p/n(twice the average leverage) indicates a high-leverage point [2]. - Influential Point: Use DFIT or Cook's distance. For small to medium datasets, an absolute DFIT value greater than 1 suggests influence [2]. For Cook's distance, a larger value indicates greater potential influence. The associated p-value from an F-distribution can quantify this: a probability over 50% indicates a major influence, 20-50% a moderate to high influence, and 10-20% a very small influence [2].

Q3: A high-leverage point is not necessarily an influential point. Can you explain why?

Yes, a high-leverage point is not automatically influential [1]. A point with an extreme x-value has the potential to exert strong influence on the regression line. However, if its observed y-value is consistent with the trend predicted by the other data points (i.e., it sits on or near the regression line formed by the other points), then including it will not significantly alter the slope or intercept [1]. In this case, it is a non-influential high-leverage point. Its main effect might be to artificially inflate the R-squared value and the statistical significance of the relationship, making the model appear stronger than it actually is for the bulk of the data [2].

Q4: What is the single most critical check for an observation that is both an outlier and has high leverage?

An observation that is both an outlier (unusual y) and has high leverage (unusual x) has the highest potential to be influential [1]. The most critical check is to assess its Cook's Distance or DFITs to statistically confirm its influence on the entire regression model [2]. You should refit the regression model with and without this point and compare key outputs like the estimated slope, R-squared, and p-values for a comprehensive view of its impact [1].

Troubleshooting Guide: Diagnosing Unusual Observations

| Symptom | Potential Cause | Diagnostic Check | Next Steps / Resolution |

|---|---|---|---|

| A dramatic change in the slope coefficient when a single point is added/removed. | An influential point that is likely both an outlier and has high leverage [1]. | 1. Check Cook's Distance (large value) [2].2. Check Leverage (HI > 2p/n) [2].3. Check Standardized Residual (absolute value > 2 or 3). | Investigate the data point for measurement or data entry error. Report results with and without the point if its validity is uncertain. |

| A very high R-squared value, but the model predictions for most data seem poor. | One or more high-leverage points are artificially strengthening the apparent relationship [2]. | Examine a scatter plot for points isolated in the x-direction. Calculate leverage statistics (HI) [2]. | Consider whether the leverage point is within the relevant scope of your research question. Collect more data in the gap to reduce the point's undue influence. |

| The residual plot shows one point with a very large deviation from zero. | A y-outlier [1]. | Check the residual (RESI) and studentized deleted residual (TRES) for that observation [2]. | Verify the data source and process that generated the point. If it is a valid but extreme value, consider robust regression techniques. |

| The model meets all statistical significance criteria, but a single point is responsible for this conclusion. | An influential point that drives the model's significance [1]. | Check if the point is influential using DFITs. Check if the model remains significant without the point. | Transparency is key. Acknowledge the point's role in the analysis. The model may not be generalizable if it relies on a single observation. |

Quantitative Diagnostics Table

The following table summarizes key statistical measures used to identify unusual observations. These values are typically calculated using statistical software.

| Diagnostic Measure | What it Identifies | Common Interpretation Threshold | Statistical Test / Calculation |

|---|---|---|---|

| Standardized Residual (SRES) | y-Outliers | Absolute value > 2 or 3 suggests a potential outlier [2]. | Residual / Standard Error of Residuals |

| Leverage (HI) | x-Outliers / High-Leverage Points | HI > 2*p/n (where p = # of parameters + intercept, n = sample size) [2]. |

Diagonal element of the Hat matrix. |

| Cook's Distance (D) | Influential Points | D > 1, or a significantly larger D value than other points; or a p-value > 50% from F-distribution indicates major influence [2]. | Complex function of leverage and residual. Measures the change in all fitted values when the i-th point is omitted. |

| DFFITS | Influential Points on Fitted Value | Absolute value > 1 for small/medium datasets [2]. | Standardized difference between the fitted value with and without the i-th observation. |

Experimental Protocol: Identifying Influential Points with Leverage Plots

Objective: To systematically identify and evaluate the impact of outliers, high-leverage points, and influential observations in a linear regression analysis.

1. Data Preparation and Initial Model Fitting

- Collect your dataset and specify your linear regression model.

- Using statistical software (e.g., R, Python, Minitab), fit the initial regression model and obtain the results.

2. Calculation of Diagnostic Statistics

- For every observation in your dataset, calculate and store the following diagnostic statistics:

- Predicted Values (y-hat)

- Residuals (RESI)

- Standardized Residuals (SRES) or Studentized Deleted Residuals (TRES)

- Leverage values (HI) from the hat matrix.

- Cook's Distance

- DFFITS (if available in your software).

3. Graphical Analysis

- Create a residuals vs. fitted values plot to visually check for outliers (points with large vertical deviations from zero) and non-constant variance.

- Create a leverage plot (e.g., studentized residuals vs. leverage) to simultaneously assess outliers and high-leverage points.

- Create an index plot of Cook's Distance to quickly identify the most influential observations.

4. Statistical Threshold Testing

- Apply the interpretation thresholds from the Quantitative Diagnostics Table above:

5. Influence Assessment and Reporting

- Refit the regression model after excluding the flagged influential points.

- Compare the key model parameters (slope, intercept, R-squared, p-values) between the original and the new model.

- Document the changes. In your thesis or report, clearly state the diagnostic process undertaken and the impact of any unusual observations on your final conclusions.

Research Reagent Solutions: Key Statistical Diagnostics

| Item / Software | Function in Analysis |

|---|---|

| Statistical Software (e.g., R, Python with statsmodels, Minitab) | The primary platform for fitting regression models and computing all diagnostic statistics (residuals, leverage, Cook's D) [2]. |

| Hat Matrix (H) | A crucial mathematical construct whose diagonal elements (HI) are the direct measure of an observation's leverage on its own predicted value [2]. |

| Cook's Distance | A single metric that aggregates the overall influence of a single data point on all regression coefficients, used to flag points that disproportionately affect the model [2]. |

| Standardized & Studentized Residuals | Residuals that have been scaled by their standard error, making it easier to identify outliers in the y-direction by providing a common scale for comparison [2]. |

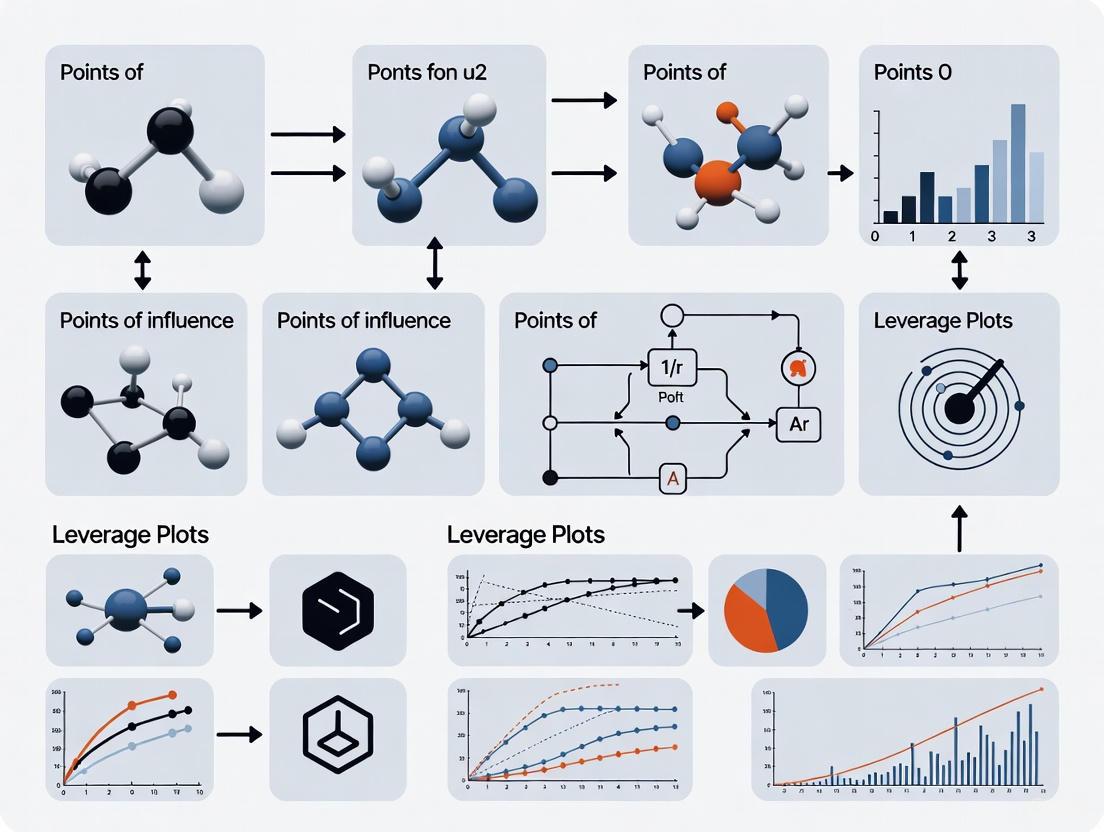

Visualizing the Concepts: A Diagnostic Workflow

The following diagram illustrates the logical process for diagnosing different types of unusual observations in a regression dataset.

Frequently Asked Questions

1. What is the fundamental difference between an outlier and a high leverage point? An outlier is a data point whose response (y-value) does not follow the general trend of the rest of the data, resulting in a large residual [1] [3]. A high leverage point is one that has "extreme" predictor (x-value) values, which may be unusually high, low, or an unusual combination of predictor values in multiple regression [1] [3] [4]. The key difference is that an outlier is unusual in the vertical (y) direction, while a high leverage point is unusual in the horizontal (x) direction.

2. Can a single data point be both an outlier and have high leverage? Yes. A data point that has both an extreme x-value and does not follow the general trend of the data (large residual) is considered both an outlier and a high leverage point [5]. Such a point has a high potential to be influential.

3. What is an influential point, and how does it relate to outliers and leverage? An influential point is a data point that unduly influences any part of a regression analysis if removed, such as the predicted responses, estimated slope coefficients, or hypothesis test results [1] [3]. While outliers and high leverage points have the potential to be influential, they are not always so. An influential point is one that, when removed, significantly changes the regression model [5]. An outlier that is also a high leverage point is the most likely to be influential [1] [5].

4. Why is it important to distinguish between these types of unusual points? Identifying and correctly classifying these points is crucial because they can skew the results of a regression analysis in different ways. Understanding whether a point is an outlier, has high leverage, or is influential helps a researcher decide the most appropriate course of action, whether it's investigating the data point for errors, using robust regression techniques, or reporting the findings with and without the point [1] [3].

5. What are some robust regression techniques to use when my data has outliers? Several robust regression techniques are less sensitive to outliers, including [6] [7]:

- Huber Regression: Uses a loss function that is less sensitive to outliers by combining squared loss for small residuals and absolute loss for large residuals.

- RANSAC Regression (RANdom SAmple Consensus): An iterative algorithm that fits a model to random subsets of data, identifying inliers and outliers.

- Theil-Sen Regression: Calculates the slope as the median of all slopes between pairs of points, making it robust to outliers.

Troubleshooting Guides

Guide 1: Diagnosing Unusual Observations in Your Regression

Use the following workflow to systematically identify and classify unusual points in your dataset. This process is integral to validating the assumptions of your leverage plots research.

Diagram 1: A diagnostic workflow for classifying unusual observations.

Experimental Protocol & Diagnostic Measures

After following the workflow, use these specific statistical measures to diagnose each type of point. The following table summarizes the key diagnostic statistics and their interpretation, which should be calculated as part of your experimental protocol.

Table 1: Diagnostic Measures for Unusual Observations [4] [8] [2]

| Point Type | Primary Diagnostic Measure | Interpretation & Common Threshold | Secondary Measures | ||

|---|---|---|---|---|---|

| High Leverage | Leverage ($h_{ii}$) [4] | $h_{ii} > 2p/n$ indicates high leverage, where $p$ is the number of parameters (including intercept) and $n$ is the number of observations [4] [2]. | Partial Leverage [4] | ||

| Outlier | Standardized Residual ($r_i$) [8] | $ | r_i | > 2$ or $3$ suggests an outlier. $ri = \frac{ei}{\sqrt{MSE(1-h_{ii})}$ [8]. | Studentized Residuals, Deleted Residuals [2] |

| Influential | Cook's Distance ($D$) [2] | Compare $D$ to an F-distribution with $p$ and $n-p$ degrees of freedom. A probability > 50% indicates major influence [2]. | DFITS [2] |

Protocol Steps:

- Fit the Model: Fit your initial regression model to the full dataset.

- Calculate Leverage: Compute the leverage statistic ($h_{ii}$) for each observation. This is typically the diagonal element of the hat matrix [4].

- Flag High Leverage: Identify points where $h_{ii} > 2p/n$ [4] [2].

- Calculate Residuals: Compute the standardized residuals for each observation [8].

- Flag Outliers: Identify points where the absolute value of the standardized residual exceeds your chosen threshold (e.g., 2 or 3) [8].

- Test for Influence: For points flagged as high leverage, outliers, or both, calculate Cook's Distance. A point is considered influential if its exclusion causes a substantial change in the regression coefficients [1] [2]. This can be tested by visually comparing regression lines with and without the point, or by using the statistical thresholds for Cook's D [2].

Guide 2: Addressing Influential Points in Analysis

Once you have diagnosed unusual points, follow this guide to address them.

Step 1: Investigate the Point

- Check for Data Errors: Verify the data was entered and processed correctly. Simple transcription errors are a common cause.

- Understand Context: Determine if the point represents a rare but valid biological event. In drug development, this could indicate a unique responder or a specific experimental condition.

Step 2: Choose an Analytical Strategy The appropriate strategy depends on whether the point is truly an error and the goals of your analysis.

Table 2: Strategies for Handling Unusual Points [6] [7] [9]

| Scenario | Recommended Strategy | Rationale & Implementation |

|---|---|---|

| Point is a data error | Remove the point | The point does not represent the underlying phenomenon and will bias the results. Re-fit the model without the point. |

| Point is valid but influential | Report analyses both with and without the point. | Provides transparency and allows readers to see the impact of a single observation on the conclusions. |

| Model must be robust to outliers | Use Robust Regression (e.g., Huber, RANSAC, Theil-Sen) [6] [7] | These algorithms are designed to be less sensitive to outliers, reducing their influence without manually removing them. |

| The point is a valid high leverage point | Retain the point and acknowledge it extends the model's scope. | A high leverage point that is not an outlier provides important information about the relationship at extreme X-values and improves the estimate of the slope [1]. |

Step 3: Document and Report Always document any unusual points found and the actions taken. In your thesis and publications, report:

- The number and nature of unusual points.

- Diagnostic statistics (e.g., leverage, Cook's D).

- A comparison of results with and without influential points.

- The final chosen model and the rationale for the choice.

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Robust Analysis

| Tool / Solution | Function in Analysis |

|---|---|

| Statistical Software (R, Python) | Platform for calculating diagnostic statistics (leverage, residuals, Cook's D) and fitting robust regression models [6] [7]. |

| Leverage (Hat) Matrix | The mathematical matrix whose diagonal elements ($h_{ii}$) are the direct measure of leverage for each observation [4]. |

| Huber Loss Function | The core function used in Huber Regression, which combines squared and absolute loss to reduce the weight given to outliers during model fitting [6] [7]. |

| RANSAC Algorithm | A non-deterministic, iterative algorithm for separating inliers from outliers in a dataset, effective for datasets with a large proportion of outliers [6] [7]. |

| Cook's Distance Metric | A statistical measure that aggregates the influence of a single data point on all fitted values, used to flag points for further investigation [2]. |

FAQs and Troubleshooting Guide

This guide provides solutions to common issues researchers face when using leverage plots to identify influential points in statistical models, particularly in drug development.

Q1: What does a "Leverage of 1.0" mean in my plot, and is it a problem?

A leverage value of 1.0 indicates a point that has the maximum possible influence on the model's fit. This is often a sign of a perfect fit for that single point, which can distort the overall model.

- Troubleshooting Steps:

- Investigate the Point: Check your raw data for this observation. Look for data entry errors, measurement malfunctions, or a unique biological outlier that doesn't belong to the population you are modeling.

- Run Diagnostics: Fit your model with and without this high-leverage point. Compare the model coefficients, R-squared, and p-values. If they change dramatically, the point is highly influential.

- Document and Decide: Based on your investigation, decide if the point is a valid but extreme value (which you may keep, but note in your report) or an error (which you should correct or remove). Never remove a point solely for statistical reasons without a scientific justification.

Q2: My leverage plot shows a point with high leverage but a small residual. Should I be concerned?

A point with high leverage but a small residual (close to the regression line) is not necessarily a problem. It means this point is an extreme value in the predictor space, but the model's prediction for it is still accurate. It can strengthen the model's fit rather than distort it.

- Action: Monitor this point, but it typically does not require removal. Its primary effect is to reduce the standard errors of your coefficient estimates, increasing the precision of your model.

Q3: How do I handle a cluster of points with high leverage?

A cluster of high-leverage points suggests a subgroup within your data. This is common in drug development, for example, when data comes from different experimental batches or patient subpopulations.

- Troubleshooting Steps:

- Validate the Subgroup: Determine if the cluster represents a scientifically relevant category (e.g., responders vs. non-responders, a specific dosage group).

- Consider Model Expansion: Your current model might be oversimplified. You may need to include an additional factor or interaction term in your model to account for this subgroup.

- Check for Confounding: Ensure that the cluster is not an artifact of an uncontrolled experimental variable.

Q4: What is the difference between leverage and influence?

While often related, leverage and influence are distinct concepts, as shown in the table below.

- Table: Leverage vs. Influence

Feature Leverage Influence (e.g., Cook's Distance) Definition A point's potential to influence the model, based on its position in the predictor space. A point's actual impact on the model's coefficients and predictions. Depends On Only the values of the independent variables (X). The values of both independent (X) and dependent (Y) variables. What to Look For Points that are distant from the mean of the predictors. Points that have high leverage and a large residual (don't fit the trend). Primary Metric Hat values (diagonals of the hat matrix). Cook's Distance, DFFITS, DFBETAS.

The following table summarizes key thresholds for common diagnostic statistics used alongside leverage plots. These are rules of thumb; context is critical.

- Table: Key Diagnostic Statistics and Thresholds

Diagnostic Statistic Calculation Common Threshold for Concern Interpretation Leverage (h~ii~) Diagonal of hat matrix > 2p/n Potential for high influence. Cook's Distance (D) Combined measure of leverage and residual > 4/n Significantly influences model coefficients. Studentized Residual Residual scaled by its standard deviation > 2 A potential outlier in the Y-direction. DFFITS Change in predicted value when point is omitted > 2√(p/n) Influences its own fitted value.

Where *n is the number of observations and p is the number of model parameters.*

Experimental Protocol: Identifying Influential Points with Leverage Plots

Objective: To detect and diagnose data points that have a strong potential to influence a linear regression model, ensuring the model's robustness and validity.

Materials:

- Statistical software (e.g., R, Python with

statsmodels/scikit-learn, SAS, JMP). - Dataset with continuous outcome and predictor variables.

Methodology:

Model Fitting:

- Fit your initial linear regression model to the data:

Y ~ X1 + X2 + ... + Xp.

- Fit your initial linear regression model to the data:

Calculation of Diagnostic Statistics:

- Extract the following statistics for every observation in the dataset:

- Leverage (Hat Values): Measures the potential influence.

- Residuals: The difference between observed and predicted values.

- Cook's Distance: A combined measure of a point's leverage and the size of its residual.

- Extract the following statistics for every observation in the dataset:

Visualization with Leverage Plots:

- Create a Residuals vs. Leverage plot.

- On this plot, the size of each point can be mapped to its Cook's Distance, creating a bubble plot. This allows for the simultaneous assessment of all three key diagnostics.

Interpretation and Diagnosis:

- Identify points that fall above the leverage threshold.

- Among high-leverage points, focus on those that also have large residuals (far from zero on the y-axis) and/or a large bubble size (high Cook's Distance). These are your most influential points.

Sensitivity Analysis:

- Refit the regression model after removing the flagged influential points.

- Systematically compare the key outputs (coefficients, standard errors, R-squared) of the original and new model to quantify the influence.

The workflow for this protocol is outlined in the diagram below.

The Scientist's Toolkit: Research Reagent Solutions

- Table: Essential Materials for Statistical Analysis of Influential Points

Item Function/Brief Explanation R Statistical Software Open-source environment for statistical computing and graphics. Essential for advanced regression diagnostics. statsmodelsLibrary (Python)A Python module that provides classes and functions for estimating many different statistical models and conducting statistical tests and explorations. Diagnostic Plots Package (e.g., carin R,statsmodels.graphicsin Python)Specialized software libraries designed specifically for creating regression diagnostic plots, including leverage plots and plots of Cook's Distance. Cook's Distance Metric A quantitative measure that combines leverage and residual information to estimate a point's overall influence on the regression model. Data Visualization Library (e.g., ggplot2in R,matplotlib/seabornin Python)Libraries used to create custom, publication-quality plots for visualizing data distributions, model fits, and diagnostic statistics.

Frequently Asked Questions (FAQs)

1. What is the Hat Matrix in linear regression? The Hat Matrix, denoted as H, is a fundamental mathematical construct in linear regression that projects the vector of observed response values onto the space spanned by the model's predictor variables [10] [11]. It is defined by the formula ( H = X(X^{T}X)^{-1}X^{T} ), where ( X ) is the data matrix of predictor variables [4] [12]. This matrix puts the "hat" on the observed response vector ( y ) to generate the predicted values ( \hat{y} ) via the equation ( \hat{y} = Hy ) [12] [13]. Its diagonal elements, known as leverage scores, are critical for diagnosing potential influential points in regression analysis.

2. What is a leverage score and what does it measure? A leverage score is the ( i )-th diagonal element, ( h_{ii} ), of the Hat Matrix [10] [4]. It quantifies the potential influence of the ( i )-th observation on its own predicted value, based solely on its position in the predictor variable space [12] [13]. A high leverage score indicates that an observation has an unusual combination of predictor values compared to the rest of the data set, making it distant from the center of the other observations in the ( X )-space [10] [12].

3. What is the difference between a high-leverage point and an influential point? This is a critical distinction. A high-leverage point has an unusual or extreme value in its predictor variables (a high ( h{ii} )) [2] [14]. However, if its response value ( yi ) follows the general trend of the other data, its high leverage may not unduly affect the regression model. An influential point, on the other hand, is one that actually does exert a disproportionate effect on the regression results—such as the estimated coefficients, ( R^2 ), or p-values—when it is included or excluded from the analysis [2] [14] [13]. All influential points are high-leverage, but not all high-leverage points are influential.

4. How can I calculate leverage scores using statistical software?

After fitting a linear regression model (e.g., using fitlm or stepwiselm in MATLAB), the leverage values can be directly accessed as a diagnostic property of the fitted model object. For a model named mdl, the command would be mdl.Diagnostics.Leverage [10]. In many software environments, you can also use specialized diagnostic plotting functions, such as plotDiagnostics(mdl), to visually inspect the leverage values [10].

5. What are the key mathematical properties of leverage scores? The leverage scores, ( h_{ii} ), possess several key properties that are useful for diagnostics [4] [12] [13]:

- Bounded Range: Each ( h_{ii} ) is a value between 0 and 1, inclusive.

- Sum Equals Parameters: The sum of all ( h_{ii} ) equals ( p ), the number of parameters in the regression model (including the intercept). This implies that the mean leverage is always ( \bar{h} = p/n ).

- Distance Measure: ( h_{ii} ) is a standardized measure of the distance between the ( i )-th observation's predictor values and the mean predictor values of all ( n ) observations.

Troubleshooting Guides: Identifying and Handling High Leverage Points

Guide 1: How to Diagnose High Leverage Observations

Problem: A researcher suspects that a few observations in their dataset, due to their extreme values in predictor variables, might have an undue potential to influence the regression model.

Solution Protocol: Follow this step-by-step guide to calculate, visualize, and interpret leverage scores.

Step 1: Compute Leverage Scores Fit your linear regression model and extract the leverage values. These are the diagonal elements of the hat matrix ( H ) [10] [4].

Step 2: Visual Inspection Create a leverage index plot—a simple scatter plot with the observation index ( i ) on the x-axis and the leverage value ( h_{ii} ) on the y-axis [10]. This helps quickly spot observations with unusually high bars.

Step 3: Apply Decision Rules Use established statistical rules of thumb to flag high-leverage points. A common practice is to compare each leverage value to a multiple of the average leverage, ( \bar{h} = p/n ) [12] [13].

Table 1: Common Thresholds for Identifying High Leverage Points

| Threshold Rule | Formula | Interpretation |

|---|---|---|

| Common Cut-off [12] [13] | ( h_{ii} > 3 \times (p/n) ) | Observations exceeding this value are often flagged as "Unusual X" and warrant investigation. |

| Refined Cut-off [12] [13] | ( h_{ii} > 2 \times (p/n) ) | A more sensitive threshold. Often used to identify points that are both high-leverage and isolated from other data. |

Step 4: Contextual Analysis Examine the flagged observations in the context of your research. Are these values plausible, or could they be data entry errors? Do they belong to a known but rare sub-population? This step requires domain expertise [2].

Guide 2: How to Determine if a High-Leverage Point is Influential

Problem: A high-leverage point has been identified. The researcher needs to determine if this point is truly influential on the regression results.

Solution Protocol: Use deletion diagnostics to quantify the actual impact of removing the suspect observation.

Step 1: Calculate Influence Measures For each observation ( i ) flagged as high-leverage, compute one or more of the following influence measures. These metrics estimate the change in regression outputs when the ( i )-th observation is omitted.

Table 2: Key Influence Diagnostics for Regression Analysis

| Diagnostic Measure | What it Quantifies | Common Threshold |

|---|---|---|

| DFBETA / DFBETAS [15] | The change in each regression coefficient ( \beta_j ) when the ( i )-th observation is removed. DFBETAS is the standardized version. | ( \mid \text{DFBETAS} \mid > \frac{2}{\sqrt{n}} ) |

| Cook's Distance [2] | A combined measure of the influence of observation ( i ) on all fitted values. | A common rule is to flag points where Cook's D is greater than the 50th percentile of an F-distribution with ( p ) and ( n-p ) degrees of freedom [2]. |

| DFFITS [2] | The change in the predicted value for observation ( i ) when it is removed from the fitting process. | ( \mid \text{DFFITS} \mid > 1 ) (for small/medium datasets) |

Step 2: Fit Models with and Without the Point As a direct validation, refit the regression model after excluding the high-leverage observation(s). Compare key outputs like coefficient estimates, R-squared, and p-values between the two models [14]. Substantial changes indicate influence.

Step 3: Decision and Reporting

- If the point is not influential, it may be safe to retain it, but its existence should be noted as it can make the model's predictions less precise in its region of the predictor space [2].

- If the point is influential, investigate its origin. If it stems from a data error, correct it. If it is a valid but unusual observation, report your model results both with and without the point to ensure transparency about the fragility of your findings [15] [13].

Visualizing the Analytical Workflow

The following diagram illustrates the logical process for diagnosing and handling unusual observations in a regression analysis.

Table 3: Key Research Reagent Solutions for Leverage Analysis

| Tool / Resource | Function in Analysis | Implementation Example |

|---|---|---|

| Hat Matrix (H) | The core mathematical object whose diagonal elements are the leverage scores. It projects observed responses into predicted values [12] [11]. | ( H = X(X^{T}X)^{-1}X^{T} ) |

| Leverage Vector | A vector containing all diagonal elements ( h_{ii} ) of H. It is the primary input for identifying observations with extreme predictor values [10]. | Accessed via mdl.Diagnostics.Leverage in MATLAB [10]. |

| Index Plot | A simple visualization to quickly scan for observations with unusually high leverage scores compared to others [10]. | Use plotDiagnostics(mdl, 'Leverage') or equivalent in your software. |

| DFBETAS | Standardized values that measure the effect of deleting the ( i )-th observation on each regression coefficient ( \beta_j ). Crucial for pinpointing which parameters are affected [15]. | In R, use dfbetas(model). A common threshold is ( 2/\sqrt{n} ). |

| Cook's Distance | A single, overall measure of the influence of an observation on the entire set of regression coefficients and predictions [2]. | Available in most statistical software regression diagnostics. Flag points with a large Cook's D relative to others. |

Frequently Asked Questions

What is an influential point in regression analysis? An influential point is an observation that, individually, exerts a large effect on a regression model's results—the parameter estimates (β̂) and, consequently, the model's predictions. Influential points are not necessarily problematic, but they warrant follow-up investigation as they can signal data-entry errors or observations that are unrepresentative of the population of interest [15].

How can a single data point significantly change my regression results? A single point can be influential if it has high leverage (an unusual value for a predictor variable) and high outlyingness (an unusual value for the response variable). Such a point can "drag" the entire regression line toward itself. For example, a single misrecorded data point can change a slope estimate from positive to negative, fundamentally altering the interpretation of the relationship between variables [15].

What is the difference between DFBETA and DFBETAS? DFBETA and DFBETAS are metrics used to quantify a point's influence on a specific regression coefficient.

- DFBETA is the raw change in a regression coefficient when the ith observation is removed:

DFBETA_ij = β̂_j - β̂_(i)j[15]. - DFBETAS is a standardized version, calculated by dividing the DFBETA by the standard error of the coefficient estimate. This makes DFBETAS values comparable across different models and variables, as they are free from the scale of the variables [15].

How do I interpret an Effect Leverage Plot? An Effect Leverage Plot (also known as a partial regression plot) visualizes the influence of individual points on the test for a specific term in the model [16] [17].

- The Fitted Line (Red): Shows the model with the term included. Its slope is the coefficient estimate for that term.

- The Horizontal Line (Blue): Represents the model without the term (the hypothesis that the coefficient is zero) [16].

- Point Positions: Points that are far from the horizontal line exert more influence on the hypothesis test. A point far from the bulk of the data can have a large influence on the parameter estimate [17].

- Confidence Curves (Shaded Red): If these curves cross the horizontal blue line, the effect is statistically significant. If the blue line lies entirely within the confidence region, the effect is not significant [16].

Is there a definitive cut-off for identifying an influential point?

While no perfect cutoff exists, a common and size-adjusted threshold for |DFBETAS| is 2/√n, where n is the sample size. This threshold helps expose a similar proportion of potentially influential observations regardless of the sample size [15].

The table below shows how this threshold changes with sample size:

| Sample Size (n) | Threshold for |DFBETAS| |

|---|---|

| 50 | ~0.283 |

| 100 | 0.200 |

| 500 | ~0.089 |

| 1000 | ~0.063 |

Troubleshooting Guides

Issue: Suspecting that influential points are distorting regression coefficients

Investigation Protocol:

- Calculate Influence Statistics: Use statistical software to compute DFBETA or DFBETAS for each observation and each coefficient. Most software packages have built-in functions for this (e.g.,

dfbeta()anddfbetas()in R) [15]. - Visualize with Effect Leverage Plots: Generate a leverage plot for each predictor variable in your model. These plots will help you see which points might be exerting influence on the test for that specific variable [16].

- Compare to Threshold: Compare the absolute DFBETAS values to the

2/√nthreshold. Any observation with a |DFBETAS| value exceeding this threshold for any coefficient should be flagged for further investigation [15]. - Inspect Flagged Points: Manually examine the raw data for the flagged observations. Check for potential data entry errors, measurement errors, or unique characteristics that might make the observation fundamentally different from the rest of your dataset.

Resolution Actions:

- If a data error is found: Correct the error if possible, then re-run the analysis.

- If the point is valid but influential: It is critical to report your model results both with and without the highly influential points. This transparency shows the fragility of your model's results and allows readers to assess the impact of these points on your conclusions [15].

- Consider model re-specification: In some cases, the presence of influential points may indicate that your model is misspecified (e.g., missing a key variable or requiring a different functional form).

Issue: Diagnosing multicollinearity from a Leverage Plot

Symptoms: In an Effect Leverage Plot, the points appear to collapse toward a vertical line or cluster very tightly toward the middle of the plot. This indicates that the predictor variable is highly correlated with other predictors already in the model [16] [17].

Interpretation: This clustering shows that the variable adds little new information, making the slope of the fitted line unstable. The standard error for the coefficient will be inflated, and the parameter estimate can be unreliable [16].

Next Steps: Investigate variance inflation factors (VIFs) for a quantitative measure of multicollinearity. You may need to remove variables, combine them, or use regularization techniques like ridge regression.

Experimental Protocols & Methodologies

Protocol: Calculating and Interpreting DFBETAS

Objective: To quantitatively assess the influence of each observation on each estimated regression coefficient.

Procedure:

- Fit the Full Model: Regress the response variable Y on all predictor variables (X₁, X₂, ..., Xₚ) using all

nobservations. Obtain the coefficient estimates β̂_j for each predictor. - Fit the Reduced Models: For each observation

i(from 1 ton), fit the same regression model but with the ith observation omitted. Obtain the new coefficient estimates β̂_(i)j. - Compute DFBETA: For each observation

iand each coefficientj, calculate:DFBETA_ij = β̂_j - β̂_(i)j[15] - Compute DFBETAS: Standardize the DFBETA values. For linear regression, the formula is:

DFBETAS_ij = (β̂_j - β̂_(i)j) / SE(β̂_j)where the standard error is calculated using the mean squared error from the regression with the ith observation deleted [15]. - Identify Influential Points: Flag any observation where |DFBETAS_ij| >

2/√nfor any variablej[15].

Protocol: Constructing and Interpreting an Effect Leverage Plot

Objective: To visually assess the influence of individual points on the significance test for a specific model effect and to spot multicollinearity.

Construction Workflow for a Continuous Predictor X:

Interpretation Guide:

- Significance: The effect of variable X is statistically significant if the confidence curves for the fitted red line cross the horizontal blue line (which represents the hypothesis that the coefficient for X is zero) [16].

- Influence: Points that are horizontally distant from the center of the plot have higher leverage and exert more influence on the test for X. A high-leverage point that is also far from the fitted line can have a large influence on the parameter estimate itself [16] [17].

- Multicollinearity: If the points collapse toward a vertical line, it indicates that X is highly collinear with other predictors in the model [17].

The Scientist's Toolkit: Research Reagent Solutions

Essential materials and statistical measures for conducting influence analysis in regression modeling.

| Item / Solution | Function in Analysis |

|---|---|

| DFBETA | Measures the raw change in a coefficient when an observation is omitted; used for direct assessment of influence on parameter estimates [15]. |

| DFBETAS | The standardized version of DFBETA; allows for comparison across different coefficients and models via a common, scale-free threshold [15]. |

| Effect Leverage Plot | A diagnostic plot that visualizes the unique effect of a predictor and the influence of each data point on its significance test [16] [17]. |

| Size-Adjusted Threshold (2/√n) | Provides a sample-size-dependent cut-off for DFBETAS to identify potentially influential points in a consistent manner [15]. |

| Statistical Software (R, JMP, etc.) | Platforms with built-in functions (e.g., dfbetas()) and visualization tools (e.g., Effect Leverage Plots) to efficiently compute diagnostics and create plots [15] [16]. |

Creating and Interpreting Leverage Plots: A Step-by-Step Protocol

Table of Contents

- Introduction to Leverage Plots

- Implementation in SAS

- Implementation in R

- Implementation in Python

- Troubleshooting FAQs

- Research Reagent Solutions

Leverage plots are powerful diagnostic tools in regression analysis for identifying influential observations. Within the context of identifying influential points, it is crucial to distinguish between different types of "unusual" data: outliers (points with large residuals, extreme y-values), high-leverage points (points with extreme x-values), and influential points (points that significantly alter the regression model when removed) [18] [5]. An influential observation is often one that possesses both high leverage and a large residual [18] [19].

The following diagram illustrates the logical relationship between these concepts and the role of leverage plots in the diagnostic process.

Implementation in SAS

SAS provides a direct method for generating partial regression leverage plots through PROC REG, which visualizes the relationship between a specific predictor and the response variable after accounting for all other predictors [20].

Detailed Methodology:

- Data Preparation: Use a

DATA STEPto load and prepare your dataset. Ensure variables are appropriately labeled [20]. - Enable Graphics: Use

ods graphics on;to allow the production of graphical output [20]. - Run Regression Model: In

PROC REG, use thePLOTS(ONLY)=(PARTIAL)and thePARTIALoption in theMODELstatement to generate only the partial regression leverage plots [20]. - Interpretation: In each plot, the slope of the fitted line corresponds to the coefficient estimate for that variable in the full model. A near-horizontal line suggests the predictor may not be statistically significant [20].

Example Code:

Implementation in R

In R, you can calculate leverage statistics and create diagnostic plots using base R functions. The process involves fitting a model and then extracting leverage values from the model object [21].

Detailed Methodology:

- Fit Regression Model: Use the

lm()function to fit a linear regression model. - Calculate Leverage: The

hatvalues()function applied to the model object returns the leverage statistics for each observation [21]. - Visualize Leverage: Use the

plot()function withtype = 'h'to create a leverage index plot, which shows each observation's leverage value [21]. Observations with leverage greater than2*mean(leverage)or3*mean(leverage)are often flagged for further investigation [19]. - Comprehensive Diagnostics: The

plot(model)command automatically generates a series of four diagnostic plots, including a "Residuals vs Leverage" plot which is key for spotting influential points [22].

Example Code:

Implementation in Python

Python's statsmodels library offers a comprehensive suite for statistical modeling, including the creation of various regression diagnostic plots similar to R [22].

Detailed Methodology:

- Import Libraries: Key libraries are

statsmodels.api(for OLS regression) andmatplotlib.pyplot(for plotting) [22]. - Fit OLS Regression: Use

statsmodels.formula.api(orstatsmodels.api) to specify and fit an Ordinary Least Squares (OLS) model [22]. - Extract Diagnostic Variables: From the fitted model, extract components like residuals, fitted values, standardized residuals, and leverage values using

results.get_influence().hat_matrix_diag[22]. - Create Diagnostic Plots: Use

matplotlibto create a grid of plots, including a "Residuals vs Leverage" plot. Thestatsmodels.graphics.influence_plot()creates a specialized plot that combines information about leverage and influence (Cook's distance) [22].

Example Code:

Troubleshooting FAQs

FAQ 1: What is the difference between an outlier, a high-leverage point, and an influential point? These terms describe different types of "unusual" data in a regression context. An outlier has an extreme value for the response variable (Y), leading to a large residual as it does not follow the general trend of the data [5]. A high-leverage point has an extreme value for one or more predictor variables (X) [5]. An influential point is one that, if removed, substantially changes the estimate of the regression coefficients (e.g., the slope or intercept) [5]. An influential point is typically both an outlier and a high-leverage point [18].

FAQ 2: My leverage plot doesn't show a reference line for Cook's distance in Python. How can I add it?

The influence_plot in statsmodels automatically adds Cook's distance as concentric circles. For manual plots, you can calculate Cook's distance using results.get_influence().cooks_distance[0] and then add contour lines to your scatter plot. You would need to calculate the Cook's distance values for a grid of leverage and residual values, which is a non-trivial process. Using the built-in influence_plot is recommended for this purpose [22].

FAQ 3: How do I label specific influential points in my R or Python plot?

In R, after creating the base plot, you can use the text() or points() functions with a logical condition to label points with high leverage or influence. For example, text(leverage, residuals, labels=ifelse(leverage > threshold, row.names(mtcars), ""), pos=4).

In Python, when using matplotlib, you can loop through your data points and use ax.annotate() to add text labels for points that meet your criteria (e.g., high Cook's distance). The influence_plot from statsmodels automatically labels the most influential points [22].

FAQ 4: What are the common thresholds for identifying high-leverage points? There are several rules of thumb:

- A leverage value greater than

2 * (p / n)or3 * (p / n), wherepis the number of predictors (including the intercept) andnis the number of observations, is often considered a high-leverage point [19]. - Huber's guideline suggests that leverage values

pᵢᵢ ≤ 0.2are safe, values0.2 < pᵢᵢ ≤ 0.5are risky, and valuespᵢᵢ > 0.5should be avoided or investigated thoroughly [19]. - Note that leverage values always fall between

1/nand1[21].

Research Reagent Solutions

The table below lists key software and computational "reagents" essential for conducting research on influential points with leverage plots.

| Research Reagent | Function / Purpose | Key Features / Notes |

|---|---|---|

SAS PROC REG [20] |

Fits linear regression models and generates diagnostic plots, including partial regression leverage plots. | The PARTIAL option in the model statement is specific for creating partial leverage plots. Highly reliable in clinical and pharmaceutical research. |

R stats Package [21] |

Core statistical functions for model fitting (lm) and diagnostics (hatvalues, plot.lm). |

Provides fundamental tools for leverage and influence analysis. The base R diagnostic plots are a quick and standard way to assess a model. |

Python statsmodels [22] |

A comprehensive library for estimating and analyzing statistical models. | Its OLS implementation provides detailed summary tables and specialized diagnostic plots (influence_plot), closely mirroring the functionality of R. |

| Cook's Distance [22] [19] | A statistical measure that combines leverage and residual size to quantify an observation's overall influence on the model. | Implemented in both R (cooks.distance) and Python (results.get_influence().cooks_distance[0]). A larger value indicates a more influential point. |

| Hat Values (Leverage) [21] [19] | The diagonal elements of the "hat" matrix. Measure an observation's potential influence based solely on its position in the predictor variable space. | Calculated via hatvalues() in R and get_influence().hat_matrix_diag in Python. It is a key input for identifying high-leverage points. |

Constructing Effect Leverage Plots for Individual Model Terms

Understanding Effect Leverage Plots

An effect leverage plot, also known as a partial regression leverage plot or an added variable plot, is a diagnostic tool that shows the unique, marginal effect of a specific term in your regression model [17]. It answers the question: "What is the effect of adding this particular predictor to a model that already contains all the other predictors?"

The plot visualizes the relationship between the response variable and the predictor of interest, after both have been adjusted for, or "purified" of, the effects of all other predictors in the model [17]. This allows you to see the direct contribution of a single term.

- The Slanted Line: Represents the fitted regression line for the full model with the term included. Its slope is equal to the coefficient estimate for that term in your full model.

- The Horizontal Line: Represents the constrained model without the term. It has a slope of zero for the term in question [17].

- Data Points: These are the partial residuals for the term. The vertical distance from a point to the horizontal line shows the total effect. The vertical distance to the slanted line shows the residual after the term's effect is accounted for.

Points that are far from the horizontal line but close to the slanted line are well-explained by the term. Points that are far from the horizontal line and still distant from the slanted line are outliers for this specific relationship. Points that are distant from the bulk of the data along the x-axis have high leverage on the term's coefficient [17].

The following diagram illustrates the core logical process for creating and interpreting these plots.

Frequently Asked Questions (FAQs)

1. What is the difference between a point with high leverage and an influential point?

While these terms are related, they describe different characteristics of an unusual observation. The table below clarifies the distinctions.

| Feature | Leverage Point | Influential Point | ||

|---|---|---|---|---|

| Definition | A point with an unusual combination of values for the predictor variables (an x-outlier) [2]. | A point that, if removed, causes a substantial change in the regression coefficients, predictions, or other model statistics [15] [2]. | ||

| Primary Cause | Extreme value in one or more independent variables (high x-value) [2]. | A combination of high leverage and an outlying y-value that does not follow the overall trend [15] [2]. | ||

| Impact on Model | Increases the apparent strength of the model (can inflate R-squared) and can make the model overly broad. It has little impact on the coefficient estimates if it follows the overall trend [2]. | Unduly influences the model's outcomes, potentially altering the slope, intercept, p-values, and R-squared, which can lead to misleading conclusions [2]. | ||

| Detection Method | Hat Values (Leverage Statistics): The diagonal elements of the "hat matrix." A common rule of thumb is that a point has high leverage if its hat value exceeds ( \frac{2p}{n} ), where ( p ) is the number of model parameters and ( n ) is the sample size [2]. | DFBETAS: Measures the standardized change in a coefficient when the i-th point is removed. A threshold of ( | DFBETAS | > \frac{2}{\sqrt{n}} ) is often used to flag influence [15]. Cook's Distance: Measures the overall influence of a point on all fitted values. Larger values indicate greater influence [2]. |

2. How do I know if a term is significant based on its effect leverage plot?

If the confidence band around the slanted regression line in the effect leverage plot fully encompasses the horizontal reference line (the model without the term), you can conclude that the term does not contribute significantly to the model. This is visually equivalent to a non-significant F-test for the partial effect of that term [17].

3. My effect leverage plot shows points far from the rest. Should I remove them?

Not necessarily. The first step is to investigate [15]. Check for data entry errors or a valid scientific reason (e.g., a unique patient subgroup) that explains the point's unusual nature. Never remove points simply to improve model fit. Always report the presence of highly influential points and any actions taken, as this is key to research transparency. Consider presenting model results both with and without these points to demonstrate the robustness (or fragility) of your findings [15].

Experimental Protocol: Creating and Analyzing Plots

This protocol provides a step-by-step methodology for constructing effect leverage plots and diagnosing influential points, tailored for research in drug development.

Objective: To visualize the marginal effect of individual predictor variables in a multiple regression model and identify observations that unduly influence the parameter estimates.

Materials & Reagents:

- Statistical Software: R (recommended), SAS, or Python with

statsmodelslibrary. - Computing Environment: Standard desktop computer or server.

- Data: A cleaned dataset containing the continuous response variable (e.g., drug efficacy, IC50 value) and all predictor variables (e.g., dosage, patient biomarkers, chemical descriptors).

Procedure:

- Model Fitting: Fit your full multiple linear regression model containing all terms of interest to the dataset.

- Plot Generation: Use the appropriate function in your statistical software to generate the suite of effect leverage plots (one for each model term).

- In R: Use the

termplotfunction or theavPlotsfunction from thecarpackage.

- In R: Use the

- Visual Inspection: For each plot, examine the position of the slanted line relative to the horizontal line to gauge the term's significance.

- Leverage Diagnosis: Identify points with high leverage—those that lie far from the mean of the partial regressor values (along the x-axis).

- Influence Calculation: Calculate influence statistics. The table below outlines key metrics and their diagnostic thresholds.

| Diagnostic Metric | Formula / Rule of Thumb | Interpretation | R Function | ||

|---|---|---|---|---|---|

| Leverage (Hat Value) | ( h_{ii} > \frac{2p}{n} ) | Flags an observation as an x-outlier with potential to influence the model [2]. | hatvalues(model) |

||

| DFBETAS | ( \left | DFBETAS_{ij} \right | > \frac{2}{\sqrt{n}} ) | Flags an observation as significantly influencing the j-th coefficient estimate [15]. | dfbetas(model) |

| Cook's Distance | Visual inspection; compare distances. A probability >50% based on F-distribution indicates major influence [2]. | Measures the overall influence of an observation on all fitted values [2]. | cooks.distance(model) |

- Follow-up Analysis: For any observation flagged by the above diagnostics, return to the raw data to verify its accuracy and investigate its scientific validity before deciding on its inclusion or exclusion.

The analytical workflow for this protocol, from data preparation to final decision-making, is summarized in the diagram below.

The Scientist's Toolkit: Key Reagents & Solutions

The following table lists the essential "research reagents" — the statistical diagnostics and functions — required for a robust analysis of leverage and influence.

| Research Reagent | Function / Purpose |

|---|---|

| Effect Leverage Plot | Visually isolates the partial effect of a single model term, showing its unique contribution after accounting for all other variables [17]. |

| Hat Values (Leverage Statistics) | Quantifies how unusual an observation's predictor values are, identifying points with the potential to exert influence on the model fit [2]. |

| DFBETAS | A standardized measure of how much a specific regression coefficient changes when a particular observation is removed. It directly quantifies a point's influence on model parameters [15]. |

| Cook's Distance | Measures the combined influence of an observation on all fitted values across the entire model, providing a single metric for overall impact [2]. |

Frequently Asked Questions

How do I interpret the slope of the line in an effect leverage plot? The solid red line in a leverage plot represents the estimated coefficient for that specific effect in your model [16]. A slope of zero suggests the effect provides no linear explanatory power. A non-zero slope indicates that adding this effect to your model helps explain variation in the response variable. The steepness of the slope is directly related to the parameter estimate for that effect in your regression output [16].

What do the confidence curves tell me about the significance of my effect? The shaded red confidence curves are a visual hypothesis test [16]. To determine significance at your set alpha level (commonly 5%):

- Significant Effect: The confidence curves cross the horizontal blue line (which represents the model without the effect). This visually rejects the null hypothesis that the parameter is zero [16].

- Non-Significant Effect: The confidence region fully contains the horizontal blue line. This indicates there is insufficient evidence to conclude the effect is significant [16].

What does it mean if the points in my leverage plot are clustered tightly in the middle? Tight clustering of points around the center of the horizontal axis often signals multicollinearity [16]. This means the effect you are plotting is highly correlated with other predictors already in the model. In this situation, the slope of the fitted line can be unstable, and the standard errors for the parameter estimate can be inflated [16].

How can I identify which data points are most influential on the effect test? Points that are horizontally distant from the center of the plot exert more leverage on the test for that specific effect [16]. The leverage of a point quantifies how far its x-value is from the mean of all x-values [12]. Points with high leverage have a greater potential to influence the estimated regression coefficient.

Troubleshooting Guide

| Observation | Potential Cause | Next Steps for Investigation |

|---|---|---|

| The confidence curves contain the horizontal line. | The effect is not statistically significant at your chosen alpha level [16]. | Consider the practical relevance of the effect. You may want to remove it to simplify the model. |

| The confidence curves cross the horizontal line. | The effect is statistically significant [16]. | Examine the parameter estimate and p-value in your regression table to confirm. |

| Data points are clustered horizontally near the center. | Potential multicollinearity with other model effects [16]. | Check Variance Inflation Factor (VIF) values for the predictors in your model. |

| One or a few points are far from the others on the horizontal axis. | High-leverage points are present [12]. | Use diagnostics like Cook's distance to determine if these points are overly influential on the model fit [23]. |

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential analytical components for conducting and interpreting leverage plot diagnostics.

| Item | Function |

|---|---|

| Effect Leverage Plot | A diagnostic plot that visualizes the significance and influence of a single model effect, conditional on all other effects already in the model [16]. |

| Hat Matrix (H) | The mathematical matrix used to calculate predicted values and leverages. The diagonal elements of this matrix ((h_{ii})) are the leverage values for each observation [12]. |

| Leverage ((h_{ii})) | A measure between 0 and 1 that quantifies how far an observation's predictor values are from the mean of all predictors. Points with high leverage can unduly influence the model fit [12]. |

| Confidence Curves | The shaded bands on a leverage plot that provide a visual confidence interval for the line of fit. Used to test the hypothesis that the effect's parameter is zero [16]. |

| Cook's Distance | A metric that combines a point's leverage and its residual to measure its overall influence on the regression model. Points with large Cook's distances warrant investigation [23]. |

Experimental Protocol: Visual Diagnosis with Leverage Plots

This workflow outlines the logical process for diagnosing model effects and data issues using a leverage plot. The diagram below provides a visual summary of this diagnostic pathway.

Within the framework of research focused on identifying influential points using leverage plots, calculating and correctly applying diagnostic thresholds is a fundamental skill. For researchers, scientists, and drug development professionals, statistical robustness is paramount. Whether analyzing high-throughput screening data in phenotypic drug discovery or refining clinical trial models, distinguishing between typical observations and statistically influential points ensures the integrity of your conclusions. Leverage, quantified by the hat value ((h_{ii})), measures how extreme an independent variable value is for a particular observation. This technical guide provides the methodologies and troubleshooting knowledge to master the application of the 2p/n rule, a key diagnostic threshold for hat values [4].

Core Concepts: Hat Values and the 2p/n Rule

What is a Hat Value?

In linear regression models, the leverage of the (i^{th}) observation is measured by its hat value, the (i^{th}) diagonal element of the hat matrix (H). The hat matrix is defined as [12]: [ H = X(X^{'}X)^{-1}X^{'} ] where (X) is the (n \times p) matrix of predictor variables (including a column of 1s for the intercept). The predicted response vector is then given by (\hat{y} = Hy), which is why (H) is called the "hat" matrix—it puts the hat on (y) [12].

The hat value (h{ii}) has a direct interpretation: it quantifies the influence of the observed response (yi) on its own predicted value (\hat{y}i) [12]. Key properties of (h{ii}) include [4]:

- Range: It is a number between 0 and 1, inclusive.

- Sum: The sum of all (h_{ii}) equals (p), the number of parameters (regression coefficients including the intercept). This means the average hat value is (\bar{h} = p/n) [12] [4].

The 2p/n Threshold Rule

A leverage value's raw magnitude is less important than its value relative to other observations. The 2p/n rule states that an observation with a hat value greater than (2p/n) should be considered a high-leverage point [4] [24].

- Purpose: This rule identifies observations whose predictor values are distant from the center of the predictor space, making them potentially influential on the model fit [4].

- Theoretical Basis: The threshold is derived from the mean hat value, (\bar{h} = p/n). A value of (2p/n) is twice the average, a commonly used benchmark for identifying unusual values [4].

- Comparison with Other Rules: Some texts suggest a more conservative threshold of (3p/n) for smaller samples (e.g., (n \leq 30)) [24], while others use (3p/n) as a default flag in statistical software [12]. The rule of thumb is therefore context-dependent. A refined approach is to use (3p/n) as a strict cutoff, and (2p/n) as a threshold for points that are also visually isolated in plots [12].

The table below summarizes these key thresholds.

Table 1: Diagnostic Thresholds for Hat Values

| Threshold | Condition | Interpretation |

|---|---|---|

| (2p/n) | General case | Observation is a high-leverage point [4] [24]. |

| (3p/n) | Small samples ((n \leq 30)) or strict flagging | Observation is a high-leverage point requiring close inspection [12] [24]. |

The following workflow diagram illustrates the logical process of calculating hat values and applying these diagnostic thresholds to identify high-leverage points.

Experimental Protocol & Calculation Methodology

This section provides a step-by-step protocol for calculating hat values and applying the 2p/n rule, suitable for replication in statistical software like R or Python.

Protocol: Identification of High-Leverage Points

1. Problem Formulation and Data Preparation

- Objective: To detect observations with extreme values in the predictor variable space that may disproportionately influence a linear regression model.

- Inputs: A dataset with (n) observations and (p) predictor variables (which will become (p) parameters in the model, including the intercept).

- Software Setup: Prepare your statistical computing environment (e.g., R with base stats package or Python with

statsmodels).

2. Model Fitting and Matrix Computation

- Step 1: Construct the (n \times p) design matrix (X). Ensure a column of 1s is included if an intercept is part of the model [12].

- Step 2: Compute the hat matrix (H) using the formula: [ H = X(X^{'}X)^{-1}X^{'} ] Implementation Note: In practice, most statistical software can compute the hat values directly without explicitly creating the full (H) matrix due to computational efficiency.

3. Hat Value Extraction

- Step 3: Extract the diagonal elements of (H), (h_{ii}), for (i = 1, ..., n). These are the hat values for each observation [4].

4. Threshold Calculation and Diagnostic Application

- Step 4: Calculate the mean leverage, (\bar{h} = p/n).

- Step 5: Calculate the diagnostic thresholds: (2\bar{h} = 2p/n) and (3\bar{h} = 3p/n) [4] [24].

- Step 6: Compare each (h{ii}) to the thresholds. Flag any observation where (h{ii} > 2p/n) as a high-leverage point. For a more conservative list, use (h_{ii} > 3p/n) [12].

5. Documentation and Visualization

- Step 7: Create a leverage plot (index plot of hat values) with horizontal lines at the (2p/n) and (3p/n) thresholds. This visually identifies outliers in the X-space.

- Step 8: Document all flagged observations for further investigation in the influence analysis phase.

Research Reagent Solutions

Table 2: Essential Components for Leverage Analysis

| Component | Function / Interpretation |

|---|---|

| Design Matrix ((X)) | The structured input of predictor variables. The foundation for all subsequent calculations [12]. |

| Hat Matrix ((H)) | The linear operator that projects the observed response vector (y) onto the predicted vector (\hat{y}). Its diagonal elements are the diagnostics of interest [12] [4]. |

| Hat Value ((h_{ii})) | The diagnostic metric. A value close to 1 indicates extreme leverage, meaning a small change in (yi) would cause a large shift in (\hat{y}i) [12]. |

| Threshold ((2p/n)) | The diagnostic criterion. Serves as a benchmark to objectively flag statistically unusual observations in the predictor space [4]. |

Frequently Asked Questions (FAQs)

Q1: An observation in my drug response dataset was flagged as a high-leverage point using the 2p/n rule. Should I automatically remove it? A: No. Removal is not automatic. A high-leverage point is not necessarily a "bad" point. It may be a highly informative observation, such as a sample with an unusually high dosage of an analyte in a calibration study. Investigate its influence further. If this point also has a large residual, it is likely a highly influential point that can distort your model. Its removal should be justified by domain knowledge and its impact on model parameters [24].

Q2: What is the difference between a high-leverage point, an outlier, and an influential point? A: These are distinct but often related concepts, summarized in the diagram below.

- High-Leverage Point: An outlier in the X-space (detected by (h_{ii} > 2p/n)) [24].

- Outlier: An outlier in the Y-space, meaning it has a large residual (difference between observed and predicted (y)) [24].

- Influential Point: A point that, if omitted, substantially changes the estimated regression coefficients. Such a point often combines high leverage with a large residual [24].

Q3: In the context of my research on clinical trial efficiency, how can I use this method? A: When using real-world evidence (RWE) to inform trial design or conducting pharmacogenomics analyses to identify patient subgroups, your regression models are key. Applying the 2p/n rule helps you audit your data. For example, you can identify if a small subset of patients with unique genomic markers or extreme baseline characteristics is having an outsized effect on the model predicting treatment response. This ensures your conclusions about patient stratification are robust and not driven by a few unusual cases [25].

Q4: The 2p/n and 3p/n rules give me different results. Which one should I use for my analysis? A: The choice can depend on your sample size and the desired sensitivity.

- For large samples, (2p/n) is commonly used [4].

- For smaller samples ((n \leq 30)), the (3p/n) rule is sometimes recommended as a more appropriate benchmark [24]. A best practice is to use the (3p/n) threshold as a strict cutoff for "unusual" points, and the (2p/n) threshold to flag points that are less extreme but still warrant attention, especially if they appear isolated in diagnostic plots [12]. Consistency within your field of research is also an important consideration.

Technical Support Center

Troubleshooting Guides & FAQs

This section addresses common challenges researchers face when performing leverage diagnostics in clinical trial data analysis.

FAQ 1: My leverage plot shows several high-leverage points. How can I determine if they are unduly influencing the model's conclusions?

Answer: A high-leverage point does not necessarily equate to a harmful influential point. Follow this diagnostic protocol:

- Calculate Influence Metrics: For each high-leverage point, compute its Cook's distance and DFFITS (Difference in Fits). These metrics quantify how much the regression model changes when a particular observation is omitted.

- Establish Thresholds: Use standard statistical thresholds to identify significantly influential points. A common rule of thumb is that an observation with a Cook's distance greater than 4/n (where n is the sample size) may be influential. For DFFITS, a value greater than ( 2 \sqrt{(k+1)/n} ) (where k is the number of predictors) is often used.

- Visual Inspection: Create a leverage-versus-residual-squared plot (or a similar diagnostic plot) to visualize the relationship between leverage and the goodness of fit for each point.

- Compare Models: Fit your model with and without the flagged points. Compare the coefficients, p-values, and overall model predictions. A significant change indicates that the points are influential and warrant further investigation into their clinical validity [26].

FAQ 2: What are the best practices for visualizing complex clinical data and diagnostic results to communicate findings clearly to a multidisciplinary team?

Answer: Effective visualization is key to communicating complex diagnostic information. The human brain processes images in as little as 13 milliseconds, and people learn more deeply from words and pictures than from words alone [27].

- Simplify and Clarify: Minimize cognitive burden by simplifying reports. Use clear legends, titles, and axis labels. Use color conservatively and purposefully to highlight key findings, not as mere decoration [28].

- Choose the Right Visual: Select visualization types that match your data and the story you need to tell. For hierarchical composite endpoints (HCEs), a Maraca plot can display all outcome components in a single, intuitive visual. To summarize adverse event data, including timing and distribution, the Tendril plot is highly effective [27].

- Ensure Accessibility: All visualizations must have sufficient color contrast. For standard text, the contrast ratio between foreground and background should be at least 4.5:1. For large-scale text, a minimum ratio of 7:1 is required for enhanced readability [29] [30].

FAQ 3: Which software tools are best suited for performing leverage diagnostics on large, complex clinical trial datasets?

Answer: The choice of software depends on your team's expertise and the specific analysis needs. The table below summarizes key tools used in clinical data management and analysis:

Table 1: Software Tools for Clinical Data Analysis and Diagnostics

| Tool Name | Type | Primary Function in Diagnostics |

|---|---|---|

| R Studio [31] | Integrated Development Environment (IDE) | Provides a flexible environment for statistical computing and graphics. Ideal for performing custom leverage diagnostics and creating sophisticated plots using packages like stats, influence.ME, and ggplot2. |

| JMP Clinical [32] | Clinical Data Analysis Software | Offers specialized tools for clinical trial safety and efficacy review. Includes capabilities for data visualization, pattern detection, and generating interactive reports that can help identify outliers and influential points. |

| Python with Pandas/Seaborn [31] | Programming Language & Libraries | Powerful for data manipulation (Pandas) and statistical data visualization (Seaborn, Matplotlib). Suitable for building custom diagnostic workflows from the ground up. |

| SAS [31] | Statistical Analysis System | A long-standing standard in the pharmaceutical industry for clinical trial analysis, offering robust procedures for regression diagnostics and influence analysis. |

| Tableau / Power BI [31] | Data Visualization Tools | Best for creating interactive dashboards to visually explore data, identify potential outliers, and share findings with stakeholders who may not have a statistical background. |

FAQ 4: My data comes from multiple sources (eCRF, ePRO, labs). How can I ensure data integrity before running diagnostic analyses?

Answer: Data integrity is the foundation of any valid diagnostic procedure. Implement a multi-layered approach:

- Use a Clinical Data Management System (CDMS): A robust CDMS provides automated data validation rules, ontology enforcement, and quality control processes to ensure accuracy, completeness, and consistency from the point of capture [31].

- Perform Risk-Based Monitoring: Utilize tools within your software (e.g., JMP Clinical) to identify data anomalies at the vendor, site, and patient level. This helps determine the factors responsible for lapses in data quality early on [32].

- Leverage Audit Trails: A CDMS with comprehensive audit trails tracks all data changes, which is crucial for regulatory compliance and for understanding the history of any potentially anomalous data point [31].

Experimental Protocol: Identifying Influential Points with Leverage Plots

This protocol provides a detailed methodology for conducting leverage diagnostics, framed within the context of clinical trial research.

Objective: To identify and assess influential data points in a clinical trial regression analysis that may disproportionately affect the model's parameters and conclusions.

Materials and Reagents: Table 2: Research Reagent Solutions for Data Analysis

| Item | Function |

|---|---|

| Clinical Dataset | The structured dataset from a clinical trial (e.g., from an EDC system or CDMS), containing patient outcomes, interventions, and covariates [31]. |

| Statistical Software | A computational environment capable of multiple linear regression and advanced diagnostics (e.g., R Studio or SAS) [31]. |

| Data Visualization Package | A software library (e.g., ggplot2 for R, Seaborn for Python) for creating high-quality leverage plots and other diagnostic visualizations [31]. |

Methodology:

Data Preparation and Model Fitting:

- Import Data: Load the cleaned clinical trial dataset from the clinical data repository or warehouse into your statistical software [31].