Low-Data Drug Discovery: Overcoming Data Scarcity with Active Deep Learning

This article explores the transformative potential of active deep learning (DL) in accelerating drug discovery within data-scarce environments.

Low-Data Drug Discovery: Overcoming Data Scarcity with Active Deep Learning

Abstract

This article explores the transformative potential of active deep learning (DL) in accelerating drug discovery within data-scarce environments. Aimed at researchers and drug development professionals, it provides a comprehensive roadmap from foundational concepts to real-world validation. We first define the core challenge of limited data in pharmaceutical research and introduce active learning as a strategic solution. The discussion then progresses to practical methodologies, including novel neural network architectures and multi-task learning frameworks designed for low-data efficiency. The article critically addresses significant hurdles such as model generalizability, the 'black box' problem, and data quality, offering actionable optimization strategies. Finally, we present rigorous validation protocols, benchmarking results, and emerging success stories from the field, synthesizing key takeaways to outline a future where AI-driven discovery is both faster and more accessible.

The Low-Data Challenge in Pharma: Why Traditional AI Falls Short

The drug discovery process represents one of the most financially intensive and高风险 endeavors in modern healthcare, with traditional approaches requiring an average of $1.3-$4 billion and 10-12 years to bring a single new therapeutic to market [1] [2]. This inefficiency stems primarily from a startlingly high failure rate, with approximately 90% of drug candidates failing during pre-clinical and clinical stages [1]. At the heart of this crisis lies a fundamental constraint: the severe limitation of high-quality, relevant biological and chemical data needed to make informed decisions early in the discovery pipeline. This data bottleneck forces researchers to operate in low-information environments, where critical decisions about target validation and compound optimization must be made with insufficient evidence, ultimately contributing to late-stage failures that drive up costs and extend timelines.

The traditional drug discovery paradigm relies heavily on repetitive Design-Make-Test-Analyze (DMTA) cycles that generate data slowly and expensively through manual laboratory processes. This approach creates a fundamental constraint where the sheer size of chemical space—estimated at >10^60 synthesizable compounds—stands in stark contrast to the minute fraction that can be physically tested using conventional methods [2]. This data scarcity problem is particularly acute for the approximately 7,000 rare diseases affecting over 350 million people globally, where patient populations are small and research incentives are limited by traditional economic models [1]. The following sections quantify this data bottleneck across multiple dimensions and present emerging computational strategies that are reshaping the economics of therapeutic development.

Quantifying the Data Bottleneck: Economic and Temporal Impacts

The Economic Burden of Traditional Discovery

Table 1: Quantitative Analysis of Drug Discovery Costs and Success Rates

| Parameter | Metric | Source |

|---|---|---|

| Average Development Cost | $1.3-4.0 billion per approved drug | [1] |

| Development Timeline | 10-12 years from discovery to approval | [2] |

| Clinical Failure Rate | ~90% failure in pre-clinical/clinical stages | [1] |

| Hit-to-Lead Timeline | 3-5 years (approximately 26% of total timeline) | [2] |

| Structure Determination | 6 months and $50,000-250,000 per structure | [2] |

| Recent Expenditure Growth | 10.2% increase in 2024 to $805.9 billion total | [3] |

The economic data reveals a sector under significant pressure. Overall pharmaceutical expenditures in the U.S. reached $805.9 billion in 2024, representing a 10.2% increase from the previous year [3]. This growth significantly outpaces inflation and is driven primarily by increased utilization (7.9%) and new drug introductions (2.5%), while drug prices have remained essentially flat (0.2% decrease) [3]. This expenditure environment creates tremendous pressure to improve the efficiency of the discovery process, particularly as the days of blockbuster drugs generating >$1 billion in sales are receding in favor of targeted, personalized medicines with smaller patient populations [2].

The Data Generation Bottleneck in Experimental Processes

Table 2: Experimental Data Generation Bottlenecks in Traditional Workflows

| Process Step | Data Limitation | Impact | |

|---|---|---|---|

| Target Identification | Multi-omics data dimensionality requires AI collapse | Correlations understandable but create structural bottleneck | [2] |

| Protein Structure Determination | Physical methods (X-ray, Cryo-EM) slow and expensive | Creates dependency on virtual prediction methods | [2] |

| Compound Screening | Limited to ~2 million compounds vs. >10^60 possible | Severely restricted exploration of chemical space | [2] |

| Hit-to-Lead Optimization | Manual DMTA cycles with sparse, inconsistent data | 3-5 year timeline with high failure rate | [2] |

| Clinical Development | Heterogeneous coding, missing biomarkers in RWE | Undermines downstream analyses and regulatory utility | [4] |

The data generation constraints extend throughout the entire discovery pipeline. In the initial stages, the "data glut" from high-throughput biology techniques (genomics, proteomics, metabolomics) has created information so complex that it requires artificial intelligence to identify meaningful correlations [2]. This then creates a subsequent bottleneck in structural biology, where traditional physical methods for determining protein structure require 6 months and $50,000-250,000 per structure [2]. The compound screening phase represents another critical constraint, with conventional high-throughput screening limited to approximately 2 million compounds from a chemical space exceeding 10^60 possibilities [2]. This represents an exploration of less than 0.0000000000000001% of potential chemical space.

The hit-to-lead optimization phase typically consumes 3-5 years (approximately 26% of the total development timeline) and involves optimizing 15-20 chemical parameters simultaneously, including potency, selectivity, solubility, permeability, and toxicity [2]. This process suffers from what experts have identified as a "molecular discovery bottleneck" where AI cannot function effectively due to insufficient data [2]. The underlying causes include data confidentiality restrictions, inconsistent reporting formats, lack of reproducibility, and the high cost of producing each data point through traditional physical methods [2].

Active Deep Learning: A Paradigm Shift for Low-Data Discovery

Theoretical Framework and Experimental Evidence

Active deep learning represents a fundamental shift from traditional screening approaches by employing an iterative, query-based strategy that selects the most informative compounds for testing, thereby maximizing learning from minimal data. Recent research demonstrates that this approach can achieve up to a sixfold improvement in hit discovery compared to traditional screening methods in low-data scenarios typical of drug discovery [5]. This performance advantage stems from the algorithm's ability to strategically explore chemical space by prioritizing compounds that maximize information gain, rather than testing compounds randomly or based on structural similarity alone.

The methodology operates through a continuous feedback loop where the model's predictions guide the next round of experimental testing, with results further refining the model's understanding. This approach directly addresses the "small data regimes" that typically challenge deep learning approaches in drug discovery [6]. By focusing resources on the most chemically informative regions, active learning overcomes the limitations of conventional virtual screening, which often fails when applied to novel target classes with limited structural information [5].

Experimental Protocol for Active Deep Learning Implementation

Implementation Protocol: Active Deep Learning for Hit Identification

Initial Model Training

- Begin with a modestly-sized labeled dataset (typically 50-500 compounds with measured activity against the target)

- Utilize graph neural networks (GNNs) or molecular fingerprint-based architectures

- Implement Bayesian optimization for uncertainty quantification

Compound Selection and Prioritization

- Deploy acquisition functions (e.g., expected improvement, upper confidence bound)

- Apply diversity-based sampling to prevent clustering in familiar chemical space

- Balance exploration of novel scaffolds with exploitation of known active chemotypes

Iterative Experimental Validation

- Synthesize or procure top-ranked compounds from virtual screening (typically 20-100 compounds per cycle)

- Test selected compounds in relevant biological assays

- Incorporate results into training set for model refinement

Termination Criteria

- Continue cycles until identification of compounds meeting potency thresholds (e.g., IC50 < 100 nM)

- Typical campaigns require 3-8 cycles depending on chemical tractability and assay complexity

This protocol was validated in a recent large-scale study that simulated low-data drug discovery scenarios and systematically analyzed six active learning strategies combined with two deep learning architectures across three large molecular libraries [5]. The research identified that the most successful strategies specifically addressed the key determinants of performance in low-data regimes, including appropriate uncertainty quantification and strategic exploration-exploitation balancing.

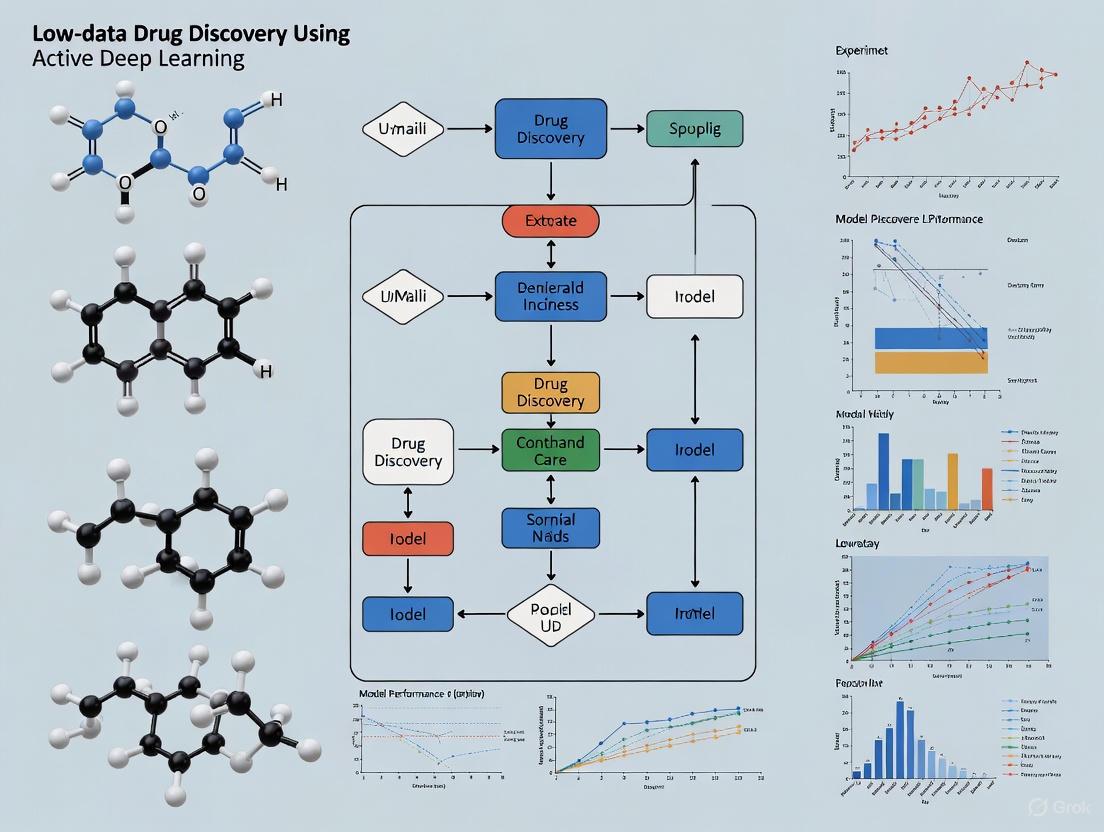

Diagram 1: Active deep learning iterative workflow for low-data drug discovery.

Research Reagent Solutions for Implementation

Table 3: Essential Research Tools for Active Deep Learning Deployment

| Reagent/Tool | Function | Application Context | |

|---|---|---|---|

| LIT-PCBA Datasets | Benchmarking libraries for virtual screening | Validation of active learning protocols against standardized metrics | [5] |

| CETSA (Cellular Thermal Shift Assay) | Target engagement validation in intact cells | Confirmation of compound binding in physiologically relevant environments | [7] |

| AutoDock & SwissADME | Molecular docking and ADMET prediction | Preliminary assessment of binding potential and drug-likeness | [7] |

| PyTorch Geometric | Graph neural networks for molecular data | Implementation of deep learning architectures for structure-activity modeling | [5] |

| RDKit | Cheminformatics and molecular handling | Processing and featurization of chemical structures for machine learning | [5] |

| Apheris Federated Learning | Privacy-preserving collaborative modeling | Multi-institutional model training without sharing proprietary data | [4] |

| Ginkgo Datapoints | Automated antibody assay generation | Uniform biophysical data generation for model training | [4] |

The implementation of active deep learning approaches requires specialized tools and datasets. Publicly available benchmarking libraries like LIT-PCBA provide standardized datasets for validating virtual screening protocols [5]. For experimental validation, technologies like CETSA enable confirmation of target engagement in physiologically relevant environments by measuring thermal stabilization of drug targets in intact cells [7]. Computational chemistry tools including AutoDock and SwissADME provide preliminary assessment of binding potential and drug-likeness before synthesis [7].

The technical infrastructure for implementing these approaches relies on specific programming frameworks. PyTorch Geometric enables implementation of graph neural networks for molecular data, while RDKit provides essential cheminformatics capabilities for processing and featurizing chemical structures [5]. For addressing data scarcity through collaboration, federated learning platforms like Apheris enable multi-institutional model training without sharing proprietary data, while automated assay systems like Ginkgo Datapoints generate uniform biophysical data specifically for model training [4].

Emerging Technologies: Bridging the Data Gap

Large Quantitative Models (LQMs) and Physics-Based Simulation

A transformative development in addressing data scarcity is the emergence of Large Quantitative Models (LQMs) that differ fundamentally from language-based AI models [1]. While LLMs are trained on textual data, LQMs are grounded in first principles of physics, chemistry, and biology, allowing them to simulate fundamental molecular interactions and create new knowledge through billions of in silico experiments [1]. This approach represents a transition from data-driven to physics-driven AI, significantly reducing dependency on existing experimental data.

The power of LQMs has been dramatically enhanced by newly available datasets providing information on over one million protein-ligand complexes and 5.2 million 3D structures with annotated experimental potency data [1]. This structural information enables researchers to train AI models for rapid evaluation of potential drug molecules, focusing resources on compounds with the highest likelihood of success. By incorporating quantum mechanical principles, these models can predict molecular behavior at the subatomic level, providing unprecedented accuracy in forecasting how drugs will interact with biological systems [1].

Federated Learning and Data Collaboration Frameworks

To overcome the critical data access limitations imposed by proprietary archives and intellectual property concerns, the field is increasingly adopting collaborative data sharing models [4]. Pharmaceutical companies are implementing federated learning approaches that allow training models on distributed datasets without transferring sensitive proprietary data. Initiatives like OpenFold3 exemplify this approach, with companies including AbbVie, J&J, and Bristol Myers contributing co-folding data while raw structures remain behind corporate firewalls [4].

This "trust by architecture" approach allows aggregated model gradients to flow through centralized nodes while protecting underlying structural data [4]. The resulting models are then returned to each participant for local inference, creating a collaborative advantage while maintaining competitive positioning. These architectures are particularly valuable for addressing the training data void that hampers AI scalability in drug discovery, especially for novel target classes with limited structural information [4].

Diagram 2: Integrated technology solutions addressing data scarcity in drug discovery.

The quantitative evidence presented demonstrates that the high cost of data represents the fundamental bottleneck in traditional drug discovery, with economic impacts measured in billions of dollars and temporal consequences extending over decades. The emergence of active deep learning strategies capable of achieving sixfold improvements in hit discovery efficiency signals a paradigm shift from data-intensive to intelligence-intensive approaches [5]. When integrated with Large Quantitative Models grounded in physical first principles and federated learning frameworks that enable collaborative advantage without intellectual property compromise, these technologies form a powerful toolkit for overcoming the data scarcity challenge [1] [4].

For researchers and drug development professionals, the practical implementation of these approaches requires specialized infrastructure spanning algorithmic frameworks, experimental validation technologies, and collaborative data ecosystems. Organizations that successfully integrate these capabilities position themselves to significantly reduce the 90% failure rate that plagues conventional discovery efforts [1]. As these technologies mature, we anticipate continued acceleration of the discovery timeline, potentially reducing the current 10-12 year development process by years rather than months, while simultaneously expanding the therapeutic landscape to include thousands of diseases currently deemed "undruggable" due to economic rather than scientific constraints [1] [2]. The future of drug discovery lies not in generating more data, but in generating more knowledge from limited data through sophisticated computational intelligence.

Active learning (AL) represents a paradigm shift in machine learning for drug discovery, strategically addressing the field's pervasive challenge of limited labeled data. This technical guide details the core principles, methodologies, and applications of AL, with a specific focus on its role in low-data regimes. By iteratively selecting the most informative data points for labeling, AL maximizes informational gain, significantly accelerating critical tasks such as molecular property prediction, virtual screening, and hit identification. This primer provides a comprehensive overview of AL strategies, benchmarks their performance in real-world drug discovery scenarios, and offers detailed experimental protocols for implementation, serving as an essential resource for computational researchers and drug development professionals.

The primary objective of drug discovery is to identify specific target molecules with desirable characteristics within a vast and ever-expanding chemical space. The traditional experimental approach to this problem has become impractical, prompting the integration of machine learning (ML) algorithms to navigate the complexity and expedite the process [8]. However, the effective application of ML is consistently hindered by the limited availability of labeled data and the resource-intensive nature of its acquisition. Furthermore, challenges such as severe data imbalance and redundancy within labeled datasets further impede model performance [8]. In this context, active learning (AL) has emerged as a compelling solution. AL is a subfield of artificial intelligence characterized by an iterative feedback process that selects the most valuable data for labeling based on model-generated hypotheses, using this newly labeled data to iteratively enhance the model's performance [8]. This approach neatly aligns with the core challenges in drug discovery, making AL a valuable facilitator throughout the drug development pipeline.

The applicability of AL is particularly critical in low-data drug discovery, where the cost of data acquisition—whether through high-throughput screening, synthesis, or clinical experiments—is exceptionally high. Recent studies have demonstrated that AL can achieve up to a sixfold improvement in hit discovery compared with traditional screening methods and can identify 60% of synergistic drug pairs by exploring only 10% of the combinatorial space [9] [10]. By maximizing the informational gain from every experiment, AL enables the construction of robust predictive models and the efficient exploration of chemical space with minimal resource expenditure.

Core Workflow and Query Strategies

The Active Learning Cycle

The AL process is a dynamic feedback loop that begins with an initial model trained on a small set of labeled data. The core of the cycle involves selecting informative data points from a large pool of unlabeled data, querying their labels (e.g., through experimentation), and updating the model with the newly acquired information. This process continues iteratively until a predefined stopping criterion is met, such as a performance target or exhaustion of resources [8] [11]. The following diagram illustrates this continuous cycle.

Fundamental Query Strategies

The "selection function" or query strategy is the intellectual engine of AL, determining which unlabeled instances are most valuable for model improvement. These strategies generally fall into several core categories, each with distinct mechanisms and advantages.

- Uncertainty Sampling: This is one of the earliest and most straightforward AL strategies. The learner selects instances for which it is least certain about the correct label. In regression tasks, this is often implemented using techniques like Monte Carlo Dropout to estimate predictive variance [11] [12].

- Diversity Sampling: Also known as representative sampling, this approach aims to cover the underlying distribution of the data by selecting a set of instances that are maximally diverse from each other. This helps in building a model that generalizes well across the entire input space [13].

- Expected Model Change Maximization: This strategy selects instances that are expected to cause the greatest change in the current model parameters if their labels were known. It is based on the principle that the most influential data points will prompt the most significant learning.

- Hybrid Strategies: Given the complementary strengths of different principles, many modern AL strategies combine them. A common and powerful hybrid approach balances exploration (diversity) and exploitation (uncertainty). For example, a method might select a batch of points that are both uncertain and diverse from each other to avoid redundancy [11] [12].

Table 1: Comparison of Core Active Learning Query Strategies

| Strategy | Mechanism | Advantages | Limitations | Typical Use Case in Drug Discovery |

|---|---|---|---|---|

| Uncertainty Sampling | Selects data points where model prediction is least certain. | Simple to implement; highly effective for refining model decision boundaries. | Can be myopic; may select outliers. | Optimizing lead compounds; refining QSAR models. |

| Diversity Sampling | Selects a set of data points that are maximally dissimilar. | Promotes broad exploration of chemical space; improves model generalization. | Ignores model-specific informativeness. | Initial exploration of a new chemical series or library. |

| Expected Model Change | Selects points that would cause the largest change in the model. | Theoretically powerful for rapid model improvement. | Computationally expensive to calculate for complex models. | Less common in deep learning due to computational cost. |

| Hybrid (e.g., Uncertainty + Diversity) | Balances uncertainty and diversity within a selected batch. | Mitigates outliers; achieves comprehensive batch information content. | Requires tuning of balance parameters. | Most common in practice [12]; batch selection for virtual screening. |

Performance Benchmarking and Quantitative Insights

The efficacy of AL is not merely theoretical; comprehensive benchmarks across materials science and drug discovery demonstrate its significant impact on data efficiency. A large-scale benchmark study evaluating 17 different AL strategies within an Automated Machine Learning (AutoML) framework on materials science regression tasks revealed clear performance hierarchies, as summarized in Table 2 [11].

Table 2: Benchmark of Active Learning Strategies in a Low-Data Regime (Adapted from Scientific Reports, 2025)

| Strategy Type | Example Methods | Early-Stage Performance (Data-Scarce) | Late-Stage Performance (Data-Rich) | Key Characteristics |

|---|---|---|---|---|

| Uncertainty-Driven | LCMD, Tree-based-R | Clearly outperforms random baseline and geometry-only heuristics. | Performance gap narrows; converges with other methods. | Highly effective for initial model improvement. |

| Diversity-Hybrid | RD-GS | Clearly outperforms baseline; comparable to top uncertainty methods. | Performance gap narrows; converges with other methods. | Balances exploration and exploitation effectively. |

| Geometry-Only | GSx, EGAL | Underperforms compared to uncertainty and hybrid strategies early on. | Performance gap narrows; converges with other methods. | Useful for coverage but ignores model uncertainty. |

| Random Sampling | Random | Serves as the baseline for comparison. | Serves as the baseline for comparison. | Diminishing returns as labeled set grows. |

The benchmark concluded that early in the data acquisition process, uncertainty-driven and diversity-hybrid strategies are paramount for selecting informative samples and improving model accuracy rapidly. However, as the labeled set grows, the marginal gain from sophisticated AL diminishes, and all strategies tend to converge [11].

In drug discovery specifically, novel AL methods have shown remarkable results. Research from Sanofi developed two novel batch AL methods, COVDROP and COVLAP, which leverage deep learning models. These methods select batches of molecules that maximize the joint entropy (i.e., the log-determinant of the epistemic covariance), ensuring both high uncertainty and diversity within the batch [12]. When tested on public ADMET and affinity datasets, these methods consistently led to better model performance with fewer experiments compared to prior methods like BAIT or k-means sampling. For instance, on a solubility dataset of 9,982 molecules, the COVDROP method achieved a lower RMSE more quickly than other methods, indicating significant potential savings in experimental costs [12].

Experimental Protocols for Drug Discovery

Protocol 1: Batch Active Learning for ADMET Optimization

This protocol details the methodology for applying batch AL to optimize Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties, a critical step in lead optimization [12].

- Objective: To train accurate predictive models for ADMET properties with a minimal number of experimental measurements.

- Materials & Reagents:

- Unlabeled Compound Library: A large virtual library of compounds (e.g., 10,000+ molecules) represented by molecular fingerprints or graph structures.

- Oracle: An experimental assay or a high-fidelity simulation capable of providing the ground-truth property value (e.g., solubility, permeability) for any selected compound.

- Initial Training Set: A small, randomly selected set of compounds (e.g., 50-100) with measured properties.

- Computational Methods:

- Model Architecture: A graph neural network (GNN) or a multilayer perceptron (MLP) suitable for regression or classification.

- Uncertainty Estimation: Monte Carlo Dropout or Laplace Approximation is used to estimate the epistemic uncertainty of model predictions for each unlabeled compound.

- Procedure:

- Initialization: Train an initial predictive model on the small labeled dataset.

- Iteration: a. Prediction & Covariance: Use the current model to predict the properties and, crucially, the uncertainty for all compounds in the unlabeled pool. For batch selection, compute a covariance matrix that captures the predictive relationships between compounds. b. Batch Selection: Apply a greedy algorithm to select a batch of compounds (e.g., 30) from the unlabeled pool. The selection criterion is the maximization of the log-determinant of the submatrix of the covariance corresponding to the batch. This maximizes the joint information content (joint entropy) of the batch. c. Labeling: Submit the selected batch of compounds to the "oracle" (experimental assay) to obtain their true property values. d. Model Update: Add the newly labeled compounds to the training set and retrain the model.

- Termination: Repeat the iteration until a desired model performance (e.g., RMSE, R²) is achieved or the experimental budget is exhausted.

The following workflow diagram encapsulates this protocol.

Protocol 2: Active Learning for Synergistic Drug Combination Screening

This protocol outlines the use of AL to efficiently navigate the vast combinatorial space of drug-drug combinations to identify rare synergistic pairs [10].

- Objective: To maximize the discovery of synergistic drug pairs while minimizing the number of combination screenings performed.

- Materials & Reagents:

- Drug Library: A library of approved or investigational drugs.

- Cell Line Panel: A set of cancer cell lines with associated genomic features (e.g., gene expression profiles from GDSC).

- Synergy Screening Platform: A high-throughput system for measuring combination effects (e.g., Bliss synergy score).

- Computational Methods:

- Model Architecture: A neural network that takes as input the molecular representations of two drugs (e.g., Morgan fingerprints) and the genomic features of the cell line.

- Selection Strategy: An exploration-exploitation trade-off, such as selecting combinations with the highest predicted synergy (exploitation) or highest uncertainty (exploration).

- Procedure:

- Pre-training: Pre-train the synergy prediction model on publicly available data (e.g., O'Neil dataset) to obtain a prior model.

- Iteration: a. Prediction: Use the current model to predict synergy scores and uncertainties for all unexplored drug-drug-cell line combinations. b. Priority Selection: Rank combinations based on a acquisition function (e.g., expected improvement, upper confidence bound) that balances high predicted synergy with high uncertainty. c. Batch Screening: Experimentally test the top-ranked batch of combinations. d. Model Retraining: Update the model with the new experimental results.

- Termination: The campaign stops when a sufficient number of synergistic pairs have been identified or the screening budget is spent. Studies show this method can find 300 synergistic combinations in ~1,500 measurements, whereas a random search would require over 8,250 measurements [10].

Successful implementation of AL in drug discovery relies on a suite of computational and experimental resources. The table below catalogs key components of the modern AL research stack.

Table 3: Essential Research Reagents and Resources for Active Learning

| Category | Item | Function in Active Learning | Examples/Notes |

|---|---|---|---|

| Software & Libraries | DeepChem | An open-source toolkit for deep learning in drug discovery; provides implementations of AL loops and molecular ML models. | Critical resource; includes graph convolutional primitives and one-shot learning models [12] [14]. |

| Automated Machine Learning (AutoML) | Automates the process of model selection and hyperparameter tuning, making AL robust to changing model architectures. | Ensures the surrogate model in the AL loop is always near-optimal [11]. | |

| Molecular Representations | Morgan Fingerprints | Circular fingerprints representing the atomic environment within a molecule; used as input features for ML models. | A common and effective 2D representation; outperformed more complex representations in some synergy prediction tasks [10]. |

| Graph Convolutions | Learns meaningful representations directly from the molecular graph structure, capturing topological information. | Used with advanced deep learning models for superior predictive performance [12] [14]. | |

| Data Sources | Public Bioactivity Databases | Provide initial data for pre-training models and benchmarking AL strategies. | ChEMBL, DrugComb, LIT-PCBA [9] [10]. |

| Genomic Data | Cellular context features that are critical for accurate predictions in tasks like drug synergy and response. | Gene expression profiles from GDSC; as few as 10 key genes can be sufficient [10]. | |

| Experimental Systems | High-Throughput Screening (HTS) | Acts as the "oracle" in the AL loop, providing ground-truth labels for selected compounds or combinations. | Must be automated to fit the iterative AL cycle [8]. |

| Cell-Based Assays | Measure functional outcomes like permeability, toxicity, or cell viability (e.g., Caco-2, PPBR). | Used for labeling in ADMET and drug response prediction [12] [13]. |

Active learning is a powerful framework that transforms the drug discovery process from a resource-intensive, data-hungry endeavor into a strategic, iterative, and efficient search. By focusing experimental resources on the most informative data points, AL maximizes informational gain and accelerates the journey from target identification to lead optimization. As benchmark studies and novel methodologies consistently show, the integration of AL—particularly deep batch AL and hybrid query strategies—can lead to order-of-magnitude improvements in efficiency for tasks ranging from molecular property prediction to synergistic drug combination screening. For researchers operating in the critical low-data environment of drug discovery, the adoption of active learning is no longer an optimization but a necessity for maintaining a competitive and innovative pipeline.

Deep learning has ushered in a transformative era for drug discovery, promising to accelerate target identification, compound screening, and lead optimization. These algorithms demonstrate remarkable capabilities in pattern recognition and predictive modeling when applied to vast chemical and biological datasets [15]. However, the fundamental paradox limiting their widespread adoption lies in the inherent data scarcity that characterizes many critical stages of pharmaceutical research. While deep learning models are notoriously "data-hungry," real-world drug discovery pipelines often struggle to generate sufficient high-quality data, creating a critical gap between theoretical potential and practical application [6] [16].

This discrepancy is particularly pronounced during lead optimization, where researchers must refine candidate molecules with only minimal biological data available [16]. The pharmaceutical industry faces a formidable challenge: traditional deep learning approaches require millions of data points to achieve reliable performance, yet practical constraints often limit experimental validation to merely dozens or hundreds of compounds [16]. This review examines the technical foundations of this data gap, evaluates emerging solutions for low-data learning, and provides a practical framework for implementing these approaches in contemporary drug discovery pipelines.

Quantitative Landscape: Assessing the Data Discrepancy in Real-World Discovery

The disconnect between data requirements and data availability manifests across multiple dimensions of the drug discovery workflow. The following table summarizes key quantitative indicators of this challenge:

Table 1: Data Requirements vs. Reality in Drug Discovery Applications

| Application Area | Typical Deep Learning Data Requirement | Real-World Data Availability | Performance Impact |

|---|---|---|---|

| Synergistic Drug Combination Screening | Hundreds of thousands to millions of drug-cell pairs [10] | ~15,000 measurements (Oneil dataset); 3.55% synergy rate [10] | Active learning discovers 60% of synergistic pairs with only 10% combinatorial space exploration [10] |

| Lead Optimization | Millions of compound-property relationships for robust prediction [16] | Often only dozens to hundreds of characterized compounds [16] | One-shot learning significantly lowers data requirements for meaningful predictions [16] |

| Low-Data Regime Predictions | Standard deep learning fails with small datasets [6] | Often <100 compounds for rare diseases or novel targets [16] | Specialized architectures enable learning from few hundred compounds [16] |

The data scarcity problem is further compounded by the high dimensionality of biological feature spaces and the extreme class imbalance common in discovery settings. For example, in synergistic drug combination screening, synergistic pairs represent only 1.47-3.55% of all possible combinations, creating significant challenges for standard classification approaches [10].

Technical Foundations: Deep Learning Architectures for Low-Data Environments

One-Shot Learning Methodologies

One-shot learning represents a fundamental shift from traditional deep learning paradigms by focusing on metric learning rather than direct pattern recognition. These approaches learn a meaningful distance metric over the space of possible inputs, allowing them to generalize from minimal examples by comparing new data points to limited available data [16].

The mathematical formalism for one-shot learning in drug discovery involves multiple binary learning tasks, where some proportion of tasks with sufficient data are used to train a model that can then generalize to tasks with limited data [16]. Each task corresponds to an experimental assay with data points ( S = {(xi,yi)}_{i=1}^m ), where ( x ) represents compounds and ( y ) represents binary experimental outcomes. The goal is to learn a function ( h ) parameterized on support set ( S ) that predicts the probability of any query compound ( x ) being active in the same system [16].

A key architectural innovation for one-shot learning in drug discovery is the iterative refinement long short-term memory (LSTM), which modifies the matching-networks architecture to allow sophisticated metrics that trade information between evidence and query molecules [16]. This architecture enables full context embeddings, where embeddings for query compounds and support set elements influence one another, significantly strengthening one-shot learning capabilities [16].

Diagram: One-Shot Learning Architecture for Drug Discovery

Active Learning Frameworks

Active learning provides a complementary approach to data scarcity by strategically selecting the most informative experiments to perform. This creates an iterative cycle where model predictions guide experimental design, and experimental results refine model parameters [10]. In the context of synergistic drug discovery, active learning has demonstrated remarkable efficiency, discovering 60% of synergistic drug pairs while exploring only 10% of the combinatorial space [10].

The active learning workflow consists of several key components: available data, an AI algorithm to evaluate new samples, and selection criteria for prioritizing experiments [10]. Molecular encoding has limited impact on performance, while cellular environment features significantly enhance predictions [10]. Research shows that as few as 10 carefully selected genes can provide sufficient transcriptional information for effective modeling of inhibition [10].

Diagram: Active Learning Cycle for Drug Discovery

Hybrid and Specialized Architectures

Beyond one-shot and active learning, several specialized architectures have emerged to address data limitations:

Graph Neural Networks (GNNs) leverage the inherent graph structure of molecules, with atoms as nodes and bonds as edges, enabling more efficient learning from limited examples by incorporating domain knowledge directly into the model architecture [15]. These approaches have proven superior to traditional fingerprint-based methods for capturing molecular intricacies, especially with novel compounds featuring unconventional scaffolds [15].

Transfer learning and multi-task learning allow models to leverage information from related tasks or domains, increasing accuracy in low-data regimes by sharing representations across related prediction tasks [15]. Pre-training on large chemical databases like ChEMBL followed by fine-tuning on specific target data has shown particular promise for mitigating data scarcity [10].

Experimental Protocols: Implementing Low-Data Learning in Practice

One-Shot Learning Implementation

Protocol Title: Iterative Refinement LSTM for Low-Data Compound Activity Prediction

Objective: Predict compound activity in experimental assays with limited training data.

Materials and Methods:

Support Set Construction:

- Curate known active/inactive compounds for related biological targets

- Standardize molecular structures and remove duplicates

- Annotate with canonical SMILES and assay outcomes

Molecular Featurization:

- Implement graph convolutional networks to process molecular structures

- Generate molecular embeddings using message-passing neural networks

- Apply batch normalization to stabilize learning

Model Architecture:

- Implement iterative refinement LSTM for full-context embeddings

- Configure bidirectional LSTM for support set processing

- Initialize attention mechanism for similarity scoring

Training Protocol:

- Use episodic training with random task selection

- Configure Adam optimizer with learning rate 0.001

- Implement early stopping based on validation loss

Evaluation Metrics:

- Calculate precision-recall AUC for imbalanced data

- Compute ROC-AUC for overall performance

- Assess calibration curves for probability accuracy

Expected Outcomes: The protocol should enable meaningful activity predictions for novel compounds using support sets of 10-100 compounds, significantly outperforming random forest baselines and standard deep learning approaches in low-data regimes [16].

Active Learning for Synergistic Drug Discovery

Protocol Title: Batch-Aware Active Learning for Drug Combination Screening

Objective: Efficiently identify synergistic drug combinations with minimal experimental effort.

Materials and Methods:

Initial Data Collection:

- Compile existing drug combination screening data

- Encode compounds using Morgan fingerprints (radius 2, 1024 bits)

- Incorporate cellular context using GDSC gene expression profiles

Model Selection and Configuration:

- Implement multilayer perceptron with 3 hidden layers (64 neurons each)

- Configure model to predict Loewe synergy scores

- Initialize with pre-trained weights on related combination datasets

Active Learning Loop:

- Set batch size to 1-2% of total search space per iteration

- Implement uncertainty sampling for query selection

- Dynamic tuning of exploration-exploitation balance

- Retrain model after each batch of experimental results

Experimental Validation:

- Conduct combination screening in relevant cell lines

- Measure cell viability using high-throughput assays

- Calculate synergy scores using Loewe or Bliss models

Performance Assessment:

- Track synergistic pair discovery rate vs. experimental effort

- Compare against random screening baseline

- Calculate efficiency gain as experimental savings

Expected Outcomes: This protocol should identify 60% of synergistic combinations with approximately 10% of the experimental effort required for exhaustive screening [10]. Smaller batch sizes typically yield higher synergy discovery rates, with dynamic tuning of exploration-exploitation strategy further enhancing performance.

Research Reagent Solutions: Essential Tools for Implementation

Table 2: Key Research Reagents and Computational Tools for Low-Data Drug Discovery

| Resource Category | Specific Tools/Platforms | Function in Low-Data Learning | Implementation Considerations |

|---|---|---|---|

| Deep Learning Frameworks | DeepChem [16], TensorFlow [16] | Provides implementations of graph convolutional networks and one-shot learning architectures | Open-source availability; pre-built layers for molecular machine learning |

| Molecular Representations | Morgan Fingerprints [10], MAP4 [10], Graph Convolutions [15] | Encodes molecular structure for machine learning models | Morgan fingerprints with addition operation show strong performance in low-data regimes [10] |

| Cellular Context Features | GDSC Gene Expression [10], Single-cell RNA-seq | Captures cellular environment for better generalization | As few as 10 genes sufficient for convergence in synergy prediction [10] |

| Experimental Validation Platforms | High-throughput screening, CETSA [7] | Validates target engagement and compound efficacy | Provides quantitative, system-level validation in biologically relevant contexts [7] |

| Active Learning Controllers | RECOVER framework [10], Custom query strategies | Selects most informative experiments to perform | Batch size critically impacts performance; smaller batches often superior [10] |

Discussion: Bridging the Gap Between Promise and Practice

The integration of low-data learning approaches represents a paradigm shift in AI-driven drug discovery. While traditional deep learning methods struggle with the limited datasets typical of pharmaceutical research, one-shot learning, active learning, and specialized architectures offer tangible pathways to practical utility. The critical insight unifying these approaches is their focus on efficient knowledge transfer rather than de novo pattern discovery from massive datasets.

The experimental protocols and architectures presented herein demonstrate that meaningful progress is possible despite data constraints. One-shot learning's ability to leverage related assay data, combined with active learning's strategic experiment selection, creates a powerful framework for accelerating discovery while respecting practical limitations. These approaches are particularly valuable for rare diseases, novel target classes, and personalized medicine applications where data scarcity is most acute.

However, significant challenges remain. Model interpretability continues to be problematic for complex deep learning architectures, raising concerns in regulatory contexts [15]. The integration of heterogeneous data types—from chemical structures to genomic sequences and phenotypic images—poses additional technical hurdles [15]. Future research directions should focus on hybrid approaches that combine physics-based modeling with data-driven learning, enhanced uncertainty quantification for reliable decision support, and standardized benchmarking frameworks for low-data learning methodologies.

The gap between deep learning's potential and data reality in drug discovery remains substantial, but no longer insurmountable. The methodologies outlined in this review provide a roadmap for developing data-efficient AI systems that can deliver meaningful value within the constraints of real-world pharmaceutical research. By embracing one-shot learning, active learning, and specialized architectures, researchers can begin to close this critical gap while maintaining scientific rigor and biological relevance.

The future of AI in drug discovery lies not in increasingly large models trained on increasingly massive datasets, but in smarter, more efficient algorithms that respect the fundamental economics and practicalities of pharmaceutical R&D. The frameworks presented here represent significant steps toward that future—where AI accelerates discovery not despite data limitations, but by strategically working within them.

The application of artificial intelligence (AI) in drug discovery represents a paradigm shift in pharmaceutical research, yet it introduces a critical dependency: the need for vast, high-quality datasets. Modern deep learning approaches, which have demonstrated remarkable success in various domains, are inherently "data-gulping" and may fail to deliver on their promise without sufficient training data [17]. This creates a fundamental tension in drug discovery, where generating high-quality biological and chemical data is often prohibitively expensive, time-consuming, and limited by practical constraints such as patient privacy and rare disease prevalence [18]. Data scarcity—the insufficiency of adequate data for effective model training—becomes a major limiting factor that can reduce model accuracy, increase bias, and ultimately hinder the development of novel therapeutics [18] [19].

In response to these challenges, the field has developed sophisticated methodologies for operating in low-data regimes (contexts where available training data is limited) and for actively mitigating data scarcity through innovative learning paradigms. Among these, active learning cycles have emerged as a powerful strategy for maximizing information gain from minimal data points. This technical guide provides a comprehensive framework for understanding these key concepts and their practical application within drug discovery, offering researchers structured definitions, comparative analyses, experimental protocols, and practical implementations to navigate the data-scarce landscape of modern pharmaceutical research.

Key Definitions and Conceptual Framework

Core Terminology

Low-Data Regime: A learning scenario where the available training dataset is insufficient for standard deep learning models to generalize effectively without specialized techniques. In drug discovery, this often manifests when working with novel target classes, rare diseases, or proprietary chemical series where annotated data may be limited to hundreds or even dozens of examples [20] [19]. The regime is characterized by a high risk of overfitting, where models memorize training examples rather than learning generalizable patterns.

Data Scarcity: A broader condition affecting entire domains or problem spaces, defined by fundamental limitations in acquiring sufficient, high-quality data for machine learning. Causes include high acquisition costs, privacy regulations (e.g., GDPR), logistical challenges, rare events (e.g., uncommon diseases), and proprietary restrictions [18]. In pharmaceutical contexts, data scarcity affects areas like rare disease drug development and complex phenotypic screening where biological replicates are limited.

Active Learning Cycle: An iterative machine learning process that strategically selects the most informative data points for expert annotation from a pool of unlabeled examples. The cycle prioritizes quality over quantity by identifying instances where the model is most uncertain or where labeling would provide maximum information gain, thereby reducing annotation costs and improving model efficiency [18] [17].

Relationship Between Concepts

The relationship between data scarcity, low-data regimes, and active learning is hierarchical and interdependent. Data scarcity describes the fundamental resource constraint present in many scientific domains. This scarcity creates operational low-data regimes for specific machine learning tasks. To address this challenge, researchers employ strategic frameworks like active learning cycles that optimize the use of available data and guide targeted data acquisition.

Table 1: Comparative Analysis of Core Concepts in Data-Limited Drug Discovery

| Concept | Scope | Primary Cause | Typical Manifestation in Drug Discovery | Key Mitigation Strategies |

|---|---|---|---|---|

| Data Scarcity | Domain/Field-wide | Privacy regulations, rare diseases, high acquisition costs, proprietary data restrictions [18] | Rare disease research, novel target classes, complex phenotypic assays | Data augmentation, synthetic data generation, federated learning, transfer learning [18] [17] |

| Low-Data Regime | Task/Model-specific | Limited labeled examples for a specific prediction task [20] | Predicting activity for novel chemical scaffolds, toxicity prediction with limited compounds | Self-supervised learning, few-shot learning, active learning cycles [20] [19] |

| Active Learning Cycle | Process/Methodological | Need to optimize annotation resources and model performance [17] | Iterative compound prioritization in design-make-test-analyze cycles | Uncertainty sampling, diversity sampling, query-by-committee, expected model change [21] |

Technical Approaches for Low-Data Regimes

Self-Supervised Learning (SSL)

Self-supervised learning has emerged as a powerful paradigm for low-data regimes by creating supervisory signals directly from unlabeled data. The core principle involves pre-training models using pretext tasks that do not require manual annotation, followed by fine-tuning on downstream tasks with limited labeled data [20]. This approach is particularly valuable in drug discovery where unlabeled chemical and biological data is often more abundant than labeled data.

Table 2: Comparative Evaluation of Self-Supervised Learning Methods in Low-Data Regimes

| SSL Method | Mechanism | Pretext Task | Strengths | Limitations | Performance in Low-Data Drug Discovery |

|---|---|---|---|---|---|

| MAE (Masked Autoencoders) | Generative | Reconstructs masked portions of input data [20] | High robustness to noisy data; effective representation learning | Requires substantial pre-training data | Moderate performance in very low-data scenarios; improves with domain-specific pre-training |

| SimCLR | Contrastive Learning | Maximizes agreement between differently augmented views of same data instance [20] | Strong performance with limited labeled examples; effective with Vision Transformer architectures | Computationally intensive; requires careful augmentation strategy | Superior performance in limited data regimes with domain-specific adaptations |

| DINO | Self-Distillation | Knowledge distillation between different augmentations of same image [20] | Excellent generalization ability; creates semantically meaningful features | Complex training dynamics | Best transfer learning performance; maintains effectiveness across domains |

| DeepClusterV2 | Clustering-Based | Alternates between clustering representations and using cluster assignments as pseudo-labels [20] | Discovers inherent structure in data; works with unlabeled datasets | Cluster instability; sensitive to hyperparameters | Variable performance; domain-dependent effectiveness |

Few-Shot and Zero-Shot Learning

When labeled data is extremely scarce (typically fewer than 20 examples per class), few-shot learning (FSL) and zero-shot learning (ZSL) approaches become valuable. These methods leverage knowledge transfer from related tasks or domains where data is more abundant. In medical imaging and drug discovery, foundation models pre-trained on large datasets have shown remarkable effectiveness in few-shot scenarios [19].

Recent benchmarking studies demonstrate that BiomedCLIP, a vision-language model pre-trained exclusively on medical data, performs best on average for very small training set sizes in medical imaging tasks [19]. However, with slightly more training examples (typically >5 per class), very large CLIP models pre-trained on massive datasets like LAION-2B achieve superior performance. Interestingly, simple fine-tuning of standard architectures like ResNet-18 pre-trained on ImageNet can remain competitive with more sophisticated approaches when given more than five training examples per class [19].

Data Augmentation and Synthesis

Data augmentation creates expanded training sets by applying carefully designed transformations to existing data, while synthetic data generation creates entirely new examples through algorithmic means. In drug discovery, these approaches help overcome data scarcity by artificially expanding limited datasets:

- Molecular Data Augmentation: Techniques include SMILES enumeration (generating equivalent string representations of the same molecule), atom/bond masking, and scaffold-based generation of analogous structures [18].

- Synthetic Data Generation: Generative Adversarial Networks (GANs) and other generative models can create novel molecular structures with desired properties, though validation remains challenging [18] [17].

- Image-Based Augmentation: For microscopy or histology data, standard computer vision augmentations (rotation, flipping, color adjustment) are combined with domain-specific transformations [18].

Active Learning Cycles: Methodologies and Implementation

Theoretical Foundations

Active learning operates on the principle of maximum information gain—the idea that selectively choosing which data to label can yield better performance with fewer annotations than random selection. The core mathematical framework involves an acquisition function (a(x, M)) that scores the utility of labeling candidate instance (x) given the current model (M). Common acquisition strategies include:

- Uncertainty Sampling: Selects instances where the model's prediction uncertainty is highest, typically measured using entropy, least confidence, or margin-based criteria [17].

- Diversity Sampling: Prioritizes instances that differ from existing training examples to ensure broad coverage of the feature space.

- Expected Model Change: Chooses instances that would cause the greatest change to the current model parameters if their labels were known.

The active learning cycle iteratively applies this acquisition function to select the most informative batch of samples for expert annotation, then updates the model with the newly labeled data, creating a feedback loop that progressively improves model performance while minimizing labeling effort [21].

Experimental Protocol: Transcriptomics-Driven Active Learning

A recent breakthrough in Science demonstrates a practical implementation of active learning for phenotypic drug discovery. The framework leverages transcriptomic data to identify modulators of disease phenotypes through the following detailed protocol [21]:

1. Initialization Phase:

- Input: Begin with a diverse library of uncharacterized compounds (typically 10,000-100,000 molecules).

- Baseline Model: Train initial model on any available labeled data (if none, use random sampling for first cycle).

- Feature Representation: Encode compounds using molecular fingerprints (ECFP6), graph neural networks, or pre-trained molecular representations.

2. Active Learning Cycle:

- Query Strategy Implementation: Apply Bayesian optimization to select compounds that maximize expected information gain about disease-reverse activity. The acquisition function combines:

- Predictive Uncertainty: Estimated using Monte Carlo dropout or ensemble methods.

- Diversity Metric: Maximum Euclidean distance to existing labeled examples in latent space.

- Phenotypic Relevance: Incorporation of transcriptomic signature similarity to desired phenotype.

- Batch Selection: Select top 0.5-1% of compounds (typically 50-100 molecules) for experimental validation.

3. Experimental Validation & Labeling:

- Transcriptomic Profiling: Treat model systems (e.g., cell lines, patient-derived organoids) with selected compounds.

- RNA Sequencing: Perform bulk or single-cell RNA-seq to capture comprehensive transcriptional responses.

- Phenotypic Scoring: Quantify desired phenotypic outcome (e.g., disease signature reversal, viability assessment).

- Label Assignment: Assign activity labels based on statistically significant phenotypic improvement versus controls.

4. Model Update:

- Incremental Training: Fine-tune existing model with newly labeled compounds.

- Representation Learning: Optionally update feature representations based on new transcriptomic insights.

- Cycle Repetition: Execute 5-10 complete cycles or until performance plateaus.

This protocol achieved a 13-17x improvement in phenotypic hit rates compared to conventional high-throughput screening in two independent hematological discovery studies [21].

Research Reagent Solutions

Table 3: Essential Research Reagents for Transcriptomic Active Learning Experiments

| Reagent/Category | Function in Experimental Protocol | Key Considerations for Low-Data Regimes |

|---|---|---|

| DNA-Encoded Libraries (DEL) | Provides diverse chemical starting points for screening [22] | Focus on libraries with high structural diversity to maximize information gain per experiment |

| Cell-Based Disease Models | Biological context for phenotypic screening (e.g., primary cells, patient-derived organoids) [21] | Prioritize models with strong clinical relevance and well-characterized phenotypic readouts |

| RNA-Seq Kits | Transcriptomic profiling of compound effects (bulk or single-cell) | Standardized protocols to minimize technical variability; batching strategies to control costs |

| High-Content Imaging Systems | Multiparametric phenotypic characterization | Automated analysis pipelines to extract maximal information from each experiment |

| Automated Synthesis Platforms | Enables rapid compound iteration based on model predictions [22] | Integration with design software to close the design-make-test-analyze cycle rapidly |

| Multi-Well Assay Platforms | High-throughput phenotypic screening | Miniaturization (1536-well) to reduce reagent consumption and increase throughput |

Integration with Broader Drug Discovery Pipeline

The successful implementation of active learning cycles and low-data regime strategies requires seamless integration with established drug discovery workflows. This integration creates a unified framework that spans from target identification to lead optimization:

The synergy between active learning and other data-scarcity mitigation strategies creates a comprehensive approach to low-data drug discovery. Transfer learning leverages knowledge from data-rich domains (e.g., general chemical space) to bootstrap models for data-poor domains (e.g., novel target classes) [18] [17]. Multi-task learning shares representations across related prediction tasks, effectively increasing the signal available for each individual task. Federated learning enables model training across multiple institutions without sharing proprietary data, thus expanding effective dataset sizes while preserving privacy [18] [17].

The integration of these approaches within an active learning framework creates a powerful ecosystem for drug discovery in low-data regimes. For instance, a foundation model pre-trained on public chemical data can be fine-tuned on proprietary data using active learning strategies that selectively choose the most informative compounds for expensive experimental validation [19]. This approach maximizes the value of each data point while leveraging broader chemical knowledge to compensate for limited private data.

Future Directions and Challenges

Despite significant advances, several challenges remain in applying active learning and low-data regime strategies to drug discovery. The "cold start" problem—how to initialize models with little to no labeled data—still requires careful consideration, often addressed through transfer learning from related domains or sophisticated semi-supervised approaches [20]. Model calibration and uncertainty quantification remain critical in low-data settings, where overconfidence in incorrect predictions can misdirect entire research programs.

The emergence of foundation models for biology and chemistry offers promising directions for addressing data scarcity [19]. These models, pre-trained on massive diverse datasets, can potentially be adapted to specific drug discovery tasks with minimal fine-tuning. However, recent benchmarking studies highlight the need for further research on foundation models specifically tailored for medical applications and the collection of more diverse datasets to train these models effectively [19].

As the field progresses, the integration of active learning with automated synthesis and screening platforms promises to close the design-make-test-analyze cycle more rapidly [22]. This integration, coupled with continued advances in algorithmic approaches for low-data learning, will be essential for realizing the vision of accelerated therapeutic development, particularly for rare diseases and underserved patient populations where data scarcity remains the most significant barrier to innovation.

Architecting for Efficiency: Active Deep Learning Models and Pipelines

The process of drug discovery is notoriously lengthy, costly, and data-intensive. The high failure rates of candidate compounds are often exacerbated in scenarios where biological or chemical data is scarce, such as for novel targets or rare diseases. In these low-data scenarios, traditional machine learning models struggle to generalize, creating a significant bottleneck. Fortunately, two powerful paradigms in deep learning offer promising solutions: Graph Neural Networks (GNNs) and Multitask Learning (MTL).

GNNs are uniquely suited for drug discovery because they natively operate on graph-structured data, such as the molecular graph of a compound where atoms are nodes and bonds are edges [23] [24]. This allows them to learn rich representations that capture critical structural information. Multitask learning, on the other hand, enables models to leverage information from multiple related tasks simultaneously, effectively augmenting the learning signal for each individual task [25] [26]. When combined, these approaches create robust models capable of making accurate predictions even when data for any single task is limited. This technical guide explores the architectures, methodologies, and experimental protocols that make GNNs and MTL effective for low-data drug discovery.

Graph Neural Networks for Weak Information Scenarios

In real-world settings, the ideal of having complete, high-quality graph data is often not met. Practitioners must contend with weak information scenarios, which include feature loss (missing node features), structural loss (incomplete graph connectivity), and label loss (scarce labeled data) [27]. Standard GNNs, which rely on message-passing and neighbor aggregation mechanisms, can see significant performance degradation under these conditions because their core operations are compromised [27] [28].

Advanced GNN Architectures for Data Scarcity

Recent research has produced specialized GNN architectures designed to overcome these challenges:

- RM-BGNN (Residual Mechanism Bayesian Graph Neural Network): This model addresses all three aspects of weak information. It uses graph structure enhancement and long-distance message propagation to help isolated nodes connect to the main graph, mitigating structural loss. Its dual-channel design maintains both the original local graph structure and a global semantic view. Crucially, it incorporates Bayesian linear layers to handle parameter uncertainty. These layers learn the optimal probability distribution of weights and biases, improving the model's robustness and generalization ability when faced with incomplete data or unknown samples [27].

- Stable-GNN: This architecture tackles the critical problem of Out-of-Distribution (OOD) generalization. Traditional GNNs often fail when the test data distribution differs from the training data, a common occurrence in low-data regimes. Stable-GNN introduces a feature sample weighting decorrelation technique in a random Fourier transform space. This method aims to remove spurious correlations between features, forcing the model to rely on genuine causal features for predictions. This results in more stable and reliable performance across different data distributions [29].

The following diagram illustrates the core architecture and data flow of the RM-BGNN model, highlighting its key components for handling weak information.

Diagram: RM-BGNN Architecture for Weak Information Scenarios

Performance of Robust GNN Models

The table below summarizes the quantitative performance of advanced GNN models on benchmark datasets, demonstrating their effectiveness in node classification tasks under weak information scenarios compared to baseline models.

Table 1: Performance Comparison of GNN Models on Node Classification Tasks (Accuracy %)

| Model | Cora Dataset | Citeseer Dataset | Pubmed Dataset | Key Feature |

|---|---|---|---|---|

| GCN (Baseline) | 81.5 | 70.3 | 79.0 | Standard graph convolution [28] |

| GAT (Baseline) | 83.1 | 72.5 | 79.0 | Attention-based neighbor aggregation [28] |

| Stable-GNN | 85.2 | 74.8 | 80.1 | Feature decorrelation for OOD stability [29] |

| RM-BGNN | 84.7 | 74.3 | 80.1 | Bayesian layers & graph enhancement [27] |

Multitask Learning Frameworks

Multitask Learning provides a powerful alternative, or complement, to architectural innovation for tackling data scarcity. The fundamental premise of MTL is to jointly learn multiple related tasks, sharing representations between them. This acts as a form of inductive transfer and regularization, which can improve generalization and reduce the risk of overfitting on small datasets [25].

The DeepDTAGen Framework

A leading example in drug discovery is DeepDTAGen, a novel MTL framework that simultaneously predicts Drug-Target Binding Affinity (DTA) and generates novel, target-aware drug molecules [25]. This is a significant advancement because it uses a shared feature space for both predictive and generative tasks. The knowledge of ligand-receptor interactions learned during affinity prediction directly informs and conditions the drug generation process, ensuring the generated molecules are biologically relevant.

A major challenge in MTL is gradient conflict, where the gradients from different tasks point in opposing directions, hindering convergence. To solve this, DeepDTAGen introduces the FetterGrad algorithm. This algorithm mitigates gradient conflicts by minimizing the Euclidean distance between the gradients of the different tasks, keeping them aligned during training and preventing one task from dominating the learning process [25].

Comprehensive MTL Platforms

The Baishenglai (BSL) platform exemplifies the industrial application of MTL. It integrates seven core drug discovery tasks within a unified, modular framework [26]:

- Molecular property profiling

- Drug-target affinity prediction

- Drug-drug interaction prediction

- Drug-cell response prediction

- Molecular generation and optimization

- Retrosynthesis pathway prediction

By leveraging shared representations across these tasks with advanced techniques like zero-shot learning and domain adaptation, BSL achieves state-of-the-art performance even in challenging OOD settings, providing a comprehensive solution for virtual drug discovery [26].

Experimental Protocols and Methodologies

Rigorous experimentation is crucial for validating the efficacy of any model in low-data scenarios. The following protocols are standard in the field.

Dataset Splitting and Evaluation Metrics

To accurately simulate low-data and OOD conditions, datasets must be split carefully. The common i.i.d. (independent and identically distributed) random split is often insufficient. Instead, scaffold splitting is used, where molecules are grouped by their core molecular structure (scaffold), and the splits are made to ensure that training and test sets contain distinct scaffolds. This tests the model's ability to generalize to novel chemotypes [26].

Key evaluation metrics vary by task:

- Regression Tasks (e.g., DTA Prediction): Mean Squared Error (MSE), Concordance Index (CI), and the modified ( R^2 ) metric (( r_m^2 )) are standard [25] [24]. CI is particularly important as it measures the model's ability to correctly rank affinities.

- Generation Tasks: Metrics include Validity (proportion of chemically valid molecules), Uniqueness, and Novelty (fraction of valid molecules not present in the training set) [25] [24].

Detailed Protocol: DeepDTAGen DTA Prediction & Generation

The following workflow outlines the experimental procedure for training and evaluating the DeepDTAGen model, showcasing the interaction between its predictive and generative components.

Diagram: DeepDTAGen Multitask Learning Workflow

Procedure:

- Data Preparation: Use benchmark datasets like KIBA, Davis, or BindingDB. Preprocess SMILES strings and protein sequences into standardized formats and split data using scaffold splitting [25].

- Model Initialization: Construct the DeepDTAGen model with a shared encoder (e.g., using GNNs for drugs and CNNs for proteins) and two task-specific heads [25].

- Loss Function Definition:

- DTA Prediction Loss: Mean Squared Error (MSE).

- Drug Generation Loss: Cross-entropy loss for the sequence generation of SMILES strings.

- Training with FetterGrad: Implement the FetterGrad algorithm to compute gradients for both tasks, measure their conflict, and adjust the optimization direction to minimize Euclidean distance between them [25].

- Validation and Testing: Evaluate the model on held-out test sets, reporting MSE, CI, and ( r_m^2 ) for DTA prediction, and Validity, Uniqueness, and Novelty for the generated molecules.

Quantitative Results from Key Studies

The performance of these advanced models is quantified on standard benchmarks, as shown in the table below for DTA prediction.

Table 2: Drug-Target Affinity (DTA) Prediction Performance on Benchmark Datasets

| Model | KIBA (MSE/CI) | Davis (MSE/CI) | BindingDB (MSE/CI) | Approach |

|---|---|---|---|---|

| KronRLS | 0.159 / 0.836 | 0.280 / 0.872 | N/A | Traditional Machine Learning [25] |

| GraphDTA | 0.147 / 0.891 | 0.219 / 0.890 | 0.483 / 0.868 | GNN-based DTA Prediction [25] |

| DeepDTAGen | 0.146 / 0.897 | 0.214 / 0.890 | 0.458 / 0.876 | Multitask Learning (Prediction + Generation) [25] |

Successful implementation of these models requires a suite of computational tools and datasets. The table below lists essential "research reagents" for developing GNNs and MTL models for low-data drug discovery.

Table 3: Essential Research Reagents for Low-Data Drug Discovery with GNNs and MTL

| Resource Category | Specific Tool / Dataset | Function and Utility in Research |

|---|---|---|

| Benchmark Datasets | KIBA, Davis, BindingDB [25] | Standardized benchmarks for training and evaluating Drug-Target Affinity (DTA) prediction models. |

| Molecular Datasets | ESOL, FreeSolv, BBBP, Tox21 [24] | Curated datasets from MoleculeNet for various molecular property prediction tasks (solubility, permeability, toxicity). |

| Software Libraries | PyTor Geometric, Deep Graph Library (DGL) | Specialized Python libraries for building and training GNN models, offering efficient graph operations and pre-implemented layers. |

| Analysis Tools | RDKit | Open-source cheminformatics toolkit used for processing SMILES, calculating molecular descriptors, and validating generated molecules. |

| Evaluation Metrics | Concordance Index (CI), ( r_m^2 ), Validity/Novelty/Uniqueness [25] [24] | Quantitative metrics essential for objectively measuring model performance on regression, classification, and generation tasks. |

The integration of advanced GNN architectures and multitask learning frameworks represents a paradigm shift in tackling the data scarcity challenges inherent in drug discovery. Models like RM-BGNN and Stable-GNN directly address the weaknesses of standard GNNs in the face of incomplete data and distribution shifts. Simultaneously, MTL frameworks like DeepDTAGen and platforms like Baishenglai demonstrate that sharing representations across related tasks can create a powerful synergistic effect, significantly boosting generalization and predictive accuracy.

The experimental protocols and tools outlined provide a roadmap for researchers to implement and validate these approaches. As these technologies continue to mature, they hold the promise of drastically accelerating the early stages of drug discovery, reducing costs, and opening new avenues for treating diseases with limited available data. The future lies in building even more integrated, robust, and explainable AI systems that can reliably guide scientists from target identification to viable lead compounds.

In the field of drug discovery, the development of effective machine learning models is often hampered by limitations in the available data, both in terms of size and molecular diversity. This is particularly true in the early stages of research against novel targets, where labelled data is exceptionally scarce. Active deep learning represents a transformative approach to this challenge, as it enables iterative model improvement during the screening process by strategically acquiring new data [5]. This guide details the core components of the active learning loop, with a specific focus on the query strategies that determine which data points are most informative for labelling. By mastering these strategies, researchers and drug development professionals can significantly accelerate hit discovery, achieving up to a sixfold improvement over traditional screening methods in simulated low-data scenarios [5] [30].

The Active Learning Cycle: A Framework for Iterative Model Improvement

Active learning is an intelligent data labeling strategy that iteratively selects the most informative samples from a pool of unlabeled data for labeling, thereby maximizing model performance with minimal human supervision [31]. It replaces the traditional paradigm of labeling a large dataset upfront with a dynamic, iterative cycle.

The foundational cycle of active learning operates as follows [31] [32]:

- Initialization: Begin with a small, initially labeled dataset.

- Model Training: Train a machine learning model on the current set of labeled data.

- Inference and Query Strategy: Use the trained model to make predictions on a large pool of unlabeled data. Then, apply a query strategy (e.g., uncertainty sampling) to select the most informative data points from this pool.

- Human-in-the-Loop Annotation: The selected data points are labeled by a human expert, a step often referred to as "human-in-the-loop."

- Model Update: The newly labeled data points are added to the training set, and the model is retrained.

- Iteration: Steps 3 through 5 are repeated until a predefined performance threshold or labeling budget is met.

This cycle is powerfully applied in drug discovery. For instance, a generative model workflow can be integrated with two nested active learning cycles [33]. An inner cycle uses chemoinformatic oracles (e.g., for drug-likeness and synthetic accessibility) to refine generated molecules. An outer cycle then uses a physics-based oracle (e.g., molecular docking scores) to select candidates for the final training set, creating a self-improving system that explores novel chemical space while focusing on molecules with high predicted affinity [33].

Core Query Strategies: The Engine of Informed Data Selection