Machine Learning in Homogeneous Catalysis: A Comprehensive Guide to Data-Driven Optimization and Design

This article provides a comprehensive overview of the transformative role of machine learning (ML) in optimizing homogeneous transition-metal catalysis, a cornerstone of modern synthetic chemistry and pharmaceutical development.

Machine Learning in Homogeneous Catalysis: A Comprehensive Guide to Data-Driven Optimization and Design

Abstract

This article provides a comprehensive overview of the transformative role of machine learning (ML) in optimizing homogeneous transition-metal catalysis, a cornerstone of modern synthetic chemistry and pharmaceutical development. Tailored for researchers and drug development professionals, it systematically explores the foundational principles, key ML algorithms, and their practical applications in predicting catalytic activity, enantioselectivity, and reaction outcomes. The content delves into methodological best practices for data handling and model training, addresses common troubleshooting and optimization challenges, and offers a critical comparative analysis of model validation techniques. By synthesizing the latest advances, this guide aims to equip scientists with the knowledge to leverage ML for accelerating catalyst discovery, enhancing mechanistic understanding, and streamlining the development of more efficient and sustainable synthetic routes for drug discovery and beyond.

Laying the Groundwork: Core Concepts and the Rise of AI in Homogeneous Catalysis

The Unique Complexity of Homogeneous Catalytic Systems

Homogeneous catalysis, wherein the catalyst and substrates exist in the same phase (typically liquid), is fundamental to modern chemical synthesis, particularly in the pharmaceutical and fine chemical industries [1]. These systems most often involve organometallic or coordination complexes, where a central metal is surrounded by organic ligands that profoundly influence the catalyst's properties [1]. The core challenge—and opportunity—lies in the fact that a single metal center can produce a wide variety of products from one substrate simply by modifying its ligand environment [1]. This tunability creates a multidimensional optimization problem encompassing chemoselectivity, regioselectivity, diastereoselectivity, and enantioselectivity [1].

Traditional catalyst development relies on empirical, time-consuming trial-and-error approaches. Each new ligand can require days or more to prepare and evaluate, making comprehensive exploration of chemical space impractical [2]. This inefficiency is compounded by the intricate interplay of steric, electronic, and mechanistic factors that govern catalytic performance [3]. Machine learning (ML) emerges as a disruptive technology to navigate this complexity, offering statistical methods to infer functional relationships from data without requiring complete a priori mechanistic understanding [3].

Key Data and Workflow Challenges Addressed by ML

The application of ML in homogeneous catalysis targets several critical bottlenecks in the research workflow. Table 1 summarizes the primary data-related challenges and the corresponding ML-driven solutions.

Table 1: Key Data Challenges in Homogeneous Catalysis and ML Solutions

| Challenge | Impact on Research | ML-Driven Solution |

|---|---|---|

| Vast Chemical Space | Impractical to synthesize and test all possible ligand-catalyst combinations [2]. | Virtual screening of catalyst libraries to prioritize the most promising candidates [4] [3]. |

| High-Dimensional Optimization | Difficult to intuitively balance multiple reaction parameters (ligand, solvent, temperature, etc.) [3]. | Multidimensional pattern recognition to identify optimal reaction conditions [3]. |

| Limited Standardized Data | Scarcity of large, high-quality, publicly available datasets for model training [3]. | Hybrid/semi-supervised learning and transfer learning from computational or related datasets [5] [3]. |

| Complex Structure-Function Relationships | Hard to predict how subtle ligand modifications affect enantioselectivity [2]. | Graph Neural Networks (GNNs) and other algorithms to learn complex structure-activity relationships [2] [5]. |

A typical traditional workflow for ligand optimization is a cyclical, human-intensive process, as illustrated below. Machine learning, particularly explainable AI, aims to shortcut the most time-consuming phases of this cycle.

Machine Learning Approaches and Experimental Protocols

Supervised Learning for Predictive Modeling

Supervised learning is widely used to predict catalytic performance metrics such as reaction yield and enantioselectivity. The process involves training models on labeled datasets where each input (e.g., catalyst structure) is paired with a known output (e.g., % ee) [3]. Key algorithms include Linear Regression, Random Forest, and Graph Neural Networks (GNNs) [3].

Protocol 1: Building a Predictive Model for Enantioselectivity

- Data Curation: Compile a dataset of catalytic reactions containing the SMILES (Simplified Molecular-Input Line-Entry System) representations of the substrate, reagent, and chiral ligand, along with the experimentally determined enantiomeric excess (ee) or enantiomeric ratio (er) [2].

- Feature Engineering/Representation:

- Descriptor-Based Approach: Calculate molecular descriptors (e.g., steric and electronic parameters, Hammett parameters) for the ligand substituents. This can require significant human curation and computational chemistry input [2].

- Graph-Based Approach (Modern): Represent each molecule as a graph, where atoms are nodes (with features like identity, degree, hybridization) and bonds are edges. These graphs are concatenated to form a reaction-level graph, which is fed into a GNN [2]. This approach is more automated and captures complex structural patterns.

- Model Training and Validation: Split the data into training and test sets. Train a selected algorithm (e.g., Random Forest or GNN) to map the input features to the enantioselectivity output. The model's performance is validated on the held-out test set, and its ability to extrapolate to novel ligand structures is critically assessed [2].

Generative Models for Inverse Catalyst Design

Beyond predicting the performance of known catalysts, generative models can design entirely new catalyst structures. The CatDRX framework is an example of a reaction-conditioned generative model that creates catalysts for a given reaction [5].

Protocol 2: Inverse Design of Catalysts using a Conditional Variational Autoencoder (CVAE)

- Model Pre-training: Pre-train a Conditional VAE on a broad reaction database (e.g., the Open Reaction Database). The model learns to associate reaction components (reactants, reagents, products) with catalyst structures [5].

- Conditioning and Fine-tuning: For a specific design task, the model is conditioned on the SMILES of the target reaction components. It can be fine-tuned on a smaller, targeted dataset to improve performance for a particular reaction class [5].

- Latent Space Sampling and Optimization: The encoder maps known catalysts into a latent space. Sampling from this space, guided by the reaction condition embedding, allows the decoder to generate novel catalyst structures. Optimization techniques can steer the sampling toward regions of the latent space associated with high predicted performance [5].

- Validation: Generated catalyst candidates are filtered for synthesizability and validated using computational chemistry tools (e.g., DFT calculations of energy profiles) before experimental testing [5].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2 lists essential computational tools, data resources, and model architectures that form the modern ML-driven catalysis researcher's toolkit.

Table 2: Essential Research Reagent Solutions for ML in Homogeneous Catalysis

| Tool/Resource | Type | Function & Application |

|---|---|---|

| SMILES | Molecular Representation | A string-based notation for representing molecular structures, easily used as input for ML models [2]. |

| Graph Neural Network (GNN) | Model Architecture | Learns directly from molecular graph structures, capturing complex patterns without manual descriptor design [2]. |

| HCat-GNet | Specialized Model | A GNN designed to predict enantioselectivity and absolute stereochemistry using only SMILES inputs [2]. |

| CatDRX | Software Framework | A reaction-conditioned variational autoencoder for generative catalyst design and performance prediction [5]. |

| Open Reaction Database (ORD) | Data Resource | A large, open-access repository of reaction data used for pre-training generalist ML models [5]. |

| Scikit-learn | Software Library | A popular Python library providing implementations of classic ML algorithms like Random Forest and Linear Regression [6]. |

| TensorFlow / PyTorch | Software Library | Deep learning frameworks used to build and train complex neural network models, including GNNs [6]. |

Visualizing the Integrated ML-Driven Workflow

The power of ML is fully realized when it is integrated into a closed-loop, iterative workflow that connects prediction, generation, and experimental validation. This integrated pipeline accelerates the discovery process far beyond traditional methods.

Homogeneous catalysis presents a prime target for machine learning due to its inherent complexity, high-dimensional optimization challenges, and the critical need for more efficient and sustainable research methodologies. The synergy between data-driven algorithms and chemical expertise is transforming the field from a trial-and-error discipline to a more predictive and generative science. As models become more interpretable and integrated into automated workflows, ML is poised to significantly accelerate the discovery and optimization of catalytic reactions for pharmaceutical and industrial applications.

The field of chemistry, particularly catalysis research, is undergoing a profound transformation driven by artificial intelligence (AI), machine learning (ML), and deep learning (DL). In homogeneous catalysis research, where traditional discovery relies on iterative experimental cycles, ML optimization offers a paradigm shift towards data-driven, predictive design. These computational techniques enable researchers to navigate the vast and complex chemical space with unprecedented speed and accuracy, moving beyond trial-and-error approaches to rationally design catalytic systems with tailored properties [7] [8]. This document provides detailed application notes and protocols for integrating these powerful tools into homogeneous catalysis research workflows.

Core Applications and Quantitative Benchmarks

The application of AI/ML in chemistry spans generative molecular design, predictive property modeling, and the development of large-scale benchmark datasets. The table below summarizes key performance metrics for these core applications, providing a benchmark for researchers.

Table 1: Performance Metrics of AI/ML Models in Chemical Research

| Application Area | Model/Dataset Name | Key Performance Metric | Reported Value |

|---|---|---|---|

| Catalyst Property Prediction | AQCat25-EV2 Model [9] | Prediction speed vs. quantum methods | >20,000x faster |

| AQCat25-EV2 Model [9] | Energetics prediction accuracy | Approaches quantum-mechanical methods | |

| OC25 Dataset Models [10] | Force prediction error | 0.015 eV/Å | |

| OC25 Dataset Models [10] | Energy prediction error | 0.1 eV | |

| Generative Chemistry | Deep Learning Architectures [11] | Validity/Uniqueness trade-off | High correlation (AUROC 0.900 with AnoChem [12]) |

| Biomolecular Interaction | AlphaFold 3 [13] | Protein-ligand interface accuracy (pocket-aligned ligand RMSD < 2Å) | Far greater than state-of-the-art docking tools |

Application Notes & Experimental Protocols

Protocol 1: Generative AI for Catalyst Discovery using GANs

Objective: To employ a Generative Adversarial Network (GAN) for the de novo design of novel ligand structures for homogeneous metal catalysts with specified electronic properties.

Background: GANs generate new molecular structures by learning the underlying probability distribution of existing chemical data. In catalysis, they can be conditioned on key performance descriptors, such as adsorption energy, to bias the generation towards promising candidates [7] [11].

Materials:

- Hardware: Computer with a high-performance GPU (e.g., NVIDIA H100).

- Software: Python environment with libraries such as PyTorch or TensorFlow, RDKit.

- Data: A curated dataset of known catalyst ligands and their associated properties (e.g., SMILES strings and corresponding measured or computed adsorption energies) [11].

Procedure:

- Data Preprocessing:

- Compile a dataset of molecular structures (e.g., as SMILES strings) for known catalyst ligands.

- Clean the data by removing duplicates and invalid structures.

- Featurize the molecules. For a GAN, this often involves converting SMILES strings into a one-hot encoded matrix or a continuous numerical representation [11].

- If conditioning the model, format the target property data (e.g., adsorption energies) to be used as an input vector alongside the molecular representation.

Model Architecture & Training:

- Implement a GAN architecture consisting of a Generator and a Discriminator. The Generator creates new molecular representations from a random noise vector (and a conditional property vector), while the Discriminator evaluates whether a given molecule is real (from the dataset) or generated.

- Train the GAN in an adversarial loop: a. The Generator produces a batch of novel molecules. b. The Discriminator is trained on a mixed batch of real and generated molecules. c. The Generator is updated based on the Discriminator's ability to detect its fakes, learning to produce more realistic molecules.

- The training is complete when the Generator produces a high fraction of valid, novel, and unique molecular structures, as measured by benchmarks like those in MOSES or GuacaMol [11].

Candidate Generation & Validation:

- Use the trained Generator to produce a large library of candidate ligand structures.

- Filter the candidates first by chemical validity and synthetic accessibility (using tools like SAscore or the AnoChem framework [12]).

- Employ predictive ML models (see Protocol 2) or first-principles calculations (e.g., DFT) to evaluate the shortlisted candidates for the target catalytic properties.

- Select the top candidates for experimental synthesis and testing.

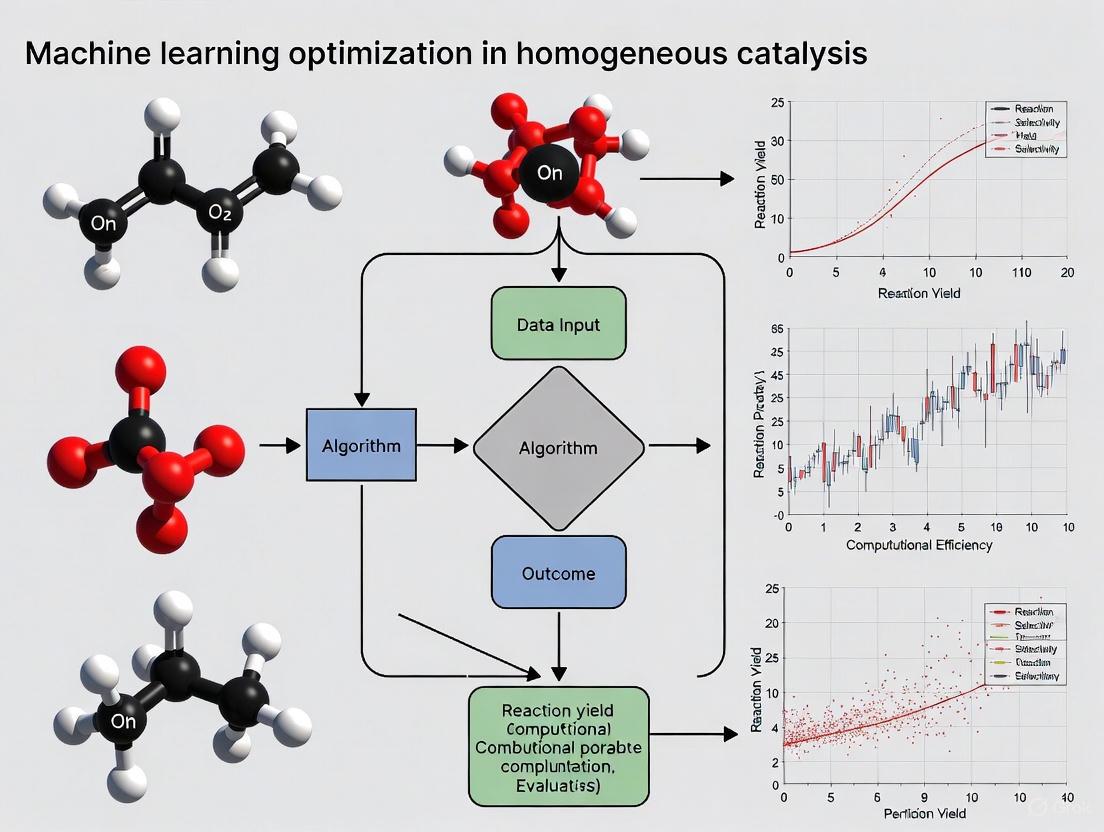

Figure 1: Generative AI Workflow for Catalyst Discovery. This diagram outlines the protocol for using a Generative Adversarial Network (GAN) to design novel catalyst ligands, from data preparation to experimental validation.

Protocol 2: Predictive Modeling of Catalyst Properties using Random Forest and SHAP

Objective: To train a robust machine learning model for predicting key catalytic properties (e.g., turnover frequency, adsorption energy) and interpret the model to identify critical electronic and steric descriptors.

Background: Supervised ML models can learn complex, non-linear relationships between a catalyst's features and its performance. Random Forest is a powerful, ensemble-based method that provides high accuracy and inherent feature importance metrics [7].

Materials:

- Software: Python with scikit-learn, SHAP, pandas, and NumPy libraries.

- Data: A structured dataset where each row is a catalyst (e.g., a metal-ligand complex) and columns include features (descriptors) and a target variable (catalytic property).

Procedure:

- Feature Engineering:

- Calculate a comprehensive set of molecular descriptors for each catalyst in your dataset. For homogeneous catalysts, this may include:

- Electronic Descriptors: d-band center, d-band width, HOMO/LUMO energies, natural population analysis charges [7].

- Steric Descriptors: Steric maps, Tolman cone angle, percent buried volume (%VBur).

- General Descriptors: Molecular weight, number of rotatable bonds, etc.

- Ensure the target variable (e.g., adsorption energy) is accurately computed or measured.

- Calculate a comprehensive set of molecular descriptors for each catalyst in your dataset. For homogeneous catalysts, this may include:

Model Training & Validation:

- Split the dataset into training (e.g., 80%) and testing (e.g., 20%) sets.

- Train a Random Forest regressor (for continuous properties) or classifier (for categorical outcomes) on the training set.

- Tune hyperparameters (e.g.,

n_estimators,max_depth) using cross-validation on the training set. - Evaluate the final model's performance on the held-out test set using metrics like Mean Absolute Error (MAE) or R² score.

Model Interpretation with SHAP:

- To move from a "black box" to an interpretable model, apply SHapley Additive exPlanations (SHAP).

- Calculate SHAP values for the entire test set. This quantifies the contribution of each feature to every individual prediction.

- Generate summary plots (e.g., beeswarm plots) to visualize the global feature importance and the direction of effect (positive or negative) each feature has on the target property [7].

- Use these insights to formulate new design rules. For example, the model might reveal that a higher d-band filling and a narrower d-band width collectively favor weaker oxygen adsorption [7].

Protocol 3: Leveraging Large-Scale Datasets for Pre-Trained Models

Objective: To utilize a large-scale, publicly available dataset and its associated pre-trained models for accelerating catalyst discovery for reactions at solid-liquid interfaces, relevant to homogeneous catalysis.

Background: Large-scale datasets like OC25 and AQCat25 provide high-fidelity quantum chemistry calculations that are indispensable for training accurate ML models. Using pre-trained models from these resources can dramatically reduce computational costs and time [10] [14].

Materials:

- Computational Resources: Standard workstation or cloud computing environment.

- Data & Models: The OC25 dataset (for solid-liquid interfaces) or the AQCat25 dataset (which includes spin polarization for magnetic metals) and their corresponding pre-trained baseline models, available on platforms like Hugging Face [10] [9] [14].

Procedure:

- Dataset and Model Acquisition:

- Download the OC25 or AQCat25 dataset from its official repository.

- Access the corresponding pre-trained model files and inference code.

System Setup and Simulation:

- Prepare the input files for your catalytic system of interest. This typically involves defining the initial geometry of the catalyst and the adsorbate(s) in a format compatible with the model (e.g., POSCAR, .xyz).

- For solid-liquid interface systems (OC25), specify the solvent environment if required by the model.

Inference and Analysis:

- Run the pre-trained model on your input structure to obtain predictions for key outputs such as total energy, forces on atoms, and possibly solvation energies [10].

- Analyze the results to compare the relative stability of different catalyst configurations or reaction pathways.

- For fine-tuning, you can use transfer learning to adapt the general pre-trained model to your specific, smaller dataset of catalytic systems, potentially improving prediction accuracy for your niche application.

The Scientist's Toolkit: Essential Research Reagents & Materials

In the context of AI-driven catalysis research, "research reagents" extend beyond chemical compounds to include critical datasets, software, and computational resources.

Table 2: Key Research Reagents and Resources for AI-Driven Catalysis

| Resource Name | Type | Primary Function in Research |

|---|---|---|

| OC25 Dataset [10] | Dataset | Provides 7.8M+ DFT calculations for solid-liquid interfaces, enabling model training and simulation of electrocatalytic processes. |

| AQCat25 Dataset [14] | Dataset | Offers 11M+ high-fidelity data points including spin polarization, critical for modeling earth-abundant magnetic metals. |

| AnoChem Framework [12] | Software Tool | Assesses the likelihood of a generative model's output being a "realistic" and synthesizable molecule. |

| NVIDIA H100 GPU [9] | Hardware | Accelerates the training of large generative and predictive models, reducing computation time from months to days. |

| SHAP Library [7] | Software Library | Interprets ML model predictions by quantifying the contribution of each input feature, revealing design rules. |

| Random Forest Algorithm [7] | ML Algorithm | Serves as a robust, interpretable predictive model for linking catalyst descriptors to performance metrics. |

| Generative Adversarial Network (GAN) [7] [11] | DL Architecture | Generates novel, valid molecular structures for catalyst ligands by learning from existing chemical data. |

The integration of machine learning (ML) into catalytic research represents a paradigm shift from traditional trial-and-error methods toward a data-driven scientific discovery process. In homogeneous catalysis, where molecular catalysts operate in the same phase as reactants, ML offers powerful tools to navigate the complex multidimensional parameter spaces that govern catalytic performance. These approaches systematically address the sequence-function relationships in molecular catalysts and the intricate relationships between catalytic structures and their activity, selectivity, and stability. The field has coalesced around three foundational learning paradigms: supervised learning for predictive modeling of catalyst properties, unsupervised learning for pattern discovery in catalytic data, and hybrid approaches that integrate physical principles with data-driven methods [15] [16].

Each paradigm offers distinct capabilities for tackling specific challenges in homogeneous catalysis. Supervised learning excels at building quantitative structure-activity relationships (QSAR) for catalysts when experimental data is available, while unsupervised methods can reveal hidden patterns in large catalytical databases without predefined labels. Hybrid approaches, particularly physics-informed machine learning, embed fundamental chemical principles into data-driven models, enhancing their interpretability and physical consistency [16]. These methodologies are transforming how researchers design molecular catalysts, optimize reaction conditions, and elucidate mechanistic pathways for complex transformations central to pharmaceutical development and fine chemical synthesis.

Supervised Learning in Catalytic Research

Core Principles and Applications

Supervised learning operates on labeled datasets where each input data point is associated with a corresponding output value. In homogeneous catalysis, this typically involves training algorithms on catalyst structures, molecular descriptors, or reaction conditions as inputs, with associated catalytic properties such as turnover frequency, enantioselectivity, or yield as target outputs [15]. The trained model can then predict the performance of unexplored catalysts, dramatically accelerating the discovery process.

This approach has demonstrated remarkable success across various catalytic domains. Recent applications include predicting adsorption energies of reaction intermediates on catalytic surfaces [15], forecasting catalytic activity and selectivity for specific transformations [17], and optimizing reaction conditions for known catalytic systems [16]. In homogeneous catalysis specifically, supervised learning has been deployed to screen ligand libraries for metal complexes, predict the effects of catalyst modifications on performance, and identify promising molecular structures from virtual libraries before synthetic investment [18].

Table 1: Common Supervised Learning Algorithms in Catalytic Research

| Algorithm Category | Specific Methods | Catalytic Applications | Key Advantages |

|---|---|---|---|

| Tree-Based Methods | Random Forest, XGBoost [15] | Catalyst screening [15], Activity prediction [17] | Handles mixed data types, Feature importance ranking |

| Neural Networks | Fully Connected Networks, Graph Neural Networks [15] | Reaction outcome prediction [17], Transition state analysis [19] | High representational power, Captures complex nonlinearities |

| Kernel Methods | Support Vector Machines, Gaussian Process Regression [15] | Performance prediction with uncertainty quantification [20] | Strong theoretical foundations, Uncertainty estimates |

Experimental Protocol: Supervised Learning for Catalyst Optimization

Objective: To implement a supervised learning workflow for predicting and optimizing the performance of homogeneous catalysts in a target transformation.

Materials and Reagents:

- Computational Resources: Workstation with adequate RAM (>16 GB) and CPU/GPU capabilities for ML modeling

- Software Environment: Python with scikit-learn, XGBoost, or similar ML libraries

- Data Collection Tools: Access to catalytic databases (e.g., DigCat) or high-throughput experimentation systems [20] [17]

- Molecular Descriptor Software: RDKit, Dragon, or custom descriptor calculation scripts

- Validation Tools: Laboratory setup for experimental validation of top predictions

Procedure:

- Data Curation and Preprocessing

- Compile a dataset of homogeneous catalysts with associated performance metrics (e.g., yield, turnover number, enantiomeric excess) from historical data or literature.

- Calculate molecular descriptors for each catalyst structure, including electronic (e.g., Hammett parameters, frontier orbital energies), steric (e.g., Tolman cone angle, steric maps), and structural features (e.g., bond lengths, coordination geometries) [15].

- Address missing data through imputation or removal and normalize features to standard scales (e.g., zero mean, unit variance).

Model Training and Validation

- Split the dataset into training (70-80%), validation (10-15%), and hold-out test sets (10-15%) using stratified sampling if class imbalance exists.

- Train multiple algorithms (e.g., Random Forest, XGBoost, Neural Networks) on the training set using k-fold cross-validation (typically k=5 or 10) to optimize hyperparameters.

- Evaluate model performance on the validation set using metrics relevant to the catalytic property (e.g., Mean Absolute Error for continuous properties, Accuracy for classification tasks).

Prediction and Experimental Validation

- Deploy the best-performing model to screen a virtual library of candidate catalysts.

- Select top candidates based on predicted performance and diversity considerations.

- Synthesize and experimentally validate the top-predicted catalysts using standard catalytic testing protocols.

- Incorporate the new experimental results into the dataset for model refinement.

Unsupervised Learning in Catalytic Research

Core Principles and Applications

Unsupervised learning operates on unlabeled data, seeking to identify inherent patterns, groupings, or reduced representations without predefined output variables. In homogeneous catalysis, these methods excel at exploring large chemical spaces, identifying natural clusters of catalyst behaviors, and revealing hidden structure-property relationships that might escape human intuition [15].

Principal applications in catalysis include dimensionality reduction techniques such as Principal Component Analysis (PCA) and t-distributed Stochastic Neighbor Embedding (t-SNE) for visualizing high-dimensional catalyst datasets in two or three dimensions [15]. Clustering algorithms like k-means and hierarchical clustering can group catalysts with similar properties or identify outlier compounds. In molecular catalyst design, unsupervised methods have been particularly valuable for analyzing the vast space of possible protein sequences in enzyme engineering [21], exploring ligand diversity in transition metal catalysis, and constructing knowledge graphs of catalytic reactions from literature data.

Table 2: Unsupervised Learning Methods in Catalysis

| Method Category | Specific Techniques | Catalytic Applications | Information Gained |

|---|---|---|---|

| Dimensionality Reduction | PCA, t-SNE, UMAP [15] | Visualization of catalyst libraries [15], Descriptor selection | Intrinsic data dimensionality, Key variance sources |

| Clustering Algorithms | k-means, Hierarchical Clustering, DBSCAN [15] | Catalyst classification [15], Identification of catalyst families | Natural catalyst groupings, Outlier detection |

| Generative Models | Autoencoders, Variational Autoencoders [21] | Latent space representation of catalysts [21], Novel catalyst design | Compressed representations, Data generation |

Experimental Protocol: Unsupervised Exploration of Catalyst Space

Objective: To employ unsupervised learning for exploring and mapping the chemical space of homogeneous catalysts to identify promising regions for further investigation.

Materials and Reagents:

- Computational Resources: Standard workstation with sufficient memory for large dataset handling

- Software: Python with scikit-learn, umap-learn, or specialized cheminformatics platforms

- Catalyst Database: Access to structured catalyst databases or curated literature datasets

- Visualization Tools: Matplotlib, Plotly, or similar visualization libraries

Procedure:

- Dataset Compilation

- Assemble a comprehensive set of molecular catalysts relevant to the transformation of interest, including both successful and unsuccessful examples from literature and experimental records.

- Calculate a diverse set of molecular descriptors capturing electronic, steric, and topological features of each catalyst.

Dimensionality Reduction

- Apply Principal Component Analysis (PCA) to identify the principal components that capture maximum variance in the descriptor space.

- Use nonlinear techniques such as t-SNE or UMAP to create low-dimensional embeddings that preserve local and global data structure.

- Visualize the reduced spaces, coloring points by catalytic properties (e.g., activity, selectivity) to identify potential patterns.

Cluster Analysis

- Apply clustering algorithms (e.g., k-means, hierarchical clustering) to identify natural groupings within the catalyst dataset.

- Determine optimal cluster numbers using metrics such as silhouette score or gap statistic.

- Characterize each cluster by its representative features and average catalytic properties.

Knowledge Extraction and Hypothesis Generation

- Analyze cluster compositions to identify structural features associated with high-performance catalysts.

- Formulate hypotheses about promising catalyst scaffolds or modifications based on the unsupervised analysis.

- Prioritize under-explored regions of the catalyst space for future investigation.

Hybrid Approaches in Catalytic Research

Core Principles and Applications

Hybrid approaches integrate multiple machine learning paradigms or combine data-driven methods with physical models to leverage their complementary strengths. In catalysis, these methods have emerged as particularly powerful for addressing the limitations of purely data-driven approaches, especially when dealing with small datasets or requiring physically consistent predictions [16].

Physics-Informed Machine Learning (PIML) and Physics-Informed Neural Networks (PINNs) embed fundamental scientific knowledge—such as conservation laws, kinetic equations, or thermodynamic constraints—directly into the ML architecture [16]. Symbolic regression methods aim to discover mathematically concise relationships that describe catalytic behavior, potentially revealing new fundamental principles [15]. Active learning frameworks, particularly those incorporating Bayesian optimization, strategically guide experimental campaigns by balancing exploration of uncertain regions with exploitation of known promising areas [20].

These hybrid methodologies have demonstrated exceptional utility in optimizing multimetallic catalyst compositions [20], discovering novel catalytic reactions [18], and bridging molecular-level simulations with macroscopic kinetic models [22]. The integration of large language models (LLMs) and vision-language models (VLMs) with robotic experimentation systems represents a particularly advanced hybrid approach, enabling the creation of self-driving laboratories that can navigate complex experimental parameter spaces [20].

Experimental Protocol: Hybrid Active Learning for Catalyst Discovery

Objective: To implement a hybrid active learning workflow combining Bayesian optimization with robotic experimentation for accelerated discovery of homogeneous catalysts.

Materials and Reagents:

- Robotic Platform: Automated synthesis and screening system (e.g., liquid handlers, flow reactors) [20] [23]

- Analysis Equipment: Inline or online analytical instrumentation (e.g., NMR, MS, HPLC) [23]

- Computational Infrastructure: Server with Bayesian optimization software and integration capabilities with robotic systems

- Chemical Reagents: Comprehensive set of catalyst precursors, ligands, and substrates for the target reaction

Procedure:

- Experimental Setup and Initial Design

- Define the parameter space for catalyst optimization, including composition variables (e.g., metal/ligand combinations, additives) and process conditions (e.g., temperature, concentration).

- Establish an automated experimental platform with integrated synthesis, reaction, and analysis capabilities.

- Execute a space-filling initial design (e.g., Latin Hypercube Sampling) to gather baseline data across the parameter space.

Model Training and Candidate Proposal

- Train a probabilistic model (typically Gaussian Process Regression) on all collected data, incorporating uncertainty estimates.

- Use an acquisition function (e.g., Expected Improvement, Knowledge Gradient) to propose the most informative experiments [20].

- Balance exploration of uncertain regions with exploitation of known high-performing areas through appropriate acquisition function tuning.

Automated Experimental Execution

- Translate proposed experimental conditions into robotic execution commands.

- Execute synthesis, reaction, and analysis steps through the automated platform.

- Process analytical data to extract performance metrics (e.g., conversion, selectivity).

Iterative Optimization and Validation

- Incorporate new experimental results into the dataset and update the model.

- Repeat the proposal-execution cycle for a predetermined number of iterations or until performance targets are met.

- Validate optimized catalysts under standard laboratory conditions to confirm performance.

Essential Research Reagents and Computational Tools

The effective implementation of ML approaches in catalytic research requires both chemical reagents and computational resources. The following table details key components of the researcher's toolkit for ML-driven catalyst discovery and optimization.

Table 3: Research Reagent Solutions for ML-Driven Catalysis

| Category | Specific Items | Function in ML Workflow | Implementation Notes |

|---|---|---|---|

| Chemical Building Blocks | Diverse ligand libraries [18], Metal precursors, Substrate arrays | Provides chemical space for exploration and model training | Diversity in electronic and steric properties is crucial |

| Descriptor Generation Tools | RDKit, Dragon, Custom quantum chemistry scripts [15] | Translates molecular structures to machine-readable features | Electronic, steric, and topological descriptors recommended |

| ML Algorithms & Libraries | scikit-learn, XGBoost, PyTorch, TensorFlow [15] | Core modeling infrastructure for prediction and optimization | Ensemble methods often outperform single algorithms |

| Specialized Catalysis Tools | Virtual Ligand-Assisted Screening (VLAS) [18], Transition State Screening (CaTS) [19] | Domain-specific screening and optimization | Incorporates catalytic mechanistic knowledge |

| Automation & Robotics | Liquid handlers, Automated reactors, Inline analytics [20] [23] | Enables high-throughput data generation for ML models | Critical for closing the design-make-test-analyze loop |

Application Note: Strategic Framework for ML in Catalysis

The application of machine learning (ML) in homogeneous catalysis represents a paradigm shift, moving research from empirical trial-and-error to a data-driven discipline [15] [24]. This transition is underpinned by a three-stage developmental framework: initial high-throughput screening, performance modeling with physical descriptors, and finally, the use of advanced techniques like symbolic regression to uncover general catalytic principles [15]. The core value of ML lies in its ability to extract implicit knowledge from data, statistically inferring functional relationships even without exhaustive a priori mechanistic understanding [3]. This allows for the efficient exploration of complex, multidimensional reaction spaces where time and cost constraints severely restrict traditional experimental scope [3].

However, this promise is tempered by three persistent challenges. The vastness of chemical space, exemplified by the thousands of derivatives that can be formulated from a classic system like Vaska's complex, makes comprehensive screening infeasible [25]. Furthermore, research is often conducted under conditions of extreme data scarcity, where experimental constraints limit the volume of high-quality data available for model training [26] [21]. Finally, the deep mechanistic complexity of catalytic cycles, involving intricate interplay of steric, electronic, and kinetic factors, poses a significant barrier to accurate prediction and interpretation [24] [27]. This application note details protocols designed to navigate these specific challenges.

Quantitative Benchmarks and Performance

The following tables summarize key performance metrics from case studies where ML was successfully applied to overcome challenges in catalysis.

Table 1: Performance of ML Models in Predicting Catalytic Activity and Properties

| Catalytic System | ML Algorithm | Key Performance Metric | Computational Efficiency Gain | Reference |

|---|---|---|---|---|

| H₂ Activation in Vaska's Complex Derivatives | Gaussian Process (GP) | MAE < 1.0 kcal/mol | Minutes on a laptop (vs. days for DFT) | [25] |

| Sludge-based Catalytic Degradation of Bisphenols | XGBoost with DV-PJS | Relative deviation from experiment: 3.2% | 58.5% improvement in efficiency | [26] |

| Pd-catalyzed Allylation (C–O Cleavage) | Multiple Linear Regression (MLR) | R² = 0.93 | N/R | [3] |

| Human Left Ventricle Model (Methodology Reference) | XGBoost & Multilayer Perceptron | R² = 0.999 | 3-4 orders of magnitude | [28] |

Table 2: Data Volume Threshold Analysis for Small-Data ML (Based on [26])

| Data Volume (Data Points) | Model Performance (Example RMSE) | Key Finding |

|---|---|---|

| < 400 | High, unstable | Performance is suboptimal and volatile. |

| ~800 (Optimal Threshold) | Lowest (ΔRMSE=0.167 improvement) | Model performance (XGBoost, RF) stabilizes at a high level. |

| > 800 | Stable, high | No significant performance gain with additional data. |

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of ML protocols requires a suite of computational and data resources.

Table 3: Key Research Reagent Solutions for ML in Catalysis

| Reagent / Resource | Type | Function & Application | Example Sources |

|---|---|---|---|

| tmQM Dataset | Database | Provides quantum-mechanical properties for transition metal complexes, mitigating data scarcity. | [27] |

| Gaussian Process (GP) Models | Algorithm | Ideal for small-data scenarios; provides uncertainty quantification for Bayesian optimization. | [28] [25] [27] |

| SOAP Descriptors | Molecular Representation | Smooth Overlap of Atomic Positions; captures 3D geometric and chemical information. | [27] |

| Data Volume Prior Judgment Strategy (DV-PJS) | Data Strategy | Determines the minimum data volume required for ML models to reach a performance threshold. | [26] |

| Random Forest / XGBoost | Algorithm | Ensemble methods robust to noise and effective at handling feature interactions in small-sample scenarios. | [26] [3] |

| SHAP (SHapley Additive exPlanations) | Interpretation Framework | Explains model output by quantifying the contribution of each feature to a prediction. | [26] [3] |

Protocol: Overcoming Data Scarcity with a Data Volume Prior Judgment Strategy (DV-PJS)

Background & Principle

Data scarcity is a fundamental bottleneck in environmental and catalytic machine learning [26]. The Data Volume Prior Judgment Strategy (DV-PJS) is a systematic framework designed to mitigate this challenge. It establishes a data volume threshold, identifying the minimum dataset size required for a model to achieve stable, optimal performance without unnecessary data acquisition costs [26]. This protocol adapts the DV-PJS for use in homogeneous catalysis research.

Experimental Materials

- Computing Environment: Standard laptop or workstation capable of running machine learning libraries (e.g., Scikit-learn, XGBoost).

- Software & Libraries: Python with Pandas, NumPy, Scikit-learn, XGBoost.

- Initial Dataset: A curated dataset of catalytic reactions. The protocol is demonstrated with a dataset (D~865) containing 10 features and 865 data points on sludge-based catalytic degradation [26]. This can be substituted with a homogeneous catalysis dataset.

Step-by-Step Procedure

Data Collection and Curation:

- Collect and preprocess your catalytic dataset (e.g., reaction yields, conditions, catalyst descriptors).

- Ensure data quality through cleaning and normalization. The original study used 10 features including catalyst properties and reaction conditions [26].

Systematic Data Subsetting:

- Split the full dataset into incrementally larger subsets. The original study divided 865 data points into subsets in increments of 100 (e.g., 100, 200, ..., 800) [26].

- For smaller datasets, use smaller increments (e.g., 50 data points) or percentage-based splits.

Iterative Model Training and Validation:

- For each data subset, train and validate multiple ML models (e.g., XGBoost, Random Forest, Stacking models).

- Use consistent validation procedures, such as 5-fold cross-validation, for each model and subset.

- Record the performance metric (e.g., RMSE, MAE) for each model at each data volume.

Threshold Identification and Analysis:

- Plot model performance (e.g., RMSE) against the data volume.

- Identify the inflection point where performance plateaus and stabilizes. In the case study, this threshold was identified at 800 data points for key models [26].

- This inflection point represents the minimum data volume required for optimal performance for that specific catalytic system and feature set.

Model Deployment and Verification:

- Select the best-performing model (e.g., XGBoost) and train it on the full threshold-data-volume dataset.

- Apply the model to predict the performance of new, real-world catalysts and validate predictions experimentally. The original study achieved a relative deviation of only 3.2% between prediction and experiment [26].

Workflow Visualization

Protocol: Navigating Vast Chemical Space for Catalyst Discovery

Background & Principle

The chemical space of possible catalysts, even for a single reaction, is astronomically large [25]. This protocol outlines a hybrid approach, combining Density Functional Theory (DFT) and machine learning to efficiently explore these vast spaces. The principle is to use DFT calculations on a strategically selected subset of complexes to generate high-quality training data. An ML model is then trained to predict properties for the entire chemical space, bypassing the need for prohibitively expensive calculations on every candidate [25] [27].

Experimental Materials

- Computational Resources: Access to high-performance computing (HPC) clusters for DFT calculations.

- Software: DFT software (e.g., Gaussian, VASP, CP2K), RDKit for descriptor generation, ML libraries (Scikit-learn, GPyTorch).

- Chemical Space Definition: A defined set of ligand and metal center combinations, such as the 2574 derivatives of Vaska's complex [25].

Step-by-Step Procedure

Define the Target Chemical Space:

- Enumerate a library of catalyst candidates based on permissible ligand and metal variations. The case study defined a space of 2574 complexes derived from Vaska's complex [25].

Generate Initial Training Data with DFT:

- Select a diverse, representative subset of complexes from the full chemical space. Sampling can be random or guided by chemical intuition.

- Perform DFT calculations on this subset to obtain the target property (e.g., H₂-activation barrier, reaction energy).

Feature Engineering and Selection:

- Compute numerical descriptors (features) for all complexes in the chemical space.

- Use descriptors such as:

- Apply feature selection methods (e.g., from Gradient Boosting) to identify the most relevant descriptors [25].

Machine Learning Model Training:

- Train an ML model on the subset of complexes with known DFT-calculated properties.

- Gaussian Process (GP) models are highly recommended for small-data settings as they provide uncertainty estimates, which are crucial for guiding further exploration [25] [27]. Alternative models like Bayesian-optimized Neural Networks or XGBoost can also be used [25].

Predict and Validate Across the Full Space:

- Use the trained model to predict the target property for all complexes in the pre-defined chemical space.

- Identify top-performing catalyst candidates from the ML predictions.

- Validate the predictions by performing DFT calculations on a selection of the top candidates. The case study achieved MAE below 1.0 kcal/mol using only 20% of the data for training [25].

Workflow Visualization

Protocol: Integrating Mechanistic Complexity for Interpretable Prediction

Background & Principle

ML models often risk being "black boxes." This protocol focuses on building interpretability directly into the modeling process, transforming mechanistic complexity from a barrier into a source of insight. By using physically meaningful descriptors and interpretation frameworks, researchers can extract actionable knowledge about the catalytic process, such as identifying the most influential ligand fragments or electronic properties [25] [27]. This aligns with the advanced "theory-oriented interpretation" stage of ML development in catalysis [15].

Experimental Materials

- Descriptor Calculation Tools: Software for computing electronic (e.g., from DFT), steric, and structural descriptors. RDKit is a common choice for molecular descriptors.

- Interpretable ML Models: Models like Random Forest or XGBoost, which have built-in feature importance metrics.

- Model Interpretation Libraries: SHAP (SHapley Additive exPlanations) library for Python.

Step-by-Step Procedure

Dataset Creation with Mechanistic Descriptors:

- Assemble a dataset where the features are not simple fingerprints but descriptors with clear physical or mechanistic meaning. Examples include:

Model Training with Physical Features:

- Train a tree-based model (e.g., XGBoost, Random Forest) or a Gaussian Process model using the mechanistic descriptor set.

Feature Importance Analysis:

- Use the model's built-in feature importance metric (e.g., Gini importance for Random Forest) to get a preliminary ranking of which mechanistic factors most strongly influence the predicted outcome.

SHAP Analysis for Local Interpretation:

- Calculate SHAP values for the trained model. SHAP values quantify the contribution of each feature to every single prediction, providing both global and local interpretability [26] [3].

- Generate summary plots (e.g., SHAP summary plots) to visualize the global impact and directionality (positive or negative) of each feature.

Extract Mechanistic Insights:

- Analyze the top descriptors identified by SHAP and feature importance. For example, a study on Vaska's complex derivatives found that features related to chemical composition, atom size, and electronegativity were most determinant for predicting H₂-activation barriers [25].

- Use these insights to formulate or validate hypotheses about the reaction's rate-determining step, catalyst speciation, or the key steric/electronic requirements for high performance.

Workflow Visualization

The development of catalysts has long been a cornerstone of chemical innovation, with profound implications for pharmaceutical synthesis, energy conversion, and sustainable manufacturing. Traditional catalyst discovery has predominantly relied on experimental trial-and-error approaches guided by chemical intuition and prior knowledge—a process that is often time-consuming and resource-intensive [29] [30]. For instance, early catalyst development involved screening over 2,500 compositions to identify an optimal catalyst for ammonia synthesis, exemplifying the inefficiencies of this paradigm [29].

The past decade has witnessed a transformative shift in catalytic science, driven by the integration of machine learning (ML) and artificial intelligence (AI). Where traditional computational tools like density functional theory (DFT) provided valuable insights but remained limited by computational expense, ML approaches now enable researchers to navigate vast chemical spaces with unprecedented efficiency [3] [29]. This evolution has culminated in the emergence of inverse design strategies, where desired catalytic properties guide the computational generation of optimal catalyst structures, fundamentally reversing the traditional discovery workflow [31].

This Application Note examines this paradigm shift within the specific context of homogeneous catalysis research, where the precise tuning of molecular structure profoundly influences catalytic activity and selectivity. We detail the methodological framework supporting this transition, provide practical protocols for implementation, and highlight how ML-driven inverse design is accelerating the development of tailored catalytic solutions for complex chemical transformations.

The Machine Learning Toolkit for Catalysis

The application of ML in catalysis encompasses diverse learning paradigms and algorithms, each suited to specific aspects of catalyst research and development.

Key Machine Learning Paradigms

Table 1: Fundamental Machine Learning Paradigms in Catalysis Research

| Learning Paradigm | Data Requirements | Primary Applications in Catalysis | Advantages | Limitations |

|---|---|---|---|---|

| Supervised Learning | Labeled data (input-output pairs) | Predicting reaction yield, enantioselectivity, or catalytic activity [3] | High predictive accuracy; interpretable results [3] | Requires extensive labeled data; costly data generation [3] |

| Unsupervised Learning | Unlabeled data | Clustering catalysts by molecular descriptor similarity; dimensionality reduction [3] | Reveals hidden patterns without predefined labels [3] | Lower predictive power; more challenging interpretation [3] |

| Hybrid/Semi-supervised Learning | Combination of labeled and unlabeled data | Pre-training on unlabeled structures followed by fine-tuning on small labeled datasets [31] [3] | Improves data efficiency; leverages abundant unlabeled data [3] | Increased model complexity; potential propagation of biases from unlabeled data |

Essential Algorithms for Catalyst Research

ML algorithms extract meaningful patterns from complex catalytic data. Key algorithms include:

Random Forest: An ensemble method comprising multiple decision trees that operates through majority voting (classification) or averaging (regression). This approach enhances predictive stability and accuracy by reducing overfitting, making it particularly valuable for modeling complex, non-linear structure-activity relationships in catalysis [3].

Artificial Neural Networks (ANNs): Especially effective for modeling the inherent non-linearity of chemical processes, ANNs have demonstrated superior performance in various chemical engineering applications, including catalyst optimization [30].

Gaussian Process Regression (GPR): Provides reliable uncertainty estimates alongside predictions, which is crucial for guiding experimental campaigns and active learning cycles [32].

Gradient Boosting Methods (XGBoost, LightGBM): Powerful ensemble techniques that sequentially build models to correct errors from previous ones, often achieving state-of-the-art performance in predictive tasks [32] [33].

The diagram below illustrates the relationships between these ML paradigms and algorithms within the catalyst development workflow:

Inverse Design: A New Paradigm

Inverse design represents a fundamental shift from traditional catalyst discovery by starting with desired properties and working backward to identify optimal structures. This approach leverages deep generative models to explore chemical space more efficiently than forward design strategies [31].

Architectural Frameworks for Inverse Design

Several generative architectures have demonstrated particular success in catalyst inverse design:

Variational Autoencoders (VAEs) have emerged as powerful tools for representing catalytic active sites in compressed latent spaces. For instance, a novel topology-based VAE framework (PGH-VAEs) has been developed to enable interpretable inverse design of catalytic active sites. This approach uses persistent GLMY homology—an advanced topological algebraic analysis tool—to quantify three-dimensional structural sensitivity and establish correlations with adsorption properties [31]. The multi-channel architecture separately encodes coordination and ligand effects, allowing the latent space to possess substantial physical meaning and interpretability [31].

Reaction-Conditioned Generative Models address a critical limitation of earlier approaches by incorporating reaction context into the generation process. The CatDRX framework employs a reaction-conditioned variational autoencoder that learns structural representations of catalysts alongside associated reaction components (reactants, reagents, products) [34]. This model is pre-trained on diverse reactions from databases like the Open Reaction Database (ORD) and then fine-tuned for specific downstream applications, enabling generation of catalyst structures tailored to specific reaction environments [34].

Case Study: Inverse Design of High-Entropy Alloy Catalysts

A compelling demonstration of inverse design utilized the PGH-VAEs framework for interpreting and designing catalytic active sites on IrPdPtRhRu high-entropy alloys (HEAs) for the oxygen reduction reaction [31]. The workflow encompassed:

- Active Site Representation: Employing persistent GLMY homology to create topology-based descriptors enriched with chemical information, enabling unified representation of coordination and ligand effects [31].

- Data Augmentation: Using a semi-supervised ML model trained on approximately 1,100 DFT calculations to predict adsorption energies of newly generated structures, effectively expanding the training dataset [31].

- Inverse Design: Leveraging the trained VAE to generate novel active site structures tailored to specific *OH adsorption energy criteria, enabling targeted optimization of HEA catalysts [31].

This approach achieved a remarkably low mean absolute error (MAE) of 0.045 eV in predicting *OH adsorption energies, demonstrating the precision possible with ML-driven inverse design [31].

Experimental Protocols and Workflows

Implementing ML-guided catalyst development requires structured methodologies. Below, we outline key protocols for inverse design implementation and catalyst evaluation.

Protocol: Inverse Design of Homogeneous Catalysts Using Generative Models

Purpose: To computationally generate novel catalyst candidates with desired properties using reaction-conditioned generative models.

Materials/Software Requirements:

- Chemical representation tools (SMILES, SELFIES, or graph representations)

- Generative model architecture (e.g., VAE, GAN, diffusion model)

- Reaction condition database (e.g., Open Reaction Database)

- High-performance computing resources

- Quantum chemistry software (e.g., for DFT validation)

Procedure:

- Data Curation and Preprocessing

- Collect diverse reaction data including catalysts, reactants, products, reagents, and yields [34]

- Standardize molecular representations (e.g., SMILES, graph structures)

- Apply data cleaning to remove inconsistencies and duplicates

Model Pre-training

- Implement reaction-conditioned VAE architecture with separate embedding modules for catalyst and reaction conditions [34]

- Train on broad reaction database (e.g., ORD) to learn general chemical patterns

- Monitor reconstruction loss and property prediction accuracy

Model Fine-tuning

- Transfer pre-trained model to specific catalytic system of interest

- Fine-tune on smaller, targeted dataset with relevant catalytic properties

- Employ transfer learning techniques to prevent catastrophic forgetting

Candidate Generation and Optimization

- Sample from latent space with conditioning on desired reaction context

- Apply optimization techniques (e.g., Bayesian optimization) to steer generation toward target properties

- Implement validity and synthesizability filters to ensure practical relevance

Validation and Experimental Testing

- Validate promising candidates using DFT calculations or molecular dynamics [34]

- Synthesize top candidates and evaluate experimentally

- Incorporate experimental results into iterative model refinement

Troubleshooting Tips:

- If model generates invalid structures, adjust decoder architecture or implement structural constraints

- For poor property prediction, increase diversity of training data or incorporate multi-task learning

- If generated catalysts lack novelty, adjust sampling temperature or explore different regions of latent space

Protocol: High-Throughput Screening of ML-Generated Catalysts

Purpose: To experimentally validate catalyst candidates generated through inverse design approaches.

Materials:

- ML-generated catalyst candidates

- Appropriate solvent systems

- Substrates for target reaction

- Analytical standards

- High-throughput screening platform

Procedure:

- Candidate Prioritization

- Rank candidates based on predicted activity, selectivity, and stability

- Apply synthesizability filters and cost considerations

- Select diverse candidates spanning chemical space

Automated Synthesis

- Implement robotic synthesis platforms for parallel catalyst preparation

- Standardize purification and characterization protocols

- Document synthesis yields and characterization data

Performance Evaluation

- Employ high-throughput reaction screening under standardized conditions

- Analyze reactions using automated GC, HPLC, or MS systems

- Determine key performance metrics (conversion, yield, selectivity)

Data Integration

- Incorporate experimental results into ML training dataset

- Retrain models with expanded data

- Identify performance trends and structural motifs

The following workflow illustrates the complete iterative cycle of ML-guided catalyst discovery:

Successful implementation of ML-driven catalyst development requires both computational and experimental resources. The following table details key components of the modern catalyst researcher's toolkit.

Table 2: Essential Research Reagents and Computational Resources for ML-Guided Catalyst Development

| Category | Specific Tool/Resource | Function/Purpose | Application Context |

|---|---|---|---|

| Computational Frameworks | Scikit-Learn | Provides accessible implementations of classical ML algorithms | Building baseline models for property prediction [30] |

| TensorFlow, PyTorch | Enable development of complex deep learning architectures | Implementing neural networks and generative models [30] | |

| Chemical Descriptors | Topological descriptors (e.g., PGH) | Quantify 3D structural features of catalytic active sites | Inverse design of alloy catalysts [31] |

| Electronic structure descriptors | Capture electronic properties influencing catalytic activity | Predicting adsorption energies and activity trends [33] | |

| Generative Architectures | Variational Autoencoders (VAEs) | Learn compressed representations of chemical space | Generating novel catalyst structures [31] [34] |

| Reaction-conditioned models | Incorporate reaction context into generation process | Designing catalysts for specific transformations [34] | |

| Validation Tools | Density Functional Theory (DFT) | Computational validation of generated catalyst candidates | Predicting adsorption energies and reaction barriers [31] |

| High-throughput experimentation | Experimental validation of candidate catalysts | Rapid performance assessment [33] |

Data Presentation and Analysis

Quantitative assessment of ML model performance is essential for evaluating their utility in catalyst discovery. The following table summarizes performance metrics from recent influential studies.

Table 3: Performance Metrics of ML Models in Catalysis Research

| Study | Catalytic System | ML Approach | Key Performance Metrics | Experimental Validation |

|---|---|---|---|---|

| Topology-based VAE for HEAs [31] | IrPdPtRhRu high-entropy alloys for ORR | Topology-based variational autoencoder (PGH-VAEs) | MAE of 0.045 eV for *OH adsorption energy prediction | DFT calculations confirming adsorption energies |

| CatDRX Framework [34] | Multiple reaction classes | Reaction-conditioned VAE | Competitive RMSE and MAE in yield prediction vs. baselines | Case studies across different catalyst types |

| Cobalt-based VOC Oxidation Catalysts [30] | Co₃O₄ catalysts for toluene/propane oxidation | Ensemble of 600 ANNs + 8 regression algorithms | Accurate prediction of conversion at 97.5% threshold | Experimental optimization of catalyst composition |

| Dual-Atom Catalyst Design [35] | Graphene-based DACs for CO₂ reduction | DFT-driven ML model | Identification of d-orbital electrons as key activity descriptor | Prediction of Ni-Ni pair as optimal catalyst |

Future Perspectives

The field of ML-guided catalyst discovery continues to evolve rapidly, with several emerging trends shaping its trajectory:

Large Language Models (LLMs) are beginning to demonstrate significant potential in catalyst design. Their ability to process textual representations of catalytic systems offers a natural and interpretable approach to incorporating diverse features [29]. As these models advance, they may enable more effective knowledge extraction from the vast body of scientific literature and more intuitive human-AI collaboration in catalyst design.

Addressing Data Scarcity remains a critical challenge, particularly for specialized catalytic systems. Transfer learning approaches, where models pre-trained on large general chemistry datasets are fine-tuned for specific catalytic applications, show promise in overcoming this limitation [21] [34]. Additionally, techniques such as active learning and semi-supervised approaches can maximize information gain from limited experimental data [31].

Interpretability and Explainability will become increasingly important as ML models grow more complex. Methods such as SHAP (Shapley Additive Explanations) and the development of inherently interpretable models like the multi-channel PGH-VAEs are crucial for building trust in ML predictions and extracting fundamental scientific insights [31].

The integration of ML-guided catalyst design with automated synthesis and high-throughput experimentation platforms points toward a future of fully autonomous catalyst discovery systems, potentially reducing development timelines from years to months or weeks while opening new frontiers in catalytic science.

From Data to Design: Key Algorithms and Real-World Applications in Catalytic Optimization

The application of machine learning (ML) in homogeneous catalysis represents a paradigm shift in how researchers approach catalyst discovery and optimization. Over the past 15 years, the number of publications combining artificial intelligence with catalysis has increased exponentially, reflecting the growing importance of these techniques in chemical research [36] [37]. This transformation is particularly evident in homogeneous catalysis with transition metal complexes, where ML methods are accelerating the development of more efficient and selective catalytic systems. The complexity of the tasks that can be carried out with AI tools is directly linked to the nature of their components, including datasets, representations, algorithms, and high-throughput experimental and computational facilities [36].

Machine learning has proven especially valuable for addressing the highly complicated problems in catalysis, where multiple target properties require optimization simultaneously [37]. Initially, models were developed to predict key aspects of reaction mechanisms to screen catalyst candidates. Subsequent studies have incorporated experimental data to optimize reaction conditions and yields. More recently, generative AI based on deep learning methods has enabled the inverse design of novel catalysts with predefined target properties [36]. While most studies historically relied on computational data, recent advancements have improved the acquisition of experimental data, enabling AI-driven automated workflows that bridge the gap between prediction and experimental validation [36].

The rich chemistry of transition metals presents particular challenges for ML applications, as discriminative models must predict multiple properties while generative models struggle to produce chemically valid outputs that account for the complexity of metal-ligand bonds and effects beyond the first coordination sphere [37]. Despite these challenges, the field has matured significantly, with applications now spanning prediction of catalytic activity, optimization of reaction conditions, and discovery of new catalytic structures across both experimental and theoretical domains [38].

Essential Machine Learning Algorithms

Algorithm Comparison and Selection Criteria

Selecting the appropriate machine learning algorithm depends on multiple factors, including data characteristics, computational resources, interpretability requirements, and the specific catalytic problem being addressed. No single algorithm performs optimally across all scenarios, making informed selection crucial for research success [39] [40].

Table 1: Comparative Analysis of Essential ML Algorithms for Catalysis Research

| Feature | Random Forest | Support Vector Machine (SVM) | Artificial Neural Network (ANN) | Graph Neural Network (GNN) |

|---|---|---|---|---|

| Primary Mechanism | Ensemble of decision trees [39] | Optimal hyperplane separation [39] | Layered neurons with weighted connections [39] | Message passing on graph structures |

| Learning Type | Supervised [39] | Supervised [39] | Supervised/Unsupervised [39] | Supervised/Unsupervised |

| Interpretability | Relatively interpretable [39] | Less interpretable [39] | Difficult to interpret [39] | Moderate to low interpretability |

| Data Size Efficiency | Efficient with small to medium datasets [40] | Effective with small to medium datasets [39] | Requires large datasets [39] [40] | Requires moderate to large datasets |

| Handling Non-linearity | Native handling [40] | Kernel tricks [40] | Non-linear activation functions [40] | Native graph structure processing |

| Computational Demand | Moderate [39] | Can be computationally expensive [39] | High [39] | High |

| Catalysis Application Example | Descriptor identification for Ni2P hydrogen evolution [41] | Prediction of reaction outcomes [4] | Catalytic activity prediction [38] | Molecular property prediction |

Algorithm-Specific Methodological Considerations

Random Forest

Random Forest operates as an ensemble learning method that constructs multiple decision trees during training, with each tree built on a unique subset of the training data [39]. For catalysis applications, it excels at identifying important descriptors from complex feature sets. In practice, the algorithm creates numerous decision trees using the CART algorithm, with each tree receiving a random subset of rows and columns from the data [41]. The final prediction is determined by aggregating the predictions of individual trees, resulting in robust performance that resists overfitting. This method is particularly valuable when working with smaller datasets common in catalysis research, provided appropriate validation techniques are employed [41].

Support Vector Machines (SVM)

SVMs are discriminative classifiers that find optimal hyperplanes to separate data into different classes [39]. For non-linearly separable data common in catalysis problems, SVM employs kernel tricks to map the original feature space to higher-dimensional spaces where separation becomes feasible [40]. The algorithm's objective is to identify a decision boundary that maximizes the margin between different classes, with the points closest to the hyperplane termed "support vectors" [39]. SVM training utilizes quadratic programming optimization, which consists of a function being optimized according to linear constraints on its variables using minimal sequential optimization [40]. This approach is especially effective for classification and regression tasks with clear separation margins.

Artificial Neural Networks (ANN)

ANNs are composed of interconnected layers of artificial neurons that process information through weighted connections and activation functions [38]. A conventional ANN structure includes at least three distinct layers: input, hidden, and output layers, with each layer containing multiple neurons [38]. The fundamental calculation involves the weighted sum of inputs plus a bias term: NET = ∑(w_ij * x_i) + b, followed by an activation function such as the sigmoid function: f(NET) = 1/(1+e^(-NET)) [38]. Training occurs through optimization algorithms like gradient descent and backpropagation, which minimize the difference between predicted and actual outputs by adjusting connection weights [39] [38]. This architecture enables ANNs to automatically learn hierarchical features from raw data, making them invaluable for complex pattern recognition in catalysis.

Graph Neural Networks (GNN)

GNNs represent a specialized class of neural networks designed to operate directly on graph-structured data, making them ideally suited for molecular representations in catalysis research. Unlike traditional ANNs, GNNs employ message-passing mechanisms where nodes in a graph update their representations by aggregating information from their neighbors. This architecture naturally captures molecular topology, bonding patterns, and spatial relationships—critical factors influencing catalytic behavior. While not explicitly detailed in the search results, GNNs extend the neural network principles [39] [38] to structured data representations highly relevant to molecular catalysis.

Experimental Protocols and Implementation

Data Preparation and Preprocessing

Effective implementation of ML algorithms in catalysis research requires meticulous data preparation. The foundation of any successful ML model begins with comprehensive database preparation and appropriate variable selection [38]. The database must be sufficiently large to avoid over-fitting, with dependent variables covering a wide range to ensure robust predictive capability beyond narrow local regions [38]. For catalytic applications, dependent variables typically represent properties that are challenging to measure experimentally or compute theoretically, while independent variables should be easily accessible parameters with potential relationships to the target properties.

For ANN implementations specifically, data preprocessing often includes normalization, handling of missing values, and feature scaling to optimize training performance [38]. For Random Forest and SVM applications, preprocessing requirements are generally less extensive, though removal of near-zero variance descriptors may be necessary to improve model performance [41]. A critical preprocessing function for Random Forest involves eliminating features with minimal variation, as implemented in the following protocol:

This function iterates through dataframe columns, identifying and removing features with variance below a specified threshold (default: 0.05), thereby improving model robustness and computational efficiency [41].

Model Training and Optimization

The training process for ML models in catalysis follows distinct algorithmic approaches tailored to each method. For Neural Networks, training involves adjusting internal parameters (weights and biases) through optimization algorithms like gradient descent and backpropagation [39] [38]. The training objective minimizes the difference between predicted and actual outputs through iterative weight adjustments based on error calculations.

For Random Forest implementations, the training protocol involves constructing multiple decision trees using random subsets of both samples and features [41]. The following code illustrates a standard implementation for catalysis applications:

This protocol highlights the standard workflow of data splitting, model initialization, training, and comprehensive performance evaluation using multiple metrics [41].

For ANN development, structural optimization is critical. Researchers must systematically vary the numbers of hidden layers and neurons, comparing average Root Mean Square Errors (RMSE) from testing sets during cross-validation to identify the optimal configuration [38]. The RMSE is calculated as:

Where Pi represents the predicted value, Ai is the actual value, and n is the total number of samples [38]. This metric provides a standardized assessment of model accuracy across different architectural configurations.

Model Validation and Interpretation

Robust validation is essential for reliable ML models in catalysis research. The testing process must utilize data groups not involved in training to properly validate model generalizability [38]. For smaller datasets common in catalysis studies, cross-validation techniques are particularly important to ensure reliable performance estimation [38] [41]. For larger databases, sensitivity analysis may replace cross-validation to reduce computational demands [38].

Visualization of results provides critical insights into model performance. The following function generates comprehensive prediction plots:

This visualization compares predicted versus actual values for training (blue), testing (red), and optional validation (green) datasets, with the ideal fit represented by the black line [41].

For Random Forest models, feature importance analysis provides critical mechanistic insights:

This analysis identifies which molecular descriptors most significantly influence catalytic properties, guiding fundamental understanding and catalyst design strategies [41].

Workflow Visualization

ML Workflow for Catalysis Research

This workflow delineates the systematic process for implementing machine learning in catalysis research, beginning with problem definition and progressing through data collection, preprocessing, algorithm selection, model training, validation, and final application to catalyst design. The decision node for model selection highlights key criteria for choosing between Random Forest (small/medium datasets with interpretability requirements), SVM (problems with clear margins and non-linear relationships), ANN (large datasets with complex patterns), and GNN (molecular structures and graph data) [39] [38] [40].

Research Reagent Solutions and Computational Materials

Table 2: Essential Research Tools for ML in Catalysis

| Resource Category | Specific Tools/Platforms | Application in Catalysis Research |

|---|---|---|

| Programming Frameworks | Python, scikit-learn [41] | Model implementation, data preprocessing, and analysis |

| Neural Network Libraries | TensorFlow, PyTorch [38] | Development and training of ANN and GNN architectures |

| Quantum Chemistry Software | Density Functional Theory (DFT) codes [41] | Generation of training data and descriptor calculation |

| Cheminformatics Tools | RDKit, Open Babel | Molecular featurization and descriptor generation |

| Data Management | Pandas, NumPy [41] | Data storage, manipulation, and processing |

| Visualization Libraries | Matplotlib, Plotly [41] | Results plotting and model interpretation |

| High-Throughput Experimentation | Automated reactors, robotic systems [36] | Experimental data generation for model training |

Application Notes for Homogeneous Catalysis

Case Study: ANN for Catalytic Activity Prediction