Mitigating Dataset Bias in Molecular Property Prediction: Strategies for Robust AI in Drug Discovery

Dataset bias presents a critical challenge in molecular property prediction, undermining the reliability of AI models in drug discovery and materials science.

Mitigating Dataset Bias in Molecular Property Prediction: Strategies for Robust AI in Drug Discovery

Abstract

Dataset bias presents a critical challenge in molecular property prediction, undermining the reliability of AI models in drug discovery and materials science. This article provides a comprehensive guide for researchers and development professionals on identifying, mitigating, and validating solutions for biased training data. Drawing from the latest research, we explore foundational concepts of experimental and selection biases, advanced mitigation techniques including multi-task learning and causal inference, practical troubleshooting for common pitfalls like negative transfer and over-specialization, and rigorous validation frameworks for comparative analysis. By addressing these interconnected aspects, we equip practitioners with the knowledge to build more accurate, generalizable, and trustworthy predictive models that accelerate biomedical innovation.

Understanding the Roots and Impact of Data Bias in Molecular Datasets

Defining Data Bias in Molecular Sciences

What is data bias in the context of molecular property prediction?

Data bias occurs when a dataset used for training machine learning models is incomplete or inaccurate, failing to accurately represent the true distribution of the broader population of interest—in this case, the chemical space [1] [2]. For molecular sciences, this means that the dataset does not uniformly cover the known universe of biologically relevant small molecules, which can severely limit the predictive power and generalizability of models trained on it [3].

What are the primary categories of data bias affecting molecular research?

Bias can be introduced at various stages of research, from data generation to model application. The table below summarizes the key types relevant to molecular property prediction.

Table 1: Common Types of Data Bias in Molecular Property Prediction

| Bias Type | Definition | Molecular Research Example |

|---|---|---|

| Historical Bias | Data reflects past inequalities or measurement priorities rather than current reality [1] [2]. | Training a toxicity predictor only on drugs that passed clinical trials, ignoring those that failed early due to toxicity [4]. |

| Selection Bias | The dataset is not a representative sample of the target population due to non-random selection [1]. | A dataset like QM9 is biased toward small molecules containing only C, H, N, O, and F, excluding other elements [4]. |

| Coverage Bias | The data does not uniformly cover the relevant structural or property space [3]. | Many public datasets lack uniform coverage of known biomolecular structures, creating "blind spots" for models [3]. |

| Reporting Bias | The frequency of events in the dataset does not match their real-world frequency [2]. | Scientific literature and databases like ChEMBL over-report successful experiments and bioactive compounds, under-reporting negative results [4]. |

Troubleshooting Guides: Identifying Bias in Your Dataset

How can I detect coverage bias in my molecular dataset?

A key method for identifying coverage bias involves assessing the structural diversity of your dataset against a proxy for the "universe of small molecules of biological interest" [3].

- Experimental Objective: To determine if your training set is a structurally representative subset of the broader chemical space of interest.

- Mechanism: Compare the distribution of your dataset against a large, aggregated set of biomolecular structures (e.g., a union of 14 public databases containing over 700,000 structures) using a chemically intuitive distance metric [3].

- Procedure:

- Compute Structural Distances: For molecular pairs, compute the distance using the Maximum Common Edge Subgraph (MCES). This method aligns well with chemical similarity but is computationally hard. An efficient approximation is the myopic MCES (mMCES) distance, which uses fast lower bounds and integer linear programming for close molecules [3].

- Visualize with Dimensionality Reduction: Use Uniform Manifold Approximation and Projection (UMAP) to create a 2D map of the reference "universe" of biomolecular structures. Your dataset can then be projected onto this map to visually identify gaps or over-represented clusters [3].

- Analyze Compound Classes: Color-code the UMAP embedding by compound classes (e.g., using ClassyFire). A biased dataset will show an uneven distribution of these classes compared to the reference set [3].

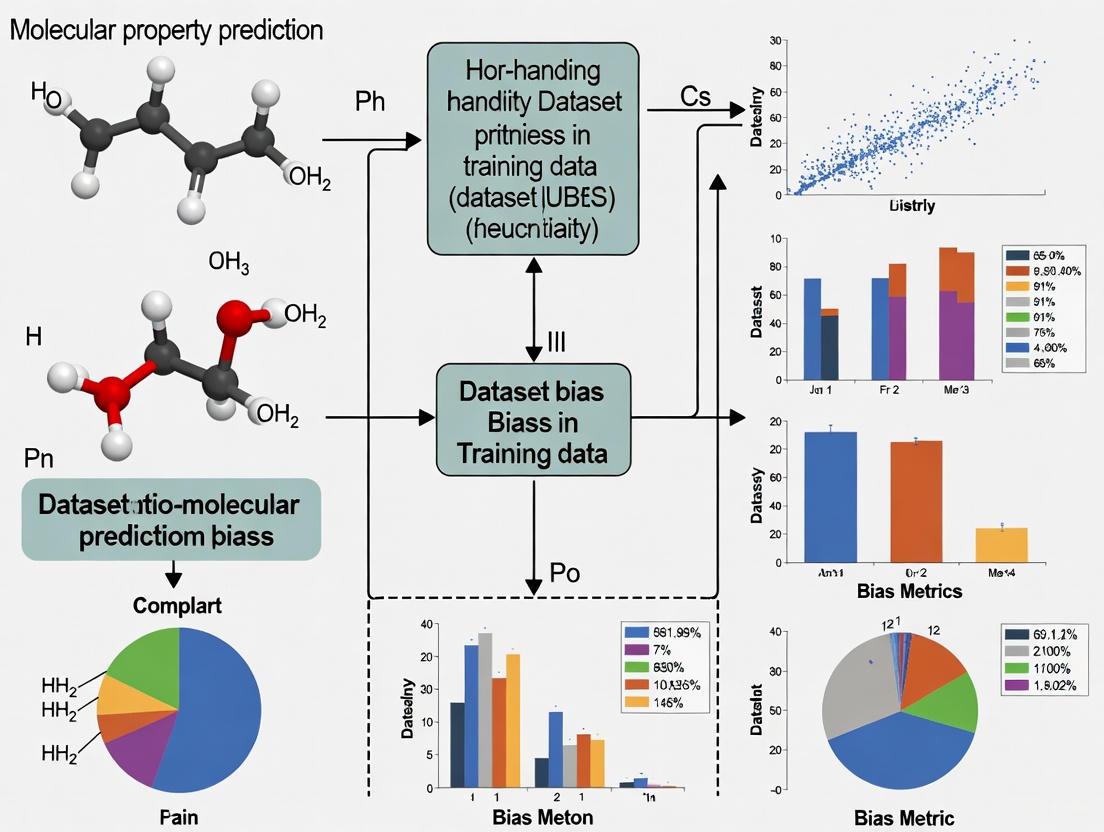

The following diagram illustrates this experimental workflow for detecting coverage bias:

How can I check if my model is being used outside its Applicability Domain (AD)?

The Applicability Domain is the chemical space where a model's predictions are reliable [4]. A molecule is outside the AD if it is structurally too different from the training data.

- Experimental Objective: Establish the boundaries of a model's Applicability Domain to flag predictions with low confidence.

- Mechanism: Define a similarity threshold based on the training data. Any new molecule falling below this threshold is considered outside the AD.

- Procedure:

- Characterize Training Data: Calculate the structural descriptors (e.g., mMCES distances, molecular fingerprints) for all molecules in your training set.

- Define a Threshold: A common approach is to consider molecules within a certain distance to the mean of the training data as inside the AD [4].

- Evaluate New Molecules: For any new molecule, compute its distance to the training set mean or its nearest neighbor in the training set. If the distance exceeds your threshold, the prediction should be treated as unreliable.

Mitigation Strategies and Protocols

What are the standard techniques to mitigate data bias?

Bias mitigation strategies can be applied at different stages of the machine learning pipeline. The table below classifies these methods.

Table 2: Bias Mitigation Strategies for Molecular Property Prediction

| Stage | Strategy | Application in Molecular Research |

|---|---|---|

| Pre-processing | Adjusting the dataset before model training to remove bias [5]. | Sampling: Use techniques like SMOTE to oversample underrepresented molecular scaffolds or undersample overrepresented ones [6].Reweighing: Assign higher weights to samples from underrepresented compound classes during training [6] [5]. |

| In-processing | Modifying the learning algorithm itself to increase fairness [5]. | Adversarial Debiasing: Train a model to predict a property while making it impossible for a subsidiary model to predict a protected attribute (e.g., a specific scaffold class) from the features [5].Adaptive Checkpointing (ACS): In Multi-Task Learning, save model parameters best suited for each task to prevent "negative transfer" from imbalanced data [7]. |

| Post-processing | Adjusting model outputs after training [5]. | Reject Option Classification: For low-confidence predictions on out-of-domain molecules, reject the prediction or flag it for expert review [5]. |

Experimental Protocol: Multi-task Learning with Adaptive Checkpointing (ACS) for Imbalanced Data

Multi-task learning (MTL) can help in low-data regimes but suffers from Negative Transfer (NT) when tasks are imbalanced. ACS mitigates this [7].

- Objective: To train a robust multi-task Graph Neural Network (GNN) that shares knowledge between related property prediction tasks without performance degradation on tasks with scarce data.

- Materials:

- Architecture: A shared GNN backbone with task-specific Multi-Layer Perceptron (MLP) heads.

- Dataset: A multi-task dataset with severe label imbalance (e.g., ClinTox, SIDER, Tox21).

- Procedure:

- Train Shared Backbone: Train the entire model (shared GNN + all task-specific heads) on all available tasks.

- Monitor Validation Loss: For each task, independently monitor its validation loss throughout the training process.

- Checkpoint Specialized Models: Whenever the validation loss for a specific task reaches a new minimum, save (checkpoint) the combination of the shared backbone and that task's specific head.

- Deploy Specialized Models: After training, use the checkpointed backbone-head pair for each task, which represents the model state that was optimal for that specific task, free from interference from other tasks.

The workflow for ACS is detailed in the following diagram:

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Bias Analysis and Mitigation

| Tool / Resource | Function | Relevance to Bias |

|---|---|---|

| Maximum Common Edge Subgraph (MCES) | A distance measure for quantifying molecular structural similarity [3]. | Core to assessing coverage bias by providing a chemically intuitive measure of how similar or dissimilar two molecules are. |

| UMAP (Uniform Manifold Approximation and Projection) | A dimensionality reduction technique for visualizing high-dimensional data [3]. | Creates 2D "maps" of chemical space, allowing visual identification of gaps and clusters in data distribution. |

| ClassyFire | A web tool for automated chemical classification [3]. | Enables the analysis of data distribution by compound class (e.g., lipids, flavonoids) to identify underrepresentation. |

| AI Fairness 360 (AIF360) | An open-source toolkit containing metrics and algorithms for bias detection and mitigation [2]. | Provides standardized fairness metrics and in-processing/post-processing algorithms to debias models. |

| Graph Neural Network (GNN) | A type of neural network that operates directly on graph structures, such as molecular graphs [7]. | The primary architecture for modern molecular property prediction, capable of being adapted with methods like ACS for bias mitigation. |

| Scaffold Split | A method for splitting data where molecules sharing a common Bemis-Murcko scaffold are kept in the same partition [7]. | Used to create a challenging train/test split that assesses a model's ability to extrapolate to novel molecular structures, revealing generalization bias. |

Frequently Asked Questions (FAQs)

Q: Why can't I trust a model that performs well on a random train/test split? A: A random split can artificially inflate performance estimates. It often places molecules with very similar scaffolds in both training and test sets, so the model is not truly tested on novel chemistries. Using a scaffold split is a more rigorous evaluation that better simulates real-world performance on new compound classes [3] [4].

Q: My dataset is large (thousands of molecules). Can it still be biased? A: Absolutely. Bias is not solely about size but about representation. A dataset with many thousands of molecules is still biased if it over-represents certain structural classes (like drug-like molecules) and under-represents others (like certain natural products or lipids) [3]. Large datasets are often assembled based on commercial availability or synthetic feasibility, which systematically excludes rare or difficult-to-synthesize compounds [3].

Q: What is the simplest first step to check for dataset bias? A: Perform a visual check. Use UMAP or t-SNE to project your dataset into a 2D space alongside a large, diverse reference set of biomolecules (like the union of multiple public databases). If your dataset occupies only a small, clustered region of the broader reference map, you have strong evidence of coverage bias [3].

Q: How does data bias lead to a "reproducibility crisis" in scientific machine learning? A: Models trained on biased data learn the biases, not the underlying physical principles. A model might appear accurate on its test set but will fail when applied to a different part of chemical space or real-world experimental settings. This leads to published models that cannot be reproduced or generalized, wasting research resources and undermining trust in data-driven approaches [3].

Troubleshooting Guides

Guide 1: Diagnosing Data Distribution Misalignments

Problem: Machine learning model performance is degraded after integrating multiple public ADME datasets. Explanation: Inconsistent experimental protocols, chemical space coverage, and measurement conditions between data sources create distributional shifts. Naive data aggregation introduces noise rather than improving predictive power [8].

Steps to Diagnose:

- Compare Property Distributions: For each dataset, plot the distribution of the key ADME property (e.g., half-life, clearance). Use statistical tests like the two-sample Kolmogorov-Smirnov (KS) test to quantify differences [8].

- Analyze Chemical Space: Generate molecular fingerprints (e.g., ECFP4) for all compounds. Use dimensionality reduction (UMAP) to project molecules into a 2D space colored by data source to visually identify coverage gaps or clusters [8].

- Check for Annotation Conflicts: Identify molecules present in multiple datasets. For these duplicates, plot the numerical differences in their property annotations. Significant conflicts indicate underlying inconsistencies [8].

Resolution:

- Use tools like

AssayInspectorto automate this diagnostic process and generate alerts for dissimilar, conflicting, or redundant datasets [8]. - If misalignments are severe, consider building separate models for different data sources or applying robust integration techniques like federated learning instead of simple aggregation [8] [9].

Guide 2: Addressing Task Imbalance in Multi-Task Learning

Problem: A multi-task model for predicting related molecular properties performs poorly on tasks with limited data. Explanation: Severe task imbalance exacerbates "negative transfer," where updates from data-rich tasks degrade performance on data-poor tasks [7].

Steps to Diagnose:

- Quantify Imbalance: Calculate the number of labeled data points for each task. The imbalance for a task

ican be defined as ( Ii = 1 - \frac{Li}{\max(L_j)} ), where ( L ) is the label count [7]. - Monitor Task-Specific Performance: During training, track validation loss for each task individually. Observe if the loss for low-data tasks fails to improve or diverges.

Resolution:

- Implement the Adaptive Checkpointing with Specialization (ACS) training scheme [7].

- Use a shared graph neural network (GNN) backbone with task-specific multi-layer perceptron (MLP) heads.

- During training, independently checkpoint the best model parameters (both backbone and head) for each task whenever its validation loss hits a new minimum.

- This allows each task to specialize, mitigating interference from other tasks [7].

Frequently Asked Questions (FAQs)

Q1: What are the most common sources of bias in public ADME data? The most prevalent biases stem from batch effects and annotation inconsistencies [8] [9]. Batch effects arise from differences in experimental protocols, reagents, and measurement conditions across labs [9]. Annotation inconsistencies occur when the same property is defined or measured differently between gold-standard literature sources and large-scale public benchmarks like TDC (Therapeutic Data Commons) [8]. Furthermore, publication bias towards positive results means public data often lacks information on failed compounds, creating a skewed view of chemical space [9].

Q2: How can I assess the consistency of multiple datasets before merging them? A systematic Data Consistency Assessment (DCA) is required prior to modeling. This involves [8]:

- Statistical Comparison: Using descriptive statistics (mean, standard deviation, quartiles) and statistical tests (KS-test for regression, Chi-square for classification) on the endpoint distributions.

- Chemical Space Analysis: Evaluating molecular similarity within and between datasets using Tanimoto coefficients on fingerprints or Euclidean distance on descriptors.

- Overlap and Conflict Analysis: Identifying shared molecules across datasets and quantifying differences in their property annotations.

Tools like

AssayInspectorare designed to automate this multi-faceted analysis [8].

Q3: We have very little labeled data for our target ADME property. What modeling strategies can help? In such ultra-low data regimes, consider these approaches:

- Multi-Task Learning (MTL): Leverage correlations with other, more data-rich, molecular properties to improve generalization [7].

- Adaptive Checkpointing with Specialization (ACS): A specific MTL method that combats negative transfer by saving specialized model checkpoints for each task, proven to work with as few as 29 labeled samples [7].

- Refined Property Profiles: Use pre-trained models built on specific therapeutic classes (e.g., from ATC classification) that may be more relevant to your chemical series than general models, potentially improving prediction accuracy [10].

Q4: How does bias in ADME data specifically impact drug discovery projects? Biased data leads to inaccurate predictive models, which in turn misguides lead optimization. This can cause expensive late-stage failures when ADME liabilities (e.g., rapid clearance, toxicity) are discovered only in preclinical or clinical stages [9] [10]. For instance, a model trained on public data with publication bias might repeatedly suggest molecules with primary amines for an antibiotic project, despite internal data showing this strategy is ineffective [9].

Data Presentation: Analysis of Public Half-Life Datasets

The table below summarizes key statistics from an analysis of five public half-life datasets, revealing significant distributional differences that can introduce bias if naively aggregated [8].

Table 1: Descriptive Statistics of Public Human Intravenous Half-Life Datasets

| Dataset Source | Number of Molecules | Endpoint Mean (logHL) | Endpoint Std Dev | Primary Source | Notable Characteristics |

|---|---|---|---|---|---|

| Obach et al. [8] | 670 | Not Specified | Not Specified | Literature | Used as a benchmark in TDC [8]. |

| Lombardo et al. [8] | 1,352 | Not Specified | Not Specified | Literature | A widely used reference dataset [8]. |

| Fan et al. (2024) [8] | 3,512 | Not Specified | Not Specified | ChEMBL | Gold-standard source used by platforms like ADMETlab 3.0 [8]. |

| DDPD 1.0 [8] | Not Specified | Not Specified | Not Specified | Public Database | Contains experimental PK data for small molecules [8]. |

| e-Drug3D [8] | Not Specified | Not Specified | Not Specified | Public Database | Contains experimental PK data for small molecules [8]. |

Note: The original study found "significant misalignments" and "inconsistent property annotations" between these sources, but specific statistical values were not detailed in the provided excerpt. A full analysis would populate mean, standard deviation, and quartiles for each source [8].

Experimental Protocols

Protocol 1: Systematic Data Consistency Assessment (DCA) with AssayInspector

This protocol outlines the use of the AssayInspector package for a pre-modeling data consistency check [8].

Objective: To identify outliers, batch effects, and distributional discrepancies across multiple molecular property datasets before integration.

Materials:

- Input Data: Two or more datasets containing molecular structures (as SMILES strings) and a property endpoint (regression or classification).

- Software: The

AssayInspectorPython package (https://github.com/chemotargets/assay_inspector) [8]. - Computational Environment: Python environment with dependencies (RDKit, SciPy, NumPy, Plotly/Matplotlib).

Methodology:

- Data Input and Feature Calculation: Load your datasets.

AssayInspectorcan automatically calculate chemical features (e.g., ECFP4 fingerprints, 1D/2D RDKit descriptors) if not precomputed [8]. - Descriptive Statistics Generation: Run the tool to generate a summary report containing:

- Number of molecules, endpoint mean, standard deviation, min/max, and quartiles for each dataset.

- For regression, it calculates skewness, kurtosis, and identifies outliers [8].

- Statistical Testing: The tool performs pairwise statistical tests between datasets:

- Two-sample KS-test for regression endpoints.

- Chi-square test for classification endpoints [8].

- Visualization and Insight Report:

- Generate property distribution plots and chemical space visualizations via UMAP.

- Produce an insight report with alerts for conflicting annotations, divergent datasets, and significantly different endpoint distributions [8].

Expected Output: A comprehensive report with statistics, visualizations, and actionable alerts to guide data cleaning and informed integration decisions.

Protocol 2: Training an ACS Model for Low-Data ADME Prediction

This protocol describes the ACS method to train a robust multi-task model in imbalanced, low-data settings [7].

Objective: To predict an ADME property with very few labels by leveraging related tasks, while mitigating negative transfer.

Materials:

- Data: A multi-task dataset where some tasks have abundant labels and your target task has very few (e.g., tens of samples).

- Model Architecture: A Graph Neural Network (GNN) backbone (e.g., Message Passing Neural Network) with task-specific Multi-Layer Perceptron (MLP) heads [7].

- Training Framework: PyTorch or TensorFlow, configured for checkpointing.

Methodology:

- Model Setup: Initialize one shared GNN backbone and one separate MLP head for each prediction task [7].

- Training Loop:

- For each batch, compute the loss for each task individually (using loss masking for missing labels).

- Update the shared backbone and the respective task heads via backpropagation.

- Adaptive Checkpointing:

- After each epoch, evaluate the model on the validation set for every task.

- For each task, if its validation loss is the lowest observed so far, checkpoint the entire model state (shared backbone + that task's specific head) [7].

- Specialization:

- After training concludes, the final model for each task is its individually checkpointed state, which represents the point where the shared backbone was most specialized for that task without interference from others [7].

Expected Output: A set of task-specialized models that demonstrate improved performance on low-data tasks compared to standard MTL or single-task learning.

Mandatory Visualization

Data Consistency Assessment Workflow

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Item Name | Function/Brief Explanation | Example/Reference |

|---|---|---|

| AssayInspector | A Python package for systematic Data Consistency Assessment (DCA) prior to model training. It identifies outliers, batch effects, and annotation conflicts. | [8] |

| ACS Training Scheme | (Adaptive Checkpointing with Specialization) A training scheme for Multi-Task GNNs that mitigates negative transfer, ideal for low-data regimes. | [7] |

| RDKit | An open-source cheminformatics toolkit used to calculate molecular descriptors, fingerprints, and process SMILES strings. | [8] [10] |

| Therapeutic Data Commons (TDC) | A platform providing standardized benchmarks for molecular property prediction, including ADME datasets. Requires careful consistency checks. | [8] |

| Polaris | A benchmarking platform that provides guidelines and certification for high-quality, standardized datasets suitable for machine learning. | [9] |

| Federated Learning | A collaborative learning approach that trains models across multiple decentralized data sources (e.g., different pharma companies) without sharing the raw data. | [8] [9] |

Frequently Asked Questions

Q1: Why does my molecular property prediction model perform well in validation but fails in real-world drug discovery applications? This is a classic sign of dataset bias. The model may have been trained and validated on benchmark datasets like those from MoleculeNet, which can have limited relevance to real-world drug discovery projects. Furthermore, inconsistencies in how these datasets are split for validation can lead to overly optimistic performance metrics that do not hold up in practice [11].

Q2: What are the most common types of bias I should check for in my molecular dataset? The most prevalent biases in molecular data often originate from the data itself and the algorithms used. Key types to investigate include:

- Representation Bias: Occurs when your training data does not adequately cover the chemical space of interest [12].

- Selection Bias: Arises from varying search terms or data sources during data collection, leading to a non-representative sample of molecules [13].

- Confirmation Bias: Happens when researchers consciously or subconsciously select or weight data that confirms their pre-existing beliefs about a molecular pattern [12].

- Systemic Bias: Reflects historical inequalities or practices, such as an over-reliance on data from high-income regions, which can limit the model's applicability to global populations [12].

Q3: How can I detect if different public data sources have inconsistencies before I combine them? Use systematic data consistency assessment (DCA) tools like AssayInspector to identify distributional misalignments and annotation discrepancies between datasets. For example, significant misalignments have been found between gold-standard sources and popular benchmarks like the Therapeutic Data Commons (TDC) for ADME properties such as half-life. Naively integrating such data can introduce noise and degrade model performance [8].

Q4: My model is complex, but its predictions are unreliable. Is this a bias or variance issue? It could be both, as they are connected through the bias-variance tradeoff. A complex model might have low bias (accurately capturing patterns in the training data) but high variance (being overly sensitive to the specific training set, including its noise and biases). This high variance manifests as poor generalizability to new, unseen data [14] [15]. Simplifying the model or increasing the training data size can help, but the root cause may be inherent biases in your data [11].

Q5: What is the impact of "activity cliffs" on model prediction? Activity cliffs occur when small changes in a molecule's structure lead to large changes in its property or activity. These can significantly impact model prediction and are a major challenge for generalization, as models may fail to learn the complex structure-activity relationships they represent [11].

Troubleshooting Guides

Issue: Model Performance Drops on New, External Data

This is a primary symptom of poor generalizability, often caused by biases in the training data that prevent the model from learning underlying rules applicable to a broader chemical space.

Diagnosis Steps:

- Profile Your Data: Analyze the label distribution and perform a structural analysis of your training set. Check for over-representation of certain molecular scaffolds [11].

- Conduct a Bias Audit: Use frameworks like PROBAST (Prediction model Risk Of Bias ASsessment Tool) to systematically evaluate your model's risk of bias. Studies show that a high percentage of published healthcare AI models have a high risk of bias [12].

- Check Data Consistency: If you've merged datasets, use tools like AssayInspector to generate visualization plots (e.g., property distribution plots, chemical space UMAPs) to detect outliers, batch effects, and significant distributional differences [8].

Solution: Mitigate the identified biases using the following protocol:

Table: Mitigation Strategies for Common Bias Types

| Bias Type | Mitigation Strategy | Key Action |

|---|---|---|

| Representation Bias | Expand and Balance Training Data | Actively source data to cover under-represented regions of chemical space [13]. |

| Selection Bias | Vary Data Sources and Search Terms | Use multiple training sets, especially if using a stock set, to ensure diversity [13]. |

| Algorithmic Bias | Re-calibrate Model Evaluation | Use cross-dataset generalization tests and multiple data splits with explicit random seeds for a more rigorous and statistically sound evaluation [11] [16]. |

| Confirmation Bias | Implement Blind Analysis | During model development and evaluation, blind the analysis to prevent pre-existing beliefs from influencing the interpretation of patterns [12]. |

Diagram: A systematic workflow for diagnosing and mitigating dataset bias.

Issue: Inconsistent Results After Integrating Public Datasets

Integrating public molecular property datasets (e.g., for ADME prediction) can expand chemical space coverage, but distributional misalignments often introduce noise and degrade performance.

Diagnosis Steps:

- Run Statistical Tests: Use a tool like AssayInspector to perform two-sample Kolmogorov-Smirnov (KS) tests on regression endpoints or Chi-square tests on classification endpoints to statistically compare distributions between datasets [8].

- Analyze Shared Compounds: Identify molecules that appear in multiple datasets and check for inconsistent property annotations. These conflicts are a major source of noise [8].

- Visualize Chemical Space: Generate a UMAP projection using molecular descriptors to see if the different datasets occupy distinct or overlapping regions of chemical space [8].

Solution: Follow a rigorous Data Consistency Assessment (DCA) protocol before aggregation:

Experimental Protocol: Data Consistency Assessment with AssayInspector

- Input Data: Compile your target datasets (e.g., Obach et al., Lombardo et al., TDC half-life data) and calculate molecular features (e.g., ECFP4 fingerprints, RDKit 2D descriptors) [8].

- Generate Summary Statistics: Run AssayInspector to produce a tabular summary for each data source, including molecule count, endpoint mean, standard deviation, and quartiles [8].

- Execute Visualization Module:

- Create property distribution plots for all datasets.

- Generate a dataset intersection plot (UpSet plot) to visualize molecular overlap.

- Produce a discrepancy plot to quantify annotation differences for shared compounds.

- Create a chemical space UMAP plot to visualize dataset coverage and alignment [8].

- Review Insight Report: Use the automated report to flag "conflicting datasets," "divergent datasets," and those with "significantly different endpoint distributions" [8].

- Make an Informed Decision: Based on the DCA, decide whether to (a) aggregate the datasets after standardization, (b) use them in a transfer learning setup, or (c) exclude a highly discordant source.

Table: Quantitative Example of Dataset Misalignment in Public Half-Life Data

| Data Source | Molecule Count | Reported Half-Life Mean (hr) | KS Test p-value vs. Gold Standard | Key Finding |

|---|---|---|---|---|

| Obach et al. (Gold Standard) | 670 | ~5.5 | (Reference) | Used in TDC as a benchmark [8]. |

| TDC Benchmark | (Based on Obach) | Varies | N/A | Significant annotation discrepancies vs. primary gold-standard sources identified [8]. |

| Fan et al. 2024 (Gold Standard) | 3,512 | ~7.1 | < 0.05 | Primary source for platforms like ADMETlab 3.0; distribution significantly different [8]. |

The Scientist's Toolkit

Table: Essential Reagents and Tools for Bias-Aware Molecular Modeling

| Tool or Reagent | Function / Explanation | Application in Bias Mitigation |

|---|---|---|

| AssayInspector | A model-agnostic Python package for Data Consistency Assessment (DCA). | Systematically identifies outliers, batch effects, and distributional misalignments between datasets before model training [8]. |

| RDKit | Open-source cheminformatics software. | Calculates standardized molecular descriptors (e.g., 2D features, ECFP fingerprints) to ensure consistent feature representation across studies [11] [8]. |

| Therapeutic Data Commons (TDC) | A platform providing standardized benchmarks for therapeutic ML. | Provides a baseline for model performance; however, requires caution and DCA due to potential misalignments with gold-standard data [8]. |

| OrthoFinder | A phylogenomic orthogroup inference algorithm. | Solves fundamental gene length bias in sequence comparison, dramatically improving inference accuracy—an example of tackling an inherent algorithmic bias [17]. |

| PROBAST Tool | A prediction model Risk Of Bias ASsessment Tool. | Provides a standardized framework to evaluate the risk of bias in predictive model studies, helping to identify methodological weaknesses [12]. |

Diagram: The AI model lifecycle, showing stages where different types of bias can be introduced.

Identifying Distributional Misalignments and Annotation Inconsistencies

Frequently Asked Questions

Q1: Why are my Graphviz nodes not showing their fill color, even though fillcolor is specified?

A: The fillcolor attribute requires the node's style to be set to filled. Without this, the fill color will not be applied [18].

Q2: How can I apply the same style to multiple nodes efficiently? A: Define nodes in a comma-separated list and apply their style attributes simultaneously [19]. This ensures visual consistency and makes the graph source code easier to maintain.

Q3: How can I create a node label where one word is bold and red, and the rest is black?

A: Use HTML-like labels with <FONT> tags to change color and <B> for bold formatting. Enclose the entire label in angle brackets <> instead of quotes, and set shape to plain or none for best results [20] [21] [22].

Q4: What are the available color formats I can use in Graphviz? A: Graphviz supports several color formats, as summarized in the table below [23].

| Format Type | Syntax Example | Description |

|---|---|---|

| RGB Hexadecimal | "#ff0000" or "#f00" |

Standard web hex colors. |

| RGBA Hexadecimal | "#ff000080" |

RGB with an alpha (transparency) channel. |

| HSV/HSVA | "0.0, 1.0, 1.0" |

Hue, Saturation, Value (and Alpha). |

| Color Names | "red", "transparent" |

X11 color scheme names (case-insensitive). |

Troubleshooting Guides

Problem: Inconsistent Molecular Property Annotations Description: A scenario where the same molecular structure receives conflicting property labels from different annotators, introducing training noise.

Diagnosis:

- Audit: Perform a random audit of 5% of the dataset, having multiple experts re-annotate the samples.

- Quantify: Calculate the inter-annotator agreement score (e.g., Cohen's Kappa).

- Identify Patterns: Check if inconsistencies correlate with specific molecular sub-structures (e.g., the presence of a "carboxylic acid" group) or specific annotators.

Solution:

- Define Rules: Establish clear, unambiguous annotation guidelines for the problematic substructures.

- Adjudicate: Have a senior chemist re-annotate all conflicting cases.

- Implement Workflow: Use a consensus-based annotation system as diagrammed below.

Experimental Protocol for Consensus Annotation:

- Sample: Select a batch of 100 molecular structures with known annotation conflicts.

- Procedure:

- Two independent annotators (Annotator A and B) label each structure.

- A computational check flags entries with disagreeing labels.

- A third, senior expert (Expert Adjudicator) provides the final label for all flagged entries.

- Validation: Train identical models on the original noisy dataset and the newly adjudicated dataset. Compare performance on a clean, expertly-curated test set.

Resolution Workflow: The following diagram outlines the logical workflow for resolving annotation inconsistencies.

Problem: Bias from Non-Random Data Splits Description: A model performs well during validation but fails in real-world screening because the training and test sets were split by time, creating a temporal bias. Newer compounds in the test set have different property distributions.

Diagnosis:

- Identify Split Method: Determine if your data was split randomly or by a hidden variable (e.g., compound registration date).

- Analyze Distributions: Use dimensionality reduction (e.g., t-SNE) to visualize the spatial distribution of training and test sets. Look for clear separation.

- Validate: Perform a "cold-start" experiment where the model is trained on older compounds and tested only on newer ones to simulate real-world deployment.

Solution:

- Re-split Data: Implement a scaffold split, where compounds are divided based on their molecular backbone (Bemis-Murcko scaffold) to ensure structural diversity across sets.

- Re-train Model: Train your model on the new, more robust split.

- Re-evaluate: Assess the model's performance on the new test set, which now provides a more realistic estimate of its predictive power.

Experimental Protocol for Scaffold Splitting:

- Input: A dataset of 50,000 molecular SMILES strings.

- Procedure:

- Generate the Bemis-Murcko scaffold for each molecule.

- Group all molecules by their scaffold.

- Randomly assign entire scaffold groups to the training (80%), validation (10%), and test (10%) sets. This ensures no structurally similar molecules leak between splits.

- Analysis: Compare model performance metrics (AUC-ROC, Precision, Recall) between the temporal split and the scaffold split.

Data Splitting Strategy: The diagram below contrasts a biased split with a robust scaffold-based split.

The Scientist's Toolkit

Research Reagent Solutions for Robust Model Training

| Item | Function |

|---|---|

| Bemis-Murcko Scaffold Generator | Extracts the core molecular framework from a compound, enabling the creation of data splits that test for generalization to novel structures [24]. |

| Tanimoto Similarity Calculator | Quantifies the structural similarity between two molecules based on their chemical fingerprints, used to detect data redundancy or leakage. |

| Molecular Descriptor Suite | Generates a standardized set of numerical features (e.g., molecular weight, logP, polar surface area) to facilitate the detection of distributional shifts between datasets. |

| Adversarial Validation Script | A diagnostic tool to check if training and test sets are from the same distribution by training a classifier to distinguish between them. |

| Consensus Annotation Platform | A software interface that manages the workflow of multiple annotators and an expert adjudicator to resolve labeling inconsistencies. |

Troubleshooting Guides and FAQs

How can I determine if my molecular property dataset has significant distributional bias?

Answer: Distributional bias, where data from different sources do not align, can be detected through statistical tests and visualizations.

- Perform Statistical Testing: Use the two-sample Kolmogorov–Smirnov (KS) test to compare the endpoint distributions (e.g., half-life, solubility values) from different datasets. A low p-value suggests a significant difference in distributions [8]. For classification tasks, the Chi-square test can be used to check for differences in class ratios across sources [8].

- Conduct Feature Similarity Analysis: Calculate the chemical similarity between molecules from different datasets. Using Tanimoto similarity for ECFP4 fingerprints or standardized Euclidean distance for RDKit descriptors can reveal if datasets occupy different regions of chemical space [8].

- Visualize with Dimensionality Reduction: Employ UMAP to project your high-dimensional molecular feature data (e.g., from fingerprints or descriptors) into a 2D plot. Visual inspection can quickly reveal if datasets cluster separately or have poor overlap, indicating a distributional misalignment [8].

What should I do if my model performs well on one dataset but poorly on another?

Answer: This is a classic symptom of dataset bias, where your model may have learned features specific to one data source. This often stems from batch effects or non-biological signals in the training data.

- Audit for Shortcut Learning: Apply a framework like G-AUDIT to systematically test if your model is relying on spurious correlations. This method quantifies the utility (association between a data attribute and the task label) and detectability (how easily the attribute can be inferred from the raw data) of various attributes [25]. Attributes with high utility and detectability pose a high shortcut risk.

- Analyze Metadata: Examine non-molecular metadata, such as the year of collection, image dimensions (for cell-based assays), or clinical site. These can be proxies for experimental conditions and are often overlooked sources of bias. Models can inadvertently learn to predict based on these proxies rather than the underlying biology [25].

- Implement Subgroup Analysis: Move beyond whole-dataset performance metrics. Evaluate your model's performance (accuracy, AUC, F1-score) separately on each data source or demographic subgroup to identify where it fails [26] [27].

Which quantitative metrics should I use to measure bias in my dataset?

Answer: The choice of metric depends on your task (regression or classification) and the aspect of fairness you wish to capture. The table below summarizes key statistical and model-based metrics for quantifying bias.

Table 1: Quantitative Metrics for Bias Detection

| Metric Category | Metric Name | Best For | Interpretation |

|---|---|---|---|

| Statistical Parity | Demographic Parity Difference [28] [27] | Classification | Compares the probability of positive outcomes between groups. A value of 0 indicates perfect parity. |

| Equalized Outcomes | Equalized Odds / Equal Opportunity Difference [28] [27] | Classification | Requires similar true positive and false positive rates across groups. A value of 0 indicates no bias. |

| Legal & Compliance | Disparate Impact [28] [27] | Classification | Ratio of positive outcome rates between groups. A value below 0.8 may indicate illegal discrimination. |

| Distribution Shift | Two-sample Kolmogorov–Smirnov (KS) Test [8] | Regression | Tests if two datasets come from the same distribution. A low p-value indicates significant distributional difference. |

| Shortcut Learning | G-AUDIT (Utility & Detectability) [25] | All Modalities | Quantifies an attribute's potential to be a shortcut. High scores for both indicate high bias risk. |

My dataset is imbalanced for a protected attribute (e.g., sex). How can I mitigate this bias?

Answer: Bias mitigation should be considered during data preprocessing, model training, or post-processing.

- Preprocessing Techniques:

- Resampling: Use oversampling (e.g., SMOTE) for underrepresented groups or undersampling for overrepresented groups to create a more balanced dataset [6] [28].

- Reweighting: Assign higher weights to samples from underrepresented groups during model training to balance their influence on the loss function [6] [27].

- In-Processing (Algorithm-Centric) Techniques:

- Adversarial Debiasing: Employ a secondary model (adversary) that tries to predict the protected attribute (e.g., sex) from the primary model's representations. The primary model is then trained to maximize task performance while minimizing the adversary's accuracy, thus learning features invariant to the protected attribute [28] [27].

- Fairness-Aware Regularization: Add a penalty term to your model's loss function that directly discourages dependence of the predictions on the protected attribute [27].

- Post-Processing Techniques:

- Reject Option Classification: Adjust the decision threshold for different subgroups to balance outcomes, such as equalizing false positive rates [28].

Experimental Protocols for Bias Detection

Protocol 1: Data Consistency Assessment (DCA) for Multi-Source Molecular Data

This protocol, inspired by the AssayInspector tool, provides a methodology for identifying inconsistencies before aggregating datasets [8].

- Data Collection & Curation: Gather molecular property datasets from multiple public or proprietary sources (e.g., TDC, ChEMBL, Lombardo et al., Obach et al. for half-life) [8].

- Descriptive Statistics Calculation: For each dataset, compute:

- Number of unique molecules

- Endpoint statistics: mean, standard deviation, quartiles (for regression); class counts and ratios (for classification)

- Chemical diversity metrics

- Statistical Comparison:

- Apply the two-sample KS test pairwise between datasets for regression endpoints.

- Apply the Chi-square test for classification endpoint distributions.

- Chemical Space Analysis:

- Generate ECFP4 fingerprints for all molecules.

- Compute a Tanimoto similarity matrix to assess within-dataset and between-dataset similarity.

- Visualization and Outlier Detection:

- Generate UMAP plots to visualize the combined chemical space of all datasets.

- Create box plots and histograms to overlay endpoint distributions.

- Identify molecules that are structural outliers or have endpoint values far outside the typical range.

- Generate Insight Report: Compile a report highlighting alerts for conflicting annotations, significantly different endpoint distributions, and datasets with low molecular overlap.

Protocol 2: Auditing for Shortcut Learning with G-AUDIT

This generalized protocol helps identify which attributes in your data could be exploited as shortcuts [25].

- Define Attributes and Task: List all available attributes: patient demographics (age, sex), molecular descriptors, and metadata (year, data source). Define your primary prediction task (e.g., malignant vs. benign).

- Quantify Utility: For each attribute, measure its utility by calculating the mutual information between the attribute and the task label. This measures how much information the attribute carries about the label.

- Quantify Detectability: For each attribute, measure its detectability by training a model to predict the attribute from the primary input data (e.g., the molecule structure or assay image). The performance (e.g., F1-score) of this predictor is the detectability score.

- Rank and Identify Risks: Rank all attributes on a 2D plot based on their utility and detectability scores. Attributes falling in the high-utility, high-detectability quadrant represent the highest shortcut risk and should be investigated first.

- Calibration (Optional): Introduce a synthetic attribute with a known, strong correlation to the label. Measure the resulting performance drop to estimate a "worst-case" performance degradation for high-risk real attributes.

Workflow Diagrams

Dataset Bias Auditing Workflow

Shortcut Learning Risk Assessment

The Scientist's Toolkit

Table 2: Essential Research Reagents and Tools for Bias Analysis

| Tool / Reagent | Function / Explanation | Application Context |

|---|---|---|

| AssayInspector [8] | A Python package designed for data consistency assessment prior to ML modeling. It generates statistics, visualizations, and diagnostic summaries. | Identifying outliers, batch effects, and distributional misalignments in physicochemical and ADME data. |

| G-AUDIT Framework [25] | A modality-agnostic auditing framework that quantifies the utility and detectability of data attributes to generate hypotheses about shortcut risks. | Systematically uncovering subtle biases in training or testing data, applicable to images, text, and tabular data. |

| ECFP4 / ECFP6 Fingerprints [11] | Circular fingerprints that encode molecular substructures. The standard molecular representation for calculating chemical similarity. | Assessing the overlap and diversity of the chemical space covered by different datasets. |

| RDKit 2D Descriptors [11] | A set of ~200 precomputed molecular descriptors (e.g., MolLogP, PSA, NumHAcceptors) that capture key physicochemical properties. | Providing an alternative feature set for chemical space analysis and model training. |

| SMOTE [6] [28] | A preprocessing technique that generates synthetic examples for the minority class to address representation bias in classification tasks. | Balancing datasets that are imbalanced with respect to a protected attribute or an outcome class. |

| Adversarial Debiasing Network [28] [27] | A neural network architecture that uses an adversary to remove correlation between the model's internal representations and a protected attribute. | In-processing bias mitigation to learn features invariant to sensitive attributes like sex or ethnicity. |

Advanced Techniques for Bias Mitigation in Molecular Machine Learning

Troubleshooting Guide: Common ACS Implementation Issues

Q1: My multi-task model performance is worse than single-task models. What is happening and how can I fix it?

A: You are likely experiencing Negative Transfer (NT), where parameter updates from one task degrade performance on another. This is particularly common in imbalanced molecular datasets where tasks have vastly different numbers of labeled samples [7].

Solution: Implement Adaptive Checkpointing with Specialization (ACS):

- Diagnose the imbalance using the task imbalance metric: (Ii = 1 - \frac{Li}{\max Lj}) where (Li) is the number of labeled entries for task (i) [7].

- Employ task-specific early stopping. Monitor validation loss for each task independently and checkpoint the best backbone-head pair for a task whenever its validation loss reaches a new minimum [7].

- Use a shared GNN backbone with task-specific MLP heads to balance shared representation learning with task specialization [7].

Q2: How do I validate ACS performance on my specific molecular dataset?

A: Follow this rigorous experimental protocol to ensure meaningful results [7] [11]:

- Dataset Splitting: Use Murcko-scaffold splits instead of random splits. This prevents data leakage and over-optimistic performance estimates by ensuring structurally similar molecules are not spread across training and test sets [7] [11].

- Benchmarking: Compare ACS against these baseline training schemes:

- Single-Task Learning (STL): Separate backbone-head pairs for each task.

- MTL without checkpointing: Standard multi-task learning.

- MTL with Global Loss Checkpointing (MTL-GLC): Checkpointing based on combined task loss [7].

- Evaluation: On benchmark datasets like ClinTox, SIDER, and Tox21, ACS should match or surpass these baselines, particularly for low-data tasks [7].

Table: Expected Performance Comparison on Molecular Benchmarks (Average Improvement %)

| Training Scheme | ClinTox | SIDER | Tox21 | Notes |

|---|---|---|---|---|

| ACS (Proposed) | +15.3% (vs STL) | Matches/Surpasses | Matches/Surpasses | Optimal for task imbalance |

| MTL-Global Checkpoint | +5.0% (vs STL) | Near ACS | Near ACS | Suboptimal for severe imbalance |

| MTL (No Checkpoint) | +3.9% (vs STL) | Lower than ACS | Lower than ACS | Susceptible to negative transfer |

| Single-Task (STL) | Baseline | Baseline | Baseline | No parameter sharing |

Q3: I suspect dataset discrepancies are hurting my model. How can I systematically check data quality before training?

A: Data distribution misalignments are a critical challenge, especially when integrating public molecular data [8].

Solution: Implement a pre-training Data Consistency Assessment (DCA) using tools like AssayInspector:

- Identify Distribution Shifts: Use statistical tests (Kolmogorov-Smirnov for regression, Chi-square for classification) and visualizations to detect significant endpoint distribution differences between data sources [8].

- Check Molecular Overlap & Annotations: Analyze dataset intersections and identify molecules present in multiple sources but with conflicting property annotations [8].

- Inspect Chemical Space Coverage: Use UMAP projections to visualize whether different datasets cover similar regions of chemical space [8].

- Generate Insight Reports: Let the tool provide alerts for dissimilar, conflicting, or redundant datasets before finalizing your training set [8].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Components for Implementing ACS in Molecular Property Prediction

| Research Reagent | Function & Explanation | Implementation Example |

|---|---|---|

| Graph Neural Network (GNN) Backbone | Learns general-purpose latent molecular representations from graph-structured data. | Message-passing GNN [7] or architectures combining Graph Attention and GraphSAGE layers [29]. |

| Task-Specific MLP Heads | Process shared representations for individual property predictions. Prevents negative interference. | Separate multi-layer perceptrons for each molecular property (e.g., toxicity, solubility) [7]. |

| Adaptive Checkpointing System | Saves optimal model parameters for each task independently when validation loss minimizes. | Custom training loop that tracks and checkpoints based on per-task validation loss [7]. |

| Data Consistency Assessment Tool | Identifies dataset misalignments and annotation conflicts before model training. | AssayInspector package for statistical comparison and visualization of molecular datasets [8]. |

| Murcko Scaffold Splitter | Creates meaningful train/test splits based on molecular scaffolds for realistic evaluation. | RDKit-based implementation to separate molecules by core bicyclic structures [7] [11]. |

Experimental Protocols for ACS Validation

Protocol 1: Validating ACS on Public Benchmarks

- Data Preparation: Obtain ClinTox, SIDER, or Tox21 datasets. Apply Murcko-scaffold splitting with published protocols [7].

- Model Architecture:

- Backbone: Implement a message-passing GNN for molecular graphs [7].

- Heads: Attach separate 2-layer MLPs for each classification task.

- Training Regime:

- Use a masked loss function to handle missing labels [7].

- Track validation loss for each task independently.

- For each task, save a checkpoint when its validation loss hits a minimum.

- Evaluation: Report ROC-AUC scores and compare against STL, MTL, and MTL-GLC baselines [7].

Protocol 2: Systematic Study of Task Imbalance

- Create Artificial Imbalance: Start with a balanced dataset (e.g., ClinTox). Artificially reduce labeled samples for one task while maintaining full labels for the other [7].

- Quantify Imbalance: Calculate the task imbalance factor (I) for the low-data task [7].

- Train Models: Apply STL, MTL, and ACS under identical low-data conditions.

- Analyze Results: Plot performance (e.g., AUC) against imbalance factor (I) to identify the regime where ACS provides maximum benefit [7].

Workflow Visualization: ACS Architecture

ACS Training and Checkpointing Logic

In molecular property prediction, machine learning models often learn from historical experimental data reported in the literature. This data is frequently biased because scientific research does not uniformly sample the chemical space; decisions on which experiments to run or publish are influenced by factors such as cost, synthetic accessibility, and current research trends [30]. This results in training datasets that are not representative of the true chemical space, causing models to overfit to these biased distributions and perform poorly on subsequent uses [30] [4].

Causal inference provides a framework to overcome these challenges. Unlike traditional methods that learn correlations, causal techniques model the underlying cause-and-effect relationships. Two prominent methods are:

- Inverse Propensity Scoring (IPS): A re-weighting technique that gives more importance to underrepresented molecules in the training data.

- Counterfactual Regression (CFR): A representation learning technique that creates balanced features, making the treated and control distributions look similar [30].

This technical support center provides practical guidance on implementing these methods to build more robust and generalizable molecular property predictors.

Frequently Asked Questions (FAQs)

FAQ 1: What is the core problem that IPS and CFR solve in molecular property prediction? The core problem is dataset bias. Models are trained on data from past experiments, which is not a random sample of the chemical space. This bias leads to poor generalization when the model is applied to new, more representative sets of molecules [30]. For example, a model trained predominantly on small, rigid molecules may fail to predict properties for large, flexible compounds accurately.

FAQ 2: How does Inverse Propensity Scoring (IPS) correct for selection bias?

IPS corrects bias by assigning a weight to each data point during model training. The weight is the inverse of its "propensity score," which is the estimated probability that a particular molecule was included in the training dataset. Molecules that are rare or less likely to be experimented on (and thus underrepresented) receive higher weights, forcing the model to pay more attention to them [30]. The IPS-weighted loss function is: L_IPS = Σ (w_i * L(y_i, ŷ_i)), where w_i = 1 / propensity_score(i).

FAQ 3: What is the key mechanistic difference between IPS and Counterfactual Regression (CFR)? The key difference lies in their approach:

- IPS is a two-step method that first estimates propensity scores and then uses them to re-weight the loss function in a separate training step.

- CFR is an end-to-end representation learning method. It uses a neural network architecture with a shared feature extractor that is explicitly optimized to create balanced representations where the distributions of "treated" and "control" groups (or different biased subsets) are indistinguishable [30]. This often leads to more robust feature learning.

FAQ 4: My dataset is small and highly biased. Which method should I try first? For smaller datasets, the IPS approach is often more practical and less computationally intensive. It can be implemented as a wrapper around your existing model training pipeline. For larger datasets or when you suspect complex, multi-faceted bias, CFR may yield better performance because it learns invariant representations directly, though it requires more sophisticated implementation and tuning [30].

FAQ 5: How can I simulate biased data to validate these methods if my original dataset is unbiased? You can introduce artificial bias by non-randomly sampling from a large, diverse dataset (like QM9). Practical biased sampling scenarios include [30]:

- Size-based bias: Selecting molecules based on the number of heavy atoms.

- Property-based bias: Selecting molecules based on a specific property value (e.g., solubility).

- Structural bias: Selecting molecules that contain or lack certain functional groups. You can then test if your model, trained on this biased sample, can predict accurately on a held-out, uniformly sampled test set.

Troubleshooting Guides

Issue 1: IPS Model Performance is Unstable or Poor

Potential Causes and Solutions:

Cause: Poorly Estimated Propensity Scores

- Solution: The accuracy of IPS hinges on good propensity score estimates. Use domain knowledge to choose relevant molecular descriptors (e.g., molecular weight, polar surface area, presence of key functional groups) for your propensity model. Validate the propensity model by checking if it can distinguish your training set from a uniform sample.

Cause: Extremely Large Weights

- Solution: When a molecule has a very low propensity score, its inverse weight becomes large and can dominate the loss function, leading to high variance. Mitigate this by clipping the weights to a maximum value (e.g., the 95th percentile of all weights) or using stabilized IPS weights.

Cause: Omitted Confounding Variables

- Solution: The propensity model must account for all variables that influence both the selection of a molecule into the dataset and its property. Review the data generation process carefully. If a key confounder is missing from your model, the bias correction will be incomplete.

Issue 2: CFR Model Fails to Learn Balanced Representations

Potential Causes and Solutions:

Cause: Inadequate Capacity of the Feature Extractor

- Solution: The shared feature extractor (typically a Graph Neural Network) must be powerful enough to learn complex molecular representations while also satisfying the balancing constraint. Consider using a deeper GNN or a model with more hidden units.

Cause: Improper Tuning of the Balancing Hyperparameter

- Solution: The CFR loss function is

L_CFR = Σ L(y_i, ŷ_i) + α * Integral Probability Metric (IPM). The hyperparameterαcontrols the trade-off between prediction accuracy and representation balance. Perform a hyperparameter search overαusing a validation set that reflects the target (unbiased) distribution.

- Solution: The CFR loss function is

Cause: Gradient Conflict

- Solution: The gradients from the prediction loss and the balancing loss (IPM) may conflict, hindering convergence. Monitor both loss terms during training. Techniques like gradient reversal or using optimizers with adaptive learning rates can help.

Experimental Protocols & Data

The following table summarizes the typical performance improvements offered by IPS and CFR across various molecular properties, as measured by Mean Absolute Error (MAE) on an unbiased test set [30].

Table 1: Performance Comparison of Bias Mitigation Techniques on QM9 Properties

| Molecular Property | Baseline MAE | IPS MAE | CFR MAE | Notes |

|---|---|---|---|---|

| zvpe (Zero-point vibrational energy) | - | - | - | IPS showed statistically significant improvement in all 4 bias scenarios. |

| u0 (Internal energy at 0K) | - | - | - | IPS showed statistically significant improvement in all 4 bias scenarios. |

| h298 (Enthalpy at 298.15K) | - | - | - | IPS showed statistically significant improvement in all 4 bias scenarios. |

| HOMO-LUMO gap | - | - | - | IPS showed insignificant improvement or failure in some scenarios. |

| mu (Dipole moment) | - | - | - | IPS showed significant improvement in 3 out of 4 scenarios. |

| General Trend | Highest MAE | Solid improvement for many properties | Outperformed IPS on most targets | CFR generally provides more robust performance. |

Detailed Methodology: Implementing IPS with a GNN

This protocol outlines the steps to implement an IPS-based debiasing technique for a Graph Neural Network (GNN) property predictor [30].

Step 1: Propensity Score Estimation

- Input: Your biased training dataset ( \mathscr{D}^\text{train} = {(Gi,yi)}_{i=1}^N ), and a representative (ideally uniform) sample of the chemical space ( \mathscr{D}^\text{representative} ).

- Action: Train a probabilistic classification model (e.g., Logistic Regression or a small GNN) to distinguish between molecules in ( \mathscr{D}^\text{train} ) and ( \mathscr{D}^\text{representative} ).

- Output: For each molecule ( Gi ) in the training set, the propensity score ( \hat{p}(Gi) ) is the predicted probability from this classifier.

Step 2: Model Training with IPS Weights

- Input: Training graphs ( Gi ), target properties ( yi ), and calculated propensity scores ( \hat{p}(G_i) ).

- Action: Train your primary GNN prediction model ( f: \mathscr{G}\rightarrow \mathbb{R} ) using an IPS-weighted loss function. The weight for the i-th sample is ( wi = 1 / \hat{p}(Gi) ).

- Loss Function: ( L\text{IPS} = \frac{1}{N} \sum{i=1}^N wi \cdot L(yi, f(G_i)) ) where ( L ) is a standard regression loss like Mean Squared Error.

- Output: A trained GNN model ( f ) that is robust to the selection bias in the training data.

Workflow Visualization

The following diagram illustrates the logical workflow and key components of the two causal inference methods.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Causal Molecular Property Prediction

| Item Name | Function / Purpose | Key Features / Notes |

|---|---|---|

| Graph Neural Network (GNN) | Core architecture for learning from molecular graphs. Represents atoms as nodes and bonds as edges. | Essential for feature extraction directly from molecular structure. Models like Message Passing Neural Networks (MPNNs) are commonly used [30] [7]. |

| Propensity Estimation Model | A classifier that estimates the probability of a molecule being included in the training set. | Can be a simpler model like Logistic Regression (on molecular fingerprints) or a second GNN. Critical for the IPS method [30]. |

| Integral Probability Metric (IPM) | A distance metric between distributions used in CFR to enforce representation balance. | Common choices are the Wasserstein distance or Maximum Mean Discrepancy (MMD). This is the core of the balancing constraint in CFR [30]. |

| Standard Molecular Datasets | Provide a "uniform" reference distribution for propensity estimation or as unbiased test sets. | QM9 [30] [4]: ~134k small organic molecules with quantum mechanical properties. ZINC [30] [4]: A vast database of commercially available compounds. |

| Deep Learning Framework | The programming environment for building and training models. | PyTorch or TensorFlow are standard. They provide the flexibility needed to implement custom loss functions (like IPS-weighted loss or CFR's joint loss). |

Adversarial and Influence-Based Data Augmentation Strategies

Troubleshooting Guide: FAQs for Experimental Challenges

This guide addresses common technical issues encountered when implementing adversarial and influence-based data augmentation strategies in molecular property prediction.

FAQ 1: How can I address severe class imbalance in a multitask molecular property prediction problem where traditional augmentation fails?

- Problem: Traditional data augmentation techniques are proving ineffective for a classification task with imbalanced data across multiple prediction tasks.

- Solution: Implement the Adversarial Augmentation to Influential Sample (AAIS) framework. This method uses distributionally robust optimization and is less dependent on the initial dataset size and number of tasks [31].

- Protocol: The core methodology involves:

- Influential Sample Identification: Use a novel one-step influence function to identify data points that have a significant impact on model training during the training process itself. These points are typically located near the model's decision boundary [31].

- Adversarial Augmentation: Generate new data samples by adversarially augmenting these influential samples.

- Model Retraining: Retrain the Graph Neural Network model with the augmented dataset. This process flattens the decision boundary locally around these critical points, leading to more robust predictions [31].

- Expected Outcome: Application of this method on molecular property benchmarks has shown performance improvements of 1%–15% in AUC and 1%–35% in F1-score [31].

FAQ 2: What strategy can boost model performance when labeled molecular data is scarce for a specific target?

- Problem: A deep learning model for predicting alpha-glucosidase inhibitors suffers from overfitting due to limited labeled data.

- Solution: Integrate data augmentation with transfer learning from pre-trained models [32].

- Protocol:

- SMILES Augmentation: Generate multiple, diverse SMILES string representations for each molecule in your dataset. This increases data variability and acts as a form of data augmentation [32].

- Leverage Pre-trained Models: Fine-tune a pre-trained BERT model (originally designed for natural language processing) that has been adapted to understand SMILES strings as a molecular representation. Models like PC10M-450k from repositories like Hugging Face can be a starting point [32].

- Fine-tuning: The pre-trained model is subsequently fine-tuned on the (augmented) task-specific molecular data to predict the target property [32].

FAQ 3: How do I perform data augmentation for a graph neural network when material structure data is limited and computationally expensive to obtain?

- Problem: Predicting properties of High-Entropy Alloys (HEAs) using graph neural networks is limited by the small number of accurate structured data points from DFT calculations.

- Solution: Use the EFTGAN (Elemental Features enhanced and Transferring corrected data augmentation in Generative Adversarial Networks) framework [33].

- Protocol:

- Feature Extraction: Train an Elemental Convolution network (ECNet) to extract elemental feature vectors from the crystal structure graph of your materials [33].

- Data Generation: Train an InfoGAN model to generate new, synthetic elemental feature vectors. The generator's input includes the elemental composition to ensure relevance [33].

- Iterative Refinement: Use an iterative approach where the generated features are used to predict targets via a multi-layer perceptron. These are added to the training set to update the InfoGAN model until the generated targets stabilize [33].

- Transfer Learning: When using the generated data for augmentation, employ transfer learning. First, train the prediction model on the generated data, then fine-tune it on the original, real data to prevent performance degradation [33].

FAQ 4: My virtual screening model has a high false positive rate after augmenting with random negative samples. How can I manage this?

- Problem: Conventional data augmentation for virtual screening, which involves generating random negative samples, leads to an unacceptably high false positive rate.

- Solution: Implement the Negative-Augmented PU-bagging (NAPU-bagging) SVM framework, a semi-supervised learning approach [34].

- Protocol:

- Model Selection: Use a Support Vector Machine (SVM) with ECFP4 fingerprints, which has been shown to match or surpass the performance of more complex deep learning models in this context [34].

- NAPU-bagging:

- Resample: Create multiple "bags" (subsets) of training data. Each bag contains all known positive samples, a sample of the unlabeled data, and a selection of generated or known negative samples [34].

- Ensemble Training: Train an ensemble of SVM classifiers, each on one of these bags [34].

- Averaging: Average the predictions from all classifiers to produce the final output. This ensemble approach manages the false positive rate while maintaining a high recall rate, which is critical for compiling candidate lists in virtual screening [34].

The following tables summarize key quantitative findings from the research cited in this guide.

Table 1: Performance Improvement of AAIS on Molecular Property Prediction

| Metric | Performance Gain | Notes |

|---|---|---|

| AUC | 1% - 15% | Improvement observed on benchmark datasets [31] |

| F1-Score | 1% - 35% | Particularly effective for imbalanced classification tasks [31] |

Table 2: Comparison of SVM and Deep Learning Models for Drug-Target Prediction

| Model Type / Specific Model | Performance Summary |

|---|---|

| Support Vector Machine (SVM) | Demonstrated superior or comparable performance to all ten DL models tested [34] |

| Deep Learning Models (e.g., DeepDTA, GraphDTA) | Ten different state-of-the-art models were evaluated and generally did not surpass SVM in this specific application [34] |

Experimental Protocols

Protocol 1: Implementing the AAIS Framework

This protocol is adapted from the "Adversarial Augmentation to Influential Sample" method [31].

- Dataset Preparation: Obtain a publicly available molecular graph dataset, such as those from the OGB (Open Graph Benchmark) [31].

- Base Model Training: Begin training a standard Graph Neural Network (GNN) for your target property prediction task.

- Influence Calculation: During training, apply the one-step influence function to identify a subset of training samples that are most influential on the model's current loss.

- Adversarial Augmentation: For each identified influential sample, apply adversarial perturbations to the molecular graph features. The perturbation is designed to maximize the model's loss for that sample, effectively creating harder examples near the decision boundary.

- Combined Training: Add the newly generated adversarial examples to the training set and continue the training process. This forces the model to learn a more robust decision boundary.

Protocol 2: Implementing NAPU-bagging SVM for Virtual Screening

This protocol is adapted from the work on multitarget-directed ligand discovery [34].

- Data Curation: Compile a set of known active compounds (positive samples) for your target of interest. Gather a larger set of compounds with unknown activity (unlabeled data).

- Molecular Representation: Convert all molecular structures into ECFP4 fingerprints.

- Bag Construction: Construct N number of bags. For each bag:

- Include all known positive samples.

- Randomly sample a portion of the unlabeled data.

- Include a set of generated or confidently predicted negative samples.

- Ensemble Model Training: Train a separate SVM classifier on each of the N bags.

- Prediction and Aggregation: For a new molecule, generate its ECFP4 fingerprint and obtain a prediction score from each of the N SVM classifiers. The final prediction is the average of all scores.

Research Workflow Diagrams

AAIS for Molecular Property Prediction

EFTGAN for Data Augmentation on Small Datasets

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function / Description | Example / Source |

|---|---|---|

| OGB Datasets | Publicly available, standardized benchmark datasets for graph property prediction; used for training and evaluation. | OGB (Open Graph Benchmark) website [31] |

| Pre-trained BERT Models | NLP models adapted for molecular SMILES strings; provide a strong foundation for transfer learning after fine-tuning on task-specific data. | Hugging Face repository (e.g., PC10M-450k) [32] |

| Influence Function Computation | A mathematical tool used to identify the training examples most influential on a model's predictions, crucial for targeted augmentation. | One-step influence function as used in AAIS [31] |

| InfoGAN Framework | A variant of Generative Adversarial Networks (GANs) that includes a classifier to generate data with specific attributes or states. | Used in EFTGAN for generating material features [33] |

| ECFP4 Fingerprints | A type of molecular fingerprint that captures circular substructures of a molecule; a effective representation for traditional ML models like SVM. | Used as a superior compound representation method [34] |

| SVM with NAPU-bagging | A robust semi-supervised learning framework combining Support Vector Machines with bagging on positive and unlabeled data to control false positives. | Implementation for virtual screening of multitarget drugs [34] |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: What is the "over-specialization spiral" in chemical databases? The over-specialization spiral is a self-reinforcing type of selection bias where predictive models, trained on existing data, tend to suggest new experiments that fall strictly within their current applicability domain (the chemical space where they make reliable predictions) [35]. When the dataset is updated with these results and the model is retrained, its focus narrows further, increasingly shifting the data distribution towards already densely populated areas [35]. Despite adding more data, the model's applicability domain can remain static or even shrink, hindering the exploration of new, potentially valuable areas of the chemical space [35].

Q2: How does the CANCELS algorithm technically differ from Active Learning? While both aim to select informative data points, they have fundamental differences in objective and operation, as summarized in the table below.

| Feature | CANCELS | Active Learning |

|---|---|---|

| Primary Goal | Improve overall dataset quality and distribution [35]. | Improve the performance of a specific model [35]. |

| Dependency | Model-free and task-free [35]. | Model-dependent; selections are specific to one model [35]. |

| Scope | Retains a desirable degree of specialization to a research domain without over-expanding [35]. | Can slowly expand the chemical space and may explore beyond the desired specialization [35]. |

Q3: What is the required input format for CANCELS? CANCELS requires two main inputs [35]:

- Your Biased Dataset (B): The existing, potentially specialized collection of chemical compounds.

- A Candidate Pool (P): A broader set of compounds from which CANCELS can select meaningful, feasible candidates for experimentation. The algorithm selects from this pool rather than generating artificial compounds, ensuring that suggestions are interpretable and worth experimental effort [35].

Q4: A key assumption of CANCELS is that the underlying data distribution is Gaussian. What if my data violates this assumption? The assumption of a Gaussian distribution is a necessary starting point for mitigating bias when no perfect ground-truth dataset is available [35]. The methods CANCELS builds upon incorporate safeguards to test if a Gaussian fits the data reasonably well and will refuse output if the fit is poor [35]. However, because the goal is to smooth the data distribution to improve quality, and such distributions are common in nature, the implications of this assumption are generally benevolent, even if the true distribution is only similar to a Gaussian [35].