Mol2Vec vs. VICGAE: A Performance and Practicality Comparison for Molecular Property Prediction

This article provides a comprehensive comparison of two prominent molecular embedding techniques, Mol2Vec and VICGAE, for predicting key chemical properties.

Mol2Vec vs. VICGAE: A Performance and Practicality Comparison for Molecular Property Prediction

Abstract

This article provides a comprehensive comparison of two prominent molecular embedding techniques, Mol2Vec and VICGAE, for predicting key chemical properties. Tailored for researchers and drug development professionals, it explores the foundational concepts behind these methods, details their practical implementation, and offers optimization strategies based on recent research. A direct performance validation reveals a critical trade-off: while Mol2Vec achieves marginally higher accuracy (R² up to 0.93 for critical temperature), the compact VICGAE embeddings deliver comparable predictive power with a tenfold improvement in computational efficiency. This analysis synthesizes these findings to guide the selection of optimal molecular representation strategies in biomedical research and drug discovery.

Understanding Molecular Embeddings: From Mol2Vec and VICGAE to the Modern Landscape

The Critical Role of Molecular Representation in Drug Discovery and Cheminformatics

The accurate prediction of molecular properties is a cornerstone of modern drug discovery and materials science, enabling the rapid computational screening of millions of compounds and significantly accelerating the development of new therapeutics. The fundamental challenge lies in transforming complex molecular structures into machine-readable numerical representations that preserve essential chemical information. This process, known as molecular embedding, serves as the critical first step upon which all subsequent machine learning (ML) models are built. The choice of representation directly influences the accuracy, efficiency, and overall success of property prediction tasks such as estimating melting points, boiling points, and biological activity [1] [2].

The field has evolved from traditional, hand-crafted descriptors like molecular fingerprints to sophisticated, deep learning-based embedding techniques. These modern methods aim to automatically learn salient features from molecular data, capturing intricate structure-property relationships that are often elusive for rule-based approaches [2] [3]. This guide provides a performance-focused comparison of two prominent molecular embedding techniques—Mol2Vec and VICGAE—evaluating their experimental performance, computational characteristics, and practical applicability for cheminformatics researchers.

Molecular Representation Methods: From Traditional to Modern Embeddings

The Evolutionary Leap in Representation Techniques

The journey of molecular representation began with traditional rule-based methods such as molecular descriptors and fingerprints. The Simplified Molecular-Input Line-Entry System (SMILES) emerged as a widely adopted string-based format, providing a compact and efficient way to encode chemical structures [2]. While computationally efficient, these traditional representations often struggle to capture the subtle and intricate relationships between molecular structure and function, particularly for complex drug discovery tasks like scaffold hopping, which aims to discover new core structures while retaining biological activity [2].

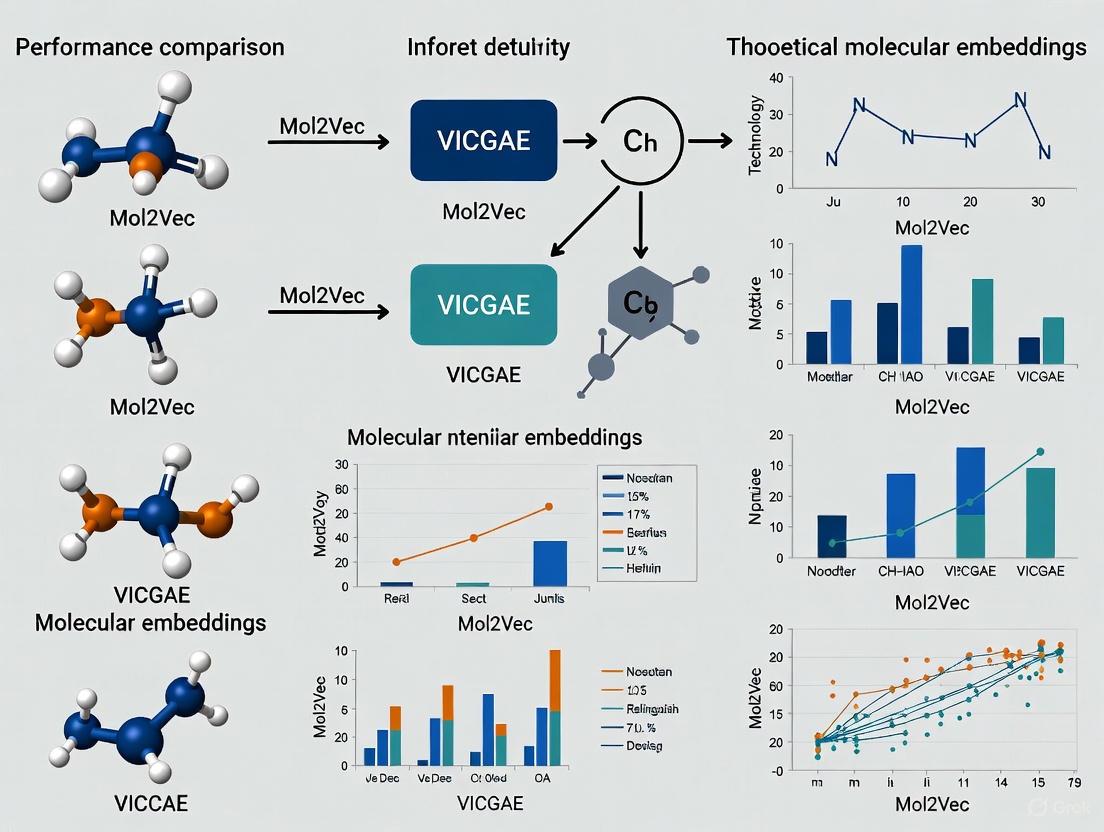

This limitation spurred the development of AI-driven molecular representation methods, which leverage deep learning models to automatically extract and learn intricate features directly from molecular data. As illustrated in Figure 1, these approaches encompass a diverse range of strategies, including language models that treat SMILES strings as a chemical language, graph-based models that operate on the inherent graph structure of molecules, and autoencoder-based architectures that learn compressed, informative representations [2] [3].

Figure 1: Classification of Molecular Representation Methods

Mol2Vec is an unsupervised machine learning approach that generates molecular embeddings by analogy to natural language processing. It treats a molecule as a "sentence" and its substructures (obtained through molecular fragmentation) as "words." Using the Word2Vec algorithm, it learns fixed-length vector representations that capture the contextual relationships between these substructures. The resulting 300-dimensional embeddings encapsulate molecular features in a continuous vector space, enabling algebraic operations that can reveal chemical relationships and similarities [1].

VICGAE (Variance-Invariance-Covariance regularized GRU Auto-Encoder) represents a different architectural philosophy. It is a deep learning model based on a Gated Recurrent Unit (GRU) Auto-Encoder regularized with variance-invariance-covariance constraints. This architecture learns to compress molecular information into a more compact 32-dimensional embedding. The regularization helps ensure that the learned representations are robust and capture chemically meaningful features while maintaining significantly lower dimensionality compared to Mol2Vec [1] [4].

Experimental Comparison: Performance and Computational Efficiency

Benchmarking Methodology and Protocol

To objectively evaluate the performance of Mol2Vec and VICGAE embeddings, we examine a comprehensive experimental framework implemented using the ChemXploreML platform [1] [4]. The benchmarking protocol follows a rigorous, standardized pipeline to ensure fair comparison:

- Dataset: Models were validated on a dataset curated from the CRC Handbook of Chemistry and Physics, comprising five fundamental molecular properties: melting point (MP), boiling point (BP), vapor pressure (VP), critical temperature (CT), and critical pressure (CP) [1].

- Data Preprocessing: SMILES strings for each compound were canonicalized using RDKit. The dataset was cleaned to remove invalid entries and ensure data integrity [1].

- Model Training: Both embedding techniques were combined with state-of-the-art tree-based ensemble methods, including Gradient Boosting Regression (GBR), XGBoost, CatBoost, and LightGBM [1].

- Evaluation Metrics: Performance was primarily assessed using the coefficient of determination (R²), with complementary analysis of computational efficiency including training time and resource utilization [1] [4].

The following workflow (Figure 2) illustrates the experimental pipeline used for this comparative analysis:

Figure 2: Molecular Property Prediction Workflow

Quantitative Performance Comparison

The experimental results reveal a nuanced performance landscape where both embedding techniques demonstrate distinct strengths. Table 1 summarizes the key performance metrics across the five molecular properties evaluated in the study.

Table 1: Performance Comparison of Mol2Vec vs. VICGAE Embeddings

| Molecular Property | Best Performing Embedding | R² Score | Key Observation |

|---|---|---|---|

| Critical Temperature (CT) | Mol2Vec | 0.93 | Highest accuracy for well-distributed properties [1] [4] |

| Critical Pressure (CP) | Mol2Vec | ~0.91 | Consistent high performance [1] |

| Boiling Point (BP) | Mol2Vec | ~0.89 | Slightly superior accuracy [1] |

| Melting Point (MP) | Mol2Vec (Marginally) | >0.85 | Modest advantage [1] |

| Vapor Pressure (VP) | Comparable | <0.85 | Similar performance with smaller datasets [1] |

Computational Efficiency Analysis

While accuracy is crucial, computational efficiency often determines practical applicability in research environments. The benchmarking revealed significant differences in this domain, as detailed in Table 2.

Table 2: Computational Efficiency Comparison

| Characteristic | Mol2Vec | VICGAE |

|---|---|---|

| Embedding Dimensionality | 300 dimensions [1] [4] | 32 dimensions [1] [4] |

| Computational Efficiency | Lower | Significantly Improved [1] [4] |

| Memory Footprint | Larger | Smaller |

| Training Speed | Slower | Faster |

| Ideal Use Case | Maximum accuracy scenarios | Large-scale screening, resource-constrained environments [1] |

Implementing molecular representation pipelines requires specific computational tools and datasets. Table 3 catalogs essential research reagents and their functions based on the methodologies examined in the comparative studies.

Table 3: Essential Research Reagents for Molecular Representation Studies

| Resource | Type | Primary Function | Application in Benchmarking |

|---|---|---|---|

| ChemXploreML | Software Platform | Modular desktop application for molecular property prediction [1] [4] | Provided the framework for embedding evaluation and comparison |

| RDKit | Cheminformatics Library | SMILES canonicalization, molecular descriptor calculation [1] | Standardized molecular representations prior to embedding |

| CRC Handbook Dataset | Chemical Database | Source of experimental property data for training and validation [1] | Served as ground truth for model performance assessment |

| PubChem | Chemical Repository | Source of canonical SMILES strings using Compound IDs (CIDs) [5] | Provided molecular structure information |

| Tree-Based Ensemble Methods | Machine Learning Algorithms | Predictive modeling using molecular embeddings [1] | XGBoost, CatBoost, LightGBM used for property prediction |

| UMAP | Dimensionality Reduction | Visualization and exploration of molecular space [1] | Assisted in chemical space analysis and dataset characterization |

The comparative analysis of Mol2Vec and VICGAE reveals that the choice of molecular embedding involves a fundamental trade-off between predictive accuracy and computational efficiency. Mol2Vec achieves marginally superior accuracy for most properties, particularly critical temperature where it reaches an impressive R² of 0.93 [1] [4]. However, this comes at the cost of significantly higher computational resources due to its 300-dimensional embedding space.

VICGAE emerges as a compelling alternative, delivering comparable predictive performance with substantially improved computational efficiency through its compact 32-dimensional representations [1] [4]. This makes VICGAE particularly advantageous for large-scale virtual screening projects or research environments with limited computational resources.

These findings align with broader trends in molecular representation learning, where recent benchmarking studies have surprisingly shown that traditional molecular fingerprints often remain competitive with, or even outperform, more complex neural models [6]. This underscores the importance of rigorous, objective evaluation of embedding techniques tailored to specific research requirements rather than automatically adopting the most complex available method.

For researchers navigating this landscape, the decision framework should consider: (1) the criticality of maximum accuracy versus throughput needs, (2) available computational resources, and (3) dataset characteristics. As the field advances, the integration of these embedding techniques with emerging approaches—including 3D-aware representations, multi-modal learning, and hybrid models—promises to further enhance our ability to map chemical space and accelerate molecular discovery [3].

In the field of cheminformatics and molecular property prediction, converting molecular structures into numerical representations that computers can process—a process known as molecular embedding—is a fundamental challenge. Among the various techniques developed, Mol2Vec, inspired by the natural language processing algorithm Word2Vec, has emerged as a prominent method for generating molecular fingerprints [7] [1]. This approach treats molecules as "sentences" composed of molecular substructure "words," creating meaningful vector representations that capture essential chemical information.

To evaluate its practical utility, this guide objectively compares Mol2Vec against a newer, more compact embedding technique known as VICGAE (Variance-Invariance-Covariance regularized GRU Auto-Encoder). The comparison is grounded in experimental data from a recent study that implemented both methods within the ChemXploreML desktop application to predict fundamental molecular properties [7] [1] [8]. The analysis focuses on predictive accuracy, computational efficiency, and practical implementation, providing researchers and drug development professionals with actionable insights for selecting appropriate embedding techniques for their projects.

Performance Comparison: Mol2Vec vs. VICGAE

A direct comparison of Mol2Vec and VICGAE was conducted using a dataset from the CRC Handbook of Chemistry and Physics [1]. The study evaluated their performance in predicting five key molecular properties when combined with state-of-the-art tree-based ensemble machine learning models.

Table 1: Summary of Model Performance (R²) by Molecular Property and Embedding Method

| Molecular Property | Mol2Vec (300-dim) | VICGAE (32-dim) | Best Performing Model(s) |

|---|---|---|---|

| Critical Temperature (CT) | 0.93 | Comparable | Gradient Boosting, XGBoost, CatBoost, LightGBM [7] [1] |

| Critical Pressure (CP) | Information missing | Information missing | Gradient Boosting, XGBoost, CatBoost, LightGBM [7] [1] |

| Boiling Point (BP) | Information missing | Information missing | Gradient Boosting, XGBoost, CatBoost, LightGBM [7] [1] |

| Melting Point (MP) | Information missing | Information missing | Gradient Boosting, XGBoost, CatBoost, LightGBM [7] [1] |

| Vapor Pressure (VP) | Information missing | Information missing | Gradient Boosting, XGBoost, CatBoost, LightGBM [7] [1] |

Table 2: Comparative Analysis of Embedding Method Characteristics

| Characteristic | Mol2Vec | VICGAE |

|---|---|---|

| Embedding Dimensionality | 300 dimensions [7] [1] | 32 dimensions [7] [1] |

| Reported Accuracy | Slightly higher accuracy [7] [1] | Comparable performance [7] [1] |

| Computational Efficiency | Less efficient | Up to 10x faster [8] |

| Key Advantage | High predictive accuracy for well-distributed properties [7] | Excellent balance of performance and speed [7] [1] |

Experimental Protocols and Workflow

The comparative data for Mol2Vec and VICGAE were generated through a structured machine learning pipeline. The following workflow diagram illustrates the key stages of this experimental process.

Detailed Experimental Methodology

The experiment followed a rigorous protocol to ensure a fair and meaningful comparison between the two embedding techniques [1]:

- Dataset Curation: A dataset of organic compounds was compiled from the CRC Handbook of Chemistry and Physics, a reliable reference for chemical and physical properties. The dataset included five key molecular properties: melting point (MP), boiling point (BP), vapor pressure (VP), critical temperature (CT), and critical pressure (CP).

- Data Preprocessing and Validation: For each compound, SMILES (Simplified Molecular-Input Line-Entry System) strings were obtained, which provide a textual representation of molecular structure. These strings were canonicalized (standardized) using the RDKit cheminformatics toolkit. The dataset was cleaned to ensure data quality, resulting in final sample sizes ranging from 323 (for vapor pressure) to 6,167 (for melting point) compounds, depending on the property and embedding method.

- Molecular Embedding Generation:

- Mol2Vec: This method converts a molecule into a numerical vector by first decomposing it into representative substructures (similar to words in a sentence) and then using the Word2Vec algorithm to generate a 300-dimensional embedding that captures the contextual relationships between these substructures [7] [1].

- VICGAE: This is a Variance-Invariance-Covariance regularized GRU Auto-Encoder. It uses a neural network architecture with Gated Recurrent Units (GRUs) to create a more compact, 32-dimensional representation of the molecule. The regularization helps in learning a robust and efficient embedding [7] [1].

- Machine Learning and Evaluation: The embeddings from both methods were used to train and test four state-of-the-art tree-based ensemble machine learning models: Gradient Boosting Regression (GBR), XGBoost, CatBoost, and LightGBM. The models were optimized using the Optuna framework for hyperparameter tuning. Performance was primarily evaluated using the R² (coefficient of determination) metric to assess prediction accuracy. Computational efficiency was also measured and compared.

Table 3: Key Software and Data Resources for Molecular Embedding Research

| Item Name | Type | Function/Brief Explanation |

|---|---|---|

| ChemXploreML | Desktop Application | A modular desktop application that integrates data preprocessing, multiple embedding techniques (Mol2Vec, VICGAE), ML model training, and visualization in an intuitive, offline-capable interface [7] [8]. |

| RDKit | Cheminformatics Library | An open-source toolkit used for canonicalizing SMILES strings, analyzing molecular structures, and extracting crucial molecular information during data preprocessing [1]. |

| CRC Handbook of Chemistry and Physics | Reference Data | A highly reliable and comprehensive source of experimental data for molecular properties, used as the benchmark dataset for training and validation [1]. |

| Tree-Based Ensemble Models (e.g., XGBoost) | Machine Learning Algorithm | A class of powerful ML models (including GBR, XGBoost, CatBoost, LightGBM) effective at capturing non-linear relationships in high-dimensional molecular data for property prediction [1]. |

| Optuna | Software Library | A framework used for automated hyperparameter optimization, enabling the fine-tuning of machine learning models for maximum predictive performance [1]. |

Technical Mechanisms of Mol2Vec and VICGAE

The following diagram illustrates the core architectural differences between the Mol2Vec and VICGAE embedding processes.

Mol2Vec: This method operates on an analogy from natural language processing [1]. A molecule is first broken down into representative substructures, analogous to words in a sentence. These "sentences" are then fed into a Word2Vec-like neural network. The model learns to place substructures that appear in similar molecular contexts close to each other in the vector space. The result is a high-dimensional (300-dimension) embedding that captures complex structural and functional relationships within the molecule.

VICGAE: This method employs a different, more compact neural network architecture based on a GRU (Gated Recurrent Unit) Autoencoder [7] [1]. The encoder compresses the molecular information into a low-dimensional latent space (32 dimensions). A key feature is its custom Variance-Invariance-Covariance regularization loss function, which ensures the learned embeddings are robust and informative. This architecture is inherently more efficient, leading to its significant speed advantage.

The comparative analysis reveals that both Mol2Vec and VICGAE are powerful techniques for molecular property prediction, yet they cater to slightly different priorities. Mol2Vec, with its higher-dimensional embedding, maintains a slight edge in predictive accuracy for certain properties, making it a robust choice when accuracy is the paramount concern. In contrast, VICGAE offers a compelling alternative by delivering comparable predictive performance with a fraction of the dimensionality and up to an order of magnitude improvement in computational speed.

For researchers engaged in large-scale virtual screening or iterative design cycles where time and computational resources are limiting factors, VICGAE presents a highly efficient and effective solution. For projects where maximizing predictive accuracy for well-characterized properties is the primary goal, Mol2Vec remains a proven and reliable choice. The development of integrated platforms like ChemXploreML, which supports both methods, ultimately democratizes access to these advanced tools, allowing scientists to choose and customize the best embedding and modeling pipeline for their specific research needs.

In molecular machine learning, translating chemical structures into numerical representations (embeddings) is a fundamental step. This guide provides a performance comparison between VICGAE (Variance-Invariance-Covariance regularized GRU Auto-Encoder), a compact, efficiency-focused embedder, and the established Mol2Vec method. Experimental data confirms that VICGAE achieves competitive predictive accuracy while offering a substantial boost in computational speed, making it a compelling choice for high-throughput screening and resource-constrained environments [1] [8] [9].

Experimental Protocol & Workflow

The comparative data presented in this guide is primarily derived from a study that implemented a standardized machine learning pipeline to ensure a fair evaluation [1] [10]. The core methodology is outlined below.

Key Experimental Components:

- Dataset: Properties (Melting Point, Boiling Point, Vapor Pressure, Critical Temperature, Critical Pressure) for organic compounds were sourced from the CRC Handbook of Chemistry and Physics [1] [10].

- Molecular Representation: Structures were standardized into canonical SMILES strings for Mol2Vec and SELFIES strings for VICGAE [1] [10].

- Embedding Generation:

- Mol2Vec: An unsupervised method that learns 300-dimensional vectors for atom-centered substructures, inspired by natural language processing [1] [10].

- VICGAE: A GRU-based autoencoder that generates compact 32-dimensional vectors, regularized to ensure high variance, invariance to trivial transformations, and low covariance between dimensions [1] [10].

- Model Training & Evaluation: Four state-of-the-art tree-based ensemble models (Gradient Boosting, XGBoost, CatBoost, LightGBM) were trained. Performance was robustly assessed using 5-fold cross-validation, reporting the coefficient of determination (R²) and computational time [1].

Performance Comparison: VICGAE vs. Mol2Vec

The following tables summarize the key quantitative results from the experimental comparison.

Predictive Accuracy (R² Scores)

| Molecular Property | Mol2Vec (300-d) | VICGAE (32-d) | Performance Note |

|---|---|---|---|

| Critical Temperature (CT) | 0.931 | 0.931 | Best performing property [10] |

| Critical Pressure (CP) | 0.92 | 0.92 | Excellent performance [10] |

| Boiling Point (BP) | 0.925 | 0.92 | Very high accuracy [10] |

| Melting Point (MP) | ~0.86 | ~0.86 | Moderate accuracy [10] |

| Vapor Pressure (VP) | ~0.40 | ~0.40 | Most challenging property [10] |

Computational Efficiency

| Metric | Mol2Vec (300-d) | VICGAE (32-d) | Advantage |

|---|---|---|---|

| Embedding Dimensionality | 300 | 32 | VICGAE is ~90% smaller [1] [10] |

| Relative Execution Time | Baseline | Up to 10x Faster | VICGAE is significantly more efficient [1] [8] [9] |

The Scientist's Toolkit

| Research Reagent / Tool | Function in the Workflow |

|---|---|

| CRC Handbook Dataset | Provides the experimental data for five key molecular properties, serving as the ground truth for model training and validation [1]. |

| RDKit | An open-source cheminformatics toolkit used to canonicalize SMILES strings and extract crucial molecular information during data preprocessing [1]. |

| Mol2Vec Embedder | Generates 300-dimensional molecular vectors by learning from atom-centered substructures, capturing local chemical environments [1] [10]. |

| VICGAE Embedder | Generates compact 32-dimensional molecular vectors from SELFIES strings, optimized for efficiency and global structural representation [1] [10]. |

| Tree-Based Ensemble Models (e.g., XGBoost) | State-of-the-art machine learning algorithms that learn the complex relationship between molecular embeddings and their target properties [1]. |

| Optuna | A hyperparameter optimization framework that uses Bayesian methods to efficiently find the best model settings, moving beyond grid or random search [1] [10]. |

Technical Insights and Analysis

- Architectural Philosophy: The core difference lies in their approach. Mol2Vec is fragment-based, treating molecules as "sentences" of substructures to create a high-dimensional representation rich in local functional group information [10]. VICGAE is sequence-based, using a GRU autoencoder on SELFIES strings to create a dense, low-dimensional representation that captures more global structural features [1] [10].

- The VIC Regularization Advantage: VICGAE's performance stems from its regularization strategy: Variance (encouraging active dimensions), Invariance (to trivial changes), and Covariance (minimizing redundancy between dimensions). This ensures the compact 32-dimensional vector is informationally dense and efficient [10].

- Performance Interpretation: The near-identical R² scores for most properties, despite a 90% reduction in dimensionality, demonstrate that VICGAE's embedding space is highly optimized. Vapor pressure remains challenging for both, likely due to its strong dependence on complex intermolecular forces not fully captured by either structural embedding [10].

The choice between VICGAE and Mol2Vec is not about absolute superiority but strategic alignment with project goals.

- Choose Mol2Vec when your primary concern is maximizing predictive accuracy for a well-defined property and computational resources or time are not limiting factors. It remains a powerful and reliable benchmark.

- Choose VICGAE when you need to deploy models in production for high-throughput virtual screening, when working with limited computational budget, or when rapid iteration and prototyping are essential. Its dramatic speedup, with minimal accuracy loss, makes it an excellent tool for accelerating the pace of discovery.

This comparative analysis demonstrates that VICGAE successfully delivers on its promise as a compact and highly efficient alternative to traditional molecular embedding methods [1] [8] [9].

The transformation of molecular structures into machine-readable numerical representations is a cornerstone of modern computational chemistry and drug discovery. The choice of representation directly influences the success of subsequent tasks, from property prediction to virtual screening. While ECFP (Extended-Connectivity Fingerprints), GNNs (Graph Neural Networks), and Transformers represent significant milestones in this evolution, the ecosystem of molecular embedding models is far more diverse [2]. These models can be broadly categorized by their input modality: string-based (e.g., SMILES), graph-based (2D/3D molecular graphs), and fingerprint-based [11]. Newer approaches, including autoencoders, multimodal models, and those leveraging contrastive learning, continue to emerge, each with distinct theoretical foundations and performance characteristics [2] [12]. Understanding this broader landscape is crucial for researchers navigating the complex trade-offs between model performance, computational efficiency, and interpretability. This guide provides an objective comparison of these key embedding families, contextualized within a broader research thesis comparing Mol2Vec and VICGAE embeddings, to inform model selection for specific scientific applications.

Comparative Performance Analysis of Embedding Models

Rigorous benchmarking studies provide critical insights into the practical performance of various molecular embedding techniques. The following tables summarize key quantitative findings from recent large-scale evaluations and applied research, focusing on performance across common chemical informatics tasks.

Table 1: Benchmarking Results on Molecular Property Prediction Tasks (Therapeutic Data Commons ADMET Benchmark)

| Model Category | Specific Model | Performance Metric | Key Finding | Source |

|---|---|---|---|---|

| Fingerprint + ML | ECFP + XGBoost/RF | State-of-the-Art (SOTA) Coverage | Achieved SOTA in ~75% of benchmarked ADMET datasets | [13] |

| Graph Neural Networks | Various GNNs (GIN, etc.) | SOTA Coverage | Achieved SOTA in ~25% of benchmarked datasets | [13] |

| Pretrained Neural Models | 25 Various Models | Statistical Improvement vs. ECFP | Nearly all showed negligible or no significant improvement over ECFP | [11] [14] |

| Pretrained Neural Models | CLAMP | Statistical Improvement vs. ECFP | Only model performing statistically significantly better than ECFP | [11] [14] |

Table 2: Performance on Specific Prediction Tasks from Applied Studies

| Task | Best Performing Model | Performance | Comparison Models | Source |

|---|---|---|---|---|

| Odor Prediction | Morgan Fingerprint + XGBoost | AUROC: 0.828, AUPRC: 0.237 | Outperformed functional group fingerprints and molecular descriptors | [15] |

| Critical Temp. Prediction | Mol2Vec + Tree Ensembles | R²: 0.93 | Slightly higher accuracy than VICGAE | [1] |

| Similarity Search | CDDD & MolFormer | Higher efficiency & speed vs. ECFP | Evaluated against ECFP in vector database setup | [12] |

| Sterimol Param. Estimation | GT Models + Contextual Training | On par with GNNs | Advantages in speed and flexibility | [16] |

Detailed Experimental Protocols for Model Evaluation

To ensure the reproducibility and rigorous comparison of molecular embedding models, researchers adhere to structured experimental protocols. The workflow below outlines the standard process for a benchmarking study, from dataset curation to performance analysis.

Diagram 1: Standard workflow for benchmarking molecular embeddings.

Dataset Curation and Preprocessing

The foundation of any robust benchmark is high-quality, curated data. Studies typically aggregate molecules from multiple reliable sources, such as the Therapeutic Data Commons (TDC) for ADMET properties, the CRC Handbook for physicochemical properties, or PubChem for general chemical information [13] [1]. The canonical Simplified Molecular Input Line Entry System (SMILES) string for each compound is obtained and standardized using toolkits like RDKit to ensure consistent representation [1] [15]. This step includes validation and cleaning to remove invalid entries, resulting in a final, analysis-ready dataset. For a fair evaluation, the data is typically split into training and test sets, often using stratified sampling to maintain the distribution of key properties across splits [15].

Molecular Representation and Feature Generation

In this critical phase, each molecule in the dataset is converted into one or more numerical representations.

- Traditional Fingerprints: Methods like ECFP are generated using algorithms (e.g., via RDKit) that encode the presence of specific topological substructures within the molecule into a fixed-length bit vector [15] [16].

- Neural Embeddings: Pretrained models (e.g., Mol2Vec, VICGAE, GNNs, Transformers) are used as feature extractors. The molecules are passed through these models without fine-tuning, and the output layer activations (typically the last hidden layer or a pooled layer) are used as the continuous, high-dimensional embedding vector [11] [1].

- Hybrid Features: Some studies create a unified feature set by concatenating different types of representations, such as fingerprints with molecular descriptors [15].

Model Training, Evaluation, and Analysis

The generated representations are used to train machine learning models for specific prediction tasks. To ensure a fair comparison, a consistent model evaluation framework is applied across all embedding types. For fingerprint and neural embedding features, this typically involves using a standard classifier or regressor like XGBoost, with its hyperparameters optimized via techniques like Bayesian optimization (e.g., with Optuna) [1]. Performance is assessed via robust methods like stratified k-fold cross-validation, and metrics relevant to the task are reported (e.g., AUROC and AUPRC for classification, R² for regression) [15]. Finally, statistical testing models, such as the dedicated hierarchical Bayesian model used in large-scale benchmarks, are employed to determine if performance differences between embedding types are statistically significant [11] [14].

The Scientist's Toolkit: Essential Research Reagents and Solutions

The experimental workflow for evaluating molecular embeddings relies on a suite of software tools and chemical databases. The following table catalogues key "research reagents" essential for work in this field.

Table 3: Essential Software and Data Resources for Molecular Representation Research

| Tool / Resource | Type | Primary Function | Relevance to Embedding Research |

|---|---|---|---|

| RDKit | Cheminformatics Library | SMILES processing, fingerprint generation, descriptor calculation | Fundamental for data preprocessing, feature extraction, and generating baseline fingerprints [1] [15]. |

| Therapeutic Data Commons (TDC) | Data Repository | Curated datasets for drug discovery (e.g., ADMET properties) | Provides standardized benchmarks for fair model evaluation and comparison [13]. |

| Optuna | Python Library | Hyperparameter optimization framework | Crucial for tuning machine learning models (e.g., XGBoost) to ensure optimal performance with different embeddings [1]. |

| XGBoost / LightGBM | ML Algorithm | Gradient boosting for classification and regression | The standard "downstream" model for evaluating the predictive power of static molecular embeddings [13] [1] [15]. |

| PubChem | Chemical Database | Repository of chemical molecules and their properties | A primary source for retrieving SMILES strings and structural information for datasets [1]. |

| Vector Databases | Data Structure | Efficient storage and search of high-dimensional vectors | Enable efficient similarity search and clustering on neural embeddings at scale [12]. |

The expanding universe of molecular embedding models offers researchers a powerful palette of tools for drug discovery and materials science. The experimental data reveals a nuanced reality: while sophisticated neural models like GNNs and Transformers excel in specific areas such as capturing 3D shape or enabling generative design, traditional fingerprints like ECFP remain remarkably competitive, and often superior, for standard property prediction tasks when combined with robust machine learning models like XGBoost [13] [11]. This performance paradox underscores that model selection is not a one-size-fits-all endeavor. Factors such as dataset size, task specificity (e.g., scaffold hopping vs. ADMET prediction), and computational constraints must guide the choice. Framed within the broader research on Mol2Vec and VICGAE, this overview highlights that the quest for a universally superior embedding is ongoing. Future progress will likely hinge on developing models that more effectively encode complex chemical principles, such as 3D geometry and electrostatics, and on their rigorous evaluation against deceptively strong baselines.

The accurate prediction of molecular properties is a cornerstone of modern chemical research and drug development, enabling the rapid screening of compounds and accelerating the discovery of new materials and therapeutics [7]. The fundamental challenge in applying machine learning (ML) to chemistry lies in transforming molecular structures into numerical representations, or embeddings, that computers can process while preserving essential chemical information [2]. Recent years have witnessed a surge in sophisticated embedding techniques, including Mol2Vec and VICGAE, which leverage deep learning to capture complex structural and chemical features [7] [1].

However, a surprising trend has emerged from comprehensive benchmarking studies. Despite the theoretical advantages of these advanced neural models, traditional molecular fingerprints often remain competitive and, in many cases, superior in performance [11]. This guide provides an objective comparison of leading molecular embedding approaches, focusing specifically on the performance of Mol2Vec versus VICGAE embeddings, while contextualizing their results against the enduring benchmark set by traditional fingerprint methods.

Traditional Molecular Fingerprints

Traditional molecular fingerprints represent a class of deterministic feature extraction methods based on identifying specific subgraphs or structural patterns within a molecule [11] [2]. The most prominent example is the Extended Connectivity FingerPrint (ECFP), which encodes circular atom neighborhoods into a fixed-length binary vector through a hashing process [11]. These representations are not task-adaptive but remain widely used in chemoinformatics due to their computational efficiency, interpretability, and consistently strong performance across diverse prediction tasks [11].

Modern Embedding Approaches

Mol2Vec

Mol2Vec is an unsupervised embedding technique inspired by natural language processing. It treats molecular substructures as "words" and entire molecules as "sentences," generating numerical representations by analyzing the co-occurrence patterns of these chemical substructures in large molecular databases [7] [17]. The resulting embeddings capture chemical context analogously to how word embeddings capture semantic meaning in text [17].

VICGAE (Variance-Invariance-Covariance Regularized GRU Auto-Encoder)

VICGAE represents a more recent approach based on deep learning architecture. This method utilizes a Gated Recurrent Unit (GRU) Auto-Encoder regularized with variance-invariance-covariance constraints to generate compact molecular representations [7] [1]. With only 32 dimensions compared to Mol2Vec's 300, VICGAE offers significantly improved computational efficiency while maintaining competitive performance [7] [1].

Experimental Comparison: Methodology and Protocols

Benchmarking Framework and Dataset

To ensure a fair and rigorous comparison of these molecular representation methods, researchers have developed standardized evaluation protocols. The ChemXploreML framework, developed at MIT, provides a modular desktop application specifically designed for molecular property prediction, allowing systematic comparison of different embedding techniques combined with state-of-the-art machine learning algorithms [7] [1].

The molecular properties dataset for these benchmarks typically originates from reliable references such as the CRC Handbook of Chemistry and Physics, ensuring high-quality ground truth data [1]. Standardized benchmarks evaluate performance across five fundamental molecular properties of organic compounds [7] [1]:

- Melting Point (MP, °C)

- Boiling Point (BP, °C)

- Vapor Pressure (VP, kPa at 25°C)

- Critical Temperature (CT, K)

- Critical Pressure (CP, MPa)

For each compound, SMILES (Simplified Molecular Input Line Entry System) representations are obtained and canonicalized using tools like RDKit to ensure consistent molecular representation [1]. The embeddings (Mol2Vec, VICGAE, or ECFP) are then generated from these standardized representations and used as input to various machine learning models.

Machine Learning Pipeline and Evaluation

The experimental workflow follows a consistent pattern across studies to ensure comparable results [7] [1] [11]:

- Data Preprocessing: Standardization of molecular representations, handling of missing values, and dataset splitting into training and test sets.

- Embedding Generation: Conversion of molecular structures into numerical representations using each method.

- Model Training: Application of multiple machine learning algorithms, typically including tree-based ensemble methods such as Gradient Boosting Regression, XGBoost, CatBoost, and LightGBM.

- Hyperparameter Optimization: Systematic tuning of model parameters using frameworks like Optuna.

- Performance Evaluation: Assessment using metrics such as R² (coefficient of determination) to quantify prediction accuracy.

Table 1: Performance Comparison of Molecular Representation Methods on Key Properties

| Molecular Property | Mol2Vec (R²) | VICGAE (R²) | Traditional Fingerprints (R²) | Notes |

|---|---|---|---|---|

| Critical Temperature | 0.93 [7] | Comparable to Mol2Vec [7] | Often superior in broader benchmarks [11] | Mol2Vec slightly higher accuracy |

| Boiling Point | High [7] | Comparable [7] | Competitive performance [11] | VICGAE offers better computational efficiency |

| Melting Point | High [7] | Comparable [7] | -- | -- |

| Various ADMET Properties | Competitive alone; improved with descriptor augmentation [17] | -- | Often top-performing [11] | Descriptor enrichment boosts Mol2Vec performance |

Table 2: Computational Characteristics of Representation Methods

| Method | Dimensionality | Computational Efficiency | Key Advantages |

|---|---|---|---|

| Traditional Fingerprints (ECFP) | Variable (typically 1024-2048 bits) | High [11] | Proven performance, interpretability, efficiency [11] |

| Mol2Vec | 300 [7] | Moderate [7] | Slightly higher accuracy in specific applications [7] |

| VICGAE | 32 [7] | High (up to 10x faster than Mol2Vec) [7] [9] | Compact representation with comparable performance [7] |

Key Findings and Performance Analysis

Performance Comparison: Mol2Vec vs. VICGAE

Direct comparisons between Mol2Vec and VICGAE reveal a nuanced performance landscape. In evaluations using the ChemXploreML framework, Mol2Vec embeddings (300 dimensions) delivered slightly higher accuracy for certain molecular properties, achieving R² values up to 0.93 for critical temperature predictions [7]. However, VICGAE embeddings (32 dimensions) exhibited comparable prediction performance despite their significantly lower dimensionality, while offering substantially improved computational efficiency—operating up to ten times faster than Mol2Vec in some applications [7] [9].

This efficiency-performance tradeoff presents researchers with a practical choice: Mol2Vec for marginal accuracy gains where computational resources are sufficient, versus VICGAE for large-scale screening where processing speed is prioritized [7]. Both methods demonstrate capability in capturing relevant chemical information for property prediction tasks when combined with modern tree-based ensemble methods [1].

The Traditional Fingerprint Benchmark

The most striking insight from recent comprehensive benchmarking studies comes from comparing these modern embeddings against traditional fingerprint methods. In the most extensive comparison to date, evaluating 25 models across 25 datasets, researchers found that "nearly all neural models show negligible or no improvement over the baseline ECFP molecular fingerprint" [11].

This surprising result challenges the prevailing narrative of continuous progress through increasingly complex neural architectures. Among all models evaluated, only the CLAMP model, which is also based on molecular fingerprints, performed statistically significantly better than alternatives [11]. These findings raise important concerns about evaluation rigor in the field and suggest that traditional fingerprints establish a formidable performance benchmark that modern methods struggle to surpass.

Performance Enhancement Strategies

Researchers have developed strategies to enhance the performance of modern embedding methods. For Mol2Vec, specifically, combining the embeddings with classical molecular descriptors and applying feature selection has been shown to significantly improve performance [17]. In ADMET prediction tasks, this descriptor-augmentation approach enabled relatively simple multilayer perceptron (MLP) models to achieve top results in 10 of 16 benchmarks, outperforming more complex models on the Therapeutics Data Commons leaderboard [17].

This enhancement strategy effectively bridges traditional and modern approaches, leveraging both the data-driven representations of deep learning and the chemically meaningful features of traditional descriptors.

Figure 1: Molecular Embedding Benchmarking Workflow

Essential Research Reagents and Computational Tools

Successful implementation of molecular property prediction requires specific computational tools and resources. The following table details key research "reagents" essential for conducting rigorous benchmarking experiments in this field.

Table 3: Essential Research Reagents and Computational Tools

| Tool/Resource | Type | Primary Function | Relevance to Benchmarking |

|---|---|---|---|

| RDKit | Cheminformatics Library | Molecular processing, SMILES canonicalization, descriptor calculation [1] | Fundamental preprocessing and traditional fingerprint generation |

| ChemXploreML | Desktop Application | Modular framework for molecular property prediction [7] [1] | Standardized evaluation of different embedding methods |

| scikit-learn | Machine Learning Library | Traditional ML algorithms, preprocessing, model evaluation [1] | Implementation of baseline models and evaluation metrics |

| XGBoost/LightGBM/CatBoost | Gradient Boosting Frameworks | High-performance tree-based ensemble methods [7] [1] | Primary prediction models for comparing embedding performance |

| Optuna | Hyperparameter Optimization Framework | Automated hyperparameter tuning [1] | Ensuring fair model optimization across different embeddings |

| Therapeutics Data Commons (TDC) | Benchmark Datasets | Standardized ADMET and molecular property datasets [17] | Providing consistent evaluation benchmarks across studies |

| CRC Handbook of Chemistry and Physics | Reference Data | Authoritative source of experimental molecular properties [1] | Ground truth data for training and evaluation |

The comprehensive benchmarking of molecular representation methods reveals a complex performance landscape where traditional fingerprints maintain surprising competitiveness against modern neural approaches. While Mol2Vec and VICGAE offer valid alternatives with specific advantages—slightly higher accuracy for Mol2Vec and significantly better computational efficiency for VICGAE—neither consistently outperforms the established benchmark of traditional ECFP fingerprints across diverse property prediction tasks [7] [11].

These findings suggest several strategic recommendations for researchers and drug development professionals:

Establish Traditional Fingerprints as Baseline: Any development of new molecular representation methods should use traditional fingerprints as a mandatory performance baseline, with claims of improvement requiring rigorous statistical validation [11].

Consider Task-Specific Requirements: For applications where marginal accuracy improvements justify computational costs, Mol2Vec with descriptor augmentation may be beneficial [17]. For high-throughput screening, VICGAE offers an efficient alternative [7].

Prioritize Enhanced Evaluation Practices: The field requires more rigorous evaluation protocols, including broader chemical space coverage, standardized dataset splits, and comprehensive statistical testing to prevent overestimation of marginal improvements [11].

The enduring performance of traditional fingerprints establishes a robust benchmark that continues to challenge sophisticated neural approaches. This reality underscores the importance of methodological rigor and balanced performance-efficiency tradeoffs in molecular property prediction, ensuring that advances in representation learning translate to genuine improvements in chemical research and drug discovery.

Implementing Mol2Vec and VICGAE: A Practical Guide for Real-World Prediction Pipelines

In the field of computational chemistry and drug discovery, the accurate prediction of molecular properties using machine learning (ML) hinges on the quality and consistency of the input data. The process begins with molecular representations, most commonly SMILES (Simplified Molecular-Input Line-Entry System) strings, which provide a compact, text-based method for encoding molecular structures. However, raw SMILES data from chemical databases often contains inconsistencies, errors, and variations that can severely compromise model performance if left unaddressed. The adage "garbage in, garbage out" is particularly relevant here, as the scarcity and non-consistent quality of available data for drug discovery necessitates a thorough initial clean-up to ensure high-quality data for model generation [18].

This guide examines the critical data preprocessing pipeline required to transform raw SMILES strings into standardized molecular inputs, with a specific focus on its role in enabling performance comparisons between two prominent molecular embedding techniques: Mol2Vec and VICGAE (Variance-Invariance-Covariance regularized GRU Auto-Encoder). The standardization of molecular inputs serves as the foundational step that ensures fair and meaningful comparisons between different embedding methodologies, allowing researchers to accurately assess their respective strengths and limitations in molecular property prediction tasks. As we demonstrate through experimental data, the choice of embedding method—Mol2Vec's 300-dimensional representations versus VICGAE's more compact 32-dimensional embeddings—has significant implications for both predictive accuracy and computational efficiency in real-world applications [1].

Molecular Representation Fundamentals

Common Molecular Representation Formats

Before delving into preprocessing protocols, it is essential to understand the various formats available for molecular representation. Each format offers distinct advantages and limitations for machine learning applications:

SMILES (Simplified Molecular-Input Line-Entry System): A line notation that encodes molecular structures using ASCII strings where atoms are represented by their standard chemical symbols. SMILES remains the mainstream molecular representation method due to its human-readability and widespread adoption [18] [2]. A significant challenge with SMILES is that multiple equally valid strings can represent the same molecule (e.g., CCO, OCC, and C(O)C all refer to ethanol), necessitating canonicalization algorithms to produce unique and consistent representations [18].

SELFIES (SELF-referencIng Embedded Strings): A more robust string-based representation designed specifically for ML applications, where virtually every string corresponds to a valid molecule. This addresses the issue of invalid SMILES strings that often arise in generative models [18]. Recent systematic evaluations have shown that while SELFIES offers improved syntactic robustness, SMILES with atomwise tokenization often yields more chemically structured embeddings [19].

InChI (International Chemical Identifier): A non-proprietary identifier for chemical substances developed by IUPAC that provides a standardized representation. Unlike SMILES, InChI is designed to be a persistent identifier rather than a computational feature representation [18].

Molecular Graphs: Represent molecules as graphs with atoms as nodes and bonds as edges, capturing the inherent topology of molecular structures. This representation forms the basis for graph neural networks (GNNs) in cheminformatics [2].

Molecular Fingerprints: Binary vectors that encode the presence or absence of specific molecular substructures or properties. Extended-connectivity fingerprints (ECFP) are among the most widely used fingerprint methods in quantitative structure-activity relationship (QSAR) analyses [2].

The Standardization Imperative

The critical importance of molecular standardization cannot be overstated. Inconsistent molecular representations introduce noise that directly impacts model performance and the validity of comparative studies between embedding methods. Without standardized inputs, performance differences between Mol2Vec and VICGAE could be attributed to representation inconsistencies rather than the intrinsic capabilities of the embedding techniques themselves. As demonstrated in research on chemical language models, design choices including molecular representation format and tokenization strategy meaningfully shape how chemical information is encoded in latent spaces, even when downstream task performance appears similar [19].

Experimental Methodology: Standardized Preprocessing Protocol

Data Collection and Validation

The foundational step in any molecular property prediction study involves curating a high-quality, chemically diverse dataset. In recent studies comparing Mol2Vec and VICGAE embeddings, researchers sourced molecular structures and their associated properties from the CRC Handbook of Chemistry and Physics, a recognized authoritative reference for chemical and physical properties [1]. The dataset encompassed diverse molecular types including hydrocarbons, halogenated compounds, oxygenated species, and heterocyclic molecules, ensuring broad chemical coverage across five key properties: melting point (MP), boiling point (BP), vapor pressure (VP), critical temperature (CT), and critical pressure (CP) [1].

For each compound, SMILES representations were obtained using CAS Registry Numbers primarily through the PubChem REST API, with supplementary retrieval via the NCI Chemical Identifier Resolver using the cirpy Python interface [1]. This meticulous approach to data collection underscores the importance of establishing reliable ground truth before commencing preprocessing operations.

Molecular Standardization Workflow

The standardization process follows a systematic pipeline implemented using cheminformatics libraries such as RDKit and Datamol [20] [18]. The workflow ensures that all molecular representations are consistent, valid, and optimized for subsequent embedding generation.

The following diagram illustrates the complete molecular standardization workflow from raw inputs to standardized representations:

Standardized Molecular Representations Workflow

Critical Preprocessing Operations

Each step in the preprocessing pipeline serves a specific purpose in ensuring molecular validity and consistency:

Conversion to Mol Object: Transforming SMILES strings into structured molecular objects that encode atomic properties, bonds, and spatial relationships using tools like RDKit [20] [18].

Error Correction: Identifying and rectifying common issues in molecular representations including invalid valences, bond specifications, and ring systems [18]. The

dm.fix_mol()function in Datamol addresses these issues through automated correction algorithms.Sanitization: Ensuring molecular realism through procedures that validate chemical feasibility. This includes adjusting nitrogen aromaticity using the Sanifix algorithm (addressing faulty valence for nitrogen in aromatic rings), charge neutralization (correcting valence issues from incorrect atomic charges), and validation through SMILES conversion cycles [18].

Standardization: Generating canonical representations through a multi-step process:

- Metal Disconnection: Removing metal ions and counter-ions that may not be relevant for the target application [18].

- Normalization: Correcting drawing errors and standardizing functional groups to ensure consistent representation of chemically equivalent moieties [18].

- Reionization: Ensuring proper protonation states by reionizing molecules according to acidity rules, particularly important for molecules with multiple acidic/basic functional groups [18].

- Stereochemistry Assignment: Properly reassigning stereochemical information if missing, using built-in RDKit functionality to force clean recalculation of stereochemistry [18].

Implementation Code

The following code demonstrates the practical implementation of the preprocessing pipeline using Datamol, which can be executed either sequentially or in parallel for large datasets:

Example of molecular preprocessing implementation using Datamol [20] [18].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of molecular preprocessing and embedding generation requires a curated set of computational tools and libraries. The following table details the essential "research reagents" for conducting comparative studies of molecular embedding techniques:

Table 1: Essential Research Reagents for Molecular Preprocessing and Embedding

| Tool/Library | Type | Primary Function | Application in Preprocessing |

|---|---|---|---|

| RDKit | Cheminformatics Library | Molecular manipulation and analysis | Core functions for chemical standardization, sanitization, and descriptor calculation [1] [18] |

| Datamol | Preprocessing Library | Simplified molecular operations | User-friendly wrapper for RDKit with standardized preprocessing pipelines [20] [18] |

| Mol2Vec | Embedding Algorithm | Molecular representation learning | Generates 300-dimensional molecular embeddings using substructure-based patterns [1] [21] |

| VICGAE | Embedding Algorithm | Molecular representation learning | Produces compact 32-dimensional embeddings via graph autoencoders with regularization [1] |

| Scikit-learn | ML Library | Machine learning workflows | Provides regression algorithms, preprocessing, and model evaluation metrics [1] |

| Optuna | Optimization Framework | Hyperparameter tuning | Enables efficient optimization of model parameters during embedding comparison [1] |

| Dask | Parallel Computing Library | Distributed processing | Accelerates preprocessing of large molecular datasets [1] |

Performance Comparison: Mol2Vec vs. VICGAE Embeddings

Experimental Framework

The comparative evaluation of Mol2Vec and VICGAE embeddings employed a rigorous experimental design to ensure fair assessment across multiple molecular properties. The study utilized tree-based ensemble methods including Gradient Boosting Regression (GBR), XGBoost, CatBoost, and LightGBM to predict the five key molecular properties mentioned previously [1]. This model diversity ensured that observed performance differences could be attributed to the embedding techniques rather than specific model architectures.

The dataset underwent thorough filtering and validation procedures, with initial compounds reduced to validated sets through SMILES canonicalization and standardization. The following table shows the dataset characteristics after preprocessing:

Table 2: Dataset Characteristics After Preprocessing for Embedding Comparison

| Molecular Property | Original Compounds | Validated Compounds (Mol2Vec) | Validated Compounds (VICGAE) | Cleaned Dataset (Mol2Vec) | Cleaned Dataset (VICGAE) |

|---|---|---|---|---|---|

| Melting Point (MP) | 7,476 | 7,476 | 7,200 | 6,167 | 6,030 |

| Boiling Point (BP) | 4,915 | 4,915 | 4,909 | 4,816 | 4,663 |

| Vapor Pressure (VP) | 398 | 398 | 398 | 353 | 323 |

| Critical Pressure (CP) | 777 | 777 | 776 | 753 | 752 |

| Critical Temperature (CT) | 819 | 819 | 818 | 819 | 777 |

Performance Metrics and Results

The performance of both embedding techniques was evaluated using R² (coefficient of determination) values, which measure how well the predicted properties correlate with experimental values. The following table summarizes the comparative performance across the five molecular properties:

Table 3: Performance Comparison of Mol2Vec vs. VICGAE Embeddings

| Molecular Property | Best Performing Embedding | Key Performance Metrics | Computational Efficiency | Optimal Model Combination |

|---|---|---|---|---|

| Critical Temperature (CT) | Mol2Vec | R² up to 0.93 | Moderate (300 dimensions) | Mol2Vec + Tree-based Ensembles [1] |

| Melting Point (MP) | Mol2Vec | High R² values | Moderate (300 dimensions) | Mol2Vec + Gradient Boosting [1] |

| Boiling Point (BP) | Mol2Vec | High R² values | Moderate (300 dimensions) | Mol2Vec + XGBoost/LightGBM [1] |

| Vapor Pressure (VP) | Mol2Vec | Good predictive accuracy | Moderate (300 dimensions) | Mol2Vec + Ensemble Methods [1] |

| Critical Pressure (CP) | Mol2Vec | Good predictive accuracy | Moderate (300 dimensions) | Mol2Vec + Tree-based Methods [1] |

| All Properties | VICGAE | Comparable performance (slightly lower R²) | High (32 dimensions) | VICGAE + Any ML model for efficiency [1] |

Critical Analysis of Comparative Results

The experimental results reveal a nuanced performance landscape between the two embedding techniques. While Mol2Vec's 300-dimensional embeddings delivered marginally higher predictive accuracy across most molecular properties, VICGAE's compact 32-dimensional representations achieved comparable performance with significantly improved computational efficiency [1]. This trade-off between accuracy and efficiency presents researchers with a strategic choice based on their specific application requirements.

For applications where prediction accuracy is paramount and computational resources are sufficient, Mol2Vec provides excellent performance, particularly for critical temperature prediction where it achieved remarkable R² values of up to 0.93 [1]. Conversely, for large-scale screening applications or resource-constrained environments, VICGAE offers a compelling alternative with substantially reduced computational requirements while maintaining competitive predictive capabilities.

Integrated Workflow: From Raw SMILES to Property Prediction

The complete pipeline from raw molecular data to final property prediction involves multiple interconnected stages, each contributing to the overall performance and reliability of the system. The following diagram illustrates this comprehensive workflow:

End-to-End Molecular Property Prediction Workflow

The systematic comparison of Mol2Vec and VICGAE embeddings demonstrates that rigorous data preprocessing is not merely a preliminary step but a critical determinant of success in molecular property prediction. The standardized transformation of SMILES strings into consistent, validated molecular representations enables fair and meaningful evaluation of embedding techniques, revealing their distinct performance characteristics.

Mol2Vec's higher-dimensional embeddings provide slightly superior predictive accuracy for well-distributed molecular properties, making them particularly suitable for applications where precision is paramount. In contrast, VICGAE's compact representations offer significantly improved computational efficiency with only marginally reduced accuracy, presenting an attractive option for large-scale screening and resource-constrained environments [1].

For researchers embarking on molecular property prediction studies, we recommend implementing a thorough preprocessing pipeline following the protocols outlined in this guide. The initial investment in data standardization pays substantial dividends through more reliable model performance, more meaningful comparative analyses, and ultimately, more accurate prediction of molecular properties for drug discovery and materials design. As the field advances, the development of increasingly sophisticated embedding techniques will further emphasize the importance of robust, standardized preprocessing methodologies that ensure fair comparison and optimal performance across diverse chemical spaces.

The accurate prediction of molecular properties is a cornerstone of modern chemical research and drug development. The challenge lies in effectively translating molecular structures into numerical representations, or embeddings, that machine learning (ML) models can process. This guide provides an objective performance comparison of two prominent molecular embedding techniques—Mol2Vec and VICGAE—when integrated with state-of-the-art tree-based ensemble models. Framed within a broader thesis on molecular representation research, we present supporting experimental data, detailed methodologies, and essential toolkits to inform researchers, scientists, and drug development professionals in selecting optimal pipelines for their specific applications.

Performance Comparison: Mol2Vec vs. VICGAE with Tree-Based Ensembles

A direct performance comparison of Mol2Vec and VICGAE embeddings, when used with various tree-based models, was conducted using a dataset from the CRC Handbook of Chemistry and Physics [1] [4] [7]. The following tables summarize the key quantitative results.

Table 1: Dataset Sizes for Different Molecular Properties After Preprocessing

| Molecular Property | Number of Compounds (Mol2Vec) | Number of Compounds (VICGAE) |

|---|---|---|

| Melting Point (MP) | 6,167 | 6,030 |

| Boiling Point (BP) | 4,816 | 4,663 |

| Vapor Pressure (VP) | 353 | 323 |

| Critical Pressure (CP) | 753 | 752 |

| Critical Temperature (CT) | 819 | 777 |

Table 2: Predictive Performance (R²) of Embedding and Model Combinations for Critical Temperature

| Machine Learning Model | Mol2Vec (300-dim) | VICGAE (32-dim) |

|---|---|---|

| Gradient Boosting Regression (GBR) | 0.92 | 0.90 |

| XGBoost | 0.93 | 0.91 |

| CatBoost | 0.91 | 0.89 |

| LightGBM (LGBM) | 0.92 | 0.90 |

Table 3: Comparative Analysis of Embedding Characteristics

| Characteristic | Mol2Vec | VICGAE |

|---|---|---|

| Embedding Dimensionality | 300 | 32 |

| Representation Type | Predefined, based on SMILES substrings | Data-driven, via a regularized autoencoder |

| Computational Efficiency | Lower (Higher-dimensional) | Significantly Higher (Lower-dimensional) |

| Best for | Tasks demanding peak predictive accuracy | Scenarios prioritizing computational speed and resource efficiency |

The experimental data indicates that Mol2Vec embeddings generally delivered marginally higher accuracy across multiple tree-based models for predicting fundamental molecular properties like critical temperature [1] [7]. However, VICGAE embeddings demonstrated comparable performance with a dramatic reduction in dimensionality (32 vs. 300), resulting in significantly improved computational efficiency [1] [4]. This suggests a trade-off where Mol2Vec may be preferable for maximum accuracy, while VICGAE offers a more efficient alternative with only a slight performance penalty.

Detailed Experimental Protocols

Dataset Curation and Preprocessing

The molecular properties dataset was sourced from the CRC Handbook of Chemistry and Physics, a reliable reference for chemical data [1] [7]. The workflow began with acquiring canonical SMILES (Simplified Molecular-Input Line-Entry System) strings for each compound using CAS Registry Numbers via the PubChem REST API and the NCI Chemical Identifier Resolver [1]. The RDKit cheminformatics package was then used to canonicalize the SMILES strings, ensuring a standardized representation for each molecule, and to extract crucial molecular information [1]. The dataset was cleaned to remove invalid entries, resulting in the final sample sizes for each molecular property, as shown in Table 1 [1].

Molecular Embedding Generation

- Mol2Vec: This method generates molecular embeddings by applying the Word2Vec natural language processing algorithm to sequences of chemical substructures derived from molecules [1]. It produces a fixed-size 300-dimensional vector for each molecule, based on the presence and context of its constituent substructures.

- VICGAE (Variance-Invariance-Covariance regularized GRU Auto-Encoder): This is a deep learning-based embedding technique that uses a Regularized GRU Auto-Encoder to learn a compressed, latent representation of molecules [1] [7]. Its key advantage is generating a much smaller, 32-dimensional embedding vector while preserving critical chemical information through its variance-invariance-covariance regularization scheme [1].

Model Training and Evaluation

The evaluation framework, implemented within the ChemXploreML desktop application, integrated the two embedding techniques with four tree-based ensemble models: Gradient Boosting Regression (GBR), XGBoost, CatBoost, and LightGBM [1] [7]. The workflow involved:

- Embedding Integration: Each molecule in the dataset was converted into its numerical representation using either Mol2Vec or VICGAE.

- Model Training: The tree-based models were trained on these embedded representations to predict target molecular properties.

- Hyperparameter Optimization: Model hyperparameters were tuned using the Optuna framework to ensure optimal performance [1].

- Performance Validation: Model efficacy was primarily evaluated using the R² metric, which measures the proportion of variance in the molecular property that is predictable from the embeddings.

Workflow and Signaling Pathway Visualizations

Molecular Property Prediction Workflow

Tree-Based Ensemble Model Architecture

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 4: Key Software and Data Resources for Molecular Property Prediction

| Resource Name | Type | Primary Function |

|---|---|---|

| ChemXploreML | Desktop Application | Modular framework for building and comparing ML pipelines for molecular property prediction [1] [7]. |

| RDKit | Cheminformatics Library | Open-source software for canonicalizing SMILES, analyzing molecular structures, and descriptor calculation [1]. |

| CRC Handbook of Chemistry and Physics | Reference Data | Source of high-quality, experimental data for key molecular properties like melting/boiling points and critical constants [1] [4]. |

| Mol2Vec | Molecular Embedding | Generates 300-dimensional molecular vectors based on substructure context [1] [7]. |

| VICGAE | Molecular Embedding | Generates compact 32-dimensional molecular embeddings via a regularized autoencoder [1] [7]. |

| XGBoost, CatBoost, LightGBM | Machine Learning Models | Advanced tree-based ensemble algorithms used for regression tasks on embedded molecular data [1]. |

This guide provides an objective performance comparison of the Mol2Vec and VICGAE molecular embedding techniques within the ChemXploreML desktop application. ChemXploreML is a modular tool designed to make machine learning-based molecular property prediction accessible to researchers without extensive programming expertise [1] [8] [22]. The following analysis uses experimental data from its implementation to compare these two core embedding methods.

Experimental Protocols & Workflow

The comparative analysis of Mol2Vec and VICGAE within ChemXploreML follows a structured machine learning pipeline.

Dataset Curation and Preprocessing

The molecular properties dataset was sourced from the CRC Handbook of Chemistry and Physics, a recognized authoritative reference [1]. The dataset comprised five key properties of organic compounds:

- Melting Point (MP, °C)

- Boiling Point (BP, °C)

- Vapor Pressure (VP, kPa at 25°C)

- Critical Temperature (CT, K)

- Critical Pressure (CP, MPa)

SMILES strings for each compound were obtained via the PubChem REST API and the NCI Chemical Identifier Resolver (CIR) [1]. These strings were then canonicalized (standardized) using RDKit, a leading open-source cheminformatics toolkit [1] [22]. The dataset was cleaned and validated, with final sample sizes for each property and embedding method detailed in [1].

Molecular Embedding and Model Training

The core of the experiment involved transforming the molecular structures into numerical representations using the two embedding techniques:

- Mol2Vec: An unsupervised method inspired by natural language processing, generating 300-dimensional vectors [1] [22].

- VICGAE: A deep generative auto-encoder producing more compact 32-dimensional vectors [1] [22].

These embeddings were then used as input for state-of-the-art tree-based ensemble methods, including Gradient Boosting Regression (GBR), XGBoost, CatBoost, and LightGBM (LGBM) [1]. The pipeline leveraged Optuna for hyperparameter optimization and employed N-fold cross-validation (typically 5-fold) to ensure robust performance estimates [1] [22]. The entire workflow, from data loading to model evaluation, was automated within the ChemXploreML application [1].

The following diagram illustrates this integrated workflow.

Performance Comparison: Mol2Vec vs. VICGAE

The primary metric for evaluating model performance was the R² score (coefficient of determination), which measures how well the model's predictions match the actual data. The following table summarizes the best-reported R² scores for predicting each molecular property, achieved by combining the respective embedding with an optimized tree-based model [1].

| Molecular Property | Mol2Vec (300 dim) | VICGAE (32 dim) |

|---|---|---|

| Critical Temperature (CT) | R²: 0.93 | R²: ~0.92 (Comparable) |

| Critical Pressure (CP) | Higher Accuracy | Comparable Performance |

| Boiling Point (BP) | Higher Accuracy | Comparable Performance |

| Melting Point (MP) | Higher Accuracy | Comparable Performance |

| Vapor Pressure (VP) | Higher Accuracy | Comparable Performance |

Analysis of Comparative Performance

- Prediction Accuracy: The Mol2Vec embedding consistently delivered slightly higher predictive accuracy across all five molecular properties [1]. This can be attributed to its higher-dimensional representation (300 dimensions), which potentially captures more nuanced chemical information.

- Computational Efficiency: Despite its lower dimensionality, VICGAE exhibited performance that was comparable to Mol2Vec [1]. This balance between accuracy and speed is a key advantage. The compact 32-dimensional embedding of VICGAE resulted in significantly improved computational efficiency, making it up to 10 times faster than Mol2Vec in the ChemXploreML pipeline [8] [9].

The Scientist's Toolkit: Essential Research Reagents

The table below details key computational "reagents" and their functions in building a property prediction pipeline with ChemXploreML.

| Tool/Component | Function in the Pipeline |

|---|---|

| CRC Handbook of Chemistry & Physics | Provides authoritative, experimental data for training and validation [1]. |

| RDKit | Canonicalizes SMILES strings and enables molecular analysis and manipulation [1] [22]. |

| Mol2Vec Embedding | Translates molecular structures into 300-dimension vectors for machine learning [1] [22]. |

| VICGAE Embedding | Generates compact 32-dimension molecular representations for faster computation [1] [22]. |

| Tree-Based Ensemble Models (e.g., XGBoost) | Advanced ML algorithms that learn complex relationships between embeddings and properties [1]. |

| Optuna | Automates and optimizes the process of finding the best model hyperparameters [1] [22]. |

The accurate prediction of molecular properties is a cornerstone in the advancement of drug discovery and materials science. Machine learning (ML) has emerged as a transformative tool for this task, though a significant challenge lies in representing molecular structures as numerical data that algorithms can process. This comparison guide objectively evaluates two prominent molecular embedding techniques—Mol2Vec and VICGAE—in their application to predicting key thermodynamic properties including melting point (MP), boiling point (BP), critical temperature (CT), and critical pressure (CP). The analysis is based on experimental data and performance metrics, providing researchers with a clear comparison to inform their selection of computational tools.

Experimental Protocols and Methodologies

The core data for this guide is derived from a study that implemented a standardized machine learning pipeline to ensure a fair and reproducible comparison between the Mol2Vec and VICGAE embedding methods [1] [7]. The following section details the key components of the experimental protocol.

Dataset Curation and Preprocessing

The molecular properties dataset was sourced from the CRC Handbook of Chemistry and Physics, a recognized authoritative reference [1]. The dataset comprised organic compounds with recorded properties of MP, BP, VP, CT, and CP. For each compound, SMILES (Simplified Molecular Input Line Entry System) representations were obtained and subsequently canonicalized using the RDKit cheminformatics toolkit. This step ensured a standardized and consistent representation of each molecular structure before the embedding process [1]. The final cleaned dataset sizes varied by property, as detailed in Table 1 of the results section.

Molecular Embedding Techniques

The study focused on two distinct molecular embedding approaches:

- Mol2Vec: This method generates 300-dimensional numerical vectors for molecules by employing principles from natural language processing on molecular substructures [1] [7]. It is designed to capture chemical similarity by analyzing the contexts in which substructures appear.

- VICGAE (Variance-Invariance-Covariance regularized GRU Auto-Encoder): This technique uses a graph neural network architecture with a Gated Recurrent Unit (GRU) Auto-Encoder to produce more compact 32-dimensional molecular embeddings. It is regularized to enforce desirable statistical properties in the latent space [1] [7].

Machine Learning and Validation Pipeline

The embedded molecular data was used to train and evaluate four state-of-the-art tree-based ensemble methods: Gradient Boosting Regression (GBR), XGBoost, CatBoost, and LightGBM [1]. The workflow incorporated robust validation practices, including automated hyperparameter optimization using Optuna and configurable parallelization via Dask to ensure model performance was both optimized and efficient [1].

The diagram below illustrates the complete experimental workflow.

The Scientist's Toolkit: Essential Research Reagents and Solutions

The following table catalogs the key computational tools and datasets that form the essential "research reagents" for replicating this molecular property prediction study.

| Item Name | Type/Version | Function in the Experiment |

|---|---|---|