Molecular Descriptors in QSPR: A Comprehensive Guide for Drug Discovery and ADMET Prediction

This article provides a comprehensive overview of the critical role molecular descriptors play in Quantitative Structure-Property Relationship (QSPR) modeling for drug discovery and development.

Molecular Descriptors in QSPR: A Comprehensive Guide for Drug Discovery and ADMET Prediction

Abstract

This article provides a comprehensive overview of the critical role molecular descriptors play in Quantitative Structure-Property Relationship (QSPR) modeling for drug discovery and development. It explores the foundational theory behind various descriptor types—from traditional 1D/2D to innovative 3D and topological indices—and their specific applications in predicting key pharmaceutical properties like ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity). The content delves into methodological advances, including machine learning integration and novel approaches like q-RASPR, while addressing crucial troubleshooting strategies for descriptor selection, redundancy, and model overfitting. Furthermore, it synthesizes current validation paradigms and comparative studies to guide researchers in selecting optimal descriptor sets for robust, predictive QSPR models, ultimately aiming to enhance efficiency in rational drug design.

The Building Blocks of QSPR: Understanding Molecular Descriptor Types and Their Fundamental Roles

In the realm of computational chemistry and rational drug design, molecular descriptors are fundamental mathematical representations that translate a molecule's chemical information into quantitative numerical values [1]. These descriptors form the foundational variables in Quantitative Structure-Property Relationship (QSPR) and Quantitative Structure-Activity Relationship (QSAR) models, which predict the physical, chemical, and biological properties of compounds based solely on their molecular structure [2] [3] [4]. By establishing correlations between structural features and observed properties, molecular descriptors enable researchers to accelerate drug discovery, reduce reliance on costly laboratory experiments, and deepen the understanding of structure-property relationships essential for designing novel therapeutics [2].

The utility of QSPR modeling, powered by molecular descriptors, is vividly demonstrated in contemporary research. For instance, studies have successfully employed degree-based topological indices to model and rank antibiotics for treating necrotizing fasciitis, while artificial neural networks (ANN) have been leveraged to predict the physicochemical properties of anti-inflammatory profens with high accuracy ((R^2 = 0.94)) [2] [3]. This guide provides a comprehensive technical examination of molecular descriptors, their computational representation, and their indispensable role in modern QSPR research for scientific and drug development professionals.

Basic Principles and Classification of Molecular Descriptors

Molecular descriptors are algorithms that convert molecular structures into numerical values, quantitatively describing the physical and chemical information of molecules [1]. They can be systematically classified based on the dimensionality of the molecular representation they derive from, which also often reflects the computational complexity involved in their calculation [5].

Table 1: Classification of Molecular Descriptors by Dimensionality

| Descriptor Dimension | Description | Key Examples |

|---|---|---|

| 0D Descriptors | Derived from molecular formula; do not require structural or connectivity information. | Atom type counts, molecular weight, bond type counts [5]. |

| 1D Descriptors | Based on counts of specific structural features or functional groups. | Counts of hydrogen bond acceptors (HBA) and donors (HBD), number of rings, presence of specific functional groups (e.g., amide, ester) [5]. |

| 2D Descriptors | Derived from the molecular graph (topological structure), considering atom connectivity but not 3D geometry. | Topological Indices (e.g., Randić, Zagreb), lipophilicity (LogP), Topological Polar Surface Area (TPSA) [2] [5]. |

| 3D Descriptors | Require the three-dimensional geometric structure of the molecule. | Geometrical descriptors, 3D polar surface area, molecular volume [5]. |

A critical distinction exists between topological descriptors (2D) and topographical descriptors (3D). Topological descriptors, akin to a public transportation map, represent the relative connections between atoms (the molecular graph) without specifying precise distances or geometries. In contrast, topographical descriptors are like a topographical map, providing specific information about distances, angles, and spatial arrangements in three dimensions [5].

Key Molecular Descriptors and Their Computational Representation

Fundamental Physicochemical Descriptors

Several 1D and 2D descriptors are critical in drug discovery for predicting a compound's absorption, distribution, metabolism, and excretion (ADME) properties. These are often evaluated against Lipinski's Rule of Five, a heuristic to assess drug-likeness [1].

- Molecular Weight (MW): The mass of the molecule in Daltons (Da). High MW (e.g., >500 Da) can complicate absorption and permeation [1].

- Calculated LogP (cLogP): A quantitative measure of a molecule's lipophilicity, representing its partitioning between an aqueous phase (e.g., water) and a lipophilic phase (e.g., n-octanol) [1].

- Hydrogen Bond Acceptors (HBA) & Donors (HBD): Counts of atoms that can accept hydrogen bonds (e.g., oxygen, nitrogen) and hydrogens attached to these atoms. These counts influence solubility and permeability [1].

- Topological Polar Surface Area (TPSA): Calculated from the surface areas of polar atoms (oxygen, nitrogen, attached hydrogens). It is a strong predictor of cell permeability, particularly blood-brain barrier penetration [2] [1].

Topological Indices

Topological Indices (TIs) are a major class of 2D descriptors derived from graph theory, where atoms are represented as vertices and bonds as edges of a mathematical graph [2]. The "degree" of a vertex (atom) is the number of bonds incident to it. Degree-based TIs are valued for their ease of calculation and strong correlation with physicochemical properties [2].

- Randić Index: Captures the degree of molecular branching [2].

- Zagreb Indices: Characterize molecular stability and connectivity [2].

- Atom-Bond Connectivity (ABC) Index: Effectively models thermodynamic and physicochemical properties [2].

- Discrete Adriatic Indices: Provide additional sensitivity to structural complexity [2].

These indices are mathematical illustrations that reflect geometric and topological properties, providing vital information regarding pharmacological interactions and stereochemistry by focusing on spatial structure, symmetry, and molecular connectivity [2].

Table 2: Key Descriptors in Drug Discovery and Their Predictive Roles

| Descriptor | Computational/Source | Primary Predictive Role in QSPR/QSAR |

|---|---|---|

| Molecular Weight (MW) | Calculated from molecular formula [5] | Bioavailability, permeation [1] |

| cLogP | Measured/calculated water-octanol partition coefficient [1] | Lipophilicity, membrane permeability [1] |

| HBA / HBD Count | Count of specific atom types [1] | Solubility, permeation (Rule of 5) [1] |

| Topological Polar Surface Area (TPSA) | Calculated from surface areas of polar atoms [1] | Cell permeability, blood-brain barrier penetration [2] |

| Topological Indices (e.g., Randić) | Calculated from the hydrogen-suppressed molecular graph [2] | Physicochemical properties, biological activity [2] |

| Fraction of sp3 Carbons (Fsp3) | Count of sp3 hybridized carbons [1] | Molecular complexity, solubility |

Methodologies for QSPR Modeling

The development of a robust QSPR model follows a structured workflow that integrates descriptor calculation, model building, and validation [2] [3].

Data Curation and Descriptor Calculation

The initial phase involves curating a structurally diverse dataset of compounds with known experimental properties. Molecular structures are typically drawn using software like KingDraw or retrieved from databases such as PubChem and ChemSpider [2] [3]. These structures are then processed computationally to calculate a wide array of molecular descriptors. For example, libraries like datamol in Python can batch compute numerous descriptors—including MW, LogP, TPSA, and HBD/HBA counts—for entire compound libraries efficiently [1].

Model Building and Validation

After calculating descriptors, statistical or machine learning techniques are applied to build the predictive model. The process involves identifying the most significant descriptors that correlate with the target property.

- Regression Analysis: Traditional QSPR models often use linear, quadratic, or cubic regression to fit the data [2]. For instance, a study on NF antibiotics used regression to identify significant topological indices for predicting physicochemical properties [2].

- Advanced Machine Learning: More complex models employ techniques like Artificial Neural Networks (ANN). A recent study on profens used an ANN model with topological indices as inputs, achieving excellent predictive ability ((R^2 = 0.94)) and a low mean squared error (MSE of 0.0087) on the test set [3].

- Hybrid Approaches: Novel methods like Quantitative Read-Across Structure-Property Relationship (q-RASPR) combine QSPR with read-across algorithms, sometimes demonstrating superior predictive performance compared to standard QSPR models [4].

Model validation is critical. This includes internal validation (e.g., Leave-One-Out cross-validation, yielding metrics like (Q^2)) and external validation using a hold-out test set not used in model training (e.g., (Q^2{F1}, Q^2{F2})) [4]. Normalization of the feature set is often performed before training to ensure model convergence and stability [3].

Multi-Criteria Decision Making (MCDM) in Drug Prioritization

Drug discovery requires balancing multiple, often conflicting, molecular properties. Multi-criteria decision-making (MCDM) methods like TOPSIS and MOORA resolve this complexity by normalizing diverse descriptors, applying criterion-specific weights, and producing composite rankings to systematically prioritize lead compounds [2].

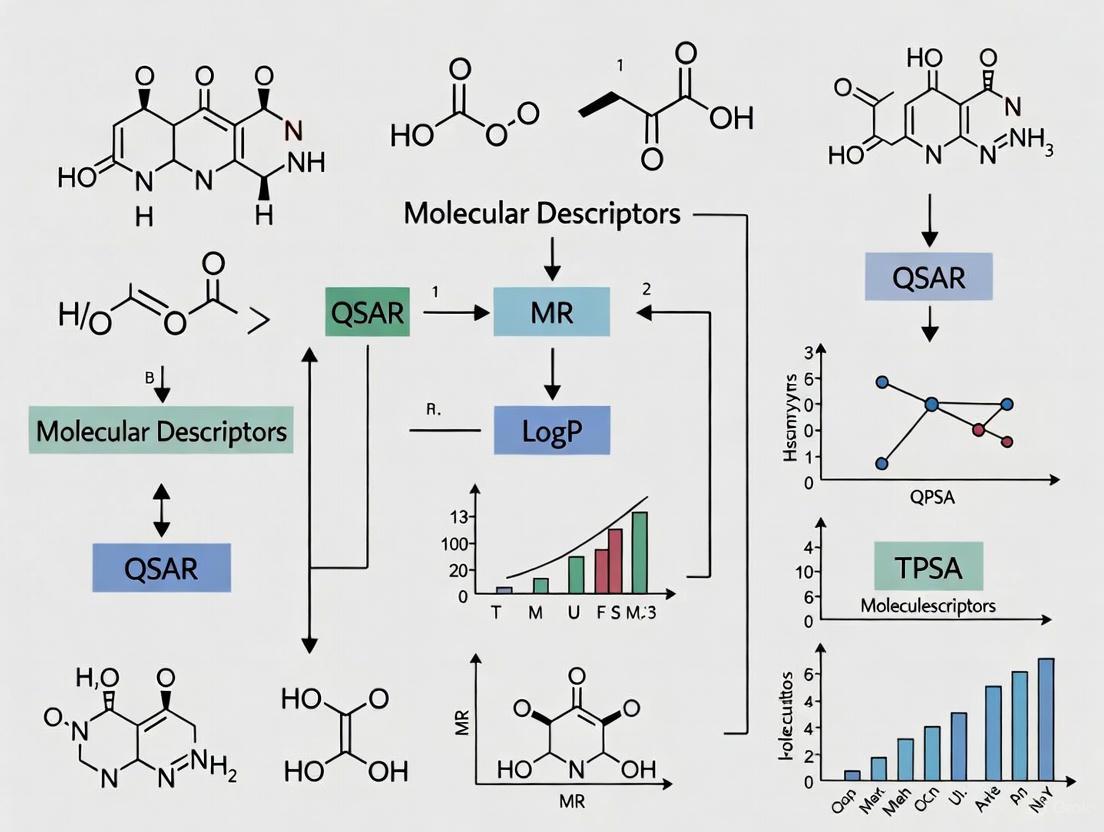

The following diagram illustrates the complete QSPR modeling workflow, from data collection to final application:

Experimental Protocols and Applications

Case Study: QSPR Modeling of Necrotizing Fasciitis Drugs

A recent study exemplifies the integrated QSPR/MCDM approach for evaluating antibiotics against necrotizing fasciitis (NF) [2].

- Objective: To predict physicochemical properties and rank NF antibiotics using degree-based topological indices.

- Materials: Key NF antibiotics included Piperacillin, Vancomycin, Imipenem, Daptomycin, Clindamycin, Ertapenem, and Gentamicin [2].

- Methodology:

- Molecular Representation: Chemical structures of NF drugs were drawn using KingDraw, with data sourced from PubChem and ChemSpider [2].

- Descriptor Calculation: Various valency-based topological indices (e.g., Randić, Zagreb, ABC) were calculated for each molecular structure [2].

- Model Development: Linear, quadratic, and cubic regression models were developed to identify significant relationships between the TIs and the target physicochemical properties [2].

- Ranking: The significant indices were then used as inputs in MCDM techniques (e.g., TOPSIS, MOORA) to generate a comprehensive ranking of the antibiotic candidates [2].

- Outcome: The study demonstrated the potential of combining topological indices with regression and MCDM to predict properties and prioritize antibiotic candidates for NF treatment, supporting rational drug design and repurposing [2].

Case Study: Machine Learning QSPR for Profens

Another protocol showcases the use of advanced machine learning for profen analysis [3].

- Objective: To develop a predictive ANN model for evaluating the principal physicochemical properties of a set of anti-inflammatory drugs (e.g., Ibuprofen, Flurbiprofen, Ketoprofen) based on topological indices.

- Methodology:

- Data Preparation: Molecular descriptors were calculated from molecular structures and used as inputs for the ANN model [3].

- Feature Normalization: The feature set was normalized before training to maintain convergence and stability of the model [3].

- Model Training & Validation: The ANN model was trained and its predictive ability was evaluated based on a high (R^2) value (0.94) and a low mean squared error (MSE of 0.0087) on the test set [3].

- Outcome: The method showcases the promise of machine learning models to facilitate better virtual screening and assist in rational drug design by making accurate predictions of properties [3].

Table 3: Key Computational Tools and Resources for QSPR Research

| Tool/Resource | Type | Primary Function in QSPR |

|---|---|---|

| PubChem / ChemSpider | Chemical Database | Sources for molecular structures and associated experimental data [2] [3]. |

| KingDraw | Chemical Drawing Software | Used to draw and represent molecular structures for analysis [2]. |

| datamol | Python Library | Calculates molecular descriptors (e.g., MW, LogP, TPSA) in batch for compound libraries [1]. |

| Topological Indices (TIs) | Mathematical Descriptors | Graph-theoretical descriptors (e.g., Randić) that correlate with physicochemical properties [2]. |

| Artificial Neural Networks (ANN) | Machine Learning Algorithm | Advanced non-linear model for building highly accurate QSPR predictive models [3]. |

| TOPSIS / MOORA | Multi-Criteria Decision Making (MCDM) | Methods for ranking lead compounds by balancing multiple property criteria [2]. |

| Gephi / Cytoscape | Network Visualization Software | Platforms for visualizing complex networks, including molecular interaction networks and analysis results [6] [7]. |

Molecular descriptors are the indispensable language of QSPR research, providing the critical link between a molecule's abstract structure and its tangible physical and biological properties. From simple 0D counts to complex 3D geometrical representations and topological indices, these quantitative measures empower researchers to build predictive models that streamline drug discovery and materials design. The integration of classical regression techniques with advanced machine learning and multi-criteria decision-making frameworks marks the cutting edge of the field. As computational power and algorithms continue to advance, the precision and scope of QSPR modeling will expand further, solidifying the role of molecular descriptors as a cornerstone of rational design in chemistry and pharmacology.

Molecular descriptors are fundamental tools in chemoinformatics and quantitative structure-property relationship (QSPR) research, serving as numerical representations that translate chemical information into a form suitable for mathematical and statistical analysis [8] [9]. They play a crucial role in pharmaceutical sciences, environmental protection policy, health research, and quality control by enabling the prediction of molecular properties and biological activities from structure alone [8] [10]. The transformation of molecules into numerical descriptors allows researchers to establish quantitative relationships that accelerate drug discovery, virtual screening, and molecular design [10] [11].

According to Todeschini and Consonni, a molecular descriptor is "the final result of a logic and mathematical procedure which transforms chemical information encoded within a symbolic representation of a molecule into a useful number or the result of some standardized experiment" [8]. This definition encompasses both experimental measurements and theoretical descriptors derived from symbolic molecular representations [8]. The critical importance of molecular descriptors in modern QSPR/QSAR studies lies in their ability to provide predictive models that can filter compound libraries before synthesis and experimental testing, significantly reducing time and costs in drug development [3] [12].

One of the most fundamental classification systems for molecular descriptors is based on their dimensionality, which reflects the level of structural information encoded in the representation and the complexity of the calculation [8] [9] [13]. This classification system ranges from simple 0D descriptors to complex 4D descriptors, with each level offering distinct advantages and limitations for specific QSPR applications [9] [13]. Understanding this dimensional hierarchy is essential for researchers to select appropriate descriptors that match the information content of the target property being modeled [9].

The Classification of Molecular Descriptors by Dimension

Theoretical Basis for Dimensional Classification

The dimensional classification of molecular descriptors is intrinsically linked to the type of molecular representation used in their calculation algorithm [8] [9]. Each dimensional level incorporates progressively more detailed information about the molecular structure, from basic composition to complex dynamic and interaction properties [13]. This hierarchy represents a trade-off between computational cost, information content, and applicability to different QSPR modeling scenarios [9] [13].

Higher-dimensional descriptors generally contain more structural information but require greater computational resources and may introduce complexities related to molecular conformation and alignment [9] [13]. Conversely, lower-dimensional descriptors are faster to compute and avoid conformational issues but may lack the structural specificity needed for modeling complex biological interactions [9]. The optimal descriptor dimension depends on the specific modeling context, with evidence suggesting that 2D descriptors often perform comparably to 3D descriptors in many QSAR applications while being significantly faster to compute [13].

The following diagram illustrates the hierarchical relationship between different molecular representations and the descriptor dimensions derived from them:

Molecular Representation and Descriptor Dimension Hierarchy

Detailed Analysis of Descriptor Dimensions

0D Descriptors (Constitutional Descriptors)

0D descriptors represent the most fundamental level of molecular description, derived solely from the chemical formula without any information about molecular structure or atom connectivity [9] [13]. These descriptors are calculated from the chemical composition alone and include basic molecular properties that can be obtained without structural knowledge [9]. Also known as count descriptors, they are characterized by simplicity, fast computation, and absence of conformational issues, but typically exhibit high degeneracy (different molecules having the same descriptor value) [9].

Table 1: Common 0D Molecular Descriptors and Their Characteristics

| Descriptor Name | Description | Calculation Method | Application in QSPR |

|---|---|---|---|

| Molecular Weight | Sum of atomic masses of all atoms in molecule | Direct calculation from atomic composition | Correlated with boiling point, solubility, pharmacokinetics |

| Atom Counts | Number of specific atom types (C, H, O, N, etc.) | Counting atoms in chemical formula | Constitutional analysis, property estimation |

| Bond Counts | Number of specific bond types (single, double, triple) | Counting bonds from molecular formula | Molecular flexibility assessment |

| Molar Refractivity | Measure of molecular polarizability | Based on molecular formula and structure | Estimating intermolecular interactions |

The primary advantage of 0D descriptors lies in their simplicity and minimal computational requirements, making them suitable for high-throughput screening and initial molecular profiling [9]. However, their severe limitations include inability to distinguish between structural isomers and generally high degeneracy, where different molecules share identical descriptor values [9]. In modern QSPR research, 0D descriptors are often used in combination with higher-dimensional descriptors to provide basic molecular information [9].

1D Descriptors (Fragment-Based Descriptors)

1D descriptors incorporate information about molecular substructures and fragments, representing a linear or one-dimensional view of molecular characteristics [9] [13]. These descriptors are derived from a list of structural fragments or functional groups present in the molecule without considering their connectivity or spatial arrangement [9]. This category includes molecular fingerprints, which are binary representations indicating the presence or absence of specific structural features [13] [14].

Table 2: Types of 1D Descriptors and Their Applications

| Descriptor Type | Description | Examples | Common Uses |

|---|---|---|---|

| Substructure Keys | Pre-defined structural fragments encoded as bit strings | MACCS keys (166/960 bits), PubChem fingerprints (881 bits) | Similarity searching, virtual screening |

| Hashed Fingerprints | Structural features hashed into fixed-length bit strings | Morgan fingerprints (ECFP), AtomPairs, Topological torsions | Machine learning, similarity analysis |

| Functional Group Counts | Number of specific functional groups | OH, NH, COOH, aromatic rings counts | Property prediction, metabolic stability |

| Pharmacophore Features | Key pharmacophoric elements | Hydrogen bond donors/acceptors, hydrophobic centers | Virtual screening, lead optimization |

The experimental protocol for calculating 1D descriptors typically involves: (1) molecular structure input (e.g., SMILES string or molecular graph); (2) fragmentation or substructure identification; (3) feature enumeration; and (4) fingerprint encoding or count calculation [14] [11]. For example, MACCS keys use a pre-defined dictionary of 166 structural fragments, where each position in the bit string corresponds to a specific substructural feature [14]. The presence of a feature sets the corresponding bit to 1, while its absence sets it to 0 [14].

1D descriptors, particularly fingerprints, excel in similarity searching, virtual screening, and machine learning applications due to their computational efficiency and ability to capture key molecular features [12] [14]. However, they may overlook stereochemistry and three-dimensional arrangement effects crucial for modeling specific biological interactions [13].

2D Descriptors (Topological Descriptors)

2D descriptors are derived from the topological representation of molecules, typically using molecular graphs where atoms correspond to vertices and bonds to edges [8] [9]. These descriptors encode information about atom connectivity and molecular topology without considering three-dimensional geometry [13]. Also known as graph invariants, they are calculated from the hydrogen-depleted molecular graph and represent one of the most extensive classes of molecular descriptors [9].

Topological descriptors capture structural patterns such as branching, cyclicity, and atom adjacency relationships [9]. Common examples include connectivity indices (e.g., Randic, Kier-Hall), Wiener index, Zagreb indices, and information-theoretic indices derived from graph theory [9]. These descriptors have demonstrated remarkable success in QSPR studies for predicting physicochemical properties, biological activity, and toxicological endpoints [9] [12].

The methodology for calculating 2D descriptors involves: (1) generating the molecular graph from the connection table; (2) applying graph-theoretical algorithms to compute invariants; (3) weighting atoms and bonds with appropriate properties (e.g., atomic number, bond order); and (4) calculating descriptor values using specific mathematical formulas [9]. For instance, the widely used Morgan algorithm (basis for ECFP fingerprints) iteratively updates atom identifiers based on their connectivity environment, effectively capturing circular substructures around each atom [12] [14].

Comparative studies have shown that 2D descriptors often perform comparably to 3D descriptors in QSAR modeling while being significantly faster to compute and avoiding conformational uncertainties [13]. This makes them particularly valuable for high-throughput virtual screening and large-scale QSPR analyses [12] [13].

3D Descriptors (Geometrical Descriptors)

3D descriptors incorporate spatial and geometrical information derived from the three-dimensional structure of molecules, requiring atomic coordinates (x, y, z) as input [8] [9]. These descriptors capture properties related to molecular size, shape, surface area, volume, and spatial distribution of electronic features [9] [13]. They are essential for modeling properties and interactions that depend on three-dimensional molecular characteristics, such as protein-ligand binding and stereoselective reactions [9].

The calculation of 3D descriptors requires prior generation of three-dimensional molecular structures, typically through molecular mechanics or quantum chemical calculations [15] [13]. This process involves: (1) generating a 3D structure from 2D representation; (2) geometry optimization to obtain low-energy conformation; (3) calculating spatial properties; and (4) deriving descriptor values [13]. Important classes of 3D descriptors include geometrical descriptors (size, shape, volume), WHIM descriptors (Weighted Holistic Invariant Molecular descriptors), 3D-MoRSE descriptors (Molecular Representation of Structures based on Electron diffraction), and quantum chemical descriptors (HOMO/LUMO energies, dipole moment, polarizability) [8] [15].

Recent advances in 3D descriptor methodology include the development of low-cost quantum chemical approaches using DFT/COSMO (Density Functional Theory/Conductor-like Screening Model) computations to determine descriptor scales for volume, hydrogen bond acidity/basicity, and charge asymmetry [15]. These theoretically derived descriptors have shown excellent correlation with empirical scales and good performance in LSER (Linear Solvation Energy Relationship) correlations of solvation-related thermodynamic and kinetic properties [15].

While 3D descriptors offer higher information content and better discrimination of stereoisomers, they introduce complexities related to conformational analysis, molecular alignment, and computational requirements [9] [13]. The choice of molecular conformation can significantly impact descriptor values and subsequent model performance, making conformational analysis a critical step in 3D-QSAR studies [9].

4D Descriptors (Interaction Field Descriptors)

4D descriptors extend beyond static three-dimensional structure to incorporate ensemble representations or interaction properties, typically derived from molecular dynamics simulations or interaction fields with probe atoms [8] [9] [13]. These descriptors capture information about molecular flexibility, conformational dynamics, and interaction potentials that are crucial for understanding biological activity and receptor binding [9] [13].

The "fourth dimension" in these descriptors can refer to different concepts: (1) multiple molecular conformations (ensemble representation); (2) interaction energies with probe atoms; or (3) temporal evolution in molecular dynamics simulations [9]. Common 4D descriptors include GRID-based descriptors, CoMFA (Comparative Molecular Field Analysis) fields, CoMSIA (Comparative Molecular Similarity Indices Analysis), and Volsurf descriptors [8] [13].

The experimental protocol for 4D descriptor calculation typically involves: (1) generating multiple low-energy conformations; (2) placing the molecule in a 3D grid; (3) calculating interaction energies with various probes at grid points; and (4) extracting descriptive parameters from the interaction fields [13]. For example, in GRID-based methods, a molecule is placed in a 3D lattice, and interaction energies with chemical probes (e.g., water, methyl group, carbonyl oxygen) are computed at each grid point, generating a scalar field that characterizes molecular interaction properties [9] [13].

4D descriptors are particularly valuable for modeling complex biological interactions where molecular flexibility and dynamic behavior play important roles [9]. However, they require significant computational resources and careful parameterization, limiting their application in high-throughput screening scenarios [13].

Comparative Analysis and Research Applications

Performance Comparison Across Dimensions

The choice of descriptor dimension significantly impacts QSPR model performance, with different dimensions excelling in specific applications. Recent comparative studies provide insights into the relative strengths of various descriptor types:

Table 3: Comparative Performance of Descriptor Dimensions in QSPR Modeling

| Descriptor Dimension | Information Content | Computational Cost | Degeneracy | Best-Suited Applications |

|---|---|---|---|---|

| 0D | Very Low | Very Low | Very High | High-throughput screening, initial filtering |

| 1D | Low | Low | High | Similarity searching, substructure analysis |

| 2D | Medium | Medium | Medium | General QSAR, property prediction, toxicity |

| 3D | High | High | Low | Stereospecific interactions, protein binding |

| 4D | Very High | Very High | Very Low | Complex bioactivity, receptor interactions |

A comprehensive study comparing descriptor performance across multiple ADME-Tox targets demonstrated that traditional 1D, 2D, and 3D descriptors generally outperformed fingerprint-based representations for targets including Ames mutagenicity, P-glycoprotein inhibition, hERG inhibition, hepatotoxicity, blood-brain-barrier permeability, and cytochrome P450 inhibition [12]. The study employed machine learning algorithms (XGBoost and RPropMLP neural network) and found that 2D descriptors frequently produced superior models across multiple endpoints [12].

Notably, 2D descriptors have shown competitive performance compared to 3D descriptors in many QSAR applications while offering advantages in computational efficiency and avoidance of conformational issues [13]. This makes them particularly valuable for large-scale virtual screening and initial property assessment. However, for endpoints strongly dependent on molecular shape and stereochemistry, 3D and 4D descriptors provide enhanced predictive capability despite their higher computational demands [9] [13].

Integrated Workflow for Descriptor Calculation and QSPR Modeling

Modern QSPR research typically employs integrated workflows that combine descriptor calculation with machine learning for predictive model development. The following diagram illustrates a comprehensive QSPR modeling workflow incorporating multiple descriptor dimensions:

Comprehensive QSPR Modeling Workflow with Multi-Dimensional Descriptors

Software tools like QSPRmodeler exemplify this integrated approach, providing open-source platforms that support the entire workflow from raw data preparation and descriptor calculation to machine learning model training and validation [11]. Such tools typically incorporate various descriptor types, including multiple fingerprint representations (Daylight, atom-pair, topological torsion, Morgan, MACCS keys) and molecular descriptors from libraries like Mordred (1825 descriptors) [11].

Modern QSPR research relies on specialized software tools for descriptor calculation and model development. The following table summarizes key resources available to researchers:

Table 4: Essential Software Tools for Molecular Descriptor Calculation and QSPR Modeling

| Tool Name | Descriptor Dimensions | Key Features | License | Application Context |

|---|---|---|---|---|

| alvaDesc [8] | 0D-3D, Fingerprints | Comprehensive descriptor calculation, GUI, KNIME integration | Commercial | Pharmaceutical research, regulatory compliance |

| Dragon [8] | 0D-3D, Fingerprints | Extensive descriptor database, well-established | Commercial | General QSAR/QSPR studies |

| Mordred [8] [16] | 0D-3D | 1800+ descriptors, Python library, open source | Open Source | Academic research, method development |

| PaDEL-descriptor [8] | 0D-3D, Fingerprints | Based on CDK, user-friendly | Free | Virtual screening, drug discovery |

| RDKit [8] | 0D-3D, Fingerprints | Comprehensive cheminformatics, Python API | Open Source | Drug discovery, materials science |

| fastprop [16] | 2D (via Mordred) | Deep learning QSPR framework, user-friendly CLI | Open Source | Property prediction, small datasets |

| QSPRmodeler [11] | 0D-3D, Fingerprints | Complete QSPR workflow, multiple ML algorithms | Open Source | Educational use, predictive modeling |

The selection of appropriate software depends on research objectives, computational resources, and technical expertise. Commercial tools like alvaDesc and Dragon offer comprehensive descriptor sets and user-friendly interfaces, while open-source options like RDKit and Mordred provide flexibility and customization for method development [8]. Emerging frameworks like fastprop combine traditional descriptors with deep learning to achieve state-of-the-art performance across datasets of varying sizes [16].

The dimensional classification of molecular descriptors provides a fundamental framework for understanding their information content, computational requirements, and appropriate applications in QSPR research. Each dimensional category—from simple 0D constitutional descriptors to complex 4D interaction field descriptors—offers distinct advantages and limitations that must be considered in the context of specific research goals [9] [13].

The choice of descriptor dimension involves balancing multiple factors: the complexity of the target property, available computational resources, dataset size, and required model interpretability [9] [12]. While higher-dimensional descriptors offer greater structural specificity, they are not universally superior; the optimal descriptor dimension depends on the information content of the property being modeled [9]. Evidence suggests that 2D descriptors frequently provide the best balance of performance and computational efficiency for many QSAR applications [12] [13].

Future directions in descriptor research include the development of novel descriptor sets based on low-cost quantum chemical computations [15], integration of traditional descriptors with deep learning frameworks [16], and creation of standardized workflows that automatically select optimal descriptor dimensions for specific modeling tasks [11]. As QSPR research continues to expand into new chemical domains including salts, ionic liquids, peptides, polymers, and nanostructures, the development of specialized descriptors for these compound classes will remain an active research frontier [8].

The dimensional hierarchy of molecular descriptors continues to provide a conceptual foundation for navigating the complex landscape of molecular representation in QSPR research. By understanding the characteristics and appropriate applications of descriptors at each dimensional level, researchers can make informed decisions that enhance the predictive power and efficiency of their QSPR models across diverse chemical and biological domains.

In the field of quantitative structure-property relationship (QSPR) research, molecular descriptors serve as the fundamental link between a compound's structure and its observable physicochemical or biological properties. Among these descriptors, topological indices hold a distinguished position as numerical representations derived directly from the molecular graph's connectivity [17] [18]. In this framework, atoms are symbolized as vertices and chemical bonds as edges, forming a mathematical structure (G = (V, E)) where (V) is the set of vertices and (E) is the set of edges [17]. The degree of a vertex (\S (\varrho)), denoted as the number of edges incident to it, provides the foundational information for calculating most degree-based topological indices [17].

The significance of topological indices in modern chemical research is substantial. They facilitate the prediction of crucial molecular properties—such as boiling points, strain energy, stability, and bioactivity—without recourse to resource-intensive experimental procedures [17] [18]. Their application extends across diverse domains, including drug discovery, material science, and environmental chemistry, where they enhance the efficiency of screening compounds for desired attributes [4] [19]. Furthermore, the integration of topological indices with entropy measures offers insights into molecular complexity and information content, while their incorporation into machine learning algorithms is elevating the predictive accuracy of contemporary QSPR models [18] [20].

Topological indices are generally categorized based on the graph-theoretical properties they quantify. The most common classes include degree-based indices, distance-based indices, and eigenvalue-based indices [17]. This guide concentrates on degree-based indices, which are among the most extensively utilized in QSPR studies due to their computational efficiency and strong correlation with numerous molecular properties.

Table 1: Foundational Degree-Based Topological Indices

| Index Name | Mathematical Formulation | Structural Interpretation |

|---|---|---|

| General Randić Index [17] | ( R{\alpha}(G) = \sum\limits{\varrho\varphi \in E(G)} (\S(\varrho) \times \S(\varphi))^{\alpha} ) | Captures the influence of molecular branching and atom connectivity. |

| Atom-Bond Connectivity (ABC) Index [17] | ( ABC(G) = \sum\limits_{\varrho\varphi \in E(G)} \sqrt{\frac{\S(\varrho) + \S(\varphi) - 2}{\S(\varrho) \times \S(\varphi)}} ) | Related to the stability of branched alkanes and the energy of molecular graphs. |

| Geometric-Arithmetic (GA) Index [17] | ( GA(G) = \sum\limits_{\varrho\varphi \in E(G)} \frac{2\sqrt{\S(\varrho) \times \S(\varphi)}}{\S(\varrho) + \S(\varphi)} ) | Balances geometric and arithmetic means of vertex degree products. |

| First Zagreb Index [17] | ( M1(G) = \sum\limits{\varrho\varphi \in E(G)} (\S(\varrho) + \S(\varphi)) ) | Measures the total degree connectivity of the graph. |

| Second Zagreb Index [17] | ( M2(G) = \sum\limits{\varrho\varphi \in E(G)} (\S(\varrho) \times \S(\varphi)) ) | Focuses on the product of degrees of adjacent vertices. |

| Hyper-Zagreb Index [17] | ( HM(G) = \sum\limits_{\varrho\varphi \in E(G)} (\S(\varrho) + \S(\varphi))^2 ) | An extension amplifying the influence of high-degree vertices. |

| First Multiple Zagreb Index [17] | ( PM1(G) = \prod{\varrho\varphi \in E(G)} (\S(\varrho) + \S(\varphi)) ) | Multiplicative variant of the first Zagreb index. |

| Second Multiple Zagreb Index [17] | ( PM2(G) = \prod{\varrho\varphi \in E(G)} (\S(\varrho) \times \S(\varphi)) ) | Multiplicative variant of the second Zagreb index. |

Recent research has led to the development of more sophisticated indices. Neighborhood degree-based indices consider the sum of degrees of adjacent vertices, providing a more detailed characterization of the local molecular environment [21]. These include the redefined third Zagreb index, the forgotten index, and the reduced Zagreb index, which have demonstrated utility in analyzing complex networks such as hexagonal chain structures found in benzenoids and nanotubes [21].

Experimental and Computational Protocols

The calculation and application of topological indices follow a structured workflow, from graph representation to model building and validation.

Protocol 1: Calculation of Degree-Based Topological Indices

This protocol details the process for computing fundamental degree-based indices from a molecular structure.

- Molecular Graph Construction: Represent the molecule as a hydrogen-suppressed graph (G=(V,E)). Each non-hydrogen atom is a vertex in (V), and each chemical bond is an edge in (E).

- Vertex Degree Assignment: For every vertex (\varrho \in V), determine its degree (\S(\varrho)), which is the number of edges incident to it. In a molecular graph, this corresponds to the number of adjacent non-hydrogen atoms.

- Edge Partitioning: Partition the edge set (E(G)) based on the degree of the incident vertices. For example, identify all edges (e=\varrho\varphi) where ((\S(\varrho), \S(\varphi)) = (di, dj)) for all possible degree pairs ((di, dj)).

- Index Calculation: For the target topological index, apply its mathematical formula to the partitioned edge set. For instance, to calculate the Randić index (( \alpha = -1/2 )):

- For each edge (e=\varrho\varphi) in the partition (E{di, d_j}), compute the term ( (\S(\varrho) \times \S(\varphi))^{-1/2} ).

- Sum the computed terms across all edge partitions: ( R{-1/2}(G) = \sum{e \in E(G)} (\S(\varrho) \times \S(\varphi))^{-1/2} ).

Protocol 2: QSPR Modeling with Topological Indices

This protocol outlines the use of topological indices as descriptors in a QSPR study to predict molecular properties.

- Data Set Curation: Compile a data set of diverse chemical structures with their experimentally measured property of interest (e.g., heat of formation, bioconcentration factor). Ensure structures are standardized and "QSAR-ready" [22].

- Descriptor Generation: Calculate a suite of topological indices (e.g., Randić, ABC, GA, Zagreb) for every molecule in the data set using tools like QSPRpred [20] or by implementing the calculations from Protocol 1.

- Data Splitting: Divide the data set into a training set (e.g., 70-80%) for model development and a test set (e.g., 20-30%) for external validation.

- Model Building and Validation:

- Training: Employ machine learning algorithms (e.g., Partial Least Squares regression, Random Forest, or neural networks) on the training set to establish a mathematical relationship between the topological indices (predictor variables) and the target property (response variable).

- Validation: Assess model performance using the test set. Key metrics include the coefficient of determination ((R^2)), cross-validated (R^2) ((Q^2)), and the Concordance Correlation Coefficient (CCC) to ensure robustness and predictive ability [23] [4].

- Model Interpretation and Deployment: Analyze the model to identify which topological indices are most significant predictors. The finalized model can then predict the property for new, untested compounds.

Diagram 1: Workflow for Calculating a Topological Index.

Case Studies and Data Analysis

The practical utility of topological indices is demonstrated through their application in predicting key material and toxicological properties.

Case Study: Predicting Heat of Formation in Titanium Diboride

A 2025 study on a Titanium Diboride ((TiB2)) network performed a statistical analysis of various topological indices against the heat of formation, a critical thermodynamic property [17]. The research employed a rational curve-fitting approach to model the relationship. The results revealed exceptionally strong correlations, with the Atom-Bond Connectivity (ABC) index achieving a Pearson’s correlation coefficient of 0.984 and the Geometric-Arithmetic (GA) index reaching 0.972 with the heat of formation [17]. This indicates that these indices are highly predictive descriptors for the stability and reactivity of the (TiB2) network, providing deep insights into its molecular interactions without extensive experimental setups.

Case Study: Estimating Soil Adsorption and Bioaccumulation

Topological indices are integral to environmental QSPR models. A large-scale study developed a model for predicting the soil adsorption coefficient (logKOC) using a dataset of 1,477 compounds [23]. The models, built with several machine learning algorithms, met strict acceptance criteria for goodness-of-fit ((R^2{Train} > 0.700)) and predictive ability ((Q^2{EXT} > 0.700)), demonstrating the reliability of structural descriptors for estimating chemical mobility and environmental fate [23].

Similarly, a novel quantitative read-across structure–property relationship (q-RASPR) model was developed to predict the bioconcentration factor (BCF) in aquatic organisms [4]. By combining traditional QSPR with read-across algorithms and using 2D molecular descriptors, the model showed robust predictive performance (external validation (Q^2_{F1} = 0.739), CCC = 0.858), offering a reliable tool for screening the bioaccumulative potential of industrial chemicals [4].

Table 2: Correlation of Topological Indices with Physicochemical Properties

| Topological Index | Property Predicted | Correlation / Performance | Context / Model |

|---|---|---|---|

| ABC Index | Heat of Formation | Pearson's r = 0.984 [17] | Titanium Diboride ((TiB_2)) Network |

| GA Index | Heat of Formation | Pearson's r = 0.972 [17] | Titanium Diboride ((TiB_2)) Network |

| Various 2D Descriptors | Soil Adsorption (logKOC) | (Q^2_{EXT} > 0.700) [23] | QSPR Model (1,477 compounds) |

| Various 2D Descriptors | Bioconcentration Factor (BCF) | CCC = 0.858 [4] | q-RASPR Model (1,303 compounds) |

Implementing QSPR studies with topological indices requires access to specific software tools and computational resources.

Table 3: Key Software Tools for QSPR and Molecular Descriptor Calculation

| Tool Name | Type/Function | Key Features | Application in Research |

|---|---|---|---|

| QSPRpred [20] | Open-Source Python Toolkit | Flexible QSPR workflow management, model serialization, includes data pre-processing for deployment. | Enables reproducible model building and benchmarking of different algorithms and descriptors. |

| OPERA [22] | Open-Source QSAR/QSPR Suite | Provides predictions for toxicity, physicochemical, and environmental fate properties based on curated models and data. | Offers readily available, validated models for regulatory-oriented property prediction. |

| Saagar Descriptors [24] | Extensible Molecular Substructure Library | Designed for environmental chemicals, offers interpretable structural features and adapts to new chemical spaces. | Improves prediction accuracy for challenging endpoints like nitrosamine toxicity and mutagenicity. |

| DeepChem [20] | Deep Learning Library | Offers a wide array of featurizers and models for molecular representation, including graph neural networks. | Facilitates the use of modern AI-driven representation learning alongside traditional descriptors. |

Diagram 2: Molecular Representation Pathways in QSPR.

Topological indices provide a powerful, mathematically rigorous framework for translating molecular structure into quantitative descriptors for predictive modeling. As demonstrated by their successful application in material science for predicting the heat of formation in ceramic networks and in environmental chemistry for estimating adsorption and bioaccumulation potential, these graph-theoretical tools are indispensable in the QSPR toolkit. The field continues to evolve, with emerging trends focusing on the integration of neighborhood degree-based indices, the combination of topological indices with entropy measures, and their use within sophisticated, open-source machine learning platforms like QSPRpred. This synergy between classic graph theory and modern computational methods ensures that topological indices will remain a cornerstone of rational molecular design and property prediction.

Molecular descriptors are the foundational language of quantitative structure-property relationship (QSPR) research, translating the intricate architecture of molecules into numerical values that algorithms can process. The evolution of these descriptors from simple, rule-based features to complex, data-driven representations is fundamentally accelerating drug discovery and materials science [19]. This guide details the latest advancements and methodologies in descriptor development, providing a technical roadmap for researchers and development professionals.

The Evolution of Molecular Descriptors: From Classical to AI-Driven

The journey of molecular descriptors reflects a broader shift in computational chemistry towards deeper, more holistic molecular characterization.

Classical Descriptor Regimes

Classical descriptors are categorized by the dimensional aspect of the molecular structure they capture [25].

- 1D Descriptors are derived from the chemical formula and count global molecular properties, such as molecular weight, atom counts, or bond counts.

- 2D Descriptors (Topological Indices) are calculated from the molecular graph, representing atoms as vertices and bonds as edges. They include:

- Connectivity-based indices: Such as the Randić index, which captures molecular branching [2].

- Distance-based indices: Which consider the shortest paths between atoms.

- Information-theoretic indices: Derived from the symmetry of the molecular graph.

- 3D Descriptors encode spatial information, including molecular volume, surface area, and dipole moments [25]. These are crucial for modeling stereospecific interactions.

- 4D Descriptors extend further by accounting for conformational flexibility, using ensembles of molecular structures to provide a more realistic representation under physiological conditions [25].

Table 1: Classical Molecular Descriptor Classifications

| Dimension | Description | Example Descriptors | Key Applications |

|---|---|---|---|

| 1D | Based on chemical formula and elemental composition | Molecular weight, atom counts, bond counts | Preliminary screening, bulk property prediction |

| 2D (Topological) | Derived from the molecular graph structure | Randić index, Zagreb indices, ABC index [2] | QSPR modeling, predicting physicochemical properties [2] |

| 3D | Encode spatial and stereochemical information | Molecular volume, surface area, dipole moment [25] | Protein-ligand docking, activity prediction for chiral compounds |

| 4D | Incorporate conformational flexibility | Ensemble-based descriptors from molecular dynamics snapshots [25] | Refining QSAR models, modeling ligand-receptor interactions |

The Rise of AI-Driven Descriptors

Modern artificial intelligence (AI) has ushered in a paradigm shift from predefined, rule-based descriptors to learned, data-driven representations [19]. These methods use deep learning models to automatically extract salient features directly from molecular data.

- Graph-Based Representations: Molecules are natively represented as graphs. Graph Neural Networks (GNNs) operate on this structure, learning embeddings by passing messages between connected atoms. The resulting "deep descriptors" capture complex, non-local relationships that are difficult to engineer manually [19] [25].

- Language Model-Based Representations: Models like Transformers treat Simplified Molecular-Input Line-Entry System (SMILES) strings or similar notations as a chemical language. By tokenizing these strings, the model learns contextual embeddings for atoms and substructures, capturing syntactic and semantic molecular rules [19].

- Multimodal and Contrastive Learning: The most advanced frameworks integrate multiple representation types (e.g., graphs and SMILES) to create a more robust molecular understanding. Contrastive learning enhances this by ensuring similar molecules have similar representations in a latent space, even if their primary structures differ, directly supporting tasks like scaffold hopping [19].

Experimental Protocols for Descriptor Development and Application

Implementing a robust QSPR model requires a disciplined workflow from data standardization to model validation. The following protocols outline the critical stages.

Protocol 1: Creating "QSAR-Ready" Standardized Structures

The quality of molecular descriptors is bounded by the quality of the input structures. An automated standardization workflow is essential for reproducible results [26].

Detailed Methodology:

- Structure Input: Read the molecular structure encoding (e.g., SMILES string) into an in-memory representation [26].

- Desalting: Remove counterions and salts to isolate the parent structure [26].

- Stereochemistry Handling: For 2D QSAR, strip stereochemistry information to focus on connectivity. For 3D QSAR, standardize stereochemical representations [26].

- Tautomer Standardization: Apply rules to normalize the representation of tautomeric forms to a single canonical structure [26].

- Functional Group Standardization: Convert non-standard representations of groups (e.g., nitro groups) into a consistent form [26].

- Valence Correction and Neutralization: Correct any invalid atomic valences and, where possible, neutralize charges [26].

- Deduplication: Identify and remove duplicate structures to prevent bias in the training data [26].

This workflow can be implemented using open-source tools like the KNIME-based "QSAR-ready" workflow, which is available as a standalone resource on GitHub and in Docker containers [26].

Protocol 2: A Modern QSPR Modeling Workflow

This protocol describes the end-to-end process of building a predictive QSPR model using both traditional and AI-driven descriptors [11].

Detailed Methodology:

- Data Curation and Standardization: Begin with a CSV file containing SMILES codes and experimental data. Apply Protocol 1 to standardize all structures [11] [26].

- Molecular Feature Calculation:

- Feature Preprocessing and Selection:

- Handle inconsistent experimental data by aggregating replicates (e.g., using the arithmetic mean) and filtering out high-variance entries [11].

- Scale descriptors (e.g., to unit variance) and apply Principal Component Analysis (PCA) to reduce dimensionality and multicollinearity [11] [25].

- Use feature selection techniques like LASSO or mutual information ranking to identify the most predictive descriptors [25].

- Model Training and Hyperparameter Optimization:

- Model Validation and Serialization:

Modern QSPR Modeling Workflow

A suite of powerful, often open-source, software libraries and platforms has democratized advanced descriptor development and QSPR modeling.

Table 2: Essential Tools for Descriptor Development and QSPR Modeling

| Tool/Resource Name | Type | Primary Function | Key Features |

|---|---|---|---|

| RDKit | Open-source Library | Cheminformatics | Core functionality for reading molecules, calculating fingerprints (Morgan, Atom-Pair) and 2D descriptors [11]. |

| Mordred | Open-source Library | Descriptor Calculation | Calculates a comprehensive set of 1D, 2D, and 3D molecular descriptors (1,825+ descriptors) [11]. |

| QSPRmodeler | Open-source Application | End-to-end QSPR Workflow | Manages the complete pipeline from data prep to model training and serialization, using RDKit and scikit-learn [11]. |

| KNIME Analytics Platform | Workflow Environment | Data Pipelines & Standardization | Graphical environment for building automated "QSAR-ready" and "MS-ready" structure standardization workflows [26]. |

| scikit-learn | Open-source Library | Machine Learning | Provides PCA, model algorithms (RF, SVM), and hyperparameter tuning tools for model building [11]. |

| Hyperopt | Open-source Library | Hyperparameter Optimization | Implements advanced algorithms like Tree of Parzen Estimators for optimizing model parameters [11]. |

Case Study: Topological Indices in Necrotizing Fasciitis Drug Research

A recent study exemplifies the potent application of classical descriptors in modern drug discovery. Researchers used degree-based topological indices (TIs) to model and rank antibiotics for necrotizing fasciitis (NF) [2].

Experimental Methodology:

- Molecular Representation: Molecular structures of NF antibiotics (e.g., piperacillin, vancomycin, imipenem) were drawn using KingDraw, with data sourced from PubChem and ChemSpider [2].

- Descriptor Calculation: A set of valency-based TIs, including the Randić, Zagreb, and Atom-Bond Connectivity (ABC) indices, were calculated for each drug. These indices were selected for their established utility in capturing branching, connectivity, and thermodynamic properties [2].

- QSPR Model Development: Linear, quadratic, and cubic regression analyses were performed to establish relationships between the TIs and key physicochemical properties of the drugs [2].

- Multi-Criteria Decision-Making (MCDM): The significant TIs were used as inputs in MCDM techniques, such as TOPSIS and MOORA, to rank the antibiotics based on a balance of multiple molecular properties [2].

This integrated approach demonstrated that TIs provide a computationally efficient and theoretically robust framework for predicting drug properties and prioritizing therapeutic candidates, supporting the rational design and repurposing of NF therapeutics [2].

QSPR Modeling with Topological Indices

Molecular descriptors are the fundamental encoding mechanism that translates chemical structures into quantitative numerical values, enabling the prediction of physicochemical and biological properties through Quantitative Structure-Property Relationship (QSPR) models. This technical guide examines the theoretical foundations, computational methodologies, and practical applications of molecular descriptors in modern chemical research. By exploring diverse descriptor types—from quantum chemical parameters to topological indices—and their implementation in both traditional QSPR and contemporary machine learning frameworks, we demonstrate how descriptors serve as the critical link between molecular structure and observable properties. The comprehensive analysis presented herein, supported by experimental protocols and empirical data, underscores the indispensable role of descriptors in accelerating drug discovery and materials design within research environments.

Quantitative Structure-Property Relationships (QSPRs) represent well-established methodologies for correlating, rationalizing, and predicting property data across diverse chemical domains, including environmental protection, material science, molecular biology, and pharmacology [15]. These relationships typically assume a multilinear form (P=P0+\sum diDi), where (P) is the experimental property, (Di) are molecular structure descriptors, (di) are system conjugate descriptors obtained through regression, and (P0) represents the property value in the reference state [15]. Molecular descriptors serve as the essential quantitative encoders that transform structural information into mathematically tractable values, thereby creating a bridge between the discrete world of molecular structures and the continuous realm of property prediction.

When QSPRs specifically address properties linked to molecular solvation and free energy, they are termed Linear Solvation Energy Relationships (LSER) or Linear Free Energy Relationships (LFER) [15]. These approaches rely on descriptors that quantify molecular characteristics such as size, hydrogen-bonding capability, polarity, and polarizability, which collectively capture a molecule's potential for various electrostatic interactions [15]. The evolution of descriptor technology has progressed from empirical parameters derived from experimental measurements to sophisticated theoretical constructs computed through quantum chemical methods, reflecting the continuous advancement of computational chemistry and machine learning in molecular sciences.

Types of Molecular Descriptors and Their Theoretical Basis

Quantum Chemical Descriptors

Quantum chemical descriptors derive from computational approaches based on quantum mechanics, particularly Density Functional Theory (DFT) combined with solvation models like the Conductor-like Screening Model (COSMO). A recent methodology proposes four fundamental descriptors computed through low-cost DFT/COSMO computations: molecular volume ((V{\text{COSMO}}^*)), hydrogen bond/Lewis acidity ((\alpha{\text{COSMO}})), basicity ((\beta{\text{COSMO}})), and charge asymmetry of the nonpolar region ((\delta{\text{COSMO}})) [15]. These descriptors offer clear physical interpretations related to molecular electronic structure and have demonstrated strong linear correlations with established empirical scales (mostly R² > 0.8, with some exceeding R² > 0.9) despite being completely independent of experimental data [15]. The advantages of such theoretical descriptors include their experiment-independent nature, well-defined physical meanings, and direct connection to essential chemical concepts, which facilitates mechanistic interpretation of QSPR models [15].

Topological Descriptors

Topological indices represent another important class of descriptors that describe structural properties of molecules using mathematical tools from graph theory. These indices simplify complex molecular structures into numerical values that quantify connectivity and complexity patterns [27]. In pharmaceutical QSPR studies, reducible topological indices based on molecular degree have shown significant relationships with key properties like molar mass and collision cross section, with correlation coefficients ranging from 0.7 to 0.9 for molar mass and 0.8 to 0.9 for collision cross section [27]. These indices have proven particularly valuable in analyzing drugs for tuberculosis treatment, establishing statistically significant QSPR models through linear, quadratic, and logarithmic regression analysis [27].

Empirical Descriptor Scales

Several well-established empirical descriptor scales have been developed through targeted experimental measurements. The Kamlet-Taft and Abraham parameters represent the most prominent sets for non-ionic solvents and solutes, respectively [15]. Other significant empirical scales include the Gutmann donor number (DN) and acceptor number (AN) for characterizing nucleophilic and electrophilic ability, Catalan's SA (acidity), SB (basicity), SP (polarizability), and SdP (dipolarity) scales based on solvatochromic measurements, and Laurence's α1 acidity and β1 basicity scales combining solvatochromic measurements with DFT calculations [15]. These empirical approaches rely on various experimental techniques including UV/Vis spectroscopy with solvatochromic dyes, equilibrium constants of acid-base reactions, chromatographic partitioning measurements, dissolution enthalpy measurements, and NMR shift measurements [15].

Table 1: Major Categories of Molecular Descriptors and Their Characteristics

| Descriptor Category | Theoretical Basis | Key Parameters | Representative Applications |

|---|---|---|---|

| Quantum Chemical | Density Functional Theory with solvation models | (V{\text{COSMO}}^*) (volume), (\alpha{\text{COSMO}}) (acidity), (\beta{\text{COSMO}}) (basicity), (\delta{\text{COSMO}}) (charge asymmetry) | LSER correlations of solvation-related thermodynamic and kinetic properties [15] |

| Topological Indices | Graph theory and mathematical connectivity | Reducible indices based on degree, connectivity metrics | TB drug analysis, correlation with molar mass (R²=0.7-0.9) and collision cross section (R²=0.8-0.9) [27] |

| Empirical Scales | Experimental measurements (spectroscopy, partitioning, calorimetry) | Abraham parameters, Kamlet-Taft parameters, Gutmann DN/AN, Catalan scales | Solvent characterization, partition coefficient prediction, solubility estimation [15] |

| Machine Learning-Oriented | Comprehensive descriptor sets for algorithm training | 1,800+ descriptors from packages like Mordred | Foundation model pre-training, molecular property prediction [28] |

Computational Methodologies for Descriptor Determination

DFT/COSMO Approach for Quantum Chemical Descriptors

The DFT/COSMO methodology represents a cost-effective computational approach for determining theoretical molecular descriptors. The step-by-step protocol involves:

Molecular Geometry Optimization: Begin with initial molecular structure and perform density functional theory calculations to obtain optimized molecular geometry at an appropriate computational level (typically B3LYP/6-31G* or similar basis sets) [15].

COSMO Calculation: Using the optimized geometry, conduct a single-point calculation with the COSMO solvation model to obtain the local screening charge density on the molecular surface [15].

Descriptor Extraction: Process the COSMO output to compute four fundamental descriptors:

- (V_{\text{COSMO}}^*): Calculated from the molecular surface area and volume parameters derived from the COSMO cavity

- (\alpha_{\text{COSMO}}): Determined from the hydrogen bond acidity based on the screening charge densities in hydrogen bond donor regions

- (\beta_{\text{COSMO}}): Derived from the hydrogen bond basicity based on the screening charge densities in hydrogen bond acceptor regions

- (\delta_{\text{COSMO}}): Computed from the variance of screening charge densities in the nonpolar molecular regions [15]

Validation: Compare computed descriptors against established empirical scales for validation, identifying and investigating any significant outliers [15].

This methodology has been successfully applied to sets of 128 non-ionic organic molecules and 47 ions composing ionic liquids, demonstrating good performance in LSER correlations of various solvation-related thermodynamic and kinetic properties including standard vaporization enthalpy, standard hydration enthalpy, air-water partition coefficient, air-IL partition coefficient, and solvent effects on activation Gibbs energy or rate constant of SN1 and SNAr reactions [15].

Topological Descriptor Calculation Methods

The computation of topological descriptors employs several distinct methodological approaches:

Edge Partition Methodology: Deconstruct the molecular graph into constituent edges and classify them based on vertex degrees [27].

Degree Counting Method: Calculate vertex degrees (number of connections) for all atoms in the molecular structure [27].

Analytical Techniques: Apply mathematical formulas specific to each topological index to compute final descriptor values [27].

Theoretical Graph Utilities: Utilize graph theory algorithms to process complex molecular connectivity patterns [27].

These methods have been implemented in QSPR studies of anti-tuberculosis drugs, establishing significant relationships between computed indices and physicochemical properties through regression analysis [27].

Experimental Protocols and Validation Frameworks

QSPR Model Development Protocol

Establishing robust QSPR models requires systematic experimental and computational protocols:

Descriptor Selection and Computation:

- Select appropriate descriptor types based on the target property and molecular dataset

- Compute descriptors using validated methodologies (quantum chemical, topological, or empirical)

- For quantum chemical descriptors, use consistent DFT/COSMO parameters across all molecules [15]

Data Set Curation:

Model Training and Validation:

Outlier Analysis and Model Refinement:

Performance Benchmarking Methods

Rigorous benchmarking ensures the practical utility of descriptor-based prediction models:

Comparison with Established Baselines:

- Evaluate novel descriptor approaches against established methods including Ridge Regression, Random Forest, Multi-Layer Perceptron, and specialized architectures like MODNet and CrabNet [29]

- Assess performance across diverse datasets (AFLOW, Matbench, Materials Project, MoleculeNet) covering electronic, mechanical, thermal, and biological properties [29]

Extrapolation Capability Assessment:

- Test model performance on out-of-distribution (OOD) property values that fall outside the training distribution [29]

- Quantify extrapolative precision as the fraction of true top OOD candidates correctly identified among the model's top predicted OOD candidates [29]

- Measure recall of high-performing candidates, with advanced methods achieving up to 3× improvement in OOD recall compared to baseline approaches [29]

Statistical Validation:

Table 2: Experimental Validation Metrics for Descriptor-Based Prediction Models

| Validation Metric | Calculation Method | Performance Standards | Application Context |

|---|---|---|---|

| Correlation Coefficient (R²) | Linear regression fit of predicted vs. experimental values | R² > 0.8 for good correlation, R² > 0.9 for excellent correlation [15] | Descriptor scale validation against empirical standards [15] |

| Mean Absolute Error (MAE) | Average absolute difference between predicted and experimental values | Lower values indicate better performance; varies by property type [29] | Model benchmarking across multiple datasets [29] |

| Extrapolative Precision | Ratio of correctly predicted top OOD candidates to total predicted top candidates | 1.8× improvement for materials, 1.5× for molecules compared to baselines [29] | OOD property prediction evaluation [29] |

| Recall of High-Performing Candidates | Proportion of true high-value candidates correctly identified | Up to 3× improvement over baseline methods [29] | Virtual screening applications [29] |

Advanced Applications in Drug Discovery and Materials Science

Pharmaceutical QSPR Applications

Molecular descriptors have demonstrated significant utility in pharmaceutical research, particularly in anti-tuberculosis drug development. Reducible topological indices based on molecular degree have established strong correlations with physicochemical properties of TB drugs, with correlation coefficients for molar mass ranging from 0.7 to 0.9 and collision cross section ranging from 0.8 to 0.9 [27]. These QSPR models employed linear, quadratic, and logarithmic regression analysis to establish quantitative relationships between molecular descriptors and properties critical for drug efficacy and delivery [27]. The statistical significance of these correlations was confirmed through p-value and F-test validation across all indices, supporting the robustness of descriptor-based approaches in pharmaceutical design [27].

Machine Learning and Foundation Models

Recent advances have introduced descriptor-based foundation models that leverage large-scale descriptor computation for enhanced molecular property prediction. The CheMeleon model represents a novel approach that pre-trains on deterministic molecular descriptors from the Mordred package, utilizing a Directed Message-Passing Neural Network to predict these descriptors in a noise-free setting [28]. This strategy leverages low-noise molecular descriptors to learn rich molecular representations without relying on noisy experimental data or biased quantum mechanical simulations [28]. When evaluated on 58 benchmark datasets from Polaris and MoleculeAE, CheMeleon achieved a win rate of 79% on Polaris tasks, significantly outperforming baselines like Random Forest (46%), fastprop (39%), and Chemprop (36%), and a 97% win rate on MoleculeAE assays [28]. The t-SNE projection of CheMeleon's learned representations demonstrates effective separation of chemical series, highlighting its capability to capture structural nuances through descriptor-based learning [28].

Out-of-Distribution Property Prediction

Addressing the challenge of predicting property values outside the training distribution represents a critical application of advanced descriptor methodologies. Bilinear Transduction methods have demonstrated remarkable capability in this domain, improving extrapolative precision by 1.8× for materials and 1.5× for molecules while boosting recall of high-performing candidates by up to 3× [29]. This approach leverages analogical input-target relations in training and test sets, enabling generalization beyond the training target support through reparameterization of the prediction problem [29]. Rather than making property value predictions directly from new candidate materials, Bilinear Transduction predicts based on known training examples and the difference in representation space between materials, facilitating more confident extension of predictions into the OOD regime [29].

Research Reagent Solutions: Computational Tools and Databases

Table 3: Essential Research Resources for Descriptor-Based Molecular Property Prediction

| Resource Name | Type | Function/Application | Key Features |

|---|---|---|---|

| ADF/COSMO-RS Module | Software Module | Computation of quantum chemical descriptors using DFT/COSMO approach | Geometry optimization, screening charge density calculation, descriptor extraction [15] |

| Mordred Package | Descriptor Calculation | Generation of 1,800+ molecular descriptors for machine learning | Comprehensive descriptor set, compatibility with ML workflows, deterministic output [28] |

| MatEx Implementation | Algorithm Package | Out-of-distribution property prediction using transductive approaches | Bilinear Transduction method, improved OOD precision (1.8× for materials) [29] |

| AFLOW, Matbench, Materials Project | Materials Databases | Source of experimental and computational property data for model training | High-throughput computational data, diverse material classes, standardized formats [29] |

| MoleculeNet | Molecular Datasets | Curated benchmark datasets for molecular property prediction | SMILES representations, experimental and calculated properties, regression tasks [29] |

| CheMeleon | Foundation Model | Descriptor-based pre-training for molecular property prediction | Directed Message-Passing Neural Network, 79% win rate on Polaris tasks [28] |

Molecular descriptors serve as the fundamental encoding mechanism that translates chemical structures into quantitative numerical representations, enabling the prediction of physicochemical and biological properties through QSPR modeling. The continuous evolution of descriptor methodologies—from empirical parameters to quantum chemical descriptors and topological indices—has significantly expanded our capability to correlate structural features with observable properties. Recent advances in machine learning, particularly descriptor-based foundation models and transductive approaches for out-of-distribution prediction, demonstrate the enduring criticality of well-designed molecular descriptors in chemical research. As descriptor technologies continue to evolve, they will undoubtedly play an increasingly pivotal role in accelerating the discovery of novel pharmaceuticals and advanced materials through computationally driven design.

From Theory to Practice: Methodological Advances and Real-World Applications of Descriptors in Drug Discovery

Descriptor Selection and Generation Workflows in Modern QSPR Studies

Molecular descriptors are the fundamental variables in Quantitative Structure-Property Relationship (QSPR) studies, serving as numerical representations of molecular structures that enable the mathematical modeling of physicochemical properties and biological activities. These descriptors encode critical information about molecular structure, topology, and electronic features, forming the basis for predicting compound behavior without resource-intensive experimental measurements. In modern drug discovery and environmental chemistry, QSPR modeling has established itself as an indispensable tool for compound prioritization, risk assessment, and property prediction [30] [11].

The evolution of descriptor generation has progressed from simple empirical measurements to sophisticated computational algorithms capable of capturing complex molecular interactions. Contemporary QSPR workflows integrate diverse descriptor types with machine learning (ML) algorithms to build predictive models with enhanced accuracy and generalizability [20] [31]. This technical guide examines current methodologies for descriptor selection and generation, emphasizing practical workflows and their applications within modern QSPR frameworks essential for researchers and drug development professionals.

Classification and Types of Molecular Descriptors

Traditional Descriptor Categories

Molecular descriptors are broadly classified into experimental and theoretical types, with theoretical descriptors further subdivided into structural and quantum chemical descriptors [30]. Structural parameters derive from molecular graphs or topology, while quantum chemical descriptors originate from computational chemistry calculations. The pixel of molecular images can even serve as descriptors in advanced applications, demonstrating the field's expanding boundaries [30].

Table 1: Classification of Molecular Descriptors in QSPR Studies

| Descriptor Category | Subcategory | Representative Examples | Calculation Basis |

|---|---|---|---|

| Experimental | Solvation Parameters | Excess molar refraction (E), Dipolarity/polarizability (S), Hydrogen-bond acidity (A), Hydrogen-bond basicity (B/B°), Hexadecane-gas partition constant (L) [32] | Chromatographic retention factors, partition constants, solubility measurements |

| Theoretical | Structural/Topological | McGowan's characteristic volume (V), Wiener Index, Atom-Bond Connectivity indices, Geometric-harmonic-Zagreb descriptors [32] [31] | Molecular structure, atomic coordinates, bond connectivity, topological features |

| Theoretical | Quantum Chemical | Heat of formation, orbital energies, electrostatic potentials [30] | Quantum mechanical calculations (DFT, semi-empirical methods) |

| Theoretical | 3D-Molecular Fields | Comparative Molecular Field Analysis (CoMFA) descriptors [33] | Steric and electrostatic interaction fields |

Specialized Descriptors for Specific Interactions