Molecular Graph Representations for AI: A Comprehensive Guide for Drug Discovery

This article provides a comprehensive overview of molecular graph representations, a cornerstone of modern AI-driven drug discovery.

Molecular Graph Representations for AI: A Comprehensive Guide for Drug Discovery

Abstract

This article provides a comprehensive overview of molecular graph representations, a cornerstone of modern AI-driven drug discovery. Tailored for researchers and drug development professionals, it explores the fundamental principles of representing molecules as graphs of atoms and bonds, detailing advanced methodologies from Graph Neural Networks (GNNs) to multimodal AI agents. The content addresses critical challenges in model optimization and data quality, offers a comparative analysis of different representation techniques, and highlights their transformative applications in real-world tasks like molecular optimization and scaffold hopping. By synthesizing the latest advancements, this guide serves as an essential resource for leveraging AI to navigate chemical space and accelerate therapeutic development.

From Strings to Graphs: The Foundational Shift in Molecular Representation

Molecular representation serves as the foundational step in computational drug discovery, bridging the gap between chemical structures and their biological properties. Traditional representations, particularly Simplified Molecular Input Line Entry System (SMILES) strings and molecular fingerprints, have enabled significant advances in chemical informatics and quantitative structure-activity relationship (QSAR) modeling. However, these methods face inherent limitations in capturing molecular complexity, leading to constrained performance in modern artificial intelligence (AI) applications. This technical review examines the core shortcomings of these traditional approaches, supported by quantitative benchmarks and experimental data, and contextualizes their role within the evolving landscape of molecular graph representations for AI-driven research.

The choice of molecular representation fundamentally shapes the performance and applicability of AI models in drug discovery. Effective representations must translate molecular structures into machine-readable formats that preserve critical chemical information while facilitating efficient computation [1]. For decades, traditional representations like SMILES and molecular fingerprints have served as the workhorses of cheminformatics, powering everything from virtual screening to similarity searching [2] [3].

However, as drug discovery tasks grow more sophisticated, the limitations of these traditional approaches have become increasingly apparent. Modern AI research requires representations that can capture subtle structure-function relationships, support generative tasks, and enable exploration of chemical space beyond the constraints of predefined rules [1]. This review systematically analyzes the technical limitations of SMILES and molecular fingerprints, providing researchers with a comprehensive framework for understanding their place within the broader ecosystem of molecular graph representations.

SMILES Representations: Syntax and Structural Limitations

The Simplified Molecular Input Line Entry System (SMILES) represents molecules as compact ASCII strings through a depth-first traversal of the molecular graph [4]. While SMILES strings are human-readable and computationally lightweight, they suffer from several critical limitations that impact their utility in AI applications.

Technical Limitations of SMILES

Lack of Canonicalization: A single molecule can generate multiple valid SMILES strings (e.g., ethanol can be represented as

CCO,OCC, orC(O)C) [4]. This many-to-one mapping problem introduces unnecessary variance for AI models, requiring canonicalization algorithms that themselves vary across implementations [4].Syntax Sensitivity and Invalidity: SMILES uses a complex grammar with parentheses for branching and numbers for ring closures. AI models, particularly generative models, often produce syntactically invalid strings with unmatched parentheses or ring identifiers [5]. Studies show that even state-of-the-art deep learning models can struggle with SMILES syntax, generating chemically impossible structures [5].

Limited Structural Expressivity: Basic SMILES representations encode molecular connectivity but often lack stereochemical and isotopic information unless specifically extended to "isomeric SMILES" [4]. This makes them inadequate for representing spatial relationships critical to biological activity.

Impact on AI Model Performance

The fundamental disjoint between SMILES' sequential nature and the graph-based reality of molecular structure creates significant challenges for AI applications:

Representation Fragility: Minor syntactic changes in SMILES strings can lead to major structural changes, while structurally similar molecules may have vastly different string representations [5].

Training Inefficiency: Models must learn both chemical principles and SMILES-specific syntax, diverting capacity from learning meaningful structure-property relationships [5].

Generation Limitations: Generative models trained on SMILES often produce high rates of invalid structures, requiring post-hoc validation and filtering [5].

Table 1: Comparative Analysis of SMILES Limitations in AI Applications

| Limitation Category | Technical Description | Impact on AI Models |

|---|---|---|

| Non-canonical Representation | Multiple valid strings per molecule | Increased model complexity, redundant learning |

| Syntax Complexity | Parentheses and ring numbering systems | High invalid generation rates in generative AI |

| Limited Stereochemistry | Basic SMILES lacks 3D configuration | Reduced predictive accuracy for stereosensitive properties |

| Sequential Bias | Depth-first traversal imposes artificial atom ordering | Model performance sensitive to input ordering |

Molecular Fingerprints: Structural and Representational Constraints

Molecular fingerprints encode molecular structures as fixed-length bit vectors, where each bit indicates the presence or absence of specific structural patterns or fragments [6] [2]. Despite their computational efficiency and historical success in similarity searching, fingerprints face significant constraints in modern AI applications.

Taxonomy of Fingerprint Limitations

Predefined Representation Space: Traditional fingerprints like Extended Connectivity Fingerprints (ECFP) and MACCS keys employ predefined structural keys or hashing functions that limit their adaptability [6]. This fixed representation cannot capture molecular features beyond their design parameters, creating a fundamental constraint on their expressiveness [1].

Loss of Structural Granularity: The hashing process in circular fingerprints (e.g., ECFP) can lead to bit collisions, where distinct structural features map to the same bit position [6]. This irreversible information loss hampers model interpretability and precision.

Context Insensitivity: Fingerprints typically encode local substructures without capturing their global context or interrelationships within the molecule [1]. This limits their ability to represent complex molecular properties that emerge from holistic structural arrangements.

Experimental Benchmarking of Fingerprint Limitations

Recent systematic evaluations have quantified these limitations across diverse chemical spaces. A 2024 benchmark study analyzed 20 fingerprinting algorithms across 100,000+ natural products, revealing substantial performance variations [6].

Table 2: Fingerprint Performance Variation Across Chemical Spaces (Adapted from [6])

| Fingerprint Category | Representative Examples | Key Strengths | Key Limitations |

|---|---|---|---|

| Path-Based | Atom Pair, Topological | Captures linear atom pathways | Limited 3D perception |

| Circular | ECFP, FCFP | Excellent for drug-like molecules | Struggles with complex natural products |

| Substructure-Based | MACCS, PubChem | Interpretable, predefined features | Fixed vocabulary limits novelty |

| Pharmacophore-Based | PH2, PH3 | Encodes interaction potential | Reduced structural specificity |

| String-Based | MHFP, LINGO | SMILES-derived, alignment-free | Inherits SMILES limitations |

The study demonstrated that no single fingerprint type consistently outperformed others across all tasks and compound classes [6]. For instance, while ECFP is considered the de facto standard for drug-like molecules, other fingerprints matched or surpassed its performance for natural product bioactivity prediction [6]. This highlights the context-dependent nature of fingerprint efficacy and the risk of suboptimal representation selection.

Experimental Protocols: Benchmarking Representation Limitations

Protocol 1: SMILES Validity Analysis

Objective: Quantify the rate of invalid chemical structure generation by AI models trained on SMILES representations.

Methodology:

- Dataset Preparation: Curate a standardized dataset of molecules (e.g., from ChEMBL or ZINC databases) and generate canonical SMILES representations using RDKit [5].

- Model Training: Train sequence-based generative models (e.g., Transformer, LSTM) on the SMILES dataset using standard architectures and hyperparameters.

- Generation and Validation: Generate novel molecular structures from the trained model and validate chemical correctness using cheminformatics toolkits [5].

- Analysis: Calculate the percentage of syntactically and semantically valid molecules, categorizing errors by type (e.g., valence violations, syntax errors).

Key Findings: Studies implementing this protocol have found that SMILES-based generative models can produce invalid structures in 5-15% of cases, with higher rates for complex molecules [5].

Protocol 2: Fingerprint Similarity-Diversity Disconnect

Objective: Evaluate the effectiveness of molecular fingerprints in capturing functional similarity across structurally diverse compounds.

Methodology:

- Compound Selection: Select a set of known bioactive compounds with diverse scaffolds but similar biological activities (e.g., different kinase inhibitors) [6].

- Similarity Calculation: Compute pairwise molecular similarities using multiple fingerprint types (ECFP, MACCS, Atom Pair, etc.) and Tanimoto coefficients [6].

- Bioactivity Correlation: Measure the correlation between fingerprint-based similarity and actual bioactivity similarity (e.g., IC50 values, target profiles).

- Statistical Analysis: Perform receiver operating characteristic (ROC) analysis to assess fingerprint performance in identifying compounds with similar bioactivity [6].

Key Findings: Fingerprint performance varies significantly across target classes and compound structural types, with circular fingerprints generally outperforming path-based fingerprints for bioactivity prediction, but with notable exceptions for complex natural products [6].

Table 3: Essential Software and Resources for Molecular Representation Research

| Resource Name | Type | Primary Function | Application Context |

|---|---|---|---|

| RDKit | Open-source Cheminformatics | Molecular descriptor and fingerprint calculation | Broad-purpose molecular representation and manipulation [2] |

| Open Babel | Format Conversion Tool | Supports 146+ molecular file formats | Interconversion between representation formats [2] |

| Chemistry Development Kit (CDK) | Java-based Library | Generates 275+ molecular descriptors | Algorithmic implementation of representation methods [2] |

| PaDEL | Descriptor Calculation | Generates 1,875 descriptors and 12 fingerprints | High-throughput descriptor calculation for QSAR [2] |

| t-SMILES | Fragment-based Representation | Converts molecules to tree-based SMILES strings | Advanced string-based representation research [5] |

Visualizing the Representation Landscape

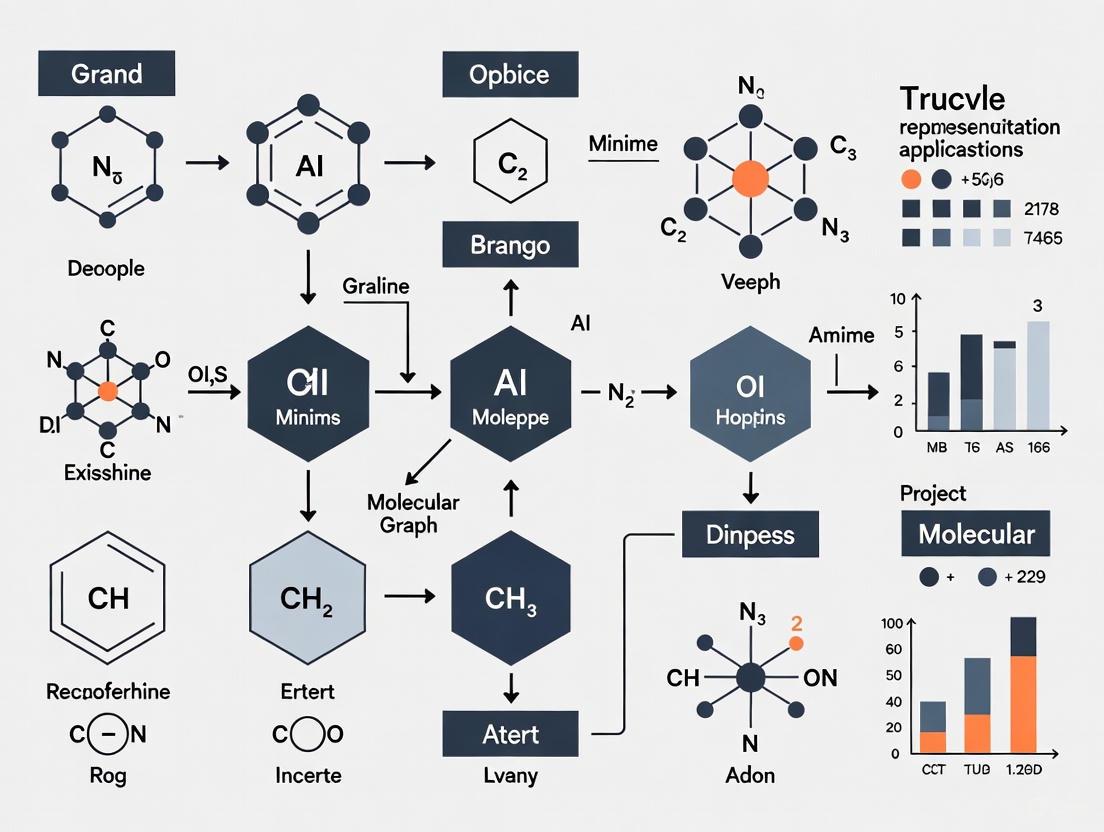

The following diagram illustrates the conceptual relationship between different molecular representation approaches and their positions in the trade-off between structural fidelity and computational efficiency:

Molecular Representation Taxonomy

The experimental workflow for benchmarking representation limitations typically follows this standardized process:

Benchmarking Experimental Workflow

Traditional molecular representations have undeniably advanced computational chemistry and drug discovery, but their limitations in structural expressivity, adaptability, and suitability for modern AI applications are increasingly apparent. SMILES representations struggle with syntactic validity and sequential bias, while molecular fingerprints face constraints from predefined feature spaces and irreversible information loss.

The future of molecular representation lies in approaches that transcend these limitations—learned representations that capture molecular features directly from data, graph-based encodings that preserve native structural relationships, and multimodal frameworks that integrate complementary perspectives [1] [5]. As AI continues to transform drug discovery, the evolution of molecular representations will remain fundamental to unlocking new frontiers in chemical space exploration and predictive modeling.

Why Graphs? Representing Molecules as Nodes (Atoms) and Edges (Bonds)

In AI-driven drug discovery, the representation of a molecule is a foundational step that bridges its chemical structure with the prediction of its biological activity and properties. Traditional methods, such as Simplified Molecular-Input Line-Entry System (SMILES) strings, encode molecular structures into linear sequences of characters [1]. While simple and compact, these string-based representations possess significant limitations for artificial intelligence applications. They can struggle to capture the complex, non-linear topology of a molecule, and small changes in the string can correspond to large, meaningful changes in the 3D structure, leading to instability in model predictions [1] [7].

Graph-based representations overcome these limitations by providing a natural and unambiguous model of molecular structure. In this paradigm, a molecule is represented as an undirected graph ( G = (V, E) ), where the set of nodes ( V ) corresponds to atoms, and the set of edges ( E ) corresponds to the chemical bonds between them [7]. This structure natively preserves the relational information and functional substructures within the molecule, making it inherently more suitable for modern deep-learning architectures, particularly Graph Neural Networks (GNNs) [1] [7]. The shift from rule-based, predefined representations to data-driven, graph-based learning represents a cornerstone of modern computational chemistry and drug design [1].

Comparative Analysis of Molecular Representation Methods

The evolution of molecular representation has progressed from manual feature engineering to learned, structural representations. The table below summarizes the core characteristics of these approaches.

Table 1: Comparison of Molecular Representation Methods

| Representation Type | Key Examples | Advantages | Limitations | Suitability for AI Models |

|---|---|---|---|---|

| String-Based | SMILES, SELFIES, IUPAC [1] | Compact, human-readable, simple to generate [1]. | Does not inherently capture molecular topology or spatial relationships; small string changes can lead to large structural changes [1] [7]. | Moderate; can be processed by NLP models (e.g., Transformers) but may not optimally capture structural nuances [1]. |

| Molecular Fingerprints | Extended-Connectivity Fingerprints (ECFPs), MACCS Keys [1] [7] | Computationally efficient, fixed-length, effective for similarity search and QSAR [1]. | Loss of positional and structural information; limited to pre-defined or circular substructures, hampering novel structure discovery [7]. | High for traditional machine learning (e.g., Random Forests, SVMs); lower for deep learning that requires structural data. |

| Graph-Based | Molecular Graphs (Nodes/Edges) [7] | Natively preserves structural and topological information; enables end-to-end learning without manual feature engineering [7]. | Higher computational complexity for graph processing; requires specialized model architectures like GNNs [7]. | Very High; the native input format for Graph Neural Networks, allowing for direct learning on molecular structure. |

Technical Deep Dive: Graph Neural Networks for Molecules

Core Architecture and Message Passing

Graph Neural Networks are a class of deep learning models designed to operate directly on graph data. In the context of molecules, GNNs learn latent representations by aggregating information from a node's local neighborhood through a process called message passing [7].

In a typical message-passing layer, each node's feature vector is updated based on its own current state and the aggregated states of its neighboring nodes connected by edges. This can be summarized in two steps:

- Message Passing: For each node ( i ), a message is computed from each of its neighbors ( j \in \mathcal{N}(i) ).

- Feature Update: Node ( i ) aggregates all messages from its neighbors and updates its own feature vector.

This process allows each atom to incorporate information from its immediate chemical environment, and by stacking multiple GNN layers, the model can capture increasingly complex, long-range interactions within the molecule.

Enhanced Node and Edge Feature Engineering

The performance of a GNN is heavily dependent on the initial features assigned to nodes (atoms) and edges (bonds). Advanced implementations move beyond basic atom symbols to incorporate richer, chemically-aware features.

For node features, algorithms inspired by Extended-Connectivity Fingerprints (ECFPs) can be used to create circular atomic features that encode both the atom itself and its surrounding chemical context [7]. These features often include the seven Daylight atomic invariants: number of immediate non-hydrogen neighbors, valence minus hydrogens, atomic number, atomic mass, atomic charge, number of attached hydrogens, and aromaticity [7]. This process iteratively incorporates information from an atom's ( r )-hop neighbors, creating a unique identifier that captures the local substructure.

For edge features, chemical bond types (single, double, triple, aromatic) are incorporated into the graph convolutional layers, allowing the model to distinguish between different bond strengths and electronic properties [7].

Table 2: Key Research Reagents and Computational Tools for Molecular GNNs

| Resource Name | Type | Primary Function in Research | Application in Experiments |

|---|---|---|---|

| RDKit [7] | Open-Source Cheminformatics Library | Converts SMILES strings into molecular graph objects; calculates molecular descriptors and fingerprints. | Used for data preprocessing to generate graph-structured inputs from chemical databases. |

| PubChem [7] | Chemical Database | Source for drug SMILES vectors and associated biological assay data. | Provides the raw molecular data (e.g., 223 drugs in XGDP study) for model training and validation [7]. |

| GDSC Database [7] | Pharmacogenomics Database | Provides drug response levels (e.g., IC50 values) for drugs across cancer cell lines. | Serves as the source of ground-truth labels for supervised learning tasks in drug response prediction [7]. |

| CCLE [7] | Genomics Database | Provides gene expression profiles for cancer cell lines. | Used as complementary input data (e.g., processed by a CNN) in multi-modal prediction frameworks like XGDP [7]. |

| USPTO [8] | Chemical Reaction Dataset | Extensive dataset of reactions refined from U.S. patents. | Used for training and evaluating models on molecular reaction prediction tasks [8]. |

Experimental Protocol for Drug Response Prediction with GNNs

The eXplainable Graph-based Drug response Prediction (XGDP) framework demonstrates a detailed methodology for applying GNNs to a critical task in drug discovery [7].

1. Data Acquisition and Preprocessing:

- Drug Data: Acquire drug names from the GDSC database. Retrieve corresponding SMILES strings from PubChem and use RDKit to convert them into molecular graphs [7].

- Cell Line Data: Obtain gene expression data for the corresponding cancer cell lines from the Cancer Cell Line Encyclopedia (CCLE) [7].

- Response Data: Collect drug response levels, typically in IC50 format, from GDSC.

- Data Integration: Merge datasets, resulting in a final data matrix (e.g., 133,212 drug-cell line pairs). To prevent overfitting, reduce the dimensionality of gene expression profiles by leveraging landmark genes (e.g., 956 genes) defined in the LINCS L1000 project [7].

2. Model Architecture and Training:

- GNN Module: Processes the molecular graph of the drug. The model uses a GNN with enhanced circular atomic features as node features and bond types as edge features to learn a latent representation of the drug [7].

- CNN Module: Processes the gene expression vector of the cell line using a Convolutional Neural Network to learn a latent representation of the cellular context [7].

- Integration and Prediction: The latent features from the GNN and CNN are integrated using a cross-attention mechanism. The combined representation is fed into a final prediction layer to estimate the drug response level [7].

- Model Interpretation: Apply explainable AI techniques such as GNNExplainer and Integrated Gradients to interpret the model's predictions. This identifies salient functional groups in the drug and significant genes in the cancer cell line, thereby revealing potential mechanisms of action [7].

The following diagram illustrates the end-to-end XGDP workflow.

Advanced Research: Hierarchical and Multimodal Representations

Current research is pushing the boundaries of molecular graph representation beyond flat node-edge structures. A significant advancement is the exploration of hierarchical graph representations, which capture molecular information at multiple levels of granularity—atomic, functional group (motif), and the entire graph level [8]. Studies reveal that different biochemical tasks benefit from different levels of feature abstraction. For instance, while graph-level features might suffice for property prediction, motif-level features can be crucial for tasks like molecular description generation [8]. This finding indicates that current multimodal large language models (LLMs) that use only a single level of graph features may lack a comprehensive understanding of the molecule [8].

Another frontier is the integration of molecular graphs with other data modalities, such as textual knowledge from scientific literature, to create powerful multimodal models. These models, often built on architectures like LLaVA, use a graph encoder to process the molecular structure and a projector to align the graph features with the embedding space of a large LLM [8]. This allows the model to leverage the vast world knowledge of the LLM to solve complex chemical challenges, such as predicting reaction outcomes and generating rich molecular descriptions [8].

The following diagram outlines the architecture of a hierarchical, multimodal molecular LLM.

The representation of molecules as graphs of atoms and bonds has emerged as a powerful and natural paradigm for AI research in drug discovery. By natively encoding structural topology, graph representations enable Graph Neural Networks and other advanced models to learn complex structure-property relationships directly from data, surpassing the capabilities of traditional string-based and fingerprint-based methods. The field continues to evolve rapidly, with hierarchical and multimodal approaches offering a path toward more comprehensive molecular AI systems. These advancements promise to significantly accelerate tasks such as drug repurposing, scaffold hopping, and novel drug design, ultimately enhancing the efficiency and precision of therapeutic development.

Molecular Descriptors, Scaffold Hopping, and Chemical Space

Molecular representations, or descriptors, are the foundational, computable definitions of chemical structures that enable machines to interpret, compare, and design molecules. In the context of artificial intelligence (AI) for drug discovery, the choice of molecular representation directly controls a model's ability to navigate chemical space—the vast, multi-dimensional universe of all possible molecules. A core application enabled by effective representations is scaffold hopping, the practice of identifying novel molecular backbones that retain a desired biological activity. This technical guide explores the critical interplay between these three concepts, framing them within a broader thesis on molecular graph representations for AI research. We detail how modern, data-driven descriptors are surpassing traditional fingerprints, providing methodologies for key experiments, and offering a toolkit for researchers to advance their exploratory campaigns.

Molecular Descriptors: The Language of Molecules in Silico

Molecular descriptors translate a molecule's structure into a numerical or symbolic format that can be processed by computational models. They can be categorized by the structural information they encode, which in turn dictates their suitability for specific tasks like property prediction or generative design.

Table 1: Categorization of Key Molecular Descriptors and Representations

| Descriptor Category | Representative Examples | Dimensionality | Key Features Encoded | Primary Applications | Key Strengths | Key Limitations |

|---|---|---|---|---|---|---|

| String-Based | SMILES, SELFIES [9] | 1D | Atom and bond sequence, branching, rings | Molecular generation, database storage | Compact, human-readable | Complex grammar (SMILES); may not explicitly capture complex topology |

| 2D Structural Fingerprints | ECFP, MACCS [10] | 2D | Presence of predefined substructures or atom environments | Virtual screening, similarity search | Fast calculation, interpretable fragments | Hand-crafted features; limited scaffold-hopping potential [10] |

| 2D Graph-Based | Atom Graph, Group Graph [11] | 2D | Atoms (nodes) and bonds (edges) | Property prediction, QSAR/QSPR | Unambiguous structure; preserves connectivity | Can overlook important functional substructures |

| 3D Geometry-Based | WHALES [10], WHIM [10] | 3D | Molecular shape, conformation, partial charge distribution | Scaffold hopping, bioactivity prediction | Encodes pharmacophoric and shape information | Dependent on 3D conformation generation |

| Substructure-Level Graph | Group Graph [11], Junction Tree | 2.5D | Functional groups or substructures (nodes) and their connections | Interpretable QSAR, lead optimization | Enhanced interpretability and efficiency [11] | Requires robust fragmentation rules |

The evolution of descriptors is moving towards more holistic and deep learning-derived representations. For instance, the Weighted Holistic Atom Localization and Entity Shape (WHALES) descriptors capture 3D molecular shape and charge distribution simultaneously, showing superior scaffold-hopping ability in benchmark studies [10]. Concurrently, graph-based representations have become the backbone for modern graph neural networks (GNNs). Innovations like the Group Graph decompose molecules into meaningful substructures (e.g., functional groups, aromatic rings), creating a graph where nodes are substructures and edges are their connections. This representation has been shown to retain molecular structural features with minimal information loss while offering improved interpretability and efficiency in property prediction tasks compared to atom-level graphs [11].

Scaffold Hopping: The Search for Novel Chemotypes

Scaffold hopping is a central medicinal chemistry strategy aimed at discovering novel molecular backbones (scaffolds) that retain or improve the biological activity of a reference compound. This is crucial for exploring uncharted chemical space, improving drug-like properties, and navigating intellectual property landscapes [10] [12]. The success of a scaffold hop often depends on maintaining similar three-dimensional (3D) topology and pharmacophore features, even while the two-dimensional (2D) connectivity of atoms differs significantly.

Experimental Protocols for Scaffold Hopping Evaluation

To rigorously assess the performance of scaffold-hopping methods, researchers typically employ the following methodological frameworks.

Protocol 1: Retrospective Virtual Screening Benchmark

This protocol evaluates a descriptor's ability to identify known actives with diverse scaffolds from a large compound library [10].

- Data Curation: Extract a set of biologically tested compounds from a database like ChEMBL [10] [12]. Filter for a specific protein target with a sufficient number of annotated active compounds (e.g., IC/EC50, Kd/Ki < 1 μM).

- Scaffold Annotation: Apply the Bemis and Murcko (BM) method to define the core scaffold of each molecule [12].

- Similarity Searching: For each active molecule used as a query, perform a similarity search against the entire compound library using the molecular descriptor under investigation (e.g., WHALES, ECFP).

- Performance Metric Calculation: Analyze the top 5% of the ranked list. The key metric is the Scaffold Diversity of Actives (SDA%), calculated as:

- SDA% = (ns / na) * 100

- where

nsis the number of unique BM scaffolds identified, andnais the total number of actives retrieved in the top 5% [10]. A higher SDA% indicates a better scaffold-hopping ability, as it retrieves many active compounds with few redundant scaffolds.

Protocol 2: Construction of Scaffold Hopping Pairs for Model Training

This protocol, used for supervised deep learning models like DeepHop, involves creating a high-quality dataset of matched molecular pairs for model training [12].

- Data Source and Preprocessing: Process a public bioactivity database (e.g., ChEMBL). Filter for a target family of interest (e.g., kinases). Normalize molecules using RDKit (remove salts, neutralize charges).

- Virtual Profiling: Train a robust quantitative structure-activity relationship (QSAR) model (e.g., a multi-task deep neural network) on the bioactivity data to predict pChEMBL values for all compounds accurately.

- Pair Selection with Similarity Constraints: Identify pairs of compounds (X, Y) meeting strict criteria for a successful hop:

- Bioactivity Improvement: pChEMBL value of compound Y is significantly higher (e.g., ≥ 1 unit) than compound X for a shared protein target Z [12].

- 2D Dissimilarity: The Tanimoto similarity of their BM scaffold Morgan fingerprints is low (e.g., ≤ 0.6) [12].

- 3D Similarity: Their shape and pharmacophoric feature similarity (e.g., SC score) is high (e.g., ≥ 0.6) [12].

- Model Training: Use the resulting pairs ((X, Y) | Z) to train a molecule-to-molecule translation model, such as a multimodal transformer, to generate hopped structure Y from input X and target Z.

Key Research Findings and Comparative Performance

Table 2: Comparative Performance of Selected Scaffold-Hopping Methods

| Method Name | Descriptor / Approach Type | Key Performance Metric | Reported Result | Reference |

|---|---|---|---|---|

| WHALES | 3D Holistic Descriptors | SDA% in retrospective screening (30,000 compounds, 182 targets) | Outperformed 7 state-of-the-art descriptors in 89% of targets | [10] |

| DeepHop | Multimodal Transformer (3D structure & protein sequence) | Percentage of generated molecules with improved bioactivity, high 3D similarity, & low 2D similarity | ~70% (1.9x higher than other deep learning and rule-based methods) | [12] |

| Group Graph (GIN) | Substructure-level Graph Neural Network | Accuracy in molecular property prediction | Higher accuracy and ~30% faster runtime than atom-level graph models | [11] |

The following workflow diagram synthesizes the key steps of the prospective scaffold-hopping process as demonstrated by WHALES descriptors for discovering novel RXR modulators [10].

Navigating the Chemical Space

Chemical space is a conceptual framework where each point represents a unique molecule, positioned based on its physicochemical properties and structural features. The objective of computational drug discovery is to efficiently navigate this vast, high-dimensional space to locate regions rich in molecules with desirable bioactivity and drug-like properties. Molecular descriptors serve as the coordinates within this space.

The choice of representation profoundly influences the map of chemical space. Fingerprint-based representations create a space where molecules with similar substructures are clustered, while 3D shape-based descriptors like WHALES create a topology where molecules with similar shapes and pharmacophores are neighbors, enabling the identification of structurally diverse but functionally similar compounds—the very definition of a successful scaffold hop [10]. AI-driven generative models, particularly those using robust string representations like SELFIES or graph-based approaches, are now capable of performing a more exhaustive exploration of this space. SELFIES, based on a formal grammar, guarantees that every random string corresponds to a valid molecular graph, making it exceptionally powerful for de novo molecular design using generative AI, genetic algorithms, and combinatorial approaches without generating invalid structures [9].

Table 3: Key Software and Data Resources for Molecular Representation and Scaffold Hopping

| Tool / Resource Name | Type | Primary Function in Research | Relevance to Field |

|---|---|---|---|

| RDKit | Open-Source Cheminformatics Library | Molecule normalization, 2D/3D conformation generation, fingerprint calculation, scaffold fragmentation | Foundational toolkit for preprocessing, descriptor calculation, and model input preparation [12] [11] |

| WHALES Descriptors | Molecular Descriptor Software | Calculation of 3D holistic descriptors for similarity searching | A specialized tool for scaffold hopping, available via published code from research institutions [10] [13] |

| SELFIES | Molecular String Representation | 100% robust string-based representation for molecular generation | Enables random exploration and AI-driven generative models without syntactic or semantic errors [9] |

| ChEMBL | Bioactivity Database | Source of curated, publicly available bioactivity data for training and benchmarking | Provides the ground truth data for constructing scaffold-hopping pairs and validating methods [10] [12] |

| DeepHop Model | Deep Learning Framework (Multimodal Transformer) | Target-aware molecule-to-molecule translation for scaffold hopping | Represents the state-of-the-art in supervised, target-aware scaffold generation [12] |

| Group Graph Representation | Substructure-Level Graph Model | Building interpretable, efficient graph neural networks for property prediction | A modern molecular representation that balances performance, efficiency, and interpretability [11] |

The synergy between advanced molecular descriptors, sophisticated scaffold-hopping algorithms, and a comprehensive understanding of chemical space is driving a paradigm shift in AI-assisted drug discovery. The transition from traditional, hand-crafted fingerprints to data-driven, holistic 3D descriptors and deep learning-optimized graph representations is enhancing our ability to traverse chemical space creatively and efficiently. As evidenced by the methodologies and results presented, these tools are not merely theoretical but are yielding experimentally validated, novel chemotypes. For researchers, the ongoing challenge is to select and develop representations that best capture the complex physical and topological determinants of bioactivity for their specific application, thereby accelerating the discovery of next-generation therapeutics.

The Role of AI in Transitioning from Rule-Based to Data-Driven Representations

The field of molecular sciences is undergoing a profound transformation, moving from traditional, human-engineered representations to sophisticated, data-driven models powered by artificial intelligence (AI). This paradigm shift is revolutionizing how researchers represent, analyze, and design molecular structures for drug discovery and materials science. Rule-based systems have long served as the foundation of computational chemistry, relying on explicit domain knowledge encoded in the form of logical rules, thresholds, or predefined decision trees [14]. These systems offer high interpretability, deterministic behavior, and ease of implementation in stable environments, making them ideal for regulated industries and safety-critical applications [14]. However, they face significant challenges with scalability, adaptability, and performance in complex or evolving contexts where manual rule creation becomes impractical [14].

In contrast, data-driven approaches leverage machine learning (ML) and deep learning (DL) to automatically learn patterns and relationships from vast molecular datasets. These AI-powered methods excel at detecting hidden anomalies, enabling predictive maintenance, and dynamically adapting to new conditions without explicit programming [14]. The integration of AI has been particularly transformative in molecular representation learning, catalyzing a shift from reliance on manually engineered descriptors to the automated extraction of features using deep learning [15]. This transition enables data-driven predictions of molecular properties, inverse design of compounds, and accelerated discovery of chemical and crystalline materials—including organic molecules, inorganic solids, and catalytic systems [15].

Historical Foundations: Rule-Based Molecular Representations

Traditional Approaches and Their Limitations

Traditional molecular representation methods have laid a strong foundation for computational approaches in drug discovery, primarily relying on string-based formats and predefined rules derived from chemical and physical properties [1]. The most prominent rule-based representations include:

Simplified Molecular Input Line Entry System (SMILES): Introduced in 1988, SMILES translates complex molecular structures into linear strings that can be easily processed by computer algorithms [1] [15]. Despite improvements through versions like CXSMILES and SMARTS, SMILES has inherent limitations in capturing the full complexity of molecular interactions [1].

Molecular Fingerprints: Techniques like extended-connectivity fingerprints (ECFP) encode substructural information as binary strings or numerical vectors, enabling rapid similarity comparisons and virtual screening of large chemical libraries [1]. These representations are computationally efficient and concise, making them valuable for quantitative structure-activity relationship (QSAR) modeling [1].

Molecular Descriptors: These quantify physical or chemical properties of molecules, such as molecular weight, hydrophobicity, or topological indices, providing interpretable features for machine learning models [1].

The advantages and limitations of these rule-based approaches are summarized in Table 1 below.

Table 1: Comparative Analysis of Rule-Based and Data-Driven Molecular Representations

| Feature | Rule-Based Systems | Data-Driven Systems |

|---|---|---|

| Foundation | Explicit domain knowledge, physical laws, expert systems [14] | Machine learning, deep learning, pattern recognition from data [14] |

| Interpretability | High - every decision can be explained by corresponding rules [14] | Variable - often considered "black boxes" with explainability challenges [14] [16] |

| Adaptability | Low - requires manual intervention to modify rules for new scenarios [14] | High - automatically adapts to new data and patterns [14] |

| Data Dependency | Low - works with limited data using prior knowledge [14] | High - requires substantial training datasets [14] [16] |

| Performance in Complex Scenarios | Limited - struggles with multivariate, non-linear relationships [14] | Excellent - excels at detecting complex, hidden patterns [14] |

| Coverage | Limited to predefined rules and scenarios [14] | Broad - can generalize to novel situations [14] |

| Implementation Complexity | Low to moderate in well-understood contexts [14] | High - requires expertise, computational resources, and infrastructure [14] |

| Ideal Use Cases | Regulated industries, safety-critical applications, contexts where transparency is crucial [14] | Complex molecular systems, predictive modeling, exploration of novel chemical spaces [1] |

The Knowledge Acquisition Bottleneck

Rule-based systems face significant scalability challenges as system complexity increases. Managing hundreds of interdependent rules becomes increasingly difficult, and updating systems requires manual intervention by experts, risking the introduction of errors or inconsistencies [14]. This "knowledge acquisition bottleneck" – the process of extracting and formalizing tacit knowledge from domain experts – presents a fundamental limitation for rule-based approaches in dynamic and complex molecular environments [14].

The Rise of Data-Driven AI Approaches

Graph Neural Networks for Molecular Representation

Graph Neural Networks (GNNs) have emerged as a powerful framework for molecular representation, naturally aligning with the graph structure of molecules where atoms represent nodes and chemical bonds serve as edges [17] [16]. Unlike traditional representations that rely on predefined features, GNNs learn directly from molecular topology, capturing both local and global interactions within molecular structures [17]. Several specialized GNN architectures have demonstrated remarkable success in molecular property prediction:

Graph Isomorphism Networks (GIN): Utilize powerful aggregation functions to capture local substructures effectively, though they are typically limited to 2D topologies without spatial knowledge of molecular geometry [17].

Equivariant GNNs (EGNN): Incorporate 3D coordinates into the learning process while preserving Euclidean symmetries (translation, rotation, and reflection), making them particularly valuable for quantum chemistry tasks where geometric conformation significantly influences molecular behavior [17].

Graph Transformers: Models like Graphormer employ global attention mechanisms that enable scalability to large datasets and long-range dependency modeling, even without explicit 3D information [17].

Recent benchmarking studies have demonstrated the superior performance of these GNN architectures compared to traditional fingerprint-based machine learning models. As shown in Table 2, each architecture excels in different molecular prediction tasks based on its structural inductive biases.

Table 2: Performance Benchmarking of GNN Architectures on Molecular Property Prediction Tasks

| Model Architecture | log Kow Prediction (MAE) | log Kaw Prediction (MAE) | log K_d Prediction (MAE) | OGB-MolHIV (ROC-AUC) |

|---|---|---|---|---|

| GIN | 0.24 | 0.31 | 0.28 | 0.781 |

| EGNN | 0.21 | 0.25 | 0.22 | 0.793 |

| Graphormer | 0.18 | 0.27 | 0.24 | 0.807 |

Performance data adapted from comparative analysis of GNN architectures on molecular datasets [17]. Lower MAE values indicate better performance for regression tasks; higher ROC-AUC values indicate better performance for classification.

Kolmogorov-Arnold Networks (KANs) and Graph Integration

A recent breakthrough in molecular representation comes from the integration of Kolmogorov-Arnold Networks (KANs) with graph neural networks [18]. Grounded in the Kolmogorov-Arnold representation theorem, KANs adopt learnable univariate functions on edges instead of fixed activation functions on nodes, enabling more accurate and interpretable modeling of complex functions [18]. The innovative KA-GNN framework integrates Fourier-based KAN modules into all three core components of GNNs: node embedding, message passing, and readout [18].

The Fourier-based formulation enables effective capture of both low-frequency and high-frequency structural patterns in graphs, enhancing the expressiveness of feature embedding and message aggregation [18]. Theoretical analysis demonstrates that this Fourier-KAN architecture possesses strong approximation capabilities, providing rigorous mathematical foundations for its expressive power [18]. Experimental results across seven molecular benchmarks show that KA-GNNs consistently outperform conventional GNNs in both prediction accuracy and computational efficiency, while also offering improved interpretability by highlighting chemically meaningful substructures [18].

Diagram 1: KA-GNN Architecture integrating Kolmogorov-Arnold Networks with Graph Neural Networks for molecular property prediction. The Fourier-based KAN layer enhances all three core GNN components [18].

Experimental Protocols and Methodologies

Benchmarking GNN Architectures for Molecular Property Prediction

Comprehensive evaluation of GNN architectures follows standardized experimental protocols to ensure fair comparison and reproducibility. The typical workflow involves:

Dataset Preparation and Preprocessing:

- Selection of diverse molecular datasets representing different prediction tasks (QM9 for quantum properties, ZINC for drug-like molecules, OGB-MolHIV for bioactivity classification) [17]

- Molecular graph construction with atoms as nodes and bonds as edges

- Node feature normalization to a 0-1 range using atom types

- Dataset splitting with 80% for training and 20% for testing [17]

Model Training Configuration:

- Implementation using deep learning frameworks (PyTorch or TensorFlow)

- Optimization with Adam optimizer and appropriate learning rate scheduling

- Loss function selection based on task type (Mean Squared Error for regression, Cross-Entropy for classification)

- Regularization techniques including dropout and weight decay to prevent overfitting

- Early stopping based on validation performance

Evaluation Metrics:

- Regression tasks: Mean Absolute Error (MAE) and Root Mean Squared Error (RMSE)

- Classification tasks: ROC-AUC (Area Under the Receiver Operating Characteristic Curve)

KA-GNN Implementation Framework

The implementation of Kolmogorov-Arnold Graph Neural Networks requires specific methodological considerations:

Fourier-KAN Layer Construction:

- Replacement of standard MLP transformations with Fourier-based KAN modules

- Implementation of learnable univariate functions using Fourier series basis

- Configuration of harmonic components for optimal frequency pattern capture

Architectural Variants:

- KA-Graph Convolutional Networks (KA-GCN): Integration of KAN modules into GCN backbones for node embedding and feature updating via residual KANs [18]

- KA-Graph Attention Networks (KA-GAT): Incorporation of edge embeddings initialized using KAN layers, with attention mechanisms enhanced by KAN transformations [18]

Experimental Validation:

- Benchmarking against conventional GNNs across multiple molecular datasets

- Assessment of computational efficiency through parameter counts and training time measurements

- Interpretability analysis via attention visualization and important substructure identification

Successful implementation of AI-driven molecular representation requires access to specialized computational resources, software frameworks, and datasets. Table 3 outlines the essential "research reagents" for experiments in this field.

Table 3: Essential Research Reagents and Resources for AI-Driven Molecular Representation

| Resource Category | Specific Tools & Platforms | Function/Purpose |

|---|---|---|

| Deep Learning Frameworks | PyTorch, TensorFlow, JAX | Model implementation, training, and experimentation [18] [17] |

| Molecular Datasets | QM9, ZINC, OGB-MolHIV, MoleculeNet | Benchmarking and evaluation of molecular property prediction models [17] |

| Cheminformatics Libraries | RDKit, OpenBabel | Molecular graph construction, feature computation, and preprocessing [17] |

| GNN Implementation Libraries | PyTorch Geometric, Deep Graph Library | Prebuilt GNN layers and graph operations for rapid prototyping [18] [17] |

| Specialized Architectures | KA-GNN, Graphormer, EGNN implementations | Advanced model architectures for specific molecular tasks [18] [17] |

| High-Performance Computing | GPU clusters (NVIDIA A100, H100), Cloud computing platforms (AWS, Azure) | Training complex models on large molecular datasets [19] |

| Visualization Tools | Matplotlib, Seaborn, Plotly | Performance analysis and model interpretability visualization [17] |

Diagram 2: Experimental workflow for AI-driven molecular property prediction, encompassing data preparation, model training, and deployment phases.

Future Directions and Emerging Trends

The transition from rule-based to data-driven molecular representations continues to evolve with several promising research directions:

Multi-Modal Molecular Representation: Future frameworks will increasingly integrate multiple representation modalities, including molecular graphs, SMILES strings, 3D geometric information, and quantum mechanical properties [15]. This hybrid approach aims to generate more comprehensive and nuanced molecular representations that capture complex molecular interactions more effectively [15].

Self-Supervised Learning and Pretraining: Techniques that leverage unlabeled molecular data through self-supervised learning (SSL) promise to unearth deeper insights from vast unannotated molecular databases [15]. Approaches like knowledge-guided pre-training of graph transformers integrate domain-specific knowledge to produce robust molecular representations that significantly enhance drug discovery processes [15].

3D-Aware and Equivariant Models: The integration of 3D molecular structures within representation learning frameworks represents a significant advancement beyond traditional 2D graph representations [17] [15]. Methods like 3D Infomax utilize 3D geometries to enhance the predictive performance of GNNs, improving accuracy for geometry-sensitive molecular properties [15].

Explainability and Interpretability: As AI models become more complex, developing methods to interpret their predictions becomes increasingly important for gaining trust from domain experts [18] [16]. Techniques that highlight chemically meaningful substructures and provide transparent reasoning will be essential for widespread adoption in critical applications like drug discovery [18].

The convergence of these advanced AI approaches with traditional computational methods creates a powerful synergistic framework that leverages the strengths of both paradigms. This integration enables researchers to navigate the vast chemical space more efficiently while maintaining the interpretability and reliability required for scientific discovery and therapeutic development [16].

Advanced Architectures and Real-World Applications in Biomedicine

In AI-driven drug discovery, representing a molecule's structure in a format understandable to computers is a foundational challenge. Molecular graph representations have emerged as a powerful solution, explicitly modeling atoms as nodes and bonds as edges [15]. This structure provides a more natural and information-rich encoding of molecular connectivity compared to traditional string-based formats like SMILES (Simplified Molecular-Input Line-Entry System) [1] [15]. The shift from manual descriptor engineering to automated, deep learning-based feature extraction represents a paradigm shift in computational chemistry and materials science, enabling more accurate predictions of molecular properties and the design of novel compounds [15].

Graph Neural Networks (GNNs) form the cornerstone of modern molecular machine learning, capable of directly processing these graph-structured data. Among various GNN architectures, Graph Isomorphism Networks (GIN) are particularly significant due to their high expressive power in distinguishing graph structures, while Variational Autoencoders (VAEs) provide a probabilistic framework for generating novel molecular structures [20] [11]. This technical guide explores these core architectures, their integration, and their practical applications in advancing AI research for drug discovery.

Graph Neural Networks (GNNs)

Architectural Foundations

GNNs are deep learning architectures specifically designed to operate on graph-structured data. They function through a message-passing mechanism where nodes aggregate feature information from their local neighbors, allowing them to capture the complex relational dependencies inherent in molecular structures [21]. In molecular graphs, nodes typically represent atoms with features such as atom type, charge, and hybridization state, while edges represent chemical bonds with features like bond type and conjugation [22].

A crucial property of GNNs in molecular applications is their equivariance to permutations - they produce the same output regardless of how the nodes are ordered, ensuring consistent processing of identical molecular structures represented differently [21]. This framework also exhibits stability to graph deformations and transferability across scales, meaning GNNs trained on smaller graphs can maintain performance when applied to larger molecular systems [21].

Key Variants and Innovations

Several GNN variants have been developed with distinct computational mechanisms:

- Graph Convolutional Networks (GCNs) apply convolutional operations to graph data by performing spectral analysis of graphs or using spatial neighborhood aggregation [18].

- Graph Attention Networks (GATs) incorporate attention mechanisms that assign different importance weights to neighbors during message aggregation [18].

- Kolmogorov-Arnold GNNs (KA-GNNs) represent a recent innovation that integrates Kolmogorov-Arnold networks (KANs) into GNN components, replacing traditional multilayer perceptrons (MLPs) with learnable univariate functions [18]. KA-GNNs using Fourier-series-based functions have demonstrated enhanced capability to capture both low-frequency and high-frequency structural patterns in molecular graphs [18].

Table 1: Performance Comparison of GNN Architectures on Molecular Property Prediction

| Architecture | Key Innovation | Expressivity | Molecular Benchmark Performance | Computational Efficiency |

|---|---|---|---|---|

| GCN [18] | Spectral graph convolutions | Moderate | Strong baseline | High |

| GAT [18] | Attention-based neighbor weighting | Moderate | Improved on complex targets | Moderate |

| GIN [11] | As powerful as WL test | High (Theoretical upper bound) | Superior on structure-sensitive tasks | High |

| KA-GNN [18] | Fourier-based KAN modules | Very High | State-of-the-art across multiple benchmarks | High (30% runtime reduction reported) |

Graph Isomorphism Networks (GIN)

Theoretical Foundation and Expressivity

The Graph Isomorphism Network is a particularly influential GNN architecture distinguished by its theoretical expressivity. GIN is designed to be as powerful as the Weisfeiler-Lehman (WL) graph isomorphism test in distinguishing non-isomorphic graphs [11]. This theoretical foundation makes GIN particularly suitable for molecular applications where subtle structural differences can significantly impact chemical properties.

The key differentiator of GIN lies in its injective aggregation mechanism during message passing. While standard GNNs may struggle to capture subtle structural differences, GIN's architecture ensures distinct node representations for structurally different neighborhoods through a mathematically provable framework [11]. This capability is crucial for molecular tasks where functional group arrangements or stereochemistry dramatically influence bioactivity.

Molecular Applications and Performance

GIN has demonstrated exceptional performance across various molecular learning tasks. In molecular property prediction, GIN-based models consistently achieve state-of-the-art results by effectively capturing the relationship between molecular structure and function [11]. For drug-drug interaction prediction, GIN's ability to model complex relational patterns enables accurate identification of potential interactions between pharmaceutical compounds [11] [22].

Recent advancements have explored specialized molecular representations optimized for GIN architectures. The group graph representation transforms traditional atom-level graphs into substructure-level graphs where nodes represent chemical functional groups or pharmacophores [11]. This approach has shown particular promise, with GIN models using group graphs demonstrating approximately 30% reduction in runtime while maintaining or improving predictive accuracy compared to atom-level graph representations [11].

Variational Autoencoders (VAEs) for Molecular Graphs

Architectural Principles

Variational Autoencoders provide a probabilistic framework for learning latent representations of molecular graphs. Unlike standard autoencoders that learn deterministic encodings, VAEs learn the parameters of a probability distribution representing the input data in a compressed latent space [20] [15]. This approach enables generative modeling by sampling from the learned distribution to produce novel molecular structures.

The VAE architecture consists of an encoder network that maps input molecules to a latent distribution, and a decoder network that reconstructs molecules from points in the latent space. The training objective combines reconstruction loss with a regularization term that encourages the learned distribution to match a prior distribution, typically a standard Gaussian [20]. For molecular graphs, both encoder and decoder are typically implemented using GNNs to handle the graph-structured nature of the data.

Advanced VAE Frameworks for Molecular Design

Recent research has developed specialized VAE architectures addressing challenges in molecular generation:

- Transformer Graph VAE (TGVAE) combines transformers, GNNs, and VAEs to capture complex structural relationships more effectively than string-based models [20] [23]. TGVAE addresses common issues like over-smoothing in GNN training and posterior collapse in VAEs, resulting in more robust training and generation of chemically valid, diverse molecular structures [20].

- Junction Tree VAEs decompose molecules into substructure junction trees, enabling more chemically meaningful generation by operating at the substructure level rather than individual atoms [11].

- Hierarchical VAEs introduce additional hierarchical structure to the latent space, allowing control over molecular generation at multiple scales from atomic arrangements to functional group compositions [11].

Table 2: Comparative Analysis of Molecular VAE Architectures

| Architecture | Representation | Key Innovation | Generation Quality | Diversity |

|---|---|---|---|---|

| Standard Graph VAE [15] | Molecular graph | Probabilistic latent space | Moderate | Moderate |

| Junction Tree VAE [11] | Substructure tree | Hierarchical generation | High validity | Moderate |

| Hierarchical VAE [11] | Multi-scale graph | Multi-level latent space | High | High |

| Transformer Graph VAE [20] | Graph + Sequence | Hybrid architecture | High validity | High |

Integrated Architectures and Experimental Frameworks

Hybrid Model Architectures

The most advanced molecular AI systems integrate multiple architectural paradigms to leverage their complementary strengths:

- TGVAE exemplifies this approach by combining GNNs for structural feature extraction, transformers for sequence modeling, and VAEs for probabilistic generation [20]. This integration enables the model to capture both local atomic interactions and global molecular patterns while maintaining the benefits of latent space exploration.

- Kolmogorov-Arnold GNNs integrate Fourier-based KAN modules into GNN message passing, node embedding, and readout components [18]. This enhancement provides stronger approximation capabilities and improved interpretability by highlighting chemically meaningful substructures through the learned activation functions.

- Multi-modal fusion architectures combine graph representations with other molecular encodings such as SMILES strings, molecular fingerprints, and 3D structural information to create more comprehensive molecular representations [15] [22].

Experimental Protocols and Methodologies

Benchmarking Molecular Representation Learning

Standardized experimental protocols are essential for evaluating molecular representation learning approaches. Key methodological considerations include:

- Dataset Selection and Splitting: Established molecular benchmarks cover diverse chemical properties including quantum mechanical characteristics, physicochemical properties, and biological activity [18] [11]. Appropriate dataset splitting strategies (random, scaffold-based, or time-based) are crucial for assessing generalization capabilities [22].

- Evaluation Metrics: Comprehensive evaluation should include multiple metrics: prediction accuracy (MAE, RMSE, ROC-AUC), computational efficiency (training/inference time, memory usage), and generative performance (validity, uniqueness, novelty, diversity) [18] [20].

- Baseline Comparisons: Rigorous evaluation requires comparison against established baselines including traditional molecular fingerprints, standard GNN architectures, and state-of-the-art methods from recent literature [18] [11].

Model Training and Optimization

Effective training of molecular graph models requires specialized techniques:

- Addressing Oversmoothing: Deep GNNs suffer from oversmoothing where node representations become indistinguishable. Solutions include residual connections, dense connections, and regularization techniques [20].

- Preventing Posterior Collapse: In VAEs, posterior collapse occurs when the latent space fails to learn meaningful representations. Approaches include KL annealing, modifying the training objective, and using more expressive decoder networks [20] [23].

- Self-Supervised Pretraining: Leveraging unlabeled molecular data through pretext tasks such as masked component prediction or contrastive learning significantly improves downstream performance on molecular property prediction tasks [15].

Visualization of Integrated Architecture

The following diagram illustrates the information flow in a hybrid Transformer Graph VAE architecture for molecular generation:

Molecular Generation with Transformer Graph VAE

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Computational Tools for Molecular Graph Research

| Tool/Category | Function | Example Implementations |

|---|---|---|

| Graph Neural Network Frameworks | Implementing GNN architectures | PyTor Geometric, Deep Graph Library (DGL) |

| Molecular Representation Tools | Converting molecules to graph formats | RDKit, OpenBabel |

| Chemical Databases | Sources of molecular structures and properties | PubChem, ChEMBL, ZINC |

| Benchmark Datasets | Standardized evaluation datasets | MoleculeNet, TDC (Therapeutic Data Commons) |

| Specialized Architectures | Reference implementations of advanced models | GraphGPS, GNoME, KA-GNN |

| Analysis and Visualization | Interpreting model predictions and results | ChemPlot, GNNExplainer, Subgraph attention visualization |

Future Directions and Challenges

Despite significant advances, molecular graph representation learning faces several important challenges. Generalization to out-of-distribution compounds remains difficult, with models often struggling when encountering scaffolds different from those in the training data [22]. Improving interpretability is crucial for building trust in AI-driven discoveries and providing meaningful insights to chemists [18] [22]. Data scarcity for specific property endpoints limits model performance, necessitating innovative approaches such as transfer learning and multi-task learning [15].

Promising research directions include 3D-aware graph representations that incorporate spatial molecular geometry [15], physics-informed neural networks that embed fundamental physical principles [15], and cross-modal learning that integrates diverse molecular representations including graphs, sequences, and structural fingerprints [15] [22]. As these architectures continue to evolve, they will further accelerate the discovery of novel therapeutic compounds and materials with tailored properties.

The quest to translate molecular structures into a computer-readable format is a cornerstone of modern computational chemistry and drug discovery. Molecular representations serve as the foundational input for artificial intelligence (AI) models, significantly influencing their performance in predicting molecular properties, designing new drugs, and optimizing lead compounds [1]. While atom-level representations, such as Simplified Molecular-Input Line-Entry System (SMILES) and atom graphs, have been dominant workhorses, they often struggle to explicitly capture important chemical substructures like functional groups or pharmacophores. This limitation can lead to confusing interpretations in quantitative structure-activity relationship (QSAR) studies and a failure to reflect the learned parameters of explainable AI [11].

This whitepaper explores the advancement beyond atom graphs to substructure-level representations, with a particular focus on the novel "group graph" methodology. Framed within a broader thesis on molecular graphs for AI research, we detail how representing molecules as interconnected substructures—rather than as individual atoms—offers enhanced performance, efficiency, and interpretability for AI-driven tasks in scientific research and drug development [11].

The Limitation of Atom-Level and Classical Substructure Representations

Traditional molecular representation methods can be broadly categorized into string-based and graph-based approaches. SMILES is a prime example of a string-based, atom-level representation. While compact and human-readable, SMILES has a complex grammar and often leads to a high rate of invalid molecular generation in AI models [9]. Furthermore, SMILES-based representations can fail to reflect the learned parameters of explainable AI, making them unreliable in interpretability [11].

The atom graph representation overcomes some of these issues by providing a unique and unambiguous representation of molecular structure, where atoms are nodes and bonds are edges [11]. However, like SMILES, it operates at the atomic level, which can obscure the higher-order chemical motifs that are critical to a chemist's understanding of molecular properties and interactions.

Classical substructure-level fingerprints, such as the Extended-Connectivity Fingerprints (ECFP), bridge molecular substructure characteristics with global features but typically do not consider the connections between substructures [11]. While methods like the Substructural Connectivity Fingerprint (SCFP) have demonstrated that adding substructural connections can enhance predictive performance [11], they often lose finer-grained structural information retained in the atom graph. Other substructure graph constructions, such as the substructure junction tree from JTVAE or the functional groups (FGS) graph, have been shown to perform worse than the atom graph in property prediction on their own, indicating a loss of essential molecular structural information [11].

Table 1: Comparison of Molecular Representation Methods

| Representation Type | Examples | Key Advantages | Key Limitations |

|---|---|---|---|

| String-Based (Atom-Level) | SMILES, SELFIES [9] | Compact, human-readable, simple to use. | Complex grammar; high invalid generation rate; poor interpretability. |

| Atom Graph | Molecular Graph | Unambiguous structure; good performance in property prediction. | Obscures important substructures; can be confusing for QSAR. |

| Substructure Fingerprint | ECFP, MACCS | Encodes important substructures; good for similarity search. | Loses structural connectivity information. |

| Advanced Substructure Graph | Junction Tree (JTVAE), FGS Graph | Provides local structural context. | Can perform worse than atom graph; potential information loss. |

| Group Graph | Group Graph (This work) | Retains structural info with minimal loss; high interpretability; efficient. | Relies on predefined fragmentation rules. |

Group Graph: A Novel Substructure-Level Representation

Conceptual Framework and Definition

The group graph is a novel substructure-level molecular representation designed to simultaneously represent molecular local characteristics and global features with minimal information loss [11]. Its core innovation lies in decomposing a molecule into meaningful, non-overlapping chemical substructures, which are then treated as nodes in a new graph. The edges in this graph represent the linkages between these substructures.

This approach offers several conceptual advantages. First, the substructures reflect the diversity and consistency of different molecular datasets, providing a tool for dataset analysis. Second, because all substructures are linked by single bonds and do not share atoms, the group graph holds potential for molecular generation tasks. Finally, like an atom graph, a group graph can be encoded as a node table and adjacency matrix, making it easily adaptable to existing graph-based AI models [11].

Construction Methodology: A Three-Step Protocol

The construction of a group graph follows a systematic, three-step protocol as illustrated in the workflow below.

Step 1: Group Matching

The process begins by identifying all atoms belonging to "active groups" within the molecule using the open-source cheminformatics package RDKit.

- Aromatic Ring Identification: All aromatic atoms bonded to each other are grouped together as aromatic ring substructures due to their distinctive effects on molecular properties [11].

- Broken Functional Group Matching: Traditional functional groups (e.g., ester, amine) are broken into smaller, charged atoms, halogens, and small groups containing only double or triple bonds. For instance, an ester is decomposed into carbonyl and oxygen groups. The atom IDs of these broken functional groups are obtained via pattern matching [11].

- Fatty Carbon Grouping: The remaining atoms not assigned to an active group are clustered. Bonded atoms from these remaining nonactive groups are grouped together as fatty carbon chains (e.g., C, CC, CC(C)C) [11].

The output of this step is a complete list of all atom IDs assigned to specific substructures.

Step 2: Substructure Extraction

Based on the atom IDs from Step 1, the specific substructures (e.g., "N", "O", "C=O", "C1=CC=C2C=CC=CC2=C1") are extracted and added to a substructure vocabulary. Concurrently, the links between these substructures are identified. If two substructures are bonded in the original atom graph, they are considered linked. The specific bonded atom pairs between substructures are recorded as "attachment atom pairs," which will define the edges in the final graph [11].

Step 3: Substructure Linking

The final group graph is assembled by:

- Defining nodes for each extracted substructure.

- Defining edges for each link between substructures.

- Using the features of the attachment atom pairs as the features of the corresponding edges [11].

This resulting graph is a reduced molecular graph that retains structural features with minimal information loss.

The Scientist's Toolkit: Essential Research Reagents

The following table details the key computational tools and datasets required for implementing and experimenting with group graph representations.

Table 2: Key Research Reagents for Group Graph Experiments

| Reagent / Resource | Type | Function in Group Graph Research |

|---|---|---|

| RDKit | Software Library | Open-source cheminformatics used for fundamental tasks like aromaticity detection, pattern matching, and molecular manipulation during group graph construction [11]. |

| Graph Isomorphism Network (GIN) | AI Model | A type of Graph Neural Network considered highly powerful for distinguishing graph structures; used as the primary model to evaluate the performance of the group graph representation in downstream prediction tasks [11]. |

| GDB-17 Dataset | Molecular Dataset | A public dataset containing millions of small, organic molecules used for analyzing the diversity and consistency of the substructure vocabulary generated by the group graph method [11]. |

| BRICS Algorithm | Fragmentation Method | A common rule-based algorithm for fragmenting molecules into retrosynthetically interesting chemical substructures; serves as a benchmark comparison for self-defined fragmentation in group graphs [11]. |

| Dynameomics Database | Simulation Dataset | A large database of protein molecular dynamics simulations; used in related chemical group graph research to validate the representation's utility in analyzing complex biological systems [24]. |

Experimental Analysis and Performance Benchmarking

Quantitative Performance Evaluation

The efficacy of the group graph representation is validated by training a Graph Isomorphism Network (GIN) on the group graph and benchmarking its performance against other representations on standard molecular property prediction tasks and drug-drug interaction prediction.

Table 3: Performance Benchmark of Molecular Representations with GIN

| Molecular Representation | Prediction Accuracy | Computational Efficiency (Runtime) | Interpretability |

|---|---|---|---|

| Group Graph | High | High (~30% faster than atom graph) | High (Direct substructure correlation) |

| Atom Graph | High | Baseline | Medium (Atom-level, can be confusing) |

| Substructure Junction Tree | Lower than Atom Graph | Not explicitly reported | Medium |

| FGS Graph | Lower than Atom Graph | Not explicitly reported | Medium (Functional group level) |

| ECFP Fingerprint | Lower than Graph-based models [11] | High (Precomputed) | Medium (Substructure presence only) |

Experimental results demonstrate that the GIN of the group graph outperforms that of the atom graph and other substructure graphs in predicting molecular properties and drug-drug interactions, even without any pretraining [11]. A key finding is that the group graph achieves this higher accuracy while also being more computationally efficient; the runtime of the GIN model decreases by approximately 30% compared to that of the atom graph [11]. This indicates that the group graph is a simplified yet highly informative molecular representation.

Case Study: Interpretability and Application in Lead Optimization

The group graph's substructure-level nature directly facilitates the interpretation of AI model predictions and guides lead optimization in drug discovery.

A salient application is the interpretation of activity cliffs—where small structural changes lead to large property differences. The group graph helps pinpoint the specific substructural changes responsible. Research shows that in 80% of molecule pairs containing activity cliffs, the importance of different substructures, as captured by the group graph model, changed significantly [11]. This allows researchers to focus on the critical substructures driving potency.

Furthermore, the group graph has been successfully used to predict structural modifications for improving specific properties, such as blood-brain barrier permeability (BBBP) [11]. The model can identify which substructures to modify, add, or remove to enhance the desired property, providing a clear, actionable path for medicinal chemists.

Advanced Applications and Future Directions

Integration with Modern AI Architectures

The field of molecular representation is rapidly evolving with the rise of large language models (LLMs). A recent multimodal approach named Llamole (large language model for molecular discovery) from MIT and the MIT-IBM Watson AI Lab demonstrates the next logical step for representations like the group graph [25]. Llamole integrates a base LLM with graph-based AI modules, using the LLM to interpret natural language queries (e.g., "a molecule that inhibits HIV with a molecular weight of 209") and then automatically switching to graph modules to generate the molecular structure and a synthesis plan [25].

This architecture underscores the power of combining the linguistic strength of LLMs with the chemical precision of graph-based representations. Llamole improved the success rate for generating synthesizable molecules that match user specifications from 5% to 35% compared to text-only LLMs, highlighting multimodality as a key to success [25]. The group graph, with its compact and chemically meaningful structure, is ideally suited for integration into such hybrid frameworks.

Beyond Organic Molecules: Solid-State Materials

Graph-based representations are also being aggressively applied to solid-state materials. The core concept remains: atoms are nodes, and edges represent bonds or interactions. However, crystals introduce periodicity, requiring models to incorporate infinite-range, repeating interactions [26]. Recent graph-based learning frameworks like SchNet and others have been developed specifically to handle the periodic boundary conditions in crystals, showing considerable performance improvement in predicting properties like formation energy and band gap [26]. This illustrates the generality of the graph-based paradigm across different domains of materials science.