Multi-Task Learning for Molecular Property Prediction: A Guide to Methods, Applications, and Best Practices

Multi-task learning (MTL) is transforming molecular property prediction by enabling models to learn multiple properties simultaneously, overcoming the critical challenge of scarce experimental data in drug discovery and materials science.

Multi-Task Learning for Molecular Property Prediction: A Guide to Methods, Applications, and Best Practices

Abstract

Multi-task learning (MTL) is transforming molecular property prediction by enabling models to learn multiple properties simultaneously, overcoming the critical challenge of scarce experimental data in drug discovery and materials science. This article provides a comprehensive overview for researchers and drug development professionals, exploring the foundational principles of MTL and its advantages over single-task approaches, particularly in low-data regimes. We delve into advanced methodological frameworks including multi-view representation learning, graph neural networks, and innovative architectures like MolP-PC and DeepDTAGen. The content addresses key optimization challenges such as negative transfer and task imbalance, presenting solutions like adaptive checkpointing and dynamic loss weighting. Finally, we examine rigorous validation paradigms and performance comparisons across benchmark datasets, offering practical insights for implementing MTL in real-world discovery pipelines.

What is Multi-Task Learning in Molecular Science? Core Concepts and Data Challenges

Defining Multi-Task Learning vs. Traditional Single-Task Approaches

The prediction of molecular properties is a cornerstone of modern drug discovery and materials science. For years, the dominant approach has been Traditional Single-Task Learning (STL), which trains separate, isolated models for each individual property prediction task. While straightforward, this paradigm faces significant limitations when labeled data is scarce, as is common in experimental settings due to the high cost and time requirements of molecular assays. In response to these challenges, Multi-Task Learning (MTL) has emerged as a powerful alternative that leverages shared representations and knowledge transfer across related tasks to improve generalization performance, particularly in data-constrained environments [1] [2].

The fundamental distinction between these approaches lies in their learning philosophy. STL follows a "one model, one task" paradigm, where each predictor is trained independently on task-specific data. In contrast, MTL employs a "one model, multiple tasks" framework, simultaneously learning multiple related tasks while exploiting commonalities and differences across them [2]. This shift enables knowledge transfer between tasks, allowing models to overcome data scarcity limitations that frequently plague molecular property prediction. Research has demonstrated that MTL can achieve superior performance compared to STL, especially when tasks are appropriately selected and the model architecture effectively balances shared and task-specific learning [2] [3].

Core Conceptual Frameworks and Architectural Differences

Traditional Single-Task Learning (STL)

The STL framework operates on a fundamental principle of task isolation. Each molecular property prediction task—whether predicting absorption, distribution, metabolism, excretion, toxicity (ADMET), or other physicochemical properties—receives its own dedicated model with separate parameters. These models are typically trained independently without any mechanism for knowledge sharing, even when the target properties may share underlying molecular determinants [2].

STL architectures generally consist of three key components: (1) a molecular representation module that converts molecular structures into machine-readable features (e.g., molecular fingerprints, graph representations, or SMILES strings); (2) a feature extraction backbone (such as Graph Neural Networks (GNNs), Convolutional Neural Networks (CNNs), or traditional machine learning models); and (3) a task-specific output layer that generates the final property prediction [4] [2]. While this approach benefits from conceptual simplicity and avoids potential negative interference between unrelated tasks, it becomes statistically inefficient when dealing with multiple related properties and struggles significantly in low-data regimes where insufficient training examples are available for individual tasks.

Multi-Task Learning (MTL)

MTL introduces a more integrated approach by designing architectures that explicitly facilitate knowledge transfer between related prediction tasks. Rather than treating each property prediction in isolation, MTL frameworks seek to leverage the inherent relatedness between molecular properties that stem from shared structural determinants and underlying biological mechanisms [1] [2].

The most common MTL architecture employs shared backbone modules combined with task-specific heads. In this configuration, all tasks utilize the same foundational feature extractor (typically a GNN or transformer), which learns a general-purpose molecular representation that captures patterns relevant across multiple properties. These shared representations are then processed by smaller, task-specific neural network heads that refine the general features for each particular prediction target [2] [5]. This design enables the model to leverage collective information from all available tasks while still accommodating task-specific peculiarities.

Advanced MTL frameworks have introduced more sophisticated architectural patterns. The "one primary, multiple auxiliaries" paradigm focuses on selecting appropriate auxiliary tasks to boost performance on a primary task of interest, even if this comes at the cost of minor degradation in auxiliary task performance [2]. Another innovative approach, SGNN-EBM, incorporates structured task relationships by applying state graph neural networks on task relation graphs and employing structured prediction with energy-based models [6] [7]. These developments represent a significant evolution beyond simple parameter sharing toward more deliberate, knowledge-driven MTL architectures.

Table 1: Core Architectural Differences Between STL and MTL Approaches

| Aspect | Single-Task Learning (STL) | Multi-Task Learning (MTL) |

|---|---|---|

| Learning Paradigm | "One model, one task" | "One model, multiple tasks" |

| Knowledge Transfer | None between tasks | Explicit sharing across tasks |

| Data Efficiency | Lower, especially with scarce labels | Higher, leverages all available data |

| Parameter Usage | Separate parameters for each task | Shared parameters with task-specific heads |

| Optimal Use Case | Abundant labeled data for each task | Limited data scenarios with related tasks |

Quantitative Performance Comparison

Empirical evaluations across diverse molecular property prediction benchmarks consistently demonstrate the advantages of MTL approaches, particularly in data-constrained environments that mirror real-world drug discovery settings.

In ADMET property prediction, the MTGL-ADMET framework—which employs a "one primary, multiple auxiliaries" paradigm—significantly outperformed both STL and conventional MTL baselines across multiple endpoints. For Human Intestinal Absorption (HIA) prediction, MTGL-ADMET achieved an AUC of 0.981, compared to 0.916 for ST-GCN and 0.972 for ST-MGA [2]. Similarly, for Oral Bioavailability (OB) prediction, it attained an AUC of 0.749, outperforming STL models (0.716 for ST-GCN) and other MTL approaches (0.745 for MGA) [2]. These improvements highlight how strategically selected task groupings in MTL can enhance prediction accuracy for pharmaceutically critical properties.

The DeepDTAGen model for drug-target affinity prediction and target-aware drug generation demonstrates another compelling MTL advantage. On the KIBA dataset, it achieved a Mean Squared Error (MSE) of 0.146, Concordance Index (CI) of 0.897, and ({r}{m}^{2}) of 0.765, outperforming traditional machine learning models like KronRLS (MSE: 0.222) and SimBoost (MSE: 0.222) by substantial margins [4]. Compared to single-task deep learning models, DeepDTAGen also showed improvements, surpassing GraphDTA by 11.35% in ({r}{m}^{2}) while reducing MSE by 0.68% [4]. This performance advantage extended to other benchmarks including Davis and BindingDB datasets, confirming the robustness of the MTL approach across diverse experimental settings.

Recent research on molecular property prediction using improved Graph Transformer networks with multitask joint learning strategies further validates these findings. This approach demonstrated an average improvement of 6.4% and 16.7% over baseline methods on multiple classification and regression datasets, with the multitask strategy boosting prediction accuracy by an additional average of 2.8% and 6.2% compared to single-dataset training [5]. These consistent performance gains across varied experimental setups underscore the fundamental advantages of MTL in capturing shared molecular representations that generalize better across related property prediction tasks.

Table 2: Quantitative Performance Comparison on Benchmark Datasets

| Dataset | Metric | Single-Task Models | Multi-Task Models | Improvement |

|---|---|---|---|---|

| ADMET (HIA) | AUC | 0.916 (ST-GCN) | 0.981 (MTGL-ADMET) | +7.1% |

| ADMET (OB) | AUC | 0.716 (ST-GCN) | 0.749 (MTGL-ADMET) | +4.6% |

| KIBA | MSE | 0.222 (KronRLS) | 0.146 (DeepDTAGen) | -34.2% |

| KIBA | ({r}_{m}^{2}) | 0.629 (KronRLS) | 0.765 (DeepDTAGen) | +21.6% |

| Davis | CI | 0.871 (KronRLS) | 0.890 (DeepDTAGen) | +2.2% |

Experimental Protocols and Methodologies

Task Selection and Relationship Modeling

A critical factor in successful MTL implementation is the appropriate selection of related tasks. The MTGL-ADMET framework introduces a sophisticated methodology for this purpose, combining status theory with maximum flow algorithms to identify optimal auxiliary tasks for a given primary task [2]. The protocol begins with building a task association network by training individual and pairwise tasks to quantify their relationships. Status theory then identifies "friendly" auxiliary tasks that have potential synergistic relationships with the primary task. Finally, maximum flow algorithms estimate the potential performance increments of MTL compared to STL, enabling the selection of auxiliary tasks that maximize benefits for the primary task even if their own performance might slightly degrade [2]. This systematic approach to task selection represents a significant advancement over ad hoc or intuition-based task grouping.

MTL Model Architecture and Training

The architectural design of MTL models requires careful balancing of shared and task-specific components. The MTGL-ADMET framework employs a multi-tiered architecture consisting of: (1) a task-shared atom embedding module that learns general atomic representations across all tasks; (2) a task-specific molecular embedding module that aggregates atom embeddings into molecular representations tailored to each task; (3) a primary task-centered gating module that strategically weights information from auxiliary tasks; and (4) a multi-task predictor that generates final property predictions [2]. This design enables the model to learn both universal molecular patterns that apply across properties and task-specific nuances critical for accurate individual predictions.

Training MTL models introduces unique optimization challenges, particularly gradient conflicts between tasks. The DeepDTAGen framework addresses this through its novel FetterGrad algorithm, which mitigates gradient conflicts by minimizing the Euclidean distance between task gradients [4]. This ensures more aligned learning across tasks and prevents biased optimization where one task dominates the shared representation. The training protocol typically involves alternating between tasks with dynamic weighting adjustments to balance learning rates across objectives [4] [5]. For structured task relationships, the SGNN-EBM approach employs noise-contrastive estimation to efficiently train energy-based models that capture complex inter-task dependencies [6] [7].

Evaluation Metrics and Validation

Comprehensive evaluation of MTL models requires multiple metrics to assess different aspects of performance. For regression tasks like binding affinity prediction, standard metrics include Mean Squared Error (MSE), Concordance Index (CI), and ({r}_{m}^{2}) [4]. For classification tasks such as ADMET property classification, Area Under the Receiver Operating Characteristic Curve (AUC) and Area Under the Precision-Recall Curve (AUPR) are commonly employed [2]. Beyond predictive accuracy, MTL models are evaluated on data efficiency—measuring performance as training data size varies—and robustness through cold-start tests that assess performance on novel molecular scaffolds [4]. For generative MTL models, additional metrics include validity (proportion of chemically valid molecules), novelty (proportion not present in training data), and uniqueness (proportion of unique molecules) [4].

Implementation and Practical Considerations

Research Reagent Solutions

Implementing effective MTL approaches requires specific computational tools and datasets. The table below outlines key resources referenced in the literature:

Table 3: Essential Research Reagents for MTL Implementation

| Resource | Type | Function | Example/Reference |

|---|---|---|---|

| Benchmark Datasets | Data | Model training & evaluation | KIBA, Davis, BindingDB, ChEMBL-STRING [4] [6] |

| Graph Neural Networks | Algorithm | Molecular representation learning | GCN, R-GCN, GIN [2] [3] |

| Task Relationship Graphs | Data | Structured MTL optimization | Protein-protein interaction networks [6] [7] |

| Multi-task Optimization | Algorithm | Gradient conflict mitigation | FetterGrad, Gradient Surgery [4] |

| Interpretability Tools | Method | Crucial substructure identification | Attention mechanisms, saliency maps [2] |

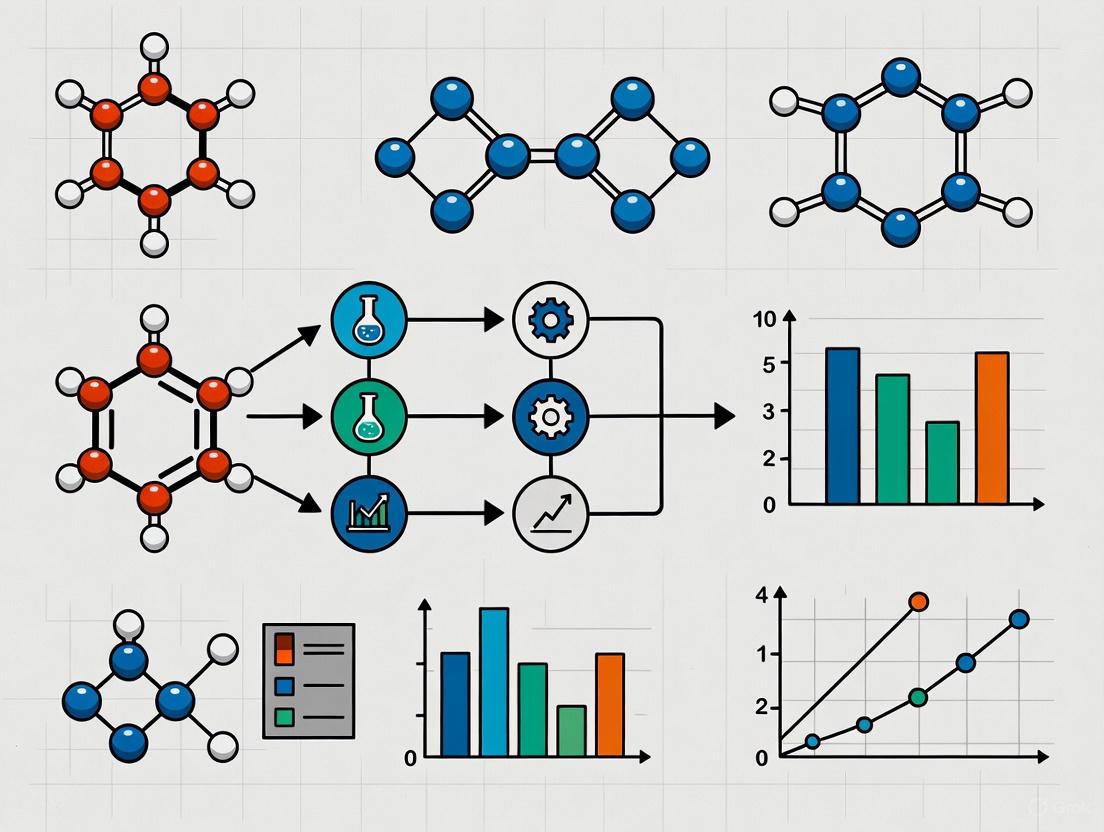

Workflow Visualization

The following diagram illustrates the comparative workflows between single-task and multi-task learning approaches in molecular property prediction:

Molecular Property Prediction Workflow Comparison

Application Guidelines and Recommendations

Based on experimental findings across multiple studies, several practical recommendations emerge for implementing MTL in molecular property prediction. For scenarios with limited labeled data, MTL consistently outperforms STL, with studies showing particular advantage when training data for individual tasks contains fewer than 1,000 compounds [1] [2]. The "one primary, multiple auxiliaries" paradigm is especially effective for prioritizing performance on critical properties while using others as auxiliary tasks [2].

For task selection, leveraging domain knowledge to identify biologically related properties enhances MTL effectiveness. Cytochrome P450 inhibition tasks, for instance, naturally complement distribution and excretion properties due to their interconnected metabolic roles [2]. When explicit task relationships are available (such as protein-protein interaction networks for target-based properties), structured MTL approaches like SGNN-EBM that incorporate these graphs demonstrate superior performance [6] [7].

To address optimization challenges, techniques like FetterGrad that explicitly manage gradient conflicts are recommended, especially when combining tasks with different scales or learning dynamics [4]. Additionally, employing dynamic task weighting during training rather than fixed weights helps balance learning across tasks with varying difficulties or data availability [5].

The comparison between multi-task learning and traditional single-task approaches reveals a fundamental trade-off between specialization and knowledge integration. While STL maintains value in scenarios with abundant, high-quality labeled data for individual tasks, MTL offers compelling advantages in the data-constrained environments typical of drug discovery. By leveraging shared representations and strategic knowledge transfer, MTL frameworks achieve superior data efficiency, enhanced generalization, and improved performance on molecular property prediction tasks [1] [4] [2].

Future research directions in MTL for molecular property prediction include several promising areas. Advanced task relationship modeling incorporating biological knowledge graphs could further enhance task selection and representation sharing [6] [8]. Generative multi-task frameworks that jointly predict properties and design optimized molecular structures represent another frontier, as demonstrated by DeepDTAGen's combined prediction and generation capabilities [4]. Additionally, federated MTL approaches that enable collaborative model training without centralized data sharing could help address privacy and intellectual property concerns in pharmaceutical research [8].

As the field progresses, the integration of MTL with explainable AI techniques will be crucial for building trust and providing mechanistic insights into molecular property predictions [2] [8]. By identifying crucial molecular substructures that influence multiple properties, these interpretable MTL frameworks can guide medicinal chemists in rational molecular design, ultimately accelerating the discovery of safer and more effective therapeutics.

The effectiveness of machine learning (ML) for molecular property prediction is often fundamentally limited by scarce and incomplete experimental datasets [1]. In diverse domains such as pharmaceuticals, solvents, polymers, and energy carriers, the scarcity of reliable, high-quality labels impedes the development of robust molecular property predictors, constraining the pace of artificial intelligence-driven materials discovery and design [9]. This data bottleneck arises from numerous practical constraints: the complex, time-consuming, and costly nature of wet-lab experiments; ethical considerations; and technical limitations in data acquisition [10] [11].

Multi-task Learning (MTL) has emerged as a powerful paradigm to address this critical challenge. Unlike single-task learning (STL), where a model is trained in isolation on a single task, MTL simultaneously learns multiple related tasks by leveraging both task-specific and shared information [12]. Through inductive transfer, MTL leverages training signals from one task to improve another, allowing the model to discover and utilize shared structures for more accurate predictions across all tasks [9]. This approach is particularly valuable in molecular science because different molecular properties often share underlying structural determinants, enabling knowledge transfer between related prediction tasks.

Table 1: Comparative Performance of MTL vs. Single-Task Learning on Molecular Property Prediction Benchmarks

| Model/Dataset | ClinTox (Avg. Improvement) | SIDER (Avg. Improvement) | Tox21 (Avg. Improvement) | Remarks |

|---|---|---|---|---|

| ACS (MTL) | +15.3% vs. STL | Outperforms STL | Outperforms STL | Specifically designed for low-data regimes |

| Standard MTL | +3.9% vs. STL | Moderate gains | Moderate gains | Susceptible to negative transfer |

| MTL-GLC | +5.0% vs. STL | Moderate gains | Moderate gains | Global loss checkpointing |

| MolFCL | Superior on 23 datasets | - | - | Uses contrastive learning and prompts |

The Technical Foundation of Multi-Task Learning for Molecular Properties

Core Architecture of Molecular MTL

The foundational architecture for MTL in molecular property prediction typically combines a shared backbone with task-specific heads. The shared backbone, often a Graph Neural Network (GNN), learns general-purpose latent representations from molecular structures through message passing [9]. These shared representations capture fundamental chemical principles that are relevant across multiple properties. The task-specific components, typically multi-layer perceptron (MLP) heads, then process these shared representations to make predictions for individual properties [9].

This architectural paradigm effectively balances two competing objectives: leveraging commonalities between tasks through shared parameters while maintaining specialized capacity for each task through dedicated heads. The GNN backbone excels at capturing molecular topology through atoms (nodes) and bonds (edges), making it particularly suitable for molecular representation learning [10]. The message-passing mechanism allows information to propagate through the molecular graph, enabling the model to learn complex structural relationships that determine molecular properties.

Critical Challenge: Negative Transfer

A significant obstacle in practical MTL implementation is negative transfer (NT), which occurs when updates driven by one task are detrimental to another [9]. This phenomenon can arise from multiple sources:

- Low task relatedness: When tasks share little underlying structure, forcing parameter sharing can degrade performance [9].

- Capacity mismatch: When the shared backbone lacks sufficient flexibility to support divergent task demands [9].

- Optimization conflicts: When tasks exhibit different optimal learning rates or gradient requirements [9].

- Task imbalance: When certain tasks have far fewer labeled examples than others, limiting their influence on shared parameters [9].

The detrimental effects of NT are particularly pronounced in real-world molecular datasets, which often exhibit severe task imbalance due to heterogeneous data-collection costs [9]. For example, some molecular properties may be expensive or technically challenging to measure, resulting in sparse labels for those tasks.

Advanced MTL Methodologies for Overcoming Data Scarcity

Adaptive Checkpointing with Specialization (ACS)

ACS is a specialized training scheme designed to mitigate negative transfer while preserving the benefits of MTL in low-data regimes [9]. The methodology operates as follows:

- Shared backbone with task-specific heads: A single GNN based on message passing learns general-purpose latent representations, processed by task-specific MLP heads [9].

- Validation loss monitoring: During training, the validation loss of every task is continuously monitored.

- Adaptive checkpointing: The best backbone-head pair is checkpointed whenever the validation loss of a given task reaches a new minimum.

- Specialized model selection: Each task ultimately obtains a specialized backbone-head pair optimized for its specific characteristics.

This approach recognizes that related tasks often reach local minima of validation error at different points in training, making task-specific early stopping crucial [9]. Through this mechanism, ACS protects individual tasks from deleterious parameter updates while promoting inductive transfer among sufficiently correlated tasks.

Table 2: Performance Comparison of ACS Against Baseline Methods on Molecular Benchmarks

| Method | ClinTox Performance | SIDER Performance | Tox21 Performance | NT Mitigation |

|---|---|---|---|---|

| STL | Baseline | Baseline | Baseline | Not applicable |

| MTL | +3.9% vs. STL | Moderate improvement | Moderate improvement | Limited |

| MTL-GLC | +5.0% vs. STL | Moderate improvement | Moderate improvement | Partial |

| ACS | +15.3% vs. STL | Significant improvement | Significant improvement | Effective |

Fragment-Based Contrastive Learning (MolFCL)

MolFCL introduces a novel approach that integrates molecular fragment reactions knowledge into contrastive learning framework [10]. The methodology addresses two key challenges:

- Fragment-based augmentation: Constructing augmented molecular graphs that preserve the original chemical environment by incorporating fragment-fragment interactions using the BRICS algorithm [10].

- Functional group prompt learning: Incorporating functional group knowledge and corresponding atomic signals during fine-tuning to guide molecular property prediction [10].

The contrastive learning framework in MolFCL operates by maximizing the similarity between the original molecular graph and its augmented fragment-based version while minimizing similarity with other molecules in the batch [10]. This approach enables the model to learn effective representations even with limited labeled data by leveraging unlabeled molecular structures.

Multi-View Fusion and Multi-Task Learning (MolP-PC)

MolP-PC addresses data sparsity and information loss by integrating multiple molecular representations through a unified framework [13]. The key components include:

- Multi-view integration: Combining 1D molecular fingerprints (MFs), 2D molecular graphs, and 3D geometric representations.

- Attention-gated fusion: Employing an attention mechanism to dynamically weight the importance of different representations.

- Multi-task adaptive learning: Utilizing adaptive loss weighting to balance learning across tasks with varying data availability.

This approach significantly enhances predictive performance on small-scale datasets, surpassing single-task models in 41 of 54 tasks in experimental evaluations [13]. The multi-view fusion enables the model to capture complementary information from different molecular representations, mitigating the limitations of any single representation scheme.

Experimental Protocols and Implementation

Implementing ACS for Ultra-Low Data Regimes

The ACS methodology has been validated on multiple molecular property benchmarks, demonstrating capability to learn accurate models with as few as 29 labeled samples [9]. The implementation protocol consists of:

Data Preparation:

- Utilize molecular datasets with multiple property annotations (e.g., ClinTox, SIDER, Tox21).

- Apply Murcko-scaffold splitting for fair evaluation of generalization capability.

- Explicitly handle missing labels through loss masking rather than imputation.

Model Architecture:

- Implement a message-passing GNN (e.g., D-MPNN) as the shared backbone.

- Design task-specific MLP heads with 1-2 hidden layers.

- Use appropriate activation functions (e.g., ReLU) and normalization layers.

Training Procedure:

- Employ standard optimization algorithms (e.g., Adam) with carefully tuned learning rates.

- Monitor validation loss for each task independently.

- Implement checkpointing logic to save best-performing parameters per task.

- Apply early stopping based on aggregated multi-task performance.

Evaluation Metrics:

- Task-specific metrics (e.g., AUC-ROC for classification, MSE for regression).

- Comparative metrics against single-task baselines.

- Negative transfer quantification through per-task performance deltas.

Benchmarking MTL Performance

Experimental validation across multiple benchmarks demonstrates the significant advantages of MTL approaches in data-scarce environments:

- On the ClinTox dataset, ACS shows particularly large gains, improving upon STL, MTL, and MTL-GLC by 15.3%, 10.8%, and 10.4%, respectively [9].

- MolFCL outperforms state-of-the-art baseline models on 23 molecular property prediction datasets, particularly in low-data regimes [10].

- MolP-PC achieves optimal performance in 27 of 54 tasks, with its MTL mechanism significantly enhancing predictive performance on small-scale datasets [13].

These results consistently show that MTL approaches not only improve average performance across tasks but particularly benefit tasks with the most limited data by transferring knowledge from richer tasks.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents and Computational Tools for Molecular MTL

| Tool/Resource | Type | Function | Application Example |

|---|---|---|---|

| Graph Neural Networks | Algorithm | Learns molecular representations from graph structure | Message passing for molecular topology [9] |

| BRICS Algorithm | Computational method | Decomposes molecules into meaningful fragments | Fragment-based graph augmentation in MolFCL [10] |

| Task-Specific Heads | Model component | Specializes shared representations for individual tasks | MLP heads for property prediction [9] |

| Attention Mechanisms | Algorithm | Dynamically weights important molecular regions | Multi-view fusion in MolP-PC [13] |

| Contrastive Loss | Optimization | Maximizes similarity between related representations | Fragment-based pre-training in MolFCL [10] |

| Adaptive Checkpointing | Training strategy | Preserves best parameters for each task | Mitigating negative transfer in ACS [9] |

The adoption of Multi-task Learning for molecular property prediction represents a paradigm shift in addressing the fundamental challenge of data scarcity in chemical and pharmaceutical sciences. By leveraging shared representations across related tasks, MTL enables more accurate predictions in low-data regimes, accelerates materials discovery, and reduces reliance on costly experimental measurements.

The methodologies discussed—including Adaptive Checkpointing with Specialization, fragment-based contrastive learning, and multi-view fusion—demonstrate that carefully designed MTL approaches can effectively overcome the negative transfer problem while maximizing knowledge sharing between tasks. As these techniques continue to mature, they hold the potential to dramatically expand the scope of molecular property prediction, particularly for emerging compound classes and poorly characterized properties.

Future research directions include developing more sophisticated task-relatedness measures, creating unified frameworks that combine MTL with transfer learning and generative modeling, and establishing standardized benchmarks for evaluating MTL approaches in molecular sciences. As the field progresses, MTL is poised to become an indispensable tool in the computational molecular scientist's arsenal, fundamentally addressing the data scarcity challenge that has long constrained AI-driven molecular discovery.

Enhanced Predictive Accuracy with Limited Labeled Data

In the fields of drug discovery and materials science, the ability to predict molecular properties accurately is foundational to accelerating research and development. However, the effectiveness of machine learning (ML) models for this task is often critically limited by the scarcity and high cost of obtaining large, experimentally labeled datasets [1] [9]. This data bottleneck impedes the development of robust predictors for diverse properties, from pharmaceutical drug toxicity to the characteristics of sustainable energy carriers [9]. Multi-task Learning (MTL) has emerged as a powerful paradigm to address this fundamental challenge. By enabling a single model to learn multiple related tasks concurrently, MTL facilitates inductive transfer; the model can leverage shared information and patterns across tasks, effectively augmenting the scarce data available for any single task and enhancing predictive accuracy where it is needed most [1].

This technical guide explores the key advantages of MTL in achieving enhanced predictive accuracy with limited labeled data. We will dissect the core mechanisms that enable this improvement, present quantitative evidence of its performance, and provide detailed methodologies for implementing and evaluating MTL approaches, providing researchers and scientists with a comprehensive toolkit for navigating low-data regimes.

Core Mechanisms for Enhanced Accuracy in MTL

The superior performance of MTL in data-scarce environments is not accidental but is driven by specific architectural and optimization strategies designed to maximize knowledge sharing while minimizing interference.

Architectural Innovations for Knowledge Sharing

At its core, MTL for molecular property prediction employs a shared backbone model, typically a Graph Neural Network (GNN), which learns a general-purpose representation of a molecule from its graph structure. This shared representation captures fundamental chemical and structural patterns that are universally relevant across various properties [9]. The shared backbone is then complemented by task-specific heads, often implemented as small Multi-Layer Perceptrons (MLPs), which fine-tune these general representations for the precise prediction of individual properties [9]. This structure allows a task with abundant data to inform and improve the representations used by a task with very little data.

Recent architectural advances have further refined this paradigm. The Multi-Level Fusion Graph Neural Network (MLFGNN) enhances traditional GNNs by integrating both local and global molecular structural information. It combines a Graph Attention Network (GAT) to capture local functional groups with a Graph Transformer to model long-range dependencies within the molecular graph. Furthermore, it incorporates pre-defined molecular fingerprints as a complementary modality of chemical knowledge, which are fused with the graph-based representations using a cross-attention mechanism [14]. This multi-scale, multi-modal approach provides a richer and more robust foundational representation for all tasks.

Mitigating Negative Transfer and Task Interference

A significant risk in naive MTL is negative transfer (NT), where the joint optimization of one task detrimentally affects the performance of another, often due to differences in task relatedness, data distribution, or optimal learning dynamics [9]. To counter this, sophisticated training schemes have been developed.

The Adaptive Checkpointing with Specialization (ACS) method is a prime example. During training, the validation loss for each task is monitored independently. The model checkpoints the best-performing backbone-head pair for a task whenever its validation loss reaches a new minimum. This ensures that each task ultimately obtains a specialized model that has benefited from shared representations early in training but is shielded from later, potentially detrimental, parameter updates driven by other tasks [9].

Another approach is the use of learnable task-weighting schemes. The Quantum-enhanced and task-Weighted MTL (QW-MTL) framework introduces a learnable parameter that dynamically adjusts each task's contribution to the total loss during training. This adaptive balancing prevents tasks with larger datasets or louder gradients from dominating the optimization process, allowing low-data tasks to exert appropriate influence on the shared model parameters [15].

Quantitative Evidence of Performance Gains

The theoretical advantages of MTL are borne out by substantial empirical evidence across multiple benchmarks and real-world applications, particularly in ultra-low data regimes.

Benchmark Performance

Extensive controlled experiments on standardized molecular property benchmarks demonstrate that MTL methods consistently outperform single-task learning (STL) baselines. The following table summarizes key results from recent studies:

Table 1: Performance Comparison of MTL vs. Single-Task Learning on Molecular Benchmarks

| Dataset / Model | Description | Key Result | Reference |

|---|---|---|---|

| ACS on ClinTox | 1,478 molecules, 2 tasks (FDA approval, clinical trial toxicity) | ACS outperformed Single-Task Learning (STL) by 15.3% | [9] |

| ACS on MoleculeNet | Aggregated performance across ClinTox, SIDER, and Tox21 datasets | ACS showed an 11.5% average improvement over other node-centric message passing methods | [9] |

| QW-MTL on TDC | 13 ADMET classification tasks from Therapeutics Data Commons | Outperformed strong single-task baselines on 12 out of 13 tasks | [15] |

| MLFGNN | Multiple benchmarks across physical chemistry, biophysics, and physiology | Achieved state-of-the-art performance in 8 out of 11 learning tasks | [14] |

| MfGNN | Evaluations across physical chemistry, biophysics, physiology, and toxicology | Outperformed leading ML/DL models in 8 out of 11 tasks | [16] |

Performance in the Ultra-Low Data Regime

The most compelling evidence for MTL's value comes from its performance when labeled data is exceptionally scarce. In a practical application predicting the properties of sustainable aviation fuel (SAF) molecules, the ACS training scheme enabled the learning of accurate models with as few as 29 labeled samples—a data regime where single-task models typically fail to generalize [9]. This capability dramatically broadens the scope of problems that can be addressed with AI-driven discovery.

Experimental Protocols & Methodologies

To ensure reproducibility and provide a clear roadmap for researchers, this section details the experimental protocols for key MTL studies.

Dataset Curation and Splits

Robust evaluation requires carefully curated datasets and meaningful data splits:

- Benchmark Datasets: Common benchmarks include those from MoleculeNet (e.g., ClinTox, SIDER, Tox21) and the Therapeutics Data Commons (TDC) for ADMET properties [9] [15].

- Splitting Strategy: To avoid inflated performance estimates and better simulate real-world conditions, a Murcko-scaffold split is recommended. This split ensures that molecules with similar core structures are grouped together in the training or test set, forcing the model to generalize to genuinely novel chemotypes rather than just memorizing local patterns [9].

- Standardized Evaluation: For reliable comparison, it is crucial to use the official leaderboard-style train-test splits provided by benchmarks like TDC, rather than custom internal splits [15].

Model Architecture and Training Details

Table 2: Key Components of a Modern MTL Framework for Molecules

| Component | Description | Example & Function |

|---|---|---|

| Backbone Model | Shared GNN that processes the molecular graph. | Directed-MPNN (D-MPNN) or Graph Attention Network (GAT). Learns a general molecular representation from atom and bond features [15] [14]. |

| Task-Specific Heads | Small networks attached to the shared backbone for each task. | Multi-Layer Perceptrons (MLPs). Map the shared representation to a task-specific prediction [9]. |

| Feature Enrichment | Additional molecular descriptors to augment the GNN's representation. | Quantum Chemical Descriptors (dipole moment, HOMO-LUMO gap) and Molecular Fingerprints (Morgan, PubChem). Provide physically-grounded and domain-knowledge-informed features [15] [14]. |

| Training Scheme | The method for coordinating the learning of multiple tasks. | Adaptive Checkpointing (ACS) or Learnable Task Weighting. Mitigates negative transfer and balances task learning [9] [15]. |

Implementation Workflow: The general workflow for a modern MTL experiment, such as QW-MTL, involves the following steps [15]:

- Input Representation: A molecule is represented as a graph and/or by its SMILES string.

- Feature Computation: Compute 2D molecular descriptors (e.g., via RDKit) and/or 3D quantum chemical descriptors.

- Graph Encoding: The molecular graph is processed by a shared GNN backbone (e.g., a D-MPNN) to generate an embedding.

- Feature Fusion: The graph embedding is concatenated with the computed molecular descriptors.

- Task-Specific Prediction: The fused representation is passed through the task-specific MLP heads to generate property predictions.

- Loss Calculation & Weighting: The loss for each task is calculated and dynamically weighted using a learnable scheme before being combined to update the shared model parameters.

Diagram 1: High-level architecture of a modern multi-task learning model for molecular property prediction, featuring a shared backbone and task-specific heads with advanced training schemes.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Datasets for MTL Research

| Tool / Resource | Type | Function in Research |

|---|---|---|

| Therapeutics Data Commons (TDC) | Dataset Collection & Benchmark | Provides curated ADMET and other molecular property datasets with standardized train-test splits for fair model evaluation [15]. |

| MoleculeNet | Dataset Collection & Benchmark | A standard benchmark suite for molecular property prediction, encompassing multiple datasets across various domains [9]. |

| RDKit | Cheminformatics Software | An open-source toolkit for Cheminformatics used to compute 2D molecular descriptors and convert SMILES strings into molecular graphs [15]. |

| Chemprop | Deep Learning Framework | A widely-used, open-source GNN implementation (based on D-MPNN) specifically designed for molecular property prediction, serving as a strong baseline and a flexible research platform [15]. |

| Quantum Chemistry Software (e.g., Gaussian, ORCA) | Computational Chemistry Software | Used to calculate 3D quantum chemical descriptors (e.g., dipole moment, HOMO-LUMO gap) that enrich molecular representations with electronic structure information [15]. |

Multi-task learning represents a fundamental shift in approaching molecular property prediction, especially under the constraint of limited labeled data. By architecturally promoting knowledge sharing through shared representations and strategically mitigating negative transfer via techniques like adaptive checkpointing and dynamic loss balancing, MTL consistently delivers enhanced predictive accuracy. The quantitative evidence confirms that MTL not only surpasses single-task baselines across diverse benchmarks but also remains effective in the ultra-low data regime, enabling reliable predictions with as few as a few dozen labeled examples. As these methodologies continue to mature, they promise to significantly accelerate the pace of discovery in drug development and materials science.

Multi-task learning (MTL) has emerged as a powerful paradigm in machine learning for molecular property prediction, demonstrating particular value in scenarios where experimental data is scarce or costly to obtain. Within drug discovery and materials science, MTL operates on the principle that learning multiple related tasks simultaneously within a single model enables beneficial transfer of information between these tasks. This approach contrasts with single-task learning (STL), which trains separate, isolated models for each prediction target. The fundamental thesis of MTL posits that by leveraging inter-task relationships and shared underlying patterns in molecular data, models can develop more robust, generalized representations that enhance predictive performance, particularly in data-constrained environments that commonly challenge molecular property prediction [1] [9].

The application of MTL in molecular domains typically employs shared backbone architectures—often graph neural networks (GNNs) that naturally represent molecular structures—combined with task-specific output heads. This design allows the model to learn both universal molecular features and task-specific nuances [9] [17]. However, the success of MTL is not universal and depends critically on specific experimental conditions and architectural decisions. This technical guide examines the practical scenarios where MTL demonstrably outperforms single-task approaches, providing researchers with evidence-based frameworks for implementation.

Key Scenarios for MTL Advantage

Ultra-Low Data Regimes

The most consistently documented advantage for MTL appears in ultra-low data regimes, where labeled training samples for a target property are extremely limited. In pharmaceutical and materials science applications, obtaining experimentally measured properties is often resource-intensive, creating precisely these data-scarce conditions.

Empirical Evidence: Research on sustainable aviation fuel (SAF) properties demonstrated that the Adaptive Checkpointing with Specialization (ACS) method, an MTL approach for GNNs, could learn accurate predictive models with as few as 29 labeled samples—a capability unattainable with single-task models [9]. In these experiments, ACS consistently surpassed STL performance when task imbalance was present, with the advantage becoming more pronounced as available data decreased.

Mechanistic Explanation: MTL mitigates the overfitting risk that plagues single-task models in low-data scenarios by leveraging auxiliary tasks as implicit regularizers. The shared representations learned across multiple tasks capture more fundamental molecular patterns rather than idiosyncrasies of limited samples [9] [18].

Presence of Sufficiently Related Auxiliary Tasks

MTL provides significant performance improvements when auxiliary tasks share underlying structural relationships with the primary task of interest. Task relatedness facilitates positive knowledge transfer, where learning one task improves performance on another.

Relatedness Dimensions: Molecular tasks can relate through shared structural determinants (e.g., specific functional groups influencing multiple properties) or similar measurement contexts (e.g., toxicity endpoints measured in similar assays) [9] [17]. A study comparing MTL approaches found that "prediction accuracy largely depends on the inter-task relationship, and hard parameter sharing improves the performance when the correlation becomes complex" [17].

Practical Application: In drug discovery, simultaneously predicting various toxicity endpoints (e.g., on Tox21 dataset) or multiple absorption, distribution, metabolism, excretion and toxicity (ADMET) properties leverages their shared dependence on fundamental biochemical interactions [19] [20].

Controlled Handling of Task Interference

While unrelated tasks can cause detrimental "negative transfer," advanced MTL methods that strategically manage task interference maintain performance advantages even with diverse task sets.

Adaptive Checkpointing: The ACS method addresses negative transfer by monitoring validation loss for each task during training and checkpointing the best backbone-head pair for each task individually. This approach preserves beneficial transfer while minimizing interference, outperforming standard MTL by 10.8% on ClinTox benchmarks [9].

Gradient-Based Task Grouping: Task Affinity Groupings (TAG) algorithm measures how one task's gradient update affects other tasks' losses, then groups tasks with high inter-task affinity. This method efficiently identifies compatible task groupings without exhaustive search, achieving state-of-the-art performance with 32x faster computation than prior approaches [21].

Data Enrichment Scenarios

MTL effectively utilizes data enrichment through additional molecular targets or properties, even when these auxiliary datasets are sparse or imperfect.

Systematic Enhancement: Research on ViralChEMBL and pQSAR datasets demonstrated that "training data enrichment could be an effective means of enhancing prediction performance in multi-task learning," particularly when the enriched data included unique compounds and targets that expanded the model's chemical space coverage [20].

Practical Recommendation: The degree of improvement depends on training data quality—enrichment with diverse molecular structures and target types provides the greatest benefits for predicting novel compound-target interactions [20].

Quantitative Performance Comparison

Table 1: MTL vs. STL Performance on Molecular Benchmark Datasets

| Dataset | Task Description | STL Performance | MTL Performance | Improvement | Key Conditions |

|---|---|---|---|---|---|

| ClinTox | FDA approval & clinical trial toxicity prediction | Baseline | ACS Method | +15.3% | Handled task imbalance effectively [9] |

| Tox21 | 12 toxicity endpoints | Varies by method | ACS Method | Matched or surpassed state-of-the-art | 5.4x larger dataset with 17.1% missing labels [9] |

| SIDER | 27 side effect targets | Varies by method | ACS Method | Consistent gains | Minimal label sparsity [9] |

| Fuel Ignition Properties | Small, sparse experimental data | Limited by data scarcity | Multi-task GNN | Significant improvement | Used auxiliary data for enhanced prediction [1] |

| QM9 Dataset | Multiple quantum chemical properties | Standard baselines | Multi-task GNN | Progressive improvement with data subsets | Controlled data availability tests [1] |

Table 2: MTL Performance Across Platform Implementations

| Platform/ Method | Task Coverage | Key MTL Features | Reported Advantages | Domain Validation |

|---|---|---|---|---|

| ACS (Adaptive Checkpointing) | Multiple property prediction | Task-specific early stopping; shared GNN backbone | 11.5% average improvement vs. node-centric message passing; works with 29 samples [9] | Sustainable aviation fuels; molecular toxicity benchmarks |

| Baishenglai (BSL) | 7 core tasks (generation, DTI, DDI, etc.) | Unified modular framework; OOD generalization | State-of-the-art on multiple benchmarks; discovered novel NMDA receptor modulators [19] | Real-world drug discovery for neurological targets |

| Task Affinity Groupings (TAG) | Flexible task groupings | Gradient-based affinity measurement | 32x faster grouping vs. prior methods; competitive on Taskonomy [21] | Computer vision benchmarks; methodology applicable to molecular domains |

| Data Enrichment MTL | Drug-target interactions | Incorporates diverse training data | Improved prediction of new compound-target interactions [20] | ViralChEMBL; pQSAR datasets |

Experimental Protocols for MTL Implementation

Adaptive Checkpointing with Specialization (ACS)

The ACS method represents a recent advancement in MTL for molecular property prediction, specifically designed to address negative transfer in imbalanced datasets:

Architecture: Employ a shared GNN backbone based on message passing with task-specific multi-layer perceptron (MLP) heads. The shared component learns general-purpose molecular representations while dedicated heads provide task-specific capacity [9].

Training Procedure:

- Monitor validation loss for each task throughout training

- Checkpoint the best backbone-head pair when a task's validation loss reaches a new minimum

- Maintain both task-agnostic and task-specific components throughout training

- For inference, use the specialized backbone-head pair for each task [9]

Implementation Details:

- Use Murcko-scaffold splits for fair evaluation of generalization

- Apply loss masking for missing values rather than imputation

- Balance shared and specialized parameters based on task relatedness

Task Affinity Grouping (TAG) for Molecular Applications

The TAG approach provides a systematic method for identifying compatible tasks before full MTL training:

Affinity Measurement: For each task pair (i, j), compute the inter-task affinity by:

- Updating shared parameters with respect to task i only

- Measuring the effect on task j's loss

- Undoing the update and repeating for all task pairs [21]

Grouping Algorithm:

- Collect affinity statistics throughout training

- Group tasks that exhibit consistently beneficial relationships

- Avoid grouping tasks with antagonistic interactions

- Balance group sizes based on computational constraints and performance objectives

Molecular Adaptation: For molecular domains, compute affinities across different property types and structural classes to identify optimal groupings [21].

Data Enrichment Protocol

Effective data enrichment for MTL requires strategic selection of auxiliary data:

Enrichment Criteria:

- Prioritize additional molecular targets that expand chemical space coverage

- Include even sparse or weakly related data, which can still provide benefits

- Balance dataset sizes across tasks to minimize imbalance effects [20]

Implementation Steps:

- Identify primary prediction target with limited data

- Select auxiliary properties with shared structural determinants

- Preprocess all datasets to consistent representations (e.g., standardized SMILES)

- Train with multi-task architecture employing appropriate weighting or checkpointing

Architectural Diagrams

Diagram 1: MTL Architecture with Shared Backbone and Task-Specific Heads

Diagram 2: Adaptive Checkpointing with Specialization (ACS) Workflow

Table 3: Essential Resources for MTL Molecular Property Prediction

| Resource Category | Specific Tools/Platforms | Function in MTL Research | Implementation Notes |

|---|---|---|---|

| Benchmark Datasets | QM9, ClinTox, SIDER, Tox21, ViralChEMBL | Provide standardized benchmarks for comparing MTL vs. STL performance | Use scaffold splits for realistic evaluation [1] [9] [20] |

| MTL Platforms | Baishenglai (BSL), ACS Implementation | Integrated frameworks with built-in MTL capabilities | BSL covers 7 core drug discovery tasks; ACS specializes in low-data regimes [19] [9] |

| Deep Learning Frameworks | PyTorch, TensorFlow | Flexible implementation of custom MTL architectures | PyTorch used in multiple referenced studies [20] |

| Molecular Encoders | Graph Neural Networks (GNNs) | Learn shared molecular representations from structure | Message-passing GNNs effective for molecular graphs [1] [9] |

| Pre-trained Models | BioBERT, NCBI BERT, ClinicalBERT | Provide initialization for molecular NLP tasks | Domain-specific BERT variants improve biomedical text mining [22] [23] |

| Task Grouping Tools | TAG Algorithm | Identify compatible tasks for joint training | Gradient-based approach more efficient than exhaustive search [21] |

Multi-task learning demonstrates clear and measurable advantages over single-task approaches for molecular property prediction in specific, well-defined scenarios. The evidence indicates that MTL should be the approach of choice when working with ultra-low data regimes (potentially as few as 29 samples), when sufficiently related auxiliary tasks are available, and when using advanced methods like ACS or TAG that mitigate negative transfer. These approaches enable researchers to overcome the data scarcity challenges that frequently impede molecular discovery and development pipelines.

Successful MTL implementation requires careful attention to task selection, architectural design, and training methodologies. The experimental protocols and resources outlined in this guide provide researchers with practical starting points for leveraging MTL in their molecular property prediction workflows. As MTL methodologies continue to evolve, particularly in handling task imbalance and quantifying task relatedness, their application domains within molecular sciences are likely to expand further, offering enhanced prediction capabilities with reduced experimental data requirements.

The Role of Inter-Task Relationships and Molecular Similarity in Knowledge Transfer

Multi-task learning (MTL) has emerged as a transformative paradigm in molecular property prediction, offering a powerful solution to critical challenges in computational drug discovery. By enabling the simultaneous learning of multiple related tasks, MTL frameworks leverage shared information across different molecular properties to enhance prediction accuracy, improve data efficiency, and generate more robust models. This approach stands in stark contrast to traditional single-task learning methods, which often suffer from data sparsity and limited generalization capabilities, particularly in the data-scarce regimes common to pharmaceutical research [24] [25].

The fundamental premise of MTL rests on the intelligent transfer of knowledge across tasks through shared representations and optimized learning dynamics. The efficacy of this knowledge transfer is governed by two principal factors: the relationships between the tasks themselves and the molecular similarities that underpin the feature representations. Understanding and quantifying these inter-task relationships allows models to prioritize progress on challenging tasks while mitigating destructive gradient interference [24]. Similarly, comprehensive molecular representations that capture diverse structural and electronic characteristics provide the foundational substrate upon which effective knowledge transfer can occur [25] [26].

This technical guide examines the sophisticated mechanisms through which modern MTL architectures harness inter-task relationships and molecular similarity to accelerate molecular property prediction. Through an analysis of cutting-edge frameworks and their experimental validation, we delineate the principles, methodologies, and practical implementations that are establishing new benchmarks in predictive accuracy and interpretability for drug development applications.

Fundamental Mechanisms of Knowledge Transfer in MTL

Inter-Task Relationship Modeling

The core challenge in multi-task learning lies in effectively managing the complex interplay between tasks, which can exhibit either synergistic or antagonistic relationships. Synergistic tasks benefit from shared representations and joint optimization, while antagonistic tasks experience performance degradation when trained together due to conflicting gradient signals [27]. Advanced MTL frameworks address this challenge through dynamic architectures that automatically detect and adapt to these relationships.

The AIM (Adaptive Intervention for Deep Multi-task Learning) framework tackles gradient interference by learning a dynamic policy to mediate conflicts during optimization. This policy, trained jointly with the main network, utilizes dense, differentiable regularizers to produce updates that are geometrically stable and dynamically efficient, prioritizing progress on the most challenging tasks [24]. Similarly, auto-branch MTL models quantify "synergistic effects" between tasks by monitoring how gradient updates for one task affect the loss of others. These models dynamically branch from a hard parameter sharing structure when tasks are deemed antagonistic, preventing negative information transfer while preserving beneficial sharing [27].

Table 1: Quantitative Performance Improvements from MTL Strategies

| Model | Dataset | Performance Improvement | Key Advantage |

|---|---|---|---|

| AIM | QM9 & Protein Degraders | Statistically significant improvements over baselines | Most pronounced in data-scarce regimes |

| MolP-PC | ADMET (54 tasks) | Optimal in 27/54 tasks; surpassed STL in 41/54 tasks | Enhanced performance on small-scale datasets |

| Auto-branch MTL | Alzheimer's Disease Traits | Outperformed Multi-Lasso and STL approaches | Prevented negative transfer between correlated phenotypes |

| MT-GNN | Site-selectivity Prediction | 0.934 average accuracy (±0.007) | Excellent interpolative and extrapolative ability |

Molecular Representation and Similarity

Molecular similarity serves as the fundamental substrate for knowledge transfer in MTL frameworks. Comprehensive molecular representations that capture diverse structural and physicochemical properties enable more effective information sharing across prediction tasks. The MolP-PC framework exemplifies this approach through multi-view fusion that integrates 1D molecular fingerprints (MFs), 2D molecular graphs, and 3D geometric representations, significantly enhancing predictive performance for ADMET properties [25] [28].

Quantum chemical descriptors provide particularly powerful representations for knowledge transfer by encoding essential electronic structure information. The QW-MTL framework incorporates dipole moment, HOMO-LUMO gap, electron distribution, and total energy to create physically-grounded molecular representations that capture properties crucial for ADMET prediction [15]. These quantum-informed features enrich the representation space, enabling more nuanced similarity assessments and more effective knowledge transfer across related molecular properties.

Technical Frameworks and Architectures

Adaptive Optimization Strategies

Gradient conflict management represents a central technical challenge in MTL implementations. The AIM framework addresses this through a novel optimization approach that learns a dynamic policy to mediate gradient conflicts via an augmented objective composed of differentiable regularizers. This policy generates updates that are geometrically stable and prioritize challenging tasks, with the learned policy matrix serving as an interpretable diagnostic tool for analyzing inter-task relationships [24].

Task weighting strategies play an equally critical role in balancing learning across heterogeneous tasks. QW-MTL introduces an exponential task weighting scheme that combines dataset-scale priors with learnable parameters to dynamically balance losses across tasks. This approach adaptively adjusts each task's contribution to the total loss, enabling stable optimization despite variations in task difficulty and data scale [15].

Figure 1: Adaptive MTL Optimization Workflow - Dynamic task weighting based on gradient analysis.

Multi-View Representation Learning

The MolP-PC framework demonstrates the power of multi-view fusion for capturing complementary molecular information. By integrating 1D molecular fingerprints (encodings of molecular structure), 2D molecular graphs (topological connections between atoms), and 3D geometric representations (spatial molecular conformation), the model constructs a comprehensive representation that significantly enhances predictive performance [25] [28]. An attention-gated fusion mechanism dynamically weights the contributions of each representation view, enabling the model to emphasize the most relevant features for specific property prediction tasks.

The MT-GNN framework extends this approach by incorporating mechanism-informed reaction graphs that embed prior mechanistic knowledge, including condensed Fukui indices (f0, f-, f+) and atomic charges (Qc). These features enrich the molecular representation with electronic structure information that is particularly relevant for predicting reaction outcomes such as site selectivity [26].

Table 2: Molecular Representation Modalities in MTL Frameworks

| Representation Type | Information Captured | Framework Examples | Application Context |

|---|---|---|---|

| 1D Molecular Fingerprints | Structural patterns and substructures | MolP-PC | ADMET property prediction |

| 2D Molecular Graphs | Topological connectivity and functional groups | MolP-PC, MT-GNN | Reaction site selectivity |

| 3D Geometric Representations | Spatial conformation and steric properties | MolP-PC | Molecular interactions |

| Quantum Chemical Descriptors | Electronic structure and properties | QW-MTL, MT-GNN | Physicochemical properties |

Dynamic Architecture Search

The auto-branch MTL approach addresses the challenge of negative transfer by dynamically determining which layers to share between tasks. Beginning with a hard parameter sharing structure where all layers except the last are shared, the model quantifies task similarities and groups tasks using inter-task affinity metrics. The network automatically branches for tasks deemed antagonistic, preserving beneficial parameter sharing while preventing detrimental interference [27].

This approach is particularly valuable for modeling correlated phenotypes in complex diseases such as Alzheimer's, where genetic contributions across phenotypes may be similar, but the relative influence of each genetic factor varies substantially among phenotypes. By maintaining shared representations for synergistic tasks while branching for antagonistic ones, the model achieves superior performance compared to fixed-architecture MTL approaches [27].

Experimental Protocols and Methodologies

Benchmarking and Evaluation Standards

Rigorous evaluation protocols are essential for accurately assessing MTL performance. The QW-MTL framework establishes a standardized benchmarking approach by conducting the first systematic study across all 13 Therapeutics Data Commons (TDC) ADMET classification tasks using official leaderboard-style splits for joint training and evaluation [15]. This represents a significant advancement over prior studies that either evaluated on small task subsets or used custom data splits, which often led to inflated performance estimates.

Cross-validation strategies must be carefully designed to assess both interpolative and extrapolative performance. The MT-GNN framework demonstrates this through extensive validation that includes both interpolation tests (random 90/10 splits) and extrapolation tests where specific functionalization types are treated as external validation sets [26]. This comprehensive evaluation provides a more complete picture of model generalization capabilities.

Ablation Study Design

Ablation studies play a critical role in validating architectural choices and quantifying the contribution of individual components. The MolP-PC framework employs systematic ablations to confirm the significance of multi-view fusion in capturing multi-dimensional molecular information and enhancing model generalization [25]. These studies typically involve:

- Component Exclusion Tests: Removing individual representation modalities (1D, 2D, or 3D) to quantify their contribution to overall performance

- Fusion Mechanism Analysis: Comparing attention-gated fusion against simpler concatenation or averaging approaches

- Task Weighting Validation: Assessing the impact of adaptive task weighting against fixed weighting schemes

Similar ablation methodologies applied to the AIM framework demonstrate that its adaptive intervention mechanism provides the greatest performance gains in data-scarce regimes, where destructive gradient interference is most pronounced [24].

Figure 2: Multi-View Molecular Representation - Integrating diverse molecular perspectives.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Reagents for MTL in Molecular Property Prediction

| Research Reagent | Function | Example Implementation |

|---|---|---|

| Quantum Chemical Descriptors | Capture electronic properties critical for molecular interactions | Dipole moment, HOMO-LUMO gap, electron distribution, total energy [15] |

| Mechanistic Reaction Graphs | Embed prior mechanistic knowledge into molecular representations | Condensed Fukui indices (f0, f-, f+), atomic charges (Qc) [26] |

| Multi-View Fusion Modules | Integrate complementary molecular representations | Attention-gated fusion of 1D, 2D, and 3D molecular representations [25] |

| Adaptive Task Weighting | Balance learning across heterogeneous tasks | Learnable exponential weighting combining dataset-scale priors with optimization [15] |

| Gradient Conflict Mediation | Manage interference between competing tasks | Dynamic policy learning for geometrically stable updates [24] |

| Auto-branching Architectures | Prevent negative transfer between antagonistic tasks | Dynamic network branching based on inter-task affinity metrics [27] |

Case Studies and Practical Applications

ADMET Property Prediction

The MolP-PC framework demonstrates substantial practical utility in predicting ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) properties, achieving optimal performance in 27 of 54 tasks and surpassing single-task models in 41 of 54 tasks [25] [28]. A case study examining the anticancer compound Oroxylin A demonstrates effective generalization in predicting key pharmacokinetic parameters including half-life (T₀.₅) and clearance (CL). The model does exhibit a tendency to underestimate volume of distribution (VD) for compounds with high tissue distribution, highlighting an area for continued improvement [25].

The QW-MTL framework further advances this domain, significantly outperforming single-task baselines on 12 out of 13 TDC ADMET classification tasks [15]. This demonstrates how quantum-enhanced representations combined with adaptive task weighting can effectively leverage inter-task relationships to enhance prediction across diverse ADMET endpoints.

Reaction Site-Selectivity Prediction

The MT-GNN framework achieves remarkable performance in predicting site selectivity for ruthenium-catalyzed C–H functionalization of arenes, with an average accuracy of 0.934 and standard deviation of 0.007 [26]. By jointly learning site-selectivity classification alongside molecular property regression tasks (including electron affinity, orbital energies, and steric properties), the model leverages inter-task relationships to enhance predictive accuracy. The embedded reaction graphs bridge previous mechanistic studies with reaction representation, enabling excellent interpolative and extrapolative ability across diverse arene substrates.

Complex Disease Phenotype Prediction

The auto-branch MTL approach demonstrates compelling performance in predicting multiple correlated traits associated with Alzheimer's disease, including cognitive assessments (MMSE, MoCA, ADAS13, CDRSB), functional questionnaires (FAQ), and neuroimaging outcomes (AV45, FDG) [27]. By dynamically branching the network architecture based on inter-task affinity, the model effectively captures the genetic relatedness between phenotypes while respecting their unique characteristics. This approach reveals that while genetic contributions across Alzheimer's phenotypes are similar, the relative influence of each genetic factor varies substantially among phenotypes.

The integration of inter-task relationship analysis with comprehensive molecular similarity metrics represents a paradigm shift in molecular property prediction. The frameworks examined in this technical guide demonstrate that explicitly modeling task relationships and leveraging multi-view molecular representations consistently outperforms single-task approaches across diverse applications, from ADMET prediction to reaction outcome forecasting.

Future research directions will likely focus on several key areas: (1) developing more sophisticated task relationship quantification methods that can predict synergies without extensive experimentation; (2) creating unified molecular representations that seamlessly integrate structural, electronic, and mechanistic information; and (3) establishing standardized benchmarking protocols that enable fair comparison across MTL approaches.

The combination of adaptive optimization strategies, multi-view molecular representations, and dynamic architecture selection positions MTL as an essential methodology for accelerating scientific discovery in molecular design and drug development. By explicitly addressing the dual challenges of inter-task relationships and molecular similarity, these frameworks create more robust, interpretable, and data-efficient models that leverage the full spectrum of available information to enhance predictive performance.

Architectures and Implementations: Multi-View Fusion and Advanced MTL Frameworks

The accurate prediction of molecular properties is a cornerstone of modern drug discovery and materials science. Traditional machine learning approaches often rely on a single molecular representation, which provides a limited perspective and can struggle to capture the complex, multi-faceted nature of molecular structure and function. In recent years, multi-task learning (MTL) has emerged as a powerful paradigm that leverages shared information across related predictive tasks to improve generalization, especially valuable in data-scarce scenarios common to molecular property prediction [1] [9]. This technical guide explores the synergistic integration of MTL with multi-view molecular representation learning, a sophisticated approach that concurrently processes one-dimensional (1D), two-dimensional (2D), and three-dimensional (3D) molecular data. By fusing information from these complementary perspectives, these models aim to construct a more holistic and informative molecular embedding, ultimately enhancing predictive performance for a broad spectrum of molecular properties within an MTL framework.

The Rationale for Multi-View Molecular Representations

Molecules are complex entities whose properties are determined by factors captured at different structural levels. Relying on a single representation inevitably leads to information loss.

- 1D Representations (SMILES, SELFIES): Simplified Molecular Input Line Entry System (SMILES) strings offer a compact, sequential notation of molecular structure [29]. While suitable for natural language processing models, they lack explicit topological information and can suffer from semantic ambiguity, where different strings represent the same molecule.

- 2D Representations (Molecular Graphs): These represent atoms as nodes and bonds as edges, explicitly encoding molecular topology and connectivity [29] [30]. Graph Neural Networks (GNNs) operate natively on this structure but may struggle with long-range interactions within the graph [30].

- 3D Representations (Molecular Geometries): This level captures the spatial coordinates of atoms, encoding critical information about stereochemistry, conformation, and intermolecular interactions [31] [30]. Properties like binding affinity and solubility are intimately tied to 3D structure, though obtaining precise geometries can be computationally expensive [30].

Integrating these views allows models to leverage their complementary strengths. For instance, a model can use the robustness of a 2D graph for basic topology, the sequence-level patterns from 1D SMILES, and the spatial awareness of 3D conformation to form a unified, information-rich representation [29] [30]. This is particularly powerful in an MTL context, where different properties may depend more heavily on different structural views.

Core Methodologies and Fusion Architectures

Several advanced architectures have been proposed to effectively integrate multi-view data. The core challenge lies in designing mechanisms that can deeply fuse features from heterogeneous representations.

Multi-View Fusion Networks

A common architectural pattern involves dedicated feature extractors for each molecular view, followed by a fusion module.

- MvMRL Framework: This method employs a multiscale CNN with squeeze-and-excitation (SE) blocks for SMILES sequences, a multiscale GNN for molecular graphs, and a Multi-Layer Perceptron (MLP) for molecular fingerprints [29]. Its distinctive feature is a dual cross-attention component that enables deep, interactive fusion of the extracted features from the three views before the final prediction [29].

- PremuNet Framework: This framework uses a two-branch architecture. The "Low-dimension" branch (PremuNet-L) integrates SMILES (via Transformer), molecular graphs (via GNN), and fingerprint information. The "High-dimension" branch (PremuNet-H) focuses on fusing 2D topological and 3D geometric information. The model is pre-trained with masked self-supervised objectives to learn effective fusion, and features from both branches are concatenated for downstream predictions [30].

The following diagram illustrates the typical workflow of a multi-view fusion network.

Multi-Modal Learning with Structured Knowledge

Beyond structural representations, some frameworks incorporate external knowledge.

- MMSA Framework: This self-supervised framework enhances molecular graphs with information from other modalities, such as images. Its key innovation is a structure-awareness module that constructs a hypergraph to model higher-order correlations between molecules and uses a memory bank to store invariant molecular knowledge, improving generalization [31].

- MV-Mol Model: This approach explicitly harvests expertise from structured (knowledge graphs) and unstructured (biomedical texts) sources. It uses text prompts to model different "views" (e.g., microscopic, biological) and a fusion network to extract view-based representations through a two-stage pre-training strategy [32].

Integration with Multi-Task Learning

MTL provides a natural and powerful framework for leveraging multi-view representations. The core idea is to jointly predict multiple molecular properties, allowing a model to learn shared representations that generalize better, particularly for tasks with limited data [1] [9].

MTL Optimization Challenges and Solutions

A significant challenge in MTL is negative transfer, where performance on a task is degraded by learning jointly with other, potentially unrelated tasks [9]. This is often exacerbated by task imbalance, where different properties have vastly different amounts of labeled data [9] [15]. Several strategies have been developed to mitigate this.

- Adaptive Checkpointing with Specialization (ACS): This training scheme maintains a shared backbone with task-specific heads. It monitors validation loss for each task and checkpoints the best backbone-head pair for a task whenever its validation loss hits a new minimum. This protects individual tasks from detrimental parameter updates during joint training [9].

- Learnable Task Weighting: The QW-MTL framework introduces a learnable exponential weighting scheme that dynamically adjusts each task's contribution to the total loss. This balances the learning process across tasks with heterogeneous data scales and difficulties [15].

- Prompt-Guided Multi-Channel Learning: This method pre-trains a model on multiple self-supervised tasks (e.g., molecule distancing, scaffold distancing, context prediction) across different "channels." During fine-tuning, a prompt selection module aggregates these channel representations into a composite, task-specific representation, making the model context-dependent and robust [33].

The table below summarizes key MTL optimization strategies used with multi-view models.

Table 1: Multi-Task Learning Optimization Strategies for Molecular Property Prediction

| Strategy | Mechanism | Key Advantage | Representative Framework |

|---|---|---|---|

| Adaptive Checkpointing | Saves best model parameters per task during training | Mitigates negative transfer in imbalanced data scenarios [9] | ACS [9] |