Navigating Activity Cliffs: Strategies to Enhance Molecular Property Prediction for Robust Drug Discovery

Activity cliffs (ACs), where minute structural changes cause significant potency shifts, present a major challenge for AI-driven molecular property prediction, often leading to model inaccuracies and unreliable guidance for drug...

Navigating Activity Cliffs: Strategies to Enhance Molecular Property Prediction for Robust Drug Discovery

Abstract

Activity cliffs (ACs), where minute structural changes cause significant potency shifts, present a major challenge for AI-driven molecular property prediction, often leading to model inaccuracies and unreliable guidance for drug design. This article provides a comprehensive resource for researchers and drug development professionals, exploring the foundational concepts of ACs and their impact on predictive modeling. It surveys cutting-edge methodological advances, from contrastive learning to explanation-supervised models, that explicitly incorporate AC awareness. The content further details practical strategies for troubleshooting and optimizing models against AC-induced errors and establishes a rigorous framework for the validation and comparative analysis of AC-robust models. By synthesizing insights from recent literature, this guide aims to equip scientists with the knowledge to build more generalizable and trustworthy predictive models, ultimately accelerating the identification and optimization of lead compounds.

What Are Activity Cliffs? Defining the Fundamental Challenge in SAR Modeling

Core Concepts FAQ

What is a Structure-Activity Relationship (SAR)? A Structure-Activity Relationship (SAR) is the relationship between the chemical structure of a molecule and its biological activity. It is based on the principle that a molecule's biological activity is a direct function of its chemical structure. SAR analysis involves systematically altering a compound's molecular structure and observing the effects on its biological activity to determine which structural elements are essential for binding and activity [1] [2] [3].

What is an Activity Cliff (AC)? An Activity Cliff (AC) occurs when two compounds are highly structurally similar but exhibit a large, unexpected difference in their biological activity [4] [5]. This phenomenon creates a discontinuity in the SAR landscape, defying the intuitive molecular similarity principle which states that similar structures should have similar activities [5].

Why are Activity Cliffs problematic for computational models? Activity Cliffs are a major roadblock for Quantitative Structure-Activity Relationship (QSAR) models and other machine learning approaches for pharmacological activity prediction [5]. Models often struggle to predict ACs because they embed structurally similar molecules close together in their latent space, making it difficult to account for the large differences in their actual biological activity [6]. This leads to significant prediction errors, particularly for "cliffy" compounds [5].

Troubleshooting Common Experimental & Modeling Issues

Issue: My QSAR model performs well overall but fails on specific compound pairs.

- Potential Cause: The model may be encountering Activity Cliffs that it cannot recognize or predict. Standard QSAR models trained with regression loss alone often have low AC-sensitivity [6] [5].

- Solution:

- Diagnose: Analyze your test set to identify Matched Molecular Pairs (MMPs)—pairs of compounds that differ only by a small structural change [4]. Calculate their actual versus predicted activity differences to confirm ACs are the source of error.

- Mitigate: Incorporate an AC-awareness inductive bias into your model. For Graph Neural Networks (GNNs), this can be done by adding a Triplet Soft Margin (TSM) loss to the standard regression loss. The TSM loss penalizes the model when the relative distances in the latent space between an anchor compound, a similar "positive" compound, and a dissimilar "negative" compound do not reflect their true activity relationships [6].

Issue: I have observed an Activity Cliff in my data. How can Iexploit it for lead optimization?

- Potential Cause: This is not a failure but an opportunity. ACs reveal small structural modifications with large biological impacts and are rich sources of pharmacological information [4] [5].

- Solution:

- Rationalize: If structural data (e.g., protein-ligand co-crystals) is available for both compounds in the pair, analyze the binding modes to establish a rationale. Common reasons for ACs include: the formation of new H-bond or ionic interactions, the displacement of key water molecules in the binding site, or changes in stereochemistry that alter interactions [4].

- Apply: Use this transformation as a proposed modification in a different chemical series. Studies have shown that when such Activity Cliff information is used, there is a 60% success rate in improving activity, and it significantly increases the chance of producing a compound in the top 10% most active [4].

Issue: My dataset is very small, which limits my ability to build a robust SAR.

- Potential Cause: Data scarcity is a common obstacle in molecular property prediction, affecting domains like pharmaceuticals and materials science [7].

- Solution: Employ multi-task learning (MTL) or few-shot learning techniques. MTL can leverage correlations among related molecular properties to improve predictive performance when data for a single task is limited. For severe data imbalance, use specialized training schemes like Adaptive Checkpointing with Specialization (ACS), which has been shown to enable accurate predictions with as few as 29 labeled samples [7]. For few-shot scenarios, context-informed meta-learning approaches that extract both property-shared and property-specific molecular features can also be effective [8].

Key Experimental Protocols

Protocol 1: Systematic SAR Exploration through Functional Group Alteration

This classic medicinal chemistry approach probes the importance of specific functional groups in a lead compound [1].

- Design Analogs: Plan the synthesis of analogs where a single functional group (e.g., a hydroxyl or carbonyl) is systematically altered.

- Probe H-Bond Interactions:

- To test if a hydroxyl group acts as an H-bond donor, synthesize analogs where the OH is replaced with -H or -OCH₃. A significant drop in activity suggests the OH is important and likely donates an H-bond to the protein [1].

- To test if a carbonyl group acts as an H-bond acceptor, synthesize analogs where the C=O is replaced with C=CH₂, CH₂, or reduced to an alcohol (CH-OH). A loss of activity indicates the carbonyl oxygen is likely accepting an H-bond [1].

- Test and Interpret: Measure the biological activity of all synthesized analogs. Interpret the results to map the SAR, identifying essential and non-essential structural features for activity. Avoid introducing multiple simultaneous changes, as this complicates interpretation [1].

Protocol 2: Evaluating and Mitigating Activity Cliffs in QSAR Models

This computational protocol assesses and improves a model's handling of Activity Cliffs.

- Identify Activity Cliffs in Dataset:

- From your dataset, generate Matched Molecular Pairs (MMPs) or identify highly similar pairs using a fingerprint-based similarity method (e.g., Tanimoto similarity on ECFP fingerprints) [4] [5].

- Define a threshold for "large activity difference" (e.g., a 100-fold change in IC₅₀ or Kᵢ) [4]. Pairs satisfying both structural similarity and activity difference criteria are ACs.

- Benchmark Model Performance:

- Apply your QSAR model to predict the activities of all compounds.

- Evaluate the model's AC-sensitivity: its ability to correctly classify pairs of similar compounds as ACs or non-ACs when the activities of both compounds are unknown [5].

- Implement AC-Informed Learning (e.g., ACANet):

- Model: Use a Graph Neural Network (GNN) as the base architecture.

- Loss Function: Replace the standard regression loss with an AC-Awareness (ACA) loss:

ACA Loss = Regression Loss (e.g., MAE) + α * Triplet Soft Margin (TSM) Loss. - Triplet Mining: During training, for a batch of molecules, mine high-value activity cliff triplets (HV-ACTs). Each triplet consists of an anchor compound (A), a structurally similar positive compound (P), and a structurally similar negative compound (N), where the activity difference between A and N is significantly larger than between A and P [6].

- Training: The TSM loss penalizes the model if the distance in the latent space between A and N is not greater than the distance between A and P, forcing the model to learn representations that reflect the sharp activity changes [6].

Essential Research Reagent Solutions

The table below lists key computational and analytical "reagents" for SAR and Activity Cliff research.

| Item Name | Type/Function | Key Application in Research |

|---|---|---|

| Extended-Connectivity Fingerprints (ECFPs) | Molecular Representation / A circular fingerprint that captures atomic environments and molecular features. [5] | Standard molecular representation for similarity searching, QSAR modeling, and identifying structurally similar pairs for AC analysis. |

| Graph Neural Network (GNN) | Machine Learning Model / A neural network that operates directly on graph structures, such as molecular graphs. [7] [6] | Base architecture for modern molecular property prediction; can be augmented with AC-awareness. |

| Matched Molecular Pair (MMP) | Analytical Concept / A pair of compounds that differ only by a single, well-defined structural transformation. [4] [5] | A rigorous method to define "small structural change" when identifying and analyzing Activity Cliffs. |

| ACANet Framework | Software/Method / A GNN-based model incorporating a novel AC-Awareness loss function. [6] | A ready-to-use solution for improving model performance on datasets with prevalent Activity Cliffs. |

| Domain of Applicability (DA) | Validation Tool / The chemical space region defined by the model's training data where reliable predictions are expected. [9] | Critical for determining when a model's predictions for new, unseen molecules can be trusted, especially near ACs. |

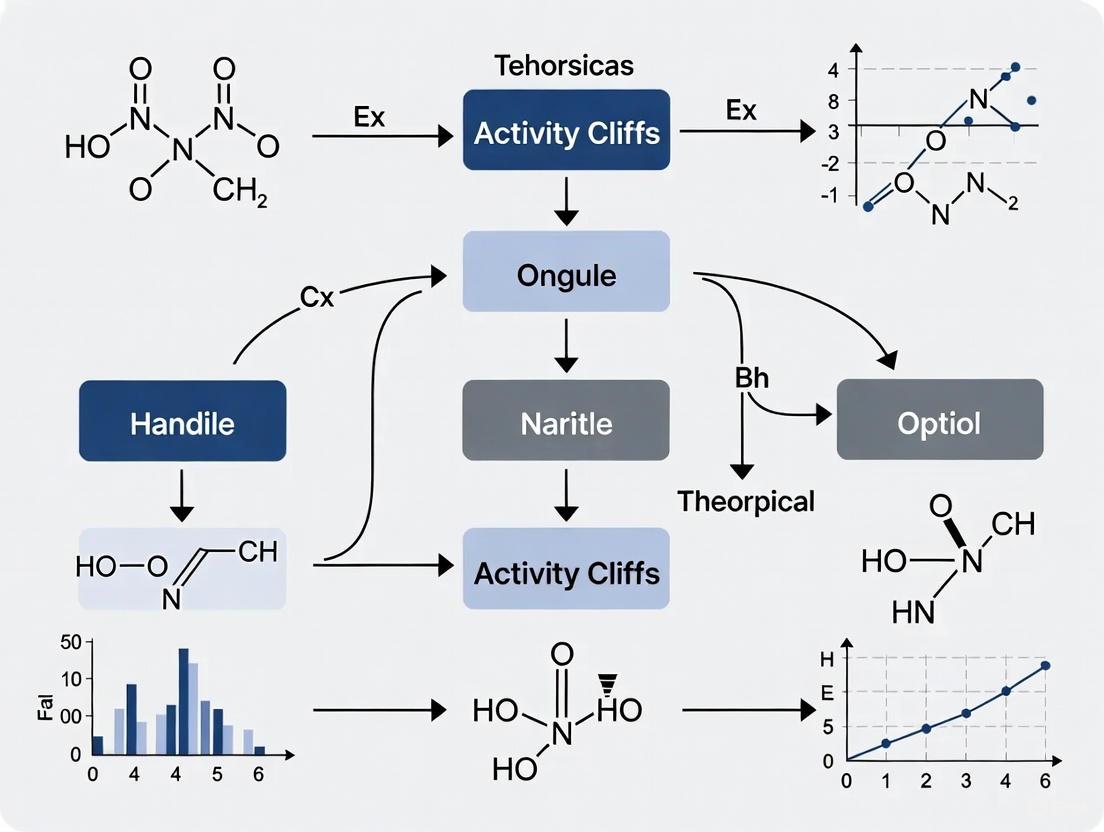

Workflow and Model Architecture Diagrams

SAR to AC Analysis Workflow

AC-Aware GNN Model (ACANet)

In molecular property prediction and drug discovery, activity cliffs (ACs) represent a significant challenge and source of valuable information. They are generally defined as pairs or groups of structurally similar compounds that are active against the same target but have large differences in potency [10]. This article provides technical support for researchers grappling with the complexities of ACs, offering troubleshooting guides, experimental protocols, and resources to navigate this critical aspect of structure-activity relationship (SAR) analysis.

FAQs: Understanding Activity Cliffs

1. What is the fundamental definition of an activity cliff?

An activity cliff is formed by structurally similar active compounds that share the same biological activity but exhibit a large potency difference [10]. This captures chemical modifications that strongly influence biological activity and represent instances of SAR discontinuity, which can be detrimental for traditional QSAR modeling but highly informative for understanding key structural drivers of potency [11] [10].

2. What are the key criteria for quantifying activity cliffs?

Two primary criteria must be considered [10]:

- Molecular Similarity: This can be assessed using molecular fingerprints and the Tanimoto coefficient (ranging from 0 to 1), shared molecular scaffolds, or the formation of Matched Molecular Pairs (MMPs)—pairs of compounds distinguished by a chemical modification at a single site [10] [12].

- Potency Difference: A difference of at least two orders of magnitude (100-fold) is often applied, as this is considered significant in medicinal chemistry. Target set-dependent potency difference thresholds can also be used to focus on the most significant variations within a specific dataset [12].

3. Why do activity cliffs pose a problem for QSAR models?

QSAR models are often based on the principle that similar structures have similar activities. ACs directly defy this principle, creating steep discontinuities in the SAR landscape that most machine learning algorithms struggle to predict accurately [5]. This frequently leads to significant prediction errors for "cliffy" compounds [5] [13].

4. What new categories of activity cliffs have been identified recently?

The AC concept has evolved to include more complex categories:

- Multi-site ACs (msACs) and Dual-site ACs (dsACs): ACs consisting of analog pairs with different substitutions at multiple sites (msACs), most of which are at two sites (dsACs) [12].

- 3D-cliffs and Interaction cliffs: ACs defined based on the three-dimensional similarity of bound ligands and their interaction patterns with the target protein, providing insight for structure-based design [10].

Troubleshooting Guides

Issue 1: Low Sensitivity in Detecting Activity Cliffs with Standard QSAR Models

Problem: Your QSAR model performs well on general compounds but fails to correctly predict the large potency differences for structurally similar pairs.

Solutions:

- Employ Pairwise Modeling: Instead of predicting individual activities, train models to directly predict activity cliff values (like SALI - Structure-Activity Landscape Index) for pairs of molecules. This approach can be more robust, especially for smaller datasets [14].

- Integrate Advanced Deep Learning: Utilize modern deep learning frameworks specifically designed for ACs. For example, the ACtriplet model integrates triplet loss and pre-training to improve discrimination of ACs [13]. The SCAGE framework uses a self-conformation-aware pre-training on ~5 million compounds to enhance performance on structure-activity cliff benchmarks [15].

- Use Graph-Based Representations: Consider using Graph Isomorphism Networks (GINs) as molecular representations. Research indicates they can be competitive with or superior to classical fingerprints like ECFPs for AC-classification tasks [5].

Issue 2: Defining Meaningful Similarity Thresholds for Cliff Identification

Problem: It is difficult to choose a single, subjective Tanimoto coefficient threshold to define structural similarity for ACs.

Solutions:

- Adopt Substructure-Based Criteria: Move beyond whole-molecule similarity metrics. Use chemically intuitive criteria such as:

- Seek Consensus: Identify "consensus activity cliffs" that are recognized independently of different molecular representations and similarity calculations [10].

- Leverage 3D Information: For compounds with known or modeled binding modes, define similarity based on 3D structural overlap or protein-ligand interaction fingerprints ("interaction cliffs") [10].

Issue 3: Handling Coordinated Activity Cliffs in Datasets

Problem: Activity cliffs in your dataset are not isolated pairs but occur in coordinated groups, complicating analysis.

Solutions:

- Implement Network Analysis: Represent your dataset as an AC network where nodes are compounds and edges represent pairwise AC relationships. This helps visualize and analyze clusters of compounds involved in multiple cliffs, revealing more SAR information than isolated pairs [10].

- Identify AC Generators: Within the network, identify highly connected nodes (hubs) or "AC generators" – compounds that form cliffs with high frequency. Analyzing these can pinpoint critical structural features [10].

Experimental Protocols & Methodologies

Protocol 1: Calculating the Structure-Activity Landscape Index (SALI)

The SALI is a quantitative measure to characterize activity cliffs for a pair of compounds [14].

Formula:

SAL(i,j) = |A_i - A_j| / (1 - sim(i,j))

Where A_i and A_j are the activities (e.g., pIC50, pKi) of molecules i and j, and sim(i,j) is their structural similarity (typically a Tanimoto coefficient using fingerprints like BCI or CDK fingerprints) [14].

Methodology:

- Calculate Pairwise Similarity: For all compound pairs in your dataset, compute the structural similarity (

sim(i,j)) using a chosen fingerprint. - Compute Activity Difference: Calculate the absolute difference in activity (

|A_i - A_j|). - Compute SALI Value: Use the formula above to calculate the SALI value for each pair. A higher SALI value indicates a more significant activity cliff.

- Handle Infinite Values: If a pair has a similarity of 1.0 (identical fingerprints), the denominator becomes zero, leading to an infinite SALI. In such cases, replace the infinite value with the highest non-infinite SALI value in the dataset [14].

Protocol 2: Building a Pairwise Random Forest Model for Activity Cliff Prediction

This protocol outlines a method to prospectively identify whether a new molecule will form an activity cliff with existing molecules [14].

Workflow:

Methodology:

- Create Pairwise Dataset: From a training set of N molecules, generate a new dataset of N(N-1)/2 objects, where each object is a unique pair of molecules [14].

- Define Dependent Variable: The SALI value for each pair is the target variable (dependent variable) for the model [14].

- Generate Independent Variables (Descriptors): For each pair of molecules (i, j), create a descriptor vector by aggregating the descriptors of the individual molecules. Common aggregation functions include:

- Arithmetic Mean (

f_mean) - Absolute Difference (

f_diff) - Geometric Mean (

f_geom) [14]

- Arithmetic Mean (

- Model Training: Train a Random Forest model on this pairwise dataset. The Random Forest algorithm is suitable as it resists overfitting and implicitly performs feature selection [14].

- Prospective Prediction: For a new molecule, create pairs with all molecules in the original set, compute the aggregated descriptors, and use the model to predict the SALI values. Rank the new molecules based on their highest predicted SALI to prioritize those most likely to form cliffs [14].

Quantitative Data Tables

Table 1: Key Quantitative Metrics for Activity Cliff Analysis

| Metric Name | Formula | Application & Interpretation | Reference | ||

|---|---|---|---|---|---|

| SALI (Structure-Activity Landscape Index) | `SAL(i,j) = | Ai - Aj | / (1 - sim(i,j))` | Quantifies the steepness of the activity cliff. Higher values indicate more significant cliffs. | [14] |

| Tanimoto Coefficient (Tc) | T = c / (a + b - c)(a,b: bits in fp A/B; c: common bits) |

Measures 2D structural similarity. Range 0-1. Requires a threshold (e.g., Tc > 0.85) to define "similar" compounds. | [10] | ||

| Potency Difference Threshold | `|Ai - Aj | >= 100-fold<br> orΔpIC50 >= 2 log units` |

A common criterion to define a "large" potency difference in medicinal chemistry. | [12] |

Table 2: Performance of Different Modeling Approaches on Activity Cliffs

| Modeling Approach | Key Feature | Reported Outcome / Advantage | Reference |

|---|---|---|---|

| Pairwise Random Forest | Predicts SALI values directly from pairs of molecular descriptors. | Can prioritize molecules for their cliff-forming ability, enabling prospective identification. | [14] |

| ACtriplet | Integrates triplet loss (from face recognition) with molecular pre-training. | Significantly improves deep learning performance on 30 benchmark AC datasets. | [13] |

| SCAGE | Self-conformation-aware graph transformer pre-trained on ~5M compounds. | Achieves significant performance improvements on 30 structure-activity cliff benchmarks. | [15] |

| QSAR Models (ECFPs, GINs) | Repurposed to predict activities of pairs individually and classify cliffs. | GINs are competitive/superior to ECFPs for AC-classification; models often fail to predict ACs when activities of both compounds are unknown. | [5] |

Table 3: Key Computational Tools and Datasets for Activity Cliff Research

| Item Name | Type | Function & Application | Reference |

|---|---|---|---|

| ChEMBL Database | Public Repository | A major source of bioactive molecules and activity data for extracting datasets and identifying cliffs. | [14] [12] [5] |

| BCI / CDK Fingerprints | Molecular Descriptor | 1051-bit BCI or 1024-bit CDK path fingerprints for calculating structural similarity (Tc) in SALI and other analyses. | [14] |

| Matched Molecular Pair (MMP) Algorithm | Computational Method | Systematically identifies pairs of compounds that differ only at a single site, providing a chemically intuitive similarity criterion for cliffs (MMP-cliffs). | [10] [12] |

| Retrosynthetic MMP (RMMP) Algorithm | Computational Method | Generates MMPs based on retrosynthetic rules, increasing the chemical interpretability of the identified cliffs. | [10] [12] |

| Triplet Loss Function | Machine Learning Component | Used in models like ACtriplet to better learn representations that distinguish between similar molecules with different properties. | [13] |

Troubleshooting Guides

Guide 1: Diagnosing and Mitigating Poor Generalization on Novel Molecular Scaffolds

Problem: Your model, trained to predict a rare molecular property, performs well on molecular scaffolds seen during training but fails to generalize to novel scaffold types.

Explanation: This is a classic symptom of cross-molecule generalization under structural heterogeneity [16]. Models tend to overfit the limited structural patterns in small training datasets, lacking the inductive bias to handle diverse molecular graphs. Furthermore, standard graph neural networks (GNNs) often produce latent spaces that prioritize structural similarity, which can be misleading when small structural changes lead to large activity differences (activity cliffs) [6].

Solution Steps:

- Analyze Scaffold Splits: Ensure your dataset is split by molecular scaffold (e.g., using Bemis-Murcko scaffolds) rather than randomly. This provides a more realistic assessment of model performance on structurally novel compounds [7].

- Integrate an AC-Informed Loss: Incorporate an Activity Cliff-aware (ACA) loss function into your GNN training. This adds a contrastive learning component that penalizes the model when structurally similar molecules with large activity differences are embedded close together in the latent space [6].

- Employ a Multi-Task Framework: Use a Multi-Task Learning (MTL) setup, such as the Adaptive Checkpointing with Specialization (ACS) scheme. ACS trains a shared backbone GNN across multiple related properties but checkpoints task-specific models to mitigate negative interference, effectively leveraging knowledge from other properties to improve generalization on the rare property of interest [7].

Guide 2: Resolving Performance Degradation from Noisy or Imbalanced Molecular Labels

Problem: Training for a rare property is unstable, and model performance is poor, likely due to the combination of very few labels and significant noise or imbalance in the annotated data.

Explanation: In ultra-low data regimes, the impact of label noise and class imbalance is severely magnified. A single mislabeled example can drastically alter the model's learned decision boundary. This is a common issue with molecular activity data from public databases, which can contain abnormal entries, duplicate records, and severe value imbalances [17] [16].

Solution Steps:

- Implement Noise-Adaptive Filtering: Integrate a Noise-Adaptive Resilience Module that uses attention mechanisms to dynamically assign lower weights to suspected noisy or mislabeled examples during training. This can be combined with consistency checks across different data augmentations [18].

- Apply Rigorous Data Denoising: Before training, systematically clean your molecular dataset. This involves removing null values, handling duplicate records, and potentially filtering out activity annotations that fall outside a credible biological range [16].

- Leverage Semi-Supervised Learning: Use techniques like pseudo-labeling on unlabeled molecular data to expand the effective training set. Consistency regularization can then be applied to improve robustness by enforcing stable predictions across different augmentations of the same molecule [18] [17].

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between standard Transfer Learning and Few-Shot Learning (FSL) for molecular property prediction?

While both leverage prior knowledge, Transfer Learning typically involves fine-tuning a model pre-trained on a large, general-purpose dataset (e.g., ChEMBL) on a smaller, specific target dataset. This fine-tuning step often still requires a "reasonable" amount of target data. In contrast, Few-Shot Learning is designed for extreme data scarcity—scenarios with as few as one to five examples per class. FSL models, often based on meta-learning, are explicitly trained in a "learning to learn" paradigm, optimizing them to adapt quickly to new tasks with minimal data, a common requirement for rare property prediction [19] [20].

FAQ 2: How can I quantitatively evaluate the risk of "negative transfer" in a Multi-Task Learning setup for my properties?

Negative transfer occurs when updates from one task degrade the performance of another. You can evaluate this risk by comparing the performance of three training schemes on your dataset:

- Single-Task Learning (STL): A dedicated model for your property of interest.

- Standard MTL: A single shared model trained on all properties simultaneously.

- Advanced MTL (e.g., ACS): A method that mitigates negative transfer.

A significant performance drop in standard MTL compared to STL indicates negative transfer. The ACS method, for instance, has been shown to outperform standard MTL by an average of 7.16% on challenging molecular benchmarks, effectively countering this issue [7].

FAQ 3: Beyond model architectures, what data-centric strategies can improve Few-Shot Learning for rare properties?

Data-centric strategies are crucial. Key approaches include:

- Data Augmentation: For molecular graphs, this can involve creating "valid" variations of a molecule through atomic masking, bond deletion, or subtree removal. For image-based representations, standard techniques like rotation and flipping can be used [21] [22].

- Synthetic Data Generation: Generative models, particularly Generative Adversarial Networks (GANs) or Variational Autoencoders (VAEs), can generate synthetic molecular representations or data points that augment your scarce labeled dataset, providing more examples for the model to learn from [19].

- Weakly Supervised Learning: If precise activity value annotations are scarce, you can train models using weaker forms of supervision, such as binary labels (e.g., "active" vs. "inactive") or ranking pairs, which are often easier to obtain [17].

Experimental Protocols & Data

Table 1: Comparison of Core Few-Shot and Low-Data Learning Methods

| Method | Core Mechanism | Best Suited For | Key Advantage | Reported Performance Gain |

|---|---|---|---|---|

| ACANet [6] | Contrastive learning with ACA loss to separate activity cliffs. | Datasets with prevalent activity cliffs, low-sample size regimes. | Explicitly models structure-activity discontinuities. | 31.4% improved label coherence in latent space; 7.54% avg. improvement over MAE baseline on LSSNS datasets. |

| ACS (Adaptive Checkpointing with Specialization) [7] | Multi-task learning with task-specific checkpointing to mitigate negative transfer. | Multi-property prediction with severe task imbalance (ultra-low data for some tasks). | Prevents performance degradation from unrelated tasks. | Achieves accurate prediction with as few as 29 labels; outperforms standard MTL. |

| Model-Agnostic Meta-Learning (MAML) [18] [19] | Optimizes model initial parameters for fast adaptation to new tasks with few gradient steps. | Rapid adaptation to novel molecular properties or targets with very few examples. | Model-agnostic and highly flexible. | Foundational method; enables quick adaptation but can be sensitive to initialization. |

| Prototypical Networks [19] | Classifies based on distance to class prototypes in an embedding space. | Classification tasks where a representative "prototype" for a property class can be defined. | Simple and efficient; no fine-tuning needed for new tasks. | Effective for few-shot classification where embedding space is well-structured. |

Table 2: Key Research Reagent Solutions for Data-Scarce Molecular Modeling

| Item / Resource | Function in Experiment | Key Application in Addressing Data Scarcity |

|---|---|---|

| Graph Neural Network (GNN) [6] [7] | Learns vector representations (embeddings) directly from molecular graph structure. | Base architecture for extracting features without manual engineering, essential for learning from limited data. |

| Triplet Soft Margin (TSM) Loss [6] | A component of the ACA loss that pulls an anchor molecule closer to a "positive" (similar activity) and pushes it away from a "negative" (dissimilar activity). | Injects "activity cliff awareness" into the model, improving sensitivity to critical activity changes. |

| Multi-Task Learning (MTL) Framework [7] | A training paradigm where a single model learns multiple related tasks (properties) simultaneously. | Allows a rare property task to leverage informational signals from other, better-represented property tasks. |

| Benchmark Datasets (e.g., LSSNS, HSSMS, Tox21, SIDER) [6] [7] | Standardized collections of molecules and properties for training and evaluation. | Provides realistic, scaffold-split benchmarks to fairly assess model generalization in low-data settings. |

Workflow Diagrams

Diagram 1: ACANet Workflow for Activity Cliff-Informed Learning

ACANet Activity Cliff-Informed Learning

Diagram 2: Adaptive Checkpointing with Specialization (ACS) Logic

ACS Adaptive Checkpointing Logic

Frequently Asked Questions (FAQs)

Q1: What exactly is an "activity cliff" in the context of drug discovery? An activity cliff (AC) is a pair of structurally similar molecules that exhibit a large, unexpected difference in their biological activity or potency against the same target [23] [24]. This phenomenon defies the core principle of medicinal chemistry—the molecular similarity principle—which states that similar molecules should have similar properties [5]. A classic example involves two inhibitors of blood coagulation factor Xa, where the simple addition of a hydroxyl group leads to a nearly 1,000-fold increase in inhibition [5].

Q2: Why are activity cliffs such a significant problem for machine learning models? Most machine learning (ML) and deep learning (DL) models for molecular property prediction operate on the assumption of a smooth structure-activity relationship (SAR) landscape [23]. Activity cliffs represent sharp discontinuities in this landscape. Models tend to make analogous predictions for structurally similar molecules, an approach that fails for activity cliff compounds because they are statistical outliers. Consequently, both traditional and deep learning models show a significant drop in prediction accuracy for these molecules [25] [23] [5]. In fact, neither enlarging the training set nor increasing model complexity reliably improves predictive accuracy for these challenging compounds [25].

Q3: Do more complex deep learning models handle activity cliffs better than simpler machine learning methods? Surprisingly, no. Extensive benchmarking has revealed that traditional machine learning methods based on molecular descriptors often outperform more complex deep learning models when predicting the properties of activity cliff compounds [23] [26] [5]. This indicates that the superior approximation power of deep neural networks does not, in itself, resolve the fundamental challenges posed by SAR discontinuities.

Q4: What are the best practices for evaluating my model's performance on activity cliffs? It is recommended to go beyond standard overall performance metrics. You should:

- Incorporate dedicated "activity-cliff-centered" metrics during model development and evaluation [23]. The MoleculeACE (Activity Cliff Estimation) benchmarking platform is specifically designed for this purpose [23].

- Use structure-based docking scores as an evaluation oracle where possible. Docking software has been proven to reflect activity cliffs more authentically than simpler scoring functions, providing a more realistic assessment of a model's utility in a drug design pipeline [25].

Q5: Are there specific modeling techniques designed to address activity cliffs? Yes, novel approaches are emerging. These include:

- Activity Cliff-Aware Reinforcement Learning (ACARL): A framework that explicitly identifies activity cliffs using a quantitative Activity Cliff Index (ACI) and incorporates them into the reinforcement learning process via a contrastive loss function, guiding the generation of molecules in high-impact SAR regions [25].

- Explainable AI (XAI) for rationalizing predictions: XAI methods can "open the black box" of ML models and help explain predictions for targeted protein degradation, including activity cliffs, by estimating how specific chemical structures contribute to the model's output [27].

- Specialized deep learning models: Models like ACtriplet integrate advanced training strategies, such as triplet loss (used in facial recognition) and pre-training, to improve a model's sensitivity to the fine structural differences that cause activity cliffs [13].

Troubleshooting Guides

Issue 1: Poor Model Performance on Activity Cliff Compounds

Symptoms:

- High overall model accuracy, but large errors on specific, structurally similar compound pairs.

- Model predictions are overly smooth and fail to capture sharp jumps in activity present in the experimental data.

Diagnosis and Solutions:

- Diagnosis: Confirm the presence and density of activity cliffs in your dataset.

- Solution 1: Data Curation and Analysis

- Calculate pairwise structural similarities (e.g., using Tanimoto similarity on ECFP4 fingerprints) and potency differences (e.g., ΔpKi or ΔpIC50) for all compounds in your dataset [23] [24].

- Identify activity cliff pairs. A common definition is a pair with high structural similarity (e.g., Tanimoto similarity ≥ 0.85) and a large potency difference (e.g., a difference > 100-fold, or a class-dependent statistically significant difference) [24].

- Use this analysis to stratify your test set to specifically evaluate performance on "cliffy" compounds.

- Solution 2: Model and Feature Selection

- Try simpler models first. Benchmark traditional machine learning models like Support Vector Machines (SVM) or Random Forests (RF) using extended-connectivity fingerprints (ECFP) against more complex deep learning models [23] [24] [5].

- Consider advanced representations. For deep learning models, graph-based representations (e.g., Graph Isomorphism Networks) have shown promise for directly tackling activity cliff prediction tasks and can be competitive with or superior to classical representations [5].

Issue 2: Generating Molecules that Fail to Exploit Activity Cliffs

Symptoms:

- A generative model produces molecules with high predicted affinity but limited structural diversity.

- The model gets stuck in local minima and cannot discover small structural modifications that lead to large potency gains.

Diagnosis and Solutions:

- Diagnosis: The model's objective function does not prioritize or recognize the value of SAR discontinuities.

- Solution: Implement an Activity Cliff-Aware Generative Framework

- Integrate an Activity Cliff Index (ACI): Implement a metric that quantifies the intensity of SAR discontinuities by comparing structural similarity with differences in predicted biological activity [25].

- Incorporate a Contrastive Loss: Modify the reinforcement learning (RL) reward function to include a contrastive loss term that actively prioritizes learning from activity cliff compounds. This amplifies the reward signal for generating molecules in high-impact SAR regions [25].

- Use a predictive oracle: Employ structure-based docking software, which better captures activity cliffs, as the scoring function (oracle) for the RL environment instead of simpler, smoother functions [25].

Experimental Protocols & Benchmarking

Protocol 1: Benchmarking Model Performance on Activity Cliffs

Objective: To rigorously evaluate and compare the performance of different ML/DL models on activity cliff compounds.

Materials:

- Dataset: A curated set of compounds with consistent bioactivity data (e.g., Ki or IC50) for a specific target from a source like ChEMBL [23].

- Software: A cheminformatics toolkit (e.g., RDKit) for standardizing molecules and calculating descriptors/fingerprints.

Methodology:

- Data Preprocessing: Standardize molecular structures (remove salts, neutralize charges) and curate activity data to remove duplicates and outliers [23].

- Activity Cliff Identification:

- Generate all possible pairwise comparisons of compounds in the dataset.

- Calculate the Tanimoto similarity using ECFP4 fingerprints for each pair.

- Calculate the absolute difference in potency (e.g., ΔpKi = |pKiA - pKiB|).

- Define activity cliff pairs using a predefined threshold (e.g., Tanimoto ≥ 0.85 and ΔpKi ≥ 2.0, equivalent to a 100-fold potency difference) [24].

- Model Training and Evaluation:

- Split the dataset into training and test sets. To avoid data leakage, use the Advanced Cross-Validation (AXV) approach, which ensures no molecules in the training set are structurally highly similar to those in the test set [24].

- Train multiple models (e.g., SVM, Random Forest, GNN) on the training set.

- Evaluate model performance on the entire test set and separately on the subset of compounds identified as part of activity cliffs. Key metrics include Mean Absolute Error (MAE) and Root Mean Square Error (RMSE).

Expected Outcome: Most models will show a significant increase in error (worse MAE/RMSE) on the activity cliff subset compared to the general test set. Simpler models may outperform deep learning models on this specific task [23] [5].

Protocol 2: Predicting Activity Cliffs from Matched Molecular Pairs (MMPs)

Objective: To build a classifier that can directly predict whether a pair of analogous compounds forms an activity cliff.

Materials: As in Protocol 1.

Methodology:

- Generate Matched Molecular Pairs (MMPs): An MMP is a pair of compounds that differ only by a chemical change at a single site (e.g., a substituent exchange). Use an algorithm (e.g., the Hussain and Rea algorithm) to fragment molecules in your dataset and generate all possible MMPs [24].

- Label MMPs: Classify each MMP as an "AC" (e.g., ΔpKi ≥ 2.0) or a "non-AC" (e.g., ΔpKi < 1.0) [24].

- Feature Representation for Pairs: Represent each MMP using a concatenated fingerprint that encodes the common core structure, the unique features of the first substituent, and the unique features of the second substituent [24].

- Model Building and Evaluation: Train a binary classifier (e.g., SVM, Random Forest) to distinguish between AC and non-AC pairs. Evaluate using standard classification metrics like AUC-ROC.

Expected Outcome: With a proper data split that excludes molecular overlap (using AXV), you can build a robust classifier to directly predict activity cliffs, providing a tool for rational compound optimization [24].

Data Presentation

Table 1: Frequency of Activity Cliffs Across Various Drug Targets

This table illustrates that activity cliffs are a common occurrence across a wide range of biological targets, highlighting the ubiquity of the challenge. Data is sourced from a benchmark study of 30 macromolecular targets [23].

| Target Name | Target Type | Total Molecules (n) | Activity Cliffs in Test Set (%) |

|---|---|---|---|

| Orexin Receptor 2 (OX2R) | GPCR | 1,471 | 52% |

| Ghrelin Receptor (GHSR) | GPCR | 682 | 49% |

| Coagulation Factor X (FX) | Protease | 3,097 | 43% |

| Kappa Opioid Receptor (KOR) agonism | GPCR | 955 | 42% |

| Peroxisome Proliferator-Activated Receptor delta (PPARδ) | Nuclear Receptor | 1,125 | 42% |

| Mu-Opioid Receptor (MOR) | GPCR | 3,142 | 35% |

| Dopamine D3 Receptor (D3R) | GPCR | 3,657 | 40% |

| Serotonin 1a Receptor (5-HT1A) | GPCR | 3,317 | 35% |

| Androgen Receptor (AR) | Nuclear Receptor | 659 | 23% |

| Glycogen Synthase Kinase-3 β (GSK3) | Kinase | 856 | 18% |

| Dual Specificity Protein Kinase CLK4 | Kinase | 731 | 9% |

| Janus Kinase 1 (JAK1) | Kinase | 615 | 8% |

Table 2: Comparison of Machine Learning Methods for Activity Cliff Prediction

This table summarizes the performance of various methods on the task of classifying pairs of molecules as activity cliffs or non-cliffs, based on a large-scale study across 100 activity classes [24]. Performance is measured by Area Under the Receiver Operating Characteristic Curve (AUC), where 1.0 is perfect.

| Model Type | Specific Model | Key Features | Average Performance (AUC) | Notes | |

|---|---|---|---|---|---|

| Kernel Method | Support Vector Machine (SVM) | MMP kernel, fingerprint representation | Best (by small margin) | Robust across many classes [24] | |

| Instance-Based | k-Nearest Neighbour (k-NN) | Simple, similarity-based | High | Competitive with complex methods [24] | |

| Tree-Based | Random Forest (RF) | Ensemble of decision trees | High | ||

| Deep Learning | Graph Neural Network (GNN) | Learns representations from molecular graphs | Variable | Does not consistently outperform simpler methods [24] [26] | |

| Deep Learning | Convolutional Neural Network (CNN) | Operates on 2D images of molecule pairs | High (in some studies) | Performance can be influenced by data leakage [24] [5] |

Workflow and Conceptual Diagrams

Activity Cliff-Aware Molecular Generation

Model Evaluation Workflow with Activity Cliffs

| Category | Item / Resource | Function / Description | Key Utility |

|---|---|---|---|

| Data Sources | ChEMBL Database | A large-scale, open-source bioactivity database containing binding constants (Ki, IC50) for millions of compounds and thousands of targets [25] [23] [24]. | Primary source for curating datasets for model training and benchmarking. |

| Molecular Representation | Extended Connectivity Fingerprints (ECFP4) | A circular fingerprint that captures atom-centered substructural features up to a bond diameter of 4, providing a numerical representation of molecular structure [23] [26]. | Standard for calculating molecular similarity and as input features for traditional ML models. |

| Molecular Representation | Matched Molecular Pairs (MMPs) | A formalized representation of a pair of compounds that differ only at a single site, ideal for systematically studying and defining activity cliffs [25] [24]. | Enables precise identification and analysis of activity cliffs by isolating the effect of specific chemical changes. |

| Evaluation Software | Structure-Based Docking | Software (e.g., AutoDock Vina, Glide) that predicts how a small molecule binds to a protein target and provides a docking score approximating binding affinity [25]. | Provides a more realistic oracle for generative models and evaluation, as it better reflects activity cliffs than simple functions. |

| Benchmarking Platform | MoleculeACE (Activity Cliff Estimation) | An open-access benchmarking platform designed to evaluate model performance specifically on activity cliff compounds [23]. | Provides standardized metrics and datasets to steer community efforts toward addressing this key limitation. |

Frequently Asked Questions (FAQs)

FAQ 1: What is an Activity Cliff and why is it important in drug discovery?

An Activity Cliff (AC) is formed by a pair of structurally similar compounds that are active against the same target but have a large difference in potency [24] [28]. From a medicinal chemistry perspective, ACs are highly relevant because they capture small chemical modifications with large consequences for specific biological activities, providing critical insights for compound optimization and understanding structure-activity relationships (SAR) [24] [28]. For computational chemists, ACs represent a major source of prediction error in Quantitative Structure-Activity Relationship (QSAR) modeling, as they create discontinuities in the SAR landscape that are difficult for machine learning models to capture [5] [23].

FAQ 2: What are the standard criteria for defining an Activity Cliff?

Defining an AC requires specifying two key criteria [28]:

- Structural Similarity Criterion: This is often defined using the Matched Molecular Pair (MMP) formalism. An MMP is a pair of compounds that share a common core structure and are only distinguished by substituents at a single site (a chemical transformation) [24] [28]. An MMP-based AC is termed an MMP-cliff [24].

- Potency Difference Criterion: While a 100-fold potency difference has been a commonly used heuristic [24] [28], more refined approaches use statistically significant, activity class-dependent potency differences derived from class-specific compound potency distributions (e.g., the mean potency per class plus two standard deviations) [24].

FAQ 3: How prevalent are Activity Cliffs in real-world databases like ChEMBL?

Activity Cliffs are a common phenomenon in chemical databases. A large-scale analysis across 100 activity classes from ChEMBL confirmed their widespread presence [24]. Furthermore, a benchmark study on 30 macromolecular targets found that the proportion of activity cliff compounds in test sets varied significantly, ranging from 7% to 52% across different targets, as detailed in Table 1 [23]. This indicates that the prevalence of ACs is target-dependent but can be substantial.

FAQ 4: My QSAR model has good overall performance but fails on Activity Cliff compounds. Why?

This is a common and widely reported issue [5] [23] [29]. Most standard QSAR and machine learning models are built on the principle that similar structures have similar activities. Activity Cliffs are a direct exception to this rule. Studies have consistently shown that both traditional and deep learning models experience a significant drop in performance when predicting the potency of compounds involved in ACs [5] [23] [30]. This failure mode underscores the need for specialized model evaluation and development for AC-rich datasets.

FAQ 5: What is "data leakage" in the context of Activity Cliff prediction, and how can I avoid it?

Data leakage occurs when compound pairs (MMPs) from the same activity class are randomly divided into training and test sets, and individual compounds are shared between MMPs in both sets [24]. This leads to high similarity between some training and test instances, artificially inflating model performance. To avoid this, use an Advanced Cross-Validation (AXV) approach [24]:

- Randomly select a hold-out set of 20% of the compounds before generating MMPs.

- Assign an MMP to the test set only if both of its compounds are in the hold-out set.

- Assign an MMP to the training set only if neither of its compounds is in the hold-out set.

- Discard any MMP where only one compound is in the hold-out set [24].

Troubleshooting Guides

Problem: Low AC-Sensitivity in QSAR Models Scenario: You have built a regression model to predict compound potency. While its overall accuracy is acceptable, it consistently fails to predict the large potency differences for structurally similar pairs (ACs).

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Insufficient AC examples in training data. | Calculate the percentage of ACs in your dataset. Compare your model's Mean Absolute Error (MAE) on all test compounds versus the subset involved in ACs [23]. | Intentionally include more AC pairs in the training set. Use data augmentation techniques specific to ACs. |

| Model architecture is not suited for capturing SAR discontinuities. | Benchmark your model against a simple baseline (e.g., Random Forest with ECFP4 fingerprints) [23] [30]. Try a different molecular representation (e.g., graph-based features) [5]. | Implement models with explicit inductive biases for ACs, such as AC-informed contrastive learning (ACANet) [6] or ACtriplet [13]. |

| Standard regression loss functions (e.g., MSE) do not penalize AC errors enough. | Inspect the model's predictions specifically for high-similarity compound pairs. | Incorporate a dedicated loss function term that penalizes errors on ACs, such as a Triplet Soft Margin (TSM) loss that enforces correct relative distances in the latent space for similar compounds with different activities [6]. |

Problem: Inconsistent Activity Cliff Identification Scenario: You are mining a database like ChEMBL for ACs, but the number of cliffs you find varies wildly when you slightly change your similarity or potency difference thresholds.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Over-reliance on a single, fixed similarity metric (e.g., Tanimoto on ECFP4). | Re-run your AC identification using different similarity criteria (e.g., MMP formalism, scaffold-based categorization) [28]. | Use an intuitive, substructure-based similarity criterion like the MMP formalism [24] [28]. Combine multiple similarity perspectives for a well-rounded definition [23] [30]. |

| Using a universal, fixed potency difference threshold (e.g., 100-fold). | Analyze the potency distribution for your specific activity class. Calculate the mean potency and standard deviation. | Use a statistically significant, activity class-dependent potency difference criterion. A robust method is to define the threshold as the mean compound potency plus two standard deviations for that specific class [24]. |

| Data quality issues leading to "fake" cliffs. | Check for duplicates, salts, and mixtures. Assess the consistency of structural annotations and the reliability of experimental values (e.g., standard deviation for multiple measurements) [23]. | Rigorously curate your dataset before analysis. Use a standardized molecular standardization pipeline (e.g., the ChEMBL structure pipeline) [5]. |

Experimental Protocols & Data

Standard Protocol for Identifying Activity Cliffs in ChEMBL

This protocol provides a step-by-step guide for the large-scale identification and analysis of Activity Cliffs from the ChEMBL database [24] [23].

1. Data Extraction and Curation:

- Source: ChEMBL database (e.g., version 29) [24] [23].

- Filters: Select compounds with molecular mass < 1000 Da, a target confidence score of 9, and a defined interaction relationship type ('D'). Prefer numerically specified equilibrium constants (Ki or Kd values) for accuracy [24] [28].

- Activity Classes: Group compounds by individual protein targets to form activity classes [24].

- Curation: Remove duplicates, salts, and mixtures. Standardize structures (e.g., using the ChEMBL structure pipeline) and check for data consistency [5] [23].

2. Activity Cliff Definition:

- Structural Similarity: Generate Matched Molecular Pairs (MMPs) using a molecular fragmentation algorithm [24].

- Typical Parameters: Maximum substituent size: 13 non-hydrogen atoms. Core must be at least twice as large as a substituent. Maximum difference in non-hydrogen atoms between exchanged substituents: 8 [24].

- Potency Difference: For each activity class, calculate a class-dependent threshold. A recommended method is: Threshold = Mean(pKi) + 2 × Standard Deviation(pKi) of all compounds in the class [24].

- An MMP with a potency difference ≥ this threshold is an AC (MMP-cliff).

- An MMP with a potency difference < 10-fold (∆pKi < 1) can be classified as a non-AC for building classification models [24].

3. Data Partitioning (Avoiding Leakage):

- Use the Advanced Cross-Validation (AXV) method to prevent data leakage from compound overlap between training and test MMPs [24].

- Before MMP generation, randomly hold out 20% of the unique compounds.

- An MMP is placed in the test set only if both its compounds are in the hold-out set [24].

Quantitative Prevalence of Activity Cliffs

The following table summarizes the statistical prevalence of Activity Cliffs across different targets, as found in a benchmark study of 30 macromolecular targets from ChEMBL [23].

Table 1: Prevalence of Activity Cliff Compounds Across Various Targets [23]

| Target Name | Type | Total Compounds (n) | Test Set Compounds (nTEST) | % Cliff Compounds (% cliffTEST) |

|---|---|---|---|---|

| Orexin Receptor 2 (OX2R) | Ki | 1471 | 297 | 52% |

| Ghrelin Receptor (GHSR) | EC50 | 682 | 139 | 48% |

| Coagulation Factor X (FX) | Ki | 3097 | 621 | 44% |

| Kappa Opioid Receptor (KOR) agonism | EC50 | 955 | 193 | 42% |

| Peroxisome Proliferator-Activated Receptor delta (PPARδ) | EC50 | 1125 | 225 | 42% |

| Cannabinoid Receptor 1 (CB1) | EC50 | 1031 | 208 | 36% |

| Mu-opioid Receptor (MOR) | Ki | 3142 | 630 | 35% |

| Serotonin 1a Receptor (5-HT1A) | Ki | 3317 | 666 | 35% |

| Dopamine D3 Receptor (D3R) | Ki | 3657 | 734 | 39% |

| Androgen Receptor (AR) | Ki | 659 | 134 | 24% |

| Dopamine Transporter (DAT) | Ki | 1052 | 213 | 25% |

| Glycogen Synthase Kinase-3 β (GSK3) | Ki | 856 | 173 | 18% |

| Janus Kinase 2 (JAK2) | Ki | 976 | 197 | 12% |

| Dual Specificity Protein Kinase CLK4 | Ki | 731 | 149 | 9% |

| Janus Kinase 1 (JAK1) | Ki | 615 | 126 | 7% |

Benchmarking Model Performance on Activity Cliffs

When evaluating predictive models, it is critical to measure their performance specifically on AC compounds. Benchmarking studies reveal a general performance drop on ACs. The table below shows a comparison of best-performing models from different categories on a set of 30 targets [23].

Table 2: Benchmarking Model Performance on Activity Cliff Compounds [23]

| Model Category | Example Model | Average RMSE (All Compounds) | Average RMSE (Cliff Compounds) | Key Finding |

|---|---|---|---|---|

| Classical Machine Learning | Random Forest (with molecular descriptors) | Lower | Lower | Classical methods based on engineered descriptors often outperform more complex deep learning models on ACs [23] [30]. |

| Deep Learning (Graph-based) | Graph Neural Networks (GNNs) | Higher | Higher | Graph-based models can struggle with ACs, potentially due to their strong bias for structural similarity in the latent space [6] [23]. |

| Deep Learning (Sequence-based) | LSTMs (on SMILES) | Intermediate | Intermediate | Can perform decently but generally do not surpass classical methods [30]. |

| AC-Informed Models | ACANet [6], ACtriplet [13] | Varies | Lowest | Models incorporating explicit AC-awareness through contrastive or triplet loss show improved performance on AC prediction tasks [6] [13]. |

Workflow Visualization

Activity Cliff Analysis Workflow

The Scientist's Toolkit

Table 3: Essential Research Reagents and Resources

| Item | Function / Description | Example / Reference |

|---|---|---|

| ChEMBL Database | A large, open-source bioactivity database containing curated compounds, targets, and experimental data for extracting activity classes [24] [31]. | https://www.ebi.ac.uk/chembl/ |

| Molecular Standardization Tool | Software to ensure consistent molecular representation by removing salts, neutralizing charges, and generating canonical tautomers. Critical for avoiding "fake" cliffs [5] [23]. | ChEMBL Structure Pipeline [5], RDKit |

| MMP Generation Algorithm | A computational method to systematically identify Matched Molecular Pairs (MMPs) from a set of compounds, providing an intuitive structural similarity criterion [24] [29]. | Molecular Fragmentation Algorithm [24] |

| Molecular Fingerprints | Bit-string representations of molecular structure used for similarity searching and as features for machine learning models. | ECFP4 (Extended Connectivity Fingerprints) [24] [23] |

| Benchmarking Platform | A dedicated framework to evaluate model performance on activity cliffs, ensuring proper data splits and metrics. | MoleculeACE (Activity Cliff Estimation) [23] [30] |

| AC-Informed Model Code | Implementation of novel algorithms designed to improve AC prediction, often using contrastive or triplet loss. | ACANet [6], ACtriplet [13] |

Building Cliff-Aware Models: From Contrastive Learning to Explainable AI

Troubleshooting Guide and FAQs

This section addresses common challenges researchers face when implementing ACANet and related activity cliff-informed models, providing targeted solutions to ensure robust experimental outcomes.

FAQ 1: What constitutes a valid "activity cliff triplet," and how can I efficiently mine them from my dataset?

Answer: A valid activity cliff triplet consists of an anchor molecule (A), a positive example (P), and a negative example (N). The key is that the anchor is structurally similar to the negative but has a significantly different activity, while the anchor and positive have similar activities. Structurally similar pairs are typically identified using molecular fingerprint comparisons (like ECFP4) with a high Tanimoto similarity score (often >0.85). From these structurally similar pairs, you then identify those with a large activity difference (e.g., pIC50 difference >1.0 log unit) to form the (A, N) pair. The positive example (P) is a molecule with activity similar to the anchor but is not required to be structurally similar.

Table: Activity Cliff Triplet Selection Criteria

| Triplet Component | Structural Relationship | Activity Relationship |

|---|---|---|

| Anchor (A) & Positive (P) | No strict requirement | Similar activity (e.g., pIC50 difference < 0.5) |

| Anchor (A) & Negative (N) | High similarity (e.g., Tanimoto > 0.85) | Large activity difference (e.g., pIC50 difference > 1.0) |

FAQ 2: My model's performance on activity cliffs is not improving despite using the ACA loss. What could be wrong?

Answer: This issue often stems from improperly tuned hyperparameters of the ACA loss function. The ACA loss contains critical hyperparameters like cliff_lower, cliff_upper, and the balancing parameter alpha that controls the weight of the contrastive loss versus the task-specific loss (e.g., MAE for regression). We recommend a systematic, data-driven approach to optimize them as follows [32]:

- Use your training set and cross-validation to first determine the optimal

cliff_lowerandcliff_upperthresholds that best define activity cliffs for your specific data. - In a separate cross-validation loop, optimize the

alphaparameter to balance the contribution of the metric learning and task learning losses. - The ACANet package provides built-in functions (

opt_cliff_by_cvandopt_alpha_by_cv) to automate this process.

FAQ 3: How can I handle the high computational cost of triplet mining and contrastive learning on large molecular datasets?

Answer: To manage computational demands:

- Online Triplet Mining: Implement online triplet selection within each mini-batch during training rather than pre-computing all possible triplets for the entire dataset. This is more efficient and allows the model to focus on challenging triplets [32].

- Efficient Similarity Search: For initial pre-screening of structural analogs, use efficient similarity search algorithms available in chemoinformatics toolkits before calculating precise fingerprints for the final candidate set.

- Distributed Training: Leverage multi-GPU training setups if available, as the contrastive learning framework is inherently amenable to data parallelism.

FAQ 4: What steps can I take if my graph neural network backbone fails to learn meaningful molecular representations when integrated with ACA?

Answer: First, verify that your GNN backbone performs satisfactorily on a standard molecular property prediction task without the ACA loss. If it does, the issue likely lies in the integration. Ensure the latent space dimensions are sufficient to capture both structural and activity-related information. It is also crucial to monitor both the regression/classification loss and the contrastive loss during training to ensure one is not overpowering the other; adjusting the alpha parameter can rectify this. Consider using a pre-trained GNN encoder as a starting point before fine-tuning with the ACA objective.

Experimental Protocols and Methodologies

This section provides detailed, step-by-step protocols for key experiments involving ACANet, ensuring reproducibility and clarity.

Protocol 1: Implementing the ACANet Training Workflow

Objective: To train an ACANet model for robust molecular property prediction, specifically enhancing performance on activity cliffs.

Materials: A dataset of molecules (represented as SMILES strings or graphs) with associated bioactivity values (e.g., pIC50, Ki); the ACANet codebase [32]; a Python environment with deep learning libraries (PyTorch, PyTorch Geometric).

Procedure:

- Data Preprocessing: Standardize molecules from SMILES and featurize them into molecular graphs with node (atom) and edge (bond) features.

- Hyperparameter Optimization:

- Cliff Threshold Tuning: Use

clf.opt_cliff_by_cv(Xs_train, y_train, total_epochs=50, n_repeats=3)to determine the optimal activity cliff thresholds (cliff_lower,cliff_upper) via cross-validation [32]. - Alpha Tuning: Use

clf.opt_alpha_by_cv(Xs_train, y_train, total_epochs=100, n_repeats=3)to find the optimal loss balancing parameteralpha[32].

- Cliff Threshold Tuning: Use

- Model Training: With optimized parameters, train the final model using k-fold cross-validation:

clf.cv_fit(Xs_train, y_train, verbose=1). - Model Evaluation: Make predictions on the test set using the ensemble of cross-validated models:

test_pred = clf.cv_predict(Xs_test). Evaluate performance using standard metrics (MAE, RMSE, R² for regression; AUC-ROC, Accuracy for classification) and specifically analyze performance on identified activity cliff pairs.

Protocol 2: Benchmarking ACANet Against Standard GNNs

Objective: To quantitatively compare the performance of ACANet against a standard Graph Neural Network without activity cliff awareness.

Materials: As in Protocol 1.

Procedure:

- Dataset Splitting: Split the data into training, validation, and test sets. Ensure the test set contains a held-out collection of known activity cliff pairs.

- Baseline Model Training: Train a standard GNN model (e.g., AttentiveFP, GCN, or MPNN) using only the regression or classification loss (MAE or Cross-Entropy) on the training set.

- ACANet Training: Train the ACANet model following Protocol 1.

- Performance Comparison: Evaluate both models on the entire test set and, crucially, on the subset of activity cliff pairs. Use the metrics mentioned in Protocol 1.

- Latent Space Visualization: Use dimensionality reduction techniques (like t-SNE or UMAP) to project the latent representations of the test set molecules from both models. Visually inspect whether ACANet better separates molecules by activity rather than just by structure.

Table: Example Benchmark Results on a Public Dataset (Hypothetical Data)

| Model | Overall Test MAE (↓) | MAE on Activity Cliffs (↓) | Overall R² (↑) |

|---|---|---|---|

| Standard GNN (Baseline) | 0.52 | 1.25 | 0.72 |

| ACANet (Ours) | 0.48 | 0.89 | 0.78 |

The Scientist's Toolkit: Research Reagent Solutions

This table details the essential computational tools and data resources required for implementing activity cliff-informed contrastive learning.

Table: Key Research Reagents and Resources

| Item Name | Function/Brief Explanation | Example/Source |

|---|---|---|

| Molecular Graph Encoder | The backbone GNN that learns representations from molecular structure. | AttentiveFP [33], DMPNN [33], or other GNN architectures. |

| Activity Cliff Triplets | The core data components (A, P, N) for the contrastive loss. | Mined from your proprietary dataset or public databases like ChEMBL. |

| ACA Loss Function | The custom loss function that combines task loss and metric learning. | Implemented as described in [34] [35], with tunable parameters cliff_lower, cliff_upper, and alpha. |

| ACANet Software Package | A high-level implementation of the model for easy training and evaluation. | Available on GitHub (shenwanxiang/ACANet) [32]. |

| Curated Benchmark Datasets | Standardized datasets with known activity cliffs for model validation. | Datasets from MoleculeNet and ChEMBL used in the original study [33]. |

| Chemical Featurization Toolkit | Software to convert SMILES strings into featurized molecular graphs. | RDKit (a core dependency in most graph-based molecular ML pipelines) [33]. |

Workflow and Latent Space Visualization

The following diagram illustrates the core conceptual shift enabled by ACANet, moving from a structure-dominated latent space to an activity-informed one.

Frequently Asked Questions (FAQs)

Q1: What is the core innovation of the ACES-GNN framework compared to standard GNNs? ACES-GNN integrates explanation supervision directly into the Graph Neural Network training objective, forcing the model to align its attributions with chemically grounded, activity-cliff-based explanations. Unlike standard "black-box" GNNs or post-hoc explanation methods, it simultaneously enhances both predictive accuracy and the chemical plausibility of its explanations by learning to focus on the minor structural differences that cause large potency changes in activity cliff pairs [36].

Q2: Why do traditional QSAR models and standard GNNs often fail with Activity Cliffs (ACs)? Traditional models frequently overemphasize the shared structural features between similar molecules, making them insensitive to the small modifications that cause significant potency differences. This leads to poor "intra-scaffold" generalization and an inability to correctly predict or explain the drastic activity changes characteristic of ACs [36] [5].

Q3: How does ACES-GNN define "ground-truth" explanations for model supervision? The ground-truth explanation is derived from the uncommon substructures between an activity cliff pair. The framework assumes that the sum of the attribution values for these uncommon atoms should reflect the direction of the activity difference. Specifically, for a pair of molecules (mi and mj) where yi > yj, the sum of attributions for the uncommon atoms in mi should be greater than that in mj [36].

Q4: My model's predictions are accurate, but the explanations seem chemically unreasonable. How can ACES-GNN help? This is a classic symptom of the "Clever Hans" effect, where a model makes correct predictions for the wrong reasons. ACES-GNN directly addresses this by using explanation supervision to penalize chemically implausible rationales during training. This aligns the model's internal decision-making logic with domain knowledge, ensuring that accurate predictions are based on meaningful structural features [36].

Q5: Which GNN architectures and attribution methods are compatible with the ACES-GNN framework? The ACES-GNN framework is designed to be adaptable. The original study validated it using the Message-Passing Neural Network (MPNN) architecture and gradient-based attribution methods. However, the framework is not restricted to these and can be integrated with various GNN backbones and attribution techniques [36].

Troubleshooting Guides

Issue 1: Poor Explanation Quality Despite Good Predictive Performance

Problem: Your model achieves high predictive accuracy on the main task, but the generated explanations (e.g., highlighted molecular substructures) do not align with known chemical rationale or activity cliff data.

Solutions:

- Verify Ground-Truth Labels: Ensure your activity cliff pairs are correctly identified. Use multiple similarity metrics (ECFP, scaffold, SMILES Levenshtein) with a high similarity threshold (e.g., >0.9) and a large potency difference (e.g., 10x) [36].

- Increase Explanation Loss Weight: The ACES-GNN loss function combines a prediction loss and an explanation loss. If explanations are poor, try increasing the weight (lambda) of the explanation loss term to force the model to prioritize rationale alignment [36].

- Check Attribution Stability: Use the ACES-GNN validation to check if the sum of attributions on uncommon atoms preserves the direction of activity difference for cliff pairs. Instability here indicates the model is not properly learning the explanation supervision [36].

Issue 2: Model Fails to Learn from Activity Cliffs

Problem: The model's performance on activity cliff molecules does not improve, or it shows low sensitivity to the small structural changes that define cliffs.

Solutions:

- Data Augmentation: Explicitly enrich your training batches with activity cliff pairs. This ensures the model is frequently exposed to these critical cases during training.

- Latent Space Inspection: Analyze the model's latent space. Molecules forming an activity cliff should be close in the latent space due to their high structural similarity. If they are not, the model may be ignoring key structural features. Consider incorporating contrastive learning, as in ACANet, to improve latent space organization for ACs [34].

- Representation Analysis: Compare different molecular representations. While ECFPs are strong for general QSAR, graph isomorphism features (like those in GINs) can be more effective for AC-related tasks, as they can better capture the subtle topological differences critical for cliffs [5].

Problem: The model exhibits poor predictive performance on both standard molecules and activity cliffs.

Solutions:

- Hyperparameter Tuning: Systematically tune key hyperparameters. The table below summarizes the core components and recommendations based on the ACES-GNN study and related research.

| Component | Recommendation | Considerations |

|---|---|---|

| GNN Backbone | Message-Passing Neural Network (MPNN) [36] | A well-established and widely used architecture. |

| Molecular Representation | Graph Isomorphism Network (GIN) features [5] | Competitive with/superior to ECFPs for AC-classification. |

| Similarity Metric | ECFP Tanimoto > 0.9 [36] | For global substructure similarity. |

| Attribution Method | Gradient-based methods [36] | Integrated into the training loop for efficiency. |

- Data Quality Check: Investigate your dataset's "modelability." Datasets with a very high density of activity cliffs are inherently more difficult. The performance drop for cliffy compounds is a known challenge, even for advanced models [5].

- Integrated Learning: Consider frameworks that fuse multiple information sources. For instance, combining structural features from pre-trained GNNs with knowledge-based features extracted from Large Language Models (LLMs) can provide a more robust representation and improve overall performance [37].

Experimental Protocols & Data

ACES-GNN Experimental Workflow

The following diagram illustrates the key stages in implementing and validating the ACES-GNN framework.

Quantitative Performance of ACES-GNN

The ACES-GNN framework was validated across 30 pharmacological targets. The table below summarizes the key quantitative findings from the study [36].

| Metric Category | Evaluation Result | Implication |

|---|---|---|

| Explainability Improvement | 28 out of 30 datasets showed improved explainability scores. | The framework is highly effective at generating better explanations across diverse targets. |

| Dual Improvement | 18 out of 30 datasets showed gains in both explainability and predictivity. | Evidence that better explanations can correlate with better predictions. |

| AC Prediction Correlation | A positive correlation was observed between improved prediction of ACs and improved explanation for ACs. | Justifies the core thesis that supervising explanations enhances model performance on challenging cases. |

The Scientist's Toolkit: Research Reagent Solutions

| Research Reagent / Resource | Function in Experiment |

|---|---|

| ChEMBL Database [36] [5] | A primary source for curated bioactivity data (e.g., Ki, IC50) of small molecules against various pharmacological targets. Used to construct benchmark datasets. |

| Extended Connectivity Fingerprints (ECFPs) [36] [5] | A circular fingerprint that captures radial, atom-centered substructures. Used to quantify molecular similarity for identifying Activity Cliff pairs (Tanimoto similarity > 0.9). |

| RDKit [5] | An open-source cheminformatics toolkit. Used for standardizing molecular structures (SMILES), computing descriptors, generating ECFPs, and handling molecular graphs. |

| Message-Passing Neural Network (MPNN) [36] | A type of Graph Neural Network architecture that operates on graph structures by passing messages between nodes (atoms) and edges (bonds). Serves as a backbone for ACES-GNN. |

| Graph Isomorphism Network (GIN) [5] | A GNN architecture with strong theoretical grounding in graph isomorphism. Can be used as an alternative molecular representation that is competitive for AC-related tasks. |

| GNNExplainer & Gradient-based Methods [36] | Explainable AI (XAI) techniques used to generate atom-level attributions, highlighting the substructures the model deems important for its prediction. |

FAQs: Core Concepts and Definitions

Q1: What is the role of chemical prior knowledge, specifically functional groups, in modern molecular property prediction? Functional groups are specific atoms or groups of atoms with distinct chemical properties that play a crucial role in determining molecular characteristics and biological activity. Integrating this knowledge into AI models helps them learn more interpretable and generalizable representations. For instance, explicitly annotating functional groups at the atomic level allows models to better understand molecular activity and rationalize structure-activity relationships, which is particularly valuable for analyzing challenging cases like activity cliffs [15].

Q2: Why are activity cliffs a significant problem in drug discovery, and how can integrating chemical knowledge help? Activity cliffs are formed by pairs of structurally similar compounds that exhibit large differences in potency against the same target. They pose a major challenge for standard quantitative structure-activity relationship predictions because small chemical modifications lead to dramatic potency changes [24]. Integrating chemical knowledge, such as functional groups and fragment reactions, helps models explain these cliffs by highlighting the specific substructures responsible for the drastic activity change, thereby bridging the gap between prediction and chemical interpretation [38] [39].

Q3: What are some common molecular representations that incorporate substructure-level information? Beyond atom-level graphs, several representations leverage substructures:

- Group Graph: A molecular representation where nodes are chemically meaningful substructures (like broken functional groups and aromatic rings), and edges represent the connections between them. This retains molecular features with minimal information loss and enhances interpretability [40].

- MMP (Matched Molecular Pair): A representation of two compounds that share a common core but differ by a substituent at a single site. It is an intuitive formalism for analyzing and predicting activity cliffs [24].

- Junction Tree: Decomposes a molecule into a tree of substructures (junctions) based on molecular rings and bonds, facilitating operations in generative models [40].

Troubleshooting Common Experimental Issues

Q4: Our model fails to learn meaningful functional group representations. What strategies can improve this?

- Implement Explicit Functional Group Annotation: Use a functional group annotation algorithm that assigns a unique functional group label to every atom in a molecule. This provides strong, atom-level supervisory signals during pre-training, guiding the model to recognize these critical chemical features [15].

- Incorporate Multi-Task Pre-training: Pre-train your model using a framework that includes a functional group prediction task alongside other tasks like molecular fingerprint prediction and 3D bond angle prediction. This forces the model to learn comprehensive semantics from molecular structures to functions [15].

- Utilize Transfer Learning: Start with a model pre-trained on a large corpus for a related task, such as functional group prediction from molecular images. The features learned can then be transferred and fine-tuned for your specific activity cliff prediction task, often leading to improved accuracy [39].

Q5: When working with graph-based models, our model's performance on activity cliffs is poor. What architectural or data-centric improvements can be made?

- Adopt an Explanation-Supervised Framework: Use frameworks like ACES-GNN (Activity-Cliff-Explanation-Supervised GNN) that integrate explanation supervision directly into the training process. This aligns the model's attributions with chemist-friendly interpretations of activity cliffs, simultaneously improving both predictive accuracy and interpretability [38].