Navigating the FDA's AI Model Credibility Assessment Framework: A Guide for Drug Development Professionals

This article provides a comprehensive guide to the U.S.

Navigating the FDA's AI Model Credibility Assessment Framework: A Guide for Drug Development Professionals

Abstract

This article provides a comprehensive guide to the U.S. Food and Drug Administration's (FDA) risk-based credibility assessment framework for artificial intelligence (AI) models used in drug and biological product development. Aimed at researchers, scientists, and regulatory affairs professionals, it details the seven-step process for establishing model trustworthiness, from defining the Context of Use (COU) to lifecycle maintenance. The content covers foundational principles, practical application methodologies, strategies for troubleshooting and optimization, and validation best practices, empowering teams to integrate AI confidently into regulatory submissions and ensure compliance with evolving FDA expectations.

Understanding the FDA's Push for AI Credibility in Drug Development

The pharmaceutical industry is undergoing a profound transformation driven by artificial intelligence (AI). This technological integration is not merely an efficiency improvement but a fundamental reshaping of drug development paradigms. The global AI in pharmaceutical market, valued at $1.94 billion in 2025, is projected to surge to $16.49 billion by 2034, expanding at a formidable compound annual growth rate (CAGR) of 27% [1]. This explosive growth is catalyzed by the pressing need to address soaring drug development costs, which can reach $2.6 billion per approved drug, and timelines extending beyond 14 years [2].

Concurrently, the U.S. Food and Drug Administration (FDA) is establishing a robust regulatory framework to ensure that this innovation aligns with rigorous standards for patient safety and product efficacy. The agency's motivation stems from a dramatic increase in regulatory submissions incorporating AI components—experiencing more than 500 drug and biological product submissions with AI components from 2016 to 2023 [3] [4]. The FDA's approach is crystallizing around a core principle: model credibility assessment, a risk-based framework designed to build trust in AI model performance for specific contexts of use in the drug development lifecycle [4] [5]. This article examines the quantitative landscape of AI adoption in pharma and deconstructs the FDA's motivated, science-based response to these technological advancements.

Quantitative Analysis of AI in the Pharmaceutical Market

The expansion of AI in pharma is not uniform; it manifests with varying intensity across technologies, applications, and geographies. The following tables provide a detailed breakdown of the market dynamics, offering researchers a granular view of the field's trajectory.

Table 1: AI in Pharmaceutical Market Growth Trajectory (2025-2034)

| Metric | Value | Notes |

|---|---|---|

| 2025 Market Size | USD 1.94 Billion | Base year [1] |

| 2026 Market Size | USD 2.51 Billion | [1] |

| 2034 Projected Market Size | USD 16.49 Billion | [1] |

| CAGR (2025-2034) | 27% | [1] |

| U.S. Market Size (2025) | USD 510 Million | [1] |

| U.S. Market Size (2034) | USD 4,350 Million | Rising at a CAGR of 27.30% [1] |

Table 2: Market Segment Analysis (2024 Share & Growth)

| Segment | Leading Category (2024 Share) | High-Growth Category (CAGR) |

|---|---|---|

| Technology | Machine Learning (38.78%) | Generative AI (43.12%) [6] |

| Offering | Software Platforms (46.15%) | AI-as-a-Service (42.97%) [6] |

| Application | Drug Discovery (34.91%) | Pharmacovigilance & Safety Monitoring (42.81%) [6] |

| Deployment | Public Cloud (68.56%) | On-Premise/Hybrid (43.25%) [6] |

| Drug Type | Small Molecules (66%) | Large Molecules (Solid growth) [1] |

| Regional Leadership | North America (42.19% share) | Asia-Pacific (43.54% CAGR) [1] [6] |

The data reveals several key trends. Technologically, machine learning remains the foundational workhorse, while generative AI is experiencing explosive growth, enabling novel tasks like de novo molecular design [6]. Geographically, North America's dominance is anchored by substantial venture funding and regulatory clarity from the FDA, whereas the Asia-Pacific region's rapid growth is fueled by state-backed initiatives in countries like China and a cost-advantaged research infrastructure in India [1] [6]. The brisk growth in pharmacovigilance applications underscores a key FDA motivation: leveraging AI for enhanced post-market safety monitoring and real-world evidence analysis [6] [5].

The FDA's Regulatory Motivation: Ensuring Safety and Efficacy in an Evolving Landscape

The FDA's regulatory posture is not an impediment to innovation but a foundational element for its sustainable and trustworthy integration. The agency's actions are motivated by several interrelated factors.

Responding to Exponential Adoption and Novel Risks

The primary catalyst for the FDA's focused guidance is the unprecedented surge in AI-enabled drug development submissions. This volume necessitates clear principles for industry sponsors. Beyond volume, the nature of AI introduces novel challenges that traditional regulatory frameworks were not designed to address [7] [5]. The FDA has identified specific technical challenges:

- Data Variability: Risk of bias from non-representative training data [5].

- Limited Transparency: The "black box" problem complicates understanding model inferences [5].

- Model Drift: Performance degradation over time as real-world data evolves [5].

- Contextual Credibility: An AI model's reliability is intrinsically tied to its specific Context of Use (COU) [4].

Establishing the Model Credibility Assessment Framework

In January 2025, the FDA issued a pivotal draft guidance, "Considerations for the Use of AI to Support Regulatory Decision-Making for Drug and Biological Products" [4] [8] [5]. This document outlines a risk-based credibility assessment framework for establishing trust in an AI model's output for a specific COU. This framework is the operational core of the FDA's motivated strategy, ensuring that models used in critical decision-making for safety, effectiveness, or quality are rigorously evaluated.

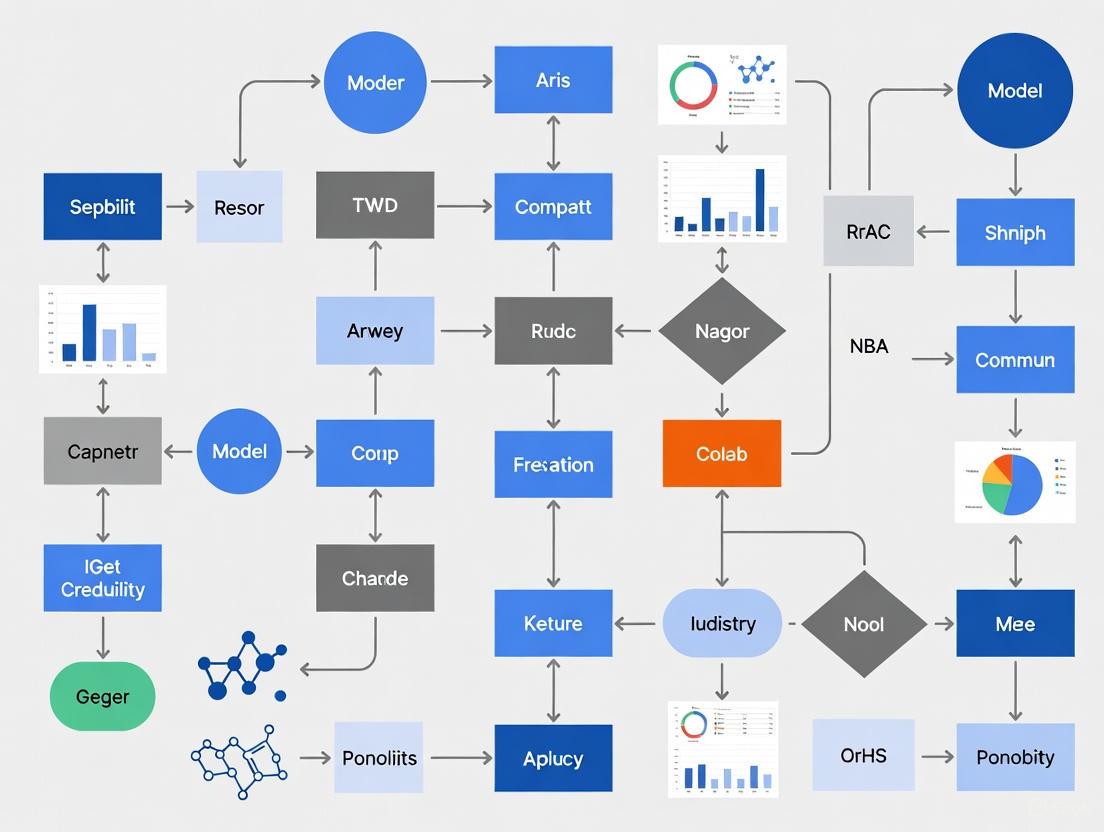

The following diagram illustrates the integrated workflow of this framework, from defining the context of use to lifecycle management, highlighting its recursive, evidence-driven nature.

Diagram Title: FDA AI Model Credibility Assessment Workflow

This framework necessitates a disciplined, documented approach from researchers. The credibility of a model is established through activities commensurate with the risk of an erroneous output influencing a regulatory decision. These activities span the entire model lifecycle, from initial development and validation to post-deployment monitoring, creating a continuous feedback loop for model governance.

Experimental Protocols and Methodologies for AI in Drug Development

The implementation of AI in pharmaceutical research requires rigorous, standardized protocols to ensure the generation of reliable and credible data. Below are detailed methodologies for two critical applications: AI-driven clinical trial optimization and generative molecular design.

Protocol: AI-Driven Adaptive Clinical Trial Design

Objective: To optimize clinical trial efficiency, reduce duration by up to 10%, and improve the probability of success through AI-enabled patient stratification and adaptive protocols [2] [6].

Materials & Workflow:

- Data Acquisition & Curation:

- Inputs: De-identified Electronic Health Records (EHRs), genomic databases, historical clinical trial data, and real-world data (RWD).

- Curation: Implement strict data harmonization protocols and ALCOA+ (Attributable, Legible, Contemporaneous, Original, Accurate, plus Complete, Consistent, Enduring, Available) principles to ensure data integrity [8].

- Predictive Modeling:

- Algorithm Selection: Employ machine learning models (e.g., Gradient Boosting Machines, Random Forests) or deep learning networks to identify complex, non-linear biomarkers predictive of treatment response.

- Training: Models are trained to segment patient populations into subgroups based on predicted efficacy and safety profiles.

- Simulation & Protocol Adaptation:

- In-silico Trial: Run extensive simulations using the predictive model to test various trial entry criteria and endpoint definitions.

- Adaptive Logic: Pre-define rules for protocol adjustments (e.g., sample size re-estimation, arm dropping) based on interim data analysis fed into the AI model.

- Execution & Monitoring:

- Patient Recruitment: Use the validated model to screen and match eligible patients from ongoing clinical feeds.

- Real-Time Analysis: Continuously monitor incoming trial data for early efficacy or safety signals, triggering adaptive protocols as defined.

Protocol: Generative AI for De Novo Molecular Design

Objective: To accelerate the discovery of novel drug candidates by generating and optimizing molecular structures with desired properties, reducing discovery timelines from years to months [2] [5].

Materials & Workflow:

- Data Foundation:

- Inputs: High-quality, annotated datasets of chemical structures (e.g., ChEMBL, ZINC), associated bioactivity data (IC50, Ki), and ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties.

- Model Architecture & Training:

- Architecture: Utilize generative models such as Generative Adversarial Networks (GANs) or Variational Autoencoders (VAEs). These are often combined with Reinforcement Learning (RL) to optimize for multi-parameter objectives.

- Training Loop: The generator creates novel molecules, while the discriminator critiques them against real molecular data and desired property profiles. The model is trained to maximize a reward function that incorporates drug-likeness (e.g., Lipinski's Rule of Five), synthetic accessibility, and predicted target affinity.

- In-Silico Validation:

- Virtual Screening: The generated library of molecules is screened against the target protein structure (e.g., from AlphaFold predictions) using molecular docking simulations.

- ADMET Prediction: Machine learning classifiers predict the pharmacokinetic and toxicity profiles of top candidates to prioritize molecules with a higher probability of preclinical success.

- Experimental Validation:

- Compound Synthesis: Top-ranking in-silico candidates are synthesized by medicinal chemistry teams.

- In-Vitro Assays: Synthesized compounds undergo biochemical and cell-based assays to confirm target engagement and efficacy, creating a critical feedback loop to refine the generative model.

The Scientist's Toolkit: Essential Research Reagent Solutions

Successfully implementing the aforementioned protocols requires a suite of specialized tools and platforms. The following table details key solutions that form the modern AI researcher's toolkit in drug development.

Table 3: Essential Research Reagent Solutions for AI Pharma

| Solution Category | Specific Examples | Function & Application |

|---|---|---|

| AI-Driven Discovery Platforms | Exscientia's Centaur Chemist, Insilico Medicine's PandaOmics | Accelerates target identification and de novo molecular design using generative AI and deep learning [2]. |

| Clinical Trial Optimization Suites | Johnson & Johnson's Trials360.ai, TrialGPT | Automates patient recruitment, predicts trial dropout, and optimizes trial design using real-world data analytics [2]. |

| Data & Model Governance Platforms | Integrated Software Suites (e.g., from USDM, SAS) | Provides unified environments for data ingestion, model training, validation, and compliance documentation, ensuring ALCOA+ principles [6] [8]. |

| High-Performance Computing (HPC) | Cloud AIaaS (e.g., AWS, Google Cloud), On-premise GPU Clusters | Offers elastic scaling for data-heavy model training. On-premise solutions address escalating cloud costs and data sovereignty [6]. |

| Predictive Protein Folding Tools | AlphaFold 3, Genie | Accurately predicts protein structures from amino acid sequences, unlocking previously "undruggable" targets for AI-based screening [2] [6]. |

The choice between cloud-based and on-premise computing is a critical strategic decision, heavily influenced by cost, data governance requirements, and the need for specialized hardware like quantum-classical hybrids for advanced molecular simulation [6].

Integrated Workflow: From Discovery to Regulatory Submission

The true power of AI is realized when its applications are integrated into a cohesive, end-to-end workflow. This integration, governed by the credibility framework, creates a seamless pipeline from initial discovery to regulatory submission. The lifecycle involves continuous iteration and validation, with credibility evidence generated at each stage to support regulatory filings.

Diagram Title: AI Integration in Drug Development Lifecycle

This integrated view demonstrates how the FDA's model credibility framework overlays the entire drug development lifecycle. It is not a final-stage checklist but a continuous process, ensuring that every AI-derived insight supporting a regulatory claim is robust, well-documented, and fit for its intended purpose.

The rising tide of AI in pharmaceuticals is undeniable, characterized by dramatic market growth and transformative applications across the R&D spectrum. This technological shift, however, is matched by an equally significant evolution in regulatory science. The FDA's motivation is clear: to foster a environment where groundbreaking innovation can flourish without compromising the fundamental commitments to patient safety and drug efficacy. The development and implementation of the risk-based model credibility assessment framework is the cornerstone of this effort. For researchers and drug development professionals, the path forward involves embracing this framework not as a hurdle, but as an integral component of building trustworthy, robust, and ultimately successful AI-driven therapies. Proactive engagement with these regulatory principles, including early dialogue with the FDA, will be a critical determinant of success in this new era [4] [8].

In modern drug development, computational models—from physiologically based pharmacokinetic (PBPK) models to artificial intelligence (AI) algorithms—play an increasingly critical role in informing key decisions. Their value and reliability are not absolute but are intrinsically tied to three interconnected concepts: Context of Use (COU), Model Credibility, and Model Risk. Establishing a rigorous framework for defining and assessing these concepts is fundamental to ensuring that models can be trusted to support regulatory decisions about the safety, efficacy, and quality of drug and biological products [4] [9]. This guide provides an in-depth examination of these core concepts, detailing their definitions, interactions, and the practical methodologies used to evaluate them within a model credibility assessment framework.

Core Concept Definitions

Context of Use (COU)

The Context of Use (COU) is a precise description that defines the specific role and scope of a model and how its outputs will be used to address a particular question in drug development or regulatory review [10] [9]. It is the foundational element upon which all assessments of model credibility and risk are built.

- Formal Definition: A concise statement that specifies how a model will be applied to inform a specific question, decision, or concern [11] [9]. The COU describes the model's purpose, the inputs it will use, the outputs it will generate, and how those outputs will be interpreted within a defined decision-making process.

- Key Components: According to the U.S. Food and Drug Administration (FDA), a COU for a biomarker (a concept that extends to models) typically includes two components: the BEST biomarker category (e.g., predictive, prognostic) and the biomarker’s intended use in drug development [11]. A COU is generally structured as:

[BEST biomarker category] to [drug development use]. - Examples of Intended Use in Drug Development:

- Defining patient inclusion or exclusion criteria for clinical trials [11].

- Supporting clinical dose selection [11].

- Evaluating treatment response [11].

- Predicting the effects of drug-drug interactions (DDIs) in specific patient populations [9].

- Predicting drug pharmacokinetics in pediatric patients based on adult data [9].

Model Credibility

Model Credibility refers to the trust, established through the collection of evidence, in the predictive capability of a computational model for a specific Context of Use [9] [12]. It is not an inherent property of a model but is assessed relative to a defined COU.

- Fundamental Principle: Credibility is established through a structured process of Verification, Validation, and Uncertainty Quantification (VVUQ), culminating in an assessment of the model's applicability to the COU [10].

- Verification: The process of ensuring that the computational model correctly implements the underlying mathematical model and its solution. It answers the question, "Did we build the model right?" [10] [9]. This includes code verification and calculation verification [10].

- Validation: The process of determining the degree to which a model is an accurate representation of the real world from the perspective of its intended uses. It answers the question, "Did we build the right model?" [9] [12]. This involves comparing model predictions with independent experimental or clinical data (comparator data) [9].

- Uncertainty Quantification: The process of identifying, characterizing, and quantifying sources of uncertainty, both due to inherent variability (aleatoric uncertainty) and lack of knowledge (epistemic uncertainty) [10].

- Credibility Factors: The ASME VV-40:2018 standard breaks down VVUQ activities into 13 credibility factors across different activities, as shown in Table 1 [9].

Table 1: Model Credibility Factors from the ASME VV-40:2018 Standard

| Activity | Credibility Factor |

|---|---|

| Verification | Software Quality Assurance |

| Numerical Code Verification | |

| Discretization Error | |

| Numerical Solver Error | |

| Use Error | |

| Validation | Model Form |

| Model Inputs | |

| Test Samples | |

| Test Conditions | |

| Equivalency of Input Parameters | |

| Output Comparison | |

| Applicability | Relevance of the Quantities of Interest |

| Relevance of the Validation Activities to the Context of Use |

Model Risk

Model Risk is the potential for an adverse outcome resulting from a decision that was based, at least in part, on an incorrect or misleading model output [9] [13]. In regulated industries, this encompasses risk to patient safety, drug quality, and the reliability of regulatory conclusions.

- Risk-Informed Framework: Model risk is not assessed in isolation; it is a function of the model's COU and is formally evaluated as a combination of two factors [10] [9]:

- Model Influence: The contribution of the model's output relative to other evidence (e.g., clinical trial data, in vitro studies) when answering the question of interest. A model used as the primary evidence has higher influence than one used for supportive evidence [9].

- Decision Consequence: The significance of the impact if an incorrect decision is made based on the model. Consequences can include patient harm, ineffective treatment, or significant resource loss [9] [13].

- Risk-Based Credibility: The level of model risk directly determines the rigor and extent of VVUQ activities required to establish sufficient credibility. A high-risk model necessitates more extensive and rigorous credibility evidence than a low-risk model [10] [14].

The Interrelationship of COU, Credibility, and Risk

The relationship between Context of Use, Model Credibility, and Model Risk forms a dynamic, risk-informed assessment framework. This logical flow can be visualized as a process diagram, illustrating how these core concepts interact within a credibility assessment workflow.

Figure 1: A workflow diagram illustrating the risk-informed model credibility assessment process. The process begins with defining the question and COU, which drives the risk assessment. The level of risk then determines the rigor of the required Verification, Validation, and Uncertainty Quantification (VVUQ) activities needed to establish credibility for the specific COU.

The COU is the primary driver of the entire process. A clearly defined COU is essential because:

- It scopes the validation effort, determining what real-world phenomena the model must accurately represent [10].

- It directly influences the model risk by defining the model's influence and the consequences of a wrong decision [9].

- It determines the applicability of any previous validation studies to the current question. A model validated for one COU may not be credible for another without further evidence [10] [9].

Frameworks and Regulatory Guidance

The ASME VV-40:2018 Standard

The American Society of Mechanical Engineers (ASME) VV-40:2018 standard, "Assessing Credibility of Computational Modeling and Simulation Results through Verification and Validation: Application to Medical Devices," provides a widely recognized risk-informed framework for credibility assessment [10] [9]. While initially developed for medical devices, its principles are directly applicable to drug development models, including PBPK and AI models [10] [9].

The core of the ASME framework is a risk-based approach where the overall model risk, informed by the COU, sets the requirements for model credibility. This determines the necessary rigor of VVUQ activities to ensure the model is fit-for-purpose [10].

FDA Guidance on AI and Modeling

The FDA has incorporated these principles into its regulatory approach for advanced models. The 2025 draft guidance, "Considerations for the Use of Artificial Intelligence to Support Regulatory Decision-Making for Drug and Biological Products," outlines a detailed, risk-based credibility assessment framework for AI models [4] [14].

The FDA's framework is a seven-step process that mirrors the logical flow shown in Figure 1 [14]:

- Define the question of interest.

- Define the COU for the AI model.

- Assess the AI model risk (based on model influence and decision consequence).

- Develop a plan to establish credibility.

- Execute the plan.

- Document the results in a credibility assessment report.

- Determine the adequacy of the AI model for the COU.

The guidance emphasizes that a model's credibility is specific to its COU and that the level of evidence required should be commensurate with the model's risk [4] [14].

Table 2: Key Regulatory and Standards Documents for Model Credibility

| Document / Standard | Issuing Body | Primary Focus | Core Contribution |

|---|---|---|---|

| ASME VV-40:2018 [10] [9] | American Society of Mechanical Engineers | Credibility of Computational Models (Medical Devices) | Risk-informed credibility assessment framework; defines key terminology and credibility factors. |

| FDA Draft Guidance on AI (2025) [4] [14] | U.S. Food and Drug Administration | AI in Drug & Biological Products | 7-step risk-based credibility process for AI models in drug development and regulatory submissions. |

| Supervisory Guidance SR 11-07 [13] | U.S. Federal Reserve | Model Risk Management (Financial) | Foundational MRM framework; principles are analogous to regulatory needs in pharma. |

Experimental Protocols for Establishing Credibility

The credibility of a model is established through targeted experiments and analyses. The following protocols detail key methodologies for the verification, validation, and uncertainty quantification of computational models.

Code Verification Protocol

Objective: To ensure the computational model is implemented correctly and is free of coding errors and numerical inaccuracies.

Methodology:

- Software Quality Assurance: Implement version control, coding standards, and automated regression testing suites [10].

- Unit Testing: Develop and run tests for individual software components (functions, subroutines) to verify they produce expected outputs for a range of inputs [10].

- Numerical Code Verification: Use problems with known analytical solutions to verify that the code solves the underlying equations correctly. This identifies procedural and approximation errors [10].

- Calculation Verification: For problems without analytical solutions, perform convergence studies to estimate numerical discretization errors (e.g., from mesh resolution or time-stepping) [10].

Model Validation Protocol

Objective: To determine the degree to which the model is an accurate representation of the real world for the specific COU.

Methodology:

- Define Validation Hierarchy: Establish a hierarchy of validation tiers, from the component level (e.g., physiological, pathological layers) to the integrated system level [10].

- Select Comparator Data: Identify and procure high-quality experimental or clinical data that is independent of the data used for model development or training. The test conditions of the comparator data should be relevant to the COU [9].

- Conduct Output Comparison: Quantitatively compare model predictions against the comparator data. This should include [9] [14]:

- Goodness-of-fit analyses (e.g., plots of predicted vs. observed values).

- Calculation of performance metrics relevant to the COU (e.g., ROC curves, sensitivity, specificity, precision, recall, F1 score for AI models; prediction error for PBPK models).

- Apply Acceptance Criteria: Assess whether the comparison meets pre-specified, scientifically justified acceptance criteria. The stringency of these criteria should be aligned with the model risk [9].

Uncertainty Quantification and Sensitivity Analysis Protocol

Objective: To identify, characterize, and quantify the impact of uncertainties on the model's outputs.

Methodology:

- Parameter Uncertainty Analysis: Identify key model inputs and parameters that are uncertain. Propagate these uncertainties through the model (e.g., via Monte Carlo simulation) to quantify their impact on the output and determine the overall uncertainty in predictions [10] [13].

- Sensitivity Analysis: Perform local (one-factor-at-a-time) or global (varying all factors simultaneously) sensitivity analyses to determine which input parameters most significantly influence the model output. This helps prioritize efforts for parameter estimation and identifies critical assumptions [13].

- Backtesting: For models predicting a temporal outcome, test the model using historical data and compare its output to known past results [13].

The Scientist's Toolkit: Key Reagents for Credibility Assessment

Successfully implementing a credibility framework requires a suite of methodological and procedural "reagents." The table below details essential components for designing and executing a credibility assessment plan.

Table 3: Essential Components of a Model Credibility Assessment Toolkit

| Tool / Component | Function in Credibility Assessment |

|---|---|

| Credibility Assessment Plan | A master document outlining the COU, model risk, and the specific VVUQ activities, goals, and acceptance criteria to be used [14]. |

| Independent Test Dataset | A hold-out dataset, not used in model training or tuning, which serves as the gold standard for objective validation [9] [14]. |

| Performance Metrics | Quantitative measures (e.g., ROC curves, prediction error, sensitivity) used to objectively evaluate model agreement with comparator data [14]. |

| Uncertainty Quantification (UQ) Scripts | Computational scripts (e.g., for Monte Carlo simulation, sensitivity analysis) to automate the process of propagating input uncertainties [10]. |

| Version Control System | Software (e.g., Git) to manage model code, documentation, and data changes, ensuring reproducibility and facilitating code verification [10]. |

| Credibility Assessment Report | The final summary documenting the execution of the plan, results, deviations, and the final determination of model credibility for the COU [14]. |

The rigorous assessment of computational models is a cornerstone of modern, evidence-based drug development. The concepts of Context of Use, Model Credibility, and Model Risk form an interdependent triad that structures this assessment. A clearly articulated COU is the indispensable starting point, as it defines the purpose and scope for which a model is applied. This COU then informs the level of model risk, which in turn dictates the rigor of the VVUQ activities required to establish sufficient credibility. Regulatory guidance and international standards are converging on this risk-informed, "fit-for-purpose" paradigm, providing frameworks that ensure models are not judged by a single universal standard, but by a standard of evidence proportionate to the decision they support. For researchers and drug development professionals, mastering the definition, application, and interconnection of these core concepts is critical for leveraging models to accelerate the delivery of safe and effective therapies.

The U.S. Food and Drug Administration (FDA) has issued its first draft guidance providing a risk-based framework for establishing the credibility of artificial intelligence (AI) models used in drug and biological product development [4]. This guidance, titled "Considerations for the Use of Artificial Intelligence to Support Regulatory Decision-Making for Drug and Biological Products," addresses the "trust" in the performance of an AI model for a particular context of use (COU) [14]. The framework applies specifically to AI intended to support regulatory decisions about a product's safety, effectiveness, or quality, while explicitly excluding applications limited to drug discovery and operational efficiencies [14]. This scope definition creates critical boundaries for researchers and developers implementing AI within the regulatory landscape, ensuring model credibility assessment focuses on areas with direct impact on patient safety and product quality.

What's In: Regulated Applications Requiring Credibility Assessment

Clinical Development Applications

AI models used in clinical development phases fall squarely within the guidance's scope when they inform regulatory decisions about safety and effectiveness. The FDA specifies these applications require rigorous credibility assessment due to their direct impact on patient outcomes and regulatory conclusions [14]. Specific in-scope applications include:

- Patient Outcome Prediction: AI models that predict individual patient responses to investigational therapies [4]

- Risk Stratification: Models identifying clinical trial participants at low risk for known adverse reactions, potentially reducing monitoring requirements [14]

- Disease Progression Modeling: AI approaches that improve understanding of predictors of disease progression [4]

- Clinical Trial Data Analysis: Processing and analysis of large datasets, including real-world data sources or data from digital health technologies [4]

- Endpoint Assessment: Models that evaluate or predict clinical trial endpoints and outcomes [15]

Manufacturing and Quality Control Applications

The guidance explicitly encompasses AI applications within the manufacturing phase where they impact drug quality or process reliability [14]. These applications require credibility assessment to ensure consistent product quality and compliance with Current Good Manufacturing Practice (CGMP) standards [16]. Key manufacturing applications include:

- Process Control: AI models monitoring and controlling manufacturing processes in real-time

- Quality Assurance: Systems that predict product quality attributes or identify potential deviations [14]

- Automated Quality Testing: AI-enabled visual inspection systems for detecting product defects or ensuring proper fill volumes in injectable drugs [14]

Postmarketing Safety Applications

AI applications used in the postmarketing phase to monitor product safety or effectiveness remain within the scope of the credibility assessment framework [14]. These include:

- Safety Signal Detection: Models analyzing real-world evidence to identify potential adverse events

- Effectiveness Monitoring: AI systems assessing product performance in broader patient populations post-approval

- Risk Evaluation and Mitigation Strategies (REMS): AI components supporting approved REMS programs [15]

What's Out: Excluded Applications and Operations

Drug Discovery and Early Research

The guidance explicitly excludes AI applications limited to early drug discovery and preliminary research activities [14]. These excluded applications represent areas where AI does not directly support regulatory decisions about specific products. Out-of-scope discovery applications include:

- Target Identification: AI models predicting potential drug targets based on biological pathways

- Compound Screening: Virtual screening of compound libraries for potential activity

- Lead Optimization: Computational models optimizing chemical structures for binding affinity

- Preclinical Compound Selection: AI tools prioritizing candidates for further development without generating data for regulatory submissions

Operational and Administrative Functions

AI applications focused solely on improving operational efficiencies without impacting product quality or safety evidence fall outside the guidance scope [14]. These excluded operational applications include:

- Workflow Optimization: AI systems streamlining internal document management processes [14]

- Resource Allocation: Predictive models for clinical trial site selection or patient recruitment that do not generate regulatory evidence [14]

- Regulatory Submission Mechanics: AI tools assisting with the assembly, formatting, or submission of regulatory documents without affecting scientific content [14]

- Administrative Automation: Natural language processing for extracting information from internal documents not submitted to FDA

The Model Credibility Assessment Framework

The Seven-Step Assessment Process

The FDA's risk-based framework for AI model credibility assessment consists of a comprehensive seven-step process that sponsors are expected to follow [14]:

FDA AI Model Credibility Assessment Process

Step 1: Define the Question of Interest - Clearly articulate the specific question, decision, or concern addressed by the AI model, such as whether clinical trial participants can be considered low risk for specific adverse reactions [14].

Step 2: Define the Context of Use (COU) - Specify the scope and role of the AI model, including what will be modeled and how outputs will inform regulatory decisions, noting whether other evidence will be used alongside model outputs [14].

Step 3: Assess AI Model Risk - Evaluate risk through two dimensions: model influence (amount of AI-generated evidence relative to other evidence) and decision consequence (impact of incorrect output) [14].

Step 4: Develop Credibility Assessment Plan - Create a comprehensive plan describing the model architecture, development data, training methodology, and evaluation strategy, incorporating FDA feedback [14].

Step 5: Execute the Plan - Implement the credibility assessment activities as defined in the approved plan [14].

Step 6: Document Assessment Results - Generate a credibility assessment report detailing the model's performance and any deviations from the planned activities [14].

Step 7: Determine Adequacy for COU - Make a final determination about whether the AI model is appropriate for its intended context of use [14].

Risk Assessment Matrix

The framework employs a risk-based approach where regulatory scrutiny corresponds to the determined risk level. Model risk is assessed by combining model influence and decision consequence [14].

Table: AI Model Risk Assessment Matrix

| Decision Consequence | Low Model Influence | Medium Model Influence | High Model Influence |

|---|---|---|---|

| Low Impact | Low Risk | Low-Medium Risk | Medium Risk |

| Medium Impact | Low-Medium Risk | Medium Risk | Medium-High Risk |

| High Impact | Medium Risk | Medium-High Risk | High Risk |

Lifecycle Management and Regulatory Engagement

AI Model Lifecycle Maintenance

The guidance emphasizes the importance of ongoing lifecycle maintenance for AI models, particularly since data-driven models can autonomously adapt without human intervention [14]. Key lifecycle management components include:

Table: Lifecycle Maintenance Requirements

| Component | Description | Risk-Based Application |

|---|---|---|

| Performance Monitoring | Continuous tracking of model performance metrics against established benchmarks | Frequency and rigor scaled based on model risk level |

| Change Management | Formal processes for documenting and evaluating modifications to AI models or their data sources | Major changes affecting performance may require FDA notification |

| Retesting Triggers | Predetermined conditions that initiate model reevaluation and validation | Trigger thresholds vary based on model risk and impact |

| Quality System Integration | Incorporation of AI model maintenance into existing pharmaceutical quality systems | Documentation requirements correspond to model risk level |

Regulatory Engagement Mechanisms

The FDA recommends early and frequent engagement regarding AI implementation in drug development programs [14]. Multiple pathways exist for sponsor-agency interaction:

- Formal Meetings: Requesting dedicated meetings to discuss AI use in specific development programs [14]

- Center for Clinical Trial Innovation (C3TI): Engaging through this center for innovative trial designs incorporating AI [14]

- Complex Innovative Trial Design Meeting Program (CID): Utilizing this program for complex trial designs involving AI components [14]

- Drug Development Tools (DDTs): Qualifying AI models as drug development tools through appropriate pathways [14]

- Emerging Technology Program (ETP): Engaging for advanced manufacturing technologies incorporating AI [14]

Experimental Protocols and Methodologies

Model Credibility Assessment Protocol

A standardized experimental approach to model credibility assessment should include these critical methodological components:

Table: Credibility Assessment Methodology

| Assessment Area | Key Experimental Protocols | Documentation Requirements |

|---|---|---|

| Model Development Data | Data provenance assessment, quality validation, characterization of training/tuning datasets | Data management practices, dataset characteristics, bias evaluation |

| Model Training | Supervised/unsupervised learning documentation, performance metrics with confidence intervals | Regularization techniques, training parameters, quality control procedures |

| Model Evaluation | Independent testing dataset validation, agreement analysis between predicted/observed data | Performance metrics (ROC curve, sensitivity, predictive values) |

| Applicability Assessment | Domain of validity determination, extrapolation boundary definition | Rationale for chosen evaluation methods, limitation documentation |

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table: Essential Materials for AI Model Credibility Assessment

| Tool/Resource | Function | Regulatory Standard |

|---|---|---|

| FDA Environmental Assessment Technical Handbook | Provides technical assistance documents for environmental fate testing protocols | Referenced for physical/chemical properties and depletion mechanisms [17] |

| EPA OPPTS Harmonized Test Guidelines | Standardized methods for environmental effects testing (850 series) and fate testing (835 series) | Accepted validated methods for environmental impact assessment [17] |

| OECD Testing Guidelines | Internationally recognized chemical testing protocols for degradation and accumulation studies | Accepted standardized approaches for environmental effects [17] |

| Structure-Activity Relationships (SAR) Programs | Prediction models for substance fate and effects when experimental data is unavailable | Evaluated for applicability to specific substances [17] |

| Training Data Validation Tools | Software solutions for verifying data quality, independence, and relevance to context of use | Critical for establishing model reliability and reproducibility [14] |

The FDA's guidance on AI in drug development establishes clear boundaries for model credibility assessment, focusing regulatory oversight on applications with direct impact on product safety, effectiveness, and quality. By implementing the structured seven-step framework and maintaining robust lifecycle management practices, sponsors can navigate the evolving regulatory expectations while leveraging AI's transformative potential in clinical research and medical product development. The exclusion of drug discovery and operational applications provides important clarity for research planning, while the comprehensive inclusion of clinical, manufacturing, and postmarketing applications ensures patient protection throughout the product lifecycle.

In the development of artificial intelligence (AI) and machine learning (ML) models for critical domains like drug development, establishing model credibility is a fundamental prerequisite for regulatory acceptance and clinical deployment. Model credibility is defined as the justified trust in a model's performance for a specific context of use [4]. As AI systems increasingly support decisions in healthcare, finance, and other high-stakes environments, addressing core challenges related to data integrity, model transparency, and performance sustainability has become paramount. The U.S. Food and Drug Administration (FDA) and other regulatory bodies now emphasize that AI credibility must be systematically demonstrated through rigorous assessment frameworks [4]. This technical guide examines three foundational challenges—data bias, black-box nature, and model drift—within the context of model credibility assessment, providing researchers and drug development professionals with experimental protocols, detection methodologies, and mitigation strategies to ensure AI systems remain reliable, equitable, and interpretable throughout their lifecycle.

Understanding and Mitigating Data Bias

Data bias represents one of the most insidious challenges in AI development, particularly in healthcare and pharmaceutical applications where biased data can lead to inequitable patient outcomes and compromised drug safety. Data bias occurs when systematic errors in training data adversely affect model behavior, potentially leading to discriminatory or inaccurate predictions [18]. In drug development contexts, biased data can skew clinical trial predictions, misrepresent drug efficacy across demographic groups, and ultimately undermine regulatory confidence in AI-supported submissions.

Typology and Characterization of Data Biases

Table: Common Types of Data Bias in AI Models for Drug Development

| Bias Type | Definition | Impact Example in Drug Development |

|---|---|---|

| Sampling Bias | Occurs when training data doesn't represent the target population [19]. | AI model trained predominantly on data from middle-aged male patients provides inaccurate predictions for women and elderly populations [18]. |

| Historical Bias | Data reflects past inequalities or biases present during collection [18]. | AI clinical trial recruitment tool perpetuates underrepresentation of minority groups based on historical enrollment patterns. |

| Measurement Bias | Inconsistent or inaccurate data measurement across groups [19]. | Medical imaging algorithms perform differently across skin tones due to training primarily on lighter-skinned individuals [19]. |

| Exclusion Bias | Important data is systematically omitted from datasets [18]. | Economic predictions for drug pricing skew toward wealthier areas due to exclusion of data from low-income regions [18]. |

Experimental Protocol for Data Bias Detection

Objective: Systematically identify and quantify data bias in clinical AI model training datasets.

Materials and Methodology:

- Dataset Characterization: Profile training data demographics against target population using statistical descriptors (means, variances, distributions) for all protected attributes (age, gender, race, socioeconomic status).

- Representation Analysis: Conduct disparity testing using Chi-squared tests to compare subgroup proportions in training data against real-world population benchmarks.

- Feature Correlation Analysis: Calculate correlation matrices between protected attributes and model features to identify potential proxy discrimination.

- Performance Disparity Assessment: Implement stratified evaluation metrics (accuracy, precision, recall, F1-score) across all demographic subgroups using cross-validation techniques.

Validation Framework:

- Employ bias detection tools such as IBM's AI Fairness 360 open-source toolkit [18]

- Establish statistical significance thresholds (p < 0.05) for disparity measurements

- Implement Bonferroni correction for multiple hypothesis testing across subgroups

Bias Mitigation Strategies for Regulatory Submissions

The FDA's draft guidance on AI in drug development emphasizes comprehensive bias mitigation strategies throughout the model lifecycle [4]. Effective approaches include:

- Representative Data Collection: Ensure training data encompasses the full spectrum of demographic, clinical, and contextual variability expected in the target population [18]. For global drug development, this requires multinational, multi-ethnic recruitment strategies.

- Synthetic Data Generation: Artificially generate data to augment underrepresented subgroups when real-world data is insufficient or unavailable [18].

- Algorithmic Fairness Interventions: Implement preprocessing techniques (reweighting, resampling), in-processing constraints (fairness regularization), and post-processing adjustments (threshold optimization) to minimize disparate impact.

- Diverse Development Teams: Foster multidisciplinary teams with representatives from various backgrounds to identify potential biases that homogeneous teams might overlook [18].

Resolving the Black-Box Nature of AI Models

The "black-box problem" refers to the lack of transparency in how complex AI models arrive at their predictions, creating significant barriers to trust and adoption in regulated environments like drug development [20]. As AI models grow in complexity—particularly deep learning architectures—their decision-making processes become increasingly opaque, making it difficult for researchers, clinicians, and regulators to understand the rationale behind critical predictions. Explainable AI (XAI) has emerged as a critical discipline to address this challenge, with the XAI market projected to reach $9.77 billion in 2025, reflecting its growing importance across healthcare and pharmaceutical sectors [20].

Explainability Frameworks and Methodologies

Transparency vs. Interpretability: A fundamental distinction in XAI differentiates model transparency from interpretability. Transparency refers to understanding how a model works internally—its architecture, algorithms, and training data—while interpretability focuses on understanding why a model makes specific predictions [20]. Linear regression and decision trees are inherently interpretable, while complex models like neural networks require post-hoc explanation techniques [21].

Global vs. Local Explainability:

- Global Interpretability: Explains the overall behavior of the model across the entire dataset [21]. Techniques include feature importance rankings, regression coefficients, and model distillation.

- Local Interpretability: Explains individual predictions for specific data instances [21]. SHAP and LIME are prominent local explanation methods that illuminate why a particular patient was identified as high-risk or why a specific molecular structure was predicted to be therapeutic.

SHAP (SHapley Additive exPlanations) Protocol

SHAP represents a game theory-based approach that explains individual predictions by quantifying the marginal contribution of each feature to the final prediction [21]. The methodology operates on the principle of fairly distributing "credit" for a prediction among input features, analogous to fairly distributing a pizza bill among people based on what they ate [21].

Experimental Workflow for SHAP Analysis:

SHAP Explanation Workflow

Implementation Protocol:

- Model Preparation: Train and validate the target AI model using standard ML workflows.

- Background Data Selection: Choose a representative sample from training data to establish baseline expectations.

- SHAP Value Computation: For each prediction instance, calculate Shapley values using model-appropriate estimators (KernelSHAP for model-agnostic applications, TreeSHAP for tree-based models).

- Explanation Visualization: Generate force plots, summary plots, or dependence plots to communicate feature contributions intuitively to clinical researchers.

- Validation: Correlate SHAP explanations with domain knowledge to ensure biological and clinical plausibility.

The Scientist's Toolkit: Explainability Research Reagents

Table: Essential Tools for AI Explainability in Drug Development

| Tool/Technique | Function | Application Context |

|---|---|---|

| SHAP | Quantifies feature contribution for individual predictions using game theory [21]. | Explaining why a patient is predicted to respond to a specific drug therapy. |

| LIME | Creates local surrogate models to approximate black-box model behavior [20]. | Interpreting image classification for medical diagnostics. |

| IBM AI Explainability 360 | Open-source toolkit with comprehensive algorithms for model interpretability [20]. | Implementing multiple explanation methods across drug discovery pipeline. |

| Partial Dependence Plots | Visualizes relationship between feature and prediction while marginalizing other features. | Understanding dose-response relationships in pharmacokinetic modeling. |

Monitoring and Managing Model Drift

Model drift describes the degradation of AI model performance over time due to changes in the underlying data distribution or relationship between inputs and outputs [22]. In pharmaceutical applications, where models may be deployed across extended clinical trials or post-market surveillance, drift represents a critical threat to model credibility and patient safety. Effective drift detection and management ensures AI systems maintain their predictive validity throughout their operational lifespan, a requirement emphasized in the FDA's approach to AI credibility assessment [4].

Drift Typology and Detection Metrics

Table: Model Drift Types and Detection Methods

| Drift Type | Definition | Detection Methods | Statistical Thresholds |

|---|---|---|---|

| Feature Drift | Change in distribution of input features over time [22]. | Population Stability Index (PSI), Kolmogorov-Smirnov test [22] [23]. | PSI < 0.1: No change; PSI 0.1-0.25: Moderate change; PSI > 0.25: Significant change [22]. |

| Concept Drift | Change in relationship between input features and target variable [22]. | Performance monitoring (accuracy, F1-score), delayed model performance analysis [22]. | Performance drop > 5% relative to baseline with p < 0.05 in statistical testing. |

| Target Drift | Change in distribution of the outcome variable being predicted [22]. | Label distribution analysis, PSI on target variable [22]. | PSI > 0.25 indicates significant shift in outcome patterns. |

Experimental Design for Continuous Drift Monitoring

Objective: Establish an automated monitoring framework to detect model drift in production environments.

Materials and Methodology:

- Baseline Establishment: Characterize feature distributions, model performance metrics, and outcome patterns from original validation datasets.

- Monitoring Infrastructure: Implement automated logging pipelines to capture feature values, predictions, and ground truth labels in near-real-time [23].

- Statistical Testing Framework: Apply appropriate statistical tests (PSI, KS-test, Chi-square) to compare incoming production data against established baselines [23].

- Alerting Mechanism: Configure threshold-based alerts with business-defined sensitivity levels to notify stakeholders when drift exceeds acceptable boundaries [23].

Validation Protocol:

- Compare drift detection sensitivity across multiple statistical methods

- Establish correlation between detected drift and business KPIs

- Implement A/B testing framework to validate drift impact on model performance

Drift Detection System Architecture

Drift Management Workflow

Integrated Framework for Model Credibility Assessment

Establishing comprehensive model credibility requires integrating solutions for data bias, black-box transparency, and model drift into a unified framework aligned with regulatory expectations. The FDA emphasizes that AI credibility must be demonstrated through a risk-based approach specific to the model's context of use [4]. This integrated assessment framework provides a structured methodology for pharmaceutical researchers to document and validate AI model trustworthiness throughout the development lifecycle.

Credibility Assessment Protocol

Documentation Requirements:

- Data Provenance: Complete characterization of training data sources, collection methodologies, and demographic representations.

- Bias Audit Trail: Comprehensive documentation of bias testing results, mitigation strategies employed, and residual risk assessment.

- Explainability Validation: Evidence that model explanations align with biological mechanisms and clinical expertise.

- Drift Monitoring Plan: Specification of monitoring frequency, detection methods, alert thresholds, and response procedures.

Validation Framework:

- Conduct pre-deployment benchmarking against established clinical standards

- Implement continuous performance validation against prospective datasets

- Maintain version control for model updates with change justification documentation

Regulatory Alignment and Best Practices

The FDA's framework emphasizes sponsor responsibility for demonstrating AI model credibility through appropriate supporting evidence [4]. Key alignment strategies include:

- Early Engagement: Pursue regulatory feedback on AI credibility assessment plans during pre-submission phases [4].

- Context-Appropriate Rigor: Match validation intensity to the model's risk level and decision-criticality within the drug development process.

- Transparent Documentation: Maintain comprehensive records of model development, validation, and monitoring activities suitable for regulatory review.

- Multi-Stakeholder Validation: Incorporate perspectives from clinicians, statisticians, computational biologists, and regulatory affairs specialists throughout model development.

As AI systems assume increasingly critical roles in drug development and regulatory decision-making, addressing data bias, black-box opacity, and model drift becomes essential to establishing and maintaining model credibility. The frameworks, protocols, and methodologies presented in this technical guide provide researchers and pharmaceutical professionals with actionable approaches to these fundamental challenges. By implementing systematic bias detection, comprehensive explainability, and continuous drift monitoring, organizations can develop AI systems worthy of trust in high-stakes healthcare environments. The FDA's increasing focus on AI credibility underscores that these are not merely technical considerations but fundamental requirements for regulatory acceptance of AI-supported drug submissions [4]. Through rigorous application of these principles, the pharmaceutical industry can harness AI's transformative potential while ensuring patient safety, regulatory compliance, and equitable therapeutic development.

Implementing the FDA's 7-Step Credibility Assessment Framework

Within the model credibility assessment framework for drug development, pinpointing the Question of Interest is the foundational first step. It establishes the context of use (COU)—a precise description of how the model's output will be used to inform a specific regulatory or research decision [4]. A clearly articulated COU is critical as it directly determines the level of evidence and validation required to establish model credibility, ensuring the artificial intelligence (AI) model is fit-for-purpose and its outputs are reliable for supporting the safety, efficacy, and quality of drug and biological products [4].

The Role and Definition of the Question of Interest

The Question of Interest translates a broader research or clinical need into a specific, answerable question for the AI model. It defines the model's purpose within the drug development workflow and provides the benchmark against which the model's performance and utility are measured.

Table: Core Concepts of the Question of Interest

| Concept | Definition | Role in Credibility Assessment |

|---|---|---|

| Question of Interest | The specific scientific or clinical question the AI model is designed to answer [4]. | Serves as the starting point for defining the model's context of use and guides all subsequent development and validation activities. |

| Context of Use (COU) | A detailed specification of how the model output will be used to inform a regulatory or development decision [4]. | Directly links the model's task to a decision-making process. The COU determines the level of rigor required for the credibility assessment plan. |

| Model Credibility | The trust in the performance of an AI model for a particular context of use [4]. | The ultimate goal, achieved through a body of evidence demonstrating the model is suitable for its intended purpose. |

Methodological Framework for Defining the Question

A systematic, multi-step methodology is recommended to ensure the Question of Interest is precisely defined, actionable, and aligned with regulatory expectations.

Assemble a Cross-Disciplinary Team

The process begins with forming a team comprising pharmaceutical physicians, drug development scientists, biostatisticians, and regulatory affairs specialists [24]. This ensures the question is clinically relevant, scientifically sound, and regulatorily compliant.

Draft the Specific Question

The team refines the broad research need into a specific, measurable, and unambiguous question. The FINER criteria (Feasible, Interesting, Novel, Ethical, Relevant) provide a useful framework for this refinement. For example, a vague prompt like "predict patient survival" is refined to: "What is the predicted probability of 12-month progression-free survival for patients with metastatic non-small cell lung cancer receiving Drug X, based on baseline tumor genomics, clinical characteristics, and early radiographic changes?"

Formalize the Context of Use

The drafted question is then formalized into a COU statement. This statement explicitly defines the intended application, the specific model outputs, and how those outputs will inform a decision (e.g., "to identify patient subpopulations for a Phase III trial enrichment strategy") [4].

Align with Regulatory Guidance

Early engagement with regulatory agencies like the FDA is encouraged [4]. Discussing the proposed COU and credibility assessment plan with the agency helps align the development strategy with current regulatory standards and expectations.

Document and Approve

The final Question of Interest and COU must be documented in a formal model development plan. This document should be approved by all key stakeholders and serve as a reference throughout the model's lifecycle.

Experimental Protocols for Scoping and Feasibility

Before full model development, preliminary experiments are conducted to scope the problem and assess feasibility.

Protocol 1: Literature-Based Feasibility Analysis

- Objective: To determine if sufficient, high-quality data exists to answer the proposed Question of Interest.

- Methodology: Execute a systematic literature review and data landscape assessment. Quantify the volume, source, and quality of available real-world data (RWD) or clinical trial data. Pre-define minimum data requirements for model development.

- Outputs: A feasibility report with a summary of available datasets, key variables, and identified data gaps.

Protocol 2: Exploratory Data Analysis (EDA)

- Objective: To understand the underlying distributions, relationships, and potential biases within the identified data sources.

- Methodology: Generate summary statistics (means, medians, standard deviations) for all candidate variables. Create visualizations (histograms, scatter plots, correlation matrices) to assess variable relationships and data quality. Analyze missing data patterns.

- Outputs: An EDA report with statistics and visualizations, informing feature selection and preprocessing strategies.

A Risk-Based Framework for Credibility Planning

The level of rigor required to establish credibility is determined by the COU's impact on decision-making. The following diagram illustrates a risk-based framework for planning credibility activities, directly informed by the COU.

Table: Risk-Based Credibility Planning for Different Contexts of Use

| Context of Use (COU) Scenario | Risk Level | Recommended Credibility Activities |

|---|---|---|

| Informing a primary endpoint in a Phase III trial [4] | High | Extensive internal and external validation, sensitivity analysis, complete documentation, and rigorous uncertainty quantification. |

| Identifying a biomarker for patient stratification [4] | Medium to High | Robust performance evaluation on held-out data, external validation if possible, and detailed analysis of model fairness across subgroups. |

| Exploratory analysis for hypothesis generation [4] | Low | Standard internal validation (e.g., cross-validation), baseline performance metrics, and minimal documentation. |

The Scientist's Toolkit: Essential Reagents and Solutions

The following table details key resources and methodologies required for the initial phase of AI model development.

Table: Research Reagent Solutions for Pinpointing the Question of Interest

| Item / Solution | Function / Description | Application in Protocol |

|---|---|---|

| Structured Question Frameworks | Provides a checklist (e.g., FINER, PICO) to ensure the Question of Interest is specific, answerable, and relevant. | Used during the initial drafting and refinement of the research question. |

| Regulatory Guidance Documents | Official documents from agencies like the FDA that outline expectations for AI use in drug development [4]. | Informing the COU definition and ensuring alignment with regulatory standards for credibility assessment. |

| Data Landscape Assessment Tools | Scripts and protocols for auditing available data sources for volume, quality, and completeness. | Executing the Literature-Based Feasibility Analysis (Protocol 1). |

| Exploratory Data Analysis (EDA) Software | Statistical software (e.g., R, Python with Pandas/Seaborn) for generating summary statistics and visualizations. | Conducting the Exploratory Data Analysis (Protocol 2) to understand data structure and quality. |

| Cross-Disciplinary Team Charter | A formal document defining team roles, responsibilities, and decision-making processes. | Ensuring effective collaboration among scientists, clinicians, and regulatory experts throughout the scoping phase. |

The Context of Use (COU) is a formal definition that specifies how an Artificial Intelligence (AI) model is intended to be employed to address a specific question within the drug development lifecycle [4]. It provides the critical scope and boundaries for the model's application, forming the foundation upon which its credibility is assessed [14]. A precisely defined COU is indispensable because it directly determines the level of rigor and the specific validation activities required to establish trust in the model's output for a given regulatory decision [4]. The U.S. Food and Drug Administration (FDA) emphasizes that defining the COU is a pivotal step in its risk-based framework for evaluating AI models used in drug and biological product submissions [4] [14].

Core Components of a COU Definition

A comprehensive COU description must explicitly address several key components. The table below summarizes these essential elements and their descriptions for easy reference and comparison.

Table 1: Core Components of a Context of Use (COU) Definition

| Component | Description | Example |

|---|---|---|

| Question of Interest | The specific decision, concern, or problem the AI model is designed to address [14]. | "Can clinical trial participants with a specific biomarker profile be identified as low-risk for a known adverse reaction and not require inpatient monitoring after dosing?" [14] |

| Model Inputs & Outputs | The data fed into the model and the predictions, recommendations, or decisions it generates [14]. | Inputs: Patient vitals, biomarker data. Outputs: A risk classification (e.g., low/high risk). |

| Scope & Boundaries | The specific conditions, population, and process stage under which the model is applied [14]. | "For the Phase III clinical trial of [Drug X] in adult patients with [Disease Y] to identify low-risk patients for outpatient monitoring." |

| Role in Decision-Making | Clarifies whether the model output is the sole evidence or is used in conjunction with other information [14]. | "The model output will be used alongside clinical judgment to inform the monitoring protocol." |

Methodological Protocol for COU Development

Developing a COU is a structured process that integrates into the broader model credibility assessment framework. The following workflow details the key steps and their relationships.

Define the Question of Interest

The process begins with a clear, concise statement of the problem. The question must be specific, actionable, and relevant to a regulatory decision about a drug's safety, effectiveness, or quality [14]. In a manufacturing context, an example could be determining whether a drug's vials meet established fill volume specifications [14].

Specify Model Inputs and Outputs

Detail the data types, formats, and sources used as model inputs. Simultaneously, define the nature of the model's output, whether it is a prediction, classification, probability score, or a recommended decision [14].

Delineate Model Scope and Boundaries

Explicitly state the model's operational domain. This includes the specific patient population, manufacturing process stage, type of drug product, and the precise point in the clinical workflow where the model will be deployed [14].

Define the Role in Regulatory Decision-Making

Articulate how the model's output will be used. Specify if it will be the primary evidence, supportive evidence, or used in conjunction with other non-clinical or clinical data to inform the final decision. This directly influences the model's risk classification [14].

Integrate into the Broader Credibility Assessment

The COU is not developed in isolation. It is the second step in the FDA's proposed seven-step credibility assessment framework [14]. The defined COU directly informs the subsequent step of AI Model Risk Assessment, which combines Model Influence (the weight of the AI evidence relative to other evidence) and Decision Consequence (the impact of an incorrect output) [14].

Document the COU in the Credibility Assessment Plan

The fully articulated COU must be formally documented in the Credibility Assessment Plan. This plan is submitted to the FDA for review and discussion, ideally through early engagement meetings [14].

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key components and methodologies referenced in the FDA's guidance that are essential for establishing AI model credibility.

Table 2: Key Reagents and Methods for AI Model Credibility Assessment

| Item / Method | Function / Purpose |

|---|---|

| Training & Tuning Datasets | Data used to build the AI model (training) and to explore optimal values of hyperparameters and architectures (tuning). Characterizing these datasets is fundamental [14]. |

| Performance Metrics | Quantitative measures to evaluate model performance. Examples include the ROC curve, recall (sensitivity), positive/negative predictive values, and F1 scores [14]. |

| Reference Method | The established, validated method used as a benchmark to compare and evaluate the agreement of the AI model's predictions against observed data [14]. |

| Life Cycle Maintenance Plan | A risk-based plan for ongoing monitoring and management of the AI model after deployment to ensure it remains fit for its COU, including performance metrics and retesting triggers [14]. |

| Credibility Assessment Report | A self-contained document summarizing the results of the credibility assessment, included in a regulatory submission or held for FDA inspection [14]. |

The COU is the linchpin connecting the initial question to the entire validation strategy. Its definition directly dictates the necessary credibility activities. The following diagram illustrates this critical path and the options available if the initial credibility is deemed inadequate.

Crafting a precise and comprehensive Context of Use is a critical, foundational step in the credibility assessment of AI models for drug development. A well-defined COU sets the stage for a targeted risk assessment, dictates the appropriate level of validation rigor, and facilitates effective communication with regulatory agencies. Following a structured methodology to define the COU's components ensures that the AI model is developed and evaluated with a clear understanding of its intended purpose, ultimately supporting its reliable use in informing regulatory decisions on drug safety, efficacy, and quality.

Within a comprehensive model credibility assessment framework, conducting a thorough risk assessment is a critical step for ensuring the reliability and regulatory acceptance of models used in drug development. This assessment systematically evaluates two core dimensions: Model Influence, which quantifies the impact of the model's outputs on key development decisions, and Decision Consequence, which characterizes the potential patient, commercial, and regulatory outcomes should those decisions be based on flawed model projections. The "fit-for-purpose" principle, emphasized in modern Model-Informed Drug Development (MIDD), dictates that the rigor of this assessment must be proportional to the model's impact and the associated risks [25]. A model guiding first-in-human (FIH) dosing, for example, demands a more exhaustive risk evaluation than one used for preliminary internal candidate screening. This guide provides a technical roadmap for researchers and scientists to execute this vital assessment, complete with structured data, experimental protocols, and visualization tools.

Quantitative Framework for Risk Assessment

The risk assessment is structured around a two-dimensional evaluation of Model Influence and Decision Consequence. The quantitative findings from this evaluation are summarized in the table below.

Table 1: Risk Assessment Matrix: Model Influence vs. Decision Consequence

| Decision Consequence | Low Influence (Informational) | Medium Influence (Supporting) | High Influence (Decisive) |

|---|---|---|---|

| Low (e.g., Internal candidate screening) | Low Risk | Low Risk | Moderate Risk |

| Medium (e.g., Trial design optimization) | Low Risk | Moderate Risk | High Risk |

| High (e.g., FIH dose selection, registration trial go/no-go) | Moderate Risk | High Risk | Critical Risk |

Key Risk Parameters and Metrics

Table 2: Key Quantitative Parameters for Model Risk Assessment

| Parameter | Description | Measurement/Metric |

|---|---|---|

| Model Influence Score | Degree to which model output dictates development decisions. | Ordinal scale (e.g., 1-5: Low-High); based on predefined criteria for decision gates. |

| Decision Consequence Severity | Impact of an incorrect decision derived from the model. | Ordinal scale (1-5) assessing patient safety, financial loss (>$100M), and program delay (>12 months). |

| Uncertainty & Variability | Key sources of unpredictability in model inputs and outputs. | Coefficient of Variation (CV%), confidence/credible intervals, sensitivity indices. |

| Credibility Evidence Level | Strength of evidence supporting model credibility. | Score based on verification, validation, and qualification activities (e.g., 0-100%). |

Experimental Protocols for Risk Assessment

A robust risk assessment requires structured methodologies to evaluate model components and their impact. The following protocols provide a detailed, executable approach.

Protocol for Sensitivity Analysis

Objective: To identify and rank model input parameters that contribute most significantly to output variability, informing uncertainty and risk.

- Parameter Selection: Identify all variable input parameters (e.g., kinetic rate constants, physiological parameters, baseline disease states).

- Define Distributions: Assign probability distributions to each parameter (e.g., Normal, Log-Normal, Uniform) based on prior knowledge or experimental data.

- Generate Input Matrix: Use a sampling technique (e.g., Latin Hypercube Sampling, Sobol' sequences) to generate a large set (N=1,000-10,000) of input parameter combinations.

- Model Execution: Run the model for each parameter set in the input matrix and record the key outputs (e.g., AUC, efficacy response, predicted survival).

- Calculate Sensitivity Indices: Compute global sensitivity indices, such as Sobol' indices, to quantify the contribution of each input parameter and their interactions to the total output variance.

- Interpretation: Rank parameters by their first-order and total-effect indices. Parameters with high total-effect indices are prioritized as key sources of uncertainty and potential risk factors.

Protocol for Scenario Analysis

Objective: To evaluate model performance and decision robustness under various plausible but distinct future states or assumptions.

- Scenario Definition: Develop 3-5 distinct scenarios that represent critical uncertainties (e.g., "Best Case," "Worst Case," "Alternate Mechanism of Action," "Changing Standard of Care").

- Parameter Adjustment: For each scenario, systematically adjust the relevant model input parameters and/or structural assumptions to reflect the defined conditions.

- Model Execution & Output Analysis: Run the model under each scenario. Record and compare the key outputs and the resulting decisions (e.g., "Go," "No-Go," "Dose X selected").

- Robustness Evaluation: Assess the stability of the decision across all scenarios. A decision that flips in more than one scenario indicates high risk and low robustness, requiring further investigation or model refinement.

Protocol for Credibility Evaluation

Objective: To systematically assess the level of confidence in the model's predictive capability for its specific Context of Use (COU).

- Define Verification & Validation Targets: Based on the COU, establish quantitative targets for model verification (e.g., code works as intended, numerical accuracy) and validation (e.g., predicts external dataset within 20% error).

- Execute Verification: Check model code, unit conversions, and parameter identifiability to ensure the model is technically correct.

- Execute Validation: Compare model predictions against a dedicated external validation dataset not used for model development. Use pre-specified goodness-of-fit plots and statistical tests.

- Document & Score: Document all evidence and score the model against the pre-defined credibility targets. This score feeds directly into the Credibility Evidence Level parameter in the risk matrix.

Visualizing the Risk Assessment Workflow

The following diagram illustrates the logical workflow and key decision points in the risk assessment process.

The Scientist's Toolkit: Essential Reagents & Materials

Table 3: Key Research Reagent Solutions for Model Risk Assessment

| Item | Function in Risk Assessment |

|---|---|

Global Sensitivity Analysis Software (e.g., SAS, R sensitivity package, Simulo) |

Automates the computation of global sensitivity indices (e.g., Sobol') to identify and rank influential model parameters, a core component of uncertainty quantification [25]. |

| Modeling & Simulation Platform (e.g., NONMEM, Monolix, GastroPlus, Simbiology) | Provides the core environment for developing, verifying, and executing models for scenario analysis and virtual population simulations [25]. |