Navigating the High-Dimensional Maze: Advanced Strategies for Hyperparameter Optimization in Chemistry and Drug Discovery

The optimization of hyperparameters in chemical and pharmaceutical models is plagued by the curse of dimensionality, where high-dimensional spaces exponentially increase computational cost and complicate the search for optimal solutions.

Navigating the High-Dimensional Maze: Advanced Strategies for Hyperparameter Optimization in Chemistry and Drug Discovery

Abstract

The optimization of hyperparameters in chemical and pharmaceutical models is plagued by the curse of dimensionality, where high-dimensional spaces exponentially increase computational cost and complicate the search for optimal solutions. This article provides a comprehensive guide for researchers and drug development professionals, synthesizing the latest advancements in tackling this challenge. We explore the foundational principles of dimensionality reduction, survey cutting-edge methodological frameworks including Bayesian optimization, nature-inspired metaheuristics, and deep learning-based feature extraction. The article further delivers practical troubleshooting advice for overcoming common pitfalls, and establishes a rigorous framework for the validation and comparative analysis of different optimization strategies. By integrating foundational knowledge with applied techniques and benchmarking insights, this work serves as an essential resource for accelerating and de-risking the optimization process in computational chemistry and AI-driven drug discovery.

Understanding the Curse of Dimensionality in Chemical Hyperparameter Spaces

Frequently Asked Questions

What is the "curse of dimensionality" in simple terms? The "curse of dimensionality" describes phenomena that occur when analyzing data in high-dimensional spaces that don't exist in low-dimensional settings. As dimensionality increases, the volume of space grows so fast that available data becomes sparse. This sparsity makes it difficult to find meaningful patterns, and the amount of data needed for reliable results often grows exponentially with dimensionality [1].

Why does adding more parameters create exponential complexity?

With each additional parameter, the number of possible combinations increases multiplicatively. For example, with d parameters each taking one of several discrete values, you must consider up to 2^d combinations. This "combinatorial explosion" means that for a 10-dimensional hypercube with a spacing of 0.01 between points, you'd need 10^20 sample points—far more than the 100 points needed for a 1-dimensional unit interval [1].

How does high dimensionality affect optimization in chemical research? In dynamic optimization problems solved by numerical backward induction, the objective function must be computed for each combination of parameter values. This becomes computationally prohibitive when the "state variable" dimension is large. In chemical kinetics, optimizing parameters for models with tens to hundreds of parameters requires sophisticated approaches like DeePMO's iterative sampling-learning-inference strategy [2] [1].

What are the practical signs of dimensionality problems in my experiments? Key indicators include: algorithms requiring exponentially more data to maintain accuracy, increased computational time becoming prohibitive, difficulty distinguishing between similar parameter sets, and optimization methods getting stuck in local optima rather than finding global solutions [1] [3].

Troubleshooting Guides

Problem: Optimization Algorithm Performance Degradation with High-Dimensional Parameters

Symptoms

- Algorithm convergence slows significantly or stalls entirely

- Solutions get trapped in local minima rather than global optima

- Results become unpredictable with small parameter changes

- Computational time increases exponentially with added parameters

Diagnosis Procedure

- Dimensionality Assessment: Count the number of tunable parameters in your model

- Data Sparsity Check: Evaluate if your data points are sufficient for the parameter space volume

- Algorithm Analysis: Determine if your current method scales well with dimensionality

Resolution Steps

- Implement Bayesian Optimization: This sequential model-based approach uses a surrogate function to estimate the posterior distribution of your objective function and an acquisition function to determine which parameters to sample next [3].

- Adopt Iterative Strategies: Use frameworks like DeePMO that employ iterative sampling-learning-inference cycles to efficiently explore high-dimensional parameter spaces [2].

- Apply Dimensionality Reduction: Utilize techniques like PCA or feature selection to reduce parameter space while preserving essential information [4].

- Hybrid Approaches: Combine multiple optimization methods to balance exploration and exploitation in high-dimensional spaces [3].

Verification

- Monitor convergence rates across iterations

- Compare results with known benchmarks or reduced-dimension models

- Test stability with different initial parameter values

Problem: Combating Data Sparsity in High-Dimensional Chemical Parameter Space

Symptoms

- Insufficient data coverage across parameter combinations

- Poor generalization from training to validation sets

- Inability to detect meaningful patterns or correlations

- High variance in model performance with different data subsets

Diagnosis Procedure

- Calculate the ratio of data points to parameter dimensions

- Analyze distribution of data points across parameter space

- Evaluate performance consistency across different regions of parameter space

Resolution Steps

- Strategic Sampling: Implement active learning approaches that focus sampling on the most informative regions of parameter space [3].

- Transfer Learning: Leverage knowledge from related chemical systems with better data coverage [2].

- Hybrid Modeling: Combine physical models with data-driven approaches to reduce pure data dependence [2].

- Multi-fidelity Optimization: Use cheaper, lower-fidelity experiments or simulations to guide higher-fidelity experiments [3].

Verification

- Perform cross-validation with different data partitions

- Test predictive accuracy on holdout parameter sets

- Compare interpolation vs. extrapolation performance

Optimization Methods for High-Dimensional Chemical Problems

Table 1: Comparison of Optimization Algorithms for High-Dimensional Spaces

| Algorithm | Key Hyperparameter | Functional Space Compatibility | Best Use Cases in Chemistry |

|---|---|---|---|

| Gradient Descent | Step size (γ) | Continuous and convex | Simple reaction optimization with smooth landscapes |

| Simulated Annealing | Accept rate (r) | Discrete and multi-optima | Molecular conformation searching, crystal structure prediction |

| Bayesian Optimization | Exploitation/exploration rate (λ) | Discrete and unknown | Complex kinetic parameter optimization, materials synthesis [3] |

| DeePMO Framework | Sampling-learning-inference cycles | High-dimensional kinetic models | Chemical kinetic model optimization across multiple fuel types [2] |

Table 2: Dimensionality Reduction Techniques for Chemical Data

| Technique | Data Type | Key Advantage | Chemical Application Example |

|---|---|---|---|

| Principal Component Analysis (PCA) | Continuous numerical | Preserves maximum variance | Spectral data analysis, compositional space mapping |

| Feature Selection | Mixed types | Maintains interpretability | Identifying critical reaction parameters |

| Autoencoders | Complex patterns | Learns nonlinear mappings | Molecular representation learning |

| t-SNE | High-dimensional visualization | Preserves local structure | Chemical space visualization [4] |

Experimental Protocols

Protocol: Bayesian Optimization for Chemical Synthesis Conditions

Purpose To efficiently optimize multiple synthesis parameters while minimizing experimental trials through sequential model-based decision making.

Materials

- High-throughput experimentation platform

- Characterization equipment for objective function measurement

- Bayesian optimization software (e.g., Ax, BoTorch, Dragonfly) [3]

Procedure

- Define Parameter Space: Identify critical synthesis parameters and their valid ranges

- Establish Objective Function: Create quantitative metric for synthesis success

- Initial Design: Perform limited initial experiments (typically 5-10× parameter count)

- Iterative Optimization Cycle:

- Train surrogate model (typically Gaussian Process) on all available data

- Calculate acquisition function (e.g., Expected Improvement) across parameter space

- Select next experiment point maximizing acquisition function

- Perform experiment and measure objective function

- Add result to dataset and repeat until convergence or budget exhaustion

Validation

- Compare against random search or grid search efficiency

- Verify optimal conditions with replicate experiments

- Test robustness to initial design variations

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Computational Tools for High-Dimensional Optimization

| Tool/Software | Function | Application in Chemical Research |

|---|---|---|

| DeePMO Framework | Kinetic parameter optimization | Optimizing parameters in chemical kinetic models for fuels and mixtures [2] |

| Bayesian Optimization Libraries (Ax, BoTorch, Dragonfly) | Sequential global optimization | Materials synthesis condition optimization, molecular design [3] |

| Gaussian Process Models | Surrogate modeling | Emulating complex experiments and predicting outcomes across parameter space [3] |

| Dimensionality Reduction (PCA, t-SNE) | Feature space compression | Visualizing and navigating high-dimensional chemical spaces [4] |

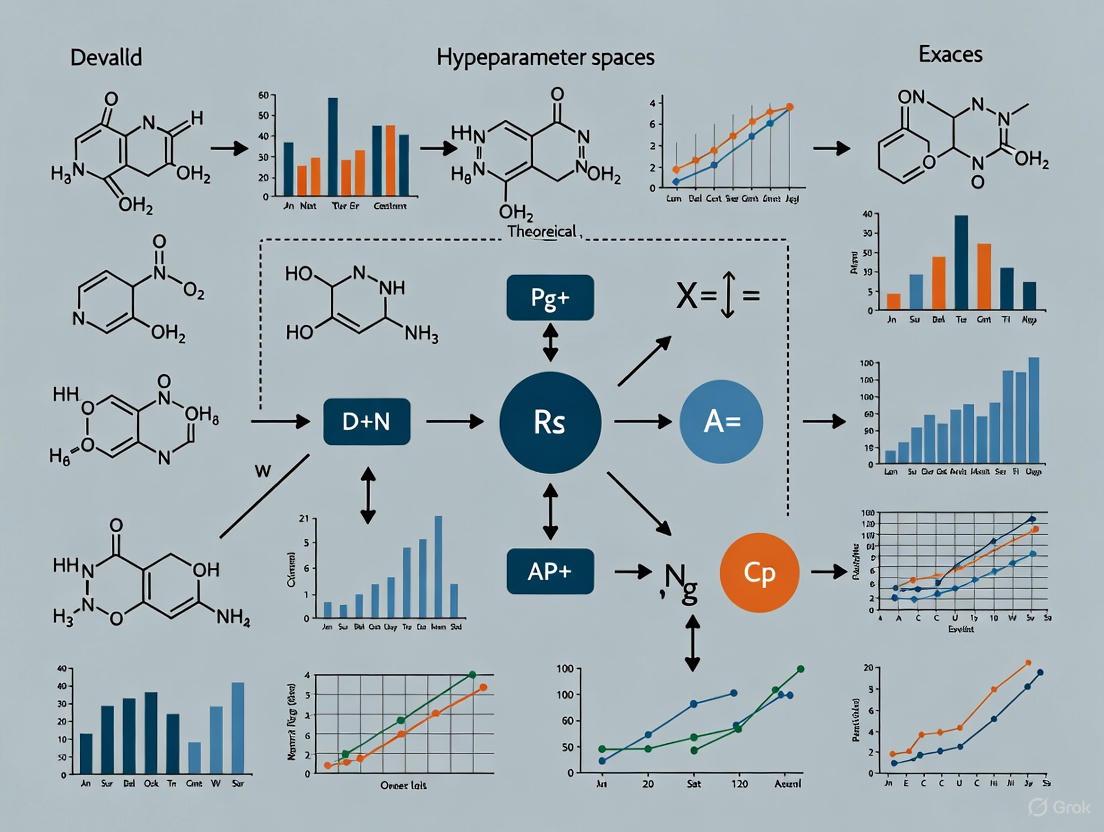

Workflow Visualization

In modern chemistry and drug discovery, researchers increasingly rely on high-dimensional data, from molecular descriptors to optimized reaction conditions. This high-dimensional space, often characterized by many variables (P) relative to the number of observations (N) - the P >> N problem - introduces a phenomenon known as the Curse of Dimensionality [5]. This "curse" describes a set of challenges that arise when analyzing data in high-dimensional spaces, leading to computational bottlenecks, model overfitting, and spurious results that can severely disrupt chemical workflows [5] [6]. This technical support guide details specific issues and solutions to help researchers navigate these challenges.

Frequently Asked Questions (FAQs)

FAQ 1: What exactly is the Curse of Dimensionality in simple terms? The Curse of Dimensionality refers to the set of problems that emerge when working with data in high-dimensional spaces. As the number of dimensions (variables) increases, data becomes incredibly sparse [7]. The volume of space grows so fast that available data becomes insufficient, making it difficult to find meaningful patterns. Key consequences include points becoming far apart from each other and the center of the distribution, and distances between all pairs of points becoming similar, which breaks down many statistical and machine learning methods [5].

FAQ 2: How does high dimensionality directly impact my QSAR models? High dimensionality can severely impair Quantitative Structure-Activity Relationship (QSAR) model performance. It leads to increased computational cost and longer training times [6]. More critically, it escalates the risk of overfitting, where a model learns noise and random fluctuations in the training data instead of the underlying relationship, resulting in poor generalization to new, unseen molecules [8] [6]. This is particularly problematic when the dimensionality of your feature vectors (e.g., from structural fingerprints) is in the order of 10^4 or more [8].

FAQ 3: My clustering results for cell populations or chemical compounds seem meaningless. Could dimensionality be the cause? Yes. In high dimensions, traditional clustering algorithms struggle because the concept of "nearest neighbors" becomes meaningless as all pairwise distances converge to be the same [5] [7]. Clusters that are distinct in lower dimensions can completely disappear or become spurious when analyzed in the full high-dimensional space. One study showed that clear clusters from two normal distributions in one dimension became a single, random grouping when 99 noise variables were added [5].

FAQ 4: What are the most effective techniques to overcome this challenge? The two primary strategies are feature selection and feature extraction [6].

- Feature Selection: Identifies and retains the most relevant features, discarding irrelevant or redundant ones (e.g., using

SelectKBest). - Feature Extraction: Transforms original high-dimensional data into a lower-dimensional space. Common techniques include Principal Component Analysis (PCA), t-SNE, and UMAP [9] [6]. Autoencoders, a type of neural network, are also powerful non-linear feature extractors [8].

Table 1: Comparison of Common Dimensionality Reduction Techniques

| Technique | Type | Key Strengths | Key Limitations | Common Use Cases |

|---|---|---|---|---|

| PCA [9] [10] | Linear | Computationally efficient; preserves global variance; easily interpretable. | Assumes linear relationships; may miss complex non-linear structures. | Exploratory data analysis, data pre-processing for linearly separable data. |

| t-SNE [9] [10] | Non-linear | Excellent at preserving local neighborhoods and revealing local clusters. | Computationally intensive; difficult to interpret axes; global structure not preserved. | Visualizing high-dimensional data in 2D/3D, like chemical space maps. |

| UMAP [9] [10] | Non-linear | Better at preserving global structure than t-SNE; faster. | Hyperparameters can significantly impact results; can be harder to interpret than PCA. | Visualizing chemical space, pre-processing for clustering. |

| Autoencoders [8] | Non-linear | Highly flexible; can learn complex, non-linear manifolds. | "Black box" nature; requires significant data and computational resources for training. | Navigating complex, non-linearly separable toxicological spaces. |

Troubleshooting Guides

Problem 1: Poor Clustering Performance in High-Dimensional Biological Data

Symptoms: Unclear cluster boundaries in flow cytometry or single-cell RNA-seq data; clusters do not correspond to known biological populations; results are not reproducible.

Root Cause: The statistical "empty space phenomenon" where data sparsity in high dimensions makes density-based clustering unreliable [7]. Distance metrics become uninformative.

Solution: Implement Automated Projection Pursuit (APP) Clustering [7].

- Concept: Instead of clustering directly in high dimensions, automatically search for lower-dimensional projections that reveal clear cluster structures.

- Protocol: The APP algorithm works as follows:

Validation: Apply this method to a biologically validated ground truth dataset. For example, using a mixture of WT-GFP and RAG-KO mouse cells, any B and T lymphocytes identified by the pipeline should exclusively express GFP. Lymphocytes lacking GFP indicate misclassification, allowing you to quantify the pipeline's accuracy [7].

Problem 2: Overfitting in a High-Dimensional QSAR Model

Symptoms: The model has near-perfect accuracy on training data but performs poorly on the test set or new experimental data.

Root Cause: The model has too much capacity relative to the data, learning noise instead of the true signal. This is a direct consequence of the curse of dimensionality [6].

Solution: Apply a rigorous dimensionality reduction pipeline before model training [6].

- Preprocessing: Clean your data by removing constant features and imputing missing values.

- Feature Selection: Use a method like

SelectKBestto select the top k features most related to your target variable (e.g., mutagenicity). - Feature Extraction: Further reduce dimensionality using PCA or a non-linear technique like an autoencoder, especially if the data is not linearly separable [8].

- Model Training & Evaluation: Train your model on the reduced-dimension data and validate on a held-out test set.

Table 2: Essential Materials for a Mutagenicity QSAR Workflow [8]

| Research Reagent / Tool | Function in the Workflow |

|---|---|

| 2014 AQICP Dataset | Provides standardized, open-source mutagenicity data (Classes A, B, C) for model training and benchmarking. |

| RDKit (Cheminformatics Package) | Calculates molecular descriptors and fingerprints (e.g., Morgan fingerprints) from SMILES strings. |

| scikit-learn (ML Library) | Provides implementations for data splitting, preprocessing, feature selection, PCA, and model training. |

| StandardScaler | A preprocessing step to standardize features by removing the mean and scaling to unit variance, crucial for distance-based algorithms. |

| Principal Component Analysis (PCA) | A linear dimensionality reduction technique to transform high-dimensional features into a lower-dimensional space while retaining most variance. |

The workflow for this solution can be summarized as follows:

Problem 3: Navigating and Visualizing High-Dimensional Chemical Space

Symptoms: Inability to create interpretable 2D/3D maps of a chemical library; difficulty identifying structural neighborhoods or outliers.

Root Cause: Standard linear projections may not capture complex, non-linear relationships between molecular structures.

Solution: Systematically compare and optimize non-linear dimensionality reduction techniques for chemical space analysis [9].

- Descriptor Calculation: Represent molecules using high-dimensional descriptors like Morgan fingerprints (1024 dimensions), MACCS keys, or neural network embeddings (e.g., ChemDist) [9].

- Hyperparameter Optimization: Conduct a grid-based search to optimize the parameters of different DR methods. Use the percentage of preserved nearest neighbors (e.g., PNN~20~) from the original high-dimensional space as the optimization metric [9].

- Method Comparison: Evaluate optimized models using multiple neighborhood preservation metrics (Q~NN~, AUC, LCMC, trustworthiness) on both in-sample data and a Leave-One-Library-Out (LOLO) scenario to test generalizability [9].

- Visual Diagnostic: Use scatterplot diagnostics (scagnostics) to quantitatively assess the visual interpretability of the resulting chemical space maps.

Expected Outcome: Studies show that non-linear methods like UMAP and t-SNE generally outperform PCA in preserving local neighborhoods, which is critical for understanding structural similarities. However, PCA can be sufficient for approximately linearly separable data and offers greater explainability [9] [10].

Troubleshooting Guides & FAQs

FAQ: Clustering and the "Smooth Elbow" Problem

Q: What is the "smooth elbow" problem in high-dimensional clustering, and why is it a major issue in chemistry research?

A: The "smooth elbow" problem occurs when using methods like the elbow curve to determine the optimal number of clusters (the k-hyperparameter) in a dataset. Instead of a clear bend, the curve is smooth, making the correct k-value unclear and subjective [11]. This is a significant issue because the performance of clustering algorithms, crucial for analyzing chemical structures or spectroscopic data, depends heavily on selecting the correct k-hyperparameter [11]. An incorrect choice can lead to misleading groupings and invalidate experimental conclusions.

Q: How can I identify the correct number of clusters when the traditional elbow method fails?

A: When the traditional elbow method fails, consider ensemble-based techniques. One advanced method involves an ensemble of a self-adapting autoencoder and internal validation indexes [11]. The optimal k-value is determined through a voting scheme that considers:

- The number of clusters visualized in the autoencoder's latent space.

- The k-value suggested by an ensemble internal validation index score.

- A value that generates a derivative of 0 or close to 0 at the elbow point on the curve [11].

Q: What is the "Curse of Dimensionality" and how does it affect computational chemistry?

A: The "Curse of Dimensionality" refers to the severe challenges that arise when working with data where the number of variables, or dimensions, is very large [12]. In chemistry, this can apply to problems involving the spatial arrangement of molecules or the vast parameter space of a reaction. In these high-dimensional spaces, traditional sampling and calculation methods become ineffective because the volume of space grows exponentially with dimensions, making it like "a blindfolded person, walking around drunk in the energy landscape" – you have very little information about the overall structure [12].

Q: Are there improved sampling techniques for navigating high-dimensional energy landscapes?

A: Yes, recent research has developed more efficient sampling techniques. One such method systematically tests the limits of a basin of attraction in an energy landscape rather than relying on random sampling. This technique, related to methods used in biomolecular simulations, can find extremely rare configurations that brute-force methods would almost never locate, making it far more effective for high-dimensional problems like chemical structure prediction [12].

Troubleshooting Guide: High-Dimensional Data Analysis

| Problem | Symptom | Probable Cause | Solution |

|---|---|---|---|

| Unclear Cluster Count | A smooth elbow curve with no distinct point; inconsistent clustering results. | High-dimensional data causing traditional metrics to fail [11]. | Implement an ensemble technique combining a self-adapting autoencoder with internal validation indexes [11]. |

| Ineffective Sampling | Computational models fail to find low-energy molecular configurations or rare reaction pathways. | The "Curse of Dimensionality"; brute-force sampling is too inefficient for the vast parameter space [12]. | Apply advanced sampling algorithms like the Multistate Bennett Acceptance Ratio to systematically explore basin limits [12]. |

| Inconsistent Validation | Different internal validation indexes (e.g., Silhouette, Dunn) suggest different optimal k-values. | Each index has different strengths and can be inconsistent in high-dimensional spaces [11]. | Use a voting scheme across multiple indexes and other metrics, such as autoencoder visualization, to find a consensus k-value [11]. |

Table 1: Internal Validation Indexes for Cluster Evaluation

This table summarizes common metrics used to evaluate clustering performance when the true labels are unknown.

| Index Name | Objective | Interpretation (Higher is Better, Unless Noted) |

|---|---|---|

| Silhouette Index | Measures how similar an object is to its own cluster compared to other clusters. | Yes (Range: -1 to 1) |

| Davies-Bouldin Index | Measures the average similarity between each cluster and its most similar one. | No (Lower value indicates better separation) |

| Calinski-Harabasz Index | Ratio of between-clusters dispersion to within-cluster dispersion. | Yes |

| Dunn Index | Ratio of the smallest distance between observations not in the same cluster to the largest intra-cluster distance. | Yes |

Table 2: Enhanced Color Contrast Requirements for Visualization (WCAG 2.1 Level AAA)

Ensuring diagrams and visualizations are accessible is critical for clear scientific communication.

| Element Type | Definition | Minimum Contrast Ratio |

|---|---|---|

| Small Text | Text smaller than 18pt (24px) or 14pt bold (19px). | 7:1 [13] |

| Large Text | Text that is at least 18pt (24px) or 14pt bold (19px). | 4.5:1 [13] |

Experimental Protocols

Detailed Methodology: Ensemble Technique for k-Hyperparameter Tuning

This protocol outlines the procedure for addressing the smooth elbow problem in high-dimensional chemical datasets [11].

1. Objective: To determine the optimal number of clusters (k) in a high-dimensional dataset where the traditional elbow method produces a smooth, unclear curve.

2. Materials and Instruments:

- High-dimensional dataset (e.g., from chemical assays, molecular descriptors, or spectroscopic analysis).

- Computational environment with Python/R and necessary libraries (e.g., scikit-learn for k-means and validation indexes, TensorFlow/PyTorch for autoencoder construction).

3. Procedure:

- Step 1: Data Preprocessing. Standardize the original dataset to have a mean of zero and a standard deviation of one [11].

- Step 2: Base k-means Modeling. Run the k-means algorithm for a range of k values (e.g., from 2 to 20). For each k, run the algorithm with several centroid initializations and select the result with the lowest sum of squared distances [11].

- Step 3: Traditional Elbow Plot. Plot the average dispersion (within-cluster sum of squares) against the number of clusters (k) to visualize the smooth elbow [11].

- Step 4: Autoencoder Dimensionality Reduction. Train a self-adapting autoencoder on the standardized data. Visualize the data in the reduced latent space of the autoencoder to estimate the number of visible, distinct groupings [11].

- Step 5: Internal Validation Index Calculation. Calculate a set of internal validation indexes (e.g., Silhouette, Dunn, Davies-Bouldin, Calinski-Harabasz) for the same range of k-values [11].

- Step 6: Ensemble Voting Scheme. Determine the final optimal k-value through a voting scheme that considers:

- The number of clusters suggested by the autoencoder's latent space visualization.

- The k-value most frequently recommended by the ensemble of internal validation indexes.

- The k-value at which the derivative of the elbow curve is 0 or closest to 0 [11].

4. Validation:

- Validate the consistency and performance of the selected k-value using statistical tests such as Cochran’s Q test, ANOVA, and McNemar’s score [11].

Workflow Visualization: Solving the Smooth Elbow Problem

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Reagents for High-Dimensional Analysis

| Research Reagent (Tool/Metric) | Function in High-Dimensional Chemistry Research |

|---|---|

| k-Means Clustering Algorithm | A partition-based algorithm used to group data points into distinct, non-overlapping clusters based on similarity [11]. |

| Internal Validation Indexes | Metrics (e.g., Silhouette, Dunn) used to evaluate the quality of a clustering result when true labels are unknown [11]. |

| Self-Adapting Autoencoder | A type of neural network used for non-linear dimensionality reduction, helping to visualize and identify cluster structures in high-dimensional data [11]. |

| Multistate Bennett Acceptance Ratio (MBAR) | An advanced statistical method used in biomolecular simulations to calculate free energies and improve sampling efficiency in high-dimensional spaces [12]. |

| Elbow Method | A heuristic technique used to estimate the optimal number of clusters (k) by identifying the "elbow" point on a plot of distortion vs. k [11]. |

Core Concepts FAQ

What is the fundamental difference between PCA and autoencoders for dimensionality reduction?

Principal Component Analysis (PCA) is a linear statistical technique that performs an orthogonal transformation to convert a set of observations of possibly correlated variables into a set of values of linearly uncorrelated variables called principal components. It works by projecting data onto the axes of maximum variance, with the first principal component capturing the greatest variance, the second (orthogonal to the first) the second-most, and so on [14] [15]. In contrast, an autoencoder is a non-linear neural network designed for unsupervised learning that compresses input data into a lower-dimensional latent space (encoding) and then reconstructs the original data from this compressed representation (decoding) [16] [17]. The key distinction is that PCA can only learn linear relationships, while autoencoders can learn complex, non-linear patterns in data.

When should I choose PCA over an autoencoder for my chemical dataset?

Choose PCA when:

- Your data has primarily linear relationships or is linearly separable [16].

- You need a computationally efficient solution for initial exploratory data analysis [16] [10].

- Interpretability is crucial, as principal components are easier to understand than latent features from autoencoders [16] [10].

- You have a relatively small dataset or limited computational resources [17].

Choose autoencoders when:

- Your chemical data contains complex non-linear relationships [16] [17].

- High-quality data reconstruction is important for your application [16].

- You have sufficient computational resources and a large enough dataset to train a neural network effectively [16] [18].

- You're working with large molecular structures with 3D complexity, such as natural products [18].

How do I evaluate whether my dimensionality reduction is preserving meaningful chemical information?

Evaluate neighborhood preservation using these key metrics [9]:

- Percentage of Preserved Nearest Neighbors (PNNk): Measures how many of the original k-nearest neighbors remain neighbors in the reduced space.

- Co-k-nearest neighbor size (QNN): Assesses the overlap between neighborhoods in original and latent spaces.

- Trustworthiness and Continuity: Evaluate whether the reduced space preserves local and global structure.

- Visual interpretability through quantitative scatterplot diagnostics (scagnostics) [9].

For chemical applications, you should also validate that structurally similar compounds cluster together in the latent space and that the reduction supports your specific goal, such as quantitative structure-activity relationship (QSAR) modeling or virtual screening [9] [19].

Troubleshooting Guides

Problem: Poor reconstruction accuracy with autoencoder on molecular data

Symptoms: High reconstruction loss, invalid molecular structures output, or failure to capture key structural features in latent space.

Solution:

- Address chemical representation issues: If using SMILES strings, implement SMILES enumeration during training to ensure the latent space represents molecules rather than specific string serializations [19]. Consider switching to graph-based representations for complex molecules [18].

- Optimize architecture: For large molecular structures, use specialized architectures like NP-VAE (Natural Product-oriented Variational Autoencoder) that combine molecular decomposition algorithms with tree-structured neural networks [18].

- Regularize effectively: Add appropriate regularization (dropout, L2) to prevent overfitting, especially with limited training data [16] [19].

- Incorporate chemical constraints: Ensure the decoder outputs chemically valid structures by incorporating validity checks or using fragment-based generation approaches [18].

Verification: Check reconstruction accuracy and validity rates on test compounds. For the St. John et al. dataset benchmark, NP-VAE achieved higher reconstruction accuracy (generalization ability) compared to previous models like CVAE, JT-VAE, and HierVAE [18].

Problem: Dimensionality reduction fails to separate chemical classes meaningfully

Symptoms: Overlapping clusters in latent space visualization, poor performance in downstream classification tasks, inability to distinguish between known chemical classes.

Solution:

- Re-evaluate feature representation: For chemical data, test different molecular descriptors (Morgan fingerprints, MACCS keys, neural network embeddings) as each captures different aspects of molecular similarity [9].

- Adjust hyperparameters: For UMAP and t-SNE, carefully optimize parameters like number of neighbors and minimum distance, as these significantly affect clustering results [9].

- Consider method complementarity: Use both PCA and non-linear methods (UMAP, t-SNE) as they may reveal different aspects of chemical space. PCA often provides better explainability for linear relationships, while UMAP can yield clearer clustering for non-linear patterns [10].

- Validate with known chemical similarities: Ensure the method preserves neighborhood relationships for compounds with known structural or activity similarities [9].

Verification: Calculate neighborhood preservation metrics and compare across methods. For the ChEMBL dataset, non-linear methods generally outperform PCA in neighborhood preservation, with UMAP and t-SNE showing particularly strong performance [9].

Problem: PCA components lack chemical interpretability in my application

Symptoms: Difficulty relating principal components to meaningful chemical properties, inability to explain variance in terms of structural features.

Solution:

- Correlate with chemical descriptors: Calculate correlation coefficients between principal components and known molecular descriptors (steric, electronic, physicochemical properties).

- Analyze loading contributions: Examine which original features contribute most significantly to each principal component and interpret these in chemical terms [20].

- Use complementary techniques: Employ Hierarchical Cluster Analysis (HCA) in tandem with PCA to identify sample groupings, then determine which variables drive these clusters [20].

- Leverage domain knowledge: Map PCA results onto known chemical concepts - for example, in organometallic catalysis, PCA of ligand spaces often separates steric and electronic effects [10].

Verification: The variance explained by each component should align with chemically meaningful separations. In catalysis studies, PCA successfully clustered ligands based on intuitive combinations of steric and electronic properties [10].

Experimental Protocols & Workflows

Protocol: Benchmarking Dimensionality Reduction Methods for Chemical Space Analysis

Purpose: Systematically compare PCA, t-SNE, and UMAP for visualizing and analyzing chemical libraries [9].

Materials and Software:

- Chemical datasets: Curated subsets from databases like ChEMBL [9] or DrugBank [18]

- Molecular descriptors: RDKit (for Morgan fingerprints, MACCS keys) [9]

- Dimensionality reduction implementations: scikit-learn (PCA), OpenTSNE (t-SNE), umap-learn (UMAP) [9]

- Evaluation metrics: Neighborhood preservation metrics (PNNk, QNN, trustworthiness, continuity) [9]

Procedure:

- Data Preparation:

- Select representative chemical datasets covering diverse structural classes

- Calculate multiple molecular representations (e.g., Morgan fingerprints, MACCS keys, neural embeddings)

- Standardize features by removing zero-variance features and applying standardization

Hyperparameter Optimization:

- Perform grid-based search for each DR method

- Optimize using percentage of preserved nearest neighbors (default: k=20) as primary metric

- For UMAP: vary nneighbors (5-50), mindist (0.0-0.5)

- For t-SNE: vary perplexity (5-100), learning rate

Model Evaluation:

- Apply optimized models to full dataset

- Calculate comprehensive neighborhood preservation metrics

- Assess visual interpretability using scagnostics

- Evaluate both in-sample and out-of-sample performance (e.g., Leave-One-Library-Out scenario)

Interpretation:

- Compare methods based on quantitative metrics and visual cluster separation

- Relate results to chemical domain knowledge

- Select optimal method based on specific application requirements

Workflow: Constructing a Chemical Latent Space with Variational Autoencoders

Purpose: Build a continuous, interpretable latent space for large molecular structures with 3D complexity [18].

Materials and Software:

- Specialized VAE architecture: NP-VAE (handles chirality and large structures) [18]

- Chemical datasets: DrugBank, natural product libraries [18]

- Representation: Graph-based molecular representation with fragment decomposition [18]

- Training infrastructure: GPU acceleration recommended for training deep architectures [18]

Procedure:

- Data Preprocessing:

- Curate diverse chemical libraries including complex natural products

- Decompose molecular structures into fragment units using specialized algorithm

- Convert to tree structures incorporating stereochemical information

- Apply data augmentation to handle multiple conformer representations

Model Configuration:

- Implement NP-VAE architecture combining Tree-LSTM with ECFP information

- Configure encoder to map molecular graphs to latent distribution parameters

- Design decoder to reconstruct molecules from latent representations

- Incorporate regularization to ensure smooth, continuous latent space

Training Process:

- Train on large-scale chemical datasets (e.g., 76,000 training compounds)

- Monitor reconstruction accuracy and validity on validation set

- Employ transfer learning from general chemical space to specific domains

- Optimize for both reconstruction fidelity and latent space regularity

Latent Space Exploration:

- Project diverse compounds into latent space for visualization

- Interpolate between known active compounds to generate novel structures

- Optimize compounds for target properties by navigating latent space

- Validate generated structures through docking studies or expert evaluation

Workflow Visualization

Diagram 1: Autoencoder Architecture for Chemical Data

Diagram 2: Chemical Space Mapping Workflow

Performance Comparison Tables

Table 1: Method Comparison for Chemical Applications

| Method | Linearity | Computational Complexity | Interpretability | Best For Chemical Data Types | Key Limitations |

|---|---|---|---|---|---|

| PCA | Linear | Low | High | Linearly separable data, small molecules [16] [10] | Cannot capture non-linear relationships [16] |

| t-SNE | Non-linear | Medium | Medium | Visualizing local neighborhood structure [9] | Global structure not preserved, computational cost [9] |

| UMAP | Non-linear | Medium | Medium | Clear clustering of chemical subsets [9] [10] | More challenging to interpret than PCA [10] |

| Autoencoders | Non-linear | High | Low | Large molecular structures, 3D complexity [18] | Requires large datasets, prone to overfitting [16] [18] |

| Variational Autoencoders | Non-linear | High | Low | Generating novel compound structures [18] | Complex training, requires specialized architectures [18] |

Table 2: Neighborhood Preservation Performance on ChEMBL Dataset

| Method | Preserved Nearest Neighbors (PNNk) | Trustworthiness | Continuity | Visual Clustering Quality | Training Time (Relative) |

|---|---|---|---|---|---|

| PCA | 62-75% [9] | Moderate | High | Good for linear relationships [10] | 1x (fastest) |

| t-SNE | 78-88% [9] | High | Moderate | Excellent local structure [9] | 5-10x |

| UMAP | 82-92% [9] | High | High | Clear, chemically meaningful clusters [9] [10] | 3-7x |

| VAE (NP-VAE) | ~90% [18] | High | High | Depends on architecture and training [18] | 20-50x |

Table 3: Reconstruction Performance on Molecular Datasets

| Model | Reconstruction Accuracy | Validity Rate | Handles Large Molecules | Chirality Awareness | Recommended Use Cases |

|---|---|---|---|---|---|

| CVAE | Low [18] | Low [18] | No | No | Basic small molecules |

| JT-VAE | Moderate [18] | High [18] | Limited | Partial | Small drug-like molecules |

| HierVAE | High [18] | High [18] | Yes | No | Polymers, repeating structures |

| NP-VAE | Highest [18] | 100% [18] | Yes | Yes | Natural products, complex 3D structures |

Research Reagent Solutions

Table 4: Essential Computational Tools for Chemical Dimensionality Reduction

| Tool/Resource | Type | Function | Implementation Notes |

|---|---|---|---|

| RDKit | Cheminformatics library | Calculate molecular descriptors (Morgan fingerprints, MACCS keys) [9] | Open-source, essential for preprocessing chemical data |

| scikit-learn | Machine learning library | Implement PCA and other linear methods [9] | Standardized API, good for baseline implementations |

| OpenTSNE | Dimensionality reduction library | Optimized t-SNE implementation [9] | Better performance than standard scikit-learn t-SNE |

| umap-learn | Dimensionality reduction library | UMAP implementation for non-linear reduction [9] | Requires careful hyperparameter tuning for chemical data |

| NP-VAE | Specialized neural architecture | Handle large molecules with 3D complexity [18] | Custom implementation needed, handles chirality |

| ChEMBL Database | Chemical database | Source of diverse molecular structures for training and benchmarking [9] | Curated bioactivity data, useful for validation |

Identifying Sloppiness and Effective Parameters in Chemical Models

Frequently Asked Questions (FAQs)

What is a "sloppy model" in chemical kinetics? A sloppy model is a high-dimensional model where the cost function (like χ² measuring fit to data) is highly sensitive to changes in a few parameter combinations but largely insensitive to many others. This creates a situation where numerous parameter sets can fit the data equally well, making it difficult to identify unique parameter values from the available experimental data [21].

What are the practical consequences of sloppiness for my research? Sloppiness can lead to significant practical challenges, including large uncertainties in parameter estimates, poor model predictive power for novel conditions, and difficulty in extracting meaningful mechanistic insights from data. Essentially, your model might fit your existing data well but fail to make accurate predictions for new experiments [21].

Can I still use a sloppy model for prediction? Yes, but with caution. While many parameter combinations may be consistent with your data, the system's behavior is often well-constrained. The collective model parameters can define system behavior better than independent measurements of each parameter. Predictions that depend on well-informed parameter directions will be reliable, whereas those sensitive to sloppy directions will be highly uncertain [21].

How does high-dimensionality worsen sloppiness? High-dimensional parameter spaces (ranging from tens to hundreds of parameters) exacerbate sloppiness by increasing the number of potential parameter interactions and compensatory effects. This makes it computationally expensive and often infeasible to explore the entire parameter space thoroughly, a common scenario in complex chemical kinetic models [2].

What is the difference between model sloppiness and global sensitivity analysis? While both assess how outputs depend on inputs, their focus differs. Global sensitivity analysis typically measures the sensitivity of model outputs to changes in parameter values. In contrast, sloppiness analysis captures the sensitivity of the model-data fit, revealing which parameter combinations are informed—or constrained—by a specific dataset [22].

Troubleshooting Guides

Issue 1: Poor Model Performance Despite Extensive Calibration

Problem: Your model has been calibrated to a dataset, but its predictions are inaccurate when applied to new conditions or validation experiments. This suggests the model may be sloppy, with poorly constrained parameters.

Solution Steps:

- Perform a Sloppiness Analysis: Calculate the Hessian matrix (matrix of second-order partial derivatives) of your cost function (e.g., χ²) at the best-fit parameters. Analyze its eigenvalues [21] [22].

- Diagnose the Eigenvalue Spectrum: A hallmark of sloppiness is eigenvalues that span many orders of magnitude (e.g., >10⁶). The eigenvectors with the smallest eigenvalues correspond to parameter combinations that are poorly informed by the data [21].

- Identify Informed and Sloppy Directions: Use this analysis to distinguish between:

- Stiff (Informed) Directions: Eigenvectors with large eigenvalues. These parameter combinations are well-constrained by your data.

- Sloppy (Uninformed) Directions: Eigenvectors with very small eigenvalues. These parameter combinations have little impact on the model-data fit [21].

Resolution:

- Design Complementary Experiments: Use experimental design methodologies to find new experiments that specifically target the sloppy parameter directions. The ideal new experiment will provide strong constraints on directions orthogonal to those already informed by existing data [21].

- Incorporate Diverse Data Types: Calibrate your model against a wider variety of performance metrics (e.g., ignition delay, laminar flame speed, reactor data) to constrain more parameters [2].

Issue 2: Navigating a High-Dimensional Parameter Space

Problem: The parameter space is too large to explore efficiently, making optimization infeasible.

Solution Steps:

- Implement an Iterative Strategy: Adopt a sampling-learning-inference cycle, as used in the DeePMO framework.

- Sample: Select multiple parameter sets from the high-dimensional space.

- Learn: Train a surrogate model (like a deep neural network) to map parameters to performance metrics.

- Inference: Use the surrogate model to guide the search for optimal parameters [2].

- Use a Hybrid Neural Network: Employ a deep learning architecture that can handle both sequential (e.g., time-series) and non-sequential data types common in chemical simulations [2].

- Apply Feature Adaptation in Bayesian Optimization: For Bayesian optimization (BO), use a framework like Feature Adaptive BO (FABO). FABO dynamically identifies the most informative molecular or material features during the optimization process, reducing the effective dimensionality of the problem without prior knowledge [23].

Resolution: This iterative, AI-guided approach efficiently explores high-dimensional spaces, significantly boosting optimization performance for complex chemical models [2] [23].

Issue 3: Deciding When and How to Reduce Model Complexity

Problem: Your model is overly complex, making it difficult to interpret, calibrate, and compute.

Solution Steps:

- Use Sloppiness Analysis for Strategic Reduction: Analyze model sloppiness to identify mechanisms (groups of parameters) that weakly inform model predictions. This helps pinpoint which parts of the model can be simplified or removed [22].

- Compare Model Predictions: A sloppiness analysis informed model reduction should be validated by comparing its predictions against the original, more complex model. The key is to preserve predictive accuracy while removing complexity [22].

- Choose an Analysis Method:

- Non-Bayesian (Hessian-based): Use standard optimization to find a best-fit parameter set and compute the Hessian there. This is effective when the best-fit is easily identified [22].

- Bayesian: Use when the likelihood surface is complex without a well-defined peak. This method uses posterior distributions to account for parameter uncertainty across the entire parameter space [22].

Resolution: Systematically reduce your model by removing mechanisms associated with sloppy parameter combinations. This results in a conceptually simpler model that retains predictive power and mechanistic interpretability [22].

Experimental Protocols & Data

Protocol 1: Sloppiness Analysis for Model Reduction

Objective: To strategically reduce a complex model by identifying and removing mechanisms that have little impact on model predictions.

Methodology:

- Model Calibration: Calibrate the model to your dataset using either:

- Frequentist approach: Standard optimization to find a single best-fit parameter set.

- Bayesian approach: Markov Chain Monte Carlo (MCMC) sampling to obtain posterior distributions for parameters [22].

- Compute the Sensitivity Matrix:

- For the frequentist approach, compute the Hessian matrix of the χ² cost function at the best-fit parameters.

- For the Bayesian approach, compute the Hessian of the log-posterior or use the covariance matrix of the posterior samples [22].

- Eigenvalue Decomposition: Perform an eigenvalue decomposition of the sensitivity matrix. The eigenvalues (λᵢ) indicate the sensitivity, and eigenvectors (νᵢ) define the parameter combinations [21].

- Identify Sloppy Mechanisms: Map eigenvectors with the smallest eigenvalues back to the model's mechanistic components (e.g., specific reaction pathways or physical processes).

- Reduce Model: Remove the mechanistic components identified as "sloppy" from the model structure.

- Validate: Ensure the reduced model's predictions remain consistent with the original model and experimental data [22].

Protocol 2: Iterative Deep Learning for Parameter Optimization (DeePMO)

Objective: To optimize parameters in high-dimensional chemical kinetic models.

Methodology:

- Initial Sampling: Use an initial sampling method (e.g., Latin Hypercube) to generate parameter sets across the high-dimensional space.

- Numerical Simulation: Run high-fidelity numerical simulations (e.g., for ignition delay, flame speed) for each parameter set.

- Train Hybrid DNN: Train a hybrid Deep Neural Network (DNN). This network should combine:

- A fully connected network for non-sequential data.

- A multi-grade network for sequential data [2].

- Inference and Guidance: Use the trained DNN as a fast surrogate model to predict performance metrics for new parameter sets, guiding the selection of more promising candidates for the next iteration.

- Iterate: Repeat the sampling-learning-inference cycle until convergence to an optimal parameter set [2].

Table 1: Key Parameter Ranges in Featured Studies

| Study / Model Context | Number of Parameters | Key Performance Metrics | Optimization Method |

|---|---|---|---|

| Chemical Kinetic Models (Methane, n-Heptane, etc.) [2] | Tens to hundreds | Ignition delay, laminar flame speed, heat release rate | DeePMO (Iterative DNN) |

| EGF/NGF Signaling Pathway Model [21] | 48 | Time-course concentration/activity data | Multi-experiment design informed by sloppiness |

| Coral Calcification Model [22] | Information not specified | Calcification rates | Sloppiness analysis for reduction |

Table 2: Comparison of Sloppiness Analysis Methods

| Feature | Non-Bayesian (Hessian-based) Analysis | Bayesian Analysis |

|---|---|---|

| Core Requirement | A single, well-defined best-fit parameter set | Posterior distribution of parameters |

| Best Suited For | Models where optimization reliably finds a global minimum | Models with complex likelihood surfaces (e.g., multiple minima, flat ridges) |

| Computational Cost | Generally lower | Higher (requires MCMC sampling) |

| Advantage | Simplicity and computational speed | Comprehensively accounts for parameter uncertainty |

Workflow and Pathway Diagrams

Sloppiness Analysis for Model Reduction

Iterative Deep Learning Optimization (DeePMO)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Computational Tools for Managing Sloppiness

| Tool / Solution | Function | Application Context |

|---|---|---|

| Hessian Matrix Calculation | Quantifies the local curvature of the cost function around the best-fit parameters, forming the basis for sloppiness analysis. | Identifying stiff and sloppy parameter combinations in a calibrated model [21] [22]. |

| Hybrid Deep Neural Network (DNN) | Acts as a surrogate model to quickly map high-dimensional parameters to system performance, bypassing expensive simulations. | High-dimensional kinetic parameter optimization (e.g., DeePMO framework) [2]. |

| Bayesian Optimization (BO) | A sample-efficient global optimization method that uses a probabilistic surrogate model and an acquisition function to balance exploration and exploitation. | Optimizing black-box functions in drug discovery and materials science [23] [24]. |

| Feature Adaptive BO (FABO) | A Bayesian Optimization framework that dynamically selects the most relevant material features during the optimization process. | Molecular and material discovery tasks where the optimal representation is unknown a priori [23]. |

| Multi-Objective Bayesian Optimization (MBO) | Extends BO to handle multiple, often competing, objectives (e.g., accuracy, fairness, computational cost). | Designing governance-ready models where predictive power must be balanced with other constraints [25]. |

Advanced Algorithms and Frameworks for Efficient Hyperparameter Search

Frequently Asked Questions (FAQs)

Q1: What makes Bayesian Optimization (BO) particularly suitable for high-dimensional problems in chemistry and drug development?

BO is well-suited for these challenges because it efficiently navigates complex, high-dimensional parameter spaces where traditional methods like grid search fail. It uses a probabilistic surrogate model, typically a Gaussian Process (GP), to approximate the expensive black-box function (like a chemical reaction yield or a material property) and an acquisition function to intelligently select the next experiment by balancing exploration and exploitation [26] [27]. For very high-dimensional spaces, advanced techniques like the Sparse Axis-Aligned Subspace Bayesian Optimization (SAASBO) can be employed. SAASBO uses a sparsity-inducing prior that assumes only a subset of the parameters are truly important, effectively identifying a lower-dimensional, relevant subspace within the larger parameter space, which dramatically improves sample efficiency [27] [28].

Q2: My BO algorithm is not converging to a good solution. What could be wrong?

Several factors can cause poor convergence. The table below outlines common issues and potential solutions.

Table: Troubleshooting Poor Convergence in Bayesian Optimization

| Problem | Potential Causes | Recommended Solutions |

|---|---|---|

| Slow or Failed Convergence | Inadequate surrogate model for the problem complexity [27]. | For high-dimensional problems (>20 parameters), switch to a model designed for sparsity like SAASBO [28]. |

| Acquisition function overly biased towards exploration or exploitation. | Test different functions (e.g., UCB, EI) and adjust their parameters (e.g., beta for UCB) [27]. |

|

| Initial data points are uninformative. | Use a space-filling design (e.g., Sobol sequence) for the initial set of experiments [28]. | |

| High Computational Overhead | Gaussian Process (GP) surrogate model becomes slow with many data points. | For large datasets (>1000 points), consider scalable GP approximations or other surrogate models like Random Forests [27]. |

| Optimization of the acquisition function is costly in high dimensions. | Use a local search or a multi-start optimization strategy for the acquisition function [29]. |

Q3: How do I handle a mix of continuous (e.g., temperature) and categorical (e.g., solvent type) parameters?

BO can naturally handle mixed parameter spaces. The key is to choose a kernel function for the GP surrogate that can compute similarities between different data types. For categorical parameters, a common approach is to use a separate kernel (like a Hamming kernel) for the categorical dimensions and combine it with a standard kernel (like the Matern kernel) for the continuous dimensions [29]. Frameworks like Ax or COMBO provide built-in support for defining such mixed search spaces [28].

Q4: We have a small dataset from initial experiments. Is BO still applicable?

Yes, BO is particularly powerful in low-data regimes. Its probabilistic nature allows it to quantify uncertainty and make informed decisions even with limited data [30]. Starting with a small dataset from a Design of Experiments (DoE) is a valid and common strategy. The BO algorithm will then sequentially suggest the most informative experiments to perform next, rapidly improving the model with each iteration [31] [30].

Troubleshooting Guides

Issue: Optimization in High-Dimensional Spaces is Inefficient

This is a common challenge when parameterizing complex models, such as a coarse-grained force field with over 40 parameters [32] or tuning a deep learning model with 23 hyperparameters [28].

Diagnosis Steps:

- Dimensionality Check: Determine the number of parameters you are optimizing. Problems with more than 20 parameters are generally considered high-dimensional and may require specialized methods.

- Sparsity Assessment: Evaluate whether it is likely that only a fraction of the parameters significantly impact the outcome. This is often the case in complex chemical or material systems [27].

Resolution Methods:

- Use a Sparsity-Promoting Method: Implement the SAASBO algorithm. It places a sparse prior on the inverse length scales of the GP kernel, automatically identifying and focusing on the most relevant parameters [28].

- Adaptive Subspace Identification: Employ frameworks like MolDAIS, which actively identify task-relevant subspaces from large libraries of molecular descriptors during the optimization process [27].

Verification of Fix: You should observe that the algorithm finds a good solution in significantly fewer iterations. For example, one study optimized a 41-parameter model in under 600 iterations [32], while another achieved a state-of-the-art result by optimizing 23 hyperparameters in 100 iterations [28].

Issue: Dealing with Permutation-Based Parameters

This issue arises in problems where the outcome depends on the sequence or ordering of components, such as in molecular sequences or experimental protocols [29].

Diagnosis Steps:

- Identify Parameter Type: Confirm that your parameters represent an order or a sequence (e.g., the order of addition in a chemical synthesis, the sequence of operations in an automated pipeline).

Resolution Methods:

- Use a Permutation-Specific Kernel: Replace the standard kernel with one designed for permutation spaces, such as the Mallows kernel [29].

- Employ a Scalable Kernel for Large Permutations: For large-scale permutation problems, the Merge Kernel, derived from the merge sort algorithm, offers a scalable alternative to the Mallows kernel with a computational complexity of

O(n log n)instead ofO(n²), making it more efficient for largen[29].

Verification of Fix: The BO algorithm should efficiently propose new permutations that improve the objective function, effectively solving sequence-dependent optimization tasks.

Experimental Protocol: High-Dimensional Parameterization of a Coarse-Grained Model

This protocol details the methodology for using BO to parameterize a high-dimensional coarse-grained (CG) molecular model, as demonstrated for the copolymer Pebax-1657 [32].

1. Problem Formulation and Objective Definition

- System: Define the molecular system to be modeled (e.g., Pebax-1657 with 50 polymer chains in an amorphous configuration) [32].

- Objective Function: Formulate a objective function that quantifies the discrepancy between the CG model's predictions and reference data. This is often a weighted sum of relative errors. For example [32]:

- Target 1: Density from atomistic simulations.

- Target 2: Radius of gyration.

- Target 3: Glass transition temperature.

- Parameters: Identify all parameters of the CG force field to be optimized simultaneously. In the referenced study, this involved 41 parameters [32].

2. Bayesian Optimization Setup

- Surrogate Model: Select a Gaussian Process (GP) model. For high-dimensional problems (like 41 parameters), use the SAASBO prior to induce sparsity [32] [28].

- Acquisition Function: Choose an acquisition function such as Expected Improvement (EI) or Upper Confidence Bound (UCB) to guide the selection of the next parameter set to evaluate [27].

- Initial Sampling: Generate an initial set of parameter sets (e.g., 5-10) using a space-filling sampling method like Sobol sequences to build the initial GP model [28].

3. Iterative Optimization Loop The core of the experiment is a closed-loop cycle, which can be visualized as follows:

Diagram: BO-MD Workflow. The iterative loop coupling Bayesian optimization with molecular dynamics simulations.

For each iteration in the loop:

- Run Molecular Dynamics (MD) Simulation: Evaluate the current parameter set by running a MD simulation of the CG model [32].

- Calculate Objective Function: Compute the physical properties from the simulation trajectory and evaluate the objective function by comparing them to the target properties [32].

- Update Surrogate Model: Add the new (parameters, objective value) pair to the dataset and update the GP model [32] [27].

- Propose Next Experiment: Optimize the acquisition function to identify the most promising parameter set to evaluate next [27].

- Check Convergence: Repeat until a stopping criterion is met (e.g., maximum iterations, objective value threshold, or minimal improvement over several iterations).

The Scientist's Toolkit: Key Research Reagent Solutions

The following table lists essential computational "reagents" and their functions for implementing Bayesian Optimization in chemistry and materials science research.

Table: Essential Components for a Bayesian Optimization Framework

| Component / Tool | Function | Example Use-Case |

|---|---|---|

| Sparse Axis-Aligned Subspace BO (SAASBO) | A BO algorithm that uses a sparsity-inducing prior to efficiently handle high-dimensional parameter spaces (>20 parameters) [28]. | Optimizing 23 hyperparameters of a deep learning model for materials property prediction [28]. |

| Gaussian Process (GP) Surrogate Model | A probabilistic model that approximates the unknown objective function and provides predictions with uncertainty estimates [27]. | Modeling the relationship between formulation parameters and tablet tensile strength [31]. |

| Expected Improvement (EI) Acquisition Function | A criterion that selects the next point to evaluate by balancing the potential value of a point (exploitation) and the uncertainty of the model (exploration) [27]. | Suggesting the next set of conditions for a pharmaceutical reaction to maximize yield [33]. |

| Adaptive Experimentation (Ax) Platform | An open-source framework for designing and optimizing experiments, including implementations of SAASBO and other BO algorithms [28]. | Serving as the backbone for a self-driving lab platform to automate materials discovery [28]. |

| Merge Kernel | A scalable kernel function for permutation spaces, derived from merge sort, with O(n log n) complexity [29]. | Optimizing the sequence of operations in an automated chemical synthesis pipeline [29]. |

Frequently Asked Questions (FAQs)

1. Why does my Aquila Optimizer (AO) algorithm converge prematurely on high-dimensional chemical data?

The standard Aquila Optimizer can struggle with narrow exploration capabilities and a tendency to converge prematurely to local optima when dealing with high-dimensional optimization problems, which is common in complex chemical equilibrium scenarios [34]. This often manifests as the algorithm returning the same suboptimal solution across multiple independent runs.

2. How can I improve Manta Ray Foraging Optimization (MRFO) performance for parameter estimation in photovoltaic models?

The standard MRFO uses a fixed somersault factor and relies solely on the current best solution during the somersault foraging phase. This can lead to premature convergence. Enhancements like an adaptive somersault factor and a hierarchical guidance mechanism have shown significant improvements, achieving up to a 97.62% success rate in parameter estimation for complex photovoltaic models [35].

3. My Chameleon Swarm Algorithm (CSA) is trapped in local optima during feature selection for medical data. What can I do?

The CSA is susceptible to local optima entrapment due to insufficient diversity and an imbalance between its exploitation and exploration phases [36] [37]. This is a common issue when dealing with high-dimensional feature selection problems in medical datasets. Incorporating a randomization Lévy flight control parameter can help avoid stagnation and early convergence [37].

4. What is a key strategy to balance exploration and exploitation in these algorithms?

A widely used and effective strategy is Opposition-Based Learning (OBL). OBL enhances population diversity by simultaneously evaluating current solutions and their opposites, which helps achieve a better balance between exploring new regions and exploiting promising areas of the search space [34].

5. Can hybridizing two algorithms benefit the optimization of complex chemical equilibrium problems?

Yes. Hybridization can create a synergetic interaction that compensates for the individual deficiencies of each algorithm. For instance, integrating the Sine-Cosine Optimizer into the Aquila Optimizer has been shown to overcome exploitative limitations and effectively solve highly nonlinear and non-convex free energy surfaces in chemical equilibrium problems [38].

Troubleshooting Guides

Issue 1: Premature Convergence in High-Dimensional Spaces

Symptoms: The algorithm stagnates early, returning a local optimum instead of the global solution. The population diversity drops rapidly within the first few iterations.

Solution A: Integrate Enhanced Exploration Strategies

- Opposition-Based Learning (OBL): Generate opposite positions for a subset of the population to maintain diversity [34].

- Mutation Search Strategy (MSS): Introduce random mutations to explore new search regions and escape local optima [34].

- Lévy Flight: Incorporate Lévy flight to promote larger, more diverse jumps in the search space [35] [36].

Solution B: Implement Adaptive Parameters

- Replace fixed parameters with adaptive ones. For MRFO, use a nonlinear cosine adjustment parameter or an adaptive somersault factor to dynamically balance global and local search based on the iteration count [35] [39].

Table: Strategy Performance for Premature Convergence

| Strategy | Key Mechanism | Reported Performance Improvement |

|---|---|---|

| Opposition-Based Learning (OBL) | Enhances solution diversity by evaluating opposites | Achieved best average ranking of 1.625 in clustering problems [34] |

| Lévy Flight | Enables long-range, exploratory jumps | Integral part of improved hybrid algorithms [38] [36] |

| Adaptive Somersault Factor (MRFO) | Dynamically balances exploration/exploitation | Achieved 73.15% average win rate on CEC2017 benchmarks [35] |

Issue 2: Poor Solution Accuracy and Slow Convergence

Symptoms: The algorithm fails to find a solution close to the known optimum, or it takes an impractically long time to converge, especially with complex, non-convex objective functions.

Solution A: Employ Hybrid Algorithms

- Combine the strengths of two algorithms. A Levy flight-assisted hybrid Sine-Cosine Aquila optimizer (AQSCA) has been developed to address the exploitative limitations of the standard AO, showing superior accuracy in solving chemical equilibrium problems through Gibbs free energy minimization [38].

Solution B: Utilize Advanced Mutation and Learning Operators

- Fractional Derivative Mutation: Used in an enhanced MRFO (NIFMRFO) to continually improve individual quality, increasing population diversity and search precision [39].

- Consumption Operator from AEO: Integrating this operator from the Artificial Ecosystem-Based Optimization algorithm into the CSA (creating mCSA) can significantly boost its global search strategy [37].

Table: Enhanced Algorithm Performance on Benchmark Problems

| Algorithm | Key Enhancement | Test Domain | Result |

|---|---|---|---|

| LOBLAO (Enhanced AO) | Opposition-Based Learning, Mutation Search Strategy | Benchmark functions & data clustering | Outperformed original AO and state-of-art algorithms [34] |

| HGMRFO (Enhanced MRFO) | Hierarchical guidance, adaptive somersault factor | CEC2017 benchmark functions | Average win rate of 73.15% [35] |

| mCSAMWL (Enhanced CSA) | Morlet wavelet mutation, Lévy flight | 97 benchmark functions & engineering problems | Superior for unimodal and multimodal functions [36] |

Issue 3: Population Diversity Breakdown

Symptoms: The individuals in the population become very similar to each other, halting progress and limiting the exploration of the search space.

Solution A: Introduce Hierarchical and Interaction Mechanisms

- Hierarchical Guidance Mechanism: This structures the population into layers, guiding individual searches through layered interactions rather than just following the global best. This prevents over-reliance on a single leader and preserves diversity [35].

Solution B: Apply Chaos and Randomization Techniques

- Chaotic Maps: Use chaotic numbers (e.g., from Ikeda Map) instead of uniformly distributed random numbers to initialize the population and control parameters, fostering better stochastic behavior [38].

- Information Interaction Strategy: In MRFO, an information interaction strategy among random individuals can help share knowledge across the population, speeding up convergence and maintaining diversity [39].

Experimental Protocols for Performance Validation

Protocol 1: Benchmarking with Standard Test Functions

Objective: To quantitatively evaluate the robustness, convergence speed, and accuracy of a metaheuristic algorithm.

Methodology:

- Select Benchmark Suites: Use recognized standard test functions from IEEE CEC2017, CEC2019, or CEC2020 [34] [35] [37]. These suites include unimodal, multimodal, hybrid, and composition functions.

- Define Algorithm Configurations: Test the standard algorithm alongside its enhanced versions (e.g., AO vs. LOBLAO).

- Set Experimental Parameters: Run each algorithm over multiple independent runs (e.g., 30 runs) to account for stochasticity. Use a fixed population size and a maximum number of iterations (e.g., 500 iterations) [40].

- Metrics: Record the mean fitness, standard deviation, convergence curves, and perform statistical tests like the Wilcoxon rank-sum test and Friedman's mean rank test [34] [36] [39].

Protocol 2: Evaluating on Real-World Engineering and Scientific Problems

Objective: To assess the practical applicability of the algorithm.

Methodology:

- Problem Selection:

- Chemical Equilibrium: Formulate the problem as the minimization of Gibbs Free Energy, a highly non-linear and non-convex challenge [38].

- Photovoltaic Parameter Estimation: Estimate unknown parameters for six multimodal photovoltaic models to match experimental current-voltage characteristics [35].

- Data Clustering: Use the algorithm to minimize the within-cluster sum of squares for datasets [34].

- Feature Selection: Maximize classification accuracy while minimizing the number of selected features on UCI datasets [37].

- Comparison: Compare results against state-of-the-art algorithms and known solutions.

- Metrics: For chemical problems, measure the consistency of finding the minimum objective value. For PV models, use success rate. For feature selection, use accuracy and number of features [35] [37].

The Scientist's Toolkit: Essential Algorithmic Components

Table: Key Research Reagent Solutions for Metaheuristic Algorithms

| Research Reagent | Function & Explanation |

|---|---|

| Opposition-Based Learning (OBL) | A strategy to enhance population diversity by considering the opposite of current solutions, aiding in balancing exploration and exploitation [34]. |

| Lévy Flight | A random walk strategy that occasionally generates long steps, facilitating escape from local optima and improving global exploration [35] [36]. |

| Adaptive Parameter Control | Dynamically adjusts algorithm parameters (e.g., somersault factor) during the search to automatically balance exploration and exploitation based on progress [35] [39]. |

| Chaotic Maps | Generates chaotic sequences for initialization and parameter control, introducing high-level randomness to improve search efficiency [38]. |

| Mutation Strategies (MSS, Wavelet) | Introduces controlled random changes to solutions, preventing premature convergence and helping to explore new regions of the search space [34] [36]. |

Workflow and Signaling Diagrams

Metaheuristic Enhancement and Validation Workflow

High-Dimensional Optimization Challenge and Solution Strategy

Frequently Asked Questions (FAQs)

Q1: My stacked autoencoder model for chemical data is overfitting. What are the primary strategies to improve generalization? Overfitting in stacked autoencoders is commonly addressed through several techniques. Regularization methods such as L1 regularization applied to the activity of hidden layers can help prevent overfitting by encouraging sparsity in the learned representations [41]. Using a semi-supervised autoencoder (SSAE) architecture, where a classifier is attached to the latent space and trained simultaneously with the autoencoder, can enhance feature extraction specifically for your classification task and lead to denser, more separable cluster distributions in the latent space [42]. Furthermore, integrating adaptive optimization methods like Hierarchically Self-Adaptive Particle Swarm Optimization (HSAPSO) can automatically find hyperparameter values that balance model complexity and prevent overfitting or under-fitting [43].

Q2: What are the primary hyperparameters to tune in a stacked autoencoder, and how do they impact performance? The performance of a stacked autoencoder is highly sensitive to its architecture and training hyperparameters. The most impactful ones are summarized in the table below.

Table 1: Key Hyperparameters for Stacked Autoencoders

| Hyperparameter | Impact on Model Performance | Recommended Tuning Approach |

|---|---|---|

| Number of Layers & Neurons | Controls model capacity and feature hierarchy; too many can cause overfitting [41]. | Use optimization algorithms like Cultural Algorithm or HSAPSO [44] [43]. |

| Learning Rate | Governs convergence speed and stability; inappropriate values prevent finding optimal solution [44]. | Adaptive learning rate strategies or Bayesian Optimization [44] [45]. |

| Activation Function | Introduces non-linearity; 'relu' is common for hidden layers [41]. | Consider 'sigmoid' for output layer to match normalized input data [41]. |

| Regularization Factor | Prevents overfitting by penalizing large weights [44] [41]. | Tune via global optimization methods to find the right penalty strength [44]. |

Q3: How can I handle high-dimensional, sparse chemical data like FTIR spectra or transcriptional profiles with autoencoders? For high-dimensional, sparse data, standard autoencoders may struggle to extract meaningful features. A Mahalanobis distance metric can be incorporated into the autoencoder's loss function. This method focuses on reducing the difference between the original data distribution and the reconstructed distribution, which improves the linear separability of the extracted features in the latent space [46]. Another powerful approach is multi-view or multimodal learning, which integrates diverse data sources (e.g., SMILES, knowledge graphs, transcriptional profiles) into a unified model. Techniques like adaptive modality dropout can dynamically regulate the contribution of each data source during training, preventing dominant but less informative modalities from overwhelming the learning process [47] [48].

Troubleshooting Guides

Issue: Poor Feature Extraction and Reconstruction Accuracy

Symptoms

- High reconstruction loss on both training and validation sets.

- The extracted features perform poorly in downstream tasks (e.g., drug classification).

Diagnosis and Resolution

- Check Model Architecture:

- Problem: The autoencoder may be too shallow to capture the complex, non-linear relationships in chemical data.

- Solution: Implement a deeper, stacked architecture. For example, one successful protocol uses three autoencoders stacked sequentially, where the input to each subsequent autoencoder is the concatenation of the input and output of the previous one. This has been shown to achieve approximately 90% reconstruction accuracy on complex signals [41].

- Verify Training Procedure:

- Problem: Inadequate training due to improper loss function or optimization.

- Solution: Use a loss function suitable for your data, such as Mean Squared Error (MSE), and optimizers like Adam [41]. For an enhanced approach, use a joint loss function as in semi-supervised autoencoders, which combines reconstruction loss (MSE) and classification loss (Categorical Cross-Entropy). This simultaneously ensures accurate reconstruction and that the latent features are discriminative for the target task [42].

- Solution: For hyperparameter tuning, employ advanced global optimization methods. A novel method based on the Cultural Algorithm, multi-island, and parallelism has been demonstrated to effectively escape local optima and find near-optimal hyperparameters in a large search space [44].

Issue: Model Fails to Converge or Training is Unstable

Symptoms

- Training loss fluctuates wildly or does not decrease over epochs.

- The model produces nonsensical or highly noisy outputs.

Diagnosis and Resolution

- Inspect Learning Dynamics: