Navigating the Unknown: Advanced Computational Strategies for Deep Uncertainty in Drug Development

This article provides a comprehensive guide to computational strategies for decision-making under deep uncertainty (DMDU), tailored for researchers and professionals in drug development.

Navigating the Unknown: Advanced Computational Strategies for Deep Uncertainty in Drug Development

Abstract

This article provides a comprehensive guide to computational strategies for decision-making under deep uncertainty (DMDU), tailored for researchers and professionals in drug development. It explores the foundational principles of DMDU, where system models and outcome probabilities cannot be agreed upon. The piece delves into specific methodological approaches like exploratory modeling and adaptive planning, alongside modern computational techniques such as deep active optimization. It addresses common troubleshooting and optimization challenges, including managing high-dimensional data and escaping local optima. Finally, it offers frameworks for the rigorous validation and comparative analysis of these models, synthesizing key takeaways to enhance robustness and efficiency in biomedical research and clinical decision-making.

Understanding Deep Uncertainty: Core Concepts and Imperatives for Biomedical Research

FAQ: What is Deep Uncertainty and How Does it Differ from Traditional Risk?

A: Deep uncertainty exists when decision-makers and stakeholders cannot agree on or determine:

- A single, "best" model structure to describe the system.

- The probability distributions that represent key uncertainties within the models.

- The relative importance (weights) of different performance objectives or outcomes [1].

This contrasts sharply with traditional risk analysis, where these elements are assumed to be known or can be reliably estimated. In conditions of deep uncertainty, the standard practice of creating a "best estimate" model and using it to find an "optimal" policy is not just unreliable but potentially dangerous, as such policies may perform very poorly under conditions not captured by that single model [1]. This is a common challenge when modeling complex adaptive systems, such as those involving interacting adaptive agents, where perpetual novelty is an inherent feature [1].

The Scientist's Toolkit: Essential Methods for Deep Uncertainty Research

The following table summarizes key methodological approaches for conducting research under deep uncertainty.

| Method / Reagent | Primary Function & Application |

|---|---|

| Exploratory Modeling & Analysis (EMA) | A computational technique that runs simulation models over a wide ensemble of plausible assumptions and scenarios, rather than a single best estimate. It helps map the decision landscape and test policy robustness [1]. |

| Robust Decision Making (RDM) | A framework that uses computer models to systematically identify policy options that perform adequately across a vast range of future scenarios, even if not optimally in any single one [2] [3]. |

| Vulnerability Analysis | An emerging technique that applies machine learning to large ensembles of simulation runs to discover the concise conditions (scenarios) that lead to critical, decision-relevant outcomes [4] [2]. |

| Ensemble Modeling | Using a collection of alternative plausible models to capture more information about the system than any single model can. This can include ensembles of model structures, parameters, or futures [1]. |

| Censored Regression (e.g., Tobit Model) | A statistical tool from survival analysis adapted for uncertainty quantification. It allows models to learn from censored data—where observations are only known to be above or below a certain threshold—which is common in pharmaceutical experiments [5]. |

| Minimax Regret (MMR) | A decision criterion from formal decision theory that selects the policy which minimizes the maximum "regret" (the difference between the outcome of a chosen policy and the best possible outcome) across all considered scenarios [6]. |

Detailed Experimental Protocols

Protocol 1: Designing an Exploratory Modeling & Analysis (EMA) Experiment

This protocol is used to stress-test policies or hypotheses against a wide range of deeply uncertain futures [1].

- Define the System and Objectives: Clearly state the system of interest and the key performance metrics (e.g., efficacy, cost, safety) by which strategies will be judged.

- Develop an Ensemble of Models: Instead of one model, create a set of models that represent contested structural assumptions (e.g., different agent behaviors, disease progression mechanisms).

- Specify the Uncertain Input Space: Identify all key uncertain parameters. For those with contested probabilities, define plausible ranges instead of fixed distributions.

- Generate Scenario Ensembles: Use techniques like Latin Hypercube sampling to generate a large number of scenarios, each combining different model structures and parameter values from the defined ranges.

- Run Simulations: Execute the entire ensemble of models across the thousands of generated scenarios.

- Analyze for Robustness: Use statistical and visualization tools (e.g., scenario discovery, policy landscapes) to identify policies that perform well across many scenarios and to pinpoint the conditions (vulnerabilities) under which they fail [4].

Protocol 2: Quantifying Uncertainty with Censored Data in Drug Discovery

This protocol enhances uncertainty quantification (UQ) in preclinical research where experimental labels are often censored [5].

- Data Preparation and Censoring Identification:

- Collect experimental data (e.g., from pharmaceutical assays for quantitative structure-activity relationships).

- Identify and label censored data points. These are values not known precisely but known to be above (right-censored) or below (left-censored) a detection threshold.

- Model Selection and Adaptation:

- Select a base model for UQ (e.g., ensemble method, Bayesian neural network, Gaussian process).

- Adapt the model's loss function using the Tobit model framework to incorporate the information from censored labels, rather than treating them as missing data.

- Model Training and Validation:

- Train the adapted model on the dataset containing both precise and censored values.

- Use temporal validation techniques, where the model is trained on past data and tested on more recent data, to assess performance under potential distribution shifts [5].

- Uncertainty Propagation and Decision Support:

- Generate predictions that include a confidence interval, reflecting the total uncertainty from both precise and censored data.

- Use these calibrated uncertainty estimates to inform decisions on which drug candidates to prioritize for further experimental testing.

Censored Data UQ Workflow

Quantitative Data & Benchmarks

Uncertainty Benchmarks in Preclinical Scaling

The table below summarizes typical prediction uncertainties for key human pharmacokinetic (PK) parameters when scaled from preclinical data, a common source of deep uncertainty in drug discovery [7].

| PK Parameter | Common Prediction Methods | Typical Uncertainty (Fold Error) | Key Notes & Sources |

|---|---|---|---|

| Clearance (CL) | Allometric scaling, In vitro-in vivo extrapolation (IVIVE) | ~3-fold | Best allometric methods predict only ~60% of compounds within 2-fold of human value; IVIVE success rates vary widely (20-90%) [7]. |

| Volume of Distribution at Steady State (Vss) | Allometric scaling, Oie-Tozer equation | ~3-fold | Dependent on physicochemical properties; allometry can be reasonable due to physiological basis [7]. |

| Oral Bioavailability (F) | Biopharmaceutics Classification System (BCS), Physiologically Based PK (PBPK) modeling | Highly variable by BCS class | High uncertainty for low-solubility/low-permeability drugs (BCS II-IV); species differences in gut physiology are a major source of uncertainty [7]. |

FAQ: How Do I Choose the Right Method for My Problem?

A: The choice of method depends on the primary sources of deep uncertainty in your research and your decision-making goal.

- Use Exploratory Modeling (EMA) and RDM when you face multiple, contested model structures and parameter futures, and your goal is to find a robust strategy that is satisfactory across many possibilities [1] [3].

- Use Vulnerability Analysis when you need to move beyond assessing robustness and want to identify the specific, critical conditions under which your system or policy fails [4].

- Use Minimax Regret (MMR) when you need a defensible decision rule for problems with high-stakes, irreversible outcomes and contested probabilities, such as climate policy or major infrastructure investments [6].

- Employ Censored Regression and advanced UQ when your primary challenge is imperfect, limited, or threshold-based data, as is common in early-stage drug discovery and clinical trials [5] [8].

Method Selection Logic Flow

FAQ: What are Common Pitfalls in Visualizing and Communicating Deep Uncertainty?

A: A major pitfall is relying on single, "best-estimate" forecasts or simple Monte Carlo analyses that only capture a narrow band of uncertainty. This can profoundly mislead decision-makers [1].

- Pitfall 1: The Best-Estimate Forecast. Presenting a single prediction path implies a level of certainty that does not exist in complex adaptive systems and is almost certain to be wrong [1].

- Pitfall 2: Incomplete Monte Carlo. Varying only well-understood quantitative parameters while ignoring deep structural uncertainties (e.g., model architecture, exogenous shocks) creates a false sense of security by grossly underestimating the true range of possible outcomes [1].

- Solution: Use Policy Landscapes and Scenario Discovery. Instead of hiding from divergent outcomes, exploit them. Use graphical depictions that show the pattern of policy performance across the full range of alternative assumptions. This helps decision-makers understand the vulnerabilities and trade-offs of different choices, engaging their tacit knowledge in the process [1].

In computational model research, deep uncertainty exists when researchers cannot determine the precise structure of a model, its key parameters, or the probability distributions that represent outcomes. This technical support guide addresses the three primary sources of deep uncertainty that impact computational modeling: system complexity, diverse stakeholders, and dynamic change. When working with complex biological systems, drug development pathways, or public health interventions, your models must account for multiple interacting components that create nonlinear behaviors and emergent properties. Engaging diverse stakeholders introduces varying perspectives, priorities, and knowledge systems that can significantly alter model assumptions and outcomes. Meanwhile, dynamic change ensures that both the systems you study and their regulatory contexts evolve throughout your research timeline. This guide provides practical troubleshooting advice to navigate these uncertainty sources while maintaining scientific rigor in your computational experiments.

Troubleshooting System Complexity Challenges

Frequently Asked Questions

Q: How can I determine if my model is capturing essential complexity without becoming unmanageably complicated? A: Focus on the research question specificity rather than comprehensive representation. Implement the Meikirch model approach, which defines health as a balanced state across physical, emotional, social, and cognitive domains [9]. If adding components doesn't change your key output insights significantly, you've likely reached sufficient complexity. Use sensitivity analysis to identify parameters with minimal impact on outcomes.

Q: What strategies exist for managing high-volume, multi-source data in complex biological models? A: Implement the Complex Network Electronic Knowledge Research (CoNEKTR) model, which facilitates collaborative, real-time data use and knowledge translation across environments [9]. This approach integrates systems thinking and complexity theory through structured steps including group brainstorming, qualitative data analysis, thematic identification, and online feedback incorporation. Ensure your data infrastructure can handle the volume, velocity, and variety of inputs while maintaining data integrity.

Q: How can I address drug prescription complexity in pharmacological models? A: Account for the multiple factors influencing prescription patterns, including patient perceptions, physician financial goals, information overload, diagnostic uncertainties, and affordability constraints [9]. Incorporate clinical decision support systems that provide real-time alerts about drug interactions and dosage adjustments. Model these factors as probabilistic rather than deterministic inputs.

Diagnostic Table: System Complexity Assessment

Table 1: Protocol Complexity Tool Domains and Scoring

| Complexity Domain | Assessment Questions | Low Complexity (0) | Medium Complexity (0.5) | High Complexity (1) |

|---|---|---|---|---|

| Operational Execution | Number of procedures per visit | <5 | 5-10 | >10 |

| Regulatory Oversight | Number of regulatory bodies | 1 | 2-3 | >3 |

| Patient Burden | Visit frequency per month | <2 | 2-4 | >4 |

| Site Burden | Data points collected per patient | <100 | 100-500 | >500 |

| Study Design | Number of primary endpoints | 1 | 2 | >2 |

Source: Adapted from BMC Medical Research Methodology Protocol Complexity Tool [10]

Managing Stakeholder-Driven Uncertainty

Frequently Asked Questions

Q: Which stakeholders should I engage throughout model development? A: Engage policy-makers, researchers, community representatives, public health professionals, healthcare providers, and individuals with lived experience of the condition being modeled [11]. The specific combination depends on your model's purpose, but inclusive representation ensures contextual accuracy. Begin stakeholder mapping early in the process to identify all relevant groups.

Q: What are effective methodologies for incorporating stakeholder input during model conceptualization? A: Participatory workshops have proven most effective during problem mapping, model conceptualization, and validation phases [11] [12]. These sessions should use catalytic questions to drive generative thinking, document path dependencies, and identify emergent patterns. Supplement workshops with individual interviews to capture perspectives that might not emerge in group settings.

Q: How can I manage timeline extensions caused by stakeholder engagement processes? A: Implement a structured participatory modeling framework with clear milestones and decision points [11]. The 4P framework (Purpose, Partnership, Processes, Product) helps standardize reporting and manage expectations [11]. Allocate 15-20% additional time in project planning specifically for stakeholder engagement activities, and establish clear protocols for incorporating feedback without endless revision cycles.

Research Reagent Solutions: Stakeholder Engagement Tools

Table 2: Essential Methodological Reagents for Participatory Modeling

| Research Reagent | Primary Function | Application Context |

|---|---|---|

| Participatory Workshops | Facilitate collaborative problem mapping and model conceptualization | Early model development stages to establish structure and parameters |

| Causal Loop Diagrams | Visualize feedback mechanisms and system interactions | Understanding complex interdependencies between model components |

| 4P Framework | Standardize reporting of participatory modeling processes | Ensuring consistent methodology across research teams and timepoints |

| Stakeholder Mapping Matrix | Identify relevant stakeholders and their influence levels | Project initiation to ensure comprehensive representation |

| Transparent Consensus-Building Protocols | Resolve conflicting stakeholder perspectives | Model validation and parameter finalization phases |

Source: Adapted from BMC Public Health scoping review on stakeholder involvement [11] [12]

Addressing Dynamic Change in Computational Models

Frequently Asked Questions

Q: What is the critical distinction between "dynamics of change" and "change in dynamics"? A: Dynamics of change refers to how a system self-regulates on a short time scale, while change in dynamics describes how those regulatory patterns themselves evolve over longer time scales [13] [14]. For example, minute-to-minute emotional regulation represents dynamics of change, while how this regulation strategy develops from adolescence to midlife represents change in dynamics.

Q: What experimental designs best capture multi-timescale phenomena? A: Implement measurement burst designs featuring intensive measurement periods separated by longer intervals [13]. These designs combine the temporal density needed to estimate short-term dynamics with the longitudinal span necessary to track developmental changes. Ensure your sampling frequency aligns with the hypothesized timescales of both change processes.

Q: How can I model individual differences in dynamic processes? A: Incorporate individual differences in equilibrium values, fluctuation amplitudes, and regulatory parameters [13]. Represent these differences through random effects in your models, allowing parameters like frequency, damping, and attractor strength to vary across individuals or contexts while estimating population-level patterns.

Dynamic Modeling Experimental Protocol

Protocol Title: Measuring Change in Dynamics Using Burst Designs

Purpose: To capture both short-term regulatory dynamics and longer-term developmental changes in system behavior.

Materials and Equipment:

- Time-series data collection platform (e.g., ecological momentary assessment software)

- Computational resources for differential equation modeling

- Statistical software capable of multilevel modeling (e.g., R, OpenMx)

- Data visualization tools

Procedure:

- Study Design: Implement a burst measurement design with at least three measurement bursts, each containing a minimum of 30 closely-spaced observations [13].

- Data Collection: Collect time-intensive repeated measurements during each burst, ensuring sufficient density to capture the hypothesized regulatory processes.

- Model Specification:

- Estimate within-burst dynamics using differential or difference equation models

- Allow key parameters (equilibrium values, damping rates, fluctuation amplitudes) to vary across individuals

- Model between-burst changes in these parameters as longitudinal trajectories

- Model Estimation: Use maximum likelihood or Bayesian estimation to simultaneously estimate within-burst dynamics and between-burst changes.

- Validation: Test model assumptions through residual analysis and sensitivity testing.

Troubleshooting:

- If models fail to converge, simplify the random effects structure

- If burst-to-burst changes are undetectable, increase the number of bursts or the interval between them

- If within-burst dynamics are poorly estimated, increase the density of measurements within each burst

Integrated Workflow for Managing Deep Uncertainty

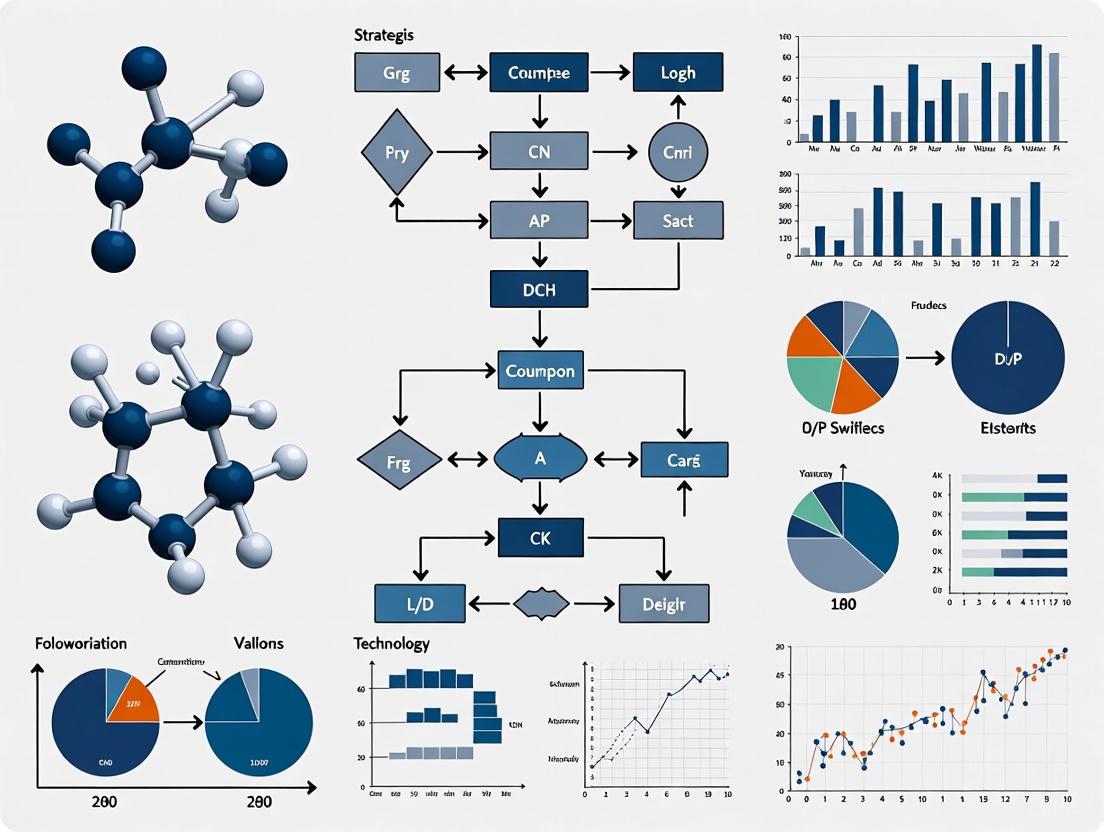

Diagram 1: Integrated workflow for managing deep uncertainty in computational models

Advanced Technical Support: Uncertainty Quantification Methods

Frequently Asked Questions

Q: What is the fundamental distinction between data uncertainty and model uncertainty? A: Data uncertainty (aleatory uncertainty) originates from inherent randomness and stochasticity in data, such as sensor noise or conflicting evidence between training labels. This uncertainty is typically irreducible. Model uncertainty (epistemic uncertainty) arises from lack of knowledge during model training, including limited training samples, suboptimal model architecture, or out-of-distribution samples [15].

Q: Which uncertainty quantification methods are most suitable for deep learning models in biological contexts? A: For model uncertainty, consider Bayesian neural networks, Monte Carlo dropout, or deep ensembles. For data uncertainty, implement direct probabilistic forecasting or quantile regression [15]. In biological contexts where both types coexist, hybrid approaches that combine Bayesian methods with probabilistic loss functions typically perform best.

Q: How can I determine whether poor model performance stems from data or model uncertainty? A: Conduct ablation studies where you systematically vary training data quantity and model complexity. If performance improves substantially with more data but not with model architecture changes, you're likely facing model uncertainty. If performance remains consistently poor regardless of data quantity or model changes, data uncertainty is the probable cause [15].

Uncertainty Diagnostic Protocol

Protocol Title: Differentiating Uncertainty Sources in Computational Models

Purpose: To identify whether poor model performance primarily stems from data uncertainty or model uncertainty.

Materials:

- Training dataset with documented provenance

- Computational resources for model training

- Uncertainty quantification software library (e.g., TensorFlow Probability, Pyro)

Procedure:

- Data Segmentation: Partition data into representative training, validation, and test sets

- Model Variation: Train multiple model architectures on identical data

- Data Scaling: Systematically increase training data quantity while monitoring performance

- Uncertainty Decomposition: Apply methods that separately quantify data and model uncertainty

- Source Attribution: Analyze which uncertainty type dominates in different prediction contexts

Interpretation Guidelines:

- Performance that improves with more data → Primarily model uncertainty

- Performance that plateaus despite more data → Primarily data uncertainty

- High variance across model architectures → Significant model uncertainty

- Consistent errors across architectures → Significant data uncertainty

Successfully navigating deep uncertainty in computational modeling requires methodological sophistication and strategic planning. By systematically addressing system complexity through structured assessment tools, engaging diverse stakeholders through participatory approaches, and accounting for dynamic change through appropriate temporal designs, your research can produce robust findings despite fundamental uncertainties. The troubleshooting guides and protocols provided here offer practical pathways to strengthen your computational models against the challenges posed by these three sources of deep uncertainty. Continue to document and share your experiences with these methods to advance collective knowledge in uncertainty-aware computational research.

Contrasting DMDU with Traditional Probabilistic Risk Analysis

Core Conceptual Differences

FAQ: What is the fundamental philosophical difference between DMDU and PRA?

The fundamental difference lies in how each framework treats the knowability of the future. Probabilistic Risk Assessment (PRA) operates under the assumption that future risks can be characterized using probability distributions derived from historical data and known system models [16]. In contrast, Decision Making under Deep Uncertainty (DMDU) is applied when experts and stakeholders cannot agree on appropriate models or the probability distributions for key parameters, often because the future is fundamentally unpredictable or the system is too complex [17] [18]. DMDU addresses conditions of deep uncertainty, where the set of possible outcomes is unknown or their likelihoods cannot be predicted [17].

FAQ: When should a researcher choose a DMDU approach over a traditional PRA?

A researcher should select a DMDU approach when facing transformational changes or novel systems where past data is not a reliable guide to the future. This includes planning for long-term challenges like climate change adaptation, designing infrastructure for unprecedented conditions, or developing strategies for emerging technologies [19] [18]. PRA is more suitable for well-understood systems with ample historical data, where the mechanisms involved are stable and can be modeled with confidence, such as calculating the annual likelihood of a car crash based on historical statistics [19].

The table below summarizes the key conceptual distinctions:

| Feature | Traditional Probabilistic Risk Analysis (PRA) | Decision Making under Deep Uncertainty (DMDU) |

|---|---|---|

| Core Question | "What is most likely to happen, and what is its risk?" | "How can we make a decision that performs well across many plausible futures?" [20] |

| View of the Future | A single, predictable future or a set of futures with known probabilities. | Multiple plausible futures, often with unknown or contested likelihoods [17]. |

| Primary Goal | Optimization: Find the most efficient solution for the most probable future. | Robustness: Find strategies that perform adequately across the widest range of futures [18]. |

| Handling of Uncertainty | Characterizes uncertainty as quantifiable risk using probability distributions. | Acknowledges deep uncertainty where probabilities are unknown, unreliable, or disputed [19] [18]. |

| Typical Approach | "Predict-then-Act": Make a best-estimate prediction, then optimize the decision for it [20]. | Iterative Stress-Testing: Test proposed strategies across many futures to find and fill gaps [20]. |

Methodologies and Workflows

Experimental Protocol: DMDU Robust Decision Making (RDM)

Robust Decision Making (RDM) is a key DMDU methodology originally developed by RAND Corporation [21]. The following workflow diagram illustrates its iterative, exploratory nature:

Protocol Steps:

- Propose a Strategy: Begin with an initial policy or plan, even if preliminary [20].

- Run Computational Experiments: Use simulation models to evaluate the proposed strategy not just for a few scenarios, but for hundreds or thousands of plausible future states of the world (SOWs), generating a large database of performance outcomes [20] [21].

- Vulnerability Analysis: Mine the resulting database to identify the specific combinations of future conditions (e.g., climate, economic growth, technology adoption) under which the proposed strategy fails to meet its performance goals [20].

- Trade-off and Iteration: Use this information to revise the strategy, filling performance gaps. The process is repeated, testing new proposals until a robust strategy—one that performs well over the broadest range of scenarios—is identified [20] [18].

Experimental Protocol: Adaptive Pathways

Another critical DMDU method is Adaptive Pathways, which focuses on designing flexible strategies that can evolve over time.

Protocol Steps:

- Map the Decision Space: Identify a long-term goal and the key uncertainties that could affect it.

- Develop Adaptive Pathways: Create a timeline of actions, where initial decisions are made with the explicit understanding that the plan will adapt as new information is gathered. This involves building a sequence of actions and identifying tipping points or thresholds that would trigger a shift from one action to the next [20].

- Monitor and Adapt: Establish a monitoring system to track the key indicators. As conditions change and thresholds are approached, decision-makers execute the pre-planned contingency actions, thus avoiding crisis-driven responses and keeping the plan on track to meet long-term objectives [20].

The Scientist's Toolkit: Key DMDU Reagents and Solutions

The table below details essential conceptual "reagents" and analytical tools used in DMDU experiments.

| Research Reagent | Function in the DMDU Experiment |

|---|---|

| Plausible Futures (States of the World) | Serves as the test medium. These are multiple, divergent scenarios used to stress-test strategies, replacing the single "best-estimate" future [17]. |

| Robustness Metrics | The measuring instrument. Quantitative or qualitative indicators used to evaluate how well a strategy performs across the many futures (e.g., satisficing criteria, regret metrics) [20]. |

| Exploratory Modeling | The core experimental apparatus. A modeling technique that runs simulations numerous times to explore the implications of many different assumptions, rather than to predict a single outcome [18] [22]. |

| Decision-Support Database | The data repository. A structured database that stores the results of thousands of model runs, allowing analysts to query and identify conditions under which policies fail [21]. |

| Adaptive Policy | The target output. A policy or strategy designed with a built-in capacity to change over time in response to how the future unfolds [20]. |

Troubleshooting Common Experimental Challenges

FAQ: Our DMDU analysis is producing an overwhelming number of scenarios, leading to "analysis paralysis." How can we simplify?

This is a common challenge. The solution is not to reduce the number of scenarios initially explored, but to use computer-assisted analysis to identify the most critical ones. Employ statistical methods (like scenario discovery) on your results database to cluster futures where your strategy fails. This will pinpoint the few key combinations of uncertain factors (e.g., "high climate sensitivity & low economic growth") that truly drive vulnerability, allowing you to focus your planning on these critical scenarios [20] [21].

FAQ: How can we gain stakeholder buy-in when DMDU does not provide a single, "guaranteed" answer?

Reframe the objective of the analysis. The goal of DMDU is not to predict the future but to build confidence in a decision despite an uncertain future [20]. Communicate the value of a robust, low-regret strategy that avoids catastrophic failures across many possibilities. Furthermore, the DMDU process itself builds consensus by allowing stakeholders with different beliefs about the future to agree on a plan for different reasons, as it demonstrates the strategy's viability across their various viewpoints [19] [18].

FAQ: Our traditional models are deterministic. Can we still apply DMDU principles?

Yes. A highly effective first step is to conduct a Decision Scaling analysis. This method starts with your existing model. Instead of relying on extensive new climate or economic projections, you systematically test your system's performance against a wide range of possible future climate or socio-economic stresses (e.g., from dry to wet, or from low to high demand). This creates a "climate stress test" for your decision, identifying the thresholds where your system fails, without requiring a complete model overhaul [20].

The Critical Need for Robust and Adaptive Plans in Drug Discovery

The drug discovery process is inherently characterized by deep uncertainty. From unpredictable clinical outcomes to complex biological systems, researchers face a landscape where traditional linear planning often falls short. Robust and adaptive plans are no longer a luxury but a critical necessity for success. This technical support center provides practical guidance for implementing adaptive strategies and troubleshooting common experimental challenges, framed within the context of decision-making under deep uncertainty (DMDU). The approaches outlined here help researchers manage the profound and persistent uncertainties transforming how we discover, develop, and evaluate new therapeutics [23] [24].

Understanding Adaptive Frameworks in Clinical Development

What are Adaptive Clinical Trial Designs?

An adaptive clinical trial design allows for prospectively planned modifications to one or more aspects of the study design based on accumulating data from subjects in that trial [25]. This approach contrasts with conventional static designs, where all parameters are fixed before the trial begins. The U.S. Food and Drug Administration (FDA) emphasizes that such modifications must be prospectively planned in the protocol to maintain trial validity and integrity [26] [25].

Advantages of Adaptive Designs

- Increased Efficiency: Potentially reduces the number of patients needed and shortens development timelines [26] [25]

- Ethical Benefits: Exposes fewer subjects to suboptimal treatments through early stopping rules [25]

- Enhanced Flexibility: Allows evaluation of a broad range of doses, regimens, and populations with opportunity to discontinue suboptimal choices [25]

- Better Resource Allocation: Enables sample size re-estimation based on interim results to avoid underpowered or excessively large studies [25]

Types of Adaptive Designs

| Design Type | Key Characteristics | Common Applications |

|---|---|---|

| Group Sequential Design | Pre-planned interim analyses with stopping rules for efficacy/futility | Well-understood design used for years in clinical research [26] |

| Adaptive Dose-Finding | Modifies dose assignments based on accumulating safety/efficacy data | Early-phase studies to identify optimal dosing [26] |

| Sample Size Re-estimation | Adjusts sample size based on interim effect size estimates | Avoids underpowered studies or excessive enrollment [25] |

| Umbrella Trials | Tests multiple targeted therapies simultaneously within a single disease | Oncology, with patient stratification by biomarkers [27] |

| Basket Trials | Tests a single therapy across multiple diseases sharing a molecular trait | Precision medicine approaches [27] |

| Platform Trials | Open-ended frameworks where arms can be added/removed over time | Long-term therapeutic evaluation in evolving standards of care [27] |

Technical Support Center: Troubleshooting Guides

FAQ: Adaptive Trial Implementation Challenges

Q: What are the key operational challenges in implementing adaptive trials?

A: Adaptive trials place significant strain on operational infrastructure. Key challenges include:

- Pharmacy Complexity: Managing different drug formulations, dose schedules, and storage requirements across multiple arms [27]

- Coordination Burden: Navigating shifting eligibility criteria and re-consenting patients amid protocol changes [27]

- Regulatory Submissions: Frequent IRB submissions and updates across multiple stakeholders [27]

- Resource Intensity: Platform trials representing 30-40% of a portfolio can consume over 50% of investigational drug service staffing hours [27]

Q: How can we control statistical error in adaptive designs?

A: Controlling Type I error (false positives) is a key regulatory concern [26]. Strategies include:

- Prospective Planning: Pre-specifying the number, timing of interim analyses, and statistical methods [25]

- Error Rate Adjustment: Using statistical methodologies that account for multiple looks at the data [26] [25]

- Clinical Trial Simulations: Running hypothetical trials under various assumptions to estimate error rates [25]

Q: What defines a "well-understood" versus "less well-understood" adaptive design?

A: The FDA classification distinguishes:

- Well-Understood Designs: Group sequential designs with established statistical methodology [26]

- Less Well-Understood Designs: Adaptive dose-finding and seamless phase I/II or II/III designs where statistical methods are not well-established and should be used with caution [26]

Troubleshooting Guide: Common Experimental Issues

Q: My TR-FRET assay shows no assay window. What should I check?

A: When there's no assay window:

- First, verify proper instrument setup and configuration [28]

- Confirm correct emission filters are being used, as filter choice can "make or break" TR-FRET assays [28]

- Test your microplate reader's TR-FRET setup using reagents already purchased for your assay [28]

Q: Why am I getting different EC50/IC50 values between labs for the same compound?

A: Differences in IC50 values between labs commonly result from:

- Variations in stock solution preparation (typically at 1 mM) [28]

- Differences in cell permeability or compound efflux mechanisms [28]

- The compound targeting different kinase forms (inactive vs. active) across assay types [28]

Q: My sequencing library yields are consistently low. What are the potential causes?

A: Low library yield can result from several issues:

| Root Cause | Mechanism of Yield Loss | Corrective Action |

|---|---|---|

| Poor Input Quality | Enzyme inhibition from contaminants or degraded nucleic acids | Re-purify input sample; ensure high purity (260/230 > 1.8) [29] |

| Quantification Errors | Underestimating input concentration leads to suboptimal enzyme stoichiometry | Use fluorometric methods (Qubit) rather than UV for template quantification [29] |

| Fragmentation Issues | Over- or under-fragmentation reduces adapter ligation efficiency | Optimize fragmentation parameters; verify distribution before proceeding [29] |

| Ligation Problems | Poor ligase performance or wrong molar ratios reduce adapter incorporation | Titrate adapter:insert molar ratios; ensure fresh ligase and buffer [29] |

Methodologies and Workflows for Adaptive Planning

Statistical Considerations for Adaptive Designs

Proper statistical methodology is crucial for maintaining trial integrity. The FDA emphasizes controlling the overall Type I error rate at a pre-specified level of significance [26]. For group sequential designs, this involves setting efficacy and futility boundaries at interim analyses. For example, one might set boundaries at α1=0.005 for efficacy and β1=0.40 for futility at interim, with α2=0.0506 at the final analysis to control overall Type I error at 5% [26].

Sample Size Calculation for Adaptive Trials

Traditional sample size calculations must be adapted for interim analyses. Below are sample sizes required for various powers assuming a 25% difference in failure rate with placebo failure rate of 50% [26]:

| Randomization Ratio | Placebo Failure Rate | Test Failure Rate | Power 80% | Power 85% | Power 90% |

|---|---|---|---|---|---|

| 1:1 | 50% | 25% | 110 (55 per arm) | 126 (63 per arm) | 148 (74 per arm) |

| 2:1 | 50% | 25% | 132 (88 test, 44 placebo) | 150 (100 test, 50 placebo) | 174 (116 test, 58 placebo) |

Data Quality Assessment in Screening Assays

The Z'-factor is a key metric for assessing data quality in assays [28]. It accounts for both the assay window size and data variation:

Assays with Z'-factor > 0.5 are considered suitable for screening. The relationship between assay window and Z'-factor plateaus quickly—above a 5-fold assay window, large increases in window size yield only incremental Z'-factor improvements [28].

Visualization of Adaptive Trial Workflows

Adaptive Trial Decision Pathway

Operational Implementation of Adaptive Trials

The Scientist's Toolkit: Essential Research Reagents

Key Reagent Solutions for Robust Assay Development

| Reagent/Tool | Function | Application Notes |

|---|---|---|

| TR-FRET Assay Reagents | Time-Resolved Fluorescence Energy Transfer detection | Use exact recommended emission filters; test instrument setup before experiments [28] |

| LanthaScreen Eu/Tb Donors | Long-lifetime lanthanide donors for TR-FRET | Donor signal serves as internal reference; ratio accounts for pipetting variances [28] |

| Z'-LYTE Assay System | Kinase activity measurement via differential cleavage | Ratio not linear between 0-100% phosphorylation; refer to protocol for calculations [28] |

| Development Reagents | Enzyme-based detection for biochemical assays | Titrate for optimal concentration; over-development affects Ser/Thr phosphopeptides [28] |

| NGS Library Prep Kits | Next-generation sequencing library construction | Check bead:sample ratios carefully; over-drying beads reduces efficiency [29] |

| Quality Control Assays | Sample integrity verification | Use fluorometric quantification (Qubit) over absorbance for accurate template measurement [29] |

Implementing robust and adaptive plans in drug discovery requires both strategic frameworks and practical troubleshooting expertise. By understanding the principles of adaptive trial design, recognizing common experimental challenges, and implementing systematic troubleshooting approaches, researchers can better navigate the deep uncertainties inherent in drug development. The methodologies and guidelines presented here provide a foundation for building more resilient, efficient, and successful drug discovery programs that can adapt to evolving scientific information while maintaining statistical and operational integrity.

Exploratory Modeling and Analysis (EMA) is a research methodology that uses computational experiments to analyze complex and uncertain issues, developed primarily for model-based decision support under deep uncertainty [30]. Deep uncertainty describes a situation where analysts and decision-makers cannot agree on a single model structure, the probability distributions for key parameters, or the valuation of outcomes [30]. Unlike traditional predictive modeling, which seeks to find the most likely future, EMA systematically explores plausible futures by running models thousands of times under different assumptions and parameter values [30]. This approach is particularly valuable for generating foresights, studying systemic transformations, and designing robust policies and plans in the face of a plethora of uncertainties [30].

FAQs: Core Concepts of EMA

1. What is the fundamental difference between predictive modeling and exploratory modeling?

Predictive modeling operates under the assumption that a system's mechanisms are sufficiently well-known and agreed upon to forecast its future state accurately. In contrast, exploratory modeling acknowledges deep uncertainty and does not attempt to make a single prediction. Instead, it uses computational models as "scenario generators" to map out the range of plausible outcomes given various uncertainties, ranging from parametric to structural and methodological [30].

2. What types of uncertainties can EMA handle?

EMA is designed to handle multiple deep and irreducible uncertainties simultaneously [30]. These can be categorized as:

- Parametric Uncertainty: Uncertainty about the precise values of key parameters within a given model structure.

- Structural Uncertainty: Uncertainty about the correct model structure itself (e.g., different causal relationships).

- Model Method Uncertainty: Uncertainty arising from the choice of different modeling methods (e.g., System Dynamics vs. Agent-Based Modeling) [30].

3. How is EMA applied in policy analysis and strategic planning?

EMA supports Decision Making under Deep Uncertainty (DMDU) by helping researchers and policymakers systematically explore a wide range of possible future scenarios [3]. It aids in developing adaptive strategic plans by identifying plausible external conditions that would cause a plan to perform poorly, allowing for iterative plan improvement [30]. This helps in designing policies that are robust across many futures, rather than optimal for a single, predicted future.

Troubleshooting Common EMA Experiment Issues

The following table addresses frequent technical challenges encountered when setting up and running EMA experiments.

| Common Issue | Probable Cause | Solution & Troubleshooting Steps |

|---|---|---|

| Model Interface Failures | Incorrectly defined model input parameters or output outcomes. | 1. Verify that all Uncertainties (model inputs) and Levers (policy controls) are correctly defined using the appropriate parameter classes (e.g., RealParameter, IntegerParameter) [31].2. Ensure all Outcomes (model outputs) are specified correctly to capture the performance metrics of interest [31].3. Check the connector (e.g., for Vensim, Excel, NetLogo) for correct variable naming and model file paths [31]. |

| Uninformative or Incoherent Results | The sampling strategy does not adequately explore the uncertainty space. | 1. Switch from a simple sampling method (e.g., Latin Hypercube) to a more sophisticated one like Monte Carlo or Sobol sequences for a more thorough exploration of the parameter space [31].2. Increase the number of experimental runs (scenarios) to improve the coverage of the plausible future space.3. Revisit the defined ranges of your uncertain parameters to ensure they are wide enough to capture plausible extremes. |

| Poor Performance or Long Run Times | The computational burden of running thousands of model evaluations sequentially. | 1. Utilize the parallel Evaluators provided by the EMA Workbench to distribute experiments across multiple CPU cores or a computing cluster [31].2. If possible, simplify the underlying simulation model to reduce its individual execution time.3. Consider using adaptive sampling techniques that focus computational resources on the most interesting regions of the uncertainty space. |

| Difficulty Identifying Robust Policies | Inability to effectively analyze the high-dimensional output from thousands of runs. | 1. Employ the specialized analysis tools in the EMA Workbench, such as the Patient Rule Induction Method (PRIM) or Classification Trees (CART), to identify key scenarios and policy levers [31].2. Use Parallel Coordinate Plots to visualize the relationships between input parameters, policy levers, and outcome metrics across all scenarios [31].3. Apply Regional Sensitivity Analysis to determine which uncertain parameters are most critical to the model's outcomes [31]. |

Methodologies for Key EMA Experiments

Experiment 1: Discovering Optimal Resource Allocations in Business Processes

This methodology uses EMA to automate the discovery of resource allocation policies that improve process performance, a technique known as SimodR [32].

- 1. Problem Formulation: Define the multi-objective optimization function. Common objectives include minimizing waiting time, flow time, and cost, while balancing resource workload [32].

- 2. Model Discovery & Configuration:

- 3. Constraint Definition (C1): Define a model that constrains resource allocation to activities they are enabled to execute based on historical data and organizational rules [32].

- 4. Scenario Generation (C2): Generate multiple "what-if" scenarios by applying different resource allocation policies, such as:

- Preference Policy: Allocates resources to activities they most frequently execute.

- Collaboration Policy: Allocates resources to enhance coordination between them [32].

- 5. Optimization (C3): Use an evolutionary multi-objective optimization algorithm (e.g., NSGA-II) to search through the generated scenarios and discover the optimal resource allocations based on the user-defined objectives [32].

- 6. Trade-off Analysis: Compare the performance trade-offs of the different optimal what-if scenarios to inform decision-making [32].

The workflow for this methodology is outlined below.

Experiment 2: Developing Adaptive Policies for Strategic Planning

This methodology, drawn from an airport planning case study, uses EMA to iteratively improve a strategic plan under deep uncertainty [30].

- 1. Develop Initial Plan: Formulate an initial strategic plan based on current best estimates and understanding.

- 2. Identify Critical Uncertainties: Identify the key external factors (e.g., fuel prices, passenger demand, regulations) that are deeply uncertain and critical to the plan's performance.

- 3. Generate Scenarios: Use exploratory modeling to generate a large ensemble of plausible future states of the world, defined by different combinations of the critical uncertainties.

- 4. Evaluate Plan Performance: Run the simulation model for each scenario to evaluate the performance of the strategic plan across all futures.

- 5. Identify Vulnerabilities: Analyze the results to identify specific scenarios or "vulnerability regions" in the uncertainty space where the plan performs poorly.

- 6. Adapt the Plan: Modify the initial plan to make it more robust against the identified vulnerabilities. This often involves adding adaptive triggers—specific conditions that, when met, signal the need to implement a pre-defined contingency action.

- 7. Iterate: Repeat steps 4-6 to iteratively test and improve the adaptive plan until a satisfactory level of robustness is achieved [30].

The iterative nature of this process is visualized in the following diagram.

The Scientist's Toolkit: Essential Research Reagents for EMA

The following table details key computational tools and components essential for conducting EMA.

| Tool / Component | Function & Purpose in EMA |

|---|---|

| EMA Workbench | The core Python package that provides the foundational classes and functions for setting up, designing, and performing computational experiments on one or more models [31]. |

| Model Connectors | Interfaces that allow the EMA Workbench to control simulation models built in different environments, such as Vensim, NetLogo, Excel, and Simio [31]. |

| Sampling Methods | Algorithms (e.g., Monte Carlo, Latin Hypercube) that generate the set of input parameters for the experiments, determining how the space of uncertainties is explored [31]. |

| Multi-Objective Optimizer | An optimization algorithm, such as NSGA-II or epsilon-NSGA-II, used to search for optimal policy configurations by balancing multiple, often competing, performance objectives [32]. |

| PRIM & CART Analyzers | Analysis techniques (Patient Rule Induction Method and Classification Trees) used to analyze the high-dimensional output of EMA experiments. They help identify regions in the uncertainty space that lead to desirable or undesirable outcomes [31]. |

| Parallel Coordinate Plot | A visualization technique for high-dimensional data. It is used to display all experimental runs, showing how combinations of input parameters and levers map to specific outcome values, revealing critical trade-offs [31]. |

From Theory to Bench: DMDU Frameworks and Computational Model Applications

Troubleshooting Guide: Common Experimental Challenges and Solutions

This guide addresses frequent issues encountered when implementing RDM and DAPP in computational modeling research.

Table 1: RDM Troubleshooting Guide

| Challenge | Symptom | Solution | Preventive Measures |

|---|---|---|---|

| Identifying Critical Uncertainties [33] | Model outcomes change drastically with minor input variations; stakeholders cannot agree on key drivers. | Use exploratory modeling and global sensitivity analysis to systematically test which uncertainties most affect outcomes [33]. | Engage diverse stakeholders early in a joint sense-making process to map the uncertainty landscape [33]. |

| Defining Robustness [18] [33] | No single strategy performs acceptably across all considered future states; persistent "cause for regret." | Shift from seeking an optimal outcome to satisficing. Define robustness as satisfactory performance across the widest range of plausible futures [18]. | Clearly define minimum performance thresholds for key objectives before running models [33]. |

| Policy Paralysis [34] | An overabundance of scenarios and trade-off analyses leads to an inability to choose any strategy. | Use scenario discovery algorithms to identify and focus on the critical scenarios where a proposed policy fails [34]. | Frame the goal as finding a robust, adaptive strategy rather than a single, perfect, static solution [18]. |

Table 2: DAPP Troubleshooting Guide

| Challenge | Symptom | Solution | Preventive Measures |

|---|---|---|---|

| Identifying Adaptation Tipping Points (ATPs) [34] | Uncertainty about when a policy will fail or an opportunity will arise in a changing environment. | Conduct bottom-up stress tests or top-down scenario analyses to find the conditions where performance drops below a target threshold [34]. | Use a combination of model-based assessments and expert stakeholder judgment to define ATPs [34]. |

| Designing a Monitoring System [35] | Difficulty selecting which indicators (signposts) to monitor and determining actionable thresholds (triggers). | Use RDM's scenario discovery to pinpoint key factors constituting vulnerabilities. These factors form the basis for technical signposts [35]. | Develop a signpost map alongside the pathways map to visualize indicator interactions, hierarchy, and data quality [35]. |

| Pathway Lock-in [34] | Early actions inadvertently eliminate future options, creating irreversible commitments. | During pathway design, explicitly screen for and label path dependencies. Include actions specifically designed to keep long-term options open [34]. | Evaluate pathways not just on immediate goals but on their capacity to maintain flexibility and avoid premature closure of options [34]. |

Frequently Asked Questions (FAQs)

Q1: What is the core philosophical difference between RDM and DAPP?

RDM is an analytical approach that emphasizes stress-testing strategies against a vast ensemble of plausible futures to identify their vulnerabilities and conditions for failure [18] [36]. Its primary question is: "Under what future conditions does my policy perform poorly?" In contrast, DAPP is a planning framework that emphasizes dynamic adaptation over time [37] [34]. Its primary question is: "What sequence of actions should I take, and how will I know when to switch from one to another?" RDM helps you understand the "what-if," while DAPP helps you plan the "what-when."

Q2: Can RDM and DAPP be used together?

Yes, they are highly complementary. Research shows that using RDM to support DAPP can create a more powerful, unified framework [38] [36] [35]. RDM's computational strength can be used to iteratively develop and stress-test potential actions and pathways intended for a DAPP plan. Furthermore, the vulnerabilities identified through RDM analysis directly inform the monitoring system in DAPP by highlighting the most critical factors to use as signposts and signals [35].

Q3: What is an Adaptation Tipping Point (ATP), and how is it determined?

An ATP is the condition under which a current policy or action can no longer meet its predefined objectives due to changing circumstances [34]. It marks the point of failure, after which a new action is required. ATPs are determined by first setting performance targets (e.g., "flood protection must be below 1:10,000 years"). Analysts then use models to stress-test the policy under changing conditions (e.g., rising sea levels, increased runoff) until the point where it no longer meets that target [34].

Q4: How do these methods move beyond the traditional "predict-then-act" paradigm?

Traditional methods demand accurate predictions to design optimal policies. DMDU methods like RDM and DAPP reject this as often unachievable and dangerous under deep uncertainty [18]. Instead, they:

- Consider a wide range of plausible futures instead of relying on a single forecast.

- Seek robustness—strategies that perform adequately across many futures—rather than a brittle, optimal strategy for one predicted future.

- Explicitly design for adaptation over time, building flexibility and learning into the plan itself [18] [33].

Experimental Protocols & Methodologies

Protocol 1: Core Robust Decision Making (RDM) Analysis

This protocol outlines the key steps for conducting an RDM analysis, based on the established framework [18] [33].

- Problem Framing & Ensemble Generation: Collaborate with stakeholders to define the system, objectives, and decisions. Identify the deeply uncertain factors and use them to generate a large ensemble of plausible future scenarios [33].

- Propose Candidate Strategies: Develop one or more candidate policies or strategies to be tested.

- Model-Based Experimentation: Run computational models to simulate the performance of each candidate strategy across the entire ensemble of futures. This is the exploratory modeling phase [33].

- Scenario Discovery & Vulnerability Analysis: Use statistical algorithms (e.g., PRIM) on the resulting database of model runs to identify the key future scenarios under which a given strategy fails to meet its goals [34] [36].

- Trade-off & Iteration: Present the vulnerabilities and trade-offs of different strategies to decision-makers. Use this insight to iteratively refine and discover new, more robust strategies [18].

Protocol 2: Constructing Dynamic Adaptive Policy Pathways (DAPP)

This protocol describes the process for creating an adaptive plan using the DAPP approach [37] [34].

- Participatory Problem Framing: Define the system, specify quantitative and qualitative objectives, and identify major uncertainties. This establishes a "definition of success" [34].

- Assess Vulnerabilities & Identify ATPs: Evaluate the current system or proposed actions against an ensemble of futures to identify Adaptation Tipping Points (ATPs)—the conditions under which performance becomes unacceptable [34].

- Identify Contingent Actions: Brainstorm a set of potential policy actions that can address the identified vulnerabilities and seize opportunities.

- Design & Evaluate Pathways: Assemble the actions into sequences, or pathways. A new action is activated once its predecessor reaches its ATP. Visualize these pathways in a pathways map (similar to a metro map) to illustrate options, path dependencies, and lock-in possibilities [34].

- Develop an Adaptive Plan: Select a preferred set of initial actions and long-term options. Establish a monitoring system with signposts (indicators) and triggers (decision points) to signal when to implement the next action on a pathway or reassess the plan [37] [34].

Framework Visualization

DAPP Adaptive Planning Workflow

RDM and DAPP Integration

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Analytical Tools for DMDU Research

| Tool / "Reagent" | Function in DMDU Analysis | Example Application |

|---|---|---|

| Ensemble of Plausible Futures | A computational representation of 100s or 1000s of possible future states, spanning deeply uncertain factors. Used to stress-test strategies instead of relying on a single prediction [18] [33]. | In water resource planning, an ensemble could combine different climate projections, population growth rates, and economic scenarios [38] [36]. |

| Exploratory Models | Simulation models not used for prediction, but for running "what-if" experiments across the ensemble of futures. They are a prosthesis for the imagination [33]. | A system dynamics model of a drug supply chain could be used to explore resilience to various disruption scenarios. |

| Scenario Discovery Algorithms (e.g., PRIM) | Data mining techniques used to analyze the output of exploratory models. They concisely identify the subset of future conditions where a proposed policy fails [34] [36]. | After running a model 10,000 times, PRIM can find that "Policy A fails only when sea-level rise exceeds X AND demand drops below Y" [35]. |

| Adaptation Pathways Map | A visual decision-support tool (like a metro map) that shows different sequences of actions available over time and the conditions that trigger switching between them [37] [34]. | Visualizing the trade-offs and timing of different flood defense strategies (e.g., levees vs. managed retreat) for a coastal city [34]. |

| Signposts and Triggers | Signposts are monitored indicators of change (e.g., sea level, disease incidence). Triggers are pre-agreed thresholds in these indicators that activate a contingency action [37] [34]. | A signpost is the annual rate of sea-level rise. A trigger is when that rate consistently exceeds 5mm/year, activating a plan to upgrade a water treatment plant [35]. |

The Role of Joint Sense-Making in Multi-Stakeholder Drug Development Teams

Technical Support Center: FAQs & Troubleshooting Guides

This technical support center provides solutions for researchers working with computational models in multi-stakeholder drug development environments. The guidance below addresses common challenges in implementing joint sense-making approaches, framed within computational models research for deep uncertainty.

Frequently Asked Questions

Q1: What is joint sense-making in the context of multi-stakeholder drug development? A1: Joint sense-making refers to the structured process where diverse stakeholders in drug development—including regulatory agencies, HTA bodies, payers, patients, and drug developers—synthesize information and quantify uncertainties to align perspectives. This process is particularly critical at the "Go/No‐Go" decision between phase II and phase III trials, where success must be defined beyond efficacy alone to include regulatory approval, market access, and financial viability perspectives [39].

Q2: Why do existing quantitative methodologies for decision-making often fail in multi-stakeholder contexts? A2: Current evidence-based quantitative methodologies frequently assess evidence without fully considering the range of stakeholder perspectives. They typically focus narrowly on overall drug efficacy and financial considerations while under-integrating other criteria such as safety profiles, patient preferences, and market dynamics. This limits their ability to address diverse stakeholder priorities and needs [39].

Q3: How can we better incorporate Real-World Data (RWD) into joint sense-making frameworks? A3: The integration of RWD remains underutilized in current decision frameworks. Our review identifies this as a critical gap and suggests that adopting RWD could support more comprehensive and adaptive decision-making by providing broader evidence bases that reflect diverse stakeholder considerations and real-world conditions [39].

Q4: What computational approaches support joint sense-making under deep uncertainty? A4: Foundational to this work are biologically plausible models that link neural structure and dynamics to spatial cognition, including open-source toolkits for simulating realistic navigation and hippocampal activity. These platforms enable rapid prototyping of models that jointly capture behavioral trajectories and neural representations, which can be analogously applied to stakeholder decision pathways in drug development [40].

Troubleshooting Common Experimental Challenges

Issue: Difficulty aligning stakeholder priorities in computational models Symptoms: Models consistently favor one stakeholder perspective (e.g., financial returns over patient safety), inability to reach consensus in simulated decision scenarios.

Troubleshooting Steps:

- Isolate Priority Variables: Identify and separate weighting factors for each stakeholder group in your model [39].

- Implement Multi-Criteria Optimization: Apply utility-based approaches that explicitly balance clinical, commercial, and regulatory objectives rather than single-objective optimization [39].

- Validate with Stakeholder Input: Test model outputs with representative stakeholders to ensure alignment with real-world priorities.

- Document Weighting Rationale: Clearly record the evidence base for priority assignments to facilitate model adjustment and transparency.

Issue: Poor model performance when transitioning from early to late-stage trial simulations Symptoms: Inaccurate probability of success (PoS) predictions, failure to anticipate regulatory or market access hurdles.

Troubleshooting Steps:

- Expand Success Definitions: Broaden PoS concepts beyond efficacy alone to include regulatory approval, market access, and financial viability metrics [39].

- Incorporate Target Product Profile (TPP) Framework: Use TPP as a strategic roadmap to align model parameters with desired labeling goals and development targets [39].

- Implement Bayesian Hybrid Approaches: Combine frequentist and Bayesian methodologies to better quantify uncertainties across stakeholder perspectives [39].

- Test with Historical Data: Validate models against known drug development outcomes to calibrate transition probability estimates.

Experimental Protocols & Methodologies

Protocol: Multi-Stakeholder Decision Framework Implementation

Purpose: To create a computational framework that integrates diverse stakeholder perspectives for drug development decisions under deep uncertainty.

Methodology:

- Stakeholder Mapping: Identify all relevant stakeholders (regulatory, HTA, payers, patients, developers) and their primary decision criteria [39].

- Criteria Weighting: Assign quantitative weights to different success factors (efficacy, safety, cost, access) based on stakeholder importance.

- Probability of Success (PoS) Modeling: Develop extended PoS calculations that incorporate multiple success definitions beyond statistical significance [39].

- Uncertainty Quantification: Implement Bayesian methods to quantify uncertainties across all stakeholder criteria.

- Decision Optimization: Apply multi-criteria optimization algorithms to identify development pathways that balance stakeholder needs.

Validation Approach:

- Compare model predictions against historical drug development outcomes

- Conduct sensitivity analyses on stakeholder weighting assumptions

- Test with hypothetical development scenarios across therapeutic areas

Protocol: Computational Model Calibration for Deep Uncertainty

Purpose: To ensure computational models remain robust under conditions of deep uncertainty in multi-stakeholder environments.

Methodology:

- Parameter Space Exploration: Systematically vary input parameters across plausible ranges to identify critical decision thresholds.

- Scenario Analysis: Test model performance under best-case, worst-case, and expected scenarios for each stakeholder group.

- Robustness Testing: Evaluate how decisions change with variations in stakeholder priority weightings.

- Convergence Validation: Ensure the model reaches stable joint sense-making outcomes across multiple simulation runs.

| Stakeholder | Primary Concerns | Secondary Factors | Decision Influence Weight |

|---|---|---|---|

| Drug Developers | Clinical outcomes, Risk mitigation | Resource allocation, Timelines | 35% |

| Regulatory Authorities | Patient safety, Efficacy evidence | Ethical standards, Labeling claims | 25% |

| HTA Bodies & Payers | Cost-effectiveness, Comparative benefit | Budget impact, Formulary placement | 20% |

| Patients | Quality of life, Side-effect management | Treatment access, Daily burden | 15% |

| Investors | Financial returns, Market potential | Competitive landscape, Exit opportunities | 5% |

| Success Category | Metrics | Typical Weight in Decision | Data Sources |

|---|---|---|---|

| Regulatory Approval | Meeting safety requirements, Labeling goals | 30% | Phase II data, TPP, Regulatory feedback |

| Market Access | HTA endorsement, Payer reimbursement | 25% | Comparative effectiveness, Cost analyses |

| Financial Viability | ROI, Profitability, Peak sales | 25% | Market research, Pricing models |

| Competitive Performance | Market share, Differentiation | 20% | Competitive intelligence, Treatment landscape |

The Scientist's Toolkit: Research Reagent Solutions

| Tool/Resource | Function | Application in Stakeholder Modeling |

|---|---|---|

| RatInABox Toolkit | Simulates realistic navigation and neural activity patterns | Provides foundational algorithms for modeling decision pathways and stakeholder interaction patterns [40] |

| Bayesian Hybrid Frameworks | Combines frequentist and Bayesian statistical approaches | Enables quantification of uncertainties across diverse stakeholder perspectives and success criteria [39] |

| Target Product Profile (TPP) | Strategic document outlining desired drug characteristics | Serves as alignment tool between developer targets and regulatory expectations [39] |

| Multi-Criteria Decision Analysis | Optimizes decisions across multiple competing objectives | Balances clinical, commercial, and regulatory objectives in development pathway selection [39] |

| Real-World Data (RWD) Integration | Incorporates evidence from non-trial settings | Enhances prediction accuracy for market access and post-approval success factors [39] |

Experimental Workflow & System Architecture Diagrams

Joint Sense-Making Workflow

System Architecture

Leveraging Deep Active Optimization (e.g., DANTE) for High-Dimensional Problem Solving

DANTE Troubleshooting Guide: Frequently Asked Questions

This FAQ addresses common challenges researchers face when implementing the Deep Active Optimization with Neural-Surrogate-Guided Tree Exploration (DANTE) pipeline for high-dimensional problems under deep uncertainty.

Q1: Our DANTE pipeline is consistently converging to local optima rather than the global optimum in our high-dimensional drug binding affinity optimization. What key mechanisms should we verify?

A: DANTE incorporates two specific mechanisms to escape local optima that should be validated in your implementation. First, ensure conditional selection is properly implemented. This mechanism prevents value deterioration by comparing the Data-Driven Upper Confidence Bound (DUCB) of root nodes against leaf nodes. The root node should only be replaced if a leaf node demonstrates a higher DUCB, ensuring the search consistently pursues promising directions [41]. Second, confirm local backpropagation is functioning correctly. Unlike traditional Monte Carlo Tree Search that updates the entire path, local backpropagation only updates visitation counts between the root and the selected leaf node. This creates local DUCB gradients that help the algorithm progressively "climb away" from local optima, forming what resembles a ladder escape mechanism [41]. Experiments on synthetic functions show that disabling these mechanisms can require up to 50% more data points to reach global optima [41].

Q2: What strategies does DANTE employ to manage the "curse of dimensionality" when dealing with 1,000-2,000 dimensional feature spaces, such as in complex alloy design?

A: DANTE's architecture specifically addresses high-dimensional challenges through its deep neural surrogate model and tree exploration strategy. The deep neural network surrogate replaces traditional machine learning models (like Bayesian methods or decision trees) that struggle with high-dimensional, nonlinear distributions [41]. This surrogate approximates the complex solution space more effectively. Simultaneously, the neural-surrogate-guided tree exploration (NTE) uses a frequentist approach where the number of visits to a state measures uncertainty, avoiding exponential partition growth that plagues traditional methods in high dimensions [41]. This combination enables DANTE to handle 2,000-dimensional problems where existing approaches are typically confined to 100 dimensions [41].

Q3: How does DANTE achieve sample efficiency in resource-intensive experiments like peptide design where data points are costly?

A: DANTE operates effectively with limited data through its closed-loop active optimization framework. The method requires only a small initial dataset (approximately 200 points) with small sampling batch sizes (≤20) [41]. It achieves this by iteratively selecting the most informative data points for evaluation rather than relying on large pre-existing datasets. The neural surrogate guides this selection process, focusing evaluation resources on regions of the search space with highest potential payoff. This approach minimizes the required samples while still finding superior solutions, demonstrated by its 9-33% performance improvements in real-world applications like peptide binder design with fewer data points than state-of-the-art methods [41].

Q4: In the context of Decision Making Under Deep Uncertainty (DMDU), how does DANTE address non-stationary objective functions when environmental conditions shift?

A: While the core DANTE paper focuses on static optimization, its architecture aligns with DMDU principles by seeking robust solutions over multiple scenarios. The DMDU paradigm emphasizes policies that perform well across numerous plausible futures rather than optimizing for a single best-estimate future [18]. DANTE's ability to explore diverse regions of complex search spaces through its tree exploration makes it suitable for this framework. For non-stationary environments, researchers can implement DANTE within an adaptive management context, where the model is periodically retrained with new data reflecting changed conditions, leveraging its sample efficiency for rapid adaptation to shifting dynamics.

Q5: What are the computational complexity considerations when scaling DANTE to massive problem sizes, and how can they be mitigated?

A: DANTE's computational burden primarily comes from the deep neural surrogate training and the tree search process. For large-scale problems, the stochastic rollout component with local backpropagation helps manage complexity by limiting updates to relevant portions of the search tree [41]. Implementation should focus on efficient parallelization of the neural network training and tree evaluation steps. The method has demonstrated practical feasibility across multiple real-world domains, including materials science and drug discovery, indicating its computational requirements are manageable relative to the experimental costs they aim to reduce [41].

Table 1: Key Experimental Parameters for DANTE Application Domains

| Application Domain | Dimensionality Range | Initial Data Points | Batch Size | Performance Improvement over SOTA |

|---|---|---|---|---|

| Synthetic Functions | 20 - 2,000 dimensions | ~200 | ≤20 | Achieved global optimum in 80-100% of cases [41] |

| Real-world Problems (Computer Science, Physics) | Not specified | Same as other methods | Not specified | Outperformed by 10-20% in benchmark metrics [41] |

| Resource-Intensive Tasks (Alloy Design, Peptide Design) | High-dimensional (exact not specified) | Fewer than SOTA | Not specified | 9-33% improvement with fewer data points [41] |

Table 2: DANTE Component Validation Protocol

| Component | Validation Method | Key Performance Indicators |

|---|---|---|

| Conditional Selection | Compare with ablation study (DANTE without conditional selection) | Data points required to reach global optimum; Value deterioration rate [41] |

| Local Backpropagation | Monitor escape trajectories from known local optima | Success rate in escaping local optima; Convergence speed [41] |

| Deep Neural Surrogate | Benchmark against traditional models (Bayesian methods, decision trees) | Prediction accuracy on high-dimensional, nonlinear functions; Generalization error [41] |

| Overall Pipeline | Application to synthetic functions with known optima | Success rate in finding global optimum; Sample efficiency [41] |

The Scientist's Toolkit: DANTE Research Reagent Solutions

Table 3: Essential Components for DANTE Implementation

| Component | Function | Implementation Notes |

|---|---|---|

| Deep Neural Surrogate Model | Approximates high-dimensional, nonlinear solution space; replaces traditional ML models that struggle with complexity [41] | Use DNN architecture appropriate for data type (e.g., CNN for spatial data, FCN for tabular); requires careful architecture selection [41] |

| Neural-Surrogate-Guided Tree Exploration (NTE) | Guides exploration-exploitation trade-off using visitation counts and DUCB; avoids exponential partition growth in high dimensions [41] | Implementation differs from traditional MCTS; focuses on noncumulative rewards [41] |

| Data-Driven UCB (DUCB) | Balances exploration and exploitation using number of visits as uncertainty measure [41] | Key innovation: uses visitation frequency rather than traditional Bayesian uncertainty [41] |

| Conditional Selection Module | Prevents value deterioration by selectively advancing to higher-value nodes [41] | Critical for maintaining search progress; compares root vs. leaf DUCB values [41] |

| Local Backpropagation Mechanism | Enables escape from local optima by creating local DUCB gradients [41] | Updates only root-to-leaf paths rather than full tree [41] |

DANTE System Workflow Visualization

DANTE Optimization Pipeline

DANTE vs. Traditional Methods for High-Dimensional Problems

Table 4: Method Comparison in High-Dimensional Context

| Method | Maximum Effective Dimensionality | Data Requirements | Assumptions about Objective Function | Local Optima Avoidance |

|---|---|---|---|---|

| DANTE | 2,000 dimensions [41] | Limited data (initial points ~200) [41] | Treats as black box; no gradient/convexity assumptions [41] | Explicit mechanisms: conditional selection + local backpropagation [41] |

| Traditional Bayesian Optimization | ~100 dimensions [41] | Considerably more data needed [41] | Often relies on kernel methods and prior distributions [41] | Primarily uncertainty-based acquisition functions [41] |

| Reinforcement Learning with MCTS | Limited in data-scarce environments [41] | Extensive training data required [41] | Requires cumulative reward structure [41] | Designed for sequential decision-making [41] |

| One-at-a-Time Feature Screening | Poor performance in high dimensions [42] | Inadequate for complexity [42] | Independent feature effects | Prone to false positives/negatives [42] |

DANTE Local Optima Escape Mechanism

FAQs: Navigating Deep Uncertainty in Computational Design

Q1: What computational strategies exist for designing peptide binders under deep uncertainty when structural data is limited?