Navigating the Vast Chemical Space: How Machine Learning is Revolutionizing Drug Discovery

The exploration of chemical space, estimated to contain over 10^60 drug-like molecules, represents a monumental challenge and opportunity for modern drug discovery.

Navigating the Vast Chemical Space: How Machine Learning is Revolutionizing Drug Discovery

Abstract

The exploration of chemical space, estimated to contain over 10^60 drug-like molecules, represents a monumental challenge and opportunity for modern drug discovery. This article provides a comprehensive overview of how machine learning (ML) is fundamentally transforming this exploration. We cover the foundational concepts of biologically active chemical space and the data limitations that have historically impeded progress. The review details cutting-edge methodological applications, from generative models and Bayesian optimization to high-throughput experimentation workflows. We critically examine troubleshooting strategies for data scarcity, model generalization, and optimization challenges, and present a comparative analysis of validation frameworks and clinical progress from leading AI-driven drug discovery platforms. Tailored for researchers and drug development professionals, this synthesis aims to equip the field with a clear understanding of both the current capabilities and future trajectory of ML in accelerating the journey from chemical design to clinical candidate.

The Frontier of Chemical Space: Defining the Challenge and Opportunity in Drug Discovery

The chemical space of drug-like molecules represents one of the most vast and complex frontiers in modern scientific exploration, with estimates placing its size at a mind-boggling >10⁶⁰ compounds [1]. This unimaginable scale presents both extraordinary opportunity and profound challenge for drug discovery and development. Traditional experimental methods, which physically synthesize and screen compounds, are incapable of exploring more than a minuscule fraction of this space. The emergence of artificial intelligence and machine learning has catalyzed a paradigm shift in how researchers approach this challenge, enabling navigation of chemical spaces that extend far beyond enumerable compound libraries [2] [3]. This technical guide examines the quantitative dimensions of drug-like chemical space, the methodologies for its exploration, and the AI-driven tools transforming this landscape within the broader context of machine learning research.

Quantifying the Vastness of Chemical Space

The Fundamental Scale Problem

The fundamental challenge in chemical space exploration stems from the combinatorial explosion that occurs when considering possible atomic arrangements. The estimate of >10⁶⁰ drug-like molecules arises from considering all possible stable compounds that could theoretically exhibit pharmacological activity [1]. This number is not merely theoretical but has practical implications: if one could evaluate a billion compounds per second, it would still take vastly longer than the age of the universe to exhaustively search this space.

Table 1: Scale of Chemical Space Representations

| Chemical Space Type | Estimated Size | Reference |

|---|---|---|

| Total drug-like chemical space | >10⁶⁰ molecules | [1] |

| GDB-17 enumerated library | 166 billion molecules | [2] |

| CHIPMUNK computational library | 95 million compounds | [2] |

| Generative AI explorable space (MolGen) | 10¹⁴ - 10²⁹ molecules | [1] |

| Commercially available screening compounds | Billions (deliverable in weeks) | [3] |

Comparative Scales in Chemical Space Exploration

The disconnect between theoretically possible and practically accessible chemical space has driven innovation in sampling and enumeration methods. Current databases and libraries represent only infinitesimal fractions of the total chemical space, creating significant bias in our understanding of molecular properties and structure-activity relationships [4].

Table 2: Diversity Metrics in Chemical Space Sampling

| Sampling/Method | Diversity Metric | Value | Context |

|---|---|---|---|

| Anyo Lab's MolGen (1B sample) | Tanimoto dissimilarity (ECFP4) | 0.889 | Full molecules [1] |

| 19 chemical libraries (18M compounds) | Extended Tanimoto index | Optimal | RDKit fingerprints [5] |

| Fragment libraries | Molecular complexity | Low | MW <300 Da, minimal features [2] |

| Representative Random Sampling | Valence-based partitioning | Comprehensive | Unbiased sampling [4] |

Methodologies for Quantification and Sampling

Species Estimation Techniques from Ecology

Adapting ecological species estimation methods has emerged as a powerful approach for quantifying chemical space. Researchers have applied three primary estimators to large molecular samples:

- Chao1: Estimates lower bound of species richness [1]

- ACE (Abundance-based Coverage Estimator): Models species diversity based on sample coverage [1]

- Good-Turing: Estimates population frequencies of species [1]

When applied to 1 billion generated molecules, these estimators yielded predictions of 1×10¹⁰, 7.9×10⁹, and 2.5×10⁹ unique molecules respectively, but failed to converge, indicating that even billion-molecule samples are insufficient for comprehensive chemical space characterization [1].

Extrapolation Modeling for Chemical Space Estimation

Beyond ecological estimators, researchers have developed logarithmic modeling approaches that plot the unique fraction of molecules against the number of generated molecules. This relationship appears linear on a logarithmic x-axis and enables extrapolation to estimate a lower bound of 1×10¹⁴ explorable molecules for specific generative systems [1]. A more sophisticated "quadratic-exponential" function (𝛼⋅(10ˣ)² + 𝛽⋅(10ˣ)) fitted to scaffold data enables even larger extrapolations, with estimates reaching as high as 10²⁶ molecules for advanced generative systems [1].

Representative Random Sampling (RRS)

The RRS methodology addresses the critical challenge of bias in chemical space sampling by generating approximately uniform random samples from a defined chemical space without full enumeration [4]. The approach operates through a multi-stage process:

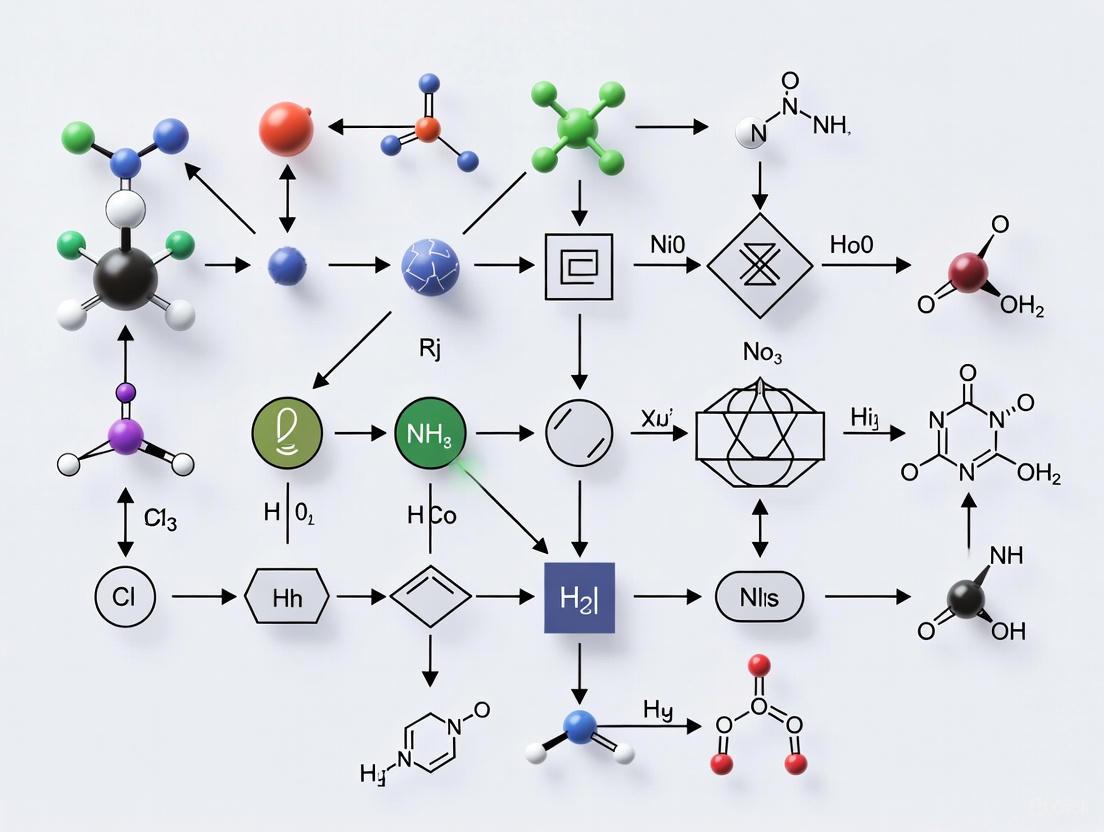

Figure 1: RRS Methodology for Unbiased Chemical Space Sampling

The RRS method considers atoms of different valences as distinct atom types, forming ordered sets of valence types. For each valence type, multiple atom types are counted as valence type multiplicity. This abstraction enables efficient sampling by first estimating the total number of molecular graphs for each sum formula within a search space, then uniformly randomly sampling from that space through formula selection followed by Markov Chain Monte Carlo sampling within that chemical formula [4].

AI-Driven Exploration Architectures

Generative Model Architectures

Deep generative models have transformed chemical space exploration by generating novel molecules through complex, non-transparent processes that bypass direct structural similarity constraints [6]. Five key architectures dominate current research:

- Recurrent Neural Networks (RNNs): Process sequential molecular representations

- Variational Autoencoders (VAEs): Learn latent representations of chemical space

- Generative Adversarial Networks (GANs): Pit generator against discriminator

- Normalizing Flows (NF): Enable exact likelihood calculation

- Transformers: Utilize self-attention mechanisms for sequence generation [6]

Relationship Between AI Models and Chemical Space

The integration of AI models has created a new paradigm in chemical space navigation, moving beyond traditional library-based approaches to generative exploration.

Figure 2: AI-Driven Chemical Space Exploration Workflow

These models employ various molecular representations including SMILES, SELFIES, graph representations, and internal notations that significantly impact their ability to explore chemical space [6]. Each architecture offers different trade-offs in terms of novelty, synthetic accessibility, and property optimization capabilities.

Experimental Protocols for Chemical Space Characterization

Scaffold Diversity Analysis Protocol

Purpose: To quantify the structural diversity of chemical space samples through scaffold analysis.

Procedure:

- Generate large molecule sample (≥1B molecules recommended)

- Apply multiple scaffolding techniques in parallel:

- True Murcko Scaffolds: Extract ring systems and linkers without double-bonded atoms

- RDKit Murcko Scaffolds: True Murcko scaffolds including double-bonded atoms

- Generic Scaffolds: True Murcko scaffolds with all atoms and bonds as carbon and single bonds

- Calculate unique scaffolds for each technique

- Track scaffold discovery rate versus sample size

- Fit quadratic-exponential function to extrapolate maximum scaffold diversity [1]

Validation: The protocol should yield consistently high diversity metrics, with Tanimoto dissimilarity values >0.85 indicating strong diversity [1].

Unbiased Sampling Validation Protocol

Purpose: To verify the representativeness of chemical space sampling methods.

Procedure:

- Define chemical space constraints (elements, molecular size, stoichiometries)

- Implement Representative Random Sampling (RRS) with valence type partitioning

- Generate chemical formulae through integer partitioning

- Apply Markov Chain Monte Carlo sampling within formulae

- Compare property distributions with known biased datasets

- Assess transferability of machine learning models trained on sampled data [4]

Validation: Successful sampling demonstrates even coverage of chemical space without software-induced biases or toolchain preferences [4].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents for Chemical Space Exploration

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| Fragment Libraries | Physical/Virtual Compound Collection | Provides low molecular weight compounds for FBDD | Target druggability assessment, hit identification [2] [7] |

| GDB-17 | Computational Library | 166 billion enumerated molecules for virtual screening | Chemical space reference, de novo design [2] |

| MindlessGen | Molecular Generator | Creates "mindless" molecules through random atomic placement | Benchmarking, method validation [8] |

| Extended Similarity Indices | Analytical Metric | Quantifies fingerprint-based diversity of large libraries | Chemical library network analysis [5] |

| Synthetic Accessibility Score (SAS) | Assessment Filter | Predicts synthetic feasibility (scores >6 indicate challenge) | Compound prioritization, library design [2] |

| ADMET Prediction Tools | Property Filter | Estimates absorption, distribution, metabolism, excretion, toxicity | Drug-likeness optimization, toxicity screening [2] |

| Chemical Library Networks (CLNs) | Visualization Framework | Represents chemical space relationships between large libraries | Library comparison, diversity analysis [5] |

Future Directions and Challenges

The future of chemical space exploration lies in developing what researchers have termed EAST methodologies: Efficient, Accurate, Scalable, and Transferable approaches that minimize energy consumption and data storage while creating robust machine learning models [9]. Key challenges include overcoming the inherent biases in existing chemical databases, improving the interpretability of generative models, and establishing better benchmarks for chemical space coverage [6] [4]. The integration of quantum-mechanical methods with machine learning techniques promises to enhance the accuracy of property predictions across broader regions of chemical space [9]. As these methodologies mature, they will progressively unlock the immense potential of the unexplored chemical universe for drug discovery and materials science.

For decades, the field of synthetic chemistry has been constrained by a fundamental bottleneck: the manual, labor-intensive nature of chemical experimentation. This artisanal approach has limited the pace of discovery and innovation, confining researchers to exploring only a minuscule fraction of the estimated 10⁶⁰ synthesizable small molecules that constitute the vastness of chemical space [10]. Traditional one-variable-at-a-time (OVAT) methodologies, while valuable, are inherently slow, resource-intensive, and prone to human error and irreproducibility [11] [12].

The convergence of automation, high-throughput experimentation (HTE), and artificial intelligence (AI) is now fundamentally reshaping this landscape. This paradigm shift is moving synthetic chemistry from a craft-based discipline to a data-driven science, enabling researchers to navigate chemical space with unprecedented speed and precision. This transition is critical for addressing complex challenges across multiple fields, from the development of sustainable energy materials to the accelerated discovery of new pharmaceutical therapies [13] [14] [15]. This whitepaper examines the core technologies driving this revolution, the experimental protocols that make it possible, and the emerging toolkit that is redefining the role of the modern chemical researcher.

The Manual Bottleneck in Historical Context

The practice of synthetic chemistry has long been characterized by manual operations conducted by highly trained chemists. While this approach has yielded extraordinary progress, it introduces significant limitations that constitute the historical bottleneck.

- Time and Labor Intensity: Organic synthesis remains a very time- and labor-consuming process, providing variable results due to differences in techniques across different laboratories [12].

- Reproducibility Challenges: Manual operation leads to inconsistent reproducibility, hindering dependable evolution toward intelligent automation [12].

- Limited Exploration Scope: The traditional OVAT method contrasts sharply with HTE, which allows the exploration of multiple factors simultaneously [11]. This limitation is particularly acute when considering the vastness of chemical space, which remains largely unexplored due to the physical and temporal constraints of manual experimentation [10].

The first significant step toward automation came in the 1960s with Merrifield's automated system for solid-phase peptide synthesis [12]. However, widespread adoption of automated approaches has been gradual, particularly in academic settings where access to dedicated HTE infrastructure and staff support remains limited [11].

Core Technologies Reshaping Synthetic Chemistry

Automated High-Throughput Experimentation (HTE)

Modern HTE represents a method of scientific inquiry that facilitates the evaluation of miniaturized reactions in parallel. This approach advances the assessment of a range of experiments, allowing researchers to explore multiple factors simultaneously [11].

Key Characteristics and Applications:

- Accelerated Data Generation: HTE enables accelerated data generation, providing a wealth of information that can be leveraged to access target molecules, optimize reactions, and inform reaction discovery while enhancing cost and material efficiency [11].

- Reaction Optimization and Discovery: HTE has emerged as a powerful tool for reaction optimization where multiple variables are simultaneously varied to identify optimal conditions to achieve high yield and selectivity of a product. More recently, HTE has been applied to reaction discovery, expanding its role beyond optimization to identifying unique transformations [11].

- Material Efficiency: The unique advantages of HTE include low consumption, low risk, high efficiency, high reproducibility, high flexibility, and good versatility [16].

Table 1: HTE System Components and Functions

| System Component | Function | Example Technologies |

|---|---|---|

| Reaction Platform | Miniaturized, parallel reaction execution | Microtiter plates (up to 1536 reactions) [11], Chemspeed ISynth [17] |

| Automated Synthesis | Robotic execution of chemical reactions | Synthesis machines with >5000 commercial building blocks [12] |

| Inline Monitoring | Real-time reaction analysis | Inline NMR, IR spectroscopy [12] |

| Analytical Interface | Orthogonal measurement acquisition | UPLC-MS, benchtop NMR [17] |

Artificial Intelligence and Machine Learning

AI and machine learning have become indispensable tools for navigating chemical space, particularly when integrated with automated experimentation platforms.

Key Applications:

- Property Prediction: Machine learning has lessened the burden of molecule property prediction by learning from existing data to make rapid predictions for new molecules. Tools like ChemXploreML make these critical predictions accessible to chemists without requiring advanced programming skills [18].

- Active Learning for Efficient Exploration: Advanced AI models can explore massive chemical spaces with minimal starting data. For instance, researchers developed an active learning model that explored a virtual search space of one million potential battery electrolytes starting from just 58 data points, ultimately identifying four distinct new electrolyte solvents that rival state-of-the-art electrolytes in performance [13].

- Foundation Models for Chemical Discovery: Scientific foundation models trained on large unlabeled datasets offer a path toward navigating chemical space across application domains. The MIST (Molecular Insight SMILES Transformers) family of molecular foundation models, with up to 1.8 billion parameters, can be fine-tuned to predict hundreds of structure-property relationships across diverse chemical domains [10].

Robotic and Autonomous Systems

The physical execution of chemistry is being transformed by robotic systems that can operate continuously and with precision exceeding human capabilities.

Modular Robotic Platforms: Recent advances have demonstrated laboratories integrated with mobile robots that operate equipment and make decisions in a human-like way. These modular workflows combine mobile robots, automated synthesis platforms, liquid chromatography–mass spectrometers, and benchtop NMR spectrometers, allowing robots to share existing laboratory equipment with human researchers without monopolizing it or requiring extensive redesign [17].

Autonomous Decision-Making: Autonomy requires more than automation; it requires agents, algorithms, or artificial intelligence to record and interpret analytical data and to make decisions based on them. This is the key distinction between automated experiments, where researchers make the decisions, and autonomous experiments, where this is done by machines [17].

Experimental Protocols in Automated Chemistry

Protocol 1: Autonomous Exploration of Chemical Space

This protocol outlines the workflow for using active learning to explore chemical space for new materials, as demonstrated in the discovery of novel battery electrolytes [13].

Procedure:

- Initial Data Curation: Begin with a small set of high-quality experimental data (e.g., 58 data points for electrolyte discovery) [13].

- Model Training: Train an active learning model on the available data, incorporating uncertainty estimation.

- Candidate Prediction: Use the model to predict properties and select the most promising candidates for experimental validation.

- Automated Synthesis: Employ automated synthesis platforms to physically create the selected candidates.

- Experimental Validation: Test the synthesized compounds in real-world applications (e.g., cycling batteries to assess cycle life).

- Data Integration: Feed experimental results back into the training dataset.

- Iterative Refinement: Repeat steps 2-6 through multiple campaigns (e.g., 7 campaigns of ~10 electrolytes each) until performance targets are met [13].

Protocol 2: Modular Robotic Workflow for Exploratory Synthesis

This protocol describes the setup for autonomous exploratory synthesis using mobile robots and modular instrumentation [17].

Procedure:

- Reaction Setup: Program the automated synthesis platform (e.g., Chemspeed ISynth) to perform parallel reactions.

- Sample Preparation: On completion of synthesis, the platform takes aliquots of each reaction mixture and reformats them separately for MS and NMR analysis.

- Robotic Transport: Mobile robots handle samples and transport them to the appropriate analytical instruments.

- Orthogonal Analysis: Perform both UPLC-MS and benchtop NMR spectroscopy analyses autonomously.

- Data Integration: Save resulting data in a central database for processing.

- Heuristic Decision-Making: Process orthogonal measurement data through a decision-maker that applies binary pass/fail grading based on experiment-specific criteria.

- Workflow Progression: Reactions that pass both analyses proceed to the next synthetic step or scale-up, mimicking human decision protocols [17].

Protocol 3: High-Throughput Screening of Material Libraries

This protocol is used for exploring vast compositional spaces, such as in the development of all-inorganic perovskites for photovoltaics [14].

Procedure:

- Training Data Generation: Perform high-fidelity computational calculations (e.g., DFT with PBEsol) on thousands of representative structures to create a training dataset.

- Machine Learning Model Training: Train surrogate models (e.g., Crystal Graph Convolutional Neural Networks) to predict key properties like decomposition energy and bandgap.

- Chemical Space Exploration: Use trained models to explore expanded compositional spaces (e.g., 41,400 B-site-alloyed ABX₃ MHPs) by accessing all possible configurations for each composition.

- Stability Assessment: Identify the most stable atomic configurations using ML-predicted decomposition energies with added mixing entropy terms.

- Experimental Validation: Select promising candidates for synthesis and experimental validation based on computational predictions [14].

The Scientist's Toolkit: Essential Research Reagents and Platforms

The transition to automated chemical exploration requires a new set of tools and platforms that extend beyond traditional laboratory equipment.

Table 2: Essential Research Reagents and Platforms for Automated Chemical Exploration

| Tool/Platform | Function | Key Features |

|---|---|---|

| ChemXploreML [18] | User-friendly desktop app for molecular property prediction | No programming skills required; operates offline; uses molecular embedders |

| iChemFoundry [16] | Intelligent automated platform for high-throughput synthesis | Low consumption, high reproducibility, good versatility |

| MIST Models [10] | Molecular foundation models for property prediction | Up to 1.8B parameters; trained on 6B molecules; predicts 400+ structure-property relationships |

| Chemputer [12] | Automated synthesis platform driven by natural language processing | Extracts procedures from publications; converts to executable commands |

| Mobile Robotic Agents [17] | Autonomous sample transport and handling | Shares existing lab equipment; no extensive redesign required |

| Active Learning Algorithms [13] | Efficient exploration of chemical space with minimal data | Identifies promising candidates after few iterations; incorporates uncertainty |

Data Management and Analysis

The massive datasets generated by HTE and automated platforms necessitate robust data management practices. Effective data management consistent with FAIR principles (Findable, Accessible, Interoperable, and Reusable) is key to establishing HTE's utility [11]. This includes:

- Standardized Protocols: Development of customized workflows and diverse analysis methods for greater accessibility and shareability [11].

- Data Visualization: Implementation of data visualization software for efficient data evaluation [11].

- Centralized Databases: Use of central databases for storing multimodal analytical data from orthogonal characterization techniques [17].

Table 3: Quantitative Performance of Automated Chemistry Platforms

| Platform/Technology | Throughput Capacity | Reported Accuracy/Performance | Key Metric |

|---|---|---|---|

| Ultra-HTE [11] | 1536 reactions simultaneously | Significantly accelerated data generation | Broadened examination of reaction chemical space |

| Active Learning Electrolyte Search [13] | 1M virtual compounds from 58 points | 4 new electrolytes rivaling state-of-the-art | High accuracy with minimal data input |

| Mobile Robotic Chemist [17] | 688 reactions over 8 days | Autonomous decision-making based on orthogonal data | Comprehensive exploratory synthesis |

| Electrocatalyst Testing [12] | 942 tests on 109 catalysts in 55 hours | Efficient discovery of novel electroorganic processes | Rapid screening of catalyst libraries |

| CGCNN for Perovskites [14] | 41,400 B-site-alloyed MHPs | Identified 10 promising photon absorbers | Accelerated materials discovery |

The transformation of synthetic chemistry from an artisanal practice to an automated, data-driven science represents a fundamental shift in how researchers explore chemical space. The integration of high-throughput experimentation, artificial intelligence, and robotic automation has created a powerful new paradigm that is overcoming the historical bottlenecks that have long constrained discovery and innovation.

This convergence enables researchers to navigate the vastness of chemical space with unprecedented efficiency, moving beyond serendipity to systematic exploration. The development of user-friendly AI tools, modular robotic platforms, and active learning methodologies is making these advanced capabilities accessible to a broader range of scientists, promising to accelerate discoveries across pharmaceuticals, materials science, and sustainable energy.

As these technologies continue to evolve and become more integrated, they will increasingly liberate chemists from routine manual tasks, allowing them to focus on higher-level creative problem-solving and hypothesis generation. The future of synthetic chemistry lies in this collaborative partnership between human expertise and automated intelligence, working together to explore the immense possibilities of chemical space.

The fundamental challenge at the heart of artificial intelligence (AI) in chemistry is the staggering vastness of chemical space contrasted with the extreme scarcity of high-quality experimental data. Chemical space—the theoretical space encompassing all possible molecules and compounds—is estimated to contain 10^60 to 10^100 potentially stable structures, a number that dwarfs the number of stars in the observable universe. However, publicly available chemical databases contain only on the order of 10^8 to 10^9 curated compounds and associated data points [19] [20]. This disparity creates a "data deficit" of monumental proportions, severely impeding the development and application of AI models that typically require massive, high-quality datasets to make accurate predictions.

Unlike domains where AI has flourished, such as image recognition or natural language processing, chemical data is characterized by its high cost, slow generation, and inherent complexity. Each experimental data point in chemistry and materials science can require months of time and tens of thousands of dollars to produce [21]. Furthermore, the data that does exist often suffers from systemic issues: publication bias favoring positive results, inconsistent experimental protocols, and a lack of standardized reporting formats [19] [21]. This combination of factors creates a fundamental bottleneck for AI progress in chemistry, as models trained on limited or biased data struggle to generalize across the immense, unexplored regions of chemical space that hold the greatest potential for discovery.

Quantifying the Data Deficit in Chemical Databases

The scale of the data challenge becomes clear when examining the contents of major chemical databases. While these repositories represent monumental curation efforts, their size remains infinitesimal compared to the theoretical chemical space.

Table 1: Key Chemical and Bioactivity Databases and Their Scale

| Database | Unique Compounds | Experimental Data Points | Primary Data Types |

|---|---|---|---|

| ChEMBL | ~1.6 million | ~14 million | Bioactivity data from literature and HTS assays [19] |

| PubChem | >60 million | >157 million | Bioactivity data from HTS assays [19] |

| Reaxys | >74 million | >500 million | Literature-mined property, activity, and reaction data [19] |

| SciFinder (CAS) | >111 million | >80 million | Experimental properties, NMR spectra, reaction data [19] |

| AZ IBIS (AstraZeneca In-House) | Not Specified | >150 million | In-house SAR data points [19] |

The data scarcity problem is further compounded by significant data quality issues. Chemical data, particularly when automatically extracted from literature and patents, can be quite "noisy" [19]. Sources of error include biological assay variability, the presence of "frequent hitters" or Pan-Assay Interference Compounds (PAINS) that produce false positives, and a lack of standard annotation for biological endpoints and modes of action [19]. Without careful curation and filtering, AI models trained on this data risk learning these artifacts rather than genuine structure-property relationships.

Case Study: Active Learning to Overcome Data Scarcity in Electrolyte Discovery

A pioneering study from the University of Chicago Pritzker School of Molecular Engineering provides a compelling blueprint for addressing the data deficit. Researchers demonstrated that an active learning framework could explore a virtual search space of one million potential battery electrolytes starting from just 58 initial data points [13].

Experimental Protocol and Workflow

The methodology combined iterative AI prediction with physical experimental validation, creating a closed-loop discovery system.

Table 2: Key Research Reagents and Materials for Active Learning Campaign

| Reagent/Material Category | Specific Examples/Properties | Function in the Experimental Workflow |

|---|---|---|

| Electrolyte Solvents | Four novel solvents identified (not named) | The target molecules for discovery; the core components of the battery electrolyte. |

| Anode-Free Lithium Metal Battery Cells | Custom-built test cells | The experimental platform for validating AI-predicted electrolyte performance in a real-world device. |

| Chemical Starting Materials | Various, based on AI suggestions | Used to synthesize the proposed electrolyte candidates for experimental testing. |

| Cycle Life Testing Equipment | Battery cycling instrumentation | To measure the primary performance metric: whether a battery has a long cycle life. |

The experimental workflow followed a structured, iterative process that tightly integrated computational prediction with laboratory validation.

Diagram 1: Active Learning Workflow for Electrolyte Discovery (49 characters)

The key to this approach was its handling of model uncertainty. The AI model provided predictions with associated uncertainty estimates. In early cycles, with minimal data, predictions were less accurate. By prioritizing the testing of candidates that would most reduce this uncertainty, the model rapidly improved its understanding of the chemical space [13]. In total, the team ran seven active learning campaigns, testing approximately 10 electrolytes in each, before converging on four new electrolytes that rivaled state-of-the-art performance [13]. This methodology directly addressed the data deficit by maximizing the informational value of each expensive, time-consuming experiment.

Technical Strategies for Mitigating the Data Deficit

Researchers have developed several sophisticated ML strategies to operate effectively in low-data regimes. These methods either maximize the utility of existing data or incorporate scientific knowledge to guide the learning process.

Table 3: Machine Learning Strategies to Overcome Data Scarcity in Chemistry

| Method | Core Principle | Application in Chemistry | Key Limitations |

|---|---|---|---|

| Active Learning (AL) | Iteratively selects the most informative data points for experimental labeling [22]. | Accelerated discovery of battery electrolytes [13]; virtual screening. | Requires physical experiments in the loop; initial model is highly uncertain. |

| Transfer Learning (TL) | Uses knowledge from a pre-trained model on a large, source dataset to improve learning on a small, target dataset [22]. | Predicting molecular properties; de novo drug design using models pre-trained on large compound libraries. | Risk of negative transfer if source and target domains are dissimilar. |

| Multi-Task Learning (MTL) | Simultaneously learns several related tasks, sharing representations between them to improve generalization [22]. | Predicting multiple biological activities or material properties from shared molecular representations. | Requires identifying related tasks; complex model architecture. |

| Data Augmentation (DA) & Synthesis | Generates artificial training examples by manipulating existing data or creating entirely new, realistic data [22]. | Creating synthetic data for rare diseases; exploring "mindless" molecules for benchmark generation [8]. | For DA, validating the chemical validity of transformed structures is non-trivial. |

| Federated Learning (FL) | Enables collaborative model training across institutions without sharing proprietary data, thus enlarging the effective training set [22]. | Training predictive models on proprietary compound libraries from multiple pharmaceutical companies. | Complex implementation; potential for communication bottlenecks. |

The following diagram illustrates the logical relationships and typical application flow between these strategies within a chemical AI project.

Diagram 2: Strategies to Mitigate Chemical Data Scarcity (49 characters)

Beyond these algorithmic strategies, integrating physical knowledge and constraints is critical for improving model performance and interpretability in data-scarce environments. For example, incorporating known physical laws (e.g., energy conservation, symmetry constraints) or chemical rules (e.g., valency, reaction rules) directly into model architectures provides a strong inductive bias [20] [21]. This approach is exemplified by Equivariant Neural Networks (ENNs), which are designed to inherently respect physical symmetries like translational and rotational invariance, leading to more physically meaningful and data-efficient learning [20]. Furthermore, new generative models now explicitly incorporate constraints such as viable synthetic pathways and atomic van der Waals radii to avoid generating unrealistic or unsynthesizable molecules [20] [22].

The "data deficit" in chemistry is not an insurmountable barrier but rather a defining constraint that shapes the development of AI in the molecular sciences. The path forward lies not in waiting for the impossible accumulation of "big data," but in the continued innovation of data-efficient, scientifically grounded AI methods. The future will be driven by models that seamlessly integrate physical knowledge, strategically guide experiments through active learning, and leverage shared knowledge via transfer and multi-task learning. As these approaches mature, they will progressively unlock the immense, unexplored regions of chemical space, ultimately accelerating the discovery of next-generation materials, drugs, and sustainable technologies.

The systematic definition of the Biologically Relevant Chemical Space (BioReCS) represents a paradigm shift in modern drug discovery. This whitepaper delineates the core principles, methodologies, and computational frameworks essential for mapping and modulating the entirety of disease-relevant targets. As the field grapples with the immense scale of the potential chemical universe, the integration of machine learning (ML) with physics-based simulations and quantitative systems pharmacology is forging new pathways to explore previously inaccessible regions of target space. We provide a technical guide detailing the experimental and in silico protocols for target identification, validation, and perturbation, with a specific focus on leveraging Large Quantitative Models (LQMs) and sustainable ML to accelerate the discovery of novel therapeutic modalities against both established and underexplored target classes.

The "Biologically Relevant Chemical Space" (BioReCS) is formally defined as a multidimensional space encompassing all molecules with biological activity—both beneficial and detrimental—where molecular properties define coordinates and relationships between compounds [23]. This space includes diverse application areas such as drug discovery, agrochemistry, and natural product research. The fundamental goal of comprehensive target modulation requires a holistic understanding of this space, which extends beyond traditional small molecules to include peptides, proteolysis-targeting chimeras (PROTACs), macrocycles, and metallodrugs [23].

The exploration of BioReCS is inherently challenged by its vast scale and heterogeneity. Current estimates suggest that the potential chemical universe contains between 10⁶⁰ and 10¹⁰⁰ possible compounds [23], while the human genome contains approximately 20,000 protein-coding genes, only a fraction of which have been successfully targeted therapeutically. A systematic study of target spaces specifically for protein and peptide drugs has revealed that these targets possess distinct characteristics compared to those of small-molecule drugs, necessitating specialized predictive models and exploration strategies [24].

Table 1: Key Dimensions of the Biologically Relevant Chemical Space (BioReCS)

| Dimension | Description | Representative Compound Classes |

|---|---|---|

| Structural Space | Variations in molecular architecture, including atomic composition, bond types, and stereochemistry. | Small molecules, macrocycles, peptides, metallodrugs. |

| Functional Space | Spectrum of biological activities, from therapeutic to toxic effects. | Agonists, antagonists, inhibitors, degraders (e.g., PROTACs). |

| Target Space | The universe of biomolecules (proteins, nucleic acids) with which compounds can interact. | Enzymes, receptors, ion channels, protein-protein interactions. |

| Physicochemical Space | Properties governing drug-like behavior (e.g., lipophilicity, solubility, polar surface area). | Compounds adhering to Rule of 5, beyond Rule of 5 (bRo5) space. |

Mapping the Target Universe: From Known to Underexplored Territories

Target identification and validation are crucial initial steps in defining the biologically active space. Bioinformatics analyses leveraging the characteristics of known successful targets have proven effective in improving the efficiency of target selection [24]. Comparative studies between targets for different drug modalities (small molecules, protein drugs, peptide drugs) reveal significant differences in their genomic and proteomic features, which can be captured by machine learning models for genome-wide target prediction [24].

The target universe can be categorized into heavily explored and underexplored subspaces. Heavily explored regions are well-represented in public databases such as ChEMBL and PubChem, which contain extensive biological activity annotations for primarily small organic molecules [23]. In contrast, several critical target classes remain underexplored:

- Metal-containing molecules and metallodrugs: Often excluded from standard chemoinformatic analyses due to modeling challenges [23].

- Macrocycles and beyond Rule of 5 (bRo5) compounds: Including protein-protein interaction (PPI) modulators and PROTACs [23].

- Dark Chemical Matter: Compounds that repeatedly fail to show activity in high-throughput screens, providing crucial negative data for defining non-biologically relevant chemical space [23].

Table 2: Key Public Compound Databases for Exploring BioReCS

| Database Name | Primary Focus | Application in Target Space Exploration |

|---|---|---|

| ChEMBL [23] | Bioactive small molecules with drug-like properties. | Identifying structure-activity relationships; target annotation. |

| PubChem [23] | Chemical substances and their biological activities. | Large-scale bioactivity data for machine learning model training. |

| InertDB [23] | Curated and AI-generated inactive compounds. | Defining boundaries of non-bioactive chemical space. |

| Dark Chemical Matter [23] | Compounds inactive across numerous HTS assays. | Mapping regions of chemical space lacking biological activity. |

Figure 1: Mapping the Target Universe. This diagram categorizes the biological target space into heavily explored and underexplored territories, highlighting key compound classes within each domain.

Computational Frameworks for Exploring BioReCS with Machine Learning

Large Quantitative Models (LQMs) and Physics-Based AI

A transformative approach to exploring BioReCS involves Large Quantitative Models (LQMs), which represent a breakthrough beyond traditional language models. Unlike Large Language Models (LLMs) trained on textual data, LQMs are grounded in first principles of physics, chemistry, and biology, allowing them to simulate fundamental molecular interactions and create new knowledge through billions of in silico simulations [25]. This physics-driven approach is particularly valuable for diseases where limited experimental data is available.

LQMs leverage quantum mechanics to understand and predict molecular behavior at the subatomic level. When integrated with AI and quantum-inspired algorithms on GPU-powered computing architectures, these models can explore a much larger chemical space and discover novel compounds that meet specific pharmacological criteria but do not yet exist in scientific literature [25]. This capability is crucial for targeting traditionally "undruggable" targets in areas such as cancer and neurodegenerative diseases.

Sustainable Machine Learning (SusML) for Chemical Space Exploration

The rising demand for computationally efficient exploration of chemical spaces has driven the development of sustainable ML approaches. The core challenge lies in developing methodologies that are Efficient, Accurate, Scalable, and Transferable (EAST), minimizing energy consumption and data storage while creating robust ML models [9] [26]. Key focus areas include:

- Data-efficient ML-based computational methods that maximize information extraction from limited experimental data.

- Inverse property-to-structure problems that enable de novo design of molecules with desired biological activities.

- Universal molecular descriptors that work across diverse compound classes, from small molecules to biomacromolecules [23].

Quantitative and Systems Pharmacology (QSP) Modeling

Quantitative and Systems Pharmacology (QSP) provides an integrative approach that combines physiology and pharmacology to model the dynamic interactions between drugs and biological systems [27]. QSP operates through sophisticated mathematical models, frequently represented as Ordinary Differential Equations (ODEs), that capture mechanistic details of pathophysiology across multiple scales.

The QSP approach follows a "learn and confirm" paradigm, where experimental findings are systematically integrated into models to generate testable hypotheses [27]. These models enable researchers to:

- Predict clinical trial outcomes and optimize dosing based on preclinical data.

- Execute "what-if" experiments to evaluate combination therapies.

- Identify inconsistencies in biological assumptions through mathematical rigor.

- Integrate knowledge horizontally (across multiple pathways) and vertically (across multiple time and space scales) [27].

Figure 2: QSP Modeling Workflow. This diagram outlines the iterative "learn and confirm" paradigm of Quantitative and Systems Pharmacology, from initial objective definition through model refinement.

Experimental Protocols for Target Space Exploration and Validation

Protocol: Genome-Wide Target Prediction for Protein and Peptide Drugs

Objective: To identify and validate novel targets in the human genome specifically amenable to modulation by protein and peptide therapeutics.

Methodology:

- Data Curation: Compile a comprehensive set of known successful protein and peptide drug targets from databases such as ChEMBL and PubChem [23] [24]. Include negative data (inactive compounds) from sources like InertDB and Dark Chemical Matter to define non-bioactive regions [23].

- Feature Extraction: Calculate molecular descriptors and features that distinguish successful targets, including genomic, structural, and physicochemical properties. For ionizable compounds, account for pH-dependent ionization states, as this significantly impacts bioactivity but is often overlooked in standard analyses [23].

- Model Training: Implement separate machine learning classifiers (e.g., Random Forest, Support Vector Machines, or Neural Networks) for protein and peptide drug targets based on their distinct characteristics [24].

- Validation: Employ rigorous cross-validation and external validation sets to assess model performance. Utilize tools like the POPPIT (Predictor Of Protein and PeptIde drugs' therapeutic Targets) web server for target prediction and annotation [24].

Protocol: In Silico Target Deconvolution Using LQMs

Objective: To identify the biological targets of compounds with observed phenotypic effects but unknown mechanisms of action.

Methodology:

- Compound Representation: Encode the query compound using 3D molecular descriptors that capture electronic properties and stereochemistry.

- Library Screening: Against a large-scale database of protein structures, such as the dataset containing over one million protein-ligand complexes with annotated experimental potency data [25].

- Binding Affinity Prediction: Use LQMs grounded in quantum mechanics to simulate and predict protein-ligand binding interactions with high accuracy [25].

- Triaging: Rank potential targets based on calculated binding affinities, phylogenetic conservation, and known biological pathways. Prioritize targets for experimental confirmation.

Protocol: QSP Model Development for Target Prioritization

Objective: To build a mechanistic mathematical model that contextualizes target modulation within a broader physiological system, predicting both efficacy and potential side effects.

Methodology:

- Define Project Scope and Objectives: Establish clear research questions, such as predicting the effect of a novel inhibitor on a specific disease pathway [27].

- Identify Model Components: Based on physiology, identify crucial "states" to track (e.g., plasma glucose, insulin concentrations) and the flows between them [27].

- Mathematical Formalization: Translate biological mechanisms into a system of Ordinary Differential Equations (ODEs). The level of granularity (e.g., inclusion of intracellular signaling) should match the model objectives [27].

- Parameter Estimation and Validation: Fit model parameters to experimental data (e.g., from IVGTT tests). Validate the model against independent datasets not used in parameterization [27].

- Simulation and Hypothesis Generation: Run "what-if" simulations to predict the outcomes of target modulation, including combination therapies and patient variability [27].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Resources for Exploring BioReCS

| Tool/Resource | Type | Function in Research |

|---|---|---|

| POPPIT Web Server [24] | Bioinformatics Tool | Provides target prediction specifically for protein and peptide drugs, along with functional annotations for identified targets. |

| ChEMBL Database [23] | Bioactivity Database | Offers curated bioactivity data on small molecules, essential for building structure-activity relationship models and understanding known target spaces. |

| LQMs (Large Quantitative Models) [25] | Computational Model | Enables physics-based simulation of molecular interactions for accurate prediction of binding affinity and de novo drug design. |

| Universal Molecular Descriptors (e.g., MAP4) [23] | Chemoinformatic Tool | Provides consistent molecular representations across diverse compound classes (small molecules, peptides, biomolecules) for unified chemical space analysis. |

| QSP Modeling Software (e.g., specialized ODE solvers) [27] | Mathematical Modeling Platform | Allows for the construction and simulation of mechanistic models that integrate drug pharmacokinetics and pharmacodynamics with disease pathophysiology. |

| Protein-Ligand Complex Database [25] | Structural Database | Supplies 3D structures and annotated potency data for training and validating AI models for target prediction and binding affinity estimation. |

The comprehensive definition of the Biologically Active Space is an ongoing endeavor that requires continued methodological innovation. Future progress will depend on several key developments: the creation of more universal molecular descriptors that seamlessly span traditional small molecules, peptides, and metallodrugs [23]; the wider adoption of sustainable ML practices to make large-scale chemical space exploration more computationally feasible [9] [26]; and the deeper integration of LQMs into clinical trial design, potentially through simulated interactions on virtual humans [25].

The integration of these advanced computational approaches—QSP, LQMs, and sustainable ML—is transforming the exploration of BioReCS from a fragmented, serendipity-driven process into a systematic, physics-informed engineering discipline. By leveraging these frameworks, researchers can accelerate the identification and validation of disease-relevant targets across the entire spectrum of the target universe, ultimately enabling the modulation of all therapeutically relevant nodes in human disease networks. This holistic approach promises to unlock novel therapeutic modalities for previously intractable diseases, reshaping the future of drug discovery.

The exploration of chemical space, estimated to contain over 10^60 drug-like molecules, represents one of the most significant challenges in modern drug discovery and materials science [28]. Traditional experimental methods are impossibly slow and resource-intensive for navigating this vastness. Artificial intelligence (AI), particularly machine learning (ML), has transitioned from a theoretical promise to a tangible force by providing the computational means to traverse this immense search space efficiently. This paradigm shift is moving AI from a supportive tool to a core driver of discovery, enabling researchers to identify novel materials and therapeutic candidates with unprecedented speed and precision [29] [30] [31]. The integration of AI into the scientific workflow marks a fundamental change in research methodology, compressing discovery timelines from years to weeks and expanding the explorable universe of molecules beyond human cognitive limits [32] [30].

Quantifying the Shift: From Promise to Tangible Impact

The transition of AI is demonstrated by concrete metrics and clinical advancements. The following table summarizes key quantitative evidence of this progress across discovery stages.

Table 1: Quantitative Evidence of AI's Impact in Chemical Discovery

| Domain | Key Performance Metric | Traditional Approach | AI-Driven Approach | Source |

|---|---|---|---|---|

| Battery Electrolyte Discovery | Data points required to explore 1M candidates | Infeasible (months per data point) | 58 initial data points | [29] |

| Virtual Screening | Computational cost reduction | Baseline (full library docking) | >1,000-fold | [28] |

| Small-Molecule Design | Design cycle time & compounds required | ~5 years, thousands of compounds | ~70% faster, 10x fewer compounds | [30] |

| Clinical Pipeline | AI-derived molecules in clinical stages (2016-2024) | Nearly zero | >75 molecules | [30] |

| Toxicity & Reactivity Prediction | Prediction speed | Hours to days | 0.82 ms per sample | [33] |

The tangible impact of AI extends beyond accelerated discovery to concrete clinical progress. By the end of 2024, over 75 AI-derived molecules had reached clinical stages, a remarkable leap from nearly zero just a few years prior [30]. Notable examples include Insilico Medicine's generative-AI-designed drug for idiopathic pulmonary fibrosis, which progressed from target discovery to Phase I trials in just 18 months, and the TYK2 inhibitor zasocitinib, which advanced into Phase III trials [30]. These milestones provide the first clear evidence that AI can compress the traditional multi-year discovery timeline and produce viable clinical candidates.

Core Methodologies: The Technical Engine of the Shift

The paradigm shift is powered by specific, sophisticated methodologies that enable efficient navigation of chemical space.

Active Learning with Minimal Data

A key innovation is the use of active learning to overcome the data scarcity that often plagues novel research areas. In a landmark study for battery electrolyte discovery, researchers started with only 58 initial data points to explore a virtual search space of one million potential electrolytes [29].

The active learning cycle creates a closed-loop, iterative process of prediction and validation:

This methodology is particularly powerful because it incorporates real-world experimental validation at its core, creating a "trust but verify" approach where the AI's predictions are continuously refined against physical reality [29]. The model acknowledges its own uncertainty initially and uses experimental feedback to improve its accuracy, ultimately identifying four distinct new electrolyte solvents that rival state-of-the-art performance after seven iterative campaigns [29].

Machine Learning-Guided Virtual Screening

For ultra-large chemical libraries containing billions of compounds, a hybrid methodology combining machine learning with molecular docking has proven exceptionally effective. This workflow addresses the fundamental challenge that screening multi-billion-scale libraries with traditional docking alone is computationally prohibitive [28].

Table 2: Key Components of ML-Guided Virtual Screening

| Component | Function | Implementation Example |

|---|---|---|

| Machine Learning Classifier | Learns to identify top-scoring compounds based on a subset of docking data. | CatBoost algorithm trained on 1 million compounds [28] |

| Molecular Descriptors | Represents chemical structures in machine-readable format. | Morgan2 fingerprints (ECFP4) [28] |

| Conformal Prediction Framework | Controls error rate and handles dataset imbalance; selects compounds from full library. | Mondrian conformal predictors [28] |

| Molecular Docking | Detailed structure-based scoring of ML-prioritized compounds. | Docking of reduced compound set (e.g., 10% of library) [28] |

The workflow employs conformal prediction to control the error rate of selections, ensuring that the percentage of incorrectly classified compounds does not exceed a predefined significance level (e.g., 8-12%) [28]. This approach demonstrated sensitivity values of 0.87-0.88, meaning it could identify close to 90% of the virtual active compounds by docking only approximately 10% of the ultralarge library [28].

Chemical Language Models and Advanced Architectures

The emergence of large-scale chemical language models represents a third transformative methodology. Models like Compound-GPT are trained on Simplified Molecular Input Line Entry System (SMILES) representations, treating chemical structures as a language to be learned [33].

These models leverage transformer architectures to capture intricate molecular patterns that have eluded prior computational approaches, including stereochemical configurations and chiral isomers [33]. After pre-training on a broad corpus of 267,381 compounds, the model can be fine-tuned for specific downstream tasks such as predicting reaction rate constants or toxicity, demonstrating superior performance over traditional machine learning methods [33].

The interpretability of these models is enhanced through attention mechanisms that identify which parts of a molecule contribute most to its properties, aligning remarkably well with quantum chemical calculations and providing chemists with actionable insights, not just predictions [33].

The Scientist's Toolkit: Essential Research Reagents and Solutions

The implementation of these AI methodologies relies on a suite of specialized computational tools and resources that form the modern scientist's toolkit for chemical space exploration.

Table 3: Essential Research Reagents for AI-Driven Chemical Space Exploration

| Tool Category | Specific Examples | Function & Application |

|---|---|---|

| Chemical Libraries | Enamine REAL Space, ZINC15 [28] | Provide billions of make-on-demand compounds for virtual screening; foundational datasets for model training. |

| Molecular Representations | Morgan2 Fingerprints (ECFP4), SMILES Strings, CDDD Descriptors [28] [33] | Encode molecular structures into machine-readable formats for AI model input. |

| AI Platforms & Models | CatBoost, Compound-GPT, Deep Neural Networks, RoBERTa [28] [33] | Core algorithms for classification, prediction, and generation of novel chemical structures. |

| Conformal Prediction | Mondrian Conformal Predictors [28] | Provide statistical guarantees for model predictions and handle imbalanced datasets in virtual screening. |

| Docking & Simulation | Molecular Docking Software, Physics-Based Simulations [28] [30] | Validate AI predictions through structure-based scoring and provide training data for AI models. |

| Automation & Robotics | Automated Synthesis Platforms, High-Throughput Screening [29] [34] | Close the design-make-test-analysis loop by physically validating AI predictions at scale. |

Integrated Workflow: Navigating from Prediction to Validation

The power of modern AI-driven discovery lies in the integration of these methodologies into cohesive workflows. The following diagram illustrates how leading platforms connect computational predictions with experimental validation:

This integrated workflow exemplifies the "design-make-test-analyze" cycle that has become central to AI-driven discovery. Companies like Exscientia have implemented this approach, reporting design cycles approximately 70% faster than traditional methods while requiring 10x fewer synthesized compounds [30]. The critical enhancement is the closed-loop nature of the process, where experimental results continuously refine the AI models, creating a self-improving discovery system [29] [30].

Future Directions and Challenges

Despite substantial progress, several frontiers remain for AI in chemical discovery. A significant challenge is moving beyond single-parameter optimization to multi-criteria design, where compounds must satisfy multiple requirements simultaneously, including efficacy, safety, and synthesizability [29] [35]. Future AI models will need to further filter the best-performing candidates across this multi-dimensional optimization landscape [29].

Another frontier is the development of truly generative AI that can create novel molecular structures from scratch rather than extrapolating from existing databases [29]. This would mean "we're no longer limited by the existing literature" and could discover molecules "that do not exist in any database" [29]. Such capability would dramatically expand the explorable chemical space.

Critical challenges include addressing model generalizability beyond their training data distribution. The introduction of "unfamiliarity" metrics helps identify when models are operating outside their reliable domain, preventing overconfident predictions on structurally novel molecules [36]. Additionally, the field must overcome data fragmentation and establish robust governance frameworks to ensure AI-driven discoveries are transparent, explainable, and ethically implemented [34] [30].

As the field matures, the focus is shifting from pure automation to augmented intelligence, where AI serves as an intelligent partner that extends human cognitive capabilities rather than simply replacing human labor [37]. This human-AI collaboration, leveraging the respective strengths of human intuition and machine scale, represents the most promising path forward for exploring the vast, uncharted territories of chemical space.

ML in Action: Core Algorithms and Real-World Applications in Chemistry

The process of drug discovery has traditionally been a costly and time-consuming endeavor, characterized by high attrition rates and timelines that often exceed a decade, with costs now surpassing $2.3 billion per approved drug [38]. A fundamental challenge underpinning this inefficiency is the sheer vastness of the chemical space, estimated to contain over 10^60 synthesizable organic molecules, making exhaustive exploration impossible. Machine learning (ML), and particularly generative artificial intelligence (AI), has emerged as a disruptive paradigm to address this challenge, enabling the algorithmic navigation and construction of chemical and proteomic spaces through data-driven modeling [39]. This technical guide delineates the core architectures, methodologies, and applications of generative AI in molecular design, framing them within the critical research initiative of sustainably exploring this vast chemical space. The overarching goal is the development of Efficient, Accurate, Scalable, and Transferable (EAST) methodologies that minimize energy consumption and data storage while creating robust ML models, a key focus of contemporary research workshops like SusML [9] [26].

Generative AI flips the traditional discovery process through inverse design—moving from a defined set of desired properties back to the molecular structure that fulfills them, instead of screening existing libraries [40]. This approach is catalyzing a paradigm shift in structure-based drug discovery, accelerating the identification of novel bioactive small molecules and functional proteins. The following sections provide an in-depth examination of the generative model architectures powering this revolution, the experimental workflows for their implementation, and the translational milestones demonstrating their real-world impact.

Generative AI Architectures for Molecular Design

Several deep generative model architectures have been developed to tackle the inverse design problem, each with distinct strengths and applications in molecular science. The choice of architecture is often intertwined with the molecular representation, which can be text-based, graph-based, or 3D structural.

Table 1: Key Generative AI Architectures in Molecular Design

| Architecture | Core Principle | Molecular Representation | Key Applications | Exemplary Tools/Models |

|---|---|---|---|---|

| Variational Autoencoders (VAEs) [39] [41] | Learns a compressed, continuous latent representation (latent space) of input data; new molecules are generated by sampling from this space. | SMILES, Graphs | De novo molecule generation, molecular optimization, exploring continuous chemical space. | |

| Generative Adversarial Networks (GANs) [39] [42] | Two neural networks, a generator and a discriminator, are trained adversarially; the generator creates new instances while the discriminator evaluates their authenticity. | SMILES, Graphs, 2D Images | Generating 2D architectural representations, molecular design. | |

| Autoregressive Models (RNNs/Transformers) [39] [41] | Models the probability of a sequence token-by-token; each new token is generated based on all previous tokens in the sequence. | SMILES (Text) | De novo design, R-group replacement, linker design, scaffold hopping. | REINVENT 4, DrugEx |

| Diffusion Models [39] | Iteratively refines a molecule from noise to a valid structure through a denoising process, guided by property constraints. | 3D Point Clouds, SMILES, Graphs | De novo protein engineering, 3D molecular conformation generation, binding affinity prediction. | RFdiffusion, FrameDiff, DiffDock |

Molecular Representation: The Foundation of AI Models

The representation of a molecule for an AI model is a critical first step, directly influencing how the molecule is generated and what properties can be learned [40].

- Text-based (e.g., SMILES): Molecules are represented as simplified molecular-input line-entry system (SMILES) strings, which are sequences of characters denoting atoms and bonds. This allows the use of natural language processing models like RNNs and Transformers [41] [40]. Generation resembles writing a sentence one character at a time.

- Graph-based: Represents molecules natively as graphs with atoms as nodes and bonds as edges. This structural view aligns well with a chemist's intuition and is powerful for generating molecular connectivity [40].

- 3D Point Clouds: Encodes the spatial coordinates of atoms, which is vital for modeling binding interactions, pharmacophore matching, and shape complementarity in structure-based design [40]. Generation in this space resembles sculpting a shape.

Experimental Protocols and Workflows

This section details the standard methodologies for implementing generative AI in molecular design projects, from building the foundational model to optimizing for specific properties.

Building a Foundation Model: Training a Prior

The first step in many generative molecular design pipelines is the creation of an unbiased "prior" model. This model learns the fundamental rules of chemical syntax and the distribution of known chemical space.

Protocol:

- Data Curation: Assemble a large dataset of valid molecular structures, typically in the range of 1-10 million molecules, from public (e.g., ChEMBL, ZINC) or proprietary databases. Represent these molecules as SMILES strings or graphs.

- Model Selection: Choose a generative architecture, such as a Recurrent Neural Network (RNN) or Transformer.

- Teacher-Forcing Training: Train the selected model using a teacher-forcing strategy [41]. The model is tasked with predicting the next token in a sequence given all previous tokens. The weights of the model are updated to minimize the negative log-likelihood (NLL) of the sequences in the training set (see Equation 1). For a sequence T of length l, the joint probability is:

P(T) = ∏ P(t_i | t_{i-1}, t_{i-2}, ..., t_1)from i=1 to l [41]. - Validation: The trained prior model should be able to generate a high percentage of valid, unique molecules that are not mere memorizations of the training set but novel extrapolations.

Molecular Optimization with Reinforcement Learning (RL)

A powerful method for biasing the generative model towards molecules with desired properties is through reinforcement learning, as implemented in platforms like REINVENT 4 [41].

Protocol:

- Define the Scoring Function (Agent's Goal): Create a composite scoring function S(M) that quantifies the desirability of a generated molecule M. This function can incorporate multiple parameters:

- Primary Target Activity: Predictions from a QSAR model or docking score.

- ADMET Properties: Predictions for absorption, distribution, metabolism, excretion, and toxicity.

- Synthetic Accessibility: Score from a dedicated model (e.g., integrated with SYNTHIA [38]).

- Other Properties: Selectivity, physicochemical properties.

- Initialize the Agent: Start with the pre-trained prior model as the initial agent.

- RL Sampling and Update Loop:

- The agent (generative model) samples a batch of molecules.

- Each molecule is scored by the scoring function S(M).

- The agent's weights are updated to increase the likelihood of generating sequences (SMILES strings) that lead to high scores and decrease the likelihood of those that lead to low scores. This is typically done by adding a scaling term to the loss function, such as

Loss = -Σ (log P(t_i | t_<i) + σ * S(M))[41], where σ is a scaling factor.

This workflow creates a closed-loop system where the AI iteratively proposes molecules and learns from the feedback provided by the scoring function.

Integrated AI-Driven Design-Make-Test-Analyze (DMTA) Cycle

Generative AI is most powerful when integrated into an automated DMTA cycle [41].

Protocol:

- Design: Use a generative AI model (e.g., AIDDISON [38]), optimized via RL, to propose a library of novel molecules predicted to have the desired property profile.

- Make: Assess the synthetic feasibility of the top-ranked virtual molecules using retrosynthesis analysis software (e.g., SYNTHIA [38]). This bridges the gap between virtual design and practical synthesis.

- Test: Synthesize and test the prioritized compounds in relevant biological and physicochemical assays.

- Analyze: Feed the experimental results (both successes and failures) back into the AI models to refine the scoring function and improve the next round of design. This creates a continuous learning loop that dramatically accelerates the optimization process.

The following diagram illustrates the logical workflow and data flow of this integrated cycle.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Software and Tools for AI-Driven Molecular Design

| Tool/Platform | Type | Primary Function | Application in Workflow |

|---|---|---|---|

| REINVENT 4 [41] | Open-source Software | A generative AI framework for de novo molecular design using RNNs/Transformers. | Core generative engine for molecular optimization via RL, TL, and CL. |

| AIDDISON [38] | Web-based Platform | Integrates AI/ML and CADD for hit identification and lead optimization. | Unified platform for virtual screening, generative design, and property filtering. |

| SYNTHIA [38] | Retrosynthesis Software | AI-powered retrosynthetic analysis to evaluate synthetic accessibility. | Downstream synthesis planning for AI-generated molecules. |

| DiffDock [39] | Algorithmic Model | AI-augmented molecular docking for binding pose and affinity prediction. | Structure-based scoring in the inverse design workflow. |

| RFdiffusion [39] | Algorithmic Model | Diffusion-based de novo protein design and engineering. | Generation of novel functional proteins and binders. |

Case Studies and Translational Milestones

Generative AI has moved from a theoretical concept to a tool producing tangible preclinical and clinical candidates.

Case Study: AI-Driven Design of Tankyrase Inhibitors

A concrete application demonstrating the integrated workflow is the design of tankyrase inhibitors, a class with potential anticancer activity [38].

Methodology:

- Starting Point: A known tankyrase inhibitor was used as a reference structure.

- Generative Exploration: AIDDISON's generative models and virtual screening were used to explore the chemical space around the reference. This involved similarity searches, pharmacophore screening, and generative models to produce a diverse set of candidate molecules.

- Multi-parameter Optimization: The generated molecules were filtered based on properties and docked into the target protein's binding site. The most promising structures were prioritized based on a combination of predicted affinity and optimal ADMET profiles.

- Synthesis Validation: The top-ranked virtual hits were sent to SYNTHIA for retrosynthetic analysis. This step ensured that the proposed molecules were synthetically accessible, identifying necessary reagents and feasible reaction pathways before any lab work began.

Outcome: This AI-driven workflow accelerated the identification of novel, synthetically accessible lead candidates for tankyrase, enabling a more thorough and efficient exploration of the chemical space than traditional methods [38].

Field Advancement: AI-Designed Molecules in Clinical Trials

The field has achieved significant translational milestones. AI-designed molecules have now entered Phase I clinical trials within just 12 months of program initiation, a dramatic acceleration compared to the traditional timeline of several years [38]. In 2024, the critical role of AI in molecular science was recognized with the Nobel Prize in Chemistry being awarded for breakthroughs in protein structure prediction and AI-designed proteins [43].

The future of generative AI in molecular design will be shaped by several converging trends. The synthesis of generative models with closed-loop automation and robotic synthesis platforms will enable fully autonomous molecular design ecosystems, drastically shortening discovery timelines [41] [43]. Furthermore, the convergence with quantum computing promises to unlock high-accuracy quantum chemistry-informed neural potentials for even more precise predictions [39].

A critical and growing focus is on sustainability. The community is increasingly aware of the computational cost of training large AI models. The push for Efficient, Accurate, Scalable, and Transferable (EAST) methodologies aims to minimize energy consumption and data storage requirements while maintaining robust performance, making the sustainable exploration of chemical space a central tenet of future research [9] [26].

In conclusion, generative AI has fundamentally altered the landscape of molecular design. By framing the problem as one of inverse design and leveraging powerful deep learning architectures, it allows researchers to systematically navigate the impossibly vast chemical space. The integration of these models into automated workflows, coupled with a focus on synthesis-aware design, is supercharging researchers and accelerating the journey from a biological target to optimized lead candidates. This represents not a replacement for human expertise, but a powerful partnership, co-authoring the next chapter of scientific progress in medicine and materials science [40] [43].

The exploration of chemical space for drug discovery faces an unprecedented data challenge. While make-on-demand chemical libraries now provide access to over 70 billion readily synthesizable molecules [28], the total potential drug-like chemical space is estimated to exceed 10^60 compounds [44]. This vastness renders traditional virtual screening approaches computationally intractable, creating an urgent need for more efficient methods that can navigate this expansive territory. Structure-based virtual screening using molecular docking has proven valuable for identifying starting points for drug discovery, but screening billion-compound libraries with conventional docking requires monumental computational resources [28] [45]. This technical guide examines how the integration of machine learning with molecular docking is transforming ultra-large virtual screening (ULVS), enabling researchers to efficiently explore previously inaccessible regions of chemical space and identify novel bioactive compounds with high probability of success.

Core Methodologies for ML-Accelerated Docking

Machine Learning-Guided Docking with Conformal Prediction

The combination of machine learning classification with conformal prediction provides a robust framework for prioritizing compounds for docking. In this workflow, a classifier is first trained to identify top-scoring compounds based on molecular docking of a subset (typically 1 million compounds) to the target protein [28]. The Mondrian conformal prediction framework then applies class-specific confidence levels to make selections from the multi-billion-scale library, significantly reducing the number of compounds requiring explicit docking scoring [28].

Experimental Protocol:

- Initial Docking: Perform molecular docking of 1 million randomly selected compounds from the library against the target protein.

- Classifier Training: Train a classification algorithm (CatBoost recommended) using molecular descriptors (Morgan2 fingerprints optimal) with the top 1% of docking scores as the active class.

- Model Calibration: Use 20% of the training data for calibration to ensure validity for both majority and minority classes.

- Conformal Prediction: Apply the calibrated classifier with optimal significance level (ε) to the entire library, dividing compounds into virtual active, virtual inactive, both, or null sets.

- Final Docking: Perform explicit docking only on the predicted virtual active set, typically 10-15% of the full library [28].

This approach has demonstrated the ability to reduce computational cost by more than 1,000-fold while maintaining high sensitivity (0.87-0.88) in identifying true actives [28].

Active Learning-Based Screening Platforms

Active learning techniques create target-specific screening pipelines that iteratively select compounds for docking based on predictions from continuously updated models. The OpenVS platform implements this approach with a two-stage docking protocol [45] [46]:

Virtual Screening Express (VSX) Mode:

- Designed for rapid initial screening

- Utilizes rigid receptor docking

- Processes millions of compounds quickly

- Serves as initial filter for promising compounds

Virtual Screening High-Precision (VSH) Mode:

- Implements full receptor flexibility

- Includes sidechain and limited backbone movement

- Provides accurate binding pose prediction and ranking

- Used for final ranking of top hits