Optimizing Active Learning for Free Energy Calculations: A Guide to Efficient Drug Discovery

Active learning (AL) is transforming the application of free energy perturbation (FEP) calculations in drug discovery by drastically reducing computational costs.

Optimizing Active Learning for Free Energy Calculations: A Guide to Efficient Drug Discovery

Abstract

Active learning (AL) is transforming the application of free energy perturbation (FEP) calculations in drug discovery by drastically reducing computational costs. This article explores how AL iteratively combines machine learning with physics-based simulations to prioritize the most informative compounds for FEP evaluation. We cover the foundational principles of AL-FEP integration, detail practical methodologies and real-world applications, address key optimization strategies and troubleshooting for robust performance, and validate these approaches through comparative analysis of recent successes. Aimed at researchers and drug development professionals, this guide provides a comprehensive framework for leveraging AL to accelerate lead optimization and explore vast chemical spaces more efficiently.

What is Active Learning in Free Energy Calculations? Core Concepts and Workflow

Defining the Active Learning (AL) Cycle for FEP

This guide provides technical support for researchers implementing Active Learning (AL) cycles for Free Energy Perturbation (FEP) in drug discovery. Active Learning FEP (AL-FEP) combines computationally intensive but highly accurate FEP calculations with faster, approximate machine learning models to efficiently explore vast chemical spaces. This iterative process helps prioritize the most promising compounds for synthesis and testing, significantly accelerating lead optimization in pharmaceutical research [1] [2].

Frequently Asked Questions (FAQs)

1. What is the core benefit of using an AL cycle with FEP? AL-FEP addresses the key limitation of standard FEP: its high computational cost, which restricts the number of compounds that can be evaluated. By using machine learning models trained on initial FEP results to pre-screen large compound libraries, AL-FEP allows you to identify the most valuable compounds for subsequent, more accurate FEP calculations. This enables the exploration of thousands to millions of compounds with high accuracy at a fraction of the computational cost [1] [3].

2. What are the main stages of a single AL cycle? A typical AL cycle consists of four key stages [1]:

- Selection and FEP Calculation: A small subset of molecules is selected from a large library for accurate FEP calculation.

- Model Training: A machine learning model (e.g., 3D-QSAR) is trained on the FEP-generated binding affinity data.

- Prediction and Prioritization: The trained model rapidly predicts the binding affinity for the entire compound library, prioritizing new candidates.

- Iteration: The highest-priority candidates from the model are added to the next round of FEP validation, and the cycle repeats, continuously improving the model.

3. How many compounds should I select for FEP in each AL cycle? The number of compounds selected per cycle significantly impacts performance. Selecting too few can hurt the model's learning. While the optimal number can be project-dependent, systematic studies suggest that under well-optimized conditions, it is possible to identify 75% of the top 100 molecules by sampling only 6% of a large dataset [2]. Another study recommends selecting enough compounds to balance exploration of chemical space with exploitation of current knowledge [4].

4. How do I choose an initial set of compounds to start the AL cycle? The method for selecting the initial sample is a key design choice. The performance of AL can be sensitive to the starting set, particularly when exploring diverse chemical series. It is recommended to use a strategy that ensures good initial chemical diversity to build a robust model from the first cycle [2] [4].

5. When should the AL cycle be terminated? The AL cycle typically runs iteratively until a predefined stopping criterion is met. This can be when the model's predictions stop improving (i.e., no more potent compounds are being discovered), when a target number of top hits have been identified and validated, or when the computational budget is exhausted [1].

Troubleshooting Guides

Poor Model Performance and Enrichment

Problem: The machine learning model trained on FEP data shows poor predictive power, failing to enrich subsequent rounds with higher-potency compounds.

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Insufficient initial FEP data | Check model performance metrics (e.g., R²) after the first cycle. | Increase the number of molecules in the initial FEP sample. Ensure the initial set has adequate chemical diversity [2] [4]. |

| Selecting too few compounds per cycle | Monitor the diversity of compounds selected in each cycle. | Increase the batch size of molecules selected for FEP in each AL iteration [2]. |

| Inappropriate explore-exploit balance | Analyze if the search is stuck in a local potency maximum or wandering randomly. | Adjust the acquisition function to balance exploring new chemical regions (exploration) with refining known potent areas (exploitation) [4]. |

| Underlying FEP inaccuracies | Validate FEP predictions against any available experimental data for a small compound set. | Review the FEP setup (e.g., force field, simulation length, protein structure) to ensure the training data is reliable [1]. |

Inefficient Exploration of Chemical Space

Problem: The workflow fails to discover new, diverse chemical scaffolds and only optimizes within a narrow chemical space.

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Overly restrictive core changes | Check if the compound pool includes core hops and diverse bioisosteres. | For earlier-stage projects aiming for scaffold discovery, ensure the compound library includes molecules with core changes and adjust the AL protocol to be more exploratory [4] [5]. |

| Biased initial compound set | Review the chemical diversity of the starting molecules. | Manually curate the initial set to cover multiple, distinct chemotypes relevant to your target. |

| Acquisition function favoring exploitation | The selection process may be overly weighted towards predicted potency. | Tune the acquisition function parameters to give more weight to chemical diversity and uncertainty in the model's predictions [4]. |

Experimental Protocols and Data

Key Performance Data from AL-FEP Studies

The following table summarizes quantitative findings from retrospective studies on AL-FEP, which can serve as benchmarks for your own experiments.

| Study Focus | Key Parameter Tested | Optimal Performance / Finding | Dataset Size |

|---|---|---|---|

| Impact of AL design choices [2] | Molecules sampled per iteration | Identified 75% of top 100 molecules by sampling only 6% of the dataset. | 10,000 molecules |

| Impact of AL protocol and diversity [4] | Compound selection strategy & explore-exploit ratio | Performance and optimal parameters depend on the project goal (maximize potency vs. broad-range prediction). | Historic GSK project data |

| Prioritizing bioisosteres [5] | 3D-QSAR with AL-FEP | The workflow could rapidly locate the strongest-binding bioisosteric replacements with modest computational cost. | 500 bioisosteres |

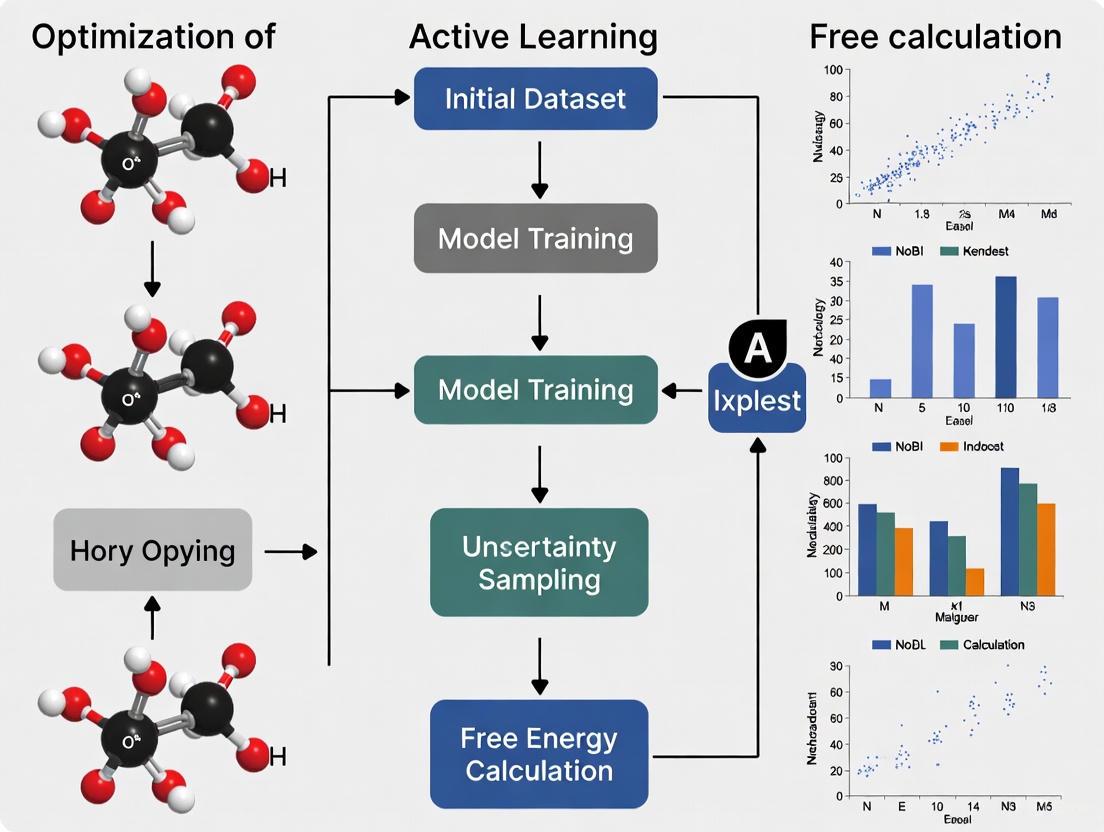

Standardized Workflow Diagram

The diagram below illustrates the logical flow and iterative nature of a standard Active Learning cycle for FEP.

Decision Guide for AL Protocol Tuning

This flowchart provides a systematic approach for diagnosing and resolving common performance issues in your AL-FEP setup.

The table below lists key computational tools and methodological components essential for setting up and running an AL-FEP workflow.

| Item / Resource | Function in AL-FEP Workflow | Notes |

|---|---|---|

| FEP Software (e.g., Flare FEP, FEP+ [1] [3]) | Generates high-accuracy binding affinity data for training the ML model. | The core physics-based simulation engine. Requires careful setup of force fields, water models, and simulation length [1]. |

| Machine Learning Model (e.g., 3D-QSAR [5]) | Learns from FEP data to make fast affinity predictions across the chemical library. | Model choice (e.g., Random Forests, Neural Networks) is often less critical than other AL parameters [2]. |

| Compound Library | The vast chemical space to be explored (e.g., bioisosteres, virtual compounds) [5]. | Can be generated via bioisostere replacement (e.g., using Spark) or virtual screening (e.g., using Blaze) [1]. |

| Acquisition Function | Balances exploration of new chemical space with exploitation of known potent regions. | Critical for selecting the next batch of compounds for FEP. Common functions include Upper Confidence Bound (UCB) and Expected Improvement (EI) [2] [4]. |

| High-Performance Computing (HPC) with GPUs | Provides the computational power to run multiple FEP calculations in parallel. | RBFE for a series of 10 ligands can take ~100 GPU hours; ABFE can take ~1000 hours [1]. |

Bridging Machine Learning and Physics-Based Simulations

Frequently Asked Questions (FAQs)

Q1: What is the most critical factor for success when applying Active Learning to Free Energy Perturbation (FEP) calculations? Research indicates that the number of molecules sampled in each Active Learning (AL) iteration is the most significant factor impacting performance. Sampling too few molecules per iteration can substantially hurt performance and prevent the model from effectively exploring the chemical space. In contrast, the study found AL performance to be largely insensitive to the specific machine learning method or acquisition function used [2].

Q2: My FEP calculations are not performing well with default settings for a particular target system. Is there an automated way to optimize the protocol? Yes, the FEP Protocol Builder (FEP-PB) tool addresses this exact problem. It uses an active learning workflow to iteratively search the protocol parameter space, automatically developing accurate FEP protocols for systems where default settings fail. This approach can generate robust protocols in a fraction of the time required for manual optimization [6].

Q3: How can I ensure my generative AI model produces synthesizable and novel molecules with high predicted affinity? Implement a nested active learning framework.

- Inner AL Cycle: Uses chemoinformatic oracles (drug-likeness, synthetic accessibility) to filter generated molecules.

- Outer AL Cycle: Uses physics-based oracles (molecular docking, free energy calculations) to evaluate and prioritize molecules with high predicted affinity. This iterative process allows the generative model to continuously refine its output based on feedback from both chemical and physical evaluators, successfully exploring novel chemical spaces for targets like CDK2 and KRAS [7].

Q4: What are the proven performance benchmarks for AL in free energy calculations? In an exhaustive study on a dataset of 10,000 congeneric molecules, under optimal AL conditions, researchers successfully identified 75% of the top 100 molecules by sampling only 6% of the full dataset. This demonstrates the profound efficiency gains achievable by optimizing the AL strategy for free energy calculations [2].

Troubleshooting Guides

Issue 1: Poor Performance of Default FEP Settings

Problem: Your FEP calculations for a specific target system are yielding inaccurate predictions and poor correlation with experimental data, even with established force fields and standard protocols.

Solution: Implement an Active Learning-based protocol optimizer.

| Step | Action | Objective | Key Parameter/Metric |

|---|---|---|---|

| 1 | Define Parameter Space | Identify tunable parameters in the FEP pipeline (e.g., simulation length, lambda spacing, force field options). | Creates a multidimensional search space. |

| 2 | Initial Sampling | Use the FEP-PB tool to select an initial set of protocol parameters for evaluation. | Establishes a baseline for model training. |

| 3 | Active Learning Loop | Iteratively run FEP calculations, evaluate performance, and select the next most informative protocols to test. | Minimizes total computational cost by focusing on high-potential protocols. |

| 4 | Protocol Validation | Apply the newly optimized protocol to a independent test set of molecules. | Validates predictive accuracy (target: ~1 kcal/mol error). |

This automated workflow rapidly generated accurate FEP protocols for challenging systems like MCL1 and p97, which were previously not amenable to calculations with default settings [6].

Issue 2: Generative Model Producing Impractical Molecules

Problem: Your generative AI model for molecular design produces molecules that are not synthesizable, have poor drug-likeness, or lack novelty (are too similar to known compounds).

Solution: Integrate a dual-cycle Active Learning framework to guide the generation process.

The following workflow diagram illustrates the nested AL cycles that iteratively refine molecule generation using chemoinformatic and physics-based oracles:

Key Checks and Actions:

- For Synthesizability: Integrate a synthetic accessibility (SA) score predictor as an oracle in the inner AL cycle. Molecules failing the SA threshold are discarded [7].

- For Novelty: In the inner cycle, calculate the similarity of generated molecules against the cumulative set of already-generated molecules. Prioritize molecules with lower similarity scores to explore new chemical space [7].

- For Target Engagement: Use physics-based oracles like molecular docking or absolute binding free energy (ABFE) calculations in the outer AL cycle to ensure generated molecules have a high predicted affinity for the biological target [7].

Issue 3: Active Learning Failing to Converge or Find Top Candidates

Problem: Your AL workflow is not efficiently identifying the best molecules in the chemical space, leading to slow convergence or sub-optimal results.

Solution: Systematically audit and optimize your AL design choices.

| Common Cause | Diagnostic Check | Corrective Action |

|---|---|---|

| Insufficient Batch Size | Check if performance plateaus or is unstable. Are too few molecules selected per iteration? | Increase the number of molecules sampled per AL iteration. This is the most critical factor. [2] |

| Poor Initial Sampling | Evaluate the diversity and representativeness of the initial training set. | Use a method like maximin or k-means++ for initial sample selection to ensure broad coverage of the chemical space. |

| Uninformative Acquisition Function | Analyze if the model is stuck in exploitation (only refining known areas) or exploration (random search). | Test different acquisition functions (e.g., UCB, EI, PI), though studies show this is less critical than batch size. Balance exploration vs. exploitation. [2] |

| Model Inaccuracy | Monitor the predictive model's error on a hold-out test set. | Ensure the machine learning model (e.g., Random Forest, Gaussian Process) is retrained with newly acquired data in each AL cycle. |

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational tools and methodologies central to integrating machine learning with physics-based simulations in drug discovery.

| Item Name | Function / Purpose | Key Application Note |

|---|---|---|

| FEP Protocol Builder (FEP-PB) | Automated tool that uses Active Learning to optimize parameters for Free Energy Perturbation calculations. | Critical for systems where default FEP settings fail. Rapidly generates predictive models for challenging targets like MCL1. [6] |

| VAE-AL Generative Workflow | A generative model (Variational Autoencoder) nested within Active Learning cycles for molecular design. | Generates novel, synthesizable, high-affinity molecules. Successfully applied to design CDK2 and KRAS inhibitors. [7] |

| Physics-Based Oracles | Molecular modeling methods (e.g., docking, absolute binding free energy calculations) used to evaluate generated molecules. | Provides a more reliable estimate of target engagement than data-driven methods alone, especially in low-data regimes. [7] |

| Chemoinformatic Oracles | Computational filters for drug-likeness (e.g., Lipinski's rules), synthetic accessibility, and molecular similarity. | Used in the inner AL cycle to ensure generated molecules are practical and novel. [7] |

| Active Learning Controller | The algorithm that selects the most informative data points (molecules or protocols) for the next round of evaluation. | Optimizing the batch size (molecules per iteration) is the most significant factor for achieving high performance. [2] |

Frequently Asked Questions

Q1: What are the most common causes of poor convergence in active learning cycles for free energy calculations? Poor convergence often stems from inadequate initial training data, poor collective variable (CV) selection, or insufficient sampling of rare binding events. To mitigate this, ensure your initial dataset, while small, is diverse and representative of the chemical space. For path-based methods, carefully choose CVs that accurately describe the binding pathway, as simple metrics like distance may fail for complex processes [8].

Q2: How can I balance the exploration of new chemical space with the exploitation of known hit compounds? Implement a balanced acquisition strategy. The ChemScreener workflow, for example, uses ensemble uncertainty to prioritize compounds predicted to be active while also selecting some molecules with high uncertainty to explore novel chemistry. This approach increased hit rates from 0.49% in primary screens to an average of 5.91% in case studies [9].

Q3: Our FEP+ protocol is not performing well for a challenging protein-ligand system. What steps should we take? Use a tool like FEP+ Protocol Builder, which employs an active learning workflow to iteratively search the protocol parameter space. This automates the optimization of settings for systems that do not work with default parameters, saving researcher time and increasing the success rate of FEP+ calculations [10].

Q4: What is the typical computational savings when using Active Learning Glide versus docking an entire ultra-large library? Active Learning Glide can recover approximately 70% of the top-scoring hits found by exhaustive docking while requiring only 0.1% of the computational cost and time [10].

Computational Performance Data

The following table summarizes key quantitative benefits of integrating active learning with free energy calculations, as demonstrated in recent research and commercial platforms.

| Method / Workflow | Key Performance Metric | Computational Savings / Efficiency Gain | Context / Library Size |

|---|---|---|---|

| Active Learning Glide [10] | Hit Recovery | ~70% of top hits recovered | Compared to exhaustive docking of ultra-large libraries (billions of compounds) |

| Active Learning Glide [10] | Cost & Time Reduction | 0.1% of compute cost and time | Achieved by docking only a fraction of the library |

| ChemScreener [9] | Hit Rate Enrichment | Increased from 0.49% (primary HTS) to avg. 5.91% | Five iterative screens on WDR5 protein (1,760 compounds tested) |

| Generative AI & Active Learning [11] | Lead Candidate Discovery | Lead candidate identified in 21 days | From generative AI to in vitro and in vivo testing |

| Physics-based & ML Screening [11] | Clinical Candidate Selection | Candidate selected after 10 months and 78 molecules synthesized | Computational screen of 8.2 billion compounds |

Detailed Experimental Protocols

Protocol 1: Active Learning Glide for Ultra-Large Virtual Screening

This protocol is designed to identify potent hits from billion-compound libraries using a combination of docking and machine learning [10].

- Library Preparation: Start with an ultra-large virtual library of readily accessible, drug-like small molecules [11].

- Initial Sampling: Perform physics-based molecular docking (e.g., with Glide) on a small, randomly selected subset of the library (e.g., 0.01%).

- Model Training & Prediction: Train a machine learning (ML) model on the docking scores and molecular descriptors from the initial set. Use this model to predict scores for the entire unscreened library.

- Iterative Batch Selection: Select the next batch of compounds based on a balanced acquisition function (e.g., prioritizing both high predicted scores and high model uncertainty). Dock this new batch.

- Model Update & Convergence: Incorporate the new docking results into the training data and update the ML model. Repeat steps 4 and 5 until the top-ranking compounds stabilize or a predefined number of iterations is reached.

- Output: A final list of top-scoring compounds, recovering a high percentage of the hits that would have been found by a prohibitively expensive exhaustive dock.

Protocol 2: ChemScreener's Multi-Task Active Learning for Hit Discovery

This protocol is tailored for early drug discovery with limited initial data, using multi-task learning and a balanced-ranking strategy [9].

- Assay Design & Initialization: Establish a primary high-throughput screening assay (e.g., HTRF). Begin with a small, diverse set of compounds for initial testing.

- Multi-Task Model Training: Train an ensemble of deep learning models on the initial bioactivity data. The "multi-task" aspect allows the model to learn from related assays or general molecular properties.

- Balanced-Ranking Acquisition: For each subsequent screening cycle, rank the remaining compounds in the library using an acquisition function that balances:

- Exploitation: Selecting compounds with the highest predicted activity.

- Exploration: Selecting compounds where the model's predictions have the highest uncertainty (ensemble disagreement).

- Iterative Screening & Model Retraining: Screen the selected compounds (e.g., 352 compounds per cycle in the WDR5 study). Add the new experimental results to the training data and retrain the model.

- Hit Validation & Progression: After several cycles, consolidate hits and their close analogs. Validate confirmed hits in secondary and counter-screens (e.g., dose-response, DSF for binding confirmation). Advance diverse scaffold series for further development.

Workflow and Pathway Visualizations

Active Learning Docking Cycle

Integrated Drug Discovery Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function in the Workflow |

|---|---|

| Ultra-Large Virtual Libraries (e.g., ZINC20, GDB-17-derived) [11] | Billions of "on-demand" synthesizable compounds provide the vast chemical space for virtual screening. |

| Molecular Docking Software (e.g., Glide) [10] | Provides the initial, physics-based binding affinity scores for compounds used to train the active learning model. |

| Free Energy Perturbation (FEP+) Software [10] | Offers high-accuracy binding affinity predictions for lead optimization, used to validate and refine hits from initial screens. |

| Path Collective Variables (PCVs) [8] | Sophisticated collective variables used in path-based free energy calculations to map the protein-ligand binding pathway accurately. |

| Balanced-Ranking Acquisition Function [9] | The algorithm that decides which compounds to test next, balancing the need to find active compounds (exploit) and learn about the chemical space (explore). |

Core Concepts: Exploitation and Exploration

In Active Learning (AL), the exploration-exploitation trade-off is a fundamental challenge. The goal is to use a limited labeling budget to query the most informative data points from a pool of unlabeled data.

- Exploitation aims to maximize a domain-specific objective given the current knowledge of the model. In drug discovery, this often means selecting compounds predicted to have the highest binding affinity to a target protein. This is also known as a greedy acquisition strategy [12].

- Exploration focuses on reducing the uncertainty of the learning model itself. This involves selecting data points where the model's prediction is most uncertain, thereby improving the model's overall understanding of the underlying data distribution [13] [12].

A balanced approach is often necessary. Purely exploitative strategies might miss more potent compounds in unexplored chemical spaces, while purely exploratory strategies may be inefficient for directly optimizing the desired objective, such as finding the highest-affinity binder [13] [12].

The following diagram illustrates a general AL workflow that can incorporate both exploitative and exploratory strategies:

Frequently Asked Questions & Troubleshooting

1. How do I choose between an exploitative or exploratory strategy for my FEP project?

The optimal choice depends on your project's stage and goals.

- Use an exploitative (greedy) strategy when you are in later stages of lead optimization and have a reasonably accurate model. This focuses resources on the most promising regions of chemical space to find the best candidates quickly [12].

- Use an exploratory (uncertainty) strategy in the early stages of a project or when exploring a new chemical series. This helps build a robust and generalizable model by sampling diverse structures [12].

- Use a balanced strategy to avoid the pitfalls of either extreme. For example, a "narrowing" strategy starts with broad exploration for the first few AL iterations before switching to exploitation, which has been shown to efficiently identify potent binders [12].

2. My AL model seems to get stuck in a local optimum, repeatedly selecting similar compounds. What should I do?

This is a common issue with overly exploitative strategies. To encourage more diversity in selected compounds:

- Incorporate exploration explicitly: Switch to an uncertainty-based acquisition function or use a mixed strategy that selects some candidates based on high uncertainty [12].

- Adjust the batch size: Select more compounds per AL iteration. Research has shown that using larger batch sizes (e.g., 60-100 molecules per iteration) significantly improves the recall of top binders by ensuring better coverage of the chemical space in each cycle [2].

- Use molecular descriptors that capture diversity: Ensure your model uses descriptors like molecular fingerprints (e.g., from RDKit) that effectively represent the chemical space. These have been shown to outperform other descriptors for broadly exploring the chemical library [12].

3. What is the impact of the initial training set on the AL process?

The initial set of labeled data is critical.

- Problem: A small or non-representative initial set can lead the model to make poor predictions from the start, causing the AL strategy to query suboptimal candidates.

- Solution: If possible, start with an initial dataset that provides broad coverage of the chemical space you intend to explore. Some methods use clustering or density-based sampling on the unlabeled pool to select a diverse initial set [13].

Experimental Protocols for AL in Free Energy Calculations

The table below summarizes a generalized protocol for implementing an AL cycle to optimize compounds using free energy calculations.

- Goal: To efficiently identify high-affinity ligands by guiding the selection of compounds for costly RBFE calculations.

- Surrogate Model: A Quantitative Structure-Activity Relationship (QSAR) model that predicts binding affinity based on molecular features [12].

| Protocol Step | Key Details & Considerations |

|---|---|

| 1. Initial Setup | Define your chemical library. Select an initial training set of compounds with known binding affinities (from experiments or preliminary FEP calculations). Train the initial QSAR model [12]. |

| 2. Iterative Active Learning Cycle | |

| a. Model Prediction | Use the current QSAR model to predict binding affinities and their uncertainties for all compounds in the unlabeled pool [12]. |

| b. Acquisition Function | Apply the chosen strategy (e.g., greedy, uncertainty, or mixed) to select the next batch of compounds for FEP calculation. A common practice is to select the top 20 predicted binders from each of the best-performing models [12]. |

| c. Experiment (FEP Calculation) | Perform RBFE calculations on the selected compounds. This provides the "ground truth" labels for the model [12] [14]. |

| d. Model Update | Add the new FEP data to the training set. Retrain the QSAR model to incorporate the new knowledge [12]. |

| 3. Termination & Validation | Stop when a stopping criterion is met (e.g., a sufficient number of high-affinity binders have been identified, or model performance plateaus). Synthesize and experimentally test the top-predicted compounds [14]. |

The Scientist's Toolkit: Research Reagents & Materials

This table lists key computational "reagents" and tools used in building an AL framework for free energy calculations.

| Item | Function in the Experiment |

|---|---|

| Chemical Library | A virtual collection of compounds to be screened. This is the search space from which the AL algorithm selects candidates [12]. |

| Molecular Descriptors/Fingerprints | Numerical representations of chemical structure (e.g., RDKit fingerprints, PLEC fingerprints). These are the input features for the QSAR model [12]. |

| Surrogate QSAR Model | A machine learning model (e.g., Random Forest, Gaussian Process) that learns the relationship between molecular features and binding affinity. It provides fast predictions to guide the AL cycle [12]. |

| Acquisition Function | The algorithm that balances exploration and exploitation to decide which compounds to test next. Examples include greedy, uncertainty, and expected improvement [13] [12]. |

| FEP/RBFE Calculation Engine | The physics-based simulation software (e.g., Schrodinger's FEP+, OpenMM) that provides high-accuracy binding affinity data for the selected compounds, used to label data and validate predictions [14]. |

Implementing AL-FEP: Workflows, Tools, and Real-World Case Studies

The AL-FEP (Active Learning for Free Energy Perturbation) workflow integrates advanced computational simulations with an iterative learning loop to optimize compounds, such as antibodies or small molecules, for properties like binding affinity. This method efficiently navigates vast chemical spaces by prioritizing the most promising candidates for computationally expensive calculations [1] [15].

The following diagram illustrates the core cyclic process of the AL-FEP workflow.

Troubleshooting Guides

Problem 1: Poor Prediction Accuracy from the Surrogate Model

Issue: The surrogate model's predictions do not correlate well with subsequent high-cost FEP calculations.

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Insufficient or poor-quality initial data | Check the size and diversity of the starting dataset. | Start with a minimum of 10-20 diverse compounds with reliable affinity data. Use clustering to ensure structural diversity [15]. |

| Inadequate representation of molecules | Evaluate the feature set or embeddings used for the model. | Use a protein Language Model (pLM) to generate sequence embeddings, capturing complex biophysical properties [15]. |

| Model overfitting | Plot learning curves to see if validation performance plateaus or worsens. | Employ Parameter-Efficient Fine-Tuning (PEFT). This adapts a large pLM to your specific task with limited data, reducing overfitting risk [15]. |

Problem 2: High Computational Cost and Slow Workflow Iteration

Issue: The time and resources required for each AL-FEP cycle are prohibitive.

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Standard FEP calculations are too expensive | Profile the computation time of a single FEP simulation. | Implement an automated lambda window scheduling algorithm. This avoids calculating too many or too few windows, optimizing GPU time [1]. |

| Inefficient candidate selection | Review the number of candidates evaluated by FEP in each cycle. | Use the surrogate model to score a large virtual library, but only run FEP on the top 5-10% of candidates that are also "informative" for the model [15]. |

| Overly large molecular systems | Check the number of atoms in the simulated system (e.g., protein, membrane, water). | For membrane-bound targets (like GPCRs), test if truncating distant parts of the protein system significantly impacts results, as this can drastically reduce simulation time [1]. |

Problem 3: Handling Charged Ligands and Hydration Effects

Issue: FEP calculations involving formal charge changes or specific water molecules yield unreliable results with high hysteresis.

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Charge changes in perturbations | Identify if ligands in the perturbation map have different formal charges. | Introduce a counterion to neutralize the charged ligand, keeping the net formal charge consistent across the simulation. Run longer simulation times for these transformations to improve reliability [1]. |

| Inconsistent hydration environment | Check for high hysteresis between forward and reverse transformations in a perturbation. | Use hydration analysis techniques like 3D-RISM or GIST to identify poorly hydrated regions. Employ sampling methods like GCNCMC to ensure stable and consistent water placement during the simulation [1]. |

Problem 4: Optimized Compounds Have Poor Developability

Issue: The workflow successfully improves binding affinity (e.g., lowers Flex ddG energy) but yields compounds with undesirable properties for therapeutics.

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Single-objective optimization | The optimization target is solely binding affinity. | Implement multi-objective optimization. Incorporate metrics like AbLang2 perplexity (to maintain "natural" antibody sequence traits), hydropathicity, and instability index as simultaneous optimization goals [15]. |

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between Relative Binding Free Energy (RBFE) and Absolute Binding Free Energy (ABFE), and when should I use each?

- RBFE calculates the binding energy difference between two similar ligands. It is highly accurate for congeneric series but is typically limited to perturbations involving a small number of atom changes (e.g., ~10 atoms). It is best suited for lead optimization where you are making small, systematic changes to a molecule [1].

- ABFE calculates the absolute binding energy of a single ligand independently. It is not restricted by the need for similar ligands, making it ideal for virtual screening and hit identification where molecules can be structurally diverse. However, ABFE calculations are computationally more demanding (~10x more GPU hours than RBFE) and may have residual errors due to unaccounted protein conformational changes [1].

Q2: How does Active Learning specifically improve upon a standard FEP workflow?

Standard FEP might involve running calculations on a large, pre-defined set of compounds. Active Learning introduces an intelligent, iterative cycle. A surrogate model selects the most "informative" compounds for the next round of FEP calculations, balancing exploration of uncertain regions of chemical space with exploitation of known high-affinity areas. This means you can achieve better results with far fewer expensive FEP calculations compared to a brute-force approach [15].

Q3: My project involves covalent inhibitors. Can the AL-FEP workflow handle them?

Modeling covalent inhibitors is challenging because it requires specialized force field parameters to correctly describe the bond formation between the ligand and the protein. Standard force fields often lack these parameters. While industry-wide efforts are ongoing to develop reliable methods, you should currently approach covalent systems with caution and be prepared to invest significant effort in parameterization [1].

Q4: What are the minimum computational resources required to start with an AL-FEP project?

A typical RBFE study for a congeneric series of about 10 ligands might require approximately 100 GPU hours. In contrast, an equivalent ABFE study would be far more demanding, likely requiring around 1000 GPU hours. The exact needs depend on system size, simulation length, and the number of compounds evaluated [1].

Experimental Protocols

Protocol 1: Setting Up a Relative Binding Free Energy (RBFE) Calculation

This protocol details the steps for a standard RBFE calculation between two similar ligands [1].

- System Preparation:

- Obtain the 3D structures of the protein and both ligands (Ligand A and Ligand B).

- Ensure both ligands are in the same protonation state. If formal charges differ, add a neutralizing counterion.

- Parameterize the ligands using a force field like Open Force Field (OpenFF), and check for any poorly described torsion angles that may require optimization using Quantum Mechanics (QM) calculations.

- Perturbation Map Generation:

- Define the transformation from Ligand A to Ligand B. An automated tool is often used to map the atoms between the two molecules.

- Use an automated lambda window scheduling algorithm to determine the optimal number of intermediate steps (windows) for the transformation. This prevents wasted computational effort.

- Simulation Setup:

- Set up the simulation boxes for both the bound (protein-ligand complex) and unbound (ligand in solvent) states for both endpoints.

- For transformations involving charge changes, plan for longer simulation times to ensure proper equilibration.

- Production Run and Analysis:

- Run the molecular dynamics simulations for each lambda window.

- Use analysis software (e.g., built-in tools in software like Flare FEP, Schrodinger's FEP+, OpenFE) to calculate the relative free energy difference (ΔΔG) between Ligand A and Ligand B.

Protocol 2: Incorporating Active Learning for Multi-Objective Optimization

This protocol extends a standard FEP workflow with an Active Learning loop for balancing affinity and developability [15].

- Initialization:

- Start with a wild-type antibody sequence and a small set of known variant sequences with measured binding affinity data.

- Fine-tune a protein Language Model (pLM) on this initial data using Parameter-Efficient Fine-Tuning (PEFT) and a learning-to-rank objective. This creates your initial surrogate model.

- Candidate Generation and Selection:

- Generate candidate sequences by sampling directly from the probability distribution of the fine-tuned pLM.

- For each candidate, calculate multiple objective scores:

- Predicted Binding Affinity: From the surrogate model.

- AbLang2 Perplexity: Measures how "natural" the antibody sequence is.

- Developability Metrics: Calculate hydropathicity, instability index, and isoelectric point from the sequence.

- Select the next set of compounds for FEP evaluation using a multi-objective selection criterion like hypervolume maximization, which balances all these objectives.

- Iteration:

- Run FEP (or a cheaper proxy like Flex ddG) on the selected compounds to obtain a more reliable binding affinity score.

- Add the new data (sequence and measured affinity) to the training set.

- Update (fine-tune) the surrogate model with the expanded dataset.

- Repeat steps 2 and 3 until a stopping criterion is met (e.g., no further improvement after several cycles or a target affinity is reached).

The Scientist's Toolkit: Research Reagent Solutions

| Item/Resource | Function in AL-FEP Workflow | Key Considerations |

|---|---|---|

| Open Force Field (OpenFF) Initiative | Provides accurate, chemically transferable force fields for small molecules, essential for correctly modeling ligand energetics and dynamics [1]. | Check for parameter coverage for novel functional groups or metal ions in your system. |

| Protein Language Models (pLMs) | Acts as a pre-trained surrogate model; generates meaningful embeddings for protein/antibody sequences and can be fine-tuned for fitness prediction with limited data [15]. | Models like AbLang2 are specifically trained on antibody sequences (OAS database) and are ideal for antibody engineering projects [15]. |

| Grand Canonical Monte Carlo (GCNCMC) | A sampling technique that allows water molecules to be inserted and deleted during simulation, ensuring the binding site is correctly and consistently hydrated [1]. | Critical for reducing hysteresis in RBFE calculations where water displacement or rearrangement is a key factor. |

| Automated Lambda Scheduler | Dynamically determines the optimal number and spacing of intermediate states (lambda windows) for a given alchemical transformation [1]. | Prevents both inaccurate results (too few windows) and wasted computational resources (too many windows). |

| Active Learning Framework (e.g., ALLM-Ab) | Provides the algorithmic backbone for the iterative cycle of selection, evaluation, and model updates. Manages the trade-off between exploration and exploitation [15]. | Look for frameworks that support multi-objective optimization to balance affinity with developability early in the design process. |

FEgrow is an open-source Python package designed to build and optimize congeneric series of ligands directly within a protein's binding pocket [16]. Its primary role in structure-based drug design is to address a critical bottleneck: the creation of reliable initial binding poses for ligands, which is a fundamental prerequisite for successful free energy calculations [16] [17]. By growing user-defined R-groups from a constrained core of a known hit compound, FEgrow maximizes the use of structural biology data and incorporates medicinal chemistry expertise, thereby reducing reliance on less accurate docking algorithms [16] [18].

This case study frames the use of FEgrow within a broader thesis on optimizing active learning for free energy calculations research. The integration of active learning allows for a more efficient exploration of the vast combinatorial space of possible chemical groups, significantly accelerating the hit identification and optimization process [19] [18]. We will demonstrate its application in targeting the SARS-CoV-2 Main Protease (Mpro), a key viral replication enzyme and a prominent drug target [20].

Research Reagent Solutions

The following table details the essential computational tools and data required to set up and run an FEgrow experiment for Mpro inhibitor optimization.

| Resource Name | Type/Source | Function in the Workflow |

|---|---|---|

| Protein Data Bank (PDB) | Database (e.g., PDB ID: 7EN8) | Source of the initial receptor structure (SARS-CoV-2 Mpro) and a known ligand-core complex [21]. |

| Ligand Core | User-defined (from a known hit) | The central scaffold whose binding mode is fixed during R-group growth [16] [18]. |

| R-group Library | FEgrow (provided ~500 groups) or user-defined | A collection of functional groups to be grown from the core's attachment point [16]. |

| Linker Library | FEgrow (provided ~2000 linkers) | A collection of flexible chemical linkers to connect the core and R-group [18]. |

| RDKit | Software Library | Handles core merging, conformer generation (ETKDG method), and maximum common substructure search [16] [18]. |

| OpenMM | Software Library | Performs structural optimization of ligand conformers within a rigid protein using molecular mechanics [18]. |

| ANI Neural Network Potential | Machine Learning Potential | Provides accurate intramolecular energetics for the ligand during optimization (hybrid ML/MM) [16]. |

| gnina | Software Tool | A convolutional neural network used to score and predict binding affinities of the low-energy poses [16] [18]. |

The process of building and optimizing ligands with FEgrow follows a structured, modular pathway. The diagram below illustrates the key stages from input preparation to final output.

FEgrow in Action: SARS-CoV-2 Mpro Case Study

Experimental Protocol for Mpro Inhibitor Elaboration

A typical FEgrow experiment to optimize Mpro inhibitors involves the following detailed methodology [16] [18]:

System Preparation:

- Receptor: Obtain the crystal structure of SARS-CoV-2 Mpro (e.g., PDB code 7EN8). Prepare the protein structure by adding hydrogen atoms and assigning protonation states at pH 7 using software like Open Babel. The key catalytic dyad residues are Cys145 and His41 [20].

- Ligand Core: Define the core scaffold from a known inhibitor (e.g., a fragment from a crystallographic screen). The core must include a specified hydrogen atom as the attachment point for growth.

Ligand Building and Conformer Generation:

- Select an R-group from the provided library or a custom list. A linker from the library can also be chosen to connect the core and R-group.

- FEgrow uses RDKit to merge the core and R-group/linker at the defined attachment point.

- An ensemble of 3D conformers for the new ligand is generated using the ETKDG algorithm. Crucially, harmonic distance restraints (with a force constant of 10^4 kcal/mol/Ų) are applied to atoms in the common core to maintain the original bioactive conformation [16].

Conformer Optimization and Scoring:

- Generated conformers are filtered to remove any that sterically clash with the rigid protein binding pocket.

- The remaining conformers undergo structural optimization via energy minimization in OpenMM. In a hybrid ML/MM approach, the ligand's intramolecular energetics are described by the ANI machine learning potential, while its non-bonded interactions with the static protein are handled by a classical force field (AMBER FF14SB for the protein) [16] [18].

- The low-energy optimized structures are scored using the

gninaconvolutional neural network scoring function to predict binding affinity [18].

Active Learning Integration (Advanced Workflow):

- To efficiently search the vast combinatorial space of linkers and R-groups, the workflow can be interfaced with an active learning cycle [19] [18].

- A batch of compounds is grown, built, and scored with FEgrow.

- The results train a machine learning model, which then predicts the scores for the remaining unexplored chemical space.

- The next batch of compounds is selected based on the model's predictions, iteratively improving the quality of designs while minimizing computational cost [18].

Troubleshooting Common Experimental Issues

FAQ 1: My grown conformers consistently show high energy or steric clashes after optimization. What steps can I take?

- A: This is often related to the initial conformer generation or the optimization parameters.

- Check Core Restraints: Verify that the atoms of the ligand core are correctly identified and strongly restrained during conformer generation. This ensures the known binding mode is preserved.

- Adjust Conformer Sampling: Increase the number of conformers generated by the ETKDG algorithm to better sample the rotational space of the added R-group and linker.

- Review Optimization Parameters: Ensure the hybrid ML/MM potential is correctly configured. The ANI potential for the ligand provides a more accurate energy surface for unusual chemistries compared to traditional force fields [16].

FAQ 2: The gnina scoring function ranks a compound as high-affinity, but subsequent free energy calculations suggest poor binding. What could be the cause?

- A: Discrepancies between different scoring methods are not uncommon.

- Pose Reliability: The gnina score is dependent on the input pose. Verify that the optimized pose from FEgrow is physically reasonable by visually inspecting key interactions (e.g., with the S1/S2 pockets of Mpro and the catalytic dyad).

- Scoring Function Limitations: Remember that gnina is a docking-style scoring function. It is excellent for rapid screening but is an approximation. Use it for relative ranking within a congeneric series, not as an absolute predictor of affinity [16] [18].

- Input for Free Energy Calculations: Ensure the structures output by FEgrow have been properly prepared (e.g., parameterized) for the subsequent free energy calculation software (e.g., SOMD) [16].

FAQ 3: How can I integrate purchasable compounds from on-demand libraries into my FEgrow active learning campaign?

- A: This is a powerful feature for ensuring synthetic tractability.

- Seed Chemical Space: The FEgrow workflow can be configured to "seed" the initial or subsequent batches of the active learning cycle with molecules from on-demand libraries (e.g., the Enamine REAL database) that contain the defined core substructure [18].

- Treat as Flexible: In this mode, the entire molecule outside of the rigid core is treated as flexible during the FEgrow building and optimization process, allowing you to evaluate purchasable analogs directly [18].

Results and Validation

Key Findings from Prospective Application

In a prospective study targeting SARS-CoV-2 Mpro, researchers used the active learning-driven FEgrow workflow to prioritize 19 compound designs for purchase and experimental testing [19] [22] [18]. The results were promising:

- Hit Rate: Three of the 19 tested compounds showed weak but detectable activity in a fluorescence-based Mpro enzyme assay, validating the workflow's ability to generate biologically active molecules [18].

- Validation of Design Strategy: The study also demonstrated that the fully automated workflow could identify novel designs with high similarity to potent inhibitors discovered by the open-science COVID Moonshot effort, using only initial fragment screen data [18].

Performance of Optimized Workflow

The integration of active learning with FEgrow represents a significant performance enhancement. The table below summarizes the key metrics of this optimized workflow.

| Workflow Component | Performance Metric | Outcome/Benefit |

|---|---|---|

| Automation & Parallelization | Throughput of compound building and scoring | Enabled automated de novo design on HPC clusters via a new API [18]. |

| Active Learning | Search efficiency in combinatorial chemical space | Identified promising inhibitors by evaluating only a fraction of the total space, reducing computational cost [18]. |

| On-demand Library Seeding | Synthetic tractability of final designs | Directly generated suggestions for purchasable compounds (e.g., from Enamine REAL database), bridging virtual design and real-world testing [18]. |

This case study demonstrates that FEgrow is a robust, open-source platform for optimizing lead compounds within a protein binding pocket, effectively preparing them for rigorous free energy calculations. Its application to SARS-CoV-2 Mpro inhibitor design, especially when coupled with an active learning framework, provides a validated blueprint for accelerating early-stage drug discovery [16] [18]. The successful identification of active Mpro inhibitors underscores the value of combining structural biology data, hybrid ML/MM optimization, and machine-learning-driven search strategies.

Future developments in FEgrow will likely focus on incorporating a wider array of optimization algorithms and scoring functions, further enhancing its accuracy and flexibility [18]. For the computational drug discovery community, adopting and contributing to such open-source, modular tools is crucial for advancing the field of free energy calculations and achieving more predictive, efficient, and reliable molecular design.

Experimental Workflow & Methodology

Core Generative AI and Active Learning Workflow

Our established methodology integrates a generative variational autoencoder (VAE) with a physics-based active learning (AL) framework to design novel inhibitors [7]. The workflow consists of several interconnected stages, as illustrated below.

Diagram 1: Generative AI with Nested Active Learning Workflow

Key Experimental Steps [7]:

Data Representation & Initial Training:

- Represent training molecules as tokenized SMILES strings converted into one-hot encoding vectors.

- Pre-train the VAE on a general molecular dataset to learn viable chemical structures.

- Fine-tune the VAE on a target-specific training set (e.g., known CDK2 or KRAS binders) to increase initial target engagement.

Nested Active Learning Cycles:

- Inner AL Cycle (Chemoinformatics Oracle): Generated molecules are evaluated for drug-likeness, synthetic accessibility (SA), and novelty (dissimilarity from training set). Molecules meeting predefined thresholds are added to a temporal-specific set and used to fine-tune the VAE.

- Outer AL Cycle (Affinity Oracle): After several inner cycles, accumulated molecules undergo molecular docking. Those with favorable docking scores are promoted to a permanent-specific set, which is used for subsequent VAE fine-tuning, directly steering generation toward high-affinity candidates.

Candidate Selection:

Key Quantitative Results from the CDK2 Case Study

The application of this workflow for CDK2 inhibitor discovery yielded the following experimental outcomes [7]:

Table 1: Experimental Validation of Generated CDK2 Inhibitors

| Metric | Result | Experimental Method |

|---|---|---|

| Molecules Synthesized | 9 | Chemical synthesis |

| Molecules with in vitro activity | 8 | Bioassay |

| Molecules with nanomolar potency | 1 | Dose-response bioassay |

| Novel scaffolds generated | Multiple, distinct from known CDK2 inhibitors | Chemical similarity analysis |

Frequently Asked Questions (FAQs) & Troubleshooting

On Generative Model Performance

Q1: Our generative model produces molecules with poor synthetic accessibility (SA). How can we improve this?

- Problem: The VAE decoder generates chemically invalid or highly complex structures.

- Solution: Integrate a synthetic accessibility (SA) predictor as a filter within the Inner AL Cycle. Molecules with poor SA scores should be rejected before fine-tuning. This iteratively teaches the VAE to prioritize synthetically feasible structures [7]. Reinforce the generation with SA-focused reward functions if using reinforcement learning.

Q2: The generated molecules lack novelty and are too similar to the training set.

- Problem: The model suffers from mode collapse or fails to explore new chemical space.

- Solution: Actively promote dissimilarity during the AL cycles. In the inner cycle, enforce a minimum Tanimoto dissimilarity threshold against the cumulative set of previously generated molecules. This explicitly penalizes the generation of redundant structures and encourages exploration [7].

On Active Learning and Free Energy Calculations

Q3: How can we configure the AL cycles for targets with very little training data, like KRAS?

- Problem: Sparse target-specific data limits the initial model's ability to generate active compounds.

- Solution: Leverage the physics-based oracles in the outer cycle. For low-data targets like KRAS, the docking score oracle provides a critical, reliable guide that is less dependent on existing bioactivity data. Extend the initial pre-training phase on a larger, general bioactivity corpus before fine-tuning on the small target-specific set [7].

Q4: Our free energy calculations are unstable or show poor convergence. What are the key parameters to check?

- Problem: Unreliable Absolute Binding Free Energy (ABFE) or Thermodynamic Integration (TI) results.

- Solution: Follow these practical guidelines derived from optimized protocols [24] [23]:

- Restraint Selection: Use an algorithm that incorporates protein-ligand hydrogen bonds to choose pose restraints, improving numerical stability and convergence [23].

- Simulation Length: For TI, sub-nanosecond simulations can be sufficient for many systems, but some (e.g., TYK2) may require longer equilibration (~2 ns) [24].

- Perturbation Size: Avoid large perturbations with |ΔΔG| > 2.0 kcal/mol, as they exhibit significantly higher errors [24].

- Annihilation Protocol & Scaling: Optimize the ligand annihilation protocol and the order in which interactions (electrostatics, Lennard-Jones, restraints) are scaled to minimize error and improve precision [23].

Q5: How can we use free energy calculations to optimize kinome-wide selectivity?

- Problem: A lead compound is potent but inhibits many off-target kinases.

- Solution: Implement a hierarchical selectivity profiling strategy using free energy calculations [14]:

- Use Ligand-based Relative Binding Free Energy (L-RB-FEP+) calculations to predict potency against the primary target (e.g., Wee1) and key off-targets (e.g., PLK1).

- Employ Protein Residue Mutation Free Energy (PRM-FEP+) calculations. This efficiently estimates the impact of mutating a "selectivity handle" residue in your primary target (e.g., the gatekeeper residue) to match the sequence of various off-target kinases, approximating binding across the kinome without simulating every individual off-target structure [14].

On Biological Context and Validation

Q6: What are the relevant cellular pathways and biomarkers for CDK2 and KRAS inhibition?

The diagrams below summarize the key signaling pathways and cellular responses for the two targets.

Diagram 2: Core Oncogenic KRAS Signaling Pathway [25]

Diagram 3: Cellular Context Determines Response to CDK2 Inhibition [26]

Q7: How can we validate the mechanism of action and address potential resistance?

- For KRAS: In pancreatic ductal adenocarcinoma (PDAC) models, combination therapy is often essential. KRAS inhibition (e.g., with MRTX1133) can reverse chemotherapy resistance promoted by therapy-induced senescence. Combining KRASG12D inhibition with gemcitabine reduces senescence-associated β-galactosidase (SA-β-gal) signal and sensitizes cells to treatment [27].

- For CDK2: Response is highly context-dependent. Use biomarkers like P16INK4A loss and Cyclin E1 overexpression to identify models sensitive to pure G1 arrest. In CDK2-independent models, inhibitors may induce a 4N arrest; in these cases, combination strategies, such as with CDK4/6 inhibitors or depletion of mitotic regulators, can be effective [26]. CRISPR screens have identified CDK2 loss as a mechanism of resistance, underscoring the need for combination therapies [26].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational and Experimental Reagents

| Item / Reagent | Function / Role in Workflow | Example / Note |

|---|---|---|

| Variational Autoencoder (VAE) | Core generative model; maps molecules to latent space and generates novel structures. | Balances rapid sampling, interpretable latent space, and stable training [7]. |

| SMILES Representation | Standardized string-based molecular representation for model input. | Requires tokenization and one-hot encoding [7]. |

| Chemoinformatic Oracles | Filters in the Inner AL Cycle for drug-likeness and synthetic accessibility (SA). | Critical for ensuring generated molecules are synthesizable and have drug-like properties [7]. |

| Molecular Docking | Physics-based affinity oracle in the Outer AL Cycle for initial affinity assessment. | Provides a rapid, structure-based score to prioritize molecules [7]. |

| PELE (Protein Energy Landscape Exploration) | Advanced simulation for refining binding poses and assessing stability. | Used for in-depth evaluation of protein-ligand complexes before synthesis [7]. |

| ABFE (Absolute Binding Free Energy) Calculations | High-accuracy prediction of binding affinity for final candidate selection. | Optimized protocols are crucial for stability and convergence [23]. |

| Thermodynamic Integration (TI) | A specific method for relative binding free energy calculations. | An automated workflow using AMBER20 and alchemlyb can be implemented [24]. |

| MRTX1133 | Experimental non-covalent KRASG12D inhibitor. | Used in vitro to validate KRAS targeting and combination strategies [27]. |

| Gemcitabine | Standard chemotherapy agent for pancreatic cancer. | Used in combination studies with KRAS inhibitors to overcome resistance [27]. |

Integrating AL into Existing FEP Pipelines

Free Energy Perturbation (FEP) is a physics-based computational technique renowned for its high accuracy in predicting protein-ligand binding affinities, a critical task in rational drug design. [3] However, its computational expense and low throughput have traditionally limited its application to smaller congeneric series, typically involving perturbations of fewer than 10 atoms. [28] Active Learning (AL) is a machine learning strategy that addresses this bottleneck. By iteratively selecting the most informative compounds for costly FEP calculations, AL creates a feedback loop that efficiently explores vast chemical spaces, making FEP a powerful tool for earlier stages of drug discovery, such as hit identification and large-scale library profiling. [1] [3]

This guide provides troubleshooting and best practices for researchers integrating AL into their FEP workflows.

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between a standard FEP workflow and an Active Learning FEP workflow?

A standard FEP workflow is typically a single-shot calculation on a pre-defined, congeneric set of ligands. In contrast, an Active Learning FEP workflow is an iterative cycle. It starts with an initial set of FEP calculations on a small, diverse subset of a larger compound library. A machine learning model (like a 3D-QSAR model) is trained on this FEP data and is then used to rapidly predict the binding affinities for the entire remaining library. The most promising or uncertain compounds from this large set are then selected for the next round of FEP calculations. This process repeats, with the model being retrained each time, continuously refining its predictions and guiding the exploration of chemical space. [1]

Q2: My Active Learning model is not improving after the first few iterations. What could be wrong?

This is a common challenge, often referred to as model stagnation.

- Lack of Diversity in Initial Training Set: If the initial compounds used for the first FEP cycle are too similar, the ML model cannot learn the broader structure-activity relationships (SAR) of the chemical space. Ensure your initial selection is chemically diverse.

- Exploration vs. Exploitation Imbalance: The selection strategy for the next FEP batch might be too greedy, only picking compounds predicted to be the very best (exploitation). Incorporate strategies that also select compounds where the model is most uncertain (exploration) to improve the model in under-sampled regions.

- Inaccurate FEP Ground Truth: The entire AL cycle relies on the accuracy of the FEP calculations. If the initial FEP results are unreliable due to protein structure issues, incorrect protonation states, or poor ligand poses, the ML model will learn from noisy or incorrect data. Always validate your FEP setup with known experimental data first. [1]

Q3: Can Active Learning FEP handle charged ligands or large conformational changes in the binding site?

This remains a significant challenge. Standard Relative FEP (RBFE), which is often used in AL cycles, struggles with formal charge changes due to numerical issues, though recent advances allow it by using counterions and longer simulation times. [1] Furthermore, most FEP methods treat the protein as largely rigid, meaning they cannot sample large backbone or loop movements. If your ligand series induces different protein conformations, they should be treated in separate FEP experiments. [28] For these complex cases, Absolute FEP (ABFE) might be considered, as it allows the use of different protein structures for different ligands, but it is computationally much more demanding. [1]

Q4: What are the key metrics to monitor for a successful Active Learning FEP campaign?

Monitor both the performance of the FEP calculations and the ML model:

- FEP Metrics: Hysteresis (the difference between forward and reverse transformations) should be low (< 1 kcal/mol), and calculated free energies should match experimental values for known compounds (root-mean-square error, or RMSE, ideally < 1.0 kcal/mol). [3] [28]

- ML Model Metrics: Track predictive performance on a held-out test set using R² and mean absolute error (MAE). The model's performance should improve over iterations. Ultimately, the success of a prospective campaign is measured by the experimental validation of the newly designed compounds. [1]

Troubleshooting Guide

| Problem Area | Specific Issue | Potential Causes | Recommended Solutions |

|---|---|---|---|

| Workflow & Setup | AL cycle fails to enrich for active compounds. | Initial training set is too small or not diverse; poor ML model choice. | Start with a larger, structurally diverse initial FEP set; use project-specific ML models. [1] |

| The ML model predictions and subsequent FEP results are inconsistent. | The machine learning model has learned artifacts or is overfitted. | Retrain the ML model with the new FEP data; check for chemical domain overlap between training and prediction sets. | |

| FEP Simulations | High hysteresis in FEP calculations. | Inadequate sampling, insufficient lambda windows, or unstable ligand binding poses. [1] | Use automated lambda scheduling; [1] extend simulation time; check ligand pose stability with MD prior to FEP. [28] |

| Poor correlation with experimental data for known ligands. | Incorrect protein/ligand protonation states; inaccurate force field parameters; poor initial ligand pose. | Re-evaluate system setup (e.g., with constant pH MD); use QM calculations to refine ligand torsion parameters; [1] validate ligand docking. | |

| System Preparation | The protein structure becomes unstable during simulation. | Missing loops or side-chain atoms; unphysical contacts in the initial structure. [28] | Use a well-prepared protein structure with missing loops modeled and side-chains filled in. Relax the initial model. [28] |

| Hydration of the binding site is inconsistent. | Water molecules in the binding site are not properly sampled, leading to hysteresis. [1] | Use techniques like 3D-RISM to analyze hydration sites and Grand Canonical Monte Carlo (GCNCMC) to sample water placement. [1] |

Active Learning FEP Experimental Protocol

The following diagram and table outline the core workflow and essential components for running an Active Learning FEP experiment.

Table: Essential Research Reagents & Computational Tools for AL-FEP

| Item Name | Function / Purpose in the Workflow | Key Considerations |

|---|---|---|

| Protein Structure | Provides the 3D model for the FEP simulation. Can be experimental (from PDB) or computational (e.g., AlphaFold2). [29] | Check for accuracy, especially in loops and binding pocket sidechains. AI-predicted models may have conformational biases. [29] |

| Compound Library | The large set of molecules to be explored (e.g., virtual screening hits, enumerated analogs). | The library's size and diversity determine the benefit of using AL. Ensure synthetic feasibility is considered. |

| FEP Software | Performs the core physics-based binding affinity calculations (e.g., Schrödinger FEP+, Cresset Flare FEP, OpenFE). [1] [3] | Validate setup with known ligands. Monitor hysteresis and sampling. Leverage automated lambda scheduling. [1] |

| ML/QSAR Model | The machine learning model that learns from FEP data to predict the larger library. | The model can be a 3D-QSAR method or other project-specific model. It must be retrained each iteration with new FEP data. [1] |

| Selection Criterion | The algorithm for choosing the next batch of compounds for FEP (e.g., predicted potency, model uncertainty, diversity). | Balancing "exploitation" (best predicted compounds) with "exploration" (high uncertainty) is key to avoiding local minima. [1] |

Step-by-Step Methodology:

System Preparation:

- Protein Preparation: Obtain a high-quality 3D structure of the target. For AI-generated models (like AlphaFold2), be aware that they may represent an "average" conformation and might not be suitable for ligands requiring a specific state (active/inactive). [29] Add missing hydrogen atoms, assign protonation states for key residues (e.g., His, Asp, Glu), and model any missing loops.

- Ligand Preparation: Generate the large compound library for exploration. For the initial set, ensure chemical diversity. Prepare 3D structures and assign appropriate protonation states. It is recommended that all ligands in a single RBFE calculation have the same formal charge where possible. [28]

Initial FEP Cycle:

- Select Initial Subset: Choose a small (e.g., 20-50), structurally diverse set of compounds from the large library for the first round of FEP.

- Run and Validate FEP: Perform FEP calculations on this initial set. Critically, validate the results against any available experimental data to ensure the computational model is accurate. High hysteresis or poor correlation with experiment must be addressed before proceeding.

Active Learning Loop:

- Train ML Model: Train a machine learning model (e.g., a 3D-QSAR model) using the FEP-calculated binding affinities from all compounds run so far.

- Predict and Select: Use the trained ML model to predict the affinities for the entire remaining compound library. Apply your selection criterion (e.g., top-ranked by prediction, or those with high prediction uncertainty) to choose the next batch of compounds for FEP.

- Iterate: Run FEP on the newly selected batch, add the results to the training set, and retrain the ML model. Repeat this process until the model's predictions are satisfactory and the chemical space has been sufficiently explored.

Output and Analysis:

- The final output is a prioritized list of compounds from the large library, with binding affinities predicted by a well-trained ML model (for speed) and validated by targeted FEP calculations (for accuracy). The top candidates can be recommended for synthesis and experimental testing.

Optimizing AL-FEP Performance: Key Parameters and Common Pitfalls

Frequently Asked Questions

Q1: What is the most common mistake researchers make when setting the batch size in an Active Learning campaign for free energy calculations? A1: The most common mistake is selecting a batch size that is too small for the initial cycle, especially when dealing with a diverse chemical space. A small initial batch provides an inadequate representation of the underlying data distribution, which can prevent the model from learning the broad structure-activity relationships essential for identifying top binders. This initial misstep can compromise the performance of all subsequent learning cycles [30].

Q2: My model performance has plateaued despite continued Active Learning cycles. Could batch size be a factor? A2: Yes. Using a batch size that is too small in subsequent cycles can prevent the model from acquiring the diverse and informative data needed to refine its predictions and escape performance plateaus. While small initial batches are detrimental, very large batches in later cycles may be inefficient. Adjusting the batch size after the initial exploration phase can help reinvigorate model learning [30].

Q3: How does the choice of batch size influence the exploration-exploitation balance? A3: Batch size is a critical lever for managing exploration and exploitation.

- Small Batches: Lean towards exploitation. With fewer samples selected per cycle, the strategy is more likely to greedily choose molecules similar to current top candidates, potentially missing novel chemotypes.

- Large Batches: Encourage exploration. A larger batch can encompass a more diverse set of molecules, helping the model to map out the chemical space more broadly and reduce the risk of getting stuck in a local optimum [31] [30].

Q4: Are there any hardware limitations I should consider when increasing my batch size? A4: Absolutely. A larger batch size requires more memory (RAM) to process the data. Exceeding your available memory will cause the program to crash. Furthermore, while modern GPUs are optimized for parallel computation of large batches, the optimal size for your specific hardware should be determined through empirical testing, starting from a known stable value (e.g., 32) and scaling up until you approach memory limits [32].

Troubleshooting Guides

Issue: Poor Recall of Top Binders in Early AL Cycles

Potential Cause: Inadequate initial batch size for the chemical space's diversity. Solution:

- Increase the Initial Batch Size: For the very first cycle of AL, use a larger batch to seed the model. Benchmarking studies have shown that a larger initial batch significantly increases the recall of top binders [30].

- Justify the Cost: Frame this larger initial investment as essential for building a robust foundational model, which will make all subsequent cycles more efficient and effective.

Issue: Slow or Inefficient Model Improvement After Initial Cycles

Potential Cause: Suboptimal batch size in the main AL loop. Solution:

- Implement a Staged Strategy: Do not use the same batch size throughout the campaign. After a large initial batch, switch to a smaller batch size for subsequent cycles. Evidence suggests that smaller batch sizes (e.g., 20 or 30 compounds) are desirable after the initial batch [30].

- Leverage Advanced Algorithms: Use batch selection methods that explicitly account for diversity and model uncertainty to maximize the informational value of each selected batch, regardless of its size. Methods like BADGE or those that maximize joint entropy are designed for this purpose [31] [33].

Issue: Model Overfitting to the Current Batch of Data

Potential Cause: The batch size is too large, and the learning rate is not properly tuned. Solution:

- Re-evaluate Batch Size and Learning Rate Coupling: The learning rate and batch size are deeply connected. A larger batch size provides a more stable gradient estimate, which often allows you to safely increase the learning rate. A common rule of thumb is to double the learning rate if you double the batch size [32].

- Monitor Validation Performance: Closely track the model's performance on a held-out validation set. If performance on the validation set starts to degrade while performance on the training data improves, it is a sign of overfitting, and you should consider reducing the batch size or learning rate.

The table below consolidates key evidence from published studies on the impact of batch size in Active Learning for drug discovery applications.

Table 1: Empirical Evidence on Batch Size Impact from Benchmarking Studies

| Study Context | Key Finding on Batch Size | Performance Metric | Recommended Value |

|---|---|---|---|

| Affinity Prediction (TYK2, USP7, D2R, Mpro targets) [30] | A larger initial batch size increases recall of top binders. | Recall of top 2%/5% binders | Larger initial batch; 20-30 for subsequent cycles |

| Relative Binding Free Energy (RBFE) Calculations [2] | Performance is largely insensitive to ML method but is significantly hurt by sampling too few molecules per iteration. | Identification of top 100 molecules | Best performance: sample 6% of library per iteration |

| ADMET & Affinity Modeling [31] | New batch selection methods (COVDROP, COVLAP) that maximize joint entropy outperform random and other batch methods. | RMSE, Model Accuracy | Method dependent; batch size fixed at 30 for benchmarking |

Experimental Protocol: Benchmarking Batch Size for a New AL Campaign

When applying Active Learning to a new target or chemical library, use the following protocol to empirically determine an effective batch size strategy.

Objective: To identify an optimal batch size schedule that maximizes the identification of high-affinity ligands while minimizing computational cost.

Materials:

- Unlabeled Compound Library: Your virtual screening library.

- Labeling Oracle: The method for obtaining binding affinities (e.g., RBFE calculation, docking score, experimental assay).

- Machine Learning Model: Such as Gaussian Process (GP) regression or a fine-tuned graph neural network (e.g., Chemprop).

- Computing Resources: Adequate memory and processing power for the planned batch sizes.

Methodology:

- Initialization:

- Split your compound library into a pool for AL sampling and a held-out test set for final evaluation.

- Define your primary success metric (e.g., Recall@Top2%, RMSE).

Systematic Comparison:

- Run parallel AL campaigns, varying the batch sizes while keeping the total number of acquired samples constant.

- Test a range of initial batch sizes (e.g., 50, 100, 200).

- Test a range of subsequent batch sizes (e.g., 10, 20, 30, 50).

- For each configuration, run multiple iterations to account for stochastic variability.

Analysis:

- Plot your success metric (e.g., Recall) against the number of cycles or total samples acquired for each batch size configuration.

- Identify the schedule that achieves the highest performance curve. The results will often indicate that a larger initial batch followed by smaller subsequent batches is most effective [30].

Workflow and Relationship Diagrams

Active Learning Batch Optimization

Batch Size Impact on Learning