Optimizing Chemical ML Models: A Comprehensive Guide to Cross-Validation and Hyperparameter Tuning

This article provides a complete framework for applying cross-validation and hyperparameter tuning to chemical machine learning applications in drug discovery and pharmaceutical development.

Optimizing Chemical ML Models: A Comprehensive Guide to Cross-Validation and Hyperparameter Tuning

Abstract

This article provides a complete framework for applying cross-validation and hyperparameter tuning to chemical machine learning applications in drug discovery and pharmaceutical development. Tailored for researchers and drug development professionals, it covers foundational concepts, practical methodologies, advanced optimization techniques using bio-inspired algorithms like Firefly and Dragonfly, and validation strategies for ensuring model generalizability. The guide addresses real-world challenges including data scarcity, class imbalance, and computational constraints, while demonstrating how these techniques enhance predictive accuracy in critical applications from pharmacokinetic prediction to pharmaceutical process optimization.

Understanding Cross-Validation and Hyperparameter Tuning in Chemical Machine Learning

The Critical Role of Model Generalization in Pharmaceutical Applications

In the high-stakes field of pharmaceutical research and development, the emergence of machine learning (ML) has introduced powerful tools for accelerating drug discovery and formulation. The global machine learning in pharmaceutical industry market, forecast to increase by USD 10.2 billion between 2024 and 2029, reflects the massive investment and confidence in these technologies [1]. However, the translation of predictive models from experimental settings to real-world applications hinges on a critical property: model generalization. This article explores how advanced hyperparameter tuning and validation frameworks serve as the cornerstone for developing robust, generalizable ML models in pharmaceutical applications, with a specific focus on predicting drug solubility and activity coefficients—key parameters in formulation development.

Generalization ensures that a model maintains its predictive performance when applied to new, unseen data, a non-negotiable requirement when model predictions inform critical decisions in drug development pipelines. Despite technical advancements, studies note that "even the best-performing models exhibit an error rate exceeding 10%, underscoring the ongoing need for careful human oversight in clinical settings" [2]. This reality highlights the imperative for methodological rigor in model development, particularly through sophisticated hyperparameter optimization and robust validation protocols that reliably estimate real-world performance.

The Generalization Imperative in Pharmaceutical ML

Model generalization represents the ultimate test of a predictive model's utility in pharmaceutical workflows. A model that performs well on its training data but fails on novel data can lead to costly missteps in candidate selection, clinical trial design, and formulation development. The challenge of generalization is particularly acute in pharmaceutical applications due to several domain-specific factors:

- Data Limitations: While pharmaceutical datasets can be large, they often suffer from sparsity, noise, and irregular sampling, especially in real-world healthcare data [3].

- High-Dimensional Spaces: Molecular descriptor data used in drug formulation typically involves numerous features (e.g., 24 input variables in one recent study [4]), creating complex optimization landscapes.

- Regulatory Scrutiny: Pharmaceutical applications often require demonstrated model robustness and reliability for regulatory approval, necessitating transparent validation approaches.

The consequences of poor generalization are not merely statistical but can directly impact patient outcomes and resource allocation. A phenomenon termed "overtuning" – a form of overfitting at the hyperparameter optimization level – has been identified as a significant risk, particularly in small-data regimes [5]. Overtuning occurs when excessive optimization of validation error leads to selecting hyperparameters that do not translate to improved generalization performance. Research indicates this occurs in approximately 10% of cases, sometimes resulting in worse performance than default configurations [5].

Methodological Framework for Robust Generalization

Hyperparameter Optimization Algorithms

Hyperparameter optimization (HPO) methods systematically search for optimal model configurations that maximize performance while ensuring robustness. Three primary approaches dominate current practice:

- Grid Search (GS): This brute-force method exhaustively evaluates all possible combinations within a predefined hyperparameter grid. While comprehensive, GS becomes computationally prohibitive for large hyperparameter spaces [6].

- Random Search (RS): RS randomly samples hyperparameter combinations from defined distributions, proving more efficient than GS for high-dimensional spaces [7].

- Bayesian Optimization (BO): BO builds a probabilistic model of the objective function to guide the search toward promising configurations, dramatically reducing the number of evaluations needed [8].

Validation Strategies

Cross-validation strategies provide the critical framework for estimating model generalization during development:

- K-Fold Cross-Validation: The dataset is partitioned into k subsets (folds), with each fold serving as a validation set while the remaining k-1 folds are used for training [3].

- Nested Cross-Validation: Also known as double cross-validation, this approach uses an outer loop for performance estimation and an inner loop for hyperparameter optimization, providing nearly unbiased performance estimates [3].

- Subject-Wise vs Record-Wise Splitting: For data with multiple records per subject, subject-wise splitting ensures all records from one subject reside in either training or validation splits, preventing data leakage [3].

Table 1: Comparison of Hyperparameter Optimization Methods

| Method | Search Strategy | Computational Efficiency | Best Use Cases |

|---|---|---|---|

| Grid Search | Exhaustive search over all combinations | Low for large spaces; becomes computationally prohibitive | Small hyperparameter spaces where exhaustive search is feasible |

| Random Search | Random sampling from parameter distributions | Higher than Grid Search; more efficient for high-dimensional spaces | Models with many hyperparameters where some are more important than others |

| Bayesian Optimization | Builds surrogate model to guide search | Highest; reduces evaluations needed by 30-50% | Complex models with expensive evaluations; limited computational budgets |

Comparative Analysis of HPO Methods in Healthcare Applications

Performance Benchmarking Studies

Recent comparative studies across healthcare domains provide compelling evidence for method selection. In a comprehensive analysis of heart failure prediction models, researchers evaluated GS, RS, and BS across three machine learning algorithms [6]. After rigorous 10-fold cross-validation, Random Forest models demonstrated superior robustness with an average AUC improvement of 0.03815, while Support Vector Machines showed signs of overfitting with a slight decline (-0.0074) [6].

The study further revealed critical differences in computational efficiency, with Bayesian Search consistently requiring less processing time than both Grid and Random Search methods [6]. This efficiency advantage makes Bayesian approaches particularly valuable in pharmaceutical applications where model complexity and dataset sizes continue to grow.

In environmental health research predicting actual evapotranspiration, Bayesian optimization demonstrated superior performance for tuning deep learning models, with LSTM achieving R²=0.8861 compared to traditional methods [8]. The authors noted "Bayesian optimization demonstrated higher performance and reduced computation time" compared to grid search approaches [8].

Pharmaceutical Formulation Case Study

A recent pharmaceutical study exemplifies the application of these methods for predicting drug solubility and activity coefficients (gamma) – critical parameters in formulation development [4]. The research employed three base models (Decision Tree, K-Nearest Neighbors, and Multilayer Perceptron) enhanced with AdaBoost ensemble method and rigorous hyperparameter tuning using the Harmony Search (HS) algorithm.

Table 2: Performance of Optimized Models in Pharmaceutical Formulation Prediction

| Model | Prediction Task | R² Score | Mean Squared Error (MSE) | Mean Absolute Error (MAE) |

|---|---|---|---|---|

| ADA-DT | Drug solubility | 0.9738 | 5.4270E-04 | 2.10921E-02 |

| ADA-KNN | Gamma (activity coefficient) | 0.9545 | 4.5908E-03 | 1.42730E-02 |

The optimized ADA-DT model for drug solubility prediction achieved remarkable performance (R²=0.9738), while the ADA-KNN model for gamma prediction also demonstrated strong predictive capability (R²=0.9545) [4]. This success was attributed to the integration of ensemble learning with advanced feature selection and hyperparameter optimization, highlighting how methodological rigor directly translates to predictive accuracy in pharmaceutical applications.

Integrated Workflows for Real-World Deployment

The NACHOS Framework

For real-world deployment, researchers have developed integrated frameworks that combine multiple methodological advances. The NACHOS (Nested and Automated Cross-validation and Hyperparameter Optimization using Supercomputing) framework integrates Nested Cross-Validation (NCV) and Automated Hyperparameter Optimization (AHPO) within a parallelized high-performance computing environment [9].

This approach addresses a critical limitation of conventional validation – the failure to quantify variance in test performance metrics when using a single fixed test set [9]. By integrating these methodologies, NACHOS provides a "scalable, reproducible, and trustworthy framework for DL model evaluation and deployment in medical imaging" [9], with principles directly applicable to pharmaceutical applications.

Experimental Protocol for Robust Pharmaceutical ML

Based on the analyzed studies, a robust experimental protocol for pharmaceutical ML applications should include:

- Data Preprocessing: Handle missing values using appropriate imputation techniques (MICE, kNN, or Random Forest imputation), remove outliers using methods like Cook's distance, and apply feature scaling [4] [6].

- Feature Selection: Implement Recursive Feature Elimination (RFE) or other selection methods to identify the most predictive molecular descriptors [4].

- Model Selection: Test multiple algorithms (e.g., Decision Trees, SVM, Random Forest, XGBoost) given their complementary strengths [6].

- Hyperparameter Optimization: Employ Bayesian Optimization for efficiency, with Random Search as a viable alternative for complex spaces [8] [6].

- Validation: Implement nested cross-validation for unbiased performance estimation, with appropriate splitting strategies (subject-wise for patient data) [3] [9].

- Performance Quantification: Report multiple metrics (AUC, accuracy, sensitivity, specificity, calibration measures) and variance estimates [6] [9].

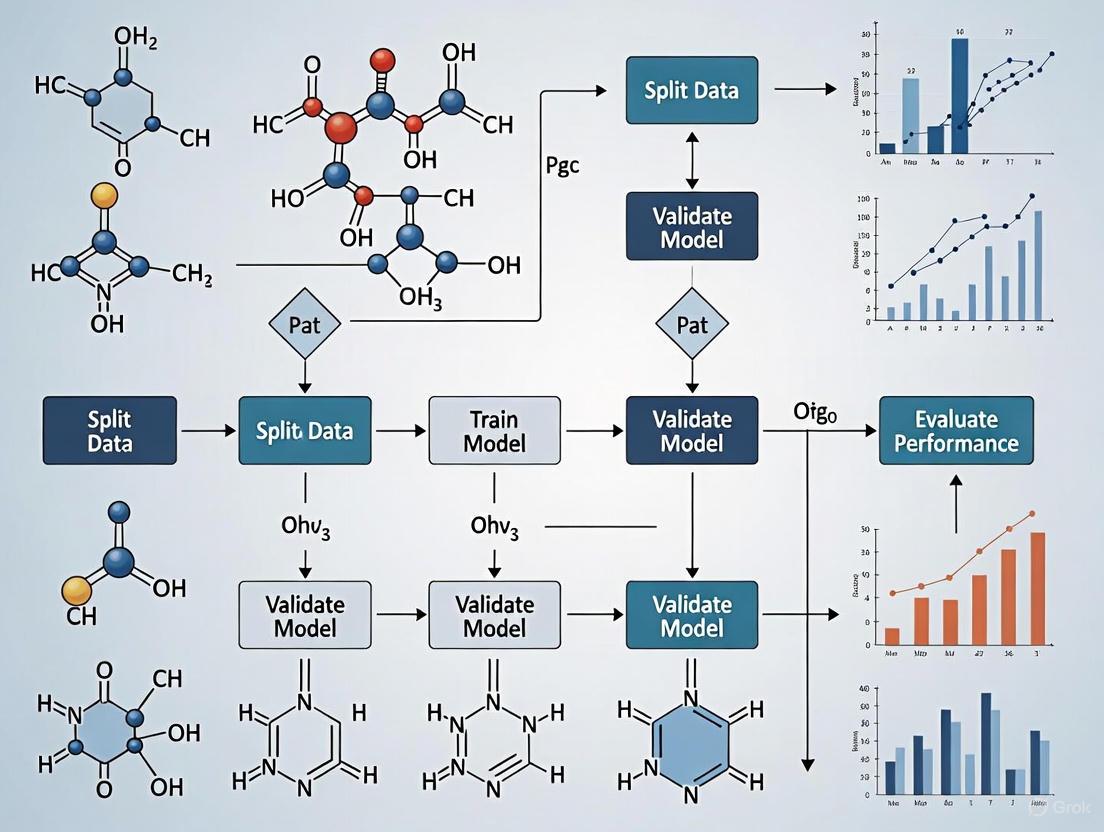

Diagram 1: End-to-end workflow for developing generalizable ML models in pharmaceutical applications, integrating data preparation, model development with HPO, and rigorous validation.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Methodological "Reagents" for Pharmaceutical ML Research

| Tool/Technique | Function | Application Context |

|---|---|---|

| Bayesian Optimization | Efficient hyperparameter search using surrogate models | Optimizing complex models with limited computational budget; recommended for deep learning architectures |

| Nested Cross-Validation | Unbiased performance estimation with hyperparameter tuning | Model evaluation for regulatory submissions; quantifying performance variance |

| Recursive Feature Elimination | Iterative feature selection by eliminating weakest performers | Identifying critical molecular descriptors from high-dimensional data |

| Harmony Search Algorithm | Music-inspired metaheuristic optimization algorithm | Pharmaceutical formulation optimization when combined with ensemble methods |

| Subject-Wise Data Splitting | Ensuring all records from one subject are in same split | Preventing data leakage in patient-derived datasets with multiple measurements |

| Cook's Distance | Statistical measure for identifying influential outliers | Improving dataset quality by removing anomalous observations in molecular data |

| AdaBoost Ensemble | Boosting algorithm combining multiple weak learners | Enhancing performance of base models (DT, KNN, MLP) for solubility prediction |

The critical role of model generalization in pharmaceutical applications demands methodological rigor throughout the ML development pipeline. Evidence from comparative studies consistently demonstrates that Bayesian Optimization provides superior computational efficiency while maintaining performance, with Random Search representing a viable alternative [6]. The integration of ensemble methods with advanced HPO, as demonstrated in drug solubility prediction, achieves exceptional predictive accuracy (R²>0.95) [4].

Furthermore, frameworks like NACHOS that combine nested cross-validation, automated HPO, and high-performance computing address the crucial need to quantify and reduce variance in performance estimation [9]. For pharmaceutical researchers and developers, these methodologies provide the foundation for building trustworthy ML models that generalize reliably to novel data – ultimately accelerating drug discovery and formulation while maintaining scientific rigor and regulatory compliance.

As the field evolves, awareness of subtle challenges like overtuning – particularly in small-data regimes – will become increasingly important [5]. By adopting these sophisticated validation and optimization approaches, pharmaceutical researchers can harness the full potential of machine learning while ensuring their models deliver reliable, generalizable predictions for real-world application.

Defining Hyperparameters vs. Model Parameters in Chemical Contexts

Core Definitions and Conceptual Framework

In the realm of chemical machine learning (ML), understanding the distinction between model parameters and hyperparameters is fundamental to developing robust, predictive models. These two elements play distinct but interconnected roles in the learning process.

Model parameters are the internal variables of a model that are learned directly from the training data during the optimization process. They are not set manually but are estimated by the learning algorithm to map input features (e.g., molecular descriptors, spectroscopic data) to outputs (e.g., reaction yields, property predictions) [10] [11]. In the context of chemical ML, common examples include the weights and biases in a neural network [11] [12] or the coefficients in a linear regression model relating molecular structure to activity [11].

Hyperparameters, in contrast, are external configuration variables that are set prior to the training process and control how the learning algorithm operates [10] [13] [12]. They cannot be learned from the data and must be defined by the researcher. Examples critical to chemical ML include the learning rate of an optimization algorithm, the number of hidden layers in a neural network, or the number of trees in a random forest model [10] [12].

The relationship between them is hierarchical: hyperparameters control the process through which model parameters are learned [12]. The choice of hyperparameters directly influences which model parameters are ultimately obtained and thus the overall performance and generalizability of the final model [10].

Table 1: Fundamental Differences Between Model Parameters and Hyperparameters

| Aspect | Model Parameters | Hyperparameters |

|---|---|---|

| Origin | Learned from data [10] [11] | Set by the researcher [10] [13] |

| Objective | Define the model's mapping function for predictions [10] | Control the learning process and model structure [10] [13] |

| Examples in Chemical ML | Weights in a NN, regression coefficients [11] | Learning rate, number of layers, number of clusters [10] |

Hyperparameter Tuning and Validation in Chemical ML

In low-data regimes common to chemical research, such as predicting reaction outcomes or molecular properties with only dozens of data points, hyperparameter tuning becomes critically important to mitigate overfitting while maintaining model capacity [14].

The Challenge of Overfitting in Small Chemical Datasets

Chemical ML applications often involve small datasets, sometimes containing only 18 to 44 data points [14]. Such datasets are highly susceptible to overfitting, where a model learns noise or spurious correlations in the training data, impairing its ability to generalize to new, unseen data [14]. Non-linear models, which can capture complex structure-property relationships, are particularly prone to this issue without careful regularization and hyperparameter selection [14].

Advanced Tuning Workflows: The ROBERT Framework

Recent research has introduced automated workflows specifically designed for chemical ML in low-data scenarios. The ROBERT software, for instance, employs Bayesian hyperparameter optimization using a specialized objective function designed to minimize overfitting [14].

The core innovation in this workflow is a combined Root Mean Squared Error (RMSE) metric that evaluates a model's generalization capability by averaging performance across both interpolation and extrapolation cross-validation (CV) methods [14]:

- Interpolation is assessed via a 10-times repeated 5-fold CV process.

- Extrapolation is evaluated using a selective sorted 5-fold CV approach, which partitions data based on the target value and considers the highest RMSE between top and bottom partitions [14].

This dual approach identifies models that perform well on training data while also filtering those that struggle with unseen data, a crucial capability for predicting new chemical entities or reactions [14].

Diagram 1: Hyperparameter optimization workflow for chemical ML. The process iteratively evaluates hyperparameter sets using a combined metric of interpolation and extrapolation performance.

Performance Benchmarking: Linear vs. Non-Linear Models

Benchmarking studies on diverse chemical datasets (ranging from 18-44 data points) have demonstrated that properly tuned non-linear models can perform on par with or outperform traditional multivariate linear regression (MVL) - the historical standard in low-data chemical research [14].

In these studies, neural network (NN) models performed as well as or better than MVL in half of the tested examples, while non-linear algorithms achieved the best results for predicting external test sets in five out of eight examples [14]. This demonstrates that with appropriate hyperparameter tuning, more complex models can be successfully deployed even in data-limited chemical applications.

Experimental Protocols and Workflows

Detailed Methodology for Hyperparameter Optimization

The following protocol outlines the hyperparameter tuning process as implemented in automated chemical ML workflows [14]:

Data Preparation and Splitting

- Reserve 20% of the initial data (or minimum of four data points) as an external test set using an "even" distribution split to ensure balanced representation of target values.

- Perform all hyperparameter optimization exclusively on the remaining 80% training/validation data to prevent data leakage.

Objective Function Definition

- Define a combined RMSE metric that incorporates both interpolation and extrapolation performance:

- Interpolation RMSE: Calculate using 10-times repeated 5-fold cross-validation on the training/validation data.

- Extrapolation RMSE: Compute using a selective sorted 5-fold CV approach, where data is sorted by target value and partitioned, considering the highest RMSE between top and bottom partitions.

- The final objective function is a combination of these two RMSE values.

- Define a combined RMSE metric that incorporates both interpolation and extrapolation performance:

Bayesian Optimization Loop

- Initialize the optimization with a defined search space for all hyperparameters.

- For each iteration:

- Select hyperparameter set using Bayesian optimization algorithms.

- Train model with the selected hyperparameters.

- Evaluate model using the combined RMSE objective function.

- Update the optimization algorithm with results.

- Continue until convergence criteria are met or maximum iterations reached.

Final Model Selection

- Select the hyperparameter set that minimizes the combined RMSE metric.

- Train the final model on the entire training/validation set using these optimal hyperparameters.

- Evaluate final model performance on the held-out test set.

Comprehensive Model Evaluation Framework

Beyond standard performance metrics, advanced chemical ML workflows employ a sophisticated scoring system (on a scale of ten) based on three key aspects [14]:

Predictive Ability and Overfitting (up to 8 points):

- Evaluation of 10× 5-fold CV and external test set performance using scaled RMSE.

- Assessment of the difference between these RMSE values to detect overfitting.

- Measurement of extrapolation ability using the lowest and highest folds in sorted CV.

Prediction Uncertainty (1 point):

- Analysis of the average standard deviation of predicted values across different CV repetitions.

Detection of Spurious Models (1 point):

- Evaluation of RMSE differences after data modifications (y-shuffling, one-hot encoding).

- Comparison against a baseline error based on the y-mean test.

Table 2: Hyperparameter Categories and Their Impact in Chemical ML

| Category | Function | Chemical ML Examples | Impact on Model |

|---|---|---|---|

| Architecture Hyperparameters [13] | Control model structure and complexity | Number of layers in NN, number of trees in RF [13] | Determines capacity to capture complex structure-activity relationships |

| Optimization Hyperparameters [13] | Govern parameter learning process | Learning rate, batch size, number of epochs [10] [13] | Affects stability, speed, and convergence of training |

| Regularization Hyperparameters [13] | Prevent overfitting | L1/L2 strength, dropout rate [13] [15] | Controls model simplicity/generality trade-off |

Essential Research Reagents and Computational Tools

Successful implementation of hyperparameter tuning in chemical ML requires both computational tools and conceptual frameworks. The following table details key "research reagents" for this domain.

Table 3: Essential Research Reagent Solutions for Chemical ML Hyperparameter Tuning

| Tool/Concept | Function | Application Context |

|---|---|---|

| Bayesian Optimization [14] | Efficiently navigates hyperparameter space to find optimal configurations | Hyperparameter tuning for non-linear models (NN, RF, GB) on small chemical datasets |

| Combined RMSE Metric [14] | Objective function balancing interpolation and extrapolation performance | Prevents overfitting by evaluating model performance on both seen and unseen data regions |

| Cross-Validation Protocols (10× 5-fold CV, Sorted CV) [14] | Robust validation strategies that mitigate dataset splitting effects | Provides reliable performance estimates for small chemical datasets where single splits are unstable |

| Automated ML Workflows (e.g., ROBERT) [14] | Integrated pipelines for data curation, hyperparameter optimization, and model evaluation | Reduces human bias and enables reproducible model development in chemical ML |

| Pre-selected Hyperparameter Sets [16] | Default hyperparameter configurations that avoid over-optimization | Provides starting points for small datasets where extensive tuning risks overfitting |

In chemical machine learning, the distinction between model parameters and hyperparameters is not merely theoretical but has profound practical implications for model performance and generalizability. The proper tuning of hyperparameters through advanced workflows that explicitly address the challenges of small datasets enables researchers to harness the power of non-linear models while mitigating overfitting risks. As automated tools and specialized validation protocols continue to evolve, they promise to make sophisticated ML approaches more accessible and reliable for chemical discovery applications, from reaction outcome prediction to molecular property optimization. The integration of robust hyperparameter tuning practices represents an essential component in the modern chemoinformatics toolkit, ultimately expanding the possibilities for data-driven chemical research even in low-data regimes.

In computational chemistry and drug development, the reliability of a machine learning (ML) model is paramount. Model validation ensures that predictions for properties like chemical activity, toxicity, or hydrogen dispersion are accurate and generalizable, preventing costly errors in research and development [17]. Cross-validation is a statistical technique used to evaluate the performance and generalization ability of a machine learning model by partitioning data into subsets, training the model on some subsets, and testing it on the others [18]. This process is crucial for assessing how well a model will perform on unseen data, preventing overfitting, and guiding model selection and hyperparameter tuning [19] [18].

This guide objectively compares the most common cross-validation techniques, from the simple holdout method to advanced k-fold approaches, providing a framework for researchers to select the most appropriate validation strategy for their chemical ML applications.

Hold-Out Validation

The hold-out method, also known as train-test split, is the most straightforward validation technique. It involves randomly splitting the dataset into two parts: a training set and a testing set. A typical ratio is 70% for training and 30% for testing, though this can vary [18]. The model is trained once on the training set and evaluated once on the testing set.

Key Characteristics:

- Simplicity: The method is straightforward and easy to implement [18].

- Computational Efficiency: It requires only one training and testing cycle, making it less computationally intensive than repetitive methods [18].

- Drawbacks: The evaluation can have high variance if the single split is not representative of the overall data distribution. It also uses only a portion of the data for training, which can be inefficient, especially with smaller datasets [20] [18].

K-Fold Cross-Validation

K-fold cross-validation provides a more robust estimate of model performance by dividing the dataset into k equal-sized folds (subsets). The model is trained and tested k times. In each iteration, k-1 folds are used for training, and the remaining fold is used for testing. This process rotates until each fold has served as the test set once [20] [19]. The final performance metric is the average of the scores from all k iterations.

Key Characteristics:

- Comprehensive Data Use: All data points are used for both training and testing, maximizing data utility [19].

- Reduced Variance: Averaging results across multiple splits provides a more stable and reliable performance estimate than a single train-test split [20] [19].

- Computational Cost: Requires training the model k times, which can be computationally expensive for large datasets or complex models [20].

Table 1: Core Comparison of Hold-Out vs. K-Fold Cross-Validation

| Feature | Hold-Out Method | K-Fold Cross-Validation |

|---|---|---|

| Data Split | Single split into training and testing sets [20]. | Dataset divided into k folds; each fold used once as a test set [20]. |

| Training & Testing | Model is trained once and tested once [20]. | Model is trained and tested k times [20]. |

| Bias & Variance | Higher bias if the split is not representative; results can vary significantly [20] [18]. | Lower bias; more reliable performance estimate; variance depends on k [20] [19]. |

| Execution Time | Faster [20]. | Slower, especially for large datasets, as the model is trained k times [20]. |

| Best Use Case | Very large datasets or when a quick evaluation is needed [20] [18]. | Small to medium-sized datasets where an accurate performance estimate is critical [20]. |

Specialized Cross-Validation Techniques

For specific data structures, standard k-fold may not be optimal. Scikit-learn offers advanced variants [21]:

- StratifiedKFold: Preserves the percentage of samples for each class in every fold, which is crucial for imbalanced datasets [20] [21].

- GroupKFold: Ensures that the same group (e.g., molecules from the same experiment) is not present in two different folds. This prevents data leakage and overoptimistic performance estimates [21].

- StratifiedGroupKFold: Combines the constraints of both

GroupKFoldandStratifiedKFold, aiming to return stratified folds while keeping groups intact [21].

Experimental Protocols and Data Presentation

A Chemical ML Case Study: Predicting Hydrogen Dispersion

A study on hydrogen leakage and dispersion prediction optimized several ML models (including DNN) using Genetic Algorithms (GA). The performance of these optimized GA-ML models was then rigorously verified using k-fold cross-validation to ensure reproducibility and reliability [17].

Methodology:

- Data Generation: A comprehensive dataset of 6,561 leakage scenarios was generated using the PHAST simulation software, covering a wide range of operating conditions and meteorological factors [17].

- Model Optimization: Hyperparameters of five different ML models were optimized using Genetic Algorithms to minimize human bias and ensure optimal performance [17].

- Model Validation: The optimized models were evaluated using k-fold cross-validation. Their performance was compared using statistical metrics like R² (coefficient of determination), MSE (Mean Squared Error), and MAE (Mean Absolute Error) [17].

Results: The GA-optimized Deep Neural Network (GA-DNN) model was identified as the best-performing model for predicting hydrogen dispersion distance. The use of k-fold cross-validation provided a statistically sound basis for this conclusion, demonstrating the model's robustness and generalizability across different data splits [17].

Table 2: Quantitative Comparison of K-Fold and Hold-Out Based on Theoretical Performance

| Aspect | K-Fold Cross-Validation | Hold-Out Validation |

|---|---|---|

| Performance Estimate Reliability | More reliable; averages multiple splits [19]. | Less reliable; depends on a single split [18]. |

| Overfitting Detection | Helps detect overfitting; a large gap between training and validation performance is a clear sign [19]. | Less effective at detecting overfitting [18]. |

| Data Efficiency | High; all data used for training and validation [20] [19]. | Lower; only a portion of data is used for training [20] [18]. |

| Optimal for Hyperparameter Tuning | Yes; provides a reliable way to select optimal hyperparameters [19] [18]. | Not ideal; can lead to overfitting to a specific validation set [18]. |

Implementation with Scikit-Learn

The following code snippets illustrate how to implement k-fold cross-validation in Python, using the scikit-learn library.

Method 1: Using cross_val_score for a Single Metric

This is the most straightforward method for quick evaluation with one primary metric.

Method 2: Using cross_validate for Multiple Metrics

For a comprehensive evaluation, cross_validate allows you to compute multiple metrics and return additional information.

citation:4

Workflow Visualization

The following diagram illustrates the logical workflow for selecting and applying a cross-validation strategy in a chemical ML project, from data preparation to model selection.

Cross-Validation Strategy Selection Workflow

The Scientist's Toolkit: Research Reagent Solutions

This section details key computational tools and methodologies used in modern cross-validation experiments, analogous to essential reagents in a wet lab.

Table 3: Essential Tools for Cross-Validation Experiments

| Tool / Solution | Function in Validation | Example in Practice |

|---|---|---|

Scikit-Learn (sklearn) |

Provides a unified API for various cross-validation splitters, model training, and metrics calculation [19] [21]. | KFold, StratifiedKFold, and GroupKFold classes for data splitting; cross_val_score for evaluation. |

| Genetic Algorithms (GA) | A metaheuristic optimization technique used to find optimal model hyperparameters, minimizing human bias before cross-validation [17]. | Optimizing the number of layers and neurons in a DNN for hydrogen dispersion prediction [17]. |

| Statistical Metrics (R², MSE) | Quantify the model's performance and generalizability. Using multiple metrics provides a comprehensive view [19] [17]. | R² measures the proportion of variance explained; MSE penalizes larger errors more heavily. Both are averaged over k-folds. |

| Simulation Software (PHAST, FLACS) | Generates comprehensive datasets for chemical phenomena where real-world experimental data is scarce or dangerous to obtain [17]. | Creating a dataset of 6,561 hydrogen leakage scenarios to train and validate ML models [17]. |

| Visualization Libraries (Matplotlib) | Helps in visualizing cross-validation behavior, model performance across folds, and comparing different models [19] [21]. | Plotting individual fold scores and average performance for multiple models to aid in comparison and selection [19]. |

The choice between hold-out and k-fold cross-validation is a trade-off between computational efficiency and estimation reliability. For initial exploratory analysis with very large datasets, the hold-out method offers a quick and simple check. However, for robust model evaluation, hyperparameter tuning, and especially with limited datasets common in chemical ML research, k-fold cross-validation is the gold standard. Its ability to maximize data usage, provide a reliable performance average, and help detect overfitting makes it an indispensable tool for researchers and scientists aiming to build generalizable and trustworthy predictive models.

Why Chemical Data Requires Specialized Validation Strategies

In the field of chemical machine learning, the path from predictive models to reliable scientific insights is paved with unique challenges. Chemical data possesses inherent characteristics—from severe class imbalances to structured experimental designs and significant measurement noise—that render standard machine learning validation protocols insufficient. These domain-specific complexities necessitate specialized validation strategies to prevent overoptimistic performance estimates, ensure model generalizability, and ultimately build trust in predictions that guide critical decisions in drug discovery and materials science. This guide examines the core challenges and provides a structured comparison of validation methodologies tailored to chemical data.

Unique Challenges in Chemical Data

Chemical data exhibits several distinctive characteristics that fundamentally complicate machine learning validation:

Data Imbalance: In many chemical applications, crucial positive samples are extremely rare. Drug discovery datasets typically contain vastly more inactive compounds than active ones, while successful reaction outcomes are often outnumbered by unsuccessful attempts. Models trained on such imbalanced data tend to be biased toward the majority class without specialized handling [22] [23].

Structured Data Collection: Chemical data frequently originates from carefully designed experiments (Design of Experiments, DOE) with fixed factor combinations. This structured nature violates the common machine learning assumption of independent and identically distributed data, making standard random cross-validation problematic [24].

High Experimental Noise: Biochemical measurements, including ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) properties and reaction yields, often exhibit significant experimental error. This aleatoric uncertainty creates a fundamental performance ceiling that proper validation must acknowledge [25] [26].

Feature Complexity: Molecular representations range from simple descriptors to complex learned embeddings, with performance heavily dependent on the specific chemical domain and endpoint being modeled [26].

Specialized Validation Methodologies

Addressing Data Imbalance

Techniques specifically adapted for chemical data imbalance go beyond standard machine learning approaches:

Informed Sampling: The Synthetic Minority Over-sampling Technique (SMOTE) and its variants (Borderline-SMOTE, SVM-SMOTE) generate synthetic minority class samples in chemically meaningful regions of feature space. In materials science, SMOTE has been successfully integrated with Extreme Gradient Boosting (XGBoost) to predict mechanical properties of polymer materials and screen for hydrogen evolution reaction catalysts [22].

Negative Data Utilization: Incorporating information from unsuccessful experiments (negative data) through reinforcement learning can significantly improve model performance, especially when positive examples are scarce. This approach has demonstrated state-of-the-art performance in reaction prediction with as few as 20 positive data points supported by negative data [23].

The following workflow illustrates the integration of these specialized techniques into a validation framework for imbalanced chemical data:

Robust Performance Estimation

Proper validation strategies must account for the structured nature of chemical data and provide statistical rigor:

Scaffold Splitting: For molecular data, splitting by chemical scaffold (core molecular structure) provides a more challenging and realistic assessment of generalizability compared to random splitting, as it tests a model's ability to predict properties for novel chemotypes [26] [27].

Nested Cross-Validation: A nested approach, with hyperparameter tuning in the inner loop and performance estimation in the outer loop, prevents optimistic bias in model evaluation. This is particularly important for complex models with many parameters [27].

Statistical Significance Testing: Using appropriate statistical tests like Tukey's Honest Significant Difference (HSD) for method comparison accounts for multiple testing and provides confidence intervals that facilitate practical decision-making [25].

The following table compares key validation approaches for chemical data:

| Validation Method | Application Context | Advantages | Limitations |

|---|---|---|---|

| Leave-One-Out CV (LOOCV) | Small designed experiments [24] | Preserves design structure, low bias | High variance with unstable procedures |

| k-Fold Cross-Validation | General chemical datasets [24] | Balance of bias and variance | May disrupt designed experiment structure |

| Scaffold Split CV | Molecular property prediction [26] [27] | Tests generalization to novel chemotypes | More challenging performance targets |

| Reinforcement Learning with Negative Data | Reaction prediction with limited positives [23] | Leverages failed experiment information | Requires carefully characterized negative data |

Meaningful Performance Metrics

Standard metrics can be misleading for chemical data, necessitating domain-aware alternatives:

Precision-Recall Analysis: For imbalanced classification tasks common in virtual screening, the Area Under the Precision-Recall Curve (PR-AUC) provides a more informative performance measure than ROC-AUC, as it focuses on the minority class of interest [27].

Statistical Comparison Protocols: Rigorous method comparison should include effect sizes with confidence intervals rather than relying solely on point estimates or "dreaded bold tables" that highlight best performers without significance testing [25].

The experimental impact of proper metric selection is evident in benchmark studies:

| Study Context | Standard Metric | Domain-Appropriate Metric | Impact on Conclusion |

|---|---|---|---|

| Virtual Screening [27] | ROC-AUC | PR-AUC | Changed model ranking, better reflected practical utility |

| ADMET Prediction [26] | Single Test Set R² | Cross-Validation with Statistical Testing | Identified statistically insignificant "improvements" |

| Method Comparison [25] | Mean Performance | Tukey's HSD with Confidence Intervals | Distinguished practically significant differences |

Experimental Protocols for Chemical ML Validation

Protocol 1: Handling Data Imbalance in Chemical Classification

Application Context: Predicting compound activity, toxicity, or material properties with imbalanced data distributions.

Methodology:

- Data Characterization: Quantify imbalance ratio and analyze minority class distribution

- Strategic Sampling: Apply Borderline-SMOTE to generate synthetic samples along class boundaries, preserving minority class characteristics [22]

- Validation Strategy: Implement stratified cross-validation to maintain class proportions across folds

- Performance Assessment: Evaluate using PR-AUC, F1-score, and balanced accuracy alongside confusion matrix analysis

- Statistical Testing: Use paired statistical tests (e.g., Tukey's HSD) across multiple dataset splits to confirm significance of improvements [25]

Illustrative Example: In polymer material property prediction, researchers combined nearest neighbor interpolation with Borderline-SMOTE to balance datasets, enabling more accurate prediction of mechanical properties that would otherwise be obscured by data imbalance [22].

Protocol 2: Incorporating Negative Data in Low-Data Regimes

Application Context: Reaction prediction, catalyst design, or any chemical application where successful outcomes are rare.

Methodology:

- Data Curation: Collect both successful (positive) and unsuccessful (negative) experimental results

- Reward Model Design: Develop a reward function that appropriately weights positive and negative examples

- Reinforcement Learning Tuning: Fine-tune base models using policy gradient methods to maximize expected reward [23]

- Validation Framework: Use rigorous cross-validation with careful separation of positive and negative examples

- Generalization Testing: Evaluate on external datasets or temporal splits to assess real-world performance

Experimental Results: In reaction prediction, this approach achieved state-of-the-art performance using only 20 positive data points supported by negative data, significantly outperforming standard fine-tuning approaches [23].

Essential Research Reagents for Chemical ML Validation

The following tools and methodologies constitute the essential "research reagents" for robust chemical machine learning validation:

| Research Reagent | Function | Example Implementations |

|---|---|---|

| SMOTE Variants | Addresses class imbalance through intelligent oversampling | Borderline-SMOTE, SVM-SMOTE, RF-SMOTE [22] |

| Scaffold Splitting | Assesses model generalization to novel chemical structures | RDKit-based scaffold implementation [26] [27] |

| Statistical Comparison Framework | Determines significance of performance differences | Tukey's HSD test, paired t-tests with multiple testing correction [25] |

| Negative Data Integration | Leverages unsuccessful experiments to improve models | Reinforcement learning with reward model [23] |

| Multi-Metric Evaluation | Comprehensive performance assessment | PR-AUC, ROC-AUC, balanced accuracy [27] |

| Nested Cross-Validation | Provides unbiased performance estimation | Outer loop for testing, inner loop for hyperparameter tuning [27] |

Comparative Performance Analysis

The effectiveness of specialized chemical validation strategies is demonstrated through comparative experimental data:

| Validation Strategy | Chemical Application | Performance Impact | Statistical Significance |

|---|---|---|---|

| Standard Random CV | Polymer Elastic Response [28] | Baseline performance | Reference |

| SMOTE + XGBoost | Polymer Material Properties [22] | Improved minority class recall | p < 0.05 via Tukey's HSD [25] |

| Reinforcement Learning with Negative Data | Reaction Prediction [23] | +15% accuracy in low-data regime | p < 0.01 via paired testing |

| Scaffold Split vs Random Split | ADMET Prediction [26] | 20-30% performance drop highlighting overoptimism | Practical significance established |

The relationship between chemical data challenges and appropriate specialized solutions can be visualized as follows:

Chemical data demands specialized validation strategies that acknowledge its unique characteristics—severe imbalance, structured collection, significant noise, and complex feature representations. Through informed sampling techniques like SMOTE, strategic incorporation of negative data, appropriate performance metrics like PR-AUC, and rigorous statistical comparison protocols, researchers can develop models that deliver reliable, generalizable predictions. The experimental protocols and comparative data presented in this guide provide a foundation for robust chemical machine learning validation, enabling more trustworthy predictions that accelerate discovery in drug development and materials science.

The integration of artificial intelligence (AI) and machine learning (ML) has ushered in a transformative era for pharmaceutical research and development. These technologies are accelerating the discovery of novel therapeutic compounds and revolutionizing the design and optimization of drug formulations. By leveraging large, complex datasets, AI-driven approaches enable researchers to predict biological activity, optimize molecular properties, and design dosage forms with enhanced efficacy and stability more rapidly and cost-effectively than traditional methods. A critical factor in the success of these ML models is the implementation of robust hyperparameter tuning and cross-validation strategies to ensure predictive accuracy and generalizability. This guide examines real-world case studies across drug discovery and formulation, comparing the performance of different AI/ML approaches, their experimental protocols, and their tangible impact on pharmaceutical development.

Case Studies in AI-Driven Drug Discovery

Predictive Modeling for Anti-Infective Agents

Experimental Protocol: A comprehensive study trained five machine learning (Random Forest (RF), Multi-Layer Perceptron (MLP), K-Nearest Neighbors (KNN), eXtreme Gradient Boosting (XGBoost), Naive Bayes (NB)) and six deep learning algorithms (including Graph Convolution Network (GCN) and Graph Attention Network (GAT)) on highly imbalanced PubChem bioassay datasets targeting HIV, Malaria, Human African Trypanosomiasis, and COVID-19 [29]. The core methodology involved addressing significant class imbalance (ratios from 1:82 to 1:104 inactive-to-active compounds) through a novel K-ratio random undersampling (K-RUS) approach, which created specific imbalance ratios (1:50, 1:25, 1:10) for model training [29]. Model performance was rigorously assessed via external validation, and the impact of dataset content was investigated through an analysis of the chemical similarity between active and inactive classes [29].

Performance Comparison: The table below summarizes the key findings from the study, highlighting the effect of different resampling techniques on model performance metrics.

Table 1: Performance of ML/DL Models on Imbalanced Drug Discovery Datasets Using Different Resampling Techniques [29]

| Dataset (Original Imbalance Ratio) | Resampling Technique | Key Performance Outcome | Optimal Model(s) |

|---|---|---|---|

| HIV (1:90) | Random Undersampling (RUS) | Highest ROC-AUC, Balanced Accuracy, MCC, and F1-score | Multiple (RF, XGBoost, GCN) |

| Malaria (1:82) | Random Undersampling (RUS) | Best MCC values and F1-score | Multiple (RF, XGBoost, GCN) |

| Trypanosomiasis | Random Undersampling (RUS) | Best scores across multiple metrics | Multiple (RF, XGBoost, GCN) |

| COVID-19 (1:104) | SMOTE | Highest MCC and F1-score | Multiple (RF, XGBoost, GCN) |

| All Datasets | K-RUS (1:10 IR) | Consistently significant performance enhancement | Multiple |

The study concluded that a moderate imbalance ratio (IR) of 1:10, achieved via K-RUS, generally provided the best balance between true positive and false positive rates across all models and datasets, outperforming conventional resampling methods like SMOTE and ADASYN [29].

Robust Antimalarial Prediction with Random Forest

Experimental Protocol: Researchers developed a robust random forest (RF) model to predict antiplasmodial activity from a large dataset of ~15,000 molecules from ChEMBL tested at multiple doses against Plasmodium falciparum blood stages [30]. "Actives" were strictly defined as having IC50 < 200 nM (N=7039) and "inactives" as IC50 > 5000 nM (N=8079) to ensure a clear, noise-free distinction [30]. The dataset was split into 80% for training/internal validation and 20% as a held-out external test set [30]. The workflow was implemented on the KNIME platform, and nine different molecular fingerprints were evaluated, with Avalon fingerprints (RF-1 model) yielding the best results after hyperparameter optimization [30].

Performance Comparison: The optimized RF model was benchmarked and experimentally validated.

Table 2: Performance of the Optimized Random Forest Model for Antimalarial Prediction [30]

| Model | Accuracy | Precision | Sensitivity | Area Under ROC (AUROC) |

|---|---|---|---|---|

| RF-1 (Avalon MFP) | 91.7% | 93.5% | 88.4% | 97.3% |

| MAIP (Consensus Model, Benchmark) | Comparable to RF-1 | Comparable to RF-1 | Comparable to RF-1 | Comparable to RF-1 |

The study noted that hits identified by the RF-1 model and the benchmark MAIP model from a commercial library did not overlap, suggesting the models are complementary [30]. External experimental validation of six purchased molecules identified two human kinase inhibitors with single-digit micromolar antiplasmodial activity, confirming the model's real-world predictive power [30].

Case Studies in AI-Optimized Drug Formulation

Smart Formulation: Predicting Stability of Compounded Medicines

Experimental Protocol: The "Smart Formulation" AI platform was designed to predict the Beyond Use Dates (BUDs) of compounded oral solid dosage forms [31]. A curated dataset of 55 experimental BUD values from the Stabilis database was used to train a Tree Ensemble Regression model within the KNIME platform [31]. Each formulation was encoded using molecular descriptors (e.g., LogP), excipient composition, packaging type, and storage conditions [31]. The trained model was then used to predict BUDs for 3166 Active Pharmaceutical Ingredients (APIs) under various scenarios [31].

Performance Comparison: The model's predictive accuracy was validated and its findings on critical stability factors are summarized below.

Table 3: Performance of Smart Formulation Model and Key Stability Factors [31]

| Aspect | Finding | Impact/Correlation |

|---|---|---|

| Predictive Accuracy | Strong correlation with experimental values (R² = 0.9761, p < 0.001) | High model reliability |

| Key Molecular Descriptor | LogP | Significant correlation with BUD (R=0.503, p=0.012) |

| Impact of Excipient Number | Use of two excipients vs. one | Frequently reduced BUDs |

| Stability-Enhancing Excipients | Cellulose, silica, sucrose, mannitol | Associated with improved stability |

| Stability-Reducing Excipients | HPMC, lactose | Contributed to faster degradation |

The platform provides a scalable, cost-effective decision-support tool for pharmacists, helping to mitigate drug shortages and improve the quality of extemporaneous preparations [31].

Generative AI for In-Silico Formulation Optimization

Experimental Protocol: A generative AI method was developed to create digital versions of drug products from images of exemplar products [32]. This approach uses a Conditional Generative Adversarial Network (cGAN) architecture, specifically an On-Demand Solid Texture Synthesis (STS) model augmented with Feature-wise Linear Modulation (FiLM) layers [32]. The model is steered by Critical Quality Attributes (CQAs) like particle size and drug loading to generate realistic digital product variations that can be analyzed and optimized in silico [32].

Performance Comparison: The method was validated in two case studies:

- Oral Tablet: Accurately predicted a percolation threshold of 4.2% weight of microcrystalline cellulose in an oral tablet product [32].

- HIV Implant: Successfully generated implant formulations with controlled drug loading and particle size distributions. Comparisons with real samples showed that the synthesized structures exhibited comparable particle size distributions and transport properties in release media [32].

This generative AI method significantly reduces the need for physical manufacturing and experimentation during early-stage formulation development, potentially cutting costs and shortening development cycles [32].

Cross-Validation and Hyperparameter Optimization in Practice

Robust model validation is paramount. A comparative analysis of hyperparameter optimization methods for predictive models on a clinical heart failure dataset offers generalizable insights [6]. The study evaluated Grid Search (GS), Random Search (RS), and Bayesian Search (BS) across Support Vector Machine (SVM), Random Forest (RF), and XGBoost algorithms [6].

Table 4: Comparison of Hyperparameter Optimization Methods on Clinical Data [6]

| Optimization Method | Description | Computational Efficiency | Best Performing Model (AUC) | Robustness to Overfitting |

|---|---|---|---|---|

| Grid Search (GS) | Exhaustive brute-force search over a parameter grid | Low (computationally expensive) | SVM (Initial AUC > 0.66) | Low (SVM showed potential for overfitting) |

| Random Search (RS) | Random sampling of parameter combinations | Moderate | SVM (Initial AUC > 0.66) | Low (SVM showed potential for overfitting) |

| Bayesian Search (BS) | Builds a surrogate model to guide the search | High (consistently less processing time) | RF (Most robust, avg. AUC improvement +0.03815) | High |

The study demonstrated that while SVM initially showed high accuracy, Random Forest models optimized with Bayesian Search demonstrated superior robustness after 10-fold cross-validation, with the highest average AUC improvement and less overfitting [6]. This underscores the necessity of rigorous cross-validation in building reliable models for pharmaceutical applications.

The Scientist's Toolkit: Essential Research Reagents & Platforms

Table 5: Key Software Platforms and Tools for AI-Driven Drug Discovery and Formulation

| Tool/Platform Name | Type | Primary Function in Research |

|---|---|---|

| KNIME Analytics Platform [30] [31] | Data Analytics Platform | Used to build automated workflows for data curation, model training (e.g., Random Forest), and stability prediction without coding. |

| Generative Adversarial Network (GAN) [32] | AI Model Architecture | Generates novel, realistic digital structures of drug formulations (e.g., tablet microstructures) based on exemplar images and target attributes. |

| Tree Ensemble Regression [31] | Machine Learning Algorithm | Predicts continuous outcomes (e.g., Beyond Use Date) by combining predictions from multiple decision trees for improved accuracy. |

| Random Forest (RF) [29] [30] [6] | Machine Learning Algorithm | An ensemble classification algorithm used for predicting biological activity (e.g., antiplasmodial activity) and robust against overfitting. |

| Avalon Molecular Fingerprints [30] | Molecular Representation | A type of chemical fingerprint used to encode molecular structure; proved effective in building predictive models for antimalarial activity. |

| Bayesian Search (BS) [6] | Hyperparameter Optimization Method | Efficiently finds optimal model parameters by building a probabilistic surrogate model, balancing performance and computational cost. |

Experimental Workflow and Signaling Pathways

The following diagram illustrates a standardized, high-level workflow for developing and validating an AI/ML model in pharmaceutical sciences, integrating common elements from the cited case studies.

Practical Implementation of Cross-Validation and Tuning Methods

Selecting Appropriate Cross-Validation Strategies for Chemical Data

In the field of chemical machine learning (ML), where models predict bioactivity, optimize drug formulations, and characterize materials, the reliability of predictive models is paramount. Overfitting remains one of the most pervasive and deceptive pitfalls, leading to models that perform exceptionally well on training data but fail to generalize to real-world scenarios [33]. Although often attributed to excessive model complexity, overfitting frequently results from inadequate validation strategies, faulty data preprocessing, and biased model selection [33]. For researchers, scientists, and drug development professionals, selecting an appropriate cross-validation (CV) strategy is not merely a technical formality but a fundamental determinant of a model's real-world utility. This guide provides a comparative analysis of cross-validation methodologies specifically for chemical data, supported by experimental data and detailed protocols to inform robust model development.

Understanding Cross-Validation and Its Importance in Chemical Applications

The Basic Principles of Cross-Validation

Cross-validation is a set of data sampling methods used to estimate the generalization performance of an algorithm, perform hyperparameter tuning, and select between candidate models [34]. The core principle involves repeatedly partitioning the available data into independent training and testing sets. The model is trained on the training set, and its performance is evaluated on the test set. This process is repeated multiple times, with the performance results averaged over the rounds to produce a more robust estimate of how the model will perform on unseen data [34]. This process helps mitigate overfitting, where a model learns patterns specific to the training data that do not generalize to new data [34].

Domain-Specific Challenges for Chemical Data

Chemical data presents unique validation challenges that necessitate careful strategy selection:

- Data Heterogeneity: Chemical datasets often encompass diverse target classes (e.g., ion channels, receptors, transporters) and assay types (e.g., binding, functional, ADME) [27].

- Limited Data Availability: Many chemical ML studies operate in a "low-data regime," where the risk of overfitting is high [35].

- Complex Data Structure: Data may exhibit strong internal correlations, such as when multiple compounds share common molecular scaffolds, violating the assumption of data independence [27].

- Imbalanced Outcomes: Bioactivity data often shows significant class imbalance, with many more inactive than active compounds for a given target [27] [3].

Comparative Analysis of Cross-Validation Strategies

The table below summarizes the key cross-validation strategies, their mechanisms, and their suitability for various chemical data scenarios.

Table 1: Comparative Analysis of Cross-Validation Strategies for Chemical Data

| Validation Strategy | Key Mechanism | Advantages | Limitations | Ideal Chemical Data Use Cases |

|---|---|---|---|---|

| Holdout Validation [34] | One-time split into training/test sets (e.g., 80/20) | Simple, fast, produces a single model | performance estimates have high variance with small datasets; susceptible to data representation bias | Preliminary model exploration with very large datasets (>100,000 samples) [27] |

| K-Fold Cross-Validation [34] | Data partitioned into k folds; each fold serves as test set once | More robust performance estimate than holdout; uses all data for evaluation | Standard k-fold can produce optimistically biased estimates if data has inherent groupings (e.g., scaffolds) | Homogeneous data without strong internal grouping structures |

| Stratified K-Fold [3] | Preserves the percentage of samples for each class in every fold | Controls for class imbalance in classification tasks | Primarily addresses imbalance, not other data structures | Bioactivity classification with imbalanced active/inactive compounds [27] |

| Grouped/Scaffold Split CV [27] | Splits data such that all samples from one group (e.g., same molecular scaffold) are in the same fold | The most realistic simulation of real-world generalization for new chemical series; reduces optimistic bias | Can lead to high variance in error estimates if few groups exist | Primary method for bioactivity prediction; essential for model generalizability estimation [27] |

| Nested Cross-Validation [35] | Outer loop for performance estimation, inner loop for hyperparameter tuning | Provides nearly unbiased performance estimates; appropriate for both model selection and evaluation | Computationally intensive; requires careful implementation | Final model evaluation and algorithm selection when dataset size is limited [35] |

Quantitative Performance Comparison

The choice of validation strategy significantly impacts reported model performance. The following table synthesizes findings from a reanalysis of a large-scale comparison of machine learning methods for drug target prediction, highlighting how validation can alter performance conclusions.

Table 2: Impact of Validation Strategy on Model Performance Interpretation

| Study Focus | Reported Finding (Original Validation) | Finding After Re-analysis (Robust Validation) | Key Implication |

|---|---|---|---|

| Deep Learning vs. SVM for Bioactivity Prediction [27] | "Deep learning methods significantly outperform all competing methods" (p < 10⁻⁷) | The performance of support vector machines (SVM) is competitive with deep learning methods. | Apparent superiority of complex models can be an artifact of validation bias. |

| Performance Metric Choice [27] | Conclusion based primarily on Area Under the ROC Curve (AUC-ROC) | AUC-ROC can be misleading; Area Under the Precision-Recall Curve (AUC-PR) is often more relevant for imbalanced bioactivity data. | Metric selection must align with the application context (e.g., virtual screening). |

| Uncertainty Estimation [27] | Performance reported without confidence intervals for many assays | Scaffold-split nested cross-validation reveals high uncertainty in performance estimates, especially for small, imbalanced assays. | Reporting confidence intervals is crucial for realistic performance assessment. |

Experimental Protocols and Workflow Visualization

Detailed Protocol: Nested Cross-Validation for Drug Release Prediction

The following protocol is adapted from a study that successfully predicted drug release from polymeric long-acting injectables using nested cross-validation [35].

1. Problem Formulation and Data Collection:

- Objective: Predict fractional drug release over time based on descriptors of the drug, polymer, and formulation.

- Data Compilation: Assemble a dataset from literature and in-house experiments. The example dataset included 181 drug release profiles with 3783 individual release measurements for 43 unique drug-polymer combinations [35].

- Feature Selection: Define molecular and physicochemical input features based on domain knowledge. Key features included:

- Drug Properties: Molecular weight, topological polar surface area, melting temperature, LogP, pKa.

- Polymer Properties: Molecular weight, lactide-to-glycolide ratio (for PLGA), cross-linking ratio.

- Formulation Parameters: Drug loading capacity, initial drug-to-polymer ratio, surface area-to-volume ratio of the formulation.

- Experimental Conditions: Percentage of surfactant in release media, fractional release at early time points (e.g., 6, 12, 24 hours) [35].

2. Nested Cross-Validation Setup:

- Outer Loop (Performance Estimation): 20% of the drug-polymer groups are randomly held out as a test set. This process is repeated multiple times (e.g., 10) with different random splits to obtain an average performance [35].

- Inner Loop (Model Training & Tuning): The remaining 80% of data undergoes hyperparameter optimization using group k-fold cross-validation (k=10). The groups are defined by drug-polymer combinations to prevent data leakage. A random grid search is used to find the hyperparameters that yield the best average performance across the k-folds [35].

3. Model Training and Evaluation:

- Algorithm Selection: Train and compare a panel of ML algorithms (e.g., Multiple Linear Regression, Random Forest, Gradient Boosting Machines, Support Vector Regressor).

- Performance Metrics: Use relevant metrics such as Root Mean Squared Error (RMSE) for regression or AUC-ROC/AUC-PR for classification.

The workflow for this protocol is visualized below.

Detailed Protocol: Scaffold-Split Validation for Bioactivity Prediction

This protocol addresses the critical issue of molecular scaffold bias in chemoinformatics [27].

1. Data Preparation and Scaffold Analysis:

- Compound Standardization: Standardize molecular structures from the dataset (e.g., from ChEMBL).

- Scaffold Assignment: Assign each compound a molecular scaffold (e.g., using Bemis-Murcko scaffolds).

- Data Stratification: Analyze the distribution of compounds across scaffolds. This reveals if the dataset is dominated by a few common scaffolds.

2. Scaffold-Split Cross-Validation:

- Split by Scaffold, Not by Compound: Partition the data into k folds such that all compounds sharing a scaffold are contained within the same fold. This prevents any compounds from the same scaffold family from being in both the training and test sets for a given CV round.

- Stratification for Imbalance: If possible, perform scaffold splitting in a way that roughly preserves the overall class balance (active/inactive) in each fold.

3. Model Training and Evaluation:

- Training: Train the model on compounds from the training scaffolds.

- Testing: Evaluate the model on the completely unseen scaffolds in the test fold.

- Performance Aggregation: Repeat for all k folds and aggregate the results. The resulting performance is a more realistic estimate of a model's ability to generalize to novel chemical series.

The logical relationship and decision process for implementing a scaffold split is outlined below.

Table 3: Essential Tools for Cross-Validation in Chemical ML

| Tool Category | Specific Tool / Resource | Function in Validation Workflow | Application Note |

|---|---|---|---|

| Cheminformatics Libraries | RDKit [27] | Calculates molecular fingerprints (e.g., ECFP6) and descriptors; performs scaffold analysis. | The de facto standard open-source toolkit for chemical informatics. |

| Machine Learning Frameworks | Scikit-learn [34] [7] | Implements core CV splitters (KFold, StratifiedKFold, GroupKFold) and hyperparameter tuners (GridSearchCV, RandomizedSearchCV). | Excellent for prototyping; provides consistent API for various models and splitters. |

| Advanced Hyperparameter Optimization | Optuna [36] [37] | Bayesian optimization framework with pruning for efficient hyperparameter search in inner CV loops. | Can significantly reduce tuning time (e.g., 6.77 to 108.92x faster) compared to Grid/Random Search [36]. |

| Public Chemical Databases | ChEMBL [27] | Provides large-scale, structured bioactivity data for training and benchmarking predictive models. | Critical for building robust, generalizable models; data heterogeneity must be accounted for in splits. |

| Specialized CV Splitters | GroupKFold (Scikit-learn), custom scaffold splitter | Enforces separation of specific groups (e.g., scaffolds, assay protocols) across training and test sets. | Essential for implementing scaffold-split or other group-based validation strategies. |

The selection of a cross-validation strategy is a foundational decision that profoundly influences the perceived performance, reliability, and ultimate utility of chemical machine learning models. Based on the comparative analysis and experimental data presented, the following recommendations are made for researchers and drug development professionals:

- Default to Grouped/Scaffold Splits: For most chemical prediction tasks, particularly quantitative structure-activity relationship (QSAR) modeling and bioactivity prediction, scaffold-based cross-validation provides the most realistic assessment of a model's ability to generalize to novel chemical matter and should be considered the gold standard [27].

- Use Nested Cross-Validation for Final Evaluation: When performing both hyperparameter tuning and final model evaluation on a single dataset, nested cross-validation is the most rigorous approach, as it prevents optimistic bias from leaking into the performance estimate [35].

- Align Metrics with Application Goals: Do not rely solely on AUC-ROC, especially for imbalanced data common in virtual screening. Complement it with metrics like AUC-PR to gain a comprehensive view of model performance [27].

- Report Uncertainty: Always report confidence intervals or the standard error of performance metrics, especially when dealing with small or imbalanced assay data, to provide a realistic picture of model reliability [27].

By moving beyond simplistic holdout validation and adopting these more robust, domain-aware strategies, the chemical ML community can build more trustworthy, reproducible, and generalizable models that accelerate discovery and development.

In the field of chemical machine learning (ML), where models like Graph Neural Networks (GNNs) are increasingly used for molecular property prediction, drug discovery, and toxicity assessment, hyperparameter tuning represents a critical step in model development. The performance of these models is highly sensitive to architectural choices and hyperparameters, making optimal configuration selection a non-trivial task that significantly impacts predictive accuracy and generalizability [38]. Hyperparameters are configuration variables that control the learning process itself, distinct from model parameters which are learned from data during training [39]. Examples include learning rates, regularization parameters, architectural depth, and hidden layer sizes.

Within cheminformatics, where datasets are often characterized by limited samples, high dimensionality, and potential class imbalance, proper hyperparameter optimization becomes even more crucial to avoid overfitting and ensure model robustness [16]. This guide provides a comprehensive examination of GridSearchCV, a systematic approach to hyperparameter optimization, comparing it with alternative methods within the context of chemical ML applications, complete with experimental data and implementation protocols relevant to researchers and drug development professionals.

Understanding GridSearchCV: Core Mechanism and Workflow

Fundamental Principles

GridSearchCV (Grid Search with Cross-Validation) is an exhaustive hyperparameter tuning technique that operates on a simple yet systematic principle: it evaluates all possible combinations of hyperparameters specified in a predefined grid. For each combination, it performs cross-validation to assess model performance, ultimately selecting the configuration that yields the best results [40] [41]. This method leaves no stone unturned within the defined search space, ensuring that the optimal combination from the discrete values provided is identified.

The technique is particularly valuable in chemical ML contexts where the relationship between hyperparameters and model performance may be complex and non-intuitive. For instance, when training GNNs for molecular property prediction, interactions between hyperparameters like graph convolution depth, dropout rate, and learning rate can significantly impact a model's ability to capture relevant chemical patterns [38]. GridSearchCV systematically explores these interactions without relying on random sampling.

Integrated Cross-Validation

A key strength of GridSearchCV is its integration of k-fold cross-validation, which addresses the critical issue of overfitting—a particular concern in cheminformatics where datasets may be small [42]. In this process, the training data is split into k partitions (folds). The model is trained k times, each time using k-1 folds for training and the remaining fold for validation. The performance metric reported is the average across all folds [40] [43]. This approach provides a more robust estimate of model generalization compared to a single train-test split, especially important when working with limited chemical data.

Table 1: Key Components of GridSearchCV

| Component | Description | Role in Hyperparameter Tuning |

|---|---|---|

estimator |

The machine learning model/algorithm to be tuned | Determines which hyperparameters are available for optimization |

param_grid |

Dictionary with parameters names as keys and lists of parameter settings to try as values | Defines the search space and specific values to explore |

scoring |

Performance metric (e.g., 'accuracy', 'r2', 'precision') | Quantifies model performance for comparison across parameter combinations |

cv |

Cross-validation strategy (e.g., integer for k-fold) | Controls the validation methodology for robust performance estimation |

n_jobs |

Number of jobs to run in parallel | Enables parallel processing to accelerate the search process |

GridSearchCV in Practice: Implementation for Chemical ML

Basic Implementation Framework

The implementation of GridSearchCV follows a consistent pattern across different ML frameworks. In scikit-learn, the process involves defining an estimator, specifying the parameter grid, and configuring the cross-validation parameters [44]. The following example demonstrates a typic al implementation for a Random Forest model, which could be used in preliminary cheminformatics studies:

Advanced Configuration for Complex Workflows

For more advanced chemical ML applications, such as those involving pipelines or specialized metrics, GridSearchCV offers additional configuration options. The refit parameter allows automatically retraining the best model on the entire dataset after hyperparameter selection, while custom scoring functions can optimize for domain-specific objectives relevant to drug discovery, such as balanced accuracy for imbalanced toxicity datasets [44].

The following diagram illustrates the complete GridSearchCV workflow:

Comparative Analysis: GridSearchCV vs. RandomizedSearchCV

Methodological Differences

While GridSearchCV employs an exhaustive search strategy, RandomizedSearchCV takes a probabilistic approach by sampling a fixed number of parameter settings from specified distributions [41] [39]. This fundamental difference in search methodology leads to distinct performance characteristics and computational requirements. RandomizedSearchCV is particularly advantageous when dealing with continuous hyperparameters or when the importance of different hyperparameters varies significantly, as it can explore a wider range of values without exponential computational cost [41].

In chemical ML applications, where certain hyperparameters (like learning rate or regularization strength) often have more significant impact than others, RandomizedSearchCV's ability to sample from continuous distributions (e.g., scipy.stats.expon for regularization parameters) can be particularly beneficial [41]. This allows for finer exploration of critical parameters while spending less computational resources on less influential ones.

Quantitative Performance Comparison

Experimental comparisons between these approaches demonstrate clear trade-offs. A study comparing both methods for optimizing a Support Vector Machine classifier found that while both methods achieved similar final accuracy (0.94), RandomizedSearchCV completed its search in 0.78 seconds for 15 candidate parameter settings, while GridSearchCV required 4.23 seconds for 60 candidate parameter settings [45]. This represents an 81% reduction in computation time with comparable performance, though the study authors noted that the slightly worse performance of randomized search was likely due to noise effects rather than systematic deficiency [45].

Table 2: Experimental Comparison of GridSearchCV and RandomizedSearchCV

| Metric | GridSearchCV | RandomizedSearchCV | Implications for Chemical ML |

|---|---|---|---|

| Search Strategy | Exhaustive: evaluates all possible combinations | Probabilistic: samples fixed number of combinations | Choice depends on parameter space complexity and computational budget |

| Computation Time | 4.23 seconds for 60 candidates [45] | 0.78 seconds for 15 candidates [45] | Randomized search offers significant speed advantages for initial exploration |

| Best Accuracy Achieved | 0.994 (std: 0.005) [45] | 0.987 (std: 0.011) [45] | Grid search may achieve marginally better performance in some cases |