Optimizing Chemistry ML: A Comprehensive Guide to Hyperparameter Tuning with Optuna

This guide provides chemistry researchers and drug development professionals with a comprehensive framework for integrating Optuna into their machine learning workflows.

Optimizing Chemistry ML: A Comprehensive Guide to Hyperparameter Tuning with Optuna

Abstract

This guide provides chemistry researchers and drug development professionals with a comprehensive framework for integrating Optuna into their machine learning workflows. Covering foundational concepts to advanced optimization techniques, it demonstrates how Optuna's efficient hyperparameter tuning can significantly enhance model performance in critical chemical applications such as molecular toxicity prediction, solvent component determination, and reaction pathway optimization. The article includes practical implementation strategies, troubleshooting guidance, and validation methodologies tailored specifically for chemical informatics and pharmaceutical research.

Understanding Optuna's Role in Chemical Machine Learning

The Critical Role of Hyperparameter Optimization in Chemistry ML

In cheminformatics and drug discovery, machine learning (ML) models, particularly Graph Neural Networks (GNNs) and other deep learning architectures, have demonstrated remarkable potential for revolutionizing traditional approaches. However, the performance of these models is highly sensitive to architectural choices and hyperparameters, making optimal configuration selection a non-trivial task that significantly impacts predictive accuracy and model reliability [1]. Hyperparameter optimization (HPO) has thus emerged as an indispensable component in developing robust ML workflows for chemical applications, from molecular property prediction to drug-target interaction forecasting.

The necessity for sophisticated HPO in chemical contexts stems from several domain-specific challenges. Chemical datasets often exhibit characteristics such as limited sample sizes relative to the high dimensionality of molecular descriptors, class imbalance in bioactivity data, and complex structure-activity relationships that are difficult to capture without appropriate model configuration [2] [3]. Furthermore, the substantial computational resources required for training complex models on large chemical libraries necessitate efficient HPO strategies that can identify optimal configurations without exhaustive search processes.

Traditional manual hyperparameter tuning approaches, which rely heavily on researcher intuition and iterative experimentation, prove increasingly inadequate as model architectures grow in complexity. This limitation has driven the adoption of automated HPO frameworks like Optuna, which employ state-of-the-art optimization algorithms to efficiently navigate high-dimensional parameter spaces and identify configurations that maximize model performance for specific chemical tasks [4] [5].

Comparative Analysis of Hyperparameter Optimization Methods

Table 1: Comparison of Hyperparameter Optimization Methods in Chemical Machine Learning

| Method | Key Mechanism | Advantages | Limitations | Suitable Chemical Applications |

|---|---|---|---|---|

| Manual Search | Human intuition and experience | Direct researcher control; No specialized tools needed | Time-consuming; Subjective; Non-reproducible | Initial model prototyping; Educational contexts |

| Grid Search | Exhaustive search over predefined parameter grid | Guaranteed to find best combination in grid; Simple implementation | Computationally prohibitive for high dimensions; Inefficient | Small parameter spaces (<5 parameters) with limited ranges |

| Random Search | Random sampling from parameter distributions | Better efficiency than grid search; Parallelizable | May miss important regions; No learning from previous trials | Medium-dimensional spaces with moderate computational budgets |

| Bayesian Optimization | Probabilistic model to guide search toward promising parameters | High sample efficiency; Adaptive sampling | Computational overhead for model updates; Complex implementation | Expensive chemical models (e.g., molecular dynamics) |

| Evolutionary Algorithms | Population-based search inspired by natural selection | Global search capability; Handles mixed parameter types | High computational cost; Many meta-parameters | Complex multi-modal optimization landscapes |

The performance implications of HPO method selection are substantial, particularly in chemical contexts where training data may be limited and models complex. Research demonstrates that advanced HPO methods can yield significant improvements in predictive accuracy for critical cheminformatics tasks. In one comprehensive analysis of Long Short-Term Memory (LSTM) networks for energy scheduling in cyber-physical production systems, Optuna with Bayesian optimization outperformed manual tuning, automated loops, and grid search approaches, establishing itself as the most effective strategy for optimizing time-series forecasting models with complex parameter interactions [4].

Similarly, in quantitative structure-activity relationship (QSAR) modeling and molecular property prediction, automated HPO has proven essential for developing models that generalize well beyond their training data. The performance of Graph Neural Networks (GNNs) - which have emerged as powerful tools for modeling molecular structures - is highly dependent on architectural choices and hyperparameters, making Neural Architecture Search (NAS) and HPO crucial for achieving state-of-the-art results [1].

Optuna: A Framework for Chemical Hyperparameter Optimization

Optuna is an open-source Python library specifically designed for efficient hyperparameter optimization, featuring an intuitive imperative interface that allows users to define parameter spaces using standard Python control structures [5]. Its relevance to chemical ML workflows stems from several distinctive capabilities that address domain-specific challenges.

Core Capabilities for Chemical Applications

Optuna implements sophisticated optimization algorithms, including the Tree-structured Parzen Estimator (TPE), which efficiently explores high-dimensional parameter spaces common in chemical ML applications [6]. This approach is particularly valuable for optimizing complex neural architectures like GNNs and Transformers used in molecular property prediction, where parameter interactions can be intricate and non-linear.

The framework's pruning functionality automatically terminates unpromising trials early in the training process, dramatically reducing computational overhead when optimizing resource-intensive models [5] [6]. This capability is especially beneficial in chemical contexts where model training may involve large molecular datasets or complex architectures requiring substantial computation time.

Optuna's seamless integration with popular ML frameworks including PyTorch, TensorFlow, Keras, and scikit-learn ensures compatibility with established cheminformatics toolkits and workflows [5]. The framework also provides comprehensive visualization tools for analyzing optimization results and hyperparameter importance, facilitating deeper insights into model behavior and chemical structure-activity relationships.

Empirical Validation in Chemical Contexts

In disease prediction studies leveraging molecular and clinical data, Optuna-optimized models have demonstrated superior performance compared to manually tuned alternatives. Research on indigenous disease prediction incorporating Optuna for hyperparameter optimization achieved significant accuracy improvements across multiple classification algorithms including Support Vector Machines (SVM), Random Forests (RF), and gradient boosting methods (XGBoost, LightGBM) [7].

Similar advantages have been observed in ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) prediction, where optimal hyperparameter configuration is crucial for model reliability in safety assessment. The AttenhERG model, based on the Attentive FP algorithm, achieved state-of-the-art accuracy in predicting hERG channel toxicity - a major cause of drug attrition - through careful optimization of architectural hyperparameters [2].

Experimental Protocols for Chemical Hyperparameter Optimization

Protocol 1: Optimizing Graph Neural Networks for Molecular Property Prediction

Application Context: Predicting biochemical properties (e.g., solubility, toxicity, bioactivity) from molecular graph representations using GNNs [1] [2].

Materials and Setup:

- Dataset Preparation: Curate molecular structures with associated experimental measurements; apply appropriate data splitting strategies (scaffold split for robustness assessment, random split for baseline performance) [2].

- Feature Representation: Implement atom and bond featurization capturing chemical attributes (atom type, hybridization, valence, etc.); consider additional molecular descriptors if needed.

- Computational Environment: GPU-accelerated environment (e.g., NVIDIA A100/P100) with Python 3.7+, PyTorch Geometric or Deep Graph Library, RDKit, and Optuna.

Optuna Optimization Procedure:

- Define Objective Function:

- Configure and Execute Optimization:

- Visualization and Analysis:

Validation and Interpretation: Evaluate optimized model on held-out test set; perform applicability domain analysis; assess uncertainty estimates; conduct mechanistic interpretation using explainable AI techniques if needed [2].

Protocol 2: Multi-Task Learning Optimization for Bioactivity Prediction

Application Context: Predicting compound bioactivity across multiple related protein targets using multi-task learning (MTL) architectures [3].

Materials and Setup:

- Dataset Curation: Collect bioactivity data for natural products or synthetic compounds across kinase families or related targets; incorporate evolutionary relatedness metrics (sequence similarity) as task relationships [3].

- Architecture Selection: Implement MTL framework with shared backbone and task-specific heads; consider feature-based MTL (FBMTL) or instance-based MTL (IBMTL) formulations.

- Computational Environment: As in Protocol 1, with additional dependencies for handling protein sequence data and multi-task metrics.

Specialized Optimization Considerations:

- Balance shared and task-specific parameters through careful regularization.

- Incorporate evolutionary relatedness metrics (AA-GSS: amino acid global sequence similarity) to guide parameter sharing [3].

- Employ weighted loss functions to address task imbalance.

Optuna Integration for MTL:

Validation Strategy: Perform per-task evaluation; assess transfer learning benefits; compare against single-task baselines; analyze whether evolutionary relatedness correlates with performance improvements [3].

Table 2: Key Research Reagent Solutions for Chemical Machine Learning

| Resource Category | Specific Tools/Libraries | Function in HPO Workflow | Application Context |

|---|---|---|---|

| Hyperparameter Optimization Frameworks | Optuna, Scikit-Optimize, Weights & Biases | Automated parameter search; Experiment tracking; Visualization | General HPO for all chemical ML tasks |

| Molecular Representation | RDKit, DeepChem, Mordred descriptors, Molecular fingerprints | Convert chemical structures to machine-readable features | Featurization for QSAR, property prediction |

| Deep Learning Architectures | PyTorch Geometric, Deep Graph Library, TensorFlow | Implement GNNs, Transformers, other advanced architectures | Molecular graph learning; Protein-ligand interaction |

| Cheminformatics Datasets | ChEMBL, NPASS, CMNPD, DrugBank, Tox21 | Provide labeled data for training and validation | Model development and benchmarking |

| Specialized Chemical ML Models | ChemProp, Attentive FP, Molecular Transformer | Pre-trained models; Domain-specific architectures | Transfer learning; State-of-the-art benchmarks |

| Visualization and Analysis | Plotly, Matplotlib, Seaborn, t-SNE/UMAP | Result interpretation; Model explainability; Chemical space visualization | Outcome analysis and hypothesis generation |

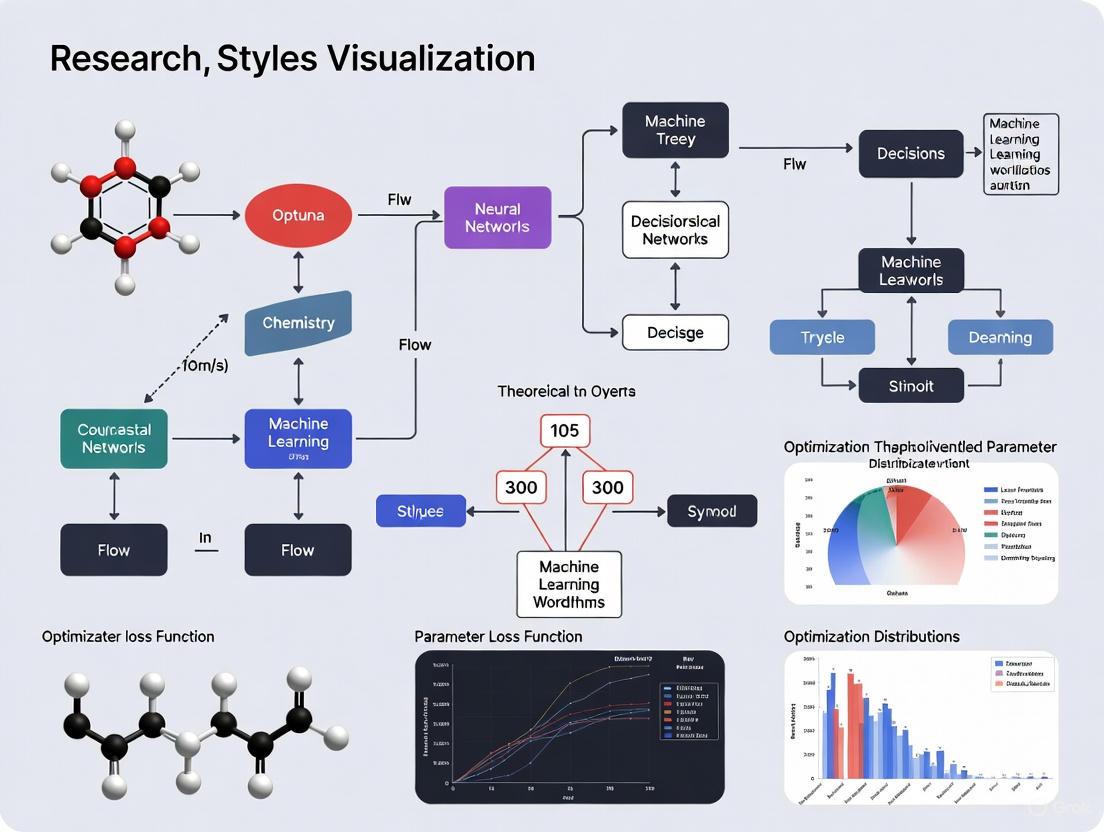

Workflow Visualization

Diagram Title: Chemical Hyperparameter Optimization Workflow

Diagram Title: Optuna Architecture for Chemical ML

Advanced Applications and Future Directions

The application of sophisticated HPO in chemical contexts continues to evolve, with several emerging trends demonstrating particular promise. For multi-task learning scenarios common in drug discovery - where predicting activity across multiple related targets can improve generalization - HPO must balance shared and task-specific parameters while incorporating domain knowledge such as evolutionary relatedness between protein targets [3]. Advanced optimization strategies that explicitly model these relationships can yield significant performance improvements over single-task approaches, particularly for natural product bioactivity prediction where data scarcity is a persistent challenge.

In structure-based drug discovery, HPO plays an increasingly important role in optimizing deep learning approaches for binding affinity prediction, binding site identification, and generative molecular design. The development of novel scoring functions like the Algebraic Graph Learning with Extended Atom-Type Scoring Function (AGL-EAT-Score) and pose prediction methods such as PoLiGenX relies on careful hyperparameter tuning to achieve state-of-the-art performance [2]. These applications often require specialized optimization strategies that account for physical constraints, synthetic accessibility, and multi-objective trade-offs between potency, selectivity, and drug-like properties.

Future directions in chemical HPO include the integration of large language models and transfer learning approaches that leverage pre-trained chemical representations, requiring optimization strategies adapted to fine-tuning rather than training from scratch [8]. Similarly, federated learning approaches that enable collaborative model development across institutions while preserving data privacy present novel HPO challenges that must be addressed to advance pharmaceutical research without compromising proprietary information or patient confidentiality [8]. As autonomous discovery laboratories become more prevalent, real-time HPO integrated with automated experimentation will likely emerge as a critical capability for accelerating the design-make-test-analyze cycle in chemical and pharmaceutical applications.

This application note details the core architectural components of Optuna, a next-generation hyperparameter optimization framework, and their specific application within chemistry-focused machine learning (ML) workflows. For researchers in drug development and materials science, efficient hyperparameter optimization (HPO) is critical for building accurate predictive models for quantitative structure-activity relationship (QSAR) analysis, property prediction, and virtual screening. We provide a structured breakdown of Optuna's Study, Trial, and Objective Function entities, supported by quantitative data summaries, step-by-step experimental protocols, and specialized workflow diagrams. This guide aims to standardize and accelerate the implementation of robust HPO in computational chemistry research.

Core Architectural Components

Optuna's optimization process is built on three fundamental concepts: the Objective Function, the Trial, and the Study [9] [10]. The table below defines these components and their roles in a typical chemistry ML pipeline.

Table 1: Core Components of the Optuna Architecture

| Component | Definition & Role | Key Attributes/Methods (Relevant to Chemistry ML) |

|---|---|---|

| Objective Function | A user-defined function that encapsulates the machine learning experiment. It takes a Trial object as input and returns a numerical value (e.g., validation loss, accuracy) to be minimized or maximized [10]. |

- trial.suggest_*() methods to define hyperparameters.- Contains model training/validation logic.- Returns a performance metric (e.g., RMSE for property prediction, AUC for activity classification). |

| Trial | A single execution of the objective function, representing one set of hyperparameters and its resulting performance [9] [11]. | - trial.number: Unique identifier.- trial.params: The set of hyperparameters used.- trial.report(): For intermediate reporting (e.g., per-epoch validation loss).- trial.should_prune(): To halt unpromising trials early. |

| Study | Manages the overall optimization process, orchestrating a sequence of trials to find the best hyperparameters. It contains the history of all trials and the best result [12] [13]. | - study.optimize(): Starts the optimization.- study.best_trial / study.best_params / study.best_value: Access the best results.- study.trials: List of all FrozenTrial objects for analysis. |

The logical relationship between these components is directed by the Study, which repeatedly instantiates Trial objects to probe the Objective Function [12] [11]. The following diagram illustrates this core orchestration workflow.

Quantitative Data & Component Analysis

To elucidate the properties of these components, the following tables aggregate key quantitative and descriptive information from the search results, providing a reference for experimental planning.

Table 2: Key Methods of the Trial Object for Hyperparameter Suggestion [9] [5] [11]

| Method | Description | Key Parameters | Example Use Case in Chemistry ML |

|---|---|---|---|

suggest_categorical() |

Suggests a value from a list of categories. | name, choices |

Selecting the type of molecular fingerprint (e.g., ['ECFP', 'Avalon', 'RDKit']) or the type of model (e.g., ['RandomForest', 'XGBoost', 'SVR']). |

suggest_int() |

Suggests an integer value from a bounded range. | name, low, high, (step, log) |

Optimizing the max_depth of a Random Forest or the n_estimators in a gradient-boosting model. |

suggest_float() |

Suggests a floating-point value from a bounded range. | name, low, high, (step, log) |

Tuning the learning rate for a neural network or the C parameter for an SVM, often with log=True. |

suggest_discrete_uniform() |

(Deprecated) Suggests a float value from a discretized range. | name, low, high, q |

Largely superseded by suggest_float(..., step=...). |

Table 3: Key Attributes and Methods of the Study Object for Analysis [12]

| Attribute/Method | Return Type / Signature | Description |

|---|---|---|

best_trial |

FrozenTrial |

Returns the single best trial for a single-objective study. |

best_params |

dict[str, Any] |

A dictionary of the parameters from the best trial. |

best_value |

float |

The objective value achieved by the best trial. |

trials |

list[FrozenTrial] |

The list of all trials conducted in the study. |

optimize() |

(objective, n_trials) |

Executes the optimization loop. |

trials_dataframe() |

(attrs=('number', 'value', ...)) |

Exports the trial history to a pandas DataFrame for easy analysis. |

Experimental Protocol: Hyperparameter Optimization for a QSAR Model

This protocol details the application of Optuna to optimize a QSAR model for predicting biological activity, a common task in drug discovery [3].

Research Reagent Solutions

Table 4: Essential Software and Libraries for Chemistry ML with Optuna

| Item | Function / Purpose | Installation Command (pip) |

|---|---|---|

| Optuna Core | The main hyperparameter optimization framework. | pip install optuna |

| Optuna-Dashboard | A real-time web dashboard to monitor optimization progress [9]. | pip install optuna-dashboard |

| Scikit-learn | Provides machine learning models and utilities for data splitting and validation. | pip install scikit-learn |

| RDKit | A cheminformatics library for calculating molecular descriptors and fingerprints. | pip install rdkit-pypi |

| XGBoost/LightGBM | High-performance gradient boosting frameworks, often used in QSAR modeling. | pip install xgboost lightgbm |

| Pandas/NumPy | For data manipulation and numerical computations. | pip install pandas numpy |

Step-by-Step Procedure

Problem Definition and Objective Function Setup

- Objective: Minimize the mean squared error (MSE) of a regression model predicting the pIC50 value of a compound series.

- Define the Objective Function:

Study Creation and Configuration

- Create a Study Object: Direct the optimization to minimize the objective value.

Execution and Monitoring

- Run the Optimization for a fixed number of trials.

- Monitor with Optuna-Dashboard: In a separate terminal, run

optuna-dashboard sqlite:///qsar_study.dbto access a web interface for real-time monitoring of the optimization history and parameter importances [9].

Post-Optimization Analysis

- Retrieve and Apply Best Hyperparameters:

- Export Trial History for further analysis:

The following diagram maps this experimental workflow to the core Optuna architecture, highlighting the flow from problem definition to model deployment.

Advanced Configuration for Chemistry Workflows

Pruning for Efficient Resource Utilization

Pruning automatically stops underperforming trials early, saving computational resources—a critical concern when training on large molecular datasets or with complex models like deep neural networks.

Integration Protocol:

- Modify the Objective Function: Use

trial.report()andtrial.should_prune()during iterative training (e.g., after each epoch for a neural network). - Specify a Pruner: When creating the study, a pruner like

optuna.pruners.HyperbandPruner()oroptuna.pruners.MedianPruner()can be specified to define the pruning strategy [9] [11].

Multi-Objective Optimization for Inverse Design

Inverse materials design often requires balancing multiple, competing objectives, such as maximizing a compound's efficacy while minimizing its toxicity [14]. Optuna supports multi-objective optimization.

Implementation Protocol:

- Define a Multi-Value Objective Function: The function should return a list or tuple of objective values.

- Create a Multi-Objective Study: Specify multiple directions.

- Analyze the Pareto Front: After optimization, the best solutions are not a single set of parameters but a set of non-dominated solutions (the Pareto front).

The integration of artificial intelligence and machine learning (ML) is fundamentally reshaping chemical research, enabling scientists to navigate complex experimental spaces with unprecedented speed and precision [15]. A critical element in deploying robust ML models is hyperparameter optimization (HPO), a process that fine-tunes the model settings not learned during training to maximize predictive performance. Within this context, Optuna, a state-of-the-art HPO framework, has emerged as a powerful tool for accelerating chemistry-focused ML workflows [16]. By leveraging efficient search algorithms and automated pruning, Optuna directly addresses the core needs of modern chemical research: enhancing computational efficiency, enabling high-throughput automation, and providing the flexibility to adapt to diverse experimental objectives. This document details how Optuna's application brings these benefits to computational chemistry, supported by quantitative data, detailed protocols, and illustrative workflows.

Quantitative Efficiency Gains in Chemical Applications

Empirical studies demonstrate that Optuna significantly outperforms traditional HPO methods in both speed and accuracy, a crucial advantage for computationally intensive chemical simulations and data analysis.

A comparative analysis of HPO methods revealed that Optuna can run 6.77 to 108.92 times faster than traditional Random Search and Grid Search while consistently achieving lower error values across multiple evaluation metrics [17]. This dramatic speedup allows researchers to iterate models more rapidly, shortening development cycles.

In a specific application for determining solvent components in an Acid Gas Removal Unit (AGRU) using a Light Gradient Boosting Machine (LightGBM) model, Optuna not only improved prediction accuracy by 0.4% but also reduced the model's training time by over 50% [18]. The table below summarizes key performance metrics from various chemical applications.

Table 1: Performance Metrics of Optuna in Chemical Workflows

| Application Area | ML Model | Key Performance Improvement | Quantitative Benefit |

|---|---|---|---|

| Solvent Component Determination [18] | LightGBM | Accuracy Increase | +0.4% |

| Training Time Reduction | >50% | ||

| Non-Invasive Creatinine Estimation [19] | XGBoost | Model Accuracy | 85.2% |

| ROC-AUC Score | 0.80 | ||

| Hyperparameter Optimization [17] | General ML | Speedup vs. Traditional Methods | 6.77x to 108.92x |

Automated and Parallel Optimization for High-Throughput Chemistry

Automation in chemical research, through high-throughput experimentation (HTE) and autonomous laboratories, generates vast datasets. Optuna integrates seamlessly with these workflows by enabling highly parallel and automated hyperparameter tuning.

The framework supports highly parallel optimization, efficiently handling large batch sizes (e.g., 24, 48, or 96 experiments) that align with standard HTE plate formats [20]. This capability allows for the simultaneous optimization of multiple reaction objectives, such as maximizing yield and selectivity while minimizing cost. Optuna's pruning capabilities automatically halt underperforming trials early, saving valuable computational resources and time [21]. Furthermore, its easy parallelization allows searches to be distributed over multiple threads or processes without significant code modifications, making it suitable for scalable research infrastructures [5].

Tools like DeepMol leverage Optuna to create end-to-end Automated ML (AutoML) pipelines for computational chemistry. These systems automatically traverse thousands of potential configurations of data pre-processing methods, feature engineering techniques, and ML models to identify the most effective pipeline for a given molecular dataset [16].

Flexible Search Spaces and Multi-Objective Optimization

Chemical optimization problems are often complex and involve balancing multiple, sometimes competing, objectives. Optuna's design provides the flexibility needed to model these real-world scenarios accurately.

A key feature is its eager search space definition, where hyperparameter ranges can be defined dynamically using Python conditionals and loops [5]. This is particularly useful for conditioning the choice of certain parameters (e.g., the number of layers in a neural network) on the values of other parameters. This allows for a more intuitive and efficient exploration of complex, hierarchical parameter spaces.

For problems with multiple goals, Optuna fully supports multi-objective optimization [22]. For instance, a researcher can simultaneously optimize a model for both highest accuracy and lowest computational complexity [21]. Optuna's algorithms, such as NSGAIII, efficiently navigate these trade-offs and identify a set of optimal solutions, known as the Pareto front, providing the scientist with multiple viable options for their specific context.

Experimental Protocols and Implementation

Protocol 1: Optimizing a Solvent Selection Model with LightGBM

This protocol outlines the steps for using Optuna to optimize a LightGBM model for classifying the optimal solvent in an Acid Gas Removal Unit (AGRU) [18].

- Objective: To classify the optimal solvent component from six different solvents using process simulation data.

- Data Preparation:

- Gather data from a verified process flowsheet (e.g., using Aspen HYSYS software).

- Features typically include composition data (e.g., CO₂ levels), pressure, and temperature.

- The target variable is the categorical solvent identifier.

- Optuna Optimization Setup:

- Define the Objective Function: The function should take an Optuna

trialobject as an argument. - Suggest Hyperparameters: Within the function, use the

trialobject to suggest values for LightGBM parameters. Key parameters to optimize often include:num_leaves:trial.suggest_int('num_leaves', 2, 256)learning_rate:trial.suggest_float('learning_rate', 1e-5, 1e-1, log=True)feature_fraction:trial.suggest_float('feature_fraction', 0.4, 1.0)lambda_l1andlambda_l2:trial.suggest_float('lambda_l1', 1e-8, 10.0, log=True)

- Model Training & Evaluation: Inside the function, train the LightGBM model with the suggested hyperparameters and return the validation accuracy (or another relevant metric).

- Define the Objective Function: The function should take an Optuna

- Execution:

- Create a study object to maximize accuracy:

study = optuna.create_study(direction='maximize') - Run the optimization for a specified number of trials (e.g., 100):

study.optimize(objective, n_trials=100)

- Create a study object to maximize accuracy:

- Output Analysis:

- Analyze

study.best_paramsandstudy.best_valueto identify the optimal configuration. - Use Optuna's visualization tools to plot hyperparameter importance and optimization history.

- Analyze

Protocol 2: Multi-Objective Optimization for a Predictive QSPR Model

This protocol describes how to optimize a model for multiple competing objectives, such as prediction accuracy and model simplicity [21].

- Objective: To train a Random Forest model that balances high predictive accuracy for a molecular property with low model complexity.

- Data Preparation:

- Use a molecular dataset (e.g., loaded as SMILES strings and converted to fingerprints or descriptors).

- The target variable could be a quantitative property (regression) or activity (classification).

- Optuna Multi-Objective Setup:

- Define a Multi-Objective Function: The function should return a tuple of values (e.g., accuracy, complexity).

- Suggest Hyperparameters: Define the search space for Random Forest parameters like

n_estimatorsandmax_depth. - Calculate Competing Metrics: Train the model and return both the cross-validation accuracy and a complexity metric (e.g.,

n_estimators * max_depth).

- Execution:

- Create a study with multiple directions:

study = optuna.create_study(directions=['maximize', 'minimize']) - Run the optimization:

study.optimize(multi_objective, n_trials=50)

- Create a study with multiple directions:

- Output Analysis:

- The result is not a single best trial, but a set of Pareto-optimal trials (

study.best_trials). - Visualize the Pareto front to understand the trade-off between accuracy and complexity and select the most suitable model for the project's needs.

- The result is not a single best trial, but a set of Pareto-optimal trials (

Visual Workflow and Research Reagents

Optuna for Chemistry ML Workflow

The following diagram illustrates the typical closed-loop workflow for an Optuna-driven ML optimization in a chemical research context.

The Scientist's Toolkit: Key Research Reagents and Solutions

The following table lists essential computational "reagents" and their roles in building ML models for chemistry, optimized using frameworks like Optuna.

Table 2: Essential Computational Reagents for Chemistry ML Workflows

| Research Reagent | Function in the Workflow |

|---|---|

| Molecular Descriptors | Quantitative representations of molecular structures (e.g., molecular weight, logP, topological indices) that serve as input features for ML models. |

| Chemical Fingerprints | Binary vectors representing the presence or absence of specific substructures or patterns in a molecule, enabling efficient similarity searches and pattern recognition. |

| Reaction Yield Data | The primary experimental outcome or target variable for models aimed at optimizing chemical synthesis conditions. |

| ADMET Properties | (Absorption, Distribution, Metabolism, Excretion, Toxicity) data used as key objectives in predictive models for drug development and safety assessment [16]. |

| Hyperparameter Search Space | The defined range and type of each ML model setting (e.g., learning rate, tree depth) that Optuna explores to find the optimal configuration. |

Application Notes

Optuna, a next-generation hyperparameter optimization framework, is revolutionizing machine learning workflows in chemical research by enabling efficient and automated tuning of complex models [23] [24]. Its define-by-run API and state-of-the-art algorithms allow researchers to dynamically construct search spaces and scale studies from single workstations to large distributed systems, making it particularly valuable for data-driven chemistry [23]. This article details its practical applications in two critical areas: predicting chemical respiratory toxicity and optimizing synthetic reaction conditions, providing structured protocols and resources for scientists.

In toxicity prediction, Optuna facilitates the development of robust QSAR models by identifying optimal hyperparameters for various machine learning algorithms and feature sets. A 2025 study demonstrated this by creating an enhanced respiratory toxicity predictor combining molecular descriptors and TF-IDF features, where Optuna-adjusted models achieved an internal validation accuracy of 88.6% and AUC of 93.2% with Random Forest, significantly outperforming previous approaches [25] [26]. This performance underscores Optuna's ability to handle class-imbalanced datasets processed with techniques like SMOTE, ensuring reliable preclinical safety assessment [25].

For reaction optimization, Optuna integrates with Bayesian optimization workflows to efficiently navigate high-dimensional chemical spaces. In pharmaceutical process development, this enables rapid identification of optimal conditions for challenging transformations like nickel-catalyzed Suzuki couplings and Buchwald-Hartwig aminations, where researchers have identified conditions achieving >95% yield and selectivity in minimal experimental cycles [20]. The Minerva framework exemplifies this, handling batch sizes of 96 and search spaces exceeding 88,000 conditions while accommodating real-world laboratory constraints [20]. Similarly, in ultra-fast flow chemistry, Optuna-based multi-objective optimization balances competing goals like yield and impurity profiles, revealing critical trade-offs and process understanding beyond traditional OFAT approaches [27].

Table 1: Performance Benchmarks of Optuna-Optimized Chemical Applications

| Application Area | Specific Task | Algorithm Optimized | Key Performance Metrics | Reference |

|---|---|---|---|---|

| Toxicity Prediction | Respiratory Toxicity Classification | Random Forest | Internal Validation: 88.6% Accuracy, 93.2% AUCExternal Validation: 92.2% Accuracy, 97% AUC | [25] |

| Reaction Optimization | Ni-catalyzed Suzuki Reaction | Bayesian Optimization (Gaussian Process) | Identified conditions with 76% Yield and 92% Selectivity in 96-well HTE campaign | [20] |

| Reaction Optimization | API Synthesis (Suzuki/Buchwald-Hartwig) | Scalable Multi-objective Acquisition Functions (q-NParEgo, TS-HVI) | Identified multiple conditions with >95% Yield and Selectivity | [20] |

Experimental Protocols

Protocol: Optuna for Predicting Chemical Respiratory Toxicity

This protocol outlines the workflow for building an optimized respiratory toxicity prediction model using molecular descriptors and Optuna, based on the methodology from Shehab et al. (2025) [25].

Data Preparation and Feature Engineering

- Data Sourcing: Compile respiratory toxicity data from public sources like PNEUMOTOX or the Hazardous Chemical Information System (HCIS) [25].

- Structure Standardization: Process chemical structures using RDKit or Chython. Convert all structures into canonical SMILES representation and remove duplicates [28].

- Descriptor Calculation:

- Calculate a comprehensive set of molecular descriptors (e.g., using RDKit or the Mordred library) capturing physicochemical properties [28].

- Generate TF-IDF features from the SMILES strings of the compounds, treating them as textual data [25] [26].

- Combine both descriptor sets into a unified feature table.

- Addressing Class Imbalance: Apply the Synthetic Minority Over-sampling Technique (SMOTE) to the training set to generate synthetic samples for the under-represented class, preventing model bias [25].

Hyperparameter Optimization with Optuna

- Objective Function Definition: Define an objective function that takes an Optuna

trialobject as input. Within this function:- Use

trial.suggest_*()methods (e.g.,suggest_categorical,suggest_int,suggest_float) to define the hyperparameter search space for a chosen classifier (e.g., Random Forest, XGBoost) [23]. - Train the model with the proposed hyperparameters on the training set.

- Evaluate the model on a validation set using an appropriate metric (e.g., AUC-ROC, balanced accuracy) as the value to be optimized.

- Use

- Study Execution: Create an Optuna study directed towards maximizing the objective metric. Execute the optimization with a specified number of trials (e.g.,

n_trials=100) to find the best-performing hyperparameter set [23]. - Model Validation: Retrain the final model using the optimized hyperparameters on the entire training set and evaluate its performance on a held-out external test set to estimate generalizability [25].

Protocol: Multi-Objective Reaction Optimization with Optuna and HTE

This protocol describes a machine learning-guided workflow for optimizing chemical reactions with multiple objectives, such as maximizing yield and selectivity, using high-throughput experimentation (HTE) and Optuna, as demonstrated in recent industrial applications [20].

Experimental Setup and Initialization

- Define Search Space: Collaboratively define a discrete combinatorial set of plausible reaction conditions with chemists. This includes categorical variables (e.g., ligand, solvent, additive) and continuous variables (e.g., temperature, concentration) [20].

- Implement Constraints: Programmatically filter out impractical or unsafe condition combinations (e.g., temperatures exceeding solvent boiling points, incompatible reagents) [20].

- Initial Sampling: Use a space-filling sampling algorithm like Sobol sampling to select an initial batch of experiments (e.g., one 96-well plate) that diversely covers the reaction space [20] [27].

Iterative Bayesian Optimization Loop

- High-Throughput Experimentation: Execute the batch of suggested reactions using an automated HTE platform to collect data on the target objectives (e.g., yield, selectivity) [20].

- Surrogate Model Training: Train a Gaussian Process (GP) regressor for each objective using the accumulated experimental data. The GP models the relationship between reaction conditions and outcomes, providing both a prediction and an uncertainty estimate [20] [27].

- Optuna for Acquisition Function Optimization:

- The core of the Bayesian optimization loop is the acquisition function, which uses the GP's predictions to decide the next best experiments to run. For multi-objective problems, functions like q-NParEgo or Thompson Sampling with Hypervolume Improvement (TS-HVI) are effective and scalable to large batch sizes [20].

- Optuna can be employed to optimize the configuration of these acquisition functions or to tune the hyperparameters of the underlying surrogate models for better performance.

- Iteration and Termination: The newly suggested experiments are run, and the cycle repeats. The optimization is typically terminated when the hypervolume of the Pareto front (a measure of multi-objective performance) stops improving for a set number of iterations [20] [27].

Table 2: Research Reagent Solutions for ML-Guided Reaction Optimization

| Reagent / Material | Function in Optimization Workflow | Example/Notes |

|---|---|---|

| High-Throughput Experimentation (HTE) Robotic Platform | Enables highly parallel execution of numerous reactions at miniaturized scales, generating consistent data for ML models. | 96-well plate systems for solid/liquid dispensing [20]. |

| Custom-Coded Reaction Condition Space | A discrete, constrained set of plausible reaction parameters (solvents, catalysts, ligands, etc.) that defines the ML-searchable domain. | Built using chemical intuition and process requirements; filters unsafe/impractical combinations [20]. |

| Gaussian Process (GP) Surrogate Model | A probabilistic ML model that learns from experimental data to predict reaction outcomes and their uncertainty for unexplored conditions. | Key component of Bayesian optimization; guides the search for optima [20] [27]. |

| Multi-Objective Acquisition Function (e.g., q-NParEgo) | An algorithmic strategy that uses the GP's output to suggest the next experiments by balancing exploration and exploitation of multiple goals. | Scalable to large batch sizes (e.g., 96 parallel reactions) [20]. |

| Hypervolume Metric | A single quantitative measure used to track progress and convergence in multi-objective optimization, based on the Pareto front. | Serves as a termination criterion for the optimization campaign [20]. |

Within the domain of chemistry machine learning (ML), where models predict molecular properties, optimize reaction yields, or design novel compounds, hyperparameter tuning is a critical step for achieving robust and predictive performance. This application note provides a detailed guide for researchers and drug development professionals to install and configure Optuna, a next-generation hyperparameter optimization framework [29]. Its define-by-run API and efficient algorithms make it particularly suited for the complex, often nested search spaces encountered in chemical ML workflows, moving beyond the limitations of traditional methods like grid search [30] [31].

Installation

Optuna supports Python 3.9 or newer [32] [29]. The following table summarizes the installation methods for different Python environments.

Table 1: Optuna Installation Methods

| Environment | Installation Command | Notes |

|---|---|---|

| PyPI (pip) | pip install optuna [32] [5] |

The recommended method for most users [32]. |

| Anaconda Cloud (conda) | conda install -c conda-forge optuna [32] [29] |

Suitable for Conda-based environments. |

| Development Version | pip install git+https://github.com/optuna/optuna.git [32] |

Installs the latest, potentially unstable, version from the master branch. |

For chemistry ML workflows that involve specific frameworks like PyTorch or TensorFlow, consider installing Optuna's integration packages for enhanced functionality [33].

Core Concepts & Basic Configuration

Understanding Optuna's core concepts is essential for its proper application.

- Study: An optimization task based on an objective function. A study aims to find the optimal hyperparameters over a series of trials [29].

- Trial: A single execution of the objective function that evaluates a specific set of hyperparameters [29].

- Objective Function: A user-defined function that contains the ML model training and evaluation logic. It takes a

trialobject as an argument and returns a performance metric (e.g., validation loss, accuracy) to be minimized or maximized [29] [5].

The trial object is used within the objective function to suggest hyperparameters, dynamically constructing the search space using methods like suggest_float(), suggest_int(), and suggest_categorical() [5].

The following diagram illustrates the basic workflow of an Optuna study.

Experimental Protocol: A Basic Hyperparameter Optimization Workflow

This protocol outlines the steps for a fundamental hyperparameter optimization task, applicable to a wide range of chemistry ML models, such as those built with scikit-learn.

Materials and Software Requirements

Table 2: Essential Research Reagent Solutions for Optuna

| Item Name | Function / Purpose | Example / Installation |

|---|---|---|

| Optuna Core | The main framework for defining and running optimization studies. | pip install optuna [32] |

| ML Framework | The machine learning library used to build the model. | PyTorch, TensorFlow, scikit-learn, XGBoost |

| MLflow Tracking | An optional platform for advanced experiment tracking and storage. | pip install mlflow [34] [35] |

| Optuna Dashboard | A web-based dashboard for real-time visualization of optimization results. | pip install optuna-dashboard [29] [36] |

| Data Storage (RDB) | A database backend for persisting study results, enabling analysis and resumption of studies. | SQLite, PostgreSQL |

Step-by-Step Procedure

- Install Optuna: Choose an installation method from Table 1. For a standard Python environment, execute

pip install optunain your terminal [32]. - Define the Objective Function: Create a function that encapsulates your model training and evaluation. The function should:

- Use the

trialobject to suggest hyperparameter values. - Instantiate the model with the suggested hyperparameters.

- Train the model on your chemical dataset (e.g., molecular descriptors, fingerprints).

- Evaluate the model on a validation set and return the metric of interest (e.g., mean squared error for property prediction, accuracy for classification). Code Snippet 1: Example objective function for a RandomForest model [29] [5].

- Use the

- Create and Run a Study: Instantiate a

studyobject and invoke the optimization process.- The

directionparameter specifies whether to 'minimize' or 'maximize' the objective function's return value. - The

storageparameter allows for persisting trials in a database. Using SQLite is a simple and effective way to save progress. Code Snippet 2: Creating a study and running the optimization [29] [36].

- The

- Analyze the Results: After optimization, query the

studyobject for the best trial's parameters and value.

Advanced Configuration for Chemistry ML Workflows

For more complex and computationally intensive chemistry ML tasks, advanced configurations are necessary.

Distributed Optimization with MLflow

Large-scale virtual screening or molecular dynamics featurization require parallel computation. Optuna can be integrated with MLflow for distributed hyperparameter tuning on a Spark cluster [35].

Code Snippet 3 demonstrates a distributed setup using MLflow as the backend storage and Spark for parallel execution.

Code Snippet 3: Distributed optimization using MLflow and Spark [35].

Visualization with Optuna Dashboard

To gain insights into the optimization process, use optuna-dashboard to launch a local web server that visualizes the study from the SQLite database [29] [36].

Code Snippet 4: Launching the Optuna Dashboard [36]. This provides real-time charts showing the optimization history, parameter importances, and parallel coordinate plots, which are invaluable for diagnosing model behavior and refining the search space.

This application note has provided a comprehensive guide for researchers to install and configure the Optuna framework within Python environments tailored for chemistry machine learning. By following the detailed protocols for both basic and advanced setups, scientists can systematically and efficiently optimize hyperparameters, thereby accelerating the development of more accurate and robust models in drug discovery and chemical informatics. The integration with distributed computing and visualization tools ensures that Optuna can scale to meet the demands of modern computational chemistry challenges.

In the field of chemical machine learning, the accuracy of predictive models for tasks such as molecular property prediction (MPP) and solvent classification is paramount. The performance of these models is highly sensitive to their hyperparameters, which are configurations not learned during training but set beforehand [37]. Traditional hyperparameter optimization (HPO) methods like Grid Search and Random Search have been widely used but present significant limitations, especially within computational chemistry workflows where data complexity and computational expense are high [38] [37] [39]. Optuna, a modern HPO framework, has emerged as a superior alternative by leveraging Bayesian optimization to find optimal hyperparameters more efficiently [38] [5] [39]. This article details the comparative advantages of Optuna and provides application notes and protocols for its implementation in chemical machine learning research.

Theoretical Background and Comparative Analysis

Core Hyperparameter Tuning Methods

- Grid Search: This method performs an exhaustive search over a pre-defined set of hyperparameter values. It is guaranteed to find the best combination within the grid but is computationally expensive and scales poorly with an increasing number of hyperparameters [38] [40]. For example, a grid with 5 parameters and 5 values each requires evaluating 3,125 combinations.

- Random Search: This method randomly samples a fixed number of hyperparameter combinations from specified distributions. It is more efficient than Grid Search for large parameter spaces but may miss the optimal combination due to its random nature and does not learn from past trials [38] [37] [40].

- Optuna: This framework uses a sequential model-based optimization approach, specifically the Tree-structured Parzen Estimator (TPE), to model the relationship between hyperparameters and model performance. It intelligently suggests new hyperparameters to evaluate based on past results, focusing the search on promising regions of the search space [38] [39]. Key features include:

Quantitative Performance Comparison

The following table summarizes the performance of different HPO methods as demonstrated in various chemical and machine learning studies.

Table 1: Comparative Performance of Hyperparameter Optimization Methods

| Method | Key Principle | Computational Efficiency | Best-Case Accuracy (Example) | Key Advantage | Key Disadvantage |

|---|---|---|---|---|---|

| Grid Search | Exhaustive search over a grid [38] | Low | Baseline | Finds best in-grid combination | Computationally intractable for large spaces [38] [39] |

| Random Search | Random sampling from distributions [38] | Medium | Varies with iterations; can approach optimal [40] | Faster exploration of large spaces | No learning from past trials; may miss optimum [38] [39] |

| Optuna | Bayesian optimization (TPE) [38] [39] | High | ~98.4% (LightGBM for solvent classification) [18] | Learns from trials; highly efficient & accurate [38] [18] [39] | Requires careful setup; black-box internals [38] |

Notably, in a study on classifying solvent components for an acid gas removal unit (AGRU), a LightGBM model optimized with Optuna achieved a final accuracy of 98.4% [18]. Furthermore, the optimization process with Optuna resulted in a training time reduction of over 50% compared to the baseline, highlighting its dual benefit of improving accuracy while enhancing computational efficiency [18].

For molecular property prediction using deep neural networks (DNNs), research has shown that advanced algorithms like Hyperband (available within Optuna) are the most computationally efficient, providing optimal or nearly optimal prediction accuracy [37].

Experimental Protocols and Workflows

Protocol 1: Hyperparameter Tuning for a Solvent Classification Model

This protocol is adapted from a study that used Optuna to optimize a LightGBM model for determining solvent components in an acid gas removal unit [18].

1. Objective: To classify the optimal solvent component from six different chemical solvents using data from verified Aspen HYSYS flowsheet simulations.

2. Materials and Reagents:

* Dataset: Operational data from an acid gas removal unit (AGRU) [18].

* Software: Python, Optuna, LightGBM, Scikit-learn.

3. Procedure:

* Step 1: Data Preparation. Load and preprocess the AGRU dataset. Split the data into training and testing sets.

* Step 2: Define the Objective Function. Create a function that takes an Optuna trial object as an argument.

* Step 3: Suggest Hyperparameters. Within the objective function, use the trial object to suggest values for key LightGBM hyperparameters.

* num_leaves: trial.suggest_int('num_leaves', 2, 256)

* learning_rate: trial.suggest_float('learning_rate', 0.01, 0.3, log=True)

* feature_fraction: trial.suggest_float('feature_fraction', 0.4, 1.0)

* lambda_l1: trial.suggest_float('lambda_l1', 1e-8, 10.0, log=True)

* Step 4: Model Training and Evaluation. Inside the objective function, train a LightGBM model with the suggested hyperparameters and return the cross-validation accuracy.

* Step 5: Optimization. Create an Optuna study and run the optimization for a specified number of trials (e.g., 100).

* Step 6: Validation. Train a final model with the best hyperparameters on the full training set and evaluate its performance on the held-out test set.

4. Conclusion: The Optuna-optimized model achieved 98.4% accuracy, a 0.4% improvement over the baseline, while also reducing training time by more than half [18].

Protocol 2: Optimizing a Deep Neural Network for Molecular Property Prediction

This protocol is derived from methodologies applied to predict bitumen properties and other molecular characteristics using DNNs [37] [41].

1. Objective: To optimize a Deep Neural Network (DNN) for predicting molecular properties (e.g., density, thermal expansion coefficient) from molecular descriptors.

2. Materials and Reagents:

* Dataset: Molecular descriptors and target properties generated from Molecular Dynamics (MD) simulations [41].

* Software: Python, Optuna, Keras/TensorFlow, Scikit-learn.

3. Procedure:

* Step 1: Data Collection. Use a dataset of molecular descriptors derived from MD simulations across a range of bituminous samples and temperatures [41].

* Step 2: Define the Objective Function. The function should take a trial object and define the model architecture and training hyperparameters dynamically.

* Step 3: Suggest Architecture and Hyperparameters.

* Number of layers: n_layers = trial.suggest_int('n_layers', 1, 3)

* Number of units per layer: trial.suggest_int(f'n_units_l{i}', 4, 128, log=True)

* Learning rate: trial.suggest_float('lr', 1e-5, 1e-1, log=True)

* Dropout rate: trial.suggest_float('dropout', 0.0, 0.5)

* Step 4: Model Training and Evaluation. Construct and train the DNN with the suggested parameters. Use a metric like Mean Squared Error (MSE) as the return value for Optuna to minimize.

* Step 5: Optimization with Pruning. Incorporate pruning to halt underperforming trials early. The optimization can be run over many trials (e.g., 2048) on a high-performance computing cluster [41].

* Step 6: Model Selection and Validation. Select the best-performing model configuration and validate it on a test set of unseen molecular compositions.

4. Conclusion: The application of this protocol has resulted in ANN models that accurately reproduce MD-predicted densities with R² > 0.99 and maximum absolute errors below 5% on test data, demonstrating superior generalization and interpolation capabilities [41].

Workflow Visualization

The following diagram illustrates the core iterative workflow of an Optuna optimization study, which is consistent across the protocols described above.

Figure 1: Optuna Hyperparameter Optimization Workflow

This table details key software and resources essential for implementing Optuna in chemical machine learning workflows.

Table 2: Essential Resources for Optuna in Chemical Machine Learning

| Resource Name | Type | Function in Workflow | Relevant Use Case |

|---|---|---|---|

| Optuna [5] | Hyperparameter Optimization Framework | Automates the search for optimal model parameters using efficient Bayesian algorithms. | Core optimization engine for all protocols. |

| LightGBM [18] | Machine Learning Library | A fast, distributed, high-performance gradient boosting framework used for classification and regression. | Solvent component classification in AGRUs [18]. |

| Keras/TensorFlow [41] | Deep Learning Library | Provides high-level building blocks for developing and training deep learning models. | Building and training DNNs for molecular property prediction [41]. |

| Scikit-learn [38] [41] | Machine Learning Library | Provides simple and efficient tools for data mining, analysis, and model evaluation. | Data preprocessing, cross-validation, and baseline model implementation. |

| RDKit [42] | Cheminformatics Software | Provides functionality for working with molecular data, including descriptor calculation and SMILES processing. | Generating molecular features and processing chemical structures [42]. |

| Molecular Dynamics (MD) Simulations [41] | Computational Data Source | Generates atomic-level trajectory data from which molecular descriptors and target properties are computed. | Creating datasets for predicting properties like density and thermal expansion [41]. |

The transition from traditional hyperparameter tuning methods like Grid and Random Search to advanced frameworks like Optuna represents a significant leap forward for machine learning in chemistry. Optuna's key advantages—computational efficiency through intelligent search and pruning, superior accuracy in real-world chemical applications, and practical flexibility with its dynamic search space—make it an indispensable tool for researchers. By adopting the detailed application notes and protocols provided, scientists and drug development professionals can accelerate their research and achieve more accurate, reliable predictive models for molecular property prediction and material design.

Implementing Optuna in Chemical ML Pipelines: From Code to Results

Structuring Chemical ML Objective Functions with Trial Objects

In computational chemistry and drug discovery, machine learning (ML) models have become indispensable tools for predicting molecular properties, optimizing chemical structures, and accelerating materials design [43]. The performance of these models heavily depends on the careful selection of hyperparameters, which governs their learning capacity, generalization ability, and computational efficiency [7]. Optuna, a next-generation hyperparameter optimization framework, addresses this challenge through its define-by-run API and versatile Trial object system, enabling researchers to dynamically construct search spaces tailored to complex chemical problems [23].

This protocol details the structured implementation of objective functions using Optuna's Trial objects specifically for chemical ML applications. We demonstrate how to effectively navigate high-dimensional parameter spaces, manage computational resources, and integrate domain-specific constraints that arise when working with molecular datasets, reaction condition prediction, or spectroscopic property estimation [44] [43]. By providing standardized methodologies and practical examples, we aim to establish reproducible optimization workflows that enhance research productivity and model performance in chemical sciences.

Core Concepts: Optuna Studies, Trials, and Chemical Search Spaces

In Optuna terminology, a study represents a complete optimization task based on an objective function, while a trial corresponds to a single execution of that function with a specific parameter set [23]. For chemical ML applications, each trial typically involves training a model with hyperparameters suggested by the Trial object and evaluating its performance on chemical data (e.g., predicting molecular properties or reaction yields).

The Trial object serves as the primary interface for hyperparameter suggestion during the optimization process. It provides various suggest_*() methods that allow researchers to define diverse search spaces appropriate for different types of chemical ML parameters [11]:

- Continuous parameters: Learning rates, regularization strengths

- Integer parameters: Neural network layers, molecular descriptor dimensions

- Categorical parameters: Model types, activation functions, solvent choices

Table 1: Hyperparameter Types in Chemical Machine Learning

| Parameter Type | Chemical ML Examples | Optuna Suggestion Method |

|---|---|---|

| Continuous | Learning rate, regularization strength | suggest_float() |

| Integer | Number of neural network layers, fingerprint bits | suggest_int() |

| Categorical | Model architecture, solvent environment | suggest_categorical() |

| Logarithmic | Concentration ranges, kinetic constants | suggest_float(log=True) |

For chemical applications, the search space design should incorporate domain knowledge where possible. For instance, when optimizing neural networks for NMR chemical shift prediction [43], learning rates typically span several orders of magnitude (1e-5 to 1e-1), while categorical choices might include different molecular representation schemes (fingerprints, 3D coordinates, or quantum mechanical descriptors).

Basic Protocol: Implementing Chemical ML Objective Functions

Defining the Objective Function Structure

A properly structured objective function for chemical ML follows a consistent pattern: receiving a Trial object as argument, suggesting hyperparameters, configuring the ML model, executing the training process, and returning a performance metric. The following example demonstrates a typical implementation for a molecular property prediction task:

Creating and Running the Optimization Study

After defining the objective function, create a study and run the optimization process:

Table 2: Key Trial Object Methods for Chemical ML Applications

| Method | Application Context | Example Usage |

|---|---|---|

suggest_categorical() |

Model type selection, solvent environment | suggest_categorical("solvent", ["water", "ethanol", "acetonitrile"]) |

suggest_int() |

Neural network layers, fingerprint dimensions | suggest_int("n_layers", 1, 5) |

suggest_float() |

Learning rate, dropout rate | suggest_float("lr", 1e-5, 1e-1, log=True) |

report() |

Intermediate training values | report(validation_loss, epoch=epoch) |

should_prune() |

Early stopping of unpromising trials | if trial.should_prune(): raise TrialPruned() |

set_user_attr() |

Storing chemical context | set_user_attr("molecular_dataset", "QM9") |

Advanced Implementation: Conditional Search Spaces and Molecular Representations

Implementing Conditional Hyperparameter Spaces

Complex chemical ML workflows often require conditional parameter spaces, where certain hyperparameters only become relevant based on other choices. Optuna's define-by-run API naturally supports these complex dependencies:

This approach is particularly valuable in chemical contexts where different molecular representation methods (fingerprints, graph neural networks, 3D descriptors) require distinct model architectures and hyperparameters [43].

Chemical-Specific Search Space Design

When designing search spaces for chemical ML applications, consider these domain-specific guidelines:

- Representation-dependent parameters: Graph neural networks for molecules require different hyperparameters than fingerprint-based models

- Scale-aware ranges: Learning rates for large molecular datasets often benefit from logarithmic sampling

- Resource-aware boundaries: Molecular dynamics or quantum chemistry features may constrain model complexity due to computational limits

Managing Large Molecular Data with Artifacts

Chemical ML often involves large datasets, model checkpoints, or molecular structures that exceed practical database sizes. Optuna's artifact module provides a solution for handling these large data associations:

This approach is particularly valuable for preserving snapshots of large chemical models or storing optimized molecular structures discovered during the optimization process [45].

Distributed Optimization for Computational Chemistry

For computationally expensive chemical ML tasks (e.g., quantum property prediction or molecular dynamics feature extraction), distributed optimization across multiple nodes significantly reduces experimentation time:

When combined with cloud-based artifact stores like AWS S3 for large chemical data, this setup enables scalable optimization across research clusters [45].

Practical Applications in Chemical Research

Case Study: Solvent Component Classification with LightGBM

In a recent study on acid gas removal units, researchers used Optuna to optimize LightGBM models for classifying optimal solvent components [18]. The implementation followed this pattern:

This approach achieved 98.4% accuracy in solvent component classification while reducing training time by over 50% compared to default parameters [18].

Chemical Reaction Optimization

For reaction condition optimization, the objective function can incorporate both chemical parameters (catalyst loading, temperature, solvent) and ML hyperparameters:

Visualization and Analysis of Optimization Results

Tracking Chemical ML Optimization Progress

Optuna provides comprehensive visualization tools to analyze optimization progress and hyperparameter importance:

These visualizations help identify which hyperparameters most significantly impact model performance on chemical data, guiding future experimentation and resource allocation.

Inter-Trial Relationship Analysis

For complex chemical search spaces with conditional parameters, understanding relationships between different trials and hyperparameters is crucial:

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents for Chemical ML with Optuna

| Research Reagent | Function in Chemical ML | Implementation Example |

|---|---|---|

| Optuna Framework | Hyperparameter optimization engine | study = optuna.create_study() |

| Trial Object | Hyperparameter suggestion interface | trial.suggest_float("learning_rate", 1e-5, 1e-1) |

| Molecular Representations | Input features for ML models | Fingerprints, graph structures, 3D coordinates [43] |

| Artifact Store | Large data management (model checkpoints, structures) | FileSystemArtifactStore, Boto3ArtifactStore [45] |

| Pruning Algorithms | Early termination of unpromising trials | MedianPruner, HyperbandPruner |

| Visualization Tools | Optimization analysis and interpretation | plot_optimization_history(), plot_param_importances() |

| Distributed Storage | Parallel experiment coordination | MySQL, PostgreSQL, Redis |

| Chemical ML Libraries | Domain-specific model implementations | Scikit-learn, PyTorch, TensorFlow, DeepChem |

Structured implementation of chemical ML objective functions with Optuna's Trial objects provides a robust methodology for hyperparameter optimization in computational chemistry and drug discovery. By following the protocols outlined in this document—from basic function design to advanced conditional spaces and artifact management—researchers can systematically navigate complex hyperparameter landscapes while incorporating domain-specific knowledge.

The integration of these practices into chemical ML workflows enhances reproducibility, accelerates model development, and ultimately leads to more predictive and reliable models for molecular property prediction, reaction optimization, and materials design. As chemical datasets continue to grow in size and complexity, these structured optimization approaches will become increasingly vital tools in the computational chemist's toolkit.

Defining Search Spaces for Chemical Features and Model Parameters

In chemical machine learning, the performance of predictive models is profoundly influenced by two critical elements: the selection of relevant chemical features and the tuning of model hyperparameters. Effectively navigating these multi-dimensional search spaces is essential for developing accurate, robust, and interpretable models in drug discovery and materials science. This protocol details the application of Optuna, a define-by-run hyperparameter optimization framework, for the simultaneous optimization of feature sets and model parameters within chemistry-focused workflows. By providing a structured methodology, these application notes enable researchers to systematically enhance model performance while gaining insights into feature importance, thereby accelerating chemical research and development.

Theoretical Foundation: Optuna Search Space Definition

Optuna provides an imperative, define-by-run API that allows for dynamic construction of search spaces using standard Python control structures such as conditionals and loops [23]. This flexibility is particularly advantageous in chemical machine learning, where the relevance of certain features or parameters may depend on the chosen algorithm or dataset characteristics.

Core Parameter Suggestion Methods

The framework offers three primary methods for defining parameter ranges [46] [47]:

suggest_categorical(): For selecting among discrete choices (e.g., algorithm types, descriptor sets)suggest_int(): For integer parameters (e.g., number of layers in neural networks, number of trees in ensembles)suggest_float(): For continuous parameters (e.g., learning rates, regularization strengths) with optional logarithmic scaling and step discretization

Search Space Sampling Strategies

Optuna implements several state-of-the-art algorithms for efficiently navigating complex parameter spaces [48]:

- TPESampler: A Bayesian optimization approach using Tree-structured Parzen Estimator, ideal for categorical and mixed parameter spaces

- NSGAIISampler: A multi-objective evolutionary algorithm for optimization against multiple metrics

- CmaEsSampler: Covariance Matrix Adaptation Evolution Strategy effective for continuous numerical spaces

Experimental Protocols

Protocol 1: Defining Chemical Feature Search Spaces

Purpose: To systematically evaluate and select optimal chemical descriptors and features for predictive modeling.

Materials:

- Chemical dataset with computed descriptors (e.g., molecular fingerprints, physicochemical properties, structural descriptors)

- Optuna optimization framework

- Target machine learning model

- Cross-validation scheme

Procedure:

Initialize Feature Space: Compile comprehensive list of available chemical features:

Implement Objective Function:

Optimization Setup:

Troubleshooting:

- For high-dimensional feature spaces (>100 features), consider feature grouping

- If optimization stalls, increase the

n_trialsparameter or adjust the sampler - For imbalanced chemical datasets, incorporate appropriate scoring metrics

Protocol 2: Integrated Feature and Hyperparameter Optimization

Purpose: To simultaneously optimize both feature selection and model hyperparameters for maximal predictive performance.

Materials:

- Curated chemical dataset with standardized features

- Computational resources for parallel trial execution

- MLflow or similar framework for experiment tracking

Procedure:

Define Complex Search Space:

Execute Parallel Optimization:

Validation:

- Perform hold-out validation on test set using best parameters

- Compare against baseline models with full feature sets

- Assess feature importance consistency across multiple optimization runs

Data Presentation

Table 1: Optuna Parameter Suggestion Methods for Chemical Machine Learning

| Method | Parameters | Chemical Application Examples | Key Options |

|---|---|---|---|

suggest_categorical() |

name, choices |

Algorithm selection, fingerprint types, solvent classes | N/A |

suggest_int() |

name, low, high, step, log |

Number of neural network layers, tree depth, fingerprint length | step=1, log=True for exponential scales |

suggest_float() |

name, low, high, step, log |

Learning rates, regularization parameters, dropout rates | step=0.1, log=True for orders of magnitude |

Table 2: Search Space Configurations for Common Chemistry ML Tasks

| Task Type | Feature Space | Parameter Space | Recommended Sampler | Typical Trials |

|---|---|---|---|---|

| QSAR Modeling | Molecular descriptors, fingerprints | Model-specific hyperparameters | TPESampler | 100-500 |

| Reaction Yield Prediction | Chemical features, conditions | Neural network architecture | TPESampler | 200-1000 |

| Materials Property Prediction | Structural descriptors, compositions | Ensemble parameters | CmaEsSampler | 500-2000 |

| Spectral Data Analysis | Spectral features, preprocessing choices | CNN/LSTM parameters | TPESampler | 300-1000 |

Workflow Visualization

Optuna Chemistry Optimization Workflow

Chemistry Search Space Components

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Tool/Reagent | Function | Example Application |

|---|---|---|

| Optuna Framework | Hyperparameter optimization engine | Coordinate all optimization tasks |

| RDKit | Chemical descriptor calculation | Generate molecular fingerprints and features |

| Scikit-learn | Machine learning algorithms | Implement models for QSAR and property prediction |

| MLflow | Experiment tracking | Log parameters, metrics, and models |

| TPESampler | Bayesian optimization sampler | Efficiently navigate mixed parameter spaces |

| Molecular Databases | Source of chemical structures | Provide training and validation compounds |

| Cross-Validation | Model validation technique | Ensure robust performance estimation |

Advanced Applications in Chemistry

Multi-Objective Optimization for Drug Discovery

In medicinal chemistry applications, researchers often need to balance multiple objectives simultaneously, such as predictive accuracy, model interpretability, and feature parsimony. Optuna's NSGAIISampler enables multi-objective optimization:

Transfer Learning Across Chemical Spaces

Leverage optimization results from similar chemical datasets to accelerate convergence:

The systematic definition of search spaces for chemical features and model parameters represents a critical competency in modern chemical machine learning. By implementing the protocols outlined in these application notes, researchers can significantly enhance the efficiency and effectiveness of their optimization workflows. The integration of Optuna's flexible search space definition with chemistry-specific considerations enables more rapid identification of optimal feature subsets and model configurations, ultimately accelerating the discovery and development of novel compounds and materials. Future directions include the development of chemistry-specific samplers that incorporate domain knowledge and the integration of active learning approaches for further optimization efficiency.

The selection of optimal solvent components is a critical rate-limiting step in chemical processes, including acid gas removal in natural gas treatment and the synthesis of pharmaceuticals [18] [49]. Traditional methods for solvent determination rely heavily on experimental trial-and-error or simulation-based approaches, which are often time-consuming, resource-intensive, and limited in their ability to explore complex chemical spaces systematically [18]. With the growing availability of chemical data and computational resources, machine learning (ML) offers a transformative approach to accelerate this discovery process.

This case study details the application of the Light Gradient Boosting Machine (LightGBM) framework, optimized using the Optuna hyperparameter tuning framework, to predict optimal solvent components for acid gas removal units (AGRUs) [18]. Within the broader context of thesis research on Optuna for chemistry machine learning workflows, this application note serves as a detailed protocol for researchers, scientists, and drug development professionals seeking to implement robust ML pipelines for chemical property prediction and component selection. The integration of LightGBM and Optuna demonstrates a significant improvement in both prediction accuracy and computational efficiency compared to traditional methods and other ML models [18].

Background and Significance

The Solvent Selection Problem in Chemistry

In chemical engineering and pharmaceutical development, solvent selection profoundly influences process efficiency, environmental impact, and cost-effectiveness. In AGRUs, for instance, chemical solvents are extensively employed to remove acid gases like hydrogen sulfide (H₂S) and carbon dioxide (CO₂) from natural gas [18]. The performance of these units is highly dependent on the specific solvent components used. Similarly, in pharmaceutical synthesis, a molecule's solubility in different organic solvents is a key determinant in developing efficient and environmentally friendly production methods [49].

Conventional approaches to solvent selection, such as the Abraham Solvation Model or manual experimentation, have limitations in accuracy and scalability [49]. The ability to predict chemical behavior accurately from data represents a paradigm shift, enabling more rapid and informed decision-making.

The Role of Machine Learning and Hyperparameter Optimization