Optimizing Molecular Property Prediction: A Bayesian Hyperparameter Tuning Guide

This article provides a comprehensive guide for researchers and drug development professionals on implementing Bayesian Optimization (BO) to tune machine learning models for molecular property prediction.

Optimizing Molecular Property Prediction: A Bayesian Hyperparameter Tuning Guide

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on implementing Bayesian Optimization (BO) to tune machine learning models for molecular property prediction. It covers foundational concepts, from overcoming the limitations of traditional hyperparameter search methods to advanced techniques for high-dimensional molecular descriptor spaces. The scope includes practical methodologies like adaptive feature selection, integration with pretrained molecular representations, and multi-objective optimization for balancing accuracy with other critical metrics like fairness. Drawing on the latest research, the guide also offers troubleshooting strategies for common pitfalls and a comparative analysis of model performance and robustness, providing a complete framework for building more accurate, efficient, and reliable predictive models in drug discovery.

Bayesian Optimization Fundamentals: Moving Beyond Grid and Random Search

The Critical Need for Efficient Hyperparameter Tuning in Molecular ML

The application of machine learning (ML) in molecular science has transformed drug discovery and materials science, enabling the prediction of complex molecular properties from chemical structure. However, the performance of these models is highly sensitive to their hyperparameters, making optimal configuration selection a non-trivial task [1]. Traditional methods like grid and random search are often prohibitively resource-intensive, especially when a single model evaluation can require hours or days of training. Bayesian optimization (BO) has emerged as a powerful sample-efficient strategy for global optimization of black-box functions, making it particularly suited for navigating complex hyperparameter spaces with minimal evaluations [2] [3]. This protocol outlines the application of BO for hyperparameter tuning of molecular property prediction models, providing researchers with a structured framework to enhance model accuracy and robustness while conserving computational resources. By implementing these methods, scientists can systematically improve predictive performance across various molecular optimization tasks, from drug efficacy prediction to materials design.

Performance Analysis of Optimization Methods

Quantitative Comparison of Fingerprint Representations in Gaussian Process Regression

The choice of molecular representation significantly impacts predictive performance in molecular property prediction. The following table summarizes the performance of different fingerprint encodings on protein-ligand docking targets from the DOCKSTRING benchmark, evaluated using Gaussian Process regression [4].

Table 1: Performance Comparison (R²) of Fingerprint Representations on DOCKSTRING Targets

| Target | Protein Family & Difficulty | Exact Fingerprints | Compressed (2048 dim) | Sort&Slice (512 dim) |

|---|---|---|---|---|

| PARP1 | Enzyme (Easy) | 0.635 | 0.620 | 0.635 |

| F2 | Protease (Easy-Medium) | 0.579 | 0.573 | 0.579 |

| KIT | Kinase (Medium) | 0.529 | 0.512 | 0.519 |

| ESR2 | Nuclear Receptor (Hard) | 0.387 | 0.375 | 0.380 |

| PGR | Nuclear Receptor (Hard) | 0.470 | 0.459 | 0.480 |

Exact fingerprints consistently outperform or match compressed fingerprints across most targets. The Sort&Slice method shows competitive performance, particularly on the challenging PGR target at a lower dimensionality [4].

Performance of Multi-Fidelity Bayesian Optimization

Multi-fidelity BO (MFBO) leverages cheaper, lower-fidelity data sources to accelerate optimization. The following table compares its performance with single-fidelity BO in real-world molecular and materials discovery campaigns [5].

Table 2: Multi-Fidelity vs. Single-Fidelity BO in Discovery Campaigns

| Application Domain | Specific Task | Key Finding | Reported Efficiency Gain |

|---|---|---|---|

| Covalent Organic Frameworks | Xe/Kr separation optimization | MFBO accelerates discovery by leveraging low-fidelity simulations. | Reduces required high-fidelity evaluations by ~30-50% [5]. |

| Drug Molecule Discovery | Multi-property optimization | MFBO over single-fidelity in identifying promising drug candidates. | Identifies high-performing molecules 2x faster [5]. |

| Organic Electronics | Reverse Intersystem Crossing (RISC) optimizer | BO identified a molecule with a high RISC rate constant of 1.3 × 10⁸ s⁻¹. | Achieved 22.8% external electroluminescence efficiency at practical luminance [6]. |

Experimental Protocols

Protocol 1: Standard Bayesian Hyperparameter Optimization for a Graph Neural Network

This protocol describes the steps for optimizing a Graph Neural Network (GNN) for molecular property prediction using a standard single-fidelity BO approach [7] [3].

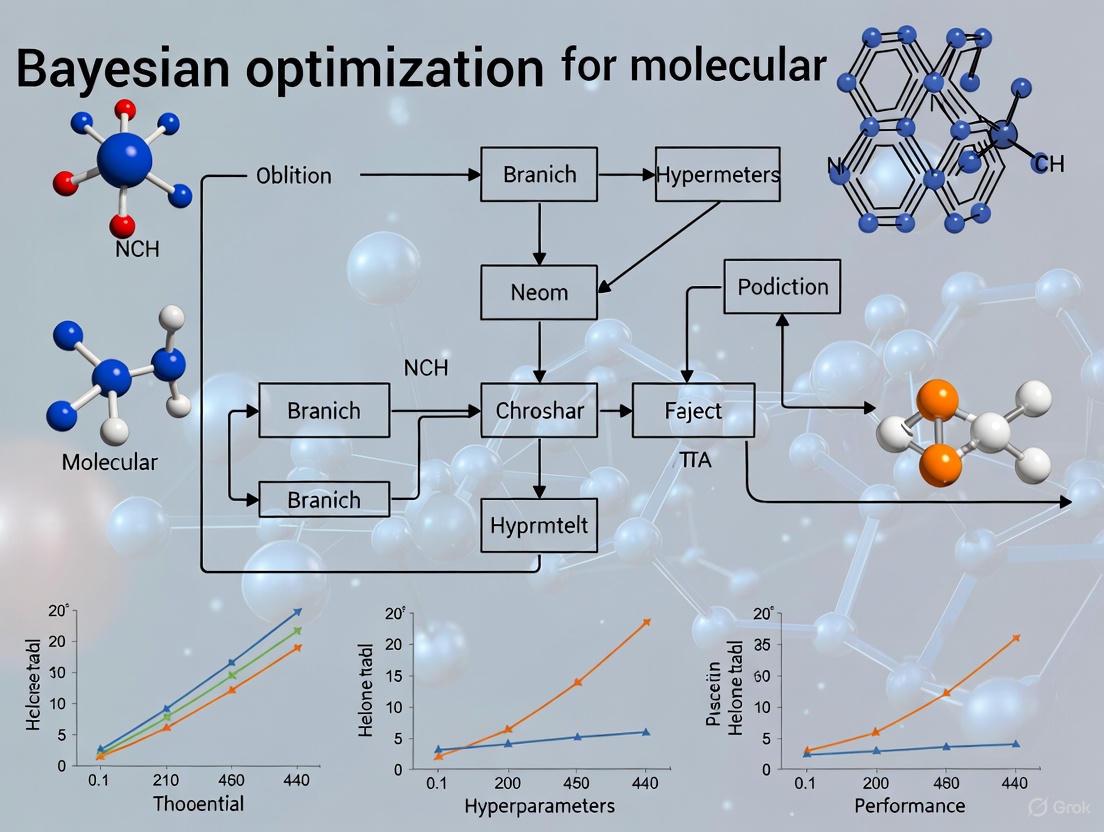

Workflow Diagram: Standard BO for GNN Hyperparameter Tuning

Step-by-Step Procedure:

Problem Formulation:

- Define Search Space: Specify the hyperparameters to optimize and their value ranges. For a GNN, this typically includes:

hidden_size: Integer, e.g., [64, 128, 256, 512]depth(number of message-passing layers): Integer, e.g., [2, 3, 4, 5, 6]dropout_rate: Continuous, e.g., [0.0, 0.5]learning_rate: Log-continuous, e.g., [1e-5, 1e-2]

- Set Objective Function: The objective is the performance of a GNN trained with a specific hyperparameter set on a validation set (e.g., to maximize R² for regression or AUC for classification) [7] [1].

- Define Search Space: Specify the hyperparameters to optimize and their value ranges. For a GNN, this typically includes:

BO Initialization:

- Select 5-10 random hyperparameter configurations from the defined search space and evaluate them to build an initial dataset

D = {(x_i, y_i)}, wherex_iis a hyperparameter set andy_iis its performance score [2].

- Select 5-10 random hyperparameter configurations from the defined search space and evaluate them to build an initial dataset

BO Loop Iteration:

- Surrogate Model Update: Fit a Gaussian Process (GP) model to the current dataset

D. The GP models the distribution over the objective function and provides a mean and variance prediction for any hyperparameter setx[4] [2]. - Acquisition Function Maximization: Using the GP surrogate, compute an acquisition function

a(x)that balances exploration (high uncertainty) and exploitation (high mean prediction). Common choices are: - Find the hyperparameter set

x_nextthat maximizesa(x)using a standard optimizer (e.g., L-BFGS-B). - Evaluation: Train and evaluate the GNN with

x_nextto obtain its performancey_next. - Data Augmentation: Update the dataset:

D = D ∪ (x_next, y_next)[7].

- Surrogate Model Update: Fit a Gaussian Process (GP) model to the current dataset

Termination:

- Repeat Step 3 until a predefined computational budget (e.g., 100 iterations) is exhausted or performance converges.

- Output the hyperparameter set

x*that achieved the highest performance during the optimization loop.

Protocol 2: Advanced Adaptive Representation with FABO

For novel molecular optimization tasks where the optimal representation is unknown, the Feature Adaptive Bayesian Optimization (FABO) framework dynamically identifies the most informative molecular features during the BO process [8].

Workflow Diagram: Feature Adaptive Bayesian Optimization (FABO)

Step-by-Step Procedure:

Initialization:

- Begin with a complete, high-dimensional representation of molecules or materials (e.g., including both chemical descriptors like RACs and pore geometric characteristics for Metal-Organic Frameworks) [8].

Closed-Loop FABO Cycle:

- Data Labeling: Obtain the target property value (e.g., gas uptake, band gap) for a molecule via experiment or simulation.

- Feature Selection: Use the currently acquired data to select the most relevant features. Two computationally efficient methods are:

- Maximum Relevancy Minimum Redundancy (mRMR): Selects features that maximize relevance to the target while minimizing redundancy among themselves [8].

- Spearman Ranking: A univariate method that ranks features based on the absolute value of their Spearman rank correlation coefficient with the target [8].

- Surrogate Model Update: Update the GP surrogate model using the adapted, lower-dimensional representation of the data.

- Next Experiment Selection: Use an acquisition function (e.g., EI) to select the next most promising molecule to test.

- Iterate until the optimization budget is exhausted [8].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Data Resources for Molecular BO

| Tool/Resource Name | Type | Primary Function | Application Note |

|---|---|---|---|

| BioKernel [2] | No-code Software | User-friendly BO framework for biological experiments. | Ideal for experimental scientists without coding expertise to optimize conditions (e.g., media composition). |

| BIOVIA Pipeline Pilot [9] | Commercial Platform | Integrates BO with Electronic Lab Notebooks (ELN) and DFT calculation workflows. | Streamlines data extraction from ELNs and suggests next experiments; democratizes advanced ML. |

| EDBO+ [9] | Python Package | Bayesian optimization for chemical reaction optimization. | Accessed via Jupyter Notebook in Pipeline Pilot; includes a free web version for academics. |

| QMOF Database [8] | Materials Dataset | Contains DFT-calculated electronic band gaps for ~8,400 MOFs. | Used for benchmarking BO tasks where material chemistry heavily influences the target property. |

| CoRE-2019 Database [8] | Materials Dataset | Gas adsorption properties for ~9,500 MOFs. | Provides data for BO tasks on gas uptake, influenced by pore geometry and/or chemistry. |

| DOCKSTRING Dataset [4] | Molecular Dataset | Docking scores for >260,000 molecules across 58 protein targets. | Serves as a key benchmark for validating molecular property prediction and optimization. |

Bayesian Optimization (BO) is a powerful framework for optimizing expensive black-box functions, making it particularly valuable for molecular property prediction and hyperparameter tuning in drug discovery research. The efficiency of BO hinges on two core computational components: the Gaussian Process (GP) surrogate model, which provides a probabilistic representation of the unknown objective function, and the acquisition function, which guides the sequential sampling strategy by balancing exploration and exploitation [10]. The synergistic relationship between these components enables researchers to navigate complex molecular search spaces with minimal experimental evaluations, significantly accelerating the discovery of promising drug candidates with desired properties.

In molecular optimization campaigns, practitioners face the challenge of identifying optimal molecular structures or synthesis parameters within vast chemical spaces where each evaluation may involve costly experiments or computations. The GP surrogate models this unknown landscape while quantifying uncertainty in predictions, while the acquisition function uses this probabilistic framework to prioritize which experiments or simulations to perform next. This combination has proven successful across diverse applications including metal-organic framework (MOF) discovery, perovskite solar cell development, and pharmaceutical compound optimization [8] [11].

Gaussian Process Surrogates

Fundamental Principles

Gaussian Processes provide a non-parametric, Bayesian approach to surrogate modeling that offers both predictive means and uncertainty estimates. Formally, a GP defines a distribution over functions, completely specified by its mean function (m(\mathbf{x})) and covariance kernel (k(\mathbf{x}, \mathbf{x}')):

[ f(\mathbf{x}) \sim \mathcal{GP}(m(\mathbf{x}), k(\mathbf{x}, \mathbf{x}')) ]

For a given set of observations (\mathcal{D} = {\mathbf{x}i, yi}{i=1}^n) with Gaussian noise (\epsilon \sim \mathcal{N}(0, \sigma\epsilon^2)), the posterior predictive distribution at a new test point (\mathbf{x}^*) is Gaussian with closed-form expressions for its mean and variance [10]:

[ f(\mathbf{x}^) | \mathcal{D} \sim \mathcal{N}(\mu(\mathbf{x}^), \sigma^2(\mathbf{x}^*)) ]

where (\mu(\mathbf{x}^) = \mathbf{k}_^\top (\mathbf{K} + \sigma\epsilon^2 \mathbf{I})^{-1} \mathbf{y}) and (\sigma^2(\mathbf{x}^*) = k(\mathbf{x}^*, \mathbf{x}^*) - \mathbf{k}^\top (\mathbf{K} + \sigma_\epsilon^2 \mathbf{I})^{-1} \mathbf{k}_), with (\mathbf{K}) being the kernel matrix between training points and (\mathbf{k}_*) the kernel vector between training and test points.

Kernel Selection and Adaptation

The choice of kernel function critically determines the GP's ability to capture the structure of molecular property landscapes. Different kernel functions encode varying assumptions about function smoothness, periodicity, and other properties. For molecular optimization, the Automatic Relevance Determination (ARD) Matérn kernel is often preferred as it can adapt length scales across different dimensions [11].

Recent research has focused on kernel adaptation strategies to improve performance on molecular problems. The BOOST framework implements automated kernel selection through offline evaluation of candidate kernels on available data, identifying the most suitable kernel before committing to expensive experimental evaluations [10]. Similarly, hierarchical GP approaches like BITS for GAPS place priors on kernel hyperparameters to encode physically meaningful structure, which is particularly valuable when integrating known molecular principles with data-driven components [12].

Table 1: Common Kernel Functions in Molecular Bayesian Optimization

| Kernel Name | Mathematical Form | Molecular Application Context |

|---|---|---|

| ARD Matérn 5/2 | (k(\mathbf{x}, \mathbf{x}') = \sigma^2 \left(1 + \sqrt{5r} + \frac{5}{3}r^2\right)\exp(-\sqrt{5r})) | Default choice for molecular representations with differing feature relevances [11] |

| ARD RBF | (k(\mathbf{x}, \mathbf{x}') = \sigma^2 \exp\left(-\frac{1}{2}\sum{d=1}^D \frac{(xd - xd')^2}{ld^2}\right)) | Suitable for modeling smooth molecular property landscapes |

| Linear | (k(\mathbf{x}, \mathbf{x}') = \sigma^2 \mathbf{x}^\top \mathbf{x}') | Captures linear relationships in molecular feature spaces |

Handling Molecular Representations

A significant challenge in molecular BO is identifying appropriate feature representations that balance completeness and compactness. High-dimensional representations can lead to the curse of dimensionality, while oversimplified representations may miss critical chemical information. The Feature Adaptive Bayesian Optimization (FABO) framework addresses this by dynamically adapting material representations throughout optimization cycles [8].

FABO employs feature selection methods like Maximum Relevancy Minimum Redundancy (mRMR) and Spearman ranking to identify the most informative features at each BO cycle. This approach has demonstrated success in MOF discovery tasks, automatically identifying representations that align with chemical intuition for known tasks while maintaining effectiveness for novel optimization challenges [8].

Acquisition Functions

Taxonomy and Selection Guidelines

Acquisition functions form the decision-making component of BO, quantifying the utility of evaluating candidate points based on the GP posterior. They typically balance exploration (sampling uncertain regions) and exploitation (sampling near promising optima). For molecular property optimization, the choice of acquisition function can significantly impact sample efficiency and final solution quality.

Table 2: Acquisition Functions for Molecular Property Optimization

| Acquisition Function | Mathematical Form | Molecular Context Performance |

|---|---|---|

| Upper Confidence Bound (UCB) | (\alpha_{\text{UCB}}(\mathbf{x}) = \mu(\mathbf{x}) + \beta \sigma(\mathbf{x})) | Robust performance across various molecular landscapes; recommended as default choice [11] |

| Expected Improvement (EI) | (\alpha_{\text{EI}}(\mathbf{x}) = \mathbb{E}[\max(f(\mathbf{x}) - f(\mathbf{x}^+), 0)]) | Widely used; provides balanced exploration-exploitation |

| q-Log Expected Improvement (qLogEI) | Monte Carlo approximation of log EI for batches | Less prone to numerical instability than qEI in batch settings [11] |

| Expected P-box Improvement (EPBI) | Global (GEPBI) and Boundary (BEPBI) variants | Improves surrogate accuracy and excels in locating global optima in complex landscapes [13] |

| Entropy-based Methods | (\alpha_{\text{Entropy}}(\mathbf{x}) = H[f(\mathbf{x})]) | Targets regions of high uncertainty and potential information gain [12] |

Recent comparative studies recommend qUCB as a default choice for batch BO in molecular optimization with dimension ≤6, as it demonstrates reliable performance across diverse functional landscapes with reasonable noise immunity [11]. For higher-dimensional problems or when prior landscape knowledge is unavailable, entropy-based acquisition functions or Expected P-box Improvement methods may offer advantages [12] [13].

Batch Acquisition Strategies

In experimental molecular optimization, evaluating multiple candidates in parallel can significantly reduce campaign duration. Batch BO methods select multiple points simultaneously while maintaining diversity in the batch. Two predominant approaches exist: serial methods using techniques like Local Penalization (LP), and Monte Carlo parallel methods like qUCB and qLogEI [11].

Serial batch methods such as UCB/LP select the first point by maximizing a standard acquisition function, then modify the function to penalize points near already-selected candidates. Monte Carlo methods jointly optimize batches by integrating acquisition functions over the joint probability distribution of multiple points. Empirical comparisons demonstrate that Monte Carlo approaches often achieve faster convergence with less sensitivity to initial conditions, particularly in noisy experimental settings [11].

Emerging Paradigms: Generative Acquisition Functions

Recent innovations have explored using generative models as acquisition functions, creating a paradigm shift in batch BO. Instead of optimizing traditional acquisition functions, these approaches train generative models to produce candidate samples with probabilities proportional to expected utility [14].

This generative BO framework offers several advantages for molecular optimization: scalability to large batches in high-dimensional spaces, ability to handle non-continuous molecular design spaces, and avoidance of difficult acquisition function optimization. Theoretically, these generative approaches asymptotically concentrate at global optima under certain conditions, providing mathematical foundations for their efficacy [14].

Integrated Experimental Protocols

Protocol 1: Standard Bayesian Optimization for Molecular Property Prediction

This protocol outlines the standard procedure for implementing BO with GP surrogates and acquisition functions for molecular property optimization.

Materials and Software Requirements:

- Python-based BO frameworks (BoTorch, Emukit, or GPyOpt)

- Molecular representation toolkit (RDKit or similar)

- Computational resources for surrogate model training

Procedure:

- Define molecular search space: Specify the range of molecular features, descriptors, or synthesis parameters to be optimized.

- Select initial design: Generate 10d-20d initial points (where d is dimension) using Latin Hypercube Sampling to ensure space-filling properties.

- Choose GP kernel: Select an ARD Matérn 5/2 kernel as default for molecular problems.

- Specify acquisition function: Choose UCB with β=2 as a robust default for initial experiments.

- For each BO iteration: a. Train GP hyperparameters by maximizing marginal likelihood b. Optimize acquisition function to identify next evaluation point(s) c. Evaluate objective function (experiment or simulation) at selected point(s) d. Update dataset with new observations

- Continue until experimental budget is exhausted or convergence criteria are met.

Validation:

- Compare final optimized molecular properties against baseline approaches

- Assess convergence rate by plotting best objective value versus number of iterations

Protocol 2: Feature Adaptive Bayesian Optimization (FABO)

For molecular optimization tasks where the optimal feature representation is unknown, the FABO protocol dynamically adapts representations during optimization [8].

Additional Requirements:

- Feature selection methods (mRMR or Spearman ranking implementations)

- Comprehensive initial molecular feature set

Procedure:

- Initialize with complete representation: Begin with a comprehensive set of molecular features (e.g., RAC descriptors for MOFs combined with geometric properties).

- Collect initial data: Evaluate an initial set of 10-20 molecules using Latin Hypercube Sampling in the full feature space.

- For each BO cycle: a. Perform feature selection using mRMR or Spearman ranking on current data b. Project molecular library into selected feature subspace c. Train GP surrogate model in the adapted feature space d. Select next candidate(s) using acquisition function (EI or UCB) e. Evaluate selected candidate(s) and update dataset

- Continue for predetermined number of cycles or until performance plateaus.

Validation:

- Compare against fixed-representation BO using the same total experimental budget

- Analyze selected features for alignment with chemical intuition in known tasks

Protocol 3: Automated Configuration with BOOST Framework

The BOOST protocol automates the selection of optimal kernel-acquisition function pairs for specific molecular optimization problems [10].

Additional Requirements:

- Multiple kernel and acquisition function candidates

- Sufficient initial data for offline evaluation (typically 20-50 points)

Procedure:

- Define candidate sets: Specify candidate kernels (Matérn, RBF, linear) and acquisition functions (UCB, EI, EPBI).

- Partition available data: Split initial data into reference and query subsets.

- Evaluate configurations: For each kernel-acquisition pair: a. Perform internal BO runs using the reference subset b. Track iterations required to reach target performance on query subset

- Select optimal configuration: Choose the kernel-acquisition pair with minimum iterations to target.

- Execute main BO: Apply selected configuration to the complete optimization problem.

- Optional: Periodically re-evaluate configuration selection as more data accumulates.

Validation:

- Compare against best fixed configuration across multiple molecular tasks

- Assess computational overhead of configuration selection versus BO performance gains

Workflow Visualization

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools for Molecular Bayesian Optimization

| Tool/Reagent | Function/Purpose | Implementation Notes |

|---|---|---|

| Gaussian Process Regression | Probabilistic surrogate modeling with uncertainty quantification | Use ARD Matérn 5/2 kernel as default; optimize hyperparameters via marginal likelihood maximization |

| Upper Confidence Bound (UCB) | Acquisition function balancing exploration and exploitation | Set exploration parameter β=2 for initial experiments; adjust based on problem characteristics |

| Expected Improvement (EI) | Alternative acquisition function for optimization | Prefer log-transformed version (LogEI) for numerical stability in batch settings |

| Molecular Representations | Convert chemical structures to numerical features | Use comprehensive feature sets (e.g., RACs for MOFs) with adaptive selection |

| Batch Acquisition Methods | Enable parallel experimental evaluation | Prefer Monte Carlo methods (qUCB) over serial approaches for noisy experimental conditions |

| Feature Selection Algorithms | Dynamically adapt molecular representations | Implement mRMR or Spearman ranking within FABO framework |

| Kernel-Acquisition Selectors | Automate configuration selection | Apply BOOST framework for problem-specific optimization |

| Generative Models | Alternative acquisition for high-dimensional batches | Use for complex molecular spaces with discrete or combinatorial structure |

Gaussian Process surrogates and acquisition functions form the computational backbone of Bayesian optimization for molecular property prediction. The synergistic combination of flexible probabilistic modeling with intelligent sampling strategies enables researchers to efficiently navigate complex chemical spaces with minimal experimental iterations. Emerging approaches including feature adaptation, automated configuration selection, and generative acquisition functions continue to enhance the capabilities of BO in molecular discovery campaigns. For practitioners in drug development, following the structured protocols outlined in this document while leveraging the appropriate toolkit components will maximize the effectiveness of BO-driven molecular optimization efforts.

Why Traditional Grid and Random Search Fall Short

In the field of molecular property prediction, the performance of machine learning models depends crucially on their hyperparameter settings [15]. Hyperparameter optimization is the process of selecting the best set of hyperparameters that control the learning process of an algorithm, ultimately finding the tuple that produces the optimal model by minimizing a predefined loss function on independent data [16]. In drug discovery and materials science, where predicting properties like solubility, toxicity, and binding affinity is essential, proper hyperparameter tuning can significantly impact the accuracy and reliability of predictions, thereby accelerating the research and development pipeline.

Traditional methods for hyperparameter optimization, namely grid search and random search, have been widely adopted for their simplicity and straightforward implementation. However, these methods exhibit significant limitations, particularly when applied to the complex, high-dimensional search spaces common in molecular informatics. This article examines the technical shortcomings of these traditional approaches and presents Bayesian optimization as a superior alternative, providing detailed protocols for its implementation in molecular property prediction research.

The Fundamental Limitations of Traditional Methods

Grid Search: The Curse of Dimensionality

Grid search, or parameter sweep, involves performing a brute-force search over a manually specified subset of the hyperparameter space [16]. In practice, this method requires researchers to define a finite set of "reasonable" values for each hyperparameter, and the algorithm then evaluates every possible combination in the Cartesian product of these sets.

Key Limitations:

- Exponential Computational Growth: The number of evaluations required grows exponentially with the number of hyperparameters, a phenomenon known as the "curse of dimensionality" [16]. For a model with multiple continuous hyperparameters, the computational cost quickly becomes prohibitive.

- Manual Discretization Requirements: Since machine learning parameter spaces often include real-valued or unbounded parameters, researchers must manually set boundaries and discretization levels before applying grid search, potentially missing optimal values that fall between the chosen points [16].

- Resource Inefficiency: The method expends equal computational resources on all regions of the parameter space, including areas with predictably poor performance, making it computationally wasteful especially for expensive-to-train models like deep neural networks used in molecular property prediction [17].

Random Search: Undirected Exploration

Random search replaces the exhaustive enumeration of all combinations with random sampling of the parameter space [16]. While conceptually simple, this method aims to overcome some limitations of grid search by exploring a wider range of parameter values without the constraints of a fixed grid.

Key Limitations:

- Lack of Directionality: Random search explores the parameter space without learning from previous evaluations, potentially wasting computational resources on non-promising regions [17].

- Unpredictable Convergence: The probability of finding optimal parameters depends entirely on chance, with no guarantee of improvement over successive iterations [17].

- Inefficient Resource Allocation: Similar to grid search, random search does not prioritize more promising regions of the parameter space based on previous results, making it inefficient for computationally expensive evaluations [18].

Table 1: Comparative Analysis of Traditional Hyperparameter Optimization Methods

| Evaluation Dimension | Grid Search | Random Search |

|---|---|---|

| Search Strategy | Exhaustive enumeration of all combinations | Random sampling from parameter space |

| Computational Efficiency | Low; becomes computationally prohibitive with increasing dimensions | Medium; dependent on number of iterations but generally better than grid search |

| Dimensionality Handling | Poor; severely impacted by curse of dimensionality | Better; can explore more values in continuous spaces |

| Exploration-Exploitation Balance | No balance; purely exhaustive | No balance; purely exploratory |

| Adaptation to Problem Structure | None; treats all parameters equally | None; no learning from previous evaluations |

| Best Use Case | Small parameter spaces with limited dimensions | Preliminary exploration of parameter spaces |

Bayesian Optimization: A Superior Alternative

Theoretical Foundation

Bayesian optimization is a sequential design strategy for global optimization of black-box functions that don't have known analytical expressions or are expensive to evaluate [18]. In the context of hyperparameter optimization, the objective function maps hyperparameters to a performance metric (e.g., validation accuracy or loss) that we wish to optimize. Unlike traditional methods, Bayesian optimization constructs a probabilistic model of the objective function and uses it to select the most promising hyperparameters to evaluate next [17].

The core components of Bayesian optimization include:

- Probabilistic Surrogate Model: Typically a Gaussian Process (GP) that approximates the unknown objective function, providing both a prediction of the function value at any point and an estimate of the uncertainty around that prediction [18]. The Gaussian Process defines a distribution over functions, where predictions at unobserved points follow a normal distribution characterized by mean and variance.

- Acquisition Function: A decision-making tool that guides the selection of the next hyperparameter combination by balancing exploration (sampling uncertain regions) and exploitation (sampling regions with high predicted performance) [17] [18]. Common acquisition functions include:

- Expected Improvement (EI): Quantifies the expected amount of improvement over the current best observed value [18].

- Upper Confidence Bound (UCB): Balances exploitation (high mean) and exploration (high variance) using a tunable parameter κ [18].

- Probability of Improvement (PI): Measures the probability that a new point will improve upon the current best value [18].

Diagram 1: Bayesian Optimization Iterative Workflow. The process begins with initial random sampling, then iteratively updates the surrogate model, optimizes the acquisition function, and evaluates promising hyperparameters until convergence.

Comparative Performance Advantages

Bayesian optimization has demonstrated superior performance compared to traditional methods across various applications in molecular property prediction and drug discovery:

- Accelerated Convergence: In molecular property prediction tasks, Bayesian optimization typically requires significantly fewer evaluations to find optimal hyperparameters compared to grid or random search [3] [19]. For instance, in toxicity prediction tasks, researchers achieved equivalent toxic compound identification with 50% fewer iterations compared to conventional approaches [19].

- Effective High-Dimensional Navigation: Bayesian optimization efficiently handles complex, high-dimensional hyperparameter spaces common in molecular informatics, such as those encountered in graph neural networks and transformer models for molecular representation [8].

- Adaptability to Complex Landscapes: The method excels at optimizing non-convex, noisy objective functions with multiple local minima, which are characteristic of hyperparameter response surfaces in deep learning models for molecular property prediction [3] [19].

Table 2: Bayesian Optimization vs. Traditional Methods in Molecular Property Prediction

| Performance Metric | Grid Search | Random Search | Bayesian Optimization |

|---|---|---|---|

| Evaluations Needed for Convergence | Exponential with dimensions | Linear with dimensions | Sublinear; often 10-100x fewer than grid search |

| Handling of High-Dimensional Spaces | Poor | Moderate | Good with adaptive techniques |

| Noise Robustness | Low | Low | High (through probabilistic modeling) |

| Adaptation to Search Space Geometry | None | None | High (learns spatial structure) |

| Theoretical Guarantees | None | Probabilistic only | Convergence guarantees available |

| Molecular Property Prediction Performance | Variable; often suboptimal | Moderate | State-of-the-art in multiple studies [3] [19] |

Experimental Protocols for Molecular Property Prediction

Protocol 1: Bayesian Optimization for Deep Learning Models

This protocol outlines the implementation of Bayesian optimization for tuning deep learning models in molecular property prediction, adapted from studies demonstrating successful application in pharmaceutical research [3] [19].

Materials and Reagents:

Table 3: Essential Research Reagent Solutions for Molecular Property Prediction

| Reagent/Resource | Specification | Function in Experimental Protocol |

|---|---|---|

| Molecular Dataset | Tox21, ClinTox, or custom molecular property data | Provides labeled data for model training and evaluation |

| Molecular Representations | SMILES strings, molecular fingerprints, graph representations, or learned embeddings | Encodes molecular structure for machine learning input |

| Deep Learning Framework | TensorFlow, PyTorch, or JAX | Provides infrastructure for model building and training |

| Bayesian Optimization Library | Scikit-optimize, KerasTuner, Ax, or BoTorch | Implements optimization algorithms and surrogate models |

| Computational Resources | GPU-enabled workstations or compute clusters | Accelerates model training and hyperparameter evaluation |

Procedure:

Problem Formulation:

- Define the hyperparameter search space based on the model architecture. For neural networks, this typically includes learning rate (log-uniform, 1e-5 to 1e-2), batch size (categorical, 16-128), number of layers (integer, 2-8), and dropout rate (uniform, 0.1-0.5) [3] [18].

- Select an appropriate objective function (e.g., validation AUC, RMSE, or recall) that aligns with the molecular prediction task.

Initial Design:

- Generate 5-10 initial points using random sampling or space-filling designs to build an initial surrogate model [18].

- For molecular data, ensure initial points cover diverse regions of the parameter space to avoid premature convergence.

Surrogate Model Selection:

- Choose a Gaussian Process with Matérn kernel for continuous parameters, which accommodates smooth but non-stationary functions common in hyperparameter response surfaces [18].

- For high-dimensional spaces (>20 parameters), consider using a Random Forest or Tree Parzen Estimator (TPE) as the surrogate model instead [15].

Acquisition Function Optimization:

- Select Expected Improvement (EI) as it generally provides robust performance across diverse molecular prediction tasks [18].

- Optimize the acquisition function using L-BFGS or multi-start gradient-based methods to find the next candidate hyperparameters.

Iterative Evaluation and Update:

- Train the model with the proposed hyperparameters and evaluate on the validation set.

- Update the surrogate model with the new (hyperparameters, performance) observation.

- Repeat steps 4-5 until convergence or depletion of computational budget (typically 50-200 iterations) [3].

Validation and Testing:

- Train the final model with the best-found hyperparameters on the complete training set.

- Evaluate on the held-out test set using appropriate metrics for molecular property prediction (AUC-ROC, RMSE, etc.).

Protocol 2: Feature Adaptive Bayesian Optimization (FABO) for Molecular Representations

The Feature Adaptive Bayesian Optimization (FABO) framework addresses the critical challenge of molecular representation selection in Bayesian optimization, which significantly impacts optimization efficiency [8].

Procedure:

Comprehensive Feature Initialization:

- Begin with a complete, high-dimensional representation of molecules, incorporating both chemical (e.g., RACs, molecular fingerprints) and geometric features [8].

- For metal-organic frameworks (MOFs), include pore geometry characteristics alongside chemical descriptors.

Integrated Feature Selection:

- At each Bayesian optimization cycle, apply feature selection methods to identify the most relevant features:

- Dynamically adapt the molecular representation throughout optimization cycles based on selected features.

Task-Specific Representation Learning:

Validation of Adapted Representations:

- Compare automatically selected features with chemical intuition for known tasks to validate the adaptive process.

- Assess optimization performance against fixed-representation baselines to quantify improvement.

Diagram 2: Feature Adaptive Bayesian Optimization (FABO) Workflow. This enhanced framework dynamically selects the most informative molecular features during optimization, improving efficiency, especially for novel molecular optimization tasks where optimal representations are unknown.

Applications in Molecular Property Prediction and Drug Discovery

Bayesian optimization has demonstrated significant impact across various molecular informatics applications:

Molecular Property Prediction: In predicting key pharmaceutical properties including water solubility, lipophilicity, hydration energy, electronic properties, blood-brain barrier permeability, and inhibition constants, Bayesian optimization combined with dynamic batch size tuning yielded state-of-the-art performance [3]. The approach consistently outperformed traditional methods in both accuracy and computational efficiency.

Toxicity Prediction: For Tox21 and ClinTox datasets, Bayesian active learning approaches achieved equivalent toxic compound identification with 50% fewer iterations compared to conventional methods [19]. This demonstrates the method's particular advantage in data-efficient scenarios common early in drug discovery.

Molecular Optimization and Materials Discovery: Bayesian optimization guides the discovery of high-performing molecules and materials by efficiently navigating complex chemical spaces [8]. The FABO framework has successfully identified optimal metal-organic frameworks (MOFs) for specific applications like CO2 adsorption and electronic band gap optimization [8].

Experimental Design in Drug Discovery: Bayesian experimental design formalizes compound selection by modeling uncertainties in predictions and using acquisition functions like Bayesian Active Learning by Disagreement (BALD) and Expected Predictive Information Gain (EPIG) to prioritize the most informative samples for experimental testing [19].

Traditional grid and random search methods fall short in molecular property prediction due to their computational inefficiency, poor scalability with dimensionality, and inability to learn from previous evaluations. Bayesian optimization addresses these limitations through probabilistic modeling and intelligent decision-making, significantly accelerating hyperparameter optimization while achieving superior model performance.

The experimental protocols presented herein provide researchers with practical frameworks for implementing Bayesian optimization in molecular informatics workflows. By adopting these advanced optimization techniques, drug discovery scientists and computational chemists can enhance the predictive accuracy of their models while making more efficient use of valuable computational resources, ultimately accelerating the journey from molecular design to viable therapeutic candidates.

Establishing the Bayesian Optimization Workflow for Molecular Data

Bayesian optimization (BO) is a powerful, sample-efficient strategy for the global optimization of expensive black-box functions. In molecular discovery, where evaluating properties through experiments or simulations is costly and time-consuming, BO provides a principled framework to navigate complex chemical spaces with minimal resources [2]. Its effectiveness hinges on a core workflow: using a probabilistic surrogate model to approximate the unknown objective function and an acquisition function to intelligently guide the selection of subsequent experiments by balancing exploration and exploitation [8] [2]. This application note details the establishment of a robust BO workflow, specifically tailored for molecular property prediction and optimization, providing researchers with detailed protocols and key considerations.

Core Workflow and Components

The standard Bayesian optimization cycle for molecular data involves four key iterative steps, as illustrated below.

Molecular Representation

The first critical step is converting molecular structures into numerical feature vectors. The choice of representation significantly influences BO performance by affecting the surrogate model's ability to learn meaningful patterns [8]. The table below summarizes common molecular representations used in BO pipelines.

Table 1: Common Molecular Representations for Bayesian Optimization

| Representation | Description | Key Advantages | Considerations |

|---|---|---|---|

| Morgan Fingerprints [20] | Bit vectors encoding molecular substructures within a specified radius around each atom. | - Computationally efficient- Works well with Tanimoto kernel [20] | - Can be high-dimensional |

| Revised Autocorrelation Calculations (RACs) [8] | Captures chemical nature by relating atomic properties (e.g., electronegativity) across the molecular graph. | - Provides chemically intuitive descriptors- Effective for complex materials like MOFs [8] | - Requires a graph representation of the material |

| mRMR-Selected Features [8] | A subset of features chosen by the Maximum Relevancy Minimum Redundancy algorithm during BO. | - Dynamically adapts to the task- Improves compactness and efficiency [8] | - Requires a initial, complete feature set |

| LLM-Generated Embeddings [21] | Dense vector representations generated by a Large Language Model pre-trained on chemical data. | - Leverages vast prior knowledge- Can improve sample efficiency [21] | - Performance depends on LLM's pre-training data |

Surrogate Modeling with Gaussian Processes

The Gaussian Process (GP) is the most widely used surrogate model in BO due to its flexibility and native uncertainty quantification [22] [2]. A GP defines a distribution over functions, fully described by a mean function ( m(\mathbf{x}) ) and a covariance kernel function ( k(\mathbf{x}, \mathbf{x}') ):

[ f(\mathbf{x}) \sim \mathcal{GP}(m(\mathbf{x}), k(\mathbf{x}, \mathbf{x}')) ]

Given a dataset ( \mathcal{D}n = {(\mathbf{x}i, yi)}{i=1}^{n} ), the posterior predictive distribution at a new point ( \mathbf{x} ) is Gaussian with closed-form mean ( \mun(\mathbf{x}) ) and variance ( \sigman^2(\mathbf{x}) ) [22]:

[ \mun(\mathbf{x}) = \mathbf{k}n(\mathbf{x})^\top (Kn + \sigma^2 I)^{-1} \mathbf{y} ] [ \sigman^2(\mathbf{x}) = k(\mathbf{x}, \mathbf{x}) - \mathbf{k}n(\mathbf{x})^\top (Kn + \sigma^2 I)^{-1} \mathbf{k}_n(\mathbf{x}) ]

The kernel function is critical; for molecular fingerprints, the Tanimoto kernel has been shown to be a high-performing choice [20].

Acquisition Functions

The acquisition function ( \alpha(\mathbf{x}) ) uses the surrogate's posterior to score the utility of evaluating a candidate ( \mathbf{x} ). The following diagram illustrates how different functions balance exploration and exploitation.

Table 2: Common Acquisition Functions for Molecular Optimization

| Function | Formula | Use Case | ||||

|---|---|---|---|---|---|---|

| Expected Improvement (EI) [8] | ( EI(\mathbf{x}) = \mathbb{E} [\max(0, f_{min} - Y)] ) | General-purpose optimization for finding minima/maxima. | ||||

| Upper Confidence Bound (UCB) [8] | ( UCB(\mathbf{x}) = \mu(\mathbf{x}) + \kappa \sigma(\mathbf{x}) ) | Explicit control of exploration (κ) vs. exploitation. | ||||

| Target-oriented EI (t-EI) [23] | ( t\text{-}EI(\mathbf{x}) = \mathbb{E}[\max(0, | y_{t.min}-t | - | Y-t | )] ) | Finding materials with a specific target property value ( t ). |

| EHVI (Multi-objective) [22] | ( EHVI(\mathbf{x}) = \mathbb{E}[HVI(Y, \mathcal{P})] ) | Optimizing multiple competing objectives simultaneously. |

Advanced BO Strategies and Protocols

Feature Adaptive Bayesian Optimization (FABO)

For novel optimization tasks where the optimal molecular representation is unknown a priori, the FABO framework dynamically identifies the most informative features during the BO process [8].

Protocol: Implementing FABO

- Initialization: Start with a complete, high-dimensional representation of the molecule (e.g., a large set of RACs and geometric descriptors).

- Iteration Cycle: At each BO cycle: a. Feature Selection: Using only the data acquired so far, apply a feature selection method. - mRMR: Selects features that have maximum relevance to the target property while maintaining minimum redundancy among themselves [8]. - Spearman Ranking: A univariate method that ranks features by the absolute value of their Spearman rank correlation coefficient with the target [8]. b. Model Fitting: Fit the GP surrogate model using the adaptively selected, lower-dimensional feature set. c. Candidate Proposal: Use an acquisition function on the updated model to propose the next experiment.

Multi-Fidelity Bayesian Optimization

This approach leverages experimental assays of differing costs and fidelities (e.g., docking scores → single-point inhibition → dose-response IC50) to maximize the use of a limited budget [20].

Protocol: Multifidelity BO for Drug Discovery

- Define Fidelities and Costs:

- Low-fidelity: Docking score (Cost: 0.01 units).

- Medium-fidelity: Single-point percent inhibition (Cost: 0.2 units).

- High-fidelity: Dose-response IC50 (Cost: 1.0 unit) [20].

- Set Budget: Allocate a total cost budget per iteration (e.g., 10.0 units) [20].

- Model and Select: Use a multi-task GP to model all fidelities jointly. The acquisition function (e.g., Expected Improvement with Targeted Variance Reduction) selects the next (molecule, fidelity) pair that maximizes the expected improvement per unit cost at the high-fidelity level [20].

Multi-Objective Bayesian Optimization

Molecular discovery requires balancing multiple properties. Pareto-based MOBO directly seeks a set of non-dominated solutions.

Protocol: Pareto-based MOBO with EHVI

- Define Objectives: Specify the multiple properties to optimize (e.g., potency, solubility, synthetic accessibility).

- Surrogate Modeling: Model each objective with a separate GP, or use a multi-output GP.

- Acquisition: Use the Expected Hypervolume Improvement (EHVI) acquisition function. EHVI quantifies the expected increase in the volume of objective space that is dominated by the current Pareto front after evaluating a new candidate [22].

- Selection: Choose the candidate that maximizes EHVI. This approach has been shown to outperform simple scalarization (weighted sum of objectives), especially in low-data regimes, by providing better Pareto front coverage and chemical diversity [22].

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Item / Resource | Function / Description | Example Use in BO Workflow |

|---|---|---|

| QMOF Database [8] | A database of over 8,000 MOFs with computed electronic properties. | Source of initial data and a search space for optimizing electronic properties like band gap. |

| CoRE-MOF Database [8] | A database of thousands of MOF structures. | Used as a search space for optimizing gas adsorption properties (e.g., CO2 uptake). |

| Tox21 & ClinTox Datasets [19] | Public benchmarks for computational toxicology, containing chemical compounds with toxicity labels. | Used to benchmark active learning and BO models for predicting molecular toxicity. |

| MolBERT / pretrained LLMs [19] [21] | Transformer models pre-trained on large molecular corpora. | Used to generate high-quality molecular representations (embeddings) for the surrogate model. |

| Gaussian Process (GP) Model | The core probabilistic surrogate model for BO. | Models the relationship between molecular representation and target property. |

| mRMR Python Package [8] | A software implementation of the Maximum Relevancy Minimum Redundancy feature selection algorithm. | Integrated into the FABO framework for dynamic feature selection. |

| Bayesian Optimization Software (e.g., BoTorch, GPyOpt) | Libraries providing implementations of BO loops, GPs, and acquisition functions. | Used to build and execute the end-to-end BO workflow. |

Implementing Adaptive Bayesian Optimization for Molecular Representations

Framing Molecular Property Prediction as a Bayesian Optimization Problem

Molecular property prediction is a critical task in fields such as drug discovery and materials science. However, optimizing these properties presents significant challenges due to the vastness of chemical space, the high cost of experiments or simulations, and the complex, often non-linear relationships between molecular structures and their target properties. Bayesian Optimization (BO) has emerged as a powerful, sample-efficient framework for navigating these complex search spaces. This framework is particularly valuable when function evaluations—such as experimental measurements or detailed simulations—are expensive. By leveraging probabilistic surrogate models and intelligent acquisition functions, BO can guide the search for optimal molecules with desired properties while minimizing the number of required evaluations.

This document provides application notes and detailed protocols for implementing BO in molecular property prediction and optimization. It is structured for researchers and development professionals who aim to integrate these methods into their molecular discovery pipelines.

Theoretical Foundations

The Bayesian Optimization Cycle

Bayesian Optimization is a sequential design strategy for optimizing black-box functions that are expensive to evaluate. In the context of molecular property prediction, the goal is to find a molecule ( m^* ) that maximizes a target property ( F(m) ) from a large set of candidate molecules [24]: [ m^* = \arg \max_{m \in \mathcal{M}} F(m) ]

The BO process relies on two core components:

- A probabilistic surrogate model that approximates the unknown objective function ( F(m) ) and provides uncertainty estimates. Gaussian Processes (GPs) are a common choice due to their strong uncertainty quantification capabilities [8] [4].

- An acquisition function that uses the surrogate's predictions to decide which molecule to evaluate next by balancing exploration (sampling uncertain regions) and exploitation (sampling regions with high predicted performance) [8] [24].

Common acquisition functions include Expected Improvement (EI), Upper Confidence Bound (UCB), and Probability of Improvement (PI) [24].

The Critical Role of Molecular Representation

A crucial aspect of applying BO to molecular problems is how molecules are converted into a numerical feature vector, or representation. The choice of representation significantly influences the efficiency of the optimization process [8]. An ideal representation must balance completeness (capturing relevant chemical information) and compactness (low dimensionality to avoid the "curse of dimensionality") [8]. High-dimensional representations can lead to poor BO performance, while overly simplified representations may miss key features governing the property of interest. This challenge has led to the development of adaptive and task-aware representation methods, which are discussed in later sections.

Key Methodologies and Experimental Protocols

This section details specific BO frameworks and provides protocols for their implementation.

Feature Adaptive Bayesian Optimization (FABO)

The FABO framework addresses the representation challenge by dynamically identifying the most informative features during the BO cycles [8].

Workflow and Protocol

The following diagram illustrates the closed-loop FABO workflow, which integrates feature selection directly into the optimization cycle.

Protocol Steps:

- Initialization: Begin with a small, initial dataset of molecules with known property values. Start with a complete, high-dimensional representation for each molecule (e.g., including both chemical and geometric features).

- Data Labeling: Perform an expensive experiment or simulation (e.g., measuring CO2 uptake or calculating an electronic band gap) to obtain the target property value for one or more molecules [8].

- Feature Selection: Using only the data acquired during the BO campaign, perform feature selection to refine the molecular representation. The FABO study used two methods:

- Maximum Relevancy Minimum Redundancy (mRMR): Selects features that have high relevance to the target variable while minimizing redundancy among themselves [8]. The score for a candidate feature ( d_i ) is calculated as:

Relevance(d_i, y) - Redundancy(d_i, {d_j, d_k, ...})where Relevance is computed using the F-statistic. - Spearman Ranking: A univariate method that ranks features based on the absolute value of their Spearman rank correlation coefficient with the target variable [8].

- Maximum Relevancy Minimum Redundancy (mRMR): Selects features that have high relevance to the target variable while minimizing redundancy among themselves [8]. The score for a candidate feature ( d_i ) is calculated as:

- Model Update: Update the Gaussian Process surrogate model using the accumulated labeled data and the newly adapted, lower-dimensional feature representation.

- Candidate Selection: Using an acquisition function (e.g., EI or UCB), select the next most promising molecule to evaluate from the pool of unlabeled candidates [8].

- Iteration: Repeat steps 2-5 until a stopping criterion is met (e.g., evaluation budget exhausted or a performance target is achieved).

Application Notes

- Case Study Performance: FABO was benchmarked on optimizing Metal-Organic Frameworks (MOFs) for CO2 adsorption (at low and high pressure) and electronic band gap. In all cases, FABO effectively reduced feature space dimensionality and accelerated the discovery of top-performing materials, outperforming both random search and BO with fixed, expert-chosen representations [8].

- Interpretability: For known tasks (e.g., band gap optimization, which is heavily influenced by chemistry), FABO automatically identified features that aligned with human chemical intuition. This validates its utility for novel tasks where such prior knowledge is unavailable [8].

Molecular Descriptors with Actively Identified Subspaces (MolDAIS)

The MolDAIS framework adaptively identifies task-relevant subspaces within large libraries of precomputed molecular descriptors [24].

Workflow and Protocol

Protocol Steps:

- Featurization: Represent each molecule in the search space using a comprehensive library of molecular descriptors. These can range from simple atom counts to complex graph-derived or quantum-informed features [24].

- Surrogate Modeling with Sparse Priors: Train a Gaussian Process surrogate model using a sparsity-inducing prior, such as the Sparse Axis-Aligned Subspace (SAAS) prior. This prior actively suppresses the influence of irrelevant descriptors during inference [24].

- Subspace Identification: The model automatically learns a compact, property-relevant subspace of descriptors as new data is acquired.

- Candidate Selection & Evaluation: Use an acquisition function to select the next molecule for expensive evaluation. Update the dataset with the new measurement.

- Iteration: Repeat steps 2-4. The model iteratively revises its hypothesis about the relevant subspace, improving sample efficiency [24].

Application Notes

- Performance: MolDAIS has been shown to consistently outperform state-of-the-art methods based on molecular graphs, SMILES strings, and learned embeddings. It can identify near-optimal candidates from chemical libraries of over 100,000 molecules using fewer than 100 property evaluations [24].

- Scalability: For larger-scale applications, MolDAIS offers screening variants based on Mutual Information (MI) and the Maximal Information Coefficient (MIC) as faster alternatives to the full SAAS prior, while retaining adaptivity and interpretability [24].

Integrating Pretrained Models and Active Learning

Leveraging large-scale pretrained models as molecular feature encoders can significantly enhance BO performance, especially in low-data regimes common to drug discovery [19] [25].

Protocol: Bayesian Active Learning with a Pretrained Model

- Representation Learning: Obtain molecular representations using a model (e.g., a Transformer-based BERT model) that has been pretrained on a large corpus of unlabeled molecules (e.g., 1.26 million compounds) [19]. This step is performed once, before the active learning cycle.

- Initial Model Training: Train a Bayesian model (e.g., a Bayesian Neural Network or a GP with a Tanimoto kernel) on a small initial labeled dataset, using the fixed, pretrained representations as input [19].

- Bayesian Active Learning Cycle:

a. Uncertainty Estimation: Use the Bayesian model to predict the mean and uncertainty for all molecules in the unlabeled pool.

b. Acquisition: Rank the unlabeled molecules using a Bayesian acquisition function. The Bayesian Active Learning by Disagreement (BALD) function is a common choice, which selects points where the model is most uncertain about its parameters [19]:

BALD(x) = H[y|x, D] - E_{p(φ|D)}[H[y|x, φ]]whereHis the predictive entropy. c. Labeling: Select the top-ranked molecule(s) for expensive experimental labeling. d. Model Update: Retrain the Bayesian model on the augmented labeled dataset. - Iteration: Repeat step 3 until the budget is exhausted.

Application Notes

- Efficiency Gains: This approach has been demonstrated to achieve equivalent toxic compound identification on the Tox21 and ClinTox datasets with 50% fewer iterations compared to conventional active learning that does not use pretrained representations [19].

- Uncertainty Quantification: Pretrained representations generate a structured embedding space that enables more reliable uncertainty estimation, which is critical for effective sample acquisition [19].

Performance Data and Benchmarking

The following tables summarize quantitative results from key studies cited in this document, providing a basis for comparing the performance of different BO approaches.

Table 1: Performance of Adaptive Representation Methods in Molecular Optimization

| Framework | Task Description | Key Result | Comparison Baseline |

|---|---|---|---|

| FABO [8] | MOF discovery for CO2 uptake & band gap | Outperformed random search and fixed-representation BO; automatically identified chemically intuitive features. | Fixed expert-chosen representations, Random Search |

| MolDAIS [24] | Single- and multi-objective molecular property optimization | Identified near-optimal candidates from >100k molecules in <100 evaluations; outperformed graph, string, and embedding-based methods. | State-of-the-art MPO baselines (Graphs, SMILES, Embeddings) |

Table 2: Performance of Pretrained Models in Bayesian Active Learning

| Method | Dataset | Key Result | Evaluation Metric |

|---|---|---|---|

| Pretrained BERT + BALD [19] | Tox21, ClinTox | Achieved equivalent task performance with 50% fewer iterations. | Iterations to target performance |

| CPBayesMPP (Contrastive Prior) [26] | Multiple MoleculeNet regression tasks | Improved prediction accuracy and uncertainty calibration; enhanced active learning efficiency. | RMSE, Uncertainty Calibration, OOD Detection |

| Exact vs. Compressed Fingerprints [4] | 5 DOCKSTRING targets | Exact fingerprints yielded small, consistent improvements in GP prediction accuracy (R² gains of 0.006 to 0.017). | R², MSE, MAE |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Representations for Molecular BO

| Tool / Resource | Type | Function in Bayesian Optimization | Example/Reference |

|---|---|---|---|

| Gaussian Process (GP) | Surrogate Model | Models the objective function and provides uncertainty estimates for acquisition. | [8] [4] [24] |

| Molecular Fingerprints (ECFP) | Fixed Representation | Creates a fixed-length vector representation of molecular structure for the surrogate model. | Extended Connectivity Fingerprints (ECFPs) [4] |

| Revised Autocorrelation Calculations (RACs) | Descriptor Set | Represents material chemistry by relating atomic properties across the crystal graph. | Used for MOF representation in FABO [8] |

| mRMR / Spearman Ranking | Feature Selection | Dynamically selects relevant features from a large pool during BO cycles. | Used in the FABO framework [8] |

| SAAS Prior | Bayesian Prior | Induces axis-aligned sparsity in the surrogate model to identify relevant feature subspaces. | Core component of the MolDAIS framework [24] |

| Pretrained Molecular Models (e.g., BERT, Graph Transformers) | Learned Representation | Provides high-quality, context-aware molecular features to improve surrogate models in low-data regimes. | MolBERT [19], SCAGE [25] |

| Epistemic Neural Networks (ENNs) | Surrogate Model | Enables scalable sampling from joint predictive distributions, facilitating efficient Batch BO. | Used for batch optimization of binding affinity [27] |

Molecular representations form the foundational layer upon which modern computational chemistry and drug discovery are built. Translating the intricate structure of a molecule into a numerical form that machine learning (ML) models can process is a critical first step in predicting molecular properties and optimizing candidate compounds [28] [29]. Within the specific context of implementing Bayesian optimization for molecular property prediction, the choice of representation is not merely a preprocessing step; it is a hyperparameter that directly influences the efficiency and success of the optimization campaign [30] [8]. An optimal representation captures the essential features relevant to the target property, enabling the Bayesian optimization algorithm to better model the structure-property relationship and navigate the complex molecular search space effectively [8].

Molecular representations broadly fall into two categories: molecular descriptors and molecular fingerprints. Descriptors are numerical values that capture specific physical, chemical, or topological properties of a molecule, ranging from simple atom counts to complex quantum mechanical calculations [31] [29]. Fingerprints, conversely, are typically binary or count vectors that encode the presence or absence of specific substructural patterns or atomic environments within the molecule, providing a holistic, albeit sometimes less interpretable, structural representation [28] [32]. The subsequent sections will dissect these representations, provide protocols for their generation, and demonstrate their application within a Bayesian optimization framework.

Classification and Application of Molecular Descriptors

Molecular descriptors can be systematically categorized based on the nature of the structural information they encode and their computational requirements. This categorization is crucial for selecting the right descriptor for a given task, especially when computational cost is a concern [29].

Table 1: Categorization of Key Molecular Descriptors

| Descriptor Class | Description | Example Descriptors | Required Input | Application in Property Prediction |

|---|---|---|---|---|

| Constitutional [31] [29] | Basic counts of atoms, bonds, and other molecular features. | Molecular Weight, Number of H-Bond Donors/Acceptors, Rotatable Bonds [33]. | 2D Structure | Lipinski's Rule of 5 for bioavailability [33]. |

| Topological [31] [29] | Graph-invariants derived from the molecular connectivity. | Wiener Index, Balaban Index, Topological Polar Surface Area (TPSA) [31]. | 2D Structure | Predicting boiling points, modeling polar interactions relevant to permeability [29]. |

| Geometric [31] [29] | Descriptors of the molecule's 3D shape and spatial properties. | Molecular Volume, Surface Area, Moment of Inertia [31] [29]. | 3D Conformation | Crucial for modeling ligand-receptor interactions and shape-based similarity [29]. |

| Electronic [31] | Properties related to the molecule's electron distribution. | Partial Charges, HOMO-LUMO Gap, Dipole Moment [31]. | 3D Conformation / QM Calculation | Predicting chemical reactivity and intermolecular interaction energies. |

The following workflow diagram outlines the general process for generating these different classes of descriptors from a molecular structure.

Protocol 1: Calculating Molecular Descriptors with RDKit

This protocol details the steps to compute a comprehensive set of molecular descriptors using the RDKit library in Python, a standard toolkit in cheminformatics [31].

Materials:

- Software: Python environment (e.g., Jupyter Notebook, Google Colab).

- Library: RDKit (

pip install rdkit). - Input: Molecular structures in SMILES format.

Procedure:

- Environment Setup: Import the necessary modules from RDKit.

- Molecule Initialization: Convert a SMILES string into an RDKit molecule object.

- 3D Coordinate Generation: For geometric descriptors, generate a 3D conformation.

- Descriptor Calculation: Compute individual descriptors or in batch.

- Batch Processing: For a list of molecules, iterate the calculation to create a dataset for model training.

Engineering and Utilizing Molecular Fingerprints

Fingerprints provide a powerful alternative to predefined descriptors by algorithmically enumerating structural features from the molecule itself. The two primary types are structural keys and hashed fingerprints [28].

Structural Keys, such as the MACCS keys and PubChem fingerprints, use a predefined dictionary of structural fragments. Each bit in the fingerprint corresponds to a specific fragment; the bit is set to 1 if the fragment is present in the molecule and 0 otherwise [28]. Hashed Fingerprints, such as the Extended-Connectivity Fingerprints (ECFP), do not require a predefined library. Instead, they use a hashing algorithm to map all possible circular atomic neighborhoods within a given radius into a fixed-length bit vector [28] [32]. ECFP is particularly renowned for its effectiveness in similarity searching and virtual screening.

Table 2: Comparison of Common Structural Fingerprints

| Fingerprint | Type | Length | Basis of Representation | Common Use Cases |

|---|---|---|---|---|

| MACCS Keys [28] | Structural Key | 166 / 960 bits | Predefined SMARTS patterns. | Rapid similarity screening, molecular clustering. |

| PubChem Fingerprint [28] | Structural Key | 881 bits | Predefined substructure list. | Similarity searching in PubChem database. |

| ECFP (e.g., ECFP4) [32] | Hashed (Circular) | Configurable (e.g., 1024, 2048) | Circular atom environments up to radius 2. | Machine learning, structure-activity modeling, virtual screening. |

| RDKit Topological Fingerprint [34] | Hashed (Path-based) | Configurable | Enumeration of linear and branched subgraphs. | General-purpose similarity and machine learning. |

The generation of a hashed fingerprint like ECFP involves an iterative process of characterizing atomic environments, as shown below.

Protocol 2: Generating Extended-Connectivity Fingerprints (ECFP)

This protocol outlines the steps to create ECFP representations, which are a cornerstone for modern molecular machine learning [32].

Materials:

- Software: Python environment.

- Library: RDKit.

- Input: Molecular structures in SMILES format.

Procedure:

- Molecule Initialization: Convert a SMILES string to an RDKit molecule object.

- ECFP Generation: Use RDKit's

GetMorganFingerprintAsBitVectfunction. The key parameter is the radius (often 2 for ECFP4) and the final vector length. - Vector Conversion: Convert the fingerprint object into a numpy array or list for use in ML models.

- Advanced Consideration - Sort & Slice: To avoid information loss from hashing collisions, a "Sort & Slice" method can be employed. This involves generating a long, unfolded fingerprint, ranking the features by their frequency across a training set, and then selecting the top L most frequent features to form a collision-free vector [32].

Integrating Representations into Bayesian Optimization

Bayesian optimization (BO) is a powerful strategy for globally optimizing black-box functions that are expensive to evaluate, making it ideal for guiding molecular discovery where property assays or simulations are resource-intensive [8]. A critical challenge in BO is the curse of dimensionality; high-dimensional representations (like long fingerprint vectors) can severely hamper the performance of the Gaussian Process surrogate models typically used in BO [8].

The Feature Adaptive Bayesian Optimization (FABO) framework addresses this by dynamically selecting the most relevant features for the optimization task at each BO cycle [8]. FABO starts with a full, high-dimensional feature set (e.g., a concatenation of multiple fingerprints and descriptors) and uses feature selection algorithms like Maximum Relevancy Minimum Redundancy (mRMR) or Spearman ranking to identify and use only the most informative features for the surrogate model, thus creating a compact, task-specific representation [8].

Protocol 3: Implementing Feature Adaptive Bayesian Optimization (FABO)

This protocol describes the steps for setting up a FABO campaign for a molecular optimization task, such as maximizing CO₂ uptake in Metal-Organic Frameworks (MOFs) or optimizing the solubility of organic molecules [8].

Materials:

- Software: Python with Bayesian optimization libraries (e.g., Scikit-Optimize, GPyOpt) and feature selection libraries (e.g.,

mrmr). - Input: A database or search space of molecules, each represented by a comprehensive set of initial features (e.g., ECFP, MACCS, geometric descriptors).

Procedure:

- Problem Formulation:

- Search Space: Define the pool of candidate molecules (e.g., a database of 10,000 MOFs).

- Objective Function: Define the property to optimize (e.g., CO₂ uptake at 16 bar).

- Initial Feature Set: Represent each molecule with a comprehensive set of features (e.g., 500+ dimensions combining chemical and geometric descriptors [8]).

- Initialization: Select a small, random set of molecules from the search space (e.g., 5-10) and evaluate their performance using the expensive objective function.

- FABO Cycle: Iterate until a budget (e.g., number of experiments) is exhausted: A. Feature Selection: Using only the data collected so far, apply a feature selection algorithm (e.g., mRMR) to the full feature set to select the top k most relevant features for the current task. B. Model Training: Train a Gaussian Process Regressor (GPR) surrogate model using the evaluated molecules, but only on the k selected features. C. Candidate Selection: Use an acquisition function (e.g., Expected Improvement - EI) on the surrogate model to propose the next molecule(s) for evaluation. The acquisition function balances exploration and exploitation. D. Evaluation: Evaluate the proposed molecule(s) using the expensive objective function and add the new data to the training set.

- Output: Return the best-performing molecule found during the optimization campaign.

The Scientist's Toolkit: Essential Research Reagents and Software

Table 3: Key Software and Libraries for Molecular Representation and Optimization

| Tool / Reagent | Type | Primary Function | License |

|---|---|---|---|

| RDKit [28] [31] | Cheminformatics Library | Core functionality for molecule handling, descriptor calculation, and fingerprint generation. | Open-Source |

| KerasTuner / Optuna [30] | Hyperparameter Optimization Library | Tuning hyperparameters of deep learning models for molecular property prediction; supports Hyperband and Bayesian optimization. | Open-Source |

| MOE (Molecular Operating Environment) [35] | Integrated Software Suite | Comprehensive platform for molecular modeling, simulation, and QSAR, including descriptor calculation. | Commercial |

| Schrödinger Suite [35] | Integrated Software Suite | Advanced physics-based modeling, including FEP and molecular docking, for high-accuracy property prediction. | Commercial |

| DataWarrior [35] | Cheminformatics Software | Open-source program for data visualization, analysis, and descriptor calculation. | Open-Source |

| mRMR Python Package [8] | Feature Selection Library | Implements the Maximum Relevancy Minimum Redundancy algorithm for dynamic feature selection in frameworks like FABO. | Open-Source |

Dynamic Feature Selection with FABO and MolDAIS Frameworks

Dynamic Feature Selection (DFS) represents a paradigm shift from traditional static feature selection by adapting the selected feature subset to each individual sample. Within molecular property prediction, this approach is invaluable, as the most informative molecular descriptors or features for predicting a specific property can vary significantly from one compound to another. When combined with Bayesian optimization (BO) frameworks, DFS becomes a powerful tool for navigating complex molecular spaces, especially in data-scarce scenarios common early-stage drug discovery. This document details the application notes and experimental protocols for implementing DFS within two advanced Bayesian frameworks: the Feature-Aware Bayesian Optimization (FABO) and the Molecular Descriptors with Actively Identified Subspaces (MolDAIS).

Theoretical Framework and Definitions

Dynamic Feature Selection (DFS)

DFS is a sample-adaptive process where features are selected sequentially based on the specific characteristics of each instance. Unlike classical methods that apply a uniform feature set, DFS customizes feature selection per sample. The core problem is formalized as follows: given an input feature vector x = (x1, …, xM) and a target label y, the goal is to design a policy that, starting with no features, progressively selects a small subset of features S from the complete set of M features to make an accurate prediction for y while minimizing the number of features acquired [36] [37].

A principled measure for selecting features in DFS is the Conditional Mutual Information (CMI), which quantifies the information a candidate feature xi provides about the target y given the currently observed features xS. It is defined as:

I(y; xi | xS) = H(y | xS) - H(y | xS, xi)

where H denotes conditional entropy. Maximizing CMI during selection is equivalent to minimizing predictive uncertainty [37].

Bayesian Optimization (BO) in Molecular Design

Bayesian Optimization is a sample-efficient framework for optimizing expensive black-box functions. In molecular design, the "function" is often a complex experimental outcome, such as binding affinity or toxicity. BO uses a surrogate model, typically a Gaussian Process (GP), to model the objective function and an acquisition function to guide the selection of which sample to evaluate next [22].

For multi-objective problems common in drug discovery (e.g., balancing potency and safety), Multi-Objective Bayesian Optimization (MOBO) is used. Instead of scalarizing objectives, MOBO aims to discover the Pareto front—the set of optimal trade-off solutions [22].

Featured Frameworks: FABO and MolDAIS

The MolDAIS Framework

MolDAIS (Molecular Descriptors with Actively Identified Subspaces) is a framework that adapts the sparse axis-aligned subspace (SAAS) Bayesian optimization for use with libraries of molecular descriptors [38].

- Core Insight: Molecular properties typically depend on a handful of physicochemical features rather than complex combinations of all possible descriptors.