Optimizing Molecular Property Prediction: A Guide to Stochastic Gradient Descent in Drug Discovery

Molecular property prediction is a cornerstone of modern drug discovery, yet it is frequently hampered by scarce and expensive experimental data.

Optimizing Molecular Property Prediction: A Guide to Stochastic Gradient Descent in Drug Discovery

Abstract

Molecular property prediction is a cornerstone of modern drug discovery, yet it is frequently hampered by scarce and expensive experimental data. This article explores how Stochastic Gradient Descent (SGD) and its advanced variants serve as critical optimization engines to overcome these data limitations. We provide a foundational understanding of SGD's role, detail its application in cutting-edge multi-task and meta-learning architectures, and offer a practical guide to troubleshooting common optimization challenges like noise and convergence. Through a comparative analysis of validation strategies and performance benchmarks on real-world datasets, this article equips researchers and drug development professionals with the knowledge to build more accurate, efficient, and robust predictive models, ultimately accelerating the pace of AI-driven therapeutic development.

The Bedrock of Optimization: Why SGD is Fundamental to Molecular AI

Troubleshooting Guide: SGD for Molecular Property Prediction

This guide addresses common challenges researchers face when implementing Stochastic Gradient Descent (SGD) for molecular property prediction tasks.

Frequently Asked Questions

Q1: My model's loss is decreasing very slowly during training. What could be the issue?

A: Slow convergence is frequently tied to your learning rate configuration.

- Overly Small Learning Rate: A learning rate that is too small leads to tiny, inefficient steps toward the minimum [1]. Gradually increase the learning rate and monitor the loss curve.

- Inadequate Learning Rate Schedule: A fixed learning rate can be inefficient. Implement a learning rate schedule (e.g., time-based decay, step decay) to reduce the learning rate over time for more stable convergence [2] [1].

- High Gradient Variance: The inherent noise in SGD can cause oscillations that slow overall progress. Increasing your mini-batch size can average out the noise, leading to more stable and direct convergence [3] [4].

Q2: The training loss is oscillating wildly and will not stabilize. How can I fix this?

A: Oscillation is a classic symptom of a learning rate that is too high or a batch size that is too small.

- Learning Rate Too High: A large learning rate causes the algorithm to overshoot the minimum [1]. Reduce the learning rate until the loss curve becomes smoother.

- Mini-Batch Size Too Small: Training with a single sample per update (strict SGD) introduces high variance [3] [5]. Switch to mini-batch SGD. Start with a common batch size (e.g., 32 or 64) and tune from there [6] [7].

- Data Not Shuffled: If your data is ordered, failing to shuffle it before each epoch can introduce pathological gradients that cause instability. Ensure you shuffle your training set at the start of every epoch [2] [7].

Q3: For molecular graph data, should I use Batch GD, SGD, or Mini-batch SGD?

A: For the large-scale datasets common in molecular property prediction, Mini-batch SGD is the default and most recommended choice. The following table summarizes the key differences:

Table: Comparison of Gradient Descent Variants for Molecular Property Prediction

| Variant | Data Used Per Step | Key Feature | Best for Molecular Prediction? |

|---|---|---|---|

| Batch GD | Entire dataset [3] [5] | Stable, slow convergence; high memory cost [4] | No, too slow for large molecular datasets [7] |

| Stochastic GD (SGD) | One sample [2] [6] | Noisy, fast updates; can escape local minima [3] [1] | Rarely used in practice due to high noise [5] |

| Mini-batch GD | Small random subset (e.g., 32 samples) [3] [7] | Balanced speed & stability; works well with GPUs [3] [7] | Yes, ideal for large molecular graphs and SMILES strings [8] |

Q4: How can I help my model escape poor local minima when optimizing complex molecular property functions?

A: The noise in SGD's updates is a primary mechanism for escaping local minima [1] [4]. While potentially disruptive for convergence, this stochasticity helps the model jump out of shallow minima and potentially find better solutions in complex, non-convex loss landscapes, which are common in molecular prediction tasks [4]. Using a small mini-batch size preserves some of this beneficial noise.

Q5: Which optimizer should I choose for my molecular property prediction model: SGD or Adam?

A: The choice involves a trade-off between generalization and speed.

- SGD with Momentum: Often generalizes better and may find wider, flatter minima that are more robust. This can be preferable for well-explored molecular datasets where ultimate performance is critical [5].

- Adam: Converges faster due to its adaptive learning rates and is often the best initial choice. However, its aggressive, per-parameter adjustments can sometimes lead to overfitting or convergence to sharp minima [5].

- Recommendation: Start with Adam for rapid prototyping. For final model training and if you suspect overfitting, try SGD with Momentum (e.g., Nesterov Accelerated Gradient) and a learning rate schedule to see if it improves validation performance [2] [5].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Components for a Molecular Property Prediction Pipeline with SGD

| Research Reagent | Function / Explanation | Example Use in Molecular Context |

|---|---|---|

| Mini-batch Data Loader | Efficiently loads and shuffles small subsets of data, reducing memory overhead and enabling GPU parallelism [3] [7]. | Crucial for handling large datasets of molecular graphs or augmented SMILES strings [8]. |

| Learning Rate Scheduler | Systematically reduces the learning rate during training to enable precise convergence to a minimum [2] [1]. | Prevents oscillation near the end of training on tasks like predicting toxicity or solubility. |

| SGD with Momentum | Accelerates convergence and dampens oscillations in relevant directions by accumulating a velocity vector from past gradients [2] [5]. | Helps navigate the complex loss landscape of a Graph Neural Network (GNN) predicting drug bioactivity. |

| Adaptive Optimizers (Adam, RMSProp) | Uses per-parameter learning rates computed from estimates of first and second moments of gradients [2] [5]. | A good default for initial experiments, e.g., training a multimodal model on molecular graphs and text [9]. |

| Data Augmentation | Artificially expands the training set by creating modified versions of existing data [8]. | Generating multiple valid SMILES strings for the same molecule to improve model generalization [8]. |

| Influence Function | Identifies which training samples most significantly influence the model's parameters and predictions [10]. | Pinpoints key molecular structures in the training set that are most responsible for a specific property prediction. |

Experimental Protocol: Implementing Mini-batch SGD for a Molecular Regression Task

This protocol outlines the steps to train a simple molecular property predictor (e.g., predicting solubility) using a linear model and mini-batch SGD.

1. Hypothesis: A linear relationship exists between molecular features and the target property. 2. Objective: Minimize the Mean Squared Error (MSE) loss between predicted and actual property values.

Methodology:

- Data Preparation: Represent molecules using feature vectors (e.g., molecular weight, number of aromatic rings, etc.) and normalize the features. Split data into training/validation sets.

- Parameter Initialization: Initialize the model's weight vector and bias term randomly [6] [1].

- Training Loop: For a predefined number of epochs:

- Shuffle: Shuffle the training data to ensure randomness [2] [7].

- Create Mini-batches: Split the shuffled data into batches of size

B(e.g., 32). - Iterate over Batches: For each mini-batch:

- Forward Pass: Compute predictions:

y_hat = X_batch * w + b. - Compute Loss: Calculate MSE for the mini-batch.

- Backward Pass (Gradient Calculation): Calculate the gradients of the loss with respect to parameters

wandb[6] [5]. - Parameter Update: Update the parameters using the SGD rule:

w := w - learning_rate * grad_wandb := b - learning_rate * grad_b[1] [7].

- Forward Pass: Compute predictions:

- Validation: After each epoch, calculate the loss on the validation set to monitor for overfitting.

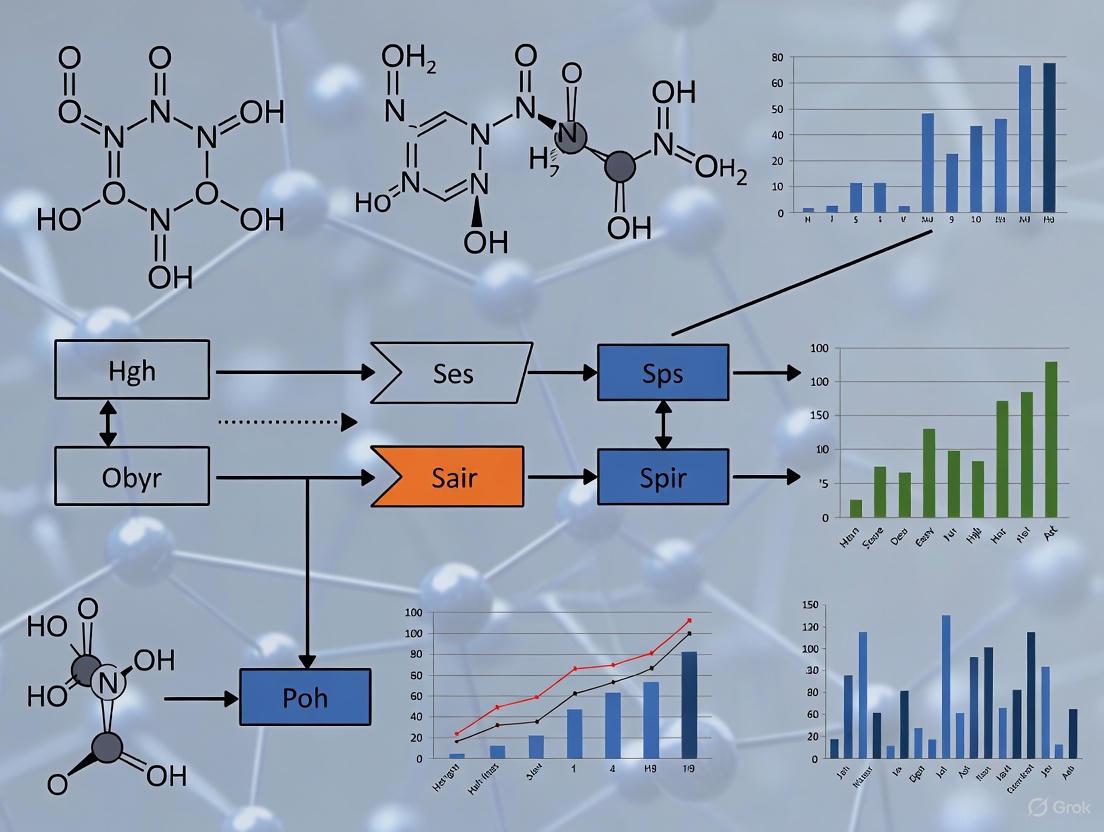

The following workflow diagram visualizes this iterative process:

The Data Scarcity Challenge in Molecular Property Prediction

Troubleshooting Guides and FAQs

Troubleshooting Common Experimental Issues

Problem 1: Negative Transfer in Multi-Task Learning

- Symptoms: Model performance on a specific task degrades during multi-task training. The validation loss for a task with limited data is significantly higher than its single-task equivalent.

- Causes: This occurs when gradient updates from a data-rich task are detrimental to a data-poor task, often due to low task relatedness or severe task imbalance [11].

- Solutions:

- Implement Adaptive Checkpointing with Specialization (ACS): Use a training scheme that combines a shared task-agnostic backbone (GNN) with task-specific heads. Monitor the validation loss for each task independently and checkpoint the best backbone-head pair for a task whenever its validation loss reaches a new minimum. This preserves the benefits of inductive transfer while shielding tasks from detrimental interference [11].

- Employ a Task Similarity Estimator: Before training, use a framework like MoTSE (Molecular Tasks Similarity Estimator) to estimate task similarity. Use this to guide which source tasks are most suitable for transfer learning to your data-scarce target task [12].

- Leverage Loss Masking: For missing labels, which are common in multi-task setups, use loss masking instead of imputation or complete-case analysis to avoid suboptimal outcomes and better utilize available data [11].

Problem 2: Poor Model Convergence with SGD-based Optimizers

- Symptoms: Training loss is unstable or decreases very slowly. Final model performance on validation set is poor and inconsistent across different random seeds.

- Causes: The default optimization strategy may be unsuitable for the model architecture (e.g., Message Passing Neural Networks) or the characteristics of the molecular dataset [13].

- Solutions:

- Switch to Adaptive Optimizers: Replace SGD or SGD with momentum with adaptive gradient-based optimizers like AdamW or AMSGrad. Empirical studies on MPNNs show these provide better convergence stability and predictive accuracy [13].

- Tune Hyperparameters Systematically: When using adaptive optimizers, pay close attention to the learning rate and weight decay. A systematic study found that a learning rate of 1e-4 and weight decay of 1e-4 were effective starting points for MPNNs, but these should be tuned for your specific setup [13].

- Validate with Multiple Runs: Conduct multiple independent training runs (e.g., 5 times) with fixed hyperparameters to ensure the observed performance is stable and not a one-off result [13].

Problem 3: Overfitting on Small Datasets

- Symptoms: The model achieves near-perfect training accuracy but performs poorly on the validation or test set. This is especially prevalent in the "ultra-low data regime" (e.g., fewer than 100 labeled samples) [11].

- Causes: The model capacity is too high for the amount of available training data, causing it to memorize noise and specific samples rather than learning generalizable patterns.

- Solutions:

- Integrate KANs into GNNs: Replace Multi-Layer Perceptrons (MLPs) in your GNN with Kolmogorov–Arnold Networks (KANs). KA-GNNs have demonstrated superior parameter efficiency, which can lead to improved generalization, especially on small datasets [14].

- Use Fourier-Based KANs: Implement KANs using Fourier series as the basis for its pre-activation functions. This enhances function approximation and has strong theoretical guarantees, helping the model learn more meaningful patterns from limited data [14].

- Generate Synthetic Mediator Trajectories (SMTs): For time-series molecular data, use complex multi-scale mechanism-based simulation models to generate synthetic multi-dimensional molecular time series data. This can augment your training set and help minimize overfitting by exposing the model to a wider range of plausible biological scenarios [15].

Problem 4: Inaccurate Performance Estimation

- Symptoms: A model reported to have high performance on benchmark datasets fails to generalize to your internal data or real-world applications.

- Causes: Inflated performance estimates can result from improper dataset splitting, such as random splits that fail to account for temporal or spatial disparities. This overstates model performance compared to more realistic time-split or scaffold-based evaluations [11] [16].

- Solutions:

- Use Rigorous Data Splits: Always use a Murcko-scaffold split for molecular property prediction tasks. This ensures that molecules with different core structures are in the training and test sets, providing a more realistic estimate of a model's ability to generalize to novel chemotypes [11].

- Account for Activity Cliffs: Be aware that activity cliffs—where small structural changes lead to large property changes—can significantly impact model prediction and inflate performance if not properly accounted for in the data split [16].

- Report Statistical Rigor: When reporting results, use multiple data splits and seeds. Do not rely on mean values from only 3-fold splits, as this may hide statistical noise. Provide standard deviations and use rigorous statistical analysis [16].

Frequently Asked Questions (FAQs)

Q1: What is the minimum amount of data required to train a reliable molecular property predictor? A1: There is no universal minimum, as it depends on the complexity of the property and the model. However, recent methods like ACS (Adaptive Checkpointing with Specialization) have demonstrated the ability to learn accurate models for predicting sustainable aviation fuel properties with as few as 29 labeled samples, a scenario where single-task learning and conventional MTL fail [11].

Q2: How can I determine if two molecular property prediction tasks are "related" enough for Multi-Task Learning (MTL) or Transfer Learning? A2: Task relatedness is a complex, open theoretical question [11]. Instead of relying on intuition, use an interpretable computational framework like MoTSE (Molecular Tasks Similarity Estimator). It provides an accurate estimation of task similarity, which has been shown to serve as useful guidance to improve the prediction performance of transfer learning on molecular properties [12].

Q3: My model uses SMILES strings. Are graph-based representations fundamentally better? A3: Not necessarily. Extensive benchmarking studies show that representation learning models (on SMILES or graphs) often exhibit limited performance gains over traditional fixed representations (like fingerprints) on many datasets. The key element is often the dataset size. For smaller datasets, fixed representations like ECFP fingerprints can be very competitive and sometimes superior. Graph-based models tend to excel when datasets are very large [16].

Q4: Can I use Generative Adversarial Networks (GANs) to create synthetic molecular data to overcome data scarcity? A4: For simple molecular structures, statistical methods like GANs can be useful. However, for generating complex, multi-dimensional molecular time series data (e.g., for forecasting disease trajectories), statistical and data-centric ML methods are often insufficient. The recommended approach is to use complex multi-scale mechanism-based simulation models to generate synthetic mediator trajectories (SMTs) that account for the underlying biological mechanisms [15].

Experimental Protocols & Data

Protocol 1: Implementing ACS for Multi-Task Learning in Low-Data Regimes

This protocol outlines the ACS method to mitigate negative transfer [11].

- Architecture Setup:

- Use a single Graph Neural Network (GNN) based on message passing as a shared, task-agnostic backbone.

- Attach independent Multi-Layer Perceptron (MLP) heads for each specific property prediction task.

- Training Loop:

- Train the entire model (shared backbone + all task heads) on all available labeled data.

- For each batch, calculate the loss only for tasks where labels are present (using loss masking for missing labels).

- Adaptive Checkpointing:

- Throughout training, continuously monitor the validation loss for each individual task.

- For a given task, whenever its validation loss hits a new minimum, checkpoint the current state of the shared backbone and its corresponding task-specific head.

- This results in a specialized backbone-head pair for each task, saved at its optimal point in training.

- Inference:

- For predicting a specific molecular property, use the dedicated specialized model (backbone-head pair) that was checkpointed for that task.

Protocol 2: Systematic Evaluation of Optimizers for MPNNs

This protocol provides a methodology for selecting the best optimizer, a key component of SGD-based research [13].

- Model and Data Selection:

- Choose a standard Message Passing Neural Network (MPNN) architecture.

- Select benchmark molecular datasets for binary classification (e.g., NCI-1, BACE).

- Optimizer Comparison:

- Test a range of optimizers, including SGD, SGD with Momentum, Adagrad, RMSprop, Adam, NAdam, AMSGrad, and AdamW.

- Experimental Rigor:

- For each optimizer, train the model from scratch five times with the same hyperparameters and training settings to ensure reproducibility.

- Use a fixed learning rate (e.g., 1e-4), weight decay (e.g., 1e-4), and SinLU activation function for a fair comparison.

- Evaluation:

- Analyze training stability, convergence behavior, and final classification accuracy using metrics like AUC-ROC and F1-score. The optimizer delivering the most stable and highest average performance should be selected.

Quantitative Data on Optimizer Performance for MPNNs

The table below summarizes findings from a systematic study evaluating optimizers on molecular classification tasks [13].

Table 1: Optimizer Performance on Molecular Property Prediction with MPNNs

| Optimizer | Key Characteristics | Reported Performance on NCI-1/BACE | Recommendation for Low-Data |

|---|---|---|---|

| AdamW | Adaptive, decoupled weight decay | High stability, top-tier accuracy | Strongly Recommended |

| AMSGrad | Adaptive, addresses Adam's convergence issues | High stability, top-tier accuracy | Strongly Recommended |

| NAdam | Adaptive, combines Adam and Nesterov momentum | Good performance | Recommended |

| Adam | Adaptive, computes individual learning rates | Good performance | Recommended |

| RMSprop | Adaptive, for non-stationary objectives | Moderate performance | Consider |

| Adagrad | Adaptive, suits sparse data | Moderate performance | Consider |

| SGD w/ Momentum | Classical, uses momentum for acceleration | Lower performance & stability | Not Recommended |

| SGD | Classical, simple gradient descent | Lowest performance & stability | Not Recommended |

Visualizations

Workflow for Adaptive Checkpointing with Specialization (ACS)

KA-GNN Architecture Integrating KAN Modules

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Molecular Property Prediction Research

| Resource Name | Type | Primary Function | Relevance to Data Scarcity |

|---|---|---|---|

| MoleculeNet Benchmarks [11] [16] | Dataset Suite | Standardized benchmarks (e.g., ClinTox, SIDER, Tox21) for fair model comparison. | Provides common ground for evaluating low-data methods using scaffold splits. |

| ACS (Adaptive Checkpointing) [11] | Algorithm/Training Scheme | Mitigates negative transfer in MTL by checkpointing task-specific models. | Enables accurate prediction with as few as 29 labeled samples. |

| KA-GNN (Kolmogorov-Arnold GNN) [14] | Model Architecture | Integrates KAN modules into GNNs for enhanced expressivity and parameter efficiency. | Improves generalization and accuracy on small datasets. |

| MoTSE (Molecular Tasks Similarity Estimator) [12] | Computational Framework | Accurately estimates similarity between molecular property prediction tasks. | Guides effective transfer learning by identifying related source tasks. |

| Message Passing Neural Network (MPNN) [13] | Model Architecture | A flexible framework for learning on graph-structured molecular data. | A standard architecture for systematic studies on optimizers like SGD variants. |

| RDKit [17] [16] | Cheminformatics Library | Computes 2D molecular descriptors and generates fingerprints (e.g., Morgan/ECFP). | Provides robust fixed representations that are competitive on small datasets. |

| DeepChem [17] | Deep Learning Library | Provides end-to-end tools for molecular property prediction, including GNNs and datasets. | Offers implemented state-of-the-art models to tackle data scarcity. |

Graph Neural Networks (GNNs) have emerged as a powerful tool for molecular property prediction in computational chemistry and drug discovery. Their natural compatibility with Stochastic Gradient Descent (SGD) optimization stems from the graph-structured representation of molecules, where atoms serve as nodes and chemical bonds as edges. This representation allows GNNs to natively capture the structural information that determines molecular properties, providing an ideal foundation for SGD-based learning. The message-passing mechanism in GNNs, where nodes aggregate information from their neighbors, creates differentiable parameter updates that align perfectly with SGD's requirements for gradual, iterative optimization. This synergy enables efficient learning of complex structure-property relationships directly from molecular graphs without relying on hand-crafted features, establishing GNNs as a natural architectural choice for molecular machine learning with SGD optimization.

Essential Research Reagents for GNN Molecular Experiments

Table 1: Key Research Reagent Solutions for GNN Molecular Experiments

| Reagent Category | Specific Examples | Function & Purpose |

|---|---|---|

| Benchmark Datasets | QM9, NCI-1, BACE | Provides standardized molecular data with computed or experimental properties for model training and validation [18] [13] [19] |

| Graph Representation Tools | SMILES Parsers, RDKit | Converts molecular structures into graph representations with node and edge features [13] [19] |

| GNN Architectures | Message Passing Neural Networks (MPNNs), Graph Convolutional Networks (GCNs) | Core model architectures that process graph-structured molecular data through neighborhood aggregation [13] [19] |

| Optimization Algorithms | SGD, Adam, AdamW, AMSGrad | Optimization methods for updating model parameters during training; choice significantly impacts convergence and performance [13] |

| Property Prediction Heads | Fully Connected Layers, Global Pooling Layers | Maps from learned graph embeddings to target molecular properties through readout functions [19] |

| Evaluation Metrics | Mean Absolute Error (MAE), Binary Cross-Entropy, Accuracy | Quantifies model performance on molecular property prediction tasks [18] [13] |

Key Experimental Protocols & Methodologies

Direct Inverse Design with Gradient Ascent

Recent advances have demonstrated that pre-trained GNN property predictors can be inverted to generate molecules with desired properties through gradient-based optimization. This methodology, termed Direct Inverse Design (DIDgen), leverages the differentiable nature of GNNs to optimize molecular graphs directly toward target properties [18].

Experimental Protocol:

- Pre-training: First, train a GNN property predictor on a large molecular dataset (e.g., QM9) to accurately predict target properties from molecular graphs

- Graph Construction: Represent the molecular graph using a symmetric adjacency matrix A (bond orders) and feature matrix F (atom types)

- Constrained Optimization: Apply gradient ascent to optimize the molecular graph toward a target property while enforcing chemical validity constraints:

- Use sloped rounding function: $[x]_{sloped} = + a(x-[x])$ to maintain differentiable integer bond orders

- Define atoms by their valence (sum of bond orders) to ensure chemical validity

- Penalize valences exceeding 4 in the loss function [18]

- Validation: Verify generated molecules using high-fidelity methods like Density Functional Theory (DFT) to confirm properties

This approach hits target properties with comparable or better success rates than state-of-the-art generative models while producing more diverse molecules, achieving generation times of approximately 2-12 seconds per molecule depending on the target [18].

Optimizer Comparison Framework for Molecular MPNNs

A systematic methodology for evaluating different optimizers in Message Passing Neural Networks (MPNNs) for molecular classification provides crucial insights for SGD-based training [13].

Experimental Protocol:

- Dataset Preparation: Use benchmark molecular datasets (NCI-1, BACE) with binary classification tasks

- Model Configuration: Implement MPNN with consistent architecture across all experiments

- Optimizer Comparison: Test eight optimizers: SGD, SGD with momentum, Adagrad, RMSprop, Adam, NAdam, AMSGrad, and AdamW

- Training Regime: Train each model for 100 epochs with fixed hyperparameters (learning rate: 1e-4, weight decay: 1e-4) using SinLU activation function

- Evaluation: Assess performance using multiple metrics with 5 repeated runs for statistical significance [13]

Table 2: Optimizer Performance Comparison on Molecular Classification Tasks

| Optimizer | NCI-1 Accuracy | BACE Accuracy | Training Stability | Convergence Speed |

|---|---|---|---|---|

| AdamW | 80.4% | 85.2% | High | Fast |

| AMSGrad | 79.8% | 84.7% | High | Medium |

| Adam | 78.9% | 83.5% | Medium | Fast |

| NAdam | 79.2% | 84.1% | Medium | Fast |

| RMSprop | 76.5% | 81.3% | Medium | Medium |

| Adagrad | 74.2% | 79.8% | Low | Slow |

| SGD+Momentum | 75.1% | 80.6% | Medium | Slow |

| SGD | 72.8% | 78.4% | Low | Slow |

The results demonstrate that adaptive gradient-based optimizers (AdamW, AMSGrad) consistently outperform traditional SGD in molecular classification tasks, achieving better accuracy with improved training stability [13].

Technical Support Center: Troubleshooting GNN-SGD Experiments

Frequently Asked Questions (FAQs)

Q1: Why does my GNN model fail to converge when using basic SGD for molecular property prediction?

A: Basic SGD often struggles with GNN training due to the complex, non-convex loss landscapes common in molecular property prediction. The issue frequently stems from inappropriate learning rates or the presence of pathological curvature. Several solutions exist:

- Switch to adaptive optimizers: AdamW and AMSGrad have demonstrated superior performance for MPNNs, achieving 5-8% higher accuracy compared to vanilla SGD on benchmarks like NCI-1 and BACE [13]

- Implement learning rate scheduling: Combine with gradient clipping to stabilize training

- Verify gradient flow: Use diagnostic tools to check for vanishing/exploding gradients, particularly deep in the network where message passing occurs

Q2: How can I enforce chemical validity when generating molecules through gradient-based optimization?

A: Maintaining chemical validity during gradient-based molecular generation requires explicit constraints:

- Valence constraints: Define atoms by their valence (sum of bond orders) and penalize violations in the loss function [18]

- Differentiable rounding: Use sloped rounding functions $[x]_{sloped} = + a(x-[x])$ to preserve gradients while achieving near-integer bond orders [18]

- Bond constraints: Prevent atoms from forming more than 4 bonds by blocking gradient updates that would increase valence beyond this limit

- Element mapping: Use additional weight matrices to differentiate between elements sharing the same valence state

Q3: What causes over-smoothing in deep GNNs for molecular graphs, and how can I mitigate it?

A: Over-smoothing occurs when node representations become indistinguishable after multiple message-passing layers, losing atomic-level information crucial for molecular property prediction. This is particularly problematic for larger molecules [19] [20]:

- Architectural solutions: Implement skip connections, gated updates, and residual pathways to preserve atomic information

- Depth optimization: Limit message passing steps to approximately log(n) for n atoms, as deeper networks rarely benefit molecular graphs

- Alternative frameworks: Consider multi-grained contrastive learning with MLPs as an alternative to very deep GNNs for certain tasks [20]

Troubleshooting Guide: Common GNN-SGD Experimental Issues

Problem: Poor Generalization to Out-of-Distribution Molecules

Symptoms: Good performance on validation split from same dataset but poor performance on external test sets or newly generated molecules

Diagnosis and Solutions:

- Issue: GNNs trained on standard benchmarks (e.g., QM9) often fail to generalize to out-of-distribution molecules due to dataset bias

- Verification: Create a separate test set with diverse molecular scaffolds not represented in training data

- Solution:

- Apply stronger regularization techniques (dropout, weight decay)

- Use domain adaptation approaches or augment training data with diverse molecular structures

- Verify predictions with DFT calculations, as GNN proxies can show significant errors (≈0.8eV MAE) compared to DFT ground truth [18]

Problem: Training Instability with Deep MPNN Architectures

Symptoms: Loss oscillations, NaN values, or performance degradation with increasing layers

Diagnosis and Solutions:

- Issue: Numerical instability from exponential growth of neighborhood size with network depth

- Solution:

- Normalize node features and use gradient clipping (max norm ≈ 1.0)

- Implement residual connections between MPNN layers

- Switch to more stable optimizers - AdamW shows significantly better training stability for MPNNs [13]

- Consider simplified architectures like Principal Neighborhood Aggregation (PNA) to maintain feature distinction [20]

Problem: Inefficient Molecular Generation with Gradient-Based Methods

Symptoms: Slow convergence, chemically invalid structures, or limited diversity in generated molecules

Diagnosis and Solutions:

- Issue: Unconstrained gradient optimization produces invalid molecular graphs

- Solution:

- Implement valence-aware constraints throughout optimization [18]

- Combine property target with diversity penalties in loss function

- Use curriculum learning, starting with simpler targets before progressing to complex ones

- Benchmark against state-of-the-art baselines - DIDgen achieves 2-12 seconds per molecule depending on target complexity [18]

Workflow Visualization: GNN-SGD for Molecular Property Prediction

GNN-SGD Molecular Optimization

Advanced Methodologies: Kernel-Graph Alignment in GNN Training

Recent research on GNN training dynamics has revealed the phenomenon of kernel-graph alignment, where the Neural Tangent Kernel (NTK) implicitly aligns with the graph adjacency matrix during optimization. This alignment explains why GNNs successfully generalize on homophilic graphs but struggle with heterophilic relationships common in certain molecular systems [21].

Experimental Protocol for Analyzing Training Dynamics:

- Setup: Monitor function evolution during GNN training rather than just parameter updates

- Kernel Computation: Calculate the NTK for GNNs (node-level GNTK) throughout training

- Alignment Measurement: Quantify correlation between the NTK and graph adjacency matrix

- Generalization Analysis: Connect alignment patterns to generalization performance across different graph homophily levels

This analytical framework explains why GNNs naturally excel at molecular property prediction where neighboring atoms tend to have interdependent properties (homophily), while suggesting limitations for molecular systems with opposite relationships between connected atoms [21].

Troubleshooting Guide: Optimization Challenges in Molecular Property Prediction

This guide addresses common optimization challenges you may encounter when using Stochastic Gradient Descent (SGD) and its variants for training Graph Neural Networks (GNNs) on molecular property prediction tasks.

FAQ: How can I tell if my optimization is stuck in a local minimum?

- Diagnosis: A local minimum is a point where the value of the cost function is lower than all other points in its immediate vicinity, but it is not the lowest point possible (the global minimum) [22]. In practice, you may observe that your training loss plateaus at a suboptimal value, and the performance of your model (e.g., in AUC or F1-score) is lower than expected, even after many training epochs [22].

- Solutions:

- Introduce Stochasticity: The inherent noise in minibatch SGD can help dislodge parameters from shallow local minima [22]. Ensure you are using a shuffled dataset and an appropriate batch size.

- Use Adaptive Optimizers: Employ optimizers with momentum. Momentum helps the optimizer build up speed in directions with persistent, consistent gradients, allowing it to traverse flat regions and potentially escape local minima [23].

- Adversarial Augmentation: For molecular property prediction, consider using methods like the Adversarial Augmentation to Influential Sample (AAIS). This technique identifies data points near the decision boundary and augments them, which can locally flatten the loss landscape and improve generalization, helping the model find a more robust minimum [10].

FAQ: What are the signs of being trapped at a saddle point, and how can I escape?

- Diagnosis: A saddle point is a location where the gradients of the function are zero, but it is neither a local minimum nor a maximum [22] [24]. In high-dimensional spaces typical of deep learning, saddle points are more common than local minima [22]. You will observe that the training loss stops improving and the gradients become very close to zero, making it difficult to distinguish from a flat region.

- Solutions:

- Second-Order Information: Saddle points can often be identified by the Hessian matrix, which is indefinite at a saddle point [24]. While computing the full Hessian is often infeasible, using optimizers that approximate second-order information can be beneficial.

- Momentum and Adaptive Learning Rates: As with local minima, momentum is a powerful tool. By accumulating past gradients, the optimizer can continue to move through saddle points where the instantaneous gradient is zero but the accumulated velocity is not [23] [25]. Adaptive optimizers like Adam also help navigate these regions effectively.

FAQ: My training is unstable with oscillating or exploding loss. Is this a high curvature problem?

- Diagnosis: High curvature in the loss landscape, often manifested as "ravines," can cause the optimization path to oscillate wildly. This leads to an unstable training loss that may spike or fluctuate dramatically [23]. In severe cases, you may see

NaNvalues in your loss due to numerical instability [26]. - Solutions:

- Adjust Learning Rate: A high learning rate is a primary cause of overshooting and oscillation in areas of high curvature [23]. Implement a learning rate schedule that reduces the learning rate over time to allow for finer convergence [25].

- Gradient Clipping: This technique caps the magnitude of gradients during the backward pass, preventing parameter updates from becoming too large and destabilizing training. This is particularly useful for handling steep cliffs in the loss landscape.

- Normalize Inputs: Ensure your input data (e.g., molecular features) is normalized. Subtracting the mean and dividing by the variance can make the loss landscape easier to traverse [26].

Experimental Protocol: Adversarial Augmentation for Molecular Property Prediction

The following protocol is based on the AAIS method, which has been shown to improve model prediction performance by 1%–15% in AUC and 1%–35% in F1-score on benchmark molecular property prediction tasks [10].

1. Objective: Improve the generalization and robustness of GNNs for molecular property prediction, particularly in scenarios with imbalanced data.

2. Methodological Workflow: The adaptive adversarial augmentation process involves identifying influential samples and using them to generate new training data.

3. Key Reagents & Computational Materials: The following table lists the essential components required to implement the AAIS protocol.

| Research Reagent / Solution | Function in the Experiment |

|---|---|

| Benchmark Dataset (e.g., from OGB) [10] | Provides standardized, publicly available molecular graphs with property labels for training and evaluation. |

| Graph Neural Network (GNN) [10] | Serves as the primary model architecture for learning representations from molecular graph structures. |

| One-Step Influence Function [10] | A computational tool to efficiently identify which training samples most significantly influence model training and lie near the decision boundary. |

| Distributionally Robust Optimization [10] | The adversarial framework used to generate augmented samples that are robust to distribution shifts. |

4. Quantitative Performance Expectations: The table below summarizes the reported performance gains from applying the AAIS method.

| Evaluation Metric | Reported Performance Improvement |

|---|---|

| AUC (Area Under the Curve) | 1% – 15% increase [10] |

| F1-Score | 1% – 35% increase [10] |

Core Optimization Algorithms & Concepts:

- Stochastic Gradient Descent (SGD): An iterative optimization algorithm that updates model parameters using a subset (minibatch) of the data. The inherent noise helps in escaping local minima [22] [25].

- Momentum: A technique that accelerates SGD by accumulating a velocity vector in directions of persistent gradient descent, helping to overcome local minima and saddle points [23] [25].

- Adaptive Learning Rate Optimizers (e.g., Adam): Algorithms that adjust the learning rate for each parameter individually, which can improve convergence in landscapes with high curvature or noisy gradients [23].

- Influence Functions: A methodology from robust statistics used to approximate the effect of a training sample on the model's predictions and parameters, central to the AAIS method [10].

Diagnostic & Troubleshooting Procedures:

- Overfit a Single Batch: A critical sanity check. If your model cannot overfit a very small batch of data (e.g., drive the loss close to zero), it likely has an implementation bug, such as an incorrect loss function or data preprocessing error [26].

- Bias-Variance Decomposition: A framework to diagnose model performance issues. High bias (underfitting) suggests the model is too simple, while high variance (overfitting) suggests the model is too complex and has memorized the training data [26].

Architectures and Algorithms: Implementing SGD in Predictive Models

Frequently Asked Questions

Q1: My multi-task GNN model is overfitting on smaller molecular property datasets. How can I improve its generalization? A1: Overfitting in low-data regimes is a common challenge. You can address this through data augmentation and leveraging auxiliary data.

- Adversarial Augmentation: Implement the AAIS (Adversarial Augmentation to Influential Sample) framework. It identifies data points near the decision boundary that significantly influence model training and performs adaptive augmentation. This locally flattens the decision boundary, leading to more robust predictions and can improve performance by 1%–15% in AUC and 1%–35% in F1-score on imbalanced molecular property tasks [10].

- Multi-Task Data Augmentation: Augment your training by using multi-task learning with even sparse or weakly related auxiliary molecular property data. Controlled experiments show that this approach can enhance prediction quality for your primary task when data is scarce [27].

Q2: The labels for different molecular properties in my dataset are incomplete. How can I train a multi-task model with missing labels? A2: Missing labels are a major obstacle that impairs model performance due to insufficient supervision.

- Missing Label Imputation: Frame the problem as predicting missing edges on a bipartite graph that models molecule-task co-occurrence relationships. Train a dedicated GNN to impute these missing labels by learning the complex patterns in this graph. Selecting reliable pseudo-labels based on prediction uncertainty can then provide your main multi-task GNN with enough supervision to achieve state-of-the-art performance [28].

Q3: How does the choice of optimizer, specifically SGD, influence the performance and characteristics of my multi-task GNN model? A3: The optimizer is not a "black box" component and significantly impacts model selection and outcomes [29].

- SGD vs. Liblinear: When using LASSO logistic regression (which can be part of a GNN's node classification head), models fit with SGD generally perform more robustly across different regularization strengths. In contrast, models using the liblinear optimizer (with coordinate descent) tend to perform best at high regularization strengths (resulting in sparse models with 100-1000 non-zero features) and can overfit more easily with low regularization [29].

- SGD's Flexibility: SGD requires tuning the learning rate but often generalizes better across a wider range of model complexities. This makes it a strong choice for multi-task learning where the optimal regularization may vary between tasks [29].

Q4: How can I design a GNN architecture that effectively leverages both node-level and graph-level information for multi-task learning? A4: A multi-task representation learning (MTRL) architecture can effectively integrate these information levels.

- Joint Training: Design an architecture that couples the graph classification task (e.g., predicting a molecule's property) with supervised node classification (e.g., predicting atom types or other node features). This enforces node-level representations to take full advantage of available node labels, which in turn leads to more informative graph-level representations after aggregation [30].

- Loss Function: Use a weighted sum of the graph classification loss and the node classification loss during backpropagation. This allows the model to capture fine-grained node features and graph-level features synchronously, improving generalization through collaborative training [30].

Experimental Protocols & Performance Data

Protocol 1: Comparing Optimizers for Sparse Model Selection

This protocol is based on a study comparing optimization approaches for logistic regression models on biological data, providing key insights applicable to GNN model heads [29].

- Objective: To compare the performance and model sparsity of LASSO logistic regression models trained using Stochastic Gradient Descent (SGD) and the liblinear (coordinate descent) optimizer.

- Dataset: Preprocessed TCGA Pan-Cancer Atlas RNA-seq data with 16,148 input genes for predicting driver gene mutation status [29].

- Data Splitting: "Valid" cancer types are split into train (75%) and test (25%) sets. The training data is further split into "subtrain" (66%) for model training and "holdout" (33%) for hyperparameter selection. All splits are stratified by cancer type [29].

- Hyperparameter Tuning:

- For liblinear, the regularization parameter

Cis tuned over a logarithmic scale from (10^{-3}) to (10^{7}) (21 values). HigherCmeans less regularization. - For SGD, the regularization parameter

αis tuned over a logarithmic scale from (10^{-7}) to (10^{3}) (21 values), and the learning rate must also be tuned. Lowerαmeans less regularization [29].

- For liblinear, the regularization parameter

- Key Quantitative Findings: The table below summarizes the typical performance and characteristics of the two optimizers based on the study's results [29].

Table 1: Comparison of Optimizer Characteristics for Sparse Model Fitting

| Optimizer | Underlying Method | Optimal Performance Region | Sparsity at Best Performance | Key Tuning Parameters | Robustness to Low Regularization |

|---|---|---|---|---|---|

| SGDClassifier | Stochastic Gradient Descent | Wide range of regularization strengths | Varies broadly (less sensitive) | Learning Rate, α |

High (less prone to overfitting) |

| LogisticRegression | Coordinate Descent (liblinear) | High regularization strengths | Best with high sparsity (100-1000 features) | C |

Low (can overfit easily) |

Protocol 2: Adaptive Adversarial Augmentation for Imbalanced Data (AAIS)

This protocol outlines the steps for implementing the AAIS framework to improve model performance on imbalanced molecular property prediction tasks [10].

- Objective: To enhance model generalization and address class imbalance by strategically augmenting influential data points near the decision boundary.

- Core Mechanism: The framework uses a distributionally robust optimization and a novel one-step influence function to identify and augment training samples that have a significant impact on the model's learning process. This adaptive augmentation flattens the decision boundary locally, leading to more robust predictions [10].

- Model: The method is applied to Graph Neural Networks for graph-level molecular property prediction tasks.

- Key Quantitative Findings: Evaluation on benchmark molecular property datasets showed consistent performance improvements, as summarized below [10].

Table 2: Performance Improvement with Adaptive Adversarial Augmentation (AAIS)

| Metric | Reported Improvement | Primary Cause of Improvement |

|---|---|---|

| AUC | 1% - 15% | Local flattening of the decision boundary and robust optimization. |

| F1-Score | 1% - 35% | Effective mitigation of class imbalance through strategic augmentation. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Datasets for Multi-Task GNNs on Molecular Properties

| Item Name | Function / Application | Access / Reference |

|---|---|---|

| QM9 Dataset | A standard benchmark dataset for validating multi-task GNNs on quantum mechanical properties of small molecules [27]. | [Ruddigkeit et al., J. Chem. Inf. Model.; Ramakrishnan et al., Sci. Data] [27] |

| OGB (Open Graph Benchmark) | Provides standardized and scalable benchmark datasets and tasks for molecular property prediction, such as those used in the AAIS study [10]. | https://ogb.stanford.edu/ [10] |

| AAIS Framework | A tool for performing adaptive adversarial data augmentation to improve performance on imbalanced molecular classification tasks [10]. | GitHub Repository [10] |

| Multi-task GNN Codebase | Code and data from a systematic study on multi-task learning and data augmentation for molecular property prediction [27]. | GitLab Repository [27] |

Workflow and Architecture Diagrams

The following diagram illustrates a high-level architecture for a multi-task GNN that leverages node-level information to enhance graph-level molecular property prediction.

Multi-Task GNN Architecture

This diagram visualizes the experimental setup for comparing optimizer performance, as described in Protocol 1.

Optimizer Comparison Workflow

Adaptive Checkpointing and Specialization (ACS) to Mitigate Negative Transfer

Troubleshooting Guide: Common ACS Experimental Issues

This guide addresses specific problems researchers may encounter when implementing the Adaptive Checkpointing and Specialization (ACS) method for molecular property prediction.

1. Problem: Performance degradation on low-data tasks during multi-task training.

- Question: "I am training a multi-task Graph Neural Network (GNN) on an imbalanced dataset. While performance on data-rich tasks is good, the results for tasks with very few labeled samples (e.g., less than 30) are poor. What is happening?"

- Answer: This is a classic symptom of Negative Transfer (NT), where gradient updates from data-rich tasks interfere with and degrade the learning of data-scarce tasks. The ACS framework is specifically designed to mitigate this [11]. Ensure you are using the ACS checkpointing strategy, which saves a specialized model for each task when its validation loss hits a new minimum, rather than using a single shared model for all tasks [31] [11].

2. Problem: Selecting the correct checkpoint for model evaluation.

- Question: "The ACS training process generates multiple model checkpoints. How do I determine which one to use for final evaluation and inference on a specific molecular property prediction task?"

- Answer: Unlike standard training, ACS does not produce a single "final" model. For each task (molecular property), you should use the task-specific specialized checkpoint that was saved when that task's validation loss was at its minimum [11]. The training code should track and record the best-performing backbone-head pair for each task individually [31].

3. Problem: High variance in model performance on ultra-low-data tasks.

- Question: "When predicting a new molecular property with only 29 labeled samples, my model's performance is unstable across different training runs. Is this expected?"

- Answer: Achieving stable performance in the ultra-low data regime is challenging. The cited research demonstrates that ACS can learn accurate models with as few as 29 samples, but some variance is inherent [31] [11]. To improve stability:

- Ensure your model architecture combines a shared GNN backbone with task-specific multi-layer perceptron (MLP) heads [11].

- Implement a rigorous K-fold cross-validation strategy, as done in the original work, to get a reliable performance estimate [31].

- Verify that your dataset split accounts for molecular scaffold integrity to avoid inflated performance estimates [11].

The following table summarizes the quantitative performance of ACS compared to other training schemes on molecular property benchmark datasets, demonstrating its effectiveness in mitigating negative transfer [11].

Table 1: Average Performance Comparison of Training Schemes on Molecular Benchmarks

| Training Scheme | Key Characteristics | Average Performance Relative to STL | Key Advantage |

|---|---|---|---|

| Single-Task Learning (STL) | Separate model for each task; no parameter sharing. | Baseline (0% improvement) | No risk of negative transfer. |

| Multi-Task Learning (MTL) | Single shared model for all tasks. | +3.9% improvement | Basic inductive transfer between tasks. |

| MTL with Global Loss Checkpointing (MTL-GLC) | Saves a single model based on overall validation loss. | +5.0% improvement | Better than standard MTL. |

| Adaptive Checkpointing with Specialization (ACS) | Saves a specialized model for each task based on its own validation loss. | +8.3% improvement | Effectively mitigates negative transfer, optimal for imbalanced data. |

Note: The performance gain was particularly pronounced on the ClinTox dataset, where ACS outperformed STL by 15.3% [11].

Experimental Protocol: Implementing ACS for Molecular Property Prediction

This protocol outlines the key steps for implementing the ACS training scheme as described in the original research [31] [11].

1. Model Architecture Setup:

- Backbone: Construct a shared Graph Neural Network (GNN) based on message passing to learn general-purpose molecular representations from input graphs [11].

- Heads: Attach separate, task-specific Multi-Layer Perceptrons (MLPs) to the backbone. Each head is responsible for predicting a specific molecular property [11].

2. Training Loop with Adaptive Checkpointing:

- Input: A multi-task dataset with potential severe task imbalance (some properties have far fewer labels than others) [11].

- Procedure:

- For each training iteration, forward propagate data through the shared GNN backbone and the respective task-specific heads.

- Calculate the loss for each task individually. Use loss masking to handle any missing labels for certain molecules [11].

- Perform backpropagation and update the model parameters.

- Critical ACS Step: On a validation set, continuously monitor the validation loss for each task individually.

- Whenever the validation loss for a specific task reaches a new minimum, checkpoint (save) the shared backbone parameters along with that task's specific head parameters [11].

- Output: After training completes, you will have a collection of specialized models—one optimal backbone-head pair for each molecular property task.

Workflow Visualization: ACS Training Scheme

The following diagram illustrates the logical flow and key components of the ACS methodology.

Table 2: Key Computational Tools and Datasets for ACS Experiments

| Item Name | Function / Description | Relevance to ACS |

|---|---|---|

| Message Passing GNN | The core neural architecture for learning from graph-structured molecular data [11]. | Serves as the shared, task-agnostic backbone in the ACS framework. |

| Multi-Layer Perceptron (MLP) Heads | Task-specific output networks that map general features to property predictions [11]. | Provide specialized learning capacity for each molecular property, key to avoiding interference. |

| MoleculeNet Benchmarks | Standardized datasets (e.g., ClinTox, SIDER, Tox21) for evaluating molecular property prediction [11]. | Used to validate ACS performance against state-of-the-art methods. |

| Murcko Scaffold Split | A method for splitting datasets based on molecular scaffolds to prevent data leakage [11]. | Ensures a more realistic and challenging evaluation, highlighting ACS's advantages. |

| K-Fold Cross-Validation | A resampling procedure used to evaluate a model's performance on limited data samples. | Provides a robust estimate of performance, especially crucial in ultra-low data regimes [31]. |

Few-Shot and Meta-Learning Strategies for Ultra-Low Data Regimes

Frequently Asked Questions & Troubleshooting Guides

This technical support resource addresses common challenges researchers face when applying few-shot and meta-learning strategies within Stochastic Gradient Descent (SGD) frameworks for molecular property prediction.

FAQ: Negative Transfer in Multi-Task Learning

Q: During multi-task training with SGD, updates for one molecular property (e.g., toxicity) are degrading performance on another (e.g., solubility). What is this phenomenon and how can it be mitigated?

A: This is a classic case of Negative Transfer (NT), which occurs when gradient updates from one task are detrimental to another due to task dissimilarity or data imbalance [11].

Troubleshooting Guide:

- Diagnosis: Monitor the validation loss for each task independently throughout the SGD training process. If the loss for a task consistently increases after parameter updates, it is likely suffering from NT.

- Solution: Implement the Adaptive Checkpointing with Specialization (ACS) strategy [11].

- Use a shared graph neural network (GNN) backbone to learn general molecular representations.

- Employ task-specific multi-layer perceptron (MLP) heads for each property.

- Throughout SGD training, independently checkpoint the best-performing model state (backbone and head) for each task whenever its validation loss hits a new minimum.

- This allows synergistic learning where possible while protecting individual tasks from harmful updates.

FAQ: Poor Cross-Property Generalization

Q: My meta-learning model, trained on a set of molecular properties, fails to generalize to new, unseen properties. What could be the issue?

A: This often stems from the cross-property generalization under distribution shifts challenge, where different properties may have weak correlations or different underlying biochemical mechanisms [32].

Troubleshooting Guide:

- Diagnosis: Evaluate whether the new property involves molecular structures or substructures that are underrepresented in your meta-training tasks.

- Solution: Adopt a context-informed heterogeneous meta-learning framework, such as CFS-HML [33] [34].

- Explicitly Separate Knowledge: Use a GNN encoder to capture property-specific knowledge (relevant to specific substructures) and a self-attention encoder to capture property-shared knowledge (fundamental molecular commonalities).

- Heterogeneous Meta-Learning: Structure your SGD outer-loop to jointly update all parameters, while the inner-loop updates focus on property-specific features. This optimizes the model to quickly adapt to new property contexts.

FAQ: Overfitting on Small Support Sets

Q: When adapting to a new few-shot task with only a handful of labeled molecules, my model severely overfits the small support set.

A: This is a fundamental risk in few-shot learning, where the model memorizes the limited data rather than learning a generalizable pattern [32].

Troubleshooting Guide:

- Solution 1: Leverage Bayesian Meta-Learning. Implement a framework like Meta-Mol [35], which combines a graph isomorphism encoder with a Bayesian meta-learning strategy. This provides uncertainty estimates and regularizes task-specific adaptation, reducing overfitting risks.

- Solution 2: Use Adaptive Adversarial Augmentation. Apply the AAIS method to generate synthetic but influential training examples [10].

- Use an influence function to identify data points that significantly impact the SGD training.

- Perform adversarial data augmentation near these influential points, which effectively flattens the loss landscape and improves robustness.

- Solution 3: Prioritize Interpretable Models. For simpler property relationships, a model like LAMeL (Linear Algorithm for Meta-Learning) can be effective [36]. It uses a meta-learned linear model, which is less prone to overfitting on very small datasets and offers greater interpretability.

Performance Comparison of Key Methods

The following table summarizes the quantitative performance and characteristics of several advanced strategies for the low-data regime.

Table 1: Comparison of Few-Shot and Meta-Learning Methods for Molecular Property Prediction

| Method Name | Core Approach | Reported Performance Gain | Key Application Context |

|---|---|---|---|

| ACS (Adaptive Checkpointing) [11] | Multi-task GNN with task-specific checkpointing | Outperformed single-task learning by 8.3% on average; achieved accurate predictions with only 29 samples. | Mitigating negative transfer in multi-task settings with imbalanced data. |

| CFS-HML [33] [34] | Heterogeneous meta-learning with separate property-specific/shared encoders | Substantial improvement in predictive accuracy, with more significant gains using fewer samples. | Improving cross-property generalization in few-shot scenarios. |

| Meta-Mol [35] | Bayesian Model-Agnostic Meta-Learning with hypernetworks | Significantly outperforms existing models on several benchmarks. | Low-data drug discovery, reducing overfitting. |

| LAMeL [36] | Meta-learning for linear models | 1.1- to 25-fold improvement over standard ridge regression. | Scenarios requiring high interpretability alongside accuracy. |

| AAIS [10] | Adaptive adversarial data augmentation | Improved model performance by 1%–15% in AUC and 1%–35% in F1-score. | Handling class imbalance in molecular classification tasks. |

Detailed Experimental Protocols

Protocol 1: Implementing ACS for Multi-Task Learning

This protocol is designed to mitigate negative transfer when predicting multiple molecular properties concurrently [11].

Model Architecture:

- Backbone: Construct a shared Message Passing Neural Network (MPNN) to generate a latent representation for each molecule.

- Heads: Attach separate, task-specific MLP heads to the backbone's output for each property to be predicted.

Training Procedure with SGD:

- Input: Imbalanced multi-task dataset (e.g., ClinTox, SIDER, Tox21).

- Procedure: For each training iteration (batch):

- Perform a forward pass through the shared MPNN backbone.

- For each task, compute the loss only on available labels (using loss masking for missing data).

- Compute the combined gradient and update the shared backbone and all task heads via SGD.

- Adaptive Checkpointing: After each epoch, evaluate the model on the validation set for each task. For any task where the validation loss is the lowest seen so far, save a checkpoint of the shared backbone parameters and its corresponding task head.

Final Model Selection: After training, the best-performing model for each task is its individually checkpointed backbone-head pair.

Protocol 2: Heterogeneous Meta-Learning for Few-Shot Properties (CFS-HML)

This protocol enables a model to quickly adapt to new molecular property prediction tasks with very few examples [33] [34].

Problem Formulation: Organize data into a set of tasks. Each task ( Tt ) is a 2-way K-shot classification problem (e.g., active vs. inactive for a property) with a support set ( St ) (K labeled examples per class) and a query set ( Q_t ) (unlabeled samples for evaluation).

Model Architecture:

- Property-Specific Encoder: A GIN (Graph Isomorphism Network) processes the molecular graph to create an embedding ( g_{t,i} ), capturing substructures relevant to specific properties.

- Property-Shared Encoder: A self-attention block acts on a set containing ( g{t,i} ) and learnable class embeddings (( ct^0 ), ( c_t^1 )) to produce a context-aware, property-shared embedding.

- Relation Learning Module: Infers relationships between molecules based on their property-shared features to aid label propagation.

Meta-Training with SGD:

- Outer Loop (Joint Update): Sample a batch of tasks. For each task, perform the inner loop adaptation. Then, compute the total loss across all query sets of the batch and update all model parameters (both encoders) via SGD. This is the meta-optimization step.

- Inner Loop (Task-Specific Adaptation): For a given task, compute the property-specific embeddings for the support set. Use these to rapidly adapt (e.g., via a few gradient steps) the parameters of the property-specific classifier. The inner loop focuses on quick specialization.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools and Datasets for FSMPP Research

| Reagent / Resource | Type | Function in Experiment | Example / Source |

|---|---|---|---|

| Graph Neural Network (GNN) | Model Architecture | Encodes the molecular graph structure into a numerical representation. | MPNN [11], GIN [34], Graph Isomorphism Encoder [35] |

| Meta-Learning Algorithm | Learning Framework | Optimizes the model for fast adaptation to new tasks with limited data. | MAML [37], Heterogeneous Meta-Learning [34] |

| Molecular Benchmark Datasets | Data | Provides standardized tasks and splits for training and fair evaluation. | MoleculeNet (ClinTox, SIDER, Tox21) [11], ChEMBL [32] |

| Large-Scale Quantum Chemistry Data | Pre-training Data | Provides foundational knowledge of atomic-level interactions for pre-training models. | Open Molecules 2025 (OMol25) [38] |

| Foundation Models for Atoms | Pre-trained Model | Serves as a powerful initialization for downstream property prediction tasks. | Universal Model for Atoms (UMA) [38] |

Workflow Diagram: ACS and Heterogeneous Meta-Learning

The diagram below illustrates the core workflows for two primary strategies discussed in this guide: Adaptive Checkpointing with Specialization (ACS) and the Context-informed Few-Shot Meta-Learning (CFS-HML) approach.

Diagram 1: Comparing ACS and CFS-HML Workflows. (A) ACS uses a shared backbone with task-specific heads and independent checkpointing to combat negative transfer. (B) CFS-HML uses separate encoders for property-specific and shared knowledge, fused for robust few-shot predictions.

Technical Support Center

This support center provides troubleshooting guidance and best practices for researchers employing Stochastic Gradient Descent (SGD) in low-data molecular property prediction, based on the Adaptive Checkpointing with Specialization (ACS) method.

Troubleshooting Guides

1. Problem: Model Performance is Degraded by Negative Transfer

- Description: In Multi-Task Learning (MTL), updates from one task detrimentally affect the performance of another, a phenomenon known as Negative Transfer (NT). This is often exacerbated by severe task imbalance, where tasks have vastly different amounts of labeled data [11].

- Solution: Implement the Adaptive Checkpointing with Specialization (ACS) training scheme.

- Methodology: Use a shared Graph Neural Network (GNN) backbone with task-specific Multi-Layer Perceptron (MLP) heads [11].

- Procedure: Continuously monitor the validation loss for each individual task during training. Save a checkpoint of the backbone and head parameters every time a new minimum validation loss is reached for a given task. This provides each task with a specialized model that benefits from shared representations while being protected from harmful parameter updates [11].

- Relevant Experimental Protocol: The ACS method was validated on molecular property benchmarks like ClinTox, SIDER, and Tox21, using a Murcko-scaffold split. It demonstrated an average 11.5% improvement over node-centric message passing methods and was particularly effective when tasks were imbalanced [11].

2. Problem: Unstable or Slow Convergence with SGD

- Description: The model's loss function fluctuates wildly or decreases too slowly during training. This is common in SGD due to the noise from per-example gradients and an improperly tuned learning rate [39] [6].

- Solutions:

- Implement Adaptive Optimizers: Use variants like Adam or RMSprop that adapt the learning rate for each parameter, which can be more stable than vanilla SGD [39].

- Apply Gradient Clipping: Cap the maximum value of gradients to prevent exploding gradients, a known issue in deep networks [39].

- Use Learning Rate Schedules: Systematically decrease the learning rate over time to balance speed and stability in convergence [39].

- Relevant Experimental Protocol: When facing slow convergence in a regression model, implementing a learning rate schedule can significantly improve convergence speed. For exploding gradients in Recurrent Neural Networks (RNNs), applying gradient clipping has been shown to stabilize training [39].

3. Problem: Poor Generalization from Ultra-Low Data Tasks

- Description: The model fails to make accurate predictions on the query set for tasks with very few (e.g., 29) labeled examples.

- Solution: Leverage a heterogeneous meta-learning framework to capture both property-shared and property-specific knowledge [34].

- Methodology:

- Property-Specific Encoder: Use a GNN (e.g., GIN) to generate embeddings focused on substructures relevant to the specific property [34].

- Property-Shared Encoder: Use a self-attention mechanism on a set of features (including molecular and class embeddings) to capture fundamental, task-common structures [34].

- Meta-Learning: Employ a two-loop training process. The inner loop updates parameters of the property-specific features within individual tasks, while the outer loop jointly updates all parameters across tasks [34].

- Methodology:

Frequently Asked Questions (FAQs)

Q1: What are the key benefits of using Stochastic Gradient Descent (SGD) for molecular property prediction? SGD is computationally efficient and scalable to large datasets because it calculates parameter updates based on small batches of data rather than the entire dataset [6]. Its noisy update nature can also help escape local minima in non-convex optimization problems, which is common in complex molecular models [6]. Furthermore, it is well-suited for online learning scenarios where new data arrives incrementally [6].

Q2: How does ACS mitigate Negative Transfer compared to standard MTL? Standard MTL shares all parameters across tasks throughout training, which can lead to persistent interference. In contrast, ACS uses a shared backbone but employs task-specific checkpointing. This allows each task to "freeze" its optimal shared parameters during training, effectively balancing inductive transfer with protection from detrimental updates. On benchmarks, ACS outperformed standard MTL and MTL with global loss checkpointing, showing particular strength in imbalanced scenarios [11].

Q3: Our dataset has a high ratio of missing labels. How is this handled? A practical alternative to methods like imputation is loss masking. This technique involves computing the loss and parameter updates only for the tasks where label data is present for a given molecule, thereby allowing the model to fully utilize the available data without making assumptions about missing values [11].

Q4: What is a key architectural consideration for GNNs in few-shot molecular prediction? It is critical to account for the fact that molecular relationships are not fixed but vary by property task. Two molecules that share a label in one task may have opposite properties in another. Therefore, models should be designed to separate property-shared knowledge (fundamental molecular commonalities) from property-specific knowledge (contextual, task-relevant substructures) [34].

Experimental Protocols & Data

Summary of ACS Performance on Molecular Benchmarks [11] Table: Average Performance Improvement of ACS over Baseline Methods

| Baseline Method | Average Performance Improvement | Key Observation |

|---|---|---|

| Single-Task Learning (STL) | +8.3% | Shows benefit of inductive transfer over no sharing. |

| Multi-Task Learning (MTL) | Outperformed by ACS | Highlights ACS's effectiveness in mitigating NT. |

| MTL with Global Loss Checkpointing (MTL-GLC) | Outperformed by ACS | Demonstrates superiority of task-specific checkpointing. |

Protocol: Implementing ACS for Molecular Property Prediction

- Model Architecture:

- Training Procedure:

- Train the model on all tasks simultaneously.

- For each task

i, monitor its validation loss throughout the training process. - Implement a checkpointing system where, for each task, you save the state of the shared backbone and the task-specific head whenever the validation loss for task

ihits a new minimum. - This results in a specialized model (backbone-head pair) for each task upon completion of training [11].

- Application to SAF Properties: This protocol was successfully deployed to predict 15 physicochemical properties of Sustainable Aviation Fuel (SAF) molecules, achieving accurate predictions with as few as 29 labeled samples [11].

Workflow Visualization

ACS Training Workflow

SGD Challenges & Solutions

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Components for Low-Data Molecular Property Prediction

| Research Reagent / Tool | Function in the Experiment |

|---|---|

| Graph Neural Network (GNN) | Serves as the primary molecular encoder, learning latent representations from the natural graph structure of molecules [11] [34]. |

| Multi-Layer Perceptron (MLP) Heads | Act as task-specific predictors, taking the general representations from the shared GNN backbone and mapping them to individual property predictions [11]. |

| Adaptive Checkpointing | A training scheme component that saves optimal model parameters per task to mitigate Negative Transfer in imbalanced multi-task settings [11]. |

| Meta-Learning Framework | A training strategy that simulates few-shot learning tasks to enhance a model's ability to generalize from very limited data [34]. |

| Influence Function | A tool used to identify influential data points in a dataset, which can guide adversarial data augmentation to improve model robustness and performance [10]. |

Beyond Vanilla SGD: Overcoming Pitfalls and Enhancing Performance

Taming Noisy Updates and Oscillations with SGD Momentum

Troubleshooting Guide: SGD Momentum

Q1: My training loss is oscillating heavily and converges slowly. Is Momentum the right solution?

A: Yes, this is a primary use case for Momentum. Momentum is specifically designed to accelerate convergence and dampen oscillations in ravines—areas where the loss surface curves more steeply in one dimension than in another [40]. It works by accumulating a velocity vector in directions of persistent reduction, smoothing out noisy or oscillating gradients [41] [40].

Recommended Solution: Implement SGD with Momentum. A standard initial configuration is a momentum term (γ) of 0.9 and a learning rate that is often set lower than what you would use for vanilla SGD [42] [40].

Experimental Protocol:

- Initialize your model parameters,

θ, and the velocity vector,v = 0. - For each training iteration

t:- Compute the gradient on a mini-batch:

g_t = ∇_θ J(θ) - Update the velocity vector:

v_t = γ * v_{t-1} + η * g_t - Update the parameters:

θ = θ - v_t

- Compute the gradient on a mini-batch:

- Monitor the training loss. The path should be smoother and converge faster compared to vanilla SGD.

Q2: How does Nesterov Accelerated Gradient (NAG) provide an advantage over standard Momentum?

A: Standard Momentum can be slow to react if the gradient changes direction. NAG, or Nesterov Momentum, is a "look-ahead" variant that corrects this. It first makes a jump in the direction of the accumulated velocity, then calculates the gradient from this approximated future position, and finally makes a correction [40]. This reduces overshooting and leads to more responsive updates, especially when the algorithm needs to slow down before an upward slope [42] [40].

Recommended Solution: Replace standard Momentum with Nesterov Momentum for improved stability and performance.

Experimental Protocol: The update rules for NAG are:

- Partial Update:

θ_lookahead = θ_{t-1} - γ * v_{t-1} - Gradient Calculation: Compute the gradient at the look-ahead point:

g_t = ∇_θ J(θ_lookahead) - Velocity Update:

v_t = γ * v_{t-1} + η * g_t - Parameter Update:

θ_t = θ_{t-1} - v_t

Q3: My model gets stuck in flat regions or local minima. Can Momentum help?

A: Yes. The inertia provided by Momentum helps the optimizer coast across flat spots of the search space where the gradient is close to zero [41]. Furthermore, the noise introduced by stochastic gradients, combined with Momentum, can help the model escape shallow local minima [1] [43].

Q4: How should I tune the momentum hyperparameter (γ) for molecular property prediction tasks?

A: The optimal value can be dataset-dependent. Systematic studies on molecular graph datasets suggest that adaptive optimizers like Adam often outperform basic SGD with Momentum [13]. However, if tuning Momentum, start with values between 0.8 and 0.99 [41] [40]. A higher value (e.g., 0.99) allows for a stronger influence from past gradients.

Experimental Protocol for Comparison:

- Fix a learning rate and model architecture.

- Train multiple identical models, varying only the momentum value (e.g.,

[0.0, 0.5, 0.9, 0.99]). - Track key metrics like training loss, validation accuracy, and convergence speed.

- Select the value that provides the most stable and accurate performance on your validation set.

Performance Data for Optimizer Selection

The following table summarizes quantitative findings from a systematic study comparing optimizers on molecular property prediction tasks using Message Passing Neural Networks (MPNNs). This data can guide your initial optimizer selection [13].

Table 1: Optimizer Performance on Molecular Classification Tasks (MPNNs)

| Optimizer | Key Principle | Test Accuracy (%) (NCI-1 Dataset) | Test Accuracy (%) (BACE Dataset) | Remarks |

|---|---|---|---|---|

| SGD with Momentum | Accumulates exponential decay of past gradients [40]. | 78.41 | 81.33 | More stable than SGD; can be sensitive to learning rate [13]. |

| Adam | Combines Momentum and adaptive learning rates per parameter [43]. | 80.15 | 83.77 | Often provides robust performance and fast convergence [13]. |

| AdamW | Decouples weight decay from gradient updates, improving generalization [13]. | 81.92 | 85.46 | Showed superior generalization in this study [13]. |

| NAdam | Incorporates Nesterov momentum into Adam [13]. | 80.33 | 84.11 | Can offer benefits of both look-ahead and adaptive learning rates [13]. |

| RMSprop | Adapts learning rate based on a moving average of squared gradients [42]. | 79.87 | 83.02 | Good for non-stationary objectives and online learning [42]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for an SGD Momentum Experiment

| Research Reagent | Function / Explanation |

|---|---|