Optimizing SVM Hyperparameter Tuning: A Computational Complexity Guide for Biomedical Research

This article provides a comprehensive analysis of hyperparameter optimization (HPO) for Support Vector Machines (SVM), with a specific focus on computational complexity and practical applications in biomedical and clinical research.

Optimizing SVM Hyperparameter Tuning: A Computational Complexity Guide for Biomedical Research

Abstract

This article provides a comprehensive analysis of hyperparameter optimization (HPO) for Support Vector Machines (SVM), with a specific focus on computational complexity and practical applications in biomedical and clinical research. It explores foundational SVM hyperparameters like C, gamma, and kernel functions, and systematically compares traditional methods (Grid Search, Random Search) with advanced techniques (Bayesian Optimization, Evolutionary Algorithms). The guide addresses critical troubleshooting challenges, including managing high-dimensional search spaces and avoiding overfitting. Through validation strategies like k-fold cross-validation and performance benchmarking, it offers actionable insights for researchers and drug development professionals to build robust, efficient, and high-performing predictive models for complex biomedical data.

SVM Hyperparameters and Computational Complexity: Core Concepts for Researchers

Frequently Asked Questions

What is the fundamental role of the C hyperparameter? The

Cparameter is the regularization parameter [1]. It controls the trade-off between achieving a low training error and a low testing error [2]. A highCvalue creates a "hard margin," forcing the model to prioritize classifying every training point correctly, which can lead to a complex model that overfits the data. A lowCvalue creates a "soft margin," allowing some misclassifications for a simpler, more generalizable model [1].How does the gamma parameter influence an SVM model with an RBF kernel? The

gammaparameter defines how far the influence of a single training example reaches [2]. It is a key hyperparameter for the Radial Basis Function (RBF) kernel. A lowgammameans a single example has a far-reaching influence, resulting in a smoother, less complex decision boundary. A highgammameans the influence of each example is limited to its nearby region, leading to a more complex, wiggly boundary that can capture finer details but also risks overfitting [2].Can I tune the C and kernel parameters independently? No, the kernel parameters (like

gammafor the RBF kernel) and theCparameter are correlated and should not be tuned in isolation [3]. Their interaction is crucial to the model's performance. Ignoring this interaction can lead to suboptimal tuning and poor model performance [2]. The optimal value ofCoften depends on the chosengammaand vice versa, which is why they are typically optimized simultaneously using techniques like grid search [3].My dataset is very large, making hyperparameter tuning slow. What can I do? For large datasets (e.g., millions of samples), a full hyperparameter search can be prohibitively slow. Some practical approaches include:

- Subsampling: Perform initial hyperparameter optimization on a smaller, representative subset of your data to identify promising parameter ranges before running a more refined search on the full dataset [3].

- Reduce CV Folds: Using a 2-fold cross-validation for the search can be a good compromise between speed and reliability [3].

- Efficient Search Algorithms: Instead of a brute-force grid search, use more efficient optimization methods like Bayesian Optimization, which attempts to minimize the number of evaluations needed [3].

What are some best practices for tuning these hyperparameters?

- Use Logarithmic Scales: The optimal values for

Candgammaoften span a wide range, so it is best to search for them on a logarithmic scale (e.g., (2^{-5}, 2^{-3}, ..., 2^{15})) [2]. - Scale Your Data: SVM is sensitive to the scale of input features. Always ensure your data is scaled (e.g., standardized or normalized) before training, as this directly impacts the effect of

gammaandC[2]. - Combine with Cross-Validation: Use techniques like k-fold cross-validation during a grid or random search to evaluate hyperparameter performance robustly and avoid overfitting to the training set [2].

- Use Logarithmic Scales: The optimal values for

Troubleshooting Common Experimental Issues

Problem: The model is overfitting the training data.

- Potential Causes:

- Solutions:

- Decrease the value of the

gammaparameter. - Decrease the value of the

Cparameter to allow for a wider, more generalizable margin. - Expand your hyperparameter search to include lower ranges for these parameters.

- Decrease the value of the

Problem: The model is underfitting and performs poorly even on the training data.

- Potential Causes:

- Solutions:

- Increase the value of the

gammaparameter. - Increase the value of the

Cparameter. - Consider using a more complex kernel function (e.g., moving from linear to RBF).

- Increase the value of the

Problem: The hyperparameter optimization process is taking too long.

- Potential Causes:

- The search space (range of values for

C,gamma, etc.) is too large or granular. - The model training itself is slow due to a large dataset or complex kernel.

- Using an inefficient search strategy like a full grid search with many folds of cross-validation.

- The search space (range of values for

- Solutions:

- Start with a coarse search on a wide logarithmic scale to identify a promising region, then perform a finer search in that region [3].

- Use a faster optimization algorithm like Bayesian Optimization, Random Search, or evolutionary algorithms instead of a full Grid Search [4] [3].

- As a preliminary step, tune the model on a smaller, stratified sample of your data [3].

- Potential Causes:

Experimental Protocols & Data Presentation

The following table summarizes a real-world experimental methodology for SVM hyperparameter optimization, as applied to the classification of wheat genotypes [5].

Table 1: Summary of Experimental Protocol for SVM Hyperparameter Optimization

| Aspect | Protocol Description |

|---|---|

| Objective | To classify 302 wheat genotypes into different yield classes (low, medium, high) using 14 morphological attributes and optimize SVM hyperparameters for maximum accuracy [5]. |

| Kernels Evaluated | Linear, Radial Basis Function (RBF), Sigmoid, Polynomial (degrees 1, 2, 3) [5]. |

| Optimization Methods | Grid Search (GS), Random Search (RS), Genetic Algorithm (GA), Differential Evolution (DE), Particle Swarm Optimization (PSO) [5]. |

| Performance Metric | Classification Accuracy [5]. |

| Key Finding (Best Kernel) | The RBF kernel achieved the highest accuracy at 93.2% among individual kernels [5]. |

| Key Finding (Ensemble) | A Weighted Accuracy Ensemble (EWA) of all six kernels further improved the accuracy to 94.9% [5]. |

| Key Finding (Best Optimizer) | Particle Swarm Optimization (PSO) was highly effective, helping the RBF-SVM model achieve a test set accuracy of 94.9%, a significant gain over the baseline [5]. |

This workflow outlines the general process for systematically tuning an SVM model, incorporating the experimental steps from the cited study [5].

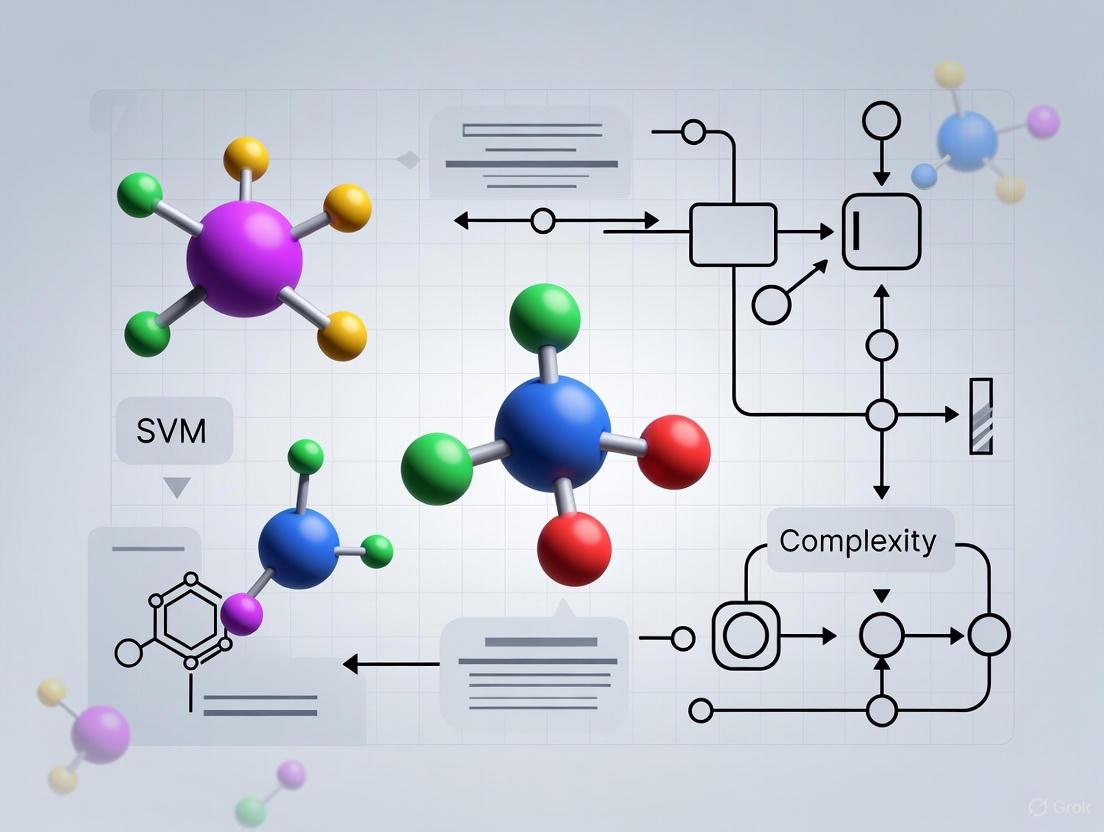

Diagram 1: SVM Hyperparameter Tuning Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for SVM Hyperparameter Optimization

| Tool / Resource | Function in the Research Process |

|---|---|

| Grid Search | A systematic method for searching a predefined set of hyperparameters. It is comprehensive but can be computationally expensive [5]. |

| Random Search | Evaluates random combinations of hyperparameters from given distributions. Often more efficient than Grid Search for a similar computational budget [4]. |

| Bayesian Optimization | A sophisticated, sequential model-based optimization technique that uses past results to suggest the next most promising hyperparameters, leading to faster convergence [3]. |

| Particle Swarm Optimization (PSO) | A population-based stochastic optimization algorithm inspired by social behavior. Effectively used to tune SVM parameters like C and gamma for improved accuracy [4] [5]. |

| Genetic Algorithm (GA) | An evolutionary algorithm that uses selection, crossover, and mutation to find optimal hyperparameters. Known to have lower temporal complexity in some comparisons [4]. |

| Hyperopt / Optuna | Advanced, open-source libraries specifically designed for hyperparameter optimization. They can efficiently handle complex search spaces and are known to improve SVM classification accuracy [6]. |

| Radial Basis Function (RBF) Kernel | A powerful, commonly used kernel that can model complex, non-linear decision boundaries. Often the default or first choice for many applications [2] [5]. |

The effect of the gamma parameter in the RBF kernel can be visualized conceptually in the decision boundary.

Diagram 2: Effect of Gamma Parameter on Model Complexity

The Critical Link Between Hyperparameter Tuning and Model Generalization

Frequently Asked Questions

What is the primary goal of hyperparameter tuning in machine learning? The primary goal is to find the optimal set of hyperparameters that minimizes a predefined loss function on a given dataset, thereby maximizing the model's generalization performance on unseen data [7]. Effective tuning helps the model learn better patterns and avoid overfitting or underfitting [8].

Why is the generalization of a model important, especially in critical fields like drug development? A model that generalizes well delivers consistent and reliable results when applied to new, unseen data [9]. In drug development, where models inform high-stakes decisions, poor generalization due to overfitting can lead to inaccurate predictions that fail in real-world clinical settings, resulting in significant financial and time losses.

What is overfitting and how does hyperparameter tuning help prevent it?

Overfitting occurs when a model learns the training data too well, including its noise and outliers, but performs poorly on new data [10]. Hyperparameter tuning helps prevent this by controlling the model's capacity. For instance, tuning parameters like the SVM's C or gamma can enforce a smoother decision boundary that captures the underlying pattern rather than the noise [9] [11].

What are the computational trade-offs between different hyperparameter optimization methods? The choice of method involves a direct trade-off between computational cost and the likelihood of finding the optimal hyperparameters [4]. Grid Search is computationally intensive but exhaustive, while Random Search is more efficient for large search spaces. Bayesian Optimization aims to find a good solution with fewer evaluations, and population-based methods like the Genetic Algorithm can offer a favorable balance between computational time and performance [8] [4] [7].

How does the bias-variance tradeoff relate to hyperparameter tuning? The goal of hyperparameter tuning is to balance the bias-variance tradeoff [9]. Bias is the error from erroneous assumptions in the model (leading to underfitting), while variance is the error from sensitivity to small fluctuations in the training set (leading to overfitting). Good hyperparameter tuning optimizes for both low bias and low variance to create an accurate and consistent model [9].

Troubleshooting Guides

Guide 1: Diagnosing and Remedying Poor Generalization in SVM Models

This guide addresses the common issue of a Support Vector Machine (SVM) model that performs well on training data but poorly on validation or test data.

Symptoms:

- High accuracy on training data, but significantly lower accuracy on test data.

- The model's decision boundary is overly complex and tightly fits the training data points.

Diagnosis: Overfitting The model has high variance and has likely learned the noise in the training data rather than the generalizable pattern [9].

Remedial Actions:

- Tune Regularization Hyperparameter

C: TheCparameter controls the trade-off between achieving a low error on the training data and a wider margin. A highCvalue creates a strict, complex boundary that risks overfitting. - Adjust Kernel Influence with

gamma: Thegammaparameter defines how far the influence of a single training example reaches. A highgammavalue means only nearby points have influence, leading to complex, localized boundaries. - Validate with Nested Cross-Validation: A standard validation score can be overly optimistic if the same set was used for hyperparameter tuning. Use nested cross-validation to get an unbiased estimate of the model's generalization performance [7].

Guide 2: Managing the Computational Cost of Hyperparameter Optimization

This guide helps researchers select an optimization strategy that balances computational complexity with model performance, a critical concern for large datasets or complex models like deep neural networks.

Symptoms:

- Hyperparameter tuning takes an impractically long time to complete.

- The cost of experimentation exceeds computational budgets.

Diagnosis: Inefficient Search Strategy The chosen method for exploring the hyperparameter space is not suitable for the problem's dimensionality or the cost of model evaluation [4].

Remedial Actions:

- Replace Grid Search with Randomized Search: For a large number of hyperparameters, Grid Search suffers from the "curse of dimensionality." Randomized Search often yields comparable results in a fraction of the time by sampling a fixed number of parameter combinations from specified distributions [8] [7].

- Adopt Bayesian Optimization: This is a smarter, sequential approach that builds a probabilistic model of the objective function to balance exploration and exploitation. It typically finds a good set of hyperparameters in fewer evaluations than Grid or Random Search [8] [7].

- Consider Evolutionary Algorithms: For very complex search spaces, algorithms like the Genetic Algorithm (GA) have been shown to achieve good performance with lower temporal complexity compared to some other methods [4].

Experimental Protocols & Data

Protocol 1: SVM Hyperparameter Tuning with GridSearchCV

This is a standard methodology for exhaustively searching a predefined hyperparameter space [12].

1. Problem Definition: Optimize an SVM classifier for a binary classification task (e.g., classifying cell features as malignant or benign). 2. Data Preparation: Load and split data into 70% training and 30% testing sets. 3. Hyperparameter Grid Definition: Define the set of values to explore for each key hyperparameter.

4. Model Initialization and Search: Initialize the SVM model and the GridSearchCV object with 5-fold cross-validation.

5. Model Fitting: Execute the search on the training data. The process trains and validates an SVM for every combination in param_grid.

6. Best Model Evaluation: Select the model with the best cross-validation score and evaluate its final performance on the held-out test set [12].

Protocol 2: Comparing Hyperparameter Optimization Algorithms

This protocol outlines a comparative experiment to evaluate the efficiency of different tuning methods [4].

1. Fixed Dataset and Model: Select a standard dataset (e.g., Breast Cancer Wisconsin) and a fixed model algorithm (e.g., SVM).

2. Define Search Space: Establish a common hyperparameter search space for all methods (e.g., C: log-uniform from 1e-5 to 1e5, gamma: log-uniform from 1e-5 to 1e5).

3. Execute Optimization Algorithms: Run different optimization techniques (e.g., Grid Search, Random Search, Bayesian Optimization, Genetic Algorithm) with the same resource constraints (e.g., maximum number of iterations or time).

4. Measure Outcomes: For each method, record the best validation score achieved and the total computational time taken.

5. Analyze and Compare: Compare the methods based on their final performance and computational cost.

Table 1: Computational Complexity of Hyperparameter Tuning Methods

| Method | Computational Approach | Key Advantage | Key Disadvantage | Typical Use Case |

|---|---|---|---|---|

| Grid Search [8] | Exhaustive search over a specified set of values | Guaranteed to find the best combination within the grid | Computationally expensive, suffers from curse of dimensionality | Small, well-understood hyperparameter spaces |

| Random Search [8] [7] | Random sampling from specified distributions | More efficient for spaces with low intrinsic dimensionality; faster than Grid Search | May miss the optimal point; results can vary between runs | Larger search spaces where some parameters are less important |

| Bayesian Optimization [8] [7] | Sequential model-based optimization using a surrogate function | Finds good solutions in fewer evaluations; balances exploration and exploitation | Higher computational overhead per iteration; complex to implement | Expensive-to-evaluate models (e.g., deep neural networks) |

| Genetic Algorithm [4] [7] | Population-based evolutionary search | Good for complex, non-differentiable spaces; can escape local minima | Can require many function evaluations; several hyperparameters itself | Large, complex search spaces with mixed data types |

Table 2: SVM Hyperparameters and Their Impact on Generalization

| Hyperparameter | Function | Effect of Low Value | Effect of High Value | Tuning Recommendation |

|---|---|---|---|---|

| C (Regularization) [9] [11] | Controls the trade-off between a wide margin and classifying all points correctly. | Simpler model, smoother decision boundary. May underfit. | Complex model, tight decision boundary. May overfit. | Start with a logarithmic scale (e.g., 0.001, 0.1, 1, 10, 100). |

| gamma (Kernel) [12] [11] | Defines the reach of a single training example. | Far reach, smoother boundary. The model is more generalized. | Short reach, complex boundary. The model is more localized and prone to overfitting. | Use a logarithmic scale. Low gamma often improves generalization. |

| kernel [11] | Transforms data into a higher dimension to find a separating hyperplane. | Linear kernel is simple but may not capture complex patterns. | Non-linear kernels (e.g., RBF) can model complex patterns but risk overfitting. | Use a linear kernel for linearly separable data; RBF for non-linear problems. |

Workflow Visualization

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Tools for Hyperparameter Optimization Research

| Tool / Solution | Function | Application Context |

|---|---|---|

| GridSearchCV (Scikit-learn) | Exhaustive search over a parameter grid with cross-validation. | Ideal for initial exploration of small, discrete hyperparameter spaces. Provides a robust baseline [8] [12]. |

| RandomizedSearchCV (Scikit-learn) | Randomized search over parameters from distributions. | The preferred baseline for larger search spaces. More efficient than grid search for spaces where some parameters are less important [8] [7]. |

| Bayesian Optimization Libraries (e.g., Optuna, Hyperopt) | Implements sequential model-based optimization. | Essential for optimizing expensive-to-train models (e.g., deep neural networks, large SVMs) where evaluation budget is limited [8]. |

| Support Vector Machine (SVM) | A powerful supervised learning model for classification and regression. | The core algorithm under investigation. Its performance is highly sensitive to the C, gamma, and kernel hyperparameters [12] [9]. |

| Nested Cross-Validation | An outer cross-validation loop for performance estimation, with an inner loop for hyperparameter tuning. | The gold-standard method for obtaining an unbiased estimate of a model's generalization error after hyperparameter tuning [7]. |

Defining Computational Complexity in Hyperparameter Optimization

Frequently Asked Questions

1. What is computational complexity in the context of hyperparameter optimization (HPO), and why does it matter? Computational complexity in HPO refers to the computational resources—primarily time and processing power—required to find the optimal set of hyperparameters for a machine learning model. It matters because many HPO methods involve evaluating a model hundreds or thousands of times with different hyperparameter configurations. For models that are expensive to train, such as Support Vector Machines (SVMs) on large datasets or deep learning models, this process can become prohibitively slow and computationally intensive [13] [4]. Efficient HPO is thus crucial for practical research and development.

2. My HPO process is taking too long. What are the primary factors contributing to this? Several key factors can slow down your HPO:

- Model Evaluation Cost: The single biggest cost is often the time it takes to train and evaluate your model once [13] [14]. Complex models like SVMs with non-linear kernels or deep neural networks contribute directly to this.

- Search Space Dimensionality: The number of hyperparameters you are tuning defines the dimension of the search space. As dimensionality grows, the number of possible combinations explodes, a phenomenon known as the "curse of dimensionality" [13].

- Choice of HPO Algorithm: Naive methods like Grid Search are computationally inefficient because they evaluate every possible combination in the search space. Random Search is better but can still be wasteful. More sophisticated algorithms like Bayesian Optimization are designed to find good parameters with fewer evaluations [15] [4].

- Size of the Dataset: Larger datasets typically mean longer model training times for each hyperparameter configuration evaluated [14].

3. How can I reduce the computational cost of HPO without sacrificing model performance? You can employ several strategies:

- Use Sequential Model-Based Optimization: Adopt Bayesian Optimization methods, which use information from past evaluations to decide the next most promising hyperparameters to test, significantly reducing the number of trials needed [16] [15] [14].

- Implement Multi-Stage Tuning: Decompose the problem. For example, you could first tune on a small subset of your data to weed out poor performers, and then do a finer-grained search on the full dataset with the most promising candidates [13].

- Leverage Early Stopping: Use algorithms that can automatically stop the evaluation of poorly performing hyperparameter sets early, before a full model training cycle is complete [15]. This is known as pruning.

- Parallelize Your Experiments: Use HPO frameworks that can run multiple hyperparameter trials in parallel across multiple GPUs or compute nodes [15].

4. For tuning an SVM, which optimization algorithms are most computationally efficient?

While the best algorithm can depend on your specific dataset and search space, research provides some guidance. One study found that a Genetic Algorithm (GA) demonstrated lower temporal complexity compared to other swarm intelligence algorithms like Particle Swarm Optimization (PSO) and Whale Optimization [4]. However, Bayesian Optimization frameworks like Optuna and Hyperopt are generally recommended for their sample efficiency and are widely used for tuning SVM hyperparameters like the regularization parameter C and kernel coefficient gamma [15] [6].

5. Are there specific HPO frameworks that help manage computational cost? Yes, several modern frameworks are designed with computational efficiency in mind:

- Ray Tune: A Python library that supports distributed HPO and can integrate with various search algorithms (e.g., HyperOpt, Bayesian Optimization) to scale experiments without code changes [15].

- Optuna: A framework that defines search spaces dynamically and features efficient sampling and pruning algorithms, which automatically stop unpromising trials [15] [6].

- HyperOpt: A library that uses Tree of Parzen Estimators (TPE), a Bayesian optimization algorithm, to search spaces efficiently [15] [6].

Troubleshooting Guides

Problem: HPO is not converging to a good solution in a reasonable time. Solution: This is often a sign of an overly large search space or an inefficient search strategy.

- Refine the Search Space: Start with a wider search over a smaller number of critical parameters. For SVM, this typically means

Candgamma. Once you narrow down a good region, you can perform a finer-grained search [6]. - Inspect the Search History: Use the visualization tools in frameworks like Optuna to analyze the completed trials. This can reveal if the search is stuck in a local optimum or if certain hyperparameters have no impact on performance, allowing you to adjust the space.

- Switch the Search Algorithm: If you are using Random Search, switch to a more efficient method like Bayesian Optimization (e.g., using Optuna's

TPESampleror HyperOpt) [15] [6].

Problem: Each model evaluation (trial) takes an extremely long time. Solution: Address the cost of the objective function.

- Use a Data Subset: For the initial search phase, train your model on a smaller, representative subset of your full dataset. This can quickly eliminate a large number of bad hyperparameter choices [13] [14].

- Enable Pruning: Implement a pruning algorithm like Hyperband or ASHA (Asynchronous Successive Halving Algorithm) in Ray Tune or Optuna. These algorithms automatically stop underperforming trials early, saving substantial compute time [15].

- Check for Hardware Acceleration: Ensure that your model training is leveraging available hardware, such as GPUs, as SVM training and inference can be accelerated on these platforms.

Problem: Tuning a system with multiple, interdependent controllers or models is computationally infeasible. Solution: This is a high-dimensionality problem common in MIMO systems.

- Decompose the Problem: Apply a multi-stage tuning framework. Identify control loops or model components that can be decoupled and tune them independently or in a specific sequence. Research has shown this can reduce computational time by over 80% [13].

- Leverage Decomposition Methods: For SVM, inherent algorithmic optimizations exist. However, the principle remains: break down a complex tuning task into smaller, manageable sub-tasks to reduce the dimensional space of the overall optimization problem [13].

Experimental Protocols & Data

Table 1: Comparison of HPO Algorithm Computational Performance This table summarizes findings from the literature on the computational efficiency of different HPO methods when applied to models like SVM.

| Optimization Algorithm | Reported Computational Complexity / Performance | Key Characteristics |

|---|---|---|

| Grid Search | Not explicitly quantified, but cited as computationally expensive and inefficient [15] [4]. | Exhaustively searches all combinations; complexity grows exponentially with parameters. |

| Random Search | Faster than Grid Search, but can be slow to converge to the optimum [15] [4]. | Randomly samples the search space; less prone to dimensionality curse than Grid Search. |

| Genetic Algorithm (GA) | Found to have lower temporal complexity than PSO, Whale Optimization, and Ant Bee Colony in one study [4]. | A metaheuristic inspired by natural selection. |

| Particle Swarm Optimization (PSO) | Higher temporal complexity than GA in a comparative study [4]. | A population-based metaheuristic inspired by social behavior of birds. |

| Bayesian Optimization (BO) | Demonstrated higher performance and reduced computation time compared to Grid Search [16]. A multi-stage BO framework showed an 86% decrease in computational time and a 36% decrease in sample complexity [13]. | Sequential model-based optimization; sample-efficient. |

Table 2: HPO Framework Capabilities for Managing Computational Cost A comparison of popular tools to help select the right framework for your experiment.

| Framework | Key Efficiency Features | Supported Algorithms | Best For |

|---|---|---|---|

| Optuna | Define-by-run API, efficient pruning algorithms, parallel distributed optimization [15]. | Grid Search, Random Search, Bayesian (TPE), GA [15]. | Research requiring dynamic search spaces and automated early stopping. |

| Ray Tune | Scalable distributed computing, seamless parallelization, integration with many libraries [15]. | Ax/Botorch, HyperOpt, BayesOpt, ASHA (pruning) [15]. | Large-scale experiments that need to run on clusters or multiple GPUs. |

| HyperOpt | Bayesian optimization via TPE, designed for awkward search spaces [15]. | Random Search, TPE, Adaptive TPE [15]. | Standard Bayesian optimization with conditional parameters. |

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational "reagents" – the software tools and algorithms – essential for conducting efficient HPO experiments within computational complexity research.

| Tool / Algorithm | Function in the HPO Experiment | |

|---|---|---|

| Bayesian Optimization (BO) | The core search algorithm that builds a probabilistic model of the objective function to guide the search for the optimal hyperparameters [16] [14]. | |

| Tree-structured Parzen Estimator (TPE) | A specific type of Bayesian optimization algorithm used by HyperOpt and Optuna that models `p(x | y)andp(y)` to determine promising hyperparameters [15]. |

| Pruning (Early Stopping) Algorithms | "Reagents" that automatically halt the evaluation of underperforming trials before completion, dramatically reducing wasted computation [15]. | |

| Multi-Stage Tuning Framework | A methodological approach that decomposes a high-dimensional tuning task into sequential, lower-dimensional subtasks, drastically reducing sample complexity [13]. | |

| Ray Tune Scheduler (e.g., ASHA) | A system component that manages parallel trial execution and implements early stopping policies, enabling efficient resource utilization [15]. |

Workflow Visualization

The diagram below illustrates a multi-stage hyperparameter optimization workflow designed to reduce computational complexity.

The following diagram maps the logical relationship between HPO strategies, the problems they solve, and the resulting impact on computational complexity.

Experimental Protocol: Multi-Stage HPO for SVM with Bayesian Optimization

Objective: To efficiently tune an SVM's C and gamma hyperparameters while minimizing computational cost.

Methodology:

- Stage 1 - Broad Exploration:

- Tool: Optuna with

TPESampler. - Data: Use a 20% stratified random subset of the full training data.

- Search Space: Define a wide log-uniform range for

C(e.g.,1e-5to1e5) andgamma(e.g.,1e-5to1e2). - Trials: Run 50 trials. Enable the

MedianPrunerto stop underperforming trials after a few epochs/iterations (if the SVM implementation is iterative) or based on intermediate validation scores. - Output: A set of promising hyperparameter regions.

- Tool: Optuna with

- Stage 2 - Focused Refinement:

- Tool: Continue with Optuna, using the same study.

- Data: Use the full 100% of the training data.

- Search Space: Refine the search space based on the top 20% of performers from Stage 1. For example, if the best

Cvalues were between 1 and 100, set a new log-uniform range of1e0to1e2. - Trials: Run an additional 30 trials on the refined, high-fidelity search space.

- Output: The best overall hyperparameter set.

Validation: Evaluate the final model from Stage 2 on a held-out test set that was not used during the tuning process. This protocol leverages the sample efficiency of Bayesian Optimization and the cost-saving benefits of a multi-stage, pruning-enabled approach [13] [15] [6].

Why Computational Efficiency is Paramount in Biomedical Datasets

Frequently Asked Questions (FAQs)

Q1: Why are my SVM hyperparameter optimization runs taking so long, and how can I speed them up? The computational challenge is often due to the complex and high-dimensional nature of biomedical data. Traditional optimization methods like Grid Search are computationally expensive. Switching to population-based or bio-inspired optimization algorithms can significantly reduce execution time. For instance, research has demonstrated that the Genetic Algorithm can achieve lower temporal complexity compared to other swarm intelligence algorithms like Particle Swarm Optimization or the Ant Bee Colony Algorithm when tuning SVM hyperparameters [4].

Q2: What are the main data-related challenges that impact computational efficiency in biomedical research? Biomedical data is often characterized by several features that directly strain computational resources [17] [18] [19]:

- High Dimensionality: Datasets with a vast number of features (e.g., from genomics or proteomics) increase computational demands and complicate modeling.

- Heterogeneity: Integrating diverse data types—such as genomic sequences, clinical records, and medical images—requires sophisticated and computationally heavy preprocessing and fusion techniques.

- Data Scale: The explosive growth of biomedical Big Data necessitates using Big Data platforms like Apache Spark and cloud computing infrastructure (AWS, Google Cloud) for storage and processing [17].

Q3: How does model complexity contribute to computational costs and irreproducibility? Complex AI models, particularly deep learning architectures with many layers and parameters, have substantial computational demands. This complexity increases the risk of overfitting and raises computational costs, which can deter independent verification and hinder reproducibility. For example, training a model like AlphaFold required 264 hours on specialized hardware (TPUs), making it resource-intensive for others to replicate [20].

Q4: My optimization results are inconsistent across runs. How can I improve reproducibility? Irreproducibility can stem from several sources [20]:

- Inherent Non-Determinism: AI models using Stochastic Gradient Descent (SGD) or random weight initialization can converge to different local minima in each run.

- Data Preprocessing Variability: Techniques like normalization, feature selection, and handling of missing data can introduce randomness if not meticulously standardized.

- Hardware Variations: Parallel processing on GPUs/TPUs can produce non-deterministic results due to floating-point operations. Mitigation strategies include setting random seeds, carefully documenting all preprocessing steps, and using containerization (e.g., Docker) to create consistent software environments.

Troubleshooting Guides

Issue: Prohibitively Long Training Times for SVM on High-Dimensional Omics Data

Background: Machine learning on datasets with thousands of genes or proteins is a computational bottleneck, especially during the hyperparameter optimization phase.

Solution: Implement efficient hyperparameter optimization algorithms and leverage distributed computing.

Step-by-Step Resolution:

- Profile the Problem: Identify the most computationally expensive part of your pipeline using profiling tools. This is often the model selection and hyperparameter tuning step.

- Select an Efficient Optimizer: Replace Grid Search with more efficient algorithms. The following table summarizes the computational characteristics of different optimizers, as identified in research [4]:

Table 1: Comparison of Hyperparameter Optimization Algorithms for SVM

| Optimization Algorithm | Computational Complexity | Key Characteristic | Best Suited For |

|---|---|---|---|

| Grid Search | Very High | Exhaustively searches all combinations | Small, low-dimensional parameter spaces |

| Random Search | High | Randomly samples parameter space | Faster broad search than Grid Search |

| Genetic Algorithm (GA) | Lower (found to have lower temporal complexity) [4] | Bio-inspired, uses selection, crossover, mutation | Complex, high-dimensional search spaces |

| Particle Swarm Optimization (PSO) | Medium | Bio-inspired, particles move through search space | Continuous optimization problems |

| Whale Optimization | Medium | Bio-inspired, mimics bubble-net hunting | |

| Ant Bee Colony Algorithm | Medium | Bio-inspired, mimics foraging behavior |

- Validate and Compare: Use a hold-out test set or nested cross-validation to ensure that the model optimized with a faster algorithm (like GA) still maintains high predictive accuracy.

- Scale Computations: For extremely large datasets, use Big Data platforms like Apache Spark which can distribute the hyperparameter search across a computing cluster, or leverage cloud computing services [17].

Visual Workflow:

Issue: Failure to Integrate Multimodal Data (e.g., Clinical, Imaging, and Genomics)

Background: Integrating data from different sources (EHRs, medical images, omics) is crucial for precision medicine but poses major challenges in data fusion, interoperability, and computational load [17] [19].

Solution: Adopt standardized data models and multimodal representation learning methods.

Step-by-Step Resolution:

- Data Harmonization: Use common data models and ontologies to standardize data from different sources. Examples include the OMOP Common Data Model or the Human Phenotype Ontology (HPO) to ensure semantic interoperability [17].

- Utilize Specialized Platforms: Employ data integration platforms like the I2B2 (Informatics for Integrating Biology & the Bedside) framework, which uses ETL (Extract, Transform, Load) processes to aggregate clinical and pre-clinical data into a translational data warehouse [17].

- Apply Multimodal AI: Implement deep learning models designed for multimodal data. These models can learn joint representations from different data types (e.g., using one sub-network for images and another for genomic data, fused in a later layer) for tasks like improved patient stratification [19].

- Address Data Privacy: For sensitive data, consider privacy-preserving techniques like federated learning, where models are trained across multiple decentralized data sources without sharing the data itself [17].

Visual Workflow:

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Computational Biomedicine

| Tool / Resource | Category | Function | Reference |

|---|---|---|---|

| Apache Spark | Big Data Platform | Distributed processing of large-scale genomic, clinical, and imaging data. | [17] |

| I2B2 Framework | Data Warehouse & Analytics | Integrates and analyzes heterogeneous biomedical data; provides query and visualization tools. | [17] |

| OMOP CDM | Data Standard | Common data model for standardizing observational health data from different sources. | [17] |

| GA, PSO, WO | Hyperparameter Optimizer | Bio-inspired algorithms for efficiently searching optimal model parameters. | [4] |

| Federated Learning | Privacy-Preserving ML | A technique to train machine learning models across decentralized data without sharing raw data. | [17] |

| Digital Twin Generator | Clinical Trial Tool | AI-driven models that simulate individual patient disease progression to optimize clinical trial design. | [21] |

Experimental Protocol: Hyperparameter Optimization of SVM using a Genetic Algorithm

Objective: To optimize the hyperparameters of a Support Vector Machine (SVM) for a high-dimensional biomedical classification task (e.g., cancer subtype classification from RNA-seq data) while minimizing computational time.

1. Materials and Data Preparation

- Dataset: A matrix of gene expression values (e.g., from TCGA) with rows as samples and columns as features (genes). The target variable is the disease class.

- Preprocessing:

- Perform standard normalization (e.g., z-score) on the gene expression features.

- Split the data into training (70%), validation (15%), and hold-out test (15%) sets. Crucially, apply normalization after splitting to prevent data leakage [20].

2. Optimization Setup

- SVM Hyperparameter Search Space:

C(regularization parameter): Log-uniform distribution between 1e-3 and 1e3.gamma(kernel coefficient for RBF kernel): Log-uniform distribution between 1e-4 and 1e1.

- Genetic Algorithm (GA) Configuration:

- Population size: 50 individuals.

- Generations: 100.

- Crossover rate: 0.8.

- Mutation rate: 0.1.

- Selection method: Tournament selection.

- Fitness function: Maximize the average accuracy on 5-fold cross-validation performed on the training set.

3. Execution and Validation

- Initialization: Randomly initialize a population of hyperparameter sets (

C,gamma). - Evaluation: For each individual in the population, train an SVM model with its hyperparameters and evaluate its performance using the cross-validation fitness function.

- Evolution: Create a new generation by applying selection, crossover, and mutation operators based on fitness scores.

- Termination: Repeat steps 2-3 until the maximum number of generations is reached or convergence is achieved.

- Final Model Training: Train a final SVM model using the best-found hyperparameters on the entire training set.

- Reporting: Evaluate the final model's performance on the held-out test set to get an unbiased estimate of its generalization ability. Report the test accuracy, precision, recall, and the total computational time.

Visual Workflow:

HPO Methodologies: From Grid Search to Bayesian Optimization

FAQs and Troubleshooting Guide

This guide addresses common challenges researchers face when using GridSearchCV for Support Vector Machine (SVM) hyperparameter optimization within computationally intensive fields like drug development.

Why is my GridSearchCV taking an extremely long time to complete?

Exhaustive Grid Search can become computationally expensive as the parameter space grows. The computation time scales with the number of hyperparameter combinations, the number of cross-validation folds, and the dataset size [22] [23].

Solutions and Best Practices:

- Reduce Search Space: Start with a coarse grid across a wide parameter range. Once a promising region is identified, perform a finer search within that area [23]. For example, instead of searching many values at once, first test

C = [0.1, 1, 10, 100]andgamma = [0.001, 0.01, 0.1]. - Decrease Cross-Validation Folds: Using 5-fold or 3-fold cross-validation instead of 10-fold can provide a 2-3x speedup with a minimal impact on performance estimation [23].

- Enable Parallel Processing: Set the

n_jobsparameter to-1to utilize all available processor cores [23]. - Use Faster Alternatives: For larger parameter spaces, consider

RandomizedSearchCVor the more advancedHalvingGridSearchCV, which uses successive halving to quickly eliminate poor parameter combinations [22] [23].

My SVM model with GridSearchCV is not converging or produces poor results. What is wrong?

The performance and convergence of SVM models are highly sensitive to data scaling and the choice of hyperparameters [24].

Solutions and Best Practices:

- Normalize Your Data: SVM algorithms are not scale-invariant. Normalizing features to a similar range (e.g., using

StandardScalerorNormalizer) is often essential for the model to converge properly and perform well [24]. - Review Parameter Definitions: Ensure the parameter grid is correctly specified. A misplaced comma or incorrect parameter name can lead to unexpected behavior [24].

- Check for Warnings: Look for scikit-learn warnings, such as "F-score is ill-defined," which may indicate that a particular parameter combination failed to make any predictions [24].

How do I specify parameters correctly forGridSearchCV?

A common error is incorrectly passing the model-building function.

Solution:

When using GridSearchCV with wrappers like KerasClassifier, pass the function name, not the result of calling it. Use build_fn=create_model instead of build_fn=create_model() [25].

The diagram below illustrates the exhaustive search mechanism of GridSearchCV and its associated challenges.

The table below summarizes strategies to manage the computational complexity of GridSearchCV.

| Strategy | Description | Expected Impact on Computation Time |

|---|---|---|

| Reduce CV Folds [23] | Decrease the number of cross-validation folds (e.g., from 10 to 5 or 3). | High (directly proportional reduction) |

| Coarse-to-Fine Search [23] | Use a broad, coarse grid first, then refine search in the best-performing region. | Very High (reduces total combinations) |

| Parallel Computation [23] | Use n_jobs=-1 to run parameter fits in parallel on all available cores. |

High (scales with number of cores) |

| Alternative Algorithms [22] [23] | Use RandomizedSearchCV or HalvingGridSearchCV for larger spaces. |

Medium to High (avoids exhaustive search) |

Experimental Protocol: SVM Hyperparameter Tuning with GridSearchCV

This protocol details the methodology for using GridSearchCV to optimize an SVM model, as demonstrated in heart disease prediction research [12] [26].

1. Problem Definition and Data Preparation: The goal is to classify medical data (e.g., patient symptoms, lab results) to predict the presence of a disease like heart disease or COVID-19 [27] [26]. After data collection, the dataset is split into training and testing sets, typically with a 70/30 or 80/20 ratio [12].

2. Preprocessing and Feature Scaling:

Critical Step: Features must be normalized. Models like SVM require data to be on a similar scale for optimal performance and convergence. Use StandardScaler or Normalizer from scikit-learn [24].

3. Define the Model and Parameter Grid: Instantiate an SVM model and define a parameter grid to search. The example below explores two different kernels and their key parameters [22] [12].

4. Configure and Execute GridSearchCV:

Set up the GridSearchCV object with the model, parameter grid, scoring metric, and cross-validation strategy. Using n_jobs=-1 enables parallel processing [23] [28].

5. Evaluate the Optimized Model: After fitting, the best model can be accessed and used for final evaluation on the held-out test set [12].

The Scientist's Toolkit: Essential Research Reagents

The table below lists key computational "reagents" for hyperparameter optimization experiments.

| Item / Software | Function in Experiment |

|---|---|

| Scikit-learn (sklearn) [22] | Provides the core machine learning library, including SVM implementations, GridSearchCV, and data preprocessing tools. |

Parameter Grid (param_grid) [22] |

The defined search space. It is a dictionary where keys are hyperparameter names and values are lists of settings to try. |

| Cross-Validation (CV) [22] | A resampling technique used to robustly estimate the performance of a model on unseen data, preventing overfitting. |

| Scoring Metric (e.g., 'accuracy', 'f1') [28] | The metric used to evaluate the performance of each parameter combination and select the best model. |

| NumPy & Pandas [27] | Fundamental packages for scientific computing and data manipulation in Python, used for handling datasets and numerical operations. |

Troubleshooting Guide

Q1: Why does my RandomizedSearchCV return NaN scores for some parameter combinations?

This occurs when certain hyperparameter values cause the model to fail during training or evaluation. A common reason is specifying hyperparameter values that are invalid for the underlying estimator [29]. For example, in an XGBoost model, values for colsample_bytree and subsample that exceed 1.0 are invalid and will cause the model to error, resulting in a NaN score for that fold [29]. The search will continue, but these failed fits waste computational resources.

- Solution: Carefully review the documentation for your estimator to ensure all hyperparameter values in your defined search space are valid. For instance, keep

colsample_bytreeandsubsamplebetween 0 and 1 [29].

Q2: I get a "Invalid parameter" error. How do I fix it?

This error typically arises from a parameter name mismatch, especially when using RandomizedSearchCV with a Pipeline [30]. The hyperparameters must be specified in the format stepname__parameterName (with a double underscore).

- Solution: Use the

estimator.get_params().keys()method to get the correct list of parameter names for your pipeline or estimator [30]. For a pipeline step, ensure you prefix the parameter with the name of the step. For example, for a logistic regression step named 'logreg', use'logreg__C'instead of just'C'.

Q3: Why does RandomizedSearchCV sometimes not find the absolute best hyperparameters?

This is an inherent trade-off of the method. RandomizedSearchCV evaluates a fixed number (n_iter) of random parameter combinations [31]. It is possible that the single best combination in the entire space is not among those randomly selected. However, empirical evidence shows it often finds a combination that performs nearly as well as the global optimum, but with significantly less computation [31].

- Solution: Increase the

n_iterparameter to sample more combinations, which increases the likelihood of finding a better model at the cost of longer runtime [31]. The optimal value forn_iteris a balance between computational cost and model quality.

Essential Experimental Protocol for SVM Research

This protocol outlines the use of RandomizedSearchCV for tuning a Support Vector Machine (SVM) within a computational complexity research context.

Define Model and Parameter Distributions

Instantiate the SVM model and define the hyperparameter search space as probability distributions, not just lists. For an SVM with an RBF kernel, key parameters to tune are C (regularization) and gamma (kernel coefficient).

Configure and Execute RandomizedSearchCV

Configure the search with cross-validation, a scoring metric, and the number of iterations.

Evaluate and Report Results

After fitting, extract and analyze the best model and all results.

Final Model Assessment

Evaluate the best model's performance on a held-out test set to estimate its generalization error.

Computational Efficiency Data

The following table quantifies the efficiency of RandomizedSearchCV compared to an exhaustive GridSearchCV [31].

Table: RandomizedSearchCV vs. GridSearchCV Efficiency Comparison

| Metric | GridSearchCV | RandomizedSearchCV |

|---|---|---|

| Search Strategy | Exhaustive: tests all combinations | Stochastic: tests a fixed number (n_iter) of random combinations |

| Computational Complexity | Multiplicative: n1 * n2 * ... * n_M models [31] |

Additive: n_iter models [31] |

| Best Model Performance | Finds the best combination within the grid | Finds a combination that is often very close to the best, with high probability [31] |

| Optimal for Large Spaces | Becomes computationally intractable [31] | Highly efficient, better trade-off between resources and performance [32] |

Research Reagent Solutions

Table: Essential Components for a RandomizedSearchCV Experiment

| Component | Function / Description |

|---|---|

RandomizedSearchCV (scikit-learn) |

The core class that implements the random search with cross-validation [33]. |

param_distributions |

A dictionary defining the hyperparameter search space, which can include probability distributions (e.g., scipy.stats.loguniform) for continuous parameters [33]. |

n_iter |

A critical hyper-hyperparameter controlling the number of random parameter sets sampled. Governs the trade-off between computational cost and search quality [31]. |

Cross-Validation Object (e.g., StratifiedKFold) |

Used to define the resampling procedure for evaluating model performance, ensuring robust estimates [33]. |

| Scoring Metric (e.g., 'accuracy', 'negmeanabsolute_error') | The performance metric used to evaluate and compare the candidate models [33]. |

Workflow and Logic Diagrams

RandomizedSearchCV Experimental Workflow

Parameter Search Strategy Trade-Offs

Frequently Asked Questions (FAQs)

FAQ 1: When should I use Bayesian Optimization over other hyperparameter tuning methods like Grid Search? Bayesian Optimization (BO) is best-suited for situations where you need to optimize a black-box function that is expensive to evaluate and you have a limited evaluation budget. This is common when tuning hyperparameters for machine learning models like Support Vector Machines (SVMs), where each training cycle can take minutes or hours. In contrast to Grid Search, which evaluates every possible combination in a predefined set, BO builds a probabilistic model to guide the search towards promising hyperparameters, dramatically reducing the number of function evaluations required [34] [35] [36]. It is particularly effective for optimization over continuous domains of less than 20 dimensions [37].

FAQ 2: What is the role of the surrogate model and the acquisition function in BO? The BO process relies on two key components:

- Surrogate Model: A probabilistic model, often a Gaussian Process (GP), used to approximate the expensive, black-box objective function. The GP provides a posterior distribution that gives a prediction of the function's value (mean) and a measure of uncertainty (variance) at any unobserved point [34] [38] [36].

- Acquisition Function: A function that uses the surrogate's posterior to decide the next point to evaluate by balancing exploration (sampling in regions of high uncertainty) and exploitation (sampling where the predicted mean is high) [35] [36]. Common acquisition functions include Expected Improvement (EI), Probability of Improvement (PI), and Upper Confidence Bound (UCB) [34] [35].

FAQ 3: My BO algorithm seems to be converging slowly. What could be the issue? Slow convergence can often be attributed to several factors:

- Poorly chosen acquisition function: The parameter controlling the exploration-exploitation trade-off (e.g.,

ξin EI,κin UCB) might be set suboptimally. A value that is too high leads to excessive exploration, while a value that is too low can cause the algorithm to get stuck in a local optimum [35]. - Inadequate initial points: The number of initial, random points (

num_initial_points) may be too small to build a good initial surrogate model. A common default is 3 times the number of dimensions in your hyperparameter space [34]. - High-dimensional search space: BO's performance can degrade in very high-dimensional spaces (e.g., >20). In such cases, consider frameworks that decompose the problem into smaller-dimensional subtasks [13].

FAQ 4: Can Bayesian Optimization handle discrete or mixed hyperparameter types? Yes, advanced BO methods can optimize over discrete and mixed spaces. One approach is Probabilistic Reparameterization (PR), which maximizes the expectation of the acquisition function over a probability distribution defined by continuous parameters. This allows the use of standard gradient-based optimizers and has been shown to achieve state-of-the-art performance on problems with discrete parameters [39].

Troubleshooting Common Experimental Issues

Problem: Inconsistent or poor results after hyperparameter tuning with BO.

- Potential Cause 1: Noisy objective function evaluations.

- Solution: Ensure that your model training process is deterministic by fixing random seeds. If noise is inherent to the process (e.g., due to small dataset size), use a Gaussian Process surrogate model that explicitly accounts for noise (e.g., by setting the

alphaparameter in scikit-learn'sGaussianProcessRegressor) [36].

- Solution: Ensure that your model training process is deterministic by fixing random seeds. If noise is inherent to the process (e.g., due to small dataset size), use a Gaussian Process surrogate model that explicitly accounts for noise (e.g., by setting the

- Potential Cause 2: The search space is incorrectly defined.

Problem: The optimization process is taking too long to complete.

- Potential Cause 1: The objective function is extremely expensive.

- Solution: Consider using a multi-fidelity optimization approach, if applicable, where the objective is first approximated using cheaper, lower-fidelity evaluations (e.g., training on a subset of data or for fewer epochs) to guide the search before moving to high-fidelity, expensive evaluations [37].

- Potential Cause 2: The internal optimization of the acquisition function is inefficient.

- Solution: The acquisition function is optimized in every iteration to suggest the next point. Use an efficient optimizer like L-BFGS-B and restart the optimization from several random initial points (

n_restarts) to avoid poor local maxima [36].

- Solution: The acquisition function is optimized in every iteration to suggest the next point. Use an efficient optimizer like L-BFGS-B and restart the optimization from several random initial points (

Experimental Protocol: Hyperparameter Tuning for an SVM Model

This protocol details the application of BO for tuning a Support Vector Machine (SVM) classifier, as demonstrated in a study for Parkinson's Disease classification [41].

Objective

To find the hyperparameters of an SVM model that maximize the classification accuracy (or an alternative metric like F1-score) on a validation set.

Key Components and Reagents

Table: Essential Components for a Bayesian Optimization Experiment

| Component/Reagent | Function in the Experiment |

|---|---|

| Objective Function | The function to be optimized. In this case, it is the process of training an SVM with a given set of hyperparameters and returning a performance metric (e.g., validation accuracy) [41]. |

| Search Space | The defined range of values for each SVM hyperparameter to be tuned (e.g., C, gamma, kernel) [40]. |

| Gaussian Process (GP) | The surrogate model that approximates the objective function. It requires a mean function and a kernel (e.g., Matérn kernel) to model covariance [36]. |

| Expected Improvement (EI) | The acquisition function used to select the next hyperparameter set to evaluate, balancing exploration and exploitation [34] [36]. |

| Optimization Library | Software such as bayes_opt, hyperopt, or KerasTuner that implements the BO loop [40]. |

Step-by-Step Methodology

Define the Objective Function:

- Create a function

svm_objective(C, gamma)that: a. Takes hyperparameters (e.g., regularizationC, kernel coefficientgamma) as input. b. Instantiates and trains an SVM model using these hyperparameters on the training data. c. Evaluates the model on the validation set and returns the performance score (e.g., accuracy) [41].

- Create a function

Specify the Search Space:

- Define the bounds for each continuous hyperparameter. For example:

C: Log-uniform distribution between1e-3and1e3gamma: Log-uniform distribution between1e-4and1e1

- For discrete hyperparameters like

kernel, define the list of choices (e.g.,['rbf', 'poly']) [40].

- Define the bounds for each continuous hyperparameter. For example:

Initialize and Run the Bayesian Optimization:

- Select a BO library and configure it with the objective function and search space.

- Set the number of initial random points (

init_points) and the total number of iterations (n_iter). A typical starting point is 5-10 initial points [34]. - Execute the optimization loop. The library will automatically manage the surrogate model (GP), acquisition function (EI), and candidate selection.

Output and Validation:

- After the optimization finishes, retrieve the set of hyperparameters that yielded the best objective function value.

- Perform a final evaluation of the model trained with these optimal hyperparameters on a held-out test set to estimate its generalization performance [41].

Expected Outcomes and Metrics

In the referenced study, BO was used to tune an SVM model on a dataset with 195 instances and 23 features. The performance was measured using accuracy, F1-score, recall, and precision. The results demonstrated that the BO-tuned SVM achieved a top accuracy of 92.3%, outperforming other machine learning models [41]. Table: Sample Results from an SVM-BO Experiment [41]

| Model | Hyperparameter Tuning | Accuracy | F1-Score | Recall | Precision |

|---|---|---|---|---|---|

| SVM | Without BO | Not Reported | Not Reported | Not Reported | Not Reported |

| SVM | With BO | 92.3% | Not Reported | Not Reported | Not Reported |

| Random Forest | With BO | <92.3% | Not Reported | Not Reported | Not Reported |

| Logistic Regression | With BO | <92.3% | Not Reported | Not Reported | Not Reported |

Workflow and Conceptual Diagrams

Bayesian Optimization Workflow

Acquisition Function Logic

Evolutionary and Swarm Intelligence Algorithms (GA, PSO)

This technical support center provides troubleshooting guides and FAQs for researchers applying Evolutionary and Swarm Intelligence Algorithms, particularly within the context of hyperparameter optimization for Support Vector Machines (SVM).

Frequently Asked Questions (FAQs)

Algorithm Selection & Fundamentals

Q1: What are the key differences between Genetic Algorithms (GA) and Particle Swarm Optimization (PSO) for hyperparameter optimization?

A1: The choice between GA and PSO depends on the problem's nature and the desired search behavior. The table below summarizes their core differences.

Table: Comparison between GA and PSO

| Feature | Genetic Algorithm (GA) | Particle Swarm Optimization (PSO) |

|---|---|---|

| Inspiration | Darwinian principles of natural selection and evolution [42] | Social behavior of bird flocks or fish schools [43] |

| Core Mechanism | Operations on a population of chromosomes (solutions) via selection, crossover, and mutation [44] | Velocity and position updates of particles guided by personal and swarm bests [43] [45] |

| Solution Representation | Chromosomes (e.g., strings of genes representing parameters) [44] | Particles with position and velocity in the search-space [43] |

| Primary Strengths | Robust global search; good for discrete and mixed parameter spaces [42] [44] | Simpler implementation, fewer parameters; efficient convergence on continuous problems [45] |

| Common Challenges | Can be computationally expensive; risk of premature convergence with poor tuning [46] | Can get stuck in local optima; sensitive to parameter settings like inertia weight [45] |

Q2: When should I prefer PSO over GA for optimizing SVM hyperparameters?

A2: PSO is often preferred when the hyperparameter space is primarily continuous (e.g., the SVM regularization parameter C and kernel coefficient gamma). It is generally easier to implement and has fewer parameters to tune [45]. GA may be more suitable for problems with discrete or categorical hyperparameters or when the fitness landscape is highly complex and requires the disruptive exploration provided by crossover and mutation [44].

Parameter Tuning & Configuration

Q3: What are the best practices for tuning a Genetic Algorithm's parameters?

A3: Tuning is critical to balance exploration and exploitation [46]. The following guidelines offer a starting point:

- Population Size: Start with a size between 20 and 100 for small-to-medium problems. Complex problems may require 100 to 1000 individuals [46].

- Mutation Rate: Typical values range from 0.001 to 0.1. A good heuristic is 1 / (chromosome length) [46].

- Crossover Rate: This is typically set between 0.6 and 0.9 [46].

- Selection & Elitism: Use strategies like tournament selection and preserve the top 1-5% of elite individuals to ensure good solutions are not lost [46].

Q4: How do I set the inertia weight and acceleration coefficients for PSO?

A4: These parameters control the trade-off between exploration and exploitation [45].

- Inertia Weight (w): A higher weight (e.g., close to 1) promotes global exploration, while a lower weight (e.g., 0.4-0.6) favors local exploitation. It must be less than 1 to prevent divergence [43] [45].

- Cognitive (c1) & Social (c2) Coefficients: These determine a particle's attraction to its personal best and the swarm's global best position. Typical values for both are in the range [43]. Higher

c1encourages individual learning, while higherc2promotes convergence toward the swarm's best find [45].

Table: PSO Parameter Guidelines

| Parameter | Function | Typical Values / Ranges | Effect of Higher Value |

|---|---|---|---|

| Inertia Weight (w) | Balances global and local search [45] | 0.4 - 0.9 [43] | More global exploration [45] |

| Cognitive Coefficient (c1) | Attraction to particle's own best position [45] | [1, 3] (often ~2) [43] | More individual learning [45] |

| Social Coefficient (c2) | Attraction to swarm's best position [45] | [1, 3] (often ~2) [43] | More social collaboration [45] |

| Swarm Size | Number of candidate solutions [43] | 20 - 40 [45] | Broader search space exploration [45] |

Troubleshooting Common Experimental Issues

Q5: My optimization is converging to a suboptimal solution too quickly. What can I do?

A5: This indicates premature convergence.

- In GA: Increase the population size to enhance diversity [46]. Increase the mutation rate to reintroduce lost genetic material [46]. Use fitness scaling (e.g., rank-based) to control selection pressure [46].

- In PSO: Increase the inertia weight to encourage more exploration [45]. Consider using a local best (lBest) topology like a ring topology, where particles only share information with immediate neighbors, slowing the spread of information and preventing premature swarm convergence [43] [45]. You can also try adaptive parameter strategies that increase mutation or inertia when stagnation is detected [46] [45].

Q6: The optimization process is taking too long. How can I improve its speed?

A6: To improve performance:

- Reduce Population/Swarm Size: A smaller population or swarm will require fewer fitness evaluations per generation/iteration [46] [45].

- Implement Early Termination: Terminate the run if the fitness does not improve over a set number of generations (e.g., 50-100) [46].

- Optimize Fitness Evaluation: The fitness function (often SVM model training) is the computational bottleneck. Ensure this code is highly optimized [47].

- Adjust PSO Parameters: Lowering the inertia weight can lead to faster convergence, though it may increase the risk of finding a local optimum [45].

Q7: How do I handle a failed evaluation (e.g., invalid parameter set) during a run?

A7: Most robust optimization frameworks have error-handling mechanisms. A standard approach is to catch the error, log an appropriate message, and assign a penalizing fitness value (e.g., a very high cost) to the invalid candidate solution [42]. The algorithm will then naturally favor valid parameters in subsequent generations/iterations.

Experimental Protocols & Methodologies

This section provides detailed workflows for implementing GA and PSO, particularly for SVM hyperparameter optimization.

Workflow for Hyperparameter Optimization using a Genetic Algorithm

The following diagram illustrates the complete experimental protocol for a GA.

GA Hyperparameter Optimization Workflow

Detailed Methodology:

- Chromosome Representation: Encode the SVM hyperparameters (e.g.,

C,gamma,degree) into a chromosome. This could be a binary string, a vector of real numbers, or a mix, depending on the parameter type [44]. - Initialization: Generate an initial population of chromosomes randomly within the defined search boundaries [42].

- Fitness Evaluation: The most critical and computationally expensive step. For each chromosome (set of hyperparameters), train an SVM model and evaluate its performance using a metric like accuracy or mean squared error. This metric becomes the individual's fitness score [44].

- Selection: Select parent chromosomes for reproduction, with a bias towards higher fitness. Common methods include tournament selection and roulette wheel selection [46].

- Genetic Operators:

- Termination: Check if a termination criterion is met. This can be a maximum number of generations, a target fitness value, or stagnation in improvement [42] [46]. If not met, return to Step 3.

Workflow for Hyperparameter Optimization using Particle Swarm Optimization

The following diagram illustrates the experimental protocol for PSO.

PSO Hyperparameter Optimization Workflow

Detailed Methodology:

- Initialization: Initialize a swarm of particles. Each particle's position represents a potential set of SVM hyperparameters. Initialize particle velocities randomly [43].

- Fitness Evaluation: For each particle's position (hyperparameters), train an SVM model and calculate its performance as the fitness [45].

- Update Bests:

- Termination Check: Check criteria similar to GA (max iterations, fitness threshold) [43].

- Update Velocity and Position: If not terminated, update each particle

iusing the following core equations [43]: - Loop: Return to Step 2 with the updated particle positions.

The Scientist's Toolkit: Essential Research Reagents & Solutions

This table details key computational "reagents" and their functions for experiments in this field.

Table: Essential Components for GA and PSO Experiments

| Item | Function / Description | Considerations for SVM-HPO |

|---|---|---|

| Fitness Function | The objective function to be optimized (maximized or minimized). | Typically the validation accuracy or a related performance metric (e.g., F1-Score) of the SVM model trained with a specific hyperparameter set. Cross-validation is often used for a robust estimate [6]. |

| Search Space | The defined domain for each hyperparameter to be optimized. | For SVM, this includes bounds for C (e.g., [1e-5, 1e5]), gamma (e.g., [1e-5, 1e2]), and kernel-specific parameters. It can be continuous, discrete, or categorical. |

| Parameter Encoding | The method for representing a solution for the algorithm. | In GA, this is a chromosome (e.g., a list of values). In PSO, this is a particle's position vector [44]. The encoding must be mapped to the hyperparameter search space. |

| Algorithm Parameters | The control parameters of the optimization algorithm itself. | GA: Population size, crossover/mutation rates [46]. PSO: Swarm size, inertia weight, c1, c2 [45]. These require tuning for optimal performance. |

| Validation Strategy | The method used to evaluate the fitness of a candidate solution. | K-fold cross-validation (e.g., 5-fold) is standard to avoid overfitting and ensure the model generalizes well, providing a reliable fitness score [6]. |

FAQs on Hyperopt and Optuna

Q1: What are the primary differences between Hyperopt's and Optuna's search space definition?

Hyperopt uses a define-and-run approach, where you must declare the entire search space upfront using a domain-specific language (DSL) before optimization begins. This often involves complex, nested dictionaries to handle conditional parameters [48]. In contrast, Optuna uses a define-by-run approach, allowing you to construct the search space dynamically within the objective function using standard Python code. This offers greater flexibility for complex, conditional hyperparameters, such as adding layers to a neural network only if a specific model type is chosen [48] [49].

Q2: How can I stop unpromising trials early to save computational resources?

Both frameworks support early stopping, but Optuna provides more integrated and versatile pruning mechanisms [50] [49]. You can use pruners like the MedianPruner or SuccessiveHalvingPruner (ASHA). To enable pruning, you must report intermediate values during the trial using trial.report(metric, step) and then check if the trial should be pruned [49]. Hyperopt's approach to early stopping is less direct and often requires manual implementation or is handled through its SparkTrials for distributed computing [51].

Q3: I am using Hyperopt, and the parameters logged in my experiment tracker are indices, not the actual values. How can I fix this?

This is a common issue with Hyperopt's hp.choice() function, which returns the index of the chosen option from a list. To retrieve the actual parameter value, you must use the hyperopt.space_eval() function after optimization to convert the best result's indices back to the original values in your search space [51].

Q4: Which framework is better for large-scale, distributed hyperparameter optimization?

Both support distributed optimization, but their approaches differ. Hyperopt uses SparkTrials to parallelize trials across an Apache Spark cluster [51]. Optuna uses a central database (e.g., MySQL or PostgreSQL) as a storage backend. You can create a study on a central machine and then have multiple workers independently run trials, all reading from and writing to the shared database [49]. It is recommended to avoid using SparkTrials on autoscaling clusters [51].

Q5: My objective function occasionally returns NaN, causing the optimization to fail. What should I do?

A reported loss of NaN typically means your objective function returned a NaN value. This does not crash the entire optimization process; other runs will continue. To prevent this, review your hyperparameter search space. For instance, very large parameter values might cause numerical instability. Adjusting the space (e.g., using suggest_loguniform instead of suggest_uniform for a parameter like the learning rate) can often resolve this [51].

Troubleshooting Guides

Issue 1: Handling Conditional Hyperparameters

Problem: Your model has hyperparameters that are only relevant under specific conditions.

- In Optuna: Use standard Python control flow (if-else statements) within your objective function. This is a core feature of its define-by-run API [50] [49].

- In Hyperopt: You must define conditional spaces using nested

hp.choicefunctions, which can become complex [50].

Issue 2: Optimization Process is Too Slow

Problem: Each trial takes a long time, and the overall optimization is not making efficient progress.

- Implement Pruning (Optuna): The most effective strategy is to use Optuna's pruning to halt underperforming trials early. Ensure your training loop reports intermediate metrics [49].

- Use a Bayesian Sampler: Both frameworks default to random search. For faster convergence, switch to a Bayesian optimization algorithm: use

algo=tpe.suggestin Hyperopt andcreate_study(sampler=optuna.samplers.TPESampler())in Optuna [50] [52]. - Reduce Dataset Size for Initial Experiments: Start with a smaller subset of your data to quickly test different hyperparameter combinations. Once you identify promising ranges, you can perform a final tuning run on the full dataset [51].

Issue 3: Reproducibility and Persisting Results

Problem: You need to restart your script but don't want to lose optimization progress.

- In Optuna: Use the

storageparameter when creating a study. This allows you to reload the study later and continue optimization [49]. - In Hyperopt: Persistence is less streamlined. You can save the

trialsobject manually using Python'spicklemodule after each iteration, but there is no built-in mechanism to resume an optimization seamlessly from a saved state.

Experimental Protocols for SVM Hyperparameter Optimization

This section details a methodology for applying Hyperopt and Optuna to optimize a Support Vector Machine (SVM) classifier, a common task in computational biology and drug development [6].

1. Problem Definition and Dataset Setup

The objective is to perform multi-class classification using an SVM model. The protocol uses a public dataset containing 20 features (e.g., technical specifications of mobile phones) to predict a price class label [6]. The dataset is split into 70% for training, 15% for validation, and 15% for testing. A 5-fold cross-validation strategy is employed on the training set to ensure model robustness and generalizability [6].

2. Hyperparameter Search Space Definition

The critical hyperparameters for SVM and their corresponding search spaces are defined as follows [6]:

| Hyperparameter | Description | Search Space (Optuna) | Search Space (Hyperopt) |

|---|---|---|---|

| Kernel | Specifies the function to map data to a higher dimension [6]. | trial.suggest_categorical('kernel', ['linear', 'poly', 'rbf']) |

hp.choice('kernel', ['linear', 'poly', 'rbf']) |

| Regularization (C) | Controls the trade-off between achieving a low error and a simple model [6]. | trial.suggest_loguniform('C', 1e-4, 1e4) |

hp.loguniform('C', np.log(1e-4), np.log(1e4)) |

| Kernel Coefficient (γ) | Defines how far the influence of a single training example reaches [6]. | trial.suggest_loguniform('gamma', 1e-5, 1e2) |

hp.loguniform('gamma', np.log(1e-5), np.log(1e2)) |

| Degree (d) | Only used by the polynomial kernel [6]. | trial.suggest_int('degree', 2, 5) |

hp.choice('degree', range(2, 6)) |

3. Core Optimization Workflow

The optimization follows a structured process to find the best hyperparameters. The diagram below illustrates the high-level steps that are common to both Hyperopt and Optuna.

4. Implementation Code

Using Optuna:

Using Hyperopt:

5. Evaluation and Analysis