Overcoming Data Imbalance: Advanced Active Learning Strategies for Chemical Library Design

This article addresses the critical challenge of data imbalance in chemical libraries, where active compounds are significantly outnumbered by inactive ones, leading to biased machine learning models in drug discovery.

Overcoming Data Imbalance: Advanced Active Learning Strategies for Chemical Library Design

Abstract

This article addresses the critical challenge of data imbalance in chemical libraries, where active compounds are significantly outnumbered by inactive ones, leading to biased machine learning models in drug discovery. We explore the foundational principles of this imbalance and its impact on predictive accuracy. The content delves into advanced methodological solutions, including strategic data sampling, active learning frameworks, and hybrid AI approaches that integrate generative models with physics-based simulations. A practical troubleshooting guide is provided for optimizing model performance and addressing common pitfalls like synthetic accessibility. Finally, we present rigorous validation protocols and comparative analyses of various techniques, showcasing successful real-world applications in targeting proteins like SARS-CoV-2 Mpro and CDK2. This comprehensive guide equips researchers with the strategies needed to enhance the efficiency and success rates of AI-driven drug discovery campaigns.

The Data Imbalance Problem: Understanding its Impact on Chemical Library Screening

Frequently Asked Questions

1. What is data imbalance, and why is it so common in drug discovery? Data imbalance refers to a situation in classification tasks where the target classes have an uneven distribution of observations. In drug discovery, this typically means that active compounds (e.g., those with a desired biological effect) are significantly outnumbered by inactive compounds [1] [2]. This is not an exception but the norm, primarily due to:

- Inherent Biological Odds: The likelihood that a random compound will be active against a specific biological target is naturally very low [1].

- Experimental Bias: High-throughput screening (HTS) campaigns are designed to test thousands of compounds, but the vast majority will show no activity, leading to a dataset where inactive samples form the overwhelming majority [2].

- Rarity of Events: The outcomes of interest, such as a compound being non-toxic or having a specific therapeutic effect, are often rare events by nature [3].

2. What are the practical consequences of ignoring data imbalance in my model? Training a model on imbalanced data without addressing the skew leads to models that are biased and of limited practical use [1] [4]. Key consequences include:

- Biased Predictions: The model will become biased toward predicting the majority class (inactive compounds) as it can achieve high accuracy by simply always predicting "inactive" [4] [3].

- Poor Generalization for the Minority Class: The model will fail to learn the characteristics of the active compounds, leading to very low sensitivity (high false negative rate). This means potentially promising drug candidates are incorrectly filtered out [1] [5].

- Misleading Performance Metrics: Reliance on overall accuracy is deceptive. A model could be 99% accurate by predicting all compounds as inactive, yet be useless for identifying active molecules [4] [3].

3. How can I detect if my dataset is imbalanced? Imbalance can be quantified using the Imbalance Ratio (IR), which is the ratio of the number of majority class samples to minority class samples [2]. Calculate this by dividing the number of inactive compounds by the number of active compounds. An IR greater than 10:1 is often considered a significant imbalance requiring attention [2]. For example, in one study on anti-pathogen activity, datasets had IRs ranging from 1:82 to 1:104 [2].

4. What are the most effective strategies to handle data imbalance? Solutions can be categorized into three main types, which can also be combined [1] [2] [6]:

- Data-Level Methods: Adjusting the training data composition.

- Algorithm-Level Methods: Modifying the learning algorithm to compensate for the imbalance.

- Hybrid Approaches: Combining data-level and algorithm-level techniques, such as using SMOTE alongside a cost-sensitive Random Forest [2].

5. Which evaluation metrics should I use instead of accuracy? When dealing with imbalanced data, it is crucial to move beyond accuracy. A comprehensive evaluation should include multiple metrics [4] [3] [5]:

- Precision: Measures how many of the predicted active compounds are truly active.

- Recall (Sensitivity): Measures how many of the truly active compounds were correctly identified.

- F1-Score: The harmonic mean of precision and recall, providing a single balanced metric [4].

- Matthews Correlation Coefficient (MCC): A more robust metric that considers all four corners of the confusion matrix and is well-suited for imbalanced datasets [2] [6].

- Area Under the Precision-Recall Curve (AUPR): Often more informative than the ROC curve when the positive class is rare [7].

Quantitative Impact of Data Imbalance

The tables below summarize key quantitative findings from recent research, illustrating the prevalence and performance impact of data imbalance.

Table 1: Examples of Imbalance Ratios (IR) in Published Drug Discovery Studies

| Data Source / Study | Prediction Target | Reported Imbalance Ratio (IR) | Citation |

|---|---|---|---|

| PubChem Bioassay | Anti-HIV activity | ~1:90 (e.g., 1093 active vs ~100,000 inactive) | [2] |

| PubChem Bioassay | Anti-Malaria activity | ~1:82 | [2] |

| Opioid Risk Prediction | Opioid use disorder | Up to 1:1000 | [3] |

| General Drug Discovery | Active vs. Inactive compounds | Commonly ranges from 1:10 to over 1:1000 | [1] [6] |

Table 2: Performance Comparison of Models With and Without Balancing on a Highly Imbalanced Dataset (Example: HIV Bioassay, IR ~1:90)

| Model / Technique | Evaluation Metric | Original Data | With Random Undersampling (RUS) | With SMOTE |

|---|---|---|---|---|

| Random Forest | MCC | < 0 (-0.04) | ~0.60 (Significant improvement) | Moderate improvement |

| Random Forest | Recall | Very Low | Significantly boosted | Increased |

| Random Forest | ROC-AUC | Moderate | Highest observed | Slight increase |

| General Trend | Precision | High | Decreased, but balanced with recall | Maintained or slightly decreased |

Experimental Protocols for Handling Imbalance

Protocol 1: Optimizing the Imbalance Ratio via K-Ratio Random Undersampling

This protocol is based on a study that systematically tested the effect of different imbalance ratios [2].

- Data Preparation: Start with your imbalanced dataset and calculate the initial IR.

- Define Target Ratios: Instead of aiming for a perfect 1:1 balance, define a series of target IRs (e.g., 1:50, 1:25, 1:10) where the majority class is undersampled to these ratios [2].

- Apply Random Undersampling (RUS): For each target IR, randomly select a subset of the majority class (inactive compounds) to achieve the desired ratio with the full minority class.

- Model Training and Evaluation: Train your chosen machine learning model (e.g., Random Forest, SVM, GNN) on each of the resampled datasets.

- Performance Analysis: Evaluate models using a suite of metrics (F1-score, MCC, Balanced Accuracy, ROC-AUC, Precision, Recall). Research found that a moderate IR of 1:10 often provided an optimal balance, significantly enhancing model performance without excessive information loss from undersampling [2].

Protocol 2: Combining SMOTE Oversampling with a Random Forest Classifier

This is a widely used data-level method for balancing chemical datasets [1] [5].

- Data Split: Split your dataset into training and test sets. Important: Apply resampling only to the training set to avoid data leakage and over-optimistic performance on the test set.

- Apply SMOTE: Use the SMOTE algorithm on the training data. SMOTE generates synthetic samples for the minority class by interpolating between existing minority class instances that are close in feature space [1] [4].

- Train Classifier: Train a Random Forest classifier on the SMOTE-balanced training dataset.

- Validate: Use the pristine, untouched test set (which retains the original, real-world imbalance) to evaluate the model's performance using metrics like MCC, F1-score, and AUC [5]. This protocol has been successfully applied in areas like Drug-Induced Liver Injury (DILI) prediction, achieving high sensitivity and specificity [5].

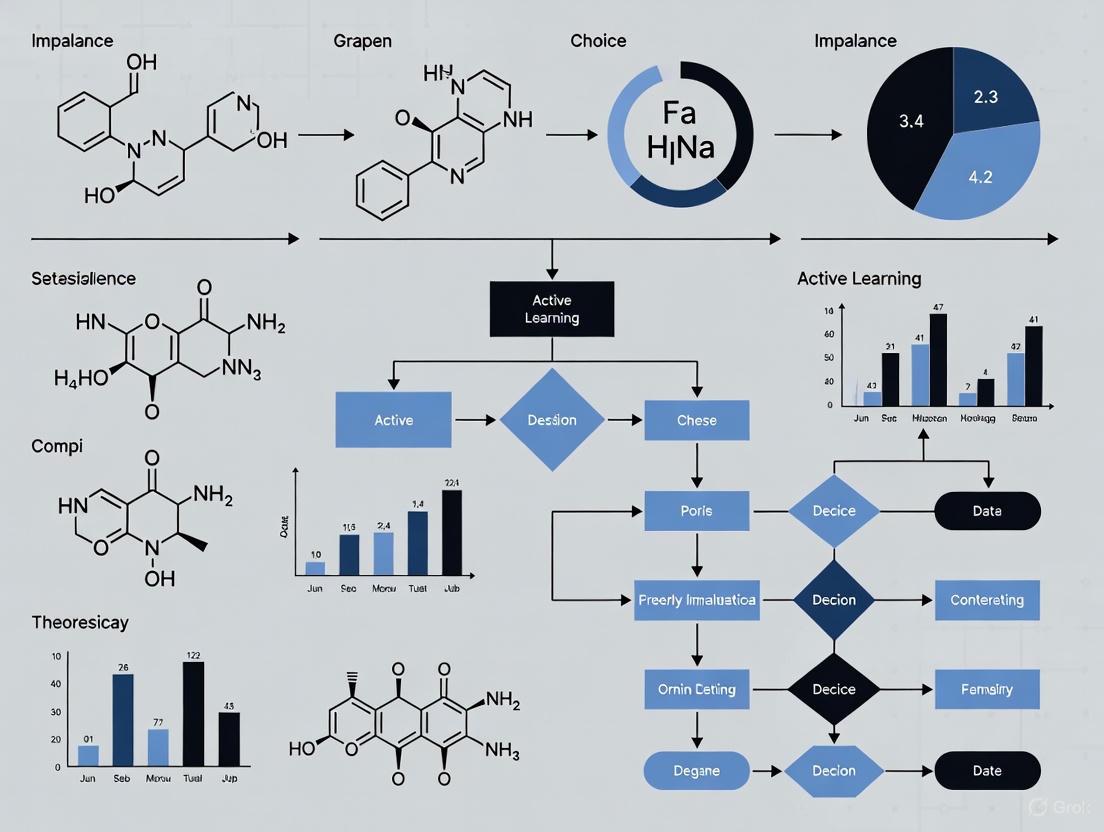

Workflow Diagram: Handling Imbalanced Data in Drug Discovery

The diagram below illustrates a conceptual workflow for tackling imbalanced datasets, integrating both data-level and algorithm-level solutions.

Table 3: Essential Software and Algorithmic Tools

| Tool / Technique | Type | Primary Function | Application Note |

|---|---|---|---|

| SMOTE | Data-Level / Oversampling | Generates synthetic samples for the minority class to balance the dataset. | Effective but can introduce noisy samples; variants like Borderline-SMOTE may perform better [1]. |

| Random Undersampling (RUS) | Data-Level / Undersampling | Randomly removes samples from the majority class to balance the dataset. | Simple and effective; can lead to loss of useful information [1] [2]. |

| Cost-Sensitive Learning | Algorithm-Level | Assigns a higher misclassification cost to the minority class during model training. | Implemented in classifiers like Random Forest and SVM via 'class_weight' parameters [2] [6]. |

| BalancedBaggingClassifier | Algorithm-Level / Ensemble | An ensemble method that balances the data via undersampling during the bootstrap sampling process. | Directly addresses imbalance within the ensemble framework [4]. |

| CTGAN | Data-Level / Advanced Augmentation | A deep learning model (GAN) that generates high-quality synthetic tabular data. | Particularly useful for complex, high-dimensional data where SMOTE may be insufficient [8]. |

| MCC & F1-Score | Evaluation Metric | Robust metrics for model performance evaluation on imbalanced data. | Should be used as primary metrics instead of accuracy [2] [6] [4]. |

Frequently Asked Questions (FAQs)

1. What is data imbalance in the context of PubChem BioAssay data? Data imbalance refers to the significant disparity in the number of active (positive) versus inactive (negative) compound samples in high-throughput screening (HTS) datasets. In PubChem, this typically manifests as a very small number of active compounds (the minority class) and a very large number of inactive compounds (the majority class), with Imbalance Ratios (IRs) often ranging from 1:50 to over 1:100 [2]. This is an inherent feature of HTS, as most tested compounds will not show activity against a specific biological target [2].

2. Why is data imbalance a critical problem for AI-driven drug discovery? When trained on highly imbalanced data, machine learning (ML) and deep learning (DL) models become heavily biased toward predicting the majority class (inactive compounds). They fail to effectively learn the features associated with the minority class (active compounds), leading to poor predictive performance for the very compounds researchers are trying to identify—the hits. This bias can severely limit the robustness and real-world applicability of these models [1] [2].

3. What are some common technical artifacts in HTS data that can mimic true activity? A substantial proportion of initial hits from HTS can be artifacts caused by assay interference. Compounds may interfere with the assay technology itself (e.g., by fluorescing in fluorescence-based assays) or exhibit non-selective binding, leading to false positives. These artifacts further complicate the identification of truly active compounds and contribute to data quality issues [9] [10].

4. What key information is missing from PubChem that complicates data quality control? A significant limitation for secondary data analysis is that the PubChem BioAssay database often lacks crucial plate-level metadata for each screened compound. This includes batch number, plate ID, and well position (row and column). Without this information, researchers cannot fully investigate or correct for common sources of technical variation like batch effects or positional (edge) effects within plates, which are known to cause false positives and negatives [9].

Troubleshooting Guide: Addressing Data Imbalance and Artifacts

| Potential Cause | Recommended Solution | Underlying Principle |

|---|---|---|

| Severe Class Imbalance | Apply resampling techniques to the training data. Random Undersampling (RUS) of the majority (inactive) class has been shown to be particularly effective for PubChem data, with an optimal Imbalance Ratio (IR) of 1:10 (active:inactive) suggested [2]. | Resampling rebalances the dataset, preventing the model from being overwhelmed by the inactive class and forcing it to learn features from the active compounds [1]. |

| Algorithmic Bias | Use cost-sensitive learning or select algorithms robust to imbalance. In one study, Random Forest combined with RUS yielded strong performance [2]. | These methods assign a higher cost to misclassifying the minority class, directly adjusting the model's learning process to pay more attention to active compounds [1]. |

| Assay Interference | Implement in silico filters to identify and remove compounds likely to cause assay interference, such as pan-assay interference compounds (PAINS) [11] [10]. | This is a data-cleaning step that removes false positives from the training set, allowing the model to learn from true structure-activity relationships rather than assay artifacts. |

Symptom: Inconsistent or non-reproducible results from a published PubChem assay.

| Potential Cause | Recommended Solution | Underlying Principle |

|---|---|---|

| Technical Variation (Batch/Plate Effects) | If plate metadata is available, apply normalization methods like percent inhibition or z-score transformation plate-by-plate [9]. | Normalization accounts for systematic technical differences between plates and batches, ensuring that compound activity is measured relative to its own plate's controls [12] [9]. |

| Insufficient Metadata in PubChem | Attempt to obtain the full source dataset, including plate layout, directly from the original screening center, as this metadata is not always fully available in PubChem [9]. | A full analysis of assay quality and technical effects is impossible without plate-level information. Obtaining the complete dataset is essential for rigorous secondary analysis. |

Experimental Protocols for Handling Imbalanced HTS Data

Protocol 1: K-Ratio Random Undersampling (K-RUS) for Model Training

This protocol is designed to optimize the Imbalance Ratio (IR) in training data to improve model performance for identifying active compounds [2].

- Data Acquisition: Download a bioassay dataset from the PubChem FTP site or via the Power User Gateway (PUG). The data should include PubChem Compound IDs (CID) and confirmed activity outcomes (active/inactive) [13].

- Calculate Initial Imbalance Ratio (IR): Determine the original IR of your dataset (e.g., 1:90, meaning 1 active compound for every 90 inactive ones).

- Apply K-RUS: Instead of balancing to a 1:1 ratio, use Random Undersampling (RUS) to reduce the majority class (inactives) to specific, less severe ratios. Research suggests testing the following IRs:

- 1:50

- 1:25

- 1:10

- Model Training and Validation: Train your ML/DL models (e.g., Random Forest, Multi-Layer Perceptron) on these resampled datasets. Evaluate performance using metrics robust to imbalance, such as the Matthews Correlation Coefficient (MCC), F1-score, and Balanced Accuracy, not just overall accuracy [2].

- Selection: Choose the IR that yields the best performance on a held-out validation set. Studies indicate a moderate IR of 1:10 often provides an optimal balance [2].

Protocol 2: Data Quality Assessment for PubChem BioAssays

Before using any PubChem dataset for modeling, perform a quality assessment to gauge its reliability [9].

- Retrieve Summary Statistics: For your assay of interest (AID), extract the provided summary data, which may include raw readouts, percent inhibition, and calculated z'-factors on a per-plate basis.

- Analyze z'-factor Distribution: The z'-factor is a measure of assay quality. Create boxplots of z'-factors by assay run date. Look for strong variation by date, which indicates potential batch effects and inconsistencies in assay performance over time [9].

- Check for Available Metadata: Determine if batch, plate, and well-position information is available. If not, note that the ability to correct for technical artifacts is severely limited.

- Decision Point: If the z'-factor indicates poor or highly variable assay quality (e.g., z' < 0.5), or if critical metadata is missing, consider selecting a different, more robust assay for your analysis to ensure reliable results.

Workflow Visualization

The following diagram illustrates the interconnected challenges of HTS data and the pathway to creating more reliable predictive models.

Pathway from HTS Data to Robust Models

Research Reagent Solutions

The table below lists key computational tools and resources essential for working with imbalanced PubChem data.

| Resource/Tool | Function | Application Context |

|---|---|---|

| PubChem BioAssay | Primary public repository for HTS data, containing compound structures, bioactivity results, and assay descriptions [13] [14]. | Source of raw, imbalanced screening data for model training and analysis. |

| SMOTE & ADASYN | Oversampling techniques that generate synthetic samples for the minority class to balance datasets [1]. | Data-level approach to mitigate imbalance; can be less effective than undersampling for extreme IRs in PubChem [2]. |

| Random Undersampling (RUS) | A data-level method that randomly removes samples from the majority class to achieve a desired Imbalance Ratio [1] [2]. | A simple but highly effective technique for handling severe imbalance in HTS data, as demonstrated with PubChem assays [2]. |

| Pan-Assay Interference Compounds (PAINS) Filters | A set of structural filters designed to identify compounds with known promiscuous, assay-interfering behavior [11]. | Critical for data cleaning to remove false positives from training sets before model building. |

| Cost-Sensitive Learning | An algorithmic-level approach that assigns a higher misclassification cost to the minority class during model training [1]. | Embeds the solution to imbalance directly into the learning algorithm, used in methods like Weighted Random Forest. |

Performance Data

The following table summarizes quantitative findings on the impact of data imbalance and resampling from recent research.

| Dataset / Condition | Original Imbalance Ratio (IR) | Key Performance Metric (MCC/F1-Score) | Optimal Resampling Method & Ratio |

|---|---|---|---|

| HIV Bioassay (AID) | 1:90 | MCC < 0 (Poor) | Random Undersampling (RUS) at 1:10 IR [2] |

| Malaria Bioassay (AID) | 1:82 | Better than HIV, but suboptimal | Random Undersampling (RUS) at 1:10 IR [2] |

| COVID-19 Bioassay (AID) | 1:104 | Performance degraded with all resampling | SMOTE (Best among tested, but overall poor) [2] |

| Theoretical Optimal | N/A | Maximizes MCC & F1-score | Moderate Imbalance (1:10) via K-RUS [2] |

FAQ: What are typical class imbalance ratios in real-world chemical library screening?

In real-world chemical screens, the number of inactive compounds (majority class) vastly outnumbers the active compounds (minority class). The following table summarizes a documented example from a study on protein-protein interaction inhibitors (iPPIs).

| Dataset Type | Number of Compounds | Approximate Imbalance Ratio (Inactive:Active) | Domain | Citation |

|---|---|---|---|---|

| Protein-Protein Interaction Inhibitors (iPPIs) | 3,248 iPPIs vs. ~566,000 non-iPPIs | ~1:174 | Cheminformatics, Drug Discovery | [11] |

FAQ: What metrics should I use to evaluate model performance on imbalanced chemical data?

When dealing with imbalanced datasets, standard accuracy can be highly misleading. A model that simply predicts the majority class for all examples will achieve high accuracy but is practically useless. The following evaluation metrics provide a more reliable assessment of performance on the minority class [15].

| Metric | Formula | Interpretation & Use Case |

|---|---|---|

| Precision | ( \frac{TP}{TP + FP} ) | Measures the reliability of positive predictions. Crucial when the cost of false positives (e.g., pursuing inactive compounds) is high. |

| Recall (Sensitivity) | ( \frac{TP}{TP + FN} ) | Measures the ability to find all positive samples. Vital when missing a true active compound (false negative) is unacceptable. |

| F1-Score | ( 2 \times \frac{\text{Precision} \times \text{Recall}}{\text{Precision} + \text{Recall}} ) | The harmonic mean of precision and recall. Provides a single score to balance the two concerns. |

| AUC-ROC | Area Under the ROC Curve | Measures the model's overall ability to distinguish between the active and inactive classes across all classification thresholds. |

| AUC-PR | Area Under the Precision-Recall Curve | More informative than ROC when the class is severely imbalanced, as it focuses directly on the performance of the minority class [15]. |

FAQ: What experimental strategies can I use to handle severe data imbalance?

A combination of data-level, algorithmic, and strategic labeling approaches can mitigate the effects of severe imbalance.

Data-Level Resampling Techniques

Resampling methods directly adjust the training set to create a more balanced class distribution [1] [15].

Oversampling the Minority Class: This involves creating synthetic examples of the minority class to increase its representation.

- SMOTE (Synthetic Minority Over-sampling Technique): Generates new synthetic samples by interpolating between existing minority class instances in feature space [1]. This helps to mitigate overfitting that can occur from simple duplication.

- Advanced SMOTE Variants: Methods like Borderline-SMOTE (focuses on samples near the decision boundary) and ADASYN (adaptively generates samples based on learning difficulty) can improve upon basic SMOTE by being more strategic about which samples to synthesize [15] [1].

Undersampling the Majority Class: This involves reducing the number of majority class samples.

- Random Undersampling (RUS): Randomly removes samples from the majority class. While simple and efficient, it risks discarding potentially useful information [1].

- NearMiss: Uses a distance-based approach to select majority class samples that are closest to the minority class, aiming to preserve the underlying distribution characteristics [1].

Algorithmic-Level and Strategic Approaches

- Cost-Sensitive Learning: This approach modifies the learning algorithm to assign a higher penalty for misclassifying minority class examples than majority class examples, forcing the model to pay more attention to the rare class [15].

- Ensemble Methods: Combining multiple models, often with resampling techniques (e.g., EasyEnsemble), can significantly improve performance on imbalanced data [15].

- Active Learning: This is a powerful strategy for imbalanced scenarios, especially within a limited labeling budget. Instead of labeling data randomly, an active learning algorithm intelligently selects the most "informative" data points for annotation. This is highly effective for identifying rare classes, as it can strategically query uncertain examples that are likely to be from the minority class, thereby building a more balanced and informative training set efficiently [16] [17].

FAQ: My model has high accuracy but poor recall for the active class. How do I troubleshoot this?

This is a classic symptom of a model failing to learn the characteristics of the minority class. Follow this systematic troubleshooting workflow to diagnose and address the issue.

Troubleshooting Steps:

- Verify Evaluation Metric: Immediately stop using accuracy as your primary metric. Switch to Precision, Recall, F1-Score, and AUC-PR to get a true picture of your model's performance on the active class [15].

- Inspect Data Balance: Calculate the imbalance ratio in your training data. Ratios of 100:1 or higher are common and require specialized techniques [11].

- Apply Resampling: Implement a resampling technique like SMOTE to create a more balanced training set, or use Random Undersampling if the dataset is very large [1].

- Adjust Decision Threshold: By default, the decision threshold for classification is 0.5. Lowering this threshold makes the model more "sensitive," increasing the chance of predicting "active," which can improve recall (but may slightly reduce precision).

- Explore Advanced Algorithms: If the problem persists, employ algorithms or frameworks designed for imbalance.

The Scientist's Toolkit: Key Research Reagents & Materials

The following table lists essential computational "reagents" and tools for conducting research on imbalanced chemical data.

| Tool / Material | Function / Explanation | Example Use Case |

|---|---|---|

| Standardized Chemical Datasets | Publicly available datasets with known imbalance, used for benchmarking. | NCI-60 cancer cell line screening panel [16]. |

| Resampling Algorithms (e.g., SMOTE) | Software packages that implement oversampling and undersampling to rebalance datasets. | imbalanced-learn (Python scikit-learn-contrib library). |

| Active Learning Framework | A computational system for iterative, strategic data labeling. | The DIRECT algorithm for imbalance and label noise [17]. |

| Performance Metrics | Software functions to calculate metrics beyond accuracy. | Scikit-learn's classification_report (outputs precision, recall, F1). |

| Molecular Descriptors | Numerical representations of chemical structures. | ECFP fingerprints (circular fingerprints), physicochemical properties [11] [16]. |

Experimental Protocol: Active Learning for Imbalanced Chemical Data

This protocol outlines the methodology for applying an active learning strategy to efficiently identify active compounds in a large, imbalanced chemical library, as conceptualized in recent literature [16] [17].

Detailed Methodology:

Problem Setup and Initialization:

- Objective: To identify a maximally informative and balanced subset of compounds for experimental labeling from a vast library where actives are rare [16].

- Initial Seed: Start by randomly selecting a very small subset (e.g., 0.5-1%) of the chemical library and obtaining their labels (e.g., "active" or "inactive" from a high-throughput assay). This creates the initial labeled set ( L0 ) and a large pool of unlabeled data ( U0 ) [17].

Iterative Active Learning Loop: Repeat the following steps until a stopping criterion is met (e.g., annotation budget is exhausted or model performance plateaus).

- Model Training: Train a classification model (e.g., a deep neural network or Random Forest) on the current labeled set ( L_t ). The model should be capable of providing uncertainty estimates.

- Query Strategy (Core of the Experiment): Use an active learning algorithm to select the most informative candidates from the unlabeled pool ( U_t ). For imbalanced data, algorithms like DIRECT are designed to find the optimal separation threshold between classes, preferentially selecting uncertain examples that are likely to improve the model's decision boundary and representation of the minority class [17].

- Expert Labeling: The selected candidate compounds are sent for experimental testing (e.g., biochemical assays) to obtain their true labels.

- Database Update: The newly labeled compounds are removed from ( Ut ) and added to ( Lt ), creating the updated set ( L_{t+1} ).

Output and Analysis:

- Final Model: The model trained on the final labeled set ( L_{final} ).

- Hit List: A curated list of predicted active compounds, which is expected to be more comprehensive and derived from a more efficient use of resources compared to random screening [16].

- Performance Validation: Evaluate the final model on a held-out test set using the metrics in the table above (F1-Score, AUC-PR, etc.) to quantify its performance.

Troubleshooting Guides

Guide 1: Addressing Poor Model Performance on Minority Classes

Problem: Your model shows high overall accuracy but fails to identify active compounds (the minority class) in your chemical library.

Symptoms:

- High number of false negatives for active compounds.

- Model predictions are strongly biased towards the inactive (majority) class.

- Metrics like accuracy are high, but recall and F1-score for the active class are low.

Solutions:

- Apply Strategic Sampling: Use the K-Ratio Random Undersampling (K-RUS) technique. Instead of a balanced 1:1 ratio, experiment with moderate imbalance ratios like 1:10 (active to inactive), which has been shown to significantly enhance model performance and stability in chemical risk assessment [18] [2].

- Implement Algorithm-Level Adjustments: Use cost-sensitive learning to assign a higher misclassification penalty to the minority class. Alternatively, employ ensemble methods like stacking, which combines multiple models to improve generalization and manage variability [18] [1].

- Utilize Active Learning: Integrate an active learning framework that uses selection strategies (e.g., uncertainty sampling) to iteratively select the most informative unlabeled data points for labeling. This reduces the need for large-scale labeled data and improves model focus on critical examples [18] [19].

Guide 2: Managing High Data Acquisition Costs in Active Learning

Problem: The active learning cycle is slow and expensive because the objective function (e.g., molecular growing and scoring) is computationally intensive.

Symptoms:

- Inability to screen large chemical spaces efficiently.

- Long waiting times for molecular docking or scoring results.

Solutions:

- Optimize the Initial Training Set: Seed your active learning model with compounds from on-demand chemical libraries (e.g., Enamine REAL database) or use known fragment hits to start the cycle from a more informed point [19].

- Leverage Hybrid Scoring: Combine fast, approximate scoring functions (like docking scores) with more accurate but expensive ones (like free energy calculations). Use the fast scores for initial screening in the active learning loop and reserve costly calculations for the most promising candidates [19].

- Automate and Parallelize: Use workflows with Application Programming Interfaces (APIs) on High-Performance Computing (HPC) clusters to automate the building and scoring of compound suggestions, significantly accelerating the process [19].

Frequently Asked Questions (FAQs)

FAQ 1: What are the main sources of bias in AI models for drug discovery? Bias in AI models can originate from multiple stages of the pipeline:

- Data Collection & Labeling: If the training data from sources like high-throughput screening (HTS) bioassays is not diverse or representative, the model will inherit these biases [20] [21] [22]. Historical data often contains underrepresentation of active compounds [2] [1]. Human annotators can also introduce subjective biases during data labeling [20].

- Model Training: Algorithms trained on imbalanced datasets naturally become biased toward predicting the majority class (e.g., inactive compounds) because it minimizes the overall error rate [23] [1]. The design of the algorithm itself can inadvertently prioritize certain features over others [24] [25].

- Human & Systemic Factors: The assumptions and cognitive biases of developers can seep into the model design [24] [21]. Systemic biases exist when institutions operate in ways that disadvantage certain groups, which can be reflected in the data and subsequent models [21].

FAQ 2: Why can't I just trust high accuracy scores from my model? In imbalanced datasets, a high accuracy score is often misleading. For example, if inactive compounds make up 95% of your data, a model that simply predicts "inactive" for every compound will be 95% accurate, but it will have completely failed to identify any active compounds. Therefore, you must rely on metrics that are sensitive to class imbalance, such as MCC (Matthews Correlation Coefficient), AUPRC (Area Under the Precision-Recall Curve), and F1-score [18] [2]. These provide a more realistic picture of model performance on the minority class.

FAQ 3: What is the difference between data-level and algorithm-level solutions to imbalance?

- Data-Level Methods involve modifying the dataset itself to create a more balanced distribution. This includes:

- Algorithm-Level Methods modify the learning algorithm to reduce bias toward the majority class. This includes:

FAQ 4: How does Active Learning specifically help with data imbalance? Active Learning (AL) directly tackles imbalance by intelligently selecting which data points to label. Instead of randomly labeling a large dataset where actives are rare, AL uses strategies like uncertainty sampling to query the most informative examples from the unlabeled pool. This often leads to the selective labeling of minority class instances that the model finds most challenging, thereby efficiently improving the model with fewer labeled examples and focusing resources on the critical areas of chemical space [18] [19].

The following table summarizes key quantitative findings from recent studies on handling imbalanced data in AI-driven chemical discovery.

Table 1: Performance of Different Sampling Techniques on Imbalanced Chemical Data

| Sampling Technique | Dataset / Context | Key Performance Metrics | Findings and Notes |

|---|---|---|---|

| K-Ratio Undersampling (K-RUS) [2] | HIV Bioassay (IR: 1:90) | MCC: ~0.45, ROC-AUC: ~0.85 | A moderate imbalance ratio of 1:10 significantly enhanced performance. RUS outperformed ROS. |

| Random Undersampling (RUS) [2] | Malaria Bioassay (IR: 1:82) | Best MCC and F1-score | RUS yielded the best MCC values and F1-score compared to other techniques. |

| Active Stacking-Deep Learning [18] | Thyroid-Disrupting Chemicals | MCC: 0.51, AUROC: 0.824, AUPRC: 0.851 | Achieved superior stability under severe class imbalance and required up to 73.3% less labeled data. |

| NearMiss Undersampling [2] | Various Bioassays | High Recall, Low Precision | Achieved the highest recall but low performances on other metrics. Can lead to information loss [1]. |

| SMOTE [2] | COVID-19 Bioassay (IR: 1:104) | Highest MCC & F1-score | For extremely imbalanced datasets, synthetic oversampling can be more effective than random methods. |

Table 2: Essential Research Reagents & Computational Tools

| Item Name | Type | Function in Experiment |

|---|---|---|

| U.S. EPA ToxCast Data [18] | Dataset | Provides high-throughput in vitro assay data for training and validating toxicity prediction models. |

| PubChem Bioassays [2] | Dataset | A key source of experimental biochemical activity data used to create imbalanced datasets for training AI models. |

| RDKit [18] [19] | Software Library | Used for cheminformatics tasks, including processing SMILES strings, calculating molecular fingerprints, and generating conformers. |

| Molecular Fingerprints [18] | Molecular Representation | A set of 12 distinct fingerprints (e.g., ECFP, topological) used to convert molecular structures into numerical features for model input. |

| Enamine REAL Database [19] | On-Demand Library | A vast catalog of readily available compounds used to seed the chemical search space and prioritize purchasable candidates. |

| FEgrow Software [19] | Workflow Tool | An open-source package for building and scoring congeneric series of ligands in protein binding pockets, integrated with active learning. |

| gnina [19] | Scoring Function | A convolutional neural network scoring function used within FEgrow to predict the binding affinity of designed compounds. |

Detailed Experimental Protocols

Protocol 1: Implementing K-Ratio Random Undersampling (K-RUS)

Objective: To optimize the imbalance ratio (IR) in a training dataset to improve model performance on the minority class without resorting to a fully balanced (1:1) dataset.

Background: Traditional resampling to a 1:1 ratio can sometimes lead to overfitting or loss of important majority class information. The K-RUS method aims to find a more effective, moderate imbalance ratio [2].

Methodology:

- Data Preparation: Curate your training set from a source like PubChem Bioassays or the EPA ToxCast program. Preprocess the data by removing invalid entries, standardizing structures (e.g., with RDKit), and eliminating duplicates [18] [2].

- Define Initial Imbalance: Calculate the original Imbalance Ratio (IR) as (Number of Active Compounds) : (Number of Inactive Compounds).

- Apply K-RUS: Instead of undersampling to a 1:1 ratio, randomly remove inactive compounds to achieve less aggressive target ratios. Studies suggest testing ratios like:

- 1:50

- 1:25

- 1:10 (often found to be optimal) [2]

- Model Training and Evaluation: Train your chosen machine learning or deep learning models (e.g., Random Forest, Deep Neural Networks) on these K-RUS adjusted datasets. Evaluate performance using metrics robust to imbalance: Matthews Correlation Coefficient (MCC), Area Under the Precision-Recall Curve (AUPRC), and F1-score [2].

Protocol 2: Active Learning for Compound Prioritization

Objective: To efficiently search a vast combinatorial chemical space and prioritize the most promising compounds for synthesis or purchase using an iterative active learning cycle.

Background: Exhaustive screening of all possible compounds is computationally prohibitive. Active learning reduces this cost by iteratively selecting the most informative candidates for evaluation [19].

Methodology:

- Initialization:

- Seed Library: Start with an initial set of compounds. This can be a small random sample or, more effectively, a set seeded with known fragment hits or compounds from on-demand libraries like the Enamine REAL database that match a desired substructure [19].

- Expensive Evaluation: Use your primary objective function (e.g., FEgrow for building and scoring, molecular docking, free energy calculations) to evaluate this initial set.

- Active Learning Cycle: Repeat for a set number of iterations or until performance plateaus:

- Model Training: Train a machine learning model (e.g., a regression model to predict the scoring function output) on all compounds evaluated so far.

- Prediction and Selection: Use the trained model to predict the scores of all unevaluated compounds in the library. Select the next batch of compounds based on a selection strategy:

- Uncertainty Sampling: Choose compounds where the model is most uncertain.

- Margin Sampling: Select compounds with the smallest difference between the top two predicted scores.

- Entropy Sampling: Pick compounds with the highest predictive entropy [18].

- Expensive Evaluation & Update: Evaluate the newly selected batch with the expensive objective function and add them to the training set.

- Output: The final model and the evaluated compounds, ranked by their scores, provide a prioritized list for experimental testing.

Workflow and Pathway Visualizations

Active Learning Workflow for Drug Discovery

The Domino Effect of Skewed Data

Solutions to Break the Chain of Bias

Frequently Asked Questions (FAQs)

Q1: Why is class imbalance a critical problem in machine learning for infectious disease research? Class imbalance, where one class (e.g., non-toxic compounds) significantly outnumbers another (e.g., toxic compounds), is a major challenge. Models trained on such data can appear accurate but fail critically at predicting the minority class, which in toxicity prediction could mean missing harmful chemicals. This is particularly problematic in studies of infectious disease targets, where the cost of a false negative is exceptionally high [18].

Q2: What is Active Learning (AL) and how can it help with limited and imbalanced data? Active Learning is a sub-field of AI that enhances ML models by iteratively selecting the most informative data points for training. Instead of requiring a large, fully-labeled dataset upfront, an AL algorithm selects unlabeled examples for which it requests labels, typically from a human expert. This approach is especially useful when unlabeled data is plentiful but acquiring labels is challenging, time-consuming, or costly. It allows researchers to efficiently explore chemical space and prioritize biochemical screenings even with limited data [18].

Q3: What are some common data-level methods to handle class imbalance? A primary data-level method is strategic sampling, which involves modifying the training data to achieve a more balanced distribution. This can include [18]:

- Oversampling: Increasing the number of samples in the minority class.

- Undersampling: Reducing the number of samples in the majority class. These techniques help prevent models from being biased toward the majority class.

Q4: Are complex methods like SMOTE always better than simple random sampling for imbalance? Not necessarily. Recent evidence suggests that for strong classifiers like XGBoost, simply tuning the prediction probability threshold can be as effective as using complex oversampling techniques like SMOTE. For weaker learners, simpler methods like random oversampling often provide similar benefits to SMOTE but with less complexity. It is recommended to start with strong classifiers and threshold tuning before exploring more complex resampling methods [26].

Q5: What is stacking ensemble learning and what are its benefits? Stacking ensemble learning is a powerful technique that combines predictions from multiple base models (e.g., a CNN, a BiLSTM, and a model with an attention mechanism) to build a more accurate and robust final model. This "stack model" learns to optimally combine the base predictions, which improves overall generalization and performance on challenging tasks like toxicity prediction with imbalanced data [18].

Troubleshooting Guides

Problem 1: Poor Model Performance on the Minority Class

- Symptoms: High overall accuracy, but very low recall or precision for the active/toxic compound class.

- Solutions:

- Implement Strategic Sampling: Use techniques like random oversampling or undersampling to rebalance your training data. Research has shown that dividing training data into k-ratios can effectively balance the distribution between toxic and non-toxic compounds [18].

- Use Strong Classifiers: Benchmark your problem against robust algorithms like XGBoost or CatBoost, which are less sensitive to class imbalance [26].

- Tune the Decision Threshold: Move away from the default 0.5 probability threshold for classification. Optimize the threshold based on your project's need for high recall or high precision [26].

- Adopt an Ensemble Method: Employ a stacking ensemble framework that leverages multiple models. For instance, a stack combining CNN, BiLSTM, and an attention mechanism can capture complementary patterns and significantly improve predictions for the minority class [18].

Problem 2: High Costs of Data Labeling in Experimental Validation

- Symptoms: Labeling all available data from high-throughput assays is prohibitively expensive or slow.

- Solutions:

- Deploy an Active Learning Framework: Integrate an AL system that uses a selection strategy (e.g., uncertainty sampling) to query the most informative samples for labeling. This can reduce the amount of labeled data required by up to 73.3% while maintaining high performance [18].

- Validate with Functional Assays: Remember that computational predictions are a starting point. Use biological functional assays (e.g., enzyme inhibition, cell viability) to empirically validate the activity of compounds flagged by your model. This creates an iterative feedback loop for improving the AL model [27].

Problem 3: Choosing a Selection Strategy for Active Learning

- Symptoms: Uncertainty about which data points to select for labeling in an AL cycle.

- Solutions:

- Uncertainty Sampling: Select instances where the model's prediction probability is closest to 0.5 (most uncertain). This has been shown to offer superior stability under severe class imbalance [18].

- Margin Sampling: Select instances where the difference between the top two predicted probabilities is smallest.

- Entropy Sampling: Select instances where the class probability distribution has the highest entropy (greatest uncertainty).

Performance Data and Method Comparison

Table 1: Performance of Active Stacking-Deep Learning on an Imbalanced Toxicity Dataset

This table summarizes the results of a study using an active stacking-deep learning framework with strategic sampling for predicting thyroid-disrupting chemicals, demonstrating effective handling of data imbalance [18].

| Metric | Performance |

|---|---|

| Matthews Correlation Coefficient (MCC) | 0.51 |

| Area Under ROC Curve (AUROC) | 0.824 |

| Area Under PR Curve (AUPRC) | 0.851 |

| Reduction in Labeled Data Needed | Up to 73.3% |

Table 2: Comparison of Methods for Handling Class Imbalance

This table compares different approaches to managing imbalanced datasets, based on recent evaluations [26].

| Method | Description | Best Use Case |

|---|---|---|

| Threshold Tuning | Adjusting the default classification probability threshold (0.5) to a more optimal value. | Primary method when using strong classifiers (XGBoost, CatBoost). |

| Cost-Sensitive Learning | Modifying the learning algorithm to assign a higher cost to misclassifying the minority class. | A strong alternative to resampling. |

| Random Oversampling | Randomly duplicating examples from the minority class. | Simple, effective baseline; useful with weak learners. |

| SMOTE | Generating synthetic minority class samples in feature space. | Can be tested with weak learners, but often no better than random. |

| Random Undersampling | Randomly removing examples from the majority class. | When the dataset is very large and reducing training time is beneficial. |

| Balanced Ensemble Methods | Using algorithms like Balanced Random Forests or EasyEnsemble. | Can outperform standard ensembles in some scenarios. |

Detailed Experimental Protocols

Protocol 1: Active Stacking-Deep Learning with Strategic k-Sampling

This protocol is adapted from a study on predicting chemical toxicity for thyroid-disrupting chemicals, which is analogous to targeting infectious disease mechanisms [18].

Data Curation and Preprocessing:

- Source: Collect data from high-throughput in vitro assays (e.g., from the U.S. EPA ToxCast program).

- Clean: Remove entries with invalid or missing SMILES notations. Convert SMILES to a standardized canonical form using a toolkit like RDKit.

- Filter: Exclude inorganic compounds (lacking carbon atoms), mixtures (SMILES containing "."), and duplicate entries.

- Split: Create an initial training set and a separate test set, ensuring no molecules overlap.

Molecular Feature Calculation:

- Compute a diverse set of molecular fingerprints (e.g., 12 distinct types) from the canonical SMILES strings. These should capture different structural aspects: predefined substructures, topological features, electrotopological states, and atom-pair relationships.

Initial Model Training with Strategic Sampling:

- Divide the initial training data into k-ratios to create balanced subsets for training base models.

- Train multiple deep learning base models (e.g., a Convolutional Neural Network (CNN), a Bidirectional Long Short-Term Memory (BiLSTM), and a model with an attention mechanism) on these subsets using the different molecular fingerprints.

Build the Stacking Ensemble:

- Generate Out-of-Fold (OOF) predictions from each of the base models.

- Use these OOF predictions as input features to train a second-level meta-learner (the stack model) that learns to combine the base predictions optimally.

Iterate with Active Learning:

- Selection: Use the trained stack model to predict on a large pool of unlabeled data. Apply a selection strategy (e.g., uncertainty sampling) to identify the most informative samples for which to request labels.

- Update: Add the newly labeled data to the training set.

- Retrain: Retrain the stacking ensemble model on the updated, larger training set.

- Repeat this cycle until a performance plateau or labeling budget is reached.

Protocol 2: Validation via Molecular Docking

- Objective: To computationally validate the predictions of the ML model, especially for highly toxic compounds [18].

- Procedure:

- Select compounds predicted to be active (toxic) by the model.

- Obtain the 3D structure of the target protein (e.g., Thyroid Peroxidase for the case study, or a relevant target like a viral protease for COVID-19).

- Perform molecular docking simulations to predict the binding affinity and pose of the candidate compounds within the target's active site.

- Analyze the results; strong binding interactions for model-predicted actives reinforce the reliability of the machine learning framework.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Computational Tools

| Item | Function / Explanation |

|---|---|

| U.S. EPA ToxCast Database | A source of high-throughput in vitro screening data used to curate training sets for chemical toxicity prediction [18]. |

| RDKit | An open-source cheminformatics toolkit used for processing SMILES notations, calculating molecular descriptors, and working with chemical data [18]. |

| Molecular Fingerprints | Numerical representations of molecular structure. Using a diverse set (e.g., 12 types) helps capture different aspects of chemistry for the model [18]. |

| imbalanced-learn Python Library | A library offering numerous resampling techniques (oversampling, undersampling) for handling class imbalance. Use it for benchmarking, but prioritize strong classifiers and threshold tuning [26]. |

| Molecular Docking Software | Tools (e.g., AutoDock Vina, GOLD) used for computational validation of predicted active compounds by simulating their binding to a protein target [18]. |

| Biological Functional Assays | Wet-lab experiments (e.g., enzyme inhibition, cell viability) that are essential for empirically validating the activity of compounds identified by computational models [27]. |

Workflow and Pathway Diagrams

Active Learning Workflow

Strategic Sampling for Stacking

Strategic Solutions: Data-Level and Algorithmic Approaches for Balanced Active Learning

Frequently Asked Questions (FAQs)

Fundamental Concepts

Q1: What is the core problem that data-level resampling techniques aim to solve in chemical library research?

Data-level resampling techniques address the problem of class imbalance. In machine learning for drug discovery, such as in Drug-Target Interaction (DTI) prediction, class imbalance occurs when one class (e.g., non-binders) is represented by a vastly larger number of samples than the other class (e.g., binders) [28]. This imbalance can cause standard learning algorithms, which often assume balanced class distributions, to become biased toward the majority class, leading to poor predictive performance for the critical minority class [28]. Resampling techniques modify the dataset itself to achieve a more balanced class distribution before it is presented to the learning algorithm.

Q2: How does handling class imbalance relate to Active Learning workflows for ultra-large chemical libraries?

In Active Learning, you work with prohibitively expensive scoring functions (like molecular docking) and iteratively select compounds for labeling [29] [30]. Class imbalance is inherent because the fraction of high-scoring "hits" (the minority class) in a random library is often tiny. While Active Learning intelligently selects data points to label, resampling can play a crucial role within the machine learning model's training cycle. After a batch of compounds is scored by the expensive function, the resulting training set for the machine learning model is likely imbalanced. Applying resampling to this set can improve the model's ability to recognize the sparse but critical "hits," thereby enhancing the next cycle of compound selection [30].

Q3: What are the two main categories of data-level resampling methods?

The two main categories are Oversampling and Undersampling [28].

- Oversampling balances the dataset by adding new instances to the minority class.

- Undersampling balances the dataset by discarding instances from the majority class.

Practical Implementation & Technique Selection

Q4: My Random Forest model on a moderately imbalanced DTI dataset is ignoring the minority class. What is a strong resampling technique to try first?

Based on comparative studies, SVM-SMOTE paired with a Random Forest classifier has been shown to record high F1 scores for moderately imbalanced activity classes [28]. It can be a reliable go-to resampling method for such scenarios.

Q5: I've heard Random Undersampling (RUS) is fast, but when should I avoid it?

You should avoid using Random Undersampling, especially when your dataset is highly imbalanced [28]. Research has found that RUS can severely affect a model's performance under these conditions because it discards a massive amount of data from the majority class, potentially throwing away valuable information and making the model's learning unreliable [28].

Q6: Are there learning methods that are inherently more robust to class imbalance?

Yes, deep learning methods like Multilayer Perceptrons (MLPs) have demonstrated a degree of inherent robustness. In DTI prediction studies, MLPs recorded high F1 scores across various activity classes even when no resampling method was applied to the imbalanced dataset [28]. However, this does not preclude the potential for further performance gains by combining deep learning with resampling techniques.

Troubleshooting Common Experimental Issues

Q7: After applying SMOTE, my model's overall accuracy increased, but it still fails to predict true binders. What is going wrong?

This is a classic sign of a persistent class imbalance problem or improperly synthesized samples. High overall accuracy can be misleading when the class distribution is skewed. Focus on metrics that are more sensitive to minority class performance, such as F1-score, Precision, or Recall for the positive class [28]. The issue may be that the synthetic samples generated by SMOTE are not meaningful representatives of the true minority class in your specific chemical space. Consider trying alternative oversampling techniques like ADASYN, which adaptively generates samples based on the density of minority class examples, or revisiting the representativeness of your features [28].

Q8: My resampling experiment is yielding inconsistent results across different runs. How can I stabilize this?

Ensure you are correctly implementing the resampling technique within a cross-validation framework [28]. The resampling should be applied after splitting the data into training and validation folds to prevent information from the validation set leaking into the training process. This means the resampling is performed only on the training fold within each cross-validation cycle. Using a fixed random seed can also help in achieving reproducible results for comparison purposes.

Comparative Analysis of Resampling Techniques

Table 1: Summary and Comparison of Core Resampling Techniques

| Technique | Category | Core Mechanism | Key Advantages | Key Disadvantages | Ideal Context in Chemical Library Screening |

|---|---|---|---|---|---|

| Random Undersampling (RUS) | Undersampling | Randomly discards majority class instances until balance is achieved. | • Computationally fast• Reduces dataset size, speeding up model training. | Severely loses information [28].Can lead to model underfitting and poor generalization, especially in highly imbalanced datasets [28]. | Generally not recommended, particularly for highly imbalanced datasets where data is precious. |

| Random Oversampling (ROS) | Oversampling | Randomly duplicates minority class instances until balance is achieved. | • Simple to implement.• No information loss from the majority class. | High risk of model overfitting because the model sees exact copies of the same minority samples multiple times. | Can be a quick baseline, but be wary of overfitting on the duplicated chemical structures. |

| SMOTE (Synthetic Minority Oversampling Technique) | Oversampling | Creates synthetic minority samples by interpolating between existing minority instances in feature space. | Reduces risk of overfitting compared to ROS.Introduces new, plausible variations of minority class examples. | Can generate noisy samples if the minority class is not well-clustered.Does not consider the majority class, potentially creating samples in majority class regions. | Useful when the "active" chemical compounds form a coherent cluster in the descriptor/fingerprint space. |

| ADASYN (Adaptive Synthetic Sampling) | Oversampling | An extension of SMOTE that adaptively generates more synthetic data for minority class examples that are harder to learn. | Focuses on the difficulty of learning minority samples, potentially improving model performance at class boundaries. | Can be more complex to implement and tune than SMOTE.Similar to SMOTE, it may amplify noise if present. | Beneficial when the boundary between binders and non-binders is complex and some binders are "harder" to identify. |

Experimental Protocols & Workflows

General Protocol for Evaluating Resampling Techniques

This protocol provides a framework for comparing the effectiveness of different resampling methods in a cheminformatics context.

- Dataset Preparation: Start with your labeled chemical dataset (e.g., compounds with known binding activity). Represent compounds using features such as molecular fingerprints (e.g., Morgan Fingerprints) or physicochemical descriptors [29].

- Define Performance Metrics: Select metrics that are robust to imbalance. F1-score for the minority class is highly recommended, along with precision, recall, and potentially AUC-ROC [28].

- Establish a Cross-Validation Scheme: Use k-fold cross-validation to ensure a robust evaluation. Critical: Apply the resampling technique only to the training folds within each cross-validation loop. The validation fold must be left untouched to provide an unbiased performance estimate.

- Model Training and Evaluation: For each training fold, apply the resampling technique (e.g., RUS, ROS, SMOTE, ADASYN). Train your chosen machine learning model(s) (e.g., Random Forest, Gaussian Naïve Bayes) on the resampled training data. Validate the model on the unaltered validation fold and record the performance metrics.

- Result Aggregation and Comparison: Aggregate the results across all cross-validation folds for each resampling technique and model combination. Use the aggregated metrics, particularly the F1-score, to determine the most effective strategy.

Detailed Methodology: A Cited SMOTE Experiment

The following workflow is derived from a comparative study on DTI prediction [28].

Objective: To compare the effectiveness of several resampling techniques, including SMOTE and RUS, in improving the binary classification performance of machine learning models for predicting drug-target interactions across ten cancer-related activity classes from BindingDB.

Workflow Diagram:

Key Experimental Details:

- Dataset: Ten cancer-related activity classes from BindingDB [28].

- Molecular Representation: Chemical compounds were represented as molecular fingerprints, specifically Extended-Connectivity Fingerprints (ECFP), or as SMILES strings [28].

- Resampling Techniques Tested: Random Undersampling (RUS), Random Oversampling (ROS), SMOTE, and SVM-SMOTE [28].

- Learning Algorithms: Included Random Forest (RF) and Gaussian Naïve Bayes (GNB) as standard machine learning models, and Multilayer Perceptron (MLP) as a deep learning baseline [28].

- Key Findings:

- RUS was found to be unreliable, severely affecting model performance, especially with high imbalance [28].

- SVM-SMOTE paired with RF or GNB achieved high F1-scores across severely and moderately imbalanced classes [28].

- The Multilayer Perceptron (MLP) deep learning model recorded high F1-scores even without resampling, demonstrating inherent robustness to imbalance [28].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Resampling Experiments in Cheminformatics

| Item / Resource | Function / Purpose |

|---|---|

| RDKit | An open-source cheminformatics toolkit used for computing molecular descriptors, generating fingerprints (e.g., Morgan Fingerprints), and handling SMILES strings [29]. |

| imbalanced-learn (scikit-learn-contrib) | A Python library providing a wide range of resampling techniques, including implementations of ROS, SMOTE, and ADASYN, integrated with the scikit-learn API. |

| Molecular Fingerprints (e.g., ECFP) | A way to represent a molecular structure as a bit string, capturing key structural features. This numeric representation is essential for both machine learning and interpolation in techniques like SMOTE [28] [29]. |

| scikit-learn | A core Python library for machine learning. It provides the classifiers (Random Forest, etc.), metrics (F1-score, etc.), and data splitting utilities needed for the experimental pipeline [29]. |

| BindingDB / ChEMBL | Public databases containing chemical and biological information for a vast number of compounds and protein targets. Used as a source for building imbalanced datasets for DTI prediction [28]. |

| Active Learning Framework | A custom or pre-built framework for iteratively selecting compounds from an ultra-large library for expensive scoring, which is the broader context where resampling is applied [29] [30]. |

Resampling Technique Mechanisms Visualized

Frequently Asked Questions (FAQs)

FAQ 1: What are the primary algorithm-level strategies to combat class imbalance without changing the data itself? At the algorithm level, the two foremost strategies are Cost-Sensitive Learning (CSL) and Ensemble Methods. CSL directly modifies the learning algorithm to assign a higher penalty for misclassifying minority class instances, forcing the model to pay more attention to them [31]. Ensemble methods combine multiple models to create a more robust and accurate predictor. When specifically designed for imbalanced data, they can integrate techniques like strategic sampling or cost-sensitive weighting to improve minority class recognition [18] [32].

FAQ 2: When should I choose Cost-Sensitive Learning over data-level methods like resampling? Cost-Sensitive Learning is particularly advantageous when the training set is noisy or when there is a significant mismatch between the class distributions in your training and real-world test data [33]. It preserves the original data distribution, avoiding the potential overfitting that can occur with oversampling or the loss of informative data from undersampling [31]. CSL is also computationally efficient as it does not increase the size of the dataset [31].

FAQ 3: How do ensemble methods like stacking improve performance on imbalanced chemical data? Stacking is an ensemble technique that uses a meta-learner to optimally combine the predictions of multiple base models. In imbalanced chemical data scenarios, this leverages the strengths of diverse models (e.g., CNNs for spatial features and BiLSTMs for sequential relationships), which collectively can capture more complex patterns from the minority class [18]. When combined with strategic sampling within an Active Learning framework, stacking has been shown to achieve high performance (e.g., AUROC of 0.824) while requiring up to 73.3% less labeled data [18].

FAQ 4: Can Cost-Sensitive Learning and ensemble methods be combined? Yes, this is a powerful and common approach. You can create a cost-sensitive ensemble by applying CSL to the base learners within an ensemble framework. For example, an Adaptive Cost-Sensitive Learning (AdaCSL) algorithm can be used with neural networks to adaptively adjust the loss function, reducing the cost of misclassification on the test set [33]. Another method is to use Error Correcting Output Codes (ECOC) with cost-sensitive baseline classifiers to handle multiclass imbalanced problems [34].

FAQ 5: My dataset has both class imbalance and label noise. What should I consider? The coexistence of class imbalance and label noise is a particularly challenging scenario. Label noise can severely impede the identification of optimal decision boundaries and lead to model overfitting [35]. In these cases, algorithm-level methods like cost-sensitive learning and certain robust ensemble methods can be beneficial. A systematic review suggests that the effectiveness of any algorithm is dataset-dependent, but deep learning methods may excel on complex datasets with these issues, while resampling approaches can be competitive with lower computational cost [35].

Troubleshooting Guides

Explanation This is a classic sign of a model biased towards the majority class. In chemical risk assessment, the minority class (active/toxic compounds) is often the class of interest, and this failure can have severe consequences [18]. Standard learning algorithms are designed to maximize overall accuracy and may ignore the minority class when it is severely underrepresented [31].

Solution Steps

- Implement Cost-Sensitive Learning: Assign a higher misclassification cost to the minority class. The goal is to minimize the high-cost errors, making the model more sensitive to the toxic compounds [31].

- Adopt an Advanced Ensemble Method: Use a stacking ensemble with strategic data sampling. For example, divide your training data into k-ratios to achieve a more balanced distribution during the training of base models [18].

- Select Appropriate Metrics: Stop using accuracy as your primary metric. Instead, use metrics that are robust to imbalance, such as AUC-ROC (Area Under the Receiver Operating Characteristic Curve), AUC-PR (Area Under the Precision-Recall Curve), and MCC (Matthews Correlation Coefficient) [18]. Monitor the recall and precision for the minority class specifically.

Prevention Tip Proactively address class imbalance during the experimental design phase, not as an afterthought. Choose algorithm-level solutions like CSL or ensembles from the start when you know your dataset is imbalanced.

Problem 2: The performance degrades significantly when applying the model to a real-world dataset with a different class ratio.

Explanation This issue often stems from a mismatch between the class distributions in your training set and the real-world (test) data. A model trained on a dataset with one imbalance ratio may not generalize well to a population with a different ratio [33].

Solution Steps

- Employ an Adaptive Cost-Sensitive Algorithm: Use methods like the Adaptive Cost-Sensitive Learning (AdaCSL) algorithm. AdaCSL adaptively adjusts the loss function to bridge the difference in class distributions between subgroups of training and validation samples, which usually leads to better performance on the test data [33].

- Utilize Active Learning (AL): Incorporate an AL framework. An uncertainty-based AL strategy can iteratively select the most informative samples from the unlabeled real-world data for labeling and model updating. This allows the model to adapt to the new distribution and has shown superior stability under severe class imbalance [18].

- Validate with Realistic Test Sets: During development, evaluate your model on test sets constructed with varying active-to-inactive ratios (e.g., 1:2, 1:3, up to 1:6) to simulate real-world scenarios and assess model robustness [18].

Problem 3: High computational cost and complexity of ensemble methods.

Explanation Combining multiple models inherently increases computational time and memory requirements. This can become a bottleneck, especially with large chemical libraries [32].

Solution Steps

- Apply Ensemble Pruning: Use selective ensemble learning (ensemble pruning) to identify a subset of base learners that maintains or even outperforms the performance of the entire ensemble. This reduces the ensemble size and computational load [32].

- Use Optimization-Based Pruning: Implement metaheuristic-based pruning methods (e.g., population-based or trajectory-based algorithms). These optimization techniques efficiently select the most diverse and complementary subset of base learners to form a compact, powerful ensemble [32].

- Leverage Efficient Base Learners: Choose computationally efficient base algorithms for your ensemble. For example, tree-based models like Random Forest or XGBoost are often faster to train than deep neural networks and can be very effective [34].

Detailed Methodology: Active Stacking-Deep Learning with Strategic Sampling

This protocol is adapted from a study focused on predicting Thyroid-Disrupting Chemicals (TDCs) [18].

1. Data Curation and Preprocessing

- Source: U.S. EPA ToxCast program and CompTox Chemical Dashboard.

- Training Set: Start with 1519 chemicals. Preprocess by:

- Removing entries with missing/invalid SMILES.

- Converting SMILES to a standardized canonical form using RDKit.

- Filtering out inorganic compounds (no carbon atoms) and mixtures (SMILES containing ".").

- Removing duplicates. Final training set: 1486 compounds (1257 inactive, 229 active).

- Test Set: Start with 1863 chemicals from the

CCTE_Simmons_AUR_TPOassay. Apply the same preprocessing and remove duplicates present in the training set. Final test set: 398 chemicals (196 active, 202 inactive). For robustness testing, create additional test sets with imbalance ratios from 1:2 to 1:6.

2. Molecular Feature Calculation

- Calculate 12 distinct molecular fingerprints from the canonical SMILES representations using RDKit. These fingerprints span categories such as predefined substructures, topology-derived substructures, electrotopological state indices, and atom pair relationships [18].

3. Model Architecture and Training

- Base Models: A stacking ensemble using three deep learning architectures:

- Convolutional Neural Network (CNN) for spatial feature extraction.

- Bidirectional Long Short-Term Memory (BiLSTM) to capture molecular relationships.

- Attention Mechanism to focus on the most relevant features.

- Stacking Procedure: The Out-of-Fold (OOF) predictions from the base models are used as input features for a second-layer meta-learner that makes the final prediction.

- Strategic k-Sampling: The training data is divided into k-ratios to achieve a more balanced data distribution during the training of the base models.

- Active Learning Framework:

- Start with a small, random subset of the training data (e.g., 10%).

- Use a selection strategy (e.g., uncertainty sampling) to query the most informative samples from the unlabeled pool.

- Retrain the model with the newly labeled data and iterate.

4. Performance Metrics

- Primary metrics should include Matthews Correlation Coefficient (MCC), Area Under the Receiver Operating Characteristic Curve (AUROC), and Area Under the Precision-Recall Curve (AUPRC), as they are informative for imbalanced classification [18].

The following table summarizes quantitative results from key studies employing algorithm-level innovations.

Table 1: Performance of Algorithm-Level Methods on Imbalanced Data

| Method | Application Domain | Key Results | Citation |

|---|---|---|---|

| Active Stacking-Deep Learning | Thyroid-Disrupting Chemical Prediction | MCC: 0.51, AUROC: 0.824, AUPRC: 0.851. Achieved with up to 73.3% less labeled data. Performance remained stable across varying test ratios. [18] | |

| Adaptive Cost-Sensitive Learning (AdaCSL) | General Binary Classification (e.g., disease severity) | Superior cost results on several datasets compared to other approaches. Also shown to improve accuracy by reducing local training-test class distribution mismatch. [33] | |

| Ensemble with ECOC & CSL | Lithology Log Classification (Imbalanced Multiclass) | An ensemble of RF and SVM with ECOC and CSL achieved a Kappa statistic of 84.50% and mean F-measures of 91.04% on blind well data. [34] |

Workflow Visualization

Active Stacking Ensemble Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Handling Imbalanced Data

| Tool / Reagent | Function / Purpose | Example Use Case |

|---|---|---|

| RDKit | An open-source cheminformatics toolkit. Used for calculating molecular fingerprints and processing SMILES strings. [18] | Generating 12 distinct molecular fingerprints (e.g., substructure, topological) from SMILES notations as model inputs. [18] |

| Cost-Sensitive Loss Functions (e.g., AdaCSL) | An adaptive algorithm that modifies the loss function to assign higher costs for minority class misclassification. | Minimizing overall misclassification cost when there is a mismatch between training and test set distributions. [33] |

| Error Correcting Output Code (ECOC) | A decomposition technique to break down a multiclass problem into multiple binary classification problems. | Enabling the application of binary cost-sensitive learning and ensemble methods to multiclass imbalanced lithofacies classification. [34] |

| Metaheuristic Algorithms (e.g., GA, PSO) | High-level optimization algorithms used for ensemble pruning. | Selecting an optimal subset of base learners from a large ensemble to reduce computational complexity while maintaining performance. [32] |

| Active Learning Query Strategies (Uncertainty, Margin, Entropy) | Methods to identify the most informative data points from an unlabeled pool for expert labeling. | Efficiently expanding the training set for a toxicity prediction model while minimizing labeling effort and cost. [18] [36] |

Active learning (AL) represents a transformative approach in computational chemistry and drug discovery, enabling researchers to navigate vast chemical spaces efficiently. By iteratively selecting the most informative data points for evaluation, AL protocols minimize resource-intensive calculations and experiments. This technical support center addresses key challenges, particularly data imbalance, encountered when implementing AL for chemical library research, providing troubleshooting guides and detailed protocols to support researchers, scientists, and drug development professionals.

FAQs and Troubleshooting Guides

FAQ 1: What is an Active Learning Cycle and How Does it Address Data Imbalance?

An Active Learning cycle is an iterative process where a machine learning model selectively queries an oracle (e.g., experimental assay or computational simulation) to label the most informative data points from a large, unlabeled pool. This closed-loop framework integrates data generation, model training, and informed data selection [37] [19] [38].

For imbalanced data sets, where inactive compounds vastly outnumber active ones, standard AL strategies can fail by ignoring the minority class. Strategic sampling within the AL framework is a key technique to address this. It involves partitioning training data to achieve a more balanced distribution between toxic and nontoxic compounds, forcing the model to learn from the rare but critical active compounds and significantly improving predictive performance for the minority class [18].

FAQ 2: Which Compound Selection Strategy Should I Use for My Imbalanced Chemical Library?

The optimal selection strategy depends on your primary goal: maximizing immediate performance or exploring the chemical space to find novel actives. The following table compares common strategies:

| Selection Strategy | Primary Goal | Key Advantage | Consideration for Imbalanced Data |

|---|---|---|---|

| Greedy [37] | Exploitation / Performance | Selects top predicted binders; quickly finds high-affinity compounds. | High risk of getting stuck in a small region of chemical space, potentially missing novel scaffolds. |

| Uncertainty [37] [18] | Exploration / Model Improvement | Selects ligands with the largest prediction uncertainty; improves model robustness. | May select many inactive compounds in imbalanced sets; can be inefficient for finding actives. |

| Mixed (e.g., Top-N Uncertain) [37] | Balanced Approach | Selects the most uncertain predictions from a pool of top candidates. Balances exploration and exploitation. | Effective at finding potent compounds while exploring chemical space; good general-purpose choice. |

| Narrowing [37] | Phased Approach | Starts broad (exploration) and switches to greedy (exploitation) after initial rounds. | Helps build a diverse initial model before focusing on performance, which can help cover the minority class. |

FAQ 3: My Model is Biased Towards the Majority Class. How Can I Improve It?