Overcoming Data Scarcity: A Practical Guide to Few-Shot Learning for Molecular Property Prediction

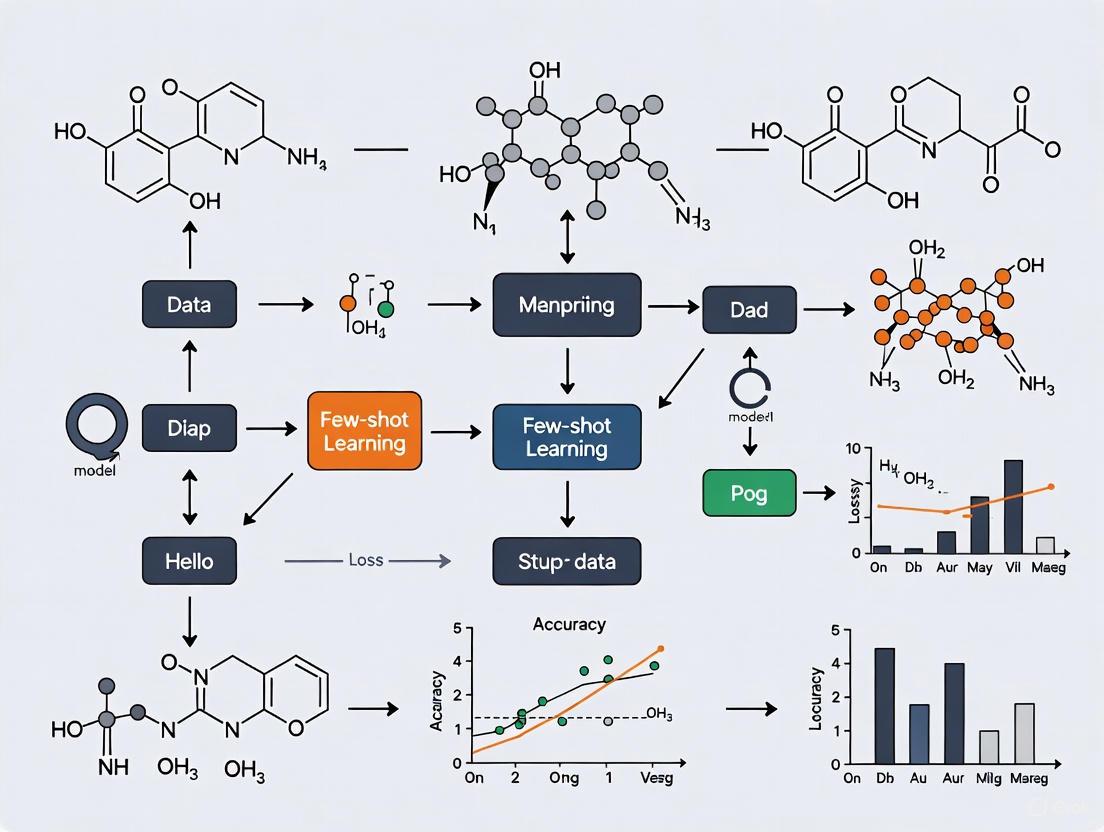

This article provides a comprehensive guide for researchers and drug development professionals on implementing few-shot learning (FSL) for molecular property prediction (MPP) with limited data.

Overcoming Data Scarcity: A Practical Guide to Few-Shot Learning for Molecular Property Prediction

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on implementing few-shot learning (FSL) for molecular property prediction (MPP) with limited data. We first explore the foundational challenges of data scarcity and distribution shifts in real-world molecular datasets. We then detail state-of-the-art methodological approaches, including meta-learning strategies and hybrid molecular representations, offering a practical framework for application. The guide further addresses common troubleshooting and optimization techniques to enhance model robustness and generalization. Finally, we present a comparative analysis of current methods using established benchmarks and evaluation protocols, validating their performance and providing insights for selecting the right approach for specific tasks in early-stage drug discovery.

The Data Scarcity Problem: Core Challenges and FS-MPP Fundamentals

Understanding the Real-World Need for Few-Shot Learning in Drug Discovery

Molecular property prediction (MPP) is a pivotal task in early-stage drug discovery, aimed at identifying innovative therapeutics with optimized absorption, metabolism, and excretion, along with low toxicity and potent pharmacological activity [1]. Traditional drug discovery methods are notoriously resource-intensive, often requiring over a decade and costing billions of dollars, yet clinical success rates remain modest at approximately 10% [1]. This inefficiency has driven the adoption of artificial intelligence (AI) to supplement or even replace traditional experimental methods in early phases, effectively filtering out molecules with a high likelihood of failing in clinical trials [1].

However, a significant obstacle impedes conventional AI approaches: the severe scarcity of high-quality, labeled molecular data. This scarcity arises from the high costs and complexity of wet-lab experiments needed to determine molecular properties [2]. Analysis of real-world molecular databases reveals critical data challenges. For instance, in Figure 2 from the FSMPP survey, the distribution of molecular activity annotations in the ChEMBL database shifts dramatically after removing abnormal entries like null values and duplicate records, revealing issues with annotation quality [2]. Furthermore, the analysis of IC50 distributions for the top-5 most frequently annotated targets shows severe imbalances and value ranges spanning several orders of magnitude [2]. These limitations lead to models that overfit the small portion of annotated training data but fail to generalize to new molecular structures or properties—an archetypal manifestation of the few-shot problem [2].

Few-shot molecular property prediction (FSMPP) has emerged as an expressive paradigm that enables learning from only a handful of labeled examples, directly addressing this data scarcity challenge [3] [2]. By formulating MPP as a multi-task learning problem where models must generalize across both molecular structures and property distributions with limited supervision, FSMPP facilitates rapid model adaptation to new tasks even when high-quality labels are scarce [2]. This capability is particularly valuable in therapeutic areas with limited data, such as rare diseases or newly discovered protein targets [2].

Table 1: Core Challenges in Few-Shot Molecular Property Prediction

| Challenge Category | Specific Challenge | Impact on Model Performance |

|---|---|---|

| Cross-Property Generalization | Distribution shifts across different property prediction tasks [2] | Hinders effective knowledge transfer due to varying label spaces and biochemical mechanisms |

| Cross-Molecule Generalization | Structural heterogeneity across molecules [2] | Causes overfitting to limited structural patterns in training data |

| Data Quality | Scarce and low-quality molecular annotations [2] | Limits supervised learning effectiveness and model generalization |

| Task Relationships | Negative transfer in multi-task learning [4] | Performance drops when updates from one task detrimentally affect another |

Key Methodological Approaches in Few-Shot Molecular Property Prediction

Taxonomy of FSMPP Methods

Research in FSMPP has produced diverse methodological approaches that can be systematically categorized into three levels [2]:

- Data-level methods focus on enhancing the quality and quantity of molecular data through techniques such as data augmentation and the use of external chemical knowledge.

- Model-level methods aim to develop more expressive architectures for molecular representation learning, including graph neural networks and multi-modal fusion models.

- Learning paradigm-level methods employ meta-learning and transfer learning strategies to enable models to quickly adapt to new tasks with limited data.

Representative FSMPP Frameworks

Several innovative frameworks exemplify the advancement of FSMPP methodologies:

The Context-informed Few-shot Molecular Property Prediction via Heterogeneous Meta-Learning (CFS-HML) approach employs a dual-pathway architecture to capture both property-specific and property-shared molecular features [5] [1]. This method uses graph neural networks (GNNs) as encoders of property-specific knowledge to capture contextual information about diverse molecular substructures, while simultaneously employing self-attention encoders as extractors of generic knowledge for shared properties [5] [1]. A heterogeneous meta-learning strategy updates parameters of property-specific features within individual tasks (inner loop) and jointly updates all parameters (outer loop), enabling the model to effectively capture both general and contextual information [1].

PG-DERN (Property-Guided Few-Shot Learning with Dual-View Encoder and Relation Graph Learning Network) introduces a dual-view encoder to learn meaningful molecular representations by integrating information from both node and subgraph perspectives [6]. The framework incorporates a relation graph learning module to construct a relation graph based on molecular similarity, improving the efficiency of information propagation and prediction accuracy [6]. Additionally, it uses a property-guided feature augmentation module to transfer information from similar properties to novel properties, enhancing the comprehensiveness of molecular feature representation [6].

Adaptive Checkpointing with Specialization (ACS) addresses the challenge of negative transfer in multi-task learning, which occurs when updates from one task detrimentally affect another [4]. This approach integrates a shared, task-agnostic graph neural network backbone with task-specific trainable heads, adaptively checkpointing model parameters when negative transfer signals are detected [4]. This design promotes inductive transfer among sufficiently correlated tasks while protecting individual tasks from deleterious parameter updates, enabling accurate predictions with as few as 29 labeled samples [4].

Table 2: Essential Components in FSMPP Research

| Component Category | Specific Element | Function in FSMPP |

|---|---|---|

| Molecular Encoders | Graph Neural Networks (GNNs) [5] [1] | Capture spatial structures and property-specific substructures of molecules |

| Self-Attention Encoders [5] [1] | Extract fundamental structures and commonalities across molecules | |

| Meta-Learning Strategies | MAML-based Optimization [6] | Learn well-initialized meta-parameters for fast adaptation |

| Heterogeneous Meta-Learning [5] [1] | Separate optimization of property-shared and property-specific knowledge | |

| Relation Learning Modules | Adaptive Relational Learning [5] [1] | Infer molecular relations for effective label propagation |

| Relation Graph Learning [6] | Construct similarity-based graphs to improve information propagation |

Experimental Protocols and Workflows

Protocol 1: Implementing Context-Informed Meta-Learning

The CFS-HML framework demonstrates an effective protocol for context-informed few-shot learning [5] [1]:

Step 1: Molecular Representation Encoding

- Property-Specific Embedding: Process each molecule using a GNN-based encoder (e.g., GIN) to obtain property-specific molecular embeddings that capture relevant substructures [1]. These embeddings serve as carriers of property-specific knowledge parameterized by ( W_g ) [1].

- Property-Shared Embedding: Apply self-attention mechanisms on molecular features combined with class-shared embeddings to capture fundamental structures and commonalities across molecules [1]. This component addresses the limitation of property-specific encoders that may weaken task-shared properties [1].

Step 2: Relational Graph Construction

- Compute similarity metrics between molecular embeddings in the support set to construct a relation graph [1].

- Implement an adaptive relational learning module to estimate pairwise molecular relations specific to the target property, enabling effective propagation of limited labels through the relation graph [5] [1].

Step 3: Heterogeneous Meta-Learning Optimization

- Inner Loop Update: For each task, compute loss on the support set and update property-specific parameters with gradient descent while keeping property-shared parameters fixed [1].

- Outer Loop Update: Compute loss on the query set and jointly update all model parameters, including both property-shared and property-specific components [5] [1].

- Repeat these episodic training steps across multiple tasks to learn transferable knowledge [5].

Protocol 2: Property-Guided Few-Shot Learning with PG-DERN

The PG-DERN framework provides an alternative protocol emphasizing property guidance and dual-view encoding [6]:

Step 1: Dual-View Molecular Encoding

- Node-Level Encoding: Extract atom-level features and relationships using standard GNN message passing to capture local structural information [6].

- Subgraph-Level Encoding: Identify and encode meaningful molecular substructures that correlate with chemical properties, providing a more global perspective [6].

- Integrate both views through attention mechanisms or concatenation to form comprehensive molecular representations [6].

Step 2: Property-Guided Feature Augmentation

- Identify source properties with sufficient data that are positively correlated with the target novel property [6].

- Transfer information from these related properties to augment the feature representation of molecules with the novel property [6].

- Implement transfer mechanisms such as feature projection or cross-property attention to ensure relevant knowledge transfer [6].

Step 3: Meta-Learning with Relation Graphs

- Construct a relation graph based on molecular similarity in the embedded space to facilitate efficient information propagation [6].

- Employ a MAML-based meta-learning strategy to learn well-initialized model parameters that enable fast adaptation to new properties [6].

- Fine-tune the meta-parameters on the target few-shot task using gradient-based optimization with a carefully tuned learning rate [6].

The Scientist's Toolkit: Essential Research Reagents

Implementing effective FSMPP requires specific computational "reagents" and resources. The following table details essential components for building and evaluating few-shot learning models for molecular property prediction.

Table 3: Key Research Reagent Solutions for FSMPP

| Reagent Category | Specific Resource | Function and Application |

|---|---|---|

| Benchmark Datasets | FS-Mol [7] | Standardized few-shot learning dataset of molecules for fair benchmarking |

| MoleculeNet [5] [4] | Benchmark containing multiple molecular property prediction tasks | |

| Molecular Encoders | GIN (Graph Isomorphism Network) [1] | Property-specific molecular graph encoder that captures spatial structures |

| Pre-GNN [5] | Pre-trained graph neural network for transfer learning in molecular tasks | |

| Meta-Learning Algorithms | MAML (Model-Agnostic Meta-Learning) [6] | Optimization-based meta-learning for fast adaptation to new tasks |

| Heterogeneous Meta-Learning [5] [1] | Specialized algorithm that separately optimizes different knowledge types | |

| Evaluation Frameworks | N-Way K-Shot Classification [8] | Standard evaluation protocol measuring model performance with K examples per class |

| Cross-Property Generalization Metrics [2] | Evaluation measures for model transferability across different molecular properties |

Few-shot learning represents a transformative approach to molecular property prediction that directly addresses the critical data scarcity challenges in drug discovery. By enabling models to generalize from limited labeled examples through advanced meta-learning strategies, dual-pathway encoding architectures, and cross-property knowledge transfer, FSMPP significantly reduces the resource barriers associated with traditional drug discovery approaches [5] [2] [1]. The experimental protocols and methodologies outlined in this document provide researchers with practical frameworks for implementing these approaches in real-world scenarios.

The future of FSMPP research points toward several promising directions, including the development of more sophisticated relational learning modules that better capture biochemical similarities, integration with large language models for enhanced molecular representation learning, and more effective negative transfer mitigation strategies in multi-task learning environments [2] [4]. As these methodologies continue to mature, few-shot learning is poised to dramatically accelerate the pace of artificial intelligence-driven molecular discovery and design, particularly in domains with severe data constraints such as rare diseases and novel therapeutic targets [2].

In the field of few-shot molecular property prediction (FSMPP), cross-property generalization under distribution shifts represents a fundamental obstacle to developing robust and widely applicable artificial intelligence models. This challenge arises from the inherent biochemical reality that different molecular properties—such as toxicity, solubility, or biological activity—are governed by distinct underlying mechanisms and structure-property relationships [2]. When a model trained on a set of source properties encounters new target properties with different data distributions, its performance often degrades significantly due to distribution shifts and weak inter-task correlations [2] [9].

The practical implications of this challenge are substantial for drug discovery and materials science. In real-world scenarios, researchers frequently need to predict novel molecular properties where only minimal labeled data is available, and the new property of interest may be biochemically distinct from previously encountered properties [4]. This creates a pressing need for methodological approaches that can maintain predictive accuracy despite significant shifts in the property space and the underlying data distributions that govern molecular behavior.

Problem Formulation and Mechanisms

Formal Definition of Distribution Shifts

Distribution shifts in FSMPP manifest through two primary mechanisms that undermine conventional machine learning assumptions:

Covariate Shift: Occurs when the feature distribution of molecules differs between training and testing scenarios, despite consistent input feature spaces [10]. For example, a model trained predominantly on planar aromatic compounds may struggle when predicting properties of complex three-dimensional macrocycles.

Concept/Semantic Shift: Arises when the fundamental relationship between molecular structures and their properties changes across tasks [10]. This is particularly problematic in molecular science since different properties (e.g., toxicity vs. solubility) often follow different biochemical principles.

Root Causes in Molecular Data

The underlying causes of these distribution shifts in molecular data are multifaceted. Task imbalance is pervasive, where certain properties have far fewer labeled examples than others due to variations in experimental cost and complexity [4]. Additionally, low task relatedness occurs when properties with weak biochemical correlations are learned jointly, leading to gradient conflicts during optimization [4]. Temporal and spatial disparities in data collection further compound these issues, as measurement techniques and instrumental conditions evolve over time [4].

Methodological Solutions

Heterogeneous Meta-Learning Approaches

The Context-informed Few-shot Molecular Property Prediction via Heterogeneous Meta-Learning (CFS-HML) framework addresses distribution shifts by explicitly separating the learning of property-shared and property-specific knowledge [5]. This approach employs:

- Graph Neural Networks (GNNs) combined with self-attention encoders to extract and integrate both property-specific and property-shared molecular features

- An adaptive relational learning module that infers molecular relationships based on property-shared features

- A heterogeneous meta-learning strategy that updates parameters of property-specific features within individual tasks (inner loop) while jointly updating all parameters (outer loop) [5]

This dual-pathway architecture enables the model to capture both general molecular patterns that transfer across properties and context-specific information crucial for particular property predictions.

Multi-Task Learning with Negative Transfer Mitigation

Adaptive Checkpointing with Specialization (ACS) represents another significant advancement, specifically designed to counteract negative transfer in multi-task learning scenarios [4]. The ACS methodology includes:

- A shared task-agnostic backbone (GNN) for learning general-purpose latent representations

- Task-specific multi-layer perceptron (MLP) heads that provide specialized learning capacity for each property

- Adaptive checkpointing of model parameters when negative transfer signals are detected, preserving optimal performance for each task [4]

This approach allows synergistic knowledge transfer among sufficiently correlated properties while shielding individual tasks from detrimental parameter updates that occur when properties are biochemically dissimilar.

Parameter-Efficient Adaptation

The PACIA framework addresses the overfitting problem common in few-shot scenarios through parameter-efficient adaptation [11]. Key innovations include:

- A unified GNN adapter that generates a minimal set of adaptive parameters to modulate the message passing process

- A hierarchical adaptation mechanism that adapts the encoder at task-level and the predictor at query-level using the unified GNN adapter [11]

- Significant reduction in trainable parameters compared to conventional fine-tuning approaches, thereby enhancing generalization in low-data regimes

Table 1: Comparison of Methodological Approaches to Cross-Property Generalization

| Method | Core Mechanism | Key Advantages | Applicable Scenarios |

|---|---|---|---|

| CFS-HML [5] | Heterogeneous meta-learning with separate property-shared/specific encoders | Explicitly handles distribution shifts; Combines general and contextual knowledge | Scenarios with mixed related and unrelated properties |

| ACS [4] | Multi-task learning with adaptive checkpointing and specialization | Mitigates negative transfer; Robust to task imbalance | Practical settings with severe data imbalance across properties |

| PACIA [11] | Parameter-efficient GNN adaptation with hierarchical mechanism | Reduces overfitting; Computationally efficient | Ultra-low data regimes with limited computational resources |

Experimental Protocols and Evaluation

Benchmark Datasets and Evaluation Metrics

Rigorous evaluation of cross-property generalization requires carefully designed benchmarks and appropriate metrics. Established molecular datasets include:

- ClinTox: Distinguishes FDA-approved drugs from compounds that failed clinical trials due to toxicity

- SIDER: Contains 27 binary classification tasks for side effect prediction

- Tox21: Comprises 12 in-vitro nuclear-receptor and stress-response toxicity endpoints [4]

Critical to proper evaluation is the use of Murcko-scaffold splits rather than random splits, as this better simulates real-world scenarios where models encounter novel molecular scaffolds not seen during training [4]. This approach prevents inflated performance estimates that occur when structurally similar molecules appear in both training and test sets.

Implementation Protocol for CFS-HML

The experimental workflow for implementing and evaluating the CFS-HML approach follows these key stages:

- Molecular Representation: Encode molecules using graph representations where atoms constitute nodes and bonds constitute edges

- Feature Extraction:

- Process molecular graphs through GIN or Pre-GNN encoders for property-specific knowledge

- Extract property-shared features using self-attention encoders

- Meta-Training:

- Inner Loop: Update property-specific parameters for individual tasks using support sets

- Outer Loop: Jointly update all parameters across tasks using query sets

- Relation Learning: Apply adaptive relational learning to molecular representations based on property-shared features

- Evaluation: Assess performance on query sets from novel properties with limited labeled examples [5]

Implementation Protocol for ACS

For the ACS method, the experimental protocol emphasizes mitigation of negative transfer:

- Backbone Initialization: Initialize a shared GNN backbone with message-passing architecture

- Task-Specific Head Construction: Implement separate MLP heads for each molecular property

- Training with Checkpointing:

- Monitor validation loss for every task independently

- Checkpoint the best backbone-head pair whenever a task achieves a new validation loss minimum

- Specialized Model Creation: After training, obtain a specialized model for each task comprising the best-performing backbone-head combination [4]

- Cross-Property Evaluation: Evaluate each specialized model on its corresponding property, particularly focusing on low-data scenarios

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Reagents for Cross-Property Generalization Research

| Research Reagent | Function | Implementation Examples |

|---|---|---|

| Graph Neural Networks (GNNs) | Encodes molecular structure information into latent representations | GIN, Pre-GNN, Message Passing Neural Networks [5] [4] |

| Self-Attention Encoders | Captures global dependencies and property-shared molecular features | Transformer-based architectures with multi-head attention [5] |

| Meta-Learning Frameworks | Enables adaptation to new properties with limited data | MAML, ProtoNets, heterogeneous meta-learning algorithms [5] [12] |

| Adaptive Checkpointing | Preserves best-performing model parameters for each property | Validation loss monitoring with task-specific checkpointing [4] |

| Molecular Benchmarks | Provides standardized evaluation across diverse properties | MoleculeNet, ChEMBL, Tox21, SIDER, ClinTox [5] [4] |

Performance Analysis and Quantitative Findings

Comparative Performance Across Methods

Experimental results demonstrate the substantial advantages of specialized approaches for cross-property generalization. The ACS method, for instance, shows an 11.5% average improvement relative to other methods based on node-centric message passing across multiple MoleculeNet benchmarks [4]. When compared specifically to single-task learning (STL) approaches, ACS achieves an 8.3% average performance gain, highlighting the benefits of effective inductive transfer while mitigating negative transfer [4].

The CFS-HML framework demonstrates particularly strong performance in scenarios with significant distribution shifts between training and target properties. By explicitly modeling both property-shared and property-specific knowledge, this approach achieves enhanced predictive accuracy with fewer training samples, with performance improvements becoming more pronounced as data scarcity increases [5].

Impact of Data Regime on Method Selection

The relative effectiveness of different approaches varies significantly with the available data quantity:

Table 3: Performance Across Data Regimes

| Data Regime | Recommended Approach | Performance Characteristics | Practical Considerations |

|---|---|---|---|

| Ultra-Low Data (≤ 50 samples) | ACS with adaptive checkpointing [4] | Achieves accuracy with as few as 29 labeled samples | Specialized for severe task imbalance; minimal data requirements |

| Standard Few-Shot (50-500 samples) | CFS-HML with heterogeneous meta-learning [5] | Balanced performance across properties; handles distribution shifts | Requires sufficient tasks for meta-learning; computationally intensive |

| Cross-Domain Transfer | PACIA with parameter-efficient adaptation [11] | Strong generalization to novel property spaces | Minimal retraining required; reduced overfitting risk |

Cross-property generalization under distribution shifts remains an active research area with several promising directions for advancement. Future work may focus on developing more sophisticated task-relatedness measures to guide knowledge transfer, creating unified frameworks that combine the strengths of meta-learning and multi-task learning approaches, and improving explainability to provide biochemical insights into why certain transfer strategies succeed or fail [2] [4].

The methodologies and experimental protocols outlined in this document provide researchers with practical tools to address one of the most persistent challenges in data-driven molecular science. By implementing these approaches, scientists and drug development professionals can significantly enhance their ability to predict novel molecular properties even when labeled data is severely limited and property distributions shift substantially.

In few-shot molecular property prediction (FSMPP), cross-molecule generalization under structural heterogeneity presents a fundamental obstacle. This challenge arises from the tendency of models to overfit the limited structural patterns present in a small number of training molecules, thereby failing to generalize to structurally diverse compounds encountered during testing [3] [2]. The core of this problem lies in the immense structural diversity of chemical space, where molecules sharing a target property can exhibit significantly different topological structures, functional groups, and substructural patterns. When only a few labeled examples are available, models often memorize specific structural features rather than learning the underlying biochemical principles that govern property expression, leading to poor performance on novel molecular scaffolds [2] [7].

The structural heterogeneity problem is particularly pronounced in real-world drug discovery applications, where researchers frequently need to predict properties for novel compound classes with limited available data. This challenge fundamentally limits the practical application of AI models in early-stage drug discovery, especially for rare diseases or newly discovered targets where annotated data is naturally scarce [2] [13]. Overcoming this limitation requires specialized approaches that can extract transferable molecular representations robust to structural variations while maintaining sensitivity to property-determining substructures.

Quantitative Evidence of Structural Heterogeneity

Table 1: Experimental Evidence of Structural Heterogeneity Challenges in Molecular Datasets

| Evidence Type | Dataset/Condition | Key Finding | Impact on Generalization |

|---|---|---|---|

| Structural Diversity [13] | MUV, DUD-E datasets | Active compounds are structurally distinct from inactives | Models struggle with structurally novel actives |

| Scaffold Distribution [2] | ChEMBL database analysis | Severe imbalance in molecular activity annotations | Models bias toward dominant scaffolds |

| Value Range [2] | IC50 distributions (top-5 targets) | Wide value ranges across several orders of magnitude | Difficulty learning consistent structure-property relationships |

| Performance Gap [13] | Tox21 vs. MUV/DUD-E | Better performance when structural diversity is lower | Highlights context-dependent few-shot learning effectiveness |

Methodological Approaches for Enhanced Generalization

Table 2: Methodological Solutions for Cross-Molecule Generalization

| Method Category | Core Principle | Representative Approaches | Key Innovations |

|---|---|---|---|

| Enhanced Graph Architectures | Integrate advanced neural modules into GNNs to capture diverse structural patterns | KA-GNN [14], KA-GCN, KA-GAT [14] | Fourier-based KAN layers for expressive feature transformation; replacement of MLPs with Kolmogorov-Arnold networks |

| Meta-Learning Frameworks | Optimize models for rapid adaptation to new molecular tasks with limited data | Context-informed Meta-Learning [5], Meta-MGNN [7] | Heterogeneous meta-learning with inner/outer loops; property-specific and property-shared encoders |

| Causal & Invariant Learning | Discover invariant molecular substructures that causally determine properties | Soft Causal Learning [15], Rationale-based Models [15] | Graph information bottleneck to disentangle environments; cross-attention for environment-invariance interactions |

| Multimodal Fusion | Combine multiple molecular representations for richer characterization | AdaptMol [7], Property-Aware Relations [7] | Adaptive fusion of sequence and topological data; property-aware molecular encoders |

| Self-Supervised Pretraining | Leverage unlabeled molecules to learn transferable structural representations | Meta-MGNN [7], SMILES-BERT [7] | Structure and attribute-based self-supervision; large-scale unsupervised pretraining |

Kolmogorov-Arnold Graph Neural Networks (KA-GNNs)

KA-GNNs represent a significant architectural advancement for addressing structural heterogeneity by integrating Kolmogorov-Arnold networks (KANs) into all core components of graph neural networks: node embedding, message passing, and readout [14]. Unlike traditional GNNs that use fixed activation functions, KA-GNNs employ learnable univariate functions on edges, enabling more expressive transformation of molecular features while maintaining parameter efficiency. The Fourier-series-based formulation within KA-GNNs enhances the model's ability to capture both low-frequency and high-frequency structural patterns in molecular graphs, which is crucial for handling structurally diverse compounds [14].

The KA-GNN framework implements two specific variants: KA-Graph Convolutional Networks (KA-GCN) and KA-Graph Attention Networks (KA-GAT), which replace conventional MLP-based transformations with Fourier-based KAN modules. In KA-GCN, node embeddings are initialized by processing both atomic features and neighboring bond features through KAN layers, effectively encoding both atomic identity and local chemical context. Message passing incorporates residual KANs instead of standard MLPs, enabling more adaptive feature updating. KA-GAT extends this approach by additionally incorporating edge embeddings processed through KAN layers, allowing more nuanced attention mechanisms that can better handle structural diversity [14].

Context-Informed Heterogeneous Meta-Learning

This approach addresses structural heterogeneity through a dual-encoder framework that separately captures property-shared and property-specific molecular features [5]. Graph neural networks, particularly Graph Isomorphism Networks (GIN), serve as encoders of property-specific knowledge to capture contextual information, while self-attention encoders extract generic knowledge shared across properties. The meta-learning algorithm employs a heterogeneous optimization strategy where parameters for property-specific features are updated within individual tasks (inner loop), while all parameters are jointly updated across tasks (outer loop) [5].

A key innovation is the adaptive relational learning module that infers molecular relations based on property-shared features. This allows the model to construct a contextual understanding of how structurally diverse molecules relate to one another with respect to the target property. The final molecular embedding is refined through alignment with property labels in the property-specific classifier, enhancing the model's ability to recognize property-determining substructures across diverse molecular scaffolds [5].

Soft Causal Learning for OOD Generalization

Soft causal learning addresses structural heterogeneity from a causal perspective by explicitly modeling molecular environments and their interactions with invariant substructures [15]. This approach recognizes that strict invariant rationale models often fail in molecular domains because property associations are complex and cannot be fully explained by invariant subgraphs alone. The framework incorporates chemistry theories through a graph growth generator that simulates expanded molecular environments, enabling systematic exposure to structural variations during training [15].

The method employs a Graph Information Bottleneck (GIB) objective to disentangle environmental factors from the whole molecular graphs, separating environmental influences from core invariant features. A cross-attention based soft causal interaction module then enables dynamic interactions between environments and invariances, allowing the model to adaptively weigh the contribution of environmental factors based on the specific molecular context. This approach demonstrates particularly strong performance in out-of-distribution (OOD) scenarios where test molecules exhibit structural shifts from the training distribution [15].

Experimental Protocols

Protocol: Evaluating KA-GNNs on Structural Heterogeneity

Objective: Assess the capability of Kolmogorov-Arnold Graph Neural Networks to generalize across structurally diverse molecules in few-shot settings.

Materials:

- Benchmark Datasets: Seven molecular property prediction benchmarks (e.g., Tox21, MUV, DUD-E) [14] [13]

- Model Variants: KA-GCN and KA-GAT implementing Fourier-based KAN layers [14]

- Baselines: Conventional GCNs, GATs, and other FSMPP approaches [14]

- Evaluation Metrics: ROC-AUC, PR-AUC, F1 score, and computational efficiency measures [14]

Procedure:

- Data Partitioning:

- Implement scaffold splitting to separate molecules with different structural frameworks

- Ensure training and test sets contain distinct molecular scaffolds to evaluate cross-scaffold generalization

- Create few-shot tasks with 1, 5, and 10 shots per property class

Model Configuration:

- Initialize node embeddings using KAN layers processing atomic features and neighborhood bond features

- Implement message passing with Fourier-based KAN modules using the formulation: where parameters aₖ and bₖ are learned during training [14]

- Configure readout layers with KAN-based global pooling instead of traditional MLP readouts

Training Protocol:

- Optimize using task-specific episodic training matching the few-shot evaluation setting

- Apply regularization techniques appropriate for small-data scenarios

- Employ early stopping based on validation performance on structurally dissimilar molecules

Interpretation Analysis:

- Visualize attention weights to identify chemically meaningful substructures

- Analyze which molecular regions contribute most to property predictions

- Correlate activated KAN basis functions with known chemical features

Protocol: Heterogeneous Meta-Learning for Structural Diversity

Objective: Validate the effectiveness of context-informed heterogeneous meta-learning for generalizing across structurally heterogeneous molecules.

Materials:

- Dataset: FS-Mol or similar few-shot molecular benchmark [5] [7]

- Encoder Architectures: GIN for property-specific features, self-attention for property-shared features [5]

- Relational Module: Graph attention networks for adaptive relation learning

Procedure:

- Meta-Training Setup:

- Construct multiple few-shot tasks from source domain data

- Ensure each task contains structurally diverse positive and negative examples

- Balance tasks to include both similar and dissimilar molecular scaffolds

Dual-Encoder Training:

- Train property-specific encoder (GIN) to extract contextual molecular features

- Train property-shared encoder (self-attention) to extract transferable features

- Implement heterogeneous meta-learning with:

- Inner loop: Update property-specific parameters within individual tasks

- Outer loop: Jointly update all parameters across tasks [5]

Relational Learning:

- Compute molecular similarity graphs based on property-shared features

- Apply adaptive relational learning to refine molecular embeddings

- Align final embeddings with property labels using contrastive learning

Evaluation:

- Test on novel tasks with structurally distinct molecules

- Ablate components to assess contribution to cross-scaffold generalization

- Compare with prototypical and matching networks as baselines

Visualization of Methodologies

Workflow: KA-GNN Architecture for Structural Generalization

Workflow: Heterogeneous Meta-Learning Framework

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Reagents for Cross-Molecule Generalization Research

| Research Reagent | Function | Example Implementation |

|---|---|---|

| FSMPP Benchmarks | Standardized evaluation of generalization capabilities | FS-Mol [7], Meta-MolNet [7], MoleculeNet [5] |

| Graph Neural Libraries | Foundation for implementing novel GNN architectures | PyTor Geometric, Deep Graph Library (DGL), TensorFlow GNN |

| Meta-Learning Frameworks | Support for few-shot learning algorithm development | Learn2Learn, Higher, TorchMeta |

| Molecular Featurization | Conversion of raw molecules to machine-readable formats | RDKit (for fingerprints, descriptors), OGB (standardized graph conversion) |

| KAN Implementation | Specialized modules for Kolmogorov-Arnold Networks | PyKAN, public implementations of GraphKAN [14] |

| Causal Learning Tools | Environments for causal discovery and invariance learning | DoWhy, CausalML, custom GIB implementations [15] |

Analyzing Data Scarcity and Quality in Molecular Databases like ChEMBL

Molecular property prediction (MPP) is a critical task in early-stage drug discovery, aiding in the identification of biologically active compounds with favorable drug-like properties. However, the real-world application of AI-assisted MPP is severely constrained by the scarcity and low quality of experimental molecular annotations. This application note frames these challenges within the context of implementing few-shot molecular property prediction (FSMPP), a paradigm designed to learn from only a handful of labeled examples. We analyze the inherent issues in public databases like ChEMBL and provide structured protocols to navigate these limitations, enabling robust model development even under significant data constraints.

The core challenge stems from the high cost and complexity of wet-lab experiments, which result in a fundamental lack of large-scale, high-quality labeled data for training supervised models. This creates a few-shot problem, where models risk overfitting to the limited annotated data and fail to generalize to new molecular structures or properties. Specifically, FSMPP must overcome two key generalization challenges: (1) cross-property generalization under distribution shifts, where each property prediction task may have a different data distribution and weak correlation to others, and (2) cross-molecule generalization under structural heterogeneity, where models must avoid overfitting to the structural patterns of a few training molecules and generalize to structurally diverse compounds [2].

Quantitative Analysis of Data Scarcity and Quality

A systematic analysis of the ChEMBL database reveals the depth of the data scarcity and quality issues. ChEMBL, a manually curated database of bioactive molecules with drug-like properties, encompasses more than 2.5 million compounds and 16,000 targets [16]. Despite its scale, the data is characterized by significant noise and imbalance.

Table 1: Quantitative Analysis of Data Challenges in ChEMBL

| Challenge Category | Specific Findings | Impact on Model Development |

|---|---|---|

| Data Quality Issues | Presence of abnormal entries (null values, duplicate records) creating a different distribution between raw and denoised molecular activity annotations [2]. | Leads to poorly calibrated models that learn from artifacts instead of true structure-activity relationships. |

| Severe Value Imbalances | Analysis of IC50 distributions for the top-5 most frequently annotated targets shows severe imbalances and ranges spanning several orders of magnitude [2]. | Hinders model convergence and can bias predictions towards frequently observed value ranges. |

| Annotation Scarcity | Real-world molecules have scarce property annotations due to high experimental costs, creating a few-shot learning environment [2]. | Prevents the effective use of data-hungry deep learning models, necessitating specialized few-shot approaches. |

These quantitative findings underscore that existing molecular datasets are often insufficient for supervised deep learning. The next section outlines protocols designed to extract reliable knowledge from such challenging data environments.

Experimental Protocols for Data Curation and Few-Shot Learning

Protocol 1: Data Curation and Preprocessing from ChEMBL

A rigorous data cleaning strategy is the first and most critical step in building reliable FSMPP models. The following protocol, adapted from state-of-the-art methodologies, ensures the construction of a high-quality training set from raw ChEMBL data [17] [18].

Application Note: This protocol is designed to remove noise, standardize molecular representation, and reduce confounding factors, thereby creating a more reliable foundation for few-shot learning.

- Data Retrieval: Download target-specific compound activity data from the ChEMBL database (e.g., version ChEMBL28 or ChEMBL34) [17] [18].

- Initial Filtering: Discard compounds without a SMILES notation or with invalid chemical structures.

- Standardization:

- Chemical Space Filtering:

- Deduplication: Remove duplicate entries based on canonical SMILES to prevent data leakage [17].

- Size-Based Filtering: Discard SMILES strings in the bottom or top 5% of the character length distribution to exclude overly simple or complex molecules that could complicate training [17].

The following workflow diagram visualizes this multi-step curation process:

Protocol 2: Implementing a Few-Shot Molecular Property Prediction Model

Once a curated dataset is available, the following protocol details the implementation of a meta-learning-based FSMPP model, incorporating insights from recent research [5] [19].

Application Note: This protocol uses meta-learning to simulate few-shot scenarios during training, allowing the model to learn a generalizable initialization that can rapidly adapt to new properties with minimal data.

Problem Formulation (Task Generation):

- Formulate the problem as an N-way, K-shot learning task. For example, in a 2-way K-shot setting, each task involves distinguishing between two classes (e.g., active/inactive for a specific assay), with only K labeled examples per class [19].

- Split the curated data into a meta-training set (for learning across many tasks) and a meta-testing set (for evaluating on novel, unseen tasks) [19].

Model Architecture Setup (Dual-Input Model):

- Graph Neural Network (GNN) Encoder: Represent a molecule as a graph ( G = (V, E) ). Use a message-passing GNN (e.g., AttentiveFP) to generate an embedding that captures topological information [19].

- Fingerprint Module: To complement the graph representation, compute a mixed molecular fingerprint. This can include MACCS (substructure), ErG (pharmacophore), and PubChem fingerprints for a comprehensive view of chemical features [19].

- Feature Fusion: Concatenate the GNN embedding and fingerprint vector. Pass the fused representation through a fully connected layer to create a unified molecular representation [19].

Meta-Training with ProtoMAML:

- Employ a meta-learning strategy like ProtoMAML, which combines prototype networks with the Model-Agnostic Meta-Learning (MAML) algorithm [19].

- Inner Loop (Task-Specific Adaptation): For each task in a training batch, compute a prototype (mean vector) for each class using the support set. The model parameters are then updated with a few gradient steps to minimize the loss on the support set, using the prototypes for classification [19].

- Outer Loop (Meta-Optimization): After the inner-loop adaptation, evaluate the model on the task's query set. The key meta-objective is to learn initial model parameters that, after just a few inner-loop steps, lead to low error on the query sets of new tasks [19].

The architecture and workflow of this model are illustrated below:

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful implementation of the aforementioned protocols relies on a suite of software tools and computational resources. The following table details the key components of the research toolkit.

Table 2: Essential Research Reagents & Computational Tools

| Tool/Resource Name | Type | Primary Function in Protocol |

|---|---|---|

| ChEMBL Database [16] | Data Repository | Primary source of raw bioactivity data for small molecules. |

| RDKit [17] [20] | Cheminformatics Toolkit | Used for SMILES sanitization, fingerprint generation (ECFP), scaffold analysis (Murcko scaffolds), and conformer generation. |

| Open Babel [17] | Chemical Toolbox | Assists in format conversion and generating canonical SMILES representations. |

| KNIME [17] | Workflow Platform | Provides a visual environment for building and executing the data curation workflow, integrating RDKit and Open Babel nodes. |

| TensorFlow/PyTorch [17] [19] | Deep Learning Framework | Backend for implementing and training GNNs and meta-learning algorithms (e.g., ProtoMAML). |

| Optuna [17] | Hyperparameter Tuning | Framework for performing Bayesian optimization to find the best model architecture parameters. |

| GitHub Repository (e.g., VeGA, AttFPGNN-MAML) [17] [19] | Code Resource | Provides open-source implementations of state-of-the-art models for reference and adaptation. |

This application note has detailed the critical challenges of data scarcity and quality in molecular databases like ChEMBL and has provided structured protocols to address them within a few-shot learning framework. By adopting the rigorous data curation practice outlined in Protocol 1, researchers can build a more reliable foundation from noisy public data. Subsequently, by implementing the FSMPP model from Protocol 2, which leverages hybrid molecular representations and meta-learning, it is possible to develop predictive tools that generalize effectively to novel molecular properties with very limited labeled examples. This combined approach provides a viable path toward accelerating drug discovery in data-sparse scenarios, such as for novel targets or rare diseases.

Few-Shot Molecular Property Prediction (FS-MPP) has emerged as a critical methodology in computational drug discovery to address the fundamental challenge of data scarcity in molecular property annotation. Traditional deep learning models for molecular property prediction require large amounts of labeled data, but real-world drug discovery faces a significant bottleneck: acquiring molecular property data through wet-lab experiments is costly, time-consuming, and often results in limited labeled examples for novel targets or rare properties [2] [21]. FS-MPP reframes this challenge as a few-shot learning problem, enabling models to make accurate predictions for new molecular properties using only a handful of labeled examples [2].

The FS-MPP task is formally defined as a N-way K-shot problem within a meta-learning framework [21] [19]. In this formulation, each "task" represents the prediction of a specific molecular property (e.g., toxicity, metabolic stability, target binding). For each task, the model has access to a "support set" containing K labeled examples for each of N classes (typically active/inactive for binary classification), and must predict labels for a "query set" of unlabeled molecules from the same property task [19]. This approach stands in contrast to conventional molecular property prediction, which trains a separate model for each property using large datasets.

Critical Challenges in FS-MPP Implementation

Cross-Property Generalization Under Distribution Shifts

A fundamental challenge in FS-MPP arises from the distribution shifts across different molecular properties [2]. Each property prediction task corresponds to distinct structure-property mappings with potentially weak biochemical correlations, differing significantly in label spaces and underlying mechanisms. This heterogeneity creates severe distribution shifts that hinder effective knowledge transfer across properties [2]. For instance, the structural features determining blood-brain barrier penetration may share limited relationship with those predicting BACE-1 enzyme inhibition, despite both being important drug discovery properties [22].

Cross-Molecule Generalization Under Structural Heterogeneity

The structural diversity of molecules presents another significant challenge [2]. Models tend to overfit the limited structural patterns available in few-shot training examples and fail to generalize to structurally diverse compounds during testing. This problem is exacerbated by the complex topological nature of molecular graphs, where small structural changes can dramatically alter properties [2] [23]. The inability to capture meaningful molecular semantics from limited examples remains a persistent obstacle in FS-MPP implementation.

Methodological Approaches to FS-MPP

Table 1: Primary Methodological Approaches in FS-MPP

| Approach Category | Core Mechanism | Key Algorithms | Strengths |

|---|---|---|---|

| Metric-Based Methods | Learns similarity measures in embedding space | Prototypical Networks [21], Matching Networks [24] | Simple implementation, no fine-tuning needed |

| Optimization-Based Methods | Learns optimal initial parameters for rapid adaptation | MAML [19] [25], Meta-Mol [25] | Strong cross-task generalization |

| Relation Graph Methods | Models molecule-property relationships via graph structures | HSL-RG [23], KRGTS [22] | Captures local molecular similarities |

| Attribute-Guided Methods | Incorporates high-level molecular attributes/fingerprints | APN [21], AttFPGNN-MAML [19] | Leverages domain knowledge |

Heterogeneous Meta-Learning Framework

Recent advances have introduced heterogeneous meta-learning strategies that update parameters of property-specific features within individual tasks in the inner loop while jointly updating all parameters in the outer loop [5]. This approach employs graph neural networks combined with self-attention encoders to effectively extract and integrate both property-specific and property-shared molecular features [5]. The Context-informed Few-shot Molecular Property Prediction via Heterogeneous Meta-Learning (CFS-HML) exemplifies this approach, capturing both general and contextual information to substantially improve predictive accuracy [5].

Knowledge-Enhanced Relation Graphs

The KRGTS framework addresses FS-MPP by constructing knowledge-enhanced molecule-property relation graphs that capture local molecular similarities through molecular substructures (scaffolds and functional groups) [22]. This approach introduces the concept of "relative nature of properties relations" and employs task sampling modules to select highly relevant auxiliary tasks for target task prediction [22]. By quantifying relationships between molecular properties, KRGTS reduces noise introduction and enables more efficient meta-knowledge learning.

Attribute-Guided Prototype Networks

The Attribute-guided Prototype Network (APN) leverages human-defined molecular attributes as high-level concepts to guide graph-based molecular encoders [21]. APN incorporates an attribute extractor that obtains molecular fingerprint attributes from 14 types of molecular fingerprints (including circular-based, path-based, and substructure-based) and deep attributes from self-supervised learning methods [21]. The Attribute-Guided Dual-channel Attention module then learns the relationship between molecular graphs and attributes to refine both local and global molecular representations.

Diagram 1: High-level workflow of the FS-MPP meta-learning framework, showing the relationship between training phases and core components.

Experimental Protocols and Benchmarking

Standardized Evaluation Framework

Table 2: Benchmark Datasets for FS-MPP Evaluation

| Dataset | Molecules | Properties | Key Characteristics | Common Evaluation Splits |

|---|---|---|---|---|

| Tox21 | ~12,000 | 12 | Toxicology assays | 8 training, 4 testing properties |

| SIDER | ~1,400 | 27 | Drug side effects | 20 training, 7 testing properties |

| MUV | ~90,000 | 17 | Virtual screening data | 12 training, 5 testing properties |

| FS-Mol | ~400,000 | ~5,000 | Large-scale benchmark | Multiple few-shot splits |

FS-MPP evaluation follows a rigorous episodic training paradigm where models are trained on a diverse set of molecular properties and tested on completely held-out properties [19]. The standard protocol involves:

- Task Construction: Sample multiple N-way K-shot tasks from training properties

- Meta-Training: Iteratively update model parameters across diverse training tasks

- Meta-Testing: Evaluate on completely unseen target properties using support-query splits

- Performance Metrics: Report ROC-AUC and PR-AUC across multiple test tasks [21] [22]

The FS-Mol dataset has emerged as a comprehensive benchmark specifically designed for few-shot drug discovery, providing standardized training/validation/test splits and evaluation protocols [19] [7].

Implementation Protocol: Attribute-Guided Prototype Network

A detailed protocol for implementing the APN framework [21] includes:

Step 1: Molecular Attribute Extraction

- Extract fingerprint attributes using 14 different fingerprint types (7 circular-based, 5 path-based, 2 substructure-based)

- Generate deep attributes using self-supervised learning methods on molecular graphs

- Formulate single, dual, and triplet fingerprint attributes to capture multi-level molecular characteristics

Step 2: Molecular Graph Encoding

- Implement graph neural network (GNN) backbone (e.g., GIN, GAT) to process molecular graphs

- Generate initial atom-level and molecular-level representations through message passing

Step 3: Attribute-Guided Dual-Channel Attention

- Apply local attention to refine atomic representations using attribute guidance

- Apply global attention to enhance molecular representations with attribute semantics

- Fuse attribute-aware representations with original graph representations

Step 4: Prototype Computation and Classification

- Compute class prototypes as centroids of support set embeddings in attribute-guided space

- Classify query molecules based on distance to prototypes in this enhanced space

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for FS-MPP Implementation

| Resource Category | Specific Tools | Function in FS-MPP | Access Information |

|---|---|---|---|

| Benchmark Datasets | Tox21, SIDER, MUV, FS-Mol | Standardized evaluation and benchmarking | MoleculeNet, TDC platforms |

| Molecular Encoders | Graph Neural Networks (GIN, GAT, MPNN) | Learning molecular representations from graph structure | PyTorch Geometric, Deep Graph Library |

| Meta-Learning Libraries | Torchmeta, Learn2Learn | Implementing MAML and relation networks | Open-source Python packages |

| Fingerprint Tools | RDKit, OpenBabel | Generating molecular fingerprint attributes | Open-source cheminformatics packages |

| Evaluation Frameworks | FS-Mol evaluation protocol | Standardized few-shot performance assessment | GitHub: microsoft/FS-Mol |

The field of FS-MPP continues to evolve with several promising research directions. Cross-domain generalization aims to transfer knowledge across molecular domains with different distributions [21]. Uncertainty quantification in few-shot predictions remains critical for reliable drug discovery applications [25]. The integration of large language models for molecular representation shows potential for enhancing few-shot reasoning capabilities [7]. Additionally, Bayesian meta-learning approaches with hypernetworks offer avenues for more robust task-specific adaptation [25].

In conclusion, the formulation of FS-MPP as a specialized few-shot learning problem addresses the fundamental data scarcity challenges in molecular property prediction. Through meta-learning frameworks, relation graphs, and attribute-guided approaches, FS-MPP enables predictive modeling for novel molecular properties with minimal labeled data, significantly accelerating early-stage drug discovery and virtual screening processes.

Building FS-MPP Models: Meta-Learning, Architectures, and Molecular Representations

Few-shot Molecular Property Prediction (FS-MPP) has emerged as a critical discipline in response to the pervasive challenge of scarce and low-quality molecular annotations in early-stage drug discovery and materials design [2]. Due to the high cost and complexity of wet-lab experiments, real-world molecular datasets often suffer from severe data limitations, making it difficult to apply standard supervised deep learning models effectively [2]. The FS-MPP paradigm addresses this fundamental constraint by enabling models to learn from only a handful of labeled examples, typically formulated as a multi-task learning problem that requires simultaneous generalization across diverse molecular structures and property distributions [2].

The core challenges in FS-MPP stem from two distinct generalization problems. First, cross-property generalization under distribution shifts occurs when models must transfer knowledge across heterogeneous prediction tasks where each property may follow different data distributions or embody fundamentally different biochemical mechanisms [3] [2]. Second, cross-molecule generalization under structural heterogeneity arises when models risk overfitting to the limited structural patterns in the training set and fail to generalize to structurally diverse compounds [3] [2]. These dual challenges necessitate specialized approaches that can extract and transfer knowledge effectively from scarce supervision.

This application note presents a unified taxonomy of FS-MPP methods organized across three fundamental levels: data, model, and learning paradigms. By systematically categorizing existing strategies and providing detailed experimental protocols, we aim to equip researchers and drug development professionals with practical frameworks for implementing FS-MPP in resource-constrained scenarios, thereby accelerating early-stage discovery pipelines where labeled data is inherently limited.

A Unified Taxonomy of FS-MPP Methods

The proposed taxonomy organizes FS-MPP methods into three hierarchical levels based on their primary approach to addressing data scarcity. This classification enables researchers to better understand the methodological landscape and select appropriate strategies for their specific challenges.

Data-Level Methods

Data-level approaches focus on augmenting or enriching the available molecular representations to enhance model generalization without increasing the number of labeled examples. These methods operate on the principle that better feature representations or artificially expanded datasets can compensate for limited supervision.

Molecular Representation Enhancement: These methods leverage diverse molecular featurization strategies to capture complementary structural information. The Attribute-guided Prototype Network (APN), for instance, innovatively combines high-level molecular fingerprints with deep learning algorithms, extracting both traditional fingerprint attributes (e.g., RDK5, RDK6, HashAP) and deep attributes generated through self-supervised learning frameworks like Uni-Mol [26]. This multi-source representation approach has demonstrated significant performance improvements, with path-based fingerprint attributes showing particular effectiveness [26].

Multi-Modal Data Integration: Advanced frameworks integrate multiple molecular representations to capture comprehensive structural information. The SGGRL model, for example, simultaneously leverages sequence (SMILES), graph (2D topology), and geometry (3D conformation) characteristics of molecules [27]. This multi-modal approach consistently outperforms single-modality baselines by capturing complementary structural information that enhances generalization in low-data regimes.

Data Augmentation Techniques: Methods like Mix-Key employ strategic data augmentation by focusing on crucial molecular features including scaffolds and functional groups [27]. This structured augmentation creates synthetic training examples that preserve chemically meaningful patterns while increasing dataset diversity.

Table 1: Quantitative Performance Comparison of Data-Level Methods on Benchmark Datasets

| Method | Key Features | Tox21 (5-shot ROC-AUC) | SIDER (ROC-AUC) | MUV (PR-AUC) |

|---|---|---|---|---|

| APN (with Uni-Mol attributes) | Combines fingerprint & deep attributes | 80.40% | 78.69% | 69.23% |

| APN (three-fingerprint combination) | hashapavalonecfp4 | 84.46% | - | - |

| SGGRL | Sequence, graph, geometry fusion | Superior to most baselines | - | - |

Model-Level Methods

Model-level approaches design specialized architectures that inherently support few-shot learning through inductive biases tailored to molecular data characteristics. These methods focus on creating structural priors that guide effective generalization from limited examples.

Attribute-Guided Prototype Networks: The APN framework incorporates an Attribute-Guided Dual-channel Attention (AGDA) module that employs both local and global attention mechanisms to optimize atomic-level and molecular-level representations [26]. The local attention module guides the model to focus on important local structural information, while the global attention module captures overall molecular characteristics. Experimental validation through ablation studies has confirmed that removing either attention module significantly reduces performance, with the global attention proving particularly critical [26].

Geometry-Enhanced Architectures: These models explicitly incorporate 3D structural information to enhance predictive accuracy. Geometry-enhanced molecular representation learning uses geometric data in graph neural networks to predict molecular properties, while GeomGCL utilizes geometric graph contrastive learning across 2D and 3D views [27]. These approaches demonstrate that geometric information provides valuable inductive biases that significantly improve generalization in data-scarce scenarios.

Context-Informed Heterogeneous Encoders: The Context-informed Few-shot Molecular Property Prediction via Heterogeneous Meta-Learning approach employs graph neural networks combined with self-attention encoders to extract both property-specific and property-shared molecular features [5]. This architecture uses an adaptive relational learning module to infer molecular relations based on property-shared features, with final molecular embeddings improved by aligning with property labels in property-specific classifiers.

Table 2: Ablation Study Results for APN Model Components on Tox21 Dataset

| Model Variant | 5-shot ROC-AUC | 10-shot ROC-AUC | Performance Impact |

|---|---|---|---|

| Complete APN | 80.40% | 84.54% | Baseline |

| Without Global Attention (w/o G) | Significant reduction | Significant reduction | Critical component |

| Without Local Attention (w/o L) | Moderate reduction | Moderate reduction | Supporting component |

| Without Similarity (w/o S) | Reduced | Reduced | Important component |

| Without Weighted Prototypes (w/o W) | Reduced | Reduced | Important component |

Learning Paradigm-Level Methods

Learning paradigm approaches modify the fundamental training procedure to optimize for few-shot scenarios, often drawing inspiration from meta-learning and other specialized optimization strategies.

Heterogeneous Meta-Learning: This strategy employs a dual-update mechanism where property-specific features are updated within individual tasks in the inner loop, while all parameters are jointly updated in the outer loop [5]. This approach enables the model to effectively capture both general molecular characteristics and property-specific contextual information, leading to substantial improvements in predictive accuracy, particularly with very limited training samples.

Knowledge-Enhanced Task Sampling: Frameworks like KRGTS (Knowledge-enhanced Relation Graph and Task Sampling) incorporate chemical domain knowledge into the meta-learning process through two specialized modules: the Knowledge-enhanced Relation Graph module and the Task Sampling module [27]. This structured approach to task construction and sampling demonstrates superior performance compared to standard meta-learning methods by ensuring tasks reflect chemically meaningful relationships.

Multi-Task Pre-training and Fine-tuning: Self-supervised pre-training on large unlabeled molecular datasets followed by task-specific fine-tuning has emerged as a powerful paradigm. Uni-Mol serves as a universal 3D molecular representation learning framework that can be pre-trained on diverse molecular structures and then adapted to specific property prediction tasks with limited labeled data [26]. This approach significantly enlarges the representation ability and application scope of molecular representation learning schemes.

Experimental Protocols and Methodologies

This section provides detailed protocols for implementing and evaluating FS-MPP methods, enabling researchers to replicate state-of-the-art approaches in their own workflows.

Protocol 1: Implementing Attribute-Guided Prototype Networks

Objective: Implement and evaluate the Attribute-guided Prototype Network (APN) for few-shot molecular property prediction.

Materials and Reagents:

- Molecular Datasets: Tox21, SIDER, and MUV from MoleculeNet benchmark

- Fingerprint Types: 14 molecular fingerprints including RDK5, RDK6, HashAP, Avalon, ECFP4, FCFP2

- Deep Attribute Extractors: Uni-Mol (unimol_10conf) for generating 3D molecular conformations

- Computational Framework: Python with PyTorch or TensorFlow

- Evaluation Metrics: ROC-AUC, F1-Score, PR-AUC

Procedure:

- Data Preparation:

- Partition datasets into meta-training and meta-testing sets using task sampling strategies

- For each few-shot task, select N classes (properties) with K support samples and Q query samples per class

- Generate multiple molecular representations including SMILES sequences, 2D graphs, and 3D conformations

Molecular Attribute Extraction:

- Extract traditional fingerprint attributes using cheminformatics libraries (RDKit)

- Generate deep attributes using self-supervised learning models (Uni-Mol with 10 conformations)

- For fingerprint combinations, evaluate single, double, and triple combinations to identify optimal feature sets

Model Architecture Configuration:

- Implement molecular encoder using Graph Attention Networks (GAT)

- Construct Attribute-Guided Dual-channel Attention (AGDA) module with:

- Local attention mechanism for atomic-level representations

- Global attention mechanism for molecular-level representations

- Design prototype computation network with weighted aggregation

Training Protocol:

- Optimize model over series of training tasks (episodic training)

- Use support set to derive prototypes for each class

- Use query set to optimize parameters of molecular encoder and AGDA module

- Employ similarity computation between query molecules and prototypes for final prediction

Evaluation:

- Assess performance on held-out test tasks using multiple metrics

- Conduct ablation studies to validate contribution of individual components

- Compare against baseline methods (Siamese networks, Attention LSTM, Iterative LSTM, MetaGAT)

Troubleshooting:

- If performance plateaus, experiment with different fingerprint combinations

- For overfitting on small support sets, increase regularization in attention modules

- If training is unstable, adjust learning rates for different components separately

Protocol 2: Heterogeneous Meta-Learning for FS-MPP

Objective: Implement context-informed few-shot molecular property prediction via heterogeneous meta-learning.

Materials and Reagents:

- Molecular Datasets: Benchmark datasets from MoleculeNet

- Feature Encoders: Graph Isomorphism Network (GIN) and Pre-GNN for property-specific knowledge

- Self-Attention Encoders: Transformer architectures for property-shared knowledge

- Computational Framework: Python with deep learning libraries

Procedure:

- Dual-Feature Extraction:

- Implement graph neural networks (GIN) to capture property-specific molecular substructures

- Employ self-attention encoders to extract property-shared molecular commonalities

- Fuse both feature types for comprehensive molecular representation

Adaptive Relational Learning:

- Implement module to infer molecular relations based on property-shared features

- Construct molecular relation graphs to capture pairwise similarities

- Use graph propagation to refine molecular embeddings

Heterogeneous Optimization:

- Implement inner loop updates for property-specific parameters within individual tasks

- Configure outer loop updates for all parameters across tasks

- Align final molecular embeddings with property labels in property-specific classifiers

Evaluation and Validation:

- Compare performance against standard meta-learning baselines

- Assess sample efficiency by varying number of shots (1, 5, 10) in support sets

- Analyze cross-property generalization capabilities

Visual Representations of FS-MPP Frameworks

The following diagrams provide visual representations of key FS-MPP frameworks and workflows to facilitate implementation and understanding of the core methodologies.

Attribute-Guided Prototype Network (APN) Architecture

Successful implementation of FS-MPP methods requires access to specific datasets, software tools, and computational resources. The following table summarizes key components of the FS-MPP research toolkit.

Table 3: Essential Research Reagents and Resources for FS-MPP

| Resource Category | Specific Examples | Function and Application |

|---|---|---|

| Benchmark Datasets | Tox21, SIDER, MUV from MoleculeNet | Standardized benchmarks for evaluating FS-MPP performance across diverse molecular properties |

| Molecular Fingerprints | RDK5, RDK6, HashAP, Avalon, ECFP4, FCFP2 | Traditional cheminformatics representations capturing structural patterns and features |

| Deep Learning Frameworks | Uni-Mol, Graph Neural Networks (GAT, GIN) | Self-supervised and supervised models for extracting deep molecular representations |

| Evaluation Metrics | ROC-AUC, F1-Score, PR-AUC | Standardized metrics for assessing predictive performance in few-shot scenarios |

| Meta-Learning Libraries | PyTorch, TensorFlow with meta-learning extensions | Frameworks for implementing episodic training and optimization algorithms |

| Conformational Generators | Distance geometry, Energy minimization | Tools for generating 3D molecular conformations for geometric learning approaches |

The unified taxonomy presented in this application note provides a structured framework for understanding and implementing Few-shot Molecular Property Prediction methods across data, model, and learning paradigm levels. By systematically addressing the dual challenges of cross-property generalization under distribution shifts and cross-molecule generalization under structural heterogeneity, FS-MPP methods enable effective molecular property prediction in real-world scenarios where labeled data is inherently scarce.

The experimental protocols and visual workflow diagrams offer practical guidance for researchers and drug development professionals seeking to incorporate these approaches into their discovery pipelines. As the field continues to evolve, emerging trends including foundation models for structured data, more sophisticated multi-modal learning approaches, and enhanced meta-learning algorithms promise to further advance the capabilities of FS-MPP, ultimately accelerating early-stage drug discovery and materials design in data-constrained environments.

Optimization-based meta-learning, particularly Model-Agnostic Meta-Learning (MAML), provides a framework for models to quickly adapt to new tasks with minimal data. This is achieved by learning a superior initial parameter set that can be rapidly fine-tuned via a few gradient descent steps on a new task. The core MAML algorithm operates through a bi-level optimization process: an inner loop for task-specific adaptation and an outer loop for meta-updates that learn a generally useful initialization [28]. This "learning to learn" paradigm is exceptionally valuable in fields like drug discovery, where labeled molecular property data is scarce and costly to obtain [29] [30].

In the context of molecular property prediction, this approach directly addresses the critical challenge of data sparseness. Traditional deep learning models require large amounts of annotated data, which is often unavailable for early-stage drug discovery projects [29]. MAML and its variants enable researchers to build predictive models that generalize effectively from only a few labeled examples, significantly accelerating the identification of promising drug candidates.

Core Principles of MAML

Algorithmic Framework

The MAML algorithm is designed to find an initial set of parameters, θ, from which a model can efficiently adapt to any new task from a given distribution. A single task in the context of few-shot molecular property prediction typically represents learning to predict a specific molecular property (e.g., solubility, protein inhibition) given only a handful of labeled molecules.

The optimization process consists of two distinct cycles:

- Inner Loop (Task-Specific Adaptation): For each task Ti in a sampled batch, the model's parameters are copied from θ to θ'i. Using the small support set of Ti, one or more gradient update steps are performed on θ'i. This yields a task-adapted parameter set. The update rule for a single step is: θ'i = θ - α ∇θ LTi(fθ), where α is the inner-loop learning rate and LTi is the loss on task Ti [31] [28].

- Outer Loop (Meta-Optimization): The performance of the adapted parameters θ'i is evaluated on the query set of Ti. The key insight of MAML is that the meta-gradient is computed with respect to the original parameters θ, not the adapted ones θ'i. This pushes θ to a region in parameter space from which efficient adaptation is possible. The meta-update is: θ = θ - β ∇θ Σi LTi(fθ'i), where β is the meta-learning rate [28].

MAML Variants for Enhanced Performance

The canonical MAML algorithm can be computationally expensive due to the need for second-order derivatives in the meta-gradient calculation. Several variants have been developed to address this and other limitations:

- FOMAML (First-Order MAML): This approximation ignores the second-order terms in the meta-gradient, treating the adapted parameters θ'i as constants. This simplifies and speeds up computation, often with minimal performance loss [31].

- Reptile: This algorithm abandons the computation of a meta-gradient altogether. Instead, it simply computes a moving average of the optimal parameters obtained from multiple tasks after inner-loop adaptation, then moves the initial parameters towards this average [31].

- iMAML (Implicit MAML): This method creates a dependency between the initial parameters and the inner-loop loss space, allowing for the computation of the meta-gradient without the need to differentiate through the inner optimization path, thus improving computational efficiency [31].

MAML Variants in Molecular Property Prediction

AttFPGNN-MAML

The AttFPGNN-MAML architecture is a specialized variant designed to tackle the unique challenges of molecular representation in few-shot learning [30].