Overcoming Data Scarcity: Advanced Strategies for Robust Molecular Property Prediction

This article addresses the critical challenge of data scarcity in molecular property prediction, a major bottleneck in AI-driven drug discovery and materials science.

Overcoming Data Scarcity: Advanced Strategies for Robust Molecular Property Prediction

Abstract

This article addresses the critical challenge of data scarcity in molecular property prediction, a major bottleneck in AI-driven drug discovery and materials science. We explore the foundational causes of performance degradation in low-data regimes, including task imbalance and negative transfer. The content provides a comprehensive overview of cutting-edge methodological solutions such as multi-task learning, transfer learning, and data augmentation, alongside practical troubleshooting advice for mitigating common pitfalls like dataset bias and model overfitting. Furthermore, we present a rigorous framework for model validation and comparative analysis, emphasizing performance on out-of-distribution data to ensure real-world applicability. Tailored for researchers, scientists, and drug development professionals, this guide synthesizes the latest research to equip readers with strategies for building accurate and reliable predictive models even with limited labeled data.

The Data Scarcity Challenge: Understanding the Foundations and Impact on Molecular AI

Defining the Ultra-Low Data Regime in Molecular Property Prediction

Troubleshooting Guides and FAQs

Frequently Asked Questions

What defines the "ultra-low data regime" in molecular property prediction? The ultra-low data regime refers to scenarios where the number of labeled molecular data points is exceptionally small, severely limiting the effectiveness of standard machine learning models. This data scarcity affects diverse domains like pharmaceuticals, solvents, polymers, and energy carriers. In practical terms, this can mean having as few as 29 labeled samples for a given property, making traditional single-task learning approaches unreliable [1].

Why is multi-task learning (MTL) particularly susceptible to failure in low-data conditions? MTL leverages correlations among related molecular properties to improve predictive performance. However, in low-data regimes, imbalanced training datasets often degrade its efficacy through a problem called negative transfer (NT). NT occurs when parameter updates driven by one task are detrimental to another, often due to low task relatedness, gradient conflicts, or data distribution mismatches [1].

How can I identify if my model is suffering from negative transfer? Key indicators of negative transfer include:

- A significant performance drop in one or more tasks after introducing shared parameter training, compared to single-task models.

- Unstable convergence or high variance in validation loss for specific tasks during multi-task training.

- The model's inability to reach a reasonable performance baseline for a task that it learns effectively in isolation.

Are pre-trained models or meta-learning better than MTL for ultra-low data? While pre-trained models and meta-learning are viable few-shot learning approaches, they have limitations in ultra-low data regimes. Meta-learning often requires a large number of training tasks for effective generalization, and pre-trained models need extensive, computationally expensive pre-training. Traditional supervised MTL methods, especially those designed to mitigate NT, can perform reliably even with as few as two tasks and without large-scale pre-training [1].

Troubleshooting Common Experimental Problems

Problem: Poor model generalization on a specific molecular property task.

- Potential Cause: Severe task imbalance, where the problematic task has far fewer labels than others, limiting its influence on shared model parameters.

- Solution: Implement a training scheme like Adaptive Checkpointing with Specialization (ACS). This method uses a shared graph neural network (GNN) backbone with task-specific heads and checkpoints the best model parameters for each task individually when its validation loss minimizes, protecting it from detrimental updates from other tasks [1].

Problem: Unstable training and performance degradation when adding new tasks.

- Potential Cause: Gradient conflicts arising from optimizing shared parameters for dissimilar tasks.

- Solution:

- Architecture Design: Ensure your model uses task-specific heads in addition to a shared backbone. This provides specialized capacity for each task.

- Training Strategy: Adopt adaptive checkpointing, which saves specialized model snapshots for each task, effectively balancing inductive transfer with protection from negative interference [1].

Problem: Model performance is inflated during validation but fails in real-world applications.

- Potential Cause: Inappropriate dataset splitting. Random splits can create artificially high structural similarity between training and test sets.

- Solution: Use a time-split or Murcko-scaffold split for evaluation. This better reflects real-world prediction scenarios where models predict properties for novel molecular structures, providing a more realistic performance estimate [1].

Problem: Handling datasets with a high ratio of missing labels.

- Potential Cause: Standard data imputation methods can reduce generalization, while complete-case analysis wastes data.

- Solution: Employ loss masking during training. This technique simply ignores the loss calculation for missing labels, allowing the model to learn effectively from all available data without the need for potentially biased imputation [1].

Experimental Protocols & Methodologies

Protocol: Adaptive Checkpointing with Specialization (ACS)

Objective: To train a robust multi-task graph neural network for molecular property prediction that mitigates negative transfer, especially in ultra-low data and imbalanced task scenarios.

Materials: See "Research Reagent Solutions" table for key computational tools.

Methodology:

- Model Architecture:

- Shared Backbone: A single Graph Neural Network (GNN) based on message passing to learn general-purpose molecular representations [1].

- Task-Specific Heads: Dedicated Multi-Layer Perceptrons (MLPs) for each molecular property task, which take the backbone's latent representations as input.

- Training Procedure:

- The shared backbone and all task-specific heads are trained jointly.

- The validation loss for every task is monitored throughout the training process.

- A model checkpoint (saving the state of both the shared backbone and the specific task's head) is created whenever the validation loss for a particular task reaches a new minimum.

- This results in a specialized, final model for each task, comprising the shared backbone parameters that were most beneficial for it and its own specific head.

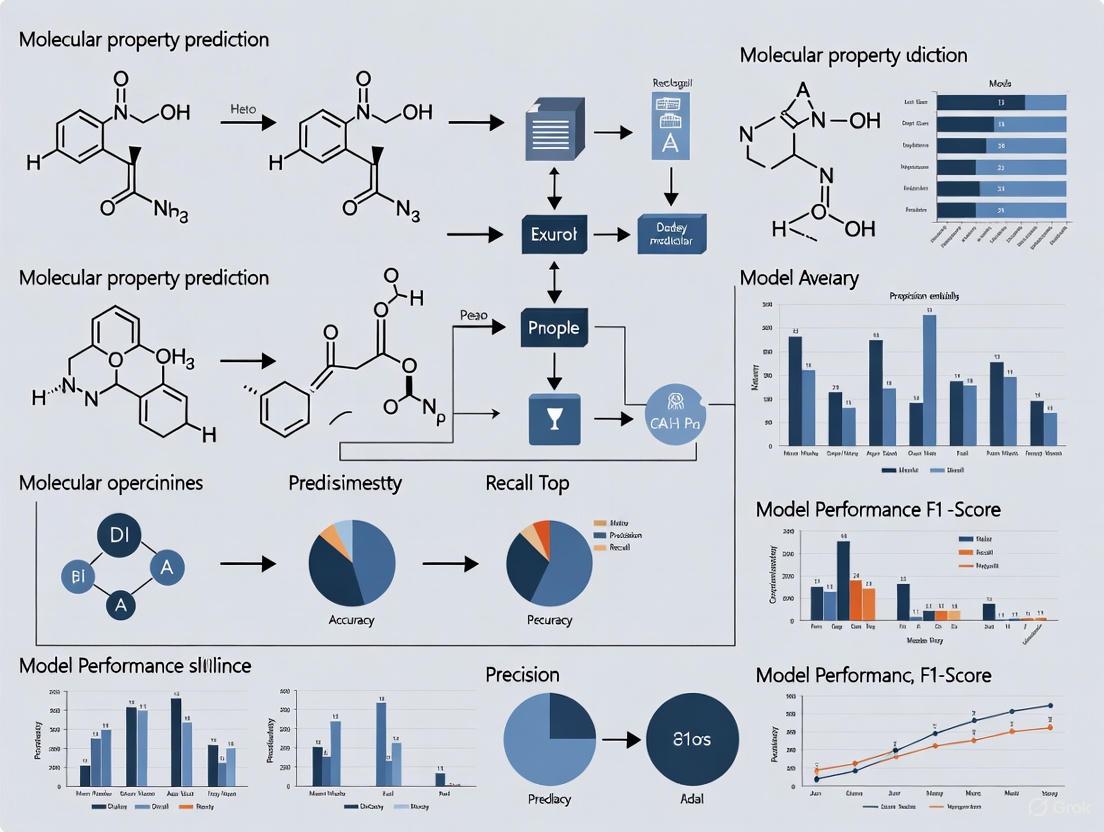

The following workflow diagram illustrates the ACS training procedure:

Quantitative Performance Comparison

The effectiveness of the ACS method is demonstrated by its performance on standard benchmarks compared to other approaches.

Table 1: Model Performance Comparison on MoleculeNet Benchmarks (Area Under the Curve) [1]

| Model / Dataset | ClinTox | SIDER | Tox21 | Average |

|---|---|---|---|---|

| ACS (Proposed) | ~90.3 | ~63.9 | ~76.8 | ~77.0 |

| D-MPNN | ~87.5 | ~62.6 | ~76.5 | ~75.5 |

| Other MTL Models | ~81.9 | ~58.2 | ~74.8 | ~71.6 |

| Single-Task Learning (STL) | ~78.3 | ~57.4 | ~73.2 | ~69.6 |

Table 2: Comparative Performance of Training Schemes on ClinTox [1]

| Training Scheme | Description | Performance (AUC) |

|---|---|---|

| ACS | Multi-task learning with adaptive checkpointing & specialization. | ~90.3 |

| MTL-GLC | Multi-task learning with global loss checkpointing. | ~81.7 |

| MTL | Standard multi-task learning without checkpointing. | ~81.5 |

| STL | Single-task learning (separate model for each task). | ~78.3 |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Molecular Property Prediction

| Item / Resource | Function / Purpose |

|---|---|

| Graph Neural Network (GNN) | The core architecture for learning directly from molecular graph structures, representing atoms as nodes and bonds as edges [1]. |

| Message Passing | A key mechanism in GNNs where nodes (atoms) iteratively aggregate information from their neighbors to build meaningful molecular representations [1]. |

| Multi-Layer Perceptron (MLP) | A fully-connected neural network used as a "task-specific head" to map the general GNN representations to predictions for a specific property [1]. |

| Murcko Scaffold Splitting | A method to split datasets based on molecular scaffolds (core structures), ensuring that training and test sets contain distinct chemotypes for a more realistic evaluation of generalization [1]. |

| QM9 Dataset | A public dataset of quantum mechanical properties for ~133,000 small organic molecules, commonly used for benchmarking models [2]. |

| MoleculeNet Benchmark | A collective benchmark for molecular property prediction, encompassing several datasets like Tox21, SIDER, and ClinTox for standardized evaluation [1]. |

Visualizing the Negative Transfer Problem and Solution

The core challenge in low-data MTL is negative transfer. The following diagram illustrates its causes and how ACS provides a solution.

Troubleshooting Guides

FAQ 1: What is negative transfer and how can I detect it in my molecular property prediction experiments?

Answer: Negative transfer occurs when sharing information between tasks during Multi-Task Learning (MTL) ends up degrading performance on one or more tasks, rather than improving it. This phenomenon is a major obstacle in molecular property prediction, where tasks may have conflicting gradients or insufficient relatedness [3] [1].

In practice, you can detect negative transfer by monitoring these key indicators during your experiments:

- Task Performance Divergence: One task's validation loss decreases while another's increases or stagnates over epochs [3].

- Gradient Conflict: Gradient vectors from different tasks point in opposing directions during optimization [3].

- Validation Loss Patterns: The shared backbone model fails to find a unified representation that minimizes loss across all tasks simultaneously [1].

For molecular property prediction, a clear sign of negative transfer is when your MTL model performs worse on a target property than a simpler single-task model trained exclusively on that property's data [1].

FAQ 2: What practical strategies can I implement to mitigate negative transfer when working with scarce molecular data?

Answer: Several effective strategies have been developed to mitigate negative transfer, particularly crucial in the low-data regimes common to molecular property prediction:

Adaptive Checkpointing with Specialization (ACS): This training scheme monitors validation loss for each task and checkpoints the best backbone-head pair whenever a task reaches a new minimum. This approach has demonstrated accurate predictions with as few as 29 labeled molecular samples [1].

Gradient Modulation Techniques: Methods like Gradient Adversarial Training (GREAT) explicitly include an adversarial loss term that encourages gradients from different tasks to have statistically indistinguishable distributions, reducing conflict [3].

Exponential Moving Average Loss Weighting: This technique scales losses based on their observed magnitudes, dynamically adjusting task contributions throughout training to balance their influence [4].

Multi-gate Mixture-of-Experts (MMOE): This architecture uses separate gating networks for each task, allowing models to selectively utilize shared experts. This is particularly beneficial when task correlations are low [5].

Table 1: Comparison of Negative Transfer Mitigation Strategies

| Method | Key Mechanism | Best Suited Scenarios | Reported Advantages |

|---|---|---|---|

| ACS [1] | Task-specific checkpointing of best parameters | Severe task imbalance with very low data (e.g., <30 samples) | 11.5% average improvement on molecular benchmarks vs. node-centric message passing |

| GradNorm [5] | Gradient normalization for loss balancing | Tasks with different loss scales or convergence speeds | Prioritizes lagging tasks; outperforms equal-weighting baselines |

| MMOE [5] | Separate gating networks per task | Loosely correlated tasks with potential conflicts | Superior to shared experts when task correlation is low |

| EMA Loss Weighting [4] | Exponential moving average of loss magnitudes | Dynamic task balancing without complex optimization | Achieves comparable/higher performance vs. best-performing methods |

FAQ 3: How does task imbalance specifically harm MTL performance in molecular property prediction, and how can I address it?

Answer: Task imbalance—where certain molecular properties have far fewer labeled examples than others—harms MTL performance by allowing high-data tasks to dominate gradient updates, potentially leading to overfitting on those tasks while underfitting scarce-data tasks [1]. This is particularly problematic in molecular datasets where different properties may have dramatically different measurement costs and availability.

To address task imbalance:

- Dynamic Temperature-based Sampling: Adjust sampling probabilities using a temperature coefficient updated each epoch based on model performance across tasks [3].

- Loss Masking for Missing Labels: Simply eliminate loss computation for missing labels rather than using imputation, which can introduce bias [1].

- Gradient-Blending: Calculate task weights based on generalization and overfitting rates, penalizing tasks that show signs of overfitting [5].

Table 2: Quantitative Performance of MTL Methods Under Data Scarcity

| Experimental Setting | Dataset | Method | Performance | Comparative Advantage |

|---|---|---|---|---|

| Ultra-low data regime (29 samples) | Sustainable Aviation Fuel properties [1] | ACS | Accurate predictions attainable | Unachievable with single-task or conventional MTL |

| Task imbalance scenario | ClinTox [1] | ACS | 15.3% improvement over STL | Effective NT mitigation in imbalanced molecular data |

| Multi-task vs. single-task | QM9 subsets [2] | MTL Graph Neural Networks | Outperforms STL in low-data conditions | Systematic framework for data augmentation |

| Rare disease mortality prediction | EHR data [6] | Ada-SiT | Effective with hundreds of tasks with insufficient data | Addresses both data insufficiency and task diversity |

Experimental Protocols

Protocol 1: Implementing Adaptive Checkpointing with Specialization (ACS) for Molecular Property Prediction

Objective: To implement the ACS training scheme that effectively mitigates negative transfer while preserving MTL benefits in low-data molecular property prediction.

Materials:

- Molecular Dataset: Such as ClinTox, SIDER, or Tox21 from MoleculeNet [1]

- Graph Neural Network: Message-passing architecture for molecular representation

- Task-Specific MLP Heads: Separate heads for each molecular property

- Validation Set: For monitoring task-specific performance

Methodology:

- Architecture Setup:

- Implement a shared GNN backbone based on message passing for general-purpose molecular representations.

- Attach task-specific Multi-Layer Perceptron (MLP) heads for each target property.

Training Procedure:

- During training, monitor validation loss for every task independently.

- Checkpoint the best backbone-head pair whenever any task reaches a new minimum validation loss.

- Continue training until all tasks have shown minimal improvement over multiple epochs.

Specialization:

- After training, each task retains its specialized backbone-head pair that achieved optimal performance.

- This ensures that the final model for each property benefits from shared representations without being harmed by negative transfer from other tasks.

This protocol has been validated on molecular property benchmarks, showing particular effectiveness in scenarios with severe task imbalance, such as predicting sustainable aviation fuel properties with as few as 29 labeled samples [1].

Protocol 2: Dynamic Loss Balancing with Exponential Moving Average for Multi-Task Molecular Optimization

Objective: To implement exponential moving average (EMA) loss weighting that dynamically balances task contributions during MTL training.

Materials:

- Multi-task Molecular Dataset: With varying label availability across properties

- Deep MTL Architecture: With shared encoder and task-specific decoders

- EMA Calculator: For tracking loss magnitudes across tasks

Methodology:

- Initialization:

- Set initial loss weights based on inverse frequency of labeled samples per task.

- Initialize EMA variables for each task's loss magnitude.

Training Loop:

- For each batch, compute task-specific losses.

- Update EMA of loss magnitudes for each task.

- Calculate new loss weights as inversely proportional to the EMA values.

- Compute weighted total loss and perform backpropagation.

Validation & Adjustment:

- Monitor relative task performance during validation.

- Adjust EMA smoothing parameters if certain tasks consistently lag.

- The system automatically increases weights for tasks that are learning slower, balancing their contribution to shared parameter updates.

This approach has demonstrated comparable or superior performance to more complex optimization-based weighting schemes on multiple molecular property datasets [4].

Research Reagent Solutions

Table 3: Essential Computational Tools for MTL in Molecular Property Prediction

| Research Reagent | Function | Example Applications | Implementation Notes |

|---|---|---|---|

| Graph Neural Networks | Learn molecular structure representations | Message-passing for molecular graph input [1] | Base architecture for shared backbone in molecular MTL |

| Task-Specific MLP Heads | Process shared representations for specific properties | Predict individual molecular properties from shared GNN output [1] | Enable specialization while sharing base representations |

| Gradient Conflict Detection | Identify opposing gradient directions between tasks | Monitor negative transfer during training [3] | Implement cosine similarity between task gradients |

| Validation Loss Tracking | Monitor task-specific performance throughout training | Trigger checkpointing in ACS [1] | Essential for detecting negative transfer patterns |

| Meta-Learning Frameworks | Learn parameter initializations for fast adaptation | Ada-SiT for rare disease prediction [6] | Particularly valuable for few-shot learning scenarios |

Workflow Visualization

MTL Negative Transfer Mitigation Workflow: This diagram illustrates the comprehensive workflow for detecting and mitigating negative transfer in molecular property prediction, incorporating checkpointing and specialization strategies.

Task Imbalance Causes and Solutions: This diagram shows the relationship between causes and effects of task imbalance in molecular data, along with effective mitigation strategies to ensure balanced learning across properties.

The Critical Role of Data Quality and Distribution in Model Generalization

FAQs: Troubleshooting Model Generalization with Scarce Molecular Data

FAQ 1: My molecular property prediction model performs well on the training set but generalizes poorly to new data. What could be wrong?

Poor generalization often stems from inadequate data quality or quantity. In the context of scarce molecular data, this can be due to:

- Data Scarcity: The model may be overfitting due to an insufficient number of labeled molecules for the target property [1].

- Improper Dataset Splitting: Using random splits instead of time-split or scaffold-aware splits can lead to over-optimistic performance estimates. Temporal and spatial disparities in data distribution can cause models to learn non-generalizable patterns [1] [7].

- Lack of Uncertainty Quantification (UQ): Without UQ, it's difficult to assess the confidence in predictions and distinguish between reliable and unreliable results [8].

FAQ 2: How can I improve my model when I have very few labeled molecules for my primary property of interest?

Leverage Multi-Task Learning (MTL). MTL can improve predictive performance by leveraging correlations among related molecular properties, thus mitigating the data bottleneck for your primary task [1] [2].

However, MTL can suffer from Negative Transfer (NT), where updates from one task degrade performance on another. To mitigate this, use methods like Adaptive Checkpointing with Specialization (ACS), which combines a shared backbone network with task-specific heads and saves the best model for each task individually during training [1].

FAQ 3: How do I know if my molecular dynamics (MD) simulation has produced reliable, well-sampled data for training?

Follow a reproducibility and reliability checklist for simulations [7]:

- Convergence Analysis: Perform at least three independent simulations starting from different configurations. Use statistical analysis to show that the properties being measured have converged.

- Statistical Uncertainty Reporting: Always report uncertainties (e.g., standard error of the mean) for any derived observable. Use methods that account for correlations in time-series data [8].

- Code and Parameter Disclosure: Provide simulation parameters, input files, and final coordinate files to allow others to reproduce your results [7].

FAQ 4: What are the best practices for quantifying and reporting uncertainty in my predictions?

A tiered approach is recommended [8]:

- Feasibility Checks: Perform back-of-the-envelope calculations to determine if the computation is feasible.

- Semi-Quantitative Checks: Check for adequate sampling and data quality.

- Uncertainty Estimation: Only after the above steps, construct formal estimates of observables and their uncertainties.

For statistical analysis, key terms are defined by the International Vocabulary of Metrology (VIM) [8]:

- Arithmetic Mean: The estimate of the true expectation value.

- Experimental Standard Deviation: The estimate of the true standard deviation of the data.

- Experimental Standard Deviation of the Mean: The standard uncertainty of the mean, often called the "standard error."

Data Presentation: Key Quantitative Findings

Table 1: Performance Comparison of Training Schemes on Molecular Property Benchmarks (Data sourced from [1])

| Training Scheme | Brief Description | Average Performance vs. Single-Task Learning (STL) | Key Advantage |

|---|---|---|---|

| Single-Task Learning (STL) | Separate model for each task; no parameter sharing. | Baseline (0% improvement) | Maximum learning capacity per task. |

| Multi-Task Learning (MTL) | Single shared model trained on all tasks simultaneously. | +3.9% improvement | Basic inductive transfer between tasks. |

| MTL with Global Loss Checkpointing (MTL-GLC) | MTL, saving one model when the average validation loss across all tasks is lowest. | +5.0% improvement | Mitigates some overfitting. |

| Adaptive Checkpointing with Specialization (ACS) | MTL with a shared backbone and task-specific heads, saving the best model for each task individually. | +8.3% improvement | Effectively mitigates negative transfer; optimal for task imbalance. |

Table 2: Best Practices for Uncertainty Quantification in Molecular Simulations [8]

| Term | VIM Definition | Common/Alias Name | Formula |

|---|---|---|---|

| Arithmetic Mean | An estimate of the (true) expectation value of a random quantity. | Sample Mean | ( \bar{x} = \frac{1}{n}\sum{j=1}^{n} xj ) |

| Experimental Standard Deviation | An estimate of the (true) standard deviation of a random variable. | Sample Standard Deviation | ( s(x) = \sqrt{\frac{\sum{j=1}^{n}(xj - \bar{x})^2}{n-1}} ) |

| Experimental Standard Deviation of the Mean | An estimate of the standard deviation of the distribution of the arithmetic mean. | Standard Error | ( s(\bar{x}) = \frac{s(x)}{\sqrt{n}} ) |

Experimental Protocols

Protocol 1: Implementing Adaptive Checkpointing with Specialization (ACS)

Objective: To train a multi-task Graph Neural Network (GNN) that is robust to negative transfer, especially in ultra-low data regimes and with imbalanced tasks [1].

Methodology:

- Architecture: Use a single GNN based on message passing as a shared, task-agnostic backbone. Attach task-specific Multi-Layer Perceptron (MLP) heads to this backbone for each property to be predicted.

- Training:

- Train the entire model (shared backbone + all task heads) on all available tasks simultaneously.

- For each task, use loss masking to handle missing labels.

- Checkpointing:

- Monitor the validation loss for every task throughout the training process.

- For each task, independently save a checkpoint of the backbone and its corresponding task-specific head whenever that task's validation loss achieves a new minimum.

- Specialization: After training, you will have a specialized model (backbone-head pair) for each task, which represents the point in training where it performed best, shielded from detrimental updates from other tasks.

Application: This method has been validated on benchmarks like ClinTox, SIDER, and Tox21, and has shown practical utility in predicting sustainable aviation fuel properties with as few as 29 labeled samples [1].

Protocol 2: Convergence and Reproducibility Checklist for Molecular Simulations

Objective: To ensure molecular simulation data is reliable, converged, and reproducible before being used for model training or analysis [7].

Methodology:

- Independent Replicates: Perform a minimum of three independent simulations, starting from different initial configurations.

- Convergence Analysis:

- Conduct time-course analysis (e.g., plotting observables over time) to detect a lack of convergence.

- For properties of interest, perform statistical analysis across the independent replicates to demonstrate convergence. When presenting representative snapshots, show quantitative analysis to prove they are representative.

- Uncertainty Quantification: For any reported observable, calculate and report the experimental standard deviation of the mean (standard error) as the standard uncertainty [8].

- Documentation and Reproducibility:

- Justify the choice of model, resolution, and force field for the system of interest.

- Deposit all simulation parameters, input files, and final coordinate files in a public repository.

- Make any custom code central to the analysis publicly available.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Molecular Property Prediction

| Tool / Resource | Function / Purpose |

|---|---|

| Graph Neural Network (GNN) | Learns general-purpose latent representations from molecular graph structures [1]. |

| Multi-Layer Perceptron (MLP) Head | Acts as a task-specific predictor on top of a shared representation backbone [1]. |

| MoleculeNet Benchmarks | Standardized datasets (e.g., ClinTox, SIDER, Tox21) for benchmarking model performance [1]. |

| Murcko Scaffold Split | A method for splitting molecular datasets that groups molecules by their core structure, providing a more challenging and realistic assessment of generalization [1]. |

| Directed Message Passing Neural Network (D-MPNN) | A variant of GNN that propagates messages along directed edges to reduce redundant updates; a strong baseline model [1]. |

Workflow and System Diagrams

Troubleshooting Guide: Overcoming Data Scarcity

Frequently Asked Questions

What are the primary consequences of data scarcity in my molecular property prediction models? Data scarcity leads to several critical failures in model performance. Your models will likely suffer from poor generalization to new molecular scaffolds, inaccurate predictions for rare but important molecular classes, and high variance in performance metrics. In practice, this translates to failed experimental validation when synthesized compounds don't exhibit predicted properties [9] [10]. The fundamental issue is that deep learning algorithms are typically "data hungry" and require large amounts of high-quality data to train millions of parameters effectively [9].

Why does my model perform well during validation but fails with real-world compounds? This common problem often stems from inappropriate dataset splitting. When you use random splits instead of scaffold-aware splits, your model is tested on molecules structurally similar to those in the training set, creating inflated performance estimates [9]. In real-world drug discovery programs, molecular design changes dramatically over the project timeline, creating a distribution mismatch that models trained on limited data cannot handle [9]. Always use scaffold splits or time-series splits to better simulate real-world performance.

How can I improve model performance when I have fewer than 100 labeled samples? Conventional deep learning approaches typically fail in this ultra-low data regime. Instead, implement multi-task learning (MTL) with adaptive checkpointing (ACS), which leverages correlations among related molecular properties to improve predictive performance [1]. Research demonstrates that ACS consistently surpasses single-task learning and conventional MTL, achieving accurate predictions with as few as 29 labeled samples [1]. Additionally, prioritize traditional machine learning methods like random forests, which frequently outperform deep learning in low-data scenarios [9].

What metrics should I use to properly evaluate models trained on scarce, imbalanced data? Avoid relying solely on the area under the receiver operating characteristic curve (AUC-ROC), as it can be overly optimistic with imbalanced datasets [9]. Instead, use the precision-recall curve, which focuses on the minority class and provides a more realistic performance assessment [9]. For regression tasks, avoid binning continuous bioactivity readouts into classifiers, as this results in significant information loss [9].

How can I access more data without compromising intellectual property or violating privacy? Federated learning approaches allow you to leverage data from multiple institutions without surrendering IP or moving raw data [10]. In these frameworks, aggregated gradients flow through secure nodes while original structures remain behind corporate firewalls [10]. Alternatively, explore collaborative data-sharing initiatives like OpenFold3, where multiple companies contribute co-folding data to create enhanced shared models [10].

Performance Comparison of ML Approaches in Low-Data Regimes

Table 1: Quantitative performance comparison of machine learning methods under data scarcity conditions

| Method | Minimum Data Requirement | Best Use Scenario | Reported Performance Advantage | Key Limitations |

|---|---|---|---|---|

| Random Forests (with circular fingerprints) [9] | Low (≈50 samples) | Benchmarking new methods, initial project phases | Competitively outperforms deep learning on BACE, BBBP, ESOL, and Lipop datasets [9] | Requires careful feature engineering; may not capture complex molecular interactions |

| Multi-task Learning with Adaptive Checkpointing (ACS) [1] | Ultra-low (29+ samples) [1] | Multiple related properties available, severe task imbalance | 11.5% average improvement over node-centric message passing methods; 8.3% improvement over single-task learning [1] | Requires multiple related tasks; more complex implementation |

| Single-Task Learning [1] | Moderate (100+ samples) | Single well-defined property with sufficient data | Baseline performance; outperformed by MTL-ACS in most scenarios [1] | No knowledge transfer between tasks; requires more data per property |

| Conventional Deep Learning (Transformers, GNNs) [9] | High (1000+ samples) [9] | Large, diverse datasets with extensive labels | Only becomes competitive in HIV dataset with >1000 training examples [9] | Data-hungry; prone to overfitting with scarce data |

Data Requirements for Different AI Applications

Table 2: Data scarcity challenges and solutions across research domains

| Application Domain | Primary Data Scarcity Challenge | Proven Solutions | Real-World Impact |

|---|---|---|---|

| Small Molecule Drug Discovery [9] [10] | Sparse coverage of chemical space; protein-ligand structures sparse for many disease targets [10] | Multi-task learning; federated learning; traditional ML (Random Forests) [9] [1] | Target identification compressed from 12 months to 3 months (Exscientia) [10] |

| Materials Innovation [1] | Limited labeled data for novel materials (polymers, energy carriers) [1] | Adaptive Checkpointing with Specialization (ACS); synthetic data generation | Accurate prediction of sustainable aviation fuel properties with only 29 labeled samples [1] |

| Clinical Trial Optimization [11] | Heterogeneous patient populations; ethical constraints on data collection | AI-driven patient stratification; multi-omics integration [11] | Identifying patient subgroups likely to respond to specific therapies |

| Opioid Use Disorder Treatment [11] | Multifactorial disease complexity; limited patient data for subpopulations | Multiomics data integration; AI-driven simulations of human biology [11] | Identifying novel molecular targets for precision therapies |

Experimental Protocols

Protocol 1: Multi-Task Learning with Adaptive Checkpointing for Ultra-Low Data Scenarios

Purpose: To enable accurate molecular property prediction when labeled data is severely limited (as few as 29 samples) [1].

Materials and Equipment:

- Molecular dataset with multiple property annotations

- Graph Neural Network framework (PyTor Geometric or DGL)

- Validation set with scaffold split (critical for realistic assessment)

Procedure:

- Architecture Setup: Implement a shared GNN backbone based on message passing with task-specific multi-layer perceptron (MLP) heads [1].

- Training Configuration: Use a Murcko-scaffold split to separate training and validation sets, ensuring structurally distinct molecules are in the validation set [9] [1].

- Checkpointing Mechanism: Monitor validation loss for each task independently. Checkpoint the best backbone-head pair whenever a task's validation loss reaches a new minimum [1].

- Specialization: After training, obtain a specialized backbone-head pair for each task, combining shared knowledge with task-specific optimization [1].

Validation:

- Test on clinically relevant benchmarks (ClinTox, SIDER, Tox21) using scaffold splits [1]

- Compare against single-task learning and conventional MTL baselines

- Report precision-recall curves in addition to AUC-ROC for imbalanced datasets [9]

Protocol 2: Federated Learning for Multi-Institutional Data Collaboration

Purpose: To leverage distributed molecular data sources without compromising intellectual property or privacy [10].

Materials and Equipment:

- Secure computational nodes at each participating institution

- Federated learning platform (Apheris, OpenFold3)

- Standardized molecular representation format

Procedure:

- Local Model Training: Each institution trains models on their proprietary data behind institutional firewalls [10].

- Gradient Aggregation: Only model gradients (not raw data) are shared with a central aggregator [10].

- Global Model Update: The aggregator combines gradients to update a global model, then redistributes updated parameters [10].

- Iterative Refinement: Repeat steps 1-3 until model convergence, maintaining data privacy throughout [10].

Validation:

- Ensure updated models maintain or improve performance when returned to each participant for local inference [10]

- Verify that raw molecular structures never leave institutional firewalls [10]

- Benchmark against models trained on isolated institutional data

Experimental Workflow Visualization

ML Approach Selection Workflow

ACS Training Methodology

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential computational tools for overcoming data scarcity in molecular research

| Tool/Resource | Function | Application Context | Key Features |

|---|---|---|---|

| Multiomics Advanced Technology (MAT) Platform [11] | Integrates genomic, transcriptomic, proteomic, and metabolomic data | Target identification, mechanism of action studies | Simulates human biology using multiomic inputs; models drug-disease interactions in silico [11] |

| Adaptive Checkpointing with Specialization (ACS) [1] | Multi-task learning framework that mitigates negative transfer | Molecular property prediction with limited labeled data | Combines shared backbone with task-specific heads; checkpoints best parameters per task [1] |

| Federated Learning Platforms (e.g., Apheris) [10] | Enables collaborative model training without data sharing | Multi-institutional research with IP constraints | "Trust by architecture" design; gradients shared while raw data remains secured [10] |

| Random Forests with Circular Fingerprints [9] | Traditional ML approach for low-data regimes | Initial project phases, benchmarking deep learning methods | Competitive performance on BACE, BBBP, ESOL, and Lipop datasets with limited data [9] |

| Synthetic Data Generation [12] | Creates artificial training data that mimics real statistical properties | Addressing edge cases, rare molecular classes | Generates diverse molecular representations; helps rebalance imbalanced datasets [12] |

| MoleculeNet Benchmarks [9] [1] | Standardized datasets for method comparison | Model validation and performance assessment | Includes ClinTox, SIDER, Tox21 with scaffold splits for realistic evaluation [9] [1] |

Proven Techniques and Architectures for Low-Data Molecular Modeling

Leveraging Multi-Task Learning (MTL) and Adaptive Checkpointing (ACS) to Share Knowledge

This technical support center provides targeted troubleshooting guides and FAQs for researchers employing Multi-Task Learning (MTL) and Adaptive Checkpointing with Specialization (ACS) to improve model performance on scarce molecular property data. The guidance is framed within a thesis context focused on overcoming data bottlenecks in molecular property prediction for drug discovery and materials science.

Troubleshooting Guide: Common MTL and ACS Implementation Issues

Problem: Negative Transfer Degrading Model Performance

- Symptoms: Performance on one or more tasks is significantly worse in MTL compared to Single-Task Learning (STL).

- Possible Causes & Solutions:

- Cause 1: Low Task Relatedness. The auxiliary tasks selected are not sufficiently correlated with your primary task of interest, leading to conflicting gradient updates [1].

- Solution: Implement a task selection algorithm before MTL training. Use status theory and maximum flow analysis on a task association network to adaptively identify friendly auxiliary tasks for your primary task [13].

- Cause 2: Severe Task Imbalance. Tasks with abundant data dominate the shared parameter updates, overwhelming the learning signal from low-data tasks [1] [14].

- Solution: Adopt the ACS training scheme. ACS monitors validation loss for each task independently and checkpoints the best backbone-head pair for a task whenever its validation loss reaches a new minimum, effectively shielding tasks from detrimental updates [1].

- Cause 3: Architectural/Optimization Mismatch. A single shared backbone lacks the capacity to learn representations for all tasks, or tasks have conflicting optimal learning rates [1].

- Solution: Use an architecture with a shared backbone (for general representations) and dedicated task-specific heads (for specialized learning). ACS naturally incorporates this design [1].

- Cause 1: Low Task Relatedness. The auxiliary tasks selected are not sufficiently correlated with your primary task of interest, leading to conflicting gradient updates [1].

Problem: Poor Generalization in Ultra-Low-Data Regimes

- Symptoms: Model performance is unsatisfactory when labeled data for a molecular property is very scarce (e.g., fewer than 100 samples).

- Possible Causes & Solutions:

- Cause 1: Overfitting on Small Training Set.

- Cause 2: Failure to Transfer Knowledge Across Properties.

Problem: Inefficient or Unstable MTL Training

- Symptoms: Training is slow, consumes excessive GPU memory, or validation losses for different tasks are highly volatile.

- Possible Causes & Solutions:

- Cause 1: Inefficient Handling of Missing Labels.

- Solution: Use loss masking for missing labels. This is a more practical and effective alternative to imputation or complete-case analysis, as it allows the model to utilize all available data without introducing bias or reducing generalization [1].

- Cause 2: Memory Overflow with Large Models and Batches.

- Solution: Implement adaptive memory management frameworks like Adacc, which combine activation checkpointing (recomputation) and adaptive tensor compression to reduce GPU memory footprint without significantly sacrificing training throughput or model accuracy [16].

- Cause 1: Inefficient Handling of Missing Labels.

Frequently Asked Questions (FAQs)

Q1: When should I use MTL over Single-Task Learning (STL) for molecular property prediction? A: Prefer MTL when you have multiple property endpoints to predict, especially when the labeled data for one or more of these properties is scarce. MTL exploits commonalities and differences across tasks to learn better representations, effectively augmenting the data for low-resource tasks [2] [13]. STL may be sufficient only when you have a single, well-defined property with a large amount of high-quality labeled data.

Q2: What is the key innovation of Adaptive Checkpointing with Specialization (ACS)? A: ACS is a training scheme designed to mitigate Negative Transfer in MTL. It combines a shared, task-agnostic backbone with task-specific heads. Its key innovation is to independently track the validation loss for each task and checkpoint the model parameters (both backbone and the corresponding head) whenever a task achieves a new best validation loss. This ensures each task gets a specialized model that has benefited from shared learning without being harmed by later conflicting updates from other tasks [1].

Q3: How can I select the best auxiliary tasks for my primary task of interest? A: Moving beyond random or heuristic selection is recommended. A robust method involves: 1. Building a Task Association Network: Train individual and pairwise task models to quantify the relationship between tasks [13]. 2. Applying Status Theory and Maximum Flow: Use these complex network science tools on the association network to identify which auxiliary tasks provide the greatest potential performance boost to your primary task, forming an optimal "primary-auxiliaries" group [13].

Q4: My molecular dataset has many missing property values. How should I handle this? A: The recommended approach is loss masking. During the loss calculation, simply ignore (mask) the contributions from missing labels. This allows the model to learn from all available data points without the need for potentially biased data imputation methods [1].

Q5: Can these methods work with very few labeled molecules, like in rare disease research? A: Yes. The combination of MTL and ACS is particularly powerful in the "ultra-low data regime." Research has shown that ACS can enable the training of accurate Graph Neural Network models for predicting fuel ignition properties with as few as 29 labeled samples, a scenario where traditional STL would fail [1].

Protocol: Implementing ACS for Molecular Property Prediction

This protocol outlines the steps to implement the ACS training scheme as validated on molecular benchmark datasets [1].

Model Architecture Setup:

- Implement a shared Graph Neural Network (GNN) backbone (e.g., based on message passing) to generate general-purpose latent molecular representations.

- For each property (task), attach a dedicated task-specific head, typically a Multi-Layer Perceptron (MLP), which takes the shared representation as input.

Training Loop with Adaptive Checkpointing:

- Train the model (shared backbone + all task heads) on your multi-task dataset.

- After each epoch, evaluate the model on the validation set and record the loss for each individual task.

- For each task, independently check: If the current validation loss is the lowest ever recorded for that task, save a checkpoint of the shared backbone parameters along with the parameters of that task's specific head. This creates a specialized model snapshot for that task.

Final Model Selection:

- At the end of training, for each task, the best-performing model is the one saved in step 2, which represents the point during training where the shared backbone and its head were optimal for that specific task, free from negative transfer.

Quantitative Data from Key Studies

Table 1: Performance comparison of ACS against other training schemes on molecular benchmark datasets (ClinTox, SIDER, Tox21). Performance is measured by the average area under the receiver operating characteristic curve (AUC) for classification tasks [1].

| Training Scheme | Average Performance (AUC) | Key Characteristic |

|---|---|---|

| Single-Task Learning (STL) | Baseline | Separate model for each task; no parameter sharing. |

| MTL (no checkpointing) | +3.9% vs. STL | Standard multi-task learning, shared parameters. |

| MTL with Global Loss Checkpointing | +5.0% vs. STL | Checkpoints model when average validation loss across all tasks is minimal. |

| ACS (Adaptive Checkpointing) | +8.3% vs. STL | Independently checkpoints best model for each task, mitigating negative transfer. |

Table 2: Performance of the MTGL-ADMET model on selected ADMET endpoints compared to other GNN-based MTL models. Results show the average AUC over 10 independent runs [13].

| Endpoint (Primary Task) | ST-GCN | MT-GCN | MGA | MTGL-ADMET |

|---|---|---|---|---|

| HIA (Absorption) | 0.916 | 0.899 | 0.911 | 0.981 |

| Oral Bioavailability | 0.716 | 0.728 | 0.745 | 0.749 |

| P-gp Inhibition | 0.916 | 0.895 | 0.901 | 0.928 |

Workflow and System Diagrams

ACS Training and Specialization Workflow

ACS Training and Specialization

Adaptive Auxiliary Task Selection Logic

Adaptive Task Selection

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key computational "reagents" and resources for MTL/ACS experiments in molecular property prediction.

| Item / Resource | Type | Function / Purpose | Example / Source |

|---|---|---|---|

| Benchmark Datasets | Data | Provides standardized datasets for training and fair evaluation of models. | MoleculeNet (ClinTox, SIDER, Tox21) [1] [13], QM9 [2] |

| Graph Neural Network (GNN) | Model Architecture | Learns representations直接从分子图结构中提取,是分子性质预测的核心模型。 | Message Passing Neural Networks [1], Graph Convolutional Networks (GCN) [13] |

| Multi-Layer Perceptron (MLP) | Model Component | Serves as task-specific "head" in MTL, mapping shared representations to property-specific predictions. | Standard fully-connected neural networks [1] |

| Adaptive Checkpointing (ACS) | Training Algorithm | Mitigates negative transfer in MTL by saving task-specific best models during training. | Implementation as described in [1] |

| Status Theory & Max Flow | Algorithm | Automates the selection of beneficial auxiliary tasks for a given primary task. | Core component of the MTGL-ADMET framework [13] |

| Loss Masking | Training Technique | Handles missing property labels in datasets without imputation, using available data efficiently. | Common practice in MTL implementations [1] |

Harnessing Transfer Learning and Δ-ML for Accurate Predictions with Small Datasets

FAQs and Troubleshooting Guides

FAQ: Core Concepts

Q1: What are Transfer Learning and Δ-ML in the context of molecular property prediction?

A1: Transfer Learning and Δ-ML (Delta-Machine Learning) are powerful techniques designed to overcome the challenge of small datasets in molecular property prediction.

- Transfer Learning involves first pre-training a model on a large, often generic, source dataset (e.g., a large molecular database) to learn fundamental chemical patterns. This pre-trained model is then fine-tuned on your smaller, specific target dataset (e.g., your experimental data), which allows it to achieve high performance without requiring a massive amount of target-specific data [17]. This approach has shown significant success in areas like predicting HOMO-LUMO gaps and solvation energies [18] [19].

- Δ-ML, or delta-learning, is a specific technique where a machine learning model is not trained to predict the target property directly. Instead, it learns to predict the difference (delta) between a high-level, accurate calculation and a lower-level, computationally cheaper method. The final prediction is the sum of the low-level method's result and the ML-predicted correction. This approach is typically more robust and accurate than a pure ML model, though it can be slower as it requires both calculations [20].

Q2: Why should I use these methods instead of training a model directly on my data?

A2: When working with scarce molecular property data, training a model from scratch often leads to overfitting, where the model memorizes the limited training examples but fails to generalize to new molecules. Transfer Learning and Δ-ML mitigate this by leveraging prior knowledge.

- Transfer Learning provides the model with a strong foundational understanding of chemistry from the large source dataset, which it refines with your specific data. This leads to better generalization, reduced training time, and lower data requirements [17].

- Δ-ML builds on the physical understanding embedded in quantum mechanical (QM) calculations. By learning only the correction term, the model's task is simplified, which enhances robustness and accuracy, especially when the low-level method already provides a reasonable approximation [20].

Q3: What is "negative transfer" and how can I avoid it?

A3: Negative transfer occurs when the knowledge from a source dataset or pre-training task is not relevant to your target task and ends up harming the model's performance after fine-tuning [21] [17]. For example, pre-training a model to predict protein-ligand binding affinity might not be helpful for a target task of predicting inorganic catalyst properties.

To avoid negative transfer:

- Quantify Transferability: Prior to fine-tuning, use metrics to select the most relevant source model. The Principal Gradient-based Measurement (PGM) is a computation-efficient method that quantifies the relatedness between source and target tasks by analyzing gradient directions, helping you choose a source model that will likely lead to positive transfer [21].

- Use Chemically Relevant Source Data: Prefer source models pre-trained on molecular datasets that are chemically similar to your target domain (e.g., organic molecules for organic photovoltaics) [18] [21].

Troubleshooting Guide: Common Experimental Issues

Q1: I have fine-tuned a pre-trained model, but its performance is poor. What could be wrong?

A1: Poor performance after fine-tuning can stem from several issues. Follow this diagnostic checklist:

Symptom: High validation loss from the beginning of fine-tuning.

- Potential Cause 1: Negative Transfer. The source task and your target task are not sufficiently related.

- Solution: Use a transferability metric like PGM to select a more relevant source dataset for pre-training [21].

- Potential Cause 2: Severe Data Mismatch. The distribution of your small dataset (e.g., range of molecular weights, elemental composition) is very different from the source data.

- Solution: Visually inspect the data distributions or use domain adaptation techniques. Consider using a more generic source model or incorporating data from an intermediate, related domain.

Symptom: Performance plateaus quickly or the model overfits.

- Potential Cause 1: Incorrect Fine-Tuning Strategy. You might be updating too many layers of the network with too little data.

- Solution: Freeze the initial layers of the pre-trained model (which capture general chemical features) and only fine-tune the top layers. You can also try using a lower learning rate for the fine-tuning phase [17].

- Potential Cause 2: Extreme Data Imbalance. Your small dataset might have very few examples of a critical class (e.g., active compounds).

- Solution: Employ techniques like failure horizons (labeling the last 'n' observations before a failure event as positive) to artificially balance time-series data, or use synthetic data generation methods like Generative Adversarial Networks (GANs) [22].

Q2: My Δ-ML model is not providing the expected accuracy boost. What steps should I take?

A2: The effectiveness of Δ-ML depends on the relationship between the computational methods used.

- Potential Cause: The low-level method is too inaccurate. If the baseline calculation (e.g., a semi-empirical method) is wildly incorrect, the machine learning model may struggle to learn a consistent correction function.

- Solution: Choose a low-level method that, while computationally cheap, still provides a qualitatively correct description of the molecular system. The Δ-ML approach works best when the "delta" is a smooth and learnable function [20].

Q3: How do I handle a very small dataset with severe class imbalance?

A3: Data scarcity and imbalance often go hand-in-hand. A multi-pronged approach is needed:

- Strategy 1: Data-Level Solutions.

- Synthetic Data Generation: Use models like Generative Adversarial Networks (GANs) to generate synthetic molecular data that follows the same patterns as your real, limited data. This directly addresses data scarcity [22].

- Algorithmic Adjustment: For run-to-failure data, use the failure horizons technique. Instead of labeling only the final point as a failure, label a window of observations leading up to the failure. This increases the number of positive examples and helps the model learn precursor signals [22].

- Strategy 2: Leveraging External Data.

- Transfer Learning: This is the primary method to inject external knowledge. Pre-training on a large, balanced dataset equips the model with robust features, making it less prone to overfitting on your small, imbalanced dataset [17].

Experimental Protocols and Methodologies

Protocol 1: Implementing a Transfer Learning Workflow with PGM Guidance

This protocol details how to apply transfer learning for molecular property prediction, using the PGM method to select the best source model.

1. Principle: Transfer knowledge from a model pre-trained on a large, labeled source dataset (Dataset_S) to a model for a data-scarce target task (Dataset_T). The PGM method quantifies transferability by calculating the distance between the principal gradients of Dataset_S and Dataset_T, which approximates their task-relatedness without requiring full model training [21].

2. Materials:

- Hardware: A computer with a CUDA-compatible GPU is recommended.

- Software: Python environment with deep learning libraries (e.g., TensorFlow, PyTorch), RDKit for cheminformatics, and the Spektral library for graph neural networks [23].

- Data: Your target dataset (

Dataset_T) and one or more candidate source datasets (e.g., from MoleculeNet) [21].

3. Step-by-Step Procedure:

Step 1: Data Preparation.

- Convert all molecular structures (from both source and target sets) into a graph representation. This typically includes an adjacency matrix (representing atomic bonds) and a node feature matrix (representing atom types and properties) [23].

- Normalize the target property values for both source and target datasets to a mean of zero and a standard deviation of one [18] [19].

Step 2: Source Model Selection via PGM.

- For each candidate source dataset, calculate its principal gradient. This is done by initializing a model, performing a small number of training steps (or a single epoch), and calculating the average gradient direction.

- Calculate the principal gradient for your target dataset in the same manner.

- Compute the distance (e.g., cosine distance) between the principal gradient of each source and the target.

- Select the source dataset with the smallest PGM distance for your main transfer learning experiment [21].

Step 3: Pre-training.

- Train a model (e.g., a Graph Neural Network) from scratch on the selected source dataset until the validation performance converges.

Step 4: Fine-Tuning.

- Take the pre-trained model and replace its final prediction layer to match the output of your target task.

- Train (fine-tune) this model on your target dataset (

Dataset_T). It is common practice to use a lower learning rate during this phase to avoid catastrophic forgetting of the pre-trained features [17].

The following workflow diagram summarizes this protocol:

Protocol 2: Setting Up a Δ-ML (Delta-Learning) Experiment

This protocol outlines the steps to create a Δ-ML model for correcting molecular property calculations.

1. Principle: A machine learning model is trained to predict the error (delta) of a low-level quantum mechanical (QM) method relative to a high-level, more accurate reference method. The final, improved prediction is the sum of the low-level result and the ML-predicted delta [20].

2. Materials:

- Software: A computational chemistry package (e.g., Gaussian, ORCA, PySCF) for QM calculations and a machine learning framework (e.g., TensorFlow, PyTorch).

- Data: A dataset of molecular structures with properties calculated at both a high-level (e.g., CCSD(T)) and a low-level (e.g., a semi-empirical method) of theory.

3. Step-by-Step Procedure:

Step 1: Generate Reference Data.

- For a set of training molecules, calculate the target property using both the high-level reference method (

Property_high) and the fast, low-level method (Property_low). - Compute the delta (correction) for each molecule:

Δ = Property_high - Property_low[20].

- For a set of training molecules, calculate the target property using both the high-level reference method (

Step 2: Train the ML Model.

- Use the molecular structures as input features (e.g., using molecular descriptors or graph representations).

- Train a machine learning model (e.g., a Gradient Boosting Regressor or a Graph Neural Network) to predict the

Δvalue. - The model's learning objective is to minimize the difference between the true

Δand its prediction.

Step 3: Deploy the Δ-ML Model.

- For a new, unknown molecule:

- Calculate

Property_lowusing the fast, low-level method. - Use the trained ML model to predict the correction term,

Δ_predicted. - Obtain the final, corrected property:

Property_final = Property_low + Δ_predicted.

- Calculate

- For a new, unknown molecule:

The logical relationship of the Δ-ML method is illustrated below:

Data Presentation

The table below summarizes key quantitative results from studies on transfer learning for molecular property prediction, demonstrating its effectiveness on small datasets.

Table 1: Performance of Transfer Learning on Small Molecular Datasets

| Target Dataset (Property) | Source Dataset Used for Pre-training | Model Architecture | Key Performance Metric | Result with Transfer Learning | Result from Scratch | Reference |

|---|---|---|---|---|---|---|

| HOPV (HOMO-LUMO gaps) | Large dataset from low-level QM | PaiNN (Message Passing NN) | Mean Absolute Error (MAE) | Significantly improved accuracy after fine-tuning | Lower accuracy | [18] [19] |

| FreeSolv (Solvation Energies) | Large dataset from low-level QM | PaiNN (Message Passing NN) | Mean Absolute Error (MAE) | Less successful (due to task complexity) | N/A | [18] [19] |

| Various from MoleculeNet | Most related source per PGM map | Graph Neural Network (GNN) | AUC-ROC / RMSE | Performance strongly correlated with PGM transferability score | Lower performance without proper source selection | [21] |

| Predictive Maintenance Data | Synthetic data from GAN | ANN / Random Forest | Accuracy | ANN: 88.98% (with synthetic data) | Lower without addressing data scarcity | [22] |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Resources for Experiments

| Item Name | Type | Function / Application | Key Notes |

|---|---|---|---|

| Message Passing Neural Networks (e.g., PaiNN) | Model Architecture | Learns directly from molecular graph structures; highly effective for molecular property prediction. | Outperformed other models on small datasets like HOPV in benchmarks [18] [19]. |

| Spektral Library | Software / Framework | A Python library for graph neural networks, based on Keras/TensorFlow. | Provides functions to convert molecules from SMILES/SDF files into graph formats suitable for NN input [23]. |

| MoleculeNet | Data Resource | A benchmark collection of molecular datasets for various property prediction tasks. | Serves as a key resource for finding source datasets for pre-training and for benchmarking [21]. |

| Principal Gradient-based Measurement (PGM) | Algorithm / Metric | Quantifies transferability between source and target tasks prior to fine-tuning. | Computationally efficient method to select the best source model and avoid negative transfer [21]. |

| Generative Adversarial Network (GAN) | Model Architecture | Generates synthetic molecular data to augment small, scarce datasets. | Used to address data scarcity in predictive modeling, increasing model accuracy significantly [22]. |

| RDKit | Software / Cheminformatics | An open-source toolkit for cheminformatics. | Used for processing molecular structures, generating fingerprints, and handling SDF files in the data preparation pipeline [23]. |

Frequently Asked Questions

This section addresses common challenges researchers face when integrating diverse molecular representations.

Q1: How can I effectively fuse 1D, 2D, and 3D molecular representations when some data is missing?

A: Utilize a dual-branch architecture like PremuNet. The PremuNet-L branch processes low-dimensional features (SMILES strings, molecular fingerprints, and 2D graphs), while the PremuNet-H branch handles high-dimensional features (2D topologies and 3D geometries). For missing 3D structures, employ self-supervised pre-training on large-scale datasets containing both 2D and 3D information. This allows the model to infer 3D features from 2D structures during downstream tasks, ensuring robust performance even when explicit 3D coordinates are unavailable [24].

Q2: What strategies can mitigate negative transfer in Multi-Task Learning (MTL) with imbalanced molecular data?

A: Adaptive Checkpointing with Specialization (ACS) is designed for this scenario. ACS uses a shared graph neural network (GNN) backbone with task-specific heads. During training, it monitors validation loss for each task and checkpoints the best backbone-head pair when a task achieves a new minimum loss. This approach preserves the benefits of inductive transfer between related tasks while shielding individual tasks from detrimental parameter updates caused by severe task imbalance [1].

Q3: How can I incorporate domain knowledge, like molecular motifs, into a deep learning model?

A: Implement a Fingerprint-enhanced Hierarchical GNN (FH-GNN). Construct a hierarchical molecular graph that integrates atom-level, motif-level, and graph-level information. Process this graph using a Directed Message-Passing Neural Network (D-MPNN). Simultaneously, encode traditional molecular fingerprints. Finally, use an adaptive attention mechanism to fuse the hierarchical graph features with the fingerprint features, creating a comprehensive molecular embedding that balances learned and expert-curated knowledge [25].

Q4: What are practical methods for molecular property prediction with very few labeled samples?

A: Leverage few-shot learning frameworks like MolFeSCue. This approach uses pre-trained molecular models for initial representation and combines them with a dynamic contrastive loss function. Contrastive learning helps extract meaningful molecular representations from imbalanced datasets by guiding the model to generate similar embeddings for molecules within the same class and dissimilar ones for different classes, which is crucial when labeled data is scarce [26].

Troubleshooting Guides

Problem: Model Performance Degradation with High Data Imbalance

- Symptoms: The model performs well on tasks or classes with abundant data but fails on those with few samples.

- Diagnosis: This is often caused by negative transfer in MTL or a model bias towards majority classes.

- Solutions:

- Implement ACS Training: Adopt the ACS scheme to specialize model parameters for individual tasks, preventing them from being overwritten by updates from data-rich tasks [1].

- Apply Contrastive Loss: Use a contrastive loss function, as in MolFeSCue, to improve the separation of molecular representations in the embedding space, which helps the model distinguish under-represented classes more effectively [26].

- Fuse Expert Features: Integrate molecular fingerprints into your model. FH-GNN shows that combining GNNs with fingerprints provides strong priors that enhance prediction accuracy, especially when data is limited [25].

Problem: Inefficient or Ineffective Fusion of Multi-Modal Molecular Data

- Symptoms: The model's performance does not improve, or even degrades, after combining features from different molecular representations (e.g., 1D SMILES, 2D graph, 3D geometry).

- Diagnosis: The fusion method may be too simplistic (e.g., naive concatenation) or the model struggles to align features from different modalities.

- Solutions:

- Adopt Structured Fusion: Follow the PremuNet framework, which uses a GNN to interactively fuse atomic features from a SMILES-Transformer with 2D graphic information. For final prediction, concatenate the fused features with molecular fingerprint and PremuNet-H branch features [24].

- Use Adaptive Attention: Employ an adaptive attention mechanism, like in FH-GNN, to dynamically weight the importance of different feature streams (e.g., hierarchical graph features vs. fingerprint features) during fusion [25].

- Leverage Pre-training: Use self-supervised pre-training on large datasets to teach the model the relationships between different molecular modalities (e.g., 2D and 3D structures) before fine-tuning on the target task with data scarcity [24].

Experimental Protocols & Data

Table 1: Summary of Key Methodologies for Data-Scarce Molecular Property Prediction

| Method Name | Core Architecture | Fusion Strategy | Key Mechanism for Data Scarcity | Best Suited For |

|---|---|---|---|---|

| ACS [1] | GNN with shared backbone & task-specific heads | Checkpointing best model states per task | Adaptive Checkpointing with Specialization | Multi-task learning with severe task imbalance |

| PremuNet [24] | Dual-branch (PremuNet-L & PremuNet-H) | Concatenation of features from both branches | Multi-representation pre-training | Fusing 1D, 2D, and inferred 3D molecular information |

| FH-GNN [25] | D-MPNN on hierarchical graphs + fingerprints | Adaptive attention mechanism | Integrating molecular fingerprints and motif information | Leveraging domain knowledge and hierarchical structures |

| MolFeSCue [26] | Pre-trained models + contrastive learning | Few-shot learning framework | Dynamic contrastive loss for class imbalance | Few-shot learning and highly imbalanced datasets |

Protocol 1: Implementing ACS for Multi-Task Learning

- Model Setup: Construct a GNN backbone (e.g., based on message passing) shared across all tasks. Attach separate Multi-Layer Perceptron (MLP) heads for each specific prediction task [1].

- Training Loop: Train the entire model on all tasks simultaneously.

- Validation & Checkpointing: Continuously monitor the validation loss for each individual task. For a given task, whenever its validation loss hits a new minimum, save a checkpoint of the shared backbone parameters paired with that task's specific head.

- Specialization: After training, each task is served by its best-performing saved backbone-head pair, creating a specialized model that benefits from shared learning without suffering from negative transfer late in training [1].

Protocol 2: Pre-training and Fine-Tuning PremuNet

- PremuNet-L Branch:

- Input: SMILES string.

- Process: Use a SMILES-Transformer to get atomic and molecular-level embeddings. Pass atomic embeddings to a GNN that uses the 2D molecular graph for information fusion. Combine the GNN's graph representation, the Transformer's molecular-level embedding, and a molecular fingerprint vector [24].

- PremuNet-H Branch:

- Pre-training: Train this branch on large datasets with both 2D and 3D data using self-supervised learning (e.g., masked feature prediction). This teaches the model the relationship between 2D topology and 3D geometry [24].

- Fine-tuning: For downstream tasks lacking 3D data, use the pre-trained PremuNet-H branch to generate 3D-informed features from 2D structures alone.

- Fusion for Prediction: Concatenate the final feature vectors from the PremuNet-L and PremuNet-H branches and feed them into a prediction layer (e.g., MLP) for the target property [24].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Resources for Molecular Property Prediction Experiments

| Reagent / Resource | Function / Description | Relevance to Data-Scarce Scenarios |

|---|---|---|

| MoleculeNet Benchmarks [1] [25] | A standardized collection of molecular datasets for fair model evaluation. | Provides critical benchmarks like ClinTox, SIDER, and Tox21 to validate methods in low-data regimes. |

| Directed-MPNN (D-MPNN) [25] | A graph neural network that propagates messages along directed edges to reduce redundant updates. | Effectively captures complex molecular structures from limited data, as used in FH-GNN and ACS. |

| Molecular Fingerprints [25] | Expert-curated binary vectors representing the presence/absence of specific chemical substructures. | Provides strong prior knowledge, compensating for lack of data and improving model generalization (e.g., in FH-GNN). |

| BRICS Algorithm [25] | A method for fragmenting molecules into chemically meaningful motifs or substructures. | Enables the construction of hierarchical molecular graphs, enriching the model's input with local functional group information. |

| Dynamic Contrastive Loss [26] | A loss function that pulls representations of similar molecules closer and pushes dissimilar ones apart. | Directly addresses class imbalance by improving feature separation, which is crucial in the MolFeSCue framework. |

Workflow Visualization

Frequently Asked Questions (FAQs)

FAQ: How can GDL models overcome the challenge of scarce molecular property data? Incorporating precise 3D structural information and physical inductive biases allows GDL models to learn more from less data. By explicitly modeling fundamental physical interactions (like covalent bonds and non-covalent forces), the model relies less on vast amounts of labeled data and more on the underlying physics of the molecular system [27] [28].

FAQ: My model performs well on small molecules but fails on macromolecules. What could be wrong? This is often due to scalability issues or an overly simplistic molecular representation. Standard GNNs can become computationally expensive for large systems. Consider a framework like PAMNet, which uses a multiplex graph to separately and efficiently handle local and non-local interactions, making it scalable from small molecules to large complexes like proteins and RNAs [28].

FAQ: Why is my model not invariant to rotation and translation of the input molecule? Your model likely lacks E(3)-invariant operations. For predicting scalar properties (e.g., energy), ensure that all input features (like interatomic distances and angles) and the operations within the network are E(3)-invariant. Frameworks that explicitly preserve this symmetry will produce consistent results regardless of the molecule's orientation in space [28].

FAQ: Are covalent bonds the only important interactions for molecular graph representation? No. Recent research demonstrates that molecular graphs constructed only from non-covalent interactions (based on Euclidean distance) can achieve comparable or even superior performance to traditional covalent-bond-based graphs in property prediction tasks. This highlights the critical role of non-covalent interactions and suggests moving beyond the covalent-only paradigm [27].

Troubleshooting Guides

Issue 1: Poor Model Generalization on Scarce Data

Problem: Model performance drops significantly when tested on molecular types or properties not well-represented in the training data.

Diagnosis and Resolution:

| Step | Action | Key Technical Details |

|---|---|---|

| 1 | Enrich Molecular Representation | Move beyond covalent graphs. Incorporate non-covalent interactions by adding edges between atoms within specific distance thresholds (e.g., 4-6 Å) [27]. |

| 2 | Incorporate Physical Inductive Biases | Use a physics-aware model like PAMNet. Separately model local (bond, angle, dihedral) and non-local (van der Waals, electrostatic) interactions, mirroring molecular mechanics [28]. |

| 3 | Leverage Multi-Scale Information | Represent the molecule as a multiplex graph with separate layers for local and global interactions. Use a fusion module (e.g., attention pooling) to learn the importance of each interaction type [28]. |

Issue 2: Inefficient Training on Large-Scale Tasks or Macromolecules

Problem: Training is slow and memory-intensive, especially with large molecules or massive virtual screening libraries.

Diagnosis and Resolution:

| Step | Action | Key Technical Details |

|---|---|---|

| 1 | Optimize Geometric Operations | Avoid expensive angular computations on all atom pairs. Frameworks like PAMNet only use computationally intensive angular information for local interactions and simpler distance-based messages for abundant non-local interactions [28]. |

| 2 | Use Appropriate Cutoff Distances | Define local and global interaction layers using cutoff distances. This creates sparse graphs, reducing the number of edges and messages that need to be computed [28]. |

| 3 | Apply Efficient Message Passing | Ensure the GDL framework is designed for efficiency. PAMNet, for instance, avoids the (O(Nk^2)) complexity of full angular messaging, leading to faster computation and lower memory use [28]. |

Issue 3: Inaccurate Prediction of E(3)-Equivariant Properties

Problem: The model fails to correctly predict vectorial properties (like dipole moments) that should rotate and translate with the input molecule.

Diagnosis and Resolution:

| Step | Action | Key Technical Details |

|---|---|---|

| 1 | Verify Input Features | For equivariant tasks, the model needs both invariant scalar features (e.g., atom types) and equivariant geometric vectors (e.g., direction vectors) [28]. |

| 2 | Select Correct Architecture | Choose a model capable of E(3)-equivariant transformations. These models update geometric vectors using operations inspired by quantum mechanics to ensure they transform correctly with the molecule [28]. |

Experimental Protocols & Data

Key Experiment: Benchmarking Molecular Graph Representations

Objective: To evaluate whether non-covalent molecular graphs can outperform the de facto standard of covalent-bond-based graphs [27].

Methodology:

- Graph Construction: For each molecule, multiple graphs are constructed.

- Covalent Graph (

I = [0, 2] Å): Only covalent bonds. - Non-Covalent Graphs: Edges defined by Euclidean distance ranges:

[2, 4) Å,[4, 6) Å,[6, 8) Å,[8, ∞) Å.

- Covalent Graph (

- Model Training: Identical GDL models are trained using each graph representation.

- Evaluation: Model performance is compared on benchmark datasets (BACE, ClinTox, SIDER, Tox21, HIV, ESOL).

Quantitative Results: The table below shows that non-covalent graphs often match or exceed the performance (hypothetical AUC/ROC values) of covalent graphs [27].

| Dataset | Covalent Graph [0,2) Å |

Non-Covalent [4,6) Å |

Non-Covalent [8,∞) Å |

|---|---|---|---|

| BACE | 0.850 | 0.881 | 0.852 |

| ClinTox | 0.910 | 0.935 | 0.915 |

| HIV | 0.780 | 0.801 | 0.782 |

Key Experiment: Evaluating the PAMNet Framework

Objective: To validate the accuracy and efficiency of the PAMNet framework across diverse molecular systems [28].

Methodology:

- Tasks: Predict small molecule properties, RNA 3D structures, and protein-ligand binding affinities.

- Baselines: Compare against state-of-the-art GNNs specific to each task.